A Modern Protocol for Phylogenetically Informed Prediction: From Evolutionary Theory to Biomedical Application

This article provides a comprehensive protocol for phylogenetically informed prediction, a powerful methodological framework that leverages evolutionary relationships to accurately infer biological traits.

A Modern Protocol for Phylogenetically Informed Prediction: From Evolutionary Theory to Biomedical Application

Abstract

This article provides a comprehensive protocol for phylogenetically informed prediction, a powerful methodological framework that leverages evolutionary relationships to accurately infer biological traits. Tailored for researchers and drug development professionals, we first explore the foundational principles establishing phylogeny as a predictive tool, supported by recent evidence of its superior performance over traditional equations. The core of the guide details methodological workflows for diverse applications, from microbial growth rate estimation to drug discovery from medicinal plants. We further address critical troubleshooting and optimization strategies for real-world data challenges and present a rigorous validation framework comparing predictive performance across methods and case studies. This integrated resource aims to equip scientists with the practical knowledge to implement these advanced techniques, thereby enhancing the accuracy and efficiency of predictive analyses in evolutionary biology, ecology, and biomedical research.

The Evolutionary Foundation: Why Phylogeny is a Powerful Predictive Tool

Phylogenetically informed prediction is a statistical technique that uses the evolutionary relationships among species (phylogeny) to predict unknown trait values. Owing to common descent, data from closely related organisms are more similar than data from distant relatives, creating a phylogenetic signal in trait data [1]. This method fundamentally outperforms traditional predictive equations derived from ordinary least squares (OLS) or phylogenetic generalized least squares (PGLS) regression, which ignore the specific phylogenetic position of the predicted taxon [1]. By explicitly incorporating shared ancestry, phylogenetically informed prediction provides a powerful tool for reconstructing ancestral states, imputing missing data in comparative analyses, and testing evolutionary hypotheses across diverse fields including ecology, palaeontology, epidemiology, and drug development [1].

The core principle hinges on models that use a phylogenetic variance-covariance matrix to account for the non-independence of species data. These models can be implemented through methods such as independent contrasts, phylogenetic generalized least squares, or phylogenetic generalized linear mixed models, all of which yield equivalent results by treating phylogeny as a fundamental component of the statistical model [1]. Bayesian implementations further advance this approach by enabling the sampling of predictive distributions for subsequent analysis [1].

Core Principle and Quantitative Superiority

Performance Advantage Over Traditional Methods

Simulation studies based on thousands of ultrametric phylogenies have unequivocally demonstrated the superior performance of phylogenetically informed predictions. When predicting unknown trait values in a bivariate framework, phylogenetically informed methods show a four to five-fold improvement in performance compared to calculations derived from OLS and PGLS predictive equations [1]. This is measured by the variance (({\sigma}^2)) of the prediction error distributions, where a smaller variance indicates consistently greater accuracy across simulations.

Table 1: Performance Comparison of Prediction Methods on Ultrametric Trees

| Method | Trait Correlation Strength | Variance of Prediction Error (({\sigma}^2)) | Relative Performance vs. PIP |

|---|---|---|---|

| Phylogenetically Informed Prediction (PIP) | r = 0.25 | 0.007 | Baseline (1x) |

| Ordinary Least Squares (OLS) Predictive Equation | r = 0.25 | 0.030 | ~4.3x worse |

| Phylogenetic GLS (PGLS) Predictive Equation | r = 0.25 | 0.033 | ~4.7x worse |

| Phylogenetically Informed Prediction (PIP) | r = 0.75 | 0.002 | Baseline (1x) |

| Ordinary Least Squares (OLS) Predictive Equation | r = 0.75 | 0.014 | ~7x worse |

| Phylogenetic GLS (PGLS) Predictive Equation | r = 0.75 | 0.015 | ~7.5x worse |

A striking finding is that predictions made using phylogenetically informed methods with only weakly correlated traits (r=0.25) are roughly twice as accurate as predictions made using strongly correlated traits (r=0.75) via traditional PGLS or OLS predictive equations [1]. In direct comparisons, phylogenetically informed predictions were more accurate than PGLS-based estimates in 96.5–97.4% of simulated trees and more accurate than OLS-based estimates in 95.7–97.1% of trees [1].

Conceptual Workflow of Phylogenetically Informed Prediction

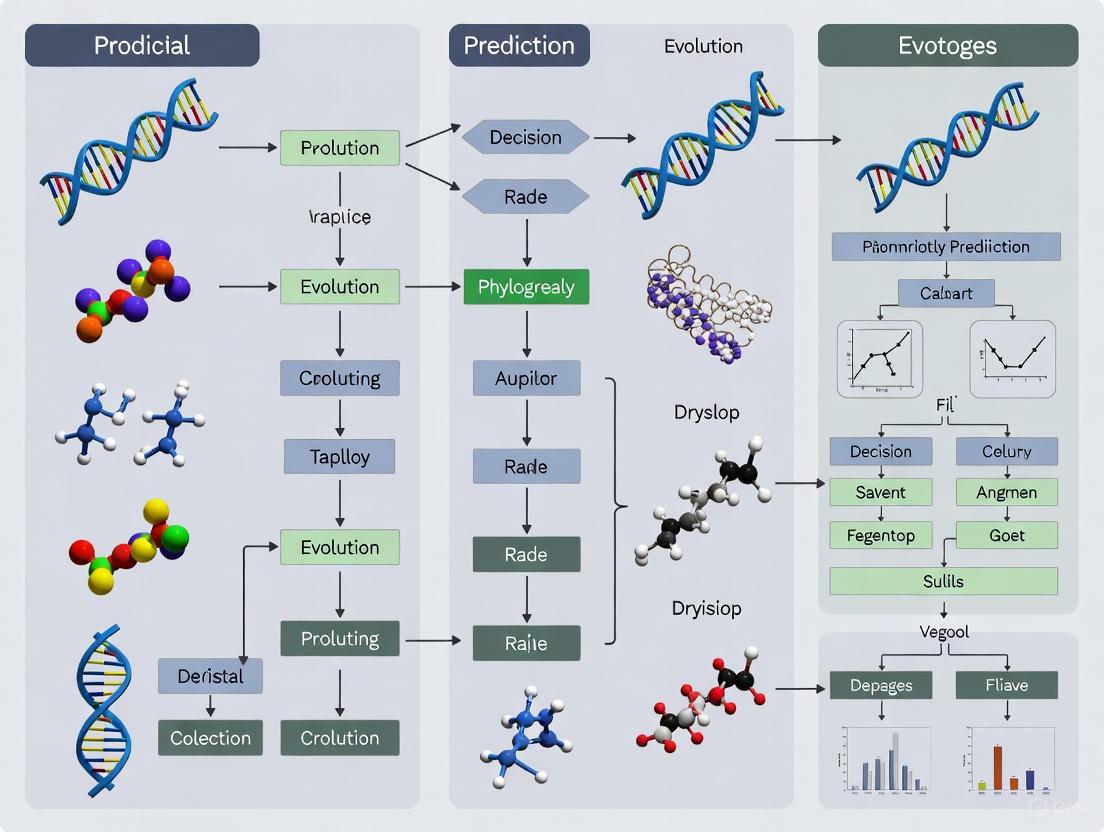

The following diagram illustrates the logical workflow and key decision points for implementing a phylogenetically informed prediction study, from data preparation to model validation.

Application Notes and Protocols

Protocol 1: Basic Phylogenetically Informed Prediction for Univariate Trait Imputation

This protocol details the steps for predicting a single unknown trait value using a phylogenetic tree and trait data from related species.

Research Reagent Solutions

Table 2: Essential Materials and Software for Basic Phylogenetic Prediction

| Item Name | Function/Benefit | Example/Format |

|---|---|---|

| Phylogenetic Tree | Represents evolutionary relationships; provides variance-covariance structure. | Newick format (.nwk or .tree) or Nexus format (.nex). |

| Trait Dataset | Contains known trait values for related species used to build the predictive model. | CSV file with species names matching tree tips. |

| R Statistical Environment | Primary platform for statistical analysis and implementation of comparative methods. | R version 4.1.0 or higher. |

| Comparative Method R Packages | Provides functions for phylogenetic regression, model fitting, and prediction. | ape, nlme, phytools, MCMCglmm, brms. |

| Bayesian Inference Engine | Enables sampling from posterior predictive distributions for robust uncertainty estimation. | Stan (via brms) or JAGS. |

Step-by-Step Procedure

Data Preparation and Integration

- Import the phylogenetic tree into R using a package like

ape(e.g.,read.tree()function). - Import and clean the trait data, ensuring species names exactly match the tip labels in the phylogenetic tree.

- Merge the tree and trait data, removing any species with missing data for the focal trait if necessary.

- Import the phylogenetic tree into R using a package like

Model Specification

- A basic phylogenetic model can be specified as a Phylogenetic Generalized Least Squares (PGLS) model or a Bayesian phylogenetic mixed model. The core structure accounts for the phylogenetic non-independence via a covariance matrix derived from the tree.

Model Fitting and Prediction

- Fit the model to the data of species with known trait values.

- For the target species with an unknown trait value, its phylogenetic position is incorporated into the covariance matrix. The model then predicts the value based on the evolutionary model and the data from related species.

- In a Bayesian framework, this generates a posterior predictive distribution for the unknown trait, from which the mean, median, and credible intervals can be derived.

Validation

- Perform a leave-one-out cross-validation: iteratively mask known values in your dataset, predict them using the model, and compare predictions to actual values to quantify average prediction error [2].

Protocol 2: Multi-Response Phylogenetic Mixed Models for Complex Trait Networks

For analyses involving multiple, potentially correlated traits, Multi-Response Phylogenetic Mixed Models (MR-PMMs) are the superior approach. They explicitly decompose covariances between traits into their phylogenetic and species-specific components, providing a more powerful framework for understanding trait coevolution and improving prediction accuracy [2].

Workflow for Multivariate Trait Prediction and Covariance Decomposition

The following diagram outlines the extended workflow for implementing a Multi-Response Phylogenetic Mixed Model (MR-PMM), highlighting the key advantage of modeling the genetic and residual covariance structures between traits.

Step-by-Step Procedure

Model Conceptualization

- Define the set of response traits to be analyzed jointly. MR-PMMs are particularly beneficial when these traits are expected to be correlated due to shared evolutionary history or constrained development [2].

Model Formulation

- The MR-PMM is specified to include a G-matrix (phylogenetic variance-covariance matrix) and an R-matrix (residual variance-covariance matrix). This allows the model to estimate how much of the correlation between traits results from shared evolutionary history (phylogenetic effect) versus independent evolution or other non-phylogenetic factors (residual effect) [2].

Implementation and Inference

- Fit the model using Bayesian software such as

MCMCglmmorbrmsin R. These packages can handle the complex covariance structures and provide posterior distributions for all parameters, including the correlations within the G and R matrices [2]. - Assess model convergence using diagnostics like Gelman-Rubin statistics and trace plots.

- Fit the model using Bayesian software such as

Prediction and Application

- Predict missing values for any of the response traits. A key strength of MR-PMMs is that information from all correlated traits is used to inform the prediction of a single missing value, leading to greater accuracy [2].

- Use the decomposed covariance structure to test hypotheses about evolutionary constraints and trade-offs.

The Scientist's Toolkit

Critical Computational Tools and Data Standards

Successful implementation of phylogenetically informed prediction requires specific computational tools and careful attention to data standards.

Table 3: Computational Tools and Data Standards for Phylogenetic Prediction

| Tool/Category | Specific Software/Packages | Role in the Workflow |

|---|---|---|

| Programming Environments | R statistical environment, Python | Primary platforms for data manipulation, analysis, and visualization. |

| Core Phylogenetic R Packages | ape, nlme, caper, phytools |

Perform foundational phylogenetic comparative methods, including PGLS and independent contrasts. |

| Advanced Mixed Model R Packages | MCMCglmm, brms |

Implement sophisticated Bayesian multi-response phylogenetic mixed models (MR-PMMs). |

| Tree Visualization & Editing | FigTree, ggtree, iTOL | Visualize and annotate phylogenetic trees to communicate results and check data alignment. |

| Data & Tree Formats | Newick (.nwk), Nexus (.nex), CSV | Standardized file formats for exchanging tree and trait data. |

Interpretation of Prediction Intervals

A critical aspect of phylogenetically informed prediction is the accurate communication of uncertainty. Prediction intervals are essential and exhibit a key property: they increase with increasing phylogenetic branch length between the predicted species and the rest of the tree [1]. Predictions for evolutionarily isolated species with long branch lengths will have wider prediction intervals, reflecting greater uncertainty. Conversely, predictions for species with many close relatives will have narrower intervals. Always report point estimates (e.g., the posterior mean) alongside these prediction or credible intervals to convey the precision of your estimates.

The inference of unknown trait values is a ubiquitous task across biological sciences, essential for reconstructing evolutionary history, imputing missing data for analysis, and understanding adaptive processes. For over 25 years, phylogenetic comparative methods have provided a principled framework for these predictions by explicitly incorporating shared evolutionary ancestry among species. These phylogenetically informed predictions account for the fundamental biological reality that closely related organisms share more similar traits due to common descent, thereby overcoming the statistical limitations of pseudo-replication and spurious correlations that plague traditional methods.

Despite the long-established theoretical superiority of phylogenetic prediction, the scientific community continues to heavily rely on predictive equations derived from ordinary least squares and phylogenetic generalized least squares regression models. This persistence occurs even as phylogenetic methods have demonstrated substantially improved accuracy in trait reconstruction. The following application notes provide a comprehensive quantitative framework and experimental protocols for implementing phylogenetically informed predictions, offering researchers across evolutionary biology, ecology, palaeontology, and drug development a standardized approach for achieving superior predictive performance.

Quantitative Performance Comparison

Table 1: Comparative performance of prediction methods across simulation studies using ultrametric phylogenies. Performance measured by variance in prediction error distributions (σ²) across 1000 simulated trees with n = 100 taxa.

| Correlation Strength (r) | Phylogenetically Informed Prediction (σ²) | PGLS Predictive Equations (σ²) | OLS Predictive Equations (σ²) | Performance Ratio (PIP vs PGLS/OLS) |

|---|---|---|---|---|

| 0.25 | 0.007 | 0.033 | 0.030 | 4.7× / 4.3× |

| 0.50 | 0.004 | 0.017 | 0.016 | 4.3× / 4.0× |

| 0.75 | 0.002 | 0.008 | 0.007 | 4.0× / 3.5× |

Accuracy Advantage in Real-World Contexts

Table 2: Comparative accuracy rates across biological datasets. Values represent percentage of predictions where method outperformed alternatives.

| Biological Dataset | PIP vs PGLS Predictive Equations | PIP vs OLS Predictive Equations | Phylogenetic Signal Strength |

|---|---|---|---|

| Primate Neonatal Brain Size | 96.8% | 97.1% | High |

| Avian Body Mass | 95.9% | 96.3% | Moderate |

| Bush-cricket Calling Frequency | 97.2% | 96.8% | High |

| Non-avian Dinosaur Neuron Number | 96.5% | 95.7% | Moderate |

The performance advantage of phylogenetically informed prediction remains consistent across correlation strengths and tree sizes. Notably, predictions using weakly correlated traits (r = 0.25) in a phylogenetic framework demonstrate roughly equivalent or superior performance to predictive equations using strongly correlated traits (r = 0.75), highlighting the substantial information content embedded within phylogenetic relationships themselves.

Experimental Protocols

Protocol 1: Implementing Phylogenetically Informed Prediction

Materials and Equipment

- Computing Environment: R statistical environment (version 4.0 or higher)

- Required R Packages: ape, nlme, phytools, phylolm, MASS

- Data Requirements: Time-calibrated phylogeny (ultrametric or non-ultrametric), trait dataset with missing values designated appropriately

Step-by-Step Procedure

Phylogeny Preparation and Validation

- Import phylogenetic tree in Newick or Nexus format

- Verify tree is rooted and properly calibrated

- Check for ultrametric properties if analyzing contemporary taxa

- Resolve polytomies using binary resolution methods

Trait Data Alignment and Standardization

- Match species names between trait dataset and phylogeny tip labels

- Log-transform continuous traits when appropriate to meet normality assumptions

- Standardize continuous traits to mean = 0 and standard deviation = 1 for comparative analyses

- Identify missing values designated for prediction

Evolutionary Model Selection

- Evaluate phylogenetic signal using Pagel's λ or Blomberg's K

- Compare fit of Brownian motion, Ornstein-Uhlenbeck, and Early-burst models via AICc

- Select optimal evolutionary model for trait covariance structure

Phylogenetically Informed Prediction Implementation

- Construct phylogenetic variance-covariance matrix from tree

- Implement prediction algorithm using selected evolutionary model

- Generate point estimates and prediction intervals for missing values

- Execute Bayesian implementation if sampling from predictive distributions is required

Validation and Performance Assessment

- Implement cross-validation by iteratively removing known values

- Calculate prediction error as difference between predicted and actual values

- Compare performance against traditional predictive equations

- Assess prediction interval coverage probabilities

Protocol 2: Performance Benchmarking Against Traditional Methods

Materials and Equipment

- Reference Datasets: Simulated datasets with known trait values, empirical datasets with complete trait information

- Validation Framework: k-fold cross-validation protocol, Monte Carlo simulation procedures

Step-by-Step Procedure

Experimental Design Configuration

- Define simulation parameters: tree size (50, 100, 250, 500 taxa), balance indices, trait correlation strengths (0.25, 0.50, 0.75)

- Specify missing data mechanisms: completely at random, phylogenetically structured

- Set replication levels: minimum 1000 iterations per parameter combination

Data Simulation Process

- Generate phylogenetic trees under birth-death processes

- Simulate correlated trait evolution under Brownian motion model

- Induce missing data according to specified mechanism

- Replicate across parameter space

Method Comparison Execution

- Apply phylogenetically informed prediction to simulated datasets

- Compute predictions using PGLS and OLS predictive equations

- Calculate absolute prediction errors for each method

- Record computational requirements and convergence statistics

Performance Quantification

- Compute variance of prediction error distributions for each method

- Calculate proportion of iterations where each method demonstrates superiority

- Assess statistical significance of performance differences using linear mixed models

- Quantify improvement factors across parameter space

Workflow Visualization

Figure 1: Complete workflow for implementing and validating phylogenetically informed predictions.

Figure 2: Performance benchmarking protocol against traditional predictive equations.

Research Reagent Solutions

Table 3: Essential computational tools and resources for phylogenetically informed prediction research.

| Research Reagent | Specification | Application Context | Implementation Source |

|---|---|---|---|

| R Statistical Environment | Version 4.0+ | Primary computing platform for phylogenetic comparative methods | Comprehensive R Archive Network (CRAN) |

| ape Package | Version 5.0+ | Phylogenetic tree manipulation, reading/writing phylogenetic formats | CRAN |

| nlme Package | Version 3.1+ | Implementation of phylogenetic generalized least squares (PGLS) | CRAN |

| phytools Package | Version 1.0+ | Phylogenetic simulation, visualization, and comparative methods | CRAN |

| phylolm Package | Version 2.6+ | Phylogenetic linear models with efficient computation | CRAN |

| Time-Calibrated Phylogenies | Ultrametric or non-ultrametric | Evolutionary framework for trait prediction | TreeBASE, Open Tree of Life |

| Phylogenetic Signal Metrics | Pagel's λ, Blomberg's K | Quantification of phylogenetic dependence in traits | R packages: phytools, picante |

| Model Selection Framework | AICc, Likelihood Ratio Tests | Evolutionary model selection for trait covariance | Standard statistical practice |

Discussion and Implementation Guidelines

Interpretation of Performance Metrics

The consistent 2-3 fold improvement in prediction performance demonstrated by phylogenetically informed methods stems from their explicit accommodation of phylogenetic non-independence in species data. This performance advantage manifests as substantially narrower prediction error distributions, with phylogenetically informed predictions showing 4-4.7× smaller variance compared to traditional predictive equations. This performance ratio remains consistent across trait correlation strengths, though absolute performance naturally improves with stronger trait correlations.

The accuracy advantage of phylogenetic prediction proves most pronounced in datasets with strong phylogenetic signal, where traditional methods particularly suffer from pseudoreplication. However, even in weakly structured traits, the phylogenetic approach demonstrates superior performance by appropriately weighting evolutionary information. Researchers should note that predictive equations from PGLS models, while incorporating phylogeny for parameter estimation, still fail to leverage phylogenetic position for individual predictions, resulting in substantially reduced accuracy compared to full phylogenetic prediction.

Best Practices for Implementation

Successful implementation of phylogenetically informed prediction requires careful attention to several critical factors. First, phylogenetic scale and branch length accuracy directly impact prediction interval width, with increasing phylogenetic distance between predicted taxa and reference species resulting in appropriately wider prediction intervals. Second, researchers should prioritize Bayesian implementations when subsequent analysis requires sampling from predictive distributions, particularly for paleontological applications where prediction uncertainty propagates through further analysis.

For drug development applications focusing on evolutionary relationships among pathogens or protein families, non-ultrametric trees require special consideration, as the temporal component of evolutionary divergence directly influences trait covariance structures. In these contexts, researchers should validate that branch lengths accurately represent evolutionary change rather than merely time, as the Brownian motion assumption expects variance to accumulate proportional to branch length.

The study of trait evolution across species requires specialized statistical models that account for shared evolutionary history. Species are not independent data points; their phylogenetic relationships create a structure of expected correlation, where closely related species are likely to be more similar than distantly related ones due to their shared ancestry. Brownian Motion (BM) serves as a fundamental null model in phylogenetic comparative methods, portraying trait evolution as a random walk through time where variance accumulates proportionally with branch lengths. This framework provides the essential statistical foundation for testing evolutionary hypotheses, estimating ancestral states, and identifying patterns of adaptation across the tree of life. More complex models, including bounded Brownian motion, extend this basic framework to incorporate evolutionary constraints and other selective pressures, offering a powerful toolkit for understanding the tempo and mode of trait evolution.

Foundational Models and Theoretical Framework

Brownian Motion (BM) Model

Brownian Motion represents the simplest and most widely used model for continuous trait evolution. It operates on the principle that trait changes over a given branch are random, unbiased, and proportional to evolutionary time, modeled as a Gaussian process with a mean change of zero and a variance that increases linearly with time [3]. The core equation describing the covariance between species under a Brownian Motion model is given by:

Cov[𝑥ᵢ, 𝑥ⱼ] = σ² × 𝑡ᵢⱼ

Where σ² is the evolutionary rate parameter, and 𝑡ᵢⱼ is the shared evolutionary path from the root to the most recent common ancestor of species i and j [3]. This model produces a variance-covariance matrix that can be used in Generalized Least Squares (GLS) analyses to account for phylogenetic non-independence.

Bounded Brownian Motion (BBM)

Bounded Brownian Motion represents a significant extension of the basic BM model by incorporating upper and lower reflective bounds on trait values [4]. This model is particularly relevant for traits subject to physiological, biophysical, or ecological constraints that prevent unlimited divergence. The model can be conceptualized as a particle undergoing Brownian motion within a confined space, with the bounds representing evolutionary constraints. The mathematical formulation connects BBM to discrete Markov models through the relationship:

σ² = 2𝑞(𝑤/𝑘)²

Where q is the transition rate between adjacent discrete states, w represents the bounds, and k is the number of discrete trait categories used to approximate the likelihood [4]. This innovative approach allows researchers to fit bounded evolutionary models using modified discrete character analysis frameworks.

Phylogenetic Signal Quantification

Phylogenetic signal measures the extent to which related species resemble each other, quantified using metrics such as Pagel's λ [3]. This parameter ranges between 0 and 1, where λ = 1 indicates that traits have evolved according to the Brownian motion model along the specified phylogeny, while λ = 0 suggests no phylogenetic dependence. This metric is essential for understanding the relative importance of phylogenetic history versus other evolutionary forces in shaping trait distributions across species.

Table 1: Key Models of Trait Evolution and Their Applications

| Model | Core Principle | Key Parameters | Typical Applications |

|---|---|---|---|

| Brownian Motion (BM) | Traits evolve as an unbiased random walk through time | σ² (evolutionary rate), x₀ (root state) | Neutral evolution benchmark, ancestral state reconstruction |

| Bounded Brownian Motion (BBM) | BM with reflective upper and lower bounds | σ², x₀, upper/lower bounds | Constrained trait evolution, traits with physiological limits |

| Ornstein-Uhlenbeck (OU) | BM with a central tendency (pull toward optimum) | σ², α (strength of selection), θ (optimum) | Adaptive evolution, stabilizing selection, niche-filling |

| Pagel's λ | Scales phylogenetic correlations from 0 to 1 | λ (phylogenetic signal strength) | Hypothesis testing for phylogenetic inertia, model fitting |

Practical Implementation and Protocols

Data Preparation and Phylogenetic Alignment

Proper data organization is essential for phylogenetic comparative analyses. The protocol begins with ensuring exact correspondence between species names in the trait dataset and the phylogenetic tree tip labels [3].

Protocol 3.1.1: Data-Tree Alignment

- Import phylogenetic tree in Newick or Nexus format using

read.tree()orread.nexus()functions [3] - Import trait data from CSV file using

read.csv()into a data frame [3] - Verify species names in the trait data (

mydata$species) match exactly (including case) with tree tip labels (mytree$tip.label) [3] - Set row names of the data frame to species names:

rownames(mydata) <- mydata$species[3] - Reorder data frame rows to match tree tip order:

mydata <- mydata[match(mytree$tip.label,rownames(mydata)),][3]

Phylogenetic Independent Contrasts (PIC)

The PIC method transforms species data into independent comparisons, each representing an evolutionary divergence event, thereby correcting for phylogenetic non-independence [3].

Protocol 3.2.1: Computing and Analyzing Contrasts

- Ensure data and tree alignment (Protocol 3.1.1) and check for zero branch lengths [3]

- Compute contrasts for traits x and y: [3]

- Fit a linear model through the origin:

z <- lm(y1 ~ x1 - 1)[3] - Calculate phylogenetic correlation: [3]

Troubleshooting: If PIC calculation produces NaN or Inf values, inspect branch lengths with mytree$edge.length and range(mytree$edge.length). Add a small constant (e.g., 0.001) to all branches: mytree$edge.length <- mytree$edge.length + 0.001 [3].

Generalized Least Squares (GLS) with Phylogenetic Correlation

GLS incorporates the phylogenetic variance-covariance matrix directly into linear models, providing a more flexible framework for phylogenetic correction [3].

Protocol 3.3.1: Phylogenetic GLS Implementation

- Compute the phylogenetic correlation matrix under Brownian Motion: [3]

- Fit the GLS model using the

nlmepackage: [3] - Extract and interpret coefficients, standard errors, and p-values using

summary(model_gls)

Implementing Bounded Brownian Motion

The BBM model can be fitted using the bounded_bm function in the phytools package, which implements the Boucher & Démery (2016) approach [4].

Protocol 3.4.1: Fitting Bounded Brownian Motion

- Install the development version of

phytoolsfrom GitHub: [4] - Fit the bounded model with appropriate parameters: [4]

- Examine model output:

print(mammal_bounded) - Compare with unbounded BM using likelihood ratio tests or AIC

Table 2: Comparison of Phylogenetic Comparative Methods

| Method | Key Assumptions | Advantages | Limitations |

|---|---|---|---|

| Phylogenetic Independent Contrasts (PIC) | Brownian motion evolution; known phylogeny with branch lengths | Intuitive interpretation; computationally simple | Limited to simple regression; assumes BM model |

| Generalized Least Squares (GLS) | Specified evolutionary model (e.g., BM, OU) | Flexible framework; accommodates multiple predictors | Requires matrix inversion; computationally intensive for large trees |

| Bounded Brownian Motion (BBM) | BM with reflective bounds; discretization adequately approximates continuous trait | Models evolutionary constraints; more realistic for many traits | Computationally demanding; requires large matrix exponentiation |

| Maximum Likelihood (ML) | Specified model of evolution; phylogenetic tree | Allows direct model comparison; estimates all parameters simultaneously | Computationally intensive; potential convergence issues |

Advanced Applications and Integration

Pharmacophylogeny in Drug Discovery

The integration of phylogenetic comparative methods with drug discovery has catalyzed the emerging field of pharmacophylogeny, which exploits evolutionary relationships to predict phytochemical composition and bioactivity [5]. Closely related plant taxa often share conserved metabolic pathways, enabling targeted bioprospecting based on phylogenetic position. For instance, the distribution of palmatine—an isoquinoline alkaloid with multi-target activity against inflammation, infection, and metabolic disorders—across Ranunculales lineages demonstrates how pharmacophylogeny predicts alkaloid-rich taxa for drug development [5]. Similarly, phylogenetic "hot nodes" in Fabaceae have successfully predicted phytoestrogen-rich lineages, including Glycyrrhiza and Glycine, by integrating ethnomedicinal data with evolutionary relationships [5].

Multi-OMS Integration (Pharmacophylomics)

Pharmacophylomics represents the cutting-edge integration of phylogenomics, transcriptomics, and metabolomics to decode biosynthetic pathways and predict therapeutic utilities [5]. This approach resolves the fundamental triad of phylogeny-chemistry-efficacy relationships through several key strategies:

Phylogeny-Guided Metabolomics: Mapping metabolomic divergence across newly identified species, as demonstrated in Paris species (Melanthiaceae), where terpenoids and steroidal saponins dominated chemoprofiles with novel metabolites linked to anticancer and anti-inflammatory activities [5]

Chloroplast Genomics and DNA Barcoding: Resolving phylogenetic ambiguities among morphologically similar species, as applied to Tetrastigma hemsleyanum (Vitaceae), establishing species-specific markers to prevent adulteration and identifying flavonoid biosynthesis genes under positive selection [5]

Network Pharmacology: Elucidating synergistic regulation of multiple pathways, exemplified by schaftoside in C. nutans, which simultaneously modulates NF-κB and MAPK pathways to produce anti-inflammatory effects [5]

Diagram 1: Workflow for Phylogenetic Comparative Analysis

The Scientist's Toolkit

Research Reagent Solutions

Table 3: Essential Tools for Phylogenetic Comparative Methods

| Tool/Resource | Function | Application Context |

|---|---|---|

| ape package (R) | Reads/writes phylogenetic trees; implements PIC and basic comparative methods | Fundamental data handling and tree manipulation; phylogenetic independent contrasts [3] |

| phytools package (R) | Implements advanced methods including bounded Brownian motion, phylogenetic signal, trait visualization | Complex model fitting; specialized visualizations; simulation studies [3] [4] |

| geiger package (R) | Fits continuous trait evolution models; model comparison and simulation | Standard Brownian motion fitting; model selection [4] |

| nlme package (R) | Fits generalized least squares models with correlation structures | Phylogenetic GLS analysis; flexible linear modeling with phylogenetic correction [3] |

| Bounded BM Software (Boucher) | Specialized implementation of bounded Brownian motion models | Testing evolutionary constraints; reflective boundary models [4] |

| Newick Tree Format | Standard text representation of phylogenetic trees | Tree storage and exchange between applications [3] |

| Nexus Tree Format | Extended format with metadata support | Complex phylogenetic data with associated information [3] |

Computational Optimization Strategies

Implementation of computationally intensive methods like bounded Brownian motion requires strategic optimization. The bounded_bm function in phytools addresses this through parallel computing, calculating large matrix exponentials for all tree edges simultaneously using the foreach package rather than serially during pruning [4]. For most applications, a discretization level of levs = 200 provides sufficient accuracy without excessive computation, balancing precision and practical runtime [4].

Diagram 2: Computational Framework for Phylogenetic Model Fitting

Future Directions and Innovations

The field of phylogenetic comparative methods continues to evolve with several emerging frontiers. Horizontal expansion into neglected taxonomic lineages (e.g., algae, lichens) and fermentation-modified phytometabolites offers untapped biosynthetic diversity for drug discovery [5]. Vertical integration through synthetic biology enables engineering of high-yield metabolites by leveraging phylogenomics-predicted biosynthetic routes, such as those for palmatine in Ranunculales [5]. Climate resilience research explores metabolomic plasticity in medicinal plants under environmental stress, potentially harnessing cold-adaptation mechanisms from species like Saussurea to engineer drought-tolerant medicinal crops [5]. Finally, ecophylogenetic conservation combines IUCN Red List assessments with pharmacophylogenetic hotspots to establish "pharmaco-sanctuaries" for critically endangered medicinal taxa, balancing therapeutic discovery with environmental stewardship [5].

Phylogenetic Signal, Conservatism, and Independent Contrasts

Application Note: Quantifying and Interpreting Phylogenetic Signal

Conceptual Foundation

Phylogenetic signal describes the statistical dependence among species' trait values resulting from their evolutionary relationships [6]. In practical terms, it measures the tendency for related species to resemble each other more than they resemble species drawn randomly from a phylogenetic tree [6]. This pattern arises because traits are inherited from common ancestors, creating evolutionary conservatism where closely related species typically share similar characteristics across morphological, ecological, life-history, and behavioral dimensions [6].

Understanding phylogenetic signal has critical applications across biological research. It helps researchers determine the degree to which traits are correlated, reconstruct how and when traits evolved, identify processes driving community assembly, assess niche conservatism across phylogenies, and evaluate relationships between vulnerability to climate change and phylogenetic history [6]. For drug development professionals, phylogenetic signal analysis can reveal evolutionary constraints on molecular targets and predict compound efficacy across related species.

Measurement Indices and Selection Criteria

Table 1: Statistical Measures for Phylogenetic Signal Analysis

| Statistic | Data Type | Statistical Framework | Key Application |

|---|---|---|---|

| Blomberg's K | Continuous | Permutation test | Measures signal relative to Brownian motion expectation; K=1 indicates Brownian motion, K<1 indicates less signal, K>1 indicates strong conservatism [6] [7] |

| Pagel's λ | Continuous | Maximum likelihood | Estimates evolutionary constraint with λ=0 indicating no signal and λ=1 indicating strong signal [6] [7] |

| Moran's I | Continuous | Permutation test | Spatial autocorrelation measure applied to phylogenetic distances [6] |

| Abouheif's C~mean~ | Continuous | Permutation test | Tests for phylogenetic independence in comparative data [6] [7] |

| D Statistic | Categorical | Permutation test | Assesses phylogenetic signal in binary traits [6] |

| δ Statistic | Categorical | Bayesian/Likelihood | Uses Shannon entropy to measure signal; accounts for tree uncertainty [6] [8] |

Selection of the appropriate metric depends on multiple factors. Continuous traits (e.g., body size, expression levels) are best analyzed with Blomberg's K or Pagel's λ, while categorical traits (e.g., presence/absence of pathways, drug response categories) require specialized statistics like the D or δ statistics [6]. Blomberg's K is ideal for assessing deviation from Brownian motion expectations, while Pagel's λ provides a multiplier of phylogenetic covariance that can be tested against specific evolutionary models [6]. For studies with tree uncertainty, the δ statistic incorporates phylogenetic error by sampling from posterior tree distributions [8].

Protocol: Implementing Phylogenetic Signal Analysis

Workflow Visualization

Step-by-Step Procedure

Step 1: Data Preparation and Curation Collect trait data and phylogenetic tree ensuring identical taxon labels across datasets. For genomic applications, align sequences and reconstruct phylogeny using appropriate models. For drug development studies, compile compound sensitivity data (IC~50~ values) or target receptor characteristics across species. Format trait data as a vector with species names matching tip labels in the phylogeny. Adhere to data sharing best practices by including README files, using meaningful taxon labels, and applying CC0 waivers to maximize reuse [9].

Step 2: Metric Selection and Implementation

Select the appropriate phylogenetic signal metric based on trait type (continuous vs. categorical) and research question. For continuous traits (e.g., protein expression levels), implement Blomberg's K in R using the picante package or Pagel's λ using phylolm. For categorical traits (e.g., presence/absence of adverse effects), use the δ statistic with the Python implementation that accounts for tree uncertainty [8]. Code example for Blomberg's K in R:

Step 3: Statistical Testing and Validation Calculate the observed test statistic and compare against a null distribution generated by randomizing trait values across tip labels (n=1000 permutations). For the δ statistic, account for phylogenetic uncertainty by computing the metric across trees from posterior distributions (approximately 600-800 trees for convergence) [8]. Determine statistical significance where p < 0.05 indicates significant phylogenetic signal.

Step 4: Interpretation and Application Interpret results in biological context: strong phylogenetic signal indicates trait conservatism with slow evolutionary rate or stabilizing selection, while weak signal suggests convergence, rapid evolution, or adaptive evolution [6]. For drug development, apply these findings to predict efficacy in untested species based on phylogenetic proximity to sensitive species, or identify evolutionary constraints on drug targets.

Application Note: Phylogenetic Independent Contrasts

Theoretical Foundation

Phylogenetic Independent Contrasts (PICs) provide a methodological framework for estimating evolutionary correlations between characters while accounting for non-independence of species data due to shared ancestry [10] [11]. Developed by Felsenstein (1985), PICs transform trait values into statistically independent comparisons representing evolutionary changes at each node in the phylogeny [10]. This approach effectively controls for phylogenetic relationships that would otherwise violate statistical assumptions of standard regression and correlation analyses.

The method operates under a Brownian motion model of evolution, which assumes that trait divergence accumulates proportionally with time. PICs work by calculating "contrasts" - differences between sister taxa or node values - standardized by their expected variance under Brownian motion [10]. These standardized contrasts become independent, identically distributed data points that can be analyzed with conventional statistical methods without phylogenetic bias.

Protocol: Implementing Phylogenetic Independent Contrasts

Workflow Visualization

Step-by-Step Computational Procedure

Step 1: Data Preparation and Tree Validation Obtain or reconstruct a time-calibrated phylogenetic tree with branch lengths proportional to time or molecular divergence. Prepare trait datasets with identical taxon labels. For multivariate analyses, ensure all traits are measured across the same species. Verify tree ultrametry (equal root-to-tip distances) as PICs require proportional branch lengths to evolutionary time [10].

Step 2: Contrast Calculation Algorithm Implement Felsenstein's pruning algorithm to compute contrasts from tips to root [10]:

- Identify two adjacent tips (i, j) with common ancestor (k)

- Compute raw contrast: c~ij~ = x~i~ - x~j~

- Calculate expected variance under Brownian motion: v~i~ + v~j~ (sum of branch lengths from ancestor to each tip)

- Standardize contrast: s~ij~ = c~ij~ / (v~i~ + v~j~)

- Compute ancestral state: x~k~ = (x~i~/v~i~ + x~j~/v~j~) / (1/v~i~ + 1/v~j~)

- Repeat process iteratively until root is reached

Step 3: Regression and Correlation Analysis Perform regression through origin on standardized contrasts of independent (X) and dependent (Y) variables:

Compare PIC results with non-phylogenetic analyses to assess phylogenetic effects. For the centrarchid fish example, PIC analysis revealed a significant but weaker relationship (slope = 0.59, p = 0.028) compared to standard regression (slope = 1.07, p = 0.010) between buccal length and gape width [11].

Step 4: Diagnostic Testing and Interpretation Verify that contrasts are independent and normally distributed using diagnostic plots. Check for correlation between absolute values of contrasts and their standard deviations, which may indicate Brownian motion model violation. Interpret results in evolutionary context: significant relationships indicate correlated evolution between traits after accounting for phylogenetic history.

Research Reagent Solutions

Table 2: Essential Resources for Phylogenetic Comparative Methods

| Resource Category | Specific Tool/Database | Function and Application |

|---|---|---|

| Molecular Databases | NCBI GenBank, Ensembl, OrthoMAM | Source for gene sequences, annotated genomes, and orthologous gene alignments for phylogenetic reconstruction [12] [8] |

| Protein Databases | UniProt, Pfam, CATH, PDB | Protein sequence, functional annotation, domain architecture, and structural information for evolutionary analyses [12] |

| Tree Visualization | Archaeopteryx, TreeGraph2, Creately | Visualization and manipulation of phylogenetic trees for analysis and publication [13] [14] |

| Comparative Methods Software | R packages: ape, phytools, picante |

Implementation of phylogenetic signal metrics, independent contrasts, and other comparative methods [11] |

| Bayesian Evolutionary Analysis | RevBayes, BEAST2 | Bayesian phylogenetic inference with relaxed clock models and tree uncertainty estimation for δ statistic applications [8] |

| Data Repositories | TreeBASE, Dryad, MorphoBank | Public archives for phylogenetic trees, character matrices, and alignments supporting reproducible research [9] |

Advanced Applications and Integration

Integration with Genomic and Drug Discovery Pipelines

Phylogenetic comparative methods have become increasingly relevant in translational research, particularly in target validation and compound prioritization. The δ statistic's recent implementation in Python enables genome-scale applications, allowing researchers to test phylogenetic signal across thousands of genes simultaneously [8]. This approach can identify evolutionarily constrained genomic regions that may represent promising drug targets with lower likelihood of resistance development.

For drug development professionals, phylogenetic signal analysis can predict cross-species compound efficacy by identifying conserved biological pathways. PICs can further elucidate correlated evolution between target expression and sensitivity patterns, informing animal model selection and translational potential. Integration of these methods with protein structure databases (e.g., PDB, CATH) enables structural phylogenetics approaches that map evolutionary constraints onto drug binding sites [12].

Accounting for Phylogenetic Uncertainty

Recent methodological advances address tree uncertainty in phylogenetic comparative methods. The δ statistic now incorporates tree uncertainty by sampling from posterior tree distributions, with convergence typically achieved after 600-840 trees depending on trait complexity [8]. This approach provides more accurate assessments of phylogenetic associations compared to single-tree methods, particularly for genomic datasets where gene trees may differ from species trees due to incomplete lineage sorting or hybridization.

Data Management and Reporting Standards

Effective implementation of phylogenetic comparative methods requires adherence to data management best practices [9]. Researchers should:

- Use consistent taxon labels across trees, trait data, and alignments

- Include README files documenting data provenance and formatting

- Apply CC0 waivers to maximize data reuse

- Archive data in public repositories (e.g., Dryad, TreeBASE) before publication

- Report complete methodological details including software versions and analysis parameters

- Share phylogenetic trees as machine-readable files (Nexus, Newick) rather than just figures

Building Your Predictive Pipeline: A Step-by-Step Methodological Guide

Phylogenetically informed prediction represents a paradigm shift in evolutionary biology, enabling researchers to infer unknown trait values by explicitly incorporating the evolutionary relationships among species. Despite the demonstrated superiority of these methods, predictive equations derived from ordinary least squares (OLS) or phylogenetic generalized least squares (PGLS) regression models persist in common practice [1]. This protocol provides a comprehensive framework for implementing phylogenetically informed predictions, which have been shown to outperform traditional predictive equations by two- to three-fold in real and simulated data [1]. These methods are particularly valuable for applications ranging from imputing missing values in trait databases to reconstructing phenotypic traits in extinct species for evolutionary studies and drug discovery research.

Key Concepts and Performance Advantages

Phylogenetically informed prediction operates on the fundamental principle that due to common descent, data from closely related organisms are more similar than data from distant relatives. This phylogenetic signal creates structured relationships that can be leveraged to make more accurate predictions than methods ignoring evolutionary history [1]. The performance advantages are substantial across multiple dimensions.

Quantitative Performance Comparison

Recent simulation studies demonstrate the significant advantage of phylogenetically informed prediction over equation-based approaches. The following table summarizes key performance metrics from comprehensive simulations using ultrametric trees with n = 100 taxa [1]:

Table 1: Performance Comparison of Prediction Methods Across Trait Correlations

| Method | Correlation Strength | Error Variance (σ²) | Performance Ratio vs. PIP | Accuracy Advantage |

|---|---|---|---|---|

| Phylogenetically Informed Prediction (PIP) | r = 0.25 | 0.007 | 1.0x | Baseline |

| PGLS Predictive Equations | r = 0.25 | 0.033 | 4.7x worse | 96.5-97.4% more accurate |

| OLS Predictive Equations | r = 0.25 | 0.030 | 4.3x worse | 95.7-97.1% more accurate |

| Phylogenetically Informed Prediction (PIP) | r = 0.75 | 0.002 | 1.0x | Baseline |

| PGLS Predictive Equations | r = 0.75 | 0.015 | 7.5x worse | >95% more accurate |

| OLS Predictive Equations | r = 0.75 | 0.014 | 7.0x worse | >95% more accurate |

Notably, phylogenetically informed predictions using weakly correlated traits (r = 0.25) achieve roughly equivalent or better performance than predictive equations using strongly correlated traits (r = 0.75) [1]. This demonstrates the considerable information content inherent in phylogenetic relationships themselves.

Methodological Foundations

The theoretical foundation of phylogenetically informed prediction rests on several core approaches: calculating independent contrasts, using a phylogenetic variance-covariance matrix to weight data in PGLS, or creating random effects in phylogenetic generalized linear mixed models (PGLMMs) [1]. Each incorporates phylogeny as a fundamental component, yielding equivalent results. Bayesian implementations have further advanced the field by enabling sampling of predictive distributions for subsequent analysis [1].

Experimental Protocols and Workflows

Core Prediction Workflow

The following workflow diagram outlines the primary steps for conducting phylogenetically informed prediction:

Phylogenomic Tree Construction Protocol

For researchers beginning with genomic data, phylogenomic tree construction represents a critical first step. The GToTree workflow provides a user-friendly approach for this process [15]:

Input Preparation: Compile National Center for Biotechnology Information (NCBI) assembly accessions, GenBank files, nucleotide fasta files, and/or amino acid fasta files (compressed or uncompressed).

Single-Copy Gene Identification: Identify single-copy genes (SCGs) suitable for phylogenomic analysis using one of 15 included SCG-sets or a user-provided set. The selection depends on the breadth of organisms being analyzed.

Quality Assessment: Review genome completion and redundancy estimates generated by the workflow.

Filtering: Apply adjustable parameters to filter genomes and target genes. By default, if a genome has multiple hits to a specific HMM profile, GToTree excludes sequences for that target gene (inserting gap sequences). Alternatively, use "best-hit" mode (

-Bflag) to retain the best hit based on HMMER3 e-value.Alignment and Trimming: Align and trim each group of target genes before concatenation into a single alignment. A partitions file describing individual gene positions is generated for potential mixed-model tree construction.

Annotation: Optionally replace or append to initial genome labels with taxonomy or user-specific information using TaxonKit for easier exploration of final outputs.

Tree Generation: Generate a phylogenomic tree using available construction methods.

This workflow supports diverse research applications, including visualizing trait distribution across bacterial domains and placing newly recovered genomes into phylogenomic context [15].

Integrated Data Analysis Platform

The Arbor platform provides an alternative workflow for comparative analyses, integrating phylogenetic, geospatial, and trait data through a visual interface [16]. Key capabilities include:

Workflow Design: Create custom analysis workflows visually by connecting data manipulation and analysis steps.

Data Integration: Combine phylogenetic trees with character data (traits, biogeography, ecological associations) using the dataIntegrator module.

Tree-Based Operations: Perform sophisticated selection and query operations through the treeManipulator module, such as locating species co-occurring in specific places and times.

Scalable Analysis: Execute workflows on computational resources ranging from personal computers to large-scale clusters for tree-of-life-scale analyses.

Modular Extension: Incorporate new analytical tools as modular plugins written in R, Python, Perl, C, or C++.

Table 2: Key Research Reagents and Computational Tools for Phylogenetic Prediction

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| GToTree [15] | Software Workflow | Phylogenomic tree construction from genomic data | Building de novo phylogenies from genomes; placing new genomes in phylogenetic context |

| Arbor [16] | Software Platform | Visual workflow design for comparative analysis | Integrating phylogenetic, spatial, and trait data; scalable tree-of-life analyses |

| PhyloControl [17] | Visualization Platform | Phylogenetic risk analysis with integrated data | Biocontrol research; combining phylogenetics with species distribution modeling |

| Single-Copy Gene Sets [15] | Biological Reference | Target genes for phylogenomic analysis | Identifying appropriate phylogenetic markers for specific taxonomic groups |

| Interactive Tree of Life (iToL) [15] | Visualization Tool | Tree visualization and annotation | Exploring and presenting phylogenetic trees with associated data |

| Phylogenetic Variance-Covariance Matrix [1] | Mathematical Framework | Accounting for phylogenetic non-independence | Core component of PGLS and phylogenetically informed prediction models |

| R packages (ape, GEIGER, picante, diversitree) [16] | Software Libraries | Comparative phylogenetic analyses | Implementing diverse comparative methods; foundational for Arbor's infrastructure |

Advanced Implementation Considerations

Data Integration and Visualization Workflow

For complex analyses integrating multiple data types, the following workflow illustrates the data synthesis process:

Prediction Interval Estimation

A critical aspect of phylogenetically informed prediction involves quantifying uncertainty. Prediction intervals increase with phylogenetic branch length, reflecting greater uncertainty when predicting traits for species distantly related to reference taxa in the tree [1]. Bayesian implementations are particularly valuable for generating predictive distributions that can be sampled for subsequent analysis [1].

Method Selection Guidelines

When implementing phylogenetic predictions, consider these evidence-based guidelines:

For weakly correlated traits (r < 0.3): Phylogenetically informed prediction is essential, as it leverages phylogenetic signal to compensate for weak trait correlations.

For missing data imputation: Phylogenetically informed imputation provides more accurate missing value estimation for subsequent analyses.

For extinct species prediction: Bayesian phylogenetic prediction enables sampling from predictive distributions for uncertain fossil specimens.

For large-scale comparative analyses: Integrated platforms like Arbor provide scalable solutions for tree-of-life-scale datasets.

This workflow overview provides a comprehensive framework for implementing phylogenetically informed prediction in evolutionary biology and related fields. The substantial performance advantages over traditional equation-based approaches—with two- to three-fold improvement in prediction accuracy—make these methods essential for contemporary comparative research [1]. By following the protocols outlined for phylogenomic tree construction, data integration, and predictive modeling, researchers can leverage the full informational content of evolutionary relationships to make more accurate biological predictions. The continued development of user-friendly workflows and integrated visualization platforms is making these powerful methods increasingly accessible to researchers across biological disciplines.

Phylogenetic Generalized Least Squares (PGLS) represents a core statistical framework in evolutionary biology, ecology, and comparative medicine for analyzing species data while accounting for shared evolutionary history. The method addresses a fundamental challenge in comparative biology: species cannot be treated as independent data points due to their phylogenetic relationships. By incorporating the phylogenetic variance-covariance matrix into regression analyses, PGLS controls for non-independence in species data, thereby preventing spurious results and misleading error rates that can occur with ordinary least squares (OLS) approaches [1]. This framework has revolutionized our ability to test evolutionary hypotheses, impute missing trait values, and reconstruct ancestral states across diverse fields including drug development, where understanding evolutionary constraints can inform target selection and toxicity prediction.

Recent advances have demonstrated the superior performance of full phylogenetically informed prediction over traditional predictive equations derived from PGLS or OLS models. Simulations using ultrametric trees with varying degrees of balance have shown that phylogenetically informed predictions perform about 4-4.7 times better than calculations derived from OLS and PGLS predictive equations across different correlation strengths [1]. This performance advantage makes phylogenetically informed prediction particularly valuable when working with weakly correlated traits, where predictions using the phylogenetic relationship between two weakly correlated (r = 0.25) traits can outperform predictive equations for strongly correlated traits (r = 0.75) [1].

Theoretical Foundation of PGLS

Mathematical Framework

The PGLS approach modifies the standard regression variance matrix (V) according to the formula:

V = (1 - ϕ)[(1 - λ)I + λΣ] + ϕW

Where:

- λ represents the size of the shared ancestry effect

- ϕ represents the contribution of spatial effects

- Σ is an n × n matrix comprising the shared path lengths on the phylogeny, proportional to the expected variances and covariances under a Brownian motion model of evolution along the branches of a phylogeny [18]

- I is the identity matrix

- W is the spatial matrix comprising pairwise great-circle distances between sites in the sample

In practical applications, researchers often report λ′ = (1 - ϕ)λ, the proportional contribution of phylogeny to variance, and ϕ, the proportional contribution of spatial effects to variance [18]. The proportion of variance independent of phylogeny and space is represented by γ = (1 - ϕ)(1 - λ) [18].

Model Fit and Evaluation

The overall model fit in PGLS is typically evaluated using R² calculated with the formula:

R² = 1 - SS~reg~/SS~tot~

Where SS~reg~ is the residual sum of squares in the PGLS fitted model accounting for spatial and phylogenetic non-independence, and SS~tot~ is the total sum of squares accounting for spatial and phylogenetic non-independence in a PGLS model with no predictors [18]. It is important to note that R² values in a Generalized Least Squares framework are not directly comparable with those from Ordinary Least Squares, and because residuals are not orthogonal, partitioning variance across independent variables presents challenges [18].

For model selection and variable importance assessment, researchers often evaluate all possible combinations of ecological predictors using model averaging based on Akaike Information Criterion (AICc). The relative variable importance (RVI) of each candidate predictor is calculated as the sum of the corrected Akaike Information Criterion (AICc) weights of all models including that variable [18].

Comparative Performance Analysis

Simulation Studies

Comprehensive simulations comparing phylogenetically informed prediction against traditional predictive equations have demonstrated significant performance advantages. These simulations utilized 1000 ultrametric trees with n = 100 taxa and varying degrees of balance, with continuous bivariate data simulated with different correlation strengths (r = 0.25, 0.5, and 0.75) using a bivariate Brownian motion model [1].

Table 1: Performance Comparison of Prediction Methods on Ultrametric Trees

| Method | Correlation Strength (r) | Error Variance (σ²) | Relative Performance | Accuracy Advantage |

|---|---|---|---|---|

| Phylogenetically Informed Prediction | 0.25 | 0.007 | 4-4.7× better than OLS/PGLS equations | 95.7-97.4% of trees |

| Phylogenetically Informed Prediction | 0.50 | 0.004 | 4-4.7× better than OLS/PGLS equations | 95.7-97.4% of trees |

| Phylogenetically Informed Prediction | 0.75 | 0.002 | 4-4.7× better than OLS/PGLS equations | 95.7-97.4% of trees |

| PGLS Predictive Equations | 0.25 | 0.033 | Reference | Reference |

| OLS Predictive Equations | 0.25 | 0.030 | Reference | Reference |

The simulations revealed that all three approaches (phylogenetically informed prediction, OLS predictive equations, and PGLS predictive equations) had median prediction errors close to zero, suggesting low bias across methods. However, the variance in prediction error distributions was substantially smaller for phylogenetically informed predictions, indicating consistently greater accuracy across simulations [1]. The performance advantage was maintained across different tree sizes (50, 250, and 500 taxa) and correlation strengths.

Real-World Applications

The superior performance of phylogenetically informed prediction extends to real-world datasets across diverse biological domains:

Table 2: Application of Phylogenetically Informed Prediction in Biological Research

| Field | Application Example | Key Finding | Reference |

|---|---|---|---|

| Palaeontology | Primate neonatal brain size reconstruction | Phylogenetically informed predictions provided more accurate reconstructions of extinct species traits | [1] |

| Ecology | Avian body mass imputation | Improved missing data estimation for functional diversity analyses | [1] |

| Entomology | Bush-cricket calling frequency prediction | Enhanced understanding of evolutionary relationships in communication systems | [1] |

| Evolutionary Neuroscience | Non-avian dinosaur neuron number estimation | More reliable inference of cognitive capabilities from endocast data | [1] |

| Forest Ecology | Deforestation and forest replacement predictors | Identified ecological and cultural predictors while controlling for phylogenetic and spatial effects | [18] |

Experimental Protocols for PGLS Analysis

Core PGLS-Spatial Protocol

Objective: To estimate independent effects of ecological and cultural predictors on forest outcomes while quantifying and controlling for non-independence due to geographic proximity and shared cultural ancestry [18].

Materials and Software Requirements:

- Phylogenetic tree data (e.g., posterior distribution of trees from Bayesian analysis)

- Geographical coordinates for all sampling sites

- Trait data for all species/populations

- Statistical software with PGLS capabilities (R packages such as ape, nlme, phylolm)

- Spatial analysis tools for calculating great-circle distances

Procedure:

- Compute Σ matrices: Generate variance-covariance matrices from phylogenetic trees, proportional to expected variances and covariances under Brownian motion evolution [18].

- Calculate spatial matrix W: Compute pairwise great-circle distances between all sites using the Haversine formula [18].

- Model specification: Implement the PGLS-spatial model incorporating both phylogenetic (λ) and spatial (ϕ) effects.

- Parameter estimation: Use maximum likelihood or restricted maximum likelihood to estimate model parameters.

- Model averaging: Evaluate all possible combinations of ecological predictors (e.g., 1024 combinations) using model averaging based on Akaike Information Criterion with listwise deletion of missing data [18].

- Calculate relative variable importance: For each predictor, compute RVI as the sum of AICc weights of all models including the variable [18].

- Significance testing: Use likelihood ratio tests to compare models with both λ and ϕ, only λ, only ϕ, or neither to determine if phylogenetic and spatial parameters significantly improve model fit [18].

- Account for phylogenetic uncertainty: Repeat analysis across multiple trees from posterior distribution and average results [18].

Validation:

- Calculate R² using PGLS-specific formula

- Examine residuals for phylogenetic and spatial autocorrelation

- Compare with null models using likelihood ratio tests

Phylogenetically Informed Prediction Protocol

Objective: To predict unknown trait values incorporating phylogenetic relationships and evolutionary models [1].

Procedure:

- Data preparation: Compile trait data and phylogenetic tree with branch lengths reflecting evolutionary time.

- Model selection: Choose appropriate evolutionary model (Brownian motion, Ornstein-Uhlenbeck, etc.) based on data characteristics.

- Parameter estimation: Estimate phylogenetic signal and other model parameters using known data points.

- Prediction generation: Calculate predicted values for unknown taxa using the full phylogenetic information.

- Prediction intervals: Generate prediction intervals that account for phylogenetic branch lengths, as these intervals increase with increasing phylogenetic distance [1].

- Validation: Compare prediction accuracy with observed values where possible using cross-validation.

Key Advantage: This approach enables prediction of unknown values from only a single trait using shared evolutionary history, which is impossible with traditional predictive equations [1].

Visualization and Data Integration with ggtree

Tree Visualization Fundamentals

The ggtree package for R provides a robust platform for visualizing phylogenetic trees with associated data, addressing the critical need for integrating diverse data types in evolutionary analysis [19] [20]. Unlike earlier tools with limited annotation capabilities, ggtree enables researchers to combine multiple layers of annotations using the grammar of graphics implementation in ggplot2 [19].

Supported Layouts:

- Rectangular (default) and slanted phylograms

- Circular and fan layouts

- Unrooted (equal angle and daylight methods)

- Time-scaled and two-dimensional layouts

- Cladograms (without branch length scaling)

Visualization Workflow:

- Parse tree file into R using treeio package

- Create basic tree visualization with

ggtree(tree_object) - Add annotation layers using

+operator - Customize appearance using standard ggplot2 syntax

Visualization Workflow: Sequential steps for creating annotated phylogenetic trees with ggtree

Advanced Annotation Features

ggtree provides specialized geometric layers for phylogenetic annotation:

geom_treescale(): Add legend for tree branch scale (genetic distance, divergence time)geom_range(): Display uncertainty of branch lengths (confidence intervals)geom_tiplab(),geom_tippoint(),geom_nodepoint(): Add taxa labels and symbolsgeom_hilight(): Highlight clades with rectanglesgeom_cladelabel(): Annotate selected clades with bars and text labels

The package supports visual manipulation of trees through collapsing, scaling, and rotating clades, as well as transformation between different layouts. The %<% operator allows transferring complex tree figures with multiple annotation layers to new tree objects without step-by-step re-creation [19].

Table 3: Essential Research Reagents and Computational Tools for PGLS Analysis

| Category | Item | Function/Application | Implementation Notes |

|---|---|---|---|

| Statistical Software | R Programming Environment | Primary platform for phylogenetic comparative methods | Required for PGLS implementation and customization |

| Core R Packages | ape, nlme, phylolm | PGLS model fitting and parameter estimation | Foundation for basic to advanced PGLS analyses |

| Specialized R Packages | ggtree, treeio | Phylogenetic tree visualization and data integration | Essential for visualizing results and complex data integration |

| Tree Handling | Phytools, phylobase | Additional phylogenetic comparative methods | Extends analytical capabilities |

| Data Types | Phylogenetic Variance-Covariance Matrix (Σ) | Quantifies expected species similarity under Brownian motion | Derived from phylogenetic tree with branch lengths |

| Data Types | Spatial Matrix (W) | Captures geographic non-independence | Calculated from geographical coordinates |

| Model Parameters | Phylogenetic Signal (λ) | Measures phylogenetic dependence in trait data | Ranges from 0 (no signal) to 1 (Brownian motion) |

| Model Parameters | Spatial Autocorrelation (ϕ) | Quantifies geographic effect on trait similarity | Important for spatially structured data |

| Validation Metrics | AICc Weights | Model selection and averaging | Basis for Relative Variable Importance (RVI) calculation |

| Validation Metrics | Likelihood Ratio Tests | Compare nested models with different parameters | Tests significance of λ and ϕ parameters |

Advanced Applications and Future Directions

Integration with High-Throughput Data

The increasing availability of large-scale OMICS data presents both challenges and opportunities for phylogenetic comparative methods. Modern sequencing technologies have made large-scale evolutionary studies more feasible, creating demand for visualization tools that can handle trees with thousands of nodes [13]. ggtree addresses this need by providing a flexible platform that can integrate diverse data types, including evolutionary rates, ancestral sequences, and geographical information [19] [20].

Future developments will likely focus on enhancing the scalability of PGLS approaches to handle increasingly large phylogenetic trees and high-dimensional data. As noted in a review of tree visualization tools, "the major challenge remains: the creation of the biggest possible phylogenetic tree of life that will classify all species showing their detailed evolutionary relationships" [13].

Methodological Innovations

Recent advances in phylogenetically informed prediction have demonstrated the limitations of traditional predictive equations, which remain common despite their introduction 25 years ago [1]. The superior performance of full phylogenetic prediction, particularly for weakly correlated traits, suggests that these methods should become standard practice in comparative biology.

Emerging approaches include:

- Bayesian implementations for sampling predictive distributions

- Integration with machine learning methods

- Development of phylogenetic prediction intervals that account for branch length uncertainty

- Applications to novel fields such as oncology and epidemiology

Future Directions: Emerging trends and methodological innovations in phylogenetic comparative methods

Phylogenetic Generalized Least Squares and associated phylogenetically informed prediction methods represent powerful frameworks for evolutionary analysis that explicitly account for shared ancestry. The demonstrated superiority of these approaches over traditional predictive equations highlights the importance of incorporating phylogenetic information directly into predictive models rather than relying solely on regression coefficients.

The integration of robust statistical frameworks with advanced visualization tools like ggtree enables researchers to explore complex evolutionary questions while integrating diverse data types. As phylogenetic comparative methods continue to evolve, their application across increasingly diverse fields from ecology to drug development promises to enhance our understanding of evolutionary processes and patterns.

The protocols and applications outlined in this article provide a foundation for implementing these methods in research practice, with particular attention to practical considerations for experimental design, analysis, and visualization. By adopting these phylogenetically informed approaches, researchers can achieve more accurate predictions and deeper insights into evolutionary relationships across the tree of life.

Pharmacophylogeny is an emerging discipline that leverages the evolutionary relationships between plant species to predict their phytochemical composition and medicinal potential [5]. This approach is grounded in the principle that phylogenetically proximate taxa often share conserved metabolic pathways, leading to the production of similar bioactive compounds [5]. The integration of modern omics technologies with phylogenetic analysis has given rise to pharmacophylomics, a powerful framework that accelerates plant-based drug discovery by identifying promising candidates more efficiently and sustainably [5]. This protocol details the practical application of phylogenetically informed prediction for bioactivity assessment in plant lineages, providing a standardized methodology for researchers in natural product drug discovery.

Key Principles and Quantitative Validation

The foundational principle of pharmacophylogeny is that evolutionary kinship begets chemical kinship [5]. Closely related plant species frequently employ conserved enzymes and biosynthetic pathways, resulting in the production of structurally similar specialized metabolites [5]. This chemical conservation allows for predictive bioactivity profiling across taxonomic groups.

Table 1: Performance Comparison of Prediction Methods

| Method | Key Characteristic | Relative Performance | Key Advantage |

|---|---|---|---|

| Phylogenetically Informed Prediction | Explicitly models shared evolutionary ancestry | 2-3 fold improvement over OLS/PGLS [21] | High accuracy even with weakly correlated traits (r = 0.25) [21] |

| Predictive Equations (PGLS) | Accounts for phylogeny in regression model | Baseline | Standard comparative method |

| Predictive Equations (OLS) | Ignores phylogenetic structure | Lower accuracy | Simplicity |

Furthermore, phylogenetically informed models using weakly correlated traits (r = 0.25) can achieve accuracy equivalent to, or even surpassing, predictive equations applied to strongly correlated traits (r = 0.75) [21]. This highlights the exceptional predictive power gained from incorporating evolutionary history.

Experimental Protocols

Phylogenomic Analysis and Taxon Selection

Purpose: To reconstruct robust evolutionary relationships and identify target lineages for bioactivity prediction.

- Step 1: Sequence Data Acquisition: Gather genomic or transcriptomic data for target taxa and appropriate outgroups. Chloroplast genomes and specific barcoding regions (e.g., matK, rbcL) are often used for plants [5].

- Step 2: Multiple Sequence Alignment (MSA): Align sequences using tools such as MAFFT or ClustalW. Critically assess the resulting MSA for accuracy, as this is a potential source of error [22].

- Step 3: Phylogenetic Inference: Reconstruct phylogenetic trees using model-based methods (Maximum Likelihood or Bayesian Inference). Select a substitution model that best fits the data using model testing software (e.g., ModelTest-NG) [22].

- Step 4: Identify Phylogenetic "Hot Nodes": Pinpoint clades (monophyletic groups) that are either known to produce valuable compounds or are evolutionarily proximate to such lineages. These clades represent high-priority candidates for bioprospecting [5].

Metabolomic Profiling and Compound Identification

Purpose: To comprehensively characterize the phytochemical profiles of selected plant taxa.

- Step 1: Sample Preparation: Extract plant materials (e.g., leaves, roots) using standardized solvents (e.g., methanol, water) to capture a wide range of metabolites.

- Step 2: Metabolomic Analysis: Analyze extracts using high-resolution techniques such as UHPLC-Q-TOF MS (Ultra-High Performance Liquid Chromatography Quadrupole Time-of-Flight Mass Spectrometry) [5].

- Step 3: Metabolite Annotation: Identify and quantify key metabolite classes (e.g., terpenoids, alkaloids, flavonoids) by comparing mass spectra and retention times to existing databases and authentic standards [5].

Integrated Pharmacophylomic Analysis

Purpose: To correlate phylogenetic data with metabolomic findings and predict bioactivity.

- Step 1: Map Chemical Traits: Superimpose the metabolomic data (e.g., presence/absence or abundance of specific compounds) onto the phylogenetic tree.

- Step 2: Assess Phylogenetic Signal: Use statistical methods to determine if the distribution of key metabolites is correlated with evolutionary history.

- Step 3: Bioactivity Prediction: Predict that closely related, yet unscreened, species within a "hot node" clade will possess similar bioactive compounds. For example, the identification of palmatine in Coptis (Ranunculales) predicts its presence and similar bioactivity in phylogenetically related genera like Berberis [5].