A Practical Guide to Selecting the Best-Fit Nucleotide Substitution Model for Robust Phylogenetic Analysis

Selecting an appropriate nucleotide substitution model is a critical, yet often overlooked, step in phylogenetic analysis that directly impacts the accuracy of inferred evolutionary relationships, divergence times, and population histories.

A Practical Guide to Selecting the Best-Fit Nucleotide Substitution Model for Robust Phylogenetic Analysis

Abstract

Selecting an appropriate nucleotide substitution model is a critical, yet often overlooked, step in phylogenetic analysis that directly impacts the accuracy of inferred evolutionary relationships, divergence times, and population histories. This article provides a comprehensive guide for researchers and scientists, covering the foundational principles of substitution models, current methodologies and software for model selection, strategies for troubleshooting complex datasets, and advanced techniques for model validation. By synthesizing the latest evidence and best practices, this guide empowers professionals in drug development and biomedical research to make informed decisions, avoid common pitfalls, and enhance the reliability of their molecular evolutionary analyses.

Understanding Nucleotide Substitution Models: The Foundation of Molecular Evolution

What is a Substitution Model? Defining the Core Markov Process

A substitution model is a mathematical description of the process by which one character in a molecular sequence (such as a nucleotide or amino acid) replaces another over evolutionary time. These models are fundamental to probabilistic methods in molecular evolutionary analysis, including phylogenetic tree reconstruction, ancestral sequence reconstruction, and estimating evolutionary parameters [1]. At their core, substitution models are based on Markov processes, which describe systems that transition between states with probabilities dependent only on the current state [2].

Core Concepts and Definitions

What is a Markov Process?

A Markov process is a stochastic process that satisfies the Markov property, meaning that the future state of the system depends only on its present state, not on the sequence of events that preceded it [2].

In molecular evolution, the "states" are the character states in a sequence. For nucleotide sequences, the four states are A, C, G, and T/U. The Markov process describes the probability of a nucleotide substituting for another at a given site over a time period [2].

The Instantaneous Rate Matrix

The core of a substitution model is the instantaneous rate matrix (Q). Its elements, ( Q{ij} ), represent the instantaneous rate of change from state ( i ) to state ( j ). The diagonal elements ( Q{ii} ) are defined so that the sum of each row is zero.

Key Parameters in Nucleotide Substitution Models

- Nucleotide frequencies (( \pi )): The equilibrium frequencies of A, C, G, and T.

- Transition/transversion ratio (κ): The relative rate of transitions (changes between purines, AG, or between pyrimidines, CT) compared to transversions (changes between a purine and a pyrimidine).

Frequently Asked Questions (FAQs)

What is the practical purpose of a substitution model in phylogenetic analysis?

Substitution models provide the statistical foundation for calculating the probability of observing sequence data given a phylogenetic tree. They are essential for obtaining accurate phylogenetic inferences, as they directly influence the reliability of resulting trees and downstream analyses [3] [1]. Using an inappropriate model can lead to incorrect evolutionary conclusions.

How do I select the best-fit substitution model for my dataset?

The statistical selection of the best-fit model is routine in phylogenetics. The standard methodology involves [4] [3]:

- Using software to fit a set of candidate models to your multiple sequence alignment.

- Applying statistical criteria (like AIC, AICc, or BIC) to compare model fit.

- Selecting the model that best balances goodness-of-fit with model complexity.

Which model selection criterion should I use: AIC, AICc, or BIC?

Comparative studies have demonstrated that the Bayesian Information Criterion (BIC) consistently outperforms both AIC and the corrected Akaike Information Criterion (AICc) in accurately identifying the true nucleotide substitution model [3]. BIC more heavily penalizes extra parameters, which helps avoid overfitting. The choice of software (jModelTest2, ModelTest-NG, or IQ-TREE) does not significantly affect the accuracy of model identification [3].

Does the choice of substitution model really affect my results?

The effect depends on your dataset's genetic diversity [1]. For datasets with low genetic diversity, model selection has less impact because evolutionary trajectories are clearly defined. For datasets with high genetic diversity, model selection plays a more important role in determining past evolutionary states, especially among states with similar probabilities under neutral evolution [1].

What is the difference between nucleotide and protein substitution models?

Nucleotide substitution models typically have fewer parameters (e.g., transition/transversion ratio, nucleotide frequencies) that are estimated directly from the study data [1]. Protein substitution models are predominantly empirical; they incorporate pre-estimated parameters (like exchangeability matrices and equilibrium amino acid frequencies) derived from large databases of protein sequences [1].

Experimental Protocols and Methodologies

Protocol 1: Selecting the Best-Fit Nucleotide Substitution Model

This protocol describes how to statistically select a best-fit model using standard software tools [3].

Materials and Equipment

- Multiple Sequence Alignment (MSA): The aligned nucleotide sequence dataset in a standard format (e.g., FASTA, PHYLIP).

- Computational Software: jModelTest2, ModelTest-NG, or IQ-TREE installed on a computer or server.

Procedure

- Prepare Input Data: Ensure your MSA is properly aligned and free of errors. The format should be compatible with your chosen software.

- Run Model Selection Software: Execute the software with commands appropriate for your dataset.

- Example Command for ModelTest-NG:

- Apply Selection Criteria: The software will fit a set of candidate models to your data. Analyze the output, paying primary attention to the model ranked highest by BIC [3].

- Report Results: Document the selected model and its parameters (e.g.,

GTR+I+G) in your methodology.

Protocol 2: Evaluating Model Fit and Performance

This methodology is used in simulation studies to assess how well model selection procedures recover true known models [3].

Procedure

- Dataset Simulation: Simulate DNA sequence datasets using a known nucleotide substitution model and a known phylogenetic tree. Tools like AliSim can be used for this purpose [3].

- Blind Model Selection: Apply model selection procedures (using software like jModelTest2, ModelTest-NG, or IQ-TREE) to the simulated datasets as if the true model were unknown.

- Accuracy Assessment: Record how often the model selection procedure correctly identifies the true, known simulation model. Compare the performance of different selection criteria (AIC, AICc, BIC) and different software.

Comparative Data and Analysis

Table 1: Performance of Model Selection Criteria in Recovering True Models

Data from a comprehensive analysis of 88 simulated datasets showing how frequently each criterion correctly identified the true model [3].

| Selection Criterion | Accuracy in Identifying True Model | Key Characteristics |

|---|---|---|

| Bayesian Information Criterion (BIC) | Consistently Highest | Most heavily penalizes extra parameters, most effective at avoiding overfitting [3]. |

| Akaike Information Criterion (AIC) | Lower than BIC | Derived from frequentist probability; less stringent penalty for complexity [3]. |

| Corrected Akaike Information Criterion (AICc) | Lower than BIC | A corrected version of AIC for small sample sizes; performance similar to AIC [3]. |

Table 2: Common Phylogenetic Software for Model Selection

Key software tools used for statistically selecting best-fit models of nucleotide substitution [3].

| Software | Primary Function | Notable Features |

|---|---|---|

| jModelTest2 | Nucleotide substitution model selection | Implements AIC, AICc, and BIC for model comparison [3]. |

| ModelTest-NG | Nucleotide and protein model selection | One to two orders of magnitude faster than jModelTest2; comprehensive model set [3]. |

| IQ-TREE | Integrated model selection and tree inference | Performs model selection simultaneously with tree search; very fast [3]. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Model Selection

| Tool / 'Reagent' | Function in Analysis |

|---|---|

| Multiple Sequence Alignment (MSA) | The fundamental input data; the molecular sequences to be analyzed. |

| ModelTest-NG Software | A fast and comprehensive program for selecting the best-fit substitution model [3]. |

| BIC (Bayesian Information Criterion) | The statistical criterion shown to most accurately identify the true model [3]. |

| Exchangeability Matrix (Q) | The core of the model, defining relative substitution rates between character states [1]. |

| Equilibrium Frequency Parameters (π) | Model parameters for the stationary frequencies of nucleotides or amino acids [1]. |

In molecular evolutionary genetics, a nucleotide substitution model is a mathematical description of how DNA sequences change over evolutionary time. It specifies the rates of substitution between all pairs of nucleotides (A, C, G, T) and the frequencies of each nucleotide in the sequence [5]. The selection of an appropriate substitution model is crucial for obtaining accurate phylogenetic inferences, as it directly influences the reliability of resulting evolutionary trees and all downstream analyses [3].

Substitution models range from simple to highly parameterized complex models. A simple model may assume all nucleotide substitutions are equally likely and that all nucleotides have the same frequency, while complex models account for biological realities such as different rates for transitions versus transversions, variation in nucleotide frequencies, and rate variation across sites [3] [6]. This technical guide explores the spectrum of available models and provides practical guidance for researchers navigating model selection in their phylogenetic analyses.

The Model Spectrum: From JC69 to GTR

The following table summarizes the most common nucleotide substitution models, ordered by complexity from simplest to most parameter-rich:

Table 1: Common DNA Substitution Models and Their Characteristics

| Model Name | Parameters | Explanation | Key Reference |

|---|---|---|---|

| JC69 (Jukes-Cantor) | 0 | Equal substitution rates and equal base frequencies. | Jukes and Cantor (1969) [7] |

| F81 | 3 | Equal substitution rates but unequal base frequencies. | Felsenstein (1981) [7] |

| K80/K2P (Kimura 2-parameter) | 1 | Distinguishes between transitions and transversions rates; equal base frequencies. | Kimura (1980) [7] [8] |

| HKY85 | 4 | Different transition/transversion rates and unequal base frequencies. | Hasegawa et al. (1985) [7] [8] |

| TN93 (Tamura-Nei) | 5 | Extends HKY85 with different rates for two types of transitions (AG vs. CT). | Tamura and Nei (1993) [7] [8] |

| SYM | 5 | Symmetric model with unequal substitution rates but equal base frequencies. | Zharkikh (1994) [7] |

| GTR (General Time Reversible) | 8 | Most general time-reversible model with unequal rates and unequal base frequencies. | Tavaré (1986) [5] [7] |

Understanding Rate Matrices and Model Parameterization

Each substitution model is defined by its instantaneous rate matrix (Q), which describes the relative rates of change between nucleotide states [5] [9]. For the GTR model, this matrix takes the form:

Where parameters a through f represent the relative exchangeability rates between nucleotide pairs, and πA through πT represent the equilibrium base frequencies [5]. The diagonal elements are set such that each row sums to zero, ensuring mathematical consistency [5] [6].

Simpler models place equality constraints on these parameters. For example, the JC69 model assumes all exchangeability parameters (a-f) are equal and all base frequencies are equal (0.25 each) [9]. The HKY85 model distinguishes only between transitions (AG, CT) and transversions (all other changes), with two different rate parameters [8].

Experimental Protocols for Model Selection

Standard Model Selection Workflow

Detailed Methodology from Comparative Studies

A comprehensive 2025 study analyzed model selection across 34 real datasets and 88 simulated datasets using three widely-used phylogenetic programs: jModelTest2, ModelTest-NG, and IQ-TREE [3]. The experimental protocol involved:

Dataset Preparation: The 34 real datasets contained multilocus DNA alignments from mitochondrial, nuclear, and chloroplast genomes from diverse animals and plants, with taxa ranging from 13 to 2,872 and alignment lengths from 823 to 25,919 sites. The 88 simulated datasets each contained 100 taxa with 10,000 nucleotides length, generated based on 88 random trees by AliSim software using different nucleotide substitution models [3].

Model Selection Execution: For each dataset, statistical selection of the best-fit nucleotide substitution model was performed using AIC, AICc, and BIC criteria in all three programs, testing all substitution models offered by each software [3].

Consistency Evaluation: The researchers evaluated whether different substitution models selected using different criteria within the same software were similar, with models differing by four or fewer parameters considered similar, and those differing by five or more considered dissimilar [3].

Accuracy Assessment: For simulated datasets with known true models, analysis determined whether best-fit models identified by each program and selection criterion were consistent with the known true model [3].

Statistical Analysis: Chi-squared tests of independence were employed to determine if significant associations existed between programs, selection criteria, and model selection consistency. All statistical analyses were conducted in RStudio using various packages including 'rcompanion' for post hoc analysis [3].

Key Findings and Data Presentation

Quantitative Comparison of Selection Criteria Performance

Table 2: Performance of Information Criteria in Identifying True Model (Based on 88 Simulated Datasets)

| Information Criterion | Accuracy in Identifying True Model | Model Complexity Preference | Key Characteristics |

|---|---|---|---|

| BIC (Bayesian Information Criterion) | Consistently outperformed AIC and AICc | Prefers simpler models with fewer parameters | Derived from Bayesian probability; most heavily penalizes extra parameters [3] |

| AIC (Akaike Information Criterion) | Lower accuracy than BIC | Prefers more complex models | Derived from frequentist probability; moderate parameter penalty [3] |

| AICc (Corrected AIC) | Lower accuracy than BIC; nearly identical to AIC | Prefers more complex models | Corrected version of AIC for small sample sizes; similar performance to AIC [3] |

Software Consistency Findings

The comparative analysis demonstrated that the choice of phylogenetic program (jModelTest2, ModelTest-NG, or IQ-TREE) did not significantly affect the ability to accurately identify the true nucleotide substitution model [3]. This indicates researchers can confidently rely on any of these programs for model selection, as they offer comparable accuracy without substantial differences [3].

However, the study revealed that selection by AIC and AICc was nearly identical across most datasets, with differences observed in only one real dataset ('Devitt_2013') in ModelTest-NG and IQ-TREE [3].

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q: When the best-fit model selected by BIC is inconsistent with that selected by AIC/AICc, which should I use?

A: Based on empirical evidence, BIC should be preferred when it conflicts with AIC or AICc. The 2025 study found BIC consistently outperformed both AIC and AICc in accurately identifying the true model, regardless of the program used [3]. BIC's heavier penalty for additional parameters helps prevent overparameterization.

Q: Are the best-fit models selected by BIC typically simpler or more complex than those selected by AIC and AICc?

A: BIC typically selects simpler models with fewer parameters because it more heavily penalizes the addition of extra parameters compared to AIC and AICc [3]. This parsimonious approach often leads to better performance in identifying the true underlying model.

Q: When different software programs suggest different best-fit models, which should I trust?

A: The research indicates that choice of program (jModelTest2, ModelTest-NG, or IQ-TREE) does not significantly affect accurate model identification [3]. You can confidently use results from any of these programs. If they disagree, consider using Bayesian model averaging approaches that account for model uncertainty [10] [11].

Q: How important is model selection for phylogenetic analysis?

A: Extremely important. Different substitution models can change the outcome of phylogenetic analyses [3]. Appropriate model selection is crucial for obtaining accurate phylogenetic inferences, as it directly influences the reliability of resulting trees and downstream analyses [3].

Common Problems and Solutions

Problem: Inconsistent model selection between criteria.

- Solution: Prioritize BIC over AIC and AICc, as empirical evidence shows BIC more accurately identifies the true model [3].

Problem: Computational limitations with complex models.

- Solution: For large datasets where computational efficiency is needed, BIC's preference for simpler models with fewer parameters can be advantageous [3].

Problem: Uncertainty in model selection.

- Solution: Consider Bayesian model averaging approaches implemented in tools like BEAST2's bModelTest, which treat the substitution model as a nuisance parameter and integrate over all available models [10] [11].

Problem: Rate variation across sites.

- Solution: Many software packages allow incorporating gamma-distributed rate variation (+G) and/or a proportion of invariant sites (+I) to account for heterogeneity in substitution rates across alignment sites [10] [9].

Advanced Topics

Bayesian Model Averaging Approach

An alternative to selecting a single best-fit model is Bayesian model averaging, which treats the substitution model as a nuisance parameter and integrates over all available models [11]. This approach, implemented in BEAST2's bModelTest package, uses reversible jump MCMC (rjMCMC) to jump between states representing different possible substitution models [11].

This method allows simultaneous estimation of phylogeny while integrating over 203 possible time-reversible nucleotide substitution models (or 31 models when considering only transitions/transversions splits) [11]. Parameter estimates are effectively averaged over different substitution models, weighted by each model's support. The proportion of time the Markov chain spends in a particular model state can be interpreted as the posterior support for that model [11].

Accounting for Across-Site Heterogeneity

More advanced approaches account for heterogeneity in the substitution process across sites in an alignment. The Substitution Dirichlet Mixture Model (SDPM) uses Dirichlet process mixture models to simultaneously estimate the number of partitions, assignment of sites to partitions, and substitution model for each partition [10].

This method addresses the limitation of traditional approaches that assume a homogeneous substitution process across all sites or require a priori partitioning of the alignment [10]. Studies have shown that as many as nine partitions may be required to explain heterogeneity in nucleotide substitution processes across sites in single-gene analyses [10].

The Scientist's Toolkit

Table 3: Essential Software Tools for Nucleotide Substitution Model Selection

| Tool/Resource | Function | Key Features |

|---|---|---|

| IQ-TREE | Phylogenetic inference and model selection | Implements ModelFinder for automatic model selection; supports wide range of substitution models including Lie Markov models [7] |

| jModelTest2 | Nucleotide substitution model selection | Statistical selection of best-fit models using AIC, AICc, and BIC [3] |

| ModelTest-NG | Nucleotide substitution model selection | One to two orders of magnitude faster than jModelTest; uses same statistical criteria [3] |

| BEAST2 + bModelTest | Bayesian phylogenetic analysis with model averaging | Uses reversible jump MCMC to average over 203 time-reversible substitution models [11] |

| RevBayes | Bayesian phylogenetic inference | Flexible platform for implementing various substitution models and conducting MCMC analyses [9] |

| RStudio + 'rcompanion' | Statistical analysis | Post hoc analysis of model selection consistency and performance [3] |

The spectrum of nucleotide substitution models ranges from the highly simplistic JC69 to the parameter-rich GTR model. Empirical research demonstrates that while choice of software platform (jModelTest2, ModelTest-NG, or IQ-TREE) has minimal impact on model selection accuracy, the choice of statistical criterion is crucial, with BIC consistently outperforming AIC and AICc in identifying the true model [3].

For practitioners, this suggests a workflow beginning with automated model selection in established software, prioritizing BIC when criteria conflict, and considering Bayesian model averaging approaches when model uncertainty remains high. As phylogenetic analyses continue to evolve with larger datasets and more complex evolutionary questions, proper model selection remains a fundamental step in ensuring accurate and reliable phylogenetic inference.

In molecular evolutionary genetics, the rate matrix (Q) and equilibrium frequencies (π) are fundamental components of nucleotide substitution models. These parameters define the mathematical framework for describing how DNA sequences evolve over time, influencing the accuracy of phylogenetic inference, detection of natural selection, and estimation of evolutionary distances [6] [12]. Proper specification and understanding of these components are crucial for researchers and drug development professionals selecting best-fit models for their analyses.

Troubleshooting Guide: Common Issues and Solutions

| Problem Area | Specific Issue | Possible Causes | Recommended Solutions |

|---|---|---|---|

| Model Selection & Fitting | Inconsistent best-fit model selection | Using different information criteria (AIC, BIC, AICc) or software [3] | Use BIC for model selection; validate with multiple criteria [3]. |

| Poor model fit to data | Overly simple model; ignoring rate heterogeneity; incorrect equilibrium frequencies [4] | Use model testing software (jModelTest, ModelTest-NG, IQ-TREE); consider +G or +I extensions [3] [12]. | |

| Parameter Estimation | Unstable or biased parameter estimates (e.g., κ, π) | Small sample size; insufficient MCMC convergence; model misspecification [13] | Increase sequence data; run MCMC longer; check prior distributions in Bayesian analysis [13]. |

| Estimates of π do not match observed base frequencies | Violation of model assumptions (e.g., selection, non-stationarity) [14] | Test for stationarity; consider models that do not assume equilibrium [14]. | |

| Technical Implementation | Poor phylogenetic inference (e.g., wrong tree) | Model misspecification; inadequate handling of rate variation across sites [12] | Use GTR+G+I model as starting point; perform rigorous model selection [12]. |

| Computational bottlenecks with complex models | Large datasets; highly parameterized models (e.g., GTR) [3] | For large datasets, use BIC to select simpler models; use efficient software (IQ-TREE, ModelTest-NG) [3]. |

Frequently Asked Questions (FAQs)

General Concepts

What is the biological interpretation of the f parameter in +gwF models? The f parameter in generalized weighted frequency (+gwF) models determines the relative influence of the starting versus ending nucleotide states on substitution rates [14]. While a value of f=0.5 is expected under a nearly neutral model with weak selection and drift, evolutionary simulations show this parameter is highly sensitive to violations of model assumptions, including population disequilibrium and mutation rate biases [14]. Consequently, f should typically be estimated as a free parameter rather than fixed a priori, as its biological interpretation is complex and context-dependent [14].

How do equilibrium frequencies (π) relate to the Hardy-Weinberg principle? The Hardy-Weinberg principle describes a state of equilibrium in population genetics where allele and genotype frequencies remain constant from generation to generation in the absence of evolutionary forces [15]. In substitution models, the equilibrium frequencies (π) represent a similar steady-state distribution of nucleotides expected under the model over long evolutionary timescales [6]. Both concepts describe stable distributions, though Hardy-Weinberg applies to genotype frequencies in a population, while π applies to nucleotide frequencies in a sequence evolution model.

Model Selection and Application

Which information criterion (AIC, AICc, or BIC) should I use for model selection? A 2025 comparative analysis demonstrates that the Bayesian Information Criterion (BIC) consistently outperforms AIC and AICc in accurately identifying the true underlying nucleotide substitution model [3]. BIC's stronger penalty for extra parameters helps avoid overfitting. While the choice of software (jModelTest2, ModelTest-NG, IQ-TREE) does not significantly impact accuracy, the choice of criterion does [3].

When should I use a reversible versus non-reversible model? Most common substitution models assume time reversibility (πiqij = πjqji), which simplifies calculations and is often biologically reasonable [6]. However, non-reversible models are crucial for detecting certain evolutionary events like horizontal gene transfer, as they can capture changes in the substitution rate matrix that occur when a gene moves to a new genomic context with different mutational biases [16].

Technical Implementation

How do I implement and test different substitution models in practice? Practical implementation involves using phylogenetic software that supports model specification and parameter estimation [13] [12]. The workflow typically involves: (1) specifying priors for model parameters (e.g., κ ~ Uniform(0,10)), (2) defining the Q matrix as a deterministic function of these parameters, (3) using MCMC moves to explore the parameter space, and (4) analyzing posterior distributions to obtain parameter estimates and assess convergence [13].

What are the key extensions to basic substitution models? Two critical extensions are the +I (proportion of invariable sites) and +G (rate variation across sites) parameters [12]. The GTR+G+I model, which incorporates both general time-reversible rates and these extensions, often provides the best fit to real data, reflecting the complexity of actual evolutionary processes [12].

Experimental Protocols and Workflows

Protocol 1: Selecting the Best-Fit Nucleotide Substitution Model

Purpose: To systematically identify the most appropriate nucleotide substitution model for a given DNA sequence alignment to ensure accurate phylogenetic inference [4] [3].

Materials:

- Multiple sequence alignment (FASTA, NEXUS, or PHYLIP format)

- Computational resources (standard desktop or high-performance computing cluster)

- Software: jModelTest2, ModelTest-NG, or IQ-TREE [3]

Procedure:

- Prepare Input Data: Ensure your multiple sequence alignment is properly formatted and free of errors.

- Run Model Selection Software: Execute one or more model selection programs. Example with IQ-TREE:

iqtree -s alignment.phy -m TEST -mset ALL -BIC -AIC -AICcThis command tests all available models and calculates AIC, AICc, and BIC values [3]. - Identify Best-Fit Model: Examine the output to find the model with the lowest BIC score, as it most accurately identifies the true model [3].

- Validate Results: Check that the selected model makes biological sense for your data (e.g., high κ for nuclear DNA).

- Proceed with Phylogenetic Analysis: Use the selected model and its estimated parameters in subsequent phylogenetic inferences.

Protocol 2: Implementing and Comparing Substitution Models in a Bayesian Framework

Purpose: To estimate parameters of different substitution models and assess their impact on phylogenetic tree inference using Bayesian methods [13].

Materials:

- Sequence alignment (e.g., NEXUS format)

- Bayesian phylogenetic software (e.g., RevBayes, MrBayes, BEAST) [13] [12]

- Computing resource capable of running MCMC simulations

Procedure:

- Specify the Model: Define the substitution model (e.g., JC, T92, HKY, GTR) in the script, including priors for all parameters.

- Example priors:

kappa ~ dnUniform(0,10)for T92;pi ~ dnDirichlet(1,1,1,1)for HKY [13].

- Example priors:

- Define the Rate Matrix: Construct the Q matrix as a deterministic function of the parameters (e.g.,

Q := fnHKY(kappa=kappa, baseFrequencies=pi)). - Set Up MCMC: Configure the Markov chain Monte Carlo sampler with appropriate moves (e.g.,

mvSlidefor frequencies,mvScalefor κ) and monitors. - Run Analysis: Execute the MCMC for a sufficient number of generations to achieve convergence (assess using ESS > 200).

- Compare Models: Analyze posterior distributions of parameters (e.g., κ, π) and trees to understand model fit and sensitivity.

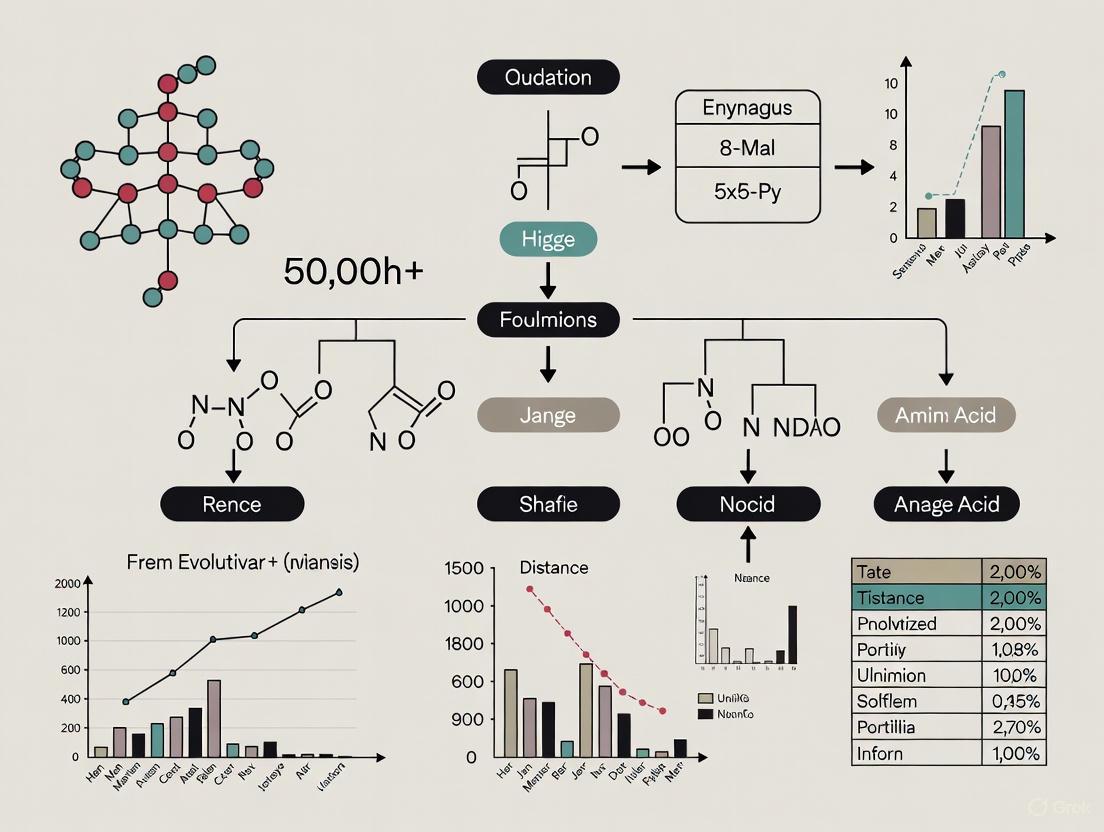

Visualization of Model Components and Workflows

Diagram 1: Relationship Between Key Components of a Nucleotide Substitution Model

Diagram 2: Workflow for Model Selection and Phylogenetic Analysis

| Category | Item/Software | Specific Function in Analysis |

|---|---|---|

| Model Selection Software | jModelTest2, ModelTest-NG, IQ-TREE [3] | Statistically compares fit of different nucleotide substitution models to a given DNA alignment using AIC, AICc, and BIC. |

| Phylogenetic Inference Packages | RevBayes [13], BEAST [12], RAxML [12], PhyML [12] | Performs phylogenetic tree inference under specified substitution models, often with Bayesian or Maximum Likelihood methods. |

| Sequence Simulation Tools | Seq-Gen, INDELible, EVOLVER [12] | Generates synthetic DNA sequence alignments under a known substitution model for method testing and validation. |

| Model Components | Rate Matrix (Q) [6] [13] | Encodes instantaneous rates of change between nucleotides; the core of any substitution model. |

| Equilibrium Frequencies (π) [6] [14] | Represents the stable, long-term distribution of nucleotide frequencies the model converges to. | |

| Γ-distributed Rate Variation (+G) [12] | Accounts for the fact that different sites in a sequence evolve at different rates. | |

| Proportion of Invariable Sites (+I) [12] | Accounts for a fraction of sites in a sequence that are completely unable to change. |

The Importance of Time-Reversibility and Stationarity Assumptions

Frequently Asked Questions

What do time-reversibility and stationarity mean in molecular evolution models? Time-reversibility describes a substitution model where the probability of changing from state i to state j is identical to that of changing from j to i when considering the equilibrium frequencies of the bases, satisfying the condition π~i~Q~ij~ = π~j~Q~ji~ [5]. Stationarity means that the substitution process parameters, particularly the equilibrium base frequencies (π), remain constant across the entire phylogenetic tree and over evolutionary time [5] [17].

Why are these assumptions practically important for my phylogenetic analysis? These assumptions dramatically simplify the mathematical complexity and computational burden of phylogenetic inference [5]. Time-reversibility makes the identification of ancestral sequences unnecessary, allowing you to use unrooted trees and re-root analyses without changing the likelihood scores [5]. Stationarity enables the application of consistent evolutionary parameters across all lineages, making statistical inference tractable.

What are the consequences of violating these assumptions in real data analysis? Violating stationarity assumptions (non-stationarity) can lead to systematic errors in tree inference, particularly when base composition differs significantly among lineages [17]. Non-stationary processes can create homoplasy that may be misinterpreted as phylogenetic signal. For time-reversibility violations, the major practical consequence is the inability to use standard modeling approaches, requiring more complex non-reversible models that are computationally intensive [5].

How can I detect violations in my dataset? Statistical tests for stationarity include examining base composition homogeneity across taxa using χ² tests or more sophisticated approaches. For time-reversibility, specialized tests exist that compare observed site patterns with those expected under reversible models. Visualization of base composition trends across lineages can also reveal non-stationarity.

Troubleshooting Guides

Problem: Suspected Non-Stationarity in Multi-Species Alignment

Symptoms

- Significant heterogeneity in base composition among taxa

- Poor model fit or anomalous tree topologies

- Long branches attracting due to compositionally similar sequences

Diagnostic Steps

- Calculate Base Frequencies: Compute and compare nucleotide or amino acid frequencies across all taxa

- Statistical Testing: Perform matched-pairs tests of homogeneity

- Visual Inspection: Plot compositional trends across the tree

Solutions

- Apply Non-Stationary Models: Use models that allow different equilibrium frequencies across lineages

- Data Recoding: Recode sequences to fewer character states to minimize compositional heterogeneity

- Remove Problematic Taxa: In severe cases, consider removing extremely compositionally biased sequences

Table 1: Comparison of Substitution Model Performance Under Stationary vs. Non-Stationary Conditions

| Data Characteristic | Stationary Model Performance | Non-Stationary Model Performance |

|---|---|---|

| Homogeneous base composition | Optimal | Similar to stationary |

| Heterogeneous base composition | Potentially misleading | More accurate |

| Computational requirements | Lower | Significantly higher |

| Parameter count | Fewer | Increased (more complex) |

| Implementation availability | Widespread | Limited |

Problem: Inaccurate Divergence Time Estimation

Background: A 2020 study surprisingly demonstrated that even extremely simple substitution models (like JC69) can produce divergence time estimates similar to those from complex models (like GTR+Γ) in many practical phylogenomic datasets [18].

Diagnostic Checklist

- Compare pairwise distances under simple and complex models

- Check for linear relationship between branch length estimates

- Evaluate calibration constraint effectiveness

Solutions and Recommendations

- Use Multiple Calibrations: Incorporate several reliable fossil calibrations to reduce model dependency [18]

- Validate with Relaxed Clocks: Implement relaxed clock methods that can automatically adjust for underestimation [18]

- Benchmark Models: Compare time estimates across model complexities for your specific dataset

Table 2: Factors Affecting Robustness of Time Estimates to Model Complexity

| Factor | Effect on Time Estimate Robustness | Practical Implication |

|---|---|---|

| Number of calibrations | Increases robustness | Include multiple high-quality calibrations |

| Taxon sampling | Moderate effect | Dense sampling recommended |

| Sequence length | Limited effect beyond sufficient data | Focus on quality over quantity |

| Rate variation among lineages | Significant effect | Use relaxed clock methods |

| Tree depth | Variable effect | Test sensitivity for your data |

Experimental Protocols

Protocol: Testing Time-Reversibility and Stationarity Assumptions

Purpose: To empirically evaluate whether your data violates time-reversibility or stationarity assumptions and determine the impact on phylogenetic inference.

Materials and Reagents

- Molecular sequences: Multi-sequence alignment in FASTA or PHYLIP format

- Computational tools: Phylogenetic software (RevBayes, IQ-TREE, PhyloBayes)

- Statistical packages: R with phylogenetic libraries (ape, phangorn)

Procedure

- Data Preparation

- Align sequences using appropriate methods (MAFFT, MUSCLE)

- Verify alignment quality and remove ambiguously aligned regions

Stationarity Testing

- Calculate base frequencies for each taxon

- Perform compositional homogeneity test (χ² or Fisher's exact test)

- Visualize compositional differences using principal components analysis

Model Comparison

- Fit both reversible and non-reversible models to your data

- Compare model fit using likelihood ratio tests or information criteria

- Evaluate topological differences between reversible and non-reversible analyses

Impact Assessment

- Compare posterior probabilities or bootstrap support across models

- Assess branch length and divergence time differences

- Evaluate phylogenetic relationships for stability

Troubleshooting Notes

- For large datasets, consider approximate methods to reduce computation time

- Non-reversible models may require substantially longer MCMC runs for convergence

- Extreme compositional heterogeneity may require specialized models

Research Reagent Solutions

Table 3: Essential Computational Tools for Testing Evolutionary Assumptions

| Tool/Software | Primary Function | Application Context |

|---|---|---|

| RevBayes | Bayesian phylogenetic inference | Flexible model testing including non-reversible models [9] |

| IQ-TREE | Maximum likelihood phylogenetics | Efficient model testing and comparison |

| PhyloBayes | Bayesian phylogenetics | Non-stationary and non-reversible model implementation |

| PAUP* | Phylogenetic analysis | Compositional homogeneity testing |

| R + phangorn | Statistical analysis | Custom tests and visualization |

Workflow Visualization

Workflow for Testing Evolutionary Assumptions

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: What are the practical consequences if I use a standard site-independent model on data that has evolved with epistasis?

Using a standard site-independent model on data with underlying epistasis (where the evolutionary change at one site depends on the state of another site) is a form of model misspecification. The consequences are twofold [19]:

- Potential for Systematic Error (Bias): Unmodeled epistasis can introduce bias into the phylogenetic estimate, meaning the inferred tree is expected to be less accurate. In this scenario, identifying and removing interacting sites could improve inference.

- Increased Variance: Epistasis can reduce the effective number of independent sites in your alignment. This does not necessarily introduce bias but increases the variance of your estimator, making the results less stable. In this case, removing paired sites would remove information and worsen inference. Simulation studies show that accuracy of Bayesian phylogenetic inference generally increases with alignment length, even when the additional sites are epistatically coupled. However, the accuracy is lower compared to an alignment of the same size where all sites were truly independent [19].

Q2: How can I detect if my dataset suffers from model misspecification due to unmodeled epistasis?

A powerful method for detecting unmodeled features like epistasis is the use of Posterior Predictive Checks [19]. This Bayesian methodology involves:

- Generating simulated datasets based on the model and parameters sampled from the posterior distribution of your original analysis.

- Calculating a specially designed test statistic that is sensitive to the misspecification you are testing for (in this case, pairwise epistasis) on both the real and the simulated datasets.

- Comparing the distribution of the test statistic from the simulated data to the value from your real data. If the real data's value is a extreme outlier compared to the simulated distribution, it indicates your model (the site-independent model) is failing to capture the patterns present in your data.

Researchers have developed alignment-based test statistics that act as diagnostics for pairwise epistasis for this exact purpose [19].

Q3: When selecting a best-fit nucleotide substitution model, does the choice of software or statistical criterion significantly impact the result?

A recent comparative analysis provides clear guidance [3] [20]:

- Software: The choice between three widely used programs—jModelTest2, ModelTest-NG, and IQ-TREE—did not significantly affect the ability to accurately identify the true underlying nucleotide substitution model. You can confidently use any of these tools.

- Statistical Criterion: The choice of information criterion is critical. The study found that the Bayesian Information Criterion (BIC) consistently outperformed both the Akaike Information Criterion (AIC) and the Corrected Akaike Information Criterion (AICc) in accurately identifying the true model, regardless of the software used. BIC more heavily penalizes model complexity, which helps prevent overfitting.

Q4: What are the core problems with the current standard protocol for phylogenetic analysis?

The standard protocol is missing two critical steps that allow model misspecification and researcher confirmation bias to influence results [21]:

- Assessment of Phylogenetic Assumptions: The protocol does not mandate an explicit check of whether the core assumptions of the chosen phylogenetic method (e.g., stationarity, reversibility, homogeneity, and site independence) are violated by the data.

- Tests of Goodness of Fit: After model selection and tree inference, there is no step to evaluate how well the chosen model actually fits the specific dataset. Without this check, there is no way to know if the model is adequate for drawing robust conclusions.

Q5: What is the "relative worth" of an epistatic site compared to an independent site?

The concept of "relative worth" (r) quantifies the informational value of an epistatic site analyzed under a misspecified (site-independent) model, relative to a site that truly evolved independently [19]. The value of r defines two scenarios:

- Best-case (r > 0): The epistatic site still contributes useful phylogenetic signal that improves inference.

- Worst-case (r < 0): The inclusion of the epistatically paired site actively makes the phylogenetic estimate worse than if it had been omitted.

The actual value of r depends on the strength of the epistatic interaction and the specific evolutionary context.

Table 1: Impact of Model Selection Criteria on Identification of True Model

| Information Criterion | Penalization of Parameters | Performance in Identifying True Model | Recommended Use Case |

|---|---|---|---|

| BIC (Bayesian Information Criterion) | Heaviest | Consistently outperformed AIC and AICc [3] [20] | Default choice for model selection |

| AICc (Corrected Akaike IC) | Moderate | Similar to AIC, but less accurate than BIC [3] | Consider for very small sample sizes |

| AIC (Akaike Information Criterion) | Lightest | Less accurate than BIC in simulations [3] | Less recommended for phylogenetic model selection |

Table 2: Consequences of Model Misspecification from Unmodeled Pairwise Epistasis

| Scenario | Impact on Phylogenetic Inference | Effect of Removing Epistatic Sites | Relative Worth (r) |

|---|---|---|---|

| Epistasis introduces bias | Inferred tree is less accurate (systematic error) [19] | Inference improves [19] | r < 0 |

| Epistasis increases variance | Estimator is less stable; effective sites are fewer [19] | Inference worsens (information is lost) [19] | r > 0 |

Experimental Protocols

Protocol 1: Assessing Model Adequacy Using Posterior Predictive Checks for Epistasis

This protocol is used to evaluate whether a standard site-independent model is a good fit for your data or if it fails to account for pairwise epistasis [19].

- Phylogenetic Analysis: Perform a Bayesian phylogenetic analysis on your original sequence alignment using a standard site-independent model (e.g., GTR+Γ). Save the posterior distribution of trees and model parameters.

- Generate Posterior Predictive Datasets: Use the samples from the posterior distribution to simulate a large number of new sequence alignments (e.g., 500-1000). These are the posterior predictive datasets.

- Calculate Test Statistics: For your original alignment and for each of the simulated alignments, calculate one or more test statistics that are sensitive to pairwise epistasis. Examples include statistics designed to detect correlated substitutions across sites.

- Compare Distributions: Create a histogram of the test statistic values from the simulated datasets. Plot the value of the test statistic from your original data on this distribution.

- Interpretation: If the original data's value lies in the tails of the distribution (e.g., outside the 95% interval of the simulated values), it indicates that the model cannot replicate the patterns in your real data, suggesting the presence of unmodeled epistasis.

Protocol 2: A Revised Phylogenetic Protocol to Minimize Bias

This updated protocol adds critical steps to the standard workflow to guard against model misspecification and confirmation bias [21].

- Obtain Sequence Data and perform Multiple Sequence Alignment.

- Conduct an Assessment of Phylogenetic Assumptions: Before model selection, explicitly test your data for violations of common assumptions like stationarity, reversibility, homogeneity, and site independence. This may involve specialized tests or exploratory data analysis.

- Perform Model Selection using a recommended criterion like BIC.

- Infer the Phylogeny using your chosen method and model.

- Conduct Tests of Goodness of Fit: Use procedures like the Posterior Predictive Check (Protocol 1) to evaluate the absolute fit of the selected model to your specific data. If the model shows a poor fit, consider alternative models or methods.

- Interpret the Results only after the model has been validated as having an adequate fit.

Visualizations

Revised Phylogenetic Protocol

Posterior Predictive Check for Epistasis

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Statistical Tools for Phylogenetic Model Evaluation

| Tool Name | Type / Category | Primary Function in Model Assessment |

|---|---|---|

| jModelTest2 [3] | Software Package | Statistical selection of best-fit nucleotide substitution model using AIC, AICc, and BIC. |

| ModelTest-NG [3] | Software Package | Faster implementation for nucleotide and amino acid substitution model selection. |

| IQ-TREE [3] | Software Package | Integrated model selection and phylogeny inference, also supports AIC, AICc, and BIC. |

| BIC (Bayesian Information Criterion) [3] [20] | Statistical Criterion | Model selection criterion that penalizes complexity; recommended for its accuracy in identifying the true model. |

| Posterior Predictive Checks [19] | Statistical Methodology | A Bayesian method to assess the absolute goodness-of-fit of a model to a specific dataset. |

| Epistasis Diagnostic Test Statistics [19] | Diagnostic Tool | Alignment-based statistics designed to detect signatures of pairwise interactions for use in posterior predictive checks. |

Model Selection in Practice: Methods, Software, and Step-by-Step Application

Selecting the best-fit nucleotide substitution model is a critical step in phylogenetic analysis, as the choice of model can directly influence the reliability of the resulting evolutionary trees and downstream conclusions [22] [3]. For molecular evolutionary genetic research, particularly in fields like drug development where accurate phylogenetic inference can inform understanding of pathogen evolution or drug target relationships, this step is indispensable. Three software tools are currently widely used for this purpose: IQ-TREE, jModelTest2, and ModelTest-NG [22] [3] [23]. This technical support center provides a comparative overview of these tools, detailed troubleshooting guides, and frequently asked questions to assist researchers in implementing robust model selection protocols within their research workflows.

Software Comparison and Quantitative Data

Understanding the performance characteristics and output of each software tool is the first step in selecting and troubleshooting them. The following sections provide a detailed comparison based on recent empirical evaluations.

Key Performance and Accuracy Metrics

A comprehensive 2025 study analyzing 34 real and 88 simulated datasets provides key quantitative metrics for comparing these tools. The findings indicate that the choice of software itself does not significantly impact the accuracy of identifying the true model, but the choice of statistical criterion is crucial [22] [3].

Table 1: Software Performance and Characteristics Comparison

| Feature | IQ-TREE (ModelFinder) | jModelTest2 | ModelTest-NG |

|---|---|---|---|

| Primary Use Case | Integrated model selection & tree inference | Dedicated model selection | Dedicated model selection |

| Speed (Relative to jModelTest2) | Very Fast (Faster than ModelTest-NG on synthetic data) [23] | Baseline (Slowest) [23] | Very Fast (1-2 orders of magnitude faster than jModelTest2) [23] |

| Best-Fit Model Accuracy (on simulated DNA) | 70% [23] | 81% [23] | 81% [23] |

| Key Strength | High integration & speed; user-friendly [24] | High accuracy [23] | High speed & accuracy; scalable for large datasets [23] |

Table 2: Impact of Statistical Criterion on Model Selection (2025 Study)

| Information Criterion | Model Identification Accuracy | Tendency in Model Selection | Recommendation |

|---|---|---|---|

| Akaike Information Criterion (AIC) | Lower than BIC [22] | Prefers more complex models [22] | -- |

| Corrected AIC (AICc) | Nearly identical to AIC [22] | Prefers more complex models [22] | Can be used interchangeably with AIC |

| Bayesian Information Criterion (BIC) | Consistently outperforms AIC and AICc [22] [3] | Prefers simpler models; heavier penalty for extra parameters [22] | Preferred for accurate model identification |

Model Selection Workflow

The following diagram illustrates the general workflow for statistical selection of the best-fit nucleotide substitution model, applicable to all three software tools.

Troubleshooting Guides

Common Errors and Solutions

Table 3: Troubleshooting Common Software Issues

| Problem | Possible Cause | Solution |

|---|---|---|

| IQ-TREE refuses to run,citing a finished checkpoint. | A previous analysis completed successfully, and IQ-TREE prevents overwriting outputs [24]. | Use the -redo option: iqtree -s alignment.phy -m MFP -redo [24]. |

| ModelTest-NG generates aninvalid IQ-TREE command. | Model naming conventions differ between software (e.g., JTT-DCMUT+G4 vs. JTTDCMut+G4) [25]. |

Manually translate the model name to the syntax required by the target software [25]. |

| Model selection fails or isinconsistent on a large dataset. | jModelTest2 is computationally intensive and may not be optimized for very large alignments [23]. | Switch to ModelTest-NG or IQ-TREE, which are designed for better scalability and speed [23] [24]. |

| AIC and AICc select amore complex model than BIC. | This is expected behavior. BIC more heavily penalizes model complexity [22]. | Prefer the model selected by BIC as it has been shown to more accurately identify the true generating model [22] [3]. |

Model Selection Consistency and Decision Framework

The flowchart below helps troubleshoot situations where different software or criteria yield different results, guiding you toward the most reliable outcome.

Frequently Asked Questions (FAQs)

Q1: I have limited computational resources. Which software should I use? For speed and efficiency, ModelTest-NG or IQ-TREE are the best choices. ModelTest-NG is one to two orders of magnitude faster than jModelTest2 [23]. IQ-TREE's ModelFinder is also known for its computational efficiency [24].

Q2: The model selected by BIC is simpler than the one selected by AIC. Which one should I trust for my analysis? You should trust the model selected by BIC. Empirical evidence shows that BIC consistently outperforms AIC and AICc in accurately identifying the true nucleotide substitution model, even though it tends to select simpler models [22] [3].

Q3: Is it necessary to run both AIC and AICc selection? No, it is generally not necessary. Research shows that the best-fit model selected by AIC and AICc is the same in the vast majority of cases. Their results are nearly identical [22].

Q4: My thesis requires the most accurate model selection possible, not just the fastest. What is the recommended strategy? Based on current evidence, the most robust strategy is to use ModelTest-NG (or jModelTest2) with the BIC criterion. These dedicated tools showed a 81% accuracy in recovering the true model on simulated DNA data, compared to 70% for IQ-TREE's ModelFinder in one study [23]. Furthermore, BIC has been independently validated as the most accurate criterion [22] [3].

Q5: How do I handle a situation where ModelTest-NG suggests a model that IQ-TREE does not recognize?

This is a known issue due to differences in model naming conventions between software packages [25]. The solution is to consult the documentation of your phylogenetic inference tool (e.g., IQ-TREE, RAxML) to find the correct model name string and manually adjust the command. For example, translate JTT-DCMUT+G4 to JTTDCMut+G4 for IQ-TREE [25].

Experimental Protocols and Reagents

Standard Protocol for Model Selection

This protocol is adapted for use with ModelTest-NG but can be easily modified for other tools.

- Input Preparation: Prepare your multiple sequence alignment (MSA) in a supported format (e.g., PHYLIP, FASTA, NEXUS). Ensure sequence names use only alphanumeric characters, underscores, dashes, or dots to avoid parsing errors [24].

- Software Execution: Run ModelTest-NG from the command line, specifying the input alignment and the desired criteria.

-i: Input alignment file.-t ml: Use maximum likelihood tree reconstruction.-h ugf: Specify the base tree search method.-c 4: Number of CPU cores to use.-r 100: Number of bootstraps for tree assessment.

- Result Interpretation: Examine the output file to find the best-fit model according to AIC, AICc, and BIC. Prioritize the model recommended by BIC for your final phylogenetic analysis [22] [3].

- Downstream Analysis: Use the selected model in your phylogenetic software (e.g., IQ-TREE, RAxML). ModelTest-NG can generate template commands for various tools to minimize errors [23].

Essential Research Reagent Solutions

Table 4: Key Software and Data "Reagents" for Model Selection Experiments

| Item Name | Function/Description | Example/Note |

|---|---|---|

| Multiple Sequence Alignment (MSA) | The primary input data; a matrix of aligned nucleotide or amino acid sequences. | Can be generated from raw sequences using aligners like MAFFT or ClustalW [24]. |

| ModelTest-NG Software | Dedicated tool for fast and accurate statistical selection of best-fit substitution models. | Preferred for its balance of high speed and high accuracy [23]. |

| IQ-TREE Software | Integrated tool for model selection (ModelFinder) and subsequent tree inference. | Ideal for a seamless workflow from model selection to tree building [24]. |

| Information Criteria (BIC) | Statistical metrics used to compare and select the best-fit model by balancing fit and complexity. | BIC is the recommended criterion due to its superior performance in model identification [22] [3]. |

| Template Configurations | Pre-defined settings that ensure model selection results are compatible with specific tree-building tools. | ModelTest-NG can output commands for RAxML, IQ-TREE, etc., reducing syntax errors [23] [25]. |

Selecting the correct nucleotide substitution model is a critical step in molecular evolutionary analysis. The right model accurately captures how DNA sequences change over time, influencing the reliability of the resulting phylogenetic tree, while a poor model can lead to incorrect evolutionary inferences [3]. Researchers often rely on information criteria like the Akaike Information Criterion (AIC), its small-sample correction (AICc), and the Bayesian Information Criterion (BIC) to choose the best-fit model from a set of candidates [26] [27].

This guide is designed as a technical support center to help you navigate this selection process. A foundational 2025 study by Li et al. demonstrated that BIC consistently outperforms AIC and AICc in accurately identifying the true nucleotide substitution model [3]. We will delve into the evidence behind this conclusion, provide troubleshooting guides for common issues, and outline best practices for your research.

Quantitative Evidence: How BIC, AIC, and AICc Compare

A comprehensive 2025 analysis of 122 datasets (34 real and 88 simulated) provides the strongest recent evidence for the performance of these criteria [3]. The table below summarizes the key findings.

Table 1: Performance of Information Criteria in Identifying the True Model (Li et al., 2025)

| Information Criterion | Performance in True Model Identification | Key Characteristic | Typical Model Selection Tendency |

|---|---|---|---|

| BIC | Consistently outperformed AIC and AICc [3] | Heavier penalty for model complexity, especially with large n [26] [27] | Simpler, more parsimonious models |

| AIC | Less accurate than BIC in model identification [3] | Focuses on predictive accuracy, lighter penalty [26] [28] | More complex models with more parameters |

| AICc | Less accurate than BIC in model identification [3] | Corrects AIC for small sample size; converges with AIC as n increases [29] | More complex models with more parameters |

The study concluded that the choice of software (jModelTest2, ModelTest-NG, or IQ-TREE) did not significantly affect the ability to identify the true model. The critical factor was the choice of information criterion, with BIC providing superior accuracy [3].

Theoretical Foundations: Why BIC Excels at Model Identification

The superior performance of BIC in identifying the true model is not accidental; it is rooted in its mathematical formulation and theoretical goal.

Table 2: Core Theoretical Differences Between AIC and BIC

| Aspect | Akaike Information Criterion (AIC) | Bayesian Information Criterion (BIC) |

|---|---|---|

| Mathematical Formula | AIC = 2k - 2ln(L) [26] | BIC = ln(n)k - 2ln(L) [26] |

| Primary Goal | Selects the model that best approximates reality (best predictive accuracy) [28] | Attempts to identify the true data-generating model [28] |

| Penalty Term | 2k (linear penalty for parameters) [26] | ln(n)k (penalty grows with sample size) [26] |

| Implied Assumption | The "true model" is not in the candidate set; all models are approximations [28] | The "true model" is among the candidates being evaluated [28] |

The key is the penalty term. BIC's penalty, which includes the log of the sample size (ln(n)), is more severe than AIC's, especially as your dataset grows larger. This stronger penalty discourages overfitting by making it harder to justify unnecessary parameters. In phylogenetic simulations where the true model is known, this property allows BIC to more reliably arrive at the correct answer [3].

Diagram 1: Decision workflow for selecting between AIC and BIC

The Scientist's Toolkit: Essential Research Reagent Solutions

When conducting nucleotide substitution model selection, you will interact with several key software tools and statistical concepts. The table below lists these essential "research reagents" and their functions.

Table 3: Key Reagents and Tools for Nucleotide Substitution Model Selection

| Tool / Concept | Function in Model Selection | Key Feature |

|---|---|---|

| jModelTest2 | Calculates best-fit model using AIC, AICc, BIC, etc. [3] | User-friendly GUI and command-line interface [30] |

| ModelTest-NG | Calculates best-fit model using AIC, AICc, BIC, etc. [3] | 1-2 orders of magnitude faster than jModelTest2 [3] |

| IQ-TREE | Performs model selection and tree inference simultaneously [3] | Integrated workflow, often faster [3] |

| BIC (Formula) | BIC = ln(n)k - 2ln(L); Selects models by penalizing complexity [26] | Stronger penalty term than AIC, prefers simpler models [27] |

| AIC (Formula) | AIC = 2k - 2ln(L); Selects models balancing fit and complexity [26] | Tends to favor more complex models than BIC [28] |

| AICc (Formula) | AICc = AIC + (2k(k+1))/(n-k-1); Corrects AIC for small n [29] | Recommended when n/k < 40 [29] |

Experimental Protocols: Key Methodologies from Cited Studies

To ensure the reproducibility of your work or the studies you read, here is a detailed methodology based on the pivotal 2025 study by Li et al.

Objective: To determine the impact of software and information criteria on the accuracy of selecting the true nucleotide substitution model [3].

Datasets:

- 34 Real Datasets: Multilocus DNA alignments from mitochondrial, nuclear, and chloroplast genomes. Taxa ranged from 13 to 2,872; alignment length ranged from 823 to 25,919 sites [3].

- 88 Simulated Datasets: Generated with known true nucleotide substitution models using AliSim. Each dataset contained 100 taxa and 10,000 nucleotides in length [3].

Software & Commands:

- Software Used: jModelTest2 v2.1.10, ModelTest-NG v0.1.7, and IQ-TREE v2.2.0.

- Execution: The statistical selection of the best-fit nucleotide substitution model was performed in each software using AIC, AICc, and BIC and all substitution models offered by the software [3].

Analysis:

- For each dataset and software, the best-fit model identified by each criterion was recorded.

- The selected models were compared across criteria and software to assess consistency.

- For simulated data, the selected model was compared against the known true model to assess accuracy [3].

- A Chi-squared test of independence was used to determine if significant associations existed between programs, criteria, and model selection consistency [3].

Diagram 2: Experimental protocol for comparing model selection criteria

Troubleshooting Guide & FAQs

FAQ 1: The best-fit model selected by BIC is different from the one selected by AIC. Which one should I trust for my phylogenetic analysis?

- Answer: This is a common scenario. Your choice should align with your research goal.

- If the primary goal of your analysis is to identify the true evolutionary model that generated your data (often the case in phylogenetic inference), you should trust the BIC result. Empirical evidence shows it is more accurate for this purpose [3].

- If your goal is maximizing the predictive accuracy of your model for forecasting, even if it is more complex, then AIC might be preferred [28].

- Recommendation: In the context of nucleotide substitution model selection for phylogenetics, the evidence strongly supports using BIC [3].

FAQ 2: My dataset is relatively small. Should I use AICc instead of AIC or BIC?

- Answer: For small sample sizes, AICc is indeed recommended over AIC because it includes a correction term that reduces the penalty for parameters, mitigating overfitting. A common rule of thumb is to use AICc when the ratio of your sample size (n) to the number of parameters (k) is less than 40 [29]. However, it is crucial to remember that the 2025 study found BIC still outperformed AICc in identifying the true model, even across diverse dataset sizes [3]. You should run all three criteria and compare the results.

FAQ 3: Does the software I use for model selection (jModelTest2, ModelTest-NG, or IQ-TREE) significantly impact the outcome?

- Answer: According to the most recent research, no. The 2025 study found that the choice of program did not significantly affect the ability to accurately identify the true model. The far more critical factor was the choice of information criterion [3]. You can confidently use any of these programs, choosing based on computational speed (ModelTest-NG is fastest) or integration with your workflow (e.g., IQ-TREE for combined selection and tree building).

FAQ 4: BIC always selects a simpler model than AIC. Is it prone to underfitting?

- Answer: BIC's stronger penalty is designed to guard against overfitting, which is the more frequent concern in model selection. While underfitting is a theoretical risk, the empirical evidence from phylogenetic studies suggests that BIC's preference for simpler models is, on balance, more reliable at arriving at the correct data-generating model without harmful overfitting [3]. The consistency of BIC's performance across many datasets indicates that it effectively balances fit and complexity.

Key Takeaways

- BIC is Superior for Identification: When the goal is to find the true nucleotide substitution model, BIC is consistently more accurate than AIC or AICc [3].

- The Penalty is Key: BIC's stricter penalty on model complexity, which increases with sample size, helps it avoid overfitting and select more parsimonious, correct models [26] [27].

- Software is Less Critical: You can rely on jModelTest2, ModelTest-NG, or IQ-TREE for model selection, as their results are largely consistent. The choice of criterion (BIC) is what matters most [3].

Troubleshooting Guides and FAQs

Model Selection and Interpretation

Q1: The model selection process is taking a very long time for my large alignment. How can I speed it up?

You can reduce the computational burden by restricting the set of models tested. Use the -mset option to specify only a subset of base models for testing. For amino acid data, the -msub option allows you to test only models relevant to your data type (e.g., -msub nuclear for general sequences or -msub viral for viral sequences) [24]. Furthermore, ensure you are using the multicore version of IQ-TREE and specify the number of CPU cores with the -nt option. For large alignments, using more cores significantly speeds up the analysis. You can use -nt AUTO to let IQ-TREE automatically determine the best number of threads [31].

Q2: How do I interpret the support values from ultrafast bootstrap (UFBoot) in my analysis?

UFBoot support values are more unbiased than standard bootstrap. A support value of 95% corresponds roughly to a 95% probability that the clade is true. For UFBoot, you should generally rely on branches with support ≥ 95%. It is also recommended to perform the SH-aLRT test (e.g., by adding -alrt 1000). You can be more confident in a clade if it has SH-aLRT ≥ 80% and UFBoot ≥ 95% [31]. Note that these thresholds are recommended for single-gene trees and may not hold for phylogenomic analyses with concatenated data.

Q3: ModelFinder selected a complex model with many parameters. Is it safe to use a simpler model for computational efficiency?

While a simpler model might be computationally faster, your primary goal should be model accuracy for robust phylogenetic inference. Recent research indicates that the Bayesian Information Criterion (BIC), which ModelFinder uses by default, consistently outperforms AIC and AICc in accurately identifying the true underlying nucleotide substitution model [3]. BIC more heavily penalizes unnecessary parameters, helping to avoid overfitting. Therefore, it is recommended to trust the ModelFinder selection based on BIC. If computational resources are a constraint for subsequent analyses, you can consider the model's specific parameters and consult the literature for potential simplifications, but this should be done cautiously.

Q4: What does the composition chi-square test at the beginning of the run mean, and what should I do if sequences "fail" this test?

IQ-TREE performs a composition chi-square test for each sequence to check for significant deviation from the average character composition of the entire alignment [31]. A "failed" sequence has a composition that is statistically different from the average. This test is an explorative tool. You should not automatically remove failing sequences. However, if your final tree shows an unexpected topology, this test might help identify sequences causing potential problems. For phylogenomic protein data, you can also try profile mixture models (e.g., C10 to C60), which account for different amino-acid compositions across the sequences and sites [31].

Q5: Can I use ModelFinder for mixed data types in a partitioned analysis?

Yes. IQ-TREE allows partitioned analysis with mixed data types (e.g., DNA, protein, codon) specified via a NEXUS partition file [31]. In the partition file, you can define subsets (charsets) from different alignment files and assign them different models. When mixing codon and DNA data, note that the branch lengths are interpreted as the number of nucleotide substitutions per nucleotide site [31].

Performance and Technical Issues

Q6: My analysis was interrupted. Do I have to start from the beginning?

No. IQ-TREE periodically writes checkpoint files (e.g., .ckp.gz). Simply re-run the same command, and IQ-TREE will resume from the last checkpoint [24]. If the run successfully completed, re-running the command will result in an error to prevent overwriting outputs. Use the -redo option to force IQ-TREE to overwrite all previous output files and start anew [24].

Q7: I want to perform a more thorough model selection that searches tree-space for each model. How can I do this?

For a more accurate but computationally intensive analysis, you can use the -mtree option with ModelFinder. This instructs IQ-TREE to perform a full tree search for each model considered, rather than comparing all models on a single initial parsimony tree [32] [24]. This can lead to a better fit between the tree, model, and data, especially if the initial tree is suboptimal [32].

Workflow and Methodology

The following diagram illustrates the logical workflow for automating model selection with IQ-TREE's ModelFinder, integrating key decision points from the troubleshooting guides.

Research Reagent Solutions

The table below details key software and methodological "reagents" essential for conducting automated model selection with IQ-TREE.

Table 1: Essential Research Reagents for Model Selection with IQ-TREE

| Item Name | Type | Function / Purpose | Key Implementation Notes |

|---|---|---|---|

| ModelFinder | Algorithm | Automatically determines the best-fit substitution model for a given alignment by comparing models using AIC, AICc, or BIC [32] [24]. | Activated with -m MFP in IQ-TREE. Default is BIC, which has been shown to accurately identify the true model [3]. |

| Probability-Distribution-Free (PDF) Model | Rate Heterogeneity Model | A key feature of ModelFinder that models rate variation across sites without assuming a Gamma distribution, providing a more flexible fit to the data [32]. | Labeled as Rk (e.g., R5) in model output. Often leads to a better fit than traditional +G or +I+G models [32]. |

| Ultrafast Bootstrap (UFBoot) | Branch Support Method | Approximates standard bootstrap supports with much less computational time, yielding less biased support values [31]. | Implemented with -bb option. Support values ≥ 95% are considered significant. |

| SH-aLRT Test | Branch Support Method | A fast likelihood-based test for branch support [31]. | Used with -alrt option. Supports ≥ 80% are considered significant. Often reported alongside UFBoot. |

| NEXUS Partition File | Data Specification Format | Enables complex partitioned analyses, including mixing of different data types (DNA, protein, codon) in a single analysis [31]. | Required for mixing data types. Each partition's site indices are specified independently. |

Experimental Protocols and Data Presentation

Detailed Protocol: Automating Model Selection and Tree Inference

This protocol outlines the steps for finding the best-fit model and reconstructing a maximum-likelihood phylogeny for a nucleotide alignment using IQ-TREE.

- Input Preparation: Prepare your multiple sequence alignment in a supported format (PHYLIP, FASTA, or NEXUS). Ensure sequence names use only alphanumeric characters, underscores, dashes, or dots [24].

- Initial Run with ModelFinder: Execute the core command to perform simultaneous model selection and tree reconstruction. The

-m MFPflag activates ModelFinder Plus.-s your_alignment.phy: Specifies the input alignment file.-m MFP: Triggers ModelFinder to select the best-fit model and then uses it for the subsequent tree search.-bb 1000: Performs 1000 ultrafast bootstrap replicates.-alrt 1000: Performs the SH-like approximate likelihood ratio test with 1000 replicates.-nt AUTO: Automatically determines the optimal number of CPU threads to use.

- Output Interpretation: Upon completion, IQ-TREE generates several output files. The main report file (

your_alignment.phy.iqtree) contains the selected model, model parameters, log-likelihood scores, and the final tree with support values. The best-fit model (e.g.,TIM2+F+I+G4) will be clearly stated in this file [24]. - Final Analysis (Optional): If you only ran model selection (

-m MF), or wish to run the final tree with a specific model, use the model name identified in the previous step.

Table 2: Impact of Model Selection Criterion on Identification of True Model (Based on Simulated Data)

| Selection Criterion | Performance in Identifying True Model | Key Characteristics |

|---|---|---|

| BIC (Bayesian Information Criterion) | Consistently outperforms AIC and AICc in accurately identifying the true model, regardless of the software used (IQ-TREE, jModelTest2, ModelTest-NG) [3]. | Heavily penalizes extra parameters, favoring simpler models when appropriate, which helps prevent overfitting. |

| AICc (Corrected Akaike Information Criterion) | Shows a 2-3% bias towards selecting more parameter-rich models of rate heterogeneity (Rk) compared to the true model [32]. | Provides a correction for small sample sizes. Results are often identical to AIC for large datasets [3]. |

| AIC (Akaike Information Criterion) | Performance similar to AICc, but may select overly complex models compared to BIC [3]. | Derived from frequentist probability, it is less punitive against model complexity than BIC. |

Frequently Asked Questions

What do the 6-digit codes in my model selection output mean?

These codes represent equality constraints on the six relative substitution rates between nucleotide pairs (A-C, A-G, A-T, C-G, C-T, G-T). Identical digits indicate that the corresponding rates are set equal to each other. For example, the code 010010 means the A-G rate equals the C-T rate (both coded as 1), while the other four rates are equal to each other (coded as 0). The code 012345 indicates a General Time Reversible (GTR) model where all six rates are free to vary independently [7].

AIC and AICc selected one model, but BIC selected a simpler one. Which should I trust? Your observation is a common and important outcome. Research indicates that the Bayesian Information Criterion (BIC) consistently outperforms both AIC and AICc in accurately identifying the true underlying nucleotide substitution model [3]. BIC more heavily penalizes model complexity, which helps prevent overfitting. For robust phylogenetic analysis, particularly with large datasets, trusting the BIC selection is generally recommended [3].

The same criterion selects different best-fit models in jModelTest2, ModelTest-NG, and IQ-TREE. Is this normal? A comparative analysis has demonstrated that the choice of software (jModelTest2, ModelTest-NG, or IQ-TREE) does not significantly affect the accuracy of model selection [3]. The key factor is the statistical criterion chosen (AIC, AICc, or BIC). You can confidently rely on results from any of these three programs, as they offer comparable performance [3].

What is the practical difference between +F, +FO, and +FQ in model names?

These suffixes specify how base frequencies are handled [7]:

+F: Uses empirical base frequencies counted directly from your alignment. This is often the default for models that allow unequal base frequencies.+FO: Optimizes base frequencies using maximum likelihood, which can provide a better fit but is computationally more intensive.+FQ: Forces equal base frequencies.

Model Codes and Common DNA Substitution Models

The table below summarizes common DNA substitution models, their parameters, and their standard 6-digit codes to help you interpret software output [7].