Accounting for Population Structure in Genomic Analysis: Methods, Challenges, and Best Practices for Researchers

This article provides a comprehensive guide for researchers and drug development professionals on the critical importance of accounting for population structure in genomic analyses.

Accounting for Population Structure in Genomic Analysis: Methods, Challenges, and Best Practices for Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical importance of accounting for population structure in genomic analyses. It explores the foundational concepts of genetic relatedness and its role as a confounder in studies ranging from Genome-Wide Association Studies (GWAS) to demographic inference and genomic selection. The content details parametric, nonparametric, and modern deep learning methodologies, alongside practical strategies for troubleshooting biased results and optimizing training populations. Through validation frameworks and comparative analyses of tools and techniques, this resource offers actionable insights for improving the accuracy and reliability of genomic findings in biomedical and clinical research.

Understanding Population Structure: Why Genetic Relatedness is a Fundamental Confounder

FAQs: Understanding and Identifying Population Structure

Q1: What is population structure, and why is it a confounder in genetic association studies?

Population structure refers to systematic ancestry differences in a study cohort. It arises from variations in allele frequencies across subpopulations due to differing demographic histories. If a trait of interest is also unevenly distributed across these subpopulations, it can create spurious genetic associations, inflating test statistics and leading to false positives [1].

Q2: What is the difference between ancestry differences and cryptic relatedness?

- Ancestry Differences (Population Structure): These are consequences of ancient relatedness, reflecting broad-scale ancestry patterns across populations [1].

- Cryptic Relatedness: This refers to recent, unknown familial relationships (kinship) between individuals within a study sample. Both are forms of relatedness that violate the assumption of sample independence in statistical analyses [1].

Q3: How does population structure confound meta-analysis of genome-wide association studies (GWAS)?

Meta-analysis assumes that the included studies are independent. However, when studies share individuals from the same population or there is relatedness across studies, it creates cryptic relatedness between studies. This violates the independence assumption, leading to correlated errors and inflated type I error rates in the meta-analysis results, even if the individual studies are properly controlled [1].

Q4: What are the typical signals that population structure is confounding my analysis?

The primary signal is inflation of test statistics. This is often measured by the genomic inflation factor (λ). A λ value significantly greater than 1.05 suggests potential confounding due to population structure or relatedness [1]. Quantile-Quantile (Q-Q) plots that deviate from the null diagonal line also indicate inflation.

Q5: What methods can I use to correct for population structure in my analysis?

Standard methods include using Principal Components (PCs) as covariates to adjust for broad-scale ancestry [1]. For recent and fine-scale population structure, more advanced methods like SPectral Components (SPCs) have been shown to outperform PCs by leveraging identity-by-descent graphs to capture non-linear patterns [2]. Linear Mixed Models (LMMs) can also simultaneously account for both population structure and cryptic relatedness [1].

Troubleshooting Guides

Problem: Inflated test statistics (High Genomic Inflation Factor) in GWAS

| Observation | Potential Cause | Corrective Action |

|---|---|---|

| Genomic inflation factor (λ) > 1.05 [1] | Population stratification: Uneven ancestry distribution correlated with the trait. | Apply PCA covariates: Include top principal components from genetic data as covariates in association model [1]. |

| Cryptic relatedness: Unknown familial relationships within sample. | Use Linear Mixed Models: Employ LMMs that incorporate a genetic relationship matrix (GRM) to account for relatedness [1]. | |

| Fine-scale structure: Recent demographic events not captured by standard PCs. | Use SPCs: Apply SPectral Components, which are better at adjusting for recent, fine-scale population structure [2]. |

Problem: Confounding in Meta-Analysis Due to Relatedness Between Studies

| Observation | Potential Cause | Corrective Action |

|---|---|---|

| Severe inflation in sex-stratified or subpopulation meta-analysis [1]. | Cryptic relatedness between studies: Non-independence from analyzing related groups (e.g., men/women from same population). | Prefer joint analysis: Perform a single GWAS on the combined dataset (mega-analysis) when individual-level data is available [1]. |

| Apply Genomic Control (GC): Correct summary statistics by dividing test statistics by the inflation factor (λ). Note: This may not restore lost power [1]. | ||

| Avoid non-independent designs: Do not meta-analyze studies that share the same underlying populations [1]. |

Experimental Protocols for Accounting for Population Structure

Protocol 1: Standard Adjustment using Principal Components (PCs)

This is a widely used method to correct for broad-scale population structure.

- Quality Control (QC): Perform standard QC on the genotype data (e.g., variant and sample call rate, Hardy-Weinberg equilibrium).

- LD Pruning: Prune SNPs to remove those in high linkage disequilibrium (LD), ensuring independence for calculating population structure.

- PCA Calculation: Compute principal components on the pruned genotype matrix.

- Covariate Selection: Determine the number of top PCs that capture significant population structure, often by inspecting a scree plot or using a predefined number (e.g., 10 PCs) [1].

- Association Testing: Run the GWAS, including the selected PCs as covariates in the regression model alongside other relevant covariates (e.g., sex, age).

Protocol 2: Advanced Adjustment using SPectral Components (SPCs)

SPCs are designed to better capture fine-scale, non-linear population structure that PCs might miss [2].

- IBD Segment Detection: Identify segments of identity-by-descent (IBD) shared between all pairs of individuals in the cohort.

- IBD Graph Construction: Construct a graph where nodes represent individuals, and edges are weighted by the proportion of the genome shared IBD.

- Spectral Analysis: Perform a spectral decomposition (eigen-analysis) of the IBD graph's Laplacian matrix.

- Component Selection: The top eigenvectors of this matrix are the SPCs, which provide a continuous representation of the fine-scale population structure.

- Association Testing: Use the selected SPCs as covariates in the association model. Studies show SPCs can reduce genomic inflation more effectively than PCs for traits influenced by local environmental effects [2].

Logical Workflow for Addressing Population Structure

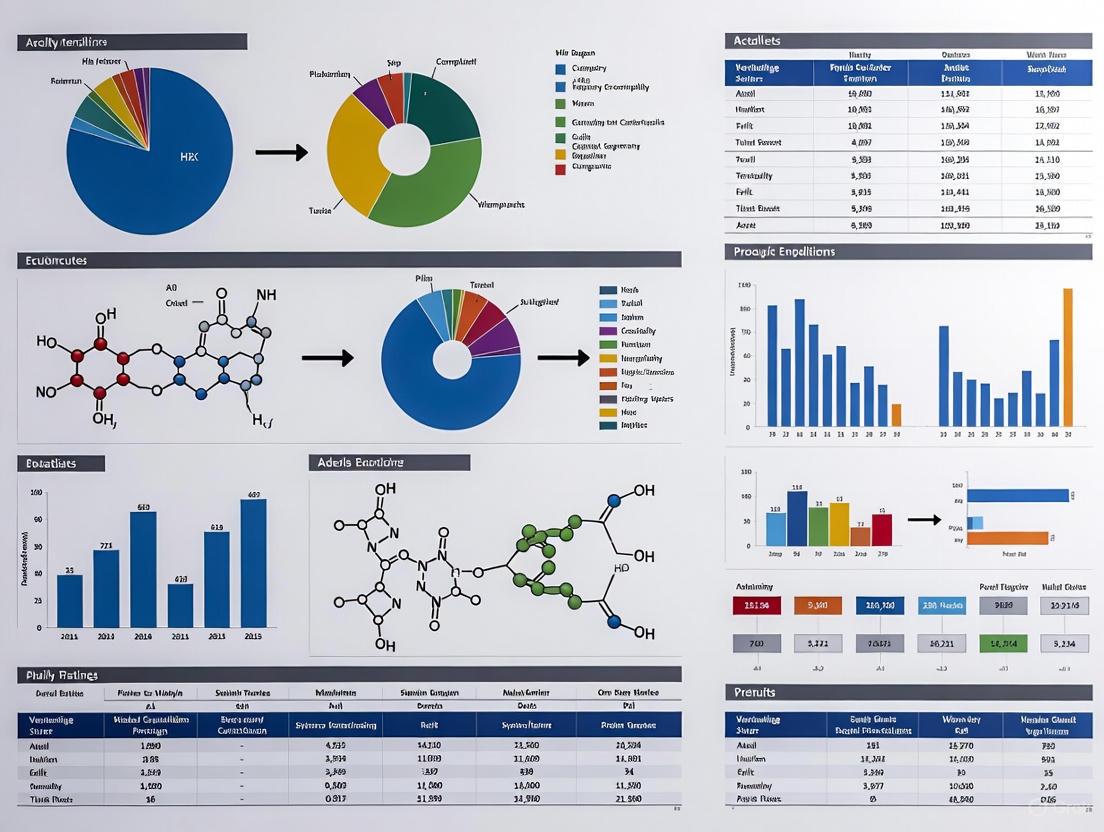

The diagram below outlines a decision workflow for diagnosing and correcting for population structure in genetic analyses.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Analysis |

|---|---|

| Principal Components (PCs) | Continuous covariates derived from genetic data to capture and adjust for broad-scale population ancestry in association models [1]. |

| Genetic Relationship Matrix (GRM) | A matrix quantifying genetic similarity between all pairs of individuals, used in Linear Mixed Models to account for structure and relatedness [1]. |

| SPectral Components (SPCs) | Continuous covariates derived from Identity-by-Descent (IBD) graphs, designed to better capture fine-scale, recent population structure than PCs [2]. |

| Genomic Control (GC) | A post-hoc correction method applied to GWAS summary statistics, which scales test statistics by the genomic inflation factor (λ) to reduce inflation [1]. |

| SAIGE Software | A tool for performing GWAS that controls for unbalanced case-control ratios and sample relatedness using mixed models [1]. |

| METAL Software | A widely used tool for performing fixed-effects meta-analysis of GWAS summary statistics [1]. |

The Problem of Spurious Associations in Genome-Wide Association Studies (GWAS)

Frequently Asked Questions (FAQs)

What causes a spurious association in a GWAS?

A spurious association, or false positive, occurs when a genetic variant appears to be statistically associated with a trait not because of a true biological relationship, but because of confounding factors in the study design or population. The primary cause is population structure—the presence of systematic ancestry differences or cryptic relatedness among individuals in your sample [3] [4]. If a trait is also correlated with that ancestry (population stratification), it can create false signals [3] [4]. Other common causes include batch effects and genotyping artifacts, where the processing of cases and controls is not randomized, causing technical variability to be mistaken for a genetic signal [5].

How can I quickly check if my GWAS results are inflated by spurious associations?

The most common diagnostic tool is the Genomic Control (GC) method, which calculates an inflation factor (λ) [4]. A λ value significantly greater than 1.0 suggests a systemic inflation of test statistics, often due to population structure or other confounding. This is easily visualized on a Quantile-Quantile (Q-Q) plot, where a deviation of the observed p-values from the expected uniform distribution indicates potential inflation [4] [6].

What is the difference between Principal Component Analysis (PCA) and Mixed Models for correction?

Both methods adjust for population structure, but they operate on different principles and are optimal in different scenarios. The table below summarizes the key differences.

| Method | Principle | Best Used For | Key Assumptions |

|---|---|---|---|

| Principal Component Analysis (PCA) [3] [4] [6] | Uses top principal components from genotype data as covariates in regression to model continuous ancestry differences. | Correcting for environmental stratification (e.g., where an environmental factor correlated with ancestry also affects the trait). | The effects of population structure on the phenotype are linear and are captured by the top PCs. |

| Mixed Models [3] [4] [6] | Incorporates a genetic relationship matrix (GRM or kinship matrix) as a random effect to account for the genetic similarity between all pairs of individuals. | Correcting for polygenic background and assortative mating. It is powerful when the genetic background itself influences the trait. | The genetic background effects are normally distributed and follow the genomic similarity defined by the GRM (infinitesimal model). |

My GWAS uses whole exome sequencing data. Are standard correction methods still effective?

Yes, research indicates that exonic variants can be sufficient for population stratification adjustment [6]. Studies have shown that PCs and GRMs computed from high-quality exonic variants are highly correlated with those from genome-wide data. Association tests using exome-computed PCs and GRMs effectively control type I error rates for common variants, performing nearly as well as genome-wide variants [6].

What are the best practices in experimental design to prevent spurious associations?

Prevention is the most effective strategy. Key practices include:

- Uniform Protocols: Use consistent DNA sources (e.g., all blood or all saliva) and the same genotyping platform for all samples [5].

- Randomization: Randomize cases and controls across genotyping plates and batches to avoid confounding case-control status with batch effects [5].

- Avoid Borrowing Controls: If you must use controls from a separate study, ensure that the data collection and processing protocols are perfectly matched, or use statistical methods designed to integrate such data safely [5].

Troubleshooting Guides

Guide 1: Diagnosing and Correcting Population Stratification

Symptoms:

- Genomic inflation factor (λ) > 1.05

- Q-Q plot shows a strong upward curve at the beginning.

- Manhattan plot shows an excess of significant SNPs across many chromosomes, especially in regions known to be associated with ancestry.

Solution: Apply a Standard PCA Correction Workflow

This workflow outlines the key steps to correct for population structure using Principal Component Analysis.

Detailed Methodology:

- Quality Control (QC) & LD Pruning: Start with genotype data that has passed standard QC (call rate, HWE). Then, prune variants to remove those in high linkage disequilibrium (LD). A common practice is to use a 50-SNP window, sliding by 5 SNPs each time, and removing one of any pair of SNPs with an r² > 0.5 [6]. This ensures the PCA is not dominated by regions of long-range LD.

- Compute Principal Components: Perform PCA on the pruned genotype matrix. Software like PLINK is commonly used for this step [7].

- Select Top PCs: Include the top principal components (e.g., the first 5-20) as covariates in your association model. The number can be determined by examining the scree plot or using a formal testing procedure [3] [6].

- Re-run & Re-diagnose: Execute the GWAS again, including the selected PCs as covariates. Re-inspect the genomic inflation factor (λ) and Q-Q plot to confirm the inflation has been controlled.

Guide 2: Addressing Severe Batch Effects

Symptoms:

- Extreme test statistics (e.g., p-values < 10⁻²⁰⁰) for multiple SNPs, which is often biologically implausible [5].

- Association signals are strongly correlated with plating order or DNA source.

Solution:

The only robust solution is to go back to the laboratory and re-design the experiment.

- Re-extract and Re-genotype: If possible, re-process the samples using a fully randomized or block-randomized design where cases and controls are evenly distributed across all plates and batches [5].

- If Re-processing is Impossible: As a last resort, you can attempt to use the batch as a covariate in the model. However, this is a palliative measure and cannot fully correct for deeply confounded data [5]. The results should be interpreted with extreme caution.

| Category | Item / Software | Primary Function | Key Consideration |

|---|---|---|---|

| Data Quality Control | PLINK [8] [7] | A whole-genome association toolset used for data management, QC, and basic association analysis. | The industry standard for processing genotype data before advanced analysis. |

| GWASTools [9] | Bioconductor package for quality control and analysis of GWAS data from microarray studies. | Particularly useful for handling and cleaning large-scale GWAS data sets in R. | |

| Population Structure Correction | PLINK [8] [7] | Performs PCA on genotype data to generate ancestry components for use as covariates. | A straightforward and integrated way to compute PCs within a common pipeline. |

| EMMAX / BOLT-LMM [3] [4] | Efficient mixed-model association tools that use a GRM to account for relatedness and structure. | Preferred for large biobank-scale datasets and when correcting for polygenic background. | |

| Summary Statistics Visualization | gwaslab [7] | A Python package for visualizing GWAS summary statistics, including Manhattan and Q-Q plots. | Enables rapid diagnostic visualization and figure generation in a Python environment. |

| Advanced Association Methods | QTCAT [10] | A multi-marker association method that clusters correlated markers, potentially avoiding the need for explicit population structure correction. | Useful for exploring data with extreme population structure where standard methods may fail. |

Effective Population Size (Ne) is a fundamental concept in population genetics, defined as the size of an idealized Wright-Fisher population that would experience the same amount of genetic drift or inbreeding as the real population under study [11] [12]. This parameter has gained significant importance in conservation genetics, evolutionary biology, and biodiversity monitoring, now forming a key indicator for the UN's Convention on Biological Diversity Kunming-Montreal Global Biodiversity Framework [11].

The accurate estimation of Ne is particularly challenging in structured populations, where violations of the idealized assumptions can lead to significant biases [11]. Most estimation methods rely on assumptions including no immigration, panmixia (random mating), random sampling, absence of spatial genetic structure, and mutation-drift equilibrium [11]. In reality, however, many species are characterized by fragmented populations experiencing changing environmental conditions and anthropogenic pressures, meaning these assumptions are seldom met [11]. This technical guide addresses the specific biases introduced by population structure in Ne estimation and provides troubleshooting approaches for researchers working in this domain.

Understanding Different Types of Effective Population Size

Before addressing biases, it is crucial to recognize that Ne can be defined in different ways, each with distinct interpretations and applications. The main types include:

Table 1: Types of Effective Population Size and Their Applications

| Type of Ne | Definition | Typical Applications | Temporal Focus |

|---|---|---|---|

| Inbreeding Effective Size | Size of an ideal population with the same rate of inbreeding | Conservation genetics, assessing extinction risk | Contemporary |

| Variance Effective Size | Size of an ideal population with the same variance in allele frequency change | Evolutionary studies, measuring genetic drift | Contemporary |

| Eigenvalue Effective Size | Derived from the largest non-unit eigenvalue of the transition matrix of allele frequencies | Complex demographic scenarios, dioecious populations | Asymptotic |

| Coalescent Effective Size | Based on the expected time to coalescence of gene copies | Molecular evolution, phylogenetic studies | Historical |

These different types of Ne converge to the same value under ideal Wright-Fisher conditions but can diverge substantially when population structure is present [13] [12]. The spatial and temporal scales also affect Ne interpretations—estimates can refer to historical Ne (a geometric mean over many generations) or contemporary Ne (current or recent generations), each with different implications for conservation and management [11].

Common Biases in Ne Estimation from Population Structure

Spatial Scale Mismatch and Sampling Issues

One of the most prevalent sources of bias occurs when the sampling scheme does not align with the actual biological population structure [11]. Depending on how sampling is conducted relative to the population range, researchers might inadvertently estimate the Ne of a subpopulation rather than the entire metapopulation, or vice versa [11].

Troubleshooting Guide:

- Problem: Inconsistent Ne estimates across studies of the same species.

- Diagnosis: Sampling schemes may be targeting different spatial scales (subpopulation vs. metapopulation).

- Solution: Conduct preliminary population structure analysis using methods like PCA, STRUCTURE, or ADMIXTURE before designing Ne estimation studies [14] [15] [16].

- Preventive Measures: Use geographical and ecological data to inform sampling designs and ensure samples represent the appropriate biological unit for the research question.

Violation of Panmixia Assumption

Most Ne estimation methods assume random mating within populations, but many natural populations exhibit assortative mating, isolation-by-distance, or other non-random mating patterns [11] [17].

FAQ: How does non-random mating affect Ne estimates? Non-random mating, including assortative mating and isolation-by-distance, increases the variance in reproductive success and reduces the effective population size. This can lead to overestimation of genetic diversity and underestimation of extinction risk if not properly accounted for [17]. Methods that assume panmixia will produce biased estimates in such cases.

Immigration and Gene Flow

Many Ne estimation methods assume populations are closed, but real populations often experience immigration and gene flow [11]. This can profoundly affect genetic diversity and drift rates.

Table 2: Impact of Gene Flow on Ne Estimation

| Gene Flow Scenario | Effect on Ne Estimation | Recommended Approach |

|---|---|---|

| Recent Immigration | Can artificially inflate Ne estimates | Use methods that account for recent migrants |

| Historical Gene Flow | Affects coalescent-based estimates | Consider metapopulation models |

| Asymmetric Migration | Creates source-sink dynamics in Ne | Implement spatial explicit methods |

| Barriers to Gene Flow | Leads to underestimation of regional Ne | Analyze hierarchical population structure |

Methodological Approaches to Account for Population Structure

Population Structure Analysis Tools

Before estimating Ne, researchers should characterize population structure using specialized software:

- STRUCTURE: Uses Bayesian clustering to assign individuals to populations based on genetic similarity [14] [18]. Key parameters include the admixture model and LOCPRIOR when sampling locations are known.

- ADMIXTURE: Implements maximum likelihood estimation of ancestry proportions [15] [16]. Faster than STRUCTURE for large datasets.

- PopMLvis: An interactive platform that supports multiple algorithms (PCA, t-SNE, K-means) and provides visualization tools [15].

- Principal Component Analysis (PCA): A dimensionality reduction technique that reveals genetic similarities among individuals [15] [16].

Integrating Population Structure in Ne Estimation

Experimental Protocol: Comprehensive Ne Estimation Accounting for Population Structure

Step 1: Initial Quality Control

- Filter genetic markers to remove those under selection or with poor quality

- Assess linkage disequilibrium and prune markers if necessary [16]

- Verify marker independence and neutrality assumptions [14]

Step 2: Population Structure Analysis

- Perform PCA to identify major axes of genetic variation [15] [16]

- Run ADMIXTURE or STRUCTURE with multiple K values (assumed number of populations) [14] [16]

- Use cross-validation to select the optimal K value [16]

- Visualize results using tools like distruct, CLUMPP, or PopMLvis [14] [15]

Step 3: Sampling Group Definition

- Define biological populations based on genetic structure results

- Ensure sampling units match inference goals (subpopulation vs. metapopulation)

- Document potential admixed individuals for separate analysis

Step 4: Ne Estimation with Appropriate Methods

- For clearly defined, discrete populations: Use single-population Ne estimators

- For admixed individuals or continuous populations: Implement spatial or clustering-based methods

- Compare multiple estimation approaches for consistency

Step 5: Interpretation and Reporting

- Report the spatial and temporal context of estimates

- Acknowledge limitations and assumptions

- Provide details of population structure findings alongside Ne estimates

Ne Estimation Workflow with Population Structure Assessment

Quantitative Data on Ne Biases from Population Structure

Research has demonstrated systematic biases in Ne estimation when population structure is ignored:

Table 3: Documented Biases in Ne Estimation from Population Structure

| Study System | Structure Type | Bias Direction | Magnitude of Bias | Reference |

|---|---|---|---|---|

| Florida Sand Skink | Habitat fragmentation | Overestimation without fire disturbance | 25-40% difference | [19] |

| Type D Killer Whales | Distinct ecotype with long-term isolation | Underestimation of historical Ne | 60% lower than expected | [19] |

| Theoretical Dioecious Populations | Sexual structure | Underestimation with improper sex ratio accounting | 10-30% depending on equations | [13] [12] |

| Ginkgo Biloba | Subtle population differentiation | Misassignment of individuals to populations | CV error lowest at K=2-3 | [16] |

Research Reagent Solutions and Tools

Table 4: Essential Tools for Population Structure and Ne Analysis

| Tool/Reagent | Function | Application Context | Considerations |

|---|---|---|---|

| STRUCTURE | Bayesian population assignment | Identifying genetic clusters, detecting migrants | Computationally intensive; requires careful parameter setting [14] [18] |

| ADMIXTURE | Maximum likelihood ancestry estimation | Large SNP datasets, ancestry proportions | Faster than STRUCTURE but similar applications [15] [16] |

| PopMLvis | Interactive visualization platform | Multiple algorithm integration, publication-ready figures | Web-based and standalone versions available [15] |

| fastSTRUCTURE | Variant for large SNP datasets | Genome-wide association studies, big data | Optimized for computational efficiency [18] |

| BCFtools | Variant calling and filtering | Processing raw sequencing data to variant calls | Often used in preprocessing pipelines [16] |

| PLINK | Genome data management | Quality control, stratification analysis | Standard format conversion [15] |

Advanced Topics and Future Directions

Temporal Analysis in Structured Populations

Many populations experience changes in structure over time, creating additional challenges for Ne estimation. Historical Ne estimates based on coalescent theory reflect long-term averages, while contemporary methods (e.g., based on linkage disequilibrium or sibship frequency) capture recent dynamics [11] [12]. In structured populations, these temporal perspectives may yield dramatically different values, each providing valid but distinct information about population history and current status.

Metapopulation Effective Size

For species with strong population subdivision, the concept of metapopulation Ne becomes relevant [11]. This considers the entire network of subpopulations connected by migration. The global effective size of a metapopulation depends on the local effective sizes of subpopulations, migration rates, and the extent of synchrony in population fluctuations [11]. Specialized estimation approaches are needed for these complex scenarios.

How Sampling Scheme Affects Ne Estimation in Structured Populations

Frequently Asked Questions (FAQs)

FAQ: What is the most appropriate Ne estimation method for slightly structured populations? For populations with weak structure, the linkage disequilibrium method often performs well, provided sample sizes are adequate (≥100 individuals). However, preliminary structure analysis is still recommended using tools like PCA or ADMIXTURE to identify potential subgroups. If structure is detected, consider using methods that explicitly account for it or analyze subgroups separately [11] [12].

FAQ: How many genetic markers are needed for reliable Ne estimation in structured populations? The requirement depends on the level of structure and the specific method. For microsatellites, early guidelines recommended 500 samples [14], but for SNPs, smaller sample sizes may suffice [14]. As a general rule, more markers are needed when population structure is weak or when dealing with admixed populations. High-density SNP arrays or whole-genome sequencing are increasingly feasible options [15].

FAQ: Can we estimate Ne for admixed populations? Yes, but with important caveats. Recent admixture creates linkage disequilibrium that can bias methods based on this signal. Specialized approaches that first estimate local ancestry may be required. The ADMIXTURE tool can help characterize admixture proportions, which can then inform appropriate Ne estimation strategies [15] [16].

FAQ: How does ignoring population structure affect conservation decisions? Underestimating population structure can lead to serious conservation errors. For example, managing a metapopulation as a single unit might allow unique local adaptations to be lost through swamping or might miss critical source-sink dynamics. Proper characterization of structure ensures that conservation resources target appropriate biological units and maintain important evolutionary processes [11] [19].

Accurate estimation of effective population size requires careful attention to population structure throughout the research process—from sampling design to method selection and interpretation. The biases introduced by ignoring structure can lead to substantially misleading conclusions about population viability, evolutionary potential, and conservation priorities. By implementing the troubleshooting approaches and methodological recommendations outlined in this guide, researchers can significantly improve the reliability of their Ne estimates and make better-informed decisions in both basic research and applied conservation contexts.

The field continues to evolve with new computational approaches and genomic technologies, promising increasingly sophisticated methods for accounting for population structure in demographic inference. Researchers should stay informed about these developments while applying current best practices to minimize biases in their work with effective population size estimation.

Frequently Asked Questions (FAQs)

FAQ 1: How does population structure lead to inflated prediction accuracies in genomic selection? Population structure, such as the presence of distinct subpopulations, can create spurious associations between markers and traits that are not due to causal genetic effects. When a model fails to account for this structure, it may mistake these population-level correlations for true genetic signals. This can artificially inflate the apparent accuracy of genomic predictions within the training data. However, this inflation often does not hold up when the model is applied to new, unrelated populations, leading to a significant drop in predictive performance and a false sense of confidence in the breeding values [20].

FAQ 2: Why do biased breeding values occur in structured populations? In populations undergoing selection, the mean breeding value of genotyped individuals often deviates from zero. If this is not accounted for in the genomic prediction model, it introduces bias. Specifically, when genotypes are centered incorrectly (e.g., using the mean of a selected group rather than the unselected founders), the genomic breeding values become biased. Fitting the mean of unselected individuals (µg) as a fixed effect in the model has been shown to effectively correct for this selection bias and prevent the resulting inaccuracies in estimated breeding values [21].

FAQ 3: Are rare variants or common variants more problematic for population structure in genomic analysis? The level of population structure embedded in rare variant data is different from that in common variant data. Analyses using rare functional variants require a much larger number of principal components (532 in one study) to account for 90% of the population structure compared to common variants (388 PCs). Rare variants can reveal fine-scale substructure but are less efficient for assigning individuals to broad ancestral populations, potentially adding a layer of complexity that can confound genomic analyses if not properly handled [22].

FAQ 4: What is the practical impact of ignoring population structure on genomic prediction accuracy? Ignoring population subdivision often leads to an underestimation of the effective population size (Ne), which is a key parameter for understanding genetic drift and inbreeding. More broadly, unaccounted-for structure can reduce the accuracy and increase the bias of genomic predictions. For instance, in strawberry breeding, models that explicitly accounted for population structure and genotype-by-environment interactions achieved a prediction accuracy of up to r=0.8 for soluble solids content, significantly outperforming standard models that did not [23] [20].

Troubleshooting Guides

Problem 1: Inflated Genomic Prediction Accuracies

Symptoms:

- High prediction accuracy within the training population, but a severe drop in accuracy when applied to a validation set from a different genetic background or environment.

- Principal Component Analysis (PCA) of the genomic data shows clear clustering of individuals according to origin, breed, or geographic location.

Diagnosis and Solution: The genomic prediction model is likely capturing the population structure itself rather than the true genetic effects of the trait of interest.

Action 1: Incorporate Population Structure into the Model.

- Method: Use a mixed model that includes fixed effects to account for structure, such as principal components (PCs) or a categorical covariate for subpopulations. Alternatively, use a genomic relationship matrix (GRM) constructed using population-specific allele frequencies [20].

- Protocol:

- Perform a PCA on the genotype data (e.g., using PLINK or R).

- Determine the significant PCs that correlate with population clusters.

- Include these PCs as fixed covariates in your genomic prediction model (e.g., GBLUP).

- Note: To avoid "double-counting" genetic information, consider using a re-parameterized GBLUP model that partitions genetic variance across and within subpopulations using PCA eigenvalues [20].

Action 2: Use Multi-Population Genomic Relationship Matrices.

- Method: Fit a model that uses separate genomic relationship matrices for different sub-populations. This approach is particularly effective when causal variants may segregate in only one population [20].

Problem 2: Biased Genomic Breeding Values

Symptoms:

- Genomic Estimated Breeding Values (GEBVs) show a systematic over- or under-prediction for individuals from certain genetic lines or time periods, especially in breeding programs with a history of selection.

- Predictions for younger selection candidates are consistently biased compared to their subsequent performance.

Diagnosis and Solution: The model is not properly accounting for the changes in allele frequencies and mean breeding values caused by selection.

- Action: Fit a Fixed Effect for the Mean of Unselected Individuals (µg).

- Method: In single-step genomic analyses, explicitly fit µg as a fixed effect in the model. This corrects for the bias introduced by using genotypes from selected individuals [21].

- Protocol:

- In your statistical model, include a fixed covariate (J) that estimates µg.

- For genotyped individuals, this covariate is typically -1.

- For non-genotyped individuals, the covariate is derived from the matrix of pedigree-based additive relationships (

Jn = -Ang * Agg^-1 * 1).

- Evidence: Simulation studies have shown that fitting µg in the model maintains high accuracy (~99.4%) even under selection, while models that do not fit µg can see accuracy drop to ~90.2% [21].

Problem 3: Low Accuracy Due to Rare Variants and Fine-Scale Structure

Symptoms:

- Despite using a high density of markers, including many rare variants, the model fails to achieve robust prediction accuracy across the entire population.

- Population structure analysis with rare variants reveals a very high number of significant principal components.

Diagnosis and Solution: Rare variants can capture fine-scale population structure that common variants miss, but they also introduce more noise and require careful handling.

- Action: Optimize Variant Selection and Model Complexity.

- Method: Perform linkage disequilibrium (LD) pruning to reduce the number of redundant and highly correlated rare variants. Alternatively, use methods that aggregate rare variants or apply specific models designed for rare variants.

- Protocol:

- Filter your genotype data (e.g., a VCF file) to remove markers with very low minor allele frequency if not the focus of the study.

- Use tools like BCFtools or PLINK for LD-based pruning to create a subset of independent markers.

- Re-run the population structure analysis (e.g., with ADMIXTURE) and genomic prediction on the pruned dataset [16].

- Note: Be aware that accurately accounting for population structure with rare variants may require a much larger number of principal components in the model compared to using common variants alone [22].

Table 1: Impact of Accounting for Population Structure on Prediction Accuracy

| Scenario / Model | Prediction Accuracy (r) | Key Parameter | Notes | Source |

|---|---|---|---|---|

| Strawberry SSC (Standard GBLUP) | Lower than Pfa/Wfa | --- | Baseline model | [20] |

| Strawberry SSC (Pfa/Wfa Models) | ~0.8 | --- | Accounted for structure & GxE | [20] |

| Simulation (Fitting µg under selection) | 99.4% | Accuracy | Panel included QTL | [21] |

| Simulation (Not fitting µg under selection) | 90.2% | Accuracy | Panel included QTL | [21] |

| Population Assignment (Common Variants) | 98% | Assignment Accuracy | Required 400 SNPs | [22] |

| Population Assignment (Rare Variants) | 98% | Assignment Accuracy | Required 1000 SNPs | [22] |

Table 2: Population Structure Characteristics of Different Variant Types

| Variant Type | PCs for 90% of Structure | Key Finding | Source |

|---|---|---|---|

| Common Functional Variants | 388 | Clear distinction of major geographic origins (Europe, Asia, Africa). | [22] |

| Rare Functional Variants | 532 | Detected fine-scale substructure and outliers within geographically close populations. | [22] |

Experimental Protocols

Protocol 1: Population Structure Analysis with ADMIXTURE in OmicsBox

This protocol outlines the steps for conducting a population structure analysis, which is a critical first step in diagnosing potential issues in genomic selection [16].

- Input Data Preparation: Begin with a filtered VCF (Variant Call Format) file containing the genotype data for all individuals.

- Phasing and Imputation: Phase and impute the VCF file using a tool like Beagle to estimate missing genotypes. This improves the quality of the data.

- LD Pruning: Perform linkage disequilibrium pruning using BCFtools to remove highly correlated SNPs. This reduces redundancy and computational load.

- Run ADMIXTURE: Execute the ADMIXTURE software, specifying a range of possible subpopulations (K).

- Model Selection: Analyze the output cross-validation (CV) errors for different K values. The model with the lowest CV error is typically the most appropriate.

- Visualization: Use the output to create bar plots showing the ancestral proportions of each individual.

Protocol 2: Implementing a PCA-Enhanced GBLUP Model (Pfa)

This methodology describes how to integrate population structure directly into the genomic prediction model to improve accuracy and reduce bias [20].

- Genotypic Data: Obtain a matrix of SNP genotypes for all individuals in the reference and target populations.

- Principal Component Analysis: Perform PCA on the genomic relationship matrix (GRM) derived from the genotype data.

- Model Reparameterization: Partition the genetic variance into components across and within subpopulations using the eigenvalues from the PCA. This creates a PCA-derived relationship matrix.

- Model Fitting: Fit the GBLUP model using the new PCA-derived relationship matrix. The model should also include terms for genotype-by-environment (G×E) interactions if data from multiple environments are available.

- Validation: Evaluate the model by comparing the predicted breeding values to a validation set of individuals that were not used in training the model.

Conceptual Workflow Diagram

Diagram: Troubleshooting Workflow for Genomic Selection Issues.

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for Genomic Selection Studies

| Tool / Reagent | Primary Function | Application Context | Source / Example |

|---|---|---|---|

| ADMIXTURE | Model-based estimation of ancestry proportions from SNP data. | Identifying and quantifying population structure in a dataset. | [16] |

| GONE2 & currentNe2 | Infer recent and contemporary effective population size (Ne) from SNP data, accounting for population structure. | Understanding population history and genetic drift, which is critical for model calibration. | [23] |

| GBLUP (Pfa/Wfa Models) | Genomic Best Linear Unbiased Prediction using PCA-derived or within-population relationship matrices. | Performing genomic prediction while accounting for population structure to reduce bias. | [20] |

| PCA (Principal Component Analysis) | Dimensionality reduction to identify major axes of genetic variation. | Visualizing population structure and generating covariates for statistical models. | [22] [20] |

| High-Density SNP Arrays | Genome-wide genotyping of thousands of single nucleotide polymorphisms. | Generating the primary genomic data for constructing relationship matrices and estimating breeding values. | IStraw35 Axiom array [20] |

Conceptual Foundations & Definitions

What is Linkage Disequilibrium (LD) and how does it impact my association study? Linkage Disequilibrium (LD) is the non-random association of alleles at different loci in a population [24] [25]. This means that knowing the allele at one locus provides information about the allele at another linked locus. It is fundamentally measured by the coefficient ( D ), which is the difference between the observed haplotype frequency (( p{AB} )) and the frequency expected under independence (( pA \times pB )): ( D = p{AB} - pA pB ) [24] [25]. In practice, standardized measures like ( r^2 ) (the squared correlation coefficient between loci) are more commonly used, as they indicate how well one marker can predict another and are critical for tag SNP selection [26].

LD is crucial for association studies because:

- Gene Mapping: It enables genome-wide association studies (GWAS) and haplotype mapping by allowing researchers to use known SNPs as proxies for ungenotyped causal variants [24] [25].

- Study Design: The extent of LD in a genome determines the density of markers required for a successful study. In regions with slow LD decay, fewer markers may be needed [27].

- Signal Interpretation: Association signals often cluster in haplotype blocks—genomic regions with high LD and low recombination [25]. A significant association with one SNP typically implicates a whole block of correlated variants, necessitating fine-mapping to identify the true causal variant.

How is FST interpreted and what does it tell me about my populations? The Fixation Index (FST) is a measure of genetic differentiation between two or more populations [28] [29]. Its values range from 0 to 1, where 0 indicates no differentiation (panmixia) and 1 indicates complete differentiation [28] [29].

One common formulation, based on heterozygosity, is: [ F{ST} = \frac{HT - HS}{HT} ] where ( HT ) is the expected heterozygosity of the total (meta-)population and ( HS ) is the average expected heterozygosity within the individual subpopulations [28].

FST is a sensitive indicator of population structure. A high FST value between populations in your study sample indicates that genetic variation is partitioned between them, which can confound association analyses if not accounted for. For context, FST between human continental populations (e.g., Europeans vs. East Asians) is often around 0.1-0.15, while values between closely related European populations can be less than 0.01 [29].

What is the critical difference between Identity by Descent (IBD) and Identity by State (IBS)? This is a fundamental distinction for relatedness inference.

- Identity by Descent (IBD): A DNA segment is IBD in two or more individuals if it was inherited from a common ancestor without recombination [30]. Only IBD segments provide information about recent genealogical connections.

- Identity by State (IBS): Two alleles or haplotypes are IBS if they are identical in sequence, regardless of whether they were inherited from a common ancestor [31]. IBS is a simple observation of matching and does not necessarily imply recent shared ancestry.

The key is that all IBD segments are IBS, but not all IBS segments are IBD. IBS can occur simply by chance, especially for short segments or common alleles. Modern IBD detection methods are designed to distinguish true IBD segments from segments that are merely IBS [31].

Troubleshooting Common Analytical Problems

How can I account for population structure to avoid spurious associations? Population structure can create LD between unlinked loci, leading to false positives in association studies [25] [26]. To mitigate this:

- Calculate FST: Estimate FST between your subpopulations to quantify the level of genetic differentiation [29].

- Correct with Principal Components: Include the top principal components (PCs) of genetic variation as covariates in your association model to adjust for broad-scale population structure.

- Use IBD-Based Methods: Identify and account for cryptic relatedness by detecting pairs of individuals who share long IBD segments, as these can also create spurious associations [30] [31]. Software like PLINK can incorporate a genetic relatedness matrix (GRM) in mixed models for this purpose [27].

My IBD detection tool is slow or has low recall on low-coverage data. What can I do? The performance of IBD detection methods is highly dependent on data quality and the algorithm used [32].

- For Large, Phased, High-Quality Data: Use fast, haplotype-based methods like hap-IBD [33] or GERMLINE [30]. These are optimized for speed and accuracy in biobank-scale datasets.

- For Low-Coverage or Ancient DNA: Standard methods fail due to uncertain genotypes and high phasing errors. Instead, use specialized tools like ancIBD, which leverages imputed genotype probabilities via a hidden Markov model (HMM) and is robust for data with coverage as low as 0.25x (WGS) or 1x (1240k capture data) [32].

- If Precision is Paramount: Methods like IBIS prioritize precision over recall, which may be suitable for specific applications where false positives are a major concern [32].

How do I choose the right LD metric (D', r²) for my analysis? The choice of LD metric depends on your analytical goal. The two most common metrics have different properties and uses [26]:

- ( r^2 ) (Squared correlation coefficient): Ranges from 0 to 1. It directly measures how well one marker predicts another. This is the preferred metric for tag SNP selection and imputation, as an ( r^2 \geq 0.8 ) typically indicates that one SNP is a strong proxy for another [26].

- ( D' ) (Normalized D): Also ranges from 0 to 1. It is more sensitive to historical recombination events and is less influenced by allele frequency. However, it can be inflated with rare alleles. It is often used in evolutionary studies to infer historical recombination [25] [26].

For most applied genetic studies focused on marker selection and association follow-up, ( r^2 ) is the more practical and informative metric.

Essential Workflow Visualizations

Analysis Workflow Integrating LD, FST, and IBD

Quantitative Data Reference

Table 1: Common FST Values in Human Populations

| Population 1 | Population 2 | FST Value | Interpretation |

|---|---|---|---|

| Danish | English | 0.0021 [29] | Very low differentiation |

| European American | Druze (at LCT locus) | 0.59 [28] | Extremely high (due to strong selection) |

| Europeans (CEU) | East Asians (JPT) | 0.111 [29] | Moderate differentiation |

| Europeans (CEU) | Sub-Saharan Africans (YRI) | 0.153 [29] | Moderate differentiation |

| Mbuti Pygmies | Papua New Guineans | 0.4573 [29] | Very high differentiation |

Table 2: Comparison of Key Genetic Concepts

| Concept | Definition | Primary Use in Analysis | Key Measures |

|---|---|---|---|

| Linkage Disequilibrium (LD) | Non-random association of alleles at different loci [24] [25]. | Gene mapping, GWAS, tag SNP selection, imputation [24] [26]. | D, D', r² [25] [26] |

| Fixation Index (FST) | Proportion of total genetic variance due to differences between subpopulations [28] [29]. | Quantifying population structure and genetic differentiation [28] [29]. | FST (0 to 1) [28] |

| Identity by Descent (IBD) | A DNA segment inherited from a common ancestor without recombination [30]. | Inferring relatedness, demographic history, IBD mapping [30] [31]. | Segment length (cM), number of segments [30] |

Research Reagent Solutions: Software Tools

Table 3: Essential Software Tools for Genetic Conception Analysis

| Tool Name | Function | Best Used For |

|---|---|---|

| PLINK [30] [27] | Whole-genome association analysis, basic IBD/relatedness estimation. | A versatile and standard toolset for a wide range of population-based genetic analyses. |

| hap-IBD [33] | Rapid detection of IBD segments in phased genotype data. | Analyzing large-scale datasets (like biobanks) for both long and short (>2 cM) IBD segments. |

| ancIBD [32] | Accurate IBD detection in low-coverage and ancient DNA (aDNA). | Specialized analysis of aDNA or other low-coverage sequencing data. |

| GERMLINE [30] | Genome-wide IBD detection in linear time. | General-purpose IBD segment detection in large cohorts. |

| BEAGLE [30] | Phasing, imputation, and IBD detection (RefinedIBD, fastIBD). | A comprehensive suite for haplotype estimation and subsequent IBD analysis. |

| GLIMPSE [32] | Imputation of genotype probabilities from low-coverage data. | Preprocessing step for IBD detection (e.g., with ancIBD) in low-coverage data. |

Methodologies for Accounting for Population Structure: From Traditional Statistics to Deep Learning

Core Assumptions and Conceptual Frameworks

What are the fundamental assumptions of the STRUCTURE/ADMIXTURE model? These models operate on several key assumptions about population genetics and the nature of ancestry:

- Discrete Ancestral Populations: The model assumes that an individual's genome originates from (K) discrete, distinct ancestral populations, each characterized by a unique set of allele frequencies [34].

- Hardy-Weinberg Equilibrium (HWE): Each ancestral population is assumed to be in HWE, meaning that genotypes are formed by random combinations of alleles according to their frequencies [34].

- Linkage Equilibrium (LE): Markers (loci) are assumed to be independent, meaning that the alleles at one locus do not influence the alleles at another locus within an ancestral population. This assumption can be relaxed in some extensions of the model [35] [34].

- Mutation Model: For specific data types like microsatellites, a mutation model (e.g., the Generalized Stepwise Mutation model) may be incorporated, with its own parameters such as mutation rate and stepwise probability [35] [36].

- Underlying Genetic Model: Many implementations use a likelihood framework, where the probability of observing the genotype data is calculated given the admixture proportions and ancestral allele frequencies [37] [34]. Some Bayesian approaches, like Approximate Bayesian Computation (ABC), use simulations to approximate the likelihood, which can incorporate more complex scenarios like mutations and linked markers [35] [36].

Common Pitfalls and Model Violations

My ADMIXTURE bar plot shows the same pattern for different historical scenarios. Why is this misleading? The ADMIXTURE/STRUCTURE algorithm will always produce a bar plot for any dataset, even when the underlying model is incorrect. Critically, different demographic histories can produce visually identical bar plots [38]. For example, a plot showing a population with mixed ancestry can be indistinguishable from scenarios involving:

- Ghost Admixture: The population is admixed with an unsampled ("ghost") population.

- Recent Bottleneck: The population underwent a severe recent reduction in size, causing strong genetic drift [38]. The algorithm forces the data to fit a simple admixture narrative, using ancestry components to approximate patterns caused by other evolutionary forces. Therefore, the bar plot should never be interpreted literally without validation [38].

How can I assess the goodness-of-fit of the admixture model for my data?

The badMIXTURE method has been developed specifically to test whether a simple admixture model adequately explains the patterns in your genetic data [38]. It works by:

- Using chromosome painting (e.g., with

CHROMOPAINTER) to create "palettes" that show how each individual's genome is shared with others in the sample. - Assuming the admixture proportions from STRUCTURE/ADMIXTURE are correct, it calculates the expected painting palettes for each individual.

- It then compares the observed palettes to the expected ones. Systematic patterns in the residuals (differences between observed and expected) indicate a poor fit, suggesting the simple admixture model is an inadequate description of the history [38].

What is the "label switching" problem in Bayesian analyses, and how is it managed?

Label switching occurs when the labels of the (K) ancestral populations are swapped during an MCMC run without affecting the model's likelihood. This can make posterior distributions for individual population parameters difficult to interpret. Standard practice involves post-processing MCMC chains to align population labels across iterations, often using methods implemented in software like PopCluster [37].

Troubleshooting Guide: Experimental Design and Parameter Selection

How do I choose the correct number of ancestral populations ((K))? Selecting (K) is a model selection problem. The most robust approach is not to rely on a single method but a combination of strategies:

- Likelihood-based Criteria: Use the cross-validation (CV) procedure implemented in

ADMIXTURE. The value of (K) with the lowest CV error is typically chosen [37]. - Bayesian Methods: For Bayesian sampling methods like

AdmixtureBayes, the model can select (K) based on the posterior distribution, providing a more automated and statistically principled selection [39] [40]. - Multiple Runs: Always run the analysis multiple times for each (K) to check for consistency of results.

- Biological Plausibility: The inferred structure should be interpreted in the context of known biological and historical information. A "true" (K) does not always exist; the goal is to find the most informative level of population subdivision for your research question.

My analysis will not converge. What steps can I take? Non-convergence is a common issue in high-dimensional optimization. You can address it with the following steps:

- Increase Runs and Iterations: Perform many more independent runs with different random seeds and substantially increase the number of MCMC generations or EM iterations.

- Use Sophisticated Optimization: Employ software that uses advanced algorithms to escape local optima. For example,

PopClusteruses simulated annealing in its initial clustering phase to better explore the parameter space [37].Ohanauses a new optimization method that often finds solutions with higher likelihoods thanADMIXTUREin comparable time [34]. - Validate with Simple Cases: Test your pipeline on a smaller, well-understood subset of your data or simulated data where the true structure is known.

- Check Data Quality: Ensure your genotype data is properly filtered for missing data and other quality controls.

How should I handle linked markers (Linkage Disequilibrium) in my dataset? The standard model assumes markers are independent. Violating this assumption with linked markers can lead to overconfident estimates (confidence intervals that are too narrow) if not accounted for [35] [36].

- Thinning Markers: Prune your SNP set to reduce linkage disequilibrium (LD) before analysis.

- Use LD-aware Models: Some software, like

Ohana, can account for the covariance in allele frequencies among populations, which can help mitigate issues caused by underlying population structure that contributes to LD [34]. Coalescent-based ABC frameworks can also explicitly simulate and account for linked markers [35] [36].

Experimental Protocols for Validation and Inference

Protocol 1: Validating Inferred Structure with badMIXTURE This protocol helps test if your data fits a simple admixture model [38].

- Run ADMIXTURE/STRUCTURE: Infer admixture proportions ((Q)-matrix) and ancestral allele frequencies for your chosen (K).

- Chromosome Painting with CHROMOPAINTER: Use this tool to paint all individuals in your sample against each other, generating a "palette" of DNA sharing for each individual.

- Run badMIXTURE: Provide the admixture proportions ((Q)) and the painting palettes to the software.

- Interpret Results: Examine the residual plots. The presence of strong, systematic patterns in the residuals indicates that the simple admixture model is a poor fit for your data, and the inferred "ancestry" components may represent other demographic processes.

Protocol 2: Inferring Population History with Admixture Graphs For a more robust historical inference beyond bar plots, you can use admixture graphs.

- Estimate Ancestry Components: Use

ADMIXTUREorOhanato obtain estimated allele frequencies for (K) ancestral components [34]. - Model Covariance: Use the Gaussian approximation (as in

OhanaorTreeMix) to model the covariance of allele frequencies among these components as a function of genetic drift [34] [40]. - Graph Search: Use a search algorithm to find the graph (tree with admixture events) that best explains the observed covariance matrix. Greedy search (

TreeMix) is fast, but for a more thorough exploration with confidence estimates, use a Bayesian MCMC sampler likeAdmixtureBayes[39] [40].

Protocol 3: Ancient Admixture Inference using ABC For complex models involving ancient admixture, mutations, and linked markers, an Approximate Bayesian Computation (ABC) framework is powerful [35] [36].

- Define a Demographic Model: Specify your admixture model with parameters (e.g., admixture proportion ( \lambda ), time of admixture ( t_{ADM} ), effective population sizes ( N )).

- Simulate Data: Use a coalescent simulator (e.g.,

SIMCOAL2) to generate a vast number of pseudo-datasets by drawing parameters from prior distributions. - Calculate Summary Statistics: Compute relevant summary statistics (e.g., FST, genetic diversity) for both the observed and simulated data.

- Rejection-Regression: Retain the simulated datasets whose summary statistics are closest to the observed data and use them to estimate the posterior distribution of the model parameters.

The Scientist's Toolkit: Key Research Reagents

Table 1: Essential Software Tools for Admixture Analysis

| Software Name | Primary Function | Key Features | Best Used For |

|---|---|---|---|

| STRUCTURE [37] [38] | Bayesian admixture inference | MCMC sampling; flexible models (correlated frequencies, null alleles) | Small datasets; analyses requiring complex genotype models. |

| ADMIXTURE/ FRAPPE [37] [34] | Fast likelihood-based admixture inference | Maximum Likelihood with EM algorithm; very fast. | Large-scale SNP datasets (thousands of individuals, millions of SNPs). |

| Ohana [34] | Admixture inference & population trees | Fast QP optimization; models genotype uncertainty from NGS data. | Inferring admixture and subsequent phylogenetic relationships between components. |

| AdmixtureBayes [39] [40] | Bayesian admixture graph inference | Reversible-jump MCMC to sample graph space; provides confidence. | Inferring population splits and admixture events with posterior probabilities. |

| badMIXTURE [38] | Goodness-of-fit test for admixture models | Compares chromosome painting data to model expectations. | Validating whether a simple admixture model is appropriate for the data. |

| PopCluster [37] | Two-step admixture inference | Simulated annealing for clustering + EM for admixture; avoids local optima. | Difficult situations (unbalanced sampling, low differentiation) for robust convergence. |

Visualizing Analysis Workflows and Model Logic

Admixture Analysis Decision Workflow

Model Assumptions and Violations

Frequently Asked Questions (FAQs)

Q1: What are the main advantages of using nonparametric methods like PCA and clustering for population structure analysis? Nonparametric approaches are highly valued for analyzing large genetic datasets because they do not rely on strict statistical model assumptions (like Hardy-Weinberg equilibrium) that parametric methods require [41]. Their key advantages include efficient computational cost, the ability to handle high-dimensional data such as genome-wide single nucleotide polymorphisms (SNPs), and no requirement for pre-specified population models, making them more practical and viable for large-scale studies [41].

Q2: My PCA plot shows clusters, but the scree plot indicates the first two PCs explain very little variance. What should I do? This is a common issue. The scree plot shows how much variance (information) each principal component captures from your dataset [42]. If the first two PCs explain a low cumulative variance (e.g., less than 50-60%), it means you are losing a significant amount of information when visualizing the data in 2D [42]. To address this:

- Include More Components: Do not rely solely on the 2D plot. Examine higher-order principal components (PC3, PC4, etc.) to see if they capture the population structure.

- Check Scree Plot First: Always consult the scree plot to determine the optimal number of components to retain for analysis. A good target is to keep enough components to explain around 80-90% of the total variance, if possible [42].

- Consider Other Methods: Use the PCA results to inform other analyses. For example, you can use the first

nprincipal components that capture sufficient variance as input for a subsequent clustering analysis, which can help delineate population groups more reliably.

Q3: How do I choose between K-means and hierarchical clustering for my population data? The choice depends on your data characteristics and research goals. The table below compares the two methods:

| Feature | K-Means | Hierarchical Clustering |

|---|---|---|

| Method Type | Partitional | Hierarchical [43] |

| Cluster Shape | Tends to find spherical clusters of similar size | Can find clusters of arbitrary shapes [43] |

| Prior Knowledge | Requires you to specify the number of clusters (K) in advance | Does not require pre-specifying the number of clusters [43] |

| Output | A single set of clusters | A dendrogram (tree structure) showing nested clusters [43] |

| Best Use Case | Large datasets where you have a hypothesis about the number of subpopulations | Exploring data to discover unknown hierarchical relationships or when you want to see multiple potential clustering levels [43] |

Q4: Population structure is a known confounder in genetic association studies. How do nonparametric methods help account for it? Population structure can cause spurious associations in genetic studies because allele frequencies can differ between subpopulations for reasons unrelated to the trait of interest [41]. Nonparametric methods help in two key ways:

- Detection: PCA and clustering are first used to identify and visualize the underlying population substructure within the sample [41].

- Adjustment: The principal components (PCs) that capture this structure can then be included as covariates in association models (like linear or logistic regression) to statistically adjust for ancestry differences, thereby reducing false positive findings [41].

Q5: What are the critical data preprocessing steps before performing PCA or clustering on genetic data? Data preprocessing is essential to ensure the quality and reliability of your analysis. The key steps for genetic data (typically SNP data) are summarized below [41]:

| Preprocessing Step | Description | Typical Threshold |

|---|---|---|

| SNP Call Rate | Proportion of genotypes with non-missing data per marker. Removes poorly genotyped SNPs. | > 95% [41] |

| Individual Call Rate | Proportion of genotypes with non-missing data per individual. Removes poorly genotyped samples. | > 95% [41] |

| Minor Allele Frequency (MAF) | Frequency of the less common allele. Removes uninformative, rare variants. | > 1-5% [41] |

| Hardy-Weinberg Equilibrium (HWE) | Statistical test for deviation from expected genotype frequencies. Removes markers with potential genotyping errors. | p-value ≥ 0.05 [41] |

| Identity by Descent (IBD) | Assesses relatedness between individuals. Prevents bias from including closely related samples. | Exclude related individuals (e.g., closer than second-degree relatives) [41] |

Troubleshooting Guides

Issue 1: Poor Clustering Results in PCA or Distance-Based Methods

Problem: The resulting PCA plot or cluster assignments are unclear, do not match known population labels, or show no discernible grouping.

Solutions:

- Verify Data Preprocessing: Re-check that all quality control (QC) steps from the FAQ above have been rigorously applied. Low-quality data or the inclusion of related individuals can severely obscure true population signals [41].

- Standardize Your Data: PCA is sensitive to the scale of variables. Ensure that your data is standardized (mean of zero, standard deviation of one) before performing the analysis so that all SNPs contribute equally [44] [45].

- Try Different Distance Metrics: The choice of distance metric can greatly affect clustering. For genetic data, consider using an allele-sharing distance. Experiment with other metrics (e.g., Euclidean, Manhattan) to see which best captures the biological reality of your data [41].

- Adjust the Number of Clusters (K): For K-means, use methods like the elbow method or silhouette analysis on your principal components to determine a more appropriate value for K, rather than relying on a guess.

- Investigate Other Clustering Algorithms: If K-means fails, try density-based algorithms like DBSCAN, which can find clusters of arbitrary shape and identify outliers, or hierarchical clustering to explore data structure at different levels [43].

Issue 2: Handling Admixed Individuals in Population Structure Analysis

Problem: Some individuals in the dataset do not clearly belong to a single cluster, showing mixed ancestry, which makes hard clustering assignments misleading.

Solutions:

- Use Fuzzy Clustering: Instead of hard-assignment methods like K-means, apply fuzzy clustering (e.g., Fuzzy C-Means). This allows data points to have partial membership in multiple clusters, which is more biologically realistic for admixed individuals [43].

- Leverage Hierarchical Clustering Dendrograms: The dendrogram produced by hierarchical clustering allows you to visualize admixture at different levels of granularity. You can observe how admixed individuals branch between major clusters [43].

- Treat PCA Output as Continuous Axes: Interpret the principal components as continuous axes of variation. An individual's position along PC1 or PC2 reflects their ancestry composition, and admixed individuals will often plot intermediately between "pure" clusters. These PC scores can be used as quantitative covariates in downstream analyses [41].

Issue 3: High-Dimensional Data Causes Computational Slowdown or Memory Issues

Problem: The analysis of a large SNP dataset (e.g., hundreds of thousands of markers) is computationally intensive, slow, or fails due to memory limitations.

Solutions:

- Feature Selection: Before PCA or clustering, perform a feature selection step to identify a subset of informative, independent SNPs that are highly representative of the genome-wide variation. This can dramatically reduce the dimensionality of the problem [41].

- Utilize Efficient Software: Ensure you are using software and packages specifically optimized for large genomic data (e.g., PLINK, GCTA). These tools use efficient algorithms and memory management for operations on genetic data [41].

- Two-Step Dimensionality Reduction: First, use PCA to reduce the dimensionality of the SNP data to a manageable number of principal components (e.g., the first 20-50 PCs). Then, perform your clustering analysis on these PCs instead of the original thousands of SNPs. This is both computationally efficient and effective [41].

Experimental Protocols

Protocol 1: Standard Workflow for Population Structure Inference Using PCA and Clustering

This protocol outlines a standard methodology for detecting and interpreting population structure from genetic SNP data.

1. Objective: To infer population substructure from a large genomic dataset using a combination of PCA and clustering.

2. Research Reagent Solutions

| Item | Function in the Protocol |

|---|---|

| PLINK Software | A standard toolset for whole-genome association and population-based analysis. Used for data quality control (QC) and format conversion [41]. |

| SNP Genotype Data | The raw input data, typically in formats like VCF or PLINK's .bed/.bim/.fam. Encodes genetic variation (AA, AB, BB) as 0, 1, 2 [41]. |

| Standardized Data Matrix | A numerical matrix where rows are individuals, columns are SNPs, and values are genotype codes that have been mean-centered and scaled [44] [45]. |

| Covariance Matrix | A symmetric matrix computed from the standardized data, summarizing how every pair of SNPs varies together [44]. |

| Eigenvectors (PCs) | The output of PCA, representing the directions of maximum variance in the data. Used to project samples into a lower-dimensional space [42] [44]. |

| Eigenvalues | Numerical values indicating the amount of variance explained by each corresponding eigenvector (PC). Used to create a scree plot [42] [44]. |

3. Workflow Diagram

4. Step-by-Step Procedure:

1. Data Preprocessing: Perform rigorous QC on the raw genotype data using a tool like PLINK. Apply filters for SNP and individual call rate, MAF, and HWE deviation as detailed in the FAQ table above [41].

2. Data Standardization: Standardize the cleaned genotype data so that each SNP has a mean of zero and a standard deviation of one. This ensures all variables contribute equally to the PCA [44] [45].

3. Compute Covariance Matrix: Calculate the covariance matrix of the standardized data. This matrix captures the relationships between all pairs of SNPs [44].

4. Perform PCA: Calculate the eigenvectors (principal components) and eigenvalues of the covariance matrix. The eigenvectors define the new axes, and the eigenvalues quantify the variance each PC explains [42] [44].

5. Visualization and Scree Plot:

* Create a PCA plot using the first two PCs (PC1 on the x-axis, PC2 on the y-axis). Each point represents an individual. Look for clusters, trends, or outliers [42].

* Generate a scree plot to visualize the proportion of total variance explained by each PC. This helps decide how many PCs to retain for further analysis [42].

6. Cluster Analysis: Using the first n PCs (where n is determined from the scree plot), perform cluster analysis. You can use K-means to assign individuals to K groups or hierarchical clustering to build a dendrogram and cut it to form clusters [43].

7. Interpretation: Correlate the clustering results with known geographic, ethnic, or breed origins of the samples. Use the inferred clusters to control for population structure in downstream genetic analyses.

Protocol 2: Selecting Informative SNP Markers for Efficient Analysis

1. Objective: To reduce the dimensionality of a genetic dataset by selecting a subset of informative SNPs that retain the signal of population structure.

2. Workflow Diagram

3. Step-by-Step Procedure:

1. LD Pruning: Remove SNPs that are in high linkage disequilibrium (highly correlated) with each other. This reduces redundancy and ensures the selection of independent markers. This can be done with PLINK using a command like --indep-pairwise 50 5 0.2 which slides a window of 50 SNPs, shifting by 5 SNPs each time, and removes one of a pair if the r² > 0.2 [41].

2. FST-based Selection: FST is a measure of population differentiation. Calculate FST for each SNP between putative populations or across the entire sample. Select the top SNPs with the highest FST values, as these are the markers that show the largest allele frequency differences between groups and are most informative for structure [41].

3. Validation: Perform PCA on the reduced SNP panel and compare the results (e.g., clustering patterns, variance explained) to the analysis with the full dataset to ensure population signals are preserved.

Frequently Asked Questions (FAQs)

1. What is the primary function of a Linear Mixed Model (LMM) in a GWAS? LMMs are primarily used to account for population stratification and cryptic relatedness among samples in a GWAS. They incorporate a random effect, typically based on a Genetic Similarity Matrix (GSM) or Genetic Relatedness Matrix (GRM), which models the genetic similarity between individuals. This adjustment helps to control for false positives (spurious associations) that can arise from these confounding structures [46].

2. What are the main computational challenges associated with using LMMs in large-scale studies? The key computational bottleneck in traditional LMMs stems from large-scale operations on the genetic similarity matrix (GSM), particularly the inversion of the covariance matrix. The time complexity for these operations can be cubic (𝒪(n³)) relative to the cohort size (n), making analysis prohibitive for biobank-scale datasets with hundreds of thousands of individuals [46].

3. For multi-ancestry studies, which method generally offers better statistical power: pooled analysis or meta-analysis? Simulation and real-data studies have demonstrated that pooled analysis generally exhibits better statistical power compared to meta-analysis and other methods like MR-MEGA. By combining individuals from all genetic backgrounds into a single dataset and adjusting for population stratification (e.g., using principal components), pooled analysis maximizes sample size and power while maintaining controlled type I error rates in realistic scenarios [47].

4. How can I control for population stratification in a GWAS using PLINK?

In PLINK 2.0, you can use the --pca command to extract top principal components (PCs) from your genomic data. These PCs can then be included as covariates in your association analysis using the --glm command with the --covar flag. This helps to correct for broad population structure. It is recommended to perform LD pruning and remove very-low-MAF variants before computing PCs for more accurate results [48] [49].

5. My GWAS involves longitudinal traits (repeated measures) and related samples. What methods are available? SPAGRM is a scalable and accurate analysis framework designed for this purpose. It controls for sample relatedness by approximating the joint distribution of genotypes and can be applied to various statistical models for longitudinal traits, including linear mixed models and generalized estimation equations. A hybrid version, SPAGRM(CCT), aggregates results from multiple models for a robust solution [50].

Troubleshooting Common Problems

Problem 1: Inflated Type I Error Rates

Possible Cause: Inadequate control for population stratification or cryptic relatedness among samples. Standard linear regression models assume individuals are unrelated, which, if violated, leads to spurious associations [46] [50]. Solution:

- Implement an LMM that includes a genetic relatedness matrix as a random effect [46].

- For multi-ancestry populations, ensure careful adjustment with principal components in a pooled analysis [47].

- For related samples in longitudinal studies, use specialized methods like SPAGRM that effectively model the correlation structure [50].

Problem 2: Computationally Expensive LMMs

Possible Cause: Traditional LMMs performing dense matrix operations on large Genetic Similarity Matrices (GSM) [46]. Solution: Utilize optimized methods and software that employ approximations.

- NExt-LMM: A near-exact linear mixed model that uses a Hierarchical Off-Diagonal Low-Rank (HODLR) format to approximate the GSM, dramatically reducing computation time from 𝒪(n³) to nearly linear [46].

- RHE-mc: A randomized method-of-moments estimator efficient for variance components analysis across millions of genomes, requiring only a single pass over the genotype data [51].

- PLINK 2.0's

--pca approx: Uses a randomized algorithm for faster principal component analysis on large sample sizes (>50,000) [48].

Problem 3: Low Power in Multi-Ancestry GWAS

Possible Cause: Using a meta-analysis approach that may have limitations in handling admixed individuals and can be less powerful when allele frequencies vary across ancestry groups [47]. Solution: Prefer a pooled analysis strategy. Combine all individuals into a single dataset and use a mixed-model framework that adjusts for population structure using principal components (PCs). This approach maximizes sample size and has been shown to yield higher power for discovery [47].

Comparison of Multi-Ancestry GWAS Strategies

The table below summarizes the core characteristics of the two primary strategies for multi-ancestry GWAS, based on a large-scale evaluation [47].

| Feature | Pooled Analysis | Meta-Analysis |

|---|---|---|