Active Learning-Assisted Design of Experiments vs Traditional DE: A Revolutionary Approach for Accelerated Biomedical Research

This article provides a comprehensive comparison between Traditional Design of Experiments (DE) and the emerging paradigm of Active Learning-Assisted Design of Experiments (ALDE) for researchers and professionals in drug development...

Active Learning-Assisted Design of Experiments vs Traditional DE: A Revolutionary Approach for Accelerated Biomedical Research

Abstract

This article provides a comprehensive comparison between Traditional Design of Experiments (DE) and the emerging paradigm of Active Learning-Assisted Design of Experiments (ALDE) for researchers and professionals in drug development and biomedical sciences. It explores the foundational principles of both approaches, detailing the methodological shift from static, pre-planned experiments to dynamic, data-adaptive frameworks. The scope includes practical guidance on implementation, strategies for troubleshooting common pitfalls, and a rigorous validation of ALDE's advantages in improving efficiency, predictive accuracy, and resource optimization. By synthesizing evidence from computational and early biomedical applications, this article serves as a guide for adopting ALDE to streamline R&D pipelines and enhance decision-making in complex experimental landscapes.

Understanding the Paradigm Shift: From Static Traditional DE to Dynamic ALDE

Directed Evolution (DE) stands as a rigorously developed methodology for engineering biomolecules, embodying core scientific principles that ensure robust and reliable outcomes. As a foundational technique developed by Nobel laureate Frances Arnold, traditional DE mimics natural evolution in the laboratory through iterative rounds of mutagenesis and screening. This article examines the core principles underpinning traditional DE as a paradigm of scientific rigor. It explores how these principles provide a trustworthy framework for protein engineering while comparing its performance and methodology to modern Active Learning-assisted Directed Evolution (ALDE). By understanding traditional DE's systematic approach and its role in establishing scientific validity, researchers can better appreciate its enduring value in the field of protein engineering.

Core Principles of Traditional DE and Scientific Rigor

Traditional DE exemplifies scientific rigor through methodical implementation of established scientific methods. Scientific rigor broadly means good experimental practice, ensuring other researchers can replicate your work and understand exactly what you did [1]. The National Institutes of Health (NIH) defines scientific rigor as "the strict application of the scientific method to ensure robust and unbiased experimental design, methodology, analysis, interpretation and reporting of results" [2].

Traditional DE embodies five core principles of rigorous science that align with the "pentateuch for scientific rigor" framework: redundancy in experimental design, sound statistical analysis, recognition of error, avoidance of logical traps, and intellectual honesty [2].

Redundancy in Experimental Design

Traditional DE incorporates redundancy through massive mutant library generation and comprehensive screening. This approach encompasses replication (testing numerous independent mutants), validation (confirming hits through multiple assays), and generalization (assessing performance across various conditions) [2]. This multi-layered redundancy enhances confidence in identified variants and ensures discoveries are not artifacts of specific experimental conditions.

Sound Statistical Analysis

The statistical power of traditional DE stems from its large sample sizes. While specific statistical methods vary, the fundamental principle remains: analyzing sufficient replicates to distinguish meaningful improvements from experimental noise. This becomes particularly important when evaluating subtle fitness enhancements that may provide evolutionary advantages.

Recognition of Error

Traditional DE explicitly acknowledges potential errors through controlled experimental designs. It incorporates systematic processes to identify and account for errors in screening, measurement, and selection. This recognition manifests in the use of appropriate controls, replicate measurements, and validation steps to distinguish true improvements from experimental artifacts.

Avoidance of Logical Traps

The traditional DE workflow is structured to minimize logical fallacies such as confirmation bias. By employing blind screening approaches and predetermined selection criteria, researchers reduce the risk of selectively favoring expected outcomes. The methodology emphasizes falsification—iteratively testing and refining hypotheses through successive rounds of evolution.

Intellectual Honesty

This principle manifests in traditional DE through comprehensive reporting of all experimental details, including the size and diversity of mutant libraries, precise screening conditions, and complete results—not just successful variants. This transparency enables other researchers to reproduce and extend the findings, a hallmark of rigorous science [2].

Traditional DE vs. ALDE: Performance Comparison

The emergence of ALDE represents a paradigm shift in protein engineering. ALDE incorporates machine learning into the DE process, using uncertainty quantification to guide protein search space exploration more efficiently than traditional DE [3]. The table below summarizes key differences in their approaches and performance.

| Aspect | Traditional DE | ALDE (FolDE) |

|---|---|---|

| Core Approach | Empirical exploration through large libraries | Computational prediction with focused experimentation |

| Typical Mutants per Round | Thousands to millions | Dozens (e.g., 16 per round) |

| Selection Method | Random or semi-random mutagenesis | Model-predicted high-value mutants |

| Information Utilization | Limited to selected variants | Incorporates all tested variants into predictive models |

| Key Strengths | Unbiased exploration; proven track record; requires no specialized computational knowledge | High efficiency with limited budgets; excels at finding top performers |

| Key Limitations | Resource-intensive; lower efficiency in low-N scenarios | Risk of over-exploitation; model dependency |

| Success Metrics | Broad improvements through cumulative mutations | Targeted discovery of elite performers |

Quantitative benchmarks from FolDE development reveal compelling performance differences. In simulations across 20 protein targets, FolDE—an ALDE method—discovered 23% more top 10% mutants than the best baseline method representing traditional DE approaches and was 55% more likely to find top 1% mutants [4].

Experimental Protocols & Methodologies

Traditional DE Workflow

The traditional directed evolution protocol follows a systematic, iterative process that has proven effective across numerous protein engineering campaigns:

Library Generation: Create genetic diversity through random mutagenesis (error-prone PCR) or homologous recombination (DNA shuffling)

Expression & Screening: Express mutant libraries in suitable host systems and screen for desired properties using high-throughput assays

Variant Selection: Identify improved variants based on screening data

Iteration: Use improved variants as templates for subsequent rounds of evolution

This workflow continues until desired functionality is achieved, often requiring multiple rounds (typically 3-8) with cumulative mutations [5].

ALDE Experimental Protocol

ALDE methods like FolDE employ a more integrated computational-experimental workflow:

Initial Selection: Round 1 uses naturalness-based zero-shot selection with protein language models (PLMs) like ESM-family models [4]

Activity Prediction: In subsequent rounds, train neural networks with ranking loss on collected data to predict mutant activities

Naturalness Warm-Start: Augment limited experimental data with PLM outputs to improve activity prediction

Batch Selection: Use constant-liar batch selection with diversity parameter (α=6) to balance exploration and exploitation [4]

Iteration: Repeat prediction and testing cycles (typically 3 rounds with 16 mutants each)

This protocol specifically addresses the exploration-exploitation tradeoff inherent in data-limited protein optimization [4].

Experimental Data and Performance Metrics

Rigorous comparison of protein engineering methods requires standardized benchmarks and appropriate metrics. The table below summarizes quantitative performance data from ProteinGym benchmarks, which evaluated methods across 17 single-mutation and 3 multi-mutation datasets [4].

| Method | Top 10% Mutants Found | Probability of Finding Top 1% Mutant | Key Advantages |

|---|---|---|---|

| Traditional DE (Random) | Baseline | Baseline | Unbiased exploration; No computational requirements |

| Zero-shot Naturalness | 3.8× more than random in round 1 [4] | 3.6× higher chance in round 1 [4] | Strong first-round performance; No experimental data required |

| ALDE (FolDE) | 23% more than best baseline [4] | 55% more likely than best baseline [4] | Balanced exploration-exploitation; Naturalness warm-starting |

These benchmarks measured success through two primary metrics that directly reflect protein optimization goals: the cumulative number of top 10% mutants discovered and the probability of finding at least one top 1% mutant within three rounds [4]. These metrics capture both overall batch quality and success at discovering exceptional mutants, making them more relevant to practical protein optimization than correlation-based metrics.

The Scientist's Toolkit: Research Reagent Solutions

Successful implementation of either traditional DE or ALDE requires specific research reagents and materials. The table below details essential components for conducting rigorous directed evolution campaigns.

| Reagent/Material | Function in DE | Application Notes |

|---|---|---|

| Mutagenesis Kits | Generate genetic diversity | Error-prone PCR kits for random mutagenesis; DNA shuffling reagents for recombination |

| Expression Vectors | Protein production | Plasmid systems with tunable promoters for controlled expression in model organisms |

| Host Organisms | Protein expression & screening | E. coli, yeast, or other suitable hosts with high transformation efficiency |

| Selection/Screening Assays | Identify improved variants | High-throughput assays (colorimetric, fluorescent, growth-based); microtiter plate formats |

| Protein Language Models | Predict mutant naturalness | ESM-family models for zero-shot prediction; requires computational infrastructure [4] |

| Activity Prediction Models | Guide ALDE mutant selection | Neural networks with ranking loss; random forest alternatives [4] |

Workflow Visualization

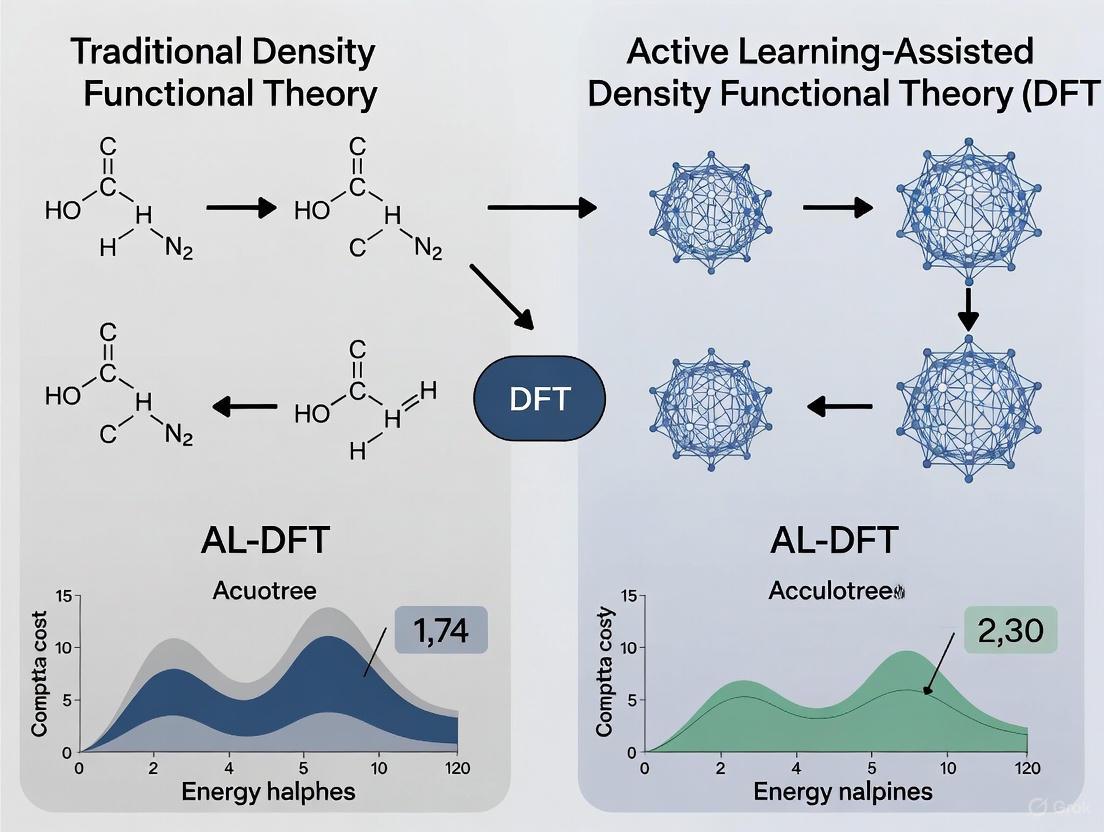

The following diagram illustrates the key decision points and process flows in traditional DE versus ALDE approaches, highlighting their fundamental strategic differences.

Traditional DE remains a pillar of scientific rigor in protein engineering, embodying time-tested principles that ensure robust and reproducible results. Its systematic approach to creating and evaluating diversity provides a trustworthy methodology that has produced numerous successes. While ALDE approaches demonstrate superior efficiency in data-limited scenarios—finding 23% more top performers with 55% greater likelihood of discovering elite mutants—traditional DE continues to offer advantages in comprehensive exploration and requires no specialized computational infrastructure [4].

The most effective protein engineering strategies often combine both approaches, leveraging the methodological rigor of traditional DE with the predictive power of ALDE. This integration represents the future of rigorous protein engineering, where established principles guide the application of emerging technologies to accelerate discovery while maintaining scientific validity.

Directed evolution (DE) stands as a cornerstone of modern protein engineering, enabling the optimization of biomolecules for therapeutic, industrial, and research applications by mimicking natural evolution in a laboratory setting. However, its efficiency is frequently hampered by the vastness of sequence space and the prevalence of epistatic interactions, where the effect of one mutation depends on the presence of others, making the fitness landscape rugged and difficult to navigate. The emergence of Active Learning-assisted Directed Evolution (ALDE) represents a paradigm shift, integrating machine learning (ML) with experimental biology to create an adaptive, intelligent framework that dramatically accelerates the protein optimization process.

This guide provides a objective comparison between traditional DE and ALDE, detailing the methodologies, presenting supporting experimental data, and outlining the essential tools required for implementation.

Understanding the Fundamental Workflows

The core distinction between traditional and active learning-assisted directed evolution lies in their approach to exploring the fitness landscape.

Traditional Directed Evolution

Traditional DE is a heuristic, iterative process that relies on generating diversity and screening for improved variants. It follows a linear path of diversification and selection, often requiring immense experimental effort to sample a sufficiently large portion of the sequence space to find beneficial mutations, especially when they are non-additive [6].

Active Learning-Assisted Directed Evolution (ALDE)

ALDE introduces a closed-loop feedback system where machine learning models guide the experimental process. The model learns from experimental data, quantifies its own predictive uncertainty, and proactively selects the most informative variants to test next. This creates an efficient exploration-exploitation balance, focusing costly experiments on sequences that maximize learning or performance gains [7] [6].

The following diagram illustrates the core iterative workflow of the ALDE framework:

Comparative Analysis: Traditional DE vs. ALDE

The theoretical advantages of ALDE are borne out in direct experimental comparisons. The table below summarizes a quantitative comparison based on a study that applied both approaches to optimizing five epistatic residues in an enzyme for a non-native cyclopropanation reaction [6].

Table 1: Performance Comparison of Traditional DE vs. ALDE on a Challenging Epistatic Landscape

| Feature | Traditional Directed Evolution | Active Learning-Assisted DE (ALDE) |

|---|---|---|

| General Approach | Heuristic; relies on random diversification and high-throughput screening. | Adaptive; uses ML to model fitness landscape and guide diversification. |

| Handling of Epistasis | Inefficient; struggles with non-additive mutations, often getting stuck in local optima. | Effective; ML models can capture non-linear, epistatic relationships between mutations. |

| Experimental Efficiency | Lower; requires screening large libraries to find rare improvements. | Higher; focuses experiments on the most promising or informative variants. |

| Data Utilization | Limited; data from one round primarily serves to select hits for the next. | Comprehensive; all data is used to iteratively refine a predictive model. |

| Reported Outcome | Initial yield: 12% (Starting point) | Final yield after 3 ALDE rounds: 93% [6] |

| Key Enabler | High-throughput screening capacity. | Machine learning with uncertainty quantification [7]. |

Detailed Experimental Protocol for ALDE

The following section details the methodology derived from the successful application of ALDE as documented in the primary literature [6]. This serves as a template for researchers aiming to implement this framework.

Step 1: Initial Library Creation and Baselines

- Objective: Generate a small, diverse set of protein sequence variants to create an initial training dataset for the ML model.

- Protocol:

- Site Selection: Identify target residues for mutation (e.g., active site residues known to influence function).

- Diversification: Use mutagenesis techniques (e.g., error-prone PCR, site-saturation mutagenesis) to create a library of variants.

- Baseline Testing: Express, purify, and assay this initial library to measure fitness (e.g., enzymatic yield, binding affinity, fluorescence). This establishes the initial dataset ( \mathcal{D} = {(sequencei, fitnessi)} ).

Step 2: Machine Learning Model Training and Uncertainty Quantification

- Objective: Train a model that can predict fitness from sequence and, crucially, know when it is uncertain.

- Protocol:

- Feature Representation: Convert protein sequences into a numerical format (e.g., one-hot encoding, physicochemical property vectors).

- Model Selection: Choose a model capable of uncertainty quantification (UQ). A common and powerful approach is using an ensemble of neural networks or Gaussian Process regression [7].

- Model Training: Train the model on the current dataset ( \mathcal{D} ). In an ensemble, the disagreement (variance) between individual model predictions serves as a measure of epistemic uncertainty.

Step 3: In-Silico Variant Selection via Acquisition Function

- Objective: Use the trained model to select the most promising variants for the next round of experimentation.

- Protocol:

- Variant Proposal: Generate a large virtual library of candidate sequences (e.g., all possible combinations within a defined mutational space).

- Acquisition Scoring: Score each candidate using an acquisition function. A common strategy is to maximize both high predicted fitness and high predictive uncertainty. This balances exploitation (testing variants expected to be good) and exploration (testing variants that will improve the model).

- Candidate Selection: Rank candidates by their acquisition score and select the top

Nvariants (e.g., 50-100) for experimental validation, constrained by laboratory capacity.

Step 4: Experimental Validation and Model Iteration

- Objective: Test the ML-selected variants and use the new data to refine the model.

- Protocol:

- Wet-Lab Testing: Build (synthesize genes) and test (express and assay) the selected variants.

- Data Integration: Add the new sequence-fitness data pairs to the existing training dataset ( \mathcal{D} ).

- Model Retraining: Retrain the ML model on the expanded dataset. This iterative loop (Steps 2-4) is repeated until a performance target is reached or experimental resources are exhausted.

The following diagram maps this protocol, highlighting the cyclical nature of the ALDE process:

The Scientist's Toolkit: Essential Research Reagents and Solutions

Implementing ALDE requires a combination of wet-lab reagents and computational tools. The table below lists key solutions and their functions based on the reviewed methodologies [6] [7] [8].

Table 2: Key Research Reagent Solutions for an ALDE Pipeline

| Category | Item / Solution | Primary Function in ALDE Workflow |

|---|---|---|

| Wet-Lab Components | Mutagenesis Kit (e.g., for SDM or epPCR) | Creates genetic diversity for the initial library and for subsequent synthesis of ML-selected variants. |

| Expression Vector & Host Cells (e.g., E. coli) | Provides the system for expressing the protein variants. | |

| Assay Reagents | Measures the fitness function (e.g., substrate for an enzyme, antigen for a binder). | |

| Computational Components | ML Model with UQ (e.g., Ensemble NN, Gaussian Process) | The core predictive engine; maps sequence to fitness and reports confidence. |

| Acquisition Function (e.g., Upper Confidence Bound) | Algorithm for scoring and selecting the most informative variants to test next. | |

| Feature Representation (e.g., One-Hot Encoding) | Converts biological sequences (AA/DNA) into numerical data for the ML model. |

Discussion and Outlook

The comparative data and detailed protocol underscore ALDE's transformative potential. Its primary strength lies in transforming protein engineering from a largely empirical screening process into a principled, data-driven search. By leveraging uncertainty quantification, ALDE efficiently navigates complex fitness landscapes that are prohibitive for traditional methods, as demonstrated by the dramatic improvement in product yield from 12% to 93% in just three rounds [6].

Future developments in ALDE will likely involve tighter integration with high-throughput automation systems and the adoption of more powerful foundation models pre-trained on broad biological data, which could further reduce the initial data requirement [7]. Furthermore, human-in-the-loop frameworks, where domain experts provide feedback on generated molecules, are emerging as a powerful way to incorporate prior knowledge and refine predictions [8]. For researchers in drug development, where optimizing biologics like enzymes and antibodies is critical, adopting the ALDE framework represents a strategic advantage in accelerating the design of novel and enhanced therapeutics.

In the field of protein engineering, directed evolution (DE) stands as a fundamental methodology for optimizing protein fitness. This process involves iterative cycles of mutagenesis and screening to accumulate beneficial mutations. The experimental strategies employed to navigate the vast sequence-function landscape can be broadly categorized into two distinct philosophies: deterministic and probabilistic experimentation. Within the context of a broader thesis comparing traditional DE with active learning-assisted DE (ALDE), this guide provides an objective comparison of these two approaches. We define deterministic methods as those relying on precise, rule-based, and objective measurements that produce the same outcome from a given input consistently. In contrast, probabilistic methods depend on statistical inference, human interpretation, and often yield results expressed as likelihoods or confidence scores, making them inherently variable and subjective [9] [10] [11]. This analysis details their performance, supported by experimental data and methodologies, to guide researchers and drug development professionals in selecting the optimal strategy for their specific applications.

Core Conceptual Differences

The choice between deterministic and probabilistic models fundamentally shapes the design, execution, and interpretation of experiments in protein engineering. Their core differences are rooted in how they handle data, uncertainty, and decision-making.

Deterministic approaches provide binary, yes/no decisions based on hard-coded rules or exact matches. They are characterized by their consistency, transparency, and precision, making them easily auditable and explainable. In practice, this could involve a fixed rule that flags a protein variant for further study only if its predicted stability change exceeds a specific threshold [11].

Probabilistic approaches, on the other hand, return confidence scores and estimate the likelihood of different outcomes. They are designed to handle incomplete or noisy data by using statistical inference and can adapt as new data becomes available. For instance, a probabilistic model might analyze a protein variant's sequence features, structural data, and partial experimental results to determine a 92% confidence that it belongs to a high-fitness class [11] [12].

The following table summarizes the key conceptual distinctions:

Table 1: Fundamental Differences Between Deterministic and Probabilistic Models

| Factor | Deterministic Models | Probabilistic Models |

|---|---|---|

| Output | Binary (yes/no) | Probability score (e.g., 87% match confidence) |

| Data Quality Requirements | Requires complete, clean data | Tolerates incomplete or noisy data |

| Flexibility & Adaptability | Rigid, requires manual updates | Learns and adapts from new data |

| Transparency & Explainability | Easy to audit and explain | May require additional tools for explainability (e.g., SHAP values) |

| Primary Strength | Precision and predictability in known scenarios | Pattern recognition and flexibility in uncertain environments [11] |

Application in Directed Evolution and Active Learning

The deterministic-probabilistic dichotomy is clearly manifested in the evolution of protein engineering methodologies, from traditional Directed Evolution (DE) to modern Active Learning-assisted Directed Evolution (ALDE).

Traditional Directed Evolution as a Probabilistic Process

Traditional DE often operates as a probabilistic, "greedy hill-climbing" process. It is empirical and relies on statistical probabilities to identify improved variants through iterative mutagenesis and screening. This approach can be inefficient, especially on rugged fitness landscapes rich in epistasis—non-additive interactions between mutations [12]. In such landscapes, beneficial mutations in the context of the initial sequence may not be beneficial in combination with others, making it easy for the search to become trapped in local optima. The reliance on limited screening data and human interpretation further introduces subjectivity and limits its ability to explore the sequence space broadly [6].

Machine Learning-Assisted DE as a Deterministic Framework

Machine Learning-assisted Directed Evolution (MLDE) and Active Learning-assisted Directed Evolution (ALDE) incorporate deterministic principles to navigate fitness landscapes more efficiently. These methods use supervised machine learning models trained on sequence-fitness data to capture complex, non-additive effects [12]. The trained models provide a deterministic, quantitative framework for predicting variant fitness across the entire combinatorial space, enabling the identification of high-fitness variants with fewer experimental rounds [12].

Active Learning-assisted Directed Evolution (ALDE) represents a more advanced, iterative application of this deterministic framework. ALDE uses uncertainty quantification to select the most informative variants for the next round of wet-lab experimentation, effectively balancing exploration of the search space with exploitation of promising regions [6]. For example, in one application, ALDE optimized five epistatic residues in an enzyme's active site, improving the yield of a non-native cyclopropanation reaction from 12% to 93% in just three rounds of experimentation [6]. This demonstrates a deterministic, data-driven workflow that systematically reduces uncertainty.

Comparative Workflow Visualization

The diagram below illustrates the key differences between the traditional DE workflow and the more deterministic ALDE workflow.

Quantitative Performance Comparison

Systematic studies across diverse protein fitness landscapes provide quantitative evidence of the advantages offered by deterministic-inspired MLDE and ALDE approaches over traditional probabilistic methods.

A comprehensive evaluation of multiple MLDE strategies across 16 combinatorial protein fitness landscapes found that MLDE consistently matched or exceeded the performance of traditional DE [12]. The study revealed that the advantages of MLDE become more pronounced on landscapes that are challenging for traditional DE, specifically those with fewer active variants and more local optima—hallmarks of epistatic interactions. The research also highlighted that combining focused training (using zero-shot predictors) with active learning provided the greatest efficiency gains [12].

Specific experimental results further demonstrate this performance gap. In one benchmark, an advanced ALDE method named FolDE was tested against baselines representing random selection (traditional DE) and a random forest ALDE method. The results, summarized in the table below, show a clear and significant improvement in discovering high-fitness variants [4].

Table 2: Performance Benchmark of FolDE vs. Baselines in Protein Optimization

| Method | Cumulative Top 10% Mutants Discovered (Rounds 1-3) | Probability of Finding a Top 1% Mutant |

|---|---|---|

| Random Selection (Traditional DE) | Baseline | Baseline |

| Zero-shot Naturalness Selection | 3.8x more than Random [4] | 3.6x higher chance than Random [4] |

| Random Forest ALDE (e.g., EVOLVEpro) | Improved over Random | Improved over Random |

| FolDE (Advanced ALDE) | 23% more than best baseline (p=0.005) [4] | 55% more likely than best baseline [4] |

Detailed Experimental Protocols

To ensure reproducibility and provide a clear technical roadmap, this section outlines the detailed methodologies for key experiments cited in this guide.

Protocol: Active Learning-Assisted Directed Evolution (ALDE)

This protocol is adapted from Yang et al. (2025) and Roberts et al. (2025) [6] [4].

- Problem Formulation: Define the protein engineering goal (e.g., improve enzymatic activity, stability, or binding affinity) and establish a reliable assay for quantifying fitness.

- Initial Library Construction:

- Generate a comprehensive in silico library of protein variants, typically focusing on 3-5 target residues known to be functionally important or epistatic.

- For the first round of experimentation, variants can be selected either randomly or via zero-shot selection using a protein language model (PLM) like ESM-2 to rank variants by their "naturalness" (wild-type marginal likelihood) [4].

- Wet-Lab Experimentation & Data Generation:

- Synthesize and clone the selected variant sequences.

- Express the proteins and measure their fitness using the predefined assay. This constitutes one round of wet-lab experimentation.

- Model Training (Active Learning Loop):

- Architecture: Use a PLM to convert protein sequences into fixed-length vector embeddings. Feed these embeddings into a top-layer predictor, such as a neural network trained with ranking loss or a random forest regressor [4].

- Training: Train the model on the accumulated dataset of variant sequences and their experimentally measured fitness values.

- Naturalness Warm-Start (FolDE): To improve performance with limited data, weights can be warm-started by pre-training the predictor to replicate the PLM's naturalness scores on all possible single mutants before fine-tuning on the experimental activity data [4].

- Variant Selection for Next Round:

- Use the trained model to predict the fitness of all candidates in the in silico library.

- Employ an active learning strategy that selects the next batch of variants not solely based on the highest predicted fitness, but by balancing exploitation (high predictions) with exploration (high model uncertainty). The constant-liar algorithm can be used to improve batch diversity [4].

- Iteration and Validation:

- Repeat steps 3-5 for multiple rounds (typically 3-5).

- Validate the final top-predicted variants, which may be distant from the wild-type sequence, through comprehensive biochemical and biophysical characterization.

Protocol: Inversion of AlphaFold2 for De Novo Protein Design

This protocol, based on the work of L. et al. (2023), illustrates a deterministic, computation-driven design approach [13].

- Target Backbone Definition: Select a target protein backbone topology for design, which may be novel or derived from a known fold.

- Sequence Initialization: Initialize a random or seed amino acid sequence as a starting point.

- Structure Prediction with AF2: Use AlphaFold2 (AF2) in single-sequence mode (without multiple sequence alignments or templates) to predict the 3D structure of the initialized sequence.

- Loss Calculation: Compute the structural loss (e.g., Frame Aligned Point Error - FAPE) between the AF2-predicted structure and the target backbone. The FAPE loss measures C-alpha distances after aligning residue frames, independent of overall structure orientation [13].

- Error Backpropagation and Sequence Optimization: Backpropagate the structural loss through the AF2 network to calculate the error gradient with respect to the input sequence. This generates an N × 20 matrix (for a sequence of length N) showing how each residue position contributes to the loss.

- Iterative Refinement: Apply a gradient descent or Markov Chain Monte Carlo (MCMC) optimization to update the input sequence, minimizing the structural loss. This step is repeated iteratively.

- In Silico Validation: Analyze the final designed sequences for correct fold, surface hydrophilicity, and a densely packed hydrophobic core using computational tools.

- In Vitro Validation: Synthesize the top-ranking designs in vitro and characterize them experimentally (e.g., via circular dichroism, X-ray crystallography, thermal stability assays) to confirm they adopt the target fold [13].

The Scientist's Toolkit: Essential Research Reagents & Solutions

This section details key computational and experimental resources essential for implementing the deterministic methodologies discussed in this guide.

Table 3: Essential Tools for Machine Learning-Assisted Protein Engineering

| Tool / Reagent | Type | Function & Application |

|---|---|---|

| Protein Language Models (PLMs) - ESM-2 | Computational Model | Provides sequence embeddings for machine learning models and enables zero-shot variant ranking via "naturalness" scores, serving as a powerful prior [4]. |

| AlphaFold2 (AF2) | Computational Model | An inverted structure prediction network used for de novo protein design by optimizing sequences to fit a target backbone [13]. |

| Random Forest / Neural Network (Ranking Loss) | Computational Model | Top-layer predictors that map PLM embeddings to functional fitness values; ranking loss outperforms regression for activity prediction [4]. |

| FolDE Software | Software Workflow | An open-source ALDE package that implements naturalness warm-starting and diversity-aware batch selection for efficient protein optimization [4]. |

| High-Throughput Screening Assay | Experimental Reagent | A reliable biochemical or cell-based assay (e.g., for enzyme activity or binding affinity) used to generate quantitative fitness data for model training in wet-lab rounds [12] [4]. |

The comparative analysis clearly demonstrates a paradigm shift in protein engineering from probabilistic, resource-intensive experimentation towards deterministic, data-driven strategies. Deterministic approaches, embodied by MLDE and ALDE, offer superior precision, efficiency, and the ability to navigate complex epistatic landscapes that are challenging for traditional DE. While probabilistic methods have historical significance, the future of protein engineering for drug development and biotechnology lies in the integration of deterministic computational frameworks with targeted wet-lab experimentation. This hybrid methodology enables researchers to systematically explore the vast protein sequence space, unlocking the potential to design novel therapeutics and enzymes with unprecedented speed and success.

The Role of Machine Learning in Transforming Experimental Design

The field of experimental design is undergoing a fundamental shift, moving from static, human-planned experiments to dynamic, adaptive processes guided by machine learning (ML). In industrial and research contexts, particularly in drug development, this translates to a transition from Traditional Design of Experiments (DE) to Active Learning-Assisted Design of Experiments (ALDE). Traditional DE relies on pre-defined, often one-shot statistical designs (e.g., full factorial, Response Surface Methodology) to explore a parameter space. While statistically sound, this approach can be inefficient, resource-intensive, and slow to converge on optimal conditions, especially in high-dimensional spaces common in biology and chemistry [14].

ALDE, in contrast, uses ML algorithms to guide an iterative discovery loop. An initial small-scale experiment is conducted, the data is used to train a model, and this model then intelligently selects the most promising or informative experiments to run next. This creates a closed-loop system that minimizes the number of experiments needed to achieve a goal, whether it's optimizing a reaction yield, discovering a new material, or identifying a potent drug candidate [15] [16]. This article provides a comparative analysis of these two paradigms, focusing on their application, performance, and practical implementation.

Performance Comparison: Traditional DE vs. ALDE

The theoretical advantages of ALDE are borne out in quantitative performance metrics across key areas such as efficiency, cost, and success rates. The table below summarizes a comparative analysis based on recent literature and industry data [14] [15] [17].

Table 1: Performance Comparison between Traditional DE and ALDE

| Performance Metric | Traditional DE | ALDE | Context & Notes |

|---|---|---|---|

| Experimental Efficiency | Requires full factorial exploration; high number of runs. | 40-60% reduction in experiments needed [17]. | ALDE focuses on the most informative experiments, avoiding redundant trials. |

| Resource Utilization | High consumption of reagents, man-hours, and equipment time. | 25-40% improvement in data engineering productivity [15]. | Reduced experimental load directly translates to lower resource use. |

| Success Rate/Accuracy | Limited by pre-defined model assumptions; prone to missing optima. | Better accuracy and insights from complex patterns [17]. | ML models detect non-linear and interactive effects that are hard to pre-specify. |

| Process Duration | Linear, sequential process; can take weeks to months. | 40% reduction in operational costs and time [15]. | Iterative, automated cycles drastically shorten the "run-analyze-decide" loop. |

| Adaptability to Complexity | Effective for low-dimensional problems (e.g., 2-4 factors). | Suitable for high-dimensional spaces (e.g., 100s of molecular descriptors). | ALDE scales to explore vast parameter spaces intractable for traditional DE [14]. |

| Cost Implications | High per-project cost due to extensive experimentation. | 189% to 335% ROI over three years reported [15]. | Major cost savings are achieved through efficiency gains and higher success rates. |

Experimental Protocols and Methodologies

Protocol for Traditional Design of Experiments (DE)

The traditional DE workflow is a linear, sequential process that relies heavily on upfront statistical planning and human oversight.

- Problem Formulation: Clearly define the objective (e.g., "maximize reaction yield") and identify the input factors (e.g., temperature, concentration, catalyst amount) and response variables (e.g., yield, purity).

- Design Selection: Choose an appropriate statistical design based on the objective and number of factors. Common designs include:

- Full Factorial Design: Studies all possible combinations of factor levels. Provides comprehensive data but becomes prohibitively large with many factors.

- Response Surface Methodology (RSM): Uses a central composite design to model quadratic relationships and find optimal conditions.

- Experimental Execution: Run all experiments as specified by the chosen design matrix. The order is often randomized to avoid confounding from lurking variables.

- Data Analysis & Modeling: Analyze the collected data using statistical methods like Analysis of Variance (ANOVA) to build a regression model (e.g., a linear or quadratic polynomial) that relates the factors to the response.

- Optimization & Validation: Use the model to predict the optimal factor settings. Run a final confirmation experiment at these predicted settings to validate the model.

Protocol for Active Learning-Assisted DE (ALDE)

The ALDE workflow is an iterative, closed-loop cycle that leverages machine learning to guide the experimental path dynamically [16].

- Initial Design & Data Collection: Start with a small, space-filling set of initial experiments (e.g., a Latin Hypercube Sample) to gather baseline data across the factor space.

- Model Training: Train a machine learning model on all data collected so far. Suitable models include:

- Gaussian Process (GP) Regression: A powerful non-parametric model that provides uncertainty estimates alongside predictions.

- Random Forests or Gradient Boosting Machines: For handling complex, non-linear relationships.

- Deep Neural Networks: For very high-dimensional data, such as molecular structures represented as graphs [14] [16].

- Acquisition Function Optimization: Use an acquisition function to decide the next most valuable experiment to run. This function balances exploration (sampling areas of high uncertainty) and exploitation (sampling areas predicted to be high-performing). Common acquisition functions include Expected Improvement (EI) and Upper Confidence Bound (UCB).

- Iterative Experimentation: Execute the experiment(s) proposed by the acquisition function.

- Model Update & Loop: Add the new data to the training set and update the ML model. Repeat steps 2-4 until a stopping criterion is met (e.g., budget exhausted, performance target reached, or convergence).

Workflow Visualization

The following diagram illustrates the logical flow and key differences between the Traditional DE and ALDE protocols, highlighting the linear nature of the former and the adaptive loop of the latter.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Implementing ALDE requires a combination of computational tools and experimental infrastructure. The following table details key resources for building an ALDE pipeline [14] [15] [16].

Table 2: Key Research Reagent Solutions for ALDE

| Tool/Reagent Category | Specific Examples | Function & Role in ALDE |

|---|---|---|

| ML Frameworks & Libraries | TensorFlow, PyTorch, Scikit-learn [14] | Provides the core algorithms for building, training, and deploying models like GPs and DNNs for predictive tasks. |

| Specialized Drug Discovery Toolkits | Therapeutics Data Commons (TDC), DeepPurpose, MolDesigner [16] | Offer curated datasets, benchmarks, and pre-built models specifically for molecular property prediction and de novo drug design. |

| Active Learning & Optimization Libs | Bayesian Optimization libraries (e.g., BoTorch, Ax) | Implement acquisition functions and provide frameworks for managing the iterative ALDE loop. |

| High-Throughput Experimentation (HTE) | Automated liquid handlers, microplate readers, robotic synthesizers. | Enables the rapid execution of the small-scale, parallel experiments proposed by the ALDE algorithm. |

| Data Management Platforms | Cloud databases (AWS, GCP, Azure), MLOps platforms (MLflow, Weights & Biases). | Handles the storage, versioning, and processing of large, complex datasets generated during the iterative process. |

| Molecular Descriptors & Representations | SMILES strings, Molecular fingerprints, Graph representations [16]. | Standardized ways to represent chemical structures as input for ML models, enabling predictions of properties and activities. |

The evidence demonstrates that Active Learning-Assisted Design of Experiments represents a superior paradigm for modern experimental challenges, particularly in complex fields like drug development. While Traditional DE provides a foundational and reliable approach for well-understood, low-dimensional problems, ALDE offers transformative gains in efficiency, cost-effectiveness, and the ability to navigate high-dimensional search spaces. The integration of machine learning into the experimental core creates a powerful, adaptive system that accelerates the pace of discovery and optimization. As ML tools become more accessible and integrated into laboratory instrumentation, the adoption of ALDE is poised to become a standard practice, empowering researchers and scientists to solve problems that were previously considered intractable.

Directed evolution (DE) has long been a cornerstone of protein engineering, enabling researchers to optimize proteins for therapeutic, industrial, and research applications through iterative cycles of mutagenesis and screening. This empirical "hill-climbing" approach, while powerful, operates with limited knowledge of the complex fitness landscape that maps protein sequence to function. The inherent challenge lies in the high-dimensional sequence space and epistatic interactions, where the effect of one mutation depends on the presence of others, creating rugged fitness landscapes difficult to traverse with traditional methods [12]. These landscapes are particularly challenging when rich in epistasis, which is frequently observed between mutations in close structural proximity and enriched at binding surfaces or enzyme active sites due to direct interactions between residues, substrates, and/or cofactors [12].

The emergence of machine learning-assisted directed evolution (MLDE), particularly active learning-assisted directed evolution (ALDE), represents a paradigm shift in protein engineering methodology. These approaches leverage computational forecasting and data-driven exploration to navigate fitness landscapes more efficiently than traditional DE. Where DE operates through experimental brute force, ALDE employs iterative model refinement to predict high-fitness variants, fundamentally changing the exploration-exploitation balance in protein optimization [6] [4]. This comparison guide examines the key performance differentiators between these methodologies, providing experimental validation and implementation frameworks for researchers considering adoption of ALDE strategies.

Performance Comparison: Traditional DE vs. ALDE

Table 1: Comprehensive Performance Metrics of Traditional DE vs. ALDE

| Performance Metric | Traditional DE | ALDE | Experimental Context |

|---|---|---|---|

| Screening Efficiency | Requires testing of thousands to millions of variants [4] | Effective with batches of 16-48 variants over 3 rounds [4] | Low-throughput optimization campaigns |

| Success Rate for Top 1% Mutants | Baseline (reference) | 55% more likely to find top 1% mutants [4] | Simulation across 20 protein targets |

| Yield Improvement | 12% starting yield [6] | 93% final yield (681% relative improvement) [6] | Cyclopropanation reaction optimization |

| Handling of Epistatic Landscapes | Inefficient due to greedy hill-climbing [12] | Superior navigation of rugged landscapes [12] [6] | 5 epistatic residues in enzyme active site |

| Top 10% Mutant Discovery | Baseline (reference) | 23% more top 10% mutants discovered (p=0.005) [4] | Multi-mutation benchmark datasets |

| Dependence on High-Throughput Screening | Required for practical implementation | Not required; optimized for low-throughput settings [4] | Targets lacking high-throughput screens |

Table 2: Advantages of Advanced ALDE Implementations (FolDE)

| Feature | Standard ALDE | FolDE Implementation | Impact on Performance |

|---|---|---|---|

| Initial Variant Selection | Random sampling or top-N predictions | Naturalness-based warm-starting [4] | 3.8× more top 10% mutants in round 1 [4] |

| Training Data Diversity | Prone to homogeneous batches | Constant-liar batch selection [4] | Improved exploration of sequence space |

| Activity Prediction Model | Random forest with PLM embeddings [4] | Neural network with ranking loss + ensemble [4] | Better identification of top performers |

| Exploration-Exploitation Balance | Suboptimal tradeoff | Managed through specialized policies [4] | 55% higher success for top 1% mutants [4] |

Experimental Evidence: Validating ALDE Performance

Case Study: Enzyme Engineering for Cyclopropanation

In a rigorous application to enzyme engineering, ALDE was deployed to optimize five epistatic residues in the active site of an enzyme for a non-native cyclopropanation reaction [6]. The experimental protocol involved:

- Library Design: Targeting five residues in the enzyme active site known to exhibit strong epistatic interactions

- Expression & Screening: Three rounds of wet-lab experimentation with iterative model refinement

- Analytical Methods: Product yield quantification to determine catalytic efficiency

The results demonstrated a dramatic improvement from 12% to 93% yield of the desired cyclopropanation product in just three rounds of experimentation [6]. This case highlights ALDE's particular strength for challenging optimization tasks where traditional DE struggles with epistatic constraints. The ALDE workflow successfully navigated a rugged fitness landscape that would have been difficult to traverse using conventional greedy hill-climbing approaches [6].

Large-Scale Computational Validation

To address reproducibility concerns, researchers conducted comprehensive computational simulations across 16 diverse combinatorial protein fitness landscapes spanning six protein systems and two function types (protein binding and enzyme activity) [12]. The experimental framework included:

- Landscape Diversity: Selection of landscapes with varying statistical attributes and ruggedness

- Algorithm Testing: Multiple MLDE strategies (including active learning and focused training)

- Performance Benchmarking: Comparison against traditional DE using standardized metrics

The study revealed that MLDE strategies consistently matched or exceeded DE performance across all 16 landscapes, with advantages becoming more pronounced as landscape difficulty increased [12]. Specifically, MLDE provided greater relative benefits on landscapes with fewer active variants and more local optima - characteristics that pose significant challenges for traditional directed evolution [12].

Methodological Comparison: Workflows and Implementation

Traditional Directed Evolution Workflow

The following diagram illustrates the iterative experimental process of traditional directed evolution:

Traditional DE Workflow Diagram Description: This process follows a repetitive cycle of diversification (mutagenesis) and selection (screening) without computational guidance between rounds. Each cycle depends on experimental throughput rather than intelligent forecasting.

Active Learning-Assisted Directed Evolution (ALDE) Workflow

The following diagram illustrates the integrated computational-experimental process of ALDE:

ALDE Workflow Diagram Description: This iterative feedback loop combines computational forecasting with experimental validation. The model improves with each round as newly tested variants enrich the training data, enabling progressively more accurate predictions.

Key Methodological Differences

The transition from traditional DE to ALDE involves several fundamental shifts in approach:

- Exploration Strategy: Traditional DE relies on experimental brute force with limited strategic direction, while ALDE employs guided exploration based on model predictions and uncertainty quantification [6] [4]

- Data Utilization: Traditional DE uses experimental data only for immediate variant selection, while ALDE accumulates knowledge across rounds to build increasingly accurate landscape models [12]

- Epistasis Management: Traditional DE struggles with epistatic interactions, while ALDE models can capture non-additive effects and identify combinations of mutations that work synergistically [12]

- Resource Allocation: Traditional DE requires massive screening campaigns, while ALDE optimizes experimental resources by prioritizing informative variants [4]

Research Reagent Solutions Toolkit

Table 3: Essential Research Tools for ALDE Implementation

| Tool Category | Specific Examples | Function in ALDE Workflow |

|---|---|---|

| Protein Language Models | ESM-family models [4] | Generate sequence embeddings and naturalness scores for zero-shot prediction |

| Activity Prediction Models | Random Forest, Neural Networks with ranking loss [4] | Predict variant fitness from sequence embeddings and experimental data |

| Experimental Assays | Isothermal Titration Calorimetry, Surface Plasmon Resonance [18] | Measure binding affinity and protein-ligand interactions for training data |

| Computational Infrastructure | GPUs for model training, Sequence embedding pipelines [4] | Enable efficient model training and inference on large sequence spaces |

| Focused Training Enhancers | Zero-shot predictors leveraging evolutionary, structural, and stability knowledge [12] | Enrich training sets with informative variants to improve model performance |

Discussion and Implementation Guidelines

When to Adopt ALDE: Key Decision Factors

Based on comprehensive benchmarking studies, ALDE provides the greatest advantages over traditional DE under these conditions:

- Epistatic Landscapes: ALDE significantly outperforms traditional DE on fitness landscapes with substantial epistatic interactions, which are challenging for greedy hill-climbing approaches [12]

- Limited Screening Capacity: When high-throughput screening is unavailable or impractical, ALDE achieves superior results with orders of magnitude fewer variants tested [4]

- Complex Functions: For optimizing multidimensional protein functions (e.g., enzyme activity with multiple substrate specificities) where simple fitness functions are inadequate [12]

- Resource Constraints: ALDE reduces experimental costs by minimizing the number of variants requiring synthesis and characterization [6]

Implementation Best Practices

Successful ALDE implementation requires careful attention to several critical factors:

- Training Set Design: Focused training using zero-shot predictors that leverage evolutionary, structural, and stability knowledge consistently outperforms random sampling [12]

- Model Selection: Neural networks with ranking loss slightly outperform random forests for activity prediction in batch selection contexts [4]

- Exploration-Exploitation Balance: Naturalness-based warm-starting improves early-round performance while maintaining diversity for subsequent model training [4]

- Multi-round Planning: Design campaigns with at least 3-4 rounds to fully leverage ALDE's iterative improvement capabilities [4]

The adoption of ALDE is driven by its demonstrated ability to overcome fundamental limitations of traditional directed evolution. Quantitative evidence across diverse protein systems reveals substantial improvements in efficiency (55% higher success rate for top 1% mutants), efficacy (681% relative yield improvement in challenging enzyme engineering), and capability (effective navigation of epistatic landscapes). While traditional DE remains effective for simpler optimization tasks, ALDE provides a superior approach for the most challenging protein engineering problems, particularly those involving epistatic interactions, limited screening capacity, or complex fitness landscapes. The availability of open-source ALDE implementations like FolDE now makes these advanced capabilities accessible to any research laboratory [4].

Implementing ALDE: A Step-by-Step Framework for Biomedical Research

Directed evolution (DE) has long been a cornerstone of protein engineering, enabling researchers to optimize protein fitness for specific applications through iterative rounds of mutagenesis and screening. This approach mimics natural evolution in the laboratory, accumulating beneficial mutations to enhance protein performance. However, traditional DE methods face significant limitations when navigating complex protein fitness landscapes where mutations exhibit non-additive, or epistatic, behavior. In such landscapes, the effect of a mutation depends on the genetic background in which it occurs, causing simple greedy hill-climbing optimization to become trapped at local optima. The vastness of protein sequence space – with 20^N possible sequences for a protein of length N – makes comprehensive exploration experimentally intractable.

Active Learning-assisted Directed Evolution (ALDE) represents a paradigm shift in protein engineering, integrating machine learning with traditional directed evolution to navigate these complex fitness landscapes more efficiently. By leveraging uncertainty quantification and iterative model updating, ALDE enables more intelligent exploration of sequence space, requiring fewer experimental rounds to identify high-fitness variants. This guide provides a comprehensive comparison of traditional DE versus ALDE methodologies, examining their experimental workflows, performance metrics, and practical implementation strategies for researchers and drug development professionals.

Experimental Foundations: Methodologies Compared

Traditional Directed Evolution Workflow

Traditional DE follows a systematic, though computationally naive, approach to protein optimization:

- Library Generation: Create genetic diversity through random mutagenesis or site-specific saturation targeting single or multiple residues.

- Screening/Selection: Assay variants for the desired fitness property (e.g., enzymatic activity, binding affinity, stability).

- Variant Selection: Identify and isolate top-performing variants based on experimental measurements.

- Iteration: Use the best variant(s) as templates for subsequent rounds of mutagenesis and screening.

This process resembles greedy hill-climbing optimization, where each step aims to immediately improve fitness. While effective on smooth fitness landscapes with additive mutation effects, this approach struggles with epistatic interactions where the beneficial effect of a mutation combination isn't predictable from individual mutations. In such cases, DE may require numerous experimental rounds and screening of thousands to millions of variants to locate global optima.

Active Learning-Assisted Directed Evolution (ALDE) Framework

ALDE introduces a computational intelligence layer to the directed evolution process, creating a closed-loop system between experimental measurement and machine learning prediction. The core ALDE workflow, as described by Yang et al., comprises several key stages [19]:

- Design Space Definition: Select k target residues for optimization, creating a 20^k possible sequence space.

- Initial Data Collection: Screen an initial library of variants mutated at all k positions.

- Model Training: Use collected sequence-fitness data to train a supervised ML model that predicts fitness from sequence.

- Uncertainty Quantification: Leverage model uncertainty estimates to balance exploration and exploitation.

- Variant Prioritization: Apply an acquisition function to rank all sequences in the design space.

- Iterative Testing: Experimentally test top-ranked variants and update the model with new data.

This workflow alternates between wet-lab experimentation and computational modeling, with each round of experimental data improving the model's understanding of the fitness landscape [19]. FolDE, a recently developed ALDE method, enhances this framework further through naturalness warm-starting (using protein language model outputs to augment limited activity measurements) and diversity-aware batch selection to improve exploration [4].

Table 1: Core Components of ALDE Workflows

| Component | Function | Implementation Examples |

|---|---|---|

| Protein Language Models | Generate sequence embeddings and naturalness scores | ESM2, M3GNet [4] |

| Uncertainty Quantification | Balance exploration vs. exploitation | Frequentist methods, Bayesian optimization [19] |

| Acquisition Function | Rank variants for experimental testing | Expected improvement, upper confidence bound [19] |

| Activity Prediction Model | Map sequence/embeddings to fitness | Random forest, neural networks with ranking loss [4] |

| Batch Selection Strategy | Ensure diversity in selected variants | Constant-liar algorithm, stratified sampling [4] |

Workflow Visualization: ALDE in Practice

The following diagram illustrates the integrated experimental-computational workflow of Active Learning-assisted Directed Evolution:

Case Study: Experimental Protocol & Implementation

ALDE Application to Protoglobin Engineering

A recent study by Yang et al. demonstrates a practical implementation of ALDE for optimizing a challenging epistatic system [19]. The research aimed to engineer the active site of a protoglobin from Pyrobaculum arsenaticum (ParPgb) for improved non-native cyclopropanation activity.

Experimental Protocol:

Target Identification: Five epistatic residues (W56, Y57, L59, Q60, and F89) in the ParPgb active site were selected based on previous studies indicating their impact on non-native activity and potential for negative epistasis.

Initial Library Construction: Researchers synthesized an initial library of ParLQ (ParPgb W59L Y60Q) variants mutated at all five positions using PCR-based mutagenesis with NNK degenerate codons.

Fitness Assay: Variants were screened for cyclopropanation of 4-vinylanisole using ethyl diazoacetate as a carbene precursor. The fitness objective was defined as the difference between yield of cis-2a and trans-2a cyclopropane products.

Machine Learning Integration:

- Model Training: Sequence-fitness data was used to train supervised ML models mapping sequence to fitness.

- Uncertainty Quantification: Both frequentist and Bayesian approaches were evaluated for balancing exploration and exploitation.

- Variant Selection: Acquisition functions ranked all sequences in the 20^5 design space to prioritize variants for subsequent rounds.

Iterative Rounds: Three rounds of ALDE were performed, with each round's experimental results updating the predictive model for the next selection cycle [19].

Reagent Solutions for ALDE Implementation

Table 2: Essential Research Reagents for ALDE Workflows

| Reagent/Resource | Function in ALDE Workflow | Implementation Example |

|---|---|---|

| NNK Degenerate Codons | Library generation with reduced codon redundancy | ParPgb active site mutagenesis [19] |

| Protein Language Models | Generate sequence embeddings & naturalness priors | ESM2 for naturalness warm-starting [4] |

| Active Learning Algorithms | Select informative variants for testing | Batch Bayesian optimization with uncertainty quantification [19] |

| Fitness Assay Systems | Quantitative measurement of target property | GC analysis of cyclopropanation products [19] |

| MLDE Software Platforms | Implement active learning workflows | ALDE codebase (https://github.com/jsunn-y/ALDE) [19] |

Performance Comparison: Quantitative Analysis

Efficiency Metrics and Experimental Outcomes

Direct comparison of traditional DE versus ALDE approaches reveals significant differences in optimization efficiency and success rates:

Table 3: Performance Comparison of DE vs. ALDE Methodologies

| Performance Metric | Traditional DE | ALDE | FolDE |

|---|---|---|---|

| Rounds to Optimization | Multiple (4+) rounds of greedy hill-climbing | 3 rounds for ParPgb optimization [19] | 3 rounds in simulation benchmarks [4] |

| Variants Screened | Typically thousands to millions | ~0.01% of design space explored [19] | 48 variants total (16 per round) [4] |

| Yield Improvement | Incremental improvements per round | 12% to 93% yield in 3 rounds [19] | N/A (simulation study) |

| Top 10% Mutants Found | Limited by local optimization | Significantly enhanced vs. DE [19] | 23% more than best baseline [4] |

| Success with Epistasis | Becomes trapped at local optima | Effectively navigates epistatic landscapes [19] | 55% more likely to find top 1% mutants [4] |

| Key Advantage | Simple implementation | Efficient exploration of complex landscapes | Naturalness warm-starting improves prediction |

The ParPgb case study exemplifies ALDE's efficiency gains. While traditional DE approaches like single-site saturation mutagenesis and recombination of beneficial mutations failed to produce variants with high yield and selectivity, ALDE identified an optimal variant with 99% total yield and 14:1 diastereoselectivity after just three rounds while exploring only approximately 0.01% of the total design space [19].

Computational Benchmarking Across Diverse Protein Targets

FolDE's performance has been systematically evaluated across multiple protein targets through computational simulations. Using datasets from ProteinGym, researchers benchmarked FolDE against three baseline methods: random selection (traditional DE), zero-shot naturalness-based selection, and random forest with ESM2 embeddings [4]:

- FolDE discovered 23% more top 10% mutants than the best baseline method (p=0.005)

- FolDE was 55% more likely to find top 1% mutants compared to baselines

- The method demonstrated particular effectiveness in multi-mutation landscapes better approximating real engineering campaigns

These improvements are primarily attributed to FolDE's naturalness warm-starting approach, which augments limited activity measurements with protein language model outputs to improve activity prediction [4]. The constant-liar batch selection strategy also contributed to batch diversity, though its effect was more limited in the benchmarks.

Discussion: Implementation Considerations and Future Directions

Practical Guidelines for ALDE Deployment

Based on the examined studies, successful ALDE implementation requires careful consideration of several factors:

Design Space Selection: The choice of k target residues balances epistasis consideration against combinatorial complexity. Larger k values capture more epistatic effects but require more data for effective modeling [19].

Initial Library Strategy: While random initial selection is common, naturalness-based warm-starting (as in FolDE) provides better initial variants but may limit diversity. This tension between round-1 performance and round-2 model training must be carefully managed [4].

Model Selection and Training: Neural networks with ranking loss outperform both regression-trained networks and random forests for activity prediction. Ensemble methods improve performance through uncertainty quantification [4].

Batch Selection Strategy: Diversity-aware selection methods like the constant-liar algorithm help prevent over-concentration on slight variants of known top performers, ensuring continued exploration of the fitness landscape [4].

Comparative Advantages and Limitations

ALDE represents a significant advancement over traditional DE, particularly for challenging optimization problems with substantial epistasis. The methodology's key advantage lies in its data efficiency – achieving superior results with far fewer experimental measurements. This makes previously intractable engineering problems feasible, especially for targets lacking high-throughput screening methods.

However, ALDE introduces additional complexity in experimental design and requires computational expertise. The need for well-defined fitness assays and quantitative measurements remains, and model performance depends on the quality and representation of initial training data. Traditional DE may still be preferable for simpler optimization tasks with minimal epistasis or when computational resources are limited.

As protein language models and active learning algorithms continue to advance, ALDE methodologies are likely to become more accessible and effective. The integration of structural information, improved uncertainty quantification, and adaptive experimental design will further enhance ALDE's capabilities, solidifying its role as a powerful tool for protein engineers and drug development professionals.

Data Requirements and Preparation for Effective Active Learning

Directed evolution (DE), the cornerstone of modern protein engineering, operates as a greedy hill-climbing optimization across vast protein fitness landscapes [19]. This process involves accumulating beneficial mutations through iterative cycles of mutagenesis and screening. However, its efficiency is severely hampered by epistasis—non-additive interactions between mutations—which creates rugged fitness landscapes rich in local optima that trap conventional DE [19] [12]. In such landscapes, beneficial mutations in isolation often fail to combine productively, making successful navigation contingent on exploring complex, high-order sequence combinations.

Active Learning-assisted Directed Evolution (ALDE) represents a paradigm shift, embedding machine learning within the experimental cycle to model epistatic interactions explicitly and guide exploration more efficiently [19]. This integration fundamentally transforms data from a mere record of screened variants into a strategic asset that trains models to predict fitness across the uncharted sequence space. The subsequent sections compare how traditional DE and ALDE differ in their data utilization, detail the specific data requirements and preparation for ALDE, and provide experimental evidence of its performance advantages in challenging protein engineering tasks.

Comparative Workflows: Data Handling in DE vs. ALDE

The core distinction between traditional Directed Evolution and Active Learning-assisted Directed Evolution lies in their data lifecycle. The workflows below contrast their fundamental processes.

Traditional Directed Evolution Workflow

Active Learning-Assisted Directed Evolution (ALDE) Workflow

Data Requirements and Preparation for ALDE

Successful implementation of ALDE hinges on meticulous data preparation and strategic sampling. The initial phase involves defining a combinatorial design space, typically focusing on 3-5 residues known or suspected to influence function, such as active site residues [19]. For a 5-residue library, this creates a theoretical space of 20^5 (3.2 million) possible sequences, though only a tiny fraction (e.g., ~0.01%) will be experimentally sampled [19]. The quality of the initial data is paramount; ALDE performance is significantly enhanced by focused training, which uses zero-shot predictors to enrich initial training sets with higher-fitness variants, avoiding uninformative regions of sequence space [12].

Key Data Dimensions for ALDE

Table: Critical Data Components in an ALDE Campaign

| Data Component | Description | Role in ALDE | Considerations |

|---|---|---|---|

| Combinatorial Design Space | Pre-defined set of k residues to be mutated (e.g., 5 residues = 20^5 variants) [19]. |

Defines the universe of possible variants the ML model will explore. | Choice of k balances epistasis consideration and experimental feasibility. |

| Initial Training Set | First round of experimentally screened variants (tens to hundreds) [19]. | Provides the foundational labeled data for initial model training. | Quality over quantity; focused training with zero-shot predictors is beneficial [12]. |

| Sequence Encodings | Numerical representations of protein sequences (e.g., from Protein Language Models) [19] [4]. | Enables the ML model to process amino acid sequences. | ESM2 embeddings are a common, powerful choice [4]. |

| Fitness Labels | Quantitative experimental measurements of protein function (e.g., yield, selectivity, activity). | The target variable for the supervised ML model to learn. | Must be reliable, reproducible, and relevant to the engineering goal. |

| Uncertainty Estimates | Quantification of model prediction uncertainty, often from ensemble methods [19] [4]. | Informs the acquisition function to balance exploration and exploitation. | Frequentist methods can be more consistent than Bayesian approaches [19]. |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table: Key Reagents and Materials for ALDE Experiments

| Item | Function in ALDE Workflow | Example from ParPgb Case Study [19] |

|---|---|---|

| Protein Scaffold | The base protein to be engineered. | Pyrobaculum arsenaticum protoglobin (ParPgb) ParLQ (W59L Y60Q) variant. |

| Defined Residue Positions | Specific amino acid locations to mutate, defining the combinatorial space. | Five epistatic active-site residues: W56, Y57, L59, Q60, and F89 (WYLQF). |

| Mutagenesis Kit/Resources | Tools for generating the mutant libraries. | PCR-based mutagenesis methods utilizing NNK degenerate codons. |

| Wet-Lab Assay | Experimental platform for high-throughput fitness quantification. | Gas chromatography assay for cyclopropanation yield and diastereomer selectivity. |

| Transition-State Analogue | Molecule for structural studies to validate active-site organization. | 6-nitrobenzotriazole (6NBT) for X-ray crystallography [5]. |

| ML Software Framework | Computational tools for model training and variant prioritization. | Custom ALDE codebase (e.g., https://github.com/jsunn-y/ALDE) [19]. |

Experimental Protocols & Performance Comparison

Case Study: Optimizing a ParPgb Cyclopropanase via ALDE

Experimental Objective: To optimize five epistatic active-site residues (W56, Y57, L59, Q60, F89) in ParPgb for a non-native cyclopropanation reaction, aiming to maximize the yield of the desired cis-cyclopropane product [19].

Methodology:

- Library Design & Initial Sampling: A combinatorial library of ParLQ variants mutated at all five positions was synthesized using NNK codon-based mutagenesis. An initial dataset was generated by random screening from this library.

- Machine Learning Model: A supervised ML model was trained to map protein sequence to fitness (defined as the difference between cis- and trans- product yields). The model used frequentist uncertainty quantification.

- Active Learning Loop: The trained model was used with an acquisition function to rank all sequences in the design space. The top N predicted variants were synthesized and screened experimentally.

- Iteration: The new sequence-fitness data were added to the training set, and the cycle (steps 2-4) was repeated for three rounds [19].

Results: The ALDE campaign successfully navigated the rugged fitness landscape, improving the yield of the desired product from 12% to 93% in just three rounds of wet-lab experimentation, also achieving high diastereoselectivity (14:1) [19]. The final optimal variant contained a mutation combination not predictable from initial single-mutation scans, underscoring ALDE's ability to overcome epistatic constraints.

Quantitative Performance Comparison

Table: Benchmarking ALDE and Related Methods Against Traditional DE

| Method | Key Principle | Typical Experimental Scale | Reported Performance Advantage |

|---|---|---|---|

| Traditional DE | Greedy hill-climbing based on recombination of beneficial single mutations [19]. | Large libraries (thousands to millions). | Baseline. Becomes inefficient or fails on highly epistatic landscapes [19] [12]. |

| MLDE | One-shot training of an ML model on a large initial dataset to predict optimal variants [12]. | Single large screening round. | Outperforms DE but limited by static training data. |

| ALDE | Iterative retraining of ML model with batches of new, strategically selected data [19]. | Small batches (tens-hundreds) over multiple rounds. | ~0.01% of search space explored; 12% to 93% yield in one case [19]. More effective than DE on challenging landscapes [12]. |

| FolDE (ALDE variant) | Incorporates naturalness-based warm-starting and diversity-aware batch selection [4]. | Batches of 16 variants over 3 rounds (48 total). | Discovers 23% more top 10% mutants and is 55% more likely to find a top 1% mutant than other ALDE baselines [4]. |

The experimental evidence demonstrates that ALDE requires a fundamentally different approach to data than traditional DE. While DE relies on large-scale, often random, sampling, ALDE leverages smaller, strategically acquired datasets informed by machine learning models. The critical preparation involves defining a sensible combinatorial space and generating an informative initial dataset, sometimes augmented by zero-shot predictors [12]. The subsequent power of ALDE derives from its closed-loop nature, where each round of data collection directly refines the model's understanding of the complex, epistatic fitness landscape.

The comparative data shows that ALDE and its advanced variants like FolDE [4] offer substantial efficiency gains, discovering superior mutants with fewer experimental measurements. This makes them particularly valuable for optimizing protein functions where high-throughput assays are unavailable or expensive. The future of protein engineering lies in these hybrid approaches that tightly integrate computation and experimentation, treating data not as a passive byproduct but as a strategic resource for navigating the complexity of biological sequence space.

Selecting and Tuning Active Learning Query Strategies for Drug Screening

In the field of drug discovery, the imperative to rapidly identify promising therapeutic candidates from vast chemical spaces has catalyzed a shift from traditional Directed Evolution (DE) methods toward machine learning-driven approaches. Traditional DE operates as a greedy hill-climbing optimization, accumulating beneficial mutations step-by-step within a local region of the protein fitness landscape [19]. While successful, this process can become trapped at local optima, especially when mutations exhibit epistatic behavior (non-additive interactions), making the optimization inefficient for complex targets [19].