Addressing Sampling Bias in Viral Phylogenies: Impacts on Research and Clinical Translation

Sampling bias presents a critical challenge in viral phylogenetics, threatening the validity of evolutionary reconstructions, epidemiological models, and public health interventions.

Addressing Sampling Bias in Viral Phylogenies: Impacts on Research and Clinical Translation

Abstract

Sampling bias presents a critical challenge in viral phylogenetics, threatening the validity of evolutionary reconstructions, epidemiological models, and public health interventions. This article synthesizes foundational concepts, methodological innovations, and validation frameworks for identifying and mitigating sampling bias. We explore how biased spatial, temporal, and host-based sampling distorts phylogenetic inference and provide actionable strategies for study design, data analysis, and interpretation. By integrating perspectives from recent genomic studies and epidemiological models, this resource equips researchers and drug development professionals with tools to enhance the reliability of viral genomic data for robust science and effective clinical outcomes.

Defining the Problem: How Sampling Bias Skews Viral Evolutionary Inference

FAQ: What is sampling bias in the context of viral phylogenetics?

In viral phylogenetics, sampling bias occurs when the genetic sequences used to reconstruct a virus's evolutionary history and spread do not accurately represent the true, underlying viral population [1] [2].

This is not simply about having too few samples, but about their composition. If samples are collected in a way that over-represents certain geographic locations, time periods, or host populations, the resulting phylogenetic and phylogeographic trees will reflect these sampling patterns rather than the true biological reality [1] [3]. This can lead to incorrect conclusions about a virus's origin, spread, and population dynamics.

Troubleshooting Guide: Identifying and Diagnosing Sampling Bias

| Symptom | Potential Case | Recommended Diagnostic Check |

|---|---|---|

| Inferred origin contradicts epidemiological data | The phylogenetic analysis points to a geographic origin that is known to have intensive sequencing efforts, but not necessarily where the outbreak started [1] [2]. | Check the distribution of sampled locations. Compare the number of sequences per location against reported case counts to identify over/under-represented areas. |

| Overestimation of specific migration routes | The model suggests frequent movement between two regions, but this may be an artifact of frequent travel-related testing and sequencing between them [1] [4]. | Review the sampling strategy: were travelers intentionally oversampled? Analyze the data with a structured coalescent model (e.g., BASTA, MASCOT) to see if the pattern holds [2]. |

| Unexpectedly low confidence in ancestral node locations | The statistical support (e.g., posterior probability) for the location of key ancestral nodes, including the root, is low [2]. | Map the spatiotemporal coverage of your samples. Identify large gaps in time or space, or "ghost demes" (locations with known transmission but no sequences) [2]. |

| Sensitivity of results to dataset composition | The key conclusions of your analysis change significantly when you add or remove a small number of sequences from a particular location [3]. | Perform a subsampling analysis. If inferences are unstable with minor changes to the sample set, it strongly indicates underlying sampling bias. |

Experimental Protocols: Methodologies for Assessing Bias

Protocol 1: Simulating to Quantify Bias Impact

This protocol uses simulated outbreaks with a known "ground truth" to measure how sampling bias distorts phylogenetic inference [1] [2].

Key Research Reagent Solutions:

- Software for Simulation: R package

diversitree[1] or specialized phylogenetic simulators within frameworks like BEAST 2 [2]. These tools generate viral phylogenies under controlled parameters. - Software for Phylogeographic Inference: BEAST 2 [2]. This is the standard software for performing Bayesian phylogenetic and phylogeographic analysis.

- Computing Cluster: Essential for handling the computationally intensive Markov Chain Monte Carlo (MCMC) analyses required for Bayesian phylogenetics.

Workflow:

- Simulate a "True" Outbreak: Use simulation software to generate a viral phylogeny and the spread of the virus between several discrete locations (e.g., Location A, B, C). This gives you a known history to compare against [1].

- Create Biased Samples: Subsample sequences from the simulated outbreak in a biased manner. For example, you might take 70% of your sequences from Location A, 20% from B, and 10% from C, regardless of the true prevalence in each location [1] [2].

- Reconstruct with Biased Data: Run a standard discrete phylogeographic analysis (e.g., a Continuous-Time Markov Chain model) in BEAST 2 using the biased sample [2].

- Compare to Ground Truth: Compare the reconstruction from step 3 to the known history from step 1. Metrics for comparison can include:

This simulation-based approach allows researchers to understand the specific impact of bias on their analytical methods before applying them to real, messy data.

Protocol 2: Implementing Mitigation Strategies in Analysis

This protocol outlines steps to mitigate the effects of sampling bias during the analysis of real-world viral sequence data [2].

Key Research Reagent Solutions:

- Structured Coalescent Models: Software implementations like BASTA (Bayesian Structured Coalescent Approximation) or MASCOT (Marginal Approximation of the Structured Coalescent) within BEAST 2. These models are explicitly designed to be more robust to uneven sampling across locations [2].

- Epidemiological Data: Case count data per location over time. This external data can be used to inform population sizes in models like MASCOT, moving beyond the assumption of constant population size and improving accuracy [2].

- Downsampling Scripts: Custom scripts (e.g., in Python or R) to create a more balanced dataset that maximizes spatiotemporal coverage rather than sheer sequence volume [2].

Workflow:

- Audit Sample Composition: Create a table or map visualizing the number of sequences per location and per month. Identify obvious gaps and over-represented areas.

- Apply a Robust Model: Analyze your data using a structured coalescent model (BASTA or MASCOT). If available, integrate reliable case count data to inform deme sizes in the model [2].

- Compare with CTMC Model: Run the same analysis using the standard Continuous-Time Markov Chain (CTMC) model for discrete traits.

- Evaluate Consensus: If the results from the structured coalescent and CTMC models are consistent, you can have greater confidence in your findings. If they diverge, the results from the structured coalescent are likely more reliable, and the CTMC results are probably biased by sampling [2].

- Consider Strategic Downsampling: If the dataset is very large and biased, create a subsample that intentionally increases the geographic and temporal evenness of the data. Re-run analyses to see if key conclusions stabilize [2].

Comparative Table: Phylogeographic Models and Their Sensitivity to Sampling Bias

| Model | Core Principle | Robustness to Sampling Bias | Best Use Case Scenario |

|---|---|---|---|

| Discrete Trait Analysis (DTA/CTMC) | Models location as a trait evolving on the tree, akin to a nucleotide substitution [1] [2]. | Low. Treats sampling proportions as data, strongly biasing migration rates and ancestral state reconstruction toward over-sampled locations [1] [2]. | Quick, initial exploration of large datasets where computational cost is a primary concern. |

| Structured Coalescent (BASTA, MASCOT) | A tree-generating model that explicitly models how lineages coalesce within and migrate between subpopulations [2]. | High. Does not use sampling proportions to inform migration parameters, leading to more accurate estimates under biased sampling [2]. | When robustness to uneven sampling is critical. Requires more computational power and can be sensitive to unsampled "ghost" locations [2]. |

| Continuous (Brownian Motion) | Models spatial spread as a random walk in continuous space (latitude/longitude) [3]. | Low. Geographically biased sampling can strongly distort the inferred dispersal history and root location [3]. | When precise spatial pathways within a continuous, well-sampled landscape are of interest. |

| Spatial Λ-Fleming-Viot Process (ΛFV) | An alternative continuous model designed to avoid equilibrium assumptions of other models [3]. | High. Demonstrates inherent robustness to spatial sampling biases [3]. | Scenarios of endemic spread within a population, rather than recent outbreaks or colonizations [3]. |

Frequently Asked Questions

Q1: What is geographic sampling bias in viral phylogenies and why is it a problem? Geographic sampling bias occurs when the number of viral sequences collected and shared varies significantly between different locations. This non-uniform sampling can severely distort phylogeographic reconstructions, leading to incorrect inferences about a virus's historical locations and movement patterns. For instance, an area with intense sequencing efforts might be incorrectly identified as the source of an outbreak simply because more data is available from there, potentially misdirecting public health responses [1].

Q2: What was a key finding from simulations about sampling bias and migration rates? Simulation studies have demonstrated that the overall accuracy of phylogeographic reconstruction is generally high, particularly when the underlying viral migration rate is low. However, sampling bias can have a large impact on the numbers and nature of estimated migration events. The relative sampling intensities of different locations can be mistakenly interpreted as actual migration rates, creating a false picture of viral spread [1].

Q3: How can researchers mitigate the effects of sampling bias? Methods to mitigate bias are in development and include:

- Structured Coalescent Models: Approaches like the BAyesian STructured coalescent Approximation (BASTA) can account for different sampling intensities between locations, providing better estimates of migration rates than methods that treat location like a mutation trait [1].

- Incorporating Travel History: Integrating individual travel history data for sequenced cases can help overcome biases introduced by the over-representation of traveler samples in some datasets [1].

- Analytical Adjustments: Other approaches involve using generalized linear models to account for bias or adding "empty" viral sequences in continuous-space models, though these do not always eliminate bias entirely [1].

Q4: Can you provide a real-world example where phylogeography was used successfully despite sampling challenges? During the 2014-2016 West Africa Ebolavirus epidemic, phylogeographic analysis was used to understand transmission dynamics in space and time. It formed part of the genomic surveillance system that informed the public health response in real-time, helping to track the virus's spread even with the inherent sampling limitations of an epidemic in a resource-limited setting [1].

Quantitative Impacts of Sampling Bias

The table below summarizes key quantitative findings on how sampling bias affects phylogeographic reconstruction, based on simulation studies [1].

| Aspect of Reconstruction | Impact of Sampling Bias | Key Finding |

|---|---|---|

| Overall Accuracy | High when migration rate is low | Reconstruction remains robust under specific conditions. |

| Root State Estimation | Can be biased | The inferred point of origin can be incorrect. |

| Migration Event Count | Large impact | The number of cross-location transmissions can be misestimated. |

| Relative Sampling Intensity | Mistaken for migration rate | High sampling in one location can appear as a migration source. |

Experimental Protocol: Assessing Sampling Bias with Simulated Phylogenies

This protocol outlines a methodology to quantify the effect of geographic sampling bias on phylogeographic inference, using simulations with a known geographic history [1].

1. Simulation of Phylogenetic Trees:

- Model: Use a state-dependent diversification model, such as the Binary-State Speciation and Extinction (BiSSE) model.

- Parameters: Simulate pathogen diversification with two geographic locations (A and B). Key parameters include:

- Speciation rate (λ): Coincident with transmission events.

- Extinction rate (μ): Coincident with the end of the infectious period.

- Migration rate (α): The rate of viral movement between locations A and B.

- Assumptions: For a focused experiment, assume speciation and extinction rates are independent of location (a neutral character) and that migration is symmetrical.

2. Introduction of Sampling Bias:

- After generating the phylogenetic tree, impose a sampling scheme where sequences are collected from locations A and B at different intensities (e.g., 80% from A and 20% from B).

3. Phylogeographic Reconstruction:

- Method: Apply a maximum-likelihood phylogeographic method to the biased sample. This involves first constructing a phylogeny and then reconstructing the geographic locations of the ancestral nodes on that fixed tree.

- Software: Tools such as those implemented in the R package

diversitreecan be used for this purpose.

4. Accuracy Assessment:

- Compare the estimated ancestral locations and migration events from the reconstruction against the known history from the simulation.

- Quantify the error in the location of individual nodes, the inference of the root location, and the number of estimated migration events.

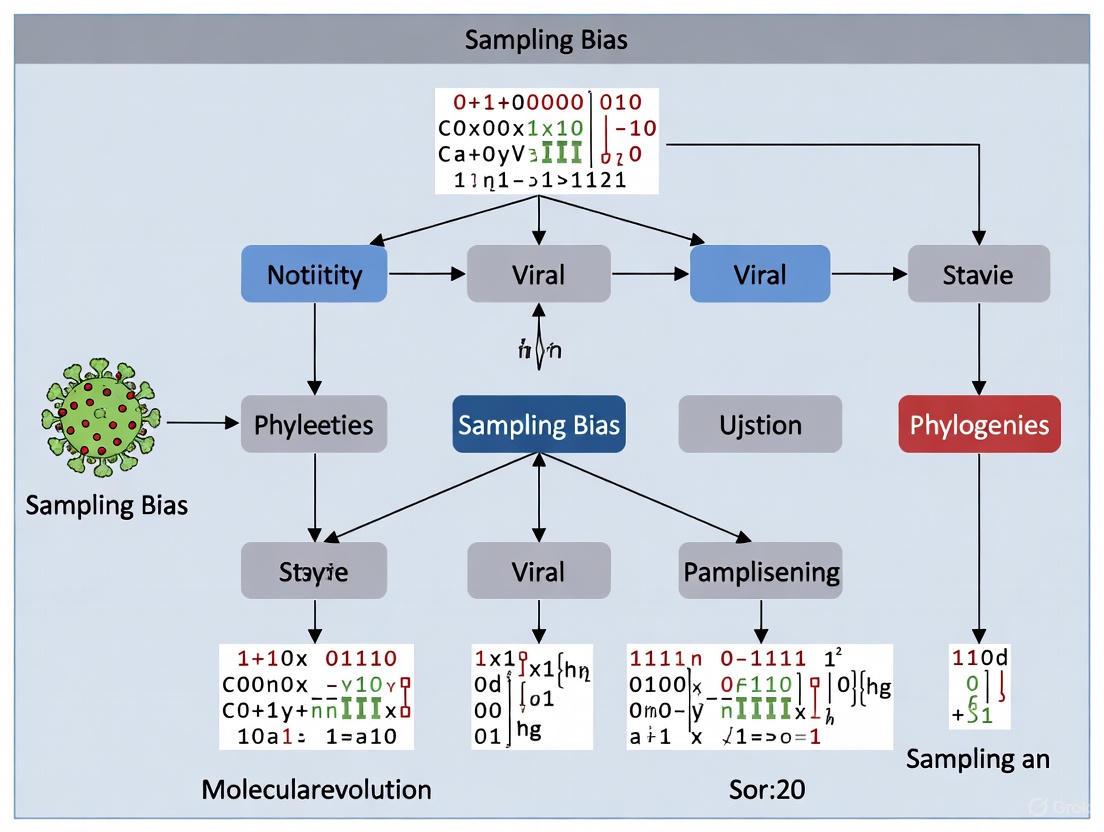

Visualizing the Workflow: From Simulation to Bias Assessment

The diagram below illustrates the logical workflow for the experimental protocol on assessing sampling bias.

Research Reagent Solutions

The table below lists key resources for conducting phylogeographic analysis and mitigating sampling bias.

| Item | Function in Research |

|---|---|

| Pathogen Genomic Sequences | The primary raw data for analysis; shared via repositories like GISAID and GenBank. |

| Computational Phylogenetic Software | Tools for building phylogenetic trees and estimating evolutionary relationships from sequence data. |

| Phylogeographic Analysis Tools | Software packages for reconstructing historical locations and migration patterns on phylogenetic trees. |

| State-Dependent Diversification Models | Models for simulating evolution under specified parameters, used for testing method accuracy. |

| High-Performance Computing Cluster | Essential for handling the large datasets and computationally intensive analyses common in genomic epidemiology. |

Troubleshooting Guides & FAQs

Spatial Sampling Bias

Q: Our phylogeographic analysis suggests a specific region is the source of a viral outbreak. How can I determine if this is a true origin or an artifact of spatial sampling bias?

A: A result showing a specific region as the source may be biased if that region had disproportionately higher sequencing effort compared to neighboring areas. Spatial sampling bias occurs when sampling intensity is not representative of the true viral population distribution across geography, often due to factors like better healthcare infrastructure, concentrated research efforts, or socioeconomic factors in specific areas [1] [5].

- Impact: This bias can distort the inferred historical locations and movements of the virus, misrepresenting migration events and the estimated root location (origin) of the outbreak [1] [6].

- Diagnosis:

- Review the sampling distribution by plotting the number of sequenced genomes per geographic region against the reported incidence of the virus in those regions. A significant mismatch suggests potential bias.

- Check if the inferred source region has a much higher number of submissions per reported case in databases like GISAID compared to other plausible source regions.

- Mitigation:

- Balanced Sampling: Where possible, design genomic surveillance to maximize spatial coverage. A study on rabies virus in Morocco found that alternative sampling strategies that improved spatiotemporal coverage greatly improved inference for some models [7].

- Model Choice: For discrete phylogeography, consider using models that explicitly account for uneven sampling, such as the structured coalescent approximations (e.g., BASTA, MASCOT) [1] [7]. Be aware that these can also be biased with unbiased samples, though informing them with case count data can improve robustness [7].

- Downsampling: In a research setting, you can create a more spatially balanced dataset by downsampling over-represented areas, though this sacrifices data [1].

Temporal Sampling Bias

Q: Our case-control study identified strong predictive biomarkers for severe viral infection. Why did these predictors fail when applied prospectively in a clinical setting?

A: This is a classic symptom of temporal bias. It occurs when data for cases (e.g., severe infection) are collected at or near the time of the outcome event. This "oversamples" the end-stage trajectory of the disease, over-emphasizing features that are strong close to the outcome but may not be predictive further in advance [8].

- Impact: Exaggerated effect sizes, false-negative predictions when deployed in real-time, and a general failure to replicate because the study design uses future information (the known outcome) not available during prospective prediction [8].

- Diagnosis:

- Identify the timing of data collection for your cases. If feature measurement (e.g., biomarker level) is intrinsically linked to the time of diagnosis or severe outcome, the study is vulnerable to temporal bias.

- In one analysis, the odds ratio for a myocardial infarction predictor (Lp(a)) was significantly lower in simulated prospective trials compared to the biased case-control observation [8].

- Mitigation:

- Density-Based Sampling: Use a nested case-control design with incidence density sampling, where controls are selected from the at-risk population at the time each case occurs [8].

- Lead-Time Analysis: When designing the study, establish a "lead time" and measure features in cases from a time point well before the outcome, mimicking the real-world predictive scenario [8].

Host-Based Sampling Bias

Q: We are using machine learning to predict the host (e.g., mammalian, insect) of newly discovered viruses from metavirome data. How does our training data affect the model's performance on truly novel viruses?

A: The predictive efficiency of host prediction models is highly dependent on dataset composition [9]. Bias arises when the training data over-represents certain virus families or known host-virus relationships, causing the model to perform poorly on viruses from novel genera or families not seen during training.

- Impact: Models may achieve high accuracy on viruses related to those in the training set but fail to generalize to genuinely novel viruses, limiting their utility for analyzing metaviromes from potential emerging infection reservoirs [9].

- Diagnosis:

- Evaluate your model's performance under different train-test splits:

- "Closely related": Families are equally represented in train and test sets.

- "Non-overlapping genera": All genera in the test set are absent from the training set.

- A significant drop in performance (e.g., in F1-score) between the first and third scenario indicates susceptibility to this bias [9].

- Evaluate your model's performance under different train-test splits:

- Mitigation:

- Strategic Train-Test Splits: Always validate your model using a "non-overlapping genera" or "non-overlapping families" split to simulate the prediction of hosts for truly novel viruses [9].

- Feature Selection: Using short k-mer frequencies (e.g., 4-mers for nucleotides) has been shown to be effective for predicting hosts of novel virus genera, improving over baseline homology-based methods [9].

- Data Curation: Actively manage training sets to reduce overrepresentation of common virus families (e.g., Picornaviridae, Coronaviridae) and exclude overly similar genomes [9].

Quantitative Data on Bias Impacts

Table 1: Impact of Sampling Bias on Phylogeographic Reconstruction Accuracy

| Bias Type | Impact on Parameter | Effect Size / Impact | Key Condition |

|---|---|---|---|

| Spatial Sampling Bias | Accuracy of past location estimation | Overall accuracy remains high, but bias can have a "large impact" [1]. | Impact is most pronounced on the number and nature of estimated migration events [1]. |

| Accuracy of root state (origin) estimation | Can lead to erroneous inference of origin [1] [7]. | Strongly non-representative sampling [1]. | |

| Temporal Sampling Bias | Observed Effect Size (Odds Ratio) | Can be significantly inflated compared to a prospective scenario [8]. | Analysis of the INTERHEART study showed lower simulated prospective odds ratios for an MI predictor [8]. |

| Host-Based Sampling | Host Prediction Performance (Weighted F1-Score) | Median score of 0.79 for novel genera, vs. 0.68 for baseline method [9]. | Using Support Vector Machine and 4-mer frequencies on a "non-overlapping genera" test split [9]. |

Table 2: Comparison of Phylogeographic Models Under Sampling Bias

| Model / Approach | Key Strength / Weakness in Biased Conditions | Mitigation Strategy |

|---|---|---|

| Discrete Trait Analysis (DTA/CTMC) | Sensitive to sampling bias; treats sampling proportions as data, which can lead to erroneously small uncertainties [1] [7]. | Increasing sample size; maximizing spatiotemporal coverage of samples [7]. |

| Structured Coalescent (BASTA, MASCOT) | Designed to be less sensitive to sampling bias by integrating over migration histories [1] [7]. | Can still be biased with unbiased samples; improved by informing models with reliable case count data [7]. |

Experimental Protocols for Bias Assessment

Protocol 1: Assessing Spatial Sampling Bias in Phylogeography Using Simulations

This protocol allows researchers to quantify the potential impact of spatial sampling bias on their specific phylogeographic inference.

- Define a Ground Truth: Use a tree simulation tool like the

diversitreeR package to generate a known phylogenetic history under a controlled model of viral spread. Use a Binary-State Speciation and Extinction (BiSSE) model where states represent geographic locations (e.g., Location A and B). Set known parameters for speciation (transmission) rate (λ), extinction (recovery) rate (μ), and symmetrical migration rate (α). The root location should be predefined [1] [10]. - Introduce Sampling Bias: From the simulated "complete" dataset, create a biased sample by subsampling tips from each location at different intensities (e.g., sample 80% of tips from Location A and only 20% from Location B).

- Reconstruct Phylogeography: Perform a discrete phylogeographic reconstruction (e.g., using maximum likelihood or Bayesian methods) on both the complete and the biased datasets.

- Quantify Impact: Compare the results against the known "ground truth" from step 1. Key metrics include:

- Accuracy of ancestral node location state estimation, especially the root.

- The number and directionality of inferred migration events [1].

- This simulation-based approach was used to demonstrate that sampling bias can have a large impact on migration event estimates, even when overall accuracy is high [1].

Protocol 2: Evaluating Host Prediction Robustness to Novel Viruses

This protocol tests the real-world utility of a machine learning model for predicting virus hosts, ensuring it doesn't just memorize training data.

- Data Curation: Obtain a comprehensive set of virus genomes from a database like Virus-Host DB. Exclude arboviruses due to their complex host cycles. To avoid model overfitting, remove sequences that are overly similar (e.g., >92% identity) within overrepresented families [9].

- Create Strategic Data Splits: Partition the data into training and testing sets in multiple ways to assess generalization [9]:

- Random Split: Shuffle all genomes and split randomly (e.g., 70/30). This tests basic learning.

- Non-overlapping Genera Split: Ensure that every viral genus present in the test set is completely absent from the training set. This is the gold standard for testing prediction of hosts for novel viruses.

- Feature Extraction & Model Training: Convert nucleotide sequences into numerical feature vectors using k-mer frequency counts (e.g., k=4). Train multiple machine learning models (e.g., Support Vector Machine, Random Forest) on the training set.

- Validation: Evaluate all models on the different test sets. A robust model will maintain a high performance metric (e.g., weighted F1-score) on the "Non-overlapping Genera" split. A study using this method achieved a median F1-score of 0.79 with an SVM model, a significant improvement over baseline methods [9].

Research Workflow Visualization

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Resources for Mitigating Sampling Bias in Viral Phylogenetics

| Item / Resource | Function in Bias Mitigation | Key Consideration |

|---|---|---|

| BEAST 2 (Bayesian Evolutionary Analysis) [7] | A software platform for Bayesian phylogenetic and phylogeographic analysis. Includes models like BASTA and MASCOT that are less sensitive to sampling bias. | Computationally intensive for large datasets (>1000 sequences). Model selection is critical [7]. |

R package diversitree [1] |

Enables simulation of phylogenetic trees under defined models (e.g., BiSSE). Used to create ground truth datasets for assessing bias impacts. | Simulation parameters (migration, sampling rates) must be carefully chosen to reflect the real system [1]. |

| Virus-Host Database | A curated database of virus-host taxonomic links. Provides reliable data for building robust host prediction models and avoiding annotation errors. | Requires active data curation (e.g., removing redundant sequences, excluding arboviruses) before use in ML [9]. |

| GISAID / NCBI Virus | Primary repositories for sharing virus genome sequences. Critical for assessing the existing spatial and temporal distribution of available data. | The metadata on sampling location and date is as important as the sequence data itself for bias assessment. |

| Structured Coalescent Models (e.g., BASTA) [1] [7] | A phylogeographic model that accounts for population structure and can correct for the effect of sampling bias on migration rate estimates. | May still produce biased estimates of ancestral locations if sampling is extremely biased or if model assumptions are violated [7]. |

| Support Vector Machine (SVM) with k-mer features | A machine learning algorithm effective for predicting hosts of novel RNA viruses from short k-mer frequencies in genome sequences. | Performance is dependent on dataset composition; requires rigorous validation with non-overlapping test sets [9]. |

Troubleshooting Guide: Sampling Bias in Viral Phylogenies

Q1: My phylogenetic tree shows strong geographical clustering. Could this be due to sampling bias? A1: Yes, this is a classic sign of sampling bias. A tree clustered by location, rather than by genetic similarity or temporal spread, often indicates that sequences were not collected proportionally from all transmission chains. To troubleshoot:

- Investigate Your Metadata: Compare the number of sequences from each location against the known or estimated incidence of the virus in those areas. A significant mismatch suggests bias.

- Run a Discrete Traits Analysis: Use software like BEAST to model the location-state evolution. If the inferred rates of movement between locations are implausibly low or zero, it may be due to a lack of sequences from key intermediate locations, a consequence of biased sampling.

- Mitigation Strategy: If possible, augment your dataset with sequences from underrepresented regions. In your analysis, explicitly model the sampling heterogeneity.

Q2: My molecular clock analysis is producing an unrealistically slow or fast evolutionary rate. What is the issue? A2: Anomalous evolutionary rates can be caused by several factors, with sampling bias being a prime suspect.

- Check for Over-sampling of Recent Outbreaks: If your dataset is heavily skewed toward very recent cases from a single, fast-growing outbreak, you may be missing the deeper, slower evolutionary history, inflating the estimated rate.

- Check for Temporal Bias: Ensure your sequences are evenly distributed across the time period of interest. A lack of older sequences can make the virus appear to evolve faster than it does.

- Protocol: Re-run your molecular clock analysis (e.g., in BEAST 2) with a subsampled dataset that is temporally balanced. Compare the resulting rate with the previously estimated one and with published rates for the virus.

Q3: I suspect my dataset has significant sampling bias. How can I quantify its impact before I begin my analysis? A3: You can perform a simple randomization test to gauge the robustness of your findings.

- Experimental Protocol:

- Define your key analysis (e.g., estimating the time to the most recent common ancestor - TMRCA).

- Randomly subsample your full dataset 100 times, ensuring each subsample is balanced by a key variable (e.g., geography or time period).

- Run your key analysis on each of the 100 subsampled datasets.

- Calculate the mean and 95% confidence interval of your key statistic (e.g., TMRCA) from these 100 runs.

- Interpretation: If the confidence interval is wide or does not include the estimate from your full, biased dataset, your main result is highly sensitive to sampling bias and should be interpreted with extreme caution.

Experimental Protocols for Addressing Sampling Bias

Protocol 1: Designing a Prospective Sequencing Study to Minimize Bias

Objective: To establish a framework for collecting viral sequence data that minimizes geographical and temporal sampling bias.

Methodology:

- Stratified Sampling: Define strata based on known outbreak hotspots, regions with low surveillance, and major travel hubs. Allocate sequencing efforts proportionally to the population size and incidence rate within each stratum, not merely to case load.

- Continuous, Time-Structured Collection: Implement a system for sequencing a fixed, random subset of positive tests each week, rather than batching requests from specific outbreaks. This ensures a steady flow of data across the entire time period.

- Metadata Standardization: Use a standardized form to collect essential metadata (e.g., sample date, location, patient age, travel history, suspected transmission link) at the point of collection.

Protocol 2: Correcting for Bias in Existing Datasets using Downsampling

Objective: To analyze a publicly available dataset (e.g., from GISAID) while mitigating known sampling biases.

Methodology:

- Bias Audit: Visualize the available metadata to identify over-represented and under-represented groups (e.g., a specific country in a particular month).

- Define a Quota: Set a maximum number of sequences allowed from any single group to prevent it from dominating the analysis.

- Randomized Selection: From each group, randomly select sequences up to the defined quota, creating a more balanced "downsampled" dataset.

- Comparative Analysis: Perform all phylogenetic analyses (tree building, phylogeography) on both the full and downsampled datasets. Report results from both, with the downsampled results presented as a bias-corrected estimate.

Visualizing the Research Workflow

The following diagram outlines a standard workflow for viral phylogenetics, highlighting key points where sampling bias can be introduced and must be checked.

Title: Viral Phylogenetic Analysis & Bias Check Workflow

Research Reagent Solutions

The table below details key reagents, tools, and software essential for conducting robust viral phylogenetic analysis while accounting for sampling bias.

| Item Name | Function/Application in Research |

|---|---|

| Next-Generation Sequencing Platforms | Generate the raw genomic sequence data from viral samples. Essential for building the primary dataset. |

| BEAST 2 / BEAST 1 | Bayesian evolutionary analysis software. Used to infer phylogenetic trees, evolutionary rates, and population dynamics while incorporating sampling dates. |

| IQ-TREE | Software for maximum likelihood phylogenetic inference. Fast and useful for building initial trees and conducting hypothesis tests. |

R Package treedater |

A tool for estimating phylogenetic trees and divergence times in the presence of heterogeneous sampling. Directly addresses sampling bias. |

| GISAID Database | A global repository for sharing influenza and coronavirus sequences. The primary source of data, but requires careful assessment for sampling bias. |

| FigTree | A graphical viewer for phylogenetic trees. Used to visualize and annotate results, helping to identify potential clusters driven by bias. |

| Audacity-Aligned Genomic Sequences | A tool for visualizing and editing multiple sequence alignments. Critical for ensuring data quality before analysis. |

Connecting Sampling Bias to Broader Epidemiological Research Challenges

Frequently Asked Questions

Q1: Why do my phylogenetic tree visualizations lack clarity when exported for publication? A1: This is often due to insufficient color contrast between tree elements (like branch lines or node labels) and their background. Text legibility is governed by luminosity contrast ratio. For regular text, ensure a minimum contrast ratio of 7:1; for large text (18pt or 14pt and bold), a ratio of 4.5:1 is required [11] [12]. Tools like the Acquia Color Contrast Checker can help validate your color choices.

Q2: How can I programmatically ensure text is readable on colored backgrounds in my automated plotting scripts? A2: You can calculate the background color's perceived brightness using the YIQ formula or the W3C luminance formula. Based on the result, automatically set the text color to either white or black for maximum contrast [13] [14].

- Example Formula (YIQ):

Brightness = (R*299 + G*587 + B*114) / 1000. If the result is greater than 128, use black text; otherwise, use white text [13]. - R packages like

prismaticoffer functions likebest_contrast()to automatically choose the most readable text color [15].

Q3: What defines "large text" in the context of contrast requirements? A3: According to WCAG guidelines, "large text" is defined as text that is at least 18 points (typically 24 CSS pixels) or 14 points (typically 19 CSS pixels) in a bold font weight [12].

Q4: A collaborator uses Windows High Contrast Mode and reports that my tree figure is unusable. How can I fix this?

A4: In high contrast modes, browsers force a limited color palette and override author styles. Use the forced-colors CSS media feature to make targeted adjustments. For instance, if box-shadow (which is forced to none) was used for contrast, replace it with a solid border in the forced-colors style sheet [16].

Troubleshooting Guides

Issue: Low Contrast Rendering Tree Labels Illegible

Problem: Text labels on your phylogenetic tree (e.g., tip labels, clade labels) are difficult to read against the background or the node's fill color.

Solution:

- Manual Check and Adjustment:

- Use a color contrast analyzer tool (e.g., the Colour Contrast Analyser) to check the ratio between your text color (

fontcolor) and your node'sfillcolor[12]. - Adjust one of the colors until the ratio meets the required 7:1 or 4.5:1 threshold. Prefer darker shades of gray for text against light backgrounds and light shades against dark backgrounds [14].

- Use a color contrast analyzer tool (e.g., the Colour Contrast Analyser) to check the ratio between your text color (

- Programmatic Fix in

ggtree/R:- Use the

prismatic::best_contrast()function within yourgeom_textorgeom_tiplablayers to dynamically set the text color. This ensures the best contrast is chosen automatically based on the fill color [15]. - Example Code:

- Use the

Issue: Inconsistent Visuals Across User Environments

Problem: A tree visualization that looks good on your machine appears with poor contrast or different colors when viewed by a collaborator.

Solution:

- Check for User Overrides: The issue may stem from custom user stylesheets or operating-system-level high contrast settings. Be aware that if you do not specify a background color, the user's default background (which may not be white) will be used, potentially breaking your contrast calculations [11].

- Use Semantic System Colors (For Web): If publishing to the web, using CSS system colors (e.g.,

Canvas,CanvasText,ButtonText) can help your visualization integrate better with the user's chosen theme [16]. - Provide Multiple Formats: When sharing, consider providing the figure in multiple formats (e.g., PNG, PDF) and explicitly document the color scheme used in the figure legend.

Experimental Protocols & Data Presentation

Protocol: Automating High-Contrast Label Placement inggtree

This protocol ensures text labels on colored nodes or bars remain legible in automated R analysis pipelines.

- Prepare Data and Tree: Organize your phylogenetic tree and associated metadata in a

treedataobject. - Create Base Tree: Generate the initial tree plot using

ggtree(). - Map Colors to Metadata: Use the

scale_fill_*functions to map a metadata variable to the node colors. - Add Labels with Automatic Contrast: Employ

geom_textorgeom_tiplabin combination withprismatic::best_contrast()andafter_scale()to dynamically set the text color based on the underlying fill color. - Export and Verify: Save the plot and run a final contrast check with an accessibility tool.

Relevant R Packages:

Table 1: Key Color Contrast Requirements for Scientific Figures

| Element Type | WCAG Level | Minimum Contrast Ratio | Text Size Definition |

|---|---|---|---|

| Normal Text | AAA (Enhanced) | 7:1 [11] | Less than 18pt/24px (not bold) |

| Large Text | AAA (Enhanced) | 4.5:1 [11] | 18pt/24px or larger, or 14pt/18.66px and bold [12] |

| User Interface Components | AA (Minimum) | 3:1 [17] | Applies to visual information identifying UI states |

Table 2: Research Reagent Solutions for Phylogenetic Visualization

| Reagent / Tool | Function in Analysis | Key Parameter / Metric |

|---|---|---|

| ggtree (R Package) [18] | A primary tool for visualizing and annotating phylogenetic trees with associated data. It extends ggplot2, allowing for layered annotations. |

Supports multiple layouts (rectangular, circular, fan, etc.) and the integration of diverse data types. |

| treeio (R Package) [18] | Parses and manages phylogenetic data and trees from various software outputs into R, preparing them for visualization in ggtree. |

Handles file formats from BEAST, EPA, PAML, etc., creating S4 objects for consistent data handling. |

| Prismatic (R Package) [15] | Provides tools for manipulating and analyzing colors, including calculating the best contrasting color for legibility. | The best_contrast() function automatically selects the most readable text color from a palette against a given background. |

| Color Contrast Analyzer | A standalone tool or browser extension to manually verify the contrast ratio between foreground and background colors. | Outputs a numerical contrast ratio and indicates pass/fail against WCAG 2.2 AA/AAA criteria [12]. |

Mandatory Visualizations

High-Contrast Labeling Logic

Phylogenetic Workflow with Contrast Control

Building Robust Frameworks: Methodologies to Detect and Correct for Bias

Frequently Asked Questions (FAQs)

Q1: What is the main risk of using an "unsampled" or convenience dataset for phylodynamic analysis? Using an unsampled dataset, where sequences are analyzed without a structured sampling strategy, is highly discouraged. Research has shown that this approach results in the most biased estimates of key epidemiological parameters like the time-varying effective reproduction number (Rₜ) and growth rate (rₜ) [19]. This bias can misrepresent the true transmission dynamics of the virus.

Q2: How does the choice of sampling strategy impact the estimation of different epidemiological parameters? The sensitivity to sampling strategy varies by parameter. Studies on SARS-CoV-2 have found that while the time-varying effective reproduction number (Rₜ) and growth rate (rₜ) are highly sensitive to the sampling scheme, other parameters like the basic reproduction number (R₀) and the date of origin (TMRCA) are relatively robust across different sampling strategies [19].

Q3: Why is geographic sampling bias a problem in phylogeography? Phylogeographic methods can be biased by disparities in sampling intensity between different locations. When one region sequences and shares a much higher proportion of its cases than another, the reconstruction of the virus's historical locations and movements can be skewed. This can lead to incorrect inferences about migration routes and the origin of outbreaks [1].

Q4: What is a key consideration when designing a proportional sampling scheme? A key consideration is the trade-off between sampling intensity and temporal spread. A dataset with sequences collected over a wider time interval often produces a stronger temporal signal for analysis, which can be more valuable than a very large number of sequences from a short period [19].

Troubleshooting Common Experimental Issues

Issue 1: Biased Phylogeographic Reconstructions

- Problem: The reconstructed ancestral locations of the virus are concentrated in specific areas, likely reflecting uneven sampling efforts rather than true transmission patterns.

- Diagnosis: Compare the number of sequences from each geographic region in your dataset to the actual reported case numbers for those regions. A significant mismatch indicates potential sampling bias.

- Solution: If possible, re-weight your analysis or use structured model approaches like the BAyesian STructured coalescent Approximation (BASTA), which are designed to be less sensitive to uneven sampling [1]. When designing a study, aim for a sampling rate proportional to case incidence across regions.

Issue 2: Inconsistent or Biased Estimates of Rₜ

- Problem: Estimates of the effective reproduction number from genomic data do not align with estimates from case or death data.

- Diagnosis: Review your sampling scheme. Analysis using an unsampled dataset is a known source of bias for Rₜ [19].

- Solution: Implement a structured sampling strategy. The table below compares different approaches based on a study of SARS-CoV-2, using estimates from case data as a benchmark [19].

Issue 3: Weak Temporal Signal in the Phylogenetic Tree

- Problem: The root-to-tip regression of genetic divergence against sampling time shows a weak correlation (low R² value), making accurate evolutionary rate and date estimation difficult.

- Diagnosis: A weak temporal signal can result from a dataset with sequences collected over a too-narrow time interval [19].

- Solution: Widen your sampling window where possible. When sub-sampling from a larger dataset, ensure the selected sequences are distributed across the entire duration of the epidemic wave of interest.

Comparison of Genomic Sampling Strategies

The following table summarizes findings from a study that estimated SARS-CoV-2 epidemiological parameters under different sampling schemes for genomic data in Hong Kong and the Amazonas state, Brazil [19].

Table 1: Impact of Sampling Strategy on Epidemiological Parameter Estimation from Genomic Data

| Sampling Strategy | Description | Key Impact on Parameter Estimation | Best Use Case |

|---|---|---|---|

| Unsampled | Using all available sequences without a structured scheme. | Leads to the most biased estimates of Rₜ and rₜ [19]. | Not recommended. |

| Proportional | Sampling in direct proportion to the number of cases per time period. | Can produce biased estimates if case data is incomplete [19]. | When case reporting is highly reliable and complete. |

| Uniform | Selecting a near-equal number of sequences from each time period. | Reduces bias compared to unsampled data; effective for capturing dynamics across phases [19]. | When aiming to capture transmission dynamics evenly across distinct epidemic phases. |

| Reciprocal-Proportional | Sampling more sequences from periods with fewer cases. | Can help mitigate bias from under-reporting by ensuring coverage during low-incidence periods [19]. | When case detection is suspected to be highly variable or inconsistent over time. |

Detailed Experimental Protocol: Implementing a Proportional Sampling Design

This protocol outlines the steps for sub-sampling a viral genomic dataset using a proportional strategy to minimize bias in subsequent phylodynamic analysis.

1. Objective To create a representative sub-sample of viral genomic sequences where the number of sequences from each time period is proportional to the officially reported case incidence for that period.

2. Materials and Research Reagent Solutions Table 2: Essential Materials for Sampling and Analysis

| Item | Function / Explanation |

|---|---|

| Viral Genomic Sequences | Primary data, ideally with associated metadata (sample date, location). |

| Epidemiological Case Data | Reported case incidence (e.g., daily or weekly cases) for the population and time period of interest. Used as the reference for proportional allocation. |

| Computational Scripting Environment | (e.g., Python with Pandas, R). Used to automate the calculation of sampling targets and randomly select sequences. |

| Phylodynamic Software Suite | (e.g., BEAST, BEAST2). Used for the final analysis to estimate parameters like Rₜ, TMRCA, and evolutionary rates. |

3. Step-by-Step Methodology

Step 1: Data Collation and Alignment

- Gather all available viral genomic sequences and their associated metadata for the outbreak.

- Collate the official epidemiological case data (e.g., weekly new cases) for the same geographic region and time period.

Step 2: Define Temporal Bins

- Divide the total time period of the study into meaningful intervals (e.g., weeks or months). The choice of bin size should reflect the tempo of the epidemic and the resolution of the case data.

Step 3: Calculate Sampling Targets

- For each temporal bin, calculate the proportion of total cases that occurred during that interval.

Proportion_of_Casesᵢ = (Cases in Binᵢ) / (Total Cases in all Bins)

- Determine the total number of sequences (N) to be included in the final analysis based on computational constraints.

- Calculate the target number of sequences to sample from each bin.

Target_Samplesᵢ = Proportion_of_Casesᵢ × N

Step 4: Random Sub-sampling

- Within each temporal bin, randomly select the calculated target number of sequences. If the number of available sequences in a bin is fewer than the target, include all available sequences.

- This random selection within bins is critical to avoid introducing additional sampling bias.

Step 5: Validation and Analysis

- Assemble the final sub-sampled dataset.

- Perform a root-to-tip regression to check for a temporal signal before proceeding with complex phylodynamic inference [19].

- Proceed with phylodynamic analysis using software like BEAST2.

The workflow for this protocol is summarized in the following diagram:

Advanced Methodologies and Visual Guide

Optimizing Sampling with Markov Decision Processes Emerging research proposes the use of Markov Decision Processes (MDPs) to model sampling as a sequential decision-making problem [20]. This framework can predict the expected informational value of sequencing a particular sample at a given time, allowing for the identification of sampling strategies that maximize information gain (e.g., for estimating growth rates or migration rates) while minimizing costs [20].

The diagram below illustrates the logical relationship between sampling bias, its consequences, and the methodological solutions discussed in this guide.

Computational Corrections and Statistical Weights in Phylogenetic Analysis

FAQs: Addressing Sampling Bias in Viral Phylogenies

1. How does geographic sampling bias affect phylogeographic reconstruction of viral movements?

Geographic sampling bias, where viruses from different locations are sequenced at different rates, significantly impacts phylogeographic reconstructions. While overall accuracy remains high, especially when viral migration rates are low, sampling bias greatly affects the number and nature of estimated migration events [1]. When some regions are over-sampled compared to others, methods like Discrete Trait Analysis (DTA) can produce erroneously small apparent uncertainties and misleading estimates of ancestral viral locations. This occurs because relative sampling intensities are treated as data that inform migration estimates in some phylogenetic models [1].

2. What computational methods can correct for sampling bias in phylogenetic analysis?

Several approaches can mitigate sampling bias:

- Structured Coalescent Models: Methods like BASTA (BAyesian STructured coalescent Approximation) model population structure and migration more accurately than DTA when locations are non-representatively sampled [1].

- Phylogenetic Novelty Scores: This weighting scheme assigns weights to sequences based on their evolutionary novelty, calculated as the expected inverse of the number of sequences "phylogenetically identical by descent" at any alignment column. This approach is robust to uneven sampling and works well across different divergence levels [21].

- Incorporating Travel History: Including travel history data for sequenced samples and accounting for "empty" locations in continuous-space models can partially overcome sampling bias [1].

3. Why has my tree structure collapsed after adding new sequences, and how can I fix it?

The sudden collapse of tree structure after adding sequences, where diverse strains appear artificially similar, can result from several issues [22]:

- Low coverage in new strains: This increases ignored positions and reduces the effective core genome size used for tree building.

- Technical artifacts: Concatenating divergent samples can create artificial heterozygous positions that are ignored by some tree-building algorithms.

- Algorithm limitations: Some fast tree-building methods ignore positions not present in all samples.

Solution: Use more computationally intensive but accurate methods like RAxML that can utilize positions not present at high quality in all strains. RAxML is optimized for accuracy rather than speed and can handle missing data more effectively, often restoring the correct tree structure [22].

4. How do I choose appropriate sequence weighting schemes for my analysis?

Different weighting schemes have distinct strengths and applications:

Table: Sequence Weighting Schemes in Phylogenetics

| Method | Approach | Best For | Limitations |

|---|---|---|---|

| Henikoff & Henikoff (HH94) | Weights based on character rarity at alignment columns [21] | General purpose, fast computation | May not fully capture evolutionary relationships |

| Gerstein et al. (GSC94) | Iterative weight assignment along phylogeny from tips to root [21] | Ultrametric trees | Can yield inaccurate results on non-ultrametric trees |

| Phylogenetic Novelty Scores | Weight based on probability sequences are "phylogenetically identical by descent" [21] | Uneven sampling scenarios, various divergence levels | Computationally more intensive than some heuristic methods |

5. What do low bootstrap values indicate about my phylogenetic tree?

Bootstrap values < 0.8-0.9 (depending on the method) indicate weak support for the branching pattern at that node [22]. This means that removing portions of your data produces different tree topologies, suggesting that your dataset lacks sufficient signal to confidently resolve that particular evolutionary relationship. Low bootstrap values can result from insufficient informative sites, model misspecification, or conflicting signals in the data [22].

Methodological Protocols

Protocol 1: Implementing Phylogenetic Novelty Scores for Sequence Weighting

Purpose: To calculate evolutionarily meaningful weights that mitigate the effects of non-independence in homologous sequences and uneven taxon sampling [21].

Workflow:

Input Preparation:

- Multiple sequence alignment (amino acid or nucleotide)

- Phylogenetic tree relating the sequences (optional for basic calculation)

Weight Calculation:

- For each sequence (tip) in the tree, compute the probability distribution of how many tips are phylogenetically identical by descent (PIBD) at a generic alignment column

- Calculate the weight for sequence s as: ws = Σ [ps(i)/i] from i=1 to N, where ps(i) is the probability that exactly i tips are PIBD to s [21]

Application:

- Use weights for character frequency estimation in protein family profiling

- Apply to sequence alignment evaluation

- Use for conservation score calculation

Diagram: Workflow for Identifying and Correcting Sampling Bias in Phylogenetic Analysis

Protocol 2: Assessing Geographic Sampling Bias in Viral Phylogeography

Purpose: To quantify and mitigate the effects of uneven geographic sampling on reconstruction of viral migration history [1].

Procedure:

Simulation Setup:

- Simulate pathogen diversification under binary-state speciation and extinction (BiSSE) model

- Model two geographic locations (A and B) with symmetrical migration

- Parameterize with speciation rate (λ), extinction rate (μ), and migration rate (α)

Bias Introduction:

- Apply different sampling intensities between locations (e.g., 80% from A, 20% from B)

- Compare with uniform sampling as control

Reconstruction Accuracy Assessment:

- Reconstruct ancestral locations using maximum likelihood discrete trait analysis

- Compare inferred root location and migration events with known simulation history

- Quantify error rates for ancestral state reconstruction under different bias conditions

Bias Correction:

- Apply structured coalescent approaches (e.g., BASTA)

- Compare corrected vs. uncorrected results

Research Reagent Solutions

Table: Essential Computational Tools for Addressing Phylogenetic Sampling Bias

| Tool/Resource | Function | Application Context |

|---|---|---|

| BASTA (BAyesian STructured coalescent Approximation) | Approximates structured coalescent to correct migration rate estimates | Geographic sampling bias correction in discrete phylogeography [1] |

| RAxML | Maximum likelihood tree inference using positions with missing data | Restoring tree structure when adding new sequences [22] |

| Phylogenetic Novelty Score Algorithms | Calculate sequence weights based on evolutionary novelty | Mitigating effects of uneven taxon sampling [21] |

| diversitree R package | Simulate diversification under BiSSE model | Testing bias impact with known evolutionary history [1] |

| FastTree | Rapid approximate maximum likelihood tree inference | Initial tree building; bootstrap support evaluation [22] |

Advanced Troubleshooting Guide

Problem: Inferred viral migration patterns show implausibly high rates from certain locations.

Diagnosis: This may reflect sampling bias rather than true biological patterns. Over-sampled locations can appear as sources of migration due to detection bias [1].

Solutions:

- Apply structured coalescent methods that explicitly model sampling proportions

- Incorporate travel history data where available to distinguish true migration from sampling artifacts

- Use phylogenetic novelty scores to downweight sequences from over-sampled clades

Problem: Root location inference conflicts with historical records.

Diagnosis: Extreme sampling bias can distort root state estimation, particularly in maximum likelihood discrete trait analysis [1].

Solutions:

- Implement sampling-aware models that account for different sampling intensities across locations

- Include appropriate outgroups to improve root positioning

- Validate with simulations using known parameters to assess method performance under your specific sampling conditions

Diagram: Decision Tree for Troubleshooting Phylogenetic Analysis Problems

The Spatial Transmission Count Statistic is a computational framework designed to efficiently summarize geographic transmission patterns from viral phylogenies and quantify geographic bias in outbreak dynamics [23] [24]. This method translates the evolutionary relationships and geographic imprints within viral genome sequences into actionable epidemiological insights, specifically addressing the critical challenge of sampling bias in genomic epidemiology [23] [1].

The statistic operates by analyzing a time-scaled phylogenetic tree with inferred ancestral trait states to identify and categorize spatial transmission linkages [23]. These linkages are classified into three distinct types:

- Imports: Introductions of the virus into a focal region from an external source

- Local Transmissions: Sustained transmission chains within the focal region

- Exports: Spread of the virus from the focal region to other areas [23]

This categorization enables researchers to construct a comprehensive epidemic profile for any region of interest, moving beyond simple case counts to understand the underlying dynamics of disease spread [23] [24].

Key Methodological Components

Experimental Workflow and Protocol

The implementation of the Spatial Transmission Count Statistic follows a structured pipeline with two major components [23]:

1. Phylogenetic Reconstruction

- Sequence Alignment: Perform multiple sequence alignment using NextAlign [23]

- Tree Building: Construct maximum likelihood phylogenies with IQ-TREE using a GTR substitution model [23]

- Time Scaling: Apply TreeTime to produce time-scaled phylogenies and infer ancestral node states [23]

- Rooting: Root the phylogeny using early reference samples (e.g., Wuhan-Hu-1/2019) [23]

- Migration Modeling: Infer migration patterns between geographic regions using time-reversible models [23]

2. Characterization of Spatial Transmission Linkages

- Tree Processing: Import and structure phylogeny data using the 'treeio' and 'tidytree' packages in R [23]

- Linkage Identification: Designate shorter branches in the phylogeny as spatial transmission linkages, excluding branches with durations exceeding 15 days [23]

- Trait State Analysis: Categorize linkages as imports, local transmissions, or exports based on trait states [23]

- Trend Analysis: Summarize time series of spatial transmission counts by type to reveal epidemic trends [23]

Workflow Diagram

Quantitative Metrics for Geographic Bias Assessment

The framework introduces two primary quantitative scores to systematically assess geographic bias and transmission patterns [23]:

Core Metrics Table

| Metric Name | Calculation Formula | Interpretation | Epidemiological Significance |

|---|---|---|---|

| Local Import Score | Ct(Import) / [Ct(Import) + Ct(LocalTrans)] [23] |

Estimates proportion of new cases due to external introductions versus local transmission [23] | Higher scores indicate outbreaks maintained by repeated introductions; lower scores suggest sustained local transmission [23] |

| Source Sink Score | Comparative analysis of export versus import linkages [23] | Determines whether a region acts as a source (net exporter) or sink (net importer) of viral lineages [23] | Identifies transmission hubs that drive regional spread versus areas dependent on external introductions [23] |

Application Findings from Texas SARS-CoV-2 Study

A comprehensive demonstration using over 12,000 SARS-CoV-2 genomes from Texas revealed distinct transmission patterns highlighting geographic bias [23] [24]:

| Region Type | Transmission Pattern | Local Import Score Profile | Source Sink Status |

|---|---|---|---|

| Urban Centers | Locally maintained outbreaks connected to global epidemics [23] | Lower scores indicating dominant local transmission [23] | Source – Net exporters seeding other regions [23] |

| Rural Areas | Driven by repeated external introductions [23] | Higher scores indicating dependency on imports [23] | Sink – Net importers dependent on external sources [23] |

Troubleshooting Common Experimental Issues

FAQ: Addressing Methodological Challenges

Q1: How does sampling bias specifically affect phylogeographic reconstruction, and how can the Spatial Transmission Count Statistic mitigate this?

Sampling bias significantly impacts phylogeographic reconstruction in multiple ways. When specific geographic areas are overrepresented in sequencing datasets, this can lead to overrepresentation of the same areas at inferred internal nodes, creating a false impression of transmission importance [23] [1]. In extreme cases, sampling bias can cause posterior distributions to exclude the true origin location of the root node [23]. The Spatial Transmission Count Statistic addresses this through proportional sampling schemes that weight genomic sampling by case counts, and by explicitly quantifying the directionality of transmission linkages to distinguish true sources from sampling artifacts [23].

Q2: What are the best practices for optimizing sampling strategies to minimize geographic bias?

Implement proportional sampling based on reported case counts to ensure representative geographic coverage [23]. The "Subsamplerr" R package referenced in the original study provides tools for implementing such sampling schemes [23]. When designing surveillance, prioritize balanced representation across both urban and rural areas, as under-sampling either can dramatically alter inferred transmission patterns [1]. For discrete phylogeographic analysis, ensure that no single region constitutes an extreme majority of sequences (>80%) to prevent reconstruction artifacts [1].

Q3: How reliable are ancestral location inferences in large phylogenies, and what factors affect their accuracy?

Ancestral location inferences should be considered highly uncertain, particularly in regions with sparse sampling [25]. Accuracy depends on multiple factors including sampling density, migration rates between regions, and temporal distribution of samples [1]. Studies have shown that reconstruction accuracy is generally higher when migration rates are low, as this creates clearer geographic signal in phylogenies [1]. The Spatial Transmission Count Statistic improves reliability by focusing on shorter branches (excluding those >15 days) which provide more definitive spatial linkage information [23].

Q4: How can researchers distinguish between genuine sources of transmission and sampling artifacts?

The framework provides two analytical approaches. First, calculate both Local Import and Source Sink Scores simultaneously – genuine sources typically show low Local Import Scores but high export activity [23]. Second, analyze the consistency of patterns across multiple time windows; true sources maintain their export role over time, while sampling artifacts may show inconsistent patterns [23]. Additionally, validate phylogenetic findings with epidemiological correlation – genuine sources should correlate with early case detection and high reproduction numbers [23].

Research Reagent Solutions

Essential Computational Tools and Packages

| Tool Name | Primary Function | Application in Spatial Transmission Analysis |

|---|---|---|

| Nextstrain Pipeline | Phylogenetic reconstruction and ancestral state inference [23] | Core framework for building time-scaled trees with geographic traits [23] |

| Subsamplerr R Package | Proportional sampling based on case counts [23] | Mitigates sampling bias by ensuring representative geographic coverage [23] |

| TreeTime | Molecular clock dating and ancestral reconstruction [23] | Inferring historical states and time-scaling phylogenies [23] |

| IQ-TREE | Maximum likelihood phylogenetic inference [23] | Constructing robust trees from sequence alignments [23] |

| treeio & tidytree | Phylogenetic data processing and manipulation in R [23] | Importing and structuring tree data for transmission linkage analysis [23] |

Interpretation Framework for Spatial Transmission Patterns

Analytical Decision Pathway

This technical framework provides researchers with a comprehensive toolkit for identifying, quantifying, and addressing geographic sampling bias in viral phylogenies, enabling more accurate reconstruction of transmission dynamics and better-informed public health interventions.

Integrating Genomic Data with Epidemiological and Environmental Metadata

Frequently Asked Questions (FAQs)

Q1: The phylogenetic tree I generated seems to be heavily influenced by the sampling locations of the sequences, not their true evolutionary relationships. How can I determine if this is sampling bias? A1: This is a classic sign of sampling bias. To diagnose it, you can:

- Correlate Traits with Geography: Statistically test (e.g., using a Mantel test) if the genetic distance between sequences is correlated with the geographical distance of their collection sites. A strong correlation suggests spatial sampling bias.

- Visualize Metadata on the Tree: Map the collection date (e.g., via a timescale) or location (e.g., via tip colors) directly onto the phylogenetic tree. If clades correspond perfectly to these metadata categories rather than known biological classifications, bias is likely.

- Analyze Sequence Distribution: Check if your sequences are overwhelmingly from one specific region or time period, leaving other areas poorly represented.

Q2: When I integrate environmental data like temperature or rainfall with my genomic sequences, the data formats are incompatible. What is the best way to combine them for analysis? A2: The most robust method is to create a unified metadata file. Structure your data in a tab-delimited or CSV format where each row represents a viral sequence and columns contain all associated data. Example Metadata Table Structure:

| Sequence ID | Collection Date | Latitude | Longitude | Average Temperature (°C) | Rainfall (mm) | Host Species |

|---|---|---|---|---|---|---|

| Virus_001 | 2023-03-15 | 40.7128 | -74.0060 | 12.5 | 85.2 | Homo sapiens |

| Virus_002 | 2023-04-01 | 34.0522 | -118.2437 | 18.3 | 12.1 | Avian |

This table can then be read by phylogenetic software (e.g., BEAST, Nextstrain) to integrate the environmental and epidemiological context directly into the evolutionary model.

Q3: My analysis pipeline involves multiple tools, and the color schemes in my final diagrams have poor contrast, making them difficult to read in publications. How can I ensure my figures are accessible?

A3: Adhere to established color contrast guidelines. For all graphical elements, especially text in diagrams and data points in plots, ensure a minimum contrast ratio. Use automated checking tools to validate your color choices. For nodes in diagrams, explicitly set the fontcolor to be high-contrast against the fillcolor (e.g., dark text on a light background or vice versa).

Troubleshooting Guides

Issue: Illogical or Poorly Supported Clades in Phylogenetic Tree

Problem: The branching pattern (topology) of your phylogenetic tree shows clusters that are inconsistent with established knowledge, often with low statistical support (e.g., low bootstrap values).

Diagnosis: This is frequently caused by incomplete or biased sequence data.

Solution:

- Re-check Data Composition: Ensure your multiple sequence alignment is of high quality and does not contain an overrepresentation of sequences from a single outbreak or location.

- Subsample the Data: If your dataset is heavily biased, create a subsampled dataset that more evenly represents different time periods, geographic regions, or host species.

- Use a Different Evolutionary Model: Run the phylogenetic inference with a different nucleotide substitution model. Model misspecification can lead to incorrect topologies.

- Add More Data: Incorporate additional sequences from under-sampled regions or time points to fill in the gaps and provide a more balanced evolutionary signal.

Issue: Failure to Detect Significant Association in Phylodynamic Analysis

Problem: A statistical analysis (e.g., a discrete trait analysis in BEAST) finds no significant association between a genetic clade and a particular metadata trait (e.g., host species or location).

Diagnosis: The lack of signal can stem from low statistical power or incorrect model parameterization.

Solution:

- Increase Sample Size: The most common solution is to add more sequences to the analysis, particularly from the trait categories of interest.

- Check for Sparse Data: Ensure that the trait you are testing is not too rare in your dataset. If a category has very few sequences, the analysis may be unable to detect a signal.

- Validate the Model: Test the analysis on a simulated dataset where the association is known to ensure your model and settings are correct. Adjust the clock model or tree prior settings if necessary.

Issue: Inaccurate Divergence Time Estimates

Problem: The estimated time to the most recent common ancestor (tMRCA) of your viral sequences seems biologically implausible (e.g., far too old or too young).

Diagnosis: This is often due to incorrect calibration or violation of model assumptions.

Solution:

- Verify Calibration Points: Re-check any internal or external calibration points (e.g., known sample dates) used to calibrate the molecular clock. Ensure they are accurate and appropriate.

- Assess Clock-Like Signal: Perform a root-to-tip regression (e.g., in TempEst) to check if your data behaves in a clock-like manner. A low correlation suggests a weak molecular clock signal, making time estimates unreliable.

- Evaluate Clock Model: Try running the analysis under both a strict and a relaxed molecular clock model to see which is a better fit for your data.

Experimental Protocols for Key Methodologies

Protocol 1: Constructing a Spatially-Explicit Phylogenetic Tree

Objective: To visualize and analyze the geographic spread of a virus alongside its evolutionary history.

Materials: See "Research Reagent Solutions" table.

Methodology:

- Data Curation: Compile a FASTA file of viral genomic sequences and a corresponding metadata file with, at minimum, sequence ID and geographic coordinates (latitude/longitude).

- Sequence Alignment: Use MAFFT or Clustal Omega to create a multiple sequence alignment.

- Phylogenetic Inference: Construct a maximum-likelihood tree using IQ-TREE.

- Spatial Visualization: Input the resulting tree and the metadata file into SPREAD4 or a similar tool to generate a spatially-embedded phylogenetic tree for visualization and analysis.

Protocol 2: Testing for Sampling Bias with a Mantel Test

Objective: To statistically determine if the genetic structure of a virus is significantly influenced by its geographic distribution.

Materials: See "Research Reagent Solutions" table.

Methodology:

- Calculate Genetic Distance Matrix: From your multiple sequence alignment, generate a pairwise genetic distance matrix (e.g., p-distance, Tamura-Nei) using IQ-TREE or the

dist.dnafunction in R. - Calculate Geographic Distance Matrix: Using the latitude and longitude for each sequence, compute a pairwise geographic distance matrix (e.g., in kilometers).

- Perform Mantel Test: Use the

mantel.testfunction in R (packageape) or a similar implementation to calculate the correlation between the two matrices and assess its statistical significance via permutation.

The Scientist's Toolkit: Research Reagent Solutions

| Item/Software | Primary Function | Key Parameter / Use Case |

|---|---|---|

| MAFFT | Multiple sequence alignment | Use --auto for automatic strategy selection; essential for creating the input for phylogenetic trees. |

| IQ-TREE | Phylogenetic inference | Use -m TEST to automatically find the best substitution model; -bb 1000 for ultrafast bootstrap. |

| BEAST2 | Bayesian evolutionary analysis | Infers timed phylogenies and trait evolution; uses XML files to define complex evolutionary models. |

| Nextstrain | Real-time pathogen tracking | Integrates phylogeny, geography, and time via augur and auspice tools for visualization. |

| R (ape, adegenet) | Statistical computing and graphics | The ape package performs Mantel tests; adegenet handles population genetic data. |

| SPREAD4 | Spatially-explicit phylogenetic analysis | Visualizes the spatial diffusion of pathogens along branches of a phylogeny. |

| TempEst | Assess temporal signal | Checks for a clock-like signal in data via root-to-tip regression before dating analysis. |

This guide provides a structured approach to identifying, troubleshooting, and mitigating sampling bias in viral phylogenomic studies. Sampling bias—the systematic error introduced when some members of a population are more likely to be included in a dataset than others—can significantly distort phylogenetic reconstructions and phylogeographic inferences, leading to erroneous conclusions about viral origins, spread, and evolution [1] [26]. The following FAQs, workflows, and tools are designed to help researchers maintain the integrity of their research from study design through to data analysis.

Frequently Asked Questions (FAQs) & Troubleshooting

Q1: Our phylogeographic analysis suggests a specific geographic origin for a virus, but epidemiological data seems to contradict this. Could sampling bias be the cause?

A: Yes, this is a classic symptom of sampling bias. Phylogeographic reconstruction can be heavily influenced by disparate sampling efforts among locations [1]. If one region sequences and shares a much higher proportion of its cases, ancestral state reconstruction algorithms may be biased toward that well-sampled location, even if the virus emerged elsewhere.

- Troubleshooting Steps:

- Audit Sampling Proportion: Compare the number of sequenced genomes from each candidate region against the total number of confirmed cases in that region. A large disparity indicates potential bias.

- Perform Sensitivity Analysis: Re-run your phylogeographic analysis using subsampled datasets that equalize sampling effort across regions. If the inferred origin changes, your initial result was likely biased.

- Incorporate Travel History: If available, use patient travel history data to inform the location states of tips in the tree, which can help correct for biased static location assignments [1].

Q2: We suspect selection bias in our sequence dataset. How can we quantify this before beginning phylogenetic analysis?

A: Quantifying selection bias involves assessing how well your genomic sample represents the true population.

- Troubleshooting Steps:

- Create a Metadata Comparison Table: Compare the demographics (e.g., age, sex), clinical outcomes (e.g., disease severity), and geographic distribution of your sequenced cases against all reported cases. Significant differences indicate selection bias.

- Check for Temporal Gaps: Plot the collection dates of your sequences against the epidemic curve. Large gaps during specific periods can introduce temporal bias.

- Use Bias Assessment Tools: Employ tools like the Prediction model Risk Of Bias ASsessment Tool (PROBAST) to structure your evaluation of the dataset's representativeness [27].

Q3: During sequence analysis, we see a strong phylogenetic cluster linked to a specific demographic group. How do we determine if this is a real transmission pattern or a result of biased sampling?

A: Distinguishing real signal from sampling artifact is critical.

- Troubleshooting Steps:

- Test for Association: Use a structured statistical test, such as a permutation test, to assess whether the observed clustering is stronger than would be expected by chance given the uneven sampling of the different demographic groups.

- Review Sequencing Strategy: Investigate if there was a targeted sequencing effort focused on that specific demographic group (e.g., outbreak investigation in a particular community). If so, the cluster's strength may be inflated.

- Contextualize with Epidemiological Data: Corroborate the finding with independent line-list or contact tracing data. A true transmission cluster should be supported by multiple data sources.

Quantitative Data on Bias Impact

The following table summarizes key quantitative findings on how sampling bias impacts phylogeographic inference, based on simulation studies [1].

Table 1: Impact of Sampling Bias on Phylogeographic Reconstruction Accuracy

| Migration Rate Between Populations | Level of Sampling Bias | Accuracy of Root State (Origin) Inference | Impact on Detection of Migration Events |

|---|---|---|---|

| Low | Low | High | Minimal; most key events detected. |