Bayesian Phylodynamics: Modeling Epidemic Spread from Genomic Data

This article provides a comprehensive overview of Bayesian phylodynamic methods for analyzing epidemic spread, tailored for researchers, scientists, and drug development professionals.

Bayesian Phylodynamics: Modeling Epidemic Spread from Genomic Data

Abstract

This article provides a comprehensive overview of Bayesian phylodynamic methods for analyzing epidemic spread, tailored for researchers, scientists, and drug development professionals. It covers foundational concepts, from basic Bayesian principles to advanced model selection, and details methodological applications using leading software like BEAST 2 and BEAST X. The content addresses critical troubleshooting aspects, including MCMC diagnostics and managing model identifiability, and presents validation through comparative case studies on pathogens like SARS-CoV-2 and PRRSV. By synthesizing theory and practical application, this guide aims to equip professionals with the knowledge to implement robust phylodynamic analyses for improving epidemic surveillance and informing public health interventions.

Bayesian Phylodynamics Foundations: From Core Concepts to Evolutionary Models

Bayesian statistics provides a powerful probabilistic framework for updating beliefs based on new evidence, making it particularly valuable for modeling complex evolutionary processes. Unlike frequentist approaches that calculate the probability of observing data given a hypothesis [1], Bayesian inference solves the "inverse probability" problem, enabling direct calculation of the probability of a hypothesis being true given the observed data [1]. This fundamental difference makes Bayesian methods exceptionally well-suited for phylogenetic analysis and phylodynamic modeling of epidemic spread, where researchers combine prior knowledge with new genetic data to infer evolutionary relationships and transmission dynamics.

The core of Bayesian inference revolves around three components: the prior distribution representing initial beliefs about parameters, the likelihood function describing the probability of observing the data given certain parameter values, and the posterior distribution representing updated beliefs after considering the evidence [2]. This Bayesian framework allows evolutionary biologists and epidemiologists to incorporate existing knowledge—such as previously estimated mutation rates or transmission parameters—while rigorously accounting for uncertainties in model parameters and phylogenetic tree topologies.

Core Principles: Prior, Likelihood, and Posterior

Mathematical Foundation

Bayesian inference is built upon Bayes' theorem, which formally describes the relationship between prior beliefs and posterior conclusions:

Posterior ∝ Likelihood × Prior

Expressed mathematically:

P(θ|X) = [P(X|θ) × P(θ)] / P(X)

Where:

- P(θ|X) is the posterior distribution of parameters θ given observed data X

- P(X|θ) is the likelihood of observing data X given parameters θ

- P(θ) is the prior distribution of parameters θ

- P(X) is the marginal likelihood of the data (a normalizing constant) [2]

In evolutionary biology, parameters θ might include evolutionary rates, divergence times, or tree topologies, while data X typically represents molecular sequences (DNA, RNA, or amino acids).

The Prior Distribution (P(θ))

The prior distribution encapsulates existing knowledge or assumptions about parameters before observing new data. Priors can range from uninformative (expressing minimal knowledge) to strongly informative (incorporating substantial previous evidence) [2]. In phylogenetic dating analyses, priors might include fossil calibration points or previously estimated mutation rates. For epidemic spread research, priors could incorporate known transmission dynamics from similar pathogens.

Table 1: Common Prior Distributions in Evolutionary Biology

| Distribution | Common Use Cases | Parameters |

|---|---|---|

| Beta(α,β) | Modeling probabilities, evolutionary rates | α, β > 0 |

| Gamma(k,θ) | Rate parameters, branch lengths | Shape k, scale θ |

| Dirichlet(α) | Stationary frequencies, substitution rates | Concentration vector α |

| Exponential(λ) | Simple priors for positive parameters | Rate λ |

| Uniform(a,b) | Uninformative priors for bounded parameters | Lower a, upper b |

The Likelihood Function (P(X|θ))

The likelihood function quantifies how probable the observed data are under different parameter values. In phylogenetics, this typically involves models of sequence evolution such as the General Time Reversible (GTR) model or its extensions [3]. For a phylogenetic tree τ with branch lengths t and substitution model parameters θ, the likelihood is calculated assuming sites evolve independently:

P(X|τ,t,θ) = Π P(X_i|τ,t,θ)

Where X_i represents the data at site i [4] [3]. Modern phylogenetic approaches often incorporate complex models accounting for rate variation across sites (Gamma distribution), heterotachy (lineage-specific rate variation), and other biological realities [3].

The Posterior Distribution (P(θ|X))

The posterior distribution represents the complete updated belief state about parameters after considering both prior knowledge and new evidence. This distribution is typically complex and high-dimensional, requiring computational methods like Markov Chain Monte Carlo (MCMC) for approximation [5] [3]. In phylodynamics, the posterior distribution might include joint estimates of transmission trees, evolutionary rates, and population dynamics.

Workflow and Computational Implementation

Bayesian Phylogenetic Analysis Pipeline

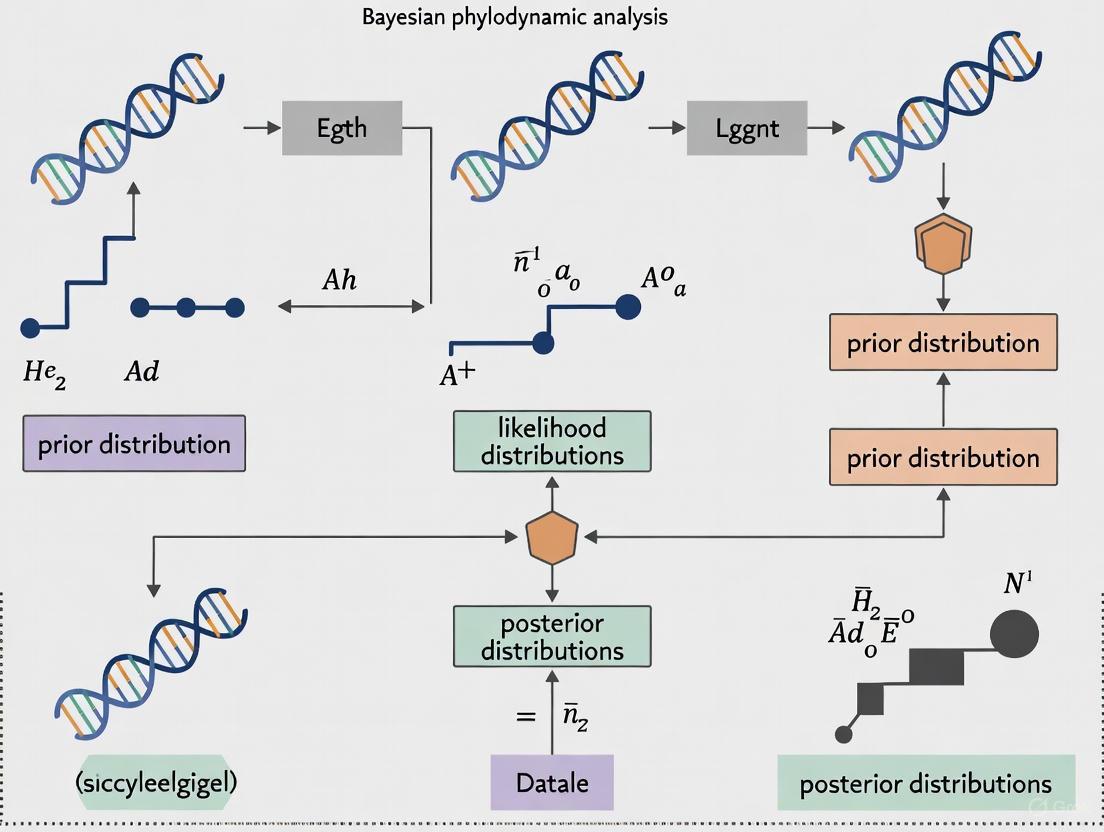

Figure 1: Bayesian phylogenetic inference workflow, showing the progression from data input to posterior distribution summarization.

MCMC Sampling and Convergence Diagnostics

Bayesian phylogenetic analysis typically employs Markov Chain Monte Carlo (MCMC) sampling to approximate the posterior distribution. Key considerations include:

- Chain Convergence: Assessing whether chains have reached the target distribution using statistics like Potential Scale Reduction Factor (PSRF) [3]

- Effective Sample Size (ESS): Ensuring sufficient independent samples from the posterior

- Burn-in: Discarding initial samples before chain convergence

- Proposal Mechanisms: Designing efficient moves for tree topology and parameter space exploration

Table 2: Essential Convergence Diagnostics for Bayesian Phylogenetics

| Diagnostic | Target Value | Interpretation |

|---|---|---|

| Potential Scale Reduction Factor (PSRF) | < 1.05 | Chains have converged to same distribution |

| Effective Sample Size (ESS) | > 200 | Sufficient independent samples |

| Trace Plot Inspection | Stationary and well-mixed | Visual confirmation of convergence |

| Autocorrelation Time | Low values preferred | Samples are sufficiently independent |

Application to Phylodynamic Analysis of Epidemic Spread

Integrating Epidemiological and Evolutionary Models

Phylodynamics combines epidemiological dynamics with phylogenetic inference to reconstruct transmission history and population dynamics of pathogens [5]. In this framework, Bayesian methods enable:

- Estimation of Key Epidemiological Parameters: Including reproduction numbers (R₀), rate of spread, and population sizes [5]

- Incorporation of Sampling Information: Accounting for uneven sampling across time and geography

- Uncertainty Quantification: Providing credible intervals for all estimated parameters

- Real-time Analysis: Updating estimates as new sequence data becomes available during outbreaks

The 2014 West African Ebola epidemic demonstrated the value of phylodynamic approaches, where Bayesian analysis of viral genomes provided insights into transmission dynamics and informed public health interventions [5].

Protocol: Bayesian Phylodynamic Analysis for Epidemic Research

Materials and Software Requirements

- Molecular sequence data (VCF or FASTA format)

- Sampling dates for tip-dating analysis

- Computational resources (high-performance computing for large datasets)

- Bayesian phylogenetic software (BEAST2, MrBayes, RevBayes)

Step-by-Step Procedure

- Data Preparation and Alignment

- Compile viral genome sequences with sampling dates

- Perform multiple sequence alignment (MAFFT, MUSCLE)

- Assess sequence quality and potential recombination

- Substitution Model Selection

- Compare nucleotide substitution models (HKY, GTR, etc.)

- Test for rate variation among sites (invariant sites, Gamma distribution)

- Select best-fitting model using Bayesian Information Criterion or similar metrics

- Clock Model Specification

- Choose among strict, relaxed, and random local clock models

- Assess clock-like behavior using root-to-tip regression

- Specify priors for clock rates based on previous estimates when available

- Tree Prior Selection

- Select appropriate population model (Coalescent, Birth-Death)

- Parameterize priors based on epidemiological context

- For epidemic spread, consider structured coalescent or birth-death skyline models

- MCMC Configuration

- Set appropriate chain length (typically 10⁷-10⁹ generations)

- Configure sampling frequency (every 1000-10000 generations)

- Specify initial values and proposal mechanisms

- Analysis and Diagnostics

- Run multiple independent MCMC chains

- Assess convergence using Tracer or similar tools

- Combine posterior samples after confirming convergence

- Posterior Summarization

- Generate maximum clade credibility trees

- Calculate posterior probabilities for tree topologies

- Compute highest posterior density intervals for parameters

Research Reagent Solutions for Bayesian Phylodynamics

Table 3: Essential Computational Tools for Bayesian Phylodynamic Analysis

| Tool/Software | Primary Function | Application Context |

|---|---|---|

| BEAST2 | Bayesian evolutionary analysis | Comprehensive phylodynamic inference with modular architecture |

| Tracer | MCMC diagnostics | Assessing convergence, effective sample sizes, parameter estimates |

| TreeAnnotator | Posterior tree summarization | Generating maximum clade credibility trees from posterior samples |

| FigTree | Tree visualization | Displaying time-scaled trees with posterior probabilities |

| MrBayes | Bayesian phylogenetic inference | MCMC sampling of phylogenetic trees and evolutionary parameters |

| RevBayes | Probabilistic graphical models | Flexible modeling for complex evolutionary hypotheses |

| IQ-TREE | Model selection and rapid inference | Efficient phylogeny inference with model testing capabilities [6] |

Advanced Modeling Considerations

Addressing Model Misspecification

Bayesian phylogenetic inference typically assumes characters evolve independently, though real morphological characters often exhibit correlations. Simulation studies demonstrate that Bayesian methods remain robust to violations of character independence for topological inference, though branch lengths may be underestimated under extreme rate heterogeneity [4]. Model extensions that integrate over character-state heterogeneity can partially correct these biases [4].

Incorporating Complex Evolutionary Patterns

Modern Bayesian phylogenetic approaches can accommodate various biological complexities:

- Heterotachy: Lineage-specific rate variation over time through covarion-style models [3]

- Rate Variation Across Sites: Gamma-distributed rate heterogeneity [3]

- Site-specific Selection: Branch-site models detecting episodic selection

- Recombination and Horizontal Gene Transfer: Phylogenetic network approaches

Protocol: Handling Character Correlation in Morphological Data

Background Morphological characters often exhibit logical, functional, or developmental correlations, violating the independence assumption of standard phylogenetic models [4].

Procedure

- Simulation Testing: Assess potential bias using parametric bootstrap or posterior predictive simulation

- Model Extension: Consider composite likelihood approaches or Markov models for character correlation

- Sensitivity Analysis: Compare results under independent and correlated models

- Prior Specification: Use conservative priors that account for potential model inadequacy

Validation Recent simulations show Bayesian inference assuming character independence accurately recovers tree topologies even when characters are strongly correlated, supporting continued use in morphological phylogenetics [4].

Future Directions and Methodological Advances

Bayesian methods in evolutionary biology continue to evolve, with promising research directions including:

- Integration with Machine Learning: Combining phylogenetic models with neural networks for improved parameter estimation [6]

- Multi-omic Data Integration: Joint analysis of genomic, transcriptomic, and phenotypic data within phylogenetic frameworks

- Real-time Phylodynamics: Developing efficient algorithms for near real-time analysis of emerging outbreaks

- Improved Computational Efficiency: Advanced MCMC techniques and variational inference for larger datasets

Despite these advances, challenges remain in model specification, computational efficiency, and interpretation of complex posterior distributions, particularly for high-dimensional phylogenetic models [3]. Ongoing methodological development focuses on addressing these limitations while expanding the biological realism of Bayesian phylogenetic models.

Phylodynamics, the study of the interaction between epidemiological and pathogen evolutionary processes, has become a fundamental building block for investigating the temporal and geographical origins, evolutionary history, and ecological risk factors associated with infectious disease spread [7]. Two well-established structured phylodynamic methodologies—based on the coalescent model and the birth-death model—are frequently employed to estimate viral spread between populations, operating under distinct assumptions that impact the accuracy of migration rate inference [8]. These models enable researchers to quantify past population dynamics from phylogenetic trees derived from molecular data, providing insights into epidemic spread that complement traditional epidemiological approaches.

The Bayesian phylodynamic inference framework BEAST2 is one of the primary software platforms within which such analyses are carried out, allowing researchers to jointly infer tree topologies, phylodynamic parameters, molecular clock rates, and substitution models via Markov Chain Monte Carlo (MCMC) sampling [9]. This integration provides a powerful approach for understanding and combating pandemics, as demonstrated during the SARS-CoV-2 pandemic, where phylogenetic and phylodynamic approaches were essential for quantifying international virus spread, identifying outbreaks and transmission chains, estimating growth rates and reproduction numbers, and tracking emerging variants [10].

Theoretical Foundation of Phylodynamic Models

The Structured Coalescent Model

The coalescent is a mathematical model of genealogies that describes the structure of genealogies generated by different demographic processes [11]. Under the neutral coalescent, the time between consecutive common ancestry events is modeled as a point process with a hazard rate that depends on the effective population size Nₑ(t) and the number of extant lineages in that interval A(t) at time t in the past. With time in units of the generation interval τ, this becomes proportional to A(t)/Nₑ(t) [11].

The coalescent model operates under the assumption that we have a small random sample from a large population, making it particularly suitable for analyzing endemic diseases with stable population dynamics [8] [12]. Originally, coalescent models were based on restrictive assumptions about the proportion of the population sampled and when taxa are sampled, but these assumptions have been relaxed since the coalescent was first introduced [11]. The model has been extended to consider heterogeneous structured populations, allowing researchers to investigate migration patterns between subpopulations [11].

A key advantage of coalescent approaches is that they require no explicit model of the sampling process, making them more robust when the sampling process deviates from simplistic assumptions [11]. However, estimates based on coalescent models may be biased by noisy demographic processes, and the model's performance can be compromised when population sizes change rapidly, such as during epidemic outbreaks [8].

The Multi-Type Birth-Death Model

The multi-type birth-death model is a linear birth-death process accounting for structured populations where individuals are classified into different types [9]. In this model, the probability density of a phylogenetic tree can be calculated by numerically integrating a system of differential equations. The process begins at time 0 with one individual of a specific type and evolves through time with individuals giving birth to additional individuals, migrating between types, dying, or being sampled according to specified rates [9].

Formally, the model with d types defines the process over time interval (0,T), partitioned into n segments through time points 0 < t₁ < ... < tₙ₋₁ < T. Each individual of type i at time t (where tₖ₋₁ ≤ t < tₖ) can [9]:

- Give birth to an additional individual of type j with birth rate λᵢⱼ,ₖ

- Migrate to type j with migration rate mᵢⱼ,ₖ (with mᵢᵢ,ₖ = 0)

- Die with death rate μᵢ,ₖ

- Be sampled with sampling rate ψᵢ,ₖ

At specific sampling times tₖ, each individual of type i is sampled with probability ρᵢ,ₖ. Upon sampling, the individual is removed from the infectious pool with probability rᵢ,ₖ [9]. This comprehensive framework allows for flexible modeling of complex population dynamics with changing parameters over time.

The birth-death model incorporates a model of the sampling process, which provides additional statistical power when the sampling process is correctly specified but may lead to bias if the sampling process is misspecified [11]. Recent improvements to birth-death model implementation in software packages like BEAST2's bdmm have dramatically increased the number of genetic samples that can be analyzed, improved numerical robustness, and extended model flexibility to include piecewise-constant changes in migration rates through time and sampling at multiple time points [9].

Quantitative Model Comparison

Table 1: Performance comparison of birth-death and coalescent models across epidemiological scenarios

| Epidemiological Scenario | Model Performance | Migration Rate Accuracy | Source Location Estimation |

|---|---|---|---|

| Epidemic Outbreaks | Birth-death model superior | More accurate regardless of migration rate | Comparable between models |

| Endemic Diseases | Comparable performance | Comparable accuracy; coalescent more precise | Comparable between models |

| Varying Population Sizes | Coalescent with constant size performs poorly | Inaccurate estimates | Robust in both models |

| Structured Populations | Multi-type birth-death excels | Accurate migration rate estimation | Both models perform well |

Recent research comparing these phylodynamic methodologies reveals distinct performance characteristics across different scenarios [8]. For epidemic outbreaks, the birth-death model exhibits a superior ability to retrieve accurate migration rates compared to the coalescent model, regardless of the actual migration rate. This advantage stems from the birth-death model's explicit accounting for population dynamics, which is crucial during exponential growth phases typical of outbreaks [8].

For endemic disease scenarios, where population sizes remain relatively constant, both models produce comparable coverage and accuracy of migration rates, with the coalescent model generating more precise estimates [8]. Regardless of the specific scenario, both models similarly estimate the source location of the disease, indicating robustness in identifying the geographical origin of outbreaks [8].

The choice between models also involves practical computational considerations. The structured birth-death model typically requires a model of the sampling process, whereas the coalescent makes no assumptions about how lineages are sampled through time [11]. This difference means that birth-death models may be biased by misspecification of the sampling process, while coalescent models may be biased by noisy demographic processes [11].

Application Protocols for Epidemic Research

Model Selection Workflow

Table 2: Decision framework for selecting appropriate phylodynamic models

| Research Objective | Recommended Model | Key Considerations | Software Implementation |

|---|---|---|---|

| Estimating migration rates during outbreaks | Multi-type birth-death | Accounts for exponential growth dynamics | BEAST2 with bdmm package |

| Endemic disease dynamics | Structured coalescent | Provides more precise estimates | BEAST2 with structured coalescent |

| Analyzing large datasets (>250 samples) | Improved birth-death models | Recent algorithmic improvements prevent numerical instability | BEAST2 with updated bdmm |

| Incorporating known sampling process | Birth-death with sampling model | Leverages additional information from sampling times | BEAST2 with appropriate sampling priors |

| Uncertain sampling process | Coalescent model | Robust to misspecification of sampling | BEAST2 with coalescent priors |

Protocol 1: Implementing Multi-Type Birth-Death Analysis

Purpose: To estimate migration rates and population dynamics during epidemic outbreaks using the multi-type birth-death model.

Materials and Reagents:

- Genetic sequence data (FASTA format): Minimum of 50 sequences per population for reliable estimates

- Temporal information: Sample collection dates for tip dating

- Population metadata: Geographic or host population labels for discrete traits

- Computational resources: BEAST2 software package with bdmm extension

- Reference files: Outgroup sequences for rooting if required

Procedure:

- Data Preparation:

- Align sequences using MAFFT or MUSCLE

- Partition data appropriately if using multi-gene datasets

- Format metadata in tab-separated files matching sequence names

Model Specification in BEAST2:

- Define population structure based on discrete traits

- Set birth rate prior: Exponential(1) or LogNormal(1,1.5)

- Set death rate prior: Often fixed based on external epidemiological data

- Specify sampling proportion prior: Beta(2,2) if unknown

- Configure migration rate prior: Exponential(1) for asymmetric migration

MCMC Configuration:

- Set chain length to at least 10⁷ iterations for complex models

- Configure log parameters every 10,000 iterations

- Set up path sampling for model comparison if needed

- Enable state logging for diagnostic purposes

Analysis Execution:

- Run multiple independent replicates to assess convergence

- Use BEAGLE library if available to accelerate computation

- Monitor effective sample sizes (ESS > 200 for all parameters)

Result Interpretation:

- Check convergence using Tracer software

- Summarize trees using TreeAnnotator

- Visualize migration rates using SpreadD3 or other visualization tools

Troubleshooting Tips:

- If ESS values remain low, increase chain length or adjust operator weights

- If numerical instability occurs with large datasets, use the updated bdmm implementation [9]

- If migration rates are poorly identified, consider simplifying population structure

Protocol 2: Structured Coalescent Analysis for Endemic Diseases

Purpose: To estimate population history and migration rates in endemic scenarios with stable population sizes.

Materials and Reagents:

- Genetic sequence data: Representative samples from each subpopulation

- Population structure definition: Clear criteria for subpopulation assignment

- Molecular clock model: Strict or relaxed clock based on evolutionary rate

- Substitution model: Selected through model testing (e.g., jModelTest)

Procedure:

- Data Preparation:

- Remove recombinant sequences using RDP or similar tools

- Select representative sequences to avoid oversampling clusters

- Verify temporal signal using root-to-tip regression

Model Specification in BEAST2:

- Select structured coalescent prior (constant or exponential growth)

- Define demographic model based on population history

- Set up discrete trait analysis for migration reconstruction

- Configure molecular clock model (strict or relaxed)

MCMC Configuration:

- Set appropriate chain length based on dataset size

- Adjust operators for efficient exploration of parameter space

- Configure tree and parameter logging intervals

Analysis Execution:

- Run analysis with appropriate computational resources

- Monitor convergence through multiple runs

- Validate results with complementary approaches

Result Interpretation:

- Summarize population size changes through time

- Estimate migration rates between subpopulations

- Identify source-sink dynamics if present

Validation Steps:

- Compare results with epidemiological surveillance data

- Assess robustness through sensitivity analyses

- Validate migration patterns with independent data sources

Case Studies in Epidemic Spread Research

SARS-CoV-2 Pandemic Investigations

Phylodynamic approaches played a crucial role in understanding and combating the early SARS-CoV-2 pandemic [10]. During the first year of the pandemic, nearly 400,000 full or partial SARS-CoV-2 genomes were generated and shared publicly, enabling unprecedented insights into viral spread dynamics.

Molecular clock dating of SARS-CoV-2 lineages using coalescent models indicated multiple introductions from Wuhan to Guangdong in early January 2020, with a subsequent fall in lineage diversity suggesting that within-country travel restrictions combined with comprehensive tracing and isolation were effective in controlling transmission [10]. Similarly, a birth-death skyline approach applied to Australian data estimated a national fall in the effective reproduction number (Rₜ) from 1.63 to 0.48 after the introduction of travel restrictions and social distancing on 27 March 2020 [10].

Phylogeographic studies investigating the impact of international travel restrictions consistently showed reduced numbers of introductions along international routes covered by travel restrictions [10]. For example, in South Africa, international introductions plummeted after travel restrictions began on 26 March 2020, while in Brazil, at least 104 international introductions were estimated during March and April 2020, arriving too early for subsequent restrictions to prevent domestic transmission establishment [10].

Table 3: Phylodynamic parameters estimated from early SARS-CoV-2 analyses

| Parameter | Estimate | 95% Interval | Model Used | Data Source |

|---|---|---|---|---|

| Evolutionary Rate | 0.80×10⁻³ | 0.14×10⁻³ – 1.31×10⁻³ | Coalescent exponential growth | 86 genomes [12] |

| TMRCA | 17-Nov-2019 | 27-Aug-2019 – 19-Dec-2019 | Coalescent exponential growth | 86 genomes [12] |

| Growth Rate | 35.38/year | 15.49 – 53.47 | Coalescent exponential growth | 86 genomes [12] |

| Doubling Time | 7.2 days | 4.7 – 16.3 | Coalescent exponential growth | 86 genomes [12] |

| Rₜ in Australia | 1.63 to 0.48 | N/A | Birth-death skyline | National data [10] |

| Rₜ in New Zealand | 7.0 to 0.2 | N/A | Birth-death model | Transmission cluster [10] |

Influenza A Virus Global Migration Patterns

The improved multi-type birth-death model algorithm was applied to two datasets of Influenza A virus HA sequences—one with 500 samples and another with only 175—to compare global migration patterns and seasonal dynamics [9]. This analysis demonstrated the information gain from analyzing larger datasets, which became possible with algorithmic changes to bdmm that dramatically increased the number of genetic samples that could be analyzed while improving numerical robustness and efficiency [9].

The study highlighted how birth-death models with sampling can quantify past population dynamics in structured populations based on phylogenetic trees, enabling researchers to infer rates at which species migrate between geographic regions or gain/lose traits of interest [9]. The comparison between datasets of different sizes showed how larger sample sizes led to improved precision of parameter estimates, particularly for structured models with a high number of inferred parameters [9].

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential computational tools and resources for phylodynamic analysis

| Tool/Resource | Function | Application Context | Access Method |

|---|---|---|---|

| BEAST2 | Bayesian evolutionary analysis | Primary platform for phylodynamic inference | Open source [9] [12] |

| bdmm package | Multi-type birth-death model implementation | Structured population analysis | BEAST2 package [9] |

| MASTER | Birth-death process simulation | Model validation and simulation studies | Open source [11] |

| Tracer | MCMC diagnostic analysis | Assessing convergence and effective sample sizes | Open source [12] |

| TreeAnnotator | Tree summarization | Generating maximum clade credibility trees | Part of BEAST package [12] |

| FigTree | Tree visualization | Viewing and annotating phylogenetic trees | Open source [12] |

Advanced Methodological Considerations

Approximate Bayesian Computation for Complex Models

As phylodynamic models increase in complexity, traditional MCMC approaches can become computationally demanding and impractical for real-time outbreak analysis [13]. Recent advances in approximate Bayesian inference methods aim to balance inferential accuracy with scalability, including Approximate Bayesian Computation (ABC), Bayesian Synthetic Likelihood (BSL), Integrated Nested Laplace Approximation (INLA), and Variational Inference (VI) [13].

These approaches are particularly relevant for epidemiological applications where rapid updates are required during ongoing outbreaks. The development of hybrid exact-approximate inference methods represents a promising frontier that combines methodological rigor with the scalability needed for outbreak response [13]. Epidemiologists can use these approaches to navigate the trade-off between statistical accuracy and computational feasibility in contemporary disease modeling.

Addressing Sampling Biases and Model Misspecification

Both birth-death and coalescent models face challenges related to sampling biases and model misspecification. Birth-death models require a model of the sampling process, which can lead to bias if incorrectly specified [11]. Coalescent models, while not requiring explicit sampling models, may be biased by noisy demographic processes [11].

Research has shown that much of the statistical power of birth-death model approaches is derived from information in the sequence of sample times rather than the genealogy itself [11]. This finding suggests an enhancement to coalescent models: if the sampling process is known, researchers can augment the coalescent likelihood with a separate likelihood for the sequence of sample times, potentially improving precision [11].

For birth-death models, the BEAST2 package bdmm now allows for more flexible sampling scenarios, including homochronous sampling events at multiple time points (not only the present), individuals that are not necessarily removed upon sampling, and piecewise-constant changes in migration rates through time [9]. These enhancements address some of the limitations of earlier implementations and expand the range of empirical datasets that can be appropriately modeled.

Birth-death and coalescent models represent essential phylodynamic components for understanding population dynamics in infectious disease research. The choice between these modeling frameworks depends on the specific research question, epidemiological context, and data characteristics. For epidemic outbreaks with rapidly changing population sizes, multi-type birth-death models generally provide more accurate estimates of migration rates, while for endemic diseases with stable population dynamics, structured coalescent models offer comparable accuracy with greater precision [8].

Recent methodological advances, including improved algorithms for birth-death model inference [9] and enhanced coalescent approaches [11], have expanded the range of questions that can be addressed using phylodynamic methods. The continued development of approximate Bayesian computation techniques [13] promises to further increase the applicability of these approaches to complex, large-scale datasets characteristic of modern pathogen genomic surveillance.

As demonstrated during the SARS-CoV-2 pandemic [10], the integration of phylodynamic methods with traditional epidemiological approaches provides powerful insights into disease spread, intervention effectiveness, and spatial dynamics, making these tools indispensable for contemporary infectious disease research and public health response.

In the field of epidemic spread research, accurately quantifying key evolutionary parameters is essential for understanding pathogen dynamics and informing public health interventions. Two of the most critical metrics for tracking infectious disease evolution and spread are the effective population size (Nₑ) and the reproductive number (R). The effective population size represents the number of individuals in an idealized population that would exhibit the same amount of genetic drift or inbreeding as the actual population, providing insights into genetic diversity and adaptive potential [14]. The reproductive number, particularly the time-varying effective reproductive number (Rₜ), indicates the average number of secondary infections generated per infectious case at time t, serving as a crucial indicator of transmission intensity [15] [16].

Bayesian phylodynamic analysis has emerged as a powerful framework for integrating genetic, epidemiological, and evolutionary data to estimate these parameters. This approach leverages pathogen genomes to reconstruct evolutionary relationships and population dynamics, enabling researchers to uncover patterns of epidemic spread that are difficult to detect using traditional epidemiological methods alone [5] [17]. When evolutionary and epidemiological processes occur on similar timescales, as with rapidly evolving RNA viruses, genomic data carry a distinctive signature of epidemic dynamics that can be extracted through phylodynamic methods [5].

The following application notes and protocols provide detailed methodologies for estimating Nₑ and Rₜ within a Bayesian phylodynamic framework, specifically designed for researchers, scientists, and drug development professionals working on epidemic surveillance and control.

Estimating Effective Population Size (Nₑ)

Conceptual Foundation and Biological Significance

The effective population size (Nₑ) is a fundamental parameter in population genetics and evolutionary biology, originally introduced by Sewall Wright in the 1930s [18]. Nₑ quantifies the magnitude of genetic drift and inbreeding within populations, with important implications for understanding adaptive potential and genetic diversity loss. In pathogen evolution, Nₑ reflects the number of viruses effectively contributing to the next generation, which influences the rate of antigenic evolution, drug resistance development, and adaptive potential [14].

For epidemics, tracking changes in Nₑ through time can reveal important aspects of outbreak dynamics, including population bottlenecks during transmission events and expansion phases as epidemics spread through susceptible populations. Unlike the census population size, Nₑ accounts for variances in reproductive success among individuals, making it typically much smaller than the total number of infected hosts [14].

Methodological Approaches for Nₑ Estimation

Table 1: Methods for Estimating Effective Population Size (Nₑ) from Genomic Data

| Method Class | Principle | Software Tools | Temporal Scope | Key Considerations |

|---|---|---|---|---|

| Linkage Disequilibrium (LD) | Measures non-random association of alleles at different loci | NeEstimator2, SPEEDNe, GONE | Contemporary/Recent | Sensitive to sample size, marker density, population structure [14] [18] |

| Allele Frequency Spectrum (AFS) | Compares observed site frequency spectrum to theoretical expectations | δaδi, GADMA | Historical/Contemporary | Requires large sample sizes; sensitive to demographic model specification [14] |

| Coalescent-based | Models the time to most recent common ancestor (TMRCA) of sequences | PHLASH, SMC++, MSMC2 | Historical | Computational intensity varies; PHLASH shows improved accuracy for whole-genome data [19] |

| Phylogenetic | Estimates Nₑ from pathogen phylogenies | BEAST, MrBayes | Varies with timescale | Integrates evolutionary and epidemiological parameters [20] [17] |

Protocol: LD-based Nₑ Estimation Using NeEstimator2

Principle: This method estimates contemporary Nₑ from the observed linkage disequilibrium (LD) between unlinked loci, as LD accumulates more rapidly through genetic drift in smaller populations [14] [18].

Materials:

- Genotype data (SNP array or sequencing data)

- High-performance computing resources

- Population genetic software packages

Procedure:

Data Preparation and Quality Control

- Format genotype data in appropriate formats (e.g., PLINK, GENEPOP)

- Perform quality control filtering: remove loci with high missingness (>10%), significant deviation from Hardy-Weinberg equilibrium (p < 0.001), and minor allele frequency below 0.01 [18]

- For LD-based methods, prune markers in high linkage disequilibrium (r² > 0.5) to ensure locus independence [18]

Parameter Configuration

- Set critical allele frequency threshold (default: 0.05) to minimize bias from rare alleles

- Specify random mating model for epidemic pathogens

- Define confidence interval method (jackknifing recommended)

Execution

- Run NeEstimator2 with prepared input files

- Use command:

Ne2 -i input_file.gen -s 0 -o output_file.txt - For large datasets, utilize high-performance computing clusters

Interpretation of Results

- Examine point estimate and confidence intervals for Nₑ

- Compare estimates across different allele frequency thresholds to assess robustness

- Consider biological plausibility given epidemiological context

Troubleshooting:

- If confidence intervals are excessively wide, increase sample size or marker density

- If estimates are implausibly small, check for population stratification or sampling artifacts

- For very large populations, consider alternative methods as LD-based approaches have upper detection limits [14]

Determining Optimal Sample Size for Nₑ Estimation

Empirical studies indicate that a sample size of approximately 50 individuals often provides a reasonable approximation of the true Nₑ value, balancing cost and precision [18]. However, this should be adjusted based on:

- Population characteristics: Larger samples are needed for more diverse populations

- Marker density: Higher density SNP arrays or whole genome sequences may allow smaller sample sizes

- Research question: Higher precision required for detecting subtle changes over time

Estimating Reproductive Numbers (R)

Conceptual Framework

The reproductive number represents the transmissibility of an infectious pathogen in a population. Several related metrics are used in epidemiological practice:

- Basic reproduction number (R₀): The average number of secondary cases generated by a single infectious individual in a fully susceptible population

- Effective reproduction number (Rₜ): The time-varying counterpart to R₀, accounting for accumulating immunity and interventions [15] [16]

- Spatiotemporal reproduction number: An extension that incorporates spatial heterogeneity in transmission [15]

When Rₜ > 1, epidemic growth is expected, while Rₜ < 1 indicates declining transmission. Accurate estimation of Rₜ is therefore crucial for assessing intervention effectiveness and predicting future case trajectories [15] [21].

Methodological Approaches for R Estimation

Table 2: Methods for Estimating Reproductive Numbers from Epidemiological and Genetic Data

| Method Category | Data Requirements | Software/Tools | Advantages | Limitations |

|---|---|---|---|---|

| Incidence-based | Case counts, serial interval distribution | EpiEstim, custom Bayesian models | Intuitive, rapid computation | Sensitive to case ascertainment, reporting delays [15] |

| Wastewater-based | SARS-CoV-2 RNA concentrations in wastewater | Percent change, GLM models | Unbiased by clinical testing availability; early outbreak detection [16] | Requires calibration with case data; sewershed population definition [16] |

| Phylodynamic | Pathogen genomes, sampling dates | BEAST, BDMM | Incorporates evolutionary history; estimates historical dynamics [22] [17] | Computational intensity; requires sufficient sequence data [20] |

| Spatiotemporal | Georeferenced case data, serial interval | Bayesian model selection/averaging | Captures local heterogeneity; identifies spatial hotspots [15] | Complex implementation; requires fine-scale data [15] |

Protocol: Bayesian Estimation of Rₜ from Case Data

Principle: This approach estimates the time-varying effective reproduction number using case incidence data and the serial interval distribution within a Bayesian framework, allowing incorporation of prior knowledge and quantification of uncertainty [15] [21].

Materials:

- Time-stamped case incidence data

- Serial interval distribution estimates (mean and standard deviation)

- Statistical software (R, Python) with appropriate packages

Procedure:

Data Preparation

- Compile daily or weekly case counts, ensuring consistent reporting periods

- Obtain serial interval distribution from literature or prior studies

- For tuberculosis in Iran, mean serial interval = 1 year was used [21]

- Adjust for reporting delays and back-projection if necessary

Model Specification

- Choose likelihood model: Poisson distribution for case counts

- Specify prior distributions for parameters:

- Rₜ ~ Gamma(mean = 1, SD = 0.5) for mildly informative prior

- Accounting for uncertainty in serial interval estimates

- Select sliding window size for Rₜ estimation (typically 5-14 days depending on reporting frequency)

Implementation

- Code model in Bayesian framework (Stan, PyMC, or use specialized packages like EpiEstim)

- Run Markov Chain Monte Carlo (MCMC) sampling:

- Minimum 10,000 iterations after burn-in of 2,000

- Multiple chains to assess convergence

- For respiratory pathogens with limited data, consider Bayesian model averaging to account for serial interval uncertainty [15]

Output and Interpretation

- Extract posterior median and credible intervals for Rₜ

- Identify time points when Rₜ crosses the epidemic threshold (Rₜ = 1)

- Correlate changes in Rₜ with intervention timing

- Validate model fit using posterior predictive checks

Application Note: In a study of pulmonary tuberculosis in Iran (2018-2022), this approach estimated Rₜ = 1.06 ± 0.05, indicating persistent transmission with each case causing approximately one new infection over time [21].

Integrated Phylodynamic Framework

Protocol: Combined Estimation of Nₑ and R using Pathogen Genomes

Principle: This integrated approach simultaneously estimates effective population size and reproductive numbers from pathogen genomic sequences within a Bayesian phylodynamic framework, leveraging the relationship between genetic diversity and epidemic growth [17].

Figure 1: Workflow for Integrated Phylodynamic Analysis of Nₑ and R

Materials:

- Pathogen whole genome sequences with sampling dates

- Computational resources for Bayesian phylogenetic inference

- Phylodynamic software (BEAST, BEAST2 with appropriate packages)

Procedure:

Data Preparation

- Perform multiple sequence alignment (MAFFT, MUSCLE)

- Assess temporal signal using root-to-tip regression (TempEST)

- Curate metadata: sampling dates, locations if available

Substitution Model Selection

- Test nucleotide substitution models (HKY, GTR) with and without gamma distributed rate heterogeneity

- Use model selection tools (jModelTest, bModelTest) [20]

- Select best-fitting model based on marginal likelihood estimation

Clock Model and Tree Prior Specification

- Choose molecular clock model (strict vs. relaxed clock)

- For epidemics, uncorrelated lognormal relaxed clock often appropriate

- Select tree prior based on epidemic context:

- For integrated analysis, birth-death skyline models can estimate both parameters

MCMC Configuration and Execution

- Configure MCMC chain length (typically 10⁷-10⁹ steps depending on dataset size)

- Set appropriate sampling frequency (every 10,000 steps)

- Specify log parameters (trees, Nₑ, R)

- Run multiple independent replicates to assess convergence

Analysis and Diagnostics

- Assess MCMC convergence (ESS > 200 for all parameters)

- Combine replicates using LogCombiner

- Summarize parameter estimates using Tracer [20]

- Annotate maximum clade credibility tree using TreeAnnotator

Application Example: In a study of West Nile Virus spread in North America, this phylodynamic approach revealed a mean lineage dispersal velocity of ~1200 km/year, with higher dispersal velocities during the early expansion phase of the epidemic [17]. The analysis further identified temperature as a significant environmental predictor of viral genetic diversity through time.

Research Reagent Solutions

Table 3: Essential Research Tools and Resources for Bayesian Phylodynamic Analysis

| Tool/Resource | Application | Key Features | Implementation Considerations |

|---|---|---|---|

| BEAST/BEAST2 | Bayesian evolutionary analysis | Comprehensive model selection; phylodynamics; coalescent inference | Steep learning curve; computational intensity [20] |

| NeEstimator2 | Effective population size estimation | LD-based methods; user-friendly interface; confidence intervals | Limited to contemporary Nₑ; sample size constraints [14] [18] |

| PHLASH | Historical population size inference | Non-parametric estimation; uncertainty quantification; GPU acceleration | New method; requires whole-genome data [19] |

| EpiEstim | Reproduction number estimation | Time-varying Rₜ; simple implementation; rapid results | Sensitive to serial interval specification [15] |

| BDMM | Multi-population transmission dynamics | Structured birth-death model; species transition rates | Complex setup; appropriate for structured populations [22] |

| Tracer | MCMC diagnostics | Parameter convergence assessment; ESS calculation; model comparison | Essential for all Bayesian analyses [20] |

Bayesian phylodynamic methods provide a powerful framework for simultaneously estimating effective population size and reproductive numbers from pathogen genomic data. The protocols outlined here offer researchers standardized approaches for deriving these key evolutionary parameters, with applications ranging from real-time epidemic monitoring to historical reconstruction of outbreak dynamics.

As methodological advancements continue, emerging approaches like PHLASH for population size history inference [19] and wastewater-based reproductive number estimation [16] are expanding the toolkit available to researchers. By following these standardized protocols and selecting appropriate methods based on research questions and data availability, scientists can generate robust estimates of these fundamental parameters to inform public health decision-making and drug development strategies.

The integration of genomic and epidemiological data within a Bayesian framework remains a rapidly evolving field, with ongoing developments in modeling complexity, computational efficiency, and incorporation of additional data sources promising to further enhance the accuracy and utility of these approaches for epidemic research.

Bayesian phylodynamic analysis has become an indispensable tool for reconstructing the evolutionary and transmission history of viral epidemics, providing critical insights into pathogen spread, intervention effectiveness, and emergence of variants. The reliability of these sophisticated statistical models is fundamentally constrained by the quality, completeness, and strategic collection of the underlying data. The integration of genetic sequence alignments with precisely structured metadata forms the empirical foundation upon which all subsequent phylogenetic inferences are built. Furthermore, the temporal and spatial distribution of samples directly determines the analytical resolution achievable in reconstructing epidemic dynamics. This protocol outlines the essential data requirements, preparation methodologies, and sampling strategies necessary for conducting robust Bayesian phylodynamic analyses focused on epidemic spread, providing researchers with a structured framework for generating phylogenetically informative datasets.

Data Types and Requirements

Phylodynamic investigations rely on the synergistic integration of genetic sequence data and associated contextual information. Each data type contributes distinct but complementary information to the analysis.

Genetic Sequence Alignments

Genetic sequence alignments represent the core molecular data for phylogenetic inference, providing the character matrices that record evolutionary changes across lineages.

- Sequence Acquisition: Viral genome sequences are typically generated through high-throughput sequencing technologies from clinical samples. The portability and rapid deployment of modern sequencers enable near real-time generation of complete genome data, which is crucial for outbreak investigation [23].

- Alignment Generation: Raw sequences are aligned against a reference genome or to each other using multiple sequence alignment algorithms (e.g., MAFFT, MUSCLE) to establish positional homology across taxa.

- Quality Control: Alignments must be inspected for sequencing errors, recombination, and misalignment, which can introduce artifacts into phylogenetic reconstruction. For SARS-CoV-2, the limited genetic diversity in early pandemic stages presented particular challenges for phylogenetic resolution [24].

- Format Specifications: Standard alignment formats include FASTA, NEXUS, and PhyloXML, which preserve both sequence data and evolutionary model specifications [25].

Essential Metadata

Metadata provides the essential contextual framework for interpreting phylogenetic patterns biologically and epidemiologically. The table below summarizes critical metadata categories and their phylodynamic applications.

Table 1: Essential Metadata Categories for Phylodynamic Analysis

| Metadata Category | Specific Data Fields | Phylodynamic Application | Example Use Case |

|---|---|---|---|

| Temporal | Sample collection date, Time of symptom onset | Molecular clock calibration, Estimation of evolutionary rates and TMRCA | Estimating time of most recent common ancestor (TMRCA) of SARS-CoV-2 lineages [10] |

| Spatial | Geographic coordinates, Country/Region of origin, Location of sampling | Phylogeographic reconstruction of diffusion pathways, Identification of spatial clusters | Mapping global spread of H1N1pdm from Mexico to worldwide locations [24] |

| Epidemiological | Host species, Clinical status, Transmission setting, Exposure history | Characterizing transmission dynamics, Identifying risk factors | Linking SARS-CoV-2 transmission clusters to specific settings or populations [10] |

| Administrative | Sample identifier, Sequencing platform, Data curator, Access restrictions | Data provenance, Access control, Resource management | Managing data sharing and usage rights in public health emergencies [26] |

Descriptive metadata enables discovery and identification of data resources, while structural metadata describes containers and relationships between data elements [26] [27]. Administrative metadata provides critical instructions for data management, including access restrictions and preservation information, which is particularly important for sensitive public health data [26].

Data Integration Challenges

The integration of genetic alignments with metadata presents several technical challenges that require careful consideration:

- Data Harmonization: Inconsistent formatting, missing values, and taxonomic inconsistencies between data sources can compromise analytical integrity. Automated validation pipelines are essential for large-scale analyses.

- Temporal Resolution: Heterogeneous sampling intervals can create biases in molecular clock estimates and reconstruction of population dynamics [23].

- Spatial Granularity: Variation in geographic resolution (e.g., country-level vs. municipal-level) affects the precision of phylogeographic inference and interpretation of spatial spread.

Sampling Strategy Framework

The strategic collection of samples across relevant dimensions fundamentally determines the analytical scope and inferential power of phylodynamic studies.

Temporal Sampling

Temporal distribution of samples directly impacts the accuracy of evolutionary rate estimation and molecular dating:

- Sampling Density: Higher sampling frequency improves resolution of evolutionary rates and population dynamics. During the SARS-CoV-2 pandemic, intensive genomic surveillance enabled near real-time tracking of variant emergence [10].

- Temporal Span: Longer sampling periods allow for more robust estimation of long-term evolutionary trends and seasonality patterns.

- Representativeness: Samples should proportionally represent incidence dynamics rather than being clustered in short time windows, unless targeting specific transmission events.

Spatial Sampling

The geographic distribution of samples constrains the ability to reconstruct spatial dispersal patterns:

- Global Scale: Analyses of international spread require broadly representative sampling across affected regions. The early SARS-CoV-2 pandemic demonstrated how sampling biases toward certain countries limited understanding of initial transmission routes [10].

- Regional Scale: Dense sampling within regions allows identification of local transmission networks and sources of introduction. For example, phylogeographic analysis of H1N1pdm revealed complex patterns of spatial spread within North America following initial emergence in Mexico [24].

- Sampling Bias Correction: Uneven spatial sampling requires explicit modeling through structured coalescent or birth-death models to avoid erroneous conclusions about transmission dynamics [10].

Phylogenetically Informed Sampling

Strategic sampling based on emerging phylogenetic patterns can optimize resource allocation:

- Oversampling of Emerging Lineages: Targeted sequencing of variants with concerning mutations or epidemiological associations enhances monitoring capacity.

- Bridge Sampling: Focusing on geographic or temporal gaps in existing phylogenies can resolve uncertainties in transmission pathways.

- Outlier Sampling: Including phylogenetic outliers helps delineate the full diversity of circulating lineages and identify previously unrecognized introductions.

Table 2: Impact of Sampling Strategies on Phylodynamic Inference

| Sampling Dimension | Inadequate Approach | Optimal Strategy | Impact on Inference |

|---|---|---|---|

| Temporal Distribution | Clustered sampling in short time periods | Proportional to incidence with regular intervals | Accurate evolutionary rate estimation; Precise dating of emergence events |

| Spatial Distribution | Concentrated in accessible locations | Representative across affected regions; Higher density at suspected sources | Reduced bias in reconstructing spatial spread; Identification of source-sink dynamics |

| Phylogenetic Coverage | Random without consideration of genetic diversity | Strategic targeting of divergent lineages and emerging variants | Comprehensive characterization of diversity; Early detection of novel lineages |

| Metadata Completeness | Inconsistent or incomplete metadata | Standardized collection of temporal, spatial and epidemiological data | Enhanced interpretation of phylogenetic patterns; Robust testing of epidemiological hypotheses |

Experimental Protocols

Protocol 1: Data Collection and Metadata Management

Objective: Establish standardized procedures for collection, documentation, and curation of genetic sequences and associated metadata to ensure phylogenetic utility.

Materials:

- Clinical specimens (swabs, serum, tissue)

- RNA/DNA extraction kits

- High-throughput sequencing platform

- Metadata recording forms (electronic preferred)

- Data management system with version control

Procedure:

- Sample Collection:

- Collect clinical specimens using appropriate methods for the target pathogen

- Record essential contextual data at point of collection using standardized forms

- Implement unique identifiers that link specimens to metadata

Laboratory Processing:

- Extract genetic material using validated protocols

- Perform quality control assessment (e.g., spectrophotometry, electrophoresis)

- Prepare sequencing libraries using appropriate enrichment strategies

Metadata Curation:

- Implement a metadata strategy that defines scope, ownership, and standards [28]

- Establish a metadata vocabulary with formal definitions for consistent communication [27]

- Map metadata to track relationships and ensure traceability to original sources [27]

- Automate metadata processes where possible to reduce errors and improve efficiency [27]

Data Integration:

Troubleshooting:

- Incomplete metadata: Implement validation checks requiring essential fields

- Taxonomic inconsistencies: Apply automated name resolution services

- Temporal anomalies: Flag collection dates outside plausible ranges

Protocol 2: Sequence Alignment and Quality Control

Objective: Generate high-quality multiple sequence alignments suitable for phylogenetic analysis through rigorous quality control procedures.

Materials:

- Raw sequence data (FASTQ format)

- Reference genome(s)

- Computing infrastructure with adequate processing capacity

- Alignment software (e.g., MAFFT, MUSCLE)

- Quality assessment tools (e.g., FastQC, trimAl)

Procedure:

- Sequence Preprocessing:

- Quality filtering and adapter trimming of raw reads

- Assembly using reference-based or de novo approaches

- Verification of complete coding regions for protein-coding genes

Multiple Sequence Alignment:

- Select appropriate alignment algorithm based on data type and size

- Execute alignment with optimized parameters

- Visually inspect alignment for obvious errors using visualization tools

Alignment Refinement:

- Trim poorly aligned regions while preserving phylogenetically informative sites

- Verify reading frames for protein-coding sequences

- Mask hypervariable regions prone to alignment ambiguity

Format Conversion:

- Convert alignment to formats compatible with downstream phylogenetic software (NEXUS, PHYLIP)

- Preserve metadata association through sequence identifiers

Validation:

- Assess alignments using phylogenetic likelihood under simple models

- Compare multiple alignment methods for consistency

- Verify that alignment properties match evolutionary expectations

Visualization and Workflow Diagrams

The following diagrams illustrate the key procedural workflows and data relationships in phylodynamic data management and analysis.

Phylodynamic Data Management Workflow

Metadata Integration Framework

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Phylodynamic Studies

| Tool Category | Specific Tool/Reagent | Function | Application Note |

|---|---|---|---|

| Sequencing Technology | Illumina NovaSeq, Oxford Nanopore, PacBio | Generation of raw sequence data from specimens | Portable sequencers enable rapid genomic surveillance in outbreak settings [23] |

| Alignment Software | MAFFT, MUSCLE, Clustal Omega | Multiple sequence alignment from raw sequences | Critical for establishing positional homology before phylogenetic inference |

| Metadata Management | Collibra, Data Catalogs, Custom Databases | Organization and curation of contextual metadata | Essential for maintaining data integrity and enabling reproducible research [26] [28] |

| Phylogenetic Software | BEAST, BEAST2, MrBayes | Bayesian phylogenetic inference with molecular clock | Enables joint estimation of evolutionary and population dynamics [10] [24] |

| Visualization Platforms | PhyloScape, Microreact, Nextstrain | Interactive visualization of annotated phylogenies | PhyloScape supports customizable visualization features with flexible metadata annotation [25] |

| Computational Infrastructure | High-performance computing clusters, Cloud computing | Handling computationally intensive Bayesian analyses | BEAGLE library exploits many-core computing for massive datasets [24] |

Bayesian statistical methods have revolutionized the study of pathogen evolution, enabling researchers to reconstruct transmission pathways, estimate evolutionary parameters, and quantify uncertainty from molecular sequence data. This framework integrates prior knowledge with current epidemiological and genetic data to generate posterior distributions of phylogenetic trees and key parameters, providing a powerful approach for phylodynamic analysis during epidemics. The adoption of Markov Chain Monte Carlo (MCMC) algorithms has been instrumental in making these computationally intensive methods accessible for practical research applications in infectious disease dynamics [29]. This protocol outlines the fundamental concepts, experimental workflows, and analytical tools for implementing Bayesian phylogenetic inference in pathogen evolution research.

Conceptual Foundations of Bayesian Phylogenetics

Bayesian inference in phylogenetics operates on the principle of Bayes' theorem, which calculates the posterior probability of a phylogenetic tree (the hypothesis) given the observed molecular sequence data [29]. The theorem is expressed as:

P(Tree | Data) = [P(Data | Tree) × P(Tree)] / P(Data)

Where:

- P(Tree | Data) is the posterior probability - the probability that the tree is correct given the data, prior, and model

- P(Data | Tree) is the likelihood - the probability of observing the data given a specific tree and evolutionary model

- P(Tree) is the prior probability - represents previous knowledge or assumptions about the tree before analyzing the data

- P(Data) is the marginal probability of the data - often computationally intractable but constant across trees [30]

This framework allows for coherent integration of uncertainty in parameter estimation, making it particularly valuable for complex evolutionary models where multiple explanations may be consistent with the data. The frequencies of trees in the posterior sample have a clear statistical interpretation as probabilities, unlike bootstrap support values from maximum likelihood analyses [30].

Table 1: Comparison of Phylogenetic Inference Frameworks

| Feature | Bayesian Inference | Maximum Likelihood |

|---|---|---|

| Statistical Basis | Inverse probability (Bayes' theorem) | Optimality criterion |

| Output | Sample of trees with posterior probabilities | Single best tree with bootstrap supports |

| Uncertainty Quantification | Direct probability statements | Frequency under data resampling |

| Prior Information | Explicitly incorporated | Not incorporated |

| Computational Approach | Markov Chain Monte Carlo (MCMC) | Heuristic search algorithms |

| Interpretation of Support | Probability tree is correct given data | Frequency branch is found in resampled data |

Key Methodological Components

Markov Chain Monte Carlo (MCMC) Sampling

Since calculating the posterior distribution analytically is computationally prohibitive for phylogenetic problems, Bayesian inference relies on MCMC sampling to approximate the posterior distribution of trees [30]. The Metropolis-Hastings algorithm, a common MCMC approach, follows these steps:

- An initial tree, Ti, is randomly selected

- A neighbor tree, Tj, is selected from the collection of trees

- The ratio R of the probabilities of Tj and Ti is computed: R = f(Tj)/f(Ti)

- If R ≥ 1, Tj is accepted as the current tree

- If R < 1, Tj is accepted as the current tree with probability R, otherwise Ti is kept

- The process repeats for thousands or millions of iterations [29]

This algorithm ensures that the Markov chain eventually reaches a stationary distribution that approximates the true posterior distribution. The Metropolis-coupled MCMC (MC³) variant runs multiple chains in parallel with different "temperatures" to improve mixing and help chains escape local optima in complex tree spaces [29].

Substitution Models and Priors

Selecting appropriate substitution models is critical for accurate phylogenetic inference. For nucleotide sequences, models range from simple (JC69) to complex (GTR+Γ), with the General Time Reversible model with Gamma-distributed rate variation among sites (GTR+Γ) often providing a good balance of complexity and biological realism [20]. Programs like jModelTest and PartitionFinder can assist in model selection, though it is generally better to over-specify than under-specify models in Bayesian phylogenetics [20].

Prior distributions must be specified for all model parameters, including tree topology, branch lengths, and substitution parameters. Common choices include:

- Uniform priors on topologies

- Exponential or gamma distributions on branch lengths

- Dirichlet priors on substitution rates

- Proper priors should be chosen to ensure model identifiability [20]

Application Protocol: Transmission Tree Reconstruction

Experimental Workflow

The following protocol outlines the application of Bayesian inference for reconstructing pathogen transmission trees during outbreaks, integrating genetic and epidemiological data [31].

Figure 1: Bayesian workflow for pathogen transmission inference

Step-by-Step Procedure

Step 1: Data Collection and Preparation

- Obtain whole-genome sequences of the pathogen from infected individuals/premises

- Collect associated epidemiological data:

- Align sequences using appropriate multiple sequence alignment software

- Assess data quality and check for recombination

Step 2: Model Specification

- Select appropriate substitution model (e.g., GTR+Γ) using model testing software

- Specify clock model to relate genetic divergence to time (strict vs. relaxed clocks)

- Define prior distributions for parameters:

- For transmission tree inference, incorporate epidemiological parameters such as generation time distribution and infectious period

Step 3: MCMC Implementation and Diagnostics

- Configure MCMC parameters:

- Chain length (typically millions of generations)

- Sampling frequency (every 1000-10000 generations)

- Number of parallel chains (at least 2, typically 4)

- Heating parameters for MC³ if used [29]

- Monitor convergence using:

- Effective sample sizes (ESS > 200 for all parameters)

- Potential scale reduction factors (≈1.0)

- Trace plots showing good mixing

- If convergence criteria not met, increase chain length or adjust proposal mechanisms

Step 4: Posterior Analysis

- Combine post-burn-in samples from multiple runs

- Summarize tree samples using maximum clade credibility trees

- Calculate posterior probabilities for specific transmission pairs or phylogenetic clusters

- Estimate key parameters: reproductive numbers, mutation rates, transmission distances [31]

- Identify presence of unsampled populations based on unexpectedly long branch lengths [31]

Step 5: Interpretation and Validation

- Annotate trees with epidemiological metadata for visualization

- Compare inferred transmission patterns with independent epidemiological observations

- Assess robustness through sensitivity analyses (different priors, models)

- Estimate the contribution of different transmission routes using structured contact data when available [32]

Table 2: Key Parameters in Bayesian Phylodynamic Inference

| Parameter | Description | Typical Prior | Epidemiological Interpretation |

|---|---|---|---|

| R0 (Basic Reproduction Number) | Average number of secondary cases | Gamma(2,1) | Transmission potential |

| Mutation Rate | Substitutions per site per year | Lognormal(empirical mean) | Evolutionary rate |

| Clock Rate | Molecular evolutionary rate | Lognormal or Gamma | Rate of genetic change |

| Population Size | Effective number of infections | Coalescent priors | Genetic diversity |

| Transmission Rate | Between-host transmission probability | Beta(1,1) | Infectivity |

| Migration Rate | Between-population transmission | Exponential(1) | Spatial spread |

Advanced Applications in Pathogen Phylogeography

Structured Coalescent Framework

For pathogens spreading across multiple geographic locations, the structured coalescent model provides a framework for inferring migration rates and effective population sizes in different locations [33]. The method models how the geographical structure affects genetic similarity, with genomes from the same location being more similar on average than those from different locations.

Implementation Protocol:

- Precompute a dated phylogeny using software such as BEAST, BEAST2, or treedater

- Define the discrete geographic structure (e.g., countries, regions)

- Specify the structured coalescent model with migration rates between locations

- Implement reversible jump MCMC to sample migration histories efficiently

- Estimate location-specific effective population sizes and directed migration rates [33]

Quantifying Transmission Routes

Recent extensions incorporate structured contact data to quantify the contribution of different transmission routes:

- Extend phylodynamic models (e.g., phybreak) to include data on contact types

- Estimate the fraction of transmission events attributable to different contact types (e.g., shared personnel, veterinary visits, environmental transmission)

- Combine genetic sequences, sampling times, and contact data in a unified Bayesian framework [32]

Table 3: Research Reagent Solutions for Bayesian Phylodynamic Analysis

| Tool/Reagent | Function | Application Context |

|---|---|---|

| BEAST/BEAST2 | Bayesian evolutionary analysis | Divergence dating, phylogeography, species tree estimation |

| MrBayes | Bayesian phylogenetic inference | Estimation of species phylogenies and divergence times |

| StructCoalescent | Phylogeographic inference | Exact structured coalescent analysis on precomputed trees |

| phybreak | Transmission tree inference | Outbreak reconstruction combining genetic and epidemiological data |

| jModelTest/PartitionFinder | Substitution model selection | Identifying best-fit evolutionary models for nucleotide data |

| Tracer | MCMC diagnostics | Assessing convergence, effective sample sizes, parameter estimates |

| MultiTypeTree | Structured coalescent implementation | Joint inference of phylogeny and ancestral locations |

Troubleshooting and Optimization

Common Issues and Solutions:

- Poor MCMC Mixing: Increase chain length; adjust proposal mechanisms; use Metropolis-coupled MCMC (MC³) with heated chains

- Low Effective Sample Sizes: Run longer chains; reparameterize model; adjust operator weights

- Prior Sensitivity: Conduct sensitivity analyses with different prior distributions; use empirical priors when justified

- Model Misspecification: Compare alternative models using Bayes factors or posterior predictive checks

- Computational Limitations: Use BEAGLE library to accelerate likelihood calculations; reduce model complexity; utilize cloud computing

Validation Approaches:

- Simulate datasets under known parameters to assess statistical identifiability

- Compare inferences with independent epidemiological observations

- Use cross-validation approaches when possible

- Conduct posterior predictive simulations to assess model fit [31] [33]

The Bayesian framework provides a powerful, coherent approach for inferring pathogen evolutionary and transmission dynamics from genetic and epidemiological data. When properly implemented with appropriate model checking and validation, it offers unique insights into the processes shaping pathogen populations during epidemics.

Methodological Workflow and Practical Applications in Pathogen Genomic Surveillance

Bayesian phylodynamics has become an indispensable methodology for reconstructing the evolutionary and population dynamics of pathogens directly from genetic sequence data. This approach integrates molecular sequences with epidemiological and temporal data within a statistical framework to infer time-scaled phylogenetic trees, population sizes, and reproductive numbers. For researchers studying epidemic spread, selecting the appropriate software toolkit is crucial for generating robust, actionable insights. This protocol focuses on three powerful software platforms—BEAST 2, BEAST X, and MrBayes—comparing their capabilities, providing structured guidance for their application, and detailing experimental workflows for phylodynamic inference. While MrBayes is a cornerstone Bayesian phylogenetics software, the available search results do not provide specific details on its phylodynamic capabilities; thus, this protocol will focus predominantly on the BEAST ecosystem, where comprehensive phylodynamic model information is available.

The BEAST (Bayesian Evolutionary Analysis by Sampling Trees) platform specializes in Bayesian phylogenetic inference using Markov chain Monte Carlo (MCMC), with a core focus on rooted, time-measured phylogenies [34] [35]. Its success stems from an integrative approach that combines sequence, phenotypic, and epidemiological data along time-scaled trees, making it particularly valuable for analyzing rapidly evolving pathogens [36]. The BEAST project has evolved into two main parallel branches: BEAST 2, a modular, extensible software platform first released in 2014 [34], and the newer BEAST X, which introduces significant advances in flexibility and scalability for evolutionary analysis [36] [37]. These tools collectively enable researchers to address critical questions about the origins, spread, and persistence of viral outbreaks, as demonstrated in studies of Ebola, SARS-CoV-2, and mpox virus lineages [36].

Table 1: Core Software Platforms for Bayesian Phylodynamic Analysis

| Software Platform | Primary Focus | Key Strengths | Model Extensibility |

|---|---|---|---|

| BEAST 2 | Bayesian evolutionary analysis of molecular sequences using MCMC [34] [35] | Modular package architecture, active community, wide model variety [38] [34] | High (via BEAST 2 Package Manager) [38] [34] |

| BEAST X | Phylogenetic, phylogeographic, and phylodynamic inference [36] | Advanced algorithms (HMC), state-of-science models, computational efficiency [36] [37] | Built-in high-end models and scalable inference |

| MrBayes | Bayesian phylogenetic inference [35] | Not specified in search results | Not specified in search results |

BEAST 2 Platform

BEAST 2 is a comprehensive software platform for Bayesian evolutionary analysis, serving as a complete rewrite of the original BEAST 1.x series. Its primary design goals focus on usability, openness, and—crucially—extensibility [34]. The software provides a core framework for phylogenetic inference, which can be significantly expanded through a dedicated package management system. This system allows third-party developers to create additional functionality that users can install directly without requiring new software releases [38] [34]. The core BEAST 2 distribution includes several standalone applications: BEAUti (a graphical user interface for setting up analyses), the BEAST engine (for running MCMC analyses), and post-processing tools including LogAnalyser, LogCombiner, TreeAnnotator, and DensiTree [38] [39].

A key innovation in BEAST 2 is its ability to model extended phylogenetic structures beyond classic binary rooted time trees. These include:

- Population and transmission trees where branches represent entire populations and branching events represent population splits or transmission events [38]

- Sampled ancestors where fossils may be direct ancestors of other fossils or extant species [38]