Benchmarking Evolutionary Algorithms for Protein Folding Prediction: A Guide for Biomedical Research and Drug Development

The revolution in protein structure prediction, led by deep learning tools like AlphaFold, has created a new landscape for computational biology.

Benchmarking Evolutionary Algorithms for Protein Folding Prediction: A Guide for Biomedical Research and Drug Development

Abstract

The revolution in protein structure prediction, led by deep learning tools like AlphaFold, has created a new landscape for computational biology. This article provides a comprehensive benchmark for researchers and drug development professionals on the role and performance of evolutionary algorithms (EAs) within this field. We explore the foundational principles of evolution-based protein design, examining how algorithms leverage co-evolutionary signals from multiple sequence alignments. The review details methodological advances, including hybrid EA-AI frameworks and their application to complex challenges like predicting protein-protein interactions and multimeric structures. A critical troubleshooting section addresses optimization strategies and inherent limitations, such as handling shallow MSAs and avoiding hydrophobic aggregation. Finally, we establish a rigorous validation framework, comparing EA performance against state-of-the-art AI predictors using metrics like pLDDT, PAE, and RMSD, offering a decisive guide for selecting the right tool for biomedical and clinical research applications.

Evolutionary Principles in Protein Design: From Co-evolution to Computational Frameworks

The revolutionary progress in protein structure prediction is fundamentally anchored in a core hypothesis: that evolutionary constraints, captured through the analysis of homologous sequences, provide sufficient information to determine a protein's three-dimensional structure. This principle posits that residues in contact within a folded protein structure co-evolve to maintain functional and structural integrity. By leveraging deep learning models to extract these co-evolutionary signals from multiple sequence alignments (MSAs), computational methods can now predict protein structures with unprecedented accuracy. AlphaFold2's demonstration that "accurate computational approaches are needed to address this gap and to enable large-scale structural bioinformatics" marked a paradigm shift in the field, establishing evolutionary constraints as the primary source of information for state-of-the-art prediction tools [1].

This guide provides an objective comparison of contemporary protein structure prediction methods, with a specific focus on how they implement the core hypothesis of leveraging evolutionary constraints. We benchmark the performance of leading algorithms including AlphaFold2, AlphaFold3, ESMFold, and the Boltz series, analyzing their architectural approaches to evolutionary data, their accuracy across diverse protein classes, and their limitations. The analysis is framed within the context of benchmarking evolutionary algorithms for protein folding predictions, providing researchers with validated experimental protocols and quantitative performance data to inform methodological selection for specific research applications.

Methodological Comparison: Architectural Approaches to Evolutionary Constraints

Core Algorithmic Frameworks

Current protein structure prediction methods vary significantly in their architectural implementation of evolutionary principles, particularly in their dependency on and processing of multiple sequence alignments:

AlphaFold2 employs a novel neural network architecture that jointly embeds MSAs and pairwise features through Evoformer blocks, which enable "continuous communication from the evolving MSA representation to the pair representation" [1]. This design explicitly reasons about spatial and evolutionary relationships through attention mechanisms and triangular multiplicative updates that enforce geometric consistency.

ESMFold represents a distinct approach that leverages a protein language model (ESM) pre-trained on millions of protein sequences without explicit structural information. While it bypasses the computationally intensive MSA generation step, it implicitly captures evolutionary patterns through its training corpus, effectively trading some accuracy for dramatically increased prediction speed [2].

Boltz methods incorporate physical principles and evolutionary constraints, attempting to bridge the gap between purely evolution-based and physics-based approaches. However, benchmarks indicate these methods can produce structures with "the highest occurrence of structures with severe geometry issues, including overlapping atoms and unlikely bond angles" [3].

AlphaFold3 extends the evolutionary framework beyond single proteins to complexes with ligands, nucleic acids, and other proteins, using a diffusion-based architecture that "de-emphasises the importance of protein evolutionary data and opts for a more generalized, atomic interaction layer" [4].

Quantitative Performance Benchmarking

Table 1: Accuracy Benchmarks Across Protein Classes (CASP14 Metrics)

| Method | Backbone Accuracy (Median Cα RMSD₉₅) | All-Atom Accuracy (RMSD₉₅) | Global Fold Accuracy (TM-score) | Speed (Predictions/Day) |

|---|---|---|---|---|

| AlphaFold2 | 0.96 Å | 1.5 Å | >0.7 (High confidence) | 10-20 |

| ESMFold | 1.5-3.0 Å* | 2.5-4.0 Å* | 0.5-0.7 (Medium confidence) | 1000+ |

| AlphaFold3 | 0.9-1.2 Å* | 1.3-1.8 Å* | >0.7 (High confidence) | 5-10 (complexes) |

| Boltz-1 | 2.8-4.0 Å* | 3.5-4.5 Å* | 0.4-0.6 (Variable confidence) | 50-100 |

| Boltz-2 | 2.5-3.5 Å* | 3.2-4.2 Å* | 0.5-0.65 (Variable confidence) | 50-100 |

Estimated ranges based on comparative studies [3] [2]

Table 2: Performance on Specialized Protein Categories

| Method | Proteins Lacking Homologs | Fold-Switching Proteins | Plant Proteins | Membrane Proteins |

|---|---|---|---|---|

| AlphaFold2 | Moderate accuracy drop (pLDDT: 70-80) | Limited (single conformation) | High accuracy for conserved domains | Good accuracy for soluble domains |

| ESMFold | Significant accuracy drop (pLDDT: 60-70) | Limited (single conformation) | 25-43% lower confidence scores [3] | Variable accuracy |

| AlphaFold3 | Moderate accuracy drop (pLDDT: 70-85) | Improved via modified sampling | Limited published data | Improved ligand binding sites |

| Boltz-1/2 | Severe accuracy drop (pLDDT: <60) | Limited (single conformation) | High geometry issues [3] | Limited published data |

The benchmarking data reveals a fundamental trade-off between evolutionary depth and predictive accuracy. AlphaFold2 achieves its remarkable precision through "a novel machine learning approach that incorporates physical and biological knowledge about protein structure, leveraging multi-sequence alignments" [1], but this comes at the computational cost of generating deep MSAs. ESMFold offers dramatically faster predictions by leveraging pre-trained evolutionary knowledge but with reduced accuracy, particularly for proteins with few homologs. The Boltz series demonstrates that incorporating physical principles without sufficient evolutionary context can lead to stereochemical inaccuracies, highlighting the continued importance of evolutionary constraints even in hybrid models.

Experimental Protocols for Method Benchmarking

Standardized Assessment Framework

Robust benchmarking of protein structure prediction methods requires standardized experimental protocols that control for evolutionary information availability and protein characteristics:

Protocol 1: CASP-Style Blind Assessment

- Dataset Curation: Collect recently solved structures not publicly available during model training periods (post-dating training cutoffs)

- MSA Generation: Use consistent MSA generation pipelines (Jackhmmer/MMseqs2) with standardized databases (UniRef, MGnify)

- Evaluation Metrics: Calculate global metrics (TM-score, GDT_TS) and local metrics (lDDT, RMSD) with residue-wise confidence estimates (pLDDT)

- Control Groups: Stratify targets by evolutionary information (number of effective sequences, phylogenetic diversity)

Protocol 2: Alternative Conformation Prediction For assessing performance on proteins with multiple biologically relevant conformations:

- Dataset: Curate experimentally characterized fold-switching proteins (e.g., 92 proteins from [5] [6])

- Sampling Method: Implement CF-random protocol with "very shallow random input MSAs with as few as 3 sequences" to explore alternative conformations [5]

- Evaluation: Compare TM-scores of fold-switching regions specifically, as "this has been shown to discriminate between fold switchers better than overall TM-score" [5]

- Multimer Context: Test with AF2-multimer model when biological context suggests oligomeric states influence conformation

Protocol 3: Orthogonal Validation Through Adversarial Testing Recent physical validation studies employ "adversarial examples based on established physical, chemical, and biological principles" [4]:

- Binding Site Mutagenesis: Systematically mutate binding site residues to glycine or phenylalanine, assessing ligand placement robustness

- Ligand Perturbation: Modify ligand structures to disrupt key interactions, evaluating pose conservation

- Steric Analysis: Quantify atom clashes and bond geometry violations using molecular mechanics tools

Algorithm Selection Workflow: Choosing prediction methods based on sequence characteristics and research goals.

Limitations and Boundary Conditions

Evolutionary Information Gaps

Despite their remarkable success, evolutionary constraint-based methods face fundamental limitations in specific biological contexts:

Proteins with Sparse Evolutionary Information Plant proteins are particularly challenging, as they are "underrepresented in sequence and structural datasets used to train these programs" [3]. Benchmarking across 417 Zea mays genes revealed that "proteins lacking conserved sequence and/or structural domains had on average 25% to 43% lower confidence scores than proteins having both domains" [3]. This performance drop extends to species-specific proteins identified through "proteome-wide phylostratigraphy" which "had substantially lower confidence scores than proteins conserved amongst angiosperms and Eukaryotes" [3].

Alternative Conformations and Dynamics Fold-switching proteins represent a significant challenge, as standard MSA sampling typically produces only one dominant conformation. The CF-random method addresses this by "randomly subsampling input MSAs at depths too shallow for robust coevolutionary inference" [5], successfully predicting both conformations for 32 of 92 fold-switchers. This suggests that deep MSAs may over-constrain predictions to single conformations, while "very shallow sequence sampling was a key to CF-random's success: 23 conformations (72%) were successfully predicted at sampling depths of 4:8 sequences or below" [5].

Physical Plausibility Violations Recent adversarial testing reveals that co-folding models like AlphaFold3 and RoseTTAFold All-Atom "demonstrate notable discrepancies in protein-ligand structural predictions when subjected to biologically and chemically plausible perturbations" [4]. In binding site mutagenesis experiments, these models continued to place ligands in mutated binding sites despite the loss of favorable interactions, indicating potential overfitting to statistical correlations rather than learning underlying physical principles.

Method-Specific Limitations

Table 3: Critical Limitations and Boundary Conditions

| Method | Primary Limitations | Recommended Mitigations |

|---|---|---|

| AlphaFold2 | Single conformation prediction; Computational cost; Template leakage concerns | Use CF-random for alternative conformations; Implement training cutoffs |

| ESMFold | Reduced accuracy for orphan proteins; Limited functional site precision | Reserve for high-throughput screening; Verify with AF2 for important targets |

| AlphaFold3 | Potential memorization of training complexes; Limited explainability | Adversarial testing; Experimental validation for critical applications |

| Boltz Series | Stereochemical inaccuracies; High computational cost | Post-prediction energy minimization; Structural validation |

The Scientist's Toolkit: Essential Research Reagents

Table 4: Critical Resources for Protein Structure Prediction Research

| Resource | Type | Function | Access |

|---|---|---|---|

| AlphaFold Protein Structure Database | Database | ~214 million predicted structures for reference | Public |

| ColabFold | Software | Efficient AF2 implementation with MMseqs2 | Public |

| CF-random | Algorithm | Alternative conformation prediction | Public [5] |

| ESM Metagenomic Atlas | Database | ~600 million structures from language model | Public |

| PDBe API | Tool | Conservation score annotation for masking | Public |

| Foldseek | Algorithm | Fast structural similarity search | Public |

| SafeProtein-Bench | Benchmark | Red-teaming dataset for safety evaluation | Public [7] |

| PoseBusterV2 Dataset | Benchmark | Protein-ligand complexes for validation | Public |

AlphaFold2 Architecture: Core computational workflow for structure prediction.

The core hypothesis of leveraging evolutionary constraints for protein structure prediction has been overwhelmingly validated by the accuracy of current methods, particularly AlphaFold2. However, benchmarking reveals significant variation in how different algorithms implement this principle, with trade-offs between accuracy, speed, and physical plausibility. Evolutionary constraint-based methods excel for proteins with rich phylogenetic information but struggle with evolutionarily unique proteins, conformational dynamics, and adherence to physical principles in adversarial scenarios.

Future methodological development should focus on integrating evolutionary constraints with physical modeling more robustly, improving performance on underrepresented protein classes, and developing standardized benchmarking frameworks that assess physical plausibility alongside accuracy. The introduction of red-teaming frameworks like SafeProtein, which "combines multimodal prompt engineering and heuristic beam search to systematically design red-teaming methods" [7], represents an important step toward more robust evaluation. As the field progresses, the successful interpretation of predictive models will require careful consideration of both the power and limitations of evolutionary constraints in determining protein structure.

The computational prediction and design of proteins represent one of the most significant frontiers in molecular biology and biotechnology. Currently, two distinct paradigms dominate the field: evolution-based approaches, which learn from the vast archive of natural protein sequences and structures generated through millennia of biological evolution, and physics-based approaches, which leverage fundamental biophysical principles and molecular simulations to engineer protein functions. While evolution-based methods draw inferences from patterns in natural sequence variation, physics-based methods attempt to computationally model the underlying physical forces that govern protein folding, stability, and function [8] [9]. This comparison guide objectively examines both paradigms, focusing on their methodological foundations, performance characteristics, and suitability for different protein engineering scenarios, providing researchers with a framework for selecting appropriate strategies for their specific applications.

The distinction between these approaches mirrors a long-standing dichotomy in scientific modeling: whether to prioritize empirical patterns observed in natural data or to build from first-principles understanding of physical mechanisms. Evolution-based protein language models (PLMs), such as Evolutionary Scale Modeling (ESM) and UniRep, are trained on millions of natural protein sequences, implicitly capturing evolutionary constraints on protein structure and function [8] [1]. In contrast, physics-based approaches like the Mutational Effect Transfer Learning (METL) framework employ molecular simulations to explicitly model relationships between protein sequence, structure, and energetics, incorporating decades of research into biophysical factors governing protein function [8]. Understanding the relative strengths and limitations of each paradigm is essential for advancing protein engineering applications across therapeutics, enzyme design, and synthetic biology.

Methodological Foundations: Core Principles and Implementation

Evolution-Based Protein Design

Fundamental Principle: Evolution-based methods operate on the core premise that amino acid sequences observed in nature contain implicit information about protein structure and function encoded through evolutionary selection pressures. The central hypothesis is that residues that co-evolve across homologous proteins are likely to be in spatial proximity within the folded structure, creating molecular constraints that can be extracted through statistical analysis [10] [1].

Technical Implementation: Modern evolution-based approaches typically begin by constructing deep multiple sequence alignments (MSAs) from homologous protein sequences. Advanced statistical methods, particularly deep learning architectures, then analyze these alignments to identify evolutionary couplings between residues. AlphaFold2 exemplifies this approach with its innovative Evoformer module, a specialized transformer architecture that jointly processes MSAs and residue pair representations to generate accurate 3D structural models [10] [1]. Protein language models like ESM-2 represent a related approach, training on millions of sequences using self-supervised learning objectives to capture evolutionary patterns without explicitly requiring MSAs for inference [8] [11].

Physics-Based Protein Design

Fundamental Principle: Physics-based methods rely on molecular modeling and biophysical simulations to predict how amino acid sequences fold into three-dimensional structures and perform functions based on fundamental physical principles. These approaches explicitly calculate energetic contributions from various molecular forces, including van der Waals interactions, hydrogen bonding, electrostatics, and solvation effects [8] [9].

Technical Implementation: The METL framework exemplifies the modern physics-based approach, implementing a three-stage workflow: (1) generating synthetic training data through molecular modeling of protein sequence variants using tools like Rosetta; (2) pretraining transformer-based neural networks to predict biophysical attributes (e.g., solvation energies, molecular surface areas) from sequences; and (3) fine-tuning the pretrained models on experimental sequence-function data [8]. This strategy explicitly incorporates biophysical knowledge through both the training data (molecular simulations) and the model architecture, which uses protein structure-based relative positional embeddings that consider three-dimensional distances between residues rather than merely their sequential positions [8].

Table 1: Methodological Comparison Between Evolution-Based and Physics-Based Approaches

| Aspect | Evolution-Based Approaches | Physics-Based Approaches |

|---|---|---|

| Primary Data Source | Natural protein sequences and structures from databases | Molecular simulations and biophysical calculations |

| Core Modeling Principle | Statistical patterns in evolutionary record | Physical laws and energetic calculations |

| Key Assumption | Evolutionary correlations reflect structural/functional constraints | Energy minimization determines structure and function |

| Representative Methods | AlphaFold2, ESM-2, EVE | METL, Rosetta-based design |

| Training Objective | Masked token prediction, next-token prediction | Biophysical attribute prediction, energy minimization |

| Positional Encoding | Sequential position in amino acid chain | 3D spatial relationships between residues |

Experimental Performance: Quantitative Comparative Analysis

Performance Across Diverse Protein Engineering Tasks

Rigorous evaluation of both paradigms across 11 experimental datasets representing proteins of varying sizes, folds, and functions (including GFP, GB1, TEM-1, and others) reveals distinct performance profiles suited to different application scenarios [8]. Evolution-based methods typically excel when deep multiple sequence alignments are available and when the target proteins share significant evolutionary relationships with those in training databases. In contrast, physics-based approaches demonstrate particular advantages in challenging protein engineering scenarios involving limited experimental data and extrapolation beyond training distributions.

A critical performance differentiator emerges in data-efficient learning scenarios. Protein-specific physics-based models (METL-Local) consistently outperform general protein representation models (including evolution-based ESM-2) when trained on small datasets, with METL-Local demonstrating particularly strong performance on GFP and GB1 with limited training examples [8]. This advantage diminishes as training set size increases, with evolution-based models becoming increasingly competitive with larger datasets. The best-performing method on small training sets tends to be either METL-Local or Linear-EVE (which combines evolutionary features with linear models), with their relative performance partly depending on the respective correlations of Rosetta total score and EVE with the experimental data [8].

Generalization Capabilities and Extrapolation Performance

Protein engineering frequently requires models to generalize beyond their training data—predicting the effects of mutations not represented in experimental libraries or at positions with limited variation. Four challenging extrapolation tasks systematically evaluated in recent research illuminate key differences between the paradigms [8]:

- Mutation Extrapolation: Predicting effects of specific amino acid substitutions not present in training data

- Position Extrapolation: Generalizing to mutations at sequence positions not represented in training variants

- Regime Extrapolation: Predicting outcomes for variants with functional scores outside the training distribution

- Score Extrapolation: Generalizing from single-substitution variants to higher-order combinations

Physics-based approaches, particularly the METL framework, demonstrate superior capabilities in these extrapolation scenarios, attributable to their foundation in biophysical principles that generalize across sequence space rather than statistical patterns derived from observed evolutionary sequences [8]. This advantage makes physics-based methods particularly valuable for engineering tasks requiring exploration beyond natural sequence neighborhoods.

Table 2: Performance Comparison Across Protein Engineering Tasks

| Engineering Task | Evolution-Based Leaders | Physics-Based Leaders | Key Performance Differentiators |

|---|---|---|---|

| Small Data Learning | Linear-EVE | METL-Local | Physics-based superior on smallest datasets (<100 examples) |

| Large Data Learning | ESM-2, EVE | METL-Global | Evolution-based gains advantage with increasing data |

| Mutation Extrapolation | Moderate performance | METL frameworks | Physics-based significantly outperforms |

| Position Extrapolation | Limited capability | METL frameworks | Physics-based demonstrates strong advantage |

| Stability Prediction | EVE, ESM-2 | Rosetta-based methods | Physics-based captures energetic contributions |

| Functional Design | ProteinNPT | METL with fine-tuning | Context-dependent on target function |

Experimental Protocols and Methodologies

METL Framework Implementation Protocol

The METL framework exemplifies modern physics-based protein design, implementing a standardized protocol that can be adapted for various protein engineering applications [8]:

Synthetic Data Generation:

- Select base protein(s) of interest (single protein for METL-Local; diverse set for METL-Global)

- Generate millions of sequence variants introducing random amino acid substitutions (typically up to 5 mutations)

- Model 3D structures of variants using Rosetta molecular modeling software

- Compute 55 biophysical attributes for each modeled structure, including molecular surface areas, solvation energies, van der Waals interactions, and hydrogen bonding networks

Pretraining Phase:

- Train transformer encoder architectures to predict biophysical attributes from protein sequences

- Implement structure-based relative positional embeddings incorporating 3D residue distances

- Continue training until high predictive accuracy for biophysical attributes is achieved (mean Spearman correlation of 0.91 for Rosetta's total score in METL-Local)

Fine-Tuning Phase:

- Initialize model with pretrained biophysical representations

- Fine-tune on experimental sequence-function data specific to target application

- Employ standard supervised learning with appropriate loss functions for regression or classification tasks

Evolution-Based Protein Language Model Protocol

Standard implementation of evolution-based methods follows this general protocol [8] [1]:

Data Curation and Preprocessing:

- Collect large-scale databases of natural protein sequences (UniProt, MGnify)

- Optionally generate multiple sequence alignments for target proteins using tools like MMseqs2

Model Training:

- Train transformer architectures using self-supervised objectives: masked token prediction or autoregressive next-token prediction

- Process sequences through numerous layers to build contextual representations

- For structure prediction: jointly embed MSA and pairwise features using specialized modules (Evoformer in AlphaFold2)

Adaptation to Engineering Tasks:

- Extract learned representations (embeddings) from pretrained models

- Fine-tune on specific protein engineering datasets

- Alternatively, use embeddings as features in traditional machine learning models

Research Reagent Solutions: Essential Tools for Protein Design

Table 3: Key Research Reagents and Computational Tools for Protein Design

| Tool/Resource | Type | Primary Function | Paradigm |

|---|---|---|---|

| Rosetta | Software Suite | Molecular modeling and structure prediction | Physics-Based |

| AlphaFold2 | AI Model | Protein structure prediction from sequence | Evolution-Based |

| ESM-2 | Protein Language Model | Sequence representation learning | Evolution-Based |

| METL | Framework | Biophysics-informed protein engineering | Physics-Based |

| EVE | Evolutionary Model | Variant effect prediction | Evolution-Based |

| Protein Data Bank | Database | Experimentally determined structures | Both |

| UniProt | Database | Protein sequence and functional information | Both |

| ColabFold | Platform | Accessible protein structure prediction | Evolution-Based |

Workflow Visualization: Comparative Engineering Approaches

Application Guidelines: Selecting the Appropriate Paradigm

Context-Dependent Method Selection

Choosing between physics-based and evolution-based approaches requires careful consideration of the specific protein engineering context, available data, and performance requirements:

Select Evolution-Based Methods When:

- Working with proteins having rich evolutionary histories and deep multiple sequence alignments

- Experimental training data is abundant (>1000 examples)

- The goal is prediction of natural protein structures or variant effects within observed evolutionary variation

- Computational resources for molecular simulations are limited

Select Physics-Based Methods When:

- Experimental training data is limited (<100 examples)

- Engineering tasks require extrapolation beyond natural sequence variation

- Working with novel protein scaffolds or de novo designs with limited evolutionary history

- Biophysical interpretability is valuable for guiding engineering decisions

- The protein engineering application benefits from explicit structural and energetic reasoning

Emerging Hybrid Approaches

The most advanced protein engineering pipelines increasingly combine elements of both paradigms, leveraging their complementary strengths [9] [12] [13]. Evolution-guided atomistic design represents one such hybrid approach, where natural sequence diversity is analyzed to eliminate rare mutations before atomistic design calculations, implementing negative design while focusing the sequence space on regions more likely to fold stably [9]. Similarly, methods that incorporate evolutionary features as inputs to physics-informed models or that use physical constraints to regularize evolution-based predictions demonstrate promising performance across diverse protein engineering benchmarks [8] [9].

The future of protein design lies not in exclusive commitment to one paradigm, but in strategic integration of both evolutionary wisdom and physical principles. As protein language models increasingly incorporate physical constraints [11] and physics-based models leverage evolutionary data for pretraining [8], the distinction between these approaches is likely to blur, giving rise to more powerful unified frameworks for protein engineering that transcend the limitations of either paradigm alone.

The Critical Role of Multiple Sequence Alignments (MSAs) and Evolutionary Couplings

In the field of computational structural biology, multiple sequence alignments (MSAs) and the evolutionary couplings derived from them serve as the foundational data for accurate protein structure prediction. The revolutionary success of deep learning-based protein structure prediction tools, most notably AlphaFold2, is deeply rooted in their ability to leverage co-evolutionary information extracted from MSAs. These alignments, which consist of homologous protein sequences gathered from diverse organisms, contain evolutionary constraints that reflect the structural and functional necessities of the protein family. When properly analyzed, these constraints reveal residue-residue contacts—amino acid pairs that must maintain spatial proximity despite sequence variations over evolutionary time. This article provides a comprehensive comparison of methodologies that utilize MSAs and evolutionary couplings, evaluating their performance across different protein types and structural scenarios, with direct implications for drug discovery and protein engineering applications.

Fundamental Concepts: From MSAs to Structural Constraints

The Theoretical Foundation of Evolutionary Couplings

The core principle underlying modern protein structure prediction is that amino acid co-evolution reflects structural and functional constraints. The concept is biologically intuitive: when two residues form a critical contact in the three-dimensional structure, a mutation at one position often necessitates a compensatory mutation at the other to maintain structural integrity and function. This phenomenon creates statistically detectable correlations in evolutionary patterns across homologous sequences. While simple correlation metrics initially showed promise for identifying such relationships, they often captured indirect connections. Advanced statistical methods, including direct coupling analysis (DCA) and pseudolikelihood maximization, were subsequently developed to distinguish direct from indirect evolutionary couplings, significantly improving the quality of predicted residue contacts [10].

Multiple Sequence Alignment Construction and Challenges

The quality of evolutionary coupling analysis is fundamentally constrained by the quality of the input MSA. Constructing an optimal MSA involves several challenges:

- Sequence Diversity vs. Alignment Accuracy: An MSA must balance sequence diversity with alignment accuracy. Highly similar sequences produce accurate alignments but provide limited evolutionary information, while overly diverse sequences may introduce alignment errors that generate noise in coupling analysis [14].

- Depth and Breadth Considerations: The MSA depth (number of sequences) significantly impacts prediction quality. Proteins with abundant homologs (deep MSAs) typically yield more accurate structures than those with few homologs (shallow MSAs). This creates a performance gap for "orphan proteins" with limited evolutionary information [15] [16].

- Optimal Filtering Strategies: Determining the optimal sequence identity thresholds for MSA construction remains challenging. Heuristic approaches using fixed thresholds may not be optimal across different protein families, necessitating more adaptive methods [14].

Table 1: Key MSA Quality Metrics and Their Structural Implications

| Metric | Description | Impact on Structure Prediction |

|---|---|---|

| Neff (Effective Sequences) | Measure of sequence diversity in MSA | Higher Neff typically improves contact prediction accuracy |

| Coverage | Proportion of query sequence aligned | Low coverage may indicate alignment errors or fragmented homologs |

| Sequence Identity Distribution | Range of similarities to query | Balanced distribution often provides optimal evolutionary information |

| Alignment Consistency | Agreement between different alignment methods | Higher consistency correlates with more reliable co-evolution signals |

Methodological Comparison: MSA-Dependent Structure Prediction Approaches

MSA-Enhanced Deep Learning Architectures

Advanced protein structure prediction pipelines have developed sophisticated methods for extracting and utilizing evolutionary information from MSAs:

- AlphaFold2's Integrated Approach: AlphaFold2 employs a novel architecture where the Evoformer module jointly processes MSA and pair representations, allowing co-evolutionary information to directly inform geometric constraints. This end-to-end differentiable model achieves near-experimental accuracy by effectively translating evolutionary statistics into physically plausible structures [10].

- RoseTTAFold's Three-Track System: This approach similarly leverages MSAs but implements a three-track system that simultaneously processes sequence, distance, and coordinate information, enabling robust structure prediction through iterative refinement [15].

- AttentiveDist's Multi-MSA Strategy: Unlike single-MSA approaches, AttentiveDist utilizes four distinct MSAs generated with different E-value cutoffs (0.001, 0.1, 1, and 10) and employs an attention mechanism to dynamically weight the importance of each MSA for different residue pairs. This approach recognizes that optimal E-value thresholds vary across protein families and structural contexts [17].

MSA Processing and Enhancement Techniques

- AF-Cluster for Conformational Diversity: AF-Cluster addresses AlphaFold2's limitation in predicting single structures by clustering MSAs based on sequence similarity. This method enables sampling of alternative conformational states, successfully predicting both ground and fold-switched states of metamorphic proteins like KaiB. By separating evolutionary signals corresponding to different conformations, AF-Cluster demonstrates that MSAs contain information about multiple biologically relevant states [18].

- DeepContact's CNN Enhancement: DeepContact applies convolutional neural networks to raw evolutionary couplings, learning structural interaction motifs from experimentally solved structures. This approach effectively re-weights evolutionary couplings using contextual information, down-weighting unlikely contacts and up-weighting plausible ones. The method converts arbitrary coupling scores into calibrated probabilities, enabling more reliable template-free modeling, particularly for proteins with limited homologous sequences [19].

- SAMMI's Mutual Information Optimization: The SAMMI (Selection of Alignment by Maximal Mutual Information) approach automatically selects optimal MSAs by maximizing the average mutual information among MSA column pairs. This strategy identifies MSAs that balance sequence diversity with functional homogeneity, outperforming manual curation for functional site prediction [14].

Diagram 1: MSA Processing Workflows for Structure Prediction

Emerging Paradigms: MSA-Free and MSA-Augmentation Approaches

Protein Language Models as Implicit MSA Repositories

While traditional methods explicitly search databases for homologous sequences, protein language models (pLMs) like ESM-2 offer an alternative approach by training on millions of sequences to learn evolutionary statistics implicitly:

- HelixFold-Single: This method combines a large-scale pLM with AlphaFold2's geometric learning capability, achieving competitive accuracy with MSA-based methods on targets with large homologous families while dramatically reducing computation time. The pLM serves as a compressed knowledge base, with model size directly correlating with prediction accuracy [15].

- ESMFold Limitations: Analysis reveals that ESM-2 appears to store pairwise co-evolutionary statistics analogous to simpler models like Markov Random Fields, rather than learning fundamental protein folding physics. This is evidenced by its tendency to incorrectly predict nonphysical structures for protein isoforms and its performance correlation with the number of sequence neighbors in training data [20].

MSA Augmentation for Low-Homology Proteins

- MSA-Augmenter: This generative language model creates novel protein sequences that retain co-evolutionary information, effectively supplementing shallow MSAs to improve structure prediction quality. By generating de novo sequences not found in databases, it addresses the fundamental limitation of MSA-dependent methods for orphan proteins [21].

- PLAME Framework: PLAME leverages pretrained language models to generate enhanced MSAs, incorporating a conservation-diversity loss function to maintain biological plausibility while optimizing for structural prediction. The framework includes the HiFiAD (High-Fidelity Appropriate Diversity) selection method to identify MSAs that balance sequence fidelity and diversity [16].

Table 2: Performance Comparison of MSA-Dependent and MSA-Free Methods

| Method | Input Type | CASP14 TM-score | CAMEO TM-score | Inference Time | Low-Homology Performance |

|---|---|---|---|---|---|

| AlphaFold2 (MSA) | MSA | 0.89 | 0.91 | ~Hours | Excellent with deep MSAs |

| RoseTTAFold (MSA) | MSA | 0.84 | 0.86 | ~Hours | Good with deep MSAs |

| HelixFold-Single | Single Sequence | 0.82 | 0.85 | ~Seconds | Competitive with deep homologs |

| ESMFold | Single Sequence | 0.79 | 0.81 | ~Seconds | Superior for very shallow MSAs |

| AlphaFold2-Single | Single Sequence | 0.72 | 0.75 | ~Hours | Poor without PLM enhancement |

Advanced Applications: Predicting Conformational Diversity

Capturing Multiple Biological States with MSA Manipulation

Proteins frequently adopt multiple conformational substates with biological significance, a challenge for standard structure prediction methods:

- CF-Random for Fold-Switching Proteins: This method randomly subsamples MSAs at extremely shallow depths (as few as 3 sequences), directing the AF2 network to predict structures from sparse evolutionary information. CF-random successfully predicts both conformations for 35% of tested fold-switching proteins, significantly outperforming other AF2-based methods while generating 89% fewer structures [6].

- Mechanistic Insights: Very shallow sampling appears to work through sequence association, relating patterns from homologous sequences to a learned structural landscape rather than robust co-evolutionary inference. This approach successfully predicts both global and local fold-switching events, including human XCL1 and TRAP1-N, which had eluded other methods [6].

Diagram 2: MSA Subsampling Strategies for Conformational Diversity

Experimental Protocols for Method Validation

Benchmarking Datasets and Metrics:

- CASP14 and CAMEO: Standard benchmarks using template modeling score (TM-score) for overall structural accuracy [15]

- Fold-Switching Protein Datasets: Specialized benchmarks evaluating ability to predict multiple distinct conformations, using metrics like fold-switched region TM-score [6]

- Low-Homology Protein Sets: Curated datasets of orphan proteins and those with shallow MSAs to test method robustness [16]

Validation Methodologies:

- Experimental Cross-Validation: Successful predictions are validated through experimental methods such as NMR spectroscopy, as demonstrated for AF-Cluster predictions of KaiB variants [18]

- Computational Saturation: Methods like CF-random perform extensive sampling across MSA depths (e.g., 3-192 sequences) to ensure conformational space is adequately explored [6]

- Ablation Studies: Systematic removal of components (e.g., PLAME's conservation-diversity loss) to quantify individual contributions to prediction accuracy [16]

Table 3: Key Research Tools for MSA-Based Structure Prediction

| Tool/Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| AlphaFold2 | End-to-end Structure Predictor | 3D structure prediction from MSAs | High-accuracy monomer prediction with sufficient homologs |

| ColabFold | Efficient AF2 Implementation | Rapid MSA generation and structure prediction | Accessible prototyping with MMseqs2 integration |

| DeepMSA/MMseqs2 | MSA Generation Pipeline | Homology search and MSA construction | Input preparation for AF2 and related tools |

| ESM-2 | Protein Language Model | Single-sequence structure prediction | Fast inference for high-homology targets |

| Foldseck | Structural Search Tool | Rapid structural similarity search | Database mining and structural classification |

| AF-Cluster | MSA Processing Algorithm | Conformational diversity prediction | Identifying alternative protein states |

| CF-random | MSA Subsampling Method | Alternative conformation prediction | Fold-switching protein analysis |

| PLAME | MSA Enhancement Framework | MSA generation for low-homology proteins | Orphan protein structure prediction |

| SAMMI | MSA Selection Tool | Optimal MSA identification | Functional site prediction |

The critical role of MSAs and evolutionary couplings in protein structure prediction remains undisputed, though the methodologies for leveraging this information continue to evolve. For researchers and drug development professionals, method selection should be guided by specific use cases:

- High-Accuracy Monomer Prediction: Traditional MSA-based approaches like AlphaFold2 with comprehensive homology searching remain the gold standard for proteins with sufficient evolutionary information [10].

- Conformational Ensemble Prediction: MSA subsampling methods like AF-Cluster and CF-random show remarkable success in capturing alternative states, essential for understanding allosteric mechanisms and fold-switching behavior [18] [6].

- Low-Homology and Orphan Proteins: MSA-augmentation approaches like PLAME and MSA-Augmenter, along with protein language models, offer promising pathways for structural insights where traditional methods fail [21] [16].

Future methodological development will likely focus on integrating explicit evolutionary information with physical principles, creating hybrid models that leverage the strengths of both approaches. As these tools become more sophisticated and accessible, they will increasingly drive discoveries in basic biology and accelerate therapeutic development through improved understanding of protein structure-function relationships across diverse biological contexts.

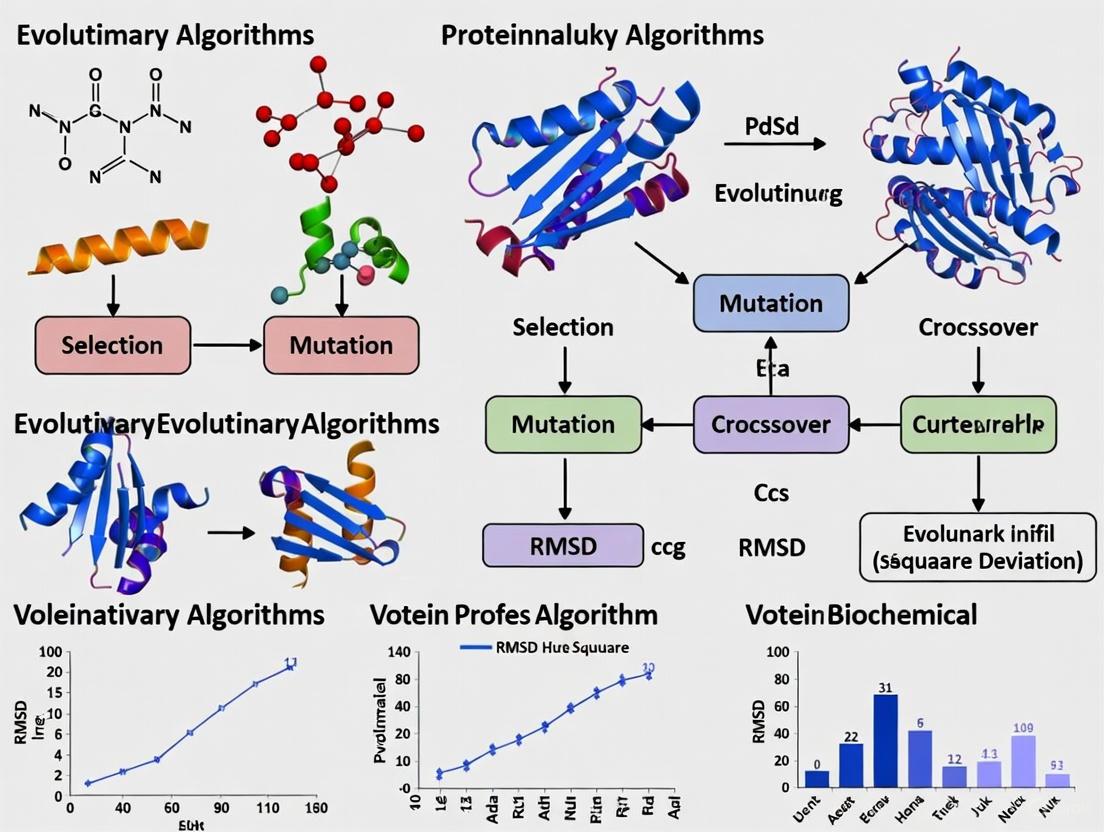

Evolutionary algorithms represent a class of optimization techniques inspired by natural selection processes, and their application to protein structure prediction and design has significantly advanced computational structural biology. These methods leverage principles of mutation, selection, and recombination to navigate the vast conformational space of protein folds and the even larger sequence space of possible amino acid arrangements. Within this domain, three distinct algorithmic approaches have demonstrated particular utility: EvoDesign, which utilizes evolutionary profiles from structurally similar proteins; Genetic Algorithms (GAs), which employ population-based stochastic search operators; and Evolution Strategies (ES), which focus on self-adaptive mutation strategies for continuous parameter optimization. The integration of these evolutionary computing paradigms has enabled researchers to tackle complex problems in protein folding, de novo protein design, and functional protein engineering that would be computationally intractable through exhaustive search methods or purely physics-based simulations alone.

The fundamental challenge in protein structure prediction lies in the astronomical size of the conformational search space, a phenomenon famously articulated by Levinthal's paradox which highlights the impossibility of proteins exhaustively sampling all possible conformations during folding [22]. Evolutionary algorithms address this challenge through biologically-inspired search strategies that efficiently explore these vast spaces. These methods have evolved from early simple implementations to sophisticated hybrid approaches that combine evolutionary operators with knowledge from structural databases and physical energy functions. As the field progresses, benchmarking these algorithms against standardized datasets and through community-wide assessments like CASP (Critical Assessment of Protein Structure Prediction) provides critical insights into their relative strengths, limitations, and optimal application domains [23] [1].

Algorithmic Frameworks and Methodologies

EvoDesign: Evolutionary Profile-Guided Design

EvoDesign employs an evolution-based methodology that leverages conserved structural patterns from nature to guide protein design. Unlike physics-based approaches that rely solely on atomic-level energy calculations, EvoDesign utilizes evolutionary profiles derived from multiple sequence alignments (MSAs) of proteins with structurally similar folds [24] [25]. The algorithm begins by identifying structurally analogous proteins from the Protein Data Bank (PDB) using the TM-align structural alignment tool, creating a position-specific scoring matrix that encapsulates the amino acid preferences at each position in the target structure [25].

The core energy function in EvoDesign combines evolutionary information with physical constraints:

E = w4(Eevolution - ⟨Eevolution⟩)/δEevolution + w5(EFoldX - ⟨EFoldX⟩)/δEFoldX [25]

Where Eevolution represents the evolutionary potential derived from structural profiles, EFoldX denotes physics-based energy terms, and w4, w5 are weighting factors. For protein-protein interaction design, EvoDesign extends this framework by incorporating interface evolutionary profiles constructed from structurally similar protein-protein interfaces identified through tools like iAlign [24]. The sequence search employs replica-exchange Monte Carlo (REMC) simulation, with subsequent clustering of sequence decoys using SPICKER based on BLOSUM62 sequence similarity [24].

Genetic Algorithms: Population-Based Stochastic Search

Genetic Algorithms (GAs) approach protein structure prediction as an optimization problem where a population of candidate conformations evolves through iterative application of genetic operators. In typical implementations, each individual in the population represents a specific protein conformation encoded using either internal coordinates (dihedral angles) or Cartesian coordinates [26]. The fitness function evaluates how well each conformation minimizes a specified energy function or satisfies spatial constraints.

The GA workflow applies selection, crossover, and mutation operators to drive population improvement. Selection favors higher-fitness individuals for reproduction, while crossover recombines structural features from parent conformations to create offspring. Mutation introduces structural variations through local perturbations to dihedral angles or atomic positions. Early GA implementations for protein structure prediction demonstrated the method's ability to explore complex conformational spaces, though with limitations in consistently achieving atomic-level accuracy [26]. Protein representation varied significantly between implementations, ranging from full all-atom representations to simplified Cα-trace models that enabled more rapid exploration at the cost of structural detail [23].

Evolution Strategies: Self-Adaptive Continuous Optimization

Evolution Strategies (ES) specialize in continuous parameter optimization problems, making them particularly suited for protein structure prediction approaches that employ real-value parameterizations of molecular geometry. Unlike GAs that emphasize recombination, ES typically focus on mutation as the primary variation operator, with strategy parameters that self-adapt during the optimization process to balance exploration and exploitation. In protein structure prediction applications, ES operate on direct representations of dihedral angles or atomic coordinates, using Gaussian mutation operators with adaptive step sizes.

The selection mechanism in ES is typically deterministic, choosing the best μ individuals from λ offspring to form the next generation. This (μ,λ)-selection strategy enables continuous improvement through gradual refinement of solution quality. For protein structure prediction, ES have been applied to both ab initio folding and homology modeling scenarios, with the adaptive mutation parameters allowing efficient navigation of rough energy landscapes that challenge gradient-based optimization methods.

Comparative Performance Analysis

Table 1: Key Characteristics of Evolutionary Algorithms in Protein Structure Prediction

| Algorithm | Core Methodology | Search Mechanism | Representation | Energy Function |

|---|---|---|---|---|

| EvoDesign | Evolutionary profile guidance | Replica-exchange Monte Carlo | All-atom with rotamer library | Evolutionary potential + physical terms (EvoEF) |

| Genetic Algorithms | Population-based stochastic search | Selection, crossover, mutation | Varies (all-atom to Cα-trace) | Physics-based or knowledge-based |

| Evolution Strategies | Self-adaptive continuous optimization | Mutation with adaptive step sizes | Continuous parameters (dihedral angles, coordinates) | Physics-based force fields |

Table 2: Performance Characteristics on Protein Structure Prediction Tasks

| Algorithm | Typical Application Domain | Reported Accuracy Metrics | Computational Demand | Key Limitations |

|---|---|---|---|---|

| EvoDesign | Monomer design, protein-protein interaction design | Significant advantage over physics-based approaches [24] | Moderate (enhanced by EvoEF energy function) | Limited to scaffolds with evolutionary analogs |

| Genetic Algorithms | Ab initio folding, loop modeling | Varies widely (normalized RMSD 11.17 to 3.48) [23] | High (depends on representation and population size) | Difficulty achieving atomic accuracy |

| Evolution Strategies | Continuous optimization in homology modeling | Not specifically reported in search results | Moderate to high (depends on parameterization) | Limited application to full de novo folding |

The benchmarking of evolutionary algorithms for protein structure prediction reveals distinct performance patterns across different problem domains. EvoDesign demonstrates particular strength in designing stable protein sequences that adopt desired target folds, showing significant advantages over purely physics-based approaches according to large-scale design and folding experiments [24]. This performance advantage stems from its use of evolutionary constraints that implicitly capture subtle structural determinants difficult to model explicitly through physical energy functions.

Genetic Algorithms exhibit highly variable performance depending on their specific implementation details, particularly the protein representation scheme and energy function. As noted in a comparative study of 18 prediction algorithms, reported performance ranged from normalized RMSD scores of 11.17 to 3.48, with the best-performing algorithms incorporating fragment assembly and sophisticated search strategies [23]. The performance of GAs was also influenced by the balance between exploration and exploitation, with excessive exploration leading to slow convergence and excessive exploitation resulting in premature convergence to suboptimal folds.

Direct comparative studies between these evolutionary approaches in standardized benchmarks like CASP are limited in the available literature. However, the consistent outperformance of methods incorporating evolutionary information (as in EvoDesign) suggests the critical importance of leveraging natural sequence constraints. The rise of deep learning methods like AlphaFold2, which also leverages evolutionary information through MSAs, has further validated this approach while setting new standards for accuracy [1].

Experimental Protocols and Methodologies

EvoDesign Workflow for Protein Design

The standard experimental protocol for EvoDesign-based protein design follows a structured workflow with distinct stages:

Scaffold Preparation and Structural Alignment: The process begins with a target scaffold structure, which is structurally aligned against the PDB using TM-align to identify proteins with similar folds (for monomer design) or iAlign to identify similar interfaces (for protein-protein interaction design) [24] [25].

Evolutionary Profile Construction: Multiple sequence alignments are generated from the structurally analogous proteins, and position-specific scoring matrices are constructed to capture amino acid preferences at each structural position [24].

Sequence Optimization via REMC: Replica-exchange Monte Carlo simulations generate sequence decoys guided by the composite energy function combining evolutionary and physical terms. The simulation typically includes 10 independent runs starting from random sequences [25].

Sequence Clustering and Selection: Generated sequences are clustered using SPICKER with BLOSUM62-based distance metrics. The final designs are selected from the largest clusters with the lowest free energy sequences rather than solely the lowest energy sequence [24].

Validation through Structure Prediction: Computational validation involves predicting the structure of designed sequences using protein structure prediction methods like I-TASSER to verify they adopt the target fold [25].

EvoDesign Methodology Workflow

Genetic Algorithm Protocol for Structure Prediction

A typical experimental protocol for GA-based protein structure prediction includes:

Population Initialization: Generate an initial population of candidate structures using fragment assembly, random torsion angles, or homology-based modeling.

Fitness Evaluation: Calculate the fitness of each individual using knowledge-based potentials, physics-based force fields, or hybrid scoring functions.

Genetic Operations:

- Selection: Implement tournament selection or fitness-proportional selection to choose parents for reproduction.

- Crossover: Apply geometric crossover operators that blend structural features from parent conformations.

- Mutation: Introduce structural diversity through local moves in dihedral angle space or Cartesian coordinate adjustments.

Termination Check: Evaluate convergence criteria based on fitness improvement, structural similarity, or generation count.

Ensemble Refinement: Select multiple top-performing structures for further refinement using local optimization methods.

The specific implementation details, particularly the protein representation scheme and energy function, significantly influence algorithm performance. Simplified representations like Cα-trace or CABS models enable more extensive conformational sampling but may lack atomic-level precision [23].

Table 3: Key Research Resources for Evolutionary Algorithm Implementation

| Resource Category | Specific Tools | Primary Function | Application Context |

|---|---|---|---|

| Structural Alignment | TM-align, iAlign | Identify structurally similar folds/interfaces | EvoDesign profile construction |

| Evolutionary Analysis | GREMLIN, MSA Transformer | Detect co-evolved residue pairs | Evolutionary constraint identification |

| Energy Functions | EvoEF, FoldX | Calculate physical interaction energies | Fitness evaluation in all algorithms |

| Structure Prediction | I-TASSER, AlphaFold2 | Validate designed sequences | Computational validation of designs |

| Sequence-Structure Databases | PDB, COTH interface library | Source of evolutionary constraints | Profile construction in EvoDesign |

The effective implementation of evolutionary algorithms for protein structure prediction requires access to specialized computational resources and databases. Structural alignment tools like TM-align and iAlign enable the identification of evolutionarily related structural templates by comparing three-dimensional protein folds rather than just sequence similarity [24] [25]. These tools form the foundation of EvoDesign's profile construction phase.

Evolutionary coupling analysis through methods like GREMLIN (Generative Regularized ModeLs of proteINs) and MSA Transformer detects co-evolved residue pairs from multiple sequence alignments, providing critical constraints for structure prediction [27]. These coevolutionary signals have been shown to significantly enhance prediction accuracy across all evolutionary algorithms.

Energy functions like EvoEF (EvoDesign Energy Function) and FoldX provide physics-based scoring for evaluating conformational energy and stability [24]. The development of EvoEF specifically addressed computational efficiency concerns in EvoDesign, replacing the external FoldX calls with a integrated energy function that maintains accuracy while significantly speeding up the design process.

Structure prediction tools serve dual purposes in the workflow: as validation mechanisms for designed sequences (I-TASSER) and as sources of methodological insights (AlphaFold2) [23] [1]. The revolutionary accuracy of AlphaFold2, which also leverages evolutionary information through its Evoformer module, provides both a benchmark for evolutionary algorithms and potential components for future hybrid approaches.

Emerging Frontiers and Future Directions

The landscape of evolutionary algorithms in protein science is rapidly evolving, particularly with the emergence of deep learning methods that have demonstrated remarkable accuracy in structure prediction. AlphaFold2's performance in CASP14 demonstrated that neural network approaches can regularly predict protein structures with atomic accuracy, significantly outperforming existing methods [1]. However, evolutionary algorithms continue to offer unique advantages in specific domains, particularly de novo protein design and the prediction of alternative conformations.

Recent research has highlighted the challenge of predicting fold-switching proteins that adopt multiple stable structures, with most algorithms including evolutionary methods typically predicting only a single conformation [27]. Novel approaches like the Alternative Contact Enhancement (ACE) method have been developed to address this limitation by enhancing coevolutionary signals from alternative folds [27]. Similarly, the CF-random method leverages AlphaFold2 with shallow multiple sequence alignments to predict alternative conformations, successfully identifying both conformations in 35% of fold-switching proteins tested [6].

The integration of evolutionary algorithms with deep learning approaches represents a promising direction for future research. Evolutionary operators could enhance the sampling diversity of neural network approaches, while learned representations could inform more efficient search strategies in evolutionary algorithms. As these hybrid approaches mature, benchmarking against standardized datasets and through community-wide assessments will remain essential for evaluating progress and identifying the most productive research directions.

Evolutionary algorithms have established themselves as powerful tools for protein structure prediction and design, with EvoDesign, Genetic Algorithms, and Evolution Strategies each offering distinct advantages for specific problem domains. EvoDesign's evolutionary profile-based approach demonstrates particular strength in designing stable proteins with native-like folding properties, while Genetic Algorithms provide flexible frameworks for exploring complex conformational spaces, and Evolution Strategies offer efficient continuous optimization for parameterized structural representations.

The comparative analysis presented in this guide provides researchers with a foundation for selecting appropriate algorithmic strategies based on their specific protein engineering objectives. As the field advances, the integration of evolutionary principles with emerging deep learning methodologies promises to further expand the frontiers of computational protein design, enabling more sophisticated applications in therapeutic development, enzyme engineering, and functional biomaterial design. The continued benchmarking of these approaches through standardized assessments will ensure rigorous evaluation of new methodologies and facilitate the systematic advancement of the field.

Predicting the three-dimensional (3D) structure of a protein from its amino acid sequence has long been one of the most important challenges in biochemistry and molecular biology. A protein's structure is directly correlated with its biological function, and determining it is critical for understanding biological processes and enabling rational drug design [28]. For decades, experimental techniques such as X-ray crystallography, nuclear magnetic resonance (NMR), and cryo-electron microscopy (cryo-EM) have been the primary methods for determining protein structures. However, these methods are often complex, time-consuming, and expensive, creating a significant gap between the number of known protein sequences and those with resolved structures [28] [29]. This disparity fueled the need for accurate computational methods to predict protein structures at scale.

Before the advent of deep learning systems like AlphaFold, computational methods were broadly divided into two categories: physical interaction-based approaches and evolutionary history-based approaches [1]. Physical approaches integrated understanding of molecular driving forces into thermodynamic or kinetic simulations. While theoretically appealing, they proved computationally intractable for many proteins due to the massive complexity involved [1] [30]. In contrast, evolutionary approaches leveraged the growing databases of protein sequences and structures, using bioinformatics analysis to derive structural constraints from evolutionary patterns [1]. This review will explore how the power of co-evolutionary information, particularly through the analysis of correlated mutations in multiple sequence alignments (MSAs), established a foundational principle that enabled dramatic progress in protein structure prediction, ultimately paving the way for the AlphaFold breakthrough.

Key Methodological Foundations in the Pre-AlphaFold Era

The integration of co-evolutionary information into structure prediction was a gradual process, with several key methodologies establishing its value.

Co-evolution and Contact Prediction

A fundamental insight driving evolutionary approaches was the observation that the 3D structure of a protein is more conserved than its amino acid sequence across evolutionary time [28]. When mutations occur at one residue in a protein, compensatory mutations often arise at an interacting residue to preserve the protein's structural integrity and function. These correlated mutations manifest as statistical covariation within multiple sequence alignments of homologous proteins. Computational methods were developed to detect these covariation signals to predict which amino acid residues are in spatial proximity, even if they are far apart in the linear sequence. This produced a contact map—a 2D representation of a 3D protein structure—which served as a powerful restraint to guide structure prediction algorithms [29].

The HP Lattice Model: A Simplified Arena for Algorithm Development

To manage the immense computational complexity of protein folding, simplified models like the Hydrophobic-Polar (HP) lattice model were widely used to investigate general principles of protein folding [30]. This model reduces the 20 amino acids to two types: H (hydrophobic) and P (hydrophilic or polar). The protein chain is folded onto a lattice (e.g., 2D square or 3D Face-Centered Cubic), and the goal is to find a conformation that maximizes the number of H-H contacts, representing the driving force of the hydrophobic effect [30]. While these models did not achieve high resolution, they provided a tractable system for developing and testing optimization algorithms, including Evolutionary Algorithms (EAs), which were robust and could handle various energy functions [30]. The performance of various pre-AlphaFold algorithms on such models is summarized in Table 1.

Table 1: Performance Overview of Key Pre-AlphaFold Prediction Method Categories

| Method Category | Core Principle | Representative Tools | Key Strength | Primary Limitation |

|---|---|---|---|---|

| Ab Initio / Free Modeling | Predicts structure based on physical laws & thermodynamics to achieve lowest free energy [28]. | QUARK [28] | Capacity to predict novel, unknown protein folds without templates. | Computationally demanding; infeasible for long sequences. |

| Threading / Fold Recognition | Aligns target sequence to a library of known folds based on a scoring function [28]. | GenTHREADER [28] | Leverages limited number of natural protein folds; useful when sequence similarity is low. | Limited by the completeness of the fold library; cannot predict new folds. |

| Homology Modeling | Builds a model based on a template from a closely related homologous protein [28]. | SWISS-MODEL [28] | Highest accuracy among classical methods when a good template exists. | Completely dependent on the availability of a suitable template. |

| EA-based HP Model Optimization | Uses genetic algorithms and local searches to find energy-minimizing conformations on a lattice [30]. | (Various custom implementations) [30] | Robust, can handle arbitrary energy functions; provides macro-scale optimized structure. | Low resolution due to model simplification; often fails on complex chains. |

Benchmarking and the CASP Competition

The Critical Assessment of protein Structure Prediction (CASP) competition, launched in 1994, has been the gold-standard, blind assessment for evaluating the state of the art in protein structure prediction [28] [29]. It provided an objective platform to benchmark new methods. Before AlphaFold, progress was steady but slow. For instance, by CASP13 in 2018, the best methods achieved a Global Distance Test (GDT) score—which measures the similarity between prediction and experimental structure—of only about 40 for the most difficult proteins, where 100 represents a perfect match [29]. This environment of rigorous benchmarking was crucial for objectively establishing the progressive improvements delivered by co-evolutionary methods.

Experimental Protocols: Establishing Co-evolution's Power

The validation of co-evolution's power was not a single event but a process cemented through specific experimental workflows and benchmarks.

Protocol for Residue Co-evolution Analysis

The standard protocol for deriving structural constraints from evolution involved several key steps, which are visualized in Figure 1.

Figure 1: Workflow for Co-evolution Based Contact Prediction

Diagram Title: Co-evolution Contact Prediction Workflow

- Sequence Homology Search: The amino acid sequence of the target protein was used to search large genomic and metagenomic databases (e.g., UniRef, BFD) to identify homologous sequences [29].

- Multiple Sequence Alignment (MSA) Construction: The identified homologous sequences were aligned to create an MSA, representing the evolutionary history of the protein family.

- Covariance Analysis: Statistical methods (e.g., direct coupling analysis, DCA) were applied to the MSA to distinguish direct, evolutionarily coupled residue pairs from indirect correlations [1] [29].

- Contact Map Generation: The strongest coupled pairs were interpreted as being in spatial contact, generating a probabilistic distance map (distogram) or a binary contact map.

- Structure Generation: These distance restraints were then used to guide physics-based molecular dynamics simulations, fragment assembly, or other conformational search algorithms to generate all-atom 3D models [1].

Protocol for Benchmarking Complex Prediction

To assess the accuracy of methods in predicting protein-protein interactions, studies followed rigorous benchmarking protocols, such as the one used to evaluate early versions of AlphaFold on complexes [31].

- Curated Benchmark Set Creation: A diverse set of protein complexes (e.g., 152 heterodimers) was curated, ensuring availability of high-resolution experimental structures as ground truth [31].

- Method Comparison: Different prediction methods, including unbound protein-protein docking and co-evolution-informed models, were run on the same benchmark set.

- Accuracy Metric Calculation: Predictions were compared to experimental structures using metrics like Root-Mean-Square Deviation (RMSD) and the Critical Assessment of Predicted Interactions (CAPRI) accuracy criteria, which classifies models as Incorrect, Acceptable, Medium, or High quality [31] [32].

- Feature Correlation Analysis: Failed and successful predictions were analyzed to identify sequence and structural features (e.g., MSA depth, interface properties) that determined accuracy [31].

The quantitative results from such a benchmark are shown in Table 2, highlighting the performance gap that co-evolution helped to narrow.

Table 2: Benchmarking Results for Protein Complex Prediction (Pre-AlphaFold & Early AlphaFold)

| Prediction Method | Benchmark Set | Near-Native Success Rate (Top Model) | Key Determinants of Success | Notable Limitations |

|---|---|---|---|---|

| Unbound Protein-Protein Docking [31] | 152 diverse heterodimers | 9% | Shape complementarity, electrostatics. | Poor performance on flexible targets and interfaces without clear co-evolution. |

| AlphaFold (Initial Multimer) [31] | 152 diverse heterodimers | 43% | Depth & quality of input MSA; co-evolutionary signals across the interface. | Low success on antibody-antigen complexes (0-11%) and T-cell receptor-antigen complexes. |

| AlphaFold-Multimer (v2.3) [32] | 254 DB5.5 targets (bound/unbound) | ~43% (overall) | Similar to AlphaFold, but trained on complexes. | Performance worsens with conformational flexibility; struggles with antibody-antigen (20% success). |

The Scientist's Toolkit: Essential Research Reagents

The experiments that established co-evolution's power relied on a suite of key computational and data resources.

Table 3: Essential Research Reagents for Co-evolution Based Structure Prediction

| Research Reagent / Resource | Type | Function in Experimental Protocol |

|---|---|---|

| Protein Data Bank (PDB) [1] [28] | Database | Primary repository of experimentally solved protein structures; used for training algorithms and as a source of templates and ground truth for benchmarking. |

| UniProt Knowledgebase (UniProtKB) [citatio---:4] | Database | Central hub for protein sequence and functional information; provides the target sequences for prediction and is a source for finding homologs. |

| Multiple Sequence Alignment (MSA) [1] | Data Structure | The core input representing the evolutionary history of a protein family; the source from which co-evolutionary signals are extracted. |

| HP Lattice Model [30] | Computational Model | A simplified model that reduces computational complexity, allowing for the development and testing of optimization algorithms like Evolutionary Algorithms. |

| CASP/CAPRI Datasets [31] [32] | Benchmarking Resource | Curated sets of protein structures and complexes with held-out experimental structures; provide a blind, objective standard for comparing method accuracy. |

| Evolutionary Algorithm (EA) [30] | Computational Algorithm | A robust, population-based optimization method used to search the conformational space for low-energy structures, often guided by co-evolutionary restraints. |

Prior to AlphaFold, the field of computational protein structure prediction had firmly established the power of co-evolution. The key principle—that evolutionary covariation in multiple sequence alignments contains a strong signal of 3D structural proximity—was proven and quantitatively validated through rigorous benchmarking. Methodologies evolved from simplified lattice models to sophisticated integration of co-evolutionary restraints into physics-based and knowledge-based modeling pipelines. While these pre-AlphaFold methods were groundbreaking, they had clear limitations: performance was highly dependent on the depth and breadth of available homologous sequences, and they often fell short of experimental accuracy, especially for targets with few homologs or for complex assemblies like antibodies. Nevertheless, by demonstrating that evolutionary data could powerfully constrain the protein folding problem, this era laid the essential groundwork for the deep learning revolution that would follow.

Methodologies and Real-World Applications: Implementing EAs for Complex Folding Problems

The field of computational protein structure prediction and design has undergone a revolutionary transformation, marked by a convergence of traditional evolutionary algorithms (EAs) and modern deep learning approaches. Evolutionary algorithms, inspired by biological evolution principles, have long been employed to navigate the complex conformational landscape of protein folding through mechanisms of mutation, selection, and recombination [33] [30]. These methods excel at exploring vast search spaces without requiring gradient information, making them particularly suitable for complex optimization problems where the relationship between sequence and structure is poorly understood [33]. Meanwhile, the recent emergence of neural network predictors such as AlphaFold2, RoseTTAFold, and ESMFold has demonstrated remarkable accuracy in predicting protein structures from amino acid sequences alone, often achieving results comparable to experimental methods [34] [35] [36].

The integration of these methodologies represents a paradigm shift in computational structural biology. Modern EA architectures now increasingly incorporate structural profiles generated by neural networks to guide the evolutionary search process more efficiently. This hybrid approach leverages the explorative power of population-based evolutionary methods with the precise structural insights provided by deep learning models [35] [2]. The resulting frameworks are capable of addressing both the "protein folding problem" (predicting structure from sequence) and the "inverse folding problem" (designing sequences that fold into specified structures) with unprecedented efficiency and accuracy [34] [2]. This comparative guide examines the architectural foundations, performance characteristics, and practical implementation considerations of these integrated approaches, providing researchers with the analytical framework needed to select appropriate methodologies for specific protein engineering challenges.

Theoretical Foundations: From Traditional EAs to Neural Integrations

Core Principles of Evolutionary Algorithms in Protein folding

Evolutionary algorithms applied to protein folding typically employ simplified models to make the computationally complex problem tractable. The HP lattice model represents one such simplification, where amino acids are classified as hydrophobic (H) or polar (P), and the protein chain is modeled as a self-avoiding walk on a discrete lattice [30]. The objective is to find conformations that maximize hydrophobic contacts, mimicking the hydrophobic effect driving protein folding in nature. EAs navigate this conformational space using several key components:

- Population initialization: Generating an initial set of candidate conformations

- Fitness evaluation: Assessing conformations based on contact energy functions

- Selection: Preferring conformations with better fitness (lower energy)

- Variation operators: Applying crossover and mutation to create new conformations

- Local search: Refining conformations through moves like pull-move and k-site rotation [30]

The strength of traditional EAs lies in their robustness and ability to handle arbitrary energy functions without requiring differentiable objective functions [30]. They perform particularly well on complex optimization landscapes where gradient-based methods struggle, though they may suffer from slow convergence and computational intensity for large-scale problems [33] [37].

Neural Network Predictors as Fitness Landscapes

Modern neural network-based protein structure predictors have transformed the field by leveraging patterns learned from the Protein Data Bank (PDB). These models function as sophisticated fitness evaluators within EA frameworks, providing accurate structural assessments that guide the evolutionary process: