Benchmarking Evolutionary Analysis vs. Machine Learning for Protein Folding: A Comprehensive Overview for Biomedical Research

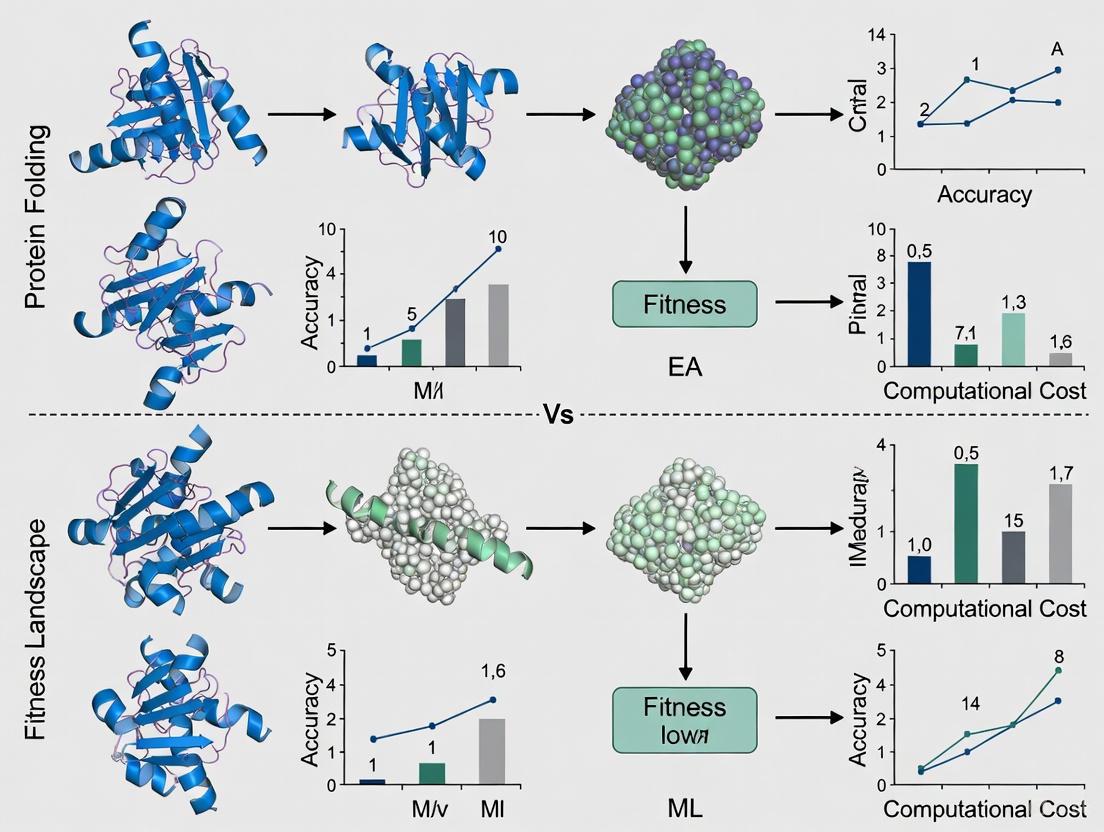

This article provides a systematic comparison of Evolutionary Analysis (EA) and Machine Learning (ML) methodologies for protein structure prediction, a critical task in drug discovery and synthetic biology.

Benchmarking Evolutionary Analysis vs. Machine Learning for Protein Folding: A Comprehensive Overview for Biomedical Research

Abstract

This article provides a systematic comparison of Evolutionary Analysis (EA) and Machine Learning (ML) methodologies for protein structure prediction, a critical task in drug discovery and synthetic biology. It explores the foundational principles of both approaches, detailing key algorithms and their real-world applications in areas like de novo protein design and drug-target interaction prediction. The content addresses significant challenges, including the poor performance of ML tools like AlphaFold on fold-switching proteins and the limitations of molecular docking, while presenting optimization strategies such as the ACE (Alternative Contact Enhancement) method and machine learning-rescored docking. Finally, it offers a rigorous validation framework, benchmarking the performance of leading ML models like AlphaFold, ESMFold, and OmegaFold on metrics of accuracy, speed, and resource consumption to guide researchers in selecting the optimal tool for their specific needs.

The Foundational Paradigms: Unpacking Evolutionary Signals and AI-Driven Prediction in Protein Folding

The Protein Folding Problem and Its Critical Role in Biotechnology and Medicine

The protein folding problem represents one of the most enduring challenges in structural biology. It centers on predicting the precise three-dimensional native structure of a protein from its linear amino acid sequence—a process fundamental to all biological function [1] [2]. This problem has profound implications, as a protein's structure directly determines its function; misfolded proteins are implicated in numerous neurodegenerative diseases, including Alzheimer's, Parkinson's, and ALS [2]. For decades, the scientific community has pursued two complementary computational approaches to tackle this problem: evolutionary algorithm (EA)-based methods, which often leverage co-evolutionary information and physical principles, and machine learning (ML)-based methods, which learn structure-prediction patterns from vast datasets of known protein structures [3] [4]. Understanding the relative strengths, limitations, and optimal applications of these paradigms is critical for researchers and drug development professionals aiming to harness computational power for biological discovery and therapeutic innovation.

Theoretical Framework and the Energy Landscape

The conceptual framework for understanding protein folding is the free energy landscape [5]. In this model, the folding process is visualized as a stochastic search across a multidimensional surface, where the protein spontaneously progresses from an ensemble of unfolded states (U) toward the native conformation (N)—the global free energy minimum [6] [5]. Evolution has selected for amino acid sequences whose energy landscapes are funnel-shaped, efficiently guiding the protein toward its functional structure while avoiding misfolding, aggregation, and long-lived metastable traps [5]. This landscape perspective provides a unified theoretical foundation for both EA and ML strategies, which can be understood as different methods for navigating this conformational space to identify the native state.

Benchmarking Computational Approaches: EA vs. ML

Machine Learning-Assisted Directed Evolution (MLDE)

Directed evolution (DE), a mainstay of protein engineering, mimics natural selection by iteratively applying mutagenesis and functional screening to accumulate beneficial mutations. However, its efficiency is severely hampered by epistasis—non-additive interactions between mutations that create rugged fitness landscapes with multiple local optima [7]. Machine learning-assisted directed evolution (MLDE) strategies address this by using supervised ML models trained on sequence-fitness data to predict high-fitness variants across the entire combinatorial landscape.

A systematic evaluation of MLDE across 16 diverse protein fitness landscapes demonstrated that MLDE consistently outperforms conventional DE [7]. The study found that the advantage of MLDE is most pronounced on landscapes that are challenging for traditional DE, particularly those with fewer active variants and more local optima. Key strategies include:

- Active Learning DE (ALDE): Uses iterative rounds of model prediction and experimental validation to refine exploration [7].

- Focused Training MLDE (ftMLDE): Enhances training set quality using zero-shot predictors, which leverage evolutionary, structural, and stability knowledge to prioritize informative variants without experimental data [7].

Table 1: Performance of MLDE Strategies Across Challenging Landscapes

| MLDE Strategy | Key Mechanism | Advantage over DE | Ideal Use Case |

|---|---|---|---|

| Standard MLDE | Single-round prediction using models trained on random sampling | Moderate | Landscapes with moderate epistasis |

| Active Learning (ALDE) | Iterative model refinement with experimental feedback | High | Resource-intensive screens; highly epistatic landscapes |

| Focused Training (ftMLDE) | Training set enriched by zero-shot predictors | Highest | Landscapes with sparse high-fitness variants |

Deep Learning for Structure Prediction

In the domain of structure prediction, deep learning models have set new standards for accuracy. These systems are trained on massive datasets of known structures from the Protein Data Bank (PDB) and leverage two key information sources: evolutionary history through Multiple Sequence Alignments (MSAs) and physico-chemical constraints [4].

Table 2: Benchmarking of Leading ML Protein Folding Tools

| Model | Key Innovation | Typical PLDDT* (Short Seq) | Inference Time (400 aa) | GPU Memory Use |

|---|---|---|---|---|

| AlphaFold | Transformer network integrating MSAs & physics | 0.89 [8] | ~210 sec [8] | ~10 GB [8] |

| ESMFold | Single-sequence inference using protein language model | 0.93 [8] | ~20 sec [8] | ~18 GB [8] |

| OmegaFold | Balanced design for accuracy & efficiency | 0.76 [8] | ~110 sec [8] | ~10 GB [8] |

| *PLDDT (Predicted Local Distance Difference Test): Confidence score (0-1) where >90 is high, <50 is low. |

A critical limitation of these ML models is their performance on intrinsically disordered proteins (IDPs) and regions (IDRs) [4]. Because they are trained predominantly on structured proteins from the PDB, they are biased toward single, stable conformations. When encountering IDPs, they often output low-confidence scores or unrealistic stable structures, highlighting a fundamental gap in their training data and design [4].

Inverse Folding and Non-Autoregressive Decoding

The inverse folding problem—designing sequences that fold into a target structure—is a critical task for protein engineering. Traditional autoregressive models generate sequences token-by-token, leading to teacher-forcing discrepancies and low efficiency [3]. The DIProT toolkit implements a non-autoregressive generative model that generates and refines the entire sequence in parallel [3]. This approach addresses the teacher-forcing problem and significantly improves generation efficiency, achieving a sequence recovery rate of 54.4% on the TS50 dataset and 50.6% on CATH4.2 [3]. DIProT integrates this model with a user-friendly interface and in-silico evaluation using ESMFold, forming a virtual design loop that allows researchers to incorporate prior knowledge and human feedback [3].

Experimental Protocols and Methodologies

Protocol for Equilibrium Unfolding

This classical experimental method determines the conformational stability of a protein by measuring its unfolding under denaturing conditions [6].

Materials:

- Protein Purification System: For producing pure, concentrated protein sample.

- Urea Stock Solution: High-purity (e.g., 10M) urea in the chosen buffer.

- Spectrofluorometer: Instrument for measuring fluorescence emission (e.g., PTI C-61).

- Circular Dichroism (CD) Spectropolarimeter: For validating secondary structure changes.

- Quartz Cuvettes: High-quality, matched cuvettes for UV spectroscopy.

Procedure:

- Prepare Denaturant Series: Create a series of solutions with incrementally increasing urea concentrations (e.g., 0 M to 8 M), ensuring constant buffer, salt, and protein concentration across all samples.

- Equilibration: Incubate all samples until equilibrium is reached. The required time must be determined empirically for each protein via time-course experiments.

- Reversibility Check: Confirm that the unfolding process is reversible by comparing the unfolding (native -> denatured) and refolding (denatured -> native) curves. The data should overlay.

- Data Collection:

- Fluorescence Emission: Acquire emission spectra (300-400 nm) following excitation at 280 nm (Trp/Tyr) or 295 nm (Trp-specific). The signal at a wavelength showing maximal change between native and unfolded states is plotted against urea concentration.

- Circular Dichroism: Measure CD signals (e.g., at 222 nm for alpha-helix content) across the same denaturant range.

- Data Analysis: Fit the resulting sigmoidal curve to a two-state or multi-state unfolding model to calculate the conformational free energy (ΔG) of unfolding.

Protocol for MLDE and Focused Training

This protocol outlines a computational workflow for implementing machine learning-assisted directed evolution.

Materials:

- Initial Variant Library: Experimental fitness data for a limited set of protein variants (training data).

- Zero-Shot Predictors: Computational tools (e.g., based on evolutionary, structural, or stability knowledge) to score sequences without experimental data.

- MLDE Software: Platforms like those described in [7] for model training and prediction.

Procedure:

- Initial Training Set Construction: Instead of random sampling, use one or more zero-shot predictors to select a focused training set of variants predicted to be high-fitness or informative.

- Model Training: Train a supervised machine learning model (e.g., regression) on the initial training set of sequences and their experimentally measured fitness values.

- Variant Prediction & Selection: Use the trained model to predict the fitness of all possible variants within the defined sequence space.

- Experimental Validation: Synthesize and experimentally test the top in silico predicted variants.

- Active Learning Loop (Optional): Incorporate the new experimental data into the training set and retrain the model for iterative rounds of prediction and validation.

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Reagents and Tools for Protein Folding Research

| Item / Reagent | Function / Application | Example / Key Property |

|---|---|---|

| Chaotropic Denaturants | Induce protein unfolding for equilibrium/kinetic studies. | Urea, Guanidinium HCl (GdmHCl) [6] |

| Reducing Agents | Prevent spurious disulfide bond formation during refolding. | Dithiothreitol (DTT), TCEP, β-mercaptoethanol [6] |

| Proteases | Probe native vs. non-native structure via specific cleavage patterns. | Used in large-scale refolding assays [2] |

| Molecular Chaperones | Assist in proper protein folding in cellular and in-vitro contexts. | Identify proteins unable to refold spontaneously [2] |

| Zero-Shot Predictors | Prioritize variant libraries for MLDE without experimental data. | Tools using evolutionary, structural, or stability data [7] |

| Structure Prediction Servers | In-silico validation of designed protein sequences. | AlphaFold, ESMFold, OmegaFold [8] [3] |

Workflow and Pathway Visualizations

EA vs. ML for Protein Engineering

ML Protein Folding Prediction Pipeline

The benchmarking of evolutionary algorithms and machine learning for solving the protein folding problem reveals a future of complementary integration rather than outright replacement. ML methods, particularly deep learning for structure prediction, have demonstrated unprecedented speed and accuracy for structured proteins, revolutionizing the field [8] [4]. Similarly, MLDE provides a powerful advantage over naive directed evolution on complex, epistatic fitness landscapes [7]. However, EA-based methods and physical principles remain crucial, especially in areas where ML currently fails, such as designing entirely novel folds, modeling intrinsically disordered proteins, and predicting the effects of mutations in de novo designed sequences [4] [2]. The most promising path forward lies in hybrid approaches that leverage the pattern-recognition power of ML with the principled exploration of EA and physical models. This synergistic strategy will be essential for unlocking the next frontier: not just predicting structures, but reliably designing novel proteins for therapeutic and biotechnological applications.

Evolutionary Analysis (EA) represents a powerful, principles-based approach for deciphering the language of protein sequences to infer structure and function. At its core, EA operates on the fundamental biological premise that evolutionary constraints preserve functionally important relationships within and between proteins. When applied to multiple sequence alignments (MSAs), EA can detect co-evolutionary signals—patterns of correlated mutations between residue positions—that reveal which amino acids interact to maintain structural stability and biological function. These signals provide a critical source of information for protein structure prediction, function annotation, and understanding molecular recognition in signaling networks.

The resurgence of EA in structural biology is particularly notable when benchmarked against emerging machine learning (ML) methods. While ML approaches like AlphaFold2 have demonstrated remarkable accuracy, they remain profoundly dependent on the evolutionary information encoded in MSAs as primary inputs [9] [10]. This dependency underscores that EA provides the foundational biological constraints that enable modern ML systems to achieve unprecedented performance, establishing EA not as a competing methodology but as an essential component in the computational structural biology toolkit.

Theoretical Foundations: From Sequences to Structural Constraints

Multiple Sequence Alignments as Evolutionary Records

A Multiple Sequence Alignment is a computational reconstruction of evolutionary history, arranging homologous protein sequences to highlight conserved and variable regions. The construction of informative MSAs begins with searching sequence databases (e.g., UniClust30, UniProt) using tools such as HHblits or Jackhmmer to collect homologous sequences [11] [12]. The quality and depth (number of sequences) of an MSA directly impacts the strength of detectable co-evolutionary signals; alignments with hundreds or thousands of diverse sequences typically yield more reliable predictions [10].

MSAs encode two primary types of evolutionary information:

- Conservation patterns: Residues critical for function or structural stability exhibit lower evolutionary variability.

- Correlation patterns: Pairs of residues that mutate in a coordinated manner often share spatial proximity or functional linkage.

The latter pattern forms the basis for detecting co-evolution and inferring structural constraints.

The Physical Basis of Co-evolution

Coevolution occurs when mutations at one residue position necessitate compensatory mutations at another position to maintain protein fitness. In structural contexts, this frequently arises from physical interactions between residues that form stabilizing contacts. When two residues interact closely—such as in hydrogen bonding, salt bridges, or hydrophobic packing—a mutation that alters side-chain properties at one position may require complementary changes at the interacting position to preserve the interaction geometry and stability [13]. Similarly, in protein-protein interactions, co-evolution maintains complementary surfaces for specific molecular recognition [13].

From an information theory perspective, co-evolving residue pairs contain mutual information about structural constraints. The computational challenge lies in distinguishing direct couplings (which reflect physical constraints) from indirect correlations (which arise from phylogenetic relationships or other confounding factors) [12].

Methodological Approaches: Detecting Co-evolution

Computational Frameworks for Co-evolution Detection

Several computational approaches have been developed to detect co-evolution from MSAs, each with distinct theoretical foundations and implementation strategies:

Table 1: Key Computational Methods for Detecting Co-evolution

| Method | Underlying Principle | Key Tools | Strengths |

|---|---|---|---|

| Direct Coupling Analysis (DCA) | Maximum entropy model estimating direct probabilities of residue pairs | mfDCA, plmDCA, GREMLIN, CCMPred | Direct estimation of coupling parameters; avoids indirect correlations [12] |

| Inverse Covariance Methods | Sparse inverse covariance estimation to identify conditional dependencies | PSICOV | Effectively filters out transitive correlations [12] |

| Meta-Predictors | Machine learning consensus from multiple methods | metaPSICOV, PConsC, PConsC2 | Improved precision by combining orthogonal prediction sets [12] |

| Evolutionary Trace | Phylogenetic tree-based identification of functionally important residues | Evolutionary Trace | Identifies specificity-determining residues; maps functional surfaces [13] |

Experimental Protocols for Method Validation

Protocol 1: Benchmarking Co-evolution Methods for Contact Prediction

- Dataset Curation: Select a diverse set of protein domains with known structures and minimal sequence similarity (e.g., <30% identity) [12].

- MSA Generation: For each target, build an MSA using HHblits (3 iterations, 99% sequence identity threshold, 60% coverage with master sequence) against the UniProt20 database [12].

- Contact Prediction: Apply co-evolution methods (e.g., metaPSICOV, PSICOV, GREMLIN) to predict residue-residue contacts.

- Performance Assessment:

- Define structural contacts as Cβ atoms (Cα for Gly) within 8Å [12].

- Exclude trivial contacts (sequence separation <5).

- Calculate precision as: Precision = True Positives / (True Positives + False Positives)

- Focus evaluation on long-range contacts (sequence separation >23) which are most informative for structure [12].

Protocol 2: De Novo Structure Prediction Using Co-evolution Constraints

- Contact Prediction: Generate top L/5 long-range contact predictions (where L is protein length) using the optimal co-evolution method [12].

- Fragment Assembly: Use contact restraints alongside secondary structure predictions in fragment-based structure prediction software (e.g., FRAGFOLD, Rosetta) [12].

- Decoy Generation: Generate thousands of structural decoys satisfying the contact constraints.

- Model Selection: Identify best models using:

- Satisfaction of predicted contacts

- Knowledge-based energy functions

- Structural quality assessment tools

Benchmarking studies have demonstrated that this approach can generate correct folds for a substantial proportion of targets when reliable MSAs are available [12].

Quantitative Benchmarking of Co-evolution Methods

Performance Comparison Across Protein Classes

Systematic evaluation of co-evolution methods reveals significant variation in performance across different protein structural classes:

Table 2: Performance Comparison of Co-evolution Methods Across SCOP Classes

| Method | All α (%) | All β (%) | α/β (%) | Membrane Proteins (%) | Overall Average Precision (%) |

|---|---|---|---|---|---|

| metaPSICOV Stage 2 | 38.5 | 61.2 | 59.8 | 32.1 | 52.9 |

| PConsC2 | 36.8 | 59.7 | 58.3 | 30.5 | 50.8 |

| GREMLIN | 35.2 | 57.4 | 56.1 | 28.9 | 48.9 |

| PSICOV | 33.7 | 55.8 | 54.6 | 27.3 | 47.1 |

| FreeContact | 30.4 | 52.1 | 51.3 | 24.8 | 43.2 |

Precision values represent the percentage of correct contacts among the top L/5 predictions for each protein class [12].

Key observations from these benchmarks include:

- All-β and α/β proteins generally yield higher precision predictions, likely due to stronger co-evolutionary signals from extensive residue contacts.

- All-α and membrane proteins present greater challenges, with precision values typically 10-20% lower [12].

- Consensus methods (metaPSICOV, PConsC2) consistently outperform individual methods by leveraging complementary strengths.

MSA Depth Requirements for Reliable Prediction

The relationship between MSA depth (number of effective sequences) and prediction accuracy follows a nonlinear pattern:

- Minimum threshold: Approximately 5×L effective sequences (where L is protein length) are needed for basic signal detection [12].

- Saturation point: Diminishing returns observed beyond 50×L sequences for most methods.

- Quality dependence: MSA diversity (sequence variation) proves equally important as raw sequence count.

EA in Modern Protein Structure Prediction Pipelines

Integration with Deep Learning: The AlphaFold2 Paradigm

AlphaFold2 represents the most successful integration of EA principles with deep learning architecture. Its neural network explicitly reasons about evolutionary relationships through several key components:

- Evoformer Architecture: A novel neural network block that jointly processes MSA and pair representations, enabling continuous information exchange between evolutionary and spatial reasoning [9].

- Triangle Attention Mechanisms: Enforce geometric consistency in pairwise predictions through triangle multiplicative updates and triangle attention [9].

- Iterative Refinement: The recycling process allows multiple rounds of structural refinement using the same network [10].

The critical role of MSAs in AlphaFold2's performance is evidenced by:

- Strong correlation between MSA depth and prediction accuracy (pLDDT score) [10].

- Dramatic performance reduction when using single sequences instead of MSAs [14].

- The system's ability to identify co-evolving residue pairs and translate them into spatial constraints [10].

MSA-Free Approaches: The Emerging Role of Protein Language Models

Recent advances in protein language models (pLMs) like ESM represent a shift toward implicit evolutionary learning. These models:

- Pre-training: Learn statistical patterns from billions of protein sequences using self-supervised objectives (masked language modeling) [14] [15].

- Embeddings: Encode evolutionary constraints in dense vector representations without explicit MSAs [15].

- Performance: Approach MSA-based methods for targets with many homologs but excel at speed, processing sequences in seconds rather than minutes [14].

Hybrid approaches like HelixFold-Single combine pLMs with geometric learning components from AlphaFold2, demonstrating competitive accuracy on targets with large homologous families while being significantly faster than MSA-based methods [14].

Research Reagents and Computational Tools

Table 3: Essential Research Reagents and Computational Tools for Evolutionary Analysis

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| HHblits | Software | Rapid MSA generation via iterative hidden Markov model searches | Building deep MSAs from sequence databases [12] |

| UniProt20/UniClust30 | Database | Curated protein sequence databases with clustered sequences | Source of homologous sequences for MSA construction [11] [12] |

| metaPSICOV | Software | Meta-predictor combining multiple co-evolution methods | High-precision contact prediction from MSAs [12] |

| GREMLIN/CCMPred | Software | plmDCA implementation for direct coupling analysis | Residue-residue contact prediction [12] |

| AlphaFold2 | Software | End-to-end deep learning structure prediction | Protein 3D structure prediction using MSA inputs [9] [10] |

| ESMFold | Software | Protein language model for structure prediction | Fast structure prediction without explicit MSA generation [14] |

Evolutionary Analysis remains an indispensable methodology for protein structure and function prediction, even as machine learning approaches dominate recent advancements. The core principles of co-evolution—detecting evolutionarily coupled residues to infer structural constraints—provide biologically grounded signals that enhance the interpretability and reliability of computational predictions. Rather than being supplanted by ML, EA has been productively integrated into state-of-the-art systems where it continues to provide the evolutionary context essential for accurate structure prediction.

Future directions will likely focus on:

- Hybrid approaches combining explicit co-evolution analysis with protein language models.

- Improved contact precision for challenging protein classes (membrane proteins, all-α).

- Integration with experimental data to validate functional predictions from co-evolution analysis.

For researchers benchmarking EA against pure ML approaches, the critical insight is that these methodologies are increasingly synergistic rather than competitive, with EA providing the fundamental biological constraints that guide and validate ML-based predictions.

Workflow Visualization

Evolutionary Analysis Workflow

The field of protein structure prediction has undergone a profound transformation, shifting from a reliance on physics-based simulations to the dominance of deep learning methodologies. This revolution, catalyzed by artificial intelligence (AI), has not only solved a decades-old scientific challenge but has also fundamentally reshaped the toolkit available to researchers and drug development professionals. This technical guide examines this paradigm shift within the context of benchmarking evolutionary algorithm (EA)-inspired methods against machine learning (ML) approaches. We detail the core architectures, provide quantitative performance comparisons, and outline experimental protocols that highlight how ML has overcome the inherent limitations of classical physics-based and EA-driven protein folding models.

The Classical Era: Physics-Based Models and Evolutionary Principles

Before the rise of deep learning, computational protein structure prediction relied heavily on physics-based principles and evolutionary information. These methods were grounded in the paradigm that a protein's native state corresponds to its global free energy minimum [16]. A key breakthrough was the development of fragment assembly, an approach pioneered by methods like Rosetta and QUARK [17]. These methods operated on the principle that local sequence segments prefer local structures found in the database of known proteins. They would identify short (3-9 residue) structural fragments from experimentally solved structures based on sequence and local predicted structure similarity, and then assemble these fragments into full-length models using Monte Carlo or Replica-Exchange Monte Carlo (REMC) simulations guided by knowledge-based or physics-based force fields [17].

Another cornerstone of pre-ML methods was the use of evolutionary coupling analysis derived from Multiple Sequence Alignments (MSAs). The hypothesis was that pairs of residues in contact within a protein's structure would exhibit correlated mutations across evolution. Early methods used simple metrics like mutual information, but accuracy was low due to an inability to distinguish direct from indirect couplings. The introduction of global statistical models, particularly Direct Coupling Analysis (DCA) and Markov Random Fields (MRFs), represented a significant advance by simultaneously considering all pairwise interactions to infer direct contacts, thereby improving prediction accuracy [17].

Despite their ingenuity, these classical approaches faced fundamental limitations. Physics-based force fields were approximations, and accurate computation of the full energy landscape was challenging, often leading to misfolded designs in vitro [16]. Furthermore, the conformational sampling required by methods like fragment assembly was computationally intensive and time-consuming, restricting throughput and the exploration of novel protein folds [16] [17].

Table 1: Comparison of Classical Physics-Based/EA and Modern ML Protein Folding Methods

| Feature | Classical Physics-Based/EA Methods | Modern ML Methods |

|---|---|---|

| Core Principle | Free energy minimization, fragment assembly, evolutionary coupling [16] [17] | Learning sequence-structure mappings from data using deep neural networks [16] [18] |

| Key Algorithms | Rosetta, QUARK, I-TASSER, DCA [17] | AlphaFold2, RoseTTAFold, ESMFold, ProteinMPNN [18] [19] |

| Primary Input | Amino acid sequence, MSAs, predicted local features [17] | Amino acid sequence (and MSAs for some models) [18] [20] |

| Sampling Method | Monte Carlo, REMC, gradient descent [17] | End-to-end forward pass, inference [18] [21] |

| Computational Cost | High (hours to days per target) [16] | Relatively low (seconds to minutes per target) [20] |

| Accuracy (on single domains) | Moderate, struggled with distant homology [17] | High, often approaching experimental accuracy [18] [21] |

| Strength | Physics-based rationale, ability to explore de novo folds | Speed, accuracy, ability to leverage evolutionary scale data |

Diagram 1: Classical protein folding workflow, illustrating the iterative, sampling-based approach.

The Deep Learning Revolution: Architectures and Breakthroughs

The application of deep learning to protein folding represents a fundamental shift from iterative simulation to direct prediction. This transition was marked by the critical assessment of protein structure prediction (CASP) competitions, where ML methods demonstrated unprecedented accuracy.

Key Architectural Innovations

The success of modern ML models stems from several key architectural innovations:

Attention Mechanisms and Transformers: AlphaFold2's core innovation was the Evoformer, a specialized transformer module that processes both the MSA and a pair representation of residues [21]. This allows the model to reason about long-range interactions and co-evolutionary signals simultaneously and globally, overcoming the limitations of earlier local and pairwise statistics like DCA.

End-to-End Differentiable Learning: Unlike classical pipelines with separate stages for feature generation, sampling, and scoring, models like AlphaFold2 are trained end-to-end [18] [17]. This means the entire network is optimized for the final task—producing accurate atomic coordinates—allowing it to learn complex, implicit mappings from sequence to structure that were previously manually engineered.

Equivariance: RoseTTAFold and other models incorporate principles of equivariance, ensuring that their predictions are transformationally invariant (e.g., rotating the input sequence should not change the predicted structure, only its orientation in space). This built-in geometric awareness is crucial for robust structure prediction [18].

The impact of these innovations is best illustrated by the dramatic performance leap in CASP. As shown in Diagram 2, AlphaFold2 achieved a score nearly three times that of the top-tier methods from just six years prior, a milestone considered to have largely solved the single-domain protein folding problem [21].

Diagram 2: Simplified AlphaFold2-style architecture highlighting the Evoformer and end-to-end learning.

From Structure Prediction to Protein Design

The revolution quickly expanded from prediction to design. The inverse problem—finding a sequence that folds into a desired structure—has been tackled by new deep learning models. Inverse folding methods, such as ProteinMPNN and ESM-IF, take a backbone structure as input and generate sequences that are likely to fold into it [18]. This has dramatically improved the success rate and efficiency of de novo protein design.

Furthermore, structure prediction models themselves have been repurposed as generative models. Tools like RFdiffusion use diffusion models, trained on the principles of AF2, to generate novel protein structures either unconditionally or conditioned on specific functional motifs, opening the door to designing proteins not seen in nature [18].

Table 2: Key ML Models in Protein Structure Prediction and Design

| Model | Primary Function | Core Innovation | Typical Use Case |

|---|---|---|---|

| AlphaFold2 [18] [21] | Structure Prediction | Evoformer, end-to-end learning | High-accuracy single-structure prediction from sequence |

| RoseTTAFold [18] | Structure Prediction | 3-track network (sequence, distance, 3D) | Accurate structure prediction, basis for design tools |

| ESMFold [18] [19] | Structure Prediction | Protein language model (single-sequence) | Fast prediction for orphan sequences, high-throughput |

| SimpleFold [20] | Structure Prediction | Flow-matching with standard transformers | Challenges need for complex, domain-specific architectures |

| ProteinMPNN [18] | Inverse Folding/Design | Message-Passing Neural Network | Robust sequence design for given backbones |

| RFdiffusion [18] | De Novo Design | Diffusion model based on RoseTTAFold | Generating novel protein structures and binders |

Experimental Protocols and Benchmarking

Benchmarking the performance of ML against classical methods requires rigorous experimental protocols. The community-wide standard is the CASP (Critical Assessment of protein Structure Prediction) experiment, a biennial blind trial where groups predict the structures of recently solved but unpublished proteins [21].

CASP Benchmarking Protocol

- Target Selection: Organizers release amino acid sequences of proteins whose structures have been experimentally determined but not published.

- Model Submission: Research teams worldwide submit their predicted 3D models within a set timeframe.

- Accuracy Assessment: Predictions are compared to the experimental ground truth using metrics like the Global Distance Test (GDT_TS), which measures the percentage of residues placed within a threshold distance of their correct position [21] [17].

The results from CASP13 (2018) and CASP14 (2020) quantitatively demonstrated ML's supremacy. As shown in Diagram 3, AlphaFold2's median GDT_TS score of ~92 for the hardest targets was comparable to experimental methods, far exceeding the best classical methods [21].

Diagram 3: Qualitative performance leap of ML models in CASP.

Protocol for Iterative Protein Optimization

Beyond static structure prediction, ML guides functional protein optimization. The DeepDE algorithm provides a protocol for directed evolution guided by deep learning [22]:

- Initial Library Construction: Generate a diverse library of ~1,000 protein mutants (e.g., triple mutants) and measure their fitness (e.g., fluorescence for GFP).

- Model Training: Train a supervised deep learning model on the sequence-fitness data from the initial library.

- In Silico Exploration: Use the trained model to virtually screen a vast space of triple mutants and select top candidates for synthesis.

- Experimental Validation: Synthesize and test the predicted high-performance mutants.

- Iteration: Incorporate the new experimental data into the training set and repeat steps 2-4.

This protocol, applied to GFP, achieved a 74.3-fold increase in activity in just four rounds, far surpassing conventional directed evolution [22]. It demonstrates how ML mitigates the "combinatorial explosion" of sequence space by learning a predictive fitness landscape from limited but smartly chosen data.

The Scientist's Toolkit: Research Reagent Solutions

The modern computational protein folding and design workflow relies on a suite of software tools and databases that function as essential "research reagents."

Table 3: Essential Research Reagents for ML-Driven Protein Science

| Item Name | Type | Function / Application | Access |

|---|---|---|---|

| AlphaFold2 [18] [21] | Software Model | High-accuracy protein structure prediction from sequence. | Open source; also via AlphaFold DB |

| RoseTTAFold [18] | Software Model | Accurate structure prediction; base network for design tools like RFdiffusion. | Open source |

| ProteinMPNN [18] | Software Model | Inverse folding for designing sequences that fold into a given backbone. | Open source |

| ESMFold [18] [19] | Software Model | Fast, single-sequence-based structure prediction using a protein language model. | Open source |

| AlphaFold DB [16] | Database | Repository of pre-computed AlphaFold2 predictions for over 200 million sequences. | Publicly accessible |

| PDB | Database | Primary repository for experimentally determined protein structures; used for training and validation. | Publicly accessible |

| FiveFold Framework [19] | Ensemble Method | Generates conformational ensembles by combining five algorithms; useful for disordered proteins and drug discovery. | Methodological framework |

Future Directions and Challenges

Despite its triumphs, the ML revolution continues to confront significant challenges. A primary limitation is the prediction of multiple conformational states. Most models, including AlphaFold2, predict a single, static structure, missing the intrinsic dynamics essential for the function of many proteins, such as enzymes and intrinsically disordered proteins (IDPs) [19]. Emerging ensemble methods like FiveFold, which aggregate predictions from multiple algorithms (AF2, RoseTTAFold, ESMFold, etc.), represent a promising approach to modeling conformational diversity and have shown utility in studying IDPs like alpha-synuclein [19].

Another frontier is the accurate modeling of protein complexes and interactions. While progress is being made, predicting the precise 3D structure of large multi-protein assemblies remains a active area of research. Finally, the "inverse folding" problem, while advanced by tools like ProteinMPNN, is not fully solved. Ensuring that designed sequences are highly designable (i.e., fold reliably into the target structure) and functional requires robust metrics and often iterative experimental validation [18]. The fusion of physics-based principles with deep learning models may hold the key to creating generative models that more accurately characterize the full energy landscape of proteins [18].

The Vast and Constrained Protein Functional Universe

The prediction of a protein's three-dimensional structure from its amino acid sequence stands as a fundamental challenge in structural biology, essential for understanding biological function and accelerating drug discovery. For decades, two distinct computational philosophies have addressed this problem: Evolutionary Algorithms (EA), which leverage physical principles and global optimization to explore conformational space, and Machine Learning (ML), which infers structural patterns from evolutionary information and known protein structures. The recent revolutionary success of deep learning models like AlphaFold2 has dramatically shifted the landscape, establishing a new benchmark for accuracy [9]. However, the core question remains: to what extent can purely physical, search-based methods (EA) compete with or complement data-driven, inference-based methods (ML) in providing accurate, generalizable, and functionally insightful protein models? This review provides a comprehensive benchmarking overview of these competing paradigms, dissecting their methodologies, accuracies, computational demands, and applicability to challenging protein classes like fold-switching proteins and complexes.

Table 1: Core Paradigms in Protein Structure Prediction

| Feature | Evolutionary Algorithm (EA) Approach | Machine Learning (ML) Approach |

|---|---|---|

| Core Philosophy | Physical search-based optimization | Data-driven pattern inference |

| Primary Input | Amino acid sequence & force fields | Amino acid sequence & Multiple Sequence Alignments (MSAs) |

| Representative Method | USPEX [23] | AlphaFold2 [9], ESMFold, OmegaFold [8] |

| Key Strength | Physical realism; potential for novel fold discovery | Unprecedented speed and accuracy for single domains |

| Key Limitation | Computationally intractable for large proteins; force field inaccuracy [23] | Limited by training data; struggles with multiple conformations [24] |

Methodological Deep Dive: Experimental Protocols

The Machine Learning (ML) Pipeline

Modern ML methods, such as AlphaFold2, employ a sophisticated end-to-end neural network architecture. The process begins with input preparation, where the primary amino acid sequence is used to generate a Multiple Sequence Alignment (MSA) and a set of homologous sequences [9]. These are fed into the Evoformer module, a novel neural network block that acts as the system's "engine." The Evoformer processes the inputs through attention-based mechanisms to reason about the spatial and evolutionary relationships between residues, producing a rich representation of the protein's potential structure [9]. This representation is then passed to the structure module, which introduces an explicit 3D structure. Starting from a trivial initial state, this module iteratively refines the atomic coordinates of all heavy atoms through a process called "recycling," resulting in a highly accurate protein structure with precise atomic details [9]. The network is trained end-to-end using a combination of structural losses, including those that emphasize the orientational correctness of residues.

Figure 1: The core workflow of an ML-based protein structure prediction pipeline, as exemplified by AlphaFold2.

The Evolutionary Algorithm (EA) Pipeline

In contrast, the Evolutionary Algorithm approach, as implemented in methods like USPEX, treats structure prediction as a global optimization problem. The algorithm starts with an initial population of random protein conformations. Each structure in this population is then relaxed using molecular mechanics force fields (e.g., Amber, CHARMM, or Oplsaal) via molecular dynamics engines like Tinker or Rosetta to locally minimize its energy [23]. The fitness of each individual in the population is evaluated based on its potential energy or scoring function. A selection process then favors the lowest-energy (fittest) structures to proceed to the next generation. To create new candidate structures, USPEX employs specialized variation operators that generate "offspring" through operations mimicking genetic evolution, such as crossover and mutation. This cycle of selection, variation, and fitness evaluation is repeated for numerous generations, allowing the population to evolve toward conformations with progressively lower energy, ideally converging on the native protein structure [23].

Figure 2: The iterative workflow of an Evolutionary Algorithm (EA) for protein structure prediction.

Benchmarking Performance: Quantitative Comparisons

Accuracy and Reliability Metrics

The performance of protein structure prediction methods is quantitatively assessed using several key metrics. The Global Distance Test (GDT) is a common measure, with a GDT_TS score above 90 generally considered competitive with experimental methods [8]. The predicted Local Distance Difference Test (pLDDT) is a per-residue confidence score where values above 90 indicate high accuracy [9]. For protein complexes, interface-specific metrics like ipTM (interface predicted Template Modeling score) and pDockQ (predicted DockQ score) are used, with higher scores indicating more reliable protein-protein interactions [25].

Table 2: Benchmarking of ML-based Protein Folding Tools [8]

| Protein Length | Method | Running Time (s) | PLDDT | GPU Memory |

|---|---|---|---|---|

| 50 | ESMFold | 1 | 0.84 | 16 GB |

| OmegaFold | 3.66 | 0.86 | 6 GB | |

| AlphaFold (ColabFold) | 45 | 0.89 | 10 GB | |

| 400 | ESMFold | 20 | 0.93 | 18 GB |

| OmegaFold | 110 | 0.76 | 10 GB | |

| AlphaFold (ColabFold) | 210 | 0.82 | 10 GB | |

| 800 | ESMFold | 125 | 0.66 | 20 GB |

| OmegaFold | 1425 | 0.53 | 11 GB | |

| AlphaFold (ColabFold) | 810 | 0.54 | 10 GB |

The benchmarking data reveals a critical trade-off between speed, accuracy, and resource consumption. For shorter sequences (e.g., 50 residues), OmegaFold provides an optimal balance of high accuracy (PLDDT 0.86) and resource efficiency [8]. For longer sequences (e.g., 400 residues), ESMFold demonstrates remarkable speed (20s) and high accuracy (PLDDT 0.93), while AlphaFold remains robust but computationally heavier. In direct performance tests, the EA method USPEX successfully found low-energy conformations for proteins up to 100 residues, with energies comparable to or lower than those generated by the established physical method Rosetta Abinitio [23]. However, the study concluded that current force fields remain a limiting factor for accurate blind prediction via EA.

Performance on Complexes and Alternative Folds

While ML methods excel at predicting single, stable domains, they exhibit significant limitations when proteins adopt multiple conformations. A systematic study found that AlphaFold2 predicts only one conformation for 92% of known dual-folding proteins [24]. This is a critical constraint in the "protein functional universe," as fold-switching proteins are involved in key biological processes like circadian rhythms and transcription regulation [24]. The underlying issue is that standard ML models are trained to output a single, static structure. In contrast, EA methods, by their nature, can sample a diverse landscape of conformations, potentially capturing metastable states. To address this, new methods like Alternative Contact Enhancement (ACE) have been developed, which uncover coevolutionary signatures for both conformations of fold-switching proteins, successfully revealing dual-fold coevolution in 56 out of 56 tested proteins [24].

For protein complexes, AlphaFold3 and ColabFold with templates perform similarly, both outperforming the template-free ColabFold. In assessments of heterodimeric complexes, AlphaFold3 produced the highest fraction of 'high-quality' models (39.8%) and the lowest fraction of 'incorrect' models (19.2%) [25]. The ipTM score and Model Confidence were identified as the most reliable metrics for evaluating these complex predictions [25].

Table 3: Key Resources for Protein Structure Prediction and Validation

| Resource / Reagent | Type | Function / Application |

|---|---|---|

| AlphaFold Database / AlphaFold3 | Software/Web Server | Predicts protein structures and complexes with high accuracy [9] [25] |

| ColabFold | Software | Accessible, cloud-based implementation of AlphaFold2 [25] |

| ESMFold & OmegaFold | Software | Alternative ML tools offering speed/resource advantages [8] |

| USPEX | Software | Evolutionary Algorithm for ab initio protein structure prediction [23] |

| GREMLIN | Software | Infers co-evolved amino acid contacts from MSAs for fold-switching analysis [24] |

| Rosetta (REF2015) | Software Suite | Force field & algorithms for structure prediction & design; used for relaxation & scoring [23] |

| Tinker (Amber/CHARMM) | Software Suite | Molecular dynamics package for structure relaxation & energy calculation [23] |

| GPCRmd, ATLAS | Database | Specialized MD databases for validating dynamics of specific protein families [26] |

| DockQ, pDockQ | Metric | Standardized scores for evaluating quality of protein-protein interfaces [25] |

The benchmarking of Evolutionary Algorithms and Machine Learning for protein folding reveals a nuanced landscape. ML methods, particularly AlphaFold2 and its successors, have achieved unprecedented accuracy for predicting single-domain protein structures, largely solving this aspect of the problem [9] [27]. However, the functional universe of proteins is vast and constrained not by single states but by dynamic conformational landscapes. Here, current ML models show a significant blind spot, often failing to predict functionally critical alternative folds and dynamic conformational changes [24] [26].

EA methods offer a fundamentally different approach based on physical principles and conformational search, proving capable of finding deep energy minima and potentially capturing structural diversity [23]. Their performance, however, is currently limited by computational cost for large proteins and the accuracy of existing force fields. The future of the field lies not in a winner-takes-all outcome but in the integration of both paradigms. ML models can provide powerful starting points and energy surrogates, while EA and physical simulations can be used to refine structures and explore conformational ensembles. Overcoming current limitations will require developing next-generation models that natively predict ensembles, better integrating biophysical constraints into ML, and creating richer training datasets that capture structural diversity, ultimately unlocking a deeper understanding of the vast and constrained protein functional universe.

Protein folding represents one of the most fundamental challenges in computational biology, standing at the intersection of physics, biology, and computer science. The process by which a linear amino acid chain spontaneously folds into a precise three-dimensional structure remains only partially understood, despite decades of research. Two conceptual frameworks—Evolutionary Algorithms (EA) and Machine Learning (ML)—offer distinct approaches to navigating this complex problem space. This technical guide examines the core challenges of combinatorial explosion and evolutionary myopia that constrain both methodologies, providing researchers with experimental protocols, analytical frameworks, and benchmarking data essential for advancing protein folding research.

The protein folding problem is intrinsically linked to astronomical combinatorial complexity. For a typical 100-amino acid protein, the theoretical sequence space encompasses 20^100 possible configurations—a number that exceeds the count of atoms in the observable universe [28]. This combinatorial explosion presents an insurmountable computational barrier for exhaustive search algorithms. Meanwhile, evolutionary myopia describes the limited predictive generalizability of models trained on narrow biological contexts, failing to capture the full diversity of protein structural principles across the tree of life.

Combinatorial Explosion in Protein Folding

The Fundamental Combinatorial Challenge

Combinatorial explosion manifests throughout protein structure prediction and design. The astronomical size of protein sequence spaces makes comprehensive exploration computationally intractable. As Levitt noted in his seminal review, protein folding's "endgame" involves the ordering of amino acid side-chains into a well-defined, closely packed configuration, a process hampered by combinatorial explosion in the number of possible configurations [29]. This challenge is not merely theoretical; it directly impacts the feasibility of computational protein design and structure prediction.

Recent research demonstrates that the genetic architecture of protein stability is remarkably simple despite this combinatorial complexity. Energy models reveal that protein genotypes can be accurately predicted using additive free energy changes with only a small contribution from pairwise energetic couplings [28]. This simplification enables navigation of high-dimensional sequence spaces that would otherwise be computationally prohibitive.

Table 1: Scale of Combinatorial Challenges in Protein Folding

| Aspect | Combinatorial Complexity | Computational Implications |

|---|---|---|

| Sequence Space for 100-aa Protein | 20^100 possible sequences | Exhaustive search impossible; requires heuristic methods |

| Side-chain Packing Configurations | Exponential growth with protein size | Endgame folding requires sophisticated search algorithms [29] |

| Mutational Combinations | 2^34 ≈ 1.7×10^10 for 34 mutation sites | Experimental exploration of high-order mutants extremely challenging [28] |

| Functional Sequence Fraction | <0.2% of 10-aa variants folded (additive model) | Random sampling yields mostly non-functional proteins [28] |

Thermodynamic Frameworks and Dimensional Hardness

A novel thermodynamic theory of intelligence frames combinatorial explosion as the central computational bottleneck in high-dimensional systems. This framework introduces a dimensional hardness parameter (Hd = Γ·τ / C(ρ)·log₂ Deff), where Γ represents entropy flow, τ is the coherence timescale, C(ρ) is the system's coherence, and Deff is the effective dimensionality of the configuration space [30]. Systems maintain structure and adaptivity when Hd <1 but collapse under combinatorial explosion when Hd >1.

This theoretical model has practical implications for protein folding simulations. All-atom molecular dynamics simulations face exponential growth in computational requirements as protein size increases. Recent simulations of protein misfolding reveal that entanglement status changes—where protein sections loop around each other incorrectly—represent a persistent class of misfolding that evades cellular quality control systems [31]. These misfolds are particularly stable and difficult to correct, requiring backtracking and unfolding several steps to correct entanglement status.

Diagram 1: Protein Folding and Misfolding Pathways

Evolutionary Myopia in Biological Systems

Conceptual Framework and Definition

Evolutionary myopia describes the phenomenon where biological systems optimized for immediate fitness advantages develop limitations in long-term adaptability. In protein science, this manifests as limited generalizability of structural principles across phylogenetic boundaries and path dependencies in evolutionary trajectories that constrain future adaptive potential.

This concept finds parallels in human vision research, where myopia development involves complex gene-environment interactions shaped by evolutionary history. Studies of myopia-related genes have detected signatures of adaptation in vision and light perception pathways, with evidence that local adaptation to different light environments during human migration diversified the genetic basis of myopia [32]. This evolutionary specialization potentially contributes to discrepancies in myopia prevalence across modern populations.

Experimental Evidence from Multi-Omics Studies

Integrative transcriptome and proteome analyses of lens-induced myopia in mouse models reveal the molecular basis of this evolutionary mismatch. Researchers identified 175 differentially expressed genes and 646 differentially expressed proteins between treated and control eyes, with insulin-like growth factor 2 mRNA binding protein 1 (Igf2bp1) emerging as a convincing biomarker [33]. The low correlation between transcriptomic and proteomic data highlights the complex regulatory layers between genetic predisposition and phenotypic expression.

Proteomic profiling of form-deprivation myopia in guinea pigs further elucidated 348 differentially expressed proteins in the vitreous body, with calcium signaling pathways playing a critical role in mediating eye changes [34]. These findings demonstrate how evolutionary adaptations to ancient light environments manifest as vulnerabilities under modern conditions.

Table 2: Evolutionary Myopia Signatures in Protein-Related Systems

| System | Evolutionary Adaptation | Modern Vulnerability | Molecular Mechanism |

|---|---|---|---|

| Human Vision | Rhodopsin molecular diversity for different light environments [32] | High myopia prevalence in altered light conditions | Phototransduction pathway genetic variants |

| Protein Fold Stability | Additive energy models with sparse couplings [28] | Misfolding diseases in aging populations | Entanglement errors evading quality control [31] |

| Cellular Quality Control | Efficient degradation of most misfolded proteins | Persistent entanglement misfolds | Buried misfolds invisible to surveillance [31] |

Experimental Methodologies and Benchmarks

High-Dimensional Sequence Space Sampling

Confronting combinatorial explosion requires sophisticated experimental designs that enrich for functional protein sequences. Methodologies for sampling high-dimensional sequence spaces include:

Library Design and Synthesis: Researchers constructed a library containing all combinations of 34 selected mutants (2^34 ≈ 1.7×10^10 genotypes) using a heuristic technique that enriches for conserved fold and function. For each possible starting single amino acid substitution, selections iteratively identified further substitutions that simultaneously maximize the resulting combinatorial mutant's predicted abundance and binding to an interaction partner [28].

AbundancePCA Measurement: Cellular abundance of sampled genotypes was quantified using highly validated pooled selection and abundance protein fragment complementation assays. This approach enabled triplicate abundance measurements for 129,320 variants (0.0007% of sequence space) with high reproducibility (Pearson's r > 0.91) [28].

Energy Model Inference: Additive free energy models were trained on abundance and ligand binding selections quantifying effects of single and double amino acid mutants. Model parameters included Gibbs free energy terms for wild type (ΔGf) and single substitutions (ΔΔGf), with a two-parameter transformation relating folded fraction to AbundancePCA fitness [28].

Diagram 2: High-Throughput Protein Stability Mapping

Classification Benchmarking Frameworks

Large-scale benchmark studies assessing tools for classifying protein-coding and non-coding transcripts reveal systematic challenges in biological sequence analysis. A comprehensive evaluation of 24 tools producing >55 models on 135 datasets identified key bottlenecks [35]:

- Lack of standardized training sets and reliance on homogeneous training data

- Gradual changes in annotated data and absence of gold standards

- Lower performance of end-to-end deep learning models compared to hybrid approaches

- Presence of false positives and negatives in benchmark datasets

These limitations directly impact the assessment of EA versus ML approaches for protein folding. Benchmarking studies must account for dataset bias, with performance metrics contextualized against training data composition and evolutionary distance between training and test cases.

EA vs ML: Comparative Analysis for Protein Folding

Performance Benchmarks and Metrics

The revolutionary success of AlphaFold2 since its 2020 debut demonstrates ML's transformative potential for protein structure prediction [36] [37]. However, evolutionary algorithms maintain distinct advantages for specific protein design challenges. Benchmarking reveals complementary strengths:

Generalization Capability: ML models like AlphaFold achieve remarkable accuracy when predicting structures homologous to training examples but face challenges with entirely novel folds. Evolutionary algorithms employing energy-based scoring functions can explore genuinely novel regions of protein space, albeit at higher computational cost.

Interpretability Trade-offs: EA approaches typically leverage physically interpretable energy models with additive free energy changes and sparse pairwise couplings [28]. In contrast, deep neural networks constitute extremely complicated models with millions of fitted parameters that function as "black boxes" [28].

Data Efficiency: Evolutionary algorithms can navigate high-dimensional sequence spaces with relatively sparse experimental data, as demonstrated by energy models explaining half the fitness variance in combinatorial multi-mutants using only single and double mutant training data [28].

Table 3: EA vs ML Benchmarking for Protein Folding Challenges

| Metric | Evolutionary Algorithms | Machine Learning | Representative Tools |

|---|---|---|---|

| Combinatorial Search | Energy-guided heuristic search | Pattern recognition in known folds | Rosetta, AlphaFold [37] |

| Novel Fold Design | Strong (energy-based exploration) | Limited by training data | – |

| Computational Efficiency | Lower (requires many evaluations) | Higher (after training) | – |

| Experimental Validation Success | 2-8% of 5-aa variants folded [28] | High for structure prediction | AlphaFold (CASP14 winner) [36] |

| Handling Evolutionary Myopia | Physical principles generalize | Limited by training data diversity | – |

Integrated Approaches and Future Directions

The most promising research directions leverage hybrid methodologies that combine physical principles with data-driven pattern recognition. Several integrative strategies show particular promise:

Energy-Based Priors in ML Architectures: Incorporating physicochemical constraints as inductive biases in neural network architectures, combining EA's interpretability with ML's pattern recognition power.

Transfer Learning Across Evolutionary Distance: Using EA-generated synthetic protein families to augment training data for ML models, addressing evolutionary myopia by expanding structural diversity beyond naturally occurring proteins.

Active Learning Frameworks: Iteratively cycling between ML-based predictions and EA-guided experimental validation to rapidly explore high-value regions of sequence space while minimizing experimental burden.

Research Reagent Solutions

Table 4: Essential Research Reagents and Computational Tools

| Reagent/Tool | Function | Application Context |

|---|---|---|

| AbundancePCA | Pooled selection and abundance measurement | High-throughput protein stability quantification [28] |

| 3D-printed lens mounts | Controlled visual form deprivation | Murine myopia induction for evolutionary studies [33] |

| AlphaFold Database | Protein structure predictions | ML-based structure inference benchmark [36] [37] |

| All-atom molecular dynamics | Atomic-scale folding simulation | Protein misfolding mechanism studies [31] |

| RNAChallenge dataset | Standardized classification benchmark | Tool performance evaluation [35] |

| HPLC-EC detection | Neurotransmitter quantification | Dopamine level measurement in myopia studies [33] |

Combinatorial explosion and evolutionary myopia represent fundamental challenges that constrain both evolutionary algorithms and machine learning approaches to protein folding. Combinatorial explosion necessitates sophisticated search strategies and energy-based heuristics to navigate astronomically large sequence spaces. Evolutionary myopia manifests as limited generalizability across evolutionary distances, constraining the predictive power of models trained on narrow biological contexts.

Benchmarking reveals complementary strengths: EA approaches provide physically interpretable models and better novel fold exploration, while ML delivers unprecedented accuracy for structure prediction within its training domain. The most promising research directions integrate these methodologies, combining physical principles with data-driven pattern recognition to overcome both combinatorial explosion and evolutionary myopia.

Future progress will depend on continued development of experimental methods for high-throughput stability mapping, standardized benchmarking datasets that account for evolutionary diversity, and hybrid algorithms that leverage the respective strengths of both evolutionary computation and deep learning. Such integrated approaches offer the greatest potential for unlocking protein folding's remaining mysteries and harnessing this knowledge for therapeutic applications.

From Theory to Practice: Key Algorithms and Transformative Applications in Biopharma

The prevailing paradigm in structural biology has long been that a single amino acid sequence encodes for one stable three-dimensional structure. However, fold-switching proteins challenge this assumption by adopting distinct secondary and tertiary structures, often in response to cellular stimuli [38]. These structural remodelling events play critical biological roles across all kingdoms of life, from regulating the cyanobacterial circadian clock to suppressing human innate immunity during SARS-CoV-2 infection [39] [38]. Despite their biological importance, state-of-the-art deep learning methods like AlphaFold2 systematically fail to predict fold switching, accurately predicting only one conformation for 92% of known dual-folding proteins [39]. This limitation stems from a fundamental challenge: these methods infer structure from evolutionary conservation patterns but appear to miss the coevolutionary signatures specific to alternative folds.

This technical guide explores how Evolutionary Analysis (EA) approaches, specifically Markov Random Fields (MRFs) and the GREMLIN algorithm, address this gap through the novel Alternative Contact Enhancement (ACE) methodology. Unlike machine learning methods that often predict single static structures, ACE successfully revealed coevolution of amino acid pairs corresponding to both conformations in 56 out of 56 tested fold-switching proteins from distinct families [39]. By leveraging evolutionary principles rather than pattern recognition alone, EA provides a powerful complementary approach to ML for predicting protein conformational diversity.

Theoretical Foundation: Evolutionary Analysis for Fold Switching

The Coevolutionary Principle in Protein Structure

The foundation of evolutionary analysis for structure prediction rests on the observation that amino acid pairs that physically interact within a protein structure tend to coevolve over natural selection [40]. When a mutation occurs at one position, compensatory mutations often arise at contacting positions to maintain structural and functional integrity. These evolutionary couplings can be detected through statistical analysis of multiple sequence alignments (MSAs) and used to infer which residues are likely in direct physical contact [39] [40].

Modern implementations use Markov Random Fields (MRFs) to distinguish direct from indirect couplings, addressing the challenge that residues can appear correlated simply because both interact with a third residue [39]. The GREMLIN (Generative Regularized ModeLs of proteINs) algorithm implements an MRF-based approach with several advantages for coevolutionary analysis: it converges to a global minimum as MSA depth increases, generates reasonable predictions from relatively shallow MSAs, and accounts for noncausal correlations through its MRF formalism [39].

Why ML Fails Where EA Succeeds for Dual-Fold Proteins

Machine learning methods like AlphaFold2 rely heavily on the same coevolutionary principle but make different structural assumptions. These systems are trained on static protein structures from the PDB and learn to predict the most thermodynamically stable conformation [41] [42]. For fold-switching proteins, this often results in prediction of only one fold—typically the one with stronger coevolutionary signatures in deep multiple sequence alignments [39].

The key insight behind the ACE approach is that coevolutionary signatures for alternative folds are not absent but are often masked in standard analyses. Single-fold variants within protein superfamilies can dominate the evolutionary signal, drowning out the subtler signatures of fold switching [39]. By strategically analyzing sequence subfamilies with more fold-switching variants, ACE successfully uncovers these hidden coevolutionary patterns.

The ACE Methodology: A Technical Deep Dive

The Alternative Contact Enhancement (ACE) approach employs a sophisticated workflow designed to unmask coevolutionary signals for alternative folds that are typically missed by conventional analyses.

The diagram below illustrates the comprehensive ACE workflow for detecting dual-fold coevolution:

Core Components and Procedures

MSA Generation and Strategic Pruning

The ACE methodology begins by generating a deep multiple sequence alignment using the query sequence known to adopt two distinct folds. Unlike standard approaches that use the deepest possible MSA, ACE strategically prunes this alignment to create successively shallower MSAs with sequences increasingly identical to the query [39]. This systematic pruning creates nested MSAs ranging from diverse superfamilies to specific subfamilies, intentionally unmasking coevolutionary couplings for alternative conformations that are strengthened in specific evolutionary contexts [39].

Dual-Algorithm Coevolutionary Analysis

Each MSA in the nested hierarchy undergoes parallel coevolutionary analysis using two complementary methods:

- GREMLIN: An MRF-based approach that identifies coevolved amino acid pairs through maximum entropy modeling and regularized inference [39]

- MSA Transformer: A language model that uses attention mechanisms to analyze evolutionary patterns both within MSA columns and across individual sequences [39]

This dual-algorithm approach leverages the complementary strengths of both methodologies, with GREMLIN offering robust performance across MSA depths and MSA Transformer sometimes providing superior accuracy for single-fold proteins [39].

Contact Prediction Integration and Filtering

Predictions from all MSAs and both algorithms are combined and superimposed on a single contact map. The contact map uses an asymmetric design to maximize information content, separately displaying contacts unique to each fold [39]. Finally, density-based scanning filters remove noisy predictions while preserving legitimate contacts corresponding to both folds [39].

Contact Categorization Framework

Predicted contacts are systematically categorized into four distinct types:

- Dominant Fold Contacts: Unique contacts corresponding to the experimentally determined structure with greatest overlap to predictions from the deepest MSA

- Alternative Fold Contacts: Unique contacts corresponding to the other experimentally determined structure

- Common Contacts: Predicted contacts overlapping experimentally determined contacts shared by both folds

- Unobserved Contacts: Predicted contacts not overlapping any experimentally determined contacts, which may represent folding intermediates or noise [39]

Table 1: Contact Categorization in ACE Analysis

| Category | Description | Structural Significance |

|---|---|---|

| Dominant Fold Contacts | Unique to the conformation best predicted by deep MSAs | Often but not always the lowest energy state (33% of cases) |

| Alternative Fold Contacts | Unique to the other experimentally determined conformation | Functionally critical alternative state |

| Common Contacts | Shared between both experimentally determined structures | Structural core preserved during fold switching |

| Unobserved Contacts | Not matching any experimental contacts | Potential folding intermediates or prediction errors |

Quantitative Performance Assessment

Enhanced Prediction of Alternative Fold Contacts

The ACE methodology demonstrates substantial improvements over standard coevolutionary analysis approaches that use only deep superfamily MSAs. When applied to 56 fold-switching proteins with sufficiently deep MSAs, ACE achieved mean and median increases of 201% and 187%, respectively, in correctly predicted amino acid contacts uniquely corresponding to alternative conformations [39].

Table 2: Performance Comparison of ACE vs. Standard Approach

| Metric | Standard Approach | ACE Methodology | Improvement |

|---|---|---|---|

| Alternative Fold Contact Prediction | Baseline | 201% mean increase | Substantial enhancement |

| Proteins with Dual-Fold Coevolution | Not detected | 56/56 proteins | 100% success in test set |

| False Positive Rate | Not specified | 0/181 in blind prediction | High specificity |

Validation and Extension to Blind Prediction

The dual-fold coevolution discovered through ACE provides evolutionary evidence that fold-switching has been preserved by natural selection, implying these functionalities provide adaptive advantages [39]. Researchers successfully leveraged ACE-derived contacts to predict two experimentally consistent conformations of a candidate protein with unsolved structure and developed a blind prediction pipeline that correctly identified 13 out of 56 fold-switching proteins (23%) with no false positives (0/181) [39].

Experimental Protocol for ACE Implementation

Step-by-Step Methodology

For researchers seeking to implement the ACE approach, the following detailed protocol provides a practical roadmap:

Input Preparation

- Obtain the amino acid sequence of the protein of interest

- Collect experimentally determined structures for both conformations (if available for validation)

- Format structures with consistent residue numbering

MSA Generation and Processing

Coevolutionary Analysis

- Run GREMLIN analysis on each MSA with default parameters

- Run MSA Transformer on each MSA with attention to both row and column patterns

- Extract top-scoring contacts from each analysis based on coupling scores

Contact Integration and Mapping

- Combine all predicted contacts into unified contact map

- Use asymmetric representation to separate fold-specific contacts

- Map predictions to experimental structures using 8Å heavy atom distance cutoff [39]

Density-Based Filtering

- Implement scanning window approach to identify high-density contact regions

- Filter out low-density predictions likely to be noise

- Retain contacts forming spatially proximate clusters

Validation and Classification

- Categorize contacts as dominant, alternative, common, or unobserved

- Quantify overlap with experimental structures

- Calculate performance metrics for each fold separately

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for ACE Implementation

| Resource | Type | Function in ACE Protocol | Availability |

|---|---|---|---|

| GREMLIN | Algorithm | MRF-based coevolutionary analysis | Publicly available |

| MSA Transformer | Algorithm | Language model-based contact prediction | Publicly available |

| MMSeq2 | Software | Rapid MSA generation | Publicly available |

| ColabFold | Platform | Integrated MSA generation and structure prediction | Publicly available [40] |

| Protein Data Bank | Database | Experimental structures for validation | Publicly available |

| AlphaFold Database | Database | Structural predictions for comparison | Publicly available [40] |

Comparative Advantages in the EA vs. ML Landscape

When benchmarking Evolutionary Analysis against Machine Learning approaches for protein structure prediction, each methodology demonstrates distinct strengths and limitations:

EA approaches, particularly the ACE methodology, excel where ML methods face fundamental challenges:

- Detection of multiple native states from single sequences [39]

- Identification of coevolutionary patterns specific to subfamilies [39]

- Revealing evolutionary selection for dual-fold functionality [39]

- Blind prediction of fold-switching capability without prior structural knowledge [39]

ML approaches maintain advantages in:

- Speed and scalability for proteome-wide prediction [40]

- Accuracy for single-fold globular proteins [19]

- Accessibility through user-friendly interfaces [42]

- Integration of multiple data types through end-to-end architectures [40]

The most powerful future framework likely combines strengths of both approaches, using EA principles to guide ML models beyond single-structure predictions toward conformational ensembles and dynamic landscapes [42] [19].

Future Directions and Integration Opportunities

The demonstrated success of ACE for identifying dual-fold coevolution suggests several promising research directions:

- Integration with ensemble prediction methods like FiveFold, which combines predictions from five algorithms to model conformational diversity [19]

- Hybrid EA-ML pipelines that use ACE-derived contacts as constraints for deep learning models

- Extension to condition-dependent folding by analyzing MSAs from specific environmental contexts

- Application to drug discovery for targeting alternative conformations in therapeutic development [19]

As the field progresses, the integration of evolutionary analysis with machine learning represents the most promising path toward comprehensively understanding and predicting protein structural diversity, moving beyond single static structures to capture the dynamic reality of proteins in their native biological environments [41].

The prediction of protein three-dimensional structures from amino acid sequences represents a monumental challenge in computational biology. For decades, this "protein folding problem" remained largely unsolved, bottlenecking advancements in fields ranging from drug discovery to fundamental biology. The landscape transformed dramatically with the advent of sophisticated machine learning (ML) methods, particularly deep learning architectures that have achieved unprecedented accuracy. These ML approaches now stand in contrast to earlier methodologies that heavily relied on evolutionary analysis (EA) through multiple sequence alignments (MSAs) and physical energy functions.