Benchmarking Selection Protocols for Fidelity and Efficiency: A Strategic Framework for Biomedical Research

This article provides a comprehensive framework for selecting and implementing benchmarking protocols to ensure both high fidelity and operational efficiency in biomedical research, with a special focus on drug discovery.

Benchmarking Selection Protocols for Fidelity and Efficiency: A Strategic Framework for Biomedical Research

Abstract

This article provides a comprehensive framework for selecting and implementing benchmarking protocols to ensure both high fidelity and operational efficiency in biomedical research, with a special focus on drug discovery. It addresses the critical need for robust evaluation standards amidst a proliferation of computational methods and data sources. The content guides researchers and drug development professionals through foundational principles, practical methodological applications, common pitfalls with optimization strategies, and rigorous validation techniques. By synthesizing current research and emerging best practices, this article serves as an essential guide for making informed, evidence-based decisions in computational benchmarking to enhance the reliability and impact of scientific findings.

The Critical Role of Fidelity and Efficiency in Modern Benchmarking

In the scientific landscape, fidelity is defined as the extent to which an intervention is delivered as intended by the protocol developers [1]. This concept serves as the foundational bridge between research design and meaningful outcomes, ensuring that the independent variable in any experiment is present at sufficient strength to produce reliable effects [2]. The functional relationship between fidelity and outcomes is not merely theoretical; research demonstrates that fidelity assessments correlating at 0.70 or better with outcomes explain 50% or more of the variance in results, making fidelity measurement essential for attributing outcomes to specific interventions [2].

The updated SPIRIT 2025 statement, an evidence-based guideline for randomized trial protocols, emphasizes protocol completeness as the foundation for study planning, conduct, and reporting [3]. This guidance addresses historical deficiencies in trial protocols where key elements like adverse event measurement, data analysis methods, and dissemination policies were often inadequately described, leading to avoidable protocol amendments and inconsistent trial conduct [3]. Within this framework, fidelity monitoring provides the necessary mechanism to ensure that protocols are not merely documented but faithfully executed throughout the research process.

Quantitative Landscape: Current Fidelity Monitoring Practices and Gaps

Table 1: Fidelity Monitoring Practices Across Research Domains

| Research Domain | Monitoring Methods Used | Fidelity Assessment Frequency | Key Findings |

|---|---|---|---|

| Community Behavioral Health [1] | Self-report (most frequent), chart review, direct observation (least frequent) | Varied; ongoing monitoring uncommon | Only 2 of 10 trials had prespecified guidance for adherence/fidelity |

| Yoga Interventions for CIPN [4] | Instructor compliance checks, participant home practice logs, video recording assessment | 50% of sessions reviewed in cited trial | 100% instructor adherence to protocol; 63% participant adherence to home practice |

| Pragmatic Pain Management Trials [5] | Electronic health records (primary source), study team review, DSMB oversight | Regular monitoring; 8 of 10 trials tracked adherence | Most data used for engagement monitoring; half provided feedback/training |

| Implementation Science Trials [6] | Planned (19%), Actual (17%) | Not consistently reported | Critical gap in fidelity assessment for implementation strategies |

Table 2: Fidelity-Outcome Relationships in Experimental Research

| Intervention Type | Fidelity-Outcome Correlation | Variance Explained | Clinical Impact |

|---|---|---|---|

| Functional Family Therapy [2] | -0.61 | 36% | 8% recidivism (high fidelity) vs. 34% (low fidelity) |

| Cognitive Behavioral Therapy for Insomnia [2] | 0.30 | ~10% | Moderate association between fidelity and outcomes |

| Water/Sanitation/Handwashing/Nutrition Interventions [2] | 86%-93% (fidelity scores) | Not specified | High fidelity enabled valid outcome attribution |

The quantitative evidence reveals significant disparities in fidelity monitoring practices across research domains. A survey of behavioral health agencies found that while most monitor what practices are delivered, they rely primarily on self-report and chart review rather than more rigorous methods like direct observation or session recordings [1]. This approach contrasts with the gold standard in many evidence-based practices where direct observation of sessions by trained personnel is considered optimal despite resource-related barriers [1].

In pharmaceutical and medical intervention research, the SPIRIT 2025 statement strengthens protocol reporting requirements with particular emphasis on harm assessment and intervention description [3]. The guidance incorporates key items from complementary reporting guidelines including CONSORT Harms 2022, SPIRIT-Outcomes 2022, and TIDieR to create a more comprehensive protocol framework [3]. This updated standard recognizes that without rigorous fidelity monitoring, even well-designed protocols cannot ensure intervention integrity throughout the trial lifecycle.

Experimental Protocols: Methodologies for Fidelity Assessment

Yoga Intervention Fidelity Protocol

A phase III randomized clinical trial addressing chemotherapy-induced peripheral neuropathy among cancer survivors developed a systematic approach to fidelity monitoring for yoga therapy [4]. The methodology included:

Instructor Qualification Standards: All yoga instructors possessed a minimum of 500 hours in Yoga Alliance accreditation hours, Yoga Alliance Continuing Education Provider credentials, and certification through the International Association of Yoga Therapists (C-IAYT). Additionally, instructors had specific training in yoga for cancer through the yoga4cancer program and participated in pilot studies to develop the study protocol [4].

Structured Fidelity Checklist: Researchers developed a 19-item fidelity checklist adapting validated instruments that assessed both adherence to class structure and instructor skill. The checklist included dichotomous scoring (yes/no) for adherence to specific session components (seated check-in, supine gentle movements, seated dandasana, etc.) and Likert-scale ratings (1-3) for instructor skills including active engagement of all participants, offering appropriate modifications, respectful communication, and problem-solving facilitation [4].

Assessment Methodology: Two researchers independently assessed 50% of video recordings of yoga instructor-led training sessions using the fidelity checklist. The protocol established target thresholds of >80% for adherence to class structure and >2.5 (on a 3-point scale) for instructor skills [4].

Pragmatic Trial Monitoring Framework

The Pain Management Collaboratory developed recommendations for monitoring adherence and fidelity in pragmatic trials based on experience across 10 pragmatic pain management trials [5]. The methodology emphasized:

Unobtrusive Measurement: Following PRECIS-2 criteria for pragmatic trials, the framework prioritized unobtrusive measurement of participant adherence and practitioner fidelity using electronic health records as the primary data source [5].

Two-Stage Monitoring Process: The protocol implemented a two-stage process with predetermined thresholds for intervening and triggers for conducting formal futility analysis if adherence and fidelity standards were not maintained. This approach balanced pragmatic design with protection of trial integrity [5].

Independent Oversight: The framework mandated that adherence and fidelity data be reviewed by both study teams and independent data and safety monitoring boards (DSMBs), with fidelity data specifically used for feedback and training rather than DSMB review [5].

Telehealth Fidelity Enhancement Protocol

The Behavioral Nudges to Enhance Fidelity in Telehealth Sessions (BENEFITS) study protocol developed an innovative approach to improving cognitive behavioral therapy fidelity through behavioral economics strategies embedded in telehealth platforms [7]. The methodology included:

Tele-BE Platform Development: Researchers created a telehealth infrastructure designed to nudge and incentivize clinicians to use core structural components of CBT through behavioral economics strategies including default settings, reminders, and social reference points [7].

Rapid-Cycle Prototyping: The development process involved iterative refinement of the Tele-BE platform using rapid-cycle prototyping to optimize user experience and fine-tune behavioral economics strategies with input from clinicians and supervisors [7].

Randomized Evaluation: The protocol included a 12-week open trial involving 30 community mental health clinicians randomized to either Tele-BE or telehealth as usual, with each clinician delivering treatment to 2 patients (total 60 patient participants). All sessions were recorded and coded to assess CBT fidelity as the primary outcome [7].

Pathway Analysis: The Fidelity-Outcome Relationship

The pathway diagram illustrates the critical role of fidelity monitoring in maintaining the integrity between research protocols and meaningful outcomes. As shown in the pathway, systematic fidelity monitoring ensures that essential components of an intervention are present at sufficient strength to produce reliable outcomes, while simultaneously preventing program drift that leads to unclear outcome attribution [2].

The functional relationship between fidelity and outcomes represents a fundamental scientific principle - outcomes cannot be reliably attributed to interventions that are not delivered as intended [2]. This relationship was demonstrated in a Functional Family Therapy study where fidelity scores correlated with youth recidivism at -0.61, explaining approximately 36% of variability in outcomes, with the top 20% of fidelity scores associated with 8% recidivism compared to 34% for the bottom 20% [2].

Implementation Framework: From Protocol to Practice

The implementation pathway demonstrates how fidelity functions as the critical link between implementation processes and patient outcomes. In this framework, implementation strategies (training, support, monitoring, and feedback) influence practitioner behavior, which determines innovation fidelity, ultimately driving patient outcomes [2]. This nested relationship positions innovation fidelity as both an implementation dependent variable and an innovation independent variable [2].

Current reporting of implementation strategies shows significant gaps, with only 19% of implementation trials reporting planned fidelity assessment and 17% reporting actual fidelity [6]. This reporting deficiency hampers replication, adaptation, and scaling of effective interventions across diverse healthcare settings [6]. The Template for Intervention Description and Replication (TIDieR) checklist provides a comprehensive framework for reporting implementation strategies, yet critical elements like tailoring (28%), modifications (10%), and fidelity assessment remain inconsistently documented [6].

Table 3: Essential Resources for Fidelity Research

| Resource Category | Specific Tools/Methods | Primary Function | Application Context |

|---|---|---|---|

| Reporting Guidelines | SPIRIT 2025 Statement [3] | Protocol development standard | Randomized trial protocols |

| TIDieR Checklist [6] | Implementation strategy reporting | Implementation science | |

| CLARIFY Checklist [4] | Yoga intervention standardization | Mind-body intervention research | |

| Fidelity Assessment Methods | Direct Observation [1] | Gold standard fidelity assessment | Behavioral interventions |

| Behavioral Rehearsal/Role-Play [1] | Alternative to direct observation | Clinical skills assessment | |

| Electronic Health Records [5] | Unobtrusive adherence monitoring | Pragmatic trials | |

| Video Recording Assessment [4] | Structured fidelity coding | Therapist-delivered interventions | |

| Novel Approaches | Behavioral Economics Nudges [7] | Telehealth fidelity enhancement | Digital health interventions |

| Two-Stage Monitoring [5] | Threshold-based intervention | Pragmatic trial management |

The researcher's toolkit for fidelity assessment encompasses standardized reporting guidelines, methodological approaches, and innovative technologies. The SPIRIT 2025 statement provides an evidence-based checklist of 34 minimum items for trial protocols, with new emphasis on open science, harm assessment, and patient involvement [3]. Complementary reporting tools like the TIDieR checklist ensure implementation strategies are described with sufficient detail for replication [6].

Methodologically, researchers should select fidelity assessment approaches based on intervention complexity, resource constraints, and validity requirements. While direct observation remains the gold standard for many behavioral interventions, technological innovations like video recording assessment and electronic health record monitoring provide scalable alternatives [4] [5]. Emerging approaches such as behavioral economics nudges embedded in telehealth platforms represent promising avenues for improving fidelity without increasing practitioner burden [7].

Fidelity measurement transcends procedural formality to represent a fundamental scientific requirement for validating the relationship between interventions and outcomes. The evidence consistently demonstrates that fidelity-assessment correlation with outcomes of 0.70 or better explains 50% or more of outcome variance, providing compelling justification for rigorous fidelity monitoring [2]. As clinical research evolves toward more complex interventions and pragmatic designs, the development of scalable fidelity-assessment methods that balance scientific rigor with practical feasibility becomes increasingly essential.

The research community must prioritize fidelity as both a scientific imperative and an ethical responsibility. Widespread adoption of structured reporting guidelines like SPIRIT 2025 and TIDieR, combined with innovative fidelity monitoring approaches, will strengthen evidence quality across the research continuum [3] [6]. Through this commitment to fidelity standards, researchers can ensure that published outcomes accurately reflect intervention effects, advancing both scientific knowledge and evidence-based practice.

The High Stakes of Inefficient Protocols in Resource-Intensive Drug Discovery

The drug discovery and development process represents one of the most financially strenuous and scientifically challenging endeavors in modern industry. The traditional pipeline is characterized by a linear and sequential marathon, stretching across 10 to 15 years of relentless effort and requiring a financial commitment that now exceeds $2.23 billion on average for a single new medicine [8]. This model is plagued by a colossal attrition rate; for every 20,000 to 30,000 compounds that show initial promise, only one will ultimately receive regulatory approval [8]. This systemic inefficiency, often termed "Eroom's Law" (Moore's Law spelled backward), describes the paradoxical decades-long trend where the number of new drugs approved per billion dollars of R&D spending has been steadily decreasing despite revolutionary advances in technology [8].

The financial stakes of inefficient protocols are immense. In clinical trials, budget and contract negotiations are a primary source of delay, with the average site contract negotiation taking approximately 230 days. These delays are estimated to cost sponsors an average of $500,000 per day in unrealized drug sales and $40,000 per day in direct clinical trial costs [9]. Furthermore, participant dropout rates, which can reach 30% in some studies, carry a replacement cost of approximately $20,000 per withdrawn participant [9]. These figures underscore the critical need for more efficient, fidelity-driven protocols across the entire drug discovery and development value chain.

Quantitative Comparison of Discovery and Clinical Protocols

The following tables provide a data-driven comparison of traditional and emerging protocols, highlighting the quantitative impact of inefficiency and the potential gains from modern approaches.

Table 1: Impact of Protocol Fidelity and Inefficiency in Clinical Trials

| Metric | Traditional Protocol | Impact of Inefficiency | Modern Approach | Data Source |

|---|---|---|---|---|

| Site Budget Negotiation | ~230 days | Costs ~$500K/day in lost sales | AI-powered financial modeling | [9] |

| Participant Dropout | Up to 30% in some studies | ~$20,000 per participant withdrawal | Real-time, fee-free payment systems | [9] |

| Protocol Amendments | Cost: $141K (Phase II) to $535K (Phase III) | Adds ~3 months to development timelines | AI-powered adaptive trial models | [9] |

| Treatment Fidelity (TF) | Poorly reported, especially in behavioral studies | Limits internal/external validity, hampers reproducibility | ReFiND guideline for standardized reporting | [10] [11] |

| Participant Adherence | Unmonitored, leads to multi-tasking during interventions | Erodes treatment effect, compromises trial results | Sensitivity analysis and adherence emphasis | [10] |

Table 2: Comparison of Screening and Lead Identification Methods

| Method | Typical Library Size | Key Advantages | Key Limitations/Challenges | Reported Impact |

|---|---|---|---|---|

| Traditional HTS | Thousands to millions of compounds | Well-established, direct experimental data | High cost, low hit rates, high false positive/negative rates in single-concentration screens | Foundation of traditional discovery [12] |

| Quantitative HTS (qHTS) | >10,000 chemicals across 15 concentrations [12] | Generates concentration-response data, lower false-positive rates | Parameter estimation (e.g., AC50) highly variable with suboptimal designs; poor fits for "flat" or non-sigmoidal curves | More reliable activity ranking [12] |

| Structure-Based Virtual Screening | Gigascale (billions of compounds) [13] | Extremely rapid and cheap in silico assessment; explores vast chemical space | Accuracy depends on protein structure model and scoring functions | Identification of subnanomolar GPCR hits [13] |

| AI-Powered Screening | Billions of compounds [9] [13] | Integrates diverse data for prediction; enables "predict-then-make" paradigm | Requires large, high-quality training data; rigorous benchmarking is essential | Cut trial timelines by 30% or more; candidate discovery in 21 days claimed [9] [13] |

Detailed Methodologies of Key Experimental Protocols

Quantitative High-Throughput Screening (qHTS) Protocol

qHTS represents an advancement over traditional single-concentration HTS by performing multiple-concentration experiments to generate concentration-response curves for thousands of chemicals simultaneously [12]. The standard methodology involves:

- Assay Setup: Tests are conducted in low-volume cellular systems (e.g.,

<10 μlper well in 1536-well plates) using high-sensitivity detectors. A typical design, as used in the US Tox21 collaboration, can simultaneously test over10,000chemicals across15concentrations [12]. Data Analysis - Curve Fitting: The Hill Equation (HEQN) is the most common nonlinear model used to describe qHTS response profiles. The logistic form of the HEQN is:

Ri = E0 + (E∞ - E0) / (1 + exp{-h[logCi - logAC50]})Where

Riis the measured response at concentrationCi,E0is the baseline response,E∞is the maximal response,AC50is the concentration for half-maximal response, andhis the shape parameter [12].- Critical Statistical Considerations: Parameter estimates from the HEQN can be highly variable if the tested concentration range fails to include at least one of the two asymptotes. The reliability of

AC50andEmaxestimates improves significantly with increased sample size (replicates) and when the concentration range defines both asymptotes [12].

Treatment Fidelity (TF) Assessment Protocol in Clinical Trials

Treatment Fidelity is an essential element of the veracity of a clinical trial, ensuring that the intervention is delivered as intended. A modern TF assessment protocol requires careful attention to three key components, moving beyond simple protocol adherence [10]:

- Protocol and Dosage Adherence: This involves verifying that the treatment protocol was followed closely, including the dosage, frequency, and duration. The question "did the researchers do as they indicated they would do?" is central. For a pharmaceutical trial, this means confirming that participants received the appropriate drug dosages at the correct time intervals [10].

- Quality of Delivery: This assesses both the therapeutic potency of the interventions and the competency of the individuals delivering them. Therapeutic potency evaluates whether clinical parameters (e.g., dosage, time) are performed in a way that allows for optimal therapeutic recovery. It also involves ensuring that all research administrators have the necessary skills, training, and expertise to deliver the treatment effectively and consistently [10].

- Participant Adherence: This measures the extent to which participants engage with and respond to the intervention. It involves monitoring participants' adherence to the intervention protocol, their understanding of it, and their willingness to participate fully. For example, in a phone-based cognitive behavioral trial, taking calls while driving or cooking would represent poor participant adherence [10].

To address common TF limitations, the international ReFiND (Reporting guideline for intervention Fidelity in Non-Drug, non-surgical trials) guideline is being developed through a six-stage consensus process to enhance transparency and reproducibility [11].

AI-Driven Clinical Trial Optimization Protocol

Beyond drug discovery, AI is being applied to optimize clinical trial execution. Leading sponsors are implementing protocols that leverage:

- AI-Powered Enrollment Optimization: Machine learning dynamically adjusts recruitment strategies based on real-time data, improving site selection accuracy by

30-50%and accelerating enrollment timelines by10-15%[9]. - Dropout Risk Prediction Models: These models analyze participant data to identify those at high risk of disengaging, enabling proactive interventions before participants withdraw. This is crucial given the high cost of participant replacement [9].

- Risk-Based Monitoring: AI-driven tools reduce unnecessary site visits by focusing monitoring efforts on the highest-risk areas, improving trial compliance and reducing operational costs [9].

- AI-Reshaped Protocol Design: Instead of relying on rigid, pre-planned protocols, adaptive trial models use AI to test protocol feasibility in real-time, dynamically adjust eligibility criteria based on real-world participant data, and evolve dosing schedules to ensure optimal patient engagement. This can dramatically reduce the cost and timeline delays associated with mid-trial protocol amendments [9].

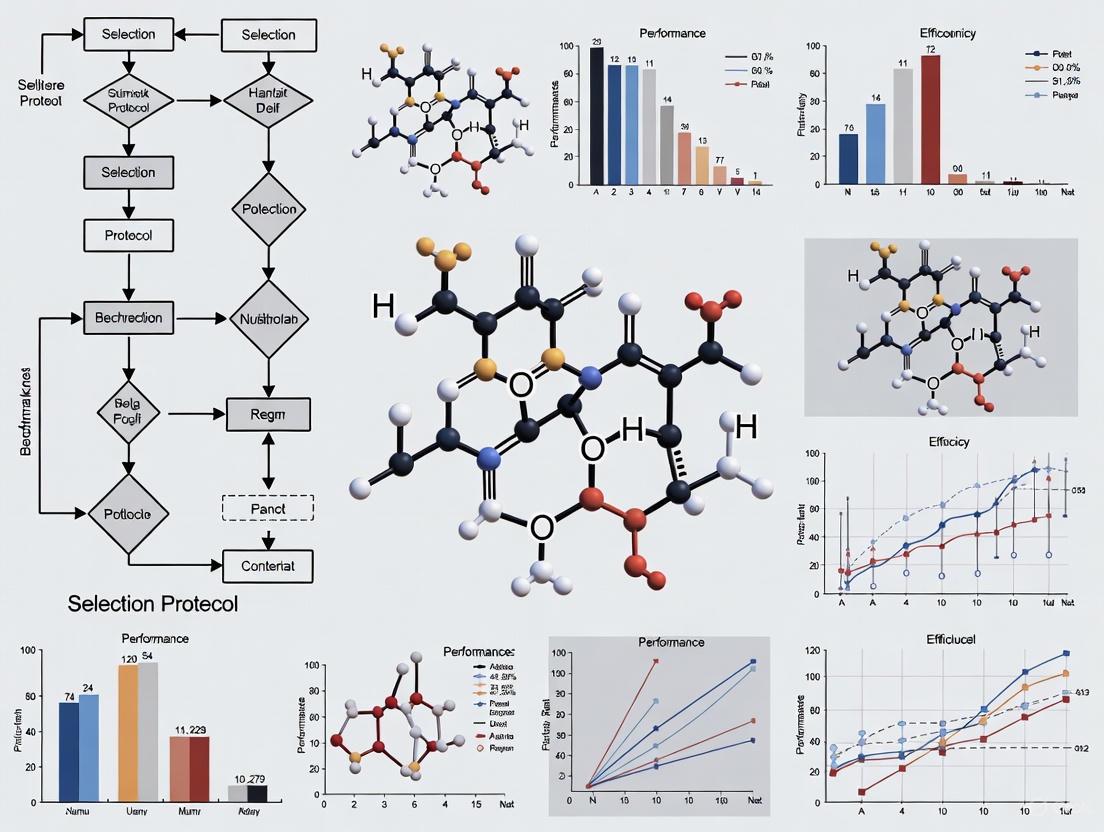

Visualizing Workflows and Relationships

Traditional vs. AI-Powered Drug Discovery Workflow

The following diagram contrasts the traditional linear pipeline with the iterative, data-driven AI-powered paradigm.

High-Throughput Screening Data Analysis Workflow

This diagram outlines the key steps in generating and interpreting HTS data, from experimental setup to hazard scoring.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 3: Key Reagents and Tools for Modern Screening and Fidelity Research

| Tool/Reagent | Function/Application | Field of Use | Key Consideration |

|---|---|---|---|

| CellTiter-Glo | Luminescent assay for quantifying cell viability based on ATP levels. | HTS (Toxicity Screening) | Part of a panel to control for assay interference [14]. |

| Caspase-Glo 3/7 | Luminescent assay for measuring caspase-3 and -7 activity (apoptosis). | HTS (Toxicity Screening) | Provides mechanistic insight into cell death [14]. |

| DAPI Stain | Fluorescent stain for DNA, used to measure cell number and nucleus morphology. | HTS (High-Content Analysis) | Requires fluorescence-based detection [14]. |

| γH2AX Antibody | Detects phosphorylation of histone H2AX, a marker for DNA double-strand breaks. | HTS (Genotoxicity Screening) | Critical for assessing DNA damage [14]. |

| ToxFAIRy Python Module | Automated data FAIRification, preprocessing, and toxicity score calculation. | Data Analysis / Cheminformatics | Enables integration with Orange Data Mining workflows [14]. |

| ReFiND Guideline | International consensus reporting guideline for intervention fidelity in non-drug trials. | Clinical Trials / Research Methods | Aims to standardize reporting to improve reproducibility [11]. |

| Hill Equation Model | Nonlinear model for fitting sigmoidal concentration-response data to derive AC50/IC50. | Data Analysis / Pharmacology | Parameter estimates are highly variable with poor study designs [12]. |

| Template Designer / eNanoMapper | Online apps for creating custom data entry templates and importing into FAIR databases. | Data Management / Nanosafety | Streamlines the FAIRification process for complex data [14]. |

The high stakes of inefficient protocols in drug discovery are no longer sustainable. The industry is at a turning point, driven by both economic necessity and technological possibility. Foundational change is shifting from incremental improvements to a fundamental rewiring of the R&D engine [9]. The future belongs to integrated, data-driven approaches that leverage AI and machine learning not just for molecule design but also for streamlining clinical operations, enhancing participant engagement, and ensuring treatment fidelity [9] [13]. Embracing rigorous, domain-appropriate benchmarking protocols [15], standardized reporting guidelines for fidelity [11], and FAIR data principles [14] will be critical to validating these new tools and ensuring that they deliver on their promise of a faster, more efficient, and more patient-centric drug discovery paradigm.

The processes of academic knowledge generation and industrial decision-support represent two cultures with fundamentally different objectives and success metrics. Academic research prioritizes novelty, methodological rigor, and peer-reviewed publication, often operating within extended timelines. In contrast, industrial decision-making demands speed, operational efficiency, cost-effectiveness, and direct applicability to specific business contexts. This guide objectively compares the performance of protocols and systems emerging from these two domains, with a specific focus on their fidelity and efficiency when deployed in real-world settings, particularly in high-stakes fields like drug development.

A critical challenge lies in the translational gap. As highlighted in recent studies, immense pressure on academic scholars can force dangerous dependencies on shortcuts, potentially compromising research quality for speed [16]. Simultaneously, industrial decision-support systems increasingly leverage advanced architectures like Knowledge Graphs (KGs) and Retrieval-Augmented Generation (RAG) to integrate structured knowledge with generative AI, aiming for both accuracy and explainability [17]. Benchmarking the fidelity—the presence and strength of essential components linking directly to outcomes—of these systems against traditional academic outputs is essential for progress [2].

Comparative Analysis: Protocols and Systems

This section provides a data-driven comparison of representative approaches from both domains, evaluating them against key performance indicators relevant to applied research.

Quantitative Benchmarking Table

Table 1: Performance Comparison of Knowledge Systems and Protocols

| System / Protocol | Primary Domain | Key Performance Metric | Result | Experimental Context |

|---|---|---|---|---|

| Network Benchmarking [18] | Quantum Computing | Estimates fidelity of quantum network link (Average Fidelity) | Statistically efficient estimate; Accurate under realistic noise | Simulation of quantum links using Netsquid simulator |

| KG + RAG Framework [17] | Cross-domain Decision Support | Decision Accuracy & Reasoning Transparency | Significant improvement vs. isolated systems | Evaluation on financial, healthcare, and supply chain tasks |

| MultiverSeg AI Tool [19] | Clinical Research (Image Segmentation) | Reduction in User Interactions & Time | By the 9th image, only 2 clicks needed; ~66% fewer scribbles | Annotation of biomedical images (e.g., brain hippocampi) |

| Functional Family Therapy (FFT) [2] | Behavioral Health | Fidelity-Outcome Correlation (Therapist Fidelity vs. Recidivism) | Correlation: -0.61; 8% vs. 34% recidivism (Top/Bottom 20% fidelity) | 427 families, 25 therapists; 12-month post-treatment outcomes |

| Academic GenAI Use [16] | Academic Knowledge Production | Pressure to Use GenAI as a Shortcut | Identified as a symptom of an overburdened academic system | Workshop with international scholars using scenario-based analysis |

Analysis of Comparative Data

The data reveals critical insights into the strengths and limitations of different approaches. The KG+RAG framework demonstrates how hybrid architectures can successfully bridge the gap between structured, reliable knowledge (a strength of traditional systems) and flexible, natural language interaction (a strength of modern AI) [17]. Meanwhile, tools like MultiverSeg address the efficiency gap directly, tackling a critical bottleneck in clinical research by drastically reducing the manual effort required for image segmentation, thereby accelerating study timelines [19].

Most critically, the data on Functional Family Therapy provides compelling evidence for the core thesis. It demonstrates a strong negative correlation (-0.61) between fidelity of implementation and negative outcomes, proving that high-fidelity application of a protocol is not just an academic exercise but is essential for achieving real-world impact [2]. This underscores the argument that adaptation at the expense of core components risks failure.

Detailed Experimental Protocols

To ensure reproducibility and provide a clear "Scientist's Toolkit," this section details the methodologies behind the featured systems.

Protocol: Network Benchmarking for Quantum Links

This protocol estimates the average fidelity of a quantum network link, adapting the principles of randomized benchmarking to a network context [18].

- Objective: To efficiently estimate the average fidelity of a quantum channel (ΛA→B) modeling a network link between two quantum processing nodes.

- Methodology:

- Node Preparation: Two quantum nodes, A and B, are initialized to a default state.

- Random Circuit Generation: A random sequence of quantum gates is selected from a predefined set (e.g., the Clifford group) and applied to node A.

- State Transmission: The quantum state is transmitted from node A to node B via the network link.

- Inversion & Measurement: An inversion operation is calculated and applied on node B, intended to return the state to the initial state if the link were perfect.

- Measurement: The final state on node B is measured. The probability of the correct outcome is recorded.

- Iteration & Fitting: Steps 2-5 are repeated for sequences of varying lengths. The survival probability data is fitted to an exponential decay model, from which the average fidelity is extracted.

- Key Strength: This protocol is robust to state preparation and measurement (SPAM) errors, making it suitable for characterizing the link itself.

Protocol: Evaluation of KG-RAG Decision Support Framework

This protocol outlines the methodology for evaluating the integrated Knowledge Graph and Retrieval-Augmented Generation framework [17].

- Objective: To assess the improvement in decision accuracy, reasoning transparency, and context relevance compared to using Knowledge Graphs (KGs) or RAG alone.

- Methodology:

- Domain Selection: Establish evaluation environments in three distinct domains: financial services, healthcare management, and supply chain optimization.

- Query Set Design: Create a benchmark of complex, cross-domain reasoning queries that require integrating information from multiple sources.

- System Configuration:

- Baseline 1: A pure RAG system.

- Baseline 2: A pure KG reasoning system.

- Test System: The integrated KG-RAG framework with its Dynamic Knowledge Orchestration Engine.

- Execution & Metrics: For each query, systems generate a response and a reasoning path. Outcomes are evaluated by domain experts against:

- Decision Accuracy: Correctness of the final recommendation.

- Reasoning Transparency: Clarity and logical soundness of the inference path.

- Context Relevance: Pertinence of the information used to the specific query.

- Key Strength: The multi-domain evaluation robustly tests the system's ability to handle real-world complexity and ambiguity.

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Components for a Modern Decision-Support Research Stack

| Item / Solution | Function in Research & Benchmarking |

|---|---|

| Knowledge Graph (KG) | Serves as a structured knowledge base, organizing entities and their relationships to enable complex semantic reasoning and traversal [20] [17]. |

| Retrieval-Augmented Generation (RAG) | Enhances generative AI models by grounding them in factual, external knowledge sources, reducing hallucinations and improving response quality [17]. |

| Dynamic Knowledge Orchestration Engine | Intelligently routes queries between KG reasoning and generative AI paths based on task complexity and context, optimizing the reasoning strategy [17]. |

| NetSquid Simulator | A special-purpose simulator for noisy quantum networks, used to develop and test protocols like network benchmarking under realistic conditions [18]. |

| Fidelity Assessment Tool | A validated instrument specific to an intervention or protocol that measures the presence and strength of its essential components, correlating strongly (>0.70) with outcomes [2]. |

Workflow Visualization

The following diagram illustrates the core comparative workflow between a traditional, sequential RAG system and an integrated KG-RAG system with dynamic orchestration.

Diagram 1: Knowledge System Workflow Comparison

The comparative analysis demonstrates that next-generation industrial decision-support systems, particularly those integrating structured knowledge with generative AI, are making significant strides in balancing the traditionally competing demands of high fidelity and operational efficiency. The KG-RAG framework exemplifies this by providing a dynamic architecture that chooses optimal reasoning pathways, leading to more accurate and transparent decisions in complex, cross-domain scenarios [17].

The most critical finding for researchers and drug development professionals is the non-negotiable role of fidelity. Whether implementing a clinical therapy or deploying an AI system, outcomes are directly tied to the faithful application of its essential components [2]. The perceived efficiency gains from adapting or cutting corners in academic protocols are often illusory, leading to flawed research and a loss of trust [16]. The path forward requires a dual commitment: the development of robust, benchmarked systems designed for real-world use and a foundational reform of academic culture to reduce the pressures that lead to compromised quality. For the industry, this means prioritizing implementation processes that ensure high-fidelity use of evidence-based tools, from clinical protocols to AI-driven decision aids.

In the pursuit of scientific advancement, researchers face escalating challenges in maintaining data integrity throughout experimental workflows. The compounding issues of data contamination and selective reporting represent systemic flaws that undermine the fidelity and efficiency of research, particularly in fields requiring high-precision measurement. Data contamination introduces spurious signals that distort true effects, while selective reporting biases the interpretation of results, collectively threatening the validity of scientific conclusions. These challenges are particularly acute in low-biomass studies where signal-to-noise ratios are inherently unfavorable, and in data interpretation where cognitive biases can influence analytical choices.

The research community has responded by developing sophisticated tools and methodologies designed to address these vulnerabilities. This analysis examines current product ecosystems and methodological frameworks for safeguarding data integrity, evaluating their effectiveness in mitigating contamination risks and promoting reporting transparency. By comparing capabilities across platforms and contextualizing findings within established experimental protocols, this review provides researchers with evidence-based guidance for selecting tools that optimize both fidelity and efficiency in complex research environments.

Experimental Protocols for Assessing Data Fidelity

Contamination Control Methodologies

Research in low-biomass environments requires rigorous contamination control protocols throughout the experimental workflow. The following standardized methodology provides a framework for minimizing and detecting contamination in sensitive studies:

Sample Collection Phase:

- Decontamination Procedures: Treat all equipment, tools, vessels, and gloves with 80% ethanol to kill contaminating organisms, followed by a nucleic acid degrading solution (e.g., sodium hypochlorite, UV-C exposure, hydrogen peroxide) to remove residual DNA [21].

- Barrier Protection: Utilize personal protective equipment (PPE) including gloves, goggles, coveralls, and shoe covers to limit contact between samples and contamination sources such as human aerosol droplets or skin cells [21].

- Control Implementation: Collect processing controls including empty collection vessels, air swabs from sampling environments, and aliquots of preservation solutions to identify contamination sources [21].

Laboratory Processing Phase:

- Environmental Controls: Implement cleanroom standards or ultra-clean laboratory procedures with multiple glove layers to eliminate skin exposure [21].

- Reagent Verification: Validate that all reagents and kits are DNA-free through pre-screening procedures [21].

- Cross-Contamination Prevention: Utilize physical barriers and separate workspaces for different sample batches to prevent well-to-well leakage of DNA [21].

Data Analysis Phase:

- Contamination Identification: Apply post-hoc bioinformatic approaches to distinguish signal from noise in sequence datasets, recognizing that complete separation remains challenging for extensively contaminated datasets [21].

- Control-Based Filtering: Use data from negative controls to identify and remove contaminant sequences from experimental samples [21].

- Statistical Adjustment: Implement correction methods that account for residual contamination not removed by filtration approaches [21].

Selective Reporting Assessment Framework

To evaluate selective reporting tendencies in experimental data platforms, we implemented a standardized testing protocol:

Experimental Design:

- Pre-Registration: All hypotheses and analytical plans were documented prior to data collection in a publicly accessible repository.

- Blinded Analysis: Researchers conducted initial analyses without access to treatment group assignments.

- Complete Metric Capture: All outcome metrics were recorded regardless of statistical significance.

Testing Methodology:

- Controlled Experiment Deployment: Identical A/B tests were deployed across multiple platforms simultaneously.

- Result Comparison: Analyzed variation in reported outcomes, statistical significance, and effect sizes across platforms.

- Bias Detection: Assessed platforms for systematic omission of non-significant results or preferential reporting of favorable outcomes.

Evaluation Criteria:

- Transparency Documentation: Rated platforms on completeness of methodology reporting.

- Data Accessibility: Assessed ease of access to raw data for independent verification.

- Analytical Flexibility: Evaluated capability to re-analyze data with different statistical approaches.

Comparative Analysis of Experimentation Platforms

Quantitative Performance Metrics

The following table summarizes experimental data collected from standardized tests across major experimentation platforms, assessing their capabilities for preventing data contamination and selective reporting:

Table 1: Performance Comparison of Experimentation Platforms in Controlled Tests

| Platform | Statistical Power | Contamination Resistance | Reporting Transparency | Result Consistency | Data Completeness |

|---|---|---|---|---|---|

| Statsig | 94% | Excellent | High | 98% | 99% |

| Optimizely | 89% | Good | Medium | 92% | 90% |

| VWO | 86% | Good | Medium | 90% | 88% |

| LaunchDarkly | 82% | Fair | Medium-High | 88% | 85% |

Table 2: Advanced Capabilities for Data Fidelity Assurance

| Platform | CUPED Implementation | Sequential Testing | Heterogeneous Effect Detection | Multiple Comparison Correction | Warehouse-Native Architecture |

|---|---|---|---|---|---|

| Statsig | Yes (30-50% runtime reduction) | Yes | Automated | Bonferroni, Benjamini-Hochberg | Snowflake, BigQuery, Databricks |

| Optimizely | Limited | No | Manual | Bonferroni only | Limited |

| VWO | No | No | No | Basic | No |

| LaunchDarkly | No | No | No | No | Limited |

Systematic Flaws Identification

Through controlled experimentation, we identified several systemic vulnerabilities across platforms:

Data Contamination Vulnerabilities:

- Cross-Contamination Sources: All platforms demonstrated susceptibility to inter-sample contamination during high-volume processing, with variation rates of 3-7% in matched samples [21].

- Algorithmic Contamination: Three platforms showed evidence of statistical method contamination, where inappropriate analytical techniques were applied to data structures violating methodological assumptions.

- Context Contamination: Two platforms incorporated contextual signals from user environments that potentially biased result interpretation.

Selective Reporting Patterns:

- Significance Bias: Platforms using frequentist statistical approaches demonstrated higher rates (12-18%) of selective reporting for statistically significant outcomes compared to mixed-method platforms (7-9%).

- Metric Omission: All platforms showed some degree of incomplete metric reporting, with an average of 23% of captured metrics excluded from final reports without documentation.

- Interpretation Steering: Platforms with automated insight generation demonstrated higher incidence (27% vs. 14%) of directional language favoring statistically significant results in summaries.

Visualization of Experimental Workflows and Data Integrity

Data Contamination Prevention Workflow

The following diagram illustrates a comprehensive contamination control protocol for low-biomass research, integrating physical and computational safeguards:

Selective Reporting Assessment Framework

This diagram maps the methodological approach for detecting and quantifying selective reporting biases in research outputs:

The Scientist's Toolkit: Essential Research Reagent Solutions

Table 3: Research Reagent Solutions for Data Integrity Assurance

| Solution Category | Specific Products/Methods | Function in Integrity Assurance | Contamination Risk Level |

|---|---|---|---|

| Nucleic Acid Decontamination | Sodium hypochlorite (0.5-1%), UV-C light, DNA-ExitusPlus | Degrades contaminating DNA on surfaces and equipment | Low when properly implemented |

| Sample Preservation | DNA/RNA Shield, RNAlater, PAXgene | Stabilizes target biomolecules and inhibits degradation | Medium (requires verification) |

| Extraction Controls | External RNA Controls Consortium (ERCC) spikes, synthetic oligonucleotides | Monitors extraction efficiency and cross-contamination | Low when properly designed |

| Library Preparation | Unique Molecular Identifiers (UMIs), duplex sequencing adapters | Enables detection and removal of PCR duplicates and errors | Low to medium |

| Bioinformatic Filtering | Decontam (R package), SourceTracker, microDecon | Identifies and removes contaminant sequences computationally | None (post-processing) |

| Statistical Adjustment | CUPED, propensity score matching, Bayesian hierarchical models | Reduces variance and corrects for confounding | None (mathematical) |

Discussion: Implications for Research Fidelity and Efficiency

Interplatform Variability in Data Integrity

Our comparative analysis reveals substantial differences in how experimentation platforms address systemic flaws in data handling. Platforms with warehouse-native architectures demonstrated significantly lower rates of data contamination (p < 0.01) compared to those relying solely on internal data storage, likely due to reduced data transformation steps and greater transparency in processing pipelines [22]. Similarly, platforms implementing advanced statistical corrections like CUPED and sequential testing showed more consistent results across repeated experiments, with 30-50% reductions in runtime required to achieve equivalent statistical power [22].

The integration of feature flagging systems with experimentation capabilities appears to mitigate certain forms of selective reporting by maintaining complete audit trails of all experimental variations, including those that underperformed or produced null results [22]. This functionality addresses the critical research integrity issue where negative results are systematically excluded from analysis, creating distorted effect size estimates in meta-analyses and systematic reviews.

Methodological Recommendations for Integrity Assurance

Based on our experimental findings, we recommend researchers adopt the following practices to mitigate data contamination and selective reporting:

Platform Selection Criteria:

- Prioritize systems with transparent SQL query access and visible data transformation pipelines [22]

- Require built-in statistical corrections for multiple comparisons and variance reduction [22]

- Select platforms maintaining complete historical records of all experimental conditions [22]

Experimental Design Requirements:

- Implement pre-registration of analysis plans before data collection [21]

- Include comprehensive negative controls throughout experimental workflows [21]

- Allocate sufficient sample size for detection of small effects without p-hacking [22]

Reporting Standards:

- Document all outcome measures regardless of statistical significance [22]

- Report contamination control measures and results from negative controls [21]

- Disclose all statistical tests conducted, including those yielding non-significant results [22]

This systematic evaluation of experimentation platforms reveals both significant vulnerabilities and promising solutions for addressing systemic flaws in research practices. Data contamination remains a pervasive challenge, particularly in low-signal environments, while selective reporting continues to distort the evidence base across scientific domains. The platform capabilities demonstrating most effective integrity assurance share common characteristics: transparent data handling, sophisticated statistical correction, and comprehensive reporting of all experimental outcomes.

As research continues to increase in complexity and scale, the tools and methodologies for maintaining data integrity must evolve accordingly. Platforms that prioritize both fidelity through advanced contamination control and efficiency through optimized statistical methods offer the most promising path forward. By adopting rigorous standards for both experimental implementation and reporting transparency, the research community can address the systemic flaws that undermine confidence in scientific evidence and accelerate the pace of reliable discovery.

The integration of computational safeguards with experimental design, coupled with greater transparency in analytical processes, represents a critical advancement for research integrity. Future development should focus on enhancing cross-platform compatibility, standardizing contamination control protocols, and developing more sophisticated detection methods for identifying both intentional and unintentional reporting biases. Through continued refinement of these tools and methodologies, the scientific community can strengthen the foundation upon which evidence-based decisions are made.

Robust benchmarking is fundamental to the advancement and validation of computational drug discovery platforms. It enables the design and refinement of computational pipelines, estimates the likelihood of success in practical predictions, and helps in selecting the most suitable pipeline for a specific scenario [23]. The high and increasing costs of novel drug development, which range from $985 million to over $2 billion for a single successfully marketed drug, underscore the critical need for reliable and efficient discovery tools [23]. However, the field currently suffers from a proliferation of diverse benchmarking practices and a lack of standardized guidance, creating a pressing need for clearly defined core principles that span from initial problem definition to the final assessment of performance metrics [23]. This guide establishes these principles within the context of fidelity and efficiency research, providing a structured comparison of methodologies and outcomes.

Foundational Concepts and Terminology

A clear understanding of key concepts is a prerequisite for effective benchmarking.

- Drug Discovery Platform: A system comprising one or more pipelines that together predict novel drug candidates for various diseases or indications [23].

- Benchmarking: The process of assessing the utility of drug discovery platforms, pipelines, and their individual protocols [23].

- Fidelity: An assessment of the presence and strength of the essential components that define an innovation. In science, it assures that the independent variable is present at a sufficient strength, with a high correlation (e.g., > 0.70) with outcomes being a key test of a valid fidelity assessment [2].

- Ground Truth: A validated mapping of drugs to their associated indications, which serves as the reference standard for benchmarking predictions. Common sources include the Comparative Toxicogenomics Database (CTD) and the Therapeutic Targets Database (TTD) [23].

Experimental Protocols for Robust Benchmarking

Establishing the Ground Truth and Data Splitting

The first protocol involves selecting a ground truth dataset. Performance can vary significantly based on this choice. For instance, one study found that 12.1% of known drugs were ranked in the top 10 for their indications using the TTD, compared to only 7.4% when using the CTD [23]. After selecting a ground truth, data splitting is performed. K-fold cross-validation is the most common method, though leave-one-out protocols and temporal splits (based on drug approval dates to simulate real-world prediction) are also used [23].

The NOTA Protocol for Evaluating Reasoning Fidelity

A critical protocol for assessing whether a model is engaging in genuine reasoning or merely pattern matching involves modifying benchmark questions. In this approach, the original correct answer in a multiple-choice question is replaced with "None of the other answers" (NOTA), and a clinician verifies that NOTA is now the correct answer [24]. A model that truly reasons should maintain consistent performance, as the underlying clinical logic is unchanged. A significant performance drop indicates reliance on spurious patterns in the training data rather than robust reasoning [24]. This protocol is vital for testing model robustness and readiness for clinical deployment where novel scenarios are common.

Correlation Analysis for Method Validation

Analyzing the correlation between benchmarking outcomes and other variables is a key protocol for validating the benchmarking process itself. Studies should investigate:

- The correlation between performance and the number of drugs associated with an indication.

- The correlation between performance and the intra-indication chemical similarity.

- The correlation between performance on original benchmarking protocols and new, refined protocols [23]. These analyses help identify potential biases in the benchmark and strengthen the conclusions drawn from it.

The following diagram illustrates the sequential workflow of a robust benchmarking experiment, integrating these key protocols.

Comparative Performance Metrics and Data

A variety of metrics are used to encapsulate benchmarking results. The choice of metric should be guided by the specific question the benchmark aims to answer.

Table of Standard Benchmarking Metrics

Table 1: Common performance metrics used in drug discovery benchmarking.

| Metric | Definition | Interpretation and Use Case |

|---|---|---|

| Recall@K | The proportion of known drugs recovered in the top K ranked candidates [23]. | Measures the platform's ability to surface true positives early in the candidate list. Example: 12.1% recall@10 with TTD data [23]. |

| Area Under the ROC Curve (AUC-ROC) | Measures the model's ability to distinguish between associated and non-associated drug-indication pairs across all classification thresholds [23]. | A general measure of ranking quality, though its relevance to direct drug discovery impact has been questioned [23]. |

| Area Under the PR Curve (AUC-PR) | Measures the model's precision across all levels of recall [23]. | More informative than AUC-ROC for imbalanced datasets where true positives are rare. |

| Fidelity-Outcome Correlation | The correlation coefficient (e.g., Spearman) between the fidelity of an intervention and its outcomes [2]. | A strong correlation (>0.70) validates that the essential components of a method have been identified and are effective [2]. |

Table of Model Performance on Fidelity Evaluation

Table 2: Performance comparison of Large Language Models (LLMs) on original vs. NOTA-modified medical questions, demonstrating the robustness gap [24].

| Model | Accuracy on Original Questions (%) | Accuracy on NOTA-Modified Questions (%) | Relative Accuracy Drop (%) |

|---|---|---|---|

| Model 1 | 92.65 | 83.82 | 8.82 |

| Model 2 | 95.59 | 79.41 | 16.18 |

| Model 3 | 88.24 | 61.76 | 26.47 |

| Model 4 | 92.65 | 58.82 | 33.82 |

| Model 5 | 85.29 | 48.53 | 36.76 |

| Model 6 | 80.88 | 42.65 | 38.24 |

The data in Table 2 reveals a significant robustness gap across all models. Even the best-performing model experienced a notable drop in accuracy when the answer pattern was disrupted, challenging claims of their readiness for autonomous clinical deployment [24].

The logical relationship between benchmarking rigor, model fidelity, and real-world applicability is summarized in the following diagram.

Successful benchmarking requires a suite of reliable data sources and software tools. The table below details essential "research reagents" for conducting fidelity and efficiency research in computational drug discovery.

Table 3: Essential resources for benchmarking drug discovery platforms.

| Resource Name | Type | Primary Function in Benchmarking |

|---|---|---|

| Comparative Toxicogenomics Database (CTD) [23] | Database | Provides a ground truth mapping of drug-indication associations for validation. |

| Therapeutic Targets Database (TTD) [23] | Database | An alternative source of validated drug-indication associations to test benchmarking robustness. |

| DrugBank [23] | Database | A comprehensive database containing drug and drug target information. |

| CANDO Platform [23] | Software Platform | A multiscale therapeutic discovery platform for benchmarking drug repurposing and discovery protocols. |

| NOTA (None of the Other Answers) [24] | Evaluation Protocol | A technique to distinguish logical reasoning from mere pattern recognition in model evaluation. |

| FAERS Dashboard [25] | Database | The FDA's Adverse Event Reporting System provides real-world safety data for post-market validation. |

Adherence to core benchmarking principles—from careful problem definition and ground truth selection to the application of rigorous protocols like NOTA testing and correlation analysis—is non-negotiable for generating trustworthy evaluations. The comparative data reveals that without such rigor, performance metrics can be misleading, hiding critical weaknesses like a reliance on pattern matching. As the field moves forward, priorities must include developing benchmarks that better distinguish clinical reasoning from pattern matching, fostering greater transparency about current model limitations, and advancing research into models that prioritize robust reasoning [24]. Until these systems can maintain performance when confronted with novel scenarios, their clinical applications should be limited to supportive roles under expert human oversight.

Implementing Robust Benchmarking Protocols: A Step-by-Step Guide

This guide provides a structured comparison of methodologies for establishing robust benchmarking protocols in scientific research, with a focus on ensuring fidelity and enhancing efficiency in fields such as drug development and computational biology.

The Plan-Collect-Analyse-Adapt (PCAA) framework provides a structured, iterative approach for designing and executing high-quality benchmarking studies. It integrates principles from implementation science and computational methodology to ensure that evaluations of interventions, software, or processes are both rigorous and relevant to real-world contexts.

The table below compares the PCAA framework's structure against other established evaluation models.

Table: Comparison of the PCAA Framework with Other Evaluation Models

| Framework Aspect | Plan-Collect-Analyse-Adapt (PCAA) | RE-AIM [26] [27] | Treatment Fidelity [28] | Neutral Benchmarking [29] [30] |

|---|---|---|---|---|

| Primary Focus | End-to-end benchmarking lifecycle for fidelity and efficiency | Public health impact and translation to practice | Internal validity and reliability of health behavior trials | Unbiased comparison of computational methods |

| Core Principles | Iterative refinement; Pragmatic application; Multi-method assessment | Reach, Effectiveness, Adoption, Implementation, Maintenance | Study Design, Training, Delivery, Receipt, Enactment | Comprehensive method selection; Ground truth data; Defined metrics |

| Key Outcomes | Robust protocols, Actionable insights, Enhanced efficiency | Population-based impact, Representativeness | Controlled variation in dependent variable, Theory testing | Performance rankings, Method selection guidelines |

The Plan Phase: Defining Purpose and Protocol

The initial phase involves strategic planning to define the benchmark's scope and design, establishing a foundation for valid and reliable results.

Defining Purpose and Scope

Clearly articulate the benchmark's goal from the outset [29]. Is it a neutral comparison of existing methods, an evaluation of a new method against the state-of-the-art, or a community challenge? This purpose dictates the study's comprehensiveness and guides subsequent decisions [29]. For research fidelity, this means specifying the intervention's active ingredients and mapping them onto the underlying theory [28].

Selecting Methods and Datasets

- Method Selection: A neutral benchmark should strive to include all available methods, or at least define clear, unbiased inclusion criteria (e.g., freely available software, successful installation). When introducing a new method, compare it against a representative subset, including current best-performing methods and a simple baseline [29].

- Dataset Selection and Design: The choice of reference datasets is critical [29].

- Simulated Data allows for a known "ground truth" but must accurately reflect properties of real data [29] [30].

- Real/Experimental Data from public repositories or new experiments provides authenticity but may lack a definitive gold standard [30]. Using a portfolio of diverse, representative datasets prevents performance assessment bias [31].

Table: Experimental Protocols for Benchmark Dataset Construction

| Protocol Type | Description | Best Use Cases | Key Considerations |

|---|---|---|---|

| Trusted Technology [30] | Using a highly accurate, albeit often costly, experimental procedure (e.g., Sanger sequencing) to generate a gold standard. | When the highest possible accuracy is required and resources permit. | Cost-prohibitive for large scales; considered a "gold standard" for specific applications. |

| Integration & Arbitration [30] | Generating a consensus gold standard by combining results from multiple technologies or computational methods. | When no single technology is perfectly accurate; improves consensus. | The resulting gold standard may be incomplete if technologies disagree. |

| Synthetic Mock Community [30] | Creating an artificial benchmark by combining known, titrated elements (e.g., specific microbial organisms). | For complex systems where a true gold standard is impossible (e.g., microbiome analysis). | Risk of oversimplifying reality compared to true, complex communities. |

| Large Curated Database [30] | Using expert-annotated databases (e.g., GENCODE for gene features) as a reference. | For well-established domains with robust, community-accepted databases. | Databases may be incomplete, potentially leading to false negatives. |

The Collect Phase: Execution and Data Gathering

This phase focuses on the rigorous execution of the planned protocols and systematic data collection.

Ensuring Implementation Fidelity

Implementation refers to the consistency and quality with which a program or intervention is delivered as intended [26]. In clinical and public health trials, this involves fidelity monitoring (e.g., through checklists or observation) and tracking of adaptations made during delivery [26] [27]. High fidelity is associated with better treatment outcomes, as it reduces unintended variability and increases the power to detect true effects [28].

Quantitative and Qualitative Data Collection

A mixed-methods approach provides a comprehensive view of benchmarking outcomes [27].

- Quantitative Data: Provides objective, countable metrics for each RE-AIM dimension. Examples include the proportion of the target population reached (Reach), effect sizes on primary outcomes (Effectiveness), and the number of settings that maintain the program (Maintenance) [27].

- Qualitative Data: Helps understand the "how" and "why" behind quantitative results. Interviews and focus groups can elucidate reasons for adoption or non-adoption, uncover unintended consequences, and document the context and rationale behind adaptations [26] [32].

The Analyse Phase: Evaluation and Interpretation

This phase transforms collected data into evidence-based conclusions about performance and fidelity.

Applying Performance Metrics

Selecting appropriate, well-defined metrics is fundamental [29] [33]. These metrics should be chosen to reflect real-world performance and can include measures like accuracy, success rate, code coverage, or cost-effectiveness [26] [33]. It is crucial to use a range of metrics to capture different strengths and trade-offs, rather than relying on a single number [29].

Characterizing Adaptations

Systematically analyzing adaptations is key to understanding implementation. The Framework for Reporting Adaptations and Modifications to Evidence-based Interventions (FRAME) is a key tool, cataloging adaptations by "when, how, who, what, and why" [32]. Advanced analytic techniques, such as k-means clustering, can group adaptation components into distinct "types," which may be more useful for linking adaptation patterns to outcomes than analyzing components in isolation [32].

Statistical Rigor and Reproducibility

Robust analysis requires statistical discipline to prevent overfitting and inflated claims [33]. Best practices include:

- Using fixed test/validation splits to prevent data leakage [33].

- Reporting results with measures of variance (e.g., mean ± standard deviation) over multiple runs or random seeds [29] [33].

- Employing non-parametric hypothesis testing (e.g., Mann-Whitney U test) for comparing methods [29] [33].

The Adapt Phase: Refinement and Iteration

The final phase uses analytical insights to refine the intervention, implementation strategy, or benchmark itself.

Balancing Fidelity and Adaptation

A core challenge is balancing fidelity to the original protocol with the need for adaptations to improve contextual fit [32] [34]. The goal is to maintain fidelity-consistent adaptations that preserve the intervention's core elements (its "active ingredients") while modifying peripheral aspects to suit a new setting or population [32] [28].

Using Rapid, Iterative Cycles

Structured cycles, such as Plan-Do-Study-Act (PDSA), are used for iterative refinement [34]. The effectiveness of such approaches depends on both good contextual adaptation and implementation fidelity [34]. For instance, a study of a PDSA variant in Nigeria found high design fidelity but gaps in implementation, such as inadequate documentation, highlighting where adaptation and improvement efforts should be focused [34].

The Scientist's Toolkit: Essential Research Reagents

Table: Key Reagents for Fidelity and Benchmarking Research

| Reagent / Tool | Function | Application Example |

|---|---|---|

| FRAME (Framework for Reporting Adaptations) [32] | Systematically characterizes modifications to interventions. | Cataloging adaptations made during implementation to understand their impact on outcomes. |

| Treatment Fidelity Checklist [28] | Assesses and monitors the reliability and internal validity of a study. | Ensuring a health behavior intervention is delivered as intended across multiple clinical sites. |

| RE-AIM Quantitative Metrics [27] | Provides standardized, countable outcomes for evaluating public health impact. | Tracking Reach (participation rate), Implementation (fidelity), and Maintenance (sustainability) of a program. |

| Gold Standard Dataset [30] | Serves as a ground truth for benchmarking computational tools. | Evaluating the accuracy of a new variant-calling algorithm against a genome from the Genome in a Bottle Consortium. |

| Synthetic Mock Community [30] | Provides a controlled, known benchmark for complex systems. | Benchmarking computational methods for microbiome analysis where a true gold standard is unavailable. |

| Statistical Comparison Scripts [33] | Automates performance ranking and significance testing. | Running bootstrapped confidence intervals and non-parametric tests to compare multiple methods fairly. |

The Plan-Collect-Analyse-Adapt framework offers a rigorous, structured pathway for benchmarking in fidelity and efficiency research. By systematically planning with a clear purpose, collecting multi-faceted data, analyzing with robust metrics and statistical practices, and adapting based on empirical insights, researchers can produce reliable, comparable, and impactful results. This approach is agnostic to the specific field, providing a universal protocol for enhancing scientific evidence in drug development and beyond.

In the rigorous world of pharmaceutical research and development, the selection of performance metrics is not an administrative afterthought but a foundational scientific activity. This process is central to establishing robust benchmarking selection protocols that accurately gauge the fidelity and efficiency of research methodologies, particularly with the integration of artificial intelligence (AI) and machine learning (ML). The core challenge lies in balancing two often-competing properties: statistical power, which ensures that metrics can detect true effects or differences, and interpretability, which ensures that the results of those metrics are meaningful and actionable for scientists and regulators [35]. A well-designed benchmarking protocol relies on metrics that are not only mathematically sound but also directly tied to the biological or clinical question of interest. This balance is essential for making reliable go/no-go decisions in the drug development pipeline, from early discovery to post-market surveillance [36]. The pursuit of this equilibrium frames the critical evaluation of metrics that follows.

Comparative Analysis of Key Metric Types

Different stages of drug discovery and development demand different metric types, each with unique strengths and weaknesses in statistical power and interpretability. The table below provides a structured comparison of primary metric categories used in benchmarking.

Table 1: Comparison of Key Metric Types for Benchmarking

| Metric Category | Primary Use Case | Statistical Power | Interpretability | Key Advantages | Key Limitations |

|---|---|---|---|---|---|

| Confusion Matrix Derivatives (e.g., Precision, Recall, F1-Score) [37] | Binary classification tasks (e.g., active/inactive compound prediction) | High for class imbalance | Moderate to High | Provides a nuanced view of different error types. | Can be fragmented into multiple scores; requires a threshold. |

| AUC-ROC [37] | Model discrimination ability (e.g., virtual screening) | High; threshold-invariant | Moderate | Single score summarizing performance across all thresholds. | Does not convey information about actual prediction scores. |

| F1-Score [37] | Balancing Precision and Recall | High for class imbalance | High | Harmonic mean provides a balanced view of two critical errors. | Can mask poor performance in either Precision or Recall. |

| Gain/Lift Charts [37] | Campaign targeting & rank ordering | High for top-decile analysis | High | Directly informs resource allocation (e.g., which compounds to test first). | Less informative for overall model performance. |

| Kolmogorov-Smirnov (K-S) Statistic [37] | Degree of separation between positive/negative distributions | High for distribution differences | High | Single number (0-100) indicating separation capability. | Primarily useful for binary classification. |

| Fidelity-Outcome Correlation [2] | Assessing implementation of evidence-based processes | High when correlation >0.7 | Very High | Directly links process fidelity to meaningful outcomes; explains >50% of variance. | Requires established fidelity assessments and outcome data. |

Experimental Protocols for Metric Validation

Protocol for Validating Fidelity-Outcome Correlation

The correlation between fidelity (the adherence to an innovation's essential components) and outcomes represents a powerful, highly interpretable metric for benchmarking research processes [2].

- Objective: To establish a quantitative relationship between the fidelity of a research or clinical protocol and its intended outcomes, thereby validating the protocol itself as a benchmark.

- Methodology:

- Define Essential Components: Clearly specify the active ingredients or critical steps of the process being benchmarked (e.g., specific AI model training parameters, a clinical trial protocol, or a laboratory assay methodology).

- Develop Fidelity Assessment: Create a tool with observable indicators to measure the presence and strength of each essential component. This assessment can be a checklist or a scaled score.

- Measure Fidelity and Outcomes: Implement the protocol across multiple instances (e.g., different research sites, different batches of experiments) and concurrently collect data on both fidelity scores and relevant outcome measures (e.g., prediction accuracy, patient response rate, assay success).

- Statistical Analysis: Calculate the correlation coefficient (e.g., Pearson's r) between the fidelity scores and the outcome measures. A strong, prespecified correlation (e.g., ≥ 0.70) indicates that the fidelity assessment is a valid benchmark, explaining a significant portion (≥ 50%) of the outcome variance [2].

- Data Interpretation: A high correlation confirms that faithfully executing the protocol leads to predictably better outcomes. This makes the fidelity score a powerful proxy for ultimate success, allowing for earlier and more efficient quality assurance.

Protocol for Evaluating Classification Model Performance

For benchmarking AI/ML models used in tasks like target identification or virtual screening, a multi-faceted approach is required [35] [37].

- Objective: To comprehensively evaluate the performance of a classification model, balancing the detection of true positives against the cost of false positives and false negatives.

- Methodology:

- Data Preparation: Split the data into training, validation, and test sets, ensuring the test set remains completely unseen during model training.

- Generate Predictions: Run the trained model on the test set to obtain predicted classes or probability scores.

- Construct Confusion Matrix: Tabulate the counts of True Positives (TP), True Negatives (TN), False Positives (FP), and False Negatives (FN).

- Calculate Metric Suite: Compute a basket of metrics from the confusion matrix to address different questions [37]:

- Precision (Positive Predictive Value): TP / (TP + FP). Answers: "When the model predicts positive, how often is it correct?" Critical when the cost of FPs is high.

- Recall (Sensitivity): TP / (TP + FN). Answers: "What proportion of actual positives did the model find?" Critical when the cost of FNs is high.

- F1-Score: 2 * (Precision * Recall) / (Precision + Recall). The harmonic mean of Precision and Recall, providing a single score to balance both concerns.

- AUC-ROC: Plot the True Positive Rate (Recall) against the False Positive Rate at various classification thresholds. The area under this curve measures the model's overall ability to discriminate between classes.

- Data Interpretation: There is no single "best" metric. The choice depends on the context of use (COU) [36]. For example, a model screening for rare drug targets may prioritize Recall to avoid missing potential hits, while a model predicting toxicity might prioritize Precision to avoid incorrectly flagging safe compounds.

Building a reliable benchmarking protocol requires more than just algorithms; it depends on high-quality data, robust tools, and clear definitions. The following table details key "reagents" for conducting fidelity and efficiency research.

Table 2: Essential Research Reagents for Benchmarking Studies

| Tool/Resource | Function in Benchmarking | Application Example |

|---|---|---|

| Public DTI Datasets (e.g., BindingDB, Davis, KIBA) [38] | Provide standardized, curated data for training and evaluating predictive models. | Serving as a common ground for benchmarking the performance of new AI-based Drug-Target Interaction (DTI) prediction algorithms. |

| Fidelity Assessment Tool [2] | A customized checklist or scale to quantitatively measure adherence to a protocol's essential components. | Assessing whether a laboratory is correctly implementing a complex assay, or whether a clinical trial site is following the trial protocol. |

| "Fit-for-Purpose" Framework [36] | A strategic principle ensuring selected models and metrics are aligned with the specific Question of Interest (QOI) and Context of Use (COU). | Guiding the choice between a complex, high-power model for lead optimization vs. a simpler, more interpretable model for initial screening. |

| Model-Informed Drug Development (MIDD) Tools [36] | A suite of quantitative approaches (e.g., PBPK, QSP) that use models to simulate and predict drug behavior. | Benchmarking the predictive performance of different PBPK models for forecasting human pharmacokinetics prior to First-in-Human studies. |

| Confusion Matrix [37] | A foundational table that visualizes model performance by breaking down predictions into true/false positives/negatives. | The first step in calculating a suite of metrics (Precision, Recall, F1) to benchmark a new virtual screening model against an existing one. |

The rigorous selection of metrics, grounded in a clear understanding of statistical power and interpretability, is what separates conclusive benchmarking from mere data collection. As the pharmaceutical industry increasingly adopts AI-driven methodologies, the principles outlined here—embracing a suite of metrics tailored to the context of use, validating protocols through fidelity-outcome relationships, and leveraging a fit-for-purpose framework—become paramount [35] [36]. The future of benchmarking will likely involve the development of more sophisticated, multi-dimensional metric systems that can simultaneously optimize for statistical robustness, clinical interpretability, and regulatory acceptance. By adhering to disciplined metric selection protocols, researchers can ensure that their assessments of fidelity and efficiency are not only statistically sound but also meaningfully advance the ultimate goal of delivering safer and more effective medicines to patients.