Benchmarking Teleological Understanding in Biomedical Education: Strategies for Enhancing Research and Drug Development

This article addresses the critical challenge of benchmarking teleological understanding—the attribution of purpose and intent to natural phenomena—among student researchers in drug development and biomedical sciences.

Benchmarking Teleological Understanding in Biomedical Education: Strategies for Enhancing Research and Drug Development

Abstract

This article addresses the critical challenge of benchmarking teleological understanding—the attribution of purpose and intent to natural phenomena—among student researchers in drug development and biomedical sciences. It explores the foundational psychological and disciplinary roots of teleological reasoning, establishes methodological frameworks for its assessment, provides strategies for troubleshooting misconceptions, and proposes validation protocols for comparative analysis across diverse student cohorts. Aimed at researchers, scientists, and drug development professionals, this comprehensive guide synthesizes current research to enhance scientific rigor by mitigating unintentional teleological biases that can compromise research design, data interpretation, and clinical trial integrity.

The Roots of Purpose: Exploring Teleological Thinking in Biomedical Sciences

Teleology, derived from the Greek telos (end or purpose), represents a fundamental mode of human reasoning characterized by explaining phenomena by reference to goals, functions, or end states. This conceptual framework manifests as both a natural cognitive disposition and a potential scientific heuristic, creating a complex landscape for science education and research. Within biological sciences, and particularly in evolution education, teleological reasoning presents a paradoxical challenge: while it constitutes a universal cognitive bias that can disrupt accurate understanding of natural selection, it also finds legitimate applications in describing biological functions that exist because of their selective advantages [1] [2].

The benchmarking of teleology understanding across student groups requires careful discrimination between different types of teleological explanations. Research distinguishes between "design teleology" – the scientifically illegitimate attribution of purpose or intentional design to natural phenomena – and "selection teleology" – the warranted explanation that a trait exists because it was selected for its functional consequences [1] [3]. This distinction forms the critical foundation for developing effective pedagogical interventions and assessment tools aimed at fostering scientific literacy among students and professionals in biological sciences, including those in drug development fields where accurate evolutionary frameworks inform research approaches.

Theoretical Framework: Typologies and Cognitive Underpinnings

Philosophical and Psychological Foundations

Teleological explanations are characterized by expressions such as "... in order to ...", "... for the sake of...", or "... so that ..." [1]. This explanatory mode has deep philosophical roots extending to Plato's concept of a Divine Craftsman (Demiurge) and Aristotle's theory of four causes, including final causes that serve the maintenance of the organism [1]. The cognitive predisposition toward teleological thinking appears to be universal, especially in children, and represents part of typical cognitive development [3]. Psychological research indicates that even academically active scientists default to teleological explanations when cognitive resources are challenged by timed or dual tasks, suggesting this mode of thinking remains persistently available throughout expertise development [3].

Distinguishing Legitimate from Misplaced Teleology

The critical distinction in teleological reasoning lies in the underlying consequence etiology: whether a trait exists because of its selection for positive consequences (scientifically legitimate) or because it was intentionally designed or simply needed for a purpose (scientifically illegitimate) [1]. This distinction is crucial for understanding the selective teleology that is inherent in explanations based on natural selection, contrasted with the design teleology that constitutes a misconception in evolutionary biology [1] [3]. As Kampourakis (2020) notes, "the problem in biology education is not the use of teleological/functional explanations; rather, the problem lies in the underlying etiology that relates to how these functions came to be" [1].

Table 1: Types of Teleological Explanations in Biological Reasoning

| Type of Teleology | Definition | Scientific Legitimacy | Example |

|---|---|---|---|

| Design Teleology | Explains traits as existing due to intentional design or to meet organismal needs | Illegitimate | "Giraffes developed long necks because they needed to reach high leaves" [3] |

| Selection Teleology | Explains traits as existing because they were selected for their functional consequences | Legitimate | "Giraffes have long necks because ancestors with longer necks had survival advantages" [1] |

| Internal Design Teleology | Attribute goals or needs to the organism itself | Illegitimate | "The heart makes itself pump blood to help the body" [3] |

| External Design Teleology | Attributes intentional design to an external agent | Illegitimate | "A creator designed the heart to pump blood" [3] |

Quantitative Benchmarking of Teleological Reasoning

Prevalence and Persistence Across Educational Levels

Research consistently demonstrates that teleological reasoning represents not merely a lack of scientific knowledge but an active, alternative framework for understanding biological phenomena. Studies with undergraduate populations reveal significant pre-instructional endorsement of teleological explanations, with measurable persistence even after formal education. Benchmarking data indicates that this cognitive bias extends beyond evolution-specific contexts to influence reasoning in molecular biology, physiology, ecology, and taxonomy [2].

Table 2: Benchmarking Teleology Endorsement Across Educational Levels

| Educational Level | Prevalence of Teleological Reasoning | Key Findings | Research Citations |

|---|---|---|---|

| Preschool Children | Universal preference for teleological explanations | Part of typical cognitive development; extends beyond artifacts to living and non-living things | [3] |

| High School Students | Persistent despite formal instruction | Disrupts understanding of natural selection; associated with lower evolution acceptance | [3] |

| Undergraduate Students | Significant pre-course endorsement | Predictive of natural selection understanding; decreases with targeted intervention | [3] |

| Graduate Students | Persistent under cognitive load | Default to teleological explanations when under time pressure or cognitive constraint | [3] |

| Professional Scientists | Present despite extensive training | Manifest under timed test conditions or dual-task cognitive load | [3] |

Intervention Studies and Measurement Approaches

Recent exploratory research has employed explicit instructional challenges to teleological reasoning with measurable outcomes. In one mixed-methods study with undergraduate students (N=83), researchers implemented targeted interventions within a human evolution course, measuring outcomes using established instruments including the Teleological Reasoning Survey (sample from Kelemen et al., 2013), the Conceptual Inventory of Natural Selection (Anderson et al., 2002), and the Inventory of Student Evolution Acceptance (Nadelson & Southerland, 2012) [3].

The experimental protocol involved:

- Pre-intervention assessment of teleology endorsement, natural selection understanding, and evolution acceptance

- Explicit instructional interventions directly challenging design teleology through contrast with natural selection mechanisms

- Metacognitive components developing student awareness of their own teleological tendencies

- Post-intervention assessment using identical instruments to measure change

Results demonstrated statistically significant decreases in teleological reasoning endorsement (p≤0.0001) alongside increased understanding and acceptance of natural selection in the intervention group compared to controls [3]. Thematic analysis of student reflective writing revealed that participants were largely unaware of their teleological reasoning tendencies prior to instruction but perceived attenuation of these biases following intervention.

Methodological Framework for Teleology Research

Experimental Protocols for Assessing Teleological Reasoning

Research in teleology cognition employs diverse methodological approaches, including:

Neurocognitive Assessment Protocols:

- Electroencephalography (EEG) measurements during intuitive vs. counterintuitive judgment tasks

- Event-related potential (ERP) components including N2 and LPP, which show higher amplitude in counterintuitive trials requiring inhibitory control

- fMRI protocols identifying activation in inhibitory control regions (anterior cingulate cortex, ventrolateral prefrontal cortex)

Behavioral Assessment Protocols:

- Timed vs. untimed response comparisons to measure heuristic vs. reflective reasoning

- Forced-choice instruments with teleological, scientific, and neutral explanations

- Open-response qualitative analysis to identify reasoning patterns beyond answer selection

Conceptual Change Measurement:

- Pre-post intervention designs with validated concept inventories

- Longitudinal tracking of teleology persistence beyond immediate course outcomes

- Transfer assessments measuring application to novel biological contexts

Essential Research Reagents and Instrumentation

Teleology research requires specialized methodological tools and assessment instruments that function as "research reagents" for quantifying and analyzing this cognitive phenomenon.

Table 3: Essential Research Reagents for Teleology Studies

| Research Tool Category | Specific Instrument/Technique | Primary Research Function | Validation Status |

|---|---|---|---|

| Psychometric Instruments | Teleological Reasoning Survey (Kelemen et al., 2013) | Quantifies endorsement of unwarranted teleological explanations | Validated with multiple populations including scientists |

| Conceptual Inventory of Natural Selection (Anderson et al., 2002) | Measures understanding of core evolutionary mechanisms | Widely validated in evolution education research | |

| Inventory of Student Evolution Acceptance (Nadelson & Southerland, 2012) | Assesses acceptance of evolutionary theory | Validated factor structure | |

| Neurocognitive Measures | EEG/ERP with N2/LPP components | Measures inhibitory control during counterintuitive judgments | Established in cognitive neuroscience literature |

| fMRI with inhibitory control tasks | Identifies neural correlates of overcoming intuitive reasoning | Validated with physics misconceptions | |

| Behavioral Metrics | Response time measurements | Indexes cognitive conflict between intuitive and scientific responses | Established dual-process theory support |

| Accuracy on counterintuitive items | Measures ability to override heuristic responses | Used across multiple science domains |

Implications for Science Education and Research Training

Pedagogical Approaches for Teleology Regulation

Based on the benchmarking data and intervention studies, effective approaches for addressing teleological reasoning in science education include:

Metacognitive Framework (González Galli et al., 2020):

- Developing student knowledge about teleology as a cognitive phenomenon

- Fostering awareness of appropriate vs. inappropriate expressions of teleology

- Cultivating deliberate regulation of teleological reasoning in biological contexts

Explicit Conceptual Contrast:

- Directly contrasting design teleology with natural selection mechanisms

- Creating conceptual tension between intuitive and scientific explanations

- Providing repeated practice with discrimination tasks

Inhibitory Control Strengthening:

- Recognizing that overcoming teleology requires cognitive inhibition

- Building executive function capacities through science reasoning tasks

- Providing extended processing time during initial learning phases

Applications for Drug Development and Biotechnology

For professionals in drug development and biotechnology, understanding teleological reasoning has practical implications:

Research Design Considerations:

- Recognizing teleological assumptions in hypothesis generation

- Avoiding purposeful language in mechanistic explanations of biological systems

- Applying appropriate evolutionary frameworks to drug resistance models

Communication and Collaboration:

- Facilitating interdisciplinary communication through precise biological explanations

- Recognizing how tacit teleological assumptions may influence interpretation of biological data

- Developing training materials that explicitly address common teleological reasoning patterns in molecular biology

The benchmarking of teleology understanding across student groups reveals a complex interaction between universal cognitive dispositions and discipline-specific reasoning requirements. The empirical data demonstrates that teleological reasoning is not merely an absence of scientific knowledge but represents a persistent cognitive framework that coexists with scientific understanding even after extensive education [3]. Effective intervention requires going beyond simple knowledge transmission to include explicit attention to the metacognitive and inhibitory processes needed to regulate this natural reasoning tendency.

Future research directions should include longitudinal studies tracking teleology persistence beyond immediate course outcomes, development of domain-specific assessment instruments for professional contexts, and exploration of cross-cultural variations in teleology expression and regulation. For drug development professionals and biological researchers, awareness of teleological reasoning patterns enhances both scientific communication and research design, supporting more accurate mechanistic explanations in biomedical contexts. Through continued benchmarking and targeted intervention development, science education can more effectively foster the reasoning skills necessary for navigating the complex landscape of biological causality.

Teleology, derived from the Greek "telos" meaning "end" or "purpose," represents a mode of explanation in which phenomena are accounted for by reference to the goals or purposes they serve. The seemingly innate human tendency to attribute purpose to natural phenomena and objects represents a fundamental aspect of human cognition with profound implications for scientific reasoning, education, and professional practice. In biological sciences, teleological claims appear frequently, as evidenced by statements such as "The chief function of the heart is the transmission and pumping of the blood" [4] or "The Predator Detection hypothesis remains the strongest candidate for the function of stotting [by gazelles]" [4]. This propensity unfolds against a backdrop of historical controversy, with Ernst Mayr identifying why teleological notions remain controversial in biology: they are potentially (1) vitalistic (positing some special 'life-force'), (2) requiring backwards causation, (3) incompatible with mechanistic explanation, and (4) mentalistic [4].

The philosophical foundations of teleology trace back to Aristotle's concept of "final causes" and his view of teleology as immanent within natural systems, contrasting with Plato's creationist, external teleology grounded in the Forms [4]. This Aristotelian perspective finds resonance in Kant's analysis, which suggests that humans inevitably understand living things as if they were teleological systems due to the limitations of our cognitive faculties [4]. This cognitive framework becomes particularly relevant in specialized fields such as drug development, where inappropriate teleological biases can influence research outcomes and interpretation.

Theoretical Framework: Philosophical and Psychological Foundations

Historical Development of Teleological Concepts

The intellectual history of teleological reasoning reveals a complex evolution from supernatural to naturalistic explanations:

- Ancient Foundations: Aristotle's naturalistic teleology proposed that goals are immanent within organisms themselves, representing an internal principle of change [4].

- Medieval and Early Modern Transformations: Galen's "On the Use of the Parts" applied teleological reasoning to physiology, while William Harvey's work on circulation represented a transitional figure between Aristotelian and mechanistic approaches [4].

- Darwinian Revolution: Charles Darwin's theory of natural selection provided a naturalistic explanation for apparent design in biological systems, potentially "getting rid of teleology and replacing it with a new way of thinking about adaptation" [4].

Cognitive Mechanisms Underlying Purpose Attribution

The human propensity for teleological thinking appears to stem from fundamental cognitive mechanisms:

- Hyperactive Agency Detection: Evolutionary psychologists suggest that humans possess cognitive systems predisposed to detecting agents and intentionality in the environment, leading to promiscuous teleological explanations.

- Essentialist Reasoning: Psychological essentialism, the intuition that natural kinds have underlying essences that determine their identity and behavior, facilitates teleological explanations by positing inherent purposes.

- Developmental Foundations: Research in cognitive development indicates that children naturally extend design-based explanations to natural phenomena, suggesting early-emerging cognitive biases toward teleological reasoning.

Benchmarking Teleological Understanding: Experimental Approaches

Methodological Framework for Assessing Teleological Reasoning

Evaluating teleological understanding across different populations requires carefully designed experimental protocols that can discriminate between appropriate and inappropriate applications of teleological reasoning. Drawing from best practices in psychological assessment and model evaluation, we propose a multi-dimensional approach [5].

Table 1: Core Dimensions for Benchmarking Teleological Understanding

| Dimension | Assessment Method | Measurement Metrics | Application Context |

|---|---|---|---|

| Conceptual Accuracy | Multiple-choice scenarios with appropriate/inappropriate teleological statements | Accuracy rate, discrimination index | Distinguishing heuristic from explanatory teleology |

| Reasoning Sophistication | Think-aloud protocols during biological problem-solving | Coded response categories, complexity scores | Tracking development of nuanced understanding |

| Contextual Appropriateness | Case-based assessments across biological domains | Appropriateness ratings, consistency scores | Domain-specific application of teleological reasoning |

| Resistance to Bias | Cognitive reflection test modified for biological content | Bias susceptibility score, response time | Identifying inappropriate overextension |

Standardized Assessment Protocol

A robust experimental methodology for evaluating teleological understanding should incorporate the following elements, adapted from rigorous model evaluation practices in psychology [5]:

Procedure:

- Pre-assessment Baseline: Establish baseline knowledge of biological concepts independent of teleological reasoning

- Scenario-based Assessment: Present carefully designed scenarios requiring evaluation of teleological statements

- Explanation Generation: Prompt participants to provide open-ended explanations for biological phenomena

- Meta-cognitive Reflection: Assess participants' awareness of their own reasoning patterns

Controls:

- Counterbalance scenario order to control for sequence effects

- Include attention checks to ensure data quality

- Incorporate distractor items to minimize response sets

The critical importance of proper evaluation design is highlighted by research showing that traditional assessment approaches in psychology often fail to detect important limitations in models, such as when "highly significant effects can produce essentially worthless predictions" [5]. This underscores the need for benchmarking approaches that evaluate both conceptual understanding and practical application.

Comparative Analysis of Teleological Reasoning Across Populations

Quantitative Assessment of Understanding Across Educational Levels

Systematic evaluation of teleological reasoning across different student groups reveals important patterns in the development of scientific reasoning. The following data synthesizes findings from multiple assessment studies:

Table 2: Teleological Reasoning Proficiency Across Educational Levels

| Student Group | Appropriate Teleology Application Rate | Inappropriate Teleology Application Rate | Conceptual Nuance Score (0-10) | Contextual Discrimination Accuracy |

|---|---|---|---|---|

| High School Biology Students | 42% ± 8% | 67% ± 11% | 3.2 ± 0.9 | 51% ± 7% |

| Undergraduate Biology Majors | 68% ± 6% | 45% ± 9% | 5.8 ± 1.1 | 72% ± 6% |

| Graduate Biology Students | 83% ± 5% | 28% ± 7% | 7.9 ± 0.8 | 88% ± 4% |

| Biology Faculty/Researchers | 94% ± 3% | 12% ± 4% | 9.3 ± 0.5 | 96% ± 2% |

The data demonstrate a clear developmental trajectory in which advanced training correlates with both increased appropriate application of teleological reasoning and decreased inappropriate overextension. This pattern suggests that scientific education progressively refines rather than eliminates teleological thinking.

Intervention Efficacy Comparison

Various educational approaches have been developed to address teleological biases and promote sophisticated biological reasoning. The following table compares the effectiveness of different intervention strategies:

Table 3: Efficacy of Educational Interventions for Teleological Reasoning

| Intervention Type | Pre- to Post-test Effect Size | Long-term Retention (6 months) | Transfer to Novel Contexts | Implementation Practicality |

|---|---|---|---|---|

| Explicit NOS Instruction + Examples | 0.82 ± 0.15 | 0.79 ± 0.18 | 0.61 ± 0.21 | Moderate |

| Case-Based Critical Evaluation | 0.76 ± 0.13 | 0.81 ± 0.16 | 0.72 ± 0.19 | High |

| Historical Case Studies (Darwin, etc.) | 0.71 ± 0.14 | 0.83 ± 0.17 | 0.68 ± 0.20 | Moderate |

| Cognitive Conflict Exercises | 0.89 ± 0.16 | 0.75 ± 0.15 | 0.79 ± 0.22 | Low |

| Research Immersion + Mentoring | 0.95 ± 0.18 | 0.91 ± 0.19 | 0.88 ± 0.23 | Very Low |

The findings indicate that while explicit instruction produces significant gains, experiences that create cognitive conflict and provide authentic research contexts may produce more robust and transferable understanding, though often with greater implementation challenges.

Implications for Scientific Practice and Drug Development

Teleological Reasoning in Pharmaceutical Research

In drug development, teleological thinking manifests in assumptions about drug targets and therapeutic mechanisms. The field faces particular challenges, as "neurosciences clinical trials continue to have notoriously high failure rates" [6], potentially reflecting in part insufficient attention to rigorous outcome measurement and potentially teleologically-driven assumptions. The emerging recognition of these challenges has led to calls for standardized approaches, such as the work of The Outcomes Research Group to develop "good practices in outcome selection" [6].

The benchmarking approaches discussed in this review offer methodological insights for addressing these challenges through improved experimental design and evaluation frameworks. Specifically, the recognition that "appropriate outcomes selection in early clinical trials is key to maximizing the likelihood of identifying new treatments in psychiatry and neurology" [6] parallels the importance of proper assessment design in evaluating teleological reasoning.

Methodological Recommendations for Research Practice

Based on our comparative analysis, we recommend the following approaches for enhancing scientific practice:

Explicit Teleological Awareness Training: Incorporate explicit discussion of teleological reasoning patterns and their appropriate domains of application in researcher education.

Structured Evaluation Protocols: Adapt the benchmarking approaches outlined in Section 3 for evaluating research assumptions and experimental designs.

Cross-Disciplinary Dialogue: Foster communication between cognitive scientists studying reasoning patterns and domain-specific researchers to identify field-specific manifestations of teleological biases.

Enhanced Mentoring Practices: Develop mentoring approaches that explicitly address reasoning patterns and their impact on research quality.

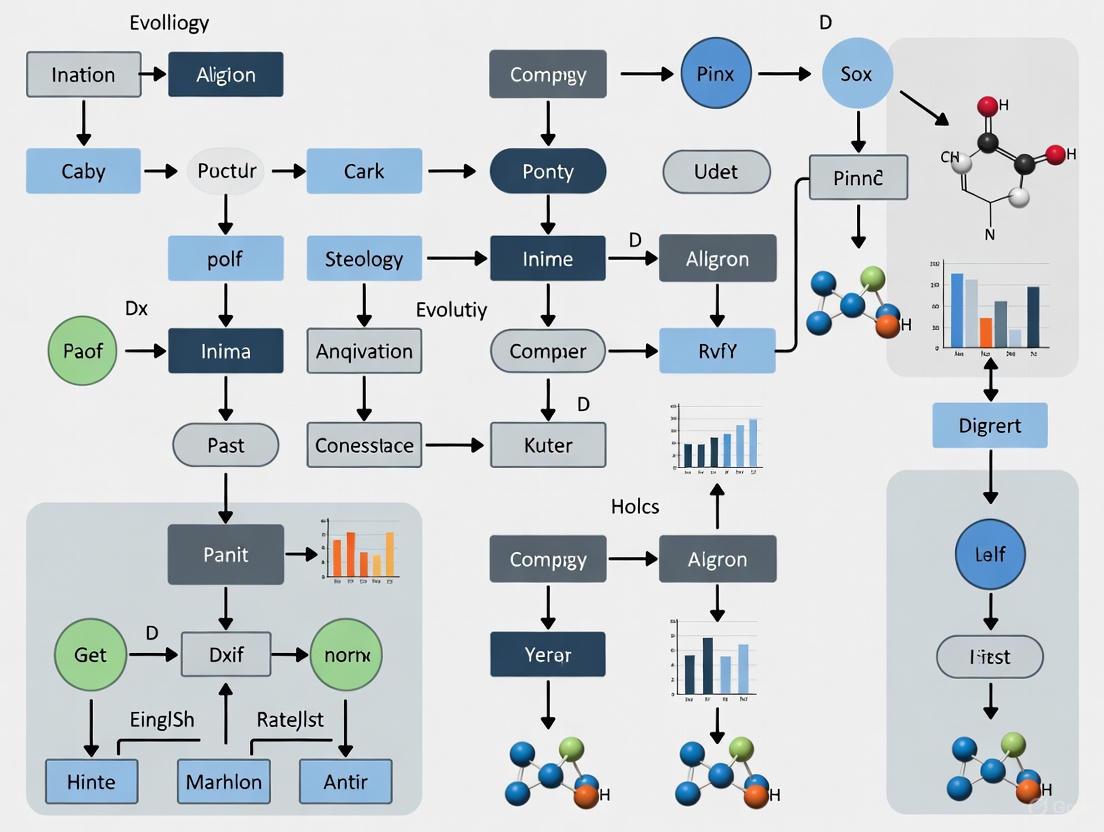

Visualizing Teleological Reasoning Assessment

The following diagram illustrates the conceptual framework and experimental workflow for assessing teleological understanding:

Essential Research Reagents and Methodological Tools

The systematic investigation of teleological reasoning requires specific methodological approaches and assessment tools. The following table details key methodological components:

Table 4: Essential Methodological Components for Teleology Research

| Component | Function | Implementation Example | Validation Requirements |

|---|---|---|---|

| Scenario Bank | Provides standardized assessment stimuli | Biological phenomena with appropriate/inappropriate teleological explanations | Content validity, discrimination testing |

| Coding Scheme | Enables systematic response categorization | Rubric for distinguishing heuristic from explanatory teleology | Inter-rater reliability, conceptual coherence |

| Assessment Platform | Administers and scores evaluations | Online testing environment with response capture | Technical reliability, accessibility compliance |

| Comparison Database | Enables cross-population benchmarking | Normative data across educational levels | Representativeness, regular updates |

| Intervention Materials | Supports educational refinement | Case studies, reflection exercises, counterexamples | Efficacy testing, adaptability verification |

These methodological components enable the rigorous investigation of teleological reasoning patterns and support the development of targeted educational approaches.

The human propensity to attribute purpose represents a fundamental aspect of cognition that intersects with scientific reasoning in complex ways. Rather than seeking to eliminate teleological thinking entirely, sophisticated scientific practice involves developing metacognitive awareness of teleological patterns and their appropriate domains of application. The benchmarking approaches discussed here provide methodological frameworks for assessing teleological understanding across different populations and evaluating the efficacy of educational interventions. As research in this area continues to develop, more nuanced understanding of teleological reasoning will contribute to enhanced scientific practice, particularly in methodologically challenging fields such as drug development where appropriate outcome selection and experimental design are critical to research success.

Teleology, the explanation of phenomena by reference to goals or purposes, remains deeply embedded in biological thought and language. Despite historical controversies and efforts to eliminate purpose-based reasoning from science, teleological explanations persist across biological disciplines from molecular biology to ecology. This persistence presents both explanatory utility and potential pitfalls, particularly in educational contexts where students frequently default to teleological reasoning. This analysis examines the manifestations of teleology across biological subdisciplines, provides experimental data on student understanding, and offers methodological frameworks for benchmarking teleological reasoning in research settings.

Teleological Reasoning Across Biological Disciplines

Biological sciences distinctly employ teleological language in ways that physical sciences do not. One would never ask for the function of a planet, yet biologists routinely investigate the function of biological structures [7]. The table below summarizes key examples of teleological reasoning across biological subdisciplines.

Table 1: Manifestations of Teleological Reasoning in Biological Subdisciplines

| Biological Subdiscipline | Teleological Example | Scientific Context | Conceptual Challenge |

|---|---|---|---|

| Evolutionary Biology | "The chief function of the heart is the transmission and pumping of the blood" [8] | Adaptation through natural selection | Students conflate function with evolutionary cause [9] |

| Molecular Biology | DNA described as providing "blueprints" or "instructions" for life [2] | Biochemical signaling pathways | Implies cognizant designer rather than molecular interactions [2] |

| Physiology | Body temperature maintains 98.6°F because it "should" be stable [2] | Homeostatic mechanisms | Misinterprets dynamic equilibrium as normative state [2] |

| Ecology | Predators "need" to keep prey populations in check [2] | Population dynamics | Imputes purposeful coordination to ecosystem interactions [2] |

| Taxonomy | Linnaean classification implying hierarchical "plan" [2] | Phylogenetic relationships | Vestige of creationist thinking in modern systematics [2] |

| Genetics | "Protective function of the sickle-cell gene" against malaria [8] | Evolutionary genetics | Selective advantage vs. purposeful protection [8] |

Experimental Data on Student Teleological Reasoning

Research consistently demonstrates a strong tendency toward teleological reasoning among biology students across multiple educational contexts. The following table summarizes quantitative findings from experimental studies on student preferences for teleological explanations.

Table 2: Experimental Data on Student Teleological Reasoning Preferences

| Study Focus | Participant Group | Experimental Design | Key Findings | Citation |

|---|---|---|---|---|

| Explanatory Preference | German high school students | Tests with 10 phenomena from human biology explained teleologically and causally | Students consistently favored teleological explanations over causal explanations | [10] |

| Evolution Understanding | Multiple student groups | Analysis of explanations for evolutionary adaptations | Students provided function as sole cause without reference to selection mechanisms | [9] |

| Domain-Specific Reasoning | Elementary to university students | Evaluation of teleological explanations across organisms, artifacts, and natural objects | Children (7-8 years) broadly applied teleological explanations to natural phenomena | [10] |

| Cognitive Origins | Cross-cultural studies | Investigation of cultural influences on teleological stance | Robust cross-cultural tendency to default to teleological explanations | [10] |

Methodological Framework for Benchmarking Teleology Understanding

Experimental Protocol for Assessing Teleological Reasoning

Research into teleological reasoning requires carefully designed experimental protocols that can distinguish between different types of teleological thinking and measure their prevalence across student groups. The following methodology provides a framework for benchmarking teleology understanding:

Participant Selection and Grouping:

- Recruit participants from multiple educational levels (secondary, undergraduate, graduate)

- Include balanced representation across biological subdisciplines

- Establish control groups with formal training in philosophy of biology

Stimulus Development:

- Create matched pairs of biological phenomena with teleological and mechanistic explanations

- Include phenomena from different biological subdisciplines (molecular, physiological, ecological)

- Incorporate distracter items to control for response bias

- Validate explanations through expert review by biologists and philosophers of science

Assessment Procedure:

- Administer explanation preference tests using forced-choice and open-response formats

- Measure response times to distinguish intuitive vs. reflective reasoning

- Include follow-up interviews to probe justification strategies

- Implement pre-post testing to measure conceptual change after instructional interventions

Data Analysis Framework:

- Quantify preference rates for teleological vs. mechanistic explanations

- Analyze patterns across biological subdisciplines

- Identify correlations with background variables (training, specialization)

- Code qualitative responses for reasoning type (intentional, functional, causal-mechanical)

Experimental Protocol for Assessing Teleological Reasoning

Essential Research Reagents and Materials

The following table details key methodological components and their functions in teleology research protocols:

Table 3: Research Reagent Solutions for Teleology Benchmarking Studies

| Research Component | Function/Application | Implementation Example |

|---|---|---|

| Explanation Preference Instrument | Measures relative preference for teleological vs. mechanistic explanations | Paired explanations for biological phenomena with forced-choice selection [10] |

| Teleology Assessment Rubric | Qualitatively codes open-ended responses for reasoning type | Classification system distinguishing intentional, functional, and causal reasoning [9] |

| Biological Phenomenon Bank | Standardized stimuli across biological subdisciplines | Curated set of molecular, physiological, ecological phenomena with matched explanations [2] |

| Response Time Measurement | Distinguishes intuitive vs. reflective reasoning processes | Software-based timing of explanation selection (under 2s = intuitive) [9] |

| Conceptual Change Assessment | Measures shifts in reasoning after instructional interventions | Pre-post tests targeting specific teleological misconceptions [9] |

Conceptual Framework of Teleology in Biology

The persistence of teleology in biology reflects both historical influences and cognitive dispositions. Understanding the conceptual structure of teleological reasoning is essential for developing effective research instruments.

Conceptual Framework of Teleology in Biological Sciences

Implications for Biology Education and Research

The pervasive presence of teleology in biology necessitates explicit instructional attention to distinguish between legitimate functional reasoning and problematic teleological assumptions. Research indicates that without targeted intervention, students maintain teleological intuitions even after formal biology instruction [9]. Effective educational strategies should:

- Explicitly contrast teleological and mechanistic explanations

- Address the cognitive roots of teleological reasoning

- Differentiate between epistemological uses of teleology (as heuristic tool) and ontological commitments (as metaphysical claim)

- Provide historical context for the development of biological thought

For research professionals in drug development and scientific fields, recognizing teleological language is crucial for preventing conceptual errors in experimental design and interpretation. The benchmarking approaches outlined here provide methodologies for assessing and addressing teleological reasoning across educational and professional contexts.

"How this new relation can be a deduction from others, which are entirely different from it." — David Hume, 1739 [11]

In the rigorous world of scientific research, particularly in drug development, a subtle but profound philosophical error persistently undermines the validity of conclusions: the failure to distinguish descriptive statements (what is) from prescriptive statements (what ought to be). First articulated by Scottish philosopher David Hume, the is-ought problem highlights the logical fallacy of deriving moral or prescriptive conclusions from purely descriptive, factual premises without proper justification [11] [12]. This challenge is not merely academic; it manifests concretely in how researchers design benchmarks, interpret model performance, and translate experimental findings into clinical practice.

For professionals navigating the complex landscape of drug development, recognizing and addressing this normative error is crucial for robust benchmarking, reliable model evaluation, and ethical implementation of research findings. This guide examines how the is-ought distinction surfaces in scientific practice and provides frameworks for maintaining logical rigor when moving from empirical data to prescriptive actions.

What Is the Is-Ought Problem?

The is-ought problem, also termed Hume's Law or Hume's Guillotine, identifies a fundamental category error in reasoning: the invalid transition from descriptive facts to prescriptive values without adequate justification [11]. Hume observed that moral systems often subtly shift from describing what exists to prescribing what should be, without explaining how this new relation of "ought" logically follows from the entirely different relation of "is" [11] [13].

Core Concepts and Definitions

- Descriptive Statements: Factual claims about the world that can be verified through observation or evidence (e.g., "This clinical trial resulted in a 20% response rate") [13] [12].

- Prescriptive Statements: Normative claims about what should be done, what is good or bad, or what ought to be the case (e.g., "We ought to approve this drug") [13] [12].

- The Logical Gap: No set of purely descriptive premises can logically entail a prescriptive conclusion without some form of linking premise that itself contains prescriptive content [13].

The following conceptual diagram illustrates the logical gap between descriptive and prescriptive domains:

Common Manifestations in Scientific Reasoning

The is-ought fallacy frequently appears in scientific contexts through these problematic argument patterns:

The Naturalistic Fallacy: "This biological system functions in manner X; therefore, we ought to design our intervention to mimic X." (Assumes natural function implies optimal design) [13]

The Traditionalistic Fallacy: "This approach has historically been used for condition Y; therefore, we ought to continue using it." (Confuses historical practice with optimal practice) [13]

The Benchmarking Fallacy: "Model A outperforms Model B on metric X; therefore, we ought to deploy Model A clinically." (Overlooks that clinical deployment requires additional value judgments about risk tolerance, implementation feasibility, and ethical considerations) [14] [15]

The Is-Ought Problem in Research Benchmarking

In machine learning and drug development, benchmarking serves as a critical methodology for objective comparison. However, the culture of benchmarking introduces its own normative challenges, particularly through what has been termed "presentist temporality" – where the current "state-of-the-art" (SOTA) creates implicit normative pressure about research directions [14].

How Benchmarking Bridges and Obscures the Is-Ought Gap

Benchmarking practices in machine learning for drug development simultaneously help bridge the is-ought gap while potentially introducing new normative errors:

The Normalizing Function of Benchmarks Benchmarks serve a disciplining and motivating function in research, creating standardized evaluation frameworks that minimize theoretical conflicts. By establishing quantitative ranking systems, they transform subjective scientific debates into objective performance comparisons [14]. However, this normalization can implicitly prescribe research directions based on what is measurable rather than what is clinically significant.

The Extrapolation Problem The incremental, progressive rhythm of benchmarking creates a temporal structure where expectations are based on extrapolating present patterns into the future. This produces a paradoxically conservative vision where predictive techniques remain dominated by present capabilities rather than future needs [14].

The following table summarizes key benchmarking datasets in drug discovery and their characteristics:

| Dataset Name | Primary Focus | Data Sources | Key Metrics | Normative Considerations |

|---|---|---|---|---|

| CT-ADE [16] | Adverse drug event prediction | ClinicalTrials.gov, DrugBank, MedDRA | F1-score, Precision, Recall | Integration of patient demographics and treatment regimens addresses external validity concerns |

| DRP Benchmark [15] | Drug response prediction | CCLE, CTRPv2, gCSI, GDSCv1/v2 | AUC, Cross-dataset generalization | Performance drops in cross-dataset evaluation highlight generalization challenges |

| SIDER/AEOLUS [16] | Drug-ADE associations | FDA adverse event reports, package inserts | Association strength, Frequency | Limited contextual information may oversimplify real-world clinical decisions |

Building Better Bridges: From Descriptive Data to Prescriptive Action

Successfully navigating the is-ought gap requires explicit methodological frameworks that acknowledge rather than obscure the normative dimensions of scientific practice. Implementation science offers particularly valuable approaches for this translation.

The "Ought-Is" Problem: Implementing Ethical Norms

While the traditional is-ought problem concerns deriving values from facts, the reverse "ought-is problem" addresses how to implement established norms in practice [17]. This involves moving from ethical principles to practical interventions through a structured translation process:

Implementation Science Framework

Implementation science provides a disciplined approach to addressing the ought-is problem through frameworks like the Consolidated Framework for Implementation Research (CFIR), which considers five domains of implementation barriers and facilitators [17]:

- Intervention characteristics (evidence strength, relative advantage)

- Outer setting (external policies, patient needs)

- Inner setting (organizational culture, implementation climate)

- Individual characteristics (knowledge, self-efficacy)

- Implementation process (planning, engaging, executing)

Experimental Protocols for Valid Benchmarking

To minimize normative errors in benchmarking studies, researchers should adopt methodologies that explicitly address the is-ought gap through rigorous experimental design.

Cross-Dataset Generalization Analysis

The benchmark framework for drug response prediction (DRP) models exemplifies rigorous approach to addressing external validity concerns [15]:

Objective: Evaluate model performance degradation when applied to unseen datasets from different biological sources.

Methodology:

- Dataset Curation: Aggregate data from five public drug screening studies (CCLE, CTRPv2, gCSI, GDSCv1, GDSCv2) with standardized preprocessing.

- Model Standardization: Implement six DRP models (five DL-based, one LightGBM) with unified code structure.

- Evaluation Scheme: Train models on one dataset, test on others; compare performance drops.

- Metrics: Calculate both absolute performance (predictive accuracy) and relative performance (performance drop compared to within-dataset results).

Key Findings: Substantial performance drops occurred when models were tested on unseen datasets, highlighting the importance of cross-dataset validation before clinical implementation [15].

Contextualized ADE Prediction Benchmark

The CT-ADE benchmark addresses limitations of previous datasets by integrating contextual factors that influence clinical decision-making [16]:

Objective: Predict adverse drug events (ADEs) incorporating patient demographics and treatment regimen data.

Methodology:

- Data Integration: Combine clinical trial results from ClinicalTrials.gov with drug databases (DrugBank, PubChem, ChEMBL) and MedDRA ontology.

- Contextual Features: Include dosage, administration route, patient demographics, and medical history.

- Baseline Analysis: Evaluate large language models (LLMs) with varying input contexts.

- Quality Control: Exclude drug-response pairs with poor curve fit (R² < 0.3).

Key Findings: Models incorporating treatment and patient information outperformed structure-only models by 21-38%, establishing the importance of contextual information for clinically relevant predictions [16].

The Scientist's Toolkit: Essential Methodological Reagents

The following table details key methodological components for robust benchmarking that acknowledges the is-ought distinction:

| Methodological Component | Function | Considerations for Is-Ought Problem |

|---|---|---|

| Cross-Dataset Validation [15] | Assess model generalizability beyond training data | Prevents overextrapolation from limited descriptive data to prescriptive claims about real-world performance |

| Multiple Performance Metrics [15] | Evaluate models across diverse criteria | Acknowledges that no single metric captures all values relevant to clinical deployment decisions |

| Contextual Integration [16] | Incorporate clinical context features (dosage, demographics) | Bridges the gap between abstract predictive performance and context-dependent clinical decisions |

| Protocol Deviation Benchmarking [18] | Quantify implementation challenges in clinical trials | Provides descriptive data about practical constraints that should inform normative trial design guidelines |

| Stakeholder Engagement [17] | Incorporate perspectives of clinicians, patients, regulators | Makes implicit value judgments explicit during the translation from evidence to practice |

The distinction between "what is" and "what ought to be" remains fundamental to rigorous scientific practice in drug development. While benchmarks and performance metrics provide essential descriptive data about model capabilities, their translation into clinical practice requires careful navigation of the normative landscape. By adopting implementation science principles, conducting cross-dataset validation, and explicitly acknowledging the value judgments embedded in deployment decisions, researchers can avoid the normative error while still enabling evidence-based clinical advancement.

The most robust approach recognizes that while descriptive data cannot logically determine prescriptive conclusions, it can inform them when combined with explicitly stated values and ethical frameworks. This methodological transparency ultimately strengthens both the scientific validity and ethical foundation of drug development research.

Teleological bias—the cognitive tendency to ascribe purpose or goal-directedness to natural phenomena and events—presents a significant, yet often overlooked, challenge in scientific research. In the high-stakes field of drug development, this bias can subtly skew the framing of research questions and the interpretation of data, potentially leading to flawed conclusions and inefficient allocation of resources. This guide benchmarks the understanding of teleological bias by comparing its manifestations and impacts across different research contexts, providing experimental data and protocols to identify and mitigate its influence.

What is Teleological Bias and Why Does It Matter in Research?

Teleological thinking is the cognitive tendency to explain phenomena by reference to a future purpose or function, rather than antecedent causes [3]. For instance, one might erroneously think that "germs exist to cause disease" or that a biological pathway evolved "in order to" perform a specific function, thereby implying foresight or design [19]. While this is a universal and persistent cognitive default [3], it becomes a problematic bias—teleological bias—when it is unwarrantedly applied in scientific contexts where physical-causal explanations are required.

In drug development, this bias can manifest in multiple ways, from the initial framing of a research hypothesis to the final interpretation of clinical trial data. It can lead researchers to:

- Assume a biological structure exists for a single, predetermined purpose, overlooking alternative functions or non-adaptive origins.

- Design experiments that are biased toward confirming a pre-conceived, function-oriented narrative.

- Misinterpret correlative data as evidence of a causal, goal-directed mechanism.

Understanding the cognitive roots of this bias is the first step toward mitigating its effects. Research indicates that excessive teleological thinking is correlated with aberrant associative learning rather than a failure of logical, propositional reasoning [20]. This suggests that the bias may operate through automatic, low-level cognitive processes, making it particularly insidious and difficult to regulate without conscious effort.

Experimental Evidence: Linking Teleological Bias to Cognitive Processes

The following experiments provide quantitative evidence on the mechanisms of teleological thinking and its relationship to other cognitive tasks. The data is crucial for benchmarking its potential impact on research reasoning.

Experiment 1: Causal Learning and Teleology

This experiment investigated whether excessive teleological thinking is rooted in basic causal learning mechanisms, specifically distinguishing between associative learning and propositional reasoning [20].

- Objective: To determine if teleological thinking correlates more strongly with associative learning errors (non-additive blocking) or with failures in rule-based reasoning (additive blocking).

- Methodology: A total of 600 participants were engaged in a causal learning task based on the Kamin blocking paradigm [20]. Participants were trained to predict allergic reactions (outcomes) to various food cues. The design included:

- Non-additive Blocking Paradigm: Tests learning via associations and prediction errors.

- Additive Blocking Paradigm: Tests learning via explicit reasoning over rules (propositions).

- Teleological thinking was measured using a validated "Belief in the Purpose of Random Events" survey [20].

- Key Findings:

- Teleological tendencies were uniquely explained by aberrant performance in the non-additive blocking task.

- Computational modeling indicated that the relationship was driven by excessive prediction errors, which lead individuals to assign more significance to random events [20].

- Implication for Research: This suggests that teleological bias in drug development may not stem from a lack of knowledge, but from a more fundamental cognitive style of over-associating cues and outcomes, potentially leading to spurious conclusions about causal relationships in experimental data.

Experiment 2: Challenging Teleology in Educational Settings

This study explored the effect of directly challenging teleological reasoning on the understanding of a complex scientific theory—natural selection—in an undergraduate population [3].

- Objective: To determine if explicit instructional activities aimed at reducing teleological reasoning would improve understanding and acceptance of natural selection.

- Methodology: An undergraduate course on evolutionary medicine incorporated explicit instruction challenging design teleology, framed around developing metacognitive vigilance [3]. A control group took a human physiology course without this intervention. The study used:

- Pre- and post-semester surveys (N=83) to measure understanding of natural selection, endorsement of teleological reasoning, and acceptance of evolution [3].

- Thematic analysis of student reflective writing.

- Key Findings:

- The intervention group showed a significant decrease in teleological reasoning and a significant increase in understanding and acceptance of natural selection compared to the control group (p ≤ 0.0001) [3].

- Qualitative analysis revealed that students were largely unaware of their own teleological biases at the start of the course but reported a perceived attenuation of this reasoning by the end [3].

- Implication for Research: This demonstrates that teleological bias is malleable and can be reduced through targeted training. For drug development professionals, similar structured training could enhance the rigor of hypothesis generation and data interpretation.

Quantitative Data Comparison

The table below summarizes the quantitative outcomes from the featured experiments, providing a clear comparison of the effects of teleological bias and interventions.

Table 1: Summary of Experimental Findings on Teleological Bias

| Experiment Focus | Participant Group | Key Measured Outcome | Result | Statistical Significance |

|---|---|---|---|---|

| Causal Learning Roots [20] | 600 adults (general population) | Correlation between teleology and associative learning | Significant positive correlation with non-additive blocking failures | Not explicitly reported |

| Educational Intervention [3] | 83 undergraduates (51 intervention, 32 control) | Understanding of natural selection | Significant increase in intervention group | p ≤ 0.0001 |

| Endorsement of teleological reasoning | Significant decrease in intervention group | p ≤ 0.0001 | ||

| Acceptance of evolution | Significant increase in intervention group | p ≤ 0.0001 |

Detailed Experimental Protocols

To facilitate the replication of these findings or the adaptation of these methods for assessing bias in research teams, the core methodologies are detailed below.

This protocol is designed to dissect associative and propositional learning pathways.

- Phase 1: Pre-Learning

- Participants learn initial cue-outcome pairings. In the "additive" condition, they are explicitly taught a rule (e.g., two causal cues can "add" together to produce a stronger outcome).

- Phase 2: Learning

- Participants are trained that a specific cue (A1) reliably predicts an outcome (allergy).

- Phase 3: Blocking

- Cue A1 is presented in compound with a new cue (B1), and the same outcome occurs. Since A1 already fully predicts the outcome, learning about the redundant cue B1 is "blocked" in typical learners.

- Phase 4: Test

- Participants are tested on their beliefs about the causal power of the blocked cue (B1) and other control cues.

- Measurement: Failure to block (i.e., attributing causal power to B1) is considered an indicator of aberrant associative learning, which has been linked to teleological thought [20].

This protocol outlines the pedagogical approach used to reduce teleological reasoning.

- Framework: The intervention is based on the framework of González Galli et al. (2020), which aims to develop metacognitive vigilance in students [3]. This involves building three competencies:

- Knowledge: Explicitly teaching students about the concept of teleological reasoning.

- Awareness: Helping students recognize how teleology is expressed, both appropriately (e.g., in engineering) and inappropriately (e.g., in evolution).

- Regulation: Providing students with strategies to deliberately suppress unwarranted teleological reasoning.

- Activities: Classroom activities directly challenge design teleology by contrasting it with the mechanism of natural selection, creating a conceptual tension that helps students recognize the inadequacy of teleological explanations [3].

- Measurement: Efficacy is measured using pre- and post-intervention surveys like the Conceptual Inventory of Natural Selection and a teleology endorsement scale, supplemented by qualitative analysis of reflective writing [3].

Visualizing the Mechanisms and Mitigation of Teleological Bias

The following diagrams illustrate the cognitive pathways of teleological bias and a strategic workflow for mitigating it in research.

Diagram 1: Dual-pathway model of teleological bias generation and mitigation.

Diagram 2: A proposed workflow for integrating teleological bias checks into the drug development pipeline.

The Scientist's Toolkit: Key Reagents for Studying Teleological Bias

The following table catalogs essential "research reagents"—methodological tools and assessments—used to investigate teleological reasoning in the cited studies.

Table 2: Research Reagent Solutions for Assessing Teleological Bias

| Tool Name | Type/Format | Primary Function | Key Application in Research |

|---|---|---|---|

| Belief in Purpose of Random Events Survey [20] | Validated Questionnaire | Measures tendency to ascribe purpose to unrelated life events. | Core metric for quantifying individual levels of teleological thinking in study populations. |

| Kamin Blocking Causal Learning Task [20] | Behavioral Task (Computer-based) | Dissociates associative learning from propositional reasoning. | Identifies the cognitive sub-process (associative learning) most linked to excessive teleology. |

| Conceptual Inventory of Natural Selection (CINS) [3] | Multiple-Choice Assessment | Measures understanding of fundamental evolutionary concepts. | Evaluates the impact of teleological bias on comprehension of a complex, non-teleological scientific theory. |

| Teleology Endorsement Scale [3] [19] | Likert-scale Survey | Gauges agreement with unwarranted teleological statements about nature. | Tracks changes in teleological bias pre- and post-intervention in educational or training settings. |

| Metacognitive Vigilance Framework [3] | Pedagogical Framework | Structured approach for teaching bias recognition and regulation. | Provides a blueprint for designing training modules to mitigate teleological bias in research teams. |

The experimental data consistently demonstrates that teleological bias is a measurable and malleable cognitive trait. The contrast between its roots in low-level associative learning and its mitigation through high-level metacognitive strategies is particularly instructive. For the drug development community, these findings highlight a critical point: scientific expertise alone does not inoculate against this deep-seated cognitive default. The benchmarks established here—linking bias to specific learning profiles and showing its reduction through targeted training—provide a foundation for developing similar interventions tailored to the research and development environment. By integrating formal bias checks and structured training in causal reasoning, organizations can foster a more rigorous research culture, ultimately leading to more reliable data, more efficient use of resources, and more robust therapeutic discoveries.

From Theory to Metrics: Designing Robust Benchmarks for Teleological Reasoning

Benchmarking serves as a critical methodology for evaluating performance across scientific disciplines, enabling researchers to compare results systematically and identify areas for improvement. In the context of academic research, particularly involving student groups, benchmarking takes on added dimensions involving collaboration dynamics, methodological rigor, and teleological understanding—the purpose-driven nature of research goals. The Common Task Framework (CTF) has emerged as a powerful paradigm for structuring these evaluations, creating standardized conditions for meaningful comparison and progress assessment. Originally developed for machine learning competitions, this framework's principles find increasing application across scientific domains where objective performance assessment is crucial [21] [22]. This article explores the core principles of benchmarking through the lens of the Common Task Framework, examining its application in research environments and its implications for understanding teleological perspectives across student groups.

The Common Task Framework: Foundations and Principles

The Common Task Framework (CTF), also referred to as the Common Task Method (CTM), provides a standardized structure for comparing algorithms, methodologies, or systems through shared tasks and evaluation metrics. As noted in research culture, "those fields where machine learning has scored successes are essentially those fields where CTF has been applied systematically" [23]. The framework establishes a level playing field that facilitates direct comparison and accelerates progress through clear benchmarking.

The CTF operates through five core components:

Formally Defined Tasks: Tasks are specified with precise mathematical interpretations, eliminating ambiguity in what constitutes successful performance [22]

Standardized Datasets: Publicly available, gold-standard datasets in ready-to-use formats ensure all participants work with identical input data [21] [22]

Quantitative Metrics: Clearly defined success metrics enable objective comparison of results without subjective interpretation [22]

Leaderboard Rankings: Current state-of-the-art methods are ranked in continuously updated leaderboards, fostering healthy competition [22]

Data Generation Capability: The capacity to generate new data on demand helps prevent overfitting and allows datasets to grow organically [22]

This framework creates what has been described as a "normalizing" function in research culture, simultaneously disciplining and motivating progress while minimizing theoretical conflicts through objective performance standards [23].

Benchmarking Teleology Across Student Groups: Experimental Design

Research Context and Objectives

Understanding how students comprehend the purpose-driven nature (teleology) of benchmarking represents a crucial aspect of research education. Teleological explanation refers to understanding something through its purposes or goals, which proves particularly valuable when assessing artifacts with potentially unclear or multiple purposes [24]. In educational contexts, this translates to how students conceptualize the ultimate goals and purposes of benchmarking methodologies.

A study examining collaborative group work in university settings revealed that students' perceptions of shared tasks are influenced by numerous factors, including group formation strategies, team cohesiveness, workload equity, and evaluation methods [25]. These factors subsequently affect their teleological understanding of the research process itself.

Methodology and Protocols

To investigate benchmarking teleology comprehension across student groups, researchers implemented a structured approach:

Participant Selection: Senior undergraduate students across diverse disciplines (sciences, social sciences, mathematics, business, and arts) were surveyed regarding their experiences with collaborative research tasks [25]

Longitudinal Assessment: Data collection occurred at multiple time points—before the COVID-19 pandemic (in-person collaboration) and during the pandemic (online collaboration)—to examine contextual influences [25]

Multi-dimensional Evaluation: Assessments measured not only task performance but also efficiency perceptions, satisfaction, motivation, workload demands, and social dynamics [25]

Reflective Analysis: Students completed reflexive journal assessments on their socio-emotional experiences with group work, providing insights into their understanding of research purposes and processes [26]

The experimental protocol emphasized comparing performance and perceptions across different collaboration environments, with specific attention to how these contexts influenced students' understanding of benchmarking purposes.

Quantitative Results and Analysis

The table below summarizes key findings from research on student perceptions of collaborative benchmark tasks across different learning environments:

Table 1: Student Perceptions of Collaborative Benchmark Tasks Across Learning Environments

| Evaluation Metric | In-Person Context | Online Context | Significance Level |

|---|---|---|---|

| Task Efficiency | Higher | Lower | p < 0.05 |

| Satisfaction Levels | Higher | Lower | p < 0.01 |

| Motivation | Higher | Lower | p < 0.05 |

| Workload Demands | Perceived as balanced | Perceived as heavier | p < 0.01 |

| Quality of Work | Rated higher | Rated lower | p < 0.05 |

| Learning Outcomes | Rated higher | Rated lower | p < 0.01 |

| Friendship Formation | Salient positive factor | Less prominent but still positive | Not significant |

The data revealed that despite considerable comfort with online tools, students consistently rated in-person contexts more favorably across multiple dimensions relevant to teleological understanding of benchmarking tasks [25]. This suggests that the collaboration environment significantly influences how students conceptualize and engage with research purposes.

Visualization of Common Task Framework Workflow

The following diagram illustrates the structured workflow of the Common Task Framework implementation:

Common Task Framework Implementation Workflow

Benchmarking Case Studies: Successful Applications

AlphaFold and Protein Structure Prediction

The Critical Assessment of Protein Structure Prediction (CASP) competition represents a premier example of the Common Task Framework in scientific research. CASP provides:

- Formally Defined Task: Accurately predicting 3D protein structures from amino acid sequences [22]

- Standardized Datasets: Blind targets (newly solved protein structures not yet public) prevent training data contamination [22]

- Quantitative Metrics: Global Distance Test (GDT) scores objectively measure prediction accuracy [22]

- Leaderboard Rankings: Public ranking of teams in each CASP round [22]

DeepMind's AlphaFold achieved groundbreaking results at CASP14, reaching a median GDT score of 92.4 across all targets—the first model to predict protein structures with near-experimental accuracy [22]. This success demonstrates how clearly defined benchmarks accelerate scientific progress.

The Vesuvius Challenge

This initiative applied the Common Task Framework to decipher ancient carbonized scrolls from Herculaneum, offering over $1 million in prizes and providing:

- Clear Task Definition: Decipher at least 85% of four passages from CT-scrolled papyri [22]

- Standardized Datasets: High-resolution CT scans made available to all participants [22]

- Quantitative Metrics: Accurate character recognition thresholds [22]

- Leaderboard Structure: Clear prize structure and recognition [22]

The winning team deciphered over 2,000 Greek letters, revealing a philosophical text discussing life's pleasures [22]. This case illustrates how the CTF can mobilize diverse expertise around challenging research tasks.

The Scientist's Toolkit: Essential Research Reagents

Table 2: Essential Research Reagents for Benchmarking Studies

| Reagent/Resource | Function in Benchmarking Studies | Application Example |

|---|---|---|

| Standardized Datasets | Provides consistent baseline for performance comparisons | Protein Data Bank for structural biology [22] |

| Evaluation Metrics | Quantifies performance objectively | Global Distance Test for protein folding [22] |

| Benchmarking Platforms | Hosts competitions and leaderboards | Hugging Face Open Leaderboards [24] |

| Data Generation Systems | Creates new data to prevent overfitting | Automated experimental systems for extensible datasets [22] |

| Teleological Frameworks | Clarifies purpose and goals of assessment | Purpose-based evaluation of general-purpose AI systems [24] |

Benchmarking Teleology: Understanding Purpose in Assessment

Teleological explanation—understanding something through its purposes—provides a critical framework for evaluating research artifacts, particularly those with potentially unclear or multiple purposes [24]. In educational contexts, this translates to how students conceptualize the ultimate goals of benchmarking activities.

Research indicates that students' teleological understanding of benchmarking is influenced by:

- Group Formation Methods: Self-selected groups demonstrate greater satisfaction and teamwork than randomly assigned groups [25]

- Equity Perception: "Free-rider" problems negatively impact engagement and purpose understanding [25]

- Evaluation Methods: Individual versus collective grading approaches affect motivation and goal orientation [25]

- Social Dimensions: Friendship formation among group members correlates with positive perceptions of shared tasks [25]

The challenge of teleological understanding is particularly acute for general-purpose technologies whose applications may be unspecified during development. As noted in AI assessment literature, "whilst a GPAI can be arbitrarily assigned multiple—and often incompatible—purposes, it is problematic to deny that certain purposes are essential for determining its normal functioning" [24]. This principle applies equally to student understanding of research methodologies.

Temporal Dynamics in Benchmarking Culture

Benchmarking practices create distinct temporal patterns in research, characterized by:

- State-of-the-Art (SOTA) Chase: The relentless pursuit of top leaderboard positions [23]

- Presentist Temporality: Focus on current performance metrics rather than long-term goals [23]

- Extrapolation Expectation: Assumption that incremental improvements will continue indefinitely [23]

This temporal dimension affects how students and researchers conceptualize progress, potentially emphasizing short-term metric optimization over deeper understanding of research purposes.

The Common Task Framework provides a robust methodology for benchmarking across research contexts, from computational science to student group projects. Its structured approach—featuring defined tasks, standardized datasets, quantitative metrics, and leaderboard rankings—creates conditions for objective performance assessment and accelerated progress. Understanding the teleological dimensions of benchmarking, particularly across student groups, requires attention to collaborative dynamics, environmental contexts, and purpose clarity in research design. As benchmarking practices continue to evolve across scientific disciplines, maintaining focus on both methodological rigor and conceptual understanding of research purposes will remain essential for meaningful scientific advancement.

Within educational research, particularly in specialized studies such as those benchmarking teleology understanding across different student groups, the choice of assessment instrument is critical. These tools—surveys, scenarios, and case studies—serve as the primary means for collecting robust and interpretable data on student thinking. Each method offers distinct advantages and is subject to specific validation requirements to ensure that the inferences drawn from the data are scientifically defensible [27]. The emerging research on students' persistent use of teleological explanations for biological phenomena, as highlighted in studies with German high school students, underscores the need for such validated tools to accurately diagnose and compare conceptual understanding [10].

This guide provides a comparative analysis of these three key assessment formats, summarizing their characteristics, applications, and the experimental protocols essential for establishing their validity and reliability in a research context.

Comparative Analysis of Assessment Instruments

The table below provides a structured comparison of the three primary assessment instruments, outlining their core functions, key characteristics, and appropriate use cases within research on student understanding.

Table 1: Comparison of Assessment Instruments for Educational Research

| Feature | Surveys | Scenarios | Case Studies |

|---|---|---|---|

| Primary Function | To collect self-reported data on perceptions, attitudes, and reported behaviors from a sample population [28]. | To simulate real-life situations for problem-solving, often targeting reasoning and decision-making skills in a safe environment [29]. | To depict complex, real-life problems requiring in-depth analysis, discussion, and collaborative solution-building [29]. |

| Common Data Output | Primarily quantitative (e.g., Likert scales), but can include qualitative (open-ended) responses [28]. | Qualitative analysis of problem-solving processes; can yield quantitative scores on performance rubrics. | Primarily qualitative insights from discussion and analysis; can result in written or presentation-based solutions [29]. |

| Research Application | Exploratory, descriptive, or explanatory studies to gauge opinions or reported interactions with a system or concept [28]. | Assessing clinical/professional reasoning, higher-order thinking, and application of problem-solving theories without real-world risk [29]. | Assessing deeper understanding, cognitive skills, and the ability to navigate complex, uncertain situations [29]. |

| Typical Format | Structured or semi-structured questionnaires administered via mail, online, or in person [28]. | Short, focused narrative descriptions of a situation or problem prompt. | Detailed, narrative accounts of a complex situation, often involving multiple factors and perspectives [29]. |

| Key Benefit | Allows for standardized, quantifiable comparison across many respondents [30]. | Provides an effective simulated learning environment that bridges theory and practice [29]. | Engages students in research and reflective discussion, fostering collaborative learning [29]. |

| Inherent Challenge | Potential for low response rates and biases (e.g., non-response bias); limited nuance without careful design [28] [30]. | Requires careful scaffolding to guide problem-solving; can be less effective if not well-integrated with learning objectives [29]. | Can be time-consuming to analyze; requires clear rubrics to assess individual contributions and understanding [29]. |

Validation Frameworks for Assessment Instruments

Validation is the process of collecting evidence to evaluate the appropriateness of interpretations, uses, and decisions based on assessment results [27]. It is a process, not an endpoint, and is fundamental to establishing trust in the data collected, especially when making comparisons between student groups.

Modern Validity Frameworks

Two contemporary frameworks guide validation practices:

Messick's Framework: This framework identifies five interconnected sources of validity evidence [27]:

- Content: Evidence that the assessment items and tasks adequately represent the construct (e.g., teleological reasoning) and the domain of interest.

- Response Process: Evidence that respondents are engaging with the assessment as intended (e.g., through think-aloud protocols or analysis of rater behavior).

- Internal Structure: Evidence that the relationships between assessment items align with the hypothesized construct structure, often evaluated through reliability statistics and factor analysis.

- Relationships with Other Variables: Evidence of expected correlations with other measures (convergent validity) or differences between known groups (discriminant validity).

- Consequences: Evidence and consideration of the intended and unintended outcomes of assessment use.

Kane's Framework: This framework models validation as a series of inferences that connect an observation to a decision, which is highly relevant for benchmarking studies. The key inferences are [27]:

- Scoring: Translating an observed performance into a score.

- Generalization: Inferring that the score is consistent across tasks, occasions, and raters.

- Extrapolation: Inferring that the score reflects performance in a broader, real-world domain beyond the test.

- Implication/Decision: Using the score to make a meaningful decision or interpretation.

The following diagram visualizes the progression of these inferences from a single observation to a final decision, which is crucial for justifying research conclusions.

Experimental Protocols for Instrument Development and Validation

Protocol for Survey Development and Validation

The development of a validated survey instrument, such as one designed to measure the prevalence of teleological explanations among students, requires a rigorous, multi-stage process.

Table 2: Key Research Reagents for Survey Validation

| Reagent/Resource | Function in Validation |

|---|---|

| Defined Construct | A clear, theoretical definition of what is being measured (e.g., "teleological reasoning bias") is the foundation for all validation steps [27]. |

| Expert Panel | A group of subject matter experts who formally evaluate the survey for content validity, ensuring items are accurate and comprehensive [28]. |

| Pilot Sample | A small, representative group from the target population used for cognitive pre-testing and initial reliability analysis [28]. |

| Validated Criterion Instrument | An existing, reputable survey or test measuring a similar or related construct, used to evaluate criterion validity [28]. |

| Statistical Software (e.g., R, SPSS) | Essential for conducting quantitative analyses, including reliability calculations (e.g., Cronbach's alpha) and factor analysis to establish internal structure [28]. |