Beyond Circular Logic: New Strategies to Overcome Tautology in Genetic Code Optimality Studies

This article addresses the persistent challenge of tautological reasoning in studies claiming the genetic code is optimized.

Beyond Circular Logic: New Strategies to Overcome Tautology in Genetic Code Optimality Studies

Abstract

This article addresses the persistent challenge of tautological reasoning in studies claiming the genetic code is optimized. Many analyses rely on amino acid substitution matrices, like BLOSUM, which themselves are products of the code's structure, creating a circular argument. We explore foundational critiques of this methodological pitfall and present modern solutions, including multi-objective evolutionary algorithms and physicochemical property clustering. The discussion extends to practical applications in synthetic biology, where recoded organisms and non-canonical amino acid incorporation provide real-world tests of code optimality. Finally, we outline a rigorous validation framework for researchers in drug development and synthetic biology to assess code fitness without falling into tautological traps, enabling more reliable engineering of biological systems.

The Tautology Trap: Deconstructing Circular Reasoning in Code Optimality Claims

Core Concepts: BLOSUM and PAM Matrices

What are substitution matrices and what is their primary function?

Substitution matrices, such as BLOSUM and PAM, are fundamental tools in bioinformatics used for sequence alignment of proteins. They provide a scoring system that quantifies the likelihood of one amino acid being replaced by another during evolution. These scores are crucial for algorithms that calculate the similarity between different protein sequences, helping researchers infer function and establish evolutionary relationships. The matrices assign higher scores to substitutions that occur more frequently in nature and are more likely to be functionally tolerated [1] [2].

What is the fundamental circularity in their construction?

The central problem is that these matrices are derived from, and subsequently used to analyze, the same biological system—the standard genetic code and its resulting protein sequences. This creates a circular argument: the observed substitution patterns used to build the matrices are themselves a product of the genetic code's inherent optimality. Therefore, when these matrices are used to evaluate the optimality of the genetic code, the analysis is inherently biased. The matrices are built upon the very property they are often used to test [3].

Table 1: Core Characteristics of PAM and BLOSUM Matrices

| Feature | PAM (Point Accepted Mutation) | BLOSUM (BLOcks SUbstitution Matrix) |

|---|---|---|

| Underlying Data | Global alignments of closely related sequences (>85% identity) [2] | Local, conserved blocks of amino acid sequences from related proteins [1] |

| Construction Method | Extrapolation from closely related sequences to model distant relationships via matrix multiplication [4] [2] | Direct observation of substitutions from clustered sequences at a specific identity threshold [4] [1] |

| Matrix Naming | PAMn, where n is the evolutionary distance (e.g., PAM250) [4] | BLOSUMn, where n is the clustering identity threshold (e.g., BLOSUM62) [1] |

| Implicit Assumption | A Markov model of evolution where substitutions are independent and time-reversible [4] | That observed substitutions in conserved blocks reflect biologically accepted changes [1] |

Troubleshooting the Circular Logic Problem

FAQ: How does this circularity impact my research on genetic code optimality?

This circularity can lead to tautological conclusions. If you use a BLOSUM or PAM matrix to demonstrate that the standard genetic code is optimal at minimizing the effects of mutations, your result is pre-conditioned by the data used to create the matrix. The code appears optimal because you are measuring it with a tool that was built from data already filtered by that same code's properties. This can artificially reinforce the notion of optimality without providing an independent test [3].

FAQ: Are some matrices more prone to this issue than others?

The circularity is a foundational issue for both families of matrices. However, the PAM matrices may introduce an additional layer of circularity when used for studying code optimality. The initial PAM1 matrix is derived from highly similar sequences assumed to have diverged through a single mutation, which inherently relies on the structure and error-minimization properties of the standard genetic code. This assumption is then exponentiated to create matrices for more distantly related sequences, potentially amplifying the underlying circularity [4] [2].

FAQ: What are the practical methodologies to overcome this tautology?

Researchers have developed several methodological approaches to break this circularity:

- Use of Theoretical Alternative Codes: Instead of relying solely on empirical matrices, compare the standard genetic code against a set of randomly generated or rationally designed alternative genetic codes. The optimality of the standard code is assessed by how well it minimizes the impact of mutations compared to these alternatives, using a chosen scoring metric [3].

- Validation with Expanded Genetic Codes: Utilize advances in synthetic biology, such as Genomically Recoded Organisms (GROs), which possess altered genetic codes. Studying the fitness and mutational robustness of these organisms provides a direct, empirical test of genetic code optimality independent of traditional substitution matrices [5].

- Robustness Analysis with Different Code Sets: As explored in recent research, the optimality of the standard genetic code should be tested against a variety of comparison code sets based on different sub-structures of the code itself. This tests whether the observed optimality is a robust finding or an artifact of a specific comparison set [3].

Table 2: Key Reagents and Computational Tools for Research

| Research Reagent / Tool | Function in Experimental Protocol |

|---|---|

| Genomically Recoded Organism (GRO) | A chassis with a reassigned codon, enabling direct testing of genetic code properties and resistance to viral infection [5]. |

| Non-Standard Amino Acid (nsAA) | An unnatural amino acid incorporated into proteins via codon reassignment, used to probe code flexibility and create novel biocatalysts [5]. |

| Orthogonal Translation System | A machinery (tRNA/aminoacyl-tRNA synthetase pair) that functions independently of the host's system, enabling specific nsAA incorporation [5]. |

| Theoretical Alternative Code Set | A computationally generated set of genetic codes used as a neutral baseline for comparing the optimality of the standard genetic code [3]. |

| MACSE (Multiple Alignment of Coding Sequences) | A multiple sequence alignment tool specific to coding sequences that accounts for frameshifts and stop codons, offering an alternative alignment perspective [6]. |

Experimental Protocol: Breaking the Circularity

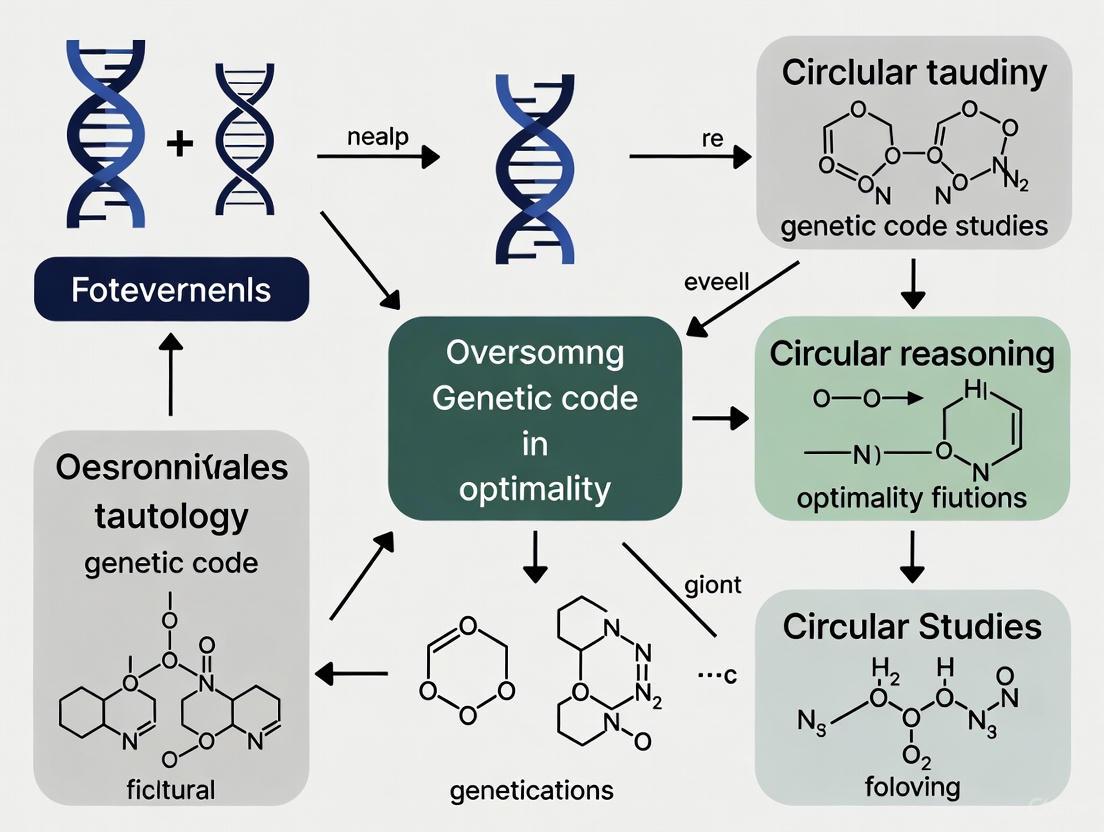

The following workflow diagram outlines a methodology for assessing genetic code optimality that avoids reliance on standard substitution matrices.

Workflow Title: Non-Circular Assessment of Genetic Code Optimality

Step-by-Step Protocol:

- Generate Alternative Genetic Codes: Create a large set of theoretical alternative genetic codes. These can be random permutations of the standard code or codes designed around specific evolutionary hypotheses [3].

- Define an Independent Fitness Metric: Establish a scoring metric that is not derived from observed amino acid substitutions. A common metric is "Mutational Robustness," which quantifies the average change in physicochemical properties (e.g., polarity, volume, hydrophobicity) when all possible single-nucleotide mutations are applied to a set of codons.

- Simulate Point Mutations: Apply all possible single-nucleotide mutations to a representative set of codons within both the standard genetic code (SGC) and each alternative code.

- Score the Standard Genetic Code: For the SGC, calculate the average change in your fitness metric across all simulated mutations. A lower average change indicates higher robustness to mutations.

- Score the Alternative Codes: Perform the same calculation for every alternative code in your set.

- Compare and Rank: Rank the SGC's performance against the distribution of scores from the alternative codes. If the SGC's score is significantly better (e.g., in the top percentile) than most random alternatives, this provides non-circular evidence for its optimality [3].

Advanced Technical Support

How do I select an appropriate matrix despite the circularity issue?

For standard sequence alignment tasks where the goal is homology detection rather than code optimality studies, BLOSUM and PAM matrices remain essential. The key is to choose based on evolutionary distance, acknowledging their inherent bias.

Table 3: Matrix Selection Guide for Practical Alignment Tasks

| Evolutionary Relationship | Recommended Matrix | Rationale and Notes |

|---|---|---|

| Very Close | BLOSUM80, PAM120 | For sequences with high identity. BLOSUM80 uses clusters at 80% identity. |

| Standard/Intermediate | BLOSUM62 (BLAST default) [1], PAM160 | A general-purpose matrix. BLOSUM62 offers a good balance for detecting most weak protein similarities [1]. |

| Distant | BLOSUM45, PAM250 | For more divergent sequences. BLOSUM45 is built from very distant relationships (≤45% identity clusters) [4] [2]. |

- NCBI BLAST Suite: The primary tool for performing sequence alignments using various substitution matrices. The

blastpprogram is used for protein-protein comparisons [7] [8]. - BLOCKS Database: The original source of aligned protein sequence segments used to derive the BLOSUM matrices [1].

- Orthogonal Translation Components: For experimental validation, these include orthogonal aminoacyl-tRNA synthetases and tRNAs, which are required for incorporating nsAAs in GROs [5].

- Computational Frameworks for Code Generation: Custom scripts or software (often in Python or R) are needed to generate theoretical alternative genetic codes and perform the mutational robustness simulations outlined in the protocol above.

Frequently Asked Questions (FAQs)

FAQ 1: What is the 'Frozen Accident' hypothesis of the genetic code? Proposed by Francis Crick in 1968, the 'Frozen Accident' hypothesis states that the specific assignments of codons to amino acids in the standard genetic code (SGC) are largely historical accidents [9] [10]. Once established in a primordial organism, any change in codon assignment would be highly deleterious because it would alter the amino acid sequences of countless essential proteins simultaneously [9]. This "freezes" the code, making it universal across all life forms descended from that last universal common ancestor, not because it is uniquely optimal, but because it became unchangeable [9].

FAQ 2: What is the Adaptive Hypothesis, and what evidence supports it? The Adaptive Hypothesis posits that the genetic code evolved its specific structure to minimize the negative effects of mutations and translation errors [11]. The key evidence is that the SGC shows a strong tendency for similar amino acids to have similar codons [12] [11]. For example, codons with U in the second position typically correspond to hydrophobic amino acids [9]. This organization means a single-point mutation or translation error often results in the incorporation of a chemically similar amino acid, thereby minimizing damage to the protein's structure and function [9] [12]. Quantitative studies show that fewer than 1 in a billion random codes are fitter than the natural code when using cost functions based on protein stability [12].

FAQ 3: How can research on code optimality avoid tautological reasoning? A common tautology occurs when the optimality of the genetic code is evaluated using amino acid substitution matrices (e.g., PAM, BLOSUM) derived from evolutionary protein sequence alignments [11]. These matrices already reflect the structure of the code itself, making any analysis circular [11]. To overcome this, researchers should use independent measures of amino acid similarity that are unrelated to the code's structure, such as:

- Fundamental physicochemical properties: Hydropathy, molecular volume, and polarity [11].

- In silico protein stability measures: Calculating the change in folding free energy caused by point mutations in protein structures [12].

FAQ 4: How optimal is the standard genetic code? Research indicates the standard genetic code is highly robust to errors, but it is not fully optimal [11]. It is significantly better than a random code and much closer to codes that minimize error costs than to those that maximize them [3] [11]. However, evolutionary algorithms can find theoretical codes that are even more robust, suggesting the SGC is a partially optimized system that emerged under the influence of multiple evolutionary factors [11].

FAQ 5: What are the main competing theories for the genetic code's evolution? The three primary competing hypotheses are:

- The Stereochemical Hypothesis: Postulates that direct chemical affinity between amino acids and their codons or anticodons determined the assignments [9] [11]. The main counterargument is a lack of widespread experimental evidence for such interactions [9] [11].

- The Coevolution Hypothesis: Suggests the code expanded alongside biosynthetic pathways, with new amino acids inheriting codons from their precursors [11].

- The Adaptive Hypothesis: Argues the code was shaped by natural selection to minimize the functional impact of errors, as discussed above [11].

Troubleshooting Common Research Challenges

Challenge 1: Inconclusive results when testing the adaptive hypothesis.

- Problem: Your analysis fails to clearly demonstrate whether the genetic code is optimized for error minimization.

- Solution:

- Verify Cost Function Independence: Ensure the amino acid similarity metric or cost function you are using is not derived from biological sequences that already encode the SGC's structure. Use physicochemical properties or in silico folding energy calculations instead [12] [11].

- Refine the Comparison Set: The optimality result can be influenced by the set of theoretical alternative codes used for comparison. Use a defined set of codes, such as those preserving the SGC's block structure, to generalize your results across different evolutionary hypotheses [3].

- Adopt a Multi-Objective Framework: The code was likely shaped by multiple properties simultaneously. Use a multi-objective evolutionary algorithm that optimizes for several independent amino acid properties (e.g., hydropathy, volume, charge) to gain a more general and robust assessment [11].

Challenge 2: Accounting for amino acid frequency in optimality calculations.

- Problem: Your model of code optimality does not reflect biological reality.

- Solution: Incorporate empirical amino acid frequencies into your fitness function. The genetic code is even more optimal when the relative abundance of amino acids in proteins is considered, as this weights the impact of errors on more frequent amino acids more heavily [12]. The table below shows sample frequencies from different domains of life.

Table 1: Example Amino Acid Frequencies in Different Domains of Life (%) [12]

| Amino Acid | Archaea | Bacteria | Eukaryotes |

|---|---|---|---|

| Leu | 9.65 | 10.52 | 9.35 |

| Ser | 5.93 | 6.18 | 8.50 |

| Ala | 7.85 | 8.08 | 6.48 |

| Glu | 7.79 | 6.35 | 6.64 |

| Val | 7.97 | 6.87 | 6.09 |

| Lys | 6.04 | 6.43 | 6.30 |

Challenge 3: Designing a modern experiment based on code optimality.

- Problem: Translating theoretical knowledge of the genetic code into practical experimental design, such as for heterologous gene expression.

- Solution: Utilize contemporary deep learning tools for codon optimization. For example, CodonTransformer is a multispecies model that learns organism-specific codon usage bias from over a million DNA-protein pairs [13]. It can be used to design DNA sequences for a target host organism that have natural-like codon distribution profiles, thereby maximizing protein expression and minimizing host toxicity [13].

Experimental Protocols

Protocol 1: Assessing Genetic Code Optimality Using a Random Code Comparison

Purpose: To quantitatively evaluate the error-minimization capacity of the Standard Genetic Code (SGC) compared to random alternative codes.

Methodology:

- Define a Cost Function: Select a relevant, independent physicochemical property of amino acids, such as hydropathy or molecular volume. Create a distance matrix where each value represents the absolute difference in this property between two amino acids [12] [11].

- Calculate the SGC Fitness Score (ΦSGC):

- For all 64 codons, compute the cost of all possible single-base substitution errors (e.g., 9 possible errors per codon).

- For each error, use the cost matrix to find the property difference between the original and the substituted amino acid.

- ΦSGC is the weighted average of all these costs. Weights can be applied based on the known higher error rates at the first and third codon positions [12].

- Generate Random Genetic Codes:

- Calculate Fitness for Random Codes: Compute the fitness score (Φ_random) for each random code in the set using the same method from step 2.

- Statistical Analysis: Determine the fraction of random codes that have a better (lower) fitness score than the SGC. A very small fraction (e.g., < 10^-6) indicates the SGC is highly optimal for that property [12].

Table 2: Key Reagents and Computational Tools for Code Optimality Research

| Item | Function in Research |

|---|---|

| Amino Acid Property Database (e.g., AAindex) | Provides hundreds of independent, quantitative indices of physicochemical properties to define non-tautological cost functions [11]. |

| Multi-Objective Evolutionary Algorithm (MOEA) | Computational method to find theoretical genetic codes that are simultaneously optimized for multiple amino acid properties, providing a robust Pareto front for comparison [11]. |

| Protein Structure Database (e.g., PDB) | Source of native protein structures for in silico calculations of folding free energy changes caused by amino acid substitutions [12]. |

| Codon Optimization Tool (e.g., CodonTransformer) | A deep learning model that uses organism-specific context to design optimal DNA sequences for synthetic biology applications [13]. |

Protocol 2: Multi-Objective Optimization with an Evolutionary Algorithm

Purpose: To explore the trade-offs between multiple amino acid properties in shaping the genetic code and to find a Pareto front of theoretical codes that are non-dominated in all objectives [11].

Methodology:

- Select Representative Properties: From a database of over 500 amino acid indices, select a small set (e.g., 8) that represent major, non-redundant clusters of physicochemical properties (e.g., hydropathy, volume, alpha-helix propensity, etc.) [11].

- Define the Search Space: Decide on a model for generating genetic codes. The Block Structure (BS) model permutes amino acids only within the canonical codon blocks of the SGC, while the Unrestricted Structure (US) model randomly assigns sense codons to amino acids with no structural constraints [11].

- Configure the Algorithm: Use a Strength Pareto Evolutionary Algorithm (SPEA2) or similar. The algorithm requires:

- Genetic Operators: Define crossover and mutation functions that work on your code representation (BS or US).

- Fitness Evaluation: For each candidate code, calculate not one but eight separate fitness functions, one for each of the selected properties [11].

- Run the Optimization: Evolve a population of codes over many generations. The output is a set of Pareto-optimal codes—codes for which no other code is better in all eight objectives simultaneously.

- Compare to SGC: Plot the SGC within this eight-dimensional space. Its proximity to the calculated Pareto front indicates its level of multi-property optimality [11].

Research Workflow and Conceptual Diagrams

Research Workflow for Genetic Code Hypotheses

Modern Codon Optimization with AI

FAQs: Understanding Genetic Code Structure and Evolution

FAQ 1: If the genetic code is so optimal, why is it described as only "partially optimized"?

The standard genetic code is not perfectly optimal but represents a point on an evolutionary trajectory. Research comparing it to millions of random alternative codes shows it is significantly more robust than the vast majority of possibilities, yet it does not reside at a fitness peak. It appears to be about halfway to a local optimum, suggesting its evolution involved a trade-off between increasing robustness and the deleterious effects of reassigning codon series in an increasingly complex biological system [14]. This partial optimization helps overcome the tautology of assuming perfect design, pointing instead to a historical evolutionary process.

FAQ 2: What specific evidence supports the non-random, error-minimizing structure of the code?

The genetic code's structure is manifestly nonrandom. The key evidence includes [14]:

- Block Structure: Similar amino acids are encoded by codons that differ by a single nucleotide, typically in the third position.

- Physicochemical Similarity: Related amino acids (e.g., similar hydrophobicity) are assigned to related codons. For instance, all codons with a U in the second position code for hydrophobic amino acids.

- Quantitative Comparisons: When using cost functions based on physicochemical properties (like the polar requirement scale), the standard code is more robust than a vast majority of randomly generated codes, with one estimate finding it to be "one in a million" [14] [15].

FAQ 3: Beyond error minimization, what other function is programmed into the code's redundancy?

Codon redundancy ("degeneracy") also prescribes translational pausing (TP), which helps control the rate of translation. This temporal regulation is crucial for the co-translational folding of the nascent protein into its functional three-dimensional structure. Different synonymous codons, recognized by tRNAs with varying cellular abundances, can purposely slow down or speed up the decoding process. This allows a single codon sequence to dual-prescribe both an amino acid sequence and a folding schedule without cross-talk [16].

FAQ 4: Can the degeneracy of the genetic code be broken to encode new amino acids?

Yes, breaking codon degeneracy is the goal of Sense Codon Reassignment (SCR), a method in Genetic Code Expansion (GCE). A key challenge is the ribosome's inherent flexibility in reading codons, especially with post-transcriptionally modified tRNAs. This has been overcome using:

- Unmodified tRNAs: In vitro transcribed tRNAs (e.g., t7tRNA) lack modifications that promote "wobble" reading, reducing codon sharing.

- Hyperaccurate Ribosomes: Engineered ribosomes with mutations in the S12 protein exhibit enhanced proofreading, significantly improving codon orthogonality by minimizing near-cognate tRNA interactions [17]. These approaches have successfully reassigned multiple codons within a single six-fold degenerate codon box to encode distinct non-canonical amino acids [17].

Troubleshooting Guide for Genetic Code Expansion Experiments

Problem: Low Fidelity in Sense Codon Reassignment (SCR) – Misincorporation of standard amino acids.

| Possible Cause | Solution / Experimental Protocol |

|---|---|

| Wobble reading by tRNAs | Use in vitro transcribed tRNAs (t7tRNA) that lack post-transcriptional modifications (PTMs). PTMs like cmo5U34 in native tRNAs expand codon recognition. Unmodified tRNAs exhibit reduced readthrough of non-cognate codons [17]. |

| Poor ribosomal discrimination | Employ a hyperaccurate ribosome mutant. For example, use ribosomes with a mutated S12 protein (mS12). These ribosomes have enhanced proofreading capabilities during tRNA accommodation, which improves discrimination against near-cognate tRNAs and enforces stricter codon orthogonality [17]. |

| Competition from endogenous tRNAs | In an in vitro translation system (e.g., PURE system), reconstitute the system using only the orthogonal tRNAs required for your reassignment. This eliminates competition from the cell's full complement of native tRNAs [17]. |

Problem: Inefficient Reassignment of Multiple Codons Within a Single Codon Box.

| Possible Cause | Solution / Experimental Protocol |

|---|---|

| Overlapping codon reading by multiple tRNA isoacceptors | Rank tRNA-codon pairing efficiency using a competitive assay. 1. Charge individual tRNA isoacceptors with unique leucine isotopologues. 2. Allow them to compete in a translation reaction with a single-codon mRNA template. 3. Quantify incorporation by mass spectrometry (e.g., MALDI-MS) to create a heatmap of pairing efficiency. This data guides orthogonal pair selection [17]. |

| Unpredictable reassignment outcomes | Combine solutions: Use a system comprising unmodified tRNAs + hyperaccurate ribosomes. This combination has been shown to enable predictable, extensive SCR, allowing the reassignment of up to nine codons across two codon boxes to encode seven distinct amino acids [17]. |

Quantitative Data on Code Optimality

Table 1: Fraction of Random Genetic Codes More Robust Than the Standard Code. This table summarizes how the estimated optimality of the standard code changes with increasingly sophisticated fitness functions, demonstrating a non-tautological, quantifiable approach [14] [15].

| Fitness Function / Cost Measure Considered | Fraction of Random Codes That Are "Fitter" | Key Reference (Concept) |

|---|---|---|

| Polarity (Hydropathy) Differences | ~ 10⁻⁴ (1 in 10,000) | Haig & Hurst (1991) [14] |

| + Transition/Transversion Bias & Positional Error Differences | ~ 10⁻⁶ (1 in 1,000,000) | Freeland & Hurst (1998) [14] |

| + Amino Acid Frequencies & Mutation Matrix* | ~ 2 x 10⁻⁹ (2 in 1,000,000,000) | Gilis et al. (2001) [15] |

*The Mutation Matrix is a cost function based on in silico evaluation of changes in protein folding free energy upon mutation [15].

Experimental Protocol: Assessing Codon Reassignment Orthogonality

Objective: To quantitatively rank the ability of different tRNA isoacceptors to read a given codon in competition, providing actionable data for SCR.

Methodology (Competitive Codon Reading Assay):

- tRNA Preparation: Purify individual tRNA isoacceptors from total E. coli tRNA (wt tRNA) or produce them via in vitro transcription (t7tRNA).

- Aminoacylation: Charge each tRNA isoacceptor with a unique leucine isotopologue (e.g., ¹²C₆-Leu, ¹³C₆-Leu) to enable mass distinction.

- Translation Reaction:

- Use a custom PURE in vitro translation system.

- Combine the five charged AA-tRNAs in equal concentrations (confirm ratio via MALDI-MS charging assay).

- Add an mRNA template containing a single type of leucine codon (e.g., CUG, UUA, etc.).

- Product Analysis:

- Translate a short peptide sequence.

- Analyze the peptide products using MALDI-MS.

- Quantify the peak intensities for each isotopologue to determine the relative "win" rate of each tRNA for the tested codon.

- Data Representation: Compile results into a heatmap to visualize codon reading patterns and identify the most orthogonal tRNA-codon pairs [17].

Workflow: Competitive Codon Reading Assay

The Scientist's Toolkit: Key Reagents for Genetic Code Expansion

Table 2: Essential Research Reagents for Genetic Code Expansion (GCE) and SCR Studies.

| Research Reagent | Function / Explanation |

|---|---|

| PURE In Vitro Translation System | A custom, reconstituted cell-free protein synthesis system. It allows for complete control over translation components, enabling the selective omission of natural tRNAs and addition of orthogonal components for SCR [17]. |

| Unmodified tRNAs (e.g., t7tRNA) | tRNAs produced by in vitro transcription, which lack natural post-transcriptional modifications. The absence of modifications like cmo5U34 reduces wobble pairing and narrows codon reading, making SCR more predictable [17]. |

| Hyperaccurate Ribosomes (mS12) | Ribosomes with a mutation in the S12 ribosomal protein. These mutant ribosomes have enhanced proofreading ability, leading to reduced misincorporation and improved discrimination between cognate and near-cognate tRNAs during SCR [17]. |

| Orthogonal Aminoacyl-tRNA Synthetases (oRS) | Engineered enzymes that specifically charge an orthogonal tRNA with a desired non-canonical amino acid (ncAA), without cross-reacting with endogenous tRNAs or standard amino acids. Essential for in vivo GCE [18]. |

A significant challenge in studying the genetic code's optimality is the risk of tautological reasoning—an "unnecessary repetition... of the same... idea, [or] argument" [19]. In this context, tautology occurs when researchers use the genetic code's observed structure to both define and then "prove" the optimization of a single physicochemical property, creating a circular argument [19]. This guide provides methodologies to help researchers overcome this limitation by implementing multi-property analysis and rigorous statistical frameworks, moving beyond single-factor analysis that has dominated the field [20].

Troubleshooting Guides

Guide: Resolving Contradictory Optimality Findings

- Problem: Different studies identify different amino acid properties (e.g., polarity vs. partition energy) as the "most optimized," leading to inconsistent conclusions about the genetic code's origin.

- Symptoms: Your analysis yields high optimization scores for multiple properties, but you cannot determine which is fundamental. Support for either the physicochemical theory or the coevolution theory seems to depend on the property chosen.

- Investigation Steps:

- Check the Model Scope: Are you testing your hypothesis against the full set of all possible random codes, or a restricted set that incorporates biosynthetic constraints? A finding that a property is 96% optimized is only meaningful when compared to the correct null model [20].

- Analyze by Code Structure: Conduct your optimization analysis separately on the columns and rows of the genetic code table. The coevolution theory predicts that biosynthetic relationships ( structuring the rows) and error minimization (optimizing the columns) apply different selective pressures. A property reaching ~98% optimization on columns strongly suggests selective pressure for error minimization [20].

- Test a Diverse Property Set: Do not rely solely on classic properties like polarity. One study analyzed 530 different properties and found that partition energy, not polarity, reached the highest optimization level (~96% globally, ~98% on columns) when biosynthetic constraints were applied [20].

- Solution: Employ a model that simultaneously accounts for both biosynthetic relationships between amino acids and their physicochemical properties. This approach can resolve contradictions by showing, for instance, that partition energy is highly optimized under biosynthetic constraints, thereby corroborating the coevolution theory [20].

Guide: Addressing the "Single-Property" Tautology

- Problem: The research design inadvertently makes the conclusion (the code is optimal for property X) a restatement of the premise (property X was selected because it fits the code's structure).

- Symptoms: The analysis feels circular. The outcome (e.g., "the code is optimal") is not a testable finding but is built into the methodology by the choice of a single property.

- Investigation Steps:

- Identify Implicit Repetition: Scrutinize your explanations for phrases that use different words to say the same thing. In writing, this appears as "necessary requirement" or "future planning." In research, it appears as using the code's structure to define the very property you are testing [21] [22].

- Search for Hidden Assumptions: Are you assuming the code is optimal for polarity because its structure correlates with polarity, without testing against a robust null model? A property appearing optimized in a vacuum may be a tautology; it must be shown to be significantly more optimized than in alternative, biologically plausible codes [23] [20].

- Validate with Independent Data: Use the Moran's I index of global spatial autocorrelation. This method can identify the property that best correlates with the genetic code's organization from a large database, reducing bias from pre-selecting a single property [20].

- Solution: Replace the tautological reasoning with a methodology that adds new information. If a formal explanation ("The code is error-minimizing because it is optimal") is tautological, a proper explanation ("The code is error-minimizing for partition energy because its structure minimizes the deleterious effects of translation errors, as shown by a 98% optimization score on columns") breaks the circle [19].

Frequently Asked Questions (FAQs)

Q1: What is the core of the tautology problem in genetic code optimality studies? A1: The core problem is circularity. A true tautology is an unnecessary repetition of the same idea. In this field, it occurs when the observed structure of the genetic code is used to define a "good" or "optimal" property, and the same structure is then presented as evidence for that optimality, providing no new explanatory information [19].

Q2: I have evidence that the genetic code is optimized for polarity. Why should I consider other properties? A2: While polarity (polar requirement) has been a historically important property, recent multi-property analyses suggest it may not be the primary driver. One extensive study found that partition energy was more optimized (~96% on the whole code table) than polarity when biosynthetic constraints were factored in. Focusing solely on polarity risks overlooking the property that may have been under the strongest selective pressure [20].

Q3: How can the coevolution theory explain high physicochemical optimality? A3: The coevolution theory posits that the code expanded by assigning codons to new amino acids based on their biosynthetic pathways. This process, by itself, does not preclude simultaneous physicochemical optimization. Research shows that as the code grew by adding biosynthetically related amino acids, the level of physicochemical optimization increased linearly. The very high optimization of partition energy on the code's columns is seen as a selective pressure that acted in concert with the biosynthetic process structuring the rows [24] [20].

Q4: What is a robust statistical method for identifying the key optimized property? A4: Using a spatial statistics index like Moran's I is a powerful method. It allows you to analyze a vast database of hundreds of amino acid properties and identify the one that shows the most significant non-random, spatially correlated organization within the genetic code table, thereby reducing investigator bias [20].

Q5: Are formal explanations always tautological and therefore invalid? A5: Not necessarily. Psychological studies show that formal explanations (e.g., "This creature flies because it is a bird") are often more satisfying than explicit tautologies, even if they are implicitly circular. This suggests that scientific audiences may find explanations based on categorical labels (e.g., "The code is optimal because it is the universal genetic code") persuasive, but this is a cognitive effect, not a validation of the logic. Proper explanations that provide mechanistic details (e.g., citing partition energy and error minimization) are consistently rated as most convincing [19].

Table 1: Levels of Optimization for Amino Acid Properties in the Genetic Code

| Amino Acid Property | Global Optimization (%) | Optimization on Columns (%) | Optimization on Rows (%) | Key Implication |

|---|---|---|---|---|

| Partition Energy [20] | ~96% | ~98% | Data Not Provided | Suggests protein structure/enzymatic catalysis was a key selective pressure. |

| Polarity (Polar Requirement) [20] | Lower than Partition Energy | Lower than Partition Energy | Data Not Provided | May not have been the primary structuring property, contrary to some prior views. |

| β-strands [20] | 95.45% | Data Not Provided | Data Not Provided | Supports the role of selection for secondary structure formation. |

Experimental Protocols

Protocol: Assessing Optimization with Biosynthetic Constraints

Purpose: To determine the optimization level of a physicochemical property in the genetic code while accounting for the code's evolutionary history as described by the coevolution theory [20].

Methodology:

- Define the Property: Select a physicochemical or biological property of the amino acids for testing (e.g., partition energy, polarity).

- Generate Permutation Codes: Instead of generating all possible random genetic codes, create a restricted set of "amino acid permutation codes" that are subject to biosynthetic constraints. This means codons are only permuted within biosynthetically related groups of amino acids [20].

- Calculate Cost/Fitness: For the real genetic code and for every permutation in the constrained set, calculate a cost function (or fitness function) that measures how well the code minimizes the impact of errors (e.g., point mutations, frameshifts) with respect to the chosen property [23] [20].

- Compute Optimization Percentage: Rank the real genetic code's performance against the distribution of performances from the constrained permutation set. The percentage of random codes in the set that perform worse than the real code is its optimization percentage [20].

Protocol: Identifying the Most Relevant Property via Spatial Autocorrelation

Purpose: To objectively identify the amino acid property that is most non-randomly structured within the genetic code, minimizing selection bias [20].

Methodology:

- Data Compilation: Gather a large database of physicochemical and biological properties for the 20 canonical amino acids. The study referenced analyzed 530 such properties [20].

- Apply Moran's I Index: Calculate the Moran's I index of global spatial autocorrelation for each property. This statistic measures whether the property's values are clustered, dispersed, or random across the two-dimensional layout of the genetic code table.

- Compare to Random Codes: For each property, compare the Moran's I value of the real genetic code to the distribution of Moran's I values from a large set of randomly generated code tables.

- Identify Key Property: The property for which the real genetic code shows the most extreme (statistically significant) spatial autocorrelation, indicating the strongest organizational signal, is identified as the most relevant.

Visualized Workflows & Relationships

Multi-Factor Code Optimization Analysis

Tautology Avoidance in Research Design

The Scientist's Toolkit

Table 2: Key Research Reagent Solutions for Genetic Code Analysis

| Reagent / Tool | Function / Description | Application in This Context |

|---|---|---|

| Constrained Null Model | A set of randomly generated genetic codes that are not completely random but obey specific biological rules (e.g., biosynthetic relationships between amino acids). | Provides a biologically realistic baseline against which the real genetic code's performance can be compared, preventing inflated optimality scores [20]. |

| Spatial Autocorrelation Index (Moran's I) | A statistical measure that quantifies how a property is clustered or dispersed across a spatial field. | Objectively identifies the physicochemical property that is most non-randomly organized within the 2D layout of the genetic code table, reducing researcher bias [20]. |

| Partition Energy Data | Experimental or calculated values representing the energy associated with the transfer of an amino acid from water to a non-polar environment. | Serves as a key physicochemical property for testing optimality, potentially more reflective of the selective pressures (e.g., for protein folding and catalysis) than polarity [20]. |

| Cost/Fitness Function | A mathematical function that quantifies the "goodness" of a genetic code, typically by calculating the average change in property value caused by errors like mutations. | The core metric for determining optimality. A code with a lower cost (or higher fitness) is more robust against genetic errors [23] [20]. |

Theoretical Foundation: Unraveling the "Frozen Accident"

The hypothesis that the standard genetic code is optimized for error minimization posits that its structure reduces the deleterious effects of both mutations and translation errors. This is achieved by ensuring that point mutations or translational misreading often result in the incorporation of amino acids with similar physicochemical properties, thereby preserving protein function. Overcoming tautological reasoning in this field requires moving beyond simply observing that the code is robust and instead focusing on testable, quantitative comparisons against neutral baselines.

The key evidence lies in comparing the standard genetic code to a vast space of theoretical alternatives. Research indicates that the standard code is significantly more robust than a vast majority of random alternative codes [14] [25] [26]. One seminal study calculated that the probability of a random code being more robust than the standard genetic code is exceptionally low, on the order of (10^{-4}) to (10^{-6}), leading to the description of the standard code as "one in a million" [14] [25]. However, the standard code is not perfectly optimal; it appears to be the result of partial optimization of a random code, representing a point on an evolutionary trajectory rather than a global peak [14]. This finding helps circumvent tautology by demonstrating a level of optimization that is unlikely to have arisen from a purely neutral "frozen accident" [26].

Table 1: Key Hypotheses on the Origin of the Genetic Code's Robustness

| Hypothesis | Core Mechanism | Key Evidence | Status in Relation to Tautology |

|---|---|---|---|

| Natural Selection for Error Minimization | Direct selection for a code that buffers against mutations and translation errors. | The standard code is far more robust than the vast majority of random alternatives [25] [26]. | Avoids tautology by using quantitative comparison to a neutral null model (random codes). |

| Stereochemical | Physicochemical affinity between amino acids and their codons/anticodons. | Limited experimental evidence for widespread affinities; if similar amino acids bind similar triplets, robustness could be an epiphenomenon [14]. | Risk of tautology if "similarity" is defined post-hoc by the code's structure. |

| Coevolution | Code structure reflects biosynthetic pathways of amino acid formation. | Explains specific codon assignments but does not fully account for the overall error-minimizing structure [14]. | Complementary; can be integrated with selective hypotheses. |

| Neutral Emergence | Robustness is a passive by-product of other structuring forces, not direct selection. | Some simulations suggest error minimization can emerge without direct selection, but this is contested [26]. | Directly challenges selective hypotheses; requires careful modeling to avoid built-in selective assumptions. |

Quantitative Evidence: Measuring Robustness and Its Consequences

The robustness of the genetic code is quantified using cost functions that measure the average change in amino acid physicochemical properties (e.g., hydropathy, volume, charge) caused by point mutations or translation errors. This "code fitness" or "distortion" score demonstrates that the standard genetic code performs exceptionally well [14] [27].

Furthermore, this robustness is correlated with protein evolvability. Robustness to mutations creates a network of protein sequences with similar functions. This network can be explored by evolution, increasing the likelihood of finding new adaptive functions while mitigating the risk of deleterious mutations. A 2024 study found that, on average, more robust genetic codes confer greater protein evolvability, though this relationship is protein-specific and can be weak [25]. This means the standard genetic code not only protects existing functions but also facilitates the exploration of new ones.

Table 2: Empirical Measurements of Translational Fidelity and its Variation

| Organism / Context | Error Type Measured | Measured Rate | Methodology & Key Finding | Citation |

|---|---|---|---|---|

| HEK293 Cells (Human) | Stop-codon readthrough (UGA) | (4.03 \times 10^{-3}) | Dual luciferase reporter assay. UGA is more permissive to readthrough than UAG. | [28] |

| HEK293 Cells (Human) | Missense (near-cognate) error | (3.4 \times 10^{-4}) | Dual luciferase reporter assay with a specific mutation (R245C) in Fluc. | [28] |

| D. melanogaster | Amino acid misincorporation | ~(10^{-3}) to (10^{-4}) per codon | Genome-wide detection using high-resolution mass spectrometry. Optimal codons had lower error rates. | [29] |

| Aging Mice | Stop-codon readthrough | Increase of +75% (muscle) and +50% (brain) with age | In-vivo and ex-vivo bioluminescent/fluorescent imaging in knock-in mouse model. Demonstrates organismal and tissue-level variation. | [28] |

Experimental Protocols for Assessing Error Minimization

Protocol 1: Quantifying Translational Readthrough In Vivo Using a Dual Reporter System

This protocol is based on the methodology used to generate knock-in mice for assessing age-dependent translational errors [28].

1. Principle: A single mRNA transcript is engineered to encode two reporter proteins. The first reporter (e.g., Katushka2S, a far-red fluorescent protein) serves as an internal control for transcription and translation efficiency. The second reporter (e.g., Firefly luciferase, Fluc) is separated by a linker containing a stop codon (e.g., TGA). Successful termination produces only the fluorescent protein. Translational readthrough results in a single fusion protein possessing both fluorescence and bioluminescence activity.

2. Key Reagents:

- Reporter Construct: Plasmid or knock-in allele expressing

Kat2-TGA-Fluc(orhRluc-TGA-Flucfor cell culture). - Cell/Animal Model: HEK293 cells [28]; for in vivo studies, a homozygous knock-in mouse model [28].

- Detection Instruments: Fluorometer for Katushka2S (ex/em ~588/633 nm); Luminometer for Fluc (with D-luciferin substrate); in vivo imaging system (IVIS).

3. Procedure:

- Transfection/Genotyping: Introduce the reporter construct into cells or genotype mice to confirm the knock-in allele.

- Sample Collection: For longitudinal studies, collect tissues (muscle, brain, liver) or perform non-invasive imaging on live animals at defined time points.

- Luciferase Assay: Homogenize tissues or lyse cells in a passive lysis buffer. Incubate lysate with D-luciferin substrate and measure bioluminescence.

- Fluorescence Assay: Measure the fluorescence of the same lysate to quantify the Kat2 control reporter.

- Data Analysis: Calculate the readthrough frequency as the ratio of Fluc activity (Relative Light Units, RLU) to Kat2 fluorescence. Normalize this ratio to that of a positive control (e.g., a construct with no stop codon, FlucWT) [28].

Readthrough Frequency = (Fluc_TGA / Kat2) / (Fluc_WT / Kat2)

4. Troubleshooting:

- Low Signal-to-Noise: Ensure the stop codon context is neutral and that the linker does not destabilize the Fluc protein upon readthrough.

- High Variability: Normalize meticulously to the internal fluorescent control to account for differences in transfection efficiency, cell viability, and tissue extraction.

Protocol 2: Genome-Wide Identification of Translation Errors via Mass Spectrometry

This protocol outlines the process for detecting amino acid misincorporation events, as applied in Drosophila melanogaster [29].

1. Principle: High-resolution mass spectrometry (MS) is used to detect peptides that differ from the expected genomic sequence by a single amino acid. By comparing "base peptides" (canonical sequences) to "dependent peptides" (variant sequences), and ruling out single nucleotide polymorphisms (SNPs) and RNA editing, these differences can be attributed to translation errors.

2. Key Reagents:

- Sample: Tissues or whole organisms across multiple developmental stages (e.g., 17 samples from 15 time points in Drosophila [29]).

- Software: MaxQuant for peptide identification and error detection [29].

- Genomic Data: A high-quality reference genome and known SNP database for the organism/strain used.

3. Procedure:

- Sample Preparation: Extract proteins from biological replicates, digest with trypsin, and prepare peptides for LC-MS/MS.

- Mass Spectrometry Analysis: Run samples on a high-resolution mass spectrometer (e.g., Orbitrap).

- Data Processing:

- Step 1: Create a customized reference proteome that incorporates known SNPs from the experimental strain to avoid false positives [29].

- Step 2: Use MaxQuant to search MS data against the reference. Identify "dependent peptides" that differ from "base peptides" by a single amino acid.

- Step 3: Filter out potential RNA editing sites (e.g., A-to-I sites) [29].

- Step 4: Perform statistical simulation to determine if errors detected in multiple samples are non-random "hotspots" or random events [29].

- Data Integration: Correlate misincorporation sites with codon usage (optimal vs. non-optimal) and genomic context.

4. Troubleshooting:

- False Positives from SNPs: The most critical step is creating an accurate, strain-specific reference database. Failure to do so will confound translation errors with genetic variation.

- Low Coverage: Use deep, multi-dimensional fractionation to increase proteome coverage and the probability of detecting low-frequency error peptides.

Visualizing the Experimental Workflow for Readthrough Assays

The following diagram illustrates the logical structure and workflow of the dual reporter assay for quantifying translational readthrough, as described in the experimental protocol.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagent Solutions for Genetic Code Robustness Research

| Reagent / Material | Function in Experiment | Specific Application Example |

|---|---|---|

| Dual Luciferase/Reporter Constructs | To quantitatively measure the frequency of translational errors (missense or readthrough) by normalizing a sensitive signal to a constitutive internal control. | pRM-based vectors with hRluc-Fluc or Kat2-Fluc configuration, containing sense or stop codons in the linker region [28]. |

| Knock-in Animal Models | To enable the study of translational fidelity in a whole-organism context, across different tissues and over time (e.g., aging). | Kat2-TGA-Fluc knock-in mice for longitudinal in-vivo and ex-vivo imaging of stop-codon readthrough [28]. |

| High-Resolution Mass Spectrometer | To detect and quantify low-frequency amino acid misincorporation events at the proteome-wide level. | Orbitrap-based LC-MS/MS systems used for identifying erroneous peptides in D. melanogaster developmental samples [29]. |

| Aminoglycosides (e.g., Geneticin) | To artificially induce mistranslation by binding to the ribosomal decoding center, serving as a positive control in error assays. | Treatment of HEK293 cells to demonstrate dose-dependent increase in missense errors and stop-codon readthrough [28]. |

| Ribosome Profiling (Ribo-Seq) | To map the positions of ribosomes on mRNA and infer translation elongation rates at codon resolution. | Used in D. melanogaster to show that optimal codons are translated more rapidly than non-optimal codons [29]. |

| Deep Mutational Scanning Datasets | To empirically define the fitness landscape of thousands of protein variants and model evolvability under different genetic codes. | Datasets of 3-4 site variants used to calculate protein evolvability networks under the standard and rewired genetic codes [25]. |

Frequently Asked Questions (FAQs)

Q1: If the genetic code is so robust, why are translation errors still a problem, and why do they increase with age? The genetic code is optimized to minimize, not eliminate, the impact of errors. The inherent error rate of the ribosome (~10⁻⁴ per codon) is a trade-off between accuracy, speed, and energetic cost. An age-related increase in errors, as observed in mouse brain and muscle [28], is thought to stem from declining function in multiple systems that maintain fidelity, including tRNA pools, rRNA modifications, and protein homeostasis networks. This accumulation of errors is itself a contributor to the aging process.

Q2: How can I distinguish between a translation error and a single nucleotide polymorphism (SNP) in my mass spectrometry data? This is a critical experimental challenge. The definitive method is to create a strain-specific reference proteome that includes all known SNPs from your experimental organism. Before analyzing for errors, you must sequence the genome of your subject strain (e.g., Oregon-R fly) and incorporate these SNPs into the reference database (e.g., ISO-1 genome) used for the MS search. This prevents SNPs from being mis-identified as translation errors [29].

Q3: Our lab wants to test the error minimization hypothesis directly. What is a modern approach that avoids circular reasoning? Move beyond simply describing the standard code's robustness. A powerful approach is to use deep mutational scanning data. You can take a dataset containing the fitness of thousands of protein variants, then use in silico simulations to "rewire" the genetic code. By comparing the evolvability and mutational robustness of your protein under the standard code versus thousands of random or optimized alternative codes, you can objectively test if the standard code performs remarkably well for your specific protein of interest, thereby avoiding the tautology of only looking at the standard code in isolation [25].

Q4: Does codon usage bias (CUB) influence error minimization? Absolutely. The robustness of the genetic code is not just about its static table but also about how it is used. Codon usage bias means that certain codons are used more frequently than their synonyms. Since different codons have different probabilities of being misread and different "mutation neighborhoods," the specific codon usage of an organism directly affects the expected average impact of mutations across its proteome—a property known as "distortion" [27]. For example, optimal codons in Drosophila are associated with both faster translation elongation and lower error rates [29].

Breaking the Cycle: Multi-Objective Frameworks and Synthetic Biology Applications

A significant challenge in evolutionary studies, particularly in genetic code optimality research, is the circular reasoning or tautology that arises when the same data is used to both define and test a hypothesis. This problem is acutely observed when studies attempt to evaluate the optimality of the standard genetic code (SGC). Many approaches fall into the trap of using amino acid substitution matrices (like PAM and BLOSUM) that themselves incorporate the very genetic code structure being evaluated, creating a self-referential system that invalidates the analysis [11]. This technical support center provides methodologies and troubleshooting guides to help researchers implement Multi-Objective Evolutionary Algorithms (MOEAs) that avoid such tautological pitfalls through proper experimental design, validation, and analysis techniques.

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: How can I avoid tautological reasoning when evaluating genetic code optimality?

Problem: Circular analysis occurs when evaluation criteria presuppose the optimality being tested.

Solution:

- Use Independent Amino Acid Properties: Instead of substitution matrices influenced by the genetic code, use fundamental physicochemical properties from databases like AAindex. Research has successfully employed clustering techniques to select representative indices from over 500 properties [11].

- Implement Multi-Objective Validation: Evaluate codes against multiple independent physicochemical properties simultaneously (e.g., polar requirement, hydropathy index, molecular volume) rather than a single property [30] [11].

- Compare Against Appropriate Benchmarks: Rather than comparing only against random codes, establish both minimization and maximization bounds to properly contextualize SGC performance [11].

Troubleshooting:

- Issue: Results consistently show SGC as optimal regardless of parameter changes.

- Fix: Audit your evaluation criteria for embedded assumptions about the SGC structure. Implement negative controls using artificially generated codes.

FAQ 2: What are common MOEA convergence problems and their solutions?

Problem: Algorithm stagnates or converges prematurely to suboptimal solutions.

Solution:

- Implement Advanced Search Strategies: Neighbor and guidance strategies can improve search efficiency. Recent research shows these can improve convergence speed by 12.54% and solution accuracy by 3.67% [31].

- Enhance Diversity Mechanisms: Use random grouping and precise sampling techniques to maintain population diversity, especially under noisy conditions [32].

- Problem-Specific Operators: Incorporate domain knowledge through custom genetic operators and local search heuristics [33].

Troubleshooting:

- Issue: Population diversity decreases rapidly in early generations.

- Fix: Adjust selection pressure, implement crowding or niche preservation techniques, and consider restricted mating approaches.

FAQ 3: How should I handle noise and uncertainty in experimental data?

Problem: Input disturbances or measurement noise leads to unreliable fitness evaluations.

Solution:

- Implement Robust Optimization: Use survival rate-based approaches that treat robustness as an explicit objective rather than a constraint [32].

- Apply Precise Sampling: Evaluate solutions multiple times with smaller perturbations to estimate performance more accurately under uncertainty [32].

- Utilize Expectation Measures: Calculate expected performance across a neighborhood of solutions using Monte Carlo or similar integration methods [32].

Troubleshooting:

- Issue: High variability in solution performance despite good theoretical fitness.

- Fix: Incorporate variance measures into your fitness evaluation and implement noise-resistant selection operators.

FAQ 4: What visualization techniques help analyze complex Pareto fronts?

Problem: High-dimensional solution sets are difficult to interpret and compare.

Solution:

- Interactive Visual Analytics: Use frameworks like ParetoLens that enable exploration of solutions in both decision and objective spaces [34].

- Multiple Projection Techniques: Combine 2D/3D scatterplots, parallel coordinates, and radial visualization methods [34].

- Dynamic Filtering: Implement interactive capabilities to focus on regions of interest in the solution space [34].

Troubleshooting:

- Issue: Visual clutter obscures patterns in large solution sets.

- Fix: Use dimensionality reduction techniques (PCA, t-SNE) and implement focus+context visualization strategies.

Experimental Protocols for Non-Tautological Genetic Code Analysis

Protocol 1: Multi-Objective Assessment of Genetic Code Optimality

This protocol provides a methodology for evaluating genetic code optimality while avoiding circular reasoning, based on established research approaches [30] [11].

Experimental Workflow:

Detailed Methodology:

Property Selection:

- Access the AAindex database containing 500+ amino acid indices

- Select properties independent of genetic code structure (avoid substitution matrices)

- Commonly used properties: polar requirement, hydropathy index, molecular volume [30]

Representative Selection:

- Apply consensus fuzzy clustering to identify property groups

- Select one representative index from each major cluster

- Aim for 6-10 representative properties covering different characteristics [11]

MOEA Configuration:

- Algorithm: NSGA-II or SPEA2 for 2-3 objectives; NSGA-III for more objectives

- Population size: 100-500 depending on problem complexity

- Genetic operators: Permutation-based for code structure preservation

Validation:

- Compare SGC against both minimization and maximization fronts

- Calculate normalized distance metrics to proper reference points

- Perform statistical testing against appropriate null models

Protocol 2: Robust MOEA under Input Uncertainty

This protocol addresses experimental scenarios with input disturbances or noisy evaluations [32].

Materials and Equipment:

- High-performance computing cluster

- Statistical analysis software (R, Python with scipy)

- Benchmark problem sets (ZDT, DTLZ, WFG)

Procedure:

Uncertainty Characterization:

- Quantify input disturbance ranges for each decision variable

- Establish probability distributions for uncertain parameters

- Define maximum perturbation thresholds (δ^max)

Robust MOEA Configuration:

- Implement survival rate as additional objective

- Configure precise sampling mechanism (multiple smaller perturbations)

- Set up random grouping for diversity preservation

Execution:

- Run optimization with robust considerations

- Calculate both nominal and robust performance metrics

- Maintain archive of non-dominated robust solutions

Analysis:

- Evaluate trade-offs between optimality and robustness

- Identify solutions with acceptable performance under uncertainty

- Validate with Monte Carlo simulation on selected solutions

Performance Data and Benchmarking

Table 1: MOEA Performance Comparison on Standard Test Problems

| Algorithm | Convergence Speed (Generations) | Solution Accuracy (IGD) | Robustness (Survival Rate) | Computational Complexity |

|---|---|---|---|---|

| NSGA-III/NG | 12.54% improvement over baseline [31] | 3.67% improvement [31] | Not Reported | O(MN²) [30] |

| MOEA/D-NG | 12.54% improvement over baseline [31] | 3.67% improvement [31] | Not Reported | Varies with decomposition |

| RMOEA-SuR | Not Reported | Improved convergence [32] | 15-30% improvement [32] | Higher due to sampling |

| KMOEA/D | Faster convergence on scheduling problems [33] | Better makespan and energy efficiency [33] | Not Reported | Problem-dependent |

Table 2: Genetic Code Optimization Results with Multiple Objectives

| Optimization Approach | Number of Objectives | Distance to SGC | Optimality Gap | Key Findings |

|---|---|---|---|---|

| Single-Objective | 1 (Polar Requirement) | Larger [30] | Significant | Incomplete picture of code optimality |

| Multi-Objective | 2 (Polar Requirement + Hydropathy) | Closer [30] | Reduced | More realistic assessment |

| Eight-Objective | 8 (Cluster Representatives) | Intermediate [11] | Partial optimization | SGC not fully optimized but better than random |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Computational Tools and Frameworks for MOEA Research

| Tool Name | Primary Function | Advantages | Limitations |

|---|---|---|---|

| ParetoLens [34] | Visual analytics of solution sets | Interactive exploration, multiple visualization techniques | Web-based, may lack advanced analysis |

| FADSE 2.0 [35] | Automatic design space exploration | Extensible architecture, multicore optimization | Requires Java expertise |

| MOVEA [36] | Brain stimulation optimization | Handles non-convex problems, Pareto front generation | Domain-specific (tES applications) |

| PlatEMO [35] | General MOEA framework | Comprehensive algorithm library, user-friendly | May not handle very large scales |

| jMetal [35] | Java-based MOEA development | Rich algorithm collection, active community | Java-centric, learning curve |

Table 4: Critical Validation Metrics and Their Applications

| Metric | Formula/Approach | Interpretation | Use Case |

|---|---|---|---|

| Survival Rate [32] | SR(x) = P(ƒ(x + δ) meets criteria) | Probability of acceptable performance under perturbation | Robust optimization |

| Hypervolume | Volume dominated relative to reference point | Combines convergence and diversity | General MOEA comparison |

| Inverted Generational Distance (IGD) | Distance from reference set to approximation | Convergence to true Pareto front | Algorithm performance |

| Survival Rate Multi-objective | Combines convergence and robustness equally | Balanced optimality and stability | Noisy environments |

Advanced Technical Support: Specialized Scenarios

Industrial Manufacturing Application

Problem: Energy-efficient scheduling in distributed permutation flow shop with heterogeneous factories (DPFSP-HF) [33].

MOEA Configuration:

- Algorithm: Knowledge-driven MOEA/D (KMOEA/D)

- Objectives: Minimize makespan (Cmax) and total energy consumption (TEC)

- Specialized Operators:

- Problem-specific initialization (modified NEH heuristic)

- Knowledge-driven local search (critical factory identification)

- Energy-saving strategy (delayed job start times)

Implementation Diagram:

Biomedical Research Application

Problem: Designing transcranial electrical stimulation strategies for human brain stimulation [36].

MOEA Configuration:

- Algorithm: MOVEA (Multi-Objective Optimization via Evolutionary Algorithm)

- Objectives: Electric field intensity, focality, stimulation depth, avoidance zone respect

- Constraints: Safety limits, anatomical considerations

Key Considerations:

- Non-convex Optimization: Standard convex methods may fail

- Multiple Targets: Simultaneous optimization without predefined weights

- Personalization: Account for inter-subject variability in head anatomy

Regulatory Compliance in Pharmaceutical Applications

For drug development professionals implementing MOEAs, compliance with regulatory standards is essential:

Current Good Manufacturing Practice (cGMP) Considerations [37]:

- Process models must be paired with in-process testing, not used alone

- Scientific rationale required for defining "significant phases" of sampling

- Quality unit approval needed for established limits and control strategies

- Advanced manufacturing technologies require validation of underlying assumptions

Documentation Requirements:

- Complete MOEA parameter settings and justification

- Validation against traditional methods

- Robustness testing under expected operating conditions

- Change control procedures for algorithm modifications

This technical support center provides guidance for researchers employing cluster analysis on amino acid indices, a foundational technique for organizing and interpreting the multifaceted physicochemical and biochemical properties of amino acids. The AAindex database, a central resource in this field, has grown from an initial collection of 222 indices to over 500, enabling the prediction of protein structure, function, and evolution [38] [39]. Proper clustering of these indices is crucial for selecting non-redundant, representative properties for machine learning models, thereby enhancing interpretability and avoiding overfitting. Within the context of genetic code optimality studies, this rigorous approach helps overcome circular reasoning (tautology) by ensuring that the properties used to argue for the code's optimality are not themselves pre-selected based on the code's known structure.

Frequently Asked Questions (FAQs) & Troubleshooting

1. FAQ: I am new to the AAindex. How is the data organized, and what is the difference between AAindex1 and AAindex2?

- Answer: The AAindex database is segmented into two main sections [39].

- AAindex1 is a collection of published amino acid indices. Each entry is a set of 20 numerical values representing a specific physicochemical or biochemical property (e.g., hydrophobicity, alpha-helix propensity) for the 20 standard amino acids. The database now contains over 500 such indices [40] [41].

- AAindex2 is a collection of published amino acid mutation matrices. These are generally 20x20 matrices of numerical values representing the similarity or substitution probability between pairs of amino acids.

2. FAQ: My clustering results on the AAindex are difficult to interpret and seem to change with different algorithms. Why is this, and how can I achieve more stable clusters?

- Troubleshooting Guide:

- Problem: The high dimensionality and inherent correlations between many amino acid indices can lead to unstable and overlapping clusters when using traditional "crisp" clustering methods like hierarchical clustering, where each index is forced into a single group [41].

- Solution: Consider using consensus fuzzy clustering techniques. Fuzzy clustering acknowledges that an index can belong to multiple clusters with varying degrees of membership, which is often a more accurate reflection of the underlying data [41].

- Action: Employ algorithms like Fuzzy C-Medoids (FCMdd) and perform consensus across multiple runs or algorithms to generate a robust, high-quality set of cluster representatives.

3. FAQ: What is the most common categorical structure identified for amino acid indices?

- Answer: Early and subsequent clustering analyses have consistently identified several major groups. The foundational study by Nakai et al. (1988) categorized 222 indices into four primary regions [38] [39]:

- α-helix and turn propensities

- β-strand propensity

- Hydrophobicity (further subdivided into subclasses like inside/outside preference and accessible surface area)

- Other physicochemical properties (including bulkiness) More recent work, like AAontology, has expanded this into a finer-grained classification of 8 categories and 67 subcategories for 586 scales [40].

4. FAQ: How can the clustering of amino acid indices help address tautology in genetic code optimality research?

- Troubleshooting Guide:

- Problem: Studies arguing that the standard genetic code (SGC) is optimal for error minimization can be circular if the physicochemical property used to measure "similarity" between amino acids was itself derived from or influenced by the known structure of the SGC [14] [3].

- Solution: Using a clustered set of amino acid indices provides a systematic and transparent method for property selection.

- Action: Instead of a single, potentially biased property, researchers can select one representative index from each major cluster (e.g., hydrophobicity, bulkiness, turn propensity) identified through unsupervised learning. Demonstrating that the SGC is robust to error across this diverse, representative, and independently derived set of properties provides much stronger evidence against a "frozen accident" and for adaptive evolution [14] [3].

5. FAQ: What are the key steps for preparing AAindex data before performing cluster analysis?

- Answer: Proper data preparation is critical for success [42].

- Handling Missing Values: Most clustering algorithms will not work with missing data. Options include complete case analysis (removing indices with missing values) or imputation (estimating missing values), each with its own trade-offs [42].

- Scaling and Normalization: Because indices measure properties on different scales (e.g., energy, volume, propensity scores), it is essential to standardize or normalize the data. This prevents variables with larger native ranges (e.g., energy values) from dominating the distance calculations in the clustering algorithm [42].

- Feature Selection: For very high-dimensional analyses, it may be beneficial to perform preliminary feature selection to reduce noise, though this is less common when the explicit goal is to cluster the indices themselves.

Detailed Experimental Protocol: Hierarchical Cluster Analysis of AAindex1

This protocol outlines the steps to perform a hierarchical cluster analysis on a set of amino acid indices, replicating and extending the methodology of foundational papers [38] [41].

Objective: To group a set of amino acid indices from AAindex1 based on their correlation, identify major clusters of physicochemical properties, and select representative indices for each cluster.

Workflow Diagram: Amino Acid Indices Clustering Workflow

Materials and Reagents:

- AAindex1 Database: The primary data source, available online [39].

- Statistical Software: R (with

stats,cluster,corrplotpackages) or Python (withscipy,scikit-learn,pandas,seabornlibraries). - Computing Environment: A standard desktop computer is sufficient for datasets of this size.

Procedure:

- Data Curation: Download the latest version of AAindex1. Select only indices that are complete (no missing values for the 20 amino acids). This results in a numerical matrix of dimensions

N x 20, whereNis the number of selected indices. - Data Normalization: Standardize each index (each row of the matrix) to have a mean of zero and a standard deviation of one. This ensures all properties contribute equally to the analysis.

- Compute Correlation Matrix: Calculate the

N x Nmatrix of Pearson correlation coefficients between every pair of standardized indices. The similarity between two indices is often defined as their absolute correlation or 1 - absolute correlation for use as a distance. - Perform Hierarchical Clustering: Using the correlation-based distance matrix, perform hierarchical clustering. The Ward's method linkage criterion is often used as it tends to create compact, spherical clusters.

- Generate and Interpret Dendrogram: Plot the dendrogram to visualize the hierarchical relationship between all indices. This helps in understanding the natural data structure and deciding where to "cut" the tree.

- Cut Dendrogram to Define Clusters: Based on the dendrogram and the goal of the analysis, cut the tree to create a specific number of clusters (e.g., 4-8). More advanced methods can determine the optimal number of clusters automatically.

- Cluster Analysis: For each cluster, analyze the common themes of the indices within it by examining their original publications and descriptions. This step assigns biological meaning to the mathematical groups.

- Select Representative Indices: Identify the index within each cluster that has the highest average correlation with all other members of the same cluster. This becomes a robust, non-redundant representative for that property group.

Data Presentation

Table 1: Evolution of the AAindex Database and its Categorization

| Database / Study Version | Number of Indices | Proposed Categorization | Key Clustering Method | Reference |

|---|---|---|---|---|

| Nakai et al. (1988) | 222 | 4 main clusters (α/turn, β, hydrophobicity, other physicochemical) | Hierarchical Cluster Analysis | [38] |

| Tomii & Kanehisa (1996) | 402 | 6 groups (e.g., alpha/turn, beta, composition, hydrophobicity, physicochemical) | Hierarchical Clustering | [41] |

| AAindex (2000) | 437 (AAindex1) | Based on prior clustering work | Database Release | [39] |

| Fuzzy Clustering Study (2011) | 544 | High-Quality Indices (HQI) subsets | Consensus Fuzzy Clustering (FCMdd) | [41] |

| AAontology (2024) | 586 | 8 categories, 67 subcategories | Bag-of-words, Clustering, Manual Refinement | [40] |

Table 2: Comparison of Clustering Algorithms for Amino Acid Indices

| Clustering Algorithm | Type | Key Principle | Advantages for AAindex | Disadvantages/Limitations |

|---|---|---|---|---|

| Hierarchical Clustering | Crisp | Creates a tree of nested clusters (dendrogram) based on proximity. | Excellent visualization; no need to pre-specify cluster count; foundational for AAindex [38]. | "Crisp" assignment can be forced; sensitive to outliers; computationally heavy for large N. |

| K-Means | Crisp | Partitions data into a pre-defined number (K) of spherical clusters by minimizing variance. | Simple, fast, and efficient for large datasets [42]. | Requires pre-specifying K; assumes spherical clusters; poor with correlated data. |

| Fuzzy C-Medoids (FCMdd) | Fuzzy | Each data point has a membership score to all clusters; uses actual data points (medoids) as centers. | Handles overlapping indices well; more robust and interpretable for AAindex [41]. | Computationally more intensive than K-Means. |

| DBSCAN | Crisp | Identifies clusters as high-density areas separated by low-density areas. | Can find arbitrary shapes; robust to outliers; does not require K [42]. | Struggles with data of varying densities; difficult to parameterize. |

Table 3: Essential Resources for Clustering Amino Acid Indices

| Resource Name | Type / Category | Function and Utility in Research |

|---|---|---|

| AAindex Database | Primary Database | The central, curated repository of published amino acid indices and mutation matrices. It is the essential starting point for any analysis [39]. |

| AAontology | Classification System | Provides a modern, fine-grained, and biologically interpretable hierarchy of amino acid scales, enhancing the explainability of machine learning models [40]. |