Beyond Infinite Sites: Advanced Methods for Accurate Mutation Rate Estimation in Biomedical Research

Accurate mutation rate estimation is fundamental for calibrating molecular clocks, understanding evolutionary history, and interpreting disease-associated genetic variation.

Beyond Infinite Sites: Advanced Methods for Accurate Mutation Rate Estimation in Biomedical Research

Abstract

Accurate mutation rate estimation is fundamental for calibrating molecular clocks, understanding evolutionary history, and interpreting disease-associated genetic variation. This article explores the frontier of mutation rate research, addressing the critical limitations of traditional infinite-sites models in the era of mega-datasets. We detail innovative methodologies like the DR EVIL framework that leverage rare variants and account for recurrent mutation and selection. The content provides a comprehensive guide for researchers and drug development professionals on integrating multi-generational pedigree studies, correcting for genomic heterogeneity, and validating estimates against empirical truth sets. Finally, we discuss the practical implications of these advances for characterizing mutational spectra across species and improving the accuracy of pathogen evolution forecasting.

The Foundation of Mutational Analysis: Core Concepts and Current Challenges

Accurate estimation of mutation rates is a foundational requirement in modern genomics, with profound implications for evolutionary biology, medical genetics, and therapeutic development. Mutation rates represent the frequency at which new genetic variations arise in DNA sequences, serving as the ultimate source of genetic diversity upon which evolutionary forces act. Recent research has demonstrated that these rates vary substantially across the genome, between individuals, and among populations, creating significant challenges for precise genetic analysis [1] [2]. The implications of these variations extend from dating evolutionary events using molecular clocks to interpreting the pathogenicity of variants in clinical settings.

Understanding why mutation rates matter requires recognizing their dual nature as both a biological parameter and an analytical tool. As a biological parameter, mutation rates reflect the complex interplay of DNA repair efficiency, environmental exposures, and cellular processes. As an analytical tool, they enable researchers to calibrate molecular clocks for dating evolutionary divergences and to establish baseline expectations for variant interpretation in disease genomics. This technical support center addresses the specific methodological challenges researchers encounter when measuring, interpreting, and applying mutation rates across diverse genomic contexts.

Key Concepts and Terminology

Mutation Rate: The frequency at which new genetic mutations occur in a DNA sequence per generation, per cell division, or per unit time. Typically measured as mutations per base pair per generation.

Molecular Clock: A technique in evolutionary biology that uses the mutation rate of biomolecules to deduce the time in prehistory when two or more life forms diverged.

De Novo Mutations (DNMs): New genetic variants that are present in an individual but absent from both biological parents' genomes, representing recently occurring mutations.

Infinite-Sites Assumption: A population genetics assumption that each polymorphic site in a genome has experienced only a single mutation event throughout history, which becomes problematic in large samples where recurrent mutation occurs.

Time Dependency Effect: The phenomenon where estimated evolutionary rates appear faster when measured over recent time scales compared to deeper evolutionary timescales, creating challenges for molecular dating [3].

Frequently Asked Questions (FAQs)

Q1: Why do my molecular dating estimates vary significantly when using different mutation rate calibrations?

Molecular dating estimates are highly sensitive to the mutation rates used for calibration due to the time dependency effect. Research on ancient and modern mitochondrial genomes has demonstrated that the substitution rate can be significantly slower or faster than the average germline mutation rate, depending on the timescale being measured [3]. This effect arises primarily from changes in effective population size over time, with exponential population growth in recent human history accelerating observed evolutionary rates. When dating recent evolutionary events (e.g., past 10,000 years), you will obtain more accurate estimates using mutation rates derived from pedigree studies, while deeper evolutionary divergences require phylogenetically-calibrated rates that account for this time-dependent effect.

Q2: How does sample size affect mutation rate estimation in large genomic datasets?

Extremely large sample sizes (e.g., hundreds of thousands to millions of genomes) violate the infinite-sites assumption that underlies many population genetic methods. When analyzing rare variants in massive datasets, you must account for recurrent mutation - where the same variant arises independently multiple times through separate mutation events [4]. Methods like DR EVIL (Diffusion for Rare Elements in Variation Inventories that are Large) use diffusion approximations that incorporate recurrent mutation and selection, providing more accurate estimates of mutation rates and demographic history from large samples where traditional approaches fail.

Q3: What factors explain mutation rate heterogeneity across genomic regions?

Mutation rates vary substantially across genomes due to multiple biological factors:

- Transcription factor binding: Proteins that regulate gene function can compete with DNA mismatch repair operations, increasing error rates at specific binding sites [5]

- Local sequence context: Trinucleotide content and homopolymer repeats significantly influence mutation susceptibility

- Epigenetic features: Methylation status, particularly at CpG sites, dramatically increases mutation rates

- Chromosomal features: Centromeres, segmental duplications, and tandem repeats exhibit elevated mutation rates [6]

- Replication timing: Late-replicating regions typically experience higher mutation rates

Q4: How do technical artifacts confound mutation rate estimation, and how can I mitigate them?

Technical artifacts pose significant challenges for accurate mutation rate estimation, particularly when relying on short-read sequencing technologies. Common issues include:

- Homopolymer-associated errors: Illumina sequencing exhibits "bleeding" errors near A/T homopolymeric runs that can be mistaken for true mutations [7]

- Mapping artifacts: Incorrect alignment of reads to repetitive regions, particularly centromeres, generates false variant calls

- Clustered false positives: Putative mutations that appear in tight clusters often represent technical artifacts rather than biological events

Mitigation strategies include implementing stringent variant filtering, requiring independent support from both sequencing strands, comparing mutation profiles against known artifact patterns, and validating unexpected findings with complementary technologies.

Troubleshooting Common Experimental Issues

Problem: Inconsistent Mutation Rates Between Pedigree and Phylogenetic Estimates

Symptoms: Mutation rates estimated from parent-offspring trios are approximately two-fold higher than those derived from phylogenetic comparisons across species.

Explanation: This discrepancy represents a real biological phenomenon rather than methodological error. Pedigree-based estimates capture transient polymorphisms that may be lost over evolutionary time, while phylogenetic approaches only reflect mutations that have fixed in populations. The effective population size and time-dependent effects cause this difference [3].

Solution:

- For studies of recent evolutionary events (e.g., human population history), use pedigree-based rates (~1.0-1.3 × 10⁻⁸ mutations per base pair per generation)

- For deeper evolutionary divergences (e.g., primate speciation), use phylogenetically-calibrated rates

- Clearly specify which rate standard you're using and justify its appropriateness for your specific timescale

Problem: Ancestry-Associated Variation in Mutation Rates

Symptoms: Significant differences in mutation rates and spectra between populations of different genetic ancestries, potentially confounding association studies.

Explanation: Recent research analyzing >10,000 trios has identified modest but statistically significant ancestry-related differences in both mutation rate and spectra [2]. These effects may reflect a combination of genetic variation in DNA repair pathways, environmental exposures correlated with ancestry, or technical artifacts related to reference genome biases.

Solution:

- Account for genetic ancestry as a covariate in mutation rate analyses

- Use ancestry-specific mutation rate references when available

- Implement careful quality control to distinguish biological differences from technical artifacts related to mapping and variant calling

Problem: Low Precision in Single-Gene Molecular Dating

Symptoms: Divergence time estimates for individual gene trees show wide confidence intervals and significant variability between genes.

Explanation: Dating inconsistency in single-gene trees arises from limited informative sites, high rate heterogeneity between branches, and low average substitution rates [8]. The statistical power for dating is fundamentally limited by the amount of information in gene alignments.

Solution:

- Focus on genes with strong phylogenetic signals and minimal rate heterogeneity

- Incorporate information from multiple unlinked loci whenever possible

- Use Bayesian methods that explicitly model rate variation among branches

- Interpret single-gene dates with appropriate caution, acknowledging substantial uncertainty

Quantitative Data Reference Tables

Table 1: Comparison of Mutation Rate Estimation Methods

| Method Type | Typical Data Source | Resolution | Key Advantages | Key Limitations | Reported Mutation Rates |

|---|---|---|---|---|---|

| Direct (Pedigree) | Parent-offspring trios [2] | Genome-wide average | Measures contemporary mutations; Direct observation | Limited to few generations; Expensive for large samples | 1.0-1.3 × 10⁻⁸ per bp per generation (human) |

| Direct (Multi-generational) | Four-generation families [6] | Individual mutations across generations | Tracks transmission; Identifies de novo mutations | Extremely rare resource; Complex analysis | 98-206 de novo mutations per generation (human) |

| Indirect (Population) | Polymorphism data [1] | Fine-scale (1kb-1Mb) | High genomic resolution; Historical timescale | Confounded by demography and selection | Varies by genomic context (e.g., 0.4-1.1 × 10⁻⁸ in aye-aye) |

| Indirect (Phylogenetic) | Cross-species comparisons [3] | Genome-wide average | Deep evolutionary perspective; Uses published data | Depends on calibration; Assumes neutrality | ~0.5-0.7 × pedigree rate (time-dependent) |

Table 2: Factors Influencing Mutation Rate Variation

| Factor Category | Specific Factor | Effect Size/Direction | Key Evidence |

|---|---|---|---|

| Genomic Context | CpG sites | 10-12x increase vs background | Methylation-induced deamination [4] |

| Genomic Context | Transcription factor binding sites | Significant increase | Competition with repair machinery [5] |

| Genomic Context | Tandem repeats | 20-fold variation across genome [6] | Replication slippage mechanism |

| Demographic | Paternal age | ~2 additional mutations/year | Primarily paternal origin [6] |

| Demographic | Population bottlenecks | Transient rate acceleration | Reduced purifying efficiency |

| Environmental | Cigarette smoking | Modest but significant increase | Epidemiology study [2] |

| Technical | Homopolymer runs | 54% of artifactual mutations [7] | Sequencing bleeding errors |

Experimental Protocols and Workflows

Protocol: Accurate Mutation Rate Estimation from Pedigree Data

Principle: Identify de novo mutations by comparing offspring genomes to their parents, providing a direct measurement of mutation rates across generations.

Step-by-Step Methodology:

- Sample Collection: Collect whole-blood or cell-line DNA from multiple family members across ≥2 generations

- Library Preparation: Use PCR-free library protocols to minimize amplification artifacts

- Sequencing: Perform high-coverage (≥30x) whole-genome sequencing on all individuals

- Variant Calling:

- Call variants jointly across all family members

- Apply strict quality filters (mapping quality ≥60, base quality ≥40)

- Require ≥5 supporting reads for alternative alleles

- De Novo Mutation Identification:

- Identify sites homozygous reference in both parents but heterozygous in offspring

- Exclude sites with any alternative allele evidence in parents

- Remove mutations in problematic genomic regions (centromeres, telomeres, segmental duplications)

- Validation: Confirm putative DNMs using orthogonal technology (Sanger sequencing)

- Rate Calculation: Calculate mutation rate as: (validated DNMs) / (callable base pairs × number of meioses)

Technical Notes:

- Cell line DNA may accumulate somatic mutations during culture [6]

- Trio-based designs avoid this issue but provide less power for transmission pattern analysis

- Computational prediction of "callable" genomic regions is critical for accurate denominator estimation

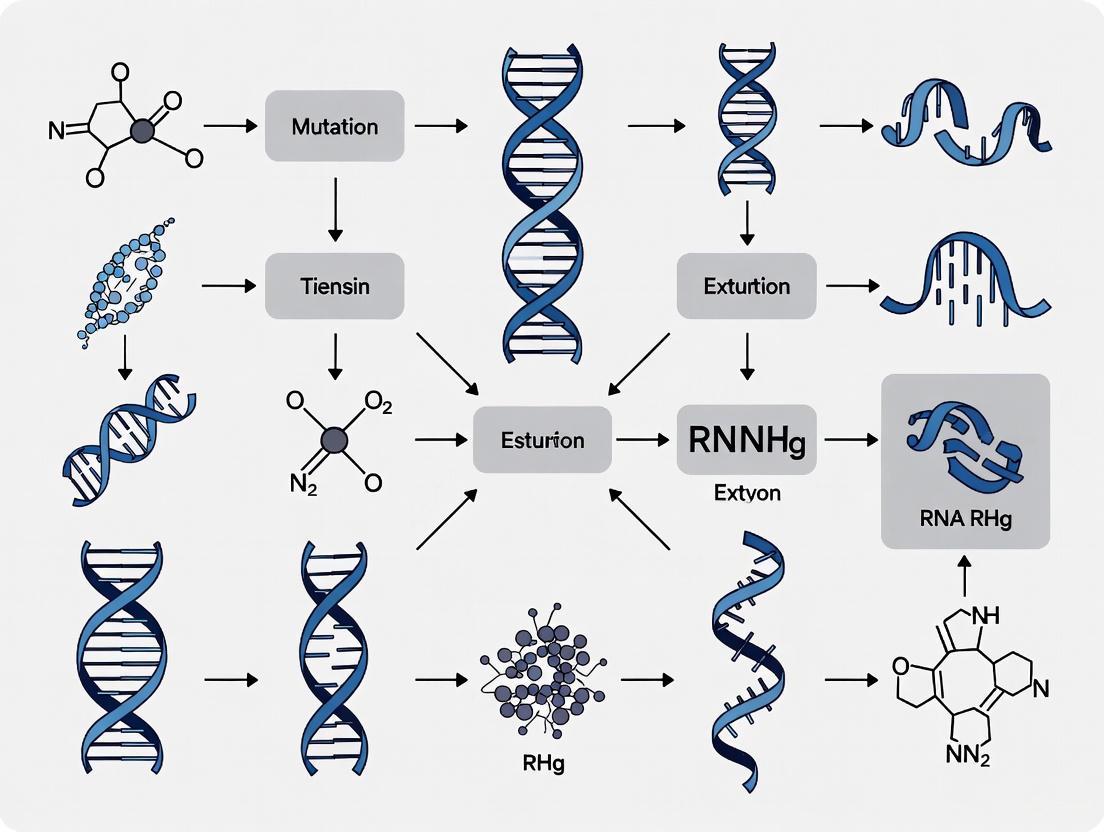

Workflow Visualization

Figure 1: Mutation rate estimation workflow from pedigree data

Research Reagent Solutions

Table 3: Essential Research Materials for Mutation Rate Studies

| Reagent/Resource | Specific Example | Application Purpose | Key Considerations |

|---|---|---|---|

| Reference Genome | GRCh38 (human) | Read mapping and variant calling | Use the most recent version to minimize mapping artifacts |

| Variant Caller | GATK HaplotypeCaller [7] | DNM identification | Joint calling across trios improves sensitivity |

| Mutation Catalog | gnomAD (various species) [4] | Filtering common polymorphisms | Essential for distinguishing rare variants from sequencing errors |

| Cell Lines | NA12878 and CEPH pedigree [6] | Method validation | Well-characterized multi-generation resource available |

| Multiple Sequence Alignments | Zoonomia Consortium data [1] | Phylogenetic rate estimation | Multi-species alignment for neutral rate estimation |

| Annotation Databases | dbSNP, ClinVar | Variant interpretation | Filtering known polymorphisms and pathogenic variants |

Advanced Technical Considerations

Modeling Mutation Rate Heterogeneity

Accurate mutation rate estimation requires accounting for heterogeneity across multiple biological scales. At the genomic level, consider implementing context-specific mutation models that differentiate rates by trinucleotide context, replication timing, and functional annotation. At the population level, account for ancestry-associated differences in both mutation rate and spectra [2]. For temporal scaling, implement time-dependent models that adjust for the observed acceleration of mutation rate estimates in recent timeframes [3].

The DR EVIL method represents a significant advance for analyzing large datasets where recurrent mutation violates the infinite-sites assumption [4]. This approach uses a diffusion approximation to a branching-process model with recurrent mutation, enabling tractable likelihood calculations accurate for rare alleles. Implementation involves:

- Modeling allele frequency dynamics in populations of time-varying size

- Incorporating both recurrent mutation and selection parameters

- Using rare-variant approximation of standard diffusion approximations

- Optimizing likelihoods to estimate mutation and demographic parameters simultaneously

Mutation Rate Estimation in Non-Model Organisms

For species without extensive genomic resources, mutation rate estimation requires modified approaches:

- Create chromosomal-level genome assemblies to enable accurate variant calling and mapping

- Sequence pedigreed individuals when possible to directly estimate mutation rates

- Identify neutral genomic regions by masking functional elements using cross-species annotation

- Use phylogenetic contrast with closely-related species to estimate divergence-based rates [1]

The aye-aye genome project demonstrates this comprehensive approach, combining pedigree sequencing, population genomic data, and functional annotation to generate the first fine-scale mutation rate maps for this endangered primate [1].

Troubleshooting Guides & FAQs

Q1: My population genetic analysis of a large dataset (n > 10,000) is yielding inconsistent parameter estimates. Could the infinite-sites assumption be the cause?

A: Yes, this is a likely cause. The infinite-sites assumption (ISA), which posits that each polymorphic site in a sample has mutated at most once in its genealogical history, is frequently violated in large-scale genomic datasets [4]. In very large samples, the same site can undergo independent, recurrent mutation events, leading to an excess of rare variants and tri-allelic sites that are incompatible with the ISA [4] [9]. These violations can introduce significant biases in the estimation of fundamental parameters like mutation rates ((\mu)) and effective population size ((N_e)).

Solution: Transition to models that explicitly account for recurrent mutation.

- For demographic and mutation rate estimation: Use methods like DR EVIL, which employs a diffusion approximation to handle recurrent mutation and is designed for samples of millions of genomes [4].

- For phylogenetic inference: Consider tools like inPhynite, which uses the ISA but does so with highly efficient algorithms on coarse mutation spaces, or the Almost Infinite Sites Model (AISM), which bridges the ISA and finite sites models for a more tractable solution [10] [9].

- Data Pre-processing Check: Inspect your data for sites that violate the ISA, such as those with more than two alleles or those that fail the four-gamete test. While removing these sites is an option, it discards information and is not a long-term solution [9].

Q2: I am observing tri-allelic sites in my data. How should I handle them in my analysis?

A: Tri-allelic sites are a clear signature of recurrent mutation and represent a direct violation of the infinite-sites assumption [9]. Simply filtering them out, a common practice, results in a loss of information and can bias your results.

Solution: Employ a mutation model that can natively accommodate multi-allelic sites.

- Use a Finite Sites Model: While computationally intensive, these models allow for multiple mutations at a single site.

- Adopt a Hybrid Model: The Almost Infinite Sites Model (AISM) is a practical compromise, allowing for a bounded number of recurrent mutations while maintaining much of the tractability of the ISA, making it suitable for data like mitochondrial DNA [9].

- Leverage Simulation: Use simulation software like msprime with appropriate mutation models (e.g., HKY, GTR) and

discrete_genome=Falseto generate data under the infinite sites assumption, or withdiscrete_genome=Trueand high mutation rates to explore scenarios with recurrent mutations [11]. Comparing your observed data to these simulations can help diagnose the severity of the problem.

Q3: How does sample size affect the validity of the infinite-sites assumption for mutation rate estimation?

A: The validity of the ISA deteriorates rapidly as sample size increases. In samples of hundreds of thousands to millions of haplotypes, the probability of recurrent mutation at a single site becomes substantial, especially at sites with high intrinsic mutation rates [4]. The following table summarizes the core issue:

Table 1: Impact of Sample Size on the Infinite-Sites Assumption

| Sample Size Scale | Consequence for Infinite-Sites Assumption | Recommended Action |

|---|---|---|

| Small (n < 1,000) | ISA is generally reasonable when per-site mutation rates are low. | Standard ISA-based methods (e.g., Coalescent with ISA) are applicable. |

| Large (n > 10,000) | Recurrent mutations become detectable, leading to violations and biased estimates [4]. | Use methods that model recurrent mutation, such as DR EVIL [4]. |

| Very Large (n > 1,000,000) | The alleles at most polymorphic sites with high mutation rates likely represent multiple mutation events, making the ISA untenable [4]. | Mandatory to use methods designed for recurrent mutation and to account for fine-scale mutation rate heterogeneity [4] [1]. |

Q4: What are the best practices for estimating mutation rates from very large genomic datasets?

A: Best practices have shifted to address the limitations of the ISA:

- Avoid ISA-only Methods: Do not rely solely on methods that enforce the infinite-sites assumption, as they will systematically underestimate the number of mutation events in large samples [4] [12].

- Account for Rate Heterogeneity: Mutation rates are not uniform across the genome. Use fine-scale mutation rate maps where available, or estimate them jointly with demographic parameters. Failure to do so can bias inferences of both neutral and selective processes [1].

- Focus on Rare Variants: Large samples provide power through rare variants, which inform recent demographic history and mutation rates. Employ methods like DR EVIL that use a rare-variant approximation for tractable likelihood calculations [4].

- Validate with Direct Estimation: Compare population genetic estimates with direct estimates from pedigree or trio sequencing studies where possible to assess accuracy [1].

Experimental Protocols for Modern Mutation Rate Estimation

Protocol 1: Estimating Demography and Mutation Rates with DR EVIL

Application: Joint inference of mutation rate ((\mu)) and demographic history from very large samples (up to millions of genomes) while accounting for recurrent mutation [4].

Workflow:

- Input Data Preparation: Prepare a file of derived allele frequency counts from your large-scale genomic dataset.

- Model Specification: Define the Wright-Fisher model with parameters for per-site mutation rate ((\mu)), heterozygote selection coefficient ((hs)), and a piecewise-constant effective population size trajectory ((N(t))).

- Likelihood Optimization: Use the DR EVIL software to maximize the approximate sampling formula for rare alleles (Equation 2 in [4]) to obtain maximum-likelihood estimates for (\mu) and demographic parameters.

- Model Checking: Compare the model's predicted site frequency spectrum to the observed one to assess goodness-of-fit.

Diagram 1: DR EVIL Analysis Workflow

Protocol 2: Phylogenetic Inference with the Almost Infinite Sites Model (AISM)

Application: Reconstructing phylogenetic trees from sequence data (e.g., mtDNA) where recurrent mutations are suspected, without having to remove incompatible sites [9].

Workflow:

- Data Input: Load aligned sequence data (e.g., in FASTA format).

- Model Setup: Specify the AISM in your phylogenetic software, which treats sites as unlabelled but allows for a bounded number of mutation events per site in the genealogy.

- Likelihood Calculation: Use the recursive characterization of the likelihood under the AISM. For computational tractability, a parsimonious approximation that considers ancestral histories with a limited number of mutations can be applied.

- Tree Search: Recover the maximum likelihood or Bayesian posterior distribution of phylogenetic trees that explain the observed data, including patterns that would violate the standard infinite-sites model.

Table 2: Performance Comparison of Mutation Models on Large Datasets

| Method / Model | Core Assumption | Handles Recurrent Mutation? | Computational Tractability | Reported Performance |

|---|---|---|---|---|

| Classical Coalescent + ISA | Infinite Sites | No | High | Biased estimates in large samples [4] |

| inPhynite | Infinite Sites (efficient) | No | Very High | >225x speedup in statistical efficiency on large data vs. competitors, but accuracy depends on ISA holding [10] |

| Almost Infinite Sites (AISM) | Almost Infinite Sites | Yes (bounded) | Medium | Recovers accurate mutation rate approximations with constrained mutation events [9] |

| DR EVIL | Finite Sites + Rare Variants | Yes | Medium-High | Accurate estimation of μ and demography from 1 million samples [4] |

| Finite Sites Model (FSM) | Finite Sites | Yes | Low (state space explosion) | Theoretically accurate but often impractical for large analyses [9] |

The Scientist's Toolkit: Essential Research Reagents & Solutions

Table 3: Key Software and Analytical Tools

| Item | Function / Description | Application in Mutation Research |

|---|---|---|

| DR EVIL | Software for estimating mutation rates and demography from large samples using a diffusion approximation with recurrent mutation [4]. | Corrects for ISA violations in ultra-large datasets (e.g., gnomAD) to infer accurate mutation rates and recent population history. |

| inPhynite | Highly efficient Bayesian phylogenetics algorithm under the infinite sites model [10]. | Rapid phylogenetic tree and population size trajectory inference when ISA is approximately valid. |

| Almost Infinite Sites Model (AISM) | A model bridging ISM and FSM, allowing recurrent mutations but with tractable inference [9]. | Phylogenetic analysis of non-recombining data (e.g., mtDNA) where recurrent mutations are present. |

| msprime | A simulation tool for generating ancestral histories and genetic variation data under a range of models [11]. | Simulating genetic data with and without recurrent mutation to benchmark methods and test for ISA violations. |

| Biopython | A collection of Python tools for computational molecular biology [13] [14]. | Parsing sequence file formats (FASTA, GenBank), sequence manipulation, and integrating analysis pipelines. |

| Fine-scale Mutation Map | Genomic map showing spatial variation in mutation rates [1]. | Accounting for mutation rate heterogeneity to avoid biases in population genetic inference. |

Diagram 2: Troubleshooting ISA Violations

Accurate estimation of mutation rates is fundamental to evolutionary biology, medical genetics, and genomic research. However, several biological factors systematically distort these estimates if not properly accounted for. Three key sources of bias—genomic heterogeneity, demography, and natural selection—frequently compromise the accuracy of mutation rate studies. Genomic heterogeneity describes how the same or similar phenotypes can arise through different genetic mechanisms in different individuals, while also encompassing variability in mutation rates themselves across the genome. Demographic history, particularly population bottlenecks and expansions, dramatically alters allele frequency distributions. Natural selection, whether positive or negative, shapes which mutations persist in populations. Together, these forces can lead to significant overestimation or underestimation of true mutation rates if not explicitly addressed in study design and analysis. This guide provides troubleshooting advice and methodological solutions to mitigate these biases in your research.

Frequently Asked Questions (FAQs)

Q1: What is genetic heterogeneity and how does it bias mutation rate estimates? Genetic heterogeneity occurs when the same or similar phenotype arises through different genetic mechanisms in different individuals. In mutation rate studies, this manifests as variation in mutation rates across genomic regions due to factors like trinucleotide context, methylation status, and replication timing. This heterogeneity biases estimates because standard methods often assume a uniform mutation rate across the genome. When this assumption is violated, estimates become inaccurate, particularly for rare variants which provide substantial power for estimating mutation rates in large datasets. Failure to account for this heterogeneity can lead to both missed associations and incorrect inferences [15] [4].

Q2: How do demographic factors like population bottlenecks affect mutation rate estimation? Demographic history profoundly affects mutation rate estimation. Population bottlenecks reduce effective population size (Nₑ), which in turn reduces the power of natural selection to remove mildly deleterious mutations. This can lead to the accumulation of mutations that would otherwise be purged, creating the illusion of a higher mutation rate. Conversely, rapid population growth generates an excess of rare variants that can be mistaken for recently increased mutation rates. Methods that assume constant population size will produce biased estimates when applied to populations with complex demographic histories [4] [16].

Q3: Can natural selection distort mutation rate estimates, and if so, how? Yes, natural selection can significantly distort mutation rate estimates through multiple mechanisms. Negative selection against deleterious mutations removes them from the population, leading to underestimation of mutation rates, while positive selection can cause beneficial mutations to rise in frequency, potentially creating overestimation. The interaction is particularly complex at high mutation rates, where natural selection may become "neutralized" because lineages bearing adaptive mutations are eroded by excessive deleterious mutations. This can result in a zero or negative adaptation rate despite the continued availability of adaptive mutations, further complicating accurate mutation rate estimation [17].

Q4: What is the "infinite-sites assumption" and why does it cause problems in large datasets? The infinite-sites assumption is a foundational principle in population genetics that presumes each mutant allele in a sample results from a single mutation event. This assumption is violated in large modern datasets (e.g., millions of genomes), where recurrent mutation—variants of a given type having multiple mutational origins—becomes detectable. When this violation occurs in standard analysis methods, it leads to incorrect estimates of both mutation rates and demographic history. New methods like DR EVIL explicitly avoid this assumption by using diffusion approximations that accommodate recurrent mutation [4].

Q5: How can I detect if genomic heterogeneity is affecting my mutation rate analysis? Genomic heterogeneity can be detected through several methods. Local Haplotyping Analysis (LHA) examines adjacent SNPs close enough to be spanned by individual sequencing reads to identify more than two haplotypes, indicating cellular heterogeneity. Significant variation in mutation rates across genomic regions after accounting for known confounders (like trinucleotide context and methylation status) also suggests heterogeneity. Advanced methods like DR EVIL can directly estimate and correct for residual mutation-rate heterogeneity in large datasets [4] [18].

Q6: What are the practical consequences of ignoring these biases in drug development research? Ignoring these biases in drug development can lead to significant errors in estimating treatment benefits and identifying therapeutic targets. One study demonstrated that failure to adjust for genetic heterogeneity in both disease progression and treatment response resulted in overestimation of life-years gained from pravastatin therapy by 5.5%. In extreme cases, this "pharmacogenomics bias" can exceed 100%, potentially leading to misallocated resources and failed clinical trials [19].

Troubleshooting Guides

Problem: Suspected Genomic Heterogeneity Bias

Symptoms:

- Unexplained variation in mutation rates across genomic regions

- Inconsistent results between different datasets or populations

- Failure to replicate associations in validation studies

Step-by-Step Solutions:

- Implement Local Haplotyping Analysis (LHA): Identify blocks where 2+ heterozygous SNPs fall within 500 bases, then enumerate haplotypes from read pairs spanning these blocks. Observation of >2 haplotypes indicates heterogeneity [18].

- Apply Heterogeneity-Aware Methods: Use tools like DR EVIL that employ diffusion approximations to a branching-process model with recurrent mutation, which avoids the infinite-sites assumption [4].

- Account for Known Covariates: Include trinucleotide context, methylation status, and replication timing in your models to account for known sources of mutation rate variation [4].

- Validate with Alternative Methods: Compare results across multiple estimation approaches (e.g., fluctuation assays, pedigree-based methods, and population genetic approaches) to identify inconsistencies suggesting heterogeneity bias.

Prevention Strategies:

- Design studies with sufficient power to detect heterogeneity

- Use sequencing approaches that enable haplotype resolution

- Pre-register analysis plans that explicitly test for heterogeneity

Problem: Demographic History Distorting Estimates

Symptoms:

- Excess of rare variants compared to expectations

- Inflated or deflated estimates of population-wide mutation rates

- Inconsistent estimates between populations

Step-by-Step Solutions:

- Estimate Demographic History: Use site frequency spectrum-based methods to infer population size changes independently of mutation rate estimation [4] [20].

- Incorporate Demography into Models: Implement methods that jointly estimate demography and mutation rates, such as the approach of Zeng and Charlesworth (2009) that accommodates population size changes [20].

- Focus on Rare Variants for Recent Demography: Leverage the correlation between variant age and frequency—rare variants likely arose recently and are particularly informative about recent population history [4].

- Use Appropriate Null Distributions: For FST-based analyses, employ methods that estimate the neutral distribution directly from multi-locus data rather than assuming a demographic model [21].

Prevention Strategies:

- Characterize demographic history before designing mutation rate studies

- Include multiple populations with different demographic histories

- Use methods that are robust to demographic assumptions

Problem: Natural Selection Skewing Mutation Spectra

Symptoms:

- Deviation from expected allele frequency spectra

- Unusual patterns of polymorphism and divergence

- Inconsistencies between synonymous and nonsynonymous mutation rates

Step-by-Step Solutions:

- Test for Selection: Implement likelihood-ratio tests (LRTγ for selection, LRTκ for mutational bias) within the reversible mutation model framework [20].

- Compare Selected and Neutral Sites: Use putatively neutral sites (e.g., ancient repeats, synonymous sites) as a baseline for estimating mutation rates, then compare with potentially selected sites.

- Account for Linked Selection: Consider Hill-Robertson interference and background selection, which reduce the effectiveness of selection at linked sites and distort allele frequency spectra [20].

- Model Selection Explicitly: Use methods that incorporate selection parameters directly into mutation rate estimation, such as diffusion approximations that include both recurrent mutation and selection [4].

Prevention Strategies:

- Focus on putatively neutral genomic regions when possible

- Use methods that simultaneously estimate selection and mutation parameters

- Account for linked selection when analyzing specific genomic regions

Quantitative Data Tables

Table 1: Mutation Rate Variation Under Different Evolutionary Scenarios

| Condition | Mutation Rate Change | Statistical Significance | Key Factors |

|---|---|---|---|

| Intermediate resource cycles (L10) | 121.4-fold SNM increase, 77.3-fold SIM increase | P = 4.4 × 10⁻⁴⁴ (SNM), P = 2.5 × 10⁻⁴⁷ (SIM) | Environmental fluctuation, effective population size [16] |

| Strong population bottlenecks (S1, MMR- background) | 41.6% SNM decrease, 48.2% SIM decrease | P = 1.8 × 10⁻⁸ (SNM), P = 4.2 × 10⁻¹⁶ (SIM) | Reduced Nₑ, selection against high mutation load [16] |

| MMR-deficient background (ancestral) | 68.6-fold SNM increase vs wild-type | Reference baseline | DNA repair deficiency [16] |

| Pharmacogenomics bias example | 5.5% overestimation of life-years gained | Clinical significance | Heterogeneity in progression and treatment response [19] |

Table 2: Performance of Statistical Tests for Detecting Selection and Mutational Bias

| Test Type | False Positive Rate | Power to Detect Selection | Robustness to Demography | Robustness to Linkage |

|---|---|---|---|---|

| LRTγ (selection) | Appropriate (∼0.05) with constant population size | Good for weak selection at typical recombination rates | Relatively insensitive to demographic effects | Sensitive only at very high mutation rates [20] |

| LRTκ (mutational bias) | Appropriate (∼0.05) with constant population size | Good power to detect mutational bias | Relatively insensitive to demographic effects | Sensitive only at very high mutation rates [20] |

| FST outlier analysis | High with demographic deviations | Good for strong divergent selection | Low robustness to demographic history | Moderate, depends on method [21] |

Experimental Protocols

Protocol: Mutation Accumulation (MA) Assay with Whole-Genome Sequencing

Purpose: To obtain essentially unbiased mutation rate estimates by capturing mutations in an effectively neutral manner.

Materials:

- Clonal isolates of study organism (e.g., E. coli)

- Appropriate growth media

- Facilities for long-term propagation

- Whole-genome sequencing platform

- Bioinformatics pipeline for variant calling

Procedure:

- Isolate clones from populations of interest after experimental evolution or natural variation.

- Propagate clones through repeated single-cell bottlenecks to minimize natural selection.

- Sequence genomes of accumulated lines after multiple generations.

- Call mutations by comparing to ancestral reference genome.

- Calculate mutation rates using generation count and number of accumulated mutations.

- Compare rates across experimental conditions or genetic backgrounds.

Applications: This protocol was used to demonstrate that evolution of mutation rates proceeds rapidly (within 59 generations) in response to environmental and population-genetic challenges [16].

Protocol: Local Haplotyping Analysis (LHA) for Detecting Cellular Heterogeneity

Purpose: To directly observe genomic heterogeneity in next-generation sequencing data.

Materials:

- NGS data from whole genome or exome sequencing

- BAM alignment files

- SAMtools API

- Custom LHA pipeline

Procedure:

- Call SNPs using standard tools (e.g., GATK UnifiedGenotyper) with minimum base quality threshold of 30.

- Identify blocks where 2+ heterozygous SNPs fall within 500 bases of each other.

- Extract read pairs overlapping each block, ignoring reads with mapping quality <30.

- Enumerate haplotypes observed in read pairs, requiring ≥3 supporting reads per haplotype.

- Cluster haplotypes parsimoniously to expand read-based haplotypes into local genomic haplotypes.

- Identify heterogeneity when >2 haplotypes are observed in a diploid organism.

Applications: This protocol has revealed that cellular heterogeneity at the genomic level is ubiquitous in both normal and tumor tissues [18].

Signaling Pathways and Workflow Diagrams

Diagram 1: Relationship between bias sources and methodological solutions in mutation rate estimation.

Diagram 2: Local Haplotyping Analysis (LHA) workflow for detecting genomic heterogeneity.

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Reagent/Tool | Function | Application Context |

|---|---|---|

| DR EVIL (Diffusion for Rare Elements in Variation Inventories that are Large) | Estimates mutation rates and recent demographic history from large samples while avoiding infinite-sites assumption | Population genetic analysis of large datasets (>1M samples) with recurrent mutation [4] |

| GATK UnifiedGenotyper | Calls SNPs from NGS data with quality filtering | Initial variant calling in LHA pipeline and general mutation discovery [18] |

| SAMtools API | Processes sequence alignment/map (SAM/BAM) files | Extracting read-based haplotypes in LHA analysis [18] |

| MR-MEGA | Multi-ancestry meta-regression for GWAS aggregation | Accounting for allelic effect heterogeneity correlated with ancestry in diverse populations [22] |

| Mutation Accumulation (MA) Lines | Propagates clones with minimal selection | Direct estimation of mutation rates without selective interference [16] |

| Reversible Mutation Model Methods | Maximum-likelihood inference for selection and mutation parameters | Estimating weak selection acting on synonymous sites or base pairs [20] |

Technical Support Center

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between a mutation rate and a mutation frequency, and why does it matter for my analysis? Using these terms interchangeably is a common but critical error. The mutation rate is the probability of a mutation occurring per cell division or per generation. In contrast, the mutation frequency is simply the proportion of mutant bacteria or alleles present in a population at a specific time [23] [24]. The mutation rate is a stable, underlying parameter, while frequency is a snapshot influenced by random chance, such as whether a mutation happened early (creating a large clone, a "jackpot") or late in a population's growth [23]. Using frequency as a proxy for rate leads to highly inaccurate and irreproducible results [25].

FAQ 2: My genome-wide association studies (GWAS) are only explaining a small fraction of heritability. Could rare variants be the missing piece? Yes. The common disease-common variant (CD/CV) hypothesis, which guided early GWAS, is now understood to be incomplete [26]. Rare variants (typically defined as those with a Minor Allele Frequency, or MAF, of less than 5%) are a crucial component of the genetic architecture of common diseases [26]. They are more likely to be functional and can have stronger effect sizes than common variants. Most SNPs in the human genome are, in fact, rare variants, making them essential for a complete understanding of disease heritability [26].

FAQ 3: When analyzing very large genomic datasets, why do I need to worry about the "infinite-sites assumption"? The infinite-sites assumption, which underpins many population-genetic methods, posits that each polymorphic site in the genome mutated only once in its evolutionary history. In ultra-large samples (e.g., hundreds of thousands to millions of genomes), this assumption is frequently violated [4]. At polymorphic sites with high mutation rates, the rare alleles you observe are likely the descendants of multiple, independent mutation events. Methods that ignore this recurrent mutation will produce biased estimates of demographic history and mutation rates [4].

FAQ 4: What are the best statistical practices for estimating mutation rates from fluctuation tests?

You should avoid using the simple arithmetic mean of mutant counts, as it is highly inaccurate and non-reproducible [25]. Instead, use methods specifically designed for the Luria-Delbrück distribution. Advanced, computer-based Maximum Likelihood Estimator (MLE) methods, such as those implemented in tools like rSalvador, FALCOR, or flan, are considered best practice as they use all the data and provide robust, accurate estimates [25]. Formula-based methods like the p0 method or Lea-Coulson's method of the median offer a balance of accuracy and simplicity if computational tools are unavailable [23] [25].

FAQ 5: How can AI models aid in the identification of disease-causing rare variants? New AI tools like popEVE help solve the problem of prioritizing which rare variants are most likely to be pathogenic [27]. These models integrate deep evolutionary information from across species with human population genetic data. They generate a score for each variant that predicts its likelihood of causing disease and its severity, allowing clinicians and researchers to efficiently find the "needle in a haystack" in a patient's genome, significantly speeding up the diagnosis of rare genetic diseases [27].

Troubleshooting Guides

Problem: Inconsistent and irreproducible mutation rate estimates from fluctuation experiments.

- Potential Cause: Using the arithmetic mean of mutant frequencies, which is extremely sensitive to the high inherent variance ("fluctuation") of the Luria-Delbrück distribution. A single "jackpot" culture can skew the results [23] [25].

- Solution: Adopt a proper statistical estimator.

- Immediate Action: Re-analyze your mutant count data using a maximum likelihood method (e.g., with the

rSalvadorpackage in R or the web-basedwebSalvador) [25]. - Best Practice for Future Experiments: Always design your fluctuation assays with an adequate number of independent cultures (replicates) and use an advanced method like MSS-MLE or the empirical generating function (GF) method from the start [25].

- Validation: Compare the estimate from the arithmetic mean with that from an MLE method; the discrepancy will demonstrate the magnitude of the error.

- Immediate Action: Re-analyze your mutant count data using a maximum likelihood method (e.g., with the

Problem: Failure to detect an association between a genetic region and a disease, despite strong clinical evidence.

- Potential Cause: The association may be driven by rare variants that are not effectively tagged by the common variants genotyped on standard arrays. These rare variants can have large effect sizes but are often missed by GWAS focused on common variants [26].

- Solution: Shift to a rare-variant analysis strategy.

- Sequencing: Use whole-genome or whole-exome sequencing instead of genotyping arrays to directly detect rare variants.

- Aggregation Tests: Employ statistical methods that aggregate the effects of multiple rare variants within a gene or pathway (e.g., SKAT, Burden tests).

- Study Design: Consider enriching your cohort by targeting patients with a family history of the disease, an extreme phenotype, or early disease onset, as these groups are more likely to carry high-effect rare variants [26].

Problem: Estimates of demographic history are biased when using large sample sequencing data.

- Potential Cause: Violation of the infinite-sites assumption. In samples of millions of haplotypes, recurrent mutation at high-mutation-rate sites is common and, if unaccounted for, distorts the site frequency spectrum, which is used to infer demography [4].

- Solution: Use inference methods that explicitly model recurrent mutation.

- Tool Recommendation: Implement a method like DR EVIL (Diffusion for Rare Elements in Variation Inventories that are Large), which uses a diffusion approximation that incorporates recurrent mutation and is designed for very large samples [4].

- Analysis Focus: Ensure the method you use is accurate for rare alleles, as they hold most of the information about recent demographic history.

Problem: Difficulty in diagnosing rare genetic diseases from a patient's genomic sequence.

- Potential Cause: The presence of tens of thousands of genetic variants of unknown significance (VUS) makes it challenging to pinpoint the single pathogenic one [27].

- Solution: Utilize AI-based variant prioritization tools.

- Workflow Integration: Run your list of candidate variants through a model like popEVE, which scores each variant across genes for pathogenicity and disease severity [27].

- Validation: Prioritize variants with high scores for functional validation in the lab. This approach can help identify novel disease genes that were previously unknown [27].

Data Presentation

Table 1: Comparison of Methods for Estimating Mutation Rates from Fluctuation Tests [25]

| Method | Type | Key Principle | Advantages | Limitations |

|---|---|---|---|---|

| Arithmetic Mean | Inappropriate | Average of mutant frequencies. | Simple to calculate. | Highly inaccurate and non-reproducible; strongly discouraged. |

| p0 Method | Formula-based | Uses the proportion of cultures with zero mutants. | Simple formula; good for low mutation rates. | Inefficient; wastes data from cultures with mutants. |

| Lea-Coulson Median Estimator | Formula-based | Uses the median number of mutants. | More accurate than p0; relatively simple. | Less accurate than advanced methods; not ideal for all m values. |

| MSS-MLE | Advanced (MLE) | Maximizes likelihood of observed data using all cultures. | High accuracy and reproducibility; uses all data. | Requires computational tools (e.g., FALCOR). |

| rSalvador (NR-MLE) | Advanced (MLE) | Refined MLE using a Newton-Raphson algorithm. | Considered one of the most accurate methods currently available. | Requires R or webSalvador. |

Table 2: Typical Mutation Rates Across Biological Systems [24]

| Biological System | Typical Mutation Rate | Notes |

|---|---|---|

| Human Nuclear DNA | 10⁻⁷ to 10⁻⁸ per nucleotide per cell division | Applies to small-scale mutations. |

| RNA Viruses | 10⁻³ to 10⁻⁵ mutations/nucleotide/replication cycle | High rate due to lack of polymerase proofreading. |

| Plant RNA Viruses | ~10⁻⁴ mutations/nucleotide/replication cycle (median) | Lower than many animal RNA viruses. |

Experimental Protocols

Protocol: Luria-Delbrück Fluctuation Test for Bacteria [23] [25]

- Inoculation: Prepare a large number (e.g., 20-100) of independent, small cultures from a small initial inoculum of genetically identical cells. Use a volume that allows for sufficient growth.

- Growth: Incubate all cultures in the absence of selective pressure until they reach a high cell density. The number of generations of growth should be sufficient to allow mutations to occur.

- Plating: From each culture, plate the entire population or a known volume onto solid medium containing a selective agent (e.g., an antibiotic at 2-4x MIC). Also plate a diluted sample from each culture onto non-selective medium to determine the total number of viable cells (Nt).

- Counting: After incubation, count the number of mutant colonies on each selective plate and the number of colonies on the non-selective plates.

- Calculation: Input the distribution of mutant counts and the corresponding total cell counts for each culture into a dedicated analysis tool (e.g.,

rSalvador,webSalvador,FALCOR) to calculate the mutation rate using a maximum likelihood estimator. Do not use the arithmetic mean.

Protocol: Key Considerations for Mutation Rate Estimation [23] [25]

- Selective Agent: Choose an antibiotic to which resistance arises via single point mutations (e.g., rifampin, quinolones).

- Parameters: The expected number of mutational events per culture (

m) influences which estimation method is most suitable. Thep0method works best for 0.3 ≤ m ≤ 2.3, while the method of the median is suitable for 1.5 ≤ m ≤ 15. - Controls: Always include appropriate positive and negative controls to confirm the selectivity of your plates.

Methodologies and Workflow Visualization

Rare Variant Analysis Workflow

Mutation Rate Estimation Paths

The Scientist's Toolkit

Table 3: Essential Research Reagents and Tools [23] [27] [25]

| Item | Function in Research |

|---|---|

| Salmonella typhimurium TA Strains | Engineered auxotrophic bacterial strains used in the standardized Ames test for mutagenicity screening. |

| rSalvador / webSalvador | R package and web tool for accurately estimating mutation rates from fluctuation assays using the NR-MLE method. |

| popEVE AI Model | An artificial intelligence tool that scores genetic variants by their likelihood and severity of causing disease, crucial for diagnosing rare genetic disorders. |

| DR EVIL Software | A computational method for estimating mutation rates and demography from very large genomic samples while accounting for recurrent mutation. |

| Selective Antibiotics (e.g., Rifampin) | Antibiotics to which resistance can arise from single chromosomal point mutations, making them ideal for fluctuation tests. |

Accurate estimation of mutation rates is fundamental to evolutionary biology, medical genetics, and drug development. These rates represent the foundation for understanding genetic diversity, disease mechanisms, and evolutionary timelines. The two primary methodological frameworks—direct (pedigree-based) and indirect (phylogenetic) estimation—offer complementary insights yet present distinct advantages and challenges. Direct methods quantify mutations observed within familial lineages over a single generation, while indirect approaches infer historical rates from genetic variation accumulated across evolutionary timescales. Discrepancies between these methods can lead to significantly different biological interpretations, making the choice and application of appropriate methodologies crucial for research accuracy. This guide provides technical support for researchers navigating these complex methodologies within the broader context of improving mutation rate estimation accuracy.

Core Concepts: Methodological Frameworks and Key Distinctions

Direct (Pedigree-Based) Estimation

Definition: Direct estimation involves identifying de novo mutations (DNMs) by comparing the whole-genome sequences of parents and their offspring. The number of new mutations observed in the offspring that are absent from the parental genomes is counted and divided by the number of sites examined, yielding a per-generation rate [28] [29].

- Key Principle: The core principle is the direct observation of mutations within a known number of generational transmission events (meioses) [30].

- Typical Workflow: A standard pipeline involves (1) sampling and sequencing pedigree members, (2) aligning reads to a reference genome, (3) variant calling and genotyping, (4) DNM detection via filtering, and (5) mutation rate calculation accounting for the accessible genome size and false-negative rates [29].

Indirect (Phylogenetic/Coalescent) Estimation

Definition: Indirect methods infer mutation rates by analyzing the amount of genetic divergence between species or populations. This approach relies on a molecular clock assumption, where the rate of mutation is constant over time, and requires calibration using paleontological data for species divergence times [30].

- Key Principle: The method estimates the substitution rate, which reflects the mutations that have become fixed in a population over long evolutionary periods [30].

- Emerging Approaches: Newer methods like

DR EVIL(Diffusion for Rare Elements in Variation Inventories that are Large) avoid the classic infinite-sites assumption (that each mutant allele is the result of a single mutation) by using a diffusion approximation to model recurrent mutation. This is particularly powerful for analyzing rare variants in very large samples (e.g., millions of genomes) to estimate recent demography and mutation rates [4]. Similarly,spectrumSplitsis an algorithm that subdivides a phylogeny into subtrees with distinct mutational spectra, helping to identify shifts in mutation processes [31].

The following table summarizes the fundamental technical differences between the two approaches.

Table 1: Fundamental Comparison of Direct and Indirect Estimation Methods

| Feature | Direct (Pedigree-Based) Estimation | Indirect (Phylogenetic) Estimation |

|---|---|---|

| Basis of Estimate | Direct observation of de novo mutations (DNMs) in parent-offspring trios [32] | Inference from genetic divergence between species or populations [30] |

| Inherent Assumptions | Minimal; primarily that identified DNMs are true germline events and not artifacts [29] | Relies on a molecular clock, known divergence times, and often the infinite-sites assumption [4] [30] |

| Inferred Timescale | A single generation (recent) [30] | Thousands to millions of generations (historical) [30] |

| Key Advantage | Provides an unbiased view of the mutation spectrum and parental origin in the present generation [32] [29] | Can be applied to species without pedigree data and provides an evolutionary average [30] |

| Primary Limitation | Costly and labor-intensive; requires high-quality samples from family members [33] [29] | Calibration is often uncertain; estimates can be confounded by selection and demography [30] |

Figure 1: Logical workflow and key characteristics differentiating direct and indirect estimation methods.

Troubleshooting Guides & FAQs

Frequently Asked Questions

Q1: My phylogenetic and pedigree-based mutation rate estimates for the same species disagree significantly. Which one is correct? A: This common discrepancy, often called the "time-dependent mutation rate," does not necessarily mean one is incorrect. The estimates reflect different timescales. Pedigree estimates capture the raw mutation rate over one generation, including mutations that may be selectively removed before they become fixed. Phylogenetic estimates reflect the long-term substitution rate, which is the mutation rate filtered by natural selection and demographic history. The disparity itself is biologically informative about the action of purifying selection [30].

Q2: Why do different research labs obtain varying mutation rate estimates even when using the same pedigree dataset? A: This highlights a critical issue of standardization. A "Mutationathon" competition using the same rhesus macaque pedigree found nearly twofold variation in final estimates across expert labs. The differences stemmed from choices in:

- Bioinformatic Pipelines: Read alignment, variant calling, and genotyping algorithms.

- Filtering Strategies: Criteria for excluding false positives (e.g., due to sequencing errors, mapping errors, or somatic mutations).

- FDR/FNR Accounting: Differing methods to account for false discovery rates (FDR) and false-negative rates (FNR) [28] [29]. Solution: Adopt community-standardized benchmarks, replicate findings with multiple pipelines, and use extended pedigrees for DNM validation [29].

Q3: How can I accurately estimate mutation rates in the presence of null alleles or other technical artifacts?

A: Technical artifacts like null alleles (alleles that fail to amplify due to polymorphisms in the primer site) can severely bias estimates, particularly those based on population-level heterozygosity deficiency (FIS). One robust solution is to use methods based on identity disequilibrium (the correlation of heterozygosity across loci), such as implemented in the RMES software. This method has been shown to be insensitive to null alleles and can provide estimates that align closely with direct pedigree-based results, unlike FIS-based methods [33].

Q4: For large-scale genomic datasets (n > 1M), the infinite-sites assumption is violated. How can I proceed?

A: In ultra-large samples, recurrent mutation at a single site becomes detectable. Methods that explicitly model this, such as DR EVIL, should be employed. DR EVIL uses a diffusion approximation that incorporates recurrent mutation and selection, enabling accurate joint estimation of mutation rates and recent demographic history from rare variants without the infinite-sites assumption [4].

Troubleshooting Common Experimental and Analytical Issues

Problem: Low Concordance in De Novo Mutation Calls

- Symptoms: High number of candidate DNMs fail validation; large discrepancy between expected and observed mutation rates.

- Potential Causes & Solutions:

- Cause 1: High false-positive rate due to sequencing or mapping errors.

- Solution: Apply stringent filters, such as requiring a minimum read depth and alternative allele count in the offspring, and absence in parents confirmed by high-depth data. Use multigeneration pedigrees to validate transmission [29].

- Cause 2: Somatic mutations in the sampled offspring tissue mistaken for germline events.

- Cause 1: High false-positive rate due to sequencing or mapping errors.

Problem: Bias in Population-Level Selfing Rate Estimates

- Symptoms: Indirect estimates of selfing rates (e.g., from FIS) are significantly higher than direct estimates from progeny arrays.

- Potential Causes & Solutions:

- Cause: Violation of assumptions underlying indirect methods, such as the presence of null alleles, population subdivision, or biparental inbreeding.

- Solution: Use the

RMESsoftware, which estimates selfing rates from identity disequilibria and is robust to null alleles. Alternatively, validate findings with direct progeny-array methods where feasible [33].

- Solution: Use the

- Cause: Violation of assumptions underlying indirect methods, such as the presence of null alleles, population subdivision, or biparental inbreeding.

Problem: Inferred Mutation Spectrum Shifts are Not Robust

- Symptoms: Identified changes in the mutation spectrum (e.g., relative rates of C>T mutations) are not consistently supported across the phylogeny.

- Potential Causes & Solutions:

- Cause: The phylogenetic nodes used for comparison were defined a priori and may not correspond to the actual timing of the spectrum shift.

- Solution: Use a data-driven partitioning algorithm like

spectrumSplits, which performs a traversal of the phylogeny to automatically identify nodes where the mutation spectrum changes significantly. Assess robustness with nonparametric bootstrapping [31].

- Solution: Use a data-driven partitioning algorithm like

- Cause: The phylogenetic nodes used for comparison were defined a priori and may not correspond to the actual timing of the spectrum shift.

Essential Methodologies and Protocols

Detailed Protocol: Pedigree-Based Germline Mutation Rate Estimation

This protocol outlines the key steps for a standard trio-based design [28] [29].

1. Sampling and Sequencing:

- Design: Collect samples from a trio (mother, father, offspring) or, ideally, an extended pedigree including a third generation for validation.

- Sample Type: Prefer blood or other primary tissues over cell lines, as cell culture can introduce non-germline (somatic) mutations [32] [29].

- Sequencing: Perform high-coverage (e.g., >30x) whole-genome sequencing on all individuals using a platform that provides uniform coverage.

2. Data Processing and Variant Calling:

- Alignment: Map sequencing reads to a high-quality reference genome using a standard aligner (e.g., BWA-MEM).

- Post-processing: Perform local realignment around indels and base quality score recalibration.

- Variant Calling: Call genotypes for all individuals simultaneously using a variant caller capable of modeling familial relationships (e.g., GATK's HaplotypeCaller in cohort mode).

3. De Novo Mutation Detection and Filtering:

- Initial Call: Use a DNM caller (e.g., DeNovoGear, TrioDeNovo) or custom filters to identify heterozygous sites in the offspring that are homozygous reference in both parents.

- Stringent Filtering: Apply a sequential filter to remove likely false positives:

- Mapping/Quality Filter: Remove sites with low mapping quality, low base quality, or located in problematic genomic regions (e.g., segmental duplications, telomeres).

- Genotype Quality Filter: Require high genotype quality for the offspring's heterozygous call and both parents' homozygous reference calls.

- Population Frequency Filter: Exclude sites present in population frequency databases (e.g., gnomAD), as these are likely inherited variants with genotyping errors in the parents.

- Visual Inspection: Manually inspect the alignment (BAM) files for all candidate DNMs using a tool like IGV to confirm the variant.

4. Validation and Rate Calculation:

- Validation: Confirm all candidate DNMs using an orthogonal technology, typically Sanger sequencing or high-depth sequencing of the original DNA.

- Calculate Accessible Genome: Determine the number of sites in the genome that passed all sequencing and filtering thresholds and were callable in all trio members.

- Compute Rate: Calculate the mutation rate (μ) using the formula:

- μ = (Number of Validated DNMs) / (Number of Accessible Sites × 2) The multiplication by 2 accounts for the two haploid genomes transmitted from parents to offspring.

Advanced Protocol: Estimating Rates from Large-Scale Population Data using DR EVIL

For datasets comprising hundreds of thousands to millions of genomes, the DR EVIL method is appropriate [4].

1. Data Preparation:

- Input: A site frequency spectrum (SFS) of rare variants, ideally from a sample of at least 100,000 haplotypes.

- Annotation: Annotate variants with genomic features known to influence mutation rates (e.g., trinucleotide context, replication timing, chromatin state).

2. Model Specification:

- Define Demography: Specify a demographic model (e.g., constant population size, exponential growth).

- Parameterize Mutation & Selection: Set up parameters for mutation rate and a distribution of fitness effects for new mutations.

3. Likelihood Optimization:

- Implementation: Use the

DR EVILsoftware to compute the likelihood of the observed rare allele counts under the specified model. - Estimation: Optimize the likelihood to obtain joint maximum-likelihood estimates for the mutation rate and demographic parameters.

Quantitative Data and Comparative Analysis

Table 2: Comparison of Germline Mutation Rates Across Vertebrates via Pedigree Sequencing Data compiled from the "Mutationathon" and other studies, highlighting methodological consistency and biological variation [28].

| Species | Mutation Rate (×10–8 per site per generation) | Number of Trios | Key Methodological Note |

|---|---|---|---|

| Human (Homo sapiens) | 1.17 – 1.30 | 78 - 1449 | Estimates have converged with large sample sizes and standardized pipelines [28] |

| Chimpanzee (Pan troglodytes) | 1.20 – 1.48 | 6 - 7 | |

| Rhesus Macaque (Macaca mulatta) | 0.58 – 0.77 | 14 - 19 | Variation between studies highlights impact of methodology [28] |

| Wolf (Canis lupus) | 0.45 | 4 | |

| Mouse (Mus musculus) | 0.39 – 0.57 | 8 - 15 | |

| Herring (Clupea harengus) | 0.20 | 12 |

Table 3: Comparison of Indirect Estimation Methods for Large-Scale Data Summary of advanced methods that move beyond the standard phylogenetic approach and infinite-sites assumption.

| Method | Core Principle | Key Advantage | Best Use Case |

|---|---|---|---|

DR EVIL [4] |

Uses a diffusion approximation with recurrent mutation and selection. | Avoids infinite-sites assumption; jointly estimates mutation rates and recent demography from rare variants. | Ultra-large samples (>100k haplotypes) for inferring recent history and mutation rate heterogeneity. |

spectrumSplits [31] |

Partitions a phylogeny into subtrees with distinct mutational spectra via depth-first traversal. | Data-driven identification of mutation spectrum shifts without a priori lineage designation. | Pinpointing branches in a large phylogeny (e.g., SARS-CoV-2) where mutation processes change. |

| ARG-derived IBD [34] | Leverages the Ancestral Recombination Graph (ARG) to infer Identical-by-Descent (IBD) segments. | No need for a hard length threshold on IBD; efficient data encoding enables use of short segments. | Powerful inference of evolutionary parameters (like mutation rate) in recombining populations. |

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Computational Tools and Resources for Mutation Rate Estimation

| Tool / Resource | Function | Application Context | Reference |

|---|---|---|---|

| GATK | Variant calling and genotyping from sequencing data. | Foundational step in most pedigree-based pipelines for generating accurate genotypes. | [29] |

| RMES | Estimates selfing rates using identity disequilibria. | Robust indirect estimation of mating system parameters in the presence of null alleles. | [33] |

| DR EVIL (R package) | Estimates mutation rates and demography from rare variants in large samples. | Analyzing population-scale sequencing data (e.g., gnomAD) while accounting for recurrent mutation. | [4] |

| spectrumSplits | Identifies shifts in the mutation spectrum across a phylogeny. | Analyzing viral evolution or any large phylogeny to find branches with altered mutational processes. | [31] |

| UShER | Builds and parses massive phylogenies using maximum parsimony. | Used by spectrumSplits to assign mutations to nodes in the tree (e.g., for SARS-CoV-2). |

[31] |

| OrthoRep System | A highly error-prone orthogonal DNA replication system in yeast. | For experimental evolution studies, allowing direct and indirect selection on mutagenic polymerases. | [35] |

Figure 2: A decision workflow linking research goals to appropriate methodologies, key tools, and experimental protocols.

Next-Generation Estimation Frameworks: From Theory to Practical Application

Technical Support Center

Frequently Asked Questions (FAQs)

What is the DR EVIL framework and what is its primary purpose? DR EVIL (Diffusion for Rare Elements in Variation Inventories that are Large) is a computational method designed for estimating mutation rates and recent demographic history from very large genomic samples, such as those containing hundreds of thousands to a million haploid genomes. Its core purpose is to model rare genetic variants while explicitly accounting for recurrent mutation and natural selection, thereby overcoming the limitations of the traditional infinite-sites assumption which is often violated in large-scale datasets [4].

Why should I use DR EVIL instead of other methods for analyzing large genomic datasets? DR EVIL is particularly suited for large samples where rare variants provide most of the information. Its key advantage is that it avoids the infinite-sites assumption, which posits that each mutant allele arises from a single mutation event. In very large samples, recurrent mutation—where the same variant arises from multiple independent mutations—becomes detectable and can bias results if not properly modeled. DR EVIL uses a diffusion approximation to handle this complexity, providing more accurate estimates of mutation rates and demography from rare allele counts [4].

What are the common data requirements and input formats for DR EVIL? The method requires data on allele counts from a large sample of haploid genomes. The core of its analysis focuses on the frequencies of rare variants. The software for running DR EVIL is available as R code from its GitHub repository, suggesting that data is likely expected in a tabular format compatible with R, such as a count matrix for variants [4].

My analysis is running slowly. What factors affect the computational performance of DR EVIL? Performance is influenced by the sample size (number of haploid genomes) and the number of polymorphic sites analyzed. The method was designed for computational efficiency on large datasets by focusing on a rare-variant approximation. This approximation simplifies the likelihood calculations, making it feasible to analyze samples on the scale of one million genomes [4].

Troubleshooting Guides

Issue: Inaccurate estimates of mutation rates or demographic history. Potential Causes and Solutions:

- Cause 1: Violation of model assumptions. DR EVIL assumes a Wright-Fisher model of allele-frequency dynamics with time-varying population size. Ensure your data and study design are compatible with this underlying model.

- Cause 2: Presence of strong, unaccounted-for natural selection at many sites. DR EVIL can incorporate selection, but if the selection parameter is misspecified, it can affect other estimates. Review the selection coefficients used in your model.

- Solution: Validate your parameter estimates using simulated data with known properties, as described in the original paper, to ensure the method is correctly configured for your specific research context [4].

Issue: Difficulty interpreting the results related to recurrent mutation. Explanation and Solution:

- Explanation: A key finding from applying DR EVIL to large samples is that at modern sample sizes, the alleles at most polymorphic sites with high mutation rates are likely the descendants of multiple mutation events. This means that for a given variant, not all copies in the population necessarily share a single common ancestor.

- Solution: Focus on the estimates of mutation rate heterogeneity. DR EVIL can identify this heterogeneity even after accounting for known factors like trinucleotide context and methylation status, potentially revealing new genomic features that influence mutation rates [4].

Experimental Protocols and Data

Core Methodology of DR EVIL

DR EVIL uses an approximate sampling formula for rare alleles based on a Wright-Fisher model with recurrent mutation and selection. The likelihoods derived from this model are then used for maximum-likelihood estimation [4].

Model Specification: Assume a standard Wright-Fisher model with:

- Mutation rate (μ) per site per generation.

- Heterozygote fitness (1+hs).

- A time-varying effective population size, N(t).

- Explicit allowance for recurrent mutation, violating the infinite-sites assumption.

Rare-Variant Approximation: The method focuses on modeling the site frequency spectrum for rare variants. This focus allows for computationally efficient handling of the model by utilizing a diffusion approximation to a branching-process model.

Likelihood Calculation and Optimization: The approximate sampling formula for allele counts is used as part of a maximum-likelihood estimation procedure to jointly infer:

- Recent demographic history (parameters of N(t)).

- Mutation rates (μ), including context-dependent heterogeneity.

- Selection coefficients (if modeled).

Table 1: Performance of DR EVIL in Simulation Studies

| Estimated Parameter | Performance Finding | Comparative Advantage |

|---|---|---|

| Mutation Rates | More accurate than existing methods | Can correct for the presence of mutation-rate heterogeneity [4] |

| Recent Demography | Accurate estimation | Highlighted importance of accounting for recurrent mutation to avoid bias [4] |

Table 2: Insights from Application to One Million Haploid Genomes (gnomAD data)

| Analysis Aspect | Key Finding |

|---|---|

| Mutation-Rate Heterogeneity | Detected even after accounting for trinucleotide context and methylation status [4] |

| Origin of Polymorphisms | Predicted that at modern sample sizes, alleles at most polymorphic sites with high mutation rates represent descendants of multiple mutation events [4] |

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Item | Function / Description | Relevance to DR EVIL Framework |

|---|---|---|

| Large-scale Genomic Data | Data from hundreds of thousands to millions of haploid genomes (e.g., from gnomAD). | Primary input for the method; provides the rare variant counts necessary for powerful inference [4]. |

| R Software Environment | A free software environment for statistical computing and graphics. | The DR EVIL software is implemented as R code, making this platform essential for analysis [4]. |

| DR EVIL R Code | The specific software package that implements the DR EVIL method. | Contains the algorithms for estimating mutation rates and demography via maximum likelihood [4]. |

| Computational Resources | Access to servers or computing clusters with sufficient memory and processing power. | Necessary for handling the large datasets (e.g., one million genomes) and performing optimizations in a reasonable time [4]. |

Workflow and Conceptual Diagrams

DR EVIL Analysis Workflow

Conceptual Relationship: Sample Size and Recurrent Mutation

Technical Support Center: Troubleshooting Guides and FAQs

This technical support center provides solutions for researchers, scientists, and drug development professionals working with ultra-large genomic datasets, specifically focusing on the DR EVIL tool for mutation rate estimation and demographic inference. The guidance is framed within the broader thesis of improving the accuracy of mutation rate estimation research.

Frequently Asked Questions

Q1: My analysis of a one-million-genome dataset is yielding biased mutation rate estimates. What could be the cause? A common cause of bias is the violation of the infinite-sites assumption, which posits that each mutant allele in a sample is the result of a single, unique mutation event. In ultra-large samples (e.g., hundreds of thousands to millions of haplotypes), polymorphic sites with high mutation rates often represent the descendants of multiple, independent mutation events. This phenomenon, known as recurrent mutation, violates the infinite-sites assumption and can skew results if not properly accounted for. The DR EVIL method is specifically designed to avoid this pitfall. [4] [36]