Beyond Just-So Stories: Correcting Need-Based Evolutionary Explanations in Biomedical Research

This article critiques the pervasive 'need-based' and simplistic genetic explanations for evolutionary traits, which often manifest as modern 'just-so stories' in scientific literature and drug discovery.

Beyond Just-So Stories: Correcting Need-Based Evolutionary Explanations in Biomedical Research

Abstract

This article critiques the pervasive 'need-based' and simplistic genetic explanations for evolutionary traits, which often manifest as modern 'just-so stories' in scientific literature and drug discovery. Aimed at researchers, scientists, and drug development professionals, it provides a framework to deconstruct these narratives. We explore the foundational theories challenging neutral evolution and simplistic adaptationism, outline methodologies for robust evolutionary analysis in biomedical contexts, address common pitfalls in interpreting genetic data, and validate approaches through comparative case studies. The goal is to foster a more rigorous, nuanced application of evolutionary principles that acknowledges complexity, changing environments, and cultural factors to enhance the predictive power of biomedical research.

The Flawed Paradigm: Deconstructing Need-Based Evolutionary Narratives

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q: My gene expression results are inconsistent across replicates. What could be the cause? A: Inconsistent results often stem from RNA degradation or improper normalization. Ensure all RNA samples have an A260/A280 ratio between 1.8-2.0, use fresh RNase inhibitors, and validate your reference genes for qPCR normalization under your specific experimental conditions.

Q: What is the minimum acceptable color contrast ratio for graphical objects in publication figures? A: For graphical objects like charts and icons required to understand content, WCAG guidelines specify a minimum contrast ratio of 3:1 against adjacent colors [1]. For body text in figures, a higher ratio of at least 4.5:1 is required [2].

Q: How can I quickly check if my figure colors meet contrast requirements? A: Use free online tools like WebAIM's Color Contrast Checker [3] or accessibility features in browser developer tools. These tools calculate contrast ratios and indicate pass/fail status against WCAG standards.

Troubleshooting Common Experimental Issues

| Problem | Possible Causes | Solutions |

|---|---|---|

| High qPCR Ct values | RNA degradation, inefficient reverse transcription, poor primer design | Check RNA integrity, optimize RT reaction temperatures, validate primer efficiency with standard curve |

| Poor cell transfection efficiency | Low-quality DNA, incorrect DNA:reagent ratio, cells at wrong confluency | Use endotoxin-free DNA, optimize ratios for each cell type, ensure 70-80% confluency at transfection |

| Weak Western blot signals | Inadequate protein transfer, expired detection reagents, insufficient protein loading | Verify transfer efficiency with Ponceau S staining, use fresh ECL reagents, increase protein amount (20-50μg) |

| High background in immunofluorescence | Non-specific antibody binding, insufficient blocking, overfixation | Include isotype controls, increase blocking time (1-2 hours), optimize fixation duration |

Experimental Protocols

Protocol 1: RNA Isolation and Quality Control

Materials Required:

- TRIzol reagent

- Chloroform

- Isopropyl alcohol

- 75% ethanol

- Nuclease-free water

- Spectrophotometer/Nanodrop

Methodology:

- Homogenize tissue or cells in TRIzol (1ml per 50-100mg tissue)

- Incubate 5 minutes at room temperature

- Add 0.2ml chloroform per 1ml TRIzol, shake vigorously for 15 seconds

- Centrifuge at 12,000 × g for 15 minutes at 4°C

- Transfer aqueous phase to new tube, add 0.5ml isopropyl alcohol

- Incubate 10 minutes at room temperature, then centrifuge at 12,000 × g for 10 minutes at 4°C

- Wash pellet with 75% ethanol, centrifuge at 7,500 × g for 5 minutes at 4°C

- Air dry pellet 5-10 minutes, resuspend in nuclease-free water

- Measure concentration and purity by spectrophotometry (A260/A280 ratio of 1.8-2.0 indicates pure RNA)

Protocol 2: Western Blot for Protein Detection

Materials Required:

- RIPA lysis buffer with protease inhibitors

- BCA protein assay kit

- SDS-PAGE gel system

- PVDF or nitrocellulose membrane

- Blocking buffer (5% non-fat dry milk in TBST)

- Primary and secondary antibodies

- ECL detection reagents

Methodology:

- Lyse cells in RIPA buffer (100-200μl per 10^6 cells) on ice for 30 minutes

- Centrifuge at 14,000 × g for 15 minutes at 4°C, collect supernatant

- Quantify protein concentration using BCA assay

- Prepare samples with Laemmli buffer, denature at 95°C for 5 minutes

- Load 20-50μg protein per well, run SDS-PAGE at 100-150V until dye front reaches bottom

- Transfer to membrane using wet or semi-dry transfer system

- Block membrane with 5% milk in TBST for 1 hour at room temperature

- Incubate with primary antibody diluted in blocking buffer overnight at 4°C

- Wash 3× with TBST, 5 minutes each

- Incubate with HRP-conjugated secondary antibody for 1 hour at room temperature

- Wash 3× with TBST, 15 minutes total

- Develop with ECL reagents and image

Quantitative PCR Results for Gene Expression Analysis

| Gene Target | Control Group (Mean ± SEM) | Treatment Group (Mean ± SEM) | Fold Change | P-value |

|---|---|---|---|---|

| MYC | 1.00 ± 0.08 | 3.45 ± 0.21 | 3.45 | 0.003 |

| TP53 | 1.00 ± 0.11 | 0.32 ± 0.05 | 0.32 | 0.008 |

| BCL2 | 1.00 ± 0.09 | 2.15 ± 0.18 | 2.15 | 0.023 |

| AKT1 | 1.00 ± 0.07 | 1.28 ± 0.12 | 1.28 | 0.142 |

| GAPDH | 1.00 ± 0.05 | 1.02 ± 0.06 | 1.02 | 0.811 |

Cell Viability Assay Data (MTT Assay)

| Compound | Concentration (μM) | % Viability (24h) | % Viability (48h) | % Viability (72h) |

|---|---|---|---|---|

| Control (DMSO) | 0 | 100.0 ± 3.2 | 100.0 ± 4.1 | 100.0 ± 3.8 |

| Compound A | 1 | 95.4 ± 2.8 | 88.7 ± 3.5 | 75.3 ± 4.2 |

| Compound A | 5 | 82.1 ± 3.5 | 62.4 ± 4.8 | 45.6 ± 5.1 |

| Compound A | 10 | 65.8 ± 4.2 | 38.9 ± 5.3 | 22.7 ± 4.9 |

| Compound B | 10 | 98.2 ± 2.9 | 96.5 ± 3.7 | 94.8 ± 4.1 |

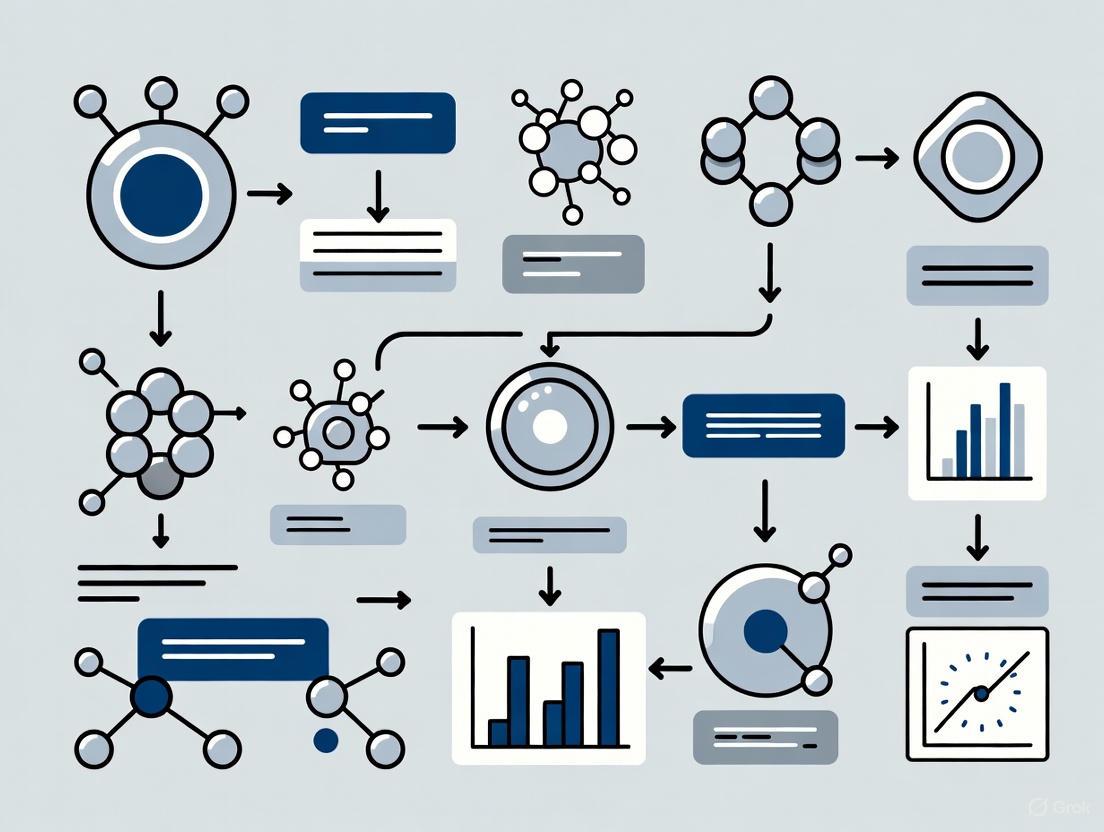

Research Visualization

Signaling Pathway Analysis

Experimental Workflow

Gene Regulation Network

The Scientist's Toolkit: Research Reagent Solutions

| Research Reagent | Function | Application Notes |

|---|---|---|

| TRIzol Reagent | Maintains RNA integrity during cell lysis, simultaneously isolates RNA, DNA and proteins | For difficult-to-lyse samples, increase volume 2-3x; critical for preserving RNA quality [4] |

| Lipofectamine 3000 | Lipid-based transfection reagent for nucleic acid delivery | Optimize DNA:reagent ratio for each cell type; reduce serum during transfection for better efficiency |

| Protease Inhibitor Cocktail | Prevents protein degradation by inhibiting serine, cysteine, and metalloproteases | Add fresh to lysis buffer; aliquot stock solutions to avoid freeze-thaw cycles |

| RNase Inhibitor | Protects RNA samples from degradation during handling and storage | Essential for reverse transcription and long-term RNA storage; use at 0.5-1U/μL |

| BCA Protein Assay Kit | Colorimetric detection and quantification of protein concentration | More detergent-compatible than Bradford assay; prepare fresh standards for accurate quantification |

| ECL Substrate | Chemiluminescent detection for immunoblotting | Sensitivity varies between formulations; high-sensitivity versions detect low-abundance targets |

| SYBR Green Master Mix | Fluorescent dye for qPCR detection of amplified DNA | Optimize primer concentrations to minimize primer-dimer formation; includes all components for PCR |

FAQs: Neutral Theory and Adaptive Tracking

Q1: What is the core finding of the recent research challenging the Neutral Theory? A1: The research led by Jianzhi Zhang at the University of Michigan found that over 1% of mutations are beneficial [5] [6] [7]. This frequency is orders of magnitude greater than expected under the Neutral Theory, which posits that beneficial mutations are exceedingly rare and that most fixed mutations are neutral [5] [6]. This creates a paradox: if beneficial mutations are so common, why is the observed rate of gene evolution in nature not higher? The resolution lies in environmental change. Beneficial mutations often confer an advantage only in a specific environment; when the environment changes, these mutations can become harmful and are thus eliminated before they become fixed in a population [5] [8] [7]. The outcome appears neutral, but the underlying process is not.

Q2: What is "Adaptive Tracking with Antagonistic Pleiotropy"? A2: This is the new theoretical model proposed to explain the findings. "Adaptive Tracking" describes how populations are constantly, but imperfectly, chasing a moving target—their changing environment [6] [8] [7]. "Antagonistic Pleiotropy" is the key mechanism, where a single mutation has opposing fitness effects in different environments—beneficial in one set of conditions but deleterious in another [5] [8] [7]. This combination explains why many beneficial mutations are observed in the lab but do not lead to long-term evolutionary change, as environmental shifts prevent their fixation.

Q3: How does this research impact our understanding of adaptation in populations, including humans? A3: The model suggests that natural populations are never fully adapted to their environments [5] [6]. Because environments change frequently, populations are always in a state of "catching up" [6]. For humans, this implies that our genetic makeup may be a mismatch for modern environments. Some genetic variants that were beneficial in our ancestral environments may now contribute to disease or be suboptimal today [5] [8] [7]. This has significant implications for evolutionary medicine and understanding disease susceptibility.

Q4: What are the main cognitive obstacles to understanding natural selection, and how does this new theory address one of them? A4: A major cognitive obstacle is teleological reasoning—the tendency to explain evolution as a need-driven process, where organisms develop traits because they "need" them to survive [9] [10]. The new theory directly counters this by demonstrating that while beneficial mutations are common, they are not a response to "need." Instead, they are random variations whose success is entirely dependent on and frequently thwarted by a fluctuating environment, preventing a directed, need-based progression [5] [9].

Troubleshooting Common Experimental & Conceptual Challenges

| Challenge | Symptom | Solution & Interpretation |

|---|---|---|

| High beneficial mutation rate in scans but low fixation rate in evolution experiments | Deep mutational scanning reveals >1% beneficial mutations, but long-term evolution shows a slower, seemingly neutral substitution rate [5] [7]. | Do not assume a constant environment. Replicate experiments in fluctuating environments. The discrepancy is likely due to antagonistic pleiotropy, where beneficial mutations in one condition are lost when the environment shifts [5] [8]. |

| Interpreting "neutral" outcomes | Population genetics analyses indicate many molecular changes are neutral, seemingly supporting the Neutral Theory. | Distinguish process from outcome. A neutral outcome does not imply a neutral process. The mutation may have been subject to selection that changed direction due to environmental shifts, leaving no net selective sweep [5] [6]. |

| Generalizing from model organisms | Data from unicellular organisms (yeast, E. coli) show clear patterns, but applicability to multicellular organisms is uncertain. | Explicitly test key premises in complex models. The next critical step is to perform deep mutational scanning in multicellular organisms to validate if adaptive tracking is a universal principle [5] [6] [7]. |

| Student/colleague uses need-based explanations | Explanations like "the cheetah evolved speed to catch prey" or "the ducks needed to swim so they got webbed feet" are used [9]. | Correct with the logic of selection, not transformation. Emphasize that evolution acts on existing random variation. Individuals with slightly webbed feet had an advantage and reproduced more; the population changed over generations without any "need" initiating the change [9]. |

Key Experimental Protocols

Deep Mutational Scanning (DMS) Protocol

Purpose: To systematically measure the fitness effects of thousands of individual mutations in a gene [5] [6] [7]. Workflow:

- Library Construction: Create a comprehensive mutant library for a target gene, where each variant carries a single, defined mutation (e.g., via site-directed mutagenesis or error-prone PCR) [7].

- Transformation & Growth: Introduce the mutant library into model organisms (e.g., yeast or E. coli) and grow them in a controlled, defined medium for multiple generations.

- Selection & Sequencing: Harvest cells at the beginning (T0) and end (Tfinal) of the experiment. Use high-throughput sequencing to count the frequency of each mutant variant at both time points.

- Fitness Calculation: The fitness effect of each mutation is estimated by the change in its frequency between T0 and Tfinal. A significant increase indicates a beneficial mutation; a decrease indicates a deleterious one [7].

Experimental Evolution in Fluctuating Environments

Purpose: To directly test the role of environmental change in preventing the fixation of beneficial mutations [5] [6]. Workflow:

- Establish Populations: Initiate multiple replicate populations from a single clonal ancestor of a model organism like yeast.

- Apply Environmental Regimes:

- Monitor and Sequence: Regularly sample populations from both groups. Use whole-genome sequencing to track the frequency of mutations over time and identify which mutations become fixed.

- Expected Outcome: The constant environment group will show a higher number of fixed beneficial mutations. The fluctuating environment group will show far fewer fixed beneficial mutations, as environmental changes will remove them before fixation, demonstrating adaptive tracking in action [5].

Research Reagent Solutions

| Reagent / Resource | Function in Research | Specific Example / Application |

|---|---|---|

| Saccharomyces cerevisiae (Budding Yeast) | A model unicellular eukaryote ideal for genetic manipulation and high-growth generation experiments. Used for deep mutational scanning and experimental evolution due to short generation time (~3 hours) [5] [6]. | |

| Escherichia coli | A model prokaryote used for large-scale mutagenesis studies and long-term evolution experiments (e.g., the 50,000-generation study) to track adaptation [8] [7]. | |

| Deep Mutational Scanning (DMS) Pipeline | A high-throughput method to create and fitness-test thousands of mutations in a gene simultaneously [5] [7]. | Applied to 21 genes in budding yeast to systematically quantify the percentage of beneficial mutations [5] [8]. |

| Controlled Growth Media & Chemostats | To maintain precise and reproducible environmental conditions (constant or fluctuating) for evolution experiments over hundreds of generations [5] [8]. | Using 10 different media types to simulate a fluctuating environment for yeast populations [5]. |

| High-Throughput Sequencer (e.g., Illumina) | Essential for tracking the frequency of thousands of mutant variants in a population over time in DMS and for whole-genome sequencing of evolved lineages [7]. |

Conceptual Diagrams

Adaptive Tracking Model

Experimental Evolution Workflow

Why 'Modern,' 'Primitive,' and 'Advanced' are Misleading Terms in Evolutionary Biology

A Technical Support Guide for Research and Drug Development

FAQ: Core Conceptual Issues

Q1: What is the fundamental error in labeling a species as 'primitive' or 'advanced'?

A: The core error is imposing a linear, hierarchical value judgment on a non-linear, branching process. Evolution is not a ladder of progress with some species being "better" than others. All extant species have been evolving for the same amount of time—approximately 3.5 billion years—since the origin of life. A so-called "primitive" species like a platypus is not a failed ancestor of a "more advanced" mammal; it is a highly evolved, modern organism whose lineage has undergone gains, losses, and specializations perfectly suited to its ecological niche [11]. Using these terms leads to flawed hypotheses by misrepresenting the nature of evolutionary relationships.

Q2: How can this terminology negatively impact scientific research and communication?

A: Misleading terminology can:

- Introduce Bias: Describing a trait or organism as "primitive" can create an unconscious bias that devalues its complexity, potentially leading researchers to overlook unique and valuable biological mechanisms [11].

- Hinder Interdisciplinary Collaboration: Inconsistent terminology can cause confusion when scientists from different sub-fields (e.g., ornithology vs. mammalogy) or working on different organisms (e.g., viruses vs. animals) communicate, as they may use the same words with different implied meanings [12] [13].

- Perpetuate Exclusion: Language in science can unintentionally isolate marginalized groups by using terms with harmful alternate meanings, which can stifle diversity and innovation within the research community [14].

Q3: What is a more scientifically accurate framework for discussing evolutionary history?

A: The accurate framework is the Tree of Life, which views all organisms as interconnected cousins sharing a common ancestry [11]. The goal is to understand the specific adaptations that different lineages have acquired and lost over time. Instead of "advanced," describe a trait as a "recently derived adaptation." Instead of "primitive," describe it as "ancestral" or "conserved." This focuses on the historical sequence of changes without implying superiority or a goal-oriented process.

Troubleshooting Guide: Common Experimental Pitfalls

| Problem | Underlying Flaw | Recommended Correction |

|---|---|---|

| Interpreting Model Organisms: Assuming a "simple" or "primitive" model organism (e.g., placozoan) is an imperfect representative of a "higher" system (e.g., human). | Misapprehension that some species are living fossils or less evolved. All modern species are equally "evolved"; they simply possess different suites of ancestral and derived traits [11] [15]. | Select model organisms based on the specific ancestral or derived trait under investigation. Justify the choice based on its phylogenetic position and the specific biological question, not on a perceived position on a evolutionary ladder. |

| Analyzing Genomic Data: Interpreting genetic similarity to a supposedly "primitive" ancestor as a lack of complexity or evolutionary stasis. | Failure to recognize that every genome is a mosaic of deeply conserved and rapidly evolving elements. A "basal" lineage can possess novel genes and complex adaptations [11]. | Focus on identifying homologous genes and tracing their evolutionary history (e.g., identifying gains, losses, and positive selection) across a well-resolved phylogeny, rather than making assumptions based on overall similarity. |

| Classifying Pathogens: Relying on common names or outdated taxonomic rankings that imply linear progression or superiority. | Taxonomic ranks (e.g., phylum, class) are human constructs, not natural categories. They are not consistently applied across all life and can be misleading [13] [15]. | Use current, formal scientific nomenclature and refer to phylogenetic clades. For example, refer to virus species by their formal binomial names and define variants by their genetic clade rather than informal, value-laden terms [13]. |

Experimental Protocols: Methodologies for Critiquing and Correcting Terminology

Protocol 1: Quantitative Analysis of Term Usage in Literature

Objective: To empirically identify and quantify the use of misleading evolutionary terms in a defined body of scientific literature.

Materials:

- Research Reagent Solutions:

- Literature Database (e.g., PubMed, Google Scholar): A source for harvesting peer-reviewed article text and metadata.

- Text-Mining Software (e.g., R with 'tm' package, Python with NLTK): For automated parsing, tokenization, and frequency analysis of large text corpora.

- Controlled Vocabulary List: A predefined list of target terms (e.g., "primitive," "advanced," "higher," "lower," "modern") and their proposed alternatives (e.g., "ancestral," "derived").

Methodology:

- Define Corpus: Select a specific set of journals or a date range for articles within your research domain (e.g., "evolutionary developmental biology, 2010-2025").

- Data Extraction: Use database APIs or bulk download tools to acquire the full text or abstracts of the target articles.

- Text Processing: Clean the text data (remove punctuation, convert to lowercase) and tokenize it into individual words or phrases.

- Frequency Analysis: Program the text-mining software to count the instances of each term in your controlled vocabulary list.

- Contextual Analysis: Implement a sentiment or collocation analysis to determine the context in which the problematic terms are used (e.g., "primitive trait" vs. "primitive organism").

- Data Synthesis: Tabulate the results to show the prevalence of misleading terms and report the frequency of use per 10,000 words for standardized comparison.

Protocol 2: Phylogenetic Trait Mapping

Objective: To visually demonstrate that traits are gained and lost in a branching, non-linear pattern, countering the "ladder of progress" narrative.

Materials:

- Research Reagent Solutions:

- Phylogenetic Tree: A well-supported tree of the taxa in question, derived from genomic or morphological data.

- Trait Data Matrix: A coded matrix (e.g., Nexus format) detailing the presence (1), absence (0), or ambiguous state (?) of specific traits for each taxon.

- Phylogenetic Software (e.g., Mesquite, R 'phytools' package): For mapping trait evolution onto the tree structure using parsimony, likelihood, or Bayesian methods.

Methodology:

- Tree and Data Curation: Assemble or select a published phylogenetic tree and code your traits of interest (e.g., "egg-laying," "electroreception," "placenta") for the terminal taxa.

- Trait Mapping: Use the phylogenetic software to reconstruct the ancestral states of the traits at each node of the tree.

- Visualization: Generate a figure where branches of the tree are colored based on the inferred trait state. This will clearly show multiple independent gains and losses of complex traits across different lineages.

- Interpretation: Analyze the visualization to show that no single lineage has accumulated all "advanced" traits and that "primitive" traits can be retained in otherwise highly derived species.

Visualizing the Logical Argument Against Misleading Terminology

The following diagram outlines the logical pathway from flawed assumptions to scientific consequences, and the corresponding corrective actions.

Research Reagent Solutions: Essential Materials for Terminology Correction

| Reagent / Tool | Function / Purpose | Application in Research |

|---|---|---|

| Phylogenetic Analysis Software (e.g., BEAST, MrBayes) | Reconstructs evolutionary relationships to create a testable framework for trait comparison. | Used in Protocol 2 to build the Tree of Life scaffold, moving analysis away from subjective rankings to objective, historical relationships. |

| Controlled Vocabulary & Thesaurus | A pre-approved list of accurate terms and their problematic counterparts. | Serves as a standard operating procedure (SOP) for writing and reviewing manuscripts, grants, and lab communications to ensure consistent, precise language. |

| Text-Mining & Bibliometric Software | Empirically audits the scientific literature for problematic terminology. | Used in Protocol 1 to baseline current usage patterns, identify problem areas, and measure the impact of corrective interventions over time. |

| Formal Taxonomic Nomenclature (e.g., ICTV, ICZN) | Provides a standardized, international system for naming species and clades. | Prevents confusion in critical fields (e.g., pathogen identification in drug development) by moving away from common names to precise scientific names [12] [13]. |

Technical Support & Troubleshooting Center

Welcome to the Research Methodology Support Center. This resource provides troubleshooting guides and FAQs to help researchers address common methodological challenges in evolutionary biology and related fields, with a focus on correcting need-based evolutionary explanations.

Frequently Asked Questions

Q1: My hypothesis about a trait's adaptive function is being criticized as a "just-so story." How can I make it more robust? A "just-so story" is an adaptive explanation for a trait that appears logical but is not backed by testable evidence [16]. To strengthen your hypothesis:

- Make Testable Predictions: A strong evolutionary hypothesis must generate falsifiable predictions about the design of the trait [16]. For example, the hypothesis that pregnancy sickness is an adaptation to protect the fetus predicts specific patterns of food aversions, unlike a non-adaptive byproduct hypothesis [16].

- Employ Multiple Discovery Heuristics: Propose different competing hypotheses and use data, confirmation strategies, and discovery heuristics to determine which one is best supported [16].

- Avoid Single-Source Evidence: Rely on converging lines of evidence from genetics, comparative anatomy, and paleontology, rather than a single logical argument.

Q2: What is the "Environment of Evolutionary Adaptedness" (EEA) and why is it a source of controversy? The EEA refers to the ancestral environment(s) to which a species is adapted. It is a critical, yet often debated, concept in forming evolutionary hypotheses [16].

- The Problem: Critics argue that assuming human evolution occurred in a uniform environment is speculative, as we know little about the specific selective pressures [16].

- The Solution: Focus on known, recurring challenges of our evolutionary past. These include predictable selection pressures like dealing with predators and prey, mate choice, child rearing, social cooperation and aggression, and tool use [16]. Frame your research around these well-established challenges rather than a vague, monolithic EEA.

Q3: How can I troubleshoot complex research problems systematically? Adopt a structured, data-driven troubleshooting framework used in technical fields [17]. This method shifts the process from guesswork to a scientific, evidence-based investigation.

Table: A Five-Step Research Troubleshooting Framework

| Step | Key Actions for Researchers | Common Pitfalls to Avoid |

|---|---|---|

| 1. Identify the Problem | Gather detailed information; differentiate the root problem from surface-level symptoms. | Relying on a vague description of the issue (e.g., "the model doesn't work"). |

| 2. Establish Probable Cause | Analyze data, logs, and literature to pinpoint potential causes. Use evidence to narrow down possibilities. | Jumping to conclusions without sufficient evidence or considering alternative hypotheses. |

| 3. Test a Solution | Implement potential solutions or experiments one variable at a time in a controlled setting. | Testing multiple changes simultaneously, which makes it impossible to isolate the effective factor. |

| 4. Implement the Solution | Apply the proven solution to your main research pipeline. Update protocols and documentation. | Implementing a fix broadly before it has been validated in a controlled test. |

| 5. Verify Functionality | Conduct thorough testing to confirm the problem is resolved and no new issues have been introduced. | Assuming a fix works without rigorous verification under various conditions [17]. |

Q4: What are the key criticisms of "massive modularity" in evolutionary psychology? The "massive modularity" hypothesis proposes that the human mind is composed of many innate, specialized cognitive circuits, each shaped by natural selection to solve specific ancestral problems [16]. Key criticisms include:

- Neurological Evidence: Research shows brain plasticity, where neural networks change in response to environmental stimuli and experience, contradicting a purely innate, fixed modular structure [16].

- Lack of Empirical Support: Some argue there is little direct evidence for domain-specific modules and that the experiments used to support them (e.g., the Wason selection task) may not adequately eliminate rival general-purpose reasoning theories [16].

- Genetic Implausibility: Critics question whether the human genome contains sufficient information to encode the vast number of specialized circuits proposed by the theory, especially given we share much of our DNA with other species [16].

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Methodological Tools for Evolutionary Research

| Item | Function & Application |

|---|---|

| Comparative Phylogenetics | A methodological framework used to test adaptive hypotheses by comparing traits across related species, controlling for shared evolutionary history. |

| Digital Behaviorual Assays | Software-based tools for precisely measuring cognitive biases and behavioral outcomes across diverse human populations. |

| Population Genetics Models | Statistical models that predict how gene frequencies change over time under forces like selection, drift, and mutation. |

| Experimental Evolution Protocols | Methodologies using organisms with short generation times (e.g., bacteria, fruit flies) to observe evolution in real-time in response to controlled selective pressures. |

Experimental Protocol: Testing Adaptive Hypotheses

Objective: To move beyond a "just-so story" and provide evidence-based support for an adaptive hypothesis.

Workflow Overview:

Methodology:

- Hypothesis Formulation: Clearly state the trait and its proposed adaptive function. Example: "Trait X evolved to solve Problem Y in Environment Z."

- Generate Testable Predictions: Derive specific, falsifiable predictions from your hypothesis. For example:

- Prediction 1: The trait should be more developed in populations that historically experienced a stronger selective pressure from Problem Y.

- Prediction 2: Manipulating the presence of Problem Y in a lab setting will lead to a measurable change in the expression or effectiveness of the trait.

- Prediction 3: The trait should develop reliably in individuals raised in environments lacking explicit instruction for the trait (to address "prepared learning").

- Select Methodology: Choose methods that directly test your predictions.

- For Prediction 1: Use comparative phylogenetics or population genetics.

- For Prediction 2: Design a controlled laboratory experiment.

- For Prediction 3: Employ cross-cultural developmental studies or twin studies.

- Data Analysis and Interpretation: Analyze the data and compare the results against your initial predictions. The hypothesis is supported only if the results consistently align with the predictions. Actively seek and consider alternative, non-adaptive explanations for your findings (e.g., the trait is a byproduct of another adaptation or genetic drift) [16].

Data Presentation: Contrasting Scientific Approaches

Table: Comparison of Scientific Approaches to Trait Explanation

| Aspect | Evidence-Based Approach | Faith/Need-Based Approach ("Just-So Story") |

|---|---|---|

| Foundation | Empirical data and testable hypotheses [16]. | Intuitive logic and post-hoc reasoning. |

| Predictions | Generates specific, falsifiable predictions [16]. | Often lacks specific, testable predictions. |

| Methodology | Uses controlled experiments, comparative analysis, and mathematical modeling. | Relies on narrative plausibility. |

| Handling of Contradictory Data | Revises or abandons hypotheses when data contradicts predictions. | Tends to ignore or explain away contradictory evidence. |

| Role of Alternative Explanations | Actively seeks and tests alternative explanations (e.g., byproducts, exaptation) [16]. | Dismisses alternatives to maintain the original narrative. |

Technical Support Center

This support center provides resources to troubleshoot common conceptual and methodological issues in research on cultural and genetic evolution. The guidance is framed within the thesis of correcting need-based evolutionary explanations, emphasizing that traits arise through historical pathways and are maintained by function, not by need alone [18].

Troubleshooting Guide: Conceptual Research Errors

Problem: Interpreting 'Current Utility' as 'Reason for Origin'

- Symptoms: Assuming a trait evolved because of its current function; framing research questions as "How did this trait evolve to meet the need for X?"

- Root Cause: Conflating the reason a trait is maintained in a population (its current function) with the historical reason it originated (which may involve drift, exaptation, or a different ancestral function) [18].

- Resolution Protocol:

- Hypothesize Separately: Clearly state separate hypotheses for the trait's origin (historical sequence, initial variation) and its maintenance (current selective pressure, function) [18].

- Test for Historical Pathways: Use comparative phylogenetic methods to identify if the trait correlated with a different environmental factor or function in the past.

- Test for Current Function: Design experiments to measure the fitness consequences of the trait in the current environment.

Problem: Misapplying Hypothetico-Deductive Logic to Historical Narratives

- Symptoms: Attempting to force phylogenetic or historical narrative explanations into a purely deductive framework; frustration when unique historical events cannot be predicted by universal laws [18].

- Root Cause: A misunderstanding of the dual nature of evolutionary explanations, which include both nomological-deductive (law-based) and historical-narrative (sequence-based) components [18].

- Resolution Protocol:

- Causal Reasoning Check: Use the Top-Down or Follow-the-Path approach to trace the causal chain of events [19].

- Narrative Construction: Build a coherent historical narrative that explains the specific sequence of events leading to the trait's evolution. This narrative should be consistent with known laws of biology but is not solely deduced from them [18].

- Consilience Test: Gather evidence from multiple independent lines (genetics, archaeology, paleontology) to assess the coherence and robustness of the proposed historical narrative [18].

Frequently Asked Questions (FAQs)

Q1: What is the core theoretical error in 'need-based' evolutionary explanations? A1: The core error is teleology—the implication that future needs can cause past evolutionary changes. Evolution lacks foresight; traits are shaped by past and present selective pressures acting on available variation, not by what an organism 'needs' for future survival [18].

Q2: How can I operationally distinguish between a trait's origin and its current maintenance in my experimental design? A2: Design experiments that can dissociate the two. For example, if studying a cultural practice, investigate if it provides a current fitness benefit (maintenance) while using archaeological or ethnographic data to trace its initial emergence (origin) in contexts that may have been unrelated to its current function [18].

Q3: Our research group is experiencing internal debates about the primacy of genetic vs. cultural adaptation. How can we structure this investigation? A3: Structure your investigation by dividing the research problem into more specialized teams, similar to how IT departments divide teams to handle specific domains like infrastructure versus user-facing tools [20]. One team could focus on quantifying the speed and dynamics of cultural transmission, while another analyzes the genomic data for signatures of recent selection. This allows for deeper expertise in each domain before synthesis.

Q4: Where can I find detailed protocols for standard experiments in gene-culture coevolution? A4: Regulatory agencies like the FDA provide detailed guidelines for the stages of drug development, which can serve as an analog for rigorous experimental phases in human studies [21]. Key phases include:

- Phase 1: Initial introduction of a concept or technology to a small group to determine basic parameters and safety (e.g., pilot studies) [21].

- Phase 2: Controlled studies on a larger, targeted group to obtain preliminary data on effectiveness and identify constraints [21].

- Phase 3: Expanded studies to gather comprehensive information on effectiveness, safety, and the overall benefit-risk relationship [21].

Experimental Protocol: Isolating Cultural vs. Genetic Adaptation

Objective: To determine whether a observed adaptive trait in a human population is primarily driven by cultural evolution or genetic adaptation.

Workflow:

Methodology:

Phase 1: Phenotype Characterization

- Procedure: Systematically document the variation of the trait (e.g., lactase persistence, specific tool-making technique) within and between populations. Conduct detailed ethnographic interviews and behavioral observations to precisely define the phenotype.

- Data Analysis: Perform quantitative analyses to correlate the trait's presence or degree of expression with relevant environmental variables (e.g., subsistence strategy, pathogen load, historical climate data).

Phase 2: Heritability & Transmission Analysis

- Procedure:

- Genetic Component: Collect DNA samples from a pedigree or a case-control cohort. Conduct a Genome-Wide Association Study (GWAS) to estimate the trait's heritability and identify potential genetic loci.

- Cultural Component: Use social network analysis and transmission chain experiments in the field. Apply the genealogical method to track if trait adoption is better predicted by kinship (suggesting genetics) or by social learning from unrelated experts (suggesting culture).

- Data Analysis: Compare the strength of genetic heritability (h²) versus cultural transmission pathways. Model the rate of trait spread; a rate faster than possible through genetic inheritance alone is indicative of cultural evolution.

Phase 3: Fitness Consequence Measurement

- Procedure: Design longitudinal studies or use historical data to measure the impact of the trait on surrogate fitness measures (e.g., health outcomes, reproductive success, wealth accumulation, social status). Compare individuals with and without the trait while controlling for confounding variables.

- Data Analysis: Calculate the selection differential or perform a structural equation model to quantify the trait's effect on fitness components.

Research Reagent Solutions

The table below details key reagents and materials for conducting research in this field.

| Item/Technique | Function in Research |

|---|---|

| GWAS (Genome-Wide Array) | Identifies genetic variants associated with a trait, allowing quantification of the trait's genetic heritability and testing for signatures of recent natural selection [21]. |

| Social Network Analysis Software | Maps and quantifies the pathways of cultural transmission, distinguishing vertical (parent-offspring), horizontal (peer), and oblique (non-parental elder) learning. |

| Structured Ethnographic Interview Protocols | Systematically characterizes a cultural trait, its variation, and the local explanations for its use, providing crucial data for hypothesis generation about function. |

| Institutional Review Board (IRB) Protocol | Ensures the ethical conduct of research involving human subjects, protecting their rights and welfare, and is required for clinical investigations [21]. |

| Informed Consent Documents | Obtains legally effective permission from human subjects after disclosing the risks and benefits of the research, a mandatory requirement for studies governed by FDA-like regulations [21]. |

A Toolkit for Rigor: Methodologies for Accurate Evolutionary Analysis in Biomedicine

Implementing 'Descent with Modification' as an Operational Framework

Frequently Asked Questions (FAQs)

Q1: What is the core operational definition of 'descent with modification' in an experimental context? A1: In experimental terms, 'descent with modification' occurs when there is a measurable change in heritable information within a population over multiple generations. This is distinct from temporary, non-heritable changes caused by environmental factors. The key is to track genetic frequency shifts, not just phenotypic changes [22].

Q2: How can I distinguish between a true evolutionary change and a non-heritable adaptation in my cell culture or microbial population? A2: Implement a common garden experiment. Transfer a subset of your modified population to a neutral or original environment for multiple generations. If the altered trait (e.g., resistance, growth rate) persists, it suggests a heritable, evolutionary change. If the trait reverts to its original state, the change was likely a non-heritable, physiological adaptation [22].

Q3: What is a "need-based" evolutionary explanation and why is it problematic for research? A3: A "need-based" explanation, or teleological reasoning, is the incorrect assumption that organisms evolve traits because they need them for survival. This is a cognitive bias that misrepresents the mechanism of evolution, which is based on random variation and non-random selection. In research, this leads to flawed experimental design and erroneous conclusions about adaptive mechanisms [10].

Q4: How can the 'descent with modification' framework improve high-throughput screening in drug discovery? A4: This framework views compound libraries as populations of variants undergoing selection. By analyzing the 'lineage' of successful drug candidates (e.g., second-generation molecules derived from a first-generation lead), researchers can identify which 'modifications' (chemical alterations) consistently lead to improved efficacy or safety, thereby optimizing the screening process for subsequent generations of therapeutics [23].

Troubleshooting Guides

Problem: Inconclusive Results on Whether a Trait is Heritable

| Symptoms | Possible Causes | Diagnostic Steps | Solutions |

|---|---|---|---|

| Trait variation observed, but pattern is erratic across generations [22]. | Non-heritable environmental influence; high mutation rate; lateral gene transfer [24]. | 1. Conduct genomic sequencing to confirm vertical inheritance. 2. Perform control experiments in a constant environment. | Isolate genetic lineage; use controlled, stable environmental conditions for assays. |

| Observed trait does not follow expected Mendelian or population genetics patterns. | Complex polygenic traits; epigenetic factors; the presence of essentialist thinking in experimental design [10]. | 1. Perform quantitative trait locus (QTL) analysis. 2. Check for epigenetic markers (e.g., methylation). | Shift experimental model to account for multi-gene traits; incorporate epigenetic screening. |

Problem: Prevalence of "Need-Based" Reasoning in Experimental Design

| Symptoms | Possible Causes | Diagnostic Steps | Solutions |

|---|---|---|---|

| Hypotheses are framed as "Organism X evolved trait Y to survive stress Z." | Deep-seated cognitive essentialism and teleological biases among researchers [10]. | Review hypothesis language for forward-looking, goal-oriented wording. | Reframe hypotheses in terms of existing variation and selective pressure: "Did pre-existing variation in trait Y confer a survival advantage under stress Z?" |

| Experiments lack proper controls for existing genetic variation and assume all individuals are identical. | Essentialist view of species, ignoring within-population variation [10]. | Analyze baseline genetic and phenotypic diversity in the starting population before applying selective pressure. | Characterize population variation at the start of any selection experiment. Use diverse, outbred populations when possible. |

Experimental Protocols

Protocol 1: Quantifying Descent with Modification in a Microbial Population

Objective: To measure the change in allele frequency of a drug-resistance gene in bacteria over multiple generations under selective pressure.

Materials:

- Wild-type bacterial culture

- Antibiotic stock solution

- Liquid growth medium

- Sterile flasks and plates

- PCR kit and specific primers for the resistance gene

- qPCR machine or equipment for gel electrophoresis

Methodology:

- Baseline Measurement: Extract genomic DNA from the initial bacterial population. Use PCR/qPCR to determine the initial frequency of the drug-resistance allele.

- Application of Selective Pressure: Divide the culture into two flasks: a control flask (no antibiotic) and an experimental flask (with a sub-lethal concentration of antibiotic).

- Serial Passaging: Allow both cultures to grow for a set period (e.g., 24 hours). Each day, transfer a small aliquot of each culture to fresh medium with the same conditions (with/without antibiotic). Repeat for at least 10-15 passages.

- Monitoring Evolution: At every 3-5 passages, sample the populations from both flasks. Extract DNA and quantify the frequency of the resistance allele using PCR/qPCR.

- Data Analysis: Plot the frequency of the resistance allele over time (generations). A significant increase in frequency in the experimental flask, but not the control, demonstrates descent with modification via natural selection.

Protocol 2: Correcting for Teleological Bias in Adaptation Assays

Objective: To design an experiment that tests if a beneficial trait arises from pre-existing variation versus "need-induced" mutation.

Materials:

- Clonal population of cells (e.g., yeast, bacteria) to minimize initial variation.

- Selective agent (e.g., toxin, high salt, temperature).

- Replica plating equipment or cell sorter.

Methodology:

- Replicate Populations: From the same clonal starter culture, establish hundreds of independent, identical populations in microplates.

- Simultaneous Challenge: Expose all populations to the identical selective pressure at the same time.

- Independent Tracking: Monitor the survival and growth of each population independently.

- Analysis:

- If resistance arises randomly and independently in populations at different times, it supports the 'descent with modification' model (random mutation followed by selection).

- If resistance arises synchronously and identically in all populations at once, it might suggest a need-based, directed response (which is biologically implausible for most traits). This design directly tests the core principle of random variation prior to selection, countering teleological assumptions.

Key Signaling Pathways, Workflows & Logical Relationships

Descent with Modification Core Workflow

Heritable vs. Non-Heritable Change Diagnosis

Research Reagent Solutions

| Reagent / Material | Function in Experimental Framework |

|---|---|

| Clonal Cell Population | Provides a genetically uniform starting point to ensure that any new variation arises during the experiment, not from pre-existing differences [22]. |

| Selective Agents (e.g., Antibiotics) | Applies a well-defined selective pressure to the population, directly driving the 'modification' aspect of the framework by favoring beneficial mutations [23]. |

| DNA Sequencing Kits | Allows for the direct measurement of 'heritable information' change by quantifying allele frequencies and identifying specific mutations across generations [22]. |

| Environmental Control Chambers | Isolates the effect of genetic evolution from non-heritable phenotypic plasticity by maintaining constant, controlled conditions for control populations [22]. |

| High-Throughput Screening Assays | Enables the tracking of 'descent' lineages by rapidly testing the performance and relatedness of thousands of chemical or biological variants, as used in drug discovery [25] [23]. |

Leveraging Deep Mutational Scanning to Quantify Fitness Landscapes

Frequently Asked Questions

Q1: What is the core principle behind Deep Mutational Scanning (DMS)? DMS is a high-throughput technique that systematically maps genetic variations to phenotypic variations [26]. It involves creating a comprehensive mutant library, subjecting it to a high-throughput phenotyping assay, and using deep sequencing to quantify the fitness or functional effect of each variant before and after selection [26].

Q2: My DMS data is noisy. What are common sources of error and how can I mitigate them? Noise often arises from biased mutant library generation or bottlenecks during selection. To mitigate this:

- Library Generation: Use oligo pool synthesis with NNK/NNS codons instead of error-prone PCR to ensure more uniform coverage of all possible amino acid substitutions and reduce sequence bias [26].

- Selection Bottlenecks: Ensure a high library coverage (typically >1000x) at every step to prevent the random loss of low-frequency variants [26].

- Data Standards: Adopt common data standards and metadata schemas, such as those found on repositories like FAIRsharing.org, to improve data quality and interoperability, which aids in cross-dataset comparison and error checking [27].

Q3: How can I study epistasis (genetic interactions) using DMS? DMS is powerful for revealing epistasis. By analyzing how the fitness effect of one mutation changes depending on the genetic background (presence of other mutations), you can infer genetic interactions. Machine learning approaches can be applied to the fitness landscape data to deconvolute these background-dependent effects [28].

Q4: Can DMS be applied to study dynamic biomolecules, like RNAs that switch structures? Yes. DMS is particularly valuable for molecules that populate multiple conformational states. The resulting fitness landscape captures the functional constraints across all these states simultaneously. For example, a study on a self-splicing group I intron showed that fitness was jointly driven by constraints on two alternative RNA helices (P1ex and P10) that form at different stages of splicing [28].

Q5: What are the key considerations when choosing a method for mutant library generation? The table below compares the two primary methods [26]:

| Method | Description | Pros | Cons |

|---|---|---|---|

| Error-Prone PCR | Uses low-fidelity polymerases to introduce random mutations during DNA amplification. | Relatively cheap and easy to perform. | Introduces sequence biases; difficult to achieve all 19 amino acid substitutions per codon. |

| Oligo Pool Synthesis | A pool of oligonucleotides containing defined or degenerate (e.g., NNK) codons is synthesized. | Customizable, less biased, can achieve all single amino acid substitutions. | More costly than error-prone PCR. |

Troubleshooting Guides

Problem: Low Diversity in Mutant Library After Selection

- Potential Cause 1: The selection pressure was too strong, wiping out most variants.

- Solution: Titrate the selection pressure. For antibiotic resistance, use a gradient of concentrations. For fluorescence-activated cell sorting (FACS), use gentler gating strategies.

- Potential Cause 2: The initial transformation efficiency was too low, creating a bottleneck.

- Solution: Optimize transformation protocols and scale up to ensure the library complexity is maintained. Use electrocompetent cells for higher efficiency.

Problem: Poor Correlation Between Biological Replicates

- Potential Cause: Insufficient sequencing depth or technical artifacts during library preparation.

- Solution:

- Increase Sequencing Depth: Ensure each variant is sequenced with a minimum depth (e.g., 200-500 reads) in each replicate.

- Normalize Data: Apply robust statistical normalization to account for differences in library size and sequencing depth between replicates.

- Control Experiments: Include wild-type and known negative controls in the library to monitor background noise.

- Solution:

Problem: Inability to Interpret Functional Scores for Dynamic Structures

- Potential Cause: The fitness score is a composite of effects from multiple conformational states.

- Solution: Employ a machine learning classifier to deconvolute the fitness landscape. The classifier can be trained to determine whether a mutation's effect is primarily due to its impact on one specific state (e.g., P1ex helix) or another (e.g., P10 helix) based on the pattern of epistasis and the mutation's location [28].

Experimental Protocols

Protocol 1: Generating a Mutant Library via Oligo Pool Synthesis

This protocol is preferred for comprehensive single amino acid substitutions [26].

- Design Oligos: Design oligonucleotides to replace the target region. Use degenerate NNK or NNS codons at the positions to be mutated, flanked by wild-type sequences for homology-directed assembly.

- Synthesize & Amplify: Synthesize the oligo pool and amplify it via PCR to create a library of double-stranded DNA gene blocks.

- Clone into Vector: Digest the expression vector and the PCR-amplified gene blocks with the appropriate restriction enzymes. Ligate the gene blocks into the vector backbone.

- Transform and Recover: Transform the ligation mix into high-efficiency cloning cell lines (e.g., electrocompetent E. coli). Grow the cells on a large scale to recover the plasmid mutant library.

- Isolate Library: Extract the plasmid library using a maxi-prep kit. Verify library diversity by sequencing a small number of clones.

Protocol 2: High-Throughput Phenotyping Using an In Vivo Splicing Reporter Assay

This protocol, adapted from Soo et al. (2021), couples RNA structure function to cellular fitness [28].

- Clone Library into Reporter Vector: Clone the mutant RNA library (e.g., the group I intron) into a reporter vector where successful self-splicing leads to the expression of a selectable marker (e.g., antibiotic resistance gene).

- Transform into Expression Host: Transform the plasmid library into the appropriate host cells (e.g., E. coli).

- Apply Selection: Grow the transformed cells under selective conditions (e.g., with antibiotic). Cells containing functional self-splicing introns will survive and proliferate, while those with non-functional variants will not.

- Harvest Genomic DNA: Before and after selection, harvest genomic DNA from a representative sample of the cell population.

- Amplify and Sequence: Amplify the mutant region from the genomic DNA and subject it to deep sequencing. The enrichment or depletion of each variant is calculated by comparing its frequency before and after selection.

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function |

|---|---|

| NNK/NNS Oligo Pool | Defines the mutant library; NNK/NNS provides all amino acids and one stop codon, ensuring comprehensive coverage [26]. |

| High-Fidelity DNA Polymerase | For accurate amplification of the mutant library without introducing additional, spurious mutations during PCR. |

| Electrocompetent Cells | Essential for achieving the high transformation efficiency required to maintain library diversity [26]. |

| Reporter Plasmid | A vector where the gene of interest is placed upstream of a selectable or screenable marker (e.g., antibiotic resistance, GFP), linking molecular function to a measurable phenotype [28]. |

| Selection Agent (e.g., Antibiotic) | Applies the selective pressure that enriches for functional variants during the high-throughput phenotyping step [28]. |

Workflow and Data Analysis Diagrams

Diagram 1: Overall DMS Experimental Workflow.

Diagram 2: Deconvoluting Fitness for Dynamic RNA Structures.

Modeling Antagonistic Pleiotropy and Environmental Change in Experimental Designs

Frequently Asked Questions (FAQs)

1. What is antagonistic pleiotropy in the context of experimental evolution? Antagonistic pleiotropy occurs when a genetic mutation has opposing fitness effects in different environments. This means a mutation that is beneficial in one environment may become deleterious when the environment changes. This phenomenon can hinder the fixation of beneficial mutations in changing environments, which is a key consideration when designing evolution experiments [29].

2. How does environmental change affect the detection of molecular adaptation? Frequent environmental changes can conceal molecular adaptations. Experiments show that the ratio of nonsynonymous to synonymous nucleotide changes (ω) is significantly lower in antagonistic, changing environments compared to constant environments. This suggests that positive selection is consistently underestimated in nature due to the antagonistic fitness effects of mutations in fluctuating conditions [29].

3. What is an "evolutionary trap" and how can it be applied to drug development? An evolutionary trap leverages antagonistic pleiotropy to target drug resistance in diseases like cancer. Researchers can identify pathways where a genetic adaptation that confers resistance to one drug simultaneously creates a hypersensitivity to a second drug. This approach templates a therapeutic strategy that selectively targets resistant cancer cells [30].

4. What are the key differences between testing in constant versus changing environments? Using constant environments alone may provide an incomplete picture. Incorporating planned environmental changes is crucial to uncover antagonistic pleiotropy. Experimental populations evolved in constant antagonistic environments often showed lower fitness when measured in other antagonistic environments, highlighting the trade-offs that only become apparent under changing conditions [29].

5. How can I troubleshoot high variability or unexpected results in adaptation experiments? Begin by clearly defining the problem and your initial hypothesis. Then, systematically analyze your experimental design, paying close attention to the adequacy of your control groups, sample size, and randomization procedures. Investigate potential external variables such as environmental conditions and biological variability. Implementing detailed Standard Operating Procedures (SOPs) can help reduce variability [31].

Troubleshooting Guides

Problem: Inability to Detect Antagonistic Pleiotropy in Experimental Evolution

Potential Causes and Solutions:

Cause: Environment set is too similar (concordant).

- Solution: Select experimental environments based on evidence of negative genetic correlations. Utilize pre-existing fitness data, if available, to choose conditions where segregant or strain fitness is negatively correlated between any two environments to ensure a sufficiently antagonistic set [29].

Cause: Insufficient frequency of environmental switching.

- Solution: The frequency of environmental switches can impact the fixation of beneficial mutations. Experiment with different switching frequencies within your experimental timeline to determine the optimal rhythm for revealing trade-offs [29].

Cause: Inadequate genomic sequencing and analysis.

- Solution: Perform genome sequencing of evolved populations and the progenitor. Calculate the nonsynonymous to synonymous rate ratio (ω) for populations in both constant and changing environments. A statistically significant lower ω in changing environments supports the presence of antagonistic pleiotropy concealing adaptations [29].

Problem: Failure to Establish an Effective Evolutionary Trap in Cancer Therapy

Potential Causes and Solutions:

Cause: Incomplete mapping of fitness trade-offs.

- Solution: Employ high-throughput functional genomics tools, such as pooled CRISPR-Cas9 knockout screens, in cancer cells treated with your primary drug. This helps map the drug-dependent genetic basis of fitness trade-offs and identify potential antagonistic pleiotropy pathways [30].

Cause: The identified pathway does not create a strong, coincident hypersensitivity.

- Solution: Validate candidate pathways across diverse models (e.g., multiple cell lines and patient-derived xenografts). The goal is to confirm that acquisition of resistance to Drug A through the pathway reliably exposes a strong and targetable hypersensitivity to Drug B [30].

The following table summarizes key quantitative findings from a yeast evolution experiment investigating antagonistic pleiotropy.

Table 1: Summary of Experimental Evolution Outcomes in Different Environments [29]

| Experimental Condition | Mean Fitness in Adapted Environment (vs. Progenitor) | Mean Fitness in Other Environments in the Set (vs. Progenitor) | Fraction of Cases with Fitness < 1 (Antagonism) | Nonsynonymous to Synonymous Rate Ratio (ω) |

|---|---|---|---|---|

| Constant Concordant Environments | 1.096 ± 0.005 | 1.065 ± 0.004 | 8 of 240 (3.3%) | Higher (Not significantly different from changing concordant) |

| Constant Antagonistic Environments | 1.174 ± 0.042 | 0.975 ± 0.014 | 124 of 240 (51.7%) | Higher |

| Changing Antagonistic Environments | Not explicitly stated | Not explicitly stated | Not explicitly stated | Significantly Lower than in constant antagonistic environments |

Experimental Protocols

Protocol 1: Experimental Evolution in Changing Environments

This protocol is designed to detect antagonistic pleiotropy in a yeast model system, based on the methodology from [29].

Strain and Culture Preparation:

- Initiate all populations from a single, genetically identical haploid progenitor strain (e.g., Saccharomyces cerevisiae).

- Establish a sufficient number of replicate populations (e.g., 12 per condition) to ensure statistical power.

Environmental Regime Design:

- Antagonistic Set: Define a set of five growth conditions (e.g., different carbon sources, stressors, temperatures) where pre-existing data shows negative genetic correlations for fitness.

- Concordant Set: Define a set of five conditions where fitness correlations are positive.

- Constant Control: Maintain separate populations in each of the above conditions constantly.

- Changing Treatment: For changing environments, rotate populations among the five conditions in a set (antagonistic or concordant). Test different frequencies of environmental switches (e.g., every 56 generations, 112 generations, etc.).

Evolution and Maintenance:

- Propagate populations for a defined number of generations (e.g., 1,120) using serial dilution or a similar method in liquid culture or on plates.

- At regular intervals (e.g., every 56 generations), archive a large fraction of each population by freezing to create a "fossil record."

Fitness Assays:

- At the endpoint, measure the fitness of evolved populations relative to the progenitor.

- Measure fitness not only in the environment where the population was last adapted (for constant groups) but also in the other four environments within its set to test for antagonistic pleiotropy.

Genomic Analysis:

- Sequence the genomes of the progenitor and all endpoint populations to a high coverage (e.g., 100x).

- Identify single nucleotide variants (SNVs) and indels with a frequency above a set threshold (e.g., 0.1).

- Calculate the nonsynonymous to synonymous rate ratio (ω) for each population and compare between constant and changing environments.

Protocol 2: Identifying Drug-Induced Evolutionary Traps in Cancer

This protocol outlines a process for discovering evolutionary traps using antagonistic pleiotropy, based on the approach in [30].

CRISPR-Cas9 Screens:

- Perform a pooled genome-wide CRISPR-Cas9 knockout screen in a relevant cancer cell line (e.g., Acute Myeloid Leukemia cells) treated with your primary chemotherapeutic agent (e.g., a bromodomain inhibitor).

- Identify genes whose knockout confers resistance to the primary drug.

Validation of Hits:

- Select candidate genes from the screen for further validation. For example, genes involved in a specific regulatory axis (e.g., a PRC2-NSD2/3-mediated MYC axis).

- Create isogenic cell lines with knockouts or knock downs of the candidate genes.

Fitness Trade-off Testing:

- Test the resistance of the validated knockout lines to the primary drug to confirm the screen result.

- In parallel, test the sensitivity of these same lines to a panel of other, potentially secondary, drugs (e.g., a BCL-2 inhibitor like venetoclax).

- The goal is to identify a secondary drug to which the resistant cells show heightened sensitivity (hypersensitivity).

In Vivo Validation:

- Validate the discovered evolutionary trap in vivo using patient-derived xenograft (PDX) models.

- Establish tumors from both wild-type and drug-resistant (knockout) cell lines.

- Treat the animals with the secondary drug to confirm that tumors which evolved resistance to the primary drug are now vulnerable to the secondary drug.

Signaling Pathways and Workflows

Evolutionary Trap Pathway

Experimental Evolution Workflow

Research Reagent Solutions

Table 2: Essential Materials for Key Experiments

| Reagent / Material | Function in Experiment | Example Application |

|---|---|---|

| Defined Media & Stressors | To create the selective antagonistic/concordant environmental sets for microbial evolution. | Creating different growth conditions for yeast evolution (e.g., varying carbon sources, salt, pH, drugs) [29]. |

| Pooled CRISPR-Cas9 Library | To perform genome-wide knockout screens to identify genes involved in drug resistance and fitness trade-offs. | Identifying the PRC2-NSD2/3-MYC axis as a source of antagonistic pleiotropy in leukemia cells [30]. |

| Next-Generation Sequencing Kits | For whole-genome sequencing of evolved populations to identify mutations and calculate ω. | Sequencing the yeast progenitor and endpoint populations to detect SNVs and indels [29]. |

| Patient-Derived Xenograft (PDX) Models | For in vivo validation of evolutionary traps in a clinically relevant model system. | Confirming that bromodomain-inhibitor resistant AML cells are hypersensitive to BCL-2 inhibition in vivo [30]. |

| Flow Cytometer with Cell Staining | To determine ploidy and analyze cell population dynamics during evolution. | Using SYTOX Green staining to monitor haploid vs. diploid transitions in evolving yeast populations [29]. |

Incorporating Phylogenetic Comparative Methods to Distinguish History from Adaptation

Conceptual Framework: Distinguishing Adaptation from Phylogenetic History

What is the fundamental challenge in distinguishing adaptation from history?

Evolutionary patterns observed across species result from two primary forces: adaptive evolution (responses to selective pressures) and phylogenetic history (descent from common ancestors). Phylogenetic comparative methods (PCMs) provide statistical tools to separate these forces by accounting for non-independence of species data due to shared ancestry. Without such correction, traits correlated due to common ancestry can be misinterpreted as adaptive correlations [32].

How does phylogenetic independent contrast (PIC) address this challenge?

PIC transforms trait data into evolutionary contrasts at each node in the phylogeny, effectively converting non-independent species data into independent data points for statistical analysis. When a correlation between two traits disappears after PIC analysis, it indicates the relationship was likely driven by shared phylogenetic history rather than adaptive evolution [32].

Table: Interpretation of Correlation Results With and Without PIC Analysis

| Analysis Type | Significant Correlation | No Significant Correlation | Biological Interpretation |

|---|---|---|---|

| Standard Correlation (Without PIC) | Present | Absent | Cannot distinguish adaptation from phylogenetic inertia |

| Phylogenetic Independent Contrasts | Present | Absent | Evidence for genuine adaptive relationship independent of history |

| Phylogenetic Independent Contrasts | Absent | Present | Relationship likely due to shared phylogenetic history, not adaptation |

What theoretical gaps exist in current evolutionary frameworks?

The Extended Evolutionary Synthesis (EES) highlights explanatory gaps in Standard Evolutionary Theory (SET), particularly regarding how to incorporate non-genetic inheritance, niche construction, and developmental plasticity as evolutionary causes rather than merely products. Phylogenetic methods must account for these processes when testing adaptation hypotheses [33].

Troubleshooting Common Experimental Issues

Why do I get no correlation after phylogenetic independent contrast?

Problem: A significant correlation between traits disappears after applying PIC.

Solution: This typically indicates that the apparent relationship was actually driven by phylogenetic inertia (shared history) rather than adaptive evolution. Closely related species share similar traits due to common descent, creating statistical non-independence that inflates correlation estimates. Your PIC analysis has successfully removed this confounding effect [32].

Next Steps:

- Verify your phylogeny is well-supported and includes branch length information

- Confirm trait data is properly normalized and transformed for PIC requirements

- Consider whether sample size provides sufficient statistical power after transformation

How should I interpret perceived acceleration in evolutionary rates over short timescales?

Problem: Evolutionary rates appear faster over shorter phylogenetic timescales.

Solution: Recent research indicates this perceived pattern may be statistical "noise" rather than biological reality. A novel statistical approach shows that time-independent noise creates a misleading hyperbolic pattern, making it seem like evolutionary rates increase over shorter time frames when they actually do not [34].

Experimental Adjustment: Apply methods that account for this statistical artifact before making biological interpretations about rate variation.

What are common pitfalls in phylogenetic tree construction and how can I avoid them?

Problem: Phylogenetic trees poorly represent true evolutionary relationships, compromising downstream comparative analyses.

Solutions:

- Model Selection: Use automated tools like

ete-buildto test multiple substitution models and select the best fit using likelihood ratio tests or AIC/BIC criteria [35] - Data Quality: Implement alignment trimming and quality assessment pipelines

- Support Values: Always include bootstrap support or posterior probabilities for nodes

- Visualization: Use tools like ETE Toolkit to identify and troubleshoot problematic regions [35]

Essential Experimental Protocols

Protocol: Basic Phylogenetic Independent Contrasts Analysis

Purpose: Test trait correlations while accounting for phylogenetic non-independence.

Workflow:

- Input Data Preparation: Obtain or estimate a phylogeny with branch lengths for your taxa

- Trait Data Collection: Compile continuous trait measurements for all species

- Data Transformation: Log-transform traits if necessary to meet assumptions

- PIC Calculation: Compute independent contrasts for each trait at all phylogenetic nodes

- Regression Analysis: Test correlation between contrasts with regression through origin

- Interpretation: Compare results with non-phylogenetic analysis

Validation: Conduct diagnostic checks to ensure contrasts are independent of their standard deviations [32].

Protocol: Testing Evolutionary Models with ETE Toolkit

Purpose: Identify patterns of natural selection acting on molecular sequences.

Implementation:

Interpretation: Use built-in likelihood ratio tests to compare fitted models and identify best-fitting evolutionary scenario [35].

Research Reagent Solutions

Table: Essential Tools for Phylogenetic Comparative Methods

| Tool/Resource | Primary Function | Application Context | Key Features |

|---|---|---|---|

| ETE Toolkit | Phylogenomic analysis pipeline | Tree building, visualization, hypothesis testing | Unified interface for reproducible workflows, multiple sequence alignment, model testing [35] |

| CodeML/PAML | Molecular evolution analysis | Detecting selection, evolutionary rate estimation | Site models, branch models, branch-site models [35] |

| TreeKO | Tree comparison | Comparing gene trees, quantifying differences | Speciation distance, accounts for duplication events, trees of different sizes [35] |

| NCBI Taxonomy | Taxonomic database | Taxonomic standardization, lineage information | Efficient local queries, taxid conversion, lineage tracking [35] |

| Phylogenetic Independent Contrasts | Statistical correction | Accounting for phylogenetic non-independence | Transforms species data into independent contrasts at nodes [32] |

Workflow Visualization

Phylogenetic Correction Workflow

Hypothesis Testing Framework

Extended Evolutionary Synthesis Integration

Applying E-E-A-T Principles to Evaluate Evolutionary Hypotheses

The E-E-A-T framework (Experience, Expertise, Authoritativeness, Trustworthiness) provides a structured approach to assess research quality and credibility in evolutionary biology [36] [37]. This methodology is particularly valuable for evaluating need-based evolutionary explanations and correcting methodological artifacts in evolutionary research [38].

Core Principles and Definitions

- Experience: Direct involvement in evolutionary research methodologies, data collection, and experimental procedures [36] [39]

- Expertise: Demonstrated knowledge of evolutionary theory, statistical methods, and relevant biological disciplines [40]

- Authoritativeness: Recognition from peer researchers, publication in respected journals, and citations by other experts [37] [39]

- Trustworthiness: Research integrity, methodological transparency, data accuracy, and reproducibility [41] [40]

Hypothesis Evaluation Methodology

E-E-A-T Assessment Protocol

Quantitative Assessment Metrics

Table 1: E-E-A-T Scoring Matrix for Evolutionary Hypotheses

| Criteria | Assessment Metrics | Weight | Data Sources |

|---|---|---|---|

| Experience | Years in evolutionary research, Direct data collection involvement, Methodological hands-on experience | 25% | Author publications, Lab protocols, Method sections |

| Expertise | Advanced degrees, Peer-reviewed publications, Technical statistical knowledge | 30% | Citation indices, Academic credentials, Method complexity |

| Authoritativeness | Journal impact factors, Citation rates, Peer recommendations | 25% | Web of Science, Google Scholar, Peer reviews |

| Trustworthiness | Data transparency, Methodological rigor, Reproducibility rate | 20% | Data availability, Code sharing, Replication studies |

Common Research Challenges & Solutions

Troubleshooting Frequent Methodology Issues

Q1: How do we distinguish genuine evolutionary patterns from statistical artifacts?

Challenge: Apparent acceleration of evolutionary rates over short timescales may represent statistical noise rather than biological reality [38].

Solution: Implement the O'Meara-Beaulieu protocol for noise accounting:

- Apply time-independent noise modeling

- Conduct hyperbolic pattern detection

- Use bias-correction statistical methods [38]

Experimental Protocol:

- Collect evolutionary rate data across multiple timescales

- Apply noise-partitioning algorithms

- Test for significance of residual patterns after noise removal

- Validate with bootstrap resampling

Q2: How should researchers account for non-genetic inheritance in evolutionary models?

Challenge: Traditional models ignore epigenetic, behavioral, and cultural inheritance channels [42].

Solution: Implement extended evolutionary models incorporating:

- Molecular epigenetic markers tracking

- Cultural practice transmission analysis

- Behavioral inheritance quantification [42]

Q3: What methodologies properly address niche construction effects?

Challenge: Organisms modify their environments, creating feedback loops not captured in standard models [42].

Solution: Develop integrated models that:

- Quantify environmental modification rates

- Measure selective feedback strength

- Account for multi-generational niche effects [42]

Advanced Statistical Troubleshooting

Q4: How to correct for perceived rate increases in younger clades?

Solution: Implement the statistical framework from PLOS Computational Biology study [38]:

- Use the "perceived increase correction factor"

- Apply time-scale normalization protocols

- Account for measurement variance heterogeneity

Experimental Protocols & Methodologies

Model Error Correction Procedure (MECP)

Application Context: Correcting misspecified evolutionary models that contain both parameter and structural errors [43].

Protocol Steps:

- Parameter Error Identification

- Systematic search for optimal parameter values

- Sensitivity analysis across parameter space

- Cross-validation with independent datasets

Structural Error Correction