Beyond Selection: How Neutral Emergence Theory is Reshaping Our Understanding of Genetic Code Evolution and Its Biomedical Applications

This article explores the Neutral Emergence Theory, a paradigm-shifting concept in molecular evolution that challenges the long-standing assumption that beneficial traits arise primarily through direct natural selection.

Beyond Selection: How Neutral Emergence Theory is Reshaping Our Understanding of Genetic Code Evolution and Its Biomedical Applications

Abstract

This article explores the Neutral Emergence Theory, a paradigm-shifting concept in molecular evolution that challenges the long-standing assumption that beneficial traits arise primarily through direct natural selection. We examine how complex, optimized systems like the error-minimizing standard genetic code can emerge through non-adaptive, neutral processes. For researchers and drug development professionals, we provide a comprehensive analysis covering the foundational principles of neutral theory, advanced methodologies for studying non-adaptive evolution, challenges in validating these models, and the significant implications for synthetic biology, genetic engineering, and therapeutic development. By synthesizing recent empirical evidence and theoretical advances, this review establishes a framework for understanding evolution beyond adaptive constraints.

Rethinking Molecular Evolution: The Principles and Evidence for Neutral Emergence

The Neutral Theory of Molecular Evolution, introduced by Motoo Kimura in 1968, represents a foundational paradigm shift in evolutionary biology [1] [2]. This theory posits that the majority of evolutionary changes observed at the molecular level are not driven by natural selection but rather by the random genetic drift of mutant alleles that are selectively neutral [1]. The theory applies specifically to molecular evolution and remains compatible with Darwinian natural selection acting at the phenotypic level [1]. Within the broader context of neutral emergence theory in genetic code evolution research, the Neutral Theory provides a critical null hypothesis for distinguishing between stochastic and selective processes in genomic evolution [2] [3]. This framework has proven indispensable for interpreting patterns of molecular divergence and polymorphism across diverse organisms [1] [2].

Historical Development and Theoretical Foundations

Origins and Key Proponents

The conceptual foundations of the Neutral Theory emerged through independent work by researchers in the late 1960s. Motoo Kimura formally introduced the theory in 1968, with King and Jukes independently proposing similar concepts in 1969 [1]. While earlier scientists including Freese and Yoshida had suggested neutral mutations might be widespread, and R.A. Fisher had published mathematical derivations relevant to neutral evolution in 1930, Kimura provided the first coherent theoretical framework [1]. His 1983 monograph, "The Neutral Theory of Molecular Evolution," substantially expanded the evidence and arguments supporting the theory [4].

The development of neutral theory was deeply connected to Haldane's dilemma regarding the "cost of selection," which highlighted mathematical inconsistencies between the observed rate of molecular substitution and what could be reasonably explained by positive selection alone [1]. Kimura leveraged the established principles of population genetics developed by J.B.S. Haldane, R.A. Fisher, and Sewall Wright to create a mathematical approach for analyzing gene frequencies under neutral expectations [1].

Table 1: Key Historical Milestones in Neutral Theory Development

| Year | Event | Key Researchers | Significance |

|---|---|---|---|

| 1930 | Mathematical foundations | R.A. Fisher | Provided initial mathematical derivations for neutral evolution |

| 1968 | Formal theory proposal | Motoo Kimura | Introduced coherent neutral theory of molecular evolution |

| 1969 | Independent proposal | King and Jukes | Offered complementary evidence supporting neutral evolution |

| 1973 | Nearly neutral theory | Tomoko Ohta | Expanded theory to include slightly deleterious mutations |

| 1983 | Comprehensive monograph | Motoo Kimura | Synthesized evidence and arguments for neutral theory |

Core Principles and Mathematical Framework

The Neutral Theory rests on several fundamental principles. First, it holds that most mutations occurring at the molecular level are either deleterious or neutral, with beneficial mutations being sufficiently rare that they contribute little to overall genetic variation [1] [2]. Deleterious mutations are rapidly removed by purifying selection, while neutral mutations persist and may eventually become fixed through random genetic drift [1]. A neutral mutation is formally defined as one that does not affect an organism's ability to survive and reproduce [1].

Kimura's infinite sites model (ISM) provides key mathematical insights into evolutionary rates of mutant alleles [1]. The rate of substitution (K) is given by:

K = 2Nvμ

Where N is the population size, v is the neutral mutation rate, and μ is the probability of fixation [1]. For strictly neutral mutations, the probability of fixation is 1/(2N), leading to the elegant prediction that:

K = v

This demonstrates that under neutral theory, the rate of molecular evolution equals the mutation rate, independent of population size [1] [2]. This relationship provides the mathematical basis for the molecular clock hypothesis, which predated but found robust theoretical support through neutral theory [1].

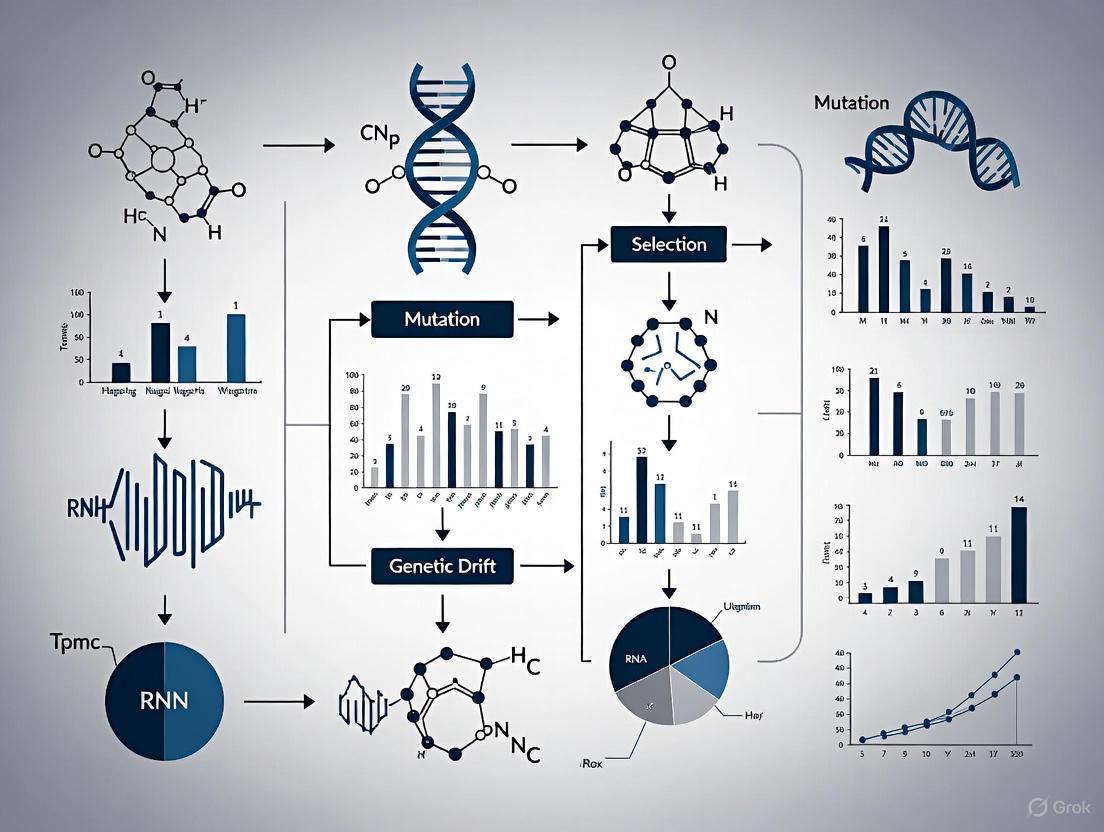

Figure 1: Fate of Mutations Under Neutral Theory. Mutations are classified by selection coefficient (s), determining their evolutionary trajectory through selective forces or genetic drift.

Key Evidence and Experimental Approaches

Functional Constraint and Evolutionary Rates

A critical prediction of neutral theory is that evolutionary rate should correlate inversely with functional constraint [1] [2]. As functional constraint diminishes, the probability that a mutation is neutral increases, leading to higher sequence divergence rates [1]. Early evidence supporting this prediction came from comparative studies of proteins with varying functional importance. Fibrinopeptides and the C chain of proinsulin, which have minimal biological function compared to their active molecules, exhibit extremely high evolutionary rates [1]. Similarly, Kimura and Ohta observed that the surface residues of hemoglobin evolve almost ten times faster than the interior pockets where heme groups bind, reflecting stronger functional constraints on interior regions essential for oxygen binding [1].

The degenerate genetic code provides further compelling evidence. Synonymous substitutions in the third codon position, which often do not change the encoded amino acid, accumulate much more rapidly than non-synonymous substitutions that alter amino acid sequences [1] [2]. This pattern is consistently observed across diverse taxa and genomes, supporting the neutral expectation that mutations with minimal functional consequences evolve more rapidly [2].

Table 2: Evolutionary Rates Across Genomic Elements with Varying Functional Constraints

| Genomic Element | Functional Constraint | Evolutionary Rate | Key Evidence |

|---|---|---|---|

| Fibrinopeptides | Very low | Very high | Rapid amino acid substitution |

| Hemoglobin surface residues | Low | High | 10x faster than interior residues |

| Synonymous sites | Low | High | Rapid nucleotide substitution |

| Non-synonymous sites | High | Low | Slow amino acid substitution |

| Pseudogenes | None | Highest | Rate similar across all positions |

| Conserved protein domains | Very high | Very low | Minimal amino acid substitution |

Experimental Protocols for Testing Neutral Theory

Researchers have developed multiple experimental approaches to test predictions of the Neutral Theory:

Comparative Sequence Analysis This foundational approach involves comparing DNA or protein sequences across species to quantify substitution patterns [2]. The protocol involves: (1) selecting orthologous sequences from multiple species with known divergence times, (2) aligning sequences using tools like ClustalW or MUSCLE, (3) calculating synonymous (dS) and non-synonymous (dN) substitution rates, and (4) applying statistical tests like the McDonald-Kreitman test to detect selection [2]. The neutral prediction that dN/dS ≈ 1 indicates neutral evolution, while dN/dS < 1 suggests purifying selection and dN/dS > 1 indicates positive selection [2].

Deep Mutational Scanning Modern implementations of this approach systematically measure the fitness effects of mutations [5] [6]. The methodology includes: (1) creating comprehensive mutant libraries for specific genes using error-prone PCR or synthetic oligonucleotides, (2) expressing these mutants in model organisms like yeast or E. coli, (3) tracking mutant frequency changes over multiple generations through high-throughput sequencing, and (4) calculating fitness effects by comparing growth rates to wild-type organisms [5]. This approach revealed that more than 1% of mutations are beneficial, challenging strict neutralist assumptions but supporting nearly neutral extensions [5].

Population Polymorphism Analysis This method examines within-species variation to test neutral predictions [2]. The protocol involves: (1) sequencing the same genomic region from multiple individuals within a population, (2) calculating polymorphism parameters such as nucleotide diversity (π) and Watterson's θ, (3) comparing polymorphism to divergence using the HKA test, and (4) examining the site frequency spectrum for deviations from neutral expectations [2]. Under neutral theory, polymorphism levels should correlate with effective population size, though this relationship is complicated by linked selection [3].

Figure 2: Workflow for Comparative Sequence Analysis to Test Neutral Theory

Evolution and Extensions of the Neutral Theory

The Neutralist-Selectionist Debate

The proposal of the Neutral Theory ignited a heated controversy throughout the 1970s and 1980s, creating the "neutralist-selectionist" debate [1] [2]. This debate centered on the relative proportions of polymorphic and fixed alleles that are neutral versus non-neutral [1]. Selectionists argued that genetic polymorphisms are maintained primarily by balancing selection, while neutralists viewed protein variation as a transient phase of molecular evolution [1].

Studies by Richard K. Koehn and W. F. Eanes demonstrated a correlation between polymorphism levels and the molecular weight of protein subunits, consistent with neutral theory predictions that larger subunits should have higher neutral mutation rates [1]. In contrast, selectionists emphasized environmental factors as primary determinants of polymorphisms [1]. The discovery that levels of genetic diversity vary much less than census population sizes—termed the "paradox of variation"—became one of the strongest arguments against strict neutral theory [1].

Nearly Neutral Theory

In 1973, Tomoko Ohta proposed the "nearly neutral theory" as a crucial extension to Kimura's original framework [1] [7]. This theory accounts for mutations with very small selection coefficients (|s| < 1/Ne), where Ne represents the effective population size [1] [7]. The nearly neutral theory recognizes that whether slightly deleterious mutations behave as effectively neutral depends on population size [1]. In large populations, selection can efficiently remove slightly deleterious mutations, while in small populations, genetic drift may overcome weak selection, allowing these mutations to behave as if they were neutral [1] [7].

This population-size-dependent threshold for purging mutations has been termed the "drift barrier" by Michael Lynch and helps explain differences in genomic architecture among species with varying population sizes [7]. The nearly neutral theory also resolved the apparent contradiction between per-generation and per-year rates of molecular evolution, as population size is generally inversely proportional to generation time [7].

Constructive Neutral Evolution

Constructive Neutral Evolution (CNE) represents a more recent extension proposing that complex structures and processes can emerge through neutral transitions [1]. CNE involves scenarios where initially unnecessary interactions between molecular components (A and B) emerge randomly [1]. If a subsequent mutation compromises component A's independent functionality, the pre-existing A:B interaction can compensate, creating dependency through neutral processes [1]. This ratchet-like mechanism can drive increasing complexity without positive selection and has been applied to understanding origins of spliceosomal complexes, RNA editing, and other complex molecular systems [1].

The Neutral Theory in Modern Research and Drug Development

Contemporary Status and Challenges

Recent research continues to evaluate and refine the Neutral Theory. A 2023 systematic review of molecular evolution education literature highlighted the ongoing importance of neutral theory in evolutionary biology curricula, while noting limited coverage in education research [8]. Contemporary genomic data have revealed more complex patterns than initially recognized, including widespread effects of linked selection and background selection [3].

A 2024 study from the University of Michigan challenged strict neutralist assumptions by demonstrating that beneficial mutations occur more frequently than neutral theory predicts [5] [6]. However, these beneficial mutations often fail to become fixed due to changing environmental conditions—a phenomenon termed "Adaptive Tracking with Antagonistic Pleiotropy" [5]. This research suggests that while substitution patterns may appear neutral, the underlying processes involve more selection than traditionally acknowledged under neutral theory [5] [6].

Table 3: Key Research Reagent Solutions for Neutral Theory Investigations

| Research Reagent | Application | Function in Experimental Protocol |

|---|---|---|

| Error-prone PCR kits | Mutant library generation | Introduces random mutations throughout target genes |

| Site-directed mutagenesis kits | Specific variant creation | Creates precise nucleotide changes for functional testing |

| High-throughput sequencing reagents | Genotype characterization | Enables parallel sequencing of multiple genomes or mutant libraries |

| Orthologous gene sequences | Comparative analysis | Provides evolutionary divergence data for substitution rate calculations |

| Population genomic datasets | Polymorphism analysis | Supplies within-species variation data for neutrality tests |

| Model organisms (yeast, E. coli) | Experimental evolution | Allows controlled study of mutation fixation under laboratory conditions |

Implications for Drug Development and Biomedical Research

The Neutral Theory framework has significant implications for drug development, particularly in understanding drug resistance evolution and identifying conserved therapeutic targets. The theory predicts that functionally constrained regions of pathogen genomes will evolve more slowly, making them attractive targets for antimicrobial drugs [2]. Similarly, in cancer biology, the neutral theory provides models for understanding tumor evolution and the emergence of treatment-resistant cell populations through neutral drift processes.

By distinguishing between neutrally evolving regions and those under selective constraint, researchers can identify functionally important genomic elements likely to represent optimal drug targets. The molecular clock hypothesis, derived from neutral theory, also enables estimation of divergence times for pathogens and evolutionary reconstruction of disease transmission pathways, informing public health interventions and vaccine development strategies.

Over more than five decades, the Neutral Theory of Molecular Evolution has evolved from a controversial proposal to a foundational framework in evolutionary biology [3]. While ongoing research continues to refine its parameters and boundaries, the core principles established by Kimura, Ohta, and others remain essential for interpreting molecular evolutionary patterns [1] [7] [3]. The theory provides the critical null hypothesis for distinguishing between neutral and selective processes, enabling more rigorous detection of adaptation in genomic data [2]. Within the broader context of neutral emergence theory, the Neutral Theory continues to guide research into the evolution of genetic codes and complex biological systems, maintaining its relevance for contemporary evolutionary biology and its applications in biomedical science [1] [3].

The neutral theory of molecular evolution, introduced by Motoo Kimura in 1968, fundamentally reshaped our understanding of evolutionary mechanisms at the molecular level [1] [9]. Kimura's revolutionary proposition held that the majority of evolutionary changes observed at the molecular level are not driven by natural selection acting on advantageous mutations, but rather by the random fixation of selectively neutral mutants through genetic drift [2] [9]. This theory emerged from mathematical analyses revealing that the number of molecular substitutions occurring between species was too high to be reconciled with traditional selectionist views, particularly in light of what became known as Haldane's dilemma concerning the "cost of selection" [1]. The neutral theory does not dispute the role of natural selection in shaping phenotypic adaptations but contends that at the molecular level, most variations within and between species result from neutral mutations spreading through populations via random genetic drift rather than selective advantage [1].

The theory was independently developed by King and Jukes in 1969, who also noted the disconnection between molecular and phenotypic evolution and observed an inverse relationship between a protein's functional importance and its evolutionary rate [1] [10]. This challenged the then-prevailing neo-Darwinian synthesis and sparked the intense "neutralist-selectionist" debate that peaked throughout the 1970s and 1980s [1] [2]. During this period, the neutral theory provided a powerful null hypothesis for molecular evolution, enabling researchers to detect the signature of natural selection by identifying deviations from neutral expectations [2] [11]. The subsequent decades have witnessed a significant expansion of neutral concepts, with the framework evolving to incorporate nearly neutral mutations, constructive neutral evolution, and applications beyond population genetics to explain the emergence of biological complexity [1] [12].

Core Principles of the Neutral Theory

Theoretical Framework and Mathematical Foundations

The neutral theory rests on several foundational principles that distinguish it from selectionist explanations of molecular evolution. First, it posits that the overwhelming majority of molecular evolutionary changes result from random genetic drift of mutant alleles that are selectively neutral rather than beneficial [1] [9]. A neutral mutation is formally defined as one that does not significantly affect an organism's probability of survival and reproduction, meaning its selection coefficient (s) is approximately zero [1]. The theory acknowledges that most new mutations are actually deleterious and are rapidly removed by purifying selection, thus contributing little to standing variation or divergence between species [1]. For the remaining non-deleterious mutations, Kimura argued that neutral variants vastly outnumber beneficial ones, making genetic drift rather than positive selection the dominant force in molecular evolution [1] [2].

Kimura developed sophisticated mathematical models using diffusion equations to make quantitative predictions about molecular evolution [1] [9]. A fundamental derivation shows that for neutral mutations, the rate of molecular evolution (K) equals the mutation rate (u), independent of population size [2]. This relationship emerges because while the number of new mutations arising in each generation in a population of size N is Nu, the probability that any single neutral mutation eventually reaches fixation is 1/N, yielding K = Nu × (1/N) = u [2]. This elegant result provides the theoretical basis for the molecular clock hypothesis, which predated neutral theory but found its justification in it [1] [9]. The neutral theory also predicts that levels of genetic variation within species should be proportional to the product of the effective population size (Nₑ) and the mutation rate (u), specifically π = 4Nₑu for diploid organisms [1].

Table 1: Key Predictions of the Neutral Theory of Molecular Evolution

| Prediction | Theoretical Basis | Empirical Evidence |

|---|---|---|

| Higher evolutionary rates in functionally less constrained sequences | Reduced functional constraint increases proportion of neutral mutations [1] | Synonymous substitutions > nonsynonymous; pseudogenes evolve rapidly [2] |

| Constant molecular clock | Neutral substitution rate equals mutation rate, independent of population size [1] [2] | Roughly constant rates of molecular evolution across lineages [1] |

| More genetic variation in larger populations | Polymorphism proportional to Nₑu [1] | Generally supported, though with less variation than expected (paradox of variation) [1] |

| Conservative amino acid changes favored | Less radical changes more likely to be neutral [2] | Observed in protein sequence comparisons [2] |

Functional Constraint and the Molecular Clock

The concept of functional constraint plays a crucial role in neutral theory, explaining variation in evolutionary rates across different genomic regions and protein types [1]. The theory holds that as functional constraint diminishes, the probability that a mutation will be neutral increases, leading to higher sequence divergence rates [1]. This principle explains several key observations: fibrinopeptides and similar proteins with minimal biological function evolve at extremely high rates, while critical proteins like histones exhibit remarkably slow evolution [1]. Similarly, within protein structures, residues in hemoglobin responsible for binding heme groups evolve much more slowly than surface residues subject to fewer functional constraints [1].

The genetic code itself embodies principles of functional constraint, with similar amino acids typically encoded by similar codons, thereby minimizing the deleterious effects of mutations or translation errors [12] [13]. This error-minimizing property of the genetic code represents a form of mutational robustness that the neutral theory helps explain. At the nucleotide level, the degeneracy of the genetic code means that mutations at the third codon position often represent synonymous changes that do not alter the encoded amino acid [1]. These "silent" or synonymous substitutions generally experience minimal functional constraint and accordingly evolve at higher rates than non-synonymous changes that alter amino acid sequences [1] [2]. The nearly universal observation that synonymous substitution rates exceed non-synonymous rates provides strong support for the neutral theory's prediction that functional importance inversely correlates with evolutionary rate [2].

The Nearly Neutral Theory and Population Size Effects

Theoretical Expansion by Tomoko Ohta

In the early 1970s, Tomoko Ohta extended Kimura's strictly neutral model by introducing the nearly neutral theory of molecular evolution, which emphasized the importance of slightly deleterious mutations [1] [10]. This theory addressed observations that many molecular variants appear to have very small selection coefficients that place them in a boundary zone between neutral and selected mutations [1]. The nearly neutral theory contends that the interaction between genetic drift and selection becomes particularly important for mutations whose effects are so small that their fate depends on population size [10]. Formally, mutations with selection coefficients where |Nₑs| < 1 are considered effectively neutral because genetic drift dominates over selection in determining their fate [1] [10].

The nearly neutral theory makes distinctive predictions about the relationship between evolutionary dynamics and population size [1] [2]. In large populations, where Nₑ is substantial, slightly deleterious mutations behave as if they are deleterious and are efficiently removed by purifying selection [1] [2]. However, in small populations, genetic drift can overcome weak selection, allowing slightly deleterious mutations to behave as if they are neutral and thus reach fixation through random sampling [1] [2]. This population-size effect leads to the prediction that species with smaller effective population sizes should experience higher rates of molecular evolution for slightly deleterious mutations, a pattern that has been observed in comparative genomic studies [2] [11].

Table 2: Comparison of Strictly Neutral and Nearly Neutral Theories

| Characteristic | Strictly Neutral Theory | Nearly Neutral Theory | ||

|---|---|---|---|---|

| Types of mutations | Strictly neutral (s = 0) | Nearly neutral ( | Nₑs | < 1) |

| Dependence on population size | Substitution rate independent of Nₑ | Evolutionary rate depends on Nₑ | ||

| Expected pattern | Constant molecular clock | Faster evolution in smaller populations | ||

| Primary mechanism | Random genetic drift | Interaction of drift and weak selection | ||

| Distribution of mutations | Neutral mutations dominate | Continuum from deleterious to beneficial |

Empirical Evidence and Genomic Tests

The development of sophisticated statistical methods for detecting selection has provided mechanisms for testing predictions of the nearly neutral theory [11]. These approaches typically compare rates of evolution at sites under different functional constraints, such as synonymous versus non-synonymous sites in protein-coding genes [2] [11]. The McDonald-Kreitman test and its derivatives examine the ratio of polymorphic to divergent sites to detect signatures of natural selection [1]. When applied to genomic data, these tests generally reveal that while most mutations behave neutrally or nearly neutrally, a significant proportion experiences purifying selection, and positive selection affects a smaller but biologically important set of mutations [2] [11].

Analysis of taxonomic groups with different effective population sizes provides strong support for the nearly neutral theory [2]. In Drosophila species, which have large effective population sizes (Nₑ ≈ 10⁶), approximately 50% of non-synonymous substitutions show evidence of positive selection, while the proportion of effectively neutral non-synonymous mutations is less than 16% [2]. In contrast, hominids with much smaller effective population sizes (Nₑ ≈ 10,000-30,000) show almost no evidence of positive selection in protein-coding genes, with about 30% of non-synonymous mutations behaving as effectively neutral [2]. These observations confirm the nearly neutral theory's prediction that the proportion of effectively neutral mutations inversely correlates with effective population size [2].

Constructive Neutral Evolution and Neutral Emergence

Theoretical Framework of Constructive Neutral Evolution

A significant expansion of neutral concepts emerged in the 1990s with the development of constructive neutral evolution (CNE), which provides a neutral explanation for the emergence of biological complexity [1]. CNE challenges the adaptationist assumption that complex biological structures and processes necessarily originate through natural selection for their current functions [1]. Instead, CNE proposes that neutral processes can drive the development of complexity through a series of non-selective steps that become locked in through irreversible dependencies [1]. The theory suggests that neutral transitions can lead to the development of intricate biological systems without positive selection for the complexity itself [1].

The CNE process typically begins with an interaction between two components (A and B) where A performs its function independently of B, and their interaction represents an "excess capacity" that is unnecessary for function [1]. If a mutation subsequently compromises A's independent functionality, the pre-existing A:B interaction can compensate, making this deleterious mutation effectively neutral [1]. Once this dependency is established, purifying selection maintains both components and their interaction, as loss of either would now be deleterious [1]. Although each step is theoretically reversible, the accumulation of multiple dependencies makes a return to simplicity increasingly unlikely, creating a "ratchet-like" process that drives complexity forward through neutral mechanisms [1].

Diagram 1: Constructive Neutral Evolution (CNE) Process. This diagram illustrates the stepwise neutral emergence of biological complexity through CNE, where initially unnecessary interactions become essential through neutral mutations that create dependencies.

Neutral Emergence in Genetic Code Evolution

The concept of neutral emergence provides a powerful framework for understanding the evolution of the standard genetic code (SGC), particularly its remarkable property of error minimization [12]. The genetic code exhibits a non-random structure where similar codons typically encode amino acids with similar physicochemical properties, thereby minimizing the deleterious effects of point mutations or translation errors [12] [13]. This error-minimization property represents a form of mutational robustness that was traditionally explained through direct natural selection [12] [13].

However, research has demonstrated that genetic codes with significant error minimization can emerge through neutral processes alone, without direct selection for this property [12]. Simulations show that as the genetic code expanded through tRNA and aminoacyl-tRNA synthetase duplication, similar amino acids would naturally be added to codons related to those of their parent amino acids [12]. This neutral process of code expansion automatically generates error minimization as an emergent property rather than an adaption, leading to the concept of "pseudaptations"—beneficial traits that arise without direct natural selection [12]. This represents a significant departure from adaptationist explanations and highlights the explanatory power of neutral concepts in understanding fundamental biological systems.

Table 3: Evidence for Neutral Processes in Genetic Code Evolution

| Observation | Implication for Neutral Theory | References |

|---|---|---|

| Error minimization in standard genetic code | Can emerge neutrally through code expansion | [12] |

| Codon reassignments in small genomes | Support Crick's Frozen Accident theory; occur when proteome size reduces constraint | [12] [13] |

| Variant genetic codes in mitochondria | Smaller proteome size (P) reduces constraint, allowing neutral reassignments | [12] [13] |

| Experimental incorporation of unnatural amino acids | Demonstrates inherent malleability of genetic code | [13] |

The Genomic Era and Neutral Theory as a Null Hypothesis

Neutral Theory in Contemporary Genomics

The advent of large-scale genomic sequencing has transformed the testing and application of neutral theory, confirming many of its predictions while refining our understanding of its scope [11]. Genome-wide analyses generally support the neutral theory's core premise that the majority of molecular evolutionary changes are effectively neutral [11]. Observations that synonymous substitutions accumulate more rapidly than non-synonymous changes, that pseudogenes evolve at high rates similar to synonymous sites, and that non-coding DNA generally shows higher evolutionary rates than coding sequences all align with neutral theory predictions [2] [11]. These patterns persist across diverse taxonomic groups, though the proportion of neutral versus selected mutations varies with effective population size [2].

In contemporary genomics, the neutral theory serves primarily as a null hypothesis for detecting selection [2] [11]. By establishing expected patterns under neutrality, researchers can identify genomic regions exhibiting signatures of natural selection through significant deviations from these expectations [2] [11]. Statistical methods based on neutral theory have identified numerous cases of both purifying and positive selection acting on specific genes or genomic regions [1] [11]. However, some researchers have argued that many methods for detecting positive selection produce high rates of false positives when neutral assumptions are violated, and that when these methodological issues are addressed, the results largely align with neutral expectations [11].

Experimental Protocols for Testing Neutral Evolution

McDonald-Kreitman Test Protocol

The McDonald-Kreitman (MK) test provides a powerful method for detecting natural selection by comparing patterns of within-species polymorphism and between-species divergence [1]. The protocol involves:

Sequence Alignment: Obtain and align homologous DNA sequences from multiple individuals within a species (polymorphism data) and from at least one closely related species (divergence data).

Mutation Classification: Classify each site as synonymous (S) or non-synonymous (N) for both polymorphic and divergent sites.

Contingency Table Construction: Tabulate counts in a 2×2 contingency table:

- P_N: Number of non-synonymous polymorphisms within species

- P_S: Number of synonymous polymorphisms within species

- D_N: Number of non-synonymous fixed differences between species

- D_S: Number of synonymous fixed differences between species

Statistical Testing: Perform a Fisher's exact test or χ² test on the contingency table. A significant excess of non-synonymous substitutions (D_N) relative to polymorphisms indicates positive selection, while a deficit suggests purifying selection.

Neutrality Index Calculation: Compute NI = (PN/PS)/(DN/DS). Values significantly less than 1 suggest positive selection, while values greater than 1 indicate purifying selection.

This test is robust to demographic fluctuations because both polymorphism and divergence are similarly affected by population history, making it one of the most reliable methods for detecting selection [1].

Genetic Code Optimization Simulation Protocol

To test hypotheses about the neutral emergence of error minimization in the genetic code, researchers employ computational simulations of code evolution [12]:

Initial Code Setup: Begin with a simplified genetic code containing a subset of amino acids, typically 4-8 amino acids with defined physicochemical properties.

Define Amino Acid Similarity Matrix: Utilize a matrix based on physicochemical properties (e.g., polarity, volume, charge) rather than substitution frequencies to avoid circularity [12].

Code Expansion Simulation: Implement a neutral expansion process where:

- New amino acids are added to the code through duplication of existing tRNA and aminoacyl-tRNA synthetase pairs

- Similar amino acids are assigned to codons adjacent to their parent amino acids

- No direct selection for error minimization is implemented

Error Minimization Calculation: For each simulated code, calculate an error minimization value by comparing the average physicochemical distance between amino acids encoded by codons differing by single nucleotides versus random pairings.

Comparison to Random Codes: Generate numerous random genetic codes with the same amino acid and codon composition and compare their error minimization values to those produced through neutral expansion.

This protocol has demonstrated that codes with significant error minimization readily emerge through neutral expansion processes, supporting the concept of neutral emergence [12].

Research Reagent Solutions for Neutral Evolution Studies

Table 4: Essential Research Reagents and Resources for Studying Neutral Evolution

| Reagent/Resource | Function/Application | Specific Examples/Notes |

|---|---|---|

| Comparative Genomic Databases | Source of sequence data for polymorphism and divergence analyses | ENSEMBL, UCSC Genome Browser, NCBI databases providing multi-species alignments |

| Population Genetics Software | Statistical analysis of selection and neutrality tests | PAML (codon substitution models), DnaSP (polymorphism analysis), LIAN (linkage disequilibrium) |

| Amino Acid Similarity Matrices | Quantifying physicochemical distances for genetic code analysis | Matrices based on polarity, volume, and charge; avoid substitution-based matrices to prevent circularity [12] |

| tRNA and AaRS Expression Systems | Experimental study of codon reassignment mechanisms | In vitro translation systems; engineered bacteria with modified tRNA synthetases [13] |

| Mutagenesis and Selection Protocols | Experimental evolution studies | EMS mutagenesis; fluctuation tests; long-term evolution experiments (e.g., E. coli LTEE) |

| Codon Optimization Algorithms | Testing code optimality and robustness | Software for generating alternative genetic codes; calculating error minimization values [12] [14] |

From its initial formulation by Kimura in 1968, the neutral theory of molecular evolution has progressively expanded its explanatory domain, evolving from a controversial challenge to selectionist orthodoxy to a foundational framework for molecular evolution [1] [9] [11]. The theory has successfully incorporated more complex phenomena through the nearly neutral theory [1] [10] and constructive neutral evolution [1], while providing a robust null hypothesis for detecting selection in genomic data [2] [11]. The application of neutral concepts to explain the emergence of biological complexity, particularly through CNE, represents a significant extension beyond the theory's original scope [1].

The demonstration that key properties of the standard genetic code, such as error minimization, can arise through neutral processes rather than direct natural selection highlights the continued relevance and expanding explanatory power of neutral concepts [12]. This concept of "neutral emergence" provides a compelling alternative to adaptationist explanations for the origin of biological features with apparent benefits [12]. As genomic data continue to accumulate, the neutral theory remains essential for distinguishing random evolutionary processes from those driven by natural selection, enabling more accurate identification of genuinely adaptive changes [2] [11]. The ongoing integration of neutral concepts with evolutionary theory continues to refine our understanding of molecular evolution while maintaining the neutral theory's central insight: stochastic processes play a fundamental and underappreciated role in shaping biological complexity at all levels of organization.

Diagram 2: Historical Expansion of Neutral Concepts. This timeline illustrates the conceptual evolution of neutral theory from its original formulation by Kimura to its modern applications in genomics and complex systems.

The standard genetic code (SGC) is a foundational paradigm in molecular biology, representing the mapping of 64 codons to 20 canonical amino acids and translation stop signals. Its structure is highly non-random, with similar amino acids typically encoded by related codons, a design that minimizes the deleterious impact of point mutations and translational errors [12] [13]. This property of error minimization has long been interpreted as a hallmark of adaptive evolution, where the genetic code was optimized through natural selection for robustness. However, an emerging perspective rooted in neutral emergence theory challenges this adaptationist view, proposing that the error-minimizing structure of the code arose as a non-adaptive byproduct of neutral evolutionary processes, specifically through genetic code expansion via duplication of tRNA and aminoacyl-tRNA synthetase genes [12] [15].

This whitepaper examines the evidence for both adaptive and neutral models for the origin of error minimization in the genetic code, framing the discussion within the broader context of neutral emergence theory. We synthesize key findings from computational simulations, phylogenetic analyses, and experimental studies to evaluate the mechanisms that could have given rise to this fundamental biological property. For researchers in drug development and synthetic biology, understanding the evolutionary forces that shaped the genetic code is not merely an academic exercise; it provides critical insights for engineering genetic systems, designing novel biocircuits, and developing therapeutic strategies that leverage or modify the coding principle.

The Architecture of Error Minimization in the Standard Genetic Code

Quantitative Evidence for Error Minimization

The SGC exhibits a striking non-random organization where codons that differ by a single nucleotide often specify the same amino acid or physicochemically similar ones. This arrangement reduces the likelihood that a point mutation or a translational error will cause a radical change to the protein's chemical properties [13]. Quantitative analyses demonstrate that the SGC is near-optimal for error minimization compared to randomly generated alternative codes, though it is not perfectly optimal [12] [16].

Table 1: Key Properties of the Standard Genetic Code Related to Error Minimization

| Property | Description | Implication for Error Minimization |

|---|---|---|

| Block Structure | Codons are arranged in blocks where the third position is often redundant [17]. | Mutations in the third codon position are often silent or conservative. |

| Physicochemical Similarity | Similar amino acids (e.g., both hydrophobic) are assigned to codons related by a single nucleotide change [13]. | Point mutations are less likely to cause disruptive amino acid substitutions. |

| Error Minimization Level | The SGC is more robust than the vast majority of random codes, but not the absolute best possible [12]. | Suggests a possible non-adaptive origin or a failure to find the global optimum during evolution. |

The degree of optimality is influenced by the metric used to define amino acid similarity. Analyses based on physicochemical properties (e.g., polarity, volume) are less prone to circularity than those based on substitution frequencies in proteins, as the latter are themselves influenced by the code's structure [12].

The Neutral Emergence Hypothesis and Pseudaptations

A central tenet of the neutral emergence theory is that beneficial traits can arise without direct selection for their beneficial effects. In this framework, error minimization is a pseudaptation—a trait that confers fitness benefits but was not built by natural selection for its current function [12]. The proposed mechanism is neutral emergence, where genetic codes with superior error minimization can arise neutrally through a process of code expansion. This occurs via gene duplication of tRNAs and aminoacyl-tRNA synthetases, where the duplicated copies diverge and assign similar amino acids to codons related to that of the parent amino acid [12] [15]. This process inherently clusters similar amino acids without requiring a selective search through a vast space of possible codes.

Experimental and Computational Evidence

Simulation Models of Code Evolution

Computer simulations have been instrumental in testing whether the SGC's structure can emerge from neutral or weakly constrained processes. These models often start with a population of hypothetical, ambiguous primordial codes and subject them to evolutionary pressures.

Table 2: Key Simulation Studies on Genetic Code Evolution

| Study Focus | Methodology | Key Finding | Support for Neutral Emergence? |

|---|---|---|---|

| Evolution of Reading Systems [17] | Simulated competition between three codon-reading mechanisms (M1, M2, M3) under selection to reduce ambiguity and error. |

The M1 system (codons with two fixed positions, akin to the SGC) dominated quickly, yielding a code with low ambiguity and high robustness. |

Mixed: Selection was applied, but the resulting SGC-like structure emerged rapidly from random initial conditions. |

| Neutral Expansion [12] [15] | Modeling code expansion via tRNA and synthetase duplication, adding amino acids to codons related to a parent amino acid. | Codes with error minimization superior to the SGC can emerge without selection for that trait, purely through this duplication-divergence process. | Yes: Demonstrates a plausible neutral pathway for the emergence of error minimization. |

A key simulation allowed different codon-reading systems to compete. The M1 system, which most closely resembles the wobble rules of the SGC, consistently outcompeted more ambiguous systems (M2, M3). This was driven by selection for reduced translational noise, not directly for error minimization, yet the final code was highly robust to errors [17]. The workflow and logical relationships of such a simulation are outlined below.

Simulation Workflow for Code Evolution

Analyzing Putative Primordial Codes

Another line of evidence comes from reconstructing ancestral genetic codes. One study proposed that an early code used only the first two nucleotide positions (forming 16 "supercodons") to encode 10 primordial amino acids. When the error minimization level of this putative two-letter code is calculated, it is found to be exceptional, even superior to the modern SGC in some analyses [16]. This finding challenges a purely adaptive narrative; if the modern code is the product of prolonged selection for error minimization, why would an earlier, simpler code be more optimal? This is consistent with the neutral emergence view, where the initial random assignment of early amino acids to codons may have been "lucky," and subsequent expansion diluted this optimality to some extent [16].

Research Reagent Solutions for Genetic Code Studies

For researchers aiming to investigate genetic code evolution and engineering, a specific toolkit is required. The table below details essential reagents and their functions.

Table 3: Key Research Reagents for Genetic Code Evolution and Engineering Studies

| Research Reagent / Tool | Function/Application | Relevance to Code Studies |

|---|---|---|

| Aminoacyl-tRNA Synthetase (aaRS) & tRNA Pairs | Enzymes that charge tRNAs with specific amino acids; the core components defining the genetic code [13]. | Target for engineering novel codon assignments; studying evolutionary history through phylogenomics [18]. |

| tRNA Gene Mutants | tRNAs with altered anticodons or identity elements. | Used to test mechanisms of codon reassignment (e.g., ambiguous intermediate, codon capture) [13]. |

| Orthogonal Translation Systems | Engineered aaRS/tRNA pairs that function in a host without cross-reacting with the host's machinery [13]. | Essential for safely incorporating unnatural amino acids into proteins in live cells. |

| Whole-Genome Synthesis Platforms | Technologies for the de novo synthesis of entire genomes. | Allows for the testing of synthetic genetic codes and the removal of specific codons to test the Frozen Accident theory [13]. |

| Phylogenomic Software | Computational tools for building evolutionary timelines from molecular sequences (e.g., of protein domains, tRNAs) [18] [19]. | Used to reconstruct the order of amino acid entry into the genetic code and co-evolution with the translation machinery. |

| Molecular Gene Resurrection | A method to clone and correct mutations in pseudogenes to recover ancestral function [20]. | Provides direct experimental insight into the function of ancient genetic elements and their evolution. |

The conceptual relationships and workflow for incorporating an unnatural amino acid using engineered reagents are visualized in the following diagram.

Unnatural Amino Acid Incorporation

Implications for Biomedical and Pharmaceutical Research

The debate over the origin of error minimization is not purely philosophical; it has practical implications. If the genetic code's structure is a frozen accident with beneficial byproducts (the neutral emergence view), it suggests a degree of inherent malleability that can be exploited. The existence of over 20 naturally occurring alternative genetic codes, particularly in genomes with small proteomes, confirms this malleability and aligns with the concept of a "proteomic constraint" [12].

In drug discovery, understanding the code's fundamental logic and evolutionary constraints aids in several areas:

- Engineering Cyclic Peptides: Resurrecting extinct plant genes, as demonstrated with the nanamin peptide, provides a platform for developing new peptide-based cancer treatments and antibiotics [20]. This approach leverages evolutionary wisdom rather than starting from random compounds.

- Expanding the Chemical Palette: The ability to incorporate unnatural amino acids into proteins through engineered genetic codes opens avenues for creating novel biologics, enzymes with new functions, and precisely labeled proteins for imaging and diagnostics [13].

- Understanding Genetic Disease: The code's error-minimizing design buffers against mutations. Understanding its structure and limits helps predict the severity of missense mutations and informs the development of suppressor tRNA therapies for genetic diseases caused by nonsense mutations.

The evidence from computational simulations, analyses of primordial codes, and the observed natural malleability of the code presents a strong case that error minimization in the genetic code is, at least in part, a neutral byproduct. The process of neutral emergence, driven by the expansion of the code through gene duplication and divergence, provides a viable and parsimonious pathway for the development of this optimal property without requiring an exhaustive adaptive search of code space. This is not to say that natural selection played no role; it likely fine-tuned the initial, neutrally emerged structure and acted to reduce translational ambiguity [17]. However, the core architecture of the genetic code, with its remarkable robustness to error, appears to be a quintessential pseudaptation [12]. For scientists and drug developers, this evolutionary perspective underscores the potential for reprogramming the genetic code, encouraging innovative approaches that treat it not as an immutable law, but as an evolved and engineerable system.

The concept of adaptation represents a cornerstone of evolutionary biology, typically describing traits that have been directly shaped by natural selection for their current beneficial functions. However, a growing body of theoretical and empirical evidence challenges the assumption that all beneficial traits arise through direct selective pressure. We introduce and define the term "pseudaptation" to describe fitness-increasing traits that emerge through non-adaptive processes, rather than via the direct action of natural selection [12]. This concept is intrinsically linked to the theory of neutral emergence, a process by which advantageous system properties can arise spontaneously through non-selective mechanisms [12] [21].

The distinction between true adaptations and pseudaptations represents a paradigm shift in evolutionary thinking. Whereas adaptations are forged through selective fine-tuning, pseudaptations emerge as byproducts of other evolutionary processes, often through the internal dynamics of complex biological systems. The standard genetic code (SGC) serves as the paradigmatic example of a pseudaptation, exhibiting the property of error minimization that reduces the deleterious impact of point mutations, yet likely arising through neutral processes of code expansion rather than direct selective optimization [12] [22]. This framework provides a powerful lens through which to reexamine other seemingly optimized biological systems, from molecular networks to developmental programs.

The Genetic Code as a Paradigmatic Pseudaptation

Error Minimization in the Standard Genetic Code

The standard genetic code is remarkably optimized for error minimization, a form of mutational robustness that reduces the deleterious consequences of point mutations or translational errors [12]. This optimization manifests as a non-random arrangement of amino acids within the codon table, wherein physicochemically similar amino acids tend to be assigned to codons that differ by only a single nucleotide substitution. When mutations occur, this organization increases the probability that they will result in functionally conservative amino acid substitutions rather than radically different amino acids that would compromise protein structure and function [12].

The error minimization property of the standard genetic code is not merely a minor feature but represents a highly optimized characteristic. Computational analyses have demonstrated that the standard genetic code is near-optimal for this property when compared to randomly generated alternative codes [12] [22]. The extent of this optimization has been a subject of ongoing investigation, with some studies suggesting the standard genetic code may be "one in a million" in terms of its error-minimizing capacity [12]. This high degree of optimization has traditionally been interpreted through an adaptationist lens, presumed to result from direct selective pressure for reduced mutational load.

The Neutral Emergence Hypothesis

Contrary to the adaptationist interpretation, the neutral emergence hypothesis proposes that the error minimization observed in the standard genetic code arose primarily through non-adaptive processes [12] [22]. This hypothesis suggests that the genetic code expanded through a series of gene duplication events affecting transfer RNAs (tRNAs) and aminoacyl-tRNA synthetases, followed by the assignment of similar "daughter" amino acids to codons related to those of the parent amino acid [22].

Through simulation studies, it has been demonstrated that when during code expansion the most similar amino acid (from the set of unassigned amino acids) is assigned to codons related to the parent amino acid, genetic codes with error minimization superior to the standard genetic code can readily emerge [22]. This process represents a form of self-organization at the coding level, whereby beneficial properties arise without the need for direct selection for those properties [22]. The neutral emergence of such optimized codes occurs across various expansion pathways and using different amino acid similarity matrices, suggesting its robustness as a mechanism [22].

Table 1: Key Evidence Supporting the Neutral Emergence of Genetic Code Optimization

| Evidence Type | Finding | Significance |

|---|---|---|

| Simulation Studies | Genetic codes with error minimization superior to the SGC readily emerge through code expansion models [22] | Demonstrates feasibility of non-adaptive emergence of beneficial traits |

| Mechanistic Plausibility | Process mimics known biological mechanisms of tRNA and aminoacyl-tRNA synthetase duplication [12] | Provides biologically realistic pathway |

| Pathway Independence | Result obtained for various code expansion schemes and similarity matrices [22] | Suggests robustness of neutral emergence mechanism |

Experimental Evidence and Methodologies

Simulation Protocols for Code Evolution

The experimental evidence for the neutral emergence of error minimization primarily comes from computational simulations that model the expansion of the genetic code. The core methodology involves simulating the stepwise addition of amino acids to an initially limited code through a process that mimics the duplication of tRNA and aminoacyl-tRNA synthetase genes [22].

The fundamental workflow follows these steps:

- Initialization: Begin with a small set of amino acids assigned to a subset of codons

- Expansion Iteration:

- Select an already assigned "parent" amino acid

- Identify the most similar unassigned amino acid based on physicochemical properties

- Assign this "daughter" amino acid to codons related to the parent's codons

- Evaluation: Calculate the error minimization value of the resulting code after each expansion

- Comparison: Compare the emergent code's error minimization value with that of the standard genetic code

The error minimization value is quantitatively defined as: [ EM = \left( \sum{n=1}^{61} \sum{i=1}^{9} \frac{V{cn{ci}}}{9} \right) / 61 ] where (c) represents a sense codon, (n) indexes the 61 sense codons, (i) indexes the 9 codons that differ from (cn) by a single point mutation, and (V{cn{ci}}) represents the physicochemical similarity between the amino acids assigned to codons (cn) and (c_i) [22].

Table 2: Key Parameters in Genetic Code Evolution Simulations

| Parameter | Description | Impact on Results |

|---|---|---|

| Amino Acid Similarity Matrix | Defines physicochemical relationships between amino acids | Different matrices yield consistent emergence of optimization [22] |

| Code Expansion Pathway | Order and mechanism of amino acid addition | Robust results across multiple expansion schemes [22] |

| Initial Code State | Starting amino acids and codon assignments | Affects trajectory but not overall capacity for neutral emergence [22] |

Diagram 1: Neutral Emergence Simulation Workflow

Empirical Support from Code Variants

Further support for the neutral emergence hypothesis comes from observations of codon reassignments in non-standard genetic codes, particularly in genomes with reduced proteome size (P, defined as the total number of codons/amino acids encoded by the genome) [12] [21]. The observed malleability of the genetic code in organisms with small proteome sizes suggests the existence of a proteomic constraint on genetic code evolution [12].

This constraint operates through the following mechanism:

- Smaller proteomes contain fewer instances of each codon

- Reassignment events affect fewer protein molecules

- Reduced deleterious impact allows for "unfreezing" of the frozen accident

- Code evolution becomes possible in constrained genomic contexts

This pattern is particularly evident in non-plant mitochondria and intracellular bacteria, which typically have small proteomes and frequently exhibit codon reassignments [12]. The inverse relationship between proteome size and code malleability provides indirect empirical support for the neutral emergence hypothesis by demonstrating that the genetic code is not immutable but can change under specific genomic conditions.

Beyond the Genetic Code: Other Potential Pseudaptations

Pseudogenes as Regulatory Elements

The concept of pseudaptations extends beyond the genetic code to other biological systems. Pseudogenes, traditionally considered non-functional genomic relics, represent compelling candidates for pseudaptations [23]. Once dismissed as "junk DNA," pseudogenes are now recognized to frequently perform regulatory functions, despite arising through non-adaptive processes of gene duplication and inactivation [23].

Multiple lines of evidence support the functional importance of pseudogenes:

- Transcriptional Activity: Many pseudogenes are transcribed into RNA, sometimes exhibiting tissue-specific patterns [23]

- Sequence Conservation: Some pseudogenes show unexpected evolutionary conservation, suggesting functional constraint [23]

- Regulatory Mechanisms: Pseudogene transcripts can regulate their protein-coding counterparts through various mechanisms, including microRNA decoy activity [23]

These regulatory functions likely emerged neutrally following duplication events, with functional significance accruing secondarily rather than through direct selection for regulatory capacity.

Protein Biophysical Properties

The field of evolutionary protein biophysics provides additional examples of potential pseudaptations, particularly regarding mutational robustness and evolvability [24]. Proteins exhibit properties such as marginal stability and conformational dynamics that facilitate the exploration of sequence space while maintaining functional integrity [24].

These biophysical properties may have emerged neutrally as consequences of physical constraints on foldable sequences rather than through direct selection for robustness or evolvability [24]. The funnel-like energy landscape of proteins, which ensures reliable folding while accommodating sequence variation, represents a physical principle that necessarily confers evolutionary benefits without requiring direct selection for those benefits [24].

Research Reagents and Experimental Tools

Table 3: Essential Research Reagents for Studying Pseudaptations

| Reagent/Tool | Function/Application | Utility in Pseudaptation Research |

|---|---|---|

| Amino Acid Similarity Matrices | Quantify physicochemical relationships between amino acids [12] | Foundation for calculating error minimization in code simulations |

| Genetic Code Simulation Software | Model code expansion and calculate error minimization values [22] | Test neutral emergence hypothesis computationally |

| Phylogenetic Analysis Tools | Reconstruct evolutionary relationships and detect selection [24] | Distinguish neutral from adaptive evolutionary trajectories |

| tRNA/Aminoacyl-tRNA Synthetase Gene Sequences | Trace historical duplication events [12] | Reconstruct evolutionary history of coding machinery |

| Proteome Size Datasets | Quantify total codons across genomes [12] | Test correlation between proteome size and code variability |

Implications for Drug Development and Biomedical Research

The concept of pseudaptations has profound implications for drug development and biomedical research, particularly in understanding disease mechanisms and evolutionary constraints on molecular targets.

Understanding Genetic Disease and Mutation Impact

The error-minimizing architecture of the genetic code, even if neutrally emerged, has direct implications for understanding mutation impact in human disease [12]. The non-random distribution of amino acid assignments buffers against the most deleterious mutational outcomes, influencing the spectrum of observed disease-causing mutations. Drug development strategies can leverage this understanding to:

- Predict severity of missense mutations

- Identify genetic contexts with higher vulnerability to mutations

- Design therapeutic approaches that account for natural mutational robustness

Exploiting Evolutionary Principles in Drug Design

Understanding the neutral emergence of beneficial traits provides novel perspectives for drug design and target selection [24]. The biophysical properties of proteins that arise through neutral processes, such as marginal stability and conformational diversity, create opportunities for therapeutic intervention by:

- Identifying cryptic binding sites in alternative conformations

- Exploiting evolutionary histories to predict drug resistance pathways

- Targeting promiscuous functions performed by hidden conformational states

The recognition that beneficial properties can emerge without direct selection expands the toolkit for therapeutic development, encouraging researchers to look beyond adaptive explanations for target characteristics.

Diagram 2: From Neutral Processes to Biomedical Applications

The concept of pseudaptations challenges the adaptationist paradigm by demonstrating that beneficial biological traits can emerge through neutral processes rather than exclusively through direct natural selection. The standard genetic code stands as a paradigmatic example, with its remarkable error-minimizing properties likely arising through neutral expansion via duplication of tRNA and aminoacyl-tRNA synthetase genes, rather than through direct selection for error minimization [12] [22].

This theoretical framework finds support in empirical observations of codon reassignments in genomes with small proteome sizes, revealing a proteomic constraint on genetic code evolution [12]. Beyond the genetic code, other biological systems including pseudogenes and protein biophysical properties exhibit characteristics consistent with pseudaptations, suggesting the broader relevance of this concept [24] [23].

For biomedical researchers and drug development professionals, recognizing pseudaptations opens new avenues for understanding disease mechanisms and developing therapeutic strategies. By appreciating the neutral origins of certain beneficial traits, we gain a more nuanced and comprehensive understanding of evolutionary processes and their biomedical implications.

The Coevolution Theory of Genetic Code Expansion

The coevolution theory of genetic code expansion posits that the genetic code evolved through a progressive expansion from simpler early forms, where the biosynthetic pathways of amino acids and their corresponding codon assignments are intrinsically linked. This paper examines this theory through the lens of neutral emergence, which proposes that the modern code's error-minimizing properties arose not as a direct target of selection but as a byproduct of code expansion driven by neutral processes. We synthesize current computational and experimental evidence, provide detailed protocols for studying code evolution, and outline practical applications in drug development. The findings support a model where the genetic code's structure reflects a deep interplay between neutral expansion and adaptive refinement.

The standard genetic code (SGC) is the fundamental framework that maps 64 codons to 20 canonical amino acids and stop signals. Its non-random, error-minimizing structure has long prompted questions about its origin. The coevolution theory provides a compelling narrative, suggesting that the code expanded from a simpler primordial form as new amino acids were synthesized from existing ones. According to this theory, when a new amino acid was biosynthetically derived from an existing precursor, its codon assignments were "captured" from subsets of the precursor's codons [12]. This process intrinsically linked the structure of the genetic code to the evolution of metabolic pathways.

A critical re-examination of this theory involves the concept of neutral emergence. This concept challenges the assumption that the code's optimal properties, particularly its robustness against errors, were the direct target of natural selection. Instead, neutral emergence posits that these beneficial traits can arise as non-adaptive byproducts of other evolutionary processes. Simulation studies have demonstrated that genetic codes with significant levels of error minimization can emerge through a neutral process of code expansion via tRNA and aminoacyl-tRNA synthetase duplication, where similar amino acids are added to codons related to that of the parent amino acid [12]. Such beneficial traits that arise without direct selection have been termed "pseudaptations" [12]. This framework suggests that the coevolution of the code and amino acid biosynthesis may have neutrally established a foundation of mutational robustness that was later refined by natural selection.

Theoretical Framework and Computational Evidence

Core Principles of the Coevolution Theory

The coevolution theory rests on several foundational principles. First, it posits a directional expansion of the amino acid repertoire, from a small set of simple, prebiotically plausible amino acids to the more complex, biosynthetically derived ones found in the modern code. Second, it asserts a mechanistic link between the emergence of a new amino acid in metabolism and the assignment of its codons, which were necessarily reassigned from the codons of its biosynthetic precursor. This process would naturally lead to similar amino acids sharing related codons, a hallmark of the SGC's organization [12]. This inherent structure contributes to error minimization, as a mutation in a codon is more likely to result in a similar, and therefore functionally tolerable, amino acid.

The Neutral Emergence of Error Minimization

A central debate in genetic code evolution is whether its error-minimizing properties are an adaptation or a byproduct. Proponents of neutral emergence argue that the process of code expansion itself, via the duplication of tRNA and aminoacyl-tRNA synthetase genes, can lead to superior error minimization without requiring direct selection for this trait. In this model, a duplicated gene set specific to a precursor amino acid can evolve to recognize a new, similar amino acid and incorporate it into a subset of the precursor's codons. This mechanism automatically clusters similar amino acids in codon space, thereby reducing the impact of point mutations and translation errors [12]. This neutral emergence of mutational robustness presents a paradigm for how complex, beneficial traits can originate without being the immediate target of Darwinian selection.

However, this view is contested. Critics argue that the high level of optimization observed in the SGC is unlikely to have arisen through neutral processes alone. They emphasize that the probability of a random code achieving the level of error minimization seen in the SGC is exceptionally low—on the order of "one in a million"—which strongly implies the intervention of natural selection [25]. This critique highlights that while neutral processes may have played a role, the final optimization of the code was likely shaped by selective forces.

Conflicting Pressures: Fidelity vs. Diversity

Recent computational models have advanced the discussion by framing code evolution as a balance between conflicting objectives. The code must not only be robust against errors (fidelity) but also encode a diverse set of amino acids with varied physicochemical properties to build complex and functional proteins.

Table 1: Key Conflicting Pressures in Genetic Code Evolution

| Pressure | Description | Evolutionary Implication |

|---|---|---|

| Fidelity (Error Minimization) | Reduces the deleterious impact of point mutations and translational errors. | Favors codes where similar amino acids share similar codons. |

| Diversity | Ensures the encoded amino acid repertoire supports the synthesis of complex, functional proteins. | Favors codes that incorporate a wide range of physicochemical properties. |

| Compositional Alignment | Matches codon usage and assignments to the natural abundance of amino acids in proteomes. | Optimizes for efficient resource use and translational throughput [26]. |

Studies using simulated annealing to explore this trade-off have found that the SGC is a highly effective solution that lies near local optima in this multi-dimensional parameter space [26]. This suggests that the modern code reflects a coevolutionary compromise under these conflicting pressures, with its structure being finely tuned to the empirical composition of modern proteomes.

Experimental Validation and Protocols

The principles of code evolution are not merely theoretical; they can be tested and exploited in the laboratory using Genetic Code Expansion (GCE) technology.

Fundamentals of Genetic Code Expansion

GCE enables the site-specific incorporation of non-canonical amino acids (ncAAs) into proteins. This is achieved by introducing an orthogonal aminoacyl-tRNA synthetase/tRNA pair into a host organism. This pair is "orthogonal" because it does not cross-react with the host's native translational machinery. The tRNA is engineered to recognize a specific codon—typically the amber stop codon (TAG)—that is repurposed to encode the ncAA [27]. This provides a powerful tool for probing protein function and introducing novel chemical properties.

A Generic Workflow for GCE

The following protocol outlines a standard workflow for establishing GCE in a new microbial host, such as Bacillus subtilis [28].

Table 2: Key Research Reagent Solutions for Genetic Code Expansion

| Research Reagent | Function in GCE | Specific Examples |

|---|---|---|

| Orthogonal Aminoacyl-tRNA Synthetase (AARS) | Enzyme that specifically charges the orthogonal tRNA with the ncAA. | MjTyrRS (tyrosyl), MbPylRS/MaPylRS (pyrrolysyl), ScWRS (tryptophanyl) variants [28]. |

| Orthogonal tRNA | Transfer RNA that recognizes a repurposed codon (e.g., TAG) and delivers the ncAA. | tRNAPylCUA, Mj-tRNATyrCUA [29] [28]. |

| Non-Canonical Amino Acid (ncAA) | The novel amino acid to be incorporated. | Azidohomoalanine (Aha), p-azido-L-phenylalanine (Azf), photocrosslinking ncAAs [30] [28]. |

| Reporter Gene Cassette | A gene with a repurposed codon (e.g., TAG) at a defined site, used to assess incorporation efficiency. | mNeonGreen-TAG, sfGFP150TAG, mCherry-TAG-EGFP [29] [28]. |

Step 1: System Construction and Integration

- Objective: Genomically integrate an orthogonal AARS/tRNA pair and a reporter gene.

- Protocol:

- Select an orthogonal pair (e.g., the M. jannaschii tyrosyl-tRNA synthetase/tRNA pair, MjTyrRS/tRNATyrCUA).

- Assemble an integration vector (e.g., a PiggyBac transposon vector for mammalian cells [29] or a homologous recombination vector for B. subtilis [28]) containing:

- The AARS gene driven by a constitutive promoter (e.g., pVeg).

- The orthogonal tRNA gene driven by a separate promoter (e.g., pSer).

- A selectable marker (e.g., antibiotic resistance).

- Co-transfect/transform the host organism with the integration vector and a reporter vector containing a fluorescent protein gene (e.g., mNeonGreen) with an in-frame amber stop codon at a permissive site.

- Select for stable integrants using the appropriate antibiotic.

Step 2: System Characterization and Optimization

- Objective: Measure the efficiency and fidelity of ncAA incorporation.

- Protocol:

- Culture the stable cell line in media supplemented with the target ncAA.

- Measure fluorescence (e.g., via flow cytometry) to quantify full-length protein yield, which indicates successful ncAA incorporation and amber suppression.

- Assess fidelity by analyzing cells grown without the ncAA; low background fluorescence indicates minimal mis-incorporation of canonical amino acids.

- Confirm incorporation accuracy via mass spectrometry analysis of the purified reporter protein [28].

Step 3: Application for Biological Discovery

- Objective: Utilize the incorporated ncAA for specific experiments.

- Protocol:

- Bio-orthogonal Labeling (BONCAT): Incorporate an azide-containing ncAA (e.g., Aha). After protein synthesis, perform a copper-catalyzed or strain-promoted azide-alkyne cycloaddition ("click" reaction) with an alkyne-linked fluorescent dye or biotin for detection or purification [30].

- Photo-crosslinking: Incorporate a photocrosslinking ncAA (e.g., diazirine-based). Irradiate cells with UV light to crosslink the ncAA with interacting biomolecules, which can then be identified via pull-down and mass spectrometry [28].

- Translational Control: Incorporate a toxic ncAA or use incorporation to titrate the expression level of an essential protein (e.g., cell division protein FtsZ) to precisely modulate its function in vivo [28].

Diagram 1: GCE Experimental Workflow.

Insights from Experimental Code Evolution

GCE experiments have provided critical insights relevant to coevolution. A study in Bacillus subtilis demonstrated that, unlike in E. coli, the orthogonal system led to pervasive incorporation of ncAAs at native TAG stop codons across the proteome without significant fitness cost [28]. This finding highlights the role of proteome size and genomic context as constraints on code malleability, supporting the idea that smaller proteomes (like those in organelles where codon reassignments are common) are more tolerant to genetic code changes [12]. Furthermore, the ability to incorporate multiple nsAAs, as demonstrated by the incorporation of 20 distinct nsAAs in B. subtilis using different synthetase families, showcases the potential for further code expansion and its application in probing complex biological questions [28].

Applications in Drug Development and Biotechnology

The ability to expand the genetic code has profound implications for pharmaceutical research and development, enabling novel approaches to drug design and production.

Table 3: Applications of Genetic Code Expansion in Drug Development

| Application Area | Description | Benefit |

|---|---|---|

| Site-Specific Bioconjugation | Incorporation of ncAAs with bio-orthogonal chemical handles (e.g., azides, alkynes) allows for precise attachment of payloads like PEG chains, toxins, or fluorescent dyes to protein therapeutics. | Improves drug half-life (PEGylation), creates stable Antibody-Drug Conjugates (ADCs), and enables targeted delivery [30] [27]. |

| Probing Protein-Protein Interactions | Incorporation of photo-crosslinking ncAAs into a target protein of interest (e.g., a G-protein coupled receptor) enables capture and identification of weak or transient interaction partners in living cells. | Identifies novel drug targets and elucidates mechanisms of drug action [30] [28]. |

| Engineering Novel Therapeutics | Direct incorporation of stable mimics of post-translational modifications (e.g., acetyl-lysine, phosphoserine) or amino acids with novel chemistries can create proteins with enhanced or entirely new functions. | Develops more stable and potent peptide and protein drugs, and allows for the study of PTM function [29] [30]. |

| Cell-Specific Labeling | Using mutant methionyl-tRNA synthetases that incorporate methionine analogs (e.g., Azidohomoalanine, Aha) in a Cre-dependent manner allows for profiling of newly synthesized proteins in specific cell types in vivo. | Reveals cell-type-specific proteomic responses to drugs in complex tissues and disease models [30]. |

Diagram 2: GCE for Target & Therapeutic Discovery.