Beyond the Black Box: Interpretable and Trustworthy Machine Learning for Biomedical Discovery and Drug Development

The 'black box' nature of advanced machine learning models poses a significant barrier to their adoption in high-stakes fields like drug development and clinical research.

Beyond the Black Box: Interpretable and Trustworthy Machine Learning for Biomedical Discovery and Drug Development

Abstract

The 'black box' nature of advanced machine learning models poses a significant barrier to their adoption in high-stakes fields like drug development and clinical research. This article provides a comprehensive framework for overcoming this challenge, tailored for researchers and scientists. We explore the foundational ethical and practical implications of unexplainable AI, detail state-of-the-art interpretability methods like SHAP and LIME, and examine techniques for quantifying predictive uncertainty using Bayesian neural networks and conformal prediction. A comparative analysis guides the selection and rigorous validation of these methods, empowering professionals to build more transparent, reliable, and clinically actionable ML models.

The Black Box Problem: Why Unexplainable AI is a Critical Barrier in Biomedical Research

Frequently Asked Questions (FAQs)

What is a "Black Box" AI model? A Black Box AI model is a system where the internal decision-making process is opaque and difficult to understand, even for the developers who built it. Data goes in and results come out, but the inner mechanisms—how the model weights different factors and arrives at a specific conclusion—remain a mystery. This is common in complex models like deep neural networks and large language models (LLMs) [1] [2].

Why is the "Black Box" problem particularly critical in drug discovery research? In drug discovery, the stakes of unexplained predictions are exceptionally high. A lack of transparency can obscure a model's reasoning for recommending a specific drug candidate, making it difficult to validate the accuracy of the prediction, identify potential biases in the training data, or understand the biological mechanisms involved [3] [4] [2]. This opacity raises concerns about reliability, accountability, and complicates regulatory approval, as agencies may require explanations for decisions made by AI systems [4] [2].

My model's performance is poor. Where should I start troubleshooting? Always start by investigating your data. Poor model performance is most commonly caused by issues with the input data [5]. The checklist below outlines the most frequent data-related challenges and how to identify them.

Table: Common Data Challenges and Identification Methods

| Challenge | Description | Identification Method |

|---|---|---|

| Corrupt Data | Data is mismanaged, improperly formatted, or combined with incompatible data [5]. | Data validation scripts; checking for formatting inconsistencies. |

| Incomplete/Insufficient Data | Missing values in a dataset or the overall dataset is too small [5]. | Summary statistics (e.g., .info() in pandas); detecting missing values. |

| Imbalanced Data | Data is unequally distributed or skewed towards one target class [5]. | Class distribution plots (e.g., using seaborn.countplot). |

| Outliers | Values that do not fit within a dataset or distinctly stand out [5]. | Box plots (e.g., seaborn.boxplot); scatter plots. |

| Improper Feature Scaling | Features are on drastically different scales, causing some to be unfairly weighted [5]. | Statistical summary (mean, std, min, max); histograms. |

What does a typical troubleshooting workflow for a machine learning model look like? After addressing data quality, a systematic approach to model tuning is essential. The following diagram outlines a standard workflow for troubleshooting and improving model performance.

How can I visualize my model's performance to better understand its weaknesses? Visualization is key to moving from abstract metrics to concrete understanding. For classification models, a confusion matrix is a fundamental tool. It compares your model's predictions with the ground truth, clearly showing which classes are being confused with one another [6]. This helps in calculating precise metrics like precision and recall, and reveals if your model is consistently failing on a particular class.

Troubleshooting Guides

Guide: Addressing Bias and Lack of Trust in Black Box Predictions

Problem: A model predicting compound efficacy appears to be biased against a certain structural class of molecules, and the research team cannot trace the rationale for its rejections, leading to a lack of trust [1].

Solution & Methodologies:

- Implement Explainable AI (XAI) Techniques: Use post-hoc interpretation methods to explain the model's predictions after it has been deployed. Techniques like SHAP (SHapley Additive exPlanations) can show how each input feature (e.g., molecular weight, presence of a specific chemical group) pushed the model's prediction for a specific compound higher or lower [7] [2].

- Simplify the Model: If high predictive power is not absolutely critical for the initial screening phase, consider using a simpler, more interpretable model (e.g., Decision Tree, Logistic Regression) as a benchmark. The structure of a Decision Tree, for example, can be fully visualized and understood, providing clear decision rules [6] [2].

- Perform Bias Audits: Intentionally test the model on balanced datasets that contain equal representation of the under-performing structural class. Analyze the confusion matrix and performance metrics (precision, recall) specifically for this subgroup to quantify the bias [6].

Guide: Debugging a Model with High Error Rates

Problem: A model for predicting drug-target interactions was launched with high training accuracy but is now producing inaccurate and unreliable predictions on new validation data.

Solution & Methodologies: Follow the systematic workflow below to debug the model.

Diagnose Overfitting/Underfitting:

- Methodology: Use cross-validation. Split your data into k equal subsets (folds). Train the model k times, each time using a different fold as the validation set and the remaining k-1 folds as the training set. This creates a more robust estimate of model performance.

- Interpretation: If performance is excellent on the training data but poor on the validation folds, the model is overfitting—it has learned the noise in the training data rather than the generalizable pattern. If performance is poor on both, it may be underfitting—the model is too simple to capture the underlying trends [5].

Conduct Feature Selection:

- Methodology: Not all input features contribute meaningfully to the output. Use algorithms to select the most useful features.

- Univariate Selection: Use statistical tests like ANOVA F-value to find features with the strongest relationship to the output variable [5].

- Feature Importance: Tree-based models like Random Forest can output a score for how much each feature decreases the model's impurity [5].

- Principal Component Analysis (PCA): A dimensionality reduction algorithm that projects features into a lower-dimensional space, helping to remove noise and redundancy [5] [7].

- Methodology: Not all input features contribute meaningfully to the output. Use algorithms to select the most useful features.

Hyperparameter Tuning:

- Methodology: Systematically search for the optimal combination of a model's hyperparameters (e.g., learning rate, number of layers in a neural network, k in k-NN). Use techniques like Grid Search or Random Search to train and evaluate the model across a range of hyperparameter values [5].

Guide: Interpreting a Complex Model's Predictions for Scientific Reporting

Problem: A deep learning model has identified a promising drug candidate, but researchers need to explain the "why" behind the prediction for internal scientific review and regulatory documentation.

Solution & Methodologies:

- Leverage Model Visualization:

- For models that are not deep neural networks, use built-in visualization. For instance, a Decision Tree can be rendered graphically, showing the entire decision-making path from input to prediction [6].

- For high-dimensional data, use dimensionality reduction visualizations like t-SNE or PCA to project features into a 2D or 3D space. This can reveal if the model is clustering similar compounds together, providing a visual intuition for its logic [7].

- Utilize Explainable AI (XAI) Libraries:

- Methodology: Employ libraries like SHAP or LIME (Local Interpretable Model-agnostic Explanations). These tools can be applied to any model ("model-agnostic") to create local explanations for individual predictions. For example, they can generate a list of the top molecular descriptors that contributed to a high efficacy score for a specific compound [7] [2].

The Scientist's Toolkit: Key Reagents for Transparent ML Research

Table: Essential "Research Reagents" for Overcoming Black Box Problems

| Tool / Solution | Function / Explanation | Commonly Used For |

|---|---|---|

| SHAP (SHapley Additive exPlanations) | A unified framework from game theory that assigns each feature an importance value for a particular prediction [7] [6]. | Explaining individual predictions; identifying global feature importance. |

| LIME (Local Interpretable Model-agnostic Explanations) | Creates a local, interpretable model to approximate the predictions of the black box model in the vicinity of a specific instance [2]. | Explaining individual predictions when model access is limited. |

| PCA (Principal Component Analysis) | A linear dimensionality reduction technique that helps in visualizing high-dimensional data and identifying broad patterns or clusters [5] [7]. | Data exploration; feature selection; simplifying model input. |

| t-SNE (t-distributed Stochastic Neighbor Embedding) | A non-linear dimensionality reduction technique optimized for visualizing local structure and revealing clusters in high-dimensional data [7]. | Exploring and visualizing complex data manifolds. |

| Cross-Validation | A resampling technique used to evaluate a model's ability to generalize to new data, primarily to diagnose overfitting [5]. | Model validation; hyperparameter tuning; model selection. |

| Confusion Matrix | A specific table layout that allows visualization of a classification algorithm's performance, showing true/false positives and negatives [6]. | Evaluating classification model performance; identifying class-specific errors. |

Technical Support Center

Troubleshooting Guides

FAQ 1: How can I diagnose and mitigate bias in my predictive model?

Bias in AI models often stems from unrepresentative training data or flawed model assumptions, which can lead to unfair outcomes and reduced generalizability [8]. To diagnose and mitigate this, follow this experimental protocol:

Step 1: Bias Diagnosis

- Method: Perform subgroup analysis. Test your model's performance (e.g., accuracy, positive predictive value) across different demographic groups (e.g., race, gender, age) [8].

- Measurement: Use metrics like disparate impact or equal opportunity difference to quantify performance gaps between groups [8]. A well-known example is the case of a commercial algorithm that showed racial bias by inadequately identifying the health needs of Black patients compared to White patients [8].

Step 2: Data Remediation

Step 3: Algorithmic Fairness

- Method: Apply fairness constraints during model training or as a post-processing step. Use frameworks like AI Fairness 360 (AIF360) which provides a suite of algorithms to mitigate bias [8].

FAQ 2: My deep learning model is a "black box." How can I explain its predictions to satisfy regulatory and clinical scrutiny?

The "black box" nature of complex models like deep neural networks makes it difficult to understand their inner workings, which is a significant barrier to trust and adoption in clinical settings [9] [10] [1]. To address this, use post-hoc explainability techniques.

Step 1: Global Explainability

- Method: Use SHAP (SHapley Additive exPlanations) to understand the overall behavior of your model [11] [10]. SHAP calculates the contribution of each feature to the model's predictions based on concepts from cooperative game theory [11].

- Protocol: Compute SHAP values for your entire dataset (or a representative sample). Visualize the results using summary plots that show the global feature importance and how each feature affects the model output [11].

Step 2: Local Explainability

- Method: Use LIME (Local Interpretable Model-agnostic Explanations) or SHAP to explain individual predictions [11]. LIME works by perturbing the input data for a single instance and observing changes in the prediction, then training a simple, interpretable model (like linear regression) on this perturbed dataset to approximate the local decision boundary [11].

- Protocol: For a specific prediction, run LIME to generate a list of features with their corresponding weights, indicating which features most influenced that particular decision. This is crucial for clinicians who need to understand the rationale behind a specific diagnosis or risk assessment [11].

FAQ 3: How do I validate an AI model for clinical use to ensure its safety and efficacy?

Rigorous validation is paramount before deploying AI in clinical practice [12]. A multi-faceted approach is required.

Step 1: Model-Centered Validation

- Method: Use standard machine learning validation techniques, but with a focus on clinical relevance [12].

- Protocol: Perform k-fold cross-validation on your development dataset. Then, evaluate the model on a held-out test set that was not used during training or tuning. Report clinically relevant metrics such as sensitivity, specificity, AUC-ROC, and calibration curves [12].

Step 2: Simulation Testing

- Method: Deploy the model in a simulated clinical environment [12].

- Protocol: Use a digital twin of the clinical workflow or a test environment that mimics real-world data streams. This allows you to monitor the model's performance and interaction with other systems in a safe setting before patient impact [12].

Step 3: Prospective Clinical Trial (The Gold Standard)

- Method: Conduct a clinical trial to evaluate the AI system's performance in its intended clinical environment [12].

- Protocol: Design a randomized controlled trial (RCT) where one arm uses AI-assisted decision-making and the other is a control group (e.g., standard of care). The primary endpoint should be a clinically meaningful outcome (e.g., reduction in diagnostic error time, improvement in patient survival rates) rather than just a technical metric [12].

Step 4: Expert Opinion

- Method: Involve clinical experts to assess the model's practicality and adherence to medical standards [12].

- Protocol: Organize a panel of board-certified clinicians to review a set of the model's predictions and explanations. Use structured surveys to capture their assessment of the model's clinical utility and safety [12].

FAQ 4: What level of autonomy should I design into my clinical AI agent?

Determining the appropriate level of autonomy is a critical design choice that balances efficiency with patient safety [13] [12]. The general consensus in clinical research favors human-in-the-loop models for safety-critical decisions [13].

- Step 1: Risk Assessment

- Method: Classify the tasks your AI will perform based on potential patient harm [13].

- Protocol: Create a risk-based framework. Use a simple table to guide the level of autonomy:

| Task Risk Level | Example | Recommended Autonomy |

|---|---|---|

| Low Risk / High Labor | Uploading documents to eTMF, initial data cleaning [13] | Fully Autonomous |

| Medium Risk | Generating patient recruitment reports, flagging data anomalies [13] | Semi-Autonomous (AI recommends, human validates) |

| High Risk / Safety Critical | Serious Adverse Event (SAE) reporting, treatment recommendations [13] [12] | Human-in-the-Loop (AI provides input, human makes final decision) |

- Step 2: Implement Guardrails

The Scientist's Toolkit: Research Reagent Solutions

The following table details key methodologies and tools essential for conducting rigorous and ethically sound biomedical AI research.

| Item | Function |

|---|---|

| SHAP (SHapley Additive exPlanations) | A unified method to explain the output of any machine learning model, providing both global and local interpretability by quantifying each feature's contribution to a prediction [11] [10]. |

| LIME (Local Interpretable Model-agnostic Explanations) | Explains individual predictions of any classifier or regressor by approximating it locally with an interpretable model [11]. |

| AI Fairness 360 (AIF360) | An open-source toolkit containing over 70 fairness metrics and 10 bias mitigation algorithms to help examine, report, and mitigate discrimination and bias in machine learning models [8]. |

| TensorBoard | A visualization toolkit for machine learning experimentation, providing tools to track metrics like loss and accuracy, visualize the model graph, and project embeddings to lower-dimensional spaces [14]. |

| Human-in-the-Loop (HITL) Framework | A system design paradigm where a human is involved in the decision-making process of an AI, crucial for validating actions and maintaining oversight in high-stakes clinical environments [13]. |

| Model Card Toolkit | A framework for documenting machine learning models, promoting transparency by providing a summary of the model's performance characteristics across different conditions and demographics. |

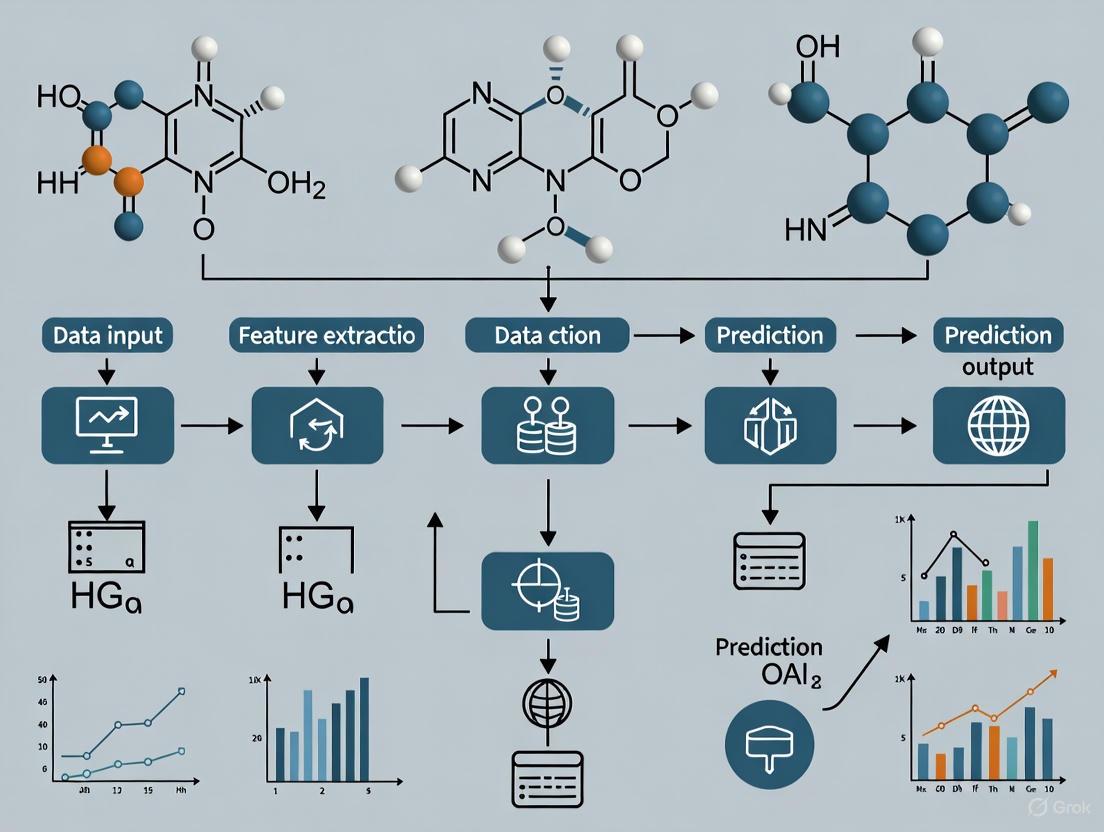

Experimental Workflow and System Diagrams

The following diagrams visualize key concepts and workflows in developing trustworthy biomedical AI systems.

Biomedical AI Model Pathways

Biomedical AI Validation Workflow

Technical Support Center

Troubleshooting Guides

Q1: Our AI diagnostic model performs well on internal test data but shows a significant drop in accuracy when deployed in a new hospital. What could be the root cause, and how can we address it?

A: This is a classic case of data distribution shift and is a common failure mode in real-world AI deployment [15]. The model's drop in performance is likely due to its training data not being representative of the new patient population or imaging equipment at the deployment site.

Experimental Protocol to Mitigate Data Pathology:

- Implement Dynamic Data Auditing: Use a federated learning framework where each clinical site computes performance metrics (e.g., AUC, sensitivity, specificity) locally on their own data [15].

- Monitor for Drift: Calculate metrics like Population Stability Index (PSI) and Kullback-Leibler (KL) divergence on these privacy-preserving aggregate metrics to detect data and performance drift [15].

- Trigger Retraining: Set threshold-based alerts. When drift exceeds a pre-defined limit, initiate a model update cycle using reweighted or newly sampled data quotas to mitigate representation disparities [15].

Q2: Clinicians report that they do not trust the AI's diagnostic recommendations because they cannot understand the reasoning behind them. How can we make our "black box" model more interpretable?

A: This lack of trust stems from the "black box" problem, where the AI's decision-making process is opaque [1] [16]. Overcoming this requires implementing a hybrid explainability engine.

Experimental Protocol for Enhanced Explainability:

- Integrate Gradient-Based Saliency: Apply techniques like Grad-CAM or Integrated Gradients to generate heatmaps that highlight which areas of a medical image (e.g., a lung CT scan) most influenced the model's prediction [15].

- Incorporate Structural Causal Models (SCM): Align the top salient regions from the saliency maps with known clinical variables in an SCM to provide a causal context [15].

- Run Faithfulness Checks: Use deletion and insertion tests to verify that the highlighted regions are truly critical to the model's decision. This process yields concise, clinician-facing rationales for the AI's output [15].

Q3: A performance review revealed that our AI system has a 28% higher false-negative rate for melanoma detection in patients with dark skin tones. How did this bias occur, and how can we correct it?

A: This is a clear example of algorithmic bias caused by underrepresentation of dark-skinned patients in the training dataset [15]. This systematic disadvantage for marginalized populations is a critical failure mode.

Experimental Protocol for Bias Mitigation:

- Audit Training Data: Systematically analyze the demographic composition (e.g., skin tone, age, gender) of your training datasets to identify representation gaps [15].

- Employ Bias-Aware Data Curation: Use techniques like federated learning to incorporate more diverse data from multiple institutions in a privacy-preserving manner [15].

- Implement Subgroup-Stratified Metrics: Continuously monitor the model's performance (e.g., False Negative Rate, AUC) not just on the overall population, but on each demographic subgroup to ensure equity [15].

Frequently Asked Questions (FAQs)

Q4: What are the most common failure modes for AI in medical diagnostics? A: Research has identified three primary, interdependent failure modes [15]:

- Data Pathology: Caused by sampling bias in training data, leading to underdiagnosis in underrepresented subgroups.

- Algorithmic Bias: Often a result of overfitting to spurious correlations in the data, causing false positives or false negatives.

- Human-AI Interaction: Issues like automation complacency, where clinicians may overlook AI errors or override correct recommendations due to distrust.

Q5: Is there a trade-off between AI model accuracy and interpretability? A: Yes, this is often referred to as the "accuracy vs. explainability" dilemma [1]. The most advanced models, like deep neural networks, often deliver superior predictive power but at the cost of transparency. Simpler, rule-based models are easier to interpret but may be less powerful and flexible [1].

Q6: Who is held accountable if a medical AI system causes a misdiagnosis that leads to patient harm? A: This remains a significant legal and ethical challenge. The lines of accountability are often blurred between the AI developers, the clinicians who use the tool, and the healthcare institutions that deploy it [15] [1]. This "tripartite accountability gap" is why new legal frameworks and "accountability-by-design" instruments, such as versioned model fact sheets, are being proposed [15].

Q7: What is an AI "hallucination" in a clinical context? A: In the context of Large Language Models (LLMs), a hallucination occurs when the model generates a plausible-sounding but factually incorrect or fabricated answer [1]. In clinical decision support, this could manifest as a confident diagnosis or treatment recommendation based on non-existent or misinterpreted evidence in the patient data, presenting a serious patient safety risk.

Quantitative Performance and Challenges in AI Diagnostics

The table below summarizes the performance and persistent challenges of AI diagnostics across key medical fields, based on published literature [15].

| Diagnostic Field | Application | Reported Diagnostic Accuracy | Key Strengths | Persistent Challenges |

|---|---|---|---|---|

| Dermatology | Skin cancer detection | 90–95% | High accuracy for melanoma; valuable for early detection | Struggles with atypical cases and non-Caucasian skin due to data bias |

| Radiology | Lung cancer detection | 85–95% | Sensitive to small nodules; reduces radiologist workload | Susceptible to image artifacts; can overfit to spurious correlations |

| Ophthalmology | Diabetic retinopathy screening | 90–98% | Enables mass screening; accurate in staging progression | Limited by dataset diversity; may miss atypical presentations |

| Pathology | Histopathology for cancer diagnosis | 90–97% | High sensitivity; helps prioritize critical cases | Limited interpretability; risk of clinician over-reliance |

| Neurology | Stroke Detection on MRI/CT | 88–94% | High accuracy for ischemic/hemorrhagic stroke; time-sensitive | Performance can drop due to limited diverse datasets; interpretability issues |

Research Reagent Solutions

The following table details key methodological solutions and tools for addressing the black box problem in medical AI research.

| Solution / Tool | Function | Relevance to Black Box Problem |

|---|---|---|

| Hybrid Explainability Engine | Combines saliency maps (e.g., Grad-CAM) with structural causal models to generate clinician-friendly rationales [15]. | Addresses model opacity by providing visual and causal explanations for AI decisions. |

| Federated Learning Framework | Enables model training across multiple institutions without sharing raw patient data, only sharing parameter updates [15]. | Mitigates data bias and improves generalizability while preserving privacy. |

| Dynamic Data Auditing | Monitors model performance and data distribution across subgroups in real-time to detect drift and bias [15]. | Provides continuous validation and alerts researchers to performance degradation and fairness issues. |

| Bias Detection & Mitigation Algorithms | Techniques like reweighting and adversarial debiasing to identify and reduce model bias [15] [17]. | Directly targets algorithmic bias, a key consequence of opaque models trained on non-representative data. |

| Accountability-by-Design Instruments | Versioned model fact sheets and blockchain-based hashing of model artifacts for audit trails [15]. | Creates transparency and traceability, helping to clarify accountability in case of model failure. |

Experimental Workflow and Accountability Framework

The diagram below outlines a proposed end-to-end workflow for developing and monitoring a responsible AI diagnostic system, integrating technical checks with accountability measures.

AI Diagnostic System Development Workflow

The following diagram illustrates the shared accountability framework required for trustworthy AI deployment in healthcare, showing the responsibilities of different stakeholders.

Shared Accountability Framework for Medical AI

Frequently Asked Questions

Q1: My machine learning model for compound screening performs well on training data but generalizes poorly to new data. What are the first things I should check?

Start by investigating data quality and splits. Poor generalization often stems from data issues like leakage or imbalance. Implement a robust data validation protocol to enhance accuracy by reviewing and cleaning datasets to remove inconsistencies [17]. Check for data leakage, ensuring preprocessing steps like scaling and encoding are done separately on training and test sets [18]. Validate your data splits using methods like Stratified K-fold to preserve the percentage of samples for each class across training and validation sets, preventing skewed representation that biases model output [19].

Q2: How can I detect and quantify "signaling bias" in GPCR drug candidates during high-throughput screening?

Signaling bias occurs when ligands preferentially activate specific downstream pathways. To detect it, you must develop assays for distinct signaling pathways (e.g., G-protein vs. β-arrestin recruitment) with appropriate dynamic range [20]. For quantification, Δlog(Emax/EC50) analysis provides a validated, high-throughput method to calculate pathway bias relative to a reference agonist [20]. This method offers a scalable alternative to the more complex operational model, enabling bias quantification across large compound libraries [20].

Q3: What are the key regulatory considerations when submitting an AI-derived drug candidate for approval?

Regulatory oversight of AI in drug development is evolving rapidly. Key considerations include:

- Transparency and Explainability: Regulators increasingly require understanding of AI decision logic, especially for "black box" models [17] [21]. The FDA has proposed a risk-based credibility framework for AI models used in regulatory decision-making [21].

- Data Provenance: Maintain detailed documentation of training data sources, preprocessing, and potential biases [21].

- Real-World Evidence: Regulatory agencies are developing frameworks to incorporate RWE into submissions, but expect stringent requirements for data quality and validation [21]. Engage regulators early through pre-IND meetings to align on evidence requirements [22].

Q4: What practical strategies can help overcome the "black box" problem of complex AI models in a regulated research environment?

Implement multiple complementary approaches:

- Interpretability Techniques: Apply methods like SHAP values to break down complex model outputs into understandable feature contributions [18] [17].

- Model Simplification: Start with simple baselines like linear models or decision trees as references; if complex models don't outperform these, it signals potential issues [18].

- Robust Validation: Use stress testing and scenario-based testing to evaluate model performance under varying conditions [17].

- Cross-Functional Collaboration: Work closely with data scientists, domain experts, and regulatory specialists to align AI capabilities with scientific and compliance needs [17].

Q5: How can I balance the trade-offs between model performance and interpretability when developing predictive models for drug discovery?

The performance-interpretability spectrum ranges from highly accurate but opaque "black box" models to more transparent but potentially less accurate "white box" alternatives [17]. Consider your specific application: for early discovery where exploration is key, performance may take priority, while for late-stage candidates requiring regulatory approval, interpretability becomes crucial [17]. Techniques like model distillation can help extract simpler, interpretable models from complex ones. Additionally, explainable AI (XAI) tools can provide insights into black box models without significantly compromising performance [17].

Troubleshooting Guides

Guide 1: Debugging Machine Learning Models in Drug Discovery

Table 1: Common ML Model Issues and Diagnostic Approaches

| Problem | Diagnostic Method | Solution Steps |

|---|---|---|

| Poor Generalization (High variance) | Plot learning curves to visualize gap between training and validation performance [18]. | Apply regularization (L1/L2, dropout), expand training data, or reduce model complexity [18]. |

| Underfitting (High bias) | Compare training and validation scores; both will be high [18]. | Increase model complexity, add relevant features, or reduce regularization [18]. |

| Data Quality Issues | Use data profiling tools (Great Expectations, Deequ) to identify missing values, outliers, or imbalances [19]. | Impute missing values, remove outliers, or apply resampling techniques for class imbalance [19]. |

| Unfair/Biased Predictions | Analyze feature importance scores or SHAP values to identify problematic dependencies [18]. | Implement bias detection algorithms, remove problematic features, or use fairness-aware ML techniques [17]. |

| Irreproducible Results | Track experiments, data versions, and hyperparameters with tools like Neptune.ai or Weights & Biases [19]. | Establish standardized experiment protocols, implement version control for data and code [19]. |

Experimental Protocol: Data-Centric Debugging

- Data Validation: Use tools like Great Expectations to create data quality assertions that check for missing values, value ranges, and allowed categories [19].

- Bias Detection: Assess reliability through data validation protocols that address diversity in datasets to minimize systemic biases [17].

- Feature Analysis: Check which features are most influential using feature importance scores or SHAP values to reveal if your model relies on irrelevant features [18].

- Cross-Validation: Implement k-fold cross-validation to ensure your model's performance is consistent across different data splits [18].

Guide 2: Detecting and Quantifying Biased Agonism

Table 2: Key Research Reagents for Biased Signaling Studies

| Research Reagent | Function/Application |

|---|---|

| PathHunter OPRM1 β-arrestin U2OS cells | Cell line for measuring β-arrestin2 recruitment to μ-opioid receptor using enzyme fragment complementation [20]. |

| CHO-μ cells | Chinese Hamster Ovary cells expressing μ opioid receptors for Gαi-dependent signaling assays [20]. |

| DAMGO ([D-Ala2, N-MePhe4, Gly-ol5]-enkephalin) | Reference balanced μ-opioid receptor agonist used to calculate relative bias [20]. |

| Membrane preparation from U2OS-μ cells | Source of μ receptor protein for binding studies and certain biochemical assays [20]. |

| TRV027 | AT1 receptor β-arrestin-biased agonist; example therapeutic candidate demonstrating translational potential of biased signaling [23]. |

Experimental Protocol: Δlog(Emax/EC50) Bias Quantification

- Assay Development: Establish at least two pathway-specific assays (e.g., G-protein activation and β-arrestin recruitment) with sufficient dynamic range and minimal systemic bias [20].

- Dose-Response Curving: Generate 11-point, half-log concentration-response curves for test compounds and reference agonist in all assays [20].

- Parameter Estimation: Calculate Emax (maximal response) and EC50 (half-maximal effective concentration) for each compound in each pathway [20].

- Bias Calculation: For each pathway, compute ΔΔlog(Emax/EC50) = Δlog(Emax/EC50)test - Δlog(Emax/EC50)reference. A significant difference between pathways indicates signaling bias [20].

Guide 3: Navigating Regulatory Hurdles for AI-Enhanced Therapeutics

Table 3: Key Regulatory Requirements for AI in Drug Development

| Regulatory Aspect | Key Requirements | Agency Guidance |

|---|---|---|

| AI Model Validation | Risk-based credibility assessment; documentation of training data, architecture, and performance [21]. | FDA: "Considerations for the Use of AI to Support Regulatory Decision-Making" [21]. |

| Real-World Evidence (RWE) | Pre-specified analysis plans, demonstrated data quality and provenance [21]. | ICH M14 Guideline: Principles for pharmacoepidemiological studies using RWD [21]. |

| Transparency/Explainability | Ability to understand and interpret AI outputs; demonstration of algorithmic fairness [17]. | EU AI Act: High-risk AI systems require transparency and human oversight [21]. |

| Quality Control & Manufacturing | Adherence to Good Manufacturing Practices (GMP); consistent production quality [22]. | FDA and EMA requirements for manufacturing consistency and quality control [22]. |

| Clinical Trial Design | Meaningful endpoints, appropriate comparators, rigorous statistical plans [24]. | ICH E6(R3): Modernized standards for risk-based, decentralized trials [21]. |

Experimental Protocol: Proactive Regulatory Strategy

- Early Engagement: Schedule pre-IND meetings with regulators (FDA, EMA) to present research plans and gain alignment on data requirements [22].

- Documentation Management: Meticulously document all preclinical and clinical trial data, pharmacokinetics, toxicology reports, and manufacturing protocols [22].

- Evidence Integration: Combine clinical trial data with real-world evidence and digital biomarkers to create comprehensive dynamic evidence packages [21].

- Lifecycle Planning: Develop post-market surveillance plans, including pharmacovigilance strategies and real-world performance monitoring for AI algorithms [22].

Additional Challenges and Considerations

The Hype vs. Reality of AI in Drug Discovery

Experts report that overhyping AI can create several problems [25]:

- FOMO-Driven Decisions: Pressure to adopt AI can impinge on scientific rigor, leading to inappropriate application where traditional methods would be better suited [25].

- Unrealistic Expectations: When overhyped tools underdeliver, it can lead to loss of belief in the technology, potentially setting the field back [25].

- Diminished Creativity: Some medicinal chemists report that current AI applications can be too conservative, potentially reducing opportunities for serendipitous discoveries [25].

Economic and Productivity Pressures

The biopharma industry faces significant R&D productivity challenges, with success rates for Phase 1 drugs falling to just 6.7% in 2024 [24]. This creates pressure to adopt efficiency-enhancing technologies like AI while maintaining scientific rigor. Companies must design trials as "critical experiments with clear success or failure criteria" rather than "exploratory fact-finding missions" [24].

Interpretability and Uncertainty Quantification: A Toolkit for Transparent ML

## Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between LIME and SHAP in explaining machine learning predictions?

A1: LIME and SHAP differ primarily in their approach and theoretical foundation. LIME (Local Interpretable Model-agnostic Explanations) creates local surrogate models by perturbing the input data and observing changes in the prediction. It explains individual predictions by approximating the complex model locally with an interpretable one, such as linear regression [26] [27] [28]. SHAP (SHapley Additive exPlanations) is grounded in cooperative game theory, specifically Shapley values. It calculates the average marginal contribution of each feature to the model's prediction across all possible combinations of features, providing a unified measure of feature importance for each prediction [26] [29] [27]. While LIME provides explanations based on local fidelity, SHAP offers a theoretically robust framework with consistent explanations.

Q2: My SHAP analysis is computationally expensive and slow on my large drug compound dataset. How can I address this?

A2: Computational expense is a common challenge with SHAP. You can employ several strategies:

- Use Model-Specific Explainers: For tree-based models, use

shap.TreeExplainer, which is optimized and faster than the model-agnostic explainers [30]. - Approximate with Smaller Samples: Calculate SHAP values on a representative subset of your data or use the

approximatemethod available in some explainers. - Leverage GPU Acceleration: If using deep learning models, ensure your SHAP implementation utilizes GPU resources, which can significantly speed up calculations.

- Consider Alternative Methods: For an initial global understanding, use faster model-specific feature importance or Permutation Importance before diving into local SHAP explanations [30].

Q3: When I run LIME multiple times on the same instance, I get slightly different explanations. Is this normal?

A3: Yes, this is an expected behavior and a known characteristic of LIME. The variations occur because LIME generates explanations by sampling perturbed instances around the prediction to be explained [29] [28]. This sampling process has a random component, leading to minor fluctuations in the resulting explanation. If this instability is a critical issue for your application, you might consider using SHAP, which provides a unique and consistent explanation for a given prediction due to its game-theoretic foundation [29].

Q4: In the context of drug discovery, how can interpretability methods help in predicting drug efficacy and toxicity?

A4: Interpretability methods are crucial for building trust and providing insights in AI-driven drug discovery. They help in:

- Identifying Critical Features: SHAP and LIME can reveal which molecular descriptors or compound structures the model deems most important for predicting efficacy or toxicity, guiding chemists in compound design [3].

- Understanding Model Decisions: By explaining individual predictions, researchers can verify if the model is relying on biologically plausible patterns or spurious correlations, increasing confidence before experimental validation [31] [3].

- Debugging and Improving Models: If a model makes an incorrect prediction, these tools can help identify flaws, such as reliance on incorrect features, allowing data scientists to refine the model [31] [30].

Q5: What are the best practices for visualizing and communicating the results from Partial Dependence Plots (PDPs) and SHAP summary plots?

A5:

- For PDPs: Clearly label axes and indicate the feature distribution (e.g., with tick marks or a rug plot) to show where data is concentrated. This prevents over-interpreting regions with little data [30] [32]. When combined with ICE plots, ensure the PDP line is highlighted to distinguish the average effect from individual variations [26] [32].

- For SHAP Summary Plots: Use this plot to show both global feature importance (via the ordering of features) and the local relationship between a feature's value and its impact on the model output (via the color and spread of points) [30].

- General Practices: Adhere to color best practices. Use categorical color palettes to distinguish different classes and sequential color palettes to represent magnitude. Always ensure sufficient color contrast and avoid red-green palettes to accommodate color-blind users [33].

## Troubleshooting Guides

### Guide 1: Resolving Common SHAP Value Errors

Problem: You encounter errors like "Additivity check failed" or "Model type not yet supported" when calculating SHAP values.

Solution: This guide helps you diagnose and fix frequent SHAP computation issues.

Step 1: Verify Model and Explainer Compatibility Ensure you are using the correct SHAP explainer for your model type. The

TreeExplaineris for tree-based models (e.g., XGBoost, Random Forest), whileKernelExplaineris a slower, model-agnostic alternative [30].Step 2: Check Input Data Format Confirm that the data passed to the explainer (

shap_values) matches the format and shape (including feature names/order) expected by your model's prediction function.Step 3: Inspect Model Output SHAP expects the model output to be a probability or a deterministic decision. For classifiers, your model should have a

predict_probamethod. If it doesn't, you may need to wrap your model or use a different explainer [27].

### Guide 2: Debugging Uninformative or Poor LIME Explanations

Problem: The explanations provided by LIME are not meaningful, seem random, or do not align with domain knowledge.

Solution: Follow these steps to improve the quality of LIME explanations.

Step 1: Adjust Perturbation Parameters The default parameters may not be optimal for your dataset. Experiment with the

kernel_widthparameter, which controls the locality of the explanation. A poorly chosen value can lead to explanations that are either too local or too global [28].Step 2: Tune Feature Selection LIME uses feature selection to create sparse explanations. The default setting is

'auto'. You can explicitly set thefeature_selectionparameter to'lasso_path', which often yields more stable and meaningful features [28].Step 3: Validate with Domain Expertise Compare the explanations for several instances with a domain expert (e.g., a medicinal chemist). If the explanations consistently lack sense, it may indicate an issue with the underlying model itself, not just the explainer [27] [30].

## Experimental Protocols & Methodologies

### Protocol 1: Implementing a SHAP Analysis for a Clinical Outcome Prediction Model

Objective: To explain a Random Forest model predicting patient response to a drug treatment using SHAP.

Materials:

- Software: Python with

shap,pandas,matplotliblibraries. - Input: Trained Random Forest model and a test dataset of patient features.

Procedure:

- Explainer Initialization: Initialize the SHAP TreeExplainer with your trained model.

explainer = shap.TreeExplainer(your_trained_model) - SHAP Value Calculation: Calculate SHAP values for the instances you wish to explain (e.g., the test set).

shap_values = explainer.shap_values(X_test) - Global Interpretation: Generate a summary plot to visualize global feature importance and feature effects.

shap.summary_plot(shap_values, X_test) - Local Interpretation: For a single patient's prediction, use a force plot or decision plot to detail how each feature contributed to the final score.

shap.force_plot(explainer.expected_value, shap_values[instance_index,:], X_test.iloc[instance_index,:])

Interpretation: The summary plot ranks features by their global impact. Each point represents a patient, its color shows the feature value (red=high, blue=low), and its position shows the impact on the prediction. A force plot for a single patient shows how feature values pushed the prediction above (positive) or below (negative) the base value [30].

### Protocol 2: Applying LIME to Interpret a Compound Toxicity Classifier

Objective: To explain why a deep learning model classified a specific chemical compound as "toxic".

Materials:

- Software: Python with

lime,numpylibraries. - Input: Trained deep learning model and the feature vector of the compound to be explained.

Procedure:

- Explainer Setup: Create a LIME TabularExplainer, providing the training data statistics and feature names.

explainer = lime.lime_tabular.LimeTabularExplainer(training_data, feature_names=feature_names, mode='classification') - Instance Explanation: Generate an explanation for the specific compound instance. Specify the number of top features to include in the explanation.

exp = explainer.explain_instance(compound_instance, model.predict_proba, num_features=5) - Result Visualization: Display the explanation, which will show the top features contributing to the "toxic" classification and their direction of influence.

exp.show_in_notebook(show_table=True)

Interpretation: The output lists the features that most strongly influenced the prediction. For example, it might show that the presence of a specific molecular substructure (feature) significantly increased the probability of the "toxic" class [27] [28].

Table 1: Comparative Analysis of Model-Agnostic Interpretability Methods

| Method | Theoretical Foundation | Scope of Explanation | Computational Cost | Key Output | Primary Use Case |

|---|---|---|---|---|---|

| SHAP | Cooperative Game Theory (Shapley values) [26] [29] | Local & Global (by aggregation) [29] [30] | High [29] | Feature importance values for a prediction that sum to the difference from the baseline [26] | Explaining individual predictions with a robust, consistent metric; identifying global feature importance. |

| LIME | Local Surrogate Models [26] [28] | Local (per-instance) [26] [27] | Moderate [29] | A simple, interpretable model (e.g., linear coefficients) that approximates the complex model locally [27] | Providing intuitive, local explanations for specific predictions without requiring a global model interpretation. |

| Partial Dependence Plots (PDP) | Marginal Effect Estimation [26] [30] | Global (average effect) [26] [30] | Low to Moderate | A plot showing the average relationship between a feature and the predicted outcome [26] | Understanding the average direction and shape of a feature's relationship with the target variable. |

| Individual Conditional Expectation (ICE) | Marginal Effect Estimation [26] [32] | Local (per-instance) & Global | High (for many instances) | A plot showing the relationship for individual instances as the feature varies [26] [32] | Visualizing heterogeneity in the effect of a feature across different instances in the dataset. |

Table 2: Essential Research Reagent Solutions for Interpretability Experiments

| Reagent / Tool | Function / Purpose | Example in Context |

|---|---|---|

| SHAP Library (Python/R) | Computes Shapley values for various model types to explain model outputs [26] [30]. | A drug discovery researcher uses shap.TreeExplainer to identify which molecular features most contribute to a high predicted efficacy score for a new compound [3]. |

| LIME Library (Python/R) | Generates local surrogate models to explain individual predictions of any black-box model [27] [28]. | A scientist uses LimeTabularExplainer to understand why a specific patient's data was predicted to be a non-responder to a particular therapy [27]. |

PDP/ICE Plots (via iml, PDPBox) |

Visualizes the marginal effect of a feature on the model's prediction, with ICE plots showing individual conditional expectations [30] [32]. | A team analyzes a PDP for "molecular weight" to confirm that the model has learned a known non-linear relationship with solubility [32]. |

Permutation Importance (via eli5) |

Measures feature importance by calculating the decrease in a model's score when a feature's values are randomly shuffled [30]. | Used as a sanity check to ensure the global features identified by SHAP are also deemed important when the model's performance is directly measured [30]. |

## Interpretability Workflow Visualizations

LIME Explanation Workflow

SHAP Additive Explanation Concept

UQ as a Key to the Black Box

The "black box" problem, where even designers cannot fully explain how complex models like deep neural networks arrive at their conclusions, is a major barrier to trust in machine learning, especially in high-stakes fields like drug development [34]. This opacity raises practical, legal, and ethical concerns, as models may make incorrect predictions with high confidence or amplify biases present in the training data [34] [35].

Uncertainty Quantification (UQ) directly addresses this by adding a crucial layer of transparency: it tells you not just what the prediction is, but how much to trust it [36] [37]. Instead of a single answer, UQ provides a measure of confidence, turning a statement like "this model might be wrong" into specific, measurable information about how wrong it might be and in what ways [36]. By revealing the model's own doubt, UQ helps researchers identify when predictions are unreliable due to unfamiliar data or insufficient knowledge, thereby building a more principled and trustworthy foundation for decision-making.

FAQs & Troubleshooting: UQ in Practice

FAQ 1: What are the main types of uncertainty I need to consider? You will primarily deal with two types of uncertainty, which require different handling strategies [36] [38] [37]:

- Aleatoric uncertainty arises from inherent randomness in the data, such as measurement noise or the stochastic nature of a system. This "data uncertainty" cannot be reduced by collecting more data.

- Epistemic uncertainty stems from a lack of knowledge about the model. This "model uncertainty" is caused by limited training data and can be reduced by gathering more relevant data.

FAQ 2: My model is overconfident on new types of data. How can UQ help? This is a classic sign of high epistemic uncertainty. When a model encounters Out-of-Distribution (OOD) samples—data that is significantly different from its training set—it often makes incorrect predictions with unjustified high confidence [37]. UQ methods like Bayesian Neural Networks or Ensembles are designed to detect this. They will show a large increase in predictive uncertainty for OOD samples, signaling that the result should not be trusted without further validation [37].

FAQ 3: UQ methods seem computationally expensive. Are there efficient approaches for complex models? Yes, you can choose from several strategies to balance cost and accuracy:

- For a quick, post-hoc method: Use Monte Carlo Dropout. It's a computationally efficient technique that keeps dropout active during prediction, running multiple forward passes to get a distribution of outputs instead of a single, overconfident point estimate [36].

- For a more robust, model-agnostic approach: Consider Conformal Prediction. This framework provides prediction sets (for classification) or intervals (for regression) with guaranteed coverage probabilities (e.g., 95%), and it can be applied to any pre-trained model without retraining [36].

- Leverage specialized toolboxes: Frameworks like Lightning UQ Box are designed to integrate UQ into standard deep learning workflows with minimal overhead, offering a wide range of methods from Bayesian to ensemble techniques [39].

FAQ 4: How can I trust the uncertainty estimates themselves? It is crucial to evaluate the quality of your predictive uncertainty [37]. A well-calibrated model should not be overconfident or underconfident. You can assess this using metrics like:

- Calibration curves: To visualize if the predicted confidence scores match the actual accuracy.

- Proper scoring rules: Such as the negative log-likelihood or the Brier score, which evaluate the quality of probabilistic predictions.

A Researcher's Toolkit: Core UQ Methods

The table below summarizes the primary UQ methods, helping you select the right tool for your experiment.

| Method | Type of Uncertainty Quantified | Key Principle | Best For |

|---|---|---|---|

| Gaussian Process Regression (GPR) [36] [37] | Aleatoric & Epistemic | A Bayesian non-parametric approach that places a prior over functions; inherently provides uncertainty via the posterior distribution. | Data-scarce regimes, surrogate modeling, and problems where a closed-form uncertainty measure is needed. |

| Bayesian Neural Networks (BNNs) [36] [37] | Primarily Epistemic | Treats network weights as probability distributions instead of fixed values, capturing model uncertainty. | Scenarios requiring rigorous uncertainty decomposition and where computational resources are sufficient. |

| Monte Carlo (MC) Dropout [36] | Epistemic | A computationally efficient approximation of a Bayesian model; performs multiple stochastic forward passes at inference time. | Easily adding UQ to existing trained neural networks without changing the architecture. |

| Deep Ensembles [36] [37] | Aleatoric & Epistemic | Trains multiple models independently and quantifies uncertainty through the disagreement (variance) of their predictions. | Achieving high predictive accuracy and robust uncertainty estimates; often used as a strong baseline. |

| Conformal Prediction [36] | Model-agnostic | A distribution-free framework that creates prediction sets/intervals with guaranteed coverage (e.g., 95%) for any black-box model. | Providing rigorous, finite-sample guarantees on uncertainty for any pre-trained model in classification or regression. |

The following diagram illustrates a general workflow for implementing and evaluating these UQ methods in a research project.

Experimental Protocol: Implementing Conformal Prediction for a Classification Task

This protocol provides a step-by-step guide to implementing conformal prediction, a powerful method for creating prediction sets with guaranteed coverage for any black-box classifier [36].

Objective: To generate a prediction set for a new data point that contains the true label with a user-specified probability (e.g., 95%).

Materials & Reagents:

| Item | Function in the Experiment |

|---|---|

| Trained Classifier | A pre-trained model (e.g., a neural network) that outputs predicted probabilities for each class. |

| Calibration Dataset | A held-out dataset, not used for training, to calculate nonconformity scores. |

| Nonconformity Measure | A function that quantifies how "strange" a data point is for a given label. For classification, this is often 1 - f(X_i)[y_i] (one minus the predicted probability for the true label) [36]. |

| Coverage Level (1 - α) | The desired probability that the prediction set contains the true label (e.g., 0.95 for 95% coverage). |

Methodology:

- Data Splitting: Split your data into three parts: a training set, a calibration set, and a test set. Train your classifier on the training set as usual.

- Compute Nonconformity Scores: For each data point

iin the calibration set, calculate its nonconformity score using the chosen measure. For a multi-class classifier, this is typically:s_i = 1 - f(X_i)[y_i]wheref(X_i)[y_i]is the model's predicted probability for the true classy_i[36]. - Determine the Threshold: Sort all the nonconformity scores from the calibration set in ascending order. Find the

(1 - α)-th quantile of these scores. For a calibration set of sizen, this is the value at the⌈(n+1)(1 - α)⌉ / nposition. This value becomes your threshold,q[36]. - Form Prediction Sets: For a new test example

X_new, form the prediction set as follows: include all labelsyfor which the nonconformity scores_new^y = 1 - f(X_new)[y]is less than or equal to the thresholdq[36].

Validation:

The resulting prediction sets are guaranteed to contain the true label with a probability of approximately (1 - α). You can validate this on your test set by checking the empirical coverage—the fraction of test examples for which the prediction set contains the true label. It should be close to your desired (1 - α) coverage level [36].

By integrating these UQ principles and tools into your research workflow, you can move beyond opaque point predictions and build machine learning systems that are not only powerful but also transparent, reliable, and worthy of trust in critical applications.

Frequently Asked Questions (FAQs)

FAQ 1: What is the fundamental difference between a traditional neural network and a Bayesian Neural Network (BNN)?

In a traditional deep learning model, the network's weights are treated as fixed, deterministic values learned during training. In contrast, a Bayesian Neural Network (BNN) treats these weights as random variables with associated probability distributions [40]. This probabilistic approach allows BNNs to naturally quantify uncertainty in their predictions, providing a principled way to know what the model does not know [41].

FAQ 2: Why are BNNs particularly important for scientific fields like drug discovery?

In drug discovery, experiments are costly and time-consuming. Computational models that predict drug-target interactions are valuable tools for prioritizing experiments [42]. BNNs provide uncertainty estimates alongside predictions, which helps professionals assess the risk associated with pursuing a particular drug candidate. A well-calibrated model ensures that a prediction of 70% probability of activity truly means there is a 70% chance the compound is active, enabling well-informed decision-making under uncertainty [42].

FAQ 3: What are the main types of uncertainty that BDL can quantify?

BDL frameworks typically distinguish between two main types of uncertainty:

- Epistemic (Model) Uncertainty: Arises from uncertainty in the model parameters themselves. It can be reduced by gathering more data [42]. This is quantified by sampling multiple sets of model parameters from the posterior distribution and observing the variability in predictions [40].

- Aleatoric (Data) Uncertainty: Stems from inherent noise in the data and cannot be reduced by collecting more data [42]. It is typically represented as the variance of the predictive distribution [40].

FAQ 4: What is the main computational challenge of BDL, and how is it addressed?

The primary challenge is that exact Bayesian inference on network weights is typically computationally intractable for large models due to the complex posterior distribution [43] [42]. This is addressed using approximate inference methods. Common approaches include:

- Markov Chain Monte Carlo (MCMC): A sampling-based method to approximate the posterior [43].

- Variational Inference (VI): Poses inference as an optimization problem, fitting a simpler distribution (e.g., Gaussian) to the complex true posterior [41].

- Monte Carlo Dropout: A practical approximation that can be interpreted as performing variational inference [42].

Troubleshooting Guides

Issue 1: Poor Model Calibration

Problem: My model's confidence scores do not match the true probability of correctness. For example, of the molecules predicted to be active with 80% confidence, only 50% are actually active [42].

Diagnosis Steps:

- Calculate Calibration Error: Use calibration metrics like Expected Calibration Error (ECE) or Brier Score on a held-out validation set to quantify the miscalibration [42].

- Inspect the Data: Check for issues like noisy labels, imbalanced class distribution, or a significant distribution shift between your training and test data, all of which can harm calibration [42].

- Check Hyperparameters: Model calibration and accuracy are often optimized by different hyperparameter settings. Review your choices for regularization, model size, and learning rate [42].

Solutions:

- Apply Post-Hoc Calibration: Use methods like Platt Scaling (a logistic regression fit to the model's logits) on a separate calibration dataset to adjust the output probabilities [42].

- Use a Bayesian Method: Adopt a proper uncertainty quantification method like a BNN or deep ensemble. These methods account for model uncertainty during training and often yield better-calibrated outputs [42].

- Hyperparameter Tuning: Optimize your hyperparameters using a metric that considers both accuracy and calibration, rather than accuracy alone [42].

Issue 2: High Memory Usage and Slow Training

Problem: Training my BNN is significantly slower and requires more memory than a standard deterministic network.

Diagnosis Steps:

- Identify the Method: Determine which approximate inference method you are using. Methods like Hamiltonian Monte Carlo (HMC) are asymptotically exact but can be very computationally intensive [42].

- Check Model Size: Large, over-parameterized models will naturally be more costly to train, especially when maintaining distributions over weights [43].

Solutions:

- Switch to a Lighter Method: Consider using a more computationally efficient Bayesian approximation. For example, the HMC Bayesian Last Layer (HBLL) method applies Bayesian inference only to the weights of the last layer, combining the scalability of standard networks with meaningful uncertainty estimation for the output [42].

- Use Variational Inference: VI is often faster than sampling-based methods like HMC because it turns the inference problem into a gradient-based optimization [41].

- Leverage the Reparameterization Trick: When using VI, ensure you are using the reparameterization trick to enable efficient low-variance gradient estimation, which is crucial for stable and efficient training [41].

Issue 3: Numerical Instabilities During Training

Problem: The training loss becomes NaN or inf during the optimization of the BNN.

Diagnosis Steps:

- Inspect the Loss Function: Check for incorrect input to the loss function (e.g., using softmax outputs with a loss that expects logits) [44].

- Check Operations: Look for operations that can cause numerical overflows, such as exponent, log, or division, especially when computing probabilities or likelihoods [44].

- Verify Priors: Ensure that the chosen prior distributions for the weights are numerically stable and appropriate for the model.

Solutions:

- Overfit a Single Batch: A key debugging heuristic is to try and overfit a very small batch of data (e.g., 2-4 samples). If the training error does not go close to zero, it often reveals implementation bugs, including numerical issues [44].

- Gradient Clipping: Implement gradient clipping to prevent exploding gradients.

- Use Built-in Functions: Rely on built-in functions from deep learning frameworks (e.g., PyTorch's

log_softmax) which are numerically stable, rather than implementing the math yourself [44]. - Debug Step-by-Step: Use a debugger to step through the model creation and inference, checking the shapes and data types of all tensors to catch silent errors like incorrect broadcasting [44].

Issue 4: Model Fails to Learn from Censored Data

Problem: In my regression task (e.g., predicting drug activity), a significant portion of the experimental data is censored (e.g., providing only a threshold rather than a precise value). Standard BDL models cannot utilize this partial information.

Diagnosis Steps:

- Analyze Data Labels: Identify what fraction of your labels are censored. In real pharmaceutical settings, this can be one-third or more of the data [45].

- Review Model Likelihood: Check if your model's likelihood function only accepts precise values.

Solutions:

- Adapt the Likelihood: Integrate tools from survival analysis. The Tobit model can be used to adapt ensemble-based, Bayesian, and Gaussian models to learn from censored labels by modifying the likelihood function to account for the thresholds [45].

- This adaptation is essential for reliably estimating uncertainties when working with real-world, incomplete experimental data [45].

Experimental Protocols & Data

Protocol 1: Implementing a Basic BNN with Variational Inference

This protocol outlines the steps to build a BNN using Variational Inference, a common approximate Bayesian method.

1. Define the Model and Prior:

- Choose a neural network architecture (e.g., a Multi-Layer Perceptron).

- Place a prior distribution over the weights, typically a simple distribution like a Gaussian with mean zero and a chosen variance:

p(w) = N(0, σ²I)[41].

2. Define the Variational Posterior:

- Choose a family of simpler distributions

q(w|θ)to approximate the true posteriorp(w|D). A common choice is a Gaussian distribution parameterized byθ=(μ, σ)for each weight [41].

3. Optimize the Variational Parameters:

- The goal is to make

q(w|θ)as close as possible top(w|D). This is done by minimizing the Kullback-Leibler (KL) divergence between them. - This is equivalent to maximizing the Evidence Lower Bound (ELBO), which is given by:

ELBO(θ) = E_{q(w|θ)}[log p(D|w)] - KL(q(w|θ) || p(w))[41]. - The first term is the expected log-likelihood (ensuring the model fits the data), and the second term is the KL divergence from the prior (acting as a regularizer).

- Use gradient-based optimization (e.g., SGD or Adam) to maximize the ELBO. The reparameterization trick (e.g., expressing a sampled weight as

w = μ + σ ⊙ ε, whereε ~ N(0,1)) is critical for enabling low-variance gradient estimation through this stochastic process [41].

Protocol 2: Evaluating Predictive Uncertainty and Calibration

This protocol describes how to evaluate the quality of your BNN's uncertainty estimates.

1. Predictive Uncertainty Estimation:

- To make a prediction for a new input

x*, use Bayesian model averaging. Draw multiple samples of the weights from the variational posteriorw_t ~ q(w|θ). The final predictive distribution is the average of the predictions from all sampled models:p(y*|x*, D) ≈ (1/T) Σ_{t=1}^T p(y*|x*, w_t)[42]. - The variability across these predictions captures the model's epistemic uncertainty.

2. Calculate Calibration Metrics:

- Expected Calibration Error (ECE): Partition the predictions into bins based on their confidence (e.g., [0, 0.1), [0.1, 0.2), ...). For each bin, compute the difference between the average confidence and the average accuracy. The ECE is the weighted average of these differences [42].

- Brier Score: Measures the mean squared difference between the predicted probability and the actual outcome (1 for correct, 0 for incorrect). A lower score is better.

Comparative Performance of Uncertainty Quantification Methods in Drug-Target Interaction Prediction

The following table summarizes findings from a calibration study in drug discovery, which can serve as a benchmark for your own experiments [42].

| Method | Description | Reported Impact on Calibration |

|---|---|---|

| Monte Carlo Dropout | Approximate Bayesian inference by applying dropout at test time. | Common method, but may be outperformed by other approaches in terms of calibration. |

| Deep Ensembles | Train multiple models with different random initializations. | Often achieves good performance and calibration. |

| HBLL (HMC Bayesian Last Layer) | Applies Hamiltonian Monte Carlo to sample weights of the last layer only. | Improves model calibration and achieves performance of common UQ methods. |

| Platt Scaling | Post-hoc calibration method that fits a logistic regression to the model's logits. | Versatile; can be combined with other UQ methods to boost both accuracy and calibration. |

The Scientist's Toolkit

Key Research Reagent Solutions

| Item / Method | Function in Bayesian Deep Learning |

|---|---|

| Variational Inference (VI) | A scalable, optimization-based method for approximating the intractable true posterior distribution of neural network weights [41]. |

| Hamiltonian Monte Carlo (HMC) | A Markov Chain Monte Carlo (MCMC) method that uses Hamiltonian dynamics to sample efficiently from the posterior. Considered a gold standard but computationally expensive [42]. |

| Reparameterization Trick | A key technique that enables efficient gradient-based optimization of variational models by separating the stochasticity from the parameters, allowing backpropagation through random nodes [41]. |

| Gaussian Process (GP) | A non-parametric Bayesian model that defines a distribution over functions. Often used as a prior for BNNs to enhance interpretability [46]. |

| Evidence Lower Bound (ELBO) | The objective function maximized during Variational Inference. It balances data fit (likelihood) and conformity to the prior (regularization) [41]. |

| Platt Scaling | A simple, post-hoc probability calibration method that can be applied to a trained model to improve the reliability of its confidence scores [42]. |

| Tobit Model | A tool from survival analysis that can be integrated into BDL models to allow learning from censored regression labels, which are common in pharmaceutical data [45]. |

Workflow and Conceptual Diagrams

Bayesian vs Deterministic Neural Network Workflow

Variational Inference in BNNs

Frequently Asked Questions (FAQs)

Q1: What is the core guarantee that Conformal Prediction provides? Conformal Prediction provides finite-sample, distribution-free guarantees for prediction sets. For any new input ( X{n+1} ), the prediction set ( C(X{n+1}) ) satisfies ( \mathbb{P}(Y{n+1} \in C(X{n+1})) \geq 1 - \alpha ), where ( \alpha ) is a user-specified error rate (e.g., 0.1 for 90% coverage). This means the true label will be contained in the prediction set with a probability of at least ( 1-\alpha ), under the assumption that the data is exchangeable [47] [48].

Q2: Can I use Conformal Prediction with any pre-trained model? Yes. A principal advantage of Conformal Prediction is that it is a model-agnostic wrapper method. It can be applied to any pre-trained model (e.g., neural networks, random forests) without the need for retraining. It uses the model's outputs to calculate conformity scores and construct valid prediction sets [49] [47].

Q3: My model is deployed on time-series data. Is exchangeability a violated assumption? Yes, time-dependent data often violates the exchangeability assumption due to temporal correlations and potential distribution shifts. However, recent advancements address this. Methods are being developed for complex data like spatio-temporal data, streaming data, and one-dimensional/multi-dimensional series, which relax the strict exchangeability requirement [47].

Q4: What is the difference between Full and Split Conformal Prediction? The key difference lies in the data usage and computational cost. Split Conformal Prediction (also known as inductive CP) uses a dedicated calibration dataset to compute nonconformity scores, making it computationally efficient. Full Conformal Prediction uses a leave-one-out approach on the training data, which is computationally more intensive but may make better use of the available data [47] [48].

Q5: In classification, my prediction set is sometimes empty. What does this mean? An empty prediction set indicates that for that specific sample, no class had a high enough conformity score to be included in the set at your chosen confidence level ( 1-\alpha ). This is a valid outcome and can be interpreted as the model detecting an outlier or a sample that is too difficult to classify with the required confidence. It signals that the input may be far from the data distribution seen during training and calibration [50].

Q6: How can I make my prediction sets smaller/more informative? Prediction set size (or efficiency) is influenced by the nonconformity measure and the quality of your underlying model. A better, more accurate model will typically produce smaller, more precise prediction sets. You can also experiment with different nonconformity scores tailored to your specific problem and data type [47] [51].

Troubleshooting Guides

Issue 1: Coverage is Incorrect on New Test Data

Problem: The empirical coverage of your prediction sets is significantly lower or higher than the expected ( 1-\alpha ) target.

Solution:

- Check the Exchangeability Assumption: CP's validity guarantee relies on the calibration and test data being exchangeable. If your test data comes from a different distribution (e.g., different time period, different lab), the coverage guarantee may not hold. Ensure your calibration set is representative of the test conditions [47] [50].

- Verify Your Calibration Set Size: A very small calibration set can lead to unstable and inaccurate quantile estimates, resulting in coverage mismatch. Use a calibration set of sufficient size (e.g., hundreds or thousands of data points, depending on the problem) for reliable results [48].

- Re-examine the Nonconformity Score: The choice of nonconformity score is crucial. For classification, using the softmax probability of the true class is common. For regression, the absolute residual ( |yi - \hat{y}i| ) is standard. Ensure your score is appropriate for your task [48] [50].

Issue 2: Prediction Sets are Too Large

Problem: The prediction sets contain too many labels, making them uninformative for decision-making.

Solution:

- Improve the Underlying Model: Large prediction sets often reflect high inherent uncertainty in the model's predictions. The most effective solution is to improve the accuracy of your base model ( \hat{f} ) through better feature engineering, model architecture, or training procedures [47] [50].

- Adapt the Nonconformity Score: Investigate more advanced nonconformity scores. For classification, using the Adaptive Prediction Set (APS) method, which considers the cumulative probability of labels until the true one is included, can often lead to smaller sets [51].

- Adjust the Significance Level: There is a direct trade-off between validity and informativeness. A lower ( \alpha ) (e.g., 0.05 for 95% coverage) will produce larger sets. If the application allows, consider using a higher ( \alpha ) (e.g., 0.2 for 80% coverage) to obtain smaller, more informative sets [48].

Issue 3: Handling High-Dimensional or Structured Outputs

Problem: Standard CP methods are designed for scalar outputs, but my task involves complex outputs like text, graphs, or images.

Solution:

- Leverage Task-Specific Conformal Methods: The CP framework has been extended to handle structured data. For knowledge graphs, methods have been developed to provide prediction sets for entities and relationships [51]. For natural language processing, research is active in applying CP to tasks with unbounded output spaces [47].

- Define a Structured Nonconformity Score: The key is to define a meaningful nonconformity score ( s(x, y) ) that measures the "strangeness" of a structured output ( y ) given input ( x ). For a knowledge graph relationship extraction task, this could be the model's confidence in a predicted relationship tuple [51].

Experimental Protocols

Protocol 1: Split Conformal Prediction for Regression

This is a foundational protocol for creating prediction intervals for a continuous outcome, such as a compound's binding affinity.

1. Objective: To construct a prediction interval ( C(X{test}) ) for a regression target such that ( \mathbb{P}(Y{test} \in C(X_{test})) \geq 0.9 ).

2. Research Reagent Solutions:

| Item | Function in Protocol | ||

|---|---|---|---|

| Pre-trained Predictor ( \hat{f} ) | The core model that outputs a point prediction for a given input. | ||

| Held-out Calibration Dataset ( {(Xi, Yi)}_{i=1}^n ) | A dataset, not used in training, for calculating nonconformity scores and the critical quantile. | ||

| Nonconformity Score ( s(x,y) = | y - \hat{f}(x) | ) | Measures the error between the actual and predicted value. |

| Significance Level ( \alpha = 0.1 ) | Determines the desired coverage probability of ( 1 - \alpha = 90\% ). |

3. Methodology:

- Step 1: Train a regression model ( \hat{f} ) on the training dataset.

- Step 2: For each sample ( (Xi, Yi) ) in the calibration dataset, compute the nonconformity score: ( Si = |Yi - \hat{f}(X_i)| ).

- Step 3: Calculate the critical quantile ( \hat{q} ). With ( n ) calibration samples, ( \hat{q} ) is the ( \lceil (n+1)(1-\alpha) \rceil / n )-th quantile of the scores ( {S1, ..., Sn} ).

- Step 4: For a new test point ( X{test} ), output the prediction interval: ( C(X{test}) = [\hat{f}(X{test}) - \hat{q}, \hat{f}(X{test}) + \hat{q}] ) [49] [48].

4. Visualization of Workflow:

Protocol 2: Split Conformal Prediction for Classification

This protocol creates prediction sets for discrete classes, which is crucial for tasks like molecular property classification.

1. Objective: To construct a prediction set ( C(X{test}) ) for a classification target such that ( \mathbb{P}(Y{test} \in C(X_{test})) \geq 0.95 ).