Beyond the False Signal: Strategies to Mitigate Ancestral Polymorphism in Introgression Detection

Accurately identifying true introgression is critical for evolutionary studies and biomedical research, yet it is frequently confounded by false positives from ancestral polymorphism and selection.

Beyond the False Signal: Strategies to Mitigate Ancestral Polymorphism in Introgression Detection

Abstract

Accurately identifying true introgression is critical for evolutionary studies and biomedical research, yet it is frequently confounded by false positives from ancestral polymorphism and selection. This article provides a comprehensive framework for researchers and drug development professionals to navigate these challenges. We explore the foundational causes of spurious signals, from lineage-specific rate variation to the pervasive effects of background selection. The content details robust methodological approaches, including alignment-based pipelines and site-pattern methods, and offers practical troubleshooting for dataset optimization. Finally, we present a rigorous validation protocol incorporating statistical benchmarking and case studies from human and plant genomics to ensure the reliability of introgression inferences in clinical and evolutionary contexts.

Unmasking the Imposters: How Ancestral Polymorphism and Selection Generate False Introgression Signals

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between introgression and incomplete lineage sorting (ILS)? A: Introgression and ILS both create discordance between gene trees and species trees, but through different mechanisms. Introgression is the movement of genetic material from one species into the gene pool of another through hybridization and repeated backcrossing [1]. Incomplete Lineage Sorting (ILS), also called hemiplasy or deep coalescence, is the failure of ancestral genetic polymorphisms to sort out (or coalesce) into distinct lineages during successive speciation events [2]. This means a gene variant in one species might be more closely related to a variant in a distant species than to its closer relative, not because of gene flow, but simply by chance retention of an ancient polymorphism [2].

2. What are the primary analytical challenges in distinguishing these two signals? A: The core challenge is that both processes produce similar patterns of shared genetic variation, making them difficult to separate using simple tree-based methods [1] [3]. Specific challenges include:

- Similar Gene Tree Discordance: Both ILS and introgression can cause a gene tree to show a different evolutionary relationship than the species tree.

- Model Violations: Statistical methods for distinguishing them often rely on simplifying assumptions (e.g., constant population size, neutral markers) that may be violated in real-world scenarios, complicating interpretation [3].

- Confounding with Other Forces: Variation in mutation rates across the genome can create patterns that mimic recent introgression [4]. Furthermore, natural selection can skew allele frequencies and complicate the picture [4].

3. What kind of data is most powerful for making this distinction? A: Using multiple, independent lines of evidence is crucial. No single locus can provide a definitive answer. Key data includes:

- Genome-Wide Data: Sequencing many unlinked loci from across the nuclear genome provides the statistical power to discern the underlying demographic history [1].

- Population-Level Sampling: Sampling multiple individuals from allopatric (geographically separated) and parapatric (adjacent) populations of the species in question is highly informative. Introgression often leaves a stronger signal in parapatric populations, while ILS is expected to be evenly distributed [1].

- Different Marker Types: Contrasting patterns from biparentally inherited nuclear DNA, maternally inherited mitochondrial DNA (mtDNA), and paternally inherited chloroplast DNA (in plants) can be very revealing. For example, mtDNA can introgress more easily than nuclear genes, creating mito-nuclear discordance [1] [3].

Troubleshooting Guides

Problem 1: Incongruent Phylogenies from Different Genomic Regions

Observation: Gene trees built from different loci show conflicting topologies, and you need to determine if the cause is ILS or introgression.

| Troubleshooting Step | Rationale and Methodology |

|---|---|

| Analyze Geographic Pattern | Compare genetic differentiation between species in allopatry versus parapatry/sympatry. Lower differentiation in parapatry suggests ongoing gene flow (introgression), while similar levels of differentiation in allopatry and parapatry are more consistent with ILS [1]. |

| Utilize Coalescent-Based Model Selection | Use frameworks like Approximate Bayesian Computation (ABC) or the Isolation with Migration (IM) model to compare the statistical support for different speciation scenarios (e.g., strict isolation vs. isolation-with-migration). These methods can estimate parameters like population divergence times and migration rates [1]. |

| Apply Summary Statistics for Introgression | Calculate statistics designed to detect gene flow. RNDmin is a robust method that uses the minimum pairwise sequence distance between two populations relative to an outgroup to detect recently introgressed loci [4]. Gmin (dmin/dXY) is another statistic sensitive to recent migration that is normalized to account for variation in mutation rates [4]. |

The following workflow integrates these steps into a logical decision-making process:

Problem 2: Mito-Nuclear Discordance

Observation: The phylogeny from mitochondrial DNA (mtDNA) does not match the phylogeny from nuclear DNA, a common form of cytonuclear discordance.

| Troubleshooting Step | Rationale and Methodology |

|---|---|

| Test for Pervasive Nuclear Introgression | If mtDNA has introgressed, it is often part of a broader, but potentially weaker, signal of nuclear introgression. Scan the nuclear genome for regions with exceptionally high similarity between the two species, using methods like RNDmin or FST outliers [4] [3]. |

| Evaluate the Role of Selection | Consider if natural selection could be driving the mtDNA capture. For example, an adaptive mitochondrial haplotype might sweep through a population, even in the absence of significant nuclear gene flow [3]. |

| Assess Reproductive Biology | Investigate the known reproductive mode of the species and their hybrids. In some systems, clonal reproduction of hybrids (e.g., gynogenesis) can prevent nuclear introgression while allowing for the complete fixation of allospecific mtDNA, pointing to an ancient hybridization event [3]. |

The following table summarizes empirical findings from published research that successfully differentiated between ILS and introgression.

Table 1: Empirical Data from Case Studies on ILS vs. Introgression

| Study System | Key Analytical Methods | Evidence for Introgression | Evidence Against ILS | Citation |

|---|---|---|---|---|

| Two pine species (Pinus massoniana and P. hwangshanensis) | Population structure analysis, Comparison of allopatric vs. parapatric FST, Approximate Bayesian Computation (ABC), Ecological Niche Modeling | More admixture in parapatric populations; Lower interspecific differentiation in parapatry; ABC supported a model of long isolation followed by secondary contact. | ILS would predict even sharing of polymorphisms across allopatric and parapatric populations, which was not observed. | [1] |

| Spined loach fish (Cobitis complex) | Multi-locus sequencing (nuclear and mtDNA), Coalescent-based analyses, Knowledge of hybrid clonal reproduction | Mito-nuclear mosaic genome (C. tanaitica nuclear DNA clusters with C. taenia, but its mtDNA clusters with C. elongatoides). Statistical rejection of ILS models. | Contemporary hybrids are clonal and cannot mediate nuclear introgression, implying an ancient hybridization event. | [3] |

| Mosquitoes (Anopheles quadriannulatus and A. arabiensis) | RNDmin statistic to detect introgressed regions | Identification of three novel candidate regions for introgression, including one on the X chromosome. | The RNDmin method is designed to be robust to ILS and variation in mutation rates, helping to pinpoint true introgression. | [4] |

The Scientist's Toolkit: Key Reagents and Computational Methods

Table 2: Essential Reagents and Computational Tools for Differentiation Studies

| Item / Method | Function / Purpose in Analysis |

|---|---|

| Multiple, Independent Intron Markers | Neutral, non-coding regions distributed across the genome are ideal for inferring demographic history without the confounding effects of natural selection [1]. |

| Outgroup Sequences | Essential for rooting phylogenetic trees and for normalizing statistics like RND (Relative Node Depth) and RNDmin to account for variation in mutation rates [4]. |

| Approximate Bayesian Computation (ABC) | A statistical framework for comparing complex demographic models (e.g., with and without gene flow) to identify the scenario best supported by the observed genetic data [1]. |

| Isolation with Migration (IM) Model | A coalescent-based model that jointly estimates population sizes, divergence times, and migration rates from multi-locus sequence data [1]. |

| RNDmin Statistic | A summary statistic used to test for introgression by examining the minimum pairwise sequence distance between populations relative to divergence to an outgroup. It is powerful for detecting recent and strong introgression [4]. |

| Phased Haplotypes | Knowing the phase of alleles (which alleles are linked on the same chromosome) is required for several powerful detection methods, including those based on minimum sequence distances (dmin) [4]. |

| Ecological Niche Modeling (ENM) | Used to infer historical species range shifts (e.g., during past climate oscillations), which can provide independent evidence for potential secondary contact zones that would facilitate introgression [1]. |

Technical Support Center

Troubleshooting Guides

Guide 1: Addressing False Positive Introgression Signals in Genomic Windows

- Problem: Your analysis detects local introgression signals that are not biologically plausible. These spurious signals often appear in genomic regions with low nucleotide diversity.

- Explanation: The widely used Patterson's D statistic can produce unreliable inferences in small genomic windows. Its variance increases in regions of low diversity, leading to false positives [5]. Furthermore, the stochastic nature of the substitution process means that even under a molecular clock, estimated substitution rates can vary erroneously between lineages, creating a pattern that mimics true rate variation [6].

- Solution:

- Implement the D+ Statistic: Use the D+ statistic, which incorporates both shared derived alleles (ABBA/BABA patterns) and shared ancestral alleles (BAAA/ABAA patterns). This increases the number of informative sites per region, improving precision and reducing the false positive rate in local analyses [5].

- Simulate Your Data: Use coalescent simulations under a model without introgression to establish a baseline for expected rate variation and D statistic variance due to stochastic error alone [5] [6].

- Compare Clade Averages: When checking for rate variation, compare average substitution rates between different clades rather than individual lineages, as this approach is less susceptible to erroneous inference from estimation error [6].

Guide 2: Managing Alert Fatigue from Statistical False Positives

- Problem: A high volume of false positive alerts from your detection pipelines is causing "alert fatigue," where real signals may be overlooked.

- Explanation: This is a common operational challenge. False positives waste time and can desensitize researchers to alerts, increasing the risk of missing genuine findings [7] [8].

- Solution:

- Clearly Define Detections: For every statistical test or alert, precisely define the specific evolutionary event or pattern it is designed to catch and the action to be taken if it fires [8].

- Tune Your Thresholds: Set accurate thresholds for your statistics by first baselining normal variation in your dataset. Avoid using default thresholds without adjusting them to your specific data and research question [9] [8].

- Implement a Feedback Loop: When a false positive is identified, use it as feedback to adjust your analytical parameters or detection rules. This continuous tuning prevents the same false positive from recurring [9].

Frequently Asked Questions (FAQs)

Q1: What is the primary cause of false positive introgression signals in local genomic regions? The primary cause is the application of genome-wide statistics, like Patterson's D, to small local regions where low nucleotide diversity maximizes the statistic's variance. This can create spurious signals that are not due to actual gene flow [5].

Q2: How does the D+ statistic improve upon the classic D statistic? The D statistic only uses shared derived alleles (ABBA and BABA site patterns). The D+ statistic increases analytical power by also leveraging shared ancestral alleles (BAAA and ABAA patterns). This provides more information per genomic region, leading to better precision and a lower false positive rate when detecting local introgression [5].

Q3: Can substitution rate variation be inferred even when the molecular clock holds true? Yes. Error in rate estimation, stemming from the stochastic (Poisson) nature of the substitution process and the underestimation of multiple substitutions, can lead to the erroneous inference of rate variation among lineages, even when the underlying substitution process is clock-like [6].

Q4: What is a key best practice for maintaining the reliability of a detection pipeline over time? Treat your detections like code. This "Detection as Code" (DaC) approach involves continuous maintenance, including testing new detections against historical data, tuning thresholds, and disabling or refining rules that become noisy as data and methods evolve [8].

Experimental Protocols & Methodologies

Protocol: Implementing the D+ Statistic for Introgression Detection

This protocol outlines the steps to compute the D+ statistic for a four-population system (P1, P2, P3, Outgroup).

- Data Preparation: Obtain genotype or allele frequency data for at least one individual from each of the four populations across your genomic regions of interest (e.g., 5kb windows) [5].

- Variant Site Identification: Identify biallelic Single Nucleotide Polymorphisms (SNPs). Use the outgroup (P4) to polarize alleles, determining the ancestral (A) and derived (B) states [5].

- Site Pattern Classification: For each SNP in a window, classify it into one of four key patterns based on the alleles present in P1, P2, and P3 (assuming the outgroup is fixed for the ancestral allele):

- ABBA: Derived allele in P3 and P2, ancestral in P1.

- BABA: Derived allele in P3 and P1, ancestral in P2.

- BAAA: Ancestral allele in P3 and P2, derived in P1.

- ABAA: Ancestral allele in P3 and P1, derived in P2 [5].

Calculate the D+ Statistic: Use the following formula, summing over all L sites in the genomic window [5]:

D+ = Σ(ABBA - BABA + BAAA - ABAA) / Σ(ABBA + BABA + BAAA + ABAA)

For sample sizes greater than one, the calculation can be expressed in terms of derived allele frequencies (p̂ᵢⱼ for population j at site i).

Interpretation: A significant positive D+ value indicates introgression between P3 and P2, while a significant negative value indicates introgression between P3 and P1.

Signaling Pathways and Workflows

Diagram 1: D vs. D+ Site Pattern Logic

Diagram 2: False Positive Mitigation Workflow

Data Presentation

Table 1: Comparison of Introgression Detection Statistics

| Feature | Patterson's D Statistic | D+ Statistic |

|---|---|---|

| Core Principle | Measures excess of shared derived alleles (ABBA vs. BABA) [5] | Measures excess of shared derived AND shared ancestral alleles [5] |

| Informative Sites Used | Shared derived alleles only (ABBA, BABA) [5] | Shared derived and shared ancestral alleles (ABBA, BABA, BAAA, ABAA) [5] |

| Optimal Use Case | Detecting genome-wide introgression [5] | Detecting local, targeted introgression in genomic windows [5] |

| Performance in Low-Diversity Regions | High variance, prone to spurious results (false positives) [5] | Improved precision and lower false positive rate due to more informative sites [5] |

Table 2: Common Sources of Error in Substitution Rate Estimation

| Source of Error | Impact on Analysis | Mitigation Strategy |

|---|---|---|

| Stochastic Substitution Process | Even with a molecular clock, the number of substitutions per branch varies, creating erroneous rate variation [6]. | Use coalescent simulations to establish a null distribution; compare average rates between clades [6]. |

| Multiple Hit / Saturation | Underestimation of true substitutions on longer branches, leading to underestimation of rates and erroneous variation [6]. | Employ appropriate substitution models that correct for multiple hits; be aware of the node-density effect [6]. |

| Imperfect Model Fit | No model perfectly captures the substitution process, leading to residual error in rate estimation [6]. | Use model selection tools to choose the best-fitting model; consider model averaging. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools and Resources

| Item | Function / Description | Relevance to Mitigating False Positives |

|---|---|---|

| Coalescent Simulation Software (e.g., ms, SLiM) | Simulates genetic data under evolutionary models without introgression. | Creates a null distribution to test whether observed rate variation or D statistics exceed expectations by chance alone [5] [6]. |

| Population Genomic Analysis Toolkit (e.g., BCFtools, PLINK, admixtools) | Suites for processing VCF files, calculating basic statistics, and implementing tests like D and f4. | The foundational environment for data preparation and initial analysis. |

| D+ Statistic Script | Custom or published script/software to calculate the D+ statistic from aligned sequence or genotype data. | Directly implements the improved method for local introgression detection, reducing false positives [5]. |

| High-Quality Reference Genome & Annotated Outgroup | A well-assembled genome for mapping and an evolutionarily close outgroup for accurate allele polarization. | Critical for correctly identifying ancestral and derived alleles, which is the basis for both D and D+ statistics [5]. |

| High-Performance Computing (HPC) Cluster | Computing resources for large-scale genomic simulations and genome-scans. | Enables the computationally intensive simulations and bootstrapping required for robust statistical testing. |

Troubleshooting Guides

Issue 1: Distinguishing True Introgression from Ancestral Polymorphism

Problem: D-statistics (ABBA-BABA test) yield significant signals, but it is unclear if this results from true introgression or incomplete lineage sorting (ILS) of ancestral variation.

Diagnosis and Solution:

| Test/Metric | Calculation Formula | Interpretation | Key Thresholds |

|---|---|---|---|

| D-Statistic | D = (NABBA - NBABA) / (NABBA + NBABA) | Measures allele frequency asymmetry; significant D ≠ 0. | |D| > 3-4 standard errors suggests significance. |

| f-branch (fb) | Estimated via f4-ratio estimation: fb = (f4(P1, O; A, B) / f4(P1, P2; A, B)) | Estimates the proportion of introgressed ancestry in a genome. | fb ≈ 0: No introgression. fb > 0: Proportion of ancestry from sister lineage. |

| Site Frequency Spectrum (SFS) | — | Compare the SFS in the test population to neutral expectations; an excess of intermediate-frequency alleles may suggest balancing selection. | — |

Experimental Protocol:

- Variant Calling: Generate a whole-genome, multi-individual SNP dataset for P1, P2, P3, and an outgroup (O).

- D-statistic Calculation: Use packages like

Dsuiteto calculate D-statistics in sliding windows across the genome. Identify regions with significant D-values. - f-branch Estimation: Apply f4-ratio estimation to significant regions to quantify the proportion of ancestry from an introgressed lineage.

- Coalescent Simulation: Simulate genomic data under a model of strict isolation (no introgession) with parameters (e.g., population size, divergence time) estimated from your data.

- Comparison: Compare the distribution and magnitude of significant D-values from your empirical data to the distribution obtained from simulations where no introgression is possible. If empirical signals are stronger/more frequent, true introgression is a more likely cause than ILS alone.

Issue 2: Confounding Effects of Background Selection

Problem: Background Selection (BGS) reduces genetic diversity in regions of low recombination, creating "valleys" of diversity that can be mistaken for selective sweeps or local reductions in gene flow, potentially creating spurious introgression signals.

Diagnosis and Solution:

| Analysis Method | Application | Interpretation of Results |

|---|---|---|

| Diversity (π) vs. Recombination Rate Correlation | Calculate π in non-overlapping windows and plot against the local recombination rate. | A strong positive correlation is a hallmark signature of BGS. |

| B-value Calculation | B = πobserved / πneutral expectation. The B-value estimates the reduction in diversity due to BGS. | B ≈ 1: No BGS effect. B < 1: Diversity is reduced by BGS. |

| Comparison to Neutral Model | Use software (e.g., SLiM, msprime) to simulate genomes with and without BGS. |

If patterns in empirical data (e.g., diversity valleys) are replicated in BGS simulations, BGS is a sufficient explanation. |

Experimental Protocol:

- Estimate Recombination Rates: Generate a genetic map for your organism or use a published one.

- Calculate Genomic Diversity: Compute nucleotide diversity (π) in windows across the genome.

- Correlation Analysis: Perform a statistical test (e.g., Spearman's correlation) between π and recombination rate.

- Modeling and Simulation:

- Estimate the density of functional elements (e.g., using annotation files) across the genome.

- Input the recombination map and functional density into a simulator like

SLiMto generate expected diversity patterns under a BGS model.

- Identify Outliers: Compare empirical diversity values to the simulated BGS distribution. Genomic windows where π is significantly lower than the BGS expectation are candidate regions for bona fide selective sweeps or other processes.

Frequently Asked Questions (FAQs)

Q1: My D-statistic is significant, and the f-branch estimate is high in a specific region. Can I conclusively say this is introgression? A1: Not yet. A high f-branch estimate suggests gene flow but does not rule out the retention of ancestral polymorphism in that specific region due to linked selection. You must test whether the region is also subject to BGS (low recombination, gene-dense) or balancing selection. If it is, the signal may be a false positive driven by Selection Past.

Q2: How can I tell if balancing selection is maintaining ancestral polymorphism in my data? A2: Look for specific genomic signatures: (1) an excess of intermediate-frequency alleles in the Site Frequency Spectrum (SFS); (2) higher than expected genetic diversity (e.g., π, θw) for long, deeply divergent haplotypes; and (3) trans-species polymorphism, where alleles are shared between species that diverged a long time ago.

Q3: What is the most critical negative control when performing these tests? A3: The most critical control is to analyze genomic regions you believe a priori to be evolving neutrally—areas far from genes, with high recombination rates, and devoid of conserved elements. Establish the null distribution of your test statistics (like D) in these regions. Any inference from putatively selected regions should be compared against this neutral baseline.

Experimental Workflow for Mitigating False Positives

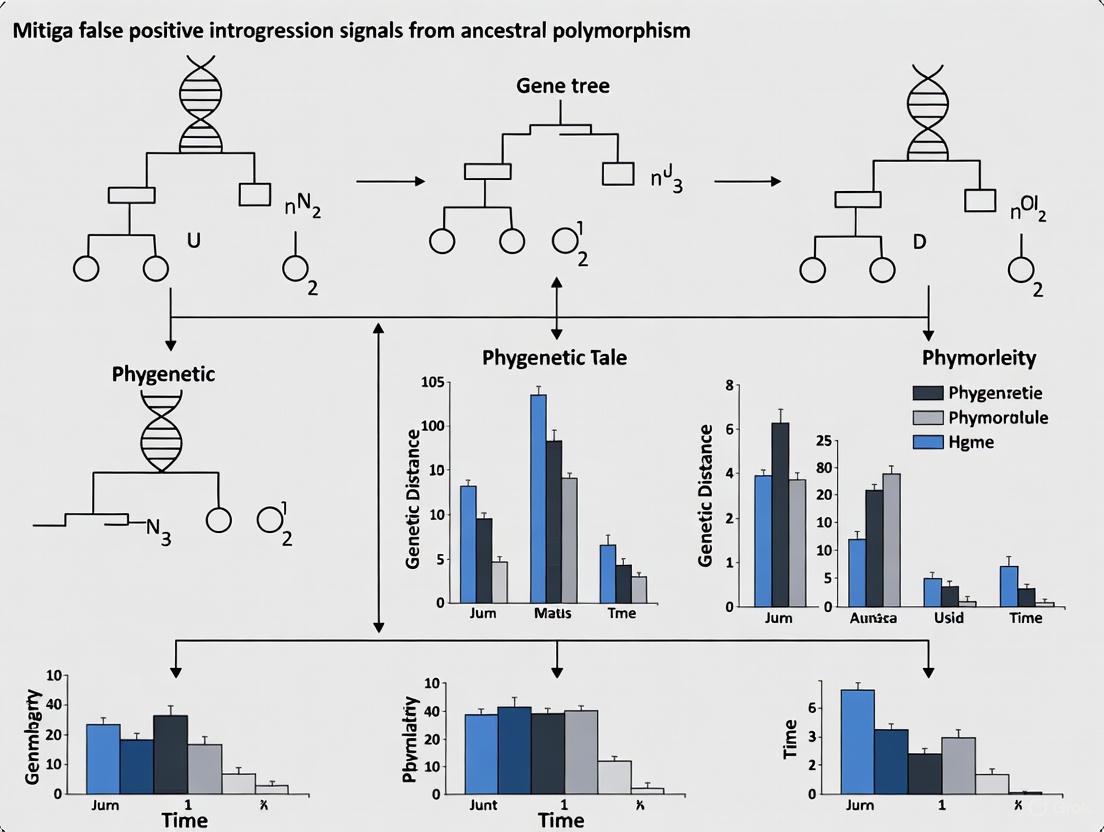

The following diagram outlines the core decision-making workflow for determining the source of an apparent introgression signal.

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Resource | Type | Primary Function in Analysis |

|---|---|---|

| Reference Genome Assembly | Dataset | Serves as the coordinate system for mapping sequence reads and calling genetic variants. A high-quality assembly is crucial. |

| Population SNP Dataset | Dataset | A VCF file containing genotype calls for multiple individuals across populations. The fundamental input for most population genetic analyses. |

| Genetic Map | Dataset | Provides estimates of recombination rates across the genome. Essential for diagnosing the effects of Background Selection. |

| Dsuite | Software Package | Efficiently calculates D-statistics (ABBA-BABA) and related metrics (f-branch) for genome-wide data to test for introgression. |

| ANGSD | Software Package | Used for estimating the Site Frequency Spectrum (SFS) and other summary statistics from next-generation sequencing data, even without full genotype calls. |

| SLiM | Software Package | A powerful simulation framework for forward-time, individual-based population genetic simulations. Ideal for modeling complex scenarios involving selection and demography. |

| msprime / stdpopsim | Software Package | Tools for coalescent simulations. Efficient for generating large genomic datasets under complex demographic models with neutral evolution or background selection. |

Accurate detection of introgression—the exchange of genetic material between species—is fundamental to understanding evolutionary processes. However, in shallow phylogenies (involving closely related taxa), commonly used methods like the D-statistic can produce false positive signals. These spurious signals are not due to actual gene flow but are often artifacts of substitution rate variation across lineages. This guide provides troubleshooting and methodologies to help researchers distinguish true introgression from these false positives, a critical consideration for research in areas like drug development where genetic insights can inform target identification.

Troubleshooting Guide: False Positive Introgression Signals

Problem: Your analysis using site-pattern methods (e.g., D-statistic, HyDe) on a shallow phylogeny indicates significant introgression, but you suspect the signal might be false.

Solution: Investigate the following potential causes and solutions.

| Symptom | Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|---|

| Significant D-statistic or HyDe signal in young phylogenies (< 300,000 generations) with small population sizes [10]. | Lineage-specific substitution rate variation (rate heterogeneity) [10]. | Perform a relative rate test to quantify rate differences between sister lineages [10]. | Use methods robust to rate variation, such as full-likelihood tests that incorporate branch length information [10]. |

| Inflated false-positive rates (up to 35% for weak, 100% for moderate rate variation) with a 500 Mb genome [10]. | Violation of the molecular clock assumption, leading to homoplasy that creates ABBA/BABA asymmetry [10]. | Simulate data under a null model of no gene flow but with estimated rate variation to establish a baseline false-positive rate [10]. | Employ the D+ statistic, which leverages both shared derived and ancestral alleles, as it has been shown to have better precision [5]. |

| Signal intensifies when a more distant outgroup is used [10]. | Increased potential for multiple hits with distant outgroups, violating the "no multiple hits" assumption [10]. | Re-run the analysis with a closer outgroup, if available, and compare the results. | Ensure the outgroup is not excessively distant from the ingroup taxa under study. |

| Unreliable local introgression signals in genomic windows, especially in regions of low nucleotide diversity [5]. | High variance of the D-statistic in small genomic windows with low diversity [5]. | Calculate the D+ statistic in these windows, as it incorporates more informative sites (BAAA and ABAA patterns) [5]. | Switch from the standard D-statistic to the D+ statistic for local inference of introgressed regions [5]. |

| Significant D-statistic but no known historical opportunity for gene flow. | Ghost introgression or other demographic complexities not accounted for in the model [10]. | Test complex demographic models that include population size changes and structure. | Consider using supervised learning or probabilistic modeling approaches designed for complex scenarios [11]. |

Frequently Asked Questions (FAQs)

1. What are the primary methods for detecting introgression, and how do they work?

Introgression detection methods generally fall into two categories:

- Site-pattern or gene-tree topology methods: These methods, like the D-statistic (ABBA-BABA test) and HyDe, detect gene flow by identifying significant asymmetries between discordant gene trees or site patterns. They operate on the principle that without introgression, two discordant site patterns (ABBA and BABA) occur with equal frequency due to Incomplete Lineage Sorting (ILS). An excess of one pattern suggests gene flow [10] [5].

- Gene-tree branch length methods: Methods like D3 and QuIBL use information from gene-tree branch lengths, examining whether their distributions deviate from expectations under ILS alone [10].

2. Why are "shallow phylogenies" particularly vulnerable to false positives?

Shallow phylogenies, involving closely related taxa, are often assumed to adhere to a molecular clock. However, empirical studies show that even within genera, species can exhibit substitution rate disparities of 10% to over 50% [10]. Methods like the D-statistic assume no multiple hits, but rate variation causes homoplasy (independent mutations), which creates an asymmetry in ABBA and BABA site patterns that mimics the signal of introgression [10].

3. What is the D+ statistic, and how does it improve upon the D-statistic?

The D+ statistic is an extension of the D-statistic that leverages both shared derived alleles (like ABBA and BABA) and shared ancestral alleles (BAAA and ABAA) [5]. Introgression reintroduces chunks of DNA containing both types of alleles into the recipient population. By using this additional information, D+ increases the number of informative sites per genomic region. This improves the precision of local introgression detection and reduces the false positive rate in small genomic windows compared to the standard D-statistic [5].

D+ = [ Σ(ABBA - BABA) + Σ(BAAA - ABAA) ] / [ Σ(ABBA + BABA) + Σ(BAAA + ABAA) ] [5]

4. Besides rate variation, what other factors can cause spurious introgression signals?

Other factors include:

- Ghost Introgression: Gene flow from an unsampled (ghost) lineage can produce a significant D-statistic signal that is misinterpreted [10].

- Inaccurate Branch Lengths: The use of "pseudo-chronograms" with suboptimal branch lengths (e.g., estimated via algorithms like BLADJ) can lead to strong overestimation of phylogenetic signal in other comparative metrics, highlighting the general sensitivity of evolutionary analyses to branch length quality [12].

- Purifying Selection: Selection against deleterious introgressed variants can create patterns that mimic adaptive introgression [5].

Experimental Protocols

Protocol 1: Quantifying Lineage-Specific Rate Variation

Objective: To test the molecular clock assumption and quantify the degree of substitution rate variation between sister lineages.

Materials:

- Genomic sequence data for the ingroup taxa and a suitable outgroup.

- Software for multiple sequence alignment (e.g., MAFFT, MUSCLE).

- Phylogenetic software capable of performing relative rate tests (e.g., HYPHY, MEGA).

Methodology:

- Sequence Alignment: Generate a whole-genome or multi-locus alignment for the taxa of interest, including the outgroup.

- Tree Estimation: Infer a time-calibrated species tree under a strict molecular clock model.

- Relative Rate Test: Apply the Relative Rate Test (RRT) to pairs of sister lineages. This test checks if the genetic distance from each sister lineage to the outgroup is equal [10].

- Interpretation: A significant RRT result indicates a violation of the molecular clock. The percentage difference in distances estimates the magnitude of rate variation.

Protocol 2: Implementing the D+ Statistic for Local Introgression Detection

Objective: To identify specific introgressed regions in a genome with improved precision.

Materials:

- Genotype or allele frequency data for four populations: P1 and P2 (sister taxa), P3 (putative donor), and P4 (outgroup).

- Scripts or software to calculate the D+ statistic (implementation may require custom scripts based on the published formula [5]).

Methodology:

- Data Preparation: Organize genomic data into non-overlapping or sliding windows.

- Site Pattern Counts: For each window, count the occurrences of the four key site patterns:

- ABBA: Derived allele in P2 and P3, ancestral in P1 and P4.

- BABA: Derived allele in P1 and P3, ancestral in P2 and P4.

- BAAA: Derived allele only in P1, ancestral in P2, P3, P4.

- ABAA: Derived allele only in P2, ancestral in P1, P3, P4 [5].

- Calculate D+: Compute the D+ value for each window using the formula provided in the FAQ section.

- Significance Testing: Determine the statistical significance of the D+ value, typically achieved through a block jackknife or bootstrap approach across windows or sites.

Signaling Pathways and Workflows

Diagram: Site Patterns in Introgression Detection

Research Reagent Solutions

Essential computational tools and conceptual "reagents" for investigating introgression.

| Research Reagent | Function / Description | Example Use Case |

|---|---|---|

| D-statistic (ABBA-BABA) | A summary statistic that tests for an excess of shared derived alleles between populations to detect genome-wide introgression [10] [5]. | Initial screening for the presence of gene flow in a four-taxon system. |

| HyDe | A site-pattern method for detecting and characterizing hybrid speciation events by testing if the two least frequent site patterns occur at comparable frequencies [10]. | Identifying if a taxon is of hybrid origin and determining its parental species. |

| D+ Statistic | An extension of the D-statistic that incorporates shared ancestral alleles to improve precision in detecting local introgressed regions [5]. | Pinpointing the exact genomic locations of introgressed tracts in windows of low diversity. |

| Relative Rate Test | A phylogenetic method used to test the molecular clock hypothesis by comparing rates of evolution between two sister lineages using an outgroup [10]. | Diagnosing potential false positive drivers by quantifying lineage-specific rate variation. |

| Coalescent Simulator (e.g., MSci) | Software that implements the multispecies coalescent model with introgression to simulate genomic data under user-defined demographic parameters [10]. | Generating null distributions to estimate false-positive rates and validate findings. |

Frequently Asked Questions

Q1: What is the primary statistical limitation of Patterson's D statistic when analyzing local genomic regions, and how can it lead to false positives? The primary limitation is its high variance and spurious inferences in genomic windows with low nucleotide diversity. The D statistic becomes unreliable in small regions because it measures an excess of shared derived alleles (ABBA or BABA sites) but does not leverage shared ancestral variation. This can produce false positive signals of introgression in areas with low diversity, as the statistic is sensitive to the limited number of informative sites in these windows [5].

Q2: How does the D+ statistic improve upon the D statistic for detecting local introgression? The D+ statistic incorporates both shared derived alleles (like the D statistic) and shared ancestral alleles. This increases the number of informative sites per genomic region, which improves precision and reduces the false positive rate when identifying local introgressed segments. By using more data, D+ provides a more robust measure for pinpointing the exact location of introgression in a genome [5].

Q3: My analysis of local genomic windows shows high D statistic values, but I suspect they are false positives. What is a likely cause? A likely cause is analyzing regions with low nucleotide diversity. In such windows, the D statistic has high variance and can produce strong but spurious signals of gene flow. It is recommended to use statistics like D+ that are designed for local analysis and to validate findings with additional demographic context and independent methods [5].

Q4: Why are small sequence fragments (like 15-mers) problematic in genomic studies and patent claims? Short sequences are highly non-specific. A 15-nucleotide sequence from one gene can perfectly match sequences in hundreds of other genes. This creates extensive "cross-matches," leading to ambiguous results in sequence-based assays and uncertain infringement liability in patent claims. For example, a 15mer from the human BRCA1 gene matches at least 689 other genes [13].

Q5: What are the common challenges in detecting microexons, and how do they relate to broader issues in genomic analysis? Microexons (≤15 nucleotides) are frequently misannotated or missed entirely in genome annotations due to their small size, which challenges standard RNA-seq read mapping and statistical models for gene prediction. This reflects a broader pervasiveness of detection problems for small genomic features, similar to the challenges of identifying short, informative sequences for introgression analysis [14].

Troubleshooting Guides

| Observation | Potential Cause | Solution |

|---|---|---|

| High D statistic in small genomic windows | Low nucleotide diversity inflating variance | Use the D+ statistic to incorporate shared ancestral alleles and increase informative sites [5]. |

| Spurious local introgression signals | Incomplete Lineage Sorting (ILS) mimicking gene flow | Apply the D+ statistic; simulate data under a null model of no gene flow to establish a baseline [5]. |

| Inability to detect local introgression | Low number of informative derived alleles in the region | Switch from D to D+ statistic, which uses both derived and ancestral alleles to increase power [5]. |

| Primer dimers (40-80 bp peaks) in library prep | Self-annealing of primers during PCR | Perform a bead purification using the same ratio as the final library purification to remove the contaminant [15]. |

| Adaptor dimers (120-160 bp peaks) in library prep | Ligation of adaptors to each other instead of target DNA | Perform an additional bead purification; ensure fresh ethanol is used and beads are fully dry before elution [15]. |

| Low library yields from SPRI bead purifications | Beads not at room temperature, improper mixing, or old ethanol | Allow beads to reach room temperature; mix bead suspension and sample thoroughly; use fresh 70% ethanol [15]. |

Experimental Protocols

Protocol 1: Detecting Local Introgression with the D+ Statistic

- Define Populations: Select four populations for analysis: two closely related populations (P1 and P2) that are potential recipients of gene flow, a donor population (P3), and an outgroup (P4).

- Sequence and Call Variants: Obtain high-coverage genome sequencing data for all populations. Perform variant calling to identify biallelic Single Nucleotide Polymorphisms (SNPs).

- Determine Ancestral Alleles: Use the outgroup (P4) to determine the ancestral (A) and derived (B) allele at each SNP site.

- Calculate Site Patterns: For a given genomic window, tabulate the counts of the following site patterns across all SNPs:

- ABBA: Derived allele in P3 and P2, ancestral in P1.

- BABA: Derived allele in P3 and P1, ancestral in P2.

- BAAA: Ancestral allele in P3 and P2, derived only in P1.

- ABAA: Ancestral allele in P3 and P1, derived only in P2.

- Compute D+: Calculate the D+ statistic for the window using the formula:

- Formula: D+ = [Σ(ABBA - BABA) + Σ(BAAA - ABAA)] / [Σ(ABBA + BABA) + Σ(BAAA + ABAA)]

- Interpretation: A significant positive D+ value indicates introgression from P3 into P2. A significant negative value indicates introgression from P3 into P1 [5].

Protocol 2: A Combined Pipeline for Plant Microexon Discovery

- Data Preparation: Collect RNA-seq datasets from multiple tissues and conditions.

- Read Mapping (Combined Approach):

- Perform ab initio spliced read mapping using OLego to identify novel splice junctions without reference to existing annotations.

- Perform a second mapping with STAR, using a splice junction database that combines existing genome annotations and the novel junctions found by OLego.

- Microexon Identification: Identify candidate internal microexons (1-15 nt) supported by junction reads. Require a minimum of 5 reads spanning each splice junction.

- Filtering and Validation: Filter candidates and experimentally validate a subset via RT-PCR followed by sequencing [14].

Methodology Visualization

Four-Population Tree for Introgression Detection

D+ Statistic Calculation Workflow

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Tool | Function in Analysis |

|---|---|

| D+ Statistic | A refined population genetic statistic that leverages both shared derived and ancestral alleles to detect local genomic introgression with higher precision than the D statistic [5]. |

| OLego | A de novo spliced read mapping tool that is highly effective for the initial discovery of novel splice junctions, including those bordering microexons, without prior annotation [14]. |

| STAR | A fast and accurate RNA-seq aligner that is optimal for annotation-guided mapping. Used in conjunction with OLego for comprehensive microexon discovery [14]. |

| SPRI Beads | Magnetic beads used for the size-selective purification of DNA fragments (e.g., in NGS library preparation) to remove unwanted products like primer and adaptor dimers [15]. |

| EvaGreen | A fluorescent DNA dye that binds double-stranded DNA with high specificity. Recommended for optional qPCR steps to accurately estimate the cycle number needed for NGS library amplification [15]. |

Building a Robust Toolkit: Methodological Approaches to Minimize False Inferences

IntroMap is a bioinformatics pipeline that employs signal analysis techniques on next-generation sequencing (NGS) data to detect genomic introgressions—the transfer of genetic material between species through hybridization and backcrossing [16]. Designed primarily for plant breeding programs, it offers an automated approach to screen large populations, potentially replacing more labor-intensive marker-assisted assays. Its key innovation is the accurate identification of introgressed genomic regions using alignment to a reference genome without requiring a variant calling step or de novo assembly of the read data [16]. This article provides a technical support center for researchers using IntroMap, framed within the critical context of mitigating false positive signals in introgression research.

Troubleshooting Guides

Installation and Environment Setup

Problem: Conda environment creation fails.

- Solution: Ensure your conda installation is up to date. If the provided YAML file with specific package versions fails, try using the version-agnostic YAML file available on the IntroMap GitHub repository, which offers more flexibility [17].

Problem: Unable to execute the IntroMap.py script.

- Solution: Verify that the script has executable permissions. Run

chmod 755 IntroMap.pyin your terminal to grant the necessary permissions [17].

Runtime and Input Errors

Problem: The pipeline terminates with an error related to input files.

- Solution: Confirm that your input BAM file is properly aligned to the reference genome you provide using the

-roption. The reference genome must share homology with the recurrent parental cultivar used in the experiment [16] [17].

Problem: No output files are generated, or the output is empty.

- Solution: Check the command-line arguments. The

-oparameter specifies the output filename format. For example, using-o lowesswould create files likechr1.lowess. Ensure you have write permissions in the output directory [17].

Performance and Interpretation

Problem: The pipeline runs successfully, but no introgressed regions are reported.

- Solution: Adjust the classification threshold using the

-tparameter (default is 0.90) and the-bflag, which controls whether to report regions below (True) or at/above (False) the threshold [17]. The optimal threshold may vary based on your specific data and the expected signal strength.

Problem: Results are inconsistent with marker-based assays or other methods.

- Solution: Be aware that methods for detecting introgression, including the popular D-statistic, can produce false-positive signals when evolutionary rates vary between the divergent taxa being analyzed [18]. These rate variations lead to homoplasies (independent substitutions at the same site) that can be misinterpreted as evidence of introgression [18]. Always validate key findings with independent methods.

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of IntroMap over variant-calling methods? IntroMap identifies introgressed regions directly from alignment data, bypassing the variant calling step. This can reduce complexity and improve accuracy compared to some marker-based approaches [16].

Q2: What input data does IntroMap require? The pipeline requires an aligned BAM file from your sample and the reference genome sequence (in FASTA format) to which the reads were aligned. Genome annotation is not required [16] [17].

Q3: How does IntroMap mitigate issues with false positives? While the core method relies on alignment and signal analysis, users should be cautious of general limitations in introgression detection. A significant source of false positives in many methods, including the D-statistic, is variation in evolutionary rates among lineages [18]. IntroMap's signal-based approach may offer a different pathway, but it is crucial to tune parameters and validate results experimentally.

Q4: Can IntroMap be used for ancient introgression events? The pipeline was demonstrated on plant breeding data. Methods developed for recent introgression (like the D-statistic) can be unreliable for ancient events due to accumulated homoplasies and evolutionary rate variation [18]. IntroMap's applicability to very old hybridization events would require further validation.

Q5: Where can I find the software and its documentation? The IntroMap software is freely available on GitHub at https://github.com/danshea/IntroMap, which includes a Jupyter notebook with usage examples [17].

Essential Research Reagent Solutions

The following table details key materials and resources required for a successful IntroMap experiment.

Table 1: Key Research Reagents and Resources for IntroMap

| Item Name | Function / Description | Critical Parameters |

|---|---|---|

| Reference Genome | A genome sequence sharing homology with the recurrent parental line. | Required format: FASTA. Genome annotation is not necessary [16] [17]. |

| Aligned Sequencing Data | The sample data to be screened for introgressed regions. | Must be provided as a BAM file, aligned to the specified reference genome [17]. |

| Diagnostic SNP Sets | For mapping alien introgressions from specific donor species. | In Arachis, for example, pre-compiled sets for five diploid species are available. The pipeline can also generate new diagnostic SNPs [19]. |

| Conda Environment | A reproducible software environment to run IntroMap. | Resolves dependencies (e.g., Python, NumPy, SciPy). Use the YAML file from the GitHub repository [17]. |

Experimental Workflow and Signaling Pathways

The following diagram illustrates the logical workflow of the IntroMap pipeline, from data input to final output.

Diagram 1: IntroMap analysis workflow

Methodological Protocols

Core Introgression Detection Protocol using IntroMap

- Data Input Preparation: Generate a BAM file by aligning your NGS reads to a reference genome that is closely related to the recurrent parent in your hybridization experiment [16] [17].

- Software Activation: Activate the IntroMap conda environment using the command

source activate IntroMap[17]. - Pipeline Execution: Run the IntroMap.py script from the command line. A sample invocation is:

where

-iis the input BAM,-ris the reference,-ois the output prefix,-tis the classification threshold,-b Truereports regions below the threshold, and-fsets the LOWESS smoothing window as a fraction of the chromosome length [17]. - Output Analysis: The pipeline generates output files for each chromosome containing the predicted introgressed regions. It also creates visualizations (PNG files) of the predictions for manual inspection [17].

- Validation: As with any computational prediction, it is critical to validate introgressed regions identified by IntroMap using independent methods, such as targeted marker-based assays or PCR, to confirm their biological validity [16].

Protocol for Mitigating False Positives from Rate Variation

- Awareness of Limitations: Understand that all introgression detection methods, including tree-based and site-based (D-statistic) approaches, are susceptible to false positives caused by homoplasies when evolutionary rates differ between lineages [18].

- Method Selection Consideration: For analyses involving highly divergent taxa, where rate variation is more likely, be cautious in interpreting results. A tree-based equivalent of the D-statistic (

Dtree) has been used in some studies, but it may also be affected by rate variation [18]. - Independent Verification: Employ a new test based on the expected clustering of introgressed sites along the genome, as implemented in the program Dsuite, to help distinguish genuine introgression from patterns caused by rate variation [18]. Using multiple, methodologically distinct detection approaches strengthens the evidence for true introgression.

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between the D-statistic and the HyDe method? Both the D-statistic and HyDe use site pattern frequencies to detect hybridization but differ in their specific approach and output. The D-statistic (or ABBA-BABA test) calculates a normalized difference in the frequencies of two site patterns (ABBA and BABA) that are expected to be equal under a null model of no gene flow [20]. A significant deviation from zero is evidence of introgression. In contrast, HyDe uses a ratio of these same site pattern frequencies to not only test for the presence of hybridization but also to directly estimate the admixture proportion, gamma (γ) [20].

Q2: My analysis with HyDe shows significant hybridization, but I suspect it might be a false positive due to ancestral polymorphism. How can I investigate this? Ancestral polymorphism can create gene tree patterns that mimic hybridization. To mitigate this, you can:

- Incorporate an Outgroup: Always use a carefully selected outgroup population that diverged before the speciation event of your ingroup taxa. This helps polarize the site patterns (e.g., determine ancestral vs. derived states) and is a requirement for both D-statistic and HyDe analyses [20].

- Use a Network-Based Method: Consider using phylogenetic network inference software (e.g., like those referenced in [20]) as a complementary approach. These methods can explicitly model both incomplete lineage sorting and hybridization, helping to distinguish between the two processes.

- Validate with Simulations: Perform coalescent simulations under a model without hybridization but with ancestral population structure. If your empirical data shows significantly stronger signals than these simulations, it strengthens the case for true hybridization.

Q3: When running HyDe with multiple individuals per population, what is the software actually doing? HyDe leverages all available individuals by calculating site patterns across all possible quartets formed by individuals from the four populations (Outgroup, P1, Hybrid, P2) [20]. This approach increases the effective sample size for estimating site pattern probabilities, which can lead to more accurate parameter estimates and greater statistical power for detecting hybridization.

Q4: Can HyDe tell me if only some individuals in a population are hybrids?

Yes, this is a key feature of HyDe. The standard test assumes all individuals in the putative hybrid population are admixed. However, HyDe provides the individual_hyde.py script to test each individual within the hybrid population separately [21] [22]. Furthermore, the bootstrap_hyde.py script can be used to assess heterogeneity in the admixture process through resampling [20] [21]. Non-uniform bootstrap support distributions can indicate that not all individuals are hybrids.

Q5: What are the common sources of false positives in D-statistic and HyDe analyses?

- Incorrect Rooting: Using an outgroup that does not properly root the tree can lead to incorrect interpretation of site patterns.

- Ancestral Population Structure: Population structure in the ancestral population of P1, P2, and the hybrid can create spurious signals of introgression.

- Genome Assembly Biases: Reference genome biases or alignment errors can create systematic artifacts that mimic introgression signals.

- Uneven Missing Data: If missing data is not random and is correlated with population relationships, it can bias site pattern counts.

Troubleshooting Guides

Issue 1: Weak or Non-Significant Introgression Signal

Problem: Your analysis yields a non-significant D-statistic or HyDe p-value, but you have a biological reason to expect hybridization.

Solution:

- Verify Data Quality:

- Check for an adequate number of informative sites (SNPs). Genome-scale data is typically required for sufficient power [20].

- Ensure high-quality alignments and variant calls to minimize noise.

- Check Population Labels:

- Confirm that you have correctly specified the parental populations (P1 and P2). Mis-specification will severely reduce power. Use population structure analyses (e.g., PCA, ADMIXTURE) to validate your assignments.

- Explore Different Triplets:

- Consider the Direction of Gene Flow: The signal might be stronger if you swap your hypotheses for P1 and P2.

Issue 2: Suspected False Positive Due to Ancestral Polymorphism

Problem: You detect a strong and significant signal of introgression, but you are concerned it is caused by incomplete lineage sorting (ILS) rather than true hybridization.

Solution:

- Re-run Analysis with a Closer Outgroup: If possible, use an outgroup that is more closely related to the ingroup. A more distant outgroup has a higher probability of containing ancestral polymorphisms.

- Conduct a D-statistic Test with Multiple Outgroups: Compare the D-statistic results across different outgroups. A consistent signal across multiple outgroups is more robust.

- Use the

bootstrap_hyde.pyScript: Bootstrap resampling of individuals can provide a distribution of the estimated admixture parameter (γ). A wide and unstable distribution might indicate a weak or false signal [20] [22]. - Compare with Other Methods: Corroborate your findings using an independent method. For example, use a phylogenetic network tool or a supervised machine learning approach like FILET, which combines multiple summary statistics to identify introgressed loci [23].

Issue 3: Interpreting HyDe's Output for Individual Tests

Problem: You have run individual_hyde.py and get varied p-values and γ estimates for different individuals within the same population.

Solution:

- Identify Pure Hybrids and Non-Hybrids: Individuals with significant p-values and intermediate γ estimates are strong hybrid candidates. Individuals with non-significant p-values are likely non-hybrids.

- Check for Backcrossing: Individuals with a significant signal but a γ estimate very close to 0 or 1 might be backcrosses to one parental population (F1 hybrid crossed with a pure parent).

- Visualize the Results: Create a plot of the γ estimate for each individual (or its bootstrap distribution) to easily visualize the variation in admixture proportions within the population.

The table below summarizes the core quantitative relationships for the D-statistic and HyDe methods.

Table 1: Key Formulas and Thresholds for Site-Pattern Methods

| Method | Core Formula / Statistic | Null Hypothesis Value | Key Output |

|---|---|---|---|

| D-Statistic | ( D = \frac{(N{ABBA} - N{BABA})}{(N{ABBA} + N{BABA})} ) [20] | D = 0 (ABBA = BABA) | Test statistic (Z-score) for introgression [20] |

| HyDe | ( \gamma = 1 - \frac{f{BABA}}{f{ABBA}} ) (estimated from data) [20] | γ = 0 (Ratio test) | P-value for hybridization test and γ, the admixture proportion [20] |

Table 2: Essential Research Reagent Solutions for HyDe Analysis

| Item / Reagent | Function in Analysis | Implementation Note |

|---|---|---|

| Genomic SNP Data | The raw input data used to calculate site pattern counts (ABBA, BABA, etc.). | Data should be unlinked SNPs, ideally from genome-wide sequencing [20]. Can be in Phylip, Plink, or VCF format. |

| Population Map File | A text file specifying which individuals belong to which population. | Critical for correctly assigning individuals to Outgroup, P1, Hybrid, and P2 roles. |

| HyDe Software (phyde) | The Python package that performs the phylogenetic invariants-based test [22]. | Installed via pip install phyde. Contains the core scripts: run_hyde.py, individual_hyde.py, and bootstrap_hyde.py [21] [22]. |

| Triples File | A text file specifying the combinations of populations (P1, Hybrid, P2) to test. | Required for individual_hyde.py and bootstrap_hyde.py. Can be generated from the output of run_hyde.py [21]. |

Experimental Protocols

Protocol 1: Standard Genome-Wide Hybridization Detection with HyDe

This protocol describes a standard workflow for running a HyDe analysis on a genomic dataset.

1. Input Data Preparation:

- Obtain a aligned genomic sequence data or a called SNP dataset in a supported format (e.g., Phylip, VCF).

- Create a population map file, a simple text file with two columns:

individual_nameandpopulation_name.

2. Software Installation:

- Install the HyDe package using pip:

pip install phyde[22].

3. Running the Analysis:

- Execute the main analysis script from the command line:

- This script will test all possible triplets and produce a filtered results file showing significant hybridization events.

4. Results Interpretation:

- Examine the output file. Key columns include:

P1,Hybrid,P2: The tested populations.Gamma: The estimated admixture proportion.Z_scoreandP_value: The test statistic and its significance.

Protocol 2: Testing for Heterogeneous Introgression within a Population

This protocol is used when you suspect that not all individuals in a "hybrid" population are actually admixed.

1. Generate a Triples File:

- Use the significant results from your

run_hyde.pyanalysis or create a custom file listing the specific (P1, Hybrid, P2) triplets you wish to investigate.

2. Run Individual-Level Tests:

- Use the

individual_hyde.pyscript to test each individual in the hybrid population:

3. Run Bootstrap Resampling:

- To assess the stability of the admixture signal, run:

4. Interpret Individual Variation:

- The individual test output will provide a γ and p-value for each specimen. The bootstrap output allows you to visualize the distribution of γ, helping you identify whether admixture is a uniform property of the population.

Workflow and Conceptual Diagrams

HyDe Analysis and Troubleshooting Workflow

Conceptual Model of Signals and False Positives

In genomic research, accurately distinguishing somatic mutations from germline variants is a critical challenge. Somatic variants arise in non-germline tissues and are not inherited, playing key roles in diseases like cancer, while germline variants are present in all cells and are inherited from parents. This guide provides troubleshooting and best practices for leveraging allele frequency spectra to differentiate these variant types, a methodology crucial for avoiding false positives in introgression and ancestry analysis.

## Key Concepts and Definitions

Allele Frequency Spectrum (AFS): The distribution of allele frequencies across multiple genomic sites in a population. Somatic Variants: DNA alterations acquired in somatic (non-germline) cells, not present in all cells, and not inherited by offspring. Germline Variants: DNA variations present in germ cells, inherited from parents, and found in all cells of an organism. Variant Allele Frequency (VAF): The proportion of sequencing reads at a genomic locus that support a specific variant.

## Allele Frequency Characteristics: Somatic vs. Germline

Table 1: Key Characteristics of Somatic and Germline Variants Based on Allele Frequency Spectra

| Characteristic | Germline Variants | Somatic Variants |

|---|---|---|

| Expected VAF in Heterozygous State | ~50% (or 100% for homozygous) [24] [25] | Highly variable (5-100%), often subclonal [24] |

| Distribution in Population | Follows Hardy-Weinberg equilibrium in large cohorts [26] | Population-specific, tissue-specific |

| Presence in Matched Normal Tissue | Present in all tissues [25] | Absent in matched normal tissue [24] [25] |

| Supporting Evidence | Population databases (gnomAD), clinical databases (ClinVar) [27] | Somatic databases (COSMIC), tumor-only evidence [27] [28] |

## Experimental Protocols

### Protocol 1: Tumor-Normal Paired Analysis

Purpose: To identify somatic variants by comparing tumor tissue to matched normal tissue.

Materials:

- DNA from tumor tissue

- DNA from matched normal tissue (e.g., blood, saliva)

- Next-generation sequencing platform

- Computational resources

Method:

- Sequencing: Perform whole-exome or whole-genome sequencing on both tumor and normal samples to a minimum coverage of 30x for normal and 60x for tumor samples [24].

- Variant Calling: Use somatic variant callers such as:

- Variant Filtering: Apply filters to remove artifacts:

- Remove variants with VAF < 5% in tumor unless ultra-deep sequencing

- Exclude variants present in matched normal at VAF > 2%

- Filter against population databases (gnomAD) to remove common germline variants [27]

- Annotation: Use annotation tools such as:

### Protocol 2: Tumor-Only Analysis with Germline Contamination Assessment

Purpose: To identify somatic variants when matched normal tissue is unavailable.

Materials:

- DNA from tumor tissue

- Population frequency databases (gnomAD)

- Computational resources

Method:

- Sequencing: Sequence tumor sample to high coverage (≥100x recommended).

- Variant Calling: Use tools capable of tumor-only analysis:

- GATK Mutect2's tumor-only mode [28]

- Implement additional filtering for germline contamination

- Germline Filtering:

- Validation: Confirm putative somatic variants with orthogonal methods (e.g., digital PCR, Sanger sequencing).

### Protocol 3: RNA-Seq Based Variant Calling

Purpose: To identify expressed variants and assess allele-specific expression.

Materials:

- RNA from tissue of interest

- RNA-Seq library preparation kit

- Computational resources

Method:

- Library Preparation and Sequencing: Perform RNA sequencing with a minimum of 70 million reads [24].

- Alignment: Use STAR two-pass alignment to GRCh38 [24].

- Variant Calling:

- Use GATK best practices for RNA-seq short variant discovery [24]

- Apply base quality score recalibration and split reads with N in CIGAR string

- Classification:

- Implement VarRNA or similar tool to classify variants as germline, somatic, or artifact [24]

- Compare VAFs between DNA and RNA to identify allele-specific expression

Diagram 1: Experimental Workflow for Variant Differentiation

## Troubleshooting FAQs

### FAQ 1: How do I handle variants with intermediate VAF (30-70%) in tumor-only samples?

Issue: Variants with VAF between 30-70% could be either germline heterozygous variants or clonal somatic variants, creating ambiguity in tumor-only analyses.

Solution:

- Database Filtering: Check population frequency databases (gnomAD). Variants with population frequency >0.1% are likely germline [27] [25].

- B-allele Frequency: Analyze B-allele frequency across the genomic region to identify copy number changes that might alter VAF expectations.

- Machine Learning Classification: Implement tools like VarRNA that use XGBoost models trained on multiple features to classify variants [24].

- Pathogenicity Assessment: Evaluate variant pathogenicity using ClinVar and other clinical databases - pathogenic germline variants in cancer predisposition genes may be present at ~50% VAF but are clinically significant [29] [25].

### FAQ 2: What are the best practices for minimizing false positives in somatic variant calling?

Issue: High false positive rates in somatic variant calling due to sequencing artifacts, mapping errors, and germline contamination.

Solution:

- Sequencing Depth: Ensure adequate sequencing depth (≥100x for tumor, ≥30x for normal) [24].

- Duplicate Removal: Remove PCR duplicates to avoid artifacts [28].

- Base Quality Recalibration: Implement base quality score recalibration (BQSR) as in GATK best practices [24].

- Strand Bias Filtering: Filter variants with significant strand bias (Fisher's exact test p-value < 0.05).

- Annotation Filtering: Use databases of common sequencing artifacts and cross-replicate validation.

### FAQ 3: How can I differentiate true somatic variants from RNA editing events in RNA-Seq data?

Issue: RNA-seq variant calling may detect RNA editing events rather than true DNA variants, leading to misinterpretation.

Solution:

- Database Comparison: Cross-reference with known RNA editing databases (e.g., REDIportal).

- DNA-RNA Concordance: Require DNA validation for variants called from RNA-seq alone [24].

- Editing Context: Identify characteristic sequence contexts of common RNA editing (e.g., A-to-I editing in Alu elements).

- VAF Comparison: Compare VAF between DNA and RNA - RNA editing events typically show sub-50% VAF and may be tissue-specific.

### FAQ 4: What thresholds should I use for allele frequency in population filtering?

Issue: Inappropriate allele frequency thresholds can either remove true somatic variants or retain common germline variants.

Solution: Table 2: Recommended Allele Frequency Thresholds for Variant Filtering

| Application | Recommended Threshold | Rationale |

|---|---|---|

| Common Germline Filter | MAF < 0.1% in gnomAD [29] [27] | Removes polymorphic germline variants while retaining rare disease-associated variants |

| Tumor-Only Somatic Calling | VAF 10-90% with supporting evidence [25] | Allows for subclonal populations and aneuploidy effects |

| Low-Frequency Somatic | VAF ≥ 5% (≥1% with ultra-deep sequencing) | Balances sensitivity with false positive rates |

| Germline Contamination | VAF 40-60% in tumor-only plus population frequency >1% [25] | Identifies likely heterozygous germline variants |

## Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Variant Analysis

| Tool/Reagent | Function | Application Context |

|---|---|---|

| GATK Mutect2 [24] [28] | Somatic variant calling | Tumor-normal paired and tumor-only analysis |

| VarRNA [24] | Machine learning classification of RNA variants | Differentiation of germline/somatic variants from RNA-seq data |

| Ensembl VEP [28] | Variant effect prediction | Functional annotation of coding and non-coding variants |

| gnomAD [27] | Population frequency database | Filtering of common germline polymorphisms |

| COSMIC [27] [28] | Catalog of somatic mutations | Evidence for somatic variant classification |

| AutoGVP [29] | Automated germline variant pathogenicity classification | Standardized variant interpretation per ACMG/AMP guidelines |

| ANNOVAR [28] | Functional annotation of genetic variants | Comprehensive variant annotation including dbNSFP, ClinVar |

## Advanced Analysis Techniques

### Allele Frequency Spectrum Analysis

Purpose: To distinguish somatic from germline variants based on population-level allele frequency distributions.

Method:

- Cohort AFS Construction: Calculate allele frequencies across your study cohort for all putative variants.

- Distribution Analysis: Germline variants will show peaks at ~50% (heterozygous) and 100% (homozygous), while somatic variants show a continuous distribution skewed toward lower frequencies [26].

- Statistical Modeling: Implement binomial mixture models to identify different variant classes based on their AFS.

- Validation: Use known germline and somatic variants from public databases to validate the AFS-based classification.

Diagram 2: Allele Frequency Spectrum Analysis Workflow

### Machine Learning Approaches

Purpose: To improve classification accuracy using multiple variant features beyond allele frequency.

Method:

- Feature Selection: Include VAF, read depth, base quality, mapping quality, population frequency, and functional impact.

- Model Training: Train XGBoost or random forest classifiers using validated variant sets [24].

- Cross-Validation: Use k-fold cross-validation to assess model performance.

- Implementation: Apply trained models to novel datasets for variant classification.

## Quality Control and Validation

### Internal QC Metrics

- Sequenceing Depth: Minimum 30x for germline, 60x for somatic variants

- VAF Concordance: Technical replicates should show VAF correlation R² > 0.98

- Positive Controls: Include known variants with expected VAFs in each run

### External Validation

- Orthogonal Methods: Validate a subset of variants using digital PCR or Sanger sequencing

- Database Comparison: Compare variant calls with public datasets (TCGA, ICGC)

- Proficiency Testing: Participate in external quality assessment programs [28]

Frequently Asked Questions

Q1: Why should I use a Bayesian approach over traditional methods for estimating allele frequencies?

Traditional methods, like simply counting observed alleles, can be unreliable with modern sequencing data, which often has high error rates and low coverage [26]. A Bayesian framework allows you to formally incorporate prior knowledge, such as allele frequency distributions from evolutionarily related populations, to improve estimation accuracy. Crucially, it avoids the need for a "hard threshold" on whether to combine data from different samples, which can introduce bias. Instead, it uses a continuous "affinity measure" to adaptively borrow strength from related samples, typically resulting in a lower mean squared error for the frequency estimates [30].

Q2: How can I mitigate false positive signals of introgression that are actually caused by ancestral genetic polymorphism?

Ancestral polymorphism can be misidentified as recent introgression because both processes can create similar genetic patterns. To mitigate these false positives, your analysis should:

- Use an Unbiased Root: Employ an outgroup species to root your phylogenetic trees reliably. This helps distinguish shared ancestral alleles (present in the common ancestor) from alleles that have moved between species after divergence (introgression) [23].

- Refine Species Borders: Use patterns of gene flow to define species boundaries (creating BSC-species) rather than relying solely on arbitrary genetic distance thresholds (ANI-species). This can prevent the misclassification of recently diverged lineages within a single species as separate species experiencing high introgression [31].

- Leverage Machine Learning: Use supervised machine learning frameworks like FILET (Finding Introgressed Loci using Extra-Trees Classifiers) that combine multiple population genetic summary statistics. These methods are trained to recognize the complex genomic signatures of true introgression and can achieve higher accuracy and power than single-statistic approaches [23].

Q3: What are the main differences between the STRUCTURE software and the empirical Bayes approach for modeling population structure?

While both methods use Bayesian principles, they are designed for different primary purposes and operate differently, as summarized in the table below.

| Feature | STRUCTURE | Empirical Bayes for Allele Frequencies |

|---|---|---|

| Primary Purpose | Identify populations and assign individuals to them based on genetic similarity [32]. | Improve the estimation of allele frequencies in a target population using data from related populations [30]. |

| Core Methodology | Uses a Bayesian clustering algorithm with MCMC to estimate individual ancestry proportions (the Q-matrix) [32]. | Uses an empirical prior (e.g., a Beta distribution) informed by related samples to compute a posterior estimate for the frequency at each marker [30]. |

| Key Assumptions | Assumes markers are in linkage equilibrium and selectively neutral; some models assume Hardy-Weinberg equilibrium within populations [32]. | Assumes independence between markers and Hardy-Weinberg equilibrium within the target population [30]. |

| Output | Individual membership coefficients to K clusters; inference of the most likely number of populations (K) [32]. | A refined, lower-variance estimate of the allele frequency for each genetic marker in the target population [30]. |

Q4: My Bayesian model for genomic prediction is computationally slow. Are there efficient alternatives to MCMC?

Yes, several efficient alternatives exist. You can use models like Bayes-C0, which is equivalent to Genomic BLUP (GBLUP) and can be solved using efficient linear algebra methods without MCMC [33]. Furthermore, fast, non-MCMC approaches based on the Expectation-Maximization (EM) algorithm have been developed for models like Bayes-A and Bayes-B, providing estimates that are the maximum likelihood equivalent of the Bayesian approaches [33].

Experimental Protocols & Methodologies

Protocol 1: Empirical Bayes Estimation of Allele Frequencies with a Booster Sample

This protocol outlines the method for improving allele frequency estimates in a primary target population by incorporating data from a related "booster" population [30].

- Data Preparation: For a large number of biallelic markers (e.g., SNPs), collect genotype data. The data should include a primary sample of

nalleles from your target population, ( \mathcal{P} ), and a booster sample ofnalleles from a related population, ( \mathcal{S} ). - Calculate Observed Frequencies: For each marker

i, compute the maximum likelihood estimate (MLE) in each population:- ( \hat{q}i = Xi / n ) (Frequency in target population ( \mathcal{P} ))

- ( \hat{p}i = Yi / n ) (Frequency in booster population ( \mathcal{S} ))

- Establish an Empirical Prior:

- For a given marker

iof interest in ( \mathcal{P} ), consider the set of all other markersjwhose observed frequency in ( \mathcal{S} ), ( \hat{p}j ), is within a small window of ( \hat{p}i ). - Examine the distribution of the allele counts ( Xj ) in ( \mathcal{P} ) for this set of markers.

- Fit a Beta distribution to this empirical distribution using maximum likelihood. This fitted Beta distribution, ( \text{Beta}(a, b) ), serves as your empirical prior for the true frequency ( qi ) at marker

i.

- For a given marker

- Compute the Posterior Estimate:

- The prior is ( q_i \sim \text{Beta}(a, b) ).

- The likelihood of observing ( Xi ) successes in

ntrials is Binomial: ( Xi | qi \sim \text{Bin}(n, qi) ). - Thanks to conjugacy, the posterior distribution is also a Beta distribution: ( qi | Xi \sim \text{Beta}(a + Xi, b + n - Xi) ).

- The Bayesian point estimate for the allele frequency is the mean of this posterior distribution: ( \hat{q}i^{\text{Bayes}} = (a + Xi) / (a + b + n) ).

The following diagram illustrates the logical workflow and the relationship between the data, the prior, and the posterior in this empirical Bayes approach.

Protocol 2: A Bayesian Multiple Regression Framework for Genome-Wide Association (GWA)

This protocol uses Bayesian variable selection models for GWA analysis to identify markers associated with a quantitative trait, which helps control for population structure and mitigates false positives [33].

- Model Specification: Define a multiple regression model where the phenotype of an individual is a function of all genotyped markers simultaneously:

( y = \mu + \sum{k=1}^K zk \betak + e )

Here, ( y ) is the vector of phenotypes, ( \mu ) is the overall mean, ( zk ) is the genotype vector for SNP