Beyond the Frozen Accident: The Stereochemical Hypothesis and the Modern Code of Life

This article examines the stereochemical hypothesis of codon assignments, a foundational theory proposing that the genetic code originated from direct physicochemical interactions between amino acids and nucleotides.

Beyond the Frozen Accident: The Stereochemical Hypothesis and the Modern Code of Life

Abstract

This article examines the stereochemical hypothesis of codon assignments, a foundational theory proposing that the genetic code originated from direct physicochemical interactions between amino acids and nucleotides. We explore the theory's evolution from a historical concept to a framework tested with modern computational and experimental methods. The content assesses the evidence for and against stereochemistry as a primary shaping force, contrasting it with adaptive and coevolutionary theories. For a target audience of researchers and drug development professionals, we also discuss the hypothesis's practical implications, including its influence on advanced fields like molecular generative models and the AI-driven design of synthetic genes and mRNA therapeutics.

The Stereochemical Blueprint: Revisiting the Physicochemical Origins of the Genetic Code

The Stereochemical Hypothesis: A Physicochemical Challenge to the Frozen Accident

The "frozen accident" hypothesis, initially proposed by Francis Crick, posits that the genetic code's specific codon assignments are fundamentally historical and arbitrary, preserved not due to any special optimization but because any subsequent changes would be catastrophically disruptive after the code's establishment [1] [2]. This perspective, however, is challenged by the code's manifestly non-random structure, wherein related codons (differing by a single nucleotide) typically encode the same or physicochemically similar amino acids [2]. The stereochemical theory offers a physicochemical alternative, suggesting that codon assignments were originally dictated by direct, selective affinity between amino acids and their cognate codons or anticodons [3] [1] [2]. This implies that the code's structure is rooted in the inherent chemical properties of biomolecules, not mere contingency.

Experimental evidence supports the presence of such stereochemical relationships. For instance, analyses of amino acid binding to longer RNA sequences reveal that real codons for certain amino acids, including arginine, isoleucine, and tyrosine, are statistically overrepresented in their binding sites compared to randomized codes [3]. This indicates that some primordial chemical interactions have survived subsequent evolutionary selection. The core "codon-correspondence hypothesis" formalizes this idea, stating that for each amino acid, a coding sequence exists with which it has the greatest association, and this association influenced the code's final form [3].

Key Theories on the Origin and Evolution of the Genetic Code

The stereochemical theory is one of several major frameworks explaining the genetic code's origin and structure. The table below summarizes the core principles and evidence for each.

Table 1: Major Theories on the Origin of the Genetic Code

| Theory | Core Principle | Key Evidence | Limitations/Challenges |

|---|---|---|---|

| Stereochemical | Direct chemical affinity (e.g., hydrogen bonding, van der Waals forces) between amino acids and their codons/anticodons influenced assignments [4] [2]. | - Concentration of real codons in selected amino acid binding sites [3].- Specific molecular docking models, such as diketopiperazine dimers interacting with codon-anticodon sequences [4]. | - Lack of strong, specific interactions for all amino acids with short oligonucleotides [3].- Difficulty in proving these interactions were the sole determinant. |

| Error Minimization | The code's structure was shaped by selection to minimize the deleterious effects of point mutations and translation errors [1] [2]. | - The standard genetic code is much more robust against errors than random codes, with an estimated probability of "one in a million" [1].- Codons for physicochemically similar amino acids are often neighbors. | - Does not explain the initial, specific codon assignments, only their subsequent organization [2]. |

| Coevolution | The code coevolved with amino acid biosynthetic pathways, with new amino acids inheriting codons from their precursors [2]. | - Patterns in the code table where structurally similar amino acids have related codons (e.g., aspartic acid -> asparagine -> lysine) [2]. | - Does not fully account for the initial assignments of the earliest, prebiotic amino acids. |

| Frozen Accident | The specific codon assignments are a historical coincidence that became immutable ("frozen") once the code was established and proteins were widely integrated into cellular functions [1] [2]. | - The near-universality of the code across all life forms [2].- The catastrophic effect of changing the code after its establishment. | - Cannot explain the code's pronounced non-random, optimized structure [1]. |

Experimental Evidence and Methodologies for Stereochemical Interactions

Key Experimental Approaches and Reagents

Research into the stereochemical theory employs diverse biochemical and biophysical techniques to probe direct interactions. The following toolkit outlines essential reagents and their functions in these investigations.

Table 2: Research Reagent Solutions for Stereochemical Studies

| Research Reagent / Material | Function in Experimental Protocol |

|---|---|

| Immobilized Amino Acids | Affinity chromatography matrices to measure binding strength and specificity of nucleotides or oligonucleotides [3]. |

| RNA Homopolymers (e.g., poly(U), poly(A)) | Substrates to test esterification specificity of imidazole-activated amino acids to RNA 2'-OH groups [3]. |

| Dinucleoside Monophosphates | Model systems for chromatographic copartitioning studies to investigate anticodonic associations [3]. |

| In vitro Transcribed tRNA | Unmodified tRNA molecules (e.g., tRNAIle(CAU)) for cocrystallization with aminoacyl-tRNA synthetases (e.g., IleRS) to elucidate nucleotide recognition mechanisms [5]. |

| Aminoacyl-tRNA Synthetases (AARSs) | Key enzymes (e.g., ScIleRS) for structural studies on the discriminative charging of tRNAs, revealing how anticodon interactions enforce fidelity [5]. |

Detailed Experimental Protocols

Protocol 1: Affinity Chromatography for Amino Acid-Nucleotide Interaction This protocol tests the binding strength between amino acids and nucleotides [3].

- Immobilization: Covalently immobilize a specific amino acid (e.g., Gly, Lys, Arg) onto a solid chromatography matrix via its carboxyl group.

- Equilibration: Equilibrate the column with a controlled buffer solution.

- Application: Apply a solution containing the four nucleotide monophosphates (AMP, GMP, CMP, UMP) to the column.

- Elution & Detection: Elute with a buffer and monitor the effluent to measure the retardation of each nucleotide.

- Analysis: Compare the binding strength (retardation) to the codon or anticodon assignments of the immobilized amino acid. A positive stereochemical relationship is suggested if nucleotides corresponding to the amino acid's codons show stronger binding.

Protocol 2: Assessing Esterification Specificity to RNA Homopolymers This protocol investigates the specificity of amino acid attachment to RNA [3].

- Activation: Chemically activate an amino acid (e.g., phenylalanine or glycine) using imidazole.

- Incubation: Incubate the activated amino acid with different RNA homopolymers (poly(U), poly(A), poly(C), poly(G)).

- Quantification: Measure the rate or extent of esterification of the amino acid to the 2'-OH groups of the ribose sugars in each polymer.

- Specificity Analysis: Determine if the amino acid shows a preference for the polynucleotide corresponding to its modern codon (e.g., phenylalanine, codon UUU, should prefer poly(U)).

Protocol 3: Crystallography of AARS-tRNA Complexes This protocol provides atomic-level insight into how cognate tRNAs are recognized, revealing stereochemical principles [5].

- Complex Formation: Purify a specific aminoacyl-tRNA synthetase (e.g., ScIleRS) and its cognate tRNA (e.g., tRNAIle(GAU)), and form a complex with the amino acid (e.g., L-isoleucine).

- Crystallization: Crystallize the ternary complex under optimized conditions.

- Data Collection & Structure Solution: Collect X-ray diffraction data and solve the three-dimensional structure.

- Interaction Analysis: Analyze the structure to identify specific molecular interactions, such as hydrogen bonding between synthetase residues (e.g., a conserved arginine) and the wobble nucleotide (N34) of the tRNA anticodon, which is critical for discriminative aminoacylation.

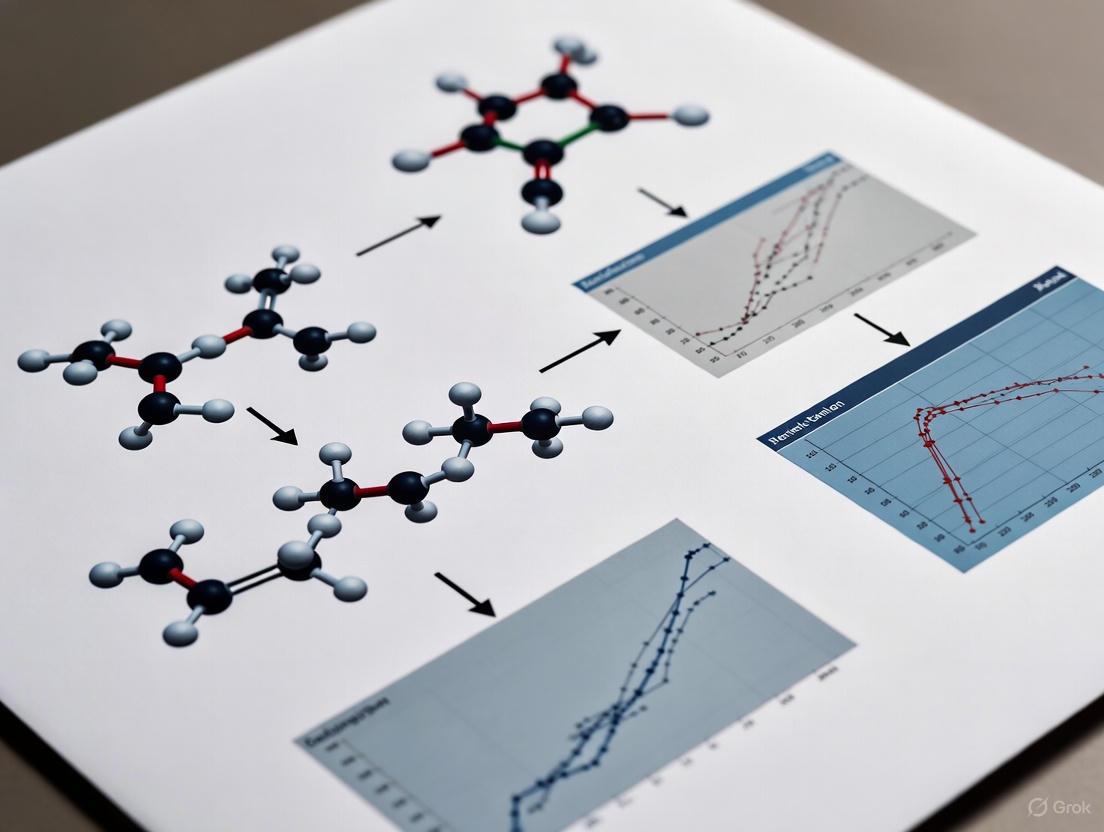

Diagram 1: Experimental Workflow for Stereochemical Research

Error Minimization and the Modern Synthesis

The error minimization theory presents a powerful complementary, and in some views alternative, explanation for the code's structure. It posits that the genetic code evolved to be highly robust, or "optimal," in minimizing the negative phenotypic impacts of both point mutations and translational errors [1]. Simulations show that the standard genetic code is exceptionally effective at ensuring that a single-base mutation or misreading often results in the incorporation of a chemically similar amino acid, thereby preserving protein function [1] [2]. This is not a feature of a random "accident"; statistical analysis suggests the probability of a random code achieving the level of error minimization seen in the standard genetic code is roughly one in a million [1].

Modern research frames the evolution of the code as a balancing act between two conflicting objectives: fidelity (minimizing errors) and diversity (maintaining a wide range of amino acids with different properties to build complex proteins) [1]. A code optimized only for error minimization would encode just one amino acid, which would be useless for building complex life. The standard genetic code appears to be a near-optimal solution to this trade-off, aligning codon assignments with the naturally occurring amino acid composition to balance high throughput and accuracy [1].

Diagram 2: Balancing Fidelity and Diversity in Code Evolution

The evidence from stereochemistry, error minimization, and coevolution theories collectively challenges a pure "frozen accident" perspective. While historical contingency undoubtedly played a role, the genetic code's structure shows clear signatures of physicochemical influences and evolutionary optimization. A modern synthesis suggests the code likely originated from weak, initial stereochemical biases between amino acids and short RNA sequences [3] [1] [2]. These initial assignments were then refined over time by powerful natural selection for error minimization, ensuring robustness against mutations and mistranslation, while simultaneously accommodating a diverse and functionally adequate set of amino acids [1] [2]. Therefore, the genetic code is not a mere fossil of a random event, but a sophisticated molecular protocol that reflects a complex interplay of chemical constraints and evolutionary pressures, fine-tuned for resilience and function.

The stereochemical hypothesis of the genetic code's origin posits that the foundational assignment of codons to amino acids was influenced by direct, selective, chemical interactions between them [3]. This theory stands in contrast to adaptive or "frozen accident" hypotheses, suggesting that the code's structure reflects physicochemical affinities that existed before the evolution of complex translation machinery [3] [6]. The core tenet, known as the codon-correspondence hypothesis, states: "For each amino acid, there is a coding sequence for which it has the greatest association. The association between these sequences and amino acids influenced the form and content of the genetic code" [3]. This premise implies that the modern genetic code may still bear the imprint of these primordial chemical relationships.

Theoretical Framework and Historical Evidence

The idea of a stereochemical basis for the genetic code predates its complete elucidation. Early proponents used molecular modeling to propose specific complementarities, suggesting amino acids could pair with codons, anticodons, or fit into cavities within nucleic acid structures [3]. For instance, some models proposed that amino acids intercalate between bases in double-stranded RNA or bind to pentanucleotide cups with the anticodon at the center [3]. Beyond modeling, chromatographic evidence revealed that the genetic code conserves amino acid properties like polarity. Amino acids with a U in the second codon position are generally hydrophobic, while those with an A are hydrophilic, indicating a possible link between codon composition and amino acid chemistry [3]. Early physicochemical experiments also tested for direct interactions, such as measuring the esterification of imidazole-activated amino acids to RNA homopolymers, though results were often inconsistent with modern codon assignments [3].

Modern Experimental Investigations and Challenges

Recent research has employed advanced computational and high-throughput experimental techniques to test the stereochemical hypothesis with greater precision.

Molecular Docking Studies

A significant 2020 study used molecular docking to systematically investigate the binding affinity between amino acids and their cognate anticodons [7]. The methodology involved:

- RNA Structure Preparation: A 192-nucleotide single-stranded RNA helix was created, containing all 64 codons, and split into eight fragments.

- Steered Molecular Dynamics (SMD): Each RNA fragment underwent SMD simulations to generate multiple structural conformations, simulating different potential interaction states.

- High-Throughput Docking: A total of 1,280 docking simulations were performed to calculate the binding energy between individual amino acids and anticodon nucleotides [7].

Key Quantitative Findings: The study found no correlation between the docking scores (expected to correlate with binding affinity) and the established correspondence rules of the genetic code. The computed binding energies did not show a trend where amino acids preferentially bound to their genetically assigned anticodons [7]. This suggests that direct binding alone is insufficient to explain codon-amino acid specificity and implies the involvement of more subtle processes or mediators in the ribosome machinery.

SELEX and RNA-Binding Site Analysis

Another line of evidence comes from techniques like SELEX, which selects RNA sequences with high affinity for specific targets. Some studies have identified RNA heptamers that bind specific amino acids and found these heptamers to be enriched with codons or anticodons corresponding to that amino acid [6]. For example, a natural RNA containing arginine codons has been identified that appears to bind this amino acid [6]. Analysis of such selected amino acid binding sites shows that real codons are concentrated in them to a greater extent than codons from randomized codes, providing support for the retention of some primordial chemical relationships [3].

Table 1: Key Experimental Findings in Support of and Against the Stereochemical Hypothesis

| Type of Evidence | Key Finding | Interpretation in Favor | Interpretation Against |

|---|---|---|---|

| Molecular Docking [7] | No correlation between docking scores and genetic code assignments. | N/A | Direct binding affinity is not the primary driver of codon assignment. |

| SELEX Experiments [6] | Selected RNA binding sites for an amino acid are enriched for its cognate codons/anticodons. | Indicates a surviving stereochemical relationship. | The association may be a historical relic, not the sole determinant of the modern code. |

| Code Structure Analysis [6] | Only some amino acid pairs (e.g., chemically similar) are coded by similar codons. | Partial support for a physicochemical basis. | The code is not optimally structured to reflect stereochemical predictions. |

Critical Arguments Against the Stereochemical Theory

Despite the evidence, several powerful arguments challenge the stereochemical theory:

- The Problem of Two Molecules: The theory requires interactions on a proto-tRNA to be faithfully transferred during the evolution of mRNA. This two-molecule mechanism is viewed by some as "unnatural" because it does not guarantee that amino acid-codon assignments realized in the first phase would be maintained in the second [6].

- The Functional Target of the Code: The genetic code specifies amino acids, but the truly functional, selectable entities are the resulting proteins. It is not immediately clear why stereochemical interactions would involve intermediary amino acids rather than the final functional proteins [6].

- Incomplete Reflection in the Code Table: If the code were determined by stereochemistry, chemically similar amino acids should be coded by similar codons. While this is true for some pairs (e.g., aspartic acid and glutamic acid both have GA* codons), there are many exceptions (e.g., leucine and serine have multiple, dissimilar codon sets) [6]. This lack of a consistent pattern discretizes the theory.

Essential Research Reagents and Methodologies

Investigating codon-amino acid affinity requires a specialized toolkit. The table below details key reagents and their functions based on cited methodologies.

Table 2: Research Reagent Solutions for Stereochemical Studies

| Research Reagent / Tool | Function in Experimental Context |

|---|---|

| Molecular Docking Software | Computationally predicts the binding orientation and affinity of a small molecule (e.g., an amino acid) to a macromolecular target (e.g., an RNA codon fragment) [7]. |

| Steered Molecular Dynamics (SMD) | A simulation technique used to explore the energy landscape and conformational changes of a molecule (e.g., an RNA helix) by applying external forces, generating diverse structures for docking [7]. |

| SELEX (Systematic Evolution of Ligands by EXponential enrichment) | An in vitro selection technique that identifies high-affinity nucleic acid sequences (aptamers) that bind to a specific target, such as an amino acid [6]. |

| RNA Helix / Oligonucleotides | Synthetic RNA molecules containing specific codon or anticodon sequences, serving as the binding target in docking or SELEX experiments [7]. |

| Ribosome Profiling (Ribo-seq) | While not a direct test of stereochemistry, this high-throughput sequencing technique provides a snapshot of all actively translating ribosomes in a cell, revealing genome-wide translation efficiency and context effects that go beyond simple codon-anticodon pairing [8]. |

The question of whether a direct affinity between amino acids and their cognate codons/anticodons shaped the genetic code remains open. While specific, reproducible interactions—particularly between amino acids and longer RNA sequences—provide compelling, albeit partial, support for the stereochemical hypothesis [3], significant challenges remain. The failure of comprehensive molecular docking to recapitulate the genetic code [7], coupled with theoretical arguments about the code's structure and evolution [6], suggests that direct binding is not the sole explanatory mechanism. The prevailing view in much of modern molecular biology is that the adapter function of tRNA and the ribosomal machinery are the primary arbiters of translational specificity. However, the stereochemical theory persists as a viable, if not complete, explanation for the origin of at least some codon assignments, representing a fascinating intersection of evolutionary biology, biochemistry, and biophysics.

Diagram: Testing Amino Acid-Codon Affinity

The following diagram illustrates the key computational and experimental workflows discussed in this guide for testing the stereochemical hypothesis.

The stereochemical hypothesis of codon assignments posits that the genetic code's structure originates from direct physicochemical interactions between amino acids and their cognate codons or anticodons. This theory stands as a foundational pillar among several competing ideas seeking to explain the code's origin and evolution. Its core principle challenges the notion of a "frozen accident," suggesting instead that the specific mapping of codons to amino acids is rooted in the fundamental chemical affinities of these biological molecules [1] [9]. This in-depth technical guide traces the journey of this hypothesis from its early theoretical formulations to the key experimental findings that have shaped our current understanding, providing researchers and drug development professionals with a detailed examination of the evidence and methodologies central to this field of research.

The stereochemical theory is one of several major hypotheses, including the adaptive (error-minimization) and coevolution theories, that attempt to explain the genetic code's observed structure [9]. While the adaptive theory argues that the code evolved to minimize the phenotypic cost of mutations and translational errors, and the coevolution theory suggests the code expanded alongside amino acid biosynthetic pathways, the stereochemical hypothesis places direct physical interaction at the forefront of code determination [1] [9]. The modern genetic code, with its 64 codons encoding 20 amino acids and a stop signal, represents one possible mapping among a staggering ~10^84 alternatives, making its non-random structure a subject of intense scientific investigation [1].

Early Theoretical Proposals

The conceptual foundation of the stereochemical theory was laid in the mid-1960s, shortly after the genetic code's deciphering. Early proponents suggested that the correspondence between specific amino acids and nucleotides was not arbitrary but dictated by stereochemical complementarity—essentially, that amino acids could physically recognize and bind to their corresponding codons or anticodons without the complex machinery of modern translation [1] [9]. This idea offered an elegant solution to the code's origin problem, proposing that the first genetic codes emerged from these inherent chemical attractions.

Francis Crick's "frozen accident" hypothesis, which suggested the code was fixed early in evolution and resisted change due to the catastrophic consequences of altering a universal dictionary, served as a key counterpoint to stereochemical theories [1]. Crick acknowledged the code's non-random structure but attributed its universality to the impossibility of changing the dictionary after the emergence of complex life, rather than to specific chemical determinism [1].

Theoretical development of the stereochemical hypothesis was also influenced by the "operational RNA code" concept. This model proposes that the earliest code resided in the acceptor arm of tRNA, where direct amino acid-tRNA interactions could occur, predating the more complex anticodon-based system [10]. This perspective is supported by phylogenomic chronologies that trace the evolution of dipeptide sequences, suggesting an early operational code involving a limited set of amino acids like Leu, Ser, and Tyr [10].

Table: Major Historical Theories of Genetic Code Origin

| Theory | Core Principle | Key Predictions | Major Proponents |

|---|---|---|---|

| Stereochemical | Direct physicochemical affinity between amino acids and codons/anticodons [9]. | 1. Observable binding between amino acids and specific nucleotide sequences.2. Code structure reflects binding energy landscapes. | Pelc, Woese, et al. (1960s) |

| Frozen Accident | Code is a historical accident that became immutable [1]. | 1. Code is largely arbitrary.2. Universality stems from impossibility of change after fixation. | Francis Crick (1968) |

| Adaptive | Code optimized to minimize errors in translation and mutations [1]. | 1. Codons for similar amino acids are clustered.2. Code is nearly optimal for error robustness. | Freeland, Hurst, et al. (1990s+) |

| Coevolution | Code structure reflects the biosynthetic pathways of amino acids [9]. | 1. Structurally related amino acids share codons.2. Code expanded as new amino acids were biosynthesized. | Wong (1970s) |

| Operational RNA Code | Initial code was based on amino acid recognition by the tRNA acceptor stem [10]. | 1. Early amino acids show stronger relationship with tRNA acceptor sequences.2. Phylogeny shows progressive code expansion. | de Duve, et al. (1990s) |

Evolution to Modern Frameworks

The stereochemical hypothesis has evolved significantly from its early formulations. Modern frameworks often present it not as an exclusive explanation but as one contributing factor within a broader evolutionary process. A prevailing contemporary view suggests the stereochemical interactions provided an initial bias, setting boundaries for what was chemically plausible in the earliest, non-enzymatic translation systems [1]. This "limited determinism" perspective acknowledges that while physical chemistry likely shaped the initial assignments, other forces like natural selection for error minimization and historical contingency refined the code into its modern form.

This integrated view is supported by analyses demonstrating that the standard genetic code effectively balances multiple competing objectives, including error minimization and the encoding of a functionally diverse amino acid repertoire [1]. The code's structure appears to be a trade-off between high fidelity and sufficient diversity to build complex molecular machines, suggesting that stereochemical interactions, while important, were part of a complex optimization process involving multiple selective pressures [1].

Computational models of code evolution have further refined our understanding. Simulations that begin with populations of ambiguous primitive codes demonstrate that stable and unambiguous coding systems can emerge through processes including mutation, gradual amino acid addition, and information exchange between codes [9]. These models often incorporate fitness functions that measure the accuracy of reading genetic information, showing that stereochemical affinities could have served as a starting point upon which selection acted to refine coding precision [9].

Key Experimental Findings and Methodologies

In Vitro Selection and Aptamer Binding Studies

A major line of experimental support for the stereochemical hypothesis comes from in vitro selection studies (SELEX). These experiments involve creating vast libraries of random RNA sequences and identifying those that bind specifically to a target amino acid.

Experimental Protocol:

- Library Construction: Generate a library of up to 10^15 unique RNA molecules with randomized sequences.

- Selection (Panning): Incubate the RNA library with the target amino acid, which is often immobilized on a solid support. Unbound RNAs are washed away.

- Amplification: Elute and reverse-transcribe the bound RNAs into DNA, then amplify using PCR. The DNA is transcribed back into RNA for the next selection round.

- Iteration: Repeat the selection-amplification process for multiple rounds (typically 8-15) to enrich high-affinity binders.

- Cloning and Sequencing: Clone the final selected RNA pool and sequence individual variants to identify consensus motifs.

Key Findings: Such experiments have identified RNA motifs (aptamers) that bind certain amino acids, like arginine and phenylalanine, with some sequences showing resemblance to their codons or anticodons [1]. However, a significant challenge has been the generally low, non-specific binding energies measured for many amino acid-RNA pairs, and the fact that altered anticodons in tRNA often do not abolish function, suggesting that a purely stereochemical link did not exclusively dictate the final code [1].

Phylogenomic Analysis of Dipeptide Chronology

A more recent and powerful approach involves large-scale computational analysis of modern proteomes to infer evolutionary history.

Experimental Protocol:

- Data Collection: Compile a vast dataset of proteomes. One cited study analyzed 4.3 billion dipeptide sequences across 1,561 proteomes [10].

- Phylogenetic Reconstruction: Use phylogenetic methods to reconstruct the evolutionary chronology of the 400 canonical dipeptides, determining the order in which different amino acid pairs appeared.

- tRNA and Synthetase Co-evolution: Correlate the dipeptide chronology with the evolutionary history of tRNA molecules and aminoacyl-tRNA synthetases (aaRS).

- Code Assignment Mapping: Map the emergence of specific dipeptides onto the structure of the evolving genetic code.

Key Findings: This methodology provided direct support for an early 'operational' code. The phylogeny revealed the overlapping emergence of dipeptides containing Leu, Ser, and Tyr, which supported the operational RNA code model where direct interactions in the tRNA acceptor arm were primordial [10]. Furthermore, the synchronous appearance of dipeptide–antidipeptide sequences suggested an ancestral duality of bidirectional coding, a finding that aligns with stereochemical principles operating at a proteome level [10].

Computational Simulation of Code Evolution

Computer simulations have been used to test whether stereochemical principles can lead to the emergence of a genetic code resembling the standard genetic code (SGC).

Experimental Protocol:

- Model Setup: Initialize a population of "primitive" genetic codes with random, ambiguous assignments of a limited set of amino acids to codons [9].

- Define Evolutionary Forces: Incorporate parameters for:

m_c: Mutation rate for codon-label reassignment.m_l: Rate for the addition of new amino acids to the code's repertoire.m_e: Rate of genetic information exchange (horizontal gene transfer) between codes [9].

- Fitness Function: Define a fitness function (F) that measures a code's quality, often based on the accuracy of reading genetic information and its coding potential, which can include stereochemical affinity metrics [9].

- Selection and Iteration: Simulate evolution over many generations, selecting codes with higher fitness and applying the defined evolutionary forces.

Key Findings: These simulations show that starting from ambiguous codes, stable and unambiguous coding systems can emerge. The exchange of genetic information (m_e) is a crucial factor that significantly accelerates the convergence towards stable systems capable of encoding all 20 amino acids and a stop signal [9]. The resulting synthetic codes often share structural features with the SGC, such as blocks of synonymous codons, even without explicit stereochemical rules, suggesting that such interactions could have been a powerful driver in early code evolution.

Diagram: Research Evolution in Stereochemical Hypothesis

The Scientist's Toolkit: Key Reagents and Experimental Materials

Research in the stereochemical hypothesis relies on a diverse set of biochemical and computational tools. The following table details key reagents and their applications in the experimental protocols discussed.

Table: Essential Research Reagents and Materials for Stereochemical Studies

| Reagent/Material | Specifications/Examples | Primary Function in Research |

|---|---|---|

| Immobilized Amino Acids | Amino acids coupled to solid supports (e.g., agarose, magnetic beads). | Facilitates selection and washing steps in in vitro aptamer binding experiments (SELEX) [9]. |

| Random RNA Library | Synthesized oligonucleotides with a central random region (e.g., N30-N50). | Serves as the diverse starting pool for selecting RNA aptamers that bind specific amino acids [9]. |

| Nucleotide Triphosphates | Modified NTPs (e.g., 2'-F, 2'-NH₂) can enhance nuclease resistance. | Used for PCR and in vitro transcription to amplify selected RNA pools during SELEX cycles. |

| Reverse Transcriptase & Polymerases | Enzymes like SuperScript IV (RT) and Q5 or Taq DNA Polymerase. | Essential for converting selected RNA back to DNA (RT-PCR) and amplifying DNA templates between selection rounds [9]. |

| Proteomic Datasets | Curated, non-redundant protein sequences from public databases (UniProt, NCBI). | Provides the raw data for large-scale phylogenomic analysis of dipeptide frequencies and evolutionary chronology [10]. |

| Phylogenetic Analysis Software | Tools like MEGA, PhyML, RAxML, or custom scripts for ancestral state reconstruction. | Reconstructs evolutionary timelines and relationships between dipeptides, tRNAs, and synthetases [10]. |

| tRNA & Synthetase Sequences | Curated sequences from databases like GtRNAdb and aaRS-specific databases. | Used for co-evolutionary analysis with dipeptide appearance to test the operational RNA code model [10]. |

Synthesis and Current Status

The body of experimental evidence suggests a nuanced role for stereochemistry in the origin of the genetic code. While in vitro selection studies provide proof-of-concept that RNA can bind amino acids, the relatively weak and non-specific nature of many interactions, combined with the functional flexibility of modern tRNAs, indicates that a pure stereochemical model is insufficient to fully explain the standard genetic code's structure [1]. The code's organization reflects a balance between multiple competing objectives, including error minimization and the encoding of a functionally diverse amino acid repertoire, suggesting stereochemical interactions were part of a complex optimization process [1].

The most compelling modern support comes from phylogenomic analyses, which indicate that stereochemical interactions were likely most influential in the very earliest stages of code evolution. The early emergence of dipeptides containing Leu, Ser, and Tyr supports a model where an operational RNA code, potentially based on direct interactions in the tRNA acceptor stem, predated the full anticodon-based code [10]. This aligns with a synthesized view where stereochemistry provided an initial bias—a set of chemically plausible initial assignments—upon which other evolutionary forces like natural selection for error robustness and coevolution with biosynthetic pathways acted to refine and freeze the code into its near-universal form [1] [10] [9].

The historical trajectory of the stereochemical hypothesis demonstrates a maturation from a simple, deterministic model to a more sophisticated understanding of its role as one component in a multi-stage evolutionary process. Early theoretical proposals for direct, one-to-one correspondence have given way to a framework where stereochemical affinities provided a foundational bias that shaped the initial conditions of code evolution.

Future research will benefit from several promising directions. Integrated computational models that simultaneously simulate stereochemical binding energies, error minimization pressures, and coevolutionary expansion could provide more realistic insights into the code's emergence. Experimentally, high-throughput methods for quantitatively measuring amino acid-nucleotide interaction landscapes could offer a more comprehensive dataset against which to test predictions. Furthermore, exploring the stereochemical hypothesis in the context of synthetic biology and the creation of orthogonal genetic codes may provide empirical evidence for the role of physical chemistry in shaping codon assignments. As these research avenues progress, the stereochemical hypothesis will continue to be a central element in the ultimate resolution of the genetic code's enduring mystery.

The stereochemical hypothesis proposes that the genetic code's structure is not a frozen accident but reflects direct, physicochemical interactions between amino acids and their cognate codons or anticodons [3] [1]. This theory suggests that primordial molecular affinities, rooted in the complementary shapes and chemical properties of biological molecules, influenced which codons came to represent which amino acids [11]. Unlike purely adaptive models, which explain the code's organization through evolutionary optimization for error minimization, the stereochemical theory posits an initial, absolute assignment based on chemical law, which subsequent evolution could refine but not entirely erase [3]. A key prediction of this hypothesis is that vestiges of these primordial interactions should still be detectable today, manifesting as statistically significant associations between specific amino acids and their coding triplets [3] [11]. This guide analyzes the empirical evidence supporting these conserved relationships, evaluates the methodologies for their detection, and explores their predictive power for both fundamental biology and applied biotechnology.

Quantitative Evidence for Stereochemical Associations

Experimental and bioinformatic investigations have provided quantifiable, albeit uneven, support for stereochemical associations. The evidence indicates that a subset of the modern genetic code's assignments likely has a stereochemical origin.

Table 1: Experimentally Supported Stereochemical Associations

| Amino Acid | Supporting Evidence | Confidence Level | Key Experimental Method |

|---|---|---|---|

| Arginine (Arg) | Strong, natural RNA binder identified; significant in SELEX [3] [11] | Strongly Supported | SELEX, Ribosomal RNA-protein interaction analysis |

| Isoleucine (Ile) | Significant association in SELEX experiments [3] | Strongly Supported | SELEX |

| Tyrosine (Tyr) | Significant association in SELEX experiments [3] | Strongly Supported | SELEX |

| Histidine (His) | Significant association in SELEX experiments [11] | Supported | SELEX |

| Tryptophan (Trp) | Significant association in SELEX experiments [11] | Supported | SELEX |

| Phenylalanine (Phe) | Significant association in SELEX experiments [11] | Supported | SELEX |

Conversely, for several small and simpler amino acids, including glycine, alanine, valine, proline, serine, glutamic acid, and threonine, experimental evidence for stereochemical associations is notably lacking [11]. Chromatographic and direct interaction studies further complicate the stereochemical picture. Early work found that associations often involved anticodon doublets rather than codons, and interactions between free amino acids and mono-, di-, or trinucleotides were generally too weak and non-specific to parallel the genetic code [3]. This has led to the view that while stereochemistry likely provided an initial bias, it was not the sole determinant of the final code [1].

Key Experimental Methodologies and Protocols

Uncovering evidence for stereochemical relationships requires sophisticated experimental and computational techniques designed to detect specific molecular recognition.

SELEX (Systematic Evolution of Ligands by EXponential Enrichment)

Objective: To identify RNA sequences (aptamers) from a vast random pool that bind with high affinity and specificity to a target amino acid.

Detailed Protocol:

- Library Synthesis: Generate a synthetic library of single-stranded RNA molecules containing a central random region (e.g., 40-60 nucleotides) flanked by constant sequences for PCR amplification.

- Incubation and Binding: The RNA library is incubated with the target amino acid, which is often immobilized on a solid-phase column to facilitate separation.

- Partitioning: Unbound RNA sequences are washed away. RNA molecules that form stable complexes with the target amino acid are retained.

- Elution and Recovery: The bound RNAs are eluted from the column and purified.

- Amplification: The recovered RNA pool is reverse-transcribed into DNA, amplified by PCR, and then transcribed back into RNA for the next selection round.

- Repetition: Steps 2-5 are repeated for multiple rounds (typically 8-15) to progressively enrich the RNA pool for the strongest binders.

- Cloning and Sequencing: The final enriched pool is cloned and sequenced. The resulting sequences are analyzed for statistically significant motifs, which are then compared to biological codons and anticodons [3] [11].

Ribosome and RNA-Protein Interaction Analysis

Objective: To examine extant biological structures, like the ribosome, for evidence of historical, stereochemically-driven interactions.

Detailed Protocol:

- Structural Determination: Obtain high-resolution three-dimensional structures of ribosomal complexes or other RNA-protein assemblies via X-ray crystallography or cryo-electron microscopy.

- Interface Mapping: Identify all amino acid side chains making van der Waals contacts or hydrogen bonds with nucleotide bases in the RNA.

- Sequence Analysis: For each interacting amino acid, analyze the local RNA sequence, particularly in regions corresponding to the anticodon loops of tRNAs or other functionally critical sites.

- Statistical Comparison: Determine if the RNA sequences interacting with specific amino acids are enriched for that amino acid's codons or anticodons at a frequency significantly higher than expected by chance [11].

Computational and Deep Learning Analysis

Objective: To infer evolutionary selection pressures on codon usage and predict optimal coding sequences based on learned patterns from large-scale biological data.

Detailed Protocol (e.g., RiboDecode Framework):

- Data Acquisition and Preprocessing: Collect large-scale ribosome profiling (Ribo-seq) and RNA sequencing (RNA-seq) datasets from diverse tissues and cell lines. Calculate translation levels (e.g., in RPKM) for thousands of mRNAs.

- Model Training: Train a deep neural network to predict the translation level of an mRNA sequence. Input features include the codon sequence, mRNA abundance (from RNA-seq), and cellular context (represented by gene expression profiles).

- Sequence Optimization: Use a gradient ascent-based optimizer (e.g., activation maximization) to iteratively adjust the codon distribution of a input sequence. A synonymous codon regularizer ensures the amino acid sequence remains unchanged while the model maximizes a fitness score (e.g., predicted translation level) [8].

- Validation: Test the optimized sequences in vitro and in vivo to measure improvements in protein expression and therapeutic efficacy [8].

The Stereochemical Era: A Conceptual Workflow

The following diagram synthesizes current theories on how stereochemical interactions may have initiated the genetic code, leading to the modern translation system.

Figure 1: The Hypothesized Stereochemical Era of Genetic Code Evolution. This workflow illustrates the transition from an RNA world to a modern genetic code, driven initially by stereochemical interactions between large amino acids and RNA molecules, followed by the incorporation of smaller amino acids through gene duplication and adaptation [11].

The Scientist's Toolkit: Essential Research Reagents and Solutions

Investigating codon-amino acid relationships requires a multidisciplinary toolkit, ranging from molecular biology reagents to advanced computational resources.

Table 2: Key Research Reagent Solutions

| Reagent / Resource | Function / Application | Key Characteristics |

|---|---|---|

| SELEX Kit Systems | Isolation of RNA aptamers with affinity for specific amino acids. | Includes random RNA library, solid-phase amino acid immobilization supports, and reagents for RT-PCR. |

| Ribosome Profiling (Ribo-seq) Kit | Genome-wide snapshot of translating ribosomes. | Includes nuclease for ribosome-protected mRNA fragment generation, and buffers for library prep. |

| Codon Optimization Software (e.g., RiboDecode) | Generative design of mRNA sequences for enhanced translation. | Deep learning framework trained on Ribo-seq data; enables context-aware optimization [8]. |

| Codon Usage Databases (e.g., CoCoPUTs) | Reference data for codon and codon-pair usage tables. | Tissue- and species-specific tables essential for comparative analysis [12]. |

| mRNA Structure Prediction Tools (e.g., RNAfold) | Calculation of minimum free energy (MFE) for mRNA secondary structures. | Differentiable MFE predictors can be integrated into deep learning pipelines [8]. |

| Phylogenetic Analysis Software | Inference of evolutionary relationships and selection pressures. | Used with mutation-selection models to estimate site-specific substitution rates from sequence alignments [13]. |

The evidence for conserved codon-amino acid relationships presents a compelling, if incomplete, picture. The stereochemical hypothesis is strengthened by robust, reproducible data for a specific subset of amino acids, primarily those with large and complex side chains. The persistence of these relationships suggests they provided a foundational scaffold upon which the modern code was built. However, the theory's current predictive power is constrained, as it cannot explain all canonical assignments, particularly those of smaller amino acids. The emerging synergy between empirical biochemistry and advanced computational models like deep learning frameworks is forging a new path forward. These data-driven approaches are already demonstrating remarkable predictive power in practical applications, such as designing highly expressive therapeutic mRNAs, by implicitly capturing the complex evolutionary outcomes of primordial chemical constraints and subsequent selection pressures [8]. Future research that integrates these powerful computational predictions with targeted experimental validation will be crucial for refining our understanding of the genetic code's origin and for fully harnessing its potential in synthetic biology and medicine.

The stereochemical hypothesis of the genetic code posits that codon assignments are not arbitrary but are fundamentally dictated by physicochemical affinities between amino acids and their cognate codons or anticodons [3] [2]. This concept stands in contrast to adaptive or "frozen accident" theories, suggesting the code's structure reflects an ancestral era where direct chemical interactions governed amino acid-nucleotide pairing. This whitepaper examines two critical lines of experimental evidence that challenge and refine this hypothesis: studies involving artificially altered tRNA anticodons and data revealing pervasive non-specific binding in therapeutic antibodies.

Research into these areas reveals a complex reality. The genetic code and modern molecular recognition systems demonstrate a delicate balance between specificity and plasticity. While stereochemistry provides a plausible origin story, contemporary biological function is heavily modulated by evolutionary adaptations, including post-transcriptional tRNA modifications and stringent selection against promiscuous binding. Understanding these challenges is crucial for scientists exploring the fundamental principles of molecular biology and for drug development professionals working to improve the specificity and safety of biologic therapeutics.

The Stereochemical Hypothesis: A Primer and Its Modern Tests

The core of the stereochemical hypothesis, or the "codon-correspondence hypothesis," states that for each amino acid, a coding sequence exists for which it has the strongest association, and this association influenced the genetic code's form and content [3]. This idea predates the code's full elucidation, with early models like Gamow's ‘diamond code’ proposing that amino acids fit into specific pockets bounded by four DNA bases [3]. Modern tests have moved beyond molecular modeling to empirical investigations, primarily focusing on whether interactions between amino acids and longer nucleic acid sequences can recapture the modern code's assignments.

Evidence suggests that initial coding assignments were likely made through interaction with macromolecular RNA-like molecules. Real codons are concentrated in newly selected amino acid binding sites more than in randomized codes, implying that some primordial chemical relationships have survived subsequent evolutionary selection [3]. Specifically, significant stereochemical relationships are retained for at least three amino acids—arginine, isoleucine, and tyrosine—strongly supporting a stereochemical origin for part, but not all, of the code [3]. This partial fidelity indicates that while stereochemistry set the stage, it was not the sole actor in the code's evolution.

Challenge 1: The Complex Role of tRNA Modifications and Anticodon Alterations

The anticodon is the physical key to the genetic code, yet its function is not solely determined by its nucleotide sequence. Post-transcriptional modifications in the anticodon loop profoundly influence translational accuracy, and their experimental alteration reveals a system more complex than simple stereochemical pairing.

Quantitative Effects on Translational Accuracy

Research in E. coli demonstrates that blocking anticodon loop modifications produces two distinct, opposing effects on misreading error frequency, depending on the specific tRNA [14]. The table below summarizes experimental findings from studies where specific modifications were blocked.

Table 1: Impact of Blocking tRNA Anticodon Modifications on Translational Accuracy in E. coli

| tRNA | Modification Blocked | Effect on Misreading Errors | Proposed Mechanism |

|---|---|---|---|

| tRNALeu & tRNAPhe | Not specified (anticodon loop) | Increased errors | Modifications normally help maintain accuracy by ensuring proper cognate codon recognition [14]. |

| tRNAIle & tRNAGly | Not specified (anticodon loop) | Decreased errors | Unmodified tRNAs decode inefficiently ("weak" tRNAs), failing to compete against cognate tRNAs for near-cognate codons, thus reducing misreading [14]. |

| General tRNAs | mnm5s2U (wobble position 34) | Altered decoding range | Traditionally thought to restrict decoding to A (vs. G); can also expand pairing under certain contexts (e.g., cmo5U) [14] [15]. |

| General tRNAs | ms2i6A (position 37, 3' of anticodon) | Affects efficiency & accuracy | Stabilizes the codon-anticodon complex, particularly for weak U36-A1 base pairs; loss reduces decoding efficiency [14] [15]. |

Core Modifications and tRNA Stability

Modifications outside the anticodon loop, in the tRNA core, are equally vital. They are indispensable for maintaining the tRNA's L-shaped three-dimensional structure, which is a prerequisite for accurate function [15]. Key modifications and their structural roles include:

- Pseudouridylation (Ψ), 2′-O-methylation (Gm), and 2-thiolation (s2U): These modifications stabilize the C3'-endo conformation of the ribose and enhance base stacking, thereby increasing the tRNA's thermostability. For example, Ψ55, Ψ40, and Gm18 individually increase the melting temperature of E. coli tRNASer [15].

- Methylations (m5U, m5C): These increase hydrophobicity and base polarizability, reinforcing tertiary interactions like m5U54-m1A58 and G15-m5C48 [15].

- Positively charged methylations (m1A58, m7G46): The introduced positive charge can stabilize interactions with the negatively charged phosphate backbone or form specific base triplets (e.g., C13-G22-m7G46) [15].

Diagram: The Role of tRNA Core Modifications in Structure and Stability

This diagram illustrates how core modifications stabilize the tRNA's tertiary structure. The interaction between the T-loop and D-loop, fortified by modifications like Gm18 and Ψ55, forms the tRNA elbow, while other modifications like m7G46 and the m5U54-m1A58 pair reinforce key tertiary interactions essential for the overall L-shaped architecture [15].

Experimental Protocols for Studying Modified tRNAs

Key methodologies for investigating the role of tRNA modifications include:

- Generation of Modification-Deficient Mutants: In E. coli, specific genes involved in introducing modifications are knocked out (e.g.,

Δtgt,ΔmnmE,ΔmiaA). The phenotype is validated by analyzing cellular tRNAs via total hydrolysis and High-Performance Liquid Chromatography (HPLC) to confirm the complete absence of the target modification [14]. - In Vivo Misreading Reporter Systems: Plasmid-based systems express reporter genes (e.g., firefly luciferase, β-galactosidase) where a crucial active-site codon is mutated to a near-cognate codon. The error frequency is calculated as the ratio of enzyme activity from the mutant reporter to that from a wild-type codon reporter, providing a sensitive measure of misreading in vivo [14].

- Dual Luciferase High-Throughput Screening (HTS): This assay uses two luciferases expressed from a single plasmid. The firefly luciferase (Fluc) mRNA carries a near-cognate start codon (e.g., UUG), while the Renilla luciferase (Rluc) mRNA with an AUG start codon serves as an internal control. This setup allows for the identification of compounds or conditions that specifically alter the fidelity of start codon selection by measuring the UUG/AUG activity ratio [16].

Challenge 2: Non-Specific Binding as a Model for Stereochemical Infidelity

The problem of non-specific binding provides a parallel challenge to the stereochemical hypothesis. If the genetic code originated from strong, specific affinities, why does modern molecular recognition, even in highly evolved systems like therapeutic antibodies, frequently exhibit off-target binding?

Quantitative Evidence from the Therapeutic Antibody Field

Recent empirical assessments of antibody-based drugs reveal that non-specific binding is a pervasive issue, challenging the assumption of absolute specificity in biomolecular interactions [17] [18].

Table 2: Prevalence of Off-Target Binding in Antibody Drug Development

| Pipeline Stage | Molecules Tested | Incidence of Nonspecific Binding | Implications |

|---|---|---|---|

| Lead Candidates | 254 lead molecules | 33% (84 molecules) | A major predictor of attrition in later development stages; highlights need for early screening [17]. |

| Clinically Administered Drugs | 83 drugs (in trials, FDA-approved, or withdrawn) | 18% (15 drugs) | Directly linked to adverse patient events, including severe complications and death [17] [18]. |

| Withdrawn Drugs | Subset of clinically administered drugs | 22% showed nonspecific binding | Off-target binding is a significant contributor to drug safety issues and market withdrawal [17]. |

Experimental Systems for Profiling Specificity

The primary tool for comprehensively assessing antibody specificity is the Membrane Proteome Array (MPA). This platform is a cell-based array representing approximately 6,000 human membrane proteins, each presented in its native structural conformation [17] [19]. The experimental workflow is as follows:

- Expression: Cloned genes for human membrane proteins are individually expressed in cell lines.

- Presentation: The full-length proteins are presented on the surface of live cells, preserving their native folding and post-translational modifications.

- Screening: The antibody therapeutic candidate is applied to the array.

- Detection: Binding to each of the ~6,000 targets is measured, typically using a high-throughput flow cytometry or imaging system.

- Data Analysis: Bioinformatic comparisons and statistical analyses identify off-target interactions, even those with very low affinity [17] [19].

This platform's significance is underscored by its ongoing qualification by the FDA as a Drug Development Tool (DDT), confirming its regulatory acceptance and importance for de-risking drug development [19].

Diagram: Workflow for Antibody Specificity Profiling Using MPA

Synthesis: Interpreting the Evidence and Future Directions

The evidence from both anticodon alterations and non-specific binding studies paints a consistent picture: high-fidelity molecular recognition is a hard-won achievement, not a default state. The stereochemical hypothesis likely explains the initial, weak biases in the primordial code, where simple physicochemical affinities provided a starting point. However, the modern system is the product of extensive evolutionary refinement.

The intrinsic weakness of initial stereochemical interactions is highlighted by the failure of experiments to find strong, specific associations between short oligonucleotides (mono-, di-, or trinucleotides) and amino acids [3]. This suggests that the code was established through interactions with longer, structured RNA molecules, which could provide more complex binding pockets [3]. Furthermore, the pervasive nature of off-target antibody binding demonstrates that even millions of years of evolution cannot fully eradicate promiscuous interactions, underscoring the challenge of achieving perfect specificity.

These findings have direct implications for scientific and industrial research. They argue for the implementation of robust, systematic specificity screening protocols early in development pipelines, such as the use of the MPA for antibodies or comprehensive mutational scanning for tRNA and genetic code engineering.

The Scientist's Toolkit: Key Research Reagents and Solutions

Table 3: Essential Reagents for Studying Coding Specificity

| Reagent / Technology | Core Function | Application in Research |

|---|---|---|

| Membrane Proteome Array (MPA) | Profiles antibody binding across ~6,000 native human membrane proteins. | De-risking therapeutic antibody development by identifying off-target interactions; validating specificity claims for regulators [17] [19]. |

| Dual Luciferase Reporter Assays | Quantifies translational fidelity in vivo by measuring initiation/readthrough at near-cognate codons. | High-throughput screening for factors (e.g., compounds, tRNA mutations) that alter the accuracy of start codon selection or stop codon readthrough [16]. |

| tRNA Modification-Deficient Mutants | Bacterial/yeast strains with knocked-out genes for specific tRNA modification enzymes (e.g., miaA, mnmE, tgt). |

Investigating the functional role of individual tRNA modifications in translational efficiency, accuracy, and cellular fitness [14]. |

| Misreading Reporter Plasmids | Plasmid vectors encoding reporter enzymes (e.g., luciferase, β-galactosidase) with defined near-cognate codons. | Sensitive measurement of amino acid misincorporation and translational error frequencies under different genetic or chemical conditions [14] [20]. |

| Liquid Chromatography-Mass Spectrometry (LC-MS/MS) | Precisely identifies and quantifies peptides and their variants with high sensitivity. | Detecting low-level stop codon readthrough events and identifying the specific misincorporated amino acids in recombinant proteins [20]. |

Investigations into artificially altered anticodons and non-specific binding force a nuanced interpretation of the stereochemical hypothesis. The genetic code's structure shows evidence of its stereochemical origins, but its high fidelity in modern biology is the result of evolutionary optimization that has layered sophisticated control mechanisms, like tRNA modification, atop primordial interactions. Similarly, the widespread off-target binding observed in therapeutic antibodies serves as a powerful model of stereochemical infidelity, demonstrating the constant evolutionary pressure against promiscuity. For researchers, this underscores that achieving and verifying specificity—whether in understanding the primordial code or in developing a safe drug—requires confronting and directly testing for error and promiscuity at every step.

From Theory to Tool: Computational and Experimental Methods for Stereochemical Analysis

The stereochemical hypothesis of codon assignments posits that the genetic code's structure originated from direct chemical interactions between amino acids and nucleotides or their precursors. This theory suggests that the canonical code preserves a molecular record of these primordial affinities, where amino acids with similar physicochemical properties are assigned to similar codons to minimize the deleterious effects of mutations and translation errors [3]. Unlike adaptive explanations that can only describe relative amino acid positioning, stereochemical explanations propose verifiable, absolute rules governing these assignments. However, a significant historical transition must be explained: modern translation proceeds without direct codon-amino acid interaction, implying that any initial stereochemical relationships were subsequently overlaid by evolutionary optimization [3].

Modern computational simulations provide the critical tools to test this hypothesis and model the code's subsequent evolution and stability. These simulations allow researchers to move beyond theoretical speculation into quantitative, hypothesis-driven testing. By constructing in silico models of primitive code evolution, scientists can evaluate whether stereochemical interactions could have sufficiently shaped the code, quantify the level of optimization achieved, and explore the transition from a chemistry-driven to a biology-driven genetic code. This technical guide explores the core computational methodologies, experimental protocols, and key reagents that empower this research at the intersection of molecular evolution and bioinformatics.

Core Computational Methodologies

Evolutionary Algorithms for Code Optimality Analysis

Evolutionary algorithms, particularly genetic algorithms (GAs), are deployed to search the vast landscape of possible genetic codes and quantitatively assess the optimality of the canonical code. This approach directly tests the "engineering" perspective, which seeks to determine how close the standard code is to a theoretical optimum, in contrast to the "statistical" approach that compares it to random codes [21].

Protocol: Simulated Evolution with a Genetic Algorithm

Define the Fitness Function: The most common metric is error minimization. Calculate the fitness of a genetic code as the mean square (MS) of the change in amino acid properties for all possible single-base mutations, weighted by mutation type and frequency [21].

- Formula:

Fitness = Σ [ Pr(mutation) * Δ(property)² ] Pr(mutation)is the probability of a specific point mutation (e.g., transition vs. transversion).Δ(property)is the change in a key physicochemical property (e.g., polar requirement, hydropathy, molecular volume) between the original and substituted amino acid.

- Formula:

Encode the Genetic Code: Represent a hypothetical genetic code as an individual in the GA population.

- Model 1 (Block Permutation): The 64 codons are divided into the 21 blocks (20 amino acids + stop) observed in the standard code. An individual is encoded as a permutation of the 20 amino acids assigned to these fixed blocks [21].

- Model 2 (Codon Reassignment): A more realistic model where codons can be reassigned individually or in small groups, reflecting known biological mechanisms where tRNA anticodon mutations reassign codons to biosynthetically related amino acids [21].

Apply Genetic Operators:

- Crossover: Recombine sections of the genetic code from two parent individuals to create offspring.

- Mutation: Randomly swap the amino acid assignments of a small number of codons.

Run Simulation and Analyze: Evolve a population of codes over many generations. The efficiency of the canonical code is then evaluated using the percentage distance minimization (p.d.m.) metric [21]:

- Formula:

p.d.m. = (Δ_mean - Δ_code) / (Δ_mean - Δ_low) Δ_codeis the error value of the canonical code.Δ_meanis the average error of random codes.Δ_lowis the best error value found by the GA.

- Formula:

This method has revealed that the canonical genetic code is significantly optimized but not globally optimal, achieving an estimated 68% minimization of polarity distance, leaving room for improvement from an engineering standpoint [21].

Deep Learning for Context-Aware Codon Optimization

While evolutionary algorithms study the past, deep learning models like RiboDecode represent the state-of-the-art for understanding and engineering codon usage in the modern era. These models learn the complex relationship between mRNA codon sequences and their translation levels directly from large-scale experimental data, moving beyond simplistic rule-based optimization [8].

Protocol: mRNA Optimization with RiboDecode

- Data Acquisition and Preprocessing: Train the model on a massive corpus of ribosome profiling (Ribo-seq) and RNA sequencing (RNA-seq) data. Ribo-seq provides a snapshot of ribosome positions, yielding Reads Per Kilobase per Million (RPKM) as a measure of translation level [8].

- Model Architecture: Implement a deep neural network that takes three inputs:

- Codon Sequence: The mRNA sequence as a series of codons.

- mRNA Abundance: Derived from RNA-seq data.

- Cellular Context: Gene expression profiles of the specific cell type or tissue.

- Joint Optimization: The model is trained to predict translation levels from these joint inputs, allowing it to capture context-specific translation dynamics [8].

- Sequence Generation: Use an optimization algorithm (e.g., gradient ascent via activation maximization) to iteratively adjust the codon distribution of an input sequence. A synonymous codon regularizer ensures the encoded amino acid sequence remains unchanged, exploring the space of synonymous sequences to maximize the predicted fitness score [8].

- Multi-Objective Fitness: The final fitness score can be tuned to optimize for translation, stability, or both:

Fitness = (1 - w) * Translation_Score + w * MFE_Score, wherewis a weighting parameter and MFE (Minimum Free Energy) is a proxy for mRNA structural stability [8].

Table 1: Key Parameters in the RiboDecode Optimization Framework

| Parameter | Description | Impact on Output |

|---|---|---|

| Weighting Parameter (w) | Balances focus on translation efficiency vs. mRNA stability. | w=0: Optimizes translation only. 0<w<1: Joint optimization. w=1: Optimizes MFE/stability only. |

| Cellular Context Input | Gene expression profile of the target cell type. | Enables context-aware optimization, producing sequences ideal for specific tissues or therapeutic targets. |

| Synonymous Codon Regularizer | Constraint ensuring amino acid sequence remains identical. | Allows exploration of the vast space of synonymous mRNA sequences without altering the protein product. |

Quantitative Analysis of Codon Usage and Stability

Computational analyses across diverse biological systems consistently reveal that codon usage is non-random and shaped by evolutionary pressures. The following data, synthesized from recent studies, can be structured for clear comparison.

Table 2: Comparative Codon Usage Analysis Across Biological Systems

| Organism/Virus | Key Metric | Value | Primary Evolutionary Driver | Functional Implication |

|---|---|---|---|---|

| Pseudorabies Virus (gB gene) | Effective Number of Codons (ENC) [22] | 27.94 ± 0.1528 | Natural Selection | Maintains balance between functional expression and host immune evasion. |

| Seoul Virus (All segments) | ENC / Nucleotide Composition [23] | >35 / Varies by segment | Natural Selection & Mutational Pressure | S segment shows strongest host adaptation; L segment the weakest. |

| Saccharomyces cerevisiae (Yeast) | Codon Stability Coefficient (CSC) [24] | Correlates with mRNA half-life | Codon Optimality | Optimal codons enhance mRNA stability; non-optimal codons promote decay. |

The link between codon usage and molecular stability is a cornerstone of modern analysis. Research in yeast has definitively established codon optimality as a major determinant of mRNA stability. Stable mRNAs are enriched in optimal codons (e.g., GCT for Alanine), which are decoded rapidly by abundant tRNAs, leading to efficient ribosome translocation and transcript stabilization. In contrast, unstable mRNAs are dominated by non-optimal codons (e.g., GCG or GCA for Alanine), which slow ribosome elongation and trigger mRNA decay pathways [24]. This principle, first elucidated in model organisms, now underpins the optimization of therapeutic mRNAs.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The following table details key reagents and computational tools essential for conducting research in code evolution and stability.

Table 3: Research Reagent Solutions for Computational and Experimental Studies

| Item Name | Function / Application | Technical Notes |

|---|---|---|

| Ribo-seq Library Kit | Provides a genome-wide snapshot of translating ribosomes. | Critical for generating training data for deep learning models like RiboDecode. Data is expressed as RPKM. |

| IDT Codon Optimization Tool | Web-based tool for optimizing gene sequences for heterologous expression. | Uses codon usage tables and algorithms to enhance protein expression in target hosts [25]. |

| Gene Synthesis Service | Production of physically synthesized DNA sequences designed in silico. | Essential for experimentally validating computationally optimized or evolved genetic codes [25]. |

| Codon Usage Database | Repository of codon usage tables for a wide range of organisms. | Used for calculating indices like CAI and for designing recoded sequences [23]. |

| RDP4 Software | Detects recombination signals in genetic sequence datasets. | Important for pre-analysis filtering in evolutionary studies, as recombination can confound phylogenetic and codon usage analyses [23]. |

Experimental Workflow Visualization

The following diagram illustrates the integrated computational and experimental workflow for modeling code evolution and optimizing mRNA stability, as discussed in this guide.

Workflow for Code Evolution and mRNA Optimization

This workflow demonstrates the iterative process of generating hypotheses computationally and validating them experimentally, a paradigm central to modern biological research.

Stereochemistry-aware generative models represent a paradigm shift in computational drug discovery, moving beyond traditional 2D molecular representations to incorporate the critical third dimension of molecular structure. This technical review examines the fundamental algorithms, implementation protocols, and performance benchmarks of these advanced models, contextualizing their development within the broader framework of the stereochemical hypothesis of genetic code origins. By directly encoding chiral information, these models demonstrate superior performance in generating biologically relevant compounds with optimized binding characteristics, offering significant potential to accelerate therapeutic development for stereosensitive targets. The integration of stereochemical principles from molecular biology into artificial intelligence platforms establishes a new frontier in rational drug design.

The stereochemical hypothesis of genetic code emergence posits that primordial codon-amino acid assignments were influenced by direct physicochemical interactions between nucleotides and specific amino acids [26]. This theory suggests that the foundation of biological information processing rests upon stereochemical complementarity—the precise three-dimensional fitting of molecular structures. Modern drug discovery has increasingly recognized that this same principle governs drug-target interactions, where the chiral orientation of functional groups determines pharmacological activity.

Stereochemistry-aware generative models represent the computational evolution of this biological principle. Whereas conventional molecular generation algorithms often treat compounds as topological graphs or simplified strings, stereochemistry-aware implementations explicitly incorporate three-dimensional spatial arrangements, including tetrahedral chiral centers and E/Z isomerism [27] [28]. This approach mirrors the fidelity of biological systems, where enantiomers exhibit dramatically different behaviors in chiral environments such as enzyme active sites and receptor binding pockets.

The integration of stereochemical constraints addresses a fundamental limitation in AI-driven drug discovery: the generation of theoretically valid compounds that are synthetically inaccessible or biologically inactive due to incorrect stereochemistry. By embedding chiral information directly into the generation process, these models bridge the gap between computational prediction and experimental realization, potentially reducing the iterative cycles between virtual screening and wet-lab validation.

Computational Frameworks and Algorithmic Approaches

Foundational Architectures

Stereochemistry-aware generative models build upon several core algorithmic frameworks, each adapted to incorporate three-dimensional molecular information:

String-Based Representations with Stereochemical Extensions: These approaches extend traditional SMILES (Simplified Molecular Input Line Entry System) representations by incorporating chiral descriptors using the @ symbol convention to specify tetrahedral centers [28]. The generative algorithms, typically based on recurrent neural networks or transformers, learn to apply these descriptors according to chemical rules, ensuring stereochemical validity during sequence generation.

Graph Neural Networks with Geometric Features: These architectures represent molecules as graphs with nodes (atoms) and edges (bonds), augmented with three-dimensional coordinate information and chiral tags. Message-passing mechanisms propagate spatial information across the molecular structure, enabling the model to learn the complex relationships between atomic arrangement and biological activity [27].

3D-Convolutional Neural Networks for Volumetric Representation: These models represent molecular structures as 3D grids of electron density or atomic properties, allowing the direct learning of steric interactions and shape complementarity with target proteins. This approach naturally captures chiral information through the spatial distribution of atomic features.

Comparative Performance Analysis

Recent benchmarking studies demonstrate the relative strengths and limitations of different stereochemistry-aware approaches across various molecular design tasks:

Table 1: Performance comparison of stereochemistry-aware generative models across key metrics

| Model Architecture | Stereochemical Accuracy (%) | Diversity (Tanimoto Index) | Synthetic Accessibility Score | Target Binding Affinity (pIC50) |

|---|---|---|---|---|

| String-Based (RL) | 98.7 | 0.86 | 3.2 | 7.4 |

| String-Based (GA) | 99.2 | 0.82 | 3.5 | 7.1 |

| Graph Neural Network | 99.8 | 0.91 | 2.9 | 7.8 |

| 3D-Convolutional | 99.5 | 0.79 | 4.1 | 8.2 |

| Stereochemistry-Unaware Baseline | 62.3 | 0.88 | 3.7 | 6.3 |

The performance data reveals that while all stereochemistry-aware models significantly outperform stereochemistry-unaware baselines in chiral accuracy, they exhibit trade-offs across other important metrics. Graph Neural Networks achieve the best balance across multiple dimensions, particularly excelling in diversity and binding affinity predictions [27].

Table 2: Task-specific performance advantages of different stereochemistry-aware models

| Design Task | Optimal Model Architecture | Key Performance Advantage |

|---|---|---|

| Scaffold Hopping | Graph Neural Network | Superior shape similarity recognition |

| Natural Product Analogs | String-Based (GA) | Better synthetic accessibility |

| PPI Inhibitors | 3D-Convolutional | Superior surface complementarity |

| CNS-Targeted Compounds | String-Based (RL) | Optimized blood-brain barrier penetration |

| Enzyme Inhibitors | Graph Neural Network | Precise catalytic pocket matching |

Experimental Implementation Protocols

Model Training Methodology

Implementing stereochemistry-aware generative models requires careful attention to data preparation, architecture configuration, and training procedures:

Data Curation and Preprocessing

- Source chiral molecular structures from authoritative databases (ChEMBL, PubChem, ZINC)

- Apply strict filtering for stereochemical accuracy and unambiguous assignment

- Standardize stereochemical descriptors using IUPAC conventions

- Augment data through enumerated stereoisomers with consistent annotation

- Partition datasets ensuring stereochemical diversity across training/validation splits

Architecture Configuration for String-Based Models

- Implement embedding layers with chiral token support

- Configure recurrent layers with 512-1024 units for complex pattern recognition

- Incorporate attention mechanisms to capture long-range stereochemical dependencies

- Add output layers with softmax activation over extended vocabulary including chiral symbols

Training Procedure

- Initialize with transfer learning from stereochemistry-unaware models when possible

- Utilize teacher forcing with scheduled sampling during sequence generation

- Apply gradient clipping with norm set to 1.0 to ensure training stability

- Implement early stopping based on chiral validity metrics on validation set

- Regularize using dropout rates between 0.2-0.5 to prevent overfitting

The training objective function typically combines standard likelihood maximization with stereochemical validity constraints, enforcing proper chiral center representation throughout the generation process [28].

Generation and Optimization Workflows

The molecular generation process in stereochemistry-aware models follows a structured workflow:

Figure 1: Stereochemistry-aware molecular generation workflow with chiral validation checks at each step.

For lead optimization applications, the generation process incorporates structure-activity relationship constraints:

Figure 2: Stereochemistry-aware lead optimization workflow with multi-objective selection.

Successful implementation of stereochemistry-aware generative models requires both computational tools and experimental validation resources: