Beyond the Known: Strategies to Overcome Database Selection Bias in Resistome Analysis

Antimicrobial resistance (AMR) poses a critical global health threat, making accurate resistome characterization essential.

Beyond the Known: Strategies to Overcome Database Selection Bias in Resistome Analysis

Abstract

Antimicrobial resistance (AMR) poses a critical global health threat, making accurate resistome characterization essential. However, database selection bias—where the choice of a specific Antibiotic Resistance Gene (ARG) database significantly influences the composition, diversity, and risk profile of the identified resistome—presents a major challenge to data comparability and biological interpretation. This article explores the foundational sources of this bias, from database curation philosophies to inherent methodological limitations. It provides a methodological guide to selecting and applying diverse databases and analytical pipelines like ARGs-OAP v3.0. The piece further offers troubleshooting strategies to mitigate bias and introduces validation frameworks for cross-database benchmarking. Aimed at researchers and bioinformaticians, this review synthesizes current knowledge to empower more robust, reproducible, and clinically relevant resistome studies.

The Hidden Variable: How Database Architecture Shapes Your Resistome

Defining Database Selection Bias in Resistome Profiling

Database selection bias occurs when the chosen reference database systematically misrepresents the true diversity and abundance of antibiotic resistance genes (ARGs) in a sample due to its inherent composition and design. This bias significantly impacts resistome profiling outcomes, as databases vary in scope, curation methods, and target sequences. When analyzing metagenomic samples, your results are constrained by the database's content; genes not included or underrepresented in your selected database will not be identified, leading to incomplete or skewed ecological conclusions [1].

The core of the problem lies in the fact that different databases have variable nucleotide or amino acid sequence similarity thresholds for defining ARGs, target different resistance mechanisms, and possess uneven taxonomic coverage. This variability precludes direct comparisons across studies using different database resources and can lead to false positives or negatives. Furthermore, databases are often populated with clinically relevant ARGs, creating a systematic underrepresentation of environmental and latent resistance elements, which form a vast reservoir of potential future resistance threats [2] [1].

Technical Support Center

Troubleshooting Guides

Guide 1: Diagnosing Database Selection Bias in Your Resistome Study

Problem: Your resistome profiling results show unexpected low diversity, fail to identify known resistance mechanisms, or are inconsistent with phenotypic resistance data.

Solution:

- Step 1: Cross-Database Validation. Process your raw sequencing data through at least two different, well-established ARG databases (e.g., CARD, ResFinder, MEGARes, ARG-ANNOT). A significant discrepancy in the number and type of ARGs recovered strongly indicates database-specific bias [1] [3].

- Step 2: Functional Enrichment Check. For resistomes derived from non-clinical environments (e.g., soil, sewage), compare your results against databases containing functional metagenomics (FG)-derived ARGs. If your primary database is clinically oriented, a failure to detect many FG ARGs suggests a bias against the latent environmental resistome [2].

- Step 3: Negative Control Analysis. Use a mock microbial community with a known, validated ARG composition. If your database and pipeline fail to recover a subset of these known genes, it reveals gaps in database coverage or issues with detection parameters [4].

Guide 2: Mitigating Bias Through Probe and Capture Design

Problem: Targeted capture methods are not detecting rare or divergent ARG variants in complex metagenomes, leading to an underestimation of resistome diversity.

Solution:

- Step 1: Probe Design Optimization. Design custom capture probes based on a comprehensive database like the Comprehensive Antibiotic Resistance Database (CARD). Use 80-mer nucleotide probes that tile across the protein homolog model of curated ARGs. This design allows for the identification of new alleles with up to 15% sequence divergence from the reference [4].

- Step 2: Assess Probe Coverage. Before experimentation, verify that your probe set provides high length coverage for target genes (aim for >80% for most genes). Be aware that genes with low probe coverage (e.g., <5%) will be poorly captured and likely underrepresented in your results [4].

- Step 3: Validate with Control Genomes. Test the sensitivity and selectivity of your probe set on genomic DNA from multidrug-resistant bacteria with known resistance genotypes. Successful capture should reproducibly yield high numbers of on-target reads with extensive coverage, even for genes with low probe coverage [4].

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary sources of database selection bias in resistome profiling?

- Variable ARG Definitions: Databases use different thresholds for defining an ARG, with nucleotide sequence identity cutoffs ranging from 80% to 95% and amino acid identity as low as 80%. This dramatically changes which sequences are annotated as resistance genes [1].

- Focus on Acquired Resistome: Many databases are skewed toward acquired ARGs that have mobilized and are known to transfer between species. This overlooks the vast reservoir of latent ARGs identified through functional metagenomics, which are more strongly associated with native bacterial taxa and show different global distribution patterns [2].

- Inconsistent Functional Annotation: The functional categories (e.g., drug class, resistance mechanism) and the classification schemes assigned to ARGs vary considerably between different reference databases, making functional profiling dependent on the database chosen [5].

FAQ 2: How does database choice affect the interpretation of a "healthy" gut resistome? The definition of a healthy or baseline gut resistome is highly dependent on database selection. Studies show that the number of ARGs profiled in healthy populations can range from 12 to over 2,000 depending on the database and methodology used. Furthermore, the marker genes selected to represent resistance to a given antibiotic class are not consistent across studies. This variability precludes the establishment of a universal healthy resistome baseline and makes cross-study comparisons unreliable [1].

FAQ 3: What computational tools can help identify and correct for database selection bias?

- ResistoXplorer: This web-based tool supports the integrative analysis of resistome profiles generated from different pipelines and databases. It allows for visual and statistical comparison, helping to identify outliers that may be database-specific artifacts [5].

- Causal Inference Methods: Techniques like propensity score matching (PSM) and confounding adjustment can be applied to genotype-phenotype AMR data. These methods can help control for confounding biases introduced by uneven species representation and spatiotemporal sampling in the source data used to build prediction models [3].

- PanRes Database: Utilizing a consolidated database like PanRes, which integrates multiple ARG collections (including ResFinder and functional metagenomics collections), can provide a broader and less biased view of the resistome compared to any single database [2].

Quantitative Data on Database Variability

Table 1: Impact of Database Construction on Resistome Study Outcomes

| Variable | Range Observed in Literature | Impact on Resistome Profiling |

|---|---|---|

| Number of ARGs Profiled | 12 to 2,000+ genes [1] | Determines the upper limit of detectable resistance diversity in a sample. |

| Sequence Similarity Threshold | 80% (amino acid) to 95% (nucleotide) identity [1] | Affects stringency; lower thresholds may detect novel genes but increase false positives. |

| Acquired vs. FG ARG Focus | FG ARGs can be more abundant and evenly distributed globally than acquired ARGs [2] | Influences ecological conclusions about resistome distribution and drivers. |

| Probe Coverage per Gene | 3.17% to 100% (average 96.2%) [4] | In targeted capture, low coverage leads to failure in detecting specific gene variants. |

Table 2: Key Research Reagent Solutions for Bias-Aware Resistome Profiling

| Reagent / Resource | Function in Resistome Profiling | Role in Mitigating Selection Bias |

|---|---|---|

| Comprehensive Antibiotic Resistance Database (CARD) | A curated resource of ARGs and their associated phenotypes [4]. | Provides a rigorously curated set of reference sequences for probe design and in silico prediction. |

| Custom Capture Probes (e.g., myBaits) | Synthesized biotin-labeled RNA baits for hybrid capture of target ARG sequences [4]. | Enables sensitive detection of both rare and common resistance elements in complex metagenomes, bypassing PCR amplification bias. |

| PanRes Database | A consolidated database integrating acquired ARGs from ResFinder and FG ARGs from ResFinderFG [2]. | Reduces single-database bias by providing a more comprehensive view of known and latent resistance elements. |

| ResistoXplorer Tool | A web-based platform for visual, statistical, and exploratory analysis of resistome data [5]. | Facilitates cross-database comparison and integrative analysis, helping researchers identify and interpret potential biases. |

| SmartChip Real-Time PCR System | High-throughput qPCR system for flexible screening of many ARG targets [6]. | Allows for customizable, direct quantification of a user-defined set of ARGs, independent of sequencing database choices. |

Experimental Protocols

Protocol 1: Targeted Capture for Bias-Reduced Resistome Enrichment

This protocol is adapted from a method designed to sensitively identify both rare and common resistance elements in complex metagenomic samples where ARGs can represent less than 0.1% of the DNA [4].

- Probe Design: Reference a comprehensive database like CARD to design a set of 80-mer nucleotide probes that tile across the sequences of interest. The example study used 37,826 probes to target over 2,000 ARG sequences.

- Library Preparation: Prepare DNA libraries from your metagenomic or genomic samples. The method has been validated as insensitive to different library preparation kits (e.g., NEBNext Ultra II vs. modified Meyer and Kircher) and various insert sizes (e.g., 396 bp to 1,257 bp).

- Hybridization and Capture: Incubate the biotin-labeled probes with the denatured DNA libraries to allow for hybridization. The probes are designed with overlap and are tolerant of sequence divergence to capture new alleles.

- Streptavidin-Bead Separation: Capture the probe-target hybrids using streptavidin-coated magnetic beads and perform a series of washes to remove non-specifically bound DNA.

- Elution and Sequencing: Elute the captured DNA, which is now enriched for ARG sequences. Pool the enriched libraries and sequence on a next-generation sequencing platform.

- Validation: The method should reproducibly yield higher on-target reads and greater length of coverage compared to shotgun sequencing, and identify ARGs that sequencing alone failed to detect.

Protocol 2: Assessing Putative Bias in Machine Learning-Based AMR Prediction

This protocol outlines a causal inference approach to evaluate and adjust for bias in models that predict AMR phenotypes from genotypic data, addressing confounding from non-random sampling [3].

- Data Preparation: Compile a dataset of bacterial genomes with paired genotype (e.g., k-mer signatures) and AMR phenotype data. Include metadata on putative confounders: species, country, and year of collection.

- Causal Assumption Modeling: Define a Directed Acyclic Graph (DAG) to formalize assumptions about how confounders (species, location, time) influence both genetic traits (exposure) and AMR (outcome).

- Propensity Score Estimation: For each genetic signature, estimate its propensity score—the probability of being present given the confounders (species, location, year)—using a regression technique.

- Data Rebalancing: Use propensity score matching (PSM) or inverse probability weighting to create a dataset where the distribution of confounders is balanced between exposed (gene present) and unexposed (gene absent) groups.

- Model Training and Evaluation: Train machine learning models (e.g., Random Forests, Boosted Logistic Regression) on both the crude and the bias-adjusted datasets. Evaluate performance on a carefully constructed external test set that features known distribution shifts (e.g., recent genomes from a single country).

Workflow and Relationship Visualizations

Diagram: Resistome Analysis Bias Identification Workflow

Welcome to the Technical Support Center

This resource provides troubleshooting guides and FAQs for researchers navigating the critical decision between manual curation and consolidated databases, specifically within the context of resistome studies. The following information is framed by the overarching thesis: Addressing database selection bias is fundamental to generating accurate, comparable, and meaningful data in antimicrobial resistance (AMR) research.

Frequently Asked Questions

FAQ 1: What is the core practical difference between using a manually curated database and a large, consolidated database for resistome analysis?

The core difference lies in the trade-off between precision and recall.

- Manually Curated Databases are characterized by high precision. Each data entry, such as the association between an antibiotic resistance gene (ARG) and its function, is validated by human experts. This dramatically reduces false positives but may result in a smaller, less comprehensive dataset [7] [8].

- Large, Consolidated Databases (often automatically assembled from multiple sources) prioritize high recall. They capture a much wider array of ARG sequences, which can improve the detection of novel genes, but at the cost of lower precision and a higher potential for false positives due to unvalidated or incorrect entries [8].

Table 1: Comparison of Curation Approaches Based on a Chemical Dictionary Study

| Curation Metric | Manually Curated Dictionary | Automated/Consolidated Dictionary |

|---|---|---|

| Precision | 0.87 (High) | 0.67 (Medium) |

| Recall | 0.19 (Low) | 0.40 (Medium) |

| F-score | 0.30 | 0.50 |

| Dictionary Size | ~80,000 terms | ~300,000 terms |

| Key Strength | Accuracy, reliability, reduced false positives | Comprehensiveness, discovery of novel elements |

Source: Adapted from a study comparing the ChemSpider and Chemlist dictionaries [8].

FAQ 2: How does database choice directly impact the geographical conclusions of a global resistome study?

Your database selection can fundamentally shape your understanding of how ARGs are distributed across the globe.

- Acquired ARG Databases (tracking mobilized, clinically relevant genes) tend to show strong geographical clustering. Patterns will clearly distinguish between world regions, as these genes are heavily influenced by human activity and local antibiotic use [2].

- Functional Metagenomic (FG) ARG Databases (including latent environmental resistance) often show a more even global distribution. These genes are more tightly linked to underlying bacterial taxonomy and environmental reservoirs than to human-driven dispersal [2].

Table 2: Impact of Database Selection on Global Resistome Patterns

| Analysis Type | Findings with Acquired ARG Databases | Findings with FG ARG Databases |

|---|---|---|

| Global Distribution | Distinct geographical patterns; most abundant in Sub-Saharan Africa, Middle East & North Africa, and South Asia [2] | More evenly dispersed globally [2] |

| Distance-Decay Effect | Significant at both national and regional scales [2] | Significant only at the national level; not at inter-regional scales [2] |

| Primary Driver of Pattern | Human activities, antibiotic use, and regional factors [2] | Association with specific bacterial taxa and environmental niches [2] |

FAQ 3: What are the specific methodological inconsistencies in resistome studies that can lead to selection bias?

A systematic review of human gut resistome studies identified multiple sources of heterogeneity that preclude direct comparison between studies [1]:

- Variable ARG Profiling: The number of ARGs profiled in different studies can range from as few as 12 to over 2,000.

- Lack of Standardized Healthy Baseline: The definition of a "healthy" gut resistome for baseline comparison is inconsistent, with antibiotic-free periods prior to sampling defined as 3, 6, or 12 months.

- Inconsistent Bioinformatics Thresholds: The sequence similarity thresholds used to identify ARGs are arbitrary, varying from 80% amino acid identity to 95% nucleotide sequence identity.

- Different Marker Genes: Studies use disparate genes to represent resistance to the same antibiotic class.

- Lack of Phenotypic Validation: The function of bioinformatically detected resistance genes is rarely validated in the laboratory.

Troubleshooting Guides

Problem: My resistome analysis yields an unmanageably high number of false-positive ARG hits. Solution: Implement a multi-step filtering and disambiguation pipeline.

- Step 1: Apply Rule-Based Filtering. Remove common English words or non-specific biological terms that are incorrectly annotated as ARGs in consolidated databases [8].

- Step 2: Utilize Disambiguation Rules. Create rules to handle context, for example, by checking if a gene is located near known mobile genetic elements or within a credible genomic context [1] [8].

- Step 3: Cross-Reference with a Manually Curated Database. Use a high-precision, manually curated database as a secondary filter to verify your final list of high-confidence ARGs [7].

Problem: I am concerned that my chosen database is missing novel or latent resistance genes. Solution: Supplement your analysis with data from functional metagenomics (FG) studies.

- Action 1: Integrate FG ARG databases (e.g., ResFinderFG) into your analysis. These databases contain genes identified through phenotypic selection in heterologous hosts, capturing a reservoir of resistance that is often missed by sequence-based databases focused on acquired genes [2].

- Action 2: Recognize that the acquired resistome (mobilized genes) and the latent FG resistome are shaped by different ecological forces. Using both provides a more complete picture of current and future resistance threats [2].

Problem: My institutional review board (IRB) has strict policies based on HHS subparts B, C, and D, but my NSF-funded resistome research uses only de-identified sewage samples. Solution: Clarify the applicable federal regulations for your funding source.

- Action: The National Science Foundation (NSF) has not adopted subparts B, C, and D of the HHS regulations. Only the Common Rule (Subpart A) is necessarily applicable to NSF-funded projects. If your institution's IRB applies a more restrictive interpretation, you are advised to consult with your NSF program officer for guidance [9].

Experimental Protocols

Protocol 1: Systematic Review and Meta-Analysis of Resistome Studies

This methodology is used to identify and quantify biases and heterogeneity across existing research [1].

- Bibliographic Search: Develop a search strategy using MEDLINE/PubMed, EMBASE, Web of Science, and Scopus. Use Medical Subject Headings (MeSH) and title/abstract keywords (e.g., "resistome," "gut microbiota," "antibiotic resistance genes").

- Study Selection: Apply pre-defined inclusion/exclusion criteria. For example, include primary studies on healthy populations and exclude studies on critically ill patients. Use a tool like the PRISMA flowchart to document screening.

- Data Extraction: Extract key data from full-text articles: first author, publication year, sample size, ARGs profiled, laboratory methods, bioinformatic pipelines, and reference databases used.

- Quality Assessment: Rate study quality using a standardized tool like the Newcastle-Ottawa Scale for observational studies, focusing on cohort representativeness and ascertainment of antibiotic exposure.

Protocol 2: Functional Metagenomics for Latent Resistome Discovery

This protocol describes the workflow for identifying novel, functional ARGs from environmental or clinical samples [2].

- DNA Extraction: Isolate total community DNA from the sample matrix (e.g., sewage, soil, feces).

- Metagenomic Library Construction: Fragment the DNA and clone large fragments (~40 kb) into a fosmid or bacterial artificial chromosome (BAC) vector.

- Transformation and Phenotypic Selection: Transform the library into a susceptible host bacterium (e.g., E. coli) and plate onto media containing a sub-inhibitory concentration of an antibiotic.

- Sequence and Annotate Resistance Clones: Isolate DNA from resistant colonies and sequence the inserted DNA fragment. Annotate the open reading frames (ORFs) and compare the sequence to known ARG databases to identify novel resistance genes.

Functional Metagenomics Workflow for Novel ARG Discovery

The Scientist's Toolkit

Table 3: Key Research Reagents and Databases for Resistome Studies

| Tool Name | Type | Function in Research |

|---|---|---|

| ResFinder | Database | A reference database for acquired antimicrobial resistance genes in bacterial pathogens [2]. |

| ResFinderFG | Database | A companion to ResFinder containing ARGs identified through functional metagenomics, capturing the latent resistome [2]. |

| PanRes | Database | A consolidated database combining multiple ARG collections, including those from ResFinder and functional metagenomic studies [2]. |

| ChemSpider | Database | An example of a manually curated chemical database, demonstrating the high-precision approach to name-structure relationships [8]. |

| mOTUs | Software Tool | A tool for profiling microbial taxonomic abundance from metagenomic sequencing data, used to correlate ARGs with bacterial hosts [2]. |

| Procrustes Analysis | Statistical Method | A multivariate analysis used to assess the congruence between two data matrices (e.g., resistome composition vs. bacteriome composition) [2]. |

Antimicrobial resistance (AMR) is a global health crisis, and metagenomics has become a pivotal tool for surveilling antibiotic resistance genes (ARGs) in diverse environments. However, your research can be significantly skewed by a critical choice: whether to analyze the acquired resistome or the functional metagenomic (FG) resistome. These two approaches probe different parts of the resistome and can lead to divergent conclusions about the abundance, diversity, and spread of ARGs.

The acquired resistome typically refers to known, often mobilized, resistance genes that have been identified in pathogens and are cataloged in databases like ResFinder. In contrast, the FG resistome is identified through functional metagenomics, a method that involves cloning environmental DNA into a host bacterium and selecting for resistance phenotypes, thereby discovering novel and latent ARGs without prior sequence knowledge [2] [1].

This guide will help you troubleshoot the specific challenges that arise from this dichotomy, ensuring your resistome studies are accurately interpreted.

Key Concepts FAQ

Q1: What is the fundamental difference between the acquired and FG resistomes in terms of their biological significance?

- Acquired Resistome: Comprises genes that are often associated with mobile genetic elements (MGEs) like plasmids, integrons, or transposons. These genes have a demonstrated potential for horizontal gene transfer between bacteria and are directly linked to clinical resistance problems [10] [11].

- FG Resistome: Represents a broader collection of resistance genes, including "latent" or "cryptic" genes. These may be intrinsic to certain bacterial taxa, not yet mobilized, or not expressed in their native hosts. The FG resistome is thus considered a reservoir of potential future resistance threats [2] [10].

Q2: My analysis shows that FG ARGs are more evenly distributed across the globe than acquired ARGs. Is this a technical artifact or a real biological pattern?

This is likely a real biological pattern. A landmark study analyzing 1240 sewage samples from 111 countries found that the FG resistome was more evenly dispersed globally, while the acquired resistome followed distinct geographical patterns [2]. This suggests that the latent resistance potential (FG ARGs) is widespread, but the mobilization and establishment of these genes into pathogens (acquired ARGs) are influenced by local factors such as antibiotic use, sanitation, and socioeconomic conditions.

Q3: Why do the acquired and FG resistomes show different associations with bacterial taxonomy?

Network analyses have confirmed that FG ARGs show stronger associations with specific bacterial taxa than acquired ARGs do [2]. This is because many FG ARGs are intrinsic, chromosome-encoded genes of these taxa. Acquired ARGs, by virtue of their mobility, can be found in a wider variety of genomic backgrounds and bacterial hosts, leading to a weaker signal with any specific taxon.

Q4: From a One-Health perspective, which resistome is more important to monitor?

Both are critical, but for different reasons. The acquired resistome helps you track the current, immediate public health threat [11]. Monitoring the FG resistome allows for a proactive surveillance strategy, identifying potential resistance threats before they mobilize and enter pathogenic bacteria [2]. A comprehensive One-Health approach should integrate both perspectives to address both current and future risks.

Troubleshooting Guide: Addressing Common Experimental Challenges

Challenge 1: Inconsistent or Non-Comparable ARG Profiles

- Problem: Different studies reporting vastly different ARG abundances and diversities, making synthesis of results difficult.

- Root Cause: A major source of bias is the lack of standardization in resistome studies. This includes the selection of different target genes and arbitrary thresholds for sequence similarity to define a "hit" (e.g., using 80% vs. 95% nucleotide identity) [1].

- Solution:

- Pre-defined Databases: Clearly state the ARG database used (e.g., CARD, ResFinder, SARG) and its version.

- Consistent Cut-offs: Justify and consistently apply alignment parameters (e.g., ≥80% sequence identity over ≥75% of the gene length is a common benchmark) [12] [1].

- Report Methodology in Detail: In your methods, explicitly detail the bioinformatics pipeline, including the tools and parameters used for read-based and/or assembly-based analysis.

Challenge 2: Loss of Genomic Context for ARGs During Assembly

- Problem: Assembled contigs containing ARGs are too short, breaking apart at the gene itself. This prevents you from determining the bacterial host or the mobility potential of the ARG.

- Root Cause: ARGs are often flanked by repetitive regions, insertion sequences, and other MGEs. When the same ARG exists in multiple genomic contexts within a sample, the assembly graph becomes highly complex and assemblers tend to fragment the output [13].

- Solution:

- Assembler Choice: Benchmark assemblers for your specific sample type. One study found that the transcriptome assembler Trinity can sometimes recover longer ARG-containing contigs in complex metagenomes, while metaSPAdes is also a good option [13].

- Complement with Read-Based Quantification: For accurate ARG abundance estimates, always complement assembly-based results with a read-based approach where reads are mapped directly to an ARG database. Assembly fragmentation can lead to significant underestimation of abundance [13].

- Utilize Long-Read Sequencing: When possible, incorporate Oxford Nanopore or PacBio long-read sequencing. Long reads can span repetitive regions and help link ARGs to their host genome and nearby MGEs [14].

Challenge 3: Difficulty Linking ARGs to Their Bacterial Hosts

- Problem: You've identified numerous ARGs in your metagenome, but you don't know which bacteria carry them.

- Root Cause: Standard metagenomic assembly and binning struggles to associate small, mobile elements like plasmids (which often carry ARGs) with their host bacterial chromosome.

- Solution:

- Advanced Long-Read Techniques: Leverage novel methods that use DNA methylation profiling on Oxford Nanopore data. Tools like NanoMotif can exploit the fact that a plasmid and its host chromosome share a common methylation signature, allowing you to bin plasmids and other MGEs with their bacterial hosts [14].

- Strain-Resolved Metagenomics: Apply haplotype phasing tools to long-read data to resolve strain-level variation and precisely identify which strain carries a specific resistance mutation or ARG [14].

Challenge 4: Contamination in Low-Biomass Samples

- Problem: Detection of ARGs or bacterial taxa that are contaminants from reagents or the lab environment, rather than the sample itself.

- Root Cause: Laboratory reagents, extraction kits, and polymerase enzymes can contain trace amounts of microbial DNA, which is amplified during sequencing and can be misinterpreted as a true signal [15].

- Solution:

- Include Control Samples: Always run negative control samples (e.g., blank extractions with no sample) alongside your experimental samples.

- Batch Reagents: Use the same batch of extraction kits and reagents for an entire project to characterize the consistent "kitome" background [15].

- Bioinformatic Subtraction: Subtract the ARG profiles and taxonomic reads found in your negative controls from your experimental samples.

Experimental Protocols & Workflows

Protocol 1: A Standard Workflow for Comparative Resistome Analysis

This workflow is adapted from the global sewage study that directly compared acquired and FG ARGs [2].

- Sample Collection & DNA Extraction: Collect environmental samples (e.g., sewage, soil) in triplicate. Use a standardized, high-yield DNA extraction kit. Include negative extraction controls.

- Shotgun Metagenomic Sequencing: Sequence on an Illumina platform to a sufficient depth (e.g., >20 million reads per sample).

- Bioinformatic Processing:

- Quality Control: Trim adapters and filter low-quality reads with tools like Trimmomatic or Fastp.

- Resistome Profiling:

- Acquired Resistome: Map quality-filtered reads to a curated database of acquired ARGs (e.g., ResFinder) using BWA or Bowtie2.

- FG Resistome: Map reads to a database of functionally confirmed ARGs (e.g., ResFinderFG).

- Taxonomic Profiling: Map reads to a marker gene database (e.g., mOTUs) or a whole-genome database to characterize the bacterial community.

- Data Analysis:

- Abundance & Diversity: Calculate normalized abundances (e.g., reads per kilobase per million reads - RPKM) and alpha/beta diversity indices for both resistomes.

- Statistical Correlation: Perform network analysis (e.g., SparCC) to link ARG subtypes to bacterial taxa. Use PERMANOVA to test the influence of geography on resistome structure.

Protocol 2: Advanced Contextualization of ARGs using Long Reads

This protocol leverages long-read sequencing to solve the challenge of genomic context [14].

- Long-Ribrary Preparation & Sequencing: Extract high-molecular-weight DNA. Prepare a library for Oxford Nanopore Technologies (ONT) sequencing from native DNA (without PCR amplification) to preserve methylation signals.

- Hybrid Assembly: Assemble the long reads using a tool like Flye. Polish the assembly with Illumina short reads (if available) using Medaka or Pilon.

- ARG and MGE Annotation: Annotate the assembled contigs for ARGs (using CARD, etc.) and MGEs (using tools like MobileElementFinder).

- Host Linking via Methylation:

- Use Nanomotif to detect DNA methylation motifs and signals from the raw ONT reads.

- Bin contigs (plasmids and chromosomes) that share highly correlated methylation patterns.

- Strain-Level Haplotyping: Use a tool like StrainGE to phase sequence variants and reconstruct haplotypes, allowing you to detect low-frequency, resistance-conferring point mutations within a population.

Data Presentation: Quantitative Comparisons

Table 1: Key Comparative Characteristics of Acquired vs. Functional Metagenomic (FG) Resistomes. Data synthesized from a global sewage metagenomic study [2].

| Characteristic | Acquired Resistome | Functional Metagenomic (FG) Resistome |

|---|---|---|

| Typical Abundance | Lower and more variable | Higher and more evenly distributed |

| Geographic Pattern | Strong regional clustering (e.g., higher in Sub-Saharan Africa, South Asia) | Weaker regional structure; more uniform globally |

| Association with Bacteriome | Weaker association with bacterial taxonomy | Stronger, more specific association with bacterial taxa |

| Distance-Decay Effect | Significant at national and regional scales | Significant only at the national scale; no effect globally |

| Representation in Databases | Well-represented in curated DBs (e.g., ResFinder) | Represented in specialized DBs (e.g., ResFinderFG) |

| Implied Risk | Direct, current threat (mobilized genes) | Latent, future threat (reservoir of genes) |

Table 2: Essential Research Reagent Solutions for Resistome Studies

| Research Reagent / Tool | Function / Application | Key Considerations |

|---|---|---|

| ResFinder / CARD | Database for profiling the acquired resistome. | Provides a curated collection of known ARGs from pathogens. May miss novel/divergent genes. |

| ResFinderFG | Database for profiling the FG resistome. | Contains ARGs identified through functional selection; crucial for studying the latent resistome [2]. |

| metaSPAdes / MEGAHIT | Metagenome assemblers for short-read data. | metaSPAdes often recovers better context; MEGAHIT can produce fragmented contigs around ARGs [13]. |

| Flye | Assembler for long-read (ONT/PacBio) data. | Essential for producing contiguous assemblies that can span entire ARG contexts and MGEs [14]. |

| NanoMotif | Bioinformatics tool for methylation-based binning. | Links plasmids to their bacterial hosts in metagenomic assemblies using DNA modification signals [14]. |

| StrainGE | Haplotype phasing tool. | Resolves strain-level variation and genotypes directly from metagenomic data [14]. |

Visualization of Methodologies and Concepts

Diagram 1: Comparative Resistome Analysis Workflow

Diagram 2: ARG Host Linking with Long-Read Technologies

The Impact of Database Scope and Classification Hierarchies

Welcome to the Technical Support Center

This resource provides troubleshooting guides and FAQs for researchers addressing database selection bias in resistome studies. The content is designed to help you identify and overcome common pitfalls related to database scope and classification hierarchies that can impact your research outcomes.

Frequently Asked Questions (FAQs)

How does the scope of different ARG databases contribute to selection bias in resistome comparisons?

Answer: Direct comparisons between resistome studies are often invalid due to significant variations in the number and type of Antibiotic Resistance Gene (ARG) targets each database uses. These differences in scope introduce substantial selection bias.

- Variable Target Range: Included studies profile a widely varying number of ARGs, ranging from as few as 12 to over 13,218 different genes [1]. This variation precludes direct comparison.

- Lack of Standardized Markers: Different studies and databases use disparate marker genes to represent resistance to the same antibiotic class [1]. There is no international consensus on which genes should be used to define resistance for a given class.

- Inconsistent Healthy Baseline Definitions: The definition of a "healthy" gut resistome baseline, used for comparison, is inconsistent across studies. The period subjects must be free from antibiotic exposure prior to sampling ranges from 3 to 12 months [1].

Table 1: Factors Contributing to Selection Bias in Resistome Database Scope

| Factor | Impact on Bias | Example from Literature |

|---|---|---|

| Number of ARG Targets | Studies using more targets report a larger, more diverse resistome. | ARG counts range from 12 to 13,218 across studies [1]. |

| Choice of Marker Genes | Resistance for an antibiotic class may be reported based on different genetic determinants. | Disparate genes were selected to represent resistance to a given class [1]. |

| Threshold for Homology | Looser thresholds may overestimate functional ARGs. | Sequence similarity thresholds are arbitrary (80% amino acid to 95% nucleotide identity) [1]. |

What are the methodological consequences of inconsistent classification hierarchies in ARG databases?

Answer: Inconsistent classification hierarchies affect how ARGs are categorized and grouped, leading to challenges in tracking resistance reservoirs and their dissemination.

- Non-Uniform Taxonomy: There is no universal standard for classification taxonomy. Hierarchies are organization-driven, capturing compliance needs, promised features, or business criteria rather than a consistent biological framework [16]. As a workload owner, you should not define your own system but rely on an organization-provided taxonomy [16].

- Functional Validation Gaps: Phenotypic resistance is frequently assumed based on sequence similarity beyond an arbitrary threshold, without laboratory validation [1]. A single amino acid substitution can alter drug target affinity, making phenotype questionable when sequence similarity is as low as 80% [1].

- Lack of Contextual Data: The genetic context of ARGs (e.g., promoter and repressor sequences) is not consistently reported. This information is crucial for predicting bacterial hosts, gene expression, and horizontal transfer potential [1].

How can I troubleshoot errors related to database connectivity and performance during large-scale resistome analysis?

Answer: Performance issues during analysis can often be traced to database connectivity, inefficient queries, or resource constraints.

- Check Connectivity: Slow responses or inaccessible databases can stem from network issues or hardware failures. Ensure your database server is reachable before proceeding [17].

- Identify Slow Queries: Use your database's monitoring tools to pinpoint the slowest queries. Focus on whether the slowdown affects a specific query pattern or all queries are performing worse than their historical average [17].

- Analyze Execution Plans: Use execution plans to understand how the database engine executes a query. Look for steps with the longest duration, highest cost, or that read the most rows. Tools like Metis Query Analyzer for Postgres or explainmysql for MySQL can visualize these plans [17].

- Manage Memory and CPU: High CPU usage can indicate poorly written or frequent queries. Monitor memory usage, particularly the Buffer Cache hit ratio. A low ratio means the database is frequently reading from the slower disk instead of memory [17].

- Use Connection Pooling: For databases like PostgreSQL, having hundreds of concurrent connections can consume excessive memory. Using a connection pooler like pgBouncer is recommended to manage this [17].

What steps can I take to ensure my chosen database's classification system aligns with my research objectives and minimizes bias?

Answer: Proactively defining your scope and understanding your database's taxonomy are critical steps to align tools with research goals and mitigate bias.

- Define Your Classification Scope: Clearly identify which data assets are in-scope and out-of-scope. Be granular; for a tabular data store, classify sensitivity at the table or even column level. Don't forget to classify related components like backups or cached data [16].

- Understand the Taxonomy: Secure a well-defined taxonomy from your database provider or institutional standard. All research team members must have a shared understanding of the structure, nomenclature, and definition of sensitivity levels or classification labels [16].

- Take Inventory of Data Stores: For existing systems, inventory all data stores and components in scope. For new systems, create a data flow diagram and perform an initial categorization based on taxonomy definitions [16].

- Apply Taxonomy for Querying: Implement a consistent classification schema with metadata to apply taxonomy labels. This standardization ensures accurate reporting and minimizes variation. Use built-in classification features of platforms like Azure SQL or specialized tools like Microsoft Purview where available [16].

Experimental Protocols for Mitigating Bias

Protocol 1: Cross-Database Validation for Resistome Profiling

Objective: To assess and correct for selection bias introduced by the scope of a single ARG database.

Methodology:

- Sample Processing: Extract metagenomic DNA from fecal specimens according to your standardized laboratory protocol.

- Sequencing & Assembly: Perform high-throughput sequencing and de novo assembly of contigs.

- Multi-Database Analysis: Query the assembled contigs against at least three distinct ARG databases (e.g., ResFinder, ARDB, MERGEM). Use each database's recommended BLAST settings and identity thresholds.

- Data Integration: Compile the results, noting ARGs that are unique to each database and those that are consistently identified across all.

- Functional Validation: For ARGs of high interest (e.g., those with clinical relevance or unique to one database), clone and express them in a competent heterologous bacterial host (e.g., E. coli) to validate phenotypic resistance using micro-broth dilution methods [1].

Protocol 2: Harmonizing Classification Hierarchies for Comparative Analysis

Objective: To enable valid cross-study comparisons by mapping different classification taxonomies to a unified standard.

Methodology:

- Taxonomy Audit: Document the full classification hierarchy (e.g., Class -> Fold -> Superfamily -> Family) for each ARG in your primary database [16] [18].

- Define a Common Framework: Establish a consensus hierarchy within your research group or consortium. This framework should include sensitivity levels, information types, and compliance scopes relevant to your research [16].

- Mapping and Annotation: Manually or programmatically map the existing classifications from your source database to the new consensus framework. Maintain consistency in key/value pairs. Use metadata for this mapping to keep it separate from the primary data [16].

- Validation and Reporting: Generate reports from the harmonized metadata. Conduct regular reviews to maintain classification accuracy, as stale metadata leads to erroneous results [16].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Materials and Tools for Resistome Research

| Item | Function / Explanation |

|---|---|

| High-Throughput Sequencer | Generates the meta-genomic data required for culture-independent resistome analysis [1]. |

| ARG Reference Databases | Databases like ResFinder, ARG-ANNOT, and MERGEM are used for BLAST-based identification of resistance genes in sequence data [1]. |

| Competent Heterologous Host | A laboratory strain of E. coli used for functional validation of putative ARGs via cloning and expression experiments [1]. |

| Micro-broth Dilution Panels | Standardized panels for determining the Minimum Inhibitory Concentration (MIC) of an antibiotic, validating phenotypic resistance [1]. |

| Bioinformatic Pipelines | Custom or published workflows (e.g., using Prodigal, CD-HIT) for sequence assembly, gene prediction, and homology comparison [1]. |

| Classification & Metadata Tools | Tools like Microsoft Purview or custom scripts to apply consistent taxonomy labels to data assets for standardized reporting [16]. |

Workflow and Relationship Diagrams

ResDB Class Hierarchy

Bias Tshoot Workflow

Inherent Biases in Sequencing-Based ARG Detection

Troubleshooting Guides

Why is my ARG detection inconsistent across different bioinformatics tools?

Problem: Variability in ARG detection results when the same dataset is analyzed with different bioinformatics pipelines.

Explanation: Different tools use distinct algorithms, database versions, and detection thresholds, leading to inconsistent ARG identification and abundance estimates. SRST2, for instance, may report distantly related ARGs due to its allowance for reads to map to multiple targets, while KMA and CARD-RGI are more specific but may miss true positives at lower coverages [19].

Solution:

- Standardize your toolkit: Select one primary bioinformatics method and use additional tools for validation.

- Verify detection thresholds: For metagenomic analysis, ensure your target organism has a minimum of 5X isolate genome coverage for reliable ARG detection [19].

- Cross-validate findings: Use multiple tools and compare results, focusing on ARGs identified by more than one method.

How does database selection impact ARG detection sensitivity?

Problem: The choice of reference database significantly affects which ARGs are detected and how they are classified.

Explanation: Databases vary in scope, curation, and update frequency. Studies have used anywhere from 12 to over 2000 AR genes in their profiling, with different similarity thresholds (80% amino acid identity to 95% nucleotide identity) for defining resistance [1].

Solution:

- Use comprehensive databases: Implement pipelines like ARGem that include extensive ARG and mobile genetic element databases [20].

- Document database versions: Maintain records of database versions and parameters for reproducibility.

- Consider custom databases: For specific research questions, supplement standard databases with custom-curated gene targets.

What causes false positives in ARG detection, and how can I minimize them?

Problem: Incorrect identification of non-ARG sequences as antibiotic resistance genes.

Explanation: False positives can arise from:

- Overly permissive similarity thresholds

- Detection of distantly related gene alleles with unknown function

- Misannotation of housekeeping genes with structural similarities to ARGs

- Tool-specific biases, such as SRST2's tendency to report distantly related ARGs at all coverage levels [19]

Solution:

- Adjust stringency parameters: Implement stricter cutoffs for sequence similarity and coverage.

- Validate functionally: When possible, use phenotypic validation to confirm resistance function [1].

- Leverage multiple tools: Use tools with different algorithmic approaches to consensus-validate findings.

Why do I detect different ARG profiles in similar sample types?

Problem: Inconsistent resistome profiles across technically comparable samples.

Explanation: Multiple factors contribute to this variability:

- Geographical patterns: Acquired ARGs show strong geographical clustering, with different abundance patterns across world regions [2].

- Bacterial community composition: The underlying bacteriome strongly influences both acquired and functionally identified ARG profiles [2].

- Limits of detection: ARG detection requires sufficient sequencing coverage (approximately 5X isolate genome coverage) which may not be achieved for all community members [19].

Solution:

- Increase sequencing depth: Ensure sufficient coverage for low-abundance community members.

- Account for geographical factors: Consider regional resistance patterns when designing studies and interpreting results.

- Normalize to bacterial abundance: Express ARG abundance relative to 16S rRNA or other universal markers.

Frequently Asked Questions

What is the minimum sequencing coverage required for reliable ARG detection?

Accurate ARG detection typically requires approximately 5X isolate genome coverage. Below this threshold, detection becomes unreliable, though some tools may identify closely related alleles at lower coverages if using a lower coverage cutoff (<80%) [19].

How does sample type influence ARG detection limits?

Sample type significantly impacts detection limits due to differences in background microbiota and inhibitor content. For example, mcr-1 was detectable at 0.1X isolate coverage in lettuce metagenomes but not in beef metagenomes with the same bioinformatic tools [19].

What are the key differences between acquired ARGs and functionally identified (FG) ARGs?

- Acquired ARGs: Show distinct geographical patterns, follow distance-decay relationships at national and regional scales, and have been mobilized from their origin [2].

- FG ARGs: More evenly distributed globally, show distance-decay only within countries, and represent a latent reservoir often linked to specific bacterial taxa [2].

How can I improve cross-study comparability of resistome results?

- Standardize metadata collection: Use structured formats and common data elements [20].

- Harmonize bioinformatics pipelines: Implement consistent tools and parameters across studies.

- Document methodological details: Record DNA extraction methods, sequencing platforms, and analysis parameters comprehensively.

Performance Metrics of Bioinformatics Tools for ARG Detection

Table 1: Comparison of bioinformatics tool performance for ARG detection in metagenomic samples

| Tool | Optimal Coverage | Strengths | Limitations |

|---|---|---|---|

| KMA | 5X isolate coverage | High specificity; only predicts expected ARG targets or closely related alleles | Background microbiota influences detection accuracy |

| CARD-RGI | 5X isolate coverage | Specific detection; minimal false positives | May miss divergent alleles |

| SRST2 | Varies | Sensitive for multiple targets | Reports distantly related ARGs at all coverage levels |

| Kraken2/Bracken | N/A (taxonomic) | Closest to expected species abundance values | Reports organisms not present in synthetic metagenomes |

| MetaPhlAn3/4 | N/A (taxonomic) | High specificity for community composition | Lower sensitivity than Kraken2/Bracken |

Detection Limits Under Different Conditions

Table 2: Factors affecting ARG detection limits in metagenomic studies

| Factor | Impact on Detection | Recommended Mitigation |

|---|---|---|

| Sequencing coverage | Accurate detection drops drastically below 5X isolate genome coverage | Increase sequencing depth; target 10-20X for reliable detection [19] |

| Similarity thresholds | 80% amino acid identity vs. 95% nucleotide identity yields different results | Use consistent thresholds; document thoroughly [1] |

| Sample matrix | Background microbiota influences detection accuracy | Consider sample-specific validation [19] |

| Tool selection | Different tools report different ARG profiles | Use multiple tools; establish consensus approach [19] |

| Geographical origin | Acquired ARGs show regional patterns | Account for geographical factors in study design [2] |

Experimental Protocols

Protocol 1: Assessing Bioinformatics Tool Performance for ARG Detection

Purpose: Systematically evaluate different bioinformatics tools for detecting antimicrobial resistance genes in metagenomic samples.

Materials: Synthetic metagenomes with known composition; computing infrastructure; bioinformatics tools (KMA, CARD-RGI, SRST2, Kraken2/Bracken, MetaPhlAn3/4) [19].

Methodology:

- Create synthetic metagenomes: Combine sequences from known bacterial isolates with predetermined ARG content in defined proportions [19].

- Bioinformatic analysis: Process synthetic metagenomes through multiple bioinformatics pipelines using consistent parameters.

- Performance assessment: Compare detected ARGs and taxonomic composition against expected values.

- Sensitivity analysis: Evaluate detection limits by analyzing samples with varying coverage of ARG-encoding organisms.

Key parameters:

- Isolate genome coverage (0.1X to 20X)

- Bioinformatics tools and their specific parameters

- Sequence similarity thresholds for ARG identification

Protocol 2: Evaluating Geographical Patterns in Resistomes

Purpose: Characterize and compare the distribution of acquired versus functionally identified ARGs across geographical gradients.

Materials: Sewage samples from multiple geographical locations; DNA extraction kits; sequencing platform; computational resources for metagenomic analysis [2].

Methodology:

- Sample collection: Collect sewage samples from cities across different world regions and geographical distances [2].

- Metagenomic sequencing: Extract DNA and perform shotgun metagenomic sequencing.

- ARG profiling: Identify and quantify both acquired ARGs (from reference databases) and functionally identified ARGs (from functional metagenomics studies).

- Distance-decay analysis: Measure similarity in ARG composition as a function of geographical distance.

- Network analysis: Examine associations between ARGs and bacterial taxa.

Key parameters:

- Number and distribution of sampling locations

- Sequencing depth and quality metrics

- Statistical measures for distance-decay relationships

Research Reagent Solutions

Table 3: Essential materials and resources for resistome studies

| Item | Function | Example/Specification |

|---|---|---|

| DNA extraction kits | Isolation of high-quality DNA from complex samples | Commercial kits from QIAGEN, used with automated extraction systems [21] |

| Quantification tools | Accurate measurement of DNA concentration and quality | Fluorometric methods (Qubit) preferred over UV spectrophotometry [22] |

| Reference databases | ARG identification and annotation | CARD, ResFinder, PanRes, ResFinderFG, custom databases [2] [20] |

| Bioinformatics pipelines | Processing and analysis of metagenomic data | ARGem, MetaWRAP, SqueezeMeta, PathoFact [20] |

| Synthetic metagenomes | Method validation and benchmarking | Communities with known composition and ARG content [19] |

| Standardized metadata templates | Ensuring comparability across studies | Spreadsheets with required and recommended fields following MIxS guidelines [20] |

Workflow Diagrams

Inherent Biases Detection Workflow

Major Bias Sources in ARG Detection

A Practical Toolkit for Bias-Aware Resistome Analysis

A Comparative Guide to Major ARG Databases (CARD, ResFinder, SARG, MEGARes)

Antimicrobial resistance (AMR) poses a catastrophic threat to global health, with estimates suggesting it could claim 10 million lives annually by 2050 if left unchecked [23]. The genetic basis of AMR, particularly antibiotic resistance genes (ARGs), has become a focal point of research utilizing next-generation sequencing technologies. This has led to the development of specialized databases and tools for ARG identification and analysis [23] [24].

A critical challenge in resistome studies is database selection bias, where the choice of ARG database significantly influences research outcomes. Different databases vary substantially in content, curation methods, and underlying structure, leading to inconsistent results and hampering comparative analyses across studies [1] [25]. This technical guide examines four major ARG databases—CARD, ResFinder, SARG, and MEGARes—to help researchers understand and mitigate this bias in their experimental workflows.

Database Architectures and Curation Philosophies

Comprehensive Antibiotic Resistance Database (CARD)

CARD employs an ontology-driven framework built around the Antibiotic Resistance Ontology (ARO), which systematically classifies resistance determinants, mechanisms, and antibiotic molecules [23] [24]. This structured ontology enables sophisticated computational analyses and data integration.

Curation Methodology:

- Strict inclusion criteria requiring experimental validation via increased Minimum Inhibitory Concentration (MIC) and peer-reviewed publication [23]

- Combination of expert manual curation with machine learning assistance (CARD*Shark) to prioritize relevant literature [24]

- Specialized "Resistomes & Variants" module for in silico validated ARGs to enhance sensitivity while maintaining quality standards [23]

ResFinder

ResFinder specializes in acquired resistance genes with particular strength in pathogens of clinical relevance. It has recently integrated with PointFinder for comprehensive coverage of both acquired genes and chromosomal mutations [24] [25].

Curation Methodology:

- Originally based on the Lahey Clinic β-Lactamase Database, ARDB, and extensive literature review [24]

- Utilizes a K-mer-based alignment algorithm enabling rapid analysis directly from raw sequencing reads without assembly [24]

- Species-specific focus, particularly for PointFinder mutations, enhancing clinical applicability [25]

Structured Antibiotic Resistance Gene (SARG)

SARG represents a consolidated database approach, integrating and re-annotating sequences from multiple resources including CARD and ARDB [23]. It employs a machine learning model to predict the best nomenclature for gene names and incorporates crowdsourcing with trust-validation filters for annotation refinement [23].

MEGARes

MEGARes is designed specifically for metagenomic analysis with a structured hierarchy that facilitates accurate classification of sequencing reads [23]. Its annotation system organizes data into increasingly specific levels, from resistance mechanism to individual gene variants [23].

Table 1: Fundamental Characteristics of Major ARG Databases

| Database | Primary Focus | Curation Method | Update Frequency | Unique Features |

|---|---|---|---|---|

| CARD | Comprehensive ARG coverage | Expert curation + experimental validation | Regular, with ML-assisted literature review | ARO ontology; RGI tool; Strict quality control |

| ResFinder | Acquired resistance genes in pathogens | Literature-based + integration of specialized DBs | Regularly updated | Integrated with PointFinder; K-mer based alignment |

| SARG | Consolidated ARG collection | Machine learning + crowdsourcing | Last update April 2019 | ML-based nomenclature; Integrated mobility data |

| MEGARes | Metagenomic resistome analysis | Structured hierarchical annotation | Information not available in search results | Hierarchical classification; Optimized for read classification |

Comparative Analysis of Database Content and Coverage

Gene Content and Resistance Mechanism Representation

Substantial differences exist in the number and type of resistance determinants across databases, which directly impacts detection sensitivity and specificity [25]. CARD provides the most comprehensive coverage of resistance mechanisms, including enzymatic resistance, target modification, efflux pumps, and regulatory changes [24]. ResFinder focuses predominantly on acquired resistance genes with strong pathogen coverage, while MEGARes and SARG offer broader environmental and metagenomic relevance [23].

Metadata and Annotation Depth

The richness of metadata associated with ARG sequences varies significantly:

- CARD provides extensive metadata through its ARO framework, including resistance mechanisms, antibiotic molecules, and taxonomic information [23]

- SARG incorporates mobility predictions (ACLAME database) and pathogenicity data (PATRIC database) through its machine learning pipeline [23]

- MEGARes offers hierarchical classification optimized for metagenomic read placement [23]

- ResFinder includes phenotype prediction tables linking genetic markers to resistance traits [24]

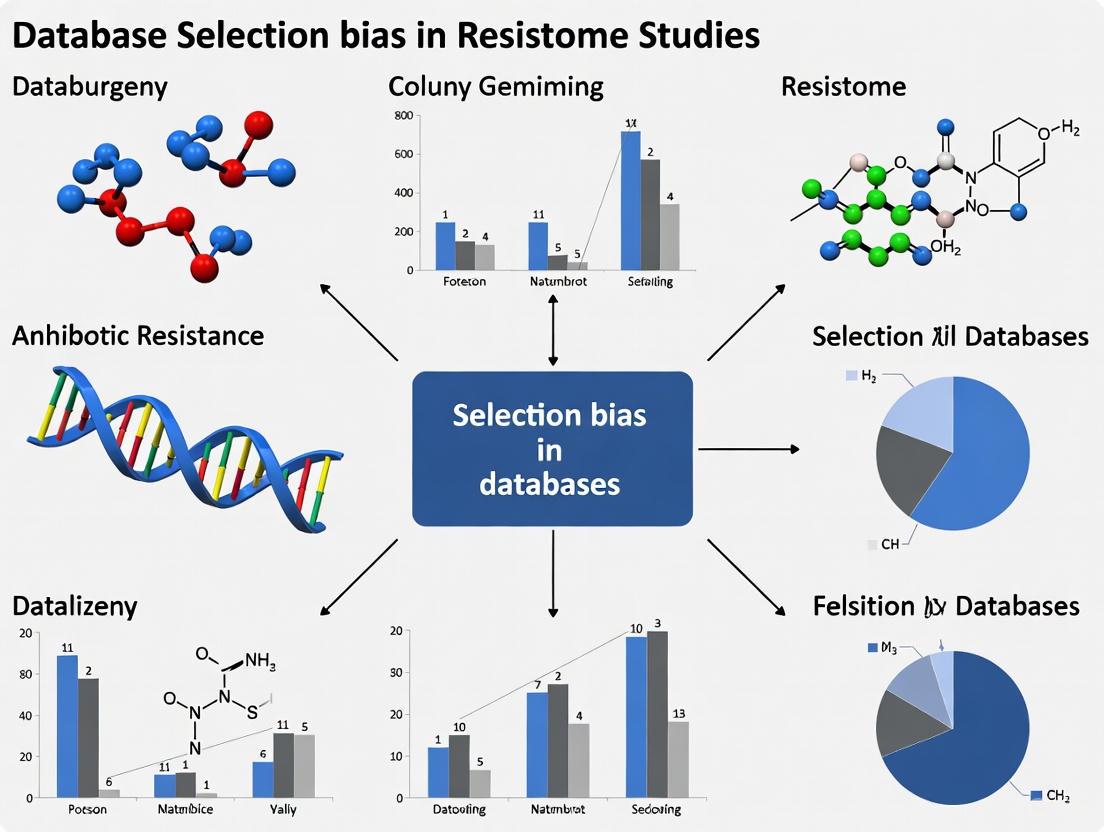

Diagram 1: Database Selection Influences on Research Outcomes and Potential Biases. The choice of ARG database directly shapes the scope, depth, and potential limitations of resistome study results.

Troubleshooting Common Experimental Issues

FAQ 1: Why do I get different ARG detection results when using different databases?

Issue: Inconsistent ARG profiles across database choices.

Root Cause: Each database has unique curation standards, gene content, and annotation structures [23] [25]. For example, CARD's strict requirement for experimental validation excludes potential ARGs lacking laboratory confirmation, while SARG's consolidated approach includes more sequences but with possible annotation inconsistencies [23].

Solution:

- Perform preliminary analysis using multiple databases to assess consistency

- Align database selection with research objectives (clinical vs. environmental focus)

- Implement a tiered approach: use CARD for high-confidence results and supplement with broader databases for exploratory analysis

- Clearly report database versions and parameters in methods sections

FAQ 2: How can I improve detection of low-abundance ARGs in metagenomic samples?

Issue: Limited sensitivity for rare resistance genes in complex microbial communities.

Root Cause: Standard shotgun metagenomics distributes sequencing depth across entire metagenomes, making low-abundance targets difficult to detect [26].

Solution:

- Consider targeted capture sequencing approaches using customized bait panels

- For MEGARes, leverage its hierarchical classification system for improved read placement

- Increase sequencing depth specifically for resistome profiling (≥10-20 million reads per sample)

- Utilize computational tools like DeepARG that employ deep learning models for sensitive detection [24]

Table 2: Recommended Database-Tool Pairings for Specific Research Scenarios

| Research Context | Recommended Database | Complementary Tool | Justification |

|---|---|---|---|

| Clinical pathogen WGS | ResFinder + PointFinder | AMRFinderPlus | Optimal for acquired genes & mutations in key pathogens |

| Environmental metagenomics | MEGARes | Targeted capture + DeepARG | Hierarchical classification; Enhanced low-abundance detection |

| Comprehensive resistome | CARD | RGI | Strict curation ensures high-confidence results |

| Exploratory ARG discovery | SARG + CARD | ABRicate | Balanced coverage with ML-enhanced annotation |

| One Health studies | Multi-database approach | Custom pipeline | Captures clinical, environmental, and agricultural ARGs |

FAQ 3: How do I handle discordant phenotypic and genotypic resistance predictions?

Issue: Discrepancy between computational ARG detection and laboratory susceptibility testing.

Root Cause: Not all detected ARGs are expressed or functional in their host organisms. Additionally, databases have varying coverage of resistance mechanisms for different antibiotic classes [25].

Solution:

- For critical clinical isolates, use ResFinder's integrated phenotype prediction tables

- Consult CARD's ARO mechanism information to assess functional requirements

- Investigate genetic context (promoters, flanking regions) for expression potential

- For problem antibiotics (e.g., certain β-lactams), employ minimal models to identify knowledge gaps [25]

Experimental Protocols for Database Selection Bias Assessment

Protocol: Cross-Database Comparative Analysis

Purpose: To evaluate how database selection influences ARG profiling results in your specific research context.

Materials:

- High-quality bacterial genomes or metagenomic datasets

- Computational tools: ABRicate (multi-database support), RGI for CARD, ResFinder, custom scripts for SARG and MEGARes

- Analysis environment: Linux workstation with sufficient RAM (≥16GB) and storage

Methodology:

- Data Preparation: Assemble or obtain test datasets representing your research focus (clinical isolates, environmental metagenomes, etc.)

- Parallel Annotation: Process all samples through each target database using consistent parameters (recommended: ≥90% identity, ≥80% coverage)

- Result Normalization: Convert all outputs to standardized format (e.g., presence/absence matrix)

- Comparative Analysis:

- Calculate total ARG richness and abundance per database

- Identify database-specific and shared ARGs using Venn diagrams

- Assess variation in resistance class representation

- Bias Quantification: Measure Jaccard dissimilarity indices between database results

Troubleshooting Tips:

- For incompatible output formats, develop parsing scripts to extract key information (gene name, coverage, identity)

- When comparing metagenomic results, normalize by sequencing depth and sample biomass

- Account for database size differences by reporting relative abundances rather than absolute counts

Protocol: Minimal Model Performance Assessment

Purpose: To identify antibiotics with significant knowledge gaps where novel resistance mechanisms may exist [25].

Materials:

- Bacterial genome collection with paired phenotypic susceptibility data

- Machine learning environment (Python/R)

- Annotated ARG datasets from target databases

Methodology:

- Feature Extraction: Annotate all genomes using selected databases to create presence/absence matrices of known resistance markers

- Model Training: Build predictive models (e.g., logistic regression, XGBoost) using only known ARGs as features

- Performance Evaluation: Assess model accuracy, precision, and recall for predicting phenotypic resistance

- Gap Identification: Flag antibiotic-class pairs where model performance is poor (e.g., accuracy <80%), indicating incomplete knowledge of resistance mechanisms

Interpretation:

- High-performance minimal models suggest well-characterized resistance mechanisms

- Poor performance highlights priority areas for novel ARG discovery

- Cross-database performance variation reveals curation differences for specific antibiotics

Table 3: Key Resources for ARG Detection and Analysis

| Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| CARD with RGI | Database + Analysis Tool | High-confidence ARG annotation | Clinical isolates; Regulatory applications |

| ResFinder/PointFinder | Web Service + Database | Acquired gene & mutation detection | Clinical outbreak investigations; WGS of pathogens |

| MEGARes | Database | Hierarchical ARG classification | Metagenomic resistome studies; Environmental monitoring |

| ABRicate | Analysis Tool | Multi-database screening | Comparative studies; Preliminary analyses |

| AMRFinderPlus | Analysis Tool | Comprehensive ARG & mutation detection | NCBI pipeline integration; Large-scale genomic studies |

| Targeted Capture Probes | Wet-bench Reagent | Enrichment of ARG sequences from metagenomes | Sensitive detection in complex samples; Low-biomass environments |

| WHONET | Data Management | Microbiology laboratory data analysis | AMR surveillance data harmonization; Pattern recognition [27] |

The selection of appropriate ARG databases requires careful consideration of research objectives, sample types, and required confidence levels. To mitigate database selection bias:

- Employ multi-database strategies for comprehensive resistome assessment, particularly in exploratory studies

- Align database selection with research questions - clinical applications benefit from ResFinder's pathogen focus, while environmental studies may require MEGARes' metagenomic optimization

- Implement validation procedures for critical findings, especially when database results conflict

- Document database versions and parameters meticulously to ensure reproducibility

- Contribute to community curation efforts by reporting novel resistance determinants with experimental validation

As the AMR crisis continues to evolve, so too must our computational resources and methodologies. The development of standardized benchmarking datasets and implementation of regular cross-database comparisons will strengthen the field and enhance our ability to combat this global health threat.

The ARGs Online Analysis Pipeline (ARGs-OAP) is a specialized bioinformatics platform designed for the high-throughput profiling of antibiotic resistance genes (ARGs) from metagenomic data. The release of version 3.0 marks a significant advancement, featuring major improvements to both its reference database—the structured ARG (SARG) database—and its integrated analysis pipeline [28] [29].

The enhanced SARG database incorporates sequence curation to improve the reliability of ARG annotations and includes newly discovered resistance genotypes. It is meticulously organized with a rigorous mechanism-based classification system and is available online in a tree-like structure with a dictionary for easier navigation. To cater to diverse research scenarios, the database has been divided into several sub-databases [28]. The accompanying pipeline has been optimized with adjusted quantification methods, simplified tool implementation, and supports user-defined reference databases, providing a more robust framework for resistome studies [28] [29].

Frequently Asked Questions (FAQs)

Q1: What are the primary advantages of using ARGs-OAP v3.0 over other ARG profiling pipelines like ARGem?

A1: ARGs-OAP v3.0 and ARGem are both designed for ARG detection but have different strengths. ARGs-OAP v3.0 provides a highly standardized and integrated pipeline built around the curated SARG database, which is specifically enhanced for annotation reliability and classification [28] [29]. Its online platform offers diverse biostatistical analysis and visualization packages, making it highly accessible. In contrast, ARGem is a locally deployable pipeline that emphasizes extensive metadata capture and normalization to facilitate cross-study comparisons. It also includes integrated tools for building co-occurrence networks and supports visualization with Cytoscape [30] [20]. Your choice should depend on your need for a standardized online service versus a customizable local workflow with robust metadata support.

Q2: How does the SARG database in v3.0 help address database selection bias in resistome studies?

A2: Database selection bias occurs when a reference database does not adequately represent the genetic diversity of ARGs in the environment being studied, leading to inaccurate profiles. The SARG database in v3.0 mitigates this by:

- Incorporating emerging resistance genotypes, which expands its coverage and reduces the omission of novel or rare ARGs [28].

- Implementing rigorous mechanism classification and sequence curation, which improves annotation accuracy and minimizes false positives [28] [29].

- Offering sub-databases for different application scenarios, allowing researchers to select a more tailored and relevant reference set for their specific sample type (e.g., human gut, water, soil) [28].

Q3: My analysis reveals a high background of ARGs not directly related to the administered antibiotic. Is this a common finding, and what does it imply?

A3: Yes, this is a common and critical finding in resistome research. Studies, particularly in Low- and Middle-Income Countries (LMICs), have revealed that antibiotic exposure not only enriches for ARGs that match the drug class administered but also unveils a substantial reservoir of diverse, pre-existing background ARGs [31]. This "silent resistome" often includes genes conferring resistance to beta-lactams, aminoglycosides, vancomycin, and tetracyclines [31]. This indicates that microbial communities carry a latent resistance potential, which can be enriched by antibiotic pressure, highlighting a complex ecological challenge that goes beyond simple drug-class selection.

Q4: What is the difference between the acquired resistome and the latent resistome characterized by functional metagenomics (FG)?

A4: These concepts describe different components of the total resistome, as illustrated in the table below:

Table 1: Key Differences Between Acquired and Latent Resistomes

| Feature | Acquired Resistome | Latent (FG) Resistome |

|---|---|---|

| Definition | ARGs known to be mobilized and transferred between bacteria, often associated with pathogens. | ARGs identified through functional cloning; often intrinsic genes in environmental bacteria that represent a potential future threat. |

| Typical Analysis Method | In silico alignment to databases of known, mobilized ARGs (e.g., ResFinder). | Functional metagenomics (cloning and phenotypic selection). |

| Geographical Pattern | Shows strong, distinct geographical patterns and distance-decay relationships. | More evenly distributed globally, showing weaker geographical structuring. |

| Association with Bacteria | More associated with human activities and mobilization events. | More strongly linked to the underlying taxonomic composition of the bacterial community. |

Troubleshooting Common Experimental Issues

Issue 1: Inconsistent ARG Quantification Between Samples

- Problem: Reported ARG abundances vary widely between samples, making comparisons difficult.

- Solution: ARGs-OAP v3.0 has adjusted quantification methods. Ensure you are using the latest pipeline version and have normalized your ARG abundance data correctly, for example, by using reads per million of total reads or contigs per million of total contigs, as is standard practice in metagenomic studies [28] [32]. Verify that sequencing depths and quality are comparable across all samples.

Issue 2: Difficulty in Interpreting and Visualizing Complex Resistome Data

- Problem: The output of ARG profiles is complex and hard to visualize for publication or reporting.

- Solution: Utilize the diverse biostatistical analysis workflow with visualization packages integrated into the ARGs-OAP v3.0 online platform [28]. For more advanced visualizations like co-occurrence networks, you might consider using a pipeline like ARGem, which supports Cytoscape visualization directly from its output [30] [20].

Issue 3: Managing Metadata for Cross-Study Comparison

- Problem: Inconsistent metadata collection prevents meaningful comparison of your results with other studies.

- Solution: While ARGs-OAP provides an analysis platform, if metadata flexibility is a core need, consider leveraging pipelines like ARGem, which are specifically designed to capture extensive and standardized metadata in a relational database to support comparability across projects [30]. Adhering to established guidelines like MIxS (Minimum Information about any (x) Sequence) is also recommended.

Key Experimental Protocols for Resistome Analysis

Standard Workflow for Resistome Profiling with ARGs-OAP v3.0

The following diagram outlines the core workflow for analyzing metagenomic data with the ARGs-OAP v3.0 pipeline.

Protocol: Mitigating Database Bias via a Dual-Database Approach

Purpose: To validate ARG findings and minimize bias inherent in using a single database. Procedure:

- Process your metagenomic sequences (post-quality control) through the ARGs-OAP v3.0 pipeline using the SARG database.

- In parallel, process the same sequences through an alternative pipeline, such as ARGem or another tool that utilizes a different comprehensive database (e.g., one that includes CARD or MEGARes) [30] [3].

- Compare the results from both analyses. Focus on:

- Overlap: ARGs identified by both pipelines (high-confidence hits).

- Unique Calls: ARGs identified by only one pipeline. Investigate these by checking for their presence in other databases or via manual curation.

- Abundance Correlation: For ARGs found in both, check if the relative abundance trends are consistent.

Protocol: Assessing Active vs. Latent Resistome with Metatranscriptomics

Purpose: To move beyond the mere presence of ARGs (potential) and determine which genes are actively expressed. Procedure:

- Sample Collection: Co-extract DNA and RNA from the same sample (e.g., rumen content, sewage, stool) [32].

- Sequencing: Perform shotgun metagenomic sequencing on the DNA to characterize the resident resistome. Perform shotgun metatranscriptomic sequencing on the RNA (converted to cDNA) to characterize the active resistome.

- Bioinformatic Analysis:

- Analyze DNA-derived sequences with ARGs-OAP v3.0 to establish the baseline resistome.

- Analyze RNA-derived sequences with the same pipeline and database to identify expressed ARGs.

- Data Integration: Compare the two profiles. As demonstrated in cattle rumen studies, only a fraction of the genomic ARGs (e.g., ~30%) may be actively expressed, providing a much clearer picture of the immediate resistance threat and its link to microbiome function and stability [32].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 2: Key Resources for Resistome Analysis

| Item Name | Function/Description | Relevance to ARGs-OAP v3.0 |

|---|---|---|

| SARG Database | The core structured ARG reference database, curated and classified by mechanism. | The foundational resource for all ARG annotation within the pipeline. Essential for accurate profiling. [28] [29] |

| High-Quality Metagenomic DNA | Input material for shotgun sequencing. Purity and integrity are critical. | The starting point for the entire workflow. Quality directly impacts assembly and annotation accuracy. |