Bioinformatic Pipelines for Evolutionary Model Validation: Enhancing Drug Discovery and Biomedical Research

This article provides a comprehensive overview of bioinformatic pipelines for validating evolutionary models, a critical area bridging computational biology and drug development.

Bioinformatic Pipelines for Evolutionary Model Validation: Enhancing Drug Discovery and Biomedical Research

Abstract

This article provides a comprehensive overview of bioinformatic pipelines for validating evolutionary models, a critical area bridging computational biology and drug development. It explores the foundational principles of Model-Informed Drug Development (MIDD) and the integration of machine learning in evolutionary genomics. The content details methodological applications, including specific tools and techniques for analyzing genetic diversity and phylogenetic relationships. A significant focus is placed on troubleshooting data quality issues and optimizing pipeline efficiency to ensure reliability. Finally, the article covers rigorous validation frameworks and comparative analyses of methodologies, offering researchers and drug development professionals actionable insights for improving the accuracy and reproducibility of evolutionary models in biomedical research.

Core Principles and the Role of Evolutionary Models in Modern Biology

Model-Informed Drug Development (MIDD) is a quantitative framework that applies pharmacokinetic (PK), pharmacodynamic (PD), and disease progression models to inform drug development decisions and regulatory evaluations [1] [2]. This approach uses a variety of modeling and simulation techniques to integrate data from nonclinical and clinical studies, helping to balance the risks and benefits of drug products in development [3]. The primary goal of MIDD is to optimize clinical trial efficiency, increase the probability of regulatory success, and facilitate dose optimization without the need for dedicated clinical trials [3] [4].

MIDD represents a shift from empirical drug development toward a more predictive, knowledge-driven paradigm. When successfully applied, MIDD approaches can significantly shorten development cycle timelines, reduce discovery and trial costs, and improve quantitative risk estimates, particularly when facing development uncertainties [4]. The framework is considered "fit-for-purpose" when the modeling tools are well-aligned with the specific "Question of Interest," "Context of Use," and the potential influence and risk of the model in presenting the totality of evidence for regulatory review [4].

Evolutionary Frameworks in Drug Discovery

The process of drug discovery and development shares remarkable similarities with biological evolution, operating through mechanisms of variation, selection, and inheritance [5]. This evolutionary analogy provides a powerful lens through which to understand the dynamics of pharmaceutical innovation.

Drug Discovery as an Evolutionary Process

In evolutionary terms, the vast chemical space represents the variation upon which selective pressures act. Between 1958 and 1982, the National Cancer Institute in the USA screened approximately 340,000 natural products for biological activity, while a major pharmaceutical company may maintain a library of over 2 million compounds available for screening [5]. This immense molecular diversity undergoes a rigorous selection process with high attrition rates, where few candidate molecules survive the prolonged development process to become successful medicines [5].

The classification system of pharmacology echoes the taxonomy of flora and fauna, with new molecular entities often representing modifications of earlier designs, frequently referred to as first, second, or third-generation compounds [5]. This iterative refinement mirrors evolutionary descent with modification, where successful molecular scaffolds serve as platforms for further optimization.

Selection Pressures in Drug Development

The evolutionary process of drug development operates under multiple selection pressures that determine which candidates progress through the development pipeline:

Scientific and Technological Pressures: Advances in basic science continuously raise the standards for drug efficacy and safety assessment. As our understanding of disease mechanisms deepens, the criteria for promising drug candidates become more stringent [5].

Regulatory Pressures: The "Red Queen Hypothesis" from evolutionary biology applies to drug development, where continuous adaptation is necessary merely to maintain relative position. As scientific knowledge expands therapeutic possibilities, it simultaneously advances toxicity assessment capabilities, creating a dynamic equilibrium where developers must continually innovate to meet evolving regulatory standards [5].

Economic Pressures: The substantial resources required for drug development act as a powerful selection mechanism. With annual world pharmaceutical sales of approximately £250 billion and about 14% spent on research, investment decisions significantly influence which drug candidates advance [5] [6].

Phylogenetics in Targeted Drug Discovery

Evolutionary principles directly inform practical drug discovery through phylogenetic analysis. By reconstructing evolutionary relationships among species, researchers can identify clades likely to produce useful compounds, effectively creating a "phylogenetic road map" for bioprospecting [7].

A classic example is the discovery of paclitaxel (Taxol), an anticancer compound initially harvested from the Pacific Yew tree (Taxus brevifolia). Through phylogenetic analysis, researchers identified related compounds in the needles of the abundant European Yew (T. baccata), providing a sustainable production method. Further research revealed the compound was actually produced by a fungal symbiont, highlighting how understanding evolutionary relationships can uncover novel drug sources [7].

Similarly, phylogenetic approaches have identified more than 1,200 species of fish not previously known to be venomous, representing a largely unexplored resource for drug discovery. This approach has also proven valuable for discovering therapeutic compounds from snake, lizard, and snail venoms [7].

Quantitative MIDD Tools and Applications

MIDD employs a diverse toolkit of quantitative approaches that address specific questions throughout the drug development lifecycle. The selection of appropriate tools follows a "fit-for-purpose" strategy aligned with development stage and specific research questions [4].

Table 1: Key MIDD Methodologies and Their Applications

| Methodology | Description | Primary Applications | Development Stage |

|---|---|---|---|

| Physiologically Based Pharmacokinetic (PBPK) | Mechanistic modeling that simulates drug absorption, distribution, metabolism, and excretion based on human physiology [4]. | Predicting drug-drug interactions, organ impairment effects, formulation optimization, and first-in-human dose prediction [1] [4]. | Preclinical to Post-Market |

| Population PK (PPK) and Exposure-Response (ER) | Models that quantify and explain variability in drug exposure (PK) and its relationship to efficacy/safety outcomes (ER) in a patient population [4]. | Dose optimization, identifying covariates affecting drug response, and supporting labeling recommendations [1] [4]. | Clinical Phase 1-3 and Post-Market |

| Quantitative Systems Pharmacology (QSP) | Integrative models combining systems biology with pharmacology to simulate drug effects on disease pathways and networks [4]. | Target validation, biomarker selection, combination therapy strategy, and understanding mechanism of action [4]. | Discovery to Clinical |

| Model-Based Meta-Analysis (MBMA) | Quantitative framework that integrates and analyzes summary data from multiple clinical trials across a drug class or disease area [1] [4]. | Competitive landscape analysis, trial design optimization, and benchmarking drug performance against standard of care [1] [4]. | Discovery to Phase 3 |

| Clinical Trial Simulation | Use of computational models to predict trial outcomes, optimize study designs, and explore scenarios before conducting actual trials [4]. | Optimizing trial duration, sample size, endpoint selection, and predicting probability of success [3] [4]. | Preclinical to Phase 3 |

These methodologies are not mutually exclusive; they often interconnect to form a comprehensive model-informed strategy. For example, PBPK models might inform PPK models, which in turn feed into ER models to fully characterize a drug's behavior across different populations and conditions [2] [4].

MIDD Protocol: Model-Based Dose Optimization in Oncology

The following protocol outlines a structured approach for applying MIDD principles to optimize dosing regimens in oncology drug development, integrating evolutionary concepts of variability and selection.

Objective: To develop a quantitative framework for selecting the optimal dosing regimen for an oncology drug candidate using integrated PK-PD-efficacy-toxicity modeling and simulation. Background: Oncology drug development faces unique challenges in balancing efficacy and toxicity, often within narrow therapeutic windows. This protocol provides a systematic approach to dose optimization prior to pivotal trials. Context of Use: To inform Phase 3 dose selection and provide evidence for potential inclusion in product labeling.

Materials and Reagent Solutions

Table 2: Essential Research Reagents and Computational Tools

| Item Name | Specifications/Provider | Critical Function |

|---|---|---|

| Nonlinear Mixed-Effects Modeling Software | NONMEM, Monolix, or equivalent | Platform for developing population PK and PD models to quantify between-subject variability and identify covariates. |

| Clinical Trial Simulation Environment | R, Python, or SAS with custom scripts | Environment for simulating virtual patient populations and trial outcomes under different dosing scenarios. |

| PBPK Modeling Platform | GastroPlus, Simcyp, or PK-Sim | Mechanistic simulation of drug disposition in specific populations (e.g., organ impairment) and drug-drug interactions. |

| Data Assembly and Curation Tools | Standard statistical software (e.g., R, SAS) | Tools for pooling, cleaning, and summarizing PK, PD, efficacy, and safety data from prior study phases. |

| Visual Predictive Check Tools | Standard diagnostic tools within modeling software | Methods for evaluating model performance and validating its predictive capability against observed data. |

Experimental Workflow and Signaling Pathways

The dose optimization workflow follows a logical progression from data integration to decision-making, incorporating feedback loops for model refinement.

Step-by-Step Procedure

Data Assembly and Curation

- Pool all available PK data from Phase 1 and 2 studies, including rich sampling and sparse population data.

- Compile corresponding efficacy endpoints (e.g., tumor size reduction, PFS) and safety data (e.g., incidence of grade 3+ adverse events) for the same patients.

- Document all patient demographics and clinical laboratory values that may serve as potential covariates (e.g., body size, renal/hepatic function, biomarkers).

Population PK Model Development

- Develop a structural PK model (e.g., 2-compartment vs. 3-compartment) to describe the drug's concentration-time profile.

- Identify and quantify between-subject and between-occasion variability on key PK parameters (e.g., clearance, volume of distribution).

- Perform covariate analysis to identify patient factors (e.g., body weight, renal function) that explain variability in PK parameters. This step is crucial for understanding the "evolutionary" variability in drug exposure across a heterogeneous patient population.

Exposure-Response (E-R) Analysis

- Develop an E-R model for the primary efficacy endpoint. For oncology, this may be a time-to-event model for overall survival/progression-free survival or a logistic model for objective response rate.

- Develop a separate E-R model for key safety endpoints, such as the probability of a dose-limiting toxicity.

- Document the uncertainty around the parameter estimates for both efficacy and safety models.

Integrated Model Development and Validation

- Link the finalized population PK model with the E-R models for efficacy and safety to create a drug-trial-disease modeling framework.

- Validate the integrated model using techniques like Visual Predictive Check (VPC) and bootstrap analysis to ensure its adequacy for simulation purposes.

Clinical Trial Simulation and Dose Strategy Evaluation

- Simulate a virtual population of 1000-10,000 patients that reflects the target Phase 3 population, incorporating the variability and covariate relationships identified in the population PK model.

- Simulate the clinical outcomes (efficacy and safety) for multiple candidate dosing regimens (e.g., different doses, schedules, or flat vs. weight-based dosing).

- For each regimen, calculate the predicted probability of efficacy and the predicted probability of key toxicities.

Benefit-Risk Analysis and Dose Selection

- Compare the simulated outcomes across all tested dosing regimens.

- Select the optimal dose(s) that maximizes therapeutic benefit while maintaining an acceptable safety profile. This represents the "selection" phase in the evolutionary framework of drug development.

- Prepare a comprehensive model summary report detailing assumptions, methodologies, and results to support regulatory interactions [3].

Regulatory Integration and Future Directions

The application of MIDD is increasingly formalized within regulatory science. The FDA's MIDD Paired Meeting Program provides a pathway for sponsors to discuss MIDD approaches with Agency staff, focusing on dose selection, clinical trial simulation, and predictive safety evaluation [3]. Regulatory acceptance hinges on clearly defining the "Context of Use" and providing a comprehensive assessment of model risk, which considers the weight of model predictions and the potential consequence of an incorrect decision [3].

The future of MIDD is evolving with emerging technologies. The integration of artificial intelligence (AI) and machine learning (ML) promises to enhance model development and interpretation [2] [4]. Furthermore, the incorporation of Real-World Data (RWD) and evidence from Digital Health Technologies (DHTs) offers opportunities to refine models with broader, more diverse patient data, creating a continuous feedback loop that mirrors an adaptive evolutionary process [2] [4]. This positions MIDD as a dynamic framework capable of accelerating the development of new therapies for patients with unmet medical needs.

Understanding the interplay between genetic diversity, natural selection, and phylogeny constitutes a cornerstone of modern evolutionary biology. Recent research has revealed a profound negative global-scale association between intraspecific genetic diversity and speciation rates across mammalian species [8]. This finding challenges simplistic assumptions and underscores the complex relationship between microevolutionary processes and macroevolutionary patterns. Meanwhile, advances in bioinformatic pipelines and computational tools are revolutionizing our capacity to analyze genetic data, validate evolutionary models, and reconstruct phylogenetic histories with unprecedented accuracy and efficiency [9] [10] [11]. These methodologies provide the essential framework for testing evolutionary hypotheses and exploring the mechanisms driving biodiversity patterns.

This article presents application notes and protocols designed to equip researchers with practical methodologies for investigating these core evolutionary concepts. By integrating cutting-edge bioinformatic workflows with classical evolutionary theory, we establish a robust foundation for analyzing the genetic underpinnings of evolutionary processes across different biological scales—from population-level diversity to deep phylogenetic splits.

Theoretical Foundation: Genetic Diversity and Speciation Dynamics

Empirical Evidence from Mammalian Systems

A comprehensive study of 1,897 mammal species—representing approximately one-third of all mammalian diversity—has revealed a statistically significant negative relationship between mitochondrial genetic diversity and speciation rates [8]. This analysis, which encompassed all mammalian orders, demonstrated that lineages with higher speciation rates consistently exhibited lower levels of within-species genetic variation. The strength of this association (PGLS slope estimate = -0.431, p-value = 2.69×10⁻⁹) indicates a systematic link between microevolutionary and macroevolutionary processes that operates across deep phylogenetic scales [8].

Table 1: Genetic Diversity and Speciation Rates Across Major Mammalian Clades

| Clade | Mean θTsyn (Genetic Diversity) | Mean Speciation Rate (events/million years) | Number of Species Sampled |

|---|---|---|---|

| Castorimorpha | 0.0254 | 0.18 | 47 |

| Carnivora | 0.0151 | 0.31 | 192 |

| Rodentia | 0.0208 | 0.27 | 523 |

| Primates | 0.0182 | 0.23 | 178 |

| Artiodactyla | 0.0169 | 0.22 | 156 |

| All Mammals | 0.0193 | 0.25 | 1,897 |

Theoretical Explanations and Competing Hypotheses

Several non-exclusive mechanistic hypotheses may explain this negative diversity-speciation association:

- Faster Accumulation of Reproductive Incompatibilities: Species with low genetic diversity (reflecting small effective population size, Nₑ) may accumulate reproductive incompatibilities more rapidly due to reduced efficacy of purifying selection, potentially leading to higher speciation rates [8].

- Speciation-Related Bottlenecks: Speciation events themselves may reduce genetic diversity through population bottleneck effects, creating a signature of low diversity in rapidly speciating lineages [8].

- Selection-Driven Reductions: If speciation is primarily adaptive, positive selection can simultaneously reduce genetic diversity (by fixing beneficial alleles) while promoting population divergence [8].

- Geographic Structure Effects: While geographic structure often promotes speciation, it may decrease species-wide genetic diversity if migration is highly asymmetric between subpopulations [8].

Table 2: Key Variables in the Genetic Diversity-Speciation Relationship

| Variable | Measurement Approach | Biological Significance | Data Source |

|---|---|---|---|

| Synonymous Genetic Diversity (θTsyn) | Tajima's θ estimator applied to cytochrome b sequences | Proxy for effective population size and neutral evolutionary potential | 90,337 mitochondrial sequences from GenBank [8] |

| Tip Speciation Rate | ClaDS model applied to time-calibrated phylogeny | Species-specific rate of lineage splitting | Mammal phylogeny from Upham et al. (2019) [8] |

| Life History Traits | Body mass, generation time, fecundity metrics | Position on r/K-strategist gradient; correlates with both diversity and speciation | PanTHERIA database; species-specific literature [8] |

| Latitudinal Zone | Tropical vs. temperate classification | Proxy for multiple environmental covariates affecting both diversity and speciation | Geographic range maps [8] |

Bioinformatic Protocols for Evolutionary Analysis

Protocol 1: DNA Metabarcoding for Dietary Analysis

The Kartzinel lab's standardized DNA metabarcoding pipeline provides a robust framework for analyzing complex dietary data from fecal or gut content samples [9]. This approach enables researchers to quantify trophic interactions and assess how natural selection shapes feeding strategies across populations and species.

Workflow Overview:

- DNA Extraction and Amplification: Extract genomic DNA from environmental samples using commercial kits. Amplify target barcode regions (e.g., rbcL, trnL for plants; COI for animals) with PCR primers containing unique molecular identifiers to track individual samples.

- High-Throughput Sequencing: Pool amplified products and sequence on Illumina platforms. Include both negative controls (to detect contamination) and positive controls (to assess sequencing accuracy).

- Bioinformatic Processing:

- Demultiplex sequences by sample-specific barcodes.

- Quality filtering and removal of primer sequences.

- Cluster sequences into operational taxonomic units (OTUs) or amplicon sequence variants (ASVs).

- Taxonomic assignment using reference databases (e.g., GenBank, BOLD).

- Ecological Statistical Analysis:

- Calculate dietary richness, diversity indices, and composition.

- Perform multivariate statistics to test for dietary differences between populations.

- Relate dietary variation to genetic diversity metrics.

Troubleshooting Tips:

- For low-quality DNA samples, consider increasing PCR cycle number or using specialized polymerases.

- To minimize cross-contamination, physically separate pre- and post-PCR workspaces and use UV irradiation in hoods between sample processing.

- For problematic taxonomic assignments, implement a bootstrap threshold (e.g., ≥80%) and manually verify unexpected taxa.

Protocol 2: Phylogenetic Analysis with PsiPartition

The PsiPartition tool addresses the critical challenge of site heterogeneity in phylogenetic inference by automatically partitioning genomic data into subsets with similar evolutionary rates [10]. This approach significantly improves both computational efficiency and the accuracy of reconstructed phylogenetic trees.

Workflow Implementation:

- Data Preparation:

- Compile DNA or protein sequence alignment in FASTA or NEXUS format.

- Define initial data partitions if known a priori (e.g., by gene, codon position).

- PsiPartition Execution:

- Run PsiPartition with Bayesian optimization to identify optimal number and composition of partitions:

psipartition -in alignment.phy -model GTR+G -out partitions.txt - The algorithm uses parameterized sorting indices to group sites with similar evolutionary patterns without requiring exhaustive search.

- Run PsiPartition with Bayesian optimization to identify optimal number and composition of partitions:

- Phylogenetic Reconstruction:

- Conduct tree inference using the optimized partitions in standard software (e.g., RAxML, IQ-TREE).

- Assess node support with bootstrapping (≥100 replicates) or Bayesian posterior probabilities.

- Tree Evaluation:

- Compare resulting trees to previously published topologies.

- Assess improvements in bootstrap support values, particularly for previously poorly resolved nodes.

Validation Case Study: When applied to the moth family Noctuidae, PsiPartition significantly improved topological accuracy and produced trees with higher bootstrap support compared to traditional partitioning approaches [10]. The method demonstrated particular efficacy with large, complex datasets exhibiting substantial site heterogeneity.

Table 3: Key Research Reagent Solutions for Evolutionary Genomics

| Resource/Reagent | Function/Application | Example/Supplier |

|---|---|---|

| nf-core Pipelines | Curated, community-supported bioinformatic workflows for various data types | 124 pipelines available covering sequencing, proteomics, and more [11] |

| Nextflow DSL2 | Workflow management system enabling scalable, reproducible analyses | Nextflow (version 24.10.4+) with support for 18 schedulers/cloud services [11] |

| PsiPartition | Computational tool for optimal partitioning of genomic data for phylogenetic analysis | Hokkaido University implementation [10] |

| Click-qPCR | Web-based Shiny application for ΔCq and ΔΔCq calculations from qPCR data | https://kubo-azu.shinyapps.io/Click-qPCR/ [12] |

| ColabFold | Protein structure prediction for functional annotation of evolutionary changes | Integrated with OmicsBox for structural characterization [12] |

| TaDRIM-seq | Technique for profiling chromatin-associated RNAs and RNA-RNA interactions | Protocol for mammalian and plant systems [12] |

Workflow Reproducibility through Nextflow and nf-core

Standardized Pipeline Implementation

The nf-core framework provides a community-driven platform for developing and sharing reproducible bioinformatic pipelines [11]. With 124 peer-reviewed pipelines covering diverse data types from high-throughput sequencing to mass spectrometry, nf-core establishes best-practice standards that ensure analytical consistency across evolutionary studies.

Key Features:

- Modular Architecture: The Nextflow Domain-Specific Language (DSL2) enables composing workflows from reusable modules and subworkflows [11].

- Containerization Support: Automatic provisioning of software environments via Docker, Singularity, Podman, or Charliecloud ensures consistent computational environments [11].

- Portability: Pipelines run seamlessly across HPC clusters, cloud platforms, and local workstations without modification [11].

- Community Governance: All pipeline changes undergo rigorous review with required approval from at least two nf-core members for release pull requests [11].

Implementation Example: The nf-core community has established a mentorship program pairing experienced developers with new members from underrepresented groups, fostering inclusive development while maintaining quality standards [11]. This model supports the long-term maintenance of over 2600 GitHub contributors and more than 10,000 Slack community members.

Data Visualization and Accessibility Standards

Effective communication of evolutionary data requires careful attention to visual design principles. Research examining over 1000 tables published in ecology and evolution journals identified key guidelines for presenting quantitative data [13]:

- Aid Comparisons: Right-flush alignment of numeric columns with consistent precision and tabular fonts (e.g., Lato, Roboto, Source Sans Pro) [13].

- Reduce Visual Clutter: Avoid heavy grid lines, remove unit repetition, and group similar data logically [13].

- Enhance Readability: Ensure headers stand out from body content, highlight statistical significance, and use active, concise titles [13].

Additionally, all visualizations must meet accessibility standards for color contrast, with minimum ratios of 4.5:1 for body text and 3:1 for large-scale text or graphical objects [14]. These guidelines ensure that evolutionary insights are accessible to researchers with diverse visual capabilities.

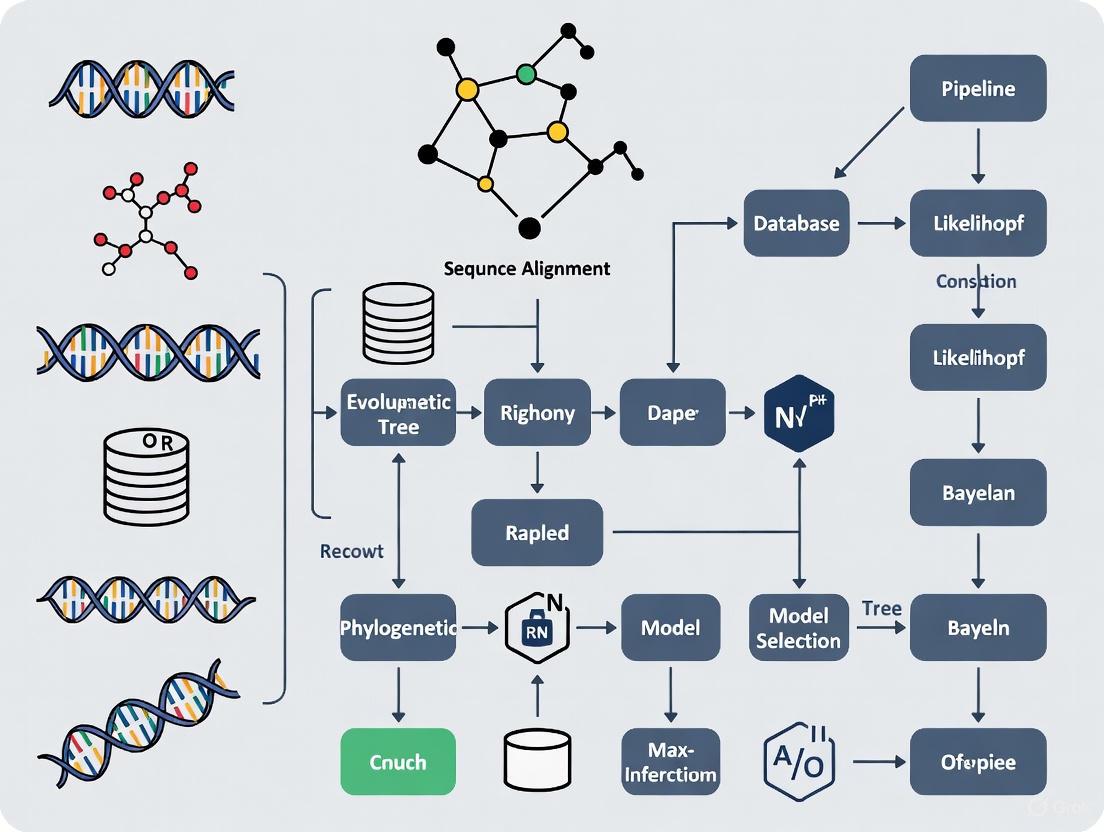

Visualizing Evolutionary Bioinformatics Workflows

The following diagrams illustrate key bioinformatic protocols for evolutionary analysis, created using Graphviz DOT language with WCAG-compliant color contrast ratios.

DNA Metabarcoding Analysis

Phylogenetic Analysis with PsiPartition

nf-core Pipeline Architecture

The integration of genetic diversity studies with phylogenetic comparative methods represents a powerful approach for unraveling evolutionary processes across biological scales. The documented negative association between genetic diversity and speciation rates in mammals [8] provides a compelling example of how bioinformatic advances enable testing of long-standing evolutionary hypotheses. Meanwhile, frameworks like nf-core [11] and analytical tools like PsiPartition [10] continue to lower technical barriers while increasing reproducibility in evolutionary bioinformatics.

As these methodologies become increasingly accessible through standardized pipelines and user-friendly interfaces, researchers can focus more attention on biological interpretation rather than computational implementation. This progression promises to accelerate our understanding of how microevolutionary processes scale to macroevolutionary patterns—a central challenge in evolutionary biology that now lies within practical reach through the integrated application of these concepts and protocols.

The Expanding Role of Machine Learning in Evolutionary Genomics and Population Genetics

Evolutionary genomics and population genetics are undergoing a profound transformation, transitioning from a traditionally theory-driven discipline to a data-driven science. This shift is largely driven by the unprecedented volume of genomic data generated by next-generation sequencing technologies, which has rendered traditional model-based statistical approaches increasingly intractable [15]. Methods such as maximum-likelihood and Bayesian inference, implemented via computationally expensive techniques like Monte Carlo Markov Chain, struggle with the scale and complexity of modern datasets comprising thousands of genomes [15].

Machine learning, particularly deep learning, has emerged as a powerful framework to address these challenges. Unlike traditional approaches that rely on human-constructed summary statistics and explicit probabilistic models, ML algorithms can learn non-linear relationships between input data and model parameters directly through representation learning from training datasets [15]. This paradigm shift enables researchers to tackle increasingly complex evolutionary scenarios, from demographic history reconstruction to detecting subtle signatures of natural selection, with unprecedented accuracy and computational efficiency.

Machine Learning Architectures in Evolutionary Genomics

The application of machine learning in evolutionary genomics encompasses diverse architectural approaches, each with distinct strengths for specific analytical tasks.

Deep Neural Networks for Population Genetic Inference

Deep learning algorithms currently employed in the field comprise both discriminative and generative models with various network architectures [15]. Fully connected networks serve as foundational architectures, while convolutional neural networks (CNNs) excel at capturing spatial patterns in genetic data, and recurrent networks (RNNs) model sequential dependencies in haplotype structures. These approaches typically utilize simulation-based training, where models learn from vast datasets generated under known evolutionary scenarios to make inferences from empirical data [15].

Representation Learning for Evolutionary Patterns

A key advantage of deep learning approaches is their ability to automatically discover informative features from raw genetic data, moving beyond the limitations of predefined summary statistics [15]. Through representation learning, neural networks can identify complex, multi-locus patterns that signal evolutionary processes such as selection, migration, or population bottlenecks. This capability is particularly valuable for detecting subtle signatures that may be missed by traditional approaches relying on human-curated statistics [15].

Table 1: Machine Learning Approaches in Evolutionary Genomics

| ML Approach | Architecture | Key Applications | Advantages |

|---|---|---|---|

| Discriminative Models | Fully Connected Networks | Demographic inference, selection scans | High accuracy for classification tasks |

| Convolutional Neural Networks | Multi-layer convolutions | Spatial pattern detection in genomic data | Captures local genomic dependencies |

| Recurrent Neural Networks | LSTM, GRU architectures | Haplotype analysis, sequential modeling | Handles variable-length sequences |

| Generative Models | GANs, VAEs | Synthetic data generation, imputation | Models complex distributions |

Application Notes: Implementing ML for Evolutionary Analysis

Protocol: Deep Learning for Balancing Selection Detection

Objective: Implement a branched neural network architecture to detect recent balancing selection from temporal haplotypic data [15].

Workflow:

- Data Simulation:

- Use evolutionary genetics simulators (e.g., SLiM, msprime) to generate training data under various selection scenarios

- Parameterize simulations to include balanced polymorphisms with varying selection coefficients, dominance effects, and time depths

- Generate balanced case/control datasets with appropriate labeling

Input Representation:

- Encode haplotypes as binary matrices (individuals × variants)

- Incorporate temporal dimension through paired sampling across generations

- Add contextual features including recombination rates, genomic annotation

Network Architecture:

- Implement branched design with separate pathways for temporal comparison and haplotype patterning

- Use convolutional layers for local haplotype structure detection

- Include attention mechanisms for identifying influential variants

- Final classification layer with softmax activation for selection probability

Training Protocol:

- Employ stratified k-fold cross-validation to address class imbalance

- Use weighted loss function to account for unequal selection scenario prevalence

- Implement learning rate reduction on plateau with factor=0.5, patience=10 epochs

- Apply early stopping with patience=15 epochs to prevent overfitting

Validation:

- Benchmark against established methods (e.g, Tajima's D, HKA test)

- Perform robustness analysis with varying demographic histories

- Apply permutation testing for empirical p-value calculation

Protocol: Phylogenetic Inference with Protein Language Models

Objective: Leverage protein language models (pLMs) for coevolution-based inference and phylogenetic analysis [16].

Workflow:

- Data Curation:

- Retrieve protein sequences from hierarchical orthologous groups (e.g., EggNOG v6) [17]

- Perform multiple sequence alignment using MAFFT or Clustal Omega

- Curate alignment quality with trimAl or similar tools

Representation Learning:

- Initialize embeddings using pre-trained pLMs (e.g., ESM, ProtTrans)

- Fine-tune embeddings on task-specific data with masked language modeling

- Extract residue-level and sequence-level representations

Coevolution Analysis:

- Compute mutual information between residue positions using embedded representations

- Identify coevolution networks using graph-based approaches

- Filter spurious correlations using statistical pruning methods

Phylogenetic Reconstruction:

- Calculate evolutionary distances from embedding similarities

- Build neighbor-joining or minimum evolution trees from distance matrices

- Compare with traditional maximum likelihood approaches

Functional Prediction:

- Annotate functional divergence using attention patterns from pLMs

- Predict interaction partners from coevolution networks

- Identify key residues for functional specialization

Table 2: Performance Benchmarks of ML Methods in Evolutionary Genomics

| Task | Traditional Method | ML Approach | Performance Gain | Key Metrics |

|---|---|---|---|---|

| Demographic Inference | ∂a∂i, ABC | CNN-based inference | 25-40% accuracy improvement | MSE, calibration error |

| Selection Scans | XP-EHH, FST | Custom branched networks | 30% higher true positive rate | AUC-ROC, precision-recall |

| Variant Calling | GATK, Samtools | DeepVariant (CNN) | >50% error reduction | F1 score, genotype concordance |

| Ancestry Prediction | PCA, STRUCTURE | Deep learning models | 15-25% assignment accuracy | Assignment accuracy, cross-entropy |

Integrated Bioinformatic Pipeline for Evolutionary Model Validation

The implementation of machine learning in evolutionary genomics requires robust bioinformatic pipelines that ensure reproducibility, scalability, and validation. Nextflow and Snakemake have emerged as dominant workflow management systems, with nf-core providing curated, community-developed pipelines that adhere to best-practice standards [11].

Pipeline Architecture for ML-Based Evolutionary Inference

A validated bioinformatic pipeline for evolutionary model validation should integrate these critical components:

Data Preprocessing Module:

- Quality control with FastQC/MultiQC

- Format standardization and normalization

- Data partitioning for training/validation/testing

Simulation Engine:

- Integration with forward-time (SLiM) and coalescent (msprime) simulators

- Parameter space exploration for training data generation

- Labeling and annotation of simulated scenarios

Model Training Framework:

- Version-controlled model architectures (Git)

- Hyperparameter optimization with cross-validation

- Distributed training on HPC clusters (SLURM) or cloud platforms

Validation Suite:

- Comparison with ground truth in simulated benchmarks

- Empirical calibration with known biological examples

- Robustness testing under model misspecification

The nf-core framework, with its extensive library of modules and subworkflows, enables research communities to progressively adopt common standards as resources and needs allow [11]. The nf-core community currently maintains 124 pipelines covering diverse data types including high-throughput sequencing, mass spectrometry, and protein structure prediction [11].

Workflow Visualization: ML-Based Evolutionary Genomics Pipeline

ML-Based Evolutionary Genomics Pipeline: This workflow integrates empirical data with simulations for robust model training and validation.

Pathway Visualization: Neural Network Architecture for Selection Detection

Neural Network for Selection Detection: Branched architecture processes temporal and haplotype data through separate pathways before integration.

Table 3: Research Reagent Solutions for ML in Evolutionary Genomics

| Resource Category | Specific Tools/Databases | Function | Application Context |

|---|---|---|---|

| Workflow Management | Nextflow, Snakemake, nf-core | Pipeline orchestration, reproducibility | Scalable execution on HPC/cloud infrastructure [11] |

| Training Data Generation | SLiM, msprime, stdpopsim | Forward-time and coalescent simulations | Generating labeled data for supervised learning [15] |

| Model Architectures | TensorFlow, PyTorch, JAX | Deep learning framework | Implementing custom neural network architectures [15] |

| Evolutionary Databases | EggNOG, TreeSAPP, OrthoDB | Orthology inference, functional annotation | Curating training data, validating predictions [17] |

| Genomic Data Repositories | UK Biobank, gnomAD, ENA | Large-scale empirical datasets | Model testing, transfer learning, real-world validation [15] |

| Benchmarking Suites | MLGE (Machine Learning in Genomics Evaluation) | Standardized performance assessment | Comparative analysis of different approaches [15] |

Validation Framework and Performance Metrics

Rigorous validation is essential for establishing the reliability of ML-based inferences in evolutionary genomics. A comprehensive validation framework should include:

Validation Protocol: Model Assessment and Calibration

Objective: Establish standardized procedures for evaluating ML model performance on evolutionary inference tasks.

Workflow:

- Simulation-Based Benchmarking:

- Create test datasets with known ground truth parameters

- Evaluate calibration (reliability diagrams, expected calibration error)

- Assess accuracy (mean squared error, absolute error for continuous parameters; precision/recall for classification)

Empirical Validation:

- Test predictions against established biological knowledge

- Perform cross-validation with independent datasets

- Implement sanity checks with negative controls

Robustness Analysis:

- Test performance under model misspecification

- Evaluate sensitivity to hyperparameter choices

- Assess stability across different demographic histories

Comparative Benchmarking:

- Compare with traditional methods (ABC, composite likelihood)

- Evaluate computational efficiency (runtime, memory usage)

- Assess scalability to large genomic datasets

The effectiveness of this validation approach is demonstrated by independent studies showing that 83% of nf-core's released pipelines could be deployed as expected, a figure nearly four times higher than that reported for other workflow catalogs [11].

Future Directions and Implementation Guidelines

As machine learning becomes increasingly integrated into evolutionary genomics, several emerging trends are shaping future developments:

Emerging Paradigms and Integration Strategies

Foundation Models and Transfer Learning: The success of protein language models and other biological foundation models suggests a future where pre-trained representations will accelerate evolutionary inference [18]. These models can be fine-tuned for specific tasks with limited labeled data, reducing the reliance on extensive simulations.

Multi-Modal Integration: Combining genomic data with other data types (e.g., environmental variables, phenotypic measurements, geographic information) through multi-modal learning approaches will enable more comprehensive evolutionary analyses [18].

Evolutionary Optimization of Models: Inspired by natural processes, evolutionary algorithms are being used to automate the development of foundation models, discovering novel architectures and combinations that exceed human-designed approaches [19].

Interpretability and Explainability: As ML models become more complex, developing methods to interpret their predictions and extract biological insights becomes increasingly important. Techniques such as attention visualization, feature importance scoring, and symbolic regression are being adapted for evolutionary applications.

Implementation Guidelines for Research Teams

For research teams implementing ML approaches in evolutionary genomics, we recommend:

Start with Community Standards: Begin with established frameworks like nf-core pipelines to ensure reproducibility and benefit from community best practices [11].

Invest in Simulation Infrastructure: Develop robust simulation capabilities for generating diverse training data that captures relevant evolutionary scenarios.

Prioritize Validation: Implement comprehensive validation frameworks that include both simulation-based and empirical testing.

Embrace Modular Design: Create modular, reusable components that can be adapted to multiple research questions and easily updated as methods evolve.

Focus on Interpretability: Balance predictive performance with biological interpretability to ensure that ML approaches yield actionable insights into evolutionary processes.

The integration of machine learning into evolutionary genomics represents a paradigm shift that is transforming how we reconstruct evolutionary history, detect natural selection, and understand the genetic basis of adaptation. By leveraging these powerful new approaches within robust bioinformatic pipelines, researchers can unlock the full potential of genomic data to address fundamental questions in evolutionary biology.

Essential Biological Databases and Knowledge Bases for Evolutionary Analysis

Biological databases are fundamental, structured repositories for storing, retrieving, and analyzing vast amounts of biological data, enabling modern research in genomics, evolution, and drug discovery [20]. In the specific context of evolutionary analysis, these resources allow scientists to compare genetic sequences and structural information across different species to infer evolutionary relationships, trace the origins of genetic variations, and understand the molecular basis of adaptation and disease [20] [21]. The integration of these databases into robust bioinformatic pipelines is crucial for processing complex data and implementing sophisticated evolutionary models, bridging the gap between computational prediction and biological validation [21] [22].

Essential Databases for Evolutionary Research

Evolutionary analysis leverages data from multiple molecular levels. The following tables summarize key databases critical for different stages of research, from sequence retrieval to functional interpretation.

Table 1: Core Sequence and Genome Databases for Evolutionary Studies

| Database | Primary Focus | Key Features for Evolutionary Analysis | Data Types |

|---|---|---|---|

| GenBank [23] | Nucleotide sequences | Comprehensive collection of annotated DNA/RNA sequences; integrated with BLAST for similarity searching. | DNA sequences, RNA sequences |

| Ensembl [23] | Genome annotation | Genome browser with detailed gene annotations, comparative genomics, and genetic variation data. | Genomes, genes, genetic variants |

| Gene Expression Omnibus (GEO) [23] | Gene expression | Public repository for high-throughput gene expression data from diverse conditions and species. | Gene expression profiles |

Table 2: Databases for Protein and Functional Analysis

| Database | Primary Focus | Key Features for Evolutionary Analysis | Data Types |

|---|---|---|---|

| UniProt [23] | Protein sequence & function | Manually curated protein sequences with functional annotations, domains, and interactions. | Protein sequences, functional data |

| Protein Data Bank (PDB) [20] [23] | 3D macromolecular structures | Repository for 3D structures of proteins and nucleic acids; essential for studying structural evolution. | 3D protein structures, nucleic acid structures |

| KEGG (Kyoto Encyclopedia of Genes and Genomes) [23] | Pathways and networks | Graphical representations of metabolic and signaling pathways for systems-level evolutionary analysis. | Pathway maps, molecular interactions |

Integrated Protocols for Evolutionary Model Validation

Validating findings from bioinformatic pipelines is a critical step to ensure biological relevance. The following protocols outline a pathway from in silico prediction to experimental confirmation.

Protocol 1: Computational Prediction of Evolutionary Relationships

This protocol forms the foundational computational workflow for evolutionary analysis [21].

- Data Acquisition: Obtain raw genomic data through high-throughput sequencing (e.g., Illumina, PacBio) or retrieve existing sequences from public repositories like GenBank or the European Nucleotide Archive (ENA) [21].

- Preprocessing and Quality Control: Perform quality assessment on raw reads using tools like FastQC. Trim low-quality bases and remove adapter sequences with tools like Trimmomatic to ensure clean data for downstream analysis [21].

- Genome Assembly & Annotation: For de novo studies, assemble genomes from raw reads using software such as SPAdes or Canu. Annotate the assembled genome to identify genes and other functional elements using tools like Prokka or MAKER [21].

- Comparative Genomics: Align multiple sequences or whole genomes from different species using alignment tools such as BLAST, MAFFT, or Clustal Omega to identify conserved regions, structural variations, and evolutionary patterns [21].

- Phylogenetic Analysis: Construct phylogenetic trees to infer evolutionary relationships using software like RAxML, IQ-TREE, or BEAST (a platform for Bayesian evolutionary analysis) [21] [24].

- Visualization and Reporting: Generate visualizations of results, such as phylogenetic trees and genome alignments, using tools like the Integrative Genomics Viewer (IGV), iTOL, or Circos [21].

Protocol 2: Experimental Validation of Bioinformatics Predictions

Computational predictions must be confirmed through experimental methods. This protocol describes the key validation steps [22].

- Gene Expression Validation:

- Computational Prediction: Identify differentially expressed genes (DEGs) from transcriptomic data (e.g., RNA-Seq) under evolutionary pressures [22].

- Experimental Validation: Verify expression levels using Quantitative PCR (qPCR). Isolate RNA from target cells or tissues, reverse transcribe to cDNA, and perform qPCR with gene-specific primers. Compare the expression fold-changes between experimental groups to the computational predictions [22].

- Protein-Protein Interaction (PPI) Validation:

- Computational Prediction: Predict potential interactions between proteins using bioinformatics tools that leverage sequence similarity, structural data, or network analysis [22].

- Experimental Validation: Validate these interactions using Co-Immunoprecipitation (Co-IP). Lyse cells and incubate the lysate with an antibody specific to the bait protein. Precipitate the antibody-protein complex and analyze the co-precipitated proteins (prey) via Western blotting to confirm the interaction [22].

- Functional and Phenotypic Validation:

- Computational Prediction: Predict the functional role of a gene or genetic variant in an evolutionary trait or disease [22].

- Experimental Validation: Use CRISPR-Cas9 gene editing to knock out or introduce specific mutations in a model organism or cell line. Analyze the resulting phenotypic changes to confirm the functional role predicted by the evolutionary model [22].

Workflow Visualization

The following diagrams illustrate the logical flow of the computational and validation protocols described above.

Diagram 1: Computational Evolutionary Analysis Pipeline

Diagram 2: Hypothesis Validation Workflow

The Scientist's Toolkit: Research Reagent Solutions

The following table details essential materials and reagents used in the experimental validation protocols.

Table 3: Essential Research Reagents for Experimental Validation

| Reagent / Material | Function in Validation | Example Application in Protocol |

|---|---|---|

| qPCR Reagents (Primers, SYBR Green, Reverse Transcriptase) | Enable precise quantification of gene expression levels by amplifying and detecting cDNA targets. | Validating differential gene expression predictions from RNA-Seq data [22]. |

| Specific Antibodies | Bind to target proteins (bait) for immunoprecipitation or detection, allowing for protein interaction and expression studies. | Co-Immunoprecipitation (Co-IP) to validate predicted protein-protein interactions [22]. |

| CRISPR-Cas9 System (Cas9 Nuclease, gRNA) | Provides a targeted method for gene knockout or editing to study the functional consequences of genetic changes. | Determining the phenotypic impact of an evolutionarily relevant gene or mutation [22]. |

| Cell Culture Models | Serve as a controlled, in vitro system for testing hypotheses about gene function and protein interactions. | Hosting Co-IP experiments and providing a platform for CRISPR editing before moving to complex organisms [22]. |

| Next-Generation Sequencing (NGS) Kits | Generate the high-throughput genomic and transcriptomic data that forms the basis for computational predictions. | Initial data acquisition for the entire bioinformatics pipeline (e.g., Illumina, Oxford Nanopore) [21] [25]. |

High-Throughput Sequencing Technologies and the Genomic Data Commons

The National Cancer Institute's Genomic Data Commons (GDC) provides the cancer research community with a unified data repository and computational platform designed to facilitate the analysis of genomic and clinical data [26]. This massive project serves as a critical resource for researchers seeking to better understand cancer at the molecular level, particularly through the lens of DNA molecules that collectively constitute the instructions for human life [26]. The GDC represents an extraordinarily complex endeavor that standardizes and harmonizes diverse genomic datasets, making them accessible to researchers investigating cancer progression, therapeutic response, and the underlying genomic drivers of malignancy.

Within the context of evolutionary model validation, the GDC provides essential data resources for studying tumor evolution and clonal dynamics. The platform enables researchers to access and analyze large-scale genomic datasets that capture the evolutionary trajectories of cancers, offering insights into the mutational processes, selective pressures, and phylogenetic relationships that shape tumor development over time. This data is particularly valuable for developing and validating probabilistic models of genome evolution in cancer, allowing researchers to test evolutionary hypotheses against comprehensive molecular profiles from thousands of patients across diverse cancer types.

High-Throughput Sequencing Technologies: Template Preparation to Data Generation

Foundational Technologies and Sequencing Approaches

High-throughput sequencing technologies have revolutionized genomic research by enabling the rapid generation of enormous numbers of sequence reads at dramatically reduced costs [27]. These technologies form the foundation of modern cancer genomics and evolutionary studies, providing the raw data necessary for analyzing mutational patterns, structural variations, and evolutionary relationships. All next-generation sequencing platforms monitor the sequential addition of nucleotides into immobilized DNA templates, but differ significantly in their approaches to template generation and sequence detection methods [27].

Table 1: Comparison of Major High-Throughput Sequencing Technologies

| Technology/Method | Read Length (bp) | Accuracy (%) | Throughput (reads/hour) | Cost per 1 Megabase | Primary Applications |

|---|---|---|---|---|---|

| CRT (Cyclic Reversible Termination) | 50-300 | 98 | 45,000,000 | $0.10 | Whole genome sequencing, transcriptomics |

| SBL (Sequencing by Ligation) | 85-100 | 99.9 | 7,000,000 | $0.13 | Variant detection, targeted sequencing |

| SAPY (Single-Nucleotide Addition via Pyrosequencing) | 700 | 99.9 | 40,000 | $10.00 | Amplicon sequencing, metagenomics |

| RTS (Real-Time Sequencing) | 14,000 | 99.9 | 500,000,000 | $0.13-$0.60 | De novo assembly, structural variant detection |

Template Preparation Strategies

The initial stage of any NGS workflow involves template preparation, which determines the quality and characteristics of the resulting genomic data [27]. Three well-established approaches exist for template creation:

Clonally Amplified Templates utilize PCR-based amplification methods, either through emulsion PCR (ePCR) or bridge PCR (bPCR), to generate millions of identical DNA fragments for sequencing. This approach requires sample concentrations of less than 20 ng/μL and is particularly suitable for qualitative analyses such as mutation detection or methylation profiling, though it may introduce amplification bias in AT-rich and GC-rich genomic regions [27].

Single-Molecule Templates involve the direct sequencing of individual DNA molecules without amplification, typically immobilized on a solid surface. This approach requires less preparation material (<1 μg) and avoids PCR-induced errors and biases, making it ideal for quantitative applications such as transcriptome analysis and for sequencing larger DNA molecules up to tens of thousands of base pairs [27].

Circle Templates represent a more recent library preparation method that dramatically reduces error rates through rolling circle replication. Double-stranded DNA is denatured and circularized, followed by amplification using random primers and Phi29 polymerase. This approach generates multiple tandem-copy dsDNA products that are sequenced simultaneously, making it particularly suitable for cancer profiling, diploid and rare-variant calling, and immunogenetics applications [27].

Sequencing and Imaging Methodologies

The sequencing and imaging components of NGS workflows employ various technological approaches to detect nucleotide incorporation:

Complementary Metal-Oxide Semiconductor (CMOS) technology, utilized by Ion Torrent's Personal Genome Machine, represents a non-optical sequencing method that detects hydrogen ions released during DNA polymerase activity using ion-sensitive field-effect transistors (ISFETs) [27].

Single-Molecule Real-Time (SMRT) sequencing, implemented in Pacific Biosciences platforms, and Fluorescently Labeled Reversible Terminator (FLRT) technologies, used by Illumina systems, constitute the primary optical sequencing methods. These approaches incorporate dye-labeled modified nucleotides during DNA synthesis, with fluorescent signals detected and recorded through advanced imaging systems [27].

Cyclic Reversible Termination (CRT) represents a widely used cyclic sequencing approach that involves nucleotide incorporation, fluorescence imaging, and signal detection. Different platforms implement CRT with either four-color cycles (Illumina/Solexa) or one-color cycles (Helicos BioSciences), with careful selection of reversible terminators being critical for sequencing quality [27].

GDC Data Processing and Bioinformatics Pipelines

The GDC employs standardized bioinformatics pipelines to process submitted FASTQ or BAM files, generating derived analytical data including somatic variant calls, gene expression quantification values, and copy-number segmentation data [28]. All sequence data undergoes alignment to the current human reference genome (GRCh38), with subsequent processing through specialized pipelines to produce harmonized, analysis-ready datasets. The GDC genomic data processing pipelines were developed in consultation with senior experts in cancer genomics and are regularly evaluated and updated as analytical tools and parameter sets improve [28].

A critical component of the GDC alignment workflow involves the inclusion of viral and decoy sequences, which serve to capture reads that would not normally map to the human genome. This approach provides information on the presence of oncoviruses and enables more accurate alignment. The current virus decoy set includes 10 types of human viruses: human cytomegalovirus (CMV), Epstein-Barr virus (EBV), hepatitis B (HBV), hepatitis C (HCV), human immunodeficiency virus (HIV), human herpes virus 8 (HHV-8), human T-lymphotropic virus 1 (HTLV-1), Merkel cell polyomavirus (MCV), simian vacuolating virus 40 (SV40), and human papillomavirus (HPV) [28].

Specialized Analysis Pipelines

The GDC implements multiple specialized processing pipelines tailored to different data types and analytical requirements:

DNA-Seq Somatic Variant Analysis identifies somatic mutations by comparing tumor and normal samples from the same case. The pipeline incorporates a co-cleaning step involving base quality score recalibration and indel realignment for improved accuracy. Variant calling employs four separate algorithms (MuSE, Mutect2, Pindel, Varscan2) to identify somatic mutations, with variants subsequently annotated using information from external databases including dbSNP and OMIM. Filtered variant calls are aggregated into Mutation Annotation Format (MAF) files, with open-access versions available to the general public and comprehensive unfiltered versions restricted to dbGaP-authorized investigators [28].

RNA-Seq Gene Expression Analysis quantifies protein-coding gene expression through a "two-pass" alignment method. Reads are initially aligned to the reference genome to detect splice junctions, followed by a second alignment that incorporates splice junction information to improve alignment quality. Read counts are generated at the gene level using STAR and normalized using Fragments Per Kilobase of transcript per Million mapped reads (FPKM) and FPKM Upper Quartile (FPKM-UQ) methods. Transcript fusions are identified using STAR Fusion and Arriba tools [28].

Single-Cell RNA-Seq Analysis generates expression counts using CellRanger, available in both filtered and raw formats. Secondary analysis employing Seurat produces coordinates for graphical representation, identifies differentially expressed genes, and generates comprehensive analysis results in loom format for downstream interpretation [28].

miRNA-Seq Analysis quantifies micro-RNA expression using annotations from miRBase, with expression levels measured and normalized using Reads per Million (RPM) methodology. The pipeline generates expression profiles for both known miRNAs and observed miRNA isoforms for each analyzed sample [28].

Data Processing Workflow in the GDC

Experimental Protocols for Evolutionary Model Validation Using GDC Data

Protocol: Accessing and Processing Whole Genome Sequencing Data for Evolutionary Analysis

Purpose: To extract and process WGS data from the GDC for phylogenetic analysis of tumor evolution.

Materials:

- GDC Data Transfer Tool

- Computational resources (minimum 16GB RAM, multi-core processor)

- Reference genome (GRCh38)

- GDC-generated BAM and VCF files

Procedure:

Data Access and Authentication

- Register for a dbGaP account and obtain appropriate data access approvals

- Install and configure the GDC Data Transfer Tool

- Authenticate using your credentials

Data Retrieval

- Identify relevant WGS datasets through the GDC Data Portal using filters for "Whole Genome Sequencing" and specific cancer projects

- Generate a manifest file for selected cases

- Download BAM files and corresponding MAF files using the GDC Data Transfer Tool:

Variant Processing for Evolutionary Analysis

- Extract high-confidence somatic variants from MAF files

- Filter variants based on read depth (>30x), variant allele frequency (>5%), and GDC quality flags

- Convert variant calls to multiple sequence alignment format for phylogenetic analysis

Evolutionary Model Selection and Validation

- Select appropriate probabilistic models (e.g., Bayesian evolutionary models) based on data characteristics and research questions

- Implement model validation protocols including coverage tests and prior sensitivity analyses [29]

- Execute phylogenetic inference using validated models to reconstruct tumor evolutionary histories

Protocol: Comparative Analysis of Tumor Subtypes Using RNA-Seq Data

Purpose: To identify evolutionary patterns across cancer subtypes using transcriptomic data from the GDC.

Materials:

- GDC RNA-Seq quantification files (FPKM or count data)

- Differential expression analysis software (DESeq2, edgeR)

- Phylogenetic analysis tools (RAxML, BEAST2)

Procedure:

Data Acquisition

- Access HTSeq count data or FPKM-normalized expression values from the GDC Data Portal

- Download clinical annotation data for sample stratification

Expression Data Processing

- Filter genes based on expression thresholds (minimum 10 reads in at least 10% of samples)

- Normalize data using variance-stabilizing transformation or regularized-log transformation

- Perform quality control to identify batch effects and outliers

Evolutionary Transcriptomics Analysis

- Construct gene co-expression networks to identify evolutionarily conserved modules

- Calculate molecular evolutionary rates using orthologous gene comparisons where possible

- Perform phylogenetic analysis of tumor subtypes using expression-based distance metrics

Validation and Interpretation

- Validate identified evolutionary patterns using orthogonal data types (e.g., DNA methylation, somatic mutations)

- Perform functional enrichment analysis on rapidly evolving gene sets

- Correlate evolutionary trajectories with clinical outcomes and therapeutic responses

Table 2: GDC Analysis Tools for Evolutionary Studies

| Tool Category | Specific Tools/Approaches | Application in Evolutionary Studies | Data Sources |

|---|---|---|---|

| Variant Analysis | MuSE, Mutect2, VarScan2, Pindel | Somatic variant calling for phylogenetic marker identification | WGS, WXS |

| Expression Analysis | STAR, HTSeq, FPKM normalization | Gene expression evolution, selection detection | RNA-Seq |

| Copy Number Analysis | ASCAT, Copy number segments | Genomic instability, chromosomal evolution | WGS, SNP arrays |

| Epigenomic Analysis | Methylation beta values, Masked arrays | Regulatory element evolution, epigenetic clocks | Methylation arrays |

| Clinical Data Integration | Annotated clinical data elements | Phenotype-genotype evolutionary correlations | Clinical supplements |

Table 3: Research Reagent Solutions for Genomic Evolutionary Studies

| Resource Category | Specific Resource | Function/Purpose | Access Method |

|---|---|---|---|

| Data Repositories | GDC Data Portal | Primary access to harmonized cancer genomic data | https://portal.gdc.cancer.gov |

| Reference Sequences | GRCh38 human genome | Standardized reference for alignment and variant calling | GDC Documentation |

| Viral Decoy Sequences | 10-oncovirus set | Improved alignment accuracy and viral detection | GDC Alignment Resources |

| Variant Callers | MuSE, Mutect2, VarScan2, Pindel | Somatic mutation identification for evolutionary analysis | GDC Pipelines |

| Expression Quantifiers | STAR, HTSeq | Gene expression quantification for transcriptome evolution | GDC RNA-Seq Pipeline |

| Annotation Databases | dbSNP, OMIM, miRBase | Functional annotation of genomic variants and non-coding RNAs | GDC Annotation Resources |

| Analysis Frameworks | ngs_toolkit, PEP format | Streamlined analysis of NGS data with reproducible workflows | [30] |

| Evolutionary Analysis | BEAST2, RAxML, IQ-TREE | Phylogenetic inference and evolutionary model testing | External installation |

Advanced Applications in Evolutionary Model Validation

Bayesian Evolutionary Model Validation Framework

The validation of probabilistic models, particularly Bayesian evolutionary models, represents a critical component in evolutionary genomic studies using GDC data [29]. Model validation ensures that computational tools implementing these models produce accurate and reliable inferences about evolutionary processes. A comprehensive validation framework encompasses two primary components: validating the model simulator (S[ℳ]) and validating the inferential engine (I[ℳ]) [29].

For evolutionary studies utilizing GDC data, model validation should include:

Coverage Analyses: Assessing whether Bayesian credible intervals achieve nominal coverage rates, indicating proper uncertainty quantification in evolutionary parameter estimates [29].

Simulation-Based Calibration: Using the model to simulate data under known parameters and verifying that inference procedures can accurately recover these parameters, particularly for evolutionary rates and divergence times [29].

Sensitivity Analyses: Evaluating the robustness of evolutionary inferences to prior specification and model assumptions, especially important for cancer evolutionary studies where population genetic parameters may be poorly characterized.

Model Comparison Techniques: Implementing formal model comparison approaches such as posterior predictive checks and marginal likelihood estimation to identify the evolutionary models best supported by GDC data [29].

Integration of Multi-Modal Data for Comprehensive Evolutionary Analysis

The GDC enables integrative evolutionary analyses through its collection of diverse data types from the same cases:

Multi-Modal Data Integration for Evolutionary Inference

Cross-Data Type Validation: Using orthogonal data types to validate evolutionary inferences, such as confirming putative positively selected genes identified through dN/dS analysis with expression-based evidence of functional importance.

Temporal Evolutionary Inference: Leveraging longitudinal clinical data when available to calibrate evolutionary rates and validate phylogenetic trees against known sampling times.

Spatial Heterogeneity Analysis: Integrating multi-region sequencing data to reconstruct spatial evolutionary patterns and validate models of tumor migration and metastasis.

The GDC's continuous updates and data releases, such as Data Release 44 with new projects and cases, ensure that evolutionary models can be tested against increasingly comprehensive and diverse datasets, strengthening the validation process and improving the robustness of evolutionary inferences in cancer genomics [26].

Building and Applying Evolutionary Analysis Pipelines

Next-generation sequencing (NGS) has revolutionized genomic research, enabling comprehensive analysis of genetic variation across diverse organisms. In evolutionary biology, robust bioinformatic pipelines are essential for transforming raw sequencing data into reliable variant calls that can test evolutionary models and phylogenetic hypotheses. This application note details the critical components and methodologies for processing sequencing data, from initial quality assessment through alignment to variant calling, with particular emphasis on practices that ensure data integrity for downstream evolutionary analyses. The protocols outlined here provide a standardized framework suitable for studying molecular evolution, population genetics, and phylogenetic relationships.

Raw Data Quality Control and Preprocessing

Quality Assessment of FASTQ Files

Raw sequencing data in FASTQ format requires rigorous quality assessment before any downstream analysis. The FASTQ format contains nucleotide sequences along with quality scores for each base, represented as ASCII characters [31]. These quality scores (Q scores) indicate the probability of an incorrect base call, calculated as Q = -10 log₁₀P, where P is the error probability [31].

Essential Quality Metrics:

- Per-base sequence quality: Determines if any positions in the read have consistently poor quality

- GC content: Identifies deviations from expected nucleotide composition

- Adapter contamination: Detects presence of adapter sequences in reads

- Sequence duplication levels: Highlights potential over-representation of certain sequences

- Overrepresented sequences: Flags possible contaminants

The FastQC tool is widely used for initial quality assessment, generating comprehensive reports with interactive graphs [31]. For long-read technologies (Oxford Nanopore, PacBio), specialized tools like NanoPlot or PycoQC provide tailored quality assessment with statistical summaries [31].

Table 1: Quality Control Tools and Their Applications

| Tool Name | Sequencing Technology | Primary Function | Key Outputs |

|---|---|---|---|

| FastQC | Short-read (Illumina) | Comprehensive quality metrics | HTML report with quality graphs |

| NanoPlot | Long-read (ONT) | Quality and length distribution | Statistical summary, quality plots |

| PycoQC | Long-read (ONT) | Interactive quality control | Customizable QC plots |

| MultiQC | Both | Aggregate results from multiple tools | Consolidated report across samples |

Read Trimming and Filtering

Quality-trimming and adapter removal are critical preprocessing steps that significantly impact downstream alignment and variant calling accuracy. Reads with poor quality tails should be trimmed to retain only high-quality segments, while adapter sequences must be removed to prevent misalignment [31].

Common Trimming Tools and Applications:

- Trimmomatic: Removes low-quality bases and Illumina adapter sequences [32]

- CutAdapt: Specializes in adapter removal with precise sequence matching [31]

- FASTQ Quality Trimmer: Filters reads based on quality thresholds and minimum length requirements [31]

- Nanofilt/Chopper: Filters long reads based on quality and length [31]

- Porechop: Removes adapters from Oxford Nanopore reads [31]

After trimming, verification of cleaning efficiency should be performed by rerunning FastQC to confirm improved quality metrics and absence of adapter contamination [31].

Reference-Based Sequence Alignment

Reference Genome Preparation

A reference genome serves as a template for aligning sequencing reads to reconstruct genomic sequences [32]. The reference is typically stored in FASTA format, beginning with a header line containing ">" followed by sequence identifiers and annotations [32].

Reference Genome Considerations:

- Completeness: Assess genome assembly quality and coverage

- Annotation: Gene models and functional elements for variant interpretation

- Evolutionary appropriateness: Phylogenetic distance to study species

- Format verification: Use tools like

seqkit statto calculate basic statistics [32]

For evolutionary studies, selection of an appropriate reference is critical, as phylogenetic distance can significantly impact alignment performance and variant discovery.

Alignment Algorithms and Tools

Sequence alignment determines the genomic origin of each read by mapping it to the reference genome. Different alignment tools are optimized for specific sequencing technologies and applications.

Short-read Aligners:

- HISAT2: Splice-aware aligner for RNA sequencing data [32]

- BWA-MEM: Popular for DNA sequencing alignment [33]

- Minimap2: Versatile aligner supporting both short and long reads [33]

- DRAGEN: Commercial solution with optimized performance [33]

Long-read Aligners:

- Minimap2: Widely used for Oxford Nanopore and PacBio data [33] [34]

- NGMLR: Designed for PacBio data [35]

For RNA sequencing analyses, splice-aware aligners like HISAT2 are essential for correctly mapping reads that span exon-exon junctions [32].

Alignment Workflow Protocol

Indexing the Reference Genome:

This command generates index files that significantly accelerate the alignment process [32].

Performing Alignment:

Parallel Processing Multiple Samples:

The --cpus-per-task option can be used to allocate computational resources efficiently [32].

Alignment Quality Assessment

After alignment, quality metrics should be evaluated to identify potential issues:

Key Alignment Statistics:

- Total alignment rate: Percentage of successfully mapped reads

- Concordant alignment rate: Properly oriented read pairs with expected insert size

- Discordant alignment rate: Improperly oriented or sized read pairs

- Multiple alignment rate: Reads mapping to multiple genomic locations

The alignment summary file from HISAT2 provides detailed statistics for evaluating mapping quality [32]. For comprehensive BAM file quality assessment, Qualimap offers detailed metrics including coverage distribution and mapping quality [34].

Variant Calling and Detection

Variant Calling Strategies

Variant calling identifies genomic differences between sequencing data and the reference genome. Different computational approaches are required for different variant types and sequencing technologies.

Structural Variant Calling Approaches:

- Read-pair: Analyses discordant insert sizes and orientations [33]

- Split-read: Detects breakpoints through partially aligned reads [33]

- Read-depth: Identifies copy number variations through coverage analysis [33]

- Assembly-based: Reconstructs sequences from unmapped reads [33]

Table 2: Structural Variant Callers for Long-Read Sequencing

| Tool | Strengths | Optimal Coverage | Variant Types Detected |

|---|---|---|---|

| Sniffles2 | Versatile for various data types | >20X | DEL, INS, DUP, INV, BND |

| cuteSV | Sensitive SV detection | >20X | DEL, INS, DUP, INV |

| DeBreak | Specialized for long-read SV discovery | >20X | DEL, INS, DUP |

| Dysgu | Supports both short and long reads | >20X (best at higher coverages) | DEL, INS, DUP, INV |

| SVIM | Excellent at distinguishing similar SV types | >20X | DEL, INS, DUP, INV |

| NanoVar | Accurate for low-depth long reads | <10X | DEL, INS, DUP |

Somatic Variant Detection

For cancer genomics or somatic evolution studies, specialized tools identify variants present in tumor samples but absent in matched normal tissue:

Somatic SV Calling Workflow:

- Separate variant calling: Call variants independently in tumor and normal samples

- VCF filtering: Retain only high-confidence calls using quality filters

- VCF merging: Identify tumor-specific variants using SURVIVOR [34]

- Validation: Manual inspection of candidate variants in IGV [34]

Specialized Somatic Callers:

- Severus: Specifically designed for tumor-normal analysis using long-read phasing [34]

Consensus Approaches for Enhanced Accuracy

Individual variant callers have distinct strengths and biases. Consensus approaches combining multiple callers significantly improve detection accuracy:

ConsensuSV-ONT: Integrates six independent SV callers (CuteSV, Sniffles, Dysgu, SVIM, PBSV, Nanovar) with convolutional neural network filtering to generate high-confidence variant sets [35]. This meta-caller approach outperforms individual tools, particularly for complex variants relevant to evolutionary studies [35].

Implementation:

Validation and Benchmarking

Pipeline Validation Standards

For robust evolutionary inference, bioinformatics pipelines require rigorous validation using established standards and benchmarks. The Association for Molecular Pathology and College of American Pathologists recommend 17 best practices for clinical NGS bioinformatics pipeline validation [36], which provide a framework for research pipeline validation:

Key Validation Components:

- Accuracy assessment: Comparison against orthogonal methods or reference materials

- Precision evaluation: Measurement of reproducibility across replicates

- Analytical sensitivity: Determination of detection limits for variant types

- Specificity assessment: False positive rate quantification

Benchmarking Datasets and Metrics

Reference Materials:

- Genome in a Bottle (GIAB): Provides benchmark variant calls for reference samples [33] [34]

- IGSR datasets: Curated variant sets from the 1000 Genomes Project [35]

Performance Metrics:

- Precision: Proportion of true variants among all calls (1 - false discovery rate)

- Recall: Proportion of benchmark variants detected (sensitivity)

- F1 score: Harmonic mean of precision and recall

- Genotype concordance: Accuracy of genotype assignments