Bridging Deep Time and Modern Data: A Framework for Validating Molecular Ecology Predictions with Fossil Evidence

This article provides a comprehensive guide for researchers and scientists on integrating molecular data with the fossil record to test and validate evolutionary hypotheses.

Bridging Deep Time and Modern Data: A Framework for Validating Molecular Ecology Predictions with Fossil Evidence

Abstract

This article provides a comprehensive guide for researchers and scientists on integrating molecular data with the fossil record to test and validate evolutionary hypotheses. It explores the foundational necessity of this synergy, detailing methodological frameworks like the fossilized birth-death model and novel deep learning approaches for analyzing biodiversity. The piece critically examines common pitfalls, such as inappropriate calibration, and offers strategies for optimization. Finally, it provides a comparative analysis of validation techniques, demonstrating how fossil data can ground-truth molecular predictions in studies of speciation, extinction, and demographic history, with significant implications for understanding evolutionary processes in biomedical research.

Why Fossils are Non-Negotiable for Molecular Ecology

In molecular ecology, accurately estimating the timing of evolutionary events is paramount for drawing correlations between speciation, demographic history, and palaeoclimatic events [1]. Calibration—the process of converting genetic divergence into units of geological time—serves as the foundation for these estimates. When researchers employ inappropriate calibration points, particularly by applying deep phylogenetic scales to recent genealogical events, they risk generating significantly misleading evolutionary timeframes [1]. This distortion directly impacts subsequent inferences about how species respond to environmental changes, ultimately affecting conservation planning and policy decisions.

The core of the problem lies in the fundamental difference between long-term substitution rates and short-term mutation rates. Studies focusing on intraspecific data (within species) primarily observe segregating sites or polymorphisms, many of which are transient and will be removed by genetic drift or selection [1]. In contrast, interspecific comparisons (between species) reflect past fixations (substitutions). Using deep fossil calibrations or canonical substitution rates (e.g., the traditional 1% per million years for birds and mammals) for recent evolutionary events can thus lead to a substantial underestimation of substitution rates and a corresponding overestimation of divergence times [1]. This article explores the consequences of such inappropriate calibration through concrete case studies and highlights methodologies for robust, validated estimates.

Comparative Analysis: The Impact of Calibration Choices on Divergence Time Estimates

The following case studies illustrate how the choice of calibration point dramatically alters biological interpretation. The table below summarizes the divergence time estimates obtained using external versus internal calibration points across different biological systems.

Table 1: Comparison of Divergence Time Estimates Using External vs. Internal Calibration Points

| Study System | External Calibration Method | Estimate with External Calibration | Internal Calibration Method | Estimate with Internal Calibration | Impact on Biological Interpretation |

|---|---|---|---|---|---|

| Avian Speciation [1] | Traditional mitochondrial rate (0.01 subs/site/Myr) | Majority of 22 species had pre-Pleistocene divergences (>2.4 Mya) | Revised rate from amakihi subspecies (0.075 subs/site/Myr) | Most phylogroup divergences occurred within the last 250,000 years | Supports Late Pleistocene speciation, rejecting the "Late Pleistocene Origins" hypothesis |

| Bowhead Whales (Demographic History) [1] | Deep fossil calibrations or canonical rates | Overestimated times to divergence and underestimated past population sizes | Heterochronous ancient DNA sequences from radiocarbon-dated samples | Revised, more recent timeline for population expansions and contractions | Alters understanding of population responses to historical climate cycles and hunting pressure |

| Brown Bears (Pleistocene Biogeography) [1] | Deep fossil calibrations or canonical rates | Overestimated divergence times for biogeographic events | Internally-calibrated substitution rates from within-species data | Significantly more recent timing for colonization and population isolation events | Changes correlation of dispersal events with specific Pleistocene glaciations or sea-level changes |

Experimental Protocols: Methodologies for Robust Molecular Dating

Protocol: Calibration Using Heterochronous Ancient DNA

The analysis of DNA from radiocarbon-dated subfossils, such as bones or teeth, provides a powerful internal calibration method for studying demographic history on genealogical scales [1].

- Sample Collection & Dating: Collect biological remains (e.g., bones, teeth, feathers) from stratified deposits or with secure archaeological context. Submit a subset for rigorous radiocarbon dating to establish a precise geological timeline for each sample.

- Ancient DNA (aDNA) Extraction: Perform DNA extraction in a dedicated, clean-room facility to prevent contamination with modern DNA. Use extraction protocols designed to recover short, degraded DNA fragments typical of ancient material.

- Library Preparation and Sequencing: Build DNA libraries, including dual-indexing with unique barcodes for each sample to track it through pooled sequencing. Use high-throughput sequencing platforms to generate millions of sequences.

- Bioinformatic Processing: Map sequence reads to a reference genome of the study species. Apply strict filters to retain only high-quality, authentic ancient DNA, checking for characteristic damage patterns.

- Molecular Dating Analysis: Compile the radiocarbon ages and genetic sequences into a single dataset. Use Bayesian phylogenetic frameworks (e.g., BEAST2) that can directly incorporate sample ages as calibration points to estimate substitution rates and divergence times.

Protocol: Fossil-Informed Ecological Niche Modeling (ENM)

While not a direct molecular dating method, integrating fossil data with Ecological Niche Models (ENMs) provides an independent means of validating hypotheses about species' past distributions and range changes, which are often inferred from molecular data [2].

- Data Compilation:

- Modern Occurrences: Compile georeferenced locality data for the target species from databases like GBIF.

- Fossil Occurrences: Gather fossil occurrence data from paleontological databases and literature, ensuring they are taxonomically validated and reliably dated.

- Paleoclimatic Data: Obtain simulated paleoclimatic layers (e.g., temperature, precipitation) for the relevant time periods from databases like PaleoClim.

- Model Calibration:

- For a Modern-Only ENM, calibrate the model using only the modern occurrence data and corresponding modern climate layers.

- For a Fossil-Informed ENM, combine both modern and fossil occurrence data. Use the fossil data with the paleoclimatic layers from their specific time periods to calibrate the model, thereby capturing a broader fraction of the species' fundamental climatic niche [2].

- Model Projection and Validation: Project both models onto past climatic scenarios to predict potential species' ranges at different times in the past. Compare the predictions against the known fossil record or independent phylogeographic patterns to assess which model is more accurate.

Table 2: Key Research Reagents and Computational Tools for Molecular Dating and Validation

| Item/Tool Name | Category | Primary Function | Application Context |

|---|---|---|---|

| Radiocarbon Dating | Dating Method | Provides absolute age for organic samples | Calibrating heterochronous ancient DNA datasets [1] |

| High-Throughput Sequencer | Laboratory Instrument | Generates millions of DNA sequences in parallel | Sequencing modern and ancient DNA for population genetic analyses [3] |

| BEAST2 (Bayesian Evolutionary Analysis) | Software Package | Bayesian inference of phylogenies and divergence times | Molecular dating with various calibration types (fossils, heterochronous sequences) [1] |

| gridCoal | Software Package | Spatially explicit coalescent simulations | Assessing expected genetic patterns under demographic models with spatio-temporal variation [4] |

| Paleoclimatic Layers | Data | Simulated historical climate data | Informing Ecological Niche Models (ENMs) and species distribution models in deep time [2] |

| Environmental DNA (eDNA) | Molecular Data | Trace DNA from environmental samples | Highly sensitive detection for presence/absence to validate model predictions [5] |

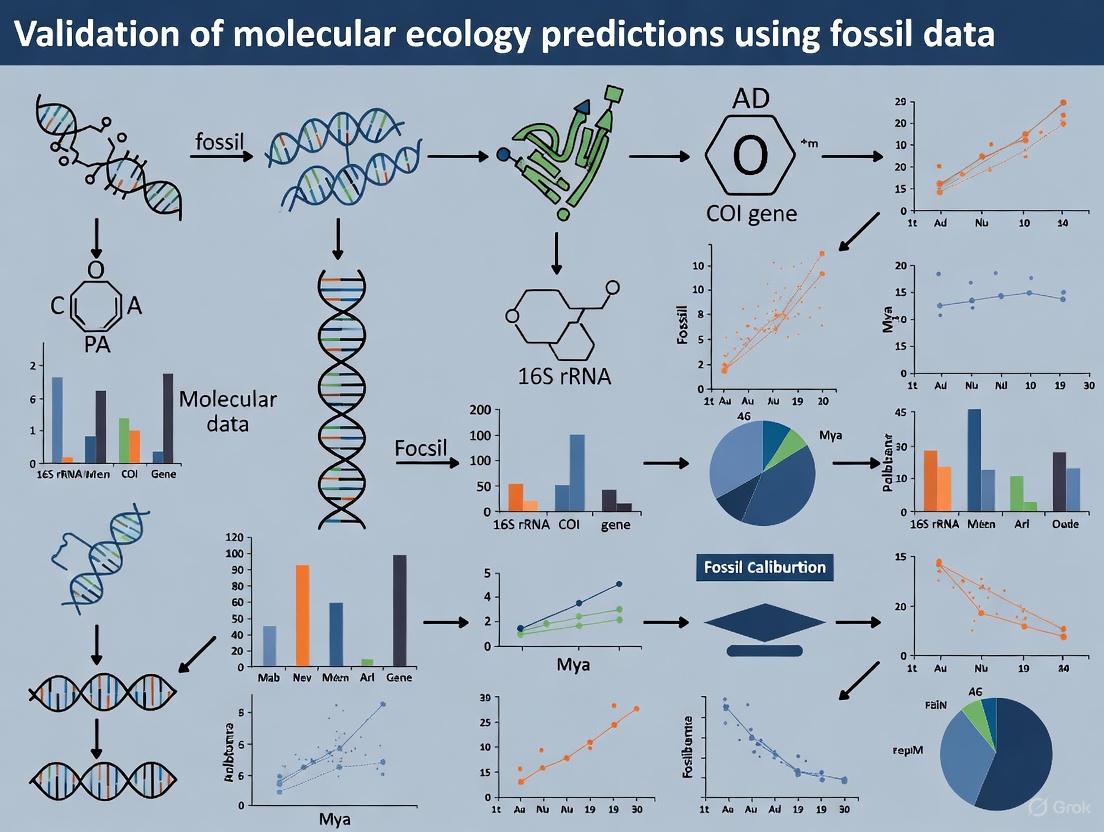

Visualizing Calibration Workflows and Their Outcomes

The diagram below illustrates the logical workflow and contrasting outcomes of using inappropriate external calibration versus validated internal calibration.

The case studies presented here underscore a critical methodological principle in molecular ecology: calibration must be temporally and biologically appropriate for the evolutionary question at hand. The persistent use of deep fossil calibrations or standardized "canonical" rates for analyzing recent intraspecific divergence events has likely led to a widespread overestimation of divergence times across numerous studies [1]. This, in turn, has skewed our understanding of how biodiversity responds to climatic oscillations and other recent environmental pressures.

The path forward requires a disciplined and integrative approach. Researchers must leverage internal calibration points, such as those provided by heterochronous ancient DNA, whenever possible [1]. Furthermore, molecular inferences should be cross-validated with independent evidence, such as fossil-informed ecological niche models [2] or patterns from spatially explicit simulations [6] [4]. By adopting these rigorous practices, the field can generate more reliable estimates of evolutionary time scales, thereby strengthening the foundation upon which we build our understanding of past, present, and future biodiversity.

The Late Pleistocene, spanning from approximately 129,000 to 11,700 years ago, was a period of significant climatic fluctuations that profoundly influenced the evolutionary trajectories of species. Within molecular ecology, hypotheses about speciation events from this era are often generated through the analysis of genetic data from modern populations. However, these molecular predictions require rigorous validation against the physical evidence of the fossil record. This guide objectively compares the insights gained from molecular data against those from paleontological data, using the divergence of three closely related tree peony species (Paeonia qiui, P. jishanensis, and P. rockii) as a central case study [7]. The debate centers on whether molecular clocks, which estimate divergence times, align with the morphological and distributional evidence from fossils, and how the integration of both provides a more robust understanding of speciation dynamics.

The following tables consolidate key quantitative findings from the tree peony study, illustrating the genetic and ecological dimensions of the speciation debate.

Table 1: Summary of Genetic Data and Analysis from the Tree Peony Study

| Analysis Type | Key Findings | Interpretation & Support for Speciation |

|---|---|---|

| Nuclear Microsatellites (nSSRs) | Clear genetic differentiation among the three species [7]. | Supports reproductive isolation and distinct evolutionary pathways. |

| Chloroplast DNA Sequences | Phylogenetic placement suggests historical introgression between P. qiui/P. jishanensis and P. rockii [7]. | Indicates a complex evolutionary history with potential gene flow after initial divergence. |

| Coalescent Analysis (DIYABC) | Estimated divergence in the late Pleistocene [7]. | Provides a temporal hypothesis for speciation, coinciding with Pleistocene climatic oscillations. |

Table 2: Ecological Niche Modeling (ENM) and Morphological Data

| Data Type | Key Findings | Interpretation & Role in Speciation |

|---|---|---|

| Ecological Niche Modeling (ENM) | Larger species ranges during the Last Glacial Maximum (LGM) compared to the present [7]. | Suggests range shifts and fragmentation due to climate change, creating conditions for allopatric speciation. |

| Morphological Characterization | Consistent, clear morphological differences between the species [7]. | Provides phenotypic evidence for speciation, correlating with genetic differentiation. |

Experimental Protocols for Key Methodologies

To ensure reproducibility and critical evaluation, this section details the core experimental protocols used in the cited research.

Phylogeographic Sampling and Genetic Data Collection

- Population Sampling: The study collected leaf samples from 587 individuals across 40 natural populations of P. qiui, P. jishanensis, and P. rockii in the Qinling-Daba Mountains, covering the majority of their known ranges [7].

- Nuclear Microsatellite Genotyping: Genomic DNA was extracted from dried leaf tissue. Twenty-two nuclear simple sequence repeat (nSSR) markers were amplified via PCR and analyzed to assess genetic diversity, population structure, and differentiation [7].

- Chloroplast DNA Sequencing: Three non-coding regions of chloroplast DNA were sequenced for multiple individuals. These sequences were used to construct phylogenetic trees and assess evolutionary relationships that might differ from nuclear DNA due to their distinct inheritance patterns [7].

Coalescent Analysis for Divergence Time Estimation

- Modeling Framework: The study employed DIYABC (Approximate Bayesian Computation), a software package designed for inferring population history using genetic data [7].

- Scenario Testing: Multiple demographic scenarios (e.g., different sequences of population divergence and admixture) were simulated and compared. The scenario with the highest posterior probability was selected as the most likely evolutionary history.

- Parameter Estimation: Based on the best-supported model, key parameters including the timing of divergence between species pairs were estimated, pointing to the late Pleistocene [7].

Ecological Niche Modeling (ENM) Protocol

- Occurrence Data: Georeferenced locations of the three tree peony species were compiled from field surveys and herbarium records [7].

- Environmental Data: Bioclimatic variables representing temperature and precipitation patterns for both the present day and the Last Glacial Maximum (LGM, ~21,000 years ago) were obtained from WorldClim.

- Model Simulation: A Maximum Entropy algorithm (MaxEnt) was used to predict the potential geographic distribution of each species under current and past climatic conditions. The model correlates known occurrence points with environmental layers to identify suitable habitat [7].

- Niche Overlap/Divergence Test: The resulting models were compared statistically to quantify the degree of niche similarity or divergence between the species, providing insight into the ecological context of their separation.

Visualizing the Speciation Workflow

The following diagram illustrates the integrated methodological workflow used to test speciation hypotheses, from data collection to synthesis.

The Scientist's Toolkit: Key Research Reagent Solutions

This table details essential reagents, materials, and tools required for conducting similar research in molecular ecology and phylogeography.

Table 3: Essential Research Reagents and Tools for Phylogeographic Studies

| Item Name | Function/Brief Explanation |

|---|---|

| DNeasy Plant Kit | Standardized kit for high-quality genomic DNA extraction from silica gel-dried leaf tissue, crucial for downstream genetic analyses [7]. |

| Nuclear Simple Sequence Repeat (nSSR) Primers | Species-specific fluorescently labelled primers to amplify highly variable microsatellite regions for assessing contemporary genetic structure and diversity [7]. |

| Chloroplast DNA Primers | Universal primers designed to amplify non-coding regions of chloroplast DNA for reconstructing deep evolutionary relationships and maternal lineages [7]. |

| DIYABC Software | A user-friendly computational tool for Approximate Bayesian Computation, used to infer population history (divergence times, admixture) from genetic data [7]. |

| MaxEnt Software | A powerful algorithm for species distribution modeling, predicting potential geographic ranges based on occurrence records and environmental data [7]. |

| WorldClim Paleoclimatic Data | A publicly available database of interpolated global climate surfaces for past (e.g., LGM), current, and future conditions, essential for ecological niche modeling [7]. |

The case of the tree peonies demonstrates that the Late Pleistocene speciation debate is not a binary argument but a call for integrative analysis. Molecular hypotheses provide powerful, quantifiable estimates of divergence times and reveal genetic structure, while fossil and ecological data offer a vital reality check, confirming the geographical and ecological feasibility of these events [7]. The debate is advanced by acknowledging that speciation is rarely a simple, clean split; it often involves periods of isolation, secondary contact, and introgression, as suggested by the genetic signals in the peonies [8].

Future research will be shaped by frameworks like Bayesian Hypothesis Generation, which provides a structured, probabilistic approach for evaluating novel hypotheses before extensive data collection, balancing skepticism with openness to high-impact ideas [9]. Furthermore, the rise of agentic AI systems and large language models holds promise for revolutionizing hypothesis generation in fields like molecular ecology. These systems can systematically map connections across disparate domains—such as genetics, paleoclimatology, and morphology—to uncover testable, interdisciplinary insights that might elude human researchers due to cognitive constraints or disciplinary silos [10]. The continued challenge and validation of molecular hypotheses with fossil data, aided by these new computational tools, will undoubtedly lead to a richer and more complex understanding of life's history.

The pursuit of evolutionary history often navigates a apparent conflict between the deep divergences predicted by molecular clock analyses and the relatively recent appearance of organisms in the fossil record. This dichotomy has historically fueled perceptions of the fossil record as hopelessly incomplete. However, methodological advances across paleontological and geochemical disciplines are transforming this perspective, enabling researchers to embrace fossil evidence as a critical archive for directly testing and validating molecular ecology predictions. This guide compares the experimental approaches and data types that power this scientific synthesis, providing a framework for researchers to evaluate the strengths and appropriate applications of complementary evolutionary dating methods.

Contemporary research demonstrates that the fossil record's value extends far beyond providing individual calibration points. It serves as an independent source of hypothesis testing through:

- Exceptional Preservation Detection: Geochemical signatures that identify environments conducive to preserving early, soft-bodied organisms [11].

- Morphological Trajectories: Continuous fossil sequences that document the pace and sequence of phenotypic diversification [12].

- Biomolecular Preservation: Direct chemical evidence of original biomolecules that provides insights into evolutionary relationships [13].

- Bias-Quantifying Algorithms: Deep learning approaches that correct for spatial, temporal, and taxonomic sampling heterogeneity [6].

The following sections compare specific technologies and experimental approaches that enable this research paradigm, providing methodological details and performance metrics essential for designing studies that integrate molecular and fossil evidence.

Comparative Analysis of Fossil-Based Research Methodologies

Quantitative Comparison of Experimental Approaches in Fossil Analysis

Table 1: Comparison of key methodological approaches for extracting evolutionary data from fossils

| Methodology | Primary Application | Spatial/Temporal Resolution | Key Measurable Parameters | Technical Limitations |

|---|---|---|---|---|

| Fossilized Biomolecule Analysis [13] | Detecting preserved original biomolecules (e.g., collagen I) | Microscopic (tissue-level); works on specimens up to 80 million years old | Presence of proteins via immunofluorescence, ELISA absorbance values, electrophoretic bands | Requires exceptional preservation; potential for contamination; humic substance interference |

| Rare Earth Element (REE) Profiling [13] | Screening fossils for likely biomolecular preservation | Microscopic (cortical bone depth profiling); applicable across Phanerozoic | REE concentration gradients, diffusion patterns, overall concentration levels | Indirect proxy; requires validation; destructive sampling |

| Geochemical Preservation Mapping [11] | Identifying rocks with exceptional preservation potential | Macroscopic (rock composition); focused on Neoproterozoic to Cambrian | Berthierine/kaolinite clay content (>20% predictive of preservation) | Regional lithological constraints; not all environments represented |

| Geometric Morphometrics of Continuous Traits [12] | Tracking phenotypic diversification through time | Population-level; millennial-scale resolution over 17,000-year sequences | Landmark-based shape coordinates, morphospace occupation, disparity metrics | Requires abundant specimens; trait-dependent |

| Deep Learning Biodiversity Estimation (DeepDive) [6] | Correcting biodiversity estimates for sampling bias | Global/regional scales; bin-level resolution across geologic eras | Re-scaled MSE (0.114-0.132 validation), R² values, confidence interval coverage | Requires extensive training data; computational intensity |

Performance Metrics in Bias Correction and Temporal Reconstruction

Table 2: Performance characteristics of biodiversity estimation methods across preservation scenarios

| Method | Optimal Preservation Context | Completeness Threshold | Error Metrics | Advantages Over Alternatives |

|---|---|---|---|---|

| DeepDive [6] | Variable preservation, strong spatial/taxonomic biases | Effective even at <20% completeness (fraction of species with fossils) | rMSE <0.01 at >0.2 completeness; test MSE 0.197-0.229 | Accounts for spatial, temporal, AND taxonomic biases simultaneously |

| Shareholder Quorum Subsampling (SQS) [6] | Consistent preservation, moderate sampling | Requires reasonable occurrence data density | Not quantified in direct comparison | Widely adopted; computationally efficient |

| Fossil Geochemical Screening [11] | Mudstone deposits with specific clay compositions | Identifies rocks with >90% probability of preserving soft tissues | 100%准确识别具有伯瑟琳/高岭石保存条件的Cambrian页岩 | Directly identifies preservation potential rather than correcting estimates |

| REE Biomolecular Proxy [13] | Vertebrate bone with minimal diagenetic alteration | Low REE concentrations with steep cortical gradients | 500% higher ELISA absorbance vs. controls in positive specimens | Enables targeted sampling for destructive biomolecular analyses |

Experimental Protocols for Key Methodologies

Detecting Fossilized Biomolecules with REE Screening

Principle: The Rare Earth Element (REE) composition of fossil bone reflects its diagenetic alteration, with low concentrations and steeply declining profiles indicating minimal pore fluid interaction and thus higher potential for biomolecular preservation [13].

Protocol:

- Sample Preparation: Cut cortical bone fragments, sequentially abrade outer surfaces, and powder samples at multiple cortical depths.

- REE Quantification: Analyze powders via ICP-MS to determine REE concentrations and distribution patterns.

- Biomolecular Extraction:

- Demineralize bone fragments in 0.5M EDTA, pH 8.0

- Extract proteins in ammonium bicarbonate (ABC) and guanidine hydrochloride (GuHCl)

- Centrifuge and collect supernatant

- Immunological Assays:

- ELISA: Coat plates with extracts, incubate with anti-collagen I primary antibodies, detect with HRP-conjugated secondary antibodies

- Immunofluorescence: Apply primary antibodies to demineralized bone sections, visualize with FITC-conjugated secondaries

- Specificity Controls: Include collagenase digestion, antibody inhibition, and secondary-only controls

Validation Criteria: Positive ELISA signal ≥2 times background levels; specific fluorescence localization in tissue sections; reduced signal in digestion controls [13].

Geometric Morphometrics for Tracking Adaptive Radiation

Principle: Morphological diversification in continuous fossil sequences can document the pace and sequence of adaptive radiation, testing predictions about evolutionary tempo [12].

Protocol:

- Stratigraphic Sampling: Collect fossils from precisely dated sediment cores with continuous deposition (e.g., Lake Victoria cores spanning 17,000 years).

- Trait Selection and Digitization: Focus on ecologically informative traits (e.g., cichlid oral jaw teeth); capture 2D or 3D landmark coordinates.

- Morphospace Construction:

- Perform Generalized Procrustes Analysis to remove non-shape variation

- Calculate principal components of shape variation

- Project fossil specimens into morphospace

- Temporal Analysis:

- Calculate disparity metrics (e.g., sum of variances) through time bins

- Compare morphospace occupation across intervals

- Test for early burst patterns vs. constant diversification

Analytical Framework: Use PERMANOVA to test shape differences between trophic groups; dispRity package in R for disparity-through-time analyses [12].

Deep Learning Biodiversity Estimation (DeepDive)

Principle: Recurrent neural networks trained on simulated biodiversity data can learn to correct for sampling biases in fossil occurrence data [6].

Protocol:

- Training Data Generation:

- Simulate diversification trajectories with varying speciation/extinction rates

- Generate fossil records with known spatial, temporal, and taxonomic biases

- Create features from occurrence data (singletons, localities per region)

- Model Architecture:

- Implement recurrent neural network (RNN) with LSTM layers

- Include Monte Carlo dropout for uncertainty estimation

- Train multiple models with different initializations

- Application to Empirical Data:

- Customize training simulations with clade-specific constraints

- Input empirical occurrence data with spatial/temporal information

- Generate diversity trajectories with confidence intervals

- Validation: Compare predictions to simulated true diversity; calculate rMSE and R² values [6].

Visualization Frameworks for Fossil Data Interpretation

Integrated Workflow for Molecular-Fossil Synthesis

Experimental Pipeline for Biomolecular Fossil Analysis

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key research reagents and materials for fossil-based evolutionary studies

| Reagent/Material | Application | Function | Experimental Context |

|---|---|---|---|

| Berthierine/Kaolinite Clays [11] | Preservation potential assessment | Antibacterial barrier preventing organic decay | Identifying rocks with exceptional fossil preservation |

| Rare Earth Element Standards [13] | Diagenetic history reconstruction | Proxies for pore fluid interactions and preservation quality | Screening fossils for biomolecular preservation potential |

| Anti-Collagen I Antibodies [13] | Biomolecular detection | Specific recognition of preserved collagen epitopes | Immunological verification of original biomolecules |

| Collagenase Enzymes [13] | Specificity controls | Enzymatic digestion of collagen substrates | Verifying antibody specificity in immunoassays |

| Ammonium Bicarbonate Buffer [13] | Protein extraction | Mild buffer for solubilizing fossil proteins | Extracting non-denatured proteins for immunological assays |

| Guanidine Hydrochloride [13] | Protein extraction | Denaturing agent for refractory proteins | Extracting tightly bound or cross-linked fossil proteins |

| EDTA Solution [13] | Demineralization | Chelates calcium ions to dissolve mineral matrix | Releasing organic components from fossil bone |

| Geometric Morphometrics Software [12] | Shape analysis | Quantifying morphological evolution from fossils | Tracking phenotypic diversification through time |

The methodologies compared in this guide demonstrate that the fossil record, when interrogated with appropriate tools and statistical corrections, provides a robust historical archive for testing evolutionary hypotheses. Rather than treating molecular clocks and fossil evidence as conflicting datasets, researchers can now leverage their complementary strengths: molecular data provide a broad phylogenetic framework, while fossil evidence offers direct temporal calibration and independent tests of diversification scenarios. The experimental approaches detailed here—from REE screening for biomolecular preservation to deep learning bias correction—enable precisely this integration, moving beyond perceptions of incompleteness to embrace the fossil record as an essential resource for reconstructing evolutionary history.

In molecular ecology and evolution, estimating the timing of species divergence is a fundamental challenge. The molecular clock hypothesis, proposed in the 1960s, serves as a crucial tool for this purpose, suggesting that DNA and protein sequences evolve at a rate that is relatively constant over time and among different organisms [14]. A direct consequence of this hypothesis is that the genetic difference between any two species is proportional to the time since they last shared a common ancestor. This principle allows researchers to estimate evolutionary timescales, especially for organisms with poor fossil records such as flatworms and viruses [14]. However, the practical application of molecular clocks reveals a significant complication: a pronounced discrepancy between genealogical mutation rates (measured from individuals with known relationships) and phylogenetic mutation rates (calculated from fixed differences between species divided by their estimated time since divergence) [15]. This guide provides a comparative analysis of the key concepts, methods, and tools for estimating divergence times, focusing on how fossil data validates molecular ecology predictions.

Core Concepts: Mutation Rates and Scales

The Genealogical vs. Phylogenetic Rate Discrepancy

The conflict between genealogical and phylogenetic mutation rates represents a significant challenge in evolutionary studies. Genealogical mutation rates, derived from comparing closely related individuals, are typically several orders of magnitude faster than phylogenetic rates [15]. This discrepancy creates substantial implications for evolutionary modeling. For instance, using the genealogical rate would place estimates for "Y Chromosome Adam" and "Mitochondrial Eve" well within a biblical timeframe, creating tension with evolutionary models that rely on much slower phylogenetic rates [15]. The evolutionary community often attempts to resolve this conflict by appealing to processes like natural selection or genetic drift, though population modeling suggests these explanations may be insufficient [15].

The Molecular Clock: From Strict to Relaxed Models

The original molecular clock hypothesis, backed by Motoo Kimura's neutral theory of molecular evolution, assumed a strictly constant substitution rate across lineages [14]. However, subsequent research revealed that rates of molecular evolution can vary significantly among organisms, rendering the strict molecular clock too simplistic [14]. This recognition led to the development of "relaxed" molecular clock models that accommodate rate variation among lineages in a limited manner:

- Type 1 Relaxed Clocks: Allow rate variation over time and among organisms, but this variation occurs around an average value [14].

- Type 2 Relaxed Clocks: Permit the evolutionary rate to "evolve" over time, based on the assumption that molecular evolution rates are tied to other evolving biological characteristics (e.g., metabolic rate) [14].

Table 1: Comparison of Molecular Clock Models

| Clock Type | Rate Variation | Theoretical Basis | Best Application Context |

|---|---|---|---|

| Strict Clock | Constant across lineages | Neutral Theory | Closely related species with similar generation times |

| Relaxed Type 1 | Varies around an average | Empirical observations | Datasets with moderate taxonomic diversity |

| Relaxed Type 2 | Evolves over time | Correlation with biological traits | Evolutionarily distant groups |

Comparative Analysis of Divergence Time Estimation Methods

Computational Approaches and Their Performance

Modern molecular dating techniques must accommodate extensive heterogeneity of evolutionary rates among lineages, especially with today's large genomic datasets [16]. Several computational approaches have been developed to address this challenge:

The RelTime method estimates relative divergence times for all branching points in large phylogenetic trees without assuming a specific model for lineage rate variation or requiring clock calibrations [16]. In comparative studies, RelTime demonstrated a linear relationship with true divergence times in simulations, accurately capturing node times and time elapsed on branches even when evolutionary rates varied extensively under autocorrelated, uncorrelated, constant rate, and random rate models [16]. Computationally, RelTime completed calculations approximately 1,000 times faster than the fastest Bayesian method (MCMCTree), with this speed advantage increasing for larger datasets [16].

DeepDive represents a more recent innovation that uses deep learning to estimate biodiversity patterns through time while incorporating spatial, temporal, and taxonomic sampling variation [6]. This approach couples a simulation module that generates synthetic biodiversity and fossil datasets with a recurrent neural network that uses fossil data to predict diversity trajectories [6]. In validation tests, DeepDive outperformed alternative methods like Shareholder Quorum Subsampling (SQS), especially at large spatial scales, providing robust paleodiversity estimates under various preservation scenarios [6]. DeepDive predictions were most accurate in datasets with completeness exceeding 0.2 (where up to 80% of species were not sampled) and with higher preservation rates [6].

Table 2: Performance Comparison of Divergence Time Estimation Methods

| Method | Theoretical Approach | Calibration Requirements | Computational Speed | Key Strengths |

|---|---|---|---|---|

| RelTime | Maximum likelihood relative dating | No specific calibrations needed | ~1000x faster than Bayesian methods | Accuracy across diverse rate variation models |

| DeepDive | Deep learning/simulations | Incorporates fossil data directly | Varies with model architecture | Handles spatial, temporal, taxonomic biases |

| Bayesian (MCMCTree) | Bayesian inference with priors | Requires multiple clock calibrations | Computationally intensive | Sophisticated modeling of rate heterogeneity |

| SQS | Subsampling approach | Sampling standardisation | Fast but less accurate | Widely applied for fossil data standardization |

Mutation-Selection Models for Site-Specific Rate Estimation

Beyond species divergence times, estimating substitution rates at specific protein sites provides invaluable information about biophysical and functional constraints [17]. Traditional phylogenetic models account for variation by introducing factors that scale the relative substitution rate at sites to the overall mean substitution rate of a multiple sequence alignment (MSA) [17]. However, mutation-selection models offer a more sophisticated approach by modeling evolutionary processes at the codon level, providing greater realism than protein-level models [17]. These models describe the relative instantaneous rate between codons as the product of the mutation rate and the site-specific fixation probability [17]. When applied to natural sequences, site rates from the mutation-selection model show strong correlation with rates calculated with empirical Bayes methods [17]. This approach can be rapidly calculated on large sequence alignments and performs particularly well on shallow multiple sequence alignments [17].

Experimental Protocols and Validation Frameworks

Calibrating the Molecular Clock with Fossil Data

Calibration represents the most critical consideration when using either strict- or relaxed-clock molecular clock methods [14]. Without calibration, a 5% difference in DNA sequences could have accumulated over 5 million years at 1% per million years, or over 1 million years at a fivefold higher rate - with no statistical way to distinguish between these possibilities from the genetic data alone [14]. The standard calibration protocol involves:

- Identifying Divergence Events: Determine absolute ages of evolutionary divergence events (e.g., mammal-bird split) from the fossil record or geological events of known antiquity (e.g., mountain range formation that initiated speciation) [14].

- Calculating Evolutionary Rates: Use these known divergence times to calculate substitution rates for specific genetic markers.

- Applying Calibrations: Extrapolate the calibrated rates to estimate timing of evolutionary events in other organisms.

A study by Weir and Schluter (2008) demonstrates advanced calibration using 90 different calibrations derived from dated fossils, land bridge formations, oceanic islands, and mountain ranges [14]. After statistical consistency checks eliminated 16 inconsistent calibrations, the remaining 74 calibrations yielded an average cytochrome b gene evolution rate in birds of approximately 1% per 1 million years (the "2% rule" for pairwise species comparisons) [14]. Notably, they found rate variation exceeding fourfold among different bird lineages, uncorrelated with biological characteristics like body mass [14].

Integrating Fossil Data to Validate Ecological Niche Models

Beyond divergence time estimation, fossil data plays a crucial role in validating ecological predictions under climate change scenarios. Ecological niche models (ENMs) typically learn species' climatic preferences from their current geographic distributions, leaving them vulnerable to niche truncation from non-climatic limits like anthropogenic activities and competition [2]. Supplementing current species observations with fossil data explores a larger fraction of the species' fundamental niche, as fossil occurrences represent periods when these non-climatic limits were absent or differently distributed [2].

Experimental protocols for integrating fossil data include:

- Data Combination: Combining current and fossil occurrence data for species of conservation concern [2].

- Niche Width Assessment: Comparing climatic niche width estimates from current data alone versus current + fossil data [2].

- Range Change Prediction: Evaluating predictions of range change under future climate scenarios using both data approaches [2].

This approach reveals that while adding fossil data invariably increases estimated niche width, it improves range change predictions for only about half of species, suggesting many species may currently be in non-equilibrium with their environment [2].

Figure 1: Experimental workflow for validating ecological niche models with fossil data

Phylogenetic Scale in Biodiversity Studies

The phylogenetic scale (representing different evolutionary depths) significantly influences relationships between biodiversity patterns and environmental conditions [18]. Research on angiosperms across latitudinal and longitudinal gradients in China demonstrates that the relationship between β-diversity and climatic distance decreases conspicuously from shallow to deep evolutionary time slices [18]. This effect differs between gradients:

- Latitudinal Gradients: Show steeper decreases in climate-β-diversity relationship strength from shallow to deep evolutionary time [18].

- Longitudinal Gradients: Exhibit less steep decreases, likely reflecting historical processes like the collision of the Indian plate with the Eurasian plate [18].

This protocol involves slicing the phylogenetic tree at multiple evolutionary depths (e.g., 0, 15, 30, 45, 60, and 75 million years ago) and quantifying taxonomic and phylogenetic β-diversity at each depth [18]. The decreasing relationship strength at deeper evolutionary depths suggests deeper clades are more likely to overlap in geographic or environmental space, and present-day environmental conditions may not reflect deep-time climate change [18].

Visualization and Analysis Tools

Phylogenetic Tree Visualization Platforms

Effective visualization is essential for interpreting divergence time estimates and phylogenetic relationships:

- iTOL (Interactive Tree Of Life): Supports trees with 50,000+ leaves, offers 19 dataset types for annotation, and provides advanced display of unrooted, circular, and regular cladograms or phylograms [19].

- OneZoom: A fractal-based tree of life explorer showing relationships between 2.2+ million species with zoomable interface, particularly valuable for public engagement and education [20].

- ggtree: An R package that extends ggplot2 for visualizing phylogenetic trees with associated data, supporting multiple layouts (rectangular, circular, slanted, etc.) and high levels of customization [21].

Table 3: Essential Research Tools for Divergence Time Estimation

| Tool/Resource | Function | Application Context |

|---|---|---|

| RelTime Software | Estimates relative divergence times | Large phylogenetic datasets with rate heterogeneity |

| DeepDive Framework | Estimates biodiversity through time using deep learning | Datasets with strong spatial, temporal, taxonomic biases |

| ggtree R Package | Visualizes and annotates phylogenetic trees | All stages of phylogenetic analysis and publication |

| Mutation-Selection Models | Predicts site-specific substitution rates | Protein evolution studies and functional constraint analysis |

| Fossil Calibration Databases | Provides absolute timepoints for molecular clock calibration | Establishing temporal frameworks for evolutionary studies |

| Ecological Niche Modeling Software | Predicts species distributions under climate change | Conservation planning and climate vulnerability assessment |

Figure 2: Logical workflow for molecular dating analysis

The integration of molecular data with fossil evidence remains essential for robust estimates of divergence times and substitution rates. While relaxed molecular clock methods and new computational approaches like RelTime and DeepDive have significantly improved our ability to estimate evolutionary timescales, the fundamental discrepancy between genealogical and phylogenetic mutation rates persists as a challenging problem in evolutionary biology [16] [15] [6]. The use of fossil data for calibrating molecular clocks and validating ecological niche models provides critical temporal frameworks that would be impossible from genetic data alone [14] [2]. As molecular datasets continue to grow in size and taxonomic breadth, developing increasingly sophisticated methods that account for rate heterogeneity while incorporating robust fossil calibrations will remain essential for understanding the tempo and mode of evolution across the tree of life.

Integrating Fossils and Molecules: From Bayesian Frameworks to Deep Learning

The Fossilized Birth-Death (FBD) Model represents a significant advancement in Bayesian phylogenetic inference by providing a unified framework for integrating data from both extant and fossil species. For researchers and drug development professionals investigating evolutionary timelines, this model addresses a critical limitation of methods that use only contemporary molecular data: the difficulty in accurately estimating extinction rates and the consequent potential for biased divergence time estimates [22]. The FBD process treats fossil observations as an integral part of the tree-generating process, explicitly modeling speciation, extinction, and fossil sampling rates to infer phylogenetic relationships and divergence times simultaneously [23]. This approach is particularly valuable for validating molecular ecology predictions, as it allows for the direct incorporation of paleontological data—the only direct record of past biodiversity—into phylogenetic analyses, creating a more robust framework for testing evolutionary hypotheses [24].

Model Foundations: The Statistical Framework of the FBD Process

The FBD model is an extension of the birth-death process, a fundamental stochastic model in phylogenetics that describes how lineages accumulate through speciation (birth) and are removed through extinction (death). The key innovation of the FBD model is the incorporation of a fossil recovery rate (ψ), which quantifies the rate at which fossils are sampled along lineages of the complete tree [23]. This allows fossils to be treated as direct observations of the diversification process, rather than as supplemental or external data points.

In the FBD framework, the probability of the tree and fossils is conditional on the birth-death parameters: f[𝒯 | λ, μ, ρ, ψ, φ], where:

- 𝒯 denotes the tree topology, divergence times, fossil occurrence times, and fossil attachment points

- λ and μ represent the speciation and extinction rates, respectively

- ρ represents the probability that an extant species is sampled

- ψ represents the fossil recovery rate

- φ represents the origin time of the process [23]

The model distinguishes between the "complete tree" (containing all extant and extinct lineages) and the "reconstructed tree" (representing only the lineages sampled as extant taxa or fossils) [23]. An important characteristic is its ability to account for the probability of sampled ancestor-descendant relationships, which is correlated with turnover rate (r = μ/λ), fossil recovery rate (ψ), and the probability of sampling an extant taxon (ρ) [23].

For analyses dealing with stratigraphic range data rather than individual fossil specimens, the FBD Range Process (FBDRP) incorporates a model of asymmetric or "budding" speciation. This allows fossil specimens sampled along a lineage to be mapped to unique species, with the tips in the sampled tree representing the age of the youngest sample for each species [23].

Model Comparison: FBD Versus Alternative Phylogenetic Approaches

The following table compares the FBD model against other major phylogenetic approaches, highlighting key differences in methodology, data requirements, and analytical outputs.

Table 1: Comparison of the FBD Model with Alternative Phylogenetic Approaches

| Model/Approach | Data Requirements | Key Parameters Estimated | Treatment of Fossils | Strengths | Limitations |

|---|---|---|---|---|---|

| Fossilized Birth-Death (FBD) | Molecular data from extant species, morphological data, fossil occurrence ages [23] [24] | Speciation rate (λ), extinction rate (μ), fossil recovery rate (ψ), divergence times [22] [23] | Directly integrated as observations in the tree-generating process [23] | Provides unified framework for extant and fossil data; improved accuracy of extinction rate estimates [22] [24] | Complex implementation; computationally intensive; requires working knowledge of Bayesian phylogenetics [24] |

| Birth-Death (BD) with Extant Taxa Only | Molecular data from extant species only [22] | Speciation rate (λ), extinction rate (μ), divergence times | Not applicable | Simpler implementation; less computationally demanding | Limited power to estimate extinction rates; potential for biased parameter estimates [22] |

| State-Dependent Speciation and Extinction (SSE) Models | Molecular data from extant species, trait information [22] | Trait-dependent speciation and extinction rates, transition rates between traits | Not typically incorporated; some recent extensions | Can test hypotheses about trait-dependent diversification [22] | High rate of spurious correlations with neutral traits; limited power for extinction rate estimation [22] |

| Node Dating with Fossil Calibrations | Molecular data from extant species, fossil-based minimum age constraints for nodes | Divergence times, substitution rates | Used as external calibration points for constraining node ages | More straightforward interpretation; well-established software support | Does not fully utilize phylogenetic information from fossils; potential for subjective prior specification |

Performance Advantages of the FBD Model

Simulation studies have demonstrated that the inclusion of fossils in FBD analyses significantly improves the accuracy of extinction-rate (μ) estimates compared to analyses using only extant taxa, with no negative impact on speciation-rate (λ) and state transition-rate estimates [22]. This improvement is particularly valuable because extinction rates are notoriously difficult to estimate from molecular phylogenies of extant species alone. The FBD model also provides a more natural statistical framework for incorporating fossil age uncertainties, as it can accommodate probability distributions for fossil occurrence times rather than requiring fixed point estimates [24].

However, it is important to note that even with fossil data, state-dependent extensions of the FBD model (like BiSSE) may still incorrectly identify correlations between diversification rates and neutral traits if the true associated trait is not observed [22]. This highlights the importance of careful model selection and hypothesis testing when investigating trait-dependent diversification.

Experimental Protocols: Implementing FBD Analyses

Combined-Evidence Analysis Workflow

A standard "combined-evidence" phylogenetic analysis under the FBD model integrates three separate likelihood components or data partitions: one for molecular data, one for morphological data, and one for fossil stratigraphic range data [23]. The FBD process then serves as a joint prior distribution on tree topologies and divergence times, modeling all observed data (both extant and fossil) as part of the same generating process.

The following diagram illustrates the workflow and logical relationships of a combined-evidence analysis:

Diagram 1: Combined-Evidence Analysis Workflow

Software Implementation Protocols

The FBD model has been implemented in several Bayesian phylogenetic software packages, each with specific capabilities:

RevBayes provides a flexible platform for FBD analyses, implementing both the specimen-level FBD process (FBDP) and the FBD Range Process (FBDRP) for stratigraphic range data [23]. The software allows for complex model specification and can accommodate various clock models and substitution models for different data types.

BEAST2 offers user-friendly implementation of the FBD model through its graphical interface BEAUti, with available packages for skyline and stratigraphic range implementations [24]. This makes it particularly accessible for researchers new to Bayesian phylogenetics.

MrBayes also includes implementations of the FBD model, providing another option for Bayesian phylogenetic inference with fossil data [24].

A typical analysis involves specifying the FBD process as a tree prior, then combining it with appropriate substitution models for molecular data (e.g., GTR+Γ) and morphological data (e.g., the Mk model) [23]. The analysis is typically conducted using Markov chain Monte Carlo (MCMC) sampling to approximate the joint posterior distribution of parameters and trees.

Research Reagent Solutions: Essential Tools for FBD Analyses

Table 2: Essential Research Reagents and Software for FBD Analyses

| Tool/Resource | Type | Primary Function | Implementation Considerations |

|---|---|---|---|

| RevBayes | Software Platform | Flexible environment for specifying FBD models and extensions [23] | Steeper learning curve but maximum model flexibility; command-line interface |

| BEAST2 | Software Platform | User-friendly FBD implementation with graphical interface (BEAUti) [24] | More accessible for beginners; limited morphological model options |

| FBD Model (Tree Prior) | Statistical Model | Provides joint prior distribution for tree topology and divergence times incorporating fossils [23] | Requires specification of priors for λ, μ, ρ, ψ parameters |

| Mk Model | Morphological Model | Models discrete morphological character evolution for fossil and extant taxa [23] | Should account for data collection bias (parsimony-informative characters only) |

| Uncorrelated Relaxed Clock Models | Molecular Clock Model | Accommodates rate variation across lineages for molecular data [23] | Important for accommodating rate heterogeneity in molecular data |

| Stratigraphic Range Data | Data Type | First and last occurrence dates for fossil species [23] | Requires careful assessment of fossil identification and dating uncertainties |

Applications in Evolutionary Biology: Validating Molecular Predictions

The FBD model has been applied in over 170 empirical studies across diverse taxonomic groups [24], demonstrating its broad utility in evolutionary biology. These applications typically fall into several key research domains:

Divergence Time Estimation: The FBD model provides a more biologically realistic approach to dating evolutionary events by directly incorporating the fossil record, leading to more reliable estimates of clade ages and diversification patterns.

Diversification Rate Analysis: By improving the accuracy of extinction rate estimates, the FBD model enables more robust tests of hypotheses about how speciation and extinction rates have varied over time and across clades [22].

Trait-Dependent Diversification: Extensions of the FBD model that incorporate trait evolution allow researchers to test hypotheses about how specific morphological, ecological, or behavioral traits influence diversification rates, though caution is needed to avoid spurious correlations [22].

Historical Biogeography: The FBD framework can be combined with biogeographic models to reconstruct how species' ranges have shifted over geological timescales, providing insight into the role of geography in diversification.

In the context of validating molecular ecology predictions, the FBD model serves as a critical bridge between neontological and paleontological data. By integrating these complementary sources of evidence, researchers can test molecular-based hypotheses about evolutionary timescales and diversification patterns against the direct historical evidence provided by the fossil record. This integrative approach is particularly valuable for calibrating molecular clocks and testing hypotheses about how environmental changes have influenced biodiversity through deep time.

Future Directions and Methodological Challenges

Despite its significant advantages, applying the FBD model in practice presents several challenges. The method requires a working knowledge of paleontological data and their complex properties, Bayesian phylogenetics, and the mechanics of evolutionary models [24]. Important considerations include:

Fossil Identification and Dating: Uncertainties in fossil taxonomic assignment and geochronological dating must be properly accounted for in analyses.

Model Misspecification: As with any model-based approach, violations of FBD model assumptions can lead to biased parameter estimates. Developing model adequacy tests for FBD analyses remains an active research area.

Computational Demands: FBD analyses, particularly those combining molecular and morphological data for large datasets, can be computationally intensive.

Future methodological developments are likely to focus on extending the FBD framework to better accommodate features of the fossil record, such as variation in preservation potential across environments and taxonomic groups, and integrating additional data sources such as geochemical or environmental information [24]. As these models continue to develop, they will further enhance our ability to synthesize paleontological and neontological data to reconstruct evolutionary history.

Integrating fossil data into phylogenetic analyses represents a significant advancement in testing and validating molecular evolutionary hypotheses. The Fossilized Birth-Death (FBD) model provides a coherent statistical framework for combining molecular data from extant species with morphological and temporal data from fossils, enabling joint inference of divergence times and phylogenetic relationships [25] [24]. For researchers in molecular ecology and drug development who utilize evolutionary patterns, FBD models offer a powerful approach to ground-truth molecular clock predictions against the tangible evidence of the fossil record. This guide objectively compares the implementation, capabilities, and application of FBD models across three major Bayesian software toolkits: BEAST2, MrBayes, and RevBayes, providing a foundation for selecting appropriate tools for fossil-calibrated evolutionary analyses.

The Fossilized Birth-Death Model: Core Concepts

The FBD model is a generating process that describes the joint distribution of phylogenetic trees, divergence times, and fossil observations under a single statistical framework [25] [23]. It combines two fundamental processes:

- Birth-Death Process: Models lineage diversification through time with speciation (λ) and extinction (μ) rate parameters, generating tree topology and divergence times [25].

- Fossilization Process: Accounts for the sampling of fossil specimens along lineages via a Poisson process with fossil recovery rate (ψ) [25].

A key advantage of the FBD process is its treatment of fossils as tips in the phylogeny or as sampled ancestors, naturally incorporating them into the tree without requiring arbitrary node calibrations [26] [24]. When combined with the Mk model for morphological character evolution [25] [23], it enables true "total-evidence" dating, simultaneously inferring relationships and divergence times from molecular, morphological, and fossil occurrence data.

Comparative Analysis of Software Implementations

Table 1: Platform Overview and Implementation Characteristics

| Feature | BEAST2 | MrBayes | RevBayes |

|---|---|---|---|

| FBD Model Implementation | FBDP and FBDRP via packages | Integrated FBDP implementation | FBDP and FBDRP with range data |

| Graphical Interface | BEAUti for model setup | Limited GUI options | Command-line only |

| Learning Resources | Taming the BEAST tutorials [24] | Extensive manual | Comprehensive tutorial series [25] [23] |

| Morphological Models | Lewis Mk via morph-models package [27] | Extended Mk models | Customizable Mk models [25] |

Model Specification and Flexibility

Table 2: Model Specification Capabilities and Data Integration

| Model Component | BEAST2 | MrBayes | RevBayes |

|---|---|---|---|

| Molecular Clock Models | Relaxed clocks (lognormal) [27] | Strict and relaxed clocks | Strict, relaxed, and uncorrelated exponential [23] |

| Morphological Clock | Strict clock | Strict clock | Strict and relaxed clocks [28] |

| FBD Parameter Handling | Estimated with uniform priors [27] | Estimated with specified priors | Highly customizable priors |

| Fossil Age Uncertainty | Through age ranges [27] | Through age distributions | Uniform and other distributions [25] |

Performance Considerations and Experimental Data

While direct performance comparisons are limited in the literature, practical considerations emerge from implementation differences:

- BEAST2 benefits from its mature architecture and efficient MCMC sampling, particularly for large molecular datasets, though morphological model options are more limited [27] [24].

- RevBayes offers superior model flexibility but may require more tuning for convergence due to its highly customizable nature [25] [23].

- MrBayes provides a balance between usability and capability, with integrated FBD implementation but fewer extensions for fossil data [24].

A critical methodological consideration is the impact of model violations on FBD analysis accuracy. Studies demonstrate that selective sampling of fossils (e.g., using only the oldest fossils per clade) can produce dramatically overestimated divergence times in FBD analyses due to underestimation of net diversification rates and fossil-sampling proportions [26]. This highlights the importance of appropriate sampling strategies or alternative approaches like CladeAge when complete sampling is impractical [26].

Experimental Protocols and Methodologies

Standardized FBD Analysis Workflow

The following diagram illustrates the core workflow for implementing FBD models across the three toolkits:

Protocol for Combined-Evidence Analysis

A robust combined-evidence FBD analysis follows these key methodological steps, with variations depending on the software platform:

Data Preparation and Alignment

- Compile molecular sequences (DNA/RNA) for extant taxa, with "empty" sequences (gaps or question marks) for fossil taxa [27].

- Prepare morphological character matrix for fossil and extant taxa using NEXUS format.

- Document fossil occurrence dates, ideally with minimum and maximum age bounds to account for uncertainty [25].

Model Specification

- Define the FBD tree prior with parameters for speciation (λ), extinction (μ), fossil recovery (ψ), and extant sampling (ρ) [23].

- Specify clock models: typically relaxed lognormal for molecular data [27] and strict clock for morphological data [23].

- Configure site models: GTR+Γ for molecular data [23] and Mk model for morphological data [25].

Prior Selection

MCMC Execution and Diagnostics

- Run extended MCMC chains (often millions of generations) to ensure adequate parameter sampling.

- Assess convergence through effective sample sizes (ESS > 200) and trace plot inspection.

- Compare marginal likelihoods for model selection when testing alternatives.

Case Study: Bear Family (Ursidae) Phylogeny

The ursid phylogeny provides an exemplary application of FBD methodology, implemented across multiple platforms [25] [23] [24]. This analysis demonstrates:

- Data Integration: Combination of molecular data from living bear species with morphological character matrices for fossil and extant taxa.

- Fossil Handling: Treatment of fossil specimens as tips in the phylogeny with accurate age representations.

- Parameter Estimation: Joint inference of divergence times and macroevolutionary parameters (speciation, extinction, and fossilization rates).

- Model Validation: Assessment of molecular clock predictions against the fossil record of well-documented bear lineages.

Research Reagent Solutions

Table 3: Essential Resources for FBD Model Implementation

| Resource Category | Specific Tools/Functions | Application in FBD Analysis |

|---|---|---|

| Data Formats | NEXUS files with CHARSTATELABELS blocks | Standardized encoding of morphological character matrices [27] |

| Morphological Models | Lewis Mk model [27] | Modeling discrete morphological character evolution with coding bias correction |

| Tree Priors | FBD Range Process (FBDRP) | Handling stratigraphic range data for fossil species [23] |

| Clock Models | Uncorrelated lognormal relaxed clock [27] | Accounting for rate variation across molecular lineages |

| MCMC Diagnostics | Effective Sample Size (ESS) & trace plots | Assessing convergence of parameter estimates |

| Sampling Methods | Sampled ancestors [22] | Modeling direct ancestor-descendant relationships in fossil record |

Discussion and Recommendations

Platform Selection Guidelines

Choosing among BEAST2, MrBayes, and RevBayes depends on several factors:

BEAST2 is recommended for analysts prioritizing user-friendliness, particularly those with molecular phylogenetics experience who are expanding to include fossil data. Its BEAUti interface provides guided model specification, though morphological model options are limited [27] [24].

RevBayes is ideal for methodologically-focused researchers requiring custom model development or complex FBD extensions. Its modular design supports sophisticated analyses like state-dependent speciation-extinction models with fossils [22], but requires proficiency with the Rev language [25] [23].

MrBayes offers a middle ground with its integrated FBD implementation and familiar Bayesian framework, suitable for analysts already experienced with the platform who want to incorporate fossil tips without extensive retooling [24].

Methodological Considerations for Molecular Ecology

For researchers validating molecular ecology predictions with fossil data, several critical factors emerge:

Fossil Sampling Strategies: Selective sampling of only the oldest fossils per clade can bias divergence time estimates [26]. Whenever possible, include comprehensive fossil occurrence data rather than just first appearances.

Model Adequacy: The FBD model assumes homogeneous diversification and fossilization rates [25], which may not hold for many clades. Consider model extensions with time-heterogeneous parameters when analyzing groups with known radiations or mass extinctions [28].

Morphological Clock Implementation: Unlike molecular clocks, morphological clocks typically assume a strict clock [23]. Evaluate whether this assumption is biologically justified for your dataset, as violation can impact divergence time estimates [28].

The integration of FBD models across multiple software platforms significantly enhances our ability to test molecular ecological predictions against the fossil record, providing a more empirical framework for understanding evolutionary timelines and processes. As these implementations continue to mature, they offer increasingly robust tools for connecting neontological and paleontological data in unified statistical frameworks.

In molecular ecology, the estimation of divergence times is fundamental for understanding the tempo of evolutionary processes, such as speciation, adaptation, and responses to historical climate changes. The molecular clock hypothesis provides the theoretical foundation for translating genetic distances into absolute time. However, this clock requires calibration with independent temporal evidence to move from relative to absolute timescales. The choice of calibration strategy—using primary evidence like fossils or secondary estimates from previous molecular dating studies—profoundly influences the accuracy and precision of resulting evolutionary timelines. This guide objectively compares these two approaches within the critical context of validating molecular ecology predictions with fossil data. We summarize experimental data on their performance, detail key methodologies, and provide resources to inform calibration decisions in evolutionary research.

Defining Primary and Secondary Calibrations

Primary Calibrations are temporal constraints derived directly from independent, non-molecular evidence. The most common source is the fossil record, where the first appearance of a taxon in the geological strata provides a minimum age for the node representing its divergence from its closest relative [29] [30]. Other sources include dated biogeographic events, such as the formation of a mountain range or the isolation of a landmass, which can constrain the maximum age of a lineage.

Secondary Calibrations are temporal constraints derived from the results of previous molecular dating analyses. In this approach, a node age and its associated uncertainty (e.g., a 95% credible interval), estimated in a "primary" study that used fossil evidence, are applied as a calibration prior in a new, "secondary" study on a different dataset or taxonomic group [29] [31]. This practice is often employed in groups that lack a robust fossil record of their own.

Comparative Analysis: Accuracy, Precision, and Error

Experimental simulations have quantified the distinct error profiles associated with primary and secondary calibration strategies. The table below summarizes key performance differences.

Table 1: Performance comparison of primary versus secondary calibrations based on simulation studies

| Aspect | Primary Calibrations | Secondary Calibrations |

|---|---|---|

| Overall Accuracy | More accurate, especially with multiple, deep-node calibrations [30]. | Estimates can shift significantly from true times; often overestimated by ~10% or younger than primary estimates [29] [31]. |

| Precision (CI Width) | Confidence/credible intervals (CIs) are wider, reflecting more appropriate uncertainty [31]. | CIs are artificially narrow, giving a false impression of precision [29] [31]. |

| Impact of Calibration Position | Deeper node calibrations yield more accurate and precise timescale estimates [30]. | Error increases with the age of the calibrated node and the number of tips in the tree [31]. |

| Error Propagation | Errors are contained within the analysis. | Compounds errors from the primary study (e.g., in fossil placement, model choice) [29]. |

| Best Practice Use Case | The preferred and recommended method whenever possible [31] [30]. | May be useful for exploring plausible evolutionary scenarios when primary calibrations are utterly unavailable [29]. |

The quantitative consequences of using secondary calibrations are significant. One study found that secondary calibrations produced age estimates that were significantly different from primary estimates in 97% of replicates, with the 95% credible intervals being significantly narrower [31]. Furthermore, the total error in the secondary analysis was positively correlated with the number of tips and the age of the secondary tree [31].

Key Experimental Protocols and Methodologies

To ensure the reliability and comparability of data on calibration performance, researchers typically employ controlled simulation studies. The following workflow outlines a standard methodology for quantifying calibration error.

Workflow for Quantifying Calibration Error

Detailed Methodology

The standard protocol, as used in studies like Schenk (2016) and others, involves several key stages [29] [31]:

Simulate a "True" Phylogeny: A large, known phylogeny (e.g., 1500 tips) is generated under a defined model of diversification, such as a pure-birth process. The tree is scaled to a known timescale (e.g., 70 million years), establishing the "true" divergence times for all nodes [31].

Simulate DNA Sequence Evolution: A DNA sequence alignment (e.g., 2000 base pairs) is simulated along the branches of the true tree using a specific nucleotide substitution model (e.g., HKY). This creates a realistic genetic dataset with a known evolutionary history [31].

Primary Divergence Time Estimation (Primary Calibration):

- The simulated DNA data is analyzed using a relaxed-clock molecular dating method (e.g., an uncorrelated lognormal model in a Bayesian framework like BEAST).

- A set of nodes on the tree is calibrated using the known true node ages from the simulation, mimicking the use of perfect fossil information. Prior distributions (e.g., lognormal) are placed on these node ages to incorporate uncertainty [31] [30].

- The output is a set of estimated node ages with confidence/credible intervals for the primary analysis.

Secondary Divergence Time Estimation (Secondary Calibration):

- A subset of the tree (a "secondary tree") is selected, or a new dataset is simulated based on a part of the original tree.

- The posterior estimates (e.g., mean and 95% CI) for one or more nodes from the primary analysis are used as the calibration priors for the secondary analysis [31].

- The secondary analysis is run on its dataset, producing a new set of divergence time estimates.

Error Quantification: The accuracy and precision of both the primary and secondary analyses are assessed by comparing their estimated node ages to the known true ages from the simulation. Metrics include:

- Bias: The direction and magnitude of the shift in estimated ages (e.g., consistent overestimation or underestimation).

- Precision: The width of the confidence/credible intervals.

- Coverage: Whether the true age falls within the stated confidence/credible interval the expected proportion of the time [29] [31].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 2: Key computational tools and resources for molecular dating and calibration analysis

| Tool/Resource | Function |

|---|---|

| BEAST (Bayesian Evolutionary Analysis Sampling Trees) | A powerful software platform for Bayesian phylogenetic analysis, widely used for divergence time estimation with relaxed molecular clocks [31] [30]. |

| MEGA X | An integrated software tool that includes the RelTime method for rapid estimation of divergence times with minimal assumptions, used in simulation studies [29]. |

R with specialized packages (e.g., ape, geiger) |

A statistical programming environment used for simulating phylogenetic trees and sequence data, and for analyzing the results of dating analyses [31]. |

| Seq-Gen | A program for simulating the evolution of DNA or protein sequences along a phylogenetic tree, crucial for generating test datasets [29]. |

| Fossil Occurrence Data (e.g., from PBDB) | Empirical fossil data from public databases like the Paleobiology Database (PBDB) are used to establish primary calibration priors and validate models [2] [6]. |

| DeepDive | A deep learning framework designed to estimate biodiversity trajectories from fossil data, accounting for spatial, temporal, and taxonomic sampling biases [6]. |

The choice between primary and secondary calibrations is not merely a technicality but a fundamental decision that shapes the reliability of evolutionary timelines. Experimental data consistently demonstrates that primary calibrations, particularly multiple constraints placed on deep nodes, provide the most accurate and robust estimates of divergence times [30]. While secondary calibrations offer a tempting solution for data-poor groups, they introduce predictable inaccuracies and an illusion of precision that can mislead downstream interpretations [29] [31]. The most effective strategy for validating molecular ecology predictions is to ground them firmly in the fossil record, using careful fossil selection and appropriate priors. When secondary calibrations must be used, their inherent limitations and compounded uncertainties should be explicitly acknowledged and reported.

Understanding how biodiversity has changed through time is a central goal of evolutionary biology, creating a critical intersection where molecular ecology predictions require validation from fossil evidence. However, the fossil record presents substantial challenges for robust analysis due to inherent incompleteness and pervasive sampling biases that distort our perception of past diversity. These biases reflect variation in sampling effort, fossil site accessibility, preservation potential across organisms and habitats, and geological history, resulting in temporal, spatial, and taxonomic heterogeneities that create a significant mismatch between true and sampled diversity patterns [6].

Traditional methods for estimating past biodiversity, including rarefaction techniques, maximum likelihood models, and richness extrapolators, have primarily focused on correcting temporal variation in preservation rates. These approaches often fail to adequately account for geographic scope, temporal duration, or environmental representation of sampling. A recent analysis highlighted that spatial sampling heterogeneity alone accounts for 50-60% of changes in standardized richness estimates, underscoring the critical need for spatially explicit methods in deep-time biodiversity research [6].

Artificial intelligence is now reshaping palaeontology and biodiversity research, offering transformative tools to analyze complex fossil data and evolutionary patterns across deep time [32]. Within this context, DeepDive represents a significant methodological advance—a deep learning framework specifically designed to estimate global biodiversity patterns through time while explicitly incorporating spatial, temporal, and taxonomic sampling variation. This approach enables researchers to test molecular ecology predictions against fossil evidence with greater accuracy, particularly for large spatial scales and across wide temporal spans where traditional methods struggle most [6].

What is DeepDive? A Framework for Estimating Past Biodiversity

DeepDive is a novel approach for estimating biodiversity trajectories from fossil data that couples mechanistic simulations with deep learning inference. The methodology was specifically developed to infer richness at global or regional scales through time while addressing the limitations of previous methods that ignore geographic and taxonomic sampling biases [6].

The framework consists of two integrated modules working in tandem: