Bridging the Virtual and the Real: A Practical Guide to Validating Molecular Dynamics Simulations with Experimental Data

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of validating molecular dynamics (MD) simulations against experimental data.

Bridging the Virtual and the Real: A Practical Guide to Validating Molecular Dynamics Simulations with Experimental Data

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on the critical process of validating molecular dynamics (MD) simulations against experimental data. It covers the foundational principles of why validation is essential, explores methodological frameworks for integration across techniques like NMR, SAXS, and cryo-EM, addresses common troubleshooting and optimization challenges including force field selection and sampling limitations, and finally, establishes robust practices for comparative analysis and quantitative validation. By synthesizing current literature and best practices, this guide aims to enhance the reliability and predictive power of MD simulations in biomedical research.

The Indispensable Bridge: Why Validating Simulations with Experiments is Non-Negotiable

In computational chemistry and materials science, force fields and empirical potentials provide the essential foundation for molecular dynamics (MD) simulations by defining the potential energy surface governing atomic interactions. These methods face an inherent and fundamental trade-off: balancing computational efficiency against physical accuracy and transferability [1]. Traditional classical force fields, with their simple functional forms and limited parameters, excel in simulation speed but often fail to capture complex quantum mechanical effects, particularly bond formation and breaking [2] [1]. While machine learning force fields (MLFFs) have emerged as powerful alternatives offering near-quantum accuracy, they introduce new challenges including data hunger, limited interpretability, and specialized computational requirements [3]. This guide objectively compares the performance limitations of prevailing force field methodologies through experimental data and case studies, providing researchers with a framework for selecting appropriate models based on their specific accuracy requirements and computational constraints.

Force Field Taxonomy and Fundamental Limitations

Force fields can be broadly categorized into three distinct classes, each with characteristic functional forms, parameterization strategies, and inherent limitations. The following table summarizes their core characteristics and primary constraints.

Table 1: Classification and Fundamental Limitations of Force Field Approaches

| Force Field Type | Typical Number of Parameters | Parameter Interpretability | Primary Limitations | Computational Cost Relative to QM |

|---|---|---|---|---|

| Classical (e.g., AMBER, CHARMM) | 10–100 | High (clear physical meaning) | Cannot describe chemical reactions; accuracy limited by fixed functional forms [1]. | 10⁻³ – 10⁻⁶ [1] |

| Reactive (e.g., ReaxFF) | 100–1000 | Medium (complex relationships) | Struggles with DFT-level accuracy for reaction potential energy surfaces; high parameterization effort [2]. | 10⁻² – 10⁻⁴ |

| Machine Learning (e.g., MACE, DeePMD) | 10⁴ – 10⁷ | Low ("black box" models) | Require extensive training data; limited transferability to unseen configurations [4] [5]. | 10⁻¹ – 10⁻⁴ [6] |

The limitations extend beyond these fundamental characteristics to specific failure modes in practical applications. Classical force fields typically employ fixed atomic charges, omitting polarization effects crucial for realistic simulation of biological systems and molecular recognition events [7]. Reactive force fields, while addressing bond formation/breaking, often exhibit documented deficiencies in accurately describing reaction barriers and energies [2]. MLFFs demonstrate remarkable accuracy within their training domain but frequently fail to generalize to extreme conditions such as high pressure or anharmonic regions of the potential energy surface not represented in training data [4] [5].

Quantitative Performance Benchmarks

Accuracy Under Standard Conditions

Benchmarking against density functional theory (DFT) calculations provides crucial insights into force field performance. The Ultra-Fast Force Fields (UF3) approach demonstrates exceptional efficiency, achieving speeds comparable to traditional empirical potentials while maintaining accuracy close to state-of-the-art ML potentials [6]. For elemental tungsten, UF3 closely matches DFT-predicted properties including phonon spectra and elastic constants [6]. Universal MLFFs trained on extensive datasets generally perform well for equilibrium properties, with studies showing that models like M3GNet, CHGNet, and MACE can achieve force mean absolute errors (MAEs) below 100 meV/Å on diverse materials systems [3] [5].

However, this accuracy deteriorates significantly for properties sensitive to anharmonic effects or specific atomic environments. In simulations of PbTiO₃, universal MLFFs trained on PBE-derived data largely failed to capture realistic finite-temperature phase transitions under constant-pressure MD, often exhibiting unphysical instabilities [4]. This failure stems from inherited biases in the exchange-correlation functionals used for training data generation and limited generalization to anharmonic interactions governing dynamic behavior [4].

Performance Under Extreme Conditions

The accuracy degradation under non-ambient conditions represents a critical limitation for many force fields. Systematic investigation of universal ML interatomic potentials under pressure reveals that while these models excel at standard pressure, their predictive accuracy deteriorates considerably as pressure increases from 0 to 150 GPa [5]. This decline originates from fundamental limitations in training data rather than algorithmic constraints, as most training datasets inadequately represent compressed atomic environments [5].

Table 2: Performance Degradation of Universal ML Potentials Under Pressure (0-150 GPa)

| Pressure Range | Typical Energy MAE Increase | Typical Force MAE Increase | Primary Structural Cause |

|---|---|---|---|

| 0-25 GPa | Minimal | Minimal | Slight compression of atomic environments |

| 25-75 GPa | Moderate (2-3×) | Significant (3-5×) | Narrowing distribution of neighbor distances |

| 75-150 GPa | Severe (5-10×) | Severe (5-10×) | Fundamental shift in atomic environments not represented in training data [5] |

This performance degradation manifests structurally through systematic shifts in first-neighbor distances, with distribution maxima decreasing from nearly 5 Å at ambient pressure to approximately 3.3 Å at 150 GPa [5]. The volume per atom distribution similarly narrows and shifts toward lower values under compression, creating atomic environments fundamentally different from those in standard training datasets [5].

Experimental Validation Case Studies

Case Study: Ferroelectric Phase Transition in PbTiO₃

The temperature-driven ferroelectric-paraelectric phase transition in PbTiO₃ (PTO-test) provides a rigorous benchmark for evaluating force field performance under realistic MD conditions [4]. This protocol assesses the ability of force fields to accurately reproduce dynamic properties and phase transition kinetics rather than merely predicting static energies and forces.

Experimental Protocol:

- Structural Optimization: Perform ground-state structural optimization of tetragonal PbTiO₃ using each force field methodology

- Property Prediction: Calculate lattice parameters (a, c) and tetragonality (c/a ratio) for comparison with experimental values (c/a ≈ 1.06)

- Phonon Analysis: Compute phonon spectra using finite-displacement method with atomic forces evaluated directly by respective force fields

- MD Simulations: Conduct constant-pressure MD simulations to observe temperature-driven phase transitions

- Validation: Compare predicted transition temperatures and structural evolution with experimental data

Results and Limitations: Universal MLFFs trained on PBE-based databases (CHGNet, M3GNet, MACE) inherited functional biases, predicting c/a ratios even larger than PBE itself (up to 1.23 vs experimental 1.06) [4]. While most MLFFs generated phonon spectra free of imaginary frequencies, confirming dynamical stability, they largely failed to capture realistic finite-temperature phase transitions under constant-pressure MD, often exhibiting unphysical instabilities [4]. The exception was UniPero, a specialized model for perovskite oxides trained on PBEsol-derived data, which successfully predicted the phase transition, highlighting the critical importance of training data quality and specificity [4].

Case Study: High-Pressure Performance

The accuracy of force fields under extreme pressure conditions represents a significant challenge with implications for materials discovery and planetary science.

Experimental Protocol:

- Dataset Generation: Create benchmark datasets through DFT calculations across pressure ranges (0-150 GPa)

- Structure Relaxation: Perform full ionic relaxations at each pressure value to obtain equilibrium volumes, atomic positions, and total energies

- Force Field Evaluation: Assess energy, force, and stress tensor predictions across pressure spectrum

- Fine-Tuning: Implement targeted fine-tuning on high-pressure configurations to improve robustness

- Validation: Compare predicted structural properties and phase stability with high-pressure experimental data

Results and Limitations: Benchmarking revealed that universal MLIPs fail to generalize to high-pressure regimes without targeted fine-tuning [5]. Through partitioning datasets at the material level and assigning all frames from a given relaxation trajectory to the same partition, researchers demonstrated that fine-tuning significantly improved model robustness under pressure [5]. This approach prevents data leakage across subsets and ensures proper evaluation of generalization capability.

High-Pressure Benchmarking Workflow: This methodology emphasizes proper dataset partitioning to prevent data leakage and enable accurate assessment of force field performance under extreme conditions.

Methodologies for Assessment and Improvement

Force Field Selection Protocol

Choosing an appropriate force field requires systematic evaluation of multiple factors beyond reported accuracy metrics:

Application-Specific Assessment:

- System Characteristics: Identify key system components (elements, bonding types, phases)

- Process Requirements: Determine if processes involve bond formation/breaking (requiring reactive or MLFFs)

- Property Targets: Define target properties (structural, dynamic, mechanical, thermal)

- Condition Range: Specify temperature, pressure, and environmental conditions

- Validation Metrics: Establish relevant experimental or theoretical validation benchmarks

Computational Resource Evaluation:

- Training Data Availability: Assess existence of relevant training data or resources for generation

- Parameterization Effort: Evaluate available resources for system-specific parameterization

- Computational Budget: Determine available resources for production simulations

- Software Compatibility: Verify integration with existing simulation workflows and codes

Accuracy Improvement Strategies

Several methodologies can address fundamental force field limitations:

Transfer Learning and Fine-Tuning: Leveraging pre-trained models and adapting them to specific systems with minimal additional data significantly improves accuracy while reducing computational cost [2]. Fine-tuning universal models with targeted datasets successfully restores predictive accuracy for challenging systems like perovskite oxides [4].

Hybrid Approaches: Combining universal pretraining with targeted optimization achieves both broad applicability and system-specific accuracy [4]. This approach addresses the trade-off between generality and precision, particularly for systems with strong anharmonicity or specific chemical environments.

Active Learning and Data Augmentation: Automated sampling of configurations beyond the training distribution improves model robustness and transferability [3] [5]. This strategy is particularly valuable for exploring high-pressure regimes or reactive pathways not adequately represented in standard datasets.

Essential Research Reagent Solutions

Table 3: Key Computational Tools for Force Field Development and Validation

| Tool Category | Specific Solutions | Primary Function | Application Context |

|---|---|---|---|

| MLFF Frameworks | DeePMD-kit [3], MACE-MP-0 [5], UF3 [6] | Developing machine learning potentials | Achieving near-DFT accuracy with molecular dynamics efficiency |

| Benchmarking Datasets | Alexandria [5], MD17/MD22 [3], OAM [5] | Providing training and testing data | Standardized performance assessment across diverse chemical spaces |

| Specialized Validation | PTO-test [4], High-pressure benchmarks [5] | Testing specific failure modes | Evaluating performance under challenging conditions |

| Transfer Learning Tools | DP-GEN [2], Fine-tuning protocols [4] | Adapting general models to specific systems | Reducing data requirements for new applications |

| Analysis Packages | Phonopy [4], Matbench [5] | Calculating derived properties | Comparing experimental observables beyond energies/forces |

Force fields and empirical potentials remain indispensable tools for molecular dynamics simulations, yet each approach carries characteristic limitations that must be considered for specific applications. Classical force fields provide interpretability and efficiency but lack transferability and cannot describe chemical reactions. Reactive force fields address bond formation but struggle with quantitative accuracy across diverse reaction spaces. Machine learning potentials offer unprecedented accuracy but face challenges in data requirements, transferability to extreme conditions, and interpretability.

The emerging paradigm of hybrid approaches—combining universal pretraining with targeted optimization—offers a promising path forward [4]. As force field methodologies continue evolving, rigorous validation against experimental data and critical assessment of limitations remain essential for advancing computational molecular science. By understanding these fundamental accuracy problems, researchers can make informed decisions in force field selection and application, ultimately enhancing the reliability of computational predictions across chemistry, materials science, and drug development.

In molecular dynamics (MD), the "sampling problem" refers to the central challenge of determining when a simulation has run for a sufficient duration to adequately explore the conformational landscape of a biological molecule, thus providing reliable, statistically meaningful results that can be trusted for comparison with experimental data [8]. Far from the static, idealized conformations deposited into structural databases, proteins and nucleic acids are highly dynamic molecules that undergo conformational changes across temporal and spatial scales spanning several orders of magnitude [8]. These motions, often intimately connected to biological function, may be obscured by traditional biophysical techniques. While MD simulations complement these techniques by providing atomistic details underlying molecular dynamics, a fundamental limitation remains: the degree to which simulations accurately and quantitatively describe these motions depends critically on achieving sufficient sampling [8].

The requisite simulation times to accurately measure dynamical properties are rarely known a priori; instead, simulations are often deemed ‘sufficiently long’ when some observable quantity appears to have ‘converged’ [8]. However, the concept of convergence is itself problematic. The timescales required to satisfy stringent tests of convergence vary from system to system and depend heavily on the method used to assess it [8]. This ambiguity places a heavy burden on researchers to rigorously demonstrate that their simulations have, in fact, sampled the relevant conformational space, especially when the results are to be compared with or validated against experimental data.

Quantitative Metrics for Assessing Sampling Adequacy

A variety of metrics and methods are employed to assess whether a simulation has reached equilibrium and is sampling conformations adequately. The choice of metric can significantly influence the conclusion about whether a simulation is "long enough."

Table 1: Common Metrics for Assessing Sampling in Molecular Dynamics Simulations

| Metric | Description | What it Measures | Key Limitations |

|---|---|---|---|

| Root Mean Square Deviation (RMSD) [9] | Spatial deviation of a structure from a reference (e.g., the starting crystal structure). | Overall structural stability and drift from the initial coordinates. | Prone to subjective interpretation; does not confirm sampling of the transition-state ensemble [9]. |

| Root Mean Square Fluctuation (RMSF) [9] | Fluctuation of each residue around its average position. | Local flexibility and regional stability within the protein. | Does not report on global conformational changes or the diversity of states sampled. |

| Convergence of Potential Energy [9] | Stability of the total potential energy of the system. | Energetic equilibrium of the entire simulated system. | System can be energetically stable but structurally trapped in a local minimum. |

| Principal Component Analysis (PCA) [9] | Analysis of the dominant collective motions from the covariance matrix of atomic positions. | The most significant large-scale conformational motions. | Requires sufficient sampling to accurately build the covariance matrix. |

| Number of Hydrogen Bonds / Torsion Angle Transitions [9] | Counts of specific, stable interactions or transitions in dihedral angles. | Stability of specific secondary structural elements or local dynamics. | Provides only a local, not global, picture of conformational sampling. |

| Cluster Counting [9] | Groups similar structures from the trajectory into clusters to see how many distinct states are visited. | Diversity of conformational states sampled during the simulation. | Dependent on the parameters chosen for clustering (cut-off, algorithm). |

Among these, Root Mean Square Deviation (RMSD) is one of the most common—and most critiqued—methods. A study highlighted the severe subjectivity in using RMSD plots to determine equilibrium, showing that scientists from the same field showed no mutual consensus about the point of equilibrium when looking at the same plots [9]. The decisions were severely biased by factors such as the graphical scaling of the y-axis and the color of the plot, leading to the conclusion that scientists should not rely solely on RMSD plots to discuss equilibration [9].

A Framework for Reliable Sampling: Best Practices and Protocols

To overcome the limitations of individual metrics and subjective assessments, the field is moving toward a more rigorous, multi-faceted framework for ensuring reliable sampling.

The Critical Role of Independent Replicates

A foundational practice is running multiple independent simulations starting from different initial conditions. Running at least three independent replicates is recommended to demonstrate that the properties being measured have converged and are reproducible [10]. A single continuous simulation, even a long one, risks being trapped in a local energy minimum, giving a false impression of stability. Multiple replicates starting from different conformations or velocities provide a much more robust assessment of whether the simulation is sampling consistently from the underlying equilibrium distribution.

Enhanced Sampling for Complex Landscapes

For slow dynamical processes like protein folding or large-scale conformational changes in RNA, the requisite timescales may remain out of reach for conventional MD [8] [11]. In such cases, where the functional states of biomolecules are separated by rugged free energy landscapes, convergence analysis of unbiased trajectories may fail to detect slow transitions between kinetically trapped metastable states [10]. The use of enhanced sampling methods is therefore critical. These techniques (e.g., metadynamics, replica-exchange) accelerate the exploration of conformational space by biasing the simulation or running multiple replicas at different temperatures, helping to ensure that relevant states are not missed due to high energy barriers [10] [11].

Experimental Validation as the Ultimate Test

Perhaps the most compelling measure of sufficient sampling is the ability of the simulation to recapitulate and predict experimental observables [8]. However, this approach has its own challenges. Experimental data are typically ensemble and time averages, meaning multiple diverse conformational ensembles could produce averages consistent with experiment [8]. Therefore, a successful strategy involves validating against multiple, independent experimental sources.

Table 2: Experimental Techniques for Validating MD Simulations

| Experimental Technique | Observable for Validation | Role in Assessing Sampling |

|---|---|---|

| Nuclear Magnetic Resonance (NMR) [11] | Scalar couplings (J-couplings), Nuclear Overhauser Effect (NOE), chemical shifts, relaxation parameters. | Provides exquisite detail on local conformations and dynamics on fast timescales; can validate the population of specific structural states. |

| Small-Angle X-Ray Scattering (SAXS) [11] | Scattering profile that reports on the global shape and size of the molecule in solution. | Validates the overall compactness and shape of the simulated ensemble, ensuring global conformations are correct. |

| Single-Molecule FRET [11] | Distance distributions between two fluorophores attached to the biomolecule. | Probes heterogeneity and dynamics of specific intramolecular distances, directly testing the diversity of the simulated ensemble. |

| Cryo-Electron Microscopy [11] | 3D electron density maps. | Can be used for "flexible fitting" to validate large-scale conformational changes, though quantitative dynamics control can be challenging. |

The process of integrating experimental data can go beyond simple validation. Quantitative integrative methods, such as maximum entropy reweighting or maximum parsimony, can be used to refine simulated ensembles to match experimental data [11]. In this approach, the simulation provides a prior distribution of structures, which is then reweighted so that the ensemble averages of back-calculated experimental observables match the actual experimental data. If a simulation cannot be reweighted to match the data, it is a strong indicator of inadequate sampling or force field inaccuracy.

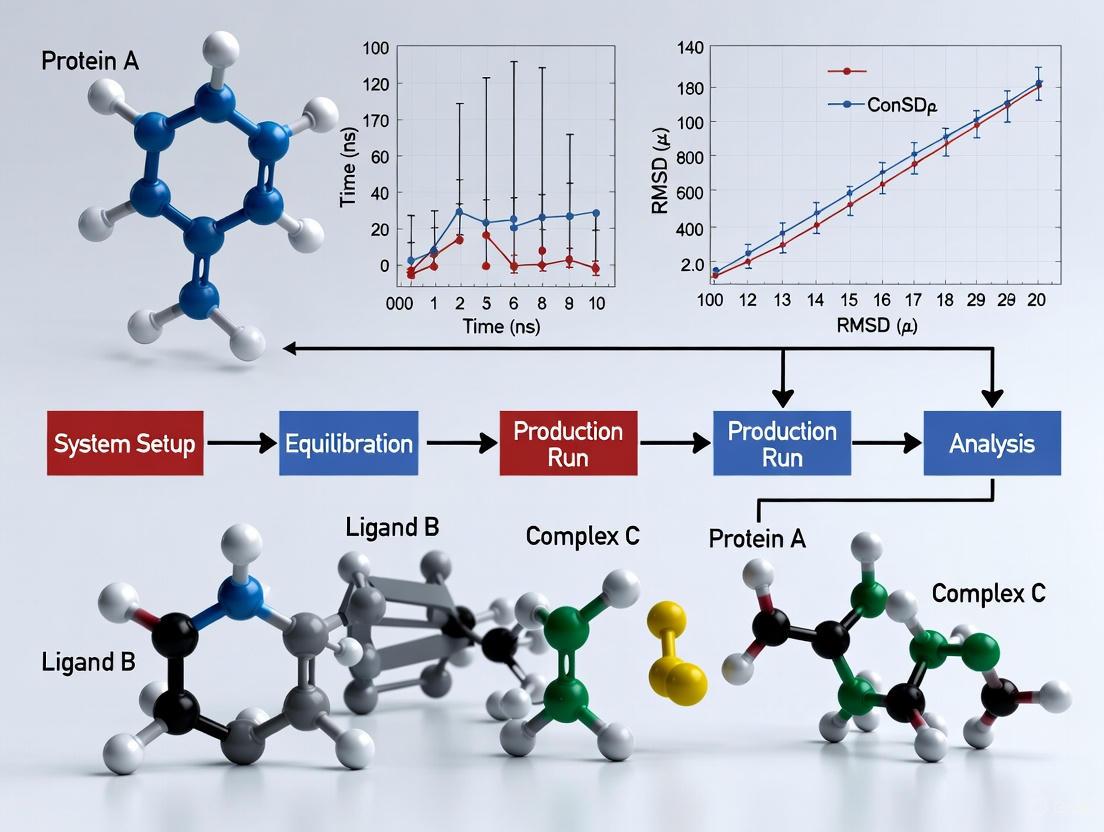

The following workflow outlines a robust protocol for running and validating a simulation to ensure sufficient sampling:

Workflow for Running and Validating MD Simulations

Table 3: Research Reagent Solutions for Molecular Dynamics

| Tool / Resource | Category | Function in Addressing Sampling |

|---|---|---|

| AMBER [8] | MD Software Package | Suite for simulating biomolecules; its performance in sampling can be compared with other packages. |

| GROMACS [8] | MD Software Package | Highly optimized software for MD, allowing for efficient sampling on CPU/GPU hardware. |

| CHARMM36 Force Field [8] | Force Field | An empirical potential energy function; its parameters influence the conformational landscape and required sampling time. |

| AMBER ff99SB-ILDN [8] | Force Field | Another widely used protein force field; different force fields can yield different sampling efficiencies and convergences. |

| TIP4P-EW Water Model [8] | Solvent Model | The water model used in solvation can affect the dynamics and stability of the simulated biomolecule. |

| Maximum Entropy Reweighting [11] | Analysis/Integration Method | A quantitative method to refine a simulated ensemble to match experimental data, correcting for incomplete sampling or force field bias. |

| Bayesian Optimization [12] | Optimization Algorithm | Can be used for efficient parameter space exploration, though noted to have unfavorable computational scaling for very large in silico iteration budgets. |

| Differential Evolution [12] | Optimization Algorithm | A powerful evolutionary algorithm for optimization problems, found to be highly competitive in terms of time and data efficiency for dry optimization. |

Determining when a molecular dynamics simulation is 'long enough' remains a complex problem with no universal solution. It requires moving beyond simplistic and subjective measures like visual inspection of RMSD plots [9]. Instead, a rigorous, multi-pronged approach is necessary. This includes running multiple independent replicates, employing enhanced sampling techniques for complex transitions, and, most critically, using a suite of experimental data to quantitatively validate the simulated conformational ensemble [8] [10] [11]. As MD simulations continue to tackle larger and more complex biological systems, the development and adherence to robust, community-accepted standards for convergence and reproducibility will be paramount in ensuring that these powerful "virtual molecular microscopes" provide insights that are both mechanistically insightful and quantitatively reliable.

Molecular dynamics (MD) simulations provide a "virtual molecular microscope" into biomolecular processes, generating vast amounts of atomistic data over temporal and spatial scales spanning several orders of magnitude [8]. However, a fundamental challenge persists: while simulations produce detailed conformational ensembles, experimental data typically report ensemble averages that obscure underlying distributions and timescales. This averaging creates inherent difficulties in validating the biological accuracy of computational models, as multiple diverse ensembles may produce averages consistent with experiment [8]. This comparison guide objectively examines current methodologies for benchmarking MD simulations against experimental data, providing researchers with a framework for selecting appropriate validation strategies based on their specific system requirements and experimental constraints.

The Core Challenge: When Multiple Ensembles Explain the Same Data

The central problem in simulation validation stems from the nature of experimental observables. Techniques including nuclear magnetic resonance (NMR), small-angle X-ray scattering (SAXS), and chemical probing measure properties that represent averages over the entire ensemble of structures present in solution [11]. Consequently, agreement between simulation and experiment does not necessarily validate the underlying conformational ensemble, as distinctly different ensembles may produce identical averages [8].

This challenge is particularly acute for multidomain proteins containing both folded and intrinsically disordered regions (IDRs), where heterogeneity creates complex dynamics that are difficult to capture with single experimental techniques or simulation approaches [13]. Similarly, RNA molecules exhibit crucial functional dynamics that are often probed through ensemble-averaging techniques, making it difficult to deconvolve the individual contributing conformations [11].

Comparative Analysis of MD Validation Methodologies

Direct Validation Against Experimental Data

The most straightforward approach uses experimental data to quantitatively validate MD-generated ensembles, testing multiple force fields to determine which produces the most trustworthy results [11].

Table 1: Experimental Techniques for MD Simulation Validation

| Experimental Technique | Observables Measured | Timescale Sensitivity | Key Validation Metrics | Best For Protein Types |

|---|---|---|---|---|

| NMR Spectroscopy | T1/T2 relaxation times, hetNOE | Picoseconds to microseconds | Spin relaxation times, order parameters | Multidomain proteins, IDPs [13] |

| SAXS/WAXS | Scattering intensity profiles | Ensemble average over all timescales | Radius of gyration, pair distribution function | RNA, disordered proteins [11] |

| Single-molecule FRET | Distance distributions | Nanoseconds to seconds | Inter-dye distances, dynamics | Conformational changes [11] |

| Chemical Probing | Reactivity patterns | Seconds | Solvent accessibility, secondary structure | RNA folding, protein dynamics [11] |

| Cryo-EM | 3D density maps | Static snapshots | Structural fitting, flexible fitting | Large complexes, structural refinement [11] |

Integrative Approaches: Combining Simulations with Experiments

Beyond simple validation, more sophisticated integrative methods use experimental data to refine simulated structural ensembles. These approaches range from qualitative methods that use data to generate initial models to quantitative methods that enforce agreement through statistical mechanics principles [11].

Table 2: Integrative Methods for Ensemble Refinement

| Method Type | Key Principle | Transferability | Computational Demand | Implementation Complexity |

|---|---|---|---|---|

| Maximum Entropy Reweighting | Adjust weights of existing structures to match experiments | Limited to specific system | Low | Moderate [11] |

| Maximum Parsimony/Sample-and-Select | Select subset of structures matching experiments | Limited to specific system | Low | Low [11] |

| On-the-fly Restraining | Apply experimental restraints during simulation | High with force field improvement | High | High [11] |

| QEBSS Protocol | Select best simulations from diverse set using NMR data | High across systems | Medium | Moderate [13] |

The QEBSS Protocol: A Case Study in Systematic Validation

The Quality Evaluation Based Simulation Selection (QEBSS) protocol addresses validation challenges for multidomain proteins with heterogeneous dynamics. This method combines MD simulations with NMR-derived protein backbone ¹⁵N T1 and T2 spin relaxation times and hetNOE values to interpret conformational ensembles [13].

QEBSS Workflow: Systematic selection of conformational ensembles

In practice, QEBSS involves running microsecond-length MD simulations from multiple different starting structures using several force fields specifically designed for disordered proteins (a99SB-ILDN, DES-Amber, a99SB-disp, a03ws, a99SB-ws) [13]. The root-mean-square deviation (RMSD) between simulated and experimental spin relaxation parameters is calculated for each trajectory, and simulations meeting predefined quality thresholds are selected for further analysis. This approach has been successfully applied to characterize flexible multidomain proteins including calmodulin, Engrailed 2 (EN2), MANF, and CDNF [13].

Force Field Performance Comparison

Different force fields show variable performance depending on the protein system and experimental observables being matched. Notably, no single force field consistently outperforms others across all systems [8] [13].

Table 3: Force Field Performance Across Biomolecular Systems

| Force Field | Optimized For | EnHD Performance | RNase H Performance | IDP Performance | Key Limitations |

|---|---|---|---|---|---|

| AMBER ff99SB-ILDN | Folded proteins | Good at 298K [8] | Good at 298K [8] | Overly compact ensembles [13] | Poor high-temperature unfolding [8] |

| CHARMM36 | Biomolecular systems | Good overall [8] | Good overall [8] | Moderate | Varies with water model [8] |

| DES-Amber | Disordered proteins | N/A | N/A | Good expansion [13] | Limited testing on folded domains |

| a99SB-disp | Disordered proteins | N/A | N/A | Excellent agreement with SAXS [13] | High computational demand |

| a99SBws/a03ws | Disordered proteins | N/A | N/A | Good agreement with NMR [13] | Parameter specificity |

Critical Considerations in Simulation Validation

Sampling Limitations and Convergence

The requisite simulation times to accurately measure dynamical properties are rarely known a priori, and "convergence" metrics vary significantly between systems [8]. Multiple short simulations often yield better sampling of protein conformational space than a single simulation with equivalent aggregate time, particularly for systems with complex energy landscapes [8].

Forward Model Accuracy

The equations used to "back-calculate" experimental observables from MD-simulated structures are often empirically parameterized and subject to systematic errors [11]. For example, different forward models for SAXS can yield substantially different interpretations of the same structural ensemble [11].

Force Field Selection and Parameter Sensitivity

While force fields typically receive primary attention in validation discussions, simulation outcomes depend significantly on other factors including water models, algorithms constraining motion, treatment of nonbonded interactions, and the simulation ensemble employed [8]. Using the same force field with different simulation packages can produce divergent results for larger amplitude motions [8].

Emerging Approaches and Future Directions

Artificial Intelligence and Machine Learning

AI methods, particularly deep learning, are emerging as transformative alternatives for sampling conformational ensembles of intrinsically disordered proteins (IDPs) [14]. These approaches leverage large-scale datasets to learn complex sequence-to-structure relationships, enabling ensemble modeling without traditional physics-based constraints [14]. Hybrid approaches that combine AI with MD show particular promise for integrating statistical learning with thermodynamic feasibility [14].

Multi-Technique Integration

No single experimental technique provides sufficient information to fully validate complex conformational ensembles. The most robust validation strategies combine multiple complementary techniques—such as NMR, SAXS, and single-molecule FRET—to provide overlapping constraints that significantly reduce the ambiguity in ensemble interpretation [11].

Essential Research Reagent Solutions

Table 4: Key Research Reagents and Computational Tools

| Reagent/Tool | Category | Primary Function | Example Applications |

|---|---|---|---|

| AMBER | MD Software Package | Biomolecular simulation with explicit solvents | Protein folding, nucleic acid dynamics [8] |

| GROMACS | MD Software Package | High-performance molecular dynamics | Large-scale biomolecular systems [8] |

| NAMD | MD Software Package | Scalable parallel molecular dynamics | Massive systems on supercomputers [8] |

| ilmm | MD Software Package | Specialized molecular mechanics | Alternative sampling approaches [8] |

| TIP4P-EW | Water Model | Explicit water representation | Solvation effects in AMBER [8] |

| HCL Wizard | Color Scheme Tool | Accessible data visualization | Scientific figures, publication graphics [15] |

| QEBSS Protocol | Validation Method | Simulation selection based on NMR data | Multidomain protein characterization [13] |

Validating molecular dynamics simulations against experimental ensemble data remains challenging due to the inherent averaging in experimental measurements. The most successful approaches combine multiple simulation packages and force fields with diverse experimental techniques, applying systematic selection protocols like QEBSS to identify the most physically realistic conformational ensembles [13]. As the field advances, integration of artificial intelligence with traditional physics-based simulations promises to enhance sampling efficiency while maintaining physical accuracy [14]. For researchers, selecting appropriate validation strategies requires careful consideration of their specific biological system, available experimental data, and the dynamical processes of interest.

Table 1: Quantitative Metrics for Molecular Dynamics Simulation Validation

| Application Domain | Validated Simulation Property | Experimental Benchmark | Correlation / Error Metric | Key Finding |

|---|---|---|---|---|

| Materials Science [16] | Grain growth kinetics in polycrystalline nickel | 3D experimental microstructure characterization | Broad match of growth characteristics | Absence of correlation between grain boundary velocity and curvature is confirmed, unrelated to solutes or processing. |

| Solvent Formulations [17] | Density, Heat of Vaporization (ΔHvap), Enthalpy of Mixing (ΔHm) | Experimental property measurements for pure and binary solvents | R² ≥ 0.84 for all properties | High-throughput MD can generate reliable, consistent datasets for benchmarking machine learning models. |

| Drug Solubility [18] | Aqueous Solubility (logS) | Experimental solubility values from literature | R² = 0.87 (RMSE = 0.537) on test set | MD-derived properties (SASA, DGSolv, etc.) combined with logP are highly effective for predicting solubility. |

| Virtual Screening [19] | Target-specific inhibition score (h-score) | Experimental IC50 values from the NCATS OpenData Portal | Higher sensitivity (0.5) vs. docking score (0.38) | MD-driven active learning drastically reduced compounds needing experimental testing from ~1300 to under 10. |

Molecular dynamics (MD) simulation has transcended its role as a purely theoretical tool to become an indispensable partner in experimental science. From designing more effective drugs to optimizing enhanced oil recovery, researchers rely on MD to provide atomic-level insights that are often difficult or impossible to obtain in the laboratory [20] [19]. However, the predictive power of any simulation is contingent upon its validation against empirical reality. A validated simulation is not merely one that produces visually plausible results; it is one whose outputs demonstrate consistent, quantitative agreement with high-quality experimental data. This process transforms a computational model from an interesting hypothesis into a trusted tool for discovery and design. This guide objectively compares validation methodologies across scientific fields, providing a framework for researchers to define and achieve success in their own simulation endeavors.

Core Principles of Simulation Validation

The process of validating a molecular dynamics simulation rests on several key pillars. First, the simulation must be grounded in a physically accurate force field, which is a set of equations and parameters that describe the potential energy of the system as a function of particle coordinates [21]. Force fields like OPLS-AA, CHARMM, and GROMOS are parameterized to reproduce key experimental data, forming the foundational layer of a reliable simulation. Second, the thermodynamic ensemble (NPT, NVT, NVE) must be appropriately chosen and controlled using thermostats and barostats to mimic the experimental conditions being modeled [21]. Finally, validation requires a direct, quantitative comparison of simulation-derived properties with experimental measurements, moving beyond qualitative observations to statistical metrics of agreement [17] [18].

Comparative Analysis of Validation Approaches

Materials Science and Chemical Engineering

In fields like materials science and chemical engineering, validation often focuses on replicating bulk thermodynamic and structural properties.

- Experimental Protocols: In solvent formulation research, validation involves simulating properties like density and heat of vaporization (ΔHvap) for a set of pure solvents and binary mixtures. These simulation-derived values are then plotted against experimentally measured values from trusted sources to calculate correlation coefficients (R²) and root-mean-squared error (RMSE) [17].

- Validation Workflow: The typical workflow involves constructing a simulation box with the correct composition, running an equilibration phase (e.g., in the NPT ensemble), and then running a production simulation from which ensemble-averaged properties are calculated [21]. As shown in Table 1, successful validation is achieved when R² values reach 0.84 or higher, confirming the simulation can capture experimental trends [17].

Pharmaceutical Development and Drug Discovery

In drug discovery, the stakes for accurate prediction are high, and validation strategies are correspondingly rigorous, often extending to functional biological outcomes.

- Experimental Protocols: For validating solubility predictions, the standard experimental benchmark is the shake-flask method followed by techniques like HPLC-UV to determine the thermodynamic solubility (logS) of a drug compound [18]. For virtual screening, the ultimate validation is the experimental measurement of inhibitory concentration (IC50), which quantifies a compound's potency in blocking a target protein's function [19].

- Validation Workflow: Researchers run MD simulations of drug molecules in explicit solvent to derive properties like the solvent-accessible surface area (SASA) and solvation free energy (DGSolv). These properties, sometimes combined with experimental logP, are used as features in machine learning models to predict logS [18]. In virtual screening, a target-specific score is computed from MD trajectories and used to rank potential drugs; success is defined when top-ranked compounds are confirmed to be potent inhibitors in subsequent wet-lab experiments [19].

Diagram: Simulation Validation Workflow. This flowchart outlines the iterative process of building and validating an MD simulation, from initial setup to final application.

Essential Research Reagents and Computational Tools

Table 2: Key Research Reagent Solutions for MD Validation

| Reagent / Tool Category | Specific Examples | Function in Validation |

|---|---|---|

| Force Fields | OPLS-AA, CHARMM27, GROMOS 54a7 | Provides the fundamental physics governing atomic interactions; choice is critical for accuracy [18] [21]. |

| Simulation Software | GROMACS, LAMMPS | The engine for running MD simulations; different packages offer various force fields and algorithms [16] [18]. |

| Validation Datasets | OMol25, Experimental Solubility (logS) Data, PDBbind | High-quality, curated experimental data used as a benchmark to test and validate simulation predictions [22] [18] [19]. |

| Analysis & ML Tools | Python (with ML libraries), In-house analysis scripts | Used to compute properties from simulation trajectories, perform statistical analysis, and build predictive models [17] [18]. |

A molecular dynamics simulation earns the status of "validated" not through a single test, but through a rigorous, multi-faceted process of quantitative benchmarking against experimental data. As evidenced across diverse fields, the gold standard involves demonstrating strong statistical correlation (e.g., R² > 0.8) for key properties, and crucially, the ability to generate accurate predictions that are later confirmed by experiment, such as identifying a nanomolar inhibitor [19] or predicting drug solubility [18]. The convergence of high-throughput MD simulations and machine learning is setting a new bar for validation, enabling the creation of large, consistent datasets that provide an even more robust foundation for testing model performance [17]. Ultimately, a validated simulation is a powerful scaffold for innovation, reducing reliance on costly trial-and-error and providing a trusted atomic-scale lens for scientific discovery.

A Toolkit for Integration: Methodologies for Combining MD with Experimental Data

In the field of structural biology, validating molecular dynamics (MD) simulations with experimental data is crucial for achieving accurate, physiologically relevant models. No single experimental technique can capture the full complexity of biomolecular behavior, which spans a vast range of spatial and temporal scales. Consequently, researchers increasingly rely on a multipronged approach, using complementary methods to constrain and validate their computational models. This guide provides an objective comparison of four key experimental techniques—NMR, SAXS, Cryo-EM, and single-molecule FRET—focusing on their unique strengths, limitations, and specific applications in validating MD simulations of proteins and nucleic acids.

Technical Comparison at a Glance

The following table summarizes the core characteristics of each technique to help guide your choice of validation metric.

Table 1: Key Characteristics of Major Structural Validation Techniques

| Technique | Typical Resolution | Optimal Distance Range | Sample Requirements | Key Strength for MD Validation | Primary Limitation |

|---|---|---|---|---|---|

| NMR [23] [24] | Atomic (0.1 - 1 Å) for local structure | Short-range (< 1 nm) | 0.2-1 mL, 50 µM–1 mM, isotope-labeled | Atomic-level dynamics on ps-ms timescales; ensemble information for IDPs [25]. | Limited to smaller proteins (< 50-70 kDa); requires significant sample. |

| SAXS [24] | Low (1-2 nm) | Overall size (1-100 nm) | 50-100 µL, 1-5 mg/mL | Low-resolution overall shape and dimensions in solution; assesses flexibility [26]. | Low information density; difficult for heterogeneous mixtures. |

| Cryo-EM [27] | Near-atomic (1-3 Å) for single-particle | N/A | ~3 µL, 0.01-1 mg/mL (low conc. possible) | Near-atomic structures of large complexes without crystallization [27]. | Static snapshot; limited direct observation of dynamics. |

| smFRET [28] [29] | Distance change (~0.1 nm) | 2-10 nm | 10-20 µL, 10-100 pM for single molecules | Detects subpopulations and conformational dynamics in real-time at the single-molecule level [30]. | Requires site-specific labeling; provides distance only between labels. |

Table 2: Quantitative Data Output for MD Validation

| Technique | Primary Measured Parameter | Directly Validates MD Output | Temporal Resolution |

|---|---|---|---|

| NMR | Chemical shifts, J-couplings, Relaxation rates (R₁, R₂, NOE), Residual Dipolar Couplings (RDCs) | Chemical environment, secondary structure, backbone dynamics, conformational ensembles [23]. | ps-s [23] |

| SAXS | Scattering intensity I(s), Radius of gyration (Rg), Pair-distance distribution function p(r) | Overall molecular shape, compactness, and oligomeric state [24]. | ms-min (time-resolved) |

| Cryo-EM | 3D Electron Density Map | Atomic coordinates, side-chain rotamers, and fitting of large complexes [27]. | Static (snapshot) |

| smFRET | FRET Efficiency (E), Photon counts, Fluorescence lifetimes | Inter-dye distances and their distributions, population heterogeneity, kinetics of transitions [28] [31]. | ms-s (millisecond dynamics with advanced methods) |

Experimental Protocols for Integration with MD

Understanding the standard workflows for each technique is essential for designing effective validation experiments.

NMR Spectroscopy

NMR is a powerful technique for studying biomolecular structure and dynamics in solution.

- Sample Preparation: Recombinantly express the protein of interest in isotope-enriched media (¹⁵N, ¹³C). The sample is typically in a buffered aqueous solution at concentrations of 0.1 to 1 mM in a volume of 200-500 µL [24].

- Key Experiments:

- Chemical Shifts: From ²H/¹⁵N/¹³C-HSQC spectra, used to identify secondary structure and backbone conformation [23].

- Spin Relaxation (R₁, R₂, hetNOE): Measures fast backbone dynamics on ps-ns timescales [23] [25].

- Residual Dipolar Couplings (RDCs): Provides information on the orientation of bond vectors, crucial for validating the relative orientation of domains in MD ensembles [24].

- Data Integration with MD: NMR data is often used to refine or select conformational ensembles. Experimental parameters (e.g., RDCs, relaxation rates, PREs) can be calculated from an MD trajectory and directly compared to the measured values to validate the simulation's accuracy [26].

Small-Angle X-Ray Scattering (SAXS)

SAXS provides low-resolution information about the overall shape and size of a biomolecule in solution.

- Sample Preparation: Requires a monodisperse, purified protein solution at multiple concentrations (e.g., 1, 2, and 5 mg/mL) to enable extrapolation to infinite dilution and account for inter-particle interactions. A matched buffer is used for background subtraction [24].

- Key Measurements:

- Radius of Gyration (Rg): Calculated from the Guinier plot at low scattering angles, indicating overall compactness.

- Pair-Distance Distribution Function (p(r)): Obtained via indirect Fourier transformation of the scattering data, revealing the molecule's shape (e.g., globular vs. elongated) [24].

- Kratky Plot: Used to assess the folded state and flexibility of the molecule.

- Data Integration with MD: The theoretical scattering profile can be computed from individual snapshots or an entire ensemble of structures from an MD simulation. The computed profile is then compared to the experimental SAXS data to validate the overall conformational ensemble sampled in the simulation [26] [24].

Cryo-Electron Microscopy (Cryo-EM)

Cryo-EM visualizes biomolecules in a near-native, frozen-hydrated state and can achieve near-atomic resolution.

- Sample Preparation: A 3-5 µL aliquot of the sample (0.01-1 mg/mL) is applied to a grid, blotted with filter paper, and rapidly plunged into a cryogen (ethane). This vitrifies the water, embedding the particles in a thin layer of amorphous ice [27].

- Key Steps:

- Data Collection: Automated imaging at the electron microscope collects thousands to millions of particle images using direct electron detectors.

- Image Processing: 2D particle images are classified, aligned, and averaged to generate a 3D reconstruction [27].

- Model Building: An atomic model is built de novo or by fitting a known structure into the electron density map.

- Data Integration with MD: The Cryo-EM density map serves as a structural template. MD simulations can be initiated from the fitted model to study flexibility and dynamics not captured in the static map. Furthermore, flexible fitting algorithms use MD-like protocols to refine atomic models into lower-resolution density maps [27].

Single-Molecule FRET (smFRET)

smFRET measures distances and their dynamics between two fluorescent dyes on a single molecule, revealing heterogeneities and conformational changes.

- Sample Preparation: The protein or RNA is site-specifically labeled with a donor (e.g., Cy3) and an acceptor (e.g., Cy5) fluorophore via cysteine or other chemistries. Measurements are performed at picomolar concentrations to ensure only one molecule is observed at a time [28] [29].

- Key Measurements:

- FRET Efficiency (E): Calculated from the ratio of acceptor and donor fluorescence intensities, reporting on the inter-dye distance.

- FRET Histograms: Reveal the number of conformational states and their populations.

- Transition Analysis: For immobilized molecules, transitions between FRET states can be tracked over time to obtain kinetic rates [28].

- Data Integration with MD: The inter-dye distance distribution from an MD trajectory can be converted into a predicted FRET efficiency distribution, accounting for dye dynamics via tools like

FRETraj[31]. This predicted distribution is then directly compared to the experimental smFRET histogram to validate the conformational landscape sampled in the simulation [31] [29].

Visualizing Integrative Workflows

The synergy between these techniques is best leveraged in integrative modeling workflows. The following diagrams illustrate two common approaches for combining experimental data with MD simulations.

Workflow 1: Ensemble Refinement with SAXS and NMR

Workflow 2: FRET-Guided Conformer Selection

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful execution of these validation experiments relies on high-quality, specific reagents.

Table 3: Key Reagent Solutions for Experimental Validation

| Reagent / Material | Function / Application | Key Considerations |

|---|---|---|

| Isotope-labeled Nutrients (¹⁵NH₄Cl, ¹³C-Glucose) [24] | Enables NMR spectroscopy of proteins by incorporating magnetically active nuclei. | Cost; required for all multi-dimensional NMR experiments on proteins. |

| Site-directed Mutagenesis Kit | Introduces cysteine residues or other mutations for specific labeling or functional studies. | Critical for smFRET and DEER, which require site-specific attachment of probes [29]. |

| Thiol-reactive Fluorophores (e.g., Cy3, Cy5 maleimides) [29] | Covalently attach to cysteine residues for smFRET measurements. | Dye photophysics, linker length, and potential perturbation of native structure must be controlled. |

| Spin Labels (e.g., MTSL) [29] | Covalently attach to cysteine residues for PELDOR/DEER EPR spectroscopy. | Similar cysteine-labeling requirements as smFRET; used for distance measurements in frozen solution. |

| Cryo-EM Grids (e.g., UltrAuFoil, Quantifoil) [27] | Support for vitrifying the sample in a thin layer of ice for Cryo-EM imaging. | Grid type and surface treatment are optimized to ensure even ice thickness and particle distribution. |

| Size Exclusion Chromatography (SEC) Columns | Final purification step to ensure sample monodispersity for SAXS, Cryo-EM, and smFRET. | Essential for removing aggregates that can severely interfere with data interpretation. |

The choice of validation metric is not a matter of selecting the "best" technique, but rather the most appropriate one for the specific biological question and molecular system. Cryo-EM provides high-resolution structural snapshots of large complexes; NMR offers unparalleled detail on atomic-level dynamics; SAXS delivers overall shape and flexibility in solution; and smFRET uniquely captures conformational heterogeneity and dynamics at the single-molecule level. The most powerful and reliable insights into molecular dynamics increasingly come from integrative approaches, where MD simulations are iteratively refined and validated against a consortium of these complementary experimental techniques. This multi-faceted strategy is fundamental for building accurate, dynamic, and physiologically relevant models of biomolecular machinery.

In molecular dynamics (MD) simulations, the force field is the mathematical heart that defines the potential energy surface and dictates the accuracy of every simulation outcome. The choice of force field is far from academic; it directly influences the predictive power of simulations studying drug-receptor binding, material properties, and biological mechanisms. Without rigorous validation against experimental data, MD results remain unverified computational hypotheses. This guide establishes a structured pipeline for force field selection grounded in experimental benchmarking, providing researchers across drug development and materials science with a methodological framework for making informed, evidence-based decisions about which force fields to trust for their specific systems.

The fundamental challenge is straightforward: while force fields aim to provide a universal description of atomic interactions, their performance varies significantly across different chemical environments and physical properties. As recent studies demonstrate, this variability necessitates a systematic approach to validation. For instance, a 2025 benchmarking study on cholesterol-containing membranes revealed that while all-atom force fields like CHARMM36 and Slipids accurately captured the cholesterol condensing effect, united-atom and coarse-grained models substantially under-predicted this phenomenon [32]. Such force-field-dependent outcomes underscore why a standardized validation pipeline is indispensable for computational science.

Core Principles of Force Field Validation

Before examining specific validation case studies, it is essential to establish the core principles that govern a rigorous force field benchmarking process. First and foremost, validation must be property-specific – a force field that excels at predicting density may perform poorly at capturing transport properties or conformational equilibria. Second, multiple experimental observables should be used for comprehensive assessment, as overfitting to a single type of measurement provides false confidence. Third, chemical transferability must be assessed by testing performance across related but distinct molecular systems beyond the original parameterization set. Finally, experimental data quality is paramount; the validation is only as reliable as the reference data used for comparison.

These principles inform the development of a generalized validation workflow that can be adapted to diverse research contexts. The following diagram illustrates this structured approach to force field selection:

Figure 1: The Force Field Validation Pipeline. This workflow provides a systematic approach for selecting force fields based on experimental validation.

Case Studies in Force Field Benchmarking

Biomembranes: Capturing Cholesterol Condensing Effects

Biomembranes represent a critical test case for force fields due to their complex composition and the central role of cholesterol in modulating membrane properties. A 2025 comparative analysis evaluated eight popular force fields—including CHARMM36, Slipids, Lipid17, GROMOS variants, and MARTINI models—for their ability to replicate the cholesterol condensing effect observed experimentally in phospholipid membranes [32].

The cholesterol condensing effect refers to cholesterol's ability to increase lipid tail ordering, decrease membrane lateral area, and increase membrane thickness. This phenomenon results from non-ideal mixing in cholesterol-lipid mixtures and directly affects membrane properties crucial for cellular function, including mechanical properties, lipid raft formation, and membrane fusion [32].

Experimental Protocol: Researchers simulated 1,2-dimyristoyl-sn-glycero-3-phosphocholine (DMPC) and 1,2-dioleoyl-sn-glycero-3-phosphocholine (DOPC) membranes with varying cholesterol concentrations. The key experimental observables were partial molecular areas of cholesterol and other parameters quantifying the condensing effect, which were compared directly against experimental measurements [32].

Performance Insights:

- All-atom force fields (CHARMM36, Slipids) successfully captured the significant deviations from ideal mixing expected in DMPC membranes.

- United-atom and coarse-grained models (GROMOS 53A6L, GROMOS-CKP, MARTINI) under-predicted the effect, condensing fewer neighboring lipids by smaller magnitudes.

- All tested force fields predicted small negative deviations from ideal mixing in cholesterol-DOPC membranes, but only all-atom models captured the more substantial effects in saturated lipid systems [32].

Table 1: Performance of Force Fields for Biomembrane Simulations

| Force Field | Resolution | Cholesterol Condensing Effect | Recommended Use |

|---|---|---|---|

| CHARMM36 | All-atom | Accurately captures experimental effect | Primary choice for cholesterol-containing membranes |

| Slipids | All-atom | Accurately captures experimental effect | Alternative all-atom option |

| Lipid17 | All-atom | Moderate accuracy | General membrane simulations |

| GROMOS 53A6L | United-atom | Under-predicts effect | Computationally efficient screening |

| GROMOS-CKP | United-atom | Under-predicts effect | Improved order parameters |

| MARTINI 2 | Coarse-grained | Substantially under-predicts effect | Large-scale membrane reorganization |

| MARTINI 3 | Coarse-grained | Substantially under-predicts effect | Enhanced MARTINI for complex systems |

Oxide Melts: Balancing Structural and Transport Properties

Industrial applications in steelmaking, glass manufacturing, and ceramics rely on understanding the structural and transport properties of oxide melts such as CaO-Al₂O₃-SiO₂. A comprehensive 2025 benchmarking study evaluated three empirical force fields—Matsui, Guillot, and Bouhadja—across ten compositions and temperatures from 1400-1600°C [33].

Experimental Protocol: Researchers compared MD predictions against experimental data, CALPHAD-based density models, and ab initio MD simulations for multiple properties. Structural assessments included density, bond lengths, and coordination environments. Dynamic properties included self-diffusion coefficients and electrical conductivity calculated using the Einstein relation, which relates mean squared displacement to diffusion coefficients [33].

Key Findings:

- Matsui's force field accurately reproduced densities and Si-O tetrahedral environments but showed limitations in capturing Al-O and Ca-O bonding.

- Guillot's force field performed similarly to Matsui's for structural properties but exhibited transferability issues beyond its original parameterization range.

- Bouhadja's force field demonstrated superior performance for dynamic properties, yielding the best agreement with experimental activation energies and robust transferability across compositions [33].

Table 2: Force Field Performance for Oxide Melt Properties

| Property Category | Specific Metric | Matsui et al. FF | Guillot & Sator FF | Bouhadja et al. FF |

|---|---|---|---|---|

| Structural Properties | Density | Accurate | Accurate | Accurate |

| Si-O bond lengths | Accurate | Accurate | Accurate | |

| Al-O coordination | Moderate | Moderate | Most accurate | |

| Ca-O coordination | Moderate | Moderate | Most accurate | |

| Transport Properties | Self-diffusion coefficients | Under-estimates | Moderate | Most accurate |

| Activation energy | Least accurate | Moderate | Most accurate | |

| Electrical conductivity | Under-estimates | Moderate | Most accurate | |

| Transferability | Beyond parameterization range | Limited | Limited | Excellent |

Reverse Osmosis Membranes: Predicting Material Performance

Polyamide-based reverse osmosis membranes are essential for water purification and desalination, but their performance depends on nanoscale properties that can be probed through MD simulations. A 2025 benchmarking study evaluated eleven force-field-water combinations for simulating both dry and hydrated polyamide membranes, with validation against experimentally synthesized membranes of similar composition [34].

Experimental Protocol: The study assessed force fields including PCFF, CVFF, SwissParam, CGenFF, GAFF, and DREIDING using multiple validation approaches. Equilibrium MD simulations compared dry-state properties (density, porosity, pore size distribution, Young's modulus) and hydrated-state properties (water uptake, pore size) against experimental measurements. Non-equilibrium MD simulations evaluated pure water permeability at experimental pressures (~100 bar), providing critical validation of transport predictions [34].

Performance Insights:

- CVFF, SwissParam, and CGenFF most accurately predicted dry-state properties including Young's modulus.

- For hydrated membranes, PCFF with the PCFF water model and GAFF with TIP4P water best reproduced experimental water uptake.

- In non-equilibrium simulations, the best-performing force fields predicted experimental pure-water permeability within a 95% confidence interval, with specific force field-water model combinations proving critical for accuracy [34].

Specialized Force Fields for Unique Systems

Mycobacterial Membranes: Addressing Specialized Lipid Composition

The unique cell envelope of Mycobacterium tuberculosis, rich in complex lipids like mycolic acids, presents particular challenges for simulation. General force fields often fail to capture the key biophysical properties of these specialized membranes, prompting the development of BLipidFF (Bacteria Lipid Force Fields) in 2025 [35].

Parameterization Methodology: BLipidFF employed quantum mechanics-based parameterization for four key mycobacterial outer membrane lipids: phthiocerol dimycocerosate (PDIM), α-mycolic acid (α-MA), trehalose dimycolate (TDM), and sulfoglycolipid-1 (SL-1). The development process involved:

- Divide-and-conquer strategy: Large lipids were divided into segments for quantum mechanical calculations

- RESP charge derivation: Using B3LYP-D3(BJ)/def2TZVP level of theory on 25 conformations

- Torsion parameter optimization: Targeting accurate reproduction of quantum mechanical potential energy surfaces [35]

Validation Results: BLipidFF successfully captured membrane properties poorly described by general force fields, including the high rigidity and appropriate diffusion rates of α-mycolic acid bilayers. Crucially, predictions based on BLipidFF showed excellent agreement with biophysical experiments including Fluorescence Recovery After Photobleaching (FRAP) measurements [35].

Active Pharmaceutical Ingredients: Solid-State Properties

For pharmaceutical applications, accurately simulating crystalline active pharmaceutical ingredients (APIs) requires force fields that capture solid-state packing and energetics. A 2025 study developed a specialized force field for organosulfur and organohalogen APIs, with validation against experimental sublimation enthalpies and single-crystal X-ray diffraction data [36].

Validation Methodology: The force field was incrementally developed starting from OPLS-AA, with missing dihedral parameters obtained from MP2/aug-cc-pVDZ potential energy surfaces. Atomic point charges were derived using the ChelpG methodology, with X-sites added to model σ-holes for iodine atoms. Validation compared MD simulations against:

- Sublimation enthalpies: Determined experimentally using Calvet microcalorimetry

- Unit cell dimensions: From single-crystal X-ray diffraction

- The test set included sulfanilamide, sulfapyridine, chlorzoxazone, clioquinol, and triclosan [36]

This approach highlights the importance of validating against both structural and thermodynamic data when developing force fields for pharmaceutical solids.

Experimental Methodologies for Force Field Validation

Key Experimental Techniques and Observables

Different experimental techniques provide distinct insights for force field validation, each probing specific aspects of molecular behavior:

Structural Techniques:

- X-ray Crystallography: Provides precise atomic positions in crystalline materials, validating bond lengths, angles, and molecular packing [37] [36].

- Neutron Scattering: Offers insight into atomic positions and dynamics, particularly useful for hydrogen bonding networks.

- NMR Spectroscopy: Delivers information on local chemical environments, dihedral angle distributions, and dynamics across various timescales [37] [38].

Thermodynamic and Dynamic Measurements:

- Sublimation Enthalpies: Reflect lattice energies in crystalline materials, providing rigorous tests for non-bonded interactions [36].

- Density Measurements: Fundamental property for validating overall molecular packing and non-bonded parameter balance.

- Diffusion Coefficients: Probe the accuracy of dynamic properties and activation barriers [33] [34].

- Elastic Moduli: Mechanical properties that sensitive to intermolecular forces and packing [34].

The following diagram illustrates how these experimental techniques integrate into a comprehensive validation framework:

Figure 2: Experimental Techniques for Force Field Validation. Different experimental methods provide complementary data for validating various aspects of force field performance.

Best Practices in Benchmarking Simulations

To ensure meaningful comparisons between simulation and experiment, several methodological considerations are essential:

Simulation Setup:

- System Size: Must be large enough to minimize finite-size effects while remaining computationally feasible

- Sampling Adequacy: Simulation length must sufficiently explore relevant conformational space

- Ensemble Choice: (NPT, NVT, etc.) should match experimental conditions

- Statistical Precision: Multiple independent replicates or error estimation through block averaging

Experimental Data Considerations:

- Data Quality: Prefer high-precision measurements with well-characterized uncertainties

- Condition Matching: Ensure simulation conditions (temperature, pressure, composition) match experiments

- Multiple Observables: Validate against diverse properties to avoid overfitting to specific measurements

As emphasized in recent guidelines for protein force field benchmarking, "best practices for setup and analysis of benchmark simulations" are crucial for obtaining reliable validation results [37].

Emerging Directions in Force Field Validation

Data-Driven and Machine Learning Approaches

Traditional force field development relied on parameter lookup tables and manual optimization, but recent advances leverage machine learning to expand chemical space coverage. The ByteFF force field, introduced in 2025, exemplifies this trend using graph neural networks trained on 2.4 million optimized molecular fragment geometries and 3.2 million torsion profiles [39].

This data-driven approach addresses key limitations of traditional methods:

- Chemical Diversity: Training on expansive datasets covering drug-like chemical space

- Continuous Representation: Moving beyond discrete atom types to continuous chemical environment descriptions

- Automated Parameterization: Reducing manual curation while improving accuracy for novel compounds [39]

Community-Wide Benchmarking Initiatives

The growing recognition of force field validation importance has spurred organized community efforts. The COST Action CA22107 represents a European initiative bringing together experimental and simulation groups to standardize validation protocols for molecular organic solids [36]. Similarly, the Living Journal of Computational Molecular Science has published structured guidelines for "Structure-Based Experimental Datasets for Benchmarking Protein Simulation Force Fields" [37].

These initiatives emphasize:

- Standardized Datasets: Curated experimental data specifically for force field validation

- Protocol Harmonization: Consistent simulation methodologies across research groups

- Open Data Sharing: Making high-quality experimental data accessible to the computational community

Table 3: Key Research Reagents and Computational Tools for Force Field Validation

| Resource Category | Specific Tools/Databases | Primary Function | Application Context |

|---|---|---|---|

| Experimental Data Sources | Cambridge Structural Database (CSD) | Crystal structure repository | Solid-state validation [36] |

| RCSB Protein Data Bank (PDB) | Biomolecular structures | Protein-ligand validation [22] | |

| BioLiP2 | Protein-ligand complexes | Binding site validation [22] | |

| Validation Software | MD analysis tools (GROMACS, AMBER) | Simulation trajectory analysis | Property calculation |

| Force field comparison frameworks | Automated benchmarking | Multi-force field assessment | |

| Specialized Force Fields | BLipidFF | Bacterial membrane lipids | Mycobacterial systems [35] |

| ByteFF | Drug-like molecules | Expanded chemical space [39] | |

| OPLS-AA/API variants | Pharmaceutical compounds | Solid-form prediction [36] | |

| Quantum Chemical References | OMol25 dataset | High-accuracy QM calculations | ML force field training [22] |

| ωB97M-V/def2-TZVPD | Reference quantum chemistry | Target accuracy for ML potentials [22] |

The evidence from recent benchmarking studies consistently demonstrates that force field performance is highly system- and property-dependent. There is no universal "best" force field for all applications. Instead, researchers must select force fields based on rigorous validation against experimental data relevant to their specific research questions.

The most successful validation strategies share common elements: they use multiple experimental observables, assess both structural and dynamic properties, test transferability beyond training systems, and employ high-quality experimental reference data. As force field development increasingly incorporates machine learning and data-driven approaches, the importance of rigorous experimental validation only grows—these advanced models must be tested against real-world measurements to ensure their predictive power extends beyond their training data.

By implementing the structured validation pipeline outlined in this guide, researchers across drug development, materials science, and molecular modeling can make evidence-based decisions about force field selection, ultimately enhancing the reliability and impact of their molecular simulations.

Proteins, especially intrinsically disordered proteins (IDPs) and proteins with flexible regions, do not typically exist as single, rigid structures but as dynamic ensembles of interconverting conformations. [40] [41] The concept of a conformational ensemble—a set of molecular structures with associated statistical weights—is fundamental to understanding the structure-function relationship in dynamic biomolecules. [40] Ensemble refinement refers to computational methods that determine these ensembles by integrating data from molecular dynamics (MD) simulations with experimental measurements. [42] [43] This integration is crucial because neither computational nor experimental approaches alone can fully capture the complexity of flexible protein systems. MD simulations provide atomic-level detail but suffer from force field inaccuracies and sampling limitations, while experimental data provide macroscopic measurements but represent ensemble averages that are consistent with numerous underlying structural distributions. [40] [43] Two dominant philosophical and methodological approaches have emerged for ensemble refinement: maximum entropy methods and sample-and-select approaches. This guide provides a comprehensive comparison of these methodologies, their underlying principles, performance characteristics, and optimal application domains.

Theoretical Foundations and Methodological Principles

Maximum Entropy Methods

Maximum entropy methods operate on the principle that, when refining a conformational ensemble with experimental data, one should introduce the minimal perturbation necessary to achieve agreement with experiments. [44] [43] Formally, they seek to minimize the Kullback-Leibler divergence (a measure of information gain) between the initial simulation ensemble and the refined ensemble while satisfying constraints derived from experimental data. [44] [42]

The core optimization problem involves determining optimal statistical weights ( w_\alpha ) for each structure ( \alpha ) in the ensemble by maximizing the log-posterior probability, which balances agreement with experiment against faithfulness to the prior simulation:

[L(w) = \frac{1}{2} \sum{i=1}^{M} \left( \frac{Yi - \langle yi \rangle}{\sigmai} \right)^2 - \theta S_{KL}]

where ( Yi ) are experimental measurements, ( \langle yi \rangle ) are ensemble-averaged calculated observables, ( \sigmai ) are uncertainties, and ( S{KL} ) is the negative Kullback-Leibler divergence. The parameter ( \theta ) expresses confidence in the reference ensemble. [42] The result is a reweighted ensemble where structures that better explain experimental data receive higher weights, without discarding any conformations from the original ensemble. [42] [43]

Sample-and-Select Approaches

Sample-and-select approaches (also called minimal-ensemble or selection-based methods) operate on a different principle. They aim to identify the smallest possible subset of conformations from a large initial pool that collectively explain the experimental data within error. [42] Unlike maximum entropy methods that assign continuous weights to all structures, sample-and-select methods typically work with discrete weights (often equal weights for selected structures) and strive to minimize the number of structures in the final ensemble.

These methods solve an optimization problem that minimizes the discrepancy between calculated and experimental observables while imposing a penalty on the number of structures included. The resulting ensembles are often more sparse and interpretable, as they highlight specific conformational states that are most relevant to explaining the experimental data. However, this sparsity comes at the cost of potentially over-representing certain states and under-representing the true heterogeneity of the system.

Table 1: Core Philosophical Differences Between the Approaches

| Feature | Maximum Entropy | Sample-and-Select |

|---|---|---|

| Core Principle | Minimal perturbation from prior | Minimal explanatory ensemble |

| Weight Treatment | Continuous, probabilistic | Discrete, often equal weights for selected structures |

| Ensemble Size | Maintains original ensemble size | Drastically reduces ensemble size |

| Information Basis | Information-theoretic | Parsimony-based |

| Handling Uncertainty | Explicit through Bayesian framework | Implicit through ensemble selection |

Comparative Performance Analysis

Methodological Strengths and Limitations

Both maximum entropy and sample-and-select approaches have distinct advantages and limitations that make them suitable for different scenarios in structural biology research.