Coalescent Theory in Viral Phylogenies: A Phylodynamic Framework for Epidemic Response and Drug Development

This article provides a comprehensive overview of coalescent theory and its critical applications in viral phylogenetics for biomedical researchers and drug development professionals.

Coalescent Theory in Viral Phylogenies: A Phylodynamic Framework for Epidemic Response and Drug Development

Abstract

This article provides a comprehensive overview of coalescent theory and its critical applications in viral phylogenetics for biomedical researchers and drug development professionals. It explores the foundational principles of how genetic lineages merge backward in time to common ancestors, establishing the mathematical framework for interpreting viral genealogies. The content covers methodological approaches for inferring epidemiological parameters like transmission dynamics and population sizes, addresses key analytical challenges including model limitations and computational constraints, and validates methods through comparative analysis of full-likelihood versus summary approaches. By synthesizing evolutionary biology with epidemiology, this guide enables more effective analysis of viral sequence data to inform public health interventions and therapeutic development.

Tracing Viral Ancestry: The Core Principles of the Coalescent Model

Coalescent theory provides a powerful population genetics-based framework for reconstructing the ancestral relationships of genes or organisms by looking backward in time from contemporary samples to their common ancestors [1]. In the context of viral phylogenetics, this approach enables researchers to model how viral sequences sampled from infected hosts originated from a common ancestral virus through a series of coalescence events [1]. The model essentially traces the genealogy of sequences backward in time, merging lineages at coalescence events until reaching the most recent common ancestor (MRCA) of the entire sample [1]. This backward-time perspective has proven particularly valuable for understanding viral transmission dynamics, estimating evolutionary parameters, and reconstructing epidemiological histories from genetic data.

The mathematical foundation of coalescent theory was primarily developed by John Kingman in the early 1980s and has since been extended to incorporate various complexities including recombination, selection, and population structure [1]. For viral pathogens like HIV, coalescent models enable inference of key epidemiological parameters such as time of transmission, population size changes, and rates of migration between populations [2] [3]. The theory operates under ideal assumptions of no recombination, no natural selection, and random mating, though practical applications often incorporate modifications to address these biological realities [1].

Theoretical Foundations of the Coalescent

Basic Coalescent Model

The simplest form of coalescent theory considers a sample of two gene lineages from a haploid population with constant effective size 2N[e]. The probability that these two lineages coalesce in the immediately preceding generation is 1/(2N[e]), while the probability that they do not coalesce is 1 - 1/(2N[e]) [1]. Looking backward t generations, the probability of coalescence at generation t follows a geometric distribution:

Pc = (1 - 1/(2N[e]))^(t-1) × (1/(2N[e]))

For large values of N[e], this distribution is well approximated by the exponential distribution:

Pc = (1/(2N[e])) × e^(-(t-1)/(2N[e]))

This continuous approximation provides both the expected time to coalescence and the standard deviation equal to 2N[e] generations [1]. The expected time for two lineages to coalesce is therefore 2N[e] generations, though actual coalescence times exhibit substantial variation around this expectation.

Extensions to Viral Applications

When applied to viral populations, the basic coalescent model requires modifications to account for peculiarities of viral evolution, including high mutation rates, rapid population dynamics, and structured populations within and between hosts [2]. The effective population size (N[e]) for viruses represents the number of infected individuals contributing to the next generation's infection pool, which may differ substantially from census population sizes.

For within-host viral dynamics, such as HIV infection, the virus effective population size within a host has been empirically documented to increase linearly with time [2]. This necessitates modifications to the basic coalescent model to accommodate changing population sizes over the course of infection. Additionally, models must account for the transmission bottleneck that occurs when only a subset of viral variants establishes infection in a new host [2].

Methodological Approaches for Transmission Time Inference

Phylogical Window Approach

A phylogeny-based logical framework places bounds on possible transmission times using time-scaled virus phylogenetic trees (timetrees) from epidemiologically linked hosts [2]. The earliest possible transmission time cannot precede the most recent node that yields descendant tips in both hosts, while the latest possible time occurs by the first sampling time of both hosts [2]. This logical time window, termed the "phylogical window," provides initial constraints on transmission timing before model-based inference.

Markov Time-Slice Model

A single-parameter Markov model has been developed to infer transmission time from HIV phylogenies constructed from multiple virus sequences from people in a transmission pair [2]. This approach uses a Markov model with parameter q denoting the instantaneous rate at which lineages change from one host state to another, representing the stochastic nature of which lineage(s) happen to be involved in transmission [2]. The model allows the transmission process to be active (q > 0) only in one direction and during a specific time slice, enabling statistical evaluation of support for transmission occurring in different possible time intervals [2].

Table 1: Comparison of Methods for Inferring Transmission Time from Viral Phylogenies

| Method | Key Principles | Data Requirements | Advantages | Limitations |

|---|---|---|---|---|

| Phylogical Window [2] | Logical bounds based on tree topology | Time-scaled phylogeny | Simple, intuitive | Provides range rather than point estimate |

| Markov Time-Slice Model [2] | Host-state transition during specific time intervals | Multiple sequences per host, timed phylogeny | Provides statistical support for different transmission times | Sensitive to time calibration accuracy |

| Coalescent Model [2] [3] | Population genetic model of lineage merging | Genome-scale variation data | Incorporates population dynamics | Computationally intensive, many parameters |

| Parsimony Rules-Based [2] | Heuristic node assignment rules | Tree topology only | Simple, fast | No confidence assessment, potentially misleading |

Coalescent-Based Inference

Coalescent-based methods frame transmission time inference within a population genetics framework, treating the phylogenetic tree as a random draw from a model of virus population history that includes within-host dynamics and transmission events [2] [3]. These models typically require estimation of multiple parameters including infection times, transmission bottleneck sizes, and viral population growth rates in each host [2]. Methods like CLEAX (Consensus-tree based Likelihood Estimation for AdmiXture) use a two-step approach that first learns summary descriptions of population history from molecular data, then applies coalescent-based inference to estimate divergence times and admixture fractions [3].

Experimental Protocols and Workflows

Phylogenetic Reconstruction from Viral Sequences

Sample Collection and Sequencing:

- Collect multiple viral sequences from source and recipient hosts using high-throughput sequencing technologies

- Ensure adequate coverage of viral genetic diversity within each host

- Record precise sampling dates for temporal calibration

Sequence Alignment and Quality Control:

- Perform multiple sequence alignment using appropriate algorithms (e.g., MAFFT, MUSCLE)

- Implement quality filtering to remove poor-quality sequences and potential contaminants

- Verify absence of recombination in aligned sequences or apply appropriate recombination-aware methods

Phylogenetic Tree Construction:

- Implement model selection to identify optimal substitution model (e.g., using ModelTest)

- Construct initial trees using maximum likelihood or Bayesian methods

- Calibrate trees using sampling dates to generate time-scaled phylogenies

Transmission Time Inference Protocol

Initial Phylogical Window Estimation:

- Identify the most recent common ancestor of sequences from both hosts

- Determine earliest possible transmission time

- Establish latest possible transmission time based on sampling dates

Model-Based Inference:

- Apply Markov time-slice model across candidate transmission intervals

- Compute statistical support for each transmission time hypothesis

- Compare results with coalescent-based approaches when computationally feasible

- Evaluate consistency across methods

Validation and Sensitivity Analysis:

- Assess impact of phylogenetic uncertainty on transmission time estimates

- Evaluate sensitivity to model assumptions and parameter choices

- Compare inferred transmission times with known epidemiological data when available

Workflow for Viral Transmission Time Inference

Quantitative Analysis and Parameter Estimation

Key Parameters in Coalescent Modeling

Coalescent-based methods for viral transmission inference estimate several key parameters that characterize the evolutionary and epidemiological history of viral populations:

Effective Population Size (N[e]): The number of individuals in an idealized population that would show the same amount of genetic drift as the actual population. For viral populations, this represents the number of infected individuals contributing to the next generation.

Time to Coalescence: The expected time for two lineages to merge into a common ancestor, typically measured in generations or calendar time using appropriate conversion factors.

Mutation Rate (μ): The rate at which mutations accumulate in the viral genome, usually expressed as substitutions per site per year.

Transmission Bottleneck Size: The number of viral variants that successfully establish infection in a new host, which affects genetic diversity in the recipient population.

Table 2: Key Parameters in Viral Coalescent Models

| Parameter | Symbol | Typical Estimation Methods | Biological Interpretation |

|---|---|---|---|

| Effective Population Size | N[e] | Coalescent interval analysis, Bayesian sampling | Number of infected individuals contributing to next generation |

| Time to Most Recent Common Ancestor | T[MRCA] | Node height in time-scaled phylogeny | Time since lineages shared a common ancestor |

| Mutation Rate | μ | Molecular clock calibration, external references | Rate of genetic change over time |

| Transmission Bottleneck Size | N[b] | Diversity comparison pre/post transmission | Number of founding variants in new infection |

| Transmission Time | t[trans] | Markov time-slice model, coalescent inference | Time when transmission occurred between hosts |

Performance Evaluation of Inference Methods

Simulation studies have evaluated the performance of different methods for inferring transmission times from viral phylogenies. The Markov time-slice model has demonstrated accurate and relatively narrow estimates of transmission time across simulations with varying numbers of transmitted lineages, different transmission times relative to the source's infection, and different sampling times relative to transmission [2]. Performance challenges emerge when transmission occurs long after the source was infected and when sampling occurs long after transmission, making estimation difficult by any method [2].

Comparative analyses show that the Markov time-slice model and coalescent models perform comparably despite utilizing different information within phylogenetic trees, suggesting potential for future methods that more fully integrate both topological and temporal information [2]. In applications to real HIV transmission pairs, inferred transmission times generally align with known case histories, though uncertainty in time-calibration of phylogenies often contributes more to estimation uncertainty than the model inference itself [2].

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools for Coalescent Analysis

| Reagent/Tool | Function | Application Context |

|---|---|---|

| High-Throughput Sequencer | Generate viral sequence data from patient samples | Obtain genetic sequences for phylogenetic analysis |

| Multiple Sequence Alignment Software | Align viral sequences for comparison | Prepare data for tree building |

| Bayesian Evolutionary Analysis Sampling Trees (BEAST) | Bayesian MCMC analysis of molecular sequences | Time-scaled phylogeny reconstruction with coalescent models [1] |

| BPP | Multispecies coalescent analysis | Infer phylogeny and divergence times among populations [1] |

| CoaSim | Simulate genetic data under coalescent model | Method validation and power analysis [1] |

| GENOME | Coalescent-based whole-genome simulation | Large-scale genomic simulations [1] |

| IQ-TREE | Maximum likelihood phylogenetic inference | Fast tree estimation with model selection |

| R/ape package | Phylogenetic analysis and visualization | Tree manipulation and comparative analysis |

Signaling Pathways and Evolutionary Relationships

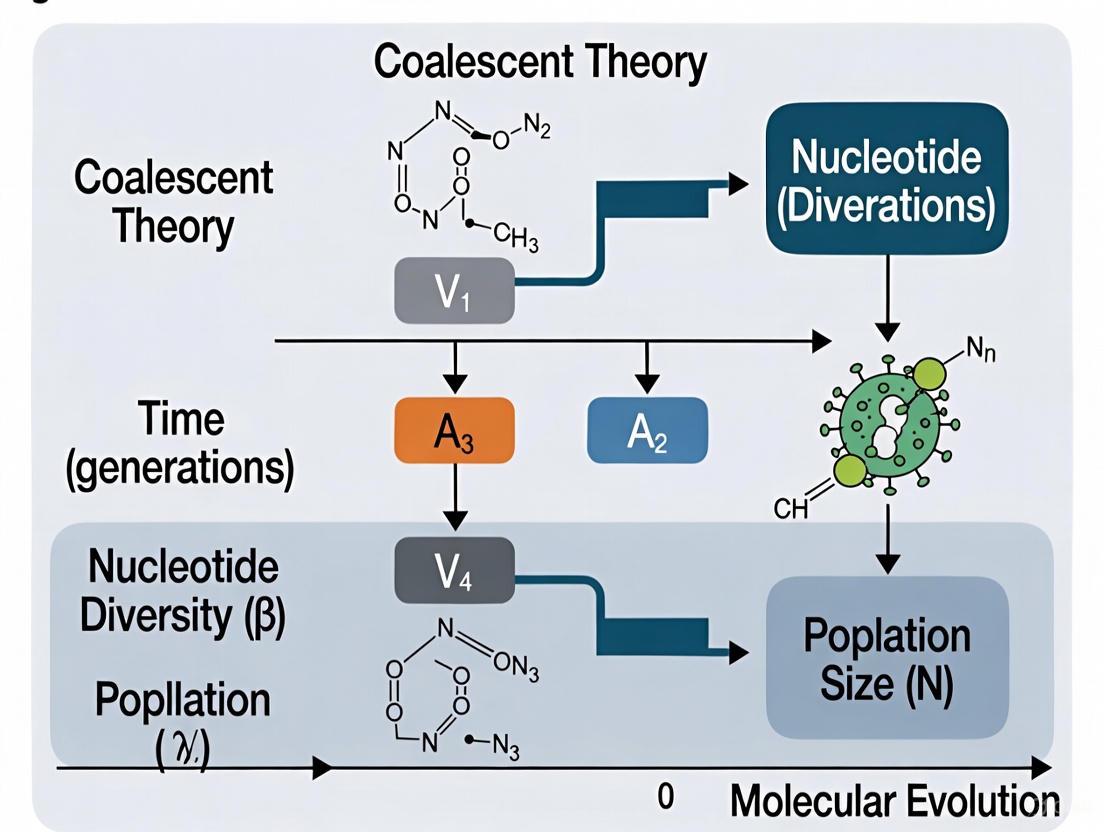

Backward-Time Coalescent Process

The diagram illustrates the fundamental concept of coalescent theory where contemporary sequences (S1-S5) are traced backward in time through a series of coalescence events. Each merging point represents a common ancestor, with the process continuing until reaching the most recent common ancestor (MRCA) of all samples. This backward-time perspective forms the basis for estimating evolutionary parameters and transmission histories from viral sequence data.

Applications in Viral Research and Drug Development

Coalescent-based methods provide powerful approaches for addressing critical questions in viral research and therapeutic development:

Transmission Network Characterization: By estimating transmission times and directions, coalescent methods help reconstruct transmission networks and identify factors influencing spread, informing targeted intervention strategies [2].

Transmission Circumstance Identification: precise transmission timing can reveal specific circumstances enabling transmission, such as in mother-to-child transmission pairs where timing relative to birth informs intervention strategies [2].

Evolutionary Rate Estimation: Comparison of evolutionary rates within and between hosts provides insights into selective pressures and adaptation mechanisms relevant to drug resistance development [2] [3].

Forensic Applications: In legal contexts, establishing whether transmission occurred at specific times can determine criminal responsibility, particularly when transmission timing relative to knowledge of infection status is relevant [2].

Vaccine and Therapeutic Design: Understanding the rate and patterns of viral evolution informs the design of vaccines and therapeutics that anticipate viral escape mutations and maintain efficacy over time.

The backward-time perspective of coalescent theory continues to provide fundamental insights into viral evolution and transmission dynamics, with ongoing methodological refinements enhancing the precision and applicability of these approaches for public health and clinical practice.

Kingman's coalescent provides a powerful mathematical framework for modeling the genealogical history of biological samples, forming a cornerstone for modern population genetic analysis. This technical guide details its core principles, from foundational mathematical assumptions to its critical role in interpreting viral sequence data. By offering a stochastic process that traces ancestral lineages backward in time to their most recent common ancestor (MRCA), the coalescent enables researchers to make inferences about population history, demographic parameters, and evolutionary dynamics. The model's application to viral phylogenies has revolutionized our understanding of rapidly evolving pathogens, providing insights into epidemic origins, transmission dynamics, and effective population sizes.

Coalescent theory represents a mathematical model describing how genetic lineages sampled from a population merge as we trace them backward in time to their common ancestors [1]. Unlike forward-time population genetics models, the coalescent adopts a retrospective approach, focusing on the ancestral process of a sample rather than the entire population. This conceptual reversal provides extraordinary computational efficiency and analytical tractability, particularly for large sample sizes.

John Kingman's seminal work in the early 1980s established the mathematical foundation for this approach, though several research groups developed similar concepts independently during this period [1]. The coalescent has since been extended in numerous ways to accommodate recombination, natural selection, population structure, and various demographic scenarios. Nevertheless, the standard Kingman coalescent remains the fundamental reference model against which more complex variations are measured.

In viral phylogenetics, the coalescent has proven particularly valuable due to the rapid evolutionary rates of viruses, which create measurable genetic diversity within epidemiologically relevant timescales [4]. The concomitant occurrence of ecological and evolutionary processes in viruses means that neutral genetic variation can effectively track both evolutionary relationships and population dynamics, creating a record of past evolutionary events that researchers can reconstruct using coalescent-based methods.

Mathematical Foundations

The Basic Coalescent Process

The Kingman coalescent models the ancestry of a sample of n genes (or individuals) haploid organisms as a backward-looking stochastic process where lineages merge through pairwise coalescence events until reaching the MRCA of the entire sample. The process operates in continuous time, with coalescence times following exponential distributions that depend on the number of ancestral lineages present.

For a sample of size n, the process begins with n lineages and moves backward in time. When k lineages remain, the waiting time until the next coalescence event follows an exponential distribution with rate parameter (\binom{k}{2} = \frac{k(k-1)}{2}). This rate reflects the number of possible pairs that could coalesce when k lineages are present.

The probability density function for the waiting time (t_k) when k lineages are present is:

[ f(tk) = \frac{k(k-1)}{2} e^{-\frac{k(k-1)}{2}tk} ]

After each coalescence event, the number of lineages decreases by one, and the process continues until only a single lineage remains (the MRCA of the entire sample).

Time Scaling and Effective Population Size

In natural populations, time is measured in generations, and the coalescent rate is influenced by population size. For a diploid population with constant effective size (Ne), the waiting time while k lineages remain has an exponential distribution with rate (\frac{\binom{k}{2}}{2Ne}) when time is measured in generations [1].

The expected time to the MRCA for a sample of size n is:

[ E[T{MRCA}] = 2Ne(1 - \frac{1}{n}) ]

The total expected tree length (sum of all branch lengths) is:

[ E[Ln] = \sum{k=2}^n k \cdot E[tk] = 2Ne \sum{k=1}^{n-1} \frac{1}{k} \approx 2Ne (\ln(n) + \gamma) ]

where (\gamma \approx 0.577) is Euler's constant.

The Coalescent as a Limit Process

Kingman's coalescent emerges as a limit process for a broad class of population models as the population size (Ne) approaches infinity, with time measured in units of (2Ne) generations [5]. This scaling relationship between mutation rate and population size is fundamental to coalescent theory and follows from the assumption that the variance in offspring number is not too large.

Mathematically, this limit process requires that:

- The population is panmictic (random mating)

- Generations are discrete or overlapping

- The variance of offspring number, (\sigma^2), converges to a finite constant as (N \to \infty)

- The sample size n is much smaller than (N_e)

Under these conditions, the ancestral process converges to Kingman's coalescent as (Ne \to \infty), with time rescaled in units of (2Ne) generations [5].

Table 1: Key Mathematical Properties of Kingman's Coalescent

| Property | Mathematical Expression | Biological Interpretation |

|---|---|---|

| Coalescence rate with k lineages | (\frac{k(k-1)}{2}) | Probability of merging lineages increases quadratically with k |

| Waiting time distribution | (t_k \sim \text{Exp}\left(\frac{k(k-1)}{2}\right)) | Higher coalescence rates lead to shorter expected waiting times |

| Expected time to MRCA | (2N_e(1 - \frac{1}{n})) | MRCA time approaches 2N_e generations for large samples |

| Total tree length | (2Ne \sum{k=1}^{n-1} \frac{1}{k}) | Sum of all branch lengths in the genealogy |

| Time scaling | (1 \text{ unit} = 2N_e \text{ generations}) | Natural timescale for the coalescent process |

Key Assumptions and Their Implications

The standard Kingman coalescent rests upon several simplifying assumptions that make the model mathematically tractable but also limit its direct application to real populations. Understanding these assumptions is crucial for proper application and interpretation of coalescent-based analyses.

Neutral Evolution

The coalescent assumes selective neutrality at the studied locus, meaning all genetic variants have equal fitness and their dynamics are governed solely by genetic drift [1]. This assumption implies that:

- Mutation rates are constant across lineages

- No natural selection affects the studied variants

- Allele frequency changes result from random reproduction rather than adaptive advantage

In viral phylogenies, the neutral evolution assumption is frequently violated due to immune pressure, antiviral treatments, and other selective forces. Methods have been developed to detect and account for such deviations, but the standard coalescent remains a valuable null model for hypothesis testing.

Constant Population Size

The basic coalescent model assumes a constant effective population size ((N_e)) through time [1]. This assumption ensures the homogeneity of the coalescent process, with rates depending only on the number of lineages. In reality, most natural populations experience size fluctuations, with important implications for coalescent analyses:

- Population expansions accelerate recent coalescence events

- Population bottlenecks accelerate deep coalescence events

- Seasonal fluctuations create complex coalescence patterns

For viruses, population sizes often change dramatically during transmission events and epidemic waves, making the constant population size assumption particularly problematic.

Random Mating and Panmixia

The model assumes panmixia (random mating) within the population, meaning:

- All individuals have equal probability of mating with any other individual

- There is no population structure or subdivision

- Lineages are equally likely to coalesce regardless of their spatial or social origin

Violations of this assumption occur in many viral populations due to spatial structure, host specialization, or transmission networks. Structured coalescent models have been developed to address these limitations.

No Recombination

The standard coalescent assumes no recombination within the studied genomic region [1]. This means:

- The entire region shares a single genealogical history

- The genealogy can be represented as a bifurcating tree

- All sites in the sequence have correlated ancestral histories

For viruses with RNA genomes or high recombination rates, this assumption is frequently violated, necessitating the use of ancestral recombination graphs (ARGs) that can model conflicting genealogical histories across the genome [6].

Table 2: Key Assumptions of Kingman's Coalescent and Their Implications

| Assumption | Biological Meaning | Consequences of Violation |

|---|---|---|

| Selective neutrality | All variants have equal fitness | Selection distorts branch lengths and tree shapes |

| Constant population size | Effective population size stable over time | Demographic history affects coalescence rates |

| Random mating (panmixia) | No population structure | Subdivision creates longer coalescence times |

| No recombination | Complete linkage across locus | Genealogies vary across the genome |

| Large population size | N_e → ∞ with time rescaled | Finite size effects in small populations |

| Non-overlapping generations | Discrete reproduction cycles | Complex coalescence rates in age-structured pops |

Visualizing the Coalescent Process

Kingman's Coalescent Process

The diagram illustrates the fundamental structure of Kingman's coalescent, showing how four sampled lineages merge backward in time through pairwise coalescence events until reaching their most recent common ancestor (MRCA). Each coalescence event reduces the number of lineages by one, with waiting times between events that follow exponential distributions depending on the number of lineages present. This binary tree structure emerges from the assumption that only two lineages coalesce at a time, which holds when the variance in offspring number is not too large [5].

Applications in Viral Phylogenetics

Estimating Evolutionary Parameters

The coalescent provides a powerful framework for estimating key parameters in viral evolution, including:

- Mutation rates: Derived from branch lengths under a molecular clock assumption

- Effective population size: Inferred from the rate of coalescence

- Growth rates: Estimated from changes in coalescence rates through time

In Bayesian phylogenetic analyses, the coalescent serves as a prior distribution on tree topologies and branch lengths, enabling joint inference of phylogeny and population dynamics [4]. For rapidly evolving viruses like HIV and influenza, this approach has revealed complex demographic histories including exponential growth, bottlenecks, and seasonal fluctuations.

Dating Evolutionary Events

Coalescent methods enable researchers to estimate the timing of key evolutionary events in viral history, including:

- Origin dates of epidemics: Using molecular clock models with coalescent priors

- Divergence times between viral strains: From the depth of coalescence events

- Rate of evolutionary change: By comparing genetic distance with time calibration points

The application to HIV research has been particularly illuminating, with coalescent analyses helping to date the zoonotic transfers of SIVcpz from chimpanzees to humans that gave rise to HIV-1 groups M and N [4]. Similar approaches have shed light on the origins of SARS-CoV-2 and seasonal influenza variants.

Phylodynamic Analysis

Phylodynamics represents the unification of phylogenetic analysis with epidemiological models, with the coalescent serving as a bridge between these traditionally separate fields [4]. This integrated approach allows researchers to:

- Infer transmission dynamics from genetic sequences

- Estimate basic reproduction numbers (R₀) from genealogical patterns

- Reconstruct spatial spread from phylogenies with geographic metadata

- Identify superspreading events from imbalanced tree structures

The phylodynamic framework has been successfully applied to numerous viral pathogens, providing insights that complement traditional epidemiological surveillance.

Methodological Approaches and Computational Tools

Bayesian Coalescent Inference

Modern coalescent analyses typically employ Bayesian framework with Markov Chain Monte Carlo (MCMC) sampling to estimate posterior distributions of parameters of interest [4]. The general form of the Bayesian coalescent model is:

[ P(\theta, T, M | D) = \frac{P(D | T, M) P(T | \theta) P(\theta) P(M)}{\int P(D | T, M) P(T | \theta) P(\theta) P(M) d\theta dT dM} ]

where:

- (P(\theta, T, M | D)) is the posterior probability of parameters, tree, and model

- (P(D | T, M)) is the phylogenetic likelihood of sequence data given tree and substitution model

- (P(T | \theta)) is the coalescent prior on tree topology and branch lengths

- (P(\theta)), (P(M)) are priors on population parameters and substitution model

This framework enables joint inference of evolutionary relationships, divergence times, and population history while accounting for uncertainty in all estimated parameters.

Software Implementations

Table 3: Computational Tools for Coalescent Analysis

| Software | Primary Function | Key Features | Application in Viral Research |

|---|---|---|---|

| BEAST/BEAST2 | Bayesian evolutionary analysis | Coalescent models, molecular dating, phylodynamics | HIV origins, influenza evolution, SARS-CoV-2 spread |

| GENIE | Coalescent simulation | Whole-genome simulation, complex demography | Method development, validation studies |

| IBDSim | Isolation by distance simulation | Spatial structure, realistic dispersal | Geographic spread of viral variants |

| IMa/IMa2 | Isolation with Migration analysis | Divergence times, migration rates | Cross-species transmission, host adaptation |

| tskit | Tree sequence processing | ARG manipulation, efficient computation | Large-scale genomic analysis, simulation |

Research Reagent Solutions for Coalescent Analysis

BEAST 2 - Bayesian evolutionary analysis software package providing a comprehensive platform for coalescent-based inference with a wide range of population models, molecular clock models, and data visualization tools [1].

Molecular clock models - Statistical frameworks for translating genetic divergence into time estimates, enabling the dating of evolutionary events from sequence data calibrated with sampling dates or known historical events [4].

Ancestral recombination graph (ARG) methods - Computational approaches for inferring and analyzing complex genealogies with recombination, implemented in tools such as tskit, which provides efficient processing of tree sequences [6].

Coalescent simulation algorithms - Computational methods for generating sample genealogies under various demographic scenarios, including the topology-free sampling approach that efficiently generates specific portions of genealogies without full simulation [7].

Relaxed phylogenetics models - Methodological frameworks that allow evolutionary rates to vary across branches, accommodating rate heterogeneity while maintaining temporal calibration for divergence time estimation [4].

Limitations and Model Extensions

Violations of Key Assumptions

The standard Kingman coalescent makes several simplifying assumptions that are frequently violated in natural populations, particularly viral pathogens:

High Variance in Offspring Number - When the variance in reproductive success is large, as in many marine organisms or during viral superspreading events, the ancestral process can deviate significantly from Kingman's coalescent [5]. In such cases, the genealogy may feature multiple mergers of ancestral lineages rather than simple pairwise coalescence, requiring more general Λ-coalescent processes.

Population Structure - Real populations often exhibit spatial, age, or social structure that violates the panmixia assumption. Recent work has extended coalescent theory to accommodate arbitrary population structures while maintaining the core conceptual framework [8].

Selection - The neutral assumption is frequently violated in viral populations subject to immune pressure, antiviral treatments, or other selective forces. While the standard coalescent does not incorporate selection, various extensions have been developed to model selected loci alongside neutral background variation.

Beyond Kingman: Extended Coalescent Models

Λ-Coalescents - These generalized coalescent processes allow multiple lineages to merge simultaneously in a single coalescence event, accommodating scenarios with high variance in offspring number or selective sweeps [1].

Ancestral Recombination Graphs (ARGs) - For genomic regions with recombination, ARGs provide a comprehensive representation of genealogical history across the genome, capturing how different genomic regions have different evolutionary histories [6].

Structured Coalescents - These models incorporate population subdivision, allowing lineages to reside in different demes with migration between them. Recent work has generalized this approach to arbitrary population structures [8].

Phylodynamic Models - Integrating epidemiological dynamics with coalescent processes, these frameworks connect population genetic patterns with the ecological processes driving transmission and population size changes [4].

Kingman's coalescent provides an elegant mathematical foundation for understanding the genealogical processes that shape genetic variation in populations. Its key assumptions of neutrality, constant population size, panmixia, and no recombination make it analytically tractable while serving as an important null model for hypothesis testing. For viral phylogenies, the coalescent has been instrumental in dating evolutionary events, estimating population parameters, and reconstructing transmission dynamics.

Despite its simplifying assumptions, the coalescent has proven remarkably robust and extensible, with numerous generalizations developed to accommodate real-world complexities. The ongoing development of more efficient computational methods, including algorithms for targeted genealogy sampling [7] and generalized representations of ancestral processes [6] [8], continues to expand the utility of coalescent theory for analyzing viral sequence data.

As genomic data sets grow in size and complexity, the coalescent remains an essential tool for making inferences about evolutionary history from patterns of genetic variation. Its application to viral pathogens has provided unique insights into the origins, spread, and dynamics of epidemics, with important implications for public health interventions and pandemic preparedness.

Coalescent theory provides a powerful mathematical framework for reconstructing population history from genetic data. This technical guide examines the fundamental relationship between coalescent waiting times, their exponential distributions, and effective population size ($N_e$). We explore how this relationship forms the basis for inferring past population dynamics, with particular emphasis on applications in viral phylogenetics. The theory enables researchers to estimate timing of evolutionary events, population size changes, and demographic histories from molecular sequence data, making it indispensable for understanding viral evolution and spread.

Coalescent theory is a retrospective model that traces genetic lineages backward in time to their most recent common ancestor (MRCA). Originally developed by Kingman and others in the 1980s, it has become the cornerstone of population genetic analysis [1] [9]. The theory operates under a random mating model assuming no recombination, no natural selection, and no gene flow or population structure, meaning each genetic variant is equally likely to have been passed from one generation to the next [1]. In viral phylogenetics, coalescent methods enable researchers to estimate population size changes, divergence times, and evolutionary rates from pathogen genetic sequences.

The model examines how alleles sampled from a population may have originated from a common ancestor, looking backward in time and merging alleles into single ancestral copies in coalescence events [1]. The key insight is that the waiting times between these coalescence events follow exponential distributions whose parameters are determined by the effective population size. This fundamental relationship provides a powerful framework for inferring past demographic changes from contemporary genetic data.

Mathematical Foundations

Coalescent Waiting Times under Constant Population Size

Under the constant population size model, the waiting times between coalescent events are independent exponentially distributed random variables. Specifically, the time $T_k$ during which there are exactly $k$ lineages follows an exponential distribution:

$$T_k \sim \text{Exp}\left(\frac{\binom{k}{2}}{N}\right)$$

where $N$ is the effective population size, and $\binom{k}{2} = \frac{k(k-1)}{2}$ represents the number of possible pairwise coalescences when $k$ lineages are present [10]. The probability density function for the coalescence time is given by:

$$Pc(t) = \left(1-\frac{1}{2Ne}\right)^{t-1}\left(\frac{1}{2N_e}\right)$$

For large values of $N_e$, this distribution is well approximated by the exponential distribution:

$$Pc(t) = \frac{1}{2Ne}e^{-\frac{t-1}{2N_e}}$$

This exponential distribution has both expected value and standard deviation equal to $2Ne$ generations [1]. Although the expected time to coalescence is $2Ne$, actual coalescence times exhibit substantial variation.

Varying Population Size Models

When population size varies as a function of time ($N(t)$), the waiting times to coalescence are no longer independent. For $k \in [2,n-1]$, $Tk$ depends on all previous coalescent times from $T{k+1}$ to $Tn$ [10]. The distribution of coalescent times under varying population size can be derived using the approach of Polanski et al. (2003), which expresses the density function of coalescent times as linear combinations of a family of functions $(qj)_{2\leq j\leq n}$:

$$qj(t) = \frac{\binom{j}{2}}{N(t)} \exp\left(-\binom{j}{2} \int0^t \frac{1}{N(\sigma)} d\sigma\right)$$

For $k \in [2,n]$, the relationship between the density function $\pik$ and $qj$ is:

$$\pik(t) = \sum{j=k}^n A{jk} qj(t)$$

where the coefficients $A_{jk}$ are defined as:

$$A{jk} = \frac{\prod{l=k, l\neq j}^n \binom{l}{2}}{\prod_{l=k, l\neq j}^n \left[\binom{l}{2} - \binom{j}{2}\right]}, \text{ for } k \leq j$$

with $A{nn} = 1$ and $A{jk} = 0$ for $k > j$ [10]. This formulation enables the estimation of past population sizes from distributions of coalescent times.

Table 1: Key Parameters in Coalescent Theory

| Parameter | Symbol | Description | Biological Significance |

|---|---|---|---|

| Effective population size | $N_e$ | The size of an idealized population that would experience genetic drift at the same rate | Determines rate of coalescence; proportional to genetic diversity |

| Coalescent rate | $\binom{k}{2}/N$ | Rate at which k lineages coalesce to k-1 lineages | Increases with more lineages and smaller populations |

| Waiting time | $T_k$ | Time during which exactly k lineages exist | Follows exponential distribution under constant size |

| Cumulative time | $V_k$ | Sum of times from present to coalescent event | $Vk = Tn + \cdots + T_k$ |

Quantitative Framework

Estimating Effective Population Size from Coalescent Times

The relationship between coalescent time distributions and effective population size enables the estimation of $N_e$ from genetic data. The Population Size Coalescent-times-based Estimator (Popsicle) provides an analytic method for solving this problem by inverting the relationship between coalescent time distributions and population size [10]. The theorem states that:

$$qj(t) = \sum{k=j}^n B{kj} \pik(t)$$

where the coefficients $B_{kj}$ are defined as:

$$B{kj} = \frac{\binom{j}{2}}{\binom{k}{2}} \prod{l=k+1}^n \left(1 - \frac{\binom{j}{2}}{\binom{l}{2}}\right), \text{ for } k < n, k \leq j$$

with $B{kj} = \frac{\binom{j}{2}}{\binom{k}{2}}$ for $k = n$, and $B{kj} = 0$ for $k < j$ [10]. These coefficients are all positive and take values between 0 and 1, providing numerical stability even for large sample sizes.

The integral of $q_j$ with respect to $t$ is:

$$Qj(t) = \int0^t qj(u)du = 1 - \exp\left(-\binom{j}{2} \int0^t \frac{1}{N(\sigma)} d\sigma\right)$$

From this, the population size can be obtained as:

$$N(t) = \frac{\binom{j}{2} (1 - Qj(t))}{qj(t)}$$

This theoretical correspondence enables the reduction of the full inference problem of population size from sequence data to a problem of estimating gene genealogies from sequence data [10].

Impact of Sample Size and Genetic Loci

Simulation studies have demonstrated that the Popsicle approach performs well with genomic data (≥10,000 loci). Increasing sample size from 2 to 10 greatly improves inference of $N_e(t)$, while further increases yield modest improvements, even under exponential growth scenarios [10]. The method can handle large sample sizes with fast computations, making it suitable for whole-genome analysis.

Table 2: Factors Affecting Coalescent-Based Estimation Accuracy

| Factor | Impact on Estimation | Practical Recommendations |

|---|---|---|

| Number of loci | Higher numbers (≥10,000) significantly improve accuracy | Use genome-wide data when possible |

| Sample size | Diminishing returns beyond 10 samples | Balance sequencing costs with information gain |

| Recombination | Can introduce bias if unaccounted for | Use methods that explicitly model recombination |

| Mutation rate | Affects branch length estimation | Incorporate reliable mutation rate estimates |

| Preferential sampling | Biases population size estimates | Model sampling times explicitly [11] |

Methodological Approaches

Experimental Protocols for Coalescent-Based Inference

Protocol 1: Bayesian Coalescent Inference using BEAST

Sequence Alignment: Compile and align molecular sequence data, noting sampling dates for each sequence.

Model Selection: Choose appropriate substitution models (e.g., HKY, GTR) and clock models (strict vs. relaxed clock).

Tree Prior Specification: Select coalescent tree priors based on demographic assumptions (constant size, exponential growth, Bayesian skyline).

MCMC Configuration: Set up Markov Chain Monte Carlo parameters for posterior sampling, including chain length, burn-in, and sampling frequency.

Analysis Validation: Assess convergence using tracer analysis and effective sample sizes (ESS > 200).

This protocol implements the Bayesian framework for coalescent inference, jointly estimating genealogies, effective population size trajectories, and other parameters directly from sequence data [11].

Protocol 2: Preferential Sampling Adjustment

Sampling Time Modeling: Model sampling times as an inhomogeneous Poisson process with log-intensity as a linear function of log-effective population size.

Covariate Incorporation: Include time-varying covariates (e.g., sequencing effort, seasonal effects) in the sampling model.

Joint Estimation: Simultaneously estimate genealogy, population size trajectory, and sampling model parameters.

Model Comparison: Use marginal likelihood estimation to compare models with and without preferential sampling adjustments.

This protocol addresses biases introduced when sampling intensity depends on population size, particularly relevant in surveillance data [11].

Computational Implementation

Several software packages implement coalescent-based methods for population size estimation:

- BEAST and BEAST 2: Bayesian inference package via MCMC with a wide range of coalescent models [1] [11]

- MSMC (Multiple Sequential Markovian Coalescent): Uses information from multiple samples, focusing on the first coalescence event at each locus [10]

- PSMC (Pairwise Sequentially Markovian Coalescent): Works with a single diploid individual, leading to simple tree topologies without requiring phase information [10]

- DiCal (Demographic Inference using Composite Approximate Likelihood): Uses all coalescent events in gene genealogies but becomes computationally intensive with large sample sizes [10]

These tools enable researchers to estimate effective population size changes from genetic sequence data, with different trade-offs between computational efficiency, sample size, and model complexity.

Visualization Framework

Coalescent Process Diagram

Coalescent Process with Waiting Times

This diagram illustrates the coalescent process for a sample of 5 individuals, showing how lineages merge backward in time until reaching the most recent common ancestor (MRCA). Waiting times between coalescent events (T5 to T2) follow exponential distributions whose parameters depend on the number of lineages and effective population size.

Population Size Inference Workflow

Population Size Inference Methodology

This workflow outlines the process of estimating effective population size changes from genetic data, highlighting key steps from sequence data to final population trajectory. Dashed lines indicate optional inputs like preferential sampling adjustments and time-varying covariates that can improve estimation accuracy.

Research Applications

The Scientist's Toolkit

Table 3: Essential Research Reagents and Computational Tools

| Tool/Reagent | Function | Application Context |

|---|---|---|

| BEAST/BEAST 2 Software | Bayesian evolutionary analysis | Coalescent parameter estimation, divergence dating |

| MSMC/PSMC Algorithms | Demographic history reconstruction | Inferring population size changes from genomic data |

| High-Throughput Sequencer | Generate genomic sequences | Obtain genetic data for multiple individuals/loci |

| Molecular Clock Models | Estimate evolutionary rates | Calibrate phylogenetic trees to geological time |

| Sampling Time Data | Record collection dates | Account for preferential sampling in analyses |

| Time-Varying Covariates | External factors affecting sampling | Improve model accuracy in surveillance data |

Applications in Viral Phylogenetics

In viral phylogenetics, coalescent methods have been applied to track population dynamics of pathogens like seasonal influenza and Ebola virus. These approaches can estimate effective population size trajectories from viral genetic sequences, providing insights into epidemic growth patterns and response to interventions [11]. The methods are particularly valuable for:

- Epidemic Tracking: Monitoring changes in effective population size during outbreaks

- Intervention Assessment: Evaluating impact of control measures on pathogen population dynamics

- Evolutionary Studies: Understanding how selection and demography shape viral diversity

- Transmission Inference: Reconstructing spread pathways using phylogenetic approaches [12]

Recent advances incorporate preferential sampling models that explicitly account for dependence between sampling intensity and population size, reducing bias in estimates [11]. This is particularly important in surveillance data where sampling effort often increases during epidemic peaks.

Coalescent theory provides a robust mathematical framework for inferring past population dynamics from genetic data. The relationship between coalescent waiting times, exponential distributions, and effective population size enables researchers to reconstruct historical population size changes, estimate divergence times, and understand evolutionary processes. In viral phylogenetics, these methods have proven invaluable for tracking epidemic dynamics, assessing interventions, and understanding evolutionary patterns.

Recent methodological advances, including preferential sampling adjustments and improved computational algorithms, have enhanced the accuracy and applicability of coalescent-based inference. These developments continue to expand the utility of coalescent theory for addressing fundamental questions in evolutionary biology, epidemiology, and conservation genetics.

Connecting Epidemiological Models to Coalescent Rates at Equilibrium

Coalescent theory, a cornerstone of population genetics, provides a powerful mathematical framework for interpreting gene genealogies. In recent decades, its application has expanded into viral phylogenetics, enabling researchers to reconstruct demographic histories of infected individuals and estimate key epidemiological parameters such as the basic reproduction number (R₀) [13] [14]. Despite its widespread use, the mathematical expressions central to coalescent theory have rarely been explicitly linked to the structure of epidemiological models used to describe pathogen transmission dynamics [13] [14]. This gap is particularly notable given that epidemiological characteristics of the infection process—such as transmission heterogeneity, exposed periods, and infectious period distributions—fundamentally shape viral genealogies, patterns of genetic diversity, and rates of genetic drift [14].

This technical guide establishes a formal connection between epidemiological models and coalescent theory under the assumption of endemic equilibrium. We present a generalized framework for deriving rates of coalescence from common epidemiological model structures, synthesize quantitative findings into comparable formats, and provide practical methodologies for applying these concepts in viral phylodynamic research. By bridging these theoretical domains, we aim to enhance the rigor of phylodynamic inference and provide researchers with tools to better understand how epidemiological processes leave identifiable signatures in pathogen genetic data.

Theoretical Foundations

Core Principles of Coalescent Theory

Coalescent theory, largely developed by John Kingman, traces the ancestral lineages of gene copies backward in time to their most recent common ancestor (MRCA) [15]. The fundamental analytical result of the Kingman coalescent states that the rate at which any two lineages coalesce is inversely proportional to the effective population size (Nₑ). For a sample of i lineages, the rate of coalescence is given by [14]:

This yields the probability density function for the time to first coalescence:

Time t in these expressions must be appropriately scaled. For a Wright-Fisher model with generation time τ, time is measured in units of N generations, while for a Moran model, time is measured in units of N/2 generations [14]. The difference in these scaling factors arises from differences in offspring distribution variances between models.

Table 1: Key Parameters in Coalescent Theory

| Parameter | Symbol | Description | Relationship to Coalescent Rate |

|---|---|---|---|

| Effective Population Size | Nₑ | Population size that yields appropriate time scaling in coalescent | Inversely proportional to coalescent rate |

| Mutation Rate | μ | Rate at which mutations accumulate | Determines genetic diversity alongside Nₑ |

| Generation Time | τ | Time between infection generations | Scales time in coalescent expressions |

| Basic Reproduction Number | R₀ | Average number of secondary infections | Affects Nₑ through offspring distribution |

| Offspring Variance | σ² | Variance in number of secondary infections | Directly affects Nₑ calculation |

The Relevance of Coalescent Theory to Viral Phylogenies

For rapidly evolving pathogens like RNA viruses, coalescent theory provides a critical link between genetic sequences and epidemiological dynamics [14]. Unlike traditional population genetic applications where the population size corresponds to the number of organisms, in infectious disease epidemiology the relevant "population" is the number of infected individuals, with "offspring" representing new infections [14]. This conceptual shift requires modifications to standard coalescent theory to account for the specific characteristics of pathogen transmission dynamics.

Phylodynamic inference leverages this connection to estimate epidemiological parameters from time-stamped viral sequences. Two primary approaches have emerged: (1) estimation of θ = 2Nₑμ (for contemporaneous samples) or θ = 2Nₑτ (for serially sampled data) to derive effective population sizes [14], and (2) direct estimation of epidemiological parameters like R₀ using demographic models with analytical solutions [14]. Recent advances have extended these approaches to nonlinear epidemiological models without analytical solutions, enabling more realistic phylodynamic inference [14] [16].

A General Framework for Connecting Epidemiological Models to Coalescent Rates

Deriving Coalescent Rates from Epidemiological Models

To derive coalescent rates appropriate for epidemiological models, we must consider four key quantities: (1) the relevant population size N (number of infected individuals), (2) the mean of the basic reproduction number distribution E[R₀], (3) the variance of this distribution var(R₀), and (4) the relevant generation time τ [14]. At endemic equilibrium, when each infected individual causes exactly one new infection on average (E[Ω] = 1), the variance in the number of new infections (the offspring distribution) can be expressed as [14]:

This relationship emerges because an individual with net reproductive rate r produces a Poisson-distributed number of offspring with mean and variance r. The effective population size Nₑ can then be calculated using the formula:

The general rate of coalescence for a sample of i lineages in an epidemiological context therefore becomes:

This framework provides a powerful approach for deriving model-specific coalescent rates by incorporating the appropriate values of N, E[R₀], var(R₀), and τ for different epidemiological scenarios [14].

Diagram 1: Framework for deriving coalescent rates from epidemiological models

The Impact of Population Structure on Coalescent Models

Population structure significantly influences coalescent processes, particularly in epidemiological contexts where host populations may be divided by geography, infection stage, or other factors [16]. Structured coalescent models account for this by allowing coalescence rates to vary between subpopulations and incorporating migration events between them [16]. The multitype birth-death model provides an alternative approach for handling population structure, particularly during epidemic outbreaks when population sizes are changing rapidly [17].

Recent comparative studies indicate that for endemic diseases with stable population sizes, structured coalescent and birth-death models produce comparable estimates of migration rates, with coalescent models offering slightly greater precision [17]. However, for epidemic outbreaks with changing population sizes, birth-death models demonstrate superior performance in estimating migration rates [17]. This highlights the importance of selecting appropriate modeling frameworks based on specific epidemiological contexts.

Application to Common Epidemiological Models

Model-Specific Coalescent Rates

The general framework for deriving coalescent rates can be applied to specific families of epidemiological models. Below, we present the key parameters and resulting coalescent rates for four common model families under endemic equilibrium conditions.

Table 2: Coalescent Rates for Common Epidemiological Models at Equilibrium

| Epidemiological Model | Key Parameters | Coalescent Rate (λ_i) | Epidemiological Interpretation |

|---|---|---|---|

| Standard SIS/SIR/SIRS | N = number of infected individualsτ = generation timevar(R₀) = 0 | λ_i = i(i-1) / (2N) | Standard neutral coalescent applies when all infected individuals have equal transmission potential |

| Superspreading Models | N = number of infected individualsτ = generation timevar(R₀) > 0, often following negative binomial distribution | λ_i = i(i-1) * [var(R₀)/(E[R₀])² + 1] / (2N) | Increased variance in transmission increases coalescent rate, reducing time to common ancestor |

| Models with Exposed Class | N = number of infected individualsτ = generation time (includes exposed period)var(R₀) = 0 | λ_i = i(i-1) / (2N) | Longer generation times (due to exposed period) stretch coalescent times without changing overall rate structure |

| Variable Infectious Period | N = number of infected individualsτ = generation timevar(R₀) depends on infectious period distribution | λ_i = i(i-1) * [var(R₀)/(E[R₀])² + 1] / (2N) | More variable infectious periods increase variance in transmission, accelerating coalescence |

Special Considerations for Superspreading and Outbreak Scenarios

Models incorporating superspreading require special consideration due to their highly overdispersed offspring distributions [18]. The variance in R₀ for these models is substantial, leading to significantly increased coalescent rates and more star-like genealogies with simultaneously coalescing lineages [14] [18]. For small outbreaks with substantial superspreading, the standard Kingman coalescent may be inadequate, necessitating alternative models such as the Omega-coalescent which allows multiple lineages to merge simultaneously [18].

The negative binomial distribution is commonly used to model superspreading, with its dispersion parameter k controlling the degree of overdispersion. As k decreases (increased superspreading), the variance in R₀ increases, further accelerating the coalescent process [18]. This has practical implications for phylodynamic inference, as superspreading events can create challenging patterns in genealogies that may be misinterpreted as population bottlenecks or other demographic events.

Experimental Validation and Methodologies

Forward Simulation Approaches

Validating derived coalescent rates requires comparison with forward simulations of epidemiological and evolutionary processes. The general methodology involves [14]:

Implementing the Forward Model: Develop a stochastic simulation of the epidemiological model, tracking both the number of infected individuals over time and the transmission network between them.

Genealogy Reconstruction: For each simulation, reconstruct the genealogy of infected individuals by tracing their transmission chains backward in time.

Coalescent Time Distribution: Extract the distribution of coalescence times from the simulated genealogies.

Comparison with Prediction: Compare the empirical distribution of coalescence times with those predicted by the analytical coalescent rate expressions.

This approach has been used to verify the accuracy of coalescent rates for SIS/SIR/SIRS models, models with superspreading, models with exposed classes, and models with variable infectious period distributions [14]. The close agreement between simulated and predicted coalescent times across these model families supports the validity of the general framework.

Diagram 2: Workflow for validating coalescent rates

Particle Filtering for Complex Models

For structured epidemiological models with complex population dynamics, standard forward simulation approaches face computational challenges. In such cases, particle filtering methods combined with Markov Chain Monte Carlo (MCMC) techniques provide a powerful alternative [16]. This approach:

Forward Simulates Population Dynamics: Generates multiple possible trajectories (particles) of the epidemiological model forward in time.

Calculates Conditional Probabilities: Computes the probability of the observed genealogy given each simulated trajectory.

Marginalizes Latent Variables: Averages over the particles to obtain a marginal likelihood that accounts for uncertainty in the population dynamics.

This particle MCMC approach has been successfully applied to stochastic, nonlinear epidemiological models with various forms of population structure, including spatial models and multi-stage infection models [16]. The method enables estimation of key epidemiological parameters (e.g., stage-specific transmission rates in HIV) and reconstruction of past incidence and prevalence trends from genealogical data [16].

Practical Implementation

The Researcher's Toolkit

Implementing coalescent-based inference for epidemiological models requires both theoretical knowledge and practical tools. Below we outline key components of the methodological toolkit for researchers in this field.

Table 3: Essential Methodological Components for Coalescent-Based Epidemiological Inference

| Component | Function | Implementation Considerations |

|---|---|---|

| Structured Coalescent Framework | Provides mathematical foundation for calculating coalescent rates in structured populations | Must be adapted to specific epidemiological model structure; requires careful specification of state transitions |

| Particle Filtering Algorithms | Enable integration over uncertain population dynamics | Computational intensity scales with model complexity; requires optimization for specific model structures |

| Bayesian MCMC Methods | Allow parameter estimation and uncertainty quantification | Requires appropriate prior specification; convergence diagnostics essential |

| Forward Simulation Capability | Validates analytical results and tests inference methods | Should incorporate stochastic elements; must track transmission chains for genealogy reconstruction |

Application to Specific Pathogens

The choice of appropriate epidemiological model and corresponding coalescent framework depends on the specific pathogen under investigation. For example:

HIV: Models with progressive stages of infection (early, chronic, AIDS) are appropriate, with stage-specific transmission rates affecting the coalescent process [16].

Influenza and other acute infections: SIR-type models with potentially seasonal forcing, requiring careful consideration of changing population sizes [14].

Emerging pathogens with superspreading: Models with negative binomial offspring distributions, potentially requiring multiple-merger coalescents rather than the standard Kingman coalescent [18].

In all cases, the assumption of endemic equilibrium should be carefully evaluated. While the framework presented here focuses on equilibrium conditions, recent advances have extended approaches to non-equilibrium settings, particularly for structured coalescent models [14] [16].

This guide has established a formal connection between epidemiological models and coalescent theory under endemic equilibrium conditions. The generalized framework for deriving coalescent rates from epidemiological parameters provides researchers with a powerful approach for interpreting viral genealogies through an epidemiological lens. The quantitative synthesis presented in tabular format enables direct comparison across model families, while the methodological protocols offer practical guidance for implementation and validation.

As phylodynamic inference continues to evolve, future work should focus on extending these approaches to increasingly realistic epidemiological scenarios, including more complex population structures, non-equilibrium conditions, and the integration of additional data sources. By strengthening the connection between epidemiological dynamics and genetic evolution, we move closer to a comprehensive framework for understanding and predicting pathogen spread at the molecular level.

Phylodynamics has emerged as a pivotal synthetic framework for understanding the spread and evolution of infectious pathogens by integrating epidemiological dynamics with phylogenetic inference. This paradigm is fundamentally rooted in coalescent theory, which provides the mathematical foundation for reconstructing demographic histories from genetic sequence data. For rapidly evolving viruses, the timescales of ecological (epidemiological) and evolutionary processes converge, enabling researchers to trace transmission dynamics, estimate key parameters like the basic reproduction number (R₀), and identify determinants of disease spread through the analysis of viral genealogies. This technical guide explores the core principles, methodologies, and applications of phylodynamics, emphasizing its critical role in shaping effective disease control strategies in public health and drug development.

The Theoretical Bedrock: Coalescent Theory

Coalescent theory is a population genetic model that traces the ancestry of alleles sampled from a population backward in time to their most recent common ancestor (MRCA) [1]. It represents the most significant progress in theoretical population genetics in the past few decades and provides a rigorous statistical framework for analyzing molecular data from populations [19]. When applied to pathogens, the theory allows investigators to infer population genetic parameters such as effective population size, migration rates, and population growth from viral phylogenies [13] [1]. The model looks backward in time, merging alleles into a single ancestral copy according to a random process in coalescence events. The expected time between successive coalescence events increases almost exponentially back in time, with wide variance introduced by the random passing of alleles between generations and the random occurrence of mutations [1].

The coalescent process is typically modeled as a stochastic process where the probability of coalescence for any two lineages in a haploid population of effective size (Ne) is (1/(2Ne)) per generation. For a diploid population, this becomes (1/(2Ne)), and for effectively haploid elements like mitochondrial DNA, it is (1/(Ne/2)) [1]. The mathematical convenience of the coalescent framework has led to its widespread adoption in population genetic analysis, with numerous extensions now incorporating recombination, selection, and complex demographic scenarios [1].

The Emergence of Phylodynamics

The term "phylodynamics" was formally introduced by Grenfell et al. (2004) to describe the "melding of immunodynamics, epidemiology, and evolutionary biology" required to analyze the interacting evolutionary and ecological processes of rapidly evolving viruses [20]. This approach recognizes that for pathogens with high mutation rates and short generation times, evolutionary and ecological processes operate on comparable timescales, making it possible to observe the imprint of epidemiological dynamics on viral genealogies [20].

The field encompasses two distinct but related pursuits [20]:

- Phylogenetic Epidemiology: Using neutral genetic variation to track ecological processes and population dynamics, under the premise that past ecological events are "imprinted" in genetic variation.

- Phylodynamics Sensu Stricto: Analyzing the inevitable interaction of evolutionary and ecological processes, which requires joint modeling of both phenomena, particularly when mutations actively influence population dynamics through factors like immune evasion or antiviral resistance.

Core Principles of Phylodynamics

The Epidemiological-Coalescent Relationship

A fundamental contribution of phylodynamics is the explicit linkage between epidemiological model structures and their expected patterns in viral genealogies. At equilibrium, the rate of coalescence in common epidemiological models can be derived, revealing how population dynamics shape genetic diversity [13]. For instance, in standard susceptible-infected-recovered (SIR) models, the coalescence rate depends on the number of infected individuals and the transmission rate. This relationship allows researchers to move beyond simple population genetic models to frameworks that explicitly incorporate epidemiological parameters [13].

Phylodynamic models have demonstrated that the effective population size ((N_e)) estimated from genetic data often reflects the number of infected individuals rather than the total host population size, providing a critical link between genetic diversity and epidemic dynamics [20]. This connection enables the estimation of key epidemiological parameters directly from genetic data.

Key Epidemiological and Evolutionary Parameters

Table 1: Core Parameters in Phylodynamic Analysis

| Parameter | Symbol | Description | Phylodynamic Inference Method |

|---|---|---|---|

| Basic Reproduction Number | R₀ | Average number of secondary infections from a single infected individual in a susceptible population | Estimated from population growth rates through phylogenetic analysis or Bayesian coalescent methods [20] |

| Effective Population Size | Nₑ | Number of individuals in an idealized population that would generate the same genetic diversity as observed | inferred through coalescent-based models (e.g., Bayesian Skyline Plots); in epidemics, often correlates with the number of infected individuals [20] [1] |

| Evolutionary Rate | μ | Rate of genetic change per unit time (e.g., substitutions per site per year) | Estimated from temporally spaced sequence data using molecular clock models [13] |

| Generation Time | T_g | Time between successive generations of infection | Distribution must be assumed or estimated from epidemiological data; relates growth rate (r) to R₀ [20] |

| Coalescence Rate | λ | Rate at which lineages merge when traced backward in time | Derived from epidemiological model structure and population dynamics at equilibrium [13] |

The Coalescent Process in Epidemics

The following diagram illustrates how the coalescent process operates within an epidemic context, connecting transmission dynamics with genealogical relationships:

Coalescent Process in Epidemics: This diagram illustrates the fundamental connection between forward-time epidemiological dynamics and backward-time genealogical processes. Infected individuals (red) transmit the pathogen to susceptible individuals (blue), moving forward in time. When samples are taken from infected hosts (dashed lines) and their genetic sequences are analyzed, the process is traced backward through coalescent events where lineages find common ancestors. The transmission dynamics directly influence the rate of coalescence, with higher transmission rates typically leading to faster coalescence [13] [20].

Methodological Approaches and Experimental Protocols

Phylodynamic Analysis Workflow

A standardized workflow for phylodynamic analysis incorporates multiple steps from data collection through to epidemiological interpretation:

Phylodynamic Analysis Workflow: This comprehensive workflow outlines the key stages in phylodynamic analysis, from initial sample collection through to epidemiological interpretation and intervention planning. The critical transition from phylogenetic tree inference to demographic reconstruction represents the application of coalescent theory, which enables the estimation of population dynamics from genetic data [13] [20] [1]. Time-stamped sequences (temporally structured data) are essential for calibrating molecular clocks and estimating evolutionary rates.

Detailed Methodological Protocols

Estimating R₀ from Genetic Data Using Coalescent Theory

The basic reproduction number (R₀) can be estimated from viral sequence data using coalescent-based approaches through the following protocol [20]:

Data Requirements: Collect temporally spaced viral sequences (at least 20-30 sequences spanning the epidemic period) with accurate sampling dates.

Population Growth Rate Estimation:

- Infer a dated phylogeny using Bayesian molecular clock methods (e.g., BEAST) [1].

- Estimate the exponential growth rate (r) from the distribution of node heights in the time-calibrated tree.

- Apply coalescent models such as the Bayesian Skyline Plot or exponential growth model to infer changes in effective population size over time [20].

R₀ Calculation:

- Use the formula relating growth rate to R₀: (R0 = 1 + r \times Tg), where (T_g) is the mean generation time.

- Account for the generation time distribution (exponential, normal, or delta distributions) using the approach of Wallinga and Lipsitch (2007) [20].

- Compute confidence intervals through Bayesian Markov Chain Monte Carlo (MCMC) methods, typically running chains for 10-100 million generations with appropriate burn-in.

Validation: Compare estimates with independent epidemiological data where available, and perform sensitivity analyses on key assumptions (particularly generation time distribution).

Bayesian Skyline Plot for Demographic Reconstruction

The Bayesian Skyline Plot (BSP) method reconstructs historical changes in effective population size from time-stamped genetic sequences [20]:

Sequence Preparation: Align viral sequences and ensure accurate sampling dates are included in the dataset.

Model Specification:

- Select an appropriate nucleotide substitution model (e.g., HKY, GTR) with gamma-distributed rate heterogeneity.

- Choose a relaxed or strict molecular clock model based on preliminary analyses.

- Specify the Bayesian Skyline Plot as the coalescent prior for population size changes.

MCMC Analysis:

- Run extended MCMC simulations (typically 50-500 million steps depending on dataset size) to ensure adequate parameter sampling.

- Monitor convergence using effective sample size (ESS) diagnostics (target ESS >200 for all parameters).

- Adjust operator weights and tuning parameters to improve mixing if necessary.

Post-processing and Interpretation:

- Use Bayesian model averaging to summarize population size through time.

- Visualize the median estimated effective population size with credible intervals across time intervals.

- Interpret changes in effective population size in the context of epidemiological events and interventions.

Table 2: Essential Computational Tools and Resources for Phylodynamic Research

| Tool/Resource | Type | Primary Function | Application in Phylodynamics |

|---|---|---|---|

| BEAST/BEAST2 | Software Package | Bayesian evolutionary analysis sampling trees | Inference of timed phylogenies, demographic history, and evolutionary parameters using coalescent models [1] |

| Coalescent Models | Theoretical Framework | Mathematical models of genealogical relationships | Deriving expected patterns in viral genealogies from epidemiological dynamics; estimating population parameters [13] [1] |

| Bayesian Skyline Plot | Analytical Method | Non-parametric estimation of population size changes | Reconstructing historical changes in effective population size from genetic data [20] |

| Molecular Clock Models | Analytical Framework | Dating evolutionary events using mutation rates | Estimating evolutionary rates and timescale of epidemic spread [20] |

| GeneRecon | Software Package | Fine-scale mapping of disease genes | Coalescent-based analysis for linkage disequilibrium mapping of disease genes using Bayesian MCMC framework [1] |

| GENOME | Software Tool | Rapid coalescent-based whole-genome simulation | Simulating genomic data under various demographic scenarios for method validation [1] |

Applications in Disease Research and Control

Tracking Pathogen Transmission and Spread

Phylodynamic approaches have proven particularly valuable in reconstructing transmission networks and identifying factors driving disease spread:

- Farm-to-Farm Transmission: In veterinary diseases like foot and mouth disease virus, phylodynamic analysis of consensus sequences (one sequence per farm) has enabled researchers to trace contacts between farms and identify undetected infections, informing targeted control measures [20].

- HIV Dynamics: Studies of the heterosexual HIV epidemic in the UK have used Bayesian skyline plots to reconstruct population structure changes and understand transmission patterns [20].

- Influenza Global Spread: Analyses of human Influenza A virus have explored sink-source dynamics and investigated spatial connections in seasonal global epidemics, revealing migration patterns and sources of new strains [20].

Understanding Determinants of Disease Emergence

Phylodynamics provides a powerful framework for investigating the factors that facilitate disease emergence and spread:

- Individual Variation: Models incorporating individual heterogeneity in infectivity (superspreading) demonstrate how host contact structures influence disease spread and genetic diversity [13] [20]. In scale-free contact networks common in sexually transmitted diseases, genetic variation is lower in highly connected hosts than in weakly connected hosts [20].

- Wildlife-Livestock-Human Interface: Phylodynamics is particularly valuable at critical interfaces for disease emergence, improving our ability to manage complex epidemics involving multiple species [20].

- Reproduction Number Estimation: Accurate estimation of R₀ from genetic data enables better targeting of control measures to populations driving transmission [20].

Future Directions and Challenges

As phylodynamics continues to evolve, several promising directions and challenges merit attention:

- Integration of Complex Population Structures: Future models need to better incorporate realistic host population structures, including contact networks, spatial heterogeneity, and multiple host species [20].

- Genealogical Discordance: Accounting for discordance between gene trees and species trees remains challenging, particularly for traits controlled by multiple loci. New multispecies coalescent models for quantitative traits are being developed to address this issue [21].

- Selection and Adaptation: More sophisticated models are needed to jointly analyze the interaction of ecological dynamics and natural selection, particularly for antigens under immune pressure [20].