Computational Requirements for Bayesian Phylodynamics: A Guide for Biomedical Researchers

Bayesian phylodynamics has become an indispensable tool for reconstructing the evolutionary and population dynamics of pathogens, directly informing public health and drug development strategies.

Computational Requirements for Bayesian Phylodynamics: A Guide for Biomedical Researchers

Abstract

Bayesian phylodynamics has become an indispensable tool for reconstructing the evolutionary and population dynamics of pathogens, directly informing public health and drug development strategies. However, the computational burden of these analyses presents a significant hurdle. This article provides a comprehensive overview for researchers and scientists, exploring the foundational principles of Bayesian phylodynamic inference, the advanced models and software that drive modern applications, and the critical hardware and algorithmic optimizations required for efficient computation. We further cover methods for model validation and comparison, synthesizing key takeaways to guide future research in infectious disease and cancer genomics.

The Computational Engine of Phylodynamics: Core Concepts and Scaling Challenges

Core Concepts: Bayesian Phylogenetics and MCMC

What is Bayesian Phylogenetic Inference?

Bayesian inference is a statistical methodology that has revolutionized molecular phylogenetics since the 1990s [1]. Its main feature is the use of probability distributions to describe the uncertainty of all unknown parameters in a model [2]. In the context of phylogenetics, these unknowns typically include the phylogenetic tree topology, branch lengths, and parameters of the evolutionary model [2] [1].

The framework combines prior knowledge with observed data to produce posterior probabilities of phylogenetic trees. This is mathematically expressed through Bayes' theorem:

f(θ|D) = (1/z) f(θ) f(D|θ) [2]

Where:

- f(θ|D) is the posterior probability of the tree parameters (θ) given the observed data (D)

- f(θ) is the prior distribution, representing previous knowledge about the parameters

- f(D|θ) is the likelihood function, indicating the probability of observing the data given the parameters

- z is the normalizing constant ensuring the posterior distribution integrates to 1 [2]

An appealing property of Bayesian inference is that it enables direct probabilistic statements about parameters. For example, the posterior probability of a tree is the probability that the tree is correct given the data, prior, and model assumptions. Similarly, 95% credibility intervals (CI) for parameters have a straightforward interpretation: there is a 95% probability that the true parameter value lies within this interval, conditional on the data [2].

The Role of Markov Chain Monte Carlo (MCMC)

For complex phylogenetic models, the posterior distribution cannot be calculated analytically [3] [1]. MCMC methods solve this problem by constructing a Markov chain that randomly walks through the parameter space, spending time in each region in proportion to its posterior probability [3] [4] [1]. After many iterations, the visited states form a sample from the posterior distribution [3] [1].

The MCMC process can be summarized in three key steps [1]:

- Proposal: A stochastic mechanism proposes a new state for the Markov chain by modifying the current state (e.g., proposing a new tree topology or branch length).

- Acceptance Probability Calculation: The probability of accepting this new state is computed based on the ratio of its posterior probability to that of the current state.

- Decision: A random uniform variable determines whether the chain moves to the new state or remains at the current state.

When this process runs for thousands or millions of iterations, the proportion of times the chain visits a particular tree approximates its posterior probability [1].

Troubleshooting Common MCMC Issues

Diagnosing Convergence Problems

| Symptom | Potential Causes | Diagnostic Tools | Solutions |

|---|---|---|---|

| Low ESS (Effective Sample Size) values [2] | Poor mixing, insufficient chain length, inappropriate tuning parameters [2] [4] | Tracer software [2] | Increase chain length; adjust tuning parameters; use multiple chains [2] |

| Chain not reaching stationarity [4] | Inappropriate initial values; poorly chosen priors [2] [4] | Time-series plots of parameter values (should look like "fuzzy caterpillars") [2] | Change initial values; modify prior distributions; use burn-in [2] |

| Poor mixing (high autocorrelation) [2] | Inefficient proposals; complex multi-modal parameter spaces [2] [1] | Autocorrelation plots [2] | Use Metropolis-coupled MCMC (MC³) [1]; adjust step size [4] |

ESS (Effective Sample Size) is a key metric that indicates how many independent samples your correlated MCMC samples are worth. Low ESS values (typically <100-200) for important parameters indicate that the chain has not explored the posterior distribution sufficiently [2].

Addressing Mixing and Efficiency Issues

MCMC mixing refers to how efficiently the Markov chain explores the parameter space [2]. Poor mixing manifests as high autocorrelation between samples and slow exploration of the posterior distribution [2].

Strategies to improve mixing:

- Use Metropolis-Coupled MCMC (MC³): This approach runs multiple chains in parallel at different "temperatures," allowing better exploration of complex parameter spaces with multiple peaks [1].

- Adjust tuning parameters: The step size or proposal distribution significantly impacts sampling efficiency [4].

- Algorithm selection: For high-dimensional problems, Hamiltonian Monte Carlo (HMC) can be more efficient than conventional Metropolis-Hastings samplers [5].

Managing Computational Challenges

Bayesian phylogenetic analyses using MCMC can be computationally intensive, especially for large datasets or complex models [6] [4]. The BEAGLE library helps address this by leveraging high-performance computing resources, including multicore CPUs and GPUs, to substantially reduce computation time for likelihood calculations [6].

Frequently Asked Questions (FAQs)

Algorithm and Conceptual Questions

Q: What is the difference between MCMC convergence and mixing? A: Convergence occurs when the Markov chain has reached a stationary distribution, meaning that further sampling will not systematically change the estimated posterior distribution. Mixing refers to how efficiently the chain moves through the parameter space, with good mixing showing low autocorrelation between samples [2] [4].

Q: How do I know if my MCMC run has converged? A: Diagnosing convergence requires multiple approaches [2]:

- Examine time-series plots of log-likelihoods and parameters - they should look stable and "fuzzy caterpillar"-like

- Check ESS values for all parameters - should typically be >200

- Run multiple independent chains from different starting points and verify they produce similar distributions (using potential scale reduction factors) [2]

Q: What are the advantages of Bayesian phylogenetic methods over other approaches? A: Key advantages include [2] [1]:

- Direct probabilistic interpretations of results (e.g., posterior probabilities)

- Natural uncertainty quantification for all parameters

- Ability to incorporate prior knowledge through prior distributions

- Flexible framework for integrating complex evolutionary models

Practical Implementation Questions

Q: How do I choose an appropriate substitution model for my data? A: For nucleotide data, models range from simple (JC69) to complex (GTR+Γ) [2]. Programs like jModelTest, ModelGenerator, or PartitionFinder can help select models based on statistical fit [2]. However, different substitution models often produce similar tree estimates, particularly when sequence divergence is low (<10%) [2].

Q: What are the risks of over-parameterization in Bayesian phylogenetic models? A: A model is non-identifiable if different parameter values make identical predictions about the data [2]. This occurs when parameters cannot be estimated separately (e.g., estimating both divergence time and evolutionary rate from a single sequence pair) [2]. Non-identifiable models can lead to convergence problems and unreliable inferences.

Q: How long should I run my MCMC analysis? A: There is no fixed rule - run length depends on your data complexity and model. Continue running until ESS values for all parameters of interest exceed 200, and convergence diagnostics indicate stationarity [2]. For complex analyses, this may require millions of generations.

Research Reagent Solutions: Software and Tools

| Tool Name | Primary Function | Key Features | Reference |

|---|---|---|---|

| BEAST/BEAST X | Bayesian evolutionary analysis | Divergence dating, phylodynamics, phylogeography | [6] [5] |

| MrBayes | Bayesian phylogenetic inference | Analysis of nucleotide, amino acid, morphological data | [2] [1] |

| BEAGLE | High-performance computation library | Accelerates likelihood calculations on GPUs and multicore systems | [6] |

| Tracer | MCMC diagnostics | Analysis of ESS, convergence, parameter distributions | [2] |

| BAMM | Diversification rate analysis | Estimates clade diversification rates on phylogenies | [2] |

| RevBayes | Flexible Bayesian inference | Programmable environment for complex hierarchical models | [2] |

Experimental Protocols for Bayesian Phylogenetic Analysis

Standard Protocol for MCMC Analysis

- Data Preparation: Assemble aligned molecular sequences (DNA, amino acids, or morphological data) [2]

- Model Selection: Choose appropriate substitution models and partitioning schemes using model-testing software [2]

- Prior Specification: Define prior distributions for all parameters based on biological knowledge [2]

- MCMC Settings: Configure chain length, burn-in period, and sampling frequency based on dataset size and complexity

- Convergence Assessment: Run multiple independent chains and verify convergence using diagnostic tools [2]

- Posterior Summarization: Combine samples from converged chains to summarize phylogenetic trees and parameter estimates

Protocol for Handling Convergence Problems

- Initial Diagnosis: Check ESS values and trace plots in Tracer [2]

- Chain Length Adjustment: Increase chain length by factors of 2-10x if ESS values are low

- Proposal Mechanism Adjustment: Modify tuning parameters to improve acceptance rates [4]

- MC³ Implementation: Enable Metropolis-coupled MCMC with 4-10 chains if multimodal posteriors are suspected [1]

- Prior Sensitivity Analysis: Verify that results are robust to reasonable changes in prior distributions [2]

Workflow and Algorithm Visualizations

Frequently Asked Questions

What makes tree topology estimation computationally difficult? The problem of reconstructing the most likely tree topology from data is NP-hard, meaning the computation time can grow exponentially as the size of your dataset (e.g., number of taxa) increases. Searching through the vast space of all possible tree topologies to find the best one is a massive computational challenge [7].

Why are likelihood calculations so slow in phylogenetics? Calculating the likelihood for a given phylogenetic tree involves computing the probability of the observed data (e.g., DNA sequences) given that tree topology, its branch lengths, and a model of evolution. This calculation must be performed for every site in the alignment and across the entire tree, making it a computationally intensive operation, especially for large datasets [8].

What is the difference between Maximum Likelihood and Bayesian inference in this context? Maximum Likelihood (ML) aims to find the single best tree topology and model parameters that maximize the likelihood function. In contrast, Bayesian inference uses Markov Chain Monte Carlo (MCMC) sampling to approximate the posterior probability distribution of trees and parameters, providing a set of plausible hypotheses rather than a single best tree. While ML gives you one answer, Bayesian inference quantifies uncertainty, but at the cost of requiring more computation to adequately sample the tree space [8].

What are marginal likelihoods and why are they important? A marginal likelihood is the average fit of a model to a dataset, calculated by integrating the likelihood over all possible parameter values (including all tree topologies and branch lengths), weighted by the prior probability of those parameters. It is central to Bayesian model comparison because it allows you to compute Bayes Factors, which measure how much the data favor one model over another [9].

Why is the MCMC algorithm fundamental to Bayesian phylogenetics? Markov Chain Monte Carlo (MCMC) is a numerical method that allows us to approximate the posterior distribution of phylogenetic trees and model parameters without having to calculate the intractable marginal likelihood directly. It works by generating a correlated sample of parameter values (including trees) from their posterior distribution [8].

How do priors impact Bayesian model selection compared to parameter estimation? When estimating parameters, the posterior distribution can be robust to the choice of prior if the data are highly informative. However, in model selection via marginal likelihoods, the choice of prior is always critical. Marginal likelihoods are inherently sensitive to the prior because they average the likelihood over the entire prior space. A diffuse prior that places weight on biologically unrealistic parameter values with low likelihood can unfairly penalize an otherwise good model [9].

Troubleshooting Common Experimental Issues

Problem 1: MCMC Convergence Failures

Symptoms: Poor effective sample sizes (ESS), high variance in parameter traces, inconsistent results between independent runs.

Solution:

| Troubleshooting Step | Action | Rationale |

|---|---|---|

| Check Prior Sensitivity | Run analysis with different, biologically justified priors. | Overly broad or misspecified priors can slow convergence and bias marginal likelihood estimates [9]. |

| Adjust Proposal Mechanisms | Tune the step size of parameter updates or use algorithms with multiple MCMC chains. | If proposed new hypotheses are too different from the current one, they are often rejected; if steps are too small, the chain gets trapped in local optima [8]. |

| Extend Run Length | Dramatically increase the number of MCMC generations. | Inadequate sampling fails to explore the complex tree topology space fully, leading to unreliable inferences. |

Problem 2: Inaccurate Marginal Likelihood Estimation

Symptoms: Unstable model support, conflicting results between different estimation methods.

Solution:

| Troubleshooting Step | Action | Rationale |

|---|---|---|

| Validate with Simulations | Test estimation methods on simulated data where the true model is known. | Benchmarks performance and identifies biases in estimation methods [9]. |

| Use Multiple Estimators | Compare results from several methods (e.g., path sampling, stepping-stone). | Different approximation methods have varying strengths and weaknesses; consensus increases confidence [9]. |

| Ensure Proper Priors | Avoid improper priors and use informed, proper priors for model parameters. | Improper priors make marginal likelihoods undefined. Diffuse priors can sink the marginal likelihood [9]. |

Methodological Guide: Core Computational Protocols

Protocol 1: Heuristic Search for Tree Topology Estimation

Objective: Efficiently navigate the vast tree topology space to find a highly probable tree.

Procedure:

- Initialization: Start with an initial tree estimate (e.g., from a fast distance-based method).

- Tree Perturbation: Propose a new tree topology ( ( H^* ) ) by modifying the current tree ( ( H ) ) using operations like Tree Bisection and Reconnection (TBR).

- Likelihood Evaluation: Calculate the likelihood ( P(D \mid H^*) ) for the new tree.

- Acceptance Decision: Accept or reject the new tree based on the acceptance ratio. In a Bayesian MCMC framework, this ratio ( R ) is: [ R = \frac{P(D \mid H^)P(H^)}{P(D \mid H)P(H)} ] If ( R > 1 ), accept ( H^* ). If ( R < 1 ), accept with probability ( R ) [8].

- Iteration: Repeat steps 2-4 for a large number of generations.

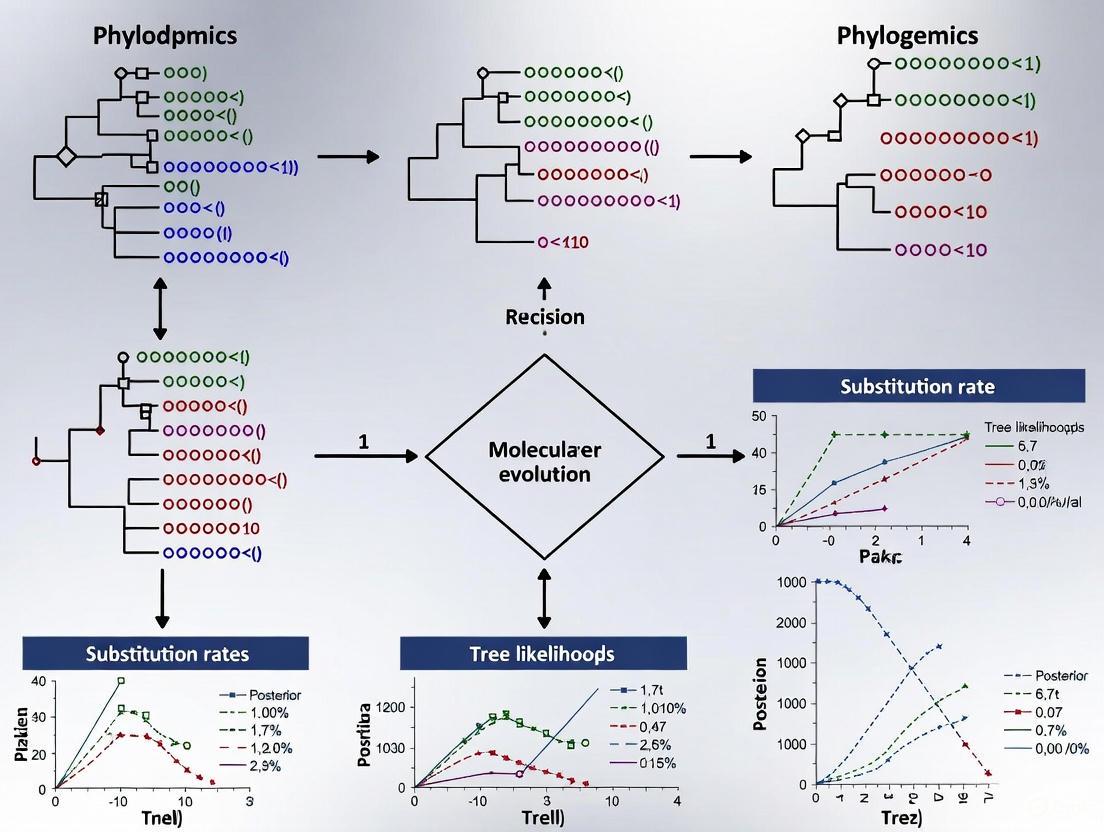

The following diagram illustrates the workflow of this heuristic search process:

Protocol 2: Bayesian Model Comparison via Marginal Likelihoods

Objective: Compare the fit of different evolutionary models to the data.

Procedure:

- Model Specification: Define the candidate models ( ( M1, M2, ... ) ), including their priors ( P(H) ).

- MCMC Sampling: For each model, run an MCMC analysis to sample from the posterior distribution ( P(H \mid D, M_i) ).

- Marginal Likelihood Estimation: Use the posterior samples to approximate the marginal likelihood ( P(D \mid M_i) ) for each model. Common methods include path sampling and stepping-stone sampling [9].

- Model Selection: Calculate Bayes Factors as the ratio of the marginal likelihoods of two models: [ BF{12} = \frac{P(D \mid M1)}{P(D \mid M2)} ] A ( BF{12} > 1 ) indicates support for model ( M1 ) over ( M2 ) [9].

The logical relationship between these components in Bayesian model choice is shown below:

The Scientist's Toolkit

| Research Reagent Solution | Function in Computational Experiments |

|---|---|

| Markov Chain Monte Carlo (MCMC) Sampler | The core engine for performing Bayesian phylogenetic inference; it generates a sample of trees and parameters from the posterior distribution [8]. |

| Marginal Likelihood Estimator | Software routines (e.g., for path sampling) that approximate the marginal likelihood from MCMC samples, enabling rigorous model comparison [9]. |

| Heuristic Tree Search Algorithm | Methods used to efficiently explore the space of possible tree topologies, often employing strategies like subtree pruning and regrafting to navigate this complex space [7]. |

| Evolutionary Substitution Model | A parametric model (e.g., GTR + Γ) that describes the process of sequence evolution along the branches of a tree; it is a core component of the likelihood function [8]. |

| Informed Prior Distributions | Carefully chosen probability distributions for model parameters based on existing biological knowledge, which are essential for obtaining meaningful marginal likelihoods [9]. |

Frequently Asked Questions (FAQs)

Q1: My Bayesian phylodynamic analysis is running extremely slowly on a large dataset of viral sequences. What could be the cause?

Exceedingly long runtimes are a common challenge when applying Bayesian MCMC methods to large sequence alignments, particularly those from within-host pathogen studies. The computational burden increases significantly when an alignment contains thousands of sequences, many of which are often duplicate sequences or haplotypes [10]. The classic approach of treating every sequence as a separate tip in the phylogenetic tree requires the MCMC chain to sample a vast tree space, leading to very poor chain mixing and slow convergence. For a data set of 6,000 sequences, a classic method may fail to converge even after running for 7 days [10].

Q2: What is the problem with simply using only the unique sequences to speed up my analysis?

While using only unique sequences (haplotypes) is a common tactic to reduce computational load, this approach can introduce significant biases into your parameter estimates [10]. These sequences, along with their frequencies, contain valuable information about evolutionary rates and population dynamics. Ignoring the frequency of haplotypes effectively analyzes a biased dataset, which can lead to inaccurate conclusions about the pathogen's evolutionary history [10].

Q3: My research involves detecting rare variants in a heterogeneous tumor sample. How can I overcome high sequencing error rates?

Standard high-throughput sequencing error rates (~0.1-1%) can obscure true rare variants. To address this, consider employing specialized library preparation methods like circle sequencing [11]. This protocol involves circularizing DNA templates and using rolling circle amplification to produce tandem copies of the original molecule within a single sequencing read. Computational consensus of these linked copies dramatically reduces the error rate. This method has been shown to lower errors to a rate as low as 7.6 × 10⁻⁶ per base, making it highly suitable for identifying low-frequency variants in complex samples [11].

Q4: When should I choose whole-exome sequencing (WES) over whole-genome sequencing (WGS) for a large-scale study?

The choice depends on your research question and resources. The table below summarizes the key differences:

| Feature | Whole-Exome Sequencing (WES) | Whole-Genome Sequencing (WGS) |

|---|---|---|

| Target Region | Protein-coding exons (<2% of genome) [12] | Entire genome (coding & non-coding) |

| Cost | Lower [12] | Higher [12] |

| Data Volume | Lower (easier storage/analysis) [12] | Higher (requires more computational resources) [12] |

| Best For | Identifying disease-causing variants in known coding regions; large cohort studies [13] [12] | Comprehensive variant discovery (SNVs, CNVs, structural variants in coding and non-coding regions) [12] |

| Major Limitation | Misses regulatory variants and large structural variants [12] | Higher cost and computational burden; potential for more false positives [12] |

Q5: What are the main computational challenges when working with large single-cell atlases?

Large cell atlases, which can exceed 1 terabyte in size, present several challenges [14]:

- Data Handling: Downloading and processing these datasets requires significant bandwidth, storage, and computing memory [14].

- Batch Effects: Technical variations between datasets generated in different batches or by different labs can confound biological signals and must be detected and corrected [14].

- Metadata and Ontology: Consistent cell type annotation across many datasets is difficult and time-consuming. Inconsistent metadata can severely limit data reuse and interpretation [14].

Troubleshooting Guides

Problem: Slow Convergence in Bayesian Phylodynamic Analysis with Large Datasets

Issue: MCMC analysis in software like BEAST 2 fails to converge in a reasonable time when using a large sequence alignment containing many duplicate sequences.

Diagnosis and Solution:

- Diagnose the Data: Determine the proportion of unique sequences (haplotypes) versus duplicate sequences in your alignment. High duplicity is a primary cause of slow convergence [10].

- Avoid Suboptimal Shortcuts:

- Implement a Computational Solution: Use the PIQMEE (Phylogenetic Inference of Quasispecies Molecular Evolution and Epidemiology) add-on for BEAST 2 [10].

- Principle: PIQMEE resolves the tree structure for unique sequences while simultaneously estimating the branching times for duplicates. It avoids computationally wasteful sampling of the subtree topology for identical sequences [10].

- Benefit: This method maintains accuracy while dramatically improving speed. PIQMEE can handle a dataset of ~21,000 sequences (with only 20 unique sequences) in about 14 days, a task that is practically infeasible with the classic method [10].

Problem: High Error Rates Obscuring Rare Genetic Variants

Issue: Standard Illumina sequencing error rates are too high to confidently identify true rare variants present at frequencies below 1%.

Diagnosis and Solution:

- Confirm the Artifact: Rule out sample cross-contamination or poor DNA quality.

- Apply an Error-Correction Protocol: Implement the circle sequencing wet-lab protocol [11].

- Workflow:

- Circularize size-selected DNA fragments.

- Perform rolling circle amplification with Phi29 polymerase to generate a single DNA molecule containing multiple tandem copies of the original fragment.

- Sequence the resulting concatemers.

- Use a dedicated bioinformatic pipeline to identify repeating units within each read and derive a high-accuracy consensus sequence for each original molecule.

- Key Advantage: Unlike barcoding strategies, circle sequencing is resistant to "jackpot mutations" from PCR, as each copy in the concatemer is independently derived from the original template [11].

- Workflow:

Circle Sequencing Wet-Lab and Computational Workflow

The Scientist's Toolkit: Essential Research Reagent Solutions

| Reagent / Tool | Function in Experimental Protocol |

|---|---|

| Phi29 Polymerase | A highly processive DNA polymerase with strand-displacement activity. It is essential for rolling circle amplification in the circle sequencing protocol, generating long concatemers from a circular DNA template [11]. |

| BEAST 2 Software | A cross-platform program for Bayesian phylogenetic and phylodynamic analysis. It provides a framework for inferring evolutionary history and population dynamics from genetic sequence data [10]. |

| PIQMEE BEAST 2 Add-on | A specialized software package that enables efficient Bayesian phylodynamic analysis of very large datasets containing many duplicate sequences, overcoming a major computational bottleneck [10]. |

| Exonuclease Enzyme | Used in circle sequencing to digest linear DNA fragments that failed to circularize, enriching the sample for successful circular templates [11]. |

Frequently Asked Questions (FAQs)

Q1: What is the core difference between using a Coalescent prior and a Birth-Death prior for my phylogenetic analysis?

The primary difference lies in the underlying population model and sampling assumptions.

- Coalescent Model: This model works backward in time, tracing the ancestry of a sample of individuals from a population. It is typically used when sequences are sampled from a single population at one or more points in time. Its parameters, like population size, directly affect the tree's shape, making shorter internal branches likely in large populations [15].

- Birth-Death Model: This model works forward in time, modeling the processes of speciation (birth) and extinction (death) or, in epidemiology, new infections (birth) and recovery/death (death). It is more appropriate when the tree represents a process of diversification, such as species evolution or an epidemic, and it explicitly models the rate of origin of new lineages [16].

Q2: My BEAST analysis is taking too long. Are there ways to resume an analysis or add new data without starting over?

Yes, BEAST includes an online inference feature for this purpose. You can configure your analysis to save a state file at regular intervals using the -save_every and -save_state command-line arguments. If you need to add new sequences, you can use the CheckPointUpdaterApp to create an updated state file from your checkpoint file and a new XML file containing the extended sequence alignment. The analysis can then be resumed from this updated state file using the -load_state argument, saving significant computation time [17].

Q3: When should I use a relaxed molecular clock instead of a strict clock?

A strict molecular clock assumes that the evolutionary rate is constant across all branches of the tree. A relaxed molecular clock model allows the rate to vary among branches. You should consider a relaxed clock when you have prior reason to believe evolutionary rates are heterogeneous, or when a preliminary analysis under a strict clock model shows a poor fit to the data (e.g., high coefficient of variation of rates) [18] [16].

Q4: What does "time-scaled" or "time-measured" mean in the context of a phylogenetic tree?

A time-scaled tree, or chronogram, is one where the branch lengths are proportional to real (calendar) time. This is in contrast to a phylogram, where branch lengths represent the amount of genetic change. Time-scaled trees are essential for phylodynamic inference because they allow the estimation of the timing of evolutionary events and the rates of population dynamic processes like growth and differentiation [18].

Troubleshooting Guides

Problem 1: Poor MCMC Convergence in Birth-Death Model Analysis

Symptoms: Low effective sample sizes (ESS) for key parameters like birth or death rates, and parameter traces that do not look like "fuzzy caterpillars."

Solutions:

- Parameter Identifiability: Check if your death (extinction) rate is identifiable. With only modern samples, the death rate is often correlated with the birth rate and can be difficult to estimate precisely. Consider fixing the death rate based on prior knowledge or using a more informative prior distribution.

- Model Parameterization: For models with time-varying rates, consider using a more robust prior. For example, a Horseshoe Markov Random Field (HSMRF) prior has been shown to offer higher precision in detecting rate shifts compared to a Gaussian Markov Random Field (GMRF) prior, with little loss of accuracy [16].

- Run Length and Sampling: Increase the number of MCMC iterations. For complex models, chain lengths of tens to hundreds of millions of steps may be necessary. Also, ensure you are not sampling too frequently, which creates autocorrelation.

Problem 2: Inability to Reconcile Tree Prior with Data

Symptoms: A significant difference in marginal likelihood between a model with a Coalescent prior and one with a Birth-Death prior, but unclear which is more biologically realistic.

Solutions:

- Validate Sampling Assumptions:

- The structured coalescent tree prior is conditioned on sample locations and makes no assumption about the randomness of sampling with respect to location. Uneven sampling will reduce power but not bias results [15].

- Mugration models (a type of discrete trait analysis), however, treat location as data and can be prone to bias if samples are collected non-randomly with respect to location [15].

- Test Model Fit: Use posterior predictive simulations to check which tree prior better predicts statistics of your actual data, such as tree balance or branch length distributions.

Problem 3: Visualizing and Annotating Complex Phylogenetic Trees

Symptoms: Difficulty in creating publication-quality figures that incorporate node annotations, branch colors, and metadata.

Solutions:

- Use the R package

ggtree: This package is specifically designed for visualizing and annotating phylogenetic trees with associated data. It integrates with the ggplot2 system, allowing you to add multiple annotation layers flexibly [19]. - Map Metadata to Visual Elements: You can visualize metadata by mapping it to:

- Explore Different Layouts:

ggtreesupports various layouts, including rectangular, circular, slanted, and unrooted, to best present your data [19].

Computational Requirements and Specifications

The following table summarizes the computational resources and software used for Bayesian phylodynamic inference as discussed in the cited sources.

Table 1: Key Research Reagent Solutions for Bayesian Phylodynamics

| Category | Item/Software | Primary Function | Key Application / Note |

|---|---|---|---|

| Core Software | BEAST 2 (Bayesian Evolutionary Analysis Sampling Trees) [18] [17] | A software platform for Bayesian phylogenetic and phylodynamic inference. | The primary software for this guide. Supports molecular clock, coalescent, and birth-death models. |

| Online Inference | BEAST's -save_state & -load_state [17] |

Saves/loads analysis state to resume runs or add data. | Critical for managing long-running analyses and incorporating new sequences. |

| Specialized Packages | GABI (GESTALT analysis using Bayesian inference) [18] | Extends BEAST 2 for single-cell lineage tracing data. | An example of a specialized package for a specific data type (CRISPR-based barcodes). |

| Specialized Packages | MultiTypeTree, BASTA [15] | Implements structured coalescent models. | For phylogeographic inference when sampling is non-random. |

| Birth-Death Models | HSMRF & GMRF Priors [16] | Bayesian nonparametric priors for modeling time-varying birth/death rates. | The HSMRF prior is recommended for its high precision in detecting rate shifts. |

| Visualization | ggtree (R package) [19] | Visualizes and annotates phylogenetic trees. | The recommended tool for creating complex, annotated tree figures. |

| Visualization | DensiTree, FigTree, iTOL | Other software for tree visualization. | Alternative tools for viewing and exporting trees [15] [19]. |

| Methodology | Bayesian Inference (BI) [21] | A general statistical framework for phylogenetic inference. | Suitable for a small number of sequences, uses MCMC for sampling posteriors. |

Experimental Protocol: Implementing an Online Bayesian Phylodynamic Analysis

This protocol details the steps to set up a BEAST analysis with checkpointing, allowing you to add new sequence data later without restarting from scratch [17].

Workflow Overview:

Step-by-Step Instructions:

Initial BEAST Configuration and Execution

- Prepare your initial XML file (

epiWeekX1.xml) in BEAUti, configuring your nucleotide substitution model, molecular clock model, and tree prior (e.g., Coalescent or Birth-Death). - Execute BEAST from the command line, instructing it to save a state file every 20,000 iterations and to maintain a single, updated checkpoint file.

- Command:

- Prepare your initial XML file (

Incorporating New Sequence Data

- When new sequences become available, create an updated XML file (

epiWeekX2.xml) in BEAUti. The model configuration must be identical to the initial XML file; only the sequence alignment should be extended. - Use the

CheckPointUpdaterAppto integrate the new data into the latest checkpoint file. This application uses a genetic distance metric (e.g., JC69) to place the new sequences into the existing tree topology. - Command:

- When new sequences become available, create an updated XML file (

Resuming the Analysis

- Use the

-load_stateargument to resume the analysis from the updated checkpoint file, continuing to save state files periodically. - Command:

- Use the

Foundational Model Relationships

The following diagram illustrates the logical relationships between the foundational models discussed in this guide and how they contribute to the overarching goal of phylodynamic inference.

Advanced Models and Software Platforms for Real-World Applications

BEAST (Bayesian Evolutionary Analysis by Sampling Trees) software encompasses two main, independent platforms for Bayesian phylogenetic and phylodynamic inference. The table below summarizes their key characteristics.

Table 1: Comparison of BEAST Software Platforms

| Feature | BEAST 2 | BEAST X |

|---|---|---|

| Project Nature | Independent project led by the University of Auckland [22] [23] | Orthogonal project; formerly BEAST 1.x series [22] [24] |

| Core Focus | Cross-platform program for Bayesian phylogenetic analysis of molecular sequences using MCMC; estimates rooted, time-measured phylogenies [25] [23] | Bayesian phylogenetic, phylogeographic, and phylodynamic inference; focuses on pathogen genomics and complex trait evolution [5] [22] |

| Key Strength | Modularity and extensibility via a robust package system [25] [23] [26] | Flexibility and scalability of evolutionary models; advanced computational algorithms [5] |

| Architecture | A software platform and library for MCMC-based Bayesian inference, easily extended via BEAST 2 Packages [25] [23] | An efficient statistical inference engine combining molecular phylogenetics with complex trait evolution and demographics [5] |

| Typical Applications | Reconstructing phylogenies, testing evolutionary hypotheses, phylogenetics, population genetics, phylogeography [25] [26] | Phylodynamics, molecular epidemiology of infectious diseases, phylogeography with sampling bias correction [5] |

| Notable Modeling Advances | Relaxed clocks, non-parametric coalescent analysis, multispecies coalescent [23] [26] | Markov-modulated substitution models, random-effects clock models, scalable Gaussian trait-evolution models [5] |

| User Interface | BEAUti 2 [25] [23] | Information not specific in search results |

Frequently Asked Questions (FAQs) and Troubleshooting

FAQ 1: What is the difference between BEAST 2 and BEAST X?

BEAST 2 and BEAST X are separate software projects that have been developed in parallel [22]. BEAST 2 is a complete rewrite of the original BEAST 1.x software, with a primary emphasis on creating a modular, extensible platform via its package management system [25] [23]. The project formerly known as BEAST 1.x was renamed to BEAST X (currently version v10.5.0) to distinguish it from the independent BEAST 2 project and to allow for a new versioning scheme [22] [24].

FAQ 2: My BEAST analysis fails to start with a "Class could not be found" error. What should I do?

This error means BEAST 2 could not identify a component (e.g., a model) specified in your XML file. The two main causes and solutions are [27]:

- Missing Package: The BEAST 2 package containing the component is not installed. Check the first line of your XML file for a list of required packages (e.g.,

required="BEAST.base v2.7.4:SA v1.2.0"). Use BEAUti's File > Manage Packages menu to install any missing packages [27] [28]. - Manual XML Error: If you edited the XML file manually, a typo may have been introduced in the component's name. Carefully review the file and correct any errors [27].

FAQ 3: BEAST will not run because my output log or tree files already exist. How can I fix this?

BEAST prevents overwriting files by default to avoid accidental data loss. You have several options [27]:

- Overwrite: In the BEAST launcher, select the Overwrite option from the dropdown menu before running, or use the

-overwriteflag on the command line. - Resume: To continue a previous analysis, select the Resume option or use the

-resumeflag. - Rename Outputs: In BEAUti's MCMC panel, change the

File Namefor thetracelogandtreelogto create new files. Using the$(filebase)variable in filenames can help avoid future conflicts [27].

FAQ 4: What does a low Effective Sample Size (ESS) value mean, and how can I improve it?

The Effective Sample Size (ESS) indicates the number of effectively independent draws from the posterior distribution. A low ESS (e.g., below 100-200) means the estimate of the posterior distribution for that parameter is unreliable [24]. Improving ESS: Low ESS values often indicate poor mixing of the Markov chain. This can sometimes be improved by adjusting the tuning parameters of MCMC operators to increase sampling efficiency. However, fundamentally poor mixing may require a longer MCMC run (increasing the chain length) [24].

FAQ 5: How do I install or manage packages on a high-performance computing (HPC) cluster without a graphical interface?

For servers or HPC clusters without a GUI, BEAST 2 provides a command-line tool called packagemanager. Use it to list, install, and uninstall packages directly from the terminal [28]. For example:

- To list available packages:

packagemanager -list - To install a package (e.g., SA):

packagemanager -install SA - To make a package available to all users on a cluster, use the

-useAppDiroption (requires write access to the system directory) [28].

Essential Packages and Research Reagent Solutions

The functionality of BEAST 2 is greatly expanded through its package system. The table below lists a selection of key packages that facilitate a wide range of advanced analyses.

Table 2: Key BEAST 2 Packages and Their Functions

| Package Name | Function / Analytical Capability | Brief Description |

|---|---|---|

| bModelTest | Substitution Model Averaging | Performs Bayesian model averaging and comparison for nucleotide substitution models [26]. |

| SNAPP | Species Tree Inference | Infers species trees from single nucleotide polymorphism (SNP) data under the multispecies coalescent model [26]. |

| BDSKY | Epidemiological Inference | Implements the birth-death skyline model to infer time-varying reproductive numbers and epidemic dynamics from time-stamped genetic data [26]. |

| SA (Sampled Ancestors) | Fossil Placement | Allows for the direct inclusion of sampled ancestors in the tree, which is crucial for realistic fossil calibration in divergence dating [27] [26]. |

| MM (Morphological Models) | Morphological Data Analysis | Enables models of morphological character evolution for analyses that incorporate fossil or trait data [27] [26]. |

| MultiTypeTree | Structured Population Inference | Infers population structure and migration history using structured coalescent models [26]. |

Standard Experimental Protocol and Workflow

A standard BEAST analysis for divergence dating or phylodynamics follows a defined workflow. The diagram below outlines the key steps from data preparation to result interpretation.

Workflow Diagram Title: BEAST Analysis Steps

Detailed Methodology:

- Data Preparation: Gather your molecular sequence alignment (e.g., in NEXUS format) and any associated metadata, such as sampling dates for tips or morphological character matrices [29].

- BEAUti Configuration: Use the BEAUti GUI to set up the analysis. This involves several critical sub-steps [29]:

- Import Alignment: Load your sequence data file.

- Site Model (Substitution Model): Specify the evolutionary model for sequence change (e.g., HKY or GTR), and account for among-site rate heterogeneity (e.g., using a Gamma distribution with 4 categories) [29].

- Clock Model: Choose a molecular clock model to describe the rate of evolution over time. A Strict Clock assumes a constant rate, while Relaxed Clock models (e.g., Uncorrelated Lognormal) allow variation across branches [29].

- Priors (Tree Prior & Calibrations): Select a tree-generating prior. The Yule Model is often used for speciation-level data, while the Coalescent model is for intra-species data. Define calibration points (e.g., using fossil evidence) to constrain node ages by applying log-normal or other prior distributions [29].

- MCMC Settings: Configure the length of the MCMC chain (number of steps), logging frequencies for parameters and trees, and output file names.

- Generate XML File: BEAUti saves all these settings into a single XML configuration file, which is the input for the BEAST engine.

- Run BEAST: Execute the BEAST program, providing the generated XML file as input. This step is computationally intensive and can take hours to days depending on dataset size and model complexity.

- Diagnostic Checks: After the run completes, analyze the log file (e.g., using Tracer). Check that the Effective Sample Size (ESS) for all key parameters is greater than 200 to ensure the MCMC chain has converged and mixed well. If ESS values are low, the analysis may need to be run for longer [24].

- Post-Process Results: Use the post-processing tools:

- LogCombiner: To combine results from multiple independent runs or to subsample a long run.

- TreeAnnotator: To generate a single Maximum Clade Credibility (MCC) tree from the posterior set of trees, summarizing node ages and posterior probabilities [24].

- Visualization: Finally, view the annotated MCC tree in a viewer like FigTree or IcyTree to interpret and present your results [29].

Computational Requirements and Performance

Performance in BEAST is highly dependent on the data and model. The table below summarizes key considerations for planning your computational resources.

Table 3: Computational Considerations for BEAST Analyses

| Factor | Impact on Performance & Resource Needs |

|---|---|

| Number of Taxa (N) | Computational complexity generally increases more than linearly with the number of taxa, significantly impacting memory and computation time [5]. |

| Sequence Alignment Length | Longer alignments increase the cost of likelihood calculations. Partitions with many unique site patterns (>500) benefit most from high-performance libraries like BEAGLE [24]. |

| Model Complexity | Complex models (e.g., relaxed clocks, structured coalescents, high-dimensional trait evolution) increase the parameter space, requiring longer MCMC runs for adequate convergence [5] [26]. |

| BEAGLE Library | Using the BEAGLE library can dramatically improve performance. To efficiently use a GPU for acceleration, long sequence partitions are required, and high-end GPUs are recommended [24]. |

| MCMC Chain Length | Adequate chain length is crucial for achieving high ESS. Required length varies massively based on the dataset and model, from hundreds of thousands to hundreds of millions of steps [24]. |

Frequently Asked Questions (FAQs)

Q: What is the fundamental difference between discrete and continuous phylogeographic inference? A: Discrete phylogeography models pathogen spread between distinct, predefined locations (e.g., countries or cities), inferring migration rates and effective population sizes for each location. Continuous phylogeography, in contrast, models the spread across a continuous landscape, often producing a diffusion path for the pathogen without the need for predefined geographic units.

Q: My analysis under the structured coalescent is computationally very slow. What are my options? A: Computational cost is a known challenge. You can consider the following approaches:

- Approximate Methods: Tools like BASTA or MASCOT use approximations to integrate over parts of the migration history, improving scalability for large datasets [30].

- Fixed Phylogeny: If appropriate for your analysis, you can fix a precomputed dated phylogeny and focus the inference only on the migration history and parameters, which significantly reduces the computational burden [30].

- Discrete Trait Analysis (DTA): While DTA is a rougher approximation that models location evolution similarly to a genetic trait on a fixed tree, it is generally the fastest method, though it may introduce unpredictable biases [30].

Q: What file format is commonly used for representing phylogenetic trees in computational tools?

A: The Newick format is a standard and widely supported text-based format for representing tree structures. You can easily write and read trees to and from files in this format using packages like ape in R [31].

Q: How can I visualize a phylogenetic tree in R?

A: The ape and phytools packages in R provide multiple functions for plotting trees. You can visualize phylogenies in different styles, such as rectangular, circular, or unrooted phylograms [31].

Troubleshooting Guides

Poor Mixing or Non-Convergence in MCMC Analysis

Problem: The Markov Chain Monte Carlo (MCMC) sampler exhibits poor mixing, fails to converge, or gets stuck in local optima during Bayesian phylogeographic inference.

Solutions:

- Adjust Operators: Fine-tune the scale and weight of MCMC proposal operators to improve the exploration of the parameter space [30].

- Parameter Re-parameterization: Consider re-parameterizing your model, as this can sometimes lead to more efficient sampling.

- Extended Runs: Run multiple, independent MCMC chains for a longer number of generations. Use diagnostics like Effective Sample Size (ESS) to confirm convergence (values >200 are generally acceptable).

Inaccurate or Biased Parameter Estimates

Problem: Inferred parameters, such as migration rates or effective population sizes, seem biologically implausible or are inconsistent across runs.

Solutions:

- Validate with Simulations: Test your inference pipeline using simulated data where the "true" parameters are known. This helps identify potential biases in the model or method [30].

- Method Comparison: Compare results obtained from different models (e.g., structured coalescent vs. DTA) to assess robustness. Be aware that approximate models can introduce biases [30].

- Review Priors: Carefully select and, if necessary, adjust the prior distributions for your model parameters, as inappropriate priors can strongly influence the results.

Handling and Visualizing Large Phylogenies

Problem: Standard visualization tools become slow or unresponsive when dealing with large datasets containing thousands of taxa, making exploration and interpretation difficult.

Solutions:

- Tree Condensation: Use tools that allow you to collapse or summarize clades of interest, reducing visual complexity.

- Interactive Viewers: Employ specialized software or libraries designed for large trees, which often offer interactive features like zooming, panning, and selective labeling.

- Focus on Sub-trees: For specific questions, extract and visualize a relevant sub-tree (e.g., a specific transmission cluster) for detailed inspection [32].

Experimental Protocols & Workflows

Protocol 1: Bayesian Phylogeographic Inference using the Structured Coalescent

This protocol outlines the steps for inferring past migration history and effective population sizes using an exact structured coalescent model, as implemented in tools like StructCoalescent [30].

Input Data Preparation:

- Sequence Alignment: Compile a multiple sequence alignment (FASTA format) of pathogen genomes.

- Metadata: Prepare a file containing the sampling location for each sequence.

- Dated Phylogeny: Obtain a dated phylogeny (Newick format) inferred from the genomic data using software like BEAST, BEAST2, or from an undated tree using tools like LSD, treedater, TreeTime, or BactDating [30].

Model Configuration:

- Specify the structured coalescent model in your chosen software (e.g., StructCoalescent, MultiTypeTree in BEAST2).

- Define the discrete locations for the analysis.

- Set priors for the parameters of interest (e.g., migration rates, effective population sizes).

MCMC Execution:

- Run the MCMC analysis for a sufficient number of generations to ensure convergence.

- Monitor the run using tracer software to check Effective Sample Size (ESS) for all parameters.

Post-processing and Interpretation:

- Summarize the posterior distribution of trees and parameters after discarding an appropriate burn-in.

- Generate a maximum clade credibility tree annotated with location changes.

- Analyze the posterior estimates of migration rates and effective population sizes.

The following diagram illustrates the core computational workflow for this protocol:

Protocol 2: Basic Phylogenetic Tree Handling and Visualization in R

This protocol provides a basic workflow for reading, manipulating, and visualizing phylogenetic trees in the R environment, which is a common platform for phylogenetic analysis [31].

Package Installation and Loading:

- Install and load necessary R packages (e.g.,

ape,phytools).

- Install and load necessary R packages (e.g.,

Reading a Tree:

- Import a tree from a Newick format file.

Basic Tree Manipulation (Optional):

- Root, drop tips, or extract clades as needed for your analysis.

Visualization:

- Plot the tree using different styles (rectangular, fan, unrooted).

Table 1: Comparison of Phylogeographic Inference Methods

| Method | Core Principle | Computational Scalability | Key Outputs | Considerations |

|---|---|---|---|---|

| Structured Coalescent | Models ancestry within a structured population using coalescent theory [30]. | Computationally demanding, scales poorly to very large datasets [30]. | Past migration rates, effective population sizes per location [30]. | Considered a "gold standard" but requires careful MCMC tuning [30]. |

| Discrete Trait Analysis (DTA) | Models location as a trait evolving along branches of a fixed tree [30]. | Highly scalable, fast computation [30]. | History of location state changes. | A rough approximation; can introduce unpredictable biases [30]. |

| Approximate Methods (e.g., BASTA, MASCOT) | Uses approximations to integrate over parts of the migration history [30]. | Better scalability than the exact model [30]. | Similar to structured coalescent but approximated. | Balance between accuracy and computational efficiency [30]. |

Table 2: Essential R Packages for Phylogenetic Analysis [31]

| Package | Version | Primary Function |

|---|---|---|

ape |

4.1+ | Analysis of Phylogenetics and Evolution; core functions for reading, writing, and manipulating trees [31]. |

phytools |

0.6.20+ | Phylogenetic tools; extensive functions for comparative biology and visualization [31]. |

phangorn |

2.2.0+ | Phylogenetic analysis; focuses on estimating phylogenetic trees and networks. |

geiger |

2.0.6+ | Analysis of evolutionary diversification. |

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Software and Analytical Tools

| Item | Function in Analysis |

|---|---|

| BEAST / BEAST2 | Software package for Bayesian evolutionary analysis by sampling trees, used to infer dated phylogenies and perform phylodynamic analysis [30]. |

| StructCoalescent | An R package for performing Bayesian inference of pathogen phylogeography using the exact structured coalescent model on a precomputed phylogeny [30]. |

| MultiTypeTree | A BEAST2 package that implements the structured coalescent for joint inference of the phylogeny and ancestral locations [30]. |

ape R package |

A core R package providing functions for reading, writing, plotting, and manipulating phylogenetic trees [31]. |

| Structured Coalescent Model | The underlying population genetic model that describes the ancestry of samples from a geographically structured population, informing inferences about population sizes and migration [30]. |

| Precomputed Dated Phylogeny | A fixed tree with branch lengths in units of time, which can be used as input for phylogeographic inference to reduce computational complexity [30]. |

Frequently Asked Questions

Q1: My skyline plot shows a spurious population bottleneck. What could be causing this? A common cause is biased geographical sampling. If your dataset includes many recent sequences from a single location, it can create an artificial signal of a population decline in the reconstruction. It is recommended to avoid this sampling scheme and instead sample sequences with uniform probability with respect to both time and spatial location [33].

Q2: When should I model spatial and temporal dynamics jointly? You should model them jointly even if you are only interested in reconstructing one of the two. Inferring one without the other can lead to unclear biases in the reconstruction. Methods like the MASCOT-Skyline integrate population and migration dynamics to mitigate this issue [34].

Q3: How can I improve the computational speed of my BEAST analysis? The latest software versions offer significant performance enhancements. BEAST X incorporates linear-time gradient algorithms and Hamiltonian Monte Carlo (HMC) transition kernels for models like the nonparametric coalescent-based Skygrid, leading to substantial increases in effective sample size (ESS) per unit time [5].

Q4: What is the difference between Coalescent and Birth-Death Skyline plots? The core difference lies in the direction of time and how sampling is treated. The Coalescent Bayesian Skyline models time backwards from the present to the past. The Birth-Death Skyline models time forwards and uses a different parameterization [35].

Troubleshooting Guides

Issue 1: Biased Phylogeographic Reconstructions

- Problem: Inferred migration rates and ancestral locations are unreliable, potentially due to uneven sampling across locations or unmodeled population size changes.

- Solution:

- Use a structured coalescent skyline approach: Implement the MASCOT-Skyline method, available in the BEAST2 package. It jointly models time-varying effective population sizes in different locations with spatial migration dynamics, reducing bias [34].

- Re-evaluate sampling strategy: Ensure your sequence sampling is as uniform as possible across time and demes (geographic locations) [33].

- Validate with simulations: For complex scenarios, use simulated outbreaks to illuminate the nature of potential biases in your specific context [34].

Issue 2: Poor Parameter Convergence in Coalescent Analyses

- Problem: Low Effective Sample Sizes (ESS) for parameters like effective population size (Ne) or migration rates, indicating the MCMC chain has not sampled the posterior distribution adequately.

- Solution:

- Upgrade software: Use BEAST X, which offers more efficient samplers like HMC for many models, leading to better convergence [5].

- Check clock model calibration: For homochronous data (all samples from the same time point), the clock rate cannot be estimated and must be fixed using an externally derived value [35].

- Replicate analysis: Run your analysis with multiple different stochastic choices of sequences to confirm that inferred dynamics are not spurious and are consistent across replicates [33].

Issue 3: Choosing a Model for Populations with Complex Demography

- Problem: Uncertainty in selecting an appropriate coalescent model for a population with a known or suspected complex history (e.g., rapidly varying size, seedbank effects).

- Solution:

- Understand model classes:

- Kingman’s coalescent is the standard for neutral evolution in stable populations [36].

- Beta coalescents are suitable for populations with skewed offspring distributions or strong selection [36].

- Time-inhomogeneous coalescents describe genealogies of populations with deterministically varying size [36].

- Leverage the TMRCA: Use the distribution of the Time to the Most Recent Common Ancestor (TMRCA) from your data as an explanatory variable to distinguish between different evolutionary scenarios and infer model parameters [36].

- Understand model classes:

Experimental Protocols & workflows

Protocol 1: Running a Coalescent Bayesian Skyline Analysis in BEAST2

This protocol outlines the key steps to infer past population dynamics from a homochronous sequence alignment [35].

- Data Preparation: Load your nucleotide sequence alignment (e.g., a NEXUS file) into BEAUti.

- Site Model Setup: Select a substitution model like GTR.

- To account for rate heterogeneity among sites, set the Gamma Category Count to 4 (or between 4-6) and ensure the Shape parameter is set to "Estimate".

- Clock Model Setup: For homochronous data, use a Strict Clock model.

- Since the clock rate is unidentifiable from the data alone, fix the clock rate to an externally obtained value (e.g., from previous literature) [35].

- Priors Setup (Tree Prior): In the Priors panel, select "Coalescent Bayesian Skyline" as the tree prior.

- Configure Skyline Parameters:

- The model divides the tree's history into segments. Set the dimension for the

bPopSizes(effective population sizes) andbGroupSizes(number of coalescent events per segment) parameters to the same value. This determines the number of population size segments.

- The model divides the tree's history into segments. Set the dimension for the

- Run Analysis: Generate the BEAST2 XML file and run the analysis.

- Diagnose and Interpret: Use Tracer to analyze the log file, check ESS values, and visualize the median and credible intervals of the effective population size through time.

Protocol 2: Simulating and Testing Sampling Strategies

This methodology uses simulations to evaluate how sampling schemes impact the quality of population dynamic reconstruction [33].

- Simulate Ground Truth: Simulate large phylogenies and sequence datasets under a known coalescent or structured coalescent model. This defines the "true" population history.

- Design Sampling Schemes: Create multiple downsampling schemes from the complete simulated dataset. Key schemes to compare include:

- Uniform spatiotemporal sampling: Sampling sequences with uniform probability across all time points and geographic demes.

- Proportional sampling: Sampling with probability proportional to the effective population size at each time and location.

- Biased sampling: For example, heavily sampling recent sequences from a single location.

- Reconstruct Dynamics: Analyze each downsampled dataset using the target coalescent method (e.g., GMRF Skygrid).

- Compare to Truth: Compare the reconstructed population size curves from each sampling scheme against the known, simulated history.

- Make a Recommendation: Based on the performance across replicates, identify the sampling strategy that most accurately and robustly recovers the true dynamics. Simulation studies consistently recommend uniform spatiotemporal sampling [33].

Workflow Diagram: Phylodynamic Inference with Skyline Methods

The diagram below visualizes the logical workflow and key decision points in a typical skyline-based phylodynamic analysis.

The Scientist's Toolkit

Table 1: Key Software and Models for Phylodynamic Inference

| Item Name | Type | Primary Function | Key Considerations |

|---|---|---|---|

| BEAST X [5] | Software Platform | Open-source, cross-platform for Bayesian phylogenetic, phylogeographic, and phylodynamic inference. | Latest version; features HMC samplers for increased efficiency and scalability for high-dimensional models. |

| MASCOT-Skyline [34] | Software Package / Model | A structured coalescent skyline that jointly infers spatial (migration) and temporal (population size) dynamics in BEAST2. | Reduces bias from unmodeled population size changes in phylogeography. |

| Coalescent Bayesian Skyline [35] | Tree Prior / Model | Infers non-parametric changes in effective population size (Ne) over time from a phylogenetic tree. | Models time backwards; number of population size segments (dimension) is a key setting. |

| Birth-Death Skyline [35] | Tree Prior / Model | Infers rates of birth, death, and sampling over time-intervals in a forward-time model. | Models time forwards; available via the BDSKY package in BEAST2. |

| Tracer [35] | Analysis Tool | Analyzes MCMC log files to assess parameter convergence (via ESS) and summarize posterior estimates. | Essential for diagnosing run performance and visualizing results like skyline plots. |

| Structured Coalescent [34] | Model Class | Models how lineages coalesce within, and move between, discrete sub-populations (demes). | Traditionally assumes constant parameters; newer methods (e.g., MASCOT-Skyline) relax this. |

| Time-Inhomogeneous Coalescent [36] | Model Class | A general class of coalescent processes for populations with deterministically varying size over time. | The TMRCA of a sample under this model can be used for demographic inference and parameter estimation. |

Quantitative Data for Method Selection

Table 2: Comparison of Coalescent Model Features and Data Requirements

| Model / Feature | Handles Population Structure? | Infers Population Size Changes? | Model Flexibility | Key Application Context |

|---|---|---|---|---|

| Kingman's Coalescent [36] | No | No (Constant size) | Low | Neutral evolution in stable, equilibrium populations (null model). |

| Coalescent Bayesian Skyline [35] | No | Yes (Non-parametric) | Medium | Inferring past population dynamics from a single, well-mixed population. |

| Structured Coalescent [34] | Yes | No (Constant rates) | Medium | Inferring migration rates between discrete, stable sub-populations. |

| MASCOT-Skyline [34] | Yes | Yes (Non-parametric) | High | Jointly inferring migration and complex, time-varying population dynamics in different locations. |

| Beta Coalescents [36] | No | No (Constant size) | High | Populations with strongly skewed offspring distributions (e.g., high fecundity) or under strong selection. |

Technical Support Center

Troubleshooting Guides

Issue 1: Model Fails to Converge During Bayesian Phylodynamic Analysis

User Question: "My Bayesian coalescent model in BEAST2 with the PhyDyn package is failing to converge, even after extended runtimes. What steps should I take?"

Symptoms:

- MCMC chains show low effective sample sizes (ESS < 200)

- Poor mixing observed in tracer analysis

- Parameter traces show clear trends rather than stable stationarity

Diagnosis and Resolution:

Verify Model Specification

- Check that your demographic or epidemiological model is correctly translated to the structured coalescent framework using PhyDyn's markup language [37].

- Ensure parametric models properly reflect the biological reality of your system.

Adjust MCMC Parameters

- Increase chain length substantially - complex models may require tens of millions of iterations.

- Adjust sampling frequency to balance disk usage and parameter estimation accuracy.

Simplify Initial Model

- Begin with a simpler demographic model (constant population size) before progressing to more complex skyline or structured models.

- Gradually add complexity while monitoring convergence metrics at each step.

Utilize Advanced Sampling Methods

- Consider using Hamiltonian Monte Carlo or other advanced sampling techniques available in BEAST2 for complex parameter spaces.

- Implement path sampling to estimate marginal likelihoods for model comparison.

Issue 2: Handling Context Dependency in Complex Trait Prediction

User Question: "When should I incorporate gene-by-environment (GxE) interactions in my GWAS versus using simple additive models?"

Symptoms:

- Poor prediction accuracy when models are applied to new populations or environments

- Unexplained heterogeneity in effect sizes across subgroups

- Missing heritability despite adequate sample sizes

Diagnosis and Resolution:

Apply Bias-Variance Tradeoff Framework

- Recognize this as a classic bias-variance tradeoff problem [38].

- Use the decision rule: employ GxE models when the bias reduction from context-specific estimation outweighs the increased estimation noise.

Sample Size Considerations

Implementation Workflow:

Empirical Testing Protocol

- Split data by context (e.g., environment, sex) and estimate effects separately.

- Compare MSE between context-specific and pooled estimators.

- Use cross-validation to assess prediction accuracy in held-out samples.

Experimental Protocols and Methodologies

Protocol 1: Polygenic Risk Score Optimization for Clinical Traits

Table 1: PRS Optimization Workflow for Epidemiological Models

| Step | Method | Software/Tools | Key Parameters |

|---|---|---|---|

| GWAS | Linear/Logistic Regression | PLINK v1.9 [39] | Top 10 PCs as covariates |

| PRS Optimization | Clumping and Thresholding | PRSice v2.3.3 [39] | P-value thresholds, LD r² = 0.1 |

| Association Testing | Logistic Regression (binary), Cox PH (survival) | R v3.6.2+ [39] | LR test P-value < 0.05 |

| Model Refinement | Backwards Stepwise Regression | Custom R scripts [39] | Remove correlated PRS (R² ≥ 0.8) |

Detailed Methodology:

- Cohort Separation: Remove target cohort samples from GWAS training data to prevent overlap [39].

- Ancestry Considerations: Perform in both transethnic and ancestry-specific (e.g., white European) populations [39].

- Clinical Adjustment: Sequentially adjust for sociodemographic then clinical variables to isolate genetic effects [39].

- Pathway Analysis: Follow significant PRS associations with enrichment analysis to identify biological mechanisms [39].

Protocol 2: Big Data Integration for Complex Trait Prediction

Table 2: Sample Size Requirements for Genomic Prediction

| Trait Heritability | Min Sample Size (Additive) | Min Sample Size (GxE) | Expected R² |

|---|---|---|---|

| High (h² > 0.5) | 80,000 [40] | 400,000+ [40] | Up to 0.38 for height [40] |

| Medium (h² = 0.3-0.5) | 100,000-200,000 [40] | 500,000+ [40] | 0.2-0.3 [40] |

| Low (h² < 0.3) | 200,000+ [40] | 1,000,000+ [40] | < 0.2 [40] |

Implementation Details:

- Data Sources: Utilize large biomedical datasets (UK Biobank, Million Veteran Program) with individual genotype-phenotype data [40].

- Method Selection: Employ whole-genome regression methods (Bayesian, penalized regression) that can handle high-dimensional inputs [40].

- Marker Density: Use imputed genotypes to maximize genomic coverage and improve SNP-based heritability estimates [40].

- Validation: Always use cross-validation or hold-out samples to assess prediction accuracy and avoid overfitting.

Visualization of Research Workflows

Research Methodology Decision Framework

Bayesian Phylodynamic Analysis Pipeline

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Bayesian Phylodynamics Research

| Tool/Resource | Function | Application Context | Key Features |

|---|---|---|---|

| PhyDyn for BEAST2 [37] | Structured coalescent modeling | Bayesian phylodynamic inference | Flexible markup language for demographic/epidemiological models |

| PRSice v2.3.3 [39] | Polygenic risk score optimization | Complex trait prediction | Clumping and thresholding approach for PRS calculation |

| UK Biobank Data [40] [39] | Large-scale genetic & phenotypic data | Training prediction models | 500,000+ participants with genotype-phenotype data |

| PLINK v1.9 [39] | Genome-wide association analysis | Variant-trait association testing | Efficient handling of large-scale genetic data |

| R Statistical Environment [39] | General statistical analysis & modeling | Data analysis and visualization | Comprehensive packages for genetic epidemiology |

Frequently Asked Questions

Q: How do I determine if my dataset is large enough to warrant GxE analysis?

A: Apply the bias-variance tradeoff rule [38]: Estimate the potential bias from ignoring context effects versus the increased estimation variance from context-specific modeling. For human physiological traits, empirical evidence suggests that for most individual variants, the increased noise of context-specific estimation outweighs bias reduction. However, for polygenic analyses considering many variants simultaneously, GxE models become beneficial with sample sizes exceeding 100,000 individuals.

Q: What are the computational requirements for Bayesian phylodynamic analysis with complex models?

A: Complex structured coalescent models in PhyDyn require substantial computational resources [37]:

- Memory: 16GB+ RAM for moderate datasets (>100 sequences)

- Processing: Multi-core processors for parallelization of MCMC chains

- Storage: Significant disk space for posterior distributions of trees and parameters

- Runtime: Days to weeks for convergence of complex epidemiological models

Q: How can I improve prediction accuracy for complex traits with missing heritability?

A: Several strategies have proven effective [40]:

- Increase Sample Size: Utilize large biobanks (UK Biobank, Million Veteran Program) with 100,000+ participants

- Improve Marker Density: Use imputation to increase genomic coverage beyond standard arrays

- Advanced Methods: Employ Bayesian or penalized regression methods that can handle high-dimensional data

- Polygenic Modeling: Move beyond GWAS-significant hits to include thousands of variants with small effects

Q: Can I use polygenic risk scores as proxies for clinical variables in epidemiological models?

A: Yes, recent research demonstrates this approach [39]. PRS for conditions like hypertension, atrial fibrillation, and Alzheimer's disease remained significantly associated with severe COVID-19 outcomes even after adjustment for sociodemographic and clinical variables. This suggests PRS can provide complementary information beyond traditional risk factors in epidemiological models.

Frequently Asked Questions (FAQs)

FAQ 1: What common issues affect molecular clock calibration in pathogens, and how can they be resolved? Pathogens like Mycobacterium tuberculosis (Mtb) with slow evolution or recent sampling histories often lack sufficient temporal signal for reliable clock calibration. Sampling times below 15-20 years may be insufficient for Mtb. Solutions include using ancient DNA (aDNA) for deeper calibration, employing date randomization tests (DRT) to validate temporal structure, and applying lineage-specific evolutionary rates, which can vary substantially (e.g., between Mtb lineages L2 and L4) [41].

FAQ 2: How can I visualize ancestral state reconstruction when results contain significant uncertainty? When marginal posterior probabilities show uncertainty (e.g., multiple states have similar probabilities), use tools like PastML that incorporate decision-theoretic concepts (Brier score). These methods predict a set of likely states in uncertain tree regions (e.g., near the root) and a single state in certain areas (e.g., near tips). The tool then clusters neighboring nodes with the same state assignments for clear visualization, effectively representing uncertainty without overwhelming detail [42].

FAQ 3: My Bayesian phylodynamic analysis is computationally prohibitive for large datasets. What approximate methods are available? For large datasets or real-time analysis, consider these approximate Bayesian inference methods:

- Approximate Bayesian Computation (ABC): Replaces likelihood calculation with simulation and data summary.

- Bayesian Synthetic Likelihood (BSL): Uses a synthetic likelihood from simulated data.

- Integrated Nested Laplace Approximation (INLA): A deterministic approach for latent Gaussian models.

- Variational Inference (VI): Optimizes a probabilistic approximation to the posterior [43]. Select methods based on your model complexity, data size, and required inferential accuracy.

FAQ 4: How does pathogen dormancy influence phylodynamic inferences, and how can I account for it? Dormancy (e.g., in Mtb) causes individuals to temporarily drop out of the active population, creating a "seedbank." This violates standard coalescent model assumptions because dormant lineages accumulate mutations slower and cannot coalesce. Not accounting for dormancy leads to underestimated evolutionary rates and biased population dynamics. Use the SeedbankTree software within BEAST 2, which implements a strong seedbank coalescent model to jointly infer genealogies, seedbank parameters, and evolutionary parameters from sequence data [44] [45].

Troubleshooting Guides

Issue: Lack of Clocklike Signal in Pathogen Genome Data

Problem: During molecular clock analysis, the root-to-tip regression against sampling time shows no clear temporal signal (low R² value), making it impossible to calibrate the evolutionary timeline.

Solutions:

- Assemble Larger Dataset: Increase the number of genomes. For slow-evolving organisms like Mtb, sample collection over decadal scales may be necessary [41].

- Incorporate Ancient DNA: Use aDNA sequences to provide deeper calibration points, which can help establish a temporal structure even when modern samples are closely dated [41].

- Validate with Date Randomization: Perform a Date Randomization Test (DRT). If the estimated rate from randomized data overlaps with the real data estimate, the temporal signal is insufficient [41].

- Check for Lineage-Specific Effects: Apply lineage-specific evolutionary rates, as clock rates can differ significantly between pathogen lineages [41].

Applicable Tools: TempEst (for root-to-tip regression), BEAST, SeedbankTree (for dormant pathogens).

Issue: Inaccurate Reconstruction of Geographic Spread in Phylogeography

Problem: The inferred migration routes between locations are biased or lack resolution, potentially due to uneven sampling across regions.

Solutions:

- Model Selection: Choose an appropriate phylogeographic model.

- Incorporate Auxiliary Data: Integrate data like air passenger volume or human mobility patterns to inform and constrain the migration model [46] [47].

- Continuous Phylogeography: For dense spatial data, use a Cauchy relaxed random walk (RRW) model to reconstruct continuous diffusion paths, rather than just discrete location jumps [48].

Applicable Tools: BEAST (BEAST 2 with packages like BEAST 2, SPREAD4 for visualization).

Issue: Accounting for Dormancy in Bacterial Population Inference

Problem: Standard coalescent models infer an artificially slow evolutionary rate and miss key population dynamics because they do not account for dormant individuals that periodically leave and re-enter the active population.

Solutions:

- Use a Seedbank Model: Implement the "strong seedbank coalescent" model, which correctly handles the fact that only active lineages coalesce and that dormant lineages mutate at a different (often slower) rate [45].

- Joint Parameter Inference: Use a Bayesian framework to simultaneously estimate:

- Software: Conduct analysis using the SeedbankTree package within BEAST 2, which is specifically designed for this purpose [45].

Applicable Tools: SeedbankTree (in BEAST 2).

Experimental Protocols & Data

Protocol 1: Phylogeographic Analysis of Viral Variants of Concern (VoCs)

Objective: To reconstruct the spatial spread and evolutionary dynamics of SARS-CoV-2 Variants of Concern (e.g., Alpha, Delta) in a specific region [48].

Methodology:

- Data Collection: Download SARS-CoV-2 whole-genome sequences and metadata (collection date, location) from GISAID. Filter for high-coverage sequences of the target lineages.