Cross-Platform Validation of Antibiotic Resistance Gene Detection: From Sequencing Technologies to AI-Driven Analysis

The accurate detection of antibiotic resistance genes (ARGs) is critical for combating the global antimicrobial resistance crisis.

Cross-Platform Validation of Antibiotic Resistance Gene Detection: From Sequencing Technologies to AI-Driven Analysis

Abstract

The accurate detection of antibiotic resistance genes (ARGs) is critical for combating the global antimicrobial resistance crisis. This article provides a comprehensive framework for researchers and drug development professionals to validate ARG detection methodologies across diverse next-generation sequencing platforms, including Illumina and Oxford Nanopore Technologies. We explore foundational principles, advanced computational tools leveraging protein language models and deep learning, and standardized protocols for troubleshooting and cross-platform validation. By synthesizing current advancements in CRISPR-enhanced NGS, bioinformatics pipelines, and AI-based predictors, this guide aims to establish robust benchmarks for ARG detection accuracy, sensitivity, and reproducibility, ultimately supporting reliable antimicrobial resistance surveillance and clinical diagnostics.

The ARG Detection Landscape: Principles, Platforms, and Databases

Antimicrobial resistance (AMR) represents one of the most severe global health threats, with bacterial AMR directly contributing to approximately 1.14 million deaths annually worldwide [1]. The genetic foundations of AMR arise through two primary pathways: intrinsic resistance mechanisms and the acquisition of resistance via horizontal gene transfer (HGT). Intrinsic resistance refers to innate characteristics of bacteria that confer resistance to specific antibiotic classes, such as reduced membrane permeability, constitutive expression of efflux pumps, and production of inactivating enzymes [2]. Acquired resistance develops through genetic changes including chromosomal mutations or the incorporation of exogenous DNA encoding antibiotic resistance genes (ARGs) through HGT [3] [2].

The rapid global dissemination of AMR is predominantly fueled by HGT, which enables resistance genes to transfer between different bacterial species across One Health compartments (human, animal, and environmental settings) [1]. Mobile genetic elements (MGEs), including plasmids, transposons, and integrons, serve as the primary vehicles for ARG transfer, creating a dynamic "environmental resistome" from which pathogens can acquire resistance traits [1] [2]. Understanding these fundamental mechanisms is critical for developing accurate ARG detection methodologies, which in turn inform clinical treatment decisions and public health interventions to combat the AMR crisis.

Fundamental Genetic Mechanisms of Resistance

Intrinsic Resistance Mechanisms

Intrinsic resistance encompasses the innate, chromosomal characteristics of bacterial species that enable survival under antibiotic exposure without prior mutation or foreign gene acquisition. These mechanisms include physiological barriers and constitutive cellular functions that limit antibiotic efficacy [2]. The primary intrinsic resistance strategies include:

Reduced Membrane Permeability: Many Gram-negative bacteria possess an outer membrane that restricts antibiotic penetration, creating an effective barrier against numerous antimicrobial agents including β-lactams, glycopeptides, and macrolides [2]. This structural characteristic explains why some antibiotics effective against Gram-positive bacteria demonstrate limited activity against Gram-negative organisms.

Constitutive Efflux Pump Expression: Membrane-associated transporter proteins actively export antibiotics from bacterial cells, reducing intracellular concentrations below effective levels. These efflux systems, such as AcrAB-TolC in Escherichia coli, may have broad specificity, conferring resistance to multiple antibiotic classes simultaneously [2].

Natural Enzymatic Inactivation: Some bacteria inherently produce enzymes that modify or degrade antibiotics. For instance, many Pseudomonas aeruginosa strains chromosomally encode AmpC β-lactamase, providing intrinsic resistance to aminopenicillins and cephalosporins [2].

Acquired Resistance Through Mutation

Bacteria can develop resistance through spontaneous chromosomal mutations that alter drug targets, regulate gene expression, or modify cellular pathways [3] [2]. These mutations are selected under antibiotic pressure, leading to resistant populations. Clinically significant mutation-based resistance mechanisms include:

Target Site Modifications: Mutations in genes encoding antibiotic target proteins can reduce drug binding affinity. For example, mutations in the gyrA and parC genes encoding DNA gyrase and topoisomerase IV confer fluoroquinolone resistance across multiple bacterial species [4] [2].

Regulatory Mutations: Mutations in promoter or regulatory genes can lead to overexpression of resistance mechanisms. Upregulation of efflux pump expression through regulatory gene mutations can transform previously susceptible bacteria into multidrug-resistant organisms [2].

Horizontal Gene Transfer of Resistance Determinants

HGT represents the most significant pathway for the rapid dissemination of ARGs among bacterial populations, enabling the transfer of resistance traits across species and genus boundaries [1]. This process occurs through three primary mechanisms:

Conjugation: Direct cell-to-cell transfer of MGEs, particularly plasmids, through specialized conjugation machinery. Plasmid-mediated transfer represents the most efficient and clinically significant route for ARG dissemination, often enabling simultaneous transfer of multiple resistance determinants [1] [5].

Transformation: Uptake and incorporation of free environmental DNA released from deceased bacterial cells. This process allows for the acquisition of ARGs from distantly related species in the environment [2].

Transduction: Bacteriophage-mediated transfer of bacterial DNA between cells. While less common than conjugation, transduction can facilitate the movement of specific ARGs between closely related bacteria [2].

The association of ARGs with MGEs dramatically increases their potential for dissemination across diverse bacterial hosts, significantly amplifying AMR risk, particularly in environmental settings where multiple bacterial species coexist [1].

Table 1: Fundamental Antibiotic Resistance Mechanisms

| Mechanism Category | Specific Process | Genetic Basis | Example |

|---|---|---|---|

| Intrinsic Resistance | Reduced permeability | Chromosomal genes | Gram-negative outer membrane |

| Efflux systems | Constitutive transporters | AcrAB-TolC in E. coli | |

| Enzymatic inactivation | Chromosomal enzymes | AmpC β-lactamase in P. aeruginosa | |

| Acquired via Mutation | Target modification | Point mutations | gyrA mutations (fluoroquinolone resistance) |

| Regulatory changes | Promoter mutations | Efflux pump overexpression | |

| Acquired via HGT | Plasmid transfer | Conjugation | blaKPC carbapenemase genes |

| Transposon transfer | Insertion sequences | Tetracycline resistance transposons | |

| Phage-mediated | Transduction | Staphylococcal β-lactamase |

ARG Detection Sequencing Platforms: Performance Comparison

Multiple sequencing platforms with distinct technical approaches are currently employed for ARG detection, each offering different advantages in accuracy, speed, throughput, and cost-effectiveness. Understanding the performance characteristics of these platforms is essential for selecting appropriate methodologies for specific research or clinical applications.

Short-Read Sequencing (Illumina)

Illumina sequencing employs synthesis-based sequencing of short DNA fragments (typically 150-300 bp) with high per-base accuracy (>99.9%). This technology provides exceptional throughput at relatively low cost per gigabase, making it suitable for large-scale surveillance studies [4] [6]. Performance characteristics include:

Sensitivity and Coverage Requirements: For isolate sequencing, approximately 300,000 reads or 15× genome coverage is sufficient to detect ARGs in E. coli with high sensitivity (1.00 ± 0.00) and positive predictive value (1.00 ± 0.00) [4]. In metagenomic samples, detecting ARGs in organisms present at 1% relative abundance requires assembly of approximately 30 million reads to achieve adequate 15× target coverage [4].

Limitations in Contextual Analysis: Short reads struggle to resolve repetitive regions and complex genomic structures, limiting their ability to determine ARG chromosomal location or association with specific MGEs without additional analytical techniques [7].

Long-Read Sequencing (Oxford Nanopore Technologies)

Oxford Nanopore Technologies (ONT) sequencing measures electrical current changes as DNA strands pass through nanopores, generating long reads (typically >10 kb) that facilitate assembly of complex genomic regions and direct linkage of ARGs with MGEs [8] [5]. Key performance attributes include:

Rapid Resistance Prediction: ONT enables real-time genomic analysis, with studies demonstrating inference of carbapenem resistance in Klebsiella pneumoniae within 10-60 minutes using whole-genome or plasmid matching approaches, achieving 77.3-85.7% accuracy compared to 54.2% accuracy for AMR gene detection at 6 hours [8].

Low-Abundance Variant Detection: Nanopore sequencing can identify low-abundance plasmid-mediated resistance that often escapes detection by conventional methods. In one clinical case, ONT detected a single copy of the blaKPC-14 resistance gene that conferred CAZ-AVI resistance, which was missed by established diagnostic methods [5].

Multiplexing Considerations: While higher multiplexing levels (8 samples per flowcell) reduce costs, lower multiplexing (4 samples per flowcell) enhances detection sensitivity for low-abundance ARGs and pathogens in metagenomic samples [7].

Emerging and Targeted Sequencing Approaches

CRISPR-Enriched Metagenomics: A CRISPR-Cas9-modified next-generation sequencing method enriches targeted ARGs during library preparation, dramatically improving detection sensitivity. This approach detects up to 1,189 more ARGs than conventional NGS in wastewater samples and lowers the detection limit of ARGs from 10⁻⁴ to 10⁻⁵ relative abundance [9].

Targeted Panels: Commercially available targeted enrichment panels, such as the Illumina AmpliSeq for Illumina Antimicrobial Resistance Panel (targeting 478 AMR genes across 28 antibiotic classes) and hybrid capture approaches, provide focused analysis of known resistance determinants with reduced sequencing requirements and enhanced sensitivity for low-abundance targets [6].

Table 2: Performance Comparison of Sequencing Platforms for ARG Detection

| Platform/ Method | Read Length | Key Strengths | Limitations | Optimal Application Context |

|---|---|---|---|---|

| Illumina Short-Read | 150-300 bp | High base accuracy (>99.9%), Cost-effective for large studies | Limited contextual information for MGE association | Large-scale surveillance, Metagenomic resistome profiling |

| Oxford Nanopore | >10 kb | Real-time analysis (minutes-hours), Direct plasmid detection | Higher error rate requires coverage, Lower throughput | Clinical diagnostics, Outbreak investigation, Hybrid assemblies |

| CRISPR-Enriched | Varies | Exceptional sensitivity for low-abundance targets, Detects novel variants | Targeted approach, Additional laboratory steps | Monitoring environmental reservoirs, Detecting emerging threats |

| Targeted Panels | Varies | High sensitivity for known targets, Cost-effective for focused studies | Limited to predefined targets, Misses novel genes | Routine clinical screening, Therapeutic guidance |

Experimental Protocols for ARG Detection

"Align-Search-Infer" Pipeline for Rapid Resistance Prediction

A novel bioinformatics approach for rapid antimicrobial susceptibility prediction from urine samples employs a three-step "Align-Search-Infer" pipeline [8]:

Alignment: Query reads (bacterial DNA sequences) are aligned against a curated whole-genome database of bacterial isolates with known antimicrobial susceptibility testing (AST) profiles using minimap2 with default parameters.

Search: The best-matched genome in the database is identified based on metrics including read abundance (number of hits) and the total number of matched bases, prioritizing matches with comprehensive genomic coverage.

Inference: The antimicrobial susceptibility phenotype of the query sample is inferred to match the AST profile of the best-matched genome in the database, enabling prediction without direct gene detection.

This method achieved 85.7% accuracy (95% CI: 70.7-100.0%) for predicting carbapenem resistance in Klebsiella pneumoniae within 1 hour using plasmid matching, outperforming conventional AMR gene detection (54.2% accuracy at 6 hours) [8]. The approach requires only 50-500 kilobases of sequencing data compared to 5,000 kilobases for conventional gene detection, making it particularly suitable for low bacterial load clinical samples [8].

Real-Time Genomics Protocol for Hidden Resistance Detection

A clinically validated protocol for detecting low-abundance resistance determinants using ONT sequencing involves [5]:

Library Preparation and Sequencing: DNA is extracted from bacterial isolates using a magnetic bead-based method (e.g., Quick-DNA HMW Magbead Kit). Libraries are prepared with rapid barcoding kits (SQK-RBK110-96) and sequenced on portable MinION Mk1B devices using FLO-MIN106 (R9.4.1) flow cells.

Basecalling and Assembly: Real-time high-accuracy basecalling is performed using Guppy (v6.1.7) in super-high accuracy mode with a quality threshold of 10. De novo genome assembly is conducted using Flye assembler with default parameters for bacterial genomes.

Resistance Gene Identification: Assembled contigs are analyzed using the EPI2ME ARG platform with the Antimicrobial Resistance protein homolog model, which identifies ARG copies with accuracy thresholds (>90% identity). Copy number quantification is normalized against chromosomal markers or highly abundant reference genes.

This protocol successfully identified a previously undetected blaKPC-14 gene present in low abundance (initially just one copy) that conferred resistance to CAZ-AVI in a Klebsiella pneumoniae infection, demonstrating how extended sequencing (2-8 hours additional run time) can reveal clinically significant resistance determinants missed by conventional diagnostics [5].

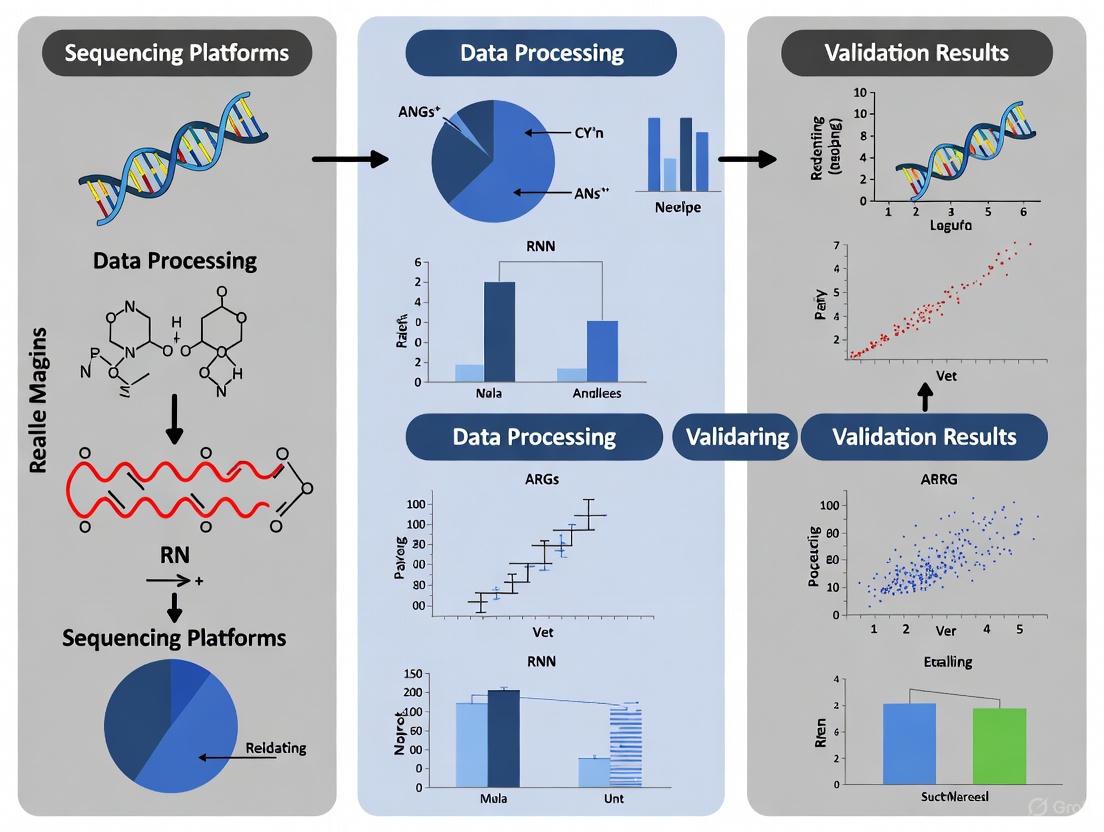

Figure 1: Workflow for Real-Time Genomic Detection of Antibiotic Resistance Genes

The accuracy of ARG detection from sequencing data depends heavily on the reference databases used for annotation. Major databases differ in curation methods, scope of resistance determinants, and associated metadata, influencing their suitability for different research applications [3] [2].

Manually Curated Databases

Comprehensive Antibiotic Resistance Database (CARD): Employing the Antibiotic Resistance Ontology (ARO), CARD provides rigorous manual curation of resistance determinants, mechanisms, and antibiotic molecules [2]. Inclusion requires experimental validation of resistance phenotype through peer-reviewed publications, ensuring high-quality annotations. The Resistance Gene Identifier (RGI) tool facilitates ARG prediction using curated reference sequences and BLASTP alignment bit-score thresholds [2].

ResFinder/PointFinder: This integrated resource combines ResFinder, which focuses on acquired AMR genes using a k-mer-based alignment algorithm for rapid analysis, with PointFinder, which specializes in detecting chromosomal point mutations conferring resistance in specific bacterial species [2]. The platform includes phenotype prediction tables that link genetic information to potential resistance traits [2].

Consolidated and Specialized Databases

National Database of Antibiotic-Resistant Organisms (NDARO): Maintained by the NCBI, this database integrates data from multiple sources including CARD and provides comprehensive information on both acquired and mutation-based AMR mechanisms [3] [2].

MEGARes: Designed specifically for metagenomic analysis, MEGARes contains sequence data for antimicrobial resistance genes accompanied by an acyclic graph-based ontology for hierarchical annotation of resistance classes, mechanisms, and groups [3].

SARG: The Structured Antibiotic Resistance Gene database organizes ARGs into a structured database that facilitates analysis of resistance gene distribution across different environments, with particular utility for environmental resistome studies [3].

Table 3: Comparison of Major ARG Annotation Databases

| Database | Curation Method | Resistance Determinants | Key Features | Best Application Context |

|---|---|---|---|---|

| CARD | Manual expert curation with inclusion criteria | Acquired genes, Mutations, Protein variants | Antibiotic Resistance Ontology (ARO), RGI tool | Comprehensive research, Clinical isolate characterization |

| ResFinder/ PointFinder | Manual curation with automated updates | Acquired genes (ResFinder), Mutations (PointFinder) | K-mer based alignment, Species-specific mutation database | Clinical diagnostics, Outbreak strain analysis |

| NDARO | Consolidated from multiple sources | Acquired genes, Mutations | Integrates CARD and other resources, NCBI pathogen focus | Public health surveillance, Reference for clinical labs |

| MEGARes | Manual curation with hierarchical ontology | Acquired genes | Designed for metagenomics, Acyclic graph ontology | Environmental resistome studies, Metagenomic analysis |

| SARG | Consolidation with manual refinement | Acquired genes | Environmental focus, Structured taxonomy | Tracking ARGs in environmental settings |

The Scientist's Toolkit: Essential Research Reagents and Materials

Successful ARG detection and characterization requires specific laboratory reagents, sequencing materials, and bioinformatic resources. The following table details essential components for comprehensive antibiotic resistance research.

Table 4: Essential Research Reagents and Materials for ARG Detection Studies

| Category | Specific Item/Kit | Function/Application | Example Use Case |

|---|---|---|---|

| DNA Extraction | Quick-DNA HMW Magbead Kit | High-molecular-weight DNA extraction | ONT sequencing requiring long DNA fragments [7] |

| Illumina DNA Prep | Flexible DNA library preparation | Short-read WGS and metagenomic sequencing [6] | |

| Library Preparation | Ligation Sequencing Kits (SQK-LSK114) | ONT library prep with ligation chemistry | Whole-genome sequencing for assembly [5] |

| Rapid Barcoding Kits (SQK-RBK110-96) | Quick ONT library prep with barcoding | Multiplexed sequencing of multiple isolates [8] | |

| Targeted Enrichment | AmpliSeq for Illumina Antimicrobial Resistance Panel | Amplification-based target enrichment | Focused detection of 478 AMR genes [6] |

| Respiratory Pathogen ID/AMR Enrichment Panel | Hybrid-capture target enrichment | Simultaneous pathogen ID and AMR detection [6] | |

| Sequencing Platforms | Oxford Nanopore MinION/GridION | Portable and benchtop long-read sequencing | Real-time ARG detection and plasmid analysis [5] |

| Illumina MiSeq/iSeq | Benchtop short-read sequencing | High-accuracy ARG detection in isolates [4] | |

| Bioinformatic Tools | Resistance Gene Identifier (RGI) | ARG detection using CARD database | Comprehensive resistome analysis [4] |

| KMA (K-mer Alignment) | Rapid read mapping for ARG assignment | High-throughput screening of metagenomes [7] | |

| Reference Databases | CARD | Curated ARG sequences and ontology | Gold-standard ARG annotation [3] [2] |

| ResFinder | Acquired resistance gene database | Clinical isolate analysis [2] |

Figure 2: Fundamental Mechanisms of Antibiotic Resistance

The comprehensive comparison of ARG detection methodologies presented herein demonstrates that effective antimicrobial resistance surveillance requires careful platform selection based on specific research objectives and clinical scenarios. Short-read sequencing technologies offer high accuracy and cost-efficiency for large-scale resistome profiling, while long-read platforms provide critical contextual information about ARG location and mobility, enabling real-time clinical decision-making [4] [5]. Emerging enrichment strategies, such as CRISPR-based target selection, dramatically enhance sensitivity for detecting low-abundance resistance determinants that would otherwise escape conventional detection methods [9].

The integration of ARG mobility assessment into environmental surveillance represents a crucial advancement for accurate risk assessment, as the association of resistance genes with mobile genetic elements significantly increases their potential for dissemination to pathogenic species [1]. Future directions in AMR research should focus on standardizing analytical frameworks across platforms, developing real-time bioinformatic tools for clinical applications, and establishing comprehensive surveillance networks that capture the dynamic nature of resistance gene flow across One Health compartments. As sequencing technologies continue to evolve, the validation of ARG detection across platforms will remain essential for generating comparable, actionable data to inform both clinical practice and public health policy in the ongoing battle against antimicrobial resistance.

Antimicrobial resistance (AMR) represents a critical global health threat, necessitating robust surveillance strategies to understand and mitigate its spread. The analysis of the resistome—the comprehensive collection of antibiotic resistance genes (ARGs) within a sample—relies heavily on advanced genomic technologies. Next-generation sequencing (NGS) platforms, particularly Illumina and Oxford Nanopore Technology (ONT), have become foundational tools for this purpose. However, these technologies differ significantly in their underlying chemistry, performance characteristics, and application suitability. This guide provides an objective comparison of Illumina and Oxford Nanopore platforms for resistome profiling, framing the analysis within the broader context of validating ARG detection across sequencing methodologies. It synthesizes current experimental data to help researchers, scientists, and drug development professionals select the appropriate technology based on their specific research objectives, whether for high-resolution surveillance, outbreak investigation, or real-time environmental monitoring.

Platform Comparison: Core Technologies and Performance

The fundamental differences between Illumina and Oxford Nanopore technologies dictate their performance in resistome profiling applications. The table below summarizes the core characteristics of each platform.

Table 1: Core Technology and Performance Characteristics of Illumina and Oxford Nanopore

| Feature | Illumina | Oxford Nanopore (ONT) |

|---|---|---|

| Sequencing Principle | Short-read; Sequencing by Synthesis (SBS) [6] | Long-read; Real-time electronic signal measurement [10] |

| Typical Read Length | 100-300 base pairs [11] | Several kilobases to over 100 kilobases [12] |

| Raw Read Accuracy | ~99.9% (Q30) [12] | ~96.84% (Q15) to >99% with latest chemistry [11] [12] |

| Primary Advantage | High accuracy, high throughput, low cost per base | Long reads, portability, real-time analysis |

| Primary Disadvantage | Limited ability to resolve repetitive regions and link ARGs to hosts [10] | Higher raw error rate can affect single-nucleotide variant calling [11] |

Illumina sequencing is characterized by its high-throughput output and exceptional base-level accuracy, making it a gold standard for applications requiring precise variant calling [11] [6]. In contrast, Oxford Nanopore technology generates long reads in real-time, enabling the resolution of complex genomic regions and direct linkage of ARGs to their microbial hosts on a single, continuous read [10] [12]. A direct comparison of sequencing quality for Clostridioides difficile analysis showed Illumina had an average base quality of Q25 (99.68% accuracy), while Nanopore reads reached Q15 (96.84% accuracy), a tenfold difference in quality [11]. It is important to note that ONT accuracy has improved significantly with newer flow cells (R10.4.1) and base-calling algorithms [12].

Comparative Experimental Data for Resistome Profiling

The performance differences between platforms directly impact the results and biological inferences drawn from resistome studies. The following table synthesizes key findings from comparative studies.

Table 2: Comparative Performance in Resistome and Microbiome Analysis

| Analysis Aspect | Illumina Performance | Oxford Nanopore Performance |

|---|---|---|

| ARG Detection Sensitivity | High sensitivity; unassembled reads yield high ARG diversity/abundance [12] | Can miss some low-abundance genes; better for assembled, contextualized ARGs [12] |

| ARG Host Linkage | Limited; requires complex assembly and statistical inference, often unreliable [10] | Excellent; long reads directly link ARGs to hosts and mobile genetic elements (MGEs) [10] [12] |

| Mobile Genetic Element (MGE) Analysis | Poor assembly of MGEs flanking ARGs, hindering context understanding [10] | Enables in-depth exploration of co-location between ARGs, MGEs, and plasmids [10] |

| Taxonomic Profiling (Genus Level) | Can detect more potential pathogens but may miss native taxa; depends on classifier [12] | Shows greater consistency with 16S data; more accurate host assignment for ARGs [12] |

| Epidemiological Resolution | High-resolution for SNP-based phylogenies and outbreak investigation [11] | Limited by higher error rate; can be inadequate for precise transmission tracing [11] |

A critical application is linking ARGs to their bacterial hosts. One study on river water samples found that while unassembled Illumina data showed higher ARG diversity, assembled Illumina contigs and ONT long reads provided comparable results for dominant genes and their host associations [12]. However, ONT's long reads facilitate direct host linkage without the need for complex bioinformatic inference, providing a more straightforward and reliable association [10]. For instance, ONT has been successfully used to characterize the resistome and link AMR genes to microbial hosts in complex environmental samples like subaerial biofilms on monuments and wetland waters [13] [14].

Detailed Experimental Protocols from Cited Studies

To ensure reproducibility and provide a clear framework for method selection, this section outlines the experimental protocols from key comparative studies.

Protocol: Comparative Analysis of ARG Detection in River Water Microbiomes

This protocol is derived from a 2025 study comparing Illumina amplicon, Illumina shotgun, and ONT long-read metagenomics for profiling river water samples [12].

- Sample Collection and DNA Extraction: 48 river water samples were collected from four sites in the Lavaca River watershed, Texas, across 12 time points. A volume of 100 mL of each water sample was filtered through a 0.2 µm pore-size membrane. DNA was extracted using the ZymoBIOMICS DNA Miniprep Kit.

- Library Preparation and Sequencing:

- Illumina 16S Amplicon Sequencing: The V3 region of the 16S rRNA gene was amplified and sequenced.

- Illumina Shotgun Metagenomics: Libraries were prepared and sequenced on an Illumina platform to generate short reads.

- ONT Long-Read Metagenomics: Libraries were prepared and sequenced on Oxford Nanopore devices (presumably MinION) to generate long reads.

- Bioinformatic Analysis:

- Quality Control: Illumina reads were trimmed for quality. ONT reads were base-called with Guppy, and adapters were removed with Porechop. Low-quality sequences were filtered using Nanofilt.

- Metagenomic Assembly: Illumina reads were assembled into contigs using metaSPAdes. ONT long reads can be assembled with Flye or used directly.

- ARG and Taxonomy Profiling: ARGs were identified by aligning reads/contigs to the Comprehensive Antibiotic Resistance Database (CARD). Taxonomy was assigned using tools like Kraken2.

Protocol: Comparison of Illumina and ONT for Bacterial Genome Analysis

This protocol is based on a 2025 study comparing sequencing data quality for Clostridioides difficile genome analysis [11].

- Bacterial Isolates and DNA Extraction: 37 C. difficile isolates were cultured. DNA was extracted using either a Roche MagNA Pure 96 system with an enzymatic lysis step or a Qiagen DNeasy PowerSoil Pro Kit with mechanical bead-beating.

- Library Preparation and Sequencing:

- Illumina Sequencing: Libraries were constructed with the Nextera XT Kit and sequenced on an Illumina NextSeq 500 platform (2x150 bp).

- Oxford Nanopore Sequencing: Libraries were prepared with rapid barcoding kits (SQK-RBK110-96 or SQK-RBK114-96) and sequenced on a MinION device using R9.4.1 or R10.4.1 flow cells.

- Bioinformatic Analysis:

- Read Processing: Illumina paired-end reads were trimmed with Trimmomatic. ONT raw FAST5 files were base-called and demultiplexed with Guppy.

- Genome Assembly: Illumina reads were assembled with SPAdes. ONT reads were assembled with Flye or Unicycler. Hybrid assemblies were also generated.

- Downstream Analysis: Assemblies were used for sequence type (ST) designation, core genome MLST (cgMLST) analysis, and virulence gene detection.

The Scientist's Toolkit: Essential Research Reagents and Materials

The following table details key reagents, kits, and software tools essential for conducting resistome profiling studies, as referenced in the cited literature.

Table 3: Essential Reagents and Tools for Resistome Profiling

| Item Name | Function/Application | Example Use Case |

|---|---|---|

| ZymoBIOMICS DNA Miniprep Kit | DNA extraction from complex environmental and microbial samples [14] [12] | DNA extraction from river water filters and subaerial biofilms [14] [12] |

| Nextera XT DNA Library Prep Kit (Illumina) | Preparation of sequencing libraries for Illumina platforms [11] | Library construction for C. difficile WGS [11] |

| SQK-RBK114-96 Rapid Barcoding Kit (ONT) | Rapid preparation and multiplexing of libraries for Nanopore sequencing [11] | Multiplexing C. difficile isolates for sequencing on MinION [11] |

| Comprehensive Antibiotic Resistance Database (CARD) | Reference database for identifying and characterizing ARGs [15] [16] [12] | Primary database for aligning reads/contigs to identify ARGs [16] [12] |

| Guppy (ONT) | Base-calling software for converting raw Nanopore signals (FAST5) to nucleotide sequences (FASTQ) [11] [10] | First step in ONT data processing post-sequencing [11] |

| Trimmomatic | Quality control tool for trimming and filtering Illumina short reads [11] | Removing adapters and low-quality bases from Illumina reads [11] |

| Porechop & Nanofilt | Adapter trimming (Porechop) and quality filtering (Nanofilt) for ONT reads [10] | Pre-processing ONT long reads before assembly or analysis [10] |

| SPAdes & Flye Assemblers | Genome assemblers for short reads (SPAdes) and long reads (Flye) [11] | De novo assembly of Illumina and ONT sequences, respectively [11] |

The choice between Illumina and Oxford Nanopore for resistome profiling is not a matter of one platform being universally superior, but rather depends on the specific research questions and practical constraints.

- Select Illumina when the research priority is maximum accuracy and precision. This includes applications such as high-resolution epidemiological surveillance where single-nucleotide polymorphisms (SNPs) are critical for tracking transmission routes [11], detecting low-frequency ARG variants, and studies requiring the highest possible throughput at the lowest cost per base.

- Select Oxford Nanopore when the research priority is genomic context and real-time analysis. This is ideal for studies focusing on the genetic environment of ARGs, such as their association with plasmids, phages, and other mobile genetic elements [10], for directly linking ARGs to their bacterial hosts without inference [12], and for field-based or point-of-care applications where portability and rapid turnaround are essential [13] [14].

For the most comprehensive analysis, a hybrid approach using both technologies is increasingly employed. This strategy leverages the high accuracy of Illumina short reads to polish and correct the long reads generated by Nanopore, resulting in highly contiguous and accurate genome assemblies that provide both context and precision [10]. As both technologies continue to evolve, with Illumina pushing the boundaries of throughput and Nanopore steadily improving its accuracy and read length, their synergistic application will undoubtedly deepen our understanding of the resistome and its dynamics in an increasingly complex world.

The rise of antimicrobial resistance (AMR) presents a grave global health threat, with antibiotic-resistant bacteria implicated in hundreds of thousands of deaths annually [17] [2]. The accurate identification of antibiotic resistance genes (ARGs) through genomic and metagenomic sequencing has become a cornerstone of AMR surveillance and research. This endeavor relies heavily on specialized databases that catalog known resistance determinants, yet significant variability in their design, curation, and content affects ARG detection outcomes [18] [2]. Within the context of validating ARG detection across different sequencing platforms, this review provides a critical examination of four prominent ARG databases: the Comprehensive Antibiotic Resistance Database (CARD), ResFinder, MEGARes, and HMD-ARG-DB. By comparing their structures, curation methodologies, and performance characteristics, this guide aims to assist researchers, scientists, and drug development professionals in selecting the most appropriate resource for their specific experimental and surveillance needs.

Database Structures and Curation Methodologies

ARG databases are foundational to resistance detection, but their utility is directly shaped by their underlying architecture and data curation principles. The four databases reviewed here employ distinct strategies, ranging from rigorous manual curation to automated consolidation of diverse sources.

CARD employs an ontology-driven framework, the Antibiotic Resistance Ontology (ARO), which systematically classifies resistance determinants, mechanisms, and antibiotic molecules [2] [19]. This structure facilitates detailed mechanistic insights and logical data organization. CARD maintains strict inclusion criteria, typically requiring that ARG sequences be deposited in GenBank and demonstrate an experimentally validated increase in Minimal Inhibitory Concentration (MIC) reported in peer-reviewed literature [2]. This focus on experimental validation ensures high confidence in its entries but may limit the inclusion of emerging, unvalidated resistance genes.

ResFinder, often used alongside PointFinder for chromosomal mutations, primarily focuses on acquired resistance genes [2]. Its original curation was based on the Lahey Clinic β-Lactamase Database, ARDB, and extensive literature review [2]. It utilizes a K-mer-based alignment algorithm, enabling rapid analysis directly from raw sequencing reads, which enhances its utility for clinical diagnostics and surveillance [2].

MEGARes adopts a consolidation approach, integrating data from multiple primary databases including CARD, ARG-ANNOT, and ResFinder to create a non-redundant resource optimized for high-throughput sequencing analysis [2] [19]. This design aims to minimize sequence redundancy, thereby streamlining the annotation process for metagenomic data.

HMD-ARG-DB represents one of the most comprehensive consolidated resources, curated from seven widely-used databases: AMRFinder, CARD, ResFinder, Resfams, DeepARG, MEGARes, and ARG-ANNOT [17]. It contains over 17,000 ARG sequences distributed across 33 antibiotic resistance classes, making it particularly valuable for training machine learning models like ProtAlign-ARG and for capturing broad resistome diversity [17].

The following diagram illustrates the complex relationships and data flow between these major databases and the analytical tools they support.

Comparative Analysis of Database Characteristics

The structural and functional differences between databases directly impact their application in research settings. The table below provides a quantitative comparison of key characteristics.

Table 1: Comparative Characteristics of Major ARG Databases

| Database | Primary Focus | Curation Approach | Key Features | Update Frequency | Notable Limitations |

|---|---|---|---|---|---|

| CARD | Comprehensive ARG catalog | Manual expert curation with ontology (ARO) | Includes RGI tool, resistome & variants module | Regular, with community input (CARD:Live) [2] | Limited to experimentally validated genes; slower updates due to manual curation [2] |

| ResFinder | Acquired ARGs | Manual curation from literature & specific sources | Integrated with PointFinder for mutations; k-mer based for speed [2] | Periodically updated | Limited coverage of point mutations; primarily for acquired genes [2] [20] |

| MEGARes | High-throughput screening | Consolidated from CARD, ARG-ANNOT, ResFinder | Non-redundant design for efficient metagenomic analysis [2] [19] | Dependent on source updates | Limited novel gene discovery due to dependency on source DBs [2] |

| HMD-ARG-DB | Machine learning training | Consolidated from 7 major databases [17] | Over 17,000 sequences across 33 ARG classes; used for ProtAlign-ARG [17] | Consolidated, not primary | Potential redundancy; context depends on original source curation |

Performance in Experimental Validation

Independent evaluations provide critical insights into how these databases perform in real-world research scenarios, particularly for genotype-phenotype correlation.

Minimal Model Benchmarking inKlebsiella pneumoniae

A 2025 study evaluating annotation tools on K. pneumoniae genomes established "minimal models" using only known resistance determinants from various databases to predict binary resistance phenotypes [18]. This approach highlighted antibiotics for which known mechanisms insufficiently explained observed resistance, thereby identifying knowledge gaps. The performance of these minimal models, built using annotations from tools relying on different databases, varied significantly across antibiotic classes.

Table 2: Performance of Minimal Models for Predicting Resistance in K. pneumoniae [18]

| Antibiotic Class | Annotation Tool (Database) | Accuracy Range | Notes on Resistance Mechanism Coverage |

|---|---|---|---|

| β-lactams | Kleborate, AMRFinderPlus (Multiple) | 85-95% | Well-characterized mechanisms; high accuracy for known genes and mutations [18] |

| Aminoglycosides | ResFinder, RGI (CARD) | 75-90% | Good coverage for acquired genes; some unexplained resistance suggests novel variants [18] |

| Fluoroquinolones | PointFinder, AMRFinderPlus (Mutation DBs) | 70-88% | Chromosomal mutations in gyrA/parC are primary drivers; performance depends on mutation database completeness [18] |

| Tetracyclines | DeepARG, HMD-ARG (Expanded DBs) | 65-82% | Unexplained resistance indicates potential novel efflux pumps or ribosomal protection genes [18] |

| Macrolides | Multiple Tools | 60-78% | Significant knowledge gaps; known mechanisms fail to explain many resistant phenotypes [18] |

Impact on Machine Learning Model Performance

The composition and scope of training databases directly influence the performance of machine learning models for ARG detection. ProtAlign-ARG, a hybrid model incorporating both protein language models and alignment-based scoring, was trained on HMD-ARG-DB due to its comprehensive coverage of over 17,000 sequences across numerous resistance classes [17]. This extensive training data contributed to the model's remarkable accuracy and recall, particularly for identifying remote ARG homologs that might be missed by alignment-only methods [17]. Furthermore, tools like DeepARG, which are trained on expanded databases, demonstrate lower false-negative rates compared to traditional best-hit methods that rely on narrower databases [21]. This underscores a critical trade-off: consolidated databases like HMD-ARG-DB and MEGARes can enhance sensitivity for novel gene detection, while tightly curated databases like CARD may provide higher specificity for well-validated mechanisms.

Essential Research Reagents and Tools

The practical application of these databases requires integration with specific computational tools and reagents. The following table catalogues key resources for a functional ARG detection pipeline.

Table 3: Research Reagent Solutions for ARG Detection and Analysis

| Resource Name | Type | Function in ARG Research | Relevant Database(s) |

|---|---|---|---|

| Resistance Gene Identifier (RGI) | Software Tool | Predicts ARGs in sequencing data using CARD's curated models and bit-score thresholds [2] [19] | CARD |

| GraphPart | Software Tool | Partitions datasets for machine learning with precise similarity thresholds to prevent biased accuracy metrics [17] | HMD-ARG-DB |

| AMRFinderPlus | Software Tool | Identifies ARGs and point mutations using NCBI's Reference Gene Catalog; command-line tool [18] [20] | Multiple |

| Kleborate | Software Tool | Species-specific tool for cataloging resistance and virulence variants in K. pneumoniae [18] | Species-specific |

| ProtAlign-ARG | Software Model | Hybrid deep learning model integrating protein language models with alignment scoring for improved ARG classification [17] | HMD-ARG-DB |

| BV-BRC Public Database | Data Resource | Source of bacterial genome sequences and associated AMR metadata for model training and testing [18] | N/A |

| COALA Dataset | Data Resource | Collection of ARG sequences from 15 published databases used for standardized tool comparison [17] | Multiple |

The critical review of CARD, ResFinder, MEGARes, and HMD-ARG-DB reveals that database selection must be aligned with specific research objectives within the broader context of ARG detection validation. CARD excels in scenarios requiring high-confidence, experimentally validated annotations and mechanistic insights through its ontology. ResFinder offers speed and efficiency for tracking acquired resistance genes in clinical isolates. MEGARes provides a streamlined, non-redundant resource for high-throughput metagenomic screening. HMD-ARG-DB, with its extensive consolidated sequence collection, is particularly powerful for training machine learning models and capturing a broad spectrum of resistance determinants.

No single database is universally superior. The observed performance variations in experimental validations underscore the persistent challenge of incomplete ARG annotation, especially for certain antibiotic classes. Future efforts should focus on integrating contextual data on mobility and host pathogens, improving standardization across resources, and developing more adaptable frameworks for capturing novel resistance mechanisms. As sequencing technologies evolve, the synergy between comprehensive, well-curated databases and sophisticated computational models will remain fundamental to advancing AMR research and surveillance.

The rapid evolution and global spread of antibiotic resistance genes (ARGs) represent one of the most pressing public health challenges of our time, with antibiotic-resistant infections causing an estimated 700,000 deaths annually worldwide [17]. Comprehensive surveillance of ARGs through genomic and metagenomic sequencing has become fundamental to understanding and mitigating this threat [4] [2]. Traditional methods for identifying ARGs have predominantly relied on alignment-based approaches that compare query sequences against reference databases. While these methods provide a reliable foundation, they face inherent limitations in detecting novel variants and remote homologs due to their dependence on existing database entries and predefined similarity thresholds [17] [22].

Recent advances in computational biology have introduced powerful alternatives using deep learning and protein language models, which can identify ARGs based on learned patterns and structural features rather than sequence similarity alone [23] [22] [24]. This guide provides an objective comparison of these methodological paradigms, presenting experimental data and protocols to assist researchers in selecting appropriate tools for ARG detection across different sequencing platforms and research contexts.

Alignment-Based Approaches

Alignment-based methods identify ARGs by computationally aligning nucleotide or amino acid sequences to determine regions of similarity that may indicate functional, structural, or evolutionary relationships [17]. These approaches typically use tools like BLAST, DIAMOND, or Bowtie2 to compare query sequences against reference databases such as the Comprehensive Antibiotic Resistance Database (CARD) or ResFinder [2] [19]. The alignment process involves calculating similarity scores (e.g., bit scores, e-values, percentage identity) to determine matches, with results highly dependent on the selected thresholds and database comprehensiveness [17] [22].

Key Limitations: Alignment-based approaches are inherently constrained by their reliance on existing databases, making them unable to detect truly novel ARGs absent from reference collections [17]. They also struggle with remote homologs where evolutionary relationships have significantly diverged over time, and they demonstrate limited capability in identifying species-specific ARGs, particularly in gram-negative bacteria [23]. Performance is further complicated by the lack of universal optimal similarity thresholds, often resulting in high false-negative rates if thresholds are too stringent or false positives if too liberal [22].

Novel Computational Approaches

Novel computational methods leverage artificial intelligence to overcome alignment-based limitations, using deep neural networks and protein language models to identify ARGs based on learned features rather than direct sequence similarity [22] [24].

- Protein Language Models (PLMs): Tools like PLM-ARG and ProtAlign-ARG utilize transformer-based models (e.g., ESM-1b) pretrained on millions of protein sequences to generate embedding representations that capture complex sequence-structure-function relationships [23]. These embeddings serve as input for classifiers that identify ARGs and categorize their resistance mechanisms.

- Deep Neural Networks: Frameworks like ARGNet employ autoencoders for unsupervised learning of sequence features combined with convolutional neural networks (CNNs) for classification, accepting both nucleotide and amino acid sequences of variable lengths [22].

- Multi-Channel Transformers: MCT-ARG integrates multiple protein modalities including primary sequences, predicted secondary structure, and relative solvent accessibility to construct comprehensive representations for ARG prediction [24].

- Hybrid Models: ProtAlign-ARG combines the strengths of PPLM-based prediction with alignment-based scoring, creating a robust solution that enhances predictive accuracy, particularly with limited training samples [17].

Performance Comparison & Experimental Data

Quantitative Performance Metrics

Table 1: Comparative Performance Metrics of ARG Detection Tools

| Tool | Methodology | Binary Classification MCC | Multi-class Accuracy | Key Strengths |

|---|---|---|---|---|

| ProtAlign-ARG [17] | Hybrid (Protein Language Model + Alignment) | 0.983 ± 0.001 (5-fold CV) | Superior recall in ARG classification | Excels with limited training data; integrates bit scores and e-values |

| PLM-ARG [23] | Protein Language Model (ESM-1b) + XGBoost | 0.838 (Independent validation) | N/A | Outperformed other tools by 51.8%-107.9% in MCC improvement |

| MCT-ARG [24] | Multi-channel Transformer | 0.927 | 92.42% (15 antibiotic categories) | Robust under class imbalance (MCC = 90.97%); integrates structural features |

| ARGNet [22] | Deep Neural Network (Autoencoder + CNN) | N/A | Outperformed DeepARG & HMD-ARG | 57% reduced inference runtime vs DeepARG; handles variable-length sequences |

| DeepARG [17] [22] | Deep Learning + Similarity Scores | Lower than PLM-based tools | Lower than newer tools | Early deep learning approach; limited by similarity score dependency |

| HMD-ARG [17] [22] | Hierarchical Multi-task CNN | Lower than PLM-based tools | Lower than newer tools | Comprehensive annotations; limited sequence length range (50-1571 aa) |

Experimental Protocols & Benchmarking Methodologies

Data Curation and Partitioning Protocols

Comprehensive benchmarking requires rigorous dataset preparation to ensure unbiased evaluation:

- Data Sources: Leading tools utilize ARG sequences curated from multiple databases including CARD, ResFinder, AMRFinder, MEGARes, DeepARG, HMD-ARG, and ARG-ANNOT, containing between 17,000-28,000 ARG sequences distributed across numerous antibiotic-resistance classes [17] [23] [22].

- Non-ARG Datasets: Critical for model training, non-ARG sequences are typically obtained from UniProt, excluding known ARGs. Sequences with e-value > 1e-3 and percentage identity < 40% to ARG databases are classified as non-ARGs, ensuring the model learns discriminative features [17].

- Data Partitioning: To prevent biased accuracy metrics, datasets are partitioned using tools like GraphPart that guarantee specified maximum similarity between training and testing sets (e.g., 40% threshold). This ensures model evaluation on genuinely unseen data rather than close homologs [17].

Sequencing Depth Requirements

Experimental design must account for sequencing depth requirements for reliable ARG detection:

- Isolate Sequencing: For pure bacterial isolates, approximately 300,000 reads or 15× genome coverage using 2×150bp Illumina sequencing is sufficient for ARG detection with high sensitivity and positive predictive value comparable to deeper coverage [4].

- Metagenomic Sequencing: For complex microbial communities, detecting ARGs in organisms present at 1% relative abundance requires assembly of approximately 30 million reads to achieve 15× target coverage [4].

Computational Workflows

Diagram: Computational Workflows for ARG Detection Methodologies

Research Reagent Solutions

Table 2: Essential Research Reagents and Computational Resources for ARG Detection

| Resource Category | Specific Tools/Databases | Function & Application |

|---|---|---|

| Reference Databases | CARD [2] [19], ResFinder [2] [19], MEGARes [2], SARG+ [25] | Curated collections of known ARGs; provide reference sequences for alignment and model training |

| Alignment Tools | DIAMOND [17] [25], BLAST [4] [23], BWA [23], Bowtie2 [19] | Perform sequence similarity searches; enable read-based or assembly-based ARG identification |

| Protein Language Models | ESM-1b [23], Transformer Architectures [17] [24] | Generate embedding representations from protein sequences; capture complex sequence-structure relationships |

| Machine Learning Frameworks | XGBoost [23], TensorFlow/Keras [22], PyTorch | Implement classifiers for ARG identification and categorization; enable model training and deployment |

| Metagenomic Assembly Tools | MetaSPAdes [19], MEGAHIT [19], IDBA-UD [19] | Reconstruct contiguous sequences from raw reads; enable assembly-based ARG detection |

| Taxonomic Classification | GTDB [25], Centrifuge [25], Kraken2 [25] | Assign taxonomic labels to ARG-containing sequences; enable host identification |

The expanding toolkit for ARG detection offers researchers multiple pathways for investigating antibiotic resistance, each with distinct strengths and optimal applications. Alignment-based methods provide reliability and interpretability for tracking known resistance determinants, while novel computational approaches significantly expand detection capabilities for novel and divergent ARGs.

For comprehensive ARG profiling in complex samples, hybrid approaches like ProtAlign-ARG that integrate alignment-based scoring with protein language models demonstrate superior performance, particularly in scenarios with limited training data [17]. When investigating novel resistance mechanisms or analyzing sequences with low similarity to reference databases, protein language model-based tools like PLM-ARG and MCT-ARG offer enhanced capability for detecting remote homologs [23] [24]. For large-scale metagenomic studies with computational constraints, deep learning tools like ARGNet provide efficient inference while maintaining high accuracy [22].

Future methodological development will likely focus on improving interpretability, integrating multimodal data (including protein structural information), and enhancing capabilities for tracking ARG mobility and host associations [25] [24]. As sequencing technologies continue to evolve, with long-read platforms becoming more accessible, bioinformatic methods must adapt to leverage the advantages of these platforms for resolving ARG contexts and host relationships [25].

The escalating global health crisis of antimicrobial resistance (AMR) has made the accurate identification of antibiotic resistance genes (ARGs) a critical endeavor for clinical, agricultural, and environmental sectors [2]. Advances in next-generation sequencing (NGS) technologies have revolutionized AMR surveillance by enabling comprehensive analysis of ARGs from both bacterial whole genomes and complex metagenomic datasets [2] [26]. However, the reliability of these genomic analyses fundamentally depends on rigorous validation using standardized metrics including sensitivity, specificity, and limit of detection (LOD). These parameters provide the essential framework for evaluating the performance of ARG detection platforms, allowing researchers to understand the capabilities and limitations of their chosen methodologies [27] [28].

The precision of ARG detection is complicated by significant variability in database structures, data curation methodologies, annotation depth, and coverage of resistance determinants across available bioinformatics resources [2]. Furthermore, the inherent challenges of detecting low-abundance targets within complex sample matrices and the presence of eukaryotic DNA in metagenomic samples can substantially impact detection accuracy [28]. This comparison guide provides an objective evaluation of ARG detection platform performance, presenting structured experimental data and methodologies to assist researchers in selecting appropriate tools and interpreting results within the broader context of AMR research validation.

Foundational Validation Metrics: Definitions and Calculations

Sensitivity and Specificity

In diagnostic testing, including ARG detection, sensitivity and specificity are fundamental indicators of test accuracy that exhibit an inherent inverse relationship [27] [29].

- Sensitivity (True Positive Rate): Proportion of true positives detected out of all actual positive conditions. It answers: "How well does the test identify those with the ARG?" [27] [29].

- Formula: Sensitivity = True Positives (TP) / [True Positives (TP) + False Negatives (FN)]

- Specificity (True Negative Rate): Proportion of true negatives detected out of all actual negative conditions. It answers: "How well does the test identify those without the ARG?" [27] [29].

- Formula: Specificity = True Negatives (TN) / [True Negatives (TN) + False Positives (FP)]

Table 1: Interpretation of High vs. Low Sensitivity and Specificity

| Metric | High Value | Low Value |

|---|---|---|

| Sensitivity | Excellent at "ruling out" disease/ARG presence when test is negative | Misses many true positives; negative result unreliable for exclusion |

| Specificity | Excellent at "ruling in" disease/ARG presence when test is positive | Many false positives; positive result unreliable for confirmation |

Predictive Values and Likelihood Ratios

Beyond sensitivity and specificity, other crucial metrics include Positive Predictive Value (PPV), Negative Predictive Value (NPV), and Likelihood Ratios (LRs), with PPV and NPV being particularly influenced by disease prevalence in the population [27].

- Positive Predictive Value (PPV): Proportion of true positives out of all positive test results [27].

- Formula: PPV = TP / (TP + FP)

- Negative Predictive Value (NPV): Proportion of true negatives out of all negative test results [27].

- Formula: NPV = TN / (TN + FN)

- Likelihood Ratios: Unlike predictive values, LRs are not impacted by disease prevalence [27].

- Positive LR = Sensitivity / (1 - Specificity)

- Negative LR = (1 - Sensitivity) / Specificity

Limit of Detection (LOD)

The Limit of Detection (LOD) represents the lowest concentration or abundance of an analyte (e.g., an ARG) that can be reliably distinguished from its absence [30]. In ARG detection, LOD is often expressed in terms of minimum genome coverage or variant allele frequency (VAF).

For metagenomic sequencing, accurate ARG detection typically requires approximately 5X isolate genome coverage [28]. For targeted NGS panels in cancer genomics (a analogous field), the minimum detected VAF can be as low as 2.9% for both SNVs and INDELs [31]. The LOD is statistically derived from blank measurements, often calculated as LOD = μ~bl~ + 3σ~bl~, where μ~bl~ is the mean of the blank signal and σ~bl~ is its standard deviation [30].

Experimental Data: Cross-Platform Performance Comparison

NGS Panel Performance in Clinical Settings

Targeted next-generation sequencing panels represent a sophisticated approach for genomic analysis. Performance validation of one such clinical oncology panel demonstrated exceptional metrics across 43 unique samples, achieving 98.23% sensitivity and 99.99% specificity at a 95% confidence interval, with additional precision and accuracy measurements at 99.99% [31]. The assay successfully detected 794 mutations, including all 92 known variants from orthogonal methods, with a minimum detection threshold of 2.9% variant allele frequency for both SNVs and INDELs [31].

The K-MASTER project, a Korean national precision medicine platform, provided revealing data on how NGS performance varies across gene types and cancer cohorts [32]. When comparing their NGS panel with orthogonal methods, the platform showed variable sensitivity for ERBB2 amplification detection: 53.7% in breast cancer and 62.5% in gastric cancer, while maintaining high specificity (99.4% and 98.2%, respectively) [32]. This variability highlights the significant influence of genomic context on assay performance.

Table 2: Performance Metrics of the K-MASTER NGS Panel Across Cancer Types [32]

| Cancer Type | Gene/Target | Sensitivity (%) | Specificity (%) | Concordance with Orthogonal Methods |

|---|---|---|---|---|

| Colorectal Cancer | KRAS | 87.4 | 79.3 | Moderate |

| Colorectal Cancer | NRAS | 88.9 | 98.9 | High |

| Colorectal Cancer | BRAF | 77.8 | 100.0 | High |

| NSCLC | EGFR | 86.2 | 97.5 | High |

| NSCLC | ALK Fusion | 100.0 | 100.0 | Perfect |

| NSCLC | ROS1 Fusion | 33.3 | 100.0 | Low |

| Breast Cancer | ERBB2 Amplification | 53.7 | 99.4 | Moderate |

| Gastric Cancer | ERBB2 Amplification | 62.5 | 98.2 | Moderate |

Metagenomic Sequencing for ARG Detection

Metagenomic sequencing enables ARG profiling in complex microbial communities but faces sensitivity challenges for low-abundance targets. Research on synthetic metagenomes has established that accurate ARG detection requires approximately 5X coverage of the isolate genome encoding the ARG [28]. This coverage requirement translates to the ARG-encoding organism needing to represent approximately 0.4% of a 40 million read metagenome [28].

The LOD is significantly influenced by both bioinformatic tools and sample type. In benchmarking studies, KMA and CARD-RGI accurately predicted only expected ARG targets or closely related gene alleles, while SRST2 (which allows reads to map to multiple targets) falsely reported distantly related ARGs at all coverage levels [28]. Notably, the presence of background microbiota differently influenced ARG detection accuracy, with mcr-1 detection possible at 0.1X isolate coverage in lettuce metagenomes but not in beef metagenomes, highlighting the matrix effect on sensitivity [28].

Enhanced Detection Methods

Novel approaches are emerging to address sensitivity limitations in conventional metagenomic sequencing. A CRISPR-Cas9-enriched NGS method demonstrated substantially improved LOD for ARGs in wastewater samples, detecting up to 1,189 more ARGs and 61 more ARG families compared to regular NGS [9]. This method lowered the detection limit of ARGs from the magnitude of 10⁻⁴ to 10⁻⁵ as quantified by qPCR relative abundance, while maintaining minimal false negative (2/1208) and false positive (1/1208) rates [9].

Methodologies: Experimental Protocols for Validation

Synthetic Metagenome Construction for LOD Determination

To establish limits of detection for ARGs in metagenomic samples, researchers have developed rigorous protocols using synthetic metagenomes with known composition [28].

Protocol:

- Strain Selection and Sequencing: Select bacterial strains encoding specific ARGs of interest (e.g., Enterococcus faecalis with vanB, Escherichia coli with mcr-1.1, Klebsiella pneumoniae with blaCTX-M-15) [28].

- Sequence Data Generation: Generate whole-genome sequence data for selected strains through Illumina HiSeq or similar platforms [28].

- Metagenome Formulation: Create synthetic metagenomes by spiking sequence reads from ARG-encoding strains into background metagenomes from relevant sample types (e.g., beef fecal metagenomes, lettuce metagenomes) at varying proportions [28].

- Bioinformatic Analysis: Analyze synthetic metagenomes using multiple bioinformatics tools (Kraken2/Bracken, MetaPhlAn, KMA, CARD-RGI, SRST2) for taxonomic composition and ARG detection [28].

- Coverage Calculation: Calculate isolate genome coverage for the spiked strains within each synthetic metagenome [28].

- Threshold Determination: Establish detection thresholds by identifying the minimum coverage at which ARGs are accurately detected across tools and sample types [28].

Targeted NGS Panel Validation

Comprehensive validation of targeted NGS panels requires multi-faceted performance assessment [31].

Protocol:

- Sample Selection: Include diverse sample types (clinical tissues, external quality assessment samples, reference controls) to assess performance across matrices [31].

- DNA Input Titration: Titrate DNA input (e.g., 10-100 ng) to determine minimum input requirements while maintaining detection sensitivity [31].

- Limit of Detection Analysis: Serially dilute positive control samples to establish minimum detectable variant allele frequency [31].

- Reproducibility Assessment:

- Intra-run precision: Sequence replicates within a single run

- Inter-run precision: Sequence replicates across different runs

- Long-term reproducibility: Repeatedly test positive controls over extended periods [31]

- Orthogonal Method Comparison: Compare NGS results with established orthogonal methods (e.g., PCR, ddPCR, FISH) for concordance assessment [31] [32].

- Quality Metrics Establishment: Set thresholds for sequencing quality metrics including percentage of target regions with coverage ≥100× unique molecules, coverage uniformity, and mean read coverage [31].

Figure 1: Workflow for ARG Detection Platform Validation

Bioinformatics Tools and Databases for ARG Detection

The accuracy of ARG detection depends significantly on the selection of appropriate bioinformatics tools and databases, which vary substantially in their structures, curation methodologies, and detection algorithms [2].

Manually Curated Databases

- CARD (Comprehensive Antibiotic Resistance Database): A rigorously curated resource built around the Antibiotic Resistance Ontology (ARO) with strict inclusion criteria requiring experimental validation of resistance mechanisms [2]. It employs the Resistance Gene Identifier (RGI) tool for prediction of ARGs based on curated reference sequences and trained BLASTP alignment bit-score thresholds [2].

- ResFinder/PointFinder: Specialized tools focused on acquired AMR genes and chromosomal point mutations, respectively, now integrated under ResFinder 4.0 [2]. They use a K-mer-based alignment algorithm enabling rapid analyses directly from raw sequencing reads without assembly [2].

Consolidated Databases

- NDARO (National Database of Antibiotic-Resistant Organisms): Integrates data from multiple sources including CARD and Lahey Clinic β-Lactamase Database, offering broad coverage but facing challenges with consistency and redundancy [2].

- SARG (Structured Antibiotic Resistance Gene): Organized ARGs into over 30 distinct categories based on resistance mechanisms and antibiotics neutralized, providing a clearer framework for understanding gene function [2].

Table 3: Performance Comparison of Bioinformatics Tools for ARG Detection

| Tool/Database | Primary Methodology | Strengths | Limitations | Optimal Use Case |

|---|---|---|---|---|

| CARD/RGI | Homology-based with curated BLASTP thresholds | High accuracy with experimentally validated references | May miss novel genes; slower updates due to manual curation | Detection of well-characterized ARGs with experimental support |

| ResFinder | K-mer-based alignment | Fast analysis from raw reads; integrated mutation detection | Focused on acquired resistance genes | Routine surveillance of known acquired ARGs |

| DeepARG | Machine learning | Can identify novel or divergent ARGs | Potential for false positives with distantly related sequences | Exploratory studies or environments with unknown resistance profiles |

| KMA | k-mer alignment | Specific detection of expected targets | May miss divergent alleles at lower coverage | Verification of specific ARG targets in complex samples |

| SRST2 | Read mapping allowing multiple targets | Sensitive for diverse gene variants | Higher false positive rate for distantly related genes | Detection of ARG diversity in complex resistomes |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 4: Essential Research Reagents and Materials for ARG Detection Validation

| Category | Item | Function/Application |

|---|---|---|

| Reference Materials | HD701 Reference Standard | Positive control for assay validation and LOD determination [31] |

| Synthetic Metagenomes | Custom mixtures with known ARG content for method benchmarking [28] | |

| Sequencing Kits | Hybridization-capture Library Kits (e.g., Sophia Genetics) | Target enrichment for focused genomic analyses [31] |

| Amplicon-based Library Kits | PCR-based target enrichment for high-sensitivity detection [33] | |

| Bioinformatics Tools | CARD-RGI | Reference-based ARG identification using curated database [2] [28] |

| DeepARG | Machine learning-based prediction of novel ARGs [2] | |

| KMA | k-mer alignment for specific ARG detection [28] | |

| Validation Reagents | Droplet Digital PCR (ddPCR) Assays | Orthogonal validation of specific genetic variants [32] |

| PNAClamp Mutation Detection Kits | Orthogonal method for specific mutation confirmation [32] |

Figure 2: Relationship Between ARG Detection Components and Validation Metrics

The validation of ARG detection across sequencing platforms reveals a complex landscape where sensitivity, specificity, and limits of detection must be balanced against practical considerations including throughput, cost, and analytical requirements [27] [28]. Metagenomic approaches require approximately 5X genome coverage for reliable ARG detection, while targeted NGS panels can achieve sensitivities above 98% with specificities approaching 100% for many applications [31] [28].

The selection of an appropriate ARG detection platform should be guided by specific research objectives. For routine surveillance of known resistance determinants, tools like ResFinder and CARD-RGI offer robust performance through homology-based approaches [2] [28]. When investigating novel or divergent ARGs, machine learning-based tools such as DeepARG may be more appropriate despite potentially higher false positive rates [2]. For clinical applications requiring the highest sensitivity, CRISPR-enriched methods can lower detection limits by an order of magnitude compared to conventional NGS [9].

Ultimately, comprehensive validation using the metrics and methodologies outlined in this guide provides the foundation for reliable ARG detection across diverse research and clinical applications. As AMR continues to pose grave threats to global health, rigorous platform validation remains paramount for accurate surveillance and effective intervention strategies.

Advanced Workflows for ARG Detection: From Wet-Lab to Computational Analysis

Sample Preparation and Library Construction Strategies for Optimal ARG Recovery

The accurate detection and characterization of Antimicrobial Resistance Genes (ARGs) is a critical objective in modern public health and microbiological research. The choice of sequencing technology and the corresponding library construction strategy directly influences the sensitivity, accuracy, and comprehensiveness of ARG recovery. This guide provides an objective comparison of current next-generation sequencing (NGS) and third-generation sequencing (TGS) platforms, framing their performance within the context of a broader thesis on validating ARG detection. We summarize experimental data and detailed methodologies to inform researchers, scientists, and drug development professionals in selecting optimal workflows for their specific applications.

Sequencing Platform Comparison for ARG Analysis

The selection of a sequencing platform involves a trade-off between read length, accuracy, throughput, and cost. Table 1 summarizes the key characteristics of the major platforms used in antimicrobial resistance (AMR) research.

Table 1: Comparison of Sequencing Platforms for ARG Detection and Analysis

| Platform/Technology | Typical Read Length | Key Strength for ARG Analysis | Primary Limitation for ARG Analysis | Suitable for Metagenomic ARG Profiling? |

|---|---|---|---|---|

| Short-Read (Illumina/MGI) [34] [35] | 50-600 bp | High per-base accuracy (>99.9%); Excellent for detecting single-nucleotide variations (SNVs) [35] [36] | Inability to resolve long repetitive regions or complex genetic structures without fragmentation [37] [36] | Yes, but results in fragmented gene assemblies and may miss novel or complex ARG contexts [38] |

| PacBio HiFi Reads [39] [40] | 15,000-25,000 bp | Long, accurate reads (99.9% accuracy); Enables complete assembly of bacterial genomes and plasmids carrying ARGs [39] [40] | Higher DNA input requirements; Traditionally higher cost per gigabase than short-read platforms | Highly suitable, provides long-range context for ARGs within metagenome-assembled genomes (MAGs) |

| Oxford Nanopore (ONT) [37] [36] | 1,000 bp to >100 kb | Ultra-long reads; Real-time sequencing; Direct detection of epigenetic modifications; Portability [37] [36] | Raw read error rate historically higher than short-reads, though recent chemistry (R10.4.1) achieves >98.9% accuracy [36] | Yes, increasingly used for real-time resistome profiling; long reads help link ARGs to mobile genetic elements [38] |

Recent advancements are rapidly changing the landscape. For long-read technologies, accuracy is no longer solely dependent on read length. PacBio's HiFi sequencing uses circular consensus sequencing (CCS) to generate long reads with 99.9% accuracy, making it powerful for assembling complete genomes and precisely locating ARGs [39] [40]. Meanwhile, Oxford Nanopore Technologies (ONT) has significantly improved its raw read accuracy with the latest chemistry (SQK-LSK114 kit with R10.4.1 flow cells), enabling de novo assembly of high-quality finished bacterial and plasmid genomes with >99.99% accuracy without the need for short-read polishing [36]. This is particularly valuable for tracking the transmission of plasmid-borne ARGs.

Experimental Data: Platform Performance in AMR Studies

Comparative Assessment of Annotation Tools and Minimal Models

A 2025 study on Klebsiella pneumoniae highlighted the impact of database and tool selection on ARG annotation completeness [18]. Researchers built "minimal models" of resistance using known AMR markers from eight different annotation tools (Kleborate, ResFinder, AMRFinderPlus, DeepARG, RGI, SraX, Abricate, and StarAMR) to predict binary resistance phenotypes for 20 antimicrobials.

- Key Finding: The performance of predictive machine learning models (Elastic Net, XGBoost) varied significantly depending on the annotation tool used, revealing critical knowledge gaps for certain antibiotics [18].

- Implication: Even with the same genomic data, the choice of bioinformatic pipeline directly affects ARG calling and phenotypic resistance prediction. This underscores the need for standardized benchmarking datasets in AMR research [18].

Impact of Multiplexing on ARG Detection in Metagenomics

A 2025 study evaluated how sample multiplexing on ONT platforms (GridION and PromethION) influences the detection sensitivity of ARGs and pathogens in pig fecal metagenomes [38]. The study compared four-plex and eight-plex sequencing runs.

- Finding on Sensitivity: While overall resistome and bacterial community profiles were comparable across multiplexing levels, ARG detection was more comprehensive in the four-plex setting for low-abundance genes [38].

- Finding on Pathogen Detection: Similarly, pathogen detection was more sensitive in the four-plex setting, identifying a broader range of low-abundance bacterial taxa [38].

- Practical Guidance: The study concluded that eight-plex sequencing is more cost-effective for general surveillance where overall community structure is the goal. In contrast, lower multiplexing levels (four-plex) are advantageous for applications requiring enhanced sensitivity for low-abundance ARGs or detailed pathogen research [38].

Concordance in Variant Calling Across Platforms

A 2025 study comparing four NGS platforms for detecting drug-resistant mutations in HIV, HBV, HCV, SARS-CoV-2, and Mycobacterium tuberculosis demonstrated high concordance for majority and minority variants (>20%) across Illumina iSeq100, MiSeq, MGI DNBSEQ-G400, and ONT MinION platforms [35]. However, a notable observation was that nanopore technology reported a higher number of minority mutations (<20%), which may be attributed to its different error profile or higher sensitivity in certain contexts, warranting further investigation [35].

Detailed Experimental Protocols for ARG Recovery

Protocol 1: High-Quality Bacterial Genome and Plasmid Assembly using ONT

This protocol, adapted from [36], is designed to generate complete genomes of multidrug-resistant (MDR) bacteria and their plasmids using only long-read sequencing.

- DNA Extraction: Use the TIANamp Bacteria DNA Kit for high-molecular-weight DNA extraction. Quantify DNA using a fluorometer (e.g., Qubit).

- Library Preparation: Utilize the ONT Ligation Sequencing Kit V14 (SQK-LSK114). The workflow involves DNA end-prep, native barcode ligation (for multiplexing), adapter ligation, and loading onto a flow cell.

- Sequencing: Sequence on a GridION device using a R10.4.1 flow cell (FLO-MIN114). Perform sequencing with MinKNOW software (v22.08.9 or later) with the super-accuracy base-calling mode selected.

- Data Analysis:

- Base-calling & QC: Use Guppy for base-calling and NanoPlot for quality assessment.

- Read Filtering: Use NanoFilt to remove reads <1,000 bp and with a quality value <10.

- De novo Assembly: Assemble filtered reads using Flye v2.8.2 with default parameters.

- Polishing: Perform error correction on the assembly by running Medaka v1.2.2 three times.

- Validation: The study achieved finished genome sequences with >99.99% accuracy at 75x coverage depth, enabling high-confidence ARG analysis and plasmid tracking [36].

Protocol 2: Metagenomic Workflow for Resistome Profiling

This protocol, based on [38], is optimized for profiling ARGs in complex microbial communities.

- Sample & DNA Extraction: Extract total DNA from complex samples (e.g., feces) using the Quick-DNA HMW Magbead Kit with enhanced lysis steps (e.g., 100 μL of 100 mg/mL lysozyme, extended incubation).

- Library Preparation: Use the ONT Ligation gDNA Native Barcoding Kit 24 V14 (SQK-NBD114.24). Use 1 μg of DNA as input. Incubate for 10 min during end-prep and 40 min during barcode and adapter ligation steps.

- Multiplexing & Sequencing: Multiplex 4 or 8 samples per flow cell. Load onto PromethION P2 Solo (FLO-PRO114M) or GridION (FLO-MIN114) flow cells, both using R10.4 chemistry. Sequence for 72 hours.

- Base-calling & Filtering: Use Guppy Basecaller (v7.2.13+) with the super-accurate base-calling option. Filter low-quality reads (quality score <9, length <200 bp) within MinKNOW.

- ARG Assignment: Align reads to the ResFinder database using KMA v1.4.12a for alignment and gene assignment [38].

Workflow Visualization and Reagent Solutions

The following diagram illustrates the core decision-making workflow for selecting a sequencing strategy based on research priorities.

Diagram: Sequencing Strategy Decision Workflow for ARG Recovery

The Scientist's Toolkit: Key Research Reagent Solutions