Decoding the Blueprint: Principles of Genotype-Phenotype Linkage and Their Evolutionary Impact

This article synthesizes foundational principles and cutting-edge methodologies for understanding genotype-phenotype relationships, a core challenge in evolutionary genetics and biomedicine.

Decoding the Blueprint: Principles of Genotype-Phenotype Linkage and Their Evolutionary Impact

Abstract

This article synthesizes foundational principles and cutting-edge methodologies for understanding genotype-phenotype relationships, a core challenge in evolutionary genetics and biomedicine. We explore the historical distinction between genotype and phenotype and its modern reinterpretation through concepts like the 'supervisor-worker' gene architecture. The review covers transformative methodologies, from deep mutational scanning to AI-driven frameworks like G–P Atlas, which enable the mapping of complex genetic interactions. We address key challenges such as pervasive epistasis and data scarcity, while evaluating solutions through metabolic control theory and multi-omics integration. Finally, we compare the predictive power of different modeling approaches. This synthesis provides researchers and drug development professionals with a comprehensive framework for leveraging genetic principles to predict evolutionary trajectories, understand disease mechanisms, and accelerate therapeutic discovery.

From Classical Concepts to Modern Architectures: Deconstructing the Genotype-Phenotype Map

The genotype-phenotype distinction, first proposed by Danish scientist Wilhelm Johannsen in 1909, represents one of the conceptual pillars of twentieth-century genetics and remains a cornerstone of modern evolutionary research [1]. Johannsen introduced these terms in his seminal work "Elemente der exakten Erblichkeitslehre" (The Elements of an Exact Theory of Heredity) and further elaborated them in his 1911 paper "The Genotype Conception of Heredity" [1]. This distinction emerged from Johannsen's pure-line breeding experiments on barley and the common bean, through which he demonstrated that the hereditary dispositions of organisms (genotypes) could be distinguished from their physical manifestations (phenotypes) [1]. The profound insight that phenotypes represent the variable expression of stable genotypes under different environmental conditions fundamentally reshaped biological research and continues to influence how researchers investigate the genetic architecture of complex traits.

Johannsen's conceptual framework was developed amidst intense scientific debates between biometricians, who supported Darwinian gradualist evolution through continuous variation, and Mendelians, who advocated for discontinuous evolutionary leaps [1]. His distinction provided a resolution to these controversies by demonstrating that continuous phenotypic variation could arise from stable genotypes through environmental influences and developmental processes. This historical context underscores how foundational concepts continue to shape contemporary research into genotype-phenotype relationships in evolutionary biology and drug development.

Historical Foundation: Johannsen's Experiments and Theoretical Contributions

The Pure Line Experiments

Johannsen's conceptual breakthrough emerged from meticulously designed experiments with self-fertilizing plants, primarily the princess bean (Phaseolus vulgaris) [1]. His experimental protocol involved several key steps that established the empirical basis for the genotype-phenotype distinction:

- Seed Selection and Grouping: Johannsen began with 5,000 bean seeds in 1900, selecting 100 average-weight seeds plus 25 each of the smallest and largest seeds for planting [2].

- Pedigree Tracking: He maintained careful pedigree records through multiple generations of self-fertilization, establishing pure lines descended from single individuals [1].

- Statistical Analysis: Johannsen measured seed weights across generations and applied statistical methods to analyze variation patterns [1] [3].

- Selection Testing: He applied selection pressure within pure lines to test hereditary response, discovering that selection only produced changes when applied across genetically mixed populations, not within pure lines [1].

The critical finding was that within pure lines, selection for larger or smaller seeds produced no hereditary change, despite phenotypic variation existing [1]. This demonstrated that the genotype remained stable while the phenotype fluctuated in response to environmental conditions, fundamentally challenging the then-prevailing "transmission conception" of heredity which assumed parental traits were directly transmitted to offspring [1].

Conceptual Innovation and Terminology

Johannsen's genius lay in recognizing that his experimental results required a new conceptual vocabulary. He introduced three fundamental terms that would become foundational to genetics:

- Genotype: "The sum total of all the 'genes' in a gamete or in a zygote" [4], representing the hereditary constitution of an organism.

- Phenotype: "The statistical average of the environmentally influenced variable appearances of individuals" [2], comprising the observable characteristics.

- Gene: A "fully free from any hypothesis" unit representing the "securely ascertained fact that at least many properties of the organism are conditional on individual, separable and thus independent 'states', 'basis', 'dispositions' found in the gametes" [3].

Johannsen explicitly contrasted his "genotype conception of heredity" with what he termed the "transmission conception" of heredity [5]. He rejected the notion that characteristics themselves were transmitted from parents to offspring, instead arguing that what was inherited was the genotype, which then interacted with environmental factors during development to produce the phenotype [5]. This ahistorical view of heredity positioned the genotype as immune to environmental influences across generations, though it expressed differently depending on developmental conditions [1].

Table: Key Terminology Introduced by Johannsen

| Term | Original Meaning | Modern Interpretation |

|---|---|---|

| Genotype | The hereditary constitution underlying a pure line; "the type as determined by the gametes" [1] | The full hereditary information of an organism, encoded in DNA |

| Phenotype | Observable characteristics of an organism as expressed under particular conditions; "the type as it is seen" [1] | The observable physical, biochemical, and behavioral properties of an organism |

| Gene | A unit of calculation for hereditary dispositions; explicitly non-hypothetical [3] | A unit of heredity composed of DNA sequence encoding functional product |

| Pure Line | A population of organisms descending from a single self-fertilized individual through repeated inbreeding [1] | A genetically homogeneous population maintained through specific breeding protocols |

Modern Research Methodologies: From Univariate to Multivariate Approaches

Evolution of Genotype-Phenotype Mapping

Contemporary research has dramatically expanded Johannsen's original concepts through sophisticated methodologies that capture the multidimensional nature of genotype-phenotype relationships. Where traditional approaches examined single genetic loci against individual traits, modern frameworks employ multivariate strategies that acknowledge the complex interplay between multiple genetic variants and phenotypic measures [6]. This evolution reflects the growing recognition that both genotypes and phenotypes exist as complex systems rather than as collections of independent elements.

The limitations of univariate approaches became increasingly apparent as researchers recognized that complex phenotypes rarely stem from single genetic variants. As noted in recent literature, "studying the pairwise associations between all measurements and all alleles is highly inefficient and prevents insight into the genetic pattern underlying the observed phenotypes" [6]. This realization has driven the development of multivariate genotype-phenotype mapping (MGP) approaches that identify patterns of allelic variation (genetic latent variables) maximally associated with patterns of phenotypic variation (phenotypic latent variables) [6].

Contemporary Experimental Workflows

Modern genotype-phenotype research typically follows sophisticated experimental workflows that integrate large-scale genomic data with multidimensional phenotypic characterization:

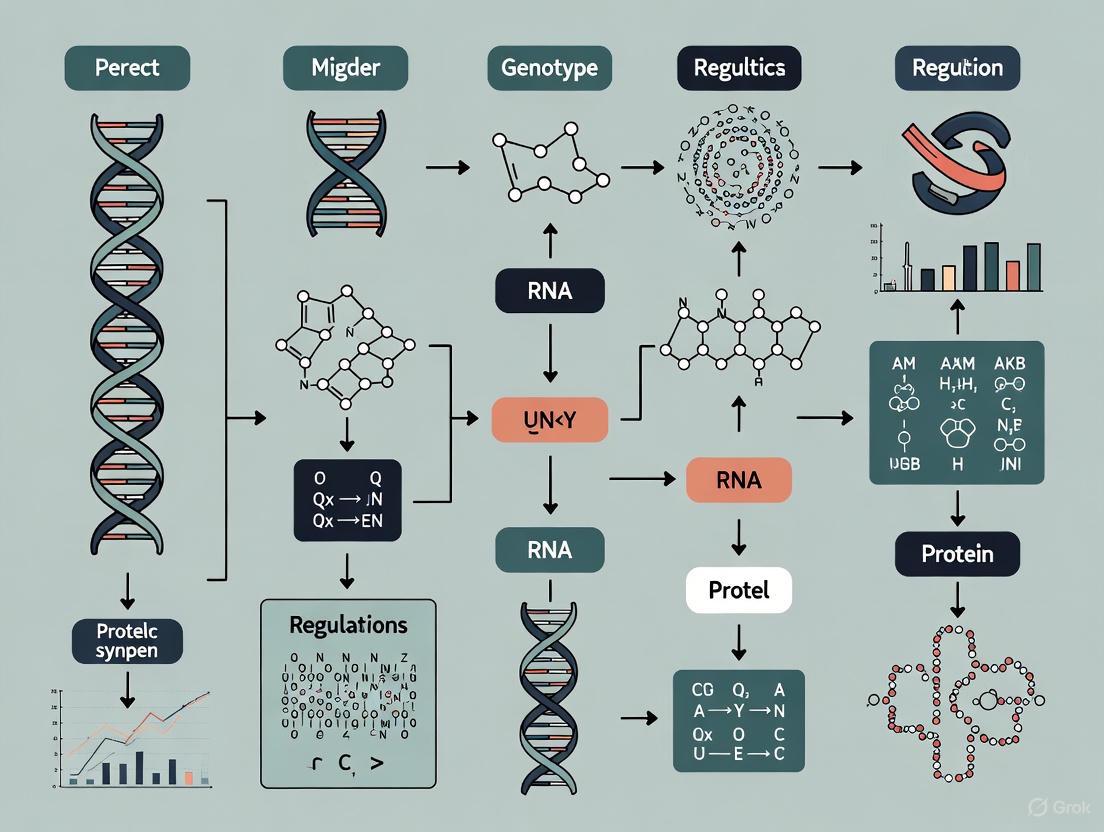

Diagram: Modern Genotype-Phenotype Association Workflow. This workflow illustrates the integrated approach used in contemporary studies, combining comprehensive genomic sequencing with multidimensional phenotypic assessment.

A landmark example of this approach comes from a recent study of 1,086 Saccharomyces cerevisiae isolates, which employed near telomere-to-telomere assemblies to generate a species-wide structural variant atlas [7]. The experimental protocol included:

- Genome Assembly: Long-read sequencing using Oxford Nanopore technology (average depth 95×, N50 19.1 kb) with hybrid assembly pipeline for chromosome-scale contiguity [7].

- Variant Detection: Comprehensive identification of structural variants (SVs >50 bp) through pairwise alignment with reference genome, classifying variants into presence-absence variations (PAVs), copy-number variations (CNVs), inversions, and translocations [7].

- Phenotypic Profiling: High-throughput phenotyping across 8,391 molecular and organismal traits, including transcriptomic, proteomic, and metabolic profiling integrated with growth and morphological assessments [7].

- Association Analysis: Genome-wide association studies (GWAS) incorporating the full spectrum of genetic variation, including single-nucleotide polymorphisms (SNPs), small indels, and structural variants [7].

This comprehensive approach revealed that structural variants contribute significantly to phenotypic variation, with SV inclusion improving heritability estimates by an average of 14.3% compared to SNP-only analyses [7]. Moreover, structural variants demonstrated greater pleiotropy than other variant types and were more frequently associated with organismal traits [7].

Machine Learning Approaches

The growing complexity of genomic and phenomic data has motivated the development of specialized machine learning tools such as deepBreaks, which identifies and prioritizes genotype-phenotype associations using multiple algorithms [8]. The deepBreaks workflow involves:

- Data Preprocessing: Imputation of missing values, handling of ambiguous reads, removal of zero-entropy columns, and clustering of correlated features using DBSCAN algorithm [8].

- Model Training: Simultaneous training of multiple machine learning models (AdaBoost, Decision Tree, Random Forest, etc.) with k-fold cross-validation [8].

- Feature Importance Analysis: Interpretation of model results to identify and prioritize sequence positions most predictive of phenotypic variation [8].

This approach addresses key challenges in genotype-phenotype mapping, including nonlinear associations, feature collinearity, and high-dimensional data, thereby uncovering complex relationships that traditional methods might miss [8].

Table: Comparison of Genotype-Phenotype Mapping Methods

| Method | Key Features | Advantages | Limitations |

|---|---|---|---|

| Univariate GWAS | Single marker-trait associations; Linear models | Simple interpretation; Well-established statistics | Multiple testing burden; Misses epistatic effects |

| Multivariate Genotype-Phenotype Mapping (MGP) | Identifies latent variables maximizing genotype-phenotype association [6] | Reduces dimensionality; Captures pleiotropic effects | Complex implementation; Challenging biological interpretation |

| Machine Learning (deepBreaks) | Multiple algorithm comparison; Non-linear pattern detection [8] | Handles complex interactions; Robust to collinearity | "Black box" interpretation; Computationally intensive |

| Graph Pangenome GWAS | Incorporates full genomic variation spectrum; Population-scale assemblies [7] | Comprehensive variant representation; Improved heritability estimates | Resource-intensive sequencing; Complex data integration |

Key Research Findings and Quantitative Insights

Structural Variants as Major Drivers of Phenotypic Diversity

Recent research has illuminated the critical role of structural variants (SVs) in shaping phenotypic diversity, a dimension largely inaccessible in earlier genetic studies. The comprehensive yeast genome study revealed:

Diagram: Structural Variant Contributions to Phenotypic Diversity. Structural variants, particularly presence-absence variations and copy-number variations, contribute disproportionately to phenotypic variation and heritability compared to single-nucleotide polymorphisms.

The yeast genome analysis identified 262,629 redundant structural variants across 1,086 isolates, corresponding to 6,587 unique events spanning 27.3 Mb of sequence [7]. The distribution of these variants across functional categories revealed:

- Presence-Absence Variations (PAVs): 4,755 events, frequently associated with transposable elements (39% involved Ty elements) [7].

- Copy-Number Variations (CNVs): 1,207 events, with 9% associated with Ty elements [7].

- Inversions: 231 events, 20% associated with Ty elements [7].

- Translocations: 394 events, predominantly in subtelomeric regions [7].

Notably, 69% of SVs were rare (minor allele frequency <1%), suggesting potential selective constraints, while SVs exhibited significantly higher heterozygosity than SNPs, particularly for larger variants (>30 kb) where 78% were heterozygous [7].

Dimensionality of Genotype-Phenotype Maps

Multivariate analyses have revealed the surprisingly low dimensionality of genotype-phenotype relationships, with fundamental implications for evolutionary biology. In a study of mice scored for 353 SNPs and 11 phenotypic traits:

- The first dimension of genetic and phenotypic latent variables accounted for >70% of genetic variation present in all 11 measurements [6].

- 43% of variation in this phenotypic pattern was explained by the corresponding genetic latent variable [6].

- The first three dimensions together accounted for almost 90% of genetic variation in the measurements and for all interpretable genotype-phenotype association [6].

This low dimensionality enables researchers to reduce the number of statistical tests from thousands to just a few meaningful independent tests, dramatically improving statistical power while providing a more integrated view of how genetic variation shapes phenotypic diversity [6].

Table: Quantitative Findings from Contemporary Genotype-Phenotype Studies

| Study System | Sample Size | Genetic Variants | Phenotypic Measures | Key Finding |

|---|---|---|---|---|

| S. cerevisiae [7] | 1,086 isolates | 6,587 unique SVs; 262,629 redundant SVs | 8,391 molecular and organismal traits | SVs improved heritability estimates by 14.3% compared to SNP-only analyses |

| Mouse sample [6] | Unspecified | 353 SNPs | 11 phenotypic traits | First three dimensions accounted for ~90% of genetic variation |

| Machine learning simulation [8] | 1,000 samples (simulated) | 1,000-2,000 features | Single continuous trait | ML approaches maintained performance despite feature collinearity |

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Essential Research Reagents for Genotype-Phenotype Studies

| Reagent/Material | Function | Application Example |

|---|---|---|

| Long-read Sequencing Platforms (Oxford Nanopore, PacBio) | Generate high-contiguity assemblies; Resolve complex genomic regions | Near telomere-to-telomere assemblies for structural variant detection [7] |

| Reference Genomes | Provide coordinate system for variant calling; Enable comparative analyses | S288c reference genome for yeast pangenome construction [7] |

| Phenotypic Screening Platforms | High-throughput characterization of molecular and organismal traits | Multiplexed growth assays; Transcriptomic, proteomic, and metabolic profiling [7] |

| Machine Learning Frameworks (deepBreaks, etc.) | Detect nonlinear genotype-phenotype associations; Prioritize predictive variants | Identification of important sequence positions associated with phenotypic traits [8] |

| Graph Pangenome Structures | Represent full spectrum of population genetic variation; Include non-reference sequences | 2.5 Mb of non-reference sequence uncovered in yeast graph pangenome [7] |

| Multiple Sequence Alignment | Align homologous sequences across individuals; Identify variable positions | Input for deepBreaks analysis to identify phenotype-associated positions [8] |

Implications for Evolutionary Research and Drug Development

Evolutionary Biology Perspectives

Johannsen's distinction between genotype and phenotype established the conceptual foundation for understanding how hereditary information passes between generations while phenotypic expression remains contingent on developmental and environmental contexts [1]. This fundamental insight continues to shape evolutionary research in profound ways:

The genotype-phenotype map concept has become central to evolutionary biology, as it determines how genetic variation translates into phenotypic variation available for natural selection [9]. As Lewontin articulated, the theoretical task for population genetics involves a process in two spaces: "genotypic space" and "phenotypic space" [9]. The challenge lies in providing laws that predictably map populations of genotypes to phenotypes where selection operates, then back to genotype space where Mendelian genetics predict subsequent generations [9].

Modern research has revealed that the genotype-phenotype relationship exhibits both phenotypic plasticity (environmental influence on phenotype expression) and genetic canalization (mutations having minimal effect on phenotypes due to developmental buffering) [9]. These complementary concepts, derived from Johannsen's original distinction, help explain how organisms maintain robustness to genetic and environmental perturbations while retaining evolutionary adaptability.

Biomedical and Pharmacological Applications

In drug development, the genotype-phenotype distinction provides a crucial framework for understanding individual variation in drug response and disease susceptibility:

- Precision Medicine: Understanding how genetic variation influences phenotypic traits enables targeted therapies for specific genotypic subgroups [7].

- Variant Interpretation: Comprehensive variant catalogs, including structural variants, improve identification of clinically relevant mutations [7].

- Pleiotropy Assessment: Recognition that genetic variants often influence multiple phenotypes helps anticipate unintended therapeutic consequences [7].

The multivariate approaches discussed in this review directly address the challenge of "missing heritability" in complex traits by considering the joint effects of multiple genetic variants on integrated phenotypic representations [6]. This represents a fundamental advancement beyond single-variant association studies that have dominated biomedical genetics.

More than a century after Wilhelm Johannsen introduced the genotype-phenotype distinction, his conceptual framework continues to guide and inspire genetic research. While modern science has revealed extraordinary complexity in the relationships between genetic information and phenotypic expression, Johannsen's fundamental insight—that heredity involves the transmission of potentialities rather than predetermined traits—remains valid [1] [4].

Contemporary research has expanded Johannsen's concepts in unexpected directions, demonstrating that structural variants often contribute more significantly to phenotypic diversity than single-nucleotide polymorphisms [7], that machine learning approaches can detect nonlinear genotype-phenotype relationships inaccessible to traditional methods [8], and that multivariate frameworks can dramatically reduce the dimensionality of genotype-phenotype maps [6]. Yet所有这些进展仍然在操作within the conceptual space that Johannsen first delineated.

As genetic research continues to evolve, with increasingly sophisticated technologies for characterizing both genomic variation and phenotypic expression, Johannsen's genotype-phenotype distinction remains an indispensable foundation for understanding biological heredity. Its enduring relevance across a century of dramatic scientific progress testifies to the power of this fundamental conceptual framework to organize our understanding of how genetic information manifests in living organisms.

The relationship between genotype and phenotype is a cornerstone of evolutionary biology, with the distribution of fitness effects (DFE) of new mutations being a critical determinant of evolutionary trajectories. The nearly neutral theory of molecular evolution emphasizes the importance of weakly selected mutations, proposing that a substantial proportion of mutations are slightly deleterious and that their fate is governed by the interplay of selection and genetic drift [10]. This framework predicts that the effective population size (Nₑ) is a key factor, with genetic drift overpowering weak selection in smaller populations [10]. A profound insight from recent empirical studies is that the DFE is often bimodal, with mutations clustering into categories of nearly neutral and strongly deleterious effects [11]. This bimodality has significant implications for understanding evolutionary dynamics, from the evolution of drug resistance in pathogens to the identification of disease-causing mutations in humans. This whitepaper explores the principles, evidence, and methodologies for studying this bimodal distribution within the context of genotype-phenotype linkage, providing researchers with a technical guide for probing one of evolution's fundamental patterns.

Theoretical Foundations: The Nearly Neutral Theory

The nearly neutral theory, primarily developed by Tomoko Ohta, represents a crucial refinement of the strict neutral and selectionist models of molecular evolution. It posits that a significant fraction of mutations are not strictly neutral, but are subject to very weak selection [10]. The theory assigns a central role to genetic drift, recognizing that in finite populations, the stochastic effects of drift can permit the fixation of slightly deleterious mutations and prevent the fixation of slightly advantageous ones.

The core prediction of the theory is a dependence on population size. The strength of genetic drift is inversely related to the effective population size (Nₑ). Consequently, the efficacy of selection in purging deleterious mutations and promoting advantageous ones is correlated with Nₑ. This leads to the expectation of a selection-drift balance, where the same mutation can behave as effectively neutral in a small population but be subject to selection in a larger one [10].

The nearly neutral theory provides a powerful explanation for the observed bimodality in the DFE. If the fitness effects of new mutations are continuously distributed but clustered near neutrality, the interaction with population size will naturally separate them into two fates: those that are effectively neutral and can fix via drift, and those that are sufficiently deleterious to be efficiently removed by purifying selection. This theoretical framework is supported by both population genetic models and, as detailed in subsequent sections, a growing body of empirical evidence.

Empirical Evidence for Bimodal Distributions

Key Experimental Findings

The advent of deep mutational scanning assays has enabled the high-throughput, empirical measurement of fitness effects for thousands of mutations in parallel. These experiments have provided direct, quantitative evidence for the bimodal nature of the DFE.

Table 1: Empirical Evidence for Bimodal DFE from Deep Mutational Scanning

| Protein/Gene System | Key Finding | Implication for DFE | Citation |

|---|---|---|---|

| S. cerevisiae Hsp90 | A comprehensive study of a 9-amino acid region revealed a bimodal distribution with "a fairly equal proportion of mutations being either strongly deleterious or nearly neutral". | Direct empirical support for the nearly neutral model; synonymous changes had minimal effects compared to nonsynonymous. | [11] |

| Human Growth Hormone | High tolerance to mutations in solvent-exposed positions; many mutations existed that increased both stability and binding affinity over wild-type. | Suggests a distribution where a subset of mutations are not deleterious, challenging a simple unimodal DFE. | [11] |

| Human WW Domain | 97% of library variants bound ligand less tightly than wild-type; mutational intolerance correlated with evolutionary conservation. | Indicates a DFE skewed towards deleterious effects, with a mode near neutrality and a long tail of deleteriousness. | [11] |

| Gβ1 Domain | Systematic mutagenesis of the 56-residue domain, assessing stability for over 400 mutations, provides a robust dataset for benchmarking predictive models. | Provides a high-resolution map of mutational effects for a complete protein domain. | [12] |

Quantitative Measures of Selection

The effect of mutations can be quantified using population genetic measures that contrast neutral and non-neutral evolution. At the microevolutionary scale (within species), the ratio of nonsynonymous to synonymous diversity (πN/πS) is used. At the macroevolutionary scale (between species), the ratio of nonsynonymous to synonymous substitutions (dN/dS, denoted as ω) is applied [10]. The nearly neutral theory predicts a negative correlation between effective population size (Nₑ) and both πN/πS and ω, as larger populations more efficiently purge slightly deleterious mutations [10]. However, these relationships are predicated on equilibrium assumptions, and demographic histories, such as population bottlenecks or expansions, can disturb the selection-drift balance and complicate interpretation [10].

In cancer genomics, the concept of "cancer effect size" has been developed to move beyond mere statistical significance (P-values) and quantify the selective advantage conferred by somatic mutations. This metric estimates the selection intensity for variants in cancer cell lineages, providing a more direct measure of a mutation's functional impact on tumorigenesis [13].

Methodological Approaches for Analysis

Experimental Protocols

Deep Mutational Scanning (DMS) for Fitness Effects This protocol enables the large-scale measurement of genotype-fitness relationships [11].

- Library Construction: Create a comprehensive library of genetic variants (e.g., all possible single point mutations in a gene-coding region) using methods such as Kunkel mutagenesis or synthetic oligonucleotide pools.

- Transformation & Propagation: Introduce the variant library into a model organism (e.g., yeast) and culture the population under competitive growth conditions. In essential gene studies, repress the native genomic copy to make cell fitness dependent on the library variant.

- Time-Point Sampling: Sample the population at multiple time points over several generations.

- Genotype Frequency Quantification: Use high-throughput sequencing (e.g., Illumina) to sequence the library variants at each time point. The read count for each variant serves as a proxy for its frequency in the population.

- Fitness Calculation: For each mutant, calculate the selection coefficient relative to the wild type. This is derived from the change in the ratio of mutant to wild-type read counts over time, normalized by the known wild-type generation time. The result is a quantitative fitness estimate for every mutation in the library.

Assessing Fitness Trade-offs in Drug Resistance This methodology, as applied to fluconazole-resistant yeast, identifies distinct classes of adaptive mutations based on their phenotypic trade-offs [14].

- Laboratory Evolution: Perform massively parallel evolution experiments in a range of drug concentrations (e.g., fluconazole), sometimes in combination with a second drug, to generate a diverse set of resistant mutants.

- Lineage Tracking: Use DNA barcoding to track a wide spectrum of adaptive lineages, including those that do not come to dominate the population, thus capturing a fuller range of resistance mechanisms.

- High-Throughput Phenotyping: Measure the fitness of each evolved mutant (e.g., 774 mutants) across a panel of distinct environments (e.g., 12 different drug conditions).

- Clustering Analysis: Group mutants based on their fitness profiles (trade-offs) across the tested environments. Mutants clustering together are inferred to operate through similar underlying molecular mechanisms.

Diagram 1: DMS Workflow for DFE.

Computational and Statistical Algorithms

Computational methods are essential for predicting mutational effects and analyzing genetic data.

Physics-Based Free Energy Prediction: Methods like Free Energy Perturbation (FEP) simulate the atomic-level thermodynamics of mutations. The QresFEP-2 protocol is a hybrid-topology approach that calculates the change in free energy (ΔΔG) associated with a point mutation, predicting its impact on protein stability or ligand binding with high accuracy [12]. It outperforms many machine learning and statistical methods by explicitly modeling physics-based interactions and solvation effects.

Statistical Genetics for Model Selection: Algorithms have been developed to analyze deleterious mutations within family pedigrees using phenotypic data alone. These methods perform model selection and parameter estimation to distinguish between scenarios like single gene mutation, double cross-effect mutations, or no genetic cause, using both classical fit methods and neural network approaches [15].

Mendelian Randomization (MR) for Causal Inference: MR uses genetic variants as instrumental variables to infer causal relationships between a biomarker (e.g., gene expression) and a complex trait. Drug target MR specifically uses genetic variants in or around a drug target gene to mimic the effect of pharmacological perturbation, thereby informing on target efficacy and safety during drug development [16]. The TWMR (Transcriptome-Wide Mendelian Randomization) extension integrates GWAS and eQTL data from multiple genes simultaneously to better account for pleiotropy and identify putatively causal gene-trait associations [17].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Research Reagents and Resources for DFE Studies

| Reagent/Resource | Function and Application | Key Features |

|---|---|---|

| Deep Mutational Scanning Libraries | Comprehensive collections of genetic variants (e.g., all single-nucleotide mutants of a gene) for high-throughput phenotyping. | Synthesized via pooled oligo libraries; cloned into display vectors (phage, yeast) or expression plasmids. |

| Display Systems (Phage, Yeast) | Couples the phenotype of a protein variant (e.g., binding affinity) to its genetic material, enabling selection and sequencing-based enrichment. | Critical for measuring biochemical phenotypes beyond fitness in cellular contexts. |

| QresFEP-2 Software | Open-source, physics-based software for predicting the effect of point mutations on protein stability and binding free energy. | Uses hybrid-topology Free Energy Perturbation (FEP); high accuracy and computational efficiency [12]. |

| cancereffectsizeR Software | R package for calculating the selection intensity (cancer effect size) of somatic mutations in tumor populations from sequencing data. | Based on evolutionary principles; estimates selective advantage of cancer drivers [13]. |

| eQTL/pQTL Datasets (e.g., GTEx, eQTLGen) | Provide summary-level data on associations between genetic variants and gene expression (eQTLs) or protein levels (pQTLs). | Used as proxies for drug target perturbation in Mendelian randomization studies [16] [17]. |

Applications and Implications

Evolutionary Dynamics and Drug Resistance

The bimodal DFE and nearly neutral theory are critical for understanding the adaptation of pathogens and cancer cells. The distribution of fitness effects dictates the rate of adaptation and the potential for evolutionary predictability. For instance, the evolution of drug resistance is not a uniform process; a single drug can select for hundreds of different resistant mutations. Research in yeast has shown that these mutants can be clustered into a limited number of groups based on their fitness trade-offs across different environments [14]. Some mutants resistant to a single drug may not resist drug combinations, while others do. This diversity of mechanisms and associated trade-offs complicates the design of sequential or combination drug therapies, which rely on the assumption that resistance to one drug confers a predictable cost (sensitivity to another) [14].

Drug Development and Evolutionary Safety

Understanding mutational effects is directly applicable to pharmaceutical development. Drug target Mendelian randomization leverages human genetics to validate therapeutic targets, demonstrating that targets with genetic support are twice as likely to succeed in clinical development [16]. This approach can inform on efficacy, safety, and repurposing opportunities by using genetic variants as proxies for lifelong drug target modulation.

A particularly nuanced application concerns mutagenic drugs, which act by increasing the mutation rate of pathogens (e.g., molnupiravir for SARS-CoV-2). The evolutionary safety of such drugs—whether they reduce the total load of viable mutant pathogens—must be rigorously assessed. A four-step framework has been proposed for this evaluation, involving measuring the natural mutation rate, the mutagenic potential of the drug, clinical trial assessment, and post-approval surveillance [18]. The goal is to ensure that the drug pushes the pathogen population toward error catastrophe without increasing the risk of generating dangerous escape mutants.

The exploration of the distribution of mutational effects has revealed a fundamental bimodality, strongly supporting the nearly neutral theory of molecular evolution. This pattern, where mutations often fall into categories of near-neutrality or strong deleteriousness, is a powerful emergent property of the genotype-phenotype map with profound consequences. The integration of high-throughput experimental genetics like deep mutational scanning with sophisticated computational models and population genetic theory allows researchers to move from descriptive observation to predictive power. This understanding is not merely academic; it is essential for tackling some of the most pressing challenges in medicine, from anticipating the evolution of antibiotic resistance to the rational design of evolutionarily robust therapeutics. As methods for profiling and predicting mutational effects continue to advance, so too will our ability to decipher the complex rules governing evolution and disease.

The relationship between genotype and phenotype is not a simple linear pathway but a complex network shaped by two fundamental forces: epistasis (the non-linear interaction between genes) and pleiotropy (the phenomenon of a single gene influencing multiple traits). Together, these forces structure the fitness landscape, determining the paths available for evolutionary adaptation and constraining the phenotypes that can emerge from genetic variation. Understanding their interplay is crucial for explaining how populations evolve complex adaptations, why genetic backgrounds influence phenotypic expression, and how biological systems balance evolutionary stability with adaptive potential.

For researchers investigating complex traits and their evolution, recognizing that epistasis and pleiotropy are integral features of genetic architecture—rather than rare exceptions—transforms our approach to studying genotype-phenotype relationships. As this technical guide will demonstrate, these forces operate across biological scales, from molecular networks to organismal phenotypes, with profound implications for evolutionary genetics, disease research, and therapeutic development.

Conceptual Foundations and Definitions

Epistasis: Beyond Simple Additivity

Epistasis occurs when the effect of a genetic variant depends on the genetic background in which it appears. In quantitative genetics, this is formally defined as a statistical deviation from additive expectation for multi-locus genotypes [19]. The biological reality is that genes operate within interconnected networks rather than in isolation, creating dependence structures where the phenotypic impact of a mutation is context-dependent.

Mathematically, for two mutations (A and B) occurring on a haplotype with wild-type fitness W0, epistasis (ε) quantifies the deviation from multiplicative expectation:

ε = log(WAB/W0) - [log(WA/W0) + log(WB/W0)] [20]

Where WAB/W0 represents the fitness effect of the double mutant, while WA/W0 and WB/W0 represent the fitness effects of each single mutation alone. When ε = 0, mutations act independently; when ε ≠ 0, epistatic interactions are present.

Pleiotropy: One Gene, Multiple Effects

Pleiotropy describes the phenomenon whereby a single genetic polymorphism affects multiple phenotypic traits [21]. Two distinct types have been characterized:

- True pleiotropy occurs when a polymorphism directly and independently affects multiple traits ("horizontal pleiotropy") or affects one trait that subsequently influences another ("mediating pleiotropy").

- Apparent pleiotropy arises when different polymorphisms in linkage disequilibrium within a gene or haplotype independently affect different traits.

The distinction has significant implications for interpreting genetic associations and predicting evolutionary trajectories, as true pleiotropy creates stronger genetic constraints than apparent pleiotropy, which can dissipate with recombination.

Quantitative Framework and Measurement

Quantifying Epistatic and Pleiotropic Effects

Researchers can measure epistasis and pleiotropy through several established quantitative frameworks. The following table summarizes key metrics and their applications:

Table 1: Quantitative Measures for Epistasis and Pleiotropy

| Measure | Formula/Approach | Application Context | Interpretation |

|---|---|---|---|

| Epistasis Coefficient (ε) | ε = log(WAB/W0) - [log(WA/W0) + log(WB/W0)] | Fitness landscapes, adaptive evolution [20] | ε = 0: No epistasis; ε > 0: Positive/synergistic epistasis; ε < 0: Negative/antagonistic epistasis |

| Pleiotropic Degree (PD) | Number of traits significantly affected by a mutation | Gene function characterization, genetic constraint estimation [21] [22] | High PD indicates greater pleiotropy; distribution often follows power law with few highly pleiotropic genes |

| Epistatic Pleiotropy (PDE) | Number of traits affected by a pairwise genetic interaction | Network analysis, evolutionary potential [22] | Measures how epistasis modifies pleiotropic patterns; high PDE increases evolutionary modularity |

| Variance Component Analysis | Partitioning genetic variance into additive, dominance, and epistatic components | Quantitative genetics, breeding values, heritability estimation [19] | Epistatic variance typically smaller than additive variance but biologically important |

The NK Model: A Framework for Studying Interactions

The NK model provides a powerful computational framework for investigating how epistasis and pleiotropy shape evolutionary dynamics [20] [23]. In this model:

- N represents the number of loci in the genome.

- K represents the number of other loci that interact with each locus (epistasis).

- Each locus contributes to fitness via interactions with K other loci, creating a tunably rugged fitness landscape.

- Increasing K amplifies both epistasis and pleiotropy, as each locus affects more phenotypic traits and interacts with more genetic partners.

Simulations using this model reveal that intermediate K values (moderate epistasis/pleiotropy) often optimize the balance between fitness potential and evolvability, allowing populations to discover high-fitness peaks without becoming trapped on local optima [20].

Experimental Approaches and Methodologies

Research Reagent Solutions for Interaction Studies

Table 2: Essential Research Reagents for Epistasis and Pleiotropy Studies

| Reagent/Resource | Function | Example Applications | Key References |

|---|---|---|---|

| Gene deletion/knockout collections | Systematic assessment of single gene effects across multiple traits | Quantifying pleiotropic degree; synthetic genetic array screens | [19] [21] |

| CRISPR-Cas9 genome editing | Precise engineering of specific variants in isogenic backgrounds | Testing epistasis between specific alleles; creating allelic series | [24] |

| Diallel cross designs | Comprehensive analysis of pairwise interactions between alleles | Mapping epistatic networks; detecting background effects | [19] |

| Near-isogenic lines (NILs) | |||

| Chromosome substitution lines | Isolating specific genomic regions in uniform genetic backgrounds | Measuring epistatic effects without confounding variation | [19] [21] |

| Transcriptional/reporter constructs | Quantifying gene expression effects in different genetic backgrounds | Cis-regulatory epistasis; network perturbations | [24] |

| P-element insertions | |||

| Transposon mutagenesis | Generating mutational variation for systematic interaction studies | Forward genetic screens for modifiers; pleiotropy assessment | [21] |

Protocol: Systematic Epistasis Mapping in Model Organisms

The following workflow outlines a comprehensive approach for detecting and quantifying epistatic interactions, integrating methodologies from multiple model systems [19] [24]:

Experimental Workflow Description

Generate Mutant Collection: Create a comprehensive set of single mutants in a uniform genetic background using gene knockouts (yeast), CRISPR-Cas9 (plants, animals), or transposon mutagenesis (Drosophila). For the tomato inflorescence study, CRISPR-Cas9 was used to generate promoter variants in the EJ2 gene [24].

Comprehensive Phenotyping: Characterize each mutant across multiple phenotypic domains. In the tomato study, this involved quantifying inflorescence branching architecture across over 35,000 inflorescences [24].

Select Query Mutations: Choose mutations representing biological pathways of interest or those showing interesting single-mutant phenotypes. Selection should balance coverage and practical feasibility.

Construct Double Mutants: Systematically cross query mutations with target mutations. In yeast, this uses synthetic genetic array technology; in plants and animals, planned crosses with genotypic verification.

High-Throughput Phenotyping: Measure relevant phenotypes in all single and double mutants. The scale required typically demands automated systems and quantitative imaging.

Statistical Interaction Analysis: Calculate epistasis using appropriate models. For continuous traits, compare observed double-mutant values to expectations based on additive or multiplicative models. Account for multiple testing using false discovery rate control.

Network Modeling: Build interaction networks from significant epistatic pairs, identifying hub genes and modular structures. Use topology measures to characterize network properties.

Functional Validation: Test predictions from network models using additional genetic perturbations or molecular assays to confirm biological mechanisms.

Protocol: Pleiotropy Quantification Across Traits

The following diagram illustrates the hierarchical nature of epistasis revealed through recent research on tomato inflorescence development, showing how different genetic layers interact to produce phenotypic outcomes [24]:

Experimental Workflow Description

Standardized Genetic Background: Use inbred lines or isogenic strains to minimize confounding variation. Engineered mutations in a common background provide the clearest evidence for true pleiotropy.

Multi-Trait Phenotyping: Measure a comprehensive set of phenotypes relevant to the biological system. This should include morphological, physiological, and molecular traits. High-throughput phenotyping platforms can automate this process.

Control for Linkage Disequilibrium: In natural populations, use fine-mapping approaches to distinguish true pleiotropy from apparent pleiotropy due to linked variants.

Effect Size Estimation: Calculate additive effects for each trait by comparing means between genotypes. Standardize effects to enable cross-trait comparisons.

Pleiotropic Degree Calculation: Count the number of traits with statistically significant effects after multiple testing correction. Alternatively, use multivariate methods like principal components analysis to identify trait covariation patterns.

Pleiotropy Network Construction: Create bipartite networks connecting genetic variants to affected traits. Analyze network topology to identify hubs and modules.

Key Findings and Empirical Patterns

Prevalence and Functional Patterns

Recent empirical studies across model organisms reveal consistent patterns in epistasis and pleiotropy:

Table 3: Empirical Patterns of Epistasis and Pleiotropy Across Biological Systems

| System | Epistasis Prevalence | Pleiotropy Patterns | Functional Consequences |

|---|---|---|---|

| Yeast (S. cerevisiae) | ~1-3% of tested pairs in qualitative screens; 13-35% in quantitative assays [19] | Most genes affect multiple growth conditions; few genes are essential | Network robustness; essential genes have higher pleiotropy |

| HIV-1 drug resistance | Extensive epistasis between reverse transcriptase and protease mutations [22] | Mutations show variable pleiotropy across drug environments | Epistatic pleiotropy creates modular cross-resistance patterns |

| Tomato inflorescence | Hierarchical epistasis with synergism within paralogs, antagonism between paralogs [24] | cis-regulatory variants show trait-specificity with minimal pleiotropy | Cryptic variation enables sudden phenotypic change |

| Drosophila melanogaster | 27% of tested random mutation pairs show epistasis for metabolic traits [19] | Distribution of pleiotropic degrees follows power law | Most mutations affect few traits; few affect many |

Evolutionary Dynamics and Landscape Topology

Research combining theoretical models with empirical data demonstrates how epistasis and pleiotropy shape evolutionary trajectories:

Fitness Valley Crossing: Epistasis can facilitate crossing fitness valleys through compensatory mutations and synergistic interactions. Populations with higher mutation rates navigate valleys more effectively but may sacrifice robustness [23].

Modularity Emergence: Epistatic pleiotropy—where the pleiotropic degree of mutations depends on genetic background—promotes the evolution of modular genetic architectures, allowing traits to evolve independently [22].

Cryptic Genetic Variation: Epistasis creates reservoirs of hidden variation that can be exposed under environmental change or genetic perturbation, fueling rapid adaptation [24].

Additive Variance Dominance: Despite pervasive biological interactions, additive genetic variance typically dominates in populations because epistatic components are converted to additive effects through allele frequency changes [19].

Implications for Biomedical Research and Therapeutic Development

The interplay of epistasis and pleiotropy has profound implications for human genetics and drug development:

Complex Disease Genetics

Missing Heritability: Epistatic interactions may contribute to the missing heritability problem in GWAS, as standard approaches primarily detect additive effects [19] [21].

Background Effects: The impact of risk alleles often depends on genetic background, explaining reduced replicability across populations with different allele frequencies and linkage disequilibrium patterns [21].

Variant Interpretation: Pleiotropy complicates causal inference, as associated variants may affect multiple traits through shared biological processes or mediated effects [21].

Antimicrobial and Antiviral Resistance

HIV research demonstrates how epistasis and pleiotropy shape resistance evolution:

Cross-Resistance Networks: Mutations in HIV reverse transcriptase and protease show distinct pleiotropic profiles across drug classes, with epistasis increasing drug-specificity of pleiotropic effects [22].

Combination Therapy: Understanding epistatic networks informs rational combination therapies that create evolutionary traps or high fitness costs for resistant variants.

Drug Target Identification

Dual-Purpose Targets: Genes with pleiotropic effects on aging and multiple age-related diseases represent promising therapeutic targets with broad impacts [25].

Network Pharmacology: Considering epistatic interactions improves prediction of drug effects across genetic backgrounds, enabling stratification by genetic context.

Epistasis and pleiotropy are not merely statistical curiosities but fundamental forces that shape evolutionary landscapes and biological complexity. Rather than representing noise in the genotype-phenotype map, they constitute essential features of its structure, enabling both robustness and adaptability in biological systems.

For research professionals, incorporating these concepts into experimental design and analysis is crucial for meaningful biological inference. Future progress will depend on developing more sophisticated computational models that capture the hierarchical nature of genetic interactions, expanding multi-trait phenotyping capabilities, and creating new statistical methods that bridge quantitative genetics and systems biology.

The integration of epistasis and pleiotropy into evolutionary models and biomedical research represents a paradigm shift from a reductionist, single-locus perspective to a network-based understanding of genetic effects. This transition promises not only more accurate predictions of evolutionary outcomes and disease risk but also more effective therapeutic interventions that work with, rather than against, the complex architecture of biological systems.

Understanding how genetic information translates into observable traits represents one of the most fundamental challenges in evolutionary biology and genetics. The genotype-phenotype relationship has long been conceptualized through various models, yet emerging evidence suggests this relationship operates through a sophisticated hierarchical architecture that reflects evolutionary processes. Recent research has revealed that natural selection and neutral drift, the dual engines of evolution, have shaped a structured gene architecture that governs complex traits through specialized genetic components with distinct functional roles. This architecture, termed the "supervisor-worker" framework, provides not only a mechanistic understanding of trait development but also insights into evolutionary constraints and opportunities that have shaped biological diversity across timescales [26]. The elucidation of this hierarchy addresses critical challenges in reconciling observations from different research strategies and offers a unified framework for interpreting how genetic variation manifests at phenotypic levels.

Within evolutionary biology, the supervisor-worker model helps explain how evolutionary forces operate differently on various components of the genetic architecture. This perspective aligns with broader efforts in comparative genomics that seek to illuminate the genetic basis of phenotypic diversity across macro-evolutionary timescales [27] [28]. As the field moves toward more comprehensive analyses, understanding this hierarchical organization becomes essential for deciphering how multiple molecular mechanisms jointly contribute to differences in cognition, metabolism, body plans, and medically relevant phenotypes. The framework also provides context for interpreting why some genetic approaches successfully identify certain components of trait architecture while overlooking others, thereby offering a more principled foundation for future research on complex traits and their evolution.

Core Concepts: Defining the Supervisor-Worker Architecture

Theoretical Foundation and Key Definitions

The supervisor-worker gene architecture represents a hierarchical model for understanding how genes collectively influence complex traits. This framework emerged from systematic analyses of approximately 500 quantitative traits in yeast, which revealed a fundamental organizational principle: genes controlling a trait segregate into two non-overlapping functional categories with distinct characteristics and roles [26]. The architecture resolves apparent contradictions between different research strategies by demonstrating that each approach targets different components of the same hierarchical system.

Supervisor Genes: These regulatory elements occupy upper hierarchical positions in gene regulatory networks and exhibit strong, detectable effects when perturbed. Supervisors are primarily identified through perturbational approaches (P-strategy) such as gene deletion, knockout, or overexpression experiments. These genes typically function as master regulators or key signaling nodes that coordinate the activity of downstream worker genes. Supervisor genes often show pleiotropic effects, influencing multiple traits simultaneously, and are enriched for functional annotations such as "Biological Regulator" in Gene Ontology analysis [26].

Worker Genes: These operational elements execute the mechanistic processes that directly construct traits but typically show small, statistically insignificant effects when individually perturbed. Workers are primarily identified through observational approaches (O-strategy) that examine correlations between gene activity patterns and trait values across various genetic or environmental backgrounds. While individually subtle in their effects, worker genes collectively implement the biochemical and cellular processes that manifest as observable phenotypes [26].

Complementary Research Strategies

The supervisor-worker architecture emerged from recognizing that two fundamental research strategies in genetics target different components of the hierarchical system:

Perturbational Strategy (P-strategy): This approach establishes causal relationships by measuring phenotypic consequences of direct genetic perturbations. It excels at identifying supervisor genes with strong phenotypic effects but typically fails to detect worker genes due to their functional redundancy or subtle individual contributions [26].

Observational Strategy (O-strategy): This approach identifies statistical correlations between gene activity patterns (e.g., mRNA expression, protein abundance) and trait values across different conditions. It effectively detects worker genes but often misses supervisors, which may not show consistent expression-trait correlations across backgrounds [26].

The surprising finding that these strategies identify essentially non-overlapping gene sets underscores the fundamental dichotomy in genetic functional organization and explains why integrative frameworks are necessary for comprehensive understanding of trait architecture.

Quantitative Evidence: Data from Yeast Morphological Traits

Empirical Patterns and Statistical Relationships

The discovery of the supervisor-worker architecture emerged from comprehensive analysis of yeast cell morphology, in which 501 quantitative morphological traits were characterized for 4,718 yeast mutants, each lacking a different nonessential gene [26]. This systematic approach provided unprecedented resolution for examining gene-trait relationships through both perturbational and observational strategies.

Table 1: Summary of Supervisor (PIG) and Worker (OIG) Identification in Yeast Morphological Traits

| Parameter | Supervisor Genes (PIGs) | Worker Genes (OIGs) |

|---|---|---|

| Identification Method | Perturbational (gene deletion) | Observational (expression-trait correlation) |

| Number of Traits Analyzed | 216 morphological traits | 501 morphological traits |

| Genes Examined | 4,718 nonessential genes | 6,123 yeast genes |

| Mean Genes per Trait | 301 | 138 |

| Median Genes per Trait | 212 | 12 |

| Proportion of Trait Variance Explained | Not quantified | 3.4% ± 2.1% (mean ± SD) |

| Total Nonredundant Genes Identified | 4,554 genes | 2,541 genes |

| Overlap Between PIGs and OIGs | Minimal (even slightly less than expected by chance) | Minimal (even slightly less than expected by chance) |

The data reveal several striking patterns. First, the number of worker genes (OIGs) identified for a trait poorly predicts the number of supervisor genes (PIGs) for that same trait (Spearman's ρ = 0.21, n = 216, P = 0.002) [26]. This statistical independence underscores the functional specialization within the hierarchy. Some traits had hundreds of worker genes but no supervisor genes, while others showed the opposite pattern, indicating that different traits vary in their regulatory complexity.

Table 2: Representative Examples of Supervisor and Worker Genes in Yeast

| Gene Name | Architectural Role | Biological Function | Phenotypic Impact |

|---|---|---|---|

| YIL040W | Supervisor | Regulates nuclear envelope morphology | Strong deletion effects on dozens of traits |

| YGR092W | Supervisor | Primary septum formation and cytokinesis | Strong deletion effects on dozens of traits |

| YNL148C | Supervisor | Folding of alpha-tubulin | Strong deletion effects on dozens of traits |

| Typical Worker Genes | Worker | Diverse cellular functions | Small, statistically insignificant individual deletion effects |

The minimal overlap between supervisor and worker genes persists even under varying statistical thresholds, with only three "super-informative" genes (YIL040W, YGR092W, and YNL148C) appearing as both strong supervisors and workers across dozens of traits [26]. When these exceptional genes are excluded, the remaining overlaps show no special status in terms of deletion effect size or explained trait variance, confirming the fundamental distinction between architectural roles.

Methodological Details for Experimental Replication

For researchers seeking to implement similar analyses, the experimental workflow involves several critical stages:

Strain Library Preparation: Generate a comprehensive collection of mutant strains, typically through homologous recombination-based gene deletion for nonessential genes. For essential genes, consider conditional knockdown systems (tet-off promoters, degrons) or temperature-sensitive alleles.

High-Content Phenotyping: Implement automated microscopy with multi-parameter staining (e.g., triple-stained cells for different cellular compartments) followed by computational image analysis to extract quantitative morphological descriptors.

Expression Profiling: Conduct transcriptome-wide mRNA quantification using RNA-seq across multiple mutant backgrounds, ensuring sufficient biological replicates to distinguish technical from biological variation.

Integrated Data Analysis:

- For P-strategy: Calculate deletion effects using appropriate linear mixed models that account for batch effects and genetic background.

- For O-strategy: Compute expression-trait correlations across mutants, applying false discovery rate control for multiple testing.

- Implement cross-validation approaches to assess robustness of identified gene-trait relationships.

This integrated methodology enables simultaneous mapping of both supervisor and worker components, providing a comprehensive view of the genetic architecture.

Evolutionary Significance: Selection and Neutral Drift in Architectural Formation

Distinct Evolutionary Forces Shape Different Architectural Components

The supervisor-worker architecture reflects the operation of different evolutionary forces on its distinct components. Analyses suggest that most worker-worker interactions evolve largely through neutral drift, resulting in pervasive epistasis that reduces the tractability of worker genes to traditional genetic analysis [26]. This neutral evolution of worker networks creates a background of complex interactions that can obscure detection of individual worker contributions.

In contrast, supervisor genes are often recruited or maintained by natural selection to establish and preserve coordinated expression patterns among worker genes. This selective maintenance boosts the tractability of worker genes by reducing interaction complexity and establishing predictable regulatory relationships [26]. The evolutionary process thus creates a mixed architecture where selection acts predominantly on supervisors to maintain functional coherence, while neutral processes shape the detailed implementation networks among workers.

This evolutionary perspective helps explain the missing heritability problem observed in human genome-wide association studies, where even extensive catalogs of associated variants fail to account for most of the estimated heritability of complex traits [29]. The supervisor-worker framework suggests this missing heritability may partly reflect the limited detection power for distributed worker genes with small individual effects and context-dependent contributions.

Implications for Comparative Genomics and Evolutionary Analysis

The hierarchical architecture provides a new lens for interpreting comparative genomics studies that link phenotypic diversity to genotypic differences across species [27] [28]. Rather than seeking one-to-one mappings between genetic changes and phenotypic innovations, this framework suggests that evolutionary changes often occur through modifications to supervisor genes that subsequently reorganize worker networks. This perspective may explain why studies frequently uncover joint contributions of multiple molecular mechanisms to phenotypic differences and indicate an underappreciated role for gene and enhancer losses in driving phenotypic change [28].

The architecture also offers insights into the genetic complexity of traits, defined as the excess of genotypic diversity over phenotypic diversity [29]. Supervisor genes may buffer phenotypic variation against genotypic variation in worker networks, allowing for evolutionary exploration of genotypic space while maintaining phenotypic stability. This buffering capacity could facilitate evolutionary innovation by permitting the accumulation of potentially useful genetic variation without immediate phenotypic consequences.

Research Applications: Methods and Experimental Toolkit

Experimental Approaches for Dissecting Architectural Components

The supervisor-worker framework necessitates specialized methodological approaches for characterizing different components of the hierarchy. The complementary strengths of perturbational and observational strategies can be leveraged in a coordinated manner to fully elucidate trait architecture.

Table 3: Research Reagent Solutions for Supervisor-Worker Architecture Studies

| Research Reagent | Function in Analysis | Architectural Target |

|---|---|---|

| CRISPR-Cas9 Gene Editing | Targeted gene knockout or modification | Supervisor identification via P-strategy |

| RNAi Libraries | Gene knockdown through RNA interference | Supervisor validation and partial perturbation |

| Single-Cell RNA Sequencing | High-resolution expression profiling | Worker identification via O-strategy |

| Yeast Deletion Collection | Systematic analysis of nonessential gene deletions | Supervisor screening in model organisms |

| Tiling Deletion Libraries | Saturation mutagenesis for essential regions | Comprehensive supervisor mapping |

| Massively Parallel Reporter Assays | Functional assessment of regulatory elements | Supervisor regulatory logic dissection |

| Protein-Protein Interaction Mapping | Physical network determination | Worker network characterization |

| Chromatin Conformation Capture | 3D genomic architecture analysis | Supervisor regulatory domain identification |

For supervisor gene identification, optimal approaches include:

- Systematic gene perturbation: Implement genome-wide CRISPR screens with deep phenotypic profiling across multiple cellular contexts.

- Epistasis mapping: Cross supervisor mutants with worker mutants to delineate hierarchical relationships.

- Expression quantitative trait locus (eQTL) analysis: Map genetic variants that regulate worker gene expression to identify potential supervisors.

For worker gene network characterization, effective strategies include:

- Multi-condition expression profiling: Measure transcriptomes across dozens to hundreds of genetic or environmental perturbations.

- Machine learning approaches: Train models to predict traits from expression patterns, then extract feature importance.

- Network inference algorithms: Reconstruct co-expression modules to identify worker communities.

Visualization of Experimental Workflows

The following diagram illustrates the integrated experimental approach for dissecting supervisor-worker architecture:

Experimental Workflow for Supervisor-Worker Architecture Dissection

Statistical Considerations and Analytical Framework

The distinct properties of supervisor and worker genes necessitate specialized statistical approaches:

For supervisor detection: Employ false discovery rate control on deletion effect sizes, with careful attention to pleiotropy metrics and network centrality measures.

For worker detection: Use correlation-based approaches with permutation testing to establish significance thresholds, accounting for the multiple testing burden across thousands of genes.

For hierarchical modeling: Implement Bayesian hierarchical models that simultaneously estimate supervisor effects and worker contributions, partially pooling information across genes to improve stability of estimates [30].

Recent methodological advances in hierarchical modeling offer promising approaches for more stable ranking of gene effects, addressing the inherent noise in individual gene effect estimates [30]. These approaches can be particularly valuable for worker gene identification, where individual effects are small and measurements noisy.

The supervisor-worker gene architecture represents a significant advance in understanding the relationship between genotype and phenotype within an evolutionary framework. This hierarchical model provides a principled explanation for why different research strategies identify distinct genetic components and how evolutionary forces shape these components differently. By revealing the complementary roles of supervisor and worker genes, this framework offers a more comprehensive understanding of complex trait architecture that integrates both regulatory and mechanistic perspectives.

For evolutionary research, this architecture provides insights into how natural selection and neutral drift operate on different genetic components to produce the patterns of trait variation observed within and between species. For biomedical applications, it suggests new strategies for identifying therapeutic targets by distinguishing between master regulatory elements and implementation networks. As the field progresses, integrating this architectural perspective with comparative genomics approaches [27] [28] and large-scale mapping studies [31] will further illuminate the genetic basis of phenotypic diversity and its evolution.

The supervisor-worker framework ultimately bridges molecular genetics with evolutionary theory, providing a more sophisticated understanding of how genetic information flows through biological systems to produce the remarkable diversity of life. This perspective moves beyond simple genotype-phenotype mappings toward a more nuanced understanding of the hierarchical genetic architectures that have evolved to balance phenotypic stability with evolutionary flexibility.

Accurate phenotypic replication constitutes the fundamental mechanism through which evolutionary processes operate and become observable. Within evolutionary biology research, the fidelity with which genotypes map to phenotypes determines not only the capacity to predict evolutionary trajectories but also the very feasibility of identifying genuine biological relationships. This technical treatise examines phenotypic replication accuracy as an indispensable prerequisite for evolution, framing this necessity within the broader thesis of robust genotype-phenotype linkage. For researchers and drug development professionals, understanding and quantifying these relationships has profound implications for predicting disease risk, reconstructing evolutionary histories, and engineering biological systems. Contemporary research reveals that even with incomplete genotype-to-phenotype maps, accurate predictions of phenotypic differences can be achieved with greater than 90% accuracy in specific contexts, underscoring the potential for extracting more phenotypic information from genomic data than previously appreciated [32]. The emerging paradigm demonstrates that the direction of phenotypic differences—whether one individual will exhibit a greater or lesser phenotypic value than another—often provides more achievable and biologically actionable information than precise phenotypic value prediction.

Theoretical Foundations: Quantitative Genetics of Phenotypic Replication

The Genetic Architecture of Complex Traits

Quantitative trait locus (QTL) analysis provides the statistical foundation for linking phenotypic data with genotypic information to explain the genetic basis of variation in complex traits [33]. This methodology bridges the gap between genes and the phenotypic traits resulting from them, allowing researchers to identify the action, interaction, number, and precise location of chromosomal regions contributing to trait variation. The fundamental principle underpinning QTL analysis is that markers genetically linked to a QTL will segregate more frequently with specific trait values, whereas unlinked markers show no significant association with phenotype [33]. Historically, a key question addressed through QTL analysis has been whether phenotypic differences stem primarily from few loci with large effects or many loci each with minute effects, with evidence suggesting both contribute substantially across different traits and organisms [33].

The additive genetic covariance matrix (G matrix) serves as a primary statistical tool for predicting phenotypic evolution, capturing all genetic variation underlying a set of traits and revealing how this variation influences each characteristic [34]. This matrix identifies which combination of trait values has the greatest amount of genetic variation (gmax), indicating the direction in which a population will evolve most rapidly. Observational and manipulative experiments have demonstrated that the G matrix corresponds with how natural populations adapt to different environments, with meta-analyses showing genetic variation can predict approximately 40% of phenotypic differences in plant populations [34].

Probabilistic Effects in Genotype-Phenotype Mapping

Despite traditional approaches focusing on deterministic genotype-phenotype relationships, recent evidence highlights the importance of probabilistic effects at cellular levels. Single-cell Probabilistic Trait Loci (scPTL) represent genetic variants that modify the statistical properties of cellular-level quantitative traits without necessarily altering mean trait values [35]. These probabilistic effects may underlie phenomena such as incomplete penetrance, where carriers of a mutation display a phenotype at increased frequency but not universally [35]. Technological advances in high-throughput flow cytometry, multiplexed mass-cytometry, image content analysis, and droplet-based single-cell transcriptome profiling now enable empirical estimation of statistical distributions for molecular and cellular traits, facilitating the detection of these scPTL [35].

Table 1: Key Concepts in Genotype-Phenotype Mapping

| Concept | Definition | Research Application | ||||

|---|---|---|---|---|---|---|

| QTL (Quantitative Trait Locus) | A chromosomal region linked to variation in a quantitative trait [33] | Mapping genetic loci contributing to continuous phenotypes | ||||

| scPTL (Single-cell Probabilistic Trait Locus) | A genetic locus modifying any characteristics of a single-cell trait density function [35] | Identifying genetic variants affecting cellular heterogeneity | ||||

| G Matrix | Additive genetic covariance matrix capturing genetic variation underlying a set of traits [34] | Predicting multivariate phenotypic evolution | ||||

| PGRM (Phenotype-Genotype Reference Map) | Curated set of genetic associations for high-throughput replication studies [36] | Validating phenotype-genotype associations across biobanks | ||||

| Known-to-Total Ratio (κ) | Ratio between sum of known effects and total effects ( | Δ | /( | Δ | +σ)) [32] | Estimating accuracy of directional phenotype predictions |

Methodological Frameworks: Ensuring Accuracy in Phenotypic Replication

Experimental Designs for Phenotypic Replication Studies

Robust phenotypic replication requires carefully controlled experimental designs that account for sources of biological and technical variation. Traditional QTL analysis necessitates two or more strains of organisms that differ genetically regarding the trait of interest, along with genetic markers that distinguish between these parental lines [33]. Molecular markers (SNPs, SSRs, RFLPs) are preferred for genotyping because they unlikely affect the trait of interest. Following crossing of parental strains, the phenotypes and genotypes of derived populations are scored, enabling identification of markers linked to QTLs influencing the trait [33].

For multicellular organisms, single-cell phenotypic replication studies must account for cell types and intermediate differentiation states that constitute predominant sources of cellular trait variation [35]. Unicellular model organisms like Saccharomyces cerevisiae provide powerful experimental systems by eliminating this complexity, enabling studies of individual cells belonging to a single cell type [35]. Methodological innovations like ptlmapper (an open-source R package) implement novel genetic mapping approaches that scan genomes for scPTL by comparing distributions of single-cell traits without prior assumptions about how genetic loci affect these distributions [35].

Diagram 1: QTL Mapping Workflow. This experimental design illustrates the process from parental crosses through genotyping, phenotyping, and statistical analysis to identify loci associated with trait variation.

Multi-Omics Integration for Enhanced Prediction

The integration of multi-omics data addresses limitations of single-omics analyses by providing more comprehensive biological context for genotype-phenotype associations. Methodologies such as the GSPLS (Group lasso and SPLS model) approach effectively handle the challenge of large feature sets with small sample sizes by clustering genes using protein-protein interaction networks and gene expression data, screening gene clusters with group lasso, obtaining SNP clusters through expression quantitative trait locus (eQTL) data, and integrating these into three-layer network blocks for analysis [37]. This approach accounts for intra-omics associations and biological pathway relationships across omics layers, improving prediction accuracy while maintaining biological interpretability [37].

Comparative analyses demonstrate that methods incorporating biological network clustering (GSPLS and GGLM) outperform approaches without such clustering (NETAM) or those ignoring inter-omics associations (mixOmics), particularly with small sample sizes [37]. This superiority highlights the importance of leveraging known biological relationships to enhance phenotypic replication accuracy when data limitations exist.

Table 2: Methodological Comparisons for Genotype-Phenotype Association Studies

| Method | Approach | Key Features | Performance (AUC) |

|---|---|---|---|

| GSPLS [37] | Multi-omics integration with biological networks | Gene clustering via PPI networks, accounts for intra-omics associations | 0.85-0.90 (superior on tested datasets) |

| GGLM [37] | Group lasso with generalized linear model | Gene network clustering, multiple regression for SNP-gene association | 0.75-0.80 (improved over basic methods) |

| NETAM [37] | Multi-staged analysis without clustering | Direct multiple regression with lasso on three-layer network | 0.60-0.65 (unsuitable for small samples) |

| mixOmics [37] | Meta-dimensional integration | Independent prediction models for each omics type | 0.70-0.75 (improves on single-omics) |

| PGRM [36] | Phenotype-genotype reference mapping | Standardized phecode phenotypes for replication studies | Effective for biobank data quality assessment |

The Phenotype-Genotype Reference Map Framework