Directed Evolution in Enzyme Engineering: From Basic Principles to AI-Driven Design

This article provides a comprehensive overview of enzyme engineering via directed evolution, tailored for researchers, scientists, and drug development professionals.

Directed Evolution in Enzyme Engineering: From Basic Principles to AI-Driven Design

Abstract

This article provides a comprehensive overview of enzyme engineering via directed evolution, tailored for researchers, scientists, and drug development professionals. It covers the foundational principles of mimicking natural evolution in a laboratory setting, details the core methodologies for generating diversity and high-throughput screening, and addresses key challenges and optimization strategies. Furthermore, it explores the emerging frontier of machine learning and AI, which are revolutionizing the field by enabling predictive design and more efficient navigation of protein sequence space, ultimately accelerating the development of specialized biocatalysts for biomedical and industrial applications.

The Principles and Power of Directed Evolution

Harnessing Darwinian Principles for Protein Design

The application of Darwinian principles—variation, selection, and heredity—to protein design represents a paradigm shift in enzyme engineering. Directed evolution mimics natural evolution in laboratory settings, enabling researchers to develop enzymes with enhanced or entirely novel functions. This approach has become a cornerstone of modern biocatalysis, yielding engineered enzymes for applications ranging from pharmaceutical synthesis to sustainable energy. The fundamental process involves creating genetic diversity in protein-coding sequences, screening or selecting for improved variants, and iteratively repeating this cycle to accumulate beneficial mutations. Unlike rational design approaches that require deep mechanistic understanding, directed evolution leverages Darwinian principles to explore vast sequence spaces efficiently, often revealing solutions that would be difficult to predict computationally. This technical guide examines the core methodologies, experimental protocols, and emerging trends that enable researchers to harness evolutionary principles for protein design, with particular emphasis on recent advances in high-throughput screening, continuous evolution, and machine-learning integration.

Core Darwinian Concepts in Enzyme Engineering

The Evolutionary Cycle in Laboratory Settings

The directed evolution workflow operationalizes Darwinian principles into a controlled engineering pipeline. Variation is introduced through mutagenesis techniques that create diverse gene libraries. Selection pressure is applied through screening methods that identify improved variants based on desired functional parameters. Heredity ensures successful variants are propagated to subsequent generations for further optimization. This cycle creates an evolutionary trajectory toward proteins with tailored properties, compressing timeframes that span millennia in nature into weeks or days in the laboratory.

The effectiveness of directed evolution hinges on several critical factors. The quality and diversity of the initial mutant library significantly influence outcomes, as larger, more diverse libraries increase the probability of discovering rare beneficial mutations. The fidelity of the genotype-phenotype linkage ensures that genetic information encoding improved functions can be reliably recovered and propagated. Finally, the sensitivity and throughput of screening methods determine the efficiency with which improved variants can be identified from large populations.

Quantitative Framework for Evolutionary Engineering

The success of directed evolution campaigns can be quantified through several key metrics that reflect Darwinian processes:

- Functional Information Gain: Measures the increase in information content resulting from mutations that enhance catalytic efficiency, substrate specificity, or stability.

- Variant Enrichment Efficiency: Quantifies the effectiveness of selection methods at identifying improved variants from complex libraries.

- Evolutionary Trajectory Analysis: Maps the historical sequence of mutations that led to functional improvements, revealing epistatic interactions and contingency effects.

Recent advances in next-generation sequencing and machine learning have enabled researchers to quantitatively analyze these parameters with unprecedented resolution, creating predictive models of protein fitness landscapes.

Experimental Methodologies and Platforms

High-Throughput Spore-Display Platform

Spore-display technology represents an advanced platform for implementing Darwinian protein design. This system uses bacteria to produce and assemble enzymes on the surface of spores, creating self-assembling, genetically encoded microparticles. The platform is based on the characterization of 37 proteins that constitute the spore coat of Bacillus subtilis, which function as fusion partners for enzyme immobilization [1].

The key advantage of spore-display lies in its integration of enzyme expression, immobilization, and screening into a single system. This platform enables directed evolution of spore-displayed enzymes through high-throughput screening of >1 million variants per day using microfluidic encapsulation approaches [1]. The methodology supports rapid prototyping of spore-enzyme variants to improve critical parameters including enzyme activity, stability, and loading density while maintaining reusability—a significant challenge in enzyme catalysis.

Table 1: Key Components of Spore-Display Directed Evolution Platform

| Component | Function | Application in Darwinian Protein Design |

|---|---|---|

| Spore coat proteins | Fusion partners for enzyme display | Genetically encoded immobilization creating genotype-phenotype linkage |

| Microfluidic encapsulation | Compartmentalization of single variants | Enables high-throughput screening of >10^6 variants daily |

| Bacillus subtilis spores | Self-assembling microparticles | Provides stable platform for enzyme display and screening |

| Machine learning algorithms | Analysis of variant sequences | Predicts beneficial mutations and guides library design |

Experimental Protocol: Spore-Display Directed Evolution

- Library Construction: Generate mutant libraries of target enzyme genes fused to spore coat protein genes using error-prone PCR or DNA shuffling

- Spore Transformation: Introduce mutant libraries into Bacillus subtilis host cells for sporulation

- Spore Harvesting: Isolate mature spores displaying enzyme variants from culture media

- Microfluidic Encapsulation: Compartmentalize individual spore-displayed variants into water-in-oil emulsions using microfluidic devices

- High-Throughput Screening: Apply fluorescence-activated cell sorting (FACS) or substrate-based assays to identify improved variants

- Genotype Recovery: Isolve DNA from improved variants and sequence to identify beneficial mutations

- Machine Learning Analysis: Use sequence-function data to train predictive models for guiding subsequent library design

- Iterative Cycling: Repeat process with focused libraries based on ML predictions to accumulate beneficial mutations

Growth-Coupled Continuous Directed Evolution

Continuous evolution systems represent a significant advancement in Darwinian protein design by eliminating discrete cycles of mutagenesis and screening. The Growth-Coupled Continuous Directed Evolution (GCCDE) approach links enzyme activity directly to bacterial growth, enabling real-time selection of superior variants in continuous culture systems [2].

The GCCDE platform utilizes the MutaT7 system for in vivo mutagenesis, which combines targeted mutagenesis with selection based on growth advantage. In this system, bacteria containing improved enzyme variants metabolize substrate more efficiently, leading to faster growth rates under selective conditions. This creates a self-perpetuating cycle where beneficial mutations automatically enrich in the population without researcher intervention.

Table 2: Quantitative Performance of Directed Evolution Platforms

| Evolution Platform | Throughput (Variants/Day) | Key Advantage | Typical Timeline | Applications |

|---|---|---|---|---|

| Spore-display with microfluidics [1] | >1,000,000 | Integrated expression and screening | 2-4 weeks | Enzyme activity, stability optimization |

| Growth-coupled continuous evolution (GCCDE) [2] | >1,000,000,000 | Automated continuous selection | 1-2 weeks | Substrate specificity, catalytic efficiency |

| Machine-learning guided cell-free [3] | 10,000-100,000 | Rapid sequence-function mapping | 1-3 weeks | Multi-property optimization, novel reactions |

Experimental Protocol: Growth-Coupled Continuous Directed Evolution

- Strain Engineering: Construct host strain with chromosomal integration of MutaT7 mutagenesis system (T7 RNA polymerase and mutagenic plasmid)

- Library Transformation: Introduce target enzyme gene into engineered strain under control of inducible promoter

- Growth Coupling Design: Establish conditions where target enzyme activity is essential for growth (e.g., sole carbon source utilization)

- Continuous Culture Setup: Implement chemostat or turbidostat system with controlled nutrient feed and waste removal

- Evolution Campaign: Operate continuous culture system for 50-200 generations under selective pressure

- Population Monitoring: Regularly sample population to track enzyme activity improvements and genetic diversity

- Variant Isolation: Plate samples on selective media to isolate individual clones from evolved population

- Characterization: Sequence and biochemically characterize improved variants to identify beneficial mutations

Machine-Learning Guided Cell-Free Protein Engineering

The integration of machine learning with cell-free expression systems has created a powerful platform for mapping protein fitness landscapes. This approach combines cell-free DNA assembly, cell-free gene expression, and functional assays to rapidly generate sequence-function data for ML model training [3].

A key application of this platform demonstrated the engineering of amide synthetases by evaluating substrate preference for 1217 enzyme variants across 10,953 unique reactions [3]. The resulting data was used to build augmented ridge regression ML models that successfully predicted enzyme variants with 1.6- to 42-fold improved activity for synthesizing nine pharmaceutical compounds compared to the parent enzyme.

Experimental Protocol: ML-Guided Cell-Free Enzyme Engineering

- DNA Library Construction: Generate site-saturation mutagenesis libraries using cell-free DNA assembly with mismatched primers

- Linear Expression Template Preparation: Amplify linear DNA expression templates (LETs) via PCR for direct use in cell-free systems

- Cell-Free Protein Synthesis: Express enzyme variants using cell-free gene expression (CFE) systems

- High-Throughput Screening: Assay enzyme variants in multi-well plates using fluorescence, absorbance, or mass spectrometry

- Data Curation: Compile sequence-function relationships into structured dataset for ML training

- Model Training: Implement ridge regression, random forest, or neural network models to predict variant fitness

- Variant Prediction: Use trained models to identify promising higher-order mutants from sequence space

- Experimental Validation: Test ML-predicted variants to validate model accuracy and identify improved enzymes

Essential Research Reagents and Solutions

Table 3: Research Reagent Solutions for Darwinian Protein Design

| Reagent/Solution | Composition/Description | Function in Experimental Workflow |

|---|---|---|

| PURE System [4] | Recombinant transcription-translation machinery | Cell-free protein synthesis without cellular constraints |

| MutaT7 System [2] | T7 RNA polymerase + mutator plasmid | In vivo mutagenesis for continuous evolution |

| Microfluidic Encapsulation Reagents [1] | Water-in-oil emulsion components | Compartmentalization for high-throughput screening |

| Spore-Display Fusion Partners [1] | Bacillus subtilis spore coat proteins | Enzyme immobilization with genotype-phenotype linkage |

| Linear DNA Expression Templates [3] | PCR-amplified gene fragments | Rapid protein expression without cloning |

| Liposome Compartments [4] | Phospholipid vesicles resembling cell membranes | Compartmentalization for genotype-phenotype linkage |

Case Studies in Darwinian Enzyme Engineering

Engineering CelB β-Galactosidase Using Continuous Evolution

The GCCDE platform was validated by evolving the thermostable enzyme CelB from Pyrococcus furiosus to enhance its β-galactosidase activity at lower temperatures while maintaining thermal stability [2]. Enzyme activity was coupled to E. coli growth by making lactose metabolism dependent on CelB function. The continuous culture system enabled automated high-throughput mutagenesis and simultaneous real-time selection of over 10⁹ variants per culture. The evolved CelB variants showed significantly enhanced low-temperature activity while preserving thermostability, with sequencing revealing key mutations responsible for improved substrate binding and catalytic turnover.

Divergent Evolution of Amide Synthetase Specialists

Machine-learning guided directed evolution was used to convert a generalist amide bond-forming enzyme (McbA) into multiple specialist enzymes [3]. Starting with evaluation of enzymatic substrate promiscuity across 1100 unique reactions, researchers identified nine pharmaceutical compounds for optimization. Using cell-free protein synthesis to test 1217 enzyme variants, they built ML models that predicted variants with significantly improved activity (1.6- to 42-fold) for all nine target compounds. This demonstrated the power of ML-guided evolution to efficiently navigate sequence space for multiple optimization targets simultaneously.

Future Perspectives and Emerging Trends

The field of Darwinian protein design is rapidly advancing through increased automation and computational integration. Continuous evolution systems are becoming more sophisticated through engineered mutagenesis systems and improved growth coupling strategies. Machine learning methodologies are evolving from predictive models to generative approaches that can design novel enzyme sequences de novo [5]. The combination of large-language models with evolutionary principles shows particular promise for exploring regions of sequence space not represented in natural proteins.

Another significant trend is the movement toward fully automated directed evolution systems that integrate library construction, screening, and data analysis with minimal human intervention. These systems leverage robotics and artificial intelligence to accelerate the design-build-test-learn cycle, potentially reducing optimization timelines from months to days. As these technologies mature, Darwinian protein design will become increasingly accessible and powerful, enabling engineering of complex enzymatic functions that have previously proven intractable through rational design approaches alone.

Directed evolution is a transformative protein engineering methodology that harnesses the principles of Darwinian evolution—iterative cycles of genetic diversification and selection—within a laboratory setting to tailor proteins for specific, human-defined applications [6]. This approach represents a paradigm shift in how new biological functions are created and optimized, earning Frances H. Arnold the 2018 Nobel Prize in Chemistry for its pioneering development [6] [7]. The profound strategic advantage of directed evolution lies in its capacity to deliver robust solutions—such as enhanced stability, novel catalytic activity, or altered substrate specificity—without requiring detailed a priori knowledge of a protein's three-dimensional structure or its catalytic mechanism [6]. By exploring vast sequence landscapes through a process of mutation and functional screening, directed evolution frequently uncovers non-intuitive and highly effective solutions that would not have been predicted by computational models or human intuition, thereby bypassing the inherent limitations of rational design [6].

At its core, the directed evolution workflow functions as a two-part iterative engine, relentlessly driving a protein population toward a desired functional goal [6]. This process compresses geological timescales of natural evolution into weeks or months by intentionally accelerating the rate of mutation and applying an unambiguous, user-defined selection pressure [6]. The success of any directed evolution campaign hinges on the quality of the initial library and, most critically, the power of the screening method used to find the rare variants with improved performance from a population dominated by neutral or non-functional mutants [6] [7]. Today, this technology is routinely deployed across the pharmaceutical, chemical, and agricultural industries to create enzymes and proteins with properties optimized for performance, stability, and cost-effectiveness, with applications ranging from developing highly stable enzymes for detergents and biofuel production to engineering therapeutic antibodies and viral vectors for gene therapy [6].

The Diversification Phase: Generating Genetic Diversity

The creation of a diverse library of gene variants is the foundational step that defines the boundaries of the explorable sequence space in directed evolution [6]. The quality, size, and nature of this diversity directly constrain the potential outcomes of the entire evolutionary campaign [6]. Several methods have been developed to introduce genetic variation, each with distinct advantages, limitations, and inherent biases that shape the evolutionary trajectories available to the protein.

Random Mutagenesis Techniques

Random mutagenesis aims to introduce mutations across the entire length of a gene without pre-selecting specific sites [6]. The most established and widely used method is Error-Prone Polymerase Chain Reaction (epPCR) [6]. This technique is a modified PCR that intentionally reduces the fidelity of the DNA polymerase, thereby introducing errors during gene amplification [6]. This is typically achieved through a combination of factors: using a polymerase that lacks a 3' to 5' proofreading exonuclease activity (such as Taq polymerase), creating an imbalance in the concentrations of the four deoxynucleotide triphosphates (dNTPs), and, most critically, adding manganese ions (Mn2+) to the reaction [6]. The concentration of Mn2+ can be precisely controlled to tune the mutation rate, which is typically targeted to 1–5 base mutations per kilobase, resulting in an average of one or two amino acid substitutions per protein variant [6].

While powerful and straightforward, epPCR is not truly random [6]. DNA polymerases have an intrinsic bias that favors transition mutations (purine-to-purine or pyrimidine-to-pyrimidine) over transversion mutations (purine-to-pyrimidine or vice versa) [6]. This bias, combined with the degeneracy of the genetic code, means that at any given amino acid position, epPCR can only access an average of 5–6 of the 19 possible alternative amino acids [6]. This inherent limitation constrains the accessible sequence space and may prevent the discovery of an optimal variant if it requires a specific transversion mutation [6].

Recombination-Based Methods (Gene Shuffling)

To overcome the limitations of point mutagenesis and to more closely mimic the power of natural sexual recombination, methods based on gene shuffling were developed [6]. These techniques allow for the combination of beneficial mutations from multiple parent genes into a single, improved offspring [6].

DNA Shuffling, also known as "sexual PCR," was pioneered by Willem P. C. Stemmer [6]. In this method, one or more related parent genes are randomly fragmented using the enzyme DNaseI [6]. These small fragments (typically 100–300 bp) are then reassembled in a PCR reaction without any added primers [6]. During the annealing step, homologous fragments from different parental templates can overlap and prime each other for extension by the polymerase [6]. This template switching results in crossovers, effectively shuffling the genetic information and creating a library of chimeric genes that contain novel combinations of mutations from the parent pool [6].

A highly effective extension of this concept is Family Shuffling [6]. This method applies the DNA shuffling protocol to a set of homologous genes isolated from different species [6]. By drawing from the standing variation that nature has already created, family shuffling provides access to a much broader and more functionally relevant region of sequence space than mutating a single gene [6]. It has been shown to significantly accelerate the rate of functional improvement compared to epPCR or single-gene DNA shuffling [6]. The primary limitation of recombination-based methods is their requirement for sequence homology [6]. The parental genes must typically share at least 70–75% sequence identity to ensure efficient and correct reassembly; with lower homology, the reaction strongly favors the regeneration of the original parent sequences [6].

Focused and Semi-Rational Mutagenesis

As an alternative to random approaches, focused mutagenesis targets specific regions or residues within a protein [6]. This is often employed when some structural or functional information is available, allowing for the creation of smaller, higher-quality libraries [6].

Site-Saturation Mutagenesis is a powerful example of this strategy [6]. This technique is used to comprehensively explore the functional importance of one or a few amino acid positions, often "hotspots" identified from a prior round of random mutagenesis or predicted from a structural model [6]. At the target codon, a library is created that encodes for all 19 other possible amino acids [6]. This allows for a deep, unbiased interrogation of a residue's role, something that is statistically improbable with epPCR [6]. This semi-rational approach, which combines knowledge-based targeting with random diversification at those sites, can dramatically increase the efficiency of a directed evolution campaign by reducing the library size and increasing the frequency of beneficial variants [6].

Table 1: Comparison of Key Genetic Diversification Methods in Directed Evolution

| Method | Principle | Typical Library Size | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Error-Prone PCR (epPCR) | Random point mutations via low-fidelity PCR | 10^4 - 10^6 variants | Simple, requires no structural information; broad exploration of local sequence space [6] | Mutation bias (favors transitions); limited to ~5-6 amino acid substitutions per position [6] |

| DNA Shuffling | In vitro recombination of fragmented genes | 10^6 - 10^8 variants | Recombines beneficial mutations; mimics natural sexual recombination [6] | Requires high sequence homology (>70-75%); crossovers biased to regions of high identity [6] |

| Family Shuffling | DNA shuffling of homologous genes from different species | 10^6 - 10^8 variants | Accesses nature's pre-evaluated diversity; significantly accelerates functional improvement [6] | Limited to natural sequence diversity; requires multiple homologous genes [6] |

| Site-Saturation Mutagenesis | Systematic mutation of specific codons to all amino acids | 10^2 - 10^3 variants per position | Comprehensive exploration of specific residues; highly efficient for optimizing known hotspots [6] | Requires prior knowledge of important residues; limited to targeted regions [6] |

The Selection Phase: Identifying Improved Variants

Once a diverse library of gene variants is created, the central challenge of directed evolution emerges: identifying the rare variants with improved properties [6]. This step, which links the genetic code of a variant (genotype) to its functional performance (phenotype), is widely recognized as the primary bottleneck in the process [6]. The success of a campaign is dictated by the axiom, "you get what you screen for" [6]. The power and throughput of the screening platform must match the size and complexity of the library generated in the first step [6].

A key distinction exists between screening and selection [6]. Screening involves the individual evaluation of every member of the library for the desired property [6]. In contrast, Selection establishes a system where the desired function is directly coupled to the survival or replication of the host organism, automatically eliminating non-functional variants [6]. Selections can handle much larger libraries and are less labor-intensive, but they are often difficult to design, can be prone to artifacts, and provide little information about the distribution of activities within the library [6]. Screening, while lower in throughput, guarantees that every variant is tested and provides quantitative data on its performance [6].

Plate-Based and Colony Screening Platforms

The most traditional screening formats utilize agar plates or multi-well microtiter plates [6]. In a colony-based screen, host cells (e.g., bacteria) expressing the enzyme library are grown on a solid medium containing a substrate that produces a visible product [6]. For example, in the landmark evolution of subtilisin, colonies expressing active variants formed clear halos on milk-agar plates due to the degradation of the protein casein [6]. In a microtiter plate format (typically 96- or 384-well), individual clones are cultured, and their cell lysates are assayed for activity using colorimetric or fluorometric substrates that can be read by a plate reader [6]. While these methods are robust and relatively simple to establish, their throughput is limited, typically to 10^3−10^4 variants [6].

High-Throughput Selection Methods

To overcome the throughput limitations of screening methods, powerful selection techniques have been developed. Phage Display, for which George P. Smith and Gregory P. Winter shared the 2018 Nobel Prize, involves fusing protein variants to the coat protein of a bacteriophage, creating a physical link between the protein (phenotype) and its encoding DNA (genotype) [7]. Variants with desired binding properties can be isolated through affinity selection against a target [7].

Fluorescence-Activated Cell Sorting (FACS) is another high-throughput selection technique that can screen up to 10^8 variants per day [7]. In this approach, protein expression is coupled to fluorescent reporters, enabling cells to be sorted based on activity levels [7]. For instance, FACS has been used to evolve glycosyltransferases, yielding variants with over 400-fold improved activity by sorting on fluorescence intensity thresholds [7].

Continuous evolution systems, such as Phage-Assisted Continuous Evolution (PACE), further enhance throughput by enabling real-time mutation and selection in microbial hosts [7]. More recent systems like T7-ORACLE can speed up evolution by an unprecedented degree, introducing mutations every time a cell divides (roughly every 20 minutes) rather than requiring repeated rounds of DNA manipulation and testing that can take a week or more per round [8]. This system uses an engineered E.coli bacterium to host a second, artificial DNA replication system that operates separately from the cell's own machinery, allowing scientists to introduce mutations with each cell division while the cell's original genome remains untouched [8].

Table 2: Comparison of Screening and Selection Methods in Directed Evolution

| Method | Principle | Throughput | Key Advantages | Key Limitations |

|---|---|---|---|---|

| Microtiter Plate Screening | Individual assay of clones in multi-well plates | 10^3 - 10^4 variants per day | Quantitative data; robust and established; amenable to various assay types [6] | Low throughput; labor-intensive; requires individual handling [6] |

| Colony-Based Screening | Activity detection on solid growth medium | 10^3 - 10^4 variants per day | Visual identification; no specialized equipment needed; simple to implement [6] | Semi-quantitative at best; limited to reactions producing visible products [6] |

| FACS (Fluorescence-Activated Cell Sorting) | Cell sorting based on fluorescence coupled to activity | Up to 10^8 variants per day [7] | Extremely high throughput; quantitative; can multiplex different activities [7] | Requires fluorescence coupling; specialized equipment needed; can be technically challenging [7] |

| Phage Display | Fusion of protein to phage coat protein; affinity selection | 10^9 - 10^11 variants per round [7] | Extremely high throughput; direct physical genotype-phenotype link [7] | Primarily for binding interactions; not directly applicable to enzymatic activity [7] |

| Continuous Evolution (e.g., PACE, T7-ORACLE) | Continuous mutation and selection in self-replicating systems | Essentially continuous | Extremely rapid; minimal researcher intervention; automated cycles [8] [7] | Complex to establish; limited to compatible systems; requires specialized expertise [8] [7] |

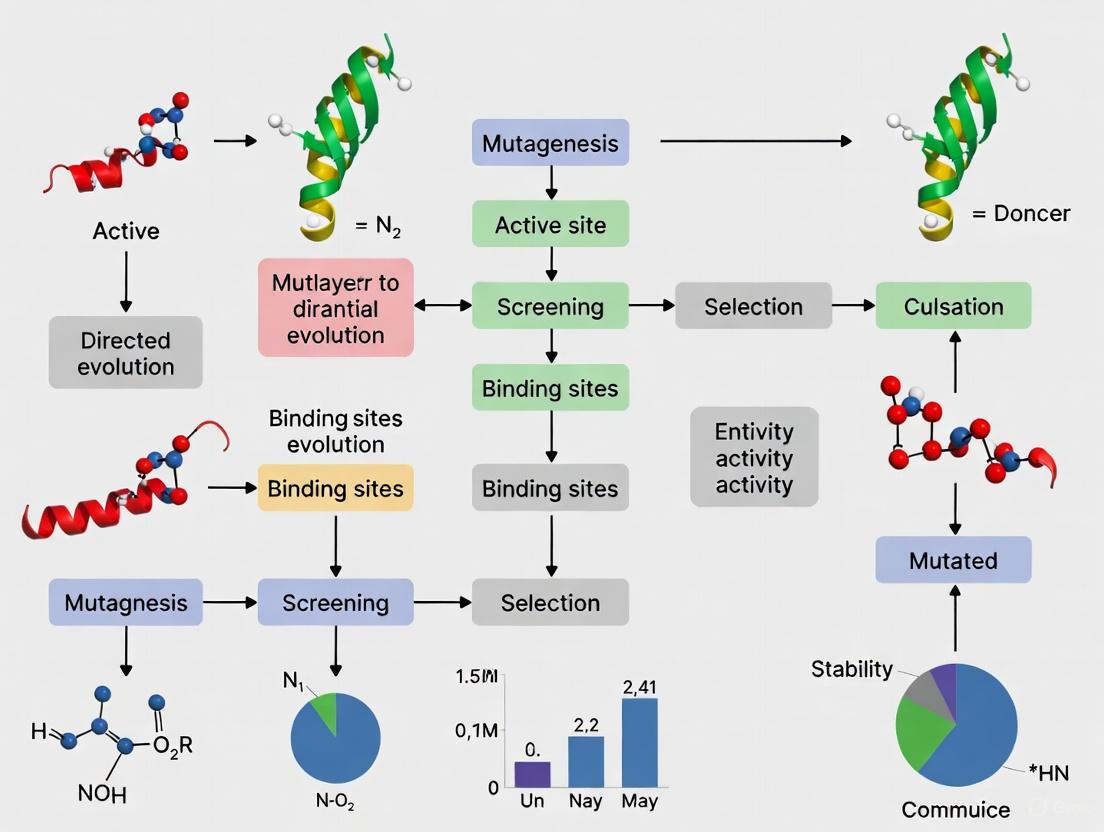

The Directed Evolution Workflow

The directed evolution process follows an iterative cycle of diversification and selection, where the output of each round serves as the input for the next, progressively optimizing the protein toward the desired function. The workflow can be visualized as follows:

Diagram 1: The Directed Evolution Cycle. This workflow illustrates the iterative process of diversification and selection that drives protein optimization.

Advanced Integration: AI and Machine Learning in Directed Evolution

The integration of artificial intelligence and machine learning with directed evolution represents a paradigm shift, moving from purely experimental approaches to computationally guided design [9] [10] [11]. This hybrid approach leverages the power of computational models to predict which mutations or sequences are most likely to yield improvements, dramatically reducing the experimental burden.

Deep Learning for Kinetic Parameter Prediction

Recent advances in deep learning have enabled the development of models that can predict enzyme kinetic parameters—such as kcat (turnover number), Km (Michaelis constant), and kcat/Km (catalytic efficiency)—from protein sequences and substrate structures [9] [11]. Models like UniKP and CataPro use pre-trained language models (e.g., ProtT5 for protein sequences) and molecular fingerprints (for substrates) to predict these parameters with remarkable accuracy [9] [11].

The UniKP framework, for instance, transforms amino acid sequences into 1024-dimensional vectors using the ProtT5-XL-UniRef50 model and processes substrate structures represented in SMILES format through a pretrained SMILES transformer [11]. These representations are then concatenated and fed into machine learning models, with ensemble methods like extra trees demonstrating superior performance (R² = 0.65 compared to linear regression's R² = 0.38) [11]. Similarly, CataPro has been shown to have clearly enhanced accuracy and generalization ability on unbiased datasets compared to previous baseline models [9].

AI-Driven De Novo Design

Beyond predicting the effects of mutations, AI frameworks are now advancing toward de novo enzyme design [10]. A visionary perspective proposes a sophisticated AI-driven framework centered on a unified, controllable generative model that learns the joint distribution of protein sequences, 3D structures, and their functions [10]. This approach moves beyond simple prediction to achieve true de novo design through three key principles:

- Unified Generative Modeling: A single, powerful model learns the deep relationships between protein sequences, structures, and functions, enabling it to generate novel protein "blueprints" that are not just structurally plausible but also functionally viable [10].

- Controllable Generation: The model can be conditioned on a desired function, allowing researchers to specify a target chemical reaction—even one for which no natural enzyme exists—and receive novel protein sequences predicted to catalyze it [10].

- Active Learning via an Automated Loop: The framework creates a closed loop where the AI designs candidate enzymes, which are then synthesized and tested in high-throughput automated experiments, with results fed back into the model to continuously refine its understanding [10].

This "design-build-test-learn" cycle creates a powerful engine for discovery that transcends the limitations of traditional directed evolution by enabling exploration beyond naturally evolved enzyme scaffolds [10].

Essential Research Reagents and Tools

Table 3: Key Research Reagents and Platforms for Directed Evolution

| Reagent/Platform | Function | Application Example |

|---|---|---|

| Error-Prone PCR Kits | Introduce random mutations during gene amplification | Commercial kits (e.g., from Thermo Fisher, Takara) with optimized Mn2+ concentrations for controlled mutation rates [6] |

| DNase I Enzyme | Fragments genes for DNA shuffling | Creating random fragments of 100-300 bp for recombination in DNA shuffling protocols [6] |

| Phage Display Vectors | Genotype-phenotype linkage for selection | pIII or pVIII fusion vectors for displaying protein variants on bacteriophage surfaces [7] |

| FACS (Fluorescence-Activated Cell Sorting) | High-throughput screening based on fluorescence | Sorting microbial cells expressing enzyme variants fused to fluorescent reporters [7] |

| Microtiter Plates (96/384-well) | Individual variant screening | Hosting cell cultures for colorimetric or fluorometric enzyme activity assays [6] |

| Specialized Cell Lines | Host organisms for library expression | T7-ORACLE engineered E. coli with separate artificial DNA replication system for continuous evolution [8] |

| AI Prediction Tools (UniKP, CataPro) | In silico prediction of enzyme kinetic parameters | Ranking enzyme variants or designs before experimental testing to prioritize library synthesis [9] [11] |

The core cycle of diversification and selection remains the fundamental engine of directed evolution, providing a robust framework for optimizing and creating novel protein functions [6]. While the basic principles have remained consistent since the field's inception, methodologies have advanced dramatically—from early random mutagenesis and simple plate screens to sophisticated recombination techniques, ultra-high-throughput selection platforms, and continuous evolution systems that compress evolutionary timescales from millennia to days [6] [8] [7]. The ongoing integration of artificial intelligence and machine learning represents the next frontier, transitioning directed evolution from a largely experimental process to a computationally guided design discipline that can explore the vast uncharted regions of protein sequence space beyond natural evolution's constraints [9] [10] [11]. As these technologies mature and converge, they promise to unlock unprecedented capabilities in enzyme engineering for therapeutic development, sustainable chemistry, and beyond.

Key Advantages Over Rational Design

Enzyme engineering is a cornerstone of modern biotechnology, enabling the development of biocatalysts for applications ranging from pharmaceutical synthesis to sustainable industrial processes. Within this field, two primary engineering strategies have emerged: rational design and directed evolution. While rational design relies on detailed structural knowledge and computational modeling to make precise, targeted mutations, directed evolution (DE) mimics natural selection in a laboratory setting to steer proteins toward user-defined goals without requiring prior mechanistic understanding [12]. This forward-engineering approach harnesses iterative cycles of genetic diversification and functional selection to optimize enzyme properties, compressing geological timescales of evolution into manageable laboratory timelines [6]. The profound impact of directed evolution was recognized with the 2018 Nobel Prize in Chemistry, awarded to Frances H. Arnold for establishing this technology as a cornerstone of modern biotechnology and industrial biocatalysis [6]. This technical guide examines the core advantages of directed evolution over rational design, providing researchers and drug development professionals with a comprehensive framework for leveraging this powerful methodology in their enzyme engineering initiatives.

Fundamental Comparative Analysis: Directed Evolution vs. Rational Design

The choice between directed evolution and rational design represents a fundamental strategic decision in protein engineering projects. Each approach employs distinct methodologies, underlying assumptions, and success criteria, making them differentially suited for specific research objectives and resource constraints.

Rational design operates analogously to architectural planning, requiring extensive pre-existing knowledge of protein structure and catalytic mechanism. Researchers using this approach employ computational models to predict how specific amino acid substitutions will affect protein function, then introduce these changes through site-directed mutagenesis [13]. This method excels when comprehensive structural data is available and the desired functional improvements can be achieved through well-understood structural modifications. However, its significant limitation lies in the inherent complexity of protein structure-function relationships, where even carefully calculated mutations often produce unexpected results due to the intricate network of interactions within protein architectures [12].

In contrast, directed evolution employs an empirical discovery-based approach that does not require mechanistic understanding of the target enzyme. By generating diverse genetic libraries and applying high-throughput screening or selection for the desired function, directed evolution identifies beneficial mutations through experimental observation rather than theoretical prediction [12] [6]. This methodology acknowledges the current limitations in our ability to fully predict protein behavior from sequence and structure alone, instead leveraging biological diversity and functional screening to uncover optimal solutions that might elude rational design efforts [14].

Table 1: Fundamental Comparison Between Directed Evolution and Rational Design

| Aspect | Directed Evolution | Rational Design |

|---|---|---|

| Knowledge Requirement | No need for detailed structural or mechanistic knowledge [12] [6] | Requires extensive structural and mechanistic understanding [13] [12] |

| Methodological Approach | Empirical, discovery-based; mimics natural evolution [12] [6] | Theoretical, structure-based; uses computational modeling [13] |

| Mutation Strategy | Random or semi-random mutagenesis across gene [12] [6] | Targeted, specific mutations based on structure [13] [12] |

| Handling of Complexity | Can navigate complex epistatic interactions experimentally [15] [16] | Struggles with predicting epistatic effects and long-range interactions [13] |

| Optimal Application Scope | Optimizing complex functions like thermostability, organic solvent tolerance, and novel activities [14] [6] | Making specific, well-understood alterations to binding sites or catalytic residues [13] |

The critical advantage of directed evolution lies in its ability to address engineering challenges where the relationship between sequence modification and functional improvement is poorly understood. Properties such as thermostability, solvent resistance, and activity toward non-natural substrates often involve complex, global changes to protein structure that are difficult to predict using rational design methodologies [14]. Through its iterative search-and-selection process, directed evolution can identify non-intuitive mutations and combinations that collectively enhance enzyme performance, frequently discovering solutions that would not have been conceived through rational approaches [6].

Core Technical Advantages of Directed Evolution

Ability to Function Without Structural Information

Perhaps the most significant advantage of directed evolution is its independence from detailed structural or mechanistic knowledge of the target enzyme. Whereas rational design requires high-resolution structural data (from X-ray crystallography, cryo-EM, or NMR) and comprehensive understanding of catalytic mechanisms to inform targeted mutations, directed evolution operates effectively with only a functional assay for the desired property [12] [6]. This capability dramatically expands the scope of enzymes accessible to engineering efforts, particularly for membrane-associated proteins, large complexes, and other targets resistant to high-resolution structural determination.

The practical implication of this advantage is that researchers can initiate engineering campaigns for enzymes with commercially or therapeutically valuable activities without investing months or years in structural characterization efforts. As long as a functional readout (however rudimentary) can be established, directed evolution can proceed to optimize the enzyme. This structural independence has enabled the engineering of numerous biocatalysts for industrial processes where structural information was limited or non-existent but where high-throughput screening methods could be developed [6].

Capacity to Address Complex Functional Properties

Directed evolution excels at optimizing complex enzyme properties that involve global structural changes and multiple synergistic mutations. These include characteristics such as thermostability, organic solvent tolerance, substrate specificity, and enantioselectivity, which often emerge from distributed networks of amino acid interactions throughout the protein structure rather than discrete localized changes [14] [6].

Thermostability engineering provides a compelling example of this advantage. Improving an enzyme's thermal stability requires enhancing the collective network of weak interactions (hydrogen bonds, van der Waals forces, hydrophobic interactions) that maintain the native folded state—a challenge poorly suited to rational design due to the distributed and cooperative nature of protein stability. Directed evolution approaches this problem by simply applying thermal challenge during the screening process, allowing variants with improved stability to be identified functionally without needing to understand the structural basis for their enhancement [6]. This empirical approach has successfully generated enzymes capable of functioning in industrial processes at temperatures up to 15°C higher than their wild-type counterparts [17].

Similarly, altering enzyme enantioselectivity for asymmetric synthesis—a valuable property for pharmaceutical production—often requires subtle coordination of multiple active site residues and access tunnels. Rational design of enantioselectivity remains exceptionally challenging, while directed evolution has produced numerous highly enantioselective biocatalysts by screening variant libraries against enantiomeric substrates [17].

Capacity to Navigate Epistatic Interactions

Proteins exhibit extensive epistasis, where the functional effect of one mutation depends on the presence or absence of other mutations in the sequence [15] [16]. This non-additive complexity creates rugged fitness landscapes with multiple local optima, presenting a fundamental challenge for rational design approaches that typically assume additive or predictable mutational effects.

Directed evolution inherently accounts for epistatic interactions through its iterative process of mutation and functional screening. As beneficial mutations are identified and accumulated in successive generations, their combinatorial effects are evaluated experimentally rather than computationally predicted. This empirical approach allows directed evolution to discover synergistic mutation combinations that collectively enhance enzyme performance beyond what would be expected from individual mutations [15].

The challenge of epistasis is particularly pronounced when engineering enzyme active sites, where residues work in concert to position substrates, stabilize transition states, and facilitate catalysis. Research has demonstrated that machine learning-assisted directed evolution shows particular advantage over traditional methods precisely in these epistatic landscapes where greedy hill-climbing approaches become trapped in local optima [16]. By testing variant combinations directly, directed evolution can escape these local optima and discover global fitness maxima that rational design would overlook.

Discovery of Non-Intuitive and Novel Solutions

The random mutagenesis component of directed evolution enables the exploration of sequence-function space beyond human intuition and current theoretical models. This capacity regularly leads to the discovery of non-intuitive mutations—changes at positions distant from active sites or involving unexpected amino acid substitutions—that nevertheless significantly enhance enzyme function [6].

These non-intuitive solutions often emerge because directed evolution selects purely based on functional outcomes rather than preconceived notions of which mutations "should" work. For example, beneficial mutations might occur in surface residues that affect protein dynamics and flexibility, in loop regions that influence active site accessibility, or at subunit interfaces in multimeric enzymes [17]. Such mutations would rarely be considered in rational design campaigns focused exclusively on active site engineering.

The ability to discover novel solutions is particularly valuable when engineering enzymes for non-natural functions or substrates. Directed evolution has successfully generated catalysts for reactions not found in nature, including cyclopropanation, Diels-Alder reactions, and silicon-carbon bond formation [15]. In these cases, where natural mechanistic principles provide limited guidance, directed evolution's empirical approach can explore entirely new catalytic solutions that expand the scope of biocatalysis beyond natural metabolic pathways.

Advanced Methodologies and Workflows

The Directed Evolution Workflow

The core directed evolution process follows an iterative cycle of diversity generation, screening or selection, and amplification. This workflow compresses evolutionary timescales into practical laboratory timelines by applying strong selective pressure for targeted enzyme properties.

Diagram 1: Directed Evolution Workflow

Diversity Generation Methods

Creating genetic diversity represents the foundational first step in any directed evolution campaign. Multiple molecular biology techniques have been developed to introduce variation into the target gene, each with distinct advantages and applications.

Error-Prone PCR (epPCR) stands as the most widely used random mutagenesis method. This technique modifies standard PCR conditions to reduce polymerase fidelity through manganese ions (Mn²⁺), unbalanced dNTP concentrations, and the use of polymerases lacking proofreading capability [6]. These conditions typically yield mutation rates of 1-5 base substitutions per kilobase, resulting in libraries with an average of one to two amino acid changes per variant. A significant limitation of epPCR is its bias toward transition mutations (purine-to-purine or pyrimidine-to-pyrimidine changes), which restricts the accessible amino acid substitutions at any given position to approximately 5-6 of the 19 possible alternatives [6].

DNA Shuffling represents a more sophisticated approach that mimics natural recombination. In this method, one or more parent genes are fragmented with DNaseI, then reassembled in a primer-free PCR reaction where fragments from different templates cross-prime each other [6]. This process generates chimeric genes containing novel combinations of mutations from the parent sequences. Family Shuffling extends this concept by recombining homologous genes from different species, accessing the functional diversity that natural evolution has already created. These recombination methods typically require at least 70-75% sequence identity between parent genes for efficient reassembly [6].

Site-Saturation Mutagenesis offers a semi-rational middle ground, targeting specific regions or residues for comprehensive variation. Using degenerate codons (such as NNK, where N represents any nucleotide and K represents G or T), researchers can create libraries that explore all 20 possible amino acids at targeted positions [6]. This approach is particularly valuable for focused optimization of active site residues or "hotspots" identified in preliminary evolution rounds, enabling deep exploration of specific sequence regions with manageable library sizes.

Table 2: Key Diversity Generation Methods in Directed Evolution

| Method | Mechanism | Diversity Scope | Library Size | Key Applications |

|---|---|---|---|---|

| Error-Prone PCR | Reduced polymerase fidelity introduces random point mutations [6] | Entire gene; 1-2 amino acid changes/variant | 10³-10⁶ variants | Initial exploration; stability improvements |

| DNA Shuffling | Fragmentation and recombination of homologous genes [6] | Recombines existing mutations; crossovers in regions of high identity | 10⁴-10⁸ variants | Combining beneficial mutations; accessing natural diversity |

| Site-Saturation Mutagenesis | Degenerate codons at targeted positions [6] | All 20 amino acids at specific residues | 10²-10⁴ variants per position | Active site engineering; optimizing key positions |

Screening and Selection Strategies

Identifying improved variants within large libraries represents the critical bottleneck in directed evolution. The screening or selection strategy must reliably detect functional enhancements while handling the library's size and complexity.

Selection methods directly couple desired enzyme function to host organism survival or replication. For example, an enzyme that degrades an environmental toxin could enable host growth in the toxin's presence, or an enzyme in a essential metabolic pathway could become necessary under specific nutrient conditions [12]. Selection approaches can handle extremely large libraries (up to 10¹⁵ variants) through survival-based enrichment but provide limited quantitative information about performance improvements and can be susceptible to false positives from general stress resistance mechanisms [12].

Screening approaches individually assay each variant's function, typically using colorimetric, fluorogenic, or spectrophotometric readouts in microtiter plate formats [6]. While lower in throughput (typically 10³-10⁴ variants per round) than selection methods, screening provides rich quantitative data on each variant's performance and enables multi-parameter optimization (e.g., balancing activity and stability). Recent advances in microfluidics and droplet-based assays have dramatically increased screening throughput while maintaining quantitative assessment capabilities [14].

The empirical principle "you get what you screen for" underscores the critical importance of assay design in directed evolution [6]. The screening method must directly measure the desired enzyme property or employ a reliable proxy that correlates with the target function. For industrial applications, it is particularly important to design screening conditions that mimic the final application environment, including factors like temperature, pH, solvent composition, and substrate concentration.

Emerging Innovations and Hybrid Approaches

Machine Learning-Assisted Directed Evolution

The integration of machine learning (ML) with directed evolution represents a paradigm shift in protein engineering methodology. ML-assisted directed evolution (MLDE) uses computational models trained on sequence-function data to predict high-fitness variants, dramatically reducing experimental screening requirements [16].

These approaches are particularly valuable for navigating epistatic landscapes where traditional directed evolution struggles. Active Learning-assisted Directed Evolution (ALDE) employs an iterative workflow where machine learning models select which variants to test in each round based on previous experimental results and uncertainty quantification [15]. This strategy has demonstrated remarkable efficiency in challenging engineering problems, such as optimizing five epistatic active site residues in a protoglobin for non-native cyclopropanation activity. Where traditional directed evolution failed to make significant progress, ALDE improved product yield from 12% to 93% in just three rounds while evaluating only ~0.01% of the possible sequence space [15].

Focused training MLDE (ftMLDE) enhances these approaches by using zero-shot predictors—computational models that estimate fitness without experimental training data—to pre-enrich libraries with promising variants before screening [16]. These predictors leverage evolutionary, structural, or biophysical principles to prioritize variants more likely to exhibit improved function. Research has demonstrated that MLDE methods provide the greatest advantage over traditional directed evolution precisely in landscapes that are most challenging for conventional approaches, such as those with few functional variants, high epistasis, and multiple local optima [16].

Semi-Rational and Hybrid Methods

The distinction between directed evolution and rational design has blurred with the emergence of semi-rational approaches that incorporate structural and sequence information to create focused, intelligent libraries. These methods leverage available knowledge to restrict mutagenesis to promising regions while still employing empirical screening to identify optimal solutions [17] [18].

Sequence-based consensus design uses multiple sequence alignments of homologous proteins to identify conserved and variable positions, guiding mutagenesis to naturally variable sites more likely to tolerate mutations [17]. Structure-guided focused libraries target residues near active sites, substrate access tunnels, or flexible regions likely to influence catalytic properties [17]. Computational design algorithms can identify positions with high potential for functional improvement based on evolutionary coupling analysis, molecular dynamics simulations, or predicted stability effects [17] [19].

These semi-rational approaches create smaller, higher-quality libraries (often <1000 variants) that require less screening effort while maintaining the exploratory power of directed evolution. This strategy has proven particularly effective for challenging engineering objectives like altering substrate specificity or enhancing stereoselectivity, where random mutagenesis of entire genes would produce impractically large libraries with low frequencies of improved variants [17].

The Scientist's Toolkit: Essential Research Reagents

Successful directed evolution campaigns require carefully selected molecular biology reagents and methodologies tailored to each project's specific goals and constraints.

Table 3: Essential Research Reagents and Methods for Directed Evolution

| Reagent/Method | Function | Key Considerations |

|---|---|---|

| Error-Prone PCR Kits | Introduce random mutations across gene [6] | Tunable mutation rates; bias toward transitions; typically 1-2 aa changes/variant |

| Site-Saturation Mutagenesis Kits | Comprehensive variation at targeted residues [6] | NNK degeneracy covers all 20 amino acids; library size manageable for screening |

| High-Throughput Screening Assays | Identify functional variants from libraries [6] | Colorimetric/fluorogenic substrates; microtiter plate compatibility; throughput 10³-10⁴ variants |

| Emulsion PCR/Compartmentalization | Genotype-phenotype linkage [14] | Aqueous droplets in oil create microreactors; enables screening of 10⁸+ variants |

| Homologous Gene Sets | DNA shuffling and family shuffling [6] | >70% sequence identity for efficient recombination; accesses natural diversity |

| Machine Learning Platforms | Predict high-fitness variants [15] [16] | Active learning; zero-shot predictors; reduces experimental screening load |

Directed evolution provides a powerful, versatile platform for enzyme engineering that demonstrates distinct advantages over rational design approaches, particularly when tackling complex functional objectives or working with structurally uncharacterized proteins. Its capacity to function without detailed mechanistic knowledge, navigate epistatic landscapes, address global protein properties, and discover non-intuitive solutions has established it as the method of choice for numerous biotechnology applications.

The continuing evolution of this technology—through machine learning integration, semi-rational methodologies, and high-throughput screening innovations—promises to further expand its capabilities and applications. As these computational and experimental advances mature, directed evolution is poised to become increasingly predictive and efficient while retaining its fundamental strength: the empirical discovery of functional solutions through experimental observation rather than theoretical prediction alone.

For researchers and drug development professionals, directed evolution offers a robust methodological framework for optimizing biocatalysts across the pharmaceutical, chemical, and biotechnology sectors. Its demonstrated success in generating enzymes with enhanced stability, novel activities, and tailored specificities underscores its value as a cornerstone technology for the ongoing development of sustainable bioprocesses and therapeutic innovations.

Historical Context and Nobel Prize Recognition

Directed evolution stands as a transformative methodology in protein engineering, enabling researchers to tailor enzymes and other biomolecules for specific applications by mimicking natural selection in a controlled laboratory environment [12]. This forward-engineering process harnesses iterative cycles of genetic diversification and functional selection to optimize protein properties such as catalytic activity, stability, and substrate specificity [6]. The profound impact of this approach on basic research and biotechnology was formally recognized with the awarding of the 2018 Nobel Prize in Chemistry to Frances H. Arnold for her pioneering work in evolving enzymes, and to George Smith and Gregory Winter for developing phage display techniques [20] [6] [12]. This whitepaper provides researchers and drug development professionals with a comprehensive technical examination of directed evolution's historical context, fundamental principles, and methodological approaches.

Historical Development

The conceptual foundations of directed evolution trace back to pioneering in vitro evolution experiments in the 1960s, most notably Spiegelman's landmark study with the Qβ bacteriophage RNA replicase [20]. In these experiments, RNA molecules were evolved based on their replication efficiency, demonstrating Darwinian principles in a test tube [12]. The field expanded significantly in the 1980s with the development of phage display technology, which enabled the selection of binding proteins by linking genotype to phenotype through physical connection between displayed peptides and their encoding DNA [20] [12].

During the 1990s, methodological advances brought directed evolution to a wider scientific audience, particularly for enzyme engineering [12]. Key developments included:

- Error-prone PCR for introducing random mutations throughout gene sequences [21] [6]

- DNA shuffling pioneered by Willem P.C. Stemmer to mimic sexual recombination and combine beneficial mutations [6]

- In vitro compartmentalization using water-in-oil emulsions to maintain genotype-phenotype linkage [21]

The subsequent decades witnessed rapid diversification of techniques for creating genetic diversity and screening for desired functions, establishing directed evolution as a cornerstone of modern protein engineering [20].

Table 1: Historical Milestones in Directed Evolution

| Time Period | Key Development | Primary Application | Key Researchers |

|---|---|---|---|

| 1960s | In vitro RNA evolution | Fundamental evolution principles | Spiegelman et al. |

| 1980s | Phage display | Peptide and antibody selection | George Smith |

| 1990s | Error-prone PCR, DNA shuffling | Enzyme engineering | Frances Arnold, Willem Stemmer |

| 2000s-present | High-throughput screening, automation | Metabolic engineering, biocatalysis | Multiple groups |

Fundamental Principles

The directed evolution cycle operates through an iterative process of diversification, selection, and amplification that mimics natural evolution while operating on laboratory timescales [6] [12]. This systematic approach enables researchers to navigate the vast landscape of protein sequence space efficiently.

The Core Evolutionary Cycle

- Diversification: Creating genetic diversity through targeted or random mutagenesis of parent gene(s) [12]

- Selection or Screening: Identifying variants with desired properties from the mutant library [12]

- Amplification: Propagating selected variants to enrich the population and serve as templates for subsequent cycles [12]

The critical distinction from natural evolution lies in the application of user-defined selection pressures specifically designed to optimize particular protein properties rather than organismal fitness [6]. Success in directed evolution experiments correlates directly with the total library size screened, as evaluating more mutants increases the probability of discovering rare beneficial variants [12].

Comparison with Rational Design

Directed evolution offers distinct advantages and limitations compared to rational design approaches:

Advantages:

- Does not require detailed knowledge of protein three-dimensional structure or catalytic mechanism [12]

- Capable of discovering non-intuitive mutations and complex epistatic solutions that would not be predicted computationally [6]

- Systematically explores sequence-function relationships through empirical testing [20]

Limitations:

- Requires development of high-throughput assays compatible with large library sizes [12]

- May lead to specialization on assay conditions rather than the true desired activity [12]

- Practical constraints on the number of variants that can be screened limit exploration of sequence space [12]

Modern protein engineering often employs semi-rational approaches that combine structural insights with directed evolution, using focused libraries to target specific regions while maintaining the benefits of empirical screening [12].

Methodologies and Experimental Approaches

Library Generation Strategies

Creating genetic diversity represents the foundational step in directed evolution, with method selection profoundly influencing experimental outcomes.

Table 2: Library Generation Methods in Directed Evolution

| Method | Mechanism | Advantages | Limitations | Typical Library Size |

|---|---|---|---|---|

| Error-prone PCR | Reduced fidelity polymerase with Mn²⁺ and dNTP imbalance [6] | Easy implementation; no prior structural knowledge needed [21] | Mutational bias (transition favored); limited amino acid sampling (5-6 alternatives per position) [6] | 10⁴-10⁶ variants |

| DNA Shuffling | DNaseI fragmentation + reassembly of homologous genes [6] | Recombines beneficial mutations; mimics natural recombination [6] | Requires high sequence homology (>70-75%); non-uniform crossover distribution [6] | 10⁶-10⁸ variants |

| Site-Saturation Mutagenesis | Targeted randomization of specific codons to all amino acids [6] | Comprehensive exploration of key positions; smaller, higher-quality libraries [21] [12] | Requires identification of target residues; limited to focused regions [12] | 10²-10⁴ variants per position |

| Trimer Codon Mutagenesis | Trimeric phosphoramidites encoding optimal codons [21] | Avoids stop codons and skewed representations; improved protein expression [21] | Custom synthesis required; higher cost [21] | 10⁴-10⁶ variants |

Screening and Selection Platforms

Identifying improved variants from mutant libraries represents the critical bottleneck in directed evolution, with method selection dictated by the specific protein property being optimized and available assay throughput.

Selection Methods directly couple desired function to host survival or replication, enabling efficient processing of extremely large libraries (up to 10¹⁵ variants) [12]. Examples include:

- Phage display for binding affinity optimization [20] [12]

- Complementation assays where enzyme activity is necessary for survival under selective pressure [12]

- FACS-based methods using fluorescence-activated cell sorting for high-throughput screening [21] [20]

Screening Methods involve individual assessment of each variant, providing quantitative activity data but with lower throughput [12]. Common approaches include:

- Microtiter plate assays using colorimetric or fluorogenic substrates [21] [6]

- Colony-based screens on solid media with chromogenic substrates [6]

- Emulsion-based technologies compartmentalizing reactions in picoliter droplets [21]

Table 3: Screening and Selection Method Comparison

| Method | Throughput | Quantitation | Key Applications | Technical Requirements |

|---|---|---|---|---|

| Microtiter plate screening | 10³-10⁴ variants | Quantitative kinetic data | Enzyme activity, specificity [21] | Plate readers, liquid handling |

| Colony screening | 10⁴-10⁵ variants | Semi-quantitative | Hydrolytic enzymes, metabolic pathways [6] | Solid media, imaging systems |

| FACS-based sorting | 10⁷-10⁸ variants | Quantitative | Binding affinity, cell-surface enzymes [21] [20] | Flow cytometer, fluorogenic substrates |

| In vitro compartmentalization | 10⁸-10¹⁰ variants | Quantitative | Antibody evolution, catalytic activity [21] | Microfluidics, emulsion expertise |

| Phage display | 10⁹-10¹¹ variants | Qualitative | Protein-protein interactions, binding proteins [12] | Phage library, immobilization |

The Scientist's Toolkit: Research Reagent Solutions

Successful directed evolution campaigns require specialized reagents and materials to enable library construction, protein expression, and functional screening.

Table 4: Essential Research Reagents for Directed Evolution

| Reagent/Material | Function | Application Examples | Technical Considerations |

|---|---|---|---|

| NNK Degenerate Codon Oligos | Incorporates all 20 amino acids at targeted positions [21] | Site-saturation mutagenesis, focused libraries [15] | NNK encodes 32 codons covering all 20 amino acids and one stop codon |

| Trimer Phosphoramidites | Equimolar mixture coding for optimal codons [21] | Targeted mutagenesis with biased codon usage [21] | Customized mixes available from vendors like IDT; avoids rare codons |

| Error-Prone PCR Kit | Modified polymerase with low fidelity for random mutagenesis [6] | Whole-gene random mutagenesis [6] | Typically uses Taq polymerase with Mn²⁺ and dNTP imbalance |

- Fluorogenic Substrates: Enable high-throughput screening by generating fluorescent signal upon enzymatic reaction; essential for FACS-based methods [21]

- Water-in-Oil Emulsion Reagents: Create artificial compartments for in vitro transcription-translation and screening [21]

- Microfluidic Devices: Generate uniform monodisperse droplets for high-throughput screening [21]

Advanced Applications and Recent Innovations

Directed evolution has demonstrated remarkable success across diverse biotechnology sectors, from industrial biocatalysis to therapeutic development.

Industrial and Therapeutic Applications

- Enzyme Stabilization: Enhancing thermostability and solvent tolerance for industrial processes [12]

- Substrate Specificity Engineering: Altering native enzyme specificity for non-natural substrates and industrial applications [12]

- Therapeutic Antibody Optimization: Improving binding affinity and reducing immunogenicity through phage display and other display technologies [20] [12]

- Metabolic Pathway Engineering: Optimizing biosynthetic pathways for production of pharmaceuticals and fine chemicals [6]

Machine Learning-Enhanced Directed Evolution

Recent advances integrate machine learning (ML) with directed evolution to navigate protein fitness landscapes more efficiently. Active Learning-assisted Directed Evolution (ALDE) represents a cutting-edge approach that leverages uncertainty quantification to prioritize which variants to test in each iterative cycle [15].

This ML-guided approach demonstrated remarkable efficiency in optimizing a challenging five-residue active site in a protoglobin for non-native cyclopropanation activity, achieving 99% total yield and 14:1 diastereoselectivity after exploring only ~0.01% of the theoretical sequence space [15]. The integration of computational modeling with empirical screening represents the future of protein engineering, particularly for navigating epistatic fitness landscapes where mutation effects are non-additive [15].

Directed evolution has matured from fundamental evolutionary studies into an indispensable protein engineering platform that has transformed biotechnology and biomedical research. The field's progression from simple random mutagenesis to sophisticated ML-integrated approaches demonstrates how methodological innovations continue to expand the scope and efficiency of protein optimization. The 2018 Nobel Prize recognition cemented directed evolution's status as a foundational technology that will continue to drive innovations in therapeutic development, industrial biocatalysis, and basic research. As methodology advances enable exploration of increasingly complex sequence-function relationships, directed evolution promises to unlock new frontiers in protein design and engineering.

Core Techniques and Real-World Applications

In the field of enzyme engineering, directed evolution mimics natural selection in the laboratory to develop enzymes with enhanced properties, such as improved catalytic efficiency, stability, or novel substrate specificity [22] [23]. The process hinges on the creation of diverse genetic libraries, from which improved protein variants are identified. Two foundational strategies for generating these libraries are random mutagenesis, typically using error-prone PCR (epPCR), and site-saturation mutagenesis (SSM). The choice between creating diversity throughout an entire gene or focusing it on specific amino acid positions represents a critical strategic decision in any directed evolution campaign. This guide provides an in-depth technical comparison of these two methods, detailing their principles, protocols, and applications within a modern enzyme engineering workflow.

Core Principles and Strategic Comparison

Error-Prone PCR (epPCR)

Error-prone PCR is a method for introducing random mutations throughout a target gene. It relies on reducing the fidelity of the DNA polymerase during amplification by manipulating PCR conditions, such as using manganese ions or unbalanced nucleotide concentrations [24] [25]. This results in a library of gene variants with mutations scattered randomly across the entire sequence. The major advantage of epPCR is its ability to discover beneficial mutations anywhere in the protein, including distant residues that can profoundly influence activity and stability through long-range effects [26]. However, because the mutations are random, the library can contain a high proportion of neutral or deleterious variants, and the number of possible variants is so vast that even the largest libraries can only sample a tiny fraction of the sequence space.

Site-Saturation Mutagenesis (SSM)

Site-saturation mutagenesis is a targeted approach where one specific codon in a gene is replaced with a mixture of codons encoding all 20 possible amino acids [27] [28]. This process is typically repeated for a set of pre-selected residues. This method is highly precise, allowing researchers to systematically interrogate the functional role of every amino acid at a defined position. Its key strength is the efficient exploration of local sequence space around active sites, substrate-binding pockets, or regions suspected to be important for stability [26]. SSM libraries are much smaller and more manageable than random mutagenesis libraries, making them ideal for high-throughput studies. The primary limitation is that it requires prior knowledge or a hypothesis about which residues to target.

Choosing the Right Strategy

The choice between epPCR and SSM is not mutually exclusive and often depends on the available structural and functional information.

- Use epPCR when working with a protein of unknown structure or when you aim to discover unexpected improvements from mutations anywhere in the sequence.

- Use SSM when you have a structural model or functional data pointing to specific residues of interest, or when you need to comprehensively analyze a defined region like an active site.

Modern directed evolution experiments often combine both strategies; for instance, using epPCR for broad discovery in early rounds, followed by SSM to fine-tune key positions identified in the best variants [23].

Table 1: Strategic Comparison of epPCR and Site-Saturation Mutagenesis

| Feature | Error-Prone PCR (epPCR) | Site-Saturation Mutagenesis (SSM) |

|---|---|---|

| Principle | Introduces random mutations throughout the entire gene [25]. | Systematically substitutes a specific residue with all 20 amino acids [27] [28]. |

| Library Diversity | Global, untargeted | Localized, focused |

| Prior Knowledge Required | Minimal | High (e.g., structural or functional data) |

| Library Size | Very large, often >106 variants [29] | Smaller and more defined (e.g., 32,000 for 3 residues) [26] |

| Key Advantage | Discovers beneficial mutations in unexpected locations [26]. | Precisely maps function to specific residues [28] [26]. |

| Primary Limitation | High frequency of neutral/deleterious mutations; vast sequence space [22]. | Restricted to pre-selected sites; can miss distant stabilizing mutations. |

| Ideal Application | Initial rounds of evolution to discover beneficial mutations [23]. | Optimizing specific regions like active sites or protein interfaces [27] [26]. |

Experimental Protocols

Library Generation via Error-Prone PCR and CPEC Cloning

A significant bottleneck in library generation is the cloning of PCR products into plasmid vectors. Traditional restriction enzyme-based cloning is inefficient. An advanced protocol using Circular Polymerase Extension Cloning (CPEC) overcomes these limitations and enhances library coverage [24].

Step 1: Perform Error-Prone PCR

- Template: Plasmid containing the target gene (e.g., pDsRed2 for DsRed2 gene).

- Primers: Design primers homologous to the vector sequence flanking the insertion site.

- Reaction: Use a commercial random mutagenesis kit (e.g., GeneMorph II Random Mutagenesis Kit) with conditions that promote polymerase errors.

- Cycling Conditions:

- Initial Denaturation: 94°C for 2 min

- 30 Cycles:

- Denaturation: 94°C for 15 s

- Annealing: 68°C for 30 s

- Extension: 72°C for 60 s

- Final Extension: 72°C for 5 min [24]

- Product Verification: Analyze the PCR product by 1% agarose gel electrophoresis and purify it.

Step 2: Clone using Circular Polymerase Extension Cloning (CPEC)

- Vector Preparation: Amplify the linearized plasmid backbone using high-fidelity PCR. The primers for the backbone and the insert must have complementary overlapping sequences.

- CPEC Reaction:

- Components: Combine the purified epPCR product (insert) and the linearized vector in a 1:1 molar ratio. Add a high-fidelity DNA polymerase (e.g., TAKARA LA Taq), buffer, and dNTPs.

- Cycling Conditions:

- Initial Denaturation: 94°C for 2 min

- 30 Cycles:

- Denaturation: 94°C for 15 s

- Annealing: 63°C for 30 s

- Extension: 68°C for 4 min

- Final Extension: 72°C for 5 min [24]

- Mechanism: In the first cycle, the overlapping ends of the insert and vector anneal. The DNA polymerase then extends these ends, creating a nicked, circular double-stranded plasmid. The product is directly used to transform competent E. coli.

The following workflow illustrates the key steps in this protocol:

Library Generation via Site-Saturation Mutagenesis

This protocol, based on modifications to the QuikChange method, allows for the efficient creation of a saturation library at a single amino acid position without requiring purified oligonucleotides or PCR products [27].

Step 1: Primer Design

- Design two complementary primers that are complementary to the same strand of the plasmid template.

- The primers should contain the degenerate codon NNK (where N is A/T/G/C and K is G/T) at the position to be randomized. This mixture encodes all 20 amino acids and one stop codon.

- Each primer should have 15–20 base pairs of correct sequence on both sides of the degenerate codon. The total primer length is typically 30–40 bases [27].

Step 2: Mutagenic PCR

- Reaction Mixture:

- Template plasmid DNA (methylated, from a standard E. coli strain): 20 ng

- Forward and reverse mutagenic primers (desalted): 6 pmol each

- dNTPs: 200 µM each

- PfuTurbo or similar high-fidelity DNA polymerase: 1 unit

- Appropriate reaction buffer

- Cycling Conditions:

- Initial Denaturation: 95°C for 2 min

- 16 Cycles:

- Denaturation: 95°C for 30 s

- Annealing: 55°C for 1 min

- Extension: 68°C for 10 min (adjust for larger plasmids)

- Final Extension: 68°C for 10 min [27]