Directed Evolution in Synthetic Biology: From Protein Engineering to Next-Generation Therapeutics

This article explores the transformative role of directed evolution in advancing synthetic biology applications for biomedical research and drug development.

Directed Evolution in Synthetic Biology: From Protein Engineering to Next-Generation Therapeutics

Abstract

This article explores the transformative role of directed evolution in advancing synthetic biology applications for biomedical research and drug development. It covers foundational principles where directed evolution acts as a discovery engine, modern methodologies enhanced by machine learning and orthogonal systems, strategies to overcome stability and efficiency challenges, and comparative validation across diverse biological systems. By synthesizing recent breakthroughs, this resource provides scientists and researchers with a comprehensive framework for leveraging directed evolution to engineer novel biocatalysts, stabilize synthetic genetic circuits, and develop advanced therapeutic platforms.

The Engine of Innovation: How Directed Evolution Drives Synthetic Biology Discovery

Protein engineering endeavors to create biomolecules with novel or enhanced functions, a pursuit critical for advancing therapeutic development, industrial biocatalysis, and synthetic biology. For decades, the field has been dominated by two primary philosophies: rational design and directed evolution. Rational design relies on in-depth knowledge of protein structure and mechanism to make precise, computed amino acid changes [1]. In practice, however, the effects of mutations are notoriously difficult to predict a priori due to the complex, non-linear interactions within protein structures [2] [1]. Directed evolution mimics the process of natural selection in a laboratory setting, iteratively accumulating beneficial mutations without requiring pre-existing structural knowledge [3] [1]. This method has emerged as a powerful solution to the limitations of purely rational approaches, bridging knowledge gaps where our understanding of structure-function relationships is incomplete. By combining elements of both strategies, and increasingly leveraging machine learning, researchers are developing more robust semi-rational pipelines that accelerate the engineering of biomolecules for a wide range of applications in synthetic biology research [4] [1] [5].

The Principles and Workflow of Directed Evolution

Directed evolution is an iterative biomimetic process comprising three core stages: diversification, selection, and amplification [1]. This cycle recapitulates natural evolution—variation, selection, and heredity—but operates on a compressed timescale under a selection regime designed to achieve a predefined goal [3].

Table 1: Core Steps in a Directed Evolution Cycle

| Step | Description | Common Methodologies |

|---|---|---|

| 1. Diversification | Creating a library of genetic variants of the starting sequence. | Error-prone PCR, DNA shuffling, site-saturation mutagenesis, synthetic oligonucleotide libraries [2] [1]. |

| 2. Selection | Identifying library variants that exhibit the desired functional improvement. | Phage/yeast display, robotic high-throughput screening, in vitro compartmentalization, survival-based selection [3] [1]. |

| 3. Amplification | Isolating the genes of the best variants to serve as templates for the next cycle. | PCR, transformation into host bacteria (e.g., E. coli) for propagation [1]. |

The power of directed evolution is rooted in its ability to explore a vast landscape of sequence variants and their linked activities. In a well-designed experiment, most sequence positions are sampled with some degree of amino acid diversity. Any sequence conferring improved activity is retained, and in the next iteration, it is re-scanned for additional beneficial substitutions, allowing combinatorial optimization of residue positions [3]. This process can reveal key activity-determining residues, combinatorial contributors to function, and even potential functional mechanisms, providing deep insight into the molecular basis of protein function [3].

Standard Experimental Workflow

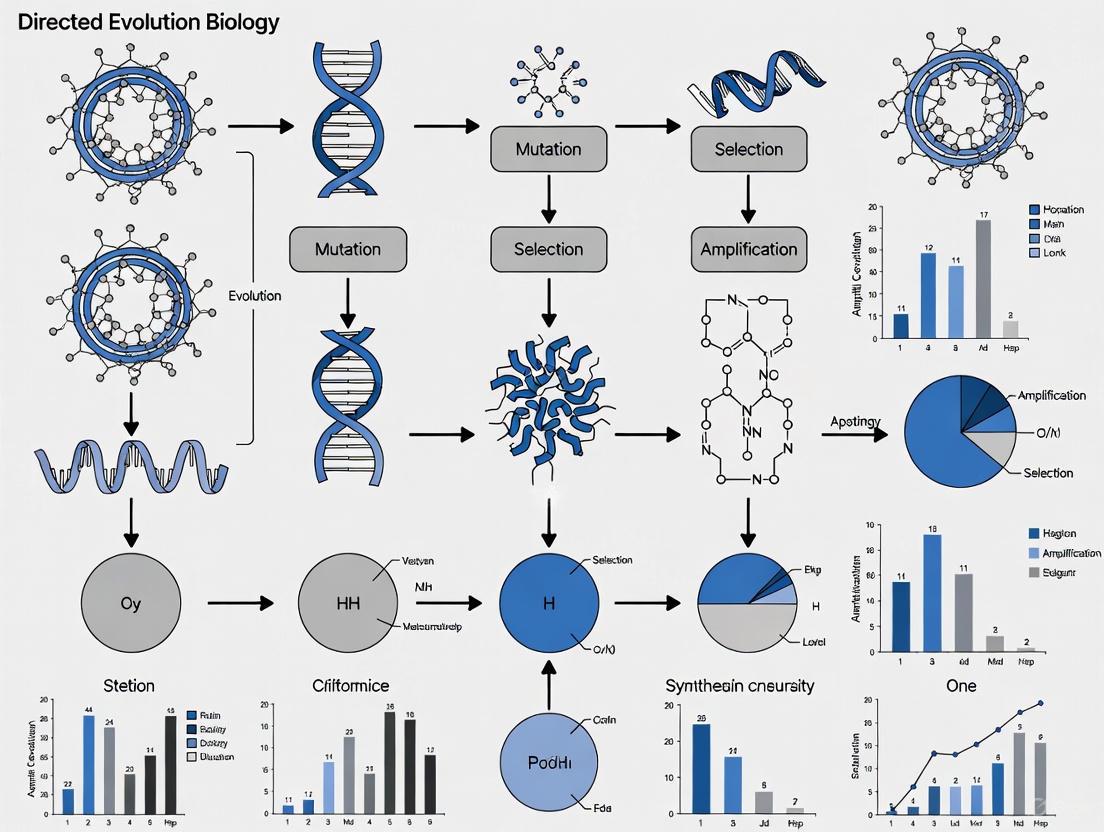

The following diagram illustrates the foundational, iterative cycle of a directed evolution experiment.

Key Methodologies and Recent Technical Advances

The field of directed evolution has progressed from simple random mutagenesis to sophisticated strategies that enhance library quality and screening efficiency. Recent advances integrate machine learning and continuous evolution systems to navigate sequence space more effectively.

Library Creation Strategies

Library creation methodologies can be broadly classified as random or targeted.

- Random Mutagenesis: Techniques like error-prone PCR introduce mutations throughout the gene, simulating random point mutations [2] [1]. While comprehensive, this approach often generates a high proportion of deleterious mutations, making it inefficient for exploring vast sequence spaces.

- Gene Recombination: Methods like DNA shuffling mimic natural recombination by fragmenting and reassembling homologous genes, generating chimeric proteins that jump through sequence space [2].

- Targeted/Semi-Rational Approaches: Focused libraries concentrate diversity on specific regions, such as active sites or regions known to be variable in nature, yielding a higher frequency of improved variants [1]. This requires some prior knowledge but drastically reduces library size and screening burden [1].

Machine Learning-Guided Directed Evolution

A significant modern advancement is the integration of active learning, which uses machine learning models to guide the exploration of protein sequence space more efficiently than greedy hill-climbing. This is particularly powerful for navigating rugged fitness landscapes with significant epistasis, where mutations have non-additive effects [4].

Table 2: Representative Advanced Directed Evolution Platforms

| Platform/Strategy | Core Innovation | Key Outcome / Demonstration | Reference |

|---|---|---|---|

| Active Learning-assisted DE (ALDE) | Iterative machine learning with uncertainty quantification to balance exploration and exploitation. | Optimized 5 epistatic residues in an enzyme; increased cyclopropanation yield from 12% to 93%. | [4] |

| DeepDE | Iterative deep learning using triple mutants as building blocks, trained on ~1,000 variants. | Achieved 74.3-fold increase in GFP activity over 4 rounds, surpassing superfolder GFP. | [5] |

| T7-ORACLE | Continuous in vivo evolution using an orthogonal, error-prone T7 replisome in E. coli. | Evolved antibiotic resistance 100,000x faster; resistance to doses 5,000x higher in <1 week. | [6] |

The workflow for ALDE demonstrates the tight integration between computational prediction and experimental validation.

The Scientist's Toolkit: Essential Research Reagents and Methods

Successful directed evolution campaigns rely on a suite of reliable reagents, methods, and model systems.

Table 3: Key Research Reagent Solutions for Directed Evolution

| Reagent / Tool | Function in Directed Evolution | Application Example |

|---|---|---|

| Error-Prone Polymerase | Enzyme for error-prone PCR; introduces random point mutations during gene amplification. | Creating diverse initial libraries from a single parent gene [2]. |

| NNK Degenerate Codons | Synthetic oligonucleotides for saturation mutagenesis; allows all 20 amino acids at a target site (N=A/T/G/C; K=G/T). | Focused library generation on key active-site residues [4]. |

| Orthogonal Replicon Plasmid | Specialized plasmid (e.g., in T7-ORACLE) mutated by an error-prone polymerase; host genome remains intact. | Enables continuous, hyper-accelerated evolution of target genes in vivo [6]. |

| Bacterial/Yeast Display | Phenotype-genotype linkage system; library proteins expressed on cell surface for binding-based selection. | Selection of high-affinity antibodies or binding proteins [3] [1]. |

| In Vitro Compartmentalization | Encapsulates individual genes & expressed proteins in water-in-oil emulsion droplets for screening. | High-throughput screening of enzymatic activities without cellular constraints [3]. |

Directed evolution has proven to be an indispensable tool for overcoming the fundamental limitations of rational design. By harnessing evolutionary principles and coupling them with technological advances in library creation, high-throughput screening, and machine learning, it provides a robust pathway for optimizing and creating protein function where predictive knowledge fails. As the field progresses, the fusion of synthetic biology platforms like T7-ORACLE with active learning algorithms heralds a new era of intelligent design. This synergy between stochastic exploration and predictive modeling is revolutionizing synthetic biology research, enabling the rapid development of novel enzymes, therapeutic proteins, and engineered biosystems that address pressing challenges in medicine and biotechnology.

The design of functional proteins represents a grand challenge in synthetic biology and drug development. The core problem lies in the astronomical size of the protein sequence space. For a typical protein of 100 amino acids, there exist over 10^130 possible sequences—a number that vastly exceeds the number of atoms in the observable universe [7]. This immense complexity renders exhaustive experimental screening practically impossible, creating a critical bottleneck in protein engineering. Traditional rational design approaches, which often rely on providing a predefined protein backbone and solving the "inverse folding problem," have significant limitations. They typically require a predetermined scaffold that may not be optimal for the desired function, and the integration of functional properties is often a separate, time-consuming process that can extend over several years [7].

Within the broader thesis of directed evolution applications in synthetic biology, this challenge becomes particularly acute. Directed evolution, defined as the application of selective pressure to libraries of variants to identify those with desired properties, has proven to be a vital tool for synthetic biology, enabling the rapid screening or selection of construct variants when rational design proves prohibitively difficult [8]. However, the effectiveness of traditional directed evolution is inherently constrained by the size and quality of the physical libraries that can be created and screened. Navigating the protein sequence space effectively requires sophisticated computational strategies that can intelligently guide the exploration toward functional regions, significantly accelerating the design process and expanding the scope of accessible proteins for therapeutic and industrial applications.

Computational Frameworks for Sequence Space Navigation

Data-Driven Fitness Landscapes

A powerful approach to modeling sequence space involves building data-driven fitness landscapes inferred from natural protein families. These landscapes serve as proxies for protein fitness and are constructed from multiple sequence alignments (MSAs) of homologous proteins. The underlying idea is to represent natural variability via a generative statistical model, often formalized as a Potts model, where the probability of a sequence is given by:

[ P(a1,\dots,aL) = \frac{1}{Z} \exp\left{ -E(a1,\dots,aL) \right} ]

with the statistical energy defined as:

[

E(a1,\dots,aL) = -\sumi hi(ai) - \sum{i

Here, (hi(ai)) represent position-specific amino acid biases, and (J{ij}(ai,a_j)) capture epistatic couplings between residue pairs [9]. This model assigns low statistical energy (high probability) to "fit" sequences and high energy to non-functional sequences. These landscapes can then be used to simulate protein evolution under various experimental conditions, predicting outcomes like fitness distributions and mutational spectra, thereby offering a way to computationally optimize experimental protocols before resource-intensive wet-lab work begins [9].

Artificial Intelligence and Language Models

Breakthroughs in artificial intelligence have revolutionized the field of protein design. Transformer-based architectures, which have profoundly impacted natural language processing, are now being applied to protein sequences with remarkable success [7]. These models can be broadly categorized into encoder-only and decoder-only architectures.

Encoder-only models, such as ESM-1b, ESM2, and ProtTrans, are trained to reconstruct original sentences from corrupted input tokens (e.g., masked tokens). While not inherently generative, they create powerful representations of protein sequences that can be used for tasks like contact prediction and functional annotation. The ESM2 model, with 15 billion parameters, has demonstrated extraordinary capabilities and has been used for de novo protein design by sampling sequences for a defined backbone using Markov chain Monte Carlo (MCMC) methods with simulated annealing [7].

Decoder-only models, inspired by OpenAI's GPT series, are trained on the classic language-modeling task of predicting the next item in a sequence. This autoregressive objective makes them particularly powerful for unconditional protein sequence generation. Notable implementations include:

- ProtGPT2: A 738 million parameter model that generates de novo sequences in unexplored regions of the protein space while maintaining natural sequence properties like disorder, dynamic properties, and predicted stability [7].

- ProGen2: A family of models of up to 6.4 billion parameters trained on over a billion proteins that can generate well-folded sequences significantly distant from natural protein space [7].

- RITA: A suite of generative models ranging from 85 million to 1.2 billion parameters that demonstrated the relationship between model size and performance in predicting protein fitness [7].

These models can be used in a zero-shot manner or fine-tuned on specific protein families to generate new sequences from that group, effectively augmenting protein family repertoires for directed evolution campaigns [7].

Table 1: Key AI Models for Protein Sequence Generation

| Model Name | Architecture | Parameters | Key Capabilities |

|---|---|---|---|

| ESM2 [7] | Encoder-only | 15 billion | Structure prediction, sequence representation for design |

| ProtGPT2 [7] | Decoder-only | 738 million | Unconditional generation of novel, stable sequences |

| ProGen2 [7] | Decoder-only | Up to 6.4 billion | Generation of distant, well-folded sequences |

| RITA [7] | Decoder-only | 85M - 1.2B | Demonstrates scaling laws for fitness prediction |

Experimental Methodologies and Protocols

In Silico Guided Directed Evolution

The integration of computational models with experimental directed evolution creates a powerful feedback loop for navigating sequence space. The following workflow outlines a typical in silico guided directed evolution campaign:

Step 1: Data-Driven Landscape Construction

- Collect a multiple sequence alignment (MSA) of natural homologs of your target protein from databases like Pfam.

- Use statistical inference methods (e.g., Direct Coupling Analysis) to infer a generative Potts model from the MSA, capturing both single-residue conservation and pairwise epistatic constraints [9].

- Validate the landscape by comparing predicted mutational effects with available deep mutational scanning data to ensure the model accurately captures fitness constraints.

Step 2: In Silico Library Generation

- Use the inferred fitness landscape or a pre-trained protein language model (e.g., ProtGPT2) to generate a diverse library of sequence variants in silico.

- For fitness landscape-based generation, implement stochastic evolutionary simulations that introduce mutations at the DNA level but select based on the statistical energy of the translated protein sequence [9].

- For language model-based generation, sample sequences either unconditionally or conditioned on specific properties (e.g., by fine-tuning on a specific protein family) [7].

Step 3: Variant Filtering and Selection

- Rank in silico variants based on their statistical energy (for landscape models) or sequence probability (for language models).

- Apply additional filters based on structural stability predictions (using tools like AlphaFold2), functional site conservation, or other domain-specific constraints.

- Select a manageable number of top candidates (typically dozens to hundreds) for experimental validation.

Step 4: Experimental Validation and Model Refinement

- Synthesize DNA for selected variants and express them in an appropriate host system.

- Screen or select for the desired function using high-throughput assays.

- Sequence functional variants and use this new experimental data to refine the computational model, creating a powerful feedback loop for subsequent evolution cycles [9].

Enhancing Evolutionary Stability with Machine Learning

A persistent challenge in synthetic biology is the evolutionary instability of heterologous gene expression, which often leads to loss of function over time. The STABLES (stop codon–tunable alternative bifunctional mRNA leading to expression and stability) methodology addresses this by physically linking a gene of interest (GOI) to an essential endogenous gene (EG) [10].

STABLES Experimental Protocol:

Machine Learning-Guided EG Selection:

- Train a machine learning model (e.g., ensemble of k-nearest neighbors and XGBoost) on features including codon usage bias (tAI, CAI), GC content, mRNA folding energy, and other bioinformatic features to predict optimal EG partners for a given GOI [10].

- The model should be trained on fluorescence or expression data from GOI-EG fusion libraries under various conditions.

- Select the top 1-3 EG candidates recommended by the model for experimental validation.

Fusion Construct Design:

- Design a genetic construct where the GOI's C-terminus is fused to the EG's N-terminus via a selected linker sequence, under a shared promoter in a single open reading frame.

- Select linkers by comparing disorder profiles of the GOI and EG before and after fusion using biophysical models to minimize disruption to protein folding [10].

- Place a "leaky" stop codon (e.g., UGA or UAG with known read-through rates) after the GOI to enable differential expression—producing both the GOI alone and the GOI-EG fusion protein.

- Optimize the fusion gene sequence for expression and avoidance of mutationally unstable sites using codon optimization tools.

Host Engineering and Validation:

- Replace the native EG in the host organism (e.g., Saccharomyces cerevisiae) with the fusion construct.

- Validate functionality by measuring GOI expression (e.g., fluorescence for reporter proteins) and host fitness over multiple generations (e.g., 15+ days) [10].

- Compare stability against unfused GOI controls to quantify improvement.

Table 2: STABLES System Components and Functions

| Component | Function | Design Considerations |

|---|---|---|

| Gene of Interest (GOI) | Encodes the target protein for expression | May require codon optimization for host system |

| Essential Gene (EG) | Provides selective pressure against deleterious mutations | Selected via ML model based on expression/stability features |

| Linker Sequence | Connects GOI and EG, minimizes misfolding | Chosen by comparing disorder profiles pre-/post-fusion |

| Leaky Stop Codon | Enables differential expression | Selected for appropriate read-through rate (e.g., UGA) |

| Shared Promoter | Drives expression of the fusion construct | Strength matched to EG function and desired GOI expression |

Quantitative Analysis of Sequence Space Exploration

The effectiveness of sequence space navigation strategies can be quantitatively evaluated using several key metrics. Experimental data should be systematically analyzed to guide the optimization of evolution protocols.

Table 3: Quantitative Metrics for Sequence Space Exploration

| Metric | Description | Measurement Method | Target Range |

|---|---|---|---|

| Sequence Divergence | Average percentage of mutated amino acids relative to wild-type | Sequence alignment and comparison | 10-15% for initial libraries [9] |

| Functional Retention | Percentage of library variants maintaining basal function | High-throughput functional screening | >70% for effective epistasis detection [9] |

| Epistatic Signal Strength | Accuracy of contact prediction from sequence correlations | plmDCA/evCouplings analysis | >50% top-L/10 precision for structure prediction [9] |

| Evolutionary Stability | Maintenance of function over generations | Longitudinal expression measurement (e.g., fluorescence) | <30% decline over 15 generations [10] |

| Library Diversity | Coverage of sequence space in the variant library | Shannon entropy or unique sequence clusters | Maximize while maintaining functionality |

Analysis of two recent experiments that used evolved sequence libraries for contact prediction illustrates the importance of these parameters. Although both experiments used similar approaches (iterative rounds of diversification via error-prone PCR followed by weak selection for functionality), they produced different outcomes in their ability to detect epistasis for structure prediction. Simulations using data-driven fitness landscapes revealed that this difference could be explained by key experimental parameters: sequence libraries with greater divergence from wild-type (15% vs. 10%) and larger sequencing depth (>10^4 vs. <10^4 sequences) produced significantly stronger epistatic signals, enabling accurate contact prediction [9]. This quantitative understanding allows researchers to optimize experimental design before committing substantial resources.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Research Reagents for Protein Sequence Space Exploration

| Reagent / Tool | Function | Example Applications |

|---|---|---|

| Error-Prone PCR Kits | Introduces random mutations during DNA amplification | Library diversification in directed evolution [9] |

| PlmDCA/evCouplings Software | Detects epistatic couplings from multiple sequence alignments | Predicting residue-residue contacts for structure modeling [9] |

| Pre-trained Protein Language Models (e.g., ProtGPT2, ESM2) | Generates novel protein sequences or predicts fitness | Zero-shot design or fine-tuning for specific families [7] |

| Fluorescent Protein Reporters (e.g., GFP) | Serves as proxy for gene expression and protein stability | Quantifying evolutionary stability in fusion systems [10] |

| Codon Optimization Tools | Optimizes DNA sequence for expression in host systems | Enhancing stability and expression of heterologous genes [10] |

| Essential Gene Tagging Libraries (e.g., SWAp-Tag) | Provides characterized essential gene clones | Source of essential genes for fusion strategies like STABLES [10] |

The navigation of vast protein sequence spaces for functional variants has been transformed by the integration of computational and experimental approaches. Data-driven fitness landscapes and protein language models now enable researchers to focus their experimental efforts on the most promising regions of sequence space, dramatically accelerating the protein design process. The STABLES system exemplifies the next generation of synthetic biology tools that not only facilitate the initial design of functional proteins but also ensure their long-term evolutionary stability—a critical consideration for industrial and therapeutic applications. As these computational and experimental methodologies continue to mature and converge, they promise to expand the scope of accessible protein functions and streamline the development of novel biocatalysts, therapeutics, and biosensors within the broader framework of directed evolution in synthetic biology research.

Directed evolution stands as a powerful methodology in synthetic biology, emulating natural evolution in a laboratory setting to engineer biomolecules with enhanced or novel functions. This iterative process of creating diversity, screening, and selecting superior variants is fundamentally powered by core technical capabilities in DNA assembly, recombineering, and high-throughput screening. The efficiency and success of directed evolution campaigns are directly contingent on the robustness, versatility, and scalability of this underlying toolkit. This technical guide provides an in-depth examination of these core methodologies, framing them within the context of accelerating research and drug development. By detailing standardized protocols, quantitative performance data, and integrated workflows, this document serves as a resource for researchers and scientists aiming to harness directed evolution for applications ranging from therapeutic antibody development to the optimization of biosynthetic pathways.

DNA Assembly: Foundational Methods for Construct Engineering

The construction of genetic variants is the first critical step in any directed evolution workflow. Modern DNA assembly techniques allow for the precise and modular assembly of multiple DNA fragments into functional constructs.

Standardized and Modular Toolkits

The development of standardized toolkits has significantly advanced the field by enabling versatile and flexible DNA assembly. For instance, one such toolkit for Streptomyces—a prolific producer of natural products like antibiotics and immunosuppressants—is compatible with various assembly approaches including BioBrick, Golden Gate, CATCH, and yeast homologous recombination [11]. This compatibility offers tremendous flexibility for handling multiple genetic parts or refactoring large biosynthetic gene clusters (BGCs), which is often necessary for activating silent pathways for novel drug discovery [11]. These toolkits allow for the easy exchange of plasmid copy numbers, selection markers, integration sites, and regulatory parts, facilitating the rapid generation of diverse variant libraries for evolutionary experiments.

Advanced Cloning Techniques for Large Gene Clusters

Many BGCs for natural products exceed the cloning capacity of standard vectors. Techniques like Cas9-Assisted Targeting of CHromosome segments (CATCH) have been developed to clone large gene clusters directly from genomic DNA [11]. The CATCH method involves:

- Embedding microbial cells in agarose plugs to protect high-molecular-weight DNA.

- In vitro digestion of the genomic DNA using the Cas9 enzyme guided by sequence-specific sgRNAs that flank the target gene cluster.

- Co-transformation of the liberated gene cluster fragment and a linearized capture vector, containing homologous overlaps, into a suitable host (e.g., E. coli) using methods like Gibson assembly [11].

This capability is crucial for directed evolution of entire biosynthetic pathways, as it allows researchers to capture and manipulate large genetic units as single, manageable entities.

Table 1: Key DNA Assembly Methods and Their Applications in Directed Evolution

| Method | Key Principle | Typical Throughput | Best Suited For |

|---|---|---|---|

| Golden Gate Assembly | Type IIS restriction enzyme digestion and ligation | Moderate to High | Modular, hierarchical assembly of standard parts [11]. |

| Gibson Assembly | Exonuclease, polymerase, and ligase enzymatic assembly | Moderate | Seamless assembly of 2-10 fragments with overlaps [11]. |

| Yeast Homologous Recombination | In vivo recombination in S. cerevisiae | High | Assembly of very large DNA fragments (>100 kb) and multi-site editing [11]. |

| CATCH Cloning | Cas9-mediated excision from chromosomes | Targeted | Cloning of specific, large gene clusters directly from genomic DNA [11]. |

Recombineering and High-Throughput Screening

Genome Editing via Recombineering

Beyond in vitro assembly, recombineering (recombination-mediated genetic engineering) is a powerful method for introducing diversity directly onto the chromosome or large-insert clones in vivo. This technique utilizes the highly efficient homologous recombination systems of prokaryotes (e.g., Lambda Red in E. coli) or eukaryotes (e.g., in S. cerevisiae) to introduce targeted changes. In a directed evolution context, recombineering can be coupled with CRISPR-Cas9 counter-selection to dramatically enhance the efficiency of generating and isolating desired mutants.

A demonstrated protocol for editing a biosynthetic gene cluster (e.g., the act cluster in Streptomyces) involves [11]:

- Designing sgRNAs targeting specific promoter regions within the cluster for replacement.

- In vitro digestion of the cluster-carrying plasmid using Cas9 complexed with the designed sgRNAs.

- Co-transforming the digested plasmid and a synthesized promoter cassette (along with a yeast selectable marker like URA3) into S. cerevisiae.

- Harvesting the correctly assembled plasmid from yeast and transforming it into the final production host.

This method allows for the precise "refactoring" of native pathways, for example, by replacing native promoters with stronger, inducible ones to boost the production of a target molecule—a common goal in directed evolution.

Quantitative High-Throughput Screening

The success of directed evolution hinges on the ability to screen vast libraries of variants. High-throughput screening (HTS) pipelines rely on automation and quantitative readouts to identify top performers.

Automation and Workstation Integration: Automated pipetting workstations and integrated liquid handling systems can execute a substantial portion of the repetitive tasks in synthetic biology, reducing manual labor and enhancing efficiency and reproducibility in library creation and screening [12].

Quantification of Regulatory Parts: Establishing libraries of characterized, modular genetic parts is essential for predictable engineering. For example, the strength of promoters can be quantitatively measured by fusing them to a reporter gene like sfGFP (super-folder Green Fluorescent Protein) and measuring fluorescence output in a host strain [11]. This data allows researchers to make informed choices when tuning gene expression levels during pathway optimization.

Table 2: Essential Research Reagent Solutions for Synthetic Biology Toolkits

| Reagent / Material | Function / Explanation |

|---|---|

| Orthogonal Integration Vectors | Plasmids with diverse replication origins and integration sites (e.g., φC31, φBT1) for stable heterologous expression in various hosts [11]. |

| Standardized Modular Plasmids | Plasmids designed for compatibility with assembly standards (e.g., BioBrick, Golden Gate) to facilitate reproducible genetic construction [11]. |

| Library of Characterized Promoters | A collection of regulatory elements with quantified strengths (e.g., via sfGFP expression) for predictable tuning of gene expression [11]. |

| Cumate-Inducible Expression System | A tightly regulated promoter system that can be switched on by the addition of cumate, allowing precise control over the timing of gene expression [11]. |

| Homing Endonuclease Cloning Systems | Systems using endonucleases like I-SceI for the assembly of very large DNA constructs, often necessary for manipulating entire gene clusters [11]. |

Integrated Workflows and Data Standards

An Integrated Workflow for Pathway Directed Evolution

The individual techniques of DNA assembly, recombineering, and screening converge into a cohesive, iterative cycle for directed evolution. The diagram below outlines a representative workflow for evolving a biosynthetic pathway to enhance product yield.

Data Visualization and Standardization

Effective communication and reproducibility in synthetic biology are bolstered by community standards.

The Synthetic Biology Open Language (SBOL) is a free, open-source data standard for the representation of biological designs, enabling the standardized electronic exchange of information on the structural and functional aspects of genetic components [13]. SBOL Visual provides a standardized set of glyphs (symbols) for drawing genetic diagrams, ensuring clarity and uniformity in visual communication [13]. Tools like DNAplotlib allow for highly customizable visualization of genetic constructs, functioning as a "matplotlib for genetic diagrams" [13].

For computational modeling, the Systems Biology Markup Language (SBML) is an XML-based format for representing models of biological processes, facilitating simulation and analysis [14]. These standards are coordinated under the COMBINE initiative, which harmonizes the development of compatible and interoperable standards in systems and synthetic biology [14].

The relentless advancement of the synthetic biology toolkit is fundamentally accelerating the pace of directed evolution and drug discovery. The integration of versatile DNA assembly techniques, efficient recombineering systems, and automated high-throughput screening platforms creates a powerful, iterative engine for biomolecular optimization. By adhering to community-developed data standards and visualization conventions, researchers can ensure the reproducibility, scalability, and shareability of their work. As these tools continue to become more robust, accessible, and automated, they will undoubtedly unlock new frontiers in engineering biology for therapeutic applications, pushing the boundaries of what is possible in synthetic biology and directed evolution.

Directed evolution has emerged as a transformative approach in synthetic biology, enabling researchers to engineer novel biocatalysts and optimize metabolic pathways with precision and efficiency. This powerful methodology mimics the process of natural selection in a laboratory setting, employing iterative cycles of genetic diversification and screening to evolve proteins or microbial strains with enhanced desired traits. The technique's primary advantage lies in its ability to generate improved biological systems without requiring complete a priori knowledge of the system's intricate structure-function relationships, thereby bypassing the limitations of purely rational design approaches [15].

The fundamental directed evolution workflow operates as an iterative two-step process: first, the generation of genetic diversity to create variant libraries, and second, the application of high-throughput screening or selection to identify variants exhibiting improvement in the target trait [15]. This engineered Darwinian process compresses geological timescales of natural evolution into manageable laboratory timeframes by intentionally accelerating mutation rates and applying user-defined selection pressures [15]. The profound impact of this approach was formally recognized with the 2018 Nobel Prize in Chemistry, awarded to Frances H. Arnold for establishing directed evolution as a cornerstone of modern biotechnology and industrial biocatalysis [15].

Within synthetic biology, directed evolution provides indispensable tools for addressing two fundamental challenges: engineering individual enzymes with novel or enhanced catalytic properties, and optimizing complex metabolic pathways for the sustainable production of valuable compounds. This technical guide examines the key applications, methodologies, and recent advancements in these domains, with a particular focus on the convergence of directed evolution with automation and artificial intelligence, which is dramatically accelerating the pace of biological engineering.

Engineering Novel Biocatalysts Through Directed Evolution

Fundamental Principles and Methodologies

The directed evolution cycle for biocatalyst development follows a systematic, iterative approach centered on two core components: diversity generation and functional identification. Success in any directed evolution campaign hinges on the strategic implementation of both phases, with the screening method representing the most critical bottleneck as it determines which variants are selected for subsequent rounds of evolution [15].

Library Creation Methods encompass several established techniques, each with distinct advantages. Error-Prone PCR (epPCR) introduces random mutations throughout the gene by reducing the fidelity of DNA polymerase through factors such as manganese ions and unbalanced nucleotide concentrations, typically achieving 1-5 base mutations per kilobase [15]. DNA Shuffling fragments multiple parent genes and reassembles them through primerless PCR, enabling recombination of beneficial mutations from different variants [15]. Site-Saturation Mutagenesis comprehensively explores all possible amino acid substitutions at targeted positions, often focusing on structural "hotspots" identified from prior evolution rounds [15].

Screening and Selection Strategies form the critical link between genotype and phenotype. Microtiter plate-based screening assays individual variants in 96- or 384-well formats using colorimetric or fluorometric substrates, offering quantitative data with moderate throughput (10³-10⁴ variants) [15]. Growth-coupled selection directly links desired enzymatic activity to host organism survival or growth, enabling extremely high throughput but requiring sophisticated genetic design [16]. Fluorescence-activated cell sorting (FACS) and microfluidics-based screening provide ultra-high-throughput analysis of cell populations, dramatically accelerating the identification of improved variants [16].

Case Studies in Biocatalyst Engineering

Directed evolution has demonstrated remarkable success in enhancing critical enzyme properties including thermostability, solvent tolerance, catalytic activity, and substrate specificity. Recent research highlights the substantial improvements achievable through systematic evolution campaigns.

In the green synthesis of cardiac drugs, directed evolution of key enzymes including cytochrome P450 monooxygenases, ketoreductases, transaminases, and hydrolases yielded dramatically improved biocatalysts [17] [18]. Evolved cytochrome P450 variant CYP450-F87A achieved 97% substrate conversion efficiency, while ketoreductase variant KRED-M181T reached 99% enantioselectivity in asymmetric reductions crucial for pharmaceutical synthesis [17]. These evolved enzymes also exhibited significantly enhanced stability, with elevated melting temperatures (+10-15°C) and maintained 85% activity in 30% ethanol solutions, making them suitable for industrial process conditions [17].

The engineering of hydrocarbon-producing enzymes represents another compelling application, particularly for sustainable fuel production. Enzymes such as the cytochrome P450 enzyme OleTJE, which catalyzes the decarboxylation of fatty acids to alkenes, have been targeted for directed evolution to improve their properties for industrial alkene and alkane biosynthesis [19]. These efforts face unique challenges due to the physiochemical properties of hydrocarbon products, which can be insoluble, gaseous, and chemically inert, complicating the development of high-throughput screening assays [19].

Table 1: Performance Metrics of Evolved Biocatalysts for Cardiac Drug Synthesis

| Enzyme Variant | Catalytic Improvement | Stability Enhancement | Application |

|---|---|---|---|

| CYP450-F87A | 97% substrate conversion | Tm +10°C | Cardiac drug intermediate synthesis |

| KRED-M181T | 99% enantioselectivity | 85% activity in 30% ethanol | Chiral alcohol synthesis |

| General variants | 7-fold increase in kcat; 12-fold increase in kcat/K_m | Tm +10-15°C | Multiple synthesis steps |

Experimental Protocol: Basic Directed Evolution Workflow

A standard directed evolution protocol for enzyme engineering typically proceeds through the following methodological stages:

Gene Diversification: Employ error-prone PCR to introduce random mutations into the parent gene, targeting a mutation rate of 1-3 amino acid changes per variant. Reaction conditions include: 10-100 ng template DNA, 0.5 mM Mn²⁺, unbalanced dNTP ratios (e.g., 0.2 mM dATP/dGTP, 1 mM dCTP/dTTP), and 5 U Taq polymerase in standard PCR buffer [15].

Library Construction: Clone the mutated gene fragments into an appropriate expression vector using restriction digestion and ligation or recombination-based cloning. Transform the library into a microbial host (typically E. coli) to create a variant library of 10⁴-10⁶ members.

Expression and Screening: Culture individual clones in deep-well microtiter plates and induce protein expression. Prepare cell lysates or use whole-cell assays to measure enzymatic activity with specific substrates. For oxidative enzymes like P450s, assays may monitor NADPH consumption or product formation via HPLC or GC-MS [17] [19].

Hit Identification and Characterization: Identify top-performing variants based on quantitative activity measurements. Sequence these hits to identify beneficial mutations. Purify selected enzyme variants for detailed biochemical characterization including kinetic parameters (kcat, Km), thermostability (Tm), and solvent tolerance.

Iterative Evolution: Use improved variants as templates for subsequent rounds of diversification, potentially employing different mutagenesis strategies such as DNA shuffling to combine beneficial mutations or site-saturation mutagenesis to optimize key positions [15].

Optimizing Metabolic Pathways Through Directed Evolution

Strategies for Pathway-Level Optimization

While enzyme engineering focuses on individual biocatalysts, metabolic pathway engineering addresses the optimization of multi-enzyme systems for the synthesis of complex valuable compounds. Directed evolution approaches at the pathway level present unique challenges and opportunities, requiring strategies that balance the activity of multiple enzymes while managing metabolic flux and avoiding toxic intermediate accumulation.

Growth-Coupled Selection Strategies represent a powerful approach for pathway optimization. This method engineers the host organism's metabolism such that the production of the target compound becomes essential for growth, creating a direct selection pressure for improved pathway performance [16]. Implementation involves deleting native genes to create auxotrophies that can only be complemented by the engineered pathway, or designing synthetic circuits that link product formation to essential cellular processes [16].

Automated Continuous Evolution Systems integrate directed evolution with laboratory automation to accelerate the optimization of metabolic pathways. These systems employ hypermutation strains that increase the mutation rate specifically in pathway genes, combined with continuous cultivation in bioreactors or chemostats that maintain selection pressure [16]. This approach enables real-time evolution of pathway performance over extended cultivation periods, allowing beneficial mutations to accumulate without researcher intervention.

Sensor-Regulator Systems utilize biosensors that detect intracellular metabolite levels and regulate reporter gene expression or antibiotic resistance markers. This creates a high-throughput screening system where fluorescence intensity or survival under antibiotic pressure indicates pathway efficiency [16]. When combined with FACS, this approach enables rapid screening of library sizes exceeding 10⁸ variants.

Case Studies in Metabolic Pathway Engineering

Directed evolution has successfully optimized metabolic pathways for diverse applications including biofuel production, pharmaceutical synthesis, and commodity chemical manufacturing. The integration of directed evolution with synthetic biology tools has enabled significant advances in pathway performance and host robustness.

In biofuel production, directed evolution has been applied to engineer hydrocarbon-producing pathways in microbial hosts. Native enzymes such as fatty acid decarboxylases and aldehyde deformylating oxygenases often exhibit insufficient activity for industrial-scale hydrocarbon production [19]. Directed evolution campaigns have focused on improving these enzymes' catalytic rates, solvent tolerance, and cofactor utilization to enhance biofuel yields. Engineering the terminal enzymes in hydrocarbon biosynthesis pathways has proven particularly impactful, as these steps often represent metabolic bottlenecks that limit overall pathway flux [19].

For sustainable pharmaceutical synthesis, directed evolution has optimized complete biosynthetic pathways for cardiac drugs, achieving substantial improvements in sustainability metrics. Evolved enzymatic pathways demonstrated significantly improved environmental profiles compared to conventional chemical synthesis, with E-factors reduced from 15.2 to 3.7 (lower values indicate less waste), CO₂ emissions decreased by 50%, and energy usage reduced by 45% while maintaining excellent 85-92% atom economy [17]. These improvements highlight the potential of directed evolution to contribute to greener manufacturing processes in the pharmaceutical industry.

Table 2: Sustainability Metrics of Evolved Biocatalytic vs. Conventional Chemical Synthesis

| Performance Metric | Conventional Synthesis | Evolved Biocatalysis | Improvement |

|---|---|---|---|

| E-factor (waste mass/product mass) | 15.2 | 3.7 | 76% reduction |

| CO₂ Emissions | Baseline | -50% | 50% reduction |

| Energy Consumption | Baseline | -45% | 45% reduction |

| Atom Economy | Variable | 85-92% | Highly efficient |

Experimental Protocol: Growth-Coupled Pathway Evolution

Implementing growth-coupled selection for metabolic pathway optimization involves the following detailed methodology:

Selection Strain Design: Identify an essential metabolic reaction that can be replaced by the target pathway. Delete the corresponding gene(s) to create an auxotrophic strain that cannot grow without pathway functionality. Computational modeling and genome-scale metabolic networks can inform optimal gene deletion strategies [16].

Pathway Integration and Library Generation: Introduce the heterologous pathway into the selection strain using chromosomal integration or stable plasmid systems. Generate pathway diversity through: Combinatorial library assembly of promiscuous enzyme variants; Tuning element engineering of ribosomal binding sites and promoters to vary expression levels; Genome-wide mutagenesis using chemical mutagens or transposons to uncover global beneficial mutations [16].

Continuous Evolution Cultivation: Cultivate the library in controlled bioreactors under steady-state conditions with limiting substrate availability. For production pathways, implement dynamic regulation where the essential nutrient is only available when the pathway produces a precursor. Monitor culture density and product titers regularly to track evolution progress.

Population Monitoring and Analysis: Sample the evolving population at intervals to monitor genetic and phenotypic changes. Use next-generation sequencing to identify mutations that rise to prominence in the population. Isolate individual clones from endpoint populations for detailed characterization of pathway performance and genetic alterations.

Validated Hit Characterization: Ferment superior evolved strains in controlled bioreactors to quantitatively measure key performance metrics including titer (g/L), yield (g product/g substrate), and productivity (g/L/h). Analyze metabolic fluxes through ¹³C tracing or enzyme activity assays to understand the evolved phenotype.

The Scientist's Toolkit: Essential Research Reagents and Solutions

Successful implementation of directed evolution campaigns requires specialized reagents, genetic tools, and screening systems. The following toolkit details essential materials and their applications in biocatalyst and pathway engineering.

Table 3: Essential Research Reagents for Directed Evolution Experiments

| Reagent/Tool Category | Specific Examples | Function and Application |

|---|---|---|

| Mutagenesis Reagents | Error-prone PCR kits (with Mn²⁺, unbalanced dNTPs), DNase I for DNA shuffling, Site-directed mutagenesis kits | Introduction of genetic diversity into target genes through random, semi-rational, or recombination-based approaches |

| Library Construction Tools | Restriction enzymes, Ligases, Gateway or Golden Gate assembly systems, Plasmid vectors with tunable promoters | Cloning of variant libraries into expression systems with varying copy numbers and expression strengths |

| Expression Hosts | E. coli BL21(DE3), Pseudomonas putida, Saccharomyces cerevisiae, specialized hypermutation strains | Heterologous expression of enzyme variants or metabolic pathways with options for inducible expression and genetic stability |

| Screening Assays | Colorimetric substrates (p-nitrophenyl esters, etc.), Fluorogenic probes, HPLC/MS systems, Biosensor strains | Detection and quantification of enzymatic activity or metabolite production with varying throughput and sensitivity |

| Selection Systems | Antibiotic resistance markers, Auxotrophic complementation strains, Toxin-antitoxin systems | Growth-coupled selection linking desired enzymatic function to host organism survival or proliferation |

| Automation Equipment | Liquid handling robots, Microplate readers, FACS instruments, Microfluidic droplet generators | Enabling high-throughput screening of large variant libraries with minimal manual intervention |

Emerging Frontiers: AI and Automation in Directed Evolution

The convergence of directed evolution with artificial intelligence (AI) and laboratory automation represents a paradigm shift in biological engineering, dramatically accelerating the design-build-test-learn cycle. Machine learning algorithms analyze complex sequence-activity relationships from directed evolution data to predict beneficial mutations and guide library design, moving beyond traditional random mutagenesis approaches [20] [16].

AI-Guided Library Design uses sequence-function data from preliminary evolution rounds to train predictive models that identify mutation hotspots and beneficial amino acid substitutions. These models can explore sequence spaces far beyond the reach of practical screening capabilities, prioritizing variants with a high probability of improved function [16]. Advanced approaches include generative models that propose entirely novel sequences optimized for multiple properties simultaneously, such as activity, stability, and expression [20].

Automated Biofoundries integrate robotic systems for liquid handling, cultivation, and screening with AI-driven experimental design and analysis. These platforms enable fully automated directed evolution campaigns where the computer plans experiments, robots execute them, and the system learns from results to design improved subsequent rounds [16]. This "self-driving lab" approach continuously refines biological systems with minimal human intervention, potentially reducing development timelines from years to months or weeks [16].

De Novo Enzyme Design represents the ultimate application of AI in biocatalyst development. Tools such as Rosetta and RFdiffusion use physical principles and deep learning to generate entirely novel enzyme scaffolds capable of catalyzing non-natural reactions [16]. While these designed enzymes typically require subsequent directed evolution to achieve practical activity levels, they provide powerful starting points for creating catalysts with functions not found in nature [16].

The integration of these advanced computational and automation technologies with established directed evolution methodologies is creating unprecedented capabilities for engineering novel biocatalysts and optimizing metabolic pathways, positioning directed evolution as an increasingly powerful approach for addressing challenges in sustainable manufacturing, therapeutic development, and bio-based production.

Next-Generation Engineering: Machine Learning, Orthogonal Systems, and Advanced Applications

In the field of synthetic biology, directed evolution (DE) stands as a powerful methodology for engineering biomolecules with enhanced functions, from novel enzymes for biocatalysis to optimized antibodies for therapeutic applications [8]. This process mimics natural selection in a controlled laboratory environment, iteratively accumulating beneficial mutations through cycles of mutagenesis and screening. However, traditional DE operates largely as a local, greedy search, which renders it particularly inefficient when navigating rugged fitness landscapes—those characterized by non-additive epistatic interactions between mutations [4] [21]. In such landscapes, the effect of a mutation depends critically on the genetic background in which it appears, leading to fitness landscapes with multiple peaks and valleys. This complexity often traps traditional DE approaches at local optima, preventing access to higher-fitness regions of sequence space [4].

The integration of artificial intelligence (AI), particularly active learning and Bayesian optimization (BO), is revolutionizing directed evolution by transforming it from a purely empirical local search into an intelligent, adaptive global exploration. These methods use machine learning (ML) models to learn the underlying sequence-function relationship and strategically propose informative experiments. This paradigm shift enables synthetic biologists to navigate epistatic landscapes more efficiently, requiring fewer experimental rounds and screening resources to discover high-performing variants [4] [22]. This technical guide delves into the core principles, methodologies, and applications of these AI-enhanced techniques, providing a framework for their implementation in advanced synthetic biology research.

Core Computational Methodologies

Active Learning-Assisted Directed Evolution (ALDE)

Active Learning-assisted Directed Evolution (ALDE) is an iterative machine learning-assisted workflow designed to address the inefficiencies of traditional DE on challenging, epistatic landscapes. Its core innovation lies in leveraging uncertainty quantification to balance the exploration of unseen regions of sequence space with the exploitation of promising leads [4] [21] [23].

The ALDE cycle involves several key stages, as shown in Figure 1. Initially, a combinatorial design space is defined, typically focusing on a set of k residues known or suspected to influence function. An initial library of variants is synthesized and screened to generate a foundational set of sequence-fitness data. This data is used to train a supervised ML model that learns a mapping from protein sequence to fitness. The trained model then evaluates all possible sequences within the defined design space. Crucially, an acquisition function is applied to rank these sequences, prioritizing those that are either predicted to have high fitness (exploitation) or those where the model's prediction is most uncertain (exploration). The top-ranked variants from this process are synthesized and assayed in the next wet-lab round, and their experimental fitness data is used to retrain and refine the model, closing the loop and initiating the next cycle [4]. This iterative process of model-guided proposal and experimental validation allows ALDE to efficiently climb fitness landscapes that would confound traditional methods.

Bayesian Optimization in Embedding Space

Bayesian Optimization (BO) is a powerful class of active learning algorithms well-suited for optimizing expensive black-box functions, a perfect analogy for protein engineering where fitness assays are costly and time-consuming. The goal is to find the optimal protein sequence x that maximizes a fitness function f(x) with as few evaluations as possible [24].

A typical BO framework uses a probabilistic surrogate model, often a Gaussian Process (GP), to model the fitness landscape. The GP provides a posterior distribution for the fitness of any sequence, quantifying both the predicted mean fitness and the associated uncertainty. An acquisition function, such as Expected Improvement (EI) or Upper Confidence Bound (UCB), uses this posterior to decide which sequence to test next by balancing exploration and exploitation [24] [22].

A key advancement is performing BO in a semantically rich embedding space learned by a pre-trained protein language model (pLM) such as ESM-2 [24] [25]. These pLMs, trained on millions of natural protein sequences, generate dense, low-dimensional vector representations (embeddings) that encapsulate evolutionary and functional information. The BOES method (Bayesian Optimization in Embedding Space) exploits this by using pLM embeddings as the input space for the GP model [24]. This approach defines a sensible metric of similarity between variants, creating a smoother fitness landscape that is more amenable to optimization, and often leads to better results with the same screening budget [24].

Table 1: Key Computational Components in AI-Enhanced Directed Evolution

| Component | Description | Common Examples/Notes |

|---|---|---|

| Probabilistic Model | A model that predicts fitness and quantifies uncertainty. | Gaussian Process (GP), Ensemble of Deep Neural Networks [24] [22] |

| Acquisition Function | Strategy for selecting the next variants to test. | Expected Improvement (EI), Upper Confidence Bound (UCB) [24] |

| Sequence Representation | The numerical encoding of a protein sequence for the model. | One-hot encoding, Amino Acid Features, Embeddings from pLMs (e.g., ESM-2) [24] [22] |

| Optimization Algorithm | The overarching procedure for navigating the landscape. | Active Learning-assisted DE (ALDE), Bayesian Optimization (BO) [4] [24] |

Experimental Implementation: A Case Study on Epistatic Landscapes

Defining a Challenging Protein Engineering Problem

To demonstrate the practical efficacy of ALDE, researchers applied it to a model system engineered to be difficult for traditional DE: optimizing five epistatic residues (W56, Y57, L59, Q60, and F89) in the active site of a protoglobin from Pyrobaculum arsenaticum (ParPgb) [4]. The goal was to enhance the enzyme's performance in a non-native cyclopropanation reaction, converting 4-vinylanisole and ethyl diazoacetate into cyclopropane products trans-2a and cis-2a with high yield and diastereoselectivity for the cis product. The objective function was explicitly defined as the difference between the yield of cis-2a and trans-2a [4].

This system was intentionally designed as a rugged landscape. Initial single-site saturation mutagenesis (SSM) at these five positions showed no single mutant that conferred a significant desirable shift in the objective. Furthermore, recombining the best-performing single mutants failed to produce a high-fitness variant, providing strong evidence of negative epistasis and making this a challenging test case for any protein engineering method [4].

The ALDE Workflow in Practice

The experimental campaign began with the synthesis of an initial library of ParPgb variants mutated at all five target positions using PCR-based mutagenesis with NNK degenerate codons [4]. The workflow then proceeded through iterative ALDE cycles, as previously described. In just three rounds of wet-lab experimentation, exploring only about ~0.01% of the total design space, ALDE successfully identified an optimal variant that improved the yield of the desired cis product from 12% to 93%, while also achieving high diastereoselectivity (14:1) [4] [21] [23]. The final variant contained a combination of mutations that was not predictable from the initial single-mutant screens, underscoring the critical importance of the ML model in navigating the epistatic interactions to discover a globally optimal sequence.

Table 2: Key Reagents and Research Tools for AI-Enhanced Directed Evolution

| Research Tool / Reagent | Function in the Workflow |

|---|---|

| NNK Degenerate Codon Primers | Allows for randomization of target codons during library construction, encoding all 20 amino acids. |

| Parent Plasmid (e.g., ParPgb W59L Y60Q) | The DNA template for mutagenesis, containing the gene of interest and necessary regulatory elements. |

| PCR Reagents for Mutagenesis | Enzymes and nucleotides for performing site-saturation or combinatorial mutagenesis. |

| Heterologous Expression System (e.g., E. coli) | Cellular chassis for expressing the library of protein variants. |

| High-Throughput Assay | A functional screen (e.g., via GC, HPLC, or fluorescence) to measure the fitness of library variants. |

| Pre-trained Protein Language Model (e.g., ESM) | Provides informative sequence embeddings for the ML model [24] [25]. |

| Computational Framework (e.g., ALDE, BOES) | Software for training models, running optimization, and proposing new variants [4] [24]. |

Technical Protocols and Best Practices

Detailed Protocol for an ALDE Campaign

- Define the Combinatorial Design Space: Select

ktarget residues based on structural knowledge (e.g., active site residues) or previous mutational studies. This defines a search space of 20^k possible variants [4]. - Generate Initial Library and Collect Data: Perform simultaneous mutagenesis at all

kpositions, for example using NNK codons. Screen a randomly selected or strategically chosen subset (e.g., hundreds) of variants to establish an initial dataset of sequence-fitness pairs [4]. - Train the Machine Learning Model: Use the collected data to train a supervised ML model. The model can use various sequence encodings, from one-hot encoding to embeddings from a pLM. The model should, where possible, provide uncertainty estimates [4] [24].

- Propose New Variants with the Acquisition Function: Use the trained model to predict the fitness and uncertainty for all sequences in the design space. Apply an acquisition function (e.g., Expected Improvement) to these predictions to rank the sequences. Select the top

N(e.g., tens to hundreds) for the next experimental round [4] [24]. - Iterate Until Convergence: Synthesize and screen the proposed variants. Add the new data to the training set and repeat steps 3-5 until a fitness goal is reached or performance plateaus [4].

Key Considerations for Implementation

- Uncertainty Quantification: Empirical evidence from ALDE studies suggests that for high-dimensional protein data, frequentist uncertainty quantification (e.g., from model ensembles) can be more consistent and better calibrated than some Bayesian deep learning approaches [4].

- Sequence Representation: The choice of how to represent a protein sequence for the model is critical. While one-hot encoding is simple, using embeddings from a pre-trained pLM can significantly boost performance and data efficiency by providing a more informative and smoother latent space for optimization [24] [22].

- Handling Epistasis: The primary strength of ALDE and BO is their ability to model non-additive effects. Using models like GPs or deep ensembles with non-linear kernels or architectures allows the model to capture the interactions between mutations that define a rugged landscape [4] [25].

The integration of active learning and Bayesian optimization into directed evolution represents a transformative advancement for synthetic biology. By intelligently modeling the protein fitness landscape, these methods enable a more efficient and effective search for high-fitness variants, particularly in the face of challenging epistatic interactions. The demonstrated success of ALDE in optimizing a rugged, five-residue landscape in an enzyme active site, achieving a dramatic improvement in yield and selectivity in only three rounds, underscores the practical power of this approach [4].

As the field progresses, several frontiers are poised to further enhance these methodologies. The use of reinforcement learning (RL) in latent space, as seen in methods like LatProtRL, offers a complementary strategy for navigating rugged landscapes and escaping local optima [25]. Furthermore, the rise of generative models for protein design suggests a future where directed evolution is not merely guided by AI but is initiated with AI-designed protein scaffolds that already occupy novel regions of the functional universe [26] [27]. For researchers in drug development and synthetic biology, mastering these AI-enhanced directed evolution tools is becoming increasingly crucial to unlock new therapeutic, catalytic, and synthetic biological capabilities that lie beyond the reach of natural evolution and traditional engineering methods.

Directed evolution is a powerful method for engineering biomolecules with new or improved functions through iterative rounds of mutation and artificial selection [8]. While this approach has been successfully implemented in prokaryotic and yeast-based systems, establishing stable mammalian directed evolution platforms has presented significant challenges [28]. Mammalian systems offer crucial advantages for evolving therapeutic proteins and biological tools, including appropriate post-translational modifications, protein-protein interactions, and signaling networks that may be absent in simpler organisms [28]. The REPLACE platform addresses these limitations through an orthogonal replication system that enables extended evolution campaigns in mammalian cells while maintaining system integrity and generating sufficient diversity for meaningful directed evolution.

PROTEUS: A Chimeric Viral Platform for Mammalian Directed Evolution

System Architecture and Design Principles

The PROTEUS (PROTein Evolution Using Selection) platform utilizes chimeric virus-like vesicles (VLVs) to enable directed evolution in mammalian cells [28]. This system is based on a modified Semliki Forest Virus (SFV) replicon engineered to encode only non-structural viral proteins, with infectivity determined by host cell expression of the Indiana vesiculovirus G (VSVG) coat protein [28].

Key modifications to the SFV replicon include:

- Fourteen point mutations (ten non-synonymous, four synonymous) in Non-Structural Proteins (NSPs 1-4) to increase VLV titer

- Attenuated NSP2 variant with a three amino acid loop exchange (A674R/D675L/A676E) to reduce cytopathic effects

- Elimination of capsid protein to prevent cheater particle formation that interferes with viral replication

- No sequence homology between VSVG RNA and SFV genome to reduce recombination events

The system demonstrates robust host-dependent propagation, with amplification factors exceeding 1000 in VSVG-expressing cells versus less than 1 in mock-transfected cells [28]. This dependency creates the essential link between transgene activity and viral propagation that enables effective selection pressure during evolution campaigns.

Mutation Generation and System Stability

PROTEUS leverages the natural error-prone replication of alphaviruses to generate diversity:

- Mutation rate: 2.6 mutations per 10^5 transduced cells

- Mutational bias: Strong A-to-G and U-to-C transitions consistent with ADAR-dependent editing

- ADAR dependence: ADAR/ADARB1 knockout reduces mutation rate by 3-fold (0.8 mutations/10^5 cells)

- Mutation detection: Sensitive to 0.3% variant frequency by amplicon deep sequencing

The platform maintains stability over multiple evolution rounds, with progressive transgene truncation observed only in the absence of selective pressure [28]. This stability enables extended evolution campaigns without loss of system integrity.

Experimental Implementation and Workflows

VLV Production and Propagation Protocol

VLV Packaging:

- Transfect BHK-21 cells with pSFV-DE replicon vector and pCMV_VSVG vector

- Harvest chimeric VLVs after 48-72 hours

- Concentrate and titer VLVs using genome copy quantification

VLV Evolution Cycles:

- Transduce naive BHK-21 cells with VLV stock

- Transfect transduced cells to express VSVG protein

- Monitor transgene expression and circuit activation

- Harvest progeny VLVs for subsequent rounds

- Repeat for 3-5 evolution cycles typically

Critical Parameters:

- Maintain VLV titers >10^8 genome copies/mL

- Use consistent BHK-21 host cell passage number

- Monitor amplification factors at each transfer

- Verify transgene integrity by PCR periodically

Selection Circuit Implementation

The platform enables diverse selection strategies through synthetic circuit design:

Tetracycline-Responsive Circuit:

- Transactivator: tTA (TetR-VP16 fusion)

- Response element: TRE3G promoter driving VSVG expression

- Selection pressure: Doxycycline concentration modulates circuit activation

- Application: Evolution of doxycycline-resistant tTA variants

Serum-Responsive Circuit:

- Transactivator: SRF-VP64 fusion (serum response factor DNA binding domain)

- Response element: SRE-driven VSVG expression

- Selection pressure: Serum concentration modulates circuit activation

- Outcome: Rapid selection for truncated SRF transgene with minimal DNA-binding domain

Competition experiments demonstrate that VLVs carrying circuit-activating transgenes outcompete neutral eGFP-LUC controls within 3-4 rounds, even at 1:1000 initial dilution [28].

Quantitative Performance Data

Table 1: PROTEUS Platform Performance Metrics

| Parameter | Value | Measurement Context |

|---|---|---|

| VLV Titer | >10^8 gc/mL | Standard production protocol |

| Amplification Factor | >1000 | VSVG-expressing host cells |

| Mutation Rate | 2.6/10^5 cells | Wildtype BHK-21 host |

| Mutation Rate (ADAR KO) | 0.8/10^5 cells | ADAR/ADARB1 knockout host |

| Selection Advantage | 3-4 rounds | tTA vs eGFP-LUC competition |

| Detection Sensitivity | 0.3% | Variant frequency by amplicon sequencing |

Table 2: Comparison of Mammalian Directed Evolution Systems

| Feature | PROTEUS Platform | Traditional Viral Systems |

|---|---|---|

| Host Dependency | Complete (VSVG-dependent) | Variable (cheater particles common) |

| Mutation Generation | Natural error-prone replication (2.6/10^5) | Often requires external mutagenesis |

| System Stability | Stable over extended campaigns | Frequently compromised by cheaters |

| Cytopathic Effects | Attenuated (NSP2 modifications) | Often significant |

| Selection Flexibility | Customizable synthetic circuits | Target-specific limitations |

| Transgene Capacity | Full-length maintained under selection | Progressive truncation common |

Research Reagent Solutions

Table 3: Essential Research Reagents for PROTEUS Implementation

| Reagent | Function | Application Notes |

|---|---|---|

| pSFV-DE Replicon | Engineered SFV genome without capsid | Contains 14 point mutations in NSPs, attenuated NSP2 |

| pCMV_VSVG | VSVG envelope protein expression | No sequence homology to SFV genome |

| BHK-21 Cells | Host cell line for VLV propagation | Wildtype preferred for higher mutation rates |

| ADAR/ADARB1 KO Cells | Host with reduced mutation bias | 3-fold lower mutation rate, reduced A-to-G bias |

| TRE3G Reporter System | Doxycycline-responsive selection | For Tet transactivator evolution |

| SRE Reporter System | Serum-responsive selection | For SRF domain evolution |

Application Case Studies

Evolution of Tetracycline-Controlled Transactivators

Using PROTEUS, researchers successfully altered the doxycycline responsiveness of tetracycline-controlled transactivators (tTA) [28]. The selection campaign:

- Circuit: tTA-activated TRE3G driving VSVG expression

- Selection pressure: Increasing doxycycline concentrations

- Outcome: Generated TetON-4G with enhanced sensitivity

- Validation: Mammalian-specific adaptations with improved regulatory properties

This application demonstrates the platform's capability to evolve complex allosteric regulatory proteins in mammalian cellular environments.

Intracellular Nanobody Evolution

PROTEUS compatibility with intracellular nanobody evolution was established through selection for DNA damage-responsive anti-p53 nanobodies [28]. This application highlights the platform's ability to:

- Evolve binding domains in appropriate cellular context

- Maintain functional protein folding and interactions

- Select for condition-responsive behavior

- Generate research tools with mammalian-specific functionality

Integration with Broader Directed Evolution Applications

The REPLACE platform represents a significant advancement in the broader context of synthetic biology and directed evolution applications [8]. Recent advances in directed evolution have focused on techniques that limit required researcher intervention and guide library design, with applications targeting biosynthetic pathways, signal transduction pathways, and multiplex genome evolution [8].

PROTEUS addresses key limitations in mammalian synthetic biology by providing:

- Contextual relevance: Mammalian post-translational modifications and signaling networks

- Scalable diversity generation: Natural mutation rates sufficient for library creation

- Selection fidelity: Tight coupling between protein function and cellular fitness

- Technical accessibility: Simplified implementation compared to ad hoc systems

System Diagrams and Workflows

Diagram 1: PROTEUS Directed Evolution Workflow

Diagram 2: PROTEUS System Architecture and Components

A paramount challenge in scaling synthetic biology for therapeutic protein production, biosensing, and biomanufacturing is maintaining the stability of engineered genes over evolutionary timescales. Heterologous gene expression often imposes a metabolic burden on host organisms, creating a selective advantage for mutants that reduce or eliminate expression. Over time, this leads to the loss of functionality and impairs the viability of engineered systems for industrial or environmental use. This instability adds regulatory concerns and limits the use of synthetic biology outside controlled laboratory environments, as it leads to a lack of control over the generated sequences [10].

Within the broader context of directed evolution applications in synthetic biology research, overcoming evolutionary instability is particularly crucial. Directed evolution, an iterative laboratory-based process that applies Darwinian principles to engineer proteins and enzymes, has become an established approach for developing new drugs using enzymatic catalysis [29] [30]. Engineered enzymes through directed evolution possess higher activity, better specificity, and stability when compared to their natural counterparts [30]. However, the effectiveness of directed evolution campaigns can be undermined if the beneficial mutations identified are not stably maintained in host organisms over multiple generations. The STABLES strategy emerges as a solution to this persistent challenge, offering a mechanism to sustain the evolutionary half-life of engineered biological systems [10].

The STABLES Platform: Core Mechanism and Components

Strategic Framework and Design Rationale

STABLES (stop codon–tunable alternative bifunctional mRNA leading to expression and stability) is a comprehensive, host- and gene-agnostic approach to enhancing evolutionary stability through gene fusion. Unlike previous strategies that attempted to couple gene expression to host fitness through complex methods like engineered gene overlaps or biosensor systems, STABLES employs a physically linked gene fusion strategy that is robust to many mutation types and provides a generic, systematic framework [10].

The fundamental innovation lies in creating a system where mutations that disrupt the gene of interest (GOI) also critically compromise the function of an essential endogenous gene (EG), thereby making such deleterious mutations lethal to the host organism. This creates a powerful selective pressure that maintains GOI expression across generations. The strategy is notably robust against promoter mutations, mutations causing misfolding, and those reducing production levels, offering broader protection than previous solutions [10].

System Architecture and Key Components

The STABLES platform integrates six sophisticated biological components into a unified stabilization system:

- Gene of Interest (GOI): The heterologous gene to be expressed in the host organism.

- Essential Endogenous Gene (EG): Selected for optimal gene expression and mutational stability using a machine learning model. The GOI and EG are expressed on a shared promoter, on a single open reading frame, where the GOI's C terminus is fused to the EG's N terminus [10].

- Optimized Linker: Selected to minimize disruption to protein folding by comparing disorder profiles of the GOI and EG before and after fusion using biophysical models. A commercial linker yielding minimal structural change is chosen [10].

- Sequence Optimization: The fusion gene is optimized for expression and avoidance of mutationally unstable sites, including optimization of the GOI, linker, and potentially the EG [10].

- Leaky Stop Codon: A stop codon with a positive rate of read-through is placed after the GOI. This leads to the generation of two proteins—either the GOI alone or the fusion protein. The expression ratio is controlled by selecting an appropriate read-through rate, ensuring the fusion protein is produced in barely viable quantities while maintaining higher expression of the GOI alone [10].

- Endogenous Gene Replacement: The native EG is deleted from the host and replaced by the gene fusion, making the host dependent on the fusion protein for the essential function [10].

Table 1: Core Components of the STABLES Platform

| Component | Function | Design Consideration |

|---|---|---|

| Gene of Interest (GOI) | Target heterologous gene for expression | Varies by application; requires codon optimization |