Directed Evolution: Key Terms, Methodologies, and Applications in Drug Development

This article provides a comprehensive resource for researchers, scientists, and drug development professionals on the field of directed evolution.

Directed Evolution: Key Terms, Methodologies, and Applications in Drug Development

Abstract

This article provides a comprehensive resource for researchers, scientists, and drug development professionals on the field of directed evolution. It covers foundational principles and key terminology, explores modern methodologies for library generation and screening, and addresses common challenges and optimization strategies. Further, it examines real-world applications and validation through comparative case studies, highlighting the impact of this powerful protein engineering tool on the development of new therapeutics, including antibodies, enzymes, and gene therapies.

The Principles and Language of Directed Evolution

Directed evolution stands as a foundational methodology in modern biotechnology, operating through an iterative process of mutagenesis and selection to steer proteins or nucleic acids toward a user-defined goal [1]. This approach systematically mimics the principles of natural selection—genetic variation, selective pressure, and heredity—within a controlled laboratory environment to optimize biological molecules for specific applications [2] [3]. Since its early in vitro demonstrations in the 1960s, directed evolution has matured into a sophisticated engineering tool, enabling researchers to enhance enzyme stability, alter substrate specificity, and even develop entirely novel functionalities not found in nature [3] [4]. The profound impact of this technology was recognized with the awarding of the 201 Nobel Prize in Chemistry to Frances H. Arnold for her pioneering work in this domain [2]. This technical guide examines the core principles, methodologies, and applications of directed evolution, providing a comprehensive resource for researchers and drug development professionals.

Core Principles and Key Terminology

At its essence, directed evolution functions as a laboratory-accelerated evolutionary engine that drives a population of biomolecules toward a desired functional objective [2]. The process compresses geological timescales into manageable experimental timelines by intentionally introducing mutations and applying unambiguous, user-defined selection pressures [2]. Unlike rational design approaches that require detailed structural knowledge, directed evolution bypasses the need for complete a priori understanding of sequence-structure-function relationships, often uncovering non-intuitive and highly effective solutions through iterative exploration [2] [5].

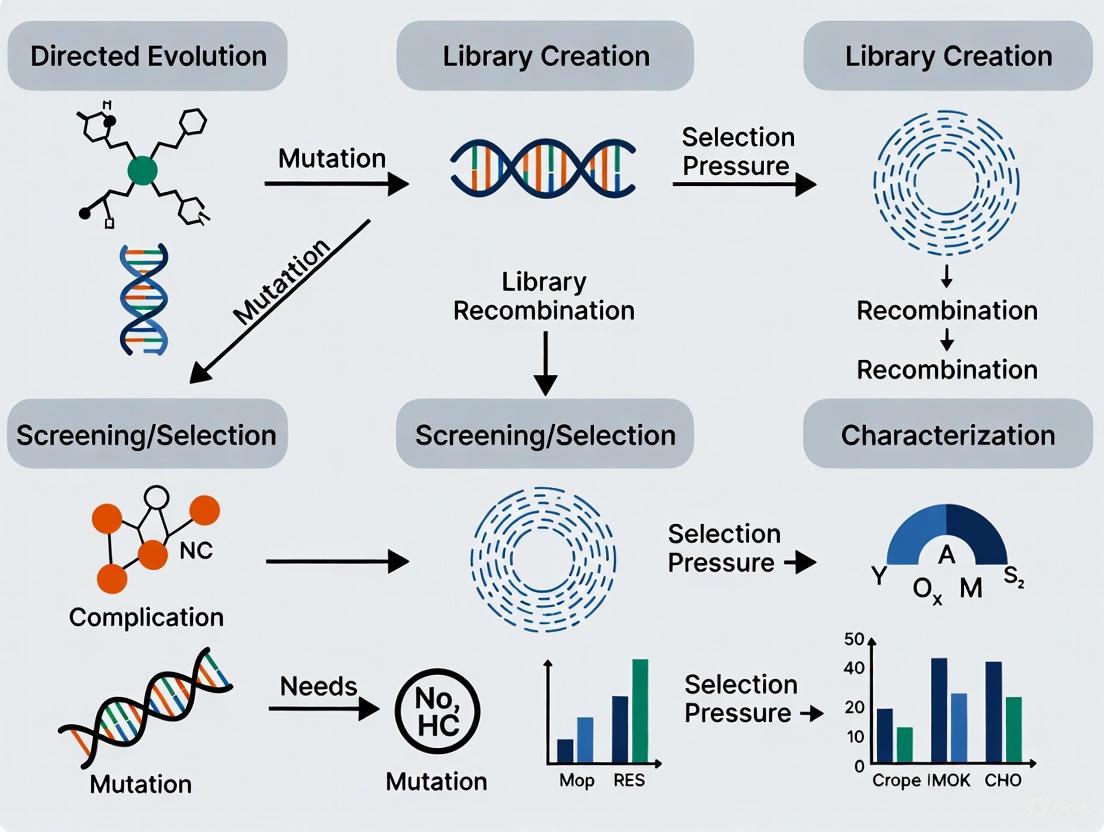

The directed evolution workflow operates through a cyclic process of two fundamental steps, as illustrated in Figure 1. First, genetic diversity is introduced to create a library of protein or gene variants. Second, a high-throughput screening or selection method identifies rare variants exhibiting improvement in the desired trait [2]. The genes encoding these improved variants are then isolated and serve as templates for subsequent evolutionary rounds, allowing beneficial mutations to accumulate over successive generations [1] [2]. A critical distinction from natural evolution is that the selection pressure is decoupled from organismal fitness, with the sole objective being optimization of a specific protein property defined by the experimenter [2].

Table 1: Key Terminology in Directed Evolution

| Term | Definition | Application Context |

|---|---|---|

| Directed Evolution | Laboratory process mimicking natural selection to steer proteins/nucleic acids toward user-defined goals [1] [6] | Protein engineering, enzyme optimization, metabolic pathway engineering |

| Genetic Diversity | Introduction of variation in gene sequences through mutagenesis or recombination [2] | Creation of mutant libraries for functional screening |

| Selection Pressure | Experimental conditions that favor survival or identification of variants with desired properties [6] | Screening for improved enzyme activity, stability, or specificity |

| High-Throughput Screening | Individual evaluation of library members for desired properties using automated assays [1] [2] | Microtiter plate-based enzymatic assays, colorimetric/fluorimetric analysis |

| Library | A collection of gene or protein variants created through diversification methods [4] | Mutant populations subjected to screening or selection |

| Epistasis | Non-additive interactions between mutations in a protein sequence [7] | Understanding synergistic mutation effects in evolved variants |

| Genotype-Phenotype Link | Covalent or physical connection between a genetic sequence and its functional expression [1] [4] | Phage display, mRNA display, in vitro compartmentalization |

| Error-Prone PCR (epPCR) | Modified PCR that reduces polymerase fidelity to introduce random point mutations [2] [5] | Whole-gene random mutagenesis without structural information |

| DNA Shuffling | In vitro recombination method that fragments and reassembles genes to create chimeras [3] | Combining beneficial mutations from multiple parent genes |

Methodological Framework

Generating Genetic Diversity

The creation of a diverse library of gene variants constitutes the foundational step that defines the explorable sequence space in a directed evolution campaign [2]. The quality, size, and nature of this diversity directly constrain potential outcomes, with several established methods available, each possessing distinct advantages and limitations [2].

Random Mutagenesis Techniques

Error-Prone Polymerase Chain Reaction (epPCR) represents the most established method for introducing random mutations across an entire gene sequence [2] [5]. This technique utilizes a modified PCR protocol that intentionally reduces the fidelity of DNA polymerase, thereby introducing errors during gene amplification [2]. This is typically achieved by employing a polymerase lacking 3' to 5' proofreading exonuclease activity, creating dNTP concentration imbalances, and adding manganese ions (Mn²⁺) to the reaction [2]. The Mn²⁺ concentration can be precisely tuned to achieve mutation rates typically targeted at 1–5 base mutations per kilobase, resulting in approximately one or two amino acid substitutions per protein variant [2]. A significant limitation of epPCR is its inherent bias toward transition mutations over transversion mutations, constraining the accessible sequence space [2].

Recombination-Based Methods

To more closely mimic natural sexual recombination, methods such as DNA Shuffling were developed to combine beneficial mutations from multiple parent genes into single, improved offspring [2] [3]. In this method, one or more related parent genes are randomly fragmented using DNaseI, and the resulting small fragments are reassembled in a primer-free PCR reaction [3]. During annealing, homologous fragments from different parental templates can overlap and prime each other, resulting in crossovers that create novel chimeric genes [3]. Family Shuffling extends this concept by applying the DNA shuffling protocol to a set of homologous genes from different species, accessing nature's standing variation to explore functionally relevant sequence regions [2]. These methods typically require at least 70-75% sequence identity between parental genes for efficient reassembly [2].

Focused and Semi-Rational Approaches

When structural or functional information is available, focused mutagenesis enables more efficient exploration of sequence space by targeting specific regions or residues [2]. Site-Saturation Mutagenesis represents a powerful example, comprehensively exploring the functional importance of specific "hotspot" residues by creating libraries that encode all 19 possible alternative amino acids at targeted positions [2]. This semi-rational approach, combining knowledge-based targeting with random diversification, dramatically increases evolutionary efficiency by reducing library size and increasing the frequency of beneficial variants [2].

Table 2: Comparison of Library Generation Methods in Directed Evolution

| Method | Mechanism | Advantages | Disadvantages | Typical Library Size |

|---|---|---|---|---|

| Error-Prone PCR [2] [5] | Reduced polymerase fidelity introduces random point mutations | Easy to perform; requires no prior knowledge of key positions | Biased mutagenesis spectrum; limited amino acid sampling | 10³ - 10⁶ |

| DNA Shuffling [3] | Fragmentation and recombination of homologous genes | Combines beneficial mutations; mimics natural recombination | Requires high sequence homology (>70%) | 10⁴ - 10⁸ |

| Site-Saturation Mutagenesis [2] | Systematic randomization of targeted codons to all amino acids | Comprehensive exploration of key positions; high frequency of beneficial variants | Limited to a few positions; libraries become large with multiple sites | 20ⁿ (n = number of residues) |

| Mutator Strains [4] | In vivo mutagenesis using bacterial strains with defective DNA repair | Simple system; continuous mutagenesis possible | Uncontrolled mutagenesis spectrum; mutations not restricted to target | Varies |

| Orthogonal Replication Systems [4] | Engineered DNA polymerases with reduced fidelity in vivo | Mutagenesis restricted to target sequence | Technically challenging; limited target size | 10³ - 10⁵ |

Screening and Selection Methodologies

The identification of improved variants from mutant libraries represents the critical bottleneck in directed evolution, with success dictated by the principle that "you get what you screen for" [2]. The power and throughput of the screening platform must align with the library size generated in the diversification step [2]. A fundamental distinction exists between screening and selection approaches [1] [2].

Screening involves individual evaluation of each library member for the desired property [2]. Plate-based and colony screening platforms represent the most traditional formats, where host cells expressing enzyme libraries are grown on solid medium or in multi-well plates containing substrates that produce visible products [2]. For example, in the landmark evolution of subtilisin, colonies expressing active variants formed clear halos on milk-agar plates due to casein degradation [2]. Microtiter plate formats allow individual clone culturing with subsequent assay of cell lysates using colorimetric or fluorometric substrates readable by plate readers [2]. While providing quantitative data on variant performance, screening throughput is typically limited to 10³-10⁴ variants [2].

Selection establishes conditions where desired function directly couples to host survival or replication, automatically eliminating non-functional variants [1] [2]. Phage display represents a powerful selection technique where exogenous peptides are fused to phage coat proteins, enabling affinity-based selection of binding variants [4]. Selection methods can handle significantly larger libraries (up to 10¹⁵ variants) but are often difficult to design, prone to artifacts, and provide limited information about activity distribution within the library [1] [2].

Figure 1: The Directed Evolution Workflow. This iterative cycle involves diversification of a parent gene, creation of variant libraries, screening or selection for improved function, isolation of successful variants, and evaluation against target criteria. The process repeats until desired properties are achieved.

Advanced Applications and Case Studies

Engineering Novel Enzyme Functions

Directed evolution has demonstrated remarkable success in creating enzymes with novel catalytic activities not observed in nature. The Arnold laboratory pioneered this approach by evolving cytochrome P450 monooxygenases to catalyze non-natural carbene transfer reactions for cyclopropanation, a transformation previously unknown in biological systems [7]. In a recent application of Active Learning-assisted Directed Evolution (ALDE), researchers optimized five epistatic residues in the active site of a Pyrobaculum arsenaticum protoglobin (ParPgb) for enhanced cyclopropanation activity [7]. Through three rounds of wet-lab experimentation combining machine learning with functional screening, the product yield increased from 12% to 93%, demonstrating the power of iterative evolution to navigate complex fitness landscapes with significant epistasis [7].

Development of Molecular Sensors

Directed evolution has enabled the creation of novel protein-based sensors for molecular imaging applications. In a landmark study, researchers evolved the heme domain of bacterial cytochrome P450-BM3 (BM3h) to develop magnetic resonance imaging (MRI) contrast agents sensitive to the neurotransmitter dopamine [8]. Starting from a protein with natural affinity for arachidonic acid, five rounds of evolution employing error-prone PCR and absorbance-based screening produced BM3h variants with dramatically altered specificity [8]. The optimized dopamine sensor exhibited a dissociation constant (Kd) of 3.3 μM for dopamine—a 300-fold improvement over the wild-type protein—while simultaneously reducing affinity for the natural substrate [8]. These evolved sensors enabled imaging of dopamine release in live animal brains, demonstrating the potential for molecular-level functional MRI [8].

Table 3: Representative Experimental Outcomes from Directed Evolution Campaigns

| Evolved Protein | Engineering Goal | Methodology | Key Improvement | Reference |

|---|---|---|---|---|

| ParPgb Protoglobin [7] | Optimize cyclopropanation activity | Active learning-assisted directed evolution (ALDE) | Product yield increased from 12% to 93% | [7] |

| P450-BM3 Heme Domain [8] | Develop dopamine MRI sensor | Error-prone PCR with absorbance screening | 300-fold improved dopamine affinity (Kd = 3.3 μM) | [8] |

| Phosphite Dehydrogenase [5] | Enhance thermostability | Combination of multiple mutagenesis methods | 23,000-fold improved half-life at 45°C | [5] |

| Subtilisin E [3] | Increase activity in organic solvent | Error-prone PCR | 256-fold higher activity in 60% DMF | [3] |

| β-Lactamase [3] | Improve antibiotic resistance | DNA shuffling | 32,000-fold increase in cefotaxime MIC | [3] |

| Pseudomonas aeruginosa Lipase [5] | Enhance enantioselectivity | Iterative saturation mutagenesis | 594-fold improved E-value for chiral ester | [5] |

Experimental Protocol: Evolution of a Dopamine-Sensitive MRI Sensor

The development of dopamine-sensitive MRI sensors from P450-BM3 exemplifies a well-executed directed evolution campaign [8]. The following protocol details the key methodological steps:

Step 1: Library Generation

- Perform error-prone PCR on the BM3h gene using Mutazyme polymerase or similar low-fidelity polymerase to achieve 1-2 amino acid substitutions per variant [8].

- Include Mn²⁺ ions and dNTP concentration imbalances to enhance mutation rate [8] [2].

- Clone the mutated genes into an appropriate expression vector and transform into E. coli host cells [8].

Step 2: Primary Screening

- Grow approximately 900 randomly selected clones in deep-well microtiter plates and induce protein expression [8].

- Prepare cleared lysates and transfer to assay plates for optical titration [8].

- Measure absorbance spectra (350-500 nm) before and after addition of dopamine and arachidonic acid [8].

- Calculate dissociation constants (Kd) for both ligands by monitoring spectral shifts as a function of ligand concentration [8].

Step 3: Secondary Validation

- Select 8-10 clones based on improved dopamine affinity and reduced arachidonic acid affinity [8].

- Purify selected variants in bulk and characterize using more precise spectrophotometric titrations [8].

- Validate functional MRI response by measuring T1 relaxivity changes in the presence and absence of saturating dopamine concentrations [8].

Step 4: Iterative Rounds

- Use the best-performing variant from each round as the template for subsequent evolution [8].

- Continue for multiple rounds (typically 3-5) until target affinity and specificity parameters are achieved [8].

Figure 2: Screening Workflow for Directed Evolution of MRI Sensor. The process involves high-throughput absorbance-based screening of mutant libraries, hit selection based on dissociation constants, and subsequent validation of promising variants using MRI relaxivity measurements.

The Scientist's Toolkit: Essential Research Reagents

Successful implementation of directed evolution requires specific reagents and methodologies to generate diversity, express variants, and screen for desired functions. The following table details essential research solutions commonly employed in directed evolution campaigns.

Table 4: Essential Research Reagents and Methodologies for Directed Evolution

| Reagent/Methodology | Function | Application Example |

|---|---|---|

| Error-Prone PCR Kits [2] [5] | Introduce random point mutations across gene of interest | Whole-gene mutagenesis of P450-BM3 heme domain [8] |

| Site-Saturation Mutagenesis Kits [2] | Systematically randomize specific codons to all amino acids | Focused exploration of enzyme active site residues [2] |

| Expression Vectors & Host Strains [1] | Enable protein expression and genotype-phenotype linkage | E. coli expression of subtilisin E variants [3] |

| Phage Display Systems [1] [4] | Selection-based platform for binding protein evolution | Antibody affinity maturation [4] |

| Fluorescence-Activated Cell Sorting (FACS) [4] | Ultra-high-throughput screening using fluorescent reporters | Evolution of fluorescent proteins with novel properties [4] |

| Microtiter Plate Readers [2] | Absorbance/fluorescence measurement for plate-based screens | Dopamine binding assays for P450-BM3 variants [8] |

| DNA Shuffling Protocols [3] | Recombine homologous genes to create chimeric libraries | Generation of improved β-lactamase variants [3] |

Directed evolution has established itself as an indispensable protein engineering methodology that harnesses nature's evolutionary principles to solve complex biotechnology challenges. By iteratively cycling through diversification and selection, researchers can optimize enzyme properties, develop novel catalytic activities, and create valuable biological tools without requiring complete structural knowledge. Recent advances, including machine learning integration [7] and ultra-high-throughput screening methods [4], continue to expand the capabilities and applications of this powerful technology. As directed evolution methodologies mature, they promise to deliver increasingly sophisticated solutions across pharmaceutical development, industrial biocatalysis, and basic scientific research, solidifying their role as essential tools in the modern biotechnology arsenal.

In the field of molecular evolution and protein engineering, a precise understanding of core terminology is fundamental for both research and application. This guide provides an in-depth technical examination of four pivotal concepts—genotype, phenotype, mutation, and selection pressure—framed within the context of directed evolution. Directed evolution is a powerful laboratory method that mimics natural selection to steer proteins, pathways, or entire organisms toward user-defined goals, with immense implications for therapeutic development, industrial biocatalysis, and basic evolutionary science [1] [3]. For researchers and drug development professionals, grasping the intricate interplay between these core terms is essential for designing robust experiments to evolve novel biologics, antibodies, and enzymes. This whitepaper details these concepts, their interrelationships, quantitative frameworks, and practical experimental protocols.

Defining the Core Terms

Genotype

The genotype constitutes the genetic makeup of a cell or organism. It is the entire set of genes, including all alleles, that an individual possesses [6]. In directed evolution experiments, the target is typically a single gene that codes for a protein of interest. This gene is the unit that is subjected to iterative rounds of diversification and selection [1] [3]. The genotype is the fundamental template that is manipulated and inherited.

Phenotype

The phenotype encompasses the observable characteristics and appearance of an organism, resulting from the expression of its genotype in a given environment [6]. At the molecular level in directed evolution, the phenotype is often a specific, measurable function of a protein, such as its catalytic activity, stability under harsh conditions, or binding affinity to a therapeutic target [1] [3]. It is the phenotypic expression that is directly screened or selected for in the laboratory.

Mutation

A mutation is defined as a change in the genetic material of an organism [6]. Mutations can range from a single nucleotide change (a point mutation) to larger insertions, deletions, or rearrangements [6]. In directed evolution, mutations are deliberately introduced into the gene of interest to create a library of gene variants. This library is the source of genetic diversity from which improved phenotypes can be selected [1]. These variants may produce slightly different proteins that affect function, such as the efficiency with which an enzyme catalyzes a reaction [6].

Selection Pressure

Selection pressure refers to environmental influences or constraints that influence which genes are transmitted from one generation to the next [6]. It is the driving force of adaptive evolution. In a natural context, this could be the presence of predators or limited resources. In directed evolution, scientists artificially apply a selection pressure to a library of variants. This pressure ensures that only individuals whose genes (genotypes) confer a desired function (phenotype) will survive and/or reproduce (be amplified) [6] [1]. For example, selection pressure could be the presence of a toxin that only cells expressing an evolved detoxifying enzyme can survive, or a substrate that yields a colored product only when acted upon by an active enzyme [1].

Table 1: Core Terminology in Genetics and Directed Evolution

| Term | Definition | Role in Directed Evolution |

|---|---|---|

| Genotype | The genetic makeup of a cell or organism [6]. | The DNA sequence of the gene being evolved; the template for diversification. |

| Phenotype | The observable characteristics of an organism [6]. | The function of the protein of interest (e.g., catalytic activity, binding). |

| Mutation | A change in the genetic material of an organism [6]. | The source of diversity; creates a library of gene variants for screening. |

| Selection Pressure | Environmental constraints that influence gene transmission [6]. | The artificial constraint applied to select for improved variants. |

The Genotype-Phenotype Relationship

The relationship between genotype and phenotype is not a simple one-to-one mapping but a complex, often non-linear interaction that is central to evolution.

The Differential View

A powerful framework for understanding this relationship is the differential view. This perspective holds that the link between genotype and phenotype is best viewed as a connection between a difference at the genetic level and a difference at the phenotypic level [9]. In genetics, the focus is often not on the absolute character of a single organism but on the phenotypic variation between individuals that can be attributed to genetic variation [9]. For instance, stating that a gene "causes" brown hair is a simplification; more accurately, a variation in that gene can cause a variation in hair color from, for example, blonde to brown [9]. This view is particularly relevant in the context of pervasive pleiotropy (where one gene affects multiple traits) and epistasis (where the effect of one gene depends on the presence of other genes) [9].

The Link in Directed Evolution

Directed evolution critically depends on establishing a strong genotype-phenotype link. This is a physical or conceptual connection that allows researchers to identify the gene sequence (genotype) responsible for a desired function (phenotype) after a selection or screening step [1]. Several methods ensure this link:

- In vivo compartmentalization: Each variant gene is expressed inside a single cell (e.g., bacteria or yeast). The cell itself acts as the compartment that links the genotype to the phenotype it produces [1].

- In vitro compartmentalization: Genes are expressed in artificial microdroplets using in vitro transcription-translation systems. Each droplet contains a single gene and the protein it encodes, maintaining the link [1] [3].

- Covalent linkage: Methods like mRNA display use puromycin to create a covalent bond between an mRNA molecule (genotype) and the protein (phenotype) it encodes [1].

The following diagram illustrates the core conceptual relationship between these terms and the process of directed evolution.

Quantitative Frameworks and Selection Classifications

Classifying Selection Pressures

Selection pressures in evolution can be categorized based on how they affect the distribution of phenotypes in a population. The table below summarizes the main types of selective pressures.

Table 2: Types of Selective Pressures and Their Population Effects [10]

| Type of Selection | Description | Effect on Population Variance | Example |

|---|---|---|---|

| Stabilizing Selection | Favors the average phenotype and selects against extreme variants. | Decreases genetic variance. | Mouse fur color that closely matches a consistent brown forest floor, selected by predators [10]. |

| Directional Selection | Favors phenotypes at one end of the spectrum of existing variation. | Shifts variance toward a new, fitter phenotype. | The shift to darker peppered moths in soot-polluted environments [10]. |

| Diversifying Selection | Favors two or more distinct phenotypes over intermediate forms. | Increases genetic variance, making the population more diverse. | In a beach habitat, both light-colored mice (on sand) and dark-colored mice (in grass) are favored over medium-colored mice [10]. |

| Frequency-Dependent Selection | Favors a phenotype because it is either common (positive) or rare (negative). | Positive: decreases variance. Negative: increases variance. | In side-blotched lizards, throat color patterns cycle in frequency based on which is rare or common in the population [10]. |

| Sexual Selection | Selection based on the ability to obtain mates, leading to secondary sexual characteristics. | Can decrease or increase variance, often leading to sexual dimorphism. | The evolution of the peacock's large, colorful tail, which impairs survival but increases mating success [10]. |

Fitness Landscapes and Evolutionary Theory

The quantitative relationship between genotype and fitness (a measure of reproductive success) is often conceptualized as a fitness landscape [11]. In this metaphor, genotypes are mapped to a landscape where height corresponds to fitness. Populations evolve by moving toward fitness peaks. However, the landscape itself can be complex and multi-dimensional.

Advanced theoretical work, such as the double-replica theory, uses statistical physics to model the co-evolution of genotypes and phenotypes. This theory introduces separate "replicas" for genotypes (the interaction couplings) and phenotypes (the spin configurations) to describe how their relationship evolves under selection pressure and noise (e.g., mutation) [11]. A key insight from such models is the existence of a "robust fitted phase" under an intermediate level of noise, where phenotypes achieve high fitness and robustness to both environmental noise and genetic mutation are correlated [11]. This phase is highly relevant to biological evolution and successful directed evolution campaigns.

Experimental Protocols in Directed Evolution

Directed evolution is an iterative process designed to mimic natural selection in the laboratory. The following workflow details a generalized protocol for evolving a protein with improved function.

The Directed Evolution Workflow

The core directed evolution cycle involves three core steps: Diversification, Selection or Screening, and Amplification [1] [3]. This cycle is repeated until a variant with the desired level of performance is obtained.

Step 1: Diversification (Creating Variation)

The first step is to create a diverse library of mutant genes from the parent template.

- Method: Error-Prone PCR (epPCR)

- Principle: Standard PCR is performed under conditions that reduce the fidelity of the DNA polymerase, introducing random point mutations throughout the gene [1] [3].

- Protocol:

- Reaction Setup: Set up a standard PCR reaction mixture containing the plasmid DNA template (~10-100 ng), primers that flank the gene of interest, dNTPs, and a suitable buffer.

- Introduce Errors: Use a DNA polymerase known for low fidelity (e.g., Taq polymerase) and alter the reaction conditions to promote misincorporation. This can be achieved by adding manganese ions (Mn²⁺), using unbalanced dNTP concentrations, and increasing the magnesium ion (Mg²⁺) concentration [3].

- Amplification: Run the PCR for 25-30 cycles.

- Purification: Purify the resulting mutagenized PCR product.

- Cloning: Clone the purified product back into an expression vector to create the mutant library. The library size can range from 10⁴ to 10⁸ variants, depending on the method and screening capacity [1].

Step 2: Selection or Screening (Detecting Fitness Differences)

The library of variant genes must be interrogated to find the rare clones with improved function. This is achieved through selection or screening.

- Method: High-Throughput Microtiter Plate Screening

- Principle: Each variant is expressed and cultured individually, and its activity is measured using a quantitative assay, often based on color or fluorescence [1].

- Protocol:

- Transformation and Expression: Transform the library of plasmid variants into a suitable host cell (e.g., E. coli). Plate the cells onto agar plates to form individual colonies.

- Culturing in Plates: Pick individual colonies into the wells of a 96- or 384-well microtiter plate containing growth medium. Induce protein expression.

- Lysate Preparation: Lyse the cells, often chemically or enzymatically, to release the expressed protein.

- Activity Assay: Add a substrate to each well. The substrate should be converted into a colored or fluorescent product by the desired enzyme activity.

- Quantitative Measurement: Use a plate reader to measure the absorbance or fluorescence in each well.

- Data Analysis: Rank the variants based on their signal intensity relative to controls (e.g., the parent sequence). The top performers are chosen for the next round.

Step 3: Amplification (Ensuring Heredity)

The genes encoding the best-performing variants are isolated and prepared for the next round of evolution.

- Method: Gene Amplification and Vector Preparation

- Principle: The genetic material from the top hits is amplified to serve as the template for the next round of diversification [1].

- Protocol:

- Plasmid Isolation: Isolate the plasmid DNA from the host cells of the top-performing variants.

- PCR Amplification: Use standard high-fidelity PCR to amplify the variant gene from the isolated plasmid. Alternatively, the plasmid itself can be used directly.

- Template for Next Round: The amplified gene (or pool of genes from the best performers) is used as the starting template for the next round of directed evolution, beginning again with the Diversification step.

This cycle continues iteratively until the evolved protein exhibits the target level of improvement in the desired property.

Advanced Modern Methods

Recent technological advances have dramatically accelerated the pace of directed evolution.

- T7-ORACLE System: This system uses an engineered E. coli bacterium that hosts a second, artificial DNA replication system operating separately from the cell's own genome. This allows scientists to introduce mutations every time the cell divides (roughly every 20 minutes), effectively condensing 100,000 years of evolution into a much shorter timeframe and enabling continuous evolution without repeated manual intervention [12].

- PROTEUS Platform: This is a platform designed to evolve proteins directly within mammalian cells, which is particularly relevant for evolving therapeutic proteins that must function in a human-cell-like environment [12].

The Scientist's Toolkit: Key Research Reagents

The following table details essential materials and reagents used in a standard directed evolution experiment.

Table 3: Essential Research Reagents for Directed Evolution

| Reagent / Tool | Function in Directed Evolution |

|---|---|

| DNA Polymerase | Enzyme that amplifies the gene of interest. Error-prone PCR uses low-fidelity versions to introduce random mutations [1] [3]. |

| Restriction Enzymes | Proteins that cut DNA at specific sequences. Used for moving genes in and out of expression vectors (cloning) [6]. |

| DNA Ligase | Enzyme that joins DNA fragments together by forming phosphodiester bonds. Essential for sealing genes into plasmid vectors to create recombinant DNA [6]. |

| Plasmids | Small, circular DNA molecules that replicate independently of the chromosome. Used as vectors to carry the gene of interest into a host cell for expression [6] [1]. |

| Host Cells | Living systems (e.g., E. coli, yeast) used to express the library of variant genes. Each cell produces a single protein variant, linking genotype to phenotype [1]. |

| Reporter Gene / Assay | A marker system (e.g., producing a colored or fluorescent product) used to detect and quantify the desired protein activity during screening [6]. |

| Selection Agent | An environmental pressure (e.g., an antibiotic, a toxin, or a required nutrient) applied to select for cells expressing a protein with the desired function [1]. |

The concepts of genotype, phenotype, mutation, and selection pressure form the foundational pillars of evolutionary theory and its practical application in directed evolution. The precise, differential relationship between genetic variation and phenotypic variation is the engine that drives adaptation, whether in nature or the laboratory. For scientists in drug development and biotechnology, mastering these terms and the associated methodologies—from creating diverse mutant libraries to applying sophisticated high-throughput screens—is indispensable. As directed evolution technologies, such as ultra-rapid continuous evolution systems, continue to advance, the ability to design and interpret these experiments with a deep understanding of core terminology will remain critical for innovating new therapies, diagnostics, and sustainable biocatalysts.

Directed evolution represents a cornerstone technique in modern biotechnology, enabling researchers to engineer biomolecules with enhanced or entirely novel functions by mimicking the principles of natural selection in a laboratory setting [6]. This iterative, two-step process involves the generation of genetic diversity within a population of biological entities, followed by the screening or selection of variants exhibiting desirable traits [3]. The selected individuals then serve as templates for subsequent rounds of diversification and selection, progressively optimizing the function of interest. The historical arc of this field stretches from foundational in vitro experiments demonstrating molecular evolution to its recognition as a powerful tool for protein engineering, culminating in the 2018 Nobel Prize in Chemistry awarded to Frances H. Arnold for the directed evolution of enzymes [13]. This article traces this scientific journey, detailing the key experiments, methodological advances, and technical reagents that have shaped the field of directed evolution, providing a resource for researchers and drug development professionals engaged in this transformative area of study.

Spiegelman's Monster: The Foundational Experiment

In the 1960s, Sol Spiegelman and his colleagues at the University of Illinois conducted a landmark study that laid the experimental groundwork for directed evolution [14] [15]. Their work demonstrated that Darwinian principles of evolution—variation and selection—could operate on simple molecular systems outside of a living cell.

Experimental Protocol and Methodology

Spiegelman's team established a cell-free system to study the replication of RNA from the bacteriophage Qβ [14] [15]. The core methodology can be broken down into the following steps:

- System Setup: A solution containing the Qβ RNA replicase (the enzyme that replicates the viral RNA), free nucleotide building blocks (ATP, GTP, CTP, UTP), and necessary salts was prepared.

- Initial Incubation: The natural Qβ phage RNA, approximately 4,500 nucleotides in length, was introduced into this solution. The Qβ replicase, which is highly specific for its own RNA, began copying the template.

- Serial Transfer: After a period of replication, a sample of the synthesized RNA was transferred to a fresh tube containing new solution with replicase and nucleotides. This process of transfer and replication was repeated multiple times—a serial passage experiment at the molecular level.

- Application of Selective Pressure: In this artificial environment, the only selective pressure was the speed of replication. The researchers observed that the RNA population changed dramatically over generations. RNA molecules that could replicate faster were favored. Since shorter RNA molecules required less time to be synthesized, deletion mutants that had shed parts of the genome not essential for replication in this simplified system emerged and came to dominate the population.

- Outcome: After 74 serial transfers, the original RNA genome had evolved into a highly truncated "monster" of only 218 nucleotides [14]. This minimal RNA, dubbed "Spiegelman's Monster," replicated rapidly but had lost the genetic information needed to produce infectious viral particles, as those genes were superfluous in an environment where the replicase was provided externally.

This experiment provided a powerful demonstration of Darwinian evolution in a test tube, proving that natural selection can act on non-cellular molecular systems.

Research Reagent Solutions for Spiegelman's Experiment

Table 1: Key research reagents used in Spiegelman's foundational experiment.

| Reagent | Function in the Experiment |

|---|---|

| Qβ Bacteriophage RNA | Initial template for replication; the "genotype" and "phenotype" under selection. |

| Qβ RNA Replicase | Enzyme catalyst responsible for recognizing the RNA template and synthesizing new complementary strands. |

| Ribonucleotides (ATP, GTP, CTP, UTP) | Building blocks for the synthesis of new RNA strands by the replicase. |

| Inorganic Salts Buffer | Provided the optimal ionic and pH conditions for replicase activity and stability. |

The Rise of Modern Directed Evolution

The principles demonstrated by Spiegelman were later formalized into a robust methodology for protein engineering. Modern directed evolution, as pioneered in the 1990s, applies these concepts to create enzymes with improved stability, activity, or novel functions [3]. A landmark example was the evolution of subtilisin E, a serine protease, for increased activity in the organic solvent dimethylformamide (DMF) [3]. This involved sequential rounds of error-prone PCR to introduce random mutations, followed by screening for improved activity.

Core Methodologies and Workflows

The general workflow for the directed evolution of a protein involves an iterative cycle, as illustrated below. Two primary strategies for creating genetic diversity are random mutagenesis (e.g., error-prone PCR) and recombination-based methods (e.g., DNA shuffling), which mimics sexual recombination by shuffling fragments from multiple parent genes [3].

Key Techniques and Their Applications

Table 2: Quantitative outcomes from landmark directed evolution experiments.

| Evolved Protein / System | Technique Used | Selection Pressure | Key Outcome |

|---|---|---|---|

| Subtilisin E [3] | Error-prone PCR | Activity in 60% DMF | 256-fold increase in activity after 3 rounds |

| β-lactamase [3] | DNA Shuffling | Antibiotic (Cefotaxime) resistance | 32,000-fold increase in MIC vs. 16-fold without recombination |

| Qβ RNA (Spiegelman's Monster) [14] | Serial Passage | Replication speed | Genome reduced from 4,500 to 218 nucleotides |

| Novel ATP-binding proteins [3] | mRNA display | Binding to ATP | Isolated from a library of 6x10^12 random sequences |

The 2018 Nobel Prize in Chemistry and Current Frontiers

The practical power of directed evolution was unequivocally recognized when the Royal Swedish Academy of Sciences awarded one half of the 2018 Nobel Prize in Chemistry to Frances H. Arnold "for the directed evolution of enzymes" [13]. The other half was jointly awarded to George P. Smith and Sir Gregory P. Winter for the phage display of peptides and antibodies. The Academy noted that the Laureates had "harnessed the power of evolution" to develop proteins that solve humankind's chemical problems. Frances Arnold's enzymes, for instance, are used in the more environmentally friendly manufacturing of pharmaceuticals and the production of renewable biofuels [13].

The Scientist's Toolkit: Essential Reagents for Directed Evolution

Table 3: Key research reagents and their functions in a standard directed evolution pipeline.

| Research Reagent / Tool | Function in Directed Evolution |

|---|---|

| Polymerase Chain Reaction (PCR) Reagents | Gene amplification; error-prone PCR introduces random mutations for diversity generation [6]. |

| DNA Ligase | Joins DNA fragments together; essential for cloning variants into expression vectors [6]. |

| Restriction Enzymes | Cuts DNA at specific sites, enabling the movement of genes into plasmids or other vectors for expression and screening [6]. |

| Expression Plasmids | Vectors used to transfer the gene library into a host organism (e.g., E. coli) for protein expression [6]. |

| Host Cells (e.g., E. coli) | Cellular factories for producing the protein variants from the gene library. |

| Selection Media / Assays | Provides the selective pressure (e.g., antibiotic, substrate, fluorescence) to identify improved variants. |

Contemporary Advances and Future Outlook

The field of directed evolution continues to advance rapidly. Current research focuses on overcoming bottlenecks such as host genome interference, small library sizes, and uncontrolled mutagenesis in complex systems [16]. A recent breakthrough, termed RNA replicase-assisted continuous evolution (REPLACE), engineers an orthogonal RNA replication system within mammalian cells [16]. This allows for the continuous evolution of RNA-based devices and proteins directly in a mammalian environment, opening new avenues for synthetic biology and cell engineering. Furthermore, directed evolution principles are now being applied beyond individual proteins to entire metabolic pathways and genomes, creating whole-cell biocatalysts for the synthesis of valuable chemicals and pharmaceuticals [3]. The integration of these methods with artificial intelligence for predicting productive mutations promises to further accelerate the design of biomolecules, solidifying directed evolution's role as an indispensable tool in the molecular life sciences [17].

Protein engineering is a cornerstone of modern biotechnology, enabling the creation of novel enzymes, therapeutic antibodies, and biosensors with tailored properties. Within this field, two primary methodologies have emerged: directed evolution and rational protein design. While both aim to optimize protein function, they approach this goal from fundamentally different perspectives. Directed evolution mimics natural selection in a laboratory setting, employing iterative rounds of mutation and selection to improve protein function without requiring detailed structural knowledge. In contrast, rational design employs computational and structure-based approaches to make precise, targeted mutations aimed at achieving predefined functional outcomes. This review provides a comprehensive technical comparison of these methodologies, detailing their underlying principles, experimental protocols, applications, and relative advantages for research and drug development professionals.

Fundamental Principles and Comparative Framework

Conceptual Foundations

Directed evolution simulates natural evolution through iterative cycles of diversification, selection, and amplification to steer proteins toward user-defined goals [18] [1]. This approach operates on the principle that random mutagenesis coupled with high-throughput screening can identify beneficial mutations without requiring prior knowledge of protein structure or mechanism [4]. The success of directed evolution was recognized with the 2018 Nobel Prize in Chemistry awarded for the directed evolution of enzymes and phage display techniques [18].

Rational protein design relies on precise, knowledge-driven modifications to protein sequences based on detailed understanding of structure-function relationships [19] [20]. This approach requires comprehensive structural information, often obtained through X-ray crystallography, NMR spectroscopy, or computational prediction, to identify specific residues for mutation that will confer desired properties [21] [22]. Rational design operates under the "sequence-structure-function" paradigm, where amino acid sequence determines three-dimensional structure, which in turn dictates protein function [18].

Key Characteristics Comparison

Table 1: Fundamental Characteristics of Directed Evolution and Rational Design

| Characteristic | Directed Evolution | Rational Design |

|---|---|---|

| Knowledge Requirements | Minimal structural knowledge needed | Detailed structural and mechanistic understanding essential |

| Mutagenesis Approach | Random or semi-random throughout gene | Targeted to specific residues |

| Throughput Requirements | Very high (10³-10¹⁵ variants) | Low to moderate (tens to hundreds of variants) |

| Handles Complexity | Excellent for complex or unknown mechanisms | Limited to well-understood structure-function relationships |

| Primary Advantage | Explores vast sequence space; no structural bias | Precise, targeted changes; minimal wasted screening effort |

| Major Limitation | Requires robust high-throughput screening | Difficult to predict distal effects of mutations |

Methodologies and Experimental Protocols

Directed Evolution Workflow

Directed evolution follows an iterative cycle of diversification, selection, and amplification [18] [4] [1]. The process begins with the generation of genetic diversity through random mutagenesis of the target gene. Common methods include error-prone PCR, which introduces random point mutations throughout the sequence [4], and DNA shuffling, which recombines fragments from related genes to explore sequence space between parent sequences [1]. More recent techniques include RAISE (random insertion/deletion mutagenesis) for introducing indels and TRINS for generating random tandem repeats [4].

Following diversification, the mutant library undergoes selection or screening to identify variants with improved properties. Selection methods directly couple desired function to survival or replication, such as through phage display for binding proteins or growth complementation for enzyme activity [1]. When selection isn't feasible, screening methods individually assay variants using colorimetric, fluorogenic, or other detectable signals [4]. High-throughput approaches like fluorescence-activated cell sorting (FACS) enable screening of libraries exceeding 10⁸ variants [4]. The best-performing variants are then amplified and serve as templates for subsequent rounds of evolution.

Table 2: Common Mutagenesis Methods in Directed Evolution

| Method | Mechanism | Advantages | Library Size |

|---|---|---|---|

| Error-prone PCR | Random point mutations via low-fidelity amplification | Easy to perform; no prior knowledge needed | 10⁶-10¹⁰ |

| DNA Shuffling | Fragmentation and recombination of homologous genes | Explores combinatorial benefits; recombines beneficial mutations | 10⁶-10¹² |

| Site-Saturation Mutagenesis | Targeted randomization of specific codons | Focused exploration of key positions; reduced library size | 10²-10⁵ per position |

| Orthogonal Replication Systems | In vivo mutagenesis using engineered polymerases | Targeted in vivo evolution; continuous evolution possible | 10⁸-10¹¹ |

Rational Design Workflow

Rational design begins with comprehensive analysis of the target protein's structure and mechanism [19] [20]. Researchers identify specific residues influencing target properties through structural visualization, molecular dynamics simulations, or computational docking studies [20]. Tools like CAVER analyze tunnels and channels in protein structures to identify "hot spot" residues affecting activity, stability, or specificity [20]. Molecular dynamics simulations generate conformational ensembles that reveal dynamic features crucial for function [20].

Once target residues are identified, computational design predicts optimal amino acid substitutions. The Rosetta software suite is widely used for designing novel proteins and optimizing existing ones [20] [22]. For enzyme design, RosettaMatch identifies protein scaffolds that can accommodate theorized catalytic sites (theozymes), while RosettaDesign optimizes the surrounding active site pocket [20]. Designed variants are then experimentally validated, with iterative refinement based on performance data.

Semi-Rational Approaches

Semirational design represents a hybrid approach that combines elements of both methods [21] [20]. This strategy uses computational or bioinformatic analysis to identify promising regions for randomization, creating focused libraries with higher probabilities of containing beneficial mutations [20]. Techniques like iterative saturation mutagenesis (ISM) systematically explore combinations of mutations at identified hot spots [20]. This approach maintains the exploratory power of directed evolution while significantly reducing library size and screening burden.

Applications in Research and Drug Development

Therapeutic Applications

Both directed evolution and rational design have proven valuable for therapeutic development. Directed evolution has been particularly successful for antibody engineering through phage display technology, enabling development of high-affinity therapeutic antibodies [18] [1]. Rational design has advanced protein-based therapeutics such as engineered insulin analogs with optimized pharmacokinetics and enzyme replacements with enhanced stability [21].

Enzyme Engineering for Industrial Catalysis

Industrial applications often require enzymes with enhanced stability, altered substrate specificity, or novel catalytic activities. Directed evolution has successfully engineered enzymes for operation under harsh industrial conditions (extreme temperatures, organic solvents) [18] [1]. Notable examples include cytochrome P450 enzymes engineered through directed evolution to catalyze non-natural transformations, expanding their synthetic utility [18]. Rational design has been employed to modify fatty acid selectivity in lipases by strategically introducing bulky residues to block binding pockets [19].

Novel Protein Design

Rational design enables creation of entirely novel proteins not found in nature. The de novo design of protein folds like Top7 demonstrates the power of computational methods to create stable proteins with unprecedented structures [22]. Similarly, rational design has created novel protein-protein interfaces, metalloproteins, and enzymes for non-biological reactions [20] [22].

Essential Research Reagents and Tools

Table 3: Key Research Reagent Solutions for Protein Engineering

| Category | Specific Tools/Reagents | Function in Protein Engineering |

|---|---|---|

| Mutagenesis | Error-prone PCR kits, DNA shuffling kits, Site-directed mutagenesis kits | Introduce genetic diversity for library generation |

| Expression Systems | Bacterial (E. coli), yeast, or in vitro transcription/translation systems | Produce protein variants for screening |

| Screening Tools | Phage display systems, FACS, Microtiter plate assays, HPLC/GC-MS | Identify variants with desired properties from libraries |

| Computational Software | Rosetta, YASARA, CAVER, PyMol, Molecular dynamics packages | Predict protein structures, identify mutation sites, design variants |

| Specialized Reagents | Kapa Biosystems polymerases, Modified nucleotides, Unnatural amino acids | Enable specialized mutagenesis and expression approaches |

Workflow Visualization

Emerging Trends and Future Perspectives

The distinction between directed evolution and rational design is increasingly blurred by hybrid approaches and new technologies. Machine learning algorithms now analyze sequence-function relationships from directed evolution data to guide rational design decisions [23]. Deep learning models like DeepDE enable more efficient exploration of sequence space by predicting promising mutation combinations [23]. Autonomous protein engineering systems such as SAMPLE (Self-driving Autonomous Machines for Protein Landscape Exploration) combine robotic experimentation with AI-driven design to accelerate both approaches [21].

Advances in structural biology, particularly in cryo-electron microscopy and artificial intelligence-based structure prediction (exemplified by AlphaFold2 and RoseTTAFold), are expanding the scope of rational design to previously intractable targets [21] [22]. Simultaneously, improvements in high-throughput screening technologies continue to enhance the efficiency of directed evolution. The integration of non-canonical amino acids and artificial cofactors further expands the functional repertoire of engineered proteins beyond natural capabilities [20].

For drug development professionals, the strategic selection between directed evolution, rational design, or hybrid approaches depends on multiple factors: the availability of structural information, the complexity of the target function, the availability of high-throughput assays, and project timelines. As both methodologies continue to advance and converge, they promise to accelerate the development of novel biologics, enzymes for sustainable chemistry, and research tools that deepen our understanding of protein function.

In the field of protein engineering, directed evolution (DE) stands as a powerful methodology for tailoring enzymes and other biomolecules to meet specific industrial and therapeutic needs. It mimics the principles of natural selection—variation, selection, and heredity—in a controlled laboratory environment to steer proteins toward a user-defined goal [1]. Unlike rational design approaches that require extensive knowledge of protein structure and mechanism, directed evolution can generate improved proteins without this prerequisite, making it a highly versatile tool [1]. The efficacy of this process hinges on a core set of molecular components: genes, which provide the blueprint; enzymes, the functional workhorses; catalysts that drive reactions; and reporter systems, which enable the detection of successful variants [6] [24]. This guide provides an in-depth technical examination of these essential components, framed within the context of directed evolution for a scientific audience.

Core Conceptual Framework

Directed evolution is an iterative process that consists of three fundamental steps, as illustrated in the workflow below. The cycle begins with the introduction of genetic variation, which serves as the raw material for evolution. This is followed by a selection or screening step, where variants with desired traits are identified, often using reporter systems. Finally, the genes of the selected variants are amplified to serve as the template for the next round of evolution, progressively enhancing the protein's properties [24] [1].

The success of a directed evolution campaign is directly linked to the total library size screened, as evaluating more mutants increases the probability of finding one with significantly improved properties [1]. The components and processes involved in this cycle are detailed in the following sections.

Genes: The Blueprint for Variation

In directed evolution, the gene encoding the target protein is the fundamental starting point. The process involves creating a diverse library of gene variants to explore a vast sequence space and identify mutants with enhanced functions [1].

Methods for Genetic Diversification

Several laboratory techniques are employed to introduce genetic diversity, each with its own advantages and applications. These methods can be broadly categorized as random or semi-rational.

Table 1: Key Methods for Generating Genetic Diversity in Directed Evolution

| Method | Principle | Key Advantage | Typical Library Size |

|---|---|---|---|

| Error-Prone PCR [24] [1] | Uses reaction conditions that reduce polymerase fidelity, introducing random point mutations. | Simple; requires no prior structural knowledge. | Large (>106) |

| DNA Shuffling [1] | Fragments of homologous genes are reassembled randomly, mimicking genetic recombination. | Recombines beneficial mutations from multiple parents. | Large |

| Site-Saturation Mutagenesis [1] | All possible amino acid substitutions are systematically introduced at a specific residue or region. | Focuses diversity on "hotspot" regions, reducing library size. | Focused (20n per region) |

The choice of mutagenesis method is critical. While random mutagenesis methods like error-prone PCR allow for a blind exploration of sequence space, semi-rational approaches that focus on specific regions (e.g., the active site or regions identified from phylogenetic analysis) create focused libraries with a higher probability of containing beneficial mutants, thus streamlining the screening process [25] [1].

Enzymes and Catalysts: The Functional Targets

Enzymes as Biological Catalysts

Enzymes are proteins that act as biological catalysts, meaning they significantly speed up the rate of specific biochemical reactions without being consumed in the process [6]. In directed evolution, enzymes are the primary targets for optimization. The goal is to alter their properties, such as activity, thermostability, solubility, and substrate specificity, to make them more suitable for industrial applications like biocatalysis in drug development [24]. A classic example is the engineering of cytochrome P450 enzymes. Through directed evolution, their functionality was transformed from fatty acid hydroxylation to alkane degradation, showcasing the power of DE to create new biocatalytic activities [24].

The Role of Catalysts in Reporter Systems

Beyond being the target of evolution, the catalytic activity of enzymes is also harnessed in reporter genes, which are a cornerstone of the screening step. A reporter gene produces a detectable signal (e.g., color, fluorescence) when expressed, allowing researchers to track gene expression or enzyme activity [6]. In a typical setup, the activity of an evolved enzyme on a specific substrate leads to the production of a colored or fluorescent product from a proxy molecule, enabling high-throughput screening [1]. This creates a direct, quantifiable link between the desired enzymatic function and a easily detectable output.

Reporter Systems and Selection: Detecting Success

The ability to accurately and efficiently identify improved variants from a vast library is the bottleneck of directed evolution. This is achieved through selection or screening methodologies, which often rely on reporter systems.

High-Throughput Screening Methodologies

Screening involves individually assaying each variant for the desired activity, typically using a quantitative measure. The table below summarizes key tools used in high-throughput screening (HTS) platforms.

Table 2: High-Throughput Screening and Selection Methods for Directed Evolution

| Method / Tool | Principle | Measured Output | Throughput |

|---|---|---|---|

| Microtiter Plates [24] | Cell cultures or reactions are isolated in wells, and activity is measured via spectroscopy. | Color or fluorescence intensity. | Medium (103-104) |

| Fluorescence-Activated Cell Sorting (FACS) [24] | Cells displaying enzymes or containing fluorescent products are automatically sorted. | Fluorescence per cell. | Very High (>108 cells) |

| Phage Display [1] | Variant proteins are expressed on the surface of phage particles, which are selected by binding to an immobilized target. | Binding affinity; enriched phage clones. | High (109-1011) |

| Growth Complementation [1] | Enzyme activity is coupled to the synthesis of a metabolite essential for survival. | Cell growth. | Extremely High (Limited by transformation) |

The Critical Role of Reporter Genes

The reporter gene is a pivotal component in many screening systems. It serves as a marker to detect a cellular response, such as the activation of a specific pathway or the success of a genetic modification, by producing a colored, fluorescent, or otherwise measurable product [6]. For instance, in a screen for improved enzymes, the reaction catalyzed by a successful variant might trigger the expression of a reporter gene like GFP, or the enzyme might directly process a substrate into a colored molecule. This allows for the rapid isolation of high-performing variants from a massive pool.

The Scientist's Toolkit: Research Reagent Solutions

The practical application of directed evolution relies on a suite of specialized reagents and materials. The following table details essential items and their functions in a typical DE workflow.

Table 3: Essential Research Reagents and Materials for Directed Evolution

| Reagent / Material | Function in Directed Evolution |

|---|---|

| DNA Polymerase for Error-Prone PCR [24] | Introduces random mutations during gene amplification under controlled, low-fidelity conditions. |

| Restriction Enzymes & DNA Ligase [6] | Restriction enzymes cut DNA at specific sites, and DNA ligase seals DNA fragments together; used for cloning variant genes into expression vectors. |

| Expression Vectors (Plasmids) [6] | Small, circular DNA molecules that carry the variant gene into a host cell (e.g., E. coli or yeast) for protein expression. |

| Host Cells [24] | Bacterial or yeast cells that act as factories to express the library of variant proteins. |

| Kapa Biosystems PCR Reagents [24] | Example of commercially available enzymes (e.g., novel polymerases) engineered via directed evolution for enhanced performance in PCR, qPCR, and NGS applications. |

| Fluorogenic/Chromogenic Substrates [1] | Proxy molecules that produce a fluorescent or colored signal when acted upon by the target enzyme, enabling high-throughput screening. |

Directed evolution is a cornerstone of modern protein engineering, enabling the development of novel enzymes for therapeutics, biocatalysis, and green chemistry. Its success is fundamentally built upon the precise interplay of its core components: the genes that are diversified, the enzymes and catalysts that are optimized for new functions, and the reporter systems that make it possible to find these rare variants in a vast pool of possibilities. As high-throughput screening technologies advance and computational tools like AlphaFold provide deeper structural insights, the integration of semi-rational design with directed evolution will further accelerate the engineering of bespoke proteins, pushing the boundaries of what is achievable in scientific research and industrial application [25].

Executing Directed Evolution: From Library Creation to Real-World Applications

Within the structured framework of directed evolution, the generation of diverse genetic libraries constitutes the foundational first step, mimicking the role of genetic variation in natural selection. This in-depth technical guide examines three cornerstone techniques for creating these mutant libraries: error-prone PCR (epPCR), DNA shuffling, and saturation mutagenesis. The strategic application of these methods enables researchers and drug development professionals to steer proteins and nucleic acids toward user-defined goals, such as enhanced catalytic activity, altered substrate specificity, or improved stability. The success of any directed evolution campaign is intrinsically linked to the quality and diversity of the initial library, making the choice and optimization of library generation technique a critical determinant of experimental success [1].

Core Principles and Comparative Analysis

Directed evolution operates through iterative cycles of diversification, selection, and amplification [1]. Library generation encompasses the diversification phase, where a single gene is transformed into a vast collection of variants. The principles of these techniques are grounded in molecular biology and harnessed for protein engineering:

- Error-Prone PCR (epPCR): This method introduces random point mutations throughout the gene by exploiting the reduced fidelity of DNA polymerase under non-ideal reaction conditions, such as the presence of manganese ions (Mn²⁺) or unequal dNTP concentrations [26] [1]. It requires no prior structural knowledge but predominantly yields single base substitutions.

- DNA Shuffling: A technique for in vitro recombination that mimics natural homologous recombination. Parental genes are randomly fragmented, and then reassembled in a primerless PCR process, creating chimeric sequences containing crossovers between the different parent sequences [27]. This method is particularly valuable for recombining beneficial mutations from different variants.

- Saturation Mutagenesis: A targeted approach where one or more specific codons in a gene are systematically replaced with all or many possible amino acid alternatives [28] [29]. This allows for a comprehensive exploration of the sequence-function relationship at defined positions and is ideal for probing active sites or other critical regions.

Table 1: Comparative Analysis of Library Generation Techniques

| Feature | Error-Prone PCR | DNA Shuffling | Saturation Mutagenesis |

|---|---|---|---|

| Principle | Low-fidelity PCR introduces random point mutations [26] | DNase I fragmentation & recombination of homologous genes [27] | Targeted replacement of codons with degenerate oligonucleotides [28] |

| Mutation Type | Primarily point mutations (substitutions) [30] | Crossovers, insertions, deletions, and point mutations [27] | Single or multiple amino acid substitutions [28] |

| Control | Low control; mutations are stochastic | Moderate control; depends on sequence homology | High control; precise targeting of specific residues |

| Library Diversity | Broad but shallow (limited to point mutations) | High; combines existing variation | Deep but narrow (focused on specific sites) |

| Key Advantage | Simple; requires no structural information | Recombines beneficial mutations; accesses larger sequence space [27] | Systematically explores all possible substitutions at a given site [28] |

| Primary Limitation | Limited mutation types; high proportion of deleterious mutations [30] | Requires high sequence homology between parents; complex optimization [27] | Restricted to predefined sites; can suffer from codon bias [30] |

| Best Suited For | Initial exploration, when no structural data is available | Recombining beneficial mutations from diverse parents, functional domain swapping [27] | Probing active sites, functional epitopes, and structure-function relationships [28] |

Experimental Protocols and Methodologies

Error-Prone PCR (epPCR)

The following protocol for random mutagenesis of a defined gene region is adapted from a study on viral haemagglutinin [26].

1. Primer and Template Design:

- Design primers flanking the gene or region of interest (RBD, 549 bp in the source study).

- Include appropriate restriction enzyme sites and promoter sequences (e.g., T7 promoter) in the primers for downstream cloning.

- Use a high-fidelity polymerase to generate the initial "faithful" wild-type (WT) template.

2. Error-Prone PCR Reaction Setup:

- Template: 10-100 ng of purified WT plasmid or PCR product.

- Primers: 0.2-1.0 µM each forward and reverse primer.

- Polymerase: Use a low-fidelity polymerase (e.g., Taq DNA polymerase).

- Buffer Conditions: Introduce mutagenesis by adding Mn²⁺ (0.1-0.5 mM MnCl₂) and/or using unbalanced dNTP concentrations (e.g., higher dCTP and dTTP) [26] [31].

- Cycling Conditions:

- Initial Denaturation: 95°C for 2-5 minutes.

- 25-35 cycles of:

- Denaturation: 95°C for 20-30 seconds.

- Annealing: 50-60°C for 20-30 seconds.

- Extension: 72°C for 1-2 minutes per kb.

- Final Extension: 72°C for 5-10 minutes.

3. Product Analysis and Cloning:

- Analyze the epPCR product by agarose gel electrophoresis.

- Purify the product and clone it into an expression vector using the designed restriction sites.

- Transform the ligated product into a competent host (e.g., E. coli) to create the mutant library.

DNA Shuffling

This protocol is based on a computational and experimental analysis of the DNA shuffling process [27].

1. DNA Fragmentation:

- Pool the parent genes (e.g., GFP gene sequences).

- Digest 8 µg of pooled DNA with 0.05 units of DNase I in a 60 µL reaction containing 10 mM MnCl₂ and 25 mM Tris-HCl (pH 7.4) for 1-5 minutes at room temperature.

- Terminate the reaction by adding EDTA to 50 mM and heat-inactivating at 95°C for 10 minutes.

- Purify the fragments using gel filtration (e.g., Centri-Sep column) to remove very small fragments (<25 bp). The goal is an average fragment size of 50-200 bp.

2. Primerless Reassembly PCR:

- Assemble a 50 µL reassembly reaction containing:

- Purified DNA fragments (10-100 ng/µL).

- Standard PCR buffer (e.g., NEB ThermoPol Buffer).

- 0.2 mM dNTPs.

- 1 unit of a non-proofreading DNA polymerase (e.g., Vent (exo-) or Taq polymerase).

- Perform PCR with the following cycling profile:

- Initial Denaturation: 95°C for 1-2 minutes.

- 30-45 cycles of:

- Denaturation: 95°C for 30-60 seconds.

- Annealing: 50-60°C for 30-60 seconds.

- Extension: 72°C for 1-2 minutes (with a 1-2 second increment per cycle).

- Final Extension: 72°C for 5-10 minutes.

3. Amplification of Full-Length Products:

- Use the reassembled product as a template in a standard PCR with outer primers that bind the ends of the target gene.

- Clone the resulting full-length chimeric genes into an expression vector for library creation.

Saturation Mutagenesis

This protocol outlines the oligonucleotide-based method for creating a single-site saturation mutagenesis library [30] [28].

1. Oligonucleotide Design:

- Design a mutagenic primer that is complementary to the target region but contains a degenerate codon (e.g., NNK or NNN, where N is A/C/G/T and K is G/T) at the specific amino acid position to be randomized. The NNK set reduces redundancy to 32 codons and excludes two stop codons [30].

- The primer should be phosphorylated at the 5' end if it will be used in a ligation step.

- For multiple sites, oligonucleotides can be synthesized in parallel on a high-throughput chip [30].

2. PCR Amplification:

- Perform PCR using a high-fidelity DNA polymerase (e.g., KAPA HiFi HotStart or Q5) to minimize secondary mutations.

- Method A: Overlap Extension PCR

- Perform two separate PCRs to generate overlapping fragments containing the mutation.

- Mix the fragments and use them as templates in a second PCR with outer primers to assemble the full-length mutated gene [28].

- Method B: Whole-Plasmid PCR

- Amplify the entire plasmid using primers that contain the degenerate codon and face outward.

- Digest the PCR product with DpnI (a methylation-specific restriction enzyme) to eliminate the original methylated template plasmid.

- Circulate the resulting linear, mutated DNA using a ligase.

3. Library Transformation and Validation:

- Transform the ligated or assembled product into competent E. coli.

- Sequence a subset of colonies to assess library quality, mutation coverage, and randomness.

Recent Advancements and Novel Techniques

The field of library generation is continuously evolving, with new technologies emerging to overcome the limitations of traditional methods.

- Chip-Based Oligonucleotide Synthesis: Advanced high-throughput methods now use chip-based oligonucleotide synthesis to construct highly complex and precisely controlled mutagenesis libraries. For example, a full-length amber codon scanning library for the PSMD10 gene achieved 93.75% mutation coverage using this technology. The choice of high-fidelity DNA polymerase (e.g., KAPA HiFi HotStart, Platinum SuperFi II) was critical for minimizing synthesis errors and chimeric sequences [30].

- Deaminase-Driven Random Mutation (DRM): This novel strategy addresses the low mutation efficiency of epPCR. DRM uses engineered cytidine (A3A-RL) and adenosine (ABE8e) deaminases to introduce a broad spectrum of C-to-T, G-to-A, A-to-G, and T-to-C mutations in a single round. It boasts a 14.6-fold higher mutation frequency and a 27.7-fold greater diversity of mutation types compared to conventional epPCR, enabling more comprehensive exploration of the genetic landscape [31].

- CRISPR-Assisted Directed Evolution: CRISPR-Cas systems have been repurposed to create powerful in vivo mutagenesis tools. Systems like "EvolvR" use a Cas9 nickase fused to an error-prone polymerase to introduce mutations continuously and locally in a user-defined window. This allows for the simulation of natural evolutionary processes directly in the host genome without the need for iterative in vitro library construction [32].

- SMuRF Framework: The Saturation Mutagenesis-Reinforced Functional (SMuRF) assay combines a simplified saturation mutagenesis method (Programmed Allelic Series with Common procedures, PALS-C) with streamlined functional assays. This framework lowers the barrier for high-throughput functional characterization of variants, making it accessible for standard research laboratories to generate functional scores for all possible coding single-nucleotide variants in disease-related genes [33] [29].

The Scientist's Toolkit: Essential Research Reagents

Successful execution of library generation techniques requires a suite of reliable reagents and materials. The following table details key solutions used in the featured protocols.

Table 2: Key Research Reagent Solutions for Library Generation

| Reagent / Material | Function / Application | Example Products / Notes |

|---|---|---|

| High-Fidelity DNA Polymerase | Accurate amplification for template prep and saturation mutagenesis; minimizes spurious mutations [30]. | KAPA HiFi HotStart, Q5 High-Fidelity, Platinum SuperFi II |

| Low-Fidelity DNA Polymerase | Introduces random mutations during error-prone PCR [26]. | Standard Taq Polymerase |

| DNase I | Randomly fragments DNA for shuffling protocols [27]. | - |

| Restriction Endonucleases | Cloning of mutant libraries into expression vectors [26]. | BsaI-HFv2, BamHI-HF, etc. |

| DNA Ligase | Joins DNA fragments during cloning and plasmid reassembly. | T4 DNA Ligase |

| Deaminases (Engineered) | Drives comprehensive random mutagenesis in novel strategies like DRM [31]. | A3A-RL (cytidine), ABE8e (adenosine) |

| Electrocompetent Cells | High-efficiency transformation for large library construction. | Endura Electrocompetent E. coli [33] |

| Next-Generation Sequencing (NGS) | Quality control of libraries; deep mutational scanning to assess variant distribution and coverage [30]. | - |

| Degenerate Codons | Encodes all possible amino acids at a target site during saturation mutagenesis [30]. | NNK, NNN codons in primers |

Workflow and Logical Relationships

The following diagram illustrates the standard iterative cycle of directed evolution and how the three library generation techniques integrate into this framework.

Directed Evolution Workflow

Error-prone PCR, DNA shuffling, and saturation mutagenesis each offer distinct and powerful pathways for generating genetic diversity in directed evolution experiments. The choice of technique is strategic, hinging on the specific goals of the project, the availability of structural information, and the desired type of genetic variation. While epPCR provides a straightforward entry into random mutagenesis, DNA shuffling excels at recombining existing traits, and saturation mutagenesis offers unparalleled precision for probing specific sites. The ongoing innovation in this field, exemplified by chip-based synthesis, deaminase-driven mutagenesis, and CRISPR-based tools, continues to expand the frontiers of protein engineering. These advancements empower researchers and drug developers to explore sequence space more efficiently than ever, accelerating the discovery of novel biologics, enzymes, and therapeutic agents.