Directed Evolution: Mimicking Natural Selection in the Lab to Engineer Better Proteins and Drugs

This article explores how directed evolution accelerates natural selection in laboratory settings to develop proteins and enzymes with enhanced functions for biomedical and therapeutic applications.

Directed Evolution: Mimicking Natural Selection in the Lab to Engineer Better Proteins and Drugs

Abstract

This article explores how directed evolution accelerates natural selection in laboratory settings to develop proteins and enzymes with enhanced functions for biomedical and therapeutic applications. It details the foundational principles of creating genetic diversity and selecting for desired traits, covering established methodologies like error-prone PCR and phage display alongside cutting-edge techniques such as CRISPR-based mutagenesis and active learning with machine learning. The content addresses common challenges and optimization strategies, validates the approach through comparative analysis with rational design, and provides insights for researchers and drug development professionals on implementing these powerful protein engineering tools.

The Principles of Artificial Selection: How Directed Evolution Harnesses Darwinian Principles

Directed evolution stands as a transformative methodology in protein engineering and synthetic biology, deliberately mimicking the principles of natural selection within a controlled laboratory environment. This technical guide delineates the core conceptual framework of directed evolution, drawing direct parallels to natural evolutionary processes. It provides a comprehensive examination of contemporary methodologies, detailed experimental protocols, and advanced applications, with a specific emphasis on drug development and therapeutic discovery. The document is structured to serve researchers and scientists by synthesizing current literature and presenting quantitative data, essential reagent toolkits, and standardized workflows to facilitate the design and execution of directed evolution campaigns.

Natural evolution operates on three fundamental principles: 1) the introduction of genetic variation, 2) selection of variants based on heritable phenotypic differences, and 3) the amplification of selected variants through reproduction [1]. Over millennia, this process has yielded an immense diversity of life and optimized biological molecules for specific functions.

Directed evolution (DE) harnesses this powerful Darwinian algorithm, condensing it into a practical and rapid laboratory technique [2] [3]. It enables the "breeding" of biomolecules, such as enzymes and antibodies, guiding them toward user-defined goals that may not be favored in natural environments [4] [3]. The success of this approach, recognized by the 2018 Nobel Prize in Chemistry, has revolutionized fields from industrial biocatalysis to the development of therapeutic proteins [1] [4].

Table 1: Core Principles - Natural Evolution vs. Directed Evolution

| Principle | Natural Evolution | Directed Evolution |

|---|---|---|

| Variation | Random mutations and genetic recombination in genomes. | Artificial mutagenesis of a target gene (e.g., error-prone PCR, DNA shuffling). |

| Selection | Environmental pressures determine survival and reproduction. | Application of artificial selection or screening for a desired function. |

| Amplification | Reproduction of selected organisms. | PCR or cellular replication of selected gene variants. |

| Time Scale | Thousands to millions of years. | Weeks to months in the laboratory. |

| Goal | Adaptation to a changing environment. | Achievement of a researcher-defined biochemical or biophysical property. |

The Directed Evolution Workflow: A Detailed Methodology

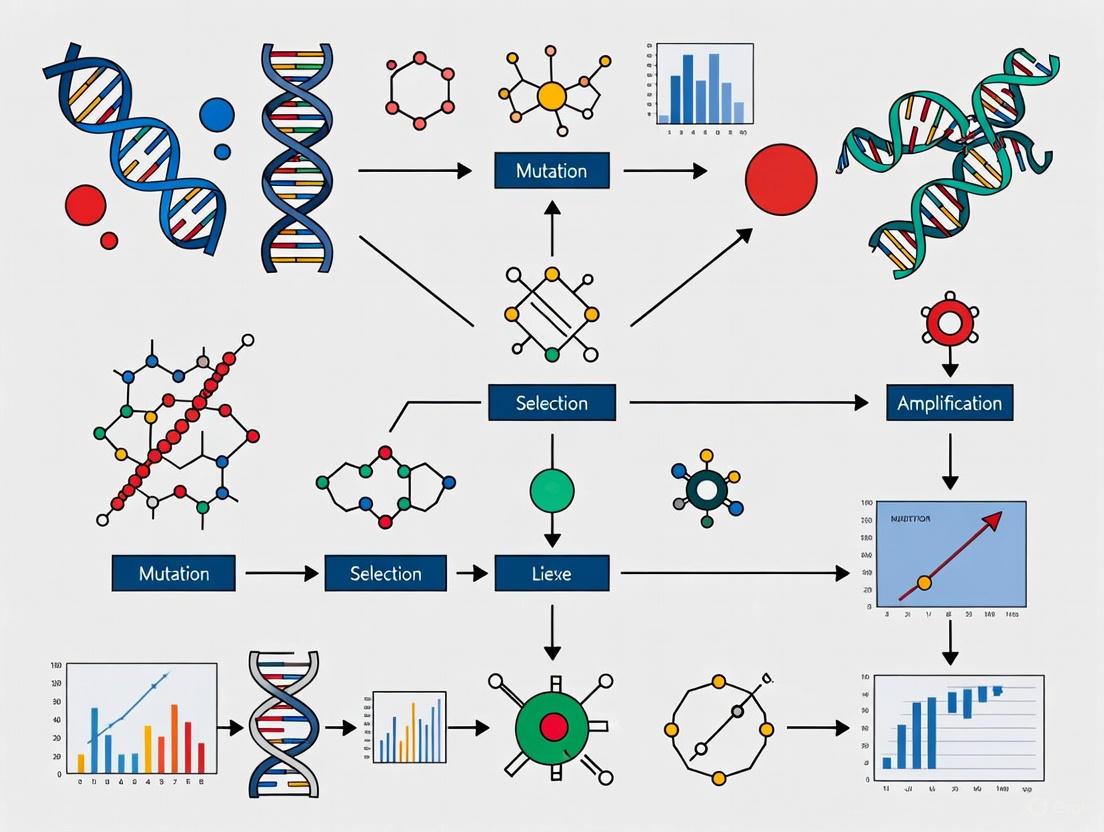

The standard directed evolution cycle is an iterative process comprising three critical stages: Diversification, Selection or Screening, and Amplification [1] [2] [4]. A generalized workflow is depicted in the diagram below.

Stage 1: Generating Diversity (Mutagenesis)

The first step involves creating a vast library of genetic variants from a starting gene. The methods chosen dictate the nature and quality of the library [2].

Table 2: Common Mutagenesis Methods in Directed Evolution

| Method | Principle | Key Advantage | Key Disadvantage |

|---|---|---|---|

| Error-Prone PCR [2] [4] | Uses reaction conditions that reduce the fidelity of DNA polymerase, introducing random point mutations. | Easy to perform; requires no prior structural knowledge. | Biased mutagenesis spectrum; limited sampling of sequence space. |

| DNA Shuffling [1] [2] | Fragments of homologous genes are reassembled randomly via PCR. | Recombines beneficial mutations from multiple parents. | Requires high sequence homology between parent genes. |

| Site-Saturation Mutagenesis [2] | All possible amino acid substitutions are introduced at one or more predefined residues. | Enables deep exploration of specific, functionally important positions. | Library size can become impractically large if many positions are targeted. |

| RAISE [2] | Random insertion and deletion of short sequences. | Mimics indels common in natural evolution. | Often introduces frameshifts, generating many non-functional proteins. |

Stage 2: Identifying Improved Variants (Selection and Screening)

This is the critical step that mimics natural selection. A high-throughput assay is essential to find the rare, beneficial variants within a large library [1].

- Selection: Couples the desired protein function directly to the survival or replication of the host organism (e.g., bacteria or phage). For example, an enzyme's activity can be linked to the production of an essential nutrient or the degradation of an antibiotic [1] [2]. Methods like Phage-Assisted Continuous Evolution (PACE) automate this process by linking protein function to the propagation of bacteriophages [5].

- Screening: Involves assaying individual clones from the library for the desired activity. While lower in throughput than selection, screening provides quantitative data on each variant [1]. Techniques include fluorescence-activated cell sorting (FACS), microtiter plate-based assays, and mass spectrometry [2] [4].

Stage 3: Amplification and Iteration

The genes encoding the top-performing variants are isolated and amplified, typically using PCR or by growing the host cells. This amplified genetic material then serves as the template for the next round of mutagenesis and selection, creating an iterative optimization loop [1] [4].

Advanced Platforms and Cutting-Edge Applications

Recent advancements have expanded the scope and efficiency of directed evolution. A prime example is the development of the GRAPE (Geminivirus Replicon-Assisted in Planta Directed Evolution) platform, which enables rapid evolution of genes directly in plant cells [6]. The workflow of this novel system is illustrated below.

Case Study: Evolving Bridge Recombinases for Gene Therapy

A compelling 2025 application of directed evolution aims to overcome limitations in CRISPR-based gene editing. The project focuses on evolving bridge recombinases—enzymes that use a bridge RNA (bRNA) to precisely insert large DNA fragments, such as healthy gene copies, without creating double-stranded breaks [5].

- Therapeutic Goal: Develop a universal gene replacement therapy for Alpha-1 Antitrypsin Deficiency (A1ATD) by inserting a healthy SERPINA1 gene into its natural genomic location [5].

- Directed Evolution Strategy: The team employs two sophisticated in vivo evolution systems:

- E. coli Orthogonal Replicon (EcORep): A system that uses a high-mutation-rate replicon within E. coli to continuously generate and enrich for recombinase variants with higher activity [5].

- Phage-Assisted Continuous Evolution (PACE): Links bridge recombinase activity directly to the propagation of bacteriophages, creating a continuous evolution system with minimal researcher intervention [5].

- Integration of Machine Learning: A computational screening method called deep mutational learning (DML) is used to analyze thousands of sequence variants and identify the most promising candidates for experimental testing, thereby accelerating the optimization process [5].

The Scientist's Toolkit: Essential Reagents and Platforms

Successful directed evolution experiments rely on a suite of specialized reagents and systems. The table below catalogs key solutions used in the field.

Table 3: Research Reagent Solutions for Directed Evolution

| Reagent / System Name | Function / Application | Key Feature |

|---|---|---|

| Kapa Biosystems Reagents [4] | PCR, qPCR, and NGS library preparation. | Utilizes novel DNA polymerases engineered via directed evolution for enhanced fidelity, processivity, and inhibitor resistance. |

| Error-Prone PCR Kits [2] [4] | Generation of random mutant libraries. | Pre-optimized buffer conditions to control mutation rate and spectrum. |

| Phage Display Systems [1] [2] | Selection of high-affinity binding proteins (e.g., antibodies, peptides). | Links the displayed protein to its genetic code, allowing for genotype-phenotype coupling. |

| PACE System [5] | Continuous evolution of proteins in bioreactors. | Automates the evolution cycle by linking protein function to phage replication, enabling evolution over hundreds of generations. |

| GRAPE Platform [6] | Directed evolution of genes directly in plants. | Uses geminivirus replicons to couple gene function to DNA replication, enabling rapid 4-day selection cycles in plant leaves. |

| OrthoRep System [2] [7] | Targeted in vivo mutagenesis in yeast. | An orthogonal DNA polymerase-plasmid pair that mutates only the target gene at a high rate within the host cell. |

Directed evolution has matured into an indispensable component of the modern molecular biology toolkit. By strategically applying the selective pressures of natural evolution in a controlled and accelerated laboratory setting, researchers can solve complex problems in protein engineering, metabolic engineering, and therapeutic development. The continued development of more efficient, scalable, and intelligent evolution platforms—such as GRAPE and PACE—coupled with machine learning, promises to further expand the boundaries of what is possible. This will undoubtedly lead to new breakthroughs in green chemistry, agriculture, and the creation of next-generation genetic medicines.

Directed evolution is one of the most powerful tools in protein engineering, functioning by harnessing the principles of natural evolution on a laboratory timescale [2]. This method enables the rapid selection of protein variants with properties that make them more suitable for specific applications, from industrial biocatalysts to therapeutic drugs [2] [8]. The process mimics the core mechanism of natural selection—variation, selection, and heredity—but under conditions directed by researchers to achieve predefined goals [1]. Since the pioneering in vitro evolution experiments performed by Sol Spiegelman in the 1960s, the field has diversified dramatically, incorporating a wide range of sophisticated techniques for genetic diversification and variant isolation [2] [9]. This whitepaper traces the historical foundation of directed evolution, detailing its core principles, methodologies, and its transformative impact on modern drug discovery and protein engineering.

Spiegelman's Monster: The Foundational Experiment

The origins of directed evolution can be traced back to a groundbreaking experiment in the 1960s by Sol Spiegelman and his team [9]. This experiment, often called "Spiegelman's Monster," demonstrated for the first time that biomolecules could be evolved in a test tube.

- Objective: To study RNA evolution under selective pressure for replication speed [9].

- Experimental System: The experiment used the replicase enzyme from the Qβ bacteriophage, which replicates the phage's RNA genome. Spiegelman introduced this enzyme into a test tube with the building blocks for RNA synthesis [9].

- Methodology: The initial RNA strand was replicated. After a period of replication, a sample of the resulting RNA population was transferred to a new tube with fresh reagents. This process was repeated iteratively across multiple generations [9].

- Outcome: Over time, the RNA molecules that could replicate the fastest were selectively favored. This resulted in the evolution of a highly optimized, minimal RNA genome of only 218 nucleotides—dubbed "Spiegelman's Monster"—that had lost its original biological function but was supremely adapted for rapid replication in the test tube environment [9].

This experiment established a critical precedent: Darwinian evolution could be reproduced and directed in a laboratory setting, setting the stage for the application of these principles to proteins.

Experimental Protocol: Spiegelman's In Vitro RNA Evolution

1. Reagent Setup:

- Qβ Replicase: Purified RNA-dependent RNA polymerase from the Qβ bacteriophage.

- NTPs Solution: Adenosine, Guanosine, Cytidine, and Uridine 5'-triphosphates (building blocks for RNA synthesis).

- Reaction Buffer: Provides optimal pH and ionic strength (e.g., Tris-HCl, MgCl₂, DTT).

- Template RNA: The native Qβ bacteriophage RNA genome.

2. Procedure:

- Step 1 - Initial Reaction: Combine the template RNA, Qβ replicase, NTPs, and reaction buffer in a test tube. Incubate at 37°C for a defined period to allow RNA replication.

- Step 2 - Serial Transfer: After incubation, take an aliquot of the reaction mixture and transfer it to a fresh tube containing all other components except the template RNA.

- Step 3 - Iteration: Repeat Step 2 multiple times, serially transferring an aliquot from one replication reaction to the next over dozens of generations.

- Step 4 - Analysis: Analyze the RNA products at different generational time points using gel electrophoresis to observe the reduction in genome size over time.

3. Key Outcome: The sequential transfers created a selective pressure where only the fastest-replicating RNA molecules could outcompete others. The final evolved RNA (the "Monster") was significantly shorter and replicated more efficiently than the starting template [9].

Core Principles: Mimicking Natural Selection in the Lab

Directed evolution in protein engineering formalizes Spiegelman's approach into a cyclical, iterative process with three defined steps, directly analogous to natural selection.

- Diversification (Creating Variation): A parent gene encoding the protein of interest is subjected to mutagenesis to create a vast library of genetic variants. This library represents the genetic diversity upon which selection can act [2] [1].

- Screening/Selection (Applying Selective Pressure): The library of protein variants is expressed and subjected to a high-throughput assay designed to identify individuals with improved or desired properties (e.g., higher stability, enzymatic activity, binding affinity) [2] [1].

- Amplification (Ensuring Heredity): The genes encoding the best-performing variants are isolated and amplified, typically using PCR or by replicating the host cells. This creates a new, enriched template for the next round of evolution [1].

The likelihood of success in a directed evolution experiment is directly related to the total library size, as screening more mutants increases the probability of finding a rare beneficial mutation [1].

Modern Methodologies in Directed Evolution

Library Generation Techniques

A variety of sophisticated methods have been developed to create genetic diversity, each with distinct advantages and applications.

Table 1: Key Methods for Genetic Diversification in Directed Evolution

| Method | Principle | Key Advantage | Key Disadvantage |

|---|---|---|---|

| Error-Prone PCR [2] | Uses PCR under conditions that introduce random point mutations across the whole gene. | Easy to perform; does not require prior knowledge of key positions. | Reduced sampling of mutagenesis space; mutagenesis bias. |

| DNA Shuffling [2] [1] | Fragments of homologous genes are reassembled randomly, creating chimeric proteins. | Recombines beneficial mutations from multiple parents. | Requires high sequence homology between parent genes. |

| Site-Saturation Mutagenesis [2] [1] | All possible amino acid substitutions are systematically introduced at one or more predefined positions. | In-depth exploration of chosen positions; enables smart library design. | Libraries can become very large; only a few positions are mutated. |

| RAISE [2] | Inserts random short insertions and deletions (indels) across the sequence. | Enables random indels, mimicking a broader range of natural mutations. | Can introduce frameshifts, leading to non-functional proteins. |

| SCRATCHY [2] | Combines two non-homologous genes through incremental truncation. | Allows recombination of sequences with no homology. | Gene length and reading frame are not always preserved. |

Screening and Selection Platforms

Isolating improved variants from a large library requires robust high-throughput methods.

Table 2: Prominent Screening and Selection Methodologies

| Method | Principle | Throughput | Best For |

|---|---|---|---|

| Phage/Yeast Display [2] [8] [1] | The protein variant is displayed on the surface of a phage or yeast cell, while its gene is inside. Binding to an immobilized target selects for high-affinity binders. | Very High (up to 1010) | Selecting antibodies, peptides, or other proteins based on binding affinity. |

| Fluorescence-Activated Cell Sorting (FACS) [2] | A fluorescent signal linked to protein function (e.g., enzymatic activity via a surrogate substrate) is used to sort single cells. | Very High (up to 108 variants/day) | Activities that can be coupled to a fluorescent readout. |

| Microtiter Plate Screening [2] | Variants are expressed in individual wells and assayed using colorimetric or fluorogenic assays. | Medium (103 - 104 variants) | Enzymatic assays where substrates or products have spectral properties. |

| mRNA Display [1] | The protein is covalently linked to its encoding mRNA molecule via puromycin, creating a direct genotype-phenotype link. | High (up to 1013 variants) | In vitro selection of peptides and proteins without cellular constraints. |

| In Vivo Selection [1] | Enzyme activity is coupled to cell survival, e.g., by enabling the synthesis of a vital metabolite or destroying a toxin. | Extremely High (limited only by transformation efficiency) | When protein function can be directly linked to host cell fitness. |

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Reagents and Materials for Directed Evolution Experiments

| Reagent / Material | Function in the Experiment |

|---|---|

| Parent Plasmid DNA | The vector containing the gene of interest to be evolved; the starting genetic template. |

| Oligonucleotide Primers | For PCR-based mutagenesis (error-prone PCR, saturation mutagenesis) and gene amplification. |

| Mutagenic Polymerase & Biased Nucleotides | Enzymes and nucleotide mixes used in error-prone PCR to introduce random mutations during amplification [2]. |

| E. coli or Yeast Strains | Workhorse host organisms for library transformation, protein expression, and in vivo selection. |

| Phage or Yeast Display System | A engineered virus (phage) or yeast strain designed to display protein variants on its surface for selection [1]. |

| Immobilized Target Antigen/Ligand | For display techniques; the target molecule is fixed to a solid support to capture binding variants [1]. |

| Fluorescent Substrate/Probe | A compound that yields a fluorescent product upon enzymatic reaction, enabling FACS-based screening [2]. |

| Microtiter Plates (96/384-well) | High-density plates for culturing and assaying thousands of individual variants in a screening campaign. |

| Next-Generation Sequencing (NGS) Platform | For deep analysis of library diversity and identifying enriched mutations after selection rounds. |

The Computational Revolution: AI and Biophysics in Protein Engineering

A significant modern shift is the integration of advanced computational tools with directed evolution, creating "semi-rational" approaches that accelerate the engineering cycle [10] [11].

Machine Learning and Protein Language Models (PLMs): Models like METL (Mutational Effect Transfer Learning) are pretrained on vast datasets of protein sequences and biophysical simulation data. They learn the fundamental relationships between protein sequence, structure, and energetics [10]. When fine-tuned on small sets of experimental data, these models can predict the effects of mutations with high accuracy, guiding the design of smarter, more focused libraries [10]. This is particularly powerful for generalizing from small training sets, a common challenge in protein engineering [10].

AlphaFold and Structure Prediction: The rise of highly accurate protein structure prediction tools, such as AlphaFold, has provided unprecedented structural insights [11]. Researchers can now use predicted structures to identify key regions for mutagenesis (e.g., active sites, binding interfaces) without requiring experimental structural determination, thereby informing more rational library design [11].

Applications in Drug Discovery and Therapeutic Development

Directed evolution has profoundly impacted biopharmaceuticals, enabling the development of highly specific and effective protein-based therapeutics [8] [12].

- Therapeutic Antibodies: Phage and yeast display technologies, for which George Smith and Gregory Winter received a Nobel Prize, are used to engineer monoclonal antibodies with ultra-high affinity (affinity maturation) and reduced immunogenicity [1] [12]. This has led to treatments for cancer, autoimmune diseases, and more.

- Enzyme Therapeutics: Enzymes can be evolved for enhanced stability in the bloodstream, altered substrate specificity, or reduced immunogenicity for use as therapeutic agents (e.g., enzyme replacement therapies) [8] [1].

- Optimized Biologics: Properties such as pharmacokinetics and pharmacodynamics can be tuned. For instance, site-specific mutagenesis in the Fc region of antibodies can increase circulation half-life by enhancing binding to the neonatal Fc receptor (FcRn) [12].

The journey from Spiegelman's Monster to today's AI-powered directed evolution platforms illustrates a powerful narrative in biotechnology. The core principle remains unchanged: applying selective pressure to populations of evolving molecules to solve complex problems. However, the methodologies have evolved from simple serial transfers of RNA to an integrated, sophisticated toolkit that combines the exploratory power of random mutagenesis with the predictive power of computational models. As these tools continue to advance, particularly with the integration of biophysical models and machine learning, the capacity to engineer novel proteins for therapeutics, industrial catalysis, and synthetic biology will expand further, solidifying directed evolution's role as a cornerstone of modern bioengineering.

Directed evolution serves as a powerful laboratory analogue of natural selection, accelerating the process of adaptation to evolve biomolecules with novel functions. This technical guide deconstructs the core cycle of directed evolution—genetic diversification, phenotype screening, and gene amplification—within the context of a broader thesis on how this methodology mimics natural selection in vitro. We provide a comprehensive overview of modern platforms, detailed experimental protocols, and a curated toolkit for researchers and drug development professionals, synthesizing the most recent advancements in the field.

Natural selection operates on heritable genetic variation that influences an organism's fitness. Directed evolution meticulously replicates this process in a controlled laboratory setting through iterative rounds of: 1) Genetic Diversification, which introduces mutations to create vast variant libraries; 2) Phenotype Screening, where high-throughput assays select for desired functional traits; and 3) Gene Amplification, which physically enriches the genetic material of superior performers for the next cycle [13] [14]. This recursive biomolecular evolution has become an indispensable tool for generating proteins, enzymes, and antibodies with enhanced properties for therapeutic and industrial applications [13].

The field has recently seen the development of platforms that integrate the core cycle with unprecedented speed and scale. The table below summarizes key quantitative metrics for two cutting-edge systems: GRAPE for plant cells and T7-ORACLE for bacterial systems.

Table 1: Comparison of Modern Directed Evolution Platforms

| Platform Feature | GRAPE (Geminivirus Replicon-Assisted in Planta Directed Evolution) | T7-ORACLE (Orthogonal T7 Replisome for Continuous Hypermutation) |

|---|---|---|

| Host System | Plant cells (Nicotiana benthamiana) | Escherichia coli |

| Core Mechanism | Geminivirus rolling circle replication (RCR) linked to gene function [15] | Orthogonal, error-prone T7 DNA polymerase [13] |

| Mutation Rate | Not explicitly quantified | 100,000 times higher than normal [13] |

| Cycle Duration | ~4 days per full selection cycle on a single leaf [15] | ~20 minutes (with each bacterial cell division) [13] |

| Key Demonstration | Evolution of NLR immune receptors (NRC3, Pikm-1) to recognize new pathogen effectors [15] | Evolution of TEM-1 β-lactamase to resist antibiotic levels 5,000x higher than wild-type [13] |

| Primary Advantage | Evolves plant-specific phenotypes directly in plant cells [15] | Ultra-fast, continuous evolution in a scalable, standard bacterial workflow [13] |

Detailed Experimental Protocols

Protocol for Optical Pooled Screening with In Situ Sequencing

This protocol enables high-content image-based screening of pooled genetic libraries by linking cell phenotype to genotype via in situ sequencing [16].

Day 1: Library Delivery and Cell Culture

- Lentiviral Transduction: Transduce a population of adherent cells (e.g., a cancer cell line) with a pooled lentiviral CRISPR library (e.g., CROP-seq vector) at a low Multiplicity of Infection (MOI) to ensure most cells receive a single viral integration.

- Selection and Expansion: Culture cells under appropriate selection (e.g., puromycin) for 3-5 days to eliminate untransduced cells and expand the library population.

- Phenotypic Assay: Perform the desired phenotypic assay, which may involve live-cell imaging, immunostaining, or response to environmental stimuli. Subsequently, fix cells with a paraformaldehyde-based fixative. A key optimization is to add glutaraldehyde post-fixation after reverse transcription of cDNA to improve sequencing read quality [16].

Day 2-3: In Situ Amplification

- Reverse Transcription: Generate cDNA from the barcode or sgRNA mRNA within the fixed cells.

- Padlock Probe Hybridization and Gap-Filling: Add padlock probes that are complementary to the target cDNA sequence. Carefully titrate the dNTP concentration in the gap-fill reaction to maximize efficiency, which improves the number and brightness of sequencing reads [16].

- Rolling Circle Amplification (RCA): Amplify the circularized padlock probes via RCA to create large, detectable DNA amplicons ("spots") at the site of the original mRNA.

Day 4: In Situ Sequencing and Imaging (~1.5 hours per cycle)

- Sequencing by Synthesis (SBS): Perform fluorescent in situ SBS. Each cycle involves the addition of fluorescently-labeled nucleotides, imaging, and cleavage.

- High-Throughput Imaging: Use a high-content microscope with 10x magnification to image the sequencing spots across multiple cycles. The provided image analysis pipeline, designed for cloud or cluster computing, then aligns phenotypic data to perturbation identities for each cell [16].

Protocol for GRAPE in Plant Cells

This platform enables directed evolution directly in plant cells by exploiting geminivirus replication [15].

- Library Construction: Mutagenize the gene of interest (GOI) in vitro and clone the variant library into an artificial geminivirus replicon vector.

- Plant Transformation: Deliver the replicon library into Nicotiana benthamiana leaves via Agrobacterium-mediated transfection.

- Selection via Replication Coupling: Inside the plant cells, the activity of the GOI variant is functionally coupled to the replication of the geminivirus replicon. Variants that promote replication are selectively amplified, while those that inhibit it are depleted.

- Harvest and Analysis: Recover the enriched replicon DNA from the plant tissue after a 4-day selection cycle. The DNA can be sequenced directly to identify beneficial mutations or re-cloned for subsequent iterative cycles [15].

Visualizing the Directed Evolution Workflow

The following diagram illustrates the core iterative cycle of directed evolution and the specific mechanisms of the GRAPE and T7-ORACLE platforms.

Diagram 1: Core cycle and platform mechanisms.

The Scientist's Toolkit: Essential Research Reagents

Successful execution of directed evolution campaigns relies on a suite of specialized reagents and tools. The following table details key components.

Table 2: Essential Research Reagent Solutions for Directed Evolution

| Reagent / Tool | Function / Description | Example Application |

|---|---|---|

| Barcoded Lentiviral Libraries | Programmable genetic perturbation vectors (e.g., CRISPR) that allow for pooled screening and genotype tracking via a unique barcode [16]. | Delivering a diverse set of genetic perturbations to a pooled population of cells for optical pooled screening. |

| Padlock Probes & In Situ Sequencing Kits | Reagents for amplifying and reading out nucleotide barcodes directly within fixed cells, linking genotype to cellular phenotype [16]. | Identifying which genetic perturbation is present in each cell during an image-based screen. |

| Artificial Geminivirus Replicon | A plant virus-based vector that undergoes rolling circle replication (RCR) in plant cells, used to link gene function to DNA amplification [15]. | Serving as the platform for variant library delivery and selection in the GRAPE system. |

| Orthogonal T7 Replisome | A synthetic DNA replication system derived from bacteriophage T7, engineered to be highly error-prone, which operates independently of the host genome [13]. | Driving continuous and rapid mutation of a target gene in E. coli without damaging the host cell's DNA. |

| Fluorescent Protein Reporters | Proteins whose fluorescence properties (intensity, color) can be quantitatively measured, serving as a selectable phenotype [14]. | Providing a high-throughput screenable output in evolution experiments, such as in tests of Ohno's hypothesis. |

The deliberate deconstruction of directed evolution into its fundamental phases—genetic diversification, phenotype screening, and gene amplification—reveals a powerful framework for mimicking natural selection in the laboratory. The advent of integrated platforms like GRAPE and T7-ORACLE, which dramatically accelerate this cycle, underscores the field's trajectory toward higher throughput, greater scalability, and more physiologically relevant contexts. By providing detailed protocols and a catalog of essential tools, this guide aims to empower researchers and drug developers to harness these methodologies, accelerating the discovery of novel proteins and therapeutic agents.

Protein engineering is a powerful biotechnological process that focuses on creating new enzymes or proteins and improving the functions of existing ones by manipulating their natural macromolecular architecture [17]. Within this field, two primary philosophies have emerged: directed evolution, which mimics natural selection in the laboratory, and rational design, which employs computational and structure-based approaches for precise modifications [1] [17]. These methodologies represent fundamentally different approaches to navigating the vast sequence space of proteins—directed evolution empirically explores functional variants through iterative selection, while rational design attempts to predict them through knowledge-driven computation.

The core distinction lies in their treatment of natural evolutionary principles. Directed evolution explicitly harnesses Darwinian principles of mutation, selection, and amplification in a controlled setting, steering proteins toward user-defined goals without requiring mechanistic understanding [1] [18]. In contrast, rational design adopts a more Lamarckian perspective, using intelligent design and prior knowledge to specify beneficial mutations [17]. This whitepaper examines these contrasting engineering philosophies, their methodological frameworks, experimental protocols, and emerging synergisms, providing researchers and drug development professionals with a comprehensive technical comparison.

Directed Evolution: Mimicking Natural Selection in the Laboratory

Philosophical and Mechanistic Principles

Directed evolution (DE) operates on the fundamental principle that natural evolutionary processes—variation, selection, and heredity—can be replicated and accelerated in a laboratory setting to achieve specific functional objectives [1]. This approach requires no prior knowledge of protein structure or mechanism, instead relying on the power of high-throughput screening to identify beneficial mutations from large variant libraries [1] [18]. The process mimics millions of years of natural evolution but condenses it into a practical timeframe through iterative rounds of genetic diversification and selection [2].

The theoretical foundation rests on three essential requirements, mirroring natural evolution: (1) variation between replicators, (2) fitness differences upon which selection acts, and (3) heritability of favorable variations [1]. In directed evolution, a single gene is evolved through iterative rounds of mutagenesis (creating a library of variants), selection or screening (isolating members with desired function), and amplification (generating a template for the next round) [1]. The likelihood of success is directly related to total library size, as evaluating more mutants increases the chances of finding one with improved properties [1].

Core Methodologies and Experimental Protocols

The directed evolution workflow follows a consistent iterative protocol, though specific techniques vary. A standard experimental cycle proceeds as follows:

Library Generation via Mutagenesis: Create genetic diversity through:

- Error-prone PCR: Random point mutations are introduced throughout the gene by adjusting PCR conditions to promote nucleotide misincorporation [1] [2].

- DNA Shuffling: Genes from homologous enzymes or beneficial mutants are fragmented with DNase I, then reassembled in a primer-free PCR-like reaction that recombines fragments into novel chimeric sequences [18].

- Site-saturation Mutagenesis: All possible amino acid substitutions are introduced at specific targeted positions [2].

Library Expression and Phenotypic Interrogation: Identify improved variants through:

- Screening: Each variant is individually expressed and assayed, often using colorimetric or fluorogenic substrates, then ranked by performance [1] [2]. This provides detailed information on each variant but typically has lower throughput than selection.

- Selection: Protein function is directly coupled to host survival or binding affinity, enabling extremely high-throughput isolation of functional variants from non-functional ones [1] [2].

Template Amplification: Genes from the best-performing variants are isolated and amplified to serve as templates for the next round of diversification [1].

This cycle repeats until the desired level of improvement is attained. The process can be performed in vivo (in living cells) or in vitro (in cell-free systems), with the latter often enabling larger library sizes due to bypassing cellular transformation bottlenecks [1].

Research Reagent Solutions Toolkit

Table 1: Essential Research Reagents for Directed Evolution

| Reagent/Category | Specific Examples | Function in Experimental Workflow |

|---|---|---|

| Mutagenesis Reagents | Error-prone PCR kits, DNase I, DNA polymerases | Introduces genetic diversity into the target gene to create variant libraries [1] [2]. |

| Cloning & Expression Systems | Expression vectors, competent cells (E. coli, yeast) | Enables propagation and expression of genetic variants to link genotype with phenotype [1]. |

| Screening Assays | Fluorogenic/colorimetric substrates, FACS | Identifies and isolates variants with improved properties from the library [1] [2]. |

| Selection Systems | Phage display, metabolic selection | Couples protein function to survival or binding for high-throughput variant isolation [1] [2]. |

Rational Protein Design: A Knowledge-Driven Approach

Philosophical and Mechanistic Principles

Rational protein design operates on the principle that detailed knowledge of protein structure, function, and mechanism enables precise, computational prediction of beneficial mutations [17]. This approach requires in-depth structural information (from X-ray crystallography or NMR) and understanding of catalytic mechanisms to make specific changes via site-directed mutagenesis [1] [17]. Unlike directed evolution's exploratory approach, rational design follows a deterministic model where researchers hypothesize specific structure-function relationships and test them through targeted modifications.

The core strength of rational design lies in its precision and efficiency—when successful, it can achieve significant functional improvements without requiring the screening of large libraries [17]. However, a significant limitation is the difficulty in accurately predicting sequence-structure-function relationships, particularly at the single amino acid level, as the structural and dynamic consequences of mutations remain challenging to model [17] [2]. This approach traditionally required extensive structural knowledge, though artificial intelligence has substantially improved protein structure prediction capabilities in recent years [17].

Core Methodologies and Experimental Protocols

Rational design employs a more linear workflow compared to the iterative cycling of directed evolution:

Structural and Sequence Analysis:

- Obtain high-resolution 3D structure of the target protein through crystallography, NMR, or computational prediction (AlphaFold, RoseTTAFold) [17].

- Identify key functional regions (active site, binding interfaces, flexible loops) and residues through structural analysis and multiple sequence alignments [19].

Computational Modeling and In Silico Design:

- Use molecular modeling software (Rosetta, FoldX) to predict the energetic effects of proposed mutations on protein stability and function [19] [20].

- Apply quantum mechanics/molecular mechanics (QM/MM) calculations to model catalytic mechanisms and transition states [19].

- Generate a limited set of candidate variants predicted to improve the target property.

Experimental Validation:

- Construct top-predicted variants using site-directed mutagenesis.

- Express and purify protein variants.

- Characterize biochemical and biophysical properties to test design hypotheses.

Recent advances incorporate machine learning and generative models to expand the capabilities of rational design. For instance, the Omni-Directional Multipoint Mutagenesis (ODM) pipeline uses a fine-tuned protein BERT model to generate and rank mutant sequences, enabling multipoint mutation design with high accuracy in recovering functional regions [21]. Another emerging approach uses deep generative models to learn "nature's blueprint" for protein design, creating synthetic proteins with elevated or novel properties through a computational-experimental feedback loop [22].

Comparative Analysis: Performance and Applications

Quantitative Comparison of Engineering Outcomes

Table 2: Comparative Analysis of Directed Evolution vs. Rational Design

| Parameter | Directed Evolution | Rational Design |

|---|---|---|

| Philosophical Basis | Darwinian/exploratory [1] [18] | Lamarckian/knowledge-driven [17] |

| Knowledge Requirements | Low (no structural/mechanistic knowledge needed) [1] | High (requires detailed structural/functional knowledge) [1] [17] |

| Library Size | Very large (10³-10¹⁵ variants) [1] | Small (often <10 variants) [19] [17] |

| Success Rate | High with adequate screening [1] | Variable; depends on prediction accuracy [17] [20] |

| Stabilization Achieved | ~3.1 ± 1.9 kcal/mol (location-agnostic) [20] | ~2.0 ± 1.4 kcal/mol (structure-based) [20] |

| Primary Limitations | Requires high-throughput assay; can get stuck in local optima [1] [23] | Difficult to predict mutation effects; limited by current knowledge [1] [17] |

| Ideal Applications | Improving stability in harsh conditions, altering substrate specificity, optimizing binding affinity [1] [18] | Engineering catalytic machinery, designing protein-protein interactions, creating de novo functions [19] [17] |

A side-by-side comparison of stabilization strategies for α/β-hydrolase fold enzymes reveals that location-agnostic directed evolution approaches (e.g., error-prone PCR) yielded the highest stabilization increases (average 3.1 ± 1.9 kcal/mol), followed by structure-based approaches (2.0 ± 1.4 kcal/mol) and sequence-based consensus approaches (1.2 ± 0.5 kcal/mol) [20]. This performance ranking held even when normalizing for the number of substitutions, suggesting that empirical exploration can identify cooperative stabilizing effects that are difficult to predict computationally [20].

Application-Specific Considerations

The choice between directed evolution and rational design often depends on the specific engineering goal and available resources. Directed evolution has proven particularly successful for: improving protein stability under harsh industrial conditions (e.g., thermostability, solvent tolerance) [1] [18]; altering substrate specificity [1]; and enhancing binding affinity of therapeutic antibodies [1]. Notable successes include the evolution of subtilisin E for 256-fold higher activity in dimethylformamide [18] and β-lactamase variants conferring 32,000-fold increased antibiotic resistance [18].

Rational design excels when precise structural modifications are required, such as: engineering catalytic residues to alter reaction specificity [19]; designing protein-protein interactions; and de novo protein design [19] [17]. Successes include the computational design of a stereoselective Diels-Alderase [19] and the creation of functional models of nitric oxide reductase in myoglobin [19].

Emerging Paradigms: Integrated and Machine Learning-Enhanced Approaches

Semi-Rational Design and Machine Learning Integration

The historical dichotomy between directed evolution and rational design is increasingly bridged by hybrid approaches that leverage the strengths of both philosophies. Semi-rational design utilizes computational and bioinformatic analysis to identify promising target regions, then creates focused libraries that are much smaller than traditional directed evolution libraries but enriched in functional variants [19] [17]. These approaches use evolutionary information from multiple sequence alignments, phylogenetic analysis, and structural constraints to preselect target sites and limited amino acid diversity [19].

Machine learning has dramatically enhanced both directed evolution and rational design. Active Learning-assisted Directed Evolution (ALDE) employs iterative machine learning with uncertainty quantification to explore protein sequence space more efficiently than traditional DE, particularly for challenging landscapes with epistatic interactions [23]. In one application to optimize a non-native cyclopropanation reaction, ALDE improved product yield from 12% to 93% in just three rounds while exploring only ~0.01% of the design space [23]. Similarly, generative models like ProteinBERT are being used to create omni-directional mutagenesis pipelines that can generate and rank thousands of mutant sequences in silico before experimental testing [21].

Autonomous Protein Engineering Systems

Fully integrated platforms are emerging that combine AI-driven design with automated experimental workflows. The Self-driving Autonomous Machines for Protein Landscape Exploration (SAMPLE) platform uses AI programs to learn protein sequence-function relationships and design new proteins, with a robotic system automatically performing experiments to test designs and provide feedback [17]. These systems represent the cutting edge of protein engineering, potentially accelerating the design-build-test cycle beyond human capabilities.

Directed evolution and rational design represent complementary philosophies for protein engineering, each with distinct strengths, limitations, and ideal applications. Directed evolution excels through its empirical exploration of sequence space and ability to identify beneficial mutations without requiring mechanistic understanding, directly mimicking natural selection principles in a accelerated timeframe. Rational design offers precision and efficiency when sufficient structural and mechanistic knowledge exists to make informed predictions. The future of protein engineering lies not in choosing between these approaches, but in developing integrated strategies that leverage the exploratory power of directed evolution with the predictive capabilities of rational design, increasingly enhanced by machine learning and automation. As these methodologies continue to converge and advance, they promise to unlock new possibilities in therapeutic development, industrial biocatalysis, and fundamental biological research.

The Protein Engineer's Toolkit: Methods and Real-World Applications in Biomedicine

Directed evolution harnesses the principles of Darwinian evolution—iterative cycles of genetic diversification and selection—within a laboratory setting to tailor proteins for specific, human-defined applications. [24] This process compresses geological timescales into weeks or months by intentionally accelerating the rate of mutation and applying a user-defined selection pressure. [24] The profound impact of this approach was formally recognized with the 2018 Nobel Prize in Chemistry awarded to Frances H. Arnold for her pioneering work. [24]

A key strategic advantage of directed evolution lies in its capacity to deliver robust solutions without requiring detailed a priori knowledge of a protein's three-dimensional structure or its catalytic mechanism. [24] This allows it to bypass the inherent limitations of rational design. The process functions as a two-part iterative engine: first, the generation of genetic diversity to create a library of protein variants, and second, the application of a high-throughput screen or selection to identify the rare improved variants. [24] The success of any directed evolution campaign hinges on the quality of the initial library and the power of the screening method. [24]

This technical guide provides a detailed examination of the core techniques for generating genetic diversity, with a focus on established methods like error-prone PCR, DNA shuffling, and saturation mutagenesis, and explores how modern CRISPR-based tools are further enhancing these capabilities.

Foundational Techniques for Library Generation

The creation of a diverse library of gene variants is the foundational step that defines the boundaries of the explorable sequence space. [24] Several methods have been developed, each with distinct advantages, limitations, and inherent biases that shape evolutionary trajectories.

Error-Prone PCR (epPCR)

Error-prone PCR (epPCR) is a widely utilized biological mutagenesis technique for generating DNA mutations during protein evolution. [25] This method exploits the inherent error-prone nature of Taq DNA polymerase in the presence of manganese ions (Mn2+), which reduces the enzyme's fidelity and leads to base mutations during PCR amplification. [25]

- Mechanism: The technique is a modified PCR that intentionally reduces fidelity. This is typically achieved by using a polymerase that lacks proofreading activity, creating an imbalance in dNTP concentrations, and adding manganese ions (Mn2+). [24] The concentration of Mn2+ can be tuned to achieve a mutation rate of 1–5 base mutations per kilobase. [24]

- Inherent Bias: epPCR is not truly random. DNA polymerases have an intrinsic bias that favors transition mutations (purine-to-purine or pyrimidine-to-pyrimidine) over transversion mutations (purine-to-pyrimidine or vice-versa). Due to the degeneracy of the genetic code, this means epPCR can only access an average of 5–6 of the 19 possible alternative amino acids at any given position. [24]

- Limitations: A significant drawback is that relatively low mutation efficiency often requires multiple rounds of mutagenesis to achieve a diverse array of mutation types, which can be time-consuming. [25] Furthermore, excessive Mn2+ can significantly impede PCR amplification efficiency, resulting in low yields. [25]

DNA Shuffling

DNA Shuffling, also known as "sexual PCR," was pioneered by Willem P. C. Stemmer to overcome the limitations of point mutagenesis and more closely mimic the power of natural sexual recombination. [24] This technique allows for the combination of beneficial mutations from multiple parent genes into a single, improved offspring. [24]

- Mechanism: In this method, one or more related parent genes are randomly fragmented using DNaseI. These small fragments are then reassembled in a PCR reaction without primers. During annealing, homologous fragments from different parental templates can prime each other, resulting in crossovers that shuffle genetic information. [24]

- Family Shuffling: An extension of this concept uses homologous genes from different species. By drawing from nature's standing variation, family shuffling provides access to a broader and more functionally relevant region of sequence space. [24]

- Limitations: The requirement for sequence homology is a primary limitation. Parental genes typically need at least 70–75% sequence identity for efficient reassembly. Crossovers also tend to occur more frequently in regions of high sequence identity. [24]

Saturation Mutagenesis

As a semi-rational alternative to random approaches, saturation mutagenesis targets specific regions or residues within a protein. [24] This is often employed when structural or functional information is available, allowing for the creation of smaller, higher-quality libraries. [24]

- Mechanism: This technique is used to comprehensively explore the functional importance of one or a few amino acid positions. At the target codon, a library is created that encodes for all 19 other possible amino acids. [24] This allows for a deep, unbiased interrogation of a residue's role. [24]

- Application: This approach is highly effective for targeting "hotspots" identified from a prior round of random mutagenesis or predicted from a structural model. It dramatically increases the efficiency of directed evolution by reducing library size and increasing the frequency of beneficial variants. [24]

Table 1: Quantitative Comparison of Library Generation Techniques

| Technique | Mutational Diversity | Typical Mutation Rate | Key Advantage | Primary Limitation |

|---|---|---|---|---|

| Error-Prone PCR (epPCR) | Primarily transition mutations (CT, AG) [24] | 1-5 mutations/kb [24] | Simple, applicable to any gene | Limited amino acid substitution space (5.6 on average) [24] |

| DNA Shuffling | Recombination of existing mutations; crossovers | N/A (depends on parents) | Combines beneficial mutations from multiple genes | Requires high sequence homology (>70-75%) [24] |

| Saturation Mutagenesis | All 19 amino acids at targeted positions [24] | Focused on specific codons | Comprehensive exploration of specific sites | Requires prior knowledge to identify key residues |

| DRM (Deaminase-driven) | C-to-T, G-to-A, A-to-G, T-to-C [25] | 14.6x higher frequency than epPCR [25] | High mutation frequency in a single round | Limited to specific transition mutations |

| EvolvR | All four nucleotides (all 12 possible substitutions) [26] | Tunable window of at least 40 bp [26] | Access to transversion mutations in genomic DNA | Performance varies with gRNA sequence [26] |

Advanced and Emerging Techniques

Recent research has focused on developing novel methods that overcome the limitations of traditional techniques, offering higher mutation frequencies, broader mutational diversity, and the ability to operate directly on chromosomal DNA.

Deaminase-Driven Random Mutation (DRM)

To address the low mutation efficiency of epPCR, researchers have developed a novel DNA mutagenesis strategy termed deaminase-driven random mutation (DRM). [25]

- Mechanism: DRM utilizes an engineered cytidine deaminase (A3A-RL) and an engineered adenosine deaminase (ABE8e) to introduce a broad spectrum of mutations, including C-to-T, G-to-A, A-to-G, and T-to-C, in both DNA strands. [25]

- Performance: This approach enables the generation of a multitude of DNA mutation types within a single round. Results show that the DRM strategy exhibits a 14.6-fold higher DNA mutation frequency and produces a 27.7-fold greater diversity of mutation types compared to epPCR. [25]

CRISPR-Guided Diversification

The advent of CRISPR technology has significantly advanced the field by enabling precise and efficient gene targeting directly on chromosomes. [27] [28] CRISPR-based methods can be categorized into two distinct mechanistic paradigms: double-strand break (DSB)-dependent and DSB-independent systems. [27]

- DSB-Dependent (CRISPR-HDR): This method uses Cas9 to create a DSB at a target locus, which is then repaired using a library of mutagenic donor DNA templates via Homology-Directed Repair (HDR). [28] This allows for the integration of user-defined variant libraries into the genome. [28]

- DSB-Independent (Base Editing & EvolvR): These systems fuse a catalytically impaired Cas9 (dCas9 or nCas9) to mutagenic proteins. For example, fusing a nickase to an error-prone DNA polymerase creates a tool like EvolvR. [26] Unlike deaminases, which are limited to transition mutations, EvolvR can generate both transition and transversion mutations throughout a mutation window of at least 40 base pairs, enabling access to a much wider diversity of missense mutations. [26]

Figure 1: Workflow of Library Generation Techniques. Traditional methods (green) are complemented by modern CRISPR-based and enzymatic methods (red) to create diverse variant libraries (blue).

Experimental Protocols

Standard Error-Prone PCR Protocol

A typical epPCR protocol aims for a mutation rate of 1–5 mutations per kilobase. [24]

- Template: 1 ng of plasmid DNA containing the gene of interest.

- Primers: Forward and reverse primers (10 µM each) flanking the gene.

- Reaction Mix:

- 25 µL of 2x high-fidelity master mix (lacking proofreading).

- Imbalanced dNTPs (e.g., higher dCTP and dTTP).

- 0.5 mM MnCl₂ (concentration can be adjusted to tune error rate). [24]

- Add primers and template, then bring to 50 µL with nuclease-free water.

- PCR Cycling Conditions:

- Initial Denaturation: 95°C for 3 min.

- 30 cycles of:

- Denaturation: 95°C for 30 sec.

- Annealing: 65°C for 30 sec.

- Extension: 68°C for 30 sec/kb.

- Final Extension: 68°C for 10 min. [25]

- Post-Processing: Analyze the PCR product by agarose gel electrophoresis and purify using a gel extraction kit. [25]

DNA Shuffling Protocol

- Fragmentation: Digest 1-2 µg of the parent DNA(s) with DNase I in the presence of Mn2+ to generate random fragments of 100-300 bp. [24]

- Purification: Gel-purify the fragments of the desired size.

- Reassembly PCR:

- Combine fragments without added primers.

- Use a thermocycler program with cycles of denaturation (95°C for 1 min), annealing (55-60°C for 1 min), and extension (72°C for 1-2 min) to allow fragments to prime each other. [24]

- Run for 40-60 cycles.

- Amplification: Use the reassembled product as a template for a standard PCR with outer primers to amplify the full-length, shuffled genes. [24]

Table 2: Research Reagent Solutions for Library Generation

| Reagent / Tool | Function / Description | Example Use Case |

|---|---|---|

| Taq DNA Polymerase | Low-fidelity polymerase used for error-prone PCR. | Introducing random point mutations across a gene in the presence of Mn²⁺. [25] [24] |

| KAPA HiFi DNA Polymerase | An engineered, high-fidelity polymerase developed via directed evolution. [29] | Amplifying mutant libraries with high accuracy and yield for NGS library preparation. [29] |

| DNase I | Enzyme that cleaves DNA to generate random fragments. | Creating small DNA fragments for the initial step of DNA shuffling. [24] |

| A3A-RL & ABE8e Deaminases | Engineered cytidine and adenosine deaminases for in vitro mutagenesis. | DRM strategy for high-frequency C-to-T and A-to-G mutagenesis. [25] |

| nCas9-PolI3M/5M (EvolvR) | Fusion protein of a Cas9 nickase and an error-prone DNA polymerase. | Targeted in vivo diversification of genomic loci with all 12 possible substitutions. [26] |

| sgRNA Library | Library of single-guide RNAs targeting different genomic sites. | Directing CRISPR-based diversifiers (like EvolvR or base editors) to multiple locations in a gene or genome. [26] [28] |

The toolbox for generating diversity in directed evolution has expanded significantly from its foundational methods. While error-prone PCR, DNA shuffling, and saturation mutagenesis remain critically important, they each possess inherent limitations in mutational scope and efficiency. The field is now being transformed by new technologies that more comprehensively mimic natural mutation. Techniques like DRM offer dramatically higher mutation frequencies, while CRISPR-guided systems like EvolvR break the constraint of transition-only mutations by enabling all 12 possible base substitutions directly in the chromosome. [25] [26] This progression towards more powerful, targeted, and diverse library generation methods continues to accelerate the exploration of protein fitness landscapes, enabling researchers to more efficiently discover novel enzymes, therapeutics, and biomaterials.

Directed evolution (DE) is a powerful protein engineering method that mimics the process of natural selection in a laboratory setting to steer proteins, nucleic acids, or entire organisms toward a user-defined goal [1] [18]. It operates on the fundamental principles of evolution: variation, selection, and heredity [1] [30]. In nature, random genetic mutations create diversity in a population. Environmental pressures then select for individuals with beneficial traits that enhance survival and reproduction, ensuring these advantageous traits are passed to the next generation.

The laboratory process of directed evolution mirrors this natural cycle through iterative rounds of:

- Diversification: Creating a library of genetic variants.

- Selection or Screening: Identifying variants with desired properties.

- Amplification: Replicating the best variants to serve as templates for the next round [1] [18].

The critical step that determines the success of any directed evolution campaign is the ability to efficiently identify the rare, improved "winners" from a vast pool of variants. This is where high-throughput screening and selection methods become indispensable [1] [31]. This guide provides an in-depth technical examination of the core high-throughput methods—including Phage Display, Fluorescence-Activated Cell Sorting (FACS), and other emerging techniques—used to isolate these winners, thereby accelerating the engineering of biological molecules for research, industrial, and therapeutic applications.

High-Throughput Screening and Selection: Core Principles

In directed evolution, a high-throughput assay is vital for finding the rare variants with beneficial mutations amid a library where the majority of mutations are deleterious [1]. The terms "screening" and "selection" refer to distinct, yet complementary, approaches for this identification.

- Screening involves individually assaying each variant from a library to quantitatively measure its activity (e.g., using a colorimetric or fluorogenic reaction) [1]. The variants are then ranked based on their performance, and the experimenter decides which ones to proceed with. Screening provides detailed information on every tested variant, allowing for the characterization of the activity distribution across the library [1].

- Selection directly couples the desired protein function to the survival or physical isolation of the host organism or the gene itself. For example, an enzyme's activity can be made essential for cell survival by enabling it to synthesize a vital metabolite or destroy a toxin [1]. Selection methods are typically limited only by the transformation efficiency of cells, allowing for the evaluation of extremely large libraries (up to 10^15 variants in in vitro systems) [1]. They are less expensive and labor-intensive than screening but can be more challenging to engineer and may provide less granular data on library performance [1].

A key enabler for both approaches, especially screening, is High-Throughput Screening (HTS) technology. HTS is a method for scientific discovery that uses robotics, data processing software, liquid handling devices, and sensitive detectors to quickly conduct millions of chemical, genetic, or pharmacological tests [32]. The process is built around microtiter plates (with 96, 384, 1536, or more wells) and integrated robotic systems that automate the plate handling, reagent addition, incubation, and final readout steps [32].

The Scientist's Toolkit: Essential Reagents and Materials for HTS

Table 1: Key research reagent solutions and materials used in high-throughput screening and selection workflows.

| Item | Function/Description | Application Example |

|---|---|---|

| Microtiter Plates | Disposable plastic plates with a grid of wells (96, 384, 1536); the primary labware for HTS. | Used in all HTS phases for assay execution [32]. |

| Liquid Handling Robots | Automated systems for precise transfer of nanoliter to microliter volumes of liquids (samples, reagents). | Assay plate preparation from stock plates; reagent addition [32]. |

| Cell Sorters (e.g., FACS) | Instruments that automatically sort cells or other microscopic particles based on specific fluorescent labels. | Isolation of cells displaying binding antibodies or enzymes from a library [33]. |

| Phage Display Libraries | Libraries of bacteriophages (e.g., M13) genetically engineered to display proteins/peptides (e.g., antibody ScFvs) on their surface. | Selection of antibodies against cell-surface targets like CCR5 [33]. |

| Fluorescent Dyes (e.g., PrestoBlue, PI) | Reagents that produce a colorimetric or fluorogenic signal in response to biological activity (e.g., cell viability, enzymatic activity). | PrestoBlue for cell viability in outgrowth assays; Propidium Iodide (PI) for dead cell staining [34]. |

| 96-Pin Replicators | Tools for simultaneous transfer of small liquid volumes (∼1 µL) between well plates. | Transfer of phage lysates during enrichment steps in high-throughput phage isolation [35]. |

Key Methodologies and Experimental Protocols

Phage Display with FACS Screening

Phage display is a foundational selection technology where a library of proteins or peptides is displayed on the surface of bacteriophages, physically linking the protein (phenotype) to its genetic code (genotype) [1] [18]. This linkage allows for the affinity-based selection of binders. When combined with FACS, it becomes a powerful tool for isolating binders to complex cellular targets.

- Principle: A phage library is incubated with a mixed population of target cells (e.g., cells expressing a protein of interest) and control cells (not expressing it). Phages binding to common surface antigens are removed by the control cells, while specific binders attach to the target cells. FACS then quantitatively sorts single cells (with bound phages) based on fluorescent labeling, enabling the isolation of highly specific binders [33].

- Experimental Protocol for Isolating Anti-CCR5 Antibodies [33]:

- Cell Preparation: Generate a target cell line (e.g., 3T3.T4.CCR5-GFP) expressing the membrane protein of interest (CCR5) and an intracellular fluorescent marker (GFP). A control cell line is otherwise identical but does not express the target.

- Negative Selection (Pre-clearing): Incubate the pooled human ScFv phage display library (e.g., Metha1 and Metha2) with an excess of control cells to remove phages that bind nonspecifically to common cell surface molecules.

- Positive Selection: Incubate the pre-cleared phage library with the target cell population (CCR5+ GFP+).

- Flow Cytometry Sorting: Use a FACS sorter (e.g., FACSAria) to isolate single GFP-positive target cells that have bound phages on their surface. The GFP signal identifies the target cells.

- Phage Elution and Amplification: Recover the bound phages from the sorted cells, typically by a low-pH elution. Infect E. coli cells (e.g., TG1 strain) with the eluted phages to amplify the selected pool.

- Iteration: Repeat steps 2-5 for 3-4 rounds to enrich for specific high-affinity binders.

- Characterization: After the final round, isolate single clones, and characterize the displayed ScFv antibodies for specificity and affinity. Promising candidates can be converted to full-length IgG.

Figure 1: Workflow for isolating specific binders using phage display combined with FACS screening. The process involves pre-clearing against control cells to remove non-specific phages, positive selection on target cells, and FACS-based isolation to recover specific clones.

High-Throughput Phage Isolation (HiTS)

While phage display often focuses on engineering known proteins, there is also a need to rapidly isolate novel, natural phages for therapy or biocontrol. The HiTS method is a high-throughput process for enriching and isolating distinct phages from hundreds of environmental samples simultaneously.

- Principle: The method organizes and upscales traditional enrichment and soft-agar overlay techniques into a 96-well plate format, using a 96-pin replicator for efficient liquid transfer. It selects for lytic, culturable phages from a large number of samples in a short time [35].

- Experimental Protocol [35]:

- Day 1: Phage Amplification: Distribute up to 94 environmental samples (≤1.5 mL) in a deep-well plate. To each well, add CaCl₂, MgCl₂, host medium, and an overnight host culture (e.g., E. coli, Salmonella). Incubate overnight on a shaker.

- Day 2: Liquid Purification: Filter the culture through a 0.45 μm filter plate to remove host bacteria, collecting the filtrate (containing phages) in a new plate. Prepare a second plate with fresh medium, host culture, and salts. Use a 96-pin replicator to transfer ∼1 μL of each filtrate to this new plate for a second overnight incubation.

- Day 3: Spot Test: Filter the second culture. Spot the filtrates onto two large soft-agar overlay plates seeded with the host bacteria. Incubate to allow plaque formation.

- Day 4: Phage Collection and Sequencing: Pick individual plaques for further purification or proceed directly to sequencing of the phage DNA (e.g., by Direct Plaque Sequencing).

Quantitative High-Throughput Screening (qHTS) in Directed Evolution

Screening is the alternative to selection. qHTS is an advanced HTS paradigm that generates full concentration-response curves for each compound or variant in a library, providing rich datasets for analysis.

- Principle: Instead of testing a single concentration, qHTS assays each library member across a range of concentrations in an automated, miniaturized format. This allows for the determination of parameters like EC₅₀, maximal response, and Hill coefficient for the entire library, enabling a more robust assessment of activity and the early establishment of structure-activity relationships (SAR) [32].

- Application in Enzyme Evolution: While classical screening uses a proxy substrate to indicate activity, qHTS can be applied to screen for improved enzyme properties. For instance, to evolve a lignin-degrading enzyme, a researcher could use a two-step HTS process [36]:

- Primary Screening: Screen a large library of microbial consortia or enzyme variants in a 96-well plate format with a liquid culture-based assay. Use a quantitative enzyme assay (e.g., for laccase activity) to identify the top performers.

- Secondary Screening and Characterization: Take the hits from the primary screen and characterize them further for multiple related enzyme activities (e.g., laccase, xylanase, and β-glucanase activities) to identify the most promising and versatile candidates.

Table 2: Comparison of high-throughput screening and selection methods in directed evolution.

| Method | Principle | Typical Library Size | Throughput | Key Applications | Advantages | Limitations |

|---|---|---|---|---|---|---|

| Phage Display with FACS | Binding to target cells followed by fluorescence-based sorting. | 10^10 - 10^11 [33] | 10^7 - 10^8 events per hour (FACS dependent) | Selecting antibodies against cell-surface proteins (e.g., GPCRs) [33]. | High specificity; direct selection on native cell-surface targets. | Requires a fluorescent label; equipment is expensive. |

| In vitro Selection (e.g., mRNA Display) | Covalent genotype-phenotype link; selection in vitro. | Up to 10^15 [1] [31] | Limited by selection steps, not transformation | Evolving protein/peptide binders and catalysts; incorporating unnatural amino acids [31]. | Largest possible library sizes; versatile selection conditions. | No cellular amplification; can be technically complex to establish. |

| Microtiter Plate-Based HTS | Individual assay of each variant in multi-well plates. | 10^4 - 10^6 [1] | 10^3 - 10^5 variants per day | Screening enzyme variants for improved activity, stability, or specificity [1] [36]. | Provides quantitative data on every variant; highly adaptable. | Lower throughput than selection; requires a good assay. |

| Quantitative HTS (qHTS) | Assaying each variant at multiple concentrations. | 10^4 - 10^5 | 10^3 - 10^4 concentration curves per day | Detailed pharmacological profiling of enzyme variants or inhibitors [32]. | Generates rich data (EC₅₀, efficacy); reduces false positives/negatives. | Even lower throughput per variant; complex data analysis. |

High-throughput screening and selection methods are the critical engines that drive successful directed evolution experiments, directly enabling the "survival of the fittest" principle in a laboratory context. Methods like phage display coupled with FACS allow for the precise isolation of binders against complex, native targets, while advanced HTS and qHTS platforms enable the quantitative ranking of enzyme variants for detailed functional improvements. The choice of method is dictated by the experimental goal, the desired library size, and the available assay technology. As these methodologies continue to advance—becoming faster, more sensitive, and more integrated with automation and data analysis—they will further accelerate our ability to engineer biological molecules with novel and enhanced functions, bridging the gap between natural evolutionary principles and human-designed objectives.

The cytochrome P450 (CYP) enzyme superfamily represents one of nature's most remarkable evolutionary success stories, with members found across all biological domains that catalyze oxidative reactions with extraordinary regio- and stereoselectivity under mild conditions [37]. These heme-containing monooxygenases have evolved in nature to perform critical functions ranging from detoxification to the biosynthesis of complex natural products [38]. The catalytic versatility of P450s, combined with their relaxed substrate specificity, makes them ideal candidates for repurposing in industrial biocatalysis, particularly for pharmaceutical synthesis where selective C-H functionalization remains a formidable challenge [37].

This case study examines how directed evolution strategically mimics natural evolutionary processes in laboratory settings to optimize P450 enzymes for novel biocatalytic applications. While natural evolution operates through random mutation and selective pressures over geological timescales, directed evolution accelerates this process by applying gene mutagenesis and high-throughput screening to achieve desired enzymatic properties within weeks [39]. The parallel between these processes is profound: both leverage sequence diversity and functional selection to solve complex biochemical challenges, with directed evolution offering the distinct advantage of targeted intentionality [40].

Evolutionary Principles in Natural P450 Diversity

Molecular Drivers of P450 Evolution in Nature

Natural P450 diversity has primarily been generated through gene duplication events followed by functional divergence, operating under a birth-and-death evolution model [41]. In this process, duplicated genes undergo neofunctionalization (acquiring novel functions) or subfunctionalization (partitioning ancestral functions between paralogs) [42]. The CYP superfamily exhibits particularly rapid evolution in response to ecological pressures, as evidenced by the expanded CYPomes in herbivorous insects and their host plants—a clear molecular arms race [41].

A compelling example of natural P450 evolution is documented in the Brassicales plant order, where a CYP98A3 retrogene emerged in a common ancestor and subsequently underwent tandem duplication, giving rise to CYP98A8 and CYP98A9 [42]. This duplication led to initial functional overlap followed by subfunctionalization, where ancestral activities partitioned between paralogs, and eventually neofunctionalization through the acquisition of novel substrate specificities [42]. This evolutionary trajectory mirrors the stepwise optimization achieved through laboratory directed evolution campaigns.

Structural Conservation Amid Sequence Divergence

Despite remarkable sequence divergence among P450 families, these enzymes maintain a conserved structural fold with a heme-binding domain that facilitates oxygen activation and catalysis [43] [38]. This structural conservation amid sequence variation enables phylogenetic analysis using physicochemical properties and structural alignment techniques, revealing evolutionary relationships that are obscured at the sequence level alone [43]. The interplay between structural constraint and functional plasticity makes P450s ideal systems for engineering, as their fundamental catalytic machinery remains intact while substrate recognition elements can be readily modified.

Directed Evolution Methodologies for P450 Optimization

Experimental Workflows for Laboratory Evolution

Directed evolution applies iterative cycles of mutagenesis and screening to enhance enzyme properties, mimicking natural selection's explore-and-exploit strategy with greatly accelerated tempo. The standard workflow encompasses three fundamental phases: diversity generation, high-throughput screening, and variant characterization [39] [38].

Diagram 1: Directed evolution workflow for P450 enzyme engineering.

Key Engineering Approaches

Three complementary strategies dominate modern P450 engineering, each with distinct advantages and applications:

Rational design utilizes structural and mechanistic knowledge to introduce targeted mutations at specific residues. This approach has successfully repurposed P450s for non-natural reactions like C-H amination by disrupting the native proton relay network and modifying conserved structural elements [38]. For example, mutations at residues T268, H266, E267, and T438 in bacterial P450s suppressed unproductive pathways while enhancing nitrene transfer activity [38].

Semi-rational design focuses mutagenesis on substrate-binding regions identified through phylogenetic analysis or structural modeling, creating smaller but higher-quality mutant libraries. This approach balances the comprehensiveness of random methods with the efficiency of rational design [38].

Directed evolution through random mutagenesis explores sequence space more broadly, particularly effective when structural information is limited or when targeting multiple enzyme properties simultaneously [37]. Recent advances incorporate machine learning to predict beneficial mutations from large datasets, reducing experimental burden [40].

Case Study: Engineering P450s for Cardiac Drug Synthesis

Experimental Protocol and Performance Metrics

A recent application of directed evolution for synthesizing cardiac drugs demonstrates the power of this approach [39]. Researchers engineered multiple enzyme classes including cytochrome P450 monooxygenases, ketoreductases (KREDs), transaminases, and hydrolases to optimize a biocatalytic route for cardiac drug synthesis. The experimental methodology followed a comprehensive workflow:

Library Construction: Mutant libraries were created via site-saturation mutagenesis targeting substrate-binding regions and potential bottleneck residues identified from structural models.

High-Throughput Screening: Approximately 10,000 variants were screened using colorimetric assays for activity and HPLC for enantioselectivity.

Iterative Evolution: Beneficial mutations were combined in subsequent rounds, with 3-4 cycles typically performed.