Directed Evolution vs. Rational Design: A Data-Driven Comparison of Success Rates in Modern Drug Discovery

This article provides a comparative analysis of directed evolution and rational design, two cornerstone methodologies in protein engineering and drug development.

Directed Evolution vs. Rational Design: A Data-Driven Comparison of Success Rates in Modern Drug Discovery

Abstract

This article provides a comparative analysis of directed evolution and rational design, two cornerstone methodologies in protein engineering and drug development. Tailored for researchers, scientists, and drug development professionals, it explores the foundational principles, distinct methodological workflows, and real-world application success rates of each approach. By examining recent technological integrations—such as AI and automation—and presenting concrete case studies, this review offers a framework for selecting and optimizing these strategies. It concludes with a forward-looking synthesis on how hybrid models are accelerating the development of novel enzymes, antibodies, and gene therapies, providing actionable insights for strategic planning in biomedical research.

Core Principles: Deconstructing the Philosophies of Directed Evolution and Rational Design

In the pursuit of advanced biologics, sustainable biocatalysts, and novel research tools, protein engineering has emerged as a transformative discipline driving innovation across the global bioeconomy, projected to exceed $500 billion by 2035 [1]. This rapid growth is powered by two fundamentally distinct yet increasingly complementary methodologies: directed evolution (empirical exploration) and rational design (structure-informed prediction). Directed evolution mimics natural selection in the laboratory through iterative rounds of mutagenesis and screening, requiring no prior structural knowledge to discover improved variants. In contrast, rational design employs computational models and structural insights to predictively engineer proteins with specific characteristics. While early implementations highlighted the philosophical and practical divides between these approaches, modern protein engineering increasingly demonstrates their powerful synergy. This guide provides an objective comparison of these paradigms, examining their success rates, methodological frameworks, and experimental requirements to inform research strategy and resource allocation in scientific and drug development contexts.

Directed Evolution: Harnessing Evolutionary Principles

Directed evolution is an iterative laboratory process that applies Darwinian principles of mutation and selection to engineer biomolecules with improved or novel functions. As formally defined, it constitutes "an iterative two-step process involving first the generation of a library of variants of a biological entity of interest, and second the screening of this library in a high-throughput fashion to identify those mutants that exhibit better properties" [2]. This empirical approach explores sequence space through random or targeted mutagenesis followed by phenotypic screening, relying on high-throughput methods to identify beneficial mutations without requiring mechanistic understanding of their effects.

The fundamental strength of directed evolution lies in its ability to discover cooperative mutations that would be difficult to predict computationally. Early landmark demonstrations included the evolution of subtilisin E for 256-fold higher activity in dimethylformamide [2] and β-lactamase for 32,000-fold increased antibiotic resistance using DNA shuffling techniques [2]. The methodology has since expanded beyond individual proteins to encompass metabolic pathways, circuits, and entire genomes [2].

Rational Design: Computational Prediction and Structure-Based Engineering

Rational design employs computational models and structural biology insights to predictively engineer proteins with desired functions. This paradigm encompasses structure-based virtual screening, molecular dynamics simulations, and artificial intelligence-driven models that explore vast chemical spaces, investigate molecular interactions, predict binding affinity, and optimize drug candidates with increasing accuracy [3]. Unlike directed evolution's exploratory approach, rational design seeks to establish causal relationships between sequence, structure, and function to enable targeted engineering.

The core assumption underlying many rational design approaches is that "natural proteins are under evolutionary pressure to be functional; therefore, novel sequences drawn from the same distribution will also be functional" [4]. This principle enables the use of generative protein sequence models to sample novel sequences with predicted functionality, though challenges remain in predicting whether generated proteins will fold and function as intended [4].

Comparative Analysis: Success Metrics and Performance Indicators

Table 1: Quantitative Performance Comparison of Protein Engineering Approaches

| Engineering Approach | Reported Improvement | Experimental Scale | Timeframe | Key Applications |

|---|---|---|---|---|

| Classical Directed Evolution | 256-fold activity increase (subtilisin E) [2] | Multiple iterative rounds | Several months | Enzyme activity, stability |

| AI-Guided Directed Evolution | 74.3-fold activity increase (GFP) in 4 rounds [5] | ~1,000 mutants per round | Weeks | Optimizing protein activity |

| CRISPR-Enhanced Directed Evolution | Efficient mammalian cell evolution [6] | Varies by system | Varies | Mammalian-specific adaptations |

| Autonomous AI-Powered Engineering | 26-90-fold activity improvements [7] | <500 variants total | 4 weeks | Multi-property optimization |

| Generative Model-Guided Design | 50-150% improved success rate for active variants [4] | 500+ expressed variants | Multiple rounds | Generating diverse functional sequences |

Table 2: Methodological Characteristics and Resource Requirements

| Parameter | Directed Evolution | Rational Design |

|---|---|---|

| Knowledge Requirements | Minimal structural knowledge needed | Requires detailed structural data |

| Infrastructure Needs | High-throughput screening capabilities | Computational resources, structural biology tools |

| Typical Experimental Workflow | Iterative: diversify → screen → select [2] | Predictive: model → design → validate |

| Strengths | Discovers cooperative mutations, avoids mechanistic understanding limitations | Targeted interventions, explores specific hypotheses |

| Limitations | Screening throughput constraints, potential for missing optimal variants | Prediction inaccuracies, limited by current model capabilities |

Experimental Protocols and Methodological Frameworks

Directed Evolution Workflows: From Classical to CRISPR-Enhanced

The classical directed evolution workflow follows a systematic, iterative process of diversity generation and screening. A representative protocol for enzyme improvement typically involves:

Library Construction: Genetic diversity is introduced through error-prone PCR or DNA shuffling. For example, error-prone PCR introduces random mutations throughout the gene of interest at controlled mutation rates [2].

Expression and Screening: Variant libraries are expressed in suitable host systems (typically E. coli or yeast) and screened for desired properties using high-throughput assays. Recent advances employ droplet microfluidics to improve screening efficiency by hundreds of times [6].

Selection and Iteration: Improved variants are selected as templates for subsequent rounds of diversification. Modern platforms like the autonomous engineering system reported by Nature Communications can complete four evolution rounds in just four weeks while testing fewer than 500 variants [7].

CRISPR-based directed evolution represents a significant methodological advancement, enabling precise and efficient gene targeting. CRISPR-directed evolution "employs RNA-guided nucleases (e.g., Cas9, Cas12a) from the CRISPR-Cas system to achieve precise and efficient gene targeting" [6]. The methodology leverages double-strand break-dependent and independent systems to generate genetic diversity, with applications spanning enzymatic engineering, metabolic engineering, antibody engineering, and plant breeding [6].

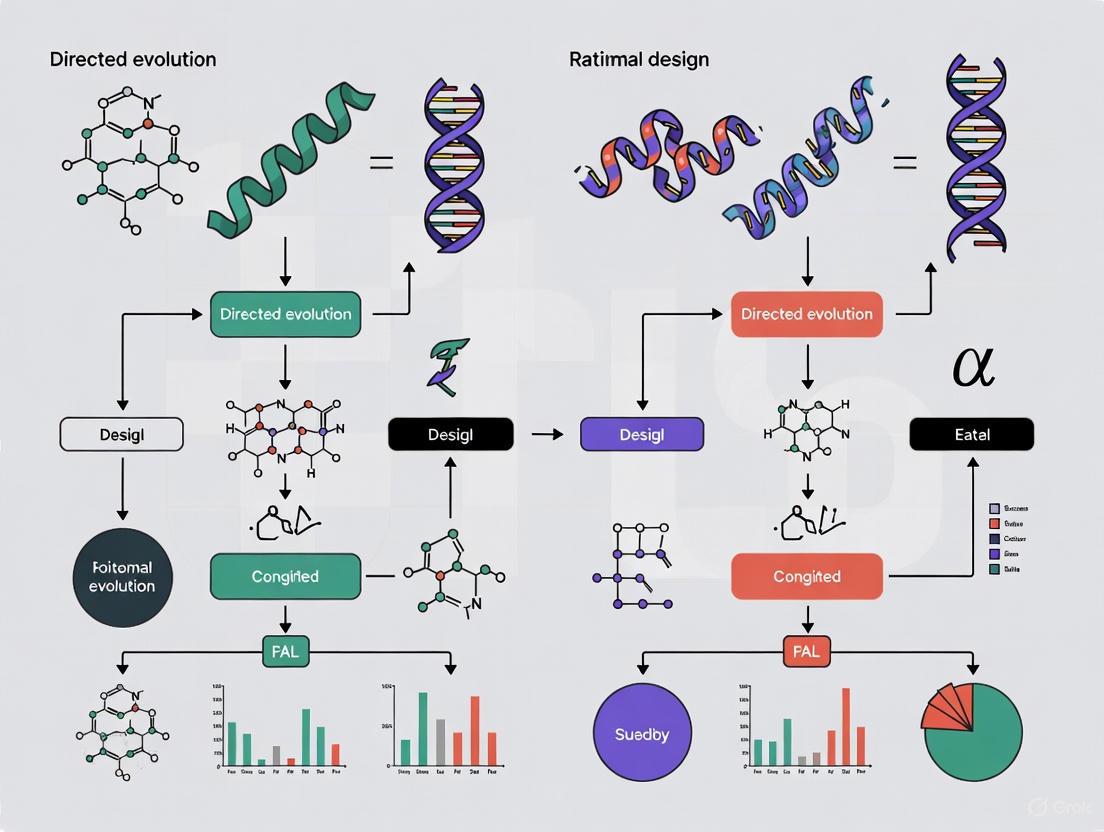

Diagram 1: Directed Evolution Workflow - Classical empirical approach

Rational Design Protocols: From Molecular Modeling to AI-Driven Design

Rational design methodologies employ computational frameworks to predict protein behavior and guide engineering decisions:

Structure Prediction and Analysis: Initial phase involves obtaining or generating high-quality protein structures through X-ray crystallography, NMR, or computational prediction tools like AlphaFold [1] [3].

Computational Scoring and Filtering: Generated sequences are evaluated using composite metrics. The COMPSS (composite metrics for protein sequence selection) framework enables selection of phylogenetically diverse functional sequences, improving experimental success rates by 50-150% [4].

Validation and Iteration: Computational predictions are validated through experimental testing. The DeepDE algorithm exemplifies iterative refinement in rational design, using "supervised learning on ~1,000 mutants" with "a mutation radius of three allows efficient exploration of vast sequence space" [5].

Machine learning pipelines like ProDomino demonstrate the power of rational design for specific engineering challenges. ProDomino uses "ESM-2-derived protein sequence representations as model inputs in combination with a masking strategy" to predict domain insertion sites in proteins, achieving approximately 80% success rates in experimental validation [8].

Diagram 2: Rational Design Workflow - Structure-informed predictive approach

Integrated Approaches: Hybrid Methodologies

Contemporary protein engineering increasingly employs hybrid approaches that leverage the strengths of both paradigms. For example, the DeepDE algorithm "enables iterative protein evolution via supervised learning on ~1,000 mutants" and achieved "a 74.3-fold increase in GFP488nm activity in just four rounds" by combining directed evolution's iterative approach with deep learning guidance [5].

Autonomous enzyme engineering platforms represent the cutting edge of this integration, combining "machine learning and large language models with biofoundry automation to eliminate the need for human intervention, judgement, and domain expertise" [7]. These systems can engineer enzyme variants with 16-90-fold improvements in specific activities within four weeks while requiring construction and characterization of fewer than 500 variants [7].

Research Reagent Solutions: Essential Materials and Platforms

Table 3: Key Research Reagents and Platforms for Protein Engineering

| Reagent/Platform | Function | Application Context |

|---|---|---|

| Error-Prone PCR | Introduces random mutations throughout target gene | Directed evolution library generation [2] |

| DNA Shuffling | Recombines gene fragments to create chimeric variants | Directed evolution of homologous genes [2] |

| CRISPR-Cas Systems | Enables precise gene targeting and editing | CRISPR-directed evolution in various host systems [6] |

| ESM-2 (Evolutionary Scale Modeling) | Protein language model for sequence likelihood prediction | Variant fitness prediction and library design [7] |

| AlphaFold2 | Protein structure prediction from sequence | Structure-informed rational design [1] |

| PROTEUS Platform | Mammalian directed evolution using virus-like vesicles | Evolution of proteins requiring mammalian cellular environment [9] |

| ProDomino | Machine learning pipeline for predicting domain insertion sites | Engineering allosteric protein switches [8] |

| Rosetta | Molecular modeling software for protein design | Structure-based design and stability prediction [4] |

| Autonomous Biofoundries | Integrated robotic systems for automated experimentation | AI-powered autonomous protein engineering [7] |

The comparative analysis of directed evolution and rational design reveals a complex landscape where strategic selection and integration of approaches delivers optimal outcomes. Directed evolution excels when structural information is limited or when targeting complex phenotypes involving cooperative mutations, with recent AI-guided approaches dramatically improving its efficiency. Rational design provides superior precision for well-characterized systems and when specific molecular properties are targeted, with computational advances continuously expanding its applicability.

The emerging paradigm of autonomous protein engineering represents the logical convergence of these approaches, combining "machine learning and large language models with biofoundry automation" to achieve dramatic improvements in efficiency and success rates [7]. These systems highlight the diminishing distinction between empirical exploration and structure-informed prediction, instead leveraging both methodologies within integrated frameworks.

For research and drug development professionals, selection criteria should consider target characterization status, available structural data, throughput capabilities, and computational resources. The experimental data and methodological comparisons presented in this guide provide a foundation for these strategic decisions, enabling more effective navigation of the protein engineering landscape and contributing to accelerated development of novel biologics, enzymes, and research tools.

Directed evolution is a powerful protein engineering technology that harnesses Darwinian principles in a laboratory setting. Through iterative cycles of genetic diversification and functional screening, researchers can tailor proteins for specific applications without requiring detailed prior knowledge of their structure, thereby bypassing the limitations of purely rational design approaches [10] [2]. This guide provides an objective comparison of its performance against rational design, supported by experimental data and detailed protocols.

Comparative Success Rates: Directed Evolution vs. Rational Design

The table below summarizes key performance metrics from recent studies, illustrating the distinct advantages and outputs of each protein engineering strategy.

| Engineering Strategy | Key Principle | Typical Fold Improvement (Recent Examples) | Required Structural Knowledge | Typical Experimental Timeline |

|---|---|---|---|---|

| Directed Evolution | Iterative random mutagenesis and screening/selection to discover improved variants [10]. | • AtHMT enzyme: 16-fold improvement in ethyltransferase activity [7].• YmPhytase: 26-fold improvement in activity at neutral pH [7].• Subtilisin E: 256-fold higher activity in organic solvent [2]. | Low to None [10] | 4 weeks for 4 rounds (AI-powered platform) [7] |

| Rational Design | Targeted mutations based on structural knowledge and computational models to achieve a predefined design [11]. | • De novo serine hydrolase: Catalytic efficiency (kcat/Km) of up to 2.2 × 105 M-1·s-1 [11].• Often results in low initial activities requiring subsequent evolution [11]. | High [11] | Varies; can be lengthy for de novo design and validation |

Detailed Experimental Protocols

Protocol 1: A Generalized Autonomous Directed Evolution Workflow

This modern protocol integrates machine learning (ML) and laboratory automation for efficient enzyme engineering [7].

- Library Design: Generate an initial library of protein variants using a combination of a protein language model (e.g., ESM-2) and an epistasis model (e.g., EVmutation) to maximize diversity and quality [7].

- Automated Library Construction:

- Utilize a biofoundry (e.g., the Illinois Biological Foundry for Advanced Biomanufacturing, iBioFAB) for a fully automated workflow.

- Employ a high-fidelity (HiFi) assembly-based mutagenesis method to create variant libraries without the need for intermediate sequencing, achieving ~95% accuracy [7].

- The process is divided into automated modules: mutagenesis PCR, DNA assembly, transformation, colony picking, plasmid purification, and protein expression [7].

- High-Throughput Screening:

- Culture expressed variants in a 96-well format.

- Perform automated, quantifiable functional enzyme assays (e.g., measuring ethyltransferase activity for AtHMT or phytase activity at neutral pH for YmPhytase) to determine variant fitness [7].

- Machine Learning-Driven Learning and Iteration:

Protocol 2: Directed Evolution of Enzyme Stability in Organic Solvents

This classic protocol outlines the evolution of subtilisin E for enhanced function in dimethylformamide (DMF) [2].

- Diversification via Error-Prone PCR (epPCR):

- Method: Perform PCR on the gene of interest under low-fidelity conditions. This is achieved by using a non-proofreading polymerase (e.g., Taq polymerase), biasing dNTP concentrations, and adding manganese ions (Mn²⁺) to introduce random mutations at a target rate of 1-5 mutations per kilobase [10] [2].

- Library Expression and Screening:

- Clone the mutated gene library into an expression vector and transform into a host organism (e.g., E. coli).

- Culture individual clones in 96-well microtiter plates and induce protein expression.

- Assay: Transfer cell lysates to a new plate containing a colorimetric or fluorometric substrate dissolved in 60% DMF. Measure the reaction rate using a plate reader to identify variants with retained or improved activity under the denaturing condition [2].

- Iteration:

Protocol 3: Rational De Novo Enzyme Design

This protocol describes the workflow for designing a novel enzyme from scratch, culminating in a designed serine hydrolase [11].

- Theozyme (Theoretical Enzyme) Construction:

- Quantum Mechanics (QM) Calculation: Define the transition state of the target chemical reaction (e.g., C-H bond cleavage). Use QM methods (e.g., Density Functional Theory) to build a minimal active-site model ("theozyme") by positioning idealized catalytic amino acid side chains around the transition state to stabilize it. This provides key geometric constraints [11].

- Backbone Generation and Sequence Design:

- Use generative AI models (e.g., RFdiffusion) to create novel protein backbone structures that are compatible with the theozyme's geometric constraints [11].

- Apply inverse-folding neural networks (e.g., ProteinMPNN) to design amino acid sequences that will fold into the generated backbone and maintain the pre-organized active site [11].

- Computational Filtering and Experimental Testing:

- Filter the designed enzyme candidates using structural prediction tools (e.g., AlphaFold2) and active-site geometry scoring [11].

- Synthesize the genes encoding the top candidates, express them in a host system, and purify the proteins for experimental characterization of catalytic efficiency (kcat/Km) [11].

Workflow Visualization: Directed Evolution vs. Rational Design

The diagrams below illustrate the core logical workflows for both directed evolution and rational design, highlighting their fundamental differences.

Directed Evolution Workflow

Rational Design Workflow

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and their functions in a standard directed evolution campaign.

| Research Reagent / Tool | Function in Workflow |

|---|---|

| Error-Prone PCR (epPCR) Kit | Introduces random mutations across the gene of interest during amplification to create genetic diversity [10]. |

| High-Fidelity DNA Polymerase | Used for accurate gene amplification in library construction methods like HiFi assembly, minimizing unintended errors [7]. |

| Expression Vector (e.g., pPICZαA) | Plasmid for cloning the variant library and expressing the target protein in a host organism (e.g., E. coli, P. pastoris) [12]. |

| Colorimetric/Fluorometric Substrate | Enables high-throughput screening by producing a measurable signal (color/fluorescence) in response to enzyme activity [10] [12]. |

| Automated Liquid Handling System | Robotics platform that automates repetitive tasks like pipetting, colony picking, and assay setup, enabling high-throughput workflows [7]. |

| Machine Learning Models (e.g., ESM-2) | AI tools used to design initial variant libraries and predict fitness from screening data, guiding the exploration of sequence space [7]. |

In the field of protein engineering, two dominant philosophies have emerged for tailoring biocatalysts: directed evolution, which mimics natural selection through iterative rounds of randomization and screening, and rational design, which employs structural and mechanistic knowledge to make targeted enhancements [2] [13]. While directed evolution has transformed protein engineering over the last two decades, its requirement for high-throughput screening and the vastness of protein sequence space present significant limitations [14] [2]. In contrast, rational design employs a "knowledge-based" strategy, leveraging understanding of protein sequence, structure, and function to preselect promising mutations, resulting in dramatically reduced library sizes and more intellectually predictable engineering outcomes [14] [13].

This guide objectively compares the performance of rational design against directed evolution and its AI-enhanced derivatives, providing experimental data and methodologies that highlight the distinct advantages, limitations, and optimal application spaces for each approach. As the toolkit available to protein engineers expands with artificial intelligence and advanced computation, the lines between these strategies are blurring, giving rise to powerful hybrid methodologies [11] [7].

Core Principles and Workflows

The Rational Design Workflow

Rational enzyme design aims to predict mutations that confer desired properties based on understanding the relationships between protein structure and function [13]. The strategy is universal, relatively fast, and has the potential to be developed into algorithms that can quantitatively predict the performance of designed sequences [13]. Its successful application, however, often depends on the availability of structural data and a deep understanding of catalytic mechanisms.

The typical workflow for rational design involves several key stages, as visualized below.

Directed Evolution and AI-Enhanced Workflows

Directed evolution employs an iterative "design-build-test-learn" (DBTL) cycle, mimicking natural selection in the laboratory [2]. Traditional directed evolution relies on random mutagenesis and high-throughput screening, while modern implementations increasingly incorporate machine learning to guide the exploration of sequence space [7] [5].

Quantitative Performance Comparison

The table below summarizes representative performance data for rational design, directed evolution, and AI-enhanced approaches across various protein engineering campaigns.

Table 1: Performance Comparison of Protein Engineering Approaches

| Target Protein | Engineering Goal | Approach | Library Size | Improvement | Key Mutations | Ref. |

|---|---|---|---|---|---|---|

| Pseudomonas fluorescens esterase | Improved enantioselectivity | Semi-rational (3DM analysis) | ~500 variants | 200-fold improved activity, 20-fold improved enantioselectivity | 4 active site positions | [14] |

| Halide methyltransferase (AtHMT) | Altered substrate preference | AI-powered autonomous platform | <500 variants over 4 rounds | 90-fold improvement in substrate preference, 16-fold in ethyltransferase activity | Combination of mutations from initial library | [7] |

| GFP from Aequorea victoria | Increased activity | DeepDE (AI-guided) | ~1,000 mutants per round | 74.3-fold increase in activity over 4 rounds | Triple mutant combinations | [5] |

| Thermus aquaticus DNA polymerase | Altered substrate specificity | Semi-rational (REAP analysis) | 93 variants | Efficient incorporation of unnatural nucleotides | Single amino acid substitution | [14] |

| Phytase (YmPhytase) | Improved neutral pH activity | AI-powered autonomous platform | <500 variants over 4 rounds | 26-fold improvement in activity at neutral pH | Combination of mutations from initial library | [7] |

| Subtilisin E | Improved organic solvent stability | Traditional directed evolution | Not specified | 256-fold higher activity in 60% DMF | 6 cumulative point mutations | [2] |

Methodologies and Experimental Protocols

Key Rational Design Strategies

Sequence-Based Design: Multiple Sequence Alignment

Multiple sequence alignment (MSA) leverages evolutionary information from homologous proteins to identify functionally important residues [13]. The underlying principle is that enzymes with high sequence identity and structural similarity tend to have functional similarity.

Protocol:

- Collect homologous sequences from databases using BLAST or specialized tools like 3DM [14]

- Perform multiple sequence alignment using tools like ClustalOmega or MUSCLE

- Identify conserved regions and CbD sites (conserved but different) - positions conserved in homologs but different in the target enzyme [13]

- Design mutations to match consensus sequences or incorporate beneficial residues from high-performing homologs

- Validate structural compatibility of proposed mutations

Case Study: Engineering Bacillus-like esterase (EstA) for improved activity toward tertiary alcohol esters [13]. MSA of 1,343 sequences revealed a conserved GGG motif in the oxyanion hole, while EstA contained GGS. The S→G mutation generated EstA-GGG with 26-fold higher conversion rate.

Structure-Based Design: Active Site Remodeling

This approach utilizes 3D structural information to design mutations that alter steric hindrance, electrostatic interactions, or hydrogen bonding networks in the active site [14] [13].

Protocol:

- Obtain 3D structure through X-ray crystallography, NMR, or homology modeling

- Identify key active site residues involved in substrate binding and catalysis

- Analyze substrate binding mode and potential bottlenecks (steric clashes, poor geometry)

- Design mutations to optimize substrate positioning, transition state stabilization, or product release

- Compute binding energies and verify geometric compatibility using molecular modeling software

Computational Protein Design with Theozymes

Theozymes (theoretical enzymes) are minimal active site models composed of catalytic groups positioned to stabilize the reaction transition state, designed using quantum mechanical calculations [11].

Protocol:

- Define the catalytic mechanism and identify key catalytic residues

- Model the transition state using quantum mechanical methods (DFT, HF, semi-empirical)

- Position catalytic groups around the transition state to form stabilizing interactions

- Scan protein scaffolds for compatibility with the designed active site (e.g., using RosettaMatch)

- Design the surrounding protein matrix to support the preorganized catalytic geometry

AI-Enhanced Directed Evolution Methodologies

Modern autonomous enzyme engineering platforms combine machine learning with biofoundry automation to accelerate protein optimization [7].

Protocol for AI-Powered Engineering:

- Initial Library Design: Use protein language models (ESM-2) and epistasis models (EVmutation) to select 150-200 diverse, high-quality variants [7]

- Automated Library Construction: Implement high-fidelity assembly methods with ~95% accuracy, eliminating intermediate sequencing verification [7]

- High-Throughput Screening: Automate protein expression, purification, and functional assays on robotic platforms

- Machine Learning Analysis: Train models on sequence-activity relationships to predict improved variants for subsequent rounds

- Iterative Optimization: Conduct multiple DBTL cycles, with each round informed by previous results

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Research Reagents and Computational Tools for Rational Design

| Category | Tool/Reagent | Specific Examples | Function in Workflow |

|---|---|---|---|

| Computational Design Software | Rosetta Design Suite | RosettaMatch, RosettaDesign | Scaffold screening, sequence design, and energy calculations for de novo enzyme design [14] [11] |

| Molecular Dynamics Software | GROMACS, AMBER, NAMD | VMD for visualization | Simulating protein dynamics, identifying functional motions, and analyzing access tunnels [14] [15] |

| Quantum Chemistry Packages | Gaussian, ORCA | DFT (B3LYP/6-31+G*) | Transition state optimization and theozyme design [11] |

| Sequence Analysis Platforms | 3DM, HotSpot Wizard | Multiple sequence alignment, phylogenetic analysis | Identifying evolutionary patterns and functional hotspots [14] |

| AI/ML Tools | Protein Language Models | ESM-2, ProteinMPNN | Variant fitness prediction and sequence design based on evolutionary patterns [7] [15] |

| Experimental Mutagenesis Kits | Site-directed mutagenesis kits | Q5 Site-Directed Mutagenesis Kit | Introducing specific mutations into target genes [13] |

| Structural Biology Reagents | Crystallization screens | Hampton Research screens | Protein crystallization for structural determination |

The comparative analysis presented in this guide demonstrates that rational design, directed evolution, and AI-enhanced approaches each occupy distinct but complementary niches in the protein engineering landscape.

Rational design excels when substantial structural and mechanistic knowledge is available, enabling targeted interventions with minimal experimental screening [14] [13]. Its strength lies in engineering specific properties like enantioselectivity or altering substrate specificity where the structural determinants are reasonably well-understood. The methodology is particularly powerful for introducing novel catalytic functions through de novo design, as demonstrated by the creation of artificial Diels-Alderases [14].

Traditional directed evolution remains valuable for optimizing complex phenotypes where structural insights are limited, or when multiple interdependent properties require improvement simultaneously [2]. However, its requirement for large library sizes and high-throughput screening presents practical limitations.

AI-enhanced approaches represent the emerging frontier, combining the exploratory power of directed evolution with the predictive capability of computational design [7] [5]. These methods significantly reduce experimental burden while efficiently navigating sequence space, as evidenced by the rapid optimization of halide methyltransferase and phytase enzymes within four weeks [7].

For researchers and drug development professionals, the strategic selection of an engineering approach should be guided by the availability of structural information, understanding of mechanism, complexity of the target property, and available screening capacity. As computational power and biological understanding advance, the integration of these methodologies will continue to push the boundaries of what is achievable in protein engineering.

Historical Context and Nobel Prize-Winning Advancements

The quest to tailor enzymes and proteins for research, therapeutics, and industrial applications has been fundamentally shaped by two powerful philosophies: directed evolution and rational design. Directed evolution, a method that mimics natural selection in the laboratory, was championed by Frances H. Arnold, whose groundbreaking work earned her the Nobel Prize in Chemistry in 2018. [16] This approach stands in contrast to rational design, which relies on detailed knowledge of protein structure and mechanism to make precise, computational predictions. For decades, these strategies have been viewed as distinct, even competing, paths to engineering better biocatalysts. Today, the most advanced platforms in the field are moving beyond this dichotomy, leveraging artificial intelligence (AI) to fuse the exploratory power of evolution with the precision of design, thereby creating a new, hybrid paradigm for protein engineering. [7] [16]

Historical Development and Key Methodologies

The journey of protein engineering reflects a continuous effort to balance the exploration of vast sequence space with the practical constraints of laboratory work.

The Rise of Directed Evolution

Modern directed evolution emerged in the 1990s as an iterative, two-step process of diversification and screening. [2] Early landmark studies, such as the evolution of the serine protease subtilisin E for enhanced activity in an organic solvent, demonstrated its power. Through sequential rounds of random mutagenesis via error-prone PCR and screening, researchers identified a variant with a 256-fold improvement, a feat achieved through the accumulation of six cooperative mutations that would have been difficult to predict in advance. [2] A significant leap forward came with the development of recombination-based techniques like DNA shuffling, which recombines beneficial mutations from different parent genes. This approach, mimicking natural sexual reproduction, proved vastly more efficient than purely random methods, yielding a β-lactamase variant that conferred a 32,000-fold increase in antibiotic resistance to its host E. coli. [2]

The Principles of Rational and Semi-Rational Design

Concurrently, rational design sought to apply a "first-principles" understanding of protein structure and function. This approach often begins with a detailed analysis of a protein's three-dimensional structure to identify key active site residues, which are then targeted for site-directed mutagenesis. [16] A more recent and powerful extension is semi-rational design, which uses computational tools to leverage evolutionary information. [14] By analyzing multiple sequence alignments of homologous proteins, tools like the HotSpot Wizard and 3DM database can identify "hotspot" positions that are naturally tolerant to mutation, allowing engineers to create small, high-quality libraries focused on functionally rich regions of the protein. [14] This strategy dramatically increases the odds of success while reducing the number of variants that need to be screened.

Table 1: Core Methodologies in Protein Engineering

| Methodology | Underlying Principle | Key Tools & Techniques | Library Size |

|---|---|---|---|

| Directed Evolution | Mimics natural selection; exploration without requiring structural knowledge. | Error-prone PCR, DNA shuffling, StEP recombination. [2] | Very Large (10^4 - 10^6+ variants) |

| Rational Design | Structure-based precise alterations using physics and knowledge. | Site-directed mutagenesis, computational modeling (e.g., Rosetta). [16] | Very Small (Often < 10 variants) |

| Semi-Rational Design | Combines evolutionary information with structural data to target specific regions. | Sequence conservation analysis (e.g., 3DM), structural analysis. [14] | Small (10^2 - 10^3 variants) |

Quantitative Comparison of Success Rates and Performance

Directly comparing the success rates of directed evolution and rational design is complex, as "success" is project-dependent. However, emerging data from AI-powered platforms that integrate both approaches provides compelling evidence for their combined efficacy.

A 2025 study demonstrated a generalized autonomous platform that integrated machine learning with biofoundry automation. This system, which uses both protein language models (e.g., ESM-2) and epistasis models (e.g., EVmutation) to design variants, achieved remarkable results in just four weeks. For two different enzymes, the initial designed libraries contained 180 variants each. A significant proportion of these designed variants performed above the wild-type baseline (59.6% for AtHMT and 55% for YmPhytase), with 50% and 23% being significantly better, respectively. [7] After four rounds of iterative optimization, the platform generated variants with up to 90-fold improvement in substrate preference and 26-fold improvement in catalytic activity. [7]

Another 2025 study on the AiCE (AI-informed constraints for protein engineering) platform, which uses inverse folding models, reported success rates across eight different protein engineering tasks ranging from 11% to 88%, successfully engineering proteins from tens to thousands of residues in size. [17]

Table 2: Representative Experimental Outcomes from Integrated AI Platforms

| Engineering Platform | Target Protein | Engineering Goal | Key Results | Experimental Timeline |

|---|---|---|---|---|

| AI-Powered Autonomous Platform [7] | Halide methyltransferase (AtHMT) | Improve ethyltransferase activity | 16-fold improvement in activity; 90-fold change in substrate preference. [7] | 4 weeks (4 rounds) |

| AI-Powered Autonomous Platform [7] | Phytase (YmPhytase) | Improve activity at neutral pH | 26-fold improvement in activity at neutral pH. [7] | 4 weeks (4 rounds) |

| AiCE Platform [17] | Various (Deaminases, Nucleases, etc.) | Improve activity & specificity | Success rates of 11%-88% across 8 different protein tasks. [17] | Not Specified |

Detailed Experimental Protocols

To illustrate how these methodologies are implemented, below are detailed protocols for a classic directed evolution campaign and a modern semi-rational design workflow.

Protocol 1: Directed Evolution via Error-Prone PCR and Screening

This protocol outlines the iterative DBTL (Design-Build-Test-Learn) cycle used in traditional directed evolution. [2]

- Diversification (Design & Build): The gene of interest is amplified using error-prone PCR. This technique uses altered reaction conditions (e.g., unbalanced dNTP concentrations, the addition of Mn2+) to reduce the fidelity of the DNA polymerase, introducing random mutations throughout the gene sequence.

- Library Construction: The mutated PCR products are cloned into an appropriate expression vector and transformed into a host organism (e.g., E. coli) to create a library of variant clones.

- Screening (Test): The expressed variants are screened for the desired property using high-throughput assays. This could involve growing colonies on selective media, assaying cell lysates for enzymatic activity against a chromogenic substrate, or using fluorescence-activated cell sorting (FACS) for surface-displayed proteins.

- Selection (Learn): The best-performing variants from the screen are identified. Their genes are sequenced and serve as the templates for the next round of diversification. This cycle is repeated until the desired level of improvement is attained.

Protocol 2: Semi-Rational Design with Consensus Analysis

This protocol leverages evolutionary data to create smart, focused libraries. [14]

- Sequence Analysis (Design): A multiple sequence alignment (MSA) is generated by collecting hundreds to thousands of homologous sequences from public databases. Residues that are highly conserved across the family are identified as potential critical functional spots. Positions that show natural variability are identified as potential "hotspots" for engineering.

- Target Selection: Based on the MSA and, if available, a 3D structure of the target protein, a subset of hotspot residues (e.g., those lining the active site or a substrate access tunnel) is chosen for mutagenesis.

- Library Construction (Build): Instead of random mutagenesis, site-saturation mutagenesis (e.g., using NNK codons) is performed at each selected position, either individually or in combination. This results in a library where only the targeted positions are varied, and only to amino acids that are evolutionarily "allowed."

- Screening & Characterization (Test & Learn): The smaller, functionally enriched library is screened. The hit rate is typically much higher than in a random library. Positive hits are characterized in detail to understand the structural and mechanistic basis for the improvement.

The logical flow of this semi-rational design strategy is summarized in the diagram below.

The Modern Toolkit: AI-Integrated Platforms

The most significant recent advancement is the fusion of these approaches into autonomous AI-powered platforms. These systems close the DBTL loop with minimal human intervention, creating a hyper-efficient cycle of protein optimization.

- AI-Driven Design: Machine learning models, particularly protein language models (e.g., ESM-2) and inverse folding models (e.g., ProteinMPNN), are used to predict beneficial mutations and generate novel sequences that are likely to be stable and functional. [7] [11] [17]

- Automated Build & Test: Robotic biofoundries, like the Illinois Biological Foundry (iBioFAB), automatically execute the construction of DNA variants, transform hosts, express proteins, and run high-throughput functional assays. [7]

- Machine Learning Learn: The experimental data from each round is used to retrain and refine the AI models, improving their predictive power for subsequent iterations. This allows the system to efficiently navigate the fitness landscape. [7]

This integrated workflow is depicted in the following diagram.

The Scientist's Toolkit: Essential Research Reagents and Solutions

The experiments cited rely on a suite of specialized reagents and computational tools.

Table 3: Key Research Reagents and Solutions for Modern Protein Engineering

| Tool / Reagent | Function / Application | Example Use Case |

|---|---|---|

| Error-Prone PCR Kit | Introduces random mutations across a gene during amplification. | Creating diverse variant libraries for initial directed evolution rounds. [2] |

| Site-Directed Mutagenesis Kit | Introduces a specific, pre-determined mutation into a plasmid. | Validating individual hits or conducting rational design of active sites. [16] |

| Protein Language Models (ESM-2) | AI model trained on protein sequences; predicts variant fitness and allowed mutations. | Generating high-quality initial variant libraries without structural data. [7] |

| Inverse Folding Models (ProteinMPNN) | AI model that designs protein sequences that will fold into a desired structure. | De novo enzyme design or optimizing sequences for a given scaffold. [11] [17] |

| Robotic Biofoundry (e.g., iBioFAB) | Integrated automation system for molecular biology and assays. | Executing the entire Build-Test cycle autonomously for high-throughput engineering. [7] |

The historical narrative of directed evolution versus rational design has converged into a unified story of integration. While their foundational principles differ—broad exploration versus targeted precision—the data shows that neither is obsolete. Instead, the highest success rates and most dramatic performance improvements are now being achieved by platforms that synergize their strengths. By using AI to draw insights from both evolutionary history and physical first principles, and by employing automation to accelerate experimentation, this hybrid approach is setting a new standard for protein engineering. It enables researchers to navigate the complex fitness landscape of proteins with unprecedented speed and accuracy, paving the way for breakthroughs in drug development, synthetic biology, and green chemistry.

Methodologies in Action: Techniques, Workflows, and Success Stories

In the pursuit of advanced biocatalysts and therapeutic proteins, researchers primarily employ two philosophical approaches: directed evolution, which mimics natural selection through iterative rounds of mutation and screening, and rational design, which relies on precise, knowledge-based modifications [10]. The successful application of these strategies depends heavily on core laboratory techniques for generating genetic diversity. Among these, Error-Prone PCR (epPCR), DNA Shuffling, and Site-Directed Mutagenesis constitute a fundamental toolkit. This guide provides an objective comparison of these three key techniques, detailing their performance characteristics, experimental protocols, and ideal applications within modern protein engineering workflows, particularly in pharmaceutical development contexts.

Core Technique Comparison

The following table summarizes the fundamental characteristics, advantages, and limitations of the three techniques, providing a high-level overview for experimental planning.

Table 1: Core Characteristics of Key Protein Engineering Techniques

| Feature | Error-Prone PCR (epPCR) | DNA Shuffling | Site-Directed Mutagenesis |

|---|---|---|---|

| Core Principle | Introduces random point mutations via low-fidelity PCR [10]. | Recombines beneficial mutations from multiple parent genes [10]. | Introduces specific, pre-determined mutations at a targeted site [18]. |

| Primary Application | Initial exploration of sequence space for property improvement [19] [10]. | Combining beneficial mutations to achieve additive or synergistic effects [19] [20]. | Probing function of specific residues or constructing known beneficial variants [18]. |

| Typical Library Size | Very Large (10^7 - 10^12) | Large (10^6 - 10^9) | Small (Single variant to 10^3 for saturation) |

| Key Advantage | Requires no structural or mechanistic knowledge; explores broad mutational space. | Mimics natural sexual recombination; can rapidly improve function. | High precision; generates clean, specific mutations without unwanted changes. |

| Key Limitation | Mutation bias (favors transitions); most mutations are neutral or deleterious [10]. | Requires high sequence homology (>70-75%) for efficient crossovers [10]. | Requires prior knowledge of which residues to target. |

Technical Performance and Experimental Data

When selecting a methodology, understanding the practical outcomes and experimental requirements is crucial. The following table compares the techniques based on quantitative data, technical requirements, and success rates from cited studies.

Table 2: Experimental Performance and Data from Applied Studies

| Aspect | Error-Prone PCR (epPCR) | DNA Shuffling | Site-Directed Mutagenesis |

|---|---|---|---|

| Mutation Rate/Fidelity | 1-5 mutations/kb, tunable via Mn²⁺ and dNTP imbalance [10]. Bias towards transition mutations [10]. | Crossovers not uniform; favors regions of high sequence identity [10]. | Near 100% fidelity for the targeted codon when optimized. |

| Reported Success Rate | Identified variants with 1.2-1.3x increased activity and expanded pH range (pH 4.0-11.25) [19]. | Generated a 7-mutation variant with a 1.2x activity increase and shifted product ratio from 1:3 to 1:7 [19]. | High success in altering product specificity when targeting known subsites (e.g., -3, -6, -7) [19]. |

| Documented Improvement | Enhanced γ-cyclodextrin synthesis and created activity in a broader pH range [19]. | Combined beneficial mutations from earlier epPCR rounds for additive improvements [19] [20]. | Successfully enhanced product specificity in α-, β-, and γ-CGTases [19]. |

| Technical Complexity | Low. A single, standard PCR reaction, though cloning can be a bottleneck [21]. | Moderate to High. Involves gene fragmentation, reassembly, and amplification [10]. | Low to Moderate. Simplified by modern kits and two-stage PCR methods [18]. |

| Structural Data Required | None | None (but beneficial for interpreting results). | Essential for effective targeting. |

Analysis of Comparative Data

The experimental data reveals a clear synergy between these techniques. A classic strategy involves using epPCR for initial discovery of beneficial mutations, as demonstrated by the engineering of a bacterial cyclodextrin glucanotransferase (CGTase), where epPCR identified variants with up to 1.3-fold increased activity [19]. DNA shuffling then serves to combine these hits, as seen in the same study where a variant (S54) with seven combined amino acid substitutions showed a 1.2-fold increase in activity and a significantly improved product ratio [19]. Conversely, Site-Directed Mutagenesis is unparalleled for focused interrogation, such as probing the role of specific amino acids at substrate-binding subsites, which has successfully altered the product spectrum of CGTases [19].

Detailed Experimental Protocols

Error-Prone PCR (epPCR)

The following workflow visualizes the standard epPCR process, from gene amplification to variant screening.

Title: Error-Prone PCR Workflow

Key Protocol Steps [10]:

- Reaction Setup: A standard PCR is assembled using a DNA polymerase lacking proofreading activity (e.g., Taq polymerase). Fidelity is reduced by adding manganese ions (Mn²⁺) and creating an imbalance in the concentrations of the four dNTPs.

- Amplification: The PCR is run with standard thermocycling conditions. The mutation rate can be tuned by adjusting the Mn²⁺ concentration, typically aiming for 1-5 base substitutions per kilobase.

- Library Construction: The mutated PCR product must be cloned into an expression vector. Traditional restriction-enzyme based cloning (Ligation-Dependent Cloning Process, LDCP) can limit library diversity. Circular Polymerase Extension Cloning (CPEC) is a more efficient alternative that avoids these pitfalls [21].

- Screening: The library of clones is then screened for the desired improved protein function.

DNA Shuffling

DNA shuffling recombines beneficial mutations from multiple gene variants. The process is more complex than epPCR, as shown in the following workflow.

Title: DNA Shuffling Workflow

Key Protocol Steps [10]:

- Fragmentation: A pool of related parent genes (e.g., beneficial mutants from a prior epPCR round) is randomly digested into small fragments (100–300 base pairs) using the enzyme DNaseI.

- Reassembly: The fragments are purified and subjected to a PCR-like reaction without added primers. During the annealing step, homologous fragments from different parents prime each other. The polymerase then extends these overlaps, leading to template switching and the recombination of genetic information.

- Amplification: Standard PCR with outer primers is used to amplify the newly assembled, full-length chimeric genes.

- Cloning and Screening: The shuffled genes are cloned into an expression vector, and the library is screened for variants with enhanced properties, often uncovering additive or synergistic effects.

Site-Directed Mutagenesis

Modern Site-Directed Mutagenesis often uses efficient whole-plasmid amplification methods. The following diagram illustrates a robust two-stage PCR method.

Title: Site-Directed Mutagenesis via Megaprimer PCR

Key Protocol Steps (Improved Two-Stage Method) [18]:

- Stage 1 - Megaprimer Synthesis: A mutagenic primer (and optionally an antiprimer) is used in a few cycles of PCR with the plasmid template. This generates a double-stranded megaprimer containing the desired mutation.

- Stage 2 - Plasmid Amplification: The product from Stage 1 is used as a megaprimer in a second PCR. The annealing temperature is increased to prevent the original oligonucleotide primers from binding. Over 20 cycles of amplification extend the megaprimer around the entire plasmid, producing a nicked, circular DNA molecule.

- Digestion and Transformation: The PCR product is treated with DpnI to digest the original, methylated template DNA. The remaining mutated plasmid is then transformed directly into E. coli, where cellular machinery repairs the nicks. This method is highly efficient and works well for difficult-to-amplify templates.

Essential Research Reagent Solutions

Successful implementation of these techniques relies on a suite of specialized reagents and tools. The following table details key solutions and their functions in the experimental workflow.

Table 3: Key Research Reagent Solutions for Mutagenesis and Screening

| Reagent/Tool Category | Specific Examples | Function in Workflow |

|---|---|---|

| Low-Fidelity DNA Polymerases | Taq Polymerase [10] | Essential for epPCR; inherent lack of proofreading allows incorporation of random mutations during amplification. |

| High-Fidelity DNA Polymerases | KOD Hot Start DNA Polymerase [18] | Used in protocols requiring high accuracy, such as CPEC [21] or the two-stage Site-Directed Mutagenesis where faithful amplification is critical [18]. |

| Cloning & Assembly Kits | CPEC (in-house) [21], T7 Ligase [21] | For assembling DNA fragments into vectors. CPEC is highlighted as a more efficient alternative to traditional LDCP for library construction [21]. |

| Specialized Primers | Mutagenic Primers, Snapback Primers [22] | Mutagenic primers introduce specific or saturated changes. Snapback primers are used in advanced SNP genotyping methods like HRM [22]. |

| Restriction Enzymes | DpnI [18], EcoRI-HF, BamHI-HF [21] | DpnI selectively digests methylated template DNA in Site-Directed Mutagenesis [18]. Other enzymes are used in traditional LDCP of epPCR products [21]. |

| Host Organisms | E. coli DH5α [18], Saccharomyces cerevisiae [20] | Standard cloning and expression hosts. S. cerevisiae is noted for its high recombination efficiency, useful for in vivo assembly techniques like Directed DNA Shuffling [20]. |

| Screening Assays | Congored Agar Plate Assay [19], Microtiter Plate Fluorometry [10] | Enable high-throughput identification of improved variants. The congored assay is highly selective for γ-cyclodextrin production [19]. |

Error-Prone PCR, DNA Shuffling, and Site-Directed Mutagenesis are not mutually exclusive techniques but are instead complementary tools in the protein engineer's arsenal. The experimental data shows that a strategic combination of these methods often yields the best results: starting with epPCR to explore sequence space, using DNA shuffling to combine beneficial mutations, and finishing with Site-Directed Mutagenesis to fine-tune key residues. The choice of technique and the design of the screening method remain the most critical factors in a successful directed evolution campaign. As the field advances, techniques like machine learning-assisted library design and continuous evolution platforms are emerging, yet the three core techniques discussed here remain the foundational workhorses for generating genetic diversity and driving innovation in enzyme engineering and drug development.

The Rise of AI and Generative Models in De Novo Enzyme Design

The field of enzyme engineering is undergoing a transformative shift from methodology-dependent approaches to function-driven computational creation. Traditional enzyme engineering has primarily relied on two paradigms: rational design, which uses structural knowledge to make targeted mutations, and directed evolution, which mimics natural selection through iterative rounds of randomization and screening [2] [11]. While directed evolution has proven remarkably successful for optimizing existing enzymes and was recognized with a Nobel Prize, it remains inherently constrained by its requirement for a natural starting scaffold, labor-intensive experimental screening, and a tendency to discover local optima rather than globally novel solutions [23] [24] [25].

The emerging paradigm of de novo enzyme design aims to transcend these limitations by creating entirely novel enzymes from first principles without relying on natural templates [11] [24] [26]. This approach has been supercharged by artificial intelligence (AI) and generative models, which enable the computational generation of protein sequences and structures tailored to specific catalytic functions. By leveraging deep learning and structural bioinformatics, researchers can now explore regions of the protein universe that natural evolution has not sampled, potentially bypassing the evolutionary constraints that have limited traditional enzyme engineering [23] [24]. This article compares the performance, success rates, and methodological frameworks of these competing approaches through experimental data and case studies.

Quantitative Comparison of Engineering Approaches

The table below summarizes key performance metrics and characteristics across different enzyme engineering methodologies, synthesized from recent experimental validations.

Table 1: Comparative Analysis of Enzyme Engineering Methodologies

| Engineering Approach | Reported Success Rates | Catalytic Efficiency (kcat/Km) | Experimental Throughput Requirements | Key Advantages | Major Limitations |

|---|---|---|---|---|---|

| Directed Evolution | Varies significantly by target | Gradual improvement over starting point | High: Requires screening of 10^3-10^6 variants per round [2] [5] | Requires no structural knowledge; proven experimental track record | Labor-intensive; confined to local optima around parent scaffold [2] [24] |

| Traditional De Novo Design | Typically <1% for novel functions [27] [11] | Often orders of magnitude below natural enzymes [11] | Medium: Computational design with experimental validation | Can create entirely novel scaffolds | Low success rates; limited catalytic efficiency [11] [26] |

| AI-Guided Directed Evolution | N/A (optimization approach) | 74.3-fold improvement in GFP activity over 4 rounds [5] | Reduced: ~1,000 variants per round sufficient for training [5] | Efficient exploration of sequence space; reduces screening burden | Still requires starting scaffold; limited to optimization rather than creation |

| AI-Driven De Novo Design | Up to 15% for functional designs [23] | Up to 2.2×10^5 M^-1·s^-1 for novel hydrolases [23] | Lower: In silico filtering prior to experimental testing | Creates novel folds; explores uncharted protein space; higher success rates | Requires sophisticated computational infrastructure [28] [23] |

The data reveal a clear progression in capabilities. While directed evolution remains invaluable for optimizing existing enzymes, AI-driven de novo design achieves unprecedented success rates and catalytic efficiencies for novel enzymes. A notable example includes the design of a fully de novo serine hydrolase with catalytic efficiencies approaching natural enzymes and a novel fold not observed in nature [23].

Table 2: Experimental Validation Metrics for AI-Designed Enzymes

| Designed Enzyme | Structural Accuracy (Cα RMSD) | Experimental Success Rate | Key Validation Methods | Reference |

|---|---|---|---|---|

| De novo serine hydrolase | <1.0 Å | 15% (20/132 variants active) [23] | X-ray crystallography, kinetic assays | Lauko et al. [23] |

| Venom toxin binders | 0.42-1.32 Å | 14% improved affinity after optimization [23] | Surface plasmon resonance, animal studies | Torres et al. [23] |

| Thermostable myoglobin | 0.66 Å | 25% (5/20 designs functional at 95°C) [23] | Thermal denaturation, spectroscopy | Sumida et al. [23] |

Experimental Protocols and Workflows

Traditional Directed Evolution Protocol

The classical directed evolution workflow follows an iterative Design-Build-Test-Learn (DBTL) cycle [2] [25]:

Library Generation: Create genetic diversity through error-prone PCR or DNA shuffling. Early studies used error-prone PCR to introduce random mutations throughout the gene of interest [2].

Expression and Screening: Express variant libraries in host systems (typically E. coli) and screen for improved activity using high-throughput assays (fluorescence, survival selection, etc.).

Selection: Identify improved variants through selective pressure (e.g., antibiotic resistance for enzyme evolution) [2].

Iteration: Use improved variants as templates for subsequent rounds of diversification and selection.

This process typically requires screening thousands to millions of variants per round and multiple iterative cycles to achieve significant improvements [2] [5].

AI-Driven De Novo Design Protocol

Modern AI-driven de novo enzyme design implements a more computationally intensive but experimentally efficient workflow [11] [26]:

Catalytic Requirement Definition: Specify the target chemical reaction and transition state geometry.

Active Site Design: Create a theoretical enzyme (theozyme) using quantum mechanical calculations to identify optimal arrangements of catalytic residues [11].

Backbone Generation: Use generative models (RFdiffusion, RFdiffusion2) to create protein backbones compatible with the active site geometry [27] [23].

Sequence Design: Apply inverse folding models (ProteinMPNN, LigandMPNN) to generate amino acid sequences that stabilize the designed backbone [23].

Computational Filtering: Prioritize designs using structure prediction (AlphaFold2/3) and functional metrics (ipSAE, pLDDT) [27] [23].

Experimental Validation: Express and characterize a limited set of top-ranking designs.

AI-Guided Directed Evolution Protocol

Hybrid approaches like DeepDE combine AI with directed evolution principles [5]:

Initial Library Construction: Generate ~1,000 protein variants focusing on triple mutants for broader sequence space coverage.

Deep Learning Training: Train supervised learning models on the variant library and corresponding activity measurements.

In Silico Prediction: Use trained models to predict improved sequences from virtual libraries.

Focused Experimental Testing: Validate top AI-predicted candidates experimentally.

Iterative Model Refinement: Incorporate new experimental data to retrain and improve predictive models.

This approach achieved a 74.3-fold increase in GFP activity over just four rounds with significantly reduced screening burden compared to conventional directed evolution [5].

The Scientist's Toolkit: Essential Research Reagents and Computational Tools

Table 3: Essential Resources for AI-Driven Enzyme Design

| Tool Category | Specific Tools | Function | Application Context |

|---|---|---|---|

| Generative Models | RFdiffusion [27] [23], RFdiffusion2 [23], SCUBA-D [11] | De novo backbone generation conditioned on functional motifs | Creating novel protein scaffolds around catalytic sites |

| Inverse Folding | ProteinMPNN [23], LigandMPNN [23] | Sequence design for stable protein structures | Optimizing sequences for designed backbones and active sites |

| Structure Prediction | AlphaFold2/3 [27] [23], RoseTTAFold All-Atom [23] | Protein structure prediction from sequence | Validating designs and filtering candidates before experimental testing |

| Functional Metrics | ipSAE [27], pLDDT [27], Interface Shape Complementarity [27] | Quantitative assessment of design quality | Ranking candidates by predicted binding and catalytic capability |

| Quantum Chemistry | DFT (B3LYP/6-31+G*) [11] | Transition state optimization and theozyme construction | Defining optimal catalytic geometry for novel reactions |

The experimental data demonstrate that AI-driven de novo enzyme design represents a paradigm shift rather than merely an incremental improvement over traditional methods. While directed evolution excels at optimizing existing functions and will continue to play a role in enzyme engineering, AI-driven approaches offer unprecedented capabilities for creating novel enzymes with customized functions. The key differentiator is the ability of generative models to explore protein sequence and structure spaces beyond natural evolutionary boundaries, accessing regions that would be unreachable through mutation of existing scaffolds alone [23] [24].

Future developments will likely focus on improving the precision of functional predictions, with recent research identifying optimized metric combinations (AF3 ipSAE_min with interface shape complementarity) that significantly enhance experimental success rates [27]. As these computational tools mature and integrate with high-throughput experimental validation, the design-build-test-learn cycle will accelerate, potentially making the precise design of efficient artificial enzymes with novel functions a mature technology in the near future [28] [26]. For researchers, the strategic implication is clear: while directed evolution remains viable for optimization problems, de novo design approaches now offer compelling advantages for creating entirely new catalytic functions not found in nature.

The field of protein engineering has traditionally been dominated by two distinct methodologies: rational design and directed evolution. Rational design operates as a precise, knowledge-driven approach, relying on detailed structural information to make targeted mutations that alter protein function. In contrast, directed evolution mimics natural selection through iterative rounds of random mutagenesis and screening to accumulate beneficial mutations without requiring prior structural knowledge [29] [30]. While both methods have successfully generated engineered enzymes for various applications, they face significant limitations in efficiency, scalability, and accessibility to non-specialists.

The recent emergence of autonomous AI-powered platforms represents a paradigm shift that transcends this traditional dichotomy. These integrated systems combine artificial intelligence, robotic automation, and biofoundry infrastructure to create self-driving laboratories capable of executing the entire design-build-test-learn (DBTL) cycle with minimal human intervention [7] [31]. This case study examines the implementation of one such generalized AI platform for engineering two distinct enzyme classes: Arabidopsis thaliana halide methyltransferase (AtHMT) and Yersinia mollaretii phytase (YmPhytase). The performance data generated from these engineering campaigns provides compelling evidence for the superior efficiency and effectiveness of autonomous platforms compared to traditional protein engineering methodologies.

Platform Architecture: Integrating AI with Biofoundry Automation

The autonomous enzyme engineering platform developed by Zhao and colleagues represents a landmark achievement in synthetic biology, integrating multiple technological innovations into a seamless workflow [7] [31]. The system's architecture eliminates human decision-making bottlenecks through a sophisticated multi-stage process that operates continuously once initiated with only a protein sequence and a quantifiable fitness metric.

Core Workflow and Integration

Table: Core Components of the Autonomous AI Platform

| Platform Component | Technology Implementation | Function in Workflow |

|---|---|---|

| AI-Driven Design | Protein LLM (ESM-2) & epistasis model (EVmutation) | Designs initial variant libraries without experimental data |

| Automated Construction | iBioFAB robotic biofoundry with HiFi-assembly mutagenesis | Executes gene synthesis, cloning, and protein expression |

| High-Throughput Testing | Integrated assay systems | Measures variant activity with minimal human intervention |

| Machine Learning | Low-N regression model | Learns from data to predict improved variants for next cycle |

The platform operates through seven fully automated modules that handle mutagenesis PCR, DNA assembly, transformation, colony picking, plasmid purification, protein expression, and enzyme assays [7]. A critical innovation enabling this continuous workflow is a high-fidelity mutagenesis method that achieves approximately 95% accuracy without requiring intermediate sequence verification, which traditionally creates significant delays in protein engineering campaigns [7]. This robust automated pipeline allows for complete DBTL cycles to be executed with remarkable efficiency, as demonstrated by the ability to engineer significantly improved enzyme variants in just four weeks.

Research Reagent Solutions

Table: Essential Research Reagents and Materials

| Reagent/Platform | Specific Implementation | Function in Workflow |

|---|---|---|

| Protein Language Model | ESM-2 (Evolutionary Scale Modeling) | Predicts beneficial mutations from natural sequence patterns |

| Epistasis Model | EVmutation | Identifies co-evolved residues and functional constraints |

| Biofoundry System | Illinois Biological Foundry (iBioFAB) | Robotic automation of molecular biology and screening |

| Mutagenesis Method | HiFi-assembly | High-fidelity DNA assembly without sequence verification |

| Host System | Komagataella phaffii (Pichia pastoris) | Eukaryotic expression host for phytase production |

| Screening Method | Oxygen Transfer Rate (OTR) monitoring | High-throughput activity assessment without manual assays |

Experimental Protocols and Performance Outcomes

Engineering Campaign for Halide Methyltransferase (AtHMT)

The engineering campaign focused on improving the ethyltransferase activity of Arabidopsis thaliana halide methyltransferase (AtHMT), an enzyme with potential applications in synthesizing S-adenosyl-L-methionine (SAM) analogs from cost-effective alkyl halides and S-adenosyl-L-homocysteine (SAH) [7]. The platform initiated the process without any prior experimental data for AtHMT, instead relying on unsupervised models (ESM-2 and EVmutation) to design the initial library of 180 variants. These AI-designed variants were subsequently constructed, expressed, and screened by the iBioFAB automated system.

The platform demonstrated remarkable efficiency in navigating the sequence-function landscape of AtHMT. In the initial round, 59.6% of variants performed above the wild-type baseline, with 50% showing significant improvement [7]. Through four iterative DBTL cycles, the system identified a variant with an approximately 16-fold increase in ethyltransferase activity and another variant with a ~90-fold shift in substrate preference toward ethyl iodide over methyl iodide [7] [31]. This engineering accomplishment was achieved while screening fewer than 500 total variants and completing the entire process in just four weeks—a timeline that would be challenging with traditional methods.

Engineering Campaign for Phytase (YmPhytase)

The parallel engineering campaign targeted Yersinia mollaretii phytase (YmPhytase), an enzyme with industrial importance in animal feed applications where high activity at neutral pH is essential for functionality throughout the gastrointestinal tract [32] [7]. Traditional directed evolution had previously been applied to this enzyme, achieving a 7-fold improvement in specific activity at neutral pH through identification of key positions T44 and K45 in the active site loop [32]. This prior work provided a valuable benchmark against which to compare the performance of the autonomous AI platform.

The AI-driven engineering campaign employed the same generalized workflow used for AtHMT, beginning with AI-designed libraries and progressing through iterative DBTL cycles. The platform successfully identified a YmPhytase variant with an approximately 26-fold higher specific activity at neutral pH compared to the wild-type enzyme [7] [31]. This result significantly surpassed the improvements achieved through traditional directed evolution and was accomplished with exceptional efficiency—requiring only four weeks and screening fewer than 500 variants.

Comparison of Engineering Methodologies

Table: Performance Comparison of Protein Engineering Methods

| Engineering Method | Time Required | Variants Screened | Fold Improvement | Key Limitations |

|---|---|---|---|---|

| Traditional Directed Evolution | Several months | 10,000+ | 7-fold (YmPhytase) [32] | Labor-intensive, expert-dependent |

| Rational Design | 1-2 months | 10-100 | Varies by target | Requires structural data, limited exploration |

| Autonomous AI Platform | 4 weeks | <500 per enzyme | 16-90 fold (AtHMT)26-fold (YmPhytase) [7] [31] | Requires quantifiable fitness assay |

The experimental outcomes from both engineering campaigns demonstrate the superior efficiency of the autonomous AI platform. The platform achieved substantially greater improvements in enzyme function while screening orders of magnitude fewer variants and completing the process in less time compared to traditional directed evolution. Furthermore, the generalized nature of the platform allowed it to successfully engineer two distinct enzymes with different catalytic mechanisms and engineering goals using the same underlying workflow.

Comparative Analysis: Traditional vs. Autonomous Approaches

Efficiency and Resource Utilization

The quantitative data from the enzyme engineering campaigns reveals dramatic differences in resource utilization between traditional and autonomous approaches. Where traditional directed evolution typically requires screening tens of thousands of variants over multiple months, the AI-powered platform achieved superior results with fewer than 500 variants screened in just four weeks [7]. This improvement in efficiency stems from several key advantages:

Intelligent Library Design: Unlike random mutagenesis methods used in directed evolution, the AI platform uses protein language models to design libraries with a higher probability of containing beneficial mutations, resulting in 55-60% of initial variants performing above wild-type levels [7].

Efficient Exploration: The machine learning component enables the system to strategically explore the fitness landscape by combining beneficial mutations identified in previous rounds, avoiding wasteful screening of non-productive regions of sequence space.

Continuous Operation: The integrated biofoundry eliminates manual workflows and operates 24/7, dramatically compressing each DBTL cycle compared to human-paced experimentation.

Accessibility and Generalizability

A significant limitation of both rational design and directed evolution has been their dependence on specialized expertise—structural biology and computational modeling for rational design, and extensive experimental optimization for directed evolution [29] [30]. The autonomous platform effectively democratizes protein engineering by encapsulating this expertise within AI tools and automated workflows [31]. Researchers need only provide a protein sequence and a quantifiable fitness assay, making advanced protein engineering capabilities accessible to non-specialists.

The platform's generalizability across two enzymatically distinct targets—AtHMT (methyltransferase) and YmPhytase (hydrolase)—with different engineering objectives demonstrates its versatility across the enzyme engineering landscape [7]. This stands in contrast to traditional methods that often require customization for each new engineering target.

Limitations and Future Directions

Despite its impressive capabilities, the autonomous platform has certain limitations. Its effectiveness depends on the availability of quantifiable high-throughput assays for the target property, which can be challenging for complex phenotypes such as organic solvent tolerance or in vivo efficacy [31]. Additionally, while the platform efficiently explores point mutations, engineering more complex structural changes such as domain swaps or insertions remains challenging.

Future developments will likely address these limitations through expanded assay capabilities and more sophisticated AI models capable of predicting the effects of larger structural modifications. The integration of foundation models trained on broader biological contexts may further enhance prediction accuracy and expand the platform's applicability to more complex engineering challenges [33].

The empirical data generated from engineering methyltransferases and phytases with autonomous AI platforms provides compelling evidence for a paradigm shift in protein engineering methodology. The demonstrated capabilities—achieving 16-90 fold improvements in enzyme activity with fewer than 500 variants screened in just four weeks—significantly surpass what is routinely achievable through traditional directed evolution or rational design alone [7] [31].

This case study illustrates how autonomous platforms have effectively transcended the historical rational design versus directed evolution dichotomy by creating a unified approach that combines the precision of computational design with the exploratory power of evolutionary methods. The resulting technology enables more efficient, accessible, and generalizable enzyme engineering that has profound implications for accelerating advancements in biotechnology, therapeutic development, and sustainable manufacturing.

As these platforms continue to evolve and become more widely adopted, they promise to transform protein engineering from a specialized craft requiring deep expertise into a more democratized capability accessible to broader scientific communities. This transition has the potential to dramatically accelerate innovation across numerous fields that rely on engineered enzymes, from pharmaceutical development to renewable energy and green chemistry.

The development of therapeutic antibodies represents a cornerstone of modern biologics, with a market value expected to reach $445 billion in the coming years and over 160 antibody therapeutics currently licensed globally [34]. A critical challenge in therapeutic antibody development involves reducing immunogenicity of antibodies derived from non-human sources, necessitating sophisticated humanization processes. Traditional antibody humanization methods have primarily relied on CDR-grafting and backmutation techniques, which often require extensive experimental optimization and can result in variable success rates [35].