Discrete Trait Analysis vs. Structured Birth-Death Models: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive comparison of Discrete Trait Analysis (DTA) and Structured Birth-Death Models (SBDM), two foundational methods in phylogenetic inference for studying trait evolution and population dynamics.

Discrete Trait Analysis vs. Structured Birth-Death Models: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive comparison of Discrete Trait Analysis (DTA) and Structured Birth-Death Models (SBDM), two foundational methods in phylogenetic inference for studying trait evolution and population dynamics. Tailored for researchers, scientists, and drug development professionals, it covers the core principles, methodological applications, and practical challenges of both approaches. Drawing on current research and software advancements, the guide offers a clear framework for selecting and optimizing these models to track pathogen spread, quantify transmission dynamics, and inform public health strategies, with a focus on real-world use cases in infectious disease and genomic epidemiology.

Core Concepts: Understanding Discrete Trait Analysis and Structured Birth-Death Models

Discrete Trait Analysis (DTA) is a phylogenetic comparative method used to infer the evolutionary history of discrete characteristics—such as geographic location, disease state, or morphological feature—across a phylogeny. In essence, DTA treats these traits as if they were evolutionary characters that can "mutate" from one state to another (e.g., from geographic region A to region B) along the branches of a tree [1] [2]. This approach allows researchers to reconstruct the ancestral states of these traits at internal nodes of the phylogeny, providing insights into historical evolutionary processes, migration patterns, and trait associations.

The method operates by modeling trait evolution using a continuous-time Markov chain (CTMC), typically defined by a transition rate matrix (Q-matrix) that describes the rate of change between all possible pairs of discrete states [3]. DTA has become a widely used technique in fields ranging from viral phylogeography, where it helps trace the spread of pathogens, to macroevolution, where it investigates correlations between phenotypic traits [4] [1]. Its popularity stems from its computational efficiency and intuitive analogy to substitution processes in molecular evolution, though this very analogy also underpins its primary limitations when applied to population-level processes such as migration [2].

Core Principles and Methodological Framework

The Statistical Foundation of DTA

The statistical engine of Discrete Trait Analysis is a Markov model that describes the instantaneous rates of change between discrete character states. The core component is the Q-matrix, a square matrix where each off-diagonal element qij represents the instantaneous rate of change from state i to state j. The diagonal elements are set such that each row sums to zero, ensuring proper probabilistic interpretation [3]. The likelihood of observing a particular pattern of trait evolution across a phylogeny can be calculated by considering the product of probabilities along all branches, integrating over all possible ancestral states at internal nodes.

Model selection plays a crucial role in DTA, with researchers typically comparing different structures of the Q-matrix:

- Equal Rates (ER): All transition rates are equal

- Symmetric (SYM): Forward and backward rates between any two states are equal

- All Rates Different (ARD): Each possible transition has a distinct rate parameter [3]

The choice among these models depends on biological rationale and can be evaluated using statistical criteria such as Akaike Information Criterion (AIC) or Bayesian model comparison [3] [5].

Implementation Workflows

The practical implementation of DTA typically follows a structured workflow, whether for ancestral state reconstruction or phylogeographic analysis:

Data Preparation: The process begins with assembling two core components: a phylogenetic tree with branch lengths (typically time-calibrated) and a dataset of discrete traits for the tips of the tree. These traits must be carefully coded into discrete states (e.g., 0/1 for binary traits, or specific labels for multi-state traits) [3] [5].

Model Specification and Fitting: Researchers specify the structure of the transition rate matrix based on biological hypotheses or employ model selection to determine the best-fitting structure. The model is then fitted to the data using maximum likelihood or Bayesian inference methods [5].

Ancestral State Reconstruction: Once the model is fitted, marginal or joint ancestral state reconstruction is performed to estimate the probability of each discrete state at internal nodes of the phylogeny [5].

Visualization and Interpretation: The results are typically visualized by projecting the reconstructed states onto the phylogeny, often using color coding or other visual cues to represent state changes across evolutionary history [3].

The following diagram illustrates this generalized workflow for a DTA analysis:

DTA Versus Alternative Phylogeographic Models

Conceptual Comparison of Modeling Approaches

Discrete Trait Analysis represents just one approach to modeling trait evolution and population history. When compared with structured coalescent and birth-death models, fundamental differences emerge in their underlying assumptions and mathematical foundations.

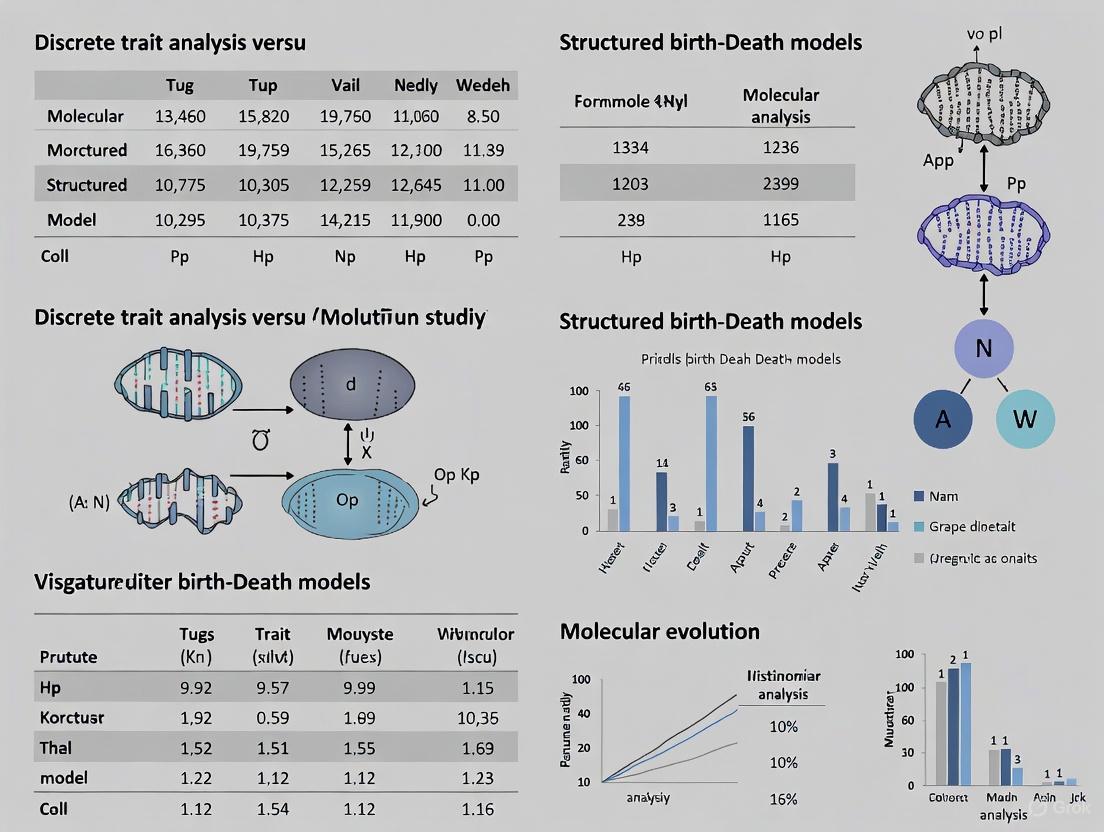

The table below summarizes the key distinctions between these approaches:

| Feature | Discrete Trait Analysis (DTA) | Structured Coalescent Models | Structured Birth-Death Models |

|---|---|---|---|

| Core Concept | Treats location/trait as evolving like a discrete character [2] | Models genealogy within structured population considering lineage migration [2] | Models lineage birth/death events across different structured populations [4] |

| Computational Demand | Low to moderate [2] | High to very high [4] [2] | Moderate to high [4] |

| Handling of Sampling Bias | Sensitive to uneven sampling across states [6] [2] | Better accounts for variable sampling intensity [2] | Can incorporate sampling proportions [4] |

| Population Size Inference | Not directly inferred | Infers effective population sizes per deme [2] | Infers birth, death, and sampling rates [4] |

| Typical Applications | Discrete trait evolution, phylogeography with limited demes [4] [2] | Accurate migration history, outbreak source attribution [2] | Emerging outbreak dynamics, serially sampled data [4] |

| Key Limitations | Assumes independence of trait evolution from tree-generating process [4] [2] | Computationally intensive with many demes [4] [2] | May require strong priors for convergence [4] |

Performance Comparison: Empirical Evidence

Experimental comparisons between DTA and structured coalescent models reveal significant performance differences, particularly in scenarios involving biased sampling or outbreak origin estimation.

A seminal study by Dellicour et al. highlighted these disparities through simulations and empirical analyses [2]. When investigating the zoonotic transmission of Ebola virus, DTA implausibly suggested sustained undetected human-to-human transmission over four decades, while the structured coalescent analysis correctly identified repeated seeding from a large unsampled non-human reservoir population [2]. This case exemplifies how model misspecification in DTA can lead to fundamentally incorrect biological conclusions.

Another critical evaluation focused on root state classification accuracy—the ability to correctly infer the geographic origin of an outbreak at the root of the phylogeny [6]. This research demonstrated that DTA performance peaks at intermediate sequence dataset sizes and that common metrics like Kullback-Leibler divergence can provide misleading support for models with finer discretization schemes, unrelated to actual classification accuracy [6].

The following diagram illustrates the fundamental conceptual differences in how these models approach lineage history:

Experimental Protocols and Validation Frameworks

Standard DTA Implementation Protocol

A robust DTA implementation for ancestral state reconstruction typically follows this experimental protocol:

Data Curation and Alignment:

- Obtain or infer a time-calibrated phylogenetic tree with branch lengths

- Code discrete traits consistently across all tips

- Ensure trait data alignment with tree tip labels

- Address missing data using appropriate methods (e.g., partial assignment or modeling uncertainty) [3] [5]

Model Selection and Fitting:

- Specify multiple candidate Q-matrix structures (ER, SYM, ARD)

- Fit models using maximum likelihood or Bayesian inference

- Conduct statistical model comparison using AIC, BIC, or Bayes factors

- Select best-fitting model for subsequent reconstruction [3] [5]

Ancestral State Reconstruction:

- Perform marginal ancestral state estimation using the selected model

- Calculate state probabilities at all internal nodes

- Optionally, perform joint reconstruction to identify the most probable overall history

- Assess uncertainty in reconstructions through bootstrap or Bayesian credibility intervals [5]

Validation and Sensitivity Analysis:

- Conduct posterior predictive simulations to assess model adequacy

- Perform sensitivity analyses on key prior assumptions

- Validate reconstructions using known historical events or fossil data when available [6] [5]

Performance Evaluation Experiments

To quantitatively evaluate DTA performance against alternative methods, researchers have developed standardized simulation protocols:

Root State Classification Accuracy:

- Simulate phylogenetic trees under known migration models

- Generate trait data with known root states

- Apply DTA and structured models to reconstruct root states

- Compare accuracy rates across methods and conditions [6]

Sampling Bias Sensitivity Assessment:

- Simulate data with controlled sampling inequalities across demes

- Systematically vary the degree of sampling bias

- Measure reconstruction accuracy degradation under bias

- Compare robustness between DTA and structured approaches [2]

Migration Rate Estimation Precision:

- Simulate data with known migration rate matrices

- Recover rates using different inference methods

- Calculate deviation between true and estimated parameters

- Assess statistical consistency and precision across methods [2]

Essential Research Toolkit for DTA

Successful implementation of Discrete Trait Analysis requires familiarity with both conceptual frameworks and practical software tools. The following table outlines key resources in the DTA researcher's toolkit:

| Tool Category | Specific Software/Package | Primary Function | Key Considerations |

|---|---|---|---|

| Bayesian Evolutionary Analysis | BEAST 2 [4] [7] | Bayesian phylogenetic inference with discrete trait models | Supports both DTA and structured coalescent approximations; packages include BEASTCLASSIC, MASCOT, GEOSPHERE [4] |

| R Comparative Methods Packages | phytools [3] [5] | Phylogenetic comparative methods including ancestral state reconstruction | Provides functions for plotting, simulation, and model fitting; integrates well with other R packages [5] |

| R Comparative Methods Packages | corHMM [3] | Hidden Markov Models for phylogenetic comparative analysis | Specializes in correlated trait evolution; efficient for complex model fitting [3] |

| Model Selection Frameworks | AIC/BIC [3] | Statistical model comparison | Standard approach for comparing DTA model structures (ER, SYM, ARD) [3] |

| Simulation Tools | Phylogenetic simulation packages | Generating synthetic data under known models | Essential for method validation and power analysis [6] [2] |

Discrete Trait Analysis represents a powerful but nuanced approach for investigating the evolutionary history of discrete characteristics across phylogenies. Its computational efficiency and intuitive framework make it well-suited for exploratory analyses, discrete phenotypic trait evolution, and situations with limited computational resources or few discrete states [4] [3]. However, the method's sensitivity to sampling bias and its fundamental assumption of independence between trait evolution and the tree-generating process necessitate careful application [2].

For researchers studying population-level processes such as migration or epidemic spread, structured coalescent models or their approximations (e.g., BASTA) generally provide more accurate inference, particularly when sampling is uneven or the number of demes is manageable [2]. The emerging generation of phylogenetic software, including BEAST X with its Hamiltonian Monte Carlo samplers, promises to reduce the computational barriers to these more sophisticated approaches [7].

Ultimately, method selection should be guided by biological context, sampling structure, and inferential goals. Discrete Trait Analysis remains a valuable tool in the phylogenetic toolkit when applied judiciously to questions aligned with its theoretical foundations and with appropriate caveats regarding its limitations.

Structured Birth-Death Models (SBDMs) represent a significant advancement in phylodynamic analysis by integrating population dynamics directly with lineage sorting in structured populations. Unlike approaches that treat discrete traits as independently evolving characters, SBDMs explicitly model how birth (speciation), death (extinction), and migration processes between subpopulations shape phylogenetic trees. This comprehensive analysis compares SBDMs against discrete trait analysis (DTA), examining their theoretical foundations, performance characteristics, and practical applications through current experimental data and case studies. The findings demonstrate that while DTA offers computational efficiency, SBDMs provide superior accuracy for inferring migration history and root state locations, particularly in epidemiological investigations and evolutionary studies of pathogens.

Phylodynamic methods aim to quantify past population dynamics from genetic sequencing data, with particular importance for understanding the spread of infectious diseases in structured populations [8]. When analyzing pathogens, the host population may be geographically structured, or the pathogen population may consist of different subpopulations, such as drug-sensitive and drug-resistant variants. Understanding how these subpopulations interact—whether separated by geographic distance, host characteristics, or other barriers—represents a key determinant in understanding how epidemics spread and evolve [8].

Two primary classes of models exist for phylodynamic analysis of structured populations: structured birth-death models (SBDMs) and discrete trait analysis (DTA). These approaches differ fundamentally in their theoretical foundations and biological assumptions. SBDMs, implemented in packages such as BDMM (Birth-Death Mixture Model) for BEAST2, are based on birth-death processes that explicitly model speciation, extinction, and migration rates between demes (subpopulations) [9] [8]. In contrast, DTA treats sampling locations as discrete traits that evolve along branches of the phylogenetic tree in a manner analogous to the substitution of alleles at a genetic locus, often described as the "Mugration" model [2].

The core distinction lies in how each approach integrates the tree-generating process with migration dynamics. SBDMs incorporate migration directly into the population dynamic process that generates the tree, while DTA models migration as a separate process occurring upon an already-existing tree [4] [2]. This fundamental difference has profound implications for model accuracy, computational requirements, and appropriate application domains.

Theoretical Foundations and Model Specifications

Structured Birth-Death Models: Mathematical Framework

Structured Birth-Death Models are continuous-time Markov processes that track the number of individuals in different subpopulations through time [10] [11]. In macroevolution and epidemiology, these "individuals" typically represent species or infected hosts. The model defines several key parameters operating within and between d discrete types (demes):

- Birth rate (λ): The per-lineage rate of speciation or infection generation

- Death rate (μ): The per-lineage rate of extinction or recovery

- Migration rate (m): The rate at which lineages move between demes

In the multi-type birth-death model with sampling as implemented in the BDMM package, the process begins at time 0 (the origin) with one individual of type i with probability hᵢ [8]. The time interval (0,T) is partitioned into n epochs through time points 0 < t₁ < ... < tₙ₋₁ < T, allowing rate parameters to change at predefined intervals. Each individual of type i at time t (where tₖ₋₁ ≤ t < tₖ) gives birth to a new individual of type j at rate λᵢⱼ,ₖ, migrates to type j at rate mᵢⱼ,ₖ (with mᵢᵢ,ₖ = 0), dies at rate μᵢ,ₖ, and is sampled at rate ψᵢ,ₖ [8]. At specific sampling times tₖ, each individual of type i is sampled with probability ρᵢ,ₖ. Upon sampling, individuals are removed from the infectious pool with probability rᵢ,ₖ [8].

The probability density of the resulting sampled phylogeny is computed by numerically integrating a system of differential equations backward along all branches to the origin of the tree [8]. This computation involves calculating the probability flow through the tree while accounting for all possible migration histories and population dynamics.

Table 1: Key Parameters in Structured Birth-Death Models

| Parameter | Symbol | Description | Units |

|---|---|---|---|

| Speciation/Birth Rate | λᵢⱼ,ₖ | Rate at which lineage in deme i gives birth to lineage in deme j during epoch k | events/time |

| Extinction/Death Rate | μᵢ,ₖ | Rate at which lineages in deme i are lost during epoch k | events/time |

| Migration Rate | mᵢⱼ,ₖ | Rate at which lineages migrate from deme i to deme j during epoch k | events/time |

| Sampling Rate | ψᵢ,ₖ | Rate at which lineages in deme i are sampled through time during epoch k | events/time |

| Sampling Probability | ρᵢ,ₖ | Probability of sampling lineages in deme i at time tₖ during epoch k | dimensionless |

| Removal Probability | rᵢ,ₖ | Probability that sampling removes lineage from infectious pool | dimensionless |

Discrete Trait Analysis: Underlying Assumptions

Discrete Trait Analysis (DTA) operates on fundamentally different principles from SBDMs. In DTA, the geographic location or other discrete trait of interest is treated as a character state that evolves along the branches of a phylogenetic tree according to a continuous-time Markov process [2]. The model assumes that:

- The relative size of subpopulations drifts over time, such that subpopulations can become lost (extinct) or fixed (the sole remaining subpopulation) without constraints

- Sample sizes across subpopulations are proportional to their relative sizes

- The migration process is conceptually separated from the coalescent process

The DTA model inherits assumptions appropriate for the independent mutation of loci within lineages but profoundly at odds with classical population genetics models of migration [2]. Specifically, it does not account for the effects of population structure on the coalescent process itself, treating the tree as fixed rather than shaped by the population dynamics it aims to infer.

Performance Comparison: Experimental Data and Case Studies

Quantitative Performance Metrics

Recent empirical studies have directly compared the performance of SBDMs and DTA across multiple metrics, revealing significant differences in accuracy, computational efficiency, and robustness to sampling bias.

Table 2: Performance Comparison of SBDM vs. DTA

| Performance Metric | Structured Birth-Death Models | Discrete Trait Analysis |

|---|---|---|

| Root State Classification | Higher accuracy, particularly with intermediate sequence dataset sizes [6] | Lower accuracy, sensitive to sampling bias [6] [2] |

| Migration Rate Estimation | More accurate across simulated and empirical datasets [2] | Often inaccurate, particularly with biased sampling [2] |

| Computational Efficiency | More demanding, especially with many demes [4] [8] | Faster computation, enabling analysis of large datasets [4] [2] |

| Sampling Bias Sensitivity | Robust to uneven sampling across demes [2] | Highly sensitive to uneven sampling [2] |

| Maximum Dataset Size | ~250 sequences in initial implementation, now improved to 500+ [8] | Effectively unlimited with sufficient computational resources |

| Theoretical Foundation | Based on population genetics principles [8] [2] | Based on phylogenetic character evolution [2] |

Case Study: Ebola Virus Transmission Dynamics

A compelling illustration of the practical implications of model choice comes from the analysis of Ebola virus genomic data [2]. When investigating the zoonotic transmission of Ebola virus, structured coalescent methods (conceptually similar to SBDMs) correctly inferred that successive human Ebola outbreaks were seeded by a large unsampled non-human reservoir population. In contrast, the discrete trait analysis implausibly concluded that undetected human-to-human transmission had allowed the virus to persist over the past four decades [2].

These diametrically opposed conclusions have significant implications for public health policy and intervention strategies. The DTA results would suggest focusing resources on detecting and interrupting human transmission chains, while the SBDM results correctly highlight the importance of understanding and monitoring the animal reservoir to prevent future spillover events. This case study underscores how model misspecification in phylogeographic analyses can lead to fundamentally incorrect inferences with real-world consequences.

Algorithmic Improvements in BDMM

Recent algorithmic enhancements to the BDMM package have substantially improved its practical utility. Initial versions were limited to analyzing datasets of approximately 250 genetic sequences due to numerical instability caused by underflow in probability density calculations [8]. Important algorithmic changes have dramatically increased the number of genetic samples that can be analyzed while improving numerical robustness and computational efficiency [8].

These improvements allow for enhanced precision of parameter estimates, particularly for structured models with a high number of inferred parameters. Additional model extensions include support for homochronous sampling events at multiple time points (not only the present), removal of the requirement that individuals are necessarily removed upon sampling, and more flexible migration rate specification through piecewise-constant changes through time [8].

Experimental Protocols and Methodologies

Standard Implementation of SBDM Analysis

The implementation of Structured Birth-Death Models using the BDMM package in BEAST2 follows a standardized workflow with specific requirements at each stage:

Software Requirements:

- BEAST2 v2.7.4 or later for Bayesian evolutionary analysis

- BEAUti2 for generating XML configuration files

- BDMM package (with automatic installation of MultiTypeTree and MASTER dependencies)

- Tracer v1.7.2 for parameter analysis

- TreeAnnotator for summary tree production

- IcyTree for tree visualization [9]

Data Preparation Protocol:

- Sequence data in FASTA format with labels containing sampling metadata

- Temporal information encoded as last element in underscore-delimited sequence names

- Location data encoded as second element in underscore-delimited sequence names

- Setting tip dates through the "Use tip dates" option with "after last" delimiter configuration

- Configuring tip locations using the "Guess" function with "split on character" and group 2 selection [9]

Model Configuration Specifications:

- Substitution Model: JC69 with 4 gamma categories for rate variation

- Gamma Category Count: 4 for discrete gamma approximation

- Shape Parameter: Estimated with starting value of 1.0

- Proportion of Invariant Sites: Fixed to alignment-specific value (e.g., 0.867)

- Clock Model: Strict clock with rate set to 0.005 substitutions/site/year

- Tree Prior: Multi-type birth-death model with appropriate epoch specification [9]

MCMC Analysis Parameters:

- Chain length: 10,000,000 to 100,000,000 steps depending on dataset size

- Log parameters: Every 1,000 to 10,000 steps

- Burn-in: 10% of chain length

- Convergence assessment: Effective Sample Size (ESS) > 200 for all parameters [9]

Model Selection and Validation Framework

Robust comparison between SBDM and DTA approaches requires a systematic validation framework:

Simulation-Based Calibration:

- Simulate phylogenetic trees under known birth-death parameters

- Generate sequence data along simulated trees using appropriate substitution models

- Analyze simulated data with both SBDM and DTA approaches

- Compare estimated parameters to known values to assess accuracy and bias [2]

Empirical Data Benchmarking:

- Select empirical datasets with known epidemiological history

- Analyze with both SBDM and DTA approaches

- Compare inferred parameters to historical records

- Assess consistency across multiple independent datasets [2]

Sensitivity Analysis Protocol:

- Vary sampling schemes across demes to assess robustness

- Test different epoch configurations for time-varying parameters

- Evaluate prior sensitivity through alternative prior specifications

- Assess convergence across multiple independent MCMC runs [8] [6]

Visualization and Workflow Diagrams

Structured Birth-Death Model Workflow

SBDM Analysis Workflow: This diagram illustrates the standard workflow for implementing Structured Birth-Death Models in BEAST2, from data preparation through final visualization.

Model Comparison Framework

Model Comparison Framework: This diagram contrasts the theoretical foundations, applications, performance characteristics, and limitations of SBDM (green) versus DTA (red) approaches.

Table 3: Essential Research Tools for SBDM Implementation

| Tool/Resource | Function | Application Context |

|---|---|---|

| BEAST2 Platform | Bayesian evolutionary analysis using MCMC | Primary inference framework for SBDM and DTA [9] |

| BDMM Package | Implements multi-type birth-death model | Phylodynamic inference in structured populations [9] [8] |

| BEAUti2 | Graphical configuration of BEAST2 XML files | Setting up analysis parameters and model specifications [9] |

| Tracer | MCMC diagnostics and parameter summary | Assessing convergence and summarizing posterior distributions [9] |

| TreeAnnotator | Summary tree production from posterior tree distribution | Generating maximum clade credibility trees [9] |

| MultiTypeTree Package | Defines colored trees for structured populations | Required dependency for BDMM analyses [9] |

| MASTER Package | Stochastic simulation of birth-death processes | Model validation and simulation-based calibration [9] |

| IcyTree | Browser-based phylogenetic tree visualization | Rapid visualization of phylogenetic trees with annotations [9] |

Discussion and Future Directions

The comparative analysis presented here demonstrates that Structured Birth-Death Models and Discrete Trait Analysis represent fundamentally different approaches to phylogeographic inference with distinct strengths and limitations. SBDMs provide a more principled foundation based on population genetics principles and generally offer superior accuracy for inferring migration history and root state locations, particularly in scenarios with biased sampling across demes [2]. However, this accuracy comes at the cost of increased computational demands, which has historically limited applications to smaller datasets.

Recent algorithmic improvements to BDMM have substantially addressed these limitations, enabling analysis of datasets containing several hundred genetic sequences [8]. These advances, coupled with the development of approximate methods like BASTA (BAyesian STructured coalescent Approximation) that maintain accuracy while improving computational efficiency, suggest a promising trajectory for SBDM methodologies [2].

For researchers and drug development professionals, model selection should be guided by the specific research question and data characteristics. When accurate reconstruction of migration history and outbreak origins is paramount—particularly in public health contexts where inferences directly inform intervention strategies—SBDMs represent the preferred approach despite their computational demands. In exploratory analyses or applications where computational efficiency is a primary concern, DTA may still offer utility, though conclusions should be interpreted with appropriate caution regarding potential biases.

Future methodological development will likely focus on further improving the scalability of SBDMs to accommodate the increasingly large genomic datasets generated by modern surveillance systems, while maintaining the theoretical rigor and statistical accuracy that distinguish them from alternative approaches.

Evolutionary trees serve as the foundational scaffold for investigating the transmission dynamics and evolutionary history of pathogens. Within Bayesian phylogenetic software platforms like BEAST2, Discrete Trait Analysis (DTA) and Structured Birth-Death (SBD) models represent two principal approaches for leveraging these trees to understand spatial spread and population dynamics [12] [7]. While both methods operate on a phylogenetic tree, their core mechanisms, underlying assumptions, and susceptibility to bias differ significantly. This guide provides an objective comparison for researchers, scientists, and drug development professionals, focusing on their application in phylogeography and phylodynamics.

Core Methodological Comparison

The table below summarizes the fundamental characteristics of Discrete Trait Analysis and Structured Birth-Death models.

Table 1: Fundamental Comparison of Discrete Trait Analysis and Structured Birth-Death Models

| Feature | Discrete Trait Analysis (DTA) | Structured Birth-Death (SBD) Models |

|---|---|---|

| Core Framework | Neutral trait evolution model mapped onto a fixed tree [12]. | Tree-generating process; the tree is an output of the model itself [12]. |

| Primary Output | History and rates of trait changes (e.g., location transitions) [12]. | Population growth rates, transmission rates, and becoming uninfectious rates [12]. |

| Key Assumption | Trait evolution does not influence the tree's branching structure [12]. | Transmission dynamics directly shape the phylogenetic tree [12]. |

| Handling of Bias | Can be sensitive to and produce biased results from uneven geographic sampling [12] [7]. | Less subject to sampling biases; better accounts for population structure [12]. |

| Computational Speed | Generally faster due to its conditional nature [12]. | Typically more computationally intensive [12]. |

Experimental Protocols & Performance Data

Simulation-Based Validation of Methodological Performance

A key approach to evaluating these methods involves simulation studies, where "truth" is known. Researchers often use software like MASTER to simulate phylogenetic trees under a controlled structured coalescent model with predefined parameters, such as effective population size (Ne) trajectories and migration rates [12]. These simulated trees then serve as input for inference by both DTA and structured models (e.g., MASCOT-Skyline). Performance is quantified by how accurately each method recovers the known simulated parameters [12].

Table 2: Comparative Performance in Key Analytical Scenarios

| Analysis Scenario | DTA Performance | Structured Model Performance | Supporting Evidence |

|---|---|---|---|

| Uneven Geographic Sampling | Biased reconstruction of migration rates and ancestral states [12]. | Significantly more robust; mitigates bias by modeling population structure [12]. | Simulation studies using SIR models and SARS-CoV-2 data [12]. |

| Inferring Population Dynamics | Not a primary function; models trait evolution conditional on the tree. | Accurately retrieves non-parametric Ne trajectories over time in different locations [12]. | Simulation of Ne trajectories from a Gaussian Markov Random Field (GMRF) [12]. |

| Joint Inference | Not designed for joint spatio-temporal inference. | Jointly infers spatial transmission and temporal outbreak dynamics, improving accuracy for both [12]. | Development of the MASCOT-Skyline method, which integrates both aspects [12]. |

Case Study: SARS-CoV-2 Omicron BA.1 Invasion

The application of these methods to real-world data is exemplified by studies of the SARS-CoV-2 Omicron variant. Research leveraging the advanced phylogeographic and phylodynamic models in BEAST X has traced the invasion of the Omicron BA.1 lineage in England [7]. Such analyses often employ discrete-trait phylogeography but are increasingly enhanced by models that parameterize transition rates between locations as functions of epidemiological predictors, helping to address inherent sensitivities to sampling bias [7].

The Scientist's Toolkit: Essential Research Reagents & Software

Successful phylodynamic analysis requires a suite of specialized software tools and computational resources.

Table 3: Key Reagents and Software for Phylogenetic Analysis

| Item | Function | Relevance to Methods |

|---|---|---|

| BEAST2 / BEAST X | A cross-platform software platform for Bayesian evolutionary analysis sampling trees; the primary engine for inference [12] [7]. | Core platform for implementing both DTA and structured models. BEAST X introduces newer, more scalable models [7]. |

| MASCOT | A BEAST2 package implementing the Marginal Approximation of the Structured COalescenT [12]. | Enables computationally efficient inference under the structured coalescent. MASCOT-Skyline adds time-varying dynamics [12]. |

| MASTER | A software package for simulating stochastic phylogenetic trees under birth-death or coalescent models [12]. | Used for validation studies and assessing model performance against known parameters [12]. |

| BEAGLE | A high-performance computational library for phylogenetic inference [7]. | Accelerates likelihood calculations for all models, enabling analysis of larger datasets [7]. |

| HAMILTONIAN MONTE CARLO (HMC) | An advanced Markov chain Monte Carlo (MCMC) algorithm for sampling from complex, high-dimensional posterior distributions [7]. | Implemented in BEAST X to improve inference efficiency for complex models like structured coalescents and relaxed random walks [7]. |

Visualizing Methodological Workflows

The following diagram illustrates the logical relationship and application focus of DTA and SBD models within a phylogenetic framework.

Diagram: Methodological Pathways. DTA and SBD models use the phylogenetic tree as input but answer different biological questions.

Discussion for Research Application

The choice between Discrete Trait Analysis and Structured Birth-Death models is not merely a technicality but a strategic decision that directly influences research conclusions. DTA offers a faster, more accessible path for initial phylogeographic reconstruction, making it suitable for exploratory analyses or when computational resources are limited. However, its known vulnerability to sampling bias necessitates cautious interpretation, particularly with unevenly sampled data [12]. In contrast, SBD models provide a more robust and mathematically coherent framework for questions where the transmission process itself is the primary object of study, as they explicitly model the processes that generate the tree [12]. They are essential for jointly inferring population dynamics and spatial spread, leading to more accurate parameter estimates. The advent of more scalable software like BEAST X and efficient algorithms like Hamiltonian Monte Carlo is making these more complex models increasingly practical for larger datasets [7]. For grant proposals or drug development research where understanding the precise dynamics of pathogen spread is critical, investing in the structured modeling approach is often the more rigorous and reliable choice.

Phylogeographic inference aims to reconstruct the spatial spread and population dynamics of pathogens using genetic sequence data. For researchers and drug development professionals, selecting the appropriate model is critical for accurately identifying outbreak origins and transmission patterns. Two principal methodologies dominate this field: Discrete Trait Analysis (DTA), which models location history as a discrete trait evolving on a phylogeny, and structured birth-death models, which explicitly incorporate population dynamics through birth (speciation/transmission) and death (recovery/removal) rates [4] [2]. The performance of these models varies significantly in accuracy, bias, and computational demand, influenced by factors such as sampling proportion across populations and the underlying biological reality. This guide provides an objective, data-driven comparison to inform model selection for genomic epidemiology.

Theoretical Foundations and Key Terminology

Core Definitions in Phylogeographic Modeling

- Traits and States: In phylogeography, a trait often represents the geographic location of a sampled sequence. These locations are categorized into distinct states (or demes), such as specific countries, regions, or host species [4] [2]. The evolution of this discrete trait over the phylogeny forms the basis for inferring migration history.

- Birth Rates and Death Rates: In structured population models, the birth rate refers to the rate at which new lineages are generated (e.g., through transmission or speciation), while the death rate is the rate at which lineages are removed from the population (e.g., through recovery or death) [2]. These parameters are fundamental to birth-death models, which use them to infer population dynamics and evolutionary history.

- Sampling Proportions: This refers to the fraction of individuals sequenced from each subpopulation (deme). Biased sampling proportions, where some populations are over- or under-represented, are a major source of inference error, particularly for some model types [2].

Model Classifications

Table 1: Core Phylogeographic Model Classifications in BEAST

| Model Category | Key Feature | Representative Software/Package |

|---|---|---|

| Discrete Trait Models | Treats location as a discrete trait evolving like a mutation; fast but makes population-genetic assumptions [4] [2]. | BEAST Classic [4] |

| Structured Coalescent Models | Accounts for the effect of population structure on the genealogy; more accurate but computationally intensive [2]. | MultiTypeTree (MTT) [4] |

| Approximated Structured Coalescent | Approximates the structured coalescent to maintain accuracy with better computational efficiency [2]. | BASTA, MASCOT [4] [2] |

| Structured Birth-Death Models | Uses birth and death rates in a structured population; appropriate when a birth-death tree prior is justified [4]. | BDMM [4] |

Performance Comparison: Quantitative Data

Accuracy and Bias in Root State Inference

A critical test for phylogeographic models is accurately identifying the root state (geographic origin) of an outbreak. Simulations based on the structured coalescent reveal significant performance differences.

Table 2: Comparative Model Performance on Simulated and Empirical Data

| Model / Method | Performance on Simulated Data | Performance on Ebola Virus Data | Key Limitation |

|---|---|---|---|

| Discrete Trait Analysis (DTA) | Highly unreliable root state inference; extremely sensitive to sampling bias [2]. | Implausibly concluded decades of undetected human-to-human transmission [2]. | Conceptual separation of migration and coalescent processes; assumes population sizes drift over time [2]. |

| Structured Coalescent (MTT) | High accuracy but becomes computationally intractable with >3-4 demes [2]. | Correctly inferred human outbreaks seeded by an unsampled non-human reservoir [2]. | Computational intensity limits application to complex models [2]. |

| BASTA (Approximated Structured Coalescent) | High accuracy, comparable to full structured coalescent, but with greater computational efficiency [2]. | Maintains reliability in complex real-world scenarios like Ebola zoonotic transmission [2]. | An approximation, though a close one to the structured coalescent [2]. |

For DTA, a study evaluating Bayesian phylogeographic models found that root state classification accuracy is highest at intermediate sequence dataset sizes and does not consistently improve with more data. Furthermore, the commonly used Kullback-Leibler (KL) divergence metric was found to increase with both the number of discrete traits and dataset size, but was not a predictor of model accuracy, limiting its utility for assessing performance on empirical data [6].

Computational Efficiency and Sample Size

Table 3: Computational and Practical Requirements

| Aspect | Discrete Trait Analysis (DTA) | Structured Birth-Death & Coalescent |

|---|---|---|

| Computational Speed | Fast; efficient for large datasets with many demes [2]. | Slower; computational demand increases with complexity and number of demes [2]. |

| Sample Size for Robust Inference | Performance can degrade with large, biased samples [6] [2]. | A study on HIV migration rate inference found a sample size of at least 1,000 sequences was needed for robust estimation with model-based phylodynamics [13]. |

| Handling of Sampling Bias | Poor; conclusions are highly sensitive to biased sampling across locations [2]. | Better; designed to explicitly account for population structure and sampling proportions [2]. |

Experimental Protocols and Methodologies

Protocol 1: Benchmarking Model Accuracy with Simulations

This protocol is used to evaluate the root state inference accuracy of different models, as referenced in studies by [6] and [2].

- Data Simulation: Generate multiple sequence alignments using a known evolutionary model and a predefined phylogenetic tree with a known root state. Key parameters to vary include:

- The number of sequences in the dataset (from small to large).

- The number of possible discrete trait values (e.g., geographic locations).

- The sampling proportion across different demes, intentionally introducing bias.

- Phylogeographic Inference: For each simulated dataset, perform inference using the models under comparison (e.g., DTA, Structured Coalescent, BASTA). Use software implementations such as BEAST2 with relevant packages.

- Accuracy Assessment: Compare the inferred root state from each model and analysis against the known, simulated root state. Calculate the classification accuracy for each model across multiple simulation replicates.

- Metric Evaluation: Record model selection metrics like KL divergence and assess their correlation with the measured classification accuracy.

Protocol 2: Assessing Model Performance on Empirical Data with Known Histories

This approach tests models against real-world outbreaks where the transmission history is well-documented.

- Dataset Selection: Curate a genomic dataset from a pathogen outbreak with a reliably known origin and spread pattern (e.g., the 2014 Ebola virus outbreak in West Africa).

- Model Application: Analyze the dataset using DTA and structured models (e.g., BASTA, BDMM) in a Bayesian framework.

- Result Validation: Compare the model-inferred origin and key migration events against the known epidemiological history to determine which model produces more plausible and accurate results [2].

Workflow Diagram: Phylogeographic Model Testing Pipeline

The following diagram illustrates the logical workflow for evaluating phylogeographic models, integrating the protocols above.

The Scientist's Toolkit: Research Reagent Solutions

Table 4: Essential Software and Analytical Tools for Phylogeographic Research

| Tool Name | Type | Primary Function | Relevance to Model Comparison |

|---|---|---|---|

| BEAST 2 / BEAST X [4] [7] | Software Platform | A comprehensive, open-source package for Bayesian phylogenetic, phylogeographic, and phylodynamic inference. | The primary ecosystem for implementing and comparing DTA, structured coalescent (e.g., MTT, BASTA), and structured birth-death (e.g., BDMM) models. |

| BASTA Package [2] | Software Package (for BEAST 2) | Implements a Bayesian structured coalescent approximation. | A key tool that balances the accuracy of the structured coalescent with the computational efficiency needed for analyses with more than a few demes. |

| BDMM Package [4] | Software Package (for BEAST 2) | Implements the structured birth-death model for scenarios where a birth-death tree prior is more appropriate than a coalescent prior. | Essential for comparing the coalescent and birth-death paradigms in structured populations. |

| MASCOT Package [4] | Software Package (for BEAST 2) | An approximated structured coalescent model that allows migration rates to be informed by predictors (e.g., flight data) via a GLM. | Used for more complex, real-world scenarios where external data can inform migration patterns. |

| ProteinEvolver [14] [15] | Software Framework | A simulator for forecasting protein evolution using birth-death models integrated with structurally constrained substitution models. | Useful for forward-time simulation of evolutionary trajectories to generate benchmark data under realistic models of selection. |

The choice between discrete trait analysis and structured birth-death/coalescent models involves a direct trade-off between computational expediency and statistical accuracy.

- Discrete Trait Analysis (DTA) offers speed and is practical for an initial exploration of datasets with many locations. However, its fundamental assumptions make it highly susceptible to sampling bias, potentially leading to misleading conclusions about the origin and spread of an outbreak [2]. Its use requires caution, and its findings should not be relied upon exclusively for critical public health decisions.

- Structured Models, including the approximated coalescent (BASTA) and structured birth-death (BDMM) models, are more reliable for inferring migration rates and root states because they explicitly account for population structure and sampling proportions [2]. While computationally more demanding, they are less sensitive to sampling bias and provide a more realistic representation of the underlying evolutionary and epidemiological processes.

For researchers and drug development professionals, the recommendation is clear: for robust, publication-quality phylogeographic inference, particularly when investigating outbreak origins or transmission dynamics, structured models should be the preferred choice. The use of DTA should be limited to preliminary analyses or cases where its assumptions are explicitly met, with results interpreted with appropriate caution. The ongoing development of efficient approximations like BASTA and advances in software like BEAST X are making these more accurate models increasingly accessible for complex, real-world analyses [7] [2].

In the study of pathogen evolution and spread, phylogeographic models are indispensable for transforming genetic sequence data into epidemiological insights. The core challenge for researchers lies in selecting the appropriate model to reconstruct transmission dynamics from molecular data. The central thesis in modern methodological research revolves on a key dichotomy: discrete trait analysis versus structured birth-death models [4]. While discrete trait models excel at identifying major transitions between predefined locations, particularly with complex population structures, structured birth-death models incorporate the tree-generating process directly, offering a more dynamic representation of how populations evolve and migrate across landscapes [4]. This guide provides an objective comparison of these approaches, detailing their performance, data requirements, and applicability to specific biological questions in pathogen research.

Model Comparison: Discrete Trait Analysis vs. Structured Birth-Death Models

The choice between discrete and structured models fundamentally shapes the inferences drawn from pathogen genetic data. The table below provides a systematic comparison of these model families based on key analytical characteristics.

Table 1: Core Model Comparison for Phylogeographic Inference

| Characteristic | Discrete Trait Analysis | Structured Birth-Death Models |

|---|---|---|

| Core Methodology | Models location as an evolving discrete trait on the phylogeny, often using Bayesian stochastic search variable selection [4]. | Integrates population structure directly into the tree prior, modeling birth, death, and migration events [4]. |

| Underlying Process | Does not incorporate the tree-generating process; trait evolution is modeled independently along branches [4]. | Explicitly models the tree-generating process (birth/death) within and between populations [4]. |

| Computational Demand | Generally faster; often the only feasible option with many demes (>10) [4]. | Computationally intensive; pure implementations are limited to 3-4 demes, though approximations exist [4]. |

| Typical Applications | Identifying major migration pathways between countries or regions; outbreaks with many locations of origin [4]. | Detailed dynamics within meta-populations; inferring migration rates and population sizes [4]. |

| Informing Mechanisms | Migration matrix can be informed by covariates (e.g., flight data, borders) in models like MASCOT [4]. | Rate matrices can be set for different epochs but not yet informed by GLM in all implementations [4]. |

Experimental Protocols and Methodologies

Protocol for Discrete Trait Analysis

Discrete trait analysis requires careful data preparation and model configuration to ensure robust inference of geographic spread.

Data Collection and Curation:

- Genetic Sequence Alignment: Assemble a multiple sequence alignment from pathogen genomes (e.g., SARS-CoV-2, influenza).

- Trait Annotation: Annotate each sequence with a discrete location trait (e.g., country, region). The granularity should reflect the biological question and data density [4].

- Covariate Data (Optional): For advanced models like MASCOT, gather relevant covariate data (e.g., flight passenger numbers, geographical adjacency, trade volumes) to inform the migration rate matrix [4].

Model Specification in BEAST 2:

- Package Selection: Typically implemented using the

BEAST_CLASSICpackage for basic analysis orMASCOTfor a structured coalescent approximation informed by covariates [4]. - Clock Model: Select a strict or relaxed molecular clock model based on prior knowledge of the pathogen's evolutionary rate.

- Site Model: Define the nucleotide substitution model (e.g., HKY, GTR) often with a gamma distribution for among-site rate variation.

- Trait Model: Set up the discrete trait model for the location data. In

MASCOT, specify the GLM to include the collected covariate data to explain migration rates [4]. - Tree Prior: Use a coalescent or birth-death tree prior that is appropriate for the population history and sampling scheme.

- Package Selection: Typically implemented using the

Analysis and Output:

- MCMC Execution: Run a Markov Chain Monte Carlo (MCMC) analysis for a sufficient number of steps to achieve convergence (effective sample sizes >200 for key parameters).

- Posterior Analysis: Use software like Tracer to assess convergence and TreeAnnotator to generate a maximum clade credibility tree.

- Visualization: Visualize the spatiotemporal spread using tools like SpreaD3, which can map the posterior distribution of ancestral locations onto the phylogeny.

Protocol for Structured Birth-Death Models

This protocol is designed for inferring population dynamics and migration rates in a structured population framework.

Data Collection and Curation:

- Genetic Sequences and Traits: Follow the same steps as for discrete trait analysis to obtain an alignment and discrete location traits.

- Epoch Definition: If analyzing data across different time periods (e.g., before and after a travel ban), define the epochs and prepare any epoch-specific rate matrices [4].

Model Specification in BEAST 2:

- Package Selection: Use the

BDMM(Birth-Death Migration Model) package [4]. - Model Parameterization: Define the structured birth-death model by specifying:

- Birth Rate: The rate of lineage diversification within each population.

- Death Rate: The rate of lineage extinction (sampling-through-time can inform this).

- Migration Rates: The rates of movement between the defined populations (demes). These can be symmetric or asymmetric.

- Epoch Settings: Configure different rate matrices for each defined epoch to model changing migration dynamics over time [4].

- Priors: Place strong, well-justified priors on at least one model parameter to aid convergence, as the model can be parameter-rich [4].

- Package Selection: Use the

Analysis and Sensitivity:

- MCMC Execution: Run long MCMC chains, as structured models are computationally intensive. Monitor mixing and convergence closely.

- Sensitivity Analysis: Perform sensitivity analyses on key priors (e.g., the birth rate prior) to ensure results are robust to prior choice [4].

- Interpretation: Analyze the posterior estimates of migration rates and population sizes to understand the dynamics of the meta-population.

Conceptual Workflows and Logical Relationships

Decision Framework for Model Selection

The following diagram outlines the logical workflow for choosing between discrete and structured phylogeographic models based on the research question and data.

Workflow for a Discrete Trait Phylogeographic Analysis

This diagram illustrates the key steps in a standard discrete trait analysis, from data preparation to the final visualization of results.

Successful phylogeographic analysis relies on a suite of software, data sources, and computational resources. The table below details key components of the modern molecular epidemiologist's toolkit.

Table 2: Key Research Reagent Solutions for Phylogeographic Analysis

| Tool/Resource | Type | Primary Function | Relevance to Model Comparison |

|---|---|---|---|

| BEAST 2 [4] | Software Package | A cross-platform program for Bayesian phylogenetic analysis of molecular sequences. | Core platform for implementing both discrete trait and structured birth-death models. |

| BEAST_CLASSIC [4] | Software Package (BEAST 2) | Contains the standard discrete trait model for phylogeography. | Enables analysis of location as an evolving trait without the tree prior. |

| MASCOT [4] | Software Package (BEAST 2) | Approximates the structured coalescent and allows migration rates to be informed by a GLM. | Bridges discrete and structured approaches; allows many demes and covariate inclusion. |

| BDMM [4] | Software Package (BEAST 2) | Implements the structured birth-death model for phylogeographic inference. | The primary package for a full structured birth-death analysis with migration. |

| Tracer | Software Tool | Analyzes the trace files from BEAST MCMC runs to assess convergence and parameter estimates. | Essential for diagnosing model performance and ensuring robust conclusions from any analysis. |

| Discrete Location Traits | Data | Categorical data (e.g., country, state) assigned to each genetic sequence. | The fundamental input for defining populations or demes in both model families. |

| Covariate Data (e.g., flight passenger numbers) [4] | Data | External data used to inform the migration rate matrix in models like MASCOT. | Adds ecological realism to the model, helping to explain why certain migration routes are preferred. |

The choice between discrete trait analysis and structured birth-death models is not a matter of one being universally superior, but of aligning the model with the specific biological question and data constraints. Discrete trait models, particularly when enhanced with covariate data in frameworks like MASCOT, offer a powerful and computationally efficient method for reconstructing large-scale spread patterns across many locations. In contrast, structured birth-death models provide a more mechanistically rich framework for inferring the dynamic processes of birth, death, and migration within a meta-population, at a higher computational cost. As the field advances, the integration of these approaches with other data streams, such as human mobility models [16] [17] and detailed social mixing patterns [18], will further refine our ability to reconstruct and forecast the complex spread of infectious diseases.

From Theory to Practice: Implementing DTA and SBDM in Research

Discrete Trait Analysis (DTA) represents a fundamental methodological approach in Bayesian phylogeography, enabling researchers to reconstruct the evolutionary history and dispersal dynamics of discrete characteristics across phylogenetic trees. Within the BEAST2 ecosystem, DTA serves as a computationally efficient method for inferring how traits such as geographical locations or phenotypic states transition through time. This approach must be understood in contrast to alternative frameworks, particularly the structured birth-death models, which offer different theoretical foundations and computational trade-offs. The core distinction lies in their treatment of the tree generating process: DTA operates by modeling trait evolution along the branches of a fixed or co-estimated phylogeny without explicitly linking the trait dynamics to the population processes that shape the tree itself. In contrast, structured models like the structured birth-death model (implemented in the bdmm package) explicitly connect population dynamics in different demes to the tree formation process, providing a more integrated but computationally demanding framework [4].

The discrete trait model in BEAST2 is implemented through the BEAST_CLASSIC package and utilizes Bayesian stochastic variable selection to reduce parameter dimensionality, making it particularly advantageous when analyzing systems with many discrete states or demes [4]. This methodological choice becomes particularly significant when designing studies to trace pathogen spread, species migration, or the evolution of drug resistance, where accurately modeling transition rates between states can illuminate critical patterns in evolutionary and epidemiological dynamics. For researchers operating within the constraints of limited computational resources or those requiring rapid analytical turnaround, DTA often presents a pragmatic solution, though its theoretical simplifications must be acknowledged and justified within the specific biological context under investigation.

Theoretical Framework: DTA versus Structured Models in Evolutionary Inference

Fundamental Methodological Divergences

The choice between Discrete Trait Analysis and structured population models represents a critical branch point in phylogenetic study design, with each approach embodying distinct philosophical and statistical assumptions about the evolutionary process. DTA conceptualizes trait evolution as a separate process that occurs along the branches of a phylogenetic tree, typically modeled using continuous-time Markov chains that describe the stochastic transition between discrete states. This methodological separation allows for computational efficiency but makes the fundamental assumption that the trait's evolutionary dynamics are conditionally independent of the underlying tree-generating process given the tree topology and branch lengths. While this simplification enables the analysis of complex multi-state systems, it potentially ignores important feedbacks between population dynamics and trait evolution [4].

In contrast, structured models like the Multi Type Tree (MTT) implementation of the structured coalescent or the birth-death migration model (BDMM) explicitly incorporate the effect of population structure on the tree generation process itself. These models treat the discrete traits (e.g., geographical locations) as integral components that shape the genealogical history through their influence on migration rates and population sizes. The structured coalescent, for instance, models how lineages coalesce within demes and migrate between them, creating a more biologically realistic but computationally intensive framework. Similarly, the birth-death serial sampling model in the bdmm package incorporates temporal epochs, allowing migration and birth rates to vary across predefined time intervals, capturing dynamic processes like seasonal migration patterns or changing connectivity between populations [4] [9].

Performance Trade-offs in Practical Applications

The theoretical distinctions between these approaches manifest in tangible performance trade-offs that researchers must navigate when designing phylogenetic studies. The computational burden of structured models increases dramatically with the number of demes, with pure implementations of the structured coalescent becoming computationally intractable beyond 3-4 demes. Approximation methods like MASCOT (Marginal Approximation of the Structured COalescenT) extend this limit to approximately 10 demes while introducing GLM capabilities to model migration rates as functions of external predictors like flight passenger volumes or trade relationships [4].

Table 1: Computational and Methodological Trade-offs Between Phylogeographic Models

| Model Characteristic | Discrete Trait Analysis (DTA) | Structured Birth-Death (BDMM) | Structured Coalescent Approximation (MASCOT) |

|---|---|---|---|

| Theoretical Foundation | Trait evolution independent of tree process | Tight integration of trait and tree processes | Approximation to structured coalescent |

| Computational Scaling | Scales well with many demes (>10) | Limited to moderate deme numbers | Handles more demes than exact methods |

| Treatment of Tree Process | Ignores tree generating process | Explicitly models population dynamics | Approximates population structure effects |

| Data Integration Capabilities | Limited external data integration | Epoch models for rate variation | GLM for migration predictors |

| Best Application Context | Exploratory analysis, many demes | Few demes with strong population dynamics | Many demes with known migration predictors |

The discrete trait model's computational efficiency stems from its treatment of the trait evolution process as separate from the tree prior, significantly reducing the parameter space that must be explored during Markov Chain Monte Carlo (MCMC) sampling. However, this efficiency comes at the cost of biological realism, as the approach does not account for how population structure in the trait of interest might have influenced the phylogenetic tree's shape and branching times. Simulation studies have demonstrated that this disconnect can introduce biases in parameter estimation, particularly when migration rates between demes are high or when the trait exhibits strong influence on population dynamics [4].

Experimental Protocols: Implementing DTA in BEAST2

Software Environment Configuration

Establishing a proper computational environment represents the foundational step in implementing Discrete Trait Analysis within BEAST2. Researchers must first install the core BEAST2 package, which includes the essential BEAUti2 configuration tool, TreeAnnotator for summarizing posterior tree distributions, and associated utilities. The critical additional requirement for DTA is the BEASTCLASSIC package, which contains the discrete trait evolutionary model implementation. Installation occurs through BEAUti2's package manager interface (File > Manage Packages), where users can select and install BEASTCLASSIC, with the system automatically handling any dependencies [19]. Following installation, a BEAUti2 restart is required to activate the newly installed packages and their associated templates.

The broader analytical workflow typically involves several additional software components that facilitate pre-processing, analysis, and post-processing. Tracer provides essential MCMC diagnostics and parameter summary capabilities, allowing researchers to assess chain convergence through Effective Sample Size (ESS) metrics and visualize posterior distributions. For tree visualization and annotation, FigTree offers publication-ready rendering of phylogenetic trees with node annotations, while DensiTree enables qualitative assessment of tree posterior distributions, revealing areas of topological uncertainty or consensus across the MCMC samples [20].

Step-by-Step Analytical Workflow

The implementation of a discrete trait analysis follows a structured pathway from data preparation through to inference and interpretation, with specific considerations at each stage to ensure biologically meaningful and computationally efficient analysis.

Data Preparation and Configuration: The analytical process begins with assembling the molecular sequence alignment in NEXUS or FASTA format and preparing a corresponding trait data set. For the geographical discrete trait analysis exemplified by the primate mitochondrial DNA data set, trait states (e.g., geographical locations) can often be extracted directly from sequence headers using BEAUti's automated parsing capabilities. The Tip Dates panel configures the temporal dimension of the analysis, critical for calibrating evolutionary rates, while the Tip Locations panel assigns discrete trait states to each taxon. The Guess function automates this process by splitting sequence names on delimiters (e.g., underscores) and extracting the relevant trait field [9].

Model Specification: Within the Site Model panel, researchers specify the nucleotide substitution model (e.g., HKY or GTR) with appropriate among-site rate heterogeneity parameters (Gamma category count typically set to 4). The Clock Model panel determines the mode of evolutionary rate variation across branches, with the strict clock representing the simplest assumption and relaxed clocks accommodating rate variation among lineages. Critically, the Tree Prior panel must be configured to Coalescent or Birth-Death models rather than structured tree priors when implementing standard DTA, as the discrete trait evolution is modeled separately from the tree generation process [20] [21].

Trait Model Configuration: The discrete trait model itself is specified through an additional trait partition, which can be added via the + button in the Partitions panel. After importing the trait data as a separate partition, researchers must navigate to the Traits tab to associate this trait data with the tree. The evolutionary model for the discrete trait typically employs Bayesian Stochastic Search Variable Selection (BSSVS), which effectively reduces the number of estimated transition rate parameters by allowing the MCMC to explore different configurations of non-zero rates between states, with Bayes Factor tests identifying well-supported migration pathways [4].

Prior Specification and MCMC Execution: The Priors panel requires careful attention, particularly for the newly added discrete trait rate parameters. Default priors (e.g., Exponential distributions) often provide reasonable starting points, though these should be adjusted based on prior biological knowledge. The MCMC settings (chain length, sampling frequency) in the MCMC panel must be configured to ensure adequate exploration of the parameter space, with chain lengths typically ranging from 10-100 million generations depending on dataset size and complexity. Following MCMC execution, diagnostic tools like Tracer assess convergence (ESS > 200 for all parameters), with TreeAnnotator generating a maximum clade credibility tree from the posterior sample for visualization and interpretation in FigTree or similar software [20].

Table 2: Essential Research Reagent Solutions for DTA Implementation

| Research Reagent | Function in Analysis | Implementation Details |

|---|---|---|

| BEAST_CLASSIC Package | Provides discrete trait evolutionary model | Install via BEAUti package manager; required for DTA |

| BEAUti2 Configuration Tool | Generates BEAST2 XML configuration files | Graphical interface for model specification and data import |

| Tracer | MCMC diagnostic assessment | Evaluates chain convergence via ESS statistics |

| TreeAnnotator | Summarizes posterior tree distribution | Generates maximum clade credibility trees with node annotations |

| FigTree/DensiTree | Phylogenetic tree visualization | Renders annotated trees and posterior tree distributions |

Comparative Performance Assessment: Empirical Data and Simulation Studies

Methodological Comparison Through Benchmarking

The performance characteristics of Discrete Trait Analysis versus structured models have been elucidated through both empirical applications and carefully designed simulation studies, revealing context-dependent advantages and limitations. A critical benchmark emerges from the analysis of influenza H3N2 evolution, where the structured birth-death model (BDMM) implemented through the bdmm package has demonstrated enhanced precision in reconstructing migration pathways between geographical regions when compared to standard DTA approaches. In these applications, BDMM recovered posterior estimates that more closely aligned with known epidemiological patterns, particularly when incorporating temporal epoch models that accommodated seasonal variation in migration rates [9].

Simulation studies examining phylogenetic regression under tree misspecification provide indirect but valuable insights into the robustness of different analytical approaches. Recent investigations have revealed that phylogenetic comparative methods exhibit heightened sensitivity to incorrect tree specification as dataset size increases, with false positive rates soaring to nearly 100% in some misspecified scenarios [22]. This finding has profound implications for DTA, which inherently assumes the correctness of the underlying phylogenetic tree or treats it as fixed during trait evolution modeling. Structured models partially mitigate this concern by co-estimating the tree and trait dynamics, though at substantial computational cost. The application of robust estimators in phylogenetic regression has demonstrated promise in rescuing analyses from tree misspecification, suggesting potential avenues for enhancing the robustness of both DTA and structured approaches [22].

Computational Efficiency and Scalability

The computational burden differential between DTA and structured models represents one of the most practically significant considerations for researchers designing phylogeographic studies. Empirical benchmarks conducted on influenza and rabies virus datasets have demonstrated that DTA implementations typically achieve convergence 3-5 times faster than structured birth-death models for equivalent datasets, making them particularly valuable for exploratory analysis or when computational resources are constrained. This efficiency advantage widens substantially as the number of discrete states increases, with DTA maintaining tractability for systems with 10+ demes where structured models become computationally prohibitive without approximation methods [4].

The introduction of approximation methods like MASCOT for the structured coalescent and the continued refinement of BDMM have narrowed but not eliminated this performance gap. For the critical task of ancestral state reconstruction at internal nodes, which forms the core objective of many discrete trait analyses, both approaches demonstrate similar accuracy under conditions of moderate migration rates and clearly differentiated populations. However, under high migration scenarios or when population structure strongly influences the tree shape, structured models consistently outperform DTA in reconstruction accuracy, justifying their additional computational requirements in these specific biological contexts [4].

Advanced Implementation Strategies and Future Directions

Post-hoc Analysis and Integration Approaches

Advanced implementation strategies for discrete trait analysis have emerged that leverage the computational advantages of DTA while mitigating some of its theoretical limitations. The recently enhanced fixed tree and tree set support in BEAST2, implemented through the FixedTreeAnalysis package, enables a hybrid approach where a previously inferred posterior tree distribution serves as the foundation for subsequent discrete trait analysis. This post-hoc strategy offers significant computational advantages for large datasets, particularly when the primary phylogenetic relationships have been well-established through previous genomic analyses and the research question focuses specifically on trait evolution patterns [23].

The post-hoc approach involves importing a fixed tree or tree set through BEAUti's template system (File > Templates > Fixed Tree Analysis or Tree Set Analysis), then adding the discrete trait partition and configuring the evolutionary model as in standard DTA. When utilizing a tree set drawn from a previous posterior distribution, the MCMC samples trees from this set throughout the analysis, preserving some uncertainty in phylogenetic relationships while dramatically reducing computational time compared to full joint inference. Empirical validation studies have demonstrated that this approach can produce comparable results to joint inference when the fixed trees adequately represent the posterior distribution, though it necessarily ignores potential feedbacks between trait evolution and tree generation [23].

Methodological Integration and Emerging Solutions

The methodological landscape for discrete trait analysis continues to evolve, with several emerging solutions addressing longstanding limitations of standard approaches. For geographical trait analysis, the discrete phylogeographic model in BEAST_CLASSIC remains the standard implementation, but alternative frameworks like the random walk on a sphere model (in the GEO_SPHERE package) offer continuous alternatives that may better reflect biological reality for certain study systems. Similarly, the break-away package implements a founder-dispersal model that assumes one population remains in place while the other migrates at each branching event, producing fundamentally different root location estimates compared to standard random walk models [4].

Future methodological development appears focused on enhancing the integration of external data sources and accommodating more complex evolutionary scenarios. The MASCOT package's GLM capabilities, which allow migration rates to be modeled as functions of predictor variables, represent a promising direction that could potentially be incorporated into DTA frameworks. Similarly, the epoch modeling functionality in BDMM, which accommodates discrete changes in migration rates over time, addresses an important biological reality that currently requires custom implementation in standard DTA [4] [9]. As Bayesian computational methods continue to advance, particularly through Hamiltonian Monte Carlo and other efficient sampling algorithms, the current computational barriers separating DTA from more complex structured models may diminish, potentially enabling more researchers to employ the most biologically appropriate methods regardless of dataset size or complexity.

The comparative analysis of Discrete Trait Analysis and structured birth-death models reveals a landscape of methodological trade-offs rather than absolute superiority of either approach. DTA emerges as the preferred choice for exploratory analyses, systems with numerous discrete states (>10), and situations with computational constraints. Its implementation through the BEAST_CLASSIC package offers a robust, well-supported workflow with relatively straightforward interpretation. In contrast, structured models like BDMM provide enhanced biological realism for systems with strong population dynamics, fewer discrete states, and available prior information about birth, death, and migration parameters.

Strategic implementation of DTA should incorporate several evidence-based practices: (1) utilization of BSSVS to reduce parameter dimensionality and identify well-supported transitions, (2) consideration of post-hoc approaches using fixed tree sets when analyzing large datasets, (3) comprehensive model diagnostics including Bayes Factor tests for migration rates, and (4) sensitivity analyses examining the impact of prior choices on posterior estimates. For research questions where the discrete trait of interest likely influenced population dynamics and thereby shaped the phylogenetic tree itself, structured models warrant their additional computational requirements. Ultimately, the expanding toolkit for discrete trait evolution in BEAST2 provides researchers with multiple pathways to reconstruct evolutionary history, with selection criteria extending beyond statistical performance to encompass biological realism, computational feasibility, and analytical transparency.