Error Minimization in the Standard Genetic Code: From Evolutionary Origins to Therapeutic Applications

This article synthesizes current research on error minimization, a fundamental property of the standard genetic code where physicochemically similar amino acids are assigned to codons related by single-nucleotide changes, thereby...

Error Minimization in the Standard Genetic Code: From Evolutionary Origins to Therapeutic Applications

Abstract

This article synthesizes current research on error minimization, a fundamental property of the standard genetic code where physicochemically similar amino acids are assigned to codons related by single-nucleotide changes, thereby buffering the deleterious effects of mutations and translational errors. We explore the foundational theories of its origin, debating whether it arose through direct natural selection or as a neutral byproduct of code expansion. The discussion extends to modern computational methodologies quantifying this optimization and its implications for synthetic biology, including the engineering of expanded genetic codes for novel therapeutic protein design. Finally, we compare the standard code's performance against random and synthetic alternatives, providing a comprehensive resource for researchers and drug development professionals aiming to harness these principles for biomedical innovation.

The Puzzle of the Genetic Code: Exploring the Evolutionary Drive for Error Minimization

The standard genetic code (SGC) is a fundamental set of rules used by virtually all life forms to translate the information stored in DNA and RNA sequences into functional proteins [1]. This code is a mapping of 64 possible triplet codons to 20 canonical amino acids and a translation stop signal [2]. The remarkable universality of this code across the tree of life implies that its fundamental structure was already present in the last universal common ancestor (LUCA) of all extant organisms [1]. A critical observation that has intrigued scientists for decades is the highly non-random organization of this code [1]. Rather than being arranged arbitrarily, amino acids with similar physicochemical properties tend to be encoded by codons that are related to one another by single nucleotide changes. This structured organization provides the genetic code with a significant degree of error minimization, reducing the likelihood that point mutations or translation errors will drastically alter protein function [1] [3].

Structural Organization of the Standard Genetic Code

The Triplet Codon Framework

The genetic code is composed of 64 triplet codons, each a unique sequence of three nucleotides [4]. Of these, 61 specify amino acids, while three (UAA, UAG, and UGA in RNA; TAA, TAG, and TGA in DNA) function as stop codons that signal the termination of protein synthesis [4]. The codon AUG serves a dual purpose, encoding methionine and often functioning as the initiation codon for translation [4]. The code is redundant, meaning that most amino acids are encoded by more than one codon—a property known as degeneracy [2]. This redundancy is not random; codons for the same amino acid typically differ only in the third nucleotide position, forming what are known as codon families [1].

Non-Random Distribution of Amino Acids

The assignment of amino acids to codons exhibits a striking pattern of organization that minimizes the chemical consequences of errors [1]. Several key patterns illustrate this non-random structure [4]:

- Similar amino acids share similar codons: Amino acids with similar physicochemical properties (e.g., hydrophobicity, size, or charge) tend to be clustered within the codon table.

- Conservative substitutions: Single nucleotide substitutions often result in the replacement of one amino acid with another that has similar chemical properties.

- First and second position conservation: The first two nucleotide positions of codons are typically more critical for determining the encoded amino acid, while the third position often allows for "wobble" and contributes to degeneracy.

Table 1: Standard Genetic Code (RNA Codons)

| Amino Acid | Codons | Amino Acid | Codons |

|---|---|---|---|

| Ala (A) | GCU, GCC, GCA, GCG | Ile (I) | AUU, AUC, AUA |

| Arg (R) | CGU, CGC, CGA, CGG; AGA, AGG | Leu (L) | CUU, CUC, CUA, CUG; UUA, UUG |

| Asn (N) | AAU, AAC | Lys (K) | AAA, AAG |

| Asp (D) | GAU, GAC | Met (M) | AUG |

| Cys (C) | UGU, UGC | Phe (F) | UUU, UUC |

| Gln (Q) | CAA, CAG | Pro (P) | CCU, CCC, CCA, CCG |

| Glu (E) | GAA, GAG | Ser (S) | UCU, UCC, UCA, UCG; AGU, AGC |

| Gly (G) | GGU, GGC, GGA, GGG | Thr (T) | ACU, ACC, ACA, ACG |

| His (H) | CAU, CAC | Trp (W) | UGG |

| Start | AUG, CUG, UUG | Stop | UAA, UGA, UAG |

| Tyr (Y) | UAU, UAC | Val (V) | GUU, GUC, GUA, GUG |

Error Minimization: A Fundamental Property

Quantitative Evidence for Error Minimization

The error minimization property of the standard genetic code can be quantitatively demonstrated by comparing its robustness against random alternative codes. The error minimization value is formally defined as [3]:

[EM = \left( \sum{n=1}^{61} \frac{\sum{i=1}^{9} V{cn{ci}}}{9} \right) / 61]

Where (c) is a sense codon, (n) is the index for the 61 sense codons, (i) is the index for the 9 codons (ci) that differ from (cn) by a single point mutation, and (V{cn{ci}}) is the physicochemical similarity between the amino acids coded for by codon (cn) and (ci).

Computational analyses have shown that the standard genetic code is nearly optimal in its level of error minimization, performing significantly better than the vast majority of randomly generated alternative codes [1] [3]. One study found that the SGC is better at error minimization than approximately 99.99% of randomly generated alternative codes [3].

Table 2: Error Minimization Performance Comparison

| Code Type | EM Value (Representative) | Relative Performance |

|---|---|---|

| Standard Genetic Code | Reference EM | 1.00 |

| Random Code (Average) | ~0.75 × Reference EM | 0.75 |

| Putative Primordial 2-Letter Code | ~0.95-1.05 × Reference EM | 0.95-1.05 |

| Optimal Theoretical Code | ~1.10 × Reference EM | 1.10 |

Experimental Validation of Error Minimization

Several methodological approaches have been employed to validate and quantify the error minimization properties of the genetic code:

- Computational comparison with random codes: Researchers generate millions of random alternative genetic codes and compare their error minimization values to that of the standard code using robust statistical methods [3].

- Amino acid similarity matrices: These experiments employ different quantitative measures of physicochemical similarity between amino acids (e.g., based on polarity, volume, or chemical properties) to ensure findings are not biased by the choice of a particular similarity metric [3].

- Analysis of primordial genetic codes: Studies investigate simpler, putative ancestral codes to determine if error minimization was an early feature of code evolution [1].

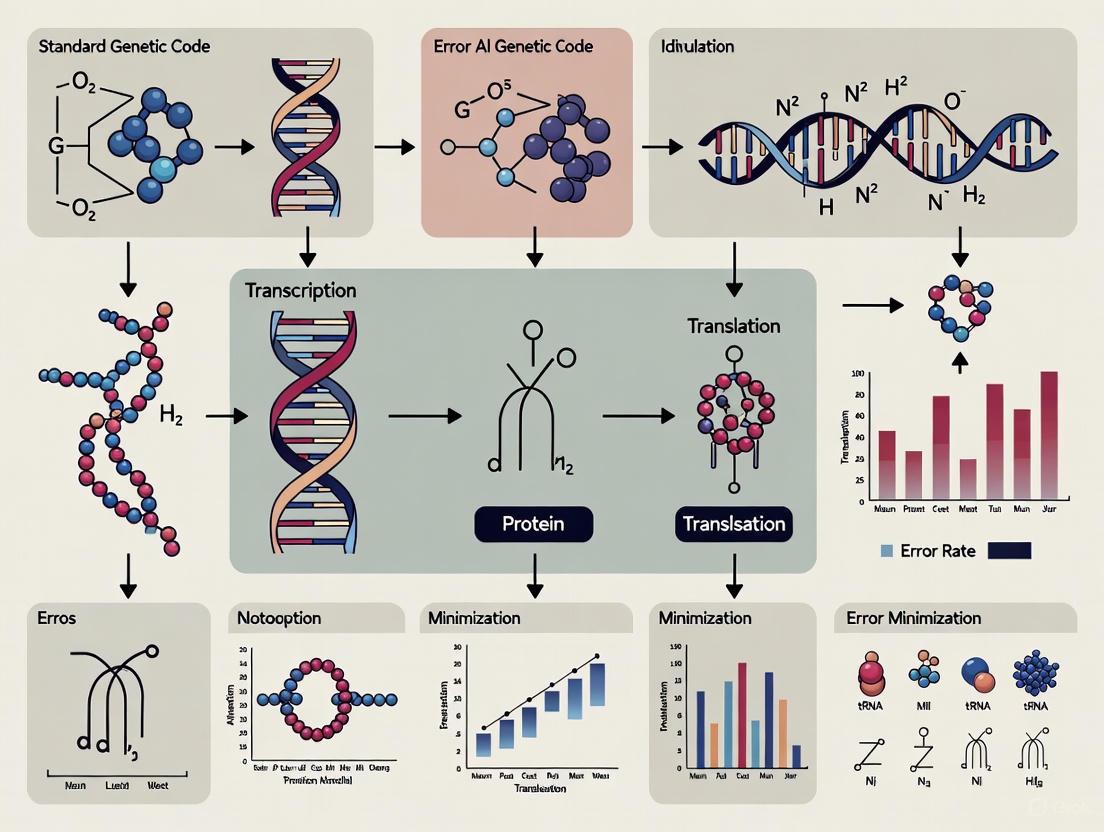

Diagram 1: Error Minimization in Genetic Code

Evolutionary Origins of Error Minimization

Theories of Code Evolution

The remarkable error minimization properties of the standard genetic code have led to several competing theories about its evolutionary origins:

- The Physicochemical Theory: Proposes that the genetic code was directly shaped by natural selection to minimize the deleterious effects of mutations and translation errors [3].

- The Frozen Accident Theory: Suggests that the code structure was initially arbitrary but became fixed early in evolution, making changes difficult due to the disruptive effect of reassignments on the proteome [3].

- The Coevolution Theory: Posits that the code evolved through the stepwise addition of new amino acids, with newer amino acids being assigned to codons related to those of their biosynthetic precursors [1] [3].

Evidence from Putative Primordial Codes

Research on simpler, putative ancestral genetic codes provides compelling insights into the early evolution of error minimization. Evidence from multiple independent lines of investigation—including abiogenic synthesis experiments, analysis of biosynthetic pathways, and consensus temporal ordering of amino acids—suggests that the earliest genetic codes likely encoded only a subset of the modern 20 amino acids [1]. A set of 10 "early" amino acids consistently emerges from these studies:

Putative Early Amino Acids: Ala, Asp, Glu, Gly, Ile, Leu, Pro, Ser, Thr, Val [1]

Strikingly, computational analyses of putative primordial codes containing only these 10 early amino acids arranged in a 2-letter supercodon structure (where only the first two nucleotide positions were informative) demonstrate that such codes would have been nearly optimal in terms of error minimization [1]. This suggests that the error minimization property may have been established very early in the evolution of the genetic code.

Diagram 2: Primordial Code Evolution

Experimental and Theoretical Approaches

Graph Theory Representation of the Genetic Code

Modern theoretical approaches have employed sophisticated mathematical frameworks to analyze the genetic code's properties. One powerful method represents the genetic code as a graph where [2]:

- Vertices (nodes) represent the 64 possible codons

- Edges (connections) represent all possible single point mutations between codons

In this representation, each codon is connected to 9 others (3 possible point mutations at each of the 3 codon positions), creating a complex network that can be analyzed for its error-buffering capacity [2]. This approach allows researchers to formally quantify the robustness of the genetic code and explore theoretical expansions or modifications to the standard code.

Code Expansion and Reprogramming

Contemporary research has explored methods for expanding or reprogramming the genetic code to incorporate non-canonical amino acids (ncAAs) for biotechnological and therapeutic applications [2]. Several key approaches include:

- Stop-codon suppression: Using rarely used stop codons to encode new amino acids [2]

- Programmed frameshift suppression: Employing four-base codons (quadruplets) to encode new amino acids [2]

- Synonymous codon reassignment: Recruiting selected synonymous codons whose corresponding tRNAs are pre-charged with ncAAs [2]

- Unnatural base pairs: Adding novel nucleotide pairs to expand the genetic alphabet [2]

Theoretical analyses using graph theory have helped identify optimal strategies for genetic code expansion that maintain robustness to errors while enabling the incorporation of new chemical functionalities [2].

Table 3: Research Reagent Solutions for Genetic Code Studies

| Reagent/Method | Function | Application in Research |

|---|---|---|

| Amino Acid Similarity Matrices | Quantifies physicochemical relationships between amino acids | Calculating error minimization values for genetic codes [3] |

| Graph Theory Models | Represents codons and mutations as connected networks | Analyzing code robustness and designing expanded codes [2] |

| tRNA Synthetase Engineering | Charges tRNAs with non-canonical amino acids | Genetic code expansion and reprogramming [2] |

| Computational Random Code Generators | Produces random alternative genetic codes | Statistical comparison with standard code [3] |

| Abiogenic Synthesis Simulation | Recreates putative prebiotic conditions | Studying early amino acid repertoire [1] |

The standard genetic code exhibits a highly non-random structure that minimizes the functional consequences of translation errors and point mutations. This error minimization property is not merely a fortunate accident but appears to be the result of evolutionary processes that may date back to the earliest stages of code evolution. The demonstration that putative primordial codes encoding only 10 early amino acids already exhibited near-optimal error minimization suggests that this property was established early and maintained throughout the code's expansion to its modern form.

Ongoing research using sophisticated mathematical frameworks and experimental approaches continues to unravel the complexities of the genetic code's structure and evolutionary history. Furthermore, understanding these principles enables the rational design of expanded genetic codes for biotechnology and therapeutic applications, demonstrating both the fundamental importance and practical utility of studying the non-random structure of the genetic code.

The standard genetic code (SGC) is the nearly universal set of rules that translates nucleotide triplets (codons) into the amino acid sequences of proteins. Its structure is manifestly non-random, with similar amino acids often encoded by codons that differ by a single nucleotide, particularly in the third position [5]. This organization suggests that the code has been shaped by evolutionary forces to minimize the deleterious effects of errors. The concept of error minimization refers to the code's inherent robustness—its ability to buffer the effects of point mutations and translation errors such that these errors are less likely to produce radical changes in the physicochemical properties of the encoded amino acids [6] [7]. This in-depth technical guide explores the quantitative evidence supporting the conclusion that the genetic code represents a highly optimized configuration, often described as a 'one in a million' code, and frames these findings within the broader context of research on error minimization.

Quantitative Evidence of Code Optimization

The hypothesis that the SGC is optimized for error minimization has been tested extensively through computational comparisons with randomly generated alternative genetic codes. These studies measure the average change in amino acid properties when a random substitution error occurs, a value often termed "error cost" or "distortion" [6] [5].

Foundational Statistical Analyses

Early quantitative studies by Haig and Hurst calculated the fraction of random codes that outperformed the SGC in preserving the polar requirement (a measure of hydrophilicity) to be approximately 10⁻⁴ [5]. Subsequent work by Freeland and Hurst incorporated a more refined cost function that accounted for the non-uniformity of misreading error probabilities across codon positions and a bias toward transition-type mutations over transversions. This more sophisticated model revealed that only about one in a million (p ≈ 10⁻⁶) random alternative codes was fitter than the standard code [8] [5]. This finding solidified the "one in a million" characterization of the SGC's optimality.

The Distortion Metric and Environmental Influence

Recent research has expanded this understanding using the information-theoretic metric of distortion, which incorporates codon usage bias into the robustness calculation. The distortion (D) is formally defined as: D = Σ P(cᵢ) × P(Y=cⱼ|X=cᵢ) × d(aaᵢ, aaⱼ) where P(cᵢ) is the source codon distribution, P(Y=cⱼ|X=cᵢ) is the probability of codon cᵢ mutating into cⱼ (based on a background mutation model), and d(aaᵢ, aaⱼ) is the cost, measured as the absolute change in a specified physicochemical property between the original and mutant amino acids [6].

A 2021 study applying this metric across all three domains of life demonstrated that the code's performance is environment-dependent. The fidelity of physicochemical properties is expected to deteriorate with extremophilic codon usages, particularly in thermophiles, suggesting the SGC is best adapted to non-extremophilic conditions [6]. This indicates that the code's optimization is not absolute but is fine-tuned to a specific biological and environmental context.

Table 1: Key Quantitative Studies on Genetic Code Robustness

| Study | Metric Used | Amino Acid Property Analyzed | Fraction of Random Codes Superior to SGC | Key Finding |

|---|---|---|---|---|

| Haig & Hurst (1991) | Error Cost | Polar Requirement | ~ 10⁻⁴ | First robust quantitative evidence of optimization |

| Freeland & Hurst (1998) | Error Cost (with ti/tv bias) | Polar Requirement | ~ 10⁻⁶ | "One in a million" code |

| Błażej et al. (2021) | Distortion (with codon usage) | Hydropathy, Polar Requirement, Volume, Isoelectric Point | Context-dependent | Code performs better under non-extremophilic conditions |

Experimental and Computational Methodologies

Quantifying the robustness of the genetic code requires well-defined experimental and computational protocols. Below is a detailed methodology for conducting such an analysis.

Protocol for Quantifying Code Robustness

Define the Physicochemical Distance Matrix

- Procedure: Select a set of key physicochemical properties for amino acids. Commonly used properties include [6] [9]:

- Hydropathy: Measure of hydrophobicity.

- Polar Requirement: Another measure of hydrophilicity.

- Molecular Volume: Size of the amino acid side chain.

- Isoelectric Point: Charge-related property.

- For each property, create a symmetric distance matrix, d, where each element d(aaᵢ, aaⱼ) is the absolute difference in the property value between amino acid i and amino acid j. The diagonal elements are zero (d(aaᵢ, aaᵢ) = 0).

Establish a Background Mutation Model

- Procedure: Model the probabilities of codon mutations to estimate P(Y=cⱼ|X=cᵢ). A common approach is a model reminiscent of Kimura's two-parameter model [6]:

- Define the transition/transversion rate ratio (κ).

- The mutation probability from codon cᵢ to cⱼ is a function of κ and the number of nucleotide changes required.

- Mutations to stop codons are typically forbidden or assigned a maximal cost.

Calculate the Robustness Metric

- Procedure: For a given genetic code (standard or alternative), codon usage distribution P(cᵢ), mutation model, and distance matrix d, compute the overall distortion D using the formula in Section 2.2. A lower D indicates a more robust code.

Compare against Alternative Codes

- Procedure:

- Generate a large number (e.g., 1,000,000) of random alternative genetic codes. These codes must maintain the same block structure and degeneracy as the SGC (i.e., the same number of codons per amino acid) to ensure a fair comparison [5].

- Calculate the distortion D for each random code using the same parameters.

- Rank the SGC's distortion value against the distribution of values from the random codes. The fraction of random codes with a lower D than the SGC is the p-value representing its estimated improbability.

Empirical Landscape Analysis

A 2024 study used empirical adaptive landscapes from massively parallel sequence-to-function assays to move beyond purely physicochemical models. This method [8]:

- Uses combinatorially complete data sets that provide a quantitative phenotype (e.g., binding affinity) for all possible amino acid sequences at a few protein sites.

- Computationally translates all possible mRNA sequences into amino acid sequences under hundreds of thousands of rewired genetic codes.

- Constructs and analyzes the topography of the adaptive landscape for each code, showing that robust genetic codes tend to produce smoother landscapes with fewer peaks, thereby enhancing protein evolvability.

The following workflow diagram illustrates the core computational protocol for assessing genetic code robustness.

The Physicochemical and Structural Basis of Optimization

The error-minimizing capacity of the genetic code is rooted in the specific arrangement of amino acids within the codon table.

The Central Role of the Second Codon Position

Analysis of all 24 possible hierarchical arrangements of the four nucleotides reveals that the second codon base carries the majority of information concerning key physicochemical properties [10] [9]. The nucleotide hierarchy U < C < G < A at the second position and its complement (A < G < C < U) show the strongest correlation with amino acid hydropathy and polarity. For instance [10]:

- Codons with U in the second position exclusively code for hydrophobic amino acids (e.g., Phe, Leu, Ile, Val, Met).

- Codons with A in the second position exclusively code for hydrophilic amino acids (e.g., Asp, Glu, Lys, Asn, Gln).

- Codons with C or G in the second position typically code for semi-polar amino acids.

This structure ensures that the most frequent type of mutation is likely to result in a substitution with a similar amino acid.

Table 2: Key Research Reagents and Computational Tools for Code Robustness Analysis

| Item/Tool Type | Specific Examples / Functions | Role in Experimental or Computational Analysis |

|---|---|---|

| Codon Usage Datasets | UniProt Reference Proteome Database, NCBI Taxonomy | Provides the source codon distribution P(cᵢ) for specific organisms or taxa. |

| Environmental Databases | BacDive Database, Engquist Compendium | Links genomic data to optimal growth conditions (temp, pH, salinity) for environmental analysis. |

| Physicochemical Scales | Hydropathy Index, Polar Requirement, Molecular Volume | Defines the cost function d for quantifying the impact of an amino acid substitution. |

| Mutation Model Parameters | Transition/Transversion Ratio (κ), Mutation Rate (μ) | Defines the probabilities P(Y=cⱼ|X=cᵢ) in the distortion calculation. |

| Empirical Fitness Landscapes | GB1, ParD3, ParB protein assay data | Provides experimental genotype-to-phenotype maps for testing code evolvability under real biological constraints. |

The Evolutionary Debate: Selection vs. Neutral Emergence

A central debate in the field concerns the evolutionary mechanism responsible for the code's optimized state.

The Natural Selection Argument: The primary argument for an adaptive origin is the sheer improbability of the observed level of optimization. Proponents argue that the probability of the SGC's robustness arising by chance is so low (on the order of 10⁻⁶) that it strongly implies the action of natural selection to minimize the phenotypic effects of errors [7] [5]. This selection would have been particularly strong in the early, error-prone stages of evolution.

The Neutral Emergence Argument: Alternative hypotheses suggest that the code's robustness could be a neutral by-product, or epiphenomenon, of other evolutionary processes. These include the stereochemical hypothesis (direct chemical affinity between amino acids and codons) and the coevolution hypothesis (code structure reflects biosynthetic pathways of amino acids) [5] [7]. A critical analysis of simulations supporting the neutral emergence view argues that they often contain hidden elements of selection, rendering their conclusions partly tautological [7]. The consensus remains that natural selection played a significant role in shaping the genetic code.

Implications for Biotech and Drug Development

Understanding the structure and evolvability of the genetic code has tangible applications in modern biotechnology and pharmaceutical research.

Enhancing Protein Evolvability: Robust genetic codes tend to produce smoother adaptive landscapes with fewer fitness peaks, making it easier for evolving populations to find mutational paths to high fitness [8]. This principle can inform directed protein evolution experiments, where the goal is to rapidly generate proteins with novel or enhanced functions.

Engineering Non-Standard Genetic Codes: Synthetic biology efforts are already creating organisms with recoded genomes. Understanding the design principles of the natural code allows engineers to build new codes with either enhanced evolvability (to accelerate protein engineering) or diminished evolvability (as a biocontainment strategy for synthetic organisms) [8] [11].

Informing AI-Driven Drug Discovery: The paradigm of optimizing a system (the genetic code) to be robust against errors (mutations) is analogous to the challenges in drug development. The "one in a million" optimization of the code serves as a powerful example of a biologically evolved, highly efficient system. This conceptual framework aligns with new AI-driven paradigms in drug discovery that aim to shift from a "one-gene perspective to a systemic view of the human body" [12], seeking to understand and predict the system-wide effects of therapeutic interventions.

Quantitative evidence firmly supports the conclusion that the standard genetic code is a highly optimized framework for error minimization, often quantified as a 'one in a million' configuration. This optimization is demonstrated through rigorous computational comparisons with random alternative codes and is rooted in the specific physicochemical organization of the codon table, particularly the preeminent role of the second base. While debates continue regarding the precise evolutionary mechanisms, the weight of evidence strongly favors the intervention of natural selection. The principles of genetic code optimization are now providing valuable insights and inspiration for advancing synthetic biology and developing the next generation of AI-powered drug discovery platforms.

The standard genetic code (SGC) is the fundamental set of rules by which DNA and RNA sequences are translated into the amino acid sequences of proteins. Its near-universality across all domains of life and its non-random, error-minimizing structure present a dual puzzle regarding its origin and evolution [13] [14]. The code's structure is highly robust, meaning that point mutations or translational errors often result in the incorporation of a chemically similar amino acid, thereby mitigating the deleterious effects on protein function [13]. This observation is central to the broader thesis that error minimization is a critical evolutionary pressure that has shaped the genetic code. The probability of the SGC's level of error robustness arising by chance has been estimated to be less than one in a million, suggesting a non-accidental origin [14]. This whitepaper examines the three core competing theories—the Frozen Accident, the Stereochemical theory, and the Error Minimization theory—that seek to explain the origin and evolution of the genetic code, with a particular focus on their implications for and interactions with the principle of error minimization.

The Frozen Accident Theory

Historical Concept and Definition

Proposed by Francis Crick in 1968, the Frozen Accident theory posits that the initial assignment of codons to amino acids was a matter of historical chance [15] [13]. Once established in a primitive biological system, the code became immutable because any subsequent change in codon assignment would have catastrophically altered the amino acid sequences of a vast number of essential, highly evolved proteins, leading to non-viable organisms [13] [16]. Crick contrasted this with the stereochemical theory, arguing that the code is universal not because it is optimal, but because it is too dangerous to change; it is a "frozen accident" [17].

Modern Interpretations and Evidence

While the core premise of universality remains, the pure "accident" aspect has been challenged. The discovery of non-canonical codes in mitochondria and certain microorganisms demonstrates that the code is not entirely frozen [15] [13]. However, these variants are minor, typically involving the reassignment of rare amino acids or stop codons, and do not represent a fundamental rewrite of the code [13]. This supports Crick's argument that only changes with minimal disruptive impact are viable.

Computational models using Ising spin systems from statistical mechanics have explored how a code could physically "freeze." In these models, codons are represented as nodes and amino acids as spins. Monte Carlo simulations show that complex interactions can lead to stable, regular patterns that resist change, compatible with a freezing process [17]. This provides a physical metaphor for Crick's biological hypothesis, suggesting that the code reached a local minimum in a fitness landscape, separated from other potential codes by deep valleys of low fitness [13].

The Stereochemical Theory

Core Principle and Hypothesized Mechanisms

The stereochemical theory proposes that the genetic code's structure originated from direct physicochemical affinities between amino acids and their cognate codons or anticodons [18] [13]. This theory suggests that these interactions, such as selective binding or molecular complementarity, directly determined the initial codon assignments.

The "codon-correspondence hypothesis" formalizes this idea, stating that for each amino acid, there is a coding nucleotide sequence with which it has the greatest association, and this association influenced the code's form [18]. These interactions may have arisen in an RNA world, where amino acids functioned as cofactors for ribozymes or stabilized RNA structures, with the binding sites containing sequences that would later become codons [18] [13].

Experimental Evidence and Methodologies

Researchers have employed several methodologies to test for stereochemical relationships, with mixed results.

- Molecular Modeling: Early attempts used molecular models to demonstrate complementarity between amino acids and codons or anticodons. However, this approach has been criticized for being insufficiently constrained, allowing for too many potential solutions and sometimes relying on incorrectly built models [18].

- Chromatography: This technique has been used to test if amino acids and nucleotides with similar physicochemical properties (e.g., hydrophobicity) co-partition in plausibly prebiotic conditions. Some studies found correlations between amino acids and their anticodon nucleotides, but the evidence for correlations with codons is weaker. Overall, the data does not strongly support copartitioning as a definitive mechanism for codon assignment [18].

- Affinity Chromatography and NMR: These methods directly test the binding strength and specificity between amino acids and short oligonucleotides. Results have been largely negative or inconclusive; interactions measured between amino acids and mono-, di-, or trinucleotides are generally weak and non-specific and do not recapitulate the pattern of the modern genetic code [18].

Despite these challenges, evidence for interactions between amino acids and longer RNA sequences exists. For some amino acids, including arginine, isoleucine, and tyrosine, their cognate codons are statistically enriched in experimentally selected RNA binding sites, implying that initial stereochemical assignments for a subset of amino acids may have survived [18].

Key Experimental Protocol: In Vitro Selection of RNA Binding Sites

A key modern protocol for investigating stereochemistry is the in vitro selection (SELEX) of RNA aptamers that bind specific amino acids.

- Library Generation: Create a vast library of random RNA sequences (~10^14 different molecules).

- Binding and Partition: Incubate the RNA library with the target amino acid, which is often immobilized on a solid support (e.g., a chromatography column). Unbound RNA molecules are washed away.

- Elution and Amplification: Bound RNA molecules are eluted and reverse-transcribed into DNA.

- Polymerase Chain Reaction (PCR): The DNA is amplified by PCR.

- In Vitro Transcription: The amplified DNA is transcribed back into RNA, creating an enriched pool for the next selection round.

- Iteration: Steps 2-5 are repeated multiple times (typically 5-15 rounds) to selectively amplify RNAs with high affinity for the target amino acid.

- Sequencing and Analysis: The final pool of RNA sequences is cloned and sequenced. The occurrence of specific codons or anticodons in the binding sites is statistically compared to a randomized control to determine if real codons are enriched [18].

The Error Minimization Theory

Definition and Theoretical Foundation

The error minimization theory posits that the SGC's non-random structure is the result of natural selection for robustness against genetic mutations and translational errors [19] [7] [14]. A code is considered error-minimizing if a substitution error (e.g., a point mutation in DNA or a misreading by tRNA) at a single nucleotide position is likely to result in the incorporation of an amino acid that is chemically similar to the original one, thus preserving the protein's structure and function [13]. This property is quantitatively measured using cost functions based on amino acid physicochemical properties, such as polarity, volume, or hydropathy [14].

Quantitative Evidence and Computational Analyses

Computational analyses form the backbone of evidence for this theory. Studies compare the error cost of the SGC to a vast number of randomly generated alternative codes.

- The "One in a Million" Finding: A seminal study by Freeland and Hurst calculated that the SGC is more robust than all but about 0.01% (1 in 10,000) to 0.0001% (1 in 1 million) of random codes, making its error-minimizing structure a significant statistical outlier [14].

- Positional Asymmetry and Transition Bias: The code's structure accounts for real-world error patterns. Transition mutations (purine-purine or pyrimidine-pyrimidine) are more common than transversions (purine-pyrimidine swaps). The SGC is organized so that transition mutations in the third codon position are often synonymous (no amino acid change) or conservative (similar amino acid), and this robustness is greater for transitions than for transversions [19] [14].

- Trade-off with Diversity: Recent work has highlighted that error minimization is not the only evolutionary pressure. A code that was perfectly robust would encode only one amino acid, lacking the diversity needed to build complex proteins. Research suggests the SGC is a near-optimal solution balancing error minimization against the need for physicochemical diversity in the encoded amino acid repertoire [14].

Key Computational Protocol: Simulated Annealing for Code Optimization

A key methodology for exploring error minimization is using optimization algorithms like simulated annealing to find optimal genetic codes.

- Define the Cost Function: A function

E(c)is defined that quantifies the total error cost of a genetic codec. This typically involves:- Error Matrix: A matrix defining the probability of one codon being misread as another (incorporating transition/transversion bias and positional effects).

- Distance Matrix: A matrix defining the physicochemical "distance" or dissimilarity between every pair of amino acids (e.g., based on polarity).

- Calculation: For each possible codon mispairing, the cost is the product of the error probability and the amino acid distance. The total cost

E(c)is the sum over all such possible mispairings, often weighted by amino acid frequency [14].

- Generate Initial Code: Start with a random assignment of codons to amino acids.

- Perturb the Code: Make a small random change to the code, such as swapping the amino acid assignments of two codons.

- Evaluate Energy Change: Calculate the new cost

E(new_c)and the change in costΔE = E(new_c) - E(old_c). - Metropolis Criterion: Decide whether to accept the new code.

- If

ΔE < 0(the new code is better), always accept the change. - If

ΔE > 0(the new code is worse), accept the change with a probabilityP = exp(-ΔE / T), whereTis a "temperature" parameter.

- If

- Iterate and Cool: Repeat steps 3-5 for many iterations. Gradually lower the temperature

Taccording to a predefined "cooling schedule." AsTdecreases, the system becomes less likely to accept worse solutions and converges towards a low-energy, error-minimizing code [14]. - Comparison: Compare the error cost of the optimized code from the simulation with the cost of the natural SGC.

Comparative Analysis of Theories

The following table summarizes the core principles, strengths, and weaknesses of the three major theories.

Table 1: Comparative Analysis of Core Theories on the Origin of the Genetic Code

| Theory | Core Mechanism | Key Evidence | Strengths | Weaknesses |

|---|---|---|---|---|

| Frozen Accident [15] [13] [16] | Historical contingency followed by evolutionary immutability | Near-universality of the code; computational Ising models showing freezing; minor variant codes are small in scope | Explains universality; simple premise | Does not explain the code's non-random, error-minimizing structure |

| Stereochemical [18] [13] | Direct physicochemical affinity between amino acids and codons/anticodons | Enrichment of specific codons in RNA aptamer binding sites for some amino acids (e.g., Arg, Ile, Tyr) | Provides a concrete physico-chemical mechanism for initial assignments | Lack of strong, specific affinity between short oligonucleotides and amino acids; cannot account for the entire code |

| Error Minimization [19] [7] [14] | Natural selection for robustness against mutations and translation errors | Statistical outlier in error cost compared to random codes; resilience to transition mutations | Quantitatively explains the code's non-random structure and its biological benefit | The level of optimization is high but not perfect; requires a trade-off with amino acid diversity |

Synthesis and Interplay of Theories

The three theories are not mutually exclusive, and a modern synthesis provides a more plausible evolutionary narrative. A compelling integrated model suggests that the genetic code evolved in stages:

- Stereochemical Initialization: The earliest assignments were likely influenced by weak stereochemical affinities between a small set of amino acids and their cognate codons or, more likely, proto-tRNA molecules [18] [13]. This provided an initial, biased mapping.

- Coadaptive Expansion and Error Minimization: The code expanded through processes such as tRNA duplication and the coevolution of new amino acids from existing ones [15] [13]. During this expansion, selective pressure for error minimization would have shaped the assignment of new codons and the reorganization of existing ones. Codes that were more robust produced more functional proteins and outcompeted others. This "code-message coevolution" occurred in a punctuated manner [19].

- Freezing: As the complexity of the proteome increased in the last universal common ancestor (LUCA), the code became increasingly immutable. Any major change would have been lethal, freezing the code in its then-current, highly optimized state [13] [14]. This frozen state preserves the historical signatures of both stereochemistry and selective optimization.

This synthesized view resolves the tension between the theories: the code is not a pure accident, nor is it solely determined by chemistry or selection. It is a historical record of early physical and biological interactions, optimized under the dominant constraint of error minimization and subsequently frozen in place.

The Scientist's Toolkit: Key Research Reagents and Methodologies

Table 2: Essential Research Tools for Genetic Code Studies

| Tool / Reagent | Function in Research | Example Application |

|---|---|---|

| Cell-Free Translation System [11] | An in vitro platform for protein synthesis, lacking a cell membrane. | Used to decipher codons (e.g., poly-U RNA for phenylalanine); test synthetic genetic codes and incorporate non-canonical amino acids. |

| In Vitro Selection (SELEX) [18] | Technique to isolate high-affinity nucleic acid ligands (aptamers) from a random sequence pool. | Used to test stereochemical theory by selecting RNA molecules that bind specific amino acids and analyzing enriched sequences. |

| Aminoacyl-tRNA Synthetase (ARS) Pairs [11] | Engineered enzyme-tRNA pairs that are orthogonal to natural ones in a host cell. | Essential for synthetic biology to expand the genetic code and incorporate non-canonical amino acids into proteins in vivo. |

| Monte Carlo Simulation [17] | A computational algorithm that relies on random sampling to obtain numerical results. | Used to model the "freezing" of the genetic code via Ising models and to explore the space of possible codes for error minimization. |

| Simulated Annealing [14] | A probabilistic metaheuristic optimization algorithm. | Used to find genetic code mappings that minimize a defined error cost function, testing the optimality of the standard code. |

Visualizing the Synthesis of Theories

The following diagram illustrates the synthesized, multi-stage model of genetic code evolution, integrating elements from all three core theories.

Synthesis of Genetic Code Evolution Theories

The quest to understand the origin of the genetic code remains a vibrant field of interdisciplinary research. While Crick's Frozen Accident theory compellingly explains the code's universality, the robust, non-random structure of the code demands a deeper explanation. The Stereochemical and Error Minimization theories provide critical mechanistic and selective insights, respectively. The most coherent modern framework synthesizes these ideas: the code was likely initiated by stereochemistry, optimized over evolutionary time by natural selection for error minimization amidst pressures for diversity, and ultimately frozen in place due to the increasing complexity of the encoded proteome. This synthesis underscores that error minimization is not merely an emergent property but was likely a central driving force in shaping the fundamental dictionary of life. For researchers in drug development, understanding these principles and the tools used to study them is foundational for efforts to expand the genetic code, which enables the incorporation of novel amino acids into therapeutic proteins, paving the way for new classes of biologics.

The coevolution theory posits that the standard genetic code (SGC) evolved its structure in tandem with the development of amino acid biosynthetic pathways. This theory provides a compelling framework for understanding the non-random organization of the codon table, linking the chemical relatedness of amino acids sharing codons to their metabolic relationships. Under this hypothesis, early genetic codes incorporated a limited set of primordial amino acids available through prebiotic synthesis. As biological systems evolved the capacity to manufacture new amino acids through biosynthetic pathways, these novel amino acids were incorporated into the expanding genetic code, often inheriting the codons of their metabolic precursors [20] [21]. This process created a systematic relationship between the structure of the genetic code and the biochemical relationships between amino acids, offering an explanation for why similar amino acids often share related codons. The theory stands alongside other major hypotheses for genetic code evolution, including the stereochemical theory (direct physicochemical interactions) and the adaptive theory (error minimization), with modern research often suggesting a complementary interplay between these mechanisms [20] [14].

The coevolution theory gains significance when examined alongside the concept of error minimization in the genetic code. The SGC exhibits a remarkable robustness against mutations and translation errors, as codons that differ by a single nucleotide typically encode amino acids with similar physicochemical properties. This error-buffering capacity suggests the code has been optimized through evolutionary processes. The coevolution mechanism may have contributed significantly to this optimization by ensuring that biosynthetically related amino acids—which often share structural similarities—were assigned to adjacent codons [7] [21]. Thus, when a mutation occurs, it is more likely to result in a similar amino acid, potentially preserving protein function. This review examines the mechanistic basis of the coevolution theory, presents contemporary evidence, and explores its integration with error minimization principles.

Theoretical Framework and Core Mechanisms

Fundamental Principles of Code Expansion

The coevolution theory rests on several foundational principles that describe how the genetic code expanded from a simpler primordial state to the complex modern code:

Stepwise Addition: The genetic code did not emerge fully formed but rather expanded through a series of sequential additions. Early versions of the code encoded only a small subset of amino acids, with new amino acids incorporated as their biosynthetic pathways evolved [20]. This stepwise process is more evolutionarily plausible than the sudden appearance of the complete code.

Inheritance of Codon Blocks: When a new amino acid was biosynthesized from an existing one, it often "inherited" part of the precursor's codon domain. For instance, a precursor amino acid encoded by a four-codon block might cede two of its codons to its biosynthetic product [21]. This inheritance mechanism created permanent metabolic signatures within the genetic code's structure.

Reduced Disruption: Incorporating new amino acids through codon inheritance minimized disruption to existing proteins. Since the new amino acid was structurally similar to its precursor, substituting one for the other was less likely to be catastrophic than a random substitution, making code expansion evolutionarily viable [21].

Biosynthetic Pathways and Codon Assignment

The theory identifies specific biosynthetic relationships that have left imprints on the genetic code's structure. The following table summarizes key amino acid pairs with their biosynthetic relationships and corresponding codon block relationships:

Table 1: Key Biosynthetic Relationships and Corresponding Codon Assignments

| Precursor Amino Acid | Product Amino Acid | Biosynthetic Relationship | Codon Block Relationship |

|---|---|---|---|

| Serine | Tryptophan | Serine contributes to tryptophan's biosynthesis | UCN (Ser) UGG (Trp) |

| Aspartate | Lysine | Aspartate is a precursor in lysine biosynthesis | GAY (Asp) AAR (Lys) |

| Glutamate | Glutamine | Direct amidation of glutamate | GAR (Glu) CAR (Gln) |

| Glutamate | Proline | Glutamate is cyclized to form proline | Not specified in search results |

| Aspartate | Asparagine | Direct amidation of aspartate | GAY (Asp) AAY (Asn) |

| Pyruvate | Valine | Shared biosynthetic origin from pyruvate | Not specified in search results |

| Valine | Leucine | Valine is a precursor to leucine | GUN (Val/Leu) block sharing |

These relationships demonstrate how metabolic pathways shaped codon assignments. For example, the connection between aspartate (codons GAC, GAU) and asparagine (codons AAC, AAU) shows how the first nucleotide changed while maintaining the second position adenine, potentially minimizing functional disruption during substitution events [21]. Similarly, the relationship between glutamate (GAA, GAG) and glutamine (CAA, CAG) demonstrates a conservative transition where only the first nucleotide differs between related amino acids.

Table 2: Chronology of Amino Acid Addition to the Genetic Code Based on Biosynthetic Evidence

| Evolutionary Stage | Amino Acids | Basis for Classification |

|---|---|---|

| Early/Phase 1 | Gly, Ala, Asp, Glu, Val, Ser, Pro, Thr, Ile, Leu | Products of prebiotic synthesis experiments; lowest free energies of formation [21] |

| Intermediate Phase | Asn, Gln, Tyr, Cys, His, Arg, Met, Phe | Require more complex biosynthetic pathways; incorporated after evolution of necessary enzymes |

| Late/Phase 2 | Tryptophan | Most complex biosynthetic pathway; considered the final addition in many models |

This chronological framework aligns with the coevolution theory's prediction that simpler, prebiotically available amino acids formed the core coding set, with more complex amino acids joining later as biosynthetic capabilities expanded.

Modern Evidence and Computational Modeling

Contemporary Phylogenomic Support

Recent phylogenomic analyses provide quantitative support for the coevolution theory. A 2025 study analyzed 4.3 billion dipeptide sequences across 1,561 proteomes to reconstruct the evolutionary chronology of the genetic code. This massive dataset revealed that:

- The temporal emergence of specific dipeptides supported an early operational RNA code in the acceptor arm of tRNA prior to the implementation of the standard genetic code in the anticodon loop [22].

- Dipeptides containing Leu, Ser, and Tyr emerged first, followed by those containing Val, Ile, Met, Lys, Pro, and Ala, creating a detailed timeline of genetic code expansion that aligns with biosynthetic pathway development [22].

- Protein thermostability was identified as a late evolutionary development, supporting an origin of proteins in mild environments and contradicting hypotheses that the code originated in high-temperature conditions [22].

This research demonstrates how contemporary bioinformatics can trace historical evolutionary processes through statistical analysis of modern protein sequences, providing empirical support for the coevolution theory's predicted sequence of amino acid additions to the code.

Computational Evolutionary Models

Computational approaches have been instrumental in testing the coevolution theory's plausibility. A 2025 study used evolutionary algorithms to simulate the emergence of stable coding systems from primitive ambiguous codes. Key findings included:

- Initial codes began with ambiguous encoding of only 3-7 amino acids (labels), progressively expanding through mutation, incorporation of new amino acids, and information exchange between codes [20].

- The simulated evolution consistently converged toward stable, unambiguous coding systems with higher coding capacity, facilitated by exchange of encoded information between evolving codes [20].

- Three factors proved crucial for efficient code evolution: mutations altering amino acid-codon assignments, progressive incorporation of new amino acids, and information exchange between organisms carrying different codes [20].

Table 3: Key Parameters in Computational Models of Genetic Code Evolution

| Parameter | Symbol | Role in Simulation | Biological Equivalent |

|---|---|---|---|

| Mutation rate of label-to-codon assignment | mc | Introduces variability in codon assignments | Random mutations in translation machinery |

| Rate of new label introduction | ml | Allows expansion of amino acid repertoire | Evolution of new biosynthetic pathways |

| Rate of information exchange | me | Enables transfer of coding innovations | Horizontal gene transfer in early life |

These computational models demonstrate that code evolution following coevolution principles can realistically produce stable, optimized genetic codes resembling the standard genetic code. The models further suggest that horizontal gene transfer between primitive organisms significantly accelerated the emergence of an efficient, universal code [20].

Methodologies for Investigating Coevolution

Phylogenomic Analysis Protocols

The phylogenomic approach to investigating genetic code evolution involves several methodical steps:

- Proteome Dataset Curation: Collect comprehensive proteome data from diverse organisms. The 2025 study utilized 1,561 proteomes spanning the tree of life to ensure representative sampling [22].

- Dipeptide Frequency Analysis: Extract and quantify all dipeptide sequences from the proteomes. The cited study analyzed 4.3 billion dipeptide sequences, providing substantial statistical power [22].

- Phylogenetic Reconstruction: Apply phylogenetic methods to reconstruct evolutionary chronologies. This involves using statistical models to infer ancestral states and temporal relationships between different dipeptides [22].

- Timeline Validation: Cross-reference the inferred timeline with independent evidence, including biosynthetic pathway complexity, amino acid physicochemical properties, and geological records [22] [21].

This methodology leverages the power of big data and evolutionary modeling to extract historical signals from contemporary biological sequences, effectively "reading" the evolutionary history embedded in modern proteomes.

Experimental Reagent Solutions

Table 4: Essential Research Reagents and Computational Tools for Coevolution Research

| Reagent/Resource | Function/Application | Example Use Case |

|---|---|---|

| Curated proteome databases (e.g., UniProt) | Source of protein sequence data for phylogenetic analysis | Provides evolutionary raw material for tracing code development [22] |

| Phylogenetic software (e.g., PhyloML, RAxML) | Reconstruction of evolutionary relationships and timelines | Building evolutionary chronologies of dipeptide appearance [22] |

| Molecular evolution simulators | Computational testing of evolutionary hypotheses | Modeling code expansion under different parameters [20] |

| Metabolic pathway databases (e.g., KEGG) | Reference for biosynthetic relationships between amino acids | Correlating codon assignments with biosynthetic pathways [21] |

| Amino acid property databases | Physicochemical characterization of amino acids | Assessing error minimization in context of biosynthetic relationships [14] |

Integration with Error Minimization

Complementary Explanatory Frameworks

The coevolution and error minimization theories are not mutually exclusive but rather provide complementary explanations for the genetic code's structure. Research indicates that:

- The addition of amino acids to the genetic code followed their relationships in biosynthetic pathways, primarily organizing the rows of the SGC table, while the allocation of amino acids to columns was optimized based on partition energy, favoring efficient protein folding and enzymatic catalysis [20].

- Putative primordial 2-letter codes containing 10 early amino acids demonstrate exceptional error minimization properties, suggesting that early stages of code evolution already exhibited substantial optimization [21].

- The standard genetic code balances two competing objectives: minimizing error load and maintaining physicochemical diversity in the encoded amino acid repertoire [14].

This integrated perspective suggests the genetic code evolved through a process where biosynthetic relationships determined the overall architecture (coevolution), while selective pressure for error minimization refined the detailed assignments within that framework.

Resolving Theoretical Controversies

The relationship between coevolution and error minimization has been the subject of scientific debate. Some researchers argue that the error minimization observed in the genetic code is too extensive to be merely a byproduct of coevolution and must result from direct natural selection [7]. Counterarguments suggest that simulations claiming to support a neutral emergence of error minimization contain elements of natural selection, potentially rendering their conclusions tautological [7].

A synthesis view proposes that coevolution created the initial framework for error minimization by assigning similar amino acids to related codons, with subsequent refinement through direct selection for error robustness. This hybrid model acknowledges the role of both historical contingency (coevolution) and adaptive optimization (error minimization) in shaping the genetic code [7] [20] [14].

Diagram 1: Coevolution and Error Minimization Integration. This diagram illustrates how biosynthetic relationships between amino acids (coevolution) and selection for error robustness interacted during genetic code evolution.

Applications and Research Implications

Informing Genetic Code Engineering

Understanding the evolutionary principles underlying the natural genetic code has practical applications in synthetic biology:

- Genetic Code Expansion (GCE): Research into the natural expansion of the genetic code informs efforts to engineer organisms with expanded amino acid repertoires. Recent advances include hijacking bacterial ABC transporters to efficiently import non-canonical amino acids (ncAAs) by packaging them as tripeptides that are processed intracellularly [23].

- Biosynthetic Integration: Engineering platforms that couple the biosynthesis of aromatic ncAAs with genetic code expansion in E. coli enable more efficient production of proteins containing ncAAs, mirroring the natural integration of biosynthesis and coding [24].

- Overcoming Uptake Limitations: Identifying cellular uptake as a major bottleneck in genetic code expansion has led to innovative solutions, including engineering specialized transport systems that actively import ncAA precursors, dramatically improving incorporation efficiency [23].

These applications demonstrate how understanding natural genetic code evolution can guide bioengineering strategies, particularly in overcoming practical challenges in synthetic biology.

Future Research Directions

The coevolution theory continues to generate productive research questions and experimental approaches:

- Integrating Chronologies: Future research should reconcile the amino acid recruitment chronology derived from biosynthetic pathways with timelines obtained from molecular fossil records and computational models [22] [25].

- Experimental Evolution: Laboratory evolution experiments with synthetic genetic systems could directly test coevolution predictions by observing how codes expand when presented with new amino acids [20].

- Structural Basis: Investigating the structural and mechanistic links between biosynthetic enzymes, tRNA aminoacylation, and codon assignment could reveal the molecular mechanisms that facilitated code coevolution [22] [23].

- Error Minimization Quantification: Developing more sophisticated metrics to quantify error minimization in putative ancestral codes could clarify whether error robustness was an emergent property or a selected feature during code expansion [7] [14].

Diagram 2: Experimental Biosynthetic Pathway for Non-Canonical Amino Acids. This workflow illustrates a generic pathway for producing aromatic ncAAs from aldehyde precursors, demonstrating how modern synthetic biology mimics natural biosynthetic principles [24].

The coevolution theory provides a robust framework explaining how biosynthetic relationships between amino acids shaped the genetic code's structure through a stepwise expansion process. Contemporary evidence from phylogenomics, computational modeling, and synthetic biology continues to support and refine this theory, revealing a complex evolutionary trajectory where historical contingency (biosynthetic pathways) interacted with selective pressures (error minimization) to produce the optimized genetic code observed today. The theory's predictive power and explanatory scope make it an enduring component of origins of life research, with practical applications in genetic engineering and synthetic biology. Future research integrating coevolution with other evolutionary mechanisms promises to further illuminate one of biology's most fundamental systems.

The standard genetic code (SGC) is remarkably optimized for error minimization, a feature that reduces the deleterious impact of point mutations and translational errors by ensuring that similar codons typically encode amino acids with similar physicochemical properties [26] [14]. For decades, the prevailing assumption was that this optimized structure was a clear product of direct natural selection for robustness. However, a significant body of contemporary research challenges this view, proposing that a substantial degree of this optimization could have arisen neutrally, as a byproduct of the code's historical expansion [27] [26]. This whitepaper delineates the core conflict between these two paradigms—selection for robustness versus neutral emergence—synthesizing current research, quantitative data, and methodologies relevant to researchers and drug development professionals working with genetic fidelity and evolutionary constraints.

The central question is whether the genetic code's error minimization is a true adaptation, shaped by direct selective pressure, or a pseudaptation, a beneficial trait that emerged without direct selection [26]. Resolving this conflict is not merely an academic exercise; it has profound implications for understanding fundamental evolutionary mechanisms, the origins of biological complexity, and the constraints on protein evolution that can inform drug design strategies aimed at combating antibiotic resistance or understanding disease-causing mutations.

Theoretical Frameworks and Key Evidence

The Case for Selective Pressure for Robustness

The selection theory posits that the genetic code's structure was actively refined by natural selection to minimize the phenotypic cost of errors. Statistical analyses show that the standard genetic code is highly efficient at buffering against the effects of mutations, performing much better than a random assignment of amino acids to codons would [14]. Some analyses suggest the probability of the SGC's level of error minimization arising by chance is extremely low, on the order of one in a million [14]. This high level of optimization is argued to be the signature of a selective process.

The Case for Neutral Emergence

In contrast, the neutral emergence theory argues that the genetic code's robustness is a non-adaptive byproduct of its evolutionary history. Simulation studies demonstrate that a substantial proportion of error minimization can arise neutrally through a process of code expansion facilitated by the duplication of genes encoding tRNAs and aminoacyl-tRNA synthetases [27] [26]. In this scenario, new amino acids are added to the coding repertoire in a non-random fashion; when a tRNA gene duplicates, the new copy is initially identical and recognizes the same set of codons. If this copy later acquires a mutation that allows it to be charged with a similar, new amino acid, the code expands by assigning this similar amino acid to a set of codons closely related to the original. This process inherently clusters similar amino acids in codon space, generating error minimization without any direct selection for that property [27]. Under certain models of expansion, such as the 213 Model, a significant proportion (up to 22%) of simulated codes can possess error minimization equivalent or superior to the natural code [27].

Table 1: Key Predictions and Evidence for the Two Competing Theories

| Aspect | Selection for Robustness Theory | Neutral Emergence Theory |

|---|---|---|

| Primary Mechanism | Direct natural selection for error-minimizing codon assignments [26] | Code expansion via tRNA/aaRS duplication and assignment of similar amino acids to adjacent codons [27] [26] |

| Predicted Code Structure | Globally optimal or near-optimal for error minimization [14] | "Near-optimal," but with many alternative, equally robust codes possible [26] |

| Key Quantitative Evidence | The SGC is a statistical outlier for error minimization compared to random codes [14] | A high proportion of codes evolved in neutral simulations show strong error minimization [27] |

| Interpretation of Optimality | The SGC is a highly refined adaptation [26] | The SGC is a "pseudaptation"—a beneficial trait that emerged non-adaptively [26] |

Quantitative Data and Computational Analyses

Computational simulations have been instrumental in quantifying the potential for neutral emergence. These models test whether randomly generated genetic codes, evolved under specific, non-adaptive constraints, can achieve levels of error minimization comparable to the standard genetic code.

Table 2: Summary of Simulation Models and Their Findings on Neutral Emergence

| Simulation Model | Core Mechanism | Key Parameters | Findings on Error Minimization |

|---|---|---|---|

| Random Stepwise Addition [27] | Random addition of physicochemically similar amino acids to the code | Physicochemical similarity matrix | Results in substantial error minimization compared to a purely random code |

| Ambiguity Reduction Model [27] [28] | Code expansion within a framework that reduces translational ambiguity | Codon domain size, ancestor-descendant relationships | Produces improved error minimization over the simple stepwise model |

| 213 Model [27] | Random addition of similar amino acids to a primordial core of 4 amino acids | Primordial amino acids, duplication and divergence rules | Under certain conditions, 22% of resulting codes possessed equivalent or superior error minimization to the SGC |

| Fidelity-Diversity Trade-off [14] | Simulated annealing to balance error load against amino acid diversity | Mutation rates, translational error rates, amino acid frequencies | The SGC lies near a local optimum, balancing two conflicting pressures |

These simulations reveal that the structure of the SGC is not a unique solution, but one of many possible codes with high error-minimizing capacity. The 213 Model, in particular, demonstrates that a neutral process can frequently produce codes as robust as the one used by nature [27]. Furthermore, modern analyses suggest the code is optimized not just for raw error minimization but for balancing this against the need for a diverse amino acid vocabulary, a trade-off that can also emerge from an evolutionary process [14].

Experimental and Analytical Methodologies

Researchers employ a range of computational and theoretical methods to investigate the origins of the genetic code's robustness.

Protocol 1: Genetic Code Simulation and Neutral Evolution Analysis

This protocol tests the capacity of neutral processes to generate error minimization.

- Define a Primordial Code: Start with a small, simplified genetic code containing only a few amino acids [27].

- Establish an Expansion Rule: Model the duplication of tRNA genes and their subsequent divergence. A key rule is that a new amino acid can only be assigned to a codon related to that of a physicochemically similar "parent" amino acid [27] [26].

- Run Iterative Expansion: Expand the code stepwise by adding new amino acids according to the rule until a full code (e.g., 20 amino acids) is generated.

- Calculate Error Minimization: For the resulting simulated code, quantify its error minimization using a cost function. This function sums the physicochemical distance between amino acids paired by point mutations, weighted by mutation probability [14]. The formula is often of the form: Cost = Σ P(mutation) * Distance(AA_original, AA_mutant)

- Compare to Standard Genetic Code: Benchmark the error minimization value of the simulated code against that of the SGC and a large sample of randomly generated codes [27] [14].

- Statistical Analysis: Repeat the simulation thousands of times to determine the percentage of neutrally evolved codes that match or exceed the error minimization of the SGC [27].

Protocol 2: Quantifying Optimality via Simulated Annealing

This protocol maps the fitness landscape of genetic codes to locate optima and assess the SGC's position.

- Define an Objective Function: Create a function that combines two competing objectives: Error Load (favoring minimal change upon mutation) and Compositional Alignment (favoring a match between codon usage and the natural amino acid frequency distribution in proteomes) [14].

- Parameterize the Model: Incorporate empirical data, such as transition/transversion mutation bias (γ) and position-dependent mutation rates within codons [14].

- Initialize and Perturb: Start with a random code or the SGC. Use a simulated annealing algorithm to explore the landscape by making small random changes (e.g., swapping the amino acid assignments of two codons).

- Evaluate and Iterate: Accept changes that improve the objective function and, with a defined probability, accept some that worsen it (to escape local optima). Gradually reduce this acceptance probability over time [14].

- Identify Optima: Run the algorithm to convergence to find local optima in the fitness landscape. Determine if the SGC is located at or near one of these optima, indicating its high, but not necessarily unique, fitness [14].

Diagram 1: Neutral Emergence Simulation Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational and Theoretical Tools for Genetic Code Research

| Tool / Reagent | Type | Function in Research |

|---|---|---|

| Amino Acid Similarity Matrix | Data Structure | Quantifies physicochemical distance between amino acids (e.g., polarity, volume, charge) to calculate the cost of a mis-incorporation in error minimization models [26]. |

| Genetic Code Simulator | Software/Model | Implements code evolution models (e.g., 213 Model, Ambiguity Reduction) to generate alternative genetic codes and test evolutionary hypotheses in silico [27] [26]. |

| Error Minimization Cost Function | Algorithm | Computes a single fitness value for any given genetic code, allowing for quantitative comparison between the SGC and simulated or random codes [14]. |

| Simulated Annealing Algorithm | Optimization Algorithm | Explores the vast space of possible genetic codes to find local and global optima, helping to map the code's fitness landscape and identify conflicting pressures [14]. |

| tRNA & aaRS Duplication Model | Conceptual Framework | Provides the mechanistic biological premise for how the genetic code could expand neutrally, linking molecular genetics to code evolution [27] [26]. |

Diagram 2: Neutral Expansion via tRNA Duplication

The conflict between selection and neutral emergence is not a simple dichotomy. Modern synthesis posits that the evolution of the genetic code was likely influenced by multiple factors. Neutral processes, particularly those driven by the duplication and divergence of tRNA and aminoacyl-tRNA synthetase genes, may have established a foundation of high error minimization from which selection could then operate [27] [26]. This initial neutral emergence potentially provided a "head start," circumventing the need for selection to search an impossibly vast space of possible codes.

Furthermore, the genetic code is now understood to be a compromise between several competing pressures, not just error minimization. These include the need for a diverse amino acid repertoire to build complex proteins and the constraints imposed by the proteome size of an organism, which affects the code's malleability [26] [14]. Therefore, the standard genetic code is likely the product of a complex evolutionary trajectory involving both stochastic, neutral forces and deterministic selective pressures, resulting in a robust, near-optimal solution that was crucial for the emergence of complex life. For drug development professionals, this nuanced understanding underscores that the genetic code's robustness is a fundamental, evolved constraint on sequence evolution, influencing the landscape of permissible mutations that can lead to drug resistance or genetic disease.

Quantifying and Engineering Robustness: Methods and Therapeutic Applications

The standard genetic code (SGC) exhibits a distinctly non-random structure, where similar amino acids are often encoded by codons that differ by a single nucleotide substitution, typically in the third or first codon position [5]. This organization provides robustness against translational errors and mutations, as a single-base change often results in a similar amino acid with comparable physicochemical properties, thus minimizing detrimental effects on protein function [5] [29]. This paper explores the computational frameworks, cost functions, and simulation methodologies used to quantify and evaluate the error-minimization capacity of the genetic code.

The adaptive hypothesis posits that the genetic code evolved to minimize the effects of amino acid replacements caused by mutations or translational errors [29]. Computational models testing this hypothesis compare the standard genetic code against theoretically possible alternatives to determine whether its structure represents a locally or globally optimized solution for error tolerance [5] [29]. These models operate within the challenging space of possible genetic codes, which is astronomically large—approximately 1.51 · 10^84 variations when accounting for the mapping of 64 codons to 21 items (20 amino acids plus stop signal) [29].

Computational Frameworks and Cost Functions

Foundational Cost Functions

At the core of error minimization research are cost functions that quantify the robustness of a genetic code. These functions typically measure the average physicochemical similarity between amino acids whose codons are connected by single-point mutations or mistranslations.

Table 1: Evolution of Cost Functions in Genetic Code Research

| Cost Function | Mathematical Formulation | Key Parameters | Reported Fraction of Random Codes Better Than SGC |

|---|---|---|---|

| Haig & Hurst (1991) [5] | ϕ = ΣΣ p(c'⎪c) · (h(a(c)) - h(a(c')))^2 |

Amino acid polarity (hydropathy); equal probability for all single-base changes | ~10⁻⁴ |

| Freeland & Hurst (1998) [5] | Modified ϕ function | Incorporates transition/transversion bias and positional error effects | ~10⁻⁶ |

| Gilis et al. (2001) [30] | ϕ = Σ p(a(c))/n(a(c)) · Σ p(c'⎪c) · g(a(c),a(c')) |

Amino acid frequencies, mutation matrix based on protein folding energies | 2×10⁻⁹ (with mutation matrix) |

| Expanded Function (with termination) [30] | Extended ϕ function | Includes mistranslations leading to stop codons; amino acid frequencies | Even lower fractions reported |

Where:

p(c'⎪c)= probability of codon c being misread as c'h(a)= hydropathy index of amino acid ap(a(c))= relative frequency of amino acid an(a(c))= number of synonymous codons for amino acid ag(a(c),a(c'))= cost measure function (e.g., from PAM matrices or mutation matrices)

The Gilis et al. approach was significant for incorporating amino acid frequencies and synonym numbers, recognizing that frequently used amino acids benefit more from robust encoding [30]. Their use of a mutation matrix derived from in silico studies of protein folding energy changes provided a biologically relevant cost measure less biased by the genetic code's structure than earlier matrices [30].

Multi-Objective Optimization Approaches

More recent research has adopted multi-objective optimization frameworks that simultaneously consider multiple physicochemical properties of amino acids. This approach acknowledges that multiple amino acid properties likely influenced code evolution rather than a single property alone [29].

One comprehensive study employed eight objective functions based on a clustering of over 500 amino acid indices from the AAindex database, selecting representative indices that capture diverse physicochemical dimensions including hydropathy, molecular volume, and isoelectric point [29]. This approach revealed that while the standard genetic code could be significantly improved in terms of error minimization, it is decidedly closer to optimal codes than to maximally inefficient ones [29].

Simulation Methodologies and Experimental Protocols

Random Code Comparison

The classic Monte Carlo approach generates large sets of random genetic codes and calculates their error costs using selected cost functions [5]. The fraction of random codes that outperform the standard genetic code provides a statistical measure of its optimality.

Table 2: Key Simulation Methods in Genetic Code Research

| Method | Code Space Definition | Optimization Approach | Key Findings |

|---|---|---|---|

| Random Code Comparison [5] | Purely random assignments of codons to amino acids | Statistical analysis of large samples (e.g., 10⁶ codes) | SGC more robust than vast majority of random codes (1 in 10⁴ to 1 in 10⁹ depending on cost function) |

| Block-Structure Model [5] [29] | Codes preserving the block structure of SGC (same degeneracy) | Evolutionary algorithms with codon block swaps | SGC appears to be partially optimized, about halfway to local optimum |

| Unrestricted Structure Model [29] | Random division of 61 sense codons into 20 non-empty sets | Multi-objective evolutionary algorithms | SGC not fully optimized but significantly better than random |

| Primordial Code Simulation [1] | 16 supercodons (XYN) encoding 10 primordial amino acids | Error minimization calculation with fixed assignments | Putative primordial codes show exceptional error minimization |

The following diagram illustrates the workflow for the random code comparison approach:

Workflow for random code comparison methodology

Evolutionary Algorithms

Evolutionary algorithms simulate code evolution through iterative improvement, providing insight into possible evolutionary trajectories [5] [29]. These algorithms require: (1) a well-defined search space representing potential solutions, (2) objective functions to evaluate solution quality, (3) genetic operators to create new solutions, and (4) selection mechanisms to choose solutions for subsequent generations [29].

For the block-structure model, evolutionary steps typically comprise swaps of four-codon or two-codon series while maintaining the degeneracy pattern of the standard code [5]. Studies using this approach have revealed that the standard genetic code appears to be a point on an evolutionary trajectory from a random code about halfway to the summit of a local peak in a rugged fitness landscape [5].

Specialized Simulation Environments

Modern computational frameworks like TraitSimulation (a Julia package within the OpenMendel suite) provide specialized environments for simulating genetic traits under various models [31]. While primarily designed for trait simulation rather than code evolution studies, such platforms demonstrate the integration of modern computational approaches with genetic analysis, leveraging efficient programming languages for high-performance computing [31].

Advanced Considerations in Model Design

Termination Codon Effects