Estimating Effective Population Size from Genetic Data: A Comprehensive Guide for Biomedical Researchers

This article provides a comprehensive overview of methodologies for estimating effective population size (Ne) from genetic data, tailored for researchers, scientists, and drug development professionals.

Estimating Effective Population Size from Genetic Data: A Comprehensive Guide for Biomedical Researchers

Abstract

This article provides a comprehensive overview of methodologies for estimating effective population size (Ne) from genetic data, tailored for researchers, scientists, and drug development professionals. It covers foundational concepts, including the definition of Ne as the size of an idealized population experiencing the same genetic drift as a real population and its critical role in understanding inbreeding, genetic diversity, and evolutionary potential. The scope extends to a detailed examination of contemporary estimation methods—such as linkage disequilibrium, temporal allele frequency changes, and heterozygosity excess—along with their underlying assumptions and required data inputs. The article further addresses common challenges and biases in Ne estimation, offers strategies for method selection and optimization, and provides guidance for validating and interpreting results within biomedical and clinical research contexts, such as clinical trial design and pharmacogenomics.

Understanding Effective Population Size: Core Concepts and Significance in Genetic Analysis

Effective population size (Ne) represents a cornerstone concept in population genetics, conservation biology, and evolutionary studies. Formally defined as the size of an idealized population that would experience the same rate of genetic drift or inbreeding as the real population under consideration [1], Ne provides a powerful metric for quantifying evolutionary processes in natural populations. The concept was first introduced by Sewall Wright in 1931 [1] [2] to bridge the gap between theoretical models and the complexities of real-world populations. Unlike census population size (N), which simply counts individuals, Ne captures the strength of genetic drift, thereby influencing the rate of genetic diversity loss, the efficiency of selection, and the dynamics of inbreeding [3] [4].

The fundamental importance of Ne extends across multiple biological disciplines. In evolutionary biology, it determines the relative power of drift versus selection [5]. In conservation genetics, Ne predicts vulnerability to inbreeding depression and loss of adaptive potential [4]. In breeding programs, it guides strategies for maintaining genetic diversity [6]. The "50/500" rule, a widely cited conservation guideline, proposes that Ne > 50 is required for short-term viability and Ne > 500 for long-term evolutionary potential [4]. However, empirical studies reveal that Ne is typically much smaller than census size, with an average Ne/N ratio of approximately 0.34 across 102 animal and plant species, dropping to just 0.10-0.11 after accounting for fluctuations in population size, variance in family size, and unequal sex ratio [1].

Theoretical Foundations: From Idealized Populations to Modern Interpretations

Wright's Idealized Population

The conceptual foundation of Ne rests on Wright's idealized population model [7], which makes several simplifying assumptions: (1) constant population size with discrete generations, (2) random mating including self-fertilization in hermaphrodites, (3) Poisson distribution of offspring number (mean equal to variance), and (4) no selection, migration, or mutation [1] [7]. Under these conditions, the rate of genetic drift is inversely proportional to population size, and Ne equals the census size N.

In this idealized Wright-Fisher model, the conditional variance of allele frequency p' given p is:

var(p'∣p) = p(1-p)/2N [1]

This equation establishes the fundamental relationship between population size and genetic drift, with the variance in allele frequency change increasing as N decreases.

Extensions of the Basic Concept

As population genetics developed, several distinct definitions of Ne emerged to address different aspects of genetic drift:

Variance Effective Size (Ne(v)) relates to the change in allele frequency variance across generations [1] [2]. It is defined as Ne(v) = p(1-p)/2var̂(p), where var̂(p) is the estimated variance of allele frequency change [1].

Inbreeding Effective Size (Ne(f)) relates to the rate of increase in inbreeding coefficient [1] [8]. It measures how quickly heterozygosity is lost from a population.

Eigenvalue Effective Size is derived from the largest non-unit eigenvalue of the transition matrix describing allele frequency dynamics [2] [9].

Coalescent Effective Size is defined through coalescence theory, where the expected coalescence time for two genes is T = 2Ne [2].

For a population with constant size and stable breeding structure, these different definitions generally converge to the same value, but they may diverge in populations with changing size or complex structure [2].

Factors Influencing Effective Population Size

Demographic Factors

Real populations systematically deviate from idealized assumptions, leading to Ne < N. The major demographic factors affecting Ne include:

Table 1: Demographic Factors Affecting Ne/N Ratio

| Factor | Effect on Ne | Mathematical Formulation | Biological Interpretation |

|---|---|---|---|

| Fluctuating Population Size | Decreases Ne dramatically | 1/Ne = (1/t)Σ(1/Ni) [1] [8] | Harmonic mean is dominated by smallest bottleneck |

| Unequal Sex Ratio | Decreases Ne, especially with few breeding males | Ne = (4NmNf)/(Nm + Nf) [1] [8] | Reduced contribution from the scarce sex increases drift |

| Variance in Family Size | Generally decreases Ne | Ne = (4N - 2)/(2 + Vk) [1] | Vk > 2 (Poisson expectation) increases drift; Vk < 2 increases Ne |

| Overlapping Generations | Decreases Ne | Complex, depends on age-specific reproduction [8] | Increases variance in reproductive success across generations |

| Population Subdivision | Variable effects | Depends on migration rates and selection [8] | Limited gene flow allows independent drift in subpopulations |

The following diagram illustrates how these demographic factors reduce the effective population size relative to the census count:

Selective and Genomic Factors

Beyond demographic factors, heterogeneity in Ne across the genome arises from selection at linked sites:

- Background selection against deleterious mutations reduces Ne in regions with low recombination [1] [5]

- Selective sweeps from positive selection temporarily reduce Ne in surrounding genomic regions [5]

- Genetic hitchhiking can cause neutral mutations to have dynamics characteristic of smaller populations [1]

These processes create variation in local Ne along chromosomes, with areas of low recombination typically exhibiting lower effective sizes due to reduced efficacy of selection against linked deleterious mutations [1].

Methodologies for Estimating Effective Population Size

Contemporary methods for estimating Ne leverage different genetic signals and data types, each with specific strengths and applications:

Table 2: Methodologies for Estimating Effective Population Size

| Method | Genetic Basis | Timescale | Key Software | Data Requirements |

|---|---|---|---|---|

| Linkage Disequilibrium (LD) | Non-random association of alleles at unlinked loci | Recent (1-100 generations) | NeEstimator [6] | Single-sample SNP data |

| Temporal Method | Allele frequency change between generations | Historical (t generations ago) | MaxTemp [10] | Two or more temporal samples |

| Coalescent-based | Time to most recent common ancestor | Deep evolutionary | fastsimcoal2 [5] | DNA sequence data |

| Pedigree-based | Rate of inbreeding accumulation | Recent generations | - | Multi-generational pedigree |

| Sibship Assignment | Reconstruction of family structure | Contemporary | - | Single-sample genotype data |

Detailed Experimental Protocol: LD-based Ne Estimation

The linkage disequilibrium (LD) method is widely used for estimating contemporary Ne from single-time-point genetic samples. Below is a detailed protocol for implementing this approach:

1. Sample Collection and DNA Extraction

- Collect tissue, blood, or other appropriate biological samples from the target population

- Aim for a sample size of at least 50 individuals, as this provides a reasonable approximation of the true Ne value [6]

- Extract high-quality DNA using standard protocols (e.g., phenol-chloroform, silica column, or magnetic bead methods)

- Quantify DNA concentration and quality using spectrophotometry or fluorometry

2. Genotyping and Quality Control

- Genotype samples using appropriate SNP arrays or sequencing approaches

- Apply quality filters to remove:

- SNPs with high missing data rates (>5-10%)

- Individuals with excessive missing genotypes (>10%)

- Markers with minor allele frequency below 0.01-0.05

- SNPs deviating from Hardy-Weinberg equilibrium (p < 0.001)

- For LD-based Ne estimation, prune markers in high linkage disequilibrium (r² > 0.5) using software such as PLINK [6]

3. Data Formatting for NeEstimator

- Convert genotype data to the appropriate input format (e.g., GENEPOP format)

- Ensure correct population assignment and sampling information

- For large datasets, consider partitioning chromosomes to reduce computational load

4. Parameter Settings in NeEstimator v2.1

- Select the LD method with random mating assumption

- Set the critical value for excluding rare alleles (recommended: 0.05 for sample sizes > 100, 0.02 for smaller samples)

- Choose the jackknifing option for confidence interval estimation

- Specify the monogamous mating model for species with pair bonding, or random mating otherwise

5. Interpretation of Results

- Examine the relationship between Ne estimates and the allele frequency threshold

- Consider the confidence intervals, which are typically wide due to the inherent stochasticity of genetic drift

- For declining populations, note that LD methods reflect Ne approximately 1-100 generations in the past [6]

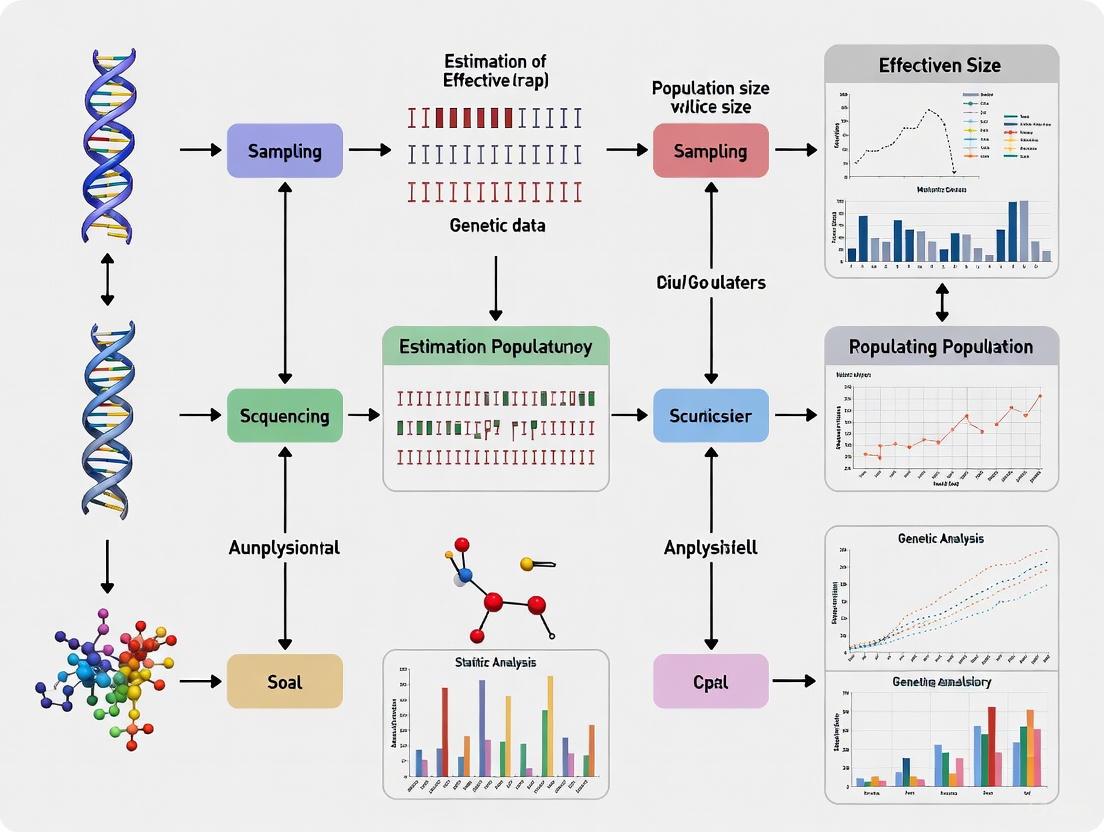

The following workflow diagram illustrates the complete process from sample collection to Ne estimation:

Detailed Experimental Protocol: Temporal Method with MaxTemp

For populations with samples collected across multiple generations, the temporal method provides estimates of historical Ne:

1. Study Design and Sampling

- Collect samples from the same population at multiple time points (minimum 2, preferably more)

- Ensure sufficient generational separation (at least 1, preferably 2-5 generations between samples)

- Maintain consistent sampling strategies across time points

- Record sample sizes for each temporal collection

2. Laboratory Analysis

- Use consistent genotyping methods across all temporal samples

- Include technical replicates to estimate genotyping error rates

- Target the same set of markers across all sampling events

3. Data Processing with MaxTemp

- Implement the newly developed MaxTemp software, which increases precision of temporal Ne estimates [10]

- Input allele frequency data for all time points

- The software optimizes the weighting of temporal F (F̂) estimates to maximize precision

- MaxTemp produces single-generation estimates of Ne, allowing matching with specific management actions or environmental events [10]

4. Validation and Interpretation

- Compare estimates with demographic data when available

- Assess consistency across different marker sets

- Consider confidence intervals, which remain challenging for temporal methods

Table 3: Essential Research Tools for Effective Population Size Estimation

| Tool/Resource | Type | Primary Application | Key Features | Implementation Considerations |

|---|---|---|---|---|

| NeEstimator v2.1 | Software | LD-based Ne estimation | User-friendly interface, multiple methods, confidence intervals | Requires unlinked markers; sensitive to rare alleles [6] |

| MaxTemp | Software | Temporal method with enhanced precision | Optimizes weighting of temporal F estimates | Newly developed; requires multiple temporal samples [10] |

| fastsimcoal2 | Software | Coalescent-based inference | Flexible demographic modeling, uses SFS | Computationally intensive; requires phased data [5] |

| PLINK | Software | Data quality control and processing | Efficient handling of large SNP datasets | Essential preprocessing for LD-based methods [6] |

| SNP Arrays | Genotyping platform | High-throughput marker generation | 50K-800K SNPs available for model species | Species-specific arrays needed; limited to known variants |

| Whole Genome Sequencing | Sequencing | Comprehensive variant discovery | Identifies novel variants; highest resolution | Higher cost; computational challenges for large sample sizes |

| Goat/Sheep SNP50K | Species-specific array | Livestock Ne studies | Standardized panels for consistent genotyping | Used in recent Ne optimization studies [6] |

Applications in Conservation and Management

Conservation Genetics

In conservation biology, Ne serves as a key indicator of population viability. Small populations with low Ne face elevated risks from:

- Inbreeding depression: Reduced fitness due to increased homozygosity of deleterious alleles [4]

- Loss of adaptive potential: Diminished capacity to respond to environmental change [4]

- Extinction vortices: Synergistic interactions between genetic and demographic threats [4]

The "50/500" rule provides a practical guideline, suggesting that Ne > 50 is needed for short-term viability and Ne > 500 for long-term evolutionary potential [4]. However, some argue that these values may be insufficient when considering demographic and environmental stochasticity, suggesting that Ne in the thousands may be necessary for long-term persistence [4].

Agricultural and Breeding Applications

In livestock and crop improvement programs, monitoring Ne helps balance selection intensity with maintenance of genetic diversity. Recent studies in sheep and goats have demonstrated that a sample size of approximately 50 animals provides a reasonable approximation of Ne, enabling cost-effective genetic monitoring in conservation programs [6]. This is particularly valuable for local breeds with limited conservation funding.

Future Directions and Methodological Challenges

Despite substantial progress in Ne estimation, several challenges remain:

- Integration of multiple methods: Combining information from LD, temporal, and pedigree approaches for more robust estimates

- Accounting for population structure: Developing better methods for structured populations and metapopulations

- Genomic heterogeneity: Modeling variation in Ne along chromosomes due to linked selection

- Single-generation estimates: New methods like MaxTemp aim to provide Ne estimates for specific generations [10]

- Standardization of reporting: Developing guidelines for sample sizes, quality control, and interpretation across studies

As sequencing technologies continue to advance and sample sizes increase, precision of Ne estimates will improve, providing deeper insights into population history and contemporary dynamics. However, careful interpretation of results remains essential, as different methodological approaches and biological factors can significantly influence estimates [6] [2].

The continued refinement of effective population size concepts and estimation methods will enhance our ability to monitor genetic health, predict evolutionary potential, and develop effective conservation strategies in an era of rapid environmental change.

The effective population size (Ne) is a foundational concept in population genetics, first introduced by Sewall Wright in 1931 [2] [11]. It is defined as the size of an idealized Wright-Fisher population that would experience the same amount of genetic drift or inbreeding as the real population under study [2] [12]. Unlike the census population size (Nc), which simply counts the number of mature individuals, Ne quantifies the number of individuals effectively contributing genes to the next generation, thereby determining the rate of genetic change in a population [13] [14]. Understanding and accurately estimating Ne is critical across evolutionary biology, conservation genetics, and breeding programs, as it directly influences a population's evolutionary potential, risk of inbreeding depression, and long-term viability [2] [11].

This article outlines the pivotal role of Ne in understanding microevolutionary dynamics and its practical estimation from genetic data. We detail the theoretical underpinnings linking Ne to genetic drift and inbreeding, provide structured protocols for its estimation, and showcase applications through contemporary case studies.

Theoretical Foundations: Ne, Genetic Drift, and Inbreeding

Ne as a Measure of Genetic Drift

Genetic drift refers to the random fluctuations in allele frequencies from one generation to the next, a process whose intensity is governed by the effective population size. In a Wright-Fisher idealized population, the variance in allele frequency change of a neutral gene is given by p(1-p)(1-(1-1/Ne)^t) after t generations [12]. This establishes that genetic drift occurs more rapidly in populations with a small Ne, leading to an increased risk of allele loss or fixation due to chance alone, rather than selection [11]. The coalescent effective population size further frames this concept in terms of genealogy, where the expected coalescence time for two random gene copies is T = 2Ne generations [2].

Ne as a Determinant of Inbreeding

The inbreeding effective population size specifically relates to the rate at which individuals become more genetically similar over time. A small Ne accelerates the accumulation of identical-by-descent (IBD) alleles, increasing the homozygosity of deleterious recessive alleles and manifesting as inbreeding depression—a reduction in fitness traits such as survival and fertility [15]. The following conceptual diagram illustrates how a small Ne drives this process.

The Critical Distinction Between Ne and Nc

A common and critical simplification is to equate the census size (Nc) with the effective size. In reality, Ne is almost always smaller than Nc due to factors such as unequal sex ratios, variance in reproductive success, and population size fluctuations [13] [2]. The relationship can be conceptually framed through the Diversity Partitioning Theorem, where the census size (Nc) represents a "richness" (the total number of potential breeders), while the effective size (Ne) is an "evenness-based diversity" that accounts for disparities in reproductive output [13]. The ratio Ne/Nc is therefore a key metric, often ranging from 0.1 to 0.3 in many vertebrates and plants, with 0.1 considered a conservative general estimate [14].

Quantitative Data and Predictive Equations

Predictive equations for Ne have been developed for populations with various reproductive modes and structures. The following table summarizes key predictive equations for different population models.

Table 1: Predictive Equations for Effective Population Size (Ne) Under Different Population Models

| Population Model | Predictive Equation | Key Parameters | Primary Reference |

|---|---|---|---|

| Simple, Constant Size | Ne ≈ (4Nc - 2) / (Vk + 2) |

Nc: Census size; Vk: variance in reproductive success |

[13] |

| Separate Sexes (Dioecious) | Ne ≈ (4NmNf) / (Nm + Nf) |

Nm: Number of males; Nf: Number of females |

[2] |

| Partial Selfing (Hermaphrodites) | Ne ≈ Nc / [σ²(1+α) + (1-α)/2] |

σ²: Variance in offspring number; α: Correlation of genes within individuals |

[2] |

These equations highlight that Ne is not a direct count but a complex parameter shaped by demography and breeding structure. For instance, the equation for separate sexes shows that Ne is maximized when the sex ratio is equal and is drastically reduced if one sex becomes a reproductive bottleneck [2].

Protocols for Estimating Ne from Genetic Data

Several genetic methods have been developed to estimate contemporary Ne. The Linkage Disequilibrium (LD) method is among the most widely used due to its practicality and reliability [11] [14] [16]. The following workflow outlines the key steps for Ne estimation using the LD method, applicable to SNP data from diploid organisms.

Protocol 4.1: Estimating Ne Using the Linkage Disequilibrium Method

Principle: Linkage disequilibrium (LD) refers to the non-random association of alleles at different loci. In finite populations, genetic drift generates LD, the extent of which is inversely proportional to the effective population size and the recombination rate c [11] [16]. The relationship is described by Sved's formula: E(r²) ≈ 1 / (4Nec + 1), which can be rearranged to estimate Ne [16].

Step 1: Sample Collection and Genotyping

- Sample a representative cohort of individuals from the target population. The sample should be random with respect to family structure to avoid bias.

- Extract high-quality DNA and generate genotype data using a high-throughput platform such as Genotyping-by-Sequencing (GBS), Whole Genome Sequencing (WGS), or SNP arrays [16].

Step 2: Data Quality Control (QC)

Perform rigorous QC on the raw variant call data using software like PLINK 1.9 [11] [16]. Standard filters include:

- Call Rate: Remove samples and SNPs with more than 5% missing data.

- Minor Allele Frequency (MAF): Filter out SNPs with MAF < 0.05, as rare alleles can bias LD estimates [16].

- Hardy-Weinberg Equilibrium (HWE): Exclude markers significantly deviating from HWE.

- Heterozygosity: Remove samples with exceptionally high heterozygosity, which may indicate contamination.

Step 3: Calculate Linkage Disequilibrium

- Use software such as PLINK 1.9 or GCTA to compute the squared correlation coefficient (

r²) between all pairs of SNP markers within a specified physical distance (e.g., 0-750 kb) [16]. - The analysis should stratify SNPs by distance bins (e.g., 0-10 kb, 10-20 kb, etc.) to observe the decay of LD with physical distance.

Step 4: Estimate Ne from LD

- Apply Sved's formula,

Ne ≈ 1 / (4cr²), wherecis the recombination rate in Morgans per base pair. Often,cis approximated using a constant value (e.g., 1 cM/Mb = 10⁻⁸ M/bp) [16]. - This calculation can be performed using specialized software like SNeP 1.1 [11] or custom scripts in R.

- The estimate reflects the effective population size

tgenerations ago, wheret = 1/(2c)generations [11]. Therefore, LD at short distances informs about more ancient Ne, while LD at long distances reflects recent Ne.

The Scientist's Toolkit: Software and Reagents

Accurate estimation of Ne relies on a suite of bioinformatics tools and laboratory reagents. The tables below catalog essential resources for researchers.

Table 2: Key Software for Estimating Effective Population Size

| Software Name | Primary Method | Application Scope | Input Data |

|---|---|---|---|

| NeEstimator v2.1 [17] [14] | LD, Heterozygosity excess, Temporal, Sibship | All-in-one suite for contemporary Ne estimation | SNP, Microsatellite |

| SNeP 1.1 [11] | Linkage Disequilibrium (LD) | Trajectory of historical Ne from SNP data | SNP data |

| GONE [17] [14] | Linkage Disequilibrium (LD) | Estimation of historical Ne over the last ~1000 generations | SNP data |

| Lamarc [18] | Coalescent Likelihood | Estimation of Ne, growth rates, and migration | Sequence, Microsatellite |

| gesp [19] | Analytical Framework | Prediction of Ne for complex, subdivided populations | Demographic parameters |

Table 3: Essential Research Reagents and Materials

| Reagent/Material | Function in Ne Estimation Workflow | Example Protocols |

|---|---|---|

| DNA Extraction Kit (e.g., DNeasy Plant Mini Kit) | High-quality DNA isolation from tissue samples (leaf, blood, etc.) for subsequent genotyping [16] | Standard silica-membrane based protocol |

| Restriction Enzyme ApeKI | Used in Genotyping-by-Sequencing (GBS) library preparation to reduce genome complexity [16] | GBS protocol as in Bari et al. [16] |

| Illumina NovaSeq S1 | High-throughput sequencing platform for generating genome-wide SNP data | Manufacturer's sequencing protocol |

| PLINK 1.9 [11] [16] | Command-line tool for robust data management, QC, and basic LD calculations | plink -bfile mydata -r2 -ld-window-kb 750 -ld-window 99999 -ld-window-r2 0 |

Case Studies in Conservation and Breeding

Case Study: Genetic Health of Kelp Forests

A 2025 genomic study of bull and giant kelp in the Northeast Pacific provides a stark example of the consequences of low Ne [15]. Researchers sequenced 429 bull kelp and 211 giant kelp genomes, identifying 6-7 genetically distinct populations. They found that populations with low Ne exhibited significantly reduced genetic diversity and higher inbreeding coefficients. Crucially, small bull kelp populations showed fixation of many deleterious alleles due to strong genetic drift, with no evidence of purging by natural selection. This reduces within-population inbreeding depression but predicts hybrid vigor in crosses between different small populations, a key insight for designing restoration strategies [15].

Case Study: Monitoring Diversity in Crop Breeding

Monitoring Ne in plant breeding programs is essential to prevent the loss of genetic gain. A 2024 study estimated Ne in two field pea germplasm sets: an elite breeding line (NDSU set) and a genetically diverse panel (USDA set) [16]. Using the LD method with GBS SNP data, they found the elite lines had a much smaller Ne (64) compared to the diversity panel (Ne = 174). The elite lines also showed higher and longer-range LD, consistent with their history of selection and a smaller effective number of founders. This three-fold difference in Ne highlights how breeding practices can narrow genetic diversity and underscores the need to actively monitor Ne to sustain long-term breeding progress [16].

The effective population size (Ne) is more than an abstract parameter; it is a vital indicator of a population's genetic health and evolutionary potential. A small Ne accelerates the loss of genetic diversity through drift and increases the genetic load through inbreeding, directly compromising population viability [15]. Modern genomics, combined with robust analytical methods and software, provides researchers and conservation managers with the tools to accurately estimate Ne and interpret its implications. Integrating these estimates into management frameworks—from setting conservation priorities for kelp forests to optimizing selection protocols in crop breeding—is fundamental to ensuring the long-term survival and adaptability of populations in a changing world.

Effective population size (Ne) is a foundational concept in population genetics, translating the complex genetic drift of a real population into the simplified framework of an idealized Wright-Fisher population [1]. It is a critical parameter for understanding the dynamics of genetic variation, inbreeding, and adaptive potential in fields ranging from conservation biology to animal breeding [2]. While often summarized as a single number, different definitions of Ne exist, each tailored to specific genetic processes and time scales. Among these, the inbreeding effective size, the variance effective size, and the coalescent effective size are paramount. These variants, though often equivalent in a constant, ideal population, can diverge significantly under realistic biological conditions such as fluctuating population size, overlapping generations, or population sub-structure [20] [12]. This article delineates these three key types of effective population size, providing a structured comparison, detailed protocols for their estimation, and practical guidance for researchers working with genetic data.

Conceptual Frameworks and Quantitative Comparison

The following table summarizes the core definitions, focal processes, and typical applications of the three primary effective population size types.

Table 1: Key Types of Effective Population Size (Ne)

| Type of Ne | Definitional Focus | Key Genetic Process | Primary Applications | Underlying Idealized Model |

|---|---|---|---|---|

| Inbreeding Effective Size | The size of an idealized population that would exhibit the same rate of increase in identity by descent (inbreeding) as the real population [1] [2]. | Rate of inbreeding | Conservation genetics (assessing inbreeding depression), managing breeding programs [2] [12]. | Wright-Fisher Model |

| Variance Effective Size | The size of an idealized population that would experience the same variance in allele frequency change over a generation due to genetic drift [1] [12]. | Allele frequency variance (genetic drift) | Microevolutionary studies, quantifying genetic drift over short terms, temporal method estimation [2] [12]. | Wright-Fisher Model |

| Coalescent Effective Size | The size of an idealized population where two gene lineages have the same expected time to coalesce (find a common ancestor) as in the real population [2] [20]. | Time to most recent common ancestor (coalescence) | Analyzing molecular sequence and polymorphism data, inferring long-term demographic history [2] [20]. | Coalescent Theory |

The relationships between these concepts and the genetic processes they represent can be visualized as a unified logical framework.

Predictive Equations and Empirical Data

Theoretical predictions for Ne are crucial for study design and interpretation. The foundational equation for a dioecious population with separate sexes, derived from the variance of individual contributions, is often expressed as:

Here, Nm and Nf are the numbers of breeding males and females, respectively [2]. This approximation assumes a Poisson distribution of offspring number. More complex equations account for variances and covariances in offspring number [2]. For a population with partial selfing (β), the effective size is approximated by Ne ≈ N / (1 + β), highlighting how inbred mating systems reduce Ne [2].

Empirical data across taxa reveal that Ne is typically much smaller than the census size. A large-scale review of 102 wildlife species found that the average ratio of effective to census size (Ne/N) was only 0.34, and when accounting for fluctuations and unequal sex ratios, this average dropped to a mere 0.10-0.11 [1]. This means a population of 1,000 individuals might genetically behave like a population of only 100-110. Furthermore, a global survey of 3829 populations showed that many taxonomic groups struggle to meet conservation thresholds, with plants, mammals, and amphibians having less than a 54% probability of reaching Ne = 50 and less than 9% probability of reaching Ne = 500 [21].

Estimation Protocols and Methodologies

Protocol for Estimating Recent Ne via Linkage Disequilibrium (LD)

The LD method is a widely used single-sample estimator for contemporary (recent) effective population size, based on the principle that genetic drift generates linkage disequilibrium between neutral loci.

- Principle: The amount of linkage disequilibrium (non-random association of alleles at different loci) in a population is inversely related to its effective size. In smaller populations, genetic drift creates stronger LD [2] [22].

- Workflow: The following diagram outlines the standard workflow for LD-based Ne estimation, from sampling to interpretation.

- Key Considerations:

- Marker Type: High-density SNPs are now standard. The number of loci should be large (hundreds to thousands) [21].

- Sample Size: A larger sample size improves precision. Typically, 50-100 individuals are used, but more may be needed for large populations [21].

- Allele Frequency Cut-off: Applying a minor allele frequency (MAF) cut-off (e.g., 0.05) is critical to avoid upward bias in Ne estimates [21].

- Software: NEESTIMATOR (v1 or v2) and LDNe are commonly used software implementations for this method [21].

Protocol for Estimating Historical Ne Using the GONE Software

For inferring recent historical Ne (over the last ~100-200 generations), methods leveraging long-range linkage disequilibrium from linked markers, such as those implemented in the software GONE, have become prominent.

- Principle: The extent of LD between linked loci decays over generations due to recombination. The pattern of LD at different genetic distances therefore contains information about the effective population size in the past, with closer loci reflecting more recent history [22].

- Workflow: The estimation of historical Ne requires high-density genomic data and careful pre-processing to ensure accuracy.

- Key Considerations:

- Assumption of Isolation: GONE assumes the population has been isolated for a significant period. Recent admixture or high migration rates can create severe biases, generating spurious signals of population bottlenecks or growth [22].

- Data Requirements: High-quality, phased genotype data from a single, well-defined population is essential. Sample sizes of 100 or more individuals are recommended.

- Chromosomal Inversions: Genomic regions with suppressed recombination (e.g., chromosomal inversions) can distort estimates and should be identified and removed prior to analysis [22].

The Scientist's Toolkit: Essential Reagents and Software

Table 2: Key Research Reagents and Solutions for Effective Population Size Estimation

| Item Name | Type | Critical Function | Application Context |

|---|---|---|---|

| NeEstimator (v2.1) | Software Program | Implements multiple methods for contemporary Ne estimation, including LD, heterozygote excess, and temporal method [21]. | General use for estimating recent Ne from microsatellite or SNP data. |

| GONE | Software Program | Estimates historical Ne for the past ~200 generations from patterns of linkage disequilibrium in a single sample [22]. | Inferring recent demographic history (bottlenecks, expansions). |

| SNP Genotyping Array | Wet-Lab / Bioinformatic Reagent | Provides high-density genotype data (1000s to millions of SNPs) from which LD is calculated. | Primary data source for most modern LD-based Ne estimates. |

| Whole-Genome Sequencing Data | Wet-Lab / Bioinformatic Reagent | Provides the most comprehensive genetic data, allowing for the highest-resolution Ne estimates and the detection of runs of homozygosity (ROH). | Advanced analyses, including historical inference and inbreeding assessment via FROH [23]. |

| Minor Allele Frequency (MAF) Filter | Bioinformatics Parameter | Reduces bias in LD-based Ne estimates by excluding rare variants [21]. | A standard quality control step in LD and GONE analyses. |

Advanced Considerations and Future Directions

The estimation of Ne is not without challenges. A critical consideration is that the coalescent effective population size, often considered the most general form, only exists when the genealogical process of a population can be approximated by the standard coalescent with a simple linear scaling of time [20]. Complex demographic histories, such as strong continuous population subdivision, can violate this condition, meaning no single Ne can accurately describe the genetic diversity and drift across the entire genome [20].

Furthermore, different genomic regions can have different effective histories due to selection at linked sites. Areas of low recombination have a lower effective population size because selection at one site affects linked neutral variants, a process known as genetic hitchhiking or background selection [1]. This means that a single genome-wide estimate is an average, and local Ne can vary significantly along chromosomes.

Emerging methods continue to refine our ability to track Ne. For example, the Ttne software leverages identity-by-descent (IBD) segments detected in ancient DNA time-series data to infer effective population size trajectories with increased resolution for recent fluctuations [24]. The use of runs of homozygosity (ROH) is also a powerful tool for quantifying individual inbreeding levels, which reflects past Ne, as demonstrated in studies of isolated wolf populations [23]. As genomic datasets grow in size and temporal depth, the integration of these various methods and a careful acknowledgment of their assumptions will be key to robust inferences of effective population size.

In population genetics, the effective population size (Ne) is a cornerstone concept, defined as the size of an idealized Wright-Fisher population that would experience the same rate of genetic drift or inbreeding as the real population under consideration [1]. In contrast, the census size (Nc) represents the total number of individuals in a population, typically counting only reproductively mature individuals for conservation and monitoring purposes [14]. This distinction is not merely academic; it has profound implications for understanding evolutionary trajectories, predicting the loss of genetic diversity, and designing effective conservation strategies [2]. The ratio between these two parameters (Ne/Nc) provides a crucial metric for evaluating population viability and genetic health, yet this relationship is notoriously complex and influenced by numerous biological and demographic factors [25] [2].

The conceptual foundation of effective population size was introduced by Sewall Wright in 1931 to quantify genetic drift in real populations by comparing them to an idealized random mating population [1]. This idealized population assumes constant size, equal sex ratio, random mating, no selection, mutation, or migration, and Poisson distribution of offspring number [2]. Real populations inevitably deviate from these assumptions, resulting in Ne values that are typically substantially lower than Nc [1]. Understanding the relationship between Ne and Nc is particularly critical in conservation biology, where Ne determines the rate of genetic diversity loss and inbreeding accumulation, ultimately affecting population adaptive potential and extinction risk [26].

Key Conceptual Differences Between Ne and Nc

The distinction between effective and census population size transcends mere numerical difference, representing fundamentally different aspects of population biology with significant consequences for genetic diversity and evolutionary potential.

Conceptual Definitions and Biological Significance

The census population size (Nc) serves as a straightforward demographic count, typically of reproductively mature individuals in a population [14]. It provides essential information about population density and abundance but offers limited insight into genetic health or evolutionary potential. In contrast, the effective population size (Ne) represents a genetic parameter that quantifies the rate of genetic drift and inbreeding [2]. Different types of effective sizes focus on specific genetic processes: the variance effective size relates to changes in allele frequency variance due to sampling error, while the inbreeding effective size relates to the rate at which heterozygosity decreases over generations [1]. For populations in equilibrium, these values converge, but they can differ dramatically in non-equilibrium populations [27].

The biological significance of Ne becomes apparent when considering its relationship to key evolutionary processes. The magnitude of genetic drift is inversely proportional to Ne, meaning smaller effective populations experience stronger drift, leading to faster loss of genetic diversity and increased fixation of deleterious mutations [28]. Similarly, the efficiency of natural selection is directly related to Ne, with larger populations better able to purge deleterious mutations and fix beneficial ones [1]. This relationship has profound implications for genome evolution, potentially affecting transposable element accumulation and overall genome architecture [28].

Factors Creating Discrepancy Between Ne and Nc

The disparity between Ne and Nc arises from systematic deviations from the idealized Wright-Fisher population assumptions. These factors can be quantified through predictive equations that adjust Ne based on specific population characteristics:

Table 1: Factors Causing Discrepancy Between Ne and Nc

| Factor | Effect on Ne | Mathematical Relationship | Biological Basis |

|---|---|---|---|

| Unequal sex ratio | Reduces Ne | ( Ne = \frac{4NmNf}{Nm + N_f} ) [2] | Skewed reproductive contributions between sexes |

| Variance in reproductive success | Reduces Ne | ( Ne = \frac{4N - 2D}{2 + Vk} ) where ( V_k ) = variance in offspring number [1] | Certain individuals contribute disproportionately to next generation |

| Population fluctuations | Reduces Ne (harmonic mean) | ( \frac{1}{Ne} = \frac{1}{t} \sum{i=1}^t \frac{1}{N_i} ) [1] | Bottlenecks have disproportionate effect |

| Overlapping generations | Complex effects | Age-structured models [2] | Different age classes contribute unequally to reproduction |

| Population subdivision | Variable effects | Dependent on migration rates and subpopulation sizes [29] | Restricted gene flow between demes affects overall genetic drift |

These factors often interact in natural populations, creating complex relationships between census counts and genetic parameters. For instance, social structure in many vertebrate species can create substantial reproductive skew, where a few dominant individuals monopolize reproduction while others contribute little to the next generation [29]. This effectively creates a genetic bottleneck regardless of the actual number of individuals physically present. Similarly, historical population fluctuations can leave a lasting genetic signature, with the harmonic mean of population sizes over time determining contemporary Ne rather than current abundance [1].

Quantitative Relationship: The Ne/Nc Ratio

Typical Values and Biological Determinants

The Ne/Nc ratio provides a practical metric for translating between demographic counts and genetic parameters, with considerable variation across taxa and populations. Empirical studies have documented Ne/Nc ratios ranging from as low as 10^-6 for Pacific oysters to nearly 0.994 for humans, with an average of approximately 0.34 across examined species [1]. After accounting for fluctuations in population size, variance in family size, and unequal sex-ratio, more comprehensive estimates average only 0.10-0.11 [1]. This surprisingly low ratio indicates that census counts often substantially overestimate genetically effective population sizes.

For conservation applications, a general conversion ratio of 0.1 is widely recommended as a conservative and suitable approximation when precise genetic data are unavailable [14]. This means that an Ne of 500—a commonly cited threshold for maintaining evolutionary potential—translates to a census size of approximately 5,000 mature individuals [26]. However, this ratio represents a generalization, with typical values potentially ranging from 0.1 to 0.3 in many vertebrates and plants [14].

Table 2: Empirical Ne/Nc Ratios Across Taxonomic Groups

| Taxonomic Group | Typical Ne/Nc Range | Notable Examples | Primary Influencing Factors |

|---|---|---|---|

| Marine fishes | Highly variable (0.000001-0.994) [1] | Pacific oyster (10^-6) [1] | Extreme variance in reproductive success, sweepstakes reproduction |

| Elasmobranchs | Near 1 in some species [25] | Grey shark, Leopard shark [25] | More stable reproductive success, different life history |

| Forest trees | Often very low [30] | Various conifers and hardwoods | Pollen and seed dispersal patterns, mating system |

| Birds and mammals | 0.1-0.5 [14] | Wide variation among species | Social structure, mating systems, reproductive skew |

| Humans | ~0.994 [1] | Inuit populations [1] | Cultural factors moderating reproductive variance |

The biological determinants of Ne/Nc ratios are complex and multifaceted. Life history traits play a predominant role, with species exhibiting high fecundity, Type III survivorship curves, and high variance in reproductive success typically demonstrating lower Ne/Nc ratios [25]. This pattern is particularly pronounced in marine species with "sweepstakes reproduction," where environmental stochasticity creates massive variance in reproductive success among individuals [25]. Similarly, mating systems profoundly influence Ne/Nc ratios, with monogamous species typically exhibiting higher ratios than polygynous or promiscuous species where reproductive skew is more extreme [29].

Implications for Conservation and Management

The Ne/Nc ratio has direct practical applications in conservation policy and management. The Ne > 500 indicator has been formally adopted as a genetic diversity metric, measuring the proportion of populations within species that maintain sufficient size to preserve evolutionary potential [26]. This threshold translates to approximately Nc > 5,000 individuals when applying the conservative 0.1 ratio, providing a tangible conservation target [26] [14].

This relationship becomes particularly important when considering minimum viable populations and conservation prioritization. Population viability analyses that consider only demographic parameters without accounting for genetic erosion may substantially overestimate long-term persistence probabilities. Furthermore, the Ne/Nc ratio provides a mechanism for estimating genetic parameters for species where comprehensive genetic studies are logistically or financially prohibitive, allowing managers to make preliminary assessments based on census data alone [14].

Methodologies for Estimating Effective Population Size

Genetic Methods for Contemporary Ne Estimation

Several methodological approaches have been developed to estimate effective population size from genetic data, each with specific requirements, assumptions, and applications. These methods leverage different signatures of genetic drift detectable in population genetic data:

Figure 1. Genetic methods for estimating contemporary versus historical effective population sizes from different analytical approaches and software implementations.

The linkage disequilibrium (LD) method is among the most widely used approaches for estimating contemporary Ne [25]. This method capitalizes on the fact that genetic drift generates non-random associations between loci (linkage disequilibrium) in finite populations, with the extent of LD inversely related to Ne [25]. The standardized LD statistic (r²) is calculated between unlinked pairs of loci, with corrections for sampling bias [25]. This approach implemented in software such as LDNe and NeEstimator provides a snapshot of contemporary effective size but requires large sample sizes and dense genetic markers for accurate estimation, particularly in large populations [25] [30].

The temporal method estimates Ne by analyzing changes in allele frequencies between samples collected across multiple generations [27]. The principle underpinning this approach is that the variance in allele frequency change over time is inversely proportional to Ne [27]. Methods such as MLNE and TempoFS implement this approach, which can provide accurate estimates but requires sampling across generations, which may be impractical for long-lived species [14].

The heterozygosity excess method leverages deviations from Hardy-Weinberg equilibrium expectations in finite populations [27]. In Wright-Fisher populations, genetic drift generates a systematic heterozygote excess relative to Hardy-Weinberg proportions by an amount approximately equal to 1/(2N-1) [27]. This method, implemented in NeEstimator, can be applied to single samples but typically exhibits low precision and is most appropriate for very small populations [27].

More recent approaches include sibship assignment methods that estimate Ne from patterns of relatedness within a sample [14], and coalescent-based methods that reconstruct historical demographic trajectories over deeper timescales [31]. The latter includes pairwise sequentially Markovian coalescent (PSMC) approaches that can infer historical population size changes from single genomes but are not appropriate for estimating contemporary Ne [14].

Demographic and Predictive Approaches

In the absence of genetic data, predictive equations based on demographic parameters provide an alternative approach for estimating Ne. These methods build on the mathematical relationships summarized in Table 1, incorporating species-specific life history information including sex ratio, variance in reproductive success, population fluctuation data, and mating systems [2].

For dioecious species with separate sexes, the foundational equation incorporating sex ratio is:

[ Ne = \frac{4NmNf}{Nm + N_f} ]

where (Nm) and (Nf) represent the number of breeding males and females, respectively [2]. More comprehensive equations incorporate variance in reproductive success:

[ Ne = \frac{4N - 2D}{2 + Vk} ]

where (D) represents dioeciousness (0 for hermaphrodites, 1 for dioecious species) and (Vk) is the variance in offspring number [1]. Under ideal Wright-Fisher conditions with Poisson-distributed reproductive success ((Vk = 2)), this simplifies to (N_e = N) [1].

For populations with fluctuating sizes, the harmonic mean provides the appropriate estimator:

[ \frac{1}{Ne} = \frac{1}{t} \sum{i=1}^t \frac{1}{N_i} ]

where (N_i) represents population size in generation (i) [1]. This relationship explains the disproportionate impact of population bottlenecks on effective size, as the harmonic mean is heavily weighted toward the smallest values in a series.

Experimental Protocols and Research Reagent Solutions

Protocol for Contemporary Ne Estimation via Linkage Disequilibrium

The linkage disequilibrium method provides a robust approach for estimating contemporary Ne from genetic data. The following protocol outlines the key steps for implementation:

Sample Collection and DNA Extraction

- Collect tissue samples from 50-100+ unrelated individuals, with larger sample sizes required for larger populations [30]

- Extract high-quality DNA using standardized extraction kits (e.g., DNeasy Blood & Tissue Kit, Qiagen)

- Quantify DNA concentration using fluorometric methods and normalize to working concentration

Genotype Data Generation

- For non-model organisms: Utilize restriction site-associated DNA sequencing (RAD-seq) to discover and genotype thousands of single nucleotide polymorphisms (SNPs) [30]

- For organisms with reference genomes: Apply whole-genome resequencing or targeted capture approaches

- Ensure adequate marker density: Minimum 1,000 SNPs recommended, with 10,000+ preferred for large populations [25]

- Apply standard quality control filters: Call rate >95%, minor allele frequency >0.01, Hardy-Weinberg equilibrium p-value >1×10^-6

Data Analysis with LDNe Software

- Convert genotype data to appropriate format (e.g., GENEPOP)

- Execute LDNe with sampling correction for bias [25]

- Apply allele frequency threshold (e.g., exclude alleles with frequency <0.05) to minimize bias from rare alleles [25]

- Generate point estimate and confidence intervals through jackknifing procedures

- Interpret results considering the method's limitations for very large populations (>10,000) where confidence intervals may be wide [25]

Validation and Interpretation

- Compare estimates from multiple methods when possible (e.g., temporal, heterozygosity excess)

- Consider biological plausibility given species' life history and census data

- Report confidence intervals and methodological limitations transparently

Research Reagent Solutions for Effective Population Size Studies

Table 3: Essential Research Reagents and Tools for Ne Estimation Studies

| Reagent/Tool | Function | Example Products/Software | Application Notes |

|---|---|---|---|

| DNA Extraction Kits | High-quality DNA isolation from various tissue types | DNeasy Blood & Tissue Kit (Qiagen), MagMAX DNA Multi-Sample Kit (Thermo Fisher) | Critical for downstream genotyping success; choose based on source material |

| SNP Genotyping Platforms | Genome-wide polymorphism discovery and scoring | Illumina NovaSeq, DNBSEQ-G400, RAD-seq protocols | Balance between coverage, cost, and information content |

| Genotype Calling Software | Raw sequence data to genotype format | STACKS, GATK, FreeBayes | Parameter optimization critical for data quality |

| Ne Estimation Software | Implementation of LD, temporal, and other methods | NEESTIMATOR v2.1, LDNe, GONE, SNeP | Method selection depends on data type and population characteristics |

| Bioinformatics Tools | Data format conversion, quality control, visualization | VCFtools, PLINK, R/genetics packages | Essential for preprocessing and results interpretation |

Critical Considerations and Methodological Limitations

Technical Challenges and Validation Approaches

Estimating effective population size presents substantial technical challenges that researchers must acknowledge and address. A primary limitation concerns statistical power, particularly for large populations where confidence intervals may be extremely wide without massive sample sizes [30]. For instance, accurate estimation of Ne > 1,000 may require sampling hundreds of individuals and genotyping tens of thousands of markers [25]. This creates practical and financial constraints, especially for conservation applications where resources are limited.

The interpretation of Ne estimates requires careful consideration of underlying assumptions. Methods based on linkage disequilibrium assume unlinked loci, an assumption increasingly violated with genomic data where physical linkage is common [25]. Similarly, most methods assume discrete generations and random mating, assumptions frequently violated in natural populations with overlapping generations and complex social structures [29]. Violations of these assumptions can generate spurious signals of population size changes, with population subdivision particularly problematic as it can create false bottleneck or expansion signatures [31] [29].

Validation approaches should include:

- Comparison of multiple estimation methods applied to the same dataset

- Simulation studies using known demographic models to assess method performance

- Comparison with demographic estimates where available

- Sensitivity analyses evaluating the impact of sampling scheme and marker selection

Conservation Applications and Policy Implications

The translation of Ne estimates into conservation policy requires careful consideration of several conceptual and practical issues. The Ne > 500 threshold widely adopted in conservation represents a practical compromise based on theoretical considerations and empirical observations [26]. This threshold aims to balance short-term demographic stability with long-term evolutionary potential, with populations below this value considered at risk of losing adaptive capacity.

However, practical application of this threshold faces challenges. Many species exhibit Ne/Nc ratios substantially lower than the conservative 0.1 value, meaning census sizes must be much larger than 5,000 to maintain genetic health [1]. This is particularly problematic for marine species with sweepstakes reproduction, where Ne/Nc ratios can approach 10^-6, requiring impossibly large census sizes to maintain genetic diversity [25]. In such cases, conservation strategies must focus on maintaining connectivity and multiple populations rather than single population size targets.

Emerging issues in conservation genetics include the environmental costs of intensive genetic monitoring programs [30]. As conservation genetics increasingly relies on genomic approaches with substantial carbon footprints through sequencing and computational requirements, the field must balance information gain against environmental impact [30]. This necessitates careful consideration of when genomic monitoring is truly necessary for conservation decision-making versus when simpler approaches may suffice.

Furthermore, the interpretation of Ne estimates in structured populations remains challenging, as different sampling schemes can yield dramatically different estimates [29]. Conservation decisions based on flawed Ne estimates risk misallocating limited resources or implementing inappropriate management strategies. As such, effective population size should be interpreted as one component of a comprehensive conservation assessment rather than a definitive metric in isolation.

Biomedical research is undergoing a paradigm shift toward approaches centered on human disease models, driven by the notoriously high failure rates of the current drug development process. Despite a 44% increase in research and development investments among the 15 largest pharmaceutical companies since 2016, the drug attrition rate reached an all-time high of 95% in 2021 [32]. Most drugs fail in clinical stages despite proven efficacy and safety in animal models, highlighting a critical translational gap between preclinical research and clinical success [32]. This gap partially stems from relying almost exclusively on animal-derived data for decisions about clinical trial entry, despite fundamental interspecies differences in anatomical layouts, biological barriers, receptor expression, immune responses, and pathomechanisms [32].

The concept of effective population size (Ne), introduced by Sewall Wright in 1931, provides a crucial framework for quantifying genetic drift and inbreeding in real-world populations [2] [27]. In biomedical contexts, understanding and accurately estimating Ne is paramount for interpreting genetic variation, validating disease targets, and designing clinically relevant experimental models. This application note establishes protocols for Ne estimation and demonstrates its critical importance across the biomedical research continuum, from basic disease mechanism discovery to clinical therapeutic development.

Ne Estimation Methodologies: Experimental Protocols and Workflows

Linkage Disequilibrium-Based Estimation Protocol

Principle: This method estimates contemporary Ne from patterns of linkage disequilibrium (LD), the non-random association of alleles at different loci, within a single population sample. LD increases as population size decreases due to greater genetic drift [25] [27].

Table 1: Key Reagents and Software for LD-Based Ne Estimation

| Category | Specific Tool/Reagent | Specifications/Requirements | Primary Function |

|---|---|---|---|

| Genomic Data | Whole Genome Sequencing (WGS) Data | Blood-derived DNA; ≥30x mean coverage; PCR-free libraries; Illumina NovaSeq 6000 [33] | High-density variant discovery |

| Genotyping Array Data | Different DNA aliquot than WGS; for quality control [33] | Sample validation and QC | |

| Software | NeEstimator2 | Includes bias correction for sample size [25] | Standardized LD calculation |

| GONE | Requires ~10^4 loci; provides historical Ne trends [25] | Estimates Ne over recent generations | |

| QC Materials | NIST Reference Materials | Genome in a Bottle consortium samples [33] | Sensitivity and precision validation |

Procedural Workflow:

- Sample Collection & DNA Extraction: Collect blood samples in EDTA-treated tubes from the target population. Process to extract high-molecular-weight DNA. Preserve plasma, genomic DNA, and urine samples at -80°C for additional studies [34] [33].

- Library Preparation & Sequencing: Prepare PCR-free barcoded WGS libraries using the Illumina Kapa HyperPrep kit. Pool libraries and sequence on an Illumina NovaSeq 6000 instrument to generate paired-end reads (150 bp) [33].

- Data Processing & QC: Demultiplex sequences and perform initial quality control using the Illumina DRAGEN pipeline. Assess lane, library, and sample-level metrics, including contamination, mapping quality, and concordance with genotyping array data [33].

- Variant Calling & Joint Calling: Align FASTQ data to the human reference genome (e.g., hg19) using Burrows–Wheeler Aligner (BWA). Perform variant calling with Genome Analysis Toolkit (GATK) HaplotypeCaller. Implement large-scale joint calling across all samples to prune artefact variants and increase sensitivity [33].

- LD Calculation & Ne Estimation: Input the final variant call format (VCF) file into specialized software (e.g., NeEstimator2). The software calculates a standardized LD statistic (r²) between unlinked pairs of loci, applying necessary corrections for sampling bias and pseudo-replication in high-density data. Generate Ne estimates with confidence intervals [25].

Temporal Allele Frequency Change Protocol

Principle: This method estimates Ne by analyzing the variance in allele frequency changes at neutral markers over multiple generations between temporally spaced samples [27].

Procedural Workflow:

- Cohort Establishment & Baseline Sampling: Establish a defined patient cohort, such as newborns with congenital anomalies and their parents (trio-based design). Collect blood samples and generate whole genome sequencing data as described in Section 2.1 [34].

- Phenotypic Data Collection: Record detailed phenotype information according to Human Phenotype Ontology (HPO) terms. Collect epidemiological data through environmental factor questionnaires covering parental occupational history, exposure to hazardous substances, medication intake, and other relevant factors [34].

- Longitudinal Follow-up & Resampling: Implement a long-term tracking system to record newly added or changed clinical symptoms and genetic information over time. Collect subsequent samples after a defined number of generations have passed [34].

- Variant Frequency Comparison: For neutral loci, calculate the variance in allele frequency changes (F) between the temporal samples. Estimate Ne using the formula: Ne = t / (2F), where t is the number of generations between samples [27].

Quantitative Data Synthesis: Ne Values Across Biological Contexts

Table 2: Effective Population Size Estimates and Ratios Across Species and Genomic Contexts

| Species/System | Census Size (N) | Effective Size (Ne) | Ne/N Ratio | Estimation Method | Key Implications |

|---|---|---|---|---|---|

| Drosophila | 16 | 11.5 | 0.72 | Direct measurement of drift [1] | High reproductive variance reduces Ne |

| Various Wildlife | Variable | Variable | 0.10-0.11 (avg, adjusted) [1] | Multiple methods [1] | Fluctuations, family size variance reduce Ne |

| Inuit Humans | Census | Autosomal: 0.6-0.7N | 0.6-0.7 | Genealogical analysis [1] | Differences in inheritance patterns |

| Census | mtDNA: 0.7-0.9N | 0.7-0.9 | Genealogical analysis [1] | Haploid, maternal inheritance | |

| Census | Y-DNA: 0.5N | 0.5 | Genealogical analysis [1] | Haploid, paternal inheritance | |

| Human Genomic Regions | - | Low in low recombination areas | Variable | Coalescent rate [1] | Selection at linked sites reduces Ne |

| - | High in high recombination areas | Variable | Coalescent rate [1] | Recombination uncouples loci from selection |

Application in Disease Modeling and Drug Development

Enhancing Preclinical Model Selection and Validation

Advanced human disease models, including organoids, bioengineered tissue models, and organs-on-chips (OoCs), are being developed to bridge the translational gap [32]. Understanding Ne is critical for characterizing the genetic diversity and potential drift within these model systems, especially when derived from specific patient populations.

- Stratified Epithelia Models: Bioengineered tissue models of the gut, lungs, and skin are cultivated at air–liquid interfaces to emulate in vivo-like tissue conditions. The genetic characterization of the primary cell sources used to create these models should include Ne considerations to ensure they adequately represent the genetic diversity of the target human population [32].

- Organoid Systems: Self-organizing 3D structures generated from tissue-specific adult stem cells (ASCs) or induced pluripotent stem (iPS) cells can mimic human organs. However, the cell type composition of organoids can vary significantly depending on the protocol, impacting reproducibility. Genetic monitoring, informed by Ne concepts, can help assess stability and representativeness [32].

- Organs-on-Chips (OoCs): These perfused microfluidic platforms contain bioengineered tissues interconnected by microchannels. Multi-organ systems aim to emulate inter-tissue crosstalk. When these systems incorporate cells from multiple donors, understanding Ne-related dynamics helps maintain representative genetic variation throughout experimental durations [32].

Informing Genetic Study Design and Analysis in Drug Discovery

The drug development process is exceptionally long and costly, requiring over 12 years and approximately $2.6 billion on average to bring a new molecular entity to market [35]. The likelihood of advancing a candidate from clinical testing to market is dramatically lower for neuropsychiatric drugs (8.2%) compared to all drugs combined (15%) [35].

- Target Validation: A biological target must be validated as relevant to the human disease. Genetic data from diverse human populations, accounting for Ne, provides critical evidence. However, drug developers face challenges as many published findings on new targets cannot be reproduced [35]. Accurate Ne estimation in source populations strengthens the validity of genetic associations.

- Clinical Trial Design: The All of Us Research Program highlights the importance of diversity in genomic datasets. This program, with 77% of participants from communities historically underrepresented in biomedical research, provides a resource to better understand genetic variants and their health correlations across diverse groups [33]. Considering Ne and population structure is essential when using such datasets for trial design to ensure findings are generalizable.

Advanced Considerations and Computational Tools

Modern algorithms, particularly Sequentially Markovian Coalescent (SMC) methods, can reconstruct historical population sizes over thousands of generations [31]. These tools are computationally faster and can exploit larger sample sizes, providing rich demographic history. However, a critical consideration is that population subdivision can produce strong false signatures of changing population size. A signal often interpreted as a recent decline (bottleneck) may actually reflect a history of structured populations undergoing range changes [31]. Collaboration between geneticists, paleoecologists, and climatologists is crucial for accurate interpretation.

Table 3: Software Tools for Advanced Ne and Demographic Inference

| Software | Method Class | Key Features | Application Scope |

|---|---|---|---|

| SLiM | Simulation | Forward-time simulation of complex evolutionary scenarios [25] | Generating biologically realistic data for method testing |

| msprime | Simulation | Efficient coalescent simulations [25] | Simulating genetic data under complex demographies |

| GADMA | SFS-based | Genetic algorithm for demographic model selection [25] | Inferring complex demographic histories, including Ne changes |

| δaδi | SFS-based | Uses diffusion approximation for allele frequency spectrum [25] | Model selection and parameter estimation for 1-5 populations |

A Practical Guide to Contemporary Ne Estimation Methods and Software

The effective population size (Ne) is a fundamental parameter in population genetics, quantifying the number of individuals in an idealized population that would experience the same amount of genetic drift or inbreeding as the observed population [22]. Accurate estimation of Ne is crucial for understanding evolutionary processes, assessing population viability, and informing conservation strategies [36]. Among various genetic methods for estimating contemporary Ne, the linkage disequilibrium (LD) method has emerged as a powerful and widely used single-sample approach [37].

Linkage disequilibrium refers to the non-random association of alleles at different loci within a population [38]. The core principle of the LD method is that in a finite population, genetic drift generates random LD between unlinked loci. The magnitude of this drift-generated LD is inversely related to the effective population size. The expected relationship is formalized as E(r²) ≈ 1/(3Ne) + 1/S, where S is the sample size, after adjusting for sampling error [37]. This theoretical foundation allows researchers to estimate Ne from a single sample of individuals, making it particularly valuable for studying natural populations where temporal data are unavailable.

The LD method presents significant advantages for conservation applications, as it performs best for relatively small populations (Ne < 200) [37], which are often the focus of conservation efforts. With the advent of high-throughput sequencing technologies, the availability of vast numbers of genetic markers has further enhanced the precision and utility of LD-based Ne estimates across diverse taxa [39] [40].

Theoretical Foundations and Mathematical Principles

Core Mathematical Formulations

The linkage disequilibrium method for estimating effective population size derives from the expected equilibrium between the creation of LD by genetic drift and its breakdown by recombination. The fundamental equation describing this relationship for a finite population was established by Hill (1981):

E(r²) = 1/(3Ne) + 1/S [37]

In this formulation, E(r²) represents the expected squared correlation coefficient of allele frequencies at pairs of loci, Ne is the effective population size, and S is the number of individuals sampled. The 1/S term accounts for the LD generated by sampling error. To obtain an unbiased estimate of the drift component, this sampling error must be subtracted:

1/(3Ne) = E(r²) - 1/S

This adjusted estimate of the drift contribution to LD can then be used to solve for Ne. However, this initial formulation is approximate and ignores second-order terms in S and Ne, which can lead to substantial bias in certain circumstances [37]. Subsequent work has developed adjusted expectations for the drift and sampling error components to address these biases, leading to improved accuracy in Ne estimation.

Accounting for Allele Frequency and Population Structure

The performance of the LD method is significantly influenced by allele frequency distributions, particularly the presence of rare alleles. Low-frequency alleles can upwardly bias Ne estimates, but this can be mitigated by excluding alleles below a frequency threshold (typically Pcrit = 0.05 or 0.02) [37]. The method's precision increases with the number of independent allelic comparisons, which is a function of both the number of loci (L) and the number of alleles per locus (K). The total degrees of freedom for the weighted mean r² is given by:

n = Σ(Ki - 1)(Kj - 1) for all pairwise locus comparisons [37]

Recent theoretical advances have extended the LD method to account for population structure through a partitioned approach:

δ² = δw² + δb² + 2·δbw² [40]

This formulation decomposes total LD (δ²) into within-subpopulation (δw²), between-subpopulation (δb²), and between-within components (δbw²). This allows for more accurate estimation in structured populations by explicitly modeling migration rates (m), genetic differentiation (FST), and the number of subpopulations (s) [40].

Software Implementation: NeEstimator and Beyond

NeEstimator v2 represents a comprehensive implementation of software for estimating contemporary effective population size from genetic data [41]. This completely revised and updated version includes:

- Three single-sample estimators: Updated versions of the linkage disequilibrium and heterozygote-excess methods, plus a new method based on molecular coancestry

- Two-sample temporal method: A moment-based temporal approach for comparing samples across generations

- Enhanced data handling: Improved methods for accounting for missing data and analyzing datasets with large numbers of genetic markers (10,000 or more)

- Bias reduction: Options for screening out rare alleles that can upwardly bias estimates

- Confidence assessment: Confidence intervals for all estimation methods

- Batch processing: Capability to analyze large numbers of datasets sequentially, facilitating method comparisons

The software features a user-friendly JAVA interface compatible with MacOS, Linux, and Windows operating systems, making it accessible to a broad research community [41].

Next-Generation Software Tools

While NeEstimator remains a cornerstone for LD-based Ne estimation, several advanced tools have emerged to address specific methodological challenges:

Table 1: Software Tools for LD-Based Effective Population Size Estimation

| Software | Key Features | Data Requirements | Strengths |

|---|---|---|---|

| GONE2 [40] | Infers recent Ne changes; accounts for population structure; handles haploid data and genotyping errors | SNP data with genetic map | Accurate for recent demographic history; models migration and subdivision |

| currentNe2 [40] | Estimates contemporary Ne without genetic maps; accounts for population structure | SNP data without genetic map | Ideal for non-model organisms; provides FST and migration estimates |

| Ttne [42] | Uses identity-by-descent (IBD) in time-series ancient DNA; models time-transect sampling | Ancient DNA with temporal sampling | Leverages temporal stratification for improved accuracy |

| HapNe [42] | Estimates recent Ne from IBD or LD; designed for modern and ancient DNA | Phased genotypes | Flexible for different data types and quality |

Experimental Protocols and Application Workflows

Standard Protocol for LD-based Ne Estimation Using NeEstimator

Step 1: Data Collection and Quality Control

- Genotype a sufficient number of individuals (recommended S = 50-100) [37]

- Utilize highly polymorphic markers (microsatellites or SNPs); for SNPs, >1000 loci are typically necessary

- Ensure genotypes represent a random sample from the population of interest

Step 2: Input File Preparation

- Format data according to NeEstimator requirements (multiple formats supported)

- Include appropriate metadata (sample size, ploidy, missing data codes)

- For large SNP datasets, consider filtering to minimize linkage between markers

Step 3: Parameter Selection in NeEstimator

- Select the LD method from available estimators

- Set allele frequency threshold (Pcrit) to exclude rare alleles; Pcrit = 0.05 is often optimal [37]

- Specify confidence interval method (jackknifing or parametric)

- Choose output options for results and diagnostic information

Step 4: Results Interpretation

- Examine point estimate and confidence intervals for Ne

- Check for diagnostic warnings about potential biases

- Consider multiple Pcrit values if results are sensitive to allele frequency threshold

Advanced Protocol for Structured Populations Using GONE2

For populations with suspected subdivision or migration, the standard LD method may produce biased estimates. The following protocol adapts the process for structured populations:

Step 1: Preliminary Population Structure Analysis

- Conduct PCA, STRUCTURE, or ADMIXTURE analysis to identify genetic clusters

- Determine whether analysis should focus on total or subpopulation-level Ne

- If strong structure is detected, consider separate analyses for distinct clusters

Step 2: Data Preparation for GONE2

- Convert genotype data to required format (PLINK or similar)

- Ensure genetic map is available for the species

- If no species-specific map exists, use a proxy from a related species

Step 3: Parameter Optimization

- Run initial analysis with default parameters

- Adjust number of chromosomes and sample size settings as needed

- Set appropriate recombination rate bins for LD decay analysis

Step 4: Metapopulation Parameter Estimation

- Use GONE2's integrated approach to estimate migration rate (m), FST, and number of subpopulations (s)

- Validate parameter estimates against biological knowledge of the population

- If structure is confirmed, use the metapopulation-aware Ne estimates

Step 5: Trajectory Interpretation

- Examine Ne trajectory over recent generations (typically 100-200 generations)

- Identify periods of stability, decline, or expansion

- Correlate demographic changes with historical events or conservation interventions

Research Reagent Solutions and Materials

Table 2: Essential Research Reagents and Materials for LD-based Ne Estimation

| Category | Specific Examples | Function/Application |

|---|---|---|

| Genotyping Platforms | Illumina SNP arrays; RADseq; Whole-genome sequencing | Generating multilocus genotype data from sampled individuals |

| DNA Extraction Kits | Qiagen DNeasy Blood & Tissue Kit; Macherey-Nagel NucleoSpin | High-quality DNA extraction from various tissue types |

| Analysis Software | NeEstimator v2.1; GONE2; currentNe2; R/popgen packages | Implementing LD algorithms and estimating Ne with confidence intervals |

| Genetic Markers | Microsatellite panels; SNP sets (100s to 1000s loci); Sequence variants | Providing polymorphic loci for LD calculation; more loci improve precision |