Evolutionary Algorithms for Protein Structure Prediction: A Comprehensive Guide for Biomedical Research

This article provides a comprehensive examination of evolutionary algorithms (EAs) in protein structure prediction, a critical challenge in structural bioinformatics.

Evolutionary Algorithms for Protein Structure Prediction: A Comprehensive Guide for Biomedical Research

Abstract

This article provides a comprehensive examination of evolutionary algorithms (EAs) in protein structure prediction, a critical challenge in structural bioinformatics. Aimed at researchers and drug development professionals, it explores the foundational principles of EAs, detailing how they navigate the vast conformational search space. The content covers advanced methodological implementations, including dynamic speciation and the integration of problem information like contact maps and secondary structure. It further addresses key optimization challenges and presents rigorous validation protocols using established metrics like RMSD and GDT. By comparing EAs with cutting-edge deep learning tools like AlphaFold2, this review highlights the unique advantages and complementary role of evolutionary approaches, offering valuable insights for de novo structure prediction and therapeutic discovery.

The Protein Folding Problem and Evolutionary Computation

The prediction of a protein's native three-dimensional (3D) structure from its amino acid sequence alone represents one of the most significant challenges in computational structural biology. This problem, often termed the "protein folding problem," is fundamentally important because a protein's structure directly determines its biological function. The challenge stems from Levinthal's paradox, which highlights the astronomical number of possible conformations a protein chain could theoretically adopt—making it computationally infeasible to sample all possibilities through brute-force calculation [1]. Despite this, Anfinsen's dogma established that a protein's native structure is determined uniquely by its amino acid sequence, implying that prediction should be theoretically possible [1]. This fundamental challenge has driven decades of research into computational methods, with evolutionary algorithms emerging as one important approach for navigating the vast conformational space to identify energetically favorable native structures.

Computational Methodologies in Structure Prediction

Historical Foundations and Energy Functions

Early computational approaches to protein structure prediction relied heavily on molecular mechanics principles adapted from small molecule modeling. The development of "Consistent Force Field" (CFF) energy functions led to widely used all-atom potentials including CHARMM, Amber, and ECEPP [2]. These classical potentials incorporate covalent, non-covalent, and electrostatic energy terms but proved inadequate for reliably discriminating native folds from incorrectly folded models, primarily due to difficulties in accounting for solvation effects [2]. Subsequent improvements included the addition of implicit solvation terms using continuum electrostatic treatments such as the Poisson-Boltzmann method and Generalized Born approximations, which improved native state identification but with limited accuracy [2].

The limitations of physics-based potentials led to the development of knowledge-based statistical potentials derived from frequencies of structural features in experimentally determined protein structures [2]. These computationally efficient potentials used simplified residue-based representations reminiscent of coarse-grained potentials used in early folding calculations [2]. When combined with energy optimization methods, they enabled ab-initio protein modeling for small proteins, though conformational sampling remained challenging for larger proteins.

Template-Based and Coevolution-Based Methods

The observation that evolutionarily related proteins adopt similar 3D structures gave rise to homology (comparative) modeling, where protein structures are modeled using experimentally determined structures of related proteins as templates [2]. In aligned regions, template backbones are copied to the target, while specialized methods predict loops in non-aligned regions and place side chains of non-conserved residues [2].

Fragment-based assembly approaches bridged template-based and ab-initio methods by constructing models from short backbone fragments (3-15 residues) extracted from known structures, assembled into full-length models using Monte Carlo simulated annealing [2].

A transformative advance came from effectively leveraging coevolutionary information through the analysis of correlated mutations in multiple sequence alignments. Methods like direct coupling analysis and pseudo-likelihood optimization identified evolutionarily coupled residue pairs likely to form contacts in 3D space, providing restraints for ab-initio modeling [2]. This approach eventually enabled neural network-based learning methods to achieve unprecedented accuracy in end-to-end protein structure prediction [2].

Evolutionary Algorithms for Protein Structure Prediction

The USPEX Framework

Evolutionary algorithms represent a class of global optimization techniques inspired by biological evolution, well-suited for navigating the complex energy landscape of protein folding. The USPEX (Universal Structure Predictor: Evolutionary Xtallography) algorithm has been successfully extended to protein structure prediction starting from amino acid sequences [3].

USPEX operates through iterative generations of candidate structures that undergo selection, variation, and inheritance. The algorithm employs novel variation operators specifically designed for protein structures to create new candidate models, exploring the conformational space while selecting for lower energy states [3]. Protein structure relaxation and energy calculations within USPEX can be performed using different force fields, including those implemented in Tinker and Rosetta with its REF2015 scoring function [3].

Performance and Force Field Limitations

Testing USPEX on proteins up to 100 residues in length (excluding those with cis-proline residues) demonstrated its ability to predict tertiary structures with high accuracy [3]. Comparative analyses revealed that USPEX frequently identified structures with potential energies comparable to or lower than those generated by Rosetta's AbInitio approach across multiple force fields including Amber, Charmm, and Oplsaal [3].

However, a critical finding from these studies was that despite the algorithm's powerful optimization capabilities, existing force fields remain insufficient for accurate blind prediction of protein structures without experimental verification [3]. This highlights a fundamental challenge in the field: the energy function accuracy ultimately limits prediction reliability, regardless of sampling efficiency.

Table 1: Comparison of Protein Structure Prediction Methods

| Method | Approach | Strengths | Limitations |

|---|---|---|---|

| USPEX | Evolutionary algorithm with global optimization | Finds deep energy minima; effective conformational sampling | Limited by force field inaccuracies; tested on small proteins |

| Classical Force Fields | Physics-based molecular mechanics | Physically realistic energy terms; transferable | Inadequate solvation treatment; poor native state discrimination |

| Knowledge-Based Potentials | Statistical potentials from known structures | Computationally efficient; effective for scoring | Limited by database size and representativeness |

| Homology Modeling | Template-based structure building | Highly accurate with good templates | Requires evolutionary related templates |

| Fragment Assembly | Combination of template and ab-initio | Balances accuracy and coverage | Limited by fragment library quality |

| Coevolution-Based Methods | Evolutionary coupling analysis | High-accuracy contact prediction; no templates needed | Requires large multiple sequence alignments |

The AI Revolution: Deep Learning Approaches

AlphaFold and Related Architectures

The application of deep learning to protein structure prediction has dramatically transformed the field. AlphaFold, developed by Google DeepMind, represented a landmark achievement, accurately predicting structures for nearly 60% of proteins in the CASP13 competition compared to 7% for the second-place model [4]. Its initial architecture used convolutional neural networks trained on Protein Data Bank structures to calculate distances between residue pairs, generating "distograms" using multiple sequence alignments to predict structure from sequence [4].

AlphaFold2 introduced a substantially redesigned architecture that achieved atomic-level accuracy competitive with experimental methods [4]. Key innovations included the Evoformer and structure module neural networks that work iteratively to refine structures using MSA and template information [4]. Subsequent developments included AlphaFold Multimer for predicting multi-chain protein complexes and database expansions incorporating over 200 million structure predictions [4] [5].

RoseTTAFold and Open-Source Alternatives

Inspired by AlphaFold2, RoseTTAFold employs a three-track network that simultaneously considers protein sequence (1D), amino acid interactions (2D), and 3D structural information [4]. This architecture allows information to flow back and forth across dimensions, enabling collective reasoning about relationships within and between sequences, distances, and coordinates [4]. The recent RoseTTAFold All-Atom extension can model assemblies containing proteins, nucleic acids, small molecules, metals, and chemical modifications [4].

The OpenFold consortium emerged to address limitations in AlphaFold2's code accessibility, developing a fully trainable implementation that matches AlphaFold2's accuracy while providing open-source availability [4]. Similarly, the controversy surrounding AlphaFold3's initial release without source code has prompted development of open-source alternatives to maintain scientific reproducibility and progress [4].

Experimental Protocols and Methodologies

USPEX Implementation Protocol

For evolutionary algorithm-based prediction using USPEX, the following methodology provides a framework for structure prediction:

Initialization: Generate an initial population of candidate structures through random conformation generation or using fragment-based assembly.

Variation Operators: Apply specialized variation operators developed for protein structures including:

- Heredity: Combining segments from different parent structures

- Mutation: Introducing local conformational changes

- Random generation: Maintaining diversity

Energy Evaluation: Perform structure relaxation and energy calculation using selected force fields (Tinker with various force fields or Rosetta with REF2015).

Selection: Identify low-energy structures for propagation to the next generation using tournament selection or ranking based on energy.

Iteration: Repeat steps 2-4 for multiple generations until convergence criteria are met (minimal energy improvement or maximum generations).

Validation: Compare predicted structures using energy values, structural similarity measures, and experimental data when available.

AI-Assisted Generative Design Protocol

Generative AI models like RFdiffusion have created new methodologies for protein design:

Scaffold Generation: Create initial structural templates using non-ML programs or natural protein fragments as starting points for diffusion.

Partial Diffusion: Use RFdiffusion's "partial diffusion" mode to generate plausible protein binders or designs from scaffold libraries.

Sequence Design: Apply ProteinMPNN to generate amino acid sequences for the designed backbones.

Validation: Verify generated structures by running structure prediction (e.g., AlphaFold2) on the designed sequences and comparing with the RFdiffusion+ProteinMPNN generated structure.

Iterative Refinement: Conduct multiple rounds of generation, with results from one round informing subsequent rounds through sequence threading and structural optimization.

Functional Enhancement: Combine with other models like AF2 Hallucination to enhance specific properties such as binding affinity.

Large-Scale Structural Analysis Protocol

The integration of massive structural databases enables comprehensive analysis of protein structure space:

Dataset Curation: Collect non-redundant sequences from major protein structure databases (AFDB, ESMAtlas high-quality subset, MIP).

Structural Clustering: Eliminate structural redundancy using Foldseek with optimized parameters for each database, followed by cross-database clustering.

Functional Annotation: Annotate clusters using structure-based function prediction methods like deepFRI.

Representation Learning: Generate structural representations using Geometricus to embed protein structures into fixed-length shape-mer vectors.

Dimensionality Reduction: Project shape-mer features into two-dimensional structure space using PaCMAP.

Functional Localization: Identify regions of structure space enriched for specific biological functions and analyze complementarity between databases.

Research Reagents and Computational Tools

Table 2: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Function | Application Context |

|---|---|---|---|

| USPEX | Evolutionary Algorithm | Global optimization of protein conformations | Ab-initio structure prediction |

| AlphaFold2 | Deep Learning Network | End-to-end structure prediction from sequence | High-accuracy single/multi-chain prediction |

| RoseTTAFold | Three-Track Neural Network | Simultaneous 1D/2D/3D structure modeling | General biomolecular modeling |

| RFdiffusion | Diffusion Model | Protein backbone generation | De novo protein design |

| ProteinMPNN | Neural Network | Sequence design for backbone structures | Protein sequence optimization |

| ESM-2 | Transformer Model | Protein sequence embedding and generation | Sequence analysis and feature extraction |

| Foldseek | Structural Alignment | Fast protein structure comparison | Structural clustering and classification |

| Geometricus | Structural Embedding | Protein structure representation as shape-mers | Structural similarity analysis |

| deepFRI | Functional Annotation | Structure-based function prediction | Functional characterization |

| AlphaFold DB | Structure Database | Repository of precomputed AF2 predictions | Structure retrieval and analysis |

Integration and Future Perspectives

The integration of evolutionary algorithms with modern deep learning approaches represents a promising direction for advancing protein structure prediction. While evolutionary algorithms like USPEX excel at global optimization and finding deep energy minima, their performance is ultimately limited by the accuracy of force fields [3]. In contrast, deep learning methods like AlphaFold2 achieve remarkable accuracy but face challenges in generalization and interpretability [4].

The creation of unified structural landscapes that integrate data from multiple sources (AFDB, ESMAtlas, MIP) reveals significant complementarity between databases, with distinct regions of structure space occupied by different data sources while sharing common functional profiles [5]. This integrative approach enables biological questions to be asked about taxonomic assignments, environmental factors, and functional specificity across the entire protein structure universe [5].

Future developments will likely focus on improving accuracy for challenging targets including intrinsically disordered regions, multi-domain proteins, and protein-ligand complexes. The extension to non-protein biomolecules (DNA, RNA, ligands) as demonstrated by AlphaFold3 and RoseTTAFold All-Atom represents another important frontier [4]. As generative models like RFdiffusion become more sophisticated, the field is shifting from pure prediction to design, enabling the creation of novel proteins with tailored functions [6]. However, critical challenges remain in evaluating generated structures and ensuring they improve upon natural designs rather than merely replicating them [6].

Evolutionary Algorithms as a Search Strategy for the Conformational Landscape

Proteins are dynamic polymers that sample an astronomical number of possible conformations to perform their biological functions. The computational prediction of these three-dimensional structures from amino acid sequences represents one of the most challenging problems in structural biology, particularly for understanding protein function in drug discovery [7]. The conceptual framework for this challenge is often described through the Levinthal paradox, which highlights the contradiction between the vast conformational space proteins must theoretically sample and the rapid timescales on which they actually fold [7] [8]. While recent AI-based methods like AlphaFold2 have revolutionized the field by predicting static structures with remarkable accuracy, they face fundamental limitations in capturing the dynamic reality of proteins in their native biological environments [7] [8]. These machine learning methods primarily rely on experimentally determined structures from databases that may not fully represent the thermodynamic environment controlling protein conformation at functional sites [7].

Evolutionary Algorithms (EAs) offer a complementary approach by directly addressing the multi-basin, funnel-like topography of protein energy landscapes. Unlike methods that produce single static models, EAs can generate ensembles of structures that represent the thermodynamic stability and conformational heterogeneity essential for biological function, especially for proteins with flexible regions or intrinsic disorders [7] [9]. This technical guide examines the fundamental principles, methodologies, and applications of EAs for mapping conformational landscapes, providing researchers with a comprehensive framework for implementing these strategies in protein structure prediction and drug discovery.

Fundamental Principles and Algorithmic Design

Energy Landscape Theory and the Need for EAs

The protein energy landscape is characterized by a complex, high-dimensional surface with multiple local minima and energy barriers separating stable and semi-stable states. Proteins functionally switch between these thermodynamically stable states, and understanding these transitions is crucial for elucidating molecular mechanisms in health and disease [9]. The limitations of the interpretation of Anfinsen's dogma have become increasingly apparent; while the amino acid sequence determines the structure, the native biological environment significantly influences the conformations a protein adopts [7]. This realization creates substantial barriers to predicting functional structures solely through static computational means.

EAs excel in this context through their ability to balance exploration and exploitation of the nonlinear, multimodal landscapes that characterize multi-state proteins [9]. Where local optimization methods become trapped in single minima, EAs maintain a population of candidate solutions that collectively map multiple basins of attraction, providing a more comprehensive representation of the conformational ensemble.

Core Components of Evolutionary Algorithms for Conformational Search

Table 1: Core Components of Evolutionary Algorithms for Protein Structure Prediction

| Component | Implementation in Protein Folding | Biological Analogy |

|---|---|---|

| Representation | All-atom or coarse-grained models with torsion angles and spatial coordinates | Physical protein structure with atomic-level detail |

| Fitness Function | Physics-based force fields (e.g., PFF01) or knowledge-based potentials | Energetic favorability of folded state |

| Selection | Tournament or fitness-proportional selection favoring low-energy conformations | Natural selection pressure |

| Variation Operators | Crossover exchanging structural fragments; Mutation modifying torsion angles | Genetic recombination and point mutations |

| Population Management | Fixed-size populations with elitism and diversity preservation | Maintaining genetic diversity in biological populations |

The representation of protein conformations varies in resolution from all-atom models that explicitly include every atom to coarse-grained representations that group atoms into larger interaction centers. The fitness function, typically a physics-based force field like PFF01 validated for tertiary structure prediction, evaluates the thermodynamic stability of each candidate conformation [10]. Selection operators emulate natural selection by preferentially retaining low-energy structures, while variation operators introduce structural diversity through crossover and mutation operations that modify torsion angles and spatial arrangements [9] [10].

Implementation Framework and Experimental Protocols

Evolutionary Mapping Algorithm Methodology

The evolutionary mapping algorithm employs a novel combination of global and local search to generate a dynamically-updated, information-rich map of a protein's energy landscape [9]. The protocol involves several key phases:

Initialization Phase: Generate an initial population of diverse conformations using fragment assembly, random torsion angle assignments, or templates from known structures if available. For the bacterial ribosomal protein L20, successful folding employed all-atom representations with an initial population of 500-1000 individuals [10].

Iterative Optimization Cycle:

- Fitness Evaluation: Score each conformation using the chosen force field (e.g., PFF01 for all-atom folding)

- Selection for Mating Pool: Implement tournament selection with size 2-3, preserving elitism

- Variation Operations: Apply geometric crossover (fragment exchange) and Gaussian mutation on torsion angles

- Local Refinement: Apply basin-hopping or short molecular dynamics to promising candidates

- Population Update: Replace least-fit individuals while maintaining diversity

Termination and Analysis: The algorithm terminates when convergence metrics stabilize or after a fixed number of generations (typically 100-500). The final population represents a map of low-energy regions in the conformational landscape [9] [10].

Quantitative Performance Metrics

Table 2: Key Metrics for Evolutionary Algorithm Performance Evaluation

| Metric | Description | Typical Range for Success |

|---|---|---|

| Native Content | Fraction of correctly predicted structural elements | >70% for high-accuracy prediction [10] |

| RMSD to Native | Root-mean-square deviation from experimental structure | <2Å for core regions [10] |

| Energy Landscape Coverage | Number of distinct low-energy basins identified | Varies by protein flexibility |

| Convergence Generations | Number of iterations until stability | 100-500 generations [10] |

| Population Diversity | Structural variety maintained in final population | Critical for multi-state proteins [9] |

For the 60-amino-acid bacterial ribosomal protein L20, EA implementations achieved steady increases in native content across generations, with final populations containing numerous near-native conformations (RMSD <2Å) representing a significant fraction of the low-energy metastable conformations in the folding funnel [10]. Comparative studies with the basin-hopping technique for the Trp-cage protein demonstrated that the evolutionary algorithm generates a dynamic memory in the simulated population, leading to faster overall convergence [10].

Research Reagents and Computational Tools

Table 3: Essential Research Reagents and Computational Tools

| Resource Type | Specific Tool/Resource | Function in Conformational Analysis |

|---|---|---|

| Force Fields | PFF01 [10] | All-atom free energy evaluation for tertiary structure prediction |

| Structure Databases | PDB, AFDB, ESMAtlas [8] [5] | Source of template structures and evolutionary constraints |

| Clustering Tools | Foldseek [5] | Structural similarity assessment and redundancy removal |

| Analysis Frameworks | Geometricus [5] | Protein structure representation via shape-mers for dimensionality reduction |

| Visualization | Custom web servers [5] | Exploration of structural landscapes and functional annotations |

The AlphaFold Protein Structure Database (AFDB) and ESMAtlas provide reference structures for validation and template-based initialization, though their static nature limits direct application to dynamic ensembles [8] [5]. Foldseek enables efficient structural clustering and redundancy removal from large conformational ensembles generated by EAs [5]. The Geometricus framework provides fixed-length shape-mer representations that facilitate structural comparisons and dimensionality reduction for visualizing high-dimensional conformational spaces [5].

Applications to Dynamic Proteins and Disease Variants

Mapping Multi-State Proteins and Dysfunctional Variants

The evolutionary mapping algorithm has been successfully applied to several dynamic proteins and their disease-implicated variants to illustrate its ability to map complex energy landscapes in a computationally feasible manner [9]. Comparison between the maps of wildtype and variant proteins allows for the formulation of a structural and thermodynamic basis for the impact of sequence mutations on dysfunction.

For proteins that switch between thermodynamically stable or semi-stable structural states to regulate their biological activity, EAs provide critical insights not available from single-structure predictions. The algorithm's balance between exploration and exploitation enables comprehensive mapping of the multi-basin energy landscapes characteristic of these dynamic proteins [9]. This approach has particular value for understanding intrinsically disordered proteins and proteins with flexible regions that cannot be adequately represented by single static models [7].

Integration with AI-Based Prediction Methods

While EAs provide distinct advantages for mapping conformational diversity, they can be integrated with AI-based prediction methods like AlphaFold2 to leverage their respective strengths. The static structures from AF2 can serve as starting points for EA exploration of conformational dynamics, particularly for functional states not well-represented in structural databases [7] [8]. This synergistic approach addresses the fundamental epistemological challenge that the machine learning methods used to create structural ensembles are based on experimentally determined structures under conditions that may not fully represent the thermodynamic environment controlling protein conformation at functional sites [7].

Future Directions in Drug Discovery

The application of EAs to conformational landscape mapping holds significant promise for drug discovery, particularly for targeting allosteric sites and understanding the structural consequences of disease mutations. By providing ensembles of structures rather than single models, EAs enable virtual screening against multiple conformational states, potentially identifying compounds that stabilize specific functional states or inhibit pathological conformations [7] [9].

Future developments will likely focus on improving the scalability of EAs for larger proteins and complexes, refining force fields for more accurate energy evaluation, and developing better metrics for assessing ensemble quality. As structural biology continues to recognize the importance of protein dynamics for function, evolutionary algorithms will play an increasingly vital role in bridging the gap between sequence and biological mechanism, ultimately enabling more effective therapeutic interventions guided by comprehensive conformational understanding [7] [9].

The application of Evolutionary Algorithms (EAs) to protein folding represents a sophisticated computational approach to solving one of biology's most fundamental challenges: predicting the three-dimensional native structure of a protein from its amino acid sequence. This problem remains daunting because the number of possible conformations grows exponentially with chain length, a phenomenon famously known as Levinthal's paradox [11]. EAs offer a powerful metaheuristic framework for navigating this vast conformational space efficiently by mimicking natural selection. Within this framework, three components form the algorithmic core: the population of candidate solutions, their representation or encoding, and the fitness function that evaluates their quality. When properly designed and implemented, these components enable researchers to sample protein conformations without exhaustive search, moving toward biologically functional structures through iterative improvement. This guide examines the technical implementation of these core components within the context of modern computational structural biology, providing researchers with both theoretical foundations and practical methodologies.

Core Component I: Population

Population Initialization and Management

In evolutionary algorithms for protein folding, the population refers to the set of candidate protein structures being evaluated and evolved throughout the optimization process. The initialization and management of this population critically impact the algorithm's ability to explore the conformational landscape effectively while avoiding premature convergence to local minima.

Population size represents a fundamental trade-off between diversity and computational expense. Research indicates that initial populations of approximately 200 individuals provide sufficient structural diversity to initiate the evolutionary process without imposing prohibitive computational costs [12]. This size balances the competing needs of capturing promising structural motifs while maintaining manageable runtime. The stochastic nature of evolutionary optimization means that smaller populations risk excessive homogeneity, while larger populations can introduce noise that hinders selective pressure and slows convergence.

Selection mechanisms determine which individuals proceed to subsequent generations. Maintaining approximately 25% of the population (50 individuals from an initial 200) between generations has demonstrated effective performance in benchmarks [12]. This selective pressure preserves the most promising structural elements while allowing sufficient diversity for continued exploration of the conformational space. Implementation typically involves tournament selection or fitness-proportional methods that favor individuals with lower energy scores while maintaining some less-fit candidates to preserve genetic diversity.

Generational dynamics in protein folding EAs typically extend for 30 or more generations, with significant discoveries often emerging after approximately 15 generations [12]. The algorithm generally does not fully converge but rather continues discovering novel well-scored molecules even after hundreds of generations, though with diminishing returns. Consequently, researchers often employ multiple independent runs with different random seeds to explore diverse regions of the conformational landscape, as each run may unveil distinct structural motifs.

Table: Population Parameters in Protein Folding Evolutionary Algorithms

| Parameter | Typical Value | Functional Role | Impact of Deviation |

|---|---|---|---|

| Initial Population Size | 200 individuals | Provides initial structural diversity | Smaller: Limited exploration; Larger: Computational inefficiency |

| Generational Carryover | 25% (50/200) | Maintains selective pressure | Higher: Premature convergence; Lower: Loss of promising motifs |

| Generation Count | 30+ generations | Allows exploration-convergence balance | Fewer: Incomplete optimization; More: Diminishing returns |

| Independent Runs | 20+ runs | Explores diverse conformational regions | Fewer: Risk of missing optimal folds; More: Resource intensive |

Advanced Population Strategies

Sophisticated EA implementations for protein folding employ additional strategies to enhance population diversity and search efficiency. Niche techniques maintain subpopulations that explore different regions of the conformational landscape, preventing any single structural motif from dominating prematurely. Elitism preserves the best-performing individuals unchanged between generations, ensuring that high-quality solutions are not lost through stochastic operations. Migration policies in island models periodically exchange individuals between subpopulations, introducing novel structural elements that may combine beneficially with existing motifs.

The REvoLd algorithm exemplifies modern population management through its implementation of multiple reproduction steps [12]. By increasing crossover operations between fit molecules, the algorithm encourages recombination of promising structural elements. Additionally, introducing mutation steps that switch single fragments to low-similarity alternatives preserves well-performing regions while enabling exploration of novel conformations. A second round of crossover and mutation that excludes the fittest molecules allows poorer-scoring candidates to contribute potentially valuable structural information, maintaining diversity throughout the optimization process.

Core Component II: Representation

Molecular Encoding Schemes

Representation encompasses the method for encoding protein structures within the evolutionary algorithm, significantly impacting the search efficiency and biological relevance of sampled conformations. An effective representation must balance biological realism with computational tractability, providing sufficient resolution to capture essential structural features while remaining amenable to evolutionary operations.

Lattice models offer a simplified discrete representation where amino acids are positioned on a two-dimensional or three-dimensional grid. The tetrahedral lattice model, for instance, places amino acids at vertices with four neighbors, representing folding directions using qubits (00→0, 01→1, 10→2, 11→3) [13]. This representation reduces the continuous conformational space to discrete states, making exhaustive sampling more feasible. For a protein of N amino acids, the required number of qubits to describe directions in the lattice is 2(N-3), as the first two directions primarily establish molecular orientation [13]. While sacrificing atomic-level precision, lattice models enable exploration of fundamental folding principles and large-scale conformational features.

Fragment assembly approaches represent proteins as combinations of structural fragments derived from known protein structures. These methods leverage the observation that local structural patterns recur frequently in protein databases. By assembling novel sequences from validated fragment libraries, these representations inherently incorporate biophysically realistic local geometries. The encoding typically involves specifying torsion angles for backbone dihedrals or selecting from predefined structural motifs at each position along the chain.

Real-valued atomic coordinates provide the most detailed representation, encoding the explicit three-dimensional positions of atoms within the protein. While offering high fidelity, this representation dramatically increases the dimensionality of the search space, requiring sophisticated constraint handling to maintain realistic bond lengths, angles, and chirality. This approach often incorporates energy functions that account for van der Waals interactions, electrostatics, solvation effects, and hydrogen bonding.

Table: Protein Representation Methods in Evolutionary Algorithms

| Representation Scheme | Structural Resolution | Computational Complexity | Best-Suited Applications |

|---|---|---|---|

| Tetrahedral Lattice | Coarse-grained (Cα atoms) | Low (2(N-3) qubits for N residues) | Fundamental folding principles, large proteins |

| Fragment Assembly | Medium (local structure) | Moderate (database-dependent) | Homology modeling, loop prediction |

| Real-valued Coordinates | High (all-atom) | High (3N coordinates for N atoms) | Refined structure prediction, docking studies |

| Combinatorial Library | Variable (molecular level) | High (billions of compounds) | Ligand design, molecular docking [12] |

Representation in Modern Evolutionary Algorithms

Contemporary EA implementations for protein folding often employ hybrid representations that combine multiple encoding schemes. The REvoLd algorithm exemplifies this approach in its handling of combinatorial chemical spaces, representing molecules through their synthetic building blocks and reaction pathways [12]. This representation directly maps to make-on-demand compound libraries, ensuring that predicted structures correspond to synthetically accessible molecules.

The quantum computing approach to protein folding implements a specialized representation that maps the conformational problem to a quantum Hamiltonian [13]. The complete energy function incorporates both geometrical constraints (Hgc) that prevent chain backtracking and interaction terms (Hint) that favor biologically realistic contact formations. This representation enables the application of quantum approximate optimization algorithms (QAOA) to identify low-energy configurations, potentially offering computational advantages for certain problem classes.

Representation significantly influences the design of evolutionary operators. Mutation operations in fragment-based representations might substitute structural fragments with alternatives of similar sequence but different conformation. In lattice models, mutations typically modify directional assignments at specific positions, while real-valued representations require more sophisticated perturbation strategies that maintain physical constraints such as bond lengths and angles.

Core Component III: Fitness Evaluation

Energy Functions and Scoring Metrics

The fitness function serves as the objective function guiding the evolutionary search, quantitatively evaluating the quality of candidate protein structures. Effective fitness functions for protein folding must accurately distinguish native-like conformations from misfolded states, balancing computational efficiency with biological accuracy.

Physics-based energy functions derive from molecular mechanics principles, incorporating terms for bond stretching, angle bending, torsional energies, van der Waals interactions, electrostatics, and solvation effects. The Hamiltonian in quantum-inspired protein folding includes both geometrical constraints (Hgc) that prevent consecutive directions from folding back and interaction terms (Hint) that favor biologically realistic contact formations [13]. The Hgc term applies a substantial penalty (parameter L, typically 500) when two consecutive directions are identical, ensuring chain continuity without backtracking [13]. The Hint term incorporates an energy benefit (ε, typically -5000) for favorable amino acid interactions at appropriate distances, with penalty terms (L1=300, L2=500) discouraging unrealistic geometries [13].

Knowledge-based scoring functions leverage statistical preferences derived from databases of known protein structures. These potentials typically include pairwise contact terms that favor amino acid interactions observed in native structures, solvation parameters that model the hydrophobic effect, and secondary structure propensities. Such functions effectively capture evolutionary constraints on protein folds without explicitly modeling physical chemistry.

Template-based similarity metrics evaluate candidates against known structural motifs, rewarding conformity to established fold families. These are particularly valuable in homology modeling applications where the target protein likely shares structural features with experimentally characterized relatives. Comparison methods might include root-mean-square deviation (RMSD) calculations, template modeling score (TM-score), or global distance test (GDT) metrics.

Multiobjective Fitness Evaluation

Sophisticated EA implementations often employ multiobjective fitness evaluation that simultaneously optimizes several competing criteria. This approach acknowledges that biological fitness encompasses multiple structural and energetic factors beyond a single energy minimum. Common objective combinations include:

- Stability minimization of the potential energy function

- Similarity maximization to known structural motifs

- Solvent-accessible surface area minimization for hydrophobic residues

- Secondary structure agreement with prediction algorithms

- Steric clash minimization

The REvoLd algorithm exemplifies modern fitness evaluation through its integration with flexible docking protocols [12]. Rather than relying on rigid docking, which introduces potential errors in protein-ligand complex prediction, REvoLd employs the RosettaLigand flexible docking protocol that accommodates both protein and ligand flexibility. This approach significantly increases success rates in identifying biologically relevant binding conformations, as demonstrated by improvements in hit rates by factors between 869 and 1622 compared to random selections [12].

Table: Fitness Function Components in Protein Folding Evolutionary Algorithms

| Fitness Component | Mathematical Formulation | Biological Basis | Computational Cost |

|---|---|---|---|

| Geometric Constraints (H_gc) | L × (1-(fi-fj)²) × (1-(fi+1-fj+1)²) | Prevents chain backtracking and ensures proper geometry | Low (scales linearly with chain length) |

| Interaction Energy (H_int) | ε + L1×(d(i,j)-1)² + L2×neighbor terms | Favors biologically realistic amino acid contacts | High (scales with N² potential interactions) |

| Solvation Effects | Based on solvent-accessible surface area | Models hydrophobic effect driving folding | Medium (depends on surface calculation method) |

| Knowledge-Based Terms | Statistical potentials from structure databases | Captures evolutionary constraints on fold space | Low to medium (database lookup) |

Integrated Experimental Protocol

Implementation Workflow

The effective integration of population, representation, and fitness components follows a structured experimental protocol. The following workflow outlines a comprehensive methodology for applying evolutionary algorithms to protein structure prediction, incorporating best practices from recent implementations.

Step 1: Problem Formulation and Representation Selection

- Define the target protein sequence and determine the appropriate representation scheme based on sequence length and research objectives

- For lattice models, establish the lattice type and resolution parameters

- For fragment-based approaches, select fragment libraries and assemble initial population

- For real-valued representations, establish boundary conditions and constraint handling methods

Step 2: Initial Population Generation

- Initialize population with 200 individuals using diverse construction methods [12]

- Apply random fragment assembly, lattice walk algorithms, or homology-derived models

- Ensure initial diversity through maximum sequence separation in structural sampling

- Validate initial conformations for physical realism (no steric clashes, reasonable geometry)

Step 3: Fitness Evaluation

- Calculate fitness scores for all individuals using the selected energy function

- For docking applications, employ flexible docking protocols like RosettaLigand [12]

- Parallelize fitness evaluation to distribute computational load

- Rank individuals based on fitness scores for selection operations

Step 4: Evolutionary Operations

- Select top 25% of individuals (50 from 200) for generational carryover [12]

- Apply crossover operations with increased frequency between fit molecules

- Implement mutation operators: fragment substitution, directional changes, or coordinate perturbations

- Introduce specialized mutations that switch fragments to low-similarity alternatives

- Execute second-round crossover and mutation excluding fittest molecules to maintain diversity

Step 5: Generational Advancement and Termination

- Advance population through 30+ generations or until convergence criteria met [12]

- Implement niche preservation techniques to maintain structural diversity

- Apply elitism to preserve best-performing individuals unchanged

- Execute multiple independent runs (20+) with different random seeds [12]

- Aggregate and analyze results across all runs to identify consensus folds

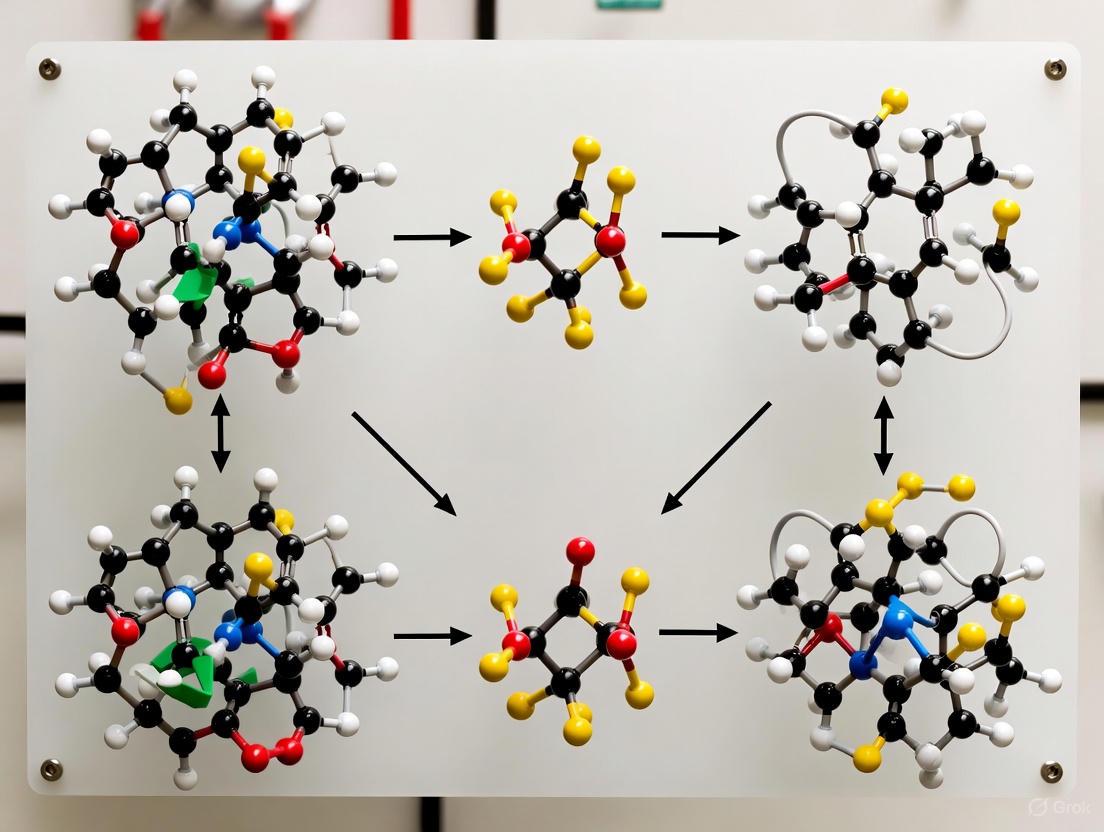

EA Workflow for Protein Folding

Validation and Analysis Protocols

Robust validation methodologies are essential for assessing the biological relevance of EA-derived protein structures. The following protocols provide a framework for evaluating prediction quality:

Structural Validation Metrics

- Calculate RMSD against experimental structures when available

- Compute TM-score for fold-level similarity assessment

- Analyze Ramachandran plot statistics for backbone torsion quality

- Assess steric clashes and structural outliers

- Evaluate residue-residue contact accuracy

Statistical Significance Assessment

- Compare results against negative controls (decoy structures)

- Evaluate enrichment factors relative to random selection [12]

- Perform multiple hypothesis testing with appropriate corrections

- Assess convergence across independent runs

Biological Functional Analysis

- Map functional sites (catalytic residues, binding pockets)

- Assess conservation of structural motifs

- Evaluate druggability for pharmaceutical applications

- Analyze quaternary structure predictions

Research Reagent Solutions

Table: Essential Research Resources for Protein Folding Evolutionary Algorithms

| Resource Category | Specific Tools/Services | Primary Function | Access Information |

|---|---|---|---|

| Evolutionary Algorithm Software | REvoLd (RosettaEvolutionaryLigand) | Evolutionary optimization in combinatorial chemical space | Available within Rosetta suite [12] |

| Compound Libraries | Enamine REAL Space | Provides make-on-demand compounds for validation | Commercial library (20B+ compounds) [12] |

| Docking Protocols | RosettaLigand | Flexible protein-ligand docking with full atom flexibility | Part of Rosetta molecular modeling suite [12] |

| Quantum Optimization | Classiq QAOA Platform | Quantum-enhanced optimization for protein folding | Commercial quantum algorithm platform [13] |

| Structure Databases | Protein Data Bank (PDB) | Repository of experimentally determined structures | Public database (200,000+ structures) [8] |

| Predicted Structure Databases | AlphaFold Database (AFDB) | Repository of AI-predicted protein structures | Public database (200M+ predictions) [14] |

| Structural Search Tools | Foldseek Cluster | Rapid structural comparison and clustering | Algorithm for large-scale structural analysis [14] |

| Validation Resources | CASP Targets | Blind test datasets for method validation | Biennial competition with unpublished structures [8] |

The integration of population management, structural representation, and fitness evaluation forms the computational foundation for applying evolutionary algorithms to protein folding challenges. As demonstrated through implementations like REvoLd, modern EA approaches can achieve substantial enrichment factors (869-1622× improvement over random selection) when these core components are properly engineered [12]. The field continues to evolve with incorporating quantum-inspired optimization [13], flexible docking protocols [12], and ultra-large library screening capabilities [12]. By adhering to the protocols and utilizing the research reagents outlined in this guide, researchers can leverage evolutionary algorithms to advance both fundamental understanding of protein folding and practical applications in drug discovery and protein engineering.

The prediction of a protein's three-dimensional structure from its amino acid sequence remains one of the most significant challenges in computational biology and biophysics. Proteins, essential for virtually all biological processes, undertake vital activities including material transport, energy conversion, and catalytic reactions [15]. Their function is intrinsically determined by their three-dimensional native structure, which corresponds to a thermodynamically stable energy minimum under physiological conditions [15]. The fundamental problem of protein structure prediction focuses on the transformation from a linear amino acid sequence to a folded, functional three-dimensional structure [15] [16]. This process is complicated by the Levinthal paradox, which highlights the astronomical number of possible conformations a protein could theoretically sample, making it impossible to find the native structure through random search [15] [7]. Computational approaches to this problem have historically been categorized into three main paradigms: template-based modeling (TBM), template-free modeling (TFM), and ab initio methods [15]. TBM methods, including homology modeling and threading, rely on identifying known protein structures as templates and are highly accurate when homologous structures exist [15] [17]. In contrast, TFM and ab initio methods are required for proteins with no homologous templates, attempting prediction primarily from physicochemical principles and the amino acid sequence alone [15] [16]. Within this landscape, Evolutionary Algorithms (EAs) have emerged as powerful global optimization strategies for navigating the vast conformational space of protein structures, offering a unique approach to the ab initio and template-free modeling challenges.

Evolutionary Algorithms: Core Principles and Methodological Fit

Evolutionary Algorithms are population-based metaheuristic optimization techniques inspired by the process of natural selection. They operate through iterative cycles of selection, variation (crossover and mutation), and fitness-based reproduction. This fundamental approach makes them exceptionally well-suited for the complex, high-dimensional, and non-convex optimization landscape of protein structure prediction.

In the context of protein folding, EAs treat potential protein conformations as individuals in a population. The fitness of each individual is typically evaluated using a scoring function or force field that aims to approximate the thermodynamic stability of the conformation, often based on the principle that the native state resides at the global free energy minimum [17] [16]. The iterative application of variation and selection operators allows the population to explore the fitness landscape and converge toward low-energy, stable structures. The key advantage of EAs in this domain is their ability to escape local minima and perform a robust global search, which is crucial given the rugged nature of protein energy landscapes [3]. Furthermore, their population-based nature facilitates the exploration of multiple promising regions of conformational space simultaneously.

Recent advancements in EA methodologies for protein structure prediction have incorporated deeper problem information to guide the search more effectively. This includes the use of fragment insertion from known protein structures to promote realistic local geometries, the application of secondary structure predictions to constrain the search, and the utilization of predicted residue-residue contact maps to bias the folding pathway toward more probable conformations [18]. Additionally, techniques such as dynamic speciation have been employed to maintain population diversity and prevent premature convergence, a common pitfall in complex optimization problems [18].

EAs in Practice: Key Algorithms and Experimental Protocols

Implemented Evolutionary Algorithms

Several specialized EAs have been developed and tested for protein structure prediction, demonstrating the practical application of the principles outlined above.

USPEX for Protein Structure Prediction: The evolutionary algorithm USPEX (Universal Structure Predictor: Evolutionary Xtallography), well-known in crystal structure prediction, has been extended to predict protein structures based on global optimization from the amino acid sequence [3]. In this implementation, protein structure relaxation and energy calculations are performed using molecular modeling packages like Tinker (with various force fields including Amber, Charmm, and Oplsaal) and Rosetta (with the REF2015 scoring function) [3]. The developers created novel variation operators specifically for proteins to generate new candidate structures within the evolutionary loop. Testing on proteins up to 100 residues in length demonstrated that USPEX could find structures with energies comparable to or lower than those produced by the established Rosetta AbInitio protocol [3]. A critical finding from this study, however, was that even when EAs successfully locate deep energy minima, the accuracy of the final prediction remains limited by the fidelity of the underlying force fields [3].

Fragment-Assisted EA with Problem Information: Another proposed EA uses a multi-faceted approach to leverage problem information [18]. This method employs:

- A dynamic speciation technique and fragment insertion to promote population diversity.

- A fragment library generated based on the Rosetta Quota protocol to ensure diversity in the building blocks.

- Information from contact maps and secondary structure in two selection strategies to better explore the conformational search space [18]. Experiments on nine proteins showed results that were competitive with the literature, evaluated using standard metrics like Root-Mean-Square Deviation (RMSD) and Global Distance Test (GDT) [18].

REvoLd: An EA for Drug Discovery: While not for predicting protein structure itself, the REvoLd (RosettaEvolutionaryLigand) algorithm exemplifies the successful application of EAs in a closely related domain: ultra-large library screening for drug discovery [12]. REvoLd efficiently explores the vast combinatorial space of "make-on-demand" chemical libraries for protein-ligand docking with full flexibility. Its protocol involves maintaining a population of ligands, with individuals selected for "crossover" (combining parts of different molecules) and "mutation" (swapping molecular fragments) based on their docking scores. This EA-based approach achieved enrichment factors of 869 to 1622 compared to random selection, demonstrating the power of evolutionary approaches in navigating complex biological configuration spaces [12].

Quantitative Performance Comparison

The table below summarizes the key characteristics and performance outcomes of the EA approaches discussed, alongside a benchmark deep learning method for context.

Table 1: Comparison of Evolutionary and Deep Learning Approaches in Protein Structure Prediction

| Method | Type | Key Features | Test Scope | Reported Outcome |

|---|---|---|---|---|

| USPEX-EA [3] | Ab Initio / TFM | Global optimization, novel variation operators, multiple force fields (Tinker, Rosetta REF2015) | 7 proteins (≤100 residues) | Found structures with close or lower energy vs. Rosetta AbInitio; accuracy limited by force fields. |

| Problem-Information EA [18] | TFM | Dynamic speciation, fragment library (Rosetta Quota), contact maps & secondary structure | 9 proteins | Competitive results in terms of RMSD, GDT, and processing time. |

| AlphaFold2 [19] | Deep Learning (TFM) | Deep neural networks, attention mechanisms, end-to-end learning | CASP14 competition (~2/3 of 96 targets) | GDT_TS >90 (competitive with experiment) for ~2/3 of targets [19]. |

Detailed Workflow of a Typical EA for Protein Structure Prediction

The following diagram illustrates the generic workflow of an evolutionary algorithm applied to protein structure prediction, integrating components from the specific implementations described above.

Successful implementation of evolutionary algorithms for protein structure prediction relies on a suite of software tools, force fields, and data resources. The table below details key components of the research toolkit as evidenced in the cited studies.

Table 2: Essential Research Reagents and Computational Tools

| Tool/Resource | Type | Function in EA-based Prediction | Example Use |

|---|---|---|---|

| Rosetta Software Suite [3] [12] | Software Platform | Provides scoring functions (e.g., REF2015) and protocols for structure relaxation, folding, and docking. | Used in USPEX for energy evaluation [3]; REvoLd is built within it [12]. |

| Tinker [3] | Molecular Modeling | Performs protein structure relaxation and energy calculations using classical force fields. | Used in USPEX with Amber, Charmm, and Oplsaal force fields [3]. |

| Fragment Libraries [18] | Data Resource | Provides short protein structure segments used in variation operators to build realistic conformations. | Libraries generated via the Rosetta Quota protocol to increase diversity [18]. |

| Evolutionary Algorithm (USPEX) [3] | Algorithm | The core optimization engine that evolves populations of structures towards the energy minimum. | Customized with protein-specific variation operators for structure prediction [3]. |

| Force Fields (Amber, CHARMM, OPLS) [3] | Scoring Function | Physics-based potential functions to calculate the potential energy of a protein conformation. | Used by Tinker to evaluate fitness; a critical factor in prediction accuracy [3]. |

| Protein Data Bank (PDB) [15] | Data Resource | A repository of experimentally solved protein structures used for training, fragment extraction, and validation. | Source of known structures for fragment libraries and template-based modeling. |

EAs in the Post-AlphaFold Era: Challenges and Strategic Positioning

The advent of deep learning methods, particularly AlphaFold2, has dramatically reshaped the field of protein structure prediction. AlphaFold2's performance in the CASP14 competition was extraordinary, with models competitive with experimental accuracy for approximately two-thirds of the targets [19] [17]. This success has established a new baseline for accuracy, especially for proteins with homologous sequences in databases. However, this does not render EAs obsolete; rather, it redefines their strategic position within the computational biology toolkit.

Enduring Strengths and Niche Applications of EAs

Evolutionary Algorithms retain several key advantages in specific scenarios:

- Force Field Independence and Physical Principles: Deep learning models like AlphaFold2 are heavily dependent on the patterns and templates present in their training data (the PDB) [7]. They can struggle with predicting structures of proteins that lack homologous counterparts or have novel folds not well-represented in the data [15]. EAs, relying on physicochemical principles and force fields, are not constrained by the limits of existing structural databases and are inherently designed for de novo exploration of conformational space. This makes them a crucial tool for investigating proteins with novel folds.

- Modeling Dynamics and Flexibility: A significant limitation of current AI approaches is their production of single, static models, which fail to capture the dynamic reality of proteins in their native biological environments [7]. Proteins, especially those with flexible regions or intrinsic disorders, exist as ensembles of conformations. EAs, by their nature, evolve a population of solutions. This makes them ideally suited for sampling conformational ensembles and investigating protein flexibility, allostery, and folding pathways.

- Handling Unusual Systems: EAs are well-adapted for studying proteins under non-biological conditions (e.g., extreme pH, temperature) or incorporating non-canonical amino acids, where training data for deep learning models is scarce.

Fundamental Challenges and Future Directions

Despite their strengths, EAs face fundamental challenges that must be addressed for them to remain competitive and valuable.

- Computational Cost: EAs typically require thousands to millions of energy evaluations, which can be computationally prohibitive for large proteins compared to the inference time of a trained neural network.

- Accuracy of Force Fields: As highlighted by the USPEX study, the accuracy of EA predictions is ultimately bounded by the quality of the force field or scoring function used [3]. Current force fields are not yet sufficiently accurate for reliable blind prediction without experimental verification [3].

- The Levinthal Paradox and Search Efficiency: While EAs are powerful global optimizers, the vastness of protein conformational space means that an exhaustive search is still impossible. Improving variation operators with deeper biological insights is crucial.

The future of EAs in this field likely lies in hybridization and specialization. One promising direction is the use of EAs for model refinement, where initial models from fast methods (like AlphaFold2) are further refined using evolutionary optimization with more sophisticated, physically detailed force fields. Another is the tight integration of EAs with experimental data from techniques like cryo-EM, NMR, or cross-linking mass spectrometry in a hybrid modeling approach to determine structures that are resistant to canonical methods. Furthermore, the development of EAs that are tightly integrated with deep learning potentials—where neural networks learn more accurate energy functions from quantum mechanical calculations or physical data—could combine the global search power of EAs with the accuracy of modern machine learning.

Evolutionary Algorithms have proven their mettle as robust and powerful tools for the ab initio and template-free prediction of protein structures. Their capacity for global optimization, ability to incorporate diverse problem information, and inherent suitability for exploring conformational ensembles secure them a durable, albeit evolved, position in the computational biology toolkit. While deep learning has set a new high-water mark for predictive accuracy on many targets, the fundamental limitations of data-driven approaches—particularly regarding novel folds, protein dynamics, and physical realism—create a persistent and vital niche for physics-based evolutionary approaches. The path forward is not one of replacement, but of synergy. The continued development of EAs, especially through hybridization with machine learning and closer integration with experimental data, will be essential for tackling the next frontiers in structural biology: understanding protein dynamics, characterizing disordered states, and designing novel proteins from first principles.

Implementing Evolutionary Algorithms: From Theory to Practice

The prediction of a protein's three-dimensional structure from its amino acid sequence represents one of the most challenging problems in computational biology and bioinformatics. This challenge stems from the astronomically vast conformational space that must be searched; even a small protein can adopt more possible conformations than there are atoms in the universe [20]. The protein structure prediction (PSP) problem is further complicated by the Levinthal paradox, which highlights that proteins fold reliably in microseconds to seconds despite the impossibility of randomly sampling all possible conformations [21]. For decades, researchers have sought computational methods to navigate this complex search space efficiently, leading to the adoption of evolutionary-inspired algorithms. Genetic Algorithms (GAs) and Multi-Objective Optimization (MOO) frameworks have emerged as powerful approaches for tackling PSP and related protein design problems by mimicking natural selection and evolution processes to explore conformational landscapes effectively [22] [23].

The integration of these algorithmic frameworks with modern deep learning has created particularly powerful hybrid tools. As one recent study notes, "With recent methodological advances in the field of computational protein design, in particular those based on deep learning, there is an increasing need for frameworks that allow for coherent, direct integration of different models and objective functions into the generative design process" [24]. This review comprehensively examines the key algorithmic frameworks of genetic algorithms and multi-objective optimization for protein structure prediction, providing researchers with both theoretical foundations and practical implementation guidance.

Algorithmic Foundations

Genetic Algorithms for Protein Structure Prediction

Genetic Algorithms belong to a broader class of evolutionary algorithms that mimic natural selection to solve optimization problems. In the context of protein structure prediction, GAs operate by maintaining a population of candidate conformations (individuals) that undergo simulated evolution through selection, crossover, and mutation operations [22] [25]. Each individual in the population encodes a particular conformation of a protein molecule, typically represented as strings or chromosomes that can be manipulated by genetic operators [22].

The fundamental components of a GA for PSP include:

- Representation: How protein conformations are encoded in the algorithm

- Fitness Function: The energy function or scoring method that evaluates conformation quality

- Selection: The process of choosing individuals for reproduction based on fitness

- Crossover: Combining elements of parent conformations to create offspring

- Mutation: Introducing random changes to maintain diversity

Early applications of genetic algorithms to protein structure prediction demonstrated potential, though reviewers noted that "more data are needed before a complete assessment can be made" [22] [25]. Subsequent research has refined these approaches, particularly through specialized representations and operators that incorporate domain knowledge about protein biochemistry and folding principles.

Multi-Objective Optimization Frameworks

Multi-objective optimization reformulates the PSP problem to simultaneously consider multiple, often conflicting objectives. Rather than seeking a single optimal solution, MOO identifies a set of Pareto-optimal solutions representing different trade-offs among objectives [23]. This approach better reflects the complex nature of protein folding, where different energy terms and structural constraints must be balanced.

The Pareto optimality concept is central to MOO. A solution is considered Pareto optimal if no objective can be improved without worsening at least one other objective. The collection of all Pareto-optimal solutions forms the Pareto front, which represents the best possible trade-offs among competing objectives [23]. For protein structure prediction, this is particularly valuable because, as noted by researchers, "at any stage the molecule exists in an ensemble of conformations" rather than a single structure [23].

The conflicting nature of different energy terms in protein folding provides a strong rationale for multi-objective approaches. Experimental studies have confirmed that "the potential energy functions used in the literature to evaluate the conformation of a protein are based on the calculations of two different interaction energies: local (bond atoms) and non-local (non-bond atoms) and experiments have shown that those types of interactions are in conflict" [26]. This fundamental conflict makes multi-objective optimization particularly suitable for protein structure prediction.

Integrated GA-MOO Frameworks

The combination of genetic algorithms with multi-objective optimization creates powerful frameworks for navigating protein conformational spaces. These integrated approaches leverage the population-based search of GAs with the trade-off analysis capabilities of MOO. The Non-dominated Sorting Genetic Algorithm II (NSGA-II) has emerged as a particularly influential algorithm in this domain [24].

In NSGA-II applied to protein design, the algorithm maintains a population of candidate sequences or structures and uses non-dominated sorting to classify solutions into Pareto fronts [24]. Crowding distance computation helps maintain diversity along the Pareto front, while specialized genetic operators enable effective exploration of the sequence-structure space. These integrated frameworks can incorporate multiple biological objectives simultaneously, such as stability, binding affinity, and specificity, to produce designed proteins that balance competing requirements.

Current Methodologies and Experimental Protocols

Multi-Objective Evolutionary Approaches for Protein Structure Prediction

Recent advances in multi-objective evolutionary algorithms have addressed key limitations in protein structure prediction. The MultiSFold method exemplifies this progress by employing a distance-based multi-objective evolutionary algorithm specifically designed to predict multiple protein conformations [27]. This approach addresses a significant limitation of static structure prediction tools like AlphaFold2, which tend to represent single conformational states despite the dynamic nature of proteins in solution.

The MultiSFold protocol implements an iterative modal exploration and exploitation strategy with three key phases:

- Energy Landscape Construction: Multiple energy landscapes are built using different competing constraints generated by deep learning.

- Conformational Sampling: Multi-objective optimization, geometric optimization, and structural similarity clustering are combined to sample diverse conformations.

- Spatial Refinement: A loop-specific sampling strategy adjusts spatial orientations to generate the final population [27].

This methodology demonstrates remarkable effectiveness, achieving a 56.25% success ratio in predicting multiple conformations compared to just 10.00% for AlphaFold2 alone [27]. Furthermore, when tested on 244 human proteins with low structural accuracy in the AlphaFold database, MultiSFold improved TM-scores by 2.97% over AlphaFold2 and 7.72% over RoseTTAFold [27].

Another innovative approach, the Modified Immune-inspired Pareto Archived Evolution Strategy (MI-PAES), incorporates immune-inspired operators to exploit knowledge about hydrophobic interactions, which represent one of the most important driving forces in protein folding [26]. This algorithm demonstrates comparable or better performance than canonical genetic algorithms in both solution quality and computational efficiency [26].

Multi-Objective Frameworks for Protein Design

Beyond structure prediction, multi-objective evolutionary optimization has shown significant promise for protein design applications. Recent research has demonstrated how the NSGA-II algorithm can integrate multiple deep learning models, including AlphaFold2, ProteinMPNN, and ESM-1v, to guide the sequence design process [24].

In one implementation, researchers used AlphaFold2 and ProteinMPNN confidence metrics to define the objective space, while ESM-1v and ProteinMPNN were embedded into a mutation operator to rank and redesign the least favorable positions [24]. This approach was particularly valuable for challenging design problems such as the multistate design of the fold-switching protein RfaH, which adopts dramatically different conformational states (RfaHα and RfaHβ) [24].

The experimental protocol for this integrative design framework involves:

- Population Initialization: Creating a population of randomized protein sequences

- Objective Calculation: Scoring sequences against multiple states using AF2Rank and pMPNN log-likelihood

- Non-dominated Sorting: Classifying solutions into Pareto fronts

- Informed Mutation: Using ESM-1v to identify unfavorable positions for ProteinMPNN to redesign

- Iterative Improvement: Repeating the process across generations to approximate the Pareto front [24]

This approach yielded significant improvements over direct application of ProteinMPNN, with reduced bias and variance in native sequence recovery, particularly at positions where ProteinMPNN alone fails [24].

Quantitative Assessment of Algorithm Performance

Table 1: Performance Comparison of Multi-Objective Evolutionary Algorithms for Protein Structure Prediction

| Algorithm | Application | Key Metrics | Performance | Reference |

|---|---|---|---|---|

| MultiSFold | Multiple conformation prediction | Success ratio for predicting multiple conformations | 56.25% (vs. AlphaFold2's 10.00%) | [27] |

| MultiSFold | Accuracy improvement on low-confidence targets | TM-score improvement over AlphaFold2 | 2.97% improvement | [27] |

| NSGA-II with informed operators | Multistate protein design | Native sequence recovery | Significant reduction in bias and variance compared to ProteinMPNN alone | [24] |

| MI-PAES | Protein structure prediction with immune operators | Search ability and computational time | Comparable or better than canonical GA | [26] |

Table 2: Key Biological and Topological Objectives in Multi-Objective Optimization for Protein Complex Detection

| Objective Type | Specific Metrics | Role in Multi-Objective Optimization | |

|---|---|---|---|

| Topological Objectives | Modularity (Q), Conductance (CO), Expansion (EX), Cut Ratio (CR), Normalized Cut (NC), Internal Density (ID) | Evaluate the structural properties of detected complexes within PPI networks | [28] |

| Biological Objectives | Gene Ontology (GO) semantic similarity | Incorporate functional annotation to improve biological relevance of detected complexes | [28] |

Implementation and Workflow Visualization

Experimental Workflows

The implementation of genetic algorithms and multi-objective optimization for protein structure prediction follows systematic workflows that integrate various computational models and optimization steps. The workflow below illustrates a generalized framework for multi-objective evolutionary protein design:

Multi-Objective Evolutionary Algorithm for Protein Design

NSGA-II Algorithm Structure

The NSGA-II algorithm provides the optimization engine for many contemporary protein design frameworks. Its specialized structure enables efficient approximation of Pareto fronts for multiple competing objectives:

NSGA-II Algorithm Structure for Multi-Objective Optimization

Implementing genetic algorithms and multi-objective optimization for protein structure prediction requires specialized computational tools and resources. The table below summarizes key components of the research toolkit:

Table 3: Essential Research Reagent Solutions for Protein Structure Prediction Using Evolutionary Algorithms

| Tool/Resource | Type | Function in Workflow | Application Context |

|---|---|---|---|

| AlphaFold2 | Structure Prediction Model | Provides folding propensity confidence metrics (AF2Rank) as objective function | Multistate design, stability optimization [24] |

| ProteinMPNN | Inverse Folding Model | Generates and evaluates sequences; embedded in mutation operators | Sequence design, mutation operator [24] |

| ESM-1v | Protein Language Model | Ranks designable positions based on evolutionary fitness | Informing mutation operators, sequence evaluation [24] |

| NSGA-II | Multi-objective Evolutionary Algorithm | Core optimization framework for approximating Pareto fronts | General multi-objective optimization [24] |

| MultiSFold | Specialized MOEA Implementation | Distance-based multi-objective conformational sampling | Multiple conformation prediction [27] |

| MI-PAES | Immune-inspired Algorithm | Incorporates hydrophobic interaction knowledge for vaccine design | Structure prediction with specialized biological insights [26] |

| FS-PTO | Gene Ontology Mutation Operator | Enhances complex detection in PPI networks using functional similarity | Protein complex identification [28] |

Future Directions and Challenges

Despite significant advances, several challenges remain in the application of genetic algorithms and multi-objective optimization to protein structure prediction. A primary limitation is the computational expense of integrating multiple deep learning models, particularly AlphaFold2, in iterative optimization loops. Future work may develop more efficient surrogate models or distillation techniques to accelerate evaluation.

Another important frontier is better modeling of protein dynamics and conformational ensembles. While methods like MultiSFold represent progress in predicting multiple conformations [27], capturing the full breadth of functional dynamics remains challenging. Future frameworks may integrate molecular dynamics simulations with multi-objective evolutionary algorithms to better model temporal transitions between states.

The interpretability of predictive models also deserves attention. While deep learning models have dramatically improved accuracy, their complexity can limit biological insights. As noted in recent research, "protein genetics is actually both rather simple and intelligible" [20], suggesting opportunities for developing more interpretable multi-objective frameworks that balance accuracy with biological insight.

Finally, extending these approaches to larger protein systems and complexes will be essential for addressing biologically significant problems. Current methods show promise but face scalability challenges with large multi-domain proteins and assemblies. Algorithmic innovations in representation, decomposition, and parallelization will help address these limitations in future work.