Evolutionary Algorithms in Protein Design: Bridging AI and Synthetic Biology for Novel Therapeutics

This article explores the transformative role of evolutionary algorithms (EAs) and artificial intelligence (AI) in de novo protein design, a field poised to revolutionize drug discovery and synthetic biology.

Evolutionary Algorithms in Protein Design: Bridging AI and Synthetic Biology for Novel Therapeutics

Abstract

This article explores the transformative role of evolutionary algorithms (EAs) and artificial intelligence (AI) in de novo protein design, a field poised to revolutionize drug discovery and synthetic biology. We provide a comprehensive analysis for researchers and drug development professionals, covering the foundational principles of navigating the vast protein functional universe beyond natural evolutionary constraints. The article delves into cutting-edge methodological frameworks, including protein language models and multi-objective optimization, and their applications in creating novel enzymes, therapeutics, and biosensors. It further addresses critical challenges in optimization and troubleshooting, such as balancing exploration with convergence and ensuring synthetic accessibility. Finally, we examine rigorous validation paradigms and comparative performance of EA-driven approaches against traditional methods, synthesizing key takeaways and future directions for clinical and biomedical translation.

The Protein Universe and Evolutionary Constraints: Foundations for Computational Design

Defining the Vast Protein Functional Universe and Its Unexplored Potential

The endeavor to define the protein functional universe reveals a domain of staggering complexity and immense opportunity. The fundamental challenge in exploring this universe lies in the astronomical scale of protein sequence space. A typical protein several hundred amino acids long represents 20³⁰⁰ (approximately 10³⁹⁰) possible sequences, a number that vastly exceeds the total number of atoms in the universe [1]. Despite this overwhelming vastness, functional proteins are not randomly scattered; they exist clustered together within this space, enabling practical navigation and optimization [1]. This clustering principle underpins all modern protein exploration strategies.

Current research efforts face a significant challenge of research bias, where scientific inquiry has concentrated on a limited subset of disease-associated proteins, overlooking many potentially important therapeutic targets [2]. This bias is further compounded by the exploration bottleneck inherent in traditional methods. For instance, the human proteome contains thousands of proteins modified by an unusual pair of enzymes, OGT and OGA, which are implicated in major diseases but remain poorly understood due to their atypical behavior and the lack of appropriate research tools [3]. Similarly, intrinsically disordered proteins—highly dynamic structural ensembles involved in various human diseases—represent a significant untapped resource in drug discovery, as current development processes exhibit a substantial bias toward structured proteins [4]. Overcoming these limitations requires innovative computational approaches that can efficiently navigate the functional protein landscape and identify promising yet under-explored regions.

Quantitative Landscape of Protein Function

Current Mapping of Protein Space

Systematic classification efforts have provided valuable frameworks for understanding protein structure and function. Traditional classification schemes have relied on sequence-based methods (e.g., Pfam), fold-domain approaches (e.g., CATH, SCOP), and more specialized methods focusing on functional surfaces [5] [6]. These complementary approaches have revealed that protein functions are distributed non-uniformly across the structural landscape.

The Protein Surface Classification (PSC) method offers a particularly insightful approach by focusing on local spatial regions that perform biological functions. This method has established a library of 1,974 surface types derived from approximately 28,986 bound forms, with the distribution of members across these types being highly uneven [5]. This uneven distribution reflects both biological reality and research bias, with only 502 surface types containing 10 or more members, and a mere 31 types containing 100 or more [5]. This skewed distribution highlights significant gaps in our functional characterization of the protein universe.

Table 1: Distribution of Proteins in Surface Type Classification

| Number of Members (Ns) in Surface Type | Number of Surface Types |

|---|---|

| Ns ≥ 100 | 31 |

| Ns ≥ 50 | 95 |

| Ns ≥ 10 | 502 |

| Total Surface Types | 1,974 |

The Untapped Functional Potential

Several specific protein families exemplify the untapped potential within the functional protein universe. The O-GlcNAc modification system, involving only two enzymes (OGT and OGA) that regulate thousands of human proteins, represents a particularly promising yet challenging frontier [3]. This system modifies at least 4,000 different proteins in the human body and is dysregulated in Alzheimer's disease, Type II diabetes, cardiovascular disease, and nearly every type of cancer [3]. Despite this broad relevance, the system defies conventional research approaches because OGT and OGA do not appear to follow standard sequence motif recognition rules, and there are currently no FDA-approved drugs targeting O-GlcNAc modification [3].

Similarly, intrinsically disordered proteins represent a significant unexplored frontier. Comprehensive analysis of the druggable human proteome reveals a substantial bias toward high structural coverage and low abundance of intrinsic disorder, despite the high disorder content of the human proteome overall and the involvement of disordered proteins in various human diseases [4]. This bias stems from heavy reliance on structural information in drug development and the difficulty of attaining structures for intrinsically disordered proteins, creating a significant gap in therapeutic exploration.

Table 2: Promising Yet Understudied Protein Systems

| Protein System | Estimated Scale | Disease Relevance | Research Challenges |

|---|---|---|---|

| O-GlcNAc Modification | Modifies ≥4,000 human proteins | Alzheimer's, cancer, diabetes, cardiovascular disease | Atypical sequence recognition; dynamic modification |

| Intrinsically Disordered Proteins | High abundance in human proteome | Various human diseases | Lack of fixed 3D structure; difficult to characterize |

| Understudied Biomedical Proteins | Identified through literature and interactome analysis | Multiple disease pathways | Research bias toward previously characterized targets |

Evolutionary Algorithms as Exploration Engines

Navigating Functional Landscapes

Evolutionary algorithms provide a powerful framework for navigating the vast, high-dimensional fitness landscapes of protein function. The concept of a protein fitness landscape visualizes protein sequences as positions in a multidimensional space, with fitness (desired function) represented as elevation [1]. These landscapes are not smooth surfaces but are instead rugged and epistatic, meaning mutational effects are often dependent on higher-order interactions rather than purely additive [1]. This ruggedness arises from structural contacts, allostery, conformational dynamics, and interactions with ligands or cofactors, creating complex fitness topography with multiple local optima.

The evolutionary approach mimics natural selection while dramatically accelerating the process through intelligent sampling. Rather than exhaustively screening all possible variants—a computationally impossible task for even moderately sized proteins—evolutionary algorithms iteratively generate and test populations of sequences, applying selection pressure to favor beneficial mutations and combinations. This approach effectively navigates the fitness landscape by taking greedy uphill steps toward fitness peaks while maintaining sufficient diversity to avoid becoming trapped in suboptimal local maxima [1].

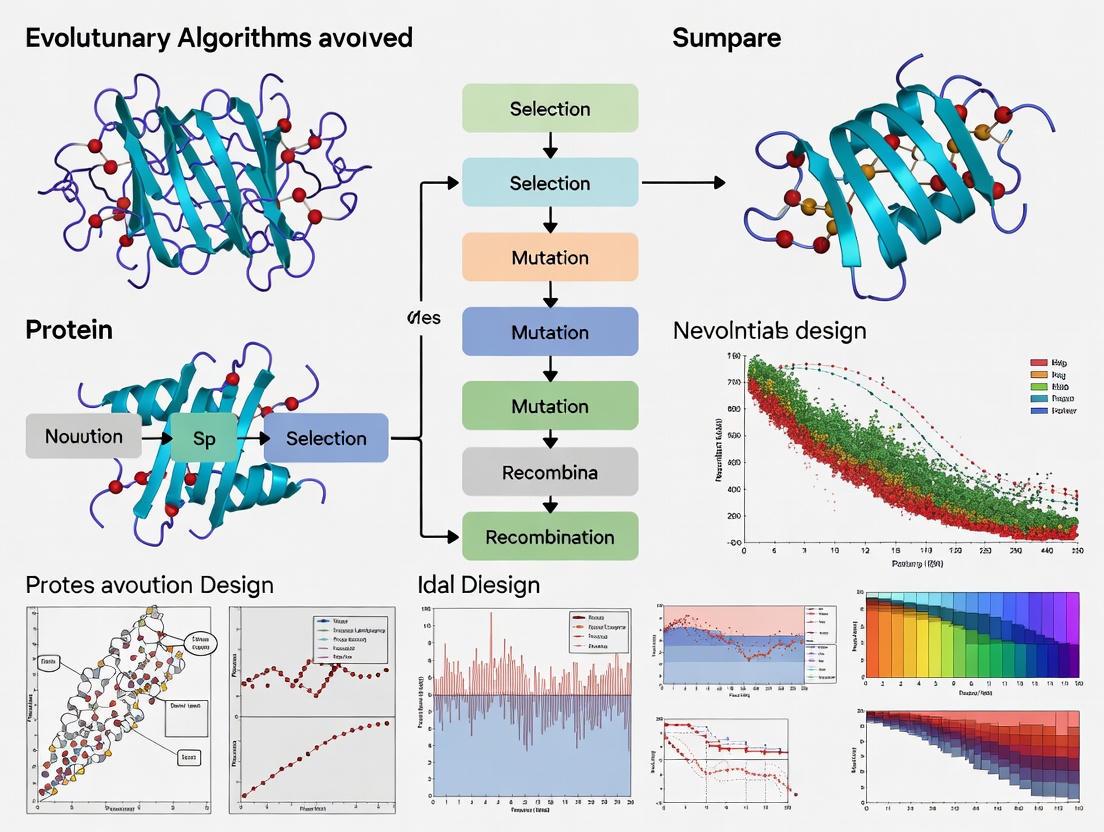

Diagram 1: Evolutionary Algorithm Cycle. This workflow illustrates the iterative process of evolutionary optimization for protein engineering.

Advanced Implementation: REvoLd and SEWING

Recent advances in evolutionary algorithms have demonstrated remarkable efficiency in exploring ultra-large protein and chemical spaces. The RosettaEvolutionaryLigand (REvoLd) algorithm represents a cutting-edge approach designed specifically for screening ultra-large make-on-demand compound libraries containing billions of readily available compounds [7]. REvoLd exploits the combinatorial nature of these libraries by searching the synthetic building block space rather than enumerating all possible molecules, enabling efficient exploration without exhaustive screening.

In benchmark studies across five drug targets, REvoLd achieved improvements in hit rates by factors between 869 and 1622 compared to random selection, while docking only between 49,000 and 76,000 unique molecules per target—a tiny fraction of the billions of compounds in the screening library [7]. This dramatic enrichment demonstrates the algorithm's ability to efficiently navigate vast combinatorial spaces and identify promising regions with minimal sampling.

Complementary to ligand-focused approaches, the SEWING (Structural Extension Workbench Using Natural Fragments) protocol addresses the challenge of protein backbone design [8]. SEWING performs requirement-driven protein design by assembling novel protein backbones from fragments of naturally occurring proteins, then applying Rosetta-based sequence optimization and backbone refinement. This approach enables the creation of proteins that satisfy specific functional requirements rather than adopting predetermined folds, particularly valuable for designing ligand binding sites and protein-protein interfaces [8].

Table 3: Performance Metrics of Evolutionary Algorithms

| Algorithm | Application Domain | Library Size | Screened Fraction | Hit Rate Improvement |

|---|---|---|---|---|

| REvoLd | Ligand docking | >20 billion compounds | ~0.0003% | 869-1622x vs. random |

| SEWING | Protein backbone design | Combinatorial fragment space | Not quantified | Successful novel helical bundles |

Experimental Protocols and Methodologies

REvoLd Protocol for Ultra-Large Library Screening

The REvoLd protocol implements an evolutionary algorithm for protein-ligand docking within the Rosetta software suite. The method performs flexible docking of both ligand and receptor using RosettaLigand, exploring the combinatorial make-on-demand chemical space through iterative generations of selection, crossover, and mutation [7].

Initialization and Hyperparameters:

- Begin with a random population of 200 ligands constructed from available building blocks and reactions

- Advance 50 individuals to each subsequent generation to balance diversity and selection pressure

- Run for 30 generations to optimize the trade-off between convergence and exploration

- Conduct multiple independent runs (typically 20) to sample different regions of chemical space

Reproduction Mechanics:

- Implement crossover operations that recombine well-performing molecular fragments

- Apply mutation steps that switch single fragments to low-similarity alternatives

- Include reaction-switching mutations that change the core reaction while preserving similar fragments

- Incorporate a second round of crossover and mutation excluding the fittest molecules to promote diversity

Validation and Output:

- Dock each proposed compound using flexible RosettaLigand protocol

- Select top-performing compounds for experimental validation

- The algorithm typically identifies hit-like molecules within 15 generations, with continued discovery through extended runs [7]

SEWING Protocol for Requirement-Driven Protein Design

The SEWING protocol enables requirement-driven protein design through a fragment assembly approach implemented in Rosetta. This method is particularly valuable for creating proteins with specific functional capabilities without constraining the overall fold [8].

Substructure Database Preparation:

- Extract supersecondary structure elements (e.g., helix-loop-helix motifs) from native proteins in the PDB

- Currently compatible with helical substructures (beta strands may be present but cannot be hybridized)

- Store substructures in a searchable database for the assembly process

Monte Carlo Assembly Process:

- Specify design requirements as filters or score terms (e.g., metal coordination geometry)

- Begin with a starting substructure or random initial fragment

- Perform Monte Carlo simulation with add, delete, and switch moves for a minimum of 10,000 cycles

- Use temperature cooling from starttemperature to endtemperature during the assembly

- Apply a window_width of 4 residues (approximately one helical turn) for fragment alignment

Sequence Design and Refinement:

- Generate at least 10,000 backbone assemblies using SEWING

- Select the top 10% by total SEWING score for further refinement

- Perform rotamer-based sequence optimization using Rosetta's fixed-backbone design protocols

- Apply quality metrics (packing statistics, interface scores, etc.) to select final sequences for experimental characterization

- The entire process requires approximately 100 CPU-hours for backbone generation and 500-1000 CPU-hours for refinement of 1000 assemblies [8]

Integration with Deep Learning Approaches

RFdiffusion for De Novo Protein Design

The integration of evolutionary methods with deep learning represents the cutting edge of protein exploration. RFdiffusion is a powerful generative model that adapts the RoseTTAFold structure prediction network for protein design using denoising diffusion probabilistic models (DDPMs) [9]. This approach enables the creation of novel protein structures with atomic-level precision, facilitating the design of functional proteins for specific applications.

RFdiffusion operates by learning to reverse a gradual noising process applied to protein structures. Starting from random noise, the model iteratively denoises the structure through up to 200 steps, progressively refining it into a coherent protein backbone [9]. Key advancements in RFdiffusion include:

- Self-conditioning: The model conditions its predictions on previous timesteps, improving coherence and performance

- Fine-tuning from RoseTTAFold: Leveraging pre-trained weights dramatically improves performance compared to training from scratch

- Auxiliary conditioning: The model can incorporate specific design constraints including partial sequences, fold information, or fixed functional motifs

Experimental validation has confirmed RFdiffusion's capability to design diverse functional proteins, including symmetric assemblies, metal-binding proteins, and protein binders. In one notable example, the cryo-EM structure of a designed binder in complex with influenza hemagglutinin was nearly identical to the design model [9].

Combined Workflow for Functional Protein Design

The integration of diffusion-based backbone generation with evolutionary sequence optimization represents a powerful combined workflow for exploring the protein functional universe. This hybrid approach leverages the strengths of both methodologies:

Diagram 2: Integrated Protein Design Workflow. This hybrid approach combines deep learning-based structure generation with evolutionary sequence optimization.

- Specification Phase: Define functional requirements (e.g., binding site geometry, catalytic residues, structural motifs)

- Backbone Generation: Use RFdiffusion to generate protein backbones compatible with functional specifications

- Initial Sequence Design: Apply ProteinMPNN or similar networks to design initial sequences for generated backbones

- Evolutionary Optimization: Implement evolutionary algorithms to optimize sequences for stability, expression, and function

- Experimental Validation: Test designed proteins experimentally, with results informing subsequent design cycles

This combined approach enables comprehensive exploration of both structural and sequence space, efficiently navigating the vast protein functional universe to identify novel solutions to complex design challenges.

Table 4: Key Computational Tools for Protein Exploration

| Tool/Resource | Primary Function | Application Context | Key Features |

|---|---|---|---|

| Rosetta Software Suite | Protein structure prediction and design | General protein engineering | Modular architecture; physics-based scoring |

| REvoLd | Evolutionary ligand docking | Ultra-large library screening | Flexible docking; combinatorial space exploration |

| SEWING | Requirement-driven protein design | Novel protein backbone generation | Fragment assembly; Monte Carlo sampling |

| RFdiffusion | De novo protein structure generation | Functional protein design | Diffusion models; conditional generation |

| ProteinMPNN | Protein sequence design | Sequence optimization for fixed backbones | Inverse folding; high success rates |

| AlphaFold2 | Protein structure prediction | Structure validation and analysis | High accuracy; confidence metrics |

| CATH/SCOP | Protein structure classification | Functional annotation and analysis | Hierarchical classification; evolutionary relationships |

The protein functional universe represents a vast, largely unexplored territory with tremendous potential for therapeutic intervention and biological discovery. While the scale of this universe is daunting—with sequence spaces exceeding astronomical proportions—advanced computational methods are now enabling efficient navigation and exploitation of this space. Evolutionary algorithms, particularly when integrated with deep learning approaches, provide powerful frameworks for identifying functional proteins that would remain inaccessible through traditional methods. As these technologies continue to mature, they promise to unlock the considerable untapped potential of the protein functional universe, enabling new therapeutic strategies and deepening our understanding of biological systems.

The concept of evolutionary myopia represents a fundamental constraint in biological systems, wherein natural proteins are optimized for biological fitness within specific ecological niches rather than for the diverse applications demanded by human biotechnology. This evolutionary short-sightedness has profound implications for protein engineering, as natural proteins often lack the stability, specificity, and functional versatility required for industrial processes, therapeutic interventions, and synthetic biology applications. The extraordinary diversity observed in natural proteins constitutes merely a glimpse of the theoretical protein functional universe—the vast space encompassing all possible protein sequences, structures, and their corresponding biological activities [10]. This universe remains largely unexplored, constrained by the limitations of natural evolution and conventional protein engineering methodologies [10].

Substantial evidence indicates that the known natural fold space is approaching saturation, with novel folds rarely emerging in nature [10]. Contemporary comparative analyses suggest that recent functional innovations in nature predominantly arise from domain rearrangements rather than the de novo emergence of entirely new structural motifs or folds [10]. This selective paradigm reinforces an evolutionary trajectory that diversifies proteomes through reorganization and repurposing, thereby constraining the exploration of genuinely novel sequences and structures [10]. The evolutionary process is inherently conservative, favoring incremental modifications to existing frameworks over revolutionary architectural innovations, creating a fundamental bottleneck in our access to the full potential of the protein universe.

The Vast but Constrained Protein Functional Universe

The Combinatorial Challenge of Sequence-Structure Space

The protein functional universe is characterized by its unimaginable scale, presenting a fundamental challenge for comprehensive exploration. The sequence → structure → function paradigm—the central tenet of molecular biology stating that a protein's amino acid sequence encodes its three-dimensional fold, which in turn determines its biological function—defines a landscape of astronomical proportions [10]. For a modest 100-residue protein, the theoretical number of possible amino acid arrangements is 20^100 (≈1.27 × 10^130), a number that exceeds the estimated number of atoms in the observable universe (~10^80) by more than fifty orders of magnitude [10]. Within this incomprehensibly vast space, the probability that a random sequence will fold into a stable structure and display useful biological activity is vanishingly small, rendering unguided experimental screening profoundly inefficient and cost-prohibitive [10].

Quantitative Dimensions of Known Protein Space

Table 1: Quantitative Cataloguing of Known Protein Sequence and Structure Space

| Resource | Type | Scale | Reference |

|---|---|---|---|

| MGnify Protein Database | Sequences | ~2.4 billion non-redundant sequences | [10] |

| Profluent Protein Atlas v1 | Sequences | ~3.4 billion full-length proteins | [10] |

| AlphaFold Protein Structure Database | Structures | ~214 million models | [10] |

| ESM Metagenomic Atlas | Structures | ~600 million predicted structures | [10] |

Despite these impressive numbers, the known protein space represents only an infinitesimal fraction of the theoretical protein functional universe [10]. Furthermore, these datasets exhibit significant biases reflecting evolutionary history and experimental assay capabilities, which channel data-driven methods toward well-explored regions of the sequence-structure space [10]. This sampling bias creates a fundamental limitation for protein engineering approaches that rely exclusively on natural templates, as they are inherently confined to the functional neighborhoods of existing proteins and cannot access the vast unexplored territories of the protein universe [10].

Computational Methodologies to Overcome Evolutionary Constraints

Physics-Based versus Evolution-Based Design Approaches

Conventional protein engineering strategies, particularly directed evolution, have demonstrated remarkable success in optimizing existing proteins but remain fundamentally constrained by their dependence on natural starting points [10]. These methods perform local searches within the protein functional universe through iterative cycles of mutation and selection, requiring the construction and experimental screening of immense variant libraries [10]. This process is not only labor-intensive and costly but, more fundamentally, confines discovery to the immediate "functional neighborhood" of the parent scaffold, making them ill-equipped to access genuinely novel functional regions beyond natural evolutionary pathways [10].

Table 2: Comparative Analysis of Protein Design Methodologies

| Methodology | Underlying Principle | Advantages | Limitations | |

|---|---|---|---|---|

| Physics-Based (e.g., Rosetta) | Energy minimization based on physical force fields | Principles-based; can create novel folds (e.g., Top7) | Approximate force fields; high computational cost; limited sampling | [10] |

| Evolution-Based (e.g., EvoDesign) | Evolutionary profile guidance from structural analogs | Native-like sequences; implicit capture of folding constraints | Limited to fold space represented in databases | [11] |

| AI-Driven De Novo Design | Machine learning on sequence-structure-function mappings | Rapid exploration; customized folds and functions | Training data limitations; black box predictions | [10] |

EvoDesign: An Evolution-Based Algorithm for Protein Design

The EvoDesign algorithm represents a sophisticated methodology that leverages evolutionary information to guide the protein design process [11]. This approach is distinguished by its use of evolutionary constraints implicitly encoded in protein families to navigate the sequence space efficiently. The algorithm operates through a systematic workflow:

- Structural Analog Identification: A set of proteins with similar folds to the target scaffold is collected from the Protein Data Bank (PDB) using structural alignment program TM-align, with similarity defined by a TM-score cutoff value [11].

- Profile Construction: A position-specific scoring matrix M(p,a) is created based on multiple sequence alignment generated from the structural analogs [11]. The matrix is calculated as: M(p,a) = ∑w(p,x)×B(a,x), where x represents a particular amino acid, B(a,x) is the BLOSUM62 substitution matrix, and w(p,x) is the frequency of amino acid x at position p in the MSA [11].

- Local Feature Optimization: Back propagation neural network predictors are used to estimate secondary structure, solvent accessibility, and torsion angles to smoothen singularities in local sequences [11].

- Energy Function Integration: The evolutionary potential is defined as E_evolution = ∑max[M(p,a) + w1ΔSS(p) + w2ΔSA(p) + w3(Δφ(p) + Δψ(p))], where ΔSS, ΔSA, Δφ, and Δψ are differences in secondary structure, solvent accessibility, and torsion angles between target assignments and predictions from decoy sequences [11].

- Monte Carlo Sequence Search: Sequences are generated through Monte Carlo searches starting from 10 random sequences updated by random residue mutations [11].

- Sequence Clustering: Instead of selecting the lowest energy sequence, all sequences from the 10 runs are pooled, and the sequence with the maximum number of neighbors is identified using the SPICKER clustering algorithm [11].

This methodology harnesses the critical insight that evolution implicitly encodes information on protein folds and binding interactions that greatly exceeds our ability to describe it through reductionist, physics-based methods alone [11].

Genetic Algorithm Approaches for Protein Redesign

Genetic algorithms (GAs) provide another evolutionary computing approach for protein engineering, implementing virtual evolutionary processes to optimize protein sequences. GAOptimizer represents one such tool that employs genetic algorithm principles to engineer diverse enzymes [12]. The algorithm requires two key input parameters: fitness functions (which can include stability-based and non-stability-based scores) and sequence libraries that define the sequence space for selecting mutation candidates [12]. The process mirrors natural selection through iterative generations of selection, crossover, and mutation, efficiently exploring the combinatorial sequence space without exhaustive enumeration.

Similarly, the REvoLd (RosettaEvolutionaryLigand) algorithm demonstrates the application of evolutionary algorithms to ultra-large library screening in protein-ligand docking [7]. This approach explores the vast search space of combinatorial libraries without enumerating all molecules, exploiting the fact that make-on-demand compound libraries are constructed from lists of substrates and chemical reactions [7]. In benchmark tests across five drug targets, REvoLd showed improvements in hit rates by factors between 869 and 1622 compared to random selections, demonstrating the remarkable efficiency of evolutionary approaches for navigating vast chemical spaces [7].

Experimental Protocols and Validation Methodologies

Computational Validation of Designed Proteins

The validation of computationally designed proteins requires rigorous computational assessment before proceeding to experimental characterization. Key computational validation protocols include:

- Structural Integrity Prediction: Using protein structure prediction tools (e.g., AlphaFold2, RosettaFold) to verify that the designed sequence adopts the intended fold [10].

- Thermodynamic Stability Calculations: Employing tools like FoldX to calculate folding free energy (ΔG) and estimate thermal stability [11].

- Aggregation Propensity Assessment: Utilizing algorithms (e.g., TANGO, AGGRESCAN) to identify sequences with high aggregation potential and modify them accordingly [11].

- Functional Site Geometry Analysis: For enzymatic designs, validating the spatial arrangement of catalytic residues and substrate binding pockets using molecular docking simulations [11].

These computational validations provide essential filters to prioritize the most promising designs for experimental characterization, significantly reducing experimental costs and time investments.

Experimental Characterization of Designed Proteins

Following computational design and validation, experimental characterization is essential to confirm the design specifications. Core experimental protocols include:

- Recombinant Protein Expression: Heterologous expression in systems such as E. coli, followed by purification using affinity, ion exchange, and size exclusion chromatography [11].

- Biophysical Characterization:

- Circular Dichroism (CD) Spectroscopy: To verify secondary structure content and assess thermal stability by monitoring unfolding transitions [11].

- Nuclear Magnetic Resonance (NMR) Spectroscopy: For high-resolution structural validation and dynamics characterization, particularly for smaller protein domains [11].

- Differential Scanning Calorimetry (DSC): To measure melting temperature (Tm) and obtain thermodynamic parameters of folding [11].

- Functional Assays: Enzyme kinetics (Km, kcat), binding affinity measurements (SPR, ITC), or cellular activity assays relevant to the intended function [11].

These experimental protocols provide the critical link between computational designs and real-world functionality, closing the design-validation loop and enabling iterative improvement of design methodologies.

Table 3: Key Research Reagents and Computational Tools for Evolutionary Protein Design

| Resource Category | Specific Tools/Resources | Function/Application | Reference |

|---|---|---|---|

| Protein Design Software | Rosetta, EvoDesign, GAOptimizer | De novo protein design and optimization | [10] [12] [11] |

| Structure Prediction | AlphaFold2, ESMFold, RosettaFold | Protein structure prediction from sequence | [10] |

| Structure Databases | PDB, AlphaFold DB, ESM Metagenomic Atlas | Template structures and evolutionary information | [10] [11] |

| Sequence Databases | MGnify, Profluent Protein Atlas | Natural sequence diversity for profile construction | [10] |

| Experimental Validation | CD Spectroscopy, NMR, X-ray Crystallography | Structural and biophysical characterization | [11] |

| Ultra-Large Library Screening | REvoLd, V-SYNTHES, SpaceDock | Efficient exploration of combinatorial chemical space | [7] |

Future Directions and Concluding Perspectives

The field of AI-driven de novo protein design is rapidly advancing beyond the constraints of evolutionary myopia, fundamentally expanding our access to the protein functional universe [10]. By integrating generative models, structure prediction tools, and iterative experimental validation, these approaches enable researchers to directly explore regions of the functional landscape that natural evolution has not sampled [10]. This paradigm shift from template-based engineering to computational de novo design represents a fundamental transformation in protein science, with profound implications for biotechnology, medicine, and synthetic biology.

Future advancements will likely focus on several key areas: (1) improved integration of physical principles with evolutionary information to enhance design accuracy; (2) development of more sophisticated multi-state design methodologies for creating dynamically functional proteins; (3) expansion of design capabilities to include non-canonical amino acids and novel chemical functionalities; and (4) increased automation of the design-build-test-learn cycle to accelerate iterative optimization [10] [11] [7]. As these methodologies mature, they promise to unlock a new era of biological engineering, providing custom-made protein tools for advances in medicine, agriculture, and green technology that transcend the limitations of natural evolutionary history [10].

The Immeasurable Vastness of Protein Sequence-Structure Space

The fundamental challenge in protein engineering lies in the astronomical scale of the protein sequence-structure landscape. For a relatively short protein of 100 amino acids, the number of possible sequence arrangements is 20^100 (approximately 1.27 × 10^130), a figure that exceeds the estimated number of atoms in the observable universe (~10^80) by more than fifty orders of magnitude [10]. Within this unimaginably vast sequence space, the subset of sequences that fold into stable, functional structures is exceptionally small, making the probability that a random sequence will possess useful biological activity vanishingly small [10].

This combinatorial explosion creates a fundamental exploration bottleneck. Experimental laboratories can typically screen only thousands to millions of variants, representing an infinitesimal fraction of the possible sequence space [10]. This disparity between what is theoretically possible and what is practically explorable defines the core challenge in conventional protein engineering.

Table 1: The Scale of the Protein Sequence-Structure Universe

| Dimension | Scale | Contextual Reference |

|---|---|---|

| Theoretical Sequence Space (100-residue protein) | 20^100 ≈ 1.27 × 10^130 sequences | Exceeds the number of atoms in the observable universe (~10^80) [10] |

| Known Natural Sequences (UniRef90) | ~172 million sequences [13] | Infinitesimal fraction of theoretical space |

| Known/Predicted Structures (AlphaFold DB) | ~214 million structures [10] [13] | Infinitesimal fraction of theoretical space |

| Functional Subset | An astronomically small fraction of sequence space [10] | Needle in a haystack problem |

The Limitations of Conventional Protein Engineering

Traditional protein engineering methods, most notably directed evolution, are fundamentally constrained by their reliance on existing biological templates and local search strategies. These methods operate through iterative cycles of random mutagenesis and high-throughput screening to identify variants with improved traits [14]. While successful for optimizing existing functions, this approach is inherently limited in its ability to discover genuinely novel folds or functions [10].

The core limitation is that directed evolution performs a local search within the protein fitness landscape. It remains tethered to the evolutionary history and structural biases of the parent scaffold, exploring only its immediate "functional neighborhood" [10]. This "evolutionary myopia" means that natural proteins are optimized for biological fitness in specific niches, not for human-desired properties such as stability under industrial conditions or novel catalytic functions [10]. Consequently, these methods are structurally biased and ill-equipped to access genuinely novel functional regions that lie beyond the boundaries of natural evolutionary pathways [10].

Furthermore, the process is inherently resource-intensive, requiring the construction and experimental screening of immense variant libraries through iterative cycles, which is laborious, costly, and slow [10] [14]. As the complexity of the desired function increases, the library sizes and screening efforts required become practically infeasible.

A Paradigm Shift: AI-Driven De Novo Protein Design

Artificial intelligence (AI) is now catalyzing a paradigm shift in protein engineering, transcending the limitations of conventional methods. Modern AI-driven de novo protein design enables the computational creation of proteins with customized folds and functions from first principles, rather than by modifying existing natural scaffolds [10]. This represents a fundamental transition from empirical trial-and-error exploration to systematic rational design.

This new paradigm leverages machine learning (ML) models trained on vast biological datasets to establish high-dimensional mappings between sequence, structure, and function [10]. Key computational frameworks include:

- Generative Models (e.g., RFdiffusion, ProteinMPNN): These AI models can imagine and generate entirely new protein sequences that are predicted to fold into desired structures or perform specific functions, venturing far beyond natural evolutionary pathways [15].

- Protein Language Models (PLMs) (e.g., ESM-2): Trained on evolutionary-scale protein sequence databases, these models learn the fundamental "grammar" of proteins. They can be used for zero-shot prediction of functional variants and to guide exploration in sequence space [14] [16].

- Structure Prediction Tools (e.g., AlphaFold 2/3, Boltz-2): These tools accurately predict the 3D structure of a protein from its amino acid sequence, and newer versions like Boltz-2 can also predict functional properties like ligand binding affinity [15].

Table 2: Comparison of Protein Engineering Methodologies

| Methodology | Search Type | Key Advantage | Primary Limitation |

|---|---|---|---|

| Directed Evolution [10] [14] | Local search | Proven, reliable for optimizing existing functions | Limited to neighborhoods of known proteins; resource-intensive |

| Physics-Based De Novo Design (e.g., Rosetta) [10] | Global search (theoretical) | Can create novel folds (e.g., Top7) | Computationally expensive; force fields are approximations |

| AI-Driven De Novo Design [10] [15] | Global search (informed) | Explores beyond evolutionary boundaries; high speed and accuracy | Dependent on quality and bias of training data |

These AI methodologies employ a powerful filter-and-refine strategy [10] [13]. Coarse, fast filters first eliminate structurally irrelevant sequences, after which accurate, slower alignment and scoring steps are applied only to the remaining promising candidates. This strategy, enhanced by machine learning, allows for efficient navigation of the combinatorial space that would be prohibitive for exhaustive search methods [13].

Experimental Protocols & Research Toolkit

Protocol: AI-Guided Automated Protein Engineering (PLMeAE)

The Protein Language Model-enabled Automatic Evolution (PLMeAE) platform exemplifies the modern, closed-loop approach to protein engineering [14]. This system integrates AI with automated biofoundries to accelerate the Design-Build-Test-Learn (DBTL) cycle.

Workflow Overview:

- Design: A protein language model (ESM-2) performs zero-shot prediction of 96 high-fitness variants to initiate the cycle. Two modules exist:

- Module I (No prior sites): The PLM identifies critical mutation sites and predicts beneficial single mutants.

- Module II (Known sites): For predefined mutation sites, the PLM samples informative multi-mutant variants.

- Build: An automated biofoundry constructs the proposed variant library.

- Test: The biofoundry expresses and tests the variants, collecting fitness data (e.g., enzyme activity).

- Learn: Experimental results are fed back to train a supervised ML model (e.g., a multi-layer perceptron) as a fitness predictor. This model then designs the next round of 96 variants with improved fitness.

This iterative process enabled a 2.4-fold improvement in enzyme activity for a tRNA synthetase within four rounds (10 days), significantly outperforming traditional directed evolution [14].

The Scientist's Toolkit: Essential Research Reagents & Platforms

Table 3: Key Research Reagents and Platforms for AI-Driven Protein Design

| Tool/Reagent | Type | Primary Function |

|---|---|---|

| RFdiffusion [15] | Generative AI Model | Designs novel protein backbone structures and complexes from scratch. |

| ProteinMPNN [15] | Generative AI Model | Designs optimal amino acid sequences for a given protein backbone structure, improving stability and function. |

| AlphaFold 2/3 [10] [15] | Structure Prediction AI | Predicts 3D protein structures from sequences (AF3 extends to multi-molecule complexes). |

| ESM-2 [14] | Protein Language Model (PLM) | Learns evolutionary principles from sequences; used for zero-shot variant prediction and sequence encoding. |

| Automated Biofoundry [14] | Integrated Robotic System | Executes high-throughput, reproducible Build and Test phases (cloning, expression, assay). |

| SARST2 [13] | Structural Alignment Algorithm | Rapidly searches massive structural databases (e.g., AlphaFold DB) to identify homologs and analyze new designs. |

Quantitative Benchmarks: Demonstrating the AI Advantage

The performance advantages of modern computational methods are quantifiable. In benchmark evaluations, the SARST2 structural alignment algorithm achieved an average information retrieval precision of 96.3%, outperforming other methods like Foldseek (95.9%) and TM-align (94.1%) [13]. Crucially, it completed a search of the massive AlphaFold Database (214 million structures) in just 3.4 minutes using 32 processors, significantly faster than Foldseek (18.6 minutes) and BLAST (52.5 minutes), while also using substantially less memory [13]. This efficiency is critical for practical research applications.

In de novo design, AI-driven workflows have demonstrated the ability to create synthetic binding proteins (SBPs) with improved solubility, stability, and binding affinity compared to conventionally engineered ones [15]. These AI-designed proteins access regions of the functional landscape that traditional methods cannot efficiently reach, proving the capability to move beyond the constraints of combinatorial explosion.

The field of protein engineering is undergoing a fundamental transformation, moving beyond the constraints of natural evolutionary history. Traditional methods, which rely on modifying existing protein scaffolds, are being superseded by computational approaches that design entirely novel proteins from first principles. This whitepaper details this paradigm shift, focusing on the central role of evolutionary algorithms and artificial intelligence in exploring the vast, uncharted regions of the protein functional universe. We provide a technical examination of cutting-edge methodologies, benchmark performance data, and a detailed toolkit for researchers driving the next wave of discovery in therapeutics, catalysis, and synthetic biology.

Proteins are the fundamental molecular machines of life, but the diversity found in nature represents only a minuscule fraction of the theoretical protein functional universe [10]. This universe encompasses all possible protein sequences, structures, and their biological activities. Conventional protein engineering strategies, most notably directed evolution, have achieved remarkable successes by mimicking natural evolution—applying iterative cycles of mutation and selection to a parent protein to improve its function [10].

However, these methods are inherently constrained. They perform a local search within the functional landscape, tethered to the starting scaffold's evolutionary history. This makes them ill-suited for accessing genuinely novel functions that lie beyond natural pathways [10]. Furthermore, the known natural fold space is approaching saturation, with recent innovations arising primarily from domain rearrangements rather than the emergence of new folds [10].

Table 1: Comparison of Protein Engineering Paradigms

| Feature | Directed Evolution | AI-Driven De Novo Design |

|---|---|---|

| Starting Point | A natural protein template | First principles / Computational specification |

| Exploration Scope | Local "functional neighborhood" | Vast, uncharted regions of sequence-structure space |

| Dependence on Natural Evolution | High | None |

| Capacity for Novel Folds | Limited | High |

| Primary Constraint | Experimental screening throughput | Computational sampling & force field accuracy |

The AI-Driven Paradigm Shift

De novo protein design aims to transcend these limits by computationally creating proteins with customized folds and functions without relying on a natural template [10]. This represents a shift from empirical trial-and-error to systematic rational design.

Early de novo methods, such as those implemented in the Rosetta software suite, relied on physics-based modeling and force-field energy minimization [10]. While successful in creating novel folds like Top7, these methods face challenges: the computational expense of sampling is prohibitive for large complexes, and inaccuracies in energy calculations can lead to designs that fail to fold correctly in vitro [10].

The paradigm shift is being driven by the integration of artificial intelligence. Modern AI-augmented strategies use machine learning models trained on vast biological datasets to establish high-dimensional mappings between sequence, structure, and function [10]. This AI-driven approach enables the rapid generation of novel, stable, and functional proteins, dramatically accelerating the exploration of the functional universe.

Evolutionary Algorithms in Ultra-Large Library Screening

A key challenge in computational design is navigating the immense scale of make-on-demand combinatorial libraries, which can contain billions to billions of readily available compounds. Exhaustive screening of these libraries with flexible docking is computationally intractable. Evolutionary algorithms have emerged as a powerful solution for this optimization problem.

The REvoLd Algorithm: A Case Study

The RosettaEvolutionaryLigand (REvoLd) algorithm is a state-of-the-art example designed specifically for screening ultra-large make-on-demand chemical spaces without enumerating all molecules [7]. REvoLd exploits the combinatorial nature of these libraries, constructed from lists of substrates and chemical reactions, to efficiently search for high-affinity protein ligands with full ligand and receptor flexibility using RosettaLigand.

Table 2: REvoLd Benchmark Performance on Five Drug Targets

| Metric | Result |

|---|---|

| Improvement in Hit Rate | 869 to 1622 times higher than random selection [7] |

| Total Unique Molecules Docked per Target | ~49,000 to ~76,000 [7] |

| Typical Run Parameters | Initial Population: 200 ligandsGenerations: 30Population Advancement: 50 individuals [7] |

Detailed REvoLd Experimental Protocol

The following workflow outlines the core methodology for a REvoLd screening campaign as described in its benchmark studies [7]:

Key Protocol Steps:

- Initialization: The algorithm begins by generating a random population of 200 ligands from the combinatorial library building blocks.

- Fitness Evaluation: Each ligand in the population undergoes flexible protein-ligand docking using RosettaLigand, which provides a binding score (fitness).

- Selection: The top 50 scoring individuals (ligands) are selected to advance and reproduce.

- Reproduction: The selected individuals undergo a series of operations to create the next generation:

- Crossover: Pairs of fit ligands are recombined to create novel hybrids, enforcing variance.

- Fragment Mutation: Single fragments in a promising ligand are switched to low-similarity alternatives, preserving most of the structure while enforcing exploration.

- Reaction Switch Mutation: The reaction core of a molecule is changed, searching for similar fragments within the new reaction group to access different regions of the chemical space.

- Iteration: Steps 2-4 are repeated for a predetermined number of generations (typically 30). To prevent premature convergence, a second round of crossover and mutation is often performed, excluding the very fittest molecules to allow worse-scoring ligands to contribute their molecular information.

The Scientist's Toolkit: Essential Research Reagents & Solutions

Implementing these advanced computational methods requires a suite of specialized software and data resources.

Table 3: Key Research Reagent Solutions for AI-Driven Protein Design

| Item Name | Function / Explanation |

|---|---|

| Rosetta Software Suite | A comprehensive platform for macromolecular modeling, used for flexible docking (RosettaLigand) and as the computational engine for algorithms like REvoLd [7]. |

| Enamine REAL Space | A make-on-demand combinatorial library of billions of chemically accessible compounds, serving as a primary search space for virtual screening campaigns [7]. |

| Protein Data Bank (PDB) | The single global archive for 3D structural data of proteins and nucleic acids, essential for obtaining target structures and training data [17]. |

| Evolutionary Algorithm (e.g., REvoLd) | A meta-heuristic optimization method inspired by natural selection, used to efficiently navigate ultra-large combinatorial chemical spaces without exhaustive enumeration [7]. |

| ESM Protein Language Model | A deep learning model trained on millions of protein sequences; its embeddings can be used as input representations for other machine learning models to improve performance on sequence-function prediction tasks [18]. |

| Uncertainty Quantification (UQ) Methods | Techniques (e.g., ensembles, dropout) to estimate the uncertainty of a model's predictions, which is critical for guiding active learning and Bayesian optimization in protein engineering [18]. |

Uncertainty Quantification: Critical Support for the Paradigm

The performance of machine learning models is highly dependent on the domain shift between training and testing data. Uncertainty Quantification (UQ) is therefore critical for reliably deploying these models in protein engineering, where data collection often violates standard independent and identically distributed (i.i.d.) assumptions [18].

Benchmarking studies on protein fitness landscapes (e.g., GB1, AAV) reveal that the quality of UQ is dataset- and task-dependent, with no single method consistently outperforming all others [18]. For convolutional neural networks, model ensembles have been shown to be particularly robust to distribution shift [18]. Well-calibrated UQ methods enable more effective experiment selection in active learning and Bayesian optimization cycles, ensuring that computational resources are focused on the most informative sequences.

The paradigm in protein engineering has irrevocably shifted. The move from directed evolution to AI-driven de novo design, powered by evolutionary algorithms and advanced computational tools, has freed researchers from the constraints of natural evolutionary history. This allows for the systematic exploration and creation of bespoke proteins with tailored functionalities. As these methodologies continue to mature and integrate with robust uncertainty quantification, they pave the way for unprecedented advances in designing novel therapeutics, enzymes, and biomaterials, fully unlocking the potential of the protein functional universe.

The fitness landscape is a foundational concept for understanding and engineering protein evolution. It provides a powerful theoretical framework for visualizing evolution as a navigation problem in a high-dimensional space. In this model, each point in the protein sequence space represents a unique amino acid sequence, and the height at that point corresponds to its "fitness"—a measure of its functional performance within a given selective environment [19]. Evolution, whether natural or directed, can then be conceptualized as an adaptive walk across this landscape, moving from sequences of lower fitness to those of higher fitness through iterative rounds of mutation and selection [19].

The structure of these landscapes profoundly influences evolutionary dynamics. Landscapes can range from smooth, "Fujiyama"-like surfaces with single peaks and gradual slopes, to highly rugged, "Badlands"-like terrains riddled with local optima that can trap evolutionary processes [19]. The roughness of the landscape determines the accessibility of functional sequences and the potential paths evolution can take. In protein engineering, the goal of directed evolution is to efficiently traverse this landscape to discover sequences with new or enhanced functions, circumventing our often-incomplete knowledge of the precise molecular details linking sequence to function [19].

The Sequence-Structure-Function Paradigm

The relationship between a protein's sequence, its three-dimensional structure, and its biological function is central to the fitness landscape concept. The classical view follows the sequence-structure-function paradigm, where the amino acid sequence uniquely determines the folded structure, which in turn dictates its biochemical function [20]. However, large-scale structural studies have revealed that this relationship is more complex and nuanced. Similar functions can be achieved by different sequences and structures, and the overall protein structure universe appears to be continuous and largely saturated rather than composed of discrete, isolated folds [20].

Structural Determinants of Sequence Plasticity

A protein's capacity to accept mutations without losing stability or function—its sequence plasticity—is influenced by quantifiable features of its three-dimensional architecture. Research has identified contact density as a key structural metric that serves as a determinant of entropy in sequence space [21]. This metric reflects a structure's potential for sequence variability and is statistically correlated with the size of gene families in nature. Essentially, some protein folds are more "designable," meaning they can be encoded by a larger number of different sequences, making them more prevalent and more tolerant to mutation during evolutionary processes [21].

Table 1: Structural Features Influencing Evolutionary Capacity

| Structural Feature | Description | Impact on Evolvability |

|---|---|---|

| Contact Density | A measure of the compactness of the network of interactions within a protein structure [21]. | Higher contact density correlates with greater designability and larger potential sequence diversity [21]. |

| Mutational Robustness | The ability of a protein to maintain its function despite mutations [19]. | Can be increased by stabilizing mutations, which open new routes for further adaptation [19]. |

| Local Optima | Regions in sequence space where all immediate mutations lead to reduced fitness [19]. | Create evolutionary traps on rugged landscapes; escaping may require multiple simultaneous mutations [19]. |

Quantitative Metrics and Landscape Topography

The topography of a fitness landscape is characterized by specific quantitative metrics that predict evolutionary behavior and functional outcomes. Analyzing these metrics allows researchers to distinguish between different types of landscapes and design more effective protein engineering strategies.

Metrics for Landscape Analysis

Key quantitative measures include the average fraction of incorrect rotamers (<f>) and the average energy difference from the global minimum energy conformation (GMEC) (<ΔE>), which gauge the accuracy of computational protein design algorithms in navigating the landscape [22]. For structural comparisons, the TM-score is a popular metric for measuring the similarity of two protein models, with a cutoff of 0.5 typically indicating the same fold [20]. Furthermore, model quality assessment (MQA) scores, often derived from averaging pairwise TM-scores of low-energy models, help filter out low-quality structural predictions and assess the confidence of a given model [20].

Table 2: Key Quantitative Metrics in Fitness Landscape Analysis

| Metric | Calculation/Definition | Application and Interpretation |

|---|---|---|

Fraction of Incorrect Rotamers (<f>) |

Proportion of amino acid side-chain conformers incorrectly assigned compared to the GMEC [22]. | Lower values indicate higher accuracy in computational protein design; <f> can range from 0.04 (good) to 0.44 (poor) depending on algorithm and protein region [22]. |

Energy Difference from GMEC (<ΔE>) |

Energy difference between a computed solution and the GMEC, in kcal/mol [22]. | Indicates thermodynamic stability of a designed variant; larger positive values signify less stable proteins. |

| TM-Score | Metric for measuring structural similarity between two protein models [20]. | A TM-score > 0.5 suggests the same fold; used to identify novel folds and validate model quality [20]. |

| Contact Density | Computed from traces of powers of the protein's contact matrix (e.g., Tr[CM]², Tr[CM]⁴) [21]. | Correlates with fold designability; higher values allow a structure to accommodate more sequence variation [21]. |

Experimental Methodologies for Navigating Fitness Landscapes

Directed Evolution Workflow

Directed evolution is a powerful experimental methodology that mimics natural selection in the laboratory to navigate fitness landscapes and discover proteins with novel or optimized functions. It operates through iterative cycles of diversity generation and screening or selection [19].

The following diagram outlines the key stages of a standard directed evolution experiment:

Key Methodological Components

Diversity Generation: The process begins with the introduction of genetic diversity into the starting gene sequence. This can be achieved through error-prone PCR to introduce random point mutations, DNA shuffling to recombine segments of related sequences, or site-saturation mutagenesis targeted to specific residues [19]. This step creates a vast library of protein variants.

Screening and Selection: The variant library is then subjected to a high-throughput assay that applies the desired functional pressure. This could involve genetic selections (e.g., where survival or reporter gene expression is linked to protein function) or physical screens (e.g., microtiter plate assays, fluorescence-activated cell sorting) to identify individuals with improved traits [19].

Iteration and Analysis: The best-performing variants from the screen are isolated, and their genes are used as the template for the next cycle of diversification and selection. This iterative process allows the protein sequence to ascend the fitness landscape through an adaptive walk. After several rounds, individual clones are characterized to validate functional improvements and to understand the sequence changes responsible [19].

Computational Protein Design Algorithms

Complementing experimental directed evolution, computational algorithms are used to search the sequence-conformation space for low-energy, stable proteins. Different algorithms offer trade-offs between computational speed and accuracy [22]:

- Dead-End Elimination (DEE): A deterministic algorithm that is guaranteed to find the GMEC if it converges. It is highly accurate for side-chain placement but becomes computationally intractable for very complex design problems [22].

- Monte Carlo (MC) and Monte Carlo plus Quench (MCQ): Stochastic methods that sample the energy landscape through random steps. They are faster than DEE for large problems but do not guarantee finding the GMEC, with accuracy varying by protein region (e.g.,

<f>of 0.04 for protein cores vs. 0.44 for surfaces) [22]. - Self-Consistent Mean Field (SCMF): A deterministic method that is computationally efficient but often converges on solutions that are not the GMEC, showing similar accuracy challenges to MCQ, particularly on protein surfaces [22].

The Scientist's Toolkit: Essential Research Reagents and Materials

The experimental and computational workflows described rely on a suite of specialized reagents, databases, and software tools.

Table 3: Essential Resources for Protein Fitness Landscape Research

| Category / Item Name | Function and Application |

|---|---|

| Experimental Materials | |

| TMT10 Isobaric Labeling Kit | Allows multiplexed quantitative mass spectrometry for comparing protein abundance across up to 10 different samples (e.g., subcellular fractions) [23]. |

| Sequencing-grade Trypsin/LysC | High-purity enzymes for specific protein digestion into peptides for mass spectrometric analysis [23]. |

| Nycodenz/Sucrose | Inert density gradient media for the separation of subcellular organelles by centrifugation [23]. |

| Software & Algorithms | |

| Rosetta | A comprehensive software suite for de novo protein structure prediction and design, using physics-based energy functions [20]. |

| DMPfold | A machine learning-based method for protein structure prediction from sequence [20]. |

| AlphaFold2 | A deep learning system for highly accurate protein structure prediction [20]. |

| DeepFRI | A Graph Convolutional Network that provides residue-specific functional annotations from protein structures [20]. |

| Databases & Resources | |

| Protein Data Bank (PDB) | The single global archive for 3D structural data of proteins and nucleic acids [20]. |

| CATH Database | A hierarchical classification of protein domain structures into Fold, Superfamily, and Family levels [20]. |

| AlphaFold Protein Structure Database | A vast resource containing predicted structural models for nearly all cataloged proteins across multiple model organisms [20]. |

| MIP Database | A database of ~200,000 predicted structures for microbial proteins, complementary to other structural databases [20]. |

Algorithmic Frameworks and Real-World Applications: From Theory to Bench

The exploration of the protein functional universe—the theoretical space encompassing all possible protein sequences, structures, and activities—represents one of the most significant challenges and opportunities in modern biotechnology. Despite nature's astounding diversity, known proteins constitute merely a fraction of this potential, constrained by evolutionary history and experimental limitations. The sequence space for even a small 100-residue protein encompasses approximately 20^100 possible amino acid arrangements, a number so vast it exceeds the estimated atoms in the observable universe [10]. Conventional protein engineering methods, particularly directed evolution, remain tethered to natural templates, performing local searches within functional neighborhoods but fundamentally unable to access genuinely novel regions of this vast landscape. This limitation underscores the critical need for computational approaches that can transcend evolutionary boundaries [10].

Artificial intelligence (AI)-driven de novo protein design is overcoming these constraints by enabling the computational creation of proteins with customized folds and functions [10]. This paradigm shift moves beyond modifying existing scaffolds to designing proteins from first principles. Central to this revolution are sophisticated computational architectures that can navigate the complex, high-dimensional search spaces of molecular design. Among these, three core architectures have emerged as particularly powerful: Genetic Algorithms (GAs) for evolutionary optimization, Monte Carlo Tree Search (MCTS) for structured exploration and planning, and Multi-Objective Optimization (MOO) frameworks for balancing competing design criteria. These algorithms facilitate a systematic exploration of the protein functional universe, accelerating the discovery of novel biomolecules with tailored properties for therapeutic, catalytic, and synthetic biology applications [24] [10]. By integrating these computational strategies with experimental validation, researchers are now building a modular toolkit to rewrite the rules of synthetic biology, from functional protein modules to fully synthetic cellular systems [24].

Genetic Algorithms for Evolutionary Protein Optimization

Genetic Algorithms (GAs) belong to a class of evolutionary computation techniques inspired by biological evolution, including selection, crossover (recombination), and mutation. In protein design, GAs treat candidate amino acid sequences as "individuals" in a population. These individuals undergo iterative cycles of evaluation and modification, where sequences with superior properties (higher "fitness") are preferentially selected to produce offspring for subsequent generations [25]. This process enables an efficient exploration of the rugged fitness landscape of protein sequences, progressively driving populations toward regions with optimized characteristics.

Core Methodology and Experimental Protocol

The application of GAs to protein design follows a structured workflow. The process begins with the initialization of a population of candidate sequences. This initial pool can be generated randomly or seeded with known sequences to bootstrap the search. A critical component is the fitness function, which quantitatively assesses each sequence's performance against the design objective, such as aggregation propensity, binding affinity, or catalytic efficiency [25].

The algorithm then enters its main generational loop:

- Selection: Individuals are selected for reproduction based on their fitness, using methods like tournament or roulette-wheel selection. This mimics natural selection by giving fitter sequences a higher chance to pass on their genetic information.

- Crossover: Pairs of selected "parent" sequences are recombined to create "child" sequences. This operation exchanges subsequences between parents, potentially merging beneficial traits from different individuals.

- Mutation: Random changes are introduced into the child sequences with a low probability. In peptide design, this typically means substituting one amino acid for another at a given position. A study on decapeptides used a mutation rate of 1% per residue, effectively balancing the introduction of novel variations with the preservation of existing beneficial sequences [25].

This cycle of selection, crossover, and mutation repeats for a predetermined number of generations or until a convergence criterion is met. A key advantage of GAs is their ability to escape local optima through stochastic operations, making them particularly suited for complex, non-linear protein fitness landscapes.

Application in De Novo Peptide Design

Genetic algorithms have demonstrated remarkable success in designing short peptides with tunable aggregation propensities (AP). In one study, researchers aimed to evolve decapeptides (10-residue peptides) toward high AP, defined by the ratio of solvent-accessible surface area before and after coarse-grained molecular dynamics (CGMD) simulations [25]. The protocol was as follows:

- Initial Population: 1,000 randomly sampled decapeptide sequences.

- Fitness Evaluation: A trained Transformer-based deep learning model predicted the AP of each sequence, serving as a rapid proxy for computationally intensive CGMD simulations [25].

- Evolution Parameters: The GA was run for 500 generations with a mutation rate of 1% per residue.

- Results: The average AP of the population increased from 1.76 to 2.15 over the course of evolution. The algorithm successfully generated sequences like

WFLFFFLFFW, which was validated by CGMD simulations to form large cluster structures, confirming its high aggregation propensity [25].

Table 1: Performance Metrics of a Genetic Algorithm for De Novo Peptide Design

| Metric | Initial Population | Evolved Population (After 500 Generations) |

|---|---|---|

| Average Aggregation Propensity (AP) | 1.76 | 2.15 |

| Sample Evolved Sequence | N/A | WFLFFFLFFW |

CGMD-Validated AP for WFLFFFLFFW |

N/A | 2.24 |

| Key Driver of Evolution | N/A | Increased hydrophobicity in optimized sequences |

The following diagram illustrates the iterative workflow of a genetic algorithm as applied to protein sequence design:

Monte Carlo Tree Search for Strategic Protein Design

Monte Carlo Tree Search (MCTS) is a search algorithm renowned for its success in complex decision-making problems like computer Go. It combines the precision of tree search with the randomness of Monte Carlo simulations. In the context of protein design, particularly for challenges like inverse folding (finding a sequence that folds into a given structure), MCTS strategically explores the vast sequence space by building a search tree where each node represents a partial or complete amino acid sequence, and edges represent amino acid choices [26].

Advancements Beyond Autoregressive Methods

Traditional autoregressive methods for protein design generate sequences one amino acid at a time, left-to-right. This approach struggles with long-range dependencies in protein structures, where distant residues in the sequence must interact closely in the folded tertiary structure. The sequential nature of autoregressive generation makes it difficult to plan for these critical interactions from the outset [26].

To address this limitation, a novel framework called Monte Carlo Tree Diffusion with Multiple Experts (MCTD-ME) has been developed. MCTD-ME integrates masked diffusion models with MCTS to enable multi-token planning. Unlike autoregressive methods, this approach can jointly revise multiple amino acid positions during the search process. It uses "biophysical-fidelity-enhanced diffusion denoising" as its rollout engine, allowing for a more holistic and efficient exploration of the sequence space [26].

Core Methodology and Experimental Protocol

The MCTD-ME protocol enhances standard MCTS through several key innovations:

- Diffusion-Based Rollouts: Instead of random or heuristic-based rollouts, MCTD-ME employs a masked diffusion model to evaluate promising regions of the search space. This model is capable of denoising multiple masked positions simultaneously, effectively assessing the quality of partial sequences and proposing coordinated changes to several residues [26].

- Multiple Experts: The framework leverages an ensemble of "experts" (models) of varying capacities. This enriches the exploration strategy by providing diverse perspectives on sequence quality.

- pLDDT-based Masking Schedule: To guide the search, MCTD-ME uses the predicted local distance difference test (pLDDT)—a confidence metric from structure prediction networks like AlphaFold. This schedule strategically masks low-confidence regions of the developing sequence for revision while preserving reliable residues, focusing computational effort on the most uncertain and potentially problematic areas [26].

- Expert Selection Rule (PH-UCT-ME): A novel selection rule extends the standard Upper Confidence Bound for Trees (UCT) to handle multiple experts. This rule balances exploration and exploitation across the ensemble of models, guiding the search toward sequences that are not only optimal but also structurally plausible [26].

The performance of MCTD-ME was rigorously evaluated on standard inverse folding benchmarks such as CAMEO and the PDB. The framework demonstrated superior performance in both Sequence Recovery (AAR), which measures the accuracy of recapitulating a native sequence, and structural similarity (scTM), which assesses the similarity between the target structure and the structure folded by the designed sequence. The performance gains were especially pronounced for longer proteins, where long-range interactions are more critical and the search space is exponentially larger [26].

Table 2: Performance of MCTD-ME on Inverse Folding Tasks

| Benchmark | Key Metric | MCTD-ME Performance |

|---|---|---|

| CAMEO | Sequence Recovery (AAR) | Outperformed single-expert and unguided baselines |

| PDB | Structural Similarity (scTM) | Outperformed single-expert and unguided baselines |

| Long Proteins | AAR & scTM Gains | Increasing performance gains observed with protein length |

The logical flow of the MCTD-ME framework, illustrating the interaction between its core components, is shown below:

Multi-Objective Optimization for Balanced Protein Engineering

Proteins for real-world applications are rarely optimized for a single property. A therapeutic antibody must exhibit high target affinity while minimizing immunogenicity; an industrial enzyme needs high activity, stability at high temperatures, and solubility. These objectives are often in conflict—optimizing one can deteriorate another. Multi-Objective Optimization (MOO) addresses this challenge by seeking a set of solutions that represent optimal trade-offs, known as the Pareto front [27] [28].

In protein design, MOO frames sequence generation as a discrete sampling problem from a complex, high-dimensional space. The goal is to identify sequences that reside on the Pareto front, meaning no other sequence is superior in all desired properties simultaneously. This approach is crucial for practical protein engineering, where a balanced profile of properties is more valuable than excellence in a single, narrowly defined metric.

Frameworks and Methodologies

Two advanced frameworks exemplify the application of MOO in protein science: MosPro and CMOMO.

MosPro (Multi-objective Protein Sequence Design): This algorithm utilizes a pre-trained, differentiable machine learning model that predicts multiple properties from a sequence. MosPro shapes a probability distribution over the sequence space, assigning high mass to regions containing high-property sequences. It then efficiently samples from this constructed distribution. Furthermore, MosPro incorporates a Pareto optimization algorithm to explicitly propose sequences that are simultaneously optimized for multiple, potentially competing properties [27].

CMOMO (Constrained Molecular Multi-objective Optimization): While developed for molecular optimization, CMOMO's principles are directly applicable to peptide and small protein design. It specifically addresses the common real-world scenario where, in addition to optimizing multiple properties, designs must satisfy hard drug-like constraints (e.g., synthesizability, absence of toxic substructures). CMOMO's innovation lies in its two-stage dynamic constraint handling strategy:

- Unconstrained Scenario: The algorithm first performs multi-objective optimization focusing solely on property enhancement, freely exploring the sequence space.

- Constrained Scenario: The search then shifts to balance property optimization with strict constraint satisfaction, identifying high-quality sequences that are also feasible for development [28].

This strategy effectively navigates the often narrow, disconnected, and irregular regions of the search space that contain feasible molecules [28].

Experimental Protocol and Evaluation

In practice, these frameworks have been validated on complex design tasks. For example, MosPro was evaluated on experimental fitness landscapes, where it successfully generated sequences that optimally traded off multiple desiderata, demonstrating the "unparalleled potential of generative ML for efficient and controllable design of functional proteins" [27].

CMOMO was benchmarked against other state-of-the-art methods. In one task involving the optimization of inhibitors for the glycogen synthase kinase-3 (GSK3) target, CMOMO demonstrated a two-fold improvement in success rate. It successfully identified molecules with favorable bioactivity, drug-likeness, synthetic accessibility, and adherence to structural constraints [28]. The table below summarizes the capabilities of these two frameworks.

Table 3: Comparison of Multi-Objective Optimization Frameworks

| Framework | Core Approach | Key Feature | Validated Application |

|---|---|---|---|

| MosPro | Pareto-optimal sampling from a learned distribution over sequences | Explicitly trades off multiple, competing protein properties | Design of functional proteins on experimental fitness landscapes [27] |

| CMOMO | Two-stage dynamic optimization with latent space evolution | Balances multiple property optimization with hard drug-like constraints | GSK3 inhibitor optimization, achieving 2x success rate [28] |

The following workflow diagram captures the dynamic two-stage process of the CMOMO framework, which can be adapted for constrained protein design:

The experimental and computational protocols outlined in this whitepaper rely on a suite of key software tools, databases, and analytical methods. The following table details these essential "research reagents" for scientists seeking to implement these core architectures.

Table 4: Research Reagent Solutions for Algorithmic Protein Design

| Tool/Resource | Type | Function in Protein Design |

|---|---|---|

| Rosetta Software Suite | Software Framework | Provides physics-based energy functions and flexible docking protocols (e.g., RosettaLigand, REvoLd) for evaluating protein structures and interactions [7]. |

| Enamine REAL Space | Chemical Database | An ultra-large make-on-demand combinatorial library of billions of synthesizable compounds; used as a search space for evolutionary algorithms like REvoLd [7]. |

| Transformer-based AP Predictor | Deep Learning Model | A self-attention-based network that predicts peptide aggregation propensity (AP), serving as a fast proxy for coarse-grained molecular dynamics simulations [25]. |

| Coarse-Grained Molecular Dynamics (CGMD) | Simulation Method | Uses simplified molecular models to simulate peptide aggregation behavior over time, providing ground-truth data for training predictors or validating designs [25]. |

| pLDDT (from AlphaFold) | Confidence Metric | A per-residue local confidence score; used in frameworks like MCTD-ME to guide search algorithms toward refining low-confidence regions [26]. |

| Latent Vector Fragmentation (VFER) | Algorithmic Strategy | An evolutionary reproduction strategy that operates in a continuous latent space to efficiently generate promising new molecular structures [28]. |