Evolutionary Algorithms in Protein Folding: From Sequence to Functional Structure

This article explores the pivotal role of evolutionary algorithms (EAs) in tackling the complex challenge of protein folding and design.

Evolutionary Algorithms in Protein Folding: From Sequence to Functional Structure

Abstract

This article explores the pivotal role of evolutionary algorithms (EAs) in tackling the complex challenge of protein folding and design. Aimed at researchers and drug development professionals, it details how EAs, inspired by natural selection, efficiently navigate the vast conformational space of proteins. The content covers foundational principles, specific methodologies for protein optimization, advanced multi-objective and troubleshooting techniques, and the critical validation of EA-generated models against experimental data and AI-based predictions. By synthesizing these aspects, the article provides a comprehensive overview of how EAs enable the design of novel proteins and the discovery of stable folds, with significant implications for therapeutic development and understanding evolutionary biology.

The Evolutionary Blueprint: Core Principles of EAs for Protein Folding

Defining the Protein Folding Problem and the Conformational Search Space

The protein folding problem represents one of the most significant challenges in modern computational biology and biophysics. At its core, this problem questions how a protein's one-dimensional amino acid sequence dictates its specific three-dimensional atomic structure, which in turn determines its biological function [1]. This inquiry has profound implications for drug discovery, as the ability to predict protein structure from sequence alone could dramatically accelerate the identification of therapeutic targets and the design of novel drugs. For researchers and drug development professionals, understanding both the nature of this problem and the computational methods being developed to solve it is crucial for advancing structural biology applications in medicine. The challenge is magnified by the astronomical size of the conformational search space—the vast landscape of possible shapes any given protein chain could potentially adopt before settling into its functional, native structure. This guide provides an in-depth technical examination of the protein folding problem, with particular focus on how evolutionary algorithms are being leveraged to navigate the complex conformational search space of proteins, offering powerful solutions where traditional computational methods often struggle.

Defining the Protein Folding Problem

The Threefold Nature of the Problem

The protein folding problem is conceptually divided into three closely related puzzles that address different aspects of the folding phenomenon. Table 1 summarizes these interconnected problems and their central questions.

Table 1: The Three Components of the Protein Folding Problem

| Component | Central Question | Research Focus |

|---|---|---|

| The Folding Code | What balance of interatomic forces dictates the native structure for a given amino acid sequence? | Thermodynamic principles and molecular forces |

| Structure Prediction | How can we predict a protein's native structure from its amino acid sequence? | Computational methods and algorithms |

| The Folding Mechanism | What pathways do proteins use to fold so quickly? | Folding kinetics and pathways |

The foundational principle underlying the folding problem is Anfinsen's thermodynamic hypothesis, which posits that a protein's native structure represents its thermodynamically most stable state under physiological conditions, determined solely by its amino acid sequence [1]. This principle implies that evolution acts on amino acid sequences, while the folding process itself is governed by the laws of physical chemistry. However, notable exceptions exist, including kinetically trapped proteins like insulin and α-lytic protease, where the biologically active form is not the thermodynamic ground state [1].

The Forces Driving Protein Folding

A critical debate in understanding the folding code concerns whether protein stability emerges from one dominant driving force or a delicate balance of many small interactions. While native proteins typically maintain only 5-10 kcal/mol stability over their denatured states—requiring that no intermolecular force be entirely neglected—substantial evidence points to hydrophobic interactions playing a major role [1]. Key observations supporting this view include: the presence of hydrophobic cores in virtually all globular proteins; model compound studies showing significant favorable free energy changes (1-2 kcal/mol) when hydrophobic side chains transfer from water to oil-like media; and the demonstration that sequences retaining only correct hydrophobic and polar patterning often fold to expected native states without explicit design of packing, charges, or hydrogen bonding [1]. Nevertheless, hydrogen bonding, electrostatic interactions, and van der Waals forces all contribute significantly to stabilizing specific native structures.

The Conformational Search Space

The Vastness of Protein Sequence and Structure Space

The conceptual challenge of protein folding becomes quantitatively apparent when examining the scale of the conformational search space. Proteins are molecular sentences written with an alphabet of 20 amino acids, with many functional proteins exceeding 1000 residues in length [2]. This creates a search space of 20ⁿ possible sequences for a protein of length n, an astronomically large number for even small proteins. Within this space, most random amino acid sequences would be unstable and non-functional, creating what researchers describe as "a few tiny islands within a vast sea of invalidity" [2]. This archipelago metaphor powerfully illustrates that naturally evolved proteins occupy only a minute fraction of possible functional sequences, with the remaining islands representing potential functional proteins that either went extinct or never evolved through natural selection.

The Hierarchy of Protein Structure

Protein structure is organized hierarchically, which helps constrain the conformational search problem by defining discrete levels of organization. Table 2 outlines the four hierarchical levels of protein structure organization.

Table 2: Hierarchical Organization of Protein Structure

| Structural Level | Description | Key Features |

|---|---|---|

| Primary Structure | Linear sequence of amino acids | Encoded in DNA; determines higher-order structure |

| Secondary Structure | Local structural elements | α-helices and β-strands stabilized by hydrogen bonding |

| Tertiary Structure | Overall 3D structure of a single chain | Folding of secondary elements into globular domains |

| Quaternary Structure | Assembly of multiple chains | Functional multi-subunit complexes |

This hierarchical organization reveals that proteins employ a limited repertoire of structural motifs. Structural classification databases like CATH and SCOP have identified approximately 1,200-1,400 distinct protein folds in nature, suggesting strong evolutionary constraints on protein structure space [3] [4]. Secondary structures themselves are substantially stabilized by chain compactness, an indirect consequence of the hydrophobic driving force for collapse [1]. Like airport security lines, helical and sheet configurations represent some of the only regular ways to pack a linear chain into a tight space.

The Challenge of Fold Switching and Dynamics

The complexity of the conformational landscape is further compounded by proteins that defy the one-sequence-one-structure paradigm. An increasing number of proteins have been shown to remodel their secondary and tertiary structures in response to cellular stimuli, a phenomenon known as fold switching [5]. These metamorphic proteins represent a particular challenge for structure prediction algorithms, as they transition between distinct stable structures to modulate biological functions—including suppressing human innate immunity during SARS-CoV-2 infection, controlling bacterial virulence gene expression, and maintaining cyanobacterial circadian rhythms [5]. State-of-the-art algorithms like AlphaFold2 typically predict only one conformation for 92% of known dual-folding proteins, often failing to identify the functionally critical alternative folds [5]. This limitation stems from the reliance of these algorithms on coevolutionary signals, which may be masked when analyzing diverse protein families.

Evolutionary Algorithms: Principles and Applications

Fundamental Concepts of Evolutionary Algorithms

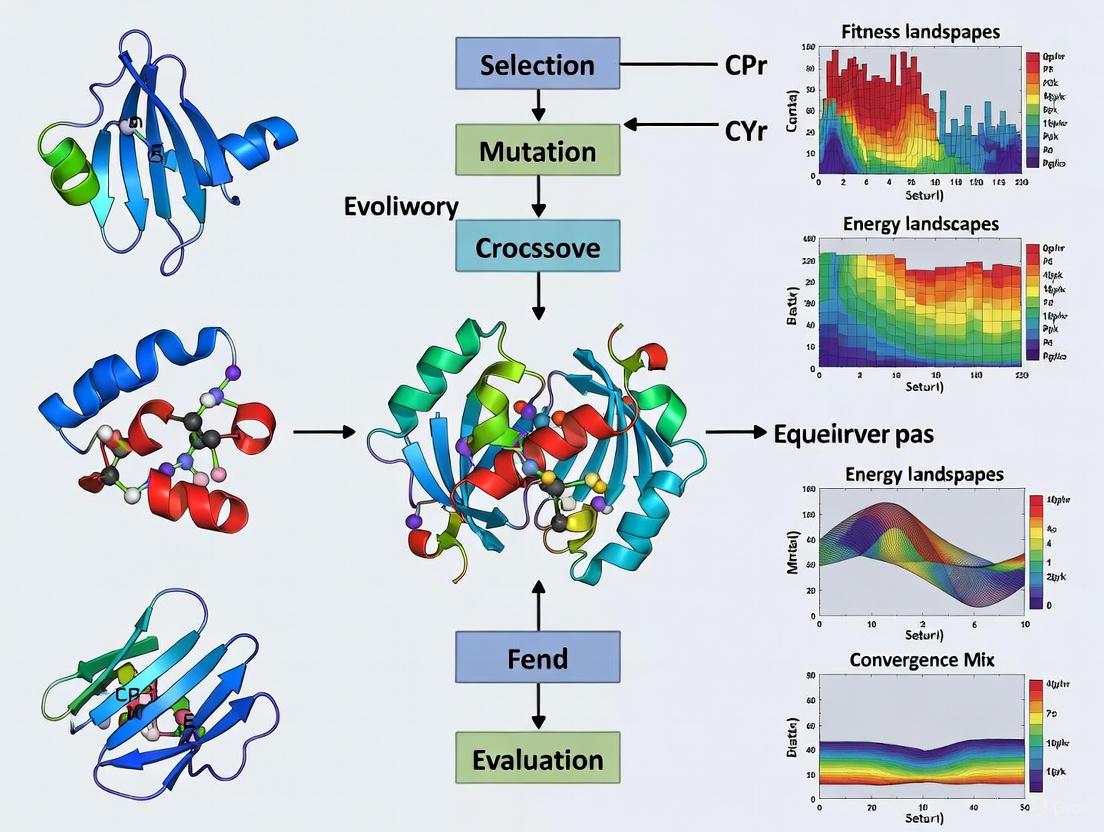

Evolutionary algorithms represent a family of population-based optimization techniques inspired by biological evolution. These metaheuristics imitate essential mechanisms of natural selection—reproduction, mutation, recombination, and selection—to solve complex optimization problems for which traditional methods are inadequate [6]. In the context of protein folding, candidate solutions (potential protein structures) play the role of individuals in a population, with a fitness function evaluating how well each structure matches experimental data or physical constraints. The general workflow of an evolutionary algorithm follows a well-defined cycle, illustrated in Diagram 1 below.

Diagram 1: Evolutionary Algorithm Workflow. This flowchart illustrates the iterative process of evolutionary algorithms, beginning with population initialization and proceeding through fitness evaluation, selection, genetic operations, and replacement until convergence criteria are met.

The power of evolutionary algorithms lies in their ability to efficiently explore vast, complex search spaces without requiring gradient information or smooth landscapes. Unlike gradient-based optimization methods that follow a single path downhill and frequently become trapped in local optima, evolutionary algorithms maintain a population of diverse solutions that can collectively "jump" between different regions of the fitness landscape [7]. This makes them particularly suited for protein folding, where the energy landscape is characterized by numerous local minima and a complex funnelling topography.

Types of Evolutionary Algorithms in Protein Research

Several specialized variants of evolutionary algorithms have been developed, each with particular strengths for different aspects of protein research. Table 3 compares the major evolutionary algorithm types relevant to protein folding studies.

Table 3: Evolutionary Algorithm Types for Protein Folding Research

| Algorithm Type | Representation | Key Operators | Protein Applications |

|---|---|---|---|

| Genetic Algorithms (GAs) | Strings of numbers (binary or real-valued) | Selection, crossover, mutation | Sequence optimization, conformational sampling |

| Genetic Programming (GP) | Computer programs | Program structure evolution, subtree crossover | Rule-based folding simulations, analytical models |

| Evolution Strategies (ES) | Vectors of real numbers | Self-adaptive mutation, deterministic selection | Continuous parameter optimization, force field tuning |

| Differential Evolution (DE) | Real-valued vectors | Differential mutation, crossover | Numerical optimization of energy functions |

| Neuroevolution | Artificial neural networks | Topology and weight evolution | Structure prediction networks, potential functions |

The theoretical foundation for evolutionary algorithms is partially established by the No Free Lunch theorem, which states that all optimization strategies are equally effective when considering all possible problems [6]. This implies that successful application of evolutionary algorithms to protein folding requires incorporating problem-specific knowledge, either through specialized genetic representations, tailored genetic operators, or hybrid approaches that combine evolutionary search with local optimization methods.

Evolutionary Algorithms for Protein Structure Prediction

Addressing the Limitations of Machine Learning

While machine learning approaches like AlphaFold2 have demonstrated remarkable success in protein structure prediction, they face fundamental limitations in exploring novel regions of protein sequence space. ML models are ultimately constrained by their training data, which is restricted to the "archipelago of extant functional proteins" [2]. This limitation becomes particularly significant when attempting to predict or design proteins that diverge significantly from natural sequences, including fold-switching proteins that adopt multiple stable structures [5]. Evolutionary algorithms offer complementary strengths by employing generative approaches that can explore beyond the constraints of existing protein databases. The explainable nature of evolutionary algorithms represents another significant advantage, as the decisions produced by these systems are often more comprehensible to human researchers compared to the "black box" nature of complex neural networks [2].

EASME: Evolutionary Algorithms Simulating Molecular Evolution

A specialized framework called Evolutionary Algorithms Simulating Molecular Evolution has recently emerged to address the particular challenges of protein sequence and structure space exploration [2]. EASME employs evolutionary algorithms with biologically realistic DNA string representations, molecular-level bioinformatics, and structure-informed fitness functions to expand the set of functional proteins beyond naturally occurring sequences. This approach can operate in two distinct modes:

- Unknown to Known: Evolving random sequences toward known consensus sequences to reconstruct evolutionary intermediates that may have gone extinct.

- Known to Unknown: Forward-evolving known proteins toward desired phenotypic characteristics, effectively implementing a "fast forward" button on evolution to discover novel functional proteins.

The EASME framework leverages increasing computational power to simulate evolving biochemical systems with unprecedented biological realism, enabling researchers to model protein-protein co-evolution across networks of discrete molecular interactions [2].

The ACE Methodology for Fold-Switching Proteins

To address the particular challenge of fold-switching proteins, researchers have developed the Alternative Contact Enhancement method specifically to detect coevolutionary signatures of alternative conformations [5]. This methodology employs an innovative approach to multiple sequence alignment analysis that systematically searches for evolutionary signals of structural heterogeneity. The workflow, depicted in Diagram 2, has successfully revealed coevolution of amino acid pairs corresponding to both conformations in 56 out of 56 tested fold-switching proteins from distinct families [5].

Diagram 2: ACE Methodology for Detecting Dual-Fold Coevolution. This workflow illustrates the Alternative Contact Enhancement approach for identifying evolutionary signatures of fold-switching proteins through systematic analysis of multiple sequence alignments at varying levels of sequence diversity.

The ACE methodology represents a significant advancement because it successfully identifies coevolutionary signals that conventional methods miss. When applied to known fold-switching proteins, ACE enhanced the prediction of contacts uniquely corresponding to alternative conformations by mean/median increases of 201%/187%, while increasing correctly predicted contacts for all 56 tested proteins by mean/median increases of 111%/107% [5]. This performance demonstrates that evolutionary algorithms can extract meaningful biological signals that remain hidden to standard analysis techniques.

Experimental Protocols and Research Tools

Key Experimental Methodologies

Research at the intersection of evolutionary algorithms and protein folding relies on both computational and experimental methodologies. For the computational identification and validation of fold-switching proteins, the following protocol has proven effective:

- Multiple Sequence Alignment Generation: Collect deep multiple sequence alignments using the query sequence as a template, incorporating diverse homologous sequences from public databases.

- MSA Pruning Strategy: Systematically prune the deep MSA to create successively shallower alignments with sequences increasingly identical to the query, enhancing sensitivity to alternative conformations.

- Coevolutionary Analysis: Apply Markov Random Fields (implemented in GREMLIN) and language models (MSA Transformer) to each MSA to infer coevolved amino acid pairs.

- Contact Map Integration: Superimpose predictions from all nested MSAs onto a single contact map, categorizing contacts as dominant fold, alternative fold, common to both folds, or unobserved.

- Density-Based Filtering: Remove noisy predictions while preserving legitimate contacts using clustering-based filtering algorithms.

- Experimental Validation: Verify computational predictions through experimental structural biology techniques, including X-ray crystallography, NMR spectroscopy, or cryo-electron microscopy.

This methodology successfully identified dual-fold coevolution in 56 out of 56 tested fold-switching proteins and enabled the development of a blind prediction pipeline that correctly identified 13 out of 56 fold-switching proteins with a false-positive rate of 0 out of 181 [5].

Table 4: Key Research Resources for Protein Folding Studies with Evolutionary Algorithms

| Resource Category | Specific Tools | Function in Research |

|---|---|---|

| Structure Prediction | AlphaFold2, RoseTTAFold, trRosetta, EVCouplings | Predict protein structures from sequence using coevolution and deep learning |

| Coevolution Analysis | GREMLIN, MSA Transformer, plmDCA | Identify evolutionarily coupled residues from multiple sequence alignments |

| Structure Databases | Protein Data Bank (PDB), CATH, SCOP, ECOD | Classify and provide reference protein structures for validation |

| Sequence Databases | UniProt, Pfam, InterPro | Provide homologous sequences for multiple sequence alignments |

| Molecular Visualization | Mol*, PyMOL, ChimeraX | Visualize and analyze protein structures and conformational changes |

| Force Fields | CHARMM, AMBER, OPLS | Provide energy functions for physics-based folding simulations |

| Evolutionary Algorithms | DEAP, ECJ, OpenBEAM | Implement evolutionary optimization for protein design and folding |

This toolkit enables researchers to implement integrated computational-experimental pipelines for protein folding research. The resources listed facilitate everything from initial sequence analysis and coevolution detection to structure prediction, molecular visualization, and experimental validation.

The protein folding problem remains a central challenge in structural biology, with profound implications for understanding biological function and accelerating drug discovery. The conformational search space that must be navigated to solve this problem is astronomically large, characterized by a complex landscape of stable, metastable, and unstable structures. Evolutionary algorithms provide powerful methods for exploring this vast space, complementing the recent advances in machine learning by offering explainable, generative approaches that can venture beyond the constraints of naturally evolved protein sequences. Frameworks like EASME and methodologies like ACE demonstrate how evolutionary principles can be translated into computational tools that address fundamental limitations in current structure prediction pipelines. For researchers and drug development professionals, these approaches offer promising pathways to discover novel protein folds, engineer proteins with customized functions, and ultimately expand our understanding of the sequence-structure-function relationship that underpins all of structural biology.

Genetic Algorithms as a Search Heuristic for Protein Landscapes

The prediction and design of protein structures represent one of the most complex computational challenges in modern biology. The fundamental problem can be framed as a search through an astronomically large conformational space. As noted by Levinthal in 1969, a typical-length protein could theoretically fold into 10³⁰⁰ possible configurations, a number so vast that exhaustive search would require longer than the age of the known universe [8]. This combinatorial explosion necessitates intelligent search heuristics, and genetic algorithms (GAs) have emerged as a powerful approach to navigate this complex landscape. Within the broader context of evolutionary algorithms for protein folding research, GAs simulate natural evolution by maintaining a population of candidate solutions that undergo selection, recombination, and mutation to progressively evolve toward improved solutions [9] [10]. This methodology is particularly well-suited to protein engineering because it mimics the very evolutionary processes that created proteins in nature, while enabling researchers to explore sequence and structural spaces far beyond what natural evolution has sampled.

The core challenge in protein folding stems from the fact that a protein's function is determined by its three-dimensional structure, which in turn depends on its linear amino acid sequence [8]. Genetic algorithms address this challenge by treating protein sequences or structures as individuals in a population that evolves toward optimal solutions based on fitness criteria such thermodynamic stability, specific functional properties, or structural similarity to a target fold. Unlike traditional optimization methods that may become trapped in local optima, GAs maintain population diversity, allowing them to explore multiple regions of the fitness landscape simultaneously [9] [11]. This makes them exceptionally well-suited for protein engineering applications where the relationship between sequence and function is often highly nonlinear and complex.

Foundations of Genetic Algorithms in Protein Engineering

Core Algorithmic Framework

Genetic algorithms belong to the broader class of evolutionary algorithms that emulate natural selection processes. When applied to protein landscapes, the basic GA cycle consists of several key components that work together to evolve solutions to complex optimization problems. The process begins with the initialization of a population of candidate solutions, which may represent protein sequences, structural conformations, or refolding conditions. Each candidate solution is evaluated using a fitness function that quantifies how well it solves the problem at hand. Selection then prioritizes higher-fitness individuals as parents for the next generation. Genetic operators including crossover (recombination) and mutation introduce variation by creating new candidate solutions from the selected parents. Finally, replacement strategies determine how the new offspring incorporate into the population for the next generational cycle [9] [10] [11].

The power of this approach lies in its ability to efficiently explore high-dimensional search spaces through parallel evaluation of multiple solutions while simultaneously exploiting promising regions through selective pressure. Unlike gradient-based optimization methods that require smooth, continuous search spaces, GAs can handle discontinuous, multi-modal, and noisy fitness landscapes commonly encountered in protein folding and design problems. The population-based approach also makes GAs less susceptible to becoming trapped in local optima compared to single-solution search methods, though careful parameter tuning is still required to maintain the balance between exploration and exploitation throughout the evolutionary process [10].

Representation Strategies for Protein Landscapes

The representation of candidate solutions is a critical design choice that significantly impacts algorithm performance. For protein-related optimization, researchers have developed several effective representation strategies:

Sequence-based representation: Amino acid sequences are encoded as strings of characters or integers, with each position corresponding to one of the 20 standard amino acids. This representation is commonly used for sequence design and optimization problems [12] [11].

Lattice models: In protein structure prediction, simplified models like the Hydrophobic-Polar (HP) model represent protein conformations as self-avoiding walks on 2D or 3D lattices. The 3D Face-Centered Cubic (FCC) lattice is particularly valued for its high packing density and more realistic angular distributions compared to simple cubic lattices [10].

Real-value parameter encoding: For experimental optimization, such as refolding condition screening, parameters like pH, buffer concentrations, and additive concentrations can be encoded as real-valued vectors [9].

Regular expression patterns: In advanced applications like POETRegex, protein motifs are represented as regular expressions, providing flexible pattern matching capabilities for identifying functional peptide sequences [12].

Each representation offers distinct advantages for different protein engineering tasks. Lattice models dramatically reduce computational complexity while preserving essential physics of protein folding, making them valuable for fundamental studies of folding principles [10]. Sequence-based representations directly manipulate the genetic code of proteins, enabling both natural and unnatural sequence variations. The choice of representation typically involves trade-offs between biological realism, computational tractability, and alignment with the target application.

Key Methodologies and Experimental Protocols

Genetic Algorithm for Experimental Refolding Optimization

A notable application of genetic algorithms in protein engineering is the optimization of protein refolding conditions. A 2010 study demonstrated a comprehensive methodology for experimentally optimizing refolding yields using a multiobjective genetic algorithm [9]. The protocol addresses the critical bottleneck of refolding recombinant proteins from inclusion bodies, which has traditionally relied on extensive empirical screening.

Table 1: Search Space Parameters for Refolding Optimization GA

| Parameter/Substance Class | Minimum Value | Maximum Value | Units | Combination Rules |

|---|---|---|---|---|

| pH | 6.0 | 9.5 | - | - |

| Buffer Substances | 20 | 1250 | mM | No combination between different buffers |

| Salts (NaCl, KCl) | 0 | 350 | mM | NaCl and KCl can be combined |

| Additives (glycerol, PEG, arginine, glutamine, glycine) | 0 | 15 | % v/v or mM | Complex combination rules apply |

| Cofactors (Cu²⁺, Zn²⁺, Mg²⁺, Mn²⁺) | 0 | 5 | mM | No combination between different cofactors |

| Detergents (various classes) | 0 | 1500 | mM | No combination between different detergents |

| Redox Agents (DTT, TCEP, GSH/GSSG) | 0 | 10 | mM | Specific pairing rules for redox systems |

The experimental workflow begins with defining the search space based on literature review and database analysis (e.g., the REFOLD database), encompassing critical parameters known to influence refolding efficiency. The first generation consists of 22 randomly generated refolding conditions. Each condition is evaluated experimentally by diluting denatured protein into the respective refolding buffer and measuring the yield of properly folded, functional protein. The multiobjective optimization typically targets both refolding yield and protein activity, though cost factors can also be incorporated [9].

The genetic algorithm employs tournament selection to identify the best-performing conditions, which then serve as parents for the next generation through variation operators. Specifically, the algorithm uses simulated binary crossover with a distribution index of 10 and polynomial mutation with a distribution index of 20. This approach efficiently navigates the complex, multi-dimensional parameter space, achieving 74-100% refolding yields for four structurally distinct model proteins within a manageable number of experimental generations [9].

POETRegex: Genetic Programming for Peptide Discovery

The POETRegex algorithm represents an advanced application of evolutionary computation to peptide discovery and optimization. This approach uses genetic programming with regular expression-based representations to evolve models that predict protein function and generate novel functional peptides [12]. The methodology was successfully applied to discover peptides with enhanced sensitivity for Chemical Exchange Saturation Transfer (CEST) magnetic resonance imaging, achieving a 58% performance improvement over the gold-standard peptide [12].

The algorithm begins with a curated dataset of peptide sequences and their corresponding functional measurements. In the case of CEST MRI optimization, the training set contained 127 peptide sequences of 10-13 amino acids in length, with measured CEST contrast values. Individuals in the genetic programming population are represented as lists of regular expressions, which provide flexible pattern matching capabilities beyond simple sequence motifs [12].

The evolutionary process employs a steady-state genetic programming approach with tournament selection. Genetic operators include crossover (swapping regular expressions between parents), mutation (modifying existing regular expressions), and a shrink step to control bloat by removing less useful rules. A key enhancement in POETRegex is the incorporation of a weight adjustment step where regular expressions are weighted based on their significance, improving the model's predictive accuracy [12].

Table 2: Performance Comparison of Protein Optimization Algorithms

| Algorithm | Application Domain | Key Innovation | Performance Metrics |

|---|---|---|---|

| Standard GA with Multiobjective Optimization [9] | Experimental refolding condition optimization | Combines screening and optimization in a single process | 74-100% refolding yield for 4 model proteins |

| POETRegex [12] | Computational peptide discovery | Regular expression representation with weight adjustment | 58% performance increase over gold-standard peptide |

| EA with FCC Lattice [10] | Protein structure prediction | Combines lattice rotation, K-site move, and generalized pull move | Finds optimal conformations not found by previous EA approaches |

| In silico Panning [12] | Peptide inhibitor selection | Docking simulation combined with GA | Effective identification of peptide inhibitors |

Lattice-Based Protein Folding with Evolutionary Algorithms

For protein structure prediction, evolutionary algorithms have been successfully applied to lattice models, particularly the 3D Face-Centered Cubic (FCC) HP model. This approach combines several innovative local search techniques to enhance traditional evolutionary algorithms [10]:

Lattice Rotation for Crossover: This operator rotates substructures around specific pivot points during recombination, increasing the success rate of crossover operations while maintaining structural validity.

K-site Move for Mutation: The K-site move introduces localized structural changes by modifying a contiguous segment of K amino acids in the chain, providing a balance between local refinement and broader exploration.

Generalized Pull Move: An extension of the original pull move, this operator ensures connectivity while allowing individual amino acids to move to adjacent lattice positions, efficiently exploring conformational space while maintaining chain connectivity.

The fitness function for these algorithms typically minimizes the free energy of the conformation, which in the HP model corresponds to maximizing the number of hydrophobic-hydrophobic contacts while ensuring valid chain geometry. The FCC lattice is particularly advantageous because it provides higher packing density and more realistic angular distributions compared to simpler cubic lattices, better approximating real protein structures [10].

Table 3: Key Research Reagents and Computational Tools for GA-Based Protein Engineering

| Item | Function/Purpose | Example Applications |

|---|---|---|

| Refolding Buffer Components [9] | Create chemical environment promoting proper protein folding | Multiobjective GA refolding optimization |

| cDNA Display Proteolysis Materials [13] | High-throughput stability measurement enabling large-scale fitness evaluation | Mega-scale stability analysis for fitness evaluation |

| HP Lattice Model Framework [10] | Simplified representation of protein structures for computational folding studies | 3D FCC lattice protein folding simulations |

| POETRegex Software [12] | Genetic programming implementation for peptide discovery and optimization | CEST MRI contrast agent development |

| trRosetta Neural Network [14] | Provides gradient information for landscape-aware sequence design | Conformational landscape optimization |

| Directed Evolution Wet-Lab Equipment [11] | Traditional mutagenesis and screening infrastructure | Experimental validation of computationally designed variants |

Integration with Modern AI Approaches

While genetic algorithms provide powerful search capabilities for protein engineering, recent advances in artificial intelligence have created opportunities for synergistic combinations of approaches. Deep learning models like AlphaFold and trRosetta have revolutionized structure prediction by leveraging coevolutionary information and sophisticated neural network architectures [8] [14]. These AI systems can enhance genetic algorithms in several ways:

First, deep learning models can provide more accurate and efficient fitness evaluations, reducing the computational cost of assessing candidate solutions. For example, the trRosetta network can rapidly predict distance distributions for protein sequences, enabling landscape-aware design that explicitly considers alternative conformations [14]. This approach can create more funneled energy landscapes with fewer alternative minima compared to traditional energy-based design.

Second, gradient information from differentiable models can guide genetic operators toward more promising regions of the search space. The method of backpropagating gradients through structure prediction networks to input sequences enables direct optimization of sequences for target structures [14]. When combined with population-based genetic algorithms, this hybrid approach can leverage both gradient information and global search capabilities.

However, despite these advances, limitations remain. A 2025 case study highlighted significant deviations between AI-predicted and experimental structures for a two-domain protein, with positional differences exceeding 30 Å and an overall RMSD of 7.7 Å [15]. These discrepancies underscore the continued importance of experimental validation and the potential role of genetic algorithms in refining AI predictions through incorporation of experimental data.

Current Limitations and Future Directions

Despite their considerable success in protein engineering applications, genetic algorithms face several important limitations. The enormous size of protein sequence space remains a fundamental challenge—for a modest peptide of just 12 amino acids, there are 20¹² (over 4 trillion) possible sequences to explore [12]. While GAs are more efficient than random sampling, they still require substantial computational resources or experimental effort to navigate these vast spaces effectively.

Another significant challenge is the accuracy of fitness functions. Computational energy functions may not perfectly correlate with experimental stability or function, while experimental fitness evaluation can be time-consuming and expensive. Recent advances in high-throughput experimental methods, such as cDNA display proteolysis that can measure stability for up to 900,000 protein domains in a single week, are helping to address this bottleneck by providing large-scale experimental data for fitness evaluation [13].

Future developments in genetic algorithms for protein landscapes will likely focus on several key areas:

Tighter integration with deep learning: Using neural networks as surrogate models for fitness prediction can dramatically reduce the cost of fitness evaluation while maintaining accuracy [14] [16].

Multiobjective optimization: Most protein engineering problems involve balancing multiple competing objectives such as stability, activity, specificity, and expressibility. Advanced multiobjective GAs can efficiently navigate these trade-offs [9].

Adaptive operators: Genetic algorithms with self-adjusting parameters and operators that adapt to the search landscape can improve efficiency and solution quality.

Hybrid approaches: Combining the global search capabilities of GAs with local gradient-based optimization from differentiable models may offer the best of both worlds [14].

As these methodologies continue to evolve, genetic algorithms will remain an essential component of the protein engineer's toolkit, providing robust and flexible approaches to some of the most challenging optimization problems in computational biology and drug development.

Representing Protein Sequences and Structures for Evolutionary Optimization

The fundamental challenge in applying evolutionary algorithms (EAs) to protein science lies in effectively representing complex biological sequences and structures for computational optimization. Proteins, as the essential engines driving most metabolic processes, are sentences written with an alphabet of 20 amino acids, with many exceeding 1000 characters in length [2]. This creates a vast search space of possible proteins where most string permutations would be unstable and non-functional, existing as mere "islands" within a "sea of invalidity" [2]. Evolutionary optimization in this context aims to colonize new islands in this sea of invalidity by expanding the set of extant proteins through computational means.

The representation of protein sequences and structures serves as the critical bridge between biological reality and computational efficiency. How we choose to represent our data has a fundamental impact on our ability to subsequently extract information from them [17]. Machine learning promises to automatically determine efficient representations from large unstructured datasets, but empirical evidence suggests that seemingly minor changes to these models yield drastically different data representations that result in different biological interpretations [17]. This comprehensive technical guide examines current methodologies for representing protein sequences and structures specifically for evolutionary optimization frameworks, providing researchers with practical implementation strategies alongside theoretical foundations.

Representation Methods for Protein Sequences

Traditional Representation Schemes

Traditional representation methods for protein sequences in evolutionary algorithms often rely on discrete encoding strategies that facilitate the application of genetic operators. The HP (hydrophobic-hydrophilic) model represents a foundational approach where amino acids are classified based on their hydrophobicity, enabling simplified lattice-based folding simulations [18]. This abstraction reduces the 20-letter amino acid alphabet to a binary or ternary code, making computational tractability possible for structure prediction problems. The simplicity of this representation allows evolutionary algorithms to efficiently explore conformational space, though at the cost of biological fidelity.

Direct one-hot encoding of each amino acid in the sequence provides another straightforward representation scheme where each amino acid position is represented as a 20-dimensional binary vector [17]. While this approach preserves the full chemical diversity of amino acids, it lacks evolutionary context and structural information, potentially limiting the effectiveness of evolutionary search processes. This representation often serves as a baseline for more sophisticated embedding approaches and can be directly utilized in genetic algorithm representations with appropriate variation operators.

Learned Representation Embeddings

Contemporary representation learning approaches dispense with hand-crafted features and instead seek highly non-linear relations directly from sequence data [17]. Inspired by developments in natural language processing, protein language models aim to reproduce their own input, either by predicting the next character given the sequence observed so far, or by predicting the entire sequence from a partially obscured input sequence [17]. The representation learned by such models is typically a sequence of local representations (r1, r2, ..., rL), each corresponding to one amino acid in the input sequence (s1, s2, ..., sL).

Table 1: Comparison of Global Representation Aggregation Methods

| Method | Mechanism | Advantages | Limitations |

|---|---|---|---|

| Attention-based Averaging | Learned weights average local representations | Preserves some global signals | Potential information loss |

| Concatenation (Concat) | Direct concatenation with padding | No aggregation information loss | Limited by fixed representation size |

| Bottleneck Autoencoder | Learned aggregation through compression | Optimized for global structure | Requires specialized architecture |

Research demonstrates that constructing a global representation as a simple average of local representations is suboptimal for downstream tasks [17]. More effective strategies include concatenation approaches that preserve all information stored in local representations (though requiring dimensional restrictions) and bottleneck autoencoders that learn optimal aggregation operations during pre-training [17]. The bottleneck strategy, where global representation is learned, clearly outperforms other approaches as it encourages the model to find more global structure in the representations during pre-training.

Representation Geometry and Evolutionary Search

The geometric properties of representation space significantly influence the effectiveness of evolutionary optimization. Representations that preserve evolutionary relationships between sequences create smoother fitness landscapes more amenable to evolutionary search. In transfer learning settings, the quality of a representation is judged by predictive performance on downstream tasks, which similarly applies to fitness evaluation in evolutionary algorithms [17].

A critical consideration is the risk of overfitted representations when fine-tuning embedding models for specific tasks. Studies show that fine-tuning a representation to a specific task often reduces test performance, as it increases the number of free parameters substantially [17]. This has direct implications for evolutionary algorithms, where fixed embedding models during task-training may provide more robust performance than continuously adapted representations, particularly with limited fitness evaluations.

Representation Methods for Protein Structures

Contact-Based Representations

Contact maps provide a fundamental representation for protein structures in evolutionary algorithms. The Size-Modified Contact Order (SMCO) offers a quantitative representation that captures the non-locality of intermolecular contacts in proteins [19]. Calculated as ( \text{SMCO} = \frac{100}{L} \cdot \frac{1}{Nc} \sum{i,j>i} |i-j| ), where L is the total number of amino acids, Nc is the number of contacts, and |i-j| is the sequence separation between residues i and j forming a native contact, this representation correlates well with folding times (correlation coefficient of 0.74) [19]. Evolutionary algorithms can leverage this representation to optimize proteins for folding speed, with research indicating an overall decrease in SMCO during natural evolution between 3.8 and 1.5 billion years ago, suggesting evolutionary optimization for rapid folding [19].

Tightness metrics that measure shortest paths in the network of protein contacts provide complementary structural representations [19]. These representations capture the local interconnectedness of residue contacts, offering evolutionary algorithms a multi-faceted view of structural constraints beyond simple contact maps. The evolutionary trend in tightness parallel to SMCO suggests these representations capture fundamental structural determinants of foldability.

Coordinate-Based Representations

Direct atomic coordinate representations provide high-fidelity structural descriptions but present challenges for evolutionary algorithms due to their high dimensionality and continuous nature. The USPEX evolutionary algorithm employs coordinate representations with specialized variation operators for protein structure prediction, performing protein structure relaxation and energy calculations using molecular mechanics force fields like those implemented in Tinker and Rosetta [20]. This approach has demonstrated capability in predicting tertiary structures of proteins up to 100 residues with high accuracy, finding structures with comparable or lower energy than Rosetta's Abinitio approach [20].

Table 2: Force Field Performance in Evolutionary Structure Prediction

| Force Field | Implementation | Strengths | Accuracy Limitations |

|---|---|---|---|

| Amber/Charmm/Oplsaal | Tinker | Physics-based parameters | Limited blind prediction accuracy |

| REF2015 | Rosetta | Knowledge-based potentials | Dependent on fragment libraries |

| Custom Fitness Functions | EASME | Direct biological measurements | Requires experimental validation |

A significant finding from evolutionary structure prediction efforts is that existing force fields remain insufficiently accurate for blind prediction of protein structures without further experimental verification, despite algorithmic capabilities to find deep energy minima [20]. This highlights the critical importance of representation fidelity in evolutionary optimization.

Evolutionary Algorithm Frameworks for Protein Optimization

EASME: Evolutionary Algorithms Simulating Molecular Evolution

The EASME framework represents a specialized approach to protein optimization that employs evolutionary algorithms with DNA string representations, biologically accurate molecular evolution, and bioinformatics-informed fitness functions [2]. This methodology encodes the full complexity of molecular evolution rather than abstracting it away, modeling actual DNA chromosomes encoding actual genes and their downstream proteins in the context of realistic fitness evaluations and structure predictions [2].

EASME operates through two primary modalities:

- Unknown to known: Evolving random sequences toward known consensus sequences to reconstruct sequence clusters that went extinct during natural evolution.

- Known to unknown: Forward-evolving known entities into the future by implementing selection regimens that drive toward desired phenotypic characteristics.

This framework leverages the explainability advantages of evolutionary computation, where decisions produced by the algorithm are often more comprehensible to human operators compared to black-box machine learning approaches [2].

Genetic Algorithm-Based Redesign Tools

GAOptimizer exemplifies applied evolutionary algorithms for protein redesign, implementing a genetic algorithm-based approach for optimizing mutation combinations to engineer diverse enzymes [21]. This tool requires two key input parameters influencing mutation selection: fitness functions and sequence libraries. Both stability-based and non-stability-based scores can serve as fitness functions, determining whether selected mutations are favorable in the design process [21].

Sequence libraries define the sequence space for selecting mutation candidates, constraining the evolutionary search to functionally plausible regions. Functional analyses of enzymes designed using GAOptimizer demonstrate the ability to produce proteins exhibiting superior properties to their native counterparts with high success rates [21], validating the practical utility of evolutionary approaches for protein engineering.

Deep-Learning Enhanced Evolutionary Frameworks

Hybrid approaches that infuse evolutionary algorithms with deep learning capabilities demonstrate enhanced performance for protein optimization. The insights-infused framework utilizes deep neural networks to learn evolutionary processes of EAs and extract useful synthesis insights from evolutionary data [22]. These insights guide the algorithm to evolve in better directions not only on original problems but also improve performance on new problems through transfer learning capabilities.

These frameworks employ specialized encoding methods to handle variable-length protein representations, often using padding strategies to standardize input dimensions for neural network processing [22]. The resulting systems demonstrate the ability to leverage abundant data generated during evolution that would otherwise be discarded, extracting valuable patterns that enhance optimization effectiveness and efficiency.

Implementation Methodologies

Workflow for Evolutionary Protein Optimization

The following diagram illustrates the comprehensive workflow for evolutionary protein optimization, integrating representation learning with evolutionary algorithms:

Experimental Protocols

Protocol for Learned Representation Construction

Data Collection: Extract protein sequences from diverse databases such as Pfam [17] or the NCBI Protein database [23]. Ensure representation across different protein families and functional classes.

Pre-training Setup: Configure embedding models (LSTM, Transformer, or Dilated Resnet) with appropriate hyperparameters. Use attention-based mechanisms for local representation extraction [17].

Global Representation Learning: Implement bottleneck autoencoder strategy rather than simple averaging. Train models to reconstruct inputs while forcing information through low-dimensional bottlenecks [17].

Representation Validation: Evaluate representations on downstream tasks including fold classification, fluorescence prediction, and stability prediction. Use cross-validation to prevent overfitting during evaluation [17].

Embedding Fixation: Fix embedding model parameters before evolutionary optimization to prevent overfitting during task-specific evolution [17].

Protocol for Evolutionary Optimization with EASME

Representation Initialization: Initialize population using either random sequences ("unknown to known" approach) or known protein sequences ("known to unknown" approach) [2].

Fitness Function Definition: Implement biologically-informed fitness functions incorporating structural stability predictions, functional constraints, and evolutionary conservation patterns [2].

Variation Operator Application: Apply specialized variation operators for protein sequences, including point mutations, recombination events, and domain shuffling operations while maintaining structural plausibility [20].

Selection and Iteration: Perform tournament selection based on multi-objective fitness evaluation, preserving diversity through niching techniques or Pareto optimization [21].

Validation and Iteration: Experimental validation of predicted proteins through wet lab characterization, with results feedback to refine fitness functions and variation operators [2].

Table 3: Essential Resources for Evolutionary Protein Optimization

| Resource | Function | Access |

|---|---|---|

| RCSB Protein Data Bank | Source of experimental protein structures for training and validation | https://www.rcsb.org/ [24] |

| NCBI Protein Database | Comprehensive sequence database for representation learning | https://www.ncbi.nlm.nih.gov/protein/ [23] |

| Pfam Database | Curated protein families for pre-training representations | https://pfam.xfam.org/ [17] |

| USPEX Algorithm | Evolutionary algorithm for protein structure prediction | Implementation described in literature [20] |

| GAOptimizer | Genetic algorithm-based protein redesign tool | Open-source implementation available [21] |

| Tinker/Rosetta | Molecular modeling packages for fitness evaluation | Academic licensing available [20] |

Effective representation of protein sequences and structures constitutes the foundation for successful evolutionary optimization in protein engineering. The integration of learned representations from large sequence databases with evolutionary algorithms incorporating biological constraints creates a powerful framework for exploring protein sequence space beyond natural boundaries. Current methodologies demonstrate robust capabilities in predicting protein structures, optimizing existing enzymes, and generating novel protein sequences with desired properties.

The emerging field of Evolutionary Algorithms Simulating Molecular Evolution (EASME) represents a promising direction that embraces biological complexity rather than abstracting it away [2]. As computing power continues to increase and experimental validation methods improve, the integration of more realistic fitness functions, more sophisticated representation learning, and more biologically-plausible variation operators will further enhance our ability to engineer proteins for biomedical and industrial applications. The explainable nature of evolutionary approaches provides additional value for scientific discovery, offering insights into sequence-structure-function relationships that purely black-box approaches may obscure.

The future of evolutionary protein optimization lies in tighter integration between computational prediction and experimental validation, creating feedback loops that continuously improve representation quality and evolutionary search efficiency. By leveraging the fundamental principles of evolution that shaped natural proteins, researchers can now harness these processes to design the next generation of protein-based therapeutics, enzymes, and biomaterials.

Evolutionary algorithms (EAs) have emerged as powerful computational tools for tackling the complex problem of protein structure prediction (PSP). By mimicking natural selection, these algorithms explore the vast conformational space of polypeptide chains to identify low-energy, native-like structures. This whitepaper provides an in-depth technical examination of the three core operators—mutation, crossover, and selection pressure—within the context of protein folding research. We detail their mechanistic implementation, present quantitative analyses of their performance, and outline standardized experimental protocols. Aimed at researchers and drug development professionals, this guide serves as a foundational resource for understanding and applying these bio-inspired optimization strategies to elucidate protein structure and function.

The "protein folding problem"—predicting a protein's three-dimensional native structure solely from its amino acid sequence—remains a cornerstone challenge in structural biology and drug discovery [25]. The conformational space is astronomically large; even for a small protein, the number of possible backbone configurations can exceed 10^50, making exhaustive search strategies infeasible [26] [27]. Evolutionary algorithms (EAs) are a class of population-based, metaheuristic optimization techniques inspired by biological evolution that are particularly well-suited for navigating such complex landscapes.

In the EA framework for protein folding, a population of candidate protein conformations is evolved over successive generations. Each individual in the population represents a potential structural solution. The quality of a conformation is evaluated by a fitness function, typically a physics-based or knowledge-based energy function that approximates the thermodynamic stability of the fold. The algorithm proceeds iteratively by applying the genetic operators of selection, crossover, and mutation to guide the population toward regions of the conformational space associated with low energy and high stability [28] [26]. The following diagram illustrates this core workflow.

Core Operator 1: Mutation

Mechanistic Role and Implementation

The mutation operator introduces stochastic, small-scale alterations to individual conformations, thereby injecting novelty into the population and preventing premature convergence at local energy minima. It serves as a crucial mechanism for maintaining population diversity and exploring the immediate neighborhood of existing solutions [25] [27].

In protein-folding EAs, mutation is strategically applied to degrees of freedom that define the protein's conformation. The most common implementations include:

- Torsion Angle Mutations: The protein backbone is defined by a sequence of phi (φ) and psi (ψ) torsion angles. A mutation randomly selects one or more of these angles and perturbs their values within allowed Ramachandran regions [25]. This induces a local change in the backbone's trajectory.

- Side-Chain Angle Mutations: For all-atom models, the chi (χ) torsion angles of side chains are mutated to alter rotameric states, optimizing side-chain packing without drastically perturbing the backbone [25].

- Move Sets in Lattice Models: In simplified lattice representations, a mutation might change a specific move instruction (e.g., from "left" to "up") in the self-avoiding walk, effectively kinking the chain at a particular position [26].

A significant advancement is the Self-Organizing Mutation Operator (SOMO), which dynamically adapts the mutation rate during execution. Instead of a fixed rate, SOMO starts with an initial value and increases it uniformly at each generation until an upper limit is reached. This self-configuration helps balance exploration and exploitation, preventing the search from stagnating in local optima [25].

Quantitative Analysis of Mutation Strategies

The table below summarizes key performance data for different mutation strategies as applied to various protein models.

Table 1: Performance Metrics of Mutation Strategies in Protein Folding EAs

| Mutation Strategy | Model Type | Key Performance Indicator | Reported Outcome/Value | Biological Rationale |

|---|---|---|---|---|

| Self-Organizing Mutation (SOMO) [25] | All-Atom (Met-enkephalin) | Energy Minimization | Significant improvement vs. fixed-rate mutation | Prevents search stagnation and premature convergence |

| Fixed-Rate Mutation [26] | 2D HP Lattice | Success Rate in Finding Global Min. | Lower performance compared to adaptive methods | Maintains basic population diversity |

| Torsion Angle Mutation [25] | All-Atom | Ramachandran Plot Quality | Conformations better than native in benchmark tests | Explores locally feasible backbone conformations |

Core Operator 2: Crossover

Mechanistic Role and Implementation

Crossover, or recombination, is a distinguishing feature of EAs that combines genetic material from two parent structures to generate one or more offspring. This operator leverages building-block hypothesis—the idea that high-quality solutions are composed of good "building blocks"—by swapping stable sub-conformations between parents [26] [27].

The effectiveness of crossover is highly dependent on the chromosome representation of the protein structure. Common representations and their corresponding crossover methods include:

- Torsion Angle Representation: The chromosome is a string of backbone and side-chain torsion angles. Single-point or two-point crossover can be applied to this string, swapping contiguous segments of the structure between parents [25].

- Lattice Move Representation: In 2D or 3D lattice models, a conformation is encoded as a sequence of moves (e.g., '1'=right, '2'=left, '3'=forward). Crossover splices and combines these move sequences from two parents [26].

A major challenge with crossover in the dense, compact environment of a protein fold is the high probability of creating invalid offspring with atomic clashes or non-self-avoiding walks [27]. To address this, advanced crossover strategies have been developed:

- Systematic Crossover (Sys-Cross): This method couples the two fittest individuals and tests every possible crossover point. From all trials, it selects the two best offspring for the next generation. This exhaustive local search around high-quality solutions has been shown to find the global minimum faster and more frequently than standard crossover [26].

- DFS-Guided Crossover: When a standard crossover fails, Depth-First Search (DFS) is used to generate a short, self-avoiding pathway that connects the two parent segments, thereby "repairing" the invalid conformation. This strategy reveals convoluted pathways that would otherwise be lost [27].

The following diagram contrasts a standard crossover with a DFS-guided crossover.

Quantitative Analysis of Crossover Strategies

Table 2: Efficacy of Advanced Crossover Strategies in Lattice and All-Atom Models

| Crossover Strategy | Model & Chain Length | Performance Gain | Key Metric | Computational Overhead |

|---|---|---|---|---|

| Systematic Crossover (Sys-Cross) [26] | 2D HP, 20 residues | Found global min. 1.5x faster | Speed to Global Minimum | Moderate (test all crossover points) |

| DFS-Guided Crossover (X(d) variant) [27] | 2D HP, 64 residues | ~10% higher success rate | Success Rate vs. Standard Crossover | Low (DFS used sparingly on failure) |

| Self-Organizing Crossover (SOCO) [25] | All-Atom | Improved convergence to low energy | Final Energy Value | Low (dynamic parameter adjustment) |

| Standard Crossover [26] | 2D HP, 20 residues | Baseline | Success Rate | Low |

Core Operator 3: Selection Pressure

Mechanistic Role and Biophysical Foundation

Selection pressure is the driving force that guides the evolutionary search toward optimality. It determines which individuals in the current population are privileged to pass their genetic information to the next generation. The primary measure for selection is the fitness of a candidate conformation, which, in the context of protein folding, is almost universally related to the stability of the fold [29] [30] [31].

The biophysical basis for this is Anfinsen's dogma, which states that a protein's native state is the one that minimizes its free energy [29]. Consequently, the fitness function is typically a potential energy function or a statistical potential that approximates the folding free energy. A widely used fitness function is based on the CHARMM force field [25]:

Fitness (Total Energy) = Ebond + Eangle + Etorsion + EvanderWaals + E_electrostatics

Selection schemes commonly used in protein-folding EAs include:

- Elitism: The best individual(s) are automatically carried over to the next generation, ensuring that the best solution found is not lost [25].

- Fitness-Proportionate Selection: Individuals are selected with a probability proportional to their fitness. In the context of energy minimization, this often means converting energy to a "fitness" score, for example, by using a linear ranking or Boltzmann selection [26].

- Tournament Selection: A subset of individuals is chosen randomly from the population, and the best among them is selected to be a parent. This provides a tunable selection pressure based on the tournament size [32].

Connecting Selection Pressure to Protein Evolution and dN/dS

The concept of selection pressure in EAs directly mirrors evolutionary selection in nature. In molecular evolution, the ratio of non-synonymous to synonymous substitutions (dN/dS) is a key metric to identify selection pressures acting on a protein [31]. A dN/dS < 1 indicates purifying selection, which preserves the protein's structure and function by removing destabilizing mutations. This is analogous to the EA selection pressure favoring low-energy (high-fitness) conformations.

Simulations coupling population genetics with protein biophysics show that selection acts primarily to maintain marginal stability (typically with an upper stability bound of ΔG ~ 7.4 kcal/mol) [29] [31]. This stability margin exists because overly stable proteins may be rigid and non-functional, while overly unstable proteins risk misfolding and aggregation. Therefore, the selection pressure in a well-designed EA should not only seek the absolute lowest energy but also navigate a landscape that reflects these biological constraints.

Experimental Protocols & Researcher's Toolkit

Detailed Protocol: Self-Organizing Genetic Algorithm (SOGA) for PSP

This protocol, adapted from [25], outlines the steps for implementing a SOGA for protein structure prediction (PSP) using self-configuring mutation and crossover rates.

Step 1: Initialization

- Population Generation: Generate

nrandom chromosomes. Encode each chromosome using torsion angles (phi, psi) and side-chain angles (chi) to define the 3D structure. - Structure Modeling: Use molecular modeling software like TINKER to convert the chromosomal representation into an atomic 3D structure.

- Fitness Calculation: Calculate the potential energy for each structure in the population using a force field like CHARMM (implemented in software such as Discovery Studio). This energy value is the fitness score.

- Population Generation: Generate

Step 2: Selection and Elite Preservation

- Identify and save the elite chromosome (the one with the minimal energy value).

Step 3: Regeneration with Self-Organizing Operators

- Repeat the following to create a new population:

- Self-Organizing Crossover (SOCO): Initialize a low crossover rate. Perform single-point crossover, then uniformly increase the rate. After each operation, calculate the energy of the new children and update the elite if a better conformation is found. Continue until the rate reaches an optimal upper limit (e.g., 0.85).

- Self-Organizing Mutation (SOMO): Similarly, initialize a low mutation rate. Mutate genes (torsion angles) at the current rate, then uniformly increase it. Calculate the energy of new mutants and update the elite. Continue until an optimal upper limit is reached.

- Repeat the following to create a new population:

Step 4: Termination

- The algorithm terminates when a predetermined number of generations is reached or the energy converges.

The Scientist's Toolkit: Essential Research Reagents

Table 3: Key Software and Computational Tools for Protein Folding EAs

| Tool Name | Type/Function | Role in EA Workflow | Relevant Citation |

|---|---|---|---|

| TINKER | Molecular Modeling Software | Chromosome encoding; converts torsion angle strings to 3D coordinates | [25] |

| CHARMM | Molecular Mechanics Force Field | Fitness function; calculates potential energy of a conformation | [25] [32] |

| Discovery Studio | Molecular Simulation & Visualization | Environment for energy calculation and structural analysis | [25] |

| HP Lattice Model | Simplified Protein Model | Benchmarking and testing EA operators (mutation, crossover) | [26] [27] |

| Protein Data Bank (PDB) | Structural Database | Source of native structures for validation and training | [33] |

The operators of mutation, crossover, and selection pressure form the computational backbone of evolutionary algorithms applied to protein folding. Mutation ensures diversity and local exploration, crossover enables the constructive combination of stable sub-structures, and selection pressure, grounded in protein biophysics, steers the population toward stable, native-like folds. While current methods show significant success, particularly on simplified models and small peptides, the field continues to evolve. The integration of these EA strategies with deep learning approaches like AlphaFold, especially for predicting dynamic conformational states [34] [33], represents the next frontier in achieving a complete, mechanistic understanding of protein folding and function. This synergy holds great promise for accelerating drug discovery and the rational design of novel proteins.

In the realm of protein folding research, evolutionary algorithms (EAs) operate on a fundamental principle: they explore the vast conformational space of a polypeptide chain through cycles of selection, reproduction, and mutation to discover low-energy, native-like structures. The critical component that guides this search is the fitness function, a computational scoring system that evaluates the quality of candidate protein structures. An effective fitness function must accurately quantify the thermodynamic stability of a fold and its similarity to the native, biologically active state, serving as an in-silico surrogate for natural selection. The development of such functions represents a central challenge in computational biology, as their accuracy directly determines the success of protein structure prediction and design. This guide examines the core components, performance, and implementation of these crucial scoring metrics within the framework of evolutionary algorithms, providing researchers with a detailed technical roadmap for their application.

Core Components of a Fitness Function

A robust physics-based fitness function for scoring protein structures typically integrates several energy terms to describe atomic interactions and solvent effects. The general form can be summarized as:

E_total = E_bonded + E_nonbonded + E_solvation

E_bonded: This term encompasses the internal covalent energy of the protein chain, including bond stretching, angle bending, and dihedral torsion potentials. These terms ensure the proper stereochemistry of the generated models.E_nonbonded: This term describes non-covalent interactions between atoms that are not directly bonded. It is typically decomposed into:- Van der Waals (

E_vdw): Accounts for short-range attractive (dispersion) and repulsive (steric overlap) forces. - Electrostatics (

E_elec): Describes the interaction between partial atomic charges, calculated via Coulomb's law.

- Van der Waals (

E_solvation: This term is critical for modeling the protein's interaction with its aqueous environment. Implicit solvent models are used for computational efficiency, primarily via two approaches:- Surface Area (SA) Models: Estimate the solvation free energy as a term proportional to the solvent-accessible surface area (SASA) of the protein. The ECEPP05/SA potential is an example of this approach, where the parameters were optimized against protein decoy sets [35].

- Poisson-Boltzmann (PB) Models: Provide a more physically realistic description by solving the Poisson-Boltzmann equation for the electrostatic potential in a continuum solvent. The ECEPP05/FAMBEpH potential uses the FAMBEpH method to solve this equation [35].

The accuracy of a fitness function is highly dependent on the specific force field parameters and the solvation model employed. For instance, the ECEPP05/SA potential represented a significant improvement over its predecessor, ECEPP3/OONS, by better discriminating native-like structures [35].

Quantitative Comparison of Scoring Function Performance

The benchmark performance of a fitness function is measured by its ability to identify native or near-native structures from a set of non-native decoys. The following table summarizes the reported success rates for several physics-based scoring functions from a large-scale study on protein decoys [35].

Table 1: Performance of All-Atom Scoring Functions in Discriminating Native-like Protein Structures

| Scoring Function | Solvation Model | Scoring Method | Success Rate (Lowest Energy) | Success Rate (Top 10) |

|---|---|---|---|---|

| ECEPP05/SA | Surface Area (SA) | Monte-Carlo-with-Minimization (MCM) | 76% | 87% |

| ECEPP3/OONS | Surface Area (Ooi et al.) | Monte-Carlo-with-Minimization (MCM) | 69% | 80% |

| ECEPP05/FAMBEpH | Poisson-Boltzmann (FAMBE) | Single Energy Calculation | 89%* | - |

The ECEPP05/FAMBEpH function showed the highest discriminative ability, though the exact "Top 10" success rate was not provided in the source material [35].

Performance benchmarks reveal key challenges. Scoring functions can struggle with fold-switching proteins, which remodel their secondary and tertiary structures in response to cellular stimuli [5]. For these proteins, state-of-the-art algorithms like AlphaFold2 predict only one conformation in 92% of known cases, often missing the functionally critical alternative fold [5]. This suggests that standard fitness functions, and the evolutionary algorithms they guide, may be biased toward a single energy minimum and require specialized approaches to explore multiple native states.

Experimental Protocols for Validation

Benchmarking with Decoy Sets

A standard protocol for validating a new fitness function involves its application to curated protein decoy sets.

- Decoy Set Selection: Utilize a comprehensive set of protein decoys, such as the Rosetta set, which contains conformations for proteins with different architectures, including a sufficient number of near-native (<4 Å Cα RMSD) and non-native structures [35].

- Structure Preparation: Prepare the decoy structures and the known native structure for scoring. This may involve adding hydrogen atoms and assigning protonation states.

- Energy Scoring: Apply the fitness function to score every decoy in the set. This can be a single-point energy evaluation, but is often followed by a brief local energy minimization or a short simulation (e.g., Monte-Carlo-with-Minimization) to relieve minor steric clashes [35].

- Performance Analysis: For each protein, rank all decoys by their energy score. The success of the function is measured by its ability to rank native or near-native structures (e.g., <3.5 Å Cα RMSD) as the lowest-energy conformation or within the top 10 lowest-energy models [35].

Identifying Dual-Fold Coevolution with ACE

For fold-switching proteins, the Alternative Contact Enhancement (ACE) protocol can uncover evolutionary signatures for multiple folds, which can then be incorporated into fitness constraints [5].

- Generate Multiple Sequence Alignments (MSAs): For a query sequence with two known folds, generate a deep MSA of a protein superfamily. Prune this MSA to create successively shallower, subfamily-specific MSAs with sequences increasingly identical to the query [5].

- Coevolutionary Analysis: Perform coevolutionary analysis on each MSA using tools like GREMLIN (Generative Regularized ModeLs of proteINs) and MSA Transformer to predict residue-residue contacts [5].

- Combine and Filter Predictions: Superimpose predictions from all nested MSAs onto a single contact map. Filter the combined predictions using density-based scanning to remove noise [5].

- Categorize Contacts: Categorize predicted contacts as belonging to the "dominant" fold, the "alternative" fold, or contacts common to both experimentally determined structures [5].

The workflow below illustrates the ACE protocol for detecting coevolution in fold-switching proteins.

ACE Workflow for identifying coevolution in fold-switching proteins. Adapted from [5].

Integration with Evolutionary Algorithms

Evolutionary algorithms for protein folding leverage these fitness functions to navigate the vast conformational search space. The following diagram outlines a generic EA cycle for protein structure prediction, highlighting the role of the fitness function.

Evolutionary Algorithm for protein folding. The fitness function (red) guides the search. Adapted from [2] [36].

The EASME (Evolutionary Algorithms Simulating Molecular Evolution) framework represents a advanced approach that merges EAs with bioinformatics to design novel proteins. It can run in two primary modes [2]:

- "Unknown to Known": Evolves a random sequence toward a known consensus sequence of an extant protein family, effectively reconstructing extinct evolutionary intermediates.

- "Known to Unknown": Forward-evolves a known protein sequence toward a desired phenotypic characteristic, acting as a "fast forward" button for evolution.

In both modes, the fitness function is the agent of selection, quantifying how well a candidate protein sequence or structure meets the target objective.

The Scientist's Toolkit: Research Reagents & Databases

Table 2: Essential Resources for Protein Scoring and Design Research

| Resource Name | Type | Primary Function |

|---|---|---|

| ECEPP/3 & ECEPP05 [35] | Force Field | Provides parameters for bonded and non-bonded atomic interactions in physics-based scoring. |

| AlphaFold DB [37] [38] [39] | Structure Database | Repository of hundreds of millions of pre-computed protein structures for benchmarking and analysis. |

| RoseTTAFold [38] [40] | Software Tool | Deep learning network for protein structure prediction, often used for model generation. |

| GREMLIN [5] | Software Tool | Infers co-evolved residue-residue contacts from MSAs for contact-based constraints. |

| Protein Data Bank (PDB) [39] | Structure Database | Primary archive of experimentally determined 3D structures of proteins, used as gold-standard references. |

| Rosetta [35] | Software Suite | A comprehensive platform for protein structure prediction, design, and refinement, using its own energy functions. |