Evolutionary Predictions: From Theoretical Foundations to Biomedical Applications

This article provides a comprehensive examination of the theoretical basis for evolutionary predictions, a field rapidly transforming biomedical research and drug development.

Evolutionary Predictions: From Theoretical Foundations to Biomedical Applications

Abstract

This article provides a comprehensive examination of the theoretical basis for evolutionary predictions, a field rapidly transforming biomedical research and drug development. We explore the core principles from Darwinian theory to modern non-equilibrium thermodynamics and information theory, which posit evolution as a quantifiable process. The scope encompasses foundational concepts, diverse methodological approaches from population genetics to machine learning, and strategies for troubleshooting predictability limits. A critical analysis of validation frameworks, including long-term studies and clinical data refinement, underscores the transition of evolutionary forecasting from a theoretical concept to a practical tool. Tailored for researchers and drug development professionals, this review synthesizes how predictive evolutionary models are being leveraged to combat antimicrobial resistance, optimize therapeutic discovery, and personalize medical interventions.

The Conceptual Pillars of Evolutionary Forecasting

Evolutionary biology has undergone a profound transformation from a historical science describing past events to a predictive discipline capable of forecasting future evolutionary outcomes. This transition represents a paradigm shift rooted in Charles Darwin's foundational principles of natural selection, now enhanced by sophisticated quantitative frameworks. The theory of evolution by natural selection, as originally articulated by Darwin and Wallace, establishes that populations will adapt to their environments when three conditions are met: phenotypic variation exists among individuals, this variation influences differential fitness, and advantageous traits are heritable [1]. For much of its history, evolutionary biology focused on reconstructing and explaining past events, with the predictability of evolutionary processes considered limited at best. However, as noted in contemporary reviews, "Evolution has traditionally been a historical and descriptive science, and predicting future evolutionary processes has long been considered impossible" [2].

The emerging capacity for evolutionary prediction represents the maturation of Darwin's theoretical framework into quantitatively precise models with significant applications across medicine, agriculture, biotechnology, and conservation biology. This whitepaper examines the core principles, mathematical foundations, and methodological approaches that enable researchers to transform Darwinian natural selection into testable, quantitative predictions of evolutionary dynamics.

Historical Foundations: From Darwin's Theory to Predictive Frameworks

Darwin's seminal work On the Origin of Species established natural selection as the primary mechanism for evolutionary change, though the term "evolution" appears only in the final sentence of the first edition [3]. Darwin identified evolutionary patterns and the ecological processes driving them, but his proposed proximate mechanisms predated the discovery of genetics, requiring subsequent theoretical refinement through Neo-Darwinism and the Modern Synthesis [3].

The integration of Mendelian genetics with Darwinian selection theory during the Modern Synthesis of the 1930s-1940s established the mathematical foundations for evolutionary prediction. Key developments included:

- Population Genetics: The work of Fisher, Wright, and Haldane established quantitative models of allele frequency change under selection, mutation, migration, and drift [4].

- The Logical Skeleton of Evolution: Lewontin (1970) formalized the necessary conditions for evolution by natural selection: phenotypic variation, differential fitness based on that variation, and heritability of fitness-related traits [1].

- Proof-of-Concept Modeling: Mathematical models began serving as tests of verbal hypotheses, clarifying thinking and uncovering hidden assumptions in evolutionary reasoning [4].

Table 1: Historical Development of Evolutionary Prediction Capabilities

| Time Period | Theoretical Framework | Predictive Capability | Key Innovations |

|---|---|---|---|

| 1859-1900 | Darwinian Natural Selection | Qualitative | Variation, inheritance, and differential success identified as necessary conditions |

| 1900-1930 | Neo-Darwinism | Semi-quantitative | Germ-plasm theory; rejection of inheritance of acquired characteristics |

| 1930s-1940s | Modern Synthesis | Statistical | Population genetics; mathematical models of selection; integration of genetics with natural selection |

| 1950s-1990s | Extended Synthesis | Short-term microevolutionary | Inclusive fitness; evolutionary game theory; quantitative genetics |

| 2000s-Present | Predictive Evolutionary Modeling | Quantitative forecasting | Genomic selection; experimental evolution; machine learning applications |

Mathematical Foundations of Evolutionary Prediction

The transformation of Darwin's verbal theory into quantitative predictive frameworks relies on mathematical formalisms that capture the dynamics of evolutionary change across different biological contexts.

Fundamental Equations and Models

Evolutionary prediction employs diverse mathematical approaches depending on the biological scale and question:

Type Recursion Equations model allele frequency change in discrete generations: [ p' = \frac{p \cdot w{A}}{\bar{w}} ] Where (p') is the frequency of allele A in the next generation, (p) is its current frequency, (w{A}) is the fitness of genotype A, and (\bar{w}) is the mean population fitness [1].

The Price Equation provides a general covariance formulation for evolutionary change: [ \Delta \bar{z} = \frac{1}{\bar{w}} \text{Cov}(wi, zi) + \frac{1}{\bar{w}} \mathbb{E}(wi \Delta zi) ] Where (\Delta \bar{z}) is the change in average character value, (wi) is the fitness of entity i, (zi) is its character value, and the terms represent selection and transmission bias respectively [1].

The Breeder's Equation predicts response to selection in quantitative genetics: [ R = h^2 \cdot S ] Where R is the response to selection, (h^2) is the heritability, and S is the selection differential [2].

Modeling Approaches and Their Applications

Different evolutionary questions require distinct modeling approaches, varying in their level of biological abstraction:

Table 2: Mathematical Modeling Approaches in Evolutionary Prediction

| Model Type | Level of Abstraction | Primary Application | Examples |

|---|---|---|---|

| Proof-of-Concept Models | High | Testing logical coherence of verbal hypotheses | Fisher's fundamental theorem; Price equation |

| Population Genetic Models | Medium | Predicting allele frequency changes | Wright-Fisher model; Moran model |

| Quantitative Genetic Models | Medium | Predicting complex trait evolution | Breeder's equation; genomic selection |

| Optimality Models | High | Predicting adaptation | Life history theory; foraging theory |

| Phylogenetic Models | Low | Reconstructing evolutionary histories | DNA substitution models; comparative methods |

Proof-of-concept models serve a particularly important role in evolutionary biology by formally testing the logic of verbal hypotheses. As noted by researchers, "Proof-of-concept models, used in many fields, test the validity of verbal chains of logic by laying out the specific assumptions mathematically" [4]. These models help identify hidden assumptions and spur new research directions even when they don't generate immediately testable quantitative predictions.

Methodological Framework: Protocols for Evolutionary Prediction

The predictive capacity of evolutionary theory rests on rigorous methodological approaches that combine theoretical models with empirical data.

Experimental Evolution Protocols

Protocol 1: Microbial Experimental Evolution

- Objective: Predict adaptation to novel environmental conditions

- Key Components:

- Replicate populations in controlled environments

- Freezing of ancestral stocks ("fossil records")

- Regular monitoring of fitness and phenotypic changes

- Whole-genome sequencing of evolved lineages

- Applications: Used to reveal general rules of microbial adaptation, including that fitness improvement is faster in maladapted genotypes and that multiple beneficial mutations often coexist in adapting populations [2]

Protocol 2: Phylodynamic Analysis of Pathogens

- Objective: Forecast viral evolutionary trajectories

- Key Components:

- Collection of temporal sequence data

- Reconstruction of ancestral states

- Estimation of selection pressures on specific codons

- Integration of epidemiological data

- Applications: Seasonal influenza vaccine selection; SARS-CoV-2 variant monitoring [2]

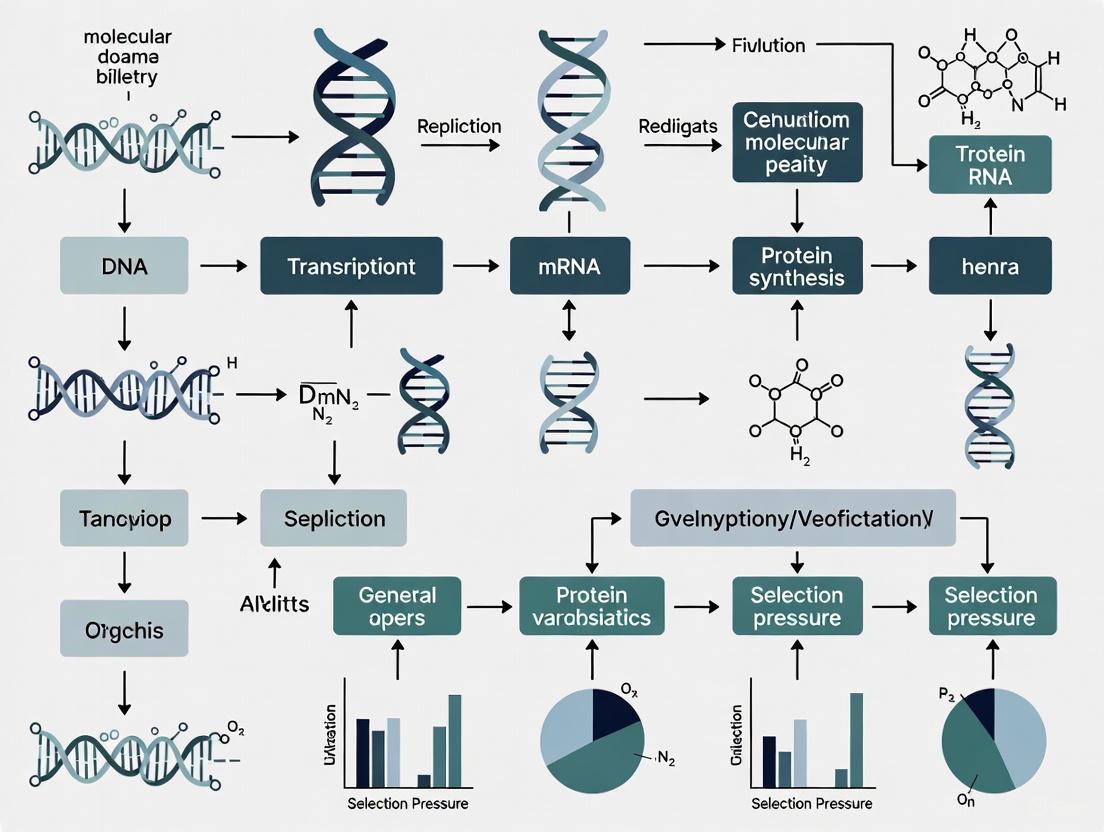

Figure 1: Workflow for Experimental Evolution Studies Illustrating the iterative process of selection, reproduction, measurement, and model refinement.

Genomic Prediction Methods

Protocol 3: Genomic Selection in Breeding

- Objective: Predict breeding values for complex traits

- Key Components:

- Genome-wide marker data (SNPs)

- Phenotypic records from reference population

- Statistical models (GBLUP, Bayesian methods)

- Validation in independent populations

- Applications: Crop and livestock improvement; prediction of complex disease risk in humans [2]

Protocol 4: Machine Learning in Evolutionary Forecasting

- Objective: Predict evolutionary outcomes from complex datasets

- Key Components:

- Integration of genomic and climate time-series data

- Spatiotemporal dataframe construction

- Algorithm selection (random forests, neural networks)

- Model validation and feature importance analysis

- Applications: Predicting pathogen responses to climate change; identifying future hotspots of evolutionary innovation [5]

Research Reagent Solutions for Evolutionary Studies

The experimental basis of evolutionary prediction relies on specialized reagents and materials that enable precise manipulation and measurement of evolutionary processes.

Table 3: Essential Research Reagents for Evolutionary Prediction Studies

| Reagent/Material | Function | Application Examples |

|---|---|---|

| Experimental Evolution Kits | ||

| Cycler chemostats | Continuous culture with controlled nutrient flow | Microbial experimental evolution; mutation rate studies |

| Animal model colonies | Controlled breeding populations | Drosophila selection experiments; rodent life history studies |

| Genomic Analysis Tools | ||

| Whole-genome sequencing kits | Comprehensive mutation detection | E. coli mutation accumulation lines; viral evolution studies |

| Barcoded strain libraries | Tracking lineage dynamics | Yeast competition experiments; cancer cell evolution |

| SNP chips | Genotyping at scale | Genomic selection in breeding programs; GWAS of fitness components |

| Computational Resources | ||

| Population genetic simulation software | Forward-time simulations | SLiM; simuPOP; NEMO |

| Phylogenetic inference packages | Reconstructing evolutionary histories | BEAST; RevBayes; IQ-TREE |

| Machine learning frameworks | Predictive modeling from complex data | TensorFlow; scikit-learn; R machine learning packages |

Applications in Drug Development and Public Health

Evolutionary prediction has found particularly valuable applications in pharmaceutical development and public health, where anticipating pathogen evolution is crucial for intervention effectiveness.

Predicting Antibiotic and Antiviral Resistance

The evolution of drug resistance represents a classic example of evolution in response to strong selection, with significant implications for treatment strategies:

- Competitive Release Strategies: Treatment regimes may be designed to guide pathogen evolution toward less fit genotypes that are less likely to spread in antibiotic-free environments [2].

- Collateral Sensitivity Networks: Using drugs where resistance to one drug increases sensitivity to another, creating evolutionary traps for pathogens [2].

- Barcoded Microbial Libraries: These enable high-throughput measurement of fitness effects of mutations across multiple drug environments, allowing researchers to predict evolutionary trajectories and identify evolutionary constraints [2].

Figure 2: Evolutionary Control Strategy Using collateral sensitivity networks to direct pathogen evolution toward vulnerability.

Vaccine Strain Selection

Seasonal influenza represents a prime example of applied evolutionary forecasting, where vaccine composition must be decided months before the flu season based on predictions of which strains will dominate:

- Phylodynamic Models: Combine phylogenetic trees with epidemiological data to forecast variant spread [2].

- Antigenic Cartography: Quantify antigenic distances between strains to predict immune escape potential [2].

- Sequence-Based Forecasting: Models such as those developed by Łuksza and Lässig (2014) predict which influenza variants will be most prevalent in upcoming seasons based on mutational effects and current frequency data [2].

An Integrated Eco-Evolutionary Prediction Framework

Contemporary evolutionary prediction increasingly recognizes that evolutionary processes cannot be fully understood in isolation from ecological dynamics. Eco-evolutionary feedback loops, where populations both respond to and modify their environments, create complex dynamics that challenge traditional predictive approaches [2].

An integrated framework for eco-evolutionary prediction includes:

- Coupling Genomic and Environmental Data: Combining genomic evolutionary analysis with climate time-series data in spatiotemporal dataframes for machine learning applications [5].

- Early-Warning Systems: Developing accessible public health tools that incorporate evolutionary forecasts to mitigate future health threats [5].

- Multi-Scale Modeling: Integrating within-host evolutionary dynamics with between-host transmission dynamics to predict public health outcomes [5].

The field continues to develop more sophisticated integration of genomic data, environmental variables, and population dynamics to enhance predictive accuracy across biological scales from microbial populations to global biodiversity patterns.

Future Directions and Conceptual Challenges

Despite significant advances, evolutionary prediction faces fundamental challenges that define the current frontiers of research:

- Predictability Limits: Inherent stochasticity in mutation, reproduction, and environmental variation imposes fundamental limits on predictive accuracy, especially for long-term forecasts [2].

- Eco-Evolutionary Dynamics: Feedback between evolutionary and ecological processes creates complex nonlinear dynamics that challenge prediction [2].

- Genotype-Phenotype Map: Unknowns in how genetic variation maps to phenotypic variation and fitness create significant uncertainty in evolutionary forecasts [2].

- Scaling Issues: Predicting macroevolutionary patterns from microevolutionary processes remains a significant challenge [4].

The most promising avenues for addressing these challenges include improved integration of mechanistic biological knowledge with machine learning approaches, development of more sophisticated multi-scale models, and enhanced data collection through emerging monitoring technologies.

As evolutionary prediction continues to mature, its applications will expand across medicine, conservation, and biotechnology, transforming Darwin's foundational insights into increasingly precise forecasts of biological change. This progression from qualitative principle to quantitative prediction represents the ongoing synthesis of evolutionary biology as both a historical and predictive science.

The Red Queen Hypothesis, derived from Lewis Carroll's Through the Looking-Glass, posits that organisms must constantly adapt and evolve merely to survive in the face of ever-evolving opposing species [6]. In evolutionary biology, this concept explains the constant extinction probability observed in the fossil record and has been pivotal in understanding the advantage of sexual reproduction. In the context of infectious diseases and cancer, this hypothesis provides a critical framework for understanding the continuous coevolutionary arms race between therapeutic agents and their rapidly adapting targets. The relentless evolutionary pressure drives pathogens and cancer cells to develop resistance, often negating the efficacy of drugs within years of their introduction. This dynamic necessitates a paradigm shift in drug discovery—from designing static molecules to anticipating and outmaneuvering evolutionary counter-strategies. The field of evolutionary prediction seeks to transform this challenge into a quantifiable discipline, using evolutionary principles to forecast resistance and design more durable therapeutic interventions [2].

Theoretical Basis: From Biological Principle to Predictive Framework

Core Principles of the Red Queen Hypothesis

Leigh Van Valen's 1973 hypothesis introduced the metaphor of species running to stay in the same place, locked in a zero-sum evolutionary game [6]. The hypothesis originally aimed to explain the "law of extinction," which observes that the probability of extinction for a taxon remains constant over millions of years, independent of its age. This occurs because the evolutionary progress of one species deteriorates the fitness of its competitors, predators, parasites, or prey; but since all are evolving simultaneously, no single species gains a permanent advantage.

The microevolutionary version of the hypothesis, later applied to host-parasite interactions, provides a powerful explanation for the maintenance of sexual reproduction. As Bell (1982) and others argued, sexual recombination generates genetic variability, allowing hosts to produce offspring that are genetically unique and potentially resistant to co-evolving parasites [6]. This antagonistic coevolution drives oscillating genotype frequencies in host and parasite populations without necessarily changing their phenotypes.

The Barrier Theory: When the Red Queen Stops

A crucial extension of the Red Queen framework is the Barrier Theory, which distinguishes between barriers that completely block exploitation and restraints that merely impede it [7]. While classic Red Queen dynamics typically involve restraints that lead to ongoing coevolutionary chases, barriers can temporarily halt these arms races.

- Barriers are mechanisms that completely block exploitation (e.g., cell cycle arrest blocking viral replication, a mutation that prevents pathogen entry)

- Restraints are mechanisms that slow but do not prevent exploitation (e.g., immune responses that reduce pathogen load but don't prevent infection)

This distinction is fundamental for drug discovery. Therapies designed as evolutionary barriers aim for complete, durable protection, while those acting as restraints predictably engender resistance, requiring continuous innovation. The transformation of a barrier into a restraint—when a pathogen evolves a countermeasure—restarts the Red Queen process, as illustrated in the workflow below [7].

Figure 1: The Barrier Theory in Coevolutionary Dynamics. This diagram illustrates how barriers can halt exploitation unless genetic variation in the exploiter population transforms them into restraints, restarting Red Queen dynamics.

Evolutionary Predictions Research Framework

The science of evolutionary prediction provides the methodological backbone for applying the Red Queen hypothesis to drug discovery. This emerging field aims to forecast evolutionary trajectories using a combination of population genetics, ecological modeling, and empirical data [2]. The predictive scope can range from short-term genotypic changes (e.g., predicting specific resistance mutations) to long-term phenotypic outcomes (e.g., fitness trajectories of resistant strains).

The Generalized Models of Divergent Selection (GMDS) approach offers a unifying framework for evolutionary predictions by deriving a priori predictions of phenotypic or genetic change based on specified assumptions for a particular system [8]. These models generate probabilistic predictions rather than precise endpoints, acknowledging the stochastic nature of evolutionary processes while still offering testable forecasts.

Quantitative Framework: Measuring the Arms Race

Key Parameters in Coevolutionary Dynamics

The table below summarizes essential quantitative parameters for measuring and predicting Red Queen dynamics in therapeutic contexts.

Table 1: Key Quantitative Parameters for Monitoring Coevolutionary Arms Races

| Parameter | Description | Measurement Approach | Therapeutic Significance |

|---|---|---|---|

| Rate of Genotype Oscillation | Frequency changes of host/resistance alleles over time | Longitudinal genome sequencing | Predicts timing of drug resistance emergence |

| Selection Coefficient (s) | Fitness advantage of resistant variant in drug environment | Competition assays in vitro/in vivo | Quantifies strength of selective pressure |

| Mutation Supply Rate | Product of population size and mutation rate | Fluctuation tests; NGS error-rate analysis | Determines probability of resistance emergence |

| Genetic Diversity | Heterogeneity in pathogen or tumor population | Heterozygosity; Shannon diversity index | Predicts adaptive potential and resistance risk |

| Coevolutionary Load | Fitness cost of resistance mutations in absence of drug | Growth rate comparisons in drug-free media | Informs drug cycling strategies to exploit fitness costs |

Experimental Data from Model Systems

Research in model systems has yielded quantifiable evidence of Red Queen dynamics. The following table compiles key experimental findings that demonstrate measurable evolutionary parameters in host-pathogen systems.

Table 2: Experimental Evidence of Red Queen Dynamics in Model Systems

| Experimental System | Key Finding | Quantitative Outcome | Implication for Drug Discovery |

|---|---|---|---|

| C. elegans / S. marcescens [6] | Sexual populations resisted extinction by coevolving parasites | Self-fertilizing populations went extinct in <20 generations | Genetic recombination provides evolutionary advantage against pathogens |

| Potamopyrgus antipodarum snails [6] | Clonal types became susceptible to parasites over time | Once-plentiful clones dwindled; some disappeared entirely | Static genotypes become evolutionary targets; supports resistance monitoring |

| P. vivax / Duffy antigen [7] | Duffy receptor mutation blocked parasite entry in W. Africa | Near-fixation of mutation correlated with P. vivax disappearance | Example of complete barrier to infection; informs receptor-targeting therapies |

| Influenza A H3N2 [2] | Predictable antigenic drift enables vaccine strain selection | Annual vaccine efficacy correlates with prediction accuracy | Proof-of-concept for evolutionary forecasting in public health |

Methodologies: Experimental Protocols for Evolutionary Prediction

Directed Evolution of Resistance Mutations

Objective: To experimentally evolve and identify pre-existing resistance mutations in pathogen populations under drug selective pressure.

Materials:

- Pathogen strain (e.g., Pseudomonas aeruginosa, Mycobacterium tuberculosis)

- Antimicrobial agent of interest

- Culture media and equipment

- Genome sequencing capabilities

Procedure:

- Prepare multiple (≥10) parallel populations of the pathogen in appropriate liquid media.

- Expose populations to sub-inhibitory concentrations of the drug (e.g., 0.5× MIC).

- Serially passage populations, progressively increasing drug concentration with each transfer.

- Monitor population growth kinetics daily using optical density measurements.

- Isplicate single clones from populations showing increased MIC.

- Sequence whole genomes of resistant clones and their ancestral strain.

- Identify mutations through comparative genomic analysis.

- Recreate identified mutations in naive background via genetic engineering to confirm causal relationship with resistance.

This experimental evolution approach directly measures the adaptive potential of pathogens and identifies likely resistance trajectories before they emerge clinically [2].

Measuring Fitness Landscapes of Resistance Mutations

Objective: To quantify the fitness effects of resistance mutations in both drug-present and drug-absent environments.

Materials:

- Isogenic strains with specific resistance mutations

- Fluorescent markers or barcode sequences

- Flow cytometer or sequencing platform

Procedure:

- Construct marked strains containing resistance mutations of interest.

- Mix all strains in equal proportions in both drug-containing and drug-free media.

- Sample the competition cultures at regular intervals (e.g., every 4-8 generations).

- Quantify relative strain frequencies using flow cytometry (for fluorescent markers) or barcode sequencing.

- Calculate selection coefficients (s) from the change in frequency over time.

- Compute fitness costs as the difference in selection coefficients between drug-free and drug-containing environments.

This protocol generates quantitative data on the fitness trade-offs associated with resistance, informing predictions about which mutations are likely to fix in populations and persist after drug withdrawal [2].

Research Reagent Solutions

The table below outlines essential research tools for studying evolutionary dynamics in therapeutic contexts.

Table 3: Essential Research Reagents for Studying Evolutionary Arms Races

| Reagent/Category | Specific Examples | Function in Research |

|---|---|---|

| Model Pathogens | P. aeruginosa, C. elegans (host); S. marcescens (pathogen) | Provide tractable systems for experimental evolution studies [6] |

| Genetic Barcoding Systems | Unique sequence tags, fluorescent protein markers | Enable high-throughput tracking of multiple lineages in competition assays [2] |

| Next-Generation Sequencing | Whole genome sequencing, RNA-Seq | Identify resistance mutations and characterize compensatory evolution [2] |

| Microfluidic Devices | Microbial evolution chips, droplet microfluidics | Allow high-replication studies of evolution in spatially structured environments |

| Fitness Assay Platforms | Growth rate scanners, flow cytometers, plate readers | Precisely quantify selection coefficients and fitness trade-offs |

Applications in Drug Discovery

Antimicrobial Drug Development

The Red Queen framework informs several innovative approaches to antimicrobial development:

Evolution-Proof Drugs target highly conserved essential genes with low mutation rates or where mutations impose catastrophic fitness costs. For example, drugs targeting the bacterial ribosome exploit its constrained evolution—mutations in core ribosomal components typically cause severe fitness defects, creating a evolutionary barrier rather than a temporary restraint [7].

Collateral Sensitivity-Based Therapies exploit trade-offs in resistance evolution. Some resistance mutations to one drug increase sensitivity to a second, unrelated drug. Smart treatment cycling can exploit these predictable evolutionary trajectories, creating a "lose-lose" scenario for pathogens. The workflow below illustrates this therapeutic approach.

Figure 2: Collateral Sensitivity Therapeutic Strategy. Resistance to Drug A can increase sensitivity to Drug B, enabling smart treatment cycling strategies.

Anticancer Therapy

In oncology, the Red Queen manifests as therapy-resistant clones that expand under treatment selective pressure. Evolutionary forecasting approaches include:

Adaptive Therapy modulates drug dose and timing to maintain treatment-sensitive cells that competitively suppress resistant clones, effectively harnessing ecological competition to prolong therapeutic efficacy. This approach acknowledges that complete eradication inevitably selects for resistance, instead aiming for long-term disease control.

Barrier-Based Approaches in cancer target multiple oncogenic pathways simultaneously to create evolutionary barriers. For example, combining cell cycle inhibitors with apoptosis inducers creates a higher barrier to full resistance than either approach alone [7].

The Red Queen Hypothesis provides both a metaphor and a mechanistic framework for understanding the inevitable emergence of drug resistance. By integrating this evolutionary perspective with quantitative predictions and barrier-based design, drug discovery can transition from reactive to proactive—anticipating evolutionary countermoves before they occur clinically. The emerging science of evolutionary prediction offers the methodological toolkit to make this transition, transforming drug discovery from an arms race into a game of strategic foresight. As these approaches mature, we may increasingly design therapies that not only treat disease today but remain effective against the evolved pathogens and cancers of tomorrow.

Traditional evolutionary theory, centered on natural selection and genetic mutation, provides a powerful framework for understanding adaptation and fitness optimization. However, it offers limited insight into the physical principles underlying the spontaneous emergence of complex, ordered biological systems [9]. This whitepaper explores two complementary theoretical frameworks—thermodynamics and information theory—that address this gap by proposing fundamental physical drivers of evolutionary complexity. These frameworks do not seek to replace Darwinian theory but rather to embed it within broader physical laws that govern the emergence of biological organization, from prebiotic chemistry to cognitive systems [9] [10] [11]. For researchers in drug development and evolutionary prediction, these approaches offer a more granular, physics-based understanding of the constraints and trajectories of evolutionary processes, potentially informing new strategies for antimicrobial development and synthetic biological systems.

Thermodynamic Frameworks for Evolution and the Origin of Life

Non-Equilibrium Thermodynamics as a Driver of Biological Organization

The apparent contradiction between life's increasing order and the second law of thermodynamics is resolved by considering living systems as dissipative structures [9]. These are open, non-equilibrium systems that maintain internal order by dissipating energy and exporting entropy to their surroundings. This perspective reframes evolution as a process in which systems are selected for their capacity to most effectively dissipate prevailing environmental energy gradients [9] [10]. The Thermodynamic Abiogenesis Likelihood Model (TALM) formalizes this for life's origin, proposing that selection-like dynamics emerge from differential persistence of chemical reaction networks based on their thermodynamic compatibility with environmental energy fluctuations, prior to the emergence of heredity or replication [10].

The Persistence Function and Reaction Viability

A core thermodynamic proposal is that persistence itself constitutes a primordial selection filter. A chemical system will persist if its energy budget remains viable, as defined by the inequality [10]:

y(t) = z(t) + S(t) + Σ r_i - Σ x_i ≥ 0

where:

y(t)is the residual energy at timetz(t)is time-varying environmental energy inputS(t)is stored energy within the systemr_iis energy released from reactionix_iis energy required to perform reactioni

This model identifies differential persistence—arising from variations in how reaction networks manage energy input, storage, release, and expenditure—as the foundation for selection-like behavior in prebiotic chemistry [10].

Key Metrics and Experimental Validation in Prebiotic Systems

Recent theoretical work has formalized several testable metrics to quantify entropy-reducing dynamics [9]. These are summarized in Table 1 below.

Table 1: Key Quantitative Metrics for Thermodynamic Evolution

| Metric | Description | Theoretical Application |

|---|---|---|

| Information Entropy Gradient (IEG) | Measures the directionality of informational entropy change in an evolving system. | Quantifies the tendency of a system to reduce internal uncertainty over time [9]. |

| Entropy Reduction Rate (ERR) | The rate at which a system reduces its informational entropy. | Could measure the efficiency of different prebiotic networks at constructing order [9]. |

| Compression Efficiency (CE) | Efficiency with which a system compresses meaningful information from environmental noise. | Applicable to the evolution of genetic codes and predictive models in neural systems [9]. |

| Normalized Information Compression Ratio (NICR) | A normalized measure of how much randomness is reduced in a system's architecture. | Useful for comparing entropy reduction across different biological scales, from molecules to ecosystems [9]. |

| Structural Entropy Reduction (SER) | Quantifies the reduction of entropy achieved through physical structure. | Can be applied to the self-assembly of membranes, protocells, and multicellular structures [9]. |

Experimental validation of these thermodynamic principles often involves analyzing amphiphilic molecules of varying chain length. These molecules form persistent structures like micelles and vesicles, with their stability (persistence time) serving as a proxy for y'(t), the augmented persistence function that includes resilience and entropic-diffusive penalties [10].

Information-Theoretic Frameworks for Evolution

Informational Entropy Reduction as an Evolutionary Driver

An information-theoretic perspective posits that evolution is fundamentally driven by the reduction of informational entropy—a measure of uncertainty or randomness within a system's state [9]. In this framework, living systems are self-organizing structures that extract and compress meaningful information from environmental noise, thereby reducing internal uncertainty while increasing complexity [9]. This process operates in synergy with Darwinian mechanisms: entropy reduction generates the structural and informational complexity upon which natural selection acts, while selection refines and stabilizes configurations that most effectively manage information [9].

Quantifying Information and Selection

Information theory provides the mathematical language to quantify uncertainty and information flow. Shannon entropy, H(P) = -Σ p_i log₂ p_i, quantifies the uncertainty in a system described by probability distribution P = {p_i} [9]. The mutual information, I(X;Y), between two variables (e.g., an organism and its environment) measures the reduction in uncertainty about one variable given knowledge of the other, representing the information gained [9].

A modern approach quantifies selection by measuring the adaptive information flow into a population. This is framed as a divergence between the actual evolutionary trajectory of a population under selection and the expected trajectory under a null model of neutral evolution. This divergence is measured using relative entropy (Kullback-Leibler divergence), which quantifies the informational content of selection itself [12].

Evolution as a Learning Process

A powerful synthesis views biological evolution through the lens of statistical learning theory [11]. In this model, evolutionary processes involve "trainable variables" (e.g., genotypes and phenotypes) that are refined by natural selection, and "non-trainable variables" (the environment) that define the constraints for learning. This establishes a threefold correspondence between thermodynamics, learning theory, and evolutionary biology, as summarized in Table 2.

Table 2: Correspondence Between Thermodynamics, Learning, and Evolution

| Thermodynamics | Machine Learning | Evolutionary Biology |

|---|---|---|

| Energy | Loss Function | Additive Fitness |

| Partition Function | Partition Function | Macroscopic Fitness |

| Helmholtz Free Energy | Free Energy | Adaptive Potential |

| Temperature | Temperature | Evolutionary Temperature (stochasticity) |

| Chemical Potential | (Absent) | Evolutionary Potential (cost of new genes) |

| Number of Molecules | Number of Neurons | Effective Population Size |

Within this framework, the maximum entropy principle, constrained by the requirement to minimize a loss function (e.g., maximize fitness), can be used to derive a canonical ensemble of organisms and a corresponding partition function—the macroscopic counterpart of population fitness [11]. This provides a formal basis for modeling major evolutionary transitions, including the origin of life, as physical phase transitions associated with the emergence of a new level of description [11].

Integrating Thermodynamic and Information-Theoretic Approaches

A Unified Conceptual Framework

These frameworks are not contradictory but complementary. Thermodynamics provides the "hard" physical constraint of energy dissipation, while information theory provides the "soft" currency of uncertainty reduction. They are unified by the understanding that to reduce its internal informational entropy, a system must be sufficiently organized—a state that is thermodynamically permitted only through the continuous dissipation of energy and export of thermal entropy [9]. This creates a recursive feedback loop: energy dissipation enables informational organization, which in turn creates more complex structures capable of more efficient energy dissipation and further entropy reduction [9].

A Research Protocol for Quantifying Evolutionary Information

A key experimental methodology involves quantifying the information imparted by selection throughout a population's lifecycle [12]. The protocol involves:

- Defining the State Space and Lifecycle: Precisely define the population state (e.g., genotype frequencies) and all possible transitions (birth, death, mutation) that constitute the reproductive lifecycle.

- Modeling the Process: Formulate the population dynamics as a stochastic process, specifying the generator that defines transition rates between states.

- Constructing a Neutral Null Model: Define a reference stochastic process that lacks selection (e.g., mutation and genetic drift occur, but without differential fitness).

- Calculating the Relative Entropy: For a population trajectory, compute the relative entropy (Kullback-Leibler divergence) between the path measure of the actual process with selection and the path measure of the neutral null process. This measures the adaptive information flow due to selection.

- Large Population Approximation: In large populations, this relative entropy can often be approximated by a large-deviation function, simplifying calculation and highlighting the exponential rate at which unlikely, adapted trajectories are selected.

Diagram: Logical Workflow for Quantifying Adaptive Information

The Scientist's Toolkit: Research Reagents and Models

Table 3: Essential Research Tools for Investigating Thermodynamic and Information-Theoretic Evolution

| Tool / Model | Type | Function and Application |

|---|---|---|

| Amphiphile Chain-Length Series | Chemical System | Isolates the effect of molecular structure on persistence (e.g., vesicle stability) to test thermodynamic models of abiogenesis [10]. |

| Autocatalytic Reaction Networks (ARNs) | Chemical / Computational Model | Models self-sustaining, self-replicating chemical cycles to study the emergence of selection and information compression from thermodynamics [9]. |

| Stoichiometric Generators | Mathematical Framework | Formally describes transitions in population states (e.g., genetic states) around reproductive lifecycles, enabling precise calculation of information flow [12]. |

| Partition Function (Z(T, q)) | Analytical Tool | The macroscopic counterpart of fitness; summing over all possible organism states, it is used to derive macroscopic evolutionary properties like free energy [11]. |

| Relative Entropy (D_KL) | Quantitative Metric | Measures the informational divergence between a population undergoing selection and a neutral null model, quantifying the "amount" of selection [12]. |

| Large-Deviation Theory | Mathematical Framework | Provides approximations for the probability of rare evolutionary events and the exponential rate of adaptive information accumulation in large populations [12]. |

The integration of thermodynamic and information-theoretic frameworks provides a profound expansion of evolutionary theory, moving beyond a gene-centric view to one grounded in universal physical principles. These approaches suggest that the trajectory of life toward greater complexity is not a historical accident but a physical inevitability under given constraints—a tendency for systems to evolve toward states of reduced informational entropy through energy dissipation. For researchers, this offers a more predictive, physics-based foundation for modeling evolutionary dynamics, with significant potential implications for understanding drug resistance, engineering synthetic biological systems, and probing the fundamental laws that govern the origin and evolution of life.

The field of evolutionary biology has traditionally been divided into two distinct domains: microevolution, which focuses on evolutionary processes occurring within species, and macroevolution, which investigates patterns of evolution above the species level [13]. This conventional dichotomy has limited our ability to understand the interconnected relationship between evolutionary process and pattern [13]. Long-term evolutionary studies provide a crucial scientific bridge connecting these domains by directly investigating how short-term microevolutionary dynamics, measured in real time, manifest as long-term evolutionary patterns over extended periods [13]. These studies have revealed that evolutionary dynamics unfold through complex interactions operating at multiple temporal and spatial scales, often exhibiting oscillations, stochastic fluctuations, and systematic trends that cannot be detected in short-term observations [13].

The critical importance of long-term perspectives becomes evident when considering the fundamental limitations of short-term evolutionary research. Nearly three-quarters of evolutionary field studies measure natural selection across five or fewer time periods, with approximately one-quarter conducting measurements just once [13]. Similarly, the vast majority of laboratory evolution studies operate on comparatively short timescales [13]. While these approaches have undoubtedly advanced our mechanistic understanding of evolutionary processes, they provide only snapshots of dynamics that inherently unfold across extended timelines. Long-term studies fulfill their unique scientific niche by uncovering critical time lags between environmental shifts and population responses, allowing weak effects to accumulate into detectable patterns, and enabling observation of rare events that spur new evolutionary hypotheses [13].

Quantitative Frameworks for Connecting Evolutionary Timescales

Modeling Approaches for Evolutionary Dynamics

The development of quantitative frameworks has been essential for bridging micro- and macroevolutionary dynamics. Mathematical modeling of speciation and extinction patterns plays an important role in quantitative inference of macroevolutionary processes, especially when combined with large-scale phylogenetic data [14]. The most commonly used framework is the birth-death model and its variations, which assumes that phylogenetic lineages accumulate with a rate of λ - μ, where λ is the speciation rate and μ is the extinction rate [14]. Recently, more sophisticated models have incorporated rate heterogeneity, including density-dependent, trait-dependent, and geography-dependent rate shifts within phylogenies [14].

For gene expression evolution across species, the Ornstein-Uhlenbeck (OU) process has emerged as a powerful modeling framework [15]. This stochastic process elegantly quantifies the contribution of both drift and selective pressure for any given gene by describing changes in expression (dXₜ) across time (dt) according to the equation: dXₜ = σdBₜ + α(θ - Xₜ)dt, where dBₜ denotes a Brownian motion process [15]. In this model:

- Drift is modeled by Brownian motion with a rate σ

- Selective pressure driving expression back to an optimal level θ is parameterized by α

- The interplay between drift (σ) and selection (α) reaches equilibrium at longer timescales

This framework allows researchers to move beyond theoretical inferences and apply the model to characterize the evolutionary history of a gene's expression for biological insight, including quantifying stabilizing selection, identifying deleterious expression levels in disease, and detecting directional selection in lineage-specific adaptations [15].

The Protracted Speciation Framework

The protracted speciation framework represents a significant advancement beyond traditional birth-death models by explicitly acknowledging that speciation and extinctions are typically protracted rather than point events [14]. This framework recognizes that the process between initial population divergence and formation of a full-fledged species is complex and influenced by numerous ecological mechanisms [14]. Within this framework, within-species lineages are considered basic units of diversification, with proliferation subject to three major events:

- Population splitting: Represents initial divergence and reduction of gene flow between within-species lineages

- Population conversion: Indicates formation of a fully reproductively isolated "good" species

- Population extirpation: Caused by either death of all lineage members or lineage merging back into its original gene pool [14]

Application of this protracted species framework has the potential to disentangle causes underlying differences in species richness among regions by modeling population-level dynamics that ultimately generate macroevolutionary patterns [14].

Table 1: Key Quantitative Frameworks for Evolutionary Analysis

| Framework | Primary Application | Key Parameters | Advantages |

|---|---|---|---|

| Birth-Death Models | Phylogenetic lineage diversification | Speciation rate (λ), Extinction rate (μ) | Tests relationships between diversification rates and ecological factors |

| Ornstein-Uhlenbeck Process | Gene expression evolution | Selection strength (α), Drift rate (σ), Optimal value (θ) | Incorporates both drift and stabilizing selection; models saturation of divergence |

| Protracted Speciation | Population to species transition | Population splitting, conversion, and extirpation rates | Explicitly models microevolutionary processes underlying macroevolutionary patterns |

Experimental Approaches in Long-Term Evolutionary Studies

Major Research Designs

Scientists have developed three principal methodological approaches to empirically examine long-term evolutionary processes through continuous study of single systems [13]:

Observational Field Studies: Direct and unmanipulated long-term sampling of natural populations has documented evolutionary changes in real time as they occur in nature, incorporating the complexities of natural environmental fluctuations, population demographics, and species interactions [13]. Seminal examples include the Grants' 40-year study of Darwin's finches in the Galápagos and research on Soay sheep in the Outer Hebrides [13].

Experimental Field Studies: Field experiments in which researchers manipulate one or more factors offer a powerful tool for investigating causal links between environmental factors and evolutionary outcomes in natural settings [13]. These include either consistent manipulative treatments maintained throughout experiments (e.g., the Park Grass Experiment established in 1856) or establishing long-term evolutionary perspectives through successive studies within a cohesive research framework (e.g., studies of guppies in Trinidadian streams and Anolis lizards on Bahamian islands) [13].

Laboratory Evolution Studies: Research using microbial populations has provided remarkable insights into evolutionary dynamics across thousands of generations [13]. These systems enable exceptional environmental control and offer unparalleled opportunities to examine the role of chance and historical contingency through initially identical replicate populations [13]. A distinctive feature is the ability to cryogenically store samples throughout experiments, creating a living 'frozen fossil record' that allows historical populations to be resurrected and re-examined as analytical technologies advance [13].

Table 2: Key Research Reagent Solutions for Long-Term Evolutionary Studies

| Research Resource | Function/Application | Key Features |

|---|---|---|

| Cryogenic Storage Systems | Preservation of historical populations in evolution experiments | Enables creation of "frozen fossil record"; allows resurrection of ancestral populations |

| RNA-seq Technologies | Comparative transcriptomics across species and timepoints | Enables quantification of gene expression evolution; applications in phylogenetic comparative methods |

| Long-Term Environmental Monitoring | Tracking environmental covariates in field studies | Documents selection pressures; correlates environmental changes with evolutionary responses |

| Pedigree Analysis Software | Tracking kinship and inheritance in natural populations | Enables quantification of selection differentials and heritability in the wild |

| Phylogenetic Comparative Methods | Analyzing trait evolution across species | Models evolutionary processes using phylogenetic trees; tests adaptive hypotheses |

Case Studies: Illuminating the Process-Pattern Connection

Experimental Evolution of Multicellularity

The ongoing Multicellularity Long-Term Evolution Experiment (MuLTEE) exemplifies how long-term studies can illuminate major evolutionary transitions [13]. This experiment uses replicate populations of simple group-forming 'snowflake' yeast (Saccharomyces cerevisiae mutant that grows as fractally branching multicellular clusters) that are passaged with daily selection for larger multicellular size [13]. Over 3,000 generations, snowflake yeast have evolved from small, brittle clusters to become tens of thousands of times larger and as tough as wood [13].

The physics of cellular packing gives rise to the first multicellular life cycles, within which novel, highly heritable multicellular traits arise via both genetic and epigenetic mechanisms [13]. The long-term value of the MuLTEE lies in its ability to prospectively explore how simple multicellular groups gradually evolve into increasingly integrated multicellular organisms, providing a window into evolutionary processes that cannot easily be reconstructed by looking backward in time [13]. This experimental system directly addresses how evolutionary innovations initially evolve and how they shape macroevolutionary trajectories, bridging the process-pattern divide for one of life's most significant transitions [13].

Yeast Multicellularity Experimental Evolution Workflow

Speciation in Action: Darwin's Finches

Perhaps the most compelling example documenting the process of speciation comes from the Grants' longitudinal research on Darwin's finches on the small island of Daphne Major in the Galápagos [13]. In 1981, eight years into the study, a single male large cactus finch (Geospiza conirostris) immigrated from the island of Española over 100 km away [13]. This bird successfully reproduced with two female medium ground finches (Geospiza fortis), producing offspring that gave rise to a genetically divergent lineage.

Through multi-generational pedigree analysis, researchers documented that this "Big Bird" lineage was strikingly different from either parental species, possessing larger body size, bigger beaks, and a distinctive song [13]. By the third generation, members of this new lineage were breeding exclusively with each other, demonstrating reproductive isolation—a hallmark of speciation [13]. This case study highlighted how the combination of song preference and cultural inheritance of song type could be powerful facilitators of the evolution of reproductive isolation, directly connecting microevolutionary mating behaviors to macroevolutionary speciation patterns [13].

Evolutionary Predictions and the Discovery of Eusocial Mammals

Evolutionary theory has demonstrated remarkable predictive power in forecasting novel biological discoveries. Based on first principles of the evolution of social behavior, Richard Alexander developed a 12-part model predicting the characteristics of a eusocial vertebrate before any such mammal was known to science [16]. His prediction was grounded in understanding of selective forces involved in the evolution of insect eusociality and included specific characteristics such as safe, expandable, subterranean nests; abundant food obtainable with minimal risk; and specific predator-prey relationships [16].

Alexander's model specifically predicted the animal would be a completely subterranean mammal, most likely a rodent, feeding on large underground roots and tubers, living in the wet-dry tropics with hard clay soils in open woodland or scrub of Africa [16]. Remarkably, this hypothetical description perfectly matched the naked mole-rat (Heterocephalus glaber), which was subsequently confirmed to exhibit true eusociality [16]. This successful prediction demonstrated how evolutionary theory could connect understanding of microevolutionary selective pressures to macroevolutionary outcomes across distant taxonomic groups.

Methodological Protocols for Key Experimental Approaches

Laboratory Experimental Evolution Protocol

Long-term laboratory evolution experiments require standardized methodologies to ensure reproducibility and meaningful interpretation across generations:

Population Establishment: Initiate multiple (typically 6-12) genetically identical replicate populations from a single ancestral clone to control for initial genetic variation [13].

Environmental Regime: Maintain consistent environmental conditions (temperature, nutrient composition, pH) while applying consistent selective pressure (e.g., daily transfer to fresh medium under specific conditions) [13].

Propagation Schedule: Implement regular transfer schedules (typically daily for microorganisms) with controlled population bottlenecks to standardize selection regimes across treatments [13].

Archival Preservation: Cryogenically preserve samples at regular intervals (every 50-500 generations) to create a "frozen fossil record" for subsequent resurrection and comparative analysis [13].

Phenotypic Monitoring: Conduct regular assays of relevant phenotypic traits (fitness measurements, morphological characteristics, metabolic capabilities) using standardized protocols [13].

Genomic Analysis: Periodically sequence complete populations or isolated clones to identify genetic changes underlying adaptations, using the archived fossil record to reconstruct evolutionary trajectories [13].

Field-Based Selection Studies Protocol

Long-term field studies of evolutionary processes require distinct methodological considerations:

Demographic Monitoring: Implement systematic capture-recapture, marking, or tracking of individuals to document survival, reproduction, and genealogical relationships across generations [13].

Environmental Characterization: Quantify relevant environmental variables (climate data, resource availability, predator densities) that constitute potential selective agents [13].

Phenotypic Measurement: Standardize measurement of relevant morphological, physiological, and behavioral traits using methods that ensure comparability across years and researchers [13].

Genetic Sampling: Collect non-invasive genetic material (feathers, hair, feces) or conduct controlled captures to obtain tissue samples for pedigree reconstruction and genomic analysis [13].

Statistical Modeling: Implement quantitative genetic approaches to estimate selection differentials, heritabilities, and evolutionary responses using mixed models that account for environmental covariates [13].

Field Study Methodology for Evolutionary Monitoring

Implications for Evolutionary Predictions in Applied Contexts

The integration of micro- and macroevolutionary perspectives through long-term studies has profound implications for evolutionary predictions in applied contexts. Evolutionary predictions are increasingly being developed and used in medicine, agriculture, biotechnology, and conservation biology [2]. These predictions serve different purposes, including preparing for the future (e.g., predicting seasonal influenza strains) and influencing evolutionary trajectories through evolutionary control (e.g., suppressing pathogen resistance or promoting beneficial adaptations) [2].

The predictive framework emerging from long-term evolutionary studies acknowledges that while evolution can be predicted in the short term from knowledge of selection and inheritance, long-term evolution remains inherently unpredictable because environments—which determine the directions and magnitudes of selection coefficients—fluctuate unpredictably [13]. This probabilistic nature of evolutionary forecasting necessitates approaches that incorporate environmental stochasticity, historical contingency, and the complex feedback between evolutionary and ecological dynamics [2].

Recent advances have demonstrated that evolutionary predictions can focus on different population variables (majority genotype, average fitness, allele frequencies, population size) across various timescales, from hours to many years [2]. The burgeoning field of evolutionary control seeks to apply these predictions to alter evolutionary processes with specific purposes, such as preventing evolution of drug resistance in pathogens or increasing the ecological range of endangered species to avoid extinction [2]. These applications highlight the translational potential of fundamental research bridging the process-pattern divide through long-term evolutionary studies.

Table 3: Evolutionary Prediction Categories and Applications

| Prediction Category | Timescale | Primary Variables | Application Examples |

|---|---|---|---|

| Short-Term Microevolutionary | Days to years | Allele frequencies, phenotype distributions | Antibiotic resistance management, seasonal vaccine design |

| Medium-Term Eco-Evolutionary | Years to decades | Population dynamics, species interactions | Conservation planning, invasive species management |

| Long-Term Macroevolutionary | Centuries to millennia | Speciation/extinction rates, phylogenetic patterns | Biodiversity conservation planning, climate change impacts |

Long-term evolutionary studies provide an indispensable approach for bridging the traditional divide between microevolutionary processes and macroevolutionary patterns. By directly investigating evolutionary dynamics in real time across extended temporal scales, these research programs have revealed complex interactions that unfold through oscillations, stochastic fluctuations, and systematic trends that cannot be detected through short-term observations alone [13]. The integration of quantitative frameworks—including birth-death models, Ornstein-Uhlenbeck processes, and protracted speciation frameworks—with sustained empirical investigations in laboratory and field settings has enabled researchers to connect genetic and phenotypic evolution within populations to the emergence of biodiversity patterns across species and higher taxa.

The methodological advances and conceptual insights emerging from long-term studies have profound implications for evolutionary forecasting and management across diverse applied contexts. As we face accelerating environmental change and its impacts on biological systems, the continued support for long-term evolutionary research remains critical both for advancing fundamental understanding of evolutionary processes and for addressing pressing challenges in human health, agriculture, and biodiversity conservation.

Methodologies for Modeling Evolution: From Theory to Clinical and Industrial Practice

The ability to accurately predict evolutionary processes represents a frontier in modern biology with profound implications for medicine, agriculture, and conservation science. Evolutionary predictions have traditionally been considered challenging, if not impossible, due to the inherent stochasticity of mutation, reproduction, and environmental change [2]. However, the integration of sophisticated computational approaches across population genetics, phylogenetics, and fitness landscape analysis is progressively transforming evolutionary biology into a predictive science. These disciplines provide complementary theoretical frameworks and analytical tools for interrogating evolutionary processes across different temporal and biological scales, from real-time adaptation in microbial populations to deep phylogenetic relationships spanning millions of years.

The theoretical foundation for evolutionary predictions rests on Darwin's theory of evolution by natural selection, extended by quantitative population genetics principles that account for genetic drift, mutation, migration, and recombination [2]. Population genetics provides the mathematical framework for understanding how genetic variation is distributed within and between populations and how it changes over time. Phylogenetics reconstructs evolutionary histories among species or genes, providing the historical context for understanding evolutionary processes. Fitness landscapes model the relationship between genotype and reproductive success, offering a powerful conceptual framework for predicting adaptive trajectories [17] [18]. Together, these approaches form an integrated toolkit for making evolutionary predictions that range from statistical likelihoods to specific forecasts of evolutionary outcomes.

Core Computational Frameworks and Their Methodologies

Population Genetic Analysis

Population genetics provides the statistical foundation for inferring evolutionary processes from genetic data. Modern population genomic analyses utilize whole-genome sequencing or genotyping-by-sequencing to acquire extensive variant information, including single nucleotide polymorphisms (SNPs), insertions/deletions (InDel), structural variations (SV), and copy number variations (CNV) [19]. These data enable researchers to investigate population genetic structure, demographic history, domestication processes, and dynamic evolutionary processes.

Table 1: Key Methods in Population Genetic Analysis

| Method | Primary Application | Data Input | Key Output |

|---|---|---|---|

| Principal Component Analysis (PCA) | Identifying major patterns of population structure | Genome-wide SNP data | Visualization of genetic similarity/dissimilarity |

| Population Structure Analysis | Inferring ancestry proportions and admixture | Genome-wide allele frequencies | Ancestral components and admixture levels |

| Selection Clearance Analysis | Detecting signatures of natural/artificial selection | Polymorphism and divergence data | Genomic regions under selection |

| Pairwise Sequentially Markovian Coalescent (PSMC) | Inferring historical population size changes | Single genome sequence | Historical effective population size trajectories |

| Gene Flow Analysis | Quantifying genetic exchange between populations | Allele frequency data across populations | Migration rates and admixture timing |

Several widely applied methods exemplify the population genetics toolkit. Principal Component Analysis (PCA) simplifies complex genetic data by transforming interrelated variables into orthogonal principal components that capture the largest amounts of variation [20] [19]. When applied to genome-wide SNP data, PCA efficiently visualizes genetic relationships among individuals, often revealing correlations between genetic variation and geography. Population structure analysis employs Bayesian clustering algorithms to determine the number of subpopulations (K), assess genetic exchange between populations, and quantify admixture in individual samples [19]. The Pairwise Sequentially Markovian Coalescent (PSMC) method infers historical population sizes from a single genome sequence, enabling reconstruction of demographic history over evolutionary timescales [19].

Phylogenetic Inference Methods

Phylogenetics has evolved from morphological comparisons to sophisticated computational analyses of molecular sequence data (DNA, RNA, or proteins) [21]. The field encompasses two major methodological approaches: distance-based methods and character-based methods, with further distinction between alignment-based and alignment-free techniques.

Table 2: Comparison of Phylogenetic Tree Construction Methods

| Method | Category | Advantages | Disadvantages |

|---|---|---|---|

| Maximum Parsimony | Character-based | Appropriate for very similar sequences; minimizes evolutionary steps | Time-consuming; suffers from long-branch attraction; fails for diverged sequences |

| Maximum Likelihood | Character-based | Suitable for dissimilar sequences; allows hypothesis testing; more accurate for small taxa sets | Computationally intensive; slow for large datasets |

| Neighbor Joining | Distance-based | Fast; works with variety of models | Loss of information from converting sequences to distances |

| UPGMA | Distance-based | Simple algorithm; provides rooted tree | Assumes constant evolutionary rate (often violated) |

Character-based methods such as Maximum Parsimony and Maximum Likelihood compare all sequences simultaneously considering one character/site at a time [21]. Maximum Parsimony seeks the evolutionary tree that requires the fewest changes to explain observed sequence variation, while Maximum Likelihood identifies the model with the highest probability of generating the observed sequences under a specific evolutionary model. Distance-based methods like Neighbor Joining and UPGMA utilize dissimilarity measures between sequences to construct trees through hierarchical clustering algorithms [21].

The critical methodological decision in phylogenetic analysis involves the sequence comparison approach. Alignment-based methods arrange sequences to highlight common symbols and substrings but face computational limitations with large or highly divergent datasets [21]. Alignment-free methods overcome these limitations through alternative metrics like k-word frequency, graphical representation, compression algorithms, or probabilistic methods using Markov chains [21].

Fitness Landscape Modeling and Analysis

Fitness landscapes represent the relationship between genotype and reproductive fitness, providing a powerful conceptual framework for predicting evolutionary trajectories [17] [18]. Initially proposed by Sewall Wright, fitness landscapes visualize genotypes as points in multidimensional space with fitness as the height, where populations evolve toward fitness peaks.

The topography of fitness landscapes fundamentally influences evolutionary predictability. Smooth landscapes with minimal epistasis (where mutation effects are independent) facilitate predictable evolutionary trajectories, while rugged landscapes with significant epistasis (where mutation effects depend on genetic background) create multiple fitness peaks and alternative evolutionary paths [18]. Quantitative measures of landscape topography include:

- Deviation from additivity: Measures how well fitness can be predicted by summing individual mutation effects

- Local roughness: The root mean squared difference between fitness of a point and its neighbors

- Mean path divergence: Quantifies how similar evolutionary trajectories are between starting and ending points

Empirical studies reveal that real fitness landscapes are rugged but significantly smoother than random landscapes, exhibiting a substantial deficit of suboptimal peaks compared to uncorrelated landscapes [17]. This relative smoothness appears to be a fundamental consequence of protein folding physics, enhancing evolutionary predictability [17].

Experimental characterization of fitness landscapes has been achieved for several systems, including TEM-1 β-lactamase, heat shock proteins, and RNA viruses, using deep sequencing to measure fitness effects of thousands of genotypes in bulk competitions [18]. These empirical landscapes demonstrate that epistatic interactions occur even among synonymous mutations and can be environment-dependent [18].

Experimental Protocols for Key Applications

Protocol: Inferring Selection from Coding Sequences

This protocol outlines a population genetics-phylogenetics approach for detecting natural selection in protein-coding genes, integrating polymorphism within species and divergence between species [22].

1. Data Collection and Preparation

- Sequence coding regions of interest from multiple individuals across related species

- Align sequences using codon-aware alignment algorithms (e.g., PRANK, MACSE)

- Generate polymorphism data (within-species variation) and divergence data (between-species differences)

2. Joint Population Genetics-Phylogenetics Analysis

- Apply Bayesian sliding window model to detect spatial clustering of selection signatures

- Estimate selection coefficients (ω = dN/dS) using codon substitution models

- Utilize allele frequency information to distinguish recent from ancient selective events

- Infer ancestral states probabilistically across the phylogeny

3. Interpretation and Validation

- Identify regions with significantly elevated dN/dS ratios indicating positive selection

- Distinguish between persistent and episodic selective regimes

- Correlate selective signatures with known functional domains or structural features

- Validate findings through experimental assays or independent datasets

This joint approach overcome limitations of methods that analyze polymorphism and divergence separately, providing enhanced power to detect heterogeneous selection pressures across genes and lineages [22].

Protocol: Empirical Fitness Landscape Characterization

This protocol describes the systematic measurement of fitness landscapes for a protein or RNA molecule, enabling predictions of evolutionary trajectories [18].

1. Library Design and Generation

- Select target gene and identify sites for mutagenesis (typically 4-8 amino acid positions)

- Generate combinatorial mutant library covering all possible combinations:

- Site-directed mutagenesis for small libraries

- Error-prone PCR or DNA synthesis for larger libraries

- Clone variants into expression vector with selectable or screenable marker

2. High-Throughput Fitness Assay

- Express mutant library in appropriate host system (e.g., bacteria, yeast)

- Subject to selective pressure (antibiotics, novel substrate, environmental stress)

- Use bulk competition assays with deep sequencing to quantify variant frequencies

- Calculate relative fitness from frequency changes over time: W_i = ln(f_i,t2 / f_i,t1) / (t2 - t1) where f_i,t is frequency of variant i at time t

3. Landscape Analysis and Visualization

- Construct fitness matrix with measurements for all combinatorial mutants

- Quantify epistasis as deviation from additive fitness expectations

- Identify accessible evolutionary paths between low and high fitness genotypes

- Validate predictions through experimental evolution studies

This approach has been successfully applied to TEM-1 β-lactamase, Hsp90, and viral proteins, revealing constraints on evolutionary paths and principles of adaptive landscapes [18].

Visualization of Computational Workflows

Workflow: Integrated Evolutionary Analysis Pipeline

Workflow: From Genetic Data to Evolutionary Predictions

Essential Research Reagents and Computational Tools

Table 3: Research Reagent Solutions for Evolutionary Prediction Studies

| Resource Type | Specific Examples | Research Application |

|---|---|---|

| Genomic Data Sources | Human Genome Diversity Project, 1000 Genomes, Bergström et al. (2020) | Reference datasets for population genetic analysis and demographic inference |

| Analysis Software | EIGENSOFT (SMARTPCA), STRUCTURE, BEAST, PSMC | Implementing population genetic and phylogenetic analyses |

| Sequencing Approaches | Whole-genome resequencing, Genotyping-by-sequencing, Reduced-representation sequencing | Generating genome-wide variant data for population studies |

| Fitness Assay Systems | TEM-1 β-lactamase, Hsp90, Viral genomes (TEV) | Model systems for empirical fitness landscape characterization |

| Computational Frameworks | Fisher's Geometric Model, Landscape State Models, Markov chain models | Theoretical frameworks for predicting evolutionary trajectories |

Applications in Disease Research and Drug Development

The predictive power of computational evolutionary approaches finds crucial applications in understanding disease mechanisms and informing therapeutic development. In infectious disease management, phylogenetic methods track pathogen transmission and evolution, enabling identification of outbreak sources and informing public health interventions [23]. For instance, seasonal influenza vaccine selection relies on evolutionary forecasts of which strains will dominate in upcoming seasons [2]. These predictions use relatively simple fitness models based on viral sequence data to anticipate antigenic evolution [18].

In cancer research, phylogenetic methods reconstruct the evolutionary history of tumor development, identifying key mutational events and classifying cancer subtypes according to their evolutionary pathways [21]. By capturing important mutational events among different cancer types, phylogenetic trees help elucidate the progression pathways and genetic heterogeneity within and between tumors. The combination of mutated genes across a population can be summarized in a phylogeny describing different evolutionary pathways in cancer development [21].

The drug resistance prediction field has benefited substantially from fitness landscape analyses. Studies of TEM-1 β-lactamase adaptation to cefotaxime revealed that epistasis constrains evolutionary paths to resistance, with specific amino acid substitutions required in a particular order [18]. Similarly, evolution experiments with bacteria and yeast combined with fitness landscape simulations address the relative contributions of standing genetic variation versus de novo mutations to antibiotic resistance evolution under different drug concentrations [18].

The integration of population genetics, phylogenetics, and fitness landscape modeling represents a powerful paradigm for advancing evolutionary predictions from retrospective explanations to prospective forecasts. While each approach provides unique insights, their synthesis offers the most promising path toward robust predictive frameworks. Population genetics reveals the processes shaping contemporary variation, phylogenetics reconstructs historical relationships, and fitness landscapes model the constraints and opportunities for future adaptation.

Challenges remain in scaling these approaches to complex, polygenic traits and incorporating eco-evolutionary feedbacks where populations modify their own selective environments [2]. However, the rapidly expanding availability of genomic data, coupled with increasingly sophisticated computational methods, suggests a promising trajectory for evolutionary prediction research. As these fields continue to converge, we anticipate enhanced capacity to forecast evolutionary outcomes across biological systems—from managing antibiotic resistance and predicting viral emergence to conserving biodiversity and understanding cancer progression—ultimately fulfilling the promise of evolutionary biology as a predictive science.

Experimental evolution uses controlled laboratory experiments to study evolutionary dynamics in real time, providing a powerful tool for testing fundamental predictions in evolutionary biology. This approach allows researchers to move beyond comparative studies and directly observe evolution, offering unprecedented validation of theoretical models. The core premise is that by subjecting microbial populations to defined selection pressures over multiple generations, one can observe and quantify adaptive processes, thereby testing the predictability of evolution [24] [25]. This methodology is particularly valuable for investigating evolutionary constraints, fitness landscapes, and the dynamics of adaptation—areas where traditional theoretical models often lack empirical validation [26]. The emerging synergy between experimental evolution and machine learning further enhances predictive capabilities, drawing analogies between evolutionary optimization and computational learning algorithms [27]. This guide details the laboratory models and methodologies that enable researchers to conduct such rigorous, prediction-focused evolutionary studies.

Core Concepts and Theoretical Frameworks

Key Theoretical Models for Experimental Testing

Table 1: Foundational Theoretical Models in Experimental Evolution

| Model Name | Core Principle | Evolutionary Prediction | Key Testable Parameters |

|---|---|---|---|