From Genomes to Trees: Advanced Whole-Genome Alignment for Phylogenetic Block Extraction

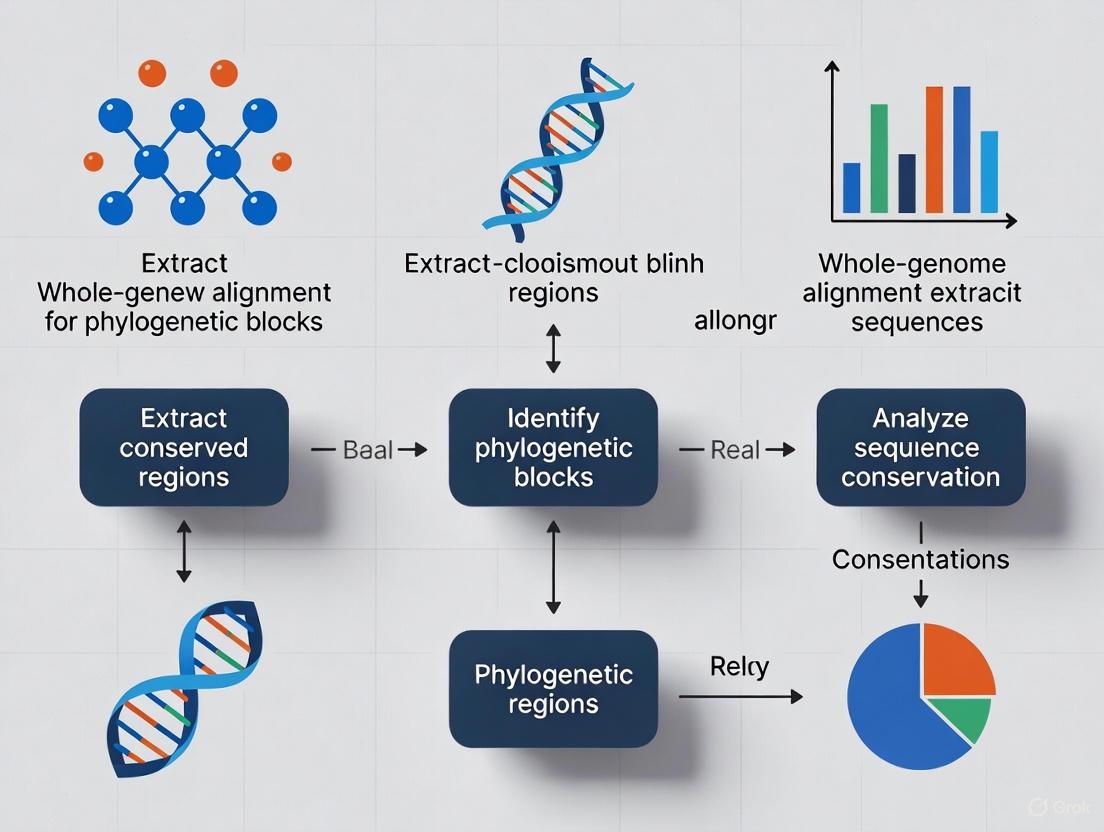

This article provides a comprehensive guide for researchers and drug development professionals on extracting phylogenetically informative blocks from whole-genome alignments.

From Genomes to Trees: Advanced Whole-Genome Alignment for Phylogenetic Block Extraction

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on extracting phylogenetically informative blocks from whole-genome alignments. It covers foundational concepts of phylogenomics and whole-genome alignment, explores cutting-edge methodologies and tools like CASTER and wgatools, addresses troubleshooting and optimization strategies for data processing, and outlines validation techniques for comparative analysis. By integrating the latest advancements in computational genomics, this resource enables robust evolutionary analyses and enhances our understanding of genomic evolution for biomedical applications.

Phylogenomic Foundations: Understanding Whole-Genome Alignment and Evolutionary Trees

Phylogenetic trees are fundamental tools in evolutionary biology, providing a graphical representation of the evolutionary relationships among species or other taxonomic groups based on their shared ancestry [1] [2]. These diagrams illustrate how life diversifies over time, tracing lineages back to common ancestors. In modern genomic research, understanding phylogenetic trees is crucial for interpreting the results of whole-genome alignment and comparative genomics studies [3]. The foundational distinction in this field lies between rooted and unrooted phylogenetic trees, each conveying different types of evolutionary information and serving complementary roles in phylogenomic analysis [4] [1]. This application note delineates these tree types, their construction, visualization, and significance within the context of whole-genome alignment extraction for phylogenetic blocks research, providing experimental protocols and resources tailored for researchers and drug development professionals.

Core Concepts: Rooted vs. Unrooted Trees

Rooted Phylogenetic Trees

A rooted phylogenetic tree possesses a single, unique node known as the root, which represents the inferred most recent common ancestor of all entities included in the tree [4] [1]. The root provides a directional axis for evolutionary time, enabling interpretation of evolutionary sequence and chronological relationships.

Key features of rooted trees include:

- Temporal Directionality: Evolution proceeds directionally from the root (oldest) to the tips (most recent) [4].

- Ancestral State Inference: The root and internal nodes represent hypothetical ancestral states, allowing reconstruction of evolutionary history.

- Common Ancestor Identification: Explicitly identifies a single common ancestor for all taxa in the tree [4].

Rooted trees are typically constructed using an outgroup (a taxon known to have diverged before the lineage of interest) or by applying assumptions such as the molecular clock hypothesis [1]. The most common method employs an uncontroversial outgroup that is close enough to allow inference from trait data or molecular sequencing, but sufficiently distant to be a clear outgroup [1].

Unrooted Phylogenetic Trees

An unrooted phylogenetic tree illustrates the relatedness of taxonomic units without specifying evolutionary direction or identifying a common ancestor [4] [1]. These trees simply depict the connectivity and relative evolutionary distances between species.

Key features of unrooted trees include:

- Topology-Only Representation: Shows branching patterns and relationships without evolutionary direction [4].

- No Defined Ancestral Root: Does not indicate the order of divergence or identify a common ancestor [4].

- Comparative Analysis Utility: Primarily used for comparative studies when evolutionary roots are unknown or uncertain [4].

Unrooted trees can be converted to rooted trees by introducing a root through the inclusion of outgroup data or by applying evolutionary rate assumptions [1].

Comparative Analysis: Key Differences

Table 1: Fundamental Differences Between Rooted and Unrooted Phylogenetic Trees

| Feature | Rooted Phylogenetic Tree | Unrooted Phylogenetic Tree |

|---|---|---|

| Root Presence | Has a common root representing the most recent common ancestor [4] | No defined root [4] |

| Evolutionary Direction | Shows clear evolutionary paths from ancestral to descendant taxa [4] | Does not indicate direction of evolution [4] |

| Ancestral Relations | Defines explicit ancestral relationships [4] | Only shows relatedness without ancestral inference [4] |

| Common Usage | Evolutionary history studies, divergence time estimation [4] | Genetic comparisons when root position is unknown [4] |

| Information Content | Higher (includes topology and temporal direction) [2] | Lower (topology only) [2] |

Table 2: Tree Enumeration for Different Types of Phylogenetic Trees (for labeled, bifurcating trees)

| Number of Tips | Number of Rooted Trees | Number of Unrooted Trees |

|---|---|---|

| 3 | 3 [1] | 1 [1] |

| 4 | 15 [1] | 3 [1] |

| 5 | 105 [1] | 15 [1] |

| 6 | 945 [1] | 105 [1] |

| 7 | 10,395 [1] | 945 [1] |

| 8 | 135,135 [1] | 10,395 [1] |

| 9 | 2,027,025 [1] | 135,135 [1] |

| 10 | 34,459,425 [1] | 2,027,025 [1] |

The number of possible trees increases dramatically with additional taxa, presenting computational challenges for phylogenetic analysis [1]. For bifurcating labeled trees, the total number of rooted trees with n leaves is calculated as (2n-3)!!, while unrooted trees follow (2n-5)!! [1].

Tree Construction Methods and Protocols

Whole-Genome Alignment for Phylogenetic Analysis

Whole-genome alignment (WGA) serves as the foundation for modern phylogenomic tree construction, enabling comparison of entire genomes across species [3]. This protocol outlines key methodologies for extracting phylogenetic blocks from whole-genome alignments.

Protocol 3.1.1: Suffix Tree-Based WGA Using MUMmer

Principle: Suffix tree-based methods identify Maximal Unique Matches (MUMs) between genomes as anchors for alignment [3].

Procedure:

- Suffix Tree Construction: Build suffix trees for each input genome sequence using Ukkonen's or McCreight's algorithm [3].

- MUM Decomposition: Identify all maximal unique matches between genome pairs using the constructed suffix trees [3].

- Match Filtering: Apply filtering techniques to remove spurious matches and improve alignment accuracy [3].

- MUM Organization: Identify the longest sequence of matches maintaining original order in both genomes [3].

- Gap Processing: Detect and characterize insertions, repetitions, and single nucleotide variations between MUMs [3].

- Local Alignment: Perform Smith-Waterman alignment for regions between MUMs to construct the final alignment [3].

- Output Generation: Produce alignments suitable for phylogenetic tree construction [3].

Applications: Particularly effective for aligning closely related genomes with high sequence similarity [3].

Protocol 3.1.2: CASTER Protocol for Genome-Wide Phylogeny Inference

Principle: The CASTER method enables direct species tree inference from whole-genome alignments using all aligned base pairs [5].

Procedure:

- Data Input: Compile whole-genome alignment data comprising aligned positions across multiple species [5].

- Model Selection: Choose appropriate evolutionary models for different genomic regions [5].

- Tree Search: Employ heuristic search algorithms to explore tree space [5].

- Likelihood Calculation: Compute likelihood scores for candidate trees using all aligned positions [5].

- Topology Evaluation: Assess topological support through bootstrap resampling or posterior probabilities [5].

Advantages: CASTER provides truly genome-wide analysis using every base pair aligned across species with standard computational resources, offering interpretable outputs that help biologists understand species relationships and evolutionary histories across the genome [5].

Tree Inference Methodologies

Protocol 3.2.1: Maximum Likelihood Phylogenetic Inference

Principle: This statistical approach evaluates the probability of observing the sequence data given a particular phylogenetic tree and evolutionary model [2].

Procedure:

- Model Selection: Select appropriate nucleotide or amino acid substitution models using model-testing software.

- Tree Space Exploration: Employ heuristic search algorithms (e.g., hill-climbing, genetic algorithms) to identify high-likelihood tree topologies.

- Likelihood Calculation: For each candidate tree, compute the likelihood score using Felsenstein's pruning algorithm [6].

- Tree Selection: Choose the tree with the highest maximum likelihood score.

- Support Assessment: Evaluate branch support using bootstrap resampling (typically with 100-1000 replicates).

Protocol 3.2.2: Bayesian Phylogenetic Inference

Principle: This method incorporates prior knowledge and updates beliefs based on sequence data to produce a posterior distribution of trees [2].

Procedure:

- Prior Specification: Define prior distributions for tree topology, branch lengths, and evolutionary model parameters.

- Markov Chain Monte Carlo (MCMC) Sampling: Run MCMC simulations to sample from the posterior distribution of phylogenetic trees.

- Chain Convergence Assessment: Monitor MCMC convergence using diagnostic tools (e.g., Tracer).

- Posterior Distribution Summarization: Summarize the sampled trees as a consensus tree with posterior probabilities for clades.

- Burn-in Discard: Exclude initial samples from the summarized distribution.

Visualization and Annotation of Phylogenetic Trees

Effective visualization is essential for interpreting phylogenetic trees, especially when integrating diverse associated data types. The ggtree package in R provides a versatile platform for phylogenetic tree visualization and annotation [7] [8].

Protocol 4.1: Basic Tree Visualization with ggtree

Procedure:

- Data Import: Import tree files (Newick, NEXUS, etc.) into R using treeio or ape packages [7].

- Basic Plotting: Generate basic tree visualizations using the

ggtree()function [7]. - Layout Selection: Choose appropriate tree layouts based on analytical needs:

- Annotation Layers: Add annotation layers using the

+operator:

Protocol 4.2: Advanced Annotation with Phylogenomic Data

Procedure:

- Data Integration: Combine tree objects with associated data (evolutionary rates, ancestral sequences, phenotypic traits) [8].

- Branch Annotation: Map data variables to visual properties (color, size, linetype) of tree branches.

- Node Annotation: Display inferred ancestral states or support values at internal nodes.

- Heatmap Integration: Associate phylogenetic trees with heatmaps of genomic features using

gheatmap(). - Publication-Quality Output: Customize visual elements and export in appropriate formats.

Diagram 1: ggtree Visualization Workflow (76 characters)

Table 3: Essential Research Reagents and Computational Tools for Phylogenomic Analysis

| Resource | Type | Primary Function | Application Context |

|---|---|---|---|

| MUMmer | Software Suite | Whole-genome alignment via suffix trees [3] | Alignment of closely related genomes |

| CASTER | Algorithm | Direct species tree inference from WGAs [5] | Genome-wide phylogeny reconstruction |

| ggtree | R Package | Phylogenetic tree visualization and annotation [7] [8] | Tree visualization and data integration |

| PhyloGPN | Genomic Language Model | Phylogenetics-based genomic pre-trained network [6] | Variant effect prediction and transfer learning |

| APE | R Package | Analysis of phylogenetics and evolution [7] | Fundamental phylogenetic analyses |

| Whole-Genome Alignments | Data Resource | Multi-species genome alignments [3] | Comparative genomics and phylogenomics |

| Zoonomia Consortium Alignment | Data Resource | 447 placental mammalian genomes [6] | Mammalian evolutionary analyses |

Advanced Applications in Genome Research

Phylogenetic Signal Detection in Genomic Blocks

Extracting phylogenetic blocks from whole-genome alignments enables detection of evolutionary signals across different genomic regions. Discordant phylogenetic signals between blocks may indicate incomplete lineage sorting, hybridization, or horizontal gene transfer.

Protocol 6.1.1: Phylogenomic Block Analysis

Procedure:

- Genome Partitioning: Divide whole-genome alignments into blocks based on functional annotation or sliding windows.

- Individual Tree Inference: Reconstruct separate phylogenetic trees for each block.

- Tree Comparison: Assess topological congruence between blocks using tree distance metrics.

- Signal Integration: Apply species tree methods (e.g., ASTRAL) to reconcile conflicting signals.

- Biological Interpretation: Interpret discordance in evolutionary context.

Integration with Genomic Language Models

PhyloGPN represents a novel framework integrating phylogenetic principles with genomic language models, trained to model nucleotide evolution on phylogenetic trees using multispecies whole-genome alignments [6]. This approach enhances variant effect prediction from single sequences alone and demonstrates strong transfer learning capabilities [6].

Diagram 2: Phylogenomic Analysis Pipeline (80 characters)

Rooted and unrooted phylogenetic trees serve as complementary frameworks for representing evolutionary relationships, each with distinct advantages for specific research contexts. Rooted trees provide temporal directionality and explicit ancestral inference, while unrooted trees offer flexibility when evolutionary roots are uncertain. Within whole-genome alignment extraction for phylogenetic blocks research, selection between these representations depends on available data, research questions, and analytical goals. Emerging methods like CASTER enable truly genome-wide phylogenetic inference, while tools like ggtree facilitate sophisticated visualization of complex phylogenomic data. Integration of phylogenetic principles with genomic language models represents a promising frontier for enhancing variant effect prediction and functional genome interpretation. As phylogenomic datasets continue expanding, robust protocols for tree construction, visualization, and interpretation remain essential for advancing evolutionary genomics and translational applications in drug development.

The Challenge of Incomplete Lineage Sorting and Horizontal Gene Transfer in Phylogenomics

In the era of whole-genome sequencing, reconstructing the evolutionary history of species (the species tree) is a fundamental goal. However, this process is significantly complicated by biological processes that cause individual gene histories to differ from the species tree. Two of the most significant sources of such incongruence are Incomplete Lineage Sorting (ILS) and Horizontal Gene Transfer (HGT).

ILS occurs when ancestral genetic polymorphisms persist through multiple speciation events, leading to gene trees that diverge from the species tree. This is modeled by the multi-species coalescent (MSC) model, where gene trees evolve within the species tree in a backward process, and lineages coalesce as they move from leaves toward the root. When coalescence fails to occur in the earliest possible branch, the resulting gene tree topology can differ from the species tree [9]. Conversely, HGT involves the lateral transfer of genetic material between distinct species, bypassing vertical inheritance. In phylogenetic terms, HGT introduces a reticulate, rather than purely treelike, evolutionary history [9].

These processes create gene tree heterogeneity, presenting a major challenge for species tree estimation. While multiple loci are needed to estimate a species phylogeny accurately, the conflicting signals from ILS and HGT can mislead traditional phylogenetic methods. This Application Note outlines the theoretical foundations, practical protocols, and analytical tools for accurately inferring species trees in the presence of these confounding factors, with a focus on workflows integrated with whole-genome alignment extraction.

Quantitative Comparison of Species Tree Estimation Methods

The performance of species tree estimation methods varies significantly under different conditions of ILS and HGT. The table below summarizes key characteristics and empirical performance of major method categories based on simulation studies.

Table 1: Comparison of Species Tree Estimation Methods Under ILS and HGT

| Method Category | Example Methods | Theoretical Consistency (ILS alone) | Theoretical Consistency (Bounded HGT) | Accuracy under Moderate ILS + Low HGT | Accuracy under Moderate ILS + High HGT | Scalability (to 50+ species, 1000+ loci) |

|---|---|---|---|---|---|---|

| Quartet-Based Summary Methods | ASTRAL-2, wQMC | Statistically consistent [9] | Statistically consistent (under bounded models) [9] | High [9] | High (robust) [9] | High [9] |

| Other Coalescent-Based Summary Methods | NJst, MP-EST | Statistically consistent [9] | Not fully established | High [9] | Low (NJst); Medium-Low (MP-EST) [9] | High (NJst); Low (MP-EST) [9] |

| Concatenation (Maximum Likelihood) | RAxML, IQ-TREE | Not statistically consistent [9] | Not statistically consistent | High [9] | Low [9] | High |

| Bayesian Methods | *BEAST, BEST | Statistically consistent [9] | Not fully established | High [9] | Not fully evaluated | Low (for large datasets) [9] |

The following diagram illustrates the logical decision process for selecting an appropriate species tree estimation method based on dataset characteristics and biological assumptions.

Theoretical Foundations and Statistical Consistency

The effectiveness of quartet-based methods in the presence of both ILS and HGT is grounded in mathematical theory. Under the MSC model, for any set of four leaves, the most probable unrooted gene tree topology is identical to the species tree topology restricted to those leaves [9]. Crucially, similar theorems have been proven under bounded models of HGT (both stochastic and highways models), where the most probable quartet tree remains topologically identical to the underlying species tree, provided the amount of HGT per gene is bounded [9].

This leads to a powerful conclusion: summary methods that construct a species tree from the dominant quartet trees are statistically consistent under both the MSC model and bounded HGT models. This means that as the number of loci and the number of sites per locus increase, the estimated species tree converges in probability to the true species tree. ASTRAL-2 and wQMC, which operate on this principle, have been proven to be statistically consistent under these conditions [9].

Protocols for Species Tree Estimation

Protocol 1: Evaluating Species Tree Methods with Simulated Data

This protocol is designed to benchmark the performance of different species tree estimation methods under controlled conditions of ILS and HGT.

1. Input Data Preparation:

- Software Required: Simulators like

SimPhy(for ILS under MSC) or custom scripts (to inject HGT events). - Procedure:

- Generate a known model species tree (e.g., using a Yule process).

- Simulate gene trees within this species tree under the MSC model to introduce ILS. Coalescent units (

θ) control the level of ILS (smallerθincreases ILS). - Introduce HGT events on the species tree according to a stochastic model (e.g., Poisson process) or a highways model. The rate parameter (

λ) controls the frequency of HGT events. - For each gene tree, simulate sequence evolution (e.g., using

INDELibleorSeq-Gen) to produce multiple sequence alignments for each locus.

2. Species Tree Inference:

- Software: ASTRAL-2, wQMC, NJst, and Maximum Likelihood concatenation (e.g., RAxML).

- Procedure for Summary Methods (ASTRAL-2, wQMC, NJst):

- Step 1: Estimate individual gene trees from the simulated sequence alignments using a method like RAxML or IQ-TREE.

- Step 2: Input the set of estimated gene trees into the species tree method.

- For ASTRAL-2: Run

astral -i input_gene_trees.tre -o species_tree.tre. - For wQMC: Use the supplied script to process gene trees into quartets and amalgamate.

- For ASTRAL-2: Run

- Procedure for Concatenation (CA-ML):

- Step 1: Concatenate all sequence alignments into a single supermatrix.

- Step 2: Infer a tree on the supermatrix using RAxML:

raxmlHPC -s supermatrix.fa -n concat -m GTRGAMMA.

3. Accuracy Assessment:

- Metric: Compare the estimated species tree to the true, simulated species tree using the Robinson-Foulds (RF) distance. Lower RF distances indicate higher accuracy.

- Tool: Use

HashRFor theRF.distfunction in thephangornR package to calculate distances [9].

The following workflow diagram summarizes this protocol.

Protocol 2: Phylogenetic Marker Selection from Whole-Genome Alignments

This protocol describes the use of the Phylomark algorithm to identify a minimal set of conserved phylogenetic markers that recapitulate the whole-genome alignment (WGA) phylogeny, ideal for downstream species tree analysis [10].

1. Whole-Genome Alignment and Filtering:

- Input: Multiple draft or complete genomes.

- Software:

MugsyorProgressive Mauvefor WGA construction. - Procedure:

- Align genomes using

Mugsy(output is a Multiple Alignment Format, MAF, file). - Parse the MAF file to retain only blocks containing homologous sequence from all genomes.

- Convert conserved blocks to a concatenated FASTA alignment.

- Remove all columns containing gaps using a tool like

mothurto create a final, gapless WGA.

- Align genomes using

2. Reference WGA Phylogeny Estimation:

- Software:

RAxMLorFastTree2. - Procedure:

- Infer a phylogenetic tree from the filtered WGA.

- This tree serves as the reference "gold standard" (e.g.,

WGA_tree.tre).

3. Phylomark Analysis:

- Software:

PhylomarkPython script. - Input Files:

- The concatenated WGA in FASTA format.

- A filter mask from

mothurindicating polymorphic sites. - The reference WGA tree.

- Multi-FASTA files of all input genomes.

- A single reference genome FASTA file.

- Procedure:

- Run

Phylomarkwith a sliding window (e.g., fragment length=500 nt, step size=5) to slice the WGA. - The script filters fragments with insufficient polymorphic sites (user-defined minimum, e.g., 50).

- For each retained fragment, it:

- Uses BLAST to verify genomic contiguity.

- Infers a tree (

FastTree2). - Calculates the Robinson-Foulds (RF) distance between this fragment tree and the reference WGA tree.

- The output is a list of genomic fragments and their RF distances.

- Run

4. Marker Selection and Validation:

- Procedure:

- Select markers with the lowest RF distances.

- A shell script can be used to randomly select combinations of 3-8 top markers, concatenate their alignments, and calculate the combined RF distance to the WGA phylogeny.

- Manually compare the tree from the best marker set to the WGA tree to verify that major lineages are resolved.

- Design PCR primers for wet-lab validation if needed [10].

Visualization and Annotation of Phylogenetic Trees

The ggtree R package is a powerful tool for visualizing and annotating phylogenetic trees, especially when integrating complex associated data [8] [7].

Basic Tree Visualization:

- The tree is viewed using

ggtree(tree_object). Layers of annotations are added sequentially with the+operator [8]. - Key geometric layers include:

geom_tiplab(): Add taxa labels.geom_hilight(): Highlight a clade with a rectangle.geom_cladelabel(): Annotate a clade with a bar and text label.geom_tippoint(),geom_nodepoint(): Add symbols to tips and internal nodes.

Tree Layouts: ggtree supports multiple layouts, including rectangular, circular, fan, slanted, and unrooted (using equal-angle or daylight algorithms), as well as cladograms (branch.length='none') [8] [7].

Annotation with Associated Data: The package seamlessly integrates with the treeio package to import and visualize diverse annotation data (e.g., evolutionary rates, ancestral states, geographic data) directly on the tree, mapping them to colors, sizes, and shapes of tree components [8].

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Data Resources for Phylogenomic Analysis

| Resource Name | Category | Primary Function | Key Application in Protocol |

|---|---|---|---|

| ASTRAL-2 | Software Tool | Quartet-based species tree estimation | Primary species tree inference from gene trees (Protocol 1) [9]. |

| Phylomark | Software Algorithm | Identification of phylogenetic markers from WGA | Selecting optimal marker set from whole-genome data (Protocol 2) [10]. |

| RAxML | Software Tool | Phylogenetic inference under Maximum Likelihood | Estimating gene trees and the reference WGA tree [9] [10]. |

| Mugsy | Software Tool | Multiple whole-genome alignment | Creating the initial WGA for marker selection (Protocol 2) [10]. |

| ggtree | R Package | Visualization and annotation of phylogenetic trees | Creating publication-quality figures of species trees (Visualization Section) [8] [7]. |

| Whole-Genome Alignment (WGA) | Data Resource | Core genomic data for phylogenomic analysis | Serves as the reference phylogeny and source for marker extraction [10]. |

| Robinson-Foulds (RF) Distance | Analysis Metric | Topological distance between two trees | Quantifying accuracy in simulations and marker performance (Protocols 1 & 2) [9] [10]. |

Whole-Genome Alignment as the Cornerstone of Modern Comparative Genomics

Whole-genome alignment (WGA) stands as a foundational methodology in comparative genomics, enabling researchers to perform large-scale comparisons of entire genomes from different species or individuals within the same species [3]. These alignments provide a global perspective on genomic similarity and variation, yielding critical insights into species evolution, gene function, and the genetic basis of diseases [3]. The process of WGA involves identifying homologous regions between genomes, which may have been altered through evolutionary processes such as mutations, insertions, deletions, and rearrangements. As genomic sequencing technologies continue to advance, producing ever-increasing amounts of data, efficient and accurate WGA methods have become indispensable for unlocking the biological information contained within these sequences.

The significance of WGA extends beyond basic evolutionary studies into applied biomedical research. In drug development, for instance, understanding conserved genomic regions across species can inform target validation and toxicity studies [11]. Furthermore, population-scale sequencing projects, such as the Tohoku Medical Megabank Project which sequenced 100,000 participants, rely on WGA methodologies to build foundations for personalized medicine and prevention strategies [12]. The growing recognition of population-specific genetic variants underscores the necessity of comprehensive WGA approaches that can accommodate diverse genomic datasets beyond European descent populations, enabling more equitable precision medicine initiatives [11].

Methodological Approaches to Whole-Genome Alignment

Classification of WGA Algorithms

Whole-genome alignment algorithms can be broadly categorized into several classes based on their underlying computational strategies. Understanding these categories is essential for selecting the appropriate tool for specific research applications.

Table 1: Classification of Whole-Genome Alignment Methods

| Method Type | Core Principle | Representative Tools | Strengths | Limitations |

|---|---|---|---|---|

| Suffix Tree-Based | Uses tree structures containing all suffixes of reference sequences to find maximal unique matches | MUMmer | High accuracy for identifying conserved regions; efficient for closely related genomes | High memory consumption for large genomes |

| Hash-Based | Creates tables of k-mers or seeds for rapid sequence comparison | BWA, BOWTIE2 | Fast alignment of short reads; optimized for large volumes of data | Challenges with complex repetitive regions |

| Anchor-Based | Identifies conserved anchors first, then extends alignment | Minimap2 | Balanced speed and sensitivity; good for long-read technologies | Performance depends on anchor identification quality |

| Graph-Based | Represents reference as a graph to capture variation | GraphAligner, VG | Handles structural variation well; pangenome applications | Computational complexity; newer tools still evolving |

Suffix tree-based methods, such as MUMmer, utilize a "Maximal Unique Match" (MUM) finding algorithm that identifies subsequences occurring exactly once in each genome [3]. These MUMs represent regions of high similarity or conserved regions between genomes, which are then organized to maintain their original order before filling in the spaces between them with detailed alignment. This approach is particularly effective for aligning closely related genomes, such as different bacterial strains, though newer versions have been adapted to handle larger eukaryotic genomes [3].

Hash-based methods employ a different strategy, creating indexes of short sequences (k-mers) from the reference genome to enable rapid comparison with query sequences [3]. Tools like BWA and BOWTIE2 have been optimized for short reads generated by next-generation sequencing technologies, excelling in processing large volumes of data and pinpointing small-scale genetic variations with high accuracy. These methods are particularly valuable for population genetics studies, such as the whole-genome sequencing of 3,135 Japanese individuals that identified over 44 million genetic variants [11].

Anchor-based methods represent a hybrid approach that first identifies high-similarity regions (anchors) between genomes and then performs more detailed alignment in these regions. Tools like Minimap2 use this strategy to achieve a balance between speed and sensitivity, making them particularly suitable for long-read sequencing technologies [3]. These methods have proven effective for aligning sequences with moderate levels of divergence.

Graph-based methods constitute the most recent advancement in WGA algorithms, representing genomes as graphs rather than linear sequences [13]. This approach naturally captures genetic variation and uncertainty, enabling more comprehensive comparisons across diverse individuals or populations. GraphAligner, for example, has demonstrated the ability to align long reads to genome graphs 13 times faster than previous state-of-the-art tools while using 3 times less memory [13]. Such graph-based approaches are particularly powerful for variant-rich regions and for building pangenome references that encompass the genetic diversity of a species.

Advanced and Emerging Approaches

Recent methodological innovations continue to expand the capabilities of whole-genome alignment. Graph-based alignment tools like GraphAligner implement a seed-and-extend strategy with minimizer-based seeding that exploits the fact that long reads typically span simpler genomic regions [13]. This approach enables efficient alignment of noisy long reads to complex graphs, facilitating applications in error correction, genome assembly, and genotyping of variants in a pangenome context.

The integration of machine learning, particularly deep learning, represents a promising frontier in phylogenetic analysis and WGA [14]. While adoption has been slower in phylogenetics due to the complex nature of phylogenetic data, new methods for encoding training data using compact bijective ladderized vectors or transformers are enabling the handling of larger trees and genomic datasets [14]. These approaches have the potential to significantly reduce computational costs compared to traditional methods, especially for computationally demanding tasks such as model selection or estimating branch support values.

Commercial WGA solutions have also seen continuous improvement, with tools like Qiagen's Whole Genome Alignment plugin receiving regular updates for enhanced functionality [15]. Recent versions have introduced capabilities such as contig rearrangement to minimize crossing connections between genomes and options to color alignment blocks by their position on a reference genome, improving visualization and interpretation of results [15].

Experimental Protocols and Workflows

Standardized WGA Protocol for Phylogenetic Studies

The following protocol outlines a comprehensive workflow for whole-genome alignment focused on extracting phylogenetic blocks, incorporating best practices from large-scale sequencing projects [12] [3] [11].

Sample Preparation and DNA Extraction

- Obtain high-quality genomic DNA from biological samples using standardized extraction methods. For blood samples, the Autopure LS system (Qiagen) or GENE PREP STAR NA-480 (Kurabo) have been used in large-scale studies [12]. For cord blood, QIAsymphony SP (Qiagen) is recommended, while saliva samples can be processed using Oragene preservative solution (DNA Genotek).

- Quantify DNA concentration using fluorescence-based methods such as the Quant-iT PicoGreen dsDNA kit (Invitrogen) and adjust to 50 ng/μL [12]. Verify DNA quality through fragment analysis, ensuring minimal degradation.

- Store extracted DNA at 4°C for short-term use or -80°C for long-term preservation. Implement quality control measures following international standards such as ISO 20387:2018 for biobanking [12].

Library Preparation and Sequencing

- Fragment genomic DNA to an average target size of 550 bp using focused-ultrasonication (e.g., Covaris LE220) [12].

- Prepare sequencing libraries using PCR-free protocols to maintain natural sequence representation. For Illumina platforms, use TruSeq DNA PCR-free HT sample prep kit with unique dual indexes for 96 samples. For MGI platforms, employ MGIEasy PCR-Free DNA Library Prep Set [12].

- Implement automation for library preparation using liquid handling systems (e.g., Agilent Bravo for Illumina libraries or MGI SP-960 for MGI platforms) to ensure reproducibility and throughput [12].

- Perform library quality control using Qubit dsDNA HS Assay for concentration measurement and fragment analyzers (e.g., Advanced Analytical Technologies) or TapeStation systems for size distribution analysis [12].

- Sequence libraries on appropriate platforms (Illumina NovaSeq series or MGI DNBSEQ series) following manufacturer protocols, aiming for sufficient coverage (typically 30x for variant detection) [12].

Data Preprocessing and Quality Control

- Transfer raw sequencing data (FASTQ format) to high-performance computing infrastructure [12].

- Perform initial quality assessment using FastQC to evaluate base quality scores, duplication rates, and sequence composition [12].

- Align reads to an appropriate reference genome using optimized aligners such as BWA-MEM or BWA-mem2 [12] [11].

- Process aligned BAM files to mark duplicates, perform base quality score recalibration, and generate alignment metrics using tools like Picard and GATK following best practices [12] [11].

- Verify sample identity by comparing with independently generated genotype data when available to detect sample mix-ups [12].

Figure 1: Comprehensive workflow for whole-genome alignment and phylogenetic analysis.

Whole-Genome Alignment and Phylogenetic Block Extraction

Genome Alignment and Variant Calling

- Select appropriate WGA tools based on research objectives, data type, and evolutionary distance between genomes (refer to Table 1 for guidance).

- For closely related genomes or reference-based alignment, use suffix tree-based tools like MUMmer, which identifies maximal unique matches between genomes and performs detailed alignment between these anchor points [3].

- For population-scale studies with significant diversity, employ graph-based aligners like GraphAligner, which provides rapid and versatile sequence-to-graph alignment, effectively handling genetic variation [13].

- Execute alignment with parameters optimized for specific sequencing technologies and evolutionary distances. For NovaSeq series, monitor percentage occupied and pass filter metrics to ensure optimal loading concentrations [12].

- Perform variant calling following GATK Best Practices, including haplotype calling with GATK HaplotypeCaller, multi-sample joint calling with GATK GnarlyGenotyper, and variant quality score recalibration [12] [11].

- Apply stringent quality filters, excluding variants with low quality scores, excess heterozygosity, or significant differences in allele frequencies between unexpected datasets [11].

Phylogenetic Block Identification and Analysis

- Extract conserved phylogenetic blocks from whole-genome alignments using identity and length thresholds appropriate for the evolutionary scope of the study.

- Annotate variants using functional prediction tools like ANNOVAR and VEP with LOFTEE plugin to identify potentially damaging variants [11].

- For population genetics analyses, filter variants to remove those in segmental duplications or low-complexity regions to avoid alignment artifacts [11].

- Construct phylogenetic trees using appropriate methods (maximum likelihood, Bayesian inference) based on the identified conserved blocks.

- Perform additional population genetics analyses such as principal component analysis (PCA) using PLINK and ancestry estimation with ADMIXTURE when working with multiple populations [11].

Figure 2: Phylogenetic block extraction and analysis workflow from aligned genomes.

Essential Research Reagents and Computational Tools

Research Reagent Solutions

Table 2: Essential Research Reagents for Whole-Genome Sequencing and Alignment

| Category | Specific Product/Kit | Manufacturer/Provider | Primary Function |

|---|---|---|---|

| DNA Extraction | Autopure LS | Qiagen | Automated purification of high-quality DNA from blood samples |

| QIAsymphony SP | Qiagen | DNA extraction from cord blood | |

| Oragene | DNA Genotek | Saliva collection and DNA preservation | |

| DNA Quantification | Quant-iT PicoGreen dsDNA | Invitrogen | Fluorescence-based accurate DNA concentration measurement |

| Library Preparation | TruSeq DNA PCR-free HT | Illumina | PCR-free library construction for Illumina platforms |

| MGIEasy PCR-Free DNA Library Prep Set | MGI Tech | PCR-free library construction for MGI platforms | |

| Library QC | Qubit dsDNA HS Assay | Life Technologies | Accurate library concentration measurement |

| Fragment Analyzer | Advanced Analytical Technologies | Library size distribution analysis | |

| Sequencing | NovaSeq S4/S1 Reagent Kits | Illumina | High-throughput sequencing on NovaSeq platforms |

| DNBSEQ-G400RS Sequencing Set | MGI Tech | High-throughput sequencing on MGI platforms |

Table 3: Essential Computational Tools for Whole-Genome Alignment and Analysis

| Tool | Primary Function | Application Context | Key Features |

|---|---|---|---|

| BWA/BWA-mem2 | Sequence alignment | Short-read alignment to linear references | Optimized for speed and accuracy with NGS data |

| GraphAligner | Sequence-to-graph alignment | Long-read alignment to variation graphs | 13x faster than previous tools; handles complex variation |

| MUMmer | Whole-genome comparison | Alignment of closely related genomes | Suffix tree-based; identifies maximal unique matches |

| GATK | Variant discovery | Variant calling and filtering | Industry standard; best practices workflow |

| IGV | Data visualization | Exploration of alignments and variants | Interactive; handles large-scale genomic data |

| Jalview | Multiple sequence alignment | Visualization and analysis of phylogenetic blocks | Linked view of DNA and protein products |

| PLINK | Population genetics | PCA, relatedness estimation | Efficient handling of large genotype datasets |

| ADMIXTURE | Population structure | Ancestry estimation | Maximum likelihood estimation of ancestry proportions |

The computational toolkit for WGA has evolved to address specific challenges in modern genomics. For conventional alignment to linear references, BWA-MEM and BWA-mem2 remain widely used for their efficiency with short-read data [12] [11]. For more complex alignment scenarios involving structural variation or diverse haplotypes, graph-based aligners like GraphAligner offer significant advantages in speed and accuracy [13]. The VG toolkit provides alternative graph-based alignment capabilities, though benchmarking has shown GraphAligner to be approximately 13 times faster with 3 times less memory usage [13].

Visualization tools are essential for interpreting whole-genome alignments and validating phylogenetic blocks. The Integrative Genomics Viewer (IGV) enables interactive exploration of large-scale genomic datasets, allowing researchers to visualize alignments, variants, and annotations simultaneously [16]. Jalview provides specialized functionality for multiple sequence alignment visualization and analysis, particularly valuable for examining conserved regions across species [17]. These visualization tools often incorporate color schemes optimized for biological data visualization, following principles such as using perceptually uniform color spaces and considering color deficiencies among researchers [18].

Applications in Pharmaceutical and Clinical Research

Whole-genome alignment methodologies have profound implications for pharmaceutical research and drug development. By enabling comprehensive identification of genetic variation across diverse populations, WGA facilitates the discovery of clinically actionable variants that may influence drug response, toxicity, and efficacy [11]. The construction of population-specific reference panels, such as the Japanese haplotype reference panel developed from 3,135 individuals, demonstrates how WGA can address the current bias in genomic databases toward European populations [11]. This is particularly important for drug safety, as population-specific variants in pharmacogenes can lead to unexpected therapeutic effects in underrepresented populations.

The functional annotation of variants identified through WGA enables researchers to prioritize putative loss-of-function (pLOF) variants in drug target genes [11]. By integrating WGA data with resources like the DrugBank and Therapeutic Target databases, researchers can assess the constraint of pLOF variants in genes relevant to specific therapeutic areas. This approach allows for the evaluation of potential genetic constraints on drug targets before significant investment in development, potentially de-risking the drug discovery process.

In clinical research, WGA supports the development of personalized treatment strategies by providing a comprehensive view of an individual's genomic variation in the context of population diversity. Graph-based reference genomes that incorporate variation from diverse populations enable more accurate alignment and variant calling for clinical genomes, potentially improving the diagnostic yield in genomic medicine [13]. As long-read sequencing technologies become more accessible in clinical settings, tools like GraphAligner that efficiently align these reads to complex variation-aware references will play an increasingly important role in clinical genomics.

Future Perspectives and Concluding Remarks

The field of whole-genome alignment continues to evolve rapidly, driven by advances in sequencing technologies, computational methods, and the growing appreciation of genomic diversity. Graph-based genome representations are increasingly becoming standard for comparative genomics, better accommodating the extensive variation observed within and between species [13]. The integration of deep learning approaches promises to address some of the most computationally challenging aspects of phylogenetics and WGA, potentially revolutionizing how we analyze large-scale genomic datasets [14].

Future developments in WGA methodology will likely focus on improving scalability to accommodate the ever-increasing volume of genomic data while enhancing sensitivity for detecting complex variation. The combination of phylogenetics and population genetics within deep learning frameworks represents a particularly promising direction [14]. As these methods mature, they may significantly reduce the computational costs associated with traditional phylogenetic approaches while improving accuracy.

For the pharmaceutical industry and clinical research, ongoing efforts to diversify genomic references through projects like the Japanese population sequencing initiative [11] will be essential for realizing the full potential of precision medicine. Whole-genome alignment serves as the computational cornerstone that enables researchers to extract meaningful biological insights from these vast genomic resources, connecting sequence variation to function across the tree of life.

In conclusion, whole-genome alignment has established itself as an indispensable methodology in modern comparative genomics, with far-reaching applications in basic evolutionary research, pharmaceutical development, and clinical medicine. The continued refinement of WGA protocols and computational tools will undoubtedly yield new discoveries and enhance our understanding of genomic function and diversity across species and human populations.

In the era of genomics, the reconstruction of species evolutionary history is increasingly reliant on molecular data. However, a fundamental challenge persists: gene trees are not species trees [19]. The evolutionary history of individual genes often differs from the species' history due to biological processes such as incomplete lineage sorting (ILS), gene duplication and loss, and horizontal gene transfer [19] [20]. This article details the conceptual frameworks and practical protocols for inferring accurate species trees from gene tree data, with a specific focus on its application within whole-genome alignment research for identifying phylogenetic marker blocks.

Conceptual Frameworks: Understanding Gene Tree-Species Tree Discordance

Gene tree-species tree discordance arises from several evolutionary processes, each leaving a distinct signature on genomic data. The table below summarizes the primary causes and their implications for species tree inference.

Table 1: Core Conceptual Frameworks of Gene Tree-Species Tree Discordance

| Framework/Process | Core Principle | Impact on Gene Trees | Key Implication for Species Tree Inference |

|---|---|---|---|

| Multispecies Coalescent (MSC) [20] | Models the genealogical history of genes within a population genetics context. Ancestral polymorphisms can persist through speciation events. | Causes Incomplete Lineage Sorting (ILS), leading to topological differences between gene and species trees. | Methods must account for the probability distribution of gene trees within a species tree to be statistically consistent [20]. |

| Gene Duplication and Loss (DL) [19] | Genes duplicate; copies can be lost independently in different lineages. | Creates gene families of varying sizes. A gene tree contains speciation and duplication nodes. | Requires reconciliation models that map gene trees into the species tree, invoking duplications and losses to explain discordance [19] [21]. |

| Gene Transfer (T) [19] | Genes are horizontally transferred between species, replacing or adding to the recipient's genome. | Introduces topologies where a gene in one species is more closely related to genes from distantly related species. | Models must incorporate this process to avoid erroneous inferences, especially in prokaryotes [19]. |

These frameworks are not mutually exclusive. Genomic data often reflects the combined effects of multiple processes. For example, current estimates suggest that up to 30% of the human genome is more closely related to Gorilla than to Chimpanzee due to incomplete lineage sorting [19]. Modern probabilistic models aim to integrate these processes to improve the reliability of both gene tree and species tree reconstruction [19].

Methodological Approaches and Protocols

Species Tree Inference from Event-Labeled Gene Trees

A specialized case involves inferring a species tree from a gene tree where internal nodes are pre-labeled as representing either speciation or duplication events (e.g., derived from orthology analysis) [22]. The core mathematical insight is that the species tree must display all rooted triples (three-taxon statements) from the gene tree that are rooted in a speciation vertex and involve three distinct species [22]. The following protocol outlines this process.

Diagram 1: Species Tree from Event-Labeled Gene Trees

Experimental Protocol: From Orthology to Species Tree

Input Data Preparation: Begin with a gene tree where each internal node is labeled as a speciation or duplication event. This labeling can be derived from orthology clustering tools (e.g., OrthoMCL, ProteinOrtho) or preliminary reconciliation with a putative species tree [22].

Triple Extraction: Decompose the gene tree into all its constituent rooted triples (three-leaf subtrees). For a tree with n leaves, this generates ( \binom{n}{3} ) triples.

Triple Filtering: Identify and retain only those rooted triples that meet two criteria:

- The three leaves (genes) belong to three distinct species.

- the root node of the triple is labeled as a speciation event.

Consistency Check and Tree Building: Input the filtered set of triples into the BUILD algorithm [22]. This algorithm either:

- Constructs a species tree that displays all input triples, or

- Recognizes that the triple set is inconsistent, indicating potential errors in the gene tree or its event labels.

Bayesian Phylogenetic Inference Protocol

For most genomic-scale analyses, a Bayesian framework is preferred as it incorporates uncertainty and provides a robust probabilistic inference of phylogeny. The following workflow and table detail a standardized protocol.

Diagram 2: Bayesian Phylogenetic Analysis Workflow

Table 2: Step-by-Step Bayesian Phylogenetic Protocol (Adapted from [23])

| Step | Protocol Description | Tools & Key Parameters |

|---|---|---|

| 1. Sequence Alignment | Perform robust multiple sequence alignment that accounts for uncertainty and evolutionary events like indels. | Tool: GUIDANCE2 with MAFFT.Parameters: For complex datasets, use Max-Iterate=1000 and localpair for sequences with local similarities. |

| 2. Format Conversion | Convert the final alignment to a format suitable for downstream analysis. | Tool: MEGA X, PAUP*.Action: Convert FASTA/PHYLIP to NEXUS format required by MrBayes. |

| 3. Model Selection | Automatically select the best-fit model of sequence evolution using statistical criteria. | Tool: ProtTest (proteins) or MrModeltest (nucleotides).Criterion: Akaike Information Criterion (AIC) or Bayesian Information Criterion (BIC). |

| 4. Bayesian Inference | Execute Markov Chain Monte Carlo (MCMC) sampling to approximate the posterior distribution of trees and parameters. | Tool: MrBayes.Parameters: Two independent runs of ≥ 2 million generations, sampling every 1000. Use model selected in Step 3. |

| 5. Diagnostics & Summary | Assess MCMC convergence and summarize the results. | Diagnostics: Ensure average standard deviation of split frequencies is < 0.01. Discard initial samples as burn-in.Output: Generate a majority-rule consensus tree with posterior clade probabilities. |

Machine Learning Integration for Phylogeny-Aware Prediction

Beyond tree inference, phylogenetic relationships are crucial for correcting confounding factors in predictive genomic models. This is particularly relevant in studies linking genotype to phenotype, such as antimicrobial resistance (AMR) prediction.

Application Note: Phylogeny-Aware AMR Prediction in M. tuberculosis

- Challenge: Standard machine learning (ML) models trained on bacterial genomic data often ignore evolutionary history, leading to spurious predictions. Mutations that are highly correlated due to shared ancestry (population structure) can be incorrectly identified as resistance markers [24].

- Solution: Incorporate a phylogeny-related parallelism score (PRPS) to pre-filter genetic features. The PRPS measures whether a genetic variant's distribution is correlated with the population structure of the sample set [24].

- Protocol:

- Construct a phylogenetic tree from core genome alignments of the bacterial strains.

- Calculate the PRPS for each genetic variant (e.g., SNP, indel).

- Filter out variants with a high PRPS, as they are likely linked to population structure rather than the AMR phenotype.

- Train the ML model (e.g., SVM, Random Forest) on the filtered feature set.

- Outcome: This approach reduces the number of features, increases model performance, and yields more biologically relevant candidate resistance markers by reducing false positives [24].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Computational Tools for Gene Tree to Species Tree Inference

| Category | Tool Name | Primary Function & Application Note |

|---|---|---|

| Sequence Databases | NCBI GenBank, UniProt, Ensembl | Function: Central repositories for nucleotide and protein sequences.Note: Use Batch Entrez (NCBI) or ID mapping (UniProt) for efficient, large-scale sequence retrieval for genome-scale studies [25]. |

| Alignment & Model Selection | GUIDANCE2, MAFFT, ProtTest, MrModeltest | Function: Robust alignment and statistical model selection.Note: Automated model selection is critical for accurate branch length and topology estimation in downstream Bayesian inference [23]. |

| Species Tree Inference (MSC) | *BEAST, ASTRAL | Function: Co-estimate species trees and gene trees under the multispecies coalescent.Note: Essential for handling incomplete lineage sorting in multi-locus datasets [20]. |

| Reconciliation (DL) | Not specified in results | Function: Map gene trees onto a species tree, inferring duplications and losses.Note: Key for analyzing gene families from whole-genome data where copy number varies [19] [21]. |

| Bayesian Inference | MrBayes, BEAST2 | Function: Probabilistic phylogenetic inference using MCMC.Note: The gold-standard for complex models, providing measures of uncertainty (posterior probabilities) [23]. |

| Whole-Genome Analysis | GATK, BWA | Function: SNP calling and read alignment for whole-genome re-sequencing data.Note: Used in studies that leverage genome-wide SNPs for high-resolution phylogenomics of closely related species [26]. |

The reconstruction of evolutionary histories, represented as phylogenetic trees, has been fundamentally transformed by the availability of whole-genome sequence data. While single-gene trees provide limited insights, the integration of information across entire genomes enables researchers to construct more robust and comprehensive phylogenetic landscapes—a "Forest of Life" that captures the complex evolutionary relationships among organisms. This paradigm shift towards genome-scale data analysis presents both unprecedented opportunities and significant computational and methodological challenges. The extraction of reliable phylogenetic blocks from whole-genome alignments forms the critical foundation for accurate tree construction, requiring sophisticated approaches to handle the scale and complexity of genomic information [27] [15].

Current phylogenetic methods face substantial hurdles in managing the ever-growing volume of genomic data. The exponential increase in genetic data intensifies computational and storage burdens, leading to substantial time constraints and a super-exponential rise in resource demands [27]. Furthermore, longer sequences may contain inconsistencies or noise that can lead to misleading results, complicating the tree inference process. This protocol addresses these challenges by integrating modern computational approaches with established phylogenetic principles, creating a standardized framework for constructing phylogenetic trees from genomic data within the context of whole-genome alignment extraction research.

Theoretical Background

Phylogenetic Tree Fundamentals

Phylogenetic trees are diagrammatic representations of evolutionary relationships among biological taxa based on their physical or genetic characteristics. These trees consist of nodes and branches, where nodes represent taxonomic units and branches depict estimated evolutionary relationships between these units. Trees contain two types of nodes: internal nodes (hypothetical taxonomic units, HTUs) and external nodes (leaf nodes representing operational taxonomic units, OTUs). The root node, the topmost internal node, symbolizes the most recent common ancestor of all leaf nodes and marks the evolutionary starting point [28].

Phylogenetic trees can be categorized into two primary types based on their topological structure. Rooted trees contain a root node from which the rest of the tree diverges, indicating explicit evolutionary direction. Unrooted trees lack a root node and only illustrate relationships between nodes without suggesting evolutionary direction. The evolutionary clade within a phylogenetic tree encompasses a node and all lineages stemming from it, representing a monophyletic group of organisms [28].

Multiple computational approaches exist for inferring phylogenetic trees from molecular data, each with distinct theoretical foundations, advantages, and limitations. These methods can be broadly classified into distance-based and character-based approaches, as summarized in Table 1.

Table 1: Common Phylogenetic Tree Construction Methods

| Algorithm | Principle | Hypothesis | Selection Criteria | Application Scope |

|---|---|---|---|---|

| Neighbor-Joining (NJ) | Minimal evolution: Minimizing total branch length | BME branch length estimation model | Constructs a single tree | Short sequences with small evolutionary distance [28] |

| Maximum Parsimony (MP) | Minimize evolutionary steps required to explain the dataset | No model required | Tree with smallest number of substitutions | High similarity sequences; difficult model scenarios [28] |

| Maximum Likelihood (ML) | Maximize probability of observing data given tree and model | Sites evolve independently; branches have different rates | Tree with maximum likelihood value | Distantly related sequences [28] |

| Bayesian Inference (BI) | Bayes' theorem to compute posterior probability of trees | Continuous-time Markov substitution model | Most frequently sampled tree in MCMC | Small number of sequences [29] [28] |

Distance-based methods, such as Neighbor-Joining (NJ), first convert molecular feature matrices into distance matrices representing evolutionary distances between species pairs, then apply clustering algorithms to infer phylogenetic relationships. NJ specifically employs an agglomerative clustering approach that builds trees by successively merging the closest pairs of nodes, resulting in a fast and efficient algorithm suitable for large datasets [28].

Character-based methods include maximum parsimony (MP), maximum likelihood (ML), and Bayesian inference (BI). MP operates on the principle of Occam's razor, seeking the tree that requires the fewest evolutionary changes to explain the observed data. ML methods identify the tree that maximizes the probability of observing the sequence data given a specific evolutionary model. BI applies Bayesian statistics to compute the posterior probability of trees, incorporating prior knowledge about parameters and using Markov chain Monte Carlo (MCMC) sampling to approximate the posterior distribution [29] [28].

The complete process for constructing phylogenetic trees from genomic data involves multiple stages from sequence acquisition to tree visualization. The following workflow diagram illustrates the integrated pipeline for phylogenetic analysis:

Protocol: Bayesian Phylogenetic Analysis with MrBayes

Sequence Alignment and Quality Assessment

Objective: Generate robust multiple sequence alignments from genomic data and assess alignment quality.

Procedure:

Sequence Collection: Obtain homologous DNA or protein sequences through experimental data or public databases (GenBank, EMBL, DDBJ). Ensure sequence names contain only alphanumeric characters and underscores to avoid formatting issues [29].

Alignment with GUIDANCE2:

- Access the GUIDANCE2 server or command-line tool.

- Upload sequence files in FASTA format.

- Select MAFFT as the alignment tool with appropriate parameters:

- For shorter sequences or rapid analyses: Use the

6mermethod. - For sequences with local similarities: Apply the

localpairapproach. - For longer sequences requiring global alignment: Implement the

genafpairmethod.

- For shorter sequences or rapid analyses: Use the

- Execute alignment and obtain confidence scores [29].

Alignment Trimming:

- Precisely trim aligned sequences to remove unreliably aligned regions.

- Balance between removing noise and retaining genuine phylogenetic signal.

- Use automated trimming tools (e.g., TrimAl) or manual inspection.

Note: Default MAFFT parameters suit most datasets. For complex data, adjust the Max-Iterate parameter (0-1000 iterations) to optimize alignment [29].

Evolutionary Model Selection

Objective: Identify the optimal evolutionary model for phylogenetic inference using statistical criteria.

Procedure:

Format Conversion: Convert aligned sequences to appropriate formats using MEGA X or bioinformatics scripts:

- Convert FASTA/PHYLIP to NEXUS format for MrBayes compatibility.

- Ensure NEXUS files begin with

#NEXUSdeclaration [29].

Model Testing:

- For nucleotide data: Execute MrModeltest2 within PAUP:

- Copy the MrModelblock file to your working directory.

- Execute in PAUP via

File > Execute. - Use generated

mrmodel.scoresfor analysis.

- For protein data: Run ProtTest (Java-dependent):

- Navigate to ProtTest directory in command line.

- Execute with appropriate parameters for your dataset.

- Select best-fitting model using Akaike Information Criterion (AIC) or Bayesian Information Criterion (BIC) [29].

- For nucleotide data: Execute MrModeltest2 within PAUP:

Bayesian Tree Inference with MrBayes

Objective: Perform Bayesian phylogenetic inference using MrBayes under the selected evolutionary model.

Procedure:

MrBayes Setup:

- Download and install MrBayes (version 3.2.7a or later).

- Place NEXUS files in the MrBayes

bindirectory. - Open command line in this directory and launch MrBayes by typing

mb.

Configure Analysis Parameters:

- Execute the following commands in MrBayes:

MCMC Diagnostics:

- Monitor average standard deviation of split frequencies (target <0.01).

- Check Potential Scale Reduction Factor (PSRF) values (should approach 1.0).

- Verify effective sample sizes (ESS > 200 for all parameters).

- If convergence not achieved, extend runs with

mcmc append=yes ngen=500000[29].

Advanced Approach: PhyloTune for Efficient Tree Updates

Objective: Implement the PhyloTune method to efficiently integrate new taxa into existing phylogenetic trees using pretrained DNA language models.

Procedure:

Model Setup:

- Obtain pretrained DNA language model (DNABERT).

- Fine-tune the model using taxonomic hierarchy information from your target phylogenetic tree.

Taxonomic Unit Identification:

- Utilize hierarchical linear probes (HLP) for each taxonomic rank.

- Simultaneously perform novelty detection and taxonomic classification.

- Identify the smallest taxonomic unit for new sequences [27].

High-Attention Region Extraction:

- Divide sequences into K equal regions.

- Calculate attention weights from the last transformer layer.

- Apply minority-majority voting to identify top M regions with highest attention scores.

- Extract these high-attention regions for subsequent analysis [27].

Targeted Subtree Construction:

- Perform multiple sequence alignment on high-attention regions using MAFFT.

- Construct subtrees using standard methods (ML, BI).

- Integrate updated subtrees into the main phylogenetic tree.

Note: PhyloTune significantly reduces computational requirements by focusing analysis on informative genomic regions and relevant subtrees, enabling efficient tree updates as new genomic data becomes available [27].

Data Visualization and Interpretation

Tree Visualization with ggtree

Objective: Create publication-quality visualizations of phylogenetic trees with comprehensive annotation capabilities.

Procedure:

Basic Tree Visualization:

- Install and load ggtree package in R:

library(ggtree) - Import tree files (Newick, NEXUS) using

read.tree()orread.nexus() - Generate basic tree plot:

ggtree(tree_object)

- Install and load ggtree package in R:

Layout Selection:

- Choose appropriate layout based on data structure and presentation needs:

Tree Annotation:

- Add tip labels:

+ geom_tiplab() - Highlight clades:

+ geom_hilight(node=XX, fill="steelblue") - Add branch support values:

+ geom_nodelab(aes(label=label)) - Map continuous traits:

+ geom_point(aes(color=trait_value)) - Add metadata layers:

+ geom_facet(column="metadata_column")[7]

- Add tip labels:

Advanced Customization:

- Modify theme elements:

+ theme_tree() - Adjust branch colors:

+ aes(color=branch_length) - Scale branch lengths:

+ scale_x_continuous(limits=c(0, 0.1))[7]

- Modify theme elements:

Color Scheme Implementation

Objective: Apply effective color schemes to enhance phylogenetic tree interpretation.

Procedure:

Define Color Palette:

- Create a consistent color palette for taxonomic groups or metadata categories.

- Ensure sufficient contrast between adjacent colors.

- Consider colorblind-friendly palettes.

Implementation in Nextstrain:

- Create a tab-separated values (TSV) file for custom color definitions:

- Reference the color file in configuration:

yaml files: colors: "path/to/colors_updated.tsv"[30]

Metadata Visualization:

- Map discrete metadata to node colors:

+ aes(color=metadata_variable) - Apply continuous color gradients:

+ scale_color_gradient(low="blue", high="red") - Use consistent color schemes across related figures [31].

- Map discrete metadata to node colors:

Research Reagent Solutions

Table 2: Essential Research Reagents and Computational Tools

| Category | Item | Function | Example Tools/Formats |

|---|---|---|---|

| Sequence Alignment | Multiple Sequence Alignment Tools | Align homologous sequences for comparison | MAFFT, GUIDANCE2, MUSCLE [29] |

| Model Selection | Evolutionary Model Testers | Identify best-fitting substitution models | ProtTest, MrModeltest [29] |

| Tree Inference | Phylogenetic Algorithms | Construct trees from aligned sequences | MrBayes, RAxML, FastTree [27] [29] |

| Data Formats | Standardized File Formats | Enable tool interoperability | FASTA, PHYLIP, NEXUS, Newick [29] |

| Visualization | Tree Plotting Packages | Visualize and annotate phylogenetic trees | ggtree, iTOL, FigTree [7] |

| Language Models | DNA Language Models | Identify taxonomic units and informative regions | DNABERT, PhyloTune [27] |

Technical Considerations

Computational Requirements

The computational resources required for phylogenetic analysis vary significantly based on dataset size and methodological approach. For basic analyses, minimal requirements include a single-core CPU (≥2.0 GHz), 2 GB RAM, and 15 GB disk space. For larger genome-scale datasets, multi-core processors (>4 cores) and expanded RAM (≥8 GB) are strongly recommended to ensure computational efficiency [29].

Bayesian inference with MrBayes particularly benefits from parallel processing capabilities. The PhyloTune approach reduces computational burdens by targeting analysis to specific subtrees and informative genomic regions, making it suitable for updating large trees with new genomic data [27].

Method Selection Guidelines

Choose phylogenetic methods based on dataset characteristics and research objectives:

- For rapid analysis of large datasets: Neighbor-joining methods provide fast, reasonably accurate trees [28].

- For model-based inference with moderate datasets: Maximum likelihood offers statistical rigor with good computational efficiency [28].

- For incorporating uncertainty and prior knowledge: Bayesian inference provides posterior probabilities and credible intervals [29].

- For integrating new sequences into existing trees: PhyloTune enables efficient updates using DNA language models [27].

Quality Control and Validation

Implement robust validation procedures to ensure phylogenetic accuracy:

- Assess convergence: For Bayesian methods, monitor MCMC convergence using multiple diagnostics [29].

- Evaluate support: Calculate bootstrap support (ML) or posterior probabilities (BI) for tree nodes.

- Compare topologies: Use statistical tests (e.g., Shimodaira-Hasegawa test) to compare alternative tree hypotheses.

- Validate alignment quality: Use GUIDANCE2 scores to identify unreliably aligned regions [29].

This protocol provides a comprehensive framework for constructing phylogenetic trees from genomic data, with particular emphasis on whole-genome alignment extraction. By integrating traditional phylogenetic methods with innovative approaches like PhyloTune, researchers can efficiently analyze genome-scale data to reconstruct evolutionary relationships. The structured workflow—from sequence alignment to tree visualization—ensures reproducible and biologically meaningful results.

The "Forest of Life" concept emphasizes that modern phylogenetic analysis often involves constructing and comparing multiple trees from genomic data, rather than seeking a single true tree. The methods described here enable researchers to navigate this complex phylogenetic landscape, providing tools to extract evolutionary signals from whole-genome data and visualize phylogenetic relationships with clarity and precision. As genomic datasets continue to grow, the integration of machine learning approaches with established phylogenetic methods will become increasingly important for managing scale and complexity while maintaining biological accuracy.

Methodological Pipeline: From Raw Genomes to Phylogenetic Blocks

Within the context of whole-genome alignment extraction for phylogenetic blocks research, the selection of an appropriate sequencing technology is a critical foundational step. The fundamental division in the field lies between short-read and long-read sequencing technologies, each with distinct performance characteristics that directly impact the quality and completeness of phylogenetic analyses. This application note provides a detailed comparison of these platforms, focusing on their utility in generating accurate alignments for evolutionary studies, and offers structured protocols for their implementation.

Short-read technologies (e.g., Illumina) generate reads typically ranging from 50 to 300 base pairs (bp) through a sequencing-by-synthesis approach with fluorescently labelled nucleotides and reversible terminators [32] [33]. These platforms offer high throughput and base-level accuracy, but their limited read length creates inherent challenges for resolving complex genomic structures and repetitive elements [33] [3].

Long-read technologies encompass platforms from Pacific Biosciences (PacBio) and Oxford Nanopore Technologies (ONT). PacBio HiFi technology generates highly accurate (>99.9%) reads of 15–25 kilobases (kb) through a circular consensus sequencing approach [33] [34]. ONT technology can produce reads from 50 bp to over 4 megabases (Mb) by measuring changes in electrical current as DNA strands pass through protein nanopores, offering the unique capability of ultra-long reads without upper length limitation [33] [34].

The following table summarizes the core characteristics of each technology relevant to phylogenetic block extraction:

Table 1: Comparison of Short-Read and Long-Read Sequencing Technologies

| Feature | Short-Read Sequencing (Illumina) | Long-Read Sequencing (PacBio HiFi, ONT) |

|---|---|---|

| Typical Read Length | 50-300 bp [33] | 1 kb - >100 kb; typically 10-25 kb [33] [34] |

| Primary Technology | Sequencing-by-synthesis with reversible terminators [32] | PacBio: Circular Consensus Sequencing (CCS)ONT: Nanopore sensing [33] [34] |

| Typical Raw Accuracy | >99.9% [35] | PacBio HiFi: >99.9%ONT: ~98-99%+ [35] [34] |

| Variant Detection Strength | SNVs, small indels [35] | Structural Variations (SVs), large indels, complex variants [35] [34] |

| Performance in Repetitive Regions | Poor alignment accuracy due to ambiguous mapping of short fragments [35] [3] | Excellent; long reads span repetitive elements, enabling accurate placement [35] [34] |

| Phasing Capability | Limited to statistical inference or specialized assays | Direct haplotype phasing across long genomic stretches [34] |

Table 2: Variant Detection Performance in Different Genomic Contexts [35]

| Variant Type | Short-Read Performance | Long-Read Performance |

|---|---|---|

| SNVs | High recall and precision in non-repetitive regions [35] | Similar high recall and precision in non-repetitive regions [35] |

| Small Indels (<10 bp) | Good recall and precision [35] | Good recall and precision [35] |

| Insertions (>10 bp) | Poor detection, especially in 10-50 bp range [35] | High sensitivity and accurate calling [35] |

| Structural Variations (SVs) | Significantly lower recall in repetitive regions; misses many small-to-intermediate SVs [35] | High recall and precision across all regions, including repetitive sequences [35] |

Workflow and Experimental Protocols

Core Workflow Diagram

The following diagram illustrates the general workflow for generating whole-genome alignments for phylogenetic research, highlighting key decision points where the choice of technology creates divergent paths.

Detailed Protocol: Library Preparation and Sequencing

3.2.1 Short-Read (Illumina) Library Preparation Protocol

- Nucleic Acid Fragmentation: Isolated DNA is mechanically or enzymatically sheared into fragments of a defined size distribution (e.g., 200-500 bp) optimal for cluster generation [32] [36].

- Adapter Ligation: Blunt-ended fragments are ligated to platform-specific Y-shaped adapters (P5 and P7). These adapters contain sequences essential for bridge amplification, sequencing primers, and index sequences for sample multiplexing [32] [36].

- Size Selection and Purification: Libraries are purified to remove adapter dimers and unligated fragments. Size selection (e.g., using SPRI beads) is performed to ensure a tight insert size distribution, which improves sequencing uniformity [32].

- Library Amplification: The adapter-ligated library is typically amplified via PCR (e.g., 4-10 cycles) to enrich for properly constructed fragments and provide sufficient mass for sequencing, especially when input DNA is limited [32] [36].

- Library Quantification: The final library is quantified using fluorometric methods (e.g., Qubit) and qPCR to ensure accurate loading onto the flow cell [32].

- Clonal Amplification and Sequencing: Libraries are loaded onto a flow cell where fragments undergo bridge amplification to form clonal clusters. Sequencing-by-synthesis with fluorescent reversible terminators is performed for the desired number of cycles (e.g., 2x150 bp paired-end) [32].

3.2.2 Long-Read (PacBio or ONT) Library Preparation Protocol

- Minimal DNA Fragmentation (Optional): For standard long-read libraries, DNA may be gently sheared to a target size (e.g., 15-20 kb for HiFi). For ultra-long reads (ONT), high-molecular-weight DNA is used with minimal fragmentation [33] [34]. DNA quality and integrity are paramount [34].

- Adapter Ligation:

- Size Selection (Optional): Libraries can be size-selected using the BluePippin or SageELF systems to enrich for longer fragments, which is crucial for improving assembly continuity and spanning large repeats.

- Library Amplification (Optional): PCR-free library preparation is standard for long-read sequencing to avoid amplification bias and maintain epigenetic modifications. However, PCR-based kits are available for very low-input samples [33].

- Sequencing:

- PacBio: The SMRTbell library is sequenced in zero-mode waveguides. For HiFi reads, the polymerase reads the circular template multiple times, and the subreads are collapsed into a highly accurate consensus sequence (CCS) [34].

- ONT: The library is loaded onto a flow cell (Flongle, MinION, PromethION). A voltage is applied, and strands of DNA are pulled through the nanopores. Basecalling (e.g., with Dorado) converts raw electrical signals (squiggles) into nucleotide sequences [34].

Bioinformatics Processing for Phylogenetic Block Extraction

Alignment and Processing Workflow