From Spiegelman to Nobel: The Definitive History and Future of Directed Evolution

This article traces the revolutionary journey of directed evolution from its exploratory origins in Sol Spiegelman's Qβ replicase experiments to its maturation into a cornerstone of modern protein engineering, recognized...

From Spiegelman to Nobel: The Definitive History and Future of Directed Evolution

Abstract

This article traces the revolutionary journey of directed evolution from its exploratory origins in Sol Spiegelman's Qβ replicase experiments to its maturation into a cornerstone of modern protein engineering, recognized by the 2018 Nobel Prize in Chemistry. Tailored for researchers, scientists, and drug development professionals, it provides a comprehensive analysis spanning foundational discoveries, key methodological breakthroughs, current troubleshooting challenges, and a comparative validation of its impact. The scope encompasses the transition from simple in vitro systems to the integration of advanced technologies like machine learning, microfluidics, and CRISPR, offering insights into how this powerful methodology continues to accelerate the development of novel enzymes, therapeutics, and biosynthetic pathways.

The Pioneering Experiments: Laying the Groundwork for Directed Evolution

The field of directed evolution, recognized by the 2018 Nobel Prize in Chemistry, did not emerge in a vacuum. Its conceptual roots are deeply embedded in principles observed and harnessed by humans for millennia. Selective breeding, also termed artificial selection, represents the earliest and most enduring practice of guiding biological evolution toward human-defined goals [1] [2]. For thousands of years, humans have consciously chosen plants and animals with desirable phenotypic traits for reproduction, thereby gradually but profoundly transforming wild species into the domesticated breeds and cultivars that sustain modern civilization [3]. This "unconscious" and "methodical" selection, as Charles Darwin categorized it, demonstrated that deliberate selection could effect substantial change over time [2].

Darwin himself relied heavily on this analogy, using the tangible results of artificial selection to argue for the plausibility of his theory of natural selection [2]. He saw domestication as a powerful model for understanding evolutionary change, a perspective that would eventually pave the way for bringing evolution into the laboratory. The critical transition in the mid-20th century was the move from selecting for visible traits in whole organisms to manipulating the molecular components of life in vitro. This shift set the stage for a new era of evolutionary experimentation, culminating in groundbreaking techniques that would allow scientists to evolve biomolecules directly, a process that would later be termed "directed evolution" [4] [5].

Selective Breeding: The Original Artificial Selection

Selective breeding is the process by which humans systematically develop particular phenotypic traits in organisms by choosing which individuals will reproduce [1]. Its history spans from prehistory to its establishment as a scientific practice.

Historical Development and Key Figures

The domestication of key species such as wheat, rice, and dogs began millennia ago, with significant advances documented by the Romans and later scholars [1]. However, Robert Bakewell, during the 18th-century British Agricultural Revolution, established selective breeding as a rigorous scientific practice [1]. His work with sheep (developing the New Leicester breed) and cattle (the Dishley Longhorn) demonstrated that methodical breeding could dramatically alter the size and form of livestock to meet market demands. The average weight of a slaughter bull, for instance, more than doubled from 370 pounds in 1700 to 840 pounds by 1786, largely due to Bakewell's influence [1].

Charles Darwin later formalized the concept, coining the term "selective breeding" and using it as a central analogy in On the Origin of Species to illustrate the power of selection [1] [2]. He distinguished between "methodical selection," driven by a predetermined standard, and "unconscious selection," which occurred without a specific intent to alter a breed [2]. This foundational work cemented the idea that selective pressure, whether artificial or natural, was a powerful mechanism for permanent change.

Underlying Genetic and Methodological Principles

At its core, selective breeding operates on the principle of manipulating heritable variation. Breeders selectively amplify desirable alleles by controlling mating pairs, often employing techniques such as inbreeding and linebreeding to fix traits [1]. A key concept is the prezygotic vs. postzygotic selection dichotomy [3]. In its "strong" form, artificial selection controls which individuals mate (prezygotic selection), leading to a dramatic acceleration of evolutionary change. In its "weaker" form, it involves selectively culling a population (postzygotic selection), allowing natural selection to act from an altered genetic baseline [3].

Table 1: Core Techniques in Traditional Selective Breeding

| Technique | Methodology | Primary Objective | Example Application |

|---|---|---|---|

| Methodical Selection | Systematic breeding according to a predetermined ideal or standard for the breed [2]. | To establish and maintain stable, predictable traits passed to the next generation [1]. | Breeding sheep for uniformly long, lustrous wool [1]. |

| Inbreeding | Mating of closely related individuals that share a high degree of genetic similarity [1]. | To "fix" or homogenize desired traits within a bloodline, creating "pure breeds" [1]. | Developing purebred dogs with highly consistent appearance and behavior. |

| Linebreeding | A milder form of inbreeding that mates individuals from the same ancestral line without direct sibling/parent mating. | To maintain a high concentration of a specific ancestor's genes while minimizing inbreeding depression. | Perpetuating the traits of a single outstanding bull across multiple generations of cattle. |

While powerful, selective breeding has limitations. It is generally slow, requiring many generations, and is constrained by the existing genetic variation within the species or closely related crossbreeds. Furthermore, single-trait breeding can be problematic, sometimes leading to unintended correlated consequences, such as roosters bred for fast growth losing their typical courtship behaviors [1].

The Transition to Laboratory Evolution

The 20th century saw the principles of selection move from the field into the controlled environment of the laboratory. This transition was marked by a shift in focus from whole organisms to individual genes and molecules, and from visible traits to specific biochemical functions.

Chemical Mutagenesis and Adaptive Evolution of Microbes

A pivotal step was the use of chemical mutagens to increase mutation rates in laboratory organisms, thereby accelerating the generation of diversity. An early example from 1964 involved using chemical mutagenesis on the bacterium Aerobacter aerogenes to induce a xylitol utilization phenotype, a study aimed at understanding how new metabolic functions evolve in nature [4]. These early adaptive evolution experiments demonstrated that selection pressures could be applied in a laboratory setting to isolate novel functions from a pool of random mutants, even without knowledge of the underlying genetic changes.

Spiegelman's In Vitro RNA Evolution Experiments

In the 1960s, a landmark series of experiments by Sol Spiegelman and colleagues bridged the gap between observing evolution and actively directing it in vitro [4] [5] [6]. Their work was radical: it removed the complexity of a living cell entirely.

Spiegelman's team isolated a self-replicating biological system—Qβ bacteriophage RNA and its replicase enzyme—in a test tube [4]. They subjected this RNA to serial transfers under the selective pressure of replication speed. In each transfer, only the fastest-replicating RNA molecules would be passed to the next tube. Over generations, the RNA population evolved into streamlined "Spiegelman's monsters"—molecules that had lost non-essential genomic segments and replicated far more rapidly than the ancestral viral RNA [4] [6]. This was a form of evolution stripped to its bare essentials: variation in RNA sequence, competition for replication resources, and heredity.

Table 2: Key Experimental Systems in Early Laboratory Evolution

| Experimental System | Evolving Entity | Selection Pressure | Key Outcome |

|---|---|---|---|

| Chemical Mutagenesis (Lerner et al., 1964) [4] | Bacterium (Aerobacter aerogenes) | Ability to utilize xylitol as a carbon source. | Demonstration that chemical mutagens could be used to generate new metabolic functions in living cells. |

| Spiegelman's Experiment (mid-1960s) [4] [5] | Qβ phage RNA | Speed of replication in vitro. | Proof that natural selection could operate on molecules outside of a cellular context, producing optimized "monsters." |

| Phage Display (Smith, 1985) [4] [5] | Peptides displayed on filamentous phage surface | Binding affinity to a target antibody. | Coupled genotype (viral DNA) with phenotype (displayed peptide), enabling selection for binding. |

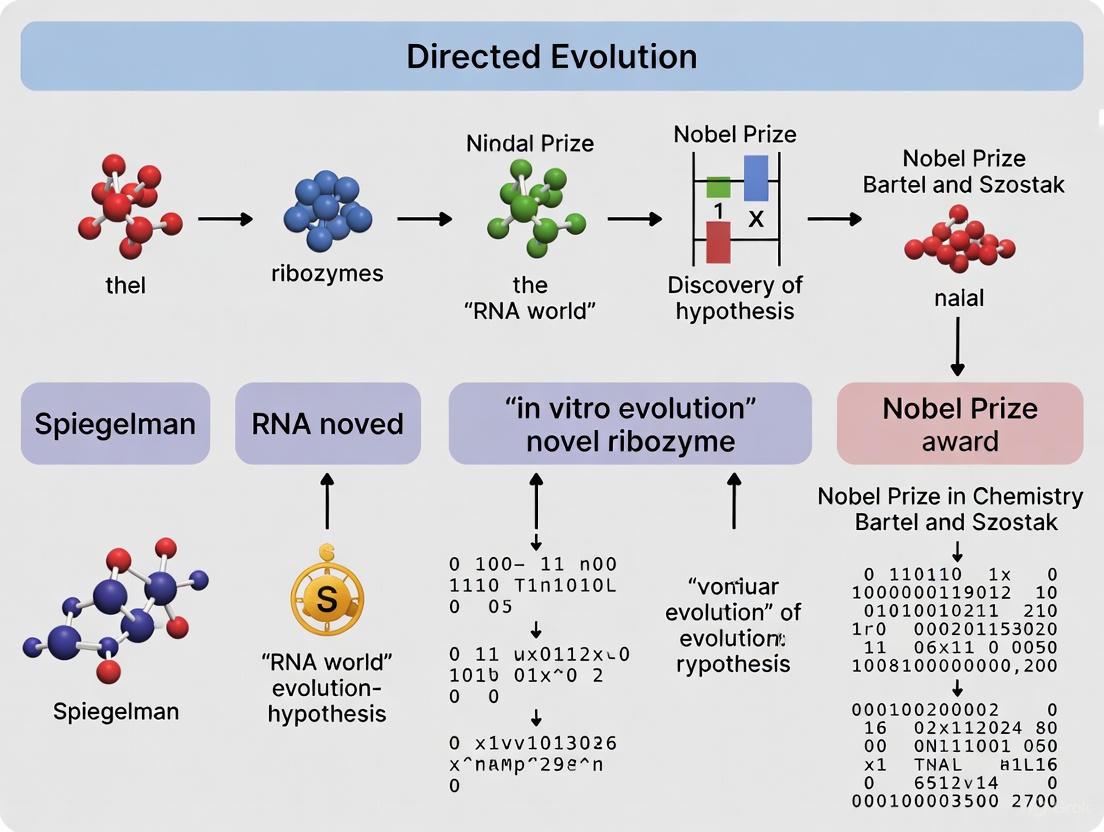

The following diagram illustrates the logical workflow of Spiegelman's experiment, highlighting the iterative cycle that became the blueprint for modern directed evolution.

Experimental Protocols of Key Pre-1990s Experiments

Detailed Protocol: Spiegelman's In Vitro RNA Evolution

Spiegelman's experiment provided a revolutionary protocol for evolving biomolecules in vitro. The methodology can be broken down into the following detailed steps [4] [5] [6]:

System Reconstitution:

- Purify the Qβ bacteriophage RNA genome and its corresponding RNA replicase enzyme. The Qβ replicase has the unique property of being able to synthesize RNA from an RNA template without a DNA intermediate.

- Create a reaction mixture containing the purified Qβ RNA, Qβ replicase, a buffer optimized for the enzyme, and the four ribonucleoside triphosphates (ATP, GTP, CTP, UTP) as building blocks.

Incubation and Replication:

- Allow the reaction to proceed for a defined period. During this time, the replicase enzymes will use the original RNA molecules as templates to synthesize new RNA strands. The process is not perfectly faithful, leading to the introduction of random mutations and the generation of a heterogeneous library of RNA variants.

Selection via Serial Transfer:

- After the incubation period, take a small aliquot of the reaction mixture and transfer it into a fresh tube containing all the necessary components (replicase, nucleotides, buffer) but no RNA template.

- This transfer is the key selection step. Because the new reaction is initiated with only a subset of the RNA population from the previous tube, RNA molecules that replicated more quickly and produced more copies are statistically more likely to be represented in the aliquot and thus to "found" the next generation.

Iteration:

- Repeat the incubation and serial transfer process repeatedly over dozens of generations. The selective pressure for replication speed is maintained consistently.

Analysis:

- Analyze the evolved RNA molecules after multiple rounds. Spiegelman found that the resulting "monsters" were much smaller than the original viral RNA, having lost genes that were essential for infection and viral coat protein production but unnecessary for replication in the optimized test tube environment. These minimalist RNAs were replication specialists in their specific environment.

The Scientist's Toolkit: Research Reagent Solutions for Early Evolution

Table 3: Essential Research Reagents in Early Evolutionary Experiments

| Reagent / Tool | Function in Experiment | Specific Example |

|---|---|---|

| Chemical Mutagens | To artificially increase the mutation rate in living cells, thereby accelerating the generation of genetic diversity for selection to act upon. | Nitrosoguanidine or ethyl methanesulfonate (EMS) used in bacterial adaptive evolution [4]. |

| Qβ Phage System | A simplified, self-replicating molecular system comprising the Qβ RNA genome and its replicase enzyme. Enabled the study of evolution decoupled from cellular processes. | Purified Qβ RNA and Qβ replicase formed the core of Spiegelman's in vitro evolution system [4] [5]. |

| Nucleoside Triphosphates (NTPs) | The fundamental building blocks (ATP, GTP, CTP, UTP) required for RNA synthesis by polymerase enzymes. | Provided the material for RNA replication in Spiegelman's experiments [4]. |

| Phage Display Vector | A genetically engineered filamentous phage (e.g., M13) that allows a foreign peptide to be expressed on its surface while encoding for that peptide in its DNA. | Enabled the physical linkage between a protein phenotype (binding) and its genetic code (DNA sequence) for efficient selection [4] [5]. |

The Bridge to Modern Directed Evolution

The pre-1990s work on selective breeding and early in vitro evolution established the core logic that would define modern directed evolution: the iterative cycle of diversification, selection, and amplification [4] [5]. Spiegelman's experiments, in particular, demonstrated that this cycle could be applied directly to molecules to solve a biochemical problem—in his case, faster replication.

The next major innovation was the development of phage display by George Smith in 1985 [4] [5]. This technology provided a robust method to physically link a protein (the phenotype) with the DNA that encodes it (the genotype). By displaying a library of random peptides on the surface of a bacteriophage and selecting for those that bound to a target antibody, researchers could then simply sequence the DNA of the bound phage to identify the functional peptide. This solved the critical problem of the "genotype-phenotype link" for proteins, which Spiegelman's system had inherently for RNA [5].

The following diagram illustrates how these precursor concepts and techniques provided the foundational pillars for the establishment of modern directed evolution as a formalized discipline in the 1990s.

These pioneering efforts collectively established that evolution was not just a historical process but a tool that could be wielded in the laboratory. They provided the conceptual framework and initial technical proofs-of-principle that would explode into the field of directed evolution in the 1990s with the advent of error-prone PCR and DNA shuffling, ultimately enabling the precise engineering of proteins and enzymes for science, industry, and medicine.

The field of directed evolution, now a cornerstone of modern protein engineering and biotechnology, traces its conceptual origins to a series of pioneering experiments conducted in the 1960s by molecular biologist Sol Spiegelman and his colleagues. Their work with the Qβ bacteriophage RNA replicase established the first controlled system to demonstrate evolutionary principles in a test tube, decoupled from living cellular processes. This groundbreaking research provided both a methodological framework and a theoretical foundation for the directed evolution approaches that would later revolutionize biological engineering. The significance of these early experiments was formally recognized decades later when the 2018 Nobel Prize in Chemistry was awarded for the development of directed evolution methods, highlighting Spiegelman's foundational contribution to this field [5]. This technical guide examines Spiegelman's Qβ replicase experiments in detail, placing them within the broader historical context of directed evolution from its inception to its current applications in drug development and basic research.

Historical and Scientific Context

Molecular Biology in the 1960s

During the 1960s, molecular biology was undergoing revolutionary developments. The role of RNA as an intermediary between DNA and protein synthesis had only recently been discovered in 1961, the same year Spiegelman began studying bacteriophages at the University of Illinois at Urbana [7]. At this time, most known bacteriophages used DNA as their genetic material, but Spiegelman's lab identified and began working with an unusual phage called MS-2 that contained no DNA whatsoever, instead utilizing RNA as its genetic template [7]. This discovery led to the identification of another RNA phage, Qβ, which produced a highly specific RNA-dependent RNA polymerase (Qβ replicase) that would only replicate Qβ RNA, ignoring other RNA molecules [7]. This specificity made Qβ replicase an ideal candidate for studying the fundamental principles of RNA replication and evolution outside of cellular constraints.

Spiegelman's Experimental Vision

Spiegelman's innovative approach was to reconstitute the core components of RNA replication in an extracellular environment, creating a simplified system where evolutionary dynamics could be observed and manipulated directly. His experiments addressed a profoundly basic question about the fundamental nature of genetic molecules: "What will happen to the RNA molecules if the only demand made on them is the Biblical injunction, multiply, with the biological proviso that they do so as rapidly as possible?" [4]. This reductionist approach allowed Spiegelman to create what he termed an "extracellular Darwinian experiment" with a self-duplicating nucleic acid molecule [7], establishing a paradigm that would influence decades of subsequent research in evolutionary biology and molecular engineering.

Experimental Protocols and Methodologies

Core Experimental System

The foundation of Spiegelman's experimental system involved isolating the essential components for RNA replication:

- Qβ RNA: The natural genomic RNA from the Qβ bacteriophage, approximately 4,500 nucleotides in length, served as the initial template [8] [7].

- Qβ Replicase: The purified RNA-dependent RNA polymerase from Qβ phage-infected E. coli cells [7] [9].

- Reaction Solution: Contained free ribonucleotides (ATP, GTP, CTP, UTP) and necessary salts to support RNA synthesis [7] [8].

The initial experiments demonstrated that Qβ RNA could be faithfully replicated in this cell-free environment. When the artificially produced RNA was introduced back into living phage particles, it functioned identically to the original natural RNA, confirming that the replication process maintained biological functionality [7].

Serial Transfer Evolution Protocol

The key innovation that demonstrated evolutionary dynamics was the serial transfer experiment, described in detail in Spiegelman's 1967 paper "An extracellular Darwinian experiment with a self-duplicating nucleic acid molecule" [7]:

- Initial Replication: Qβ RNA was added to a solution containing Qβ replicase, nucleotides, and salts, allowing replication to proceed for a defined period [8] [7].

- Sample Transfer: A portion of the replicated RNA was transferred to a fresh tube containing new replication solution [8].

- Repetition: This transfer process was repeated multiple times (eventually reaching 74 transfers in published experiments) [7].

- Analysis: RNA from different transfer points was analyzed for size and sequence characteristics.

This protocol created a selective environment where replication speed was the primary determinant of evolutionary success, mimicking natural selection in a highly simplified laboratory setting.

Modern Adaptations and Extensions

Later researchers built upon Spiegelman's original protocol. Sumper and Luce of Manfred Eigen's laboratory demonstrated that under appropriate conditions, Qβ replicase could spontaneously generate self-replicating RNA from nucleotide building blocks without an initial template [8]. Eigen further refined this system, eventually producing minimal replicating RNAs of only 48-54 nucleotides—the minimum required for replication enzyme binding [8]. Contemporary research continues to utilize similar approaches, employing combinatorial selection methods to evolve RNAs that maintain specific coding functions while optimizing replicability [9].

Key Findings and Quantitative Results

Evolution of Minimal Replicons

Spiegelman's most striking finding was the progressive reduction in RNA size over serial transfers as molecules competed for rapid replication. The data from these experiments demonstrated a clear evolutionary trajectory toward minimalized replicons:

Table 1: Evolution of RNA Size Over Serial Transfers

| Transfer Generation | RNA Size (Nucleotides) | Relative Size (%) | Replication Efficiency |

|---|---|---|---|

| 0 (Original Qβ RNA) | 4,500 | 100% | Baseline |

| Intermediate transfers | ~1,500-3,000 | 33-67% | Increased |

| Generation 74 | 218 | 4.8% | Highly optimized |

This dramatic size reduction to only 218 nucleotides—dubbed "Spiegelman's Monster" in scientific literature—represented an 86% reduction from the original Qβ RNA [8] [7]. The evolutionary pressure for replication speed had effectively eliminated all genetic information not essential for the replicase recognition and replication process itself [8] [7].

Mechanism of Evolutionary Selection

The experiments demonstrated that shorter RNA sequences replicated faster because they required less time for the replicase to synthesize, providing a selective advantage in the serial transfer environment [8] [7]. This finding directly confirmed that natural selection could operate on simple molecular systems without cellular machinery, supporting the hypothesis that evolutionary principles could have guided the development of early biological systems before the emergence of cellular life [7].

Technical and Methodological Details

Experimental Workflow

The following diagram illustrates the serial transfer process that formed the core of Spiegelman's evolutionary experiments:

Essential Research Reagents

The key components of Spiegelman's experimental system and their functions are detailed in the following table:

Table 2: Key Research Reagent Solutions in Spiegelman's Experiments

| Reagent | Composition/Type | Function in Experimental System |

|---|---|---|

| Qβ Replicase | RNA-dependent RNA polymerase from Qβ phage | Enzyme that catalyzes template-directed RNA synthesis [7] [9] |

| Bacteriophage Qβ RNA | Natural genomic RNA (initially 4500 nt) | Template for replication; subject to evolutionary pressure [8] [7] |

| Nucleotide Mixture | ATP, GTP, CTP, UTP | Building blocks for RNA synthesis [8] [7] |

| Reaction Buffer | Salts and cofactors | Optimal enzymatic activity and RNA stability [8] [7] |

| Serial Transfer Apparatus | Test tubes and pipetting systems | Enables sequential generations of replication under selection [8] [7] |

Connection to Modern Directed Evolution

Technical Evolution from Spiegelman to Modern Methods

Spiegelman's work established the fundamental paradigm that would later be formalized as directed evolution: iterative rounds of diversification, selection, and amplification. The following diagram illustrates this conceptual lineage and technical progression:

Methodological Refinements and Expansions

While Spiegelman's system utilized natural mutation rates and selection pressures, modern directed evolution employs sophisticated techniques to enhance and direct the evolutionary process:

- Library Creation Methods: Error-prone PCR, DNA shuffling, and site-saturation mutagenesis enable controlled diversification of gene sequences [4] [5].

- Selection vs. Screening: Modern approaches use either selection systems (coupling desired function to survival) or high-throughput screening methods to identify improved variants [5].

- In vivo and In vitro Applications: Directed evolution now operates in living cells, in vitro translation systems, and compartmentalized environments such as water-in-oil emulsions [5].

The 2018 Nobel Prize in Chemistry, awarded to Frances Arnold for directed evolution of enzymes and to George Smith and Gregory Winter for phage display, explicitly recognized these methods as the practical realization of principles first demonstrated in Spiegelman's pioneering experiments [5].

Applications in Drug Discovery and Development

Directed Evolution as an Evolutionary Process

The development of pharmaceutical compounds shares fundamental similarities with biological evolution, as noted in contemporary drug discovery literature: "Drug development has features in common with evolution. The classification system of pharmacology echoes the taxonomy of flora and fauna. How certain compounds become successful medicines, from the myriad potential candidate molecules, involves a selection process with a high rate of attrition" [10]. This evolutionary perspective, first exemplified by Spiegelman's controlled system, has influenced how researchers approach the challenges of drug development.

Practical Impact on Therapeutic Development

Directed evolution methods derived from Spiegelman's foundational work have produced significant advances in therapeutics:

- Therapeutic Antibodies: Phage display technology, recognized in the 2018 Nobel Prize, enables engineering of antibodies with enhanced binding affinities and reduced immunogenicity [5].

- Engineered Enzymes: Evolution of enzymes for improved stability, substrate specificity, and catalytic efficiency under process conditions [4] [5].

- Novel Biocatalysts: Creation of enzymes with functions not found in nature, enabling synthesis of previously inaccessible chemical compounds [4].

Understanding Antibiotic Resistance

Laboratory evolution experiments, directly descended from Spiegelman's approach, have provided critical insights into bacterial antibiotic resistance mechanisms. Recent high-throughput evolution studies have revealed that "bacteria E. coli is equipped with only a limited number of strategies for antibiotic resistance," primarily involving inhibition of drug uptake systems and enhancement of drug efflux systems [11]. This understanding, gained through controlled evolutionary experiments, informs strategies to combat multidrug-resistant pathogens.

Contemporary Research and Future Directions

Modern Applications of Qβ Replicase Systems

Spiegelman's specific experimental system continues to inform contemporary research. Recent studies utilizing Qβ replicase focus on developing complex artificial self-replication systems for synthetic biology applications [9]. However, introducing additional genetic functions into replicating RNAs remains challenging because "the replicase requires strong secondary structures throughout the RNA, which are absent in most genes" [9]. Modern research addresses this limitation through combinatorial selection methods that simultaneously optimize RNA replicability and encoded gene function [9], directly extending Spiegelman's original approach.

Integration with Systems Biology

Contemporary evolution experiments increasingly combine laboratory evolution with multi-omics analyses to elucidate comprehensive evolutionary mechanisms. For example, a 2023 study of paraquat tolerance in E. coli integrated laboratory evolution with transcriptomics and modeling to identify "six interacting stress-tolerance mechanisms" [12]. This systems biology approach, enabled by advanced analytical technologies, provides a more comprehensive understanding of evolutionary processes than was possible in Spiegelman's era, while still relying on the fundamental principles he established.

Future Prospects

The future of directed evolution continues to build upon Spiegelman's foundational work, with emerging trends focusing on:

- Genome-scale Engineering: Evolution of entire pathways or genomes rather than individual proteins [4].

- Automated High-Throughput Systems: Robotic automation enabling parallel evolution experiments under hundreds of conditions [11].

- Predictive Evolutionary Modeling: Integrating machine learning with directed evolution to predict mutational effects and guide library design [5] [11].

Sol Spiegelman's Qβ replicase experiments established the conceptual and methodological foundation for directed evolution, demonstrating that evolutionary principles could be harnessed in controlled laboratory environments. His serial transfer experiments with self-replicating RNA molecules provided the first definitive evidence that Darwinian evolution could operate on simple molecular systems without cellular machinery. This insight has reverberated through decades of biological research, ultimately culminating in practical protein engineering methods recognized by the Nobel Prize. The evolutionary framework established by Spiegelman continues to guide both basic research into evolutionary mechanisms and applied biotechnology for drug development, therapeutic design, and synthetic biology. As directed evolution methods become increasingly sophisticated and integrated with systems biology and computational approaches, they continue to build upon the fundamental paradigm first established in Spiegelman's pioneering experiments with Qβ replicase.

Early In Vitro Selections and the Advent of Phage Display

The development of directed evolution as a paradigm in protein engineering represents a fundamental shift from rational design to iterative Darwinian principles in the laboratory. This journey began with Spiegelman's pioneering in vitro selections with RNA replicases and culminated nearly five decades later in the 2018 Nobel Prize in Chemistry, awarded for the phage display of peptides and antibodies. Phage display, a technique that physically links genetic information to the functional proteins it encodes, has revolutionized therapeutic discovery and mechanistic enzymology. This whitepaper details the historical context, core principles, and detailed methodologies that bridge early in vitro selections to the modern application of phage display, providing a technical guide for its implementation in research and drug development.

The field of directed evolution (DE) is founded on mimicking natural selection in a controlled laboratory environment. The foundational principle requires three core components: 1) the introduction of genetic variation, 2) a selection pressure to identify fitness differences, and 3) a mechanism to ensure heredity, so that beneficial mutations are passed on [5]. The first successful application of this principle in a molecular system is attributed to Sol Spiegelman's experiments in the 1960s. In what was colloquially known as the "Spiegelman's Monster" experiment, Qβ replicase was used to evolve RNA molecules in vitro over serial transfers, selecting for variants with the fastest replication rates [5]. This demonstrated that biomolecules could be evolved independently of a living organism, establishing the core concept of in vitro selection.

The subsequent development of phage display by George P. Smith in 1985 provided a powerful and generalizable platform for directed evolution [13] [14]. Smith demonstrated that a foreign peptide could be displayed on the surface of a filamentous bacteriophage by fusing its encoding gene to a gene for a phage coat protein. Critically, this created a physical genotype-phenotype linkage: the displayed protein (phenotype) was physically connected to the genetic information (genotype) housed within the phage particle [15] [14]. This linkage made it possible to screen vast libraries of variants (typically >10^10 members) for desired binding properties and then immediately amplify and identify the selected clones. The technology was later advanced by Greg Winter, John McCafferty, and others for the display of functional antibody fragments, enabling the discovery of fully human therapeutic antibodies [13] [14]. The profound impact of this technology was recognized with the 2018 Nobel Prize, awarded jointly to Smith and Winter, as well as Frances Arnold for her parallel work on the directed evolution of enzymes [5].

Core Principles and Key Technological Advancements

The Fundamental Shift to In Vitro Selection

The advent of phage display addressed several key limitations inherent to in vivo antibody discovery methods, such as animal immunization.

Table 1: Comparison of In Vivo Immunization vs. In Vitro Phage Display

| Feature | In Vivo Immunization | In Vitro Phage Display |

|---|---|---|

| Target Scope | Limited to immunogenic, non-toxic antigens [13] | Virtually any target, including toxic and non-immunogenic antigens [13] |

| Control Over Selection | Limited control over epitope and antibody properties [13] | High control; can be tailored for specific epitopes, pH-dependent binding, or internalization [13] |

| Timeline | Time-intensive due to animal immune response [13] | Rapid process conducted entirely in vitro [13] |

| Antibody Format | Typically full-length IgG | Fragments (scFv, Fab, VHH) initially, reformatted to IgG [13] [15] |

| Key Advantage | Antibodies are naturally optimized for developability | Ability to target difficult antigens (GPCRs, specific conformations) [13] |

The Anatomy of a Phage Display System

The most commonly used phage for display is the M13 filamentous bacteriophage [14] [16]. Its structure is key to its utility:

- Major Coat Protein (pVIII): The most abundant protein, forming the shaft of the phage. It can display small peptides in high copy number (up to ~2,700 copies) [15] [16].

- Minor Coat Protein (pIII): Located at the tip of the phage in 3-5 copies, it is essential for infectivity. It is the preferred protein for displaying larger, more complex proteins like antibody fragments (scFv, Fab) as it is more tolerant of large insertions [15] [14] [16].

Two primary vector systems are used:

- Phage Vectors: The gene for the coat protein fusion is integrated directly into the phage genome.

- Phagemid Vectors: A more common system where the gene for the fusion is carried on a plasmid (phagemid). Upon infection with a helper phage, the phagemid is packaged into new virions, which display a mixture of wild-type and fusion coat proteins. This allows for monovalent display, which is crucial for selecting high-affinity binders and avoiding avidity effects [15] [17].

Experimental Protocols and Methodologies

The following section provides a detailed, step-by-step protocol for a typical antibody phage display selection campaign, known as biopanning.

Library Construction

The quality of the phage display library is paramount to success. Libraries can be constructed from natural sources (e.g., human B-cells) or be synthetically designed.

Protocol: Construction of a Synthetic scFv Phagemid Library

- Gene Synthesis and Assembly: Synthesize DNA encoding diverse single-chain variable fragment (scFv) sequences, typically with a (Gly4Ser)3 linker connecting the VH and VL domains [15]. The DNA is cloned into a phagemid vector, downstream of a secretion signal sequence and upstream of gene III (for pIII display) [13].

- Vector Preparation: The phagemid vector contains a bacterial origin of replication, an antibiotic resistance gene, and the phage origin of replication for packaging [15].

- High-Efficiency Transformation: The ligated phagemid DNA is introduced into an E. coli strain (e.g., TG1) via electroporation to achieve maximum library diversity [16]. The transformation is plated on selective antibiotic media.

- Library Amplification and Phage Rescue: The pooled bacterial colonies are grown and infected with a helper phage (e.g., M13KO7 or Hyperphage) [15]. The helper phage provides all the necessary proteins for phage replication and assembly. The resulting phage particles, displaying the scFv library on their surface and containing the corresponding phagemid DNA inside, are purified from the culture supernatant by precipitation with polyethylene glycol (PEG)/NaCl [13].

The Biopanning Process

Biopanning is an iterative affinity selection process used to isolate specific binders from a library.

Protocol: Solid-Phase Panning against an Immobilized Antigen

- Immobilization: Coat the wells of a microtiter plate with a purified target antigen (typically 10-100 µg/mL) in a suitable buffer overnight at 4°C [13] [18].

- Blocking: Block the wells with a blocking agent (e.g., 2-3% BSA or milk in PBS) for 1-2 hours at room temperature to prevent nonspecific phage binding.

- Incubation and Binding: Incubate the phage library (10^10 - 10^12 phage particles) in the blocked wells for 1-2 hours to allow binders to interact with the immobilized antigen.

- Washing: Remove unbound and weakly bound phages by extensive washing. Stringency is increased with each round of panning, often by adding detergents like Tween-20 to the wash buffer [16] [18].

- Elution: Recover specifically bound phages by elution. This can be achieved by:

- Amplification: Infect an exponentially growing culture of E. coli with the eluted phages. Subsequent rescue with a helper phage amplifies the selected pool for the next panning round [13] [14].

- Iteration: Steps 1-6 are typically repeated for 2-4 rounds to sufficiently enrich for high-affinity binders.

For more complex targets, alternative panning strategies are employed:

- Liquid Phase Panning: The antigen is biotinylated and captured onto streptavidin-coated magnetic beads after incubation with the phage library. This allows for selection in solution, which can preserve antigen conformation [13].

- Cell-Based Panning: Uses whole cells to select for binders against native cell-surface receptors in their natural conformation and membrane environment [13] [19].

Screening and Characterization of Output

After the final panning round, individual clones are characterized.

- Polyclonal Phage ELISA: A quick test to confirm enrichment by comparing the binding of phage from later rounds versus the original library.

- Monoclonal Screening: Individual bacterial colonies are picked, and their soluble antibody fragments (e.g., scFv or Fab) are produced for screening via ELISA against the target antigen.

- DNA Sequencing: The genes of positive clones are sequenced to identify unique binders and to check for convergent evolution, where multiple independent clones share similar sequences [16].

- Affinity and Kinetics Measurement: The binding affinity (KD) and kinetics (kon, koff) of lead hits are quantified using surface plasmon resonance (SPR) or bio-layer interferometry (BLI) [16].

- Further Engineering: Selected leads may undergo additional affinity maturation—a process of directed evolution where the lead antibody gene is mutagenized (e.g., via error-prone PCR) and subjected to further phage display selection to enhance affinity and specificity [13] [5].

The Scientist's Toolkit: Essential Research Reagents

Successful phage display experiments rely on a core set of reagents and materials.

Table 2: Key Reagent Solutions for Phage Display

| Reagent / Material | Function / Explanation |

|---|---|

| Phagemid Vector | A hybrid plasmid containing both bacterial and phage origins of replication; carries the gene for the antibody-coat protein fusion [15] [17]. |

| Helper Phage | Provides all necessary structural and replication proteins in trans for the packaging of the phagemid DNA into phage particles. Examples: M13KO7, Hyperphage [15]. |

| E. coli Strains | Suitable host for phage propagation. Requires the F pilus for M13 infection (e.g., TG1, XL1-Blue) [15]. |

| Selection Antibiotics | Maintains selection pressure for the phagemid (e.g., ampicillin) and helper phage (e.g., kanamycin) [15]. |

| PEG/NaCl | Polyethylene glycol and salt solution used to precipitate and concentrate phage particles from culture supernatants [13]. |

| Blocking Agents | Proteins or detergents (e.g., BSA, milk, Tween-20) used to coat surfaces and prevent nonspecific binding of phage during panning [18]. |

| Streptavidin-Coated Magnetic Beads | Essential for liquid-phase panning to capture biotinylated antigen-phage complexes [13]. |

Visualization of Workflows and Relationships

The following diagrams illustrate the core logical and experimental relationships in phage display and its historical context.

The Directed Evolution Cycle

The Phage Display Biopanning Workflow

Phage Display Vector Systems

Impact and Quantitative Outcomes

The success of phage display is quantitatively demonstrated by its contribution to the therapeutic landscape. As of November 2022, 17 therapeutic antibodies or antibody-derived drugs discovered via phage display have received market approval, including multiple blockbuster drugs [13].

Table 3: Selected Phage Display-Derived Therapeutic Antibodies

| Generic Name (Product) | Target | First Approved Year | Primary Indication(s) | Contribution of Phage Display |

|---|---|---|---|---|

| Adalimumab (Humira) | TNFα | 2002 | Rheumatoid Arthritis | Humanization [13] |

| Ranibizumab (Lucentis) | VEGFA | 2006 | nAMD | Humanization, Affinity Maturation [13] |

| Belimumab (Benlysta) | BLyS | 2011 | Systemic Lupus Erythematosus | Initial Discovery [13] |

| Atezolizumab (Tecentriq) | PD-L1 | 2016 | Urothelial Carcinoma | Initial Discovery [13] |

| Caplacizumab (Cablivi) | vWF | 2018 | aTTP | Initial Discovery (VHH format) [13] |

| Faricimab (Vabysmo) | VEGFA, Ang2 | 2022 | nAMD, DME | Initial Discovery & Affinity Maturation [13] |

Abbreviations: nAMD (neovascular Age-related Macular Degeneration), aTTP (acquired Thrombotic Thrombocytopenic Purpura), DME (Diabetic Macular Edema), VHH (Single-domain antibody)

The advent of phage display stands as a pivotal achievement in the history of directed evolution, providing a robust and versatile in vitro platform for engineering biomolecules. By creating a direct physical link between genotype and phenotype, it solved a fundamental problem in molecular evolution, enabling the high-throughput screening of unimaginably diverse libraries. From its conceptual origins in Spiegelman's in vitro evolution of RNA to its current status as an industry-standard technology responsible for life-saving therapeutics, phage display exemplifies the power of applying evolutionary principles at the molecular level. As the technique continues to evolve with improved library design, novel screening methodologies, and integration with microfluidics and computational tools, its impact on basic research and drug development is poised to grow even further.

The field of protein engineering has undergone a fundamental transformation, shifting from a structure-based rational design approach to one that harnesses the power of evolutionary principles. Directed evolution (DE), the laboratory process that mimics natural selection to steer proteins toward user-defined goals, represents this paradigm shift in its most potent form [5]. This methodological revolution has not only expanded the toolkit available to researchers and drug development professionals but has also reframed our very understanding of protein sequence-function relationships.

Unlike rational design, which requires extensive knowledge of protein structure and mechanism, directed evolution requires no such a priori knowledge, instead relying on iterative rounds of diversification, selection, and amplification to discover functional enhancements that would be difficult or impossible to predict computationally [5] [20]. The 2018 Nobel Prize in Chemistry, awarded to Frances Arnold for the directed evolution of enzymes and to George Smith and Gregory Winter for phage display, cemented the importance of this approach for both basic and applied science [5] [6]. This review traces the historical trajectory of directed evolution, details its core methodologies and applications, and explores the cutting-edge integrations with machine learning that are defining the field's future.

Historical Foundations: From Spiegelman to the Nobel Prize

The conceptual roots of directed evolution extend back to the 1960s with Sol Spiegelman's pioneering experiments on RNA replication in vitro [5] [4] [6]. In what became known as the "Spiegelman's Monster" experiment, RNA molecules were subjected to selective pressure for faster replication in a test tube, demonstrating that evolutionary principles could be harnessed in a controlled, laboratory environment [5] [4]. This work provided an early example that evolution could be directed toward a specific goal—in this case, rapid replication—divorced from a living cellular context.

The 1980s witnessed a critical expansion of these principles with the development of phage display by George Smith [5] [4]. This technology allowed for the selection of binding peptides and proteins from libraries displayed on the surface of bacteriophages, enabling researchers to "fish" for proteins with desired binding properties [5]. Gregory Winter later adapted phage display for the evolution of therapeutic antibodies, leading to groundbreaking pharmaceutical applications [6].

The modern era of directed evolution, particularly for enzymes, was firmly established in the 1990s. The work of Frances Arnold and others demonstrated that repeated rounds of random mutagenesis and high-throughput screening could progressively improve enzyme properties, such as stability in harsh organic solvents [4]. A landmark 1993 study on subtilisin E demonstrated a 256-fold increase in activity in dimethylformamide after three rounds of evolution, powerfully illustrating the method's potential [4]. This period also saw the development of in vitro recombination methods, such as DNA shuffling by Willem Stemmer, which mimicked natural sexual recombination by breaking down and reassembling genes from different parents, allowing for the exploration of larger evolutionary jumps [5] [4]. The convergence of these techniques—random mutagenesis, recombination, and high-throughput screening—formed the robust methodological foundation that defines directed evolution today.

Table: Major Historical Milestones in Directed Evolution

| Year/Period | Key Development | Key Researchers | Significance |

|---|---|---|---|

| 1960s | In vitro RNA evolution | Sol Spiegelman | First demonstration of directed evolution in a test tube [5] |

| 1980s | Phage Display | George Smith | Enabled selection of binding proteins from vast libraries [5] |

| 1985 | Discovery of PCR | Kary Mullis, et al. | Provided a key tool for gene amplification and mutagenesis [20] |

| 1990s | Enzyme Directed Evolution | Frances Arnold | Established iterative random mutagenesis & screening for enzymes [4] |

| 1994 | DNA Shuffling | Willem Stemmer | Mimicked natural recombination to accelerate evolution [4] |

| 2018 | Nobel Prize in Chemistry | Arnold, Smith, Winter | International recognition of the field's impact [5] |

Core Principles and Methodologies of Directed Evolution

Directed evolution mimics the core principles of natural evolution—variation, selection, and heredity—but in a controlled, accelerated time frame focused on a single gene or pathway [5] [20]. The process is an iterative cycle, where the best variant from one round becomes the template for the next, leading to stepwise improvements.

The Directed Evolution Cycle

The standard directed evolution workflow consists of three fundamental steps, as illustrated below.

Generating Diversity (Diversification)

The first step involves creating a library of gene variants. Multiple methods exist, each with distinct advantages:

- Error-Prone PCR: A standard PCR reaction under conditions that reduce fidelity, introducing random point mutations throughout the gene [5] [20].

- DNA Shuffling: Homologous genes (from different species or previous evolution experiments) are fragmented with DNase I and then reassembled in a primer-free PCR reaction. This creates chimeras that recombine beneficial mutations from different parents [4].

- Saturation Mutagenesis: A more targeted approach where specific codons are randomized to all possible amino acids, often focused on active sites or regions suspected to be important for function [5].

The choice of method depends on the desired diversity. Random mutagenesis is excellent for exploring local sequence space, while recombination can create larger jumps.

Detecting Fitness Differences (Selection/Screening)

A high-throughput assay is critical for identifying the rare, improved variants within a large library. The two primary strategies are selection and screening [5].

- Selection directly couples the desired protein function to the survival or replication of the host organism. For example, an enzyme that degrades a toxin will allow its host cell to survive in the toxin's presence [5]. Phage display is a powerful in vitro selection technique where binding to an immobilized target allows for the physical isolation of binding clones [5] [20].

- Screening involves assaying individual variants from the library to quantitatively measure activity (e.g., using a colorimetric or fluorescent assay). While typically lower in throughput than selection, screening provides rich, quantitative data on every variant tested [5]. Fluorescence-Activated Cell Sorting (FACS) is a common high-throughput screening method when the desired function can be linked to a fluorescent signal [20].

Ensuring Heredity (Amplification)

Once functional variants are isolated, their genes must be recovered—a concept known as the genotype-phenotype link [5]. In cellular systems, this is inherent, as the host cell contains the plasmid DNA. In in vitro systems, techniques like mRNA display physically link the protein to its mRNA template [5]. The genes of the best-performing variants are then amplified, typically via PCR, to provide the template for the next round of diversification [20].

Table: Key Research Reagents and Solutions for Directed Evolution

| Reagent/Solution | Function in Workflow | Example Application |

|---|---|---|

| Kapa Biosystems PCR Reagents | High-fidelity or error-prone amplification of gene variants [20] | Gene library construction and amplification steps. |

| NNK Degenerate Codons | Saturation mutagenesis to randomize a single codon to all 20 amino acids. | Creating focused diversity at active site residues [21]. |

| Phage Display Vectors | Genetically fuse protein library to phage coat protein for display. | Selection of high-affinity antibodies or peptides [5] [20]. |

| Fluorogenic/Chromogenic Substrates | Generate a detectable signal (fluorescence/color) upon enzyme activity. | High-throughput microtiter plate-based screening [20]. |

| His-Tag Purification Systems | Rapid purification of recombinant proteins via affinity chromatography. | Isolating expressed variants for biochemical characterization [6]. |

Applications and Impact on Science and Industry

Directed evolution has become an indispensable tool in both basic research and industrial biotechnology, demonstrating remarkable success across several domains.

Protein Engineering for Industrial and Therapeutic Use

The most direct application of directed evolution is the optimization of proteins for practical use. Key successes include:

- Improving Protein Stability: Engineering enzymes to remain functional at high temperatures or in harsh organic solvents is vital for industrial biocatalysis [5] [4]. For instance, subtilisin E was evolved for enhanced activity in dimethylformamide, broadening its application in chemical synthesis [4].

- Altering Substrate Specificity: Directed evolution can "re-tool" enzymes to accept non-native substrates. A prominent example is the engineering of cytochrome P450 enzymes from Bacillus megaterium, which was transformed from a fatty acid hydroxylase into a catalyst for alkane degradation and other novel chemistries [20].

- Affinity Maturation of Antibodies: Phage display, combined with directed evolution, is used to increase the binding affinity of therapeutic antibodies [5] [6]. This approach has led to drugs for diseases like metastatic cancer and inflammatory conditions [6].

Fundamental Studies in Enzyme Evolution

Beyond its engineering utility, directed evolution serves as a powerful experimental platform for investigating fundamental evolutionary principles [5] [22]. It allows researchers to test hypotheses about adaptive landscapes, the prevalence of epistasis (where the effect of one mutation depends on the presence of others), and the molecular mechanisms that underlie the emergence of new functions [22]. By analyzing the sequences and activities of variants across multiple rounds of evolution, scientists can map fitness landscapes and identify key residue positions that determine protein function [22] [23].

The Modern Frontier: Integration with Machine Learning

While powerful, traditional directed evolution can be inefficient, often getting trapped in local optima on rugged fitness landscapes where mutations have strong epistatic interactions [21]. The latest paradigm shift involves the integration of machine learning (ML) to navigate these complex sequence spaces more intelligently.

A seminal advance is Active Learning-assisted Directed Evolution (ALDE), as demonstrated in a 2025 Nature Communications study [21]. ALDE is an iterative ML-assisted workflow that uses uncertainty quantification to decide which variants to test in each cycle. Unlike traditional DE, which screens large, random libraries, ALDE uses data from previous rounds to train a model that predicts sequence-fitness relationships. This model then prioritizes a small batch of the most promising variants for the next wet-lab experiment, effectively balancing exploration of new sequences with exploitation of known high-fitness regions [21].

The power of ALDE was demonstrated on a challenging problem: optimizing five epistatic residues in the active site of a protoglobin for a non-native cyclopropanation reaction. Whereas simple recombination of beneficial single mutations failed, ALDE converged on an optimal variant in just three rounds, increasing the yield of the desired product from 12% to 93% [21]. This approach is particularly effective for optimizing higher-order mutational combinations where epistasis is significant.

Other ML approaches involve learning protein fitness landscapes from existing deep mutational scanning data, which can even enable zero-shot predictions for new proteins [23]. These MLDE methods promise to significantly reduce the experimental burden of directed evolution and unlock engineering goals previously considered too complex.

The journey from rational design to the adoption of evolutionary principles marks a fundamental maturation in biological engineering. Directed evolution has proven itself as a powerful and general strategy for optimizing biomolecules, leading to tangible advances in medicine, industrial catalysis, and green chemistry. The field's history, from Spiegelman's Monster to the Nobel Prize, is a testament to the power of mimicking nature's core algorithm. Today, the paradigm is shifting once more. The integration of machine learning and active learning represents a new frontier, transforming directed evolution from a largely empirical, brute-force process into a more predictive and intelligent discipline. This synergy between evolutionary principles and computational intelligence promises to further accelerate the engineering of biological systems, enabling the development of novel therapeutics, sustainable materials, and biocatalysts for challenges yet unknown.

Historical Context: From Spiegelman to the Nobel Prize

Directed evolution (DE), the laboratory process that mimics natural selection to steer biological molecules toward user-defined goals, has revolutionized basic and applied biology [5]. Its origins can be traced to the 1960s and the seminal "Spiegelman's Monster" experiment, which demonstrated the evolution of RNA molecules in vitro under a selective pressure for faster replication [5] [4]. This established the core principle that evolution could be directed outside of living cells. The field expanded in the 1980s with techniques like phage display, which allowed for the selection of proteins with enhanced binding properties [5] [4].

Modern directed evolution came of age in the 1990s, moving beyond adaptive evolution of whole organisms to focus on engineering individual proteins through iterative rounds of mutagenesis and screening [4]. Landmark work, such as the evolution of subtilisin E for enhanced activity in organic solvents, demonstrated the power of repeated rounds of genetic diversification and activity screening [4]. The development of DNA shuffling by Willem Stemmer further accelerated progress by mimicking natural recombination, allowing beneficial mutations from different parent genes to be combined efficiently [24] [4]. The profound impact of these methodologies was formally recognized in 2018, when the Nobel Prize in Chemistry was awarded to Frances H. Arnold for the directed evolution of enzymes, and to George P. Smith and Sir Gregory P. Winter for the phage display of peptides and antibodies [5] [24]. This award cemented directed evolution as a cornerstone technology of modern biotechnology.

The Core Modern Workflow: An Iterative Cycle of Diversification and Selection

The modern directed evolution workflow functions as a two-part iterative engine, driving a population of proteins toward a desired functional goal through laboratory-accelerated evolution [24]. This process compresses geological timescales into weeks or months by intentionally accelerating mutation rates and applying a stringent, user-defined selection pressure [24]. The cycle consists of two fundamental steps: the generation of genetic diversity to create a library of protein variants, and the application of a high-throughput screen or selection to identify the rare improved variants [24] [4].

Step 1: Generating Diversity — Library Creation Strategies

The creation of a diverse library of gene variants is the foundational step that defines the explorable sequence space [24]. The method of diversification is a strategic choice that shapes the entire evolutionary search.

- Random Mutagenesis: Techniques like error-prone PCR (epPCR) introduce mutations across the entire gene. This is achieved by using a low-fidelity DNA polymerase (e.g., Taq polymerase), creating dNTP imbalances, and adding manganese ions (Mn²⁺) to the reaction [24]. The mutation rate is typically tuned to 1–5 base mutations per kilobase [24]. A key limitation is its bias toward transition mutations, which constrains the accessible sequence space [24].

- Recombination-Based Methods: DNA shuffling, or "sexual PCR," more closely mimics natural evolution by recombining beneficial mutations from multiple parent genes [4]. Homologous genes are fragmented with DNaseI and then reassembled in a primer-free PCR reaction, resulting in chimeric genes with novel combinations of mutations [24] [4]. Family shuffling, which uses homologous genes from different species, provides access to a broader and more functionally relevant sequence space [24].

- Focused/Semi-Rational Mutagenesis: When structural or functional knowledge is available, focused mutagenesis creates smaller, higher-quality libraries. Site-saturation mutagenesis comprehensively explores all 19 possible amino acid substitutions at a single, pre-identified "hotspot" residue, allowing for a deep, unbiased interrogation of its role [24].

Step 2: Selection and Screening — Linking Genotype to Phenotype

This step is widely recognized as the primary bottleneck in directed evolution, as it involves identifying the rare improved variants from a vast library [24]. The power and throughput of the screening platform must match the size of the library [24]. A critical distinction exists between selection and screening.

- Selection: Selection establishes a direct link between the desired protein function and the survival or replication of the host organism [5] [24]. For example, in phage-assisted continuous evolution (PACE), the ability of a bacteriophage to reproduce is made dependent on the activity of the evolving enzyme [25]. Selections can handle immense libraries (up to 10¹⁵ variants) but are often difficult to design and can be prone to artifacts [5] [24].

- Screening: Screening involves the individual evaluation of every library member for the desired property [5] [24]. This can be done in vivo (in living cells) or in vitro (in cell-free systems) [5]. While throughput is lower than selection, screening provides quantitative data on every variant's performance [5]. Common platforms include colony screens on agar plates or assays in multi-well microtiter plates using colorimetric or fluorometric substrates read by a plate reader [24].

The genes encoding the best-performing variants are isolated and serve as the template for the next round of evolution, allowing beneficial mutations to accumulate over successive generations [24].

Quantitative Data and Methodologies

Table 1: Comparison of Major Genetic Diversification Methods in Directed Evolution.

| Method | Key Principle | Typical Library Size | Key Advantage | Key Limitation |

|---|---|---|---|---|

| Error-Prone PCR [24] | Random point mutations via low-fidelity PCR | 10³ - 10⁶ | Simple, requires no structural information | Mutation bias (prefers transitions); limited sequence coverage |

| DNA Shuffling [24] [4] | In vitro recombination of homologous genes | 10⁴ - 10⁸ | Combines beneficial mutations; mimics natural recombination | Requires high sequence homology (>70-75%) |

| Site-Saturation Mutagenesis [24] | All 20 amino acids tested at a targeted residue | 20 (per position) | Comprehensive exploration of a specific site; semi-rational | Requires prior knowledge to identify target residues |

| Phage Display [5] [4] | Selection of binding peptides/antibodies displayed on phage | 10⁷ - 10¹¹ | Extremely high throughput for binding interactions | Primarily suited for evolving binding affinity, not catalysis |

Detailed Experimental Protocol: Key Methodologies

Protocol 1: Error-Prone PCR (epPCR) [24]

- Reaction Setup: In a standard PCR reaction mixture, use a non-proofreading DNA polymerase (e.g., Taq polymerase).

- Introducing Errors: Add 0.1-0.5 mM MnCl₂ to the reaction. Deliberately create an dNTP imbalance (e.g., use 0.2 mM dGTP and dATP, and 1 mM dCTP and dTTP).

- Amplification: Run the PCR with optimized cycling conditions for the target gene.

- Cloning: Purify the mutated PCR product and clone it into an appropriate expression vector.

- Transformation: Transform the vector library into a bacterial host to create the variant library for screening.

Protocol 2: Phage-Assisted Continuous Evolution (PACE) [25]

- Setup: A population of bacterial host cells (the "host cell pool") is continuously diluted in a bioreactor. A plasmid encoding the protein to be evolved (e.g., a bridge recombinase) is linked to the expression of a gene essential for bacteriophage replication.

- Infection: A mutagenesis plasmid is used to introduce random mutations into the gene of interest within the host cells.

- Selection: Bacteriophages infect these host cells. Only phages that carry an active variant of the protein will trigger the expression of the essential gene, allowing them to replicate and produce progeny phage.

- Continuous Evolution: Progeny phage from active variants go on to infect fresh host cells in the lagoon. This continuous process, running over days, allows for hundreds of rounds of evolution to occur without manual intervention.

The Modern Toolkit: Machine Learning and Automation

The latest advancements in directed evolution integrate machine learning (ML) to overcome the challenge of navigating complex and epistatic fitness landscapes. Traditional DE can be inefficient when mutations have non-additive effects, often causing the experiment to become stuck at a local optimum [21].

Active Learning-assisted Directed Evolution (ALDE) is an iterative ML-assisted workflow that leverages uncertainty quantification to explore protein sequence space more efficiently [21]. In a typical ALDE cycle:

- An initial library of variants is synthesized and screened in the wet lab.

- The collected sequence-fitness data is used to train a supervised ML model.

- The model uses an acquisition function to rank all sequences in the design space and proposes a new batch of variants predicted to have high fitness.

- This new batch is synthesized and screened, and the new data is used to update the model for the next round [21]. This approach has been shown to optimize challenging objectives, such as enzyme stereoselectivity, much more efficiently than simple DE [21].

Similarly, the DeepDE algorithm uses iterative deep learning, training on a compact library of ~1,000 triple mutants in each round [26]. Using triple mutants as building blocks allows for the exploration of a much greater sequence space compared to single mutants. Applied to Green Fluorescent Protein (GFP), DeepDE achieved a 74.3-fold increase in activity in just four rounds of evolution [26].

Essential Research Reagents and Materials

Table 2: Key Research Reagent Solutions for Directed Evolution Experiments.

| Reagent / Material | Function in Workflow | Specific Example / Note |

|---|---|---|

| Taq Polymerase [24] | Enzyme for error-prone PCR; lacks proofreading activity to introduce mutations. | Standard reagent for random mutagenesis via epPCR. |

| DNase I [24] [4] | Enzyme used to randomly fragment genes for DNA shuffling. | Creates small fragments (100-300 bp) for recombination. |

| NNK Degenerate Codon [21] | Primer design for site-saturation mutagenesis; encodes all 20 amino acids. | NNK (N=A/T/G/C; K=G/T) reduces stop codons to one. |

| Microtiter Plates [24] | High-throughput screening platform for assaying variant activity. | Typically 96- or 384-well format, used with plate readers. |

| Bridge RNA (bRNA) [25] | RNA guide in bridge recombination systems; specifies target and donor DNA. | Key component for next-generation genome editing tools. |

| Bacteriophage [25] | Viral vector for continuous evolution systems like PACE. | Links enzyme activity directly to viral propagation and survival. |

Workflow and System Diagrams

Directed Evolution Cycle - The iterative, two-step process of diversification and selection.

Machine Learning Integration - The active learning loop for protein engineering.

The modern directed evolution workflow, built upon the foundational cycle of diversification and selection, has matured into a highly sophisticated and powerful engineering tool. From its origins in simple adaptive evolution and Spiegelman's in vitro RNA selection, the field has progressed through the development of critical methods like phage display, DNA shuffling, and PACE, culminating in Nobel Prize-winning recognition. Today, the integration of machine learning and active learning strategies is pushing the boundaries of what is possible, enabling researchers to efficiently navigate complex fitness landscapes and engineer proteins with novel, bespoke functions for therapeutics, industrial biocatalysis, and fundamental biological research. The continued refinement of these workflows promises to further accelerate the design of biological solutions to some of the world's most pressing challenges.

Methodological Revolution and Industrial Application

Directed evolution stands as a powerful methodology in protein engineering, mimicking the principles of natural selection in a laboratory setting to develop biomolecules with desired properties. This approach involves iterative rounds of diversification and selection, allowing researchers to evolve proteins or nucleic acids toward improved or novel functions without requiring extensive structural knowledge. The history of directed evolution traces a path from foundational experiments like Spiegelman's work with Qβ bacteriophage in the 1960s, which demonstrated the evolution of RNA molecules in cell-free systems, to its maturation into a standardized methodology that earned Frances Arnold the Nobel Prize in Chemistry in 2018 for pioneering enzymatic directed evolution [27] [28]. Two techniques have served as cornerstone technologies throughout this history: error-prone PCR (epPCR) for introducing random mutations and DNA shuffling for recombining beneficial mutations.

These methods have enabled groundbreaking advances across biotechnology, from engineering enzymes that catalyze non-biological reactions to developing therapeutic proteins with enhanced efficacy. This technical guide examines the principles, methodologies, and applications of these core techniques, providing researchers with the foundational knowledge to implement them effectively in protein engineering campaigns.

Historical Context: From Spiegelman to the Nobel Prize

The conceptual foundation for directed evolution was laid in the 1960s by Sol Spiegelman's experiments with the Qβ bacteriophage. Spiegelman demonstrated that RNA molecules could evolve in a cell-free system through serial transfer under selective pressure, resulting in optimized replicators. This established the fundamental principle that mutation and selection could be harnessed to shape biomolecules outside living cells.

The field matured significantly in the 1990s with the development of key laboratory techniques:

- 1992-1994: The introduction of error-prone PCR by Cadwell and Joyce, and its application to directed evolution by Frances Arnold, who used it to engineer a version of subtilisin E with 256-fold increased activity in organic solvent dimethylformamide (DMF) [29] [27].

- 1994: Willem P.C. Stemmer's invention of DNA shuffling, which allowed in vitro homologous recombination of genes [29].

- 1993: Oskar Kuipers' incorporation of deoxyinosine triphosphate (dITP) in epPCR to modulate mutation rates [29].

Frances Arnold's pioneering work demonstrated that iterative rounds of epPCR mutagenesis and screening could efficiently optimize enzyme properties, establishing a paradigm that would dominate protein engineering for decades [27]. Her Nobel Prize in 2018 recognized how these methods "brought new chemistry to life" by enabling the development of enzymes for environmentally-friendly synthesis processes, renewable fuel production, and pharmaceutical applications [27] [28].

Table 1: Historical Milestones in Directed Evolution

| Year | Development | Key Researchers | Significance |

|---|---|---|---|

| 1960s | Qβ phage evolution | Spiegelman | Demonstrated molecular evolution in cell-free systems |

| 1992-1994 | Error-prone PCR | Cadwell, Joyce, Arnold | Provided method for introducing random mutations |

| 1993 | dITP incorporation | Kuipers | Enhanced mutation diversity in epPCR |

| 1994 | DNA shuffling | Stemmer | Enabled recombination of beneficial mutations |

| 2018 | Nobel Prize | Arnold | Recognized directed evolution of enzymes |

Error-Prone PCR: Principles and Methodologies

Fundamental Mechanisms

Error-prone PCR is a random mutagenesis technique that deliberately introduces nucleotide substitutions during PCR amplification by reducing replication fidelity. Traditional PCR aims for perfect replication, while epPCR strategically introduces "controlled chaos" to generate molecular diversity [29]. This is achieved through several biochemical approaches:

- Low-fidelity DNA polymerases: Utilizing enzymes naturally prone to misincorporation [29]

- Biased nucleotide pools: Unequal concentrations of dNTPs to promote misincorporation [30]

- Metal ion cofactor manipulation: Adding manganese or altering magnesium concentrations to reduce fidelity [30]

- Nucleotide analogs: Incorporating mutagenic bases like deoxyinosine triphosphate (dITP) [29]

The mutation rate in epPCR can be controlled by varying the initial amount of template DNA and the number of amplification cycles, typically achieving 1-16 mutations per kilobase [30]. Modern commercial systems like the GeneMorph II Random Mutagenesis Kit employ engineered enzyme blends such as Mutazyme II to provide controlled mutation rates with minimal mutational bias, producing more uniform mutational spectra across all nucleotide bases [30].

Inosine-Mediated epPCR

A specialized variant of epPCR utilizes deoxyinosine triphosphate (dITP) as a universal base during amplification. Inosine acts as a "wild card" nucleotide during amplification, pairing promiscuously with adenine, cytosine, or thymine in the first extension cycle. In subsequent amplifications, inosine is preferentially converted to guanine or cytosine, thereby increasing GC content and introducing focused mutations [29]. This approach not only diversifies the sequence pool but also enhances thermal stability and structural rigidity due to the formation of stronger GC base pairs, which can support more stable secondary structures [29].

Table 2: Error-Prone PCR Method Comparison

| Method | Mechanism | Mutation Rate | Mutational Bias | Applications |

|---|---|---|---|---|

| Standard epPCR | Low-fidelity polymerases, biased dNTPs | 1-16/kb | Variable, often GC-rich | General protein engineering |

| Inosine-mediated | dITP incorporation | Variable | Favors G/C mutations | Increasing aptamer stability |

| Mutazyme II | Engineered polymerase blend | 1-16/kb | Uniform (A/T = G/C) | Comprehensive mutant libraries |

Experimental Protocol: Error-Prone PCR

The following protocol adapts established epPCR methods for creating mutant libraries [31] [30]:

Reaction Setup:

- Template DNA: 10-100 pg for high mutation rate (4-16/kb), 1-10 ng for low mutation rate (1-4/kb)

- Primers: 0.5 μM each forward and reverse primer

- dNTPs: 0.2 mM each dATP, dTTP, dGTP, dCTP (or biased concentrations for increased mutation rate)

- Magnesium chloride: 1.5-7 mM (optimize for target)

- Mutazyme II DNA polymerase: 1-2 units/μL

- Reaction buffer: As supplied with enzyme

Thermocycling Conditions:

- Initial denaturation: 95°C for 2 minutes

- 25-35 cycles of:

- Denaturation: 95°C for 30 seconds

- Annealing: 55-65°C (primer-specific) for 30 seconds

- Extension: 72°C for 1 minute/kb

- Final extension: 72°C for 10 minutes

Product Analysis:

- Verify amplification by agarose gel electrophoresis

- Purify PCR product using standard methods

- Clone into expression vector for screening

For targeted mutagenesis of specific domains, epPCR can be combined with overlap extension PCR to generate libraries with a "faithful" N-terminus and a mutagenized C-terminus, as demonstrated in studies of morbillivirus haemagglutinin [31].

Error-Prone PCR Workflow

DNA Shuffling: Principles and Methodologies

Fundamental Mechanisms

DNA shuffling, introduced by Willem P.C. Stemmer in 1994, represents a significant advancement over purely random mutagenesis by enabling the recombination of beneficial mutations from multiple parent sequences. This technique mimics natural sexual evolution by breaking down homologous genes into fragments and reassembling them through a primerless PCR, allowing the exchange of genetic material between different variants [32].

The standard DNA shuffling process involves:

- Random fragmentation of related genes using DNase I

- Recursive reassembly of fragments through primerless PCR

- Amplification of full-length chimeric genes using external primers

This approach allows the exploration of sequence space more efficiently than point mutagenesis alone, as beneficial mutations from different lineages can be combined while deleterious mutations can be eliminated.

Advanced DNA Shuffling Techniques

Several advanced DNA shuffling methods have been developed to address limitations of the original technique:

- StEP (Staggered Extension Process): Involves repeated short annealing/extension cycles to generate recombination between homologous sequences [33]

- RACHITT (Random Chimeragenesis on Transient Templates): Uses temporary templates to facilitate more controlled recombination

- SEP-DDS (Segmental error-prone PCR with Directed DNA Shuffling): Combines segmental mutagenesis with directed recombination to minimize negative mutations in large genes [34]

The SEP-DDS approach is particularly valuable for large genes, as it involves dividing the gene into small fragments, independently mutagenizing them, and then reassembling them in Saccharomyces cerevisiae, which has high recombination efficiency [34]. This method ensures more even distribution of mutations and reduces the frequency of reverse mutations common in traditional DNA shuffling.

Experimental Protocol: DNA Shuffling

The following protocol describes a simplified DNA shuffling method based on established procedures [32] [34]:

Template Preparation:

- Mix equimolar amounts (100-500 ng each) of homologous DNA sequences (>93% identity optimal)

- Adjust Mg²⁺ concentration to 1-2 mM

Random Fragmentation:

- Add 0.015-0.03 units of DNase I per μL reaction

- Incubate at 15-25°C for 10-30 minutes

- Target fragment size: 10-50 bp

- Heat-inactivate DNase I at 80°C for 10 minutes

Primerless Reassembly:

- Assemble without primers using:

- 100-200 ng fragmented DNA

- 0.2 mM dNTPs

- 2.5 mM MgCl₂

- Thermostable DNA polymerase

- Thermocycling:

- 94°C for 2 minutes

- 40-60 cycles: 94°C for 30 seconds, 50-60°C for 30 seconds, 72°C for 30 seconds

- 72°C for 5 minutes

- Assemble without primers using:

Amplification of Full-Length Products:

- Add gene-specific primers to reassembled product

- Perform standard PCR to amplify full-length chimeric genes

- Clone into expression vector for screening

DNA Shuffling Workflow

Comparative Analysis and Technical Considerations

Strategic Selection of Methods