Genomic Selection in Predictive Breeding: Modern Methods, AI Integration, and Clinical Applications

This article provides a comprehensive overview of the application of genomic selection (GS) in predictive breeding for biomedical and pharmaceutical research.

Genomic Selection in Predictive Breeding: Modern Methods, AI Integration, and Clinical Applications

Abstract

This article provides a comprehensive overview of the application of genomic selection (GS) in predictive breeding for biomedical and pharmaceutical research. It explores the foundational principles of GS, from traditional GBLUP and Bayesian methods to cutting-edge machine learning and deep learning approaches like LSTM networks. The content covers methodological implementation, including optimizing two-stage models and cross-performance prediction tools, while addressing key challenges such as computational efficiency, data integration, and model selection. Through comparative validation of statistical versus AI-driven models and examination of real-world frameworks like ABM-BOx, this resource offers scientists and drug development professionals actionable insights for enhancing genetic gain, accelerating breeding cycles, and improving target validation in therapeutic development.

The Genomic Selection Revolution: From Basic Principles to Transformative Potential

Genomic Selection (GS) is an advanced method in molecular breeding that exploits dense, genome-wide molecular markers to predict the genetic merit of individuals [1] [2]. In contrast to earlier methods that focused on a few significant markers, GS simultaneously estimates the effects of all markers across the entire genome [2]. The core output of a GS analysis is the Genomic Estimated Breeding Value (GEBV), which represents the sum of the effects associated with all marker alleles for a given individual, thereby capturing the combined contribution of all quantitative trait loci (QTL) to the breeding value [1] [3]. Since its conceptual proposal by Meuwissen, Hayes, and Goddard in 2001, GS has revolutionized animal and plant breeding by providing a powerful tool to accelerate genetic gain, particularly for complex, polygenic traits [1] [4].

Core Principles and Workflow

The fundamental principle of GS is the use of a large reference or training population (TP) that is both genotyped for genome-wide markers and phenotyped for the target traits [1] [5]. Statistical models are used to calibrate or "train" the relationship between the genotypic and phenotypic data in this TP. This calibrated model is then applied to a breeding population (BP)—individuals that have been genotyped but not phenotyped—to predict their GEBVs [1] [5]. Selection decisions are subsequently based on these GEBVs.

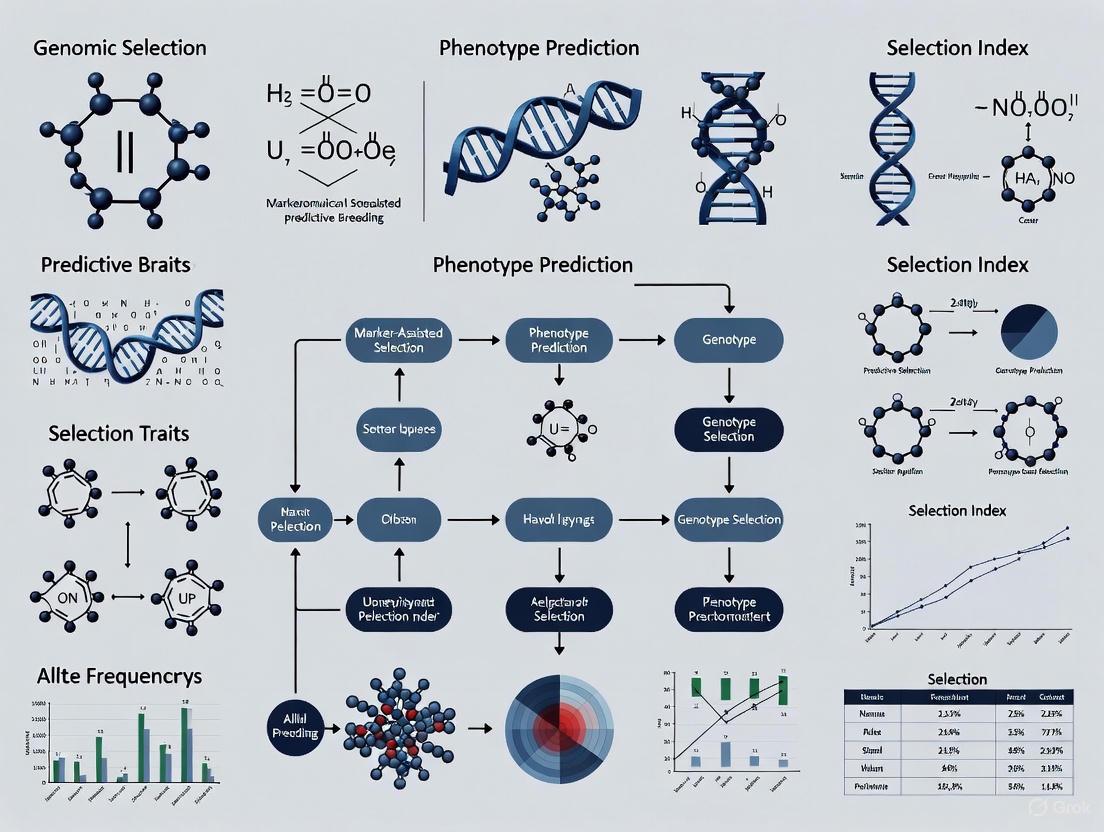

The following diagram illustrates the typical workflow for implementing genomic selection.

Advantages over Traditional Breeding Methods

GS offers significant advantages over conventional phenotypic selection (PS) and marker-assisted selection (MAS), which are summarized in the table below.

Table 1: Comparison of Genomic Selection with Traditional Breeding Methods

| Feature | Phenotypic Selection (PS) | Marker-Assisted Selection (MAS) | Genomic Selection (GS) |

|---|---|---|---|

| Basis of Selection | Direct measurement of phenotype [1] | Effects of a few pre-identified markers [4] | Genome-wide marker effects [1] [2] |

| Handling of Complex Traits | Less effective for low-heritability, complex traits [1] | Inefficient for polygenic traits controlled by many minor QTLs [1] [4] | Highly effective; captures both major and minor effect QTLs [1] [5] |

| Selection Accuracy | Environmentally sensitive, less reliable [1] | Can be inferior to PS if markers explain little genetic variance [1] | High and more reliable; less sensitive to environment [1] |

| Breeding Cycle Time | Long (5-12 years to develop a variety) [1] | Shorter than PS, but still requires phenotyping | Shortens cycles significantly (e.g., from 9 to 3 years) [4] |

| Cost & Labor | High (costly, labor-intensive phenotyping) [1] | Moderate | Can be lower, especially for expensive-to-measure traits [6] |

Key Factors Influencing Prediction Accuracy

The accuracy of GEBV predictions is paramount to the success of a GS program. This accuracy is not static and is influenced by several factors, as detailed in the table below.

Table 2: Key Factors Affecting Genomic Prediction Accuracy and Their Impacts

| Factor | Impact on GEBV Accuracy | Supporting Evidence |

|---|---|---|

| Training Population (TP) Size | Accuracy increases with TP size up to a point of diminishing returns, related to population dimensionality [7] [5]. | In pigs, a population with ~5,000 independent segments required ~5,000 animals for stable accuracy [7]. |

| Marker Density | Higher density generally improves accuracy, but sufficient density is determined by linkage disequilibrium (LD) decay [1] [5]. | In maize FSR studies, accuracy increased with marker density from 40% to 100% [5]. |

| Trait Heritability | Higher heritability traits yield higher prediction accuracies [7] [3]. | In a pig study, a growth trait (h²=0.21) had higher accuracy than a fitness trait (h²=0.06) [7]. |

| Relatedness between TP and BP | Accuracy is higher when the TP and BP are closely related, as LD patterns are more consistent [5]. | Biparental populations maximize this relationship, allowing accurate predictions with limited markers [5]. |

| Statistical Model | The choice of model (e.g., GBLUP, Bayesian methods) can impact accuracy, especially for traits with non-additive effects [5] [8]. | In dairy cattle, BLUP performed nearly as well as more complex methods for many traits [3]. |

Furthermore, GEBV accuracy is not permanent and can decay over generations due to factors like selection and recombination. The rate of decay is influenced by the quantity and quality of data in the TP [7].

The diagram below outlines the statistical relationships and data structures that underpin the genomic prediction models used to calculate GEBVs.

Experimental Protocols and Applications

Protocol 1: Implementing GS for Fusarium Stalk Rot (FSR) Resistance in Maize

This protocol, adapted from Showkath Babu et al. (2025), outlines the key steps for a GS study on a complex disease resistance trait [5].

- Population Development:

- Generate Doubled Haploid (DH) populations from F1 or F2 generations of resistant × susceptible crosses to create completely homozygous lines for accurate phenotyping and genotyping [5].

- Phenotyping:

- Evaluate the TP (DH lines) for FSR resistance in replicated trials across multiple environments.

- Record disease severity scores or related metrics. This creates the phenotypic vector (y) for model training [5].

- Genotyping and Quality Control (QC):

- Model Training and Validation:

- GEBV Prediction and Selection:

- Select the best-performing model based on prediction accuracy in the validation step.

- Apply this model to the genotyped-only BP to calculate GEBVs for all candidates.

- Select top-performing individuals based on their GEBVs for the next breeding cycle.

Protocol 2: Optimizing Cross Performance Using Genomic Predicted Cross Performance (GPCP)

For traits with significant non-additive (dominance) effects, such as in clonal crops, predicting the performance of specific crosses is more valuable than predicting the value of individual parents [9].

- Training Population and Model:

- Develop a TP with both phenotypic records and genotype data.

- Fit a model that includes both additive and directional dominance effects, such as: y = Xβ + Fθ + Za + Wd + ε, where F is a vector of inbreeding coefficients, θ is the inbreeding effect, a is the vector of additive effects, W is a matrix of heterozygosity indicators, and d is the vector of dominance effects [9].

- Estimate Effects and Predict Crosses:

- Use the model to estimate the additive and dominance effects of all markers.

- For any potential parental pair, predict the mean genetic value of their F1 progeny (the GPCP) by summing the expected additive and dominance contributions based on the parents' genotypes [9].

- Selection of Crosses:

- Rank all possible parental combinations based on their GPCP.

- Select and make the crosses with the highest predicted performance to form the next breeding generation [9].

The Scientist's Toolkit: Essential Research Reagents and Materials

Table 3: Key Research Reagents and Solutions for Genomic Selection Studies

| Tool / Reagent | Function / Application | Examples / Notes |

|---|---|---|

| High-Density SNP Arrays | Genome-wide genotyping; provides the marker data matrix (Z) for analysis. | Illumina platforms (e.g., 50K SNP chip in dairy cattle [3]); flexible for species with reference genomes. |

| Genotyping-by-Sequencing (GBS) | Reduced-representation sequencing for cost-effective, high-throughput SNP discovery and genotyping. | Ideal for non-model species and large populations without a reference genome [1]. |

| DNA Extraction Kits | High-quality, high-molecular-weight DNA isolation from tissue samples (e.g., blood, leaf). | A critical first step; quality directly impacts genotyping success and data quality. |

| Phenotyping Equipment | Precise measurement of the trait of interest to create the phenotypic vector (y). | Can range from field scales (yield) to ELISA readers (disease titers [6]) to near-infrared spectroscopy (NIR) for quality traits. |

| Statistical Software | Fitting genomic prediction models, estimating effects, and calculating GEBVs. | Variety of specialized software available (e.g., sommer R package [9], AIREMLF90 [7], BreedBase [9]). |

Advanced Applications and Future Directions

GS is moving beyond predicting additive breeding values. The Genomic Predicted Cross Performance (GPCP) tool is a significant advancement for leveraging non-additive genetic effects, particularly dominance, to identify optimal parental combinations in hybrid and clonal breeding programs [9]. The integration of machine learning (ML) and deep learning (DL) models is another frontier, showing promise in handling complex, non-linear relationships in big genomic and phenotypic datasets [8]. Furthermore, efforts are underway to democratize GS through user-friendly software platforms and data management tools, making this powerful methodology accessible to a broader range of breeding programs [8].

Genomic Best Linear Unbiased Prediction (GBLUP) has established itself as a cornerstone method in genomic selection (GS), valued for its robustness and computational efficiency in predicting complex traits [10] [11]. Its widespread adoption in both animal and plant breeding programs is largely due to its solid theoretical foundation within the linear mixed model framework and its relatively straightforward implementation. GBLUP operates by estimating breeding values using a genomic relationship matrix derived from genome-wide markers, typically single nucleotide polymorphisms (SNPs) [11]. This approach has demonstrably accelerated genetic gains, particularly in major crop species, by enabling selection decisions earlier in the breeding cycle [12] [1].

However, the core strength of GBLUP is also the source of its primary limitation. The method implicitly assumes that all markers contribute equally to the total genetic variance of a trait [10]. This assumption is mathematically convenient and enhances computational stability, but it represents a significant oversimplification of biological reality. Many agriculturally important traits, including grain yield, disease resistance, and various quality attributes, are controlled by a complex genetic architecture comprising a mixture of loci with varying effect sizes [10] [1]. The equal variance assumption is most appropriate for highly polygenic traits governed by numerous loci with infinitesimally small effects. For traits influenced by a combination of major and minor effect genes, or those involving non-additive genetic interactions, this assumption can substantially limit predictive accuracy [10] [11].

This article examines the fundamental limitations of GBLUP's equal variance assumption, explores advanced statistical methods designed to overcome these constraints and provides detailed protocols for implementing these next-generation genomic prediction approaches in predictive breeding research.

The GBLUP Framework and Its Core Assumption

Mathematical Foundation of GBLUP

The GBLUP model is typically formulated as:

y = Xβ + Zg + ε

Where:

- y is the vector of phenotypic observations

- X is the design matrix for fixed effects

- β is the vector of fixed effects

- Z is the design matrix for random genetic effects

- g is the vector of random additive genetic effects ~ N(0, Gσ²g)

- ε is the vector of residual errors ~ N(0, Iσ²ε)

The central component is the genomic relationship matrix (G), which captures the genetic covariance between individuals based on their marker profiles. The critical assumption is that all markers have equal variance, meaning σ²g is constant across all loci in the genome [10].

Biological Scenarios Where the Equal Variance Assumption Fails

The table below outlines trait architectures where GBLUP's core assumption becomes problematic and describes the consequences for prediction accuracy.

Table 1: Trait Architectures Where GBLUP's Equal Variance Assumption is Limiting

| Trait Architecture | Description | Impact on GBLUP Performance |

|---|---|---|

| Oligogenic Architecture | Controlled by few genes with major effects amid many minor genes | Underestimates contributions of major genes, reducing accuracy for validation populations [10] |

| Traits with Selective Sweeps | Regions under strong selection show reduced diversity and different LD patterns | Misses localized genetic effects, limiting across-population portability [13] |

| Non-Additive Traits | Exhibits epistasis (gene-gene interactions) and dominance | Cannot capture interaction effects, potentially missing substantial genetic variance [14] [11] |

| Low-Heritability Traits | Phenotype strongly influenced by environmental factors | Struggles to distinguish true genetic signals from noise, yielding unstable predictions [10] |

Advanced Methods Overcoming GBLUP's Limitations

Methodological Spectrum for Genomic Prediction

Several advanced statistical approaches have been developed to address the limitations of the equal variance assumption. These methods can be broadly categorized into variable selection, Bayesian, and machine learning approaches.

Table 2: Comparison of Advanced Genomic Prediction Methods

| Method Category | Example Methods | Key Mechanism | Advantages | Limitations |

|---|---|---|---|---|

| Variable Selection | GA-GBLUP [10] | Uses genetic algorithms to select informative markers | Higher accuracy for oligogenic traits; Reduces dimensionality | Computationally intensive; Requires tuning |

| Bayesian Approaches | BayesA, BayesB, BayesC [13] | Uses prior distributions for marker variances | Allows different variance for each marker; Flexible modeling | Computationally demanding; Prior specification affects results |

| Machine Learning | Deep Learning (MLP) [11] | Neural networks capturing non-linear patterns | Models complex interactions; No pre-specified model | Requires large sample sizes; "Black box" interpretation |

| Hybrid Methods | Sparse GBLUP | Combines GBLUP with significant QTLs as fixed effects | Improves upon GBLUP for major genes | Depends on accurate QTL detection |

Detailed Protocol: Implementing GA-GBLUP for Trait-Specific Marker Selection

The GA-GBLUP method represents an innovative hybrid approach that combines the robustness of GBLUP with the variable selection capability of genetic algorithms [10]. Below is a detailed protocol for implementing this method:

Experimental Workflow

Step-by-Step Procedure

Step 1: Data Preparation and Quality Control

- Genotype a training population using high-density SNP arrays or sequencing technologies

- Perform standard QC: remove markers with high missing rate (>10%), low minor allele frequency (<5%), and significant deviation from Hardy-Weinberg equilibrium

- Code genotypes numerically (e.g., 0, 1, 2 for homozygous, heterozygous, and alternative homozygous)

- Collect high-quality phenotypic records for the target trait(s), adjusting for fixed effects (e.g., year, location, replication) using BLUEs (Best Linear Unbiased Estimates)

Step 2: Linkage Disequilibrium (LD)-based Dimension Reduction

- Calculate pairwise LD (r²) between adjacent markers using tools like PLINK or TASSEL

- Bin adjacent markers with LD r² > 0.8 to reduce computational complexity while preserving genetic information [10]

- Standardize the binned genotype matrix to have mean zero and variance one for each marker

Step 3: Genetic Algorithm Configuration

- Initialize a population of 100-500 chromosomes, each representing a random subset of markers

- Define fitness functions based on:

- R²: Predictive accuracy from cross-validation

- HAT: Leverage of the relationship matrix for model stability [10]

- AIC/BIC: Model fit with penalty for complexity

- Set genetic parameters:

- Selection rate: 10-50% (proportion of chromosomes retained)

- Crossover rate: 60-80% (probability of recombination)

- Mutation rate: 1-5% (probability of random marker changes)

- Run for 50-200 generations or until convergence

Step 4: Model Building and Validation

- Extract the optimal marker set identified by the genetic algorithm

- Construct a genomic relationship matrix using only selected markers

- Fit the GBLUP model with the optimized relationship matrix

- Validate predictive ability using cross-validation (e.g., 5-fold or leave-one-family-out) [15]

Detailed Protocol: Deep Learning for Capturing Non-linear Genetic Patterns

Deep learning (DL) models offer a powerful alternative for capturing non-additive genetic effects that GBLUP cannot model effectively [11]. The following protocol describes implementation of a multilayer perceptron (MLP) for genomic prediction.

Experimental Workflow

Step-by-Step Procedure

Step 1: Data Preprocessing

- Encode genotypes as continuous dosage values (0, 1, 2) or one-hot encoded vectors

- Standardize both genotype and phenotype data to zero mean and unit variance

- Split data into training (70%), validation (15%), and testing (15%) sets

- For small datasets (<1000 samples), employ k-fold cross-validation to maximize training efficiency [11]

Step 2: Network Architecture Design

- Implement an MLP with 2-5 hidden layers depending on dataset size and complexity

- Use 64-256 neurons in the first hidden layer, reducing by approximately 50% in subsequent layers

- Apply ReLU activation functions in hidden layers for efficient training

- Use linear activation in the output layer for continuous traits

- Incorporate dropout layers (rate: 0.2-0.5) to prevent overfitting

Step 3: Model Training and Optimization

- Compile model with Adam optimizer and learning rate of 0.001-0.0001

- Use mean squared error as loss function for continuous traits

- Implement early stopping with patience of 20-50 epochs based on validation loss

- Train for 100-1000 epochs with batch sizes of 16-64

- Employ learning rate reduction on plateau to refine convergence

Step 4: Model Interpretation and Validation

- Calculate predictive ability as correlation between predicted and observed values

- Compare performance with GBLUP baseline on the same validation set

- Use permutation tests to assess significance of prediction accuracy

- For interpretability, implement gradient-based feature importance to identify influential markers

Table 3: Research Reagent Solutions for Genomic Prediction Studies

| Tool/Resource | Function | Application Context |

|---|---|---|

| GAGBLUP R Package [10] | Implements GA-GBLUP with customizable fitness functions | Trait-specific marker selection for oligogenic traits |

| WOMBAT [16] | REML-based variance component estimation | Flexible mixed model analyses for quantitative genetics |

| TensorFlow/PyTorch [11] | Deep learning frameworks for building neural networks | Modeling non-linear genetic architectures and interactions |

| ASREML-R | Fitted mixed models with variance structure estimation | Genomic prediction implementation in breeding programs |

| PLINK 2.0 | Whole-genome association analysis and data management | QC, LD calculation, and basic genomic analyses |

| GBLUP | Benchmark method assuming equal SNP effects | Baseline comparison for evaluating advanced methods |

GBLUP remains a valuable tool for genomic prediction, particularly for highly polygenic traits with predominantly additive genetic architecture. However, its assumption of equal SNP effect variances represents a significant limitation for traits with more complex genetic architectures. Methods like GA-GBLUP that perform trait-specific marker selection and deep learning approaches that capture non-linear patterns provide powerful alternatives that can significantly enhance prediction accuracy [10] [11].

The choice of method should be guided by the genetic architecture of the target trait, available sample size, and computational resources. For traits suspected to be governed by a mix of major and minor genes, GA-GBLUP offers a balanced approach that maintains the robustness of the GBLUP framework while allowing for differential marker contributions. For traits where non-additive effects are suspected to play an important role, deep learning methods provide the flexibility to capture these complex patterns, though they require careful tuning and validation.

As genomic selection continues to evolve, integrating these advanced prediction methods with high-throughput phenotyping and functional genomics data will further enhance our ability to accurately predict complex traits and accelerate genetic gain in breeding programs.

Genomic Selection (GS) has emerged as a transformative breeding strategy that uses genome-wide molecular markers to predict the genetic value of individuals for selection. Proposed by Meuwissen et al. in 2001, GS has fundamentally revised traditional breeding processes by shifting phenotyping to a role of generating data for building prediction models, thereby accelerating genetic gain [1] [12] [17]. This approach allows breeders to select candidates based on Genomic Estimated Breeding Values (GEBVs) derived from their genotypic data and a trained prediction model, significantly shortening breeding cycles and increasing selection intensity and accuracy [1] [12]. The core of GS lies in its four major steps: training population design, model building, prediction, and selection [17]. GS plays multiple roles in modern plant breeding, including turbocharging gene banks, parental selection, and candidate selection at various breeding cycle stages [17]. With growing evidence that GS improves genetic gains in plant breeding, research innovations have focused on enhancing prediction accuracy through advanced statistical models, optimized training populations, and incorporation of multi-omics data [12] [8].

Statistical Approaches for Genomic Prediction

Foundational Models and Methods

Statistical approaches form the foundation of genomic prediction, with genomic best linear unbiased prediction (GBLUP) standing as a benchmark method widely adopted in breeding programs [18] [19] [11]. GBLUP utilizes genomic markers within linear mixed models to produce accurate estimates of genetic values, particularly for traits predominantly influenced by additive genetic effects [11]. This method employs a genomic relationship matrix derived from marker data to replace the pedigree-based relationship matrix in traditional best linear unbiased prediction (BLUP) [20] [21]. The statistical foundation of GBLUP ensures reliability, scalability, and ease of interpretation, making it a cornerstone in both animal and plant breeding applications [11]. Another popular statistical approach is ridge regression, which applies L2-penalization to estimate marker effects and is equivalent to GBLUP when using a specific relationship matrix [21]. These linear models have demonstrated substantial effectiveness for many quantitative traits, especially those with additive genetic architectures.

Reproducing Kernel Hilbert Spaces (RKHS) represents a semi-parametric statistical method that has gained popularity in genomic prediction [19]. This approach uses kernel functions to capture complex patterns in the data, including certain non-linear relationships, while maintaining a tractable statistical framework. RKHS offers flexibility in modeling genetic architectures that deviate from strict additivity without requiring the extensive parameter tuning of machine learning methods. The method has proven particularly valuable for traits influenced by epistatic interactions or when dealing with population structures that complicate traditional linear models [19].

Experimental Protocol for GBLUP Implementation

Protocol Title: Implementation of Genomic Best Linear Unbiased Prediction for Genomic Selection

Principle: GBLUP predicts breeding values by utilizing a genomic relationship matrix (G-matrix) that quantifies the genetic similarity between individuals based on genome-wide markers, replacing the pedigree-based numerator relationship matrix in traditional BLUP.

Materials and Reagents:

- Genotypic data (SNP matrix) for training and validation populations

- Phenotypic records for the training population

- Computing environment with appropriate software (e.g., R, Python)

Procedure:

Data Preparation and Quality Control

- Format genotypic data as a matrix X of dimensions n × m, where n is the number of individuals and m is the number of markers

- Code markers as 0, 1, and 2 representing the number of reference alleles

- Remove markers with high missing rates (>10%) and low minor allele frequency (<5%)

- Impute missing genotypes using appropriate methods (e.g., mean imputation, k-nearest neighbors)

- Standardize the phenotype data by adjusting for fixed effects (e.g., location, year, replication)

Construction of Genomic Relationship Matrix (G)

- Calculate the genomic relationship matrix G using the following formula: where X is the genotype matrix, P is a matrix of allele frequencies, and p_i is the frequency of the reference allele for marker i [21]

- Alternatively, use the method described by VanRaden (2008): where W is the centered genotype matrix (wij = xij - 2pi) and k = 2∑pi(1-pi) [21]

Model Fitting

- Implement the mixed linear model: y = Xβ + Zu + ε where y is the vector of phenotypes, X is the design matrix for fixed effects, β is the vector of fixed effects, Z is the design matrix for random effects, u ~ N(0, Gσ²g) is the vector of genomic breeding values, and ε ~ N(0, Iσ²ε) is the residual vector [20]

- Estimate variance components (σ²g and σ²ε) using restricted maximum likelihood (REML)

- Solve the mixed model equations to obtain GEBVs for all individuals

Model Validation

- Partition the data into training and validation sets using cross-validation or independent validation schemes

- Calculate prediction accuracy as the correlation between predicted GEBVs and observed phenotypes in the validation set

- Adjust the correlation by dividing by the square root of heritability to estimate the accuracy of genetic value prediction

Troubleshooting Tips:

- Low prediction accuracy may indicate insufficient training population size or poor relationship between training and validation populations

- Computational challenges with large G matrices can be addressed through partitioning or sparse matrix techniques

- Check for population stratification that may inflate prediction accuracy

Bayesian Methods in Genomic Selection

Theoretical Foundations and Model Variants

Bayesian methods represent a powerful paradigm for genomic prediction that incorporates prior knowledge about marker effects through specified prior distributions. These methods employ Markov Chain Monte Carlo (MCMC) techniques to estimate posterior distributions of parameters, allowing for flexible modeling of genetic architectures [20] [21]. The fundamental Bayesian linear model for genomic prediction can be represented as:

y = β₀ + XΓβ + Zu + ε [20]

where y is the vector of phenotypes, β₀ is the intercept, X is the genotype matrix, Γ is a diagonal matrix of indicator variables (for variable selection models), β is the vector of marker effects, Z is the design matrix for polygenic effects, u is the vector of polygenic effects, and ε is the residual error [20].

The Bayesian alphabet comprises several model variants differing primarily in their prior specifications. Key models include BayesA, which uses a scaled-t prior distribution for marker effects; BayesB, which incorporates both a scaled-t prior and indicator variables for variable selection; BayesC, which utilizes a mixture of a point mass at zero and a normal distribution; and Bayesian LASSO (BL), which applies a double exponential (Laplace) prior to induce shrinkage of marker effects [20] [21]. These methods effectively handle the "small n, large p" problem common in genomic prediction, where the number of markers (p) far exceeds the number of phenotypic observations (n) [20].

Experimental Protocol for Bayesian Analysis

Protocol Title: Implementation of Bayesian Methods for Genomic Prediction

Principle: Bayesian genomic prediction methods estimate marker effects by combining likelihood from the data with prior distributions that incorporate biological assumptions about genetic architecture, using MCMC sampling to approximate posterior distributions.

Materials and Reagents:

- Genotypic data (SNP matrix)

- Phenotypic measurements

- Computing environment with Bayesian GS software (e.g., BGLR, BayZ, ASREML)

Procedure:

Data Preprocessing

- Code markers as 0, 1, 2 for the number of reference alleles

- Standardize genotype matrix to have mean zero and variance one

- Adjust phenotypes for fixed effects and experimental designs

- Divide data into training and validation sets

Prior Specification

- Select appropriate prior based on genetic architecture:

- BayesA: Scaled-t prior for marker effects

- BayesB: Mixture prior with point mass at zero and scaled-t distribution

- BayesC: Mixture prior with point mass at zero and normal distribution

- Bayesian LASSO: Double exponential (Laplace) prior

- Set hyperparameters for priors based on prior knowledge or estimate from data

- Select appropriate prior based on genetic architecture:

Model Implementation

- Initialize chain with starting values for parameters

- Implement Gibbs sampling for conditional distributions when available

- Use Metropolis-Hastings algorithm for non-conjugate full conditionals

- Run multiple chains to assess convergence

MCMC Settings and Convergence Diagnostics

- Set chain length (typically 10,000-100,000 iterations)

- Determine burn-in period (typically 1,000-10,000 iterations)

- Set thinning rate to reduce autocorrelation

- Monitor convergence using Gelman-Rubin statistic, trace plots, and autocorrelation plots

Posterior Inference and Prediction

- Calculate posterior means of marker effects from post-burn-in iterations

- Compute GEBVs for validation population: GEBV = Xβ

- Evaluate prediction accuracy as correlation between GEBVs and observed phenotypes in validation set

Troubleshooting Tips:

- Lack of convergence may require longer chains or reparameterization

- High autocorrelation may necessitate increased thinning rates

- Computational intensity can be addressed by parallelization or faster algorithms like expectation-maximization (EM)

Machine Learning and Deep Learning Approaches

Algorithmic Diversity and Applications

Machine learning (ML) and deep learning (DL) represent non-parametric approaches to genomic prediction that offer tremendous flexibility to adapt to complex associations between genotype and phenotype [18] [8]. These methods excel at capturing nonlinear patterns and epistatic interactions without requiring explicit specification of the model form [18] [11]. Popular ML methods include random forests (RF), which construct multiple decision trees and aggregate their predictions; support vector regression (SVR), which maps input data into high-dimensional feature spaces; and gradient boosting methods (e.g., XGBoost, LightGBM), which sequentially build ensembles of weak learners to minimize prediction error [19].

Deep learning methods, particularly multilayer perceptrons (MLPs or feedforward neural networks), generalize artificial neural networks by stacking multiple processing layers [18] [11]. Each layer consists of interconnected nodes ("neurons") that receive input from the previous layer, apply an activation function, and pass the output to the next layer [18]. The "depth" of these networks enables them to learn hierarchical representations of the data, potentially capturing complex genetic architectures that challenge traditional methods [18]. For a univariate response, the MLP model with L hidden layers can be represented as:

Yi = w₀₀ + W₁₀xi^L + ε_i [11]

where xi^l = gl(w₀^l + W₁^l xi^{l-1}) for l = 1, ..., L, with xi⁰ = xi (the input vector of markers for individual i), gl denotes the activation function for layer l, w₀^l and W₁^l represent the bias vector and weight matrix for hidden layers, and w₀⁰ and W₁⁰ are the bias and weight vector for the output layer [11].

Experimental Protocol for Deep Learning Implementation

Protocol Title: Implementation of Deep Learning for Genomic Prediction

Principle: Deep learning models learn complex mappings from genotypes to phenotypes through multiple layers of nonlinear transformations, automatically learning feature representations and potentially capturing epistatic interactions without explicit specification.

Materials and Reagents:

- Genotypic data (SNP matrix)

- Phenotypic measurements

- Computing environment with DL frameworks (e.g., TensorFlow, PyTorch, Keras)

- GPU acceleration (recommended for large networks)

Procedure:

Data Preparation and Preprocessing

- Encode markers as 0, 1, 2 and standardize to mean zero, variance one

- Standardize phenotypic values

- Split data into training, validation, and test sets (e.g., 70%-15%-15%)

- Implement data augmentation if needed (e.g., synthetic minority over-sampling)

Network Architecture Design

- Determine number of hidden layers (typically 1-5 for genomic data)

- Specify number of neurons per layer (often 100-1000)

- Select activation functions (ReLU, sigmoid, or tanh for hidden layers; linear for regression output)

- Add regularization components (dropout, L1/L2 penalty)

Model Training and Hyperparameter Tuning

- Initialize weights (e.g., He or Xavier initialization)

- Select optimizer (Adam, RMSprop, or SGD with momentum)

- Set learning rate (typically 0.001-0.0001) and scheduling

- Determine batch size (32-256) and number of epochs

- Implement early stopping based on validation performance

- Use cross-validation for hyperparameter optimization

Model Evaluation and Interpretation

- Evaluate final model on test set

- Calculate prediction accuracy metrics (correlation, mean squared error)

- Perform feature importance analysis using permutation methods or integrated gradients

- Visualize learned representations if using dimensionality reduction

Troubleshooting Tips:

- Overfitting can be addressed with increased regularization, dropout, or early stopping

- Training instability may require learning rate adjustment or batch normalization

- Poor performance may necessitate architecture modifications or feature engineering

Comparative Analysis of Genomic Prediction Approaches

Performance Comparison Across Methods

Table 1: Comparison of Genomic Prediction Approaches

| Method Category | Specific Methods | Genetic Architecture Assumptions | Advantages | Limitations | Typical Prediction Accuracy* |

|---|---|---|---|---|---|

| Statistical | GBLUP, RR-BLUP | Additive effects, linear relationships | Computational efficiency, interpretability, stability | Limited ability to capture non-additive effects | 0.62 (mean across species) [19] |

| Bayesian | BayesA, BayesB, BayesC, BL | Various prior distributions for marker effects | Flexibility, ability to model different genetic architectures, variable selection | Computational intensity, convergence issues | Varies by trait and model [20] |

| Machine Learning | RF, SVR, XGBoost | Non-linear relationships, complex interactions | No distributional assumptions, handles complex patterns | Extensive hyperparameter tuning, black box nature | +0.014 to +0.025 over Bayesian methods [19] |

| Deep Learning | MLP, CNN, RNN | Complex non-linear and epistatic interactions | Automatic feature learning, handles high-dimensional data | Large data requirements, computational complexity | Comparable or superior to GBLUP in some studies [11] |

Note: Prediction accuracy measured as Pearson's correlation between predicted and observed values

Factors Influencing Model Performance

Multiple factors influence the performance of genomic prediction models, with training population size and genetic diversity being particularly important [12]. The relationship between training population size and prediction accuracy follows a pattern of diminishing returns, with optimal size balancing resource allocation and prediction accuracy [12]. Other vital factors include marker density and distribution, level of linkage disequilibrium, genetic complexity of the target trait, heritability, and statistical methods employed [12]. Recent evidence suggests that no single method universally outperforms others across all traits and datasets. Rather, the optimal approach depends on the genetic architecture of the trait, population structure, and available data resources [12] [11].

For complex traits influenced by non-additive genetic effects, machine learning and deep learning methods often demonstrate advantages over linear models [11]. However, for traits with predominantly additive genetic architecture, traditional GBLUP and Bayesian methods remain competitive while offering greater computational efficiency and interpretability [11]. In practical breeding applications, the choice of method must consider not only prediction accuracy but also computational requirements, implementation complexity, and interpretability of results.

Table 2: Key Research Reagent Solutions for Genomic Selection

| Reagent/Resource | Function | Application Examples | Considerations |

|---|---|---|---|

| GBS (Genotyping-by-Sequencing) | Reduced-representation genotyping using restriction enzymes | SNP discovery in barley, common bean, maize [1] [22] [19] | Cost-effective but potential missing data due to non-random enzyme sites [22] |

| SNP Arrays | Targeted genotyping of predefined variants | Wheat, loblolly pine genotyping [19] | High data quality but limited to known variants, ascertainment bias |

| Whole Genome Sequencing | Comprehensive variant discovery across entire genome | High-resolution genomic prediction [1] | Highest information content but computationally demanding |

| EasyGeSe Database | Curated benchmarking datasets for method comparison | Multi-species model evaluation [19] | Standardized evaluation but may not capture all breeding scenarios |

| BGLR Statistical Package | Bayesian implementation of various GS models | Plant and animal breeding applications [21] | Flexible prior specification but MCMC computationally intensive |

| TensorFlow/PyTorch | Deep learning frameworks for custom model development | Neural networks for complex trait prediction [18] [11] | Maximum flexibility but requires programming expertise |

Integrated Workflow and Future Perspectives

Decision Framework for Method Selection

Future Directions and Integration with Multi-Omics

The future of genomic selection lies in integrating diverse data types and developing more sophisticated modeling approaches. Emerging trends include the incorporation of multi-omics data (transcriptomics, metabolomics, proteomics) with genomic information to improve prediction accuracy [12] [17]. Deep learning approaches are particularly suited for integrating these heterogeneous data types and capturing complex biological relationships [18] [8]. With the continuous decline in sequencing costs, whole-genome sequencing is becoming increasingly feasible for GS applications, potentially providing more comprehensive genetic information than traditional marker arrays [1].

The development of user-friendly software tools and data management resources is democratizing GS methodology, making advanced prediction models accessible to more breeding programs [8] [19]. Future advances in artificial intelligence are expected to further enhance GS through improved data processing, feature selection, and model optimization [21] [8]. As these technologies mature, GS will evolve toward more comprehensive models that optimize prediction accuracy while providing insights into biological mechanisms, ultimately accelerating the development of improved crop varieties to address global food security challenges.

Genomic selection (GS) has revolutionized predictive breeding by enabling the selection of superior genotypes based on genomic estimated breeding values (GEBVs), thereby accelerating genetic gain per unit time [23] [24]. The efficacy of a GS program hinges on the accuracy of these predictions, defined as the correlation between the true and estimated breeding values (rMG). This accuracy is not a fixed property but is influenced by several interdependent factors. Among these, trait heritability (h²), training population size (TPS), and marker density (MD) are widely recognized as three pivotal drivers [23] [25] [26]. Understanding their individual and interactive effects is crucial for breeders to design efficient, accurate, and cost-effective genomic selection workflows. This application note synthesizes recent research findings to provide a structured protocol for optimizing these key parameters within a predictive breeding framework.

Quantitative Analysis of Key Drivers

Empirical studies across diverse species provide quantitative insights into how each factor influences genomic prediction accuracy. The table below summarizes core findings from recent research.

Table 1: Impact of Key Drivers on Genomic Prediction Accuracy Across Species

| Species | Trait Heritability (h²) | Training Population Size (TPS) | Marker Density (MD) | Primary Findings | Citation |

|---|---|---|---|---|---|

| Tropical Maize | Variable (Six trait-environment combinations) | 50% of total population (~2000 lines) | ~200 SNPs | h² was the most important factor; MD was the least important. rMG increased with increases in h², TPS, and MD. | [23] |

| Soybean, Rice, Maize | Wide range of broad-sense heritability | 50:50 to 90:10 (Training:Testing ratio) | Subsets from full genome-wide markers | Accuracy improved with higher h². BayesB model performed best. A subset of significant markers (P<0.05) boosted accuracy. | [25] |

| Mud Crab | High (0.521 to 0.860 for growth traits) | 30 to 400 individuals | 0.5K to 33K SNPs | Accuracy plateaued after ~10K SNPs. Accuracy improved as TPS increased up to 400. Minimum of 150 samples & 10K SNPs recommended. | [26] |

| Whiteleg Shrimp | Moderate (0.321 for weight; 0.452 for length) | 200 individuals from 13 families | 0.05K to 23K SNPs | Prediction accuracy improved with MD but gains diminished after ~3.2K SNPs. Close genetic relationship between TP and validation set was critical. | [27] |

| Meat Rabbits | Not specified | 1,515 individuals | Imputed from low-coverage sequencing | Multi-trait GBLUP model improved prediction accuracy by >15% compared to single-trait models. | [28] |

| Hanwoo Cattle | Not specified | 18,269 animals | 50K vs. Imputed High-Density (HD) | HD genotypes gave only marginal (0.6-2%) accuracy gains over 50K for most carcass traits. | [29] |

Interplay and Relative Importance of Factors

While all three factors contribute to accuracy, their relative importance varies. A study on 22 bi-parental tropical maize populations concluded that trait heritability is the most influential factor, followed by training population size, with marker density being the least important for most traits [23]. This hierarchy underscores that no amount of genotyping can fully compensate for a poorly heritable trait or an inadequately sized training population.

The relationship between these factors and prediction accuracy is often non-linear. Gains in accuracy from increasing marker density or population size eventually plateau, indicating a point of diminishing returns. For instance, in mud crab, increasing marker density beyond 10K SNPs provided minimal improvement [26], and in whiteleg shrimp, the plateau occurred at around 3.2K SNPs [27]. Similarly, while accuracy increases with training population size, the marginal gain decreases as the size becomes very large [30].

Experimental Protocols for Parameter Optimization

This section outlines a generalizable, step-by-step protocol for empirically determining the optimal TPS and MD for a new breeding program or trait, based on common methodologies in the literature.

Protocol 1: Optimizing Training Population Size and Marker Density

Objective: To determine the minimal training population size and marker density required to achieve acceptable genomic prediction accuracy for a target trait.

Materials and Reagents:

- Plant/Animal Population: A large, genotyped, and phenotyped population of at least 500-1000 individuals.

- Genotypic Data: Genome-wide marker data (e.g., SNP array or sequencing data). A high-density set is ideal for down-sampling.

- Phenotypic Data: High-quality phenotypic records for the target trait(s) with estimated heritability.

- Computing Hardware: High-performance computing cluster or server.

- Software: R statistical environment with packages like

rrBLUP,BGLR, or custom scripts for genomic prediction.

Workflow:

Data Preparation:

- Genotype Quality Control: Filter markers based on minor allele frequency (MAF < 0.05) and call rate (e.g., < 90%). Impute missing genotypes using software like Beagle [26] [27].

- Phenotype Processing: Adjust phenotypes for fixed effects (e.g., location, year, sex) to obtain best linear unbiased estimates (BLUEs).

Experimental Design:

- Define TPS Levels: Create a series of training population sizes (e.g., 50, 100, 200, 400, 800 individuals) via random sampling from the full population.

- Define MD Levels: Create subsets of markers from the full set (e.g., 0.5K, 1K, 2K, 5K, 10K, All) by random selection. For a more even distribution, select one marker per LD block.

- Replication: Repeat each (TPS, MD) combination with multiple random samples (e.g., 10-50 iterations) to account for sampling variance.

Genomic Prediction and Validation:

- For each iteration of a (TPS, MD) combination, use a cross-validation scheme.

- Split the data into a training set (of the specified TPS) and a validation set (the remaining individuals).

- Train the chosen genomic prediction model (e.g., GBLUP, BayesB) using the training set's genotypes (at the specified MD) and phenotypes.

- Predict the GEBVs of the individuals in the validation set.

- Calculate the prediction accuracy as the correlation between the predicted GEBVs and the adjusted phenotypes in the validation set.

Data Analysis:

- For each (TPS, MD) combination, average the prediction accuracies across all iterations.

- Plot the accuracy against TPS for different MD levels, and against MD for different TPS levels.

- Identify the "elbow" points where increasing TPS or MD no longer provides a substantial gain in accuracy. These points represent cost-effective optima.

The following workflow diagram illustrates this experimental procedure.

Protocol 2: Implementing a Targeted Training Population Optimization

Objective: To select a training population that maximizes prediction accuracy for a specific target set of breeding lines, potentially reducing phenotyping costs.

Materials and Reagents: (In addition to Protocol 1 materials)

- Test Set (TS): A defined set of genotyped, but not yet phenotyped, elite lines or families targeted for prediction.

- Software: R package

STPGA(Selection of Training Populations with a Genetic Algorithm) or similar.

Workflow:

- Define Candidate and Target Sets: The genotyped and phenotyped individuals form the candidate set. The elite lines for which predictions are needed form the target test set (TS).

- Apply Optimization Algorithm: Use the

STPGApackage to select a subset from the candidate set that is genetically most representative of, or related to, the target TS. The optimization can be based on criteria like the Coefficient of Determination (CDmean), which aims to minimize the prediction error variance for the TS [30]. - Validate and Compare: The prediction accuracy using this optimized, targeted training population (T-Opt) should be compared against a randomly selected training population of the same size (U-Opt). Studies consistently show T-Opt yields higher accuracy, especially with smaller training sizes [30].

Advanced Applications and Integrated Strategies

Multi-Trait and Multi-Omics Models

For complex traits with low heritability, integrating information from correlated traits or other biological layers can significantly boost accuracy.

- Multi-Trait GBLUP: This model leverages genetic correlations between traits. In meat rabbits, a multi-trait model improved prediction accuracy by over 15% for all growth and slaughter traits compared to single-trait models [28]. This is particularly valuable when the primary trait is expensive or difficult to measure.

- Multi-Omics Integration: Incorporating transcriptomic, metabolomic, or proteomic data can provide a more comprehensive view of the biological pathways underlying a trait. Advanced model-based fusion methods for integrating these omics layers have shown consistent improvements in predictive accuracy for complex traits in maize and rice, moving beyond the limitations of genomics alone [31].

Model Selection

The choice of statistical model is another lever for optimizing accuracy. While GBLUP and related linear mixed models are computationally efficient, Bayesian models (e.g., Bayes B) that assume a proportion of markers have zero effect often perform better, especially for traits influenced by a few loci with large effects [25]. Studies in soybean, rice, and maize found that Bayes B consistently matched or outperformed other models [25]. Furthermore, using a subset of markers pre-selected for significant association with the trait (e.g., P < 0.05) within a Bayesian framework can further enhance prediction performance [25].

The Scientist's Toolkit

Table 2: Essential Research Reagents and Solutions for Genomic Selection

| Tool / Reagent | Function in GS Workflow | Example/Note |

|---|---|---|

| SNP Array / lcWGS | Genotyping platform to obtain genome-wide marker data. | Custom 50K SNP array in mud crab [26]; low-coverage Whole Genome Sequencing (lcWGS) in meat rabbits [28]. |

| Genotype Imputation Software | To infer missing genotypes and increase marker density cost-effectively. | Beagle [26] [27]; STITCH [28]. Crucial for leveraging low-coverage sequencing data. |

| Genomic Prediction Software | To train models and calculate GEBVs. | R packages: rrBLUP (for GBLUP/RR-BLUP) [27], BGLR (for Bayesian models) [25]. |

| Training Population Optimization Software | To select an optimal subset of individuals for phenotyping. | R package STPGA [30]. Uses algorithms like CDmean to maximize prediction accuracy for a target set. |

| Genomic Relationship Matrix (G-matrix) | A matrix quantifying the genetic similarity between all individuals based on markers. | Foundation of GBLUP models. Calculated from genotype data to capture additive genetic relationships [26]. |

The successful implementation of genomic selection requires a balanced and strategic approach to its key drivers. Evidence consistently shows that investing in a sufficiently large and well-designed training population is paramount, often yielding greater returns than simply increasing marker density beyond a certain plateau. Trait heritability sets the upper limit for achievable accuracy. Breeders should first conduct pilot studies, as outlined in the protocols above, to establish population- and trait-specific optima for TPS and MD. Furthermore, embracing advanced strategies like multi-trait models and targeted training population design can unlock significant additional gains, particularly for challenging, low-heritability traits. By systematically optimizing these parameters, researchers and breeders can dramatically enhance the efficiency and predictive power of their genomic selection programs.

Genomic selection (GS) has emerged as a transformative technology for accelerating genetic gains in plant breeding and is now redefining paradigms in therapeutic development. This methodology uses genome-wide markers to calculate Genomic Estimated Breeding Values (GEBVs), enabling the selection of superior individuals based on genetic potential alone [12] [32]. The core principle involves building a statistical model that correlates marker data with phenotypic traits in a training population, then applying this model to a breeding population with only genotypic information available [33]. This approach has significantly reduced breeding cycles and improved selection intensity across biological domains. The convergence of large-scale biobanks, multi-omics data, and advanced computational methods now enables the systematic prioritization of therapeutic targets while predicting adverse effects and identifying drug repurposing opportunities [34]. This article details the practical application of genomic selection through structured protocols, comparative analyses, and implementation frameworks that bridge agricultural and biomedical research.

Genomic Selection in Crop Breeding: Applications and Protocols

Fundamental Principles and Key Optimization Factors

Genomic selection accuracy depends on multiple interconnected factors that must be optimized for successful implementation. The following table summarizes these critical elements and their impacts on prediction accuracy:

Table 1: Key Factors Influencing Genomic Prediction Accuracy in Plant Breeding

| Factor | Impact on Accuracy | Optimization Approach |

|---|---|---|

| Training Population Size & Diversity | Positively correlated up to optimum point (~2,000-4,000 individuals) [12] | Use optimization algorithms to balance genetic diversity with resource allocation [12] |

| Marker Density & Distribution | Higher density improves accuracy until linkage disequilibrium (LD) plateaus [12] | Select markers based on LD decay patterns; 5K-50K SNPs typically sufficient [32] |

| Trait Heritability | Direct positive correlation; highly influential for model performance [12] | Improve phenotyping protocols; use multi-environment trials to reduce error [12] |

| Genetic Architecture | Complex traits with non-additive effects reduce accuracy for simple models [12] | Select models that capture epistatic and dominance effects (e.g., RKHS, deep learning) [33] |

| Statistical Models | Varying performance based on genetic architecture [32] | Benchmark multiple methods; consider ensemble approaches [33] |

Implementation Workflow for Genomic Selection in Breeding Programs

The standard genomic selection pipeline involves sequential steps from population development to selection decisions. The following diagram illustrates this workflow:

Protocol: Implementing Genomic Selection for Grain Yield in Soybean

Application Note: This protocol outlines a complete genomic selection workflow optimized for soybean yield improvement, adaptable to other crops with modification.

Materials and Reagents:

- Plant material: 300 families with 50 individuals per family (15,000 total) [32]

- Genotyping platform: 6K SNP array or equivalent [32]

- Phenotyping equipment: Field trial infrastructure, yield measurement tools

- Statistical software: R with specialized packages (rrBLUP, BWGS, GBM, DNNGP) [33]

Procedure:

Training Population Development (Cycle 0)

Genotyping Protocol

- Extract DNA from young leaf tissue using standard CTAB methods

- Genotype all training individuals using 6K SNP array or sequence-based genotyping

- Perform quality control: remove markers with >10% missing data and minor allele frequency <0.05 [33]

- Impute missing genotypes using appropriate algorithms (e.g., BEAGLE, FILLIN)

Phenotyping Protocol

- Evaluate training population in replicated field trials across target environments (minimum 3 locations)

- Record yield measurements (tons per hectare) with proper experimental design [32]

- Collect relevant covariate data (flowering time, plant height) to correct for confounding effects

Model Training and Validation

- Randomly divide data into training (80%) and validation (20%) sets

- Implement multiple models: GBLUP, Bayesian methods (BayesA, BayesB), and random forest [32]

- Calculate prediction accuracy as correlation between predicted and observed values in validation set

- Select best-performing model for breeding value prediction

Breeding Application (Cycle 1+)

- Genotype new breeding candidates without phenotyping

- Calculate GEBVs using trained model

- Select top 5-10% individuals based on GEBVs as parents for next cycle [32]

- Repeat process for subsequent breeding cycles, updating model with new data every 2-3 cycles

Troubleshooting:

- Low prediction accuracy: Increase training population size or improve phenotypic data quality

- Model overfitting: Use cross-validation and reduce model complexity

- Genetic gain plateau: Introduce new genetic diversity through wild relatives or interspecific crosses

Speed Breeding Integration for Accelerated Cycles

Speed breeding protocols dramatically reduce generation times, complementing genomic selection's statistical advantages:

Protocol: Speed Breeding for Spring Cereals [35]

- Growth Conditions: Extended photoperiod (22 hours light/2 hours dark)

- Light Intensity: 400-600 μmol/m²/s using full-spectrum LEDs

- Temperature Regime: 22°C day/17°C night

- Support Methods: Embryo culture 14-20 days after flowering to reduce seed maturation time

- Generation Output: 6 generations per year for spring wheat, barley, and chickpea [35]

Transition to Biomedical Applications: Drug Target Identification

Computational Framework for Therapeutic Target Discovery

Genomic selection principles have been successfully adapted to drug discovery, particularly through CRISPR screening and subtractive genomics. The following workflow illustrates the target identification pipeline:

Protocol: CRISPR Screening for Therapeutic Target Identification

Application Note: This protocol enables genome-wide functional screening to identify genes essential for disease processes, particularly in cancer and infectious diseases [36].

Materials and Reagents:

- sgRNA library: Genome-scale (e.g., Brunello, GeCKO v2) [36]

- Cell lines: Disease-relevant models (primary cells, organoids)

- CRISPR components: Cas9 nuclease, delivery system (lentiviral, nucleofection)

- Screening reagents: Selection antibiotics, cell culture media

- Sequencing platform: Next-generation sequencer for sgRNA quantification

Procedure:

Library Design and Preparation

- Select genome-wide sgRNA library targeting ~20,000 genes with 4-6 guides per gene

- Include non-targeting controls (minimum 500) for normalization [36]

- Package sgRNAs into lentiviral vectors at low MOI (<0.3) to ensure single integration

Cell Transduction and Selection

- Transduce target cells at coverage of 500-1000x per sgRNA

- Apply selection pressure (e.g., puromycin) 48 hours post-transduction

- Maintain minimum 500x coverage throughout experiment

Phenotypic Selection

- Apply relevant selective pressure: drug treatment, pathogen infection, or survival challenge

- Harvest genomic DNA at multiple timepoints (T0, T14, T28)

- Extract high-quality DNA using silica column methods

Sequencing and Analysis

- Amplify integrated sgRNAs with barcoded PCR primers

- Sequence on Illumina platform (minimum 100x coverage per sample)

- Align sequences to reference library using MAGeCK or similar tools [37]

- Identify significantly enriched/depleted sgRNAs using negative binomial models

- Validate hits through secondary screening with individual sgRNAs

Troubleshooting:

- Low library representation: Increase transduction coverage and viral titer

- High false-positive rate: Include more negative controls; use redundant sgRNAs

- Poor phenotype penetration: Optimize selection pressure and timing

Protocol: Subtractive Genomics for Novel Antibacterial Targets

Application Note: This computational protocol identifies essential, pathogen-specific proteins as novel drug targets against Bordetella pertussis, adaptable to other bacterial pathogens [38].

Materials and Reagents:

- Computational resources: Linux workstation with 16GB+ RAM

- Software: BLAST+, PSORTb, KEGG KAAS, DEG database

- Data: Complete bacterial genomes from EDGAR 3.0 or NCBI

Procedure:

Core Proteome Determination

- Retrieve 554 complete bacterial genomes from EDGAR 3.0 database [38]

- Identify proteins present in all strains (core proteome) using BLASTP (E-value <10^-5)

- Extract core proteins in FASTA format for subsequent analysis

Subcellular Localization Prediction

- Process core proteome through PSORTb 3.0 for localization prediction

- Retire cytoplasmic proteins for drug target consideration [38]

- Export membrane and extracellular proteins for vaccine candidate analysis

Human Non-Homology Filtering

- Perform PSI-BLAST search against human proteome (taxid: 9606)

- Remove proteins with significant similarity (E-value <0.005, identity >35%) [38]

- Confirm absence of similarity to human mitochondrial proteins via MITOMASTER

Essentiality and Pathway Analysis

- Compare retained proteins against Database of Essential Genes (DEG)

- Annotate metabolic pathways using KEGG Automatic Annotation Server

- Identify pathogen-specific pathways absent in humans

- Select targets with essential metabolic functions (e.g., amino acid biosynthesis)

Experimental Validation Prioritization

- Rank targets by essentiality score, conservation across strains, and "druggability"

- Select 5-10 top candidates for experimental validation [38]

Comparative Analysis of Genomic Prediction Models

Performance Benchmarking Across Domains

The selection of appropriate statistical models critically influences genomic prediction accuracy. The following table compares model performance across agricultural and biomedical applications:

Table 2: Comparative Performance of Genomic Prediction Models Across Domains

| Model Category | Specific Methods | Plant Breeding Accuracy* | Drug Discovery Application | Computational Requirements |

|---|---|---|---|---|

| Linear Mixed Models | GBLUP, rrBLUP | 0.42-0.58 [33] | Polygenic disease risk prediction [34] | Low to Moderate |

| Bayesian Methods | BayesA, BayesB, BayesC | 0.45-0.61 [32] | Target prioritization integrating multiple evidence lines [34] | Moderate to High |

| Machine Learning | Random Forest, SVM, GBM | 0.38-0.55 [33] [32] | Gene-drug interaction prediction [36] | Variable (GBM: Low; SVM: High) |

| Deep Learning | DNNGP | 0.51-0.64 [33] | Multi-omics data integration for target identification [36] | Very High |

| Specialized Methods | RKHS, MKRKHS | 0.48-0.63 (non-additive traits) [33] | Modeling complex gene networks in disease [34] | High |

Accuracy ranges represent Pearson's correlation coefficients for various traits in maize and wheat [33] [32]

Integrated Research Toolkit

Successful implementation of genomic selection approaches requires specialized analytical tools and resources:

Table 3: Essential Research Reagent Solutions for Genomic Selection Applications

| Tool Category | Specific Tools | Application | Key Features | Access |

|---|---|---|---|---|

| Genomic Prediction Software | ShinyGS [33] | Plant breeding | 16 methods incl. Bayesian, machine learning; user-friendly interface | Docker container |

| MAGeCK [37] | CRISPR screen analysis | Identifies positively/negatively selected sgRNAs; controls FDR | Open-source R package | |

| CRISPR Guide Design | CRISPOR [37] | gRNA design | Off-target prediction; supports 120 genomes | Web server |

| Breaking CAS [37] | Off-target detection | Works with eukaryotic genomes in ENSEMBL | Web server | |

| Variant Analysis | CrispRVariants [37] | Mutation characterization | Resolves individual mutant alleles; quantification | R/Bioconductor package |

| Sequence Analysis | Geneious [39] | General bioinformatics | Integrated sequence analysis and visualization | Commercial software |

| Specialized Analysis | ScreenBEAM [37] | CRISPR/RNAi screening | Bayesian evaluation of high-throughput data | R package |

Genomic selection methodologies have demonstrated remarkable versatility across biological domains, from accelerating crop improvement to redefining therapeutic target identification. The protocols and applications detailed herein provide a framework for researchers to implement these powerful approaches in their respective fields. As genomic technologies continue to advance, the integration of multi-omics data, artificial intelligence, and automated phenotyping will further enhance prediction accuracy and biological insight. The convergence of agricultural and biomedical applications highlights the fundamental unity of genomic science and its potential to address diverse challenges in food security and human health.

Implementation Frameworks and Advanced Modeling Techniques

Genomic selection (GS) has revolutionized animal and plant breeding by using genome-wide molecular markers to predict an individual's genetic merit, enabling earlier selection and accelerating genetic gain [40] [41]. The accuracy of Genomic Estimated Breeding Values (GEBVs) is paramount and hinges on the choice of statistical model, each embodying different assumptions about the underlying genetic architecture of traits [40] [42]. These methods can be broadly categorized into linear parametric models like Genomic Best Linear Unbiased Prediction (GBLUP) and non-linear parametric models known as the "Bayesian Alphabet" (e.g., BayesA, BayesB, BayesC, BayesR) [40] [43].

The core difference between these approaches lies in their prior assumptions regarding the distribution of marker effects. GBLUP assumes all markers contribute equally to the genetic variance, with effects following a normal distribution, making it ideal for traits controlled by many genes with small effects [40]. In contrast, Bayesian methods specify different prior distributions, allowing for variable selection and differing variances among markers, which is more suitable for traits influenced by a few genes with larger effects [40] [42]. This article provides a detailed protocol for applying these models in predictive breeding research, offering structured comparisons, experimental workflows, and practical toolkits for scientists.

Selecting the appropriate model requires understanding how each performs under different genetic architectures and experimental conditions. The following tables summarize key performance metrics and the recommended application contexts for each model.

Table 1: Summary of Genomic Prediction Model Performance Across Studies

| Model | Key Assumptions | Reported Prediction Accuracy (Range/Example) | Best-Suited Trait Architecture |

|---|---|---|---|

| GBLUP | All markers have an effect; effects follow a normal distribution with common variance [40]. | Accuracy for carcass traits in pigs: 0.371 - 0.502 (ssGBLUP, an advanced variant) [41]. | Polygenic traits controlled by many small-effect QTLs [40]. |

| BayesA | All markers have an effect, but each has its own variance [40] [42]. | Performance varies significantly with genetic architecture; no single accuracy range provided. | Traits governed by many QTLs with a few having relatively larger effects [40]. |

| BayesB | A proportion of markers have zero effects; non-zero markers have different variances [40] [42]. | More persistent accuracy over generations for egg weight in chickens vs. GBLUP [42]. | Traits with a sparse genetic architecture, where few major QTLs explain much variance [40] [42]. |

| BayesCπ | A fraction of markers have zero effects; non-zero markers share a common variance; π is estimated from data [42]. | Used in dairy cattle studies with large sample sizes; specific accuracy not detailed here [44]. | Intermediate architecture; some major QTLs amidst many small-effect ones [42]. |

| BayesR | Marker effects follow a mixture of normal distributions, including some with zero effect [41] [42]. | -- | Powerful for mapping QTL precisely and for traits with a mix of effect sizes [42]. |

| Bayesian LASSO | A form of continuous shrinkage; many markers have very small (nearly zero) effects [40]. | Identified as less biased for GEBV estimation among Bayesian methods [40]. | Various architectures, provides a compromise between variable selection and shrinkage. |

Table 2: Impact of Experimental Factors on Genomic Prediction Accuracy

| Factor | Impact on Accuracy | Supporting Evidence |

|---|---|---|

| Trait Heritability | Accuracy increases with heritability, irrespective of sample size or marker density [40]. | Study on wheat, maize, and barley traits [40]. |

| Genetic Architecture | Bayesian methods excel for traits with few large-effect QTLs; GBLUP for traits with many small-effect QTLs [40]. | Analysis of nine actual and 54 simulated datasets [40]. |

| Marker Density | Improves accuracy in low-density panels; plateaus in medium-to-high-density scenarios [41]. | Pig study using imputed whole-genome sequence data [41]. |

| Training Population Size | Increasing training set size improves within-population prediction accuracy [45]. | Simulation study on beef cattle populations [45]. |

| Model Biases | GBLUP is the least biased; Bayesian Ridge Regression and Bayesian LASSO are less biased among Bayesian methods [40]. | Comparison of GEBV estimation across methods [40]. |

Experimental Protocols

Protocol 1: Five-Fold Cross-Validation for Model Comparison

This protocol outlines a standard method for evaluating and comparing the performance of GBLUP and Bayesian models, as applied in recent studies [40] [44].

1. Data Preparation: - Phenotypic Data: Collect and correct phenotypes for non-genetic effects (e.g., contemporary group, age, farm) using a linear model to obtain adjusted phenotypes for analysis [41] [44]. - Genotypic Data: Perform quality control (QC) on genotype data. Standard filters include: individual call rate > 90%, SNP call rate > 90%, and minor allele frequency (MAF) > 5% [41]. Retain only autosomal markers.

2. Data Partitioning: - Randomly divide the entire dataset (after QC) into five mutually exclusive subsets (folds) of approximately equal size [40] [44].

3. Model Training and Validation: - For each of the 100 replications [40]: - Iteratively use four folds (80% of data) as the training population to estimate marker effects and train the prediction model. - Use the remaining one fold (20% of data) as the validation population for which GEBVs are predicted based on the trained model.

4. Accuracy Calculation: - For each validation fold, calculate the Pearson’s correlation coefficient between the observed phenotypic data (or corrected phenotypes) and the GEBVs [40]. - The final reported accuracy for a model is the mean correlation across all 100 replications and five folds.

Protocol 2: Implementing a Single-Step GBLUP (ssGBLUP) Analysis

This protocol details the application of ssGBLUP, which integrates both pedigree and genomic data to enhance prediction accuracy, as demonstrated in pig breeding [41].

1. Input Data Preparation: - Phenotype File: Prepare a file containing individual IDs and corrected phenotypes. - Genotype File: Prepare a file in PLINK raw format or similar, containing individual IDs and genotype dosages (0, 1, 2) for all QC-passed SNPs. - Pedigree File: Prepare a file with individual, sire, and dam IDs, ensuring the pedigree is complete and consistent.

2. Relationship Matrix Construction: - Construct the H matrix, which is a combined relationship matrix that uses genomic information for genotyped individuals and pedigree information for non-genotyped individuals [41]. This single matrix replaces the traditional pedigree-based (A) matrix.

3. Model Execution:

- Use software like blupf90 or GCTA that supports ssGBLUP.

- Fit the following model:

y = Xb + Zu + e

where y is the vector of corrected phenotypes, b is a vector of fixed effects, u is a vector of additive genetic effects with a prior distribution u ~ N(0, Hσ²_u), Z is a design matrix, and e is the vector of random residuals [41].

4. Output and Interpretation: - The software will output GEBVs for all individuals in the pedigree. - Model accuracy can be assessed via cross-validation as described in Protocol 1.

Workflow Visualization

The following diagram illustrates the critical decision points for selecting an appropriate genomic prediction model based on the known or hypothesized genetic architecture of the target trait.

The Scientist's Toolkit

Successful implementation of genomic prediction requires a suite of computational tools and data resources. The following table lists essential "research reagents" for the field.

Table 3: Essential Research Reagents and Tools for Genomic Prediction

| Tool/Reagent | Function/Purpose | Application Example |

|---|---|---|

| SNP Chip (e.g., 50K) | High-throughput genotyping to obtain genome-wide marker data for individuals. | Standard platform for initial genotyping in pigs and cattle [41] [44]. |

| Whole Genome Sequence (WGS) Data | Provides a complete catalog of genetic variants; used for imputation and identifying functional variants. | Imputed from SNP chip data to create high-density marker sets for analysis [41] [44]. |

| PLINK | Software for comprehensive genotype data management and quality control (QC). | Used for filtering SNPs based on call rate and MAF [41]. |

| BLUPF90 Suite | Software for estimating breeding values using mixed models (GBLUP, ssGBLUP). | Used for genomic prediction and phenotype correction in pig studies [41]. |

| JWAS | Software implementing various Bayesian Alphabet models via Markov Chain Monte Carlo (MCMC). | Used for genomic evaluation with BayesCπ in dairy cattle [44]. |

| BGLR R Package | R package for Bayesian regression models, offering a wide range of priors for genomic prediction. | Flexible tool for implementing Bayesian models (BayesA, BayesB, BayesC, BL, etc.) [42]. |

| Functional Variants | SNPs identified via GWAS, RNA-seq, etc., presumed to be closer to causal mechanisms. | Can be used to build smaller, more predictive SNP panels, especially for percent traits in dairy cattle [44]. |

| Adjusted Phenotypes (y~c~) | Phenotypic records corrected for significant non-genetic factors (fixed effects). | Serves as the input variable (y) in genomic prediction models to improve accuracy [41] [44]. |

The practice of genomic selection requires careful consideration of statistical models tailored to the biological and experimental context. GBLUP remains a robust, least-biased choice for complex, polygenic traits, while the Bayesian alphabet (BayesA, BayesBπ, BayesCπ, BayesR) offers powerful alternatives for traits with a more pronounced genetic architecture, enabling more precise QTL mapping. Future developments will likely focus on the integration of multi-omics data and functional annotations into these models to further enhance predictive accuracy and biological insight, solidifying the role of genomic prediction in accelerating genetic gain across breeding programs.

Application Notes on Machine Learning and Deep Learning Architectures in Genomic Selection

Genomic Selection (GS) has revolutionized predictive breeding by enabling the prediction of breeding values using genome-wide markers. The choice of statistical and machine learning architecture is paramount, as it directly influences the ability to model the complex genetic architecture of agronomic traits, which often involves additive, dominance, and epistatic effects [46]. This document provides a detailed overview of Support Vector Regression (SVR), Kernel Ridge Regression (KRR), Deep Neural Networks (DNN), Convolutional Neural Networks (CNN), Recurrent Neural Networks (RNN), and Long Short-Term Memory (LSTM) networks within the context of GS.