Harnessing Phylogenetic Signal for Predictive Modeling: Advanced Methods for Biomedical Research and Drug Development

This article provides a comprehensive guide for researchers and drug development professionals on integrating phylogenetic signal into predictive models.

Harnessing Phylogenetic Signal for Predictive Modeling: Advanced Methods for Biomedical Research and Drug Development

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on integrating phylogenetic signal into predictive models. It explores the foundational concept that shared evolutionary history creates non-independence in biological data, a factor that, when accounted for, can dramatically improve prediction accuracy. We detail advanced methodological approaches, including Phylogenetically Informed Prediction (PIP), Phylogenetic Generalized Least Squares (PGLS), and new software tools for variance partitioning. The article systematically addresses common troubleshooting and optimization challenges, such as handling weak trait correlations and non-ultrametric trees. Finally, we present a rigorous validation and comparative framework, showcasing simulations and case studies that demonstrate a two- to three-fold performance improvement over traditional methods, with direct implications for predicting drug targets, understanding disease evolution, and tracing pathogen lineages.

The Why and What: Uncovering the Critical Role of Phylogenetic Signal in Biological Prediction

Defining Phylogenetic Signal and Its Impact on Trait Evolution

What is phylogenetic signal?

Phylogenetic signal is the tendency for related biological species to resemble each other more than they resemble species drawn at random from the same phylogenetic tree. In simpler terms, it is the pattern we observe when closely related species have more similar traits than distantly related species. When phylogenetic signal is high, closely related species exhibit similar trait values, and this biological similarity decreases as the evolutionary distance between species increases [1] [2].

Conversely, a trait shows low phylogenetic signal when it appears more similar in distantly related taxa than in close relatives (a pattern often resulting from convergent evolution), or when it varies randomly across a phylogeny [1]. This concept helps researchers understand the degree to which trait evolution is constrained by evolutionary history [2].

Measuring Phylogenetic Signal: Key Methods and Metrics

Several statistical methods have been developed to quantify phylogenetic signal. The table below summarizes the most common indices for both continuous and categorical traits [1].

| Statistic | Data Type | Evolutionary Model? | Statistical Framework / Test | Brief Description |

|---|---|---|---|---|

| Blomberg's K | Continuous | ✓ (Brownian Motion) | Permutation | Ratio of observed trait variance to the variance expected under Brownian motion [2]. |

| Pagel's λ | Continuous | ✓ (Brownian Motion) | Maximum Likelihood | Multiplicative parameter that transforms internal branch lengths of the phylogeny [2]. |

| Abouheif's C~mean~ | Continuous | ✗ (Autocorrelation) | Permutation | Based on autocorrelation to test for phylogenetic similarity [1]. |

| Moran's I | Continuous | ✗ (Autocorrelation) | Permutation | A spatial autocorrelation statistic adapted for phylogenetic analysis [1]. |

| D Statistic | Categorical | ✓ | Permutation | Measures phylogenetic signal for binary traits [1]. |

| δ Statistic | Categorical | ✓ | Bayesian / Likelihood | Uses Shannon entropy to measure signal between a categorical trait and a phylogeny [3]. |

Detailed Methodologies for Key Metrics

Blomberg's K [2]

- Goal: Measures the amount of observed trait variance relative to the trait variance expected under a Brownian motion (BM) model of evolution.

- Calculation: It is the ratio of two mean squared errors (MSEs):

K = MSE0 / MSE, whereMSE0is the mean squared error of the tip data around the phylogenetic mean, andMSEis the mean squared error from a generalized least-squares model that incorporates the phylogenetic variance-covariance matrix. - Interpretation:

K ≈ 0: Suggests no phylogenetic signal (close relatives are not more similar than distant ones).K ≈ 1: Indicates trait evolution follows a Brownian motion model.K > 1: Suggests close relatives are more similar than expected under Brownian motion.

- Significance Test: A p-value is obtained by randomizing the trait data across the tips of the phylogeny and calculating how often the randomized data produces a higher K value than the observed one.

Pagel's λ [2]

- Goal: A maximum-likelihood-based measure of phylogenetic dependence.

- Calculation: The λ parameter is estimated by finding the value that best explains the trait variation at the tips. It works by transforming the off-diagonal values (the covariances between species) in the phylogenetic variance-covariance matrix.

- Interpretation:

λ = 0: No phylogenetic signal. The internal branches of the tree are effectively set to zero, resulting in a star phylogeny.λ = 1: Strong phylogenetic signal, consistent with trait evolution under a Brownian motion model. The internal branch lengths are unchanged.0 < λ < 1: Indicates an intermediate level of phylogenetic signal, consistent with an evolutionary process other than pure Brownian motion.

- Significance Test: Likelihood ratio tests are used to compare a model with the maximum-likelihood value of λ to models where λ is fixed at 0 or 1.

δ Statistic (for categorical traits) [3]

- Goal: Measures the degree of phylogenetic signal between a categorical trait (e.g., diet type, social structure) and a phylogeny.

- Calculation: Based on the concept of Shannon entropy from information theory. It exploits the uncertainty in the inferred ancestral states of the trait (calculated via maximum likelihood or Bayesian methods) to quantify the signal.

- Implementation: A recent re-implementation in Python allows for faster processing and can account for uncertainty in the phylogenetic tree topology itself, providing more robust estimates.

- Interpretation: Lower δ values indicate a stronger phylogenetic signal, meaning the trait's evolutionary history is highly dependent on the phylogeny.

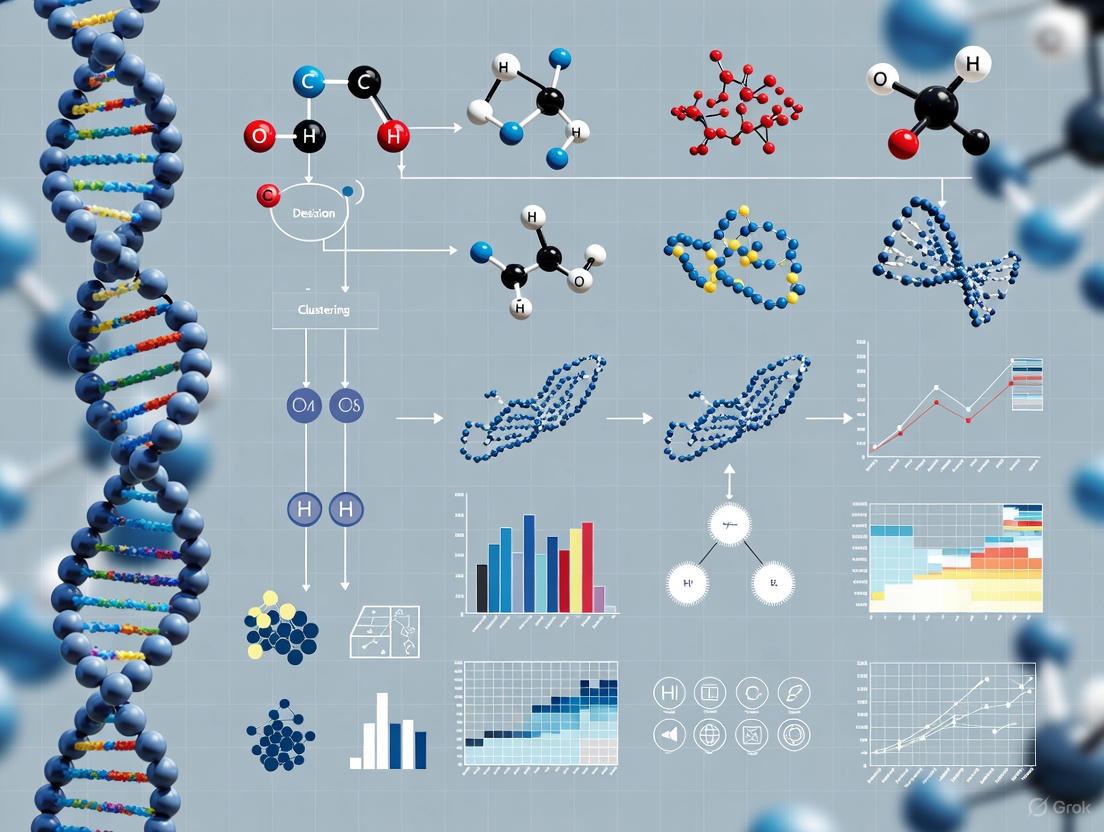

The following workflow diagram illustrates the decision-making process for selecting and applying these methods.

Troubleshooting Guide: Common Issues and Solutions

Problem 1: Inflated or Biased Estimates of Phylogenetic Signal

- Q: My estimate of phylogenetic signal seems too high. Could my phylogenetic tree be the problem?

- A: Yes, the quality of your phylogenetic tree can significantly impact your results, particularly for certain metrics.

- Polytomies (unresolved nodes): Phylogenies with many polytomies, especially deeper in the tree, can lead to inflated estimates of phylogenetic signal when using Blomberg's K [4]. Pagel's λ, however, has been shown to be strongly robust to this issue [4].

- Suboptimal Branch Lengths (Pseudo-chronograms): Using branch lengths that are not accurately calibrated (e.g., estimated via algorithms like BLADJ) can be a major source of error. This practice can lead to strong overestimation of phylogenetic signal (high rates of Type I errors) when using Blomberg's K, where you might incorrectly reject the null hypothesis of no signal [4]. Pagel's λ is again more robust to this problem [4].

- Solution:

- Where possible, use a fully resolved, time-calibrated phylogeny with accurate branch lengths.

- If you must use a tree with polytomies or estimated branch lengths, prioritize using Pagel's λ over Blomberg's K for more reliable results [4].

- For categorical traits, use the updated δ statistic, which can account for uncertainty in the tree topology by integrating over multiple possible trees from a Bayesian posterior distribution [3].

Problem 2: Non-Significant Results Despite Biological Expectation of Signal

- Q: I expect a trait to be phylogenetically conserved, but my analysis shows no significant signal. What could be wrong?

- A: Several factors can reduce the power to detect a phylogenetic signal.

- Labile Traits: The trait may truly be evolutionarily labile, with high rates of change or convergent evolution overwhelming the historical pattern [1] [2].

- Incorrect Evolutionary Model: The Brownian motion model assumed by K and λ may not fit your trait's actual evolutionary process. Explore other models of evolution (e.g., Ornstein-Uhlenbeck) that might be more appropriate [2].

- Low Statistical Power: This can be due to a small number of species in the phylogeny or a genuinely weak signal that your dataset is too small to detect.

- Solution:

- Visually inspect the distribution of your trait on the phylogeny. Does it look clustered?

- Check the fit of different evolutionary models to your data.

- Ensure your sample size (number of species) is sufficient for the analysis.

Problem 3: Handling Categorical Traits and Tree Uncertainty

- Q: How can I accurately measure phylogenetic signal for a categorical trait (like diet category) when I am unsure about the exact tree topology?

- A: Traditional methods for categorical data often ignore tree uncertainty, which can affect the results.

- Solution: Use the δ statistic with its modern implementation. This method [3]:

- Uses ancestral state reconstruction to infer trait evolution.

- Can incorporate a distribution of trees (e.g., from a Bayesian phylogenetic analysis) rather than a single tree, thus accounting for topological uncertainty.

- Provides a more accurate and confident assessment of phylogenetic signal for categorical data by averaging results over multiple plausible trees.

Problem 4: Discrepancies Between Different Metrics

- Q: I used both Blomberg's K and Pagel's λ on the same data and got conflicting results. Which one should I trust?

- A: This is not uncommon, as the two metrics measure signal in different ways and can have different sensitivities.

- Solution: Interpret the results in context.

- Pagel's λ is generally more robust to common issues like polytomies and poor branch-length information [4]. If your tree is not perfect, lean towards the λ result.

- Check the assumptions. Blomberg's K is a descriptive statistic tested via permutation, while λ is a model parameter estimated with maximum likelihood. If the Brownian motion model is a poor fit, λ might be less accurate.

- Consider your tree quality. The following table summarizes the recommended practices based on the findings of [4]:

| Phylogenetic Tree Condition | Impact on Blomberg's K | Impact on Pagel's λ | Recommendation |

|---|---|---|---|

| Fully Resolved, Accurate Branch Lengths | Reliable | Reliable | Either metric is suitable. |

| Polytomies (Unresolved Nodes) | Inflated signal estimates | Strongly robust | Prefer Pagel's λ. |

| Suboptimal Branch Lengths (Pseudo-chronograms) | Strong overestimation, high Type I error | Strongly robust | Prefer Pagel's λ. |

The Scientist's Toolkit: Essential Research Reagents & Materials

The table below lists key resources and tools used in phylogenetic signal analysis.

| Item / Resource | Function / Application |

|---|---|

| Ultrametric Phylogenetic Tree | A phylogenetic tree where the branch lengths are proportional to time. Essential for calculating most phylogenetic signal metrics under a Brownian motion model [2]. |

| R Statistical Environment | The primary platform for phylogenetic comparative methods. Key packages include phytools, ape, caper, and geiger [4]. |

| Python (with Numba library) | An alternative environment for high-performance computing. The δ statistic has been re-implemented in Python for faster analysis of large genomic datasets [3]. |

| RevBayes | Bayesian software for phylogenetic inference. Used to generate posterior distributions of trees, which can then be used in analyses (like the δ statistic) to account for tree uncertainty [3]. |

| Phylocom | Software that includes the BLADJ algorithm for estimating node ages on a phylogeny. Its output ("pseudo-chronograms") should be used with caution as it can introduce bias [4]. |

| PastML Package | A tool for fast ancestral character reconstruction. It is used internally by the updated δ statistic implementation to infer ancestral states for categorical traits [3]. |

Experimental Best Practices and Protocols

- Define the Problem Clearly: Start by precisely defining the biological question and the trait you are investigating. This guides your choice of method and data collection strategy.

- Gather and Vet Your Phylogeny: The accuracy of your phylogeny is paramount. Prioritize using time-calibrated trees derived from molecular data over supertrees with many polytomies or estimated branch lengths. Always document the source and construction of your phylogeny.

- Clean and Prepare Trait Data: This is a critical step. Ensure your trait data (both continuous and categorical) is correctly coded, and check for errors. For continuous traits, test if they follow a normal distribution or need transformation.

- Choose the Right Metric: Let your data type and tree quality guide you.

- Test Multiple Methods and Models: Don't rely on a single metric. Compare results from K and λ for continuous traits. Explore if your data fits models of evolution beyond Brownian motion.

- Validate and Interpret: Always check the statistical significance of your signal. Remember that a significant phylogenetic signal does not imply a specific evolutionary process (e.g., it could be due to genetic drift or stabilizing selection) [1] [5]. Interpret your results within a broader biological context.

Core Concepts: Understanding Non-Independence

What is the fundamental problem of non-independence in comparative biology?

In comparative analyses across species or populations, data points are not statistically independent due to shared evolutionary history. This phylogenetic non-independence means that phenotypes measured in one species are influenced by and related to those in closely related species, violating a core assumption of standard statistical models. Consequently, treating related species as independent data points overestimates degrees of freedom and inflates false positive rates (Type I errors) [6].

How does phylogenetic non-independence differ from other statistical dependencies?

While other fields deal with non-independence through random effects or spatial autocorrelation, phylogenetic non-independence has unique characteristics. It arises specifically from patterns of shared common ancestry and can be complicated by additional processes like gene flow between populations. The expected covariance among traits is directly derived from the phylogenetic tree structure, distinguishing it from other dependency structures [6].

Why do standard predictive models fail when phylogenetic signal is present?

Standard models like ordinary least squares (OLS) regression fail because they assume all observations are independent. When phylogenetic signal exists, closely related species share similar trait values through common descent rather than through functional relationships. This creates pseudoreplication that standard models cannot detect, leading to spurious correlations and inflated confidence in results [6] [7].

Quantitative Evidence: The Performance Gap

Table 1: Performance Comparison of Predictive Modeling Approaches Across Simulation Studies

| Method | Prediction Error Variance | Accuracy Advantage | Appropriate Context |

|---|---|---|---|

| Phylogenetically Informed Prediction | 0.007 (when r=0.25) | Reference standard | All comparative contexts with known phylogeny |

| PGLS Predictive Equations | 0.033 (when r=0.25) | 4.7× worse than PIP | When only regression coefficients are used without phylogenetic position |

| OLS Predictive Equations | 0.03 (when r=0.25) | 4.3× worse than PIP | Inappropriate for phylogenetic data; produces spurious results |

Recent simulations demonstrate that phylogenetically informed predictions outperform predictive equations from both OLS and phylogenetic generalized least squares (PGLS) models by approximately 4-4.7× in accuracy metrics. Notably, phylogenetically informed prediction using weakly correlated traits (r=0.25) performs better than predictive equations from strongly correlated traits (r=0.75) [7].

Table 2: Error Rates Associated with Different Modeling Approaches

| Method | False Positive Rate | Handling of Phylogenetic Signal | Degree of Freedom Inflation |

|---|---|---|---|

| Standard OLS Models | Severely inflated | Ignored | Extreme overestimation |

| PGLS Models | Properly controlled | Explicitly modeled | Accurate estimation |

| Phylogenetically Informed Prediction | Properly controlled | Incorporated into predictions | Accurate estimation |

Methodological Solutions: Experimental Protocols

Protocol 1: Implementing Phylogenetically Informed Predictions

Purpose: To accurately predict unknown trait values while incorporating phylogenetic relationships.

Workflow:

- Phylogeny Acquisition: Obtain a well-supported phylogenetic tree for your taxa of interest

- Trait Data Collection: Compile known trait values for related species

- Model Specification: Use comparative methods that explicitly incorporate phylogenetic relationships

- Prediction Generation: Generate predictions that account for the phylogenetic position of taxa with unknown values

- Validation: Assess prediction accuracy using cross-validation or comparison with held-out data

Key Considerations: Phylogenetically informed predictions can be implemented using several statistical frameworks, including phylogenetic generalized least squares (PGLS), phylogenetic generalized linear mixed models (PGLMM), or Bayesian approaches. These methods explicitly model the phylogenetic covariance structure to produce accurate predictions [7].

Protocol 2: Evaluating Relative Importance of Phylogeny vs. Predictors

Purpose: To partition explained variance between phylogenetic history and ecological predictors.

Workflow:

- Model Fitting: Implement Phylogenetic Generalized Linear Models (PGLMs) with both phylogenetic and ecological predictors

- Variance Partitioning: Use hierarchical partitioning methods (e.g., phylolm.hp R package) to calculate likelihood-based R² contributions

- Signal Quantification: Estimate phylogenetic signal using metrics like Pagel's λ or Blomberg's K

- Importance Assessment: Distinguish unique versus shared explained variance between phylogeny and ecological predictors

Key Considerations: Traditional partial R² methods often fail to sum to total R² due to multicollinearity between phylogenetic and ecological predictors. The phylolm.hp package implements average shared variance partitioning specifically designed for phylogenetic models [8].

Research Reagent Solutions: Essential Tools for Phylogenetic Prediction

Table 3: Essential Computational Tools for Phylogenetic Comparative Methods

| Tool/Software | Primary Function | Implementation | Key Features |

|---|---|---|---|

| phylolm.hp | Variance partitioning in PGLMs | R package | Calculates individual R² for phylogeny and predictors, handles continuous and binary traits |

| phylopict | Phylogenetically informed prediction | Multiple implementations | Predicts unknown values using phylogenetic relationships and trait correlations |

| PGLS/PGLMM | Phylogenetic regression modeling | R packages (ape, nlme, etc.) | Incorporates phylogenetic covariance structure into regression frameworks |

| Bayesian Prediction | Probabilistic prediction of ancestral states | Software like BEAST, RevBayes | Samples predictive distributions for further analysis, applicable to extinct species |

Troubleshooting Common Experimental Issues

Why does my phylogenetic model show poor predictive performance despite high R²?

This often indicates overfitting, particularly when the number of parameters is large relative to sample size. In phylogenetic contexts, overfitting can occur when model complexity exceeds evolutionary information contained in the tree. Solutions include implementing penalization methods (LASSO, ridge regression), cross-validation, or reducing predictor dimensionality [9] [10].

How can I handle missing phylogenetic relationships in my tree?

Unresolved nodes and polytomies can be accommodated using generalized least squares frameworks that incorporate incomplete phylogenetic information. For analyses across populations within species, alternative approaches like mixed models may be necessary as phylogeny-based methods alone may be insufficient due to gene flow [6].

What should I do when I detect significant phylogenetic signal in model residuals?

Significant phylogenetic signal in residuals indicates the model has not adequately accounted for evolutionary relationships. Consider alternative evolutionary models (e.g., Ornstein-Uhlenbeck, early burst), check for model misspecification, or evaluate whether additional phylogenetic predictors are needed [6] [8].

Advanced Applications and Future Directions

Can these methods predict traits for extinct species?

Yes, phylogenetically informed prediction has been successfully used to reconstruct traits in extinct species, including genomic and cellular traits in dinosaurs and feeding behaviors in hominins. Bayesian implementations are particularly valuable as they enable sampling of predictive distributions for further analysis [7].

How do I choose between different phylogenetic prediction frameworks?

The choice depends on your research question and data structure. For simple bivariate relationships, PGLS may suffice. For complex multivariate predictions or binary outcomes, PGLMM provides greater flexibility. Bayesian approaches are preferable when quantifying uncertainty in predictions is critical [7].

Troubleshooting Guides and FAQs

Frequently Asked Questions

1. Why should I use phylogenetically informed prediction instead of standard predictive equations?

Using predictive equations derived from standard (OLS) or phylogenetic (PGLS) regression is common, but this practice ignores the phylogenetic position of the predicted taxon. Research shows that phylogenetically informed predictions, which explicitly incorporate shared evolutionary history, can outperform predictive equations from PGLS and OLS by a factor of two- to three-fold. In fact, using the phylogenetic relationship between two weakly correlated traits (r=0.25) can provide predictions that are as good as, or even better than, using predictive equations from strongly correlated traits (r=0.75) [7].

2. What can I do if my gene knockout yields no observable phenotype?

A lack of observable phenotype in a gene knockout does not mean the gene is non-functional. This is a common issue in functional genomics. Potential explanations and solutions include [11]:

- Explanation: The gene function is redundant with another gene.

- Solution: Test for phenotypes in a more diverse range of ecological or environmental contexts, as these can reveal phenotypes undetectable in standard laboratory conditions.

- Explanation: The gene's function is only critical under specific selective pressures.

- Solution: Conduct fitness assays in competitive or naturalistic environments to quantify the mutation's importance in evolutionary terms.

3. How can I partition the relative importance of phylogeny versus other predictors in my model?

Accurately separating the effects of shared ancestry from other ecological or trait-based predictors has been a persistent challenge. The phylolm.hp R package is designed specifically to solve this problem. It works by extending the concept of "average shared variance" to Phylogenetic Generalized Linear Models (PGLMs), calculating individual likelihood-based R² contributions for both phylogeny and each predictor. This allows for a nuanced quantification of their relative importance [8].

4. What are the key considerations for building a high-quality predictive model for microbial traits?

When predicting gene presence or function in microorganisms like ammonia-oxidizing archaea, the following steps are crucial [12]:

- Ensure a Strong Phylogenetic Signal: Confirm that the trait you are predicting displays significant phylogenetic conservatism.

- Use Appropriate Modeling Techniques: Methods like phylogenetic eigenvector mapping or ancestral state reconstruction have been shown to predict gene presence with high accuracy (>88%), sensitivity (>85%), and specificity (>80%).

- Validate with Environmental Data: Apply the predictive model to environmental sequencing data (e.g., from soil communities) to generate testable ecological hypotheses about microbial function.

5. How much data is needed to train a viable predictive solution?

While requirements can vary, a general rule of thumb for training a robust predictive model is to have a dataset containing between 30,000 and 100,000 records. If more than 100,000 records are available, using the most recent 100,000 is often sufficient for effective training [13].

Troubleshooting Common Experimental Issues

Problem: Model predictions are inaccurate and have high error.

- Potential Cause 1: The model is overfitting the training data, meaning it learns the noise instead of the underlying pattern.

- Solution: Use techniques like cross-validation to assess how well your model generalizes to unseen data. Select a model that balances complexity with predictive performance, rather than simply picking the one with the lowest training error [14].

- Potential Cause 2: Insufficient or poor-quality training data.

- Solution: Inspect your training data for relevance, coverage, and noise. For genomic or ecological data, ensure the data encompasses adequate phylogenetic and environmental diversity. A trusted reference dataset can help identify knowledge gaps [15].

Problem: Failure to recapitulate a complex extinct phenotype (e.g., for de-extinction).

- Potential Cause: Relying solely on genomic DNA provides a static blueprint but misses dynamic gene expression information.

- Solution: Integrate transcriptome (RNA) data, if available. For instance, RNA sequencing from preserved specimens of the Thylacine provided critical data on which genes were expressed in specific tissues, creating a precise "edit list" for genome engineering that goes beyond the simple presence of a gene [16].

Problem: Difficulty in creating induced Pluripotent Stem Cells (iPSCs) for species with robust cancer suppression.

- Potential Cause: Some species, like elephants, have multiple copies of the TP53 tumor-suppressor gene, making their cells hyper-resistant to reprogramming and causing them to self-destruct.

- Solution: Researchers have successfully navigated the TP53 pathway by developing specific methods to inhibit its activity during the reprogramming process, allowing for the creation of stable elephant iPSCs. This was a major technical hurdle overcome in the Woolly Mammoth de-extinction project [16].

The table below summarizes key performance data from recent studies on phylogenetic prediction and functional trait imputation.

| Method | Use Case / Trait | Performance Metric | Result | Source |

|---|---|---|---|---|

| Phylogenetically Informed Prediction | Predicting continuous traits on ultrametric trees (r=0.25 simulation) | Variance in Prediction Error (σ²) [7] | 0.007 [7] | |

| PGLS Predictive Equation | Predicting continuous traits on ultrametric trees (r=0.25 simulation) | Variance in Prediction Error (σ²) [7] | 0.033 [7] | |

| Phylogenetic Eigenvector Mapping | Predicting gene presence in Ammonia-Oxidizing Archaea | Accuracy [12] | >88% [12] | |

| Sensitivity [12] | >85% [12] | |||

| Specificity [12] | >80% [12] |

Experimental Protocols

Protocol 1: Phylogenetically Informed Prediction for Trait Imputation

This protocol is used to predict unknown trait values for species based on their phylogenetic relationships and trait correlations [7].

- Data Collection: Assemble a dataset of trait values for a set of species with a known phylogenetic relationship.

- Model Fitting: Fit a phylogenetic regression model (e.g., using PGLS) to the data for species with known values for both the predictor and target traits.

- Prediction Generation: For a species with an unknown target trait value, use phylogenetically informed prediction. This method integrates the phylogenetic correlation structure and the known trait values to generate a prediction and a prediction interval, rather than simply calculating a value from the regression equation.

- Validation: Validate the predictions by comparing them to held-out data or known values from fossils, if available.

Protocol 2: Predicting Gene Distribution from Phylogenetic Signal

This methodology predicts the presence or absence of specific genes in microbial lineages based on phylogenetic conservatism [12].

- Genome Curation: Compile a set of high-quality genomes or metagenome-assembled genomes (MAGs) for the microbial group of interest.

- Gene Annotation & Phylogeny: Annotate the presence/absence of target genes and construct a robust phylogenetic tree (e.g., based on a core gene like amoA for ammonia-oxidizing archaea).

- Signal Testing: Test for a significant phylogenetic signal in the distribution of each gene.

- Model Building & Prediction: Apply a predictive modeling technique, such as phylogenetic eigenvector mapping with elastic net regularization, to build a model that predicts gene presence from phylogenetic position.

- Environmental Application: Apply the predictive model to a community phylogeny derived from environmental sequencing to infer the functional potential of the community.

Methodology and Workflow Diagrams

The Scientist's Toolkit: Research Reagent Solutions

| Reagent / Solution | Function / Application | Field of Use |

|---|---|---|

| Phylogenetic Generalized Linear Models (PGLMs) | Statistical models that integrate phylogenetic relationships to control for shared ancestry when testing trait correlations. | Comparative Biology, Ecology, Evolution [8] |

phylolm.hp R Package |

A software tool that partitions the variance explained in a PGLM among predictors and phylogeny, quantifying their relative importance. | Ecology, Evolutionary Biology [8] |

| Multiplex CRISPR-Cas9 | A genome engineering technique that allows for the simultaneous editing of multiple gene loci in a single experiment. | Functional Genomics, De-extinction Biology [16] |

| Induced Pluripotent Stem Cells (iPSCs) | Somatic cells that have been reprogrammed to an embryonic-like state, capable of differentiating into any cell type. | Developmental Biology, Regenerative Medicine, De-extinction [16] |

| Primordial Germ Cells (PGCs) | Precursor cells to eggs and sperm. Can be edited in vitro and injected into surrogate embryos for the generation of gametes of a related species. | Avian De-extinction, Conservation Biology [16] |

| Phylogenetic Eigenvector Mapping | A technique that uses phylogenetic eigenvectors to model and predict trait distributions (e.g., gene presence) across a phylogeny. | Microbial Ecology, Functional Prediction [12] |

FAQs on Phylogenetic Signal

Q1: What is a phylogenetic signal, and why is it critical for my predictive models in drug development?

A phylogenetic signal is the tendency for closely related species to resemble each other more than they resemble species drawn at random from a phylogenetic tree [17]. In practical terms, it measures the statistical dependence in your data due to shared evolutionary history. Ignoring this signal in predictive models, such as those used to predict trait values or biological activities, can lead to false perceptions of precision, inflated statistical significance, and spurious results [7] [18]. For drug development, this could mean misjudging the efficacy or toxicity of a compound across different biological systems. Accounting for phylogenetic signal ensures your predictions are evolutionarily informed and more accurate.

Q2: I have a dataset with both continuous and discrete traits. Which method should I use to detect phylogenetic signal?

Most traditional methods are designed for only one type of trait. However, a new unified method, the M statistic, has been developed to detect phylogenetic signals in continuous traits, discrete traits, and even combinations of multiple traits [17]. This method uses Gower's distance to convert different types of traits into a comparable distance matrix, allowing you to test for a signal across your entire dataset with a single, consistent approach. The R package phylosignalDB facilitates these calculations [17].

Q3: My phylogenetic tree is not fully resolved and has uncertainty. How does this impact the quantification of phylogenetic signal?

Phylogenetic uncertainty, whether in tree topology or branch lengths, is a major source of error that can lead to overconfident and biased results [18]. When you use a single consensus tree for analysis, you assume this tree is correct, which is rarely the case. Bayesian methods that incorporate a distribution of trees (e.g., a posterior set of trees from MrBayes or BEAST) as a prior in your comparative analysis provide a more honest and precise estimation of parameters, including phylogenetic signal [18]. This approach propagates phylogenetic uncertainty into your final results, yielding more reliable confidence intervals.

Q4: How can I measure phylogenetic signal for non-Gaussian data, such as binomial or count data, in a Bayesian framework?

For non-Gaussian data (e.g., binomial, lognormal), the phylogenetic signal (often analogous to Pagel's λ or heritability, (h^2)) is typically estimated on the link (linear predictor) scale [19]. The formula (\lambda = Va / (Va + V_e)) is used, where:

- (V_a) is the variance attributable to the phylogeny.

- (V_e) is the residual variance.

The challenge lies in determining (V_e) for non-Gaussian families. For a Bernoulli distribution, the residual variance on the link scale is often taken to be (\pi^2/3) [19]. For other distributions like the negative binomial, you would need to consult literature for the appropriate calculation of residual variance. The R package brms can be used for such models, though extracting (\lambda) requires post-processing [19].

Q5: What does it mean if my model has a "poor performance" in describing the trait evolution, and what should I do?

A model with "poor performance" means its distributional assumptions are inconsistent with your observed data, making its conclusions unreliable [20]. This is often assessed via parametric bootstrapping or posterior predictive simulations [20]. A common reason for poor performance, especially in gene expression data, is the model's failure to account for heterogeneity in the evolutionary rate across the tree [20]. If your model performs poorly, you should:

- Consider using more complex models that allow for rate variation.

- Use model adequacy tools (e.g., the R package

Arbutus) to diagnose specific failures [20].

Troubleshooting Guides

Issue 1: Poor Prediction Accuracy in Trait Imputation

Problem: Your phylogenetic generalized least squares (PGLS) model is producing inaccurate predictions for unknown trait values.

Diagnosis: This is a common issue when using simple predictive equations from regression models (OLS or PGLS), which ignore the specific phylogenetic position of the taxon being predicted [7].

Solution: Use phylogenetically informed prediction.

- Procedure: This method uses the full phylogenetic regression model—including the phylogenetic variance-covariance matrix—to predict missing values, rather than just the slope and intercept coefficients [7].

- Expected Outcome: Simulations show phylogenetically informed predictions outperform predictive equations from OLS and PGLS by two- to three-fold. Remarkably, using this method with two weakly correlated traits (r=0.25) can yield better predictions than using predictive equations from strongly correlated traits (r=0.75) [7].

- Recommendation: Always use phylogenetically informed prediction for imputing missing trait values or reconstructing ancestral states. The following workflow outlines this superior approach and a common suboptimal alternative for comparison.

Issue 2: Detecting Signal in Mixed-Type Trait Data

Problem: You need to test for phylogenetic signal in a dataset that includes a combination of continuous and discrete traits.

Diagnosis: Standard indices like Blomberg's K or Pagel's λ are designed for continuous data, while D and δ statistics are for discrete data. Using different methods hinders comparability [17].

Solution: Apply the unified M statistic.

- Procedure:

- Compute Trait Distance: Calculate the pairwise distance matrix for all species using Gower's distance, which can handle mixed data types [17].

- Compute Phylogenetic Distance: Obtain a pairwise distance matrix from your phylogenetic tree (e.g., patristic distance).

- Calculate M Statistic: The M statistic is computed by comparing the distances from the traits and the phylogeny, strictly adhering to the definition of phylogenetic signal [17].

- Significance Testing: Use a permutation test to assess whether the observed M statistic is significantly different from random.

- Tools: The R package

phylosignalDBis designed for this calculation [17].

Issue 3: Accounting for Phylogenetic and Measurement Uncertainty

Problem: Your analysis lacks robustness because you are using a single fixed phylogeny, and your trait measurements contain error.

Diagnosis: Ignoring phylogenetic uncertainty and measurement error leads to overly narrow confidence intervals and inflated significance [18].

Solution: Implement a Bayesian framework that integrates over a distribution of trees and includes measurement error.

- Procedure:

- Obtain Tree Distribution: Generate a posterior distribution of phylogenetic trees (e.g., from BEAST or MrBayes) [18].

- Specify Model: In a Bayesian modeling environment (e.g., OpenBUGS, JAGS, or

brmsin R), specify your comparative model. The phylogenetic tree is treated as a random effect, with its variance-covariance matrix (Σ) sampled for each tree in the distribution. - Incorporate Measurement Error: Include a data model that accounts for the standard error of your trait measurements. For example, if your measured trait value is ( yi ), and its standard error is ( sei ), you can model the true trait value as ( y{true,i} \sim N(yi, se_i^2) ) [21] [18].

- Outcome: This method provides parameter estimates (like regression coefficients and phylogenetic signal) that more accurately reflect the true uncertainty in your data [18].

Quantitative Data on Phylogenetic Signal

Table 1: Performance Comparison of Prediction Methods on Simulated Ultrametric Trees (n=100 taxa)

| Correlation Strength (r) | Prediction Method | Variance of Prediction Error (σ²) | Relative Performance vs. PIP |

|---|---|---|---|

| 0.25 | Phylogenetically Informed Prediction (PIP) | 0.007 | (Baseline) |

| 0.25 | PGLS Predictive Equation | 0.033 | ~4.7x worse |

| 0.25 | OLS Predictive Equation | 0.030 | ~4.3x worse |

| 0.75 | Phylogenetically Informed Prediction (PIP) | Data not shown | (Baseline) |

| 0.75 | PGLS Predictive Equation | 0.015 | ~2x worse |

| 0.75 | OLS Predictive Equation | 0.014 | ~2x worse |

Source: Adapted from [7]. Performance is measured by the variance of prediction errors; a smaller variance indicates better and more consistent accuracy. PIP was more accurate than PGLS and OLS predictive equations in 96.5-97.4% and 95.7-97.1% of simulated trees, respectively.

Table 2: Common Metrics for Quantifying Phylogenetic Signal in Continuous Traits

| Metric | Interpretation | Best For | Implementation Example |

|---|---|---|---|

| Blomberg's K | K = 1: Trait evolves as expected under Brownian Motion. K < 1: Close relatives are less similar than expected. K > 1: Close relatives are more similar than expected. | Quantifying signal relative to a Brownian Motion (BM) model. | toytree.pcm.phylogenetic_signal_k() in Python [21] |

| Pagel's λ | λ = 0: No phylogenetic signal (traits independent of phylogeny). λ = 1: Traits covary in direct proportion to their shared evolutionary history (as under BM). | Testing hypotheses about the strength of the phylogenetic signal; a multiplier of off-diagonal elements of the variance-covariance matrix. | toytree.pcm.phylogenetic_signal_lambda() in Python [21] |

| M Statistic | A value that strictly adheres to the definition of phylogenetic signal by comparing trait and phylogenetic distances. Can handle continuous, discrete, and multiple traits. | Unified analysis of datasets with mixed variable types. | phylosignalDB package in R [17] |

The Scientist's Toolkit: Essential Research Reagents & Software

Table 3: Key Software and Analytical Tools for Phylogenetic Signal Analysis

| Tool Name | Function | Use-Case Example |

|---|---|---|

| phylosignalDB (R package) | Detects phylogenetic signals for continuous, discrete, and multiple trait combinations using the M statistic [17]. | Analyzing a dataset of plant traits that includes both morphological measurements (continuous) and habitat types (discrete) [17]. |

| phylolm.hp (R package) | Partitions the variance explained in a Phylogenetic Generalized Linear Model (PGLM) among predictors, including phylogeny, to evaluate their relative importance [8]. | Determining whether phylogeny or environmental factors are the primary drivers of a trait like maximum tree height [8]. |

| Arbutus (R package) | Assesses the absolute performance (adequacy) of phylogenetic models of continuous trait evolution via parametric bootstrapping [20]. | Checking if a fitted Brownian Motion model adequately describes the evolution of gene expression levels across species [20]. |

| OpenBUGS / JAGS | Bayesian analysis software that allows for flexible model specification, enabling the incorporation of phylogenetic uncertainty and measurement error [18]. | Fitting a phylogenetic regression model using a posterior distribution of 100 trees from a Bayesian phylogenetic analysis [18]. |

| PhyKIT (toolkit) | A suite of functions for phylogenomic analyses, including summarizing information content and identifying genes with strong phylogenetic signal [22]. | Filtering a large set of genes to retain those with the strongest phylogenetic signal (e.g., high parsimony informative sites) for robust species tree inference [22]. |

| brms (R package) | Fits Bayesian multivariate response models with a wide range of distributional families, including phylogenetic random effects [19]. | Modeling a binomial trait (e.g., presence/absence of a disease) while accounting for phylogenetic non-independence among species [19]. |

Experimental Protocol: Quantifying Phylogenetic Signal with Blomberg's K

This protocol details the steps to quantify phylogenetic signal for a continuous trait using Blomberg's K, including a significance test and accounting for measurement error, as implemented in the toytree library [21].

Objective: To test if a continuous trait (e.g., body mass) exhibits a phylogenetic signal significantly different from random.

Step-by-Step Method:

Data Preparation:

- Phylogeny: Load your rooted, ultrametric phylogenetic tree with branch lengths.

- Trait Data: Prepare a vector of trait values for each tip in the tree. Ensure the order of species in the trait vector matches the order of tips in the tree.

- Measurement Error (Optional): If available, prepare a vector of standard errors for each trait value (e.g., from repeated measurements).

Initial Visualization and Inspection:

- Plot the tree and map the trait values onto the tips to visually inspect for potential phylogenetic structure.

Calculate Blomberg's K:

- Without measurement error: Use a function like

toytree.pcm.phylogenetic_signal_k(tree, trait_data, nsims=0)to get the K statistic [21]. - With measurement error: Use the function and include the

errorargument:toytree.pcm.phylogenetic_signal_k(tree, data=trait_data, error=measurement_error, nsims=0)[21].

- Without measurement error: Use a function like

Perform Significance Testing via Permutation:

- To test the null hypothesis (no phylogenetic signal), run a permutation test. This shuffles the trait data across the tips and recalculates K many times to generate a null distribution.

- Use

toytree.pcm.phylogenetic_signal_k(tree, trait_data, nsims=1000). The output will include a P-value, which is the proportion of permutations that generated a K value as extreme as your observed value [21].

Interpretation:

- A significant P-value (e.g., P < 0.05) indicates that the trait exhibits significant phylogenetic signal.

- Interpret the K value: K ~1 suggests evolution under a Brownian Motion model; K < 1 suggests traits are more similar across distantly related species; K > 1 suggests strong conservatism among close relatives [21].

The following workflow summarizes this protocol and the key decision points.

Frequently Asked Questions

Q1: What is the core advantage of using phylogenetically informed prediction over traditional predictive equations? Phylogenetically informed prediction explicitly uses the evolutionary relationships between species (the phylogeny) to make predictions. Research demonstrates that this approach provides a 2- to 3-fold improvement in prediction performance compared to predictive equations derived from ordinary least squares (OLS) or phylogenetic generalized least squares (PGLS) models. In simulations, it performed about 4 to 4.7 times better, meaning predictions were consistently more accurate across thousands of simulations [7].

Q2: Can I use this method even when my trait data is only weakly correlated? Yes. A key finding is that phylogenetically informed prediction using two weakly correlated traits (e.g., r = 0.25) can be roughly equivalent to, or even better than, using predictive equations from models with strongly correlated traits (r = 0.75). This highlights the powerful predictive signal contained within the phylogenetic tree itself [7].

Q3: Why are fossils and extinct taxa critical for accurate ancestral state reconstruction? Analyses of primate biogeography show that ancestral range estimates for nodes older than the late Eocene become increasingly unreliable when based solely on extant species. Fossil data provides essential evidence of past geographical distributions that extant taxa alone cannot recover. Without fossils, inferences about the deep-time origins of major clades should be viewed with skepticism [23].

Q4: My data includes both continuous and discrete traits. Is there a unified method to detect phylogenetic signals for them? Yes, newer methods like the M statistic are designed to handle both continuous and discrete traits, as well as combinations of multiple traits. This capability comes from using Gower's distance, which can convert different types of trait data into a single distance matrix for analysis [17].

Q5: How does taxonomic revision (e.g., species splitting) impact measures of evolutionary history at risk? Splitting a single species into several new ones increases estimates of the evolutionary history (phylogenetic diversity) at risk. This is because the newly recognized species often have smaller ranges and potentially higher extinction risks, and the post-split phylogenetic tree contains more, but less evolutionarily distinct, species. Not acknowledging valid splits can lead to suboptimal conservation priorities [24].

Quantitative Performance Data

Table 1: Comparison of Prediction Method Performance on Ultrametric Trees (n=100 taxa) [7]

| Prediction Method | Correlation Strength (r) | Variance (σ²) of Prediction Error | Relative Performance vs. PIP |

|---|---|---|---|

| Phylogenetically Informed Prediction (PIP) | 0.25 | 0.007 | (Baseline) |

| OLS Predictive Equation | 0.25 | 0.030 | 4.3x worse |

| PGLS Predictive Equation | 0.25 | 0.033 | 4.7x worse |

| Phylogenetically Informed Prediction (PIP) | 0.75 | 0.002 | (Baseline) |

| OLS Predictive Equation | 0.75 | 0.014 | 7.0x worse |

| PGLS Predictive Equation | 0.75 | 0.015 | 7.5x worse |

Table 2: Accuracy Comparison Across Simulated Phylogenies [7]

| Comparison | Percentage of Trees Where PIP is More Accurate |

|---|---|

| PIP vs. PGLS Predictive Equations | 96.5% - 97.4% |

| PIP vs. OLS Predictive Equations | 95.7% - 97.1% |

Experimental Protocols

Protocol 1: Performing Phylogenetically Informed Prediction (PIP)

This protocol outlines the steps for a basic bivariate prediction using a phylogenetic tree and trait data [7].

- Data Preparation: Assemble a time-calibrated phylogeny that includes all taxa for which you have data (both known and unknown). Collect trait data for at least one predictor trait (X) and one target trait (Y). The target trait should have missing values for the taxa you wish to predict.

- Model Specification: Use a statistical framework that explicitly incorporates the phylogenetic variance-covariance matrix derived from your tree. This can be implemented in a Phylogenetic Generalized Least Squares (PGLS), a phylogenetic mixed model, or a Bayesian framework.

- Parameter Estimation: Fit the model to your data. The model will estimate the evolutionary relationship between traits X and Y while accounting for the non-independence of species due to shared ancestry.

- Prediction Generation: For a taxon with an unknown value of Y, the prediction is generated by combining the model's parameters with the taxon's known value of X and its phylogenetic position relative to all other species in the tree. This leverages information from closely related species.

- Uncertainty Quantification: Generate prediction intervals for each estimate. These intervals will logically increase with greater phylogenetic distance from species with known data.

Protocol 2: Detecting Phylogenetic Signals with the M Statistic

This protocol describes how to use the M statistic to detect phylogenetic signals in continuous, discrete, or multiple trait combinations [17].

- Calculate Phylogenetic Distance: Compute a pairwise distance matrix for all species based on the phylogenetic tree. This matrix represents the evolutionary dissimilarity between species.

- Calculate Trait Distance: Compute a pairwise distance matrix for all species based on their trait data. For this, use Gower's distance, as it can handle a mix of continuous and discrete traits. This matrix represents the phenotypic dissimilarity.

- Compute the M Statistic: The M statistic is calculated by comparing the distances from the phylogeny and the traits. It strictly adheres to the definition of a phylogenetic signal as "the tendency for related species to resemble each other more than they resemble species drawn at random from the tree."

- Statistical Testing: Perform a permutation test to assess the significance of the M statistic. This typically involves randomly shuffling the trait values across the tips of the phylogeny many times and recalculating the M statistic for each shuffle to create a null distribution.

- Interpretation: A significant M statistic indicates the presence of a phylogenetic signal, meaning that closely related species are more similar in their traits than would be expected by chance.

Experimental Workflow Diagrams

Diagram 1: Core workflow for phylogenetic prediction.

Diagram 2: Unified phylogenetic signal detection for mixed data types.

The Scientist's Toolkit

Table 3: Essential Research Reagents and Resources for Phylogenetic Prediction

| Item/Resource | Function & Application | Key Considerations |

|---|---|---|

| Time-Calibrated Phylogeny | The foundational scaffold representing evolutionary relationships and time. Used to compute the phylogenetic variance-covariance matrix. | Resolution and taxon sampling are critical. Incorporate fossil data for accurate deep-time inference [23]. |

| 'phylosignalDB' R Package | An R package designed to calculate the M statistic for detecting phylogenetic signals in continuous, discrete, and multiple trait combinations [17]. | Provides a unified method for various data types, improving comparability across studies. |

| Gower's Distance Metric | A versatile dissimilarity measure used to calculate trait distances from a mix of continuous and discrete (nominal, ordinal) variables [17]. | Essential for creating a single trait distance matrix when analyzing multi-format trait data. |

| Bayesian Evolutionary Models | Statistical framework for complex phylogenetic predictions, allowing for sampling from full predictive distributions and integration of uncertainty [7]. | Particularly useful for incorporating fossil data and for further analysis of predictive distributions. |

| DEC/+J Model Framework | A model (Dispersal-Extinction-Cladogenesis, with jump dispersal) used in historical biogeography to infer ancestral ranges and range evolution over phylogeny [23]. | Key for testing hypotheses about past geographical distributions and events like vicariance and sweepstakes dispersal. |

Building Robust Models: A Practical Guide to Phylogenetically Informed Prediction Methods

In phylogenetic comparative studies, a core challenge is accurately predicting unknown trait values—whether for imputing missing data, reconstructing ancestral states, or forecasting traits in unmeasured species. The central thesis of this methodological discussion is that explicitly accounting for phylogenetic signal is not merely a statistical formality but a fundamental requirement for generating accurate and evolutionarily meaningful predictions. For decades, researchers have commonly used predictive equations derived from regression models, but these approaches differ dramatically in how they handle the non-independence of species due to shared ancestry. This technical support center dives deep into the distinction between Phylogenetically Informed Prediction (PIP) and predictions from Phylogenetic Generalized Least Squares (PGLS), providing a structured guide to their application, troubleshooting, and implementation.

Conceptual Foundation and Key Differences

What is the fundamental mathematical difference between a PIP and a PGLS predictive equation?

The fundamental difference lies in how the phylogenetic position of the target species is incorporated.

- A PGLS predictive equation uses only the estimated regression coefficients (e.g.,

Y = β₀ + β₁X). It calculates a prediction based solely on the value of the predictor variable(s), essentially providing the value ofYat a givenXon the phylogenetically-corrected regression line [25]. - A Phylogenetically Informed Prediction (PIP) goes a step further by incorporating the phylogenetic covariance between the target species and all other species in the tree. It adjusts the prediction from the regression line by a weighted average of the residuals from related species. Formally, the prediction for a species h is given by

Ŷₕ = (β₀ + β₁Xₕ) + εᵤ, where the crucial termεᵤis derived from the phylogenetic variance-covariance matrix V [25].

How does this mathematical difference manifest in practical performance?

Simulation studies demonstrate that PIP consistently and significantly outperforms predictions based solely on PGLS coefficients. The table below summarizes the key performance advantages of PIP.

Table 1: Performance Comparison of PIP vs. PGLS Predictive Equations

| Performance Metric | PIP Performance | PGLS Predictive Equation Performance |

|---|---|---|

| Overall Accuracy | Two- to three-fold improvement in prediction error reduction [25]. | Higher prediction error due to ignoring phylogenetic position of the target. |

| Leveraging Weak Correlations | Can achieve accuracy with weakly correlated traits (r=0.25) similar to PGLS with strongly correlated traits (r=0.75) [25]. | Highly dependent on strong trait correlations for accurate predictions. |

| Handling Phylogenetic Uncertainty | Prediction intervals logically widen with increasing phylogenetic branch length to the target species [25]. | Does not naturally account for this source of uncertainty. |

| Biological Interpretation | Estimate is "pulled" towards the value of closely related sister taxa, reflecting evolutionary history [25]. | Provides a "one-size-fits-all" estimate for a given predictor value, ignoring evolutionary relationships. |

Implementation and Experimental Protocols

Workflow for Phylogenetically Informed Prediction

The following diagram outlines the logical workflow for conducting a phylogenetic prediction analysis, from data preparation to model selection and interpretation.

How do I implement PIP and PGLS predictions in R?

While specific code for PIP is model-dependent, the following protocol outlines the general steps and provides examples for fitting a base PGLS model, which is a foundational step for PIP.

Protocol: Basic Phylogenetic Regression and Prediction in R

Package Preparation: Load the necessary R packages.

Data and Tree Loading: Read your phylogenetic tree and trait data, ensuring names match.

Model Fitting - PGLS: Fit a phylogenetic regression model using Generalized Least Squares (GLS). The

corBrowniancorrelation structure implies a Brownian motion model of evolution.Advanced Note: The

corPagelfunction can be used to fit a Pagel'slambdatransformation, which can better model the strength of phylogenetic signal [26].Making Predictions:

- PGLS Predictive Equation: Use the model coefficients directly.

- Phylogenetically Informed Prediction (PIP): This requires a function or package that can implement the PIP algorithm. This often involves adding the new species to the phylogeny and using a function designed for phylogenetic prediction (e.g.,

phylo.informed.predor similar custom functions). Thephytoolspackage contains various functions for ancestral state reconstruction and prediction that can be adapted for this purpose.

Frequently Asked Questions (FAQs)

Q1: My dataset has multiple observations per species. Can I still use these methods?

Yes, but a standard PGLS or PIP that assumes one observation per species will not be appropriate. You will need a mixed model approach that can account for both phylogenetic non-independence and within-species variation. MCMCglmm is a powerful Bayesian package that can handle this complexity [27]. It allows you to include species (linked to the phylogeny via the pedigree argument) and specimen (or individual) as random effects, properly partitioning the variance.

Q2: When would I ever use a PGLS predictive equation instead of PIP?

The PGLS predictive equation might be considered only if the phylogenetic position of the target species is completely unknown, making it impossible to calculate the phylogenetic covariance term (εᵤ). However, in such a scenario, the prediction would be made with the understanding that it carries greater uncertainty and potential bias. PIP is the superior and recommended method whenever the phylogenetic relationships are known [25].

Q3: Beyond continuous traits, can these principles be applied to binary traits, like gene presence/absence?

Absolutely. The principle of phylogenetic conservatism extends to discrete traits, including gene content. A 2025 study on ammonia-oxidizing archaea successfully predicted the distribution of 18 different genes across a phylogeny using methods like phylogenetic eigenvector mapping and ancestral state reconstruction, achieving over 88% accuracy [12]. For such analyses, generalized linear models with a logistic (binomial) link function would be used within the phylogenetic framework.

Q4: How do I report phylogenetic predictions in a publication?

Always state clearly whether you used a PIP or a simple PGLS predictive equation. Report the phylogenetic regression model details (e.g., lambda, coefficients, R²) and, critically, provide prediction intervals around your estimates, not just point predictions. These intervals quantify the uncertainty and naturally increase with the phylogenetic distance from known data [25].

Troubleshooting Common Problems

Table 2: Common Errors and Solutions in Phylogenetic Prediction

| Problem | Likely Cause | Solution |

|---|---|---|

Error: duplicate 'row.names' are not allowed (e.g., in caper) [27]. |

The comparative data object expects one entry per species, but your dataset has multiple records per species. | Use a method that handles multiple observations, such as MCMCglmm, specifying species and individual as random effects [27]. |

PGLS model fails to converge, especially with corPagel. |

The optimization algorithm is struggling, often due to the scale of branch lengths or a poorly identified phylogenetic signal parameter (lambda). | Try rescaling your tree's branch lengths (e.g., tree$edge.length <- tree$edge.length * 100). Alternatively, fix lambda to 1 (Brownian motion) or 0 (no signal) as a sensitivity test [26]. |

| Predictions seem biologically implausible. | The evolutionary model (e.g., Brownian motion) may be a poor fit for your trait. High phylogenetic signal might be pulling predictions too strongly towards relatives. | Experiment with different evolutionary models (e.g., Ornstein-Uhlenbeck with corMartins). Validate predictions with any known hold-out data or fossil information if available [26]. |

| I need to partition the importance of phylogeny vs. predictors. | Standard regression R² does not correctly partition variance when predictors are phylogenetically correlated. | Use specialized packages like phylolm.hp, which performs hierarchical partitioning of the variance in Phylogenetic Generalized Linear Models (PGLMs) to quantify the unique contributions of phylogeny and each predictor [8]. |

Table 3: Key Software and Statistical Packages for Phylogenetic Prediction

| Tool / Package | Primary Function | Application Note |

|---|---|---|

nlme / gls [26] |

Fits PGLS models with various correlation structures. | The core workhorse for standard PGLS in R. Uses corBrownian, corPagel, etc. |

phytools [28] |

A vast toolkit for phylogenetic comparative methods. | Contains functions for visualizing, simulating data, and conducting various types of phylogenetic imputation and ancestral state reconstruction. |

caper |

Fits comparative models using phylogenetic independent contrasts (PICs). | Its comparative.data function is useful for data management, but it requires one observation per species [27]. |

MCMCglmm [27] |

Fits Bayesian phylogenetic mixed models. | Essential for complex data structures, including multiple observations per species, binary traits, and more. Has a steeper learning curve. |

phylolm.hp [8] |

Performs hierarchical partitioning of variance in PGLMs. | Answers the question: "How much unique variance does my predictor explain, controlling for phylogeny?" |

| Ultrametric Phylogenetic Tree | Input data specifying evolutionary relationships and divergence times. | The foundational "map" of shared ancestry. Required for all PIP and PGLS analyses. |

Implementing Bayesian Phylogenetic Prediction for Sampling Predictive Distributions

FAQs: Core Concepts and Setup

1. What is Bayesian Phylogenetic Prediction, and how does it differ from maximum likelihood methods? Bayesian phylogenetic inference estimates the posterior probability of phylogenetic trees, which is the probability that a tree is correct given the genetic sequence data, a model of evolution, and prior beliefs [29]. Unlike maximum likelihood, which identifies a single "best" tree, Bayesian methods using Markov Chain Monte Carlo (MCMC) sampling produce a set of trees (a posterior distribution) with known probabilities [30]. This allows for direct probabilistic statements about trees and model parameters, such as "this clade has a 95% probability of being correct" [31].

2. Why should I use Bayesian methods for predicting trait distributions? Phylogenetically informed prediction, which explicitly uses phylogenetic relationships, significantly outperforms predictive equations derived from ordinary least squares (OLS) or phylogenetic generalized least squares (PGLS) regression [7]. Simulations show that phylogenetically informed predictions can be 4 to 4.7 times more accurate (as measured by the variance of prediction errors) than calculations from OLS or PGLS predictive equations [7]. For weakly correlated traits (r=0.25), phylogenetically informed prediction performs as well as or better than predictive equations for strongly correlated traits (r=0.75) [7].

3. What types of data can I use for Bayesian phylogenetic prediction? The most common data are DNA and amino acid sequence alignments [31]. However, models also exist for discrete morphological characters (using the Mk model or extensions) and continuous traits (using diffusion process models like the Wiener or Ornstein-Uhlenbeck processes) [31]. For species tree estimation, it is critical that sequences are orthologs [31].

4. How do I select an appropriate substitution model for my nucleotide data?

Programs like jModelTest, ModelGenerator, or PartitionFinder can help select a model based on goodness-of-fit [31]. However, note that model robustness is also important. For deep phylogenies, more complex models like GTR+Γ are often necessary, while for sequence divergences below 10%, simpler models like HKY+Γ often produce similar tree and branch length estimates [31]. It is generally considered more problematic to under-specify than to over-specify the model in Bayesian phylogenetics [31].

5. What does it mean to "sample predictive distributions," and why is it valuable? Sampling predictive distributions means using Bayesian methods, like MCMC, to generate a distribution of possible trait values for a taxon (including extinct or unmeasured species) based on its phylogenetic position and evolutionary models [7] [29]. This provides a full probabilistic assessment of uncertainty, going beyond a single point estimate. This approach has been used, for example, to reconstruct genomic and cellular traits in dinosaurs and to build large trait databases with phylogenetic imputation [7].

Troubleshooting Guides

Issue 1: MCMC Chain Won't Converge or Mixes Poorly

Symptoms:

- Low Effective Sample Size (ESS) values for key parameters (e.g., tree likelihood, branch lengths).

- Trace plots that show the parameter value drifting without stabilizing or getting stuck in one place.

- Multiple, independent MCMC runs sampling significantly different tree spaces.

Solutions:

- Adjust Proposal Mechanisms: Modify the "step size" of proposals in your MCMC algorithm. If steps are too large, the chain will reject too many proposals; if too small, it will get trapped in local optima [30].

- Use Metropolis-Coupled MCMC (MC³): Run multiple heated chains in parallel. This allows the main "cold" chain to occasionally jump between peaks in the posterior distribution, leading to better mixing, especially when the tree space has multiple local optima [29].

- Check Priors: Ensure your prior distributions are reasonable and not conflicting with the information in your data. Overly restrictive or misspecified priors can prevent convergence [31].

- Run Chains Longer: Sometimes, the solution is simply to run the MCMC analysis for more generations.

Issue 2: Inaccurate or Biased Trait Predictions

Symptoms:

- Predictions for unknown traits are consistently over- or under-estimated compared to known values.

- Prediction intervals do not reliably capture the true trait value when tested with data.

Solutions:

- Incorporate Phylogeny Directly: Do not rely solely on predictive equations from PGLS or OLS regressions. Instead, use methods that explicitly include the phylogenetic position of the predicted taxon in the model [7]. The prediction interval should widen with increasing phylogenetic distance from species with known data.

- Verify Model Adequacy: Ensure your evolutionary model (e.g., Brownian motion, Ornstein-Uhlenbeck) is appropriate for your trait. Model misspecification can lead to biased predictions.

- Check for Phylogenetic Signal: Use tools like

phylolm.hpin R to partition the variance explained by phylogeny versus other predictors. A strong phylogenetic signal indicates that prediction methods incorporating the tree should be used [8].

Issue 3: Model Is Non-Identifiable or Parameters Have High Variance

Symptoms:

- Very wide posterior distributions for parameters.

- Strong correlations between parameters (e.g., between divergence time and evolutionary rate).

- Warnings of non-identifiability from software.

Solutions:

- Simplify the Model: Remove unnecessary parameters. A model is non-identifiable if different parameter combinations make the same predictions about the data [31]. For example, the molecular distance

d = r * tdepends on both the raterand timet; you cannot estimate both from a single pair of sequences without additional information [31]. - Add Informative Priors: If external data exists (e.g., fossil calibrations for divergence times), use it to define informed prior distributions, which can help pin down otherwise correlated parameters.

- Reparameterize: Sometimes, reparameterizing the model (e.g., using a compound parameter like

d = r * t) can resolve identifiability issues.

Experimental Protocols and Data

Table 1: Performance Comparison of Prediction Methods on Simulated Data

This table summarizes the variance of prediction errors from simulations on ultrametric trees with 100 taxa, comparing phylogenetically informed prediction against predictive equations from OLS and PGLS [7].

| Prediction Method | Weak Correlation (r=0.25) | Moderate Correlation (r=0.50) | Strong Correlation (r=0.75) |

|---|---|---|---|

| Phylogenetically Informed Prediction | σ² = 0.007 | σ² = 0.004 | σ² = 0.002 |

| PGLS Predictive Equations | σ² = 0.033 | σ² = 0.017 | σ² = 0.015 |

| OLS Predictive Equations | σ² = 0.030 | σ² = 0.016 | σ² = 0.014 |

Table 2: Key Software for Bayesian Phylogenetics and Prediction

This table lists essential software tools for conducting Bayesian phylogenetic analysis and prediction [31].

| Software | Primary Function | Brief Description |

|---|---|---|

| BEAST | Bayesian Evolutionary Analysis | Estimates trees, divergence times, phylodynamics, and species trees under complex models. |

| MrBayes | Bayesian Phylogenetic Inference | Implements a large number of models for nucleotide, amino acid, and morphological data. |

| RevBayes | Probabilistic Graphical Models | Provides a flexible language for building complex hierarchical Bayesian phylogenetic models. |

| Tracer | MCMC Diagnostics | Analyzes output from Bayesian MCMC runs to assess convergence and mixing (e.g., ESS values). |

| BPP | Species Tree & Delimitation | Implements species tree estimation and species delimitation under the multi-species coalescent. |

| phylolm.hp (R package) | Variance Partitioning | Calculates individual R² values for phylogeny and predictors in Phylogenetic Generalized Linear Models. |

Workflow and Signaling Pathways

Bayesian Phylogenetic Prediction Workflow

Logical Relationship of Bayesian Components

The Scientist's Toolkit

Table 3: Essential Research Reagents and Software Solutions

| Item | Type | Function |

|---|---|---|

| BEAST 2 | Software Package | A cross-platform program for Bayesian evolutionary analysis of molecular sequences; samples from posterior distributions of trees and model parameters. [31] |

| MrBayes | Software Package | A program for Bayesian inference of phylogenies using MCMC sampling; supports a wide range of evolutionary models. [31] [29] |

| Tracer | Software Tool | Visualizes and analyzes the MCMC output, allowing diagnosis of convergence (via ESS) and summarization of parameter distributions. [31] |

| jModelTest / PartitionFinder | Software Tool | Helps select the best-fit nucleotide substitution model for the data based on statistical criteria. [31] |

| phylolm.hp R Package | Software Library | Partitions the explained variance in a trait among phylogenetic history and other predictors in a PGLM. [8] |

| MCMC Algorithm | Computational Method | The core engine (e.g., Metropolis-Hastings) that samples parameter values and trees in proportion to their posterior probability. [31] [29] [30] |

| Phylogenetic Generalized Linear Model (PGLM) | Statistical Model | A regression framework that incorporates a phylogenetic variance-covariance matrix to account for non-independence of species data. [7] [8] |

Frequently Asked Questions (FAQs)

Q1: What is the primary function of the phylolm.hp R package?

The phylolm.hp package is designed to conduct hierarchical partitioning to calculate the individual contributions of phylogenetic signal (the phylogenetic tree) and each predictor variable towards the total R² in Phylogenetic Generalized Linear Models (PGLMs). It helps researchers disentangle the effects of shared evolutionary history from those of ecological or trait-based predictors in comparative analyses [8] [32].

Q2: My model has several correlated predictors. Can phylolm.hp handle multicollinearity?

Yes, a key feature of phylolm.hp is its ability to address the challenge of correlated predictors. It extends the concept of "average shared variance" to PGLMs, allowing it to partition the explained variance among predictors and phylogeny into both unique and shared components. This approach overcomes the limitations of traditional partial R² methods, which often fail to sum to the total R² due to multicollinearity [8] [33].

Q3: I have binary trait data (e.g., presence/absence). Is phylolm.hp suitable for this data type?

Absolutely. The package is compatible with models fitted using both phylolm (for continuous traits) and phyloglm (for binary traits). The functionality has been demonstrated in case studies involving both continuous and binary trait data, such as analyzing species invasiveness [8] [32].

Q4: How do I visualize the results of the hierarchical partitioning?

The package includes a dedicated plotting function, plot.phyloglmhp(). You can use it to create bar plots showing the individual effects (or their percentages) of variables and the phylogenetic signal. It can also generate plots for commonality analysis, providing a clear visual breakdown of the variance partitioning results [34] [35].

Q5: What is the difference between phylolm.hp and phyloglm.hp functions?

In the context of the package, these functions are used for the same purpose. The documentation indicates that phyloglm.hp is the function to perform hierarchical partitioning for both phylolm and phyloglm model objects. The similarly named phylolm.hp function is described identically in the package manual, suggesting they are equivalent in their core operation [32].

Troubleshooting Guides

Issue 1: Functionphyloglm.hp()Not Found

Problem: After installing the phylolm.hp package, you receive an error that the function phyloglm.hp cannot be found.

Solutions:

- Check Installation: Ensure the package is correctly installed from CRAN using

install.packages("phylolm.hp"). - Load Libraries: Verify you have loaded the required libraries. The function depends on the

phylolmandrr2packages. - Check Function Name: The primary function for analysis is

phyloglm.hp(), as per the package documentation [32].

Issue 2: Interpreting Commonality Analysis Output

Problem: The output of the commonality analysis is complex and difficult to interpret.

Solution:

- When you run

phyloglm.hp(fit, commonality=TRUE), the result includes acommonality.analysismatrix. This matrix details the value and percentage of all commonality components (2^N - 1 for N predictors or matrices) [32]. - Each row in this matrix represents a unique combination of predictors and phylogeny. The values indicate the portion of the total R² that is attributed exclusively to the overlap of that specific combination of factors. For a simpler overview, focus on the

Individual.R2matrix first, which provides a more summarized view of individual contributions.

Issue 3: Grouping Predictors for Analysis

Problem: You want to assess the relative importance of groups of predictors (e.g., climatic variables vs. soil variables) rather than individual variables.

Solution:

- Use the

ivargument in thephyloglm.hp()function. This argument takes a list where each element contains the names of variables belonging to a specific group. - Example: This will calculate the combined individual R² contribution for the "climate" group and the "soil" group [32].

Experimental Protocols & Workflows

Standard Workflow for Variance Partitioning withphylolm.hp

The following diagram illustrates the standard workflow for conducting variance partitioning analysis using the phylolm.hp package.

Step-by-Step Protocol:

- Data Preparation: Organize your data into a data frame where rows represent species and columns represent the response trait and predictor variables. Have your phylogenetic tree ready in "phylo" format [32].

- Model Fitting: Fit a phylogenetic model using the