How AI and Deep Learning Are Revolutionizing Evolutionary Genomics in 2025

This article explores the transformative impact of artificial intelligence and deep learning on evolutionary genomics, a field at the intersection of computational biology and genetics.

How AI and Deep Learning Are Revolutionizing Evolutionary Genomics in 2025

Abstract

This article explores the transformative impact of artificial intelligence and deep learning on evolutionary genomics, a field at the intersection of computational biology and genetics. Aimed at researchers, scientists, and drug development professionals, it provides a comprehensive analysis of how these technologies are addressing long-standing challenges. The content covers foundational concepts, from processing the genomic data deluge to leveraging evolutionary constraints for variant interpretation. It delves into cutting-edge methodologies, including generative models for genome design and AI-powered tools for phylogenetic inference and rare disease diagnosis. The article also addresses critical troubleshooting aspects like model interpretability and data bias, and validates these approaches through comparative analysis of their performance in clinical and research settings. By synthesizing insights from recent breakthroughs and major conferences, this review serves as a strategic guide for leveraging AI to unlock new discoveries in evolution, disease mechanisms, and therapeutic development.

The New Frontier: AI for Decoding Evolutionary Patterns and Genomic Complexity

The field of genomics is experiencing a data explosion that has rendered traditional computational methods inadequate. The cost of sequencing a human genome has plummeted from millions of dollars to under $1,000, democratizing access but also releasing a data deluge that challenges conventional analysis pipelines [1]. By 2025, global genomic data is projected to reach 40 exabytes (40 billion gigabytes), creating a critical computational bottleneck that threatens to outpace even Moore's Law [1]. This massive scale, combined with the inherent complexity of genomic information, has created a paradigm where artificial intelligence is no longer an optional enhancement but an essential component of evolutionary genomics research.

In evolutionary genomics specifically, researchers investigate patterns of genetic diversity between species and populations, playing fundamental roles from theoretical evolutionary studies to practical applications in conservation genetics and biomedical sciences [2]. The application of AI, and particularly deep learning, to this domain is still in its infancy while showing promising initial results for tasks including inference of demographic history, ancestry, natural selection, phylogeny, and species delimitation [2]. However, these applications face unique challenges, including identifying appropriate assumptions about evolutionary processes and determining optimal ways to handle complex biological data types like sequences, alignments, phylogenetic trees, and associated geographical or environmental data [2].

Quantitative Dimensions of the Genomic Data Deluge

Table 1: Scaling Challenges in Genomic Data Analysis

| Parameter | Traditional Scaling | Current Challenge | Projected Trend |

|---|---|---|---|

| Data Volume per Human Genome | ~100 GB [1] | Millions of genomes sequenced globally [1] | 40 exabytes by 2025 [1] |

| Sequencing Cost | Millions of dollars [1] | Under $1,000 [1] | Continuing to decrease |

| Computational Demand | Hours for variant calling [1] | Minutes with AI acceleration [1] | Near real-time analysis |

| Data Complexity | Single nucleotide variants | Structural variants, epigenomics, multi-omics integration [3] | Increasingly multi-modal data |

The exponential growth in genomic data generation has created several fundamental challenges that traditional bioinformatics approaches struggle to address. First, the sheer volume of data exceeds the processing capabilities of conventional computational infrastructure [1]. Second, the complexity of biological signals and prevalence of technical artifacts like amplification bias, batch effects, and sequencing errors create analytical hurdles that traditional computational tools often cannot overcome [3]. Third, the need to integrate multi-modal data sources - including genomics, transcriptomics, proteomics, epigenomics, and clinical information - requires sophisticated analytical approaches capable of identifying nonlinear patterns across diverse data types [3].

AI Architectures for Genomic Analysis

Core Machine Learning Paradigms

AI encompasses several distinct but related technological approaches that are hierarchically related: all deep learning is machine learning, and all machine learning is artificial intelligence [1]. In genomic applications, different learning paradigms address specific analytical challenges:

- Supervised Learning: Models trained on labeled datasets where correct outputs are known, such as classifying genomic variants as "pathogenic" or "benign" after training on expertly curated examples [1].

- Unsupervised Learning: Models that work with unlabeled data to find hidden patterns or structures, useful for exploratory analysis like clustering patients into distinct subgroups based on gene expression profiles [1].

- Reinforcement Learning: AI agents that learn to make sequences of decisions in an environment to maximize cumulative reward, applicable to designing optimal treatment strategies or creating novel protein sequences [1].

Deep Learning Architectures in Genomics

Table 2: Deep Learning Architectures for Genomic Applications

| Architecture | Typical Applications | Advantages for Genomics | Specific Examples |

|---|---|---|---|

| Convolutional Neural Networks (CNNs) | Variant calling, sequence motif identification [1] [3] | Identify spatial patterns in sequence data | DeepVariant [1], DeepCRISPR [3] |

| Recurrent Neural Networks (RNNs) | Protein structure prediction, disease-linked variations [1] | Process sequential data where order matters | LSTM networks for long-range dependencies [1] |

| Transformer Models | Gene expression prediction, variant effect prediction [1] | Weigh importance of different input parts | Evo 2 [4], DNA language models [5] |

| Generative Models | Novel protein design, synthetic data generation [1] | Create new data resembling training set | GANs, VAEs for privacy-preserving data sharing [1] |

Application Note: AI-Driven Variant Prioritization with popEVE

Experimental Background and Principles

The popEVE model represents a significant advancement in addressing one of the most persistent challenges in clinical genomics: distinguishing the few disease-causing genetic variants from tens of thousands of benign alterations in an individual's genome [6]. This AI tool was developed by Harvard Medical School researchers to produce a continuous score for each variant indicating its likelihood of causing disease, effectively ranking variants by disease severity and providing a prioritized, clinically meaningful view of a person's genome [6].

popEVE builds upon the EVE model, which uses deep evolutionary information from different species to learn patterns of highly conserved mutations [6]. The innovation in popEVE comes from integrating two additional components: a large-language protein model that learns from amino acid sequences, and human population data capturing natural genetic variation [6]. This combination allows the model to reveal both how much a variant affects protein function and the importance of that variant for human physiology [6].

Experimental Protocol: Variant Prioritization with popEVE

Objective: To identify and prioritize likely pathogenic variants from whole genome sequencing data of patients with suspected genetic disorders.

Input Requirements:

- Whole genome sequencing data in BAM or CRAM format

- Reference genome (GRCh38 recommended)

- Population frequency data from gnomAD

- Clinical phenotype data using HPO terms

Methodology:

Data Preprocessing (Duration: 2-4 hours)

- Perform quality control on raw sequencing data using FastQC

- Align sequences to reference genome using BWA-MEM or STAR

- Perform post-alignment processing including duplicate marking and base quality recalibration

Variant Calling (Duration: 3-5 hours)

- Generate GVCF files using GATK HaplotypeCaller for each sample

- Perform joint genotyping across all samples

- Filter variants based on quality metrics and annotated using Ensembl VEP

popEVE Analysis (Duration: 1-2 hours)

- Extract missense and putative loss-of-function variants

- Submit variant set to popEVE web interface or API

- Download popEVE scores for all variants

- Filter variants with popEVE score > 0.7 as high confidence pathogenic

Validation and Interpretation (Duration: 2-3 hours)

- Correlate popEVE predictions with clinical presentation

- Segregation analysis in family members if available

- Review literature for previously reported associations

- Consider functional validation using CRISPR-based approaches

Performance Characteristics: In validation studies, popEVE successfully distinguished between pathogenic and benign variants, discerned healthy controls from patients with severe developmental disorders, determined whether variants were likely to cause childhood versus adult-onset disease, and assessed whether alterations were inherited or occurred de novo [6]. Importantly, the model showed no ancestry bias and did not overpredict pathogenic variant prevalence [6]. When applied to approximately 30,000 previously undiagnosed patients with severe developmental disorders, popEVE enabled diagnosis in about one-third of cases and identified variants in 123 genes not previously linked to developmental disorders [6].

Application Note: Evolutionary Sequence Design with Evo 2

Experimental Background and Principles

Evo 2 represents a milestone in generative AI for biology, capable of predicting the form and function of proteins coded in the DNA of all domains of life [4]. This open-source tool was trained on a dataset that includes all known living species - humans, plants, bacteria, amoebas - and even some extinct species, totaling almost 9 trillion nucleotides [4]. Unlike its predecessor Evo 1, which was trained only on prokaryotic genomes, Evo 2 includes eukaryotes and features an expanded context window of up to 1 million nucleotides, enabling exploration of long-distance genetic interactions [4].

The fundamental principle behind Evo 2 is treating DNA as a language with its own grammar and syntax. The model learns patterns from evolutionary data and can autocomplete gene sequences, sometimes generating improvements or writing genes in novel ways not seen in natural evolutionary history [4]. This capability allows researchers to "speed up evolution" by steering toward mutations with useful functions, then testing these predictions in the lab using CRISPR and DNA synthesis technologies [4].

Experimental Protocol: Generative Gene Design with Evo 2

Objective: To design novel gene sequences with optimized functions for therapeutic applications.

Input Requirements:

- Target protein sequence or structural information

- Functional constraints or desired properties

- Evolutionary context or phylogenetic information

Methodology:

Sequence Preparation (Duration: 30 minutes)

- Define target functional constraints and desired properties

- Input partial gene sequence or homologous sequences as starting point

- Format input according to Evo 2 API specifications (FASTA format)

Generative Design (Duration: 1-2 hours)

- Access Evo 2 through web interface or local installation

- Set generation parameters (diversity, length, constraints)

- Run sequence generation with multiple iterations

- Collect candidate sequences with likelihood scores

Functional Prediction (Duration: 1 hour)

- Analyze generated sequences for structural properties

- Predict functional characteristics using integrated models

- Check for similarity to natural sequences

- Filter candidates based on design criteria

Experimental Validation (Duration: 2-4 weeks)

- Synthesize top candidate sequences commercially

- Clone into appropriate expression vectors

- Transfer into target cell lines (bacterial, yeast, or mammalian)

- Assess functional performance using relevant assays

- Iterate design based on experimental results

Performance Characteristics: Evo 2 has demonstrated remarkable capability in distinguishing harmful from benign mutations and generating novel sequences with desired functions [4]. The model excels at discovery tasks, particularly predicting mutation pathogenicity and designing new genetic sequences with specific functions of interest [4]. The 1-million-nucleotide context window enables identification of long-distance genetic interactions that would be impossible to detect with shorter context windows [4].

The Scientist's Toolkit: Essential Research Reagents and Platforms

Table 3: Essential Research Reagents and Computational Platforms

| Resource Category | Specific Tools/Platforms | Primary Function | Application Context |

|---|---|---|---|

| AI-Assisted Design | Evo 2 [4], Benchling [3], Synthego CRISPR Design Studio [3] | Generative sequence design, experimental planning | Evolutionary sequence optimization, CRISPR guide design |

| Variant Analysis | popEVE [6], DeepVariant [1] [3], NVIDIA Parabricks [1] | Variant calling, pathogenicity prediction | Rare disease diagnosis, population genetics |

| Laboratory Automation | Tecan Fluent systems [3], Opentrons OT-2 [3], YOLOv8 QC [3] | Liquid handling, workflow automation, quality control | High-throughput screening, NGS library prep |

| Multi-Omics Integration | DNAnexus [3], Illumina BaseSpace [3], Galaxy [7] | Cloud-based analysis, pipeline execution | Integrated genomic, transcriptomic, proteomic analysis |

| Specialized AI Models | DeepCRISPR [3], R-CRISPR [3], AlphaFold [1] | Predictive modeling for specific applications | Gene editing optimization, protein structure prediction |

Implementation Challenges and Ethical Considerations

The integration of AI into evolutionary genomics presents several significant challenges that researchers must address. Data heterogeneity across platforms and experimental systems creates integration difficulties [3]. Model interpretability remains a barrier to clinical adoption, as black-box predictions are insufficient for diagnostic applications [3]. Ethical concerns regarding cognitive offloading, algorithmic biases, and privacy issues require ongoing attention [3].

Particularly in the context of evolutionary genomics, the convergence of AI and synthetic biology raises dual-use concerns and governance challenges [5]. The democratization of design tools could potentially reduce barriers to engineering concerning biological constructs, necessitating thoughtful oversight frameworks that balance safety with innovation [5]. Researchers should implement guidelines for responsible development based on principles of knowledge cultivation, accountability, transparency, and ethics [5].

Future Directions in AI-Driven Evolutionary Genomics

The future of AI in evolutionary genomics will likely focus on several key developments. Federated learning approaches will address data privacy concerns while enabling model training across institutions [3]. Interpretable AI methods will enhance clinical trust and adoption by making model decisions more transparent [3]. Unified frameworks for multi-modal data integration will enable more comprehensive biological understanding [3].

Emerging capabilities in generative AI for biological sequence design will accelerate protein engineering and therapeutic development [4]. The expanding application of large language models to biological sequences will uncover deeper patterns in evolutionary relationships [5]. Finally, increasingly automated discovery pipelines will integrate AI across the entire design-build-test-learn cycle, dramatically accelerating evolutionary genomics research [5].

The genomic data deluge has fundamentally transformed evolutionary genomics from a data-poor to data-rich science. In this new paradigm, artificial intelligence has transitioned from an optional enhancement to an essential infrastructure component. As the field continues to evolve, researchers who effectively leverage AI capabilities will lead discoveries in understanding evolutionary processes, diagnosing genetic diseases, and developing novel therapeutics.

The field of evolutionary genomics is being transformed by the application of artificial intelligence (AI), which provides powerful new methods for analyzing complex biological data. This shift is primarily driven by deep learning architectures—Convolutional Neural Networks (CNNs), Recurrent Neural Networks (RNNs), and Transformers—that can identify intricate patterns within massive genomic datasets [8]. These technologies are moving biology from a descriptive science to a predictive and engineering discipline, enabling researchers to connect genetic variations to phenotypic outcomes, reconstruct evolutionary histories, and predict protein structures with unprecedented accuracy [9] [10].

The integration of these AI architectures is particularly valuable in evolutionary studies because they can process the fundamental sequential nature of genomic information and model complex relationships across different biological scales. From analyzing DNA sequences that have evolved over millions of years to predicting the functional consequences of modern genetic variations, CNNs, RNNs, and Transformers each bring unique capabilities to address longstanding challenges in evolutionary biology and genomics [11] [12].

Core Architectural Foundations

Convolutional Neural Networks (CNNs)

CNNs are specialized deep learning architectures designed to process grid-like data through parameter sharing and spatial hierarchy. Their architecture makes them particularly well-suited for identifying conserved motifs and regulatory elements in genomic sequences, essentially functioning as sophisticated pattern detectors for evolutionary conservation studies [12] [13].

The fundamental operation of a CNN involves convolutional layers that scan filters across input data to detect local patterns, pooling layers that reduce spatial dimensions while retaining important features, and fully connected layers that perform final classification or regression tasks. In genomics, DNA sequences are typically encoded as one-hot matrices (where A=[1,0,0,0], C=[0,1,0,0], G=[0,0,1,0], T=[0,0,0,1]), allowing CNNs to identify transcription factor binding sites and other functional elements through their pattern recognition capabilities [12].

Recurrent Neural Networks (RNNs)

RNNs represent a class of neural networks designed for sequential data processing, making them naturally suited for analyzing biological sequences where temporal dynamics and long-range dependencies are important. Unlike feedforward networks, RNNs contain cyclic connections that allow them to maintain a "memory" of previous inputs in the sequence, which is crucial for understanding evolutionary relationships where context matters [13].

The Long Short-Term Memory (LSTM) and Gated Recurrent Unit (GRU) variants address the vanishing gradient problem in basic RNNs, enabling them to capture longer-range dependencies in protein sequences and phylogenetic data. This architecture is particularly valuable for tasks that involve modeling sequential evolution, such as predicting how gene sequences change over time or analyzing the temporal patterns of evolutionary selection pressures [11].

Transformer Architectures

Transformers represent a paradigm shift in sequence processing through their use of self-attention mechanisms, which allow them to weigh the importance of different positions in the input sequence when generating representations. This architecture processes all sequence elements in parallel rather than sequentially, enabling more efficient training on large genomic datasets while capturing global dependencies across entire sequences [11].

The key innovation in Transformers is the multi-head attention mechanism, which allows the model to jointly attend to information from different representation subspaces at different positions. This is particularly powerful in evolutionary genomics for identifying non-adjacent regulatory elements, understanding epistatic interactions between distant mutations, and modeling complex evolutionary relationships across entire genomes [11].

Table 1: Comparative Analysis of Core AI Architectures in Evolutionary Genomics

| Architecture | Core Mechanism | Evolutionary Applications | Strengths | Limitations |

|---|---|---|---|---|

| CNN | Convolutional filters & spatial hierarchy | Motif discovery, regulatory element prediction, sequence classification | Excellent local pattern detection, translation invariance, parameter efficiency | Limited long-range dependency modeling, fixed filter sizes |

| RNN | Sequential processing with memory gates | Phylogenetic inference, evolutionary sequence modeling, indel prediction | Natural handling of variable-length sequences, temporal dynamics modeling | Sequential processing limits parallelism, gradient instability in very long sequences |

| Transformer | Self-attention & parallel processing | Genome-scale sequence analysis, protein structure prediction, cross-species comparison | Global context capture, superior parallelism, state-of-the-art performance on many tasks | High computational requirements, extensive data needs for training |

Application Notes in Evolutionary Genomics

CNN Applications: Evolutionary Conservation and Regulatory Genomics

CNNs have revolutionized the identification of evolutionary conserved elements and functional genomic regions. Their ability to detect spatial hierarchies in sequence data makes them ideal for pinpointing regulatory elements that have been preserved across species, providing insights into evolutionary constraints and adaptive evolution.

In practice, CNNs are deployed for transcription factor binding site prediction by training on chromatin immunoprecipitation sequencing (ChIP-seq) data, where they learn to recognize the subtle sequence patterns that define protein-DNA interactions across evolutionary timescales. They similarly excel at evolutionary constraint detection by identifying genomic regions with unusual mutation patterns that suggest purifying selection. The visualization of learned CNN filters often reveals sequence motifs corresponding to known regulatory elements, providing both predictive power and biological interpretability for understanding functional conservation [12].

For enhancer prediction and functional element discovery, CNNs analyze sequences flanking genes to identify signatures of regulatory potential, often discovering novel non-coding elements that have been conserved through evolution. These applications typically use architectures with multiple convolutional layers followed by fully connected layers, trained on validated regulatory elements from model organisms and then applied to less-characterized genomes to infer function based on evolutionary principles [8].

RNN Applications: Phylogenetics and Evolutionary Sequence Modeling

RNNs bring unique capabilities to evolutionary genomics through their inherent capacity for modeling sequential dependencies and temporal processes, making them particularly valuable for phylogenetic inference and evolutionary sequence analysis.

In phylogenetic tree construction, RNNs process multiple sequence alignments to model substitution probabilities along branches, capturing complex dependencies between sites that affect evolutionary rates. This approach often outperforms traditional phylogenetic methods when evolutionary processes involve context-dependent mutations or correlated evolution across sites. For ancestral sequence reconstruction, RNNs model the probabilistic relationships between modern sequences and their inferred ancestors, generating plausible ancient protein sequences for functional testing in experimental evolution studies [13].

RNNs also excel at evolutionary rate estimation by incorporating genomic features (GC content, recombination rate, chromatin accessibility) to predict site-specific evolutionary constraints across the genome. These models can identify signatures of positive selection, evolutionary conservation, and functional importance by learning from the patterns of molecular evolution across species comparisons. The sequential nature of RNNs makes them particularly adept at modeling insertion-deletion (indel) evolution, capturing the dependencies between neighboring sites that influence indel probabilities and length distributions throughout evolutionary history [11].

Transformer Applications: Genome-Scale Evolution and Protein Structure Prediction

Transformers have enabled groundbreaking advances in genome-scale evolutionary analysis and protein structure prediction through their ability to capture long-range dependencies and integrate information across entire sequences. Their attention mechanisms are particularly well-suited for identifying epistatic interactions and coordinating evolutionary signals across distributed genomic regions.

The protein structure prediction revolution exemplified by AlphaFold2 and its successors relies heavily on transformer-like attention mechanisms to coordinate information between residues that may be distant in sequence but proximate in three-dimensional space. These models use multiple sequence alignments of homologous proteins to detect evolutionary covariation signals that reveal structural constraints, effectively reading the evolutionary record to infer physical structure. This approach has demonstrated remarkable accuracy in protein folding problems that resisted solution for decades, creating new opportunities for evolutionary studies of protein function and stability [9] [11].

For whole-genome evolutionary analysis, transformers process complete chromosome sequences to identify coordinated evolution across loci, detect signatures of selective sweeps, and model population genetic processes. The self-attention mechanism allows these models to consider interactions between distant genomic regions that might evolve in concert due to structural or functional constraints. Similarly, in cross-species evolutionary genomics, transformers excel at aligning and comparing genomes from diverse organisms, identifying conserved regulatory programs, and reconstructing evolutionary trajectories of gene regulatory networks by attending to relevant sequence features across evolutionary timescales [10].

Table 2: Performance Metrics of AI Architectures on Evolutionary Genomics Tasks

| Application Domain | Architecture | Key Performance Metrics | Reported Performance | Baseline Comparison |

|---|---|---|---|---|

| Regulatory Element Prediction | CNN | AUPRC, Accuracy | AUPRC: 0.89-0.94 [12] | 15-30% improvement over position weight matrices |

| Variant Effect Prediction | CNN + RNN | AUC, Precision-Recall | AUC: 0.92-0.97 [8] | Superior to evolutionary conservation scores alone |

| Protein Structure Prediction | Transformer | RMSD, GDT_TS | RMSD: 1-2Å on many targets [9] | Revolutionized field, near-experimental accuracy |

| Evolutionary Rate Estimation | RNN | Correlation Coefficient | r: 0.75-0.85 with experimental measures [11] | 20-25% improvement over codon models |

| Phylogenetic Inference | RNN | Tree Accuracy, Likelihood | 15-30% more accurate trees for simulated data [13] | Better recovery of known topology with high divergence |

Experimental Protocols

Protocol 1: CNN for Evolutionary Constraint Detection

Objective: Identify evolutionarily constrained genomic elements using a convolutional neural network trained on multi-species sequence alignment data.

Materials:

- Genomic sequences from multiple species with phylogenetic relationships

- Functional genomic annotations (e.g., chromatin states, expression data)

- Computing resources with GPU acceleration

- Deep learning framework (TensorFlow or PyTorch)

Procedure:

- Data Preparation:

- Obtain whole-genome multiple sequence alignments for at least 10 mammalian species

- Convert aligned sequences to one-hot encoding (A=[1,0,0,0], C=[0,1,0,0], G=[0,0,1,0], T=[0,0,0,1])

- Generate binary labels for constrained elements using phyloP or phastCons scores

- Partition data into training (70%), validation (15%), and test sets (15%)

Model Architecture:

- Input layer: 1000bp windows of one-hot encoded sequences

- Convolutional layer 1: 256 filters, size 12, ReLU activation

- Max pooling: size 2

- Convolutional layer 2: 128 filters, size 6, ReLU activation

- Global average pooling

- Fully connected layer: 64 units, ReLU activation

- Output layer: 1 unit, sigmoid activation for constraint classification

Training:

- Initialize model with He initialization

- Use Adam optimizer with learning rate 0.001

- Implement early stopping with patience of 20 epochs

- Train for maximum 200 epochs with batch size 64

Interpretation:

- Apply gradient-based attribution methods (Saliency, Integrated Gradients)

- Visualize first-layer filters as sequence motifs

- Identify high-impact nucleotides contributing to constraint predictions

Protocol 2: RNN for Phylogenetic Inference

Objective: Infer phylogenetic relationships and evolutionary parameters from multiple sequence alignments using a recurrent neural network architecture.

Materials:

- Multiple sequence alignment data (DNA or protein)

- Known phylogenetic trees for model validation (optional)

- High-performance computing cluster with multiple GPUs

- Python with PyTorch and Biopython libraries

Procedure:

- Data Preparation:

- Curate high-quality multiple sequence alignments with 10-50 taxa

- Partition data into training and validation sets using different gene families

- Encode sequences as one-hot vectors with gap characters

- Generate corresponding phylogenetic trees for supervised training

Model Architecture (LSTM-based):

- Input layer: One-hot encoded sequences with embedding

- Bidirectional LSTM layer: 256 units each direction

- Attention mechanism to weight important sequence regions

- Fully connected layers for branch length prediction

- Softmax output for topological probability distribution

Training Procedure:

- Use teacher forcing with scheduled sampling

- Implement gradient clipping (norm = 1.0)

- Employ learning rate scheduling with reduce-on-plateau

- Monitor both likelihood and topological accuracy metrics

Evaluation:

- Compare inferred trees to known phylogenies (Robinson-Foulds distance)

- Assess branch length correlation with established methods

- Perform bootstrap analysis for confidence estimation

- Compare computational efficiency with maximum likelihood methods

Protocol 3: Transformer for Protein Evolution Analysis

Objective: Analyze evolutionary patterns in protein families using transformer architectures to predict fitness landscapes and functional constraints.

Materials:

- Protein multiple sequence alignments from diverse organisms

- Experimental fitness data for model validation

- GPU cluster with substantial memory (≥32GB per GPU)

- Transformer implementation (PyTorch or JAX)

Procedure:

- Data Preprocessing:

- Collect deep mutational scanning data for model training

- Generate multiple sequence alignments using Jackhmmer or MMseqs2

- Create position-specific scoring matrices (PSSMs)

- Partition data with consideration of evolutionary relationships

Model Architecture:

- Input embeddings: sequence tokens + positional encoding

- Multi-head self-attention layers (8-12 heads)

- Position-wise feedforward networks

- Layer normalization and residual connections

- Output heads for fitness prediction and conservation scoring

Training Strategy:

- Pre-training on large protein sequence databases (UniRef)

- Fine-tuning on specific protein families with experimental data

- Masked language modeling objectives for unsupervised learning

- Multi-task learning combining fitness prediction and structure estimation

Interpretation and Analysis:

- Extract attention weights to identify co-evolving residues

- Generate mutational effect maps across protein positions

- Identify sectors of interacting residues through attention patterns

- Compare evolutionary predictions with experimental measurements

The Scientist's Toolkit

Table 3: Essential Research Reagent Solutions for AI-Driven Evolutionary Genomics

| Resource Category | Specific Tools/Databases | Primary Function | Application Examples |

|---|---|---|---|

| Genomic Data Resources | ENSEMBL, UCSC Genome Browser, NCBI Datasets | Provide reference genomes & evolutionary annotations | Training data for conservation models, phylogenetic context |

| Protein Databases | UniProt, Pfam, InterPro | Protein families, domains & functional annotations | Transformer pre-training, functional evolutionary analysis |

| Evolutionary Data | OrthoDB, TreeFam, PANTHER | Gene families, orthology assignments, phylogenetic trees | Ground truth for evolutionary model training |

| AI Frameworks | TensorFlow, PyTorch, JAX | Deep learning model development & training | Implementing custom architectures for evolutionary analysis |

| Specialized Libraries | BioPython, TensorFlow Genomics, PyTorch Geometric | Biological data processing & specialized layers | Handling sequence data, phylogenetic trees, protein structures |

| Visualization Tools | TensorBoard, BioViz, Archaeopteryx | Model interpretation & evolutionary data visualization | Analyzing attention weights, displaying phylogenetic trees |

| Computational Resources | GPU Clusters, Google Colab, AWS/Azure | High-performance computing for model training | Handling large genomic datasets and complex architectures |

The integration of CNN, RNN, and Transformer architectures into evolutionary genomics represents a fundamental shift in how researchers can interrogate and understand molecular evolution. Each architecture brings distinct strengths: CNNs for local pattern detection in sequences, RNNs for modeling temporal evolutionary processes, and Transformers for capturing long-range dependencies across genomes. As these technologies mature, they are increasingly moving from predictive tools to generative models that can design novel sequences and hypothesize evolutionary pathways, creating new opportunities for experimental validation and therapeutic development [10].

The future of AI in evolutionary biology will likely involve hybrid architectures that combine the strengths of these approaches while addressing current limitations in interpretability and data requirements. As these models become more sophisticated and integrated with emerging experimental technologies, they promise to unlock deeper insights into the evolutionary forces that have shaped biological diversity and continue to drive adaptation in natural populations and disease states. This integration positions evolutionary genomics to make increasingly significant contributions to fundamental biology, drug development, and our understanding of life's history and future trajectories.

Application Notes: Deciphering Evolutionary Signals with AI

The application of artificial intelligence (AI) and deep learning in evolutionary genomics is transforming our ability to interpret genetic signals across deep time. These technologies are enabling researchers to decode the functional meaning of genetic sequences, predict the form and function of biological elements, and detect the faintest traces of ancient life. By treating DNA as a biological language with its own grammar and syntax, AI models can read, interpret, and even generate genetic information, providing unprecedented insights into evolutionary processes spanning billions of years.

Key AI Applications in Evolutionary Genomics

Table: Core Applications of AI in Decoding Evolutionary Signals

| Application Area | AI Model/Tool | Primary Function | Evolutionary Scale |

|---|---|---|---|

| Generative Genomics | Evo 2 [4] | Generates novel, functional genetic sequences and predicts protein structures. | All domains of life (extant & extinct) |

| Ancient Biosignature Detection | Pyrolysis-GC-MS + Random Forest [14] [15] | Identifies molecular traces of life in ancient rocks using chemical fingerprint patterns. | >3.3 billion years |

| Variant Pathogenicity Prediction | popEVE [16] | Scores human genetic variants by disease likelihood and evolutionary constraint. | Modern human genomics |

| Remote Homology Detection | eHMMER [17] | Enhances detection of evolutionary relationships between distantly related protein sequences. | Deep evolutionary time |

| Gene Constraint Estimation | Demography-based SFS models [17] | Estimates selection pressure on genes using site frequency spectrum from population data. | Population evolutionary history |

Quantitative Performance of AI Models in Evolutionary Tasks

Table: Performance Benchmarks of Featured AI Models in Genomics

| Model | Reported Accuracy/Performance | Key Evolutionary Insight Enabled |

|---|---|---|

| Evo 2 [4] | Can process contexts of up to 1 million nucleotides; trained on ~9 trillion nucleotides from all known life. | Discerns harmful vs. beneficial mutations; predicts long-distance gene interactions. |

| Ancient Biosignature AI [14] [15] | Distinguishes biological from non-biological materials with >90% accuracy; detects photosynthesis signatures with 93% accuracy. | Extends detectable chemical record of life by ~1.6 billion years; evidence of photosynthesis 800 million years earlier than known. |

| popEVE [16] | Identified 123 novel genes linked to developmental disorders; 25 independently confirmed. | Provides a continuous spectrum of variant pathogenicity based on evolutionary and population data. |

| Demography-based Constraint Model [17] | Outperformed existing scores (AUPRC 0.196 vs. 0.157 for GeneBayes). | Enables comparison of fitness effects between missense and loss-of-function mutations across genes. |

Experimental Protocols

The following protocols detail the methodologies for key experiments that leverage AI to interpret billion-year-old genetic and molecular signals.

Protocol 1: Detecting Molecular Biosignatures in Ancient Rocks Using AI

Objective: To identify faint chemical traces of ancient life in Archean-aged rocks (≥2.5 billion years old) by pairing pyrolysis gas chromatography-mass spectrometry (Py-GC-MS) with supervised machine learning.

Principle: While original biomolecules degrade over geological time, the distribution of their molecular fragments retains diagnostic patterns indicative of a biological origin. A machine learning model is trained to recognize these subtle chemical "fingerprints" [14] [15].

Materials:

- Rock Samples: Archean sedimentary rocks (e.g., shale, chert).

- Control Samples: Modern plants, animals, fungi, carbon-rich meteorites, synthetic organic materials.

- Equipment: Pyrolysis unit coupled to a Gas Chromatograph-Mass Spectrometer (GC-MS).

- Software: Machine learning environment (e.g., R, Python with scikit-learn for Random Forest implementation).

Procedure:

- Sample Preparation and Analysis:

- Crush rock samples to a fine powder using a hydraulic crusher or mortar and pestle.

- For each sample (ancient and control), perform Py-GC-MS analysis. This thermally breaks down organic materials into smaller molecular fragments (volatiles) which are separated by the GC and identified by the MS, generating a complex chromatogram.

- Data Preprocessing and Feature Extraction:

- Process the raw Py-GC-MS data to align peaks across all samples.

- Integrate the peak areas for hundreds to thousands of distinct molecular fragments (e.g., aromatic hydrocarbons, alkanes, alkylbenzenes) to create a high-dimensional data matrix. Each sample is represented as a vector of relative fragment abundances.

- Model Training and Validation:

- Assemble a labeled training dataset from the control samples (e.g., "Biotic" for modern life, "Abiotic" for meteorites/synthetic materials).

- Train a Random Forest classifier or a similar supervised machine learning model on this dataset. The model learns the complex combinations of fragments that distinguish biotic from abiotic origins.

- Validate the model's performance using a held-out test set of control samples, confirming accuracy exceeds 90% [15].

- Inference on Ancient Samples:

- Input the chemical fragment data from the prepared ancient rock samples into the trained model.

- The model outputs a probability score (0% to 100%) for the sample being of biological origin. A score above a pre-defined threshold (e.g., 60%) is considered a strong indicator of past life [15].

AI Integration: The Random Forest model is central to this protocol, as it can handle high-dimensional, noisy data and uncover non-linear relationships between molecular fragments that are imperceptible to manual analysis.

Protocol 2: Generative Protein Design and Functional Validation with Evo 2

Objective: To use a generative AI model to design novel protein sequences with desired functions and validate them experimentally.

Principle: Large language models, trained on the evolutionary "language" of protein sequences from thousands of species, can generate new, functional sequences that may not exist in nature [4].

Materials:

- AI Model: Evo 2, an open-source generative AI model for biological sequences [4].

- Computational Resources: High-performance computing cluster with GPU acceleration (e.g., NVIDIA hardware).

- Wet-Lab Materials: DNA synthesizer, reagents for DNA synthesis, microbial cells (e.g., E. coli), cell culture media, gene editing technology (e.g., CRISPR-Cas9), and relevant functional assays (e.g., enzymatic, binding).

Procedure:

- Sequence Generation:

- Prompt Design: Provide Evo 2 with a starting sequence or a functional prompt (e.g., a partial gene sequence known to be associated with a specific function).

- Autocompletion: The model will autocomplete the sequence, generating novel genetic code. The output may closely resemble a known natural sequence or represent a new combination not seen in evolutionary history [4].

- In Silico Analysis and Filtering:

- Use integrated machine learning models within the Evo 2 framework to predict the structure and function of the generated sequences.

- Filter the list of candidate sequences based on predicted stability, solubility, and functional efficacy.

- DNA Synthesis and Cloning:

- Select the top-ranking generated sequences for experimental validation.

- Chemically synthesize the DNA sequences in vitro.

- Clone the synthesized DNA into an appropriate expression vector.

- Functional Validation:

- Introduce the vector into a host cell (e.g., via transformation of E. coli).

- Express the novel protein.

- Run functional assays specific to the desired protein activity (e.g., measure enzyme kinetics, ligand binding affinity, or antibiotic resistance) to confirm the AI-predicted function.

AI Integration: Evo 2 acts as a generative engine that leverages patterns learned from the entire known tree of life to propose novel, viable biological sequences, dramatically accelerating the design-build-test cycle.

Protocol 3: Prioritizing Pathogenic Variants for Rare Disease Diagnosis with popEVE

Objective: To analyze a patient's genome and identify which genetic variants are most likely to cause a severe or lethal genetic disorder.

Principle: The popEVE model combines deep evolutionary information from across species with human population genetic data to score variants based on their functional impact and disease severity [16].

Materials:

- Input Data: A patient's whole genome or exome sequencing data (VCF file).

- Software Tools: popEVE online portal or local installation; standard bioinformatics tools for initial variant calling (e.g., GATK).

- Reference Data: Population frequency databases (e.g., gnomAD), clinical variant databases (e.g., ClinVar).

Procedure:

- Variant Calling:

- Process the raw sequencing data through a standard variant calling pipeline to generate a comprehensive list of genetic variants (single nucleotide variants, indels) for the patient.

- Variant Annotation:

- Annotate each variant with functional predictions (e.g., missense, loss-of-function), and its frequency in population databases.

- popEVE Analysis:

- Input the list of annotated variants into the popEVE model.

- popEVE will analyze each variant and assign a score indicating its likelihood of being pathogenic. This score is calibrated to be comparable across different genes.

- The model can further stratify variants based on predicted severity, such as those leading to childhood mortality versus adult-onset disease [16].

- Variant Triaging and Clinical Correlation:

- Generate a prioritized list of candidate variants, ranked from highest to lowest popEVE score.

- Cross-reference top-ranked variants with the patient's clinical phenotype.

- Sanger sequencing can be used to confirm the presence of the top candidate variant(s) in the patient and perform segregation analysis in the family.

AI Integration: popEVE's AI integrates two powerful data streams: a generative model (EVE) that learns from deep evolutionary conservation, and a language model that learns from protein sequence context, allowing for cross-gene comparison of variant impact.

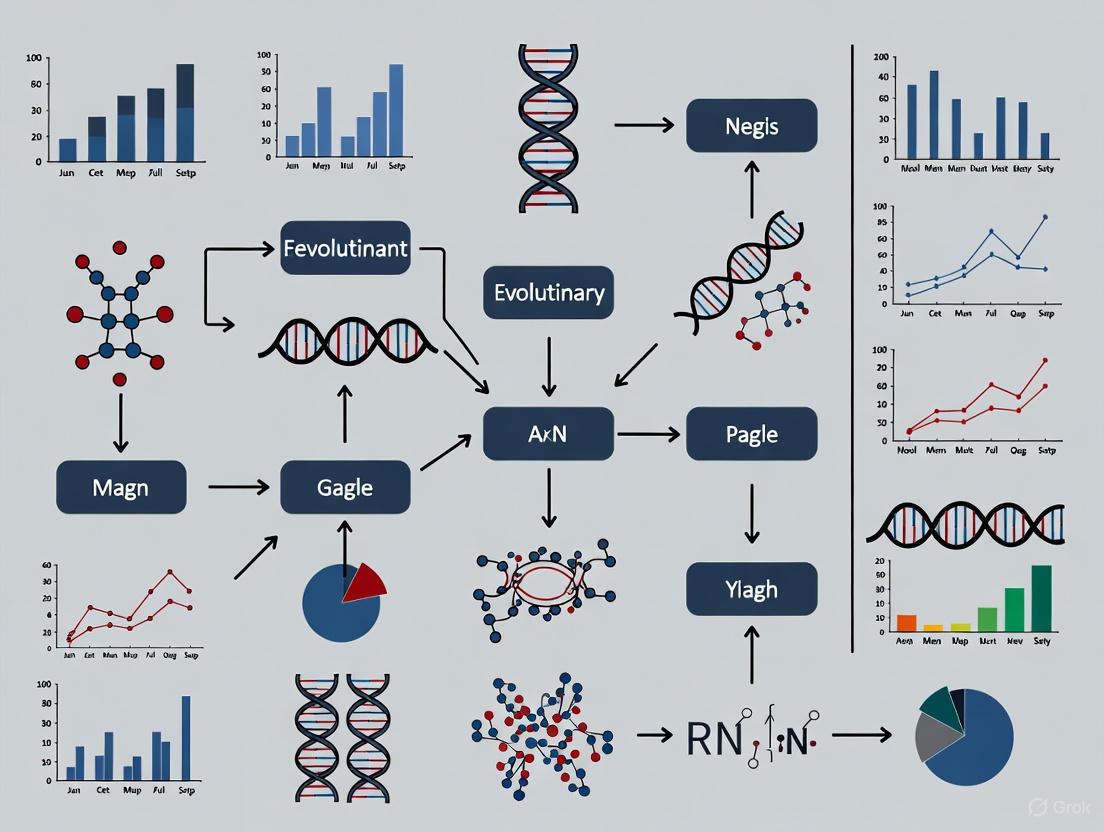

Signaling Pathways and Workflows

AI-Driven Discovery of Ancient Biosignatures

This diagram illustrates the integrated chemical and machine learning workflow for detecting traces of ancient life in billion-year-old rocks.

Generative AI for Protein Design and Validation

This workflow outlines the cycle of using a generative AI model like Evo 2 to design and experimentally test novel protein sequences.

AI for Pathogenic Variant Prioritization

This chart depicts the process of using the popEVE AI model to sift through thousands of genetic variants in a patient's genome to find the causative mutation for a rare disease.

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Tools and Reagents for AI-Driven Evolutionary Genomics

| Category | Item | Function/Description |

|---|---|---|

| Computational Models & Tools | Evo 2 [4] | Open-source generative AI for designing and predicting protein functions across all life. |

| popEVE [16] | AI model for scoring pathogenicity and disease severity of human genetic variants. | |

| eHMMER [17] | Enhanced homology search tool that uses dynamic evolutionary models for sensitive remote homolog detection. | |

| Data Sources | Genomic Datasets (e.g., gnomAD) [17] | Large-scale human population genomic data used for calibrating selection and constraint models. |

| Pfam Database [17] | Curated database of protein families used for training and benchmarking homology detection tools. | |

| Laboratory & Analytical Equipment | Pyrolysis-GC-MS [14] [15] | Instrument for thermally decomposing samples and analyzing the molecular fragments; crucial for ancient biosignature studies. |

| DNA Synthesizer [4] | Equipment for chemically synthesizing AI-designed DNA sequences for experimental validation. | |

| High-Performance Computing (HPC) / Cloud GPU [4] [18] | Essential computational infrastructure for training and running large AI models like Evo 2. | |

| Validation Technologies | CRISPR-Cas9 [4] | Gene-editing system used to insert synthesized DNA into living cells for functional testing. |

| Functional Assays (e.g., enzymatic, binding) | Customized laboratory protocols to test the predicted function of an AI-generated protein or the impact of a genetic variant. |

The field of evolutionary genomics is undergoing a profound transformation, driven by the confluence of massive-scale sequencing initiatives and advanced artificial intelligence (AI) methodologies. The Earth BioGenome Project (EBP), a biological "moonshot" for the 21st century, aims to sequence all of Earth's eukaryotic biodiversity to create a comprehensive digital library of life [19]. This endeavor, alongside other major genomic resources, generates the complex, high-dimensional data that deep learning models are uniquely positioned to decipher. The integration of these large-scale datasets with AI is reshaping fundamental knowledge about genome evolution, function, and diversity, enabling researchers to move from descriptive observations to predictive modeling of evolutionary processes. This article provides a structured overview of key genomic datasets and repositories, details protocols for their utilization in AI-driven research, and discusses the ethical frameworks essential for responsible science, providing evolutionary biologists and genomic scientists with a practical toolkit for navigating this rapidly expanding field.

Major Genomic Data Repositories and Initiatives

Large-scale international consortia and curated databases form the backbone of modern evolutionary genomics research, providing the raw data necessary for training and testing deep learning models.

The Earth BioGenome Project (EBP)

The Earth BioGenome Project (EBP) represents one of the most ambitious biological undertakings, with the goal of sequencing, cataloging, and characterizing the genomes of all of Earth's eukaryotic biodiversity—estimated at approximately 1.8 million species—over a ten-year period [20] [19]. This project has transitioned from its initial phase to Phase II (2025-2030), which aims to sequence 150,000 species within four years, a rate of 3,000 reference-quality genomes monthly [19]. As of late 2025, the EBP has grown into a global collaboration of more than 2,200 scientists in 88 countries and has amassed more than 4,300 high-quality genomes, covering more than 500 eukaryotic families [19]. The project operates as a network of affiliated projects, including national sequencing efforts, regional consortia, and taxonomic-focused initiatives, all united by common standards for data generation and sharing.

Table 1: Key Metrics of the Earth BioGenome Project

| Aspect | Phase I (2018-2024) | Phase II (2025-2030 Targets) |

|---|---|---|

| Goal | Establish standards, frameworks, and initial data | Scale sequencing to 150,000 species in 4 years |

| Genomes Produced | 4,300+ high-quality genomes | 3,000 genomes per month target |

| Cost per Genome | ~$28,000 (average) | ~$6,100 (target) |

| Key Innovations | Data standards, ethical frameworks | Portable "gBox" sequencing labs, enhanced automation |

The EBP is not merely a sequencing endeavor but aims to create a "digital library of life" that will serve as a foundational resource for biology, driving solutions for preserving biodiversity and sustaining human societies [20]. Initial results from the project have already yielded insights into the evolution of chromosomes in butterflies and moths, as well as the adaptation of Arctic reindeer to extreme environments [19]. The data generated follows the FAIR (Findable, Accessible, Interoperable, Reusable) principles and is contributed to the International Nucleotide Sequence Database Collaboration (INSDC) through its founder nodes (GenBank, European Nucleotide Archive, and DNA Database of Japan) or affiliated repositories [21].

Beyond the comprehensive EBP, numerous specialized databases provide curated genomic data tailored to specific research questions in evolutionary genomics. The National Center for Biotechnology Information (NCBI) provides a suite of databases that are indispensable for genomic research [22]. Key resources include:

- GenBank: The NIH genetic sequence database, an annotated collection of all publicly available DNA sequences [23].

- RefSeq: The Reference Sequence collection provides a comprehensive, integrated, non-redundant, well-annotated set of sequences that form a foundation for medical, functional, and diversity studies [23].

- Gene: Integrates information from a wide range of species about gene loci, including nomenclature, Reference Sequences, maps, pathways, variations, and phenotypes [22].

- dbVar: Database of genomic structural variation—insertions, deletions, duplications, inversions, mobile element insertions, translocations, and complex chromosomal rearrangements [23].

- Gene Expression Omnibus (GEO): A public functional genomics data repository supporting MIAME-compliant data submissions for array- and sequence-based data [22].

Specialized resources like the GenomeArk serve as working spaces and database repositories for high-quality reference genomes generated by the EBP, the Vertebrate Genomes Project, and the Telomere-to-Telomere Consortium [24]. These assemblies are expertly curated before submission to public archives. The Tree of Sex Database compiles information on sex determination systems across the tree of life, with over 30,000 records, enabling large-scale comparative studies of sex chromosome evolution [25]. Similarly, specialized Karyotype Databases contain more than 8,000 records for amphibians, coleoptera, and polyneoptera, allowing researchers to investigate patterns of chromosome number evolution [25].

Table 2: Specialized Genomic Databases for Evolutionary Research

| Database Name | Primary Focus | Key Features | Relevance to Evolutionary Genomics |

|---|---|---|---|

| Tree of Sex Database | Sex determination systems | >30,000 records across tree of life | Study of sex chromosome evolution, transitions in sex determination |

| Karyotype Databases | Chromosome number/structure | >8,000 records for specific clades | Investigating chromosome evolution, fission/fusion events, genome organization |

| dbVar | Genomic structural variation | Insertions, deletions, inversions, etc. | Understanding large-scale genomic rearrangements and their evolutionary impact |

| GenomeArk | High-quality reference genomes | Expertly curated assemblies from multiple projects | Source of high-quality data for structural variant discovery and comparative genomics |

AI and Deep Learning Applications in Genomics

The application of artificial intelligence, particularly deep learning (DL), has become instrumental in extracting meaningful patterns from complex genomic data. Deep learning methods process information through mathematical operations (neurons) arranged in multiple connected layers (neural networks), enabling them to automatically extract features from raw, high-dimensional data [26]. This capability makes DL particularly well-suited for genomic applications, where relationships between sequence features and functional outcomes are often complex and non-linear.

Key Deep Learning Applications Across Genomic Subdisciplines

Deep learning has been successfully applied across virtually all areas of genomics, transforming how researchers analyze and interpret genetic information:

Variant Calling and Annotation: Traditional variant callers like GATK and SAMtools have been supplemented by DL approaches that offer improved accuracy. DeepVariant, developed by Google, treats mapped sequencing data as images and converts variant calling into an image classification task, significantly improving the accuracy of single-nucleotide variant and Indel detection [27]. Subsequent tools like DeepSV specialize in predicting long genomic deletions (>50 bp) from sequencing read images [27].

Gene Expression and Regulation: DL models can predict gene expression levels from histone modification data [27], identify transcriptional enhancers [27], and understand the effects of mutations on protein-RNA binding [27]. These applications help bridge the gap between genotype and phenotype by modeling the complex regulatory logic of the genome.

Epigenomics: Deep learning tools analyze epigenetic marks such as DNA methylation and histone modifications to understand their role in gene regulation and cellular identity. Dynamic Bayesian Networks (DBNs) can model complex time series of epigenetic data to uncover temporal relationships in gene regulation processes [26].

Disease Variant Prediction: DL models help classify the pathogenicity of missense mutations [27] and diagnose patients with rare genetic disorders [27]. These applications are particularly valuable for interpreting the clinical significance of variants of unknown significance (VUS) discovered through sequencing.

Pharmacogenomics: Deep learning approaches predict individual drug responses and synergy based on genomic profiles, moving toward personalized treatment strategies [27].

Table 3: Deep Learning Methods and Their Applications in Genomics

| Method | Type | Description | Genomics Applications |

|---|---|---|---|

| Convolutional Neural Networks (CNNs) | Deep Learning | Process data with grid-like topology; excel at feature detection | Variant calling (DeepVariant), sequence motif discovery, epigenomic feature identification |

| Recurrent Neural Networks (RNNs) | Deep Learning | Designed for sequential data; contain internal memory | DNA sequence annotation, time-series gene expression analysis |

| Dynamic Bayesian Networks (DBNs) | Deep Learning | Probabilistic graphical models with temporal extension | Gene regulation analysis, epigenetic data integration, protein sequencing [26] |

| Support Vector Machines (SVM) | Machine Learning | Finds optimal hyperplanes for classification in high-dimensional space | Cancer genomics classification, biomarker discovery [26] |

| Random Decision Forests (RDF) | Machine Learning | Ensemble of decision trees; averages their predictions | Genome-Wide Association studies, epistasis detection, pathway analysis [26] |

Experimental Protocol: Implementing Deep Learning for Variant Calling

The following protocol outlines a standard workflow for implementing deep learning approaches to identify genetic variants from next-generation sequencing (NGS) data, using tools like DeepVariant as an example:

Step 1: Data Acquisition and Preparation

- Obtain whole genome or whole exome sequencing data in BAM or CRAM format, aligned to a reference genome.

- Download corresponding reference genome sequence (FASTA) and annotation files (GTF/GFF).

- For supervised learning approaches, acquire validated variant calls (VCF files) for training and validation.

Step 2: Data Preprocessing and Formatting

- Convert aligned sequencing data into the format required by the DL model. For DeepVariant, this involves creating images of read alignments around candidate variant sites.

- Generate tensor representations or windowed sequences around regions of interest.

- Split data into training, validation, and test sets (typical ratio: 70%/15%/15%), ensuring chromosomal independence between sets to prevent data leakage.

Step 3: Model Selection and Configuration

- Choose an appropriate DL architecture based on the specific variant calling task:

- CNNs for image-based representation of read alignments

- RNNs/LSTMs for sequence-based approaches

- Hybrid architectures for integrating multiple data types

- Configure model hyperparameters (learning rate, batch size, optimizer settings) based on established benchmarks.

Step 4: Model Training and Validation

- Implement training with appropriate loss functions (e.g., categorical cross-entropy for classification) and evaluation metrics (precision, recall, F1-score).

- Use data augmentation techniques specific to genomics (e.g., sequence rotation, synthetic minority oversampling) to address class imbalance.

- Perform k-fold cross-validation to assess model robustness and prevent overfitting.

Step 5: Variant Calling and Post-processing

- Run the trained model on test data to generate initial variant calls.

- Apply quality filters and calibration based on validation performance.

- Combine DL-based calls with conventional caller results (e.g., GATK, SAMtools) using ensemble methods to improve overall accuracy, as demonstrated by Kumaran et al. [27].

Step 6: Functional Annotation and Interpretation

- Annotate called variants using databases like dbSNP, ClinVar, and gnomAD.

- Use interpretation tools to predict functional impact (e.g., SIFT, PolyPhen-2, CADD).

- Implement model interpretation techniques (e.g., SHAP, Integrated Gradients) to identify features driving variant classifications.

Diagram 1: Deep learning workflow for genomic variant calling, showing the sequential steps from data preparation to final variant annotation.

Data Management, Ethical Considerations, and Best Practices

The generation and analysis of genomic data at scale necessitates careful attention to data management, storage solutions, and ethical frameworks, particularly when working with Indigenous Peoples and Local Communities (IPLCs).

Data Storage and Management Solutions

Genomic datasets present significant challenges for storage and efficient access due to their massive size. The D4 (dense depth data dump) format has been developed specifically for quantitative genomics data to balance improved analysis speeds with file size requirements [28]. Unlike general-purpose formats like HDF5, D4 uses an adaptive encoding scheme that profiles a random sample of aligned sequence depth to determine an optimal encoding strategy [28]. For typical whole genome sequencing data with 30-fold coverage, more than 99% of observed depths fall between 0 and 63, enabling efficient encoding with just 6 bits per base [28]. The d4tools software suite provides utilities for creating D4 files from BAM, CRAM, and bigWig inputs, along with tools for statistical summaries and visualization [28].

Ethical Framework and Data Sharing Principles

The Earth BioGenome Project has established comprehensive guidelines for ethical data sharing, particularly emphasizing relationships with Indigenous Peoples and Local Communities (IPLCs) [21]. The EBP affirms that "the protection and conservation of biodiversity is of common interest to all humanity" and supports the establishment of "responsible procedures for the sharing and management of biodiversity genomic data that maximize openness while respecting international and national legislation and the rights of Indigenous Peoples and Local Communities" [21].

Key principles include:

- FAIR and CARE Principles: EBP requires that genome assemblies, raw data, and specimen metadata be shared in alignment with both FAIR (Findable, Accessible, Interoperable, Reusable) and CARE (Collective Benefit, Authority to Control, Responsibility, Ethics) principles [21].

- Access and Benefit-Sharing: EBP supports the ambitions of the Convention on Biological Diversity (CBD) and Nagoya Protocol, advocating for "open access policy for all digital sequence information (DSI)" while ensuring equitable sharing of benefits [21].

- Respect for Sovereignty and Rights: The project recognizes national sovereignty over biodiversity and the rights of IPLCs, acknowledging that open sharing through INSDC may be prohibited or delayed due to national laws, regulations, or agreements with communities [21].

- Equitable Capacity Building: EBP encourages highly resourced projects to support lower-resourced projects through funding, collaboration, technology transfer, training, and capacity building to reduce barriers to producing reference genomes that meet quality standards [21].

When partnering with IPLCs, biological samples or Traditional Knowledge must be ethically and legally obtained through engagement that "accommodate[s] the priorities, needs and preferences of the IPLCs in a clear and transparent manner" [21]. This includes respecting mutually agreed-upon research dissemination strategies and publication embargoes that protect community interests [21].

Diagram 2: Ethical framework for genomic data governance, showing the integration of FAIR, CARE, and TRUST principles into practical applications for responsible data management.

Essential Research Reagent Solutions

The following table outlines key computational tools and resources essential for conducting AI-driven evolutionary genomics research:

Table 4: Essential Research Reagent Solutions for AI-Driven Evolutionary Genomics

| Resource Category | Specific Tools/Platforms | Function/Purpose |

|---|---|---|

| Variant Calling Tools | DeepVariant, DeepSV, GATK, SAMtools | Identification of genetic variants from sequencing data |

| Data Formats | D4 format, BAM, CRAM, VCF, FASTA | Efficient storage and access of genomic data and variants |

| Cloud Computing Platforms | Amazon Web Services, Google Compute Engine, Microsoft Azure | Provide GPU resources for deep learning model training |

| Specialized Databases | Tree of Sex Database, Karyotype Databases, dbVar, GEO | Curated data for specific evolutionary questions |

| Programming Frameworks | TensorFlow, PyTorch, Keras | Implementation and training of deep learning models |

| Genomic Browsers/Viewers | Genome Data Viewer (GDV), UCSC Genome Browser | Visualization and exploration of genomic data |

The integration of large-scale genomic initiatives like the Earth BioGenome Project with advanced deep learning methodologies represents a paradigm shift in evolutionary genomics research. The resources, protocols, and ethical frameworks outlined in this article provide a roadmap for researchers to leverage these powerful tools and datasets effectively. As the field continues to evolve, several key challenges remain, including the need for more efficient data compression formats like D4, improved model interpretability, and the ongoing implementation of ethical guidelines that respect both open science principles and the rights of Indigenous Peoples and Local Communities. The rapid pace of advancement in both sequencing technologies and AI algorithms promises to further accelerate discoveries, enabling unprecedented insights into the patterns and processes of genome evolution across the tree of life.

From Theory to Therapy: AI Applications Redefining Genomic Analysis and Discovery

The emergence of generative artificial intelligence (AI) represents a paradigm shift in evolutionary genomics research, enabling machines to read, write, and think in the language of nucleotides [29]. Foundation models trained on biological sequences can now decode the patterns evolution has imprinted on DNA, RNA, and proteins over millions of years [29] [9]. The Evo model series, developed through a collaboration between Arc Institute, NVIDIA, Stanford University, UC Berkeley, and UC San Francisco researchers, stands at the forefront of this revolution [29] [30]. This application note examines the capabilities of Evo 2 and its predecessor, providing detailed protocols for leveraging these tools in genomic research and therapeutic development.

Evo 2 represents the largest publicly available AI model for biology to date, building upon the architecture and training methodologies established by Evo 1 [29] [31]. These models demonstrate how deep learning can harness evolutionary constraints to predict molecular function and design novel biological systems [32]. For researchers and drug development professionals, these tools offer unprecedented capabilities for identifying disease-causing mutations, designing targeted genetic therapies, and accelerating the development of precision medicines [33] [9].

Model Architecture and Technical Evolution

From Evo 1 to Evo 2: A Quantitative Comparison

The Evo model series leverages a novel StripedHyena architecture that overcomes limitations of traditional Transformer models for handling long genomic sequences [33]. This hybrid architecture combines convolutional filters and gates to efficiently process context lengths up to 1 million nucleotides, enabling the understanding of relationships between distant genomic regions [29] [33].

Table 1: Technical Specifications of Evo Model Generations

| Feature | Evo 1 | Evo 2 |

|---|---|---|

| Training Data | 300 billion nucleotides from prokaryotic genomes [33] | 8.8-9.3 trillion nucleotides from all domains of life [29] [33] |

| Species Coverage | 113,000 bacterial and archaeal genomes [34] | 128,000+ species including eukaryotes [29] [30] |

| Model Parameters | 7 billion [33] | 7 billion and 40 billion [33] |

| Context Length | 131,072 tokens [33] | Up to 1,048,576 tokens [33] |

| Architecture | StripedHyena (29 layers) [33] | StripedHyena 2 (up to 40B parameters) [29] [33] |

| Training Hardware | Not specified | 2,000+ NVIDIA H100 GPUs on DGX Cloud [29] [33] |

| Modalities | DNA, RNA, protein [33] | DNA, RNA, protein [33] |

Architectural Innovations

The StripedHyena architecture enables Evo 2 to process genetic sequences of up to 1 million nucleotides at once, representing a fundamental breakthrough in genomic AI [29]. This long context window allows researchers to explore interactions between genes that may not be physically close on the DNA molecule but collaborate functionally [34]. The architecture trains nearly three times faster than optimized transformer models, making large-scale genomic analysis computationally feasible [31].

Evo 2's training on over 128,000 whole genomes across all domains of life (eukaryotes, prokaryotes, and archaea) provides it with a generalist understanding of the tree of life [29] [30]. This cross-species generalization capability enables the model to identify patterns that experimental researchers would need years to uncover through traditional laboratory methods [30].

Research Applications and Performance Benchmarks

Predictive Capabilities in Disease Research

Evo 2 demonstrates exceptional performance in predicting functional effects of genetic variations, achieving over 90% accuracy in distinguishing benign from pathogenic mutations in the BRCA1 gene associated with breast cancer risk [29] [31]. Unlike specialized variant effect prediction methods such as AlphaMissense, Evo 2 can predict effects of both coding and non-coding mutations, making it state-of-the-art for comprehensive genomic analysis [31].

Table 2: Experimental Applications and Validation Methodologies

| Application Domain | Experimental Protocol | Validation Method | Performance Metrics |

|---|---|---|---|

| Variant Effect Prediction | In silico analysis of human gene variants [29] | Comparison to clinical databases and functional studies [31] | >90% accuracy for BRCA1 classification [29] [31] |

| Gene Essentiality Identification | Genome-wide analysis across species [33] | Comparison to experimental knockout studies [33] | State-of-the-art identification of essential genes [33] |

| Semantic Design | Prompt-based generation with functional context [32] | Growth inhibition assays for toxin-antitoxin systems [32] | High experimental success rates for novel proteins [32] |

| Regulatory Element Design | Generation of cell-type specific promoters [29] | Chromatin accessibility profiling in target cells [31] | Specific activity in desired cell types [29] |

Generative Capabilities for Synthetic Biology

Evo 2 enables "semantic design" of novel biological sequences by leveraging the model's understanding of genomic context and functional associations [32]. This approach allows researchers to generate novel genes with specified functions by providing genomic prompts that establish functional context. The model has successfully generated functional anti-CRISPR proteins and type II/III toxin-antitoxin systems, including de novo genes with no significant sequence similarity to natural proteins [32].

The generative process functions as a genomic "autocomplete" where researchers can input partial sequences or functional contexts, and Evo 2 generates novel sequences enriched for related functions [34] [32]. This capability has been validated through experimental testing, demonstrating that sequences generated by Evo achieve robust activity even without structural priors or task-specific fine-tuning [32].

Experimental Protocols

Protocol 1: Variant Pathogenicity Assessment

Principle: Evo 2 can distinguish between benign and pathogenic genetic mutations with high accuracy by leveraging its training across evolutionary sequences [29] [31].

Procedure:

- Input Preparation: Format the DNA sequence containing the variant of interest in FASTA format. Include at least 500 base pairs of flanking sequence context.

- Model Query: Use the Evo 2 API to compute likelihood scores for both reference and alternative alleles at the variant position.

- Score Calculation: Compute the log-likelihood ratio (LLR) between reference and alternative sequences. More negative LLR values indicate higher probability of pathogenicity.

- Interpretation: Classify variants using validated thresholds established for genes of interest (e.g., BRCA1).

Validation: This protocol achieved over 90% accuracy on BRCA1 variants compared to clinical classifications [29] [31].

Protocol 2: Semantic Design of Novel Genes

Principle: By leveraging the distributional hypothesis of gene function ("you shall know a gene by the company it keeps"), Evo can generate novel sequences with desired functions based on genomic context [32].

Procedure:

- Prompt Engineering: Identify and extract genomic sequences with known functional associations from databases. For toxin-antitoxin systems, prompt with known toxin sequences to generate novel antitoxins [32].

- Sequence Generation: Use Evo's generation API with appropriate sampling parameters (temperature=0.7-1.0, top_k=4-10) to generate diverse candidate sequences.

- In Silico Filtering: Filter generated sequences for protein-coding potential, novelty requirements (<70% sequence identity to known proteins), and predicted molecular interactions.

- Experimental Validation: Synthesize filtered sequences and test functionality using appropriate assays (e.g., growth inhibition for toxins [32]).

Validation: This approach successfully generated functional anti-CRISPR proteins and toxin-antitoxin systems with high experimental success rates [32].

Protocol 3: Cell-Type Specific Regulatory Element Design

Principle: Evo 2 can design genetic elements that function specifically in target cell types by learning patterns of chromatin accessibility and gene regulation [29] [31].

Procedure:

- Target Definition: Identify cell type of interest (e.g., neurons, liver cells) and desired regulation pattern.

- Context Provision: Provide Evo 2 with known cell-type specific regulatory elements as context.

- Generation: Generate novel regulatory sequences using conditional sampling.

- Validation: Test designed sequences experimentally using reporter assays in the target cell type.

Application: This protocol enables design of gene therapies with reduced side effects through cell-type specific activity [29].

Workflow Visualization

Evo 2 Research Workflow Diagram. The visualization outlines the key stages in utilizing Evo 2 for genomic research, from input preparation through experimental validation.

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Research Resources for Evo 2 Applications

| Resource | Type | Function | Access Method |

|---|---|---|---|

| NVIDIA BioNeMo | Cloud Platform | Hosted Evo 2 API for sequence analysis and generation [33] | NVIDIA cloud services |

| Evo Designer | Web Interface | User-friendly interface for interactive sequence design [29] | Web browser access |

| StripedHyena 2 | Model Architecture | Open-source code for local implementation [33] | GitHub repository |

| OpenGenome2 | Training Dataset | 8.8 trillion nucleotides for model training [35] | HuggingFace dataset |

| SynGenome | AI-Generated Database | 120 billion base pairs of AI-generated sequences [32] | evodesign.org/syngenome |

Implementation Guide

API Integration Example

Evo 2 is accessible through the NVIDIA BioNeMo platform as a NIM microservice. Below is a basic implementation example for sequence generation:

Local Implementation Requirements

For researchers requiring local deployment, the following specifications are necessary:

- GPU: Compute Capability 8.9+ (Ada/Hopper) for FP8 support [35]

- Software: CUDA 12.1+, cuDNN 9.3+, Python 3.12 [35]

- Memory: Significant VRAM for the 40B parameter model [33]

Safety and Ethical Considerations

The Evo 2 development team implemented important safety measures, excluding pathogens that infect humans and other complex organisms from the training data [29]. The model is designed not to return productive answers to queries about these excluded pathogens [29]. These precautions, developed with ethics experts including Stanford Professor Tina Hernandez-Boussard and her lab, ensure responsible deployment while maintaining broad utility for legitimate research applications [29].

Future Directions