Mitigating Contamination in Metagenomic Antibiotic Resistance Gene Analysis: A Comprehensive Guide for Researchers

Accurate metagenomic analysis of Antibiotic Resistance Genes (ARGs) is paramount for public health surveillance and drug development, yet is critically threatened by contamination and analytical artifacts.

Mitigating Contamination in Metagenomic Antibiotic Resistance Gene Analysis: A Comprehensive Guide for Researchers

Abstract

Accurate metagenomic analysis of Antibiotic Resistance Genes (ARGs) is paramount for public health surveillance and drug development, yet is critically threatened by contamination and analytical artifacts. This article provides a comprehensive framework for researchers and scientists to identify, troubleshoot, and mitigate contamination throughout the ARG analysis pipeline. Covering foundational concepts, advanced methodologies like long-read sequencing and machine learning, optimization strategies for low-biomass samples, and rigorous validation techniques, this guide synthesizes the latest advancements to ensure the integrity and reliability of resistome data for biomedical and clinical applications.

Understanding the ARG Contaminome: Sources, Impacts, and Foundational Concepts

Troubleshooting Guides

Low ARG Detection Specificity in Complex Environmental Samples

Problem: Inconclusive or non-specific detection of Antibiotic Resistance Genes (ARGs) in complex environmental samples (e.g., wastewater, sediment), leading to unreliable abundance profiles.

Background: Metagenomic analysis of ARGs in environments like wastewater or agricultural runoff is complicated by high microbial diversity and the presence of chemical contaminants. These factors can interfere with DNA extraction, sequencing, and bioinformatic classification [1].

Solution: A multi-faceted approach is required to improve specificity:

- Enhanced Bioinformatic Filtering: Utilize updated and comprehensive ARG databases. The

SARG+database, for instance, expands upon common databases like CARD by including multiple sequence variants for a single ARG from different species, improving detection sensitivity and accuracy [2]. - Incorporate Abiotic Data: Analyze key environmental variables. Studies show that nutrients like Total Nitrogen (TN) and Total Phosphorus (TP) in water, as well as heavy metal contamination, can be key drivers of ARG co-selection and abundance. Correlating this data with metagenomic findings can validate ARG profiles [1] [3].

- Apply Health Risk Frameworks: Use frameworks that classify ARGs based on their human accessibility, mobility, pathogenicity, and clinical availability. This helps prioritize ARGs that pose a genuine health risk over ubiquitous environmental resistance genes [3].

Prevention:

- Implement strict quality control during DNA extraction and library preparation.

- Use a standardized metadata sheet to document all environmental parameters (e.g., pH, nutrient levels, antibiotic concentrations) to aid in later interpretation and normalization of data [4].

Inaccurate Host Attribution of ARGs

Problem: Difficulty in accurately linking detected ARGs to their specific bacterial host species, hindering risk assessment of pathogen transmission.

Background: Short-read sequencing technologies produce fragments that are often too short to unambiguously link an ARG to its host genome, especially when ARGs are located on mobile genetic elements (MGEs) [2] [5].

Solution: Leverage long-read sequencing technologies and advanced bioinformatic tools.

- Adopt Long-Read Sequencing: Platforms like Oxford Nanopore Technologies (ONT) and PacBio generate reads tens of thousands of bases long. These long reads can span ARGs and their surrounding genomic context, enabling more confident host attribution [2].

- Use Specialized Bioinformatics Tools: Employ tools like

Argo, which is designed for long-read metagenomic data. Instead of classifying each read individually,Argoclusters overlapping reads and assigns taxonomic labels collectively, significantly enhancing the accuracy of host identification at the species level [2]. - Track Mobile Genetic Elements (MGEs): Actively screen for MGEs (plasmids, integrons, transposons) in your analysis. The presence of an ARG near an MGE is a strong indicator of potential horizontal gene transfer [6].

Prevention:

- For critical studies requiring high-confidence host linkage, design your workflow around long-read sequencing from the start.

- In bioinformatic pipelines, use comprehensive taxonomic databases like GTDB for classification [2].

Background Contamination and Cross-Talk in Samples

Problem: High levels of background noise or suspected cross-contamination between samples, leading to inflated or false-positive ARG calls.

Background: Contamination can be introduced at multiple stages: during sample collection, DNA extraction, library preparation, or via bioinformatic artifacts during sequence classification [7]. Low-biomass samples are particularly vulnerable.

Solution: A rigorous experimental and analytical workflow is essential.

- Include Control Samples: Always process negative controls (e.g., blank extraction controls, no-template PCR controls) alongside your experimental samples. The sequences derived from these controls should be used to create a "background contaminant" profile that can be subtracted from your experimental data.

- Bioinformatic Decontamination: Use the data from your negative controls to filter out contaminant reads from your experimental samples. Tools and scripts are available that can subtract reads or taxa present in controls from the main dataset.

- Validate with Assembly: While read-based analysis is fast, assembly-based approaches can provide more accurate gene calls. Assemble reads into contigs and then annotate ARGs. This reduces the risk of false positives from short, non-specific matches [4] [5].

Prevention:

- Maintain separate pre- and post-PCR laboratories and use dedicated equipment.

- Use unique dual-indexed barcodes for each sample to minimize the risk of index hopping or cross-talk during multiplex sequencing.

Inconsistent ARG Abundance Quantification

Problem: ARG abundance values are inconsistent across different studies or when using different bioinformatic tools, making comparisons invalid.

Background: Different methods for normalizing ARG abundance (e.g., to 16S rRNA gene count, to number of cellular controls, to sequencing depth) can yield vastly different results [7] [5].

Solution: Standardize the normalization approach.

- Choose a Robust Normalization Method: A common and recommended practice is to normalize the number of ARG reads to the number of 16S rRNA gene copies in the sample. This controls for variations in bacterial biomass and sequencing depth [7].

- Use a Standardized Unit: Express results as "copy of ARG per copy of 16S rRNA gene" to ensure comparability [7].

- Pipeline Consistency: When comparing datasets, use the same bioinformatic pipeline and ARG database. Note that different databases (e.g., CARD, SARG) have different curation rules, which will affect counts [5].

Prevention:

- Clearly state the normalization method and database used in all publications and reports.

- When using a pipeline like

ARGem, take advantage of its integrated workflow to ensure consistency from raw read to final quantification [4].

Frequently Asked Questions (FAQs)

Q1: What are the key environmental factors that can confound ARG analysis, and how should I account for them? Environmental factors like nutrients (especially nitrogen and phosphorus), heavy metals, pH, and organic matter can significantly influence ARG abundance and distribution through co-selection pressure [1]. You should account for them by:

- Measuring these variables during sample collection.

- Incorporating them as metadata in your statistical models to determine their correlation with ARG profiles.

- Recognizing that these factors can be as significant a driver as antibiotic residues themselves [1] [3].

Q2: How can I distinguish between a 'high-risk' ARG and a benign environmental resistance gene? A 'high-risk' ARG is one that has a high potential to end up in a human pathogen. Use a metagenomic-based risk assessment framework that scores ARGs based on [3]:

- Human accessibility: Can the ARG be found in bacteria that infect humans?

- Mobility: Is the ARG associated with mobile genetic elements like plasmids?

- Pathogenicity: Is the ARG host a known pathogen?

- Clinical availability: Is the antibiotic to which it confers resistance used in clinical medicine? ARGs with high scores across these indicators, particularly multidrug resistance genes, pose the greatest health risk [3].

Q3: My analysis detected ARGs in a sample with no known antibiotic exposure. Is this contamination? Not necessarily. Antibiotic resistance is a natural phenomenon, and ARGs exist in pristine environments as part of the natural resistome [1] [6]. Their presence can be explained by:

- Co-selection from other pollutants, such as heavy metals, which can maintain ARGs in bacterial populations even in the absence of antibiotics [3].

- The ancient and ubiquitous nature of many resistance mechanisms [1].

- Background levels of antibiotics or other selective agents that were not measured.

Q4: What is the single most effective step to improve the accuracy of my metagenomic ARG analysis? While a robust workflow is essential, for complex environmental samples, performing sequence assembly prior to ARG annotation is highly recommended. While read-based analysis is faster, assembly into contigs provides longer sequences for annotation, which greatly improves the confidence and accuracy of ARG identification and reduces false positives [4] [5].

Experimental Protocols & Data Presentation

Standardized Metagenomic Workflow for ARG Analysis

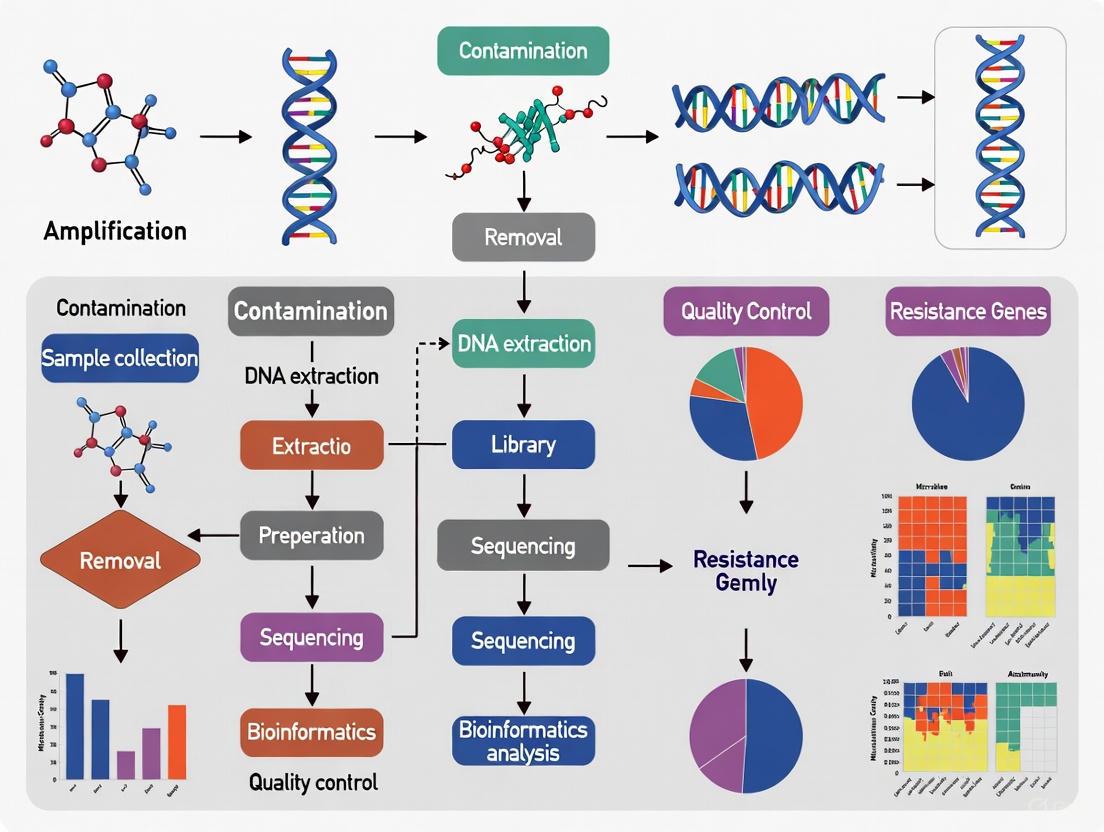

The following diagram outlines a robust workflow for metagenomic ARG analysis, integrating steps for contamination mitigation.

Diagram: Integrated ARG Analysis Workflow with Key Control Points.

Key Environmental Factors and Their Impact on ARG Abundance

Table: Key Environmental Drivers of ARG Abundance and Their Proposed Mechanisms [1] [3] [7].

| Environmental Factor | Observed Correlation with ARGs | Proposed Mechanism |

|---|---|---|

| Nutrients (N & P) | Strong positive correlation; Total Nitrogen (TN) identified as a major contributor [1]. | Nutrient pollution can enhance microbial growth and density, facilitating horizontal gene transfer and co-selection of ARGs. |

| Heavy Metals | Positive correlation with metals like Sb, Cu, Zn in mining areas [3]. | Co-selection where metal resistance genes (e.g., on same plasmid as ARGs) are selected for under metal stress. |

| pH | Significant correlation with tetracycline resistance genes (e.g., tetM) in soils [1]. | pH influences microbial community structure and the bioavailability of antibiotics and metals, indirectly shaping the resistome. |

| Antibiotic Residues | Direct selective pressure; water column antibiotics majorly affect sediment ARGs [1]. | Direct selection for bacteria possessing ARGs that confer resistance to the specific antibiotic present. |

| Mobile Genetic Elements | Strong co-occurrence network between ARGs and MGEs [6] [7]. | MGEs (plasmids, integrons, transposons) are the primary vectors for the horizontal transfer of ARGs between bacteria. |

Research Reagent Solutions

Table: Essential Tools and Databases for Contamination-Aware Metagenomic ARG Analysis.

| Reagent / Resource | Type | Primary Function in ARG Analysis |

|---|---|---|

| SARG+ [2] | ARG Database | A manually curated database that includes multiple variants per ARG from different species, improving detection accuracy and reducing false negatives. |

| GTDB [2] | Taxonomic Database | A comprehensive and quality-controlled taxonomic database used for accurate classification of microbial hosts, especially in long-read analysis. |

| ARGem Pipeline [4] | Bioinformatics Pipeline | A user-friendly, full-service pipeline that integrates ARG annotation with metadata capture and supports various visualizations, promoting reproducible and comparable results. |

| Argo Profiler [2] | Bioinformatics Tool | A tool specifically designed for long-read metagenomic data that uses read-overlapping and cluster-based classification to achieve highly accurate species-level host attribution of ARGs. |

| CARD / NDARO [2] | ARG Database | Widely used reference databases for antibiotic resistance. Often used in combination with other tools to ensure comprehensive ARG profiling. |

| Negative Control Samples | Wet-lab Control | Field and extraction blanks are processed alongside samples to identify and bioinformatically subtract laboratory and reagent-derived contaminants. |

| Unique Dual Indexes | Sequencing Reagent | Barcodes used during library preparation to minimize index hopping and cross-contamination between samples in a sequencing run. |

Troubleshooting Guides and FAQs

Environmental Cross-Talk

What is "environmental cross-talk" and how does it affect my metagenomic data?

Environmental cross-talk, or well-to-well contamination, occurs when genetic material from one sample inadvertently transfers to another during laboratory processing. This is not just background reagent contamination but represents a previously undocumented form of contamination where sequences from high-biomass samples appear in neighboring low-biomass samples [8]. This contamination primarily occurs during DNA extraction rather than PCR and is highest with plate-based methods compared to single-tube extraction [8]. The effect is most pronounced in low-biomass samples, where it can disproportionately impact alpha and beta diversity metrics and lead to incorrect ecological interpretations [8].

How significant is the distance effect for cross-contamination between samples?

Cross-contamination follows a distinct distance-decay relationship, with the highest rates occurring in immediately proximate wells [8]. Research has demonstrated that well-to-well contamination occurs primarily in neighboring samples, with rare events detected up to 10 wells apart [8]. The effect is more strongly distance-dependent for plate-based extractions than for manual single-tube methods [8].

Table 1: Well-to-Well Contamination Statistics by Extraction Method

| Extraction Method | Primary Contamination Source | Contamination Pattern | Distance Decay Relationship |

|---|---|---|---|

| Plate-Based Methods | DNA extraction process [8] | Highest in immediate neighbors, up to 10 wells away [8] | Stronger distance-decay effect [8] |

| Single-Tube Methods | DNA extraction process [8] | More dispersed pattern [8] | Weaker distance-decay effect [8] |

Recommended Protocol: Minimizing Environmental Cross-Talk

- Sample Randomization: Randomize samples across plates instead of grouping them by sample type or biomass. This prevents systematic bias from high-biomass samples contaminating entire groups of low-biomass samples [8].

- Biomass Grouping: When possible, process samples of similar biomasses together on the same plate [8].

- Physical Separation: Incorporate blank wells between samples, especially between high-biomass and low-biomass samples, to act as buffers [8].

- Method Selection: For critical low-biomass work, employ manual single-tube extractions or hybrid plate-based cleanups to reduce cross-talk compared to automated plate-based systems [8].

Laboratory Procedures

At which stage of my workflow is contamination most likely to occur?

Contamination can be introduced at virtually every stage, from sample collection to data analysis [9]. Major sources during laboratory procedures include:

- Sample Collection: Contamination from human operators, sampling equipment, and adjacent environments [9].

- DNA Extraction: This is a critical point for well-to-well contamination in plate-based formats [8]. It also introduces contaminants from reagents and kits [9].

- Library Preparation: While less frequent than during extraction, cross-contamination can also occur in this stage [8].

Human-derived contamination primarily comes from the laboratory personnel themselves. Sources include aerosol droplets from breathing or talking, as well as cells shed from clothing, skin, and hair [9]. Poor aseptic technique, such as talking over open samples, resting pipettes on benches, or wearing the same personal protective equipment (PPE) between different samples, are classic examples of lapses that lead to contamination [10].

Recommended Protocol: Contamination-Control During Lab Work

- Personal Protective Equipment (PPE): Wear appropriate PPE—including gloves, lab coats, goggles, and—for very low-biomass work—face masks and hair covers to create a barrier between the operator and the sample [9].

- Aseptic Technique: Use sterile, single-use consumables wherever possible. Decontaminate work surfaces and equipment with solutions like ethanol (to kill microbes) followed by a nucleic acid degrading solution like bleach (to remove DNA residues) before and after use [9].

- Workflow Design: Implement a one-way workflow where samples and reagents move from "clean" areas (e.g., sample preparation and PCR setup) to "dirty" areas (e.g., post-amplification analysis) without backtracking [10].

- Equipment Maintenance: Regularly clean and calibrate equipment. Use HEPA-filtered laminar flow hoods or biological safety cabinets for sensitive steps [10].

Reagent Microbiomes

What is meant by the "reagent microbiome"?

The "reagent microbiome" refers to the background microbial DNA present in the reagents and consumables (e.g., DNA extraction kits, plasticware, water, and PCR master mix) used in laboratory workflows [8]. This DNA is co-extracted and co-amplified with the target DNA from your sample, contributing a contaminant "noise" that can be particularly problematic in low-biomass studies where the contaminant signal can overwhelm the true biological signal [9].

How can I identify contaminants from my reagent microbiome?

The most effective method is the consistent use of negative controls (or "blanks") throughout your workflow. These controls should undergo the exact same processing as your samples—from DNA extraction to sequencing—but contain no template biological material [9]. The sequences identified in these negative controls represent your specific reagent and laboratory contaminant profile.

Table 2: Essential Controls for Contamination Identification

| Control Type | Composition | Purpose | What It Identifies |

|---|---|---|---|

| Negative Control (Blank) | No-template sample (e.g., sterile water) taken through entire workflow [9] | Defines the background contaminant profile | Reagent contaminants, laboratory environment contaminants [9] |

| Positive Control | Known community (Mock community) or single organism [9] | Verifies assay sensitivity and specificity | PCR inhibition, protocol failures, bioinformatic errors [9] |

| Sampling Control | Swab of air, PPE, or sampling equipment [9] | Identifies contamination introduced during sample collection | Contamination from the sampling environment or personnel [9] |

Recommended Protocol: Managing Reagent Microbiome Effects

- Batch Testing: If possible, test different lots of extraction kits to select those with the lowest background contamination for your specific application [11].

- Use Certified Kits: Choose DNA extraction kits that are manufactured according to high standards and are recommended for low-biomass work [11].

- Critical Interpretation: Be cautious with simplistic bioinformatic approaches that remove all taxa found in negative controls. This can be misleading, as sequences in blanks may be due to cross-talk from other samples rather than just reagent contaminants [8].

- Ultra-Clean Reagents: For extremely sensitive applications, reagents can be treated with UV sterilization or DNase to degrade contaminating DNA, or purchased as certified DNA-free [9].

The Scientist's Toolkit: Essential Research Reagents & Materials

Table 3: Key Reagent and Material Solutions for Mitigating Contamination

| Item | Function | Considerations for Low-Biomass Studies |

|---|---|---|

| PureLink Microbiome DNA Purification Kit (example) | DNA extraction from various sample types using a triple lysis approach (beads, heat, chemicals) for efficient microbial cell wall disruption [11] | Includes a clean-up buffer to remove inhibitors; manufacturers should follow high production standards to minimize kit-borne contaminants [11]. |

| Pre-sterilized Consumables | Single-use, DNA-free pipette tips, tubes, and plates act as physical barriers to contaminants [10]. | Eliminates variability and effort of in-house cleaning. Using plates with individual tube strips may reduce well-to-well contamination compared to fixed-well plates [8]. |

| DNA Degrading Solutions (e.g., Bleach, DNA Away) | Chemical sterilants used to decontaminate surfaces and equipment by degrading trace DNA [9] [10]. | Critical for removing cell-free DNA that remains after ethanol treatment or autoclaving. Use on lab benches, instruments, and reusable equipment [9]. |

| HEPA-Filtered Laminar Flow Hood/BSC | Provides a sterile, particle-free air environment for handling samples and setting up sensitive reactions like PCR [10]. | Protects against airborne contaminants and aerosols. Essential for processing low-biomass samples and setting up library preparations [10]. |

| Qubit Fluorometer | Provides highly accurate and specific quantification of DNA concentration using fluorescent dyes [11]. | More accurate for microbiome samples than spectrophotometers (e.g., NanoDrop), which can overestimate concentration due to contaminants [11]. |

The analysis of antibiotic resistance genes (ARGs) in hyper-eutrophic lakes reveals distinct profiles shaped by anthropogenic contamination. Below are consolidated findings from relevant case studies.

Table 1: ARG Distribution and Abundance in Hyper-Eutrophic Lakes

| Lake / Study | Predominant ARG Types (% of Total) | Primary Bacterial Hosts (Carrying ARGs) | Key Anthropogenic Influences |

|---|---|---|---|

| Lake Cajititlán, Mexico [12] | Multidrug (63.33%), Macrolides (11.55%), Aminoglycosides (8.22%), Glycopeptides (6.22%), Tetracyclines (4%) | Pseudomonas (144 genes), Stenotrophomonas (88 genes), Mycobacterium (54 genes) | Urban wastewater, agricultural and livestock runoff [12] |

| Chaohu Lake, China [13] | Multidrug, Bacitracin, Polymyxin, Macrolide-Lincosamide-Streptogramin (MLS), Aminoglycoside | Proteobacteria, Actinobacteria, Cyanobacteria, Firmicutes, Bacteroidetes | Wastewater treatment plants, hospitals, agricultural activity, pesticides, PPCPs [13] [14] |

| ~350 Canadian Lakes [15] | Vast diversity of naturally occurring ARGs, with significant impact from human activity. | Not Specified | Watershed agriculture/pasture, manure fertilizer, wastewater effluent, population density, number of hospitals [15] |

Table 2: Key Physicochemical Factors Influencing ARG Profiles

| Factor | Correlation/Influence on ARGs | Supporting Study |

|---|---|---|

| Total Phosphorus (TP) / PO₄-P | Strong positive correlation (0.4971 for TP, 0.5927 for PO₄-P). Key indicator of eutrophication's link to ARG abundance [13]. | Chaohu Lake [13] |

| Nutrients (Nitrogen) | Lesser, but measurable impact (Total Nitrogen: 0.0515) compared to phosphorus [13]. | Chaohu Lake [13] |

| Pesticides & PPCPs | Act as co-selectors for antibiotic resistance, facilitating ARG transfer even at sub-inhibitory concentrations [14]. | Chaohu Lake [14] |

| Trophic Status | Increasing eutrophication correlates with higher ARG abundance and diversity [15]. | Canadian Lakes Survey [15] |

Technical Support: Troubleshooting Contamination in Low-Biomass Metagenomics

Frequently Asked Questions (FAQs)

Q1: Our negative controls show high microbial biomass. What are the most likely sources of this contamination? A1: Contamination in low-biomass samples like oligotrophic lake water typically originates from:

- Reagents and Kits (Kitome): DNA extraction kits, polymerases, and water are common sources of contaminating microbial DNA. Different lots from the same manufacturer can have varying contaminant profiles [9] [16].

- Laboratory Environment: Human-associated microbiota from skin and hair, as well as aerosols from lab surfaces and air, can introduce contaminant DNA [9].

- Cross-Contamination: During sample processing, DNA can leak between adjacent samples in plates, or equipment used for multiple samples can be a vector if not properly decontaminated [9].

Q2: How can we distinguish true environmental ARG signals from contamination? A2: Distinguishing signal from noise requires a multi-pronged approach:

- Robust Negative Controls: Include "field blanks" (e.g., sterile water exposed to the sampling air) and "extraction blanks" (no-sample controls carried through DNA extraction) in every batch. The contaminant profiles from these controls should be bioinformatically subtracted from your actual samples [9].

- Statistical & Bioinformatic Decontamination: Use tools like

decontam(R package) which can identify contaminant sequences based on their higher prevalence in negative controls and/or their inverse correlation with DNA concentration [9]. - Consistency Across Replicates: True signals should be reproducible across your true sample replicates and correlate with expected environmental gradients [16].

Q3: Our metagenomic data is dominated by host (e.g., human) DNA from sampling. How can we mitigate this? A3: Host DNA depletion is critical for increasing the sequencing depth of your target microbiome.

- During Wet Lab: Use PPE (gloves, masks, coveralls) rigorously to minimize operator contamination. Decontaminate all sampling equipment with ethanol followed by a DNA-degrading solution (e.g., bleach, UV-C light) to remove residual DNA [9] [17].

- During Analysis: Bioinformatic host removal is a standard and essential step. After quality control, map your sequencing reads to a host reference genome (e.g., human GRCh38) and remove all matching reads before downstream assembly and analysis [17] [18].

Essential Methodologies & Protocols

Protocol 1: Contamination-Aware Sample Collection for Lake Water [9]

- Decontaminate Equipment: Treat samplers, bottles, and filters with 80% ethanol (to kill cells) followed by a nucleic acid degrading solution like 10% sodium hypochlorite (to remove DNA). Use DNA-free, single-use equipment where possible.

- Use Personal Protective Equipment (PPE): Wear gloves, masks, and clean suits to prevent contamination from the researcher.

- Collect Controls: Simultaneously collect multiple negative controls:

- Field Blank: Pass sterile, DNA-free water through a filter at the sampling site.

- Equipment Blank: Swab the sampling equipment.

- Air Blank: Leave an open, sterile Petri dish with a filter at the sampling site.

- Preserve Immediately: Flash-freeze filters in liquid nitrogen or at -80°C to halt biological activity.

Protocol 2: Bioinformatic Host DNA Removal and Quality Control [18] This protocol assumes you have paired-end metagenomic sequencing data.

- Quality Control (QC) & Trimming:

- Use

fastporTrimmomaticto remove adapter sequences and low-quality bases. - Run

FASTQCbefore and after trimming to visualize the quality of your data. - Code Example (fastp):

- Use

- Host Read Removal:

- Download a host reference genome (e.g., human GCF_000001405.26).

- Align your quality-filtered reads to the host genome using

Bowtie2and retain only the UNMAPPED reads. - Code Example (Bowtie2):

- The resulting

sample_nonhost.1.fastq.gzandsample_nonhost.2.fastq.gzfiles are your cleaned metagenomic data, ready for assembly and ARG analysis.

Protocol 3: Metagenome-Assembled Genome (MAG) Construction and ARG Profiling [18]

- Metagenomic Assembly: Assemble the cleaned, non-host reads into contigs using a metagenomic assembler like

MEGAHITormetaSPAdes.- Code Example (MEGAHIT):

- Binning: Group contigs into draft genomes (MAGs) using binning software like

metaWRAPbinning module. - Bin Refinement and Quality Check: Use

metaWRAPrefine module orDAS_Toolto obtain high-quality MAGs. Check completeness and contamination withCheckMorCheckM2. - Taxonomic Classification: Classify MAGs using

GTDB-Tk. - Functional Profiling & ARG Identification: Annotate genes on contigs or MAGs using

Prokka. Scan for ARGs using thestaramrtool or by aligning to databases like CARD (Comprehensive Antibiotic Resistance Database).

The Scientist's Toolkit: Key Research Reagent Solutions

Table 3: Essential Materials and Bioinformatics Tools for Metagenomic ARG Analysis

| Item / Solution | Function / Purpose | Example Tools / Brands |

|---|---|---|

| DNA-Free Collection Kits | Single-use, sterile filters and vessels to minimize contamination at source. | DNA-free water sampling kits, sterile disposable filter units [9]. |

| Nucleic Acid Degrading Solution | Destroys contaminating free DNA on equipment and surfaces post-ethanol decontamination. | Dilute sodium hypochlorite (bleach), commercial DNA removal solutions [9]. |

| High-Sensitivity DNA Extraction Kits | To extract maximum DNA from low-biomass samples while minimizing reagent-derived contaminant DNA. | QIAGEN DNeasy PowerWater Kit (used in Canadian Lake survey) [15]. |

| Sequence Data QC & Trimming | Assess read quality and remove adapters/ low-quality bases. | Fastp, FASTQC, Trimmomatic [17] [18]. |

| Host DNA Removal (Bioinformatic) | In-silico subtraction of host-associated reads to enrich for microbial data. | Bowtie2, BWA (for alignment to host genome) [17] [18]. |

| Metagenomic Assembler | Reconstructs longer DNA sequences (contigs) from short sequencing reads. | MEGAHIT, metaSPAdes [18]. |

| Metagenomic Binning Tool | Groups assembled contigs into draft genomes (MAGs) based on sequence composition and abundance. | metaWRAP, MaxBin2 [18]. |

| ARG Profiling Tool | Identifies and annotates antibiotic resistance genes from metagenomic data. | staramr (based on CARD, ResFinder, PointFinder) [18]. |

The Role of Mobile Genetic Elements (MGEs) in Misleading ARG Attribution

Troubleshooting Guide: Frequent Issues in Metagenomic ARG Analysis

Why am I detecting ARGs in samples where known resistant pathogens are absent?

This is a classic sign of MGE-mediated contamination or misattribution.

- Root Cause: ARGs are often embedded within Mobile Genetic Elements (MGEs) like plasmids, transposons, and integrons [19]. These elements can exist in a cell independently of the chromosome and are highly mobile between different bacterial species via Horizontal Gene Transfer (HGT) [19] [20].

- Solution:

- Re-analysis with Host Attribution: Use analytical tools that can link the detected ARG to its specific genetic context (e.g., is it on a plasmid or chromosome?) and to a taxonomic host [21].

- Profile MGE Co-occurrence: Actively map the abundance of MGEs in your sample. A strong correlation between the abundance of a specific ARG and specific MGEs is a strong indicator of potential HGT and misattribution [22] [20].

- Employ Long-Read Sequencing: Technologies like Oxford Nanopore or PacBio generate long sequences that can span an ARG and its adjacent genetic elements, providing clearer evidence of the host species and the mobile nature of the gene [21].

Why do I get inconsistent ARG host identification between replicate experiments?

Inconsistencies often stem from the dynamic nature of MGEs and limitations in short-read sequencing.

- Root Cause: MGEs can be gained or lost by bacterial cells without affecting their core genomic identity. Short-read metagenomic sequencing often produces fragmented data, making it difficult to correctly assemble the full context of an ARG, leading to variable host assignments across runs [21].

- Solution:

- Utilize Advanced Profiling Tools: Implement methods like Argo, which uses long-read overlapping to cluster ARG-containing reads before taxonomic assignment. This collective labeling of read clusters significantly improves the accuracy and consistency of host identification compared to per-read classification [21].

- Strict Contamination Control: Follow a strict experimental guideline to rule out technical contamination from reagents or lab equipment, which can introduce exogenous genetic material [23].

How can I distinguish a genuine chromosomal ARG from a plasmid-borne one?

Determining the genetic location is crucial for assessing the transfer risk of an ARG.

- Root Cause: Plasmids are a major type of MGE and primary vectors for the rapid dissemination of ARGs across bacterial populations [19] [20].

- Solution:

- Database Mapping: Map your metagenomic reads not only to ARG databases but also to dedicated plasmid databases (e.g., a decontaminated subset of RefSeq plasmid) [21].

- Contextual Analysis: Look for genetic signatures of plasmids or other MGEs in the sequences flanking the detected ARG. The presence of mobility genes (e.g., transposases, integrases) is a key indicator [22] [19].

The following table summarizes key types of Mobile Genetic Elements and their documented impact on ARG dissemination as observed in recent environmental metagenomic studies.

Table 1: Mobile Genetic Elements (MGEs) and Their Documented Role in ARG Dissemination

| MGE Type | Key Characteristics | Primary Mechanism in ARG Spread | Documented Findings |

|---|---|---|---|

| Plasmids [19] [20] | Extrachromosomal DNA elements; can be conjugative. | Conjugation between bacterial cells. | Carry a wide variety of bla (β-lactamase) and erm (macrolide resistance) genes; lead to co-selection of multiple resistance traits [19]. |

| Transposons (Tn) & Composite Transposons (ComTn) [19] [20] | DNA sequences that can move within the genome. | Transposition within a cell's DNA; can be carried by plasmids. | Frequently associated with ARGs; ComTn can mobilize nearby genes, facilitating their spread to other MGEs [20]. |

| Insertion Sequences (IS) [19] [20] | Simplest transposable elements (<3 kb); encode transposase. | Transposition; can inactivate genes or provide promoters. | High copy numbers in genomes; can mediate mobilization of adjacent genes, contributing to the formation of ComTn [19] [20]. |

| Integrative & Conjugative Elements (ICEs) [20] | Integrate into the chromosome but can excise and conjugate. | Conjugation, similar to plasmids. | Can carry intracellular transposing MGEs and ARGs, acting as a bridge for gene transfer between integrated and mobile states [20]. |

| Integrons [19] | Site-specific recombination systems that capture gene cassettes. | Capture and promote expression of antibiotic resistance genes. | Often located within transposons and plasmids; enable bacteria to rapidly acquire and stack multiple resistance genes [19]. |

Detailed Experimental Protocol: Tracking MGE-Mediated ARG Transfer

This protocol outlines a metagenomic approach to identify ARGs and their associated MGEs in complex environmental samples, helping to clarify their origins and dissemination pathways.

Sample Collection and DNA Extraction

- Sample Type: The protocol can be applied to water samples (e.g., from lakes or sewage) [22] [20] or other environmental matrices.

- Microbial Enrichment: Filter water samples through membranes to concentrate microbial biomass [22].

- DNA Extraction: Use a standard phenol-chloroform method or commercial kit to extract total microbial DNA [22]. The extraction should be optimized for high molecular weight DNA if long-read sequencing is planned.

Metagenomic Sequencing and Data Processing

- Sequencing Platform: Use either:

- Quality Control: Process raw reads with tools like Fastp to remove low-quality sequences and adapters [22].

- Assembly: For short-read data, assemble clean reads into contigs using MEGAHIT or similar assemblers [22].

Gene Prediction and Annotation

- Open Reading Frame (ORF) Prediction: Use Prodigal to predict protein-coding genes on the assembled contigs or directly on long reads [22].

- ARG Identification: Annotate ARGs using the DeepARG-LS model to achieve high accuracy and low false-negative rates [22]. The reference database SARG+, which consolidates and expands sequences from CARD, NDARO, and SARG, is recommended for comprehensive profiling [21].

- MGE Annotation: Annotate MGEs by aligning ORFs to a specialized database like the mobileOG-DB [22].

- MRG Annotation: Align ORFs to the BacMet database to identify metal resistance genes, which can be co-selected with ARGs [22].

Identification of ARG Hosts and Dissemination Risk

- Taxonomic Assignment: Assign taxonomy to ARG-containing sequences by aligning them to a curated database like GTDB using BLAST or minimap2 [22] [21].

- Correlation and Network Analysis: Use correlation algorithms (e.g., SparCC) to identify significant positive correlations between the abundance profiles of specific ARGs, MGEs, and bacterial taxa [20]. This helps identify potential hosts and vectors.

- Risk Assessment: Tools like MetaCompare can be used to assess the potential dissemination risk of ARGs based on the co-occurrence of acquired ARGs, human bacterial pathogens (HBPs), and MGEs [22].

Visualizing the Workflow for Contamination-Aware ARG Analysis

The following diagram illustrates the core logical workflow for conducting a metagenomic analysis that accounts for MGEs to prevent misattribution of ARGs.

The Scientist's Toolkit: Essential Research Reagents & Databases

Table 2: Key Bioinformatics Tools and Databases for ARG and MGE Analysis

| Resource Name | Type | Primary Function | Key Application in Mitigating Misattribution |

|---|---|---|---|

| DeepARG-LS [22] | Computational Tool / Model | Accurate annotation of antibiotic resistance genes from metagenomic data. | Reduces false positives/negatives in initial ARG detection, providing a more reliable foundation for analysis. |

| SARG+ [21] | Manually Curated Database | A comprehensive compendium of ARG protein sequences, expanded from CARD, NDARO, and SARG. | Includes ARG variants from multiple species, improving detection sensitivity and reducing misattribution due to sequence divergence. |

| mobileOG-DB [22] | Database | An integrated database of protein sequences for annotating Mobile Genetic Elements. | Allows for the systematic identification of MGEs in metagenomes, enabling the study of their correlation with ARGs. |

| BacMet [22] | Database | A database of experimentally verified biocide and metal resistance genes. | Identifying metal resistance genes helps reveal co-selection pressures that may maintain ARGs in the absence of direct antibiotic selection. |

| Argo [21] | Computational Profiler | Species-resolved ARG profiling from long-read metagenomes using read-overlapping and clustering. | Dramatically improves the accuracy of host identification by collectively labeling read clusters, directly addressing the misattribution problem. |

| GTDB (Genome Taxonomy Database) [21] | Database | A high-quality, standardized bacterial and archaeal taxonomy. | Provides a reliable reference for taxonomic classification, reducing errors in host assignment from sequence data. |

Impact of Contamination on Resistome Risk Assessment and Data Interpretation

Frequently Asked Questions (FAQs)

FAQ 1: What are the most common sources of contamination in metagenomic studies for antibiotic resistome risk assessment? Contamination can originate from multiple sources throughout the experimental workflow. Key sources include:

- Reagents and Kits: DNA extraction kits and polymerase enzymes often contain microbial DNA, creating a unique "kitome" background that varies between brands and even between lots of the same product [24] [16].

- Laboratory Environment: Contaminants can be introduced from laboratory surfaces, air, and equipment [9] [16].

- Sample Handling: Human skin of operators, improper sterile technique, and cross-contamination between samples during processing are significant sources [9] [16].

- Sequencing Process: Index hopping in multiplexed runs and well-to-well leakage during library preparation can cause cross-contamination [24].

FAQ 2: How does contamination specifically impact the assessment of antibiotic resistance gene (ARG) risk? Contamination skews risk assessment by distorting key metrics:

- False Positives: It can lead to the false detection of high-risk ARGs and human bacterial pathogens (HBPs), resulting in an overestimation of the resistome risk [24] [25].

- Distorted Abundance and Diversity: Contaminant sequences artificially inflate the perceived abundance and diversity of ARGs, complicating ecological interpretations [26] [27].

- Compromised Connectivity Analysis: Accurate tracking of ARG transfer between environmental and clinical settings depends on distinguishing true signals from background noise. Contamination obscures these pathways [27].

FAQ 3: What are the best practices for preventing and controlling contamination during sample collection and processing? A contamination-informed sampling design is critical [9]. Key practices include:

- Decontamination: Thoroughly decontaminate equipment and surfaces with 80% ethanol followed by a nucleic acid-degrading solution (e.g., bleach) [9].

- Personal Protective Equipment (PPE): Use gloves, lab coats, masks, and hair covers to minimize contamination from operators [9].

- Use of Controls: Always include negative controls (e.g., extraction blanks with molecular-grade water) and sampling controls (e.g., swabs of the air or sampling surfaces) in every run [9] [24].

- Reagent Quality: Use high-quality, trusted reagents and consider aliquoting to minimize repeated exposure [28].

FAQ 4: My negative controls show microbial signals. How should I handle this in my data analysis? The presence of signals in negative controls confirms the need for bioinformatic decontamination. You should:

- Profile the Contaminants: Use the negative controls to create a profile of the background "kitome" and laboratory contaminants [24].

- Apply Statistical Tools: Utilize specialized bioinformatics tools like

Decontam(which identifies contaminants based on their higher frequency in low-concentration samples and negative controls),SourceTracker, ormicroDeconto statistically identify and remove contaminant sequences from your dataset [24]. - Report the Process: Transparently report the contamination profiles and the decontamination steps taken in your methodology [9].

FAQ 5: Are some sequencing approaches more robust against contamination for resistome risk assessment? Emerging methods are being developed to improve accuracy. Long-read sequencing (e.g., Nanopore, PacBio) offers advantages by allowing for the analysis of ARGs, mobile genetic elements (MGEs), and their hosts without assembly, reducing chimeric artifacts [25]. Specifically, the Long-read based Antibiotic Resistome Risk Assessment Pipeline (L-ARRAP) has been developed to quantify ARG risk from long-read data, helping to distinguish true genetic linkages from spurious ones [25].

Quantitative Data on Contamination Impacts

Table 1: Documented Impacts of Contaminants on Resistome Profiles

| Contaminant Source | Observed Impact on Resistome Analysis | Study Context |

|---|---|---|

| DNA Extraction Reagents | Distinct background microbiota profiles found across commercial brands; patterns varied significantly between different lots of the same brand [24]. | Clinical mNGS for pathogen detection [24]. |

| Landfill Leachate (as an environmental contaminant) | Elevated levels of ARGs (e.g., sul, tet) and heavy metals found; metal pollution suggested to co-select for antibiotic resistance [26]. |

Metagenomic analysis of landfill leachates [26]. |

| Global Soil Resistome | Analysis showed soil shares 50.9% of its high-risk Rank I ARGs with human-associated habitats like feces and wastewater, highlighting connectivity and potential cross-contamination [27]. | Global meta-analysis of soil metagenomes [27]. |

Table 2: Key Metrics for High-Risk ARG (Rank I) Assessment [27]

| Metric | Definition | Interpretation |

|---|---|---|

| Relative Abundance | Copies of Rank I ARGs per 1000 cells. | Measures the prevalence of high-risk genes within a microbial community. |

| Occurrence Frequency | Proportion of samples in a set where a specific ARG is detected. | Indicates how widespread a high-risk ARG is across different samples. |

| Connectivity | Genetic overlap of ARGs with clinical pathogens, assessed through sequence similarity and phylogenetic analysis. | Evaluates the potential transfer risk of ARGs from the environment to human pathogens. |

Essential Experimental Protocols

Protocol 1: Implementing Negative and Process Controls

Purpose: To identify and account for background contamination introduced from reagents and the laboratory environment [9] [24].

Detailed Methodology:

- Extraction Blank: For each batch of DNA extractions, include a sample where molecular biology-grade water is used as the input instead of a biological sample. Process this blank identically to all other samples [24].

- Sampling Controls: During field sampling, include controls such as:

- An empty, sterile collection vessel exposed to the air.

- A swab of the air at the sampling site.

- A swab of the gloves or PPE of the sampler [9].

- Processing: Subject all controls to the entire downstream workflow, including DNA extraction, library preparation, and sequencing, alongside the actual samples.

- Analysis: Use the sequencing data from these controls to create a contaminant profile for your specific study, which can then be used for bioinformatic decontamination [24].

Protocol 2: Bioinformatics Decontamination withDecontam

Purpose: To statistically identify and remove contaminant sequences from metagenomic data [24].

Detailed Methodology:

- Data Input: Prepare a feature table (e.g., ASV or OTU table) and a corresponding table of sequence metadata.

- Identify Contaminants: Use the

Decontampackage in R with the "prevalence" method. This method identifies contaminants as sequences that are significantly more prevalent in negative control samples than in true biological samples. - Threshold Setting: Apply a user-defined probability threshold (e.g., 0.5) to classify features as contaminants.

- Data Filtering: Remove the identified contaminant sequences from the feature table and all downstream analyses.

- Validation: Compare the community composition and ARG profiles before and after decontamination to ensure realistic outcomes.

Protocol 3: Assessing ARG Risk with the L-ARRAP Pipeline

Purpose: To quantify the antibiotic resistome risk in metagenomic samples, particularly those from long-read sequencing platforms [25].

Detailed Methodology:

- Quality Control: Process raw long-reads (Nanopore/PacBio) with a tool like

Chopper, using parameters such as-q 10 -l 500to filter out low-quality and short reads [25]. - ARG and MGE Identification: Align quality-controlled reads to the SARG database for ARGs and the MobileOG-db for mobile genetic elements (MGEs). Use

Minimap2for ARGs andLASTfor MGEs, with thresholds of >75% identity and >90% coverage [25]. - Pathogen Identification: Annotate the taxonomy of reads using

Centrifugeand identify reads belonging to Human Bacterial Pathogens (HBPs) by comparing them to a curated database from WHO and ESKAPE pathogens [25]. - Calculate Risk Index: The Long-read based Antibiotic Resistome Risk Index (L-ARRI) is calculated by integrating the abundance of ARGs, their mobility potential (link to MGEs), and their association with pathogenic hosts [25].

Workflow Diagrams

Diagram 2: L-ARRAP Risk Assessment Pipeline for Long Reads

The Scientist's Toolkit: Essential Reagents & Solutions

Table 3: Key Research Reagent Solutions for Contamination Control

| Item | Function/Purpose | Key Considerations |

|---|---|---|

| Molecular Grade Water | Used for preparing solutions and as input for extraction blanks. Must be DNA-/RNA-free and nuclease-free [24]. | Verify sterility and the absence of microbial bioburden. Pre-filtration (0.1 µm) is a key quality indicator [24]. |

| DNA Decontamination Solutions | Used to remove contaminating DNA from surfaces and equipment before use. | Sodium hypochlorite (bleach), UV-C light, hydrogen peroxide, or commercial DNA removal solutions are effective [9]. |

| High-Fidelity Polymerases | Enzymes for PCR and library amplification with low levels of contaminating DNA. | Recombinant polymerases generally have lower contamination, but levels should be checked. Avoid enzymes with known viral contaminants [16]. |

| Spike-in Controls | Synthetic microbial communities (e.g., ZymoBIOMICS Spike-in Control) added to samples. | Serves as an internal positive control for extraction and sequencing efficiency, helping to distinguish technical failures from true negatives [24]. |

| Mycoplasma Prevention & Detection Kits | To prevent and detect mycoplasma contamination in cell cultures used for experiments. | Regular testing (every 1-2 months) is recommended. Use removal reagents and prevention sprays for contaminated cultures [28]. |

Advanced Profiling and Source Tracking: Cutting-Edge Methodologies for Accurate ARG Detection

Species-Resolved ARG Profiling with Long-Read Sequencing Technologies

Antimicrobial resistance (AMR) poses a critical global health threat, directly responsible for an estimated 1.14 million deaths worldwide in 2021 alone, with projections rising to 1.91 million by 2050 without concerted global action [21]. Environmental surveillance of antibiotic resistance genes (ARGs) is crucial for understanding and mitigating the spread of antimicrobial resistance. While metagenomic sequencing has revolutionized AMR surveillance by enabling culture-free analysis of complex microbial communities, traditional short-read technologies have faced significant limitations in linking detected ARGs to their specific microbial hosts—information indispensable for tracking transmission and assessing risk [21] [29].

Long-read sequencing technologies from platforms such as Oxford Nanopore Technologies (ONT) and PacBio have emerged as powerful solutions for overcoming these challenges. These technologies generate reads tens of thousands of bases in length, enabling them to span not only full-length ARGs but also their surrounding genomic context [21]. This contextual information dramatically increases the likelihood of correct taxonomic classification and provides insights into whether ARGs are located on chromosomes or mobile genetic elements—a critical distinction for assessing transmission risk [30].

This technical support center provides comprehensive guidance for researchers implementing long-read sequencing for species-resolved ARG profiling, with particular emphasis on mitigating contamination and analytical artifacts in metagenomic analysis. The protocols and troubleshooting guides below address common experimental and bioinformatic challenges to ensure accurate, reliable results.

Frequently Asked Questions (FAQs)

Q1: What are the primary advantages of long-read over short-read sequencing for ARG surveillance?

Long-read technologies provide two fundamental advantages for ARG profiling: (1) Enhanced host tracking: Reads spanning entire ARGs plus flanking regions enable more reliable taxonomic assignment to species level [21]; (2) Contextual information: Long reads can determine whether ARGs are located on chromosomes or mobile genetic elements like plasmids, informing mobility risk assessment [30]. Short-read approaches often fail to resolve these aspects due to fragmentation in complex genomic regions surrounding ARGs [29].

Q2: How does the Argo method improve accuracy in host identification compared to traditional approaches?

Argo employs a novel read-overlapping approach that clusters ARG-containing reads before taxonomic assignment, unlike tools like Kraken2 or Centrifuge that assign taxonomy to individual reads. By leveraging graph clustering of read overlaps and assigning taxonomic labels collectively to read clusters, Argo substantially reduces misclassifications that commonly occur with per-read methods, especially for ARGs prone to horizontal gene transfer that may appear across multiple species [21].

Q3: My metagenomic assemblies consistently break around ARG regions. Is this a technical issue?

Assembly fragmentation around ARGs is a recognized technical challenge, not necessarily an error in your workflow. ARGs are often surrounded by repetitive regions and mobile genetic elements, and nearly identical ARG variants can occur in multiple genomic contexts across different species. These factors create highly complex, branched assembly graphs that assemblers resolve by breaking them into shorter contigs [29]. Consider complementing assembly-based approaches with read-based methods like Argo for more accurate ARG quantification and host assignment [21] [29].

Q4: Can long-read sequencing detect resistance mechanisms beyond acquired ARGs, such as chromosomal mutations?

Yes. Recent advances enable long-read technologies to identify resistance-associated point mutations through haplotype phasing. For example, fluoroquinolone resistance mechanisms include both plasmid-mediated genes (qnrA, qnrB, qnrS) and chromosomal mutations in gyrA and parC genes. Specialized bioinformatic approaches can now uncover these strain-level SNPs directly from metagenomic data [30].

Q5: How can I distinguish genuine ARG hosts from false positives due to contamination?

Multiple strategies can mitigate false host assignments: (1) Implement read clustering approaches like Argo that reduce misclassification [21]; (2) Leverage DNA methylation signatures to link plasmids to their bacterial hosts based on common methylation patterns [30]; (3) Use coverage-based filters and read-pair consistency checks to eliminate chimeric neighborhoods, as implemented in tools like ARGContextProfiler [31].

Troubleshooting Guides

Common Experimental Challenges

Table 1: Troubleshooting Experimental Challenges

| Problem | Potential Causes | Solutions | Contamination Mitigation |

|---|---|---|---|

| Low ARG detection sensitivity | Inadequate DNA quantity/quality, low sequencing depth, inefficient library preparation | Use high molecular weight DNA extraction methods, increase sequencing depth, optimize library prep for complex samples | Include extraction controls to detect reagent contamination |

| Inaccurate host assignment | Sequencing errors, horizontal gene transfer events, database limitations | Apply adaptive identity cutoffs based on read quality, use clustering approaches like Argo, employ comprehensive databases like SARG+ [21] | Validate host assignments with complementary methods (e.g., methylation linking) [30] |

| Failure to detect plasmid-borne ARGs | Reference databases lacking plasmid sequences, incomplete assembly | Augment databases with plasmid sequences (e.g., RefSeq plasmid), implement methylation-based plasmid-host linking [30] | Use decontaminated plasmid databases to minimize false positives |

| Inconsistent results between replicates | Variable DNA extraction efficiency, sampling heterogeneity, sequencing batch effects | Standardize extraction protocols, increase biological replicates, randomize sequencing across batches | Monitor technical variation through process controls |

Bioinformatics and Analysis Challenges

Table 2: Troubleshooting Bioinformatics Challenges

| Problem | Diagnostic Steps | Solutions | Preventive Measures |

|---|---|---|---|

| Assembly fragmentation around ARGs | Check for repetitive regions flanking ARGs, assess coverage uniformity | Use specialized assemblers like Trinity or metaSPAdes, combine with read-based approaches [29] | Implement local assembly approaches or graph-based context extraction [31] |

| High false positive ARG calls | Verify alignment identity thresholds, check for regulator/housekeeping genes | Apply stringent identity cutoffs, use curated databases that exclude regulators and housekeeping genes [21] | Employ frameshift-aware alignment (DIAMOND) and filter non-bona fide ARGs [21] |

| Inability to resolve strain-level variation | Assess read length and coverage, check haplotype phasing capability | Implement strain haplotyping tools, leverage ultra-long phasing blocks [30] | Utilize technologies that maintain haplotype information (linked reads, phased sequencing) [32] |

| Chimeric genomic contexts | Examine assembly graph complexity, check for repetitive elements | Use ARGContextProfiler to validate contexts through read mapping and coverage consistency [31] | Apply graph-based approaches with multiple filters to eliminate chimeric paths |

Key Experimental Protocols

Argo Workflow for Species-Resolved ARG Profiling

The Argo methodology represents a significant advancement for accurate host tracking in long-read metagenomics [21]. The following protocol details its implementation:

Step 1: ARG Identification

- Begin with quality-controlled long reads from ONT or PacBio platforms

- Identify ARG-containing reads using DIAMOND's frameshift-aware DNA-to-protein alignment against the SARG+ database

- Set adaptive identity cutoff based on per-base sequence divergence derived from read overlaps

- Record ARGs with their precise coordinates on reads for downstream analysis

Step 2: Taxonomic Classification

- Map ARG-containing reads to the GTDB reference taxonomy database using minimap2's base-level alignment

- Generate candidate species labels for each read

- Aggregate labels into candidate species sets where each set contains at least one read

Step 3: Read Clustering

- Overlap ARG-containing reads to construct a sparse overlap graph representing pairwise identities

- Segment the graph into read clusters using the Markov Cluster (MCL) algorithm

- Refine taxonomic assignments on a per-cluster basis using greedy set covering

Step 4: Plasmid Detection

- Mark reads as "plasmid-borne" if they additionally map to a decontaminated subset of RefSeq plasmid database

- Exclude phage-associated ARGs as they rarely represent bona fide resistance genes

Argo Analysis Workflow

Methylation-Based Plasmid-Host Linking

This protocol leverages DNA modification detection in native long reads to associate plasmids with their bacterial hosts, addressing a key challenge in tracking ARG transmission [30]:

Step 1: Native DNA Sequencing

- Perform ONT sequencing without PCR amplification to preserve natural DNA modifications

- Use appropriate flow cells (R10 recommended) and chemistry (V14 or newer) for optimal modification detection

Step 2: Methylation Motif Detection

- Basecall raw signals with modified base detection enabled (e.g., Dorado with 5mC/6mA models)

- Identify methylation motifs using MicrobeMod or NanoMotif

- Generate per-sample methylation profiles for both chromosomal and plasmid reads

Step 3: Host-Linking Analysis

- Cluster plasmids and bacterial hosts based on shared methylation motifs

- Validate links using coverage correlation and sequence composition

- Resolve ambiguous assignments through manual inspection of motif conservation

Strain-Level Haplotyping for Point Mutation Detection

This protocol enables identification of resistance-conferring point mutations directly from metagenomic data [30]:

Step 1: Variant Calling

- Map long reads to reference genomes or MAGs using minimap2 or similar aligner

- Call variants with tools sensitive to metagenomic data characteristics

- Filter variants based on quality metrics and coverage thresholds

Step 2: Haplotype Phasing

- Phase variants into haplotypes using long-read connectivity

- Apply population genetics models to distinguish strains

- Validate phasing accuracy through comparison with isolate sequencing when available

Step 3: Mutation Annotation

- Annotate phased variants with functional prediction

- Cross-reference with known resistance mutations (e.g., gyrA for fluoroquinolone resistance)

- Correlate mutation patterns with ARG profiles for comprehensive resistome assessment

Research Reagent Solutions

Table 3: Essential Research Reagents and Databases

| Category | Resource | Function | Key Features | Contamination Control |

|---|---|---|---|---|

| ARG Databases | SARG+ [21] | Comprehensive ARG reference | Manually curated compendium from CARD, NDARO, SARG; excludes regulators/housekeeping genes | Deduplicated sequences; focused on bona fide ARGs |

| CARD [33] | ARG identification and annotation | Antibiotic Resistance Ontology; rigorous curation standards | Experimentally validated sequences only | |

| Taxonomy Databases | GTDB [21] | Taxonomic classification | Higher quality control than NCBI; better resolved taxonomy | Deprecated assemblies removed |

| NCBI RefSeq [21] | Reference sequences | Comprehensive collection including plasmids | Decontaminated subsets available | |

| Analysis Tools | Argo [21] [34] | Species-resolved ARG profiling | Read clustering approach; reduces misclassification | Adaptive identity cutoffs based on read quality |

| ARGContextProfiler [31] | Genomic context extraction | Assembly graph exploration; minimizes chimeric errors | Coverage-based filtering of false contexts | |

| NanoMotif [30] | Methylation motif detection | Plasmid-host linking via shared methylation patterns | Single-library approach reduces contamination risk | |

| Sequencing Technologies | ONT R10/V14 [30] | Long-read sequencing | Native DNA modification detection; improved accuracy | Preserves natural modification signatures |

| PacBio HiFi [32] | High-fidelity long reads | Circular consensus sequencing; high accuracy | Reduced systematic errors |

Advanced Technical Considerations

Mitigating Assembly Artifacts in ARG Regions

Metagenomic assemblies frequently break around ARGs due to their association with repetitive elements and multiple genomic contexts [29]. To address this:

Hybrid Assembly Approaches

- Combine long reads for scaffolding with short reads for base-level accuracy

- Use metaSPAdes or Trinity for improved recovery of ARG contexts compared to other assemblers

- Implement iterative assembly strategies focusing on ARG-containing regions

Graph-Based Context Extraction As implemented in ARGContextProfiler [31]:

- Extract ARG neighborhoods directly from assembly graphs before linearization

- Apply multiple filters (read-pair consistency, coverage variation) to eliminate chimeric paths

- Validate reconstructed contexts through independent read mapping

Quantitative Framework for ARG Source Tracking

Machine learning classification combined with comprehensive ARG profiling enables probabilistic source attribution [35]:

Implementation Steps

- Build reference ARG profiles from potential source environments (human/animal gut, wastewater, soil)

- Apply Bayesian classification tools (SourceTracker) to estimate source contributions in sink samples

- Identify specialist indicator ARGs specific to particular sources

- Validate predictions through artificial configurations with known source proportions

ARG Source Tracking Framework

Leveraging Tools like Argo for Enhanced Host Attribution and Read Clustering

Frequently Asked Questions (FAQs)

Q1: What is Argo and how does it improve upon existing methods for tracking Antibiotic Resistance Gene (ARG) hosts? Argo is a novel bioinformatics tool that uses long-read metagenomic sequencing to identify and quantify antibiotic resistance genes (ARGs) and accurately link them to their specific microbial hosts at the species level. Unlike short-read methods that often misattribute ARGs due to fragmented assemblies, Argo leverages long-read overlapping and graph-based clustering to collectively assign taxonomic labels to groups of reads, significantly enhancing the accuracy of host identification and providing superior resolution for tracking ARG transmission [21].

Q2: My analysis is plagued by high levels of contamination from off-target DNA. How can Argo help mitigate this? Argo's workflow includes a stringent, frameshift-aware alignment step to a manually curated ARG database called SARG+. This database excludes regulators, housekeeping genes, and ARGs from point mutations that are not direct indicators of antibiotic resistance. By using this focused database and applying adaptive identity cutoffs, Argo reduces false positives from non-target genetic material, ensuring that the reported ARGs are bona fide resistance genes and minimizing noise from contaminating DNA [21].

Q3: Why are my Argo results showing a low number of ARG-carrying reads, and what can I do to improve detection? Low ARG detection can stem from two main issues. First, check the quality of your long-read sequencing data, as highly diverse quality scores can affect alignment accuracy. Second, ensure you are using an appropriate and comprehensive reference database. Argo's SARG+ database is expanded to include multiple sequence variants for each ARG from a wide range of species, which increases detection sensitivity. Using a database with only single representative sequences per ARG can lead to underestimation [21].

Q4: What are the key computational requirements for running Argo effectively? Argo is designed to be computationally efficient by avoiding full metagenome assembly. However, processing complex environmental metagenomes with long reads requires substantial memory and processing power for the read overlapping and graph clustering steps. The tool's performance is optimized for long-read data (e.g., Oxford Nanopore or PacBio) and relies on a robust reference database built from GTDB, which encompasses over 500,000 assemblies [21].

Troubleshooting Guides

Problem: Inaccurate Host Attribution for ARGs

- Symptoms: ARGs are assigned to incorrect or implausible microbial species.

- Diagnosis: This is often caused by the inherent challenges of classifying individual reads that span horizontally transferred genes, which may be present in multiple species.

- Solution:

- Utilize Clustering: Argo's core innovation is its read-overlapping approach. Ensure this step is enabled, as it groups reads from the same genomic region, increasing the confidence of taxonomic assignment per cluster rather than per single read [21].

- Verify Database Composition: Confirm that the reference taxonomy database (e.g., GTDB) is comprehensive and includes the species present in your sample. A limited database will lead to misclassifications [21].

- Check for Plasmids: Use Argo's feature to mark reads as "plasmid-borne" if they map to a RefSeq plasmid database. This helps distinguish between chromosomal ARGs (which are firmly linked to a host) and those on mobile genetic elements [21].

Problem: High Computational Resource Usage or Slow Runtime

- Symptoms: The Argo pipeline runs very slowly or fails due to memory constraints.

- Diagnosis: The initial step of identifying ARG-containing reads from the entire metagenomic dataset is computationally intensive.

- Solution:

- Leverage Pre-filtering: Argo first uses DIAMOND's frameshift-aware alignment to filter for reads carrying ARGs. This significantly reduces the number of reads that undergo the more costly overlapping and clustering steps [21].

- Optimize Infrastructure: For large-scale studies, ensure the analysis is performed on a high-performance computing (HPC) cluster or a cloud instance with sufficient CPUs and RAM. Dynamic cluster distribution, as seen in other Argo projects, can help manage resource-intensive workloads, though this specific feature may not be directly ported to the bioinformatics tool Argo [36].

Problem: Integration with a Multi-Cluster or High-Availability Computing Environment

- Symptoms: Need to distribute Argo analyses across multiple computing clusters for scalability and reliability.

- Diagnosis: While the bioinformatics tool "Argo" itself is not described as a multi-cluster Kubernetes application, other tools in the Argo ecosystem (like Argo Workflows) are designed for this.

- Solution:

- Use Argo Workflows: Containerize the Argo analysis steps and define them as an Argo Workflow. This allows you to orchestrate the multi-step bioinformatics pipeline effectively [37].

- Employ KubeStellar: To distribute and synchronize these workflows across multiple Kubernetes clusters, use a tool like KubeStellar. It simplifies multi-cluster deployment by using binding policies to assign workloads (like your Argo analysis) to specific execution clusters, making the entire operation appear as a single, unified cluster [37].

Experimental Protocols & Workflows

Detailed Methodology for ARG Profiling with Argo

The following protocol is adapted from the benchmarking and validation studies performed with Argo [21].

Sample Preparation & Sequencing:

- Extract high-molecular-weight DNA from your environmental sample (e.g., soil, water, feces).

- Perform long-read sequencing using a platform such as Oxford Nanopore Technologies (ONT) or PacBio. Ensure you achieve sufficient sequencing depth for your sample complexity.

Data Preprocessing:

- Conduct basecalling and quality assessment of the raw sequencing data using platform-specific tools (e.g., Guppy for ONT).

- Perform adapter trimming and quality filtering to obtain clean, high-quality reads for downstream analysis.

ARG Identification with Argo:

- Input: Preprocessed long reads in FASTA/FASTQ format.

- Process: a. Argo aligns reads to the SARG+ protein database using DIAMOND's frameshift-aware DNA-to-protein alignment. b. An adaptive identity cutoff is calculated based on read overlaps to ensure stringency. c. Reads carrying at least one ARG are identified and their coordinates recorded.

Taxonomic Classification & Read Clustering:

- Base-Level Alignment: ARG-containing reads are mapped to the GTDB-based reference taxonomy database using

minimap2. - Read Overlapping and Graph Clustering: The core Argo process: a. An overlap graph is built from the ARG-containing reads. b. The graph is segmented into read clusters using the Markov Cluster (MCL) algorithm. Reads in the same cluster are presumed to originate from the same genomic region of a specific species.

- Taxonomic Label Assignment: Taxonomic labels are assigned collectively to each read cluster, refining the host attribution.

- Base-Level Alignment: ARG-containing reads are mapped to the GTDB-based reference taxonomy database using

Output and Analysis:

- Argo generates a comprehensive profile of ARGs and their associated host species.

- The output can be used for quantitative analysis of ARG abundance, diversity, and host associations in the context of your study.

Signaling Pathways and Workflow Diagrams

Argo Analysis Workflow

Multi-Cluster Execution Logic

Research Reagent Solutions and Key Materials

Table 1: Essential Research Reagents and Databases for Argo Analysis

| Item Name | Type/Format | Function in the Protocol | Key Notes |

|---|---|---|---|

| SARG+ Database | Protein Sequence Database | Core reference for identifying ARGs via alignment. | Manually curated; includes variants from CARD, NDARO, and SARG; excludes regulators and housekeeping genes [21]. |

| GTDB Release 09-RS220 | Genomic Taxonomy Database | Reference for taxonomic classification of ARG-containing read clusters. | Comprises 596,663 assemblies; provides better quality control and fewer annotation issues than NCBI RefSeq [21]. |

| RefSeq Plasmid Database | Sequence Database | Used to identify and mark plasmid-borne ARGs. | A decontaminated subset of 39,598 sequences; helps distinguish mobile from chromosomal ARGs [21]. |

| DIAMOND | Software Tool | Performs frameshift-aware DNA-to-protein alignment for initial ARG detection. | Faster than BLASTX; critical for efficiently filtering ARG-containing reads from large datasets [21]. |

| Minimap2 | Software Tool | Performs base-level alignment of reads to the GTDB reference database. | Generates candidate species labels for each read prior to clustering [21]. |

| MCL Algorithm | Software Algorithm (Markov Cluster) | Segments the read overlap graph into clusters representing single ARG-species pairs. | Key to Argo's accurate host attribution by grouping related reads [21]. |

Table 2: Argo Performance Metrics from Benchmarking Studies

| Metric | Short-Read Assembly & Classification | Per-Read Long-Read Classification | Argo (Cluster-based) |

|---|---|---|---|

| Host Misclassification Rate | High (due to fragmented contigs) | Moderate (challenging for individual reads) | Significantly Reduced [21] |

| Sensitivity in ARG Detection | Good (but can be variable) | Good | High (with SARG+ DB) [21] |

| Computational Intensity | High (assembly is costly) | Moderate | Moderate (avoids assembly) [21] |

| Resolution for HGT Tracking | Low | Moderate | High [21] |

Co-assembly Strategies to Improve Gene Recovery in Complex Metagenomes

Frequently Asked Questions (FAQs) on Metagenomic Co-assembly

1. When should I choose a co-assembly strategy over individual assembly? Co-assembly is particularly beneficial when your research goal is to create a comprehensive gene catalog from a set of related samples or to recover genomes from low-abundance microorganisms. By pooling sequencing data from multiple samples, the combined coverage for rare community members increases, making their sequences easier to assemble [38]. This approach has successfully recovered low-abundance genomes crucial for differentiating between healthy and disease states, such as in colorectal cancer studies [39]. However, for tracking strain-level variation across samples, individual assembly might be preferable to avoid merging data from closely related strains, which can fragment the assembly [38].

2. What are the main computational challenges of co-assembly, and how can I mitigate them? Co-assembly, especially of complex metagenomes from environments like soil or gut, is computationally intensive and can demand terabytes of memory [40] [41]. To mitigate this:

- Optimize Assembly Parameters: Using a reduced, optimized set of k-mers (e.g., 21, 29, 39, 59, 79, 99, 119) with assemblers like MEGAHIT can slash assembly time by half without sacrificing the quality or number of recovered Metagenome-Assembled Genomes (MAGs) [41].

- Leverage Workflow Managers: Use workflow managers like Nextflow or Snakemake, which are designed for scalable and reproducible analyses on high-performance computing (HPC) clusters or cloud environments [40].

- Utilize Web Platforms: For researchers without access to HPC, web-based platforms like KBase, MGnify, and Galaxy offer predefined workflows and computational resources [40].

3. My co-assembled bins have high contamination. How can I improve bin quality? High contamination in bins often results from the incorrect grouping of contigs from different organisms. To address this:

- Employ Multiple Binning Tools: Using several binning algorithms (e.g., MaxBin, MetaBAT, CONCOCT) and then consolidating their results with a tool like DASTool can significantly improve the quality of the final MAG set [39] [40].

- Incorporate Refinement Steps: Many pipelines include bin refinement steps that use differential coverage and sequence composition to remove contaminating contigs from bins [40].

- Leverage Long-Read Sequencing: If possible, using highly accurate long-read sequencing (e.g., PacBio HiFi) produces longer contigs, which drastically simplifies the binning process and reduces the chances of errors, resulting in more complete and less contaminated MAGs [42].

4. How can co-assembly strategies help in mitigating contamination in Antibiotic Resistance Gene (ARG) analysis? Co-assembly provides a more genomic context-aware approach to ARG analysis compared to read-based methods. By assembling longer contigs, you can more accurately link an ARG to its host genome and determine if it is located on a mobile genetic element (MGE) like a plasmid [6] [5]. This helps distinguish between ARGs in transient contaminants versus those entrenched in a resident microbial population. Furthermore, a robust co-assembly allows for the discovery of novel ARGs from previously uncultured organisms, providing a fuller picture of the environmental "resistome" [43] [6].

Troubleshooting Guides

Issue: Inefficient Assembly and Low Gene Recovery

Problem: The metagenomic assembly process is too slow, consumes excessive memory, and fails to recover a comprehensive set of genes, particularly from low-abundance community members.

Solution: Implement a mixed-assembly strategy and optimize k-mer selection.

Detailed Protocol:

- Perform Individual Sample Assemblies: Assemble each metagenomic sample individually using an assembler like MEGAHIT with a reduced set of k-mers (e.g., 21, 29, 39, 59, 79, 99, 119) to improve computational efficiency [41].

- Perform Co-assembly: Pool reads from all samples (or related groups of samples) and assemble them. Normalizing reads before co-assembly using a tool like

BBnormcan help manage complexity [38]. - Predict Genes: Use a gene prediction tool like