Navigating Molecular Dynamics Convergence: From Fundamental Challenges to Advanced Solutions in Drug Discovery

This article provides a comprehensive guide to molecular dynamics (MD) simulation convergence, a critical challenge in computational drug discovery.

Navigating Molecular Dynamics Convergence: From Fundamental Challenges to Advanced Solutions in Drug Discovery

Abstract

This article provides a comprehensive guide to molecular dynamics (MD) simulation convergence, a critical challenge in computational drug discovery. It explores the foundational principles behind convergence issues, including sampling limitations and force field accuracy. The content details advanced methodological approaches, such as enhanced sampling and machine-learning-integrated simulations, for achieving robust convergence. A dedicated troubleshooting section offers practical strategies for optimizing simulations and diagnosing common problems. Finally, the article establishes a rigorous framework for validating convergence through statistical analysis and comparison with experimental data, empowering researchers to produce reliable, publication-ready results for target modeling, binding pose prediction, and lead optimization.

Understanding the Root Causes of MD Non-Convergence

Frequently Asked Questions (FAQs)

What does "convergence" mean in the context of Molecular Dynamics (MD) simulations?

In MD, convergence is achieved when a simulation has sampled a representative portion of the system's conformational space such that the measured properties have reached stable, plateaued values. A practical working definition is: given a trajectory of length T and a property Aᵢ measured from it, the property is considered equilibrated if the fluctuations of its running average, 〈Aᵢ〉(t), remain small for a significant portion of the trajectory after a convergence time t_c [1].

What is the difference between equilibration and convergence?

Equilibration is the initial process where a system relaxes from its starting coordinates towards a state of thermodynamic equilibrium. Convergence is the subsequent state where the simulation has sampled a sufficient number of configurations to reliably calculate the average values of the properties of interest. A system can be partially equilibrated (some properties are stable) but not fully converged if other properties, especially those dependent on infrequent events, are still changing [1].

Why hasn't my root mean square deviation (RMSD) value plateaued? Should I be concerned?

A non-plateauing RMSD can indicate that the system is still undergoing significant conformational drift and has not yet equilibrated. However, for systems with surfaces or interfaces, RMSD can be an unsuitable convergence metric. It is recommended to monitor multiple properties, such as energy, density profiles, or other system-specific observables, to get a comprehensive picture of convergence [1] [2].

My simulation energy is stable, but I am told my sampling is not converged. Is this possible?

Yes, this is a common and critical distinction. A stable potential energy indicates that the system may be thermally equilibrated. However, it could be trapped in a local energy minimum, failing to sample other relevant conformational states. Convergence of sampling for structural and dynamic properties often requires simulation timescales much longer than those needed for energy stabilization, especially for biomolecules [1] [3].

What does "ergodicity" mean, and why is it important?

Ergodicity is the principle that the time average of a property over an infinitely long simulation trajectory will equal the ensemble average over all possible states. In practice, for a simulation to be valid, it must be long enough to sample all the energetically relevant conformational states that contribute significantly to the property being measured. A failure to achieve ergodic sampling means the simulation results may not be representative of the true system behavior [1].

Troubleshooting Guides

Symptom: Suspected Non-Equilibration or Poor Sampling

| Observed Issue | Potential Causes | Diagnostic Steps | Solutions & Recommendations |

|---|---|---|---|

| Continuous drift in energy or RMSD | Insufficient equilibration time; starting structure far from native basin. | 1. Plot the potential energy, temperature, and pressure as a function of time.2. Calculate the running average of the RMSD to the starting structure [1]. | 1. Extend the equilibration procedure.2. Ensure proper energy minimization before heating and pressurization.3. Consider using the final frame of a previous, stable simulation as a new starting point. |

| High energy or system instability | Incorrect force field parameters; steric clashes; inaccurate bonding. | 1. Check the log files for "Shake failures" or LINCS warnings.2. Visualize the trajectory to locate atoms with high force or unusual geometry. | 1. Re-run energy minimization until the maximum force is below a acceptable threshold.2. Double-check the system topology and parameter assignment.3. Review the protonation states of residues. |

| Property averages not reproducible | Inadequate sampling of conformational space; trajectory too short. | 1. Perform multiple independent simulations starting from different initial velocities [3].2. Use ensemble similarity methods (e.g., CES, DRES) to see if different trajectory segments sample similar conformational spaces [4]. | 1. Aggregate data from multiple independent simulations to improve sampling [3].2. Significantly extend the simulation time, if possible.3. Use enhanced sampling techniques to overcome high energy barriers. |

Symptom: Challenges in Assessing Convergence

| Observed Issue | Potential Causes | Diagnostic Steps | Solutions & Recommendations |

|---|---|---|---|

| Uncertain if a property is converged | Lack of a known target value; slow, undetected dynamics. | 1. Plot the cumulative average of the property as a function of simulation time. A converged property will show a stable plateau [1].2. For interfaces, use a tool like DynDen to track the convergence of linear partial density profiles [2]. | 1. Do not rely on a single metric. Monitor several structural, energetic, and dynamic properties.2. Compare the distribution of the property from the first and second halves of the trajectory. |

| Correlation analysis shows no meaningful networks | Insufficient sampling to accurately calculate cross-correlations. | Calculate the cross-correlation matrix ( C_{ij} ) for atomic fluctuations and check if the pattern stabilizes over different trajectory segments [5]. | Extend the simulation time. Accurate correlation analysis typically requires more sampling than simple structural averages. |

Quantitative Data on Convergence Timescales

The time required for convergence is highly system- and property-dependent. The following table summarizes findings from published long-timescale simulations.

Table 1: Empirical Convergence Timescales from MD Studies

| System Type | Property | Observed Convergence Timescale | Notes | Source |

|---|---|---|---|---|

| B-DNA Helix (d(GCACGAACGAACGAACGC)) | Structure & Dynamics (excluding termini) | ~1–5 μs | Structure and dynamics were essentially fully converged on this timescale, but terminal base pairs (fraying) were not. | [3] |

| General Biomolecules | Properties of biological interest (e.g., distances, angles) | Multi-microsecond trajectories | Convergence was observed for average structural properties, but not for transition rates to low-probability conformations. | [1] |

| General Biomolecules | Transition rates to low-probability conformations | > Multi-microsecond trajectories | Sampling rare events requires simulation timescales that extend beyond what is needed for average properties. | [1] |

Experimental Protocols for Convergence Analysis

Protocol 1: Assessing Convergence using Ensemble Similarity (via MDAnalysis)

This protocol uses the encore module in MDAnalysis to evaluate how similar conformational ensembles are from different parts of a trajectory [4].

1. Loading the Trajectory:

2. Clustering Ensemble Similarity: This method divides the trajectory into growing windows and compares the conformational clusters between them.

A drop in the Jensen-Shannon divergence to zero indicates that newer trajectory windows are not sampling new conformational space, suggesting convergence [4].

3. Dimensionality Reduction Ensemble Similarity: This method is similar but uses the similarity of low-dimensional projections (like Principal Component Analysis) instead of clusters.

Protocol 2: Calculating a Dynamic Cross-Correlation Matrix (DCCM)

This protocol identifies networks of correlated motions, which is useful for understanding allosteric mechanisms. The analysis requires a well-sampled, converged trajectory [5].

1. Perform a Molecular Dynamics Simulation: Use a package like GROMACS, AMBER, or NAMD to simulate your system and generate a stable trajectory.

2. Calculate the Cross-Correlation Matrix:

Using the Bio3D package in R (or g_covar in GROMACS), compute the cross-correlation matrix. The element C(i,j) for atoms i and j is calculated as:

[

C{ij} = \frac{\langle \Delta \vec{r}i \cdot \Delta \vec{r}j \rangle}{\sqrt{\langle \|\Delta \vec{r}i\|^2 \rangle \langle \|\Delta \vec{r}_j\|^2 \rangle}}

]

where ( \Delta \vec{r} ) is the displacement from the mean position, and ( \langle \cdots \rangle ) represents the time average over the trajectory [5].

3. Interpret the Results:

- C(i,j) = 1: Perfectly correlated motion (same direction).

- C(i,j) = -1: Perfectly anti-correlated motion (opposite direction).

- C(i,j) = 0: Uncorrelated motion. The resulting matrix can be visualized as a heatmap to identify communication pathways within the protein.

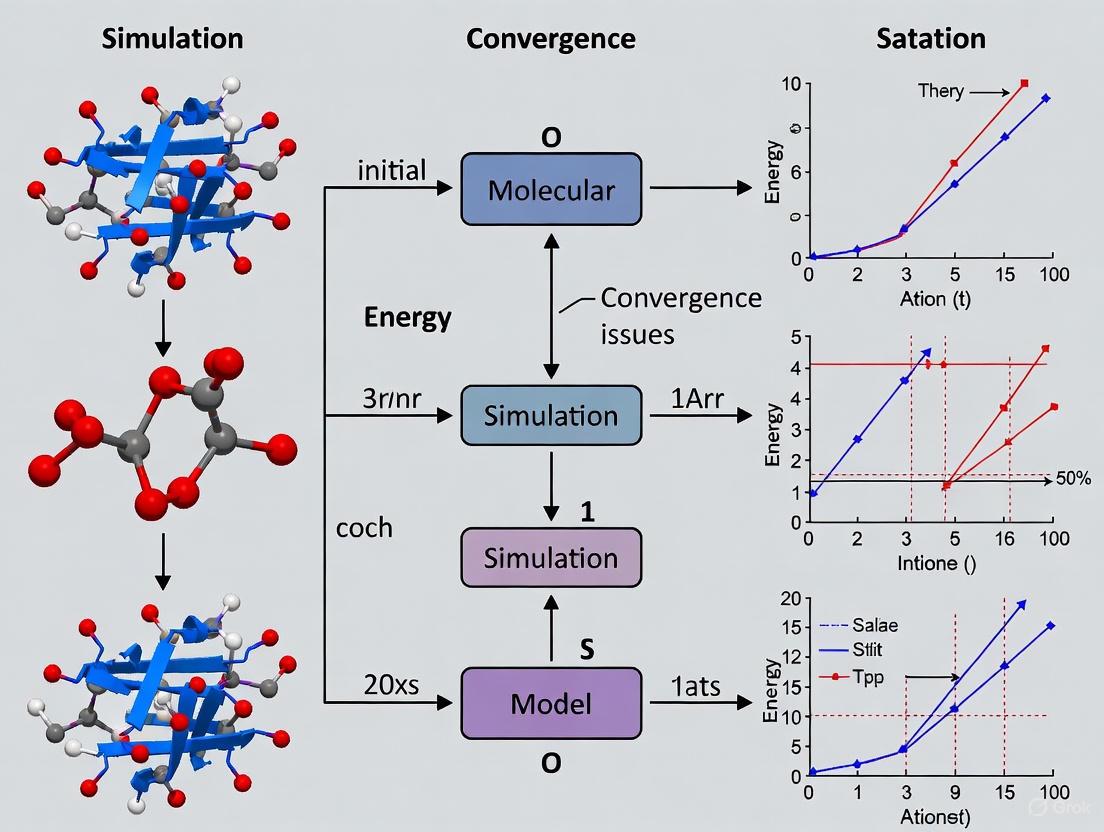

Workflow Visualization

Title: Convergence Assessment Workflow

The Scientist's Toolkit: Key Research Reagents & Software

Table 2: Essential Tools for Convergence Analysis

| Tool / Reagent | Type | Primary Function in Convergence Analysis |

|---|---|---|

| MDAnalysis [4] | Software Library (Python) | A versatile toolkit for analyzing MD trajectories, including specific methods for calculating ensemble similarity and convergence. |

| DynDen [2] | Software Tool (Python) | Specialized for assessing convergence in simulations featuring surfaces and interfaces by analyzing linear density profiles. |

| Bio3D [5] | Software Package (R) | Used for dynamic cross-correlation analysis (DCCM) and other essential analyses of biomolecular structure and dynamics. |

| AMBER/CHARMM [3] [6] | MD Simulation Suite | Widely-used simulation packages that include tools for running simulations and performing basic analysis like RMSD and energy monitoring. |

| GROMACS [5] [6] | MD Simulation Suite | A high-performance MD package with built-in commands (e.g., g_rms, g_energy) for fundamental convergence checks. |

| Neural Network Potentials (NNPs) [7] | Computational Model | Next-generation force fields (e.g., eSEN, UMA) trained on massive quantum chemical datasets, offering high accuracy for energy calculations that underpin reliable dynamics. |

Frequently Asked Questions (FAQs)

Q1: What are the most common signs that my molecular dynamics simulation has not reached equilibrium? A simulation may not have reached equilibrium if key properties have not stabilized. Relying solely on the Root Mean Square Deviation (RMSD) plot is not recommended, as studies show no consensus among scientists on determining equilibrium from RMSD alone [8]. Better indicators include monitoring multiple metrics (e.g., energy, RMSF, hydrogen bonds) and checking if their running averages have reached a plateau with small fluctuations for a significant portion of the trajectory [1].

Q2: Why are biologically important rare events, like protein folding or conformational changes, so difficult to simulate? These events are considered "rare" because the system dwells for long periods in metastable states, and the transitions between them are infrequent. The dwell time (tdwell) in a stable state is often much longer than the actual transition event time (tb). Conventional MD simulations are typically too short to observe these rare transitions spontaneously, creating a significant timescale gap [9].

Q3: What can I do if my simulation of a charged or magnetic system fails to converge electronically? Convergence in such systems can be particularly challenging. A recommended strategy is to split the calculation into multiple steps. For magnetic systems with LDA+U, start with a non-magnetic calculation, then progress to a spin-polarized calculation with a small time step, and finally introduce the LDA+U parameters [10]. For charged systems, ensuring sufficient empty bands (NBANDS) and using a suitable algorithm (ALGO) is often critical [10].

Q4: My simulation seems stuck in a local energy minimum. How can I encourage it to explore other states? Path sampling approaches are specifically designed to address this problem. Methods like Transition Path Sampling (TPS) or the Weighted Ensemble (WE) strategy focus computational effort on the transitions between states rather than on the long dwell times within a state. They allow you to generate an ensemble of transition pathways and calculate unbiased rate constants for events that would be impractical to simulate with conventional MD [9].

Q5: How can I directly compute the free-energy landscape of a process like lipid phase separation in membranes? Measuring free energy directly with standard MD is challenging. A more effective approach is to combine coarse-grained MD with enhanced sampling protocols. This involves using a novel collective variable that describes the process, allowing you to probe the thermodynamics beyond just the sign of the free-energy change [11].

Troubleshooting Guides

Issue 1: Non-Convergence of Equilibrium Properties

Problem: The simulated trajectory does not reach a stable equilibrium, and calculated properties do not converge, potentially invalidating the results [1].

Diagnosis and Solutions:

| Step | Action | Technical Details / Parameters | Expected Outcome |

|---|---|---|---|

| 1 | Check Multiple Metrics | Monitor several properties: potential energy, RMSD, RMSF, number of hydrogen bonds, radius of gyration [1]. | A system is considered equilibrated when the running averages of these properties plateau and their fluctuations remain small [1]. |

| 2 | Avoid Sole Reliance on RMSD | Do not use RMSD plots as the sole indicator of convergence. Be aware that its interpretation is highly subjective and influenced by plot scaling and color [8]. | A more robust and objective assessment of convergence based on multiple lines of evidence. |

| 3 | Define a Convergence Time (tc) | For a property A, find the time tc after which the fluctuation of 〈A〉(t) around the final average 〈A〉(T) is small [1]. | A clear, quantitative criterion to define the production segment of your trajectory. |

| 4 | Understand Partial vs. Full Equilibrium | Recognize that some average properties may converge faster than others. Properties depending on low-probability regions of conformational space (e.g., transition rates, full free energy) take much longer to converge [1]. | Informed interpretation of results, acknowledging that some properties may be reliable while others are not. |

Issue 2: Sampling Rare Events and Complex Energy Landscapes

Problem: The process of interest (e.g., large conformational change, protein-ligand unbinding) occurs on timescales far exceeding the practical limits of conventional MD simulations [9].

Diagnosis and Solutions:

| Approach | Method | Key Principle | Best for |

|---|---|---|---|

| Path Sampling | Transition Path Sampling (TPS), Dynamic Importance Sampling (DIMS) [9]. | Uses Monte Carlo or biased dynamics to sample complete, continuous transition paths from state A to state B. | Generating mechanistic insights and the full sequence of intermediates for a known transition [9]. |

| Region-to-Region Sampling | Weighted Ensemble (WE), Adaptive Multilevel Splitting (AMS) [9]. | Splits and replicates unbiased trajectory segments that progress between predefined regions ("bins") of configuration space. | Efficiently calculating rate constants and sampling transitions where progress can be measured in stages [9]. |

| Interface-to-Interface Sampling | Transition Interface Sampling (TIS), Forward Flux Sampling (FFS) [9]. | Calculates flux of trajectories through a series of interfaces between states A and B. | Obtaining accurate rate constants for rare events with a series of nested interfaces [9]. |

The following diagram illustrates the conceptual workflow shared by path sampling methods to overcome the timescale gap:

Issue 3: Electronic Convergence Failures in Ab Initio MD

Problem: The self-consistent field (SCF) procedure fails to converge to the electronic ground state, halting the simulation [10].

Diagnosis and Solutions:

| Symptom | Possible Cause | Solution |

|---|---|---|

| Convergence failure in charged systems or those with f-orbitals [10]. | Insufficient number of empty bands (NBANDS). | Increase NBANDS significantly. The default is often too low for such systems [10]. |

| Convergence failure in magnetic systems or when using meta-GGA functionals like MBJ [10]. | Inappropriate algorithm or time step. | Switch ALGO (e.g., to All). For LDA+U, use a small TIME (e.g., 0.05). For MBJ, start from a PBE-converged wavefunction [10]. |

| Oscillations in the charge density during SCF [10]. | Over-mixing of the charge density. | Reduce the mixing parameters (AMIX, BMIX). For magnetic systems, use linear mixing (BMIX=0.0001, BMIX_MAG=0.0001) [10]. |

| General convergence issues in a complex system. | System is too complex for initial parameters. | Simplify the calculation: use lower ENCUT, reduce k-points, set PREC=Normal. Once converged, gradually restore settings [10]. |

The Scientist's Toolkit: Research Reagent Solutions

The following table details key software and methodological "reagents" essential for tackling convergence and sampling challenges.

| Tool / Method | Function | Key Use-Case |

|---|---|---|

| Path Sampling Software [9] | Publicly available packages (e.g., for WE, TPS, FFS) that implement advanced sampling algorithms. | Generating ensembles of transition paths and calculating rate constants for rare events beyond the reach of conventional MD. |

| Enhanced Sampling Collective Variables (CVs) [11] | A user-defined parameter that describes the progress of a reaction or transition, used to bias the simulation. | Directly measuring the free-energy landscape of processes like lipid phase separation in bilayers. |

| Weighted Ensemble (WE) Strategy [9] | A "splitting" method that runs multiple trajectories in parallel, replicating those that make progress and pruning others. | Efficiently calculating rate constants and sampling binding/unbinding events or conformational changes. |

| Multi-step Electronic Convergence Protocol [10] | A predefined sequence of calculations with increasing complexity (e.g., PBE → MBJ, non-magnetic → LDA+U). | Achieving stable electronic convergence in difficult systems like those with magnetic properties or using advanced functionals. |

| Equilibration Metrics Suite | A set of scripts/tools to compute multiple properties (RMSF, H-bonds, energy, etc.) and their running averages. | Providing a robust, multi-faceted assessment of whether a simulation has reached equilibrium, moving beyond RMSD alone [1] [8]. |

The workflow for a multi-step protocol to stabilize convergence in difficult systems, such as those with magnetic properties, is outlined below:

The Impact of Force Field Inaccuracies on Sampling and Stability

Frequently Asked Questions (FAQs)

1. How can I determine if my MD simulation has reached true equilibrium and not just a local energy minimum?

A system can be in partial equilibrium where some properties have converged while others have not. A working definition is: given a trajectory of length T and a property Aᵢ, the property is considered "equilibrated" if the fluctuations of its running average ⟨Aᵢ⟩(t) remain small for a significant portion of the trajectory after some convergence time t꜀ (where 0 < t꜀ < T). If all individual properties are equilibrated, the system is considered fully equilibrated [12] [1].

Standard metrics include monitoring energy and root-mean-square deviation (RMSD) to see if they reach a plateau. However, be aware that a plateau does not guarantee true equilibrium; the system may be trapped in a deep local minimum from which it could escape in a longer simulation [12] [1].

2. Why do my simulations consistently misfold a protein, even with microsecond-long trajectories?

This is a classic sign of potential force field bias. When a simulated protein fails to fold, it can be unclear if the cause is insufficient sampling or inherent force field deficiencies. In one studied case, the human Pin1 WW domain failed to fold in >1 μs simulations because the force field itself was found to favor misfolded states over the native state [13]. This highlights that simply extending simulation time cannot correct for a force field that inaccurately represents the underlying energy landscape.

3. My binding affinity calculations are inaccurate, even with good sampling. Could the force field be the problem?

Yes, force field inaccuracies are a major limitation in reliably predicting protein-ligand binding thermodynamics. Despite advances in sampling, the simulation is only as accurate as the force field it uses [14]. Commonly used data for force field parametrization (like neat liquid properties or small molecule hydration free energies) may not adequately test performance for the complex interactions at a binding interface, leading to systematic errors in binding affinity and enthalpy calculations [14].

4. What is the difference between a fully converged simulation and one that is only partially converged?

- Full convergence/equilibrium requires that the simulation has thoroughly explored all physically allowed conformations, including low-probability regions of the conformational space (Ω). Properties that depend on the entire partition function, like absolute free energy and entropy, require this full exploration to converge [12] [1].

- Partial convergence is achieved when the system has sufficiently explored the high-probability regions of Ω. Average properties that depend mostly on these regions (such as distances, angles, or RMSD) can appear converged even when the simulation as a whole is not fully equilibrated [12] [1].

5. Are newer, data-driven force fields less prone to these inaccuracies?

Modern data-driven approaches aim to expand chemical space coverage and improve accuracy. For example, force fields like ByteFF are trained on expansive, diverse quantum mechanics (QM) datasets encompassing millions of molecular fragments and torsion profiles [15]. This approach seeks to overcome the limitations of traditional parametrization, which often relies on limited experimental data sets that may not probe all relevant interactions for biomolecular simulations, potentially leading to better accuracy across a wider range of drug-like molecules [14] [15].

Troubleshooting Guides

Problem: Sampling seems insufficient, and key biological properties do not converge.

Potential Cause: The simulation time is too short to adequately sample the relevant conformational space for the property of interest.

Solution:

- Check for Partial Convergence: First, verify if the lack of convergence is universal or specific. Calculate running averages for multiple properties (e.g., RMSD, radius of gyration, specific distances or angles). You may find that structurally relevant properties converge in multi-microsecond trajectories, while others (like transition rates to rare states) do not [12] [1].

- Extend Simulation Time: If biologically critical properties are unconverged, the primary solution is to run longer simulations. Recent studies analyze trajectories up to hundreds of microseconds to probe convergence [12].

- Validate with Experimental Data: Where possible, compare converged simulation averages with available experimental data (e.g., from NMR or spectroscopy) to build confidence in the model.

Diagram 1: Workflow for troubleshooting insufficient sampling.

Problem: Suspected force field bias leading to unrealistic structures or dynamics.

Potential Cause: The force field's parameters inaccurately represent the potential energy surface, favoring non-native or incorrect conformations.

Solution:

- Identify the Symptom: Clearly document the artifact, such as a protein consistently misfolding [13] or a host-guest binding enthalpy that deviates systematically from experiment [14].

- Compare with Robust Benchmarks: Calculate observable properties (e.g., binding free energies/enthalpies, conformational preferences) for a set of systems where reliable experimental or high-level QM data is available.

- Perform Free Energy Analysis: Use advanced methods like the "deactivated morphing" method [13] or free energy perturbation to quantitatively compare the stability of correct vs. incorrect states. This can confirm if the force field is biased.

- Consider Force Field Refinement or Replacement:

- Parameter Adjustment: For specific interactions, sensitivity analysis can be used to tune parameters (e.g., Lennard-Jones terms) to match experimental binding data [14].

- Switch Force Fields: If a systematic bias is confirmed, try a different, more modern force field. Consider ones that use data-driven approaches trained on large QM datasets for better general accuracy [15].

Problem: Inaccurate calculation of binding thermodynamics.

Potential Cause: The force field may not accurately describe the specific interactions or solvation effects critical for the binding process.

Solution:

- Use Simple Test Systems: Before simulating a complex protein-ligand system, test the force field on simpler host-guest systems. Their small size allows for more rigorous converged simulations and clearer comparison with experiment [14].

- Decompose the Energetics: Analyze the different energy components (van der Waals, electrostatic, solvation) contributing to the calculated binding affinity. A large error in one component can point to specific force field deficiencies [14] [16].

- Incorporate Binding Data in Parametrization: For advanced users, use sensitivity analysis to guide the adjustment of force field parameters. The derivatives of binding enthalpies with respect to force field parameters can be used to systematically improve agreement with experimental data [14].

Quantitative Data on Convergence and Accuracy

Table 1: Summary of Convergence Findings from Long-Timescale MD Simulations [12] [1]

| System Size/Type | Simulation Length | Convergence Status | Key Findings |

|---|---|---|---|

| Dialanine (22-atom toy model) | Multi-microsecond | Mixed Convergence | Even in this small system, not all properties reached convergence, challenging the assumption that small systems equilibrate quickly. |

| Larger Proteins | Up to 100 μs | Partial Equilibrium | Properties of primary biological interest (dependent on high-probability conformations) often converged. Properties relying on low-probability states (e.g., certain transition rates) did not. |

Table 2: Impact of Force Field Choice on Binding Enthalpy Calculation [14]

| Water Model | Computed Host-Guest Binding Enthalpy Error (MSE) | Implication |

|---|---|---|

| TIP3P | -3.0 kcal/mol | Different water models, both commonly used, produced significantly different and systematically erroneous binding enthalpies, highlighting force field sensitivity. |

| TIP4P-Ew | -6.8 kcal/mol |

Table 3: Performance of a Data-Driven Force Field (ByteFF) on QM Benchmarks [15]

| Benchmark Category | Description | ByteFF Performance |

|---|---|---|

| Relaxed Geometries | Accuracy in predicting optimized molecular structures. | State-of-the-art |

| Torsional Energy Profiles | Accuracy in modeling rotation around chemical bonds. | State-of-the-art |

| Conformational Energies/Forces | Accuracy in relative energies and forces between different molecular shapes. | State-of-the-art |

Experimental Protocols

Protocol 1: Checking for Equilibrium and Convergence in an MD Trajectory

Methodology:

- Run a long, unrestrained simulation starting from an energy-minimized and pre-equilibrated structure.

- Extract multiple properties from the trajectory. These should include:

- Calculate the running average for each property Aᵢ as a function of time, ⟨Aᵢ⟩(t).

- Analyze the running averages: A property is considered converged when its running average plateaus and the fluctuations around the final average ⟨Aᵢ⟩(T) become small and stable for a significant portion of the total simulation time T [12] [1].

Protocol 2: Using Sensitivity Analysis for Force Field Tuning

Methodology (as applied to host-guest binding enthalpy):

- Select a training set: Choose a small set of host-guest systems (e.g., cucurbit[7]uril with aliphatic guests) for which high-precision experimental binding enthalpies are available [14].

- Compute binding enthalpies: Use the unmodified force field. The binding enthalpy (ΔHₐᵦ) is calculated as the difference between the mean potential energy of the solvated complex and the sum of the mean potential energies of the separate solvated host and guest simulations [14].

- Perform sensitivity analysis: Evaluate the partial derivatives (∂ΔHₐᵦ/∂p) of the binding enthalpy with respect to the target force field parameters (p), such as Lennard-Jones coefficients [14].

- Adjust parameters: Use the sensitivity information to predict parameter changes that will reduce the error between calculation and experiment.

- Validate: Run new binding calculations with the adjusted parameters on both the training set and a separate test set to assess improvement and transferability [14].

Diagram 2: Force field refinement workflow using sensitivity analysis.

The Scientist's Toolkit: Key Research Reagents and Solutions

Table 4: Essential Resources for Studying Force Field Effects

| Tool / Resource | Function / Description | Relevance to Convergence & Accuracy |

|---|---|---|

| Long-Timescale MD Capability (Hardware/Software) | Enables multi-microsecond to millisecond simulations. | Essential for empirically testing convergence of various properties in biologically relevant systems [12] [1]. |

| Host-Guest Systems (e.g., CB7) | Simple, chemically well-defined models of molecular recognition. | Ideal testbeds for calculating converged binding thermodynamics and identifying force field errors without the complexity of full protein-ligand systems [14]. |

| Sensitivity Analysis Algorithm | Computes gradients of simulation averages with respect to force field parameters. | Allows for data-driven, efficient force field optimization based on experimental observables like binding enthalpies [14]. |

| Data-Driven Force Fields (e.g., ByteFF, Espaloma) | ML-based models parameterized on large, diverse QM datasets. | Aims to reduce inherent force field bias by providing broader and more accurate coverage of chemical space [15]. |

| Deactivated Morphing Method | A technique for calculating free energy differences between states. | Used to quantitatively diagnose force field bias, e.g., by showing it favors a misfolded state over the native fold [13]. |

Insufficient Simulation Time and System Size Limitations

Frequently Asked Questions

How can I definitively know if my simulation has run long enough to be considered converged? There is no single definitive test, but a combination of methods should be used. A system can be considered equilibrated when multiple properties, calculated as running averages, stop drifting and fluctuate around a stable value for a significant portion of the production trajectory. Crucially, you must monitor properties relevant to your scientific question, as different properties converge at different rates [1]. Relying only on basic metrics like potential energy and density is insufficient, as these often stabilize long before the system is truly equilibrated [17].

What is the practical consequence of using too large a time step? An excessively large time step can cause instability, making the simulation "blow up," or introduce significant energy drift, where the total energy is not conserved. This leads to physically unrealistic results. A good rule of thumb is that the time step should be at least 2 times smaller than the period of the fastest vibration in your system (e.g., ~5 fs for C-H bonds). For all-atom simulations with flexible bonds, 1-2 fs is standard. Using constraints on bond vibrations involving hydrogen allows for a time step of 2-2.5 fs [18].

My simulation is too slow. What are my options for reaching longer time scales? Several strategies exist to extend the effective time scale of your simulations:

- Coarse-Graining: Represent groups of atoms as single, larger particles. This smoothens the energy landscape, allowing for larger time steps (e.g., picoseconds instead of femtoseconds) and faster dynamics [19].

- Enhanced Sampling Methods: Use techniques like metadynamics or replica exchange to actively push the system over energy barriers it would rarely cross in conventional MD.

- Neural Network Potentials (NNPs): Newly available pre-trained models, like those from Meta's OMol25 dataset, can offer quantum-mechanical accuracy at a fraction of the computational cost of traditional ab initio MD, enabling longer and larger simulations [7].

Troubleshooting Guide

Symptom: Suspected Non-Equilibrium or Poor Sampling

| Observation | Potential Cause | Diagnostic Steps | Solution |

|---|---|---|---|

| Properties like RMSD, radius of gyration, or a specific distance/angle fail to plateau. | The simulation has not run long enough to escape initial configuration or sample relevant states [1]. | 1. Plot the property as a running average vs. time.2. Check if the autocorrelation function of key properties decays to zero. | Extend simulation time. Use enhanced sampling if the barrier is high. |

| Radial Distribution Function (RDF) peaks are irregular, multi-peaked, or excessively noisy. | The system is not equilibrated; intermolecular interactions have not converged [17]. | Compare RDFs calculated from the first and second halves of the trajectory. If they differ significantly, the system is not equilibrated. | Significantly extend the simulation time. The study on asphalt systems found RDFs can take orders of magnitude longer to converge than energy [17]. |

| High energy drift in an NVE (constant energy) simulation. | The time step is too large, or the integration algorithm is not behaving correctly [18]. | Monitor the total energy in an NVE simulation. A drift of more than 1 meV/atom/ps is a concern for publishable results [18]. | Reduce the time step. Ensure you are using a symplectic integrator like Velocity Verlet. |

| The system gets stuck in a single conformational state. | The energy barrier between states is too high to cross on the simulated time scale. | Check time series of dihedral angles or other reaction coordinates for state transitions. | Implement an enhanced sampling method (e.g., metadynamics, umbrella sampling) to drive transitions. |

Symptom: Instability and Crashes

| Observation | Potential Cause | Diagnostic Steps | Solution |

|---|---|---|---|

| Simulation crashes with "LINCS warning" or "SHAKE failure." | The time step is too large for the chosen model, or the system has extreme forces. | Check the log file for the step at which the crash occurs. Visualize that frame to look for unrealistic atomic overlaps (e.g., atoms too close). | 1. Short-term: Reduce time step.2. Long-term: Ensure proper system preparation via energy minimization and slow equilibration. Use constraints. |

| Pressure or temperature is far from the target value and won't stabilize. | The chosen thermostat/barostat is inappropriate, or its coupling constant is too strong/weak. | Plot temperature/pressure over time during equilibration. Check for oscillations or drift. | Adjust the coupling constant for the thermostat/barostat. Use a stochastic thermostat (e.g., Langevin) for better control. |

Quantitative Data on Convergence

The table below summarizes key metrics to monitor and their typical behavior, based on research into convergence issues [17] [1].

| Metric | Time to Converge (Relative) | What it Indicates | Is it a Good Indicator? |

|---|---|---|---|

| Density & Total Energy | Fast (ps-ns) | The system has found a locally stable packing and energy minimum. | No. These are necessary but not sufficient. They equilibrate long before the system is fully relaxed [17]. |

| Pressure | Slow (ns-µs) | The virial (related to forces) has stabilized. | Better than energy/density. Takes longer to converge, giving a more robust check [17]. |

| RMSD (to initial structure) | System Dependent | The structure has drifted from its starting point. | Use with caution. A plateau suggests a stable meta-stable state, not necessarily global equilibrium [1]. |

| Radial Distribution Function (RDF) | Very Slow (µs+) | The local structure and intermolecular interactions have stabilized. | Excellent. Studies show RDFs, especially between large molecules like asphaltenes, can require microsecond-plus timescales to converge [17]. |

| Autocorrelation Function (ACF) | System Dependent | The system has "forgotten" its initial state and is sampling freely. | Excellent. The decay of the ACF for key properties directly measures the correlation time and needed simulation length [1]. |

Experimental Protocols for Convergence Validation

Protocol 1: Verifying Equilibration

Aim: To determine if a production simulation is started from a truly equilibrated state. Methodology:

- Multiple Starts: Initiate 3-4 independent simulations from the same initial structure but with different random velocity seeds.

- Monitor Key Properties: In each simulation, track multiple structural and thermodynamic properties as running averages. Good choices include:

- Potential Energy

- Pressure

- Radius of Gyration

- Root-Mean-Square Deviation (RMSD) relative to a stable reference

- Key distances or angles relevant to your hypothesis

- Statistical Comparison: After a fixed time, compare the average and fluctuation of each property across all independent runs. If the system is equilibrated, the values from different runs should overlap within their statistical uncertainty [20].

Protocol 2: Block Averaging for Uncertainty Quantification

Aim: To reliably estimate the error bar (standard uncertainty) of a calculated observable from a single, long trajectory. Methodology:

- Calculate the Observable: Compute the property of interest (e.g., an angle) for every frame of the production trajectory.

- Divide into Blocks: Split the full time series into N consecutive blocks.

- Block Averages: Calculate the average of the observable within each block.

- Check for Independence: Gradually increase the block size. The correct standard uncertainty of the mean is found when the variance of the block averages becomes independent of the block size. This indicates that the blocks are long enough to be statistically uncorrelated [20].

The Scientist's Toolkit

| Category | Item / Technique | Function / Relevance |

|---|---|---|

| Analysis Software | GROMACS, AMBER, NAMD, MDAnalysis, VMD | Standard suites for running simulations and analyzing trajectories (e.g., calculating RMSD, RDFs, etc.). |

| Enhanced Sampling | Metadynamics, Replica Exchange MD (REMD) | Accelerates sampling of conformational space by helping the system overcome high energy barriers. Critical for studying rare events. |

| Advanced Potentials | Neural Network Potentials (NNPs) e.g., eSEN, UMA | Provides near-quantum mechanical accuracy at a much lower computational cost, enabling more converged sampling for complex systems [7]. |

| Statistical Metrics | Autocorrelation Function (ACF), Block Averaging | Quantifies the correlation time in a time series, which is essential for determining the true statistical error of an averaged property [20] [1]. |

Workflow Diagrams

Diagram 1: Equilibration and Convergence Verification Workflow. This chart outlines the decision-making process for running a simulation and verifying that it has reached a sufficiently converged state for scientific analysis.

Diagram 2: The Sampling Problem in MD. This schematic illustrates why insufficient simulation time is a fundamental problem. Conventional MD may sample high-probability states (A, B) but fail to visit low-probability states (C) separated by high energy barriers, which enhanced sampling methods are designed to overcome.

Advanced Algorithms and Enhanced Sampling Techniques for Robust Convergence

Alchemical vs. Path-Based Methods for Binding Free Energy Calculations

Within molecular dynamics (MD) research, a significant challenge is ensuring simulations are long enough to reach thermodynamic equilibrium, so that computed properties are converged and reliable [1]. This issue of convergence is central to accurately predicting binding free energy, a crucial metric in drug discovery for quantifying the affinity of a potential drug for its biological target [21] [22]. Two primary classes of computational methods are employed to tackle this challenge: alchemical methods and path-based methods. This guide explores these techniques, providing troubleshooting advice and FAQs to help researchers navigate the common pitfalls associated with binding free energy calculations.

Core Principles at a Glance

The following table summarizes the fundamental differences between alchemical and path-based approaches.

| Feature | Alchemical Methods | Path-Based Methods |

|---|---|---|

| Basic Principle | Uses non-physical ("alchemical") intermediate states to compute free energy differences [23]. | Uses physical pathways connecting the bound and unbound states [21] [22]. |

| Typical Application | Relative Binding Free Energy (RBFE) calculations for congeneric series; Absolute Binding Free Energy (ABFE) [23] [24]. | Absolute binding free energy estimation; studying binding/unbinding mechanisms and pathways [21] [22]. |

| Sampling Domain | Alchemical parameter space (e.g., coupling parameter λ). | Physical configurational space along a reaction coordinate [21]. |

| Mechanistic Insight | Provides little to no insight into the binding pathway or mechanism [22]. | Provides atomistic details of the binding pathway, interactions, and mechanism [21] [22]. |

| Handling of Charged Ligands | Can be challenging; may require neutralization with counterions and longer simulation times [24]. | Can handle charged ligands using electrostatics-based collective variables to guide dissociation [21] [25]. |

Detailed Workflow Diagrams

The workflows for these methods involve distinct steps and decision points, as visualized below.

Alchemical Free Energy Calculations

Path-Based Free Energy Calculations

The Scientist's Toolkit: Essential Research Reagents and Solutions

The table below lists key methodological "reagents" and their functions in free energy calculations.

| Research Reagent | Function in Free Energy Calculations |

|---|---|

| Lambda (λ) Windows | Non-physical coupling parameter in alchemical methods that defines intermediate states for transforming one system into another [23] [24]. |

| Path Collective Variables (PCVs) | Collective variables that define a progression along a pre-computed physical path, used in steered MD simulations to guide binding/unbinding [21]. |

| Steered MD (SMD) | An enhanced sampling technique that applies a time-dependent biasing potential to collective variables to accelerate rare events like ligand unbinding [21]. |

| Bennett Acceptance Ratio (BAR) | A statistically optimal estimator for calculating free energy differences from equilibrium simulations conducted at different alchemical states [23]. |

| Crooks Fluctuation Theorem (CFT) | A nonequilibrium estimator that relates the work distributions from forward and reverse processes to the free energy difference [21]. |

| Open Force Field (OpenFF) | An initiative to develop accurate, broadly applicable force fields for small molecules and biomolecules, critical for reliable energy evaluations [24]. |

Troubleshooting Guides and FAQs

Frequently Asked Questions

Q1: My alchemical relative free energy calculation for a congeneric series shows poor convergence and high hysteresis. What could be the issue?

A: This is a common problem often stemming from inadequate sampling or incorrect lambda scheduling.

- Check Lambda Windows: Ensure you have a sufficient number of λ windows, especially in regions where the potential energy changes rapidly. Using an automated lambda scheduling algorithm can reduce guesswork and improve efficiency [24].

- Extend Simulation Time: For transformations involving charge changes or large structural perturbations, longer simulation times at each lambda window are often necessary to achieve convergence [24].

- Investigate Hydration: Inconsistent hydration environments around the ligands in the forward and reverse transformations can cause large hysteresis. Using techniques like Grand Canonical Monte Carlo (GCMC) can ensure consistent and adequate hydration of the binding site [24].

Q2: When should I choose a path-based method over an alchemical method?

A: The choice depends on your primary research goal.

- Choose alchemical methods when your main objective is to efficiently rank a series of chemically similar compounds (lead optimization) and you do not require mechanistic insights [22] [24].

- Choose path-based methods when you need to compute the absolute binding free energy for a diverse set of ligands, or when you want to gain atomistic insight into the binding/unbinding pathway, mechanisms, and key interactions [21] [22]. They are particularly useful for studying complex systems with significant conformational rearrangements, like the binding of Gleevec to Abl kinase [21].

Q3: How can I assess if my molecular dynamics simulation has reached equilibrium before starting free energy calculations?

A: Convergence is a critical but often overlooked prerequisite.

- Monitor Key Properties: Plot properties like the system potential energy and the root-mean-square deviation (RMSD) of the biomolecule over time. These should reach a stable plateau, indicating equilibration [1].

- Understand Partial Equilibrium: Be aware that a system can be in "partial equilibrium" where properties depending on high-probability regions (like average distances) may converge faster than properties requiring sampling of low-probability states (like free energies and entropies) [1]. For free energy calculations, which depend on thorough exploration of conformational space, ensuring adequate sampling is paramount.

Troubleshooting Common Problems

| Problem | Potential Causes | Solutions |

|---|---|---|

| Poor Convergence in Alchemical Calculations | 1. Insufficient sampling at specific λ windows.2. Inadequate system equilibration.3. Large conformational changes during transformation. | 1. Increase simulation time per window; use adaptive λ scheduling [24].2. Extend equilibration protocol; monitor energy/RMSD plateaus [1].3. Introduce intermediate states or use a softer core potential. |

| High Hysteresis in RBFE | 1. Inconsistent hydration between forward/reverse transformations.2. Ligand charge changes not properly handled. | 1. Use hydration techniques like GCNCMC or 3D-RISM to ensure consistent water placement [24].2. Neutralize charged ligands with counterions and run longer simulations [24]. |

| Path-Based Method Fails to Find a Low-Dissipation Path | 1. The initial "guess path" from ABMD is not the most probable pathway.2. System size is large, leading to high work dissipation. | 1. Run multiple ABMD replicates and select the most statistically representative path as the guess [21].2. Employ a refined nonequilibrium strategy with path optimization algorithms to minimize dissipation [21]. |

| Large Error in Absolute Binding Free Energy vs. Experiment | 1. Force field inaccuracies, particularly for ligand torsions.2. Incorrect protonation states of binding site residues.3. Unaccounted protein conformational changes. | 1. Refine torsion parameters using QM calculations [24].2. Perform constant-pH simulations or careful pKa analysis pre-simulation.3. Consider using path-based methods that can capture large rearrangements or run longer replicas [21] [24]. |

Experimental Protocols

Protocol 1: Absolute Binding Free Energy Calculation via the Alchemical Double Decoupling Method (DDM)

This protocol outlines the steps for calculating the absolute binding free energy of a ligand by decoupling it from its environments [23] [25].

- System Setup: Prepare the protein-ligand complex in a solvated simulation box. Generate a second system containing only the ligand in a box of solvent.

- Restraint Application: Apply harmonic restraints to the ligand in the bound simulation. These restraints should limit the translational and rotational freedom of the ligand within the binding site but be weak enough to allow necessary fluctuations.

- Define Alchemical Pathway: Set up a series of λ windows (e.g., 12-20 windows) for both the bound and unbound (solvent) legs of the calculation. The transformation typically involves:

- Turning off the electrostatic interactions of the ligand with its environment.

- Turning off the van der Waals interactions of the ligand with its environment.

- Equilibration and Production: For each λ window in both the bound and unbound legs, run an energy minimization, followed by equilibration and a production MD simulation. The production run must be long enough to ensure proper sampling and convergence.

- Free Energy Analysis: Use the Bennett Acceptance Ratio (BAR) or the Multistate Bennett Acceptance Ratio (MBAR) to compute the free energy change for decoupling the ligand in the bound state (ΔGbind) and in the solvent (ΔGsolv).

- Free Energy Calculation: The absolute binding free energy is calculated as: ΔGbind = ΔGsolv - ΔGcomplex + ΔGstd, where ΔGstd is a correction term for the standard state concentration [23] [25].

Protocol 2: Absolute Binding Free Energy Calculation via a Path-Based Nonequilibrium Approach

This protocol uses physical pathways and nonequilibrium dynamics to compute binding free energies [21].

- Generate Guess Unbinding Path: Starting from the crystallographic bound structure, run multiple Adiabatic Bias MD (ABMD) simulations. Use a collective variable (CV) such as a fictitious electrostatic potential or the Debye-Hückel interaction energy to promote gentle dissociation of the ligand from the protein. From these trajectories, select the most probable unbinding path as the "guess path."

- Optimize the Reference Path: Refine the guess path using path optimization algorithms (e.g., the Principal Path Algorithm and the Equidistant Waypoints Algorithm). The output is a smooth reference path composed of consecutive configurations that are equidistant in terms of mean-square-deviation (MSD).

- Define Path Collective Variables (PCVs): From the optimized reference path, define a PCV that measures the progress along the path (s) and the distance from the path (z).

- Run Bidirectional Nonequilibrium SMD: Perform multiple independent Steered MD (SMD) simulations that either pull the ligand from the bound to the unbound state ("unbinding") or from the unbound to the bound state ("binding"). These simulations use the PCV

sas the steering coordinate. - Calculate Work Distributions: For each nonequilibrium trajectory, calculate the work performed during the process (Jarzynski work, WJ).

- Compute Free Energy Surface: Apply the Crooks Fluctuation Theorem (CFT) to the distributions of work from the binding and unbinding simulations. This yields the Free Energy Surface (FES) along the path collective variable.

- Estimate Standard Binding Free Energy: The standard binding free energy is obtained by summing the binding free energy from the FES and a correction term for the standard concentration and the accessible ligand volume [21].

Implementing Metadynamics and Path Collective Variables (PCVs)

Frequently Asked Questions (FAQs)

Path Collective Variable (PCV) Fundamentals

Q1: What are Path Collective Variables (PCVs) and when should I use them?

Path Collective Variables (PCVs) are computational tools designed to describe and simulate complex molecular transitions, such as conformational changes in proteins or chemical reactions. They are particularly valuable when studying activated processes that involve moving between two known stable states (e.g., state A and state B) across a complex free energy landscape. PCVs are advantageous when the transition requires many descriptors (collective variables), as they project this high-dimensional space onto a 1D progress parameter (often denoted as s) that tracks advancement along a path, and a distance parameter (z) that measures deviation from this path. This makes them ideal for tackling complex biomolecular transitions that are difficult to describe with just one or two traditional collective variables [26].

Q2: What are the key differences between MSD, DMSD, and CMAP for defining a path?

The choice of metric to measure distances between structures in path CVs is critical:

- MSD (Mean Squared Deviation): Suitable when the conformational change involves the collective motion of a large number of atoms. A common issue is setting the

LAMBDAparameter incorrectly, which can cause the system to get "stuck" at integer values ofs[27]. - DMSD (Distance-based Root Mean Squared Deviation): Often a better choice when the transition is governed by a specific subset of atoms or distances, as it can be more robust to irrelevant structural fluctuations.

- CMAP: This metric can be useful for capturing more complex relationships in the conformational space.

As a rule of thumb, RMSD-based PCVs (MSD) are good for large-scale collective motions, while contact distance-based or DRMSD-based PCVs are more appropriate when the transition is driven by specific, localized changes [27].

Implementation and Parameter Selection

Q3: How do I choose the correct LAMBDA parameter for my PCV?

The LAMBDA parameter controls the "tightness" of the path following. An incorrect value is a common source of sampling issues. The parameter should be inversely proportional to the squared distance between frames.

- Standard Method: ( \lambda = \frac{2.3}{\langle \text{MSD between neighbors} \rangle} ), where the average MSD is calculated between consecutive frames in your path [27].

- Robust Method: To avoid high

zvalues and poor sampling in regions where consecutive frames are farther apart, use ( \lambda = \frac{2.3}{\text{largest MSD between two adjacent frames}} ) [27]. This ensures the path is well-defined even at its most disparate points.

Q4: What is the exploration-convergence trade-off in Metadynamics with suboptimal CVs?

When using suboptimal collective variables, a fundamental trade-off exists between how quickly the simulation explores new configurations and how quickly the bias potential converges to the underlying free energy surface.

- Fast Exploration: Using a rapidly changing bias (e.g., high hill deposition rate) can push the system to escape metastable states quickly and explore phase space efficiently. However, the free energy estimate will be slow to converge and potentially unreliable for long periods.

- Fast Convergence: Using a slower-updating bias focuses on obtaining a accurate free energy estimate, but at the cost of slower transitions between states. Methods like the OPES-explore variant are specifically designed to prioritize exploration when dealing with challenging, suboptimal CVs [28].

Analysis and Convergence

Q5: How can I assess the convergence of my Metadynamics simulation?

Convergence can be evaluated by monitoring the evolution of the bias potential and the reconstructed free energy.

- Time Evolution of the Bias: The bias potential should become quasi-static, meaning it changes very little over time.

- Free Energy Profile: The free energy profile calculated from the simulation should become stable and not shift significantly with further simulation time.

- Block Analysis: A more rigorous approach involves dividing the simulation trajectory into blocks, calculating the free energy for each block, and ensuring that the differences between blocks are small compared to the thermal energy (k_B T) [29].

- Multiple Walkers: Using multiple parallel simulations (walkers) that share a common bias potential can help improve and assess convergence more rapidly [26].

Q6: How can I combine Metadynamics with other techniques to improve sampling?

Combining Metadynamics with other enhanced sampling methods can yield greater acceleration than either method alone.

- Stochastic Resetting (SR): This involves periodically stopping the simulation and restarting it from independent initial conditions. Combining SR with Metadynamics can lead to significant speedups, even when the CVs are suboptimal. The bias potential is typically reset to zero upon restart. The acceleration is most effective when the coefficient of variation (COV, standard deviation/mean) of the transition time distribution is greater than 1 [30].

- Machine Learning-Guided Sampling: Deep learning models are increasingly used to analyze MD trajectories, identify relevant features for CVs, or even calculate interatomic forces with high accuracy, which can improve the underlying model for Metadynamics simulations [31].

Troubleshooting Guides

PCV Simulation Issues

Problem: Replicas are "stuck" at discrete values of the path progress variable (s)

- Symptoms: The value of

sremains fixed at integer values (e.g., 2.000, 3.000) and does not fluctuate smoothly. - Primary Cause: The

LAMBDAparameter is set too high. A highLAMBDAvalue creates an overly stiff path, making the energy barriers between frames insurmountable [27]. - Solution:

- Recalculate

LAMBDAusing the robust method: ( \lambda = \frac{2.3}{\text{largest MSD between two adjacent frames}} ). - Ensure the frames defining your path are approximately equidistant in CV-space.

- Visually inspect the initial path in the CV-space to identify and correct any large gaps between frames.

- Recalculate

Problem: The simulation samples high values of the path distance variable (z)

- Symptoms: The value of

z, which measures the deviation from the path, is consistently high (e.g., > 3-6 Ų), indicating the system is frequently far from the defined path. - Primary Cause: The

LAMBDAparameter may be too low, making the path definition too loose. Alternatively, the initial path may be a poor representation of the true transition mechanism [27]. - Solution:

- Increase the

LAMBDAvalue to tighten the path constraint. - Consider using an adaptive path-CV method, such as path-metadynamics (PMD), which allows the path to iteratively update and refine itself based on the simulation data, converging to the minimum free energy path [26].

- Add more frames to the path in regions where

zis observed to be high.

- Increase the

Metadynamics Performance Issues

Problem: Slow exploration of phase space despite biasing

- Symptoms: The simulation remains trapped in a metastable state for an extended period, and transitions are rare.

- Primary Cause: The collective variables are suboptimal and do not fully capture all the slow modes of the transition [28].

- Solution:

- Increase Hill Height/Deposition Rate: Temporarily use more aggressive biasing parameters to force the system to explore. Be aware this delays convergence.

- Use an Exploratory Method: Switch to a method like OPES-explore, which is specifically designed to favor rapid exploration over fast convergence when CVs are suboptimal [28].

- Combine with Stochastic Resetting: Implement stochastic resetting to periodically restart the simulation from different initial conditions, which can prevent the simulation from being trapped in a single minimum for too long [30].

- Re-evaluate CVs: Consider using machine learning or other analysis techniques on short unbiased simulations to identify more descriptive collective variables [31].

Problem: Poor convergence of the free energy estimate

- Symptoms: The reconstructed free energy profile continues to drift significantly and does not stabilize over time.

- Primary Cause: The bias deposition rate is too high, preventing the system from equilibrating locally before the landscape changes [28].

- Solution:

- Use Well-Tempered Metadynamics: This variant reduces the hill height over time, promoting convergence.

- Decrease the Hill Deposition Pace: Add Gaussian hills less frequently to allow for more local equilibration.

- Employ Multiple Walkers: Use multiple simulations that share a common bias potential. This improves the statistical quality of the bias and accelerates convergence [26].

- Check CV Quality: Ensure your CVs can properly discriminate between the metastable states of interest.

Key Experimental Parameters and Protocols

Parameter Tables for Simulation Setup

Table 1: Key Parameters for Path Collective Variable (PCV) Simulations

| Parameter | Description | Recommended Value/Guideline |

|---|---|---|

LAMBDA |

Controls the "stiffness" of the path. | ( \lambda = \frac{2.3}{\text{largest MSD between adjacent frames}} ) [27] |

| Number of Frames | Number of structures defining the path. | Enough to ensure ~equidistant spacing in CV-space; typically 20-50+ for complex changes [27]. |

| Path Metric | Method to measure distance between frames. | MSD: Large collective motions. DMSD/Contact Maps: Localized changes [27]. |

Sigma (s) |

Gaussian width for biasing the progress variable s. |

Based on the fluctuation of s in a short unbiased simulation; e.g., 0.2 [27]. |

Table 2: Key Parameters for Well-Tempered Metadynamics

| Parameter | Description | Recommended Value/Guideline |

|---|---|---|

| PACE | Frequency (in steps) for adding a Gaussian hill. | 500-1000 steps. Lower for faster exploration, higher for better convergence [29]. |

| HEIGHT | Initial height of the Gaussian hills. | 1.0 - 1.2 kJ/mol is a common starting point [29]. |

| SIGMA | Width of the Gaussian hills for each CV. | ~1/2 to 1/3 of the CV's fluctuation in the metastable state [29]. |

| BIASFACTOR | Controls the bias tempering. | 8-20 for a ~5-25 kBT barrier. Higher values increase exploration [29]. |

Workflow Visualization

Title: Path-Metadynamics Adaptive Workflow

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Computational Tools

| Tool/Reagent | Function | Application Context |

|---|---|---|

| PLUMED | Library for enhanced sampling & CV analysis; core platform for MetaD/PCVs. | Defining CVs, applying biases, and analyzing simulations. Essential for all protocols [26] [29]. |

| PLUMED PESMD | Plumed's internal engine for fast testing. | Prototyping and testing enhanced sampling setups without a full MD engine [26]. |

| OpenBPMD | Open-source Python implementation of Binding Pose MetaD. | Specifically for assessing protein-ligand binding pose stability [32]. |

| GROMACS | High-performance MD engine. | Running production MD simulations coupled with PLUMED [29]. |

| OPES-explore | An exploratory variant of the OPES method. | Preferred over standard MetaD for faster exploration with suboptimal CVs [28]. |

| AI2BMD | AI-based ab initio biomolecular dynamics system. | Providing highly accurate, ML-driven force fields for underlying dynamics [33]. |

Leveraging Neural Network Potentials (NNPs) for Accelerated Sampling

Troubleshooting Guides

Guide 1: Resolving NNP Instabilities and Poor Convergence in Molecular Dynamics

Problem: Molecular dynamics (MD) simulations become unstable or fail to converge, often characterized by unphysical atomic configurations, energy explosions, or unrealistic bond lengths.

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Insufficient Training Data in High-Energy Regions | Check if the simulation is sampling bond-breaking/formation or phase transitions. Use uncertainty quantification (UQ) to identify regions with high prediction variance [34]. | Implement active learning (AL) with enhanced sampling (e.g., steered MD) to specifically sample rare events and add these configurations to the training set [34] [35]. |

| Poorly Calibrated Uncertainty | Compare the model's force uncertainty against the actual force error from DFT on a validation set. Poor calibration often shows underestimated uncertainties [36]. | Apply conformal prediction to recalibrate uncertainties, ensuring they reliably indicate true prediction errors and prevent exploration of unphysical regions [36]. |

| Lack of Stress Training | Evaluate the model's accuracy on properties derived from energy derivatives, such as elastic constants. Overfitting to energy/forces may occur [37]. | Incorporate stress terms into the model's loss function during training. Use transfer learning to fine-tune weights for a smoother potential energy surface [37]. |

Guide 2: Addressing Sampling Gaps for Rare Events

Problem: The NNP fails to accurately simulate rare but critical events like chemical reactions, nucleation, or phase transitions because these configurations are missing from the training data.

| Possible Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Biased MD Sampling | Conventional MD runs may miss rare events or extrapolative regions. Analyze the collective variable (CV) space coverage [36]. | Use uncertainty-biased MD, where the simulation is biased by the NNP's energy uncertainty to simultaneously explore rare events and extrapolative regions [36]. |

| High Cost of Generating Reactive Data | Expensive ab initio MD (AIMD) is required to capture infrequent transitions [38]. | Employ Transition Tube Sampling (TTS). Generate candidate structures via normal mode expansions along a minimum energy path, then use active learning (e.g., Query by Committee) to select the most informative ones for DFT labeling [38]. |

| Inefficient Data Selection | The active learning loop selects too many similar, non-informative configurations. | In the AL framework, use batch selection algorithms that prioritize both informativeness (high uncertainty) and diversity of atomic structures to build a maximally diverse training set [36]. |

Frequently Asked Questions (FAQs)

Q1: What is the most reliable method to quantify uncertainty in NNPs for adaptive sampling? The best method depends on the trade-off between computational cost and quality. Ensemble-based methods (using multiple models) are widely used and provide a robust measure of uncertainty through prediction variance [39] [38]. For higher computational efficiency, gradient-based uncertainties offer a promising, ensemble-free alternative by leveraging the sensitivity of the model's output to its parameters [36]. For the highest quality uncertainty quantification, Bayesian Neural Networks (BNNs) using Markov Chain Monte Carlo (MCMC) sampling are superior but are computationally more demanding [40].

Q2: How can I generate a robust training set for a reactive system without running prohibitively expensive AIMD? The Transition Tube Sampling (TTS) method is designed for this purpose [38]. It works by:

- Calculating a minimum energy path (MEP) for the reaction.

- Performing local normal mode expansions at several points along the MEP to generate a large pool of thermally distorted candidate geometries at negligible cost.

- Using an active learning protocol (like Query by Committee) to select the most critical candidate structures for subsequent DFT calculations. This approach avoids a full AIMD simulation, creating a compact, high-quality training set that accurately describes the reaction pathway.

Q3: My NNP is accurate on the test set but fails during long MD simulations. Why? This common issue often arises because the standard training paradigm minimizes the single-step prediction error but does not account for the temporal evolution and error accumulation in MD [41]. A solution is Dynamic Training (DT), which integrates the equations of motion directly into the training process. Instead of treating data points as isolated, the model is trained on subsequences of AIMD simulations, forcing it to learn the correct dynamics and remain stable over time [41].

Q4: How can I combine enhanced sampling techniques like metadynamics with NNPs? The combination is powerful for simulating rare events. A standard protocol is [39]:

- Train an initial NNP ensemble on a diverse dataset from short AIMD runs.

- Run metadynamics simulations using the NNP, biased by selected collective variables (CVs).

- Use an active learning framework (e.g., based on CUR matrix decomposition) to selectively sample representative structures—including rare transition states—from the metadynamics trajectory.

- Compute DFT-level energies and forces for these new structures and retrain the NNP. This iterative process creates an NNP that is accurate across the entire reaction landscape, enabling fast and reliable simulation of rare events.

Experimental Protocols

Protocol 1: Uncertainty-Biased Molecular Dynamics for Uniformly Accurate Potentials

This methodology uses the NNP's own uncertainty to drive sampling toward under-explored regions of the configurational space [36].

- Initial Model Training: Train an initial NNP on a small, seed dataset of DFT calculations.

- Uncertainty Quantification: Implement an uncertainty metric. Gradient-based uncertainties are computationally efficient, while ensemble-based methods are a common alternative [36].

- Uncertainty Calibration: Use Conformal Prediction (CP) on a calibration set to rescale the raw uncertainties. This is critical to prevent the MD from exploring unphysical regions with extremely large errors [36].

- Biased MD Simulation: Run an MD simulation where a bias potential, proportional to the calibrated energy uncertainty, is applied. This forces the simulation to explore high-uncertainty regions and rare events.

- Active Learning Loop: When uncertainties exceed a threshold, select candidate configurations. Use batch selection algorithms to ensure diversity. Compute reference DFT data for these candidates and add them to the training set.

- Model Retraining: Retrain the NNP on the expanded dataset. Iterate steps 2-6 until the model achieves uniform accuracy across the relevant configurational space.

Protocol 2: Active Learning for Rare Events using Steered MD

This protocol is designed to capture bond-breaking events and other rare events under mechanical loading [34] [35].

- Initial Sampling: Generate an initial set of configurations, potentially using enhanced sampling methods like steered molecular dynamics (SMD) to induce bond breaking or formation.

- Committee Model (C-NNP): Train a committee of NNPs on the initial data. The committee disagreement (standard deviation) serves as the uncertainty metric [38].

- Configurational and Uncertainty Screening: From new SMD trajectories, select candidate structures based on both high committee uncertainty and low configurational similarity to the existing training set.

- Data Augmentation and Retraining: Perform DFT calculations on the selected candidates. Add them to the training set and retrain the committee of NNPs.

- Validation: Validate the final model on targeted properties, such as activation energy for the rare event, aiming for errors below chemical accuracy (e.g., < 1 kcal mol⁻¹) [34] [35].

Workflow Visualization

Active Learning Loop for NNP Development

Research Reagent Solutions

The following table lists key computational tools and datasets used in advanced NNP development for accelerated sampling.

| Item Name | Type | Function/Purpose |

|---|---|---|

| ANI-1x / ANI-2x | Pretrained NNP / Dataset | Provides a general-purpose, transferable potential for organic molecules containing H, C, N, O. Serves as a starting point for transfer learning [42]. |

| Open Catalyst (OC20/OC22) | Dataset | Contains billions of DFT relaxations for surface catalysis, essential for training NNPs on catalyst-adsorbate interactions [42]. |

| Materials Project (MPtrj) | Dataset | An open repository of periodic DFT calculations on inorganic materials, useful for training NNPs on bulk systems and defects [42]. |

| Equivariant GNNs (e.g., NequIP) | NNP Architecture | A state-of-the-art neural network architecture that builds in rotational equivariance, leading to high data efficiency and accuracy [39] [40]. |

| Query by Committee (QbC) | Active Learning Algorithm | Uses a committee (ensemble) of models to select the most uncertain data points for labeling, improving training set efficiency [38]. |

| Conformal Prediction (CP) | Statistical Framework | Calibrates the model's uncertainty estimates, ensuring they are reliable indicators of true error and preventing unphysical sampling [36]. |

Structure-Preserving (Symplectic) Integrators for Long-Time-Step Stability

Frequently Asked Questions

Q1: My molecular dynamics simulations show non-physical energy drift over long time periods. Could my choice of numerical integrator be the cause?

Yes. Conventional, non-symplectic integrators can introduce numerical dissipation or energy drift, violating the conservation properties of the underlying Hamiltonian system [43]. Symplectic integrators are designed to preserve the geometric structure of Hamiltonian dynamics, leading to superior long-time energy behavior and stability, which is crucial for accurate molecular dynamics simulations [44].

Q2: What is the fundamental difference between a symplectic integrator and a standard Runge-Kutta method?

While many standard Runge-Kutta methods focus solely on local truncation error, symplectic integrators are specifically designed to preserve the symplectic two-form of Hamiltonian systems. This structural preservation means that the numerical solution retains properties like phase space volume conservation, leading to more accurate long-term behavior, even if the energy is not exactly conserved at every time step [43] [44].

Q3: For a given problem, how do I choose between Partitioned Runge-Kutta (PRK) and Runge-Kutta-Nyström (RKN) methods?

The choice often depends on your system's structure. PRK methods are applied directly to Hamilton's equations in phase space (coordinates and momenta) and are a general approach [43] [45]. RKN methods are a specialized development for second-order systems of the form dq/dt = v, dv/dt = g(t, q), which are common in molecular dynamics. RKN schemes can sometimes be more efficient, requiring fewer evaluations of the force field g for a given order of accuracy [43].

Q4: Can structure-preserving integrators be applied to dissipative systems?

Yes. The framework of General Equations for the Nonequilibrium Reversible–Irreversible Coupling (GENERIC) allows for the design of integrators that preserve the thermodynamic structure of dissipative systems. These methods often involve splitting the reversible (often Hamiltonian/symplectic) and irreversible dynamics and applying appropriate structure-preserving methods to each part [44].

Troubleshooting Guides

Issue 1: Poor Long-Term Energy Behavior

Symptoms: The total energy of the system shows a significant upward or downward drift over time, rather than bounded fluctuations around a mean value.

Diagnosis and Solutions:

- Verify Integrator Symplecticity: Ensure you are using a confirmed symplectic scheme. Consult the literature for the coefficients of known symplectic methods, such as those from the Forest-Ruth or Yoshida families [43] [45].

- Check the Timestep Size: Even symplectic integrators have a stability limit. Reduce the timestep and observe if the energy drift improves. Excessively large timesteps can lead to instability even with symplectic methods.

- Consider a Higher-Order Scheme: Lower-order symplectic schemes (2nd order) can exhibit more significant energy error. If computational cost allows, switch to a higher-order symplectic method (e.g., 4th or 6th order), which typically provides better energy conservation for a given timestep [43] [45].

Issue 2: Numerical Instability with Large Timesteps

Symptoms: The simulation "blows up" or produces NaN values shortly after starting.

Diagnosis and Solutions:

- Determine Stability Region: Each explicit symplectic scheme has an associated stability region. The instability might occur because your timestep is outside this region. Consult stability analyses for your chosen method [45].

- Explore Different Schemes: Some symplectic schemes have larger stability domains than others. For example, within the family of four-stage fourth-order Partitioned Runge-Kutta schemes, different specific parameter sets (e.g., FR50, Y7, Y95, Y110) can have varying stability properties and error functionals. Testing alternative schemes from the same family can yield a more stable integrator for your specific problem [43].