Navigating Molecular Dynamics Integration Errors: From Fundamentals to AI-Enhanced Solutions in Drug Discovery

This article provides a comprehensive analysis of molecular dynamics integration algorithm errors, a critical yet often overlooked factor determining the reliability of simulations in biomedical research.

Navigating Molecular Dynamics Integration Errors: From Fundamentals to AI-Enhanced Solutions in Drug Discovery

Abstract

This article provides a comprehensive analysis of molecular dynamics integration algorithm errors, a critical yet often overlooked factor determining the reliability of simulations in biomedical research. It establishes a foundational understanding of numerical instabilities and their physical consequences, explores advanced and reactive algorithms pushing current boundaries, and offers a practical troubleshooting framework for optimizing simulation stability. Furthermore, it examines cutting-edge validation methodologies and the transformative role of machine learning in error detection and correction. Designed for researchers, scientists, and drug development professionals, this guide synthesizes traditional best practices with emerging AI-powered approaches to enhance the accuracy and predictive power of computational studies for drug solubility, protein-ligand interactions, and clinical translation.

The Roots of Instability: Core Principles and Common Pitfalls of MD Integrators

This technical support center is designed within the context of academic research on errors in molecular dynamics (MD) integration algorithms. It provides researchers, scientists, and drug development professionals with practical troubleshooting guides and FAQs to address common issues encountered when working with the MD integration loop, the core algorithm that propagates the equations of motion in an MD simulation.

Troubleshooting Guides and FAQs

Frequently Asked Questions (FAQs)

1. What is the most common source of error in the Verlet integration algorithm? The most common source of error is the discretization error introduced by using a finite time step (Δt). While the Verlet algorithm is time-reversible and numerically stable, it is an approximation of the continuous equations of motion. The local truncation error is proportional to O(Δt⁴), meaning the accuracy of the simulation highly depends on a sufficiently small time step [1].

2. My simulation exhibits a significant energy drift. What could be the cause? Energy drift, where the total energy of the system is not conserved, often points to an overly large time step. A large Δt can lead to numerical instability and inaccurate force calculations. Try reducing your time step. Additionally, ensure that all forces, particularly long-range electrostatic forces, are calculated with sufficient accuracy, as approximations in force calculations can also lead to energy drift [1].

3. Why is my simulation becoming unstable and "exploding"? This is typically a sign of numerical instability. The primary cause is a time step that is too large, leading to excessive forces and unrealistic atomic displacements. Immediately reduce the time step. Furthermore, check for physical impossibilities in your initial configuration, such as atomic overlaps, which generate extremely high forces at the start of a simulation [1].

4. How does the choice of time step (Δt) affect my simulation and its performance? The time step is a critical compromise between accuracy and computational cost. A smaller Δt yields higher accuracy but requires more simulation steps to cover the same physical time, increasing computational expense. A larger Δt improves performance but risks inaccuracy and instability. For all-atom simulations with explicit solvent, a time step of 1-2 femtoseconds is common [1].

5. What are the performance trade-offs in extreme-scale MD simulations? In large-scale simulations involving millions to billions of atoms, performance is limited by the calculation of non-bonded interactions. While bonded interactions scale linearly (O(N)) with the number of atoms, non-bonded interactions are more complex. Van der Waals interactions can be scaled to O(N) using a cutoff, but full electrostatic calculations without special methods scale as O(N²). Using methods like Particle Mesh Ewald (PME) reduces this to O(N log N), but introduces significant computational overhead and communication costs in parallel computing environments [2].

Troubleshooting Guide: Common MD Integration Errors

Issue: Unphysical Temperature Rise in an NVT Ensemble

- Symptoms: The system temperature consistently rises above the target value in a constant-temperature (NVT) simulation, even with a functional thermostat.

- Potential Causes & Solutions:

- Time Step Too Large: This is the most probable cause. The large time step leads to inaccurate integration and energy injection into the system.

- Action: Systematically reduce the time step (e.g., from 2 fs to 1 fs) and monitor the temperature stability.

- Incorrect Thermostat Coupling Parameter: A weak coupling to the thermostat (too large a time constant) may be unable to dissipate the excess energy.

- Action: Adjust the thermostat coupling parameter according to the documentation of your MD software (e.g., GENESIS, GROMACS, NAMD).

- Force Calculation Errors: Inaccurate or unstable forces, potentially from a poorly defined potential energy function, can cause drifts.

- Action: Double-check your force field parameters and system topology for errors.

- Time Step Too Large: This is the most probable cause. The large time step leads to inaccurate integration and energy injection into the system.

Issue: Poor Parallel Scaling in Large-Scale Simulations

- Symptoms: Simulation performance does not improve as expected when using more CPU cores.

- Potential Causes & Solutions:

- Communication Overhead: The cost of communication between processors begins to dominate the computation time, especially in reciprocal-space calculations using PME [2].

- Action: Profile your MD software to identify the bottleneck. Consider using advanced parallelization schemes like the 2d_alltoall method in GENESIS, which minimizes the frequency of MPI communications [2].

- Load Imbalance: The computational load is not evenly distributed across all CPU cores.

- Action: Adjust the domain decomposition parameters in your MD software. Optimizing the number of grid points for PME calculations can also improve load balancing [2].

- I/O Bottleneck: Writing trajectory data to disk can halt parallel computation.

- Action: Use parallel I/O utilities and reduce the frequency of trajectory output. The performance optimization for extreme-scale MD in GENESIS includes effective parallel file I/O for this reason [2].

- Communication Overhead: The cost of communication between processors begins to dominate the computation time, especially in reciprocal-space calculations using PME [2].

Issue: Failure to Sample Rare Events

- Symptoms: The simulation remains trapped in a metastable state and does not observe important conformational changes or transitions within a feasible simulation time.

- Potential Causes & Solutions:

- Intrinsic Timescale Limitation: The energy barrier for the transition is too high to be crossed in a standard MD simulation.

- Action: Employ enhanced sampling methods, such as metadynamics or uncertainty-biased MD. Uncertainty-biased MD, which uses the model's energy uncertainty to guide sampling, has been shown to effectively capture both rare events and extrapolative regions, leading to more uniformly accurate models [3].

- Intrinsic Timescale Limitation: The energy barrier for the transition is too high to be crossed in a standard MD simulation.

Quantitative Data and Performance

Table 1: Performance Metrics for Extreme-Scale MD Simulations on the Fugaku Supercomputer

Data sourced from performance optimization of the GENESIS MD software on the Fugaku supercomputer [2].

| System Size (Number of Atoms) | Performance (ns/day) | Key Optimizations Enabled |

|---|---|---|

| 1.6 Billion | 8.30 | New real-space algorithm, 2d_alltoall FFT, optimized parallel I/O |

| Large System (unspecified) | Significantly improved | L1 cache prefetch, Structure of Array (SoA) for neighbor search, Array of Structure (AoS) for force evaluation |

Table 2: Impact of Time Step on Simulation Stability and Error

Data based on error analysis of the Verlet integration algorithm [1].

| Time Step (fs) | Local Truncation Error | Risk of Energy Drift | Risk of Instability | Recommended Use Case |

|---|---|---|---|---|

| 0.5 | Very Low | Very Low | Very Low | High-precision studies of fast vibrations |

| 1.0 | Low | Low | Low | Standard all-atom, explicit solvent MD |

| 2.0 | Moderate | Moderate | Moderate | Can be used with constraints on bonds involving H |

| > 2.0 | High | High | High | Not recommended for all-atom systems |

Experimental Protocols and Methodologies

Protocol 1: Setting Up a Standard MD Integration Loop using the Størmer-Verlet Algorithm

This protocol details the foundational steps for initiating a simulation with the Verlet integration method, which is widely used for its numerical stability and time-reversibility [1].

Initialization:

- Input: Initial particle positions (x₀) and velocities (v₀) at time t = 0.

- Parameter Setting: Define the time step (Δt) and the total number of simulation steps.

- Force Calculation: Compute the initial acceleration (a₀) from the potential energy function: a₀ = A(x₀).

First Step:

- Calculate the position at the first time step using a Taylor expansion: x₁ = x₀ + v₀Δt + ½a₀Δt² [1].

Main Integration Loop:

- For each subsequent time step (n = 1, 2, 3, ...), iterate using the core Verlet formula: xₙ₊₁ = 2xₙ - xₙ₋₁ + aₙΔt². where aₙ = A(xₙ) is the acceleration computed from the force at position xₙ [1].

Protocol 2: Uncertainty-Biased Molecular Dynamics for Active Learning

This advanced protocol uses the MLIP's uncertainty to bias the simulation and actively generate training data, which is crucial for creating uniformly accurate machine-learned interatomic potentials (MLIPs) [3].

Prerequisite: Train an initial MLIP on a starting dataset of atomic configurations with reference DFT energies and forces.

Configuration and Bias:

- Uncertainty Quantification: During the MD simulation, at each step, calculate the uncertainty of the MLIP's prediction. This can be an ensemble-based uncertainty or a more computationally efficient, gradient-based uncertainty [3].

- Uncertainty Calibration: Apply Conformal Prediction (CP) to calibrate the uncertainties. This critical step prevents the underestimation of force errors, which can lead to the exploration of unphysical configurations. CP rescales the uncertainties to ensure they are correlated with the actual prediction errors [3].

- Bias Application: Apply a bias force (and optionally, a bias stress) proportional to the gradient of the calibrated uncertainty. This bias forces the simulation to explore regions of high uncertainty (extrapolative regions) and rare events [3].

Active Learning Loop:

- Run the uncertainty-biased MD simulation.

- When the atomic force uncertainty exceeds a predefined threshold, terminate the simulation and evaluate reference DFT energies and forces for the new configuration.

- Add this new data to the training set and retrain the MLIP.

- Repeat the process until the MLIP's accuracy is uniform across the relevant configurational space.

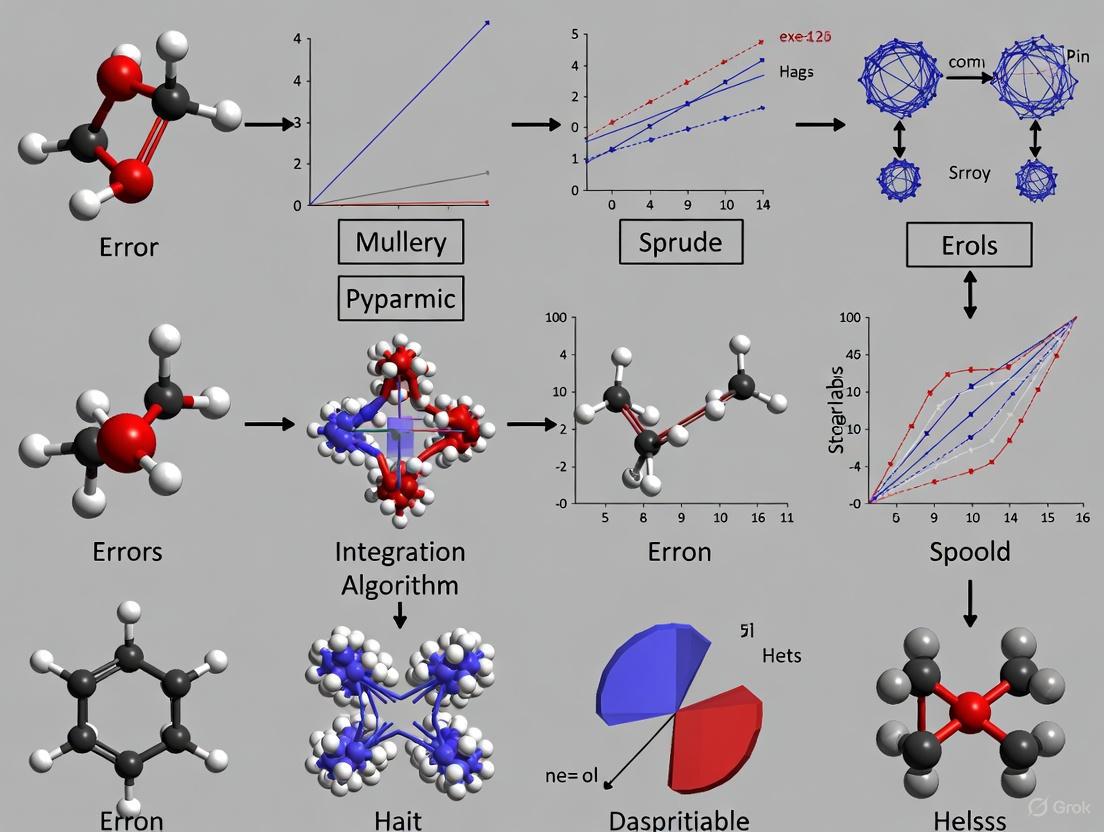

Algorithm Workflow and Signaling Pathways

Diagram 1: Verlet Integration Loop

This diagram illustrates the core computational workflow of the Størmer-Verlet integration algorithm, the fundamental "MD Integration Loop." [1]

Diagram 2: Uncertainty-Biased Active Learning Cycle

This diagram shows the active learning cycle that uses uncertainty-biased MD to efficiently build training sets for Machine-Learned Interatomic Potentials (MLIPs). [3]

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Software and Computational Tools for MD Integration Research

| Item Name | Function / Role in Research |

|---|---|

| GENESIS MD Software | A molecular dynamics software package designed for extreme-scale simulations on supercomputers. It features optimized parallelization schemes, including the 1dalltoall and 2dalltoall for reciprocal-space calculations, enabling simulations of over a billion atoms [2]. |

| Machine-Learned Interatomic Potentials (MLIPs) | A class of potentials that use machine learning to model the potential energy surface with near-quantum accuracy but at a fraction of the computational cost. Essential for simulating large systems and long timescales [3]. |

| Uncertainty Quantification Metrics | Methods, such as gradient-based or ensemble-based uncertainties, used to estimate the reliability of MLIP predictions. They are critical for active learning and preventing simulations from entering unphysical regions of configurational space [3]. |

| Conformal Prediction (CP) | A statistical technique used to calibrate the uncertainties of an MLIP. It ensures that the predicted uncertainties are meaningful and correlate well with the actual errors in forces and energies, which is vital for robust active learning [3]. |

| Fugaku Supercomputer | A massively parallel supercomputer with an ARM CPU architecture (A64FX). Its architecture requires specific optimization of MD algorithms, such as cache prefetching and adjusted array structures, to achieve maximum performance for huge biological systems [2]. |

Frequently Asked Questions (FAQs)

Q1: My molecular dynamics (MD) simulation is unstable and "blows up." What is the most likely cause? Instability and simulation failure are often directly caused by using an inappropriately large time step for numerical integration [4]. If the time step is too large, the numerical integration becomes unstable, leading to unrealistic atom movements, overstretched bonds, and ultimately, simulation termination [4]. To resolve this, ensure you are using a time step suitable for your force field and that you have applied constraints to bonds involving hydrogen atoms. A time step of 2 fs is common for many systems with constrained bonds [4].

Q2: My simulation runs, but the results do not match experimental data. What should I investigate? This often points to errors in the system setup or force field selection, not a software bug [4]. Begin by validating your setup:

- Force Field: Ensure you are using a force field that is appropriate and parameterized for your specific molecules (e.g., proteins, lipids, ligands). Using an incorrect or outdated force field leads to inaccurate energetics and conformations [4].

- System Validation: Check simple observables from your simulation (e.g., RMSD, RMSF) against available experimental data, such as X-ray crystallography B-factors or NMR data [4].

- Sampling: A single short simulation may not be representative. Conduct multiple independent simulations with different initial velocities to ensure adequate sampling of conformational space [4].

Q3: What does the GROMACS error "particles communicated to PME rank are more than 2/3 times the cut-off" mean? This fatal error indicates that particles have moved too far between neighbor list updates, often because the system is not properly equilibrated or there is a sudden, large force spike [5]. The solution is to:

- Ensure thorough energy minimization and equilibration until energy, temperature, and pressure have stabilized [4] [5].

- Check for steric clashes or incorrect parameters in your starting structure [4].

- In some cases, reducing the number of MPI ranks or adjusting the domain decomposition may help [5].

Q4: How can I improve the accuracy of my numerical integration without slowing the simulation drastically? Numerical integration accuracy can be enhanced by:

- Reducing the step size: Halving the step size reduces the global truncation error of the composite trapezoidal rule by a factor of four [6].

- Using a higher-order algorithm: For the same step size, a higher-order method like Simpson's rule yields significantly higher accuracy than the trapezoidal rule [6]. In MD, the Verlet algorithm is preferred over simpler methods like Euler integration due to its better numerical stability and energy conservation properties [1].

Q5: What is the difference between local and global truncation error?

- Local Truncation Error: This is the error introduced by a single step of the numerical integration method, assuming the previous step was exact. It is typically expressed as a function of the step size (e.g., (O(h^{p+1}))) [7].

- Global Truncation Error: This is the accumulated error over the entire simulation interval, resulting from the propagation of local errors at each step. For a method with a local truncation error of (O(h^{p+1})), the global error is generally (O(h^{p})) [7].

Troubleshooting Guides

Guide 1: Resolving Simulation Instabilities

Problem: Simulation crashes with errors related to particle movement, bond stretching, or energy non-conservation.

Diagnosis and Solution Protocol:

Verify Time Step:

Inspect Minimization and Equilibration:

- Action: Confirm that energy minimization has converged and that the equilibration phase (NVT and NPT) has allowed key properties (temperature, pressure, density, total energy) to reach stable plateaus before starting production runs [4].

- Rationale: Inadequate minimization fails to remove high-energy steric clashes, leading to forces that cause the simulation to "blow up" [4].

Check Starting Structure:

- Action: Use visualization software to inspect your initial configuration for missing atoms, steric clashes, or unrealistic geometries. Ensure protonation states are correct for the simulated pH [4].

- Rationale: A poorly prepared starting structure forces the simulation to begin from an unphysical, high-energy state [4].

Guide 2: Managing Numerical Integration Errors

Problem: The simulation runs but produces results with low accuracy, failing to reproduce known physical properties or experimental observables.

Diagnosis and Solution Protocol:

Quantify Truncation Error:

- Action: Understand that the global truncation error of your integration method is proportional to a power of your step size. For example, the composite Trapezoidal rule has a global error of (O(h^2)) [6] [8].

- Rationale: This knowledge allows you to estimate how much reducing the step size will improve accuracy. For instance, halving the step size in a method with (O(h^2)) error should reduce the error by a factor of four [6].

Select an Appropriate Integration Algorithm:

- Action: Prefer higher-order, symplectic integrators like the Verlet algorithm or its variants (Leapfrog, Velocity Verlet) for MD simulations [1].

- Rationale: These algorithms provide good numerical stability, are time-reversible, and conserve the symplectic form on phase space, which is crucial for long-term energy conservation in physical systems [1].

Assess Sampling Adequacy:

- Action: Run multiple independent simulations with different initial random seeds. Do not rely on a single, short trajectory [4].

- Rationale: A single run may be trapped in a local energy minimum and not represent the true thermodynamic ensemble of the system. Multiple replicates provide better statistics and confidence in the results [4].

Technical Foundation

Measures of Numerical Error

In numerical analysis, the quality of an approximation is assessed using specific error measures [9]. Suppose x is an exact value and x̃ is its approximation.

| Error Type | Definition | Interpretation |

|---|---|---|

| Absolute Error | ( | \tilde{x} - x | ) | Magnitude of the error, relevant for correct decimal places [9]. |

| Relative Error | ( \frac{| \tilde{x} - x |}{|x|} ) | Error relative to the value's size, relevant for significant digits [9]. |

| Backward Error | ( f(\tilde{x}) ) for ( f(x)=0 ) | How much the problem must be perturbed for the approximation to be exact (e.g., the residual in linear systems) [9]. |

Order of Convergence

The efficiency of an iterative numerical method is often described by its order of convergence p [9]. If the error at step k is (Ek = |xk - x|), the method converges with order p if (E{k+1} \approx C Ek^p) for large k.

| Convergence Type | Order (p) |

Relationship | Example Methods |

|---|---|---|---|

| Linear | 1 | (E{k+1} \approx C Ek) | Fixed-point iteration (typically) [9]. |

| Quadratic | 2 | (E{k+1} \approx C Ek^2) | Newton's method (on simple roots) [10] [9]. |

| Super-linear | (1 < p < 2) | (\frac{E{k+1}}{Ek} \to 0) | Secant method [9]. |

Truncation Error in Numerical Integration

When numerically solving ordinary differential equations or computing integrals, truncation error arises from discretizing a continuous function [6] [7]. The local truncation error, τ_n, is the error introduced in a single step. The global truncation error, e_n, is the cumulative error over all steps [7]. For a one-step numerical method with local truncation error (O(h^{p+1})), the global truncation error is (O(h^p)) [7].

Figure 1: A hierarchical diagram classifying different types of numerical errors encountered in computational simulations.

Figure 2: A workflow illustrating how local truncation error is introduced at the discretization step and accumulates into a global error over the course of a simulation.

Research Reagent Solutions

The following table lists software tools and their primary functions, which are essential for preparing and analyzing molecular dynamics simulations.

| Tool / Software | Primary Function in MD |

|---|---|

| GROMACS | A molecular dynamics package primarily designed for simulations of proteins, lipids, and nucleic acids. It is highly optimized for performance [5] [11]. |

| PDBFixer | A tool that can correct common problems in PDB files, such as adding missing atoms and residues [4]. |

| AMBER Tools | A suite of programs providing a complete environment for running molecular dynamics simulations, particularly with the AMBER force field [4]. |

| CHARMM-GUI | A web-based platform that provides a graphical interface for setting up complex molecular simulations, including system building and input generation [4]. |

Quantitative Error Bounds for Integration Methods

The error bounds for common numerical integration rules provide a quantitative way to predict and control error [8]. For a function f with a bounded second derivative |f''(x)| ≤ M on [a, b], and n subintervals of width Δx = (b-a)/n, the error bounds are as follows.

| Integration Method | Error Bound Formula | Key Dependence |

|---|---|---|

| Midpoint Rule | (E(\Delta x) = \frac{(b-a)^3}{24n^2}M) [8] | (O(\Delta x^2)) |

| Trapezoidal Rule | (E(\Delta x) = \frac{(b-a)^3}{12n^2}M) [8] | (O(\Delta x^2)) |

| Simpson's Rule | (E(\Delta x) = \frac{(b-a)^5}{180n^4}K) | (O(\Delta x^4)) |

Note: For Simpson's Rule, K is a bound on the fourth derivative of f on [a,b]. The above table shows that for a given n, higher-order methods like Simpson's Rule can offer substantially higher accuracy [6] [8].

Frequently Asked Questions

Q1: Why is the leapfrog integrator the default choice in many molecular dynamics packages like GROMACS?

The leapfrog integrator is a default due to its combination of computational efficiency, numerical stability, and symplectic properties [12] [13]. As a second-order method, it offers greater accuracy than first-order Euler integration without additional computational cost [13]. Its symplectic nature means it better conserves the energy of Hamiltonian dynamical systems over long simulation periods, which is crucial for physical fidelity in molecular dynamics [13]. Furthermore, the algorithm is time-reversible, allowing forward integration and backward reversal to the starting position, a valuable property for consistency [13].

Q2: What specific stability limits must be considered when using the leapfrog integrator?

The primary stability limit for the leapfrog integrator is related to the time-step (Δt) and the highest frequencies present in the system. For stable oscillatory motion, the time-step must satisfy Δt < 2/ω, where ω is the highest angular frequency in the system [13]. Exceeding this limit makes the simulation unstable. In practice, the choice of the mass matrix M can influence these frequencies; an appropriate M can reduce the frequency spectrum, making the system easier to integrate [14].

Q3: Our HMC simulations suffer from a high rejection rate. Could the integrator be the cause?

Yes, the integrator is a likely cause. Recent research provides strong evidence that the standard leapfrog method may not be optimal for Hamiltonian Monte Carlo (HMC) sampling [14]. Systematic numerical experiments show that alternative, easy-to-implement integrators from the same family can yield up to three times more accepted proposals for the same computational cost in high-dimensional target distributions [14]. This improvement stems from reduced expected energy error, which directly correlates with higher acceptance rates [14].

Q4: What is "energy drift," and how is it related to the pair-list buffering in MD simulations?

Energy drift is an unphysical gradual change in the total energy of a system over time. In molecular dynamics, one cause is the Verlet buffer scheme used for neighbor searching [12]. The pair list (used to compute non-bonded forces) is updated periodically, not every step. A buffer zone ensures all necessary atom pairs are included as they move between updates. If this buffer is too small, a particle pair outside the cut-off could move within range between list updates, causing a small, systematic energy error that accumulates as drift [12]. GROMACS can automatically determine the buffer size based on a user-defined tolerance for this energy drift [12].

Troubleshooting Guides

Scenario 1: Unstable Simulation (Rapid Energy Blow-up)

Problem: Simulation crashes due to rapidly increasing energy, often accompanied by atoms flying apart.

Diagnosis and Solution:

This is typically caused by an excessively large integration time-step that violates the method's stability limits.

| Diagnostic Step | Action & Solution |

|---|---|

| Check Time-step | Reduce the time-step (Δt) significantly. Ensure it satisfies Δt < 2/ω_max, where ω_max is the highest vibration frequency (e.g., from bonds involving hydrogen atoms) [13]. |

| Verify Forces | Ensure the force calculation is correct. A buggy force routine can generate impossibly large accelerations, destabilizing any integrator. |

| Inspect Initialization | Confirm that initial velocities are reasonable and generated correctly, for instance, from a Maxwell-Boltzmann distribution [12]. |

Scenario 2: High Energy Drift in Microcanonical (NVE) Ensemble

Problem: The total energy shows a consistent upward or downward trend in an NVE simulation where it should be conserved.

Diagnosis and Solution:

This can indicate a too-aggressive simulation setup or algorithmic approximations.

| Diagnostic Step | Action & Solution |

|---|---|

| Analyze Drift Source | Determine if the drift comes from numerical integration or other approximations. Run a very short time-step simulation as a benchmark. |

| Adjust Pair-List Buffer | If using a Verlet-style neighbor list, increase the pair-list buffer size (rlist) or reduce the update frequency (nstlist). Using the automatic buffer tolerance in GROMACS is recommended [12]. |

| Consider Integrator Alternatives | For simulations requiring strict energy conservation, consider using a higher-order symplectic integrator or an extended leapfrog method that uses Lagrange multipliers to enforce an energy constraint, thus minimizing drift [15]. |

Scenario 3: Low Acceptance Rate in HMC Sampling

Problem: Hamiltonian Monte Carlo sampling produces a high number of rejected proposals, reducing sampling efficiency.

Diagnosis and Solution:

A low acceptance rate is directly linked to large errors in the Hamiltonian (energy) introduced by the numerical integrator [14].

| Diagnostic Step | Action & Solution |

|---|---|

| Reduce Time-step | Decrease the integration time-step. This is the simplest fix but increases computational cost per proposal. |

| Switch Integrator | Replace the standard leapfrog integrator with a more accurate alternative from the same family. The integrator from Blanes et al. is designed to minimize expected energy error for a given computational cost and can significantly boost acceptance rates [14]. |

| Monitor Energy Error | For a given test configuration, track the change in Hamiltonian H after the integration trajectory. A large error confirms the integrator is the source of rejections. |

Experimental Protocols & Quantitative Data

Protocol: Systematic Comparison of Integrators in HMC

This protocol outlines how to compare the efficiency of different numerical integrators within an HMC framework, based on the methodology cited in the research [14].

- Select Integrator Family: Choose a family of integrators for comparison, such as the two-parameter splitting family that includes leapfrog as a special case [14].

- Define Test Problems: Use a range of target distributions with high dimensionality (d), including a multivariate Gaussian, a Log-Gaussian Cox model, and a complex molecular system like the canonical distribution of alkane molecules [14].

- Fix Computational Budget: For a fair comparison, ensure each integrator operates under the same computational budget (e.g., total number of force evaluations).

- Measure Performance Metrics: Run each integrator and measure (a) the number of accepted proposals and (b) the expected energy error (

μ). - Analyze Relationship: Confirm the theoretical relationship where the expected acceptance rate

ais approximatelya = 2Φ(−√μ/2), withΦbeing the standard normal cumulative distribution function [14].

Quantitative Integrator Performance

The following table summarizes key characteristics of the leapfrog integrator and a noted alternative, synthesizing information from the search results.

| Integrator | Global Error Order | Key Properties | Reported Performance vs. Leapfrog |

|---|---|---|---|

| Leapfrog (Verlet) | O(Δt²) [13] [16] |

Symplectic, Time-reversible, Low computational cost per step [13] | Baseline (Default) |

| Blanes et al. Integrator | Higher-order variant | Symplectic, Minimizes expected energy error for Gaussian targets [14] | ~3x more accepted proposals in HMC for same computational cost in high-dimensional settings [14] |

Workflow Diagram: Integrator Decision Path

The diagram below outlines a logical workflow for diagnosing and resolving common issues related to the leapfrog integrator in molecular dynamics and HMC simulations.

The Scientist's Toolkit: Research Reagents & Computational Materials

| Item / Algorithm | Function / Role in Simulation |

|---|---|

| Leapfrog Integrator | The baseline symplectic integrator used to numerically solve Newton's equations of motion, updating particle positions and velocities in a staggered manner [12] [13]. |

| Maxwell-Boltzmann Distribution | Used to generate physically realistic initial velocities for all particles at the beginning of a simulation or during equilibration [12]. |

| Verlet Neighbor List | A critical performance optimization that creates a list of spatially close particle pairs for non-bonded force calculations, updated periodically to manage computational cost [12]. |

| Blanes et al. Integrator | An alternative symplectic integrator within the same family as leapfrog, designed to minimize energy error and shown to improve HMC acceptance rates [14]. |

| Yoshida Coefficients | A set of coefficients used to apply the leapfrog integrator multiple times with specific timesteps, generating a composite, higher-order integrator for increased accuracy [13]. |

Troubleshooting Guide: Common Issues in Molecular Dynamics Simulations

FAQ: Why does my molecular dynamics simulation become unstable or "blow up"? This is often caused by an excessively large integration time step. If the time step is too large, it leads to a dramatic increase in total energy, making the dynamics unstable [17]. For systems with light atoms (like hydrogen) or strong bonds (like carbon), a smaller time step (e.g., 1-2 fs) is required compared to the 5 fs that is often suitable for metallic systems [17].

FAQ: My simulation conserves energy in the NVE ensemble, but the temperature drifts. Is this an error? Not necessarily. In a constant NVE (microcanonical) simulation, the total energy is conserved by the integration algorithm [17]. The temperature, which is related to the kinetic energy, is typically approximately constant but can fluctuate. Significant structural changes or external work done on the system can lead to observable temperature changes [17].

FAQ: How do I know if my initial velocity distribution is correct? The velocities should be assigned randomly from a Maxwell-Boltzmann distribution, which corresponds to the desired simulation temperature [18]. The functional form of this distribution for the speed is given by: [f(v) = 4\pi v^2 \left(\dfrac{m}{2\pi kBT} \right)^{3/2} \exp \left(\dfrac{-mv^2}{2kBT} \right)] Most MD packages include commands to initialize velocities according to this distribution. An incorrect distribution will prevent the system from equilibrating to the correct thermodynamic state.

FAQ: Why do my simulation results show large variations with slightly different starting atomic positions? This can indicate that your system is trapped in a local energy minimum or that the starting geometry is physically unrealistic, leading to high initial forces. This sensitivity is a key aspect of error propagation from initial conditions. Energy minimization (or geometry optimization) should be performed prior to dynamics to relax the starting structure and avoid unphysical configurations that can cause instability or drift [17].

The table below summarizes critical initial conditions and their impact on error propagation.

| Parameter | Description | Common Values / Types | Impact on Error Propagation |

|---|---|---|---|

| Integration Time Step | The discrete time interval for solving equations of motion [17]. | 5 fs (metals), 1-2 fs (H, C bonds) [17]. | Too large a time step causes instability and energy drift (system "blows up") [17]. |

| Velocity Distribution | The initial velocities assigned to particles. | Maxwell-Boltzmann distribution at temperature T [18]. | Incorrect distribution prevents correct equilibration; fluctuations in initial velocities propagate, causing trajectory divergence. |

| Starting Geometry | The initial positions of all atoms in the system. | Crystal lattice, equilibrated structure, or model-built geometry. | Unphysical clashes or high-energy configurations lead to large initial forces, causing instability and trapping in local minima. |

| Ensemble Choice | The thermodynamic ensemble defining conserved quantities. | NVE (microcanonical), NVT (canonical) [17]. | NVE conserves energy but allows temperature fluctuation; NVT algorithms (e.g., Langevin, Nosé-Hoover) introduce their own error and sampling characteristics [17]. |

Experimental Protocol: Setting up a Robust MD Simulation

This protocol provides a general methodology for initializing and running a molecular dynamics simulation to minimize errors stemming from initial conditions [17].

1. System Preparation and Energy Minimization

- Objective: Obtain a low-energy, physically realistic starting geometry to avoid large initial forces.

- Procedure:

- Construct your initial molecular model (e.g., from a crystal structure or protein data bank file).

- Solvate the molecule in a water box and add necessary ions to neutralize the system charge.

- Perform energy minimization using a suitable algorithm (e.g., steepest descent, conjugate gradient) until the maximum force is below a chosen threshold. This step relaxes the structure and is crucial for stability [17].

2. System Equilibration

- Objective: Gently bring the system to the desired temperature and density.

- Procedure:

- Begin with a short simulation in the NVT ensemble (constant Number of particles, Volume, and Temperature) using a thermostat like Langevin or Nosé-Hoover to stabilize the temperature [17].

- Follow with a simulation in the NPT ensemble (constant Number of particles, Pressure, and Temperature) to adjust the system density to the correct value.

- Use a slightly smaller time step during equilibration if the system is unstable.

3. Initial Velocity Assignment

- Objective: Assign velocities that correspond to the target temperature.

- Procedure:

- Use the software's command to generate velocities from a Maxwell-Boltzmann distribution [18].

- Specify a random seed for the velocity assignment to ensure reproducibility. For multiple runs, use different seeds to assess the robustness of your results against variations in initial velocities.

4. Production Simulation

- Objective: Run the main simulation to collect data for analysis.

- Procedure:

- Switch to the desired production ensemble (typically NVE or NVT).

- Use the largest stable time step (determined from equilibration tests).

- Run for a sufficient duration to ensure proper sampling of the properties of interest.

The following workflow diagram illustrates the key stages of this protocol and their logical relationships.

The Scientist's Toolkit: Essential Research Reagents & Solutions

The table below lists key computational "reagents" and tools for managing initial conditions and errors.

| Item / Software Module | Function | Key Consideration |

|---|---|---|

| Velocity Verlet Integrator | Integrates Newton's equations of motion for the NVE ensemble [17]. | Provides good long-term energy conservation. Instability indicates the time step is too large [17]. |

| Langevin Thermostat | Maintains constant temperature (NVT) by adding friction and a stochastic force [17]. | Correctly samples the canonical ensemble. The friction coefficient is a key parameter [17]. |

| Nosé-Hoover Thermostat | Maintains constant temperature (NVT) using extended Lagrangian dynamics [17]. | A "chain" of thermostats is often needed for stable operation. Can show slow convergence if started far from the target temperature [17]. |

| Maxwell-Boltzmann Velocity Generator | Assigns initial atomic velocities from the correct statistical distribution [18]. | Essential for initializing a simulation at a specific temperature. The random seed should be recorded for reproducibility. |

| Energy Minimizer | Finds a local minimum energy configuration from the starting geometry [17]. | Critical for relaxing unphysical structures before dynamics to prevent instability. |

| Monte Carlo Barostat | Maintains constant pressure (e.g., in NPT simulations) by adjusting the simulation box size. | Important for equilibrating system density. Can be used in combination with a thermostat. |

In molecular dynamics (MD) simulations, numerical instability refers to the exponential growth of small errors in the numerical algorithm, which can cause the simulation to produce unrealistic results or fail completely. Unlike the problem being solved, numerical instability is a phenomenon due to the numerical method itself [19]. For MD simulations, which compute the trajectories of atoms by integrating Newton's equations of motion, energy drift serves as the primary and most reliable indicator of such instability.

In a perfectly conservative system with proper numerical integration, the total energy (sum of kinetic and potential energy) should fluctuate around a stable mean value. A persistent, directional change in this total energy constitutes energy drift, signaling that errors are accumulating in a systematic rather than random fashion [20]. This guide provides researchers with practical methodologies for identifying, quantifying, and resolving numerical instability in MD simulations, with particular emphasis on energy drift analysis within the context of molecular dynamics integration algorithm errors research.

Understanding the Core Concepts

What is Numerical Instability?

Numerical instability occurs when approximations and rounding errors in computational algorithms magnify instead of dampen, causing the deviation from the exact solution to grow exponentially [21] [19]. In MD simulations, this manifests as unphysical system behavior, including:

- Exponential growth in energy or forces

- Atomic clashes or bond over-extension

- Catastrophic simulation failure (e.g., "blowing up")

The opposite phenomenon, numerical stability, describes calculations that do not magnify approximation errors. An algorithm is considered numerically stable if it solves a nearby problem approximately, meaning it produces a solution that is close to the exact solution of a slightly different input problem [21].

Energy Drift as the Primary Indicator

In MD simulations, energy drift quantitatively measures the failure of the integration algorithm to conserve energy, making it the most sensitive indicator of numerical instability. As one researcher noted: "After 50,000 steps (1.0 fs time step, no shake, just brute force MD), the drift value is upwards of 0.07 (7%)" [20]. This drift occurs because:

- Truncation errors from discrete time steps accumulate systematically

- Roundoff errors from finite precision arithmetic compound over thousands of iterations

- Inaccurate force calculations introduce energy inconsistencies

Energy drift is typically defined as the relative change in total energy over time: drift = (total potential + kinetic - total initial energy)/total initial energy [20].

Force Field Parameterization Issues

Incorrect force field parameters represent a common source of energy drift in MD simulations.

Table 1: Force Field Parameterization Errors and Solutions

| Error Type | Impact on Energy Conservation | Diagnostic Approach | Solution |

|---|---|---|---|

| Missing parameters | Unphysical forces cause energy explosions | Check penalty scores in ParamChem output [22] | Develop missing parameters using Force Field Toolkit (ffTK) [22] |

| Incompatible force field | Systematic bias in energy calculations | Compare results across different force fields | Select force field parametrized for specific molecules [23] |

| Incorrect partial charges | Electrostatic energy discrepancies | Validate against quantum mechanical water-interaction profiles [22] | Use CHARMM-compatible charge derivation methods |

Integration Algorithm Problems

The numerical integrator itself can introduce instability if improperly configured.

- Time step too large: Excessive time steps amplify truncation errors. Test multiple step sizes (e.g., 0.5 fs vs 1.0 fs) and observe drift changes [20]

- Incompatible constraint algorithms: Using LINCS or SHAKE inappropriately with certain molecular systems [24]

- Explicit vs. implicit methods: Explicit methods (like standard Verlet) have stability limits, while implicit methods may require more computation but offer better stability [19] [25]

A researcher experiencing energy drift noted: "It doesn't appear to be the integrator, unless 0.5 fs isn't a small enough to notice the difference from 1.0 fs" [20], highlighting the importance of systematic testing.

Nonbonded Interaction Calculation Errors

Inaccurate calculation of long-range forces significantly contributes to energy drift.

- Poor neighbor list updating: Setting

nstlisttoo high with Verlet cutoff scheme [24] - Inappropriate electrostatic treatment: Using cutoff methods instead of PME for charged systems [24]

- Incorrect van der Waals parameters: Leading to unphysical attraction or repulsion [22]

One user reported: "I haven't been able to run with PME, since I get a warning from GROMacs when trying to enable it" [24], indicating potential electrostatic calculation issues.

System Preparation and Topology Errors

Preparation errors introduce structural instabilities that manifest as energy drift.

- Missing bonds or angles in topology files cause structural instabilities [23]

- Atomic overlaps from poor initial configurations create huge initial forces [23]

- Incorrect periodic boundary conditions leading to artificial interactions [26]

- System non-neutrality causing electrostatic artifacts [24]

As noted in GROMACS documentation: "The vast majority of error messages generated by GROMACS are descriptive, informing the user where the exact error lies" [26], making careful attention to setup warnings crucial.

Quantitative Assessment of Energy Drift

Measuring and Calculating Energy Drift

Proper quantification of energy drift requires careful measurement protocols:

- Use sufficient sampling: Analyze at least 200,000 steps for reliable statistics [20]

- Exclude equilibration phase: "Drift in MD is indeed commonly defined as a rate of change of total energy. One should only make such measurements excluding equilibration time" [20]

- Normalize appropriately: Calculate per-atom drift for cross-system comparisons

Table 2: Energy Drift Benchmarks and Interpretation

| Drift Magnitude | Interpretation | Recommended Action |

|---|---|---|

| < 0.001 kBT/ns per atom | Excellent conservation | None needed |

| 0.001-0.01 kBT/ns per atom | Acceptable for most applications | Monitor and document |

| 0.01-0.1 kBT/ns per atom | Concerning, may affect results | Investigate and optimize parameters |

| > 0.1 kBT/ns per atom | Unacceptable, results unreliable | Immediate intervention required |

One study reported unacceptable "drift of 4*10^4 kBT/ns per atom, which is at least four orders of magnitude higher than acceptable reference values" [24].

Diagnostic Workflow for Energy Drift

Follow this systematic approach to identify instability sources:

Diagram 1: Energy Drift Diagnostic Workflow

Experimental Protocols for Stability Analysis

Parameter Optimization Methodology

The Force Field Toolkit (ffTK) provides a systematic workflow for parameter development [22]:

Diagram 2: Parameter Optimization Workflow

Stability Threshold Determination

Establish numerical stability boundaries through systematic testing:

- Time step sensitivity analysis: Run simulations with decreasing time steps (2 fs, 1 fs, 0.5 fs) and measure drift

- Force field validation: "Parameters developed for a small test set of molecules using ffTK were comparable to existing CGenFF parameters in their ability to reproduce experimentally measured values for pure-solvent properties (<15% error from experiment)" [22]

- Buffer tolerance optimization: Adjust Verlet buffer tolerance (e.g., 0.0005) and neighbor list update frequency [24]

Research Reagent Solutions

Table 3: Essential Tools for Numerical Stability Research

| Tool/Resource | Primary Function | Application Context |

|---|---|---|

| Force Field Toolkit (ffTK) | CHARMM-compatible parameter development | Deriving missing parameters for novel molecules [22] |

| ParamChem Web Server | Automated parameter assignment by analogy | Initial parameter estimation and atom typing [22] |

| Double Precision GROMACS | High-precision arithmetic calculations | Reducing roundoff error in sensitive systems [24] |

| Verlet Cutoff Scheme | Neighbor searching with buffer tolerance | Controlling force calculation accuracy [24] |

| PME Electrostatics | Particle Mesh Ewald method | Accurate long-range electrostatic forces [24] |

Advanced Diagnostic Utilities

- Energy decomposition analysis: Isolate specific force contributions to drift

- Trajectory analysis tools:

gmx rms,gmx msd,gmx hbondfor structural stability assessment [23] - Quantum mechanical validation: Compare with target data from QM calculations [22]

Frequently Asked Questions

Q1: What constitutes acceptable energy drift in production simulations? Acceptable drift is system-dependent, but generally should be below 0.01 kBT/ns per atom. For precise thermodynamic calculations, stricter thresholds (< 0.001 kBT/ns per atom) are recommended. Always report drift metrics in publications for reproducibility [20].

Q2: How can I distinguish between physical instability and numerical instability? Physical instability shows bounded oscillations around equilibrium, while numerical instability exhibits exponential growth. As explained in heuristic analysis: "When a numerical instability occurs and exhibits the character of increasing plus and minus values on successive time steps it can be cured by reducing the time-step size" [25].

Q3: Why does my simulation show energy drift even with small time steps? Small time steps address integrator stability but don't fix other issues like:

- Incorrect force field parameters [22]

- Poor system preparation (overlaps, missing atoms) [26]

- Inadequate nonbonded treatment [24] Implement the diagnostic workflow in Section 4.2 systematically.

Q4: When should I consider using double precision compilation? Double precision is recommended when:

- Single precision shows unacceptable drift even after parameter optimization

- Simulating highly charged systems with sensitive electrostatic balances

- Performing free energy calculations requiring high numerical accuracy [24]

Q5: How do I properly measure energy drift in NVE simulations? Use these guidelines:

- Exclude equilibration phase from analysis [20]

- Calculate both relative and absolute drift:

drift = (E_current - E_initial)/E_initial - Report per-atom normalized values for comparison across systems

- Use sufficient sampling statistics (>200,000 steps) [20]

Advanced and Reactive Algorithms: Enhancing Accuracy for Complex Biomedical Systems

Classical Molecular Dynamics (MD) simulations have been fundamentally limited by their inability to simulate chemical reactions due to their use of harmonic bond potentials, which prevent bond dissociation. The Reactive INTERFACE Force Field (IFF-R) represents a transformative approach by replacing classical harmonic bond potentials with reactive, energy-conserving Morse potentials [27] [28]. This implementation enables bond breaking and formation while maintaining compatibility with established force fields like CHARMM, PCFF, OPLS-AA, and AMBER, and achieves computational speeds approximately 30 times faster than previous reactive simulation methods such as ReaxFF [27].

The Morse potential, with the form V(r) = D_e(1 - e^{-a(r - r_e)})^2, where D_e is the dissociation energy, r_e is the equilibrium bond length, and a controls the potential width, provides a quantum-mechanically justified representation of bond dissociation that aligns with experimental measurements [29] [27]. Unlike harmonic potentials that approach infinite energy as bonds stretch, the Morse potential realistically describes bond dissociation, approaching zero energy at large separations [27] [30].

Table: Core Components of Reactive MD Implementation with Morse Potentials

| Component | Traditional Harmonic Force Fields | IFF-R with Morse Potentials | Key Parameters |

|---|---|---|---|

| Bond Potential | Harmonic: U = ½k(r - r₀)² |

Morse: V(r) = D_e(1 - e^{-a(r - r_e)})^2 |

D_e, a, r_e |

| Bond Dissociation | Not possible - infinite energy at large r | Realistic dissociation with finite energy | Dissociation energy D_e |

| Computational Cost | Low | ~30x faster than ReaxFF [27] | — |

| Compatibility | Specific to parameterized systems | Compatible with IFF, CHARMM, PCFF, OPLS-AA, AMBER [27] | — |

| Parameter Interpretation | Force constant k |

Dissociation energy D_e, width parameter a |

Typically a = 2.1 ± 0.3 Å⁻¹ [27] |

Diagram 1: Workflow for Implementing Reactive MD with Morse Potentials

Technical Support & Troubleshooting Guide

Frequently Asked Questions (FAQs)

Q1: My simulation becomes unstable when bonds break, leading to error messages about "lost atoms" or "missing bonds." What causes this and how can I resolve it?

A: This common issue occurs because bond dissociation releases significant energy locally, creating "hot spots" with excessively high velocities and forces [31]. When a bond breaks, the stored potential energy is converted to kinetic energy, causing rapid acceleration of atoms. Implement targeted velocity rescaling around the breakage site using LAMMPS's fix temp/rescale applied to a dynamic group of atoms near broken bonds [31]. Additionally, ensure proper handling of angle and dihedral terms connected to broken bonds, as these can exert sudden large forces when constituent bonds dissociate [31].

Q2: How do I determine appropriate Morse parameters (De, a, re) for my specific molecular system?

A: Follow this systematic parameterization protocol [27]:

- Equilibrium distance (r_e): Use the same value as in your original harmonic force field or experimental crystal structure data

- Dissociation energy (D_e): Obtain from experimental thermochemical data or high-level quantum mechanical calculations (CCSD(T), MP2)

- Width parameter (a): Calculate using

a = √(k_e/2D_e), wherek_eis the harmonic force constant, typically resulting in values of2.1 ± 0.3 Å⁻¹[27] Refine parameters by validating against experimental vibrational frequencies from Infrared and Raman spectroscopy [27].

Q3: After implementing Morse potentials, my system's equilibrium properties (density, elastic moduli) have changed. How can I maintain accuracy for non-reactive properties?

A: This indicates poor parameter transfer between harmonic and Morse potentials. The IFF-R approach specifically addresses this by designing the Morse potential to exactly match the harmonic potential near equilibrium [27] [28]. Verify that your Morse parameters satisfy the relationship k_e = 2a²D_e, where k_e is the original harmonic force constant. This ensures identical curvature at the potential minimum, preserving equilibrium structural and mechanical properties while enabling reactivity at larger separations [27].

Q4: Can I simulate both bond breaking AND bond formation with Morse potentials?

A: Yes, but with important methodological considerations. IFF-R enables bond breaking directly through Morse potentials. For bond formation, the approach uses template-based methods such as the REACTER toolkit, which provides rules for new bond creation under specific geometric and energetic criteria [27]. For comprehensive two-way reactivity, some researchers combine Morse potentials for dissociation with distance-based bonding criteria (similar to ReaxFF) for association, though this requires careful implementation to avoid unphysical results [27] [30].

Advanced Troubleshooting: Error Resolution Reference

Table: Common Implementation Errors and Solutions

| Error Message/Symptom | Root Cause | Solution Steps | Prevention Strategy |

|---|---|---|---|

| "lost atoms" or "missing bonds" | Excessive local velocities after bond breakage; insufficient communication cutoff [31] | 1. Implement local temperature rescaling2. Increase communication cutoff (e.g., 12Å to 16Å)3. Reduce timestep during reactive phases | Use smaller timesteps (0.1-0.5 fs) for reactive simulations; implement energy redistribution |

| Instability during strain/deformation | Sudden angle/dihedral term collapse when bonds break [31] | 1. Use smooth angle term reduction near dissociation2. Implement dynamic constraint removal3. Apply gradual strain rates | Pre-process system to identify vulnerable angles/dihedrals; use incremental loading |

| Unphysical bond reformation | Insufficient separation criteria; lacking solvation effects | 1. Implement distance and orientation constraints2. Add reaction templates for specific chemistries3. Include environmental screening | Use larger cutoff distances for bond breaking; implement geometric criteria for new bonds |

| Energy conservation violations | Incomplete force field parameter transfer; incorrect Morse width parameter | 1. Verify k_e = 2a²D_e relationship2. Check consistency of all bonded terms3. Validate with single molecule tests |

Thoroughly validate Morse implementation on small systems before scaling up |

Diagram 2: Temperature Stabilization Protocol After Bond Breaking

Essential Research Reagent Solutions

Table: Key Computational Tools for Reactive MD Implementation

| Tool/Resource | Function/Purpose | Implementation Notes | Availability |

|---|---|---|---|

| IFF-R Parameters | Morse potential parameters for diverse chemistries | Provides De, a, re for covalent bonds; compatible with multiple force fields [27] | Published supplementary materials [27] |

| LAMMPS fix bond/break | Automated bond dissociation when beyond cutoff distance | Triggers when Morse potential forces become negligible; requires careful cutoff selection [31] | Standard LAMMPS distribution |

| REACTER Toolkit | Template-based bond formation | Defines geometric and chemical criteria for new bond creation [27] | Specialized package (requires citation) |

| Temperature Rescaling Methods | Local energy dissipation after bond breakage | Prevents "hot spot" formation; critical for stability [31] | Custom LAMMPS scripts |

| PCFF-IFF-R | Reactive force field for polymers and organic systems | Specifically parameterized for epoxy networks, biomolecules [31] | Specialized parameter sets |

Experimental Protocol: Implementing Morse Potentials in LAMMPS

Step 1: System Preparation and Parameterization

- Begin with a equilibrated system using your standard force field

- Identify specific bonds requiring reactivity (typically lowest energy bonds dissociate first)

- Calculate Morse parameters:

r_e(from equilibrium structure),D_e(from experimental/QC data), anda = √(k_e/2D_e)[27] - Validate parameters by comparing vibrational frequencies with experimental IR/Raman data [27]

Step 2: LAMMPS Implementation

Step 3: Temperature Stabilization Setup

Step 4: Validation and Production

- Run validation tests on small systems comparing to harmonic potential behavior at equilibrium

- Verify energy conservation in microcanonical ensemble

- Perform incremental strain tests to validate dissociation behavior

- Execute production runs with appropriate thermostatting and barostatting [31]

Successful implementation of reactive force fields with Morse potentials requires careful attention to parameter transfer, energy redistribution, and dynamic topology management. The IFF-R methodology provides a robust framework for incorporating reactivity while maintaining the accuracy and efficiency of classical MD simulations [27]. For researchers integrating these methods into thesis work on molecular dynamics integration algorithms, particular focus should be placed on: (1) validating energy conservation during bond dissociation events, (2) implementing localized temperature control to manage released energy, and (3) establishing rigorous criteria for both bond breaking and formation processes. Following the troubleshooting guidelines and experimental protocols outlined above will significantly enhance simulation stability and physical accuracy in reactive molecular dynamics investigations.

Troubleshooting Guide: Common Velocity Verlet Implementation Issues

Problem 1: Significant Energy Oscillations in a Solar System Simulation

- Observed Symptom: The total energy (kinetic + potential) of the system shows significant oscillations around a baseline, even though the orbits themselves appear stable and angular momentum is conserved [32].

- Root Cause Analysis: This is a known, expected behavior of the Velocity Verlet algorithm. As a symplectic integrator, it does not conserve the true physical energy of the system at every step but instead conserves a modified Hamiltonian that is very close to the true energy. The observed oscillations are a result of this property [32] [33]. Their amplitude is proportional to O(Δt²), where Δt is the time step [32].

- Solution:

- Verification: Confirm that the energy oscillations are bounded and do not show a long-term drift. A steady, unbounded increase or decrease in energy would indicate an implementation error, while bounded oscillations are characteristic of the algorithm [32] [33].

- Reduction: To reduce the oscillation amplitude, decrease the integration time step (Δt). Alternatively, implement a higher-order symplectic integrator, such as a composition method that uses the Velocity Verlet algorithm as a building block [32].

Problem 2: Particle Drifting Away or Leaving its Orbit

- Observed Symptom: A planet or particle in an n-body simulation drifts away from its expected orbit, often most noticeably at the point of closest approach and highest acceleration [34].

- Root Cause Analysis: This unphysical behavior can stem from several sources:

- Incorrect Initial Conditions: The system may have a non-zero net momentum, causing the entire system to drift [35].

- Singularities: With close approaches in gravitational potentials, the force calculation can become very large, leading to catastrophic numerical errors [34].

- Sequential Update Error: Implementing the algorithm by first updating all positions and then all velocities (instead of the correct sequence for n-body systems) can introduce significant errors [34].

- Solution:

- Correct Initialization: Before starting the simulation, calculate the system's center-of-mass velocity and subtract it from all particles to ensure zero net momentum [35].

- Force Calculation: Ensure the force calculation is correct and that the algorithm sequence is properly implemented for multi-body interactions [34].

- Softening Parameter: For gravitational n-body problems, consider using a softening parameter in the force calculation to prevent forces from becoming infinite at very small distances.

Problem 3: Unphysical Pendular Motion in Granular Flow Simulations

- Observed Symptom: In Discrete Element Method (DEM) simulations with large particle size ratios (R > 3), fine particles become trapped in unphysical oscillatory or pendular motion between larger particles [36].

- Root Cause Analysis: This is caused by a phase difference of Δt/2 between the position and velocity variables used in the force calculations within the standard Velocity Verlet scheme. This de-synchronization can lead to inaccurate calculations of tangential forces, such as static friction [36].

- Solution: Implement an improved Velocity Verlet algorithm (sometimes called

synchronized_verlet). This variant synchronizes the position and velocity updates before the critical force calculations, ensuring physical accuracy even for large size ratios [36].

Frequently Asked Questions (FAQs)

Q1: Does the Velocity Verlet algorithm exactly conserve energy? A1: No, not exactly. In practice, Velocity Verlet exhibits small, bounded oscillations in the total energy around the true value [32] [33]. However, it is symplectic, meaning it conserves a modified Hamiltonian that is very close to the true energy, leading to excellent long-term stability and no significant energy drift for conservative systems [32].

Q2: Is Velocity Verlet stable with variable time steps? A2: No, not in its standard form. Using a variable time step can destabilize the Störmer/Leapfrog/Verlet method and break its symplectic property, which is key to its good energy conservation [37]. While a mathematically equivalent formulation exists, changing the time step adaptively generally makes the method non-symplectic [37].

Q3: Why is my simulation crashing when particles get too close? A3: This is often due to singularities in the potential function (e.g., the 1/r² term in gravity or the Lennard-Jones potential). As particles approach each other, the calculated forces can become extremely large, causing numerical overflow and simulation failure [34]. This is a known limitation of many integrators, including Verlet, when dealing with singular potentials [34].

Q4: How does Velocity Verlet compare to the Runge-Kutta method? A4: While a detailed comparison table is beyond the scope of this guide, a key difference lies in their conservation properties. Velocity Verlet is symplectic and thus better for long-term energy conservation in Hamiltonian systems. Runge-Kutta methods (like RK4) are not symplectic but can have higher-order local accuracy. For "short" integration intervals, RK4 might be more precise, but for long-term stability in orbital mechanics or molecular dynamics, Velocity Verlet is often preferred [32].

The following table summarizes key characteristics of the Velocity Verlet algorithm and its variants, as discussed in the troubleshooting guides.

Table 1: Velocity Verlet Algorithm Characteristics and Variants

| Algorithm / Property | Energy Conservation Property | Time Step Stability | Key Advantage | Common Application Context |

|---|---|---|---|---|

| Standard Velocity Verlet | Bounded oscillations (O(Δt²)), no long-term drift (symplectic) [32] [33] | Requires fixed time step to remain symplectic [37] | Good long-term stability for conservative systems [32] | General molecular dynamics, n-body simulations [32] [34] |

| Improved/Synchronized Verlet | Improved physical accuracy for tangential forces [36] | Fixed time step | Corrects unphysical motion in large size-ratio systems [36] | Discrete Element Method (DEM) with large particle size ratios (R > 3) [36] |

| Composition Method (4th Order) | Reduced oscillation amplitude (higher-order symplectic) [32] | Fixed time step | Higher accuracy while preserving symplectic property [32] | High-precision orbital simulations |

Experimental Protocol: Implementing and Validating Velocity Verlet for a 2-Body System

This protocol outlines the steps to correctly implement the Velocity Verlet algorithm for a simple 2-body system, such as a star and a planet, and validate its energy conservation properties.

1. System Initialization

- Define Parameters: Set the gravitational constant (G), masses (m₁, m₂), and integration time step (Δt).

- Set Initial Conditions: Provide initial positions (x₁, y₁, x₂, y₂) and velocities (vx₁, vy₁, vx₂, vy₂) for the two bodies.

- Remove Center-of-Mass Velocity: Calculate and subtract the system's center-of-mass velocity from all bodies to prevent overall drift [35].

vx_cm = (vx₁*m₁ + vx₂*m₂) / (m₁ + m₂)- Then,

vx₁ = vx₁ - vx_cmandvx₂ = vx₂ - vx_cm(repeat for y-components).

2. Initial Force Calculation

- Compute the distance vector between the two bodies:

dx = x₁ - x₂,dy = y₁ - y₂. - Calculate the distance:

r = sqrt(dx² + dy²). - Compute the force magnitude:

F = G * m₁ * m₂ / r². - Calculate the force components on each body:

Fx₁ = -F * (dx / r),Fy₁ = -F * (dy / r), andFx₂ = -Fx₁,Fy₂ = -Fy₁.

3. Velocity Verlet Integration Loop

For each time step n, perform the following sequence:

- Update Positions:

x₁[n+1] = x₁[n] + vx₁[n] * Δt + 0.5 * (Fx₁[n] / m₁) * Δt²- (Repeat for y₁, x₂, y₂)

- Update Forces: Using the new positions from step 1, calculate the new forces

Fx₁[n+1],Fy₁[n+1], etc., as in the initialization. - Update Velocities:

vx₁[n+1] = vx₁[n] + 0.5 * ( (Fx₁[n] / m₁) + (Fx₁[n+1] / m₁) ) * Δt- (Repeat for y₁, x₂, y₂)

4. Validation and Monitoring

- Calculate Energy: At each step, compute the total kinetic and potential energy.

- Monitor for Drift: Plot the total energy over time. Look for bounded oscillations rather than a steady increase or decrease [32] [33].

- Check Momentum: Verify that the total linear momentum of the system remains zero (or constant).

The workflow for this protocol is illustrated below:

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Computational Tools for Verlet Algorithm Research

| Tool / Resource | Function / Purpose | Relevance to Thesis Research |

|---|---|---|

| LAMMPS (MD Package) | A widely used open-source molecular dynamics simulator that implements various integrators, including Velocity Verlet and its improved variants [36]. | Provides a robust, tested platform for benchmarking custom implementations and studying algorithm performance on complex, many-body systems. |

| Conformal Prediction (CP) | A statistical technique for calibrating the uncertainties of machine-learned interatomic potentials (MLIPs) used in MD simulations [3]. | Crucial for research into algorithm errors when MLIPs are involved, as it helps distinguish true extrapolative regions from numerical artifacts. |

| Hierarchical Grid Algorithm | An advanced neighbor-detection algorithm for efficiently handling systems with large particle size ratios [36]. | Enables the study of Velocity Verlet's behavior in highly polydisperse systems, where standard implementations may fail [36]. |

| Automatic Differentiation (AD) | A technique to obtain exact numerical derivatives of functions specified by computer programs [3]. | Used in advanced uncertainty-biased MD to compute bias forces and bias stresses, enhancing configurational space exploration [3]. |

Troubleshooting Guide: Frequently Asked Questions

FAQ 1: How can I ensure the quality and sufficiency of my training data for ML-driven NAMD?

Answer: Generating a comprehensive, high-quality dataset is a foundational challenge. Best practices involve active learning to efficiently sample the configurational space. You should employ uncertainty-biased molecular dynamics, where simulations are biased towards regions where the machine-learned interatomic potential (MLIP) exhibits high predictive uncertainty. This method simultaneously captures rare events and extrapolative regions, which are crucial for developing a uniformly accurate potential [3]. Ensure your reference data comes from high-level electronic structure methods (like CASPT2 or ADC(2)) that can correctly describe excited-state phenomena, including bond breaking, conical intersections, and charge-transfer states [38] [39].

FAQ 2: My ML model for properties like non-adiabatic couplings (NACs) is unstable. What could be wrong?

Answer: Instability often stems from the non-uniqueness of the quantum mechanical wavefunction phase. Properties such as NACs are derived from the wavefunction, which has an arbitrary phase; this can lead to sign changes in the reference data that are difficult for a model to learn [39]. To resolve this, implement a phase correction step during the pre-processing of your quantum chemical reference data. Before training, ensure the phases of the wavefunctions are consistent across all molecular geometries in your dataset. This often involves tracking the phase along a trajectory and correcting for sudden flips, leading to a smoother and more learnable target property for the ML model [39].

FAQ 3: How can I improve the exploration of rare events in my active learning cycle?

Answer: Conventional molecular dynamics (MD) can struggle to sample rare but important events without prohibitively long simulation times. Instead of running unbiased MD, use an uncertainty-bias force during the MD simulation. This force is proportional to the gradient of the MLIP's uncertainty metric. By pushing the system towards less-explored, high-uncertainty regions of the configurational space, this technique promotes the discovery of both rare events and new extrapolative configurations more efficiently than unbiased MD or metadynamics [3]. For periodic systems, this approach can be extended by also applying a bias stress to explore different cell parameters [3].

FAQ 4: What are the main challenges in applying ML to full quantum dynamics?

Answer: While ML potentials are highly successful for mixed quantum-classical methods like trajectory surface hopping, their application to full quantum dynamics methods (e.g., MCTDH) is limited. The primary challenge is the curse of dimensionality. Full quantum dynamics methods explicitly treat the nuclear wavefunction, whose computational cost grows exponentially with the number of degrees of freedom. Currently, generating the massive amount of accurate quantum dynamics data required to train a general ML model for this purpose is computationally intractable [39]. Current research focuses on ML for on-the-fly NAMD, where ML predicts energies and forces for classical nuclei, rather than learning the nuclear wavefunction itself [39].

FAQ 5: How do I manage the complexity of analyzing large amounts of trajectory data from NAMD simulations?

Answer: The large volume of data from NAMD trajectories requires robust post-processing techniques. You should leverage machine learning-based dimensionality reduction and clustering methods. Techniques like Principal Component Analysis (PCA) or t-Distributed Stochastic Neighbor Embedding (t-SNE) can project high-dimensional trajectory data onto a lower-dimensional space, revealing the main structural evolution pathways [39]. Subsequently, clustering algorithms (e.g., k-means) can be used to group similar molecular configurations, helping to identify key metastable states and major nonradiative decay channels visited during the dynamics [39].

Experimental Protocols & Data

Table 1: Comparison of Electronic Structure Methods for Excited-State Reference Data

| Method | Typical Accuracy | Computational Cost | Key Strengths | Key Limitations for NAMD |

|---|---|---|---|---|

| Time-Dependent DFT (TDDFT) | Moderate | Low | Good for large systems; widely available | Accuracy depends on functional; can fail for charge-transfer, double excitations [38] |

| Multiconfigurational (CASSCF) | Qualitative to Good | High | Correctly describes bond breaking, conical intersections | Lacks dynamic correlation, can yield biased energies [38] |

| Perturbation Theory (CASPT2) | High | Very High | Adds dynamic correlation to CASSCF; high accuracy | Computationally expensive; not always feasible for dynamics [38] |

| Algebraic Diagrammatic Construction (ADC) | Moderate to High | Moderate | Systematic improvement via order (e.g., ADC(2), ADC(3)) | Higher orders are computationally demanding [38] |

Table 2: Research Reagent Solutions: Key Computational Tools

| Item | Function | Technical Notes |

|---|---|---|

| High-Level Electronic Structure Code | Generates reference data for energies, forces, NACs. | Examples: OpenMolcas, ORCA, Gaussian. Essential for accurate excited-state PESs [38] [39]. |

| Machine-Learned Interatomic Potential (MLIP) | Acts as a surrogate quantum chemistry method for fast evaluation of PESs. | Can be a neural network or kernel-based model. Reduces computational cost of NAMD by orders of magnitude [39] [3]. |

| Molecular Dynamics Engine | Propagates nuclear trajectories according to forces. | Must be compatible with MLIPs and surface hopping algorithms (e.g., Newton-X, SHARC). |

| Active Learning Framework | Manages the iterative process of data generation and model training. | Uses uncertainty metrics to select new configurations for quantum chemistry calculations, improving dataset efficiency [3]. |

Workflow Visualization

Diagram 1: Active Learning for Robust ML Potentials

Diagram 2: ML-Enabled Nonadiabatic Molecular Dynamics Workflow

Frequently Asked Questions (FAQs)

FAQ 1: What are the key differences between descriptor-based and fingerprint-based machine learning models for predicting aqueous solubility?

Descriptor-based models rely on predefined physicochemical properties (e.g., molecular topological indices, ring count) [40]. These are explicit, interpretable features calculated from the molecular structure. In contrast, fingerprint-based models, such as Morgan fingerprints (ECFP4), use a hashing technique to represent a molecule's structure as a binary bit-string based on the presence of circular substructures around each atom [40]. This method does not require pre-defining chemical building blocks.

From a performance perspective, a comparative study using Random Forest regression on a dataset of over 8,400 compounds showed that the descriptor-based model achieved a higher coefficient of determination (R² = 0.88) and a lower root-mean-square error (RMSE = 0.64) on test data compared to the fingerprint model (R² = 0.81, RMSE = 0.80) [40]. However, the fingerprint model offers superior interpretability of molecular-level interactions and is more compatible with thermodynamic analysis [40].

FAQ 2: What are the primary challenges in simulating protein folding using molecular dynamics (MD)?

The two main, interconnected challenges are sampling and force field accuracy [41].

- Sampling: Folding events for even small, fast-folding proteins can occur on timescales of microseconds. Observing a single folding event requires extremely long and computationally expensive simulations to obtain statistically meaningful data on the folding pathways and mechanisms [41].

- Force Field Accuracy: The empirical potential energy function (force field) must correctly describe the relative energies of the native state, the unfolded ensemble, and all key intermediates and transition states along the folding pathway. Inaccuracies can lead to the stabilization of non-native states or incorrect melting temperatures, as observed in simulations of model systems like the Trpcage miniprotein [41].

FAQ 3: What methods are available to account for protein flexibility in molecular docking?