Navigating Protein Fitness Landscapes: From Adaptive Walks to Clinical Applications

This article provides a comprehensive overview of protein fitness landscapes and the principles of adaptive walks, tailored for researchers and drug development professionals.

Navigating Protein Fitness Landscapes: From Adaptive Walks to Clinical Applications

Abstract

This article provides a comprehensive overview of protein fitness landscapes and the principles of adaptive walks, tailored for researchers and drug development professionals. It explores the foundational concepts of fitness landscapes and epistasis, details cutting-edge methodologies from deep mutational scanning to machine learning models, addresses key challenges like evolutionary traps and rugged landscapes, and validates approaches through comparative analysis of experimental and computational strategies. By synthesizing theoretical models with practical applications in protein engineering and viral evolution prediction, this resource aims to bridge fundamental evolutionary principles with biomedical innovation.

The Topography of Evolution: Mapping Protein Fitness Landscapes

The fitness landscape is a foundational concept in evolutionary biology, providing a powerful metaphor for understanding the relationship between genotype and fitness. First proposed by Sewall Wright in 1932, a fitness landscape is a mapping from a set of genotypes to fitness, where the genotypes are organized based on their mutational connectivity [1]. This framework allows researchers to conceptualize evolution as a navigational process across a topographic surface, where populations ascend fitness peaks through the combined actions of mutation and selection. While initially a theoretical construct, the fitness landscape concept has become an indispensable tool for interpreting empirical data on protein evolution [2] [3].

This whitepaper traces the conceptual development of fitness landscapes from Wright's original formulation to their modern applications in protein engineering and evolutionary analysis. We explore how theoretical frameworks have evolved to accommodate high-dimensional genotypic spaces and discuss state-of-the-art methodologies for visualizing and analyzing these complex landscapes. Within the context of ongoing research on protein fitness landscapes and adaptive walks, this review aims to equip researchers and drug development professionals with both the theoretical foundation and practical tools needed to leverage fitness landscape concepts in their work.

Historical Development and Theoretical Foundations

Wright's Original Formulation and Visualizations

Sewall Wright introduced the fitness landscape concept in 1932, proposing two distinct methods for its representation. For small genotypic spaces, he advocated plotting individual genotypes and connecting them with lines to denote possible mutational transitions [1]. The spatial arrangement in these diagrams was determined either by designating a wild-type reference and plotting other genotypes based on their mutational distance from it, or through ad-hoc arrangements designed to reveal qualitative features of the landscape.

For larger genotypic spaces, Wright proposed a topographical metaphor, suggesting that continuous surfaces could serve as heuristics for understanding evolutionary dynamics [1]. He created iconic diagrams depicting populations as localized on adaptive peaks, with selection driving populations upward and mutation enabling exploration of the fitness surface. Despite their profound influence on evolutionary thinking, these simplified representations were criticized for their lack of mathematical rigor, with Provine describing them as "unintelligible" and "meaningless in any precise sense" [1].

The Challenge of High-Dimensional Genotypic Spaces

A significant limitation of Wright's heuristic approach emerges when considering the actual dimensionality of genotypic spaces. While visualizations typically reduce landscapes to two or three dimensions, real biological systems operate in extremely high-dimensional spaces. For instance, even a modest protein with 100 amino acid positions represents a genotypic space of 20¹⁰⁰ possible sequences [1].

This high dimensionality fundamentally alters the structure of fitness landscapes. Gavrilets demonstrated that in high-dimensional spaces, each genotype has numerous mutational neighbors, creating extensive connected networks of high-fitness genotypes even when fitness is assigned randomly [1]. This contrasts sharply with the isolated fitness peaks that appear natural in low-dimensional visualizations. The implication is profound: while Wright's shifting balance theory emphasized the difficulty of traversing fitness valleys, high-dimensional landscapes typically feature interconnected ridges that facilitate evolutionary exploration without requiring passage through deep valleys [1].

Table: Evolution of Fitness Landscape Concepts

| Era | Key Concept | Representation Method | Limitations |

|---|---|---|---|

| Classical (1930s) | Isolated fitness peaks; adaptive valleys | Low-dimensional continuous surfaces; genotype networks | Heuristic, non-rigorous visualizations |

| Late 20th Century | Neutral networks; holey landscapes | Statistical descriptions; connectivity graphs | Difficulty of empirical validation |

| Modern (21st Century) | High-dimensional interconnected networks | Eigenvector projections; smoothed landscapes | Computational complexity; data scarcity |

Modern Frameworks for Fitness Landscape Visualization and Analysis

Random Walk-Based Dimensionality Reduction

Contemporary approaches address the visualization challenge through rigorous dimensionality reduction techniques. A particularly powerful method uses the eigenvectors of the transition matrix describing evolutionary dynamics under weak mutation [1]. In this framework, a population is modeled as taking a biased random walk on the fitness landscape, with natural selection influencing transition probabilities between genotypes.

The method creates a low-dimensional representation where genotypes are positioned based on their "evolutionary distance" rather than mere mutational proximity. This evolutionary distance is formalized as the "commute time" - the expected number of generations required for a population to evolve from genotype i to j and back again [1]. By plotting genotypes using coordinates derived from the eigenvectors of the transition matrix, this approach generates visualizations where Euclidean distance directly reflects evolutionary accessibility, with genotypes connected by neutral paths drawn close together despite potentially large mutational distances, and genotypes separated by fitness valleys positioned far apart despite minimal mutational separation [1].

Graph-Based Formulation of Fitness Landscapes

The mathematical foundation for modern landscape analysis treats the genotypic space as a graph G = (V, E), where:

- V represents the set of genotypes (vertices)

- E represents possible mutational transitions (edges)

- Each vertex v ∈ V has an associated fitness w(v)

This graph-based formulation enables the application of sophisticated analytical tools, including the graph Laplacian, which quantifies the smoothness of the fitness landscape when treated as a signal on the graph [3].

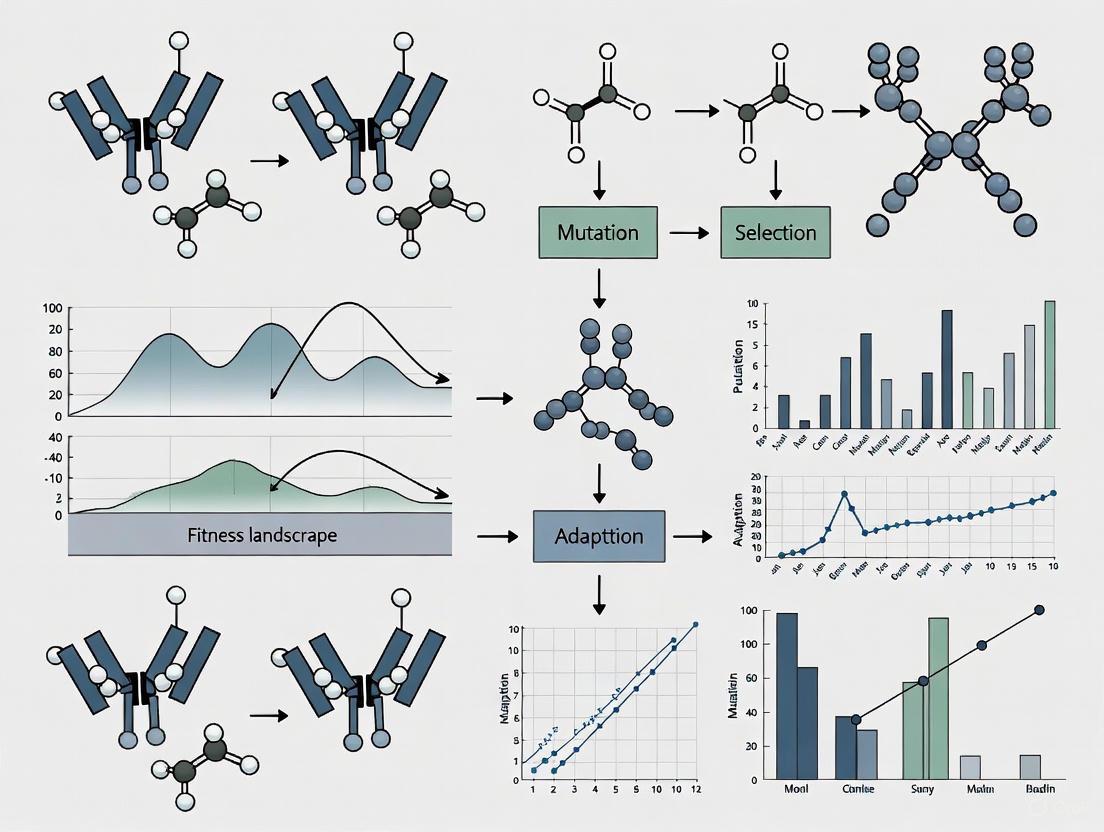

Diagram 1: Workflow for fitness landscape visualization showing the process from high-dimensional genotype space to interpretable evolutionary maps.

Fitness Landscapes in Protein Evolution Research

Empirical Protein Fitness Landscapes

Recent technological advances have enabled the empirical characterization of protein fitness landscapes, moving beyond theoretical models to data-driven analyses. Two landmark studies illustrate this progress:

1. E. coli Antitoxin Protein Landscape: This combinatorially complete landscape comprises fitness measurements for 7,882 antitoxin protein genotypes, with fitness quantified through microbial growth rates [2]. The comprehensive nature of this dataset enables rigorous analysis of mutational interactions and evolutionary trajectories.

2. Yeast tRNA Landscape: This landscape includes 4,176 transfer RNA genotypes in Saccharomyces cerevisiae, providing insights into RNA-protein interactions and their evolutionary constraints [2].

These empirical landscapes reveal several fundamental principles:

- Fitness landscapes contain extensive neutral networks that facilitate evolutionary exploration

- Epistatic interactions (where mutational effects depend on genetic background) are pervasive

- Certain regions of sequence space show enhanced evolutionary potential

Table: Characteristics of Empirical Fitness Landscapes

| Landscape Feature | E. coli Antitoxin Protein | Yeast tRNA |

|---|---|---|

| Number of Genotypes | 7,882 | 4,176 |

| Fitness Metric | Microbial growth rate | Functional competence |

| Combinatorial Completeness | Yes | Yes |

| Key Finding | Existence of evolvability-enhancing mutations | Connected neutral networks |

| Evolutionary Implications | Some mutations enhance potential for future adaptation | Structural constraints shape evolutionary paths |

Evolvability-Enhancing Mutations

A significant discovery from empirical landscape analysis is the existence of evolvability-enhancing mutations (EE mutations) - genetic changes that increase the likelihood that subsequent mutations will be adaptive [2]. Formally, a mutation from wild-type (wt) to mutant (m) is considered evolvability-enhancing if:

For neutral mutations (Δw = w(m) - w(wt) = 0):

- w̄(nₘ) - w̄(n_wt) > 0

For beneficial mutations (Δw > 0):

- w̄(nₘ) - w̄(n_wt) > Δw

Where w̄(nₘ) and w̄(n_wt) represent the mean fitness of one-mutant neighbors of the mutant and wild-type genotypes, respectively [2].

These EE mutations constitute a small fraction of all mutations but significantly shift the distribution of fitness effects of subsequent mutations toward less deleterious outcomes and increase the incidence of beneficial mutations [2]. Populations that encounter EE mutations during adaptation can evolve to significantly higher fitness levels, suggesting these mutations may serve as evolutionary stepping stones across fitness landscapes.

Computational Methods for Protein Optimization

Smoothed Fitness Landscapes for Protein Engineering

The practical application of fitness landscape concepts to protein engineering faces significant challenges, including the combinatorial vastness of sequence space, experimental noise in fitness measurements, and the prevalence of local optima [3]. To address these limitations, researchers have developed computational approaches that intentionally smooth fitness landscapes to facilitate optimization.

The Gibbs sampling with Graph-based Smoothing (GGS) method formulates protein sequences as graphs with fitness values as node attributes and applies Tikhonov regularization to smooth the fitness landscape using the graph Laplacian [3]. This smoothing process enforces the principle that similar sequences should have similar fitness values, creating a landscape more amenable to gradient-based optimization methods.

The mathematical formulation defines the smoothed fitness ỹ as:

- ỹ = argmin {||y - ŷ||² + λŷᵀLŷ}

Where y represents the original fitness values, ŷ represents the smoothed fitness values, λ is a regularization parameter controlling the degree of smoothing, and L is the graph Laplacian matrix that encodes sequence similarity [3].

Optimization in Smoothed Landscapes

Following landscape smoothing, the GGS method performs optimization using Gibbs sampling with Gradients (GWG), which constructs a discrete distribution based on the model's gradients where mutations with improved fitness receive higher probability [3]. This approach enables efficient exploration of sequence space while progressively guiding sampling toward higher-fitness regions.

Diagram 2: GGS protein optimization workflow showing the integration of graph-based smoothing with discrete sampling methods.

This approach has demonstrated remarkable efficacy, achieving 2.5-fold fitness improvements over starting training sets in silico and significantly outperforming traditional methods in benchmarks using Green Fluorescent Protein (GFP) and Adeno-Associated Virus (AAV) datasets [3].

Experimental Protocols and Research Applications

Key Methodologies for Fitness Landscape Characterization

Combinatorially Complete Landscape Construction:

- Sequence Selection: Identify a wild-type sequence and define a mutational space of interest (typically focusing on specific residues or limited regions due to combinatorial explosion)

- Library Generation: Synthesize all possible combinatorial variants within the defined sequence space

- Fitness Assay: Measure fitness for each genotype using appropriate functional assays (e.g., microbial growth rates, fluorescence intensity, catalytic activity)

- Data Curation: Organize fitness measurements into a structured database with genotype-fitness pairs

Invasion Analysis Framework: The adaptive dynamics framework provides a mathematical approach for analyzing mutation invasion in fitness landscapes [4]. The methodology involves:

- Population Genetic Modeling: Describe species distributed across habitats with distinct selective environments

- Thermodynamic Parameterization: Model protein stability using temperature-dependent enthalpy and entropy contributions

- Invasion Fitness Calculation: Determine whether mutant genotypes can invade wild-type populations

- Evolutionary Trajectory Simulation: Model successive mutations fixation events to understand long-term evolutionary dynamics

The Scientist's Toolkit: Essential Research Reagents and Materials

Table: Key Research Reagents for Fitness Landscape Studies

| Reagent/Material | Function/Application | Example Use Cases |

|---|---|---|

| Combinatorial DNA Libraries | Systematic exploration of mutational space | Constructing all possible variants at targeted residues |

| High-Throughput Sequencing Platforms | Genotype identification and frequency tracking | Monitoring evolutionary dynamics in experimental populations |

| Fluorescence-Activated Cell Sorting (FACS) | Isolation of functional protein variants | GFP fitness landscape characterization |

| Microbial Growth Assays | Fitness quantification via growth rates | Antitoxin protein fitness measurements |

| Thermodynamic Stability Assays | Measuring protein folding stability | Characterizing thermal adaptation in proteins |

| Graph Analysis Software | Implementing dimensionality reduction algorithms | Constructing evolutionary accessibility maps |

The concept of the fitness landscape has evolved dramatically from Wright's original heuristic visualizations to become a rigorous framework for understanding and engineering molecular evolution. Modern approaches recognize the high-dimensional nature of genotypic spaces and leverage sophisticated mathematical tools to create meaningful low-dimensional representations that reflect evolutionary accessibility rather than mere mutational proximity. Empirical characterization of protein fitness landscapes has revealed fundamental principles, including the existence of evolvability-enhancing mutations that increase evolutionary potential. Concurrently, computational methods that intentionally smooth fitness landscapes have demonstrated remarkable efficacy in protein engineering applications, enabling the design of novel variants with significantly enhanced properties. As these approaches continue to mature, fitness landscape analysis promises to play an increasingly central role in basic evolutionary research and applied biotechnology, including therapeutic protein development and enzyme engineering for industrial applications.

The concept of a fitness landscape, first introduced by Sewall Wright, provides a powerful framework for understanding protein evolution [5] [6]. In this conceptual model, each point in a high-dimensional sequence space represents a unique protein variant, with the landscape's height corresponding to its fitness or functional proficiency [5] [7]. Protein evolution can then be visualized as an adaptive walk across this landscape, where populations accumulate beneficial mutations through a process of mutation and natural selection, moving toward fitness peaks [8] [6]. Theoretical models of adaptive walks, such as the Orr-Gillespie model, predict a pattern of diminishing returns, whereby populations farther from their fitness optimum take larger adaptive steps than those closer to their optimal configuration [8] [6].

The GB1 domain of streptococcal protein G has emerged as a quintessential model system for empirically characterizing these theoretical concepts [9] [5]. This small 56-amino-acid domain binds to the Fc region of immunoglobulin G (IgG) and possesses a well-defined structure featuring an α-helix packed against a four-stranded β-sheet [10] [11]. Its modest size, combined with its extensive characterization through high-throughput experiments, makes GB1 an ideal subject for mapping sequence-function relationships and testing fundamental principles of protein evolution [9] [5].

Comprehensive Mapping of the GB1 Fitness Landscape

The Combinatorial Four-Site Landscape

A landmark in experimental fitness landscape characterization was the comprehensive analysis of all 160,000 (20⁴) possible amino acid combinations at four key positions (V39, D40, G41, and V54) in GB1 [5]. These sites were strategically chosen because they constitute an epistatic hotspot, containing 12 of the top 20 positively epistatic interactions among all pairwise interactions in GB1 [5]. This experimental design enabled researchers to move beyond traditional diallelic landscapes and explore the full complexity of a 20-dimensional sequence space at these positions.

Table 1: Key Findings from the GB1 Four-Site Fitness Landscape Study

| Aspect Characterized | Finding | Implication |

|---|---|---|

| Beneficial Mutants | 2.4% of the 160,000 variants showed fitness > wild-type | The landscape contains numerous fitness peaks, not just a single optimum |

| Epistasis Prevalence | Widespread sign epistasis and reciprocal sign epistasis observed | Constrains evolutionary paths through sequence space |

| Direct Path Analysis | Only 1-12 selectively accessible direct paths found among 29 subgraphs | Evolutionary accessibility varies significantly between genotypes |

| Indirect Paths | Identified paths involving gain and subsequent loss of mutations | Circumvents evolutionary traps created by reciprocal sign epistasis |

The research employed mRNA display coupled with Illumina sequencing to measure the fitness of all 160,000 variants in a single experiment [5]. The fitness metric incorporated both stability (the fraction of folded proteins) and function (binding affinity to IgG-Fc), providing a biologically relevant measure of protein performance [5]. This high-throughput approach revealed that while most mutants had reduced fitness compared to wild-type GB1, a significant proportion (2.4%) were beneficial, indicating multiple regions of high fitness in the localized landscape [5].

Experimental Methodology for High-Throughput Fitness Mapping

The mRNA display technique used in this comprehensive mapping involves several critical steps that enable accurate fitness quantification for thousands of variants in parallel:

Library Construction: A mutant library containing all possible amino acid combinations at the four target sites is generated through codon randomization, ensuring complete coverage of the sequence space [5].

In Vitro Selection: The protein variants are subjected to binding selection against IgG-Fc, during which functional binders are retained while non-functional variants are washed away [5].

Deep Sequencing: The relative frequency of each variant before and after selection is quantified using Illumina sequencing, allowing calculation of enrichment factors [5].

Fitness Calculation: The fitness of each variant is determined relative to wild-type GB1 by comparing the logarithmic ratios of sequence frequencies before and after selection, normalized to the wild-type sequence [5].

This methodology provides a robust quantitative fitness measure that captures the combined effects of mutations on protein folding, stability, and binding function—key determinants of biological fitness in evolutionary contexts.

Neural Network Extrapolation in GB1 Fitness Landscapes

Machine Learning-Guided Landscape Exploration

Recent advances have combined empirical fitness mapping with machine learning to explore regions of the GB1 fitness landscape beyond experimentally characterized territories [9]. Neural network models trained on local sequence-function information (approximately 500,000 single and double mutants) can infer the complete fitness landscape and guide the search for high-fitness sequences through in silico design [9]. This approach represents a powerful methodology for extrapolating beyond the training data to identify novel functional sequences.

Table 2: Performance Comparison of Neural Network Architectures on GB1 Fitness Prediction

| Model Architecture | Key Characteristics | Extrapolation Performance | Design Preferences |

|---|---|---|---|

| Linear Model (LR) | Assumes additive effects of mutations | Poor performance due to inability to capture epistasis | Limited to local exploration near training data |

| Fully Connected Network (FCN) | Captures nonlinearities and epistasis | Excels at local extrapolation for designing high-fitness proteins | Prefers smooth landscape regions with prominent peaks |

| Convolutional Neural Network (CNN) | Parameter sharing across sequence; detects patterns | Can design folded but non-functional proteins at high mutation distances | Captures fundamental biophysical properties |

| Graph Convolutional Network (GCN) | Incorporates structural context | Best recall for identifying high-fitness 4-mutants | Leverages structural information for prediction |

Researchers systematically evaluated different neural network architectures by training them on the GB1 double mutant data and then using simulated annealing to optimize each model over sequence space, designing thousands of GB1 variants sampling increasingly distant regions (5-50 mutations from wild-type) [9]. The designs were experimentally validated using a high-throughput yeast display assay that simultaneously assessed variant foldability and IgG binding [9]. This rigorous experimental framework enabled direct comparison of each architecture's capacity for extrapolative protein design.

Ensemble Methods for Robust Protein Design

A critical finding from this research was that individual neural networks exhibit significant prediction variance when extrapolating far from their training data, due to millions of parameters that remain unconstrained by the limited training examples [9]. To address this challenge, researchers implemented ensemble predictors (EnsM and EnsC) that combined predictions from 100 CNNs with different random initializations [9]. The ensemble approach returned either the median (EnsM) or the conservative lower 5th percentile (EnsC) prediction for each sequence, substantially improving the robustness of protein design compared to single models [9].

The experimental results demonstrated that while all model architectures could extrapolate to design functional proteins with 2.5-5× more mutations than present in the training data, performance decreased sharply with further extrapolation [9]. Simpler models like FCNs excelled at local extrapolation for designing high-fitness proteins, while more sophisticated CNNs could venture deeper into sequence space to design proteins that folded correctly but often lost function—suggesting these models captured fundamental biophysical properties related to protein folding even when functional details were inaccurate [9].

Visualization of Experimental and Computational Workflows

High-Throughput Fitness Landscape Characterization

High-Throughput Fitness Mapping Workflow

ML-Guided Protein Design and Validation

ML-Guided Design and Validation Pipeline

The Scientist's Toolkit: Essential Research Reagents and Methods

Table 3: Key Research Reagent Solutions for GB1 Fitness Landscape Studies

| Reagent/Method | Function/Application | Key Features |

|---|---|---|

| GB1 B1 Domain | Model protein for fitness landscape studies | 56-amino acids; IgG-binding; well-characterized structure [9] [11] |

| mRNA Display | High-throughput fitness quantification | Couples genotype to phenotype; enables deep sequencing readout [5] |

| Yeast Display | Experimental validation of designs | Simultaneously assesses protein folding and binding function [9] |

| Neural Network Ensembles | Robust fitness prediction | Combines multiple models to reduce prediction variance [9] |

| Simulated Annealing | In silico sequence optimization | Guided search through sequence space for high-fitness designs [9] |

The experimental characterization of GB1's high-dimensional fitness landscape has provided fundamental insights into the principles governing protein evolution. The discovery of indirect paths that circumvent evolutionary traps created by epistasis reveals how proteins can navigate complex fitness landscapes through sequences of mutations that include temporary reversions [5]. This explains how proteins can overcome rugged landscape topography that would otherwise constrain adaptation to only direct paths.

Furthermore, the integration of machine learning with empirical fitness mapping represents a paradigm shift in protein engineering [9] [12]. By demonstrating that neural networks can extrapolate from local fitness measurements to guide the design of novel functional sequences, this research establishes a framework for accelerated protein optimization that reduces experimental burden while expanding the explorable sequence space [9]. The finding that different neural network architectures capture distinct aspects of the fitness landscape suggests that hybrid approaches or carefully chosen ensembles may provide the most robust strategy for protein design applications.

The GB1 case study exemplifies how detailed empirical characterization of model systems can yield general principles that extend to broader protein engineering and evolutionary biology. As methods for fitness landscape mapping continue to advance, combining deeper mechanistic insights from biophysical studies with increasingly sophisticated computational models, our ability to predictively engineer proteins with novel functions will continue to improve, with significant implications for therapeutic development, enzyme engineering, and understanding the fundamental constraints on protein evolution.

Within the metaphorical fitness landscape, where genotype determines evolutionary fitness, epistasis—the interaction between mutations—plays a definitive role in sculpting the topography that guides adaptive evolution. This technical review focuses on two severe forms of epistasis, sign epistasis and reciprocal sign epistasis, which create evolutionary constraints by rendering mutational effects dependent on genetic background. We detail the mechanistic causes of these interactions, from signaling cascades to physical atomic interactions within proteins, and summarize quantitative evidence from experimental fitness landscapes. Furthermore, we provide protocols for measuring epistasis and discuss its profound implications for predicting evolutionary trajectories and combating antibiotic resistance. The evidence consolidated herein underscores that genetic interaction is not a peripheral phenomenon but a central architect of the rugged, multi-peaked fitness landscapes that define molecular evolution.

The concept of the fitness landscape, introduced by Sewall Wright, maps the relationship between genotype and evolutionary fitness, providing a powerful metaphor for visualizing adaptation as a "walk" across a topographic surface [8] [6]. Populations evolve by accumulating beneficial mutations, "walking" from low-fitness valleys towards higher-fitness peaks. A critical model describing this process is the adaptive walk model, which predicts a pattern of diminishing returns [8] [6]. According to this model, a population or gene starting far from its fitness optimum tends to fix mutations with large fitness effects initially. As it approaches a fitness peak, the fixed mutations have progressively smaller effects because fewer large-benefit mutations remain available [8] [6].

Strong evidence for this model comes from the study of gene age, which shows that younger genes, being further from their optimum, experience both a faster rate of adaptive evolution (ωa) and accumulate mutations with larger physicochemical effects compared to older genes [8] [6]. This walk, however, is not freeform. Its trajectory and ultimate destination are profoundly shaped by the topography of the landscape, a topography largely sculpted by epistasis.

Defining the Architects of Ruggedness: Sign and Reciprocal Sign Epistasis

Epistasis occurs when the effect of a mutation depends on its genetic background. The most severe forms create rugged landscapes with multiple peaks and valleys.

- Sign Epistasis: This occurs when a mutation is beneficial in one genetic background but deleterious in another. For example, Mutation A may be beneficial in the wild-type background but deleterious in a background that already contains Mutation B [13] [14].

- Reciprocal Sign Epistasis (RSE): This is a more extreme form where two mutations are individually beneficial, but their combination is deleterious. In this case, each mutation is deleterious in the background of the other [13] [14]. This specific interaction is a necessary condition for the existence of multiple local fitness peaks on a landscape, as it creates a fitness valley between two genotypes [13].

The table below categorizes the scenarios that lead to sign epistasis based on the effects of individual mutations.

Table 1: Categories of Sign Epistasis Based on Single Mutation Effects

| Single Mutation Effects | Condition for Sign Epistasis | Condition for Reciprocal Sign Epistasis (RSE) |

|---|---|---|

| Beneficial + Detrimental [14] | Double mutant (AB) is fitter than the single beneficial mutant (Ab) OR less fit than the single detrimental mutant (aB). | Not applicable for this combination. |

| Beneficial + Beneficial [14] | Double mutant (AB) is less fit than the better of the two single mutants. | Double mutant (AB) is less fit than both single mutants. |

| Detrimental + Detrimental [14] | Double mutant (AB) is fitter than one of the single detrimental mutants. | Double mutant (AB) is fitter than both single detrimental mutants. |

Mechanistic Causes of Epistasis

The manifestation of sign and reciprocal sign epistasis is not arbitrary; it arises from fundamental biological mechanisms.

Signaling Cascades and Gene Regulatory Networks

In hierarchical signaling cascades, mutations in upstream and downstream components can exhibit strong epistasis. A synthetic bacterial signaling cascade demonstrated that mutations affecting transcription factors' binding affinities can readily produce sign epistasis [14]. The network's architecture—whether a linear cascade or a system with feedback that produces a peaked response—predisposes it to these interactions. In one peaked-response network, over 50% of significant epistatic pairs showed sign epistasis, with beneficial mutation combinations frequently resulting in negative reciprocal sign epistasis [14].

Peaked Fitness Landscapes

Many biological systems exhibit non-monotonic, peaked relationships between a molecular trait (e.g., enzyme activity, gene expression level) and fitness [14]. Both insufficient and excessive activity can be detrimental. On such a landscape, two detrimental mutations that push the trait in opposite directions (one increasing, one decreasing activity) can, when combined, restore the trait to its optimal level. This results in sign epistasis, as individually detrimental mutations become beneficial in combination [14]. This phenomenon is common in metabolic pathways, such as the Arabinose utilization pathway [14].

Physical Atomic Interactions

Within proteins and protein complexes, direct physical interactions between atoms are a major source of specific, or idiosyncratic epistasis. A classic example is the interaction between the barnase enzyme and its inhibitor, barstar. Individually detrimental mutations E76R (in barstar) and R59E (in barnase) involve a charge swap that, in the double mutant, restores a stable complex through newly formed salt bridges [14]. Similarly, in SARS-CoV-2, the Q498R mutation weakly reduces binding affinity to the ACE2 receptor alone, but combined with the N501Y mutation, it enhances affinity by restoring salt bridges and creating new stabilizing interactions [14].

Quantitative Evidence from Experimental Fitness Landscapes

Empirical data from combinatorially complete fitness landscapes provides direct evidence for how epistasis shapes adaptation.

Antibody-Antigen Binding

A deep mutational scan of an antibody's binding affinity for fluorescein revealed that epistasis is a pervasive force. A simple additive model explained most of the variance in binding free energy, but a significant portion (25–35%) was attributable to epistatic interactions [15]. A large fraction of this epistasis was beneficial, and it served to both constrain and enlarge the set of evolutionary paths available during affinity maturation [15].

Table 2: Quantitative Analysis of Epistasis in an Antibody-Antigen System [15]

| Metric | CDR1H Domain | CDR3H Domain |

|---|---|---|

| Variance explained by additive (PWM) model | 62% | 58% |

| Approximate variance attributable to epistasis | 25–35% | 25–35% |

| Fraction of epistasis that is beneficial | Large fraction | Large fraction |

Experimental Evolution in Yeast

A rugged fitness landscape was empirically demonstrated during an evolution experiment with Saccharomyces cerevisiae. Adaptive mutations in the MTH1 and HXT6/HXT7 genes arose multiple times independently but remained mutually exclusive [13]. Fitness assays revealed this was due to reciprocal sign epistasis: the double mutant had lower fitness than both the wild-type and each single mutant [13]. This created a genuine fitness valley, forcing evolving lineages to choose one adaptive peak or the other and demonstrating how inter-genic interactions can create absolute barriers between adaptive solutions.

Table 3: Experimentally Evolved Mutations in Yeast Demonstrating RSE [13]

| Evolved Clone | Adaptive Mutations Identified | Fitness Effect of Single Mutation | Fitness Effect of Double Mutant (MTH1 + HXT6/7) |

|---|---|---|---|

| M1 | MTH1 | Beneficial | Lower than either single mutant and the wild-type (Reciprocal Sign Epistasis) |

| M4 | HXT6/HXT7 (amplification) | Beneficial | |

| M5 | HXT6/HXT7 (amplification), MTH1 | Beneficial |

Evolvability-Enhancing Mutations

While epistasis often constrains evolution, certain mutations can enhance evolvability. Evolvability-enhancing (EE) mutations are defined as mutations that increase the likelihood that subsequent mutations are adaptive [2]. In the fitness landscape of a bacterial antitoxin protein, a small fraction of beneficial mutations were found to be EE mutations. These mutations shift the distribution of fitness effects (DFE) of subsequent mutations, reducing the incidence of deleterious mutations and increasing the incidence of beneficial ones [2]. Populations that encounter EE mutations during their adaptive walk can achieve significantly higher fitness, demonstrating that the genetic background itself can be tuned to facilitate future adaptation.

Experimental Protocols for Characterizing Epistasis

Protocol: Measuring Epistasis in Protein-Binding Affinity Using Tite-Seq

Objective: To quantitatively map the fitness landscape of an antibody-antigen interaction and identify epistatic interactions between mutations.

Workflow Overview: The following diagram illustrates the key steps in this high-throughput protocol:

Key Steps:

- Library Generation: Create a mutant library targeting specific protein domains (e.g., CDR loops of an antibody). The library should include all single amino acid mutants and a large number of random double and triple mutants within the targeted stretches [15].

- Tite-Seq Assay:

- Display the variant library on the surface of yeast cells.

- Use fluorescence-activated cell sorting (FACS) to sort cells based on binding to a fluorescently tagged antigen across a range of concentrations.

- Use high-throughput sequencing to count the variants in each sorted fraction.

- Data Analysis:

- Calculate the dissociation constant (Kd) for each protein variant from the sequencing data and sort statistics [15].

- Transform Kd values into binding free energy (F = ln(Kd)) [15].

- Construct a Position Weight Matrix (PWM) model from the single mutant data. This model represents the additive expectation for the effect of any combination of mutations.

- Calculate epistasis as the difference between the measured free energy of a multiple mutant and the value predicted by the additive PWM model: ε = Fmeasured - FPWM [15].

- Use Z-scores to control for measurement noise and identify statistically significant epistasis.

Protocol: Identifying Reciprocal Sign Epistasis via Competitive Fitness Assays

Objective: To determine if two adaptive mutations exhibit reciprocal sign epistasis in a specific environment.

Workflow Overview: The logical process for constructing and testing genotypes is as follows:

Key Steps:

- Strain Construction: Using the ancestral (wild-type) genetic background, engineer four isogenic strains:

- Wild-type (aB)

- Strain with only Mutation A (Ab)

- Strain with only Mutation B (aB)

- Double mutant strain with both mutations (AB) [13].

- Competitive Fitness Assays: Co-culture each mutant strain with a differentially marked neutral reference strain (e.g., expressing a different fluorescent protein) in the environment of interest (e.g., glucose-limited chemostat) [13].

- Fitness Calculation: Track the ratio of the test strain to the reference strain over multiple generations. The relative fitness is calculated from the exponential growth rate difference between the competing strains [13].

- Analysis for RSE: Test for reciprocal sign epistasis by verifying the following fitness relationship: w(AB) < w(aB) and w(AB) < w(Ab). This confirms that the double mutant is less fit than each of the single mutants, creating a fitness valley [13].

The Scientist's Toolkit: Key Research Reagents

Table 4: Essential Reagents and Tools for Fitness Landscape and Epistasis Research

| Reagent / Tool | Function / Application | Specific Example |

|---|---|---|

| Tite-Seq [15] | High-throughput measurement of protein-binding affinities (Kd) for thousands of variants. | Used to map the affinity landscape of the 4-4-20 antibody against fluorescein [15]. |

| Yeast Surface Display [15] | A platform for displaying protein variants on the yeast cell surface, enabling sorting based on binding. | Coupled with Tite-Seq for affinity-based sorting of antibody variant libraries [15]. |

| Combinatorially Complete Libraries [2] | A set of genotypes that includes all possible combinations of a defined set of mutations. | Essential for comprehensively evaluating epistatic interactions, as used in studies of an E. coli antitoxin protein and a yeast tRNA [2]. |

| MacDonald-Kreitman (MK) Test Extensions [8] [6] | Population genetics method to estimate the rate of adaptive molecular evolution (ωa). | Used with software like Grapes to show higher adaptive rates in young genes in Drosophila and Arabidopsis [8] [6]. |

| Phylostratigraphy [8] [6] | A bioinformatics method to infer gene age based on phylogenetic distribution of homologs. | Used to categorize genes by age and test the adaptive walk model [8] [6]. |

Implications and Applications

Understanding sign and reciprocal sign epistasis is critical for applied fields. In drug development, particularly for antiviral and antibacterial therapies, epistasis can lead to resistance. A mutation that confers resistance to one drug may be deleterious on its own, but in combination with a second "permissive" mutation (a form of sign epistasis), resistance can emerge [14]. Predicting the evolution of drug resistance therefore requires knowledge of the epistatic interactions within the pathogen's genome. Furthermore, in protein engineering, efforts to improve function through iterative mutagenesis can be stymied by rugged landscapes. Identifying EE mutations or mapping epistatic networks can help design smarter mutagenesis strategies that avoid evolutionary dead ends and navigate toward optimal genotypes [2].

Adaptive Walks and the Diminishing Returns Pattern in Molecular Evolution

The concept of the fitness landscape, first introduced by Sewall Wright, provides a powerful framework for understanding evolutionary dynamics [8] [6]. In this metaphorical landscape, elevation corresponds to fitness, while the multidimensional horizontal axes represent the vast space of possible genetic sequences [16]. An adaptive walk describes the step-by-step process by which a population explores this landscape through the accumulation of beneficial mutations, moving toward fitness peaks [8] [6]. John Maynard Smith later adapted this concept specifically for protein evolution, visualizing it as a "walk" through the space of all possible amino acid sequences toward regions of higher function [6]. The modern synthesis of this model, particularly through Allen Orr's extension of Fisher's geometric model, predicts a characteristic pattern of diminishing returns during adaptation, where populations farther from their fitness optimum take larger steps than those closer to their optimal state [8] [6].

This whitepaper examines the theoretical foundations, experimental evidence, and practical implications of adaptive walks in molecular evolution, with particular focus on applications for drug development and protein engineering.

Theoretical Framework of Adaptive Walks

Fundamental Principles

Adaptive walk theory makes two key predictions about molecular evolution. First, sequences further from their fitness optimum (typically younger genes) should experience faster rates of adaptive evolution as they have more potential for improvement. Second, the evolutionary steps taken by these sub-optimal sequences should be larger, meaning mutations with stronger fitness effects are fixed early in the evolutionary process [8] [6]. This pattern arises because when a sequence is far from its optimum, many mutations of large effect are available and likely to be beneficial. As the sequence approaches its fitness peak, the remaining beneficial mutations tend to have progressively smaller effects—hence the diminishing returns [6].

Landscape Topology and Evolutionary Dynamics

The structure of the fitness landscape itself profoundly influences evolutionary trajectories. Landscapes range from smooth, single-peaked "Fujiyama" landscapes to highly rugged, multi-peaked "Badlands" landscapes [16]. The connectivity of the landscape, defined as the fraction of fitness levels accessible via a single mutation, plays a crucial role in determining whether populations can reach global fitness peaks or become trapped at local optima [17]. Computational studies have revealed a critical transition point in landscape connectivity—below a threshold value of approximately 1% of accessible fitness levels, populations almost always get trapped in local optima, while above this threshold, they reliably reach the global peak [17].

Table: Characteristics of Fitness Landscape Topologies

| Landscape Type | Epistasis | Accessible Paths | Probability of Reaching Global Peak | Typical Evolutionary Dynamics |

|---|---|---|---|---|

| Smooth (Fujiyama) | Minimal | Many | High | Predictable, gradual adaptation |

| Moderately Rugged | Moderate | Several | Moderate (depends on connectivity) | Variable with some historical contingency |

| Highly Rugged (Badlands) | Extensive | Few | Low | Predominantly stuck at local optima |

Quantitative Evidence for the Diminishing Returns Pattern

Genomic Studies Across Taxa

Strong evidence for the adaptive walk model comes from large-scale genomic analyses comparing genes of different evolutionary ages. Using population genomic datasets from Arabidopsis and Drosophila, researchers estimated rates of adaptive (ωa) and nonadaptive (ωna) nonsynonymous substitutions across genes from different phylostrata (evolutionary age categories) [8] [6]. After controlling for confounding factors like protein length, gene expression levels, intrinsic disorder, and protein function, these studies found that gene age significantly impacts molecular adaptation rates [8] [6].

Younger genes exhibited significantly higher rates of adaptive substitution (ωa) than older genes, supporting the prediction that sequences further from their optimum adapt faster [8] [6]. Additionally, substitutions in young genes tended to involve amino acids with larger physicochemical differences, indicating they represent "larger steps" in the fitness landscape [8] [6].

Table: Correlation Between Gene Age and Evolutionary Parameters in Arabidopsis and Drosophila

| Evolutionary Parameter | Arabidopsis Correlation | Drosophila Correlation | Combined Significance | Biological Interpretation |

|---|---|---|---|---|

| ω (dN/dS) | 0.962* | 0.727* | p < 0.001 | Younger genes evolve faster |

| ωa (adaptive) | 0.733* | 0.636 | p < 0.01 | Younger genes have more adaptive substitutions |

| ωna (nonadaptive) | 0.848* | 0.697 | p < 0.01 | Younger genes experience less purifying selection |

| Physicochemical Effect | Positive correlation | Positive correlation | p < 0.05 | Younger genes undergo larger effect mutations |

*p < 0.001, p < 0.01

Heterogeneity in Fitness Peak Topology

Recent high-throughput studies of orthologous green fluorescent proteins (GFPs) reveal substantial heterogeneity in fitness peak topography across related proteins [18]. While some GFP fitness peaks were sharp and epistatic, others were considerably flatter with minimal epistatic interactions [18]. This heterogeneity influences evolutionary potential—flat peaks correspond to mutationally robust proteins, while sharp peaks represent fragile genotypes with stronger epistatic constraints [18]. Interestingly, this variation in fitness peak architecture does not simply correlate with evolutionary distance, suggesting that the starting sequence significantly influences evolutionary trajectories and adaptive potential [18].

Experimental Methodologies for Studying Adaptive Walks

Laboratory Directed Evolution

Directed evolution applies iterative rounds of random mutation and artificial selection to explore protein fitness landscapes in the laboratory [16]. This approach has been successfully used to engineer proteins with novel functions, such as a recombinase that removes proviral HIV from host genomes, cytochrome P450 enzymes with new substrate specificities, and fluorescent proteins with enhanced properties [16].

A typical directed evolution workflow consists of:

- Library Generation: Creating genetic diversity through error-prone PCR or other mutagenesis methods

- Selection/Screening: Applying stringent conditions to identify improved variants

- Gene Recovery: Isulating beneficial mutations from selected variants

- Iteration: Repeating the process through multiple rounds [16] [19]

Figure 1: Directed Evolution Workflow for Exploring Adaptive Walks

Population Genomic Approaches

For studying natural evolutionary processes, researchers employ population genomic methods based on the McDonald-Kreitman (MK) framework [8] [6]. This approach uses polymorphism and divergence data to estimate the rate of adaptive molecular evolution (ωa) while accounting for slightly deleterious mutations by modeling the distribution of fitness effects (DFE) [8] [6].

Key steps in this methodology include:

- Phylostratigraphy: Determining gene age based on phylogenetic distribution using tools like BLAST [8] [6]

- Polymorphism Data Collection: Gathering within-species sequence variation [8] [6]

- Divergence Estimation: Calculating between-species sequence differences [8] [6]

- DFE Modeling: Using methods like Grapes to estimate adaptive and nonadaptive substitution rates [8] [6]

Figure 2: Population Genomic Analysis of Adaptive Walks

The Scientist's Toolkit: Key Research Reagents and Solutions

Table: Essential Research Tools for Studying Adaptive Walks

| Reagent/Resource | Function | Example Applications | Key Considerations |

|---|---|---|---|

| Error-prone PCR Kits | Generate random mutagenesis libraries | Creating diverse variant populations for directed evolution | Control mutation rate (typically 1-4 mutations/gene) |

| Grapes Software | Estimate adaptive substitution rates (ωa) from genomic data | Population genomic analysis of natural selection | Accounts for slightly deleterious mutations via DFE modeling |

| Phylostratigraphy Pipelines | Determine gene age based on phylogenetic distribution | Classifying genes as young or old for comparative studies | Uses BLAST-based homology searches across taxa |

| Deep Mutational Scanning Platforms | High-throughput characterization of mutation effects | Mapping fitness landscapes of specific proteins | Requires efficient library construction and phenotyping |

| MK Test Frameworks | Detect positive selection from polymorphism and divergence | Population genomic studies of adaptation | Multiple extensions available for different evolutionary scenarios |

Implications for Drug Development and Protein Engineering

Predicting Evolutionary Trajectories in Pathogens

Understanding adaptive walks provides valuable insights for anticipating drug resistance evolution in pathogens. The diminishing returns pattern suggests that previously adapted pathogens (those closer to their fitness optimum) may evolve resistance through mutations of smaller effect, potentially leading to more gradual resistance development. Conversely, naive pathogens encountering new drugs may initially develop resistance through large-effect mutations [8] [6]. This knowledge can inform combination therapy design by identifying evolutionary trajectories with higher genetic constraints.

Engineering Therapeutic Proteins

In protein therapeutic development, the adaptive walk framework guides engineering strategies. For stabilizing existing proteins, small-step adaptive walks may be optimal, while for creating novel functions, larger steps may be necessary [16] [19]. The heterogeneity of fitness peaks observed across orthologous proteins [18] suggests that choosing the right starting template is crucial—some natural variants provide better foundation for engineering than others due to their position in the fitness landscape.

Leveraging Epistatic Constraints

Epistasis (where the fitness effect of a mutation depends on genetic background) creates historical contingencies that shape adaptive walks [20] [21]. Understanding these constraints enables more predictive protein engineering by identifying evolutionary accessible paths through sequence space [19] [21]. Recent computational approaches can now infer fitness landscapes from laboratory evolution data, allowing in silico prediction of future evolutionary trajectories [19].

Future Directions and Methodological Advances

Emergent technologies are pushing the boundaries of adaptive walk research. High-resolution fitness landscape mapping through deep mutational scanning now allows comprehensive characterization of epistatic interactions [20] [18]. Statistical learning frameworks that model evolutionary processes can infer fitness landscapes from time-series laboratory evolution data [19]. Additionally, high-dimensional landscape models with distance-dependent statistics provide more realistic frameworks for understanding how epistasis shapes evolutionary trajectories over long timescales [21].

These advances are progressively transforming adaptive walk theory from a conceptual framework to a predictive science with significant applications in drug development, protein engineering, and evolutionary forecasting.

The structure of fitness landscapes critically governs adaptive protein evolution. While direct adaptive paths are often blocked by epistatic interactions, evolutionary trajectories can circumvent these roadblocks through indirect paths that involve temporary fitness reductions or reversions. This technical review synthesizes recent advances in empirical characterization and computational modeling of these alternative evolutionary routes, highlighting how high-dimensionality in sequence space facilitates adaptation despite landscape ruggedness. We present quantitative comparisons of path accessibility, detailed experimental protocols for landscape mapping, and emerging applications in proactive therapeutic design.

The concept of fitness landscapes, introduced by Sewall Wright, provides a powerful framework for understanding evolutionary dynamics [22]. In protein evolution, these landscapes map genetic sequences to reproductive success, visualized as mountainous terrain where height corresponds to fitness. Adaptive walks represent the stepwise process by which populations ascend these landscapes through beneficial mutations [21].

The high-dimensionality of protein sequence space (20L for a protein of length L) creates extraordinary complexity. While traditional studies focused on diallelic landscapes (2L), recent technological advances now enable exploration of more complex sequence spaces [23]. A critical finding across these studies is that evolution frequently navigates around fitness valleys via indirect paths rather than being constrained to direct uphill trajectories, fundamentally changing our understanding of evolutionary constraints and possibilities.

Theoretical Framework: Epistasis and Evolutionary Accessibility

Types of Epistasis and Their Evolutionary Consequences

Epistasis—the interaction between mutations—creates the rugged topography that makes evolutionary paths inaccessible. The three primary forms have distinct implications:

- Magnitude Epistasis: The fitness effect of a mutation changes in magnitude but not sign across genetic backgrounds. This creates sloping landscapes without local peaks [22].

- Sign Epistasis: A mutation that is beneficial in one background becomes deleterious in another. This creates local fitness peaks and constrains evolutionary ordering [22].

- Reciprocal Sign Epistasis: Two mutations are individually deleterious but beneficial in combination. This creates evolutionary "traps" that block all direct paths to higher fitness [23] [22].

Table: Classification and Consequences of Epistatic Interactions

| Epistasis Type | Definition | Impact on Accessibility | Landscape Analogy |

|---|---|---|---|

| Magnitude | Effect size changes without sign reversal | Mild constraint | Smooth incline |

| Sign | Beneficial mutation becomes deleterious in some backgrounds | Limits path number | Isolated peak |

| Reciprocal Sign | Mutations individually deleterious but beneficial together | Blocks all direct paths | Trapped valley |

Direct Versus Indirect Evolutionary Paths

Direct paths maintain a constant reduction in Hamming distance from the starting sequence to the destination, with fitness increasing monotonically at each step. In contrast, indirect paths may involve temporary increases in Hamming distance or transient fitness reductions while ultimately reaching superior fitness peaks [23].

The theoretical foundation for understanding these paths emerges from population genetics models showing that stochastic tunneling enables populations to cross fitness valleys without the intermediate genotype ever fixing [24]. This process becomes significant when 2Nμ ≥ 1, where N is the effective population size and μ is the mutation rate per gene.

Empirical Evidence: The GB1 Protein Model System

Experimental Characterization of a Four-Site Landscape

A landmark study systematically characterized the fitness landscape of four amino acid sites (V39, D40, G41, V54) in the GB1 immunoglobulin-binding domain, encompassing all 160,000 (204) possible variants [23]. The experimental workflow coupled saturation mutagenesis with mRNA display and deep sequencing to measure relative fitness through selection for IgG-Fc binding.

Table: Quantitative Analysis of Path Accessibility in GB1 Landscape

| Path Type | Number of Accessible Paths | Percentage of Total | Key Characteristics |

|---|---|---|---|

| Direct Paths | 1-12 (across 29 subgraphs) | 4-50% per subgraph | Monotonic fitness increase |

| Indirect Paths | Significantly expanded | Not quantified | Mutation gain/loss cycles |

| Blocked by Reciprocal Sign Epitasis | 0 in many cases | Up to 95% in extreme cases | All direct paths inaccessible |

The research revealed that while reciprocal sign epistasis blocked many direct adaptation paths, these evolutionary traps could be circumvented through indirect trajectories involving gain and subsequent loss of mutations [23]. This alleviates evolutionary constraints and demonstrates that high-dimensional sequence space provides alternative routes that are invisible in simplified diallelic models.

Experimental Protocol: Comprehensive Fitness Landscape Mapping

Materials and Reagents:

- Codon-randomized oligonucleotide library covering target sites

- In vitro transcription/translation system

- mRNA display components (puromycin linkage, reverse transcription reagents)

- Selection matrix (e.g., IgG-Fc for GB1 binding studies)

- High-throughput sequencing platform (Illumina)

Methodological Workflow:

- Library Construction: Generate mutant library using codon randomization at target sites

- In vitro Selection: Couple genotype to phenotype through mRNA display and apply selective pressure

- Deep Sequencing: Quantify variant frequencies before and after selection via Illumina sequencing

- Fitness Calculation: Compute relative fitness from enrichment ratios (fpost-selection/fpre-selection)

- Pathway Analysis: Identify accessible paths through combinatorial analysis of all variants

This high-throughput approach enables fitness measurement for thousands of variants in parallel, overcoming previous throughput limitations that restricted landscape analysis to small sequence subspaces [23].

Diagram: Direct vs. Indirect Evolutionary Paths. Direct paths maintain monotonic fitness increases but are often blocked by epistatic interactions. Indirect paths may involve temporary fitness reductions but circumvent evolutionary traps.

Computational Approaches for Mapping Evolutionary Paths

Protein Language Models and Fitness Prediction

Recent advances in protein language models (pLMs) like ESM-2 enable prediction of variant fitness from sequence alone. The CoVFit model, fine-tuned on SARS-CoV-2 spike protein variants, demonstrates how pLMs can capture epistatic effects and predict variant fitness with high accuracy (Spearman correlation: 0.990) [25].

These models leverage evolutionary information from multiple sequence alignments and structural constraints to infer fitness landscapes without exhaustive experimental characterization. The multitask learning framework combines genotype-fitness data with deep mutational scanning measurements of antibody escape, enhancing predictive power for viral evolution [25].

Machine Learning-Assisted Directed Evolution

Machine learning-assisted directed evolution (MLDE) strategies significantly enhance navigation of rugged fitness landscapes. Comparative studies across 16 diverse protein landscapes demonstrate that MLDE provides the greatest advantage on landscapes challenging for conventional directed evolution, particularly those with fewer active variants and more local optima [26].

Table: Machine Learning Approaches for Fitness Landscape Navigation

| Method | Mechanism | Best-Suited Landscape Properties | Performance Advantage |

|---|---|---|---|

| MLDE | Supervised learning on sequence-fitness data | Moderate epistasis, identifiable patterns | 2-5x efficiency gain |

| Active Learning DE | Iterative model refinement with new data | High ruggedness, complex epistasis | 3-8x efficiency gain |

| Focused Training MLDE | Zero-shot predictor enriched training sets | Sparse high-fitness regions | 5-10x efficiency gain |

Focused training using zero-shot predictors that leverage evolutionary, structural, and stability information consistently outperforms random sampling across diverse protein engineering tasks [26]. This approach is particularly valuable for navigating landscapes where beneficial combinations require specific mutations that are deleterious individually—precisely the scenario where indirect paths become essential.

Research Reagent Solutions Toolkit

Table: Essential Research Reagents for Fitness Landscape Studies

| Reagent/Category | Function | Example Applications |

|---|---|---|

| Codon-Randomized Libraries | Generation of comprehensive variant libraries | Saturation mutagenesis at target sites [23] |

| mRNA Display Systems | In vitro coupling of genotype to phenotype | High-throughput fitness screening [23] |

| Deep Mutational Scanning | Parallel fitness assessment of thousands variants | Epistasis mapping, path accessibility [25] |

| Potts Models/EVmutation | Statistical inference of epistatic interactions | Fitness prediction from sequence data [27] |

| Protein Language Models | Sequence-based fitness prediction | CoVFit for viral evolution prediction [25] |

| Stability Prediction Tools | Computational ΔΔG calculation | Biophysical fitness modeling [27] |

Applications in Viral Evolution and Therapeutic Design

Fitness Landscape Design for Viral Entrapment

The emerging field of fitness landscape design (FLD) aims to proactively shape evolutionary landscapes to constrain pathogen adaptation. For SARS-CoV-2, FLD algorithms can optimize antibody ensembles that force viral evolution into low-fitness trajectories, potentially enabling proactive vaccine design that preempts escape variants [27].

The biophysical model underlying this approach bridges fitness and binding affinities:

F(s) ≈ krep × No^{-1} × Nent × pb(s)

Where p_b(s) represents the binding probability to host receptors, modulated by antibody concentrations and binding free energies [27]. This quantitative framework allows computational optimization of antibody combinations that minimize viral fitness across potential escape variants.

Valley Crossing in Natural Evolution

Empirical studies of natural proteins reveal that fitness valley crossing occurs more frequently than classical models predict. Research on mammalian mitochondrial proteins indicates that genes encoding small protein motifs navigate fitness valleys of depth 2Ns ≳ 30 with probability P ≳ 0.1 on evolutionary timescales [24].

This surprising facility with valley crossing stems from the high-dimensionality of protein sequence space, which provides numerous alternative routes around evolutionary obstacles. The conventional picture of populations trapped on local fitness peaks requires revision in light of these findings about indirect path accessibility.

The dichotomy between direct and indirect evolutionary paths represents a fundamental principle in protein fitness landscape navigation. While epistatic interactions frequently block direct adaptive routes, evolutionary innovation proceeds through indirect paths that leverage the high-dimensional nature of sequence space. This understanding transforms our perspective on evolutionary constraints and opportunities, with significant implications for protein engineering, antiviral therapeutic design, and fundamental evolutionary biology.

Emerging methodologies in deep mutational scanning, protein language models, and fitness landscape design provide powerful tools for mapping these alternative routes and harnessing them for biomedical applications. The integration of computational prediction with experimental validation promises to unlock further insights into the topological features that govern evolutionary trajectories across diverse biological systems.

From Theory to Therapy: Methodological Advances in Landscape Navigation

The relationship between a protein's amino acid sequence and its function is one of the most fundamental questions in molecular biology and genetics. This relationship can be conceptualized as a protein fitness landscape, a high-dimensional map where each point in the space of all possible protein sequences is assigned a fitness value representing a measurable property such as catalytic activity, stability, or binding affinity [28]. In evolutionary theory, an adaptive walk describes the process by which a population evolves by "walking" through this fitness landscape towards sequences with higher fitness, characterized by a pattern of diminishing returns [8]. Populations further from their fitness optimum tend to take larger adaptive steps (mutations with stronger fitness effects), while those closer to optimum fix mutations with smaller effects [8] [6].

Deep Mutational Scanning (DMS) has emerged as a powerful experimental technique to empirically map these fitness landscapes at unprecedented resolution [29] [30]. By systematically quantifying the functional effects of tens of thousands of protein variants in a single experiment, DMS provides the high-throughput data necessary to visualize the structure of fitness landscapes and understand the constraints and potential trajectories of protein evolution [29] [19]. This whitepaper provides an in-depth technical guide to DMS methodology, its integration with computational approaches, and its applications in basic research and therapeutic development.

Technical Foundations of Deep Mutational Scanning

Core Principles and Workflow

Deep Mutational Scanning is a technique that combines high-diversity mutant library generation, functional selection, and next-generation sequencing to measure the functional consequences of thousands to millions of mutations in parallel [29] [30]. The core principle involves tracking the enrichment or depletion of individual variants before and after a functional selection pressure is applied.

A standard DMS experiment follows four key steps [30]:

- Library Generation: Creating a comprehensive library of genetic variants of the target protein.

- Functional Selection: Subjecting the library to a selection pressure that links genotype to phenotype.

- Deep Sequencing: Using next-generation sequencing (NGS) to count each variant in pre- and post-selection populations.

- Data Analysis & Fitness Scoring: Calculating enrichment scores to determine the functional effect of each mutation.

The following diagram illustrates this workflow and its position within the broader cycle of fitness landscape research:

Key Methodologies for Mutant Library Generation

The foundation of any DMS experiment is a high-quality, diverse mutant library. The choice of library generation method significantly impacts the type and quality of the resulting fitness landscape data.

Table 1: Comparison of Mutant Library Generation Methods in DMS

| Method | Principle | Advantages | Limitations | Best Suited For |

|---|---|---|---|---|

| Error-Prone PCR [29] | Uses low-fidelity DNA polymerases to incorporate random mutations during PCR amplification. | Relatively cheap and easy to perform; suitable for generating comprehensive nucleotide-level mutations [29]. | Mutations are not completely random due to polymerase biases; poorly suited for achieving all possible single amino acid substitutions [29]. | Directed evolution experiments; exploring random mutational space [29]. |

| Oligo Pools with NNN/S/K Codons [29] | Synthesizes oligonucleotides containing NNN (any base), NNS (G/C), or NNK (G/T) triplets at targeted codons. | Can generate a customized library with fewer biases; allows for all possible 19 amino acid substitutions per codon [29]. | More costly than error-prone PCR; requires careful design [29]. | Saturation mutagenesis for all single amino acid substitutions; user-defined variant libraries [29]. |

| Doped Oligo Synthesis [29] | Incorporates a defined percentage of mutations at each position during oligo synthesis. | Allows control over mutation rate and spectrum; can generate long mutant oligos (up to 300 nt) [29]. | Synthesis biases can occur; may still require sophisticated normalization [29]. | Focused libraries targeting specific regions with controlled diversity [29]. |

The Scientist's Toolkit: Essential Reagents and Materials

Table 2: Key Research Reagent Solutions for DMS Experiments

| Reagent/Material | Function in DMS Workflow | Key Considerations |

|---|---|---|

| Mutant DNA Library | Provides the genetic diversity for the experiment; the starting genotype pool. | Quality is paramount. Assess diversity and distribution via deep sequencing of the input library to quantify biases [30]. |

| Selection System | Links the genetic variant (genotype) to a functional output (phenotype). | Stringency must be optimized. Too strong a pressure selects only top variants; too weak fails to distinguish functional from non-functional [30]. |

| Next-Generation Sequencing Platform | Quantitatively counts the frequency of each variant before and after selection. | Requires sufficient sequencing depth to reliably quantify even rare variants. Error rates must be managed [30]. |

| Unique Molecular Identifiers (UMIs) | Short, random DNA sequences attached to each initial DNA molecule. | Critical for robust error correction. UMIs allow computational collapsing of reads to correct for PCR and sequencing errors [30]. |

| Expression Vector & Cloning System | Hosts the mutant library for expression in the destination cells. | Must be compatible with the selection system and allow efficient library cloning and propagation [29]. |

Advanced Applications and Integration with Computational Models

Key Research Applications

DMS has moved from a niche method to a central tool in biotechnology and biomedical research, enabling several high-impact applications:

- Mapping Viral Evolution and Immune Escape: DMS data on immune-escape mutants of various SARS-CoV-2 variants have been instrumental in guiding vaccine design by predicting mutations that allow viruses to evade neutralizing antibodies [29].

- Classifying Human Disease Variants: Many human disease-related genetic variants of unknown significance have been systematically classified as either benign or detrimental using DMS, advancing the interpretation of clinical genetic data [29].

- Protein Engineering and Optimization: DMS provides a complete roadmap for protein engineering, identifying mutations that enhance stability, activity, or binding affinity for enzymes and therapeutic proteins like antibodies [30] [28].

- Revealing Genetic Interactions and Epistasis: DMS has been used to uncover complex genetic interaction patterns both between genes and within the same gene, revealing the biophysical mechanisms underlying protein function and evolution [29].

- Multi-Environment Fitness Profiling: Performing DMS under different conditions (e.g., temperature) reveals how fitness landscapes shift with the environment, identifying condition-sensitive variants and challenging simplistic stability-activity trade-off assumptions [31].

Machine Learning and Fitness Landscape Modeling

The large-scale sequence-function data generated by DMS are ideal for training machine learning (ML) models to predict protein fitness, creating a powerful synergy between high-throughput experimentation and in silico design.

Supervised learning models, including deep neural networks like convolutional neural networks (CNNs) and transformers, learn the sequence-function mapping from DMS data [28]. These models can then extrapolate beyond the tested sequences to propose new, high-fitness variants through in silico optimization using search heuristics like hill climbing and genetic algorithms [28].

Active learning frameworks, such as Machine Learning-Assisted Directed Evolution (MLDE) and Bayesian Optimization (BO), implement an iterative design-test-learn cycle [28]. These approaches use an ML model to select the most informative sequences to test experimentally, dramatically reducing the screening burden required to find optimal proteins [28]. Recent advances, such as the μProtein framework, combine a deep learning model (μFormer) for mutational effect prediction with a reinforcement learning algorithm (μSearch) to navigate the fitness landscape efficiently, successfully designing high-functioning multi-point mutants for β-lactamase trained solely on single-mutation data [32].

The following diagram illustrates how these computational and experimental approaches integrate into a modern protein engineering workflow:

Deep Mutational Scanning has fundamentally transformed our ability to map protein fitness landscapes empirically, moving protein science from a paradigm of targeted, hypothesis-driven inquiry to one of comprehensive, data-rich exploration. By providing a high-throughput, quantitative readout of sequence-function relationships, DMS offers an unprecedented view of the adaptive walks that proteins can undertake. Its integration with machine learning creates a powerful, iterative feedback loop that accelerates the discovery and design of novel proteins with tailored functions. As DMS methodologies continue to mature—encompassing more complex multi-environment selections and more sophisticated library designs—their role in basic biological discovery, therapeutic antibody engineering, and enzyme optimization will only expand, solidifying DMS as an indispensable tool for modern biotechnology and evolutionary biology.

Machine Learning-Assisted Directed Evolution (MLDE) Strategies

The process of protein engineering is fundamentally a search for high-functioning sequences within a vast and complex fitness landscape. This landscape maps every possible protein sequence to a corresponding "fitness" value, representing a measurable property like catalytic activity, binding affinity, or thermostability [16] [28]. Directed Evolution (DE), a workhorse method in protein engineering, mimics natural selection by performing iterative cycles of mutagenesis and screening to identify improved variants. This process can be visualized as an adaptive walk across the fitness landscape, where each step moves towards a sequence of higher fitness [16].

However, the structure of the fitness landscape itself dictates the efficiency of this search. Landscapes can range from smooth, "Fujiyama"-like surfaces with a single peak to highly rugged, "Badlands"-like terrains rich in local optima and epistasis [16]. Epistasis—the non-additive, often unpredictable interaction between mutations—is a pervasive feature of these rugged landscapes and poses a significant challenge for traditional DE. A beneficial mutation in one sequence background may be neutral or even detrimental in another, causing simple greedy walks to become trapped on local fitness peaks [26] [16]. Machine Learning-Assisted Directed Evolution (MLDE) has emerged as a powerful strategy to overcome these limitations. By using ML models to learn the underlying sequence-function relationship, MLDE can navigate epistatic landscapes more efficiently, predicting high-fitness variants and drastically reducing the experimental screening burden [33] [28].

Core MLDE Methodologies and Workflow

At its core, MLDE uses supervised machine learning to build a model that maps protein sequence representations (inputs) to experimentally measured fitness values (outputs). This model is trained on a relatively small, initially screened subset of a combinatorial library. Once trained, the model can predict the fitness of all unscreened variants in the library, guiding researchers toward the most promising candidates for further experimental validation [34] [35].

The Standard MLDE Workflow

The following diagram illustrates the foundational, single-round MLDE workflow:

Advanced MLDE Strategy: Active Learning

A more sophisticated, iterative approach involves Active Learning (ALDE), which creates a closed-loop design-test-learn cycle to refine the model with strategically chosen new data [26] [28]. The following diagram illustrates this adaptive process:

Key MLDE Strategies and Performance Analysis

Recent systematic studies have evaluated multiple MLDE strategies across a diverse set of 16 protein fitness landscapes, encompassing both binding interactions and enzyme activities. The table below summarizes the core strategies and their performance characteristics [26].

Table 1: Summary of Core MLDE Strategies and Advantages

| Strategy | Core Principle | Key Advantage | Reported Performance Gain |

|---|---|---|---|

| Standard MLDE | Single-round training on random library subset, followed by in-silico prediction of the entire landscape. | Reduces screening burden compared to exhaustive screening; accounts for epistasis. | Up to 81-fold greater success rate in finding the global maximum compared to greedy DE on an epistatic landscape [34]. |