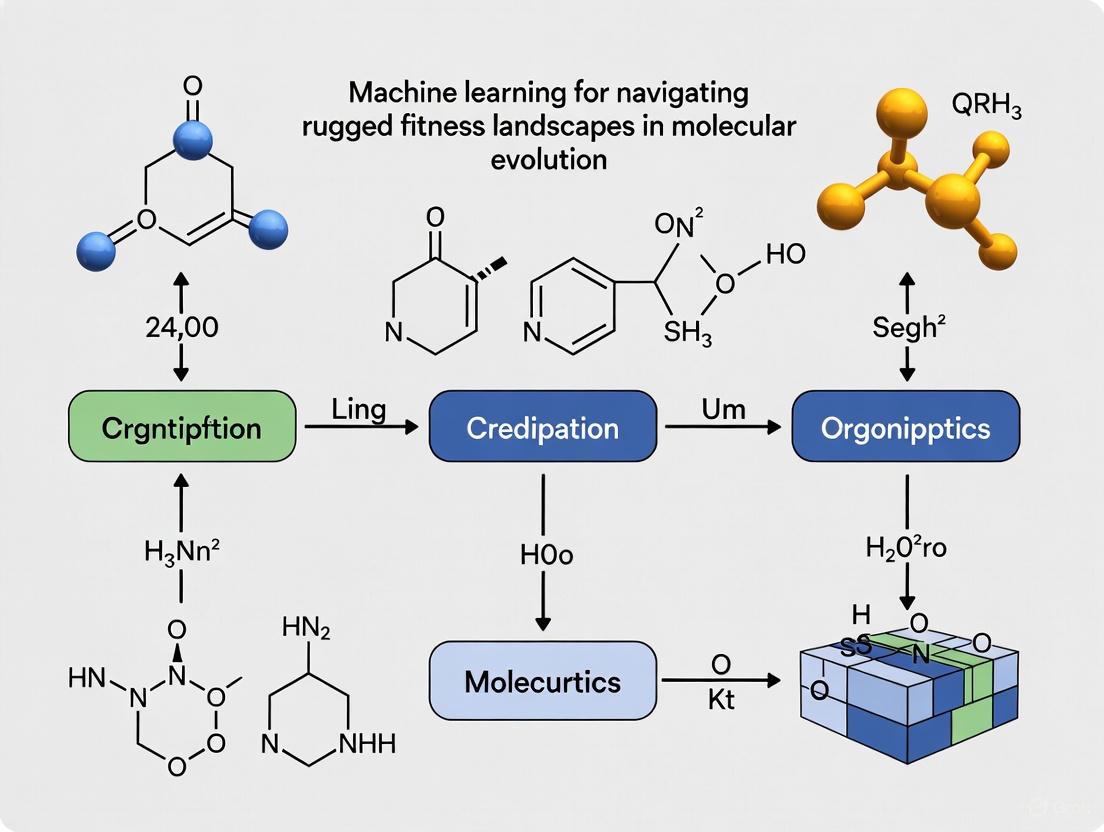

Navigating Rugged Fitness Landscapes: Machine Learning Strategies for Protein Engineering and Drug Development

This article explores the transformative role of machine learning (ML) in navigating rugged fitness landscapes, a significant challenge in protein engineering and therapeutic development.

Navigating Rugged Fitness Landscapes: Machine Learning Strategies for Protein Engineering and Drug Development

Abstract

This article explores the transformative role of machine learning (ML) in navigating rugged fitness landscapes, a significant challenge in protein engineering and therapeutic development. Rugged landscapes, characterized by epistasis and numerous local optima, render traditional optimization methods inefficient. We survey foundational concepts, including key metrics of landscape ruggedness and neutrality. The review then details state-of-the-art ML methodologies, from unsupervised protein language models to supervised learning and active learning frameworks, highlighting their application in designing novel enzymes and optimizing protein functions. We further analyze performance determinants and troubleshooting strategies for ML models when faced with epistasis and sparse data. Finally, we present rigorous validation protocols and comparative analyses of ML approaches, offering researchers a comprehensive guide to leveraging ML for accelerated biomolecular design.

Understanding Rugged Fitness Landscapes: From Biological Concepts to ML Challenges

In protein science, a fitness landscape is a conceptual mapping that relates every possible genotype (e.g., a protein sequence) to its corresponding fitness or function [1]. Imagine a three-dimensional topography where the horizontal plane represents all possible protein sequences, and the vertical elevation represents the functional fitness of each sequence. The highest peaks correspond to sequences with optimal performance for a desired function, such as catalytic activity or binding affinity [2] [1]. The core challenge in protein engineering is to efficiently navigate these vast, high-dimensional landscapes to find these peaks.

This guide provides troubleshooting and best practices for researchers mapping these landscapes, with a special focus on integrating machine learning to traverse rugged terrains where mutations have complex, non-additive effects (epistasis) [3].

Frequently Asked Questions (FAQs)

Q1: What is the primary challenge in navigating fitness landscapes for protein engineering? The main challenge is the immensity, sparsity, and complexity of the sequence-performance landscape [4]. The number of possible sequences is astronomically large, functional variants are often rare, and the presence of epistasis creates a rugged landscape with many local optima, making simple hill-climbing approaches ineffective [3].

Q2: How can machine learning (ML) assist in directed evolution? Machine learning-assisted directed evolution (MLDE) uses models trained on experimental sequence-fitness data to predict high-performing variants [3]. This is more efficient than testing random mutants. Strategies include:

- Active Learning (ALDE): Iteratively selecting which variants to test next based on model predictions.

- Focused Training (ftMLDE): Using zero-shot predictors (based on evolutionary, structural, or stability knowledge) to select a smarter initial training set, which consistently outperforms random sampling [3].

Q3: On what type of fitness landscape does MLDE offer the greatest advantage? MLDE provides a greater advantage on landscapes that are more challenging for traditional directed evolution, particularly those with fewer active variants, more local optima, and higher ruggedness due to strong epistatic interactions [3].

Q4: What is the benefit of a high-resolution sequence-function map? A high-resolution map, which quantifies the performance of hundreds of thousands of variants, allows you to move beyond simply finding a good variant. It elucidates the specific role of each position and amino acid, revealing the complex sequence-function relationships that inform fundamental biology and improve future engineering efforts [5] [4].

Troubleshooting Guide: Common Experimental Pitfalls in Landscape Mapping

| Problem | Potential Cause | Solution |

|---|---|---|

| Poor Library Diversity | Limited mutational coverage in the initial variant library. | Use comprehensive library synthesis (e.g., covering all single/double mutants) [5] and employ error-correcting codes in DNA synthesis. |

| Selection Bottlenecks | Overly stringent selection pressure that causes convergence to a few dominant variants. | Apply moderate selection pressure to maintain library diversity and enable mapping of a wide range of variants [5]. |

| High Experimental Noise | Inaccurate fitness measurements from display methods (e.g., phage, yeast) due to expression biases or inefficient selection. | Include control selections for expression/folding; use deep sequencing with paired-end reads to minimize errors [5]; utilize high-throughput, high-integrity screens [4]. |

| Difficulty Modeling Epistasis | Rugged landscape with many local optima confounds machine learning models. | Combine focused training (ftMLDE) with active learning (ALDE); use ensemble models or models specifically designed to capture epistasis [3]. |

Experimental Protocol: High-Resolution Mapping via Phage Display

This protocol, adapted from a large-scale study of a WW domain, details how to generate a quantitative sequence-function map [5].

1. Key Research Reagent Solutions

| Reagent / Material | Function in the Experiment |

|---|---|

| T7 Bacteriophage System | A lytic phage used for protein display; ideal for complex folded domains as displayed proteins need not cross a membrane [5]. |

| Cognate Peptide Ligand | The target peptide (e.g., GTPPPPYTVG) used for selection; it is immobilized on beads to capture functional WW domain variants [5]. |

| DNA Sequencing Library | Prepared via PCR from the phage pool for high-throughput sequencing to link variant sequence to its abundance after selection [5]. |

| Illumina Paired-End Sequencing | Provides overlapping sequence reads to achieve a very low error rate (e.g., ~3e-6), essential for confidently identifying rare variants [5]. |

2. Detailed Workflow The following diagram illustrates the core experimental cycle for generating a sequence-function map:

3. Quantitative Data Analysis After sequencing, the enrichment ratio for each variant is calculated as: Enrichment = (Frequency in Selected Library) / (Frequency in Input Library) [5].

The table below summarizes hypothetical data for key positions in a WW domain, illustrating how tolerance to mutation varies:

| Protein Position | Wild-Type Residue | Mutational Tolerance | Representative Mutation & Effect |

|---|---|---|---|

| 17 | Tryptophan (W) | Highly Intolerant | W17F: Severely diminishes binding [5]. |

| 39 | Tryptophan (W) | Highly Intolerant | W39F: Severely diminishes binding [5]. |

| Other | Variable | Permissive | Many substitutions show minimal effect on fitness [5]. |

Machine Learning Integration for Navigating Landscapes

Machine learning models are powerful tools for predicting fitness and guiding exploration. The diagram below outlines a strategy for deploying ML in directed evolution:

Performance of MLDE Strategies The table below summarizes findings from a systematic evaluation of MLDE across 16 protein fitness landscapes [3].

| MLDE Strategy | Key Principle | Relative Advantage |

|---|---|---|

| Standard MLDE | Train model on a randomly sampled dataset. | Consistently matches or exceeds traditional DE. |

| Focused Training (ftMLDE) | Enrich training set using zero-shot predictors. | Outperforms standard MLDE; more efficient use of experimental data. |

| Active Learning (ALDE) | Iteratively select informative variants for testing. | Provides the greatest advantage on the most challenging, rugged landscapes. |

| Tool / Database | Function | URL / Access |

|---|---|---|

| Basic Local Alignment Search Tool (BLAST) | Finds regions of local similarity to infer functional/evolutionary relationships [6]. | https://blast.ncbi.nlm.nih.gov |

| Conserved Domain Search (CD-Search) | Identifies conserved protein domains present in a query sequence [6]. | https://www.ncbi.nlm.nih.gov/Structure/cdd/wrpsb.cgi |

| Multiple Sequence Alignment Viewer | Visualizes alignments to analyze conservation and variation [6]. | https://www.ncbi.nlm.nih.gov/projects/msaviewer/ |

FAQs on Rugged Fitness Landscapes and Epistasis

What are fitness landscapes and ruggedness?

In evolutionary biology, a fitness landscape is a concept used to visualize the relationship between genotypes (or protein sequences) and their reproductive success or "fitness." Imagine a map where the height represents fitness; peaks correspond to high-fitness variants, and valleys correspond to low-fitness variants. Landscape ruggedness refers to how many local peaks and valleys exist. A highly rugged landscape is like a jagged mountain range with many small peaks, making it difficult to find the highest global peak because evolution can get stuck on a lower, local optimum [7]. Ruggedness is primarily caused by epistasis [8].

What is epistasis?

Epistasis is a genetic interaction where the effect of one mutation depends on the presence or absence of other mutations in the genome [9] [10]. It is the biological reason behind landscape ruggedness. Think of it like this: a mutation that is beneficial in one genetic background can become neutral or even harmful in another genetic background due to interactions between genes.

- Classical (Population Genetics) View: Epistasis describes any non-additive interaction between mutations. This includes both positive and negative interactions [9].

- Classical Genetics View: More specifically, it describes a situation where one mutation masks or suppresses the phenotypic effect of another mutation at a different locus [9] [10].

What is higher-order epistasis?

While pairwise epistasis involves interactions between two mutations, higher-order epistasis involves complex, non-additive interactions between three or more mutations [10]. The impact of a single mutation cannot be predicted without knowing the state of several other positions in the sequence. Recent studies on proteins like TEM-1 β-lactamase have shown that higher-order epistasis is a major driver of evolutionary unpredictability, especially when adapting to novel environments (e.g., new antibiotics) [11] [12].

Why is epistasis a major challenge in directed evolution?

Directed evolution (DE) is a powerful protein engineering method that mimics natural evolution by iteratively introducing mutations and selecting improved variants. This process is akin to hill-climbing on a fitness landscape.

- On a smooth landscape: Mutations have consistent, additive effects. DE can efficiently climb uphill to a fitness peak [13].

- On a rugged landscape: Epistasis causes the effect of a mutation to change depending on the genetic background. A beneficial mutation identified in one round can become deleterious when combined with new mutations in the next round, causing the process to get stuck at a low local peak instead of finding the highest global peak [13] [3]. This is a primary reason why DE can be inefficient and fail to find optimal protein variants.

How can machine learning help navigate rugged landscapes?

Machine learning (ML) models can learn the complex, context-dependent rules defined by epistasis from experimental data. Instead of making greedy, step-by-step decisions like traditional DE, ML models can predict the fitness of many untested variants, identifying combinations of mutations that would be missed by sequential approaches. Key ML strategies include:

- ML-assisted Directed Evolution (MLDE): Training a model on a screened library to predict and select high-fitness variants for testing in a single round [13] [3].

- Active Learning-assisted Directed Evolution (ALDE): An iterative process where a model is continuously updated with new experimental data, using uncertainty quantification to intelligently explore the sequence space and avoid local optima [13].

- Focused Training (ftMLDE): Using zero-shot predictors (based on evolutionary, structural, or stability knowledge) to pre-select a more informative training library, improving model performance on challenging landscapes [3].

Troubleshooting Guides for Experimental Challenges

Problem: Unpredictable Mutation Effects in Combinatorial Libraries

Symptoms: Beneficial single mutations, when recombined, do not produce additive fitness gains. Instead, the combined variant shows no improvement or even a severe loss of function.

Underlying Cause: Prevalent negative epistasis and sign epistasis, where the effect of a mutation changes sign (from beneficial to deleterious) in different genetic backgrounds [3].

Solutions:

- Shift from Greedy to Global Search: Avoid simple "best-hits" recombination.

- Implement MLDE: Construct a combinatorial library targeting key residues. Screen a subset, use the data to train an ML model, and have the model predict the best overall combinations from the entire sequence space [3].

- Utilize Focused Training: If possible, use a zero-shot predictor to design an initial library enriched with potentially high-fitness variants, giving your ML model a better starting point [3].

Experimental Protocol: MLDE for a Multi-Residue Combinatorial Library

- Step 1: Define Design Space. Select 3-5 functionally important, potentially epistatic residues based on structure or previous studies.

- Step 2: Generate Combinatorial Library. Use PCR-based mutagenesis with degenerate codons (e.g., NNK) to create a library covering all possible combinations at the selected sites.

- Step 3: Screen Initial Library. Assay a randomly sampled subset (e.g., hundreds to thousands) of variants for your target fitness metric (e.g., enzymatic activity, binding affinity).

- Step 4: Train ML Model. Use the sequence-fitness data to train a supervised regression model (e.g., based on Gaussian processes or neural networks).

- Step 5: Predict and Validate. Use the trained model to predict the fitness of all variants in the design space. Synthesize and test the top 50-100 predicted high-fitness variants to identify your final improved clone [3].

Problem: Evolution is Stuck at a Local Fitness Peak

Symptoms: Sequential rounds of mutagenesis and screening no longer yield fitness improvements despite the known existence of higher-fitness sequences.

Underlying Cause: Traditional DE is a local search method that cannot traverse fitness valleys to reach higher peaks on a rugged landscape [13] [7].

Solutions:

- Adopt an Active Learning (ALDE) Workflow. This iterative approach balances exploration (testing uncertain variants) with exploitation (testing predicted high-fitness variants), allowing it to navigate around local optima [13].

- Leverage Uncertainty Quantification. Use ML models that provide uncertainty estimates for their predictions. Prioritize testing variants with high predicted fitness and high uncertainty, as they may lead to new, promising regions of the sequence space.

Experimental Protocol: ALDE Workflow

- Step 1: Initial Random Library. Generate and screen a small, random library from your design space.

- Step 2: Model Training & Proposal. Train an ML model on all data collected so far. Use an acquisition function (e.g., Upper Confidence Bound) to rank all sequences and propose the next batch of variants to test, focusing on those that are high-fitness, high-uncertainty, or both.

- Step 3: Iterative Looping. Synthesize and test the proposed batch. Add the new data to the training set and repeat Steps 2-3 until a satisfactory variant is found (typically 3-5 rounds) [13].

Key Concepts and Data Visualization

Table: Comparing Protein Engineering Strategies on Rugged Landscapes

| Strategy | Core Principle | Advantage | Best Suited For |

|---|---|---|---|

| Traditional Directed Evolution (DE) | Greedy, step-wise hill-climbing | Simple, requires no model | Smooth landscapes with weak epistasis [13] |

| ML-assisted DE (MLDE) | One-shot model prediction after initial screening | More efficient than DE; finds global optima in single round | Landscapes with moderate epistasis [3] |

| Active Learning-assisted DE (ALDE) | Iterative model retraining with smart exploration | Navigates ruggedness, escapes local optima | Highly rugged landscapes with strong higher-order epistasis [13] |

| Focused Training MLDE (ftMLDE) | Enriches initial data using zero-shot predictors | Boosts ML performance with less data | All landscapes, especially when screening budget is limited [3] |

Table: Quantitative Evidence of Epistasis and ML Performance

This table summarizes key findings from recent studies, highlighting the prevalence of epistasis and the performance gains offered by ML.

| Protein / System | Key Finding | Experimental Scale / Performance |

|---|---|---|

| TEM-1 β-lactamase [11] | Higher-order epistasis is extensive under selection with a novel antibiotic (aztreonam), creating a rugged landscape. | Over 8 million fitness measurements; landscape highly unpredictable. |

| ParPgb Protoglobin [13] | ALDE optimized 5 epistatic active-site residues for a cyclopropanation reaction, where DE failed. | In 3 rounds, improved product yield from 12% to 93%, exploring only ~0.01% of sequence space. |

| 16 Diverse Protein Landscapes [3] | MLDE strategies consistently matched or exceeded DE performance. Advantage was greatest on landscapes challenging for DE (few active variants, many local optima). | Systematic computational analysis across 16 landscapes. |

| NK Model [8] | The K parameter tunes landscape ruggedness. Higher K (more epistatic interactions) leads to more local peaks and shorter adaptive walks. |

Theoretical model foundational to the field. |

The Scientist's Toolkit: Research Reagent Solutions

| Item | Function in Experiment |

|---|---|

| Combinatorial Mutant Library | A collection of protein variants containing all possible combinations of mutations at pre-selected residues. Essential for mapping epistatic interactions [11] [3]. |

| High-Throughput Screening Assay | A method to rapidly measure the fitness (e.g., enzymatic activity, binding affinity) of thousands of protein variants in parallel. Provides the essential data for training ML models [13] [3]. |

| Zero-Shot Predictors | Computational models (e.g., based on evolutionary coupling, structural stability, or language models) that estimate protein fitness without experimental data. Used for focused training (ftMLDE) to design smarter initial libraries [3]. |

| Epistatic Transformer Model | A specialized neural network architecture designed to isolate and quantify higher-order epistatic interactions in protein sequence-function data. Helps decipher the complex rules underlying landscape ruggedness [12]. |

Frequently Asked Questions

Q1: My optimization algorithm appears to have stalled. The fitness score is no longer improving despite continued iterations. Could I be in a flat fitness landscape region, and how can I confirm this?

A1: Yes, this is a classic symptom of a search algorithm navigating a flat region, or "neutral network," of the fitness landscape. To confirm, we recommend the following diagnostic protocol:

- Compute a Population Diversity Metric: Track the average genetic distance (e.g., Hamming distance for sequences) between individuals in your population over time. A sustained drop in diversity indicates the population is converging and may be trapped on a neutral network where mutations do not change fitness [14].

- Perform a Local Random Walk: Select your best-performing genotype and generate a set of single-step mutants. If a significant proportion (e.g., >5%) of these mutants show no significant change in fitness despite sequence variation, you are likely on a flat, neutral ridge [14]. This is quantified by measuring the mutational robustness of the genotype.

Q2: Are flat regions ultimately beneficial or detrimental for finding a global optimum in protein engineering?

A2: The impact of flat regions is nuanced and depends on your experimental strategy. The table below summarizes the characteristics and strategic implications based on recent research [14]:

| Characteristic | Impact on Search |

|---|---|

| Exploration | Beneficial. Neutrality allows a population to explore a wider genotypic space without fitness penalties, potentially discovering new paths to higher fitness peaks. |

| Predictive Modeling | Detrimental. Mutationally robust proteins from flatter peaks provide less informative data due to weaker epistatic interactions, leading to less accurate machine learning models for protein design [14]. |

| Algorithm Choice | Critical. Gradient-based methods can fail. Algorithms like evolutionary strategies that leverage neutral drift are often more effective for traversing these regions. |

Q3: For a real-world project engineering an amide synthetase, what is a proven experimental workflow to handle epistasis and neutrality?

A3: A successful ML-guided, cell-free framework has been demonstrated for engineering amide synthetases [15]. The workflow integrates high-throughput data generation with machine learning to navigate the sequence-function landscape efficiently, as detailed in the following protocol and diagram.

- Experimental Protocol:

- Design: Select active site residues for mutagenesis based on structural data (e.g., all residues within 10 Å of the docked substrate).

- Build: Use cell-free DNA assembly and PCR to generate a site-saturation mutagenesis library. This avoids cloning and enables the creation of sequence-defined variants in a day [15].

- Test: Express mutant proteins using cell-free gene expression (CFE) and perform functional assays in parallel. The cited study evaluated 1,216 enzyme variants across 10,953 unique reactions [15].

- Learn: Use the collected sequence-function data to train a supervised machine learning model (e.g., augmented ridge regression). This model predicts the fitness of higher-order mutants not explicitly tested [15].

Q4: How does the structure of a fitness peak itself affect the success of data-driven protein design?

A4: Research on green fluorescent protein (GFP) orthologues reveals that the "topography" of the fitness peak is critical. Counterintuitively, fragile proteins with sharp, epistatic fitness peaks yield more accurate machine learning predictions for new protein designs. In contrast, mutationally robust proteins with flatter peaks provide a dataset with weaker epistatic constraints, which leads to less reliable predictions when the model extrapolates to novel sequences [14]. Therefore, your starting template protein can significantly influence the outcome of a data-driven engineering campaign.

The Scientist's Toolkit: Research Reagent Solutions

The following table details key materials and computational tools used in the featured experiments for navigating fitness landscapes.

| Item / Solution | Function in Experiment |

|---|---|

| Cell-Free Gene Expression (CFE) System | Enables rapid synthesis and testing of thousands of protein variants without the need for live cells, drastically accelerating the "Build-Test" cycle [15]. |

| Linear DNA Expression Templates (LETs) | PCR-amplified linear DNA used directly in CFE systems. Simplifies and speeds up the expression of variant libraries compared to circular plasmid DNA [15]. |

| Augmented Ridge Regression ML Model | A supervised learning algorithm that integrates experimentally measured fitness data with evolutionary sequence information ("zero-shot" predictors) to accurately forecast the performance of untested enzyme variants [15]. |

| Gaussian Process (GP) Performance Predictor | In neural architecture search, a GP models the relationship between network design and performance, acting as a surrogate for expensive full training to efficiently navigate architectural search spaces [16]. |

| Pareto Optimal Reward Function | A multi-task objective function used in search algorithms to balance competing goals (e.g., model accuracy vs. inference latency), identifying the best compromises for a given hardware constraint [16]. |

Quantitative Landscape of Fitness and Search

The table below synthesizes key quantitative findings from recent studies on fitness landscapes and search algorithm performance.

| Metric | Value / Ratio | Context & Impact |

|---|---|---|

| Activity Improvement | 1.6x to 42x | Improvement shown by ML-predicted amide synthetase variants over the parent enzyme across nine pharmaceutical compounds [15]. |

| Library Throughput | 1,216 variants; 10,953 reactions | Scale of a single DBTL cycle for enzyme engineering, demonstrating the high-throughput capability of a cell-free, ML-guided platform [15]. |

| Contrast Ratio (Enhanced AAA) | 7:1 (text) 4.5:1 (large text) | Minimum WCAG guideline for enhanced visual contrast. Serves as an analogy for the sharpness required to distinguish a fitness peak from a neutral background [17] [18]. |

| Fitness Peak Heterogeneity | Sharp vs. Flat peaks | Observed in orthologous fluorescent proteins. Fragile proteins (sharp peaks) showed stronger epistasis and enabled more accurate ML-based design than robust ones (flat peaks) [14]. |

FAQs and Troubleshooting Guide

This guide addresses common challenges in fitness landscape analysis for machine learning, particularly in protein engineering and drug development.

Q1: My optimization algorithm stalls unexpectedly. How can I determine if the fitness landscape is too rugged?

- A: Algorithm stalling often indicates high ruggedness, characterized by many local optima. Quantify this using the Nearest-Better Network (NBN). A highly connected, complex NBN structure suggests many attraction basins, making it easy for algorithms to get trapped [19]. You can also use Gaussian Process (GP) regression; a model with a small length-scale parameter and poor goodness-of-fit on a sufficient sample size often indicates a rugged, hard-to-model landscape [20].

Q2: How can I confirm if a flat region in my search data is a neutral network versus a sign of poor algorithm performance?

- A: Use landscape visualization tools like the Nearest-Better Network (NBN). Vast, flat areas in the visualization where many solutions have identical or nearly identical fitness values confirm neutrality [19]. If you suspect neutrality, perform a neutral walk—a series of moves where each step does not decrease fitness—to measure the size and structure of the neutral network [19].

Q3: Why does my model fail to generalize when applied to a new protein fitness dataset?

- A: Generalization failure can stem from high epistasis (ruggedness) in the new landscape [21] [3]. Before deployment, characterize the new landscape's ruggedness using the metrics in Table 1. Machine learning models, especially those assuming simple additive effects, often struggle on highly epistatic landscapes. Consider using models specifically designed to capture non-additive interactions or employing focused training strategies that leverage zero-shot predictors [3].

Q4: What is the minimum sample size required for a reliable landscape analysis?

- A: There is no universal minimum, as it depends on problem dimensionality and complexity. Instead of guessing, use a data-driven approach: fit a regression model (like a GP) to your samples and use the goodness-of-fit to validate the approximation. Increase the sample size until the model's fit stabilizes, indicating a reasonably accurate landscape representation [20].

Q5: How can I choose the best machine learning-assisted directed evolution (MLDE) strategy for my project?

- A: Your choice should be guided by landscape attributes [3]. For landscapes with few active variants and many local optima, standard directed evolution (DE) performs poorly. In these cases, MLDE with active learning (ALDE) and focused training (ftMLDE) using zero-shot predictors provides a significant advantage. Assess your landscape's navigability using the metrics in Table 1 to inform your strategy selection [3].

Quantitative Metrics for Landscape Characterization

The table below summarizes key metrics for quantifying critical landscape characteristics.

Table 1: Key Metrics for Fitness Landscape Analysis

| Characteristic | Description | Key Quantitative Metrics & Signatures |

|---|---|---|

| Ruggedness | Measures the prevalence of local optima and the erratic nature of the fitness surface. High ruggedness, often from epistasis, hinders convergence [19] [3]. | - NBN Graph Complexity: A highly interconnected NBN indicates many attraction basins [19].- GP Model Fit: Poor goodness-of-fit and a small length-scale in a GP model suggest ruggedness [20].- Epistasis Measurement: Quantify pairwise and higher-order epistatic interactions in the dataset [3]. |

| Neutrality | Exists when large regions of the genotype space have identical or very similar fitness values, causing search algorithms to stagnate [19]. | - Neutral Walk Length: The average number of steps possible without changing fitness [19].- NBN Visualization: Identifies vast, flat regions in the fitness landscape [19]. |

| Ill-Conditioning | Indicates high sensitivity to small parameter changes. Ill-conditioned problems have long, narrow valleys in the fitness landscape, slowing convergence [19]. | - Condition Number: A high condition number of the landscape's Hessian matrix (or covariance matrix in a model) is a direct metric [19].- Model-Based Distance: GP models can detect ill-conditioning as a specific problem characteristic that differentiates it from other landscapes [20]. |

Experimental Protocols for Metric Quantification

Protocol 1: Characterizing Landscapes with the Nearest-Better Network (NBN)

This protocol provides a visual and structural analysis of the fitness landscape [19].

- Sample Collection: Collect a set of candidate solutions ( X = {x1, x2, ..., xn} ) and evaluate their fitness ( F = {f(x1), f(x2), ..., f(xn)} ).

- Construct NBN Graph: For each solution ( xi ) in the sample, define its nearest-better ( b(xi) ) as the solution with the smallest Euclidean distance to ( xi ) that has a higher fitness. Each ( xi ) becomes a node, and a directed edge is drawn from ( xi ) to ( b(xi) )".

- Analyze Graph Structure: The resulting graph reveals landscape features:

- Number of Roots/Trees: Each root node (with no outgoing edges) is a local optimum. The number of trees indicates modality.

- Tree Depth and Complexity: Deep, complex trees suggest ruggedness and large attraction basins.

- Presence of Large, Flat Subgraphs: Indicates significant neutrality.

The following diagram illustrates the workflow for this protocol:

Protocol 2: Comparing Problems using Gaussian Process (GP) Regression

This methodology uses flexible regression models to characterize and measure distances between problem landscapes [20].

- Data Sampling: For each problem instance, sample a set of points ( X ) and their fitness values ( y ).

- Model Fitting: Fit a Gaussian Process model to the data ( (X, y) ). A GP is defined by a mean function and a covariance (kernel) function, which captures the smoothness and structure of the landscape.

- Model Validation: Use a goodness-of-fit measure (e.g., predictive log likelihood on a hold-out set) to ensure the GP is a reasonable approximation of the underlying problem. This also helps determine if the sample size is adequate [20].

- Calculate Problem Distance: To compare two problems, compute the symmetric Kullback-Leibler (KL) divergence between their fitted GP models. This provides a principled, quantitative distance measure in the space of problems, revealing their similarity or difference [20].

The logical flow for this model-based analysis is shown below:

The Scientist's Toolkit: Essential Research Reagents and Solutions

Table 2: Key Research Reagents for Fitness Landscape Analysis

| Item | Function in Research |

|---|---|

| Exploratory Landscape Analysis (ELA) Features | A set of numerical metrics (e.g., dispersion, correlation length) used to describe problem characteristics for algorithm selection frameworks [20]. |

| Gaussian Process (GP) Regression Models | A flexible, non-parametric Bayesian model used to approximate the black-box objective function, characterize problem similarity, and validate sample size adequacy [20]. |

| Nearest-Better Network (NBN) | A visualization and graph-based tool that effectively captures landscape characteristics like ruggedness, neutrality, and ill-conditioning across various dimensionalities [19]. |

| Zero-Shot (ZS) Predictors | Machine learning models (e.g., based on evolutionary, structural, or stability knowledge) that predict fitness without experimental data. Used for focused training in MLDE to improve performance on challenging landscapes [3]. |

| NK Model Landscapes | A tunable, synthetic fitness landscape model where parameter ( K ) controls the level of epistasis and ruggedness. Used for controlled benchmarking of optimization algorithms and ML models [21]. |

Why Rugged Landscapes Challenge Traditional Directed Evolution and Computational Optimization

Fundamental Concepts: Fitness Landscapes and Ruggedness

What is a fitness landscape in protein engineering?

A fitness landscape is a conceptual mapping where every point in a high-dimensional space represents a unique protein sequence, and the "height" at that point corresponds to its functional performance or fitness. Navigating this landscape involves finding the highest peaks, which represent optimal sequences [22]. The ruggedness of a landscape describes how unpredictably fitness changes with sequence modifications. In highly rugged landscapes, small mutational steps can lead to dramatic fitness changes, creating many local optima (suboptimal peaks) and "fitness cliffs" where performance drops precipitously [23] [22].

How does epistasis contribute to landscape ruggedness?

Epistasis—the context-dependence of mutation effects—is the primary cause of ruggedness. When the effect of a mutation depends on the genetic background in which it occurs, it creates non-additive, unpredictable interactions between mutations [23] [22]. Research on the LacI/GalR transcriptional repressor family revealed "extremely rugged landscapes with rapid switching of specificity even between adjacent nodes," demonstrating how epistasis creates complex evolutionary paths where traditional stepwise approaches struggle [23].

Table: Characteristics of Smooth vs. Rugged Fitness Landscapes

| Feature | Smooth Landscape | Rugged Landscape |

|---|---|---|

| Epistasis | Minimal or additive effects | High, non-additive interactions |

| Topology | Single or few peaks | Many local optima |

| Predictability | High; gradual fitness changes | Low; fitness cliffs present |

| Evolutionary Paths | Continuous, accessible | Discontinuous, trapped in local optima |

| Example Systems | Many enzymes & binding proteins [23] | Transcriptional regulators, specific enzymes [23] [13] |

Troubleshooting Common Experimental Challenges

Why does my directed evolution experiment get stuck at suboptimal solutions?

This common problem, called premature convergence, occurs when traditional directed evolution's "greedy hill-climbing" navigates rugged landscapes. Since DE tests mutations incrementally, it becomes trapped at local fitness peaks without escaping to explore potentially superior regions [13]. In one case, optimizing five epistatic residues in a protoglobin (ParPgb) active site failed with single-site saturation mutagenesis and recombination, as beneficial mutations in isolation created deleterious combinations when brought together [13].

Solution: Implement Active Learning-assisted Directed Evolution (ALDE). This machine learning approach uses uncertainty quantification to strategically explore the sequence space, balancing exploration of new regions with exploitation of known promising areas [13].

Why do my computational predictions fail on highly epistatic targets?

Machine learning models struggle with rugged landscapes because they cannot capture complex epistatic interactions without sufficient training data that adequately samples these interactions [22]. As landscape ruggedness increases, all models show degraded prediction performance for both interpolation and extrapolation [22].

Solution:

- Increase training data diversity: Sample across multiple mutational regimes rather than just local sequences [22].

- Use specialized encodings: Incorporate protein language model representations that may better capture latent evolutionary patterns [13].

- Implement ensemble methods: Combine models with different architectures to improve uncertainty quantification [13].

Table: ML Model Performance Degradation with Increasing Ruggedness (NK Model Analysis)

| Ruggedness (K value) | Interpolation Performance | Extrapolation Capacity | Recommended Approach |

|---|---|---|---|

| K=0-1 (Smooth) | High (R² > 0.8) | Extrapolates 3+ regimes | Standard regression models sufficient |

| K=2-3 (Moderate) | Moderate (R² = 0.5-0.8) | Extrapolates 1-2 regimes | Ensemble methods + uncertainty quantification |

| K=4-5 (Rugged) | Poor (R² < 0.5) | Fails at extrapolation | Active learning essential [22] |

Machine Learning Solutions for Rugged Landscapes

What machine learning approaches specifically address ruggedness?

Several ML strategies have demonstrated success on rugged protein fitness landscapes:

Active Learning-assisted Directed Evolution (ALDE): This iterative workflow combines batch Bayesian optimization with wet-lab experimentation. After initial library screening, a model trained on the data uses uncertainty quantification to select the next batch of variants to test. In one application, ALDE optimized a non-native cyclopropanation reaction in a protoglobin, improving product yield from 12% to 93% in just three rounds while exploring only ~0.01% of the design space [13].

µProtein Framework: This approach combines µFormer (a deep learning model for mutational effect prediction) with µSearch (a reinforcement learning algorithm). The framework successfully identified high-gain-of-function multi-point mutants for β-lactamase, surpassing the highest known activity level when trained solely on single mutation data [24].

Frequentist Uncertainty Quantification: Research indicates that for protein fitness optimization, frequentist uncertainty methods (like ensemble variance) often outperform Bayesian approaches in guiding exploration of rugged landscapes [13].

How do I choose the right ML architecture for my rugged landscape problem?

Model selection should be guided by landscape characteristics and data availability [22]:

- For sparsely sampled landscapes: Gradient-boosted trees (GBTs) and linear models show better robustness with limited data [22].

- For landscapes with local data: Neural networks with protein language model embeddings (like ESM) can capture complex epistatic patterns [13].

- For high-epistasis targets: Active learning with ensemble-based uncertainty quantification is essential [13].

Advanced Optimization Algorithms

What novel optimization algorithms show promise for rugged landscapes?

Octopus Inspired Optimization (OIO): This hierarchical metaheuristic mimics the octopus's neural architecture to unify centralized global exploration with parallelized local exploitation. The algorithm features a three-level structure: (1) "Individual" level for global strategy, (2) "Tentacle" level for regional search, and (3) "Sucker" level for local exploitation. OIO outperformed 15 competing metaheuristics on a real-world protein engineering benchmark and achieved top performance on the NK-Landscape benchmark, demonstrating its suitability for rugged landscapes [25].

Evolutionary Salp Swarm Algorithm (ESSA): This enhanced swarm intelligence algorithm incorporates distinct evolutionary search strategies and an advanced memory mechanism that stores both superior and inferior solutions. ESSA achieved optimization effectiveness values of 84.48%, 96.55%, and 89.66% for dimensions 30, 50, and 100 respectively, outperforming many existing optimizers on complex problems [26].

Experimental Protocols & Methodologies

Protocol: Active Learning-assisted Directed Evolution (ALDE)

Application: Optimizing five epistatic residues in Pyrobaculum arsenaticum protoglobin (ParPgb) for cyclopropanation reaction [13].

Step 1 - Define Combinatorial Space:

- Select 5 active-site residues (W56, Y57, L59, Q60, F89) based on structural proximity and known epistatic effects

- Design space encompasses 20⁵ (3.2 million) possible variants

Step 2 - Initial Library Construction:

- Use NNK degenerate codons for simultaneous mutation at all five positions

- Employ sequential PCR-based mutagenesis

- Screen initial random library to establish baseline sequence-fitness data

Step 3 - Active Learning Cycle:

- Train Model: Use sequence-fitness data to train supervised ML model (frequentist uncertainty recommended)

- Rank Variants: Apply acquisition function (e.g., upper confidence bound) to rank all sequences in design space

- Screen Batch: Test top N (typically 50-200) variants in wet lab using GC analysis for cyclopropanation yield and selectivity

- Iterate: Repeat until fitness plateaus or target achieved (typically 3-5 rounds)

Key Parameters:

- Batch size: 96-384 variants per cycle

- Fitness function: Difference between cis-2a and trans-2a cyclopropane product yields

- Model inputs: One-hot encoding or protein language model embeddings

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Resources for Navigating Rugged Fitness Landscapes

| Resource/Tool | Function/Purpose | Application Example |

|---|---|---|

| ALDE Computational Framework | Open-source active learning platform for protein engineering | Optimizing epistatic enzyme active sites [13] |

| µProtein Framework | Combines deep learning (µFormer) with RL (µSearch) for sequence optimization | Multi-point mutant design from single mutation data [24] |

| NK Landscape Model | Tunable ruggedness benchmark for algorithm validation | Evaluating ML model performance on epistatic landscapes [22] |

| Octopus Inspired Optimization (OIO) | Hierarchical metaheuristic for complex optimization | Protein engineering benchmarks [25] |

| Dual-LLM Evaluation Framework | Objective fitness assessment for prompt engineering landscapes | Error detection tasks in fitness landscape analysis [27] |

FAQs: Addressing Specific Experimental Issues

How can I quantify the ruggedness of my specific protein fitness landscape?

Use autocorrelation analysis across mutational space [27]. Measure how fitness correlation decays with increasing mutational distance from a reference sequence. Smooth landscapes show gradual correlation decay, while rugged landscapes exhibit rapid decorrelation. For preliminary assessment, the NK model with fitted K parameter can provide a ruggedness estimate [22].

What are the practical limits for tackling rugged landscapes with current methods?

Current methods successfully handle combinatorial spaces of 5-8 residues (~100,000 to 25.6 billion variants) with strong epistasis. The µProtein framework demonstrated success designing 4-6 point mutants, while ALDE efficiently optimized 5 epistatic residues [13] [24]. Beyond 8 residues, computational requirements increase substantially, though hierarchical approaches like OIO show promise for scaling [25].

How critical is uncertainty quantification for ML-guided protein engineering?

Essential for rugged landscapes [13]. Standard prediction models without uncertainty estimates tend to overexploit and miss global optima. Frequentist approaches (ensemble variance) have outperformed Bayesian methods in practical protein engineering applications. Uncertainty guides exploration of promising but poorly characterized regions of sequence space.

Can I apply these approaches with limited initial fitness data?

Yes, but strategy must adapt [22] [24]. With sparse data (10s-100s of labeled sequences):

- Start with random sampling or diverse sequence selection to maximize initial coverage

- Use simple models (linear regression, GBTs) that perform better with limited data

- Prioritize exploration in early active learning rounds

- Consider transfer learning from protein language models (ESM) to compensate for data scarcity [24]

What experimental throughput is needed to benefit from these ML approaches?

Successful implementations have used moderate throughput screens (96-384 variants per cycle) [13]. The key is iterative experimentation with ML guidance between rounds rather than massive parallel screening. Methods like ALDE achieve significant improvements with 3-5 rounds of screening (total 500-1500 variants), making them accessible to many academic labs [13].

Machine Learning Arsenal for Landscape Navigation: Models and Real-World Applications

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: Model Selection and Performance

Q1: My zero-shot predictor performs well on one protein but poorly on another. What could be the cause?

Performance variation is common and can be attributed to several factors related to the target protein's properties and the model's design. Key factors to investigate include:

- Evolutionary Information Content: Proteins with shallow multiple sequence alignments (MSAs), such as orphan proteins or designed proteins, often pose a challenge for models that rely heavily on evolutionary information. In such cases, structure-aware or biophysics-based models may be more robust [28].

- Presence of Disordered Regions: Intrinsically disordered regions (IDRs) lack a fixed 3D structure. Predictions within these regions are generally less reliable for structure-based models and can also affect sequence-based models. If a significant portion of your assay covers IDRs, this could explain performance drops [29].

- Model Architecture and Modality: Ensure the model's training data and architecture match your protein's context. For example, using a model trained on monomeric structures to predict effects on a protein complex can lead to inaccuracies [29].

Q2: When should I use a structure-based model over a sequence-only pLM?

The choice depends on data availability, the biological context, and the specific task. The following table summarizes key considerations:

| Model Type | Best Use Cases | Advantages | Limitations / Considerations |

|---|---|---|---|

| Sequence-only pLM (e.g., ESM) | - High-throughput screening where speed is critical.- Proteins without reliable structural data.- Tasks where evolutionary signals are strong. | - Fast, MSA-free inference [30] [31].- Consumes fewer computational resources than MSA-based methods [30]. | - May lack detailed biophysical context [31] [32].- Can struggle with orphan proteins or designed sequences [28]. |

| Structure-based Model (e.g., ESM-IF1, ProMEP) | - Assessing mutations in ordered, structured regions.- Understanding effects mediated by long-range contacts or steric clashes.- Engineering tasks where stability is key. | - Explicitly captures physical constraints and long-range interactions [29] [31].- Often superior for stability prediction [29]. | - Performance can be misled by predicted structures of disordered regions [29].- May require a structure (experimental or predicted) as input. |

| Multimodal Model (e.g., ProMEP, SI-pLM) | - Maximizing prediction accuracy across diverse protein types and functions.- Applications requiring generalization from small datasets. | - Integrates complementary information from sequence and structure [28] [31].- Robust performance across various benchmarks [28] [31]. | - More complex to implement and train.- Training requires both sequence and structure data. |

Q3: Are predicted protein structures from tools like AlphaFold2 sufficient for structure-based fitness prediction?

Yes, in many cases. Research shows that for many monomeric proteins, using AlphaFold2-predicted structures can lead to predictive performance that is comparable to or sometimes even better than using experimental structures. This is often because predicted structures provide a clean, single-chain context. However, for multimers or proteins with key conformational changes, the choice of structure is critical, and an experimental structure that matches the functional state of the protein assayed is preferable [29].

FAQ 2: Data Handling and Experimental Design

Q4: How does the type of fitness assay (e.g., activity, binding, stability) affect model performance?

Model performance is not uniform across all functional types. Stability assays are often predicted more accurately because they are directly linked to the protein's folding energy, a physical property that many models capture well. Predicting activity or binding, which can involve more complex and long-range epistatic effects, is generally more challenging. You should consult benchmark results, like those from ProteinGym, to understand the typical performance of a model for your specific function of interest [29].

Q5: How can I improve predictions for proteins with low MSA depth or high intrinsic disorder?

For proteins with low MSA depth, consider these approaches:

- Use MSA-free models: pLMs and structure-based models do not require building an MSA for inference, making them suitable for these cases [30] [31].

- Leverage structure-aware models: Models like SI-pLMs or ProMEP incorporate structural context, which is often more conserved than sequence, providing a signal even when evolutionary information is sparse [28] [31].

- Use biophysics-based models: Frameworks like METL are pretrained on synthetic data from molecular simulations, providing a biophysical foundation that is independent of evolutionary data [32].

For disordered regions, be cautious in interpreting results. Currently, no model excels at predicting fitness consequences within these regions. If possible, focus your experimental validation on predictions within ordered domains [29].

FAQ 3: Implementation and Advanced Applications

Q6: What is "focused training" and how can it enhance machine learning-assisted directed evolution (MLDE)?

Focused training (ftMLDE) is a strategy to improve the efficiency of MLDE by using a zero-shot predictor to select which variants to test experimentally for the initial training set. Instead of randomly sampling the vast sequence space, you use the zero-shot model to pre-screen and select variants that are predicted to be high-fitness. This enriches your training set with more informative, high-fitness sequences, allowing the supervised model to learn the fitness landscape more effectively with fewer experimental measurements [3].

Q7: How can I integrate a zero-shot predictor into a protein engineering campaign?

A robust workflow integrates computational prediction with experimental validation. The following diagram outlines a general protocol for using these models in practice.

Experimental Protocols

Protocol 1: Benchmarking a Zero-Shot Predictor on a Deep Mutational Scanning (DMS) Dataset

This protocol allows you to evaluate the performance of a zero-shot predictor against experimental data.

1. Objective: To calculate the correlation between model predictions and experimental fitness measurements for a set of protein variants.

2. Materials:

- DMS Dataset: A dataset containing protein variant sequences and their corresponding experimental fitness scores. Publicly available benchmarks like ProteinGym are excellent resources [29] [31].

- Computing Environment: A Python environment with necessary libraries (e.g., PyTorch, Hugging Face Transformers).

- Model: The zero-shot predictor you wish to evaluate (e.g., ESM-1v, Tranception, ProMEP).

3. Methodology: 1. Data Preparation: Download and preprocess the DMS assay data from your chosen source. Ensure the variant sequences are in the correct format for the model. 2. Model Inference: For each variant in the DMS dataset, use the model to compute a fitness score. For pLMs, this is often the log-likelihood or the pseudo-log-likelihood (PLLR) of the mutated sequence compared to the wild-type [29] [31]. 3. Performance Calculation: Calculate the rank correlation (Spearman's ρ) between the model-predicted scores and the experimental fitness scores across all variants in the dataset. Spearman's ρ is the standard metric for this task as it assesses the monotonic relationship without assuming linearity [29] [31]. 4. Analysis: Compare the correlation coefficient against baseline models and published benchmarks to assess performance.

Protocol 2: Implementing Focused Training for ML-Assisted Directed Evolution

This protocol uses a zero-shot predictor to design a smart initial training set for a supervised model.

1. Objective: To efficiently explore a combinatorial protein landscape by training a supervised model on a training set enriched with high-fitness variants.

2. Materials:

- Protein Variant Library: A defined library of protein variants (e.g., all combinations of mutations at 3-4 specific residues).

- Zero-Shot Predictor: A model such as ESM-1v, EVE, or a stability predictor [3].

3. Methodology: 1. In-silico Library Generation: Generate the sequences for all variants in your combinatorial library. 2. Zero-Shot Screening: Use the zero-shot predictor to score every variant in the library. 3. Focused Training Set Selection: Instead of random selection, choose the top N (e.g., 10-20%) of variants ranked by the zero-shot score for experimental testing. This is your "focused training set." 4. Experimental Training: Synthesize and experimentally measure the fitness of the variants in the focused training set. 5. Supervised Model Training: Train a supervised machine learning model (e.g., a regression model) on this experimentally characterized focused training set. 6. Prediction and Design: Use the trained supervised model to predict the fitness of the entire in-silico library and select the top predicted candidates for the next round of experimental validation or final design [3].

Research Reagent Solutions

This table outlines key computational tools and resources essential for working with unsupervised and zero-shot predictors.

| Resource Name | Type | Primary Function | Relevance to Research |

|---|---|---|---|

| ProteinGym Benchmark [29] [31] | Benchmark Suite | A collection of deep mutational scanning assays for evaluating fitness prediction models. | Serves as the standard for benchmarking new predictors across a diverse set of proteins and functions. |

| ESM Model Family [30] [33] | Protein Language Model (pLM) | A series of transformer-based pLMs (e.g., ESM-2, ESM-1v) for zero-shot variant effect prediction. | Provides state-of-the-art, MSA-free predictions. Can be used as a standalone predictor or for generating protein sequence embeddings. |

| ProMEP [31] | Multimodal Predictor | A model that integrates both sequence and structure context for zero-shot mutation effect prediction. | Offers high accuracy by combining multiple data modalities; is MSA-free and fast. |

| METL [32] | Biophysics-Based pLM | A framework that pretrains models on biophysical simulation data before fine-tuning on experimental data. | Excels in data-scarce scenarios and extrapolation tasks by incorporating fundamental biophysical principles. |

| ESM-IF1 [29] | Inverse Folding Model | A structure-based model that predicts amino acid sequences given a protein backbone. | Used for zero-shot fitness prediction by evaluating the likelihood of a sequence given its structure. |

| AlphaFold DB [31] | Structure Database | A repository of protein structures predicted by AlphaFold2. | Provides high-quality predicted structures for millions of proteins, enabling structure-based modeling where experimental structures are unavailable. |

| EVmutation [3] | Evolutionary Model | An MSA-based model that uses a Potts model to capture co-evolutionary signals for fitness prediction. | A strong baseline evolutionary model that captures pairwise residue constraints. |

Frequently Asked Questions (FAQs)

Q1: What are the primary strengths of CNNs, RNNs, and Transformers in the context of fitness and rehabilitation data?

- CNNs (Convolutional Neural Networks) excel at extracting local, spatial patterns from data. In fitness prediction, this makes them highly effective for analyzing the spatial relationships in body pose data (e.g., from Kinect or video frames) to classify specific exercises or movements with high accuracy, as demonstrated by achievements of over 99% accuracy on some datasets [34].

- RNNs (Recurrent Neural Networks), particularly LSTMs and Bi-LSTMs, are designed for sequential data. They model temporal dependencies, making them ideal for analyzing time-series data from wearable sensors (like accelerometers) to track movement over time and predict metrics such as energy expenditure during a workout [35] [36].

- Transformers utilize attention mechanisms to weigh the importance of different parts of the input sequence, capturing long-range dependencies effectively. They are powerful for complex sequence modeling tasks but can be computationally intensive and sometimes prone to overfitting on smaller datasets, which can challenge their deployment in resource-constrained real-time applications [34] [37].

Q2: My model achieves high accuracy on training data but performs poorly on validation data. What could be the cause and how can I address it?

This is a classic sign of overfitting. The following strategies can help:

- Simplify your model architecture: A model that is too complex for the available data will memorize the training examples instead of learning general patterns. Consider reducing the number of layers or neurons in your network [37].

- Incorporate regularization techniques: Add Dropout layers, which randomly "drop out" a subset of neurons during training, preventing the model from becoming over-reliant on any specific neuron and thus improving generalization [37].

- Use more training data or data augmentation: If your dataset is small, the model may not see enough variety to learn robust features. Techniques like synonym replacement for text or other domain-specific augmentations for sensor data can help [37].

- Employ Early Stopping: Monitor the validation loss during training and halt the process when the validation loss stops improving, preventing the model from over-optimizing to the training data [37].

Q3: How do I choose the right model architecture for my specific fitness prediction task?

The choice depends heavily on your data type and prediction goal. The following table summarizes key considerations:

Table: Model Selection Guide for Fitness Prediction Tasks

| Data Type | Prediction Goal | Recommended Architecture | Key Justification |

|---|---|---|---|

| Skeleton/Pose Data (e.g., from Kinect) | Exercise movement classification | CNN [34] | Superior at capturing spatial relationships between body joints. |

| Wearable Sensor Data (e.g., accelerometer time-series) | Energy consumption prediction | Hybrid CNN-Bi-LSTM [36] | CNN extracts local features, Bi-LSTM models bidirectional temporal dependencies. |

| Genomic/Proteomic Data | Predicting drug side effects or treatment response | Ensemble Methods (e.g., Random Forest, XGBoost) [38] [39] | Effective at integrating diverse biological features and handling structured data. |

| Sequential Data requiring long-range context | Complex activity recognition | Transformer [34] | Powerful attention mechanism captures dependencies across entire sequence. |

Q4: What are the common challenges when deploying these models for real-time fitness monitoring?

Deploying models for real-time use on mobile devices or embedded systems presents specific hurdles:

- Computational Complexity and Resource Intensiveness: Models like Transformers and large CNNs can demand substantial processing power and memory, which are limited on mobile devices [34] [36].

- Model Efficiency vs. Accuracy Trade-off: There is often a "trilemma" between accuracy, efficiency, and deployability. Achieving high performance on large models can come at the cost of battery life and speed, necessitating optimization for a balanced framework [36].

- Data Quality and Noise: Real-world sensor data from accelerometers is often noisy and contains outliers, which can severely impact model performance if not properly pre-processed [36].

Troubleshooting Guides

Poor Performance on Training Data

Symptoms: The model fails to learn meaningful patterns, resulting in low accuracy on both training and validation sets.

Potential Causes and Solutions:

- Inappropriate Learning Rate: A learning rate that is too high can cause the model to overshoot optimal solutions, while one that is too low leads to extremely slow training.

- Solution: Use a learning rate scheduler to adjust the rate during training, for example, by gradually reducing it as training progresses [37].

- Issues with Weight Initialization: Poorly initialized weights can hinder the training process.

- Solution: Employ standard initialization methods like Xavier or He initialization to ensure gradients flow smoothly through the network [37].

- Inadequate Model Capacity: A model that is too simple (e.g., not enough layers) may fail to capture the underlying complexity of the data (underfitting).

- Solution: Increase the model's complexity by adding more layers or increasing the number of neurons in the feed-forward networks [37].

Model Produces Inconsistent Outputs

Symptoms: The model generates varying predictions for the same or very similar input data.

Potential Causes and Solutions:

- Instability in the Attention Mechanism (for Transformers/Attention Models): Unstable attention weights can lead to erratic focus on different parts of the input.

- Solution: Visualize the attention maps to identify unusual patterns. Adjust the initialization of attention weights and add normalization layers to the attention mechanism to stabilize training [37].

- Insufficient Data Preprocessing: Inconsistent data scaling or the presence of outliers can confuse the model.

- Solution: Implement rigorous data cleaning, including identifying outliers using methods like the three-sigma or boxplot method, and normalize features to a consistent scale like [0, 1] [36].

Experimental Protocols & Data

Protocol: Rehabilitation Exercise Classification with CNNs

This protocol is based on research that achieved state-of-the-art accuracy in classifying rehabilitation exercises using pose data [34].

- Objective: To accurately classify specific physical rehabilitation exercises from human pose data.

- Dataset: Benchmark datasets such as KIMORE and UI-PRMD, which contain skeleton data of exercises.

- Methodology:

- Feature Engineering: Represent each exercise frame as a 1D feature vector. This involves extracting comprehensive statistical features (mean, standard deviation, etc.) from the body joint coordinates.

- Model Training: Train a CNN model on these 1D feature vectors. The architecture is designed to learn spatial hierarchies of patterns from the pose data.

- Evaluation: Use cross-validation and report mean testing accuracy.

- Key Results: Table: Performance of CNN Model on Rehabilitation Datasets [34]

| Dataset | Model | Mean Testing Accuracy | Improvement vs. Previous Works |

|---|---|---|---|

| KIMORE | CNN | 93.08% | +0.75% |

| UI-PRMD | CNN | 99.70% | +0.10% |

| KIMORE (Disease Identification) | CNN | 89.87% | - |

Protocol: Energy Consumption Prediction with a Hybrid CNN-Bi-LSTM Model

This protocol details the construction of a robust model for predicting energy expenditure from sensor data [36].

- Objective: To accurately predict energy consumption during exercise training using accelerometer data.

- Dataset: PAMAP2 (Physical Activity Monitoring) dataset, which includes data from inertial measurement units (IMUs) and heart rate sensors.

- Methodology:

- Data Preprocessing:

- Identify and eliminate outliers using the three-sigma or boxplot method.

- Normalize the acceleration signal to the range [0,1].

- Segment the continuous data using a sliding window (e.g., 1-second window with 50% overlap).

- Feature Extraction:

- Extract time-domain features: mean, standard deviation, root mean square, and signal energy.

- Extract frequency-domain features: main frequency, spectral energy distribution, and spectral entropy.

- Perform feature selection using Pearson correlation coefficient or mutual information to retain features most relevant to energy consumption.

- Model Architecture & Training:

- Construct a model that integrates:

- CNN: For local feature extraction from the input sequences.

- Bi-LSTM: For modeling long-term, bidirectional temporal dependencies.

- Attention Mechanism: To dynamically assign importance to different time steps.

- Use ablation experiments to validate the contribution of each component (CNN, Bi-LSTM, Attention).

- Construct a model that integrates:

- Data Preprocessing:

- Key Results: Table: Performance of Optimized CNN-Bi-LSTM-Attention Model [36]

| Evaluation Metric | Optimized Model Performance | Outperformed Models (e.g., TCN, GRU-ATT, SST) |

|---|---|---|

| Mean Squared Error (MSE) | 0.273 | Significantly Lower |

| R-Squared (R²) | 0.887 | Significantly Higher |

| Standard Deviation | 0.046 | Lower (Indicates better robustness) |

Architectures and Workflows

Data Processing Workflow for Sensor-Based Prediction

This diagram illustrates the step-by-step workflow for processing raw accelerometer data into features ready for model training, as described in the experimental protocol [36].

Title: Sensor Data Processing Workflow

Hybrid CNN-Bi-LSTM Model Architecture

This diagram outlines the architecture of a hybrid model that combines CNNs and Bi-LSTMs for time-series prediction, a structure proven effective for energy consumption prediction from sensor data [36].

Title: CNN-Bi-LSTM Model Architecture

The Scientist's Toolkit

Table: Essential Research Reagents and Resources for Fitness Prediction Research

| Item / Resource | Function / Purpose | Example Use Case |

|---|---|---|

| Benchmark Datasets (KIMORE, UI-PRMD) | Provides standardized skeleton and exercise data for training and validating classification models. | Comparing model performance (e.g., CNN, LSTM) on rehabilitation exercise classification [34]. |

| PAMAP2 Dataset | Contains multi-modal sensor data (IMU, heart rate) for physical activity monitoring, ideal for energy prediction tasks. | Developing and testing hybrid models (e.g., CNN-Bi-LSTM) for predicting energy consumption during exercise [36]. |

| CNN (Convolutional Neural Network) | Acts as a spatial feature extractor from structured data, such as body pose coordinates or formatted sensor readings. | Achieving high accuracy in classifying correct and incorrect exercise movements from pose data [34]. |

| Bi-LSTM (Bidirectional LSTM) | Models the temporal dependencies in sequential data in both forward and backward directions. | Capturing the comprehensive movement pattern over time from accelerometer data for energy prediction [36]. |

| Attention Mechanism | Allows the model to focus on the most relevant parts of the input sequence, improving interpretability and performance. | Dynamically weighting the importance of different time-steps in a sensor data sequence for more accurate prediction [36]. |

| Genetic Risk Score (GRS) | A score derived from machine learning analysis of genetic data to predict individual treatment response. | Identifying patients more likely to experience side effects (e.g., nausea) from GLP-1 obesity therapies in precision medicine [39]. |

In the realm of machine learning-driven scientific discovery, researchers often face the challenge of optimizing complex systems—such as protein fitness, drug candidate properties, or material performance—across vast, high-dimensional search spaces. These spaces are characterized by rugged fitness landscapes, where the relationship between input parameters and the desired output is highly non-linear, discontinuous, and influenced by epistasis (non-additive interactions between variables) [13]. Traditional high-throughput screening methods become prohibitively expensive and inefficient in such environments. This technical support article details the implementation of iterative Design-Build-Test-Learn (DBTL) cycles, powered by Active Learning (AL) and Bayesian Optimization (BO), to efficiently navigate these complex landscapes, a methodology central to modern research in fields from synthetic biology to drug discovery [40] [41].

The core principle involves an iterative feedback loop. Instead of testing every possible candidate, a machine learning model is used to guide experimentation. The model learns from accumulated data, designs new candidates predicted to have high fitness, and updates its understanding after new experimental results are obtained [13] [42]. This active learning paradigm, particularly when instantiated as Bayesian Optimization, enables researchers to maximize information gain and accelerate towards optimal solutions while minimizing the number of expensive experimental trials [43].

Core Concepts: FAQs for Researchers

FAQ 1: What are the fundamental components of a Bayesian Optimization loop in this context?

A Bayesian Optimization loop for navigating fitness landscapes consists of four key components:

- Probabilistic Surrogate Model: A model, typically a Gaussian Process (GP), that learns from all data collected so far to predict the fitness of untested candidates and, crucially, quantifies the uncertainty (variance) of its own predictions [43] [44].

- Acquisition Function: A utility function that uses the surrogate model's predictions (both mean and uncertainty) to score and rank all untested candidates. It automatically balances exploration (probing regions of high uncertainty) and exploitation (probing regions of high predicted fitness) [13] [44].

- Experimental Oracle: The "test" phase of the cycle. This is the often expensive and time-consuming experimental assay—a wet-lab measurement, a clinical trial, or a high-fidelity simulation—that provides the ground-truth fitness value for a candidate proposed by the acquisition function [42].

- Iterative Loop: The process is cyclic. The new experimental data is added to the training set, the surrogate model is retrained, and the cycle repeats, progressively refining the model's understanding of the landscape [13] [40].

FAQ 2: How does Active Learning differ from standard supervised machine learning in this application?

Standard supervised learning in this domain typically involves training a model on a fixed, pre-existing dataset with the goal of achieving high predictive accuracy on a static test set. In contrast, Active Learning is an interactive, sequential process. The AL algorithm actively chooses which data points (i.e., which experimental conditions or candidates) would be most valuable to label (i.e., test experimentally) next. The goal is not just to build a good predictor, but to efficiently guide an experimental campaign towards a specific objective, such as finding the highest-fitness protein variant, with as few experimental cycles as possible [13] [45].

FAQ 3: What are common acquisition functions and when should I use them?

The choice of acquisition function is critical and depends on the specific challenges of your fitness landscape. The table below summarizes common functions and their applications.

Table 1: Common Acquisition Functions in Bayesian Optimization

| Acquisition Function | Mechanism | Best For | Considerations |

|---|---|---|---|

| Upper Confidence Bound (UCB) | Selects candidates maximizing mean prediction + κ * uncertainty. The κ parameter controls exploration-exploitation trade-off. |

Rugged landscapes with multiple potential optima; scenarios where exploration is critical to avoid local optima [44]. | Requires tuning of κ. A well-tuned UCB can identify high-fitness variants up to five times more efficiently than random sampling [44]. |

| Expected Improvement (EI) | Selects candidates with the highest expected improvement over the current best-observed fitness. | Primarily exploitation-focused optimization; efficiently climbing a single fitness peak. | Can get trapped in local optima if the landscape is very rugged and the initial samples are poor [13]. |

| Greedy / Probability of Improvement | Selects candidates with the highest predicted fitness (mean) or the highest probability of being better than the incumbent. | Simple, rapid optimization in smooth, convex landscapes. | Highly prone to becoming stuck in local optima and is not recommended for complex, epistatic landscapes [44]. |

FAQ 4: My optimization is stuck in a local optimum. What strategies can help escape it?

Local optima are a fundamental challenge in rugged landscapes. To escape them:

- Increase Exploration: Adjust your acquisition function to favor uncertainty more heavily. For UCB, increase the κ parameter. This encourages the algorithm to probe less-explored regions of the space [44].

- Hybrid Strategies: Incorporate diversity- or novelty-based sampling into your acquisition function. This ensures a wider coverage of the sequence or parameter space, preventing over-concentration in one region [45].

- Batch Selection: Instead of proposing one candidate per cycle, propose a batch of candidates. Algorithms like batch Bayesian Optimization can explicitly optimize for both high fitness and diversity within a batch, allowing parallel exploration of multiple promising regions [13].

- Leverage Latent Space Exploration: Methods like LatProtRL use Reinforcement Learning in a low-dimensional latent space learned by a protein language model. This allows for larger, more exploratory steps in the sequence space, facilitating escape from local optima [42].

Troubleshooting Common Experimental Issues

Issue: The model's recommendations are not yielding improved candidates after the first few cycles.

- Potential Cause 1: Model bias from a non-representative initial dataset. If the initial random screen does not capture sufficient diversity or any high-fitness sequences, the model may struggle to generalize.

- Solution: Increase the size and diversity of your initial library. If possible, use a diverse set of wild-type sequences or incorporate domain knowledge to seed the initial set. Leveraging pre-trained models (e.g., protein language models) can provide a strong, biologically-informed prior that helps even with little initial data [42] [44].

- Potential Cause 2: Over-exploitation. The acquisition function is too greedy, causing the algorithm to fine-tune in a local optimum.

- Potential Cause 3: High experimental noise is obscuring the true fitness signal.

- Solution: Implement replicate experiments for key candidates to obtain more reliable fitness estimates. Consider using a Gaussian Process surrogate model with a built-in noise term to explicitly account for observational noise [43].

Issue: Handling high-dimensional combinatorial spaces (e.g., 5+ mutation sites) is computationally infeasible.

- Potential Cause: The number of possible variants grows exponentially with the number of dimensions ("combinatorial explosion"), making it impossible to model or search exhaustively.

- Solution: Use a latent space representation. Encode protein sequences or material compositions into a lower-dimensional, continuous vector using an autoencoder or a protein language model (pLM) like ESM [42] [44]. Perform the Bayesian Optimization in this compressed latent space, then decode the optimized vectors back into candidate sequences. This dramatically reduces the complexity of the search space.

Issue: The experimental data is highly imbalanced, with very few positive hits.

- Potential Cause: Standard regression models will be biased towards the majority class (low-fitness candidates), performing poorly at identifying rare high-fitness variants.

- Solution: Implement pipelines like CILBO (Class Imbalance Learning with Bayesian Optimization). This involves using Bayesian Optimization not just for model hyperparameters, but also to find the best strategy for handling imbalance, such as optimal class weights or data sampling strategies [46]. This can significantly improve the model's ability to identify true positives.

Essential Experimental Protocols

Protocol: Implementing an Active Learning-assisted Directed Evolution (ALDE) Cycle

This protocol is adapted from wet-lab studies optimizing epistatic residues in an enzyme active site [13].

- Define Design Space: Select

kspecific residues to mutate (e.g., 5 epistatic residues in an enzyme active site). The theoretical sequence space is 20k, but the goal is to explore only a tiny fraction. - Initial Library Construction & Screening (Build-Test):

- Build: Synthesize an initial library of variants using PCR-based mutagenesis with NNK degenerate codons to randomize the selected positions.