Navigating the Rugged Terrain: Modern Strategies to Overcome Epistasis in Protein Fitness Landscapes

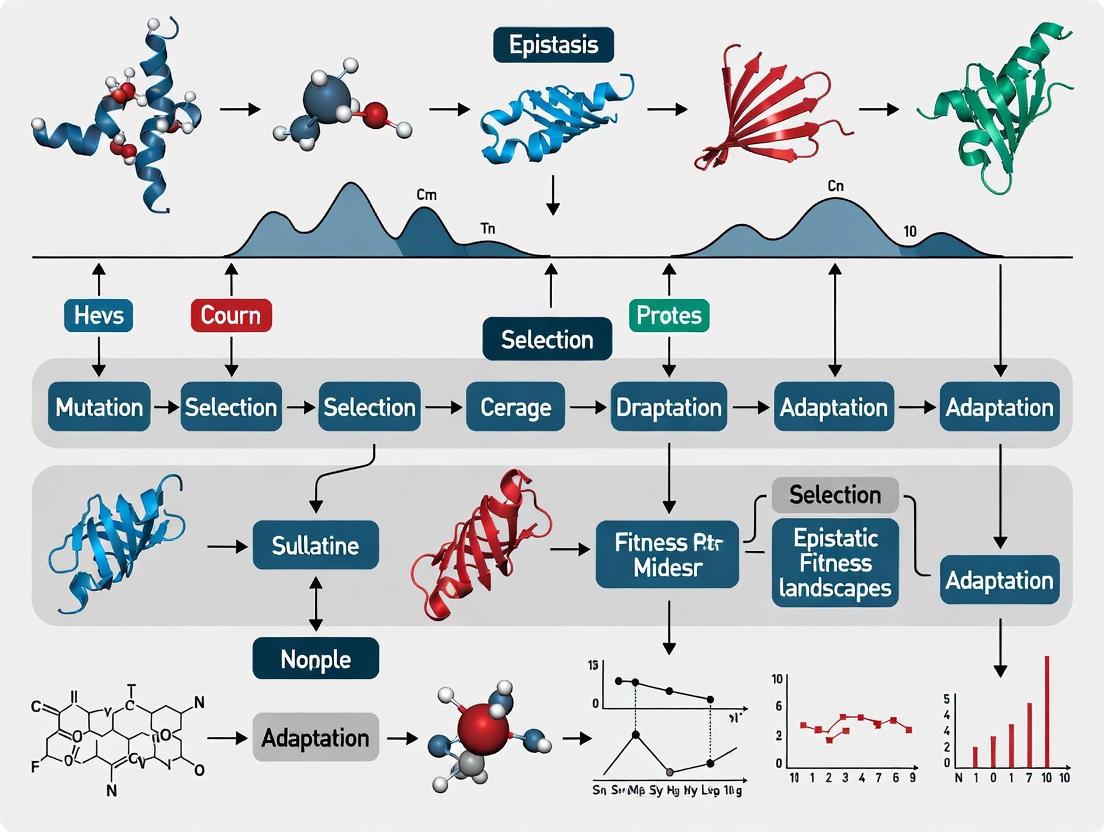

Epistasis—the context-dependent effect of mutations—creates rugged fitness landscapes that challenge predictable protein evolution and engineering.

Navigating the Rugged Terrain: Modern Strategies to Overcome Epistasis in Protein Fitness Landscapes

Abstract

Epistasis—the context-dependent effect of mutations—creates rugged fitness landscapes that challenge predictable protein evolution and engineering. This article synthesizes recent advances in understanding and overcoming epistasis, exploring its fluid and higher-order nature. We detail how machine learning models, including novel epistatic transformers and language models, are being deployed to predict mutational effects and guide directed evolution. The article provides a comparative analysis of methodological performance across diverse protein systems and offers practical troubleshooting strategies for navigating complex landscapes. Finally, we discuss validation frameworks and future directions, providing researchers and drug development professionals with a comprehensive toolkit for tackling epistasis in biomedical applications.

Understanding the Fundamental Challenge: The Fluid and Rugged Nature of Epistasis

Defining Epistasis and Fitness Landscape Ruggedness

Frequently Asked Questions (FAQs)

Fundamental Concepts

Q1: What is epistasis and why is it important in protein evolution? Epistasis is the phenomenon where the effect of a mutation on an organism's fitness depends on the genetic background in which it occurs [1]. In molecular terms, for proteins, this reflects physical interactions between residues that cause mutations to have non-additive effects on function [1] [2]. Epistasis is a major determinant in the emergence of novel protein function and shapes evolutionary trajectories by constraining or enlarging the set of possible evolutionary paths [1] [2].

Q2: What defines a "rugged" fitness landscape? A rugged fitness landscape is one characterized by many local fitness peaks and valleys, where adjacent sequences can have sharp, unpredictable changes in fitness [3]. This ruggedness arises primarily from epistatic interactions [3]. In contrast, smooth landscapes show gradual, predictable fitness changes between neighboring sequences, typically with fewer local optima [4] [5].

Q3: How do epistasis and ruggedness affect evolutionary predictability? High ruggedness makes evolutionary outcomes less predictable because populations can become trapped at suboptimal local fitness peaks, and evolutionary outcomes become strongly dependent on initial conditions and chance events [6]. Sign epistasis—where a mutation changes from beneficial to deleterious (or vice versa) depending on genetic background—creates particularly strong constraints on accessible evolutionary paths [6].

Experimental Challenges

Q4: Why is detecting epistasis so challenging in experimental studies? Epistasis detection faces a fundamental combinatorial explosion problem: the number of potential interactions increases exponentially with the number of genetic sites considered [7]. For example, searching for all possible 4-way interactions among thousands of genetic variants becomes computationally prohibitive. Additionally, measurement noise can be mistaken for epistasis if not properly controlled [1], and the apparent presence or magnitude of epistasis can depend on the chosen scale of measurement (e.g., additive on free energy versus additive on binding affinity) [1] [8].

Q5: How does landscape ruggedness impact machine learning predictions of fitness? Ruggedness dramatically reduces the predictive accuracy of machine learning models for sequence-fitness relationships [3] [9]. As landscape ruggedness increases, model performance decreases for both interpolation (predicting within training data regimes) and extrapolation (predicting beyond training data regimes) [3]. In highly rugged landscapes, even state-of-the-art models may fail completely at extrapolation tasks [3].

Table 1: Impact of Landscape Ruggedness on Machine Learning Prediction Performance

| Ruggedness Level (K value) | Interpolation R² | Extrapolation Capacity | Best-performing Model Type |

|---|---|---|---|

| Low (K=0) | ~0.9 | +3 mutational regimes | Gradient Boosted Trees |

| Moderate (K=2) | ~0.7 | +1 mutational regimes | Neural Networks |

| High (K=4) | ~0.3 | Limited | Linear Models |

| Maximum (K=5) | ~0.1 | None | All models fail |

Data adapted from systematic evaluation using NK landscape models [3]

Troubleshooting Guides

Problem: Inconsistent Epistatic Effects Across Genetic Backgrounds

Symptoms: The same pair of mutations shows different types of epistasis (positive, negative, or sign epistasis) when measured in different genetic backgrounds.

Explanation: This phenomenon, known as "fluid epistasis," occurs when higher-order interactions with the genetic background alter the relationship between two focal mutations [6]. For example, in the folA fitness landscape, a specific mutation pair (G→A at position 3 and T→C at position 7) exhibited positive epistasis in 12.7% of backgrounds, negative epistasis in 9.1%, and various forms of sign epistasis in 2.7% of backgrounds [6].

Solutions:

- Systematic Background Sampling: Measure epistatic effects across multiple defined genetic backgrounds rather than just the wildtype

- Control for Background Fitness: Analyze whether epistatic patterns correlate more with background fitness than specific genotypes

- Higher-order Modeling: Use models that explicitly account for three-way and higher interactions

Fluid Epistasis Relationships: Higher-order interactions modulate how the genetic background determines epistatic type between two mutations.

Problem: Inability to Detect Biologically Relevant Epistasis

Symptoms: Known functional interactions from biological systems are not detected by statistical epistasis scans, or detected interactions lack biological interpretability.

Explanation: Traditional approaches often assume specific forms of epistasis (e.g., only pairwise) or struggle with computational constraints that limit search depth [7]. Biological systems frequently involve higher-order interactions that are missed by these methods [7].

Solutions:

- Incorporate Biological Priors: Focus search on functionally relevant regions (e.g., protein binding pockets, catalytic sites) [2]

- Use Multi-modal Approaches: Combine statistical methods with machine learning and biophysical modeling

- Leverage Known Interactions: Start with biologically established interactions as seeds for expanded searches [7]

Table 2: Comparison of Epistasis Detection Methods

| Method Category | Examples | Strengths | Limitations | Best For |

|---|---|---|---|---|

| Statistical Models | GLM, Case-only, Mixed models | Formal hypothesis testing, interpretable parameters | Combinatorial explosion, limited to low-order interactions | Well-characterized systems with clear priors |

| Machine Learning | MDR, GMDR, RPM, DNNs | Can detect complex patterns, handle high-dimensional data | Black box, requires large datasets, computational intensity | Exploratory analysis of high-throughput data |

| Biophysical Approaches | Rosetta design, energy calculations | Mechanistic insights, physically interpretable | Dependent on structural data, computational cost | Protein engineering, binding specificity studies |

Based on analysis of methods from GAW16 and recent reviews [8] [7]

Problem: Machine Learning Models Failing on Rugged Landscapes

Symptoms: Models trained on limited mutational regimes perform poorly when predicting effects of multiple mutations or in novel sequence contexts.

Explanation: Rugged landscapes dominated by epistasis violate the smoothness assumptions implicit in many machine learning approaches [3]. As epistasis increases, the sequence-fitness mapping becomes increasingly discontinuous and context-dependent [3].

Solutions:

- Stratified Training: Explicitly train on data spanning multiple mutational regimes

- Architecture Selection: Use models with demonstrated robustness to ruggedness (e.g., certain neural architectures)

- Landscape-aware Regularization: Incorporate ruggedness metrics into model training

- Transfer Learning: Pre-train on evolutionarily related systems before fine-tuning

Key Experimental Protocols

Protocol 1: Systematic Epistasis Measurement Using Tite-Seq

Purpose: Quantify epistatic contributions to antibody-antigen binding affinity with controlled measurement error [1].

Workflow:

- Library Construction: Generate all single mutants and random double/triple mutants within target regions (e.g., CDR1H and CDR3H for antibodies)

- Tite-Seq Implementation: Use yeast display with high-throughput sequencing across multiple antigen concentration gradients

- Affinity Calculation: Extract dissociation constant (Kd) for each variant from binding curves

- Additive Model Fitting: Construct Position Weight Matrix (PWM) model from single mutant data: FPWM(s) = FWT + Σhi(si) where F = ln(Kd/c₀)

- Epistasis Quantification: Calculate epistasis as difference between measured and PWM-predicted binding free energies: ε = Fmeasured - FPWM

- Noise Control: Compute Z-scores using replicate measurements and synonymous variants to distinguish true epistasis from measurement noise

Tite-Seq Epistasis Workflow: Systematic approach from library construction to controlled epistasis quantification.

Protocol 2: Computational Design of Specificity Switches

Purpose: Engineer changes in ligand specificity while characterizing epistatic constraints [2].

Workflow:

- Binding Pocket Redesign: Use Rosetta or similar suite to redesign ligand-contacting residues for altered specificity

- Diverse Starting Poses: Generate multiple ligand docking orientations to account for binding mode uncertainty

- Variant Library Construction: Synthesize oligonucleotides encoding thousands of designed variants

- Pooled Functional Screening: Implement toggle screening (e.g., sort for DNA binding competence followed by induction response)

- Pathway Reconstruction: Synthesize all intermediate variants along evolutionary paths between start and end states

- Multi-parameter Fitness Mapping: Characterize fold induction, basal expression, maximum expression, and EC₅₀ for each variant

- Epistasis Classification: Identify specific vs. nonspecific epistasis based on physical proximity and functional effects

Research Reagent Solutions

Table 3: Essential Research Reagents and Computational Tools

| Reagent/Tool | Function | Application Examples | Key Features |

|---|---|---|---|

| Tite-Seq | High-throughput affinity measurement | Antibody-antigen binding [1] | Physical units (Kd), separates binding from expression |

| Rosetta Design Suite | Computational protein design | Ligand specificity switches [2] | Structure-based mutagenesis, energy-based scoring |

| NK Landscape Model | Tunable rugged landscape simulation | ML performance benchmarking [3] | Precisely controlled epistasis (K parameter) |

| Ancestral Sequence Reconstruction | Phylogenetic sampling of sequence space | LacI/GalR family analysis [4] | Evolutionary diverse sequences, historical trajectories |

| Deep Mutational Scanning | Comprehensive variant phenotyping | folA landscape mapping [6] | Nearly complete sequence-space coverage |

Advanced Diagnostic Approaches

Method: Epistasis Formalism and Mathematical Representation

The relationship between genotype and phenotype with epistasis can be formally represented as:

y = ΣβₐΠxᵢ^{aᵢ}

where y is the phenotype, xᵢ represents genetic variants, βₐ are epistatic parameters, and the summation is over all combinations of variants up to a certain order [7]. This formulation reveals the combinatorial challenge—the number of parameters grows exponentially with the number of genetic sites considered.

Method: Ruggedness Quantification Metrics

Dirichlet Energy: Measures the average squared fitness difference between neighboring sequences—higher values indicate more rugged landscapes [9].

Number of Local Maxima: Direct count of genotypes fitter than all their one-mutant neighbors [6] [3].

Autocorrelation: Measures fitness similarity between sequences at different mutational distances—faster decay indicates higher ruggedness [9].

Fourier Spectrum: Decomposes fitness landscape into additive components—more high-frequency components indicate higher epistasis and ruggedness [9].

Fluid Epistasis describes how the effect of a genetic mutation can change dramatically depending on the genetic background in which it occurs. This phenomenon, driven by higher-order genetic interactions, makes evolutionary outcomes difficult to predict and presents significant challenges in protein engineering and evolutionary studies [6].

Key Characteristics:

- Background Dependence: The fitness effect of a mutation is not constant but shifts across different genetic backgrounds [6]

- Higher-Order Interactions: Interactions among multiple mutations collectively reshape the effect of any single mutation [6]

- Binary Nature: A small subset of mutations exhibits strong global epistasis, while most do not [6]

- Statistical Predictability: Despite idiosyncratic individual interactions, reproducible global statistical patterns emerge [6] [10]

Frequently Asked Questions (FAQs)

Q1: Why do my mutational effect measurements produce inconsistent results across different strain backgrounds?

A: You are likely observing fluid epistasis in action. Approximately 24% of natural variants show strain-specific fitness effects due to epistatic interactions [11]. This background dependence means a mutation beneficial in one strain may be neutral or deleterious in another. To address this:

- Characterize the distribution of fitness effects (DFE) across multiple genetic backgrounds

- Identify whether mutations fall into the category exhibiting strong global epistasis (minority) or weak/no epistasis (majority) [6]

- Use statistical models that account for background fitness as a predictor of mutational effects [10]

Q2: How can I predict evolutionary trajectories when epistasis makes effects so unpredictable?

A: While individual mutational effects may be unpredictable due to epistasis, statistical regularities emerge at the distribution level. Implement these approaches:

- Phenotypic DFE Prediction: Use the fitness of a genetic background to predict its distribution of fitness effects rather than individual mutation effects [6]

- Global Epistasis Models: Apply models where mutations have additive effects on an unobserved trait that maps nonlinearly to the observed phenotype [10]

- Pattern Recognition: Look for diminishing-returns epistasis, where beneficial mutations become less beneficial in fitter backgrounds [10]

Q3: What experimental strategies can circumvent evolutionary traps created by reciprocal sign epistasis?

A: Reciprocal sign epistasis occurs when two mutations are deleterious individually but beneficial together, creating evolutionary traps. Overcoming this requires:

- Indirect Paths: Explore evolutionary paths involving gain and subsequent loss of mutations rather than direct paths [12]

- Higher-Dimensional Exploration: Utilize the full 20-amino acid diversity at sites rather than binary approaches to discover bypass routes [12]

- Evolvability-Enhancing Mutations: Identify mutations that increase the likelihood that subsequent mutations are adaptive [13]

Troubleshooting Guides

Problem: Navigating Rugged Fitness Landscapes with Multiple Peaks

Symptoms: Experimental evolution populations become trapped at suboptimal fitness peaks; inability to reach global optimum despite extensive mutagenesis.

Diagnosis and Resolution:

| Step | Procedure | Expected Outcome |

|---|---|---|

| 1 | Map local fitness landscape around trapped genotype | Identify surrounding fitness values and epistatic interactions |

| 2 | Introduce evolvability-enhancing mutations (EE mutations) | Shift DFE toward less deleterious mutations and increased beneficial mutations [13] |

| 3 | Explore indirect paths with temporary fitness losses | Circumvent evolutionary traps via mutations that are later reverted [12] |

| 4 | Implement phased selection regimes | Alternate selection pressures to escape fitness valleys |

Validation: Sequence evolved populations to confirm different mutational pathways; measure fitness gains compared to direct paths.

Problem: Unpredictable Mutation Effects Across Genetic Backgrounds

Symptoms: Same mutation shows different fitness effects in closely related strains; inability to generalize mutation effects from model strains to field isolates.

Diagnosis and Resolution:

| Step | Procedure | Expected Outcome |

|---|---|---|

| 1 | Quantify fluid epistasis for target mutations | Measure how epistasis between mutations changes across backgrounds [6] |

| 2 | Classify mutations as strong/weak epistatic | Identify which mutations show consistent vs. background-dependent effects [6] |

| 3 | Build statistical epistasis models | Predict DFE from background fitness rather than individual mutation effects [6] [10] |

| 4 | Validate with precision editing | Confirm predictions using genome editing in multiple backgrounds [11] |

Validation: High correlation between predicted and measured DFEs across diverse genetic backgrounds.

Table 1: Epistasis Patterns in Experimental Fitness Landscapes

| System | Landscape Size | Functional Variants | Epistatic Variants | Key Finding |

|---|---|---|---|---|

| E. coli folA gene | ~260,000 variants | ~7% | Fluid epistasis in most pairs | >96% of interactions show no epistasis in non-functional backgrounds [6] |

| Protein GB1 (4 sites) | 160,000 variants | 2.4% beneficial | Prevalent sign epistasis | Indirect paths circumvent evolutionary traps [12] |

| S. cerevisiae natural variants | 1,826 variants | 31% affect fitness | 24% of non-neutral variants | Beneficial variants more likely epistatic than deleterious [11] |

Table 2: Types of Epistasis and Their Evolutionary Consequences

| Epistasis Type | Definition | Evolutionary Impact | Detection Method |

|---|---|---|---|

| Diminishing-returns | Beneficial mutations less beneficial in fitter backgrounds | Declining adaptability in evolving populations [10] | Fitness effect vs. background fitness correlation |

| Sign epistasis | Mutation effect changes sign between backgrounds | Constrains accessible evolutionary paths [12] | Reciprocal fitness measurements |

| Fluid epistasis | Pairwise epistasis changes with genetic background | Limits predictability of evolution [6] | Multi-background interaction mapping |

| Global epistasis | Mutational effects predictable from few variables | Enables statistical prediction of evolution [10] | Pattern analysis in high-throughput data |

Experimental Protocols

Protocol 1: Measuring Fluid Epistasis in Protein Variants

Purpose: Quantify how genetic interactions change across backgrounds in a targeted protein region.

Materials:

- CRISPEY-BAR vector or similar precision editing system [11]

- Diverse genetic backgrounds (≥4 strains with sufficient divergence)

- Deep sequencing capability

- Fitness assay system (growth competition, binding affinity, etc.)

Procedure:

- Variant Selection: Identify target sites with suspected epistatic interactions

- Library Construction: Clone guide-donor oligomers with unique barcodes into editing vector

- Multi-Background Editing: Transform and edit variant library into each genetic background

- Competition Experiments: Compete edited pools for ~60 generations with periodic sampling

- Fitness Calculation: Estimate fitness effects from barcode frequency changes

- Epistasis Quantification: Calculate interaction coefficients across backgrounds

Data Analysis:

- Classify epistasis types (positive, negative, sign epistasis) for each pair

- Calculate fluidity as variance in epistasis type across backgrounds

- Identify mutations with strong global epistasis patterns

Protocol 2: Identifying Evolvability-Enhancing Mutations

Purpose: Discover mutations that increase potential for adaptive evolution.

Materials:

- Combinatorially complete or nearly complete fitness landscape data [13]

- Fitness measurements for all single mutants and their neighbors

- Computational resources for landscape analysis

Procedure:

- Landscape Characterization: Obtain fitness data for wild-type and all single mutants

- Neighbor Fitness Calculation: For each mutant m, compute mean fitness of all its 1-mutant neighbors

- EE Mutation Identification: Apply criteria for evolvability-enhancing mutations [13]:

- For beneficial mutations (Δw > 0): (\bar{w}({n}{m})-\bar{w}({n}{{wt}}) > \Delta w)

- For neutral mutations (Δw = 0): (\bar{w}({n}{m})-\bar{w}({n}{{wt}}) > 0)

- Validation: Confirm EE mutations shift DFE toward more adaptive mutations

Applications:

- Protein engineering starting point selection

- Adaptive evolution strain design

- Understanding evolutionary potential constraints

Research Reagent Solutions

Table 3: Essential Research Tools for Epistasis Studies

| Reagent/System | Function | Application Examples |

|---|---|---|

| CRISPEY-BAR precision editing | High-throughput genome editing with barcode tracking | Measuring fitness effects of 1,826 natural variants across 4 yeast strains [11] |

| Deep mutational scanning | Comprehensive variant fitness profiling | Characterizing ~260,000 folA gene variants [6] |

| Combinatorial complete landscapes | All possible combinations of target sites | 160,000 GB1 protein variants; 4^4=256 DHFR variants [12] |

| Transposon mutagenesis libraries | Genome-wide loss-of-function fitness effects | Tracking how DFEs change during 10,000 generations of evolution [10] |

Conceptual Diagrams

The Impact of Higher-Order Epistasis on Evolutionary Trajectories

FAQs: Understanding Higher-Order Epistasis in Experimental Evolution

Q1: What is higher-order epistasis and why does it matter for predicting evolutionary paths? Higher-order epistasis occurs when the effect of a mutation depends on interactions with two or more other mutations simultaneously. Unlike pairwise epistasis (interactions between two mutations), higher-order interactions make it impossible to predict evolutionary trajectories from the individual and paired effects of mutations alone. This creates profound unpredictability in evolution, as the effect of a mutation in an ancestral background cannot reliably predict its effect later in an evolutionary trajectory [14]. These interactions strongly shape which evolutionary paths are accessible and their probabilities, ultimately influencing evolutionary outcomes.

Q2: In practical terms, how prevalent is higher-order epistasis in empirical fitness landscapes? Higher-order epistasis is common across biological systems. Studies analyzing complete genotype-fitness maps find that statistically significant high-order epistasis appears in almost every published landscape [14] [15]. While its magnitude is generally smaller than additive and pairwise epistatic effects, it consistently makes detectable contributions to fitness variation. The contribution of epistasis to total fitness variation across different studied systems ranges from 6.0% to 32.2% [14].

Q3: What are the evolutionary consequences when higher-order epistasis is present? Higher-order epistasis profoundly influences evolutionary dynamics by:

- Altering trajectory accessibility: It can open and close evolutionary pathways, making some trajectories possible while blocking others [14].

- Creating historical contingency: The effect of a mutation depends on the specific previous substitutions in the genetic background [14].

- Generating evolutionary traps: Reciprocal sign epistasis can block direct adaptive paths, potentially requiring indirect paths with mutational reversions to circumvent these traps [16].

- Affecting drug resistance evolution: In pathogens, positive epistasis among resistance-associated mutations can alleviate fitness costs and create fitness valleys that prevent reversal of resistance, leading to persistent drug resistance even after drug withdrawal [17].

Q4: What experimental approaches can effectively detect and quantify higher-order epistasis? The most robust approach involves:

- Constructing complete genotype-fitness maps: Measuring fitness for all possible combinations of a set of mutations (e.g., all 32 combinations for 5 mutations) [14] [16].

- Statistical decomposition: Using Walsh polynomials or Fourier-Walsh transformation to decompose fitness variation into additive, pairwise, and higher-order components [14] [18].

- Scale linearization: Empirically determining a nonlinear scale for each map using power transforms before analysis to account for potential confounding effects [14]. This approach allows researchers to quantify the specific contribution of each order of epistasis to the total fitness variation.

Q5: How can researchers overcome evolutionary constraints imposed by epistasis? Experiments on protein fitness landscapes reveal that evolutionary traps created by epistasis can be circumvented through indirect paths in sequence space. These paths may involve gaining a mutation that paves the way for other beneficial mutations, followed by subsequent loss of the initial mutation once the epistatic constraint is overcome. The high dimensionality of protein sequence space (20L for a protein with L amino acid sites) provides many such alternative routes for adaptation that are not accessible through direct paths alone [16].

Troubleshooting Guides for Epistasis Experiments

Guide 1: Addressing Unpredictable Evolutionary Trajectories

Problem: Evolutionary trajectories in experiments deviate significantly from predictions based on individual mutation effects.

Explanation: This typically occurs when higher-order epistatic interactions influence mutational effects in different genetic backgrounds. The magnitude of epistasis, rather than its specific order, primarily predicts its effects on evolutionary trajectories [14].

Solution:

- Characterize complete genotype networks: Don't rely solely on individual mutations and pairs. Use experimental evolution to track trajectories across multiple genetic backgrounds [19].

- Account for nonlinear scales: Epistasis models assume mutational effects add, but they may combine multiplicatively or on other nonlinear scales. Empirically determine the proper scale for your system [14].

- Model higher-order terms: Incorporate third-order and higher epistatic coefficients in predictive models, as they can significantly alter evolutionary outcomes despite their smaller magnitude [14].

Guide 2: Managing Compensatory Evolution in Drug Resistance Studies

Problem: Drug resistance mutations persist despite fitness costs, contrary to expectations they would disappear when drug selection pressure is removed.

Explanation: Positive epistasis among resistance mutations can create fitness landscapes where resistance mutations are maintained through compensatory effects. This produces fitness barriers that prevent reversion to susceptibility [17].

Solution:

- Map collateral sensitivity: Identify drugs to which resistant strains become hypersensitive, creating alternative treatment strategies [19].

- Monitor evolutionary landscapes: Use experimental evolution with sequential regimens that maximize collateral sensitivity while minimizing cross-resistance [20].

- Profile fitness trade-offs: Quantify the fitness costs of resistance mutations across multiple genetic backgrounds to identify which combinations might persist [17].

Table 1: Contributions of Different Epistatic Orders to Fitness Variation Across Experimental Systems

| Dataset | Additive (%) | Pairwise Epistasis (%) | Third-Order (%) | Fourth-Order (%) | Fifth-Order (%) | Total Epistasis (%) |

|---|---|---|---|---|---|---|

| I | 94.0 | 3.8 | 1.2 | 0.9 | 0.1 | 6.0 |

| II | * | * | * | * | * | * |

| IV | * | * | * | * | * | * |

| V | * | * | * | * | * | * |

| VI | * | * | * | * | * | 32.2 |

Note: Exact values for some datasets are not fully specified in the search results. The pattern shows substantial variation between systems, with total epistasis contributions ranging from 6.0% to 32.2% [14].

Table 2: Experimental Evolution Approaches for Studying Epistasis

| Method | Key Features | Applications | Limitations |

|---|---|---|---|

| Serial Batch Transfer | Repeated growth and transfer in liquid medium; adjustable selective pressure | Studying resistance dynamics in Candida species [19] | Simplified environment lacking host factors |

| Chemostat Culture | Continuous growth in controlled conditions; steady-state population dynamics | Fundamental evolutionary studies [19] | Technical complexity; may select for adherence mutants |

| In Vivo Experimental Evolution | Evolution in animal models; includes host-pathogen interactions | Studying resistance in clinically relevant conditions [19] | Lower selective pressure; ethical and cost considerations |

| High-Throughput Fitness Profiling | Deep mutational scanning of mutant libraries; thousands of genotypes | Mapping genetic interactions in HIV-1 protease [17] | Requires specialized sequencing and computational resources |

Experimental Protocols

Protocol 1: Constructing Complete Genotype-Fitness Maps

Purpose: To empirically measure a complete fitness landscape for a set of mutations, enabling detection and quantification of higher-order epistasis.

Materials:

- Library of variants covering all combinations of target mutations

- Appropriate selection system (antibiotics, fluorescence sorting, etc.)

- High-throughput sequencing capability

- Facilities for competitive fitness assays

Procedure:

- Generate mutant library: Create all possible combinations of L target mutations (2L genotypes for biallelic sites) using codon randomization or synthetic DNA assembly [16].

- Measure fitness: For each genotype, determine fitness relative to a reference using either:

- Competitive growth assays: Mix genotypes and track frequency changes over time

- Direct fitness measurements: Measure growth rates or survival under selection

- Linearize the map: Empirically determine the proper power transformation to linearize the fitness scale and account for nonlinearity [14].

- Decompose epistasis: Apply Walsh transformation to partition fitness variance into additive, pairwise, third-order, fourth-order, and fifth-order components [14] [18].

- Validate significance: Use statistical testing to identify epistatic coefficients significantly different from zero, accounting for multiple comparisons.

Troubleshooting:

- If library coverage is incomplete, use imputation methods or focus on well-sampled regions

- If measurement noise obscures signals, increase biological replicates and implement error models

- If transformation doesn't linearize the map, consider alternative scale transformations

Protocol 2: Experimental Evolution to Track Trajectories

Purpose: To observe evolutionary trajectories in real-time and identify how epistasis influences path accessibility.

Materials:

- Ancestral strain(s)

- Selective conditions (drug gradient, specific nutrients, etc.)

- Serial transfer equipment

- Genotyping or sequencing capability

Procedure:

- Initiate parallel lineages: Start multiple replicate populations from the same ancestor.

- Apply selection: Expose populations to constant or fluctuating selective pressure.

- Sample regularly: At each transfer, archive samples for later analysis.

- Monitor genotypic changes: Sequence populations at multiple time points to reconstruct evolutionary trajectories.

- Measure fitness effects: Isolate evolved mutants and measure their fitness in different genetic backgrounds.

- Identify interactions: Look for mutations whose effects change depending on the presence of other mutations.

Analysis:

- Calculate the probability of each observed trajectory

- Compare observed trajectories to expectations without epistasis

- Identify instances of historical contingency where mutation effects change along trajectories

Visualizations

Diagram 1: Epistasis Blocks Direct Paths but Indirect Paths Enable Adaptation

Diagram 2: Experimental Evolution Workflow for Epistasis Studies

Research Reagent Solutions

Table 3: Essential Research Reagents and Resources for Epistasis Studies

| Reagent/Resource | Function | Application Examples |

|---|---|---|

| Codon-Randomized Mutant Libraries | Generation of all amino acid combinations at target sites | Studying 160,000 variants across 4 sites in protein GB1 [16] |

| Fluorescent Protein Markers (GFP, RFP) | Strain labeling for competitive fitness measurements | Tracking population dynamics in experimental evolution [19] |

| DNA Barcoding Systems | High-throughput quantification of subpopulation sizes | Multiplexed fitness measurements using next-generation sequencing [19] |

| Antifungal/Antibiotic Resistance Markers | Selection and differentiation of strains | Studying drug resistance evolution in pathogens [17] [19] |

| Walsh Transformation Software | Decomposition of fitness landscapes into epistatic coefficients | Quantifying contributions of different epistatic orders [14] [18] |

| Experimental Evolution Platforms | Controlled environments for evolutionary studies | Chemostats, serial batch culture for long-term evolution [19] |

Welcome to this technical support center, designed as a resource for researchers investigating epistatic interactions within fitness landscapes, using genes like folA (dihydrofolate reductase, DHFR) as a primary model. Epistasis—where the effect of one mutation depends on the presence of other mutations—is a fundamental challenge in genetics, protein engineering, and drug development [21]. This guide provides targeted troubleshooting advice and detailed protocols to help you navigate the complexities of detecting, quantifying, and interpreting these interactions, framed within the broader goal of overcoming epistasis in protein fitness landscape research.

Section 1: Fundamental Concepts & FAQs

FAQ 1.1: What is the core difference between statistical and biological epistasis?

- Answer: It is crucial to distinguish between these two concepts, as a signal in one does not guarantee a signal in the other.

- Biological (or Compositional) Epistasis: Refers to the physical interaction between biomolecules within networks and pathways. It is a functional property of the system [22] [21]. An example is one mutated protein in a pathway masking the effect of another.

- Statistical Epistasis: Defined as a deviation from additivity in a mathematical model for a phenotype (e.g., a regression model) [22] [21]. It is a population-level measure and may not directly correspond to a physical interaction.

FAQ 1.2: Why is thefolA/DHFR system a canonical model for studying epistasis?

- Answer: The DHFR enzyme, encoded by the

folAgene in bacteria, is a well-established model due to its role in antimalarial and antibiotic drug resistance. Key reasons include:- Proven Epistasis: Specific combinations of mutations in DHFR confer resistance to drugs like pyrimethamine, and the fitness effects of these mutations are highly dependent on genetic background and environmental conditions [23].

- Structural and Functional Knowledge: Its well-characterized structure and mechanism allow for biophysical interpretation of epistatic measurements.

- Evolutionary Relevance: Studying how mutations combine in DHFR helps predict paths of drug resistance evolution.

Section 2: Experimental Design & Troubleshooting

FAQ 2.1: Our genome-wide epistasis screen has a high false-positive rate. What are the key analysis parameters to check?

- Answer: High false-positive rates in screens like Genome-Wide Association Interaction Studies (GWAIS) often stem from inappropriate analytical choices. You must control for the following [22]:

- Effect Encoding: Using an additive encoding scheme for genotypes can elevate Type I error rates. Ensure your model is appropriate for the suspected interaction.

- Population Structure: Failure to correct for population stratification can create spurious association signals. Include principal components or a relatedness matrix as covariates.

- Linkage Disequilibrium (LD): High LD between distant SNPs may induce redundant epistasis signals. Apply appropriate LD pruning.

- Multiple Testing Correction: The dependencies between interaction test statistics affect standard correction methods (like Bonferroni). Use a permutation procedure or another method validated for epistasis screening.

FAQ 2.2: How can we improve the statistical power of our interaction screen?

- Answer: Consider implementing a multi-stage screening approach to reduce the multiple testing burden. An example protocol is summarized below [22]:

Protocol: Multi-Stage Epistasis Screening

- Stage 1 - Test H1 vs. Full Model: Test an intercept-only model (H1:

g(p) = α) against the full model (HA:g(p) = α + β1*SNP1 + β2*SNP2 + β12*SNP1*SNP2). - Stage 2 - Test H2 vs. Full Model: Test a main-effect model for SNP1 (H2:

g(p) = α + β1*SNP1) against the full model. - Stage 3 - Test H3 vs. Full Model: Test a main-effect model for SNP2 (H3:

g(p) = α + β2*SNP2) against the full model. - Stage 4 - Test H4 vs. Full Model: Test an additive model with both SNPs (H4:

g(p) = α + β1*SNP1 + β2*SNP2) against the full model.

- Corrective Method: Maintain Type I error control using a static (pre-estimated number of tests) or adaptive (actual number of tests per stage) Bonferroni correction. If the phenotype can be described by a simpler model at any stage, the SNP pair is excluded, reducing the number of tests in subsequent stages.

The following workflow diagram illustrates this multi-stage protocol and the related concept of global epistasis analysis:

FAQ 2.3: The fitness effects of our mutations change with drug concentration. Is this normal?

- Answer: Yes, this is a well-documented phenomenon known as environmental modulation of epistasis. The concentration of an antimicrobial drug is a powerful environmental variable that can reshape the fitness landscape [23]. For instance, a mutation might be neutral in a no-drug environment but beneficial at a high drug concentration. Furthermore, the very pattern of global epistasis (e.g., diminishing returns) can shift as the environment changes.

Section 3: Data Analysis & Interpretation

FAQ 3.1: How do we quantify the strength and global nature of epistasis for a specific mutation?

- Answer: You can map any mutation based on two key quantitative metrics. For a focal mutation

iand a set of genetic backgroundsB[23]:- Strength of Epistasis: Calculated as

Variance(Δfi) / Variance(f(B)).- High value: The mutation's effect is highly variable and dependent on the genetic background.

- Low value: The mutation's effect is relatively constant (additive).

- Degree of Global Epistasis: Calculated as the

R²of the regression of the mutation's fitness effect(Δfi)against the background fitness(f(B)).- High R²: Epistasis is largely "global" and can be predicted from background fitness.

- Low R²: Epistasis is "idiosyncratic" and specific to particular genetic interactions.

- Strength of Epistasis: Calculated as

The table below summarizes how to interpret these metrics:

Table: Interpreting Epistasis Metrics

| Strength of Epistasis (Variance Ratio) | Degree of Global Epistasis (R²) | Interpretation |

|---|---|---|

| Low (e.g., ~0) | High | Effects are mostly additive and predictable. |

| High (e.g., ~1 or above) | High | Strong, globally predictable epistasis (e.g., diminishing returns). |

| High (e.g., ~1 or above) | Low | Strong, idiosyncratic epistasis; difficult to predict from background fitness alone. |

FAQ 3.2: What is "global epistasis" and how can we model it?

- Answer: Global epistasis is a phenomenon where the fitness effect of a mutation correlates with the fitness of its genetic background, often following a simple linear relationship [23] [10]. The most common pattern is diminishing returns epistasis, where beneficial mutations have a smaller effect in fitter genetic backgrounds.

A typical workflow for analyzing global epistasis involves:

- Measuring the fitness

f(B)of all genetic backgrounds lacking the focal mutation. - Measuring the fitness effect

Δfiof adding the focal mutation to each background. - Plotting

Δfiagainstf(B)and fitting a regression model (e.g., linear) to quantify the relationship.

Section 4: Advanced Applications & Reagents

FAQ 4.1: Can we use machine learning to predict fitness landscapes with epistasis?

- Answer: Yes, machine learning (ML) is a powerful tool for this task. However, the performance of different ML architectures is highly dependent on the ruggedness of the fitness landscape, which is governed by epistasis [24]. When selecting or evaluating an ML model, you must assess its performance against key metrics, including:

- Robustness to increasing epistasis/ruggedness.

- Ability to interpolate within the training domain.

- Ability to extrapolate to new, unseen genotypes (positional extrapolation).

FAQ 4.2: What are the essential reagents and computational tools for studying epistasis in a gene likefolA?

- Answer: Below is a table of key solutions for constructing and analyzing epistatic interactions.

Table: Research Reagent Solutions for Epistasis Studies

| Item | Function/Description | Example/Application |

|---|---|---|

| Site-Directed Mutagenesis Kits | To systematically introduce specific point mutations into the gene of interest (e.g., folA). |

Creating all single and combination mutants for a deep mutational scan. |

| Deep Mutational Scanning (DMS) Library | A comprehensive library of gene variants for high-throughput functional screening under selective pressure (e.g., with an antifolate drug) [25]. | Empirically mapping the fitness of thousands of variants in a single experiment. |

| Antifolate Drugs (Pyrimethamine, Cycloguanil) | Selective agents used to apply pressure and reveal fitness differences between DHFR variants [23]. | Modulating the environment to study how epistasis changes with drug dose. |

| Protein Language Models (e.g., ESM-2) | Pre-trained deep learning models that can predict the functional effects of protein sequences by learning evolutionary patterns [25]. | Tools like CoVFit can be adapted to predict fitness of folA variants and identify epistatic interactions from sequence alone. |

| Thermodynamic Models | Biophysical models that predict how mutations affect protein folding and ligand-binding stability, providing a mechanistic basis for observed epistasis [26]. | Interpreting why certain mutations show synergistic or antagonistic interactions. |

Statistical Patterns and the 'Binary' Nature of Epistatic Mutations

FAQs: Understanding Epistasis in Experimental Research

What is epistasis and why does it matter for my protein engineering work? Epistasis occurs when the effect of one mutation depends on the presence or absence of other mutations in the genetic background [27]. This is critical because it determines whether adaptive evolutionary paths are possible and predictable. In protein fitness landscapes, epistasis can create evolutionary traps where certain beneficial combinations are inaccessible via direct mutational paths, requiring indirect routes that temporarily lose fitness before gaining it later [16].

I've heard epistasis is "binary" - what does this mean for my experiments? Recent research on the E. coli folA gene landscape revealed that mutations can be classified into two distinct groups: a small fraction exhibit extremely strong patterns of global epistasis, while most mutations do not [28]. This "binary" nature means that in your experiments, you should anticipate that only a few key mutations will drive most of the complex epistatic interactions, while many others will have more additive effects.

How does the "fluidity" of epistasis affect my experimental predictions? Epistasis is "fluid" - the interaction between any two mutations can change dramatically depending on the genetic background [28]. For example, a pair of mutations might show positive epistasis in 26% of backgrounds, negative epistasis in 34%, and no epistasis in 32% across different genotypes [28]. This means predictions from one genetic context may not transfer to others.

What are the practical implications of high-order epistasis? Studies of 13 mutation pathways in fluorescent proteins show extensive high-order epistasis (interactions among three or more mutations) [29]. This means you cannot accurately predict phenotypes from pairwise interactions alone - you must consider higher-order interactions, especially when working with more than 2-3 mutations.

How can I overcome evolutionary traps caused by reciprocal sign epistasis? Research on GB1 protein demonstrates that while reciprocal sign epistasis blocks direct adaptive paths, proteins can circumvent these traps via indirect paths that involve gaining and then losing mutations [16]. This suggests exploring sequences beyond immediate Hamming distance neighbors may reveal accessible evolutionary paths.

Key Experimental Data on Epistatic Patterns

Table 1: Quantitative Patterns of Epistasis Across Protein Systems

| Protein System | Number of Variants Tested | Key Finding on Epistasis | Epistasis Order Observed | Accessible Paths |

|---|---|---|---|---|

| GB1 Protein [16] | 160,000 (204) | Indirect paths circumvent evolutionary traps | Up to 4th order | 1-12 of 24 direct paths accessible; indirect paths provide alternatives |

| eqFP611 Fluorescent Protein [29] | 8,192 (213) | Extensive high-order epistasis detected | Up to 13th order | Color switch requires specific cooperative mutations |

| TtgR Transcription Factor [30] | ~3,500 designed variants | Specific epistasis shapes inducer specificity | 4 mutations in binding pocket | Computational design identified functional combinations |

| E. coli folA (DHFR) [28] | ~260,000 sequences | "Binary" pattern: few mutations show strong epistasis | Up to 9th order | Highly navigable despite 514 fitness peaks |

Table 2: Epistasis Fluidness in folA Gene (9-bp region)

| Epistasis Type | Frequency in High Fitness Backgrounds | Frequency in Low Fitness Backgrounds |

|---|---|---|

| Positive Epistasis | 41% (median) | 21% (median) |

| Negative Epistasis | 23% (median) | 22% (median) |

| No Epistasis | 16% (median) | 30% (median) |

| Sign Epistasis | Relatively rare | 13% median for "Other Sign Epistasis" |

| Reciprocal Sign Epistasis | 0.67% (example pair) | 7.65% (example pair) |

Experimental Protocols for Epistasis Mapping

High-Throughput Combinatorial Mutagenesis and Deep Sequencing

Purpose: To empirically characterize fitness landscapes and detect epistatic interactions across many variants [16] [29].

Procedure:

- Library Construction: Use codon randomization or iterative gene synthesis to generate all possible amino acid combinations at target sites. For the GB1 study, this created 160,000 (204) variants at four sites [16].

- Selection System: Couple protein variants to their encoding mRNA via mRNA display or express in cellular systems with selectable reporters [16] [29].

- Fitness Measurement: Apply selection pressure (e.g., binding affinity for GB1, fluorescence brightness for eqFP611) [16] [29].

- Deep Sequencing: Sequence library pre- and post-selection using Illumina sequencing to quantify variant frequencies [16].

- Fitness Calculation: Compute relative fitness from frequency changes: Fitness = (frequencypost-selection)/(frequencypre-selection) [16].

- Epistasis Analysis: Calculate epistatic coefficients using mathematical transforms that decompose phenotypes into additive and interaction terms [29].

Computational Design of Epistatic Regions

Purpose: To engineer proteins with novel specificities by targeting epistatic regions [30].

Procedure:

- Pose Generation: Dock target ligand into binding pocket in multiple orientations (16 poses used for TtgR-resveratrol) [30].

- Rosetta Design: Redesign ligand-contacting residues with constrained backbone flexibility, generating thousands of design variants (~19,000 for TtgR) [30].

- Variant Curation: Apply scoring metric cutoffs to select promising variants (~3,500 for TtgR) [30].

- Pooled Screening: Express variant library in cells with reporter system (GFP for TtgR) and sort based on activity [30].

- Toggle Screening: Sequential sorting for desired properties (e.g., DNA binding competence followed by induction response) [30].

- Isolation and Validation: Isitate top performers and characterize dose-response curves for multiple inducers [30].

Visualization of Key Concepts

Epistasis in Protein Evolution Paths

Experimental Workflow for Fitness Landscape Mapping

Binary Nature of Mutational Effects

Research Reagent Solutions

Table 3: Essential Research Tools for Epistasis Studies

| Reagent/Resource | Function in Epistasis Research | Example Application |

|---|---|---|

| Combinatorial Mutagenesis Libraries | Generates full sequence space coverage | GB1 (204 variants) [16]; eqFP611 (213 variants) [29] |

| mRNA Display Technology | Links genotype to phenotype for in vitro selection | GB1 fitness measurements [16] |

| Fluorescence-Activated Cell Sorting (FACS) | High-throughput phenotyping and screening | eqFP611 brightness selection [29]; TtgR reporter assays [30] |

| Illumina Deep Sequencing | Quantifies variant frequencies pre-/post-selection | Fitness calculation for thousands of variants [16] [29] |

| Rosetta Software Suite | Computational protein design predicting functional variants | TtgR binding pocket redesign [30] |

| Chip DNA Synthesis | Synthesis of large variant libraries | TtgR 3,500 variant library [30] |

Computational Arsenal: Machine Learning and Modeling Approaches for Epistatic Landscapes

Troubleshooting Guide

Q1: My model's predictions are inaccurate for protein variants that are distant from the training sequences. How can I improve generalization?

A: This is a common challenge when epistatic interactions in distant regions of sequence space are not captured by the model. The solution is to incorporate higher-order epistasis.

- Root Cause: Models limited to additive or pairwise (2nd-order) epistasis often fail to generalize to genotypes with higher mutational load (greater Hamming distance from training data) because they miss critical interactions among three or more amino acid sites [31].

- Solution: Increase the model's epistatic order. Use an epistatic transformer with 3 layers of multi-head attention (MHA) to model interactions among up to 8 sites. Research shows that for distant genotypes, the contribution of higher-order epistasis (3-way and 4-way interactions and above) can become the dominant factor, accounting for over 60% of the explained epistatic variance in some cases [31].

- Verification: After retraining your higher-order model, bin your test genotypes by their mean Hamming distance to the training set. You should observe that the performance gap (in R²) between your 8th-order model and an additive model widens with increasing distance, confirming the importance of higher-order terms for generalization [31].

Q2: How can I determine if my dataset has significant higher-order epistasis, and what order of interactions I should model?

A: Systematically fit and compare a series of models with increasing epistatic complexity.

- Diagnostic Procedure: Fit your data using the epistatic transformer framework with M=1, 2, and 3 layers (corresponding to pairwise, 4th-order, and 8th-order specific epistasis). For each model, calculate the R² on a held-out test set [32] [33].

- Interpretation: Calculate the "percent epistatic variance" for each order. The gain in R² from the additive to the pairwise model shows the importance of 2nd-order epistasis. The further gain from the pairwise to the 4th-order model shows the contribution of 3rd- and 4th-order interactions. In applied studies, the contribution of higher-order epistasis (within the epistatic component) has been found to range from negligible to over 60%, depending on the protein [32] [33].

- Decision Point: If the performance gain from adding another layer (e.g., from 4th-order to 8th-order) is marginal, your landscape may be sufficiently described by lower-order terms. However, for multi-peak landscapes or tasks requiring prediction far from the training data, defaulting to a higher-order model is recommended [31].

Q3: The training process is computationally expensive and slow. Are there ways to improve efficiency?

A: Yes, computational demands are a known challenge. Consider architectural optimizations and hyperparameter tuning.

- Hyperparameter Search: The epistatic transformer architecture was designed with efficiency in mind. Use the Optuna framework for an automatic hyperparameter search to efficiently find an optimal configuration, preventing wasteful cycles on suboptimal setups [31].

- Alternative Architectures: Explore newer, more efficient architectures inspired by the epistasis problem. For example, the Lyra architecture combines state space models (for global epistasis) and gated convolutions (for local epistasis), achieving state-of-the-art performance with orders-of-magnitude fewer parameters and faster inference than standard transformers [34].

- Distributed Training: For very large-scale datasets (e.g., genome-wide association studies), a distributed transformer framework that partitions the key matrix has been shown to be effective. This approach allows the model to be scaled across multiple AI accelerators, making high-order epistasis detection in large datasets feasible [35].

Q4: How can I validate that the higher-order interactions captured by the model are biologically real?

A: Validation requires combining computational checks with experimental evidence.

- Computational Validation on Simulated Data: First, test your entire pipeline on a simulated fitness landscape with known, predefined higher-order interactions. The epistatic transformer has been shown to accurately recapitulate true variance components in such settings [31].

- Experimental Cross-Validation: If available, use a multi-peak fitness landscape for validation. For instance, a study on four orthologous green fluorescent proteins (GFPs) showed that models with higher-order epistasis generalized better across the different wild-type peaks. Train your model on data from some GFP orthologs and test its prediction accuracy on the others. Superior performance of the higher-order model indicates it captures genuine, transferrable biological constraints [31].

- Statistical Checks: Analyze the model's latent space

ϕ(x). The structure of the inferred landscape should be consistent with population genetics principles and known biophysical properties of the protein [36].

Frequently Asked Questions (FAQs)

Q1: What is the fundamental difference between an epistatic transformer and a standard transformer?

A: The key difference is architectural modification to explicitly control the maximum order of specific epistasis. In a standard transformer, it's difficult to disentangle the orders of interaction. The epistatic transformer makes two critical changes [32] [33]:

- Bypassing with Raw Embeddings: In each MHA layer, the query (Q) and key (K) are generated from the previous layer's output, but the value (V) tensor is directly taken from the raw input embeddings (

Z0). This prevents the model from implicitly creating interactions of unlimited order within a single layer. - Simplified Layers: It removes components like LayerNorm and feedforward networks within the attention block. This streamlined design ensures that after

Mlayers, the output embeddings contain specific epistatic interactions exactly up to order2M.

Q2: Can this architecture be applied to genetic data beyond protein sequences (e.g., for GWAS)?

A: Yes, the transformer architecture is highly adaptable. A distributed transformer framework has been successfully applied to genome-wide association studies (GWAS) to detect high-order epistasis between Single Nucleotide Polymorphisms (SNPs). This method partitions the SNP data and uses a combination of attention scores and gradient calculations to identify interacting SNP combinations up to the 8th order, outperforming other deep learning models like MLPs and CNNs on several benchmark diseases [35].

Q3: How does the model distinguish between "specific epistasis" and "non-specific (global) epistasis"?

A: The model uses a compartmentalized structure defined by the equation f(x) = g(ϕ(x)) [32] [33].

ϕ(x): This is the latent phenotype, modeled by the core transformer blocks. It is an additive sum of independent amino acid effects and all specific epistatic interactions up to a chosen order (see Eq. 2 in [33]).g: This is a final, monotonic nonlinear activation function (e.g., a sigmoid) that maps the latent phenotypeϕ(x)to the actual measurement scale of the observed function. This single nonlinearity captures the global epistasis that applies uniformly across all sequences.

This clear separation allows researchers to directly attribute improvements in model performance to the specific epistatic interactions within ϕ(x).

Experimental Protocols & Workflows

Key Experiment: Quantifying Epistatic Order in a Protein Dataset

Objective: To systematically measure the contribution of pairwise and higher-order epistasis to the function of a protein using the epistatic transformer.

Detailed Methodology [31] [32] [33]:

- Data Preparation: Start with a combinatorial mutagenesis dataset (e.g., for protein GRB-1 or AAV2-Capsid). Randomly split the data, using 80% for training and 20% for testing. Perform multiple replicates (e.g., 3-5) with different random splits.

- Model Training Series:

- Train an additive model (a simplified version with no MHA layers).

- Train a pairwise epistasis model (M=1 MHA layer).

- Train a 4th-order epistasis model (M=2 MHA layers).

- Train an 8th-order epistasis model (M=3 MHA layers).

- All models should include a final nonlinear function

gto account for global epistasis.

- Model Evaluation & Analysis:

- Calculate the R² for each model on the held-out test set.

- Compute the percent epistatic variance for each order:

- Pairwise:

(R²_pairwise - R²_additive) / (1 - R²_additive) - 3rd & 4th-order:

(R²_4way - R²_pairwise) / (1 - R²_additive) - 5th to 8th-order:

(R²_8way - R²_4way) / (1 - R²_additive)

- Pairwise:

- The sum of these percentages indicates the total variance explained by specific epistasis.

The workflow for this key experiment is summarized in the following diagram:

Key Workflow: Generalization to Distant Genotypes

Objective: To evaluate the necessity of higher-order epistasis for predicting the function of protein variants that are far away in sequence space from the training data.

Detailed Methodology [31]:

- Data Preparation: Use a dataset with wide sequence diversity (e.g., AAV2-Capsid or cgreGFP). Sample a small fraction (e.g., 20%) of genotypes randomly for training. The remaining 80% will be the test pool.

- Binning by Distance: For each genotype in the test pool, calculate its mean Hamming distance to the entire training set. Bin the test genotypes into discrete distance classes based on this value.

- Model Training & Prediction: Train both an additive model and an 8th-order epistatic transformer model on the small training sample. Use these models to predict the phenotypes for all test genotypes.

- Performance Analysis:

- For each distance class, calculate the test R² for both the additive and the 8th-order model.

- Plot the R² values against the distance classes. The gap between the two curves represents the variance explained by all orders of specific epistasis for that distance.

- Furthermore, the "percent epistatic variance" attributable to pairwise vs. higher-order interactions can be decomposed at different distances.

Table 1: Performance Comparison of Epistatic Models on Protein Datasets

| Protein Dataset | Additive Model R² | Pairwise Model R² | 4th-order Model R² | 8th-order Model R² | % Epistatic Variance from Higher-Orders |

|---|---|---|---|---|---|

| GRB-1 | Data not provided in sources, but the analysis follows this pattern. The percent epistatic variance from higher-orders is calculated from the R² values. | ||||

| AAV2-Capsid | Data not provided in sources, but the analysis follows this pattern. The percent epistatic variance from higher-orders is calculated from the R² values. | ||||

| Simulated Landscape | High R² achievable, but model fails to recapitulate true higher-order variance components. | Good R², but only captures up to 2nd-order interactions. | Better R², captures up to 4th-order interactions. | Best R², aligns with ground-truth variance components. | Up to 100% of the epistatic variance in a simulated 8th-order landscape [31]. |

Table 2: Detection Power of Deep Learning Models for High-Order Epistasis (on Simulated Genetic Data) [35]

| Interaction Order | Proposed Framework (Attention + Gradients) | Transformer (Attention Only) | CNN (Saliency Maps) | MLP (Layerwise Relevance) |

|---|---|---|---|---|

| 2nd Order | ~99% (Additive Model) | ~90% | ~85% | ~75% |

| 5th Order | ~75% (Multiplicative Model) | ~44% | ~30% | <10% |

| 8th Order | Maintains significant detection power | Performance declines | Performance declines severely | Not reported |

The Scientist's Toolkit: Key Research Reagents & Solutions

Table 3: Essential Components for an Epistatic Transformer Study

| Item / Reagent | Function / Description | Example or Note |

|---|---|---|

| Combinatorial Mutagenesis Dataset | Provides the sequence-function data for training and testing the model. Must be large enough to support complex model fitting. | AAV2-Capsid, GRB-1, cgreGFP, and other datasets from 10 large-scale protein studies were used [31]. |

| Epistatic Transformer Software | The core machine learning architecture for modeling fixed-order epistasis. | Custom implementation based on a modified transformer. Key features: modified MHA and removal of LayerNorm/softmax [32] [33]. |

| Hyperparameter Optimization Framework | Automates the search for the best model configuration, saving time and computational resources. | Optuna was used in the original study [31]. |

| Multi-Peak Fitness Landscape Data | Serves as a rigorous benchmark for testing model generalization and transferability across distinct sequence regions. | Data from four orthologous green fluorescent proteins (avGFP, amacGFP, ppluGFP2, cgreGFP) [31]. |

| Distributed Computing Resources | Enables training on very large datasets (e.g., full genomes) by parallelizing computations. | A distributed transformer framework was scaled across AI accelerators for GWAS-scale data [35]. |

Protein Language Models (e.g., ESM-2, CoVFit) for Fitness Prediction

What are Protein Language Models (PLMs) and how are they relevant to fitness prediction?

Protein Language Models (PLMs), such as ESM-2 and CoVFit, are a class of artificial intelligence models that apply transformer architectures—similar to those powering large language models like ChatGPT—to the "language" of proteins. Instead of words, these models are trained on extensive datasets of protein sequences composed of the 20 amino acids. They learn the underlying patterns and "grammar" that govern protein structure and function, allowing them to predict protein properties directly from their amino acid sequence alone [37]. For fitness prediction, a PLM can be fine-tuned to estimate the relative reproductive success (fitness) of a protein variant, such as a viral spike protein, based solely on its sequence. This enables researchers to rapidly identify high-risk variants or design optimized proteins without requiring resource-intensive experimental measurements for every new sequence [25].

What is epistasis and why is it a central challenge?

Epistasis occurs when the effect of one mutation depends on the presence or absence of other mutations in the same protein [12]. This interaction makes the fitness landscape rugged, creating evolutionary traps where direct paths to higher fitness are blocked. A specific and powerful type is reciprocal sign epistasis, where two mutations are individually deleterious but become beneficial when combined. This phenomenon severely constrains the number of accessible evolutionary paths a protein can take to reach a high-fitness state [12]. Overcoming epistasis is therefore critical for accurately predicting fitness and understanding protein evolution.

Frequently Asked Questions (FAQs)

Q: How can PLMs like ESM-2 account for epistasis when previous statistical models could not? Traditional statistical models often represented fitness as a simple linear combination of individual mutation effects, completely ignoring interactions between mutations [25]. In contrast, PLMs like ESM-2 are context-aware. During training, they learn to understand how the identity of an amino acid at one position influences the role of amino acids at other positions. This allows the model to capture the complex, higher-order interactions that constitute epistasis, providing a more accurate prediction of a variant's overall fitness from its complete sequence [25].

Q: My model's fitness predictions are inaccurate for newly emerging variants. What could be wrong? This is a common issue when a model encounters sequences that are too divergent from those in its training set. Solutions include:

- Domain Adaptation: First, perform additional pre-training of a general-purpose PLM (like ESM-2) on a large corpus of sequences relevant to your specific problem. For example, the developers of CoVFit created ESM-2Coronaviridae by further training ESM-2 on spike protein sequences from 1,506 coronaviruses, which significantly improved its performance on SARS-CoV-2 variants [25].

- Multi-task Learning: Fine-tune your model not just on fitness data, but also on related functional data. CoVFit was simultaneously trained on both variant fitness data and deep mutational scanning (DMS) data measuring antibody escape, which informed the model about key functional constraints and improved generalization [25].

Q: What does an "evolvability-enhancing mutation" mean in the context of a fitness landscape? An evolvability-enhancing (EE) mutation is a mutation that, while often beneficial itself, also alters the genetic background in a way that increases the likelihood that subsequent mutations will be adaptive [13]. In other words, it "smooths" the local fitness landscape, making it easier for evolution to find further improvements. These mutations shift the distribution of fitness effects for future mutations, reducing the incidence of deleterious changes and increasing the incidence of beneficial ones [13]. Identifying such mutations with PLMs can help predict evolutionary trajectories.

Q: How can I validate that my PLM's predictions are capturing real biology and not just artifacts?

- Cross-validation: Use a rigorous k-fold cross-validation scheme (e.g., five-fold) on your experimental data to ensure the model is not overfitting [25].

- Benchmarking: Correlate your model's predictions with held-out experimental data. CoVFit, for instance, achieved a high Spearman's rank correlation (0.990) on test data, indicating it could accurately rank variant fitness [25].

- Experimental Follow-up: Perform targeted experiments on a subset of model-predicted high-fitness or low-fitness variants to confirm the predictions.

Troubleshooting Common Experimental and Computational Issues

Problem: Poor Generalization to Unseen Regions of Sequence Space

- Symptoms: The model performs well on variants similar to the training set but fails on highly mutated or newly emerged variants.

- Solutions:

- Implement Domain Adaptation: As done with ESM-2Coronaviridae, continue pre-training your base PLM on a broad, domain-specific sequence database before fine-tuning on your fitness data [25].

- Incorporate Diverse Data Types: Adopt a multi-task learning framework during fine-tuning. Leverage auxiliary data, such as DMS measurements on antibody escape or binding affinity, to provide the model with a richer understanding of functional constraints [25].

Problem: Model is a "Black Box" and Predictions are Unexplainable

- Symptoms: Difficulty understanding which features or mutations the model is using for its predictions, reducing trust in the results.

- Solutions:

- Use Interpretability Tools: Apply techniques like sparse autoencoders. These tools can decompose the model's internal representations into individual, human-interpretable features (or "neurons") that often correspond to specific biological concepts like protein function or family [38].

- Feature Analysis: Analyze the model's attention maps to see which amino acid positions it deems most important when making a prediction for a given sequence.

Problem: Handling of Indirect Evolutionary Paths and Epistatic Traps

- Symptoms: The model correctly identifies a high-fitness variant but cannot find a viable mutational path to it because all direct paths are blocked by deleterious intermediate steps.

- Solutions:

- Model the Full Landscape: Acknowledge that evolution can take indirect paths involving reversions or "gatekeeper" mutations. In the GB1 protein landscape, while direct paths were often blocked, many indirect paths that involved gaining and then losing a mutation allowed access to high-fitness peaks [12].

- In-Silico Directed Evolution: Use your trained PLM to simulate evolutionary walks, exploring not just single-step mutants but also multiple mutations and potential reversions to discover accessible paths [36].

Experimental Protocols & Workflows

Protocol 1: Building a Fitness Prediction Model with ESM-2

This protocol outlines the steps for fine-tuning a general-purpose ESM-2 model to predict protein fitness.

Data Preparation:

- Compile a dataset of protein sequences (e.g., spike protein variants) and their corresponding experimentally measured fitness values (e.g., relative effective reproduction number) or functional scores.

- Format sequences in FASTA format. Split data into training, validation, and test sets, ensuring no significant sequence similarity between splits.

Model Setup:

- Load a pre-trained ESM-2 model and its associated alphabet using the

torch.hubinterface or theesm.pretrainedmodule.

- Load a pre-trained ESM-2 model and its associated alphabet using the

Sequence Encoding and Fine-Tuning:

- Use the batch converter to tokenize sequences and convert them into model-ready inputs.

- Add a regression head (a linear layer) on top of the pre-trained model to predict a continuous fitness score.

- Fine-tune the entire model on your fitness dataset using a mean-squared error loss function.

Protocol 2: A Multi-Task Learning Framework for Enhanced Prediction (CoVFit Method)

This protocol describes the advanced methodology used to develop CoVFit, which combines fitness prediction with functional data.

Domain Adaptation (Optional but Recommended):

- Perform continued pre-training of ESM-2 on a large, curated dataset of protein sequences from your family of interest (e.g., Coronaviridae spike proteins) to create a domain-specialized model [25].

Multi-Task Learning Setup:

- Architecture: Use the domain-adapted model as a shared backbone. Attach two separate prediction heads:

- Head 1 (Fitness Prediction): A regression head for predicting variant fitness.

- Head 2 (Functional Prediction): A head for predicting auxiliary properties, such as antibody escape scores from DMS data [25].

- Training: Jointly train the model on both tasks. The loss function is a weighted sum of the fitness prediction loss and the functional prediction loss. This forces the model to learn representations that capture both overall fitness and key biophysical constraints.

- Architecture: Use the domain-adapted model as a shared backbone. Attach two separate prediction heads:

The workflow for this protocol is visualized below:

Workflow: Overcoming Epistatic Barriers in Evolution

This diagram illustrates how evolvability-enhancing mutations can enable access to high-fitness regions via indirect paths, circumventing evolutionary traps caused by epistasis.

Data Presentation

Table 1: Key Quantitative Results from Featured Studies

This table summarizes core performance metrics and findings from major PLM and fitness landscape studies.

| Study / Model | Key Metric | Result / Value | Biological Insight |

|---|---|---|---|

| CoVFit (2025) [25] | Spearman's Correlation (Fitness Prediction) | 0.990 | Demonstrates high accuracy in ranking variant fitness from sequence alone. |

| CoVFit (2025) [25] | Number of Fitness Elevation Events Identified | 959 | Applied to SARS-CoV-2 evolution until late 2023. |

| GB1 Protein Landscape (2016) [12] | Accessible Direct Paths to Peak (in one subgraph) | 1 out of 24 | Highlights the severe constraint imposed by reciprocal sign epistasis. |

| EE Mutations Study (2023) [13] | Incidence of Evolvability-Enhancing (EE) Mutations | Small fraction of all mutations | Suggests EE mutations are rare but can pivot evolutionary trajectories. |

A curated list of key software, models, and data resources for protein fitness prediction research.

| Resource Name | Type | Function / Application | Reference / Source |

|---|---|---|---|

| ESM-2 | Protein Language Model | General-purpose foundational model for sequence representation; base for fine-tuning. | Meta FAIR [39] |

| CoVFit | Specialized PLM | Predicts SARS-CoV-2 variant fitness from spike protein sequences. | TheSatoLab/GitHub [40] |

| Deep Mutational Scanning (DMS) Data | Experimental Dataset | Maps the functional effects of thousands of mutations; used for multi-task learning. | Cao et al., 2022 [25] |

| Sparse Autoencoders | Interpretability Tool | Decomposes PLM representations into human-understandable features to explain predictions. | Gujral et al., 2025 [38] |

| DHFR Laboratory Evolution Data | Experimental Dataset | A time-series dataset of protein sequences from directed evolution; used for inferring fitness landscapes. | D'Costa et al., 2023 [36] |

Machine Learning-Assisted Directed Evolution (MLDE) Workflows

Frequently Asked Questions (FAQs)

Q1: What is the main challenge epistasis presents for traditional directed evolution? Epistasis, where the effect of a mutation depends on its genetic background, creates rugged and complex fitness landscapes. This non-additivity makes evolutionary paths unpredictable and can cause traditional directed evolution to get stuck in local fitness peaks, hindering the discovery of optimally functional proteins [41] [2].

Q2: How can machine learning (ML) models help overcome epistasis in protein engineering? ML models learn the sequence-function relationship from experimental data. They can predict the effect of unexplored mutations, including those with strong epistatic interactions, and identify beneficial combinations of mutations that would be difficult to find through random screening alone. This allows researchers to navigate around epistatic roadblocks [42] [32].

Q3: My ML model performs well on the training data but fails to predict the function of distant sequences. Could epistasis be the cause? Yes. If your training data only samples a local region of sequence space, the model may not have encountered the specific higher-order epistatic interactions present in distant sequences. Incorporating higher-order epistasis into your model and expanding training data to cover more diverse sequences can improve generalization [32].

Q4: What are some advanced ML architectures specifically designed to capture epistasis? Beyond standard regression models, newer architectures like the "epistatic transformer" have been developed. This model uses a modified transformer architecture where the number of attention layers explicitly controls the maximum order of epistasis (e.g., pairwise, four-way, eight-way) the network can fit, allowing for systematic study of these complex interactions [32].

Q5: How can I control for avidity effects in yeast surface display to obtain accurate affinity measurements? Multivalency/avidity effects can lead to overestimation of binding affinity. Using a yeast-titratable display (YTD) system allows for tight transcriptional control over the number of proteins displayed on the yeast surface. By titrating down the display level, you can minimize avidity effects and obtain more accurate monovalent equilibrium dissociation constant (KD) measurements [43].

Troubleshooting Common Experimental Issues