Optimizing Evolutionary Algorithms for Advanced Protein Prediction: A Guide for Computational Biologists

This article provides a comprehensive guide for researchers and drug development professionals on optimizing evolutionary algorithm (EA) parameters to enhance protein prediction accuracy.

Optimizing Evolutionary Algorithms for Advanced Protein Prediction: A Guide for Computational Biologists

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing evolutionary algorithm (EA) parameters to enhance protein prediction accuracy. It explores the foundational principles of EAs in structural bioinformatics, examines cutting-edge methodological applications from protein-ligand docking to inverse folding, and details systematic strategies for hyperparameter tuning and troubleshooting. By synthesizing recent advances and validating EA performance against other state-of-the-art methods, this review serves as a critical resource for improving computational efficiency and predictive power in protein science, with significant implications for accelerating drug discovery and biomedical research.

The Evolutionary Algorithm Blueprint for Protein Prediction

Proteins are dynamic entities that exist as ensembles of interconverting conformations, rather than single, static structures. These dynamic conformations are fundamental to their biological function, from enzymatic catalysis to signal transduction [1]. For researchers, the central challenge is efficiently navigating the vast, high-dimensional conformational space—the universe of all possible spatial arrangements of a protein's atoms—to identify biologically relevant structures. This space is astronomically large; a systematic search is computationally prohibitive [2]. Evolutionary Algorithms (EAs) offer a powerful, bio-inspired solution to this problem by mimicking natural selection to efficiently sample this landscape and locate low-energy, functional conformations.

FAQ: Understanding Evolutionary Algorithms in Protein Science

Q1: What are Evolutionary Algorithms, and why are they suited for protein conformation problems?

Evolutionary Algorithms (EAs) are a class of population-based, stochastic optimization techniques inspired by the principles of biological evolution. They are particularly suited for navigating protein conformational space because this problem is often NP-hard, meaning that finding an exact solution by brute-force calculation is computationally infeasible for all but the smallest proteins [3]. EAs handle this complexity by maintaining a diverse population of candidate conformations and using genetic operators like mutation and crossover to iteratively evolve this population towards regions of lower energy and higher biological relevance.

Q2: How do EAs handle the prediction of multiple conformational states, a key limitation of some AI methods?

While deep learning tools like AlphaFold have revolutionized static structure prediction, capturing multiple conformational states remains a challenge [1] [4]. EAs address this by simultaneously evolving multiple populations, each guided towards a different potential energy basin. For instance, the M-SADA algorithm uses a multiple population-based EA to sample distinct conformational states for multidomain proteins. It combines homologous and analogous templates with inter-domain distances predicted by deep learning, successfully assembling two highly distinct conformational states for 40.3% of tested proteins [5].

Q3: A common experimental issue is the algorithm getting trapped in local energy minima, yielding non-native structures. How can this be troubleshooted?

Stagnation in local minima often indicates a lack of genetic diversity or insufficient exploration pressure. The following strategies can mitigate this:

- Implement Diversity-Preserving Mechanisms: Introduce multi-objectivization, where sequence diversity itself is an explicit optimization goal alongside energy minimization. This helps the algorithm explore a wider region of sequence space, which can correlate with broader conformational sampling [6] [7].

- Employ Hybrid "Memetic" Operators: Combine global evolutionary search with local gradient-based minimization. This approach, as seen in the SIfTER algorithm, allows each candidate solution to fully relax into its nearest local minimum, providing a more accurate energy evaluation and preventing the population from being trapped in high-energy regions [8].

- Adjust Variation Operators: Design novel variation operators that are specifically tailored to protein geometry to ensure that newly generated conformations are physically plausible, thereby improving the efficiency of the search [9].

Q4: How can external biological knowledge be integrated to improve the accuracy of EA predictions?

Incorporating domain-specific knowledge significantly constrains the search space and enhances biological relevance. A key method is the use of a Functional Similarity-Based Protein Translocation Operator (FS-PTO). This mutation operator uses Gene Ontology (GO) annotations to probabilistically guide the search. Proteins with high functional similarity are more likely to be grouped together, steering the algorithm towards functionally coherent and thus more biologically plausible complexes [3]. Additionally, EAs can be initialized using experimentally determined structural fragments or templates to seed the population with promising starting conformations [8].

Experimental Protocols & Methodologies

Protocol 1: Multi-State Assembly of Multidomain Proteins using M-SADA

This protocol outlines the process for predicting multiple conformational states of a multidomain protein, as implemented in the M-SADA algorithm [5].

- Input Preparation: Gather the amino acid sequences and predicted individual domain structures (e.g., from AlphaFold2).

- Energy Function Construction: Build multiple knowledge-based energy functions by combining information from:

- Homologous Templates: Structures with high sequence similarity.

- Analogous Templates: Structures with low sequence similarity but high structural similarity.

- Predicted Inter-Domain Distances: From deep learning models.

- Multi-Population EA Initialization: Initialize separate populations for each targeted conformational state.

- Evolutionary Sampling:

- Crossover: Exchange structural domains or sub-structures between candidate solutions in the population.

- Mutation: Perturb domain orientations and linker conformations.

- Selection: Select candidates for the next generation based on a fitness function that includes the constructed energy terms and structural similarity metrics.

- Model Selection & Validation: Select final models from each population cluster based on lowest energy and highest confidence. Validate using metrics like TM-score (where a TM-score > 0.90 indicates a high-quality model).

Protocol 2: Mapping Mutation-Induced Landscape Changes with SIfTER

This protocol details how to map the conformational energy landscape of a protein and its mutants to understand functional changes, using the SIfTER algorithm [8].

- Data Curation: Collect all available experimental structures (from the PDB) for the wild-type and mutant protein sequences.

- Define Search Space: Use a dimensionality reduction technique (like Principal Component Analysis) on the collective experimental structures to identify the dominant reaction coordinates (modes of motion). This defines a reduced, biologically relevant search space.

- Conformational Sampling with EA:

- The population is initialized with the experimental structures.

- A memetic EA performs global sampling across the defined low-dimensional space.

- Each new conformation generated by the EA undergoes local energy minimization (a "memetic" step) using a physical or knowledge-based force field.

- Energy Landscape Reconstruction: Calculate the potential energy for each sampled conformation. Project these energies back onto the low-dimensional space to reconstruct a continuous energy landscape.

- Comparative Analysis: Juxtapose the landscapes of wild-type and mutant proteins. Differences in the depth, location, and connectivity of energy minima reveal the mechanistic impact of the mutation on protein function and dynamics.

Performance & Algorithm Comparison

The table below summarizes quantitative data and key characteristics of several evolutionary algorithms used in protein structure research.

Table 1: Comparison of Evolutionary Algorithms in Protein Research

| Algorithm Name | Primary Application | Key Features | Reported Performance / Output |

|---|---|---|---|

| M-SADA [5] | Multi-state multidomain protein assembly | Multi-population EA; Combines homologous/analogous templates & deep learning distances | 40.3% of proteins assembled with 2 distinct states (TM-score > 0.90); Best model TM-score 0.913 on 296 proteins. |

| SIfTER [8] | Mapping conformational landscapes of wild-type and mutant proteins | Memetic EA; Uses experimental structures to define search space; Multiscale optimization | Elucidated distinct activation mechanisms for H-Ras mutants G12V and Q61L by comparing energy landscapes. |

| USPEX [9] | De novo tertiary structure prediction | Global optimization with novel variation operators; Interfaces with Tinker & Rosetta for energy evaluation | Predicted structures with close or lower energy than Rosetta Abinitio for proteins up to 100 residues. |

| FS-PTO EA [3] | Detecting protein complexes in PPI networks | Multi-objective EA; Gene Ontology-based mutation operator (FS-PTO) | Outperformed state-of-the-art methods in identifying protein complexes, especially in noisy PPI networks. |

| EvoIF [7] | Protein fitness prediction (DMS assays) | Lightweight model; Integrates within-family (MSA) and cross-family (Inverse Folding) evolutionary profiles | State-of-the-art performance on ProteinGym (217 assays, >2.5M mutants) using only 0.15% of training data. |

The Scientist's Toolkit: Essential Research Reagents

Table 2: Key Databases and Software for EA-Driven Protein Research

| Resource Name | Type | Function in EA Workflow | Relevance |

|---|---|---|---|

| ATLAS [1] | Molecular Dynamics Database | Provides MD simulation trajectories for ~2000 proteins; used for initial sampling, validation, or constructing training sets. | Foundation for understanding dynamic conformations. |

| GPCRmd [1] | Specialized MD Database | Focuses on GPCR family; essential for studying dynamics of membrane proteins and drug target identification. | Provides specialized, high-quality conformational data. |

| PDB [1] [8] | Structural Database | Source of experimental structures for initializing EA populations and validating final predicted models. | The primary repository of ground-truth structural data. |

| Tinker / Rosetta [9] | Molecular Modeling Suite | Provides force fields and energy functions for relaxing candidate structures and evaluating their fitness within the EA. | Critical for the accurate energy evaluation of conformations. |

| Foldseek [7] | Structural Similarity Search | Used to find analogous templates (structural homologs) for constructing informed energy functions in methods like M-SADA. | Enables leverage of evolutionary information from structure. |

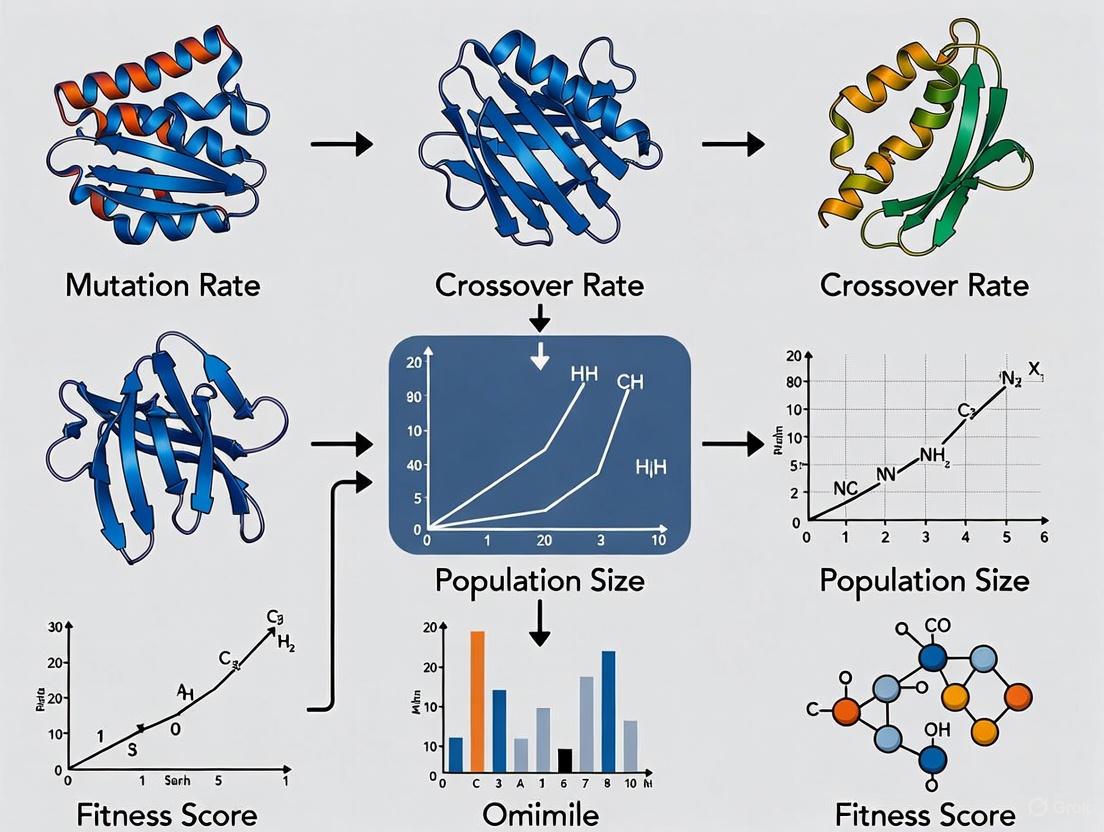

Core Workflow Visualization

The following diagram illustrates the generalized logic and workflow of an Evolutionary Algorithm applied to protein conformational sampling.

Troubleshooting Common Parameterization Issues

Optimizing EA parameters is critical for success. The table below lists common issues and evidence-based tuning strategies.

Table 3: Troubleshooting Guide for EA Parameter Optimization

| Problem | Potential Causes | Recommended Solutions & Parameter Adjustments |

|---|---|---|

| Premature Convergence | Population lacks diversity; selection pressure too high. | Increase population size. Introduce multi-objectivization (e.g., optimize for both energy and structural diversity) [6] [7]. Adjust selection operator to be less greedy. |

| Slow or Stalled Convergence | Poor exploration; inefficient variation operators. | Tune mutation rates (increase for more exploration). Design domain-specific variation operators for proteins [9]. Hybridize with local search (memetic algorithms) [8]. |

| Non-Biological or Clashing Structures | Energy function inaccuracies; unphysical moves. | Incorporate knowledge-based terms into the energy function. Use gradient-based local minimization (memetic) to relax structures [8]. Apply stricter constraints based on known structures. |

| Failure to Sample Multiple States | Search is biased towards a single, dominant energy minimum. | Implement multiple populations with different guidance [5]. Use niching techniques to maintain sub-populations in different regions of conformational space. |

Frequently Asked Questions (FAQs)

1. What are the core components of an Evolutionary Algorithm (EA) for protein modeling? An Evolutionary Algorithm for protein modeling is built on three core components: a population of candidate protein conformations, a fitness function that evaluates the energy or quality of each structure, and genetic operators (mutation and crossover) that explore the conformational space. The goal is to evolve the population towards low-energy, native-like structures through iterative application of selection, variation, and fitness evaluation [10] [11].

2. Which metaheuristics are most effective for navigating the vast protein conformational space? Several metaheuristics have proven effective for Protein Structure Prediction (PSP). Empirical analyses and benchmark studies highlight the following algorithms [10]:

- Genetic Algorithms (GA): Effective for sampling protein conformations by applying selection, crossover, and mutation to a population of structures.

- Particle Swarm Optimization (PSO): Useful for optimizing protein structures, with variants demonstrating success in multi-objective refinement by simultaneously optimizing different energy functions [11].

- Differential Evolution (DE): A robust and versatile optimizer for continuous parameter spaces, making it well-suited for refining atomic coordinates. It has been shown to outperform other EAs in many applications, including PSP [11].

3. How is fitness typically defined in protein structure refinement EAs? Fitness is most commonly defined using physics-based or knowledge-based energy functions. The central hypothesis is that the native protein conformation corresponds to the state with the lowest free energy.

- Full-Atom Energy Models: For detailed refinement, functions like the Rosetta

Ref2015score are used. This is a weighted sum of ~19 energy terms that capture interactions between non-bonded atom pairs, electrostatics, solvation, and torsional preferences [11]. - Multi-Objective Optimization: Some approaches use multiple fitness functions simultaneously. For example, a PSO-based refiner successfully optimized protein models by concurrently minimizing three different energy functions: RWplus, Rosetta, and CHARMM [11].

4. What are common challenges when applying EAs to protein modeling and how can they be troubleshooted?

- Challenge: Premature Convergence. The algorithm gets stuck in a local minimum, yielding a suboptimal protein structure.

- Troubleshooting: Increase population diversity by using a larger population size or implementing diversity-preservation mechanisms. Hybrid memetic algorithms that combine global search (like DE) with powerful local search (like the Rosetta Relax protocol) can more effectively escape local minima [11].

- Challenge: High Computational Cost. The evaluation of fitness functions for thousands of protein conformations is computationally intensive.

Experimental Protocols & Methodologies

Protocol 1: Memetic Algorithm for Protein Structure Refinement

This protocol combines Differential Evolution (DE) with the Rosetta Relax protocol for full-atom refinement of protein structures [11].

- Initialization: Generate an initial population of protein models. These can be output structures from deep learning predictors like AlphaFold2 or RoseTTAFold.

- Representation: Represent each protein conformation in full-atom detail, using Cartesian coordinates or dihedral angles.

- Fitness Evaluation: Calculate the fitness of each individual using the Rosetta

Ref2015full-atom energy function. - Differential Evolution Cycle:

- Mutation: For each target individual in the population, create a mutant vector by combining other randomly selected individuals.

- Crossover: Mix the parameters of the mutant vector with the target individual to produce a trial individual.

- Selection: Evaluate the fitness of the trial individual. If it is better than the target individual, it replaces the target in the next generation.

- Local Search (Memetic Component): Integrate the Rosetta Relax protocol into the DE cycle. This can be applied to every new individual, or to the best individuals after a set number of generations. Rosetta Relax performs local optimization of side-chain and backbone positions to minimize the energy.

- Termination: Repeat from step 3 until a convergence criterion is met (e.g., a maximum number of generations or no improvement in the best fitness).

Protocol 2: Multi-Objective Refinement using Particle Swarm Optimization

This protocol uses a decomposition-based multi-objective PSO to balance different energy functions [11].

- Problem Decomposition: Transform the multi-objective problem into a set of single-objective subproblems using a set of weight vectors. Each subproblem is a weighted sum of the three energy functions: RWplus, Rosetta, and CHARMM.

- Swarm Initialization: Initialize a swarm of particles, where each particle represents a protein conformation and is assigned to a specific subproblem.

- Fitness Evaluation: For each particle, compute the weighted sum of the three energy functions based on its assigned subproblem's weight vector.

- PSO Flight: Update the velocity and position of each particle based on its personal best position and the best position found in its neighborhood.

- Neighborhood Selection: The neighborhood for a particle is defined by the several closest weight vectors, promoting diversity.

- Termination: The swarm evolves until a maximum number of iterations is reached, providing a set of refined models that represent different trade-offs between the energy functions.

Quantitative Data on Algorithm Performance

Table 1: Summary of Metaheuristic Applications in Protein Modeling

| Metaheuristic Algorithm | Application Context | Reported Outcome | Key Reference |

|---|---|---|---|

| Differential Evolution (DE) | Full-atom structure refinement combined with Rosetta Relax (Memetic Algorithm) | Better sampling of the energy landscape and lower-energy conformations compared to Rosetta Relax alone in the same runtime. | [11] |

| Particle Swarm Optimization (PSO) | Multi-objective refinement using RWplus, Rosetta, and CHARMM energy functions | A decomposition-based version showed better diversity and convergence than a prior multi-objective version. | [11] |

| Genetic Algorithm (GA) | General Protein Structure Prediction (PSP) | Included among 15 metaheuristics shown to be effective for navigating the vast conformational space of proteins. | [10] |

Workflow Visualization

Research Reagent Solutions

Table 2: Key Software and Data Resources for EA-based Protein Modeling

| Resource Name | Type | Function in EA-based Modeling | |

|---|---|---|---|

| Rosetta Software Suite | Software Environment | Provides the fitness function (e.g., Ref2015 energy score) and local search protocols (e.g., Rosetta Relax) for full-atom refinement. | [11] |

| AlphaFold Protein Structure Database (AFDB) | Database | Source of high-quality initial protein models that can be used as starting points for the population in a refinement EA. | [12] |

| Protein Data Bank (PDB) | Database | Repository of experimentally solved protein structures used for validation, and for deriving knowledge-based energy terms for fitness functions. | [13] [11] |

| Foldseek | Software Tool | Enables fast, efficient structural comparisons and clustering, useful for analyzing population diversity or for structure-based fitness metrics. | [12] |

Frequently Asked Questions

Q1: What are the main computational models that use evolutionary information for protein design? Several powerful models leverage evolutionary data. Direct Coupling Analysis (DCA) uses a statistical energy model derived from Multiple Sequence Alignments (MSAs) to capture co-evolutionary signals and predict fitness. The model's evolutionary Hamiltonian energy correlates well with experimental protein stability [14]. Latent Space Models, trained using Variational Auto-Encoders (VAEs), project protein sequences into a continuous low-dimensional space. This representation captures evolutionary relationships and enables the learning of complex fitness landscapes, overcoming some limitations of DCA by modeling higher-order epistasis [15]. Finally, modern Protein Language Models (pLMs), trained via Masked Language Modeling (MLM), implicitly learn the fitness landscape. The log-odds scores they produce can be interpreted as fitness estimates, framing natural evolution as an implicit reward-maximization process [7].

Q2: Why might my designed protein sequences be unstable, even with a good fitness score? Instability can arise from several issues:

- Inadequate MSA Depth: The accuracy of models like DCA is highly dependent on the quantity and diversity of homologous sequences in the MSA. Performance deteriorates significantly with MSAs containing fewer than 30 homologs [16]. A shallow MSA fails to capture the necessary co-evolutionary constraints.

- Ignoring Key Contacts: Designs based solely on single-site amino acid propensities often fail because they miss critical epistatic (residue-residue) interactions. One study found that none of the 43 sequences designed using only single-site propensities for a WW domain folded correctly [14].

- Overlooking Structural Context: Modern predictors like AlphaFold and RoseTTAFold can struggle with proteins whose structures are dictated by interactions with other domains or ligands, or with intrinsically disordered regions. Always check the per-residue confidence (pLDDT) and predicted aligned error (PAE) to identify low-confidence, and potentially unstable, regions [16] [17].

Q3: How can I efficiently search ultra-large combinatorial chemical spaces for drug discovery? Exhaustive screening of billion-member libraries is computationally prohibitive. Evolutionary Algorithms (EAs) like REvoLd offer an efficient alternative by exploiting the combinatorial nature of "make-on-demand" libraries. Instead of docking every molecule, REvoLd uses an evolutionary protocol with selection, crossover, and mutation operators to iteratively optimize ligands within the RosettaLigand flexible docking framework. This approach can improve hit rates by factors of 869 to 1622 compared to random selection, exploring the space with only a few thousand docking calculations [18].

Q4: My evolutionary algorithm is converging too quickly to a suboptimal solution. How can I improve exploration? Premature convergence is a common challenge. Consider these strategies:

- Adaptive Genetic Operators: Instead of fixed crossover and mutation probabilities, implement operators that adapt based on an individual's performance. For example, probabilities can be adjusted according to non-dominated layer levels, granting superior individuals more genetic opportunities while maintaining diversity [19].

- Enhanced Mutation Steps: Introduce mutation steps that promote exploration. REvoLd, for instance, uses a mutation that switches single fragments to low-similarity alternatives, causing significant changes to small parts of otherwise promising molecules [18].

- Dynamic Scoring: Use a dynamic scoring mechanism for decision variables that is recalculated each iteration based on the current population's non-dominated layers, rather than relying on a static, initial score [19].

Troubleshooting Guides

Issue: Poor Correlation Between Predicted and Experimental Fitness

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Low-quality MSA | Check the number of effective sequences in your MSA. | Use iterative search tools (e.g., Jackhmmer) to build a deeper, more diverse MSA. If homologs are scarce, consider latent space models or pLMs that leverage broader sequence context [14] [15] [7]. |

| Overfitting to phylogeny | DCA models can be inflated by indirect phylogenetic correlations instead of direct structural couplings [15]. | Ensure the DCA implementation includes pseudo-likelihood optimization to disentangle direct from indirect effects [14]. |

| Insufficient model complexity | The model may fail to capture higher-order epistasis critical for fitness. | Transition from a pairwise Potts model (DCA) to a more flexible latent space model (VAE) or a large pLM, which can capture higher-order interactions [15] [7]. |

Issue: Evolutionary Algorithm Fails to Find High-Fitness Sequences

| Potential Cause | Diagnostic Steps | Recommended Solution |

|---|---|---|

| Loss of population diversity | Monitor the genetic diversity of the population over generations. | Introduce a diversity penalty in the selection criteria. Implement mechanisms like the "second round of crossover and mutation" in REvoLd, which allows less-fit individuals a chance to improve and contribute genetic material [18]. |

| Inefficient genetic operators | Standard operators may not effectively explore the sparse sequence space. | For large-scale sparse problems, use algorithms like SparseEA-AGDS that employ adaptive genetic operators and dynamic scoring to focus search on the most promising decision variables [19]. |

| Rugged fitness landscape | The algorithm gets trapped in local optima. | Perform multiple independent runs with different random seeds. As with REvoLd, this strategy seeds different evolutionary paths and can unveil diverse high-scoring motifs [18]. |

Experimental Protocols & Data

Protocol 1: Designing Proteins Using a Co-evolutionary Fitness Landscape

This methodology uses DCA to guide Monte Carlo simulations for generating novel, stable protein sequences [14].

- Construct MSA: For your target wild-type sequence (e.g., PDB: 2FS1, 1PGA, 3THK), generate a deep Multiple Sequence Alignment using an iterative tool like Jackhmmer.

- Learn DCA Model: From the MSA, infer the parameters of the Potts model (single-site propensities

hi(X)and coupling parametersJij(X,Y)) using a pseudo-likelihood optimization procedure. - Define Evolutionary Energy: Calculate the Evolutionary Hamiltonian energy for a sequence

xasEEH(x) = -ln P(x), whereP(x)is the probability from the Potts model. - Run Monte Carlo Sampling:

- Start from a random or wild-type sequence.

- In each iteration, propose a mutation to a randomly chosen residue.

- Accept or reject the mutation based on the change in

EEHusing the Metropolis criterion. - To enhance sequence diversity, add a penalty term proportional to sequence similarity to the wild-type during sampling.

- Select Designs: Choose final sequences based on low

EEH, low sequence identity to wild-type (<50-80%), and low pairwise identity between designs (<85%).

The workflow for this protocol is summarized in the diagram below:

Protocol 2: Integrating Evolutionary Profiles for Fitness Prediction (EvoIF)

The EvoIF framework integrates multiple evolutionary signals for accurate, data-efficient fitness prediction [7].

- Input Representation: For a wild-type sequence and its structure, generate the mutant sequence, assuming the backbone structure remains unchanged.

- Extract Within-Family Profile: Perform a sequence similarity search (e.g., with BLAST) or a structure similarity search (e.g., with Foldseek) to retrieve homologous sequences. Use these to build a Multiple Sequence Alignment and create an evolutionary profile.

- Extract Cross-Family Profile: Pass the protein structure through a pre-trained Inverse Folding model (e.g., ProteinMPNN) to obtain likelihoods for all possible amino acids at each position. This provides a structural-evolutionary profile.

- Model Fusion: Input the sequence and structure into a lightweight neural network backbone. Fuse the within-family and cross-family evolutionary profiles via a compact transition block.

- Fitness Prediction: Use the fused model to calculate log-odds scores, which serve as the fitness estimate for the mutant.

Quantitative Data from Co-evolutionary Design

The table below shows experimental results for proteins designed using the co-evolutionary fitness landscape (DCA) method, demonstrating that sequences with lower evolutionary energy (EEH) generally have higher stability [14].

| Protein & Variant | Sequence Identity to WT (%) | Evolutionary Energy (EEH) in kBT | Melting Temp. (Tm) °C |

|---|---|---|---|

| GA WT (2fs1) | 100% | -127.1 | 86 |

| GA Seq1 | 79% | -129.0 | 86 |

| GA Seq2 | 54% | -117.0 | 63 |

| GA Seq3 | 50% | -114.7 | 73 |

| GA Seq5 | 50% | -111.5 | 59 |

| GB WT (1pga) | 100% | -106.5 | 77 |

| GB Seq1 | 75% | -94.2 | 73 |

| GB Seq2 | 75% | -93.9 | 75 |

| SH3 WT (3thk) | 100% | -72.3 | 70 |

| SH3 Seq1 | 45% | -96.4 | 64 |

| SH3 Seq3 | 48% | -97.9 | 63 |

The Scientist's Toolkit

| Research Reagent / Tool | Function in Research |

|---|---|

| Jackhmmer [14] | An iterative sequence search tool used to build deep and diverse Multiple Sequence Alignments (MSAs) from a query sequence, which is fundamental for DCA and other co-evolutionary analyses. |

| Direct Coupling Analysis (DCA) [14] [15] | A computational method that analyzes MSAs to infer direct, epistatic interactions between amino acid residues. It is used to build statistical fitness landscapes for protein design and contact prediction. |

| Variational Auto-Encoder (VAE) [15] | A deep learning architecture used to learn a continuous, low-dimensional latent space representation of protein sequences. This latent space captures evolutionary relationships and complex fitness landscapes. |

| RosettaLigand [18] | A flexible molecular docking protocol within the Rosetta software suite that allows for full ligand and receptor flexibility, used for accurate binding affinity predictions in virtual screening. |

| Inverse Folding Models (e.g., ProteinMPNN) [7] | Models that predict amino acid sequences compatible with a given protein backbone structure. They provide cross-family structural-evolutionary constraints for fitness prediction. |

| Evolutionary Algorithm Frameworks (e.g., REvoLd, SparseEA-AGDS) [19] [18] | Software implementations of evolutionary algorithms tailored for specific search spaces, such as ultra-large chemical libraries or large-scale sparse protein sequences, enabling efficient optimization. |

Frequently Asked Questions (FAQs)

1. What is the fundamental difference between using energy minimization and structural accuracy as an optimization goal?

Energy minimization approaches operate on Anfinsen's dogma, which states that a protein's native structure corresponds to its thermodynamic ground state—the conformation with the lowest Gibbs free energy [20]. Methods like the Rosetta ab initio protocol use search algorithms guided by a physics-based energy function to find this state [20]. In contrast, structural accuracy goals, often pursued by deep learning methods like AlphaFold2, aim to directly predict a structure that is as close as possible to the experimentally resolved native structure, typically using learned patterns from known structures [20].

2. My energy-minimized models have low energy scores but poor structural accuracy when compared to the native fold. What could be wrong?

This is a common issue indicating a potential problem with the energy function or the search algorithm's ability to escape local minima. The force field might be inaccurate or incomplete, failing to properly balance different energy terms (e.g., van der Waals, electrostatics, solvation) [20] [21]. Furthermore, the high-dimensional energy landscape of proteins is fraught with local minima, and the search algorithm may have become trapped in one that does not correspond to the global minimum (the native state) [20]. You may need to refine your force field weights or incorporate more sophisticated search strategies, like memetic algorithms that combine global and local search [20].

3. How can I improve the physical realism and structural accuracy of a low-resolution model generated by an evolutionary algorithm?

A two-step refinement protocol is often effective. First, rebuild the main-chain atoms from your Cα trace using a knowledge-based look-up table to ensure proper backbone geometry. Second, add side-chain atoms from a rotamer library and perform a full-atomic energy minimization using a composite physics- and knowledge-based force field. This process optimizes both global topology and local atomic geometry, addressing issues like unphysical bond lengths, angles, and steric clashes [21]. Tools like ModRefiner are designed specifically for this purpose [21].

4. For predicting protein complexes, what specific challenges should I consider when defining the optimization goal?

Predicting complexes introduces the critical challenge of accurately modeling inter-chain interactions alongside intra-chain folding. Relying solely on sequence-based co-evolutionary signals can be insufficient for complexes like antibody-antigen pairs, where such signals may be weak or absent [22]. Your optimization goal must therefore incorporate structural complementarity between chains. Advanced methods now use deep learning to predict interaction probability and structural similarity from sequence, which helps construct better paired multiple sequence alignments for significantly improved complex structure prediction [22].

Troubleshooting Guides

Problem: Inconsistent Performance of Evolutionary Algorithm

- Symptoms: The algorithm converges to different structures with varying energy levels and accuracies across multiple runs on the same protein sequence.

- Possible Causes:

- Poor Parameter Tuning: The parameters for the evolutionary algorithm (e.g., mutation rate, crossover rate, population size) are not optimal for the protein's conformational landscape [20] [3].

- Insufficient Diversity: The population converges prematurely to a local minimum, lacking the genetic diversity to explore the search space effectively [20].

- Solutions:

- Implement Niching: Introduce a crowding method or other niching technique to maintain population diversity and explore multiple minima simultaneously [20].

- Adopt a Memetic Strategy: Hybridize your global evolutionary search with a local search operator. For example, combine Differential Evolution with the Rosetta fragment replacement technique to refine trial solutions, leading to more consistent convergence to low-energy states [20].

- Leverage Biological Knowledge: Incorporate a domain-specific mutation operator. For instance, in protein complex detection, a Gene Ontology-based mutation operator can translocate proteins based on functional similarity, guiding the search more effectively [3].

Problem: High-RMSD in Refined Models

- Symptoms: After full-atom refinement, your model has a high Root-Mean-Square Deviation (RMSD) from the experimental reference structure, despite a good energy score.

- Possible Causes:

- Over-Relaxation: The refinement process may over-optimize the local physical geometry, causing the model to drift away from the correct global topology [21].

- Inadequate Restraints: The energy function used during refinement may lack sufficient long-range or global restraints to maintain the overall fold.

- Solutions:

- Use Multi-Source Restraints: During refinement, include a spatial restraint term in your energy function. This term should penalize deviations from the pairwise Cα distances in your initial, globally correct model [21].

- Two-Step Energy Minimization: Employ a protocol like that in ModRefiner, which first focuses on building a physically plausible backbone while keeping Cα positions restrained, and then refines side-chain rotamers and backbone atoms together [21].

Experimental Protocols & Data

Table 1: Key Metrics for Evaluating Optimization Goals

| Metric | Measures | Ideal For Goal | Tool / Method |

|---|---|---|---|

| Rosetta Score | Weighted sum of energy terms (steric clash, van der Waals, H-bonding, etc.) | Energy Minimization | Rosetta Software Suite [20] |

| Root-Mean-Square Deviation (RMSD) | Average distance between atoms of superimposed structures | Structural Accuracy | Pymol, MODELLER |

| Template Modeling Score (TM-Score) | Global topological similarity (scale 0-1; >0.5 same fold) | Structural Accuracy | TM-score program |

| Global Distance Test (GDT-TS) | Percentage of Cα atoms within a threshold distance of native structure | Structural Accuracy | CASP assessment |

| Steric Clash Score | Number of atom pairs closer than sum of van der Waals radii | Physical Realism | MolProbity, ModRefiner [21] |

| Ramachandran Outliers | Percentage of residues in disallowed backbone torsion regions | Physical Realism | MolProbity, PROCHECK |

Protocol: Memetic Algorithm for Protein Structure Prediction

This protocol outlines a hybrid approach combining Differential Evolution (DE) with the Rosetta fragment replacement technique, as described by Varela and Santos [20].

Initialization:

- Representation: Encode a protein conformation in each individual of the population using a coarse-grained model (e.g., dihedral angles φ and ψ for each residue).

- Population Seeding: Generate the initial population by running the first stage of the Rosetta ab initio protocol to create partially folded and diverse starting conformations.

Evolutionary Cycle:

- Differential Evolution: For each generation, create new trial vectors (conformations) through DE operations of mutation and crossover.

- Mutation: For a target vector ( \vec{x}i ), generate a mutant vector ( \vec{v}i ) using: ( \vec{v}i = \vec{x}{r1} + F \cdot (\vec{x}{r2} - \vec{x}{r3}) ) where ( \vec{x}{r1}, \vec{x}{r2}, \vec{x}{r3} ) are three distinct randomly selected population members, and ( F ) is a scaling factor.

- Crossover: Create a trial vector ( \vec{u}i ) by mixing parameters from the target vector ( \vec{x}i ) and the mutant vector ( \vec{v}i ) based on a crossover probability.

- Differential Evolution: For each generation, create new trial vectors (conformations) through DE operations of mutation and crossover.

Local Search (Fragment Replacement):

- Apply the Rosetta fragment replacement technique as a local search operator to both the newly created trial vector ( \vec{u}_i ) and the existing population.

- For a segment of the protein, select a small fragment (3-9 residues) from a library of resolved structures based on sequence similarity.

- Use the Metropolis criterion to decide whether to replace the current conformational angles with the fragment's angles. Always accept changes that lower the energy, and occasionally accept those that increase it to escape local minima [20].

Selection:

- Evaluate the fitness (e.g., Rosetta score) of the refined trial vector ( \vec{u}_i ).

- If the trial vector has a better (lower) energy than the target vector, it replaces the target vector in the next generation.

Multi-Stage Fitness Evaluation:

- Run the evolutionary process for a series of stages (e.g., three stages). In each stage, use a progressively more detailed Rosetta score function that incorporates additional energy terms, guiding the population toward a physically realistic and low-energy conformation [20].

Workflow Visualization

Decision Workflow: Energy Minimization vs. Structural Accuracy

The Scientist's Toolkit: Research Reagent Solutions

Table 2: Essential Software and Databases for Protein Prediction Research

| Item | Type | Function / Application |

|---|---|---|

| Rosetta | Software Suite | A comprehensive platform for macromolecular modeling. Used for ab initio structure prediction, docking, and design via energy minimization protocols [20]. |

| AlphaFold-Multimer | Software / Algorithm | A deep learning method specifically designed for predicting the 3D structures of protein complexes, extending the capabilities of AlphaFold2 [22]. |

| ModRefiner | Software Algorithm | A program for constructing and refining full-atom protein structures from Cα traces using a two-step, atomic-level energy minimization to improve physical realism [21]. |

| PDB (Protein Data Bank) | Database | The single worldwide archive of experimental structural data of biological macromolecules. Used for templates, fragment libraries, and method benchmarking [21]. |

| UniProt/UniRef | Database | A comprehensive resource for protein sequence and functional information. Used for constructing deep multiple sequence alignments (MSAs) critical for deep learning methods [22]. |

| CASP Results | Benchmark Dataset | Data from the Critical Assessment of protein Structure Prediction, the community-wide experiment to assess the state of the art in structure prediction. Essential for method comparison [20]. |

Advanced EA Implementations in Modern Protein Science

Ultra-Large Library Screening with REvoLd for Drug Discovery

REvoLd Technical Support Center

This guide provides solutions to common technical and methodological challenges researchers may encounter when using the RosettaEvolutionaryLigand (REvoLd) algorithm for ultra-large library screening in drug discovery projects.

Frequently Asked Questions (FAQs)

Q1: My REvoLd runs converge too quickly on suboptimal molecules. How can I improve the exploration of the chemical space? A1: This is often caused by excessive selective pressure. To promote diversity:

- Modify Selection Pressure: Introduce non-deterministic selectors. The

TournamentSelectororRouletteSelectorcan allow worse-scoring individuals a chance to reproduce, helping the algorithm escape local minima [23]. - Increase Crossover Rate: Boost the number of crossovers between fit molecules to enforce more variance and recombination of promising ligand scaffolds [18].

- Utilize Mutation Steps: Implement the low-similarity fragment mutation, which keeps well-performing parts of a molecule intact but makes significant changes to small parts, and the reaction-switching mutation, which explores different combinatorial reaction groups [18].

Q2: How should I configure the initial population size and number of generations for a typical screen? A2: Based on benchmark studies, the following parameters provide a good balance between efficiency and exploration [18]:

- Initial Population Size: 200 randomly created ligands. This provides sufficient variety to initiate the optimization process without excessive runtime costs.

- Generations: 30 generations of optimization. Good solutions often appear after ~15 generations, but discovery rates typically flatten around generation 30.

- Population Carryover: Allowing 50 individuals to advance to the next generation performs best, balancing effectiveness and noise reduction.

Q3: The algorithm is not finding the absolute best-scoring molecule in my defined space. Is this a flaw? A3: No, this is expected and often desirable behavior. REvoLd is a meta-heuristic designed to find numerous promising compounds rather than a single global optimum. The "rugged" scoring landscape can trap runs in local minima, which in practice enriches for a diverse set of viable hit candidates for further experimental testing [18].

Q4: What is the best strategy to obtain a large and diverse set of hits? A4: Instead of running one optimization for a very long time, perform multiple independent runs [18]. Each run, seeded with a different random starting population, will likely converge on different high-scoring molecular motifs, thereby uncovering a broader range of chemical scaffolds.

Troubleshooting Guide

| Problem | Potential Cause | Solution |

|---|---|---|

| Poor enrichment in final generation | Initial population is too small or homogeneous. | Increase the initial population size from 200 to a larger number to capture more starting diversity [18]. |

| Low diversity among top hits | Over-reliance on the ElitistSelector or insufficient mutation. |

Incorporate the TournamentSelector and increase the frequency of the low-similarity fragment mutation step [18] [23]. |

| Algorithm fails to improve fitness over generations | Reproduction steps are not creating meaningful variants. | Enable a second round of crossover and mutation that includes lower-fitness individuals to help refine them [18]. |

| High computational time per docking evaluation | Using the full RosettaLigand protocol with 150 complexes per molecule by default. | For initial screening, consider reducing the number of generated complexes per molecule, though this may affect scoring accuracy [23]. |

Key Experimental Protocols and Workflows

Standard REvoLd Screening Protocol

The following workflow outlines a standard procedure for a REvoLd screening campaign against a specific protein target [18] [23] [24].

1. Target Preparation

- Structure Refinement: Refine the target protein structure using molecular dynamics (MD) simulations to account for flexibility and generate a more physiologically relevant model [24].

- Binding Site Identification: Perform blind docking across the protein surface to identify potential binding pockets if the active site is not known [24].

2. REvoLd Configuration and Execution

- Define Chemical Space: Specify the make-on-demand library (e.g., Enamine REAL space) by providing the required reaction and fragment list files [23].

- Set Evolutionary Parameters:

- Population size: 200

- Generations: 30

- Individuals advancing: 50

- Use a combination of selection operators (Elitist, Tournament) and mutation operators (fragment swap, reaction change) [18].

- Run Optimization: Execute multiple independent REvoLd runs to maximize scaffold diversity.

3. Hit Analysis and Expansion

- Cluster and Select: Manually select top-scoring molecules from the final generations, prioritizing diverse chemotypes [24].

- Derivative Screening: Use identified hit compounds as input for a subsequent round of REvoLd to sample analogous regions of the chemical space for improved derivatives [24].

Performance Benchmarking Data

The table below summarizes the documented performance of REvoLd on five different drug targets, demonstrating its strong enrichment capabilities [18] [23].

| Performance Metric | Result / Value | Experimental Context |

|---|---|---|

| Hit Rate Improvement | 869 to 1,622 times random selection | Benchmark against five drug targets [18] [23]. |

| Total Molecules Docked per Target | ~49,000 to ~76,000 | Sum of unique molecules docked across 20 independent runs per target [18]. |

| Typical Runtime | 15-30 generations for convergence | Good solutions often emerge within 15 generations, with discovery rates flattening around 30 [18]. |

Workflow Diagram: REvoLd Algorithm

The diagram below illustrates the core iterative cycle of the REvoLd evolutionary algorithm.

The Scientist's Toolkit: Essential Research Reagents & Materials

The table below lists key resources required to implement a REvoLd-based screening campaign.

| Item | Function / Description | Relevance to Experiment |

|---|---|---|

| Make-on-Demand Library (e.g., Enamine REAL) | Defines the synthetically accessible chemical space of fragments and reactions for REvoLd to explore. | Core component. The algorithm's reproduction steps are strictly confined to molecules enumerable from this library [18] [23]. |

| Rosetta Software Suite | Provides the REvoLd application and the underlying RosettaLigand docking protocol. | Essential software platform. Required for running the evolutionary algorithm and performing flexible protein-ligand docking [18] [25]. |

| Prepared Protein Structure (PDB file) | The 3D structure of the drug target, ideally refined via MD simulations. | The target for docking. Structure quality and conformational relevance are critical for predicting valid binding poses [24]. |

| High-Performance Computing (HPC) Cluster | A computing environment with many CPUs/cores. | Necessary for practical runtime. Docking thousands of molecules with full flexibility is computationally intensive [18]. |

Multi-Objective EAs for Complex Detection in PPI Networks

Frequently Asked Questions (FAQs)

FAQ 1: What are the primary advantages of using a Multi-Objective Evolutionary Algorithm (MOEA) over single-objective approaches for protein complex detection?

Single-objective optimization methods often rely on a single fitness function, such as network density, which can overlook biologically significant but topologically sparse complexes [26]. MOEA frameworks address this by simultaneously optimizing multiple, often conflicting, objectives. This typically includes:

- Topological Objectives: Such as maximizing the internal density of a cluster or the closeness centrality of its nodes [27].

- Biological Objectives: Such as maximizing the functional similarity of proteins within a cluster, often measured using Gene Ontology (GO) semantic similarity [28] [27]. This multi-faceted approach allows MOEAs to identify complexes that are not only densely connected but also functionally coherent, leading to more biologically relevant predictions [28].

FAQ 2: How can I incorporate biological knowledge, like Gene Ontology, into my MOEA to improve the quality of predicted complexes?

A highly effective method is to integrate GO knowledge directly into the evolutionary operators of the algorithm. For instance, you can design a Functional Similarity-Based Protein Translocation Operator (a specialized mutation operator) that guides the search based on GO semantic similarity [28]. This operator promotes the grouping of proteins with high functional similarity, enhancing the biological coherence of the detected complexes. The GO-based semantic similarity serves as a key objective function, ensuring that proteins within a predicted complex share common biological functions [27].

FAQ 3: My PPI network data is known to be noisy, with both false positive and false negative interactions. How can I make my MOEA more robust to such noise?

MOEAs can be evaluated for robustness by testing them on artificially perturbed networks. The following table summarizes a protocol for such an evaluation, demonstrating that algorithms incorporating biological knowledge (e.g., GO similarity) maintain higher performance under noise [28]:

Table: Evaluating MOEA Robustness to Network Noise

| Noise Introduction | Evaluation Method | Expected Outcome for a Robust MOEA |

|---|---|---|

| Randomly remove a percentage of edges (simulate false negatives) [28]. | Compare the quality (e.g., F1-score) of complexes detected from the original and perturbed networks against a gold-standard dataset [28]. | Performance metrics remain relatively stable or degrade less significantly compared to methods that rely solely on topology. |

| Randomly add a percentage of non-existent edges (simulate false positives) [28]. | As above. | The algorithm can still recover true complexes, as biological objectives help to filter out spurious connections. |

FAQ 4: What are the key metrics and benchmarks I should use to validate the protein complexes predicted by my MOEA?

Validation should be performed against known reference complexes from databases like the Munich Information Center for Protein Sequences (MIPS) or the Saccharomyces Genome Database (SGD) [28] [26]. Common performance metrics include:

- Maximum Matching Ratio (MMR): Measures the best one-to-one mapping between predicted and reference complexes [26].

- F1-Score: The harmonic mean of precision (fraction of predicted complexes that match real ones) and recall (fraction of real complexes that are matched) [26].

- Geometric Accuracy (Acc): A composite measure that considers both the correctness and completeness of the prediction [26].

FAQ 5: My MOEA is computationally expensive on large human PPI networks. What strategies can I use to improve its efficiency?

To enhance efficiency, consider the following:

- Heuristic Initialization: Initialize the population with densely connected subgraphs or biclusters from the network's adjacency matrix instead of purely random individuals, giving the algorithm a better starting point [27].

- Objective Function Selection: Choose objective functions that are informative but computationally less intensive. For example, using a combination of internal density and a GO-based similarity measure has proven effective [27].

- Fitness Evaluation Optimization: Focus on optimizing the calculation of the most expensive objectives, such as pre-computing GO semantic similarities where possible.

Troubleshooting Guides

Issue 1: The algorithm converges to solutions that are topologically dense but lack functional coherence.

- Problem: The topological objectives are dominating the search, overpowering the biological objectives.

- Solution:

- Review Objective Functions: Ensure your biological objective (e.g., average GO semantic similarity within a cluster) is properly formulated and its value range is comparable to that of your topological objectives.

- Adjust Operator Bias: Increase the influence of biological knowledge in your evolutionary operators. Implement or strengthen a mutation operator that translocates proteins based on their functional similarity to the core of a cluster [28].

- Parameter Tuning: Adjust the weights or ranks in the multi-objective selection process to give higher priority to the biological coherence of clusters.

Issue 2: Poor overlap between predicted complexes and known reference complexes.

- Problem: The predicted complexes do not match the gold-standard data well, as measured by low precision or recall.

- Solution:

- Verify Data Preprocessing: Ensure your PPI network is properly filtered. Use functional similarity scores (e.g., TCSS) to remove interactions with low reliability [26].

- Incorporate Contrast Patterns: Use a supervised approach to discover "emerging patterns" – combinations of topological features that sharply distinguish true complexes from random subgraphs. An integrative score based on these patterns can then guide the complex detection process, improving accuracy [26].

- Benchmark Against Multiple Methods: Compare your results not just against one, but several state-of-the-art methods (e.g., MCODE, MCL, ClusterONE) to identify specific weaknesses in your approach [28] [26].

Issue 3: High computational time required for fitness evaluation, especially with GO semantic similarity.

- Problem: Calculating semantic similarity for all protein pairs in a large population is a performance bottleneck.

- Solution:

- Pre-computation: Pre-compute the GO semantic similarity for all protein pairs in the entire PPI network before starting the evolutionary algorithm. Store the results in a lookup table for fast retrieval during fitness evaluation.

- Approximate Measures: For very large networks, consider using faster, approximate measures of functional similarity.

- Parallelization: Design your fitness evaluation function to be parallelized, as calculating the fitness of individuals in a population is an embarrassingly parallel task.

Experimental Protocols & Workflows

Protocol 1: Standard Workflow for a Multi-Objective EA in Complex Detection

The following diagram illustrates the core workflow of a typical MOEA for protein complex detection.

MOEA for Complex Detection Workflow

Protocol 2: Validation and Robustness Testing Protocol

This protocol outlines the steps for rigorously validating predicted complexes and testing the algorithm's robustness to noise, a critical step for benchmarking against other methods [28] [26].

Table: Key Parameters for Robustness Evaluation

| Parameter | Description | Typical Values / Method |

|---|---|---|

| Gold-Standard Datasets | Known protein complexes used for validation. | MIPS catalog, SGD complexes [28] [26]. |

| Performance Metrics | Quantitative measures for comparing predicted and known complexes. | Maximum Matching Ratio (MMR), F1-Score, Geometric Accuracy (Acc) [26]. |

| Noise Simulation | Method to artificially corrupt the PPI network. | Randomly remove 5-20% of edges (false negatives); Randomly add 5-20% of non-existent edges (false positives) [28]. |

| Comparison Algorithms | Other complex detection methods used for benchmarking. | MCODE, MCL, ClusterONE, CMC, RNSC [26] [27]. |

Complex Validation and Benchmarking Process

The Scientist's Toolkit: Research Reagent Solutions

Table: Essential Resources for MOEA-based Protein Complex Detection Research

| Resource / Reagent | Type | Function in Research | Example Sources / Tools |

|---|---|---|---|

| PPI Network Data | Data | The primary input data representing protein interactions as a graph. | HPRD, DIP, STRING [27] [26] [29]. |

| Gold-Standard Complexes | Data | A curated set of known complexes used for training (in supervised methods) and validation. | MIPS complex catalogue, SGD [28] [26]. |

| Gene Ontology (GO) Database | Data | Provides functional annotations for proteins; used for calculating semantic similarity. | Gene Ontology Consortium [28] [27]. |

| GO Semantic Similarity Measure | Algorithm | Quantifies the functional similarity between two proteins based on their GO annotations. | Relevance measure [27]. |

| Multi-Objective Evolutionary Algorithm | Algorithm | The core optimization framework for detecting complexes. | NSGA-II [27]. |

| Complex Validation Metrics | Metric | Quantitative measures to assess the quality of predicted complexes. | Maximum Matching Ratio (MMR), F1-Score, Geometric Accuracy [26]. |

| Benchmarking Algorithms | Software | Other complex detection methods used for performance comparison. | MCODE, MCL, ClusterONE, CMC [26] [27]. |

This technical support center provides guidance for researchers implementing a Memetic Algorithm (MA) that combines Differential Evolution (DE) with the Rosetta Relax protocol for protein structure refinement. This hybrid approach addresses a critical step in computational biology: improving the quality of initial protein structure models (e.g., from deep learning tools like AlphaFold2) by optimizing the positions of amino acid atoms to resolve atomic collisions and achieve lower-energy, more biologically accurate conformations [11] [30]. The process is framed as a complex optimization problem within a vast conformational space, where the MA aims to synergistically combine DE's global search capabilities with Rosetta Relax's potent local exploitation of problem-specific knowledge [11] [31].

The following diagram illustrates the high-level workflow and logical relationship between the core components of the Relax-DE algorithm.

Frequently Asked Questions (FAQs) and Troubleshooting Guides

FAQ 1: What is the core advantage of this memetic approach over using Rosetta Relax alone?

The primary advantage is more effective sampling of the protein energy landscape. While Rosetta Relax is a powerful local search heuristic, embedding it within the Differential Evolution framework provides a robust global search strategy. This combination helps avoid getting trapped in local energy minima and explores a wider range of low-energy conformations. Empirical results demonstrate that this memetic approach can obtain better energy-optimized refined structures within the same runtime compared to the standard Rosetta Relax protocol [11] [30].

FAQ 2: My refinement process is not converging to lower-energy structures. What could be wrong?

Poor convergence often stems from an imbalance between global exploration (DE) and local exploitation (Rosetta Relax). Use the table below to diagnose and troubleshoot common convergence issues.

Table: Troubleshooting Guide for Convergence and Performance Issues

| Problem | Potential Causes | Recommended Solutions |

|---|---|---|

| Stagnation in Early Generations | DE parameters too aggressive, causing loss of diversity. | Reduce the differential weight (F parameter) to ~0.5; increase population size. |

| No Improvement Despite Sampling | Ineffective local search; Rosetta Relax not adequately refining individuals. | Increase the number of minimization cycles within the Rosetta Relax protocol. |

| Excessive Runtime | Rosetta Relax is computationally expensive, limiting the number of DE generations. | Apply the local Rosetta Relax operator selectively, not to every individual in the DE population [11]. |

| Structurally Unreasonable Output | Energy function may be dominated by a single term (e.g., fa_rep for atomic clashes). |

Ensure the full Rosetta Ref2015 energy function with all 19 weighted terms is used for a balanced physical and knowledge-based potential [11]. |

FAQ 3: How do I integrate the Rosetta Relax protocol into the Differential Evolution loop?

Rosetta Relax acts as a local search operator applied to individuals (protein conformations) within the DE population. A standard practice is to apply it to the best-performing individuals after each generation or to a subset of offspring before selection. The key is to use Rosetta Relax to "polish" the structures found by DE, driving them to the nearest local minimum on the energy landscape, which is defined by the Rosetta Ref2015 all-atom energy function [11].

FAQ 4: Are there alternative memetic or evolutionary approaches for refinement in Rosetta?

Yes, the IterativeHybridize protocol is another genetic-algorithm-inspired refinement method available within Rosetta. While the Relax-DE method uses DE as its global sampler, IterativeHybridize uses a different selection and sampling strategy, with its HybridizeMover as the core structural operator for crossover and mutation. It also incorporates concepts from Conformational Space Annealing (CSA) to manage structural diversity [32]. Comparing the performance of your Relax-DE implementation against IterativeHybridize on your target proteins is an excellent validation step [32].

The following workflow provides a detailed, step-by-step methodology for implementing the core Relax-DE experiment as described in the primary literature [11].

The Scientist's Toolkit: Research Reagent Solutions

This table details the essential software tools, libraries, and data required to implement and run the Relax-DE protein structure refinement protocol.

Table: Essential Research Reagents and Resources

| Item Name | Type | Function/Purpose | Acquisition/Usage Notes |

|---|---|---|---|

| Rosetta Software Suite | Software Environment | Provides the Rosetta Relax protocol and the Ref2015 full-atom energy function for local minimization and scoring [11] [32]. | Licensed from the University of Washington; required for the local search component. |

| Differential Evolution Library | Algorithmic Code | Implements the global search operations (mutation, crossover, selection). | Can be implemented from scratch (e.g., Python, C++) or using libraries like SciPy. |

| Initial Structural Models | Data | The starting 3D protein models to be refined. | Often generated by AI predictors like AlphaFold2 or RoseTTAFold [11] [33]. |

| Protein Data Bank (PDB) | Database | Source of experimentally-solved "native" structures for benchmarking and validating refinement performance [11] [13]. | Publicly available; used to calculate metrics like GDT-TS or RMSD. |

| Fragment Libraries | Data | Used by some Rosetta protocols for conformational sampling [32]. | Generated for a target sequence using the Rosetta fragment_picker application [32]. |

Frequently Asked Questions (FAQs)

Q1: What is the primary advantage of using Evolutionary Algorithms (EAs) for the Inverse Protein Folding Problem (IFP)?

Evolutionary Algorithms (EAs), particularly Multi-Objective Genetic Algorithms (MOGAs), excel in exploring the vast sequence space to discover novel protein sequences that fold into a target structure. A key advantage is their ability to simultaneously optimize multiple, often competing, objectives. For instance, one study implemented a MOGA that concurrently optimizes for secondary structure similarity and sequence diversity. This approach, known as diversity-as-objective (DAO) multi-objectivization, allows the algorithm to search more deeply and broadly in the sequence solution space, preventing premature convergence and generating a diverse set of viable sequence solutions for a single target structure [6].

Q2: When using EAs for sequence design, my designs are stable but often lack biological function. How can I preserve function during optimization?

This is a common challenge, as over-optimizing for structural stability can disrupt precise functional motifs. The issue arises because standard inverse folding does not inherently incorporate functional constraints. To address this, you should integrate functional information directly into the evolutionary fitness function or the initial sequence sampling. Modern approaches, including advanced machine learning models, suggest several strategies:

- Incorporate Evolutionary Information: Use Multiple Sequence Alignments (MSA) to inform the EA. The MSA contains evolutionary constraints that can guide the algorithm toward sequences that are not only stable but also functionally competent [34].

- Include Multiple Structural States: If your protein's function relies on conformational dynamics (e.g., an allosteric protein or a binding protein that undergoes induced fit), optimize sequences against multiple backbone conformations. This prevents the EA from overspecializing on a single, static structure and helps preserve the dynamic behavior essential for function [34].

- Explicitly Model Functional Sites: For enzymes or binding proteins, fix the identities or physico-chemical properties of catalytically essential residues or binding pocket residues during the evolutionary optimization process. An alternative is to add a term to the fitness function that rewards the preservation of these critical residues [35] [34].

Q3: How do I balance the trade-off between exploration (diversity) and exploitation (fitness) in my EA parameters?

Balancing exploration and exploitation is critical for the success of an EA. The DAO strategy explicitly treats diversity as an objective to be maximized alongside fitness [6]. Beyond this, parameter tuning is essential:

- Population Size: A larger population promotes diversity but increases computational cost.

- Crossover and Mutation Rates: A higher crossover rate facilitates the exploitation of good building blocks, while a higher mutation rate promotes exploration of new sequences.

- Selection Pressure: Implement selection mechanisms like tournament or roulette-wheel selection with carefully tuned pressure. Too much pressure leads to premature convergence; too little slows optimization.

The table below summarizes key parameters and their effect on the exploration-exploitation balance [6]:

| Parameter | Effect on Exploration | Effect on Exploitation | Recommendation for IFP |

|---|---|---|---|

| Population Size | Increases | Decreases | Use a large size (hundreds to thousands) to maintain diverse sequence pools. |

| Mutation Rate | Increases | Decreases | Set to a moderate-to-high level (e.g., 0.01-0.1 per residue) to encourage novelty. |

| Crossover Rate | Decreases | Increases | Use a high rate to effectively combine stable structural motifs. |

| Selection Pressure | Decreases | Increases | Apply moderate pressure (e.g., tournament size of 3-5) to avoid early convergence. |

Q4: What are the key metrics to validate sequences designed by my EA for the target structure?

Validation should occur at multiple levels, from fast in silico checks to experimental verification.

- In Silico Validation:

- Tertiary Structure Prediction: Use tools like I-TASSER or AlphaFold2 to predict the 3D structure of your designed sequence [6] [36].

- Structure Similarity: Compare the predicted model to your original target structure using metrics like TM-Score (values >0.5 suggest similar folds; >0.8 indicate a functionally similar structure) and GDT_TS [6] [37].

- Secondary Structure Annotation: Verify that the secondary structure elements (alpha-helices, beta-sheets) of the predicted model match the target using tools like DSSP [6].

- Experimental Validation:

- Thermal Stability (∆Tm): Measure the melting temperature. A significant increase (e.g., ∆Tm ≥ 10 °C) indicates successful stabilization [35] [34].

- Functional Assays: Perform activity assays specific to the protein's function (e.g., enzyme kinetics, binding affinity measurements) to confirm that function is retained or enhanced [34].

Troubleshooting Guides

Issue 1: Poor Sequence Diversity and Premature Convergence

Problem: Your EA converges quickly on a single, sub-optimal sequence variant, failing to explore the full solution space.

Solutions:

- Implement Multi-Objectivization: Reformulate the single-objective problem (e.g., maximize stability) into a multi-objective one. A proven method is the Diversity-as-Objective (DAO) approach, where you explicitly optimize for both structural fitness and sequence diversity. This forces the algorithm to maintain a Pareto front of diverse, high-quality solutions [6].

- Adjust Algorithmic Parameters: Increase the mutation rate and population size. Decrease the selection pressure by using a less aggressive selection scheme [6].

- Use Niching Techniques: Integrate methods like fitness sharing or crowding to prevent any single sequence variant from dominating the population too quickly. This promotes the formation of stable sub-populations (niches) around different local optima in the fitness landscape [6].

Issue 2: Computationally Expensive Fitness Evaluation

Problem: The energy or fitness calculation for each candidate sequence is slow, severely limiting the number of generations and population size you can realistically evaluate.

Solutions:

- Utilize Fast Approximations: During the main evolutionary loop, use fast, approximate energy functions or statistical potentials. Reserve more accurate but slower physics-based calculations (like those in CHARMM) only for the final refinement of top-ranked candidates [6].

- Leverage Machine Learning Potentials: Train or use pre-trained deep neural networks as surrogate fitness functions. These models, once trained, can evaluate sequence fitness orders of magnitude faster than traditional physics-based calculations [36].

- Parallelize Fitness Evaluations: The fitness evaluation of individuals in a population is an "embarrassingly parallel" problem. Distribute these computations across multiple CPU/GPU cores or an HPC cluster to drastically reduce wall-clock time [6].

Issue 3: Designed Sequences Are Stable but Misfolded or Non-Functional

Problem: Your designed sequences express well and are thermally stable, but structural validation reveals they are misfolded or lack the intended biological activity.

Solutions:

- Integrate Negative Design: Your fitness function must not only stabilize the desired target state (positive design) but also destabilize competing, misfolded states (negative design). This can be implicitly achieved by using evolutionary guidance. Filter candidate mutations based on natural sequence variation from homologous proteins (MSA), as evolution has already selected against aggregation-prone and misfolding motifs [35].

- Incorporate Co-evolutionary Data: Use direct coupling analysis (DCA) on a multiple sequence alignment to identify evolutionarily coupled residue pairs. Adding a term to your fitness function that rewards the preservation of these couplings can strongly guide the EA toward natively-like, functional folds [38].

- Validate with Folding Models: Incorporate a step where candidate sequences are passed through a protein folding model like AlphaFold2 or ESMFold. Use the predicted TM-Score relative to the target structure as a critical filter or an additional fitness objective before selecting sequences for experimental testing [37].

Experimental Protocols & Workflows

Protocol 1: MOGA with Diversity-as-Objective for Inverse Folding

This protocol outlines the methodology for using a Multi-Objective Genetic Algorithm to design protein sequences for a target structure [6].

1. Input Preparation:

- Obtain the target protein's 3D backbone structure (e.g., from PDB).

- Annotate its secondary structure using a tool like DSSP.

2. Algorithm Initialization:

- Representation: Encode a protein sequence as a string of characters (amino acids).

- Initialization: Generate a random population of sequences or seed it with fragments of natural sequences.

- Parameter Setting: Set population size (e.g., 1000), number of generations (e.g., 500), crossover rate (e.g., 0.8), and mutation rate (e.g., 0.05).

3. Fitness Evaluation (Multi-Objective): For each individual in the population, calculate two primary fitness objectives:

- Objective 1: Secondary Structure Similarity. Measure how well the sequence's predicted secondary structure (using a tool like PSIPRED) matches the target's.

- Objective 2: Sequence Diversity. Calculate the pairwise sequence diversity within the current population (e.g., average Hamming distance).

4. Evolutionary Loop:

- Selection: Apply a multi-objective selection method (e.g., NSGA-II) to select parents based on the Pareto front of the two objectives.

- Crossover: Perform crossover (e.g., single-point) on parent sequences to produce offspring.

- Mutation: Introduce point mutations in the offspring sequences.

- Replacement: Form a new population from the best parents and offspring.

5. Validation and Output:

- Select a subset of the best-performing sequences from the final Pareto front.

- Perform tertiary structure prediction (e.g., with I-TASSER) for these sequences.

- Validate by comparing the predicted model's tertiary structure and secondary structure annotation to the original target [6].

MOGA for Inverse Folding Workflow

Protocol 2: Integrating Evolutionary Algorithms with Inverse Folding Feedback

This protocol describes a advanced workflow that uses a folding model's feedback to iteratively improve an inverse folding process, inspired by Direct Preference Optimization (DPO) techniques [37].

1. Setup:

- Have a target protein structure ready.

- Choose a base inverse folding model (e.g., a trained EA or a neural network like ProteinMPNN).

- Choose a protein folding model (e.g., AlphaFold2, ESMFold).

2. Sequence Sampling and Folding:

- Use the inverse folding model to generate a diverse set of candidate sequences for the target structure.

- For each candidate sequence, use the folding model to predict its 3D structure.

3. Preference Pair Generation:

- Calculate the TM-Score between each predicted structure and the original target structure.

- For a given target, rank the sequences and create pairwise preference data: (chosensequence, rejectedsequence) based on their TM-Scores.

4. Model Optimization:

- Use a preference optimization algorithm (like DPO) to fine-tune the inverse folding model. The objective is to increase the probability of generating "chosen" sequences over "rejected" ones.

- This process can be repeated for multiple rounds, creating a self-improving loop where the inverse folding model learns to produce sequences that are more likely to fold correctly according to the folding model [37].

DPO Feedback Loop for Inverse Folding

Research Reagent Solutions

The table below lists key computational tools and resources essential for conducting research in Evolutionary Algorithms for Inverse Protein Folding.

| Resource Name | Type | Primary Function in Research |

|---|---|---|

| I-TASSER Suite [6] | Software Suite | Protein structure prediction for validating designed sequences. |

| CHARMM [6] | Molecular Dynamics | Detailed energy minimization and dynamics calculations for final sequence refinement. |

| ESM Protein Language Model [34] | Machine Learning Model | Provides evolutionary-informed sequence embeddings to guide design towards functional regions. |

| AlphaFold2 / ESMFold [37] | Folding Model | Provides fast, accurate in silico feedback on whether a designed sequence will fold into the target structure. |

| DSSP [6] | Algorithm | Annotates protein secondary structure from 3D coordinates, used for fitness calculation. |

| NSGA-II [6] | Algorithm | A multi-objective optimization algorithm for managing trade-offs like stability vs. diversity. |

| ProteinMPNN [39] [37] | Inverse Folding Model | A neural network-based inverse folding model; can be used as a baseline or within a hybrid EA/ML workflow. |

| Direct Coupling Analysis (DCA) [38] | Analytical Method | Infers evolutionarily coupled residues from MSAs to constrain the EA's search space. |

Technical Support Center

Frequently Asked Questions (FAQs)