Optimizing Selection Conditions in Directed Evolution: A Strategic Guide for Biomedical Researchers

This article provides a comprehensive guide for researchers and drug development professionals on optimizing selection conditions in directed evolution.

Optimizing Selection Conditions in Directed Evolution: A Strategic Guide for Biomedical Researchers

Abstract

This article provides a comprehensive guide for researchers and drug development professionals on optimizing selection conditions in directed evolution. It covers the foundational principles of fitness landscapes and genotype-phenotype linkage, explores advanced methodological frameworks including machine learning-assisted and continuous evolution systems, and details strategies for troubleshooting common pitfalls like false positives and parasite variants. Through comparative analysis of empirical case studies across enzyme engineering and therapeutic protein development, we validate optimization techniques that enhance selection efficiency, improve functional outcomes, and accelerate the development of novel biologics and biotherapeutics.

The Foundation of Fitness: Understanding Landscapes and Selection Principles

FAQs: Navigating Fitness Landscapes in Directed Evolution

How does the relationship between genotype, phenotype, and fitness impact my directed evolution experiment?

The path from a protein's sequence (genotype) to its observable function, like catalytic activity (phenotype), and finally to its overall performance in your screen (fitness) is often non-linear [1]. Your genotype-phenotype landscape might be smooth, but if your selection pressure favors an intermediate level of phenotypic expression (e.g., not too much nor too little of an activity), it can create a rugged fitness landscape with multiple peaks [1]. This means that the protein variants you select based on fitness may not have the most extreme phenotypic values, but those that are "just right" for the selection conditions you set. The assumption that a higher measured phenotype always equals higher fitness can be misleading.

What are fitness "seascapes" and why are they important for directed evolution?

A fitness landscape is a static metaphor, while a seascape models how the adaptive topography changes over time or across different environments [2]. In practice, your selection conditions (like temperature, substrate concentration, or presence of an inhibitor) define the landscape. If you alter these conditions between rounds, you are effectively changing the seascape, which can help escape local fitness peaks and discover variants with more robust or novel functions [2]. This is crucial for engineering proteins that need to function in fluctuating environments, such as therapeutic enzymes.

How can I optimize my selection conditions to efficiently find improved variants?

Optimizing selection conditions is a critical, non-trivial task [3]. A systematic approach involves using Design of Experiments (DoE) to screen and benchmark key parameters, such as cofactor concentration (e.g., Mg²⁺), substrate concentration, and reaction time, using a small, focused protein library [3]. This allows you to identify parameter combinations that maximize the recovery of desired variants while minimizing the enrichment of "parasite" variants that thrive under non-optimal conditions without performing the desired function [3]. The goal is to shape the fitness landscape such that the highest peaks correspond to your truly desired protein functions.

My library is large, but I can only screen a fraction of it. How does this affect my search?

While generating large diversity is possible, the real bottleneck is often linking genotype to phenotype with a high-throughput screen or selection [4]. The power and throughput of your screening method must match the library size [4]. Selections, where survival or replication is tied to function, can handle immense libraries but may be prone to artifacts and provide less quantitative data [4]. Screening, where you individually assess each variant, gives rich data but has lower throughput. A robust strategy often combines both, using small-scale screening to inform the design of larger-scale selections. Furthermore, iterative deep learning approaches have shown that even limited screening of ~1,000 variants per round can guide evolution efficiently if the right "building blocks," like triple mutants, are used to explore a broader sequence space [5].

Experimental Protocols for Landscape Analysis

Protocol 1: Establishing a Baseline Genotype-Phenotype Map via Site-Saturation Mutagenesis

This protocol is used to deeply explore the functional contribution of specific amino acid positions, often identified as hotspots from prior random mutagenesis [4].

- Target Selection: Identify one or a few key residues based on structural knowledge or previous data.

- Library Construction: Perform site-saturation mutagenesis at the target codon(s) using degenerate primers to encode all 19 possible alternative amino acids [4]. This is typically done via inverse PCR on the plasmid containing the parent gene.

- Transformation: Transform the ligated library into a high-efficiency E. coli strain via electroporation to maximize library diversity.

- Phenotypic Screening: Culture individual clones and assay for the target phenotype (e.g., enzymatic activity) using a high-throughput method in 96- or 384-well plates with colorimetric or fluorometric substrates [4].

- Data Analysis: Sequence variants and plot phenotypic value against genotype to construct a local genotype-phenotype map for the targeted region.

Protocol 2: An Iterative Deep Learning-Guided Directed Evolution Workflow

This modern protocol, as exemplified by the DeepDE algorithm, uses machine learning to efficiently navigate the fitness landscape [5].

- Initial Library Creation: Generate a compact but diverse training library of approximately 1,000 protein variants. Using triple mutants as building blocks, instead of singles or doubles, allows for exploration of a much greater sequence space per round [5].

- Phenotyping and Training: Screen the entire library for the desired activity to obtain phenotypic data. Use this data to train a deep learning model to predict function from sequence.

- In Silico Prediction and Selection: The trained model predicts the fitness of a vast number of in silico variants. A new set of candidates is selected based on the model's predictions.

- Iterative Rounds: The newly selected variants are synthesized, tested experimentally, and the resulting data is used to re-train and refine the model for the next round. This cycle repeats until the performance target is met [5].

Research Reagent Solutions

The following table details key materials and their functions for directed evolution experiments focused on fitness landscape analysis.

| Item | Function in Experiment |

|---|---|

| Error-Prone PCR (epPCR) Kit | A modified PCR protocol that uses low-fidelity polymerases and manganese ions (Mn²⁺) to introduce random mutations across the gene of interest, creating diverse genotype libraries [4]. |

| High-Efficiency Competent Cells | Essential for achieving large library sizes after mutagenesis or gene shuffling, ensuring maximum sequence diversity is captured for screening [3]. |

| Colorimetric/Fluorometric Substrates | Enable high-throughput phenotypic screening by producing a detectable signal (color or fluorescence) proportional to enzyme activity in microtiter plate assays [4]. |

| Family Shuffling Templates | A set of homologous genes from different species. Used in recombination-based methods to access a broader, nature-approved region of sequence space by shuffling beneficial mutations [4]. |

| Saturation Mutagenesis Primers | Degenerate primers designed to randomize specific codons, allowing for the exhaustive exploration of all possible amino acids at a targeted position [4]. |

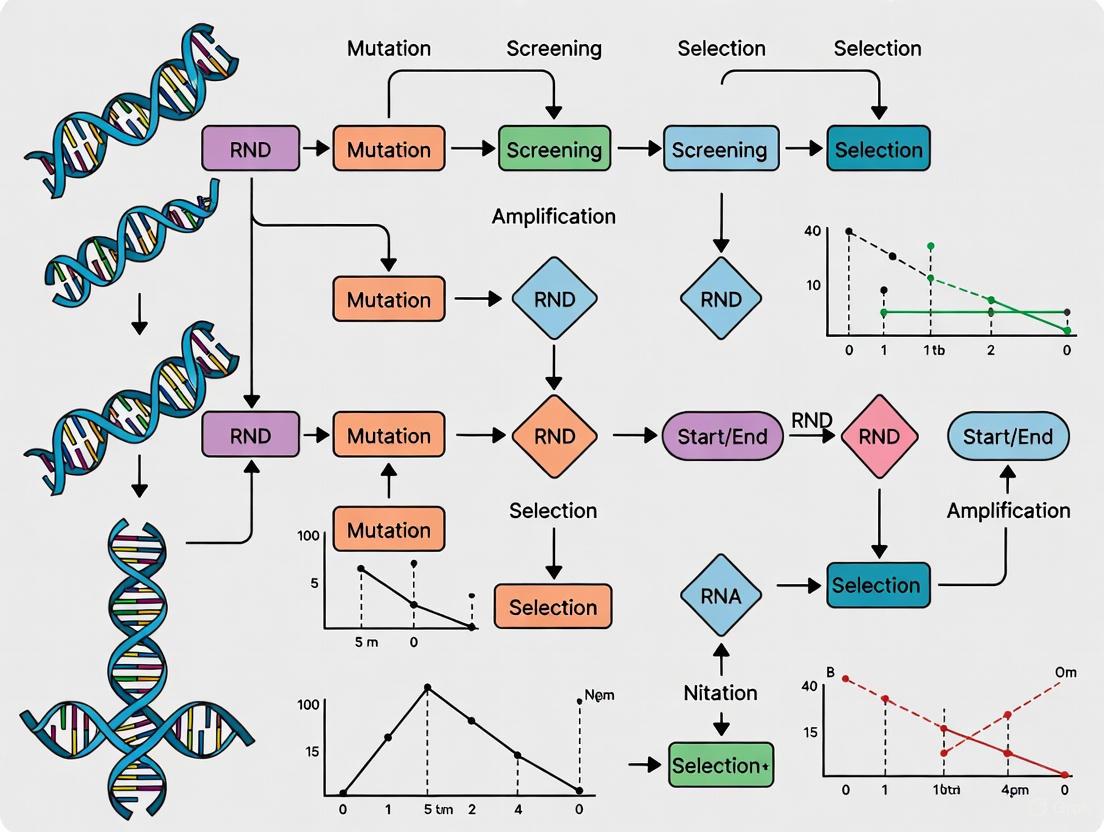

Workflow Diagram: Directed Evolution on a Fitness Landscape

The diagram below illustrates the iterative cycle of directed evolution, conceptualized as a walk on a dynamic fitness seascape.

The Critical Role of Genotype-Phenotype Linkage in Selection

Frequently Asked Questions (FAQs)

1. What is a genotype-phenotype linkage and why is it critical in directed evolution? The genotype is an organism's full hereditary information (its DNA), while the phenotype is its actual observed physical properties and functional traits, such as binding or catalytic activity [6] [7]. A genotype-phenotype linkage is a method that physically connects a protein (phenotype) to the gene that encodes it (genotype) [8]. This linkage is the fundamental practical consideration in directed evolution because it allows researchers to select a protein based on its desired function and then amplify the underlying DNA for subsequent rounds of mutation and selection, mimicking natural evolution in a laboratory setting [7].

2. What are the main methods for establishing this linkage? The primary methods can be classified into three categories [8]:

- Cell-based methods: These include techniques like phage display, where the protein is displayed on the surface of a virus (bacteriophage) that contains the gene for that protein [7].

- In vitro compartmentalization (IVC): This method uses water-in-oil emulsions to create microscopic compartments, effectively acting as artificial cells. Each compartment contains a single gene and the products it encodes, linking genotype and phenotype by physical isolation [7].

- Display technologies: Methods like ribosome display and mRNA display create a physical link between the protein and its mRNA or DNA gene in a test tube, without using cells [7].

3. When should I choose an in vitro method (like ribosome or mRNA display) over a cell-based method (like phage display)? In vitro methods offer several advantages, particularly when working with very large libraries (>10^12 members) or challenging proteins. The following table summarizes the key differences to guide your selection:

| Parameter | Cell-Based Methods (e.g., Phage Display) | In Vitro Methods (e.g., Ribosome/mRNA Display) |

|---|---|---|

| Typical Library Size | Typically limited to < 10^12 members due to transformation efficiency [7] | Can exceed 10^14 members, as no cellular transformation is needed [7] |

| Selection Conditions | Limited to physiological conditions compatible with cell survival [7] | Highly flexible; allows for non-physiological conditions (e.g., extreme pH, temperature, solvents) [7] |

| Protein Toxicity | Problematic; proteins toxic to the host cell cannot be efficiently displayed [7] | Not an issue, as no living cells are used [7] |

| Desired Activity | Well-suited for binding selections (panning) [7] | Best suited for binding selections; mRNA display can also be adapted for some catalytic functions [7] |

4. What are some common issues when working with ribosome display? A common challenge is the instability of the non-covalent ternary complex (mRNA-ribosome-protein). This can be mitigated by working at low temperatures (often 0-4°C), using high magnesium ion concentrations to stabilize the ribosome, and ensuring your mRNA template lacks a stop codon to prevent the ribosome from releasing the complex [7].

5. How can I improve my success with in vitro compartmentalization (IVC)? For IVC, the uniformity and stability of your emulsion are critical. Ensure you use a consistent and vigorous emulsification procedure. The droplet size should be small (around 2 μm diameter) to achieve a high degree of compartmentalization, ensuring that most droplets contain no more than one gene [7].

Troubleshooting Guides

Problem 1: Low Diversity in Selected Output

Potential Causes and Solutions:

- Cause: Inefficient linkage formation. The connection between the protein and its gene is broken during selection.

- Solution: For ribosome display, verify stabilization conditions (Mg²⁺ concentration, temperature). For mRNA display, check the efficiency of the puromycin linkage reaction [7].

- Cause: Incomplete removal of non-binders. High background noise can mask the selection of weak but desirable binders.

- Solution: Increase the number and stringency of wash steps. Include competitive elution (adding a known ligand to displace specific binders) in addition to non-specific elution (e.g., low pH) to isolate target-specific clones.

- Cause: Library bias. The initial genetic library may have limited diversity for the target.

- Solution: Use high-fidelity polymerases during library construction to avoid random mutations. Consider using different mutagenesis strategies (e.g., error-prone PCR, DNA shuffling) to create a more diverse starting pool.

Problem 2: No Output or Very Low Output After Selection

Potential Causes and Solutions:

- Cause: Low library quality or quantity. The initial DNA library may be too small or contain a high proportion of non-functional sequences.

- Solution: Quantify your DNA library accurately. Check the integrity of the library by agarose gel electrophoresis. For cell-based methods, ensure the transformation efficiency is sufficient.

- Cause: Harsh selection conditions. The conditions may be too stringent, inactivating the proteins or disrupting the genotype-phenotype link.

- Solution: Gradually increase selection stringency over multiple rounds. For the first round, use milder conditions (e.g., shorter incubation time, higher target concentration) to enrich for any binders.

- Cause: Failure in amplification step. After selection, the recovered DNA cannot be amplified for the next round.

- Solution: For cell-based methods, ensure your competent cells are highly efficient. For in vitro methods, use a robust PCR protocol, potentially adding DMSO (2-8%) to assist in amplifying GC-rich templates [9].

Problem 3: High Background of Non-Specific Binders

Potential Causes and Solutions:

- Cause: Non-specific binding to the solid support.

- Solution: Include a pre-clearing or negative selection step by incubating the library with the support material (e.g., the beads or plate) without the target present. Use a high concentration of a non-specific blocking agent (e.g., BSA, skim milk) during incubation and washing steps.

- Cause: "Sticky" proteins in the library.

- Solution: Incorporate counter-selection strategies. For example, if selecting against a specific protein, first remove binders to a closely related but undesired protein.

The Scientist's Toolkit: Key Research Reagent Solutions

The following table details essential materials and their functions for establishing robust genotype-phenotype linkages.

| Reagent / Material | Function in Experiment |

|---|---|

| In Vitro Transcription/Translation (IVT) System | A cell-free extract containing all necessary components (ribosomes, tRNAs, enzymes) to synthesize proteins from DNA or mRNA templates. Essential for all in vitro display technologies [7]. |

| Puromycin-Linker | A key reagent for mRNA display. This DNA oligonucleotide, covalently linked to puromycin, is hybridized to the mRNA. The puromycin molecule enters the ribosome and forms a covalent bond with the nascent protein, creating a stable mRNA-protein fusion [7]. |

| Magnetic Beads (Streptavidin) | Coated with streptavidin, these beads are used to immobilize biotinylated target molecules. They are the solid support of choice for many panning experiments due to easy and rapid separation using a magnet. |

| Emulsification Detergent (e.g., Abil WE 01) | A critical component for creating stable water-in-oil emulsions for In Vitro Compartmentalization (IVC). It stabilizes the microscopic aqueous droplets that act as artificial cells [7]. |

| DpnI Restriction Enzyme | Used in site-directed mutagenesis protocols to digest the methylated parental DNA template after PCR, enriching for the newly synthesized mutated DNA [9]. |

| High-Efficiency Competent Cells | Essential for transforming DNA libraries into bacterial hosts for cell-based methods like phage display. High efficiency is required to maintain library diversity [9]. |

Experimental Workflows for Key Linkage Technologies

The following diagrams illustrate the core workflows for two major in vitro genotype-phenotype linkage methods.

Diagram Title: Ribosome Display Workflow

Diagram Title: mRNA Display Workflow

In directed evolution, the selection step is where the evolutionary pressure is applied, determining which protein variants are enriched for subsequent rounds of evolution. The efficiency and success of an entire campaign hinge on the careful optimization of three key selection parameters: stringency, which defines the selective pressure; throughput, which determines the number of variants that can be assessed; and recovery, which ensures that improved variants are successfully captured. Balancing these interdependent parameters is a common challenge that requires a strategic approach. This guide provides troubleshooting advice and foundational methodologies to help researchers navigate these critical aspects of selection optimization.

Troubleshooting Common Selection Challenges

FAQ: How do I balance stringency and throughput in my selections?

- Challenge: Increasing selection stringency (e.g., higher antibiotic concentration, shorter induction time) often reduces the number of surviving clones, thereby reducing throughput and potentially losing valuable variants.

- Solution:

- Staggered Stringency: Perform parallel selections at low, medium, and high stringency. This approach captures a broad range of variant improvements without prematurely discarding moderately improved clones [10].

- Progressive Stringency: Use lower stringency in initial rounds to enrich for a larger pool of variants, then gradually increase stringency in subsequent rounds to isolate the top performers.

- Leverage FACS: When available, use Fluorescence-Activated Cell Sorting (FACS) to separate the stringency (gating on fluorescence intensity) from the throughput, as it can sort up to 10^8 variants per day [11].

FAQ: My selection yields very few colonies. What could be wrong?

- Challenge: Low recovery after a selection round, resulting in an insufficient number of variants to maintain library diversity.

- Solution:

- Troubleshoot the Cause:

- Excessive Stringency: The selection pressure may be too high. Titrate the selective agent (e.g., antibiotic, substrate concentration) to find a level that allows for adequate colony growth.

- Low Transformation Efficiency: If using an in vivo system, ensure high-quality competent cells and optimal transformation protocols. For viral systems like VLVs, confirm high transduction efficiency [12].

- Toxic Gene Expression: If the selected trait itself is toxic to the host, consider using a tightly regulated, inducible promoter to control expression only during the selection window.

- Optimize Recovery: Use larger culture volumes or multiple selection plates to increase the absolute number of cells recovered. Ensure that the growth conditions (media, temperature, aeration) are optimal for the host organism.

- Troubleshoot the Cause:

FAQ: How can I ensure my selection is enriching for the desired function and not for "cheaters"?

- Challenge: Variants that bypass the intended selection pressure (e.g., by downregulating the selection system, acquiring genomic mutations, or losing the genetic element) can dominate the pool, leading to false positives [12].

- Solution:

- Counter-Selection Systems: Implement strategies to actively eliminate unedited or non-functional cells. The SELECT method, for example, uses DNA damage-induced promoters to kill unedited cells, achieving up to 100% editing efficiency and reducing background noise [13].

- Genotype-Phenotype Linkage: Use systems that physically link the gene to its encoded protein product, such as phage display or yeast surface display, making it harder for cheaters to propagate without the functional gene [11].

- Validate Hits: Always sequence the genetic material of selected variants to confirm that the improved function is linked to mutations in the gene of interest and not to host genomic mutations.

Essential Experimental Protocols for Selection Optimization

Protocol 1: Method for Analyzing Selection Parameters Using Design of Experiments (DoE)

This protocol, adapted from current research, provides a systematic framework for understanding the impact of selection conditions [10].

- Define Key Variables: Identify the critical factors to test (e.g., antibiotic concentration, induction time, temperature, substrate concentration).

- Design the Experiment: Use a statistical DoE approach (e.g., a factorial design) to create a set of selection conditions that efficiently explores the interaction between these variables.

- Perform Selections: Subject a small, well-characterized library (e.g., a mock library with known ratios of active/inactive variants) to each set of conditions.

- Analyze Output with NGS: Sequence the output populations from each condition using Next-Generation Sequencing (NGS). The required sequencing coverage for accurate identification of enriched mutants is higher than for genome assembly [10].

- Evaluate Efficiency and Fidelity: Analyze the NGS data to determine:

- Enrichment Efficiency: How effectively were the known functional variants enriched?

- Library Diversity: Was a diverse set of functional sequences recovered, or did the selection collapse to a few clones?

- Fidelity: Did the selection enrich for variants with the desired function, or did other mutations (cheaters) dominate?

- Identify Optimal Conditions: Select the set of conditions that provides the best balance of high enrichment efficiency and high library diversity for your specific directed evolution goal.

Protocol 2: Bacterial Selection for PAM-Relaxed Cas12a Variants

This protocol outlines the directed evolution workflow used to generate Cas12a variants with relaxed PAM requirements, demonstrating a robust in vivo selection strategy [14].

- Library Generation: Create a library of LbCas12a variants with random mutations in the PAM-interacting (PI) and wedge (WED) domains using error-prone PCR. Aim for a mutation rate of 6–9 nucleotides per kilobase [14].

- Dual-Plasmid Selection System:

- Expression Plasmid: Clone the Cas12a variant library into a chloramphenicol-resistant (CAM⁺) vector under an inducible promoter.

- Selection Plasmid: Use an ampicillin-resistant (Amp⁺) plasmid encoding a lethal gene (e.g., ccdB) and a target sequence adjacent to a non-canonical PAM. The lethal gene should be under a tightly regulated, inducible promoter (e.g., pBAD with arabinose) [14].

- Transformation and Selection:

- Co-transform the library and selection plasmid into competent E. coli.

- Plate the transformed bacteria on agar plates containing chloramphenicol, arabinose (to induce the lethal gene), and the Cas12a inducer.

- Only cells expressing a functional Cas12a variant that cleaves the lethal gene target will survive.

- Isolation and Validation: Isolve plasmids from surviving colonies and sequence the LbCas12a gene to identify beneficial mutations. These hits can be subjected to further rounds of evolution or characterization in mammalian systems.

Key Signaling Pathways and Workflows in Selection Systems

The following diagrams illustrate the core logic and experimental workflows of advanced selection systems discussed in this guide.

Directed Evolution Workflow

SELECT Method Counter-Selection Logic

The Scientist's Toolkit: Key Research Reagent Solutions

Table 1: Essential reagents and their functions in directed evolution selection systems.

| Reagent / Tool | Function in Selection | Example Use Case |

|---|---|---|

| Error-Prone PCR | Generates random point mutations within a gene of interest to create genetic diversity [11]. | Creating initial library of LbCas12a variants to evolve new PAM specificity [14]. |

| DNA Shuffling | Recombines fragments from homologous genes to rapidly combine beneficial mutations [11]. | Accelerating the evolution of beta-lactamase resistance by recombining mutations from different lineages. |

| Phage/Yeast Display | Provides a physical link between a protein variant (phenotype) and its encoding gene (genotype), allowing for efficient library screening [11]. | Evolution of therapeutic antibodies with high affinity for a specific antigen. |

| Fluorescence-Activated Cell Sorting (FACS) | Enables high-throughput screening and sorting of millions of cells based on a fluorescent signal linked to protein function [11]. | Isolating enzyme variants with improved activity from a library of >10^7 clones. |

| Counter-Selection Markers (e.g., ccdB, sacB) | Genes that are lethal to the host under specific conditions; their disruption signifies successful editing or function [13]. | In the SELECT system, killing unedited cells to ensure high-fidelity editing efficiency [13]. |

| Chimeric Virus-like Vesicles (VLVs) | A stable mammalian directed evolution platform that links protein function to viral propagation, enabling evolution in mammalian cells [12]. | Evolving a tetracycline transactivator (tTA) for enhanced doxycycline responsiveness within a native mammalian environment [12]. |

Troubleshooting Guides & FAQs

Fluorescence-Activated Cell Sorting (FACS) Troubleshooting

FAQ: What are the most common issues encountered during FACS experiments and how can they be resolved?

FACS is a powerful technique for analyzing and isolating cell populations based on fluorescence and physical characteristics. The table below summarizes frequent problems, their causes, and solutions to help optimize your experiments [15] [16] [17].

Table 1: Common FACS Issues and Troubleshooting Guide

| Problem | Possible Causes | Recommended Solutions [16] |

|---|---|---|

| Weak or No Fluorescent Signal | Degraded antibodies, low antibody concentration, low antigen expression, antigen internalization, or incompatible laser/PMT settings. | Titrate antibodies; use bright fluorochromes (e.g., PE, APC) for low-expression targets; store antibodies properly in the dark; optimize staining conditions at 4°C; check instrument laser and PMT settings [16] [17]. |

| High Background/ Non-Specific Staining | Excess unbound antibodies, Fc receptor-mediated binding, high auto-fluorescence, or dead cells in the sample. | Include Fc receptor blocking; add viability dyes (e.g., PI, 7-AAD); wash cells thoroughly; use an unstained control to subtract auto-fluorescence; use fluorochromes that emit in the red channel [16] [17]. |

| High Fluorescence Intensity | Antibody concentration too high, high PMT voltage, or under-compensated signal. | Titrate antibodies to find optimal concentration; reduce PMT voltage; check and adjust compensation using MFI alignment [16]. |

| Abnormal Scatter Profiles | Lysed/damaged cells, bacterial contamination, incorrect instrument settings, or presence of dead cells/debris. | Optimize sample preparation to avoid cell lysis; use fresh, healthy cells to set FSC/SSC; sieve cells to remove debris; ensure proper sterile technique [16]. |

| Low Event Rate | Low cell count, sample clumping, or a clogged sample injection tube. | Ensure cell concentration is at least 1x10⁶/ml; sieve cells to remove clumps; unclog the system per manufacturer's protocol (e.g., running bleach and dH₂O) [16]. |

| High Event Rate | Overly concentrated sample or air in the flow cell. | Dilute the sample to the correct concentration; refer to the instrument manual to address air in the flow cell [16]. |

Experimental Protocol: Addressing High Background Staining

- Block Fc Receptors: Incubate cells with an Fc blocking agent, BSA, or FBS prior to antibody incubation [16].

- Include Controls: Use an unstained control to set baselines and an isotype control to identify non-specific Fc-mediated binding [16] [17].

- Wash Thoroughly: Include adequate wash steps after each antibody incubation, potentially with detergents like Tween or Triton X in the buffer to remove unbound antibodies [16].

- Viability Staining: Incorporate a viability dye to gate out dead cells during analysis, as they often bind antibodies non-specifically [16] [17].

FACS Troubleshooting Workflow

Growth Coupling in Directed Evolution Troubleshooting

FAQ: How can selection conditions be optimized to minimize parasites and false positives in growth-coupled directed evolution?

Growth coupling links a host cell's survival or growth to the activity of a desired enzyme, creating a powerful selection pressure. A key challenge is the emergence of "parasites" – variants that grow without performing the desired function – and false positives [3].

Experimental Protocol: Optimizing Selection Conditions using Design of Experiments (DoE)

This pipeline allows for systematic screening and benchmarking of selection parameters before committing large libraries [3].

- Define Factors and Ranges: Identify critical selection parameters (e.g., substrate concentration, cofactor concentration like Mg²⁺/Mn²⁺, selection time, additive concentration) and their experimental ranges [3].

- Employ a Small, Focused Library: Use a small, well-defined mutant library (e.g., a site-saturation mutagenesis library targeting active site residues) for initial screening. This makes the process efficient and cost-effective [3].

- Execute DoE and Analyze Outputs: Run the DoE and analyze selection outputs (responses). Key metrics include:

- Recovery Yield: The number of variants recovered.

- Variant Enrichment: The frequency of known active variants.

- Variant Fidelity: The balance between synthesis efficiency and accuracy, which can indicate a desired shift in polymerase/exonuclease equilibrium [3].

- Iterate and Scale: Use the optimized selection parameters for subsequent rounds of evolution with larger, more complex libraries [3].

Table 2: Addressing Common Growth Coupling Challenges

| Challenge | Impact on Selection | Mitigation Strategy |

|---|---|---|

| Selection Parasites | Variants recover by using alternative substrates (e.g., cellular dNTPs) or pathways, not the desired function. | Carefully control substrate and cofactor concentrations to favor the desired activity; use a DoE approach to find conditions that minimize background growth [3]. |

| Low Recovery Yield | Insufficient number of variants recovered for subsequent rounds. | Optimize factors like selection time and nutrient availability using the DoE pipeline to improve yield without increasing parasites [3]. |

| Poor Fidelity in Polymerase Selections | Active variants exhibit high error rates, which is undesirable for many applications. | Analyze the polymerase/exonuclease balance by measuring fidelity; adjust cofactors (e.g., Mg²⁺/Mn²⁺ ratio) to select for high-fidelity variants [3]. |

Display Technologies Troubleshooting

FAQ: What are the key technical considerations when choosing a display technology for a directed evolution campaign?

The choice of display technology (e.g., phage display, yeast display, ribosome display) is critical. While the search results do not provide direct troubleshooting for these systems, they emphasize that the underlying display component's performance is crucial for success [18].

Key Considerations for Display System Performance:

When setting up a display system, the physical display module (screen) can impact usability and detection. Consider these specs to ensure reliable interaction with your system for screening and sorting [18]:

- Outdoor Visibility/Readability: If your workflow involves ambient light, consider the display's performance in such conditions. Technologies like reflective LCD (RLCD) or E-paper perform well under high ambient light without consuming excess power [18].

- Power Draw: Battery life is critical for portable devices. Lower power draw extends operational time. E-paper and front-lit reflective LCDs (LCD 2.0) are top performers for low power consumption [18].

- Response Time (Refresh Rate): For applications requiring dynamic visual feedback, a fast response time is necessary to display high-quality video graphics without lag [18].

- Operating Temperature: Ensure the display technology can function within the temperature range of your lab or any specialized environmental chambers you use [18].

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Essential Reagents for Selection Modalities

| Reagent / Material | Function in Experiment | Application Context |

|---|---|---|

| Viability Dyes (e.g., PI, 7-AAD) | Distinguishes live cells from dead cells during analysis, reducing background from non-specific binding [16] [17]. | FACS |

| Fc Receptor Blocking Reagent | Blocks non-specific antibody binding to Fc receptors on cells, reducing background staining and improving signal-to-noise ratio [16] [17]. | FACS |

| Bright Fluorochromes (e.g., PE, APC) | Provides strong signal amplification, ideal for detecting low-abundance antigens or when a signal needs to be distinguished from cellular auto-fluorescence [16]. | FACS |

| Brefeldin A | A Golgi transport blocker used in intracellular cytokine staining to prevent secretion and allow protein accumulation within the cell [16]. | FACS |

| 2′F-rNTPs (2′-deoxy-2′-α-fluoro nucleoside triphosphate) | Xenobiotic nucleic acid (XNA) substrates used to select for engineered polymerases with novel activity against non-natural substrates [3]. | Growth Coupling / Directed Evolution |

| Front-lit Reflective LCD (LCD 2.0) | A display technology that provides high resolution and quick refresh rate with ultra-low power consumption by using ambient light, ideal for portable or battery-operated screening devices [18]. | Display Technologies |

Advanced Selection Frameworks and Their Real-World Applications

Frequently Asked Questions (FAQs)

FAQ 1: What is the key advantage of using Active Learning-assisted Directed Evolution (ALDE) over traditional Directed Evolution (DE)?

ALDE more efficiently navigates complex protein fitness landscapes, especially when mutations exhibit non-additive, or epistatic, behavior. Traditional DE can be inefficient, often getting stuck at local optima. In contrast, ALDE uses an iterative machine learning workflow that leverages uncertainty quantification to explore the vast sequence space more deliberately. In a practical application, ALDE optimized an enzyme for a non-native cyclopropanation reaction, improving the product yield from 12% to 93% in just three rounds of experimentation, a scenario that was challenging for standard DE methods [19].

FAQ 2: My Bayesian Optimization (BO) performance is poor. What are the common pitfalls?

Three common pitfalls can cause poor BO performance [20]:

- Incorrect Prior Width: Using an inappropriate prior for your Gaussian Process surrogate model can misguide the search.

- Over-smoothing: This often results from a misspecified kernel lengthscale, causing the model to overlook important, sharp features of the objective function.

- Inadequate Acquisition Function Maximization: Failing to properly optimize the acquisition function itself can lead to suboptimal point selection.

FAQ 3: Why does Bayesian Optimization often perform poorly in high-dimensional problems (e.g., >20 dimensions)?

BO's performance challenges in high dimensions are primarily due to the curse of dimensionality [21]. The volume of the search space grows exponentially with the number of dimensions, making it extremely difficult to model the objective function accurately with a limited number of samples. The "20 dimensions" rule is a practical observation; performance degradation is gradual, not a strict threshold. Success in higher dimensions often requires making structural assumptions, such as that the problem has a lower intrinsic dimensionality or that only a sparse subset of dimensions is relevant [21].

FAQ 4: How can I identify and handle errors in my training data for ML-assisted directed evolution?

Unreliable model behavior is often traced to errors in training data, such as missing, incorrect, noisy, or biased values [22]. A holistic approach involves [22]:

- Identification: Use data attribution frameworks like influence functions or Shapley values to identify training points most responsible for model predictions and errors [22].

- Debugging: Understand how errors propagate through the different stages of your ML pipeline.

- Learning from Imperfection: Instead of attempting to repair all errors (which can be expensive and introduce new errors), use methods that reason about the model's reliability in the presence of this uncertainty [22].

FAQ 5: What is the role of the acquisition function in Bayesian Optimization?

The acquisition function is a heuristic that guides the BO algorithm by determining the next best point to evaluate. It uses the surrogate model's predictions and uncertainty to balance exploration (probing uncertain regions) and exploitation (concentrating on areas known to have high performance). Common acquisition functions include [20] [23]:

- Expected Improvement (EI): Selects the point with the highest expected improvement over the current best observation.

- Probability of Improvement (PI): Selects the point with the highest probability of improving upon the current best.

- Upper Confidence Bound (UCB): Selects the point based on a weighted sum of the predicted mean and uncertainty.

Troubleshooting Guides

Problem: BO is Not Finding Better Variants

Possible Causes and Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Incorrect Prior Width [20] | Review the kernel amplitude and lengthscale of your Gaussian Process. Check if the model uncertainty is too low/high. | Adjust the GP prior to better reflect your knowledge of the protein fitness landscape. |

| Over-smoothing [20] | Check if the model is failing to capture the ruggedness of your fitness data. | Tune the kernel lengthscale to prevent the model from smoothing out important epistatic effects. |

| Poor Acquisition Maximization [20] | Verify if the internal optimization of the acquisition function is converging properly. | Use a more robust optimizer for the acquisition function and consider multiple restarts. |

| High-Dimensional Search Space [21] | Check the number of dimensions (mutations) you are optimizing. | Simplify the problem by focusing on a sparse subset of key residues or using a dimensionality reduction technique. |

Problem: My Model Performance is Unstable or Poor

Possible Causes and Solutions:

| Cause | Diagnostic Steps | Solution |

|---|---|---|

| Harmful Data Errors [22] | Use data valuation methods (e.g., Data Shapley, influence functions) to identify mislabeled or out-of-distribution data points in your training set. | Clean or remove the identified harmful data points, or use methods like confident learning to account for label noise [22]. |

| Inadequate Model for Epistasis | Analyze if your model architecture (e.g., linear model) can capture complex, non-linear interactions between mutations. | Switch to a more expressive model or use a protein language model-based representation that can better capture epistasis [19]. |

| Insufficient Initial Data | Evaluate model performance across different sizes of initial random libraries. | Ensure you start with a sufficiently large and diverse initial library to build a reasonable initial model. |

Experimental Protocols & Data

Table comparing recent experimental implementations and their outcomes.

| Method / Tool | Target System | Key Innovation | Experimental Rounds | Result / Fold Improvement | Citation |

|---|---|---|---|---|---|

| ALDE (Active Learning-assisted Directed Evolution) | ParPgb enzyme (5 epistatic residues) | Batch BO with wet-lab experimentation, leveraging uncertainty quantification. | 3 rounds | Yield improved from 12% to 93% for a cyclopropanation reaction [19]. | [19] |

| DeepDE (Iterative deep learning) | Green Fluorescent Protein (GFP) | Uses triple mutants as building blocks; trained on ~1,000 mutants per round. | 4 rounds | 74.3-fold increase in activity over baseline [5]. | [5] |

| MADGUI (Graphical User Interface) | General process optimization | User-friendly GUI for active learning and BO, requires no coding. | N/A | Provides an accessible platform for optimal experiment design [24]. | [24] |

Table 2: Comparison of Data Importance Quantification Methods

Based on "Navigating Data Errors in Machine Learning Pipelines" [22].

| Method | Core Principle | Scalability | Key Utility |

|---|---|---|---|

| Influence Functions | Traces model prediction back to training data to find the most responsible points. | Moderate (requires gradients/Hessians) | Understanding model behavior, debugging, detecting dataset errors [22]. |

| Data Shapley | Equitably values each training point based on its contribution to predictor performance. | Computationally expensive | More powerful than leave-one-out; identifies outliers and valuable data [22]. |

| Beta Shapley | A generalization of Data Shapley by relaxing the efficiency axiom. | Improved over standard Shapley | A unified and noise-reduced data valuation framework [22]. |

| Confident Learning | Estimates uncertainty in dataset labels by characterizing label errors. | Good | Identifies label errors in datasets; used to clean data prior to training [22]. |

Workflow Visualization

Diagram 1: Active Learning-Assisted Directed Evolution (ALDE) Workflow

Diagram 2: The Bayesian Optimization Cycle

The Scientist's Toolkit: Research Reagent Solutions

Table 3: Key Materials and Computational Tools

Essential resources for implementing ML-assisted directed evolution from the cited literature.

| Item / Resource | Function / Description | Example Use Case / Note |

|---|---|---|

| PROTEUS Platform [25] | A biotech platform for evolving molecules (proteins, antibodies) inside mammalian cells. | Enables faster evolution (years/decades faster) for applications like switching off genetic diseases [25]. |

| ALDE Codebase [19] | A computational package for running the Active Learning-assisted Directed Evolution workflow. | Available at https://github.com/jsunn-y/ALDE; integrates with wet-lab screening data [19]. |

| MADGUI [24] | A user-friendly Graphical User Interface (GUI) for active learning and Bayesian optimization. | Built for users with no programming knowledge; accelerates discovery of optimal solutions [24]. |

| ParPgb (Pyrobaculum arsenaticum) [19] | A protoglobin scaffold used as an engineering target for non-native carbene transfer reactions. | Chosen for its high thermostability and ability to perform novel chemistries [19]. |

| cleanlab [22] | An open-source Python library for confident learning and estimating label errors in datasets. | Used to find label errors and improve model accuracy by cleaning data prior to training [22]. |

Frequently Asked Questions (FAQs)

Q1: What are the fundamental differences between PACE and orthogonal replication systems?

A1: While both are continuous evolution platforms, they are architecturally and operationally distinct, as summarized in the table below.

| Feature | Phage-Assisted Continuous Evolution (PACE) | Orthogonal Replication Systems (e.g., OrthoRep) |

|---|---|---|

| Core Principle | Links protein function to the infectivity of the M13 bacteriophage. [26] | Uses an error-prone, dedicated DNA polymerase to replicate a separate plasmid independently of the host genome. [27] |

| Host Organism | Primarily Escherichia coli. [26] | Primarily Saccharomyces cerevisiae (yeast). [27] |

| Mutation Mechanism | Error-prone replication of the phage genome in a mutator host cell. [26] | Engineered, targeted mutagenesis by an orthogonal DNA polymerase (e.g., TP-DNAP1). [27] |

| Key Advantage | Extremely fast generational turnover (as short as 1-2 hours) with minimal researcher intervention. [26] | Stable, targeted mutagenesis of a specific plasmid without altering the host genome, enabling long-term evolution. [27] |

Q2: My PACE experiment is not producing any selection phage (SP). What could be wrong?

A2: A lack of SP output typically indicates a failure in the core selection circuit. The following checklist can help diagnose the issue.

- Check the Accessory Plasmid (AP): Ensure the AP is present and functional. It must supply the pIII protein only in response to the desired activity of your protein of interest (POI). Confirm that the selection circuit on the AP is correctly configured and that the inducer (if any) is working. [26]

- Verify the Selection Phage (SP) Construction: Confirm that the gene for the POI has correctly replaced the pIII gene (gIII) in the SP. If the SP still contains a functional gIII, it will propagate regardless of your POI's activity, invalidating the selection. [26]

- Troubleshoot the Lagoon Culture: The lagoon must maintain a high and constant density of host cells and a continuous flow of fresh SP. Check that the lagoon dilution rate is correctly calibrated to prevent washout and that the host cells are healthy and consistently producing the AP. [26]

Q3: How stable are orthogonal replication systems across different host strains, and what could cause instability?

A3: Systems like OrthoRep are generally highly stable across a wide range of yeast strains, including common lab strains (BY4741, W303–1A), industrial strains (CEN.PK2-1C), and diploids. [27] However, a primary historical source of instability was traced to a toxin/antitoxin (TA) system naturally encoded on the wild-type orthogonal plasmid. Critical Note: In any OrthoRep application, this TA system is replaced by your gene of interest. Therefore, if you are using a properly engineered OrthoRep plasmid, instability from this source should not occur, and the system should be broadly compatible. [27]

Q4: What are "parasite" variants in directed evolution, and how can I minimize their emergence?

A4: Selection parasites are variants that are enriched not by performing the desired function, but by exploiting an alternative, often easier, pathway to survive the selection pressure. [3] For example, a polymerase intended to incorporate xenobiotic nucleic acids (XNAs) might be selected for its ability to use low levels of endogenous dNTPs present in the system instead. [3] To minimize parasites, you must rigorously optimize your selection conditions (e.g., substrate concentration, cofactors, time) to strongly favor the desired activity and de-select the parasitic one. [3]

Troubleshooting Guides

PACE: Low Mutant Diversity or Stagnant Evolution

This issue arises when the population lacks sufficient genetic diversity to find a solution or becomes trapped on a local fitness peak.

- Problem: Evolution has stalled; the population seems homogeneous.

- Solution: Increase the mutation rate by using a mutator plasmid (MP) that expresses an error-prone DNA polymerase. Titrate the mutagenesis rate, as excessively high rates can lead to a population collapse. [26]

- Solution: Implement a "tunable" selection circuit. If the selection pressure is too strong too early, it can eliminate all but a few variants before beneficial mutations arise. Consider starting with a weaker selection and gradually increasing its stringency over time. [26]

Orthogonal Replication: Low Mutation Rate or No Evolution

When the orthogonal plasmid does not mutate at the expected rate, the evolution process grinds to a halt.

- Problem: The gene of interest (GOI) on the orthogonal plasmid is not accumulating mutations.

- Solution: Verify the expression of the error-prone orthogonal DNA polymerase. In OrthoRep, the mutagenic TP-DNAP1 must be expressed in trans from the host genome. Confirm that its gene is present and functional. [27]

- Solution: Confirm the replication status of the orthogonal plasmid. The system relies on the orthogonal polymerase being the sole replicator for the target plasmid. Ensure that host polymerases are not interfering with its replication, which would bypass the mutagenic process. [27]

- Solution: Measure the baseline mutation rate using a fluctuation assay with a reporter gene (e.g., leu2 reversion). Compare your measured rate to the expected rate for your specific orthogonal polymerase variant (e.g., ~10⁻⁹ substitutions per base for wild-type TP-DNAP1 vs. ~10⁻⁶ for an error-prone variant). [27]

General: Optimizing Selection Conditions to Reduce False Positives

This guide uses Design of Experiments (DoE) to systematically optimize selection parameters, a method applicable to various directed evolution platforms, including emulsion-based ones. [3]

- Step 1: Define Factors and Responses. Identify key adjustable factors (e.g., Mg²⁺/Mn²⁺ concentration, nucleotide chemistry and concentration, selection time). Define the measurable responses (e.g., recovery yield, enrichment of desired variants, variant fidelity). [3]

- Step 2: Screen with a Small Library. Use a small, focused mutant library to test a matrix of different factor combinations. This allows for efficient benchmarking of how selection parameters influence outcomes without the cost of running a full-scale evolution experiment. [3]

- Step 3: Analyze and Iterate. Analyze the selection outputs to identify the conditions that maximize your desired response (e.g., highest enrichment of true positives, lowest recovery of parasites). Use these optimized conditions for subsequent, larger-scale evolution rounds. [3]

Orthogonal Replication Mutation Rates Across Host Strains

The per-base substitution rates for the OrthoRep system are consistent across various S. cerevisiae strains, confirming its general applicability. [27]

| Host Strain | Orthogonal DNAP | Mutation Rate (subs/base) |

|---|---|---|

| BY4741 | Wild-type TP-DNAP1 | 1.23 × 10⁻⁹ |

| BY4741 | Error-prone TP-DNAP1-4-3 | 4.48 × 10⁻⁶ |

| CEN.PK2-1C | Wild-type TP-DNAP1 | 2.01 × 10⁻⁹ |

| CEN.PK2-1C | Error-prone TP-DNAP1-4-3 | 3.36 × 10⁻⁶ |

| W303-1A | Wild-type TP-DNAP1 | 1.73 × 10⁻⁹ |

| W303-1A | Error-prone TP-DNAP1-4-3 | 2.71 × 10⁻⁶ |

Key Selection Parameters for Polymerase Engineering

Based on a study optimizing selection conditions for DNA/XNA polymerase engineering, the following parameters are critical to monitor and control. [3]

| Parameter Category | Specific Factors | Impact on Selection |

|---|---|---|

| Cofactors | Mg²⁺ and/or Mn²⁺ concentration | Shapes polymerase activity and fidelity; influences cooperative interplay between polymerase and exonuclease domains. [3] |

| Substrates | Nucleotide chemistry (dNTPs vs. XNTPs) and concentration | Directly selects for enzymes that utilize desired substrates; low concentration can favor "parasite" variants. [3] |

| Reaction Conditions | Selection time, PCR additives | Alters stringency; longer time or specific additives can favor variants with higher processivity or stability. [3] |

The Scientist's Toolkit: Essential Research Reagents

This table details key reagents and materials required to establish and run continuous evolution systems.

| Reagent / Material | Function in Experiment | Example / Note |

|---|---|---|

| Mutator Plasmid (MP) | Expresses error-prone DNA polymerase in host to elevate mutation rate of the target gene in PACE. [26] | A plasmid expressing a mutagenic version of the T7 RNA polymerase for in vivo mutagenesis. |

| Accessory Plasmid (AP) | In PACE, encodes the essential pIII protein under the control of a selection circuit linked to the protein's activity. [26] | The AP is the "brain" of the selection, linking survival to function. |

| Selection Phage (SP) | The engineered M13 phage where the gene of interest replaces the pIII gene. Its propagation is dependent on the POI's function. [26] | The vehicle for the evolving gene. |

| Orthogonal DNA Polymerase | A dedicated polymerase that replicates only a specific plasmid, not the host genome. Error-prone versions drive targeted evolution. [27] | TP-DNAP1 in the yeast OrthoRep system. |

| Orthogonal Plasmid | The specialized plasmid that is replicated by the orthogonal DNA polymerase. It carries the gene(s) to be evolved. [27] | The p1 plasmid in the OrthoRep system. |

| Host Cells | The organism that houses the continuous evolution system. Must be compatible with all system components. | E. coli for PACE; S. cerevisiae for OrthoRep. [27] [26] |

Design of Experiments (DoE) for Systematic Selection Parameter Screening

Frequently Asked Questions (FAQs)

FAQ 1: What is the primary purpose of a Screening Design of Experiments (DOE) in directed evolution?

The primary purpose of a screening DOE is to efficiently identify the few significant factors—such as cofactor concentration, substrate concentration, or selection time—from a long list of potential variables that influence your selection output [3] [28] [29]. It is an economical experimental plan that focuses on determining the relative significance of main effects when you are dealing with many potential factors [29].

FAQ 2: When should I use a screening DOE in my directed evolution pipeline?

A screening DOE is particularly useful in several scenarios [28]:

- When you are dealing with a process that involves a large number of potential factors and running a full factorial DOE would be impractical.

- When your goal is to quickly identify the most significant variables affecting a fitness metric (e.g., enzymatic activity or yield) so you can focus resources on them.

- As a preparation for a subsequent optimization DOE, where you will find the optimal levels for the critical factors identified during screening.

FAQ 3: What are the main limitations of screening designs?

While efficient, screening DOEs have limitations [28]:

- They often confound interactions with main effects, meaning you might miss important information about how factors influence each other.

- They are generally not able to detect quadratic or higher-order effects.

- Their efficiency comes at the cost of reduced information compared to a full factorial design.

FAQ 4: How do I choose the right type of screening design?

The choice depends on the number of factors and the need to detect interactions [28]:

- 2-Level Fractional Factorial Designs: Common for screening; they estimate main effects while confounding interactions. The resolution of the design (e.g., Resolution III or IV) indicates the degree of this confounding [29].

- Plackett-Burman Designs: Useful for investigating a large number of factors with a very small number of runs, but they assume interactions are negligible [28].

- Definitive Screening Designs: A more advanced option that can estimate main effects, two-way interactions, and quadratic effects in a single experiment [28].

FAQ 5: What are the critical best practices for conducting a successful screening DOE?

Key practices include [28]:

- Eliminate Noise: Control for known sources of variation to prevent contamination of your results.

- Clear Objectives: Understand if you are only interested in main effects or if interactions are likely to be important.

- Sequential Experimentation: Be prepared to revisit and refine your screening DOE using techniques like "folding" to increase resolution if interactions are suspected.

Troubleshooting Guides

Problem 1: Inconclusive or No Significant Factors Identified

Potential Causes and Solutions:

- Cause: Factor levels set too close together. The high and low levels you chose for your factors may not be sufficiently different to produce a detectable effect on the response.

- Solution: In your next experiment, select more extreme (but still realistic) high and low levels for each factor based on process knowledge [30].

- Cause: Excessive background noise. Uncontrolled variables may be obscuring the signal from the factors you are testing.

- Cause: The selected factors genuinely do not impact the response.

- Solution: Re-evaluate your initial hypothesis and consider screening a different set of parameters.

Problem 2: Results Are Not Reproducible at Scale

Potential Causes and Solutions:

- Cause: Assembly or configuration errors during testing. This is a common issue where units are not built according to the specified experimental design [31].

- Solution: Maintain hyper-vigilance during the assembly of experimental units. Use visual aids and checklists to ensure each configuration is built correctly [31].

- Cause: Laboratory test conditions do not accurately reflect real-world performance.

- Solution: Validate your test conditions. Ensure that the selection pressures and screening environment used in the DOE are a meaningful simulation of the final application [31].

Problem 3: Suspected Factor Interactions Were Missed

Potential Causes and Solutions:

- Cause: Use of a low-resolution screening design. Resolution III designs confound main effects with two-factor interactions [28] [29].

Quantitative Data on Common Screening Designs

The table below summarizes key characteristics of different screening design types to aid in selection.

Table 1: Comparison of Common Screening Design of Experiments (DOE) Types

| Design Type | Primary Use | Typical Resolution | Key Advantage | Key Limitation |

|---|---|---|---|---|

| 2-Level Fractional Factorial [28] | Screening many factors | III, IV, or V [29] | Highly efficient; requires only a fraction of the full factorial runs. | Confounds (aliases) interactions with main effects or other interactions. |

| Plackett-Burman [28] | Screening a very large number of factors | III | Extremely low number of runs for the factors investigated. | Assumes all interactions are negligible; not suitable if interactions are present. |

| Definitive Screening [28] | Screening with potential for curvilinear effects | N/A | Can estimate main effects, interactions, and quadratic effects. | Requires more runs than a Plackett-Burman design. |

Experimental Workflow & Protocol

The following diagram illustrates a generalized workflow for executing a screening DOE in the context of directed evolution.

Figure 1: Screening DOE workflow for directed evolution.

Detailed Protocol for a 2-Factor Screening DOE

This protocol outlines the steps for a basic 2-factor, 2-level full factorial design, which is a foundational building block for more complex fractional factorial designs [30].

1. Define the Problem and Metrics:

- Objective: Understand the effect of Mg2+ concentration and nucleotide chemistry on the recovery yield of active polymerase variants in a compartmentalized self-replication (CSR) selection [3].

- Response Variable: Recovery yield (a quantitative measure).

2. Select Factors and Levels:

- Code the factors as

-1(low level) and+1(high level).- Factor A: Mg2+ Concentration:

-1= 2 mM,+1= 8 mM. - Factor B: Nucleotide Chemistry:

-1= dNTPs,+1= 2′F-rNTPs [3].

- Factor A: Mg2+ Concentration:

3. Create a Design Matrix:

- The matrix specifies the experimental conditions for each run.

Table 2: Design Matrix and Hypothetical Results for a 2-Factor Polymerase Selection DOE

| Experiment # | Mg2+ Conc. (Coded) | Nucleotide (Coded) | Mg2+ Conc. (Actual) | Nucleotide (Actual) | Recovery Yield (Response) |

|---|---|---|---|---|---|

| 1 | -1 | -1 | 2 mM | dNTPs | 21% |

| 2 | -1 | +1 | 2 mM | 2′F-rNTPs | 42% |

| 3 | +1 | -1 | 8 mM | dNTPs | 51% |

| 4 | +1 | +1 | 8 mM | 2′F-rNTPs | 57% |

4. Execute Experiments and Analyze Data:

- Run the experiments in a randomized order to avoid bias [30].

- Calculate the main effect of each factor [30]:

- Effect of Mg2+:

(Y3 + Y4)/2 - (Y1 + Y2)/2=(51 + 57)/2 - (21 + 42)/2= 22.5% - Effect of Nucleotide:

(Y2 + Y4)/2 - (Y1 + Y3)/2=(42 + 57)/2 - (21 + 51)/2= 13.5%

- Effect of Mg2+:

- This analysis shows that increasing Mg2+ concentration has a larger positive effect on recovery yield under these conditions.

Research Reagent Solutions

The table below lists key materials and their functions in establishing selection parameters for directed evolution, particularly for enzyme engineering.

Table 3: Essential Research Reagents for Selection Parameter Screening

| Reagent / Material | Function in Directed Evolution | Example Application |

|---|---|---|

| Metal Cofactors (e.g., Mg2+, Mn2+) | Essential for catalytic activity of many enzymes; concentration can dramatically influence activity and fidelity [3]. | Optimizing polymerase performance in CSR selections [3]. |

| Nucleotide Analogues (e.g., 2′F-rNTPs) | Unnatural substrates used to select for polymerases with novel or enhanced activities, such as XNA synthesis [3]. | Engineering XNA polymerases for biotechnological applications [3]. |

| PCR Additives | Chemical additives that can alter enzyme stability, processivity, or specificity during selection pressure [3]. | Fine-tuning selection stringency in emulsion-based screens [3]. |

| Emulsification Agents | Enable compartmentalization of individual variants in water-in-oil emulsions, creating a strong genotype-phenotype link [3]. | Implementing CSR and other ultra-high-throughput screening platforms [3]. |

This section provides a detailed guide to the Active Learning-assisted Directed Evolution (ALDE) workflow, from establishing your initial library to analyzing the final results. The diagram below illustrates the iterative, closed-loop nature of the process.

Workflow Diagram Title: ALDE Iterative Optimization Cycle

Step-by-Step Protocol

- Define the Combinatorial Design Space: The case study focused on five epistatic residues (W56, Y57, L59, Q60, F89) in the active site of the Pyrobaculum arsenaticum protoglobin (ParPgb) starting variant ParLQ (W59L Y60Q). This creates a theoretical design space of 20^5 (3.2 million) possible variants [19].

- Generate Initial Library: Synthesize an initial library of variants mutated at all five positions. Use sequential rounds of PCR-based mutagenesis with NNK degenerate codons to introduce diversity [19].

- Screen for Fitness: Express and screen the library variants using a relevant biochemical assay. The primary fitness objective for the cyclopropanation case study was defined as the difference between the yield of the desired cis-cyclopropane product (cis-2a) and the yield of the trans product (trans-2a) from the reaction of 4-vinylanisole and ethyl diazoacetate [19].

- Train the Machine Learning Model: Use the collected sequence-fitness data to train a supervised machine learning model. This model learns to map protein sequences to the fitness objective. Employ frequentist uncertainty quantification for robust performance [19].

- Rank and Select New Variants: Apply an acquisition function, such as the Upper Confidence Bound (UCB), to the trained model. This function ranks all sequences in the design space, balancing the exploration of uncertain regions with the exploitation of regions predicted to have high fitness [19] [32].

- Iterate the Process: The top-ranked variants from the acquisition function are synthesized and screened in the next wet-lab round (Round 2). The new data is then used to retrain the model, and the cycle repeats until a variant with satisfactory performance is identified [19].

Troubleshooting Common Experimental Issues

FAQ 1: My initial library screening shows no significant improvement over the parent sequence. Should I continue?

- Answer: Yes, you should continue. A lack of significantly improved single mutants is a classic signature of a rugged fitness landscape with strong epistasis, where the beneficial effect of a mutation depends on the genetic background. This is precisely the scenario where ALDE provides the most value over traditional methods. In the ParPgb case, single-site saturation mutagenesis (SSM) also showed no promising single mutants, and simple recombination of the "best" single mutants failed. However, after three rounds of ALDE, a high-performance variant was successfully identified [19].

FAQ 2: How do I choose an acquisition function, and what is the UCB parameter (β)?

- Answer: The Upper Confidence Bound (UCB) is a popular acquisition function. It is defined as ( \alpha(\mathfrak{p}) = \mu(\mathfrak{p}) + \sqrt{\beta}\sigma(\mathfrak{p}) ), where ( \mu ) is the predicted fitness and ( \sigma ) is the model's uncertainty [32].

- High β (>0): Prioritizes exploration (sampling uncertain regions). This helps the model learn about under-explored areas of the sequence space and can escape local optima.

- Low β (~0): Prioritizes exploitation (sampling from high-fitness predicted regions). This acts like a greedy search.

- Recommendation: Theory suggests a dynamically adjusted β, but a practical sweet spot often lies at a smaller, non-zero value (e.g., ( \beta=0.2\beta_t^* )). Start with a balanced approach and adjust based on experimental progress [32].

FAQ 3: Why is uncertainty quantification (UQ) critical in ALDE, and which method should I use?

- Answer: UQ is essential for balancing exploration and exploitation. Without a measure of uncertainty, the model can over-exploit its initial predictions and get stuck in a local optimum. The ALDE study found that frequentist UQ methods performed more consistently than typical Bayesian approaches in their experimental and computational tests. Always ensure your chosen ML model and sequence encoding can provide reliable uncertainty estimates [19].

FAQ 4: My model predictions and experimental results are inconsistent after the first ALDE round. What could be wrong?

- Answer: This is common when the initial dataset is too small for the model to learn the complex epistatic relationships. Ensure your initial library is sufficiently diverse and large enough to provide a meaningful signal. The ALDE workflow is designed to improve the model's accuracy iteratively as more data is collected. Proceed to the next round, as the model's performance typically improves with more data [19].

Key Reagents and Experimental Materials

Table 1: Essential Research Reagent Solutions for ALDE

| Reagent / Material | Function / Description | Application in ALDE Case Study |

|---|---|---|

| NNK Degenerate Codons | Allows for the incorporation of all 20 amino acids at a targeted position during mutagenesis. | Used to build the initial combinatorial library for the five active-site residues [19]. |

| PCR Mutagenesis Kit | A commercial kit for efficient site-directed or combinatorial mutagenesis. | Used for sequential rounds of PCR-based mutagenesis to generate variant libraries [19]. |

| ParPgb Parent Scaffold | The protoglobin (ParPgb) starting variant ParLQ (W59L Y60Q). | The protein scaffold to be engineered for improved cyclopropanation activity [19]. |

| Substrates: 4-Vinylanisole & Ethyl Diazoacetate (EDA) | The olefin and carbene precursor, respectively, for the non-native cyclopropanation reaction. | Used in the high-throughput screening assay to measure variant fitness [19]. |

| Gas Chromatography (GC) | An analytical technique for separating and quantifying chemical compounds in a mixture. | Used to screen variants for yield and diastereoselectivity of the cyclopropanation products [19]. |

The following table summarizes the quantitative outcomes from the ALDE case study, demonstrating its efficiency and effectiveness.

Table 2: Summary of Key Experimental Data and Results from the ALDE Case Study [19]

| Metric | Starting Point (ParLQ) | After 3 Rounds of ALDE | Notes |

|---|---|---|---|

| Total Yield of Product | ~40% | 93% (of a desired product) | The yield for a specific desired product increased from an initial 12% to 93% [19]. |

| Diastereomeric Ratio (cis:trans) | 1:3 (preferring trans) | 14:1 (preferring cis) | A dramatic reversal and improvement in stereoselectivity for the cis product [19]. |

| Fitness Objective | Low / Negative | Highly Optimized | The objective was defined as (cis yield - trans yield) [19]. |

| Sequence Space Explored | - | ~0.01% of the total 3.2M design space | Demonstrates high sample efficiency [19]. |

| Key Mutations Identified | W59L, Y60Q (parent) | Specific combination of mutations at W56, Y57, L59, Q60, F89 | The optimal combination was not predictable from single-mutant data, highlighting epistasis [19]. |

Precise replacement or repair of entire genes in human cells remains a significant challenge in modern genome editing. While technologies like CRISPR or base editors can change individual DNA letters with high precision, they are poorly suited for inserting long DNA fragments, such as full-length genes. These methods often create double-stranded DNA breaks, leading to unwanted mutations, low efficiency in certain cell types, or larger genomic rearrangements [33].

For many genetic diseases caused by numerous different mutations within the same gene, developing individual therapies for each variant is impractical. Bridge recombinases present a promising solution: these enzymes combine a recombinase protein with a bridge RNA (bRNA) molecule that guides precise recombination without breaking both DNA strands. This enables safer insertion of large DNA fragments [33]. This case study explores the application of the E.coli Orthogonal Replicon (EcORep) system, a novel directed evolution platform, to optimize bridge recombinases for therapeutic gene replacement, with a specific proof-of-concept focusing on Alpha-1 Antitrypsin Deficiency (A1ATD) caused by mutations in the SERPINA1 gene [33].

Key Concepts and Definitions

Bridge Recombinases: A class of genome editing enzymes that perform precise DNA exchange using a recombinase protein guided by a bridge RNA (bRNA), which binds both the genomic target and a donor DNA fragment [33].

Directed Evolution: A laboratory technique that mimics natural selection to engineer biomolecules with improved properties. It involves iterative cycles of creating genetic diversity and selecting variants with enhanced function [4].

EcORep (E.coli Orthogonal Replicon): A directed evolution system that uses a special DNA replicon inside E. coli with a high mutation rate, allowing for continuous mutagenesis and enrichment of protein variants with improved activity [33].

Fitness Landscape: A conceptual mapping of protein sequences (genotypes) to a quantitative measure of fitness, such as enzymatic activity or thermostability. Directed evolution is essentially a guided walk across this landscape [3].

Off-Target Effects: Unintended modifications at DNA locations other than the desired target site, a key safety concern for any gene editing therapeutic [34].

Technical FAQs and Troubleshooting Guide

FAQ 1: What is the core principle behind using EcORep for evolving bridge recombinases?

The EcORep system establishes a direct link between a bridge recombinase's function and its own replication. The gene encoding the bridge recombinase is placed on a special, high-mutation-rate replicon in E. coli. Variants with higher recombination activity are selectively enriched over time because their enhanced function allows the replicon to propagate more efficiently. This creates a self-sustaining cycle of continuous evolution where improved enzyme variants "survive" and dominate the population [33].

FAQ 2: Our bridge recombinase evolution campaign has stalled, with no fitness improvement after several rounds. What could be wrong?

Stalling in a directed evolution campaign often indicates that the experiment is trapped at a local fitness peak or is being hindered by epistasis (non-additive interactions between mutations). We recommend the following troubleshooting steps [3] [19]:

- Alter Selection Pressure: Gradually increase the stringency of your selection conditions. For example, if you are selecting for recombination efficiency, reduce the induction time for the donor DNA or the amount of the donor template.

- Diversify the Library: The initial library may be exhausted. Introduce a new round of diversity using a different mutagenesis method (e.g., switch from error-prone PCR to targeted saturation mutagenesis of identified hotspot residues) to escape the local optimum [4].

- Check for Parasitic Variants: Ensure that your selection logic is not enriching for "cheater" variants that propagate without performing the desired recombination function. Validate the functional output of enriched variants using a secondary, low-throughput assay [3].

FAQ 3: We are observing high background noise in our selection system. How can we optimize conditions to reduce it?

High background is a common issue that can mask the signal from genuinely improved variants. Systematic optimization of selection parameters is crucial. A robust strategy involves using Design of Experiments (DoE) to screen multiple factors simultaneously [3].

Table: Key Parameters to Optimize for Reducing Background in EcORep Selection

| Parameter | Effect on Background | Suggested Adjustment |

|---|---|---|

| Donor DNA Concentration | High concentrations can lead to non-specific recombination or increase survival of non-functional clones. | Titrate to find the minimum concentration that allows functional selection. |

| Induction Time & Strength | Overly long or strong induction can increase noise from leaky expression. | Shorten induction time or use weaker inducers. |

| Cofactor Concentration (e.g., Mg²⁺) | Can influence enzyme fidelity and cleavage/ligation equilibrium. | Optimize concentration to favor precise recombination over non-specific nicking [3]. |

| Host Cell Physiology | The health and metabolic state of the E. coli host can affect replication dynamics. | Use a well-defined growth medium and control cell density at induction. |

FAQ 4: What sequencing coverage is sufficient for accurately identifying enriched variants from an EcORep experiment?

While whole-genome sequencing often requires high coverage (e.g., 30x), directed evolution experiments using targeted sequencing have different requirements. Research indicates that precise and accurate identification of significantly enriched mutants is achievable even at relatively low coverages. A minimum of 50x coverage per variant is a good starting point, but for confident detection of rare (<1%) beneficial mutants in a complex library, aim for 100-200x coverage. This balances cost with the need to avoid false positives/negatives [3].

FAQ 5: How do we assess the safety of an evolved bridge recombinase for therapeutic applications?

Safety profiling is a multi-step process. A key component is comprehensive off-target analysis. The FDA recommends using multiple methods to measure off-target editing events, including genome-wide analysis [34].

- Biochemical Methods (e.g., CIRCLE-seq, CHANGE-seq): Use purified genomic DNA and the evolved recombinase in a test tube. These methods are highly sensitive and can reveal a broad spectrum of potential off-target sites, but may overestimate risk as they lack cellular context [34].

- Cellular Methods (e.g., GUIDE-seq, DISCOVER-seq): Performed in living cells, these techniques capture off-target effects in the context of native chromatin and DNA repair pathways, providing biologically relevant insights. They are essential for validating the clinical relevance of off-target sites identified by biochemical methods [34].

Experimental Protocols

Protocol: Establishing a Baseline EcORep Selection

This protocol outlines the steps to initiate a directed evolution campaign for a bridge recombinase using the EcORep system, based on the work of the iDEC 2025 team [33].

Objective: To establish a functional selection system in E. coli for enriching active bridge recombinase variants.

Materials:

- Plasmid: EcORep replicon vector carrying the gene for the parent bridge recombinase.

- Host Strain: Specified E. coli strain (e.g., 10-beta competent E. coli).

- Selection Cassette: A DNA construct containing the donor sequence and a reporter or selection marker flanked by the target sequences for the bridge recombinase.

- Growth Media: LB media supplemented with appropriate antibiotics.

Procedure:

- Transformation: Transform the EcORep plasmid encoding the bridge recombinase into the competent E. coli host strain.

- Culture Growth: Inoculate a primary culture and grow to mid-log phase.

- Selection Induction: Introduce the selection cassette (via transformation or induction) and provide conditions that induce the expression of the bridge recombinase and its bridge RNA.

- Outgrowth: Allow cells to recover and grow for a specified period (e.g., 4-16 hours) to enable replication of the EcORep plasmid in successful recombinants.

- Harvest and Analysis: Harvest the cells. Extract plasmids and subject the bridge recombinase gene to sequencing to monitor the emergence of new variants. The functional output can be validated by a secondary assay, such as PCR to check for cassette flipping [33].

Protocol: Validating Gene Replacement in Human Cells

After evolving a promising bridge recombinase variant, its function must be validated in a therapeutically relevant human cell model.

Objective: To confirm that an evolved bridge recombinase can precisely insert a healthy copy of the SERPINA1 gene into its natural genomic location in human cells.

Materials:

- Cell Line: Human hepatocyte line (e.g., HepG2).

- Editing Components: Lipid nanoparticles (LNPs) or viral vectors delivering the evolved bridge recombinase protein/mRNA and its specific bRNA.